text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# 6. Challenge Solution - A2C

So far, we managed to implement the REINFORCE agent version for the Cartpole environment. Now let's try to do the A2C one. As both models have a pretty similar structure and functioning mechanism, this notebook will contain more missing parts.

### Theory

As it has been mentioned in Actor-Critic methods notebook, A2C algorithm has pretty similar expected return expression - the only difference is that the return function is exchanged by the advantage function.

$$

\nabla_{\theta}J(\theta)=\sum_{t = 0}^T\nabla_{\theta}log\pi_{\theta}(a_t|s_t)A(s_t, a_t)

$$

$$

A(s_t, a_t) = Q(s_t, a_t) - V(s_t)

$$

From the structural perspective, A2C model has two parts - actor and critic. Actor takes state as an input and outputs probability distribution for actions, while critic calculated values for those actions.

Your implementation should take the following form:

1. Extracting environment information (state, action, etc.)

2. Passing state through the model to generate action and critic outputs

3. Sample action from the probability distribution

4. Calculating rewards

5. Comparing rewards after taken trajectory to those calculated at the start of the trajectory to generate loss

### Building model

```

import gym

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

seed = 42

# Discount value

gamma = 0.99

max_steps_per_episode = 10000

# Create the environment

env = gym.make("CartPole-v0")

env.seed(seed)

eps = np.finfo(np.float32).eps.item() # Smallest number such that 1.0 + eps != 1.0

```

### Defining model

As it has been mentioned, A2C algorithm contains two parts - actor and critic - both of which should be modeled by separate neural networks. In our implementation, the input layer is shared.

```

num_states = env.observation_space.shape[0]

num_hidden = 128

num_inputs = 4

num_actions = env.action_space.n

inputs = layers.Input(shape=(num_inputs,))

common = layers.Dense(num_hidden, activation="relu")(inputs)

action = layers.Dense(num_actions, activation="softmax")(common)

critic = layers.Dense(1)(common)

model = keras.Model(inputs=inputs, outputs=[action, critic])

optimizer = tf.keras.optimizers.Adam(learning_rate=0.01)

huber_loss = keras.losses.Huber()

action_probs_history = []

critic_value_history = []

rewards_history = []

running_reward = 0

episode_count = 0

while True: # Run until solved

state = env.reset()

episode_reward = 0

with tf.GradientTape() as tape:

for timestep in range(1, max_steps_per_episode):

# show the attempts of the agent

env.render()

state = tf.convert_to_tensor(state)

state = tf.expand_dims(state, 0)

# Predict action probabilities and estimated future rewards

# from environment state

action_probs, critic_value = model(state)

critic_value_history.append(critic_value[0, 0])

# Sample action from action probability distribution

action = np.random.choice(num_actions, p=np.squeeze(action_probs))

action_probs_history.append(tf.math.log(action_probs[0, action]))

# Apply the sampled action in our environment

state, reward, done, _ = env.step(action)

rewards_history.append(reward)

episode_reward += reward

if done:

break

# Update running reward to check condition for solving

running_reward = 0.05 * episode_reward + (1 - 0.05) * running_reward

# Calculate expected value from rewards

# - At each timestep what was the total reward received after that timestep

# - Rewards in the past are discounted by multiplying them with gamma

# - These are the labels for our critic

returns = []

discounted_sum = 0

for r in rewards_history[::-1]:

discounted_sum = r + gamma * discounted_sum

returns.insert(0, discounted_sum)

# Normalize

returns = np.array(returns)

returns = (returns - np.mean(returns)) / (np.std(returns) + eps)

returns = returns.tolist()

# Calculating loss values to update our network

history = zip(action_probs_history, critic_value_history, returns)

actor_losses = []

critic_losses = []

for log_prob, value, ret in history:

# At this point in history, the critic estimated that we would get a

# total reward = `value` in the future. We took an action with log probability

# of `log_prob` and ended up recieving a total reward = `ret`.

# The actor must be updated so that it predicts an action that leads to

# high rewards (compared to critic's estimate) with high probability.

diff = ret - value

actor_losses.append(-log_prob * diff) # actor loss

# The critic must be updated so that it predicts a better estimate of

# the future rewards.

critic_losses.append(

huber_loss(tf.expand_dims(value, 0), tf.expand_dims(ret, 0))

)

# Backpropagation

loss_value = sum(actor_losses) + sum(critic_losses)

grads = tape.gradient(loss_value, model.trainable_variables)

optimizer.apply_gradients(zip(grads, model.trainable_variables))

# Clear the loss and reward history

action_probs_history.clear()

critic_value_history.clear()

rewards_history.clear()

# Log details

episode_count += 1

if episode_count % 10 == 0:

template = "running reward: {:.2f} at episode {}"

print(template.format(running_reward, episode_count))

if running_reward > 195: # Condition to consider the task solved

print("Solved at episode {}!".format(episode_count))

break

```

| github_jupyter |

# Drawing Bloch vector of a density matrix

Creating matplotlib software to draw Bloch vector given a density matrix for single qubit state.

From Exercise 2.72 in *Quantum Computation and Quantum Information* by Nielsen and Chuang, an arbitrary density matrix for a single qubit can be written as

$$ \rho = \frac{I + \vec{r} \cdot \vec{\sigma}}{2}$$

where $\vec{\sigma}$ is the vector of Pauli $X$, $Y$, and $Z$ matrices. The $3$-d real vector is the Bloch vector.

A state $\rho$ is pure if and only if $\vec{r}$ is a unit vector.

```

import numpy as np

# Global variables

eps = 0.0001

def gen_density_matrix(states, probs):

"""

Generate a density matrix from an array of 1-qubit states with an array of their corresponding probabilities.

"""

if len(states) != len(probs):

raise ValueError('Size of `states` and `probs` arrays must be the same.')

if np.sum(probs) != 1:

raise ValueError('Probabilities must sum to 1.')

for state in states:

if np.shape(state) != (2,):

raise ValueError('Each state must be a 2-dimensional vector. ')

if np.linalg.norm(state) < 1-eps or np.linalg.norm(state) > 1+eps:

raise ValueError('Each state must have norm 1. ')

rho = np.zeros((2,2))

for i in range(len(states)):

conj = np.conj(states[i])

for j in range(len(conj)):

# Add probs[i] * conj[j] * states[i] to column j of rho

# We use np.dot because otherwise 0 multiplication results in scalar

rho[:, j] = rho[:, j] + np.dot(probs[i], np.dot(conj[j],states[i]))

return rho

def validate(rho):

"""

Validate rho as a valid single-qubit density matrix.

Raise error if rho fails.

"""

# Verify rho is 2x2

if np.shape(rho) != (2, 2):

raise ValueError('Rho must be a 2x2 matrix.')

# Verify rho has trace 1

if np.trace(rho) < 1-eps or np.trace(rho) > 1+eps:

raise ValueError('Rho must have trace 1.')

# Verify rho is a positive operator. We do this by using the generalized Sylvester's criterion for

# positive semidefiniteness: check that the determinant of all principal minors of rho are >= 0

if rho[0][0] < 0 or rho[1][1] < 0 or np.linalg.det(rho) < 0:

raise ValueError('Rho must be a positive operator. ')

def bloch_vector_from_density(rho):

"""

Returns r vector.

"""

# Check to make sure rho is a legit density matrix

validate(rho)

# Simplify to get r x sigma on one side of equation

pauli_weighted = np.dot(2, rho) - np.eye(2)

# Define Pauli matrices

X = np.array([[0, 1], [1, 0]])

Y = np.array([[0, -1j], [1j, 0]])

Z = np.array([[1, 0], [0, -1]])

# Do inner product with Pauli X, Y, Z to get r vector coefficients

r = np.zeros(3)

r[0] = HS_inner_product(X, pauli_weighted) / HS_inner_product(X, X)

r[1] = HS_inner_product(Y, pauli_weighted) / HS_inner_product(Y, Y)

r[2] = HS_inner_product(Z, pauli_weighted) / HS_inner_product(Z, Z)

return r

def HS_inner_product(M1, M2):

# Hilbert-Schmidt inner product is defined as Tr(M1*M2)

return np.trace( np.dot( np.transpose(np.conjugate(M1)), M2 ) )

bloch_vector_from_density(gen_density_matrix([[1/np.sqrt(2), 1/np.sqrt(2)]], [1]))

```

## Plotting Bloch vector

Using [this guide](https://jakevdp.github.io/PythonDataScienceHandbook/04.12-three-dimensional-plotting.html).

```

from mpl_toolkits import mplot3d

%matplotlib inline

#%matplotlib notebook

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(10,10))

ax = plt.axes(projection='3d')

fig = plt.figure(figsize=(10,10))

ax = plt.axes(projection='3d')

# Data for a three-dimensional line

zline = np.linspace(0, 15, 1000)

xline = np.sin(zline)

yline = np.cos(zline)

ax.plot3D(xline, yline, zline, 'gray')

# Data for three-dimensional scattered points

zdata = 15 * np.random.random(100)

xdata = np.sin(zdata) + 0.1 * np.random.randn(100)

ydata = np.cos(zdata) + 0.1 * np.random.randn(100)

ax.scatter3D(xdata, ydata, zdata, c=zdata, cmap='Greens');

from mpl_toolkits.mplot3d import Axes3D

import numpy as np

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(10,10))

ax = fig.gca(projection='3d')

# theta = np.linspace(-4 * np.pi, 4 * np.pi, 100)

# z = np.linspace(-2, 2, 100)

# r = z**2 + 1

# x = r * np.sin(theta)

# y = r * np.cos(theta)

# ax.plot(x, y, z)

ax.plot([0, 1], [0, 0], [0, 1])

plt.savefig('foo.png', bbox_inches='tight')

from mpl_toolkits.mplot3d import Axes3D

import matplotlib.pyplot as plt

import numpy as np

from itertools import product, combinations

fig = plt.figure(figsize=(10,10))

ax = fig.gca(projection='3d')

#ax.set_aspect("equal")

# draw sphere

u, v = np.mgrid[0:2*np.pi:20j, 0:np.pi:10j]

x = np.cos(u)*np.sin(v)

y = np.sin(u)*np.sin(v)

z = np.cos(v)

ax.plot_wireframe(x, y, z, color="r")

# draw a point

ax.scatter([0], [0], [0], color="g", s=100)

# draw a vector

from matplotlib.patches import FancyArrowPatch

from mpl_toolkits.mplot3d import proj3d

class Arrow3D(FancyArrowPatch):

def __init__(self, xs, ys, zs, *args, **kwargs):

FancyArrowPatch.__init__(self, (0, 0), (0, 0), *args, **kwargs)

self._verts3d = xs, ys, zs

def draw(self, renderer):

xs3d, ys3d, zs3d = self._verts3d

xs, ys, zs = proj3d.proj_transform(xs3d, ys3d, zs3d, renderer.M)

self.set_positions((xs[0], ys[0]), (xs[1], ys[1]))

FancyArrowPatch.draw(self, renderer)

a = Arrow3D([0, 1], [0, 1], [0, 1], mutation_scale=20,

lw=1, arrowstyle="-|>", color="k")

ax.add_artist(a)

plt.savefig('foo.png', bbox_inches='tight')

```

## Visualizing expressibility of different ansatze

```

import qutip as qtp

from qiskit import *

thetas = [i * 2 * np.pi / 500 for i in range(500)]

statevectors = []

for theta in thetas:

qc = QuantumCircuit(1)

qc.h(0)

qc.rz(theta, 0)

backend = Aer.get_backend('statevector_simulator')

statevectors.append(execute(qc,backend).result().get_statevector())

rhos = [gen_density_matrix([statevector], [1]) for statevector in statevectors]

vecs = [bloch_vector_from_density(rho) for rho in rhos]

print(len(vecs))

b = qtp.Bloch()

b.clear()

b.add_points(vecs)

b.show()

```

| github_jupyter |

##### Copyright 2018 The TensorFlow Authors.

Licensed under the Apache License, Version 2.0 (the "License");

```

#@title Licensed under the Apache License, Version 2.0 (the "License"); { display-mode: "form" }

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

```

# Copulas Primer

<table class="tfo-notebook-buttons" align="left">

<td>

<a target="_blank" href="https://colab.research.google.com/github/tensorflow/probability/blob/master/tensorflow_probability/examples/jupyter_notebooks/Gaussian_Copula.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

</td>

<td>

<a target="_blank" href="https://github.com/tensorflow/probability/blob/master/tensorflow_probability/examples/jupyter_notebooks/Gaussian_Copula.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

</td>

</table>

```

!pip install -q tensorflow-probability

import numpy as np

import matplotlib.pyplot as plt

import tensorflow as tf

import tensorflow_probability as tfp

tfd = tfp.distributions

tfb = tfp.bijectors

```

A [copula](https://en.wikipedia.org/wiki/Copula_(probability_theory%29) is a classical approach for capturing the dependence between random variables. More formally, a copula is a multivariate distribution $C(U_1, U_2, ...., U_n)$ such that marginalizing gives $U_i \sim \text{Uniform}(0, 1)$.

Copulas are interesting because we can use them to create multivariate distributions with arbitrary marginals. This is the recipe:

* Using the [Probability Integral Transform](https://en.wikipedia.org/wiki/Probability_integral_transform) turns an arbitrary continuous R.V. $X$ into a uniform one $F_X(X)$, where $F_X$ is the CDF of $X$.

* Given a copula (say bivariate) $C(U, V)$, we have that $U$ and $V$ have uniform marginal distributions.

* Now given our R.V's of interest $X, Y$, create a new distribution $C'(X, Y) = C(F_X(X), F_Y(Y))$. The marginals for $X$ and $Y$ are the ones we desired.

Marginals are univariate and thus may be easier to measure and/or model. A copula enables starting from marginals yet also achieving arbitrary correlation between dimensions.

# Gaussian Copula

To illustrate how copulas are constructed, consider the case of capturing dependence according to multivariate Gaussian correlations. A Gaussian Copula is one given by $C(u_1, u_2, ...u_n) = \Phi_\Sigma(\Phi^{-1}(u_1), \Phi^{-1}(u_2), ... \Phi^{-1}(u_n))$ where $\Phi_\Sigma$ represents the CDF of a MultivariateNormal, with covariance $\Sigma$ and mean 0, and $\Phi^{-1}$ is the inverse CDF for the standard normal.

Applying the normal's inverse CDF warps the uniform dimensions to be normally distributed. Applying the multivariate normal's CDF then squashes the distribution to be marginally uniform and with Gaussian correlations.

Thus, what we get is that the Gaussian Copula is a distribution over the unit hypercube $[0, 1]^n$ with uniform marginals.

Defined as such, the Gaussian Copula can be implemented with `tfd.TransformedDistribution` and appropriate `Bijector`. That is, we are transforming a MultivariateNormal, via the use of the Normal distribution's inverse CDF. A `Bijector` modelling this is listed below.

```

class NormalCDF(tfb.Bijector):

"""Bijector that encodes normal CDF and inverse CDF functions.

We follow the convention that the `inverse` represents the CDF

and `forward` the inverse CDF (the reason for this convention is

that inverse CDF methods for sampling are expressed a little more

tersely this way).

"""

def __init__(self):

self.normal_dist = tfd.Normal(loc=0., scale=1.)

super(NormalCDF, self).__init__(

forward_min_event_ndims=0,

validate_args=False,

name="NormalCDF")

def _forward(self, y):

# Inverse CDF of normal distribution.

return self.normal_dist.quantile(y)

def _inverse(self, x):

# CDF of normal distribution.

return self.normal_dist.cdf(x)

def _inverse_log_det_jacobian(self, x):

# Log PDF of the normal distribution.

return self.normal_dist.log_prob(x)

```

Below, we implement a Gaussian Copula with one simplifying assumption: that the covariance is parameterized

by a Cholesky factor (hence a covariance for `MultivariateNormalTriL`). (One could use other `tf.linalg.LinearOperators` to encode different matrix-free assumptions.).

```

class GaussianCopulaTriL(tfd.TransformedDistribution):

"""Takes a location, and lower triangular matrix for the Cholesky factor."""

def __init__(self, loc, scale_tril):

super(GaussianCopulaTriL, self).__init__(

distribution=tfd.MultivariateNormalTriL(

loc=loc,

scale_tril=scale_tril),

bijector=tfb.Invert(NormalCDF()),

validate_args=False,

name="GaussianCopulaTriLUniform")

# Plot an example of this.

unit_interval = np.linspace(0.01, 0.99, num=200, dtype=np.float32)

x_grid, y_grid = np.meshgrid(unit_interval, unit_interval)

coordinates = np.concatenate(

[x_grid[..., np.newaxis],

y_grid[..., np.newaxis]], axis=-1)

pdf = GaussianCopulaTriL(

loc=[0., 0.],

scale_tril=[[1., 0.8], [0., 0.6]],

).prob(coordinates)

# Plot its density.

with tf.Session() as sess:

pdf_eval = sess.run(pdf)

plt.contour(x_grid, y_grid, pdf_eval, 100, cmap=plt.cm.jet);

```

The power, however, from such a model is using the Probability Integral Transform, to use the copula on arbitrary R.V.s. In this way, we can specify arbitrary marginals, and use the copula to stitch them together.

We start with a model:

$$\begin{align*}

X &\sim \text{Kumaraswamy}(a, b) \\

Y &\sim \text{Gumbel}(\mu, \beta)

\end{align*}$$

and use the copula to get a bivariate R.V. $Z$, which has marginals [Kumaraswamy](https://en.wikipedia.org/wiki/Kumaraswamy_distribution) and [Gumbel](https://en.wikipedia.org/wiki/Gumbel_distribution).

We'll start by plotting the product distribution generated by those two R.V.s. This is just to serve as a comparison point to when we apply the Copula.

```

a = 2.0

b = 2.0

gloc = 0.

gscale = 1.

x = tfd.Kumaraswamy(a, b)

y = tfd.Gumbel(loc=gloc, scale=gscale)

# Plot the distributions, assuming independence

x_axis_interval = np.linspace(0.01, 0.99, num=200, dtype=np.float32)

y_axis_interval = np.linspace(-2., 3., num=200, dtype=np.float32)

x_grid, y_grid = np.meshgrid(x_axis_interval, y_axis_interval)

pdf = x.prob(x_grid) * y.prob(y_grid)

# Plot its density

with tf.Session() as sess:

pdf_eval = sess.run(pdf)

plt.contour(x_grid, y_grid, pdf_eval, 100, cmap=plt.cm.jet);

```

Now we use a Gaussian copula to couple the distributions together, and plot that. Again our tool of choice is `TransformedDistribution` applying the appropriate `Bijector`.

Specifically, we define a `Concat` bijector which applies different bijectors at different parts of the vector (which is still a bijective transformation).

```

class Concat(tfb.Bijector):

"""This bijector concatenates bijectors who act on scalars.

More specifically, given [F_0, F_1, ... F_n] which are scalar transformations,

this bijector creates a transformation which operates on the vector

[x_0, ... x_n] with the transformation [F_0(x_0), F_1(x_1) ..., F_n(x_n)].

NOTE: This class does no error checking, so use with caution.

"""

def __init__(self, bijectors):

self._bijectors = bijectors

super(Concat, self).__init__(

forward_min_event_ndims=1,

validate_args=False,

name="ConcatBijector")

@property

def bijectors(self):

return self._bijectors

def _forward(self, x):

split_xs = tf.split(x, len(self.bijectors), -1)

transformed_xs = [b_i.forward(x_i) for b_i, x_i in zip(

self.bijectors, split_xs)]

return tf.concat(transformed_xs, -1)

def _inverse(self, y):

split_ys = tf.split(y, len(self.bijectors), -1)

transformed_ys = [b_i.inverse(y_i) for b_i, y_i in zip(

self.bijectors, split_ys)]

return tf.concat(transformed_ys, -1)

def _forward_log_det_jacobian(self, x):

split_xs = tf.split(x, len(self.bijectors), -1)

fldjs = [

b_i.forward_log_det_jacobian(x_i, event_ndims=0) for b_i, x_i in zip(

self.bijectors, split_xs)]

return tf.squeeze(sum(fldjs), axis=-1)

def _inverse_log_det_jacobian(self, y):

split_ys = tf.split(y, len(self.bijectors), -1)

ildjs = [

b_i.inverse_log_det_jacobian(y_i, event_ndims=0) for b_i, y_i in zip(

self.bijectors, split_ys)]

return tf.squeeze(sum(ildjs), axis=-1)

```

Now we can define the Copula we want. Given a list of target marginals (encoded as bijectors), we can easily construct

a new distribution that uses the copula and has the specified marginals.

Note that $C'(X, Y) = C(F_X(X), F_Y(Y))$. This is mathematically equivalent to using `Concat([F_X, F_Y])`.

```

class WarpedGaussianCopula(tfd.TransformedDistribution):

"""Application of a Gaussian Copula on a list of target marginals.

This implements an application of a Gaussian Copula. Given [x_0, ... x_n]

which are distributed marginally (with CDF) [F_0, ... F_n],

`GaussianCopula` represents an application of the Copula, such that the

resulting multivariate distribution has the above specified marginals.

The marginals are specified by `marginal_bijectors`: These are

bijectors whose `inverse` encodes the CDF and `forward` the inverse CDF.

"""

def __init__(self, loc, scale_tril, marginal_bijectors):

super(WarpedGaussianCopula, self).__init__(

distribution=GaussianCopulaTriL(loc=loc, scale_tril=scale_tril),

bijector=Concat(marginal_bijectors),

validate_args=False,

name="GaussianCopula")

```

Finally, let's actually use this Gaussian Copula. We'll use a Cholesky of $\begin{bmatrix}1 & 0\\\rho & \sqrt{(1-\rho^2)}\end{bmatrix}$, which will correspond to variances 1, and correlation $\rho$ for the multivariate normal.

We'll look at a few cases:

```

# Create our coordinates:

coordinates = np.concatenate(

[x_grid[..., np.newaxis], y_grid[..., np.newaxis]], -1)

def create_gaussian_copula(correlation):

# Use Gaussian Copula to add dependence.

return WarpedGaussianCopula(

loc=[0., 0.],

scale_tril=[[1., 0.], [correlation, tf.sqrt(1. - correlation ** 2)]],

# These encode the marginals we want. In this case we want X_0 has

# Kumaraswamy marginal, and X_1 has Gumbel marginal.

marginal_bijectors=[

tfb.Kumaraswamy(a, b),

# Kumaraswamy follows the above convention, while

# Gumbel does not, and has to be inverted.

tfb.Invert(tfb.Gumbel(loc=0., scale=1.))])

# Note that the zero case will correspond to independent marginals!

correlations = [0., -0.8, 0.8]

copulas = []

probs = []

for correlation in correlations:

copula = create_gaussian_copula(correlation)

copulas.append(copula)

probs.append(copula.prob(coordinates))

# Plot it's density

with tf.Session() as sess:

copula_evals = sess.run(probs)

for correlation, copula_eval in zip(correlations, copula_evals):

plt.figure()

plt.contour(x_grid, y_grid, copula_eval, 100, cmap=plt.cm.jet)

plt.title('Correlation {}'.format(correlation))

```

Finally, let's verify that we actually get the marginals we want.

```

def kumaraswamy_pdf(x):

return tfd.Kumaraswamy(a, b).prob(np.float32(x)).eval()

def gumbel_pdf(x):

return tfd.Gumbel(gloc, gscale).prob(np.float32(x)).eval()

samples = []

for copula in copulas:

samples.append(copula.sample(10000))

with tf.Session() as sess:

copula_evals = sess.run(samples)

# Let's marginalize out on each, and plot the samples.

for correlation, copula_eval in zip(correlations, copula_evals):

k = copula_eval[..., 0]

g = copula_eval[..., 1]

plt.figure()

_, bins, _ = plt.hist(k, bins=100, normed=True)

plt.plot(bins, kumaraswamy_pdf(bins), 'r--')

plt.title('Kumaraswamy from Copula with correlation {}'.format(correlation))

plt.figure();

_, bins, _ = plt.hist(g, bins=100, normed=True)

plt.plot(bins, gumbel_pdf(bins), 'r--')

plt.title('Gumbel from Copula with correlation {}'.format(correlation))

```

# Conclusion

And there we go! We've demonstrated that we can construct Gaussian Copulas using the `Bijector` API.

More generally, writing bijectors using the `Bijector` API and composing them with a distribution, can create rich families of distributions for flexible modelling.

| github_jupyter |

# Методы доступа к атрибутам

https://github.com/alexopryshko/advancedpython/tree/master/1

В предыдущей теме были рассмотрены дескрипторы. Они позволяют переопределять доступ к атрибутам класса изнутри атрибута. Тем не менее в питоне есть еще группа магических методов, которые вызываются при доступе к атрибутам со стороны объекта вызывающего класса:

- `__getattribute__(self, name)` - будет вызван при попытке получить значение атрибута. Если этот метод переопределён, стандартный механизм поиска значения атрибута не будет задействован. По умолчанию как раз он и лезет в `__dict__` объекта и вызывает в случае неудачи `__getattr__`:

- `__getattr__(self, name)` - будет вызван в случае, если запрашиваемый атрибут не найден обычным механизмом (в `__dict__` экземпляра, класса и т.д.)

- `__setattr__(self, name, value)` - будет вызван при попытке установить значение атрибута экземпляра. Если его переопределить, стандартный механизм установки значения не будет задействован.

- `__delattr__(self, name)` - используется при удалении атрибута.

В следующем примере показано, что `__getattr__` вызывается только тогда, когда стандартными средствами (заглянув в `__dict__` объекта и класса) найти атрибут не получается. При этом в нашем случае метод срабатывает для любых значений, не вызывая AttributeError

```

class A:

def __getattr__(self, attr):

print('__getattr__')

return 42

field = 'field'

a = A()

a.name = 'name'

print(a.__dict__, A.__dict__, end='\n\n\n')

print('a.name', a.name, end='\n\n')

print('a.field', a.field, end='\n\n')

print('a.random', a.random, end='\n\n')

a.asdlubaslifubasfuib

```

А если переопределим `__getattribute__`, то даже на `__dict__` посмотреть не сможем.

```

class A:

def __getattribute__(self, item):

if item in self.__dict__:

return self.__dict__[item]

if item in self.__class__.__dict__:

return self.__class__.__dict__[item]

if item in object.__dict__:

return object.__dict__[item]

if '__getattr__' in self.__class__.__dict__:

return self.__getattr__(self, item)

raise AttributeError

print('__getattribute__')

return 42

def __len__(self):

return 0

def test(self):

print('test', self)

field = 'field'

a = A()

a.name = 'name'

print('__dict__', getattr(a, "__dict__"), end='\n\n')

print('a.name', a.name, end='\n\n')

print('a.field', a.field, end='\n\n')

print('a.random', a.random, end='\n\n')

print('a.__len__', a.__len__, end='\n\n')

print('len(a)', len(a), end='\n\n')

print('type(a)...', type(a).__dict__['test'](a), end='\n\n')

print('A.field', A.field, end='\n\n')

a.test()

```

Переопределяя `__setattr__`, рискуем не увидеть наши добавляемые атрибуты объекта в `__dict__`

```

class A:

def __setattr__(self, key, value):

print('__setattr__')

field = 'field'

a = A()

a.field = 1

a.a = 1

print('a.__dict__', a.__dict__, end='\n\n')

A.field = 'new'

print('A.field', A.field, end='\n\n')

A.__dict__

dir(a)

a.a

```

А таким образом можем разрешить нашему объекту возвращать только те атрибуты, название которых начинается на слово test. Теоретически, используя этот прием, можно реализовать истинно приватные атрибуты, но зачем?

```

class A:

def __getattribute__(self, item):

if 'test' in item or '__dict__' == item:

return super().__getattribute__(item)

else:

raise AttributeError

a = A()

a.test_name = 1

a.name = 1

print('a.__dict__', a.__dict__)

print('a.test_name', a.test_name)

print('a.name', a.name)

class A:

def __init__(self):

self.obj_field = 4

class_field = 5

data_descr = DataDescriptor()

nondata_descr = NonDataDescriptor()

a = A()

a.liunyiuynliun

```

## Общий алгоритм получения атрибута

Чтобы получить значение атрибута attrname:

- Если определён метод `a.__class__.__getattribute__()`, то вызывается он и возвращается полученное значение.

- Если attrname это специальный (определённый python-ом) атрибут, такой как `__class__` или `__doc__`, возвращается его значение.

- Проверяется `a.__class__.__dict__` на наличие записи с attrname. Если она существует и значением является data дескриптор, возвращается результат вызова метода `__get__()` дескриптора. Также проверяются все базовые классы.

- Если в `a.__dict__` существует запись с именем attrname, возвращается значение этой записи.

- Проверяется `a.__class__.__dict__`, если в нём существует запись с attrname и это non-data дескриптор, возвращается результат `__get__()` дескриптора, если запись существует и там не дескриптор, возвращается значение записи. Также обыскиваются базовые классы.

- Если существует метод `a.__class__.__getattr__()`, он вызывается и возвращается его результат. Если такого метода нет — выкидывается `AttributeError`.

## Общий алгоритм назначения атрибута

Чтобы установить значение value атрибута attrname экземпляра a:

- Если существует метод `a.__class__.__setattr__()`, он вызывается.

- Проверяется `a.__class__.__dict__`, если в нём есть запись с attrname и это дескриптор данных — вызывается метод `__set__()` дескриптора. Также проверяются базовые классы.

- `a.__dict__` добавляется запись value с ключом attrname.

## Задание

Библиотека pandas предназначена для работы с табличными данными. В ней есть сущности DataFrame (по сути, сама таблица) и Series (колонка либо строка таблицы). У колонок внутри таблицы есть названия, притом получить колонку можно двумя способами:

- `dataframe.colname`

- `dataframe['colname']`

Задание: реализовать структуру данных ключ-значение, где и присваивание, и получение элементов можно будет производить обоими этими способами.

| github_jupyter |

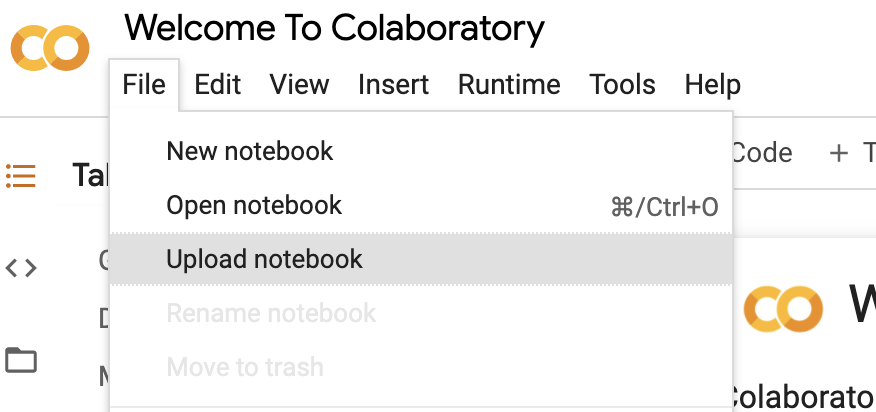

# What's this TensorFlow business?

You've written a lot of code in this assignment to provide a whole host of neural network functionality. Dropout, Batch Norm, and 2D convolutions are some of the workhorses of deep learning in computer vision. You've also worked hard to make your code efficient and vectorized.

For the last part of this assignment, though, we're going to leave behind your beautiful codebase and instead migrate to one of two popular deep learning frameworks: in this instance, TensorFlow (or PyTorch, if you switch over to that notebook)

#### What is it?

TensorFlow is a system for executing computational graphs over Tensor objects, with native support for performing backpropogation for its Variables. In it, we work with Tensors which are n-dimensional arrays analogous to the numpy ndarray.

#### Why?

* Our code will now run on GPUs! Much faster training. Writing your own modules to run on GPUs is beyond the scope of this class, unfortunately.

* We want you to be ready to use one of these frameworks for your project so you can experiment more efficiently than if you were writing every feature you want to use by hand.

* We want you to stand on the shoulders of giants! TensorFlow and PyTorch are both excellent frameworks that will make your lives a lot easier, and now that you understand their guts, you are free to use them :)

* We want you to be exposed to the sort of deep learning code you might run into in academia or industry.

## How will I learn TensorFlow?

TensorFlow has many excellent tutorials available, including those from [Google themselves](https://www.tensorflow.org/get_started/get_started).

Otherwise, this notebook will walk you through much of what you need to do to train models in TensorFlow. See the end of the notebook for some links to helpful tutorials if you want to learn more or need further clarification on topics that aren't fully explained here.

# Table of Contents

This notebook has 5 parts. We will walk through TensorFlow at three different levels of abstraction, which should help you better understand it and prepare you for working on your project.

1. Preparation: load the CIFAR-10 dataset.

2. Barebone TensorFlow: we will work directly with low-level TensorFlow graphs.

3. Keras Model API: we will use `tf.keras.Model` to define arbitrary neural network architecture.

4. Keras Sequential API: we will use `tf.keras.Sequential` to define a linear feed-forward network very conveniently.

5. CIFAR-10 open-ended challenge: please implement your own network to get as high accuracy as possible on CIFAR-10. You can experiment with any layer, optimizer, hyperparameters or other advanced features.

Here is a table of comparison:

| API | Flexibility | Convenience |

|---------------|-------------|-------------|

| Barebone | High | Low |

| `tf.keras.Model` | High | Medium |

| `tf.keras.Sequential` | Low | High |

# Part I: Preparation

First, we load the CIFAR-10 dataset. This might take a few minutes to download the first time you run it, but after that the files should be cached on disk and loading should be faster.

In previous parts of the assignment we used CS231N-specific code to download and read the CIFAR-10 dataset; however the `tf.keras.datasets` package in TensorFlow provides prebuilt utility functions for loading many common datasets.

For the purposes of this assignment we will still write our own code to preprocess the data and iterate through it in minibatches. The `tf.data` package in TensorFlow provides tools for automating this process, but working with this package adds extra complication and is beyond the scope of this notebook. However using `tf.data` can be much more efficient than the simple approach used in this notebook, so you should consider using it for your project.

```

import os

import tensorflow as tf

import numpy as np

import math

import timeit

import matplotlib.pyplot as plt

%matplotlib inline

def load_cifar10(num_training=49000, num_validation=1000, num_test=10000):

"""

Fetch the CIFAR-10 dataset from the web and perform preprocessing to prepare

it for the two-layer neural net classifier. These are the same steps as

we used for the SVM, but condensed to a single function.

"""

# Load the raw CIFAR-10 dataset and use appropriate data types and shapes

cifar10 = tf.keras.datasets.cifar10.load_data()

(X_train, y_train), (X_test, y_test) = cifar10

X_train = np.asarray(X_train, dtype=np.float32)

y_train = np.asarray(y_train, dtype=np.int32).flatten()

X_test = np.asarray(X_test, dtype=np.float32)

y_test = np.asarray(y_test, dtype=np.int32).flatten()

# Subsample the data

mask = range(num_training, num_training + num_validation)

X_val = X_train[mask]

y_val = y_train[mask]

mask = range(num_training)

X_train = X_train[mask]

y_train = y_train[mask]

mask = range(num_test)

X_test = X_test[mask]

y_test = y_test[mask]

# Normalize the data: subtract the mean pixel and divide by std

mean_pixel = X_train.mean(axis=(0, 1, 2), keepdims=True)

std_pixel = X_train.std(axis=(0, 1, 2), keepdims=True)

X_train = (X_train - mean_pixel) / std_pixel

X_val = (X_val - mean_pixel) / std_pixel

X_test = (X_test - mean_pixel) / std_pixel

return X_train, y_train, X_val, y_val, X_test, y_test

# Invoke the above function to get our data.

NHW = (0, 1, 2)

X_train, y_train, X_val, y_val, X_test, y_test = load_cifar10()

print('Train data shape: ', X_train.shape)

print('Train labels shape: ', y_train.shape, y_train.dtype)

print('Validation data shape: ', X_val.shape)

print('Validation labels shape: ', y_val.shape)

print('Test data shape: ', X_test.shape)

print('Test labels shape: ', y_test.shape)

```

### Preparation: Dataset object

For our own convenience we'll define a lightweight `Dataset` class which lets us iterate over data and labels. This is not the most flexible or most efficient way to iterate through data, but it will serve our purposes.

```

class Dataset(object):

def __init__(self, X, y, batch_size, shuffle=False):

"""

Construct a Dataset object to iterate over data X and labels y

Inputs:

- X: Numpy array of data, of any shape

- y: Numpy array of labels, of any shape but with y.shape[0] == X.shape[0]

- batch_size: Integer giving number of elements per minibatch

- shuffle: (optional) Boolean, whether to shuffle the data on each epoch

"""

assert X.shape[0] == y.shape[0], 'Got different numbers of data and labels'

self.X, self.y = X, y

self.batch_size, self.shuffle = batch_size, shuffle

def __iter__(self):

N, B = self.X.shape[0], self.batch_size

idxs = np.arange(N)

if self.shuffle:

np.random.shuffle(idxs)

return iter((self.X[i:i+B], self.y[i:i+B]) for i in range(0, N, B))

train_dset = Dataset(X_train, y_train, batch_size=64, shuffle=True)

val_dset = Dataset(X_val, y_val, batch_size=64, shuffle=False)

test_dset = Dataset(X_test, y_test, batch_size=64)

# We can iterate through a dataset like this:

for t, (x, y) in enumerate(train_dset):

print(t, x.shape, y.shape)

if t > 5: break

```

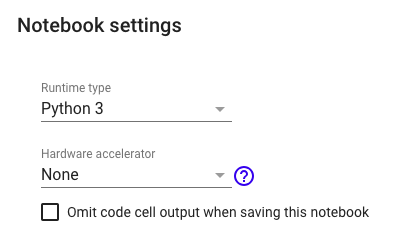

You can optionally **use GPU by setting the flag to True below**. It's not neccessary to use a GPU for this assignment; if you are working on Google Cloud then we recommend that you do not use a GPU, as it will be significantly more expensive.

```

# Set up some global variables

USE_GPU = True

if USE_GPU:

device = '/device:GPU:0'

else:

device = '/cpu:0'

# Constant to control how often we print when training models

print_every = 100

print('Using device: ', device)

```

# Part II: Barebone TensorFlow

TensorFlow ships with various high-level APIs which make it very convenient to define and train neural networks; we will cover some of these constructs in Part III and Part IV of this notebook. In this section we will start by building a model with basic TensorFlow constructs to help you better understand what's going on under the hood of the higher-level APIs.

TensorFlow is primarily a framework for working with **static computational graphs**. Nodes in the computational graph are Tensors which will hold n-dimensional arrays when the graph is run; edges in the graph represent functions that will operate on Tensors when the graph is run to actually perform useful computation.

This means that a typical TensorFlow program is written in two distinct phases:

1. Build a computational graph that describes the computation that you want to perform. This stage doesn't actually perform any computation; it just builds up a symbolic representation of your computation. This stage will typically define one or more `placeholder` objects that represent inputs to the computational graph.

2. Run the computational graph many times. Each time the graph is run you will specify which parts of the graph you want to compute, and pass a `feed_dict` dictionary that will give concrete values to any `placeholder`s in the graph.

### TensorFlow warmup: Flatten Function

We can see this in action by defining a simple `flatten` function that will reshape image data for use in a fully-connected network.

In TensorFlow, data for convolutional feature maps is typically stored in a Tensor of shape N x H x W x C where:

- N is the number of datapoints (minibatch size)

- H is the height of the feature map

- W is the width of the feature map

- C is the number of channels in the feature map

This is the right way to represent the data when we are doing something like a 2D convolution, that needs spatial understanding of where the intermediate features are relative to each other. When we use fully connected affine layers to process the image, however, we want each datapoint to be represented by a single vector -- it's no longer useful to segregate the different channels, rows, and columns of the data. So, we use a "flatten" operation to collapse the `H x W x C` values per representation into a single long vector. The flatten function below first reads in the value of N from a given batch of data, and then returns a "view" of that data. "View" is analogous to numpy's "reshape" method: it reshapes x's dimensions to be N x ??, where ?? is allowed to be anything (in this case, it will be H x W x C, but we don't need to specify that explicitly).

**NOTE**: TensorFlow and PyTorch differ on the default Tensor layout; TensorFlow uses N x H x W x C but PyTorch uses N x C x H x W.

```

def flatten(x):

"""

Input:

- TensorFlow Tensor of shape (N, D1, ..., DM)

Output:

- TensorFlow Tensor of shape (N, D1 * ... * DM)

"""

N = tf.shape(x)[0]

return tf.reshape(x, (N, -1))

def test_flatten():

# Clear the current TensorFlow graph.

tf.reset_default_graph()

# Stage I: Define the TensorFlow graph describing our computation.

# In this case the computation is trivial: we just want to flatten

# a Tensor using the flatten function defined above.

# Our computation will have a single input, x. We don't know its

# value yet, so we define a placeholder which will hold the value

# when the graph is run. We then pass this placeholder Tensor to

# the flatten function; this gives us a new Tensor which will hold

# a flattened view of x when the graph is run. The tf.device

# context manager tells TensorFlow whether to place these Tensors

# on CPU or GPU.

with tf.device(device):

x = tf.placeholder(tf.float32)

x_flat = flatten(x)

# At this point we have just built the graph describing our computation,

# but we haven't actually computed anything yet. If we print x and x_flat

# we see that they don't hold any data; they are just TensorFlow Tensors

# representing values that will be computed when the graph is run.

print('x: ', type(x), x)

print('x_flat: ', type(x_flat), x_flat)

print()

# We need to use a TensorFlow Session object to actually run the graph.

with tf.Session() as sess:

# Construct concrete values of the input data x using numpy

x_np = np.arange(24).reshape((2, 3, 4))

print('x_np:\n', x_np, '\n')

# Run our computational graph to compute a concrete output value.

# The first argument to sess.run tells TensorFlow which Tensor

# we want it to compute the value of; the feed_dict specifies

# values to plug into all placeholder nodes in the graph. The

# resulting value of x_flat is returned from sess.run as a

# numpy array.

x_flat_np = sess.run(x_flat, feed_dict={x: x_np})

print('x_flat_np:\n', x_flat_np, '\n')

# We can reuse the same graph to perform the same computation

# with different input data

x_np = np.arange(12).reshape((2, 3, 2))

print('x_np:\n', x_np, '\n')

x_flat_np = sess.run(x_flat, feed_dict={x: x_np})

print('x_flat_np:\n', x_flat_np)

test_flatten()

```

### Barebones TensorFlow: Two-Layer Network

We will now implement our first neural network with TensorFlow: a fully-connected ReLU network with two hidden layers and no biases on the CIFAR10 dataset. For now we will use only low-level TensorFlow operators to define the network; later we will see how to use the higher-level abstractions provided by `tf.keras` to simplify the process.

We will define the forward pass of the network in the function `two_layer_fc`; this will accept TensorFlow Tensors for the inputs and weights of the network, and return a TensorFlow Tensor for the scores. It's important to keep in mind that calling the `two_layer_fc` function **does not** perform any computation; instead it just sets up the computational graph for the forward computation. To actually run the network we need to enter a TensorFlow Session and feed data to the computational graph.

After defining the network architecture in the `two_layer_fc` function, we will test the implementation by setting up and running a computational graph, feeding zeros to the network and checking the shape of the output.

It's important that you read and understand this implementation.

```

def two_layer_fc(x, params):

"""

A fully-connected neural network; the architecture is:

fully-connected layer -> ReLU -> fully connected layer.

Note that we only need to define the forward pass here; TensorFlow will take

care of computing the gradients for us.

The input to the network will be a minibatch of data, of shape

(N, d1, ..., dM) where d1 * ... * dM = D. The hidden layer will have H units,

and the output layer will produce scores for C classes.

Inputs:

- x: A TensorFlow Tensor of shape (N, d1, ..., dM) giving a minibatch of

input data.

- params: A list [w1, w2] of TensorFlow Tensors giving weights for the

network, where w1 has shape (D, H) and w2 has shape (H, C).

Returns:

- scores: A TensorFlow Tensor of shape (N, C) giving classification scores

for the input data x.

"""

w1, w2 = params # Unpack the parameters

x = flatten(x) # Flatten the input; now x has shape (N, D)

h = tf.nn.relu(tf.matmul(x, w1)) # Hidden layer: h has shape (N, H)

scores = tf.matmul(h, w2) # Compute scores of shape (N, C)

return scores

def two_layer_fc_test():

# TensorFlow's default computational graph is essentially a hidden global

# variable. To avoid adding to this default graph when you rerun this cell,

# we clear the default graph before constructing the graph we care about.

tf.reset_default_graph()

hidden_layer_size = 42

# Scoping our computational graph setup code under a tf.device context

# manager lets us tell TensorFlow where we want these Tensors to be

# placed.

with tf.device(device):

# Set up a placehoder for the input of the network, and constant

# zero Tensors for the network weights. Here we declare w1 and w2

# using tf.zeros instead of tf.placeholder as we've seen before - this

# means that the values of w1 and w2 will be stored in the computational

# graph itself and will persist across multiple runs of the graph; in

# particular this means that we don't have to pass values for w1 and w2

# using a feed_dict when we eventually run the graph.

x = tf.placeholder(tf.float32)

w1 = tf.zeros((32 * 32 * 3, hidden_layer_size))

w2 = tf.zeros((hidden_layer_size, 10))

# Call our two_layer_fc function to set up the computational

# graph for the forward pass of the network.

scores = two_layer_fc(x, [w1, w2])

# Use numpy to create some concrete data that we will pass to the

# computational graph for the x placeholder.

x_np = np.zeros((64, 32, 32, 3))

with tf.Session() as sess:

# The calls to tf.zeros above do not actually instantiate the values

# for w1 and w2; the following line tells TensorFlow to instantiate

# the values of all Tensors (like w1 and w2) that live in the graph.

sess.run(tf.global_variables_initializer())

# Here we actually run the graph, using the feed_dict to pass the

# value to bind to the placeholder for x; we ask TensorFlow to compute

# the value of the scores Tensor, which it returns as a numpy array.

scores_np = sess.run(scores, feed_dict={x: x_np})

print(scores_np.shape)

two_layer_fc_test()

```

### Barebones TensorFlow: Three-Layer ConvNet

Here you will complete the implementation of the function `three_layer_convnet` which will perform the forward pass of a three-layer convolutional network. The network should have the following architecture:

1. A convolutional layer (with bias) with `channel_1` filters, each with shape `KW1 x KH1`, and zero-padding of two

2. ReLU nonlinearity

3. A convolutional layer (with bias) with `channel_2` filters, each with shape `KW2 x KH2`, and zero-padding of one

4. ReLU nonlinearity

5. Fully-connected layer with bias, producing scores for `C` classes.

**HINT**: For convolutions: https://www.tensorflow.org/api_docs/python/tf/nn/conv2d; be careful with padding!

**HINT**: For biases: https://www.tensorflow.org/performance/xla/broadcasting

```

def three_layer_convnet(x, params):

"""

A three-layer convolutional network with the architecture described above.

Inputs:

- x: A TensorFlow Tensor of shape (N, H, W, 3) giving a minibatch of images

- params: A list of TensorFlow Tensors giving the weights and biases for the

network; should contain the following:

- conv_w1: TensorFlow Tensor of shape (KH1, KW1, 3, channel_1) giving

weights for the first convolutional layer.

- conv_b1: TensorFlow Tensor of shape (channel_1,) giving biases for the

first convolutional layer.

- conv_w2: TensorFlow Tensor of shape (KH2, KW2, channel_1, channel_2)

giving weights for the second convolutional layer

- conv_b2: TensorFlow Tensor of shape (channel_2,) giving biases for the

second convolutional layer.

- fc_w: TensorFlow Tensor giving weights for the fully-connected layer.

Can you figure out what the shape should be?

- fc_b: TensorFlow Tensor giving biases for the fully-connected layer.

Can you figure out what the shape should be?

"""

conv_w1, conv_b1, conv_w2, conv_b2, fc_w, fc_b = params

scores = None

############################################################################

# TODO: Implement the forward pass for the three-layer ConvNet. #

############################################################################

paddings = tf.constant([[0,0], [2,2], [2,2], [0,0]])

x = tf.pad(x, paddings, 'CONSTANT')

conv1 = tf.nn.conv2d(x, conv_w1, strides=[1,1,1,1], padding="VALID")+conv_b1

relu1 = tf.nn.relu(conv1)

paddings = tf.constant([[0,0], [1,1], [1,1], [0,0]])

conv1 = tf.pad(conv1, paddings, 'CONSTANT')

conv2 = tf.nn.conv2d(conv1, conv_w2, strides=[1,1,1,1], padding="VALID")+conv_b2

relu2 = tf.nn.relu(conv2)

relu2 = flatten(relu2)

scores = tf.matmul(relu2, fc_w) + fc_b

############################################################################

# END OF YOUR CODE #

############################################################################

return scores

```

After defing the forward pass of the three-layer ConvNet above, run the following cell to test your implementation. Like the two-layer network, we use the `three_layer_convnet` function to set up the computational graph, then run the graph on a batch of zeros just to make sure the function doesn't crash, and produces outputs of the correct shape.

When you run this function, `scores_np` should have shape `(64, 10)`.

```

def three_layer_convnet_test():

tf.reset_default_graph()

with tf.device(device):

x = tf.placeholder(tf.float32)

conv_w1 = tf.zeros((5, 5, 3, 6))

conv_b1 = tf.zeros((6,))

conv_w2 = tf.zeros((3, 3, 6, 9))

conv_b2 = tf.zeros((9,))

fc_w = tf.zeros((32 * 32 * 9, 10))

fc_b = tf.zeros((10,))

params = [conv_w1, conv_b1, conv_w2, conv_b2, fc_w, fc_b]

scores = three_layer_convnet(x, params)

# Inputs to convolutional layers are 4-dimensional arrays with shape

# [batch_size, height, width, channels]

x_np = np.zeros((64, 32, 32, 3))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

scores_np = sess.run(scores, feed_dict={x: x_np})

print('scores_np has shape: ', scores_np.shape)

with tf.device('/cpu:0'):

three_layer_convnet_test()

```

### Barebones TensorFlow: Training Step

We now define the `training_step` function which sets up the part of the computational graph that performs a single training step. This will take three basic steps:

1. Compute the loss

2. Compute the gradient of the loss with respect to all network weights

3. Make a weight update step using (stochastic) gradient descent.

Note that the step of updating the weights is itself an operation in the computational graph - the calls to `tf.assign_sub` in `training_step` return TensorFlow operations that mutate the weights when they are executed. There is an important bit of subtlety here - when we call `sess.run`, TensorFlow does not execute all operations in the computational graph; it only executes the minimal subset of the graph necessary to compute the outputs that we ask TensorFlow to produce. As a result, naively computing the loss would not cause the weight update operations to execute, since the operations needed to compute the loss do not depend on the output of the weight update. To fix this problem, we insert a **control dependency** into the graph, adding a duplicate `loss` node to the graph that does depend on the outputs of the weight update operations; this is the object that we actually return from the `training_step` function. As a result, asking TensorFlow to evaluate the value of the `loss` returned from `training_step` will also implicitly update the weights of the network using that minibatch of data.

We need to use a few new TensorFlow functions to do all of this:

- For computing the cross-entropy loss we'll use `tf.nn.sparse_softmax_cross_entropy_with_logits`: https://www.tensorflow.org/api_docs/python/tf/nn/sparse_softmax_cross_entropy_with_logits

- For averaging the loss across a minibatch of data we'll use `tf.reduce_mean`:

https://www.tensorflow.org/api_docs/python/tf/reduce_mean

- For computing gradients of the loss with respect to the weights we'll use `tf.gradients`: https://www.tensorflow.org/api_docs/python/tf/gradients

- We'll mutate the weight values stored in a TensorFlow Tensor using `tf.assign_sub`: https://www.tensorflow.org/api_docs/python/tf/assign_sub

- We'll add a control dependency to the graph using `tf.control_dependencies`: https://www.tensorflow.org/api_docs/python/tf/control_dependencies

```

def training_step(scores, y, params, learning_rate):

"""

Set up the part of the computational graph which makes a training step.

Inputs:

- scores: TensorFlow Tensor of shape (N, C) giving classification scores for

the model.

- y: TensorFlow Tensor of shape (N,) giving ground-truth labels for scores;

y[i] == c means that c is the correct class for scores[i].

- params: List of TensorFlow Tensors giving the weights of the model

- learning_rate: Python scalar giving the learning rate to use for gradient

descent step.

Returns:

- loss: A TensorFlow Tensor of shape () (scalar) giving the loss for this

batch of data; evaluating the loss also performs a gradient descent step

on params (see above).

"""

# First compute the loss; the first line gives losses for each example in

# the minibatch, and the second averages the losses acros the batch

losses = tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=scores)

loss = tf.reduce_mean(losses)

# Compute the gradient of the loss with respect to each parameter of the the

# network. This is a very magical function call: TensorFlow internally

# traverses the computational graph starting at loss backward to each element

# of params, and uses backpropagation to figure out how to compute gradients;

# it then adds new operations to the computational graph which compute the

# requested gradients, and returns a list of TensorFlow Tensors that will

# contain the requested gradients when evaluated.

grad_params = tf.gradients(loss, params)

# Make a gradient descent step on all of the model parameters.

new_weights = []

for w, grad_w in zip(params, grad_params):

new_w = tf.assign_sub(w, learning_rate * grad_w)

new_weights.append(new_w)

# Insert a control dependency so that evaluting the loss causes a weight

# update to happen; see the discussion above.

with tf.control_dependencies(new_weights):

return tf.identity(loss)

```

### Barebones TensorFlow: Training Loop

Now we set up a basic training loop using low-level TensorFlow operations. We will train the model using stochastic gradient descent without momentum. The `training_step` function sets up the part of the computational graph that performs the training step, and the function `train_part2` iterates through the training data, making training steps on each minibatch, and periodically evaluates accuracy on the validation set.

```

def train_part2(model_fn, init_fn, learning_rate):

"""

Train a model on CIFAR-10.

Inputs:

- model_fn: A Python function that performs the forward pass of the model

using TensorFlow; it should have the following signature:

scores = model_fn(x, params) where x is a TensorFlow Tensor giving a

minibatch of image data, params is a list of TensorFlow Tensors holding

the model weights, and scores is a TensorFlow Tensor of shape (N, C)

giving scores for all elements of x.

- init_fn: A Python function that initializes the parameters of the model.

It should have the signature params = init_fn() where params is a list

of TensorFlow Tensors holding the (randomly initialized) weights of the

model.

- learning_rate: Python float giving the learning rate to use for SGD.

"""

# First clear the default graph

tf.reset_default_graph()

is_training = tf.placeholder(tf.bool, name='is_training')

# Set up the computational graph for performing forward and backward passes,

# and weight updates.

with tf.device(device):

# Set up placeholders for the data and labels

x = tf.placeholder(tf.float32, [None, 32, 32, 3])

y = tf.placeholder(tf.int32, [None])

params = init_fn() # Initialize the model parameters

scores = model_fn(x, params) # Forward pass of the model

loss = training_step(scores, y, params, learning_rate)

# Now we actually run the graph many times using the training data

with tf.Session() as sess:

# Initialize variables that will live in the graph

sess.run(tf.global_variables_initializer())

for t, (x_np, y_np) in enumerate(train_dset):

# Run the graph on a batch of training data; recall that asking

# TensorFlow to evaluate loss will cause an SGD step to happen.

feed_dict = {x: x_np, y: y_np}

loss_np = sess.run(loss, feed_dict=feed_dict)

# Periodically print the loss and check accuracy on the val set

if t % print_every == 0:

print('Iteration %d, loss = %.4f' % (t, loss_np))

check_accuracy(sess, val_dset, x, scores, is_training)

```

### Barebones TensorFlow: Check Accuracy

When training the model we will use the following function to check the accuracy of our model on the training or validation sets. Note that this function accepts a TensorFlow Session object as one of its arguments; this is needed since the function must actually run the computational graph many times on the data that it loads from the dataset `dset`.

Also note that we reuse the same computational graph both for taking training steps and for evaluating the model; however since the `check_accuracy` function never evalutes the `loss` value in the computational graph, the part of the graph that updates the weights of the graph do not execute on the validation data.

```

def check_accuracy(sess, dset, x, scores, is_training=None):

"""

Check accuracy on a classification model.

Inputs:

- sess: A TensorFlow Session that will be used to run the graph

- dset: A Dataset object on which to check accuracy

- x: A TensorFlow placeholder Tensor where input images should be fed

- scores: A TensorFlow Tensor representing the scores output from the

model; this is the Tensor we will ask TensorFlow to evaluate.

Returns: Nothing, but prints the accuracy of the model

"""

num_correct, num_samples = 0, 0

for x_batch, y_batch in dset:

feed_dict = {x: x_batch, is_training: 0}

scores_np = sess.run(scores, feed_dict=feed_dict)

y_pred = scores_np.argmax(axis=1)

num_samples += x_batch.shape[0]

num_correct += (y_pred == y_batch).sum()

acc = float(num_correct) / num_samples

print('Got %d / %d correct (%.2f%%)' % (num_correct, num_samples, 100 * acc))

```

### Barebones TensorFlow: Initialization

We'll use the following utility method to initialize the weight matrices for our models using Kaiming's normalization method.

[1] He et al, *Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification

*, ICCV 2015, https://arxiv.org/abs/1502.01852

```

def kaiming_normal(shape):

if len(shape) == 2:

fan_in, fan_out = shape[0], shape[1]

elif len(shape) == 4:

fan_in, fan_out = np.prod(shape[:3]), shape[3]

return tf.random_normal(shape) * np.sqrt(2.0 / fan_in)

```

### Barebones TensorFlow: Train a Two-Layer Network

We are finally ready to use all of the pieces defined above to train a two-layer fully-connected network on CIFAR-10.

We just need to define a function to initialize the weights of the model, and call `train_part2`.

Defining the weights of the network introduces another important piece of TensorFlow API: `tf.Variable`. A TensorFlow Variable is a Tensor whose value is stored in the graph and persists across runs of the computational graph; however unlike constants defined with `tf.zeros` or `tf.random_normal`, the values of a Variable can be mutated as the graph runs; these mutations will persist across graph runs. Learnable parameters of the network are usually stored in Variables.

You don't need to tune any hyperparameters, but you should achieve accuracies above 40% after one epoch of training.

```

def two_layer_fc_init():

"""

Initialize the weights of a two-layer network, for use with the

two_layer_network function defined above.

Inputs: None

Returns: A list of:

- w1: TensorFlow Variable giving the weights for the first layer

- w2: TensorFlow Variable giving the weights for the second layer

"""

hidden_layer_size = 4000

w1 = tf.Variable(kaiming_normal((3 * 32 * 32, 4000)))

w2 = tf.Variable(kaiming_normal((4000, 10)))

return [w1, w2]

learning_rate = 1e-2

train_part2(two_layer_fc, two_layer_fc_init, learning_rate)

```

### Barebones TensorFlow: Train a three-layer ConvNet

We will now use TensorFlow to train a three-layer ConvNet on CIFAR-10.

You need to implement the `three_layer_convnet_init` function. Recall that the architecture of the network is:

1. Convolutional layer (with bias) with 32 5x5 filters, with zero-padding 2

2. ReLU

3. Convolutional layer (with bias) with 16 3x3 filters, with zero-padding 1

4. ReLU

5. Fully-connected layer (with bias) to compute scores for 10 classes

You don't need to do any hyperparameter tuning, but you should see accuracies above 43% after one epoch of training.

```

def three_layer_convnet_init():

"""

Initialize the weights of a Three-Layer ConvNet, for use with the

three_layer_convnet function defined above.

Inputs: None

Returns a list containing:

- conv_w1: TensorFlow Variable giving weights for the first conv layer

- conv_b1: TensorFlow Variable giving biases for the first conv layer

- conv_w2: TensorFlow Variable giving weights for the second conv layer

- conv_b2: TensorFlow Variable giving biases for the second conv layer

- fc_w: TensorFlow Variable giving weights for the fully-connected layer

- fc_b: TensorFlow Variable giving biases for the fully-connected layer

"""

params = None

############################################################################

# TODO: Initialize the parameters of the three-layer network. #

############################################################################

conv_w1 = tf.Variable(kaiming_normal([5, 5, 3, 32]))

conv_b1 = tf.Variable(np.zeros([32]), dtype=tf.float32)

conv_w2 = tf.Variable(kaiming_normal([3, 3, 32, 16]))

conv_b2 = tf.Variable(np.zeros([16]), dtype=tf.float32)

fc_w = tf.Variable(kaiming_normal([32*32*16,10]))

fc_b = tf.Variable(np.zeros([10]), dtype=tf.float32)

params = (conv_w1, conv_b1, conv_w2, conv_b2, fc_w, fc_b)

############################################################################

# END OF YOUR CODE #

############################################################################

return params

learning_rate = 3e-3

train_part2(three_layer_convnet, three_layer_convnet_init, learning_rate)

```

# Part III: Keras Model API

Implementing a neural network using the low-level TensorFlow API is a good way to understand how TensorFlow works, but it's a little inconvenient - we had to manually keep track of all Tensors holding learnable parameters, and we had to use a control dependency to implement the gradient descent update step. This was fine for a small network, but could quickly become unweildy for a large complex model.

Fortunately TensorFlow provides higher-level packages such as `tf.keras` and `tf.layers` which make it easy to build models out of modular, object-oriented layers; `tf.train` allows you to easily train these models using a variety of different optimization algorithms.

In this part of the notebook we will define neural network models using the `tf.keras.Model` API. To implement your own model, you need to do the following:

1. Define a new class which subclasses `tf.keras.model`. Give your class an intuitive name that describes it, like `TwoLayerFC` or `ThreeLayerConvNet`.

2. In the initializer `__init__()` for your new class, define all the layers you need as class attributes. The `tf.layers` package provides many common neural-network layers, like `tf.layers.Dense` for fully-connected layers and `tf.layers.Conv2D` for convolutional layers. Under the hood, these layers will construct `Variable` Tensors for any learnable parameters. **Warning**: Don't forget to call `super().__init__()` as the first line in your initializer!

3. Implement the `call()` method for your class; this implements the forward pass of your model, and defines the *connectivity* of your network. Layers defined in `__init__()` implement `__call__()` so they can be used as function objects that transform input Tensors into output Tensors. Don't define any new layers in `call()`; any layers you want to use in the forward pass should be defined in `__init__()`.

After you define your `tf.keras.Model` subclass, you can instantiate it and use it like the model functions from Part II.

### Module API: Two-Layer Network

Here is a concrete example of using the `tf.keras.Model` API to define a two-layer network. There are a few new bits of API to be aware of here:

We use an `Initializer` object to set up the initial values of the learnable parameters of the layers; in particular `tf.variance_scaling_initializer` gives behavior similar to the Kaiming initialization method we used in Part II. You can read more about it here: https://www.tensorflow.org/api_docs/python/tf/variance_scaling_initializer

We construct `tf.layers.Dense` objects to represent the two fully-connected layers of the model. In addition to multiplying their input by a weight matrix and adding a bias vector, these layer can also apply a nonlinearity for you. For the first layer we specify a ReLU activation function by passing `activation=tf.nn.relu` to the constructor; the second layer does not apply any activation function.

Unfortunately the `flatten` function we defined in Part II is not compatible with the `tf.keras.Model` API; fortunately we can use `tf.layers.flatten` to perform the same operation. The issue with our `flatten` function from Part II has to do with static vs dynamic shapes for Tensors, which is beyond the scope of this notebook; you can read more about the distinction [in the documentation](https://www.tensorflow.org/programmers_guide/faq#tensor_shapes).

```

class TwoLayerFC(tf.keras.Model):

def __init__(self, hidden_size, num_classes):

super().__init__()

initializer = tf.variance_scaling_initializer(scale=2.0)

self.fc1 = tf.layers.Dense(hidden_size, activation=tf.nn.relu,

kernel_initializer=initializer)

self.fc2 = tf.layers.Dense(num_classes,

kernel_initializer=initializer)

def call(self, x, training=None):

x = tf.layers.flatten(x)

x = self.fc1(x)

x = self.fc2(x)

return x

def test_TwoLayerFC():

""" A small unit test to exercise the TwoLayerFC model above. """

tf.reset_default_graph()

input_size, hidden_size, num_classes = 50, 42, 10

# As usual in TensorFlow, we first need to define our computational graph.

# To this end we first construct a TwoLayerFC object, then use it to construct

# the scores Tensor.

model = TwoLayerFC(hidden_size, num_classes)

with tf.device(device):

x = tf.zeros((64, input_size))

scores = model(x)

# Now that our computational graph has been defined we can run the graph

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

scores_np = sess.run(scores)

print(scores_np.shape)

test_TwoLayerFC()

```

### Funtional API: Two-Layer Network

The `tf.layers` package provides two different higher-level APIs for defining neural network models. In the example above we used the **object-oriented API**, where each layer of the neural network is represented as a Python object (like `tf.layers.Dense`). Here we showcase the **functional API**, where each layer is a Python function (like `tf.layers.dense`) which inputs and outputs TensorFlow Tensors, and which internally sets up Tensors in the computational graph to hold any learnable weights.

To construct a network, one needs to pass the input tensor to the first layer, and construct the subsequent layers sequentially. Here's an example of how to construct the same two-layer nework with the functional API.

```

def two_layer_fc_functional(inputs, hidden_size, num_classes):

initializer = tf.variance_scaling_initializer(scale=2.0)

flattened_inputs = tf.layers.flatten(inputs)

fc1_output = tf.layers.dense(flattened_inputs, hidden_size, activation=tf.nn.relu,

kernel_initializer=initializer)

scores = tf.layers.dense(fc1_output, num_classes,

kernel_initializer=initializer)

return scores

def test_two_layer_fc_functional():

""" A small unit test to exercise the TwoLayerFC model above. """

tf.reset_default_graph()

input_size, hidden_size, num_classes = 50, 42, 10

# As usual in TensorFlow, we first need to define our computational graph.

# To this end we first construct a two layer network graph by calling the

# two_layer_network() function. This function constructs the computation

# graph and outputs the score tensor.

with tf.device(device):

x = tf.zeros((64, input_size))

scores = two_layer_fc_functional(x, hidden_size, num_classes)

# Now that our computational graph has been defined we can run the graph

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

scores_np = sess.run(scores)

print(scores_np.shape)

test_two_layer_fc_functional()

```

### Keras Model API: Three-Layer ConvNet

Now it's your turn to implement a three-layer ConvNet using the `tf.keras.Model` API. Your model should have the same architecture used in Part II:

1. Convolutional layer with 5 x 5 kernels, with zero-padding of 2

2. ReLU nonlinearity

3. Convolutional layer with 3 x 3 kernels, with zero-padding of 1

4. ReLU nonlinearity

5. Fully-connected layer to give class scores

You should initialize the weights of your network using the same initialization method as was used in the two-layer network above.

**Hint**: Refer to the documentation for `tf.layers.Conv2D` and `tf.layers.Dense`:

https://www.tensorflow.org/api_docs/python/tf/layers/Conv2D

https://www.tensorflow.org/api_docs/python/tf/layers/Dense

```

class ThreeLayerConvNet(tf.keras.Model):

def __init__(self, channel_1, channel_2, num_classes):

super().__init__()

########################################################################

# TODO: Implement the __init__ method for a three-layer ConvNet. You #

# should instantiate layer objects to be used in the forward pass. #

########################################################################

initializer = tf.variance_scaling_initializer(scale=2.0)

self.conv1 = tf.layers.Conv2D(channel_1, [5,5], [1,1], padding='valid',

kernel_initializer=initializer,

activation=tf.nn.relu)

self.conv2 = tf.layers.Conv2D(channel_2, [3,3], [1,1], padding='valid',

kernel_initializer=initializer,

activation=tf.nn.relu)

self.fc = tf.layers.Dense(num_classes, kernel_initializer=initializer)

########################################################################

# END OF YOUR CODE #

########################################################################

def call(self, x, training=None):

scores = None

########################################################################

# TODO: Implement the forward pass for a three-layer ConvNet. You #

# should use the layer objects defined in the __init__ method. #

########################################################################

padding = tf.constant([[0,0],[2,2],[2,2],[0,0]])

x = tf.pad(x, padding, 'CONSTANT')

x = self.conv1(x)

padding = tf.constant([[0,0],[1,1],[1,1],[0,0]])

x = tf.pad(x, padding, 'CONSTANT')

x = self.conv2(x)

x = tf.layers.flatten(x)

scores = self.fc(x)

########################################################################

# END OF YOUR CODE #

########################################################################

return scores

```

Once you complete the implementation of the `ThreeLayerConvNet` above you can run the following to ensure that your implementation does not crash and produces outputs of the expected shape.

```

def test_ThreeLayerConvNet():

tf.reset_default_graph()

channel_1, channel_2, num_classes = 12, 8, 10

model = ThreeLayerConvNet(channel_1, channel_2, num_classes)

with tf.device(device):

x = tf.zeros((64, 3, 32, 32))

scores = model(x)

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

scores_np = sess.run(scores)

print(scores_np.shape)

test_ThreeLayerConvNet()

```

### Keras Model API: Training Loop

We need to implement a slightly different training loop when using the `tf.keras.Model` API. Instead of computing gradients and updating the weights of the model manually, we use an `Optimizer` object from the `tf.train` package which takes care of these details for us. You can read more about `Optimizer`s here: https://www.tensorflow.org/api_docs/python/tf/train/Optimizer

```

def train_part34(model_init_fn, optimizer_init_fn, num_epochs=1):

"""

Simple training loop for use with models defined using tf.keras. It trains

a model for one epoch on the CIFAR-10 training set and periodically checks

accuracy on the CIFAR-10 validation set.

Inputs:

- model_init_fn: A function that takes no parameters; when called it

constructs the model we want to train: model = model_init_fn()

- optimizer_init_fn: A function which takes no parameters; when called it

constructs the Optimizer object we will use to optimize the model:

optimizer = optimizer_init_fn()

- num_epochs: The number of epochs to train for