text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

## RIHAD VARIAWA, Data Scientist - Who has fun LEARNING, EXPLORING & GROWING

In workspaces like this one, you will be able to practice visualization techniques you've seen in the course materials. In this particular workspace, you'll practice creating single-variable plots for categorical data.

```

# prerequisite package imports

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sb

%matplotlib inline

# solution script imports

from solutions_univ import bar_chart_solution_1, bar_chart_solution_2

```

In this workspace, you'll be working with this dataset comprised of attributes of creatures in the video game series Pokémon. The data was assembled from the database of information found in [this GitHub repository](https://github.com/veekun/pokedex/tree/master/pokedex/data/csv).

```

pokemon = pd.read_csv('./data/pokemon.csv')

pokemon.head()

```

**Task 1**: There have been quite a few Pokémon introduced over the series' history. How many were introduced in each generation? Create a _bar chart_ of these frequencies using the 'generation_id' column.

```

base_color = sb.color_palette()[0]

sb.countplot(data = pokemon, x = 'generation_id', color = base_color);

```

Once you've created your chart, run the cell below to check the output from our solution. Your visualization does not need to be exactly the same as ours, but it should be able to come up with the same conclusions.

```

bar_chart_solution_1()

```

**Task 2**: Each Pokémon species has one or two 'types' that play a part in its offensive and defensive capabilities. How frequent is each type? The code below creates a new dataframe that puts all of the type counts in a single column.

```

pkmn_types = pokemon.melt(id_vars = ['id','species'],

value_vars = ['type_1', 'type_2'],

var_name = 'type_level', value_name = 'type').dropna()

pkmn_types.head()

```

Your task is to use this dataframe to create a _relative frequency_ plot of the proportion of Pokémon with each type, _sorted_ from most frequent to least. **Hint**: The sum across bars should be greater than 100%, since many Pokémon have two types. Keep this in mind when considering a denominator to compute relative frequencies.

```

pkmn_cnts = pkmn_types['type'].value_counts(sort=True)

pkmn_labels = pkmn_cnts.index

pkmn_max = max(pkmn_cnts) / pokemon.shape[0]

ticks=np.arange(0,pkmn_max,.02)

ticks_names = ['{:.2f}'.format(x) for x in ticks]

sb.countplot(data = pkmn_types, y = 'type',color = base_color, order = pkmn_labels);

plt.xticks(ticks * pokemon.shape[0],ticks_names);

bar_chart_solution_2()

```

If you're interested in seeing the code used to generate the solution plots, you can find it in the `solutions_univ.py` script in the workspace folder. You can navigate there by clicking on the Jupyter icon in the upper left corner of the workspace. Spoiler warning: the script contains solutions for all of the workspace exercises in this lesson, so take care not to spoil your practice!

| github_jupyter |

```

import panel as pn

import numpy as np

import pandas as pd

pn.extension()

```

The ``Bokeh`` pane allows displaying any displayable [Bokeh](http://bokeh.org) model inside a Panel app. Since Panel is built on Bokeh internally, the Bokeh model is simply inserted into the plot. Since Bokeh models are ordinarily only displayed once, some Panel-related functionality such as syncing multiple views of the same model may not work. Nonetheless this pane type is very useful for combining raw Bokeh code with the higher-level Panel API.

When working in a notebook any changes to a Bokeh objects may not be synced automatically requiring an explicit call to `pn.state.push_notebook` with the Panel component containing the Bokeh object.

#### Parameters:

For the ``theme`` parameter, see the [bokeh themes docs](https://docs.bokeh.org/en/latest/docs/reference/themes.html).

* **``object``** (bokeh.layouts.LayoutDOM): The Bokeh model to be displayed

* **``theme``** (bokeh.themes.Theme): The Bokeh theme to apply

___

```

from math import pi

from bokeh.palettes import Category20c, Category20

from bokeh.plotting import figure

from bokeh.transform import cumsum

x = {

'United States': 157,

'United Kingdom': 93,

'Japan': 89,

'China': 63,

'Germany': 44,

'India': 42,

'Italy': 40,

'Australia': 35,

'Brazil': 32,

'France': 31,

'Taiwan': 31,

'Spain': 29

}

data = pd.Series(x).reset_index(name='value').rename(columns={'index':'country'})

data['angle'] = data['value']/data['value'].sum() * 2*pi

data['color'] = Category20c[len(x)]

p = figure(plot_height=350, title="Pie Chart", toolbar_location=None,

tools="hover", tooltips="@country: @value", x_range=(-0.5, 1.0))

r = p.wedge(x=0, y=1, radius=0.4,

start_angle=cumsum('angle', include_zero=True), end_angle=cumsum('angle'),

line_color="white", fill_color='color', legend_field='country', source=data)

p.axis.axis_label=None

p.axis.visible=False

p.grid.grid_line_color = None

bokeh_pane = pn.pane.Bokeh(p, theme="dark_minimal")

bokeh_pane

```

To update a plot with a live server, we can simply modify the underlying model. If we are working in a Jupyter notebook we also have to call the `pn.io.push_notebook` helper function on the component or explicitly trigger an event with `bokeh_pane.param.trigger('object')`:

```

r.data_source.data['color'] = Category20[len(x)]

pn.io.push_notebook(bokeh_pane)

```

Alternatively the model may also be replaced entirely, in a live server:

```

from bokeh.models import Div

bokeh_pane.object = Div(text='<h2>This text replaced the pie chart</h2>')

```

The other nice feature when using Panel to render bokeh objects is that callbacks will work just like they would on the server. So you can simply wrap your existing bokeh app in Panel and it will render and work out of the box both in the notebook and when served as a standalone app:

```

from bokeh.layouts import column, row

from bokeh.models import ColumnDataSource, Slider, TextInput

# Set up data

N = 200

x = np.linspace(0, 4*np.pi, N)

y = np.sin(x)

source = ColumnDataSource(data=dict(x=x, y=y))

# Set up plot

plot = figure(plot_height=400, plot_width=400, title="my sine wave",

tools="crosshair,pan,reset,save,wheel_zoom",

x_range=[0, 4*np.pi], y_range=[-2.5, 2.5])

plot.line('x', 'y', source=source, line_width=3, line_alpha=0.6)

# Set up widgets

text = TextInput(title="title", value='my sine wave')

offset = Slider(title="offset", value=0.0, start=-5.0, end=5.0, step=0.1)

amplitude = Slider(title="amplitude", value=1.0, start=-5.0, end=5.0, step=0.1)

phase = Slider(title="phase", value=0.0, start=0.0, end=2*np.pi)

freq = Slider(title="frequency", value=1.0, start=0.1, end=5.1, step=0.1)

# Set up callbacks

def update_title(attrname, old, new):

plot.title.text = text.value

text.on_change('value', update_title)

def update_data(attrname, old, new):

# Get the current slider values

a = amplitude.value

b = offset.value

w = phase.value

k = freq.value

# Generate the new curve

x = np.linspace(0, 4*np.pi, N)

y = a*np.sin(k*x + w) + b

source.data = dict(x=x, y=y)

for w in [offset, amplitude, phase, freq]:

w.on_change('value', update_data)

# Set up layouts and add to document

inputs = column(text, offset, amplitude, phase, freq)

bokeh_app = pn.pane.Bokeh(row(inputs, plot, width=800))

bokeh_app

```

### Controls

The `Bokeh` pane exposes a number of options which can be changed from both Python and Javascript. Try out the effect of these parameters interactively:

```

pn.Row(bokeh_app.controls(jslink=True), bokeh_app)

```

| github_jupyter |

<a href="https://colab.research.google.com/github/finlytics-hub/LTV_predictions/blob/master/LTV_Analysis.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Environment Setup

```

# import and install all required libraries

import pandas as pd

import seaborn as sns

import datetime as dt

import matplotlib.pyplot as plt

import numpy as np

! pip install lifetimes==0.11.3

from lifetimes.plotting import *

from lifetimes.utils import *

from lifetimes import BetaGeoFitter

from lifetimes import GammaGammaFitter

# Load data

data = pd.read_csv('.../OnlineRetail_2yrs.csv')

```

# EDA and Data Preprocessing

```

data.head(10)

data.info()

# Convert InvoiceDate into DateTime format and extract the date values

data['InvoiceDate'] = pd.to_datetime(data['InvoiceDate']).dt.date

# drop rows with missing CustomerID as our analysis will be at the individual customer level

data.dropna(axis = 0, subset = ['Customer ID'], inplace = True)

# filter out the negative values from Quantity field as these could relate to returns that are not relevant to LTV predictions

data = data[(data['Quantity'] > 0)]

# create a new column for Sales per invoice and filter out only the required columns for the Lifetimes package

data['Sales'] = data['Quantity'] * data['Price']

data_final = data[['Customer ID', 'InvoiceDate', 'Sales']]

data_final.head()

data_final.info()

# transform our transaction level data into the required summary form for Lifetimes

data_summary = summary_data_from_transaction_data(data_final, customer_id_col = 'Customer ID', datetime_col = 'InvoiceDate', monetary_value_col = 'Sales', freq = 'D')

# used freq = 'D' since we have a daily transactions log

data_summary.head(10)

# retain only those customers with frequency > 0

data_summary = data_summary[data_summary['frequency'] > 0]

# Some descriptive statistics of the summary data

data_summary.describe()

```

# BG/NBD Model Training & Visualisation

```

# fit the BG/NBD model to our data_summary

bgf = BetaGeoFitter()

bgf.fit(data_summary['frequency'], data_summary['recency'], data_summary['T'])

# plot the estimated gamma distribution of λ (customers' propensities to purchase)

plot_transaction_rate_heterogeneity(bgf);

# plot the estimated beta distribution of p, a customers' probability of dropping out immediately after a transaction

plot_dropout_rate_heterogeneity(bgf);

# visualize our frequency/recency matrix

fig = plt.figure(figsize=(12,8))

plot_frequency_recency_matrix(bgf, T = 7);

# Now let's visualise the probability of a customer being alive

fig = plt.figure(figsize=(12,8))

plot_probability_alive_matrix(bgf);

```

# Model Validation

```

# partition the dataset into a calibration and a holdout dataset

summary_cal_holdout = calibration_and_holdout_data(data_final, 'Customer ID', 'InvoiceDate', freq = "D", monetary_value_col = 'Sales', calibration_period_end='2011-06-30')

summary_cal_holdout.head()

# again, retain only the +ve frequency_cal values

summary_cal_holdout = summary_cal_holdout[summary_cal_holdout['frequency_cal'] > 0]

# compare the predicted # of repeat puchases with actual repeat purchases during the holdout period

bgf_cal = BetaGeoFitter()

bgf_cal.fit(summary_cal_holdout['frequency_cal'], summary_cal_holdout['recency_cal'], summary_cal_holdout['T_cal'])

plot_calibration_purchases_vs_holdout_purchases(bgf_cal, summary_cal_holdout, kind = 'frequency_cal', n = int(summary_cal_holdout['frequency_holdout'].max()), figsize = (12,8));

```

# Gamma-Gamma Model Fitting

```

# Let's fit the Gamma-Gamma model to our data_summary

ggf = GammaGammaFitter()

ggf.fit(frequency = data_summary['frequency'], monetary_value = data_summary['monetary_value'])

```

# Prediction Time!

```

# Calculate the expected number of repeat purchases up to time t for a randomly chosen individual from the population

t = 30 # to calculate the number of expected repeat purchases over the next 30 days

data_summary['predicted_purchases'] = bgf.conditional_expected_number_of_purchases_up_to_time(t, data_summary['frequency'], data_summary['recency'], data_summary['T'])

data_summary.sort_values(by='predicted_purchases').tail(5)

# Calculate probability of being currently alive and assign to each CustomerID

data_summary['p_alive'] = bgf.conditional_probability_alive(data_summary['frequency'], data_summary['recency'], data_summary['T'])

data_summary.sort_values(by='predicted_purchases').tail(5)

sns.distplot(data_summary['p_alive']);

data_summary['churn'] = ['churned' if p_alive < 0.5 else 'not churned' for p_alive in data_summary['p_alive']]

data_summary['churn'][(data_summary['p_alive'] >= 0.5) & (data_summary['p_alive'] < 0.75)] = "high risk"

data_summary['churn'].value_counts()

# After applying Gamma-Gamma model, now we can estimate average transaction value for each customer over his/her lifetime

data_summary['predicted_Sales'] = ggf.conditional_expected_average_profit(data_summary['frequency'], data_summary['monetary_value'])

data_summary.head()

# Final piece of the puzzle - calculate LTV for each customer over the next 12 months with an assumed monthly discount rate of 0.01%

data_summary['LTV'] = ggf.customer_lifetime_value(

bgf, #the model to use to predict the number of future transactions

data_summary['frequency'], data_summary['recency'], data_summary['T'], data_summary['monetary_value'],

time = 12, # number of months to predict LTV for

discount_rate = 0.01 # monthly discount rate ~ 12.7% annually

)

data_summary.head()

# Let's identify our top 20 customers based on LTV

best_projected_cust_LTV = data_summary.sort_values('LTV').tail(20)

best_projected_cust_LTV

```

| github_jupyter |

```

#hide

from nbdev.showdoc import *

```

# 1 - SC2 Training Grounds Computer Model

In this deliverable, I focus on developing the computer model of _SC2 Training Grounds_. This model is based on the application's conceptual model defined in the project's design brief.

> Note: see this project's dissertation document (Chapter 2, Human-Computer Interaction section). There, I explore the idea of design as the process of connecting an artifact's computer and user models.

To this end, I first use the activity diagrams shown below, which illustrates the application's three primary processes. I separate the processes, given that they are meant to be triggered by different events and run parallel to each other. However, they also interact with each other in different ways at different moments.

## Primary User Interaction

First, the following diagram shows the actions that define the users' primary interaction with the application. Through these actions, the application responds to two goals: handling the player profile classification process and offering recommendations based on the progress and results of that process.

The profile classification process, in turn, has three goals:

1. Check if the player's profile has been classified into one of the categories built in the application's clustering process (see Bellow).

2. Remind and update the player about their progress in this process.

3. Trigger the reclassification process once a player completes a training regime.

> Note: The process proposed in the diagram asks players to play five classification online matches every time a season starts or when they complete a training regime. I settled on five matches since it's the number of matches that the game already asks players to complete to rank them in the online competitive ladders every season.

Meanwhile, _SC2 Training Grounds_ centres its recommendation process on two tasks. First, it maintains a set of similarity matrixes that it uses to generate recommendations based on Item-to-Item and User-to-User comparisons. Second, it determines what recommendations to offer to the players based on their classification and current training regime status.

It maintains the similarity matrixes by assigning positive or negative points to recommendations similarly to how other recommender systems award points through ranking or rating systems. However, in this case, the system rewards regimes and challenges chosen by the players, to regimes carried out to completion and regimes that elicit profile changes according to the reclassification. Meanwhile, the process punishes regimes that players abandon.

> Note: see dissertation document Section 4.3 for a review of collaborative filtering recommendation systems.

<img alt="Primary UX Diagram" caption="Sc2 Training Grouns' Primary User Interaction Diagram" id="interaction_diagram" height="900" src="images/Final_Activity_diagram.png">

## Clustering and Classification Model

The next diagram shows the _clustering_ and _classification model training_ process that runs parallel and in the background of the users' interaction.

This process is conceptualised to match StarCraft 2's ranked ladder season cycles. In other words, since players are being asked to get ranked every season, I propose that _SC2 Training Grounds_ recalculates its classification clusters at that same pace.

In this case, the clustering is meant as a form of **Player Experience Modelling** (see Dissertation Document Chapters 2.1.2, 2.3.5 and 3.3.3). Here, the system responds to the changing tactics and strategies of the game to classify players dividing players into clusters based on their performance indicators every season. In addition, these cluster features can also be used as a source of information to communicate the state of the population's characteristics (see Dissertation Document Chapters 3.3.6).

Clustering also tags the replay batch it uses to create its classification. These tags can then be used to train an efficient classification model that can be used during the Primary Interaction Loop described above.

Since all other processes in the system are contingent upon this classification process, I develop the components that run this process in Section 1 of this deliverable.

<img alt="Clustering process diagram" caption="Clustering and classification model training process diagram" width="300" id="cluster_diagram" src="images/Clustering_diagram.png">

## Collaborative Filtering

> Warning: To be contimnued

| github_jupyter |

# Analyzing the flexible path length simulation

```

from __future__ import print_function

%matplotlib inline

import openpathsampling as paths

import numpy as np

import matplotlib.pyplot as plt

import os

import openpathsampling.visualize as ops_vis

from IPython.display import SVG

```

Load the file, and from the file pull our the engine (which tells us what the timestep was) and the move scheme (which gives us a starting point for much of the analysis).

```

# note that this log will overwrite the log from the previous notebook

#import logging.config

#logging.config.fileConfig("logging.conf", disable_existing_loggers=False)

%%time

flexible = paths.AnalysisStorage("ad_tps.nc")

# opening as AnalysisStorage is a little slower, but speeds up the move_summary

engine = flexible.engines[0]

flex_scheme = flexible.schemes[0]

print("File size: {0} for {1} steps, {2} snapshots".format(

flexible.file_size_str,

len(flexible.steps),

len(flexible.snapshots)

))

```

That tell us a little about the file we're dealing with. Now we'll start analyzing the contents of that file. We used a very simple move scheme (only shooting), so the main information that the `move_summary` gives us is the acceptance of the only kind of move in that scheme. See the MSTIS examples for more complicated move schemes, where you want to make sure that frequency at which the move runs is close to what was expected.

```

flex_scheme.move_summary(flexible.steps)

```

## Replica history tree and decorrelated trajectories

The `ReplicaHistoryTree` object gives us both the history tree (often called the "move tree") and the number of decorrelated trajectories.

A `ReplicaHistoryTree` is made for a certain set of Monte Carlo steps. First, we make a tree of only the first 25 steps in order to visualize it. (All 10000 steps would be unwieldy.)

After the visualization, we make a second `PathTree` of all the steps. We won't visualize that; instead we use it to count the number of decorrelated trajectories.

```

replica_history = ops_vis.ReplicaEvolution(replica=0)

tree = ops_vis.PathTree(

flexible.steps[0:25],

replica_history

)

tree.options.css['scale_x'] = 3

SVG(tree.svg())

# can write to svg file and open with programs that can read SVG

with open("flex_tps_tree.svg", 'w') as f:

f.write(tree.svg())

print("Decorrelated trajectories:", len(tree.generator.decorrelated_trajectories))

%%time

full_history = ops_vis.PathTree(

flexible.steps,

ops_vis.ReplicaEvolution(

replica=0

)

)

n_decorrelated = len(full_history.generator.decorrelated_trajectories)

print("All decorrelated trajectories:", n_decorrelated)

```

## Path length distribution

Flexible length TPS gives a distribution of path lengths. Here we calculate the length of every accepted trajectory, then histogram those lengths, and calculate the maximum and average path lengths.

We also use `engine.snapshot_timestep` to convert the count of frames to time, including correct units.

```

path_lengths = [len(step.active[0].trajectory) for step in flexible.steps]

plt.hist(path_lengths, bins=40, alpha=0.5);

print("Maximum:", max(path_lengths),

"("+(max(path_lengths)*engine.snapshot_timestep).format("%.3f")+")")

print ("Average:", "{0:.2f}".format(np.mean(path_lengths)),

"("+(np.mean(path_lengths)*engine.snapshot_timestep).format("%.3f")+")")

```

## Path density histogram

Next we will create a path density histogram. Calculating the histogram itself is quite easy: first we reload the collective variables we want to plot it in (we choose the phi and psi angles). Then we create the empty path density histogram, by telling it which CVs to use and how to make the histogram (bin sizes, etc). Finally, we build the histogram by giving it the list of active trajectories to histogram.

```

from openpathsampling.numerics import HistogramPlotter2D

psi = flexible.cvs['psi']

phi = flexible.cvs['phi']

deg = 180.0 / np.pi

path_density = paths.PathDensityHistogram(cvs=[phi, psi],

left_bin_edges=(-180/deg,-180/deg),

bin_widths=(2.0/deg,2.0/deg))

path_dens_counter = path_density.histogram([s.active[0].trajectory for s in flexible.steps])

```

Now we've built the path density histogram, and we want to visualize it. We have a convenient `plot_2d_histogram` function that works in this case, and takes the histogram, desired plot tick labels and limits, and additional `matplotlib` named arguments to `plt.pcolormesh`.

```

tick_labels = np.arange(-np.pi, np.pi+0.01, np.pi/4)

plotter = HistogramPlotter2D(path_density,

xticklabels=tick_labels,

yticklabels=tick_labels,

label_format="{:4.2f}")

ax = plotter.plot(cmap="Blues")

```

## Convert to MDTraj for analysis by external tools

The trajectory can be converted to an MDTraj trajectory, and then used anywhere that MDTraj can be used. This includes writing it to a file (in any number of file formats) or visualizing the trajectory using, e.g., NGLView.

```

ops_traj = flexible.steps[1000].active[0].trajectory

traj = ops_traj.to_mdtraj()

traj

# Here's how you would then use NGLView:

#import nglview as nv

#view = nv.show_mdtraj(traj)

#view

flexible.close()

```

| github_jupyter |

# OpenACC Interoperability

This lab is intended for C/C++ programmers. If you prefer to use Fortran, click [this link.](../Fortran/README.ipynb)

---

## Introduction

The primary goal of this lab is to cover how to write an OpenACC code to work alongside other CUDA codes and accelerated libraries. There are several ways to make an OpenACC/CUDA interoperable code, and we will go through them one-by-one, with a short exercise for each.

When programming in OpenACC, the distinction between CPU/GPU memory is abstracted. For the most part, you do not need to worry about explicitly differentiating between CPU and GPU pointers; the OpenACC runtime handles this for you. However, in CUDA, you do need to differentiate between these two types of pointers. Let's start with using CUDA allocated GPU data in our OpenACC code.

---

## OpenACC Deviceptr Clause

The OpenACC `deviceptr` clause is used with the `data`, `parallel`, or `kernels` directives. It can be used in the same way as other data clauses such as `copyin`, `copyout`, `copy`, or `present`. The `deviceptr` clause is used to specify that a pointer is not a host pointer but rather a device pointer.

This clause is important when working with OpenACC + CUDA interoperability because it is one way we can operate on CUDA allocated device data within an OpenACC code. Take the following example:

**Allocation with CUDA**

```c++

double *cuda_allocate(int size) {

double *ptr;

cudaMalloc((void**) &ptr, size * sizeof(double));

return ptr;

}

```

**Parallel Loop with OpenACC**

```c++

int main() {

double *cuda_ptr = cuda_allocate(100); // Allocated on the device, but not the host!

#pragma acc parallel loop deviceptr(cuda_ptr)

for(int i = 0; i < 100; i++) {

cuda_ptr[i] = 0.0;

}

}

```

Normally, the OpenACC runtime expects to be given a host pointer, which will then be translated to some associated device pointer. However, when using CUDA to do our data management, we do not have that connection between host and device. The `deviceptr` clause is a way to tell the OpenACC runtime that a given pointer should not be translated since it is already a device pointer.

To practice using the `deviceptr` clause, we have a short exercise. We will examine two functions, both compute a dot product. The first code is [dot.c](/edit/C/deviceptr/dot.c), which is a serial dot product. Next is [dot_acc.c](/edit/C/deviceptr/dot_acc.c), which is an OpenACC parallelized version of dot. Both dot and dot_acc are called from [main.cu](/edit/C/deviceptr/main.cu) (*note: .cu is the conventional extension for a CUDA C++ source file*). In main.cu, we use host pointers to call dot, and device pointers to call dot_acc. Let's quickly run the code, it will produce an error.

```

!make -C deviceptr

```

To fix the error, we must tell the OpenACC runtime in the dot_acc function that our pointers are device pointers. Edit the [dot_acc.c](/edit/C/deviceptr/dot_acc.c) file using the deviceptr clause to get the code working. When you think you have it, run the code below and see if the error is fixed.

```

!make -C deviceptr

```

Next, let's do the opposite. Let's take data that was allocated with OpenACC, and use it in a CUDA function.

---

## OpenACC host_data directive

The `host_data` directive is used to make the OpenACC mapped device address available to the host. There are a few clauses that can be used with host_data, but the one that we are interested in using is `use_device`. We will use the `host_data` directive with the `use_device` clause to grab the underlying device pointer that OpenACC usually abstracts for us. Then we can use this device pointer to pass to CUDA kernels or to use accelerated libraries. Let's look at a code example:

**Inside CUDA Code**

```c++

__global__

void example_kernel(int *A, int size) {

// Kernel Code

}

extern "C" void example_cuda(int *A, int size) {

example_kernel<<<512,128>>>(A, size);

}

```

**Inside OpenACC Code**

```c++

extern void example_cuda(int*, int);

int main() {

int *A = (int*) malloc(100*sizeof(int));

#pragma acc data create(A[0:100])

{

#pragma acc host_data use_device(A)

{

example_cuda(A, 100);

}

}

}

```

A brief rundown of what is actually happening under-the-hood: the `data` directive creates a device copy of the array `A`, and the host pointer of `A` is linked to the device pointer of `A`. This is typical OpenACC behavior. Next, the `host_data use_device` translates the `A` variable on the host to the device pointer so that we can pass it to our CUDA function.

To practice this, let's work on another code. We still have [dot.c](/edit/C/host_data/dot.c) for our serial code. But instead of an OpenACC version of dot, we have a CUDA version in [dot_kernel.cu](/edit/C/host_data/dot_kernel.cu). Both of these functions are called in [main.c](/edit/C/host_data/main.c). First, let's run the code and see the error.

```

!make -C host_data

```

Now edit [main.c](/edit/C/host_data/main.c) and use the `host_data` and `use_device` to pass device pointers when calling our CUDA function. When you're ready, rerun the code below, and see if the error is fixed.

```

!make -C host_data

```

---

## Using cuBLAS with OpenACC

We are also able to use accelerated libraries with `host_data` and `use_device` as well. Just like the previous section, we can allocate the data with OpenACC using either the `data` or `enter data` directives. Then, pass that data to a cuBLAS call with `host_data`. This code is slightly different than before; we will be working on a matrix multiplication code. The serial code is found in [matmult.c](/edit/C/cublas/matmult.c). The cuBLAS code is in [matmult_cublas.cu](/edit/C/cublas/matmult_cublas.cu). Both of these are called from [main.c](/edit/C/cublas/main.c). Let's try running the code and seeing the error.

```

!make -C cublas

```

Now, edit [main.c](/edit/C/cublas/main.c) and use host_data/use_device on the cublas call (similar to what you did in the previous exercise). Rerun the code below when you're ready, and see if the error is fixed.

```

!make -C cublas

```

Next we will learn how make CUDA allocated memory behave like OpenACC allocated memory.

---

## OpenACC map_data

We briefly mentioned earlier about how OpenACC creates a mapping between host and device memory. When using CUDA allocated memory within OpenACC, that mapping is not created automatically, but it can be created manually. We are able to map a host pointer to a device pointer by using the OpenACC `acc_map_data` function. Then, before the data is unallocated, you will use `acc_unmap_data` to undo the mapping. Let's look at a quick example.

**Inside CUDA Code**

```c++

int *cuda_allocate(int size) {

int *ptr;

cudaMalloc((void**) &ptr, size*sizeof(int));

return ptr;

}

void cuda_deallocate(int* ptr) {

cudaFree(ptr);

}

```

**Inside OpenACC Code**

```c++

int main() {

int *A = (int*) malloc(100 * sizeof(int));

int *A_device = cuda_allocate(100);

acc_map_data(A, A_device, 100*sizeof(int));

#pragma acc parallel loop present(A[0:100])

for(int i = 0; i < 100; i++) {

// Computation

}

acc_unmap_data(A);

cuda_deallocate(A_device);

free(A);

}

```

To practice, we have another example code which uses the `dot` functions again. Serial `dot` is in [dot.c](/edit/C/map/dot.c). OpenACC `dot` is in [dot_acc.c](/edit/C/map/dot_acc.c). Both of them are called from [main.cu](/edit/C/map/main.cu). Since main is a CUDA code, we have placed the OpenACC map/unmap in a separate file [map.c](/edit/C/map/map.c). Try running the code and seeing the error.

```

!make -C map

```

Now, edit [map.c](/edit/C/map/map.c) and add the OpenACC mapping functions. When you're ready, rerun the code below and see if the error is fixed.

```

!make -C map

```

---

## Routine

The last topic to discuss is using CUDA device functions within OpenACC `parallel` and `kernels` regions. These are functions that are compiled to be called from the accelerator within a GPU kernel or OpenACC region.

If you want to compile an OpenACC function to be used on the device, you will use the `routine` directive with the following syntax:

```c++

#pragma acc routine seq

int func() {

return 0;

}

```

You can also have a function with a loop you want to parallelize like so:

```c++

#pragma acc routine vector

int func() {

int sum = 0;

#pragma acc loop vector

for(int i = 0; i < 100; i++) {

sum += i;

}

return sum;

}

```

To use CUDA `__device__` functions within our OpenACC loops, we can also use the `routine` directive. See the following example:

**In CUDA Code**

```c++

extern "C" __device__

int cuda_func(int x) {

return x*x;

}

```

**In OpenACC Code**

```c++

#pragma acc routine seq

extern int cuda_func(int);

...

int main() {

A = (int*) malloc(100 * sizeof(int));

#pragma acc parallel loop copyout(A[:100])

for(int i = 0; i < 100; i++) {

A[i] = cuda_func(i);

}

}

```

To practice, we have one last code to try out. Our main function is in [main.c](/edit/C/routine/main.c), and our serial code is in [distance_map.c](/edit/C/routine/distance_map.c). Our parallel loop is in [distance_map_acc.c](/edit/C/routine/distance_map_acc.c). Note that the parallel loop is trying to use a CUDA `__device__` function without including any routine information. The CUDA function is in [dist_cuda.cu](/edit/C/routine/dist_cuda.cu). Let's run the code and see the error.

```

!make -C routine

```

Now, edit [distance_map_acc.c](/edit/C/routine/distance_map_acc.c) and include the routine directive. When you're ready, rerun the code below and see if the error is fixed.

```

!make -C routine

```

---

## Bonus Task

Here are some additional resources for OpenACC/CUDA interoperability:

[This is an NVIDIA devblog about some common techniques for implementing OpenACC + CUDA](https://devblogs.nvidia.com/3-versatile-openacc-interoperability-techniques/)

[This is a github repo with some additional code examples demonstrating the lessons covered in this lab](https://github.com/jefflarkin/openacc-interoperability)

---

## Post-Lab Summary

If you would like to download this lab for later viewing, it is recommend you go to your browsers File menu (not the Jupyter notebook file menu) and save the complete web page. This will ensure the images are copied down as well.

You can also execute the following cell block to create a zip-file of the files you've been working on, and download it with the link below.

```

%%bash

rm -f openacc_files.zip

zip -r openacc_files.zip *

```

**After** executing the above zip command, you should be able to download the zip file [here](files/openacc_files.zip)

| github_jupyter |

*This notebook contains an excerpt from the [Python Data Science Handbook](http://shop.oreilly.com/product/0636920034919.do) by Jake VanderPlas; the content is available [on GitHub](https://github.com/jakevdp/PythonDataScienceHandbook).*

# Introduction to machine learning

*image from Deep Learning with Javascript*

# Act 1

```

def decide(income, criminal_record, years_job, credit_payments):

if income < 30000:

if criminal_record:

return 1

else:

return 0

elif income <= 70000:

if years_job < 1:

return 0

elif years_job <= 5:

if credit_payments:

return 1

else:

return 0

else:

return 1

else:

if criminal_record:

return 0

else:

return 1

decide(income=20000, criminal_record=1, years_job=3, credit_payments=1)

import random

import pandas as pd

random.seed(333)

data = []

for i in range(100):

income = random.randint(0, 100000)

criminal_record = random.randint(0, 1)

years_job = random.randint(0, 10)

credit_payments = random.randint(0, 1)

decision = decide(income, criminal_record, years_job, credit_payments)

data.append({'income':income, 'criminal_record':criminal_record, 'years_job':years_job,

'credit_payments':credit_payments, 'decision':decision})

df = pd.DataFrame(data)

df.head(20)

```

# Act 2

```

import pandas as pd

from sklearn import tree

from sklearn.tree import export_text

dtree = tree.DecisionTreeClassifier().fit(

df[['income','criminal_record','years_job','credit_payments']], df['decision'])

print(export_text(dtree, feature_names=['income','criminal_record','years_job','credit_payments']))

```

# What Is Machine Learning?

*building models of data*

Fundamentally, machine learning involves building mathematical models to help understand data.

"Learning" enters the fray when we give these models *tunable parameters* that can be adapted to observed data; in this way the program can be considered to be "learning" from the data.

Once these models have been fit to previously seen data, they can be used to predict and understand aspects of newly observed data.

## Categories of Machine Learning

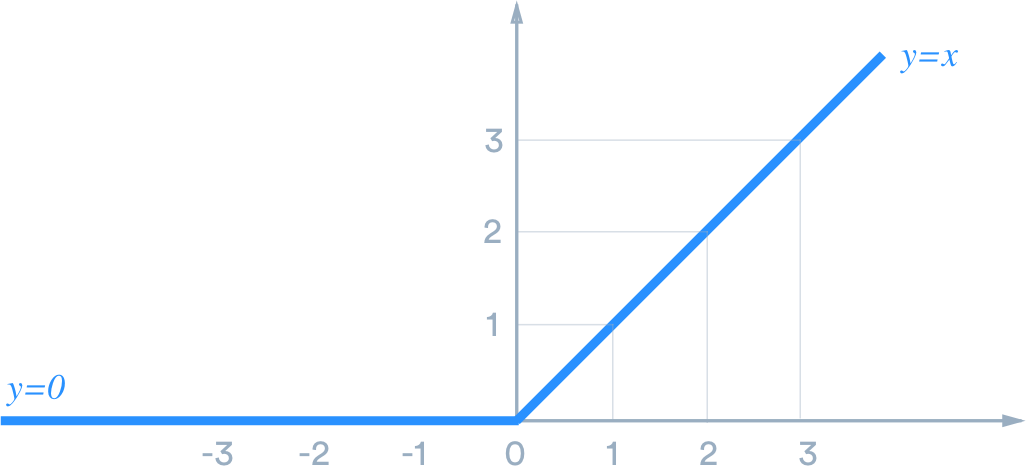

- *Supervised learning*: Models that can predict labels based on labeled training data

- *Classification*: Models that predict labels as two or more discrete categories

- *Regression*: Models that predict continuous labels

- *Unsupervised learning*: Models that identify structure in unlabeled data

- *Clustering*: Models that detect and identify distinct groups in the data

- *Dimensionality reduction*: Models that detect and identify lower-dimensional structure in higher-dimensional data

### Classification: Predicting discrete labels

We will first take a look at a simple *classification* task, in which you are given a set of labeled points and want to use these to classify some unlabeled points.

Imagine that we have the data shown in this figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Classification-Example-Figure-1)

[figure source in Appendix](06.00-Figure-Code.ipynb#Classification-Example-Figure-2)

Now that this model has been trained, it can be generalized to new, unlabeled data.

In other words, we can take a new set of data, draw this model line through it, and assign labels to the new points based on this model.

This stage is usually called *prediction*. See the following figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Classification-Example-Figure-3)

### Regression: Predicting continuous labels

In contrast with the discrete labels of a classification algorithm, we will next look at a simple *regression* task in which the labels are continuous quantities.

Consider the data shown in the following figure, which consists of a set of points each with a continuous label:

[figure source in Appendix](06.00-Figure-Code.ipynb#Regression-Example-Figure-1)

As with the classification example, we have two-dimensional data: that is, there are two features describing each data point.

The color of each point represents the continuous label for that point.

There are a number of possible regression models we might use for this type of data, but here we will use a simple linear regression to predict the points.

This simple linear regression model assumes that if we treat the label as a third spatial dimension, we can fit a plane to the data.

This is a higher-level generalization of the well-known problem of fitting a line to data with two coordinates.

We can visualize this setup as shown in the following figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Regression-Example-Figure-2)

This plane of fit gives us what we need to predict labels for new points.

Visually, we find the results shown in the following figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Regression-Example-Figure-4)

### Clustering: Inferring labels on unlabeled data

The classification and regression illustrations we just looked at are examples of supervised learning algorithms, in which we are trying to build a model that will predict labels for new data.

Unsupervised learning involves models that describe data without reference to any known labels.

One common case of unsupervised learning is "clustering," in which data is automatically assigned to some number of discrete groups.

For example, we might have some two-dimensional data like that shown in the following figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Clustering-Example-Figure-2)

By eye, it is clear that each of these points is part of a distinct group.

Given this input, a clustering model will use the intrinsic structure of the data to determine which points are related.

Using the very fast and intuitive *k*-means algorithm (see [In Depth: K-Means Clustering](05.11-K-Means.ipynb)), we find the clusters shown in the following figure:

[figure source in Appendix](06.00-Figure-Code.ipynb#Clustering-Example-Figure-2)

| github_jupyter |

<a href="https://colab.research.google.com/github/jeffheaton/t81_558_deep_learning/blob/master/t81_558_class_02_5_pandas_features.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# T81-558: Applications of Deep Neural Networks

**Module 2: Python for Machine Learning**

* Instructor: [Jeff Heaton](https://sites.wustl.edu/jeffheaton/), McKelvey School of Engineering, [Washington University in St. Louis](https://engineering.wustl.edu/Programs/Pages/default.aspx)

* For more information visit the [class website](https://sites.wustl.edu/jeffheaton/t81-558/).

# Module 2 Material

Main video lecture:

* Part 2.1: Introduction to Pandas [[Video]](https://www.youtube.com/watch?v=bN4UuCBdpZc&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_02_1_python_pandas.ipynb)

* Part 2.2: Categorical Values [[Video]](https://www.youtube.com/watch?v=4a1odDpG0Ho&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_02_2_pandas_cat.ipynb)

* Part 2.3: Grouping, Sorting, and Shuffling in Python Pandas [[Video]](https://www.youtube.com/watch?v=YS4wm5gD8DM&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_02_3_pandas_grouping.ipynb)

* Part 2.4: Using Apply and Map in Pandas for Keras [[Video]](https://www.youtube.com/watch?v=XNCEZ4WaPBY&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_02_4_pandas_functional.ipynb)

* **Part 2.5: Feature Engineering in Pandas for Deep Learning in Keras** [[Video]](https://www.youtube.com/watch?v=BWPTj4_Mi9E&list=PLjy4p-07OYzulelvJ5KVaT2pDlxivl_BN) [[Notebook]](t81_558_class_02_5_pandas_features.ipynb)

# Google CoLab Instructions

The following code ensures that Google CoLab is running the correct version of TensorFlow.

```

try:

%tensorflow_version 2.x

COLAB = True

print("Note: using Google CoLab")

except:

print("Note: not using Google CoLab")

COLAB = False

```

# Part 2.5: Feature Engineering

Feature engineering is an essential part of machine learning. For now, we will manually engineer features. However, later in this course, we will see some techniques for automatic feature engineering.

## Calculated Fields

It is possible to add new fields to the data frame that your program calculates from the other fields. We can create a new column that gives the weight in kilograms. The equation to calculate a metric weight, given weight in pounds, is:

$ m_{(kg)} = m_{(lb)} \times 0.45359237 $

The following Python code performs this transformation:

```

import os

import pandas as pd

df = pd.read_csv(

"https://data.heatonresearch.com/data/t81-558/auto-mpg.csv",

na_values=['NA', '?'])

df.insert(1, 'weight_kg', (df['weight'] * 0.45359237).astype(int))

pd.set_option('display.max_columns', 6)

pd.set_option('display.max_rows', 5)

df

```

## Google API Keys

Sometimes you will use external API's to obtain data. The following examples show how to use the Google API keys to encode addresses for use with neural networks. To use these, you will need your own Google API key. The key I have below is not a real key; you need to put your own in there. Google will ask for a credit card, but unless you use a huge number of lookups, there will be no actual cost. YOU ARE NOT required to get a Google API key for this class; this only shows you how. If you would like to get a Google API key, visit this site and obtain one for **geocode**.

[Google API Keys](https://developers.google.com/maps/documentation/embed/get-api-key)

```

GOOGLE_KEY = 'REPLACE WITH YOUR GOOGLE API KEY'

```

# Other Examples: Dealing with Addresses

Addresses can be difficult to encode into a neural network. There are many different approaches, and you must consider how you can transform the address into something more meaningful. Map coordinates can be a good approach. [latitude and longitude](https://en.wikipedia.org/wiki/Geographic_coordinate_system) can be a useful encoding. Thanks to the power of the Internet, it is relatively easy to transform an address into its latitude and longitude values. The following code determines the coordinates of [Washington University](https://wustl.edu/):

```

import requests

address = "1 Brookings Dr, St. Louis, MO 63130"

response = requests.get(

'https://maps.googleapis.com/maps/api/geocode/json?key={}&address={}' \

.format(GOOGLE_KEY,address))

resp_json_payload = response.json()

if 'error_message' in resp_json_payload:

print(resp_json_payload['error_message'])

else:

print(resp_json_payload['results'][0]['geometry']['location'])

```

If latitude and longitude are fed into the neural network as two features, they might not be overly helpful. These two values would allow your neural network to cluster locations on a map. Sometimes cluster locations on a map can be useful. Figure 2.SMK shows the percentage of the population that smokes in the USA by state.

**Figure 2.SMK: Smokers by State**

The above map shows that certain behaviors, like smoking, can be clustered by the global region.

However, often you will want to transform the coordinates into distances. It is reasonably easy to estimate the distance between any two points on Earth by using the [great circle distance](https://en.wikipedia.org/wiki/Great-circle_distance) between any two points on a sphere:

The following code implements this formula:

$\Delta\sigma=\arccos\bigl(\sin\phi_1\cdot\sin\phi_2+\cos\phi_1\cdot\cos\phi_2\cdot\cos(\Delta\lambda)\bigr)$

$d = r \, \Delta\sigma$

```

from math import sin, cos, sqrt, atan2, radians

# Distance function

def distance_lat_lng(lat1,lng1,lat2,lng2):

# approximate radius of earth in km

R = 6373.0

# degrees to radians (lat/lon are in degrees)

lat1 = radians(lat1)

lng1 = radians(lng1)

lat2 = radians(lat2)

lng2 = radians(lng2)

dlng = lng2 - lng1

dlat = lat2 - lat1

a = sin(dlat / 2)**2 + cos(lat1) * cos(lat2) * sin(dlng / 2)**2

c = 2 * atan2(sqrt(a), sqrt(1 - a))

return R * c

# Find lat lon for address

def lookup_lat_lng(address):

response = requests.get(

'https://maps.googleapis.com/maps/api/geocode/json?key={}&address={}' \

.format(GOOGLE_KEY,address))

json = response.json()

if len(json['results']) == 0:

print("Can't find: {}".format(address))

return 0,0

map = json['results'][0]['geometry']['location']

return map['lat'],map['lng']

# Distance between two locations

import requests

address1 = "1 Brookings Dr, St. Louis, MO 63130"

address2 = "3301 College Ave, Fort Lauderdale, FL 33314"

lat1, lng1 = lookup_lat_lng(address1)

lat2, lng2 = lookup_lat_lng(address2)

print("Distance, St. Louis, MO to Ft. Lauderdale, FL: {} km".format(

distance_lat_lng(lat1,lng1,lat2,lng2)))

```

Distances can be a useful means to encode addresses. It would help if you considered what distance might be helpful for your dataset. Consider:

* Distance to a major metropolitan area

* Distance to a competitor

* Distance to a distribution center

* Distance to a retail outlet

The following code calculates the distance between 10 universities and Washington University in St. Louis:

```

# Encoding other universities by their distance to Washington University

schools = [

["Princeton University, Princeton, NJ 08544", 'Princeton'],

["Massachusetts Hall, Cambridge, MA 02138", 'Harvard'],

["5801 S Ellis Ave, Chicago, IL 60637", 'University of Chicago'],

["Yale, New Haven, CT 06520", 'Yale'],

["116th St & Broadway, New York, NY 10027", 'Columbia University'],

["450 Serra Mall, Stanford, CA 94305", 'Stanford'],

["77 Massachusetts Ave, Cambridge, MA 02139", 'MIT'],

["Duke University, Durham, NC 27708", 'Duke University'],

["University of Pennsylvania, Philadelphia, PA 19104",

'University of Pennsylvania'],

["Johns Hopkins University, Baltimore, MD 21218", 'Johns Hopkins']

]

lat1, lng1 = lookup_lat_lng("1 Brookings Dr, St. Louis, MO 63130")

for address, name in schools:

lat2,lng2 = lookup_lat_lng(address)

dist = distance_lat_lng(lat1,lng1,lat2,lng2)

print("School '{}', distance to wustl is: {}".format(name,dist))

```

| github_jupyter |

# SSNet Predictions

This notebook is meant for hands-on interaction with the code and data used in `SSNet_predictions.py`. Annotations explaining the general functioning of each section and the other modules they reference are provided. Similar notebooks may be added for individual models and combiners in the future. Note that the code shown here does not necessarily reflect the content of the script version.

This cell can be run to easily convert this notebook to a Python script:

```

!jupyter nbconvert --to script SSNet_predictions_notebook.ipynb

```

## License

```

'''

==================================================LICENSING TERMS==================================================

This code and data was developed by employees of the National Institute of Standards and Technology (NIST), an agency of the Federal Government. Pursuant to title 17 United States Code Section 105, works of NIST employees are not subject to copyright protection in the United States and are considered to be in the public domain. The code and data is provided by NIST as a public service and is expressly provided "AS IS." NIST MAKES NO WARRANTY OF ANY KIND, EXPRESS, IMPLIED OR STATUTORY, INCLUDING, WITHOUT LIMITATION, THE IMPLIED WARRANTY OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE, NON-INFRINGEMENT AND DATA ACCURACY. NIST does not warrant or make any representations regarding the use of the data or the results thereof, including but not limited to the correctness, accuracy, reliability or usefulness of the data. NIST SHALL NOT BE LIABLE AND YOU HEREBY RELEASE NIST FROM LIABILITY FOR ANY INDIRECT, CONSEQUENTIAL, SPECIAL, OR INCIDENTAL DAMAGES (INCLUDING DAMAGES FOR LOSS OF BUSINESS PROFITS, BUSINESS INTERRUPTION, LOSS OF BUSINESS INFORMATION, AND THE LIKE), WHETHER ARISING IN TORT, CONTRACT, OR OTHERWISE, ARISING FROM OR RELATING TO THE DATA (OR THE USE OF OR INABILITY TO USE THIS DATA), EVEN IF NIST HAS BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES.

To the extent that NIST may hold copyright in countries other than the United States, you are hereby granted the non-exclusive irrevocable and unconditional right to print, publish, prepare derivative works and distribute the NIST data, in any medium, or authorize others to do so on your behalf, on a royalty-free basis throughout the world.

You may improve, modify, and create derivative works of the code or the data or any portion of the code or the data, and you may copy and distribute such modifications or works. Modified works should carry a notice stating that you changed the code or the data and should note the date and nature of any such change. Please explicitly acknowledge the National Institute of Standards and Technology as the source of the code or the data: Citation recommendations are provided below. Permission to use this code and data is contingent upon your acceptance of the terms of this agreement and upon your providing appropriate acknowledgments of NIST's creation of the code and data.

Paper Title:

SSNet: a Sagittal Stratum-inspired Neural Network Framework for Sentiment Analysis

SSNet authors and developers:

Apostol Vassilev:

Affiliation: National Institute of Standards and Technology

Email: apostol.vassilev@nist.gov

Munawar Hasan:

Affiliation: National Institute of Standards and Technology

Email: munawar.hasan@nist.gov

Jin Honglan

Affiliation: National Institute of Standards and Technology

Email: honglan.jin@nist.gov

====================================================================================================================

'''

'''

This is master file that runs all the three combiners proposed in the paper.

Use following snippet to run all the three combiners: python SSNet_predictions.py

Please note that this code has tensorflow dependencies.

'''

```

## Imports/Dependencies

TensorFlow is the main machine learning framework used to implement, train, and apply the models. Pandas and NumPy are used for general data preprocessing and manipulation. Components from Matplotlib/Pyplot and IPython which are absent from `SSNet_predictions.py` are utilized here to provide enhanced interactivity and visualization. Functions from the following scripts (corresponding to the combiner models described in the paper) are imported:

- `SSNet_Neural_Network.py`

- `SSNet_Bayesian_Decision.py`

- `SSNet_Heuristic_Hybrid.py`

```

import tensorflow as tf

import math

import re

import pandas as pd

import numpy as np

import random

import os

import csv

import matplotlib.pyplot as plt

from IPython.display import JSON

import itertools

from SSNet_Neural_Network import nn

from SSNet_Bayesian_Decision import bayesian_decision

from SSNet_Heuristic_Hybrid import heuristic_hybrid

imdb_5ktr = 'imdb_train_5k.csv'

model_a_tr = 'model_1_5ktrain.csv'

model_b_tr = 'model_2_5ktrain.csv'

# model_c_tr = 'model_3_bert_result_train_5k.csv'

# model_d_tr = 'model_4_use_result_train_5k.csv'

model_c_tr = 'model_3_5ktrain.csv'

model_d_tr = 'model_4_5ktrain.csv'

model_a_te = 'model_1_25ktest.csv'

model_b_te = 'model_2_25ktest.csv'

# model_c_te = 'model_3_bert_result_test_25k.csv'

# model_d_te = 'model_4_use_result_test_25k.csv'

model_c_te = 'model_3_25ktest.csv'

model_d_te = 'model_4_25ktest.csv'

```

## Utilities

### Training Dict Threshold

```

def get_training_dict_threshold(split):

training_dict = dict()

if split == "5K":

training_dict["5K"] = [

[model_a_tr, model_b_tr, model_c_tr, model_d_tr], [

model_a_te, model_b_te, model_c_te, model_d_te]

]

return training_dict

list(itertools.combinations('1234', 2))

```

### Training Dictionary

```

def get_training_dict(split):

training_dict = dict()

# Store a running list of model combinations

# included_models = []

if split == "5K":

# Loop through integers 2 to 4 (inclusive); the number of component models in each combination

for r in range(2, 5):

# Generate combinations of model indices (without repetition)

for c in itertools.combinations(map(str, range(1, 5)), r):

training_dict['model_{{{}}}'.format(','.join(c))] = [

# Generate the file names corresponding to each model's output on both the training and testing data

[f'model_{m}_{n}{t}.csv' for m in c] for n, t in [

('5k', 'train'),

('25k', 'test')

]

]

return training_dict

# Test the function

JSON(get_training_dict("5K"))

# Compile a list of text files containing reviews and their corresponding sentiment labels

imdb_25k_list = list()

data_dir = 'models/train'

for file_name in os.listdir(f'../{data_dir}/pos'):

if file_name != '.DS_Store':

imdb_25k_list.append([file_name, str(1)])

for file_name in os.listdir(f'../{data_dir}/neg'):

if file_name != '.DS_Store':

imdb_25k_list.append([file_name, str(0)])

SAMPLE_SPLIT = ["5K"]

len(imdb_25k_list)

model_weights = []

```

## Train Predictors

Train the predictors and return the results.

```

def train_predictor():

for split in SAMPLE_SPLIT:

print("Sample Split: ", split)

imdb_list = list()

training_dict = None

training_dict_threshold = None

if split == "5K":

df_imdb_tr = pd.read_csv(imdb_5ktr)

for index in df_imdb_tr.index:

file_name = str(df_imdb_tr['file'][index])

label = int(df_imdb_tr['label'][index])

imdb_list.append([file_name, str(label)])

random.shuffle(imdb_list)

training_dict = get_training_dict(split)

training_dict_threshold = get_training_dict_threshold(split)

random.shuffle(imdb_list)

training_dict = get_training_dict(split)

training_dict_threshold = get_training_dict_threshold(split)

acc_dict_nn = dict()

acc_dict_bdc = dict()

for k, v in training_dict.items():

tr_list = list()

te_list = list()

for i in range(len(v[0])):

df = pd.read_csv(v[0][i])

df_dict = dict()

for idx in df.index:

file_name = str(df['file'][idx])

proba = float(df['prob'][idx])

df_dict[file_name] = proba

tr_list.append(df_dict)

for i in range(len(v[1])):

df = pd.read_csv(v[1][i])

df_dict = dict()

for idx in df.index:

file_name = str(df['file'][idx])

proba = float(df['prob'][idx])

df_dict[file_name] = proba

te_list.append(df_dict)

assert len(tr_list) == len(te_list), "train and test samples mismatch ...."

tr_acc = -1.

te_acc = -1.

while True:

tr_acc, te_acc, weights = nn(tr_list=tr_list, imdb_tr_list=imdb_list,

te_list=te_list, imdb_te_list=imdb_25k_list)

model_weights.append(weights)

if weights[0][0] == 0. or weights[0][1] == 0.:

print("bad event ...., training again")

print("\t" +k)

else:

break

acc_dict_nn[k] = [tr_acc, te_acc]

acc_dict_bdc[k] = bayesian_decision(tr_list=tr_list, imdb_tr_list=imdb_list,

te_list=te_list, imdb_te_list=imdb_25k_list)

for k, v in training_dict_threshold.items():

tr_list = list()

te_list = list()

for i in range(len(v[0])):

df = pd.read_csv(v[0][i])

df_dict = dict()

for idx in df.index:

file_name = str(df['file'][idx])

proba = float(df['prob'][idx])

df_dict[file_name] = proba

tr_list.append(df_dict)

for i in range(len(v[1])):

df = pd.read_csv(v[1][i])

df_dict = dict()

for idx in df.index:

file_name = str(df['file'][idx])

proba = float(df['prob'][idx])

df_dict[file_name] = proba

te_list.append(df_dict)

hh_dict = heuristic_hybrid(tr_list=tr_list, imdb_tr_list=imdb_list,

te_list=te_list, imdb_te_list=imdb_25k_list)

nn_metrics = []

# Print summaries of the results for each combiner

#print("Training Complete: ")

print("Neural Network Combiner: ")

for k, v in acc_dict_nn.items():

print("\t" +k +": training accuracy = " +str(v[0]) + ", test accuracy = " +str(v[1]))

nn_metrics.append(v)

bdr_metrics = []

print("\n")

print("Bayesian Decision Rule Combiner: ")

for k, v in acc_dict_bdc.items():

print("\t" +k)

for i, j in v.items():

print("\t\t" +i +": training accuracy = " +str(j[0]) +", test accuracy = " +str(j[1]))

bdr_metrics.append(v)

hh_metrics = []

print("\n")

print("Heuristic-Hybrid Combiner: ")

for k, v in hh_dict.items():

print("Base:", k)

for index in range(len(v)):

print("\t\t", v[index])

print("\n")

hh_metrics.append(v)

return nn_metrics, bdr_metrics, hh_metrics

W = [m[0] for m in model_weights[:7]]

print(W)

# plt.pcolormesh(model_weights)

```

## Result Aggregation

Runs the predictor training script and displays the results

```

trials = []

for i in range(1):

results = train_predictor()

trials.append(results)

def to_list(d):

L = [v for k, v in d.items()]

return L

# Convert result dictionaries to lists

for k, t in enumerate(trials):

for i in range(len(trials[k])):

for j, m in enumerate(trials[k][i]):

# for k, v in m.items():

if type(m) is dict:

trials[k][i][j] = to_list(m)

for j2, m2 in enumerate(m):

if type(m2) is dict:

trials[k][i][j][j2] = to_list(m2)

JSON(list(trials))

```

## Visualization of Results

```

use_scienceplots = True

b = trials[0][0]

# print(trials[0][0])

l = ['Train', 'Test']

combiner_names = [

'Neural Network',

'Bayesian Decision Rule',

'Heuristic-Hybrid'

]

bdr_props = ['Max', 'Avg', 'Sum', 'Majority']

hh_props = l + ['Threshold']

label_data = [l, bdr_props, hh_props]

# plot_style = 'ggplot'

if use_scienceplots:

try:

plt.style.use('science')

plt.style.use(['science','no-latex'])

except:

pass

plt.style.use('ggplot')

def grouped_plot(C=0, sections=2, reduce=0):

labels = [x.split('_')[-1] for x in get_training_dict('5K').keys()]

values = trials[0][C]

# print(values)

# valshape = np.array(values).shape

# if len(valshape) == 3:

# values

reduced = False

combiner_type = combiner_names[C]

if 'Bayesian' in combiner_type:

for i, v in enumerate(values):

for j, vi in enumerate(v):

if type(vi) in [list, tuple]:

values[i][j] = vi[reduce]

elif 'Heuristic' in combiner_type:

# print(values)

values = values[reduce]

# print(values)

if any(g in combiner_type for g in ['Bayesian', 'Heuristic']):

reduced = True

# plt.bar(labels, trials[0][0])

pos = np.arange(len(labels))

fig, ax = plt.subplots(figsize=(10, 5))

# sections = min(len(values[0]), sections)

sections = min(len(label_data[C]), sections)

for n in range(sections):

sections_ = min(len(values[0]), sections)

barwidth = (0.5/sections_)

# V = [x[n] if n <= len(x) else None for x in values]

V = []

for x in values:

try:

V.append(x[n])

except:

V.append(0)

# print(C, n, min(len(label_data[C]), n))

section_labels = label_data[C][min(len(label_data[C])-1, n)]

section_pos = pos+(barwidth*n)

# print(V, section_labels, section_pos, labels)

ax.bar(section_pos, V, barwidth, label=section_labels)

# ticks = pos-(barwidth*sections/4)

ticks = pos+(barwidth*round((sections-2)/2))

# print(ticks)

ax.set_xticks(ticks)

ax.set_xticklabels(labels)

ax.set_ylabel('Accuracy (%)')

ax.set_xlabel('Contributing Models')

s = l[reduce] if reduced else ''

title = f'{combiner_names[C]} Combiner | {s} Accuracy by Model'

# title += ' - '+l[reduce]

ax.set_title(title, pad=30)

# ax.margins(0.5)

# plt.subplots_adjust(left=0.3)

ax.legend(loc='upper right', bbox_to_anchor=(1.2, 1))

# fig.tight_layout(pad=1.5)

return ax

```

### Performance

Visualizes each model/combiner's loss values on the training and testing datasets

```

# Loop through the combiner indices and generate the graph for each one

for i in range(2):

grouped_plot(C=i, sections=4, reduce=1)

# Read a CSV file and return a (nested) list of its rows (split on commas)

# Params:

# filename: the name of the CSV file

# *or* [a and b]; the model number (e.g., "2") and dataset (e.g., "5ktrain")

def read_csv(filename=None, a=None, b=None, s=1):

if not filename:

filename = f'./model_{int(a)}_{b}.csv'

# If file extension is missing, assume CSV

if not filename.endswith('.csv'):

filename += '.csv'

with open(filename) as predictions:

reader = csv.reader(predictions)

data = list(reader)

data.sort(key=lambda d: d[s])

return data

imdb_data = []

for f in [5]:

imdb_data.extend(read_csv(f'imdb_train_{f}k.csv', s=2))

imdb_data.sort(key=lambda d: d[2])

print([d[2] for d in imdb_data[:3]])

imdb_data = [d[1:] for d in imdb_data]

```

### Predictions

```

p = []

# for f in range(2):

dataset = '5ktrain'

plot_size = (12, 6)

fig = plt.figure(figsize=plot_size)

plt.rcParams["figure.figsize"] = plot_size

fig, ax = plt.subplots()

# ax.set_xscale('log')

# ax.set_yscale('log')

# Load predictions for each model

for f in list('1234'):

p.append(read_csv(a=f, b=dataset))

# p.append(read_csv('imdb_train_5k'))

p.append(imdb_data)

# p.append(read_csv(a='2', b=dataset))

# Convert strings in data to floating-point numbers

def prep_data(n):

return [float(m[0]) for m in n if '.' in m[0]]

# Prepare the data to graph

points = [prep_data(n) for n in p]

# ax.scatter(*points)

# Draw the plot

ax.scatter(*points[:2], alpha=0.5, s=5, c=points[4], cmap='inferno')

ax.axis('off')

```

### Losses

Plot the distribution of loss values (absolute value of difference between predicted and actual value) for each model.

```

target = [0, 1, 2, 3]

A = 1 / len(target)

# losses = np.abs(np.array(points[1]) - np.array(points[2]))

losses = []

for t in target:

ys = np.array(prep_data(imdb_data))

# ys = np.random.randint(0, 2, ys.shape)

loss = np.abs(np.array(prep_data(p[t])) - np.array(ys))

losses.append(loss)

x = plt.hist(losses, bins=15, alpha=1)

np.array(prep_data(p[0])).shape

```

### Prediction Distribution

Plot a histogram of the models' predictions.

```

target = [0, 1, 2, 3]

A = 1 / len(target)

# for t in target:

# plt.hist(points[t], bins=50, alpha=A)

plt.style.use('seaborn-deep')

x = plt.hist([points[t] for t in target], bins=15)

p[3][:10]

a = {

'a': 5,

'b': 35,

'c': 2.8

}

# plt.plot(a)

```

| github_jupyter |

<a href="https://colab.research.google.com/gist/justheuristic/4c82ef4d448ce62cb5459484f66f56aa/practice.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

### Practice 1: Parallel GloVe

In this assignment we'll build parallel GloVe training from scratch. Well, almost from scratch:

* we'll use python's builtin [`multiprocessing`](https://docs.python.org/3/library/multiprocessing.html) library

* and learn to access numpy arrays from multiple processes!

```

%env MKL_NUM_THREADS=1

%env NUMEXPR_NUM_THREADS=1

%env OMP_NUM_THREADS=1

# set numpy to single-threaded mode for benchmarking

!pip install --upgrade nltk datasets tqdm

!wget https://raw.githubusercontent.com/mryab/efficient-dl-systems/main/week02_distributed/utils.py -O utils.py

import time, random

import multiprocessing as mp

import numpy as np

from tqdm import tqdm, trange

from IPython.display import clear_output

import matplotlib.pyplot as plt

%matplotlib inline

```

### Multiprocessing basics

```

def foo(i):

""" Imagine particularly computation-heavy function... """

print(end=f"Began foo({i})...\n")

result = np.sin(i)

time.sleep(abs(result))

print(end=f"Finished foo({i}) = {result:.3f}.\n")

return result

%%time

results_naive = [foo(i) for i in range(10)]

```

Same, but with multiple processes

```

%%time

processes = []

for i in range(10):

proc = mp.Process(target=foo, args=[i])

processes.append(proc)

print(f"Created {len(processes)} processes!")

# start in parallel

for proc in processes:

proc.start()

# wait for everyone finish

for proc in processes:

proc.join() # wait until proc terminates

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

```

Great! But how do we collect the values?

__Solution 1:__ with pipes!

Two "sides", __one__ process from each side

* `pipe_side.send(data)` - throw data into the pipe (do not wait for it to be read)

* `data = pipe_side.recv()` - read data. If there is none, wait for someone to send data

__Rules:__

* each side should be controlled by __one__ process

* data transferred through pipes must be serializable

* if `duplex=True`, processes can communicate both ways

* if `duplex=False`, "left" receives and "right" side sends

```

side_A, side_B = mp.Pipe()

side_A.send(123)

side_A.send({'ololo': np.random.randn(3)})

print("side_B.recv() -> ", side_B.recv())

print("side_B.recv() -> ", side_B.recv())

# note: calling recv a third will hang the process (waiting for someone to send data)

def compute_and_send(i, output_pipe):

print(end=f"Began compute_and_send({i})...\n")

result = np.sin(i)

time.sleep(abs(result))

print(end=f"Finished compute_and_send({i}) = {result:.3f}.\n")

output_pipe.send(result)

%%time

result_pipes = []

for i in range(10):

side_A, side_B = mp.Pipe(duplex=False)

# note: duplex=False means that side_B can only send

# and side_A can only recv. Otherwise its bidirectional

result_pipes.append(side_A)

proc = mp.Process(target=compute_and_send, args=[i, side_B])

proc.start()

print("MAIN PROCESS: awaiting results...")

for pipe in result_pipes:

print(f"MAIN_PROCESS: received {pipe.recv()}")

print("MAIN PROCESS: done!")

```

__Solution 2:__ with multiprocessing templates

Multiprocessing contains some template data structures that help you communicate between processes.

One such structure is `mp.Queue` a Queue that can be accessed by multiple processes in parallel.

* `queue.put` adds the value to the queue, accessible by all other processes

* `queue.get` returns the earliest added value and removes it from queue

```

queue = mp.Queue()

def func_A(queue):

print("A: awaiting queue...")

print("A: retreived from queue:", queue.get())

print("A: awaiting queue...")

print("A: retreived from queue:", queue.get())

print("A: done!")

def func_B(i, queue):

value = np.random.rand()

time.sleep(value)

print(f"proc_B{i}: putting more stuff into queue!")

queue.put(value)

proc_A = mp.Process(target=func_A, args=[queue])

proc_A.start();

proc_B1 = mp.Process(target=func_B, args=[1, queue])

proc_B2 = mp.Process(target=func_B, args=[2, queue])

proc_B1.start(), proc_B2.start();

```

__Important note:__ you can see that the two values above are identical.

This is because proc_B1 and proc_B2 were forked (cloned) with __the same random state!__

To mitigate this issue, run `np.random.seed()` in each process (same for torch, tensorflow).

<details>

<summary>In fact, please go and to that <b>right now!</b></summary>

<img src='https://media.tenor.com/images/32c950f36a61ec7e5060f5eee9140396/tenor.gif' height=200px>

</details>

```

```

__Less important note:__ `mp.Queue vs mp.Pipe`

- pipes are much faster for 1v1 communication

- queues support arbitrary number of processes

- queues are implemented with pipes

### GloVe preprocessing

Before we can train GloVe, we must first construct the co-occurence

```

import datasets

data = datasets.load_dataset('wikitext', 'wikitext-103-raw-v1')

# for fast debugging, you can temporarily use smaller data: 'wikitext-2-raw-v1'

print("Example:", data['train']['text'][5])

```

__First,__ let's build a vocabulary:

```

from collections import Counter

from nltk.tokenize import NLTKWordTokenizer

tokenizer = NLTKWordTokenizer()

def count_tokens(lines, top_k=None):

""" Tokenize lines and return top_k most frequent tokens and their counts """

sent_tokens = tokenizer.tokenize_sents(map(str.lower, lines))

token_counts = Counter([token for sent in sent_tokens for token in sent])

return Counter(dict(token_counts.most_common(top_k)))

count_tokens(data['train']['text'][:100], top_k=10)

# sequential algorithm

texts = data['train']['text'][:100_000]

vocabulary_size = 32_000

batch_size = 10_000

token_counts = Counter()

for batch_start in trange(0, len(texts), batch_size):

batch_texts = texts[batch_start: batch_start + batch_size]

batch_counts = count_tokens(batch_texts, top_k=vocabulary_size)

token_counts += Counter(batch_counts)

# save for later

token_counts_reference = Counter(token_counts)

```

### Let's parallelize (20% points)

__Your task__ is to speed up the code above using using multiprocessing with queues and/or pipes _(or [shared memory](https://docs.python.org/3/library/multiprocessing.shared_memory.html) if you're up to that)_.

__Kudos__ for implementing some form of global progress tracker (like progressbar above)

Please do **not** use task executors (e.g. mp.pool, joblib, ProcessPoolExecutor), we'll get to them soon!

```

texts = data['train']['text'][:100_000]

vocabulary_size = 32_000

batch_size = 10_000

<YOUR CODE HERE>

token_counts = <...>

assert len(token_counts) == len(token_counts_reference)

for token, ref_count in token_counts_reference.items():

assert token in token_counts, token

assert token_counts[token] == ref_count, token

token_counts = Counter(dict(token_counts.most_common(vocabulary_size)))

vocabulary = sorted(token_counts.keys())

token_to_index = {token: i for i, token in enumerate(vocabulary)}

assert len(vocabulary) == vocabulary_size, len(vocabulary)

print("Well done!")

```

### Part 2: Construct co-occurence matrix (10% points)

__Your task__ is to count co-occurences of all words in a 5-token window. Please use the same preprocessing and tokenizer as above.

__Also:__ please only count words that are in the vocabulary defined above.

__Note:__ this task and everything below has no instructions/interfaces. We will design those interfaces __together on the seminar.__

The detailed instructions will appear later this night after the seminar is over.

However, if you want to write the code from scratch, feel free to ignore these instructions.

```

import scipy

def count_token_cooccurences(lines, vocabulary_size: int, window_size: int):

""" Tokenize lines and return top_k most frequent tokens and their counts """

cooc = Counter()

for line in lines:

tokens = tokenizer.tokenize(line.lower())

token_ix = [token_to_index[token] for token in tokens

if token in token_to_index]

for i in range(len(token_ix)):

for j in range(max(i - window_size, 0),

min(i + window_size + 1, len(token_ix))):

if i != j:

cooc[token_ix[i], token_ix[j]] += 1 / abs(i - j)

return counter_to_matrix(cooc, vocabulary_size)

def counter_to_matrix(counter, vocabulary_size):

keys, values = zip(*counter.items())

ii, jj = zip(*keys)

return scipy.sparse.csr_matrix((values, (ii, jj)), dtype='float32',

shape=(vocabulary_size, vocabulary_size))

texts = data['train']['text'][:100_000]

batch_size = 10_000

window_size = 5

cooc = scipy.sparse.csr_matrix((vocabulary_size, vocabulary_size), dtype='float32')

for batch_start in trange(0, len(texts), batch_size):

batch_texts = texts[batch_start: batch_start + batch_size]

batch_cooc = count_token_cooccurences(batch_texts, vocabulary_size, window_size)

cooc += batch_cooc

# This cell will run for a couple minutes, go get some tea!

reference_cooc = cooc

```

__Simple parallelism with `mp.Pool`__