code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # `satn_to_seq`

# Converts values of invasion saturation into sequence numbers

import numpy as np

import porespy as ps

import matplotlib.pyplot as plt

from edt import edt

# The arguments and default values for this function are:

import inspect

inspect.signature(ps.filters.satn_to_seq)

# Generate an image containing invasion sizes using the ``porosimetry`` function:

np.random.seed(0)

im = ps.generators.blobs([200, 200], porosity=0.5)

inv = ps.simulations.drainage(im=im, voxel_size=1, g=0)

# ## `satn`

seq = ps.filters.satn_to_seq(satn=inv.im_satn)

fig, ax = plt.subplots(1, 2, figsize=[12, 6])

ax[0].imshow(inv.im_satn/im, origin='lower', interpolation='none')

ax[0].set_title('Invasion map by saturation')

ax[0].axis(False)

ax[1].imshow(seq/im, origin='lower', interpolation='none')

ax[1].set_title('Invasion map by sequence')

ax[1].axis(False);

# ## `im`

# Passing the boolean image lets the function correctly determine voxels that are solid vs uninvaded, which are both labelled 0.

# +

fig, ax = plt.subplots(1, 2, figsize=[12, 6])

seq = ps.filters.satn_to_seq(satn=inv.im_satn)

ax[0].imshow(seq, origin='lower', interpolation='none')

ax[0].set_title('Invasion map by saturation')

ax[0].axis(False)

seq = ps.filters.satn_to_seq(satn=inv.im_satn, im=im)

ax[1].imshow(seq, origin='lower', interpolation='none')

ax[1].set_title('Invasion map by sequence')

ax[1].axis(False);

|

examples/filters/reference/satn_to_seq.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/yohanesnuwara/reservoir-engineering/blob/master/docs/testing_matbal.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="2i6PesJ8vnoY" colab_type="code" colab={}

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# + id="qSp2JQhqu7I3" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 134} outputId="3d42bbfa-45dd-4ef5-e9a4-6f6f72bed675"

# !git clone https://github.com/yohanesnuwara/reservoir-engineering

# + id="h165vBcPwIZU" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 118} outputId="d897c0f5-83a3-41ed-bed2-977328bccdd6"

# !git clone https://github.com/yohanesnuwara/pyreservoir

# + [markdown] id="Q2uflxWwvUHz" colab_type="text"

# ## Gas Condensate Material Balance

# + id="b5AwM0TZ-38Z" colab_type="code" colab={}

import sys

sys.path.append('/content/pyreservoir/pvt')

import gascorrelation

# + id="bFYi7m8FwQf8" colab_type="code" colab={}

import sys

sys.path.append('/content/pyreservoir/matbal')

from plot import *

# + [markdown] id="wH-_ax7-vwyf" colab_type="text"

# ### Data 1. Ideal data (Rs and Bo are known, measured)

# + id="GKSN4_tAvufu" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 462} outputId="2af5e914-24bd-4979-c004-0e2734277e11"

columns = ['p', 'Np', 'Gp', 'Bg', 'Bo', 'Rs', 'Rv']

data = pd.read_csv('/content/reservoir-engineering/Unit 10 Gas-Condensate Reservoirs/data/Table 10.15-PVT and Production Data for Problem 10.5.csv', names=columns)

data

# + [markdown] id="qtuN_X60BfMG" colab_type="text"

# #### Plot 1

# + id="N-28O-O2AqaA" colab_type="code" colab={}

Pdp = 4400 # psia

p = data['p'].values # psia

Np = data['Np'].values # STB

Gp = data['Gp'].values # scf

Bg = data['Bg'].values # RB/scf

Bo = data['Bo'].values # RB/STB

Rs = data['Rs'].values # scf/STB

Rv = data['Rv'].values # STB/scf

# + id="O312aJF5wGx_" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 295} outputId="311a65f5-5dda-493f-b690-f0b4abb830e5"

x = condensate()

F, Eg = condensate.plot1(x, Pdp, p, Rs, Rv, Rv[0], Bo, Bg, Bg[0], Np, Gp, Gp[0], Rs[0])

|

docs/testing_matbal.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: env37

# language: python

# name: env37

# ---

# +

# default_exp models.wavenet

# +

# hide

import sys

sys.path.append("..")

# -

# # Wavenet model

#

# > The WaveNet` architecture for time series forecasting. <https://arxiv.org/pdf/1609.03499.pdf>

#

# Mostly copied from <https://github.com/MSRDL/Deep4Cast>

# hide

from nbdev.showdoc import *

from fastcore.test import *

#export

from fastcore.utils import *

from fastcore.imports import *

from fastai.basics import *

# # Model

# +

# export

class ConcreteDropout(torch.nn.Module):

"""Applies Dropout to the input, even at prediction time and learns dropout probability

from the data.

In convolutional neural networks, we can use dropout to drop entire channels using

the 'channel_wise' argument.

Arguments:

* dropout_regularizer (float): Should be set to 2 / N, where N is the number of training examples.

* init_range (tuple): Initial range for dropout probabilities.

* channel_wise (boolean): apply dropout over all input or across convolutional channels.

"""

def __init__(self,

dropout_regularizer=1e-5,

init_range=(0.1, 0.3),

channel_wise=False):

super(ConcreteDropout, self).__init__()

self.dropout_regularizer = dropout_regularizer

self.init_range = init_range

self.channel_wise = channel_wise

# Initialize dropout probability

init_min = np.log(init_range[0]) - np.log(1. - init_range[0])

init_max = np.log(init_range[1]) - np.log(1. - init_range[1])

self.p_logit = torch.nn.Parameter(

torch.empty(1).uniform_(init_min, init_max))

def forward(self, x):

"""Returns input but with randomly dropped out values."""

# Get the dropout probability

p = torch.sigmoid(self.p_logit)

# Apply Concrete Dropout to input

out = self._concrete_dropout(x, p)

# Regularization term for dropout parameters

dropout_regularizer = p * torch.log(p)

dropout_regularizer += (1. - p) * torch.log(1. - p)

# The size of the dropout regularization depends on the kind of input

if self.channel_wise:

# Dropout only applied to channel dimension

input_dim = x.shape[1]

else:

# Dropout applied to all dimensions

input_dim = np.prod(x.shape[1:])

dropout_regularizer *= self.dropout_regularizer * input_dim

return out, dropout_regularizer.mean()

def _concrete_dropout(self, x, p):

# Empirical parameters for the concrete distribution

eps = 1e-7

temp = 0.1

# Apply Concrete dropout channel wise or across all input

if self.channel_wise:

unif_noise = torch.rand_like(x[:, :, [0]])

else:

unif_noise = torch.rand_like(x)

drop_prob = (torch.log(p + eps)

- torch.log(1 - p + eps)

+ torch.log(unif_noise + eps)

- torch.log(1 - unif_noise + eps))

drop_prob = torch.sigmoid(drop_prob / temp)

random_tensor = 1 - drop_prob

# Need to make sure we have the right shape for the Dropout mask

if self.channel_wise:

random_tensor = random_tensor.repeat([1, 1, x.shape[2]])

# Drop weights

retain_prob = 1 - p

x = torch.mul(x, random_tensor)

x /= retain_prob

return x

# -

# export

class WaveNet(torch.nn.Module):

"""Implements `WaveNet` architecture for time series forecasting. Inherits

from pytorch `Module <https://pytorch.org/docs/stable/nn.html#torch.nn.Module>`_.

Vector forecasts are made via a fully-connected linear layer.

References:

- `WaveNet: A Generative Model for Raw Audio <https://arxiv.org/pdf/1609.03499.pdf>`_

Arguments:

* input_channels (int): Number of covariates in input time series.

* output_channels (int): Number of target time series.

* horizon (int): Number of time steps to forecast.

* hidden_channels (int): Number of channels in convolutional hidden layers.

* skip_channels (int): Number of channels in convolutional layers for skip connections.

* n_layers (int): Number of layers per Wavenet block (determines receptive field size).

* n_blocks (int): Number of Wavenet blocks.

* dilation (int): Dilation factor for temporal convolution.

"""

def __init__(self,

input_channels,

output_channels,

horizon,

hidden_channels=64,

skip_channels=64,

n_layers=7,

n_blocks=1,

dilation=2):

"""Inititalize variables."""

super(WaveNet, self).__init__()

self.output_channels = output_channels

self.horizon = horizon

self.hidden_channels = hidden_channels

self.skip_channels = skip_channels

self.n_layers = n_layers

self.n_blocks = n_blocks

self.dilation = dilation

self.dilations = [dilation**i for i in range(n_layers)] * n_blocks

# Set up first layer for input

self.do_conv_input = ConcreteDropout(channel_wise=True)

self.conv_input = torch.nn.Conv1d(

in_channels=input_channels,

out_channels=hidden_channels,

kernel_size=1

)

# Set up main WaveNet layers

self.do, self.conv, self.skip, self.resi = [], [], [], []

for d in self.dilations:

self.do.append(ConcreteDropout(channel_wise=True))

self.conv.append(torch.nn.Conv1d(in_channels=hidden_channels,

out_channels=hidden_channels,

kernel_size=2,

dilation=d))

self.skip.append(torch.nn.Conv1d(in_channels=hidden_channels,

out_channels=skip_channels,

kernel_size=1))

self.resi.append(torch.nn.Conv1d(in_channels=hidden_channels,

out_channels=hidden_channels,

kernel_size=1))

self.do = torch.nn.ModuleList(self.do)

self.conv = torch.nn.ModuleList(self.conv)

self.skip = torch.nn.ModuleList(self.skip)

self.resi = torch.nn.ModuleList(self.resi)

# Set up nonlinear output layers

self.do_conv_post = ConcreteDropout(channel_wise=True)

self.conv_post = torch.nn.Conv1d(

in_channels=skip_channels,

out_channels=skip_channels,

kernel_size=1

)

self.do_linear_mean = ConcreteDropout()

self.do_linear_std = ConcreteDropout()

self.do_linear_df = ConcreteDropout()

self.linear_mean = torch.nn.Linear(

skip_channels, horizon*output_channels)

self.linear_std = torch.nn.Linear(

skip_channels, horizon*output_channels)

self.linear_df = torch.nn.Linear(

skip_channels, horizon*output_channels)

def forward(self, inputs):

"""Forward function."""

output, reg_e = self.encode(inputs)

output_df, reg_d = self.decode(output)

# Regularization

regularizer = reg_e + reg_d

return output_df # , 'loc': output_mean, 'scale': output_std, 'regularizer': regularizer}

def encode(self, inputs: torch.Tensor):

"""Returns embedding vectors.

Arguments:

* inputs: time series input to make forecasts for

"""

# Input layer

output, res_conv_input = self.do_conv_input(inputs)

output = self.conv_input(output)

# Loop over WaveNet layers and blocks

regs, skip_connections = [], []

for do, conv, skip, resi in zip(self.do, self.conv, self.skip, self.resi):

layer_in = output

output, reg = do(layer_in)

output = conv(output)

output = torch.nn.functional.relu(output)

skip = skip(output)

output = resi(output)

output = output + layer_in[:, :, -output.size(2):]

regs.append(reg)

skip_connections.append(skip)

# Sum up regularizer terms and skip connections

regs = sum(r for r in regs)

output = sum([s[:, :, -output.size(2):] for s in skip_connections])

# Nonlinear output layers

output, res_conv_post = self.do_conv_post(output)

output = torch.nn.functional.relu(output)

output = self.conv_post(output)

output = torch.nn.functional.relu(output)

output = output[:, :, [-1]]

output = output.transpose(1, 2)

# Regularization terms

regularizer = res_conv_input \

+ regs \

+ res_conv_post

return output, regularizer

def decode(self, inputs: torch.Tensor):

"""Returns forecasts based on embedding vectors.

Arguments:

* inputs: embedding vectors to generate forecasts for

"""

# Apply dense layer to match output length

output_mean, res_linear_mean = self.do_linear_mean(inputs)

output_std, res_linear_std = self.do_linear_std(inputs)

output_df, res_linear_df = self.do_linear_df(inputs)

output_mean = self.linear_mean(output_mean)

output_std = self.linear_std(output_std).exp()

output_df = self.linear_df(output_df).exp()

# Reshape the layer output to match targets

# Shape is (batch_size, output_channels, horizon)

batch_size = inputs.shape[0]

output_mean = output_mean.reshape(

(batch_size, self.output_channels, self.horizon)

)

output_std = output_std.reshape(

(batch_size, self.output_channels, self.horizon)

)

output_df = output_df.reshape(

(batch_size, self.output_channels, self.horizon)

)

# Regularization terms

regularizer = res_linear_mean + res_linear_std + res_linear_df

return output_df, regularizer

@property

def n_parameters(self):

"""Returns the number of model parameters."""

par = list(self.parameters())

s = sum([np.prod(list(d.size())) for d in par])

return s

@property

def receptive_field_size(self):

"""Returns the length of the receptive field."""

return self.dilation * max(self.dilations)

# +

class LogTransform(Transform):

r"""Natural logarithm of target covariate + `offset`.

.. math:: y_i = log_e ( x_i + \mbox{offset} )

Args:

* offset (float): amount to add before taking the natural logarithm

* targets (list): list of indices to transform.

Example:

>>> transforms.LogTransform(targets=[0], offset=1.0)

"""

def __init__(self, target_dim=None, offset=0.0):

self.offset = offset

self.target_dim = target_dim

def encodes(self, sample):

X = sample[0]

y = sample[1]

if self.target_dim:

X[:,self.target_dim, :] = torch.log(self.offset + X[:,self.target_dim, :])

y[:,self.target_dim, :] = torch.log(self.offset + y[:,self.target_dim, :])

else:

X = torch.log(self.offset + X)

y = torch.log(self.offset + y)

return X,y

def decodes(self, sample):

X, y = sample[0], sample[1]

if self.target_dim:

X[:, self.target_dim, :] = torch.exp(X[:, self.target_dim, :]) - self.offset

else:

X = torch.exp(X) - self.offset

y = torch.exp(y) - self.offset

return X,y

# -

tmf = LogTransform([0], offset=1.0)

x, y = tensor([[[0.0,1.0,2.1]]]), torch.randn(1,1,3)

_x,_y = tmf((x,y))

test_eq(_x, torch.log(1.0 + tensor([[[0.0,1.0,2.1]]])))

__a,_b = tmf.decode((_x,_y))

# # Learner

# +

#export

from fastai.callback.all import *

@delegates(WaveNet.__init__)

def wavelet_learner(dbunch, output_channels=None, metrics=None, hidden_channels=89, skip_channels =199, **kwargs):

"Build a dnn style learner"

output_channels = ifnone(output_channels,dbunch.train[0][0].shape[0])

model = WaveNet(input_channels=dbunch.train[0][0].shape[0],

output_channels=output_channels,

horizon = dbunch.train_dl.horizon,

hidden_channels=hidden_channels,

skip_channels=skip_channels,

**kwargs

)

dbunch.after_batch.add(LogTransform([0], offset=1.0))

learn = Learner(dbunch, model, loss_func=F.mse_loss, opt_func= Adam, metrics=L(metrics)+L(mae, smape),cbs=[ShowGraphCallback()])

return learn

# -

from fastseq.all import *

from fastseq.core import *

from fastai.basics import *

# +

# hide

path = untar_data(URLs.m4_daily)

data = TSDataLoaders.from_folder(path, horizon = 14, lookback = 128, bs=16, nrows=100, device='cpu')

test_eq(data.train_dl.one_batch()[0].is_cuda,False)

for o in data.valid_dl:

print(o[0].shape)

# -

path = untar_data(URLs.m4_daily)

data = TSDataLoaders.from_folder(path, horizon = 14, lookback = 128, bs=64, nrows=1000, device = 'cpu')

learn = wavelet_learner(data)

from fastai.callback.all import *

learn.lr_find()

learn.fit_one_cycle(1, 5e-4)

from fastai.callback.all import *

learn.lr_find()

learn.fit_one_cycle(3, 1e-3)

learn.validate()

# +

# hide

from nbdev.export import *

notebook2script()

# -

|

nbs/archive/_05_models.wavenet.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: venv_pirel_test

# language: python

# name: venv_pirel_test

# ---

# # ```pirel``` Tutorial : introduction and ```pcells```

#

# This is a tutorial for the [```pirel```](https://github.com/giumc/pirel) Python3 module.

#

# ```pirel``` stands for PIezoelectric REsonator Layout, and it is based on the module [```phidl```](https://github.com/amccaugh/phidl)

#

# There are ***four*** packages within ```pirel```: ```pcells``` , ```modifiers``` , ```sweeps``` and ```tools``` . Let's start with ```pcells```.

import pirel.pcells as pc

# ```pcells``` is a collection of classes that are commonly required in piezoelectric resonator design.

# all the cells defined here are derived from ```pirel.tools.LayoutPart``` and they share :

#

# * ```name``` attribute

# * ```set_params``` method

# * ```get_params``` method

# * ```view``` method

# * ```draw``` method

# * ```get_components``` method

#

# In general, these modules are growing pretty fast in size, so it wouldn't make sense to go through all the pcells/modifiers/sweeps/tools.

#

# Instead, try to ```help(pirel.pcells)```,```help(pirel.tools)``` when you are looking for information!

#

# An example of layout class is the InterDigiTated fingers ```pc.IDT```.

idt=pc.IDT(name='TutorialIDT')

# + [markdown] tags=[]

# You can get the parameters available to ```idt``` by just printing it!

# -

idt

# you can get these parameters in a ```dict``` by using ```get_params```.

#

# you can modify any of the parameters and then import them back in the object.

# + tags=[]

idt_params=idt.get_params()

idt_params["N"]=4

idt.set_params(idt_params)

idt

# -

# At any point, you can visualize the layout cell by calling ```view()```

# + tags=[]

import matplotlib.pyplot as plt

idt.view(blocking=True)

# -

# the output is showing a ```phidl.Device``` . These ```Device``` instances are powered up versions of ```gdspy.Cell``` instances.

#

# Refer to [```phidl```](https://github.com/amccaugh/phidl) if you want to learn how many cool things you can do with them.

#

# you can explicitly get this ```Device``` instance by calling the ```draw()``` method.

#

# At that point, you can play around with the cells by using the powerful tools in ```phidl```.

#

# In this example, we will align and distribute two ```idt``` Device using the ```phidl``` module.

# +

idt.coverage=0.3

cell1=idt.draw()

idt.coverage=0.8 ### yes you can set attributes like this, but you will have to find variable names from typing help(idt)

cell2=idt.draw()

import phidl

import phidl.device_layout as dl

from phidl import quickplot as qp

g=dl.Group([cell1,cell2])

g.distribute(direction='x',spacing=30)

g.align(alignment='y')

cell_tot=phidl.Device()

cell_tot<<cell1

cell_tot<<cell2

qp(cell_tot)

# -

# Feel free to look at ```help(pc)``` to figure out all the classes implemented in this module.

#

# Some classes in ``pc`` are created by subclassing , some other by composition of *unit* classes.

# For example, a Lateral Field Excitation RESonator (```pc.LFERes```) is built starting from some components:

#

# * ```pc.IDT```

# * ```pc.Bus```

# * ```pc.EtchPit```

# * ```pc.Anchor```

#

# For any class in ```pc```, you can find components by querying the ```get_components()``` method:

via=pc.Via(name='TutorialVia')

res=pc.LFERes(name='TutorialLFERes')

via.get_components()

res.get_components()

# Note that ```via``` has no components, ```res``` has four.

#

# All layout parameters of each component is also a layout parameter of the composed class.

#

# For example, this is the list of parameters that define ```LFERes```:

lfe_params=res.get_params()

res.view()

lfe_params

# Classes built from ```components``` can have also parameters of their own :

# the class ```FBERes``` (Floating Bottom Electrode Resonators) has a parameter that sets the margin of the floating bottom electrode:

# +

fbe=pc.FBERes(name="TutorialFBE")

params=fbe.get_params()

params["PlatePosition"]='in, long'

fbe.set_params(params)

fbe.view()

# -

# A useful feature of ```set_params``` is that functions can be passed.

#

# For example, when setting a resonator anchor, it might happen that some dimensions have to be derived from others.

#

# The resonator overall width can be found by querying the ```get_active_area``` method of the ```idt``` component of ```fbe```:

fbe.idt.active_area

# If you want to set a pitch, ```active area``` will be updated automatically.

# If you want to keep the anchor size a third of the active area, you can simply write

# +

params['AnchorSizeX']=lambda x : x.idt.active_area.x/3

params['AnchorMetalizedX'] = lambda x : x.anchor.size.x*0.8

fbe.set_params(params)

fbe.view()

# -

# Note that ```idt.active_area``` is a ```pt.Point``` instance . To checkout what you can do with ```pt.Point```, ```help (pt.Point)```!

#

# Now, scaling ```IDTPitch``` will scale ```AnchorSizeX``` and ```AnchorMetalizedX``` accordingly...

#

params['IDTPitch']=40

fbe.set_params(params)

fbe.view()

# If you are bothered by the ```phidl.Port``` labels, just pass the optional ```joined=True``` to the ```check``` method in ```pirel.tools```

# +

import pirel.tools as pt

fbe_cell=fbe.draw()

pt.check(fbe_cell,joined=True)

# -

# Check out the next tutorial on how to power up the ```LayoutPart``` classes using [```pirel.modifiers```](./Tutorial_modifiers.ipynb)

|

tutorials/Tutorial.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernel_info:

# name: python3

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Long-term Investment in SPY

# https://finance.yahoo.com/quote/SPY?p=SPY

# If you have time, is good to invest in SPY for long-term investment.

# ## SPY Market

# + outputHidden=false inputHidden=false

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib.mlab as mlab

import seaborn as sns

from tabulate import tabulate

import math

from scipy.stats import norm

import warnings

warnings.filterwarnings("ignore")

# yfinance is used to fetch data

import yfinance as yf

yf.pdr_override()

# + outputHidden=false inputHidden=false

# input

symbol = 'SPY'

start = '2007-01-01'

end = '2019-01-01'

# Read data

df = yf.download(symbol,start,end)['Adj Close']

# View Columns

df.head()

# + outputHidden=false inputHidden=false

df.tail()

# + outputHidden=false inputHidden=false

df.min()

# + outputHidden=false inputHidden=false

df.max()

# + outputHidden=false inputHidden=false

from datetime import datetime

from dateutil import relativedelta

d1 = datetime.strptime(start, "%Y-%m-%d")

d2 = datetime.strptime(end, "%Y-%m-%d")

delta = relativedelta.relativedelta(d2,d1)

print('How many years of investing?')

print('%s years' % delta.years)

# -

# ### Starting Cash with 100k to invest in Bonds

# + outputHidden=false inputHidden=false

Cash = 100000

# + outputHidden=false inputHidden=false

print('Number of Shares:')

shares = int(Cash/df.iloc[0])

print('{}: {}'.format(symbol, shares))

# + outputHidden=false inputHidden=false

print('Beginning Value:')

shares = int(Cash/df.iloc[0])

Begin_Value = round(shares * df.iloc[0], 2)

print('{}: ${}'.format(symbol, Begin_Value))

# + outputHidden=false inputHidden=false

print('Current Value:')

shares = int(Cash/df.iloc[0])

Current_Value = round(shares * df.iloc[-1], 2)

print('{}: ${}'.format(symbol, Current_Value))

# + outputHidden=false inputHidden=false

returns = df.pct_change().dropna()

# + outputHidden=false inputHidden=false

returns.head()

# + outputHidden=false inputHidden=false

returns.tail()

# + outputHidden=false inputHidden=false

# Calculate cumulative returns

daily_cum_ret=(1+returns).cumprod()

print(daily_cum_ret.tail())

# + outputHidden=false inputHidden=false

# Print the mean

print("mean : ", returns.mean()*100)

# Print the standard deviation

print("Std. dev: ", returns.std()*100)

# Print the skewness

print("skew: ", returns.skew())

# Print the kurtosis

print("kurt: ", returns.kurtosis())

# + outputHidden=false inputHidden=false

# Calculate total return and annualized return from price data

total_return = (returns[-1] - returns[0]) / returns[0]

print(total_return)

# + outputHidden=false inputHidden=false

# Annualize the total return over 12 year

annualized_return = ((1+total_return)**(1/12))-1

# + outputHidden=false inputHidden=false

# Calculate annualized volatility from the standard deviation

vol_port = returns.std() * np.sqrt(250)

# + outputHidden=false inputHidden=false

# Calculate the Sharpe ratio

rf = 0.001

sharpe_ratio = (annualized_return - rf) / vol_port

print(sharpe_ratio)

# + outputHidden=false inputHidden=false

# Create a downside return column with the negative returns only

target = 0

downside_returns = returns.loc[returns < target]

# Calculate expected return and std dev of downside

expected_return = returns.mean()

down_stdev = downside_returns.std()

# Calculate the sortino ratio

rf = 0.01

sortino_ratio = (expected_return - rf)/down_stdev

# Print the results

print("Expected return: ", expected_return*100)

print('-' * 50)

print("Downside risk:")

print(down_stdev*100)

print('-' * 50)

print("Sortino ratio:")

print(sortino_ratio)

# + outputHidden=false inputHidden=false

# Calculate the max value

roll_max = returns.rolling(center=False,min_periods=1,window=252).max()

# Calculate the daily draw-down relative to the max

daily_draw_down = returns/roll_max - 1.0

# Calculate the minimum (negative) daily draw-down

max_daily_draw_down = daily_draw_down.rolling(center=False,min_periods=1,window=252).min()

# Plot the results

plt.figure(figsize=(15,15))

plt.plot(returns.index, daily_draw_down, label='Daily drawdown')

plt.plot(returns.index, max_daily_draw_down, label='Maximum daily drawdown in time-window')

plt.legend()

plt.show()

# + outputHidden=false inputHidden=false

# Box plot

returns.plot(kind='box')

# + outputHidden=false inputHidden=false

print("Stock returns: ")

print(returns.mean())

print('-' * 50)

print("Stock risk:")

print(returns.std())

# + outputHidden=false inputHidden=false

rf = 0.001

Sharpe_Ratio = ((returns.mean() - rf) / returns.std()) * np.sqrt(252)

print('Sharpe Ratio: ', Sharpe_Ratio)

# -

# ### Value-at-Risk 99% Confidence

# + outputHidden=false inputHidden=false

# 99% confidence interval

# 0.01 empirical quantile of daily returns

var99 = round((returns).quantile(0.01), 3)

# + outputHidden=false inputHidden=false

print('Value at Risk (99% confidence)')

print(var99)

# + outputHidden=false inputHidden=false

# the percent value of the 5th quantile

print('Percent Value-at-Risk of the 5th quantile')

var_1_perc = round(np.quantile(var99, 0.01), 3)

print("{:.1f}%".format(-var_1_perc*100))

# + outputHidden=false inputHidden=false

print('Value-at-Risk of 99% for 100,000 investment')

print("${}".format(int(-var99 * 100000)))

# -

# ### Value-at-Risk 95% Confidence

# + outputHidden=false inputHidden=false

# 95% confidence interval

# 0.05 empirical quantile of daily returns

var95 = round((returns).quantile(0.05), 3)

# + outputHidden=false inputHidden=false

print('Value at Risk (95% confidence)')

print(var95)

# + outputHidden=false inputHidden=false

print('Percent Value-at-Risk of the 5th quantile')

print("{:.1f}%".format(-var95*100))

# + outputHidden=false inputHidden=false

# VaR for 100,000 investment

print('Value-at-Risk of 99% for 100,000 investment')

var_100k = "${}".format(int(-var95 * 100000))

print("${}".format(int(-var95 * 100000)))

# + outputHidden=false inputHidden=false

mean = np.mean(returns)

std_dev = np.std(returns)

# + outputHidden=false inputHidden=false

returns.hist(bins=50, normed=True, histtype='stepfilled', alpha=0.5)

x = np.linspace(mean - 3*std_dev, mean + 3*std_dev, 100)

plt.plot(x, mlab.normpdf(x, mean, std_dev), "r")

plt.title('Histogram of Returns')

plt.show()

# + outputHidden=false inputHidden=false

VaR_90 = norm.ppf(1-0.9, mean, std_dev)

VaR_95 = norm.ppf(1-0.95, mean, std_dev)

VaR_99 = norm.ppf(1-0.99, mean, std_dev)

# + outputHidden=false inputHidden=false

print(tabulate([['90%', VaR_90], ['95%', VaR_95], ['99%', VaR_99]], headers=['Confidence Level', 'Value at Risk']))

|

Python_Stock/Portfolio_Strategies/Long_term_SPY.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# The notebook is meant to help the user experiment with different models and features. This notebook assumes that there is a saved csv called 'filteredAggregateData.csv' somewhere on your local harddrive. The location must be specified below.

# The cell imports all of the relevant packages.

# +

############## imports

# general

import statistics

import datetime

from sklearn.externals import joblib # save and load models

import random

# data manipulation and exploration

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import matplotlib

## machine learning stuff

# preprocessing

from sklearn import preprocessing

# feature selection

from sklearn.feature_selection import SelectKBest, SelectPercentile

from sklearn.feature_selection import f_regression

# pipeline

from sklearn.pipeline import Pipeline

# train/testing

from sklearn.model_selection import train_test_split, KFold, GridSearchCV, cross_val_score

# error calculations

from sklearn.metrics import mean_squared_error, mean_absolute_error, r2_score

# models

from sklearn.linear_model import LinearRegression # linear regression

from sklearn.linear_model import BayesianRidge #bayesisan ridge regression

from sklearn.svm import SVR # support vector machines regression

from sklearn.gaussian_process import GaussianProcessRegressor # import GaussianProcessRegressor

from sklearn.neighbors import KNeighborsRegressor # k-nearest neightbors for regression

from sklearn.neural_network import MLPRegressor # neural network for regression

from sklearn.tree import DecisionTreeRegressor # decision tree regressor

from sklearn.ensemble import RandomForestRegressor # random forest regression

from sklearn.ensemble import AdaBoostRegressor # adaboost for regression

# saving models

# from sklearn.externals import joblib

import joblib

# -

# Imports the API. 'APILoc' is the location of 'API.py' on your local harddrive.

# +

# import the API

APILoc = r"C:\Users\thejo\Documents\school\AI in AG research\API"

import sys

sys.path.insert(0, APILoc)

from API import *

# -

# Load the dataset. Note that the location of the dataset must be specified.

# +

# get aggregate data

aggDataLoc = r'C:\Users\thejo\Documents\school\AI in AG research\experiment\aggregateData_RockSprings_PA.csv'

#aggDataLoc = r'C:\Users\thejo\Documents\school\AI in AG research\experiment\aggregateDataWithVariety.csv'

targetDataLoc = r'C:\Users\thejo\Documents\school\AI in AG research\experiment\aggregateData_GAonly_Annual_final.csv'

aggDf = pd.read_csv(aggDataLoc)

#aggDf = aggDf.drop("Unnamed: 0",axis=1)

targetDf = pd.read_csv(targetDataLoc)

#targetDf = targetDf.drop("Unnamed: 0",axis=1)

# -

# Test to see if the dataset was loaded properly. A table of the first 5 datapoints should appear.

aggDf.head()

#targetDf.head()

# Filter out features that will not be made available for feature selection. All of the features in the list 'XColumnsToKeep' will be made available for feature selection. The features to include are: <br>

# "Julian Day" <br>

# "Time Since Sown (Days)" <br>

# "Time Since Last Harvest (Days)" <br>

# "Total Radiation (MJ/m^2)" <br>

# "Total Rainfall (mm)" <br>

# "Avg Air Temp (C)" <br>

# "Avg Min Temp (C)" <br>

# "Avg Max Temp (C)"<br>

# "Avg Soil Moisture (%)"<br>

# "Day Length (hrs)"<br>

# "Percent Cover (%)"<br>

# +

# filter out the features that will not be used by the machine learning models

# the features to keep:

# xColumnsToKeep = ["Julian Day", "Time Since Sown (Days)", "Time Since Last Harvest (Days)", "Total Radiation (MJ/m^2)",

# "Total Rainfall (mm)", "Avg Air Temp (C)", "Avg Min Temp (C)", "Avg Max Temp (C)",

# "Avg Soil Moisture (%)", "Day Length (hrs)"], "Percent Cover (%)"]

xColumnsToKeep = ["Julian Day", "Time Since Sown (Days)", "Total Radiation (MJ/m^2)",

"Total Rainfall (mm)", "Avg Air Temp (C)", "Avg Min Temp (C)", "Avg Max Temp (C)",

"Avg Soil Moisture (%)"]

#xColumnsToKeep = ["Julian Day", "Time Since Sown (Days)", "Total Radiation (MJ/m^2)", "Total Rainfall (mm)"]

# the target to keep

yColumnsToKeep = ["Yield (tons/acre)"]

# get a dataframe containing the features and the targets

xDf = aggDf[xColumnsToKeep]

test_xDf = targetDf[xColumnsToKeep]

yDf = aggDf[yColumnsToKeep]

test_yDf = targetDf[yColumnsToKeep]

# reset the index

xDf = xDf.reset_index(drop=True)

yDf = yDf.reset_index(drop=True)

test_xDf = xDf.reset_index(drop=True)

test_yDf = yDf.reset_index(drop=True)

pd.set_option('display.max_rows', 2500)

pd.set_option('display.max_columns', 500)

xCols = list(xDf)

# -

# Test to see if the features dataframe and the target dataframe were successfully made.

xDf.head()

yDf.head()

# Lets now define the parameters that will be used to run the machine learning experiments. Note that parameter grids could be made that will allow sci-kit learn to use a 5-fold gridsearch to find the model's best hyperparameters. The parameter grids that are defined here will specify the possible values for the grid search. <br>

# <br>

# Once the parameter grids are defined, a list of tuples must also be defined. The tuples must take the form of: <br>

# (sci-kit learn model, appropriate parameter grid, name of the file to be saved). <br>

# <br>

# Then the number of iterations should be made. This is represented by the variable 'N'. Each model will be evaluated N times (via N-fold cross validation), and the average results of the models over those N iterations will be returned. <br>

# <br>

# 'workingDir' is the directory in which all of the results will be saved. <br>

# <br>

# 'numFeatures' is the number of features that will be selected (via feature selection).

# +

# hide the warnings because training the neural network caues lots of warnings.

import warnings

warnings.filterwarnings('ignore')

# make the parameter grids for sklearn's gridsearchcv

rfParamGrid = {

'model__n_estimators': [5, 10, 25, 50, 100], # Number of estimators

'model__max_depth': [5, 10, 15, 20], # Maximum depth of the tree

'model__criterion': ["mae"]

}

knnParamGrid ={

'model__n_neighbors':[2,5,10],

'model__weights': ['uniform', 'distance'],

'model__leaf_size': [5, 10, 30, 50]

}

svrParamGrid = {

'model__kernel': ['linear', 'poly', 'rbf', 'sigmoid'],

'model__C': [0.1, 1.0, 5.0, 10.0],

'model__gamma': ["scale", "auto"],

'model__degree': [2,3,4,5]

}

nnParamGrid = {

'model__hidden_layer_sizes':[(3), (5), (10), (3,3), (5,5), (7,7)],

'model__solver': ['sgd', 'adam'],

'model__learning_rate' : ['constant', 'invscaling', 'adaptive'],

'model__learning_rate_init': [0.1, 0.01, 0.001]

}

linRegParamGrid = {}

bayesParamGrid={

'model__n_iter':[100,300,500]

}

dtParamGrid = {

'model__criterion': ['mae'],

'model__max_depth': [5,10,25,50,100]

}

aModelList = [(MLPRegressor(), nnParamGrid, "nnTup.pkl")]

N = 10

workingDir = r"C:\Users\thejo\Documents\school\AI in AG research\experiment"

numFeatures = 8 # 11

# -

# This cell will run the tests and save the results.

saveMLResults(test_xDf, test_yDf, N, xDf, yDf, aModelList, workingDir, numFeatures, printResults=True)

|

notebooks/modelExperiments112320_PA_to_GA-NN.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:python37]

# language: python

# name: conda-env-python37-py

# ---

# +

# Millions to miss out on the net

# aust addresses un security council over iraq

# -

import requests

import os

from tqdm import tqdm

import spacy

nlp = spacy.load("en")

# +

host = "http://0.0.0.0:8890/correct"

def call_gec(data):

resp = requests.post(host, json=data)

res = resp.json()

return res

# -

data_path = '/home/citao/github/BBC-Dataset-News-Classification/dataset/data_files'

domains = ['business', 'entertainment', 'politics', 'sport', 'tech']

# +

corpus = {d: [] for d in domains}

for domain in domains:

domain_path = os.path.join(data_path, domain)

for f in os.listdir(domain_path):

full_path = os.path.join(domain_path, f)

try:

doc = nlp(open(full_path).read())

corpus[domain].extend([i.text for i in doc.sents])

except:

print(full_path)

print(domain, len(corpus[domain]))

# +

import json

json.dump(corpus, open('./bbc_news.json', 'w'))

# +

# text = "England coach <NAME> is already without centre <NAME> and flanker <NAME> while fly-half <NAME> is certain to miss at least the first two games."

# text = "Hi, Guibin! My namme is Citao. The marked was closed yestreday. (This email are sent from OnMail.)"

# text = "Millions to miss out on the net"

# text = "The marked is closed today."

text = """

Hi,

My namme is <NAME> (EID: cw39729) and I do admitted into the Computer Online Master prograssm 2021 spring. In my status panel, I saw a requirement about "Required immunizations".

My question is whether I still need to meets this requirement or not, even thoxugh my program is fully online.

If no, could you please help me to remove this requirements from my application?

Thanks,

"""

# text = "I want go school"

# text = "I I want go to school"

data = {

'text': text,

'iterations': 3,

'min_probability': 0.5,

'min_error_probability': 0.7,

'case_sensitive': True,

'languagetool_post_process': True,

'languagetool_call_thres': 0.7,

'whitelist': ['Citao', 'Guibin', 'Onmail'],

'with_debug_info': True,

}

result = call_gec(data)

print(result['debug_info'])

print(result['input'])

print(result['output'])

print(result['corrections'])

# -

for domain in domains:

print(domain)

right_data = record[domain]['pass']

wrong_data = record[domain]['wrong']

print(len(right_data), len(wrong_data))

for sent in record['tech']['wrong']:

print([sent])

break

# +

import json

total = json.load(open('./bbc_news_result_2_0.8_0.9_False.json'))

i = 0

for min_probability in [0.5, 0.6, 0.7, 0.8]:

for min_error_probability in [0.7, 0.8, 0.9]:

for add_spell_check in [True, False]:

fn = './result/bbc_news_result_2_{}_{}_{}.json'.format(min_probability, min_error_probability, str(add_spell_check))

tmp = {domain: total[domain][5000*i:5000*(i+1)] for domain in domains}

print(fn)

json.dump(tmp, open(fn, 'w'))

i+=1

# +

import pandas as pd

source_rows = []

for min_probability in [0.5, 0.6, 0.7, 0.8]:

for min_error_probability in [0.7, 0.8, 0.9]:

for add_spell_check in [True, False]:

fn = './result/bbc_news_result_2_{}_{}_{}.json'.format(min_probability, min_error_probability, str(add_spell_check))

tmp = json.load(open(fn))

result = {

'cased': {domain: {'right':[], 'wrong':[]} for domain in domains},

'uncased': {domain: {'right':[], 'wrong':[]} for domain in domains}

}

print(fn)

for domain in domains:

for ori_sent, cor_sent, correction in tmp[domain]:

if correction == []:

result['cased'][domain]['right'].append(ori_sent)

result['uncased'][domain]['right'].append(ori_sent)

else:

result['cased'][domain]['wrong'].append(ori_sent)

uncased_correction = [c for c in correction if c[0].lower()!=c[1].lower()]

if uncased_correction:

result['uncased'][domain]['wrong'].append(ori_sent)

else:

result['uncased'][domain]['right'].append(ori_sent)

for case_type in ['cased', 'uncased']:

row = {

'min_probability': min_probability,

'min_error_probability': min_error_probability,

'add_spell_check': add_spell_check,

'cased_type': case_type,

}

row.update({d: len(result[case_type][d]['right'])/5000.0 for d in domains})

source_rows.append(row)

# -

df = pd.DataFrame(source_rows)

df

df.to_csv('~/eval_pos.csv', index=False)

for domain in domains:

df[df['min_probability']==0.]

import numpy as np

1-np.average(df[df['cased_type']=='uncased'].iloc[:, 4:].to_numpy())

# +

path='/home/citao/github/gector/dataset/'

fn = 'wil.ABCN.dev.gold.bea19.0'

source_list = [i.strip() for i in open(path+fn+'.source').readlines()]

target_list = [i.strip() for i in open(path+fn+'.target').readlines()]

diff = 0

for s, t in zip(source_list, target_list):

if s!=t:

diff+=1

print(diff, len(source_list), len(target_list))

# -

4384-2819

for s, t in zip(source_list[:10], target_list):

print(s)

print(t)

print()

25000+1565

2819 / (2819+26565)

# +

import Levenshtein

from difflib import SequenceMatcher

from nltk.corpus import words

NLTK_COMMON_WORDS = {w:1 for w in words.words()}

# 1: spelling error

# 2: grammar error

def spell_or_grammer_error(correction):

ori, cor, _ = correction

if len(ori.split()) != len(cor.split()):

return 2

# dist = Levenshtein.distance(ori, cor)

# print(dist)

seq = SequenceMatcher(None, ori, cor)

ratio = seq.ratio()

print(ratio)

# if dist <= 3:

if ratio > 0.8 and ori not in NLTK_COMMON_WORDS:

return 1

else:

return 2

correction = ['are', 'is', [10, 15]]

spell_or_grammer_error(correction)

# -

|

eval_pos.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

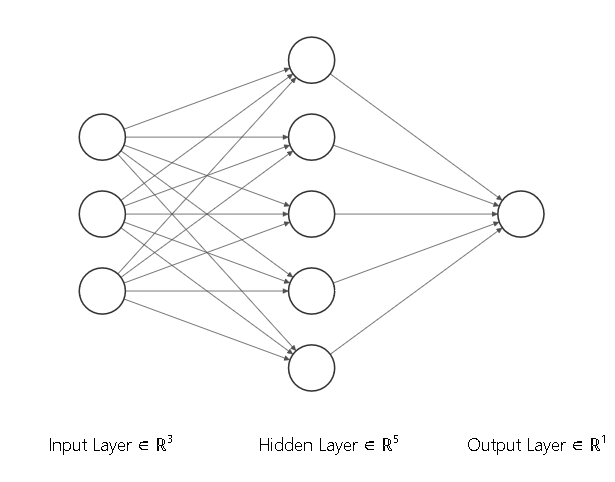

# # Buidling simple layers of a neural net

#

# ### Dr. <NAME><br><br>Fremont, CA 94536 <br><br>Nov 2019

import torch

import numpy as np

torch.manual_seed(7)

# ### Simple feature vector of dimension 3

features = torch.randn((1,3))

print(features)

# ### Define the size of each layer of the neural network

n_input = features.shape[1] # Must match the shape of the features

n_hidden = 5 # Number of hidden units

n_output = 1 # Number of output units (for example 1 for binary classification)

# ### Weights for the input layer to the hidden layer

W1 = torch.randn(n_input,n_hidden)

# ### Weights for the hidden layer to the output layer

W2 = torch.randn(n_hidden,n_output)

# ### Bias terms for the hidden and the output layer

B1 = torch.randn((1,n_hidden))

B2 = torch.randn((1,n_output))

# ### Define the activation function - sigmoid

# $$\sigma(x)=\frac{1}{1+exp(-x)}$$

def activation(x):

"""

Sigmoid activation function

"""

return 1/(1+torch.exp(-x))

# ### Check the shape of all the tensors

print("Shape of the input features: ",features.shape)

print("Shape of the first tensor of weights (between input and hidden layers): ",W1.shape)

print("Shape of the second tensor of weights (between hidden and output layers): ",W2.shape)

print("Shape of the bias tensor added to the hidden layer: ",B1.shape)

print("Shape of the bias tensor added to the output layer: ",B2.shape)

#

# ### First layer output

# $$\mathbf{h_1} = sigmoid(\mathbf{W_1}\times\mathbf{feature}+\mathbf{B_1})$$

h1 = activation(torch.mm(features,W1)+B1)

print("Shape of the output of the hidden layer",h1.shape)

# ### Second layer output

# $$\mathbf{h_2} = sigmoid(\mathbf{W_2}\times\mathbf{h1}+\mathbf{B_1})$$

h2 = activation(torch.mm(h1,W2)+B2)

print("Shape of the output layer",h2.shape)

print(h2)

|

Building a simple NN.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <img src="../../../images/qiskit-heading.gif" alt="Note: In order for images to show up in this jupyter notebook you need to select File => Trusted Notebook" width="500 px" align="left">

# ## _*Superposition*_

#

#

# The latest version of this notebook is available on https://github.com/qiskit/qiskit-tutorial.

#

# ***

# ### Contributors

# <NAME>, <NAME>, <NAME>, <NAME>

#

# ### Qiskit Package Versions

import qiskit

qiskit.__qiskit_version__

# ## Introduction

# Many people tend to think quantum physics is hard math, but this is not actually true. Quantum concepts are very similar to those seen in the linear algebra classes you may have taken as a freshman in college, or even in high school. The challenge of quantum physics is the necessity to accept counter-intuitive ideas, and its lack of a simple underlying theory. We believe that if you can grasp the following two Principles, you will have a good start:

# 1. A physical system in a definite state can still behave randomly.

# 2. Two systems that are too far apart to influence each other can nevertheless behave in ways that, though individually random, are somehow strongly correlated.

#

# In this tutorial, we will be discussing the first of these Principles, the second is discussed in [this other tutorial](entanglement_introduction.ipynb).

# +

# useful additional packages

import matplotlib.pyplot as plt

# %matplotlib inline

import numpy as np

# importing Qiskit

from qiskit import QuantumCircuit, QuantumRegister, ClassicalRegister, execute

from qiskit import BasicAer, IBMQ

# import basic plot tools

from qiskit.tools.visualization import plot_histogram

# +

backend = BasicAer.get_backend('qasm_simulator') # run on local simulator by default

# Uncomment the following lines to run on a real device

#IBMQ.load_accounts()

#from qiskit.providers.ibmq import least_busy

#backend = least_busy(IBMQ.backends(operational=True, simulator=False))

#print("the best backend is " + backend.name())

# -

# ## Quantum States - Basis States and Superpositions<a id='section1'></a>

#

# The first Principle above tells us that the results of measuring a quantum state may be random or deterministic, depending on what basis is used. To demonstrate, we will first introduce the computational (or standard) basis for a qubit.

#

# The computational basis is the set containing the ground and excited state $\{|0\rangle,|1\rangle\}$, which also corresponds to the following vectors:

#

# $$|0\rangle =\begin{pmatrix} 1 \\ 0 \end{pmatrix}$$

# $$|1\rangle =\begin{pmatrix} 0 \\ 1 \end{pmatrix}$$

#

# In Python these are represented by

zero = np.array([[1],[0]])

one = np.array([[0],[1]])

# In our quantum processor system (and many other physical quantum processors) it is natural for all qubits to start in the $|0\rangle$ state, known as the ground state. To make the $|1\rangle$ (or excited) state, we use the operator

#

# $$ X =\begin{pmatrix} 0 & 1 \\ 1 & 0 \end{pmatrix}.$$

#

# This $X$ operator is often called a bit-flip because it exactly implements the following:

#

# $$X: |0\rangle \rightarrow |1\rangle$$

# $$X: |1\rangle \rightarrow |0\rangle.$$

#

# In Python this can be represented by the following:

X = np.array([[0,1],[1,0]])

print(np.dot(X,zero))

print(np.dot(X,one))

# Next, we give the two quantum circuits for preparing and measuring a single qubit in the ground and excited states using Qiskit.

# +

# Creating registers

qr = QuantumRegister(1)

cr = ClassicalRegister(1)

# Quantum circuit ground

qc_ground = QuantumCircuit(qr, cr)

qc_ground.measure(qr[0], cr[0])

# Quantum circuit excited

qc_excited = QuantumCircuit(qr, cr)

qc_excited.x(qr)

qc_excited.measure(qr[0], cr[0])

# -

qc_ground.draw(output='mpl')

qc_excited.draw(output='mpl')

# Here we have created two jobs with different quantum circuits; the first to prepare the ground state, and the second to prepare the excited state. Now we can run the prepared jobs.

circuits = [qc_ground, qc_excited]

job = execute(circuits, backend)

result = job.result()

# After the run has been completed, the data can be extracted from the API output and plotted.

plot_histogram(result.get_counts(qc_ground))

plot_histogram(result.get_counts(qc_excited))

# Here we see that the qubit is in the $|0\rangle$ state with 100% probability for the first circuit and in the $|1\rangle$ state with 100% probability for the second circuit. If we had run on a quantum processor rather than the simulator, there would be a difference from the ideal perfect answer due to a combination of measurement error, preparation error, and gate error (for the $|1\rangle$ state).

#

# Up to this point, nothing is different from a classical system of a bit. To go beyond, we must explore what it means to make a superposition. The operation in the quantum circuit language for generating a superposition is the Hadamard gate, $H$. Let's assume for now that this gate is like flipping a fair coin. The result of a flip has two possible outcomes, heads or tails, each occurring with equal probability. If we repeat this simple thought experiment many times, we would expect that on average we will measure as many heads as we do tails. Let heads be $|0\rangle$ and tails be $|1\rangle$.

#

# Let's run the quantum version of this experiment. First we prepare the qubit in the ground state $|0\rangle$. We then apply the Hadamard gate (coin flip). Finally, we measure the state of the qubit. Repeat the experiment 1024 times (shots). As you likely predicted, half the outcomes will be in the $|0\rangle$ state and half will be in the $|1\rangle$ state.

#

# Try the program below.

# +

# Quantum circuit superposition

qc_superposition = QuantumCircuit(qr, cr)

qc_superposition.h(qr)

qc_superposition.measure(qr[0], cr[0])

qc_superposition.draw()

# +

job = execute(qc_superposition, backend, shots = 1024)

result = job.result()

plot_histogram(result.get_counts(qc_superposition))

# -

# Indeed, much like a coin flip, the results are close to 50/50 with some non-ideality due to errors (again due to state preparation, measurement, and gate errors). So far, this is still not unexpected. Let's run the experiment again, but this time with two $H$ gates in succession. If we consider the $H$ gate to be analog to a coin flip, here we would be flipping it twice, and still expecting a 50/50 distribution.

# +

# Quantum circuit two Hadamards

qc_twohadamard = QuantumCircuit(qr, cr)

qc_twohadamard.h(qr)

qc_twohadamard.barrier()

qc_twohadamard.h(qr)

qc_twohadamard.measure(qr[0], cr[0])

qc_twohadamard.draw(output='mpl')

# +

job = execute(qc_twohadamard, backend)

result = job.result()

plot_histogram(result.get_counts(qc_twohadamard))

# -

# This time, the results are surprising. Unlike the classical case, with high probability the outcome is not random, but in the $|0\rangle$ state. *Quantum randomness* is not simply like a classical random coin flip. In both of the above experiments, the system (without noise) is in a definite state, but only in the first case does it behave randomly. This is because, in the first case, via the $H$ gate, we make a uniform superposition of the ground and excited state, $(|0\rangle+|1\rangle)/\sqrt{2}$, but then follow it with a measurement in the computational basis. The act of measurement in the computational basis forces the system to be in either the $|0\rangle$ state or the $|1\rangle$ state with an equal probability (due to the uniformity of the superposition). In the second case, we can think of the second $H$ gate as being a part of the final measurement operation; it changes the measurement basis from the computational basis to a *superposition* basis. The following equations illustrate the action of the $H$ gate on the computational basis states:

# $$H: |0\rangle \rightarrow |+\rangle=\frac{|0\rangle+|1\rangle}{\sqrt{2}}$$

# $$H: |1\rangle \rightarrow |-\rangle=\frac{|0\rangle-|1\rangle}{\sqrt{2}}.$$

# We can redefine this new transformed basis, the superposition basis, as the set {$|+\rangle$, $|-\rangle$}. We now have a different way of looking at the second experiment above. The first $H$ gate prepares the system into a superposition state, namely the $|+\rangle$ state. The second $H$ gate followed by the standard measurement changes it into a measurement in the superposition basis. If the measurement gives 0, we can conclude that the system was in the $|+\rangle$ state before the second $H$ gate, and if we obtain 1, it means the system was in the $|-\rangle$ state. In the above experiment we see that the outcome is mainly 0, suggesting that our system was in the $|+\rangle$ superposition state before the second $H$ gate.

#

#

# The math is best understood if we represent the quantum superposition state $|+\rangle$ and $|-\rangle$ by:

#

# $$|+\rangle =\frac{1}{\sqrt{2}}\begin{pmatrix} 1 \\ 1 \end{pmatrix}$$

# $$|-\rangle =\frac{1}{\sqrt{2}}\begin{pmatrix} 1 \\ -1 \end{pmatrix}$$

#

# A standard measurement, known in quantum mechanics as a projective or von Neumann measurement, takes any superposition state of the qubit and projects it to either the state $|0\rangle$ or the state $|1\rangle$ with a probability determined by:

#

# $$P(i|\psi) = |\langle i|\psi\rangle|^2$$

#

# where $P(i|\psi)$ is the probability of measuring the system in state $i$ given preparation $\psi$.

#

# We have written the Python function ```state_overlap``` to return this:

state_overlap = lambda state1, state2: np.absolute(np.dot(state1.conj().T,state2))**2

# Now that we have a simple way of going from a state to the probability distribution of a standard measurement, we can go back to the case of a superposition made from the Hadamard gate. The Hadamard gate is defined by the matrix:

#

# $$ H =\frac{1}{\sqrt{2}}\begin{pmatrix} 1 & 1 \\ 1 & -1 \end{pmatrix}$$

#

# The $H$ gate acting on the state $|0\rangle$ gives:

Hadamard = np.array([[1,1],[1,-1]],dtype=complex)/np.sqrt(2)

psi1 = np.dot(Hadamard,zero)

P0 = state_overlap(zero,psi1)

P1 = state_overlap(one,psi1)

plot_histogram({'0' : P0.item(0), '1' : P1.item(0)})

# which is the ideal version of the first superposition experiment.

#

# The second experiment involves applying the Hadamard gate twice. While matrix multiplication shows that the product of two Hadamards is the identity operator (meaning that the state $|0\rangle$ remains unchanged), here (as previously mentioned) we prefer to interpret this as doing a measurement in the superposition basis. Using the above definitions, you can show that $H$ transforms the computational basis to the superposition basis.

print(np.dot(Hadamard,zero))

print(np.dot(Hadamard,one))

# This is just the beginning of how a quantum state differs from a classical state. Please continue to [Amplitude and Phase](amplitude_and_phase.ipynb) to explore further!

|

terra/qis_intro/superposition.ipynb

|

/ -*- coding: utf-8 -*-

/ ---

/ jupyter:

/ jupytext:

/ text_representation:

/ extension: .q

/ format_name: light

/ format_version: '1.5'

/ jupytext_version: 1.14.4

/ kernelspec:

/ display_name: SQL

/ language: sql

/ name: SQL

/ ---

/ + [markdown] azdata_cell_guid="a2782576-c8ad-483e-bd03-289dd656844c" extensions={"azuredatastudio": {"views": []}}

/ # Set up Azure SQL Database for catching the bus application

/

/ This is a SQL Notebook, which allows you to separate text and code blocks and save code results. Azure Data Studio supports several languages, referred to as kernels, including SQL, PowerShell, Python, and more.

/

/ In this activity, you'll learn how to import data into Azure SQL Database and create tables to store the route data, geofence data, and real-time bus information.

/

/ ## Connect to `bus-db`

/

/ At the top of the window, select **Select Connection** \> **Change Connection** next to "Attach to".

/

/ Under _Recent Connections_ select your `bus-db` connection.

/

/ You should now see it listed next to _Attach to_.

/ + [markdown] azdata_cell_guid="f348fdae-e69a-4271-907d-e6b4e0619151" extensions={"azuredatastudio": {"views": []}}

/ ## Part 1: Import the bus route data from Azure Blob Storage

/

/ The first step in configuring the database for the scenario is to import a CSV file that contains route information data. The following script will walk you through that process. Full documentation on "Accessing data in a CSV file referencing an Azure blob storage location" here: [https://docs.microsoft.com/sql/relational-databases/import-export/examples-of-bulk-access-to-data-in-azure-blob-storage](https://docs.microsoft.com/sql/relational-databases/import-export/examples-of-bulk-access-to-data-in-azure-blob-storage).

/

/ You need to first create a table and schema for data to be loaded into.

/ + azdata_cell_guid="c14329fd-fee4-4014-a77d-ed5b59785685" extensions={"azuredatastudio": {"views": []}}

CREATE TABLE [dbo].[Routes]

(

[Id] [int] NOT NULL,

[AgencyId] [varchar](100) NULL,

[ShortName] [varchar](100) NULL,

[Description] [varchar](1000) NULL,

[Type] [int] NULL

)

GO

ALTER TABLE [dbo].[Routes] ADD PRIMARY KEY CLUSTERED

(

[Id] ASC

)

GO

/ + [markdown] azdata_cell_guid="962dcc3f-be18-4cc3-bdfc-d670c962a0dc" extensions={"azuredatastudio": {"views": []}}

/ The next step is to create a master key.

/ + azdata_cell_guid="5a690353-b1f9-43b2-85e1-2a7467f7b3ad" extensions={"azuredatastudio": {"views": []}}

CREATE MASTER KEY ENCRYPTION BY PASSWORD = '<PASSWORD>!'

/ + [markdown] azdata_cell_guid="3a30a8e0-5054-471c-84f3-6e28dc47c694" extensions={"azuredatastudio": {"views": []}}

/ A master key is required to create a `DATABASE SCOPED CREDENTIAL` value because Blob storage is not configured to allow public (anonymous) access. The credential refers to the Blob storage account, and the data portion specifies the container for the store return data.

/

/ We use a shared access signature as the identity that Azure SQL knows how to interpret. The secret is the SAS token that you can generate from the Blob storage account. In this example, the SAS token for a storage account that you don't have access to is provided so you can access only the store return data.

/ + azdata_cell_guid="92c0d495-d239-4dd1-b095-05f8fa0a6cef" extensions={"azuredatastudio": {"views": []}}

CREATE DATABASE SCOPED CREDENTIAL AzureBlobCredentials

WITH IDENTITY = 'SHARED ACCESS SIGNATURE',

SECRET = 'sp=r&st=2021-03-12T00:47:24Z&se=2025-03-11T07:47:24Z&spr=https&sv=2020-02-10&sr=c&sig=BmuxFevKhWgbvo%2Bj8TlLYObjbB7gbvWzQaAgvGcg50c%3D' -- Omit any leading question mark

/ + [markdown] azdata_cell_guid="bca17886-bcfa-4eaa-87b1-94e94e801ce7" extensions={"azuredatastudio": {"views": []}}

/ Next, create an external data source to the container.

/ + azdata_cell_guid="aea81643-4d8a-44b2-a915-8d797f036be5" extensions={"azuredatastudio": {"views": []}}

CREATE EXTERNAL DATA SOURCE RouteData

WITH (

TYPE = blob_storage,

LOCATION = 'https://azuresqlworkshopsa.blob.core.windows.net/bus',

CREDENTIAL = AzureBlobCredentials

)

/ + [markdown] azdata_cell_guid="7658b21b-c9a0-4ffd-a2dc-7af3d0f87842" extensions={"azuredatastudio": {"views": []}}

/ Now you are ready to bring in the data.

/ + azdata_cell_guid="3f642673-43ec-4215-91da-a3a37776fb2f" extensions={"azuredatastudio": {"views": []}} tags=[]

DELETE FROM dbo.[Routes];

INSERT INTO dbo.[Routes]

([Id], [AgencyId], [ShortName], [Description], [Type])

SELECT

[Id], [AgencyId], [ShortName], [Description], [Type]

FROM

OPENROWSET

(

BULk 'routes.txt',

DATA_SOURCE = 'RouteData',

FORMATFILE = 'routes.fmt',

FORMATFILE_DATA_SOURCE = 'RouteData',

FIRSTROW=2,

FORMAT='csv'

) t;

/ + [markdown] azdata_cell_guid="622d6f84-6ece-4b52-a2a1-8666cec71128" extensions={"azuredatastudio": {"views": []}}

/ Finally, let's look at what's been inserted relative to the route we'll be tracking.

/ + azdata_cell_guid="48fa0ba2-64cb-4230-a9b2-e7264994d7d7" extensions={"azuredatastudio": {"views": []}}

SELECT * FROM dbo.[Routes] WHERE [Description] LIKE '%Education Hill%'

/ + [markdown] azdata_cell_guid="102d8657-f5ab-4921-809d-d77fc9b41ad2" extensions={"azuredatastudio": {"views": []}}

/ ## Part 2: Create necessary tables

/

/ ### Select a route to monitor

/

/ Now that you've added the route information, you can select the route to be a "Monitored Route". This will come in handy if you later choose to monitor multiple routes. For now, you will just add the "Education Hill - Crossroads - Eastgate" route.

/ + azdata_cell_guid="b8d596bf-0540-49fa-b206-ce52397c0459" extensions={"azuredatastudio": {"views": []}}

-- Create MonitoredRoutes table

CREATE TABLE [dbo].[MonitoredRoutes]

(

[RouteId] [int] NOT NULL

)

GO

ALTER TABLE [dbo].[MonitoredRoutes] ADD PRIMARY KEY CLUSTERED

(

[RouteId] ASC

)

GO

ALTER TABLE [dbo].[MonitoredRoutes]

ADD CONSTRAINT [FK__MonitoredRoutes__Router]

FOREIGN KEY ([RouteId]) REFERENCES [dbo].[Routes] ([Id])

GO

-- Monitor the "Education Hill - Crossroads - Eastgate" route

INSERT INTO dbo.[MonitoredRoutes] (RouteId) VALUES (100113);

/ + [markdown] azdata_cell_guid="a53ad031-a7c3-40a9-bf5b-695a148dbea9" extensions={"azuredatastudio": {"views": []}}

/ ### Select a GeoFence to monitor

/

/ In addition to monitoring specific bus routes, you will want to monitor certain GeoFences so you can ultimately get notified when your bus enters or exits where you are (i.e. the GeoFence). For now, you will add a small GeoFence that represents the area near the "Crossroads" bus stop.

/ + azdata_cell_guid="1cc9823a-4b83-4e91-a36f-8b503abf0347" extensions={"azuredatastudio": {"views": []}}

-- Create GeoFences table

CREATE SEQUENCE [dbo].[global]

AS INT

START WITH 1

INCREMENT BY 1

GO

CREATE TABLE [dbo].[GeoFences](

[Id] [int] NOT NULL,

[Name] [nvarchar](100) NOT NULL,

[GeoFence] [geography] NOT NULL

)

GO

ALTER TABLE [dbo].[GeoFences] ADD PRIMARY KEY CLUSTERED

(

[Id] ASC

)

GO

ALTER TABLE [dbo].[GeoFences] ADD DEFAULT (NEXT VALUE FOR [dbo].[global]) FOR [Id]

GO

CREATE SPATIAL INDEX [ixsp] ON [dbo].[GeoFences]

(

[GeoFence]

) USING GEOGRAPHY_AUTO_GRID

GO

-- Create a GeoFence

INSERT INTO dbo.[GeoFences]

([Name], [GeoFence])

VALUES

('Crossroads', 0xE6100000010407000000B4A78EA822CF4740E8D7539530895EC03837D51CEACE4740E80BFBE630895EC0ECD7DF53EACE4740E81B2C50F0885EC020389F0D03CF4740E99BD2A1F0885EC00CB8BEB203CF4740E9DB04FC23895EC068C132B920CF4740E9DB04FC23895EC0B4A78EA822CF4740E8D7539530895EC001000000020000000001000000FFFFFFFF0000000003);

GO

/ + [markdown] azdata_cell_guid="46ac87ea-ab53-4c87-9b41-57a3b96924a0" extensions={"azuredatastudio": {"views": []}}

/ ### Create table to track activity in the GeoFence

/

/ Next, create a system-versioned table to keep track of what activity is currently happening within the GeoFence. This means tracking buses entering, exiting, and staying within a given GeoFence. Another table within that table will serve as a histroical log for all activity.

/ + azdata_cell_guid="3e3da57b-2132-4788-b46c-20374d080b2f" extensions={"azuredatastudio": {"views": []}}

CREATE TABLE [dbo].[GeoFencesActive]

(

[Id] [int] IDENTITY(1,1) NOT NULL PRIMARY KEY CLUSTERED,

[VehicleId] [int] NOT NULL,

[DirectionId] [int] NOT NULL,

[GeoFenceId] [int] NOT NULL,

[SysStartTime] [datetime2](7) GENERATED ALWAYS AS ROW START NOT NULL,

[SysEndTime] [datetime2](7) GENERATED ALWAYS AS ROW END NOT NULL,

PERIOD FOR SYSTEM_TIME ([SysStartTime], [SysEndTime])

)

WITH ( SYSTEM_VERSIONING = ON ( HISTORY_TABLE = [dbo].[GeoFencesActiveHistory] ) )

GO

/ + [markdown] azdata_cell_guid="c8d2f9b4-af0e-48c2-a594-e21bb7e297a7" extensions={"azuredatastudio": {"views": []}}

/ ### Create a table to store real-time bus data

/

/ You'll need one last table to store the real-time bus data as it comes in.

/ + azdata_cell_guid="59797569-2711-4675-ab8a-532e0e2a7c22" extensions={"azuredatastudio": {"views": []}}

CREATE TABLE [dbo].[BusData](

[Id] [int] IDENTITY(1,1) NOT NULL,

[DirectionId] [int] NOT NULL,

[RouteId] [int] NOT NULL,

[VehicleId] [int] NOT NULL,

[Location] [geography] NOT NULL,

[TimestampUTC] [datetime2](7) NOT NULL,

[ReceivedAtUTC] [datetime2](7) NOT NULL

)

GO

ALTER TABLE [dbo].[BusData] ADD DEFAULT (SYSUTCDATETIME()) FOR [ReceivedAtUTC]

GO

ALTER TABLE [dbo].[BusData] ADD PRIMARY KEY CLUSTERED

(

[Id] ASC

)

GO

CREATE NONCLUSTERED INDEX [ix1] ON [dbo].[BusData]

(

[ReceivedAtUTC] DESC

)

GO

CREATE SPATIAL INDEX [ixsp] ON [dbo].[BusData]

(

[Location]

) USING GEOGRAPHY_AUTO_GRID

GO

/ + [markdown] azdata_cell_guid="48c43e3d-b0d0-462a-aaab-da6ca728b2de" extensions={"azuredatastudio": {"views": []}}

/ Confirm you've created the tables with the following.

/ + azdata_cell_guid="5619e4aa-306c-46e5-9779-699bb29e387a" extensions={"azuredatastudio": {"views": []}}

SELECT * FROM INFORMATION_SCHEMA.TABLES WHERE TABLE_TYPE = 'BASE TABLE' AND TABLE_SCHEMA = 'dbo'

|

database/notebooks/01-set-up-database.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.8.11 64-bit (''hinton'': conda)'

# name: python3

# ---

# # Breast cancer detection from thermal imaging

# The main purpose of this project is to develop a comprehensive decision support system for breast cancer screening.

# ## Library import

# In this section we will try to import the libraries that will be used throughout this model. Note that some of the libraries used in this program are declared in the files found in `src/scripts/*.py`.

# %reload_ext autoreload

# %autoreload 2

from scripts import *

computer.check_available_devices() # Check available devices

# ## Data selection

# To make this model work correctly it will be necessary to extract and save the images found in the `data` folder.

#

# In this folder there are two labeled folders that contain all the images to be used:

# ```

# data

# ├── healthy

# └── sick

# ```

# +

data = Data("./data/") # Data imported into a table

data.images.head(3) # Display first 3 rows

# -

# ## Transformation

# In the transformation stage, the data is adapted to find the solution to the problem to be solved.

# First of all, the data obtained previously will be divided to be able to use it for training and to check the results.

data.training, data.test = data.train_test_split(test_size=0.15, shuffle=True, stratify=True) # Split data into train and test

# The category distribution is shown for the original, training, and test data

data.count_labels(data.images, "Original")

data.count_labels(data.training, "Training")

data.count_labels(data.test, "Test")

# Once the data is divided, different transformation techniques are applied on it to expand the size of the dataset in real time while training the model.

train_generator, validation_generator, test_generator = data.image_generator(shuffle=False) # Image genearation

# +

filters = {

"original": lambda x: x,

"red": lambda x: data.getImageTensor(x, (330, 0, 0), (360, 255, 255)) + data.getImageTensor(x, (0, 0, 0), (50, 255, 255)),

"green": lambda x: data.getImageTensor(x, (60, 0, 0), (130, 255, 255)),

"blue": lambda x: data.getImageTensor(x, (180, 0, 0), (270, 255, 255)),

}

data.show_images(train_generator, filters, "Training") # Show some images from the training generator

# -

# ## Data Mining

# This section seeks to apply techniques that are capable of extracting useful patterns and then evaluate them.

# ### Model creation

# The model to be used for the next training is created.

red_model = Model("red", filter=filters["red"], new=True, summary=False, plot=False) # Red model creation

green_model = Model("green", filter=filters["green"], new=True, summary=False, plot=False) # Green model creation

blue_model = Model("blue", filter=filters["blue"], new=True, summary=False, plot=False) # Blue model creation

red_model.compile() # Compile the red model

green_model.compile() # Compile the green model

blue_model.compile() # Compile the blue model

# ### Model training

# The created model is trained indicating the times that are going to be used.

red_model.fit(train_generator, validation_generator, epochs=600, verbose=False, plot=False) # Train the red model

green_model.fit(train_generator, validation_generator, epochs=600, verbose=False, plot=False) # Train the green model

blue_model.fit(train_generator, validation_generator, epochs=600, verbose=False, plot=False) # Train the blue model

# ### Model evaluation

# The trained model is evaluated using the generators created before. In this case, the best weight matrix obtained in the training will be used.

red_model.evaluate(test_generator, path=None) # Evaluate the red model

green_model.evaluate(test_generator, path=None) # Evaluate the green model

blue_model.evaluate(test_generator, path=None) # Evaluate the blue model

# ### Grad-CAM

# An activation map of the predictions obtained by the convolutional network is displayed.

# +

join_models = Join(red_model, green_model, blue_model)

# The activation map is displayed

for index, image in data.test.iterrows():

join_models.visualize_heatmap(image)

|

src/model.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

#default_exp vision.core

#default_cls_lvl 3

# +

#export

from local.torch_basics import *

from local.test import *

from local.data.all import *

from local.notebook.showdoc import show_doc

from PIL import Image

# -

#export

_all_ = ['Image','ToTensor']

# +

#It didn't use to be necessary to add ToTensor in all but we don't have the encodes methods defined here otherwise.

#TODO: investigate

# -

# # Core vision

# > Basic image opening/processing functionality

# ## Helpers

im = Image.open(TEST_IMAGE).resize((30,20))

#export

@patch_property

def n_px(x: Image.Image): return x.size[0] * x.size[1]

# #### `Image.n_px`

#

# > `Image.n_px` (property)

#

# Number of pixels in image

test_eq(im.n_px, 30*20)

#export

@patch_property

def shape(x: Image.Image): return x.size[1],x.size[0]

# #### `Image.shape`

#

# > `Image.shape` (property)

#

# Image (height,width) tuple (NB: opposite order of `Image.size()`, same order as numpy array and pytorch tensor)

test_eq(im.shape, (20,30))

#export

@patch_property

def aspect(x: Image.Image): return x.size[0]/x.size[1]

# #### `Image.aspect`

#

# > `Image.aspect` (property)

#

# Aspect ratio of the image, i.e. `width/height`

test_eq(im.aspect, 30/20)

#export

@patch

def reshape(x: Image.Image, h, w, resample=0):

"`resize` `x` to `(w,h)`"

return x.resize((w,h), resample=resample)

show_doc(Image.Image.reshape)

test_eq(im.reshape(12,10).shape, (12,10))

#export

@patch