code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

# %load_ext autoreload

# %autoreload 2

import cPickle as pickle

import os; import sys; sys.path.append('..')

import gp

import gp.nets as nets

from nolearn.lasagne.visualize import plot_loss

from nolearn.lasagne.visualize import plot_conv_weights

from nolearn.lasagne.visualize import plot_conv_activity

from nolearn.lasagne.visualize import plot_occlusion

from matplotlib.pyplot import imshow

import matplotlib.pyplot as plt

# %matplotlib inline

# -

PATCH_PATH = ('cylinder2_rgba_small')

X_train, y_train, X_test, y_test = gp.Patch.load_rgba(PATCH_PATH)

gp.Util.view_rgba(X_train[100], y_train[100])

cnn = nets.RGBANet()

cnn = cnn.fit(X_train, y_train)

cnn = cnn.fit(X_train, y_train)

test_accuracy = cnn.score(X_test, y_test)

test_accuracy

plot_loss(cnn)

plot_conv_weights(cnn.layers_['conv2'])

# store CNN

sys.setrecursionlimit(1000000000)

with open(os.path.expanduser('~/Projects/gp/nets/RGBA.p'), 'wb') as f:

pickle.dump(cnn, f, -1)

with open(os.path.expanduser('~/Projects/gp/nets/RGBA.p'), 'rb') as f:

net = pickle.load(f)

from sklearn.metrics import classification_report, accuracy_score, roc_curve, auc, precision_recall_fscore_support, f1_score, precision_recall_curve, average_precision_score, zero_one_loss

test_prediction = net.predict(X_test)

test_prediction_prob = net.predict_proba(X_test)

print

print 'Precision/Recall:'

print classification_report(y_test, test_prediction)

|

ipy_train/train_RGBA.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Assignment 1.1 - Python 101

#

# Python is an easy to learn, powerful programming language with efficient high-level data structures and object-oriented programming. Python’s elegant syntax and dynamic typing, together with its interpreted nature, make it an ideal language for scripting and rapid application development in many areas on most platforms.

# ### Jupyter Notebook

# What you are reading now is an example of a Jupyter Notebook. The basic concept is that of a "notebook" containing text and programming code. You can easily edit the notebook using your web browser, run the programs in the ipython server in the background and see the output of the programs within the notebook. This is a powerful paradigm that is well suited to machine learning research, particularly when collaborating with other people.

# For Reference:

# - https://ipython.org/notebook.html

# - https://jupyter.org/

# Python also works as your basic calculator directly through the interpreter

5**7/5*4+3-6**5 # ** is exponentation

# Declare a few variables. Set <code style="background-color:#dddddd">newnum</code> as integer, <code style="background-color:#dddddd">newstring</code> as string, and <code style="background-color:#dddddd">mylist</code> as a list of integers (using list comprehension).

# +

#Your code here

# SHIFT + ENTER to execute a cell

# -

# <h2>Exercise 1</h2><br>

# Iterate through the values in the list and run those numbers through a function that produces their squares.

# +

#Your code here

# -

# ## Exercise 2

# Find the sum of the largest 10 numbers. You may find Sorted and Sum functions useful

L =[20, 22, 18, 100, 40, 71, 34, 76, 94, 7, 6, 82, 3, 86, 46, 5, 36, 70, 54, 56, 57, 21, 99, 87, 40, 15, 100,

87, 97, 45, 87, 11, 37, 100, 46, 21, 44, 60, 32, 88, 46, 38, 31, 65, 78, 47, 20, 30, 3, 65, 14, 3, 3, 100,

3, 97, 42, 44, 46, 94, 64, 29, 79, 70, 27, 83, 85, 47, 98, 27, 48, 58, 51, 7, 96, 31, 79, 87, 80, 8, 96, 88,

4, 79, 52, 15, 57, 83, 21, 59, 25, 28, 74, 75, 70, 79, 73, 11, 7, 42]

ans = None# your code here

# ## Exercise 3

# Convert given integer input of seconds into hours, minutes, and seconds.

# +

#Your code here

# -

# ## Exercise 4

# Write a function to generate a fibonacci series upto n terms.

# +

#Your code here

# -

# ## Exercise 5

# Calculate the sum of all prime numbers between 3000 and 42000

# +

#Your code here

# -

# ## Exercise 6

# Use the split and join functions to produce the required string.

dum_str = "A few gems, diamonds, rubies, and I'm rich."

string_to_be_produced = "A few gems, rubies, diamonds, and I'm rich."

# ## Exercise 7

# Deal four sets of cards using the given dictionary.

# The solution looks something like this

#

# ><code style="background-color:#eeeeee;border-radius:5px;padding:5px">Deal 1: Ace of Spades, Queen of Hearts, 2 of Clubs and 7 of Clubs<br> Deal 2: 4 of Hearts, 5 of Clubs, Jack of Spades and Queen of Clubs<br> Deal 3: 7 of Clubs, 8 of Diamonds, 8 of Spades and 10 of Diamonds</code>

#

# The deals must be random. You will find the documentation of the [Random Module](https://docs.python.org/2/library/random.html) helpful

ranks = ["Ace"] + [str(x) for x in range(2,11)] + ["Jack","Queen","King"]

cards = {

"Spades":ranks,

"Diamonds":ranks,

"Clubs":ranks,

"Hearts":ranks

}

# ans str(cards[list(cards.keys())[1]][1])+" of "+str(list(cards.keys())[1])

# ## Exercise 8

# Create a Function to transpose a matrix.

def transpose(A):

#Your code here

pass

A = [

[1,2,1],

[4,2,7],

[6,4,5],

[7,8,9]

]

transpose(A)

|

Session1/Assignments/Assignment 1.1 - Python 101.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# This notebook is designed to allow you to tweak how you might like your walkers initialized. Edit the cells as you see fit and then proceed to evaluate each cell and save the final `pos0.npy` file.

import numpy as np

# Generally, you want at least a few walkers for each dimension you may be exploring. For the **truncated** model, there are 13 parameters.

nparam = 13

nwalkers = 4 * nparam

print(nwalkers)

# If you are fixing distance, then you should change the previous line to

#

# nparam = 12

#

# and then comment out the `dpc` row below. Below, we create an array of starting walker positions, similar to how `emcee` is initialized. You should tweak the `low` and `high` ranges to correspond to a small guess around your starting position.

p0 = np.array([np.random.uniform(1.03, 1.05, nwalkers), # mass [M_sun]

np.random.uniform(30., 50.0, nwalkers), #r_c [AU]

np.random.uniform(110., 115, nwalkers), #T_10 [K]

np.random.uniform(0.70, 0.71, nwalkers), # q

np.random.uniform(0.0, 1.5, nwalkers), # gamma_e

np.random.uniform(-3.4, -3.5, nwalkers), #log10 Sigma_c [log10 g/cm^2]

np.random.uniform(0.17, 0.18, nwalkers), #xi [km/s]

np.random.uniform(144.0, 145.0, nwalkers), #dpc [pc]

np.random.uniform(159.0, 160.0, nwalkers), #inc [degrees]

np.random.uniform(40.0, 41.0, nwalkers), #PA [degrees]

np.random.uniform(-0.1, 0.1, nwalkers), #vz [km/s]

np.random.uniform(-0.1, 0.1, nwalkers), #mu_a [arcsec]

np.random.uniform(-0.1, 0.1, nwalkers)]) #mu_d [arcsec]

# Just to check we have the right shape

p0.shape

# Save the new position file to disk

np.save("pos0.npy", p0)

# Just to check that we have written the file, you can read it back in and check that it has the proper shape.

np.load("pos0.npy").shape

|

assets/InitializeWalkers.truncated.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 基本程序设计

# - 一切代码输入,请使用英文输入法

print('123')

# ## 编写一个简单的程序

# - 圆公式面积: area = radius \* radius \* 3.1415

# ### 在Python里面不需要定义数据的类型

# ## 控制台的读取与输入

# - input 输入进去的是字符串

# - eval

bianchang = int(input('请输入正方形边长'))

area = bianchang * bianchang

print(area)

import os

input_=input('good')

os.system('say'+ input_)

# - 在jupyter用shift + tab 键可以跳出解释文档

# ## 变量命名的规范

# - 由字母、数字、下划线构成

# - 不能以数字开头 \*

# - 标识符不能是关键词(实际上是可以强制改变的,但是对于代码规范而言是极其不适合)

# - 可以是任意长度

# - 驼峰式命名

# ## 变量、赋值语句和赋值表达式

# - 变量: 通俗理解为可以变化的量

# - x = 2 \* x + 1 在数学中是一个方程,而在语言中它是一个表达式

# - test = test + 1 \* 变量在赋值之前必须有值

# ## 同时赋值

# var1, var2,var3... = exp1,exp2,exp3...

# ## 定义常量

# - 常量:表示一种定值标识符,适合于多次使用的场景。比如PI

# - 注意:在其他低级语言中如果定义了常量,那么,该常量是不可以被改变的,但是在Python中一切皆对象,常量也是可以被改变的

# ## 数值数据类型和运算符

# - 在Python中有两种数值类型(int 和 float)适用于加减乘除、模、幂次

# <img src = "../Photo/01.jpg"></img>

# ## 运算符 /、//、**

# ## 运算符 %

# ## EP:

# - 25/4 多少,如果要将其转变为整数该怎么改写

# - 输入一个数字判断是奇数还是偶数

# - 进阶: 输入一个秒数,写一个程序将其转换成分和秒:例如500秒等于8分20秒

# - 进阶: 如果今天是星期六,那么10天以后是星期几? 提示:每个星期的第0天是星期天

int(25/4)

num = eval(input('数字'))

if num % 2 == 0:

print('oushu')

else:

print('jishu')

shu = int(input('输入秒数'))

fen = shu // 60

miao = shu % 60

print(fen,'分' ,miao,'秒')

shu = eval(input('输入一个数'))

if shu % 2 == 0 ;

print(ou)

week = eval(input('..'))

se = (week + 10 ) % 7

print('今天是星期'+str(week),'10天之后是星期'+str(se))

# ## 科学计数法

# - 1.234e+2

# - 1.234e-2

# ## 计算表达式和运算优先级

# <img src = "../Photo/02.png"></img>

# <img src = "../Photo/03.png"></img>

x = 10

y = 6

a = 0

b = 1

c = 1

yi = (3+4*x)/5

er = 10*(y-5)*(a+b+c)/x

san = 9*((4/x)+(9+x)/y)

sum= yi-er+san

print(yi)

print(er)

print(san)

print(sum)

# ## 增强型赋值运算

# <img src = "../Photo/04.png"></img>

# ## 类型转换

# - float -> int

# - 四舍五入 round

# ## EP:

# - 如果一个年营业税为0.06%,那么对于197.55e+2的年收入,需要交税为多少?(结果保留2为小数)

# - 必须使用科学计数法

round((0.06e-2)*(197.55e+2),2)

# # Project

# - 用Python写一个贷款计算器程序:输入的是月供(monthlyPayment) 输出的是总还款数(totalpayment)

#

# # Homework

# - 1

# <img src="../Photo/06.png"></img>

celsius = eval(input('摄氏温度'))

fahrenheit = (9 / 5) * celsius + 32

print('华氏温度为:'+str(fahrenheit))

pr

# - 2

# <img src="../Photo/07.png"></img>

r = eval(input('半径'))

h = eval(input('高'))

PI = 3.14

area = r * r * PI

volume = area * h

print('该圆的底面积为:',+area)

print('该圆的体积为:',+volume)

# - 3

# <img src="../Photo/08.png"></img>

feet = eval(input('输入英尺'))

meters = 0.305 * feet

print(str(feet)+'英尺等于'+str(meters)+'米')

# - 4

# <img src="../Photo/10.png"></img>

water = eval(input('水量'))

initial = eval(input('初始温度'))

final = eval(input('最终温度'))

Q = water * (final - initial) * 4184

print('需要的能量为'+str(Q)+'焦耳')

# - 5

# <img src="../Photo/11.png"></img>

balance,rate = eval(input('差额,年利率'))

interest = balance * (rate / 1200)

print('利息为'+str(interest))

# - 6

# <img src="../Photo/12.png"></img>

v0,v1,t = eval(input('初速度,末速度,所用时间'))

a = (v1 - v0) / t

print('平均加速度为:'+str(a))

# - 7 进阶

# <img src="../Photo/13.png"></img>

# +

x = eval(input('存入金额 '))

mon = 0.00417

s = 0

for y in range (6):

s = x * (1 + mon)

# s = s

x = s + 100

print(s,x)

# -

# - 8 进阶

# <img src="../Photo/14.png"></img>

import random

x = random.randint(0,1000)

a = x % 10

b = (x // 10) % 10

c = x // 100

s = a + b + c

print('随即获取:',x)

print('位数之和为',s)

|

910.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import cv2

import numpy as np

from matplotlib import pyplot as plt

import os

import xlsxwriter

import pandas as pd # Excel

import struct # Binary writing

import scipy.io as sio # Read .mat files

import h5py

from grading import *

# -

# Convert .mat arrays to binary files

path = r'V:\Tuomas\PTASurfaceImages'

savepath = r'V:\Tuomas\PTASurfaceImages_binary'

filelist = os.listdir(path)

for k in range(len(filelist)):

#Load file

file = os.path.join(path,filelist[k])

try:

file = sio.loadmat(file)

Mz = file['Mz']

sz = file['sz']

except NotImplementedError:

file = h5py.File(file)

Mz = file['Mz'][()]

sz = file['sz'][()]

# Save file

dtype = 'double'

Mz = np.float64(Mz)

sz = np.float64(sz)

name = filelist[k]

print(filelist[k])

writebinaryimage(savepath + '\\' + name[:-4] + '_mean.dat', Mz, dtype)

writebinaryimage(savepath + '\\' + name[:-4] + '_std.dat', sz, dtype)

# Convert .mat arrays to .png files

path = r'V:\Tuomas\PTASurfaceImages'

savepath = r'V:\Tuomas\PTASurfaceImages_png'

filelist = os.listdir(path)

for k in range(len(filelist)):

#Load file

file = os.path.join(path,filelist[k])

try:

file = sio.loadmat(file)

Mz = file['Mz']

sz = file['sz']

except NotImplementedError:

file = h5py.File(file)

Mz = file['Mz'][()]

sz = file['sz'][()]

# Save file

dtype = 'double'

mx = np.amax(np.float64(Mz))

mn = np.amin(np.float64(Mz))

Mbmp = (np.float64(Mz) - mn) * (255 / (mx - mn))

sx = np.amax(np.float64(sz))

sn = np.amin(np.float64(sz))

sbmp = (np.float64(sz) - sn) * (255 / (sx - sn))

name = filelist[k]

print(filelist[k])

#print(savepath + '\\' + name[:-4] +'_mean.png')

cv2.imwrite(savepath + '\\' + name[:-4] +'_mean.png', Mbmp)

cv2.imwrite(savepath + '\\' + name[:-4] +'_std.png', sbmp)

|

training/notebooks/Conversions.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# <a href="https://colab.research.google.com/github/DingLi23/s2search/blob/pipelining/pipelining/exp-csse/exp-csse_csse_1w_ale_plotting.ipynb" target="_blank"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# ### Experiment Description

#

#

#

# > This notebook is for experiment \<exp-csse\> and data sample \<csse\>.

# ### Initialization

# +

# %load_ext autoreload

# %autoreload 2

import numpy as np, sys, os

in_colab = 'google.colab' in sys.modules

# fetching code and data(if you are using colab

if in_colab:

# !rm -rf s2search

# !git clone --branch pipelining https://github.com/youyinnn/s2search.git

sys.path.insert(1, './s2search')

# %cd s2search/pipelining/exp-csse/

pic_dir = os.path.join('.', 'plot')

if not os.path.exists(pic_dir):

os.mkdir(pic_dir)

# -

# ### Loading data

# +

sys.path.insert(1, '../../')

import numpy as np, sys, os, pandas as pd

from getting_data import read_conf

from s2search_score_pdp import pdp_based_importance

sample_name = 'csse'

f_list = [

'title', 'abstract', 'venue', 'authors',

'year',

'n_citations'

]

ale_xy = {}

ale_metric = pd.DataFrame(columns=['feature_name', 'ale_range', 'ale_importance', 'absolute mean'])

for f in f_list:

file = os.path.join('.', 'scores', f'{sample_name}_1w_ale_{f}.npz')

if os.path.exists(file):

nparr = np.load(file)

quantile = nparr['quantile']

ale_result = nparr['ale_result']

values_for_rug = nparr.get('values_for_rug')

ale_xy[f] = {

'x': quantile,

'y': ale_result,

'rug': values_for_rug,

'weird': ale_result[len(ale_result) - 1] > 20

}

if f != 'year' and f != 'n_citations':

ale_xy[f]['x'] = list(range(len(quantile)))

ale_xy[f]['numerical'] = False

else:

ale_xy[f]['xticks'] = quantile

ale_xy[f]['numerical'] = True

ale_metric.loc[len(ale_metric.index)] = [f, np.max(ale_result) - np.min(ale_result), pdp_based_importance(ale_result, f), np.mean(np.abs(ale_result))]

# print(len(ale_result))

print(ale_metric.sort_values(by=['ale_importance'], ascending=False))

print()

# -

# ### ALE Plots

# +

import matplotlib.pyplot as plt

import seaborn as sns

from matplotlib.ticker import MaxNLocator

categorical_plot_conf = [

{

'xlabel': 'Title',

'ylabel': 'ALE',

'ale_xy': ale_xy['title']

},

{

'xlabel': 'Abstract',

'ale_xy': ale_xy['abstract']

},

{

'xlabel': 'Authors',

'ale_xy': ale_xy['authors'],

# 'zoom': {

# 'inset_axes': [0.3, 0.3, 0.47, 0.47],

# 'x_limit': [89, 93],

# 'y_limit': [-1, 14],

# }

},

{

'xlabel': 'Venue',

'ale_xy': ale_xy['venue'],

# 'zoom': {

# 'inset_axes': [0.3, 0.3, 0.47, 0.47],

# 'x_limit': [89, 93],

# 'y_limit': [-1, 13],

# }

},

]

numerical_plot_conf = [

{

'xlabel': 'Year',

'ylabel': 'ALE',

'ale_xy': ale_xy['year'],

# 'zoom': {

# 'inset_axes': [0.15, 0.4, 0.4, 0.4],

# 'x_limit': [2019, 2023],

# 'y_limit': [1.9, 2.1],

# },

},

{

'xlabel': 'Citations',

'ale_xy': ale_xy['n_citations'],

# 'zoom': {

# 'inset_axes': [0.4, 0.65, 0.47, 0.3],

# 'x_limit': [-1000.0, 12000],

# 'y_limit': [-0.1, 1.2],

# },

},

]

def pdp_plot(confs, title):

fig, axes_list = plt.subplots(nrows=1, ncols=len(confs), figsize=(20, 5), dpi=100)

subplot_idx = 0

plt.suptitle(title, fontsize=20, fontweight='bold')

# plt.autoscale(False)

for conf in confs:

axes = axes if len(confs) == 1 else axes_list[subplot_idx]

sns.rugplot(conf['ale_xy']['rug'], ax=axes, height=0.02)

axes.axhline(y=0, color='k', linestyle='-', lw=0.8)

axes.plot(conf['ale_xy']['x'], conf['ale_xy']['y'])

axes.grid(alpha = 0.4)

# axes.set_ylim([-2, 20])

axes.xaxis.set_major_locator(MaxNLocator(integer=True))

axes.yaxis.set_major_locator(MaxNLocator(integer=True))

if ('ylabel' in conf):

axes.set_ylabel(conf.get('ylabel'), fontsize=20, labelpad=10)

# if ('xticks' not in conf['ale_xy'].keys()):

# xAxis.set_ticklabels([])

axes.set_xlabel(conf['xlabel'], fontsize=16, labelpad=10)

if not (conf['ale_xy']['weird']):

if (conf['ale_xy']['numerical']):

axes.set_ylim([-1.5, 1.5])

pass

else:

axes.set_ylim([-8, 15])

pass

if 'zoom' in conf:

axins = axes.inset_axes(conf['zoom']['inset_axes'])

axins.xaxis.set_major_locator(MaxNLocator(integer=True))

axins.yaxis.set_major_locator(MaxNLocator(integer=True))

axins.plot(conf['ale_xy']['x'], conf['ale_xy']['y'])

axins.set_xlim(conf['zoom']['x_limit'])

axins.set_ylim(conf['zoom']['y_limit'])

axins.grid(alpha=0.3)

rectpatch, connects = axes.indicate_inset_zoom(axins)

connects[0].set_visible(False)

connects[1].set_visible(False)

connects[2].set_visible(True)

connects[3].set_visible(True)

subplot_idx += 1

pdp_plot(categorical_plot_conf, f"ALE for {len(categorical_plot_conf)} categorical features")

# plt.savefig(os.path.join('.', 'plot', f'{sample_name}-1wale-categorical.png'), facecolor='white', transparent=False, bbox_inches='tight')

pdp_plot(numerical_plot_conf, f"ALE for {len(numerical_plot_conf)} numerical features")

# plt.savefig(os.path.join('.', 'plot', f'{sample_name}-1wale-numerical.png'), facecolor='white', transparent=False, bbox_inches='tight')

|

pipelining/exp-csse/exp-csse_csse_1w_ale_plotting.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Corpus and BGRF Composition

#

# We describe and analyse the status quo of the corpus with respect to criteria like author gender, year of first publication, narrative form etc. Then we compare it to the "baseline" of the Bibliographie du genre romanesque français, 1751-1800 (BGRF).

#

# **Table of Contents**

# * [Prerequisites](#Prerequisites)

# * [Corpus Metadata](#Corpus-Metadata)

# - [Author Gender](#Author-Gender)

# - [Text Length](#Text-Length)

# - [Publication Date](#Year-of-first-publication)

# - [Narrative Form](#Narrative-Form)

# * [BGRF Metadata from Wikibase](#BGRF-Metadata-from-Wikibase)

# - [Configuration](#Configuration)

# - [Data Loading](#Data-Loading)

# - [Author Gender](#Author-Gender-(BGRF))

# - [Text Length](#Text-Length-(BGRF))

# - [Publication Date](#Publication-Date-(BGRF))

# - [Narrative Form](#Narrative-Form-(BGRF))

# * [Comparison](#Comparison)

# - [Publication Date](#Publication-Date-(Corpus-vs-BGRF))

#

# ## Prerequisites

# +

import pandas as pd

import matplotlib.pyplot as plt

import numpy as np

import seaborn as sns

sns.set()

# Install with e.g. `pip install sparqlwrapper`

from SPARQLWrapper import SPARQLWrapper, JSON

# Make plots appear directly in the notebook.

# %matplotlib inline

from pprint import pprint

# -

# The later parts also require access to a Wikibase instance, which is only accessible in/over the university network.

# ## Corpus Metadata

# Adjust the URL to the .tsv file as needed.

DATA_URL = 'https://raw.githubusercontent.com/MiMoText/roman18/master/XML-TEI/xml-tei_metadata.tsv'

corpus = pd.read_csv(DATA_URL, sep='\t')

print('Available column names:', corpus.columns.values)

# ### Author Gender

#

# Data is in the column 'au-gender'. Possible values are 'F', 'M' and 'U'.

gender = corpus['au-gender'].astype('category')

print('Set of all occuring values:', set(gender.values))

ratio_female = (gender == 'F').sum() / gender.count()

ratio_male = (gender == 'M').sum() / gender.count()

ratio_other = 1 - ratio_female - ratio_male

print(

f'% of female authors: \t{ratio_female:.3f}\n'

f'% of male authors: \t{ratio_male:.3f}\n'

f'% of unknown/other: \t{ratio_other:.3f}'

)

sns.countplot(x=gender)

plt.xlabel('Author gender')

plt.ylabel('Count in corpus')

# ### Text Length

# Data is in the column 'size', possible values are 'short', 'medium', 'long'.

size = corpus['size']

ratio_size_short = (size == 'short').sum() / size.count()

ratio_size_med = (size == 'medium').sum() / size.count()

ratio_size_long = (size == 'long').sum() / size.count()

print(

f'% of short texts: \t{ratio_size_short:.3f}\n'

f'% of medium texts: \t{ratio_size_med:.3f}\n'

f'% of long texts: \t{ratio_size_long:.3f}'

)

sns.countplot(x=size)

plt.xlabel('Text length')

plt.ylabel('Count in corpus')

# ### Year of first publication

# Data is in the column 'firsted-yr'. However, possible values can be single years `(yyyy)`, year spans `(yyyy-yyyy)`, the floating point number value `NaN`, or even a string like `'unknown'`. Therefore, we need to clean up a bit before we can use it. In case of year ranges, we simply use the first year.

# + raw_mimetype="text/x-python"

pubyear = pd.to_datetime(corpus['firsted-yr'], format='%Y')

time_range = pubyear.max().year - pubyear.min().year

plot = sns.displot(x=pubyear, bins=time_range, height=5, aspect=16/8)

plot.set_xticklabels(rotation=90)

pubyear.head()

# -

pd.to_datetime(corpus['firsted-yr'])

# ### Narrative form

# Data is in the column 'form'. Possible values include `'mixed'`, `'autodiegetic'`, `'heterodiegetic'`, `'homodiegetic'`, `'epistolary'`, `'dialogue novel'` and also `NaN`.

form = corpus['form'].astype('category')

print('Set of all values: ', set(form.values))

print('\n'.join([

f'% of {kind}: \t{((form==kind).sum()/form.count()):.3f}'

for kind in [

'mixed', 'autodiegetic', 'heterodiegetic', 'homodiegetic',

'epistolary', 'dialogue novel'

]

]))

plot = sns.countplot(x=form)

_ = plt.xticks(rotation=30, horizontalalignment='right')

# ## BGRF Metadata from Wikibase

# Data is pulled from Wikibase. For the moment, our instance on port 53100 is used. This may change in the future, which then will not only affect the URL but also the IDs of the items and predicates. Adjust these accordingly in the [Configuration Section](#Configuration).

# ### Configuration

# Adjust these values whenever another Wikibase instance is to be used.

WB_URL = 'http://zora.uni-trier.de:11100'

ITEM_IDS = {

'publication_date': 'P7',

'publication_date_str': 'P42', # hard to use, since not normalized

'sex_or_gender': 'P28',

'narrative_form': 'P54', # the wikibase label is "narrative perspective"

'narrative_form_str': 'P46', # the wikibase label is "narrative perspective_string"

'page_count': 'P34', # the wikibase label is "number of pages"

'page_count_str': 'P44', # the wikibase label is "number of pages_string"

'distribution_format_str': 'P45',

'distribution_format': 'P37',

}

# ### Data Loading

# We use the SPARQL endpoint to query the bibliography metadata. Each metadatum gets its own query for simplicity's sake.

# +

bgrf = pd.DataFrame()

wb_endpoint = f'{WB_URL}/proxy/wdqs/bigdata/namespace/wdq/sparql'

def get_data(endpoint, query):

'''Given an endpoint URL and a SPARQL query, return

the data as JSON.

'''

user_agent = 'jupyter notebook'

sparql = SPARQLWrapper(endpoint, agent=user_agent)

sparql.setQuery(query)

sparql.setReturnFormat(JSON)

return sparql.query().convert()["results"]["bindings"]

# -

# This wrapper function conveniently provides the data as python dictionaries.

# For example, to get all the data values for the property `narrative_form_str`,

# we can use the following:

ex_query = 'SELECT DISTINCT ?form WHERE { ?item wdt:P46 ?form. }'

results = get_data(wb_endpoint, ex_query)

print('Number of distinct values:', len(results))

print('Each entry has the following form (NPI):', results[0])

# The key `'form'` corresponds to us choosing `?form` as the output variable in our SPARQL query.

# ### Author Gender (BGRF)

# Author gender

# NOTE: currently no data in Wikibase at :44100.

query = ''.join([

'SELECT ?item ?gender ',

'WHERE { ?item wdt:',

ITEM_IDS['sex_or_gender'],

' ?gender. }'

])

gender = get_data(wb_endpoint, query)

# ### Text Length (BGRF)

#

# For the bibliography we do not have e.g. the word count. We do however have both a page count (as custom string, in 'page_count_str'/P45) and information about the page format (in 'distribution_format_str'/P46). We can use this to estimate a text length.

#

# Interestingly though, historically formats vary substantially (see [Wikipedia](https://fr.wikipedia.org/wiki/Reliure#Formats_des_feuilles_et_des_reliures) and [other source](http://home.page.ch/pub/reliurebcapt@vtx.ch/format.htm)).

# Formats are, in order, 'in-plano', 'in-folio', 'in-4', 'in-8', 'in-12', 'in-16', 'in-18'.

# Taken from https://fr.wikipedia.org/wiki/Reliure#Formats_des_feuilles_et_des_reliures and

# http://home.page.ch/pub/reliurebcapt@vtx.ch/format.htm

# Note, that both tables are NOT in complete agreement with each other.

formats = {

'colombier': [(90, 63), (63,45), (45,31.5), (30,21), (21, 14), (22.5,15.7), (21,15)],

'jesus': [(70,54), (54,35), (35,27), (27,18), (23, 9), (17.5,13.7), (18.3, 11.6)],

'raisin': [(65,50), (49,32), (32,24), (24,16), (21, 8), (16.2,12.5), (16.6, 10.8)],

}

areas = {

key: [w*h for (w, h) in value]

for key, value in formats.items()

}

# Ratio of one format and the next smaller one, for each convention.

ratios = {

key: [f'{a/b:.2f}' for a, b in zip(values[1:], values[:-1])]

for key, values in areas.items()

}

from pprint import pprint

pprint(list(ratios.values()))

# As we can see, the ratio from one format to another is, if not identical, still pretty consistent for each column (as it should be, considering how they are derived). If we assume that the entries in the bibliography are at least internally consistent, we can use _any_ of the conventions and multiply by the number of pages to get a "combined page area" for each text. This can of course not simply be mapped to the actual text length. But as a heuristic, maybe we can assume that the combined area is roughly proportional to the text length. If this is the case, we can use this value to categorize texts into 'short', 'medium' and 'long' (although these labels are independent of, and can differ from, the ones used for the corpus, which directly use word count).

# First, let's query both `page_count_string` and `distribution_format_string`.

# Page count

query = ''.join([

'SELECT ?item ?page_count ?page_format ',

'WHERE { ?item wdt:',

ITEM_IDS['page_count_str'],

' ?page_count;',

' wdt:',

ITEM_IDS['distribution_format_str'],

' ?page_format.',

' }'

])

print(query)

results = get_data(wb_endpoint, query)

pprint(results[0])

# Unsurprisingly, both `page_count_string` and `distribution_format_string` need some cleaning up and normalization. To keep this notebook tidy and allow for both easier re-use and easier testing, the corresponding parsing functions have been outsourced into their own `utils.py` module in the same folder as this notebook.

# +

# Have a look at ./utils.py if you are interested in the implementation details.

from utils import parse_distribution_format

from utils import parse_page_count

# Note: parse_page_count() ignores page numbers given in roman numerals by default.

# To include them in the sum, call parse_page_count() with `count_preface=True`.

# For more info about these functions use help():

#help(parse_page_count)

#help(parse_distribution_count)

# Adjust as needed:

INCLUDE_PREFACE = False

bgrf['page_count'] = pd.Series(

data=[sum(parse_page_count(entry['page_count']['value'], count_preface=INCLUDE_PREFACE))

for entry in results],

index=[entry['item']['value'] for entry in results],

dtype='Int64'

)

bgrf['dist_format'] = pd.Series(

data=[parse_distribution_format(entry['page_format']['value'])

for entry in results],

index=[entry['item']['value'] for entry in results],

)

print('"page_count" column:\n', bgrf['page_count'].head(2))

print('"dist_format" column:\n', bgrf['dist_format'].head(2))

sns.countplot(x=bgrf['dist_format'], order=['in-1', 'in-2', 'in-4', 'in-8', 'in-12', 'in-16', 'in-18', 'in-24', 'in-32'])

plt.show()

sns.displot(x=bgrf['page_count'])

plt.show()

# -

# In order to estimate the cummulative page area of each text, we need to choose any of the conventions listed at the start of this section.

# **However, for some of the formats like 'in-24' we do not have any estimate!** The following code simply uses NaN in these cases, but this is obviously not a real solution.

page_areas = {

format: area

for format, area

in zip(['in-1', 'in-2', 'in-4', 'in-8', 'in-12', 'in-16', 'in-18'], areas['jesus'])

}

pprint(page_areas)

bgrf['page_area'] = bgrf['dist_format'].apply(lambda f: page_areas.get(f, np.nan))

# +

# Calculate an estimated 'cummulative page area' for each work.

bgrf['cumm_area'] = bgrf['page_area'] * bgrf['page_count']

sns.displot(x=bgrf['cumm_area'])

plt.show()

bgrf['estimated_length'] = pd.cut(bgrf['cumm_area'], bins=3, labels=['short', 'medium', 'long'])

sns.countplot(x=bgrf['estimated_length'])

plt.show()

# There is only one work described as 'long' by this procedure:

only_long = bgrf['estimated_length'][bgrf['estimated_length'] == 'long']

print('The only work categorized as "long" is actually a collection of novels:\n', only_long)

# -

# The only work which is categorized as long by this procedure is actually a collection of novels. This probably means that our binning is rather meaningless. Domain-specific knowledge would be necessary to choose adequate limits for the three bins.

# ### Publication Date (BGRF)

# +

# Publication date

import datetime as dt

query = ''.join([

'SELECT ?item ?pubdate ',

'WHERE { ?item wdt:',

ITEM_IDS['publication_date'],

' ?pubdate. }'

])

pubdate = get_data(wb_endpoint, query)

bgrf['pubyear'] = pd.Series(

data=[dt.date.fromisoformat(entry['pubdate']['value'].split('T')[0]) for entry in pubdate],

index=[entry['item']['value'] for entry in pubdate],

dtype='datetime64[ns]'

)

print('The new data Series looks like this:\n', bgrf['pubyear'].head(3))

year_range = bgrf['pubyear'].max().year - bgrf['pubyear'].min().year

plot = sns.displot(x=bgrf['pubyear'], bins=time_range, height=5, aspect=16/8)

plot.set_xticklabels(rotation=90)

# -

# ### Narrative Form (BGRF)

# +

# Narrative form

query = ''.join([

'SELECT ?item ?form ?label ',

'WHERE { ?item wdt:',

ITEM_IDS['narrative_form'],

' ?form.',

' ?form rdfs:label ?label .',

' FILTER(LANG(?label) = "en") }'

])

form = get_data(wb_endpoint, query)

form = pd.Series(

data=[entry['label']['value'] for entry in form],

index=[entry['item']['value'] for entry in form],

dtype='category'

)

#print('\n'.join([i for i in set(bgrf['form'].values) ]))

bgrf['form'] = form

plot = sns.countplot(x=bgrf['form'])

_ = plt.xticks(rotation=30, horizontalalignment='right')

# -

# ## Comparison

# ### Publication Date (Corpus vs BGRF)

# +

# Publication year of corpus texts:

year_corpus = pd.to_datetime(corpus['firsted-yr'], format='%Y')

# Publication year of BGRF items:

year_bgrf = bgrf['pubyear']

# Create a date index which includes the whole data range

# so that we can fill in missing data points.

idx = pd.date_range(start='1730', end='1800', freq='YS', closed=None)

# In previous visualizations we have used absolute value counts.

# For comparison we obviously need to use relative frequencies instead.

df = pd.DataFrame(index=idx)

df['freq_corpus'] = year_corpus.value_counts(normalize=True)

df['freq_bgrf'] = year_bgrf.value_counts(normalize=True)

df['year'] = df.index.year

print('The data in "wide form"\n', df.head(4), '\n')

# For the visualization we need the data in "long form", i.e. all the

# relative frequencies are in one single column, with another column

# specifying whether it stems from the corpus or the bibliography.

long = pd.melt(

df, id_vars=['year'], value_vars=['freq_corpus', 'freq_bgrf'],

var_name='origin', value_name='rel_freq')

print('The data in "long form"\n', long.head(4))

# -

sns.catplot(x='year', y='rel_freq', hue='origin', kind='bar', data=long, height=5, aspect=3, legend_out=False)

plt.xlabel('Publication Year')

plt.ylabel('Share of all texts in collection')

_ = plt.xticks(rotation=90)

# The outlier with publication year 1731 makes the above chart a bit harder to read than necessary. So let's create the same graph with data starting at 1751.

sns.catplot(x='year', y='rel_freq', hue='origin', kind='bar', data=long[long['year'] > 1750], height=5, aspect=3,

legend_out=False)

plt.xlabel('Publication Year')

plt.ylabel('Share of all texts\nin resp. collection')

_ = plt.xticks(rotation=90)

|

balance_analysis.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: conda_braket

# language: python

# name: conda_braket

# ---

# # Testing the tensor network simulator with 2-local Hayden-Preskill circuits

#

# **Abstract:** We study a class of random quantum circuits known as Hayden-Preskill circuits using the tensor network simulator backend in Amazon Braket. The goal is to understand the degree to which the tensor network simulator is capable of detecting a hidden local structure in a quantum circuit, while simultaneously building experience with the Amazon Braket service and SDK. We find that the TN1 tensor network simulator can efficiently simulate local random quantum circuits, even when the local structure is obfuscated by permuting the qubit indices. Conversely, when running genuinely non-local versions of the quantum circuits, the simulator's performance is significantly degraded.

#

# This notebook is aimed at users who are familiar with Amazon Braket and have a working knowledge of quantum computing and quantum circuits.

# <div class="alert alert-block alert-warning">

# <b>NOTE:</b> Remember to update your S3 bucket.

# </div>

# +

from braket.circuits import Circuit

from braket.aws import AwsDevice

from braket.devices import LocalSimulator

import numpy as np

import random

import matplotlib.pyplot as plt

import time

import os

# Please enter the S3 bucket you created during onboarding

# (or any other S3 bucket starting with 'amazon-braket-' in your account) in the code below

my_bucket = f"amazon-braket-Your-Bucket-Name" # the name of the bucket

my_prefix = "Your-Folder-Name" # the name of the folder in the bucket

s3_folder = (my_bucket, my_prefix)

# -

# #### Setup the tensor network simulator:

# In this notebook we will use the TN1 simulator on Amazon Braket [[1]](#References):

device = AwsDevice("arn:aws:braket:::device/quantum-simulator/amazon/tn1")

# ## Local Hayden-Preskill Circuits

# Hayden-Preskill circuits are a class of unstructured, random quantum circuits. To produce a Hayden-Preskill circuit, one chooses a gate at random from some universal gate set at each time step and applies this gate to random target qubits. For example, one can choose to either apply a random single qubit rotation to a random qubit, or a CZ gate to a random pair of qubits at each time step. As a concrete example, consider the following pseudocode:

# ```

# Choose either {single qubit, two qubit} gate w/ prob. {1/2, 1/2}

#

# If single qubit:

# Choose either {Rx, Ry, Rz, H} randomly w/ prob. {1/4, 1/4, 1/4, 1/4}

# Apply the chosen gate to a randomly chosen qubit

# If the gate is Rx, Ry, or Rz, rotate by a randomly chosen angle

#

# If two qubit gate:

# Choose (qubit 1, qubit 2) to be two randomly chosen qubits out of the set of N qubits

# Apply CZ(qubit 1, qubit 2) # This means the couplings are long range, all-to-all

# ```

#

# Using the strategy above, one can quickly generate random circuits with all-to-all, long-range couplings. These circuits generate unitaries that rapidly converge to Haar random unitaries, and they are difficult to simulate.

#

# A much simpler class of random circuits, which we call **local Hayden-Preskill circuits**, can be generated using the same strategy as above, but in which the two qubit CZ gates are applied to nearest neighbour qubits instead of random pairs:

# ```

# Choose a random qubit j from [0, N-2]

# Apply CZ(qubit j, qubit j+1)

# ```

# #### In this notebook, we will focus on both local and non-local Hayden-Preskill circuits, defined using the helper functions below.

# +

def CZtuple_generator(qubits):

"""Yields a CZ between a random qubit and its next nearest neighbor.

For simplicity, we choose a random qubit from the first N-1 qubits for

the control and we set the target to be qubit i+1, where i is the control."""

a = np.random.choice(range(len(qubits)-1), 1, replace=True)[0]

yield Circuit().cz(qubits[a],qubits[a+1])

def local_Hayden_Preskill_generator(qubits,numgates):

"""Yields the circuit elements for a scrambling unitary.

Generates a circuit with numgates gates by laying down a

random gate at each time step. Gates are chosen from single

qubit unitary rotations by a random angle, Hadamard, or a

controlled-Z between a qubit and its nearest neighbor (i.e.,

incremented by 1)."""

for i in range(numgates):

yield np.random.choice([

Circuit().rx(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().ry(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().rz(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().h(np.random.choice(qubits,1,replace=True)),

CZtuple_generator(qubits), # For all-to-all: Circuit().cz(*np.random.choice(qubits,2,replace=False)),

],1,replace=True,p=[1/8,1/8,1/8,1/8,1/2])

def non_local_Hayden_Preskill_generator(qubits,numgates):

"""Yields the circuit elements for a scrambling unitary.

Generates a circuit with numgates gates by laying down a

random gate at each time step. Gates are chosen from single

qubit unitary rotations by a random angle, Hadamard, or a

controlled-Z between a qubit and its nearest neighbor (i.e.,

incremented by 1)."""

for i in range(numgates):

yield np.random.choice([

Circuit().rx(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().ry(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().rz(np.random.choice(qubits,1,replace=True),np.random.ranf()),

Circuit().h(np.random.choice(qubits,1,replace=True)),

Circuit().cz(*np.random.choice(qubits,2,replace=False)),

],1,replace=True,p=[1/8,1/8,1/8,1/8,1/2])

# -

# We use the helper functions above to generate local Hayden-Preskill (random) quantum circuits. For example:

# Generate an example of a local Hayden Preskill circuit

test_circuit = Circuit()

test_circuit.add(local_Hayden_Preskill_generator(range(5),20))

print(test_circuit)

# Generate an example of a non-local Hayden Preskill circuit

test_circuit = Circuit()

test_circuit.add(non_local_Hayden_Preskill_generator(range(5),20))

print(test_circuit)

# ## Simulating _local_ random circuits using the TN1 tensor network simulator

# ### Testing and timing

# Let's start with a reasonably sized circuit

num_qubits = 20 # Number of qubits

num_layers = 10 # Number of layers. A layer consists of num_qubits gates.

numgates = num_qubits * num_layers # Total number of gates.

print(f"{num_qubits} qubits, {num_layers} layers = {numgates} total gates")

circ = Circuit()

circ.add(local_Hayden_Preskill_generator(range(num_qubits), numgates)); # Create the circuit with numgates gates.

# Time this circuit using TN1. It should take about a minute or so.

# +

# %%time

# define task

print(f"Running: {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

task = device.run(circ, s3_folder, shots=1000, poll_timeout_seconds = 1000)

# get id and status of submitted task

task_id = task.id

status = task.state()

print('ID of task:', task_id)

print('Status of task:', status)

# wait for job to complete

terminal_states = ['COMPLETED', 'FAILED', 'CANCELLED']

while status not in terminal_states:

time.sleep(20) # Update this for shorter circuits.

status = task.state()

print('Status:', status)

# get results of task

result = task.result()

# get measurement shots

counts = result.measurement_counts

plt.bar(counts.keys(), counts.values());

# -

# ### The importance of locality in circuits

# The goal of this section is to understand the importance of a local structure in quantum circuits being simulated in the tensor network simulator. We will first generate and benchmark a local Hayden-Preskill circuit, and then we will re-run the exact same circuit with the qubits randomly permuted. By permuting the qubits, we produce a circuit that appears to be have non-local, long-range coupling, but for which we know that there exists an underlying local structure.

#

# An example of a circuit and its permuted version is shown below. A local Hayden-Preskill circuit is generated, and then a version of the same circuit is created in which the qubits are randomly permuted, according to the permutation [0,1,2,3,4,5]$\mapsto$[5,2,4,1,0,3].

from IPython.display import Image

Image(filename='permuted_circuit.png', width=400)

# With these two circuits (that seem to have vastly different locality, but which are "secretly" the same), we can explore the tensor network simulator's ability to discern structure in a given circuit.

# First generate a modest sized local Hayden-Preskill circuit. Then make a copy of that circuit by permuting the qubit indices randomly. We'll compare the runtime to sample from the outputs of these two circuits.

# +

num_qubits = 50 # Number of qubits

num_layers = 10 # Number of layers. A layer consists of num_qubits gates.

numgates = num_qubits * num_layers # Total number of gates.

qubits=range(num_qubits) # Generate the (1D) qubits

print(f"{num_qubits} qubits, {num_layers} layers = {numgates} total gates")

# Generate the circuit with numgates gates acting on qubits.

circ = Circuit()

circ.add(local_Hayden_Preskill_generator(qubits,numgates));

# Choose a random permutation of the qubits

permuted_qubits=np.random.permutation(qubits)

# Copy the circuit circ acting on the permuted qubits

perm = Circuit().add_circuit(circ, target_mapping=dict(zip(qubits, permuted_qubits)))

##Uncomment for testing:

# print(permuted_qubits)

# print(circ)

# print(perm)

# -

# Time both circuits using the tensor network simulator for a **single shot**.

# +

# %%time

# define task

task = device.run(circ, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

counts = result.measurement_counts

print(f"The sample was: {next(iter(counts))}.")

# +

# %%time

# define task

task = device.run(perm, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the non-local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

counts = result.measurement_counts

print(f"The sample was: {next(iter(counts))}.")

# -

# If you repeat these experiments, you'll find that the two runtimes are (typically) very similar! Even though the permuted circuit seems to be highly non-local at first glance, the simulator discovers the underlying local structure, and the total runtime is comparable to the manifestly local circuit. This similarity is due to the rehearsal phase of the tensor network simulation [[1]](#References). A sophisticated algorithm works behind the scenes to find an efficient path for contracting the tensor network. Thus, when the tensor network has an underlying structure, the tensor network simulator can often tease it out.

# ## Simulating _non-local_ random circuits using the TN1 tensor network simulator

# Let us now compare the efficiency of simulating local and genuinely non-local random quantum circuits. When using the non-local Hayden Preskill circuits above, the circuits we generate have no underlying structure, making them especially difficult for the tensor network simulator.

# We will generate one local random circuit and one non-local quantum circuit of the same size, and we will compare their runtimes. In this section, the we will not be comparing identical quantum circuits as we were above, so our results can be understood by repeating these experiments several times and noting that our claims are true on average.

# +

num_qubits = 50 # Number of qubits

num_layers = 8 # Number of layers. A layer consists of num_qubits gates.

numgates = num_qubits * num_layers # Total number of gates.

qubits=range(num_qubits) # Generate the (1D) qubits

print(f"{num_qubits} qubits, {num_layers} layers = {numgates} total gates")

# Generate the local circuit with numgates gates acting on qubits.

localcirc = Circuit()

localcirc.add(local_Hayden_Preskill_generator(qubits,numgates));

# Generate the non-local circuit with numgates gates acting on qubits.

nonlocalcirc = Circuit()

nonlocalcirc.add(non_local_Hayden_Preskill_generator(qubits,numgates));

##Uncomment for testing:

# print(permuted_qubits)

# print(circ)

# print(perm)

# -

# Run the local circuit:

# +

# %%time

# define task

task = device.run(localcirc, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

counts = result.measurement_counts

print(f"The sample was: {next(iter(counts))}.")

# -

# Run the non-local circuit:

# +

# %%time

# define task

task = device.run(nonlocalcirc, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the non-local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

counts = result.measurement_counts

print(f"The sample was: {next(iter(counts))}.")

# -

# When running this notebook several times, we find that the non-local circuit generally takes 2-3 times longer to run than the local circuit. However, on occasion the non-local circuit fails to run, for a reason we will explore below.

# ## Non-local circuits quickly become too difficult for tensor network methods

# In this section, we will compare larger circuits with and without locality. We will see that the local circuits execute very efficiently on the tensor network simulator, whereas the non-local circuits actually fail in the rehearsal phase.

#

# We start by generating these larger circuits:

# +

num_qubits = 50 # Number of qubits

num_layers = 20 # Number of layers. A layer consists of num_qubits gates.

numgates = num_qubits * num_layers # Total number of gates.

qubits=range(num_qubits) # Generate the (1D) qubits

print(f"{num_qubits} qubits, {num_layers} layers = {numgates} total gates")

# Generate the circuit with numgates gates acting on qubits.

localcirc = Circuit()

localcirc.add(local_Hayden_Preskill_generator(qubits,numgates));

# Generate the circuit with numgates gates acting on qubits.

nonlocalcirc = Circuit()

nonlocalcirc.add(non_local_Hayden_Preskill_generator(qubits,numgates));

##Uncomment for testing:

# print(permuted_qubits)

# print(circ)

# print(perm)

# -

# The local Hayden Preskill circuit executes in a reasonable amount of time, generally about a minute or so:

# +

# %%time

# define task

task = device.run(localcirc, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

counts = result.measurement_counts

print(f"The sample was: {next(iter(counts))}.")

# -

# Conversely, the non-local Hayden Preskill circuit actually fails to execute:

#

# <div class="alert alert-block alert-info">

# <b>Note:</b> The following cell can take several minutes to run on TN1. It is only present to illustrate a task that will result in a FAILED state.

# </div>

# +

# %%time

# define task

task = device.run(nonlocalcirc, s3_folder, shots=1, poll_timeout_seconds = 1000)

# get results of task

result = task.result()

# get measurement shots

print(f"Running the non-local circuit with {num_qubits} qubits, {num_layers} layers = {numgates} total gates")

# counts = result.measurement_counts

# print(f"The sample was: {next(iter(counts))}.")

# -

# To see why this circuit `FAILED` to run, we can check the `failureReason` in the task's `_metadata`:

print(task._metadata['failureReason'])

# Evidently, without any structure to exploit, this tensor network would take too long to simulate, and the simulator returns with a `FAILED` state.

# ## Conclusions

# We saw that structured quantum circuits can be simulated much more efficiently than unstructured random quantum circuits. That said, structure in a quantum circuit may not be immediately evident, as the tensor network simulator was able to discover the hidden structure in our permuted quantum circuits, leading to efficiency on-par with their unpermuted, local counterparts. Note, however, that discovering this underlying structure is analogous to the graph isomorphism problem, and finding an efficient contraction path for a tensor network is a hard problem.

# ## Appendix

# Check SDK version

# alternative: braket.__version__

# !pip show amazon-braket-sdk | grep Version

# ## References

# [1] [Amazon Braket Documentation: Tensor Network Simulator](https://docs.aws.amazon.com/braket/latest/developerguide/braket-devices.html#braket-simulator-tn1)

|

examples/braket_features/TN1_demo_local_vs_non-local_random_circuits.ipynb

|

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 0.6.3

# language: julia

# name: julia-0.6

# ---

# + [markdown] slideshow={"slide_type": "slide"}

# # Solving Partial Differential Equations in Julia

#

# ## JuliaCon 2018

#

# ## <NAME>

#

# ## University of Maryland, Baltimore

# ## University of California, Irvine

# + [markdown] slideshow={"slide_type": "slide"}

# ## The goal of this workshop is to show how very disparate parts of the package ecosystem can be joined together to solve PDEs

#

# How are finite elements, multigrid methods, ODE solvers, etc. all the same topic?

#

# Teach a man to fish: we won't be going over pre-built domain specific PDE solvers, instead we will be going over the tools which are used to build PDE solvers.

#

# #### While the basics of numerically solving PDEs is usually taught in mathematics courses, the way it is described is not suitable for high performance scientific computing. Instead, we will describe the field in a very practical "I want to compute things fast and accurately" style.

# + [markdown] slideshow={"slide_type": "slide"}

# ## What is a PDE?

#

# A partial differential equation (PDE) is a differential equation which has partial derivatives. Let's unpack that.

#

# A differential equation describes a value (function) by how it changes. `u' = f(u,p,t)` gives you a solution `u(t)` by you inputing / describing its derivative. Scientific equations are encoded in differential equations since experiments uncovers laws about what happens when entities change.

#

# A partial differential equation describes a value by how it changes in multiple directions: how it changes in the `x` vs `y` physical directions, and how it changes in time.

#

# Thus spatial properties, like the heat in a 3D room at a given time, `u(x,y,z,t)` are described by physical equations which are PDEs. Values like how a drug is distributed throughout the liver, or how stress propogates through an airplane hull, are examples of phonomena described by PDEs.

#

# You are interested in PDEs.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Part 1: Representations of PDEs as other mathematical problems

#

# This will either be an overview where I will reframe the most common method of solution, or your first walkthrough of a PDE solver!

# + [markdown] slideshow={"slide_type": "slide"}

# ## The best way to solve a PDE is...

# + [markdown] slideshow={"slide_type": "slide"}

# ## By converting it into another problem!

#

# Generally, PDEs are converted into:

#

# - Linear systems: `Ax = b` find `x`.

# - Nonlinear systems: `G(x) = 0` find `x`.

# - ODEs: `u' = f(u,p,t)`, find `u`.

#

# There are others:

#

# - SDEs: `du = f(u,p,t)dt + g(u,p,t)dW`, find `mean(u)`.

#

# ... Yes experts, there are more but we will stick to the usual stuff.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Learning by example: the Poisson Equation

#

# Let's introduce some shorthand: $u_x = \frac{\partial u}{\partial x}$. The Poisson Equation is the PDE:

#

# $$ \Delta u(x) = b(x) $$

#

# for $ x \in [0,1]$. In one dimension:

#

# $$u_{xx} = b$$

#

# $b(x)$ is some known constant function (known as "the data"). Given the data (the second derivative), find $u$.

#

# ### How do we solve this PDE?

# + [markdown] slideshow={"slide_type": "slide"}

# ## First Choice: Computational Representation of a Function

#

# First we have to choose how to computationally represent our continuous function $u(x)$ by finitely many numbers. Let's start with the most basic way (and we'll revisit the others later!). Let $\Delta x$ be some constant discretization size and let $x_i = i\Delta x$. Then we represent our function $u(x) \approx {u_i} = {u(x_i)}$, i.e. we represent a continuous function by values on a grid (and we can assume some interpolation, like linear interpolation)

# + slideshow={"slide_type": "slide"}

Δx = 0.1

x = 0:Δx:1

u(x) = sin(2π*x)

using Plots

plot(u,0,1,lw=3)

scatter!(x,u.(x))

plot!(x,u.(x),lw=3)

# + [markdown] slideshow={"slide_type": "slide"}

# ## Second Choice: Discretization of Derivatives

#

# Forward difference: $ u_x \approx \delta^+ u = \frac{u_{i+1} - u_{i}}{\Delta x} $

#

# Backward difference: $ u_x \approx \delta^- u = \frac{u_{i} - u_{i-1}}{\Delta x} $

#

# $$ u_{xx} = \frac{\partial u_x}{\partial x} $$

#

# Central difference for 2nd derivative: $ u_{xx} \approx \delta u_{x} = \frac{\delta^+ u_x - \delta^- u_x}{\Delta x}$

#

# This gives the well-known central difference formula:

#

# $$ u_{xx} \approx \frac{u_{i+1} - 2u_i + u_{i-1}}{\Delta x^2} $$

# + [markdown] slideshow={"slide_type": "slide"}

# ## Quick Recap

#

# We just made **two choices**:

#

# - Represent our function by an evenly spaced grid of points

# - Represent the derivative by the central difference formula

#

# Given these two choices, how can we re-write our PDE?

# + [markdown] slideshow={"slide_type": "slide"}

# ## The Representation of Our PDE

#

# Remember we want to solve $ \Delta u = b $ on $ x \in [0,1] $

#

# - $u(x)$ is now a vector of points $u_i = u(x_i)$

# - $b(x)$ is now a vector of points $b_i = b(x_i)$

# - The second derivative is now the function $\frac{\partial^2 u}{\partial x}(x_i) = \frac{u_{i+1} - 2u_i + u_{i-1}}{\Delta x^2}$

#

# Thus we have a system of $i$ equations:

#

# $$ \frac{u_{i+1} - 2u_i + u_{i-1}}{\Delta x^2} = b_i $$

# + [markdown] slideshow={"slide_type": "slide"}

# ## But Wait...

#

# What happens at $i=0$?

#

# $$ \frac{u_1 - 2u_0 + u_{i-1}}{\Delta x^2} = b_0 $$

#

# Translating back to $u_i = u(x_i) = u(i\Delta x)$:

#

# $$ u(\Delta x) - 2u(0) + u(-\Delta x)$$

#

# The last point is out of the domain! In order to solve this problem we have to impose **boundary conditions**. For example, let's add the following condition to our problem: $u(0) = u(1) = 0$. Then the $0$th point is determined: $u_0 = 0$, and the 1st point is:

#

# $$ b_1 = \frac{u_2 - 2u_1 + u_0}{\Delta x^2} = \frac{u_2 - 2u_1}{\Delta x^2} $$

#

# so we can solve it!

# + [markdown] slideshow={"slide_type": "slide"}

# ## The Linear Representation of Our Derivative

#

# Notice that if $$ U=\left[\begin{array}{c}

# u_{1}\\

# u_{2}\\

# \vdots\\

# u_{N-1}\\

# u_{N}

# \end{array}\right], $$ then

#

# $$ AU=\frac{1}{\Delta x^{2}}\left[\begin{array}{ccccc}

# -2 & 1\\

# 1 & -2 & 1\\

# & \ddots & \ddots & \ddots\\

# & & 1 & -2 & 1\\

# & & & 1 & -2

# \end{array}\right]\left[\begin{array}{c}

# u_{1}\\

# u_{2}\\

# \vdots\\

# u_{N-1}\\

# u_{N}

# \end{array}\right]=\frac{1}{\Delta x^{2}}\left[\begin{array}{c}

# u_{2}-2u_{1}\\

# u_{3}-2u_{2}+u_{1}\\

# \vdots\\

# u_{N}-2u_{N-1}+u_{N-2}\\

# -2u_{N}+u_{N-1}

# \end{array}\right]=\left[\begin{array}{c}

# b_{1}\\

# b_{2}\\

# \vdots\\

# b_{N-1}\\

# b_{N}

# \end{array}\right]=B $$

# + [markdown] slideshow={"slide_type": "slide"}

# ## This is a linear equation!

#

# We know $A$, $B$ is given to us, find $U$.

#

# Let's walk through a concrete version with some Julia code now. Let's solve:

#

# $$ u_{xx} = sin(2\pi x) $$

# + slideshow={"slide_type": "slide"}

Δx = 0.1

x = Δx:Δx:1-Δx # Solve only for the interior: the endpoints are known to be zero!

N = length(x)

B = sin.(2π*x)

A = zeros(N,N)

for i in 1:N, j in 1:N

abs(i-j)<=1 && (A[i,j]+=1)

i==j && (A[i,j]-=3)

end

A = A/(Δx^2)

# + slideshow={"slide_type": "slide"}

# Now we want to solve AU=B, so we use backslash:

U = A\B

plot([0;x;1],[0;U;0],label="U")

# + [markdown] slideshow={"slide_type": "slide"}

# ## Did we do that correctly?

#

# This equation is simple enough we can check via the analytical solution.

#

# $$ u_{xx} = sin(2\pi x) $$

#

# Integrate it twice:

#

# $$ u(x) = -\frac{sin(2\pi x)}{4\pi^2} $$

# + slideshow={"slide_type": "slide"}

# Now we want to solve AU=B, so we use backslash:

plot([0;x;1],[0;U;0],label="U")

plot!([0;x;1],-sin.(2π*[0;x;1])/4(π^2),label="Analytical Solution")

# + [markdown] slideshow={"slide_type": "slide"}

# ## Recap

#

# We solved the PDE $$\Delta u = b $$ by transforming our functions into vectors of numbers $U$ and $B$, transforming second derivative into a linear operator (a matrix) $A$, and solving $AU = B$ using backslash.

#

# Does this method generally apply?

#

# Pretty much.

#

# #### Because derivatives are linear, when you discretize a function, the derivative operators become linear operators = matrices

# + [markdown] slideshow={"slide_type": "slide"}

# ## Semilinear Poisson Equation

#

# $$ \Delta u = f(u) $$

#

# Now the right hand side is dependent on $u$! Let's choose the same discretization and the same representation of the derivatives. Then once again $ \Delta u = AU$ for the same matrix $A$. Now we get the equation:

#

# $$ AU = f(U) $$

#

# Find the vector of $U$ which satisfy this nonlinear system! If we redefine:

#

# $$ G(U) = f(U) - AU $$

#

# then we are looking for the vector $U$ which causes $G(U) = 0$.

#

# #### tl;dr: Semilinear equations convert into nonlinear rootfinding problems

# + [markdown] slideshow={"slide_type": "slide"}

# ## Semilinear Heat Equation

#

# $$ u_t = u_{xx} + f(u,t) $$

#

# Discretize the function the same way as before. This once again makes $u_{xx} = AU$. Thus letting $U_t$ be the time derivative of each point in the vector, we get:

#

# $$ U_t = AU + f(U,t) $$

#

# but since there's now only one coordinate, let the derivative be by time. Then we can write this as:

#

# $$ U' = AU + f(U,t) $$

#

# This is an ODE!

#

# #### tl;dr: Time-dependent PDEs (can) convert into ODE problems!

# + [markdown] slideshow={"slide_type": "slide"}

# ## Loose End: Higher Dimensions Do The Same Thing

#

# Now let's look at $$ \Delta u = u_{xx} + u_{yy} = b(x,y) $$ on $x \in [0,1], y \in [0,1]$.

#

# In this case, you can let $u_{i,j} = u(x_i,y_i)$ where $x_i = i\Delta x$ and $y_i = i\Delta y$. You can list out all of the $u_{i,j}$ into a vector $U$ by lexicographic ordering:

#

# $$ U=\left[\begin{array}{c}

# u_{1,1}\\

# u_{2,1}\\

# \vdots\\

# u_{N-1,}\\

# u_{N,1}\\

# u_{1,2}\\

# \vdots\\

# u_{N,M}

# \end{array}\right] $$

#

# $$ \Delta u = u_{xx} + u_{yy} \approx \frac{u_{i+1,j} - 2u_{i,j} + u_{i-1,j}}{\Delta x^2} + \frac{u_{i,j+1} -2 u_{i,j} + u_{i,j-1}}{\Delta y^2} = AU$$

#

# for some A. Now solve $AU=B$

# + [markdown] slideshow={"slide_type": "slide"}

# ## Part 1 Summary

#

# To solve a PDE,

#

# - You choose a way to represent functions.

# - You choose a way to represent your derivative (and on the function representation, your derivative representation is a matrix!)

#

# Then when you write out your PDE, you get one of the following problems:

#

# - Linear systems: `Ax = b` find `x`.

# - Nonlinear systems: `G(x) = 0` find `x`.

# - ODEs: `u' = f(u,p,t)`, find `u`.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Part 2: The Many Ways to Discretize

#

# There are thus 4 types of packages in the PDE solver pipeline:

#

# - Packages with ways to represent functions as vectors of numbers and their derivatives as matrices

# - Packages which solve linear systems

# - Packages which solve nonlinear rootfinding problems

# - Packages which solve ODEs

#

# In this part we will look at the many ways you can discretize a PDE.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Part 2.1: Packages to Represent Functions and Derivatives

#

# There are four main ways to represet functions and derivatives as vectors:

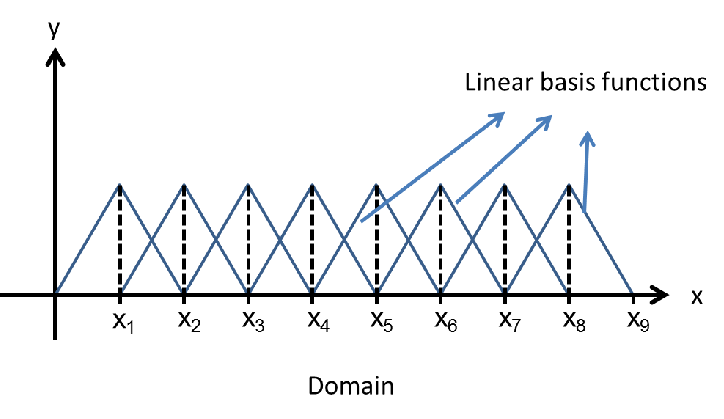

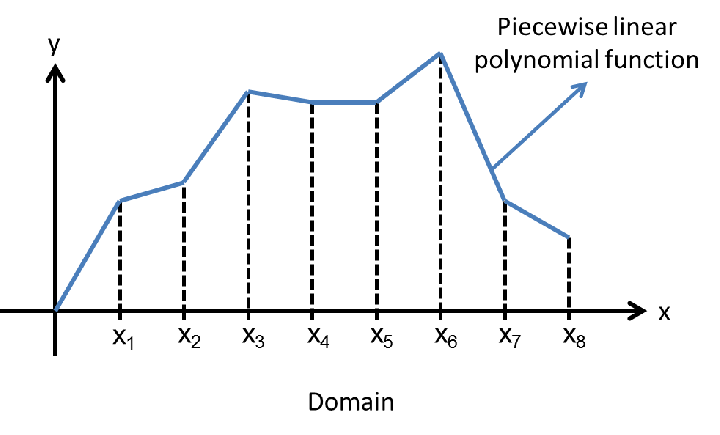

#

# - Finite difference method (FDM): functions are represented on a grid. Packages: DiffEqOperators.jl (still developing)

# - Finite volume method (FVM): functions are represented by a discretization of its integral. Currently no strong generic package support.

# - Finite element method (FEM): functions are represented by a local basis. FEniCS.jl, JuliaFEM and JuAFEM.jl

# - Spectral methods: functions are represented by a global basis. FFTW.jl and ApproxFun.jl

# + [markdown] slideshow={"slide_type": "slide"}

# ## Finite Difference method: DiffEqOperators.jl

#

# DiffEqOperators.jl is part of the JuliaDiffEq ecosystem. It automatically develops lazy operators for finite difference discretizations (functions represented on a grid). For example, to represent $u_{xx}$, we'd do:

# + slideshow={"slide_type": "fragment"}

using DiffEqOperators

# Second order approximation to the second derivative

order = 2

deriv = 2

Δx = 0.1

N = 9

A = DerivativeOperator{Float64}(order,deriv,Δx,N,:Dirichlet0,:Dirichlet0)

# + [markdown] slideshow={"slide_type": "fragment"}

# This `A` is lazy: it acts `A*u` like it was a matrix but without ever building the matrix by overloading `*` and directly computing the coefficients. This makes it efficient, using $\mathcal{O}(1)$ memory while not having the overhead of sparse matrices!

# + [markdown] slideshow={"slide_type": "slide"}

# This package also makes it easy to generate the matrices without much work. For example, let's get a 2nd order discretization of $u_{xxxx}$:

# + slideshow={"slide_type": "fragment"}

full(DerivativeOperator{Float64}(4,2,Δx,N,:Dirichlet0,:Dirichlet0))

# + [markdown] slideshow={"slide_type": "fragment"}

# #### This package is still in heavy development: improved boundary condition handling and irregular grid support is coming

# + [markdown] slideshow={"slide_type": "slide"}

# ## Brief brief brief overview of finite element methods

#

# Represent your function $u(x) = \sum_i c_i \varphi_i(x) $ with some chosen basis $\varphi_i(x)$.

#

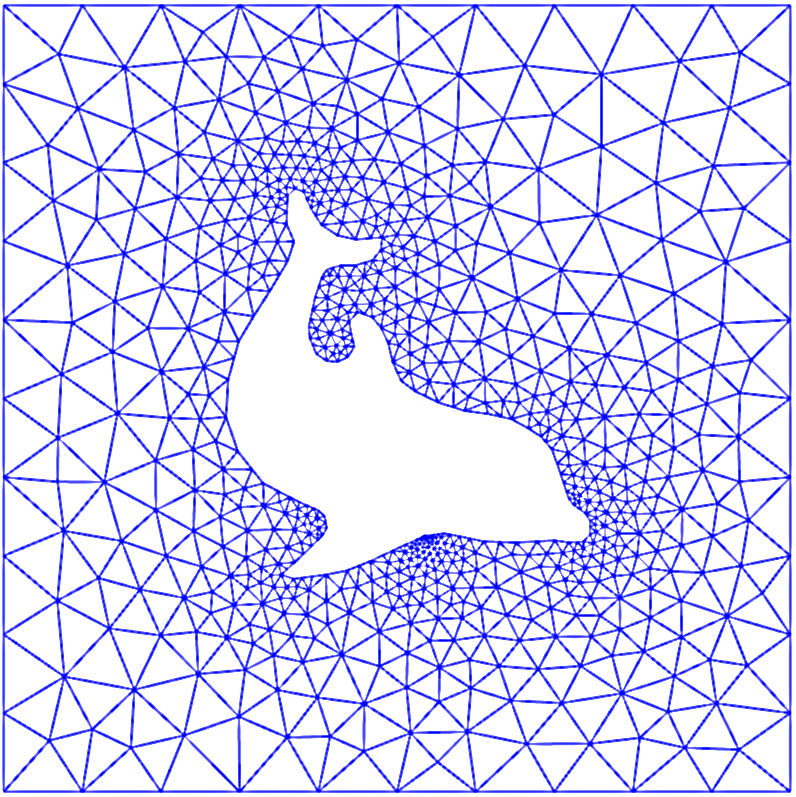

# "Matrix Assembly" = calculate the matrix representations of the derivatives in this function representation. The core of an FEM package is its matrix assembly tools.

#

# #### Finite difference method is good if your domain is a square / hypercube. Finite element methods can solve PDEs on more complicated domains

# + [markdown] slideshow={"slide_type": "slide"}

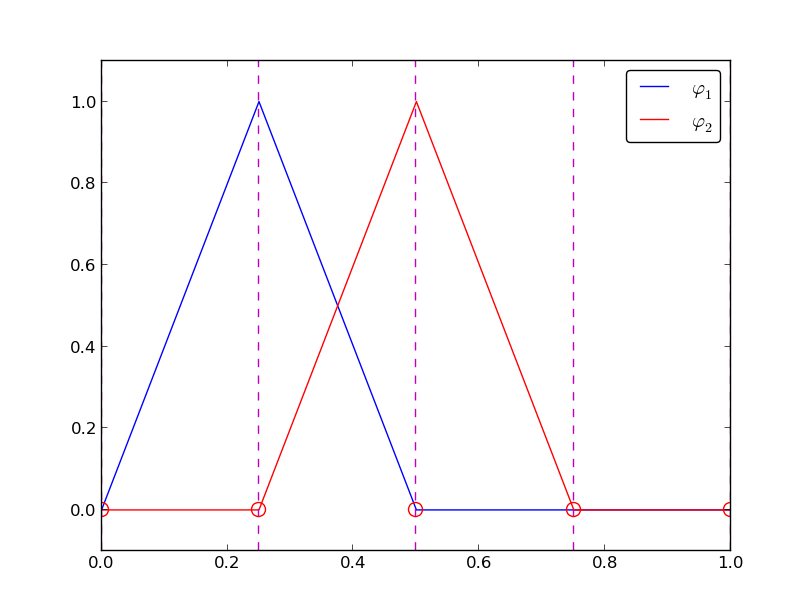

# ## 1D Basis Example

#

#

# + [markdown] slideshow={"slide_type": "slide"}

#

# + [markdown] slideshow={"slide_type": "slide"}

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## 2D Basis Elements

#

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## FEM Easily Handle Difficult Domains

#

#

# + [markdown] slideshow={"slide_type": "slide"}

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## FEM Package 1: FEniCS.jl

#

# FEniCS is a well-known finite element package for Python (fellow NumFOCUS project!). It lets you describe the kind of PDE you want to solve and what elements (basis functions) you want to discretize with in a DSL, and it handles the rest.

#

# FEniCS.jl is a wrapper over FEniCS which is maintained by the JuliaDiffEq organization.

#

# - Pro: very full featured (since it's wrapping an existing package). Linear solvers are built in.

# - Con: not Julia-based, so it's missing a lot of the fancy Julia features (generic programming, arbitrary number types, etc.)

# + slideshow={"slide_type": "slide"}

using FEniCS

mesh = UnitSquareMesh(8,8)

V = FunctionSpace(mesh,"P",1)

u_D = Expression("1+x[0]*x[0]+2*x[1]*x[1]", degree=2)

u = TrialFunction(V)

bc1 = DirichletBC(V,u_D, "on_boundary")

v = TestFunction(V)

f = Constant(-6.0)

a = dot(grad(u),grad(v))*dx

L = f*v*dx

U = FEniCS.Function(V)

lvsolve(a,L,U,bc1) #linear variational solver

# + [markdown] slideshow={"slide_type": "slide"}

# ## FEM Package 2: JuliaFEM

#

# JuliaFEM is an organization with a suite of packages for performing finite element discretizations. It focuses on FEM discretizations of physical PDEs and integrates with Julia's linear solver, nonlinear rootfinding, and DifferentialEquations.jl libraries to ease the full PDE solving process.

# + [markdown] slideshow={"slide_type": "slide"}

# ## FEM Package 3: JuAFEM.jl

#

# JuAFEM.jl is a FEM toolbox. It gives you functionality that makes it easier to write matrix assembly routines.

# + slideshow={"slide_type": "slide"}

function doassemble(cellvalues::CellScalarValues{dim}, K::SparseMatrixCSC, dh::DofHandler) where {dim}

n_basefuncs = getnbasefunctions(cellvalues)

Ke = zeros(n_basefuncs, n_basefuncs)

fe = zeros(n_basefuncs)

f = zeros(ndofs(dh))

assembler = start_assemble(K, f)

@inbounds for cell in CellIterator(dh)

fill!(Ke, 0)

fill!(fe, 0)

reinit!(cellvalues, cell)

for q_point in 1:getnquadpoints(cellvalues)

dΩ = getdetJdV(cellvalues, q_point)

for i in 1:n_basefuncs

v = shape_value(cellvalues, q_point, i)

∇v = shape_gradient(cellvalues, q_point, i)

fe[i] += v * dΩ

for j in 1:n_basefuncs

∇u = shape_gradient(cellvalues, q_point, j)

Ke[i, j] += (∇v ⋅ ∇u) * dΩ

end

end

end

assemble!(assembler, celldofs(cell), fe, Ke)

end

return K, f

end

K, f = doassemble(cellvalues, K, dh);

apply!(K, f, ch)

u = K \ f;

# + [markdown] slideshow={"slide_type": "slide"}

# ## Spectral Methods

#

# Like finite element methods, spectral methods represent a function in a basis: $u(x) = \sum_i c_i \varphi_i(x) $ with some chosen basis $\varphi_i(x)$.

#

# "Spectral" usually refers to global basis functions. For example, the Fourier basis of sines and cosines.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Spectral Packages 1: FFTW.jl

#

# Okay, this is not necessarily a spectral discretization package. However, it is a package to change from a pointwise representation of a function to a Fourier representation via a Fast Fourier Transform (FFT). Thus if you want to find the coefficients $c_ki$ for

#

# $$ u(x) = \sum_k c_k sin(kx) $$

#

# you'd use:

# + slideshow={"slide_type": "slide"}

using FFTW

x = linspace(0,2π,100)

u(x) = sin(x)

freqs = fft(u.(x))[1:length(x)÷2 + 1]

c = 2*abs.(freqs/length(x))'

# + [markdown] slideshow={"slide_type": "fragment"}

# This corresponds to basically saying that $sin(x)$ is represented in the Fourier basis as:

#

# $$ sin(x) \approx 5\times 10^{-18} sin(0x) + 0.9948sin(x) + 0.014sin(2x) + 0.0076sin(3x) + \ldots $$

#

# It almost got it, and you can see the slight discretization error. This goes away as you add more points. But now you can represent any periodic function!

# + [markdown] slideshow={"slide_type": "slide"}

# ## Spectral Packages 2: ApproxFun.jl

#

# ApproxFun.jl is a package for easily approximating functions and their derivatives in a given basis, making it an ideal package for building spectral discretizations of PDEs. It utilizes lazy representations of infinite matrices to be efficient and save memory. It uses a type system to make the same code easy to translate between different basis choices.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Representing a Function and its Derivative in the Fourier Basis

#

# Let's represent

#

# $$ r(x) = cos(cos(x-0.1)), $$

#

# in the Fourier basis, and build a matrix representation of the second derivative in this basis:

# + slideshow={"slide_type": "slide"}

S = Fourier()

n = 100

T = ApproxFun.plan_transform(S, n)

Ti = ApproxFun.plan_itransform(S, n)

x = points(S, n)

r = (T*cos.(cos.(x-0.1)))'

# + slideshow={"slide_type": "slide"}

D2 = Derivative(S,2)

# + [markdown] slideshow={"slide_type": "slide"}

# ## Now let's change to the Chebyshev basis:

#

# This basis is $$ \sum_k c_k T_k(x) $$ for $T_k$ the Chebyschev polynomials $(1, x, 2x^2-1, 4x^3 - 3x, \ldots)$

# + slideshow={"slide_type": "slide"}

S = Chebyshev()

n = 100

T = ApproxFun.plan_transform(S, n)

Ti = ApproxFun.plan_itransform(S, n)

x = points(S, n)

r = (T*cos.(cos.(x-0.1)))'

# + slideshow={"slide_type": "slide"}

D2 = Derivative(S,2)

# + [markdown] slideshow={"slide_type": "slide"}

# ## Part 2 Summary

#

# Using these packages, you can easily translate your PDE functions to coefficient vectors and your derivatives to matrices:

#

# - Use spectral methods or finite difference methods for cases with "simple enough" boundary conditions and on simple (square) domains