code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Dependencies

# + _cell_guid="b1076dfc-b9ad-4769-8c92-a6c4dae69d19" _kg_hide-input=true _uuid="8f2839f25d086af736a60e9eeb907d3b93b6e0e5"

import json, warnings, shutil

from scripts_step_lr_schedulers import *

from tweet_utility_scripts import *

from tweet_utility_preprocess_roberta_scripts import *

from transformers import TFRobertaModel, RobertaConfig

from tokenizers import ByteLevelBPETokenizer

from tensorflow.keras.models import Model

from tensorflow.keras import optimizers, metrics, losses, layers

from tensorflow.keras.callbacks import EarlyStopping, ModelCheckpoint, LearningRateScheduler

SEED = 0

seed_everything(SEED)

warnings.filterwarnings("ignore")

# -

# # Load data

# + _cell_guid="79c7e3d0-c299-4dcb-8224-4455121ee9b0" _kg_hide-input=true _uuid="d629ff2d2480ee46fbb7e2d37f6b5fab8052498a"

database_base_path = '/kaggle/input/tweet-dataset-7fold-roberta-64-clean/'

k_fold = pd.read_csv(database_base_path + '5-fold.csv')

display(k_fold.head())

# Unzip files

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_1.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_2.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_3.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_4.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_5.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_6.tar.gz

# !tar -xf /kaggle/input/tweet-dataset-7fold-roberta-64-clean/fold_7.tar.gz

# -

# # Model parameters

# +

vocab_path = database_base_path + 'vocab.json'

merges_path = database_base_path + 'merges.txt'

base_path = '/kaggle/input/qa-transformers/roberta/'

config = {

"MAX_LEN": 64,

"BATCH_SIZE": 32,

"EPOCHS": 2,

"LEARNING_RATE": 1e-4,

"ES_PATIENCE": 2,

"N_FOLDS": 7,

"question_size": 4,

"base_model_path": base_path + 'roberta-base-tf_model.h5',

"config_path": base_path + 'roberta-base-config.json'

}

with open('config.json', 'w') as json_file:

json.dump(json.loads(json.dumps(config)), json_file)

# -

# # Tokenizer

# + _kg_hide-output=true

tokenizer = ByteLevelBPETokenizer(vocab_file=vocab_path, merges_file=merges_path,

lowercase=True, add_prefix_space=True)

tokenizer.save('./')

# + _kg_hide-input=true

# pre-process

k_fold['jaccard'] = k_fold.apply(lambda x: jaccard(x['text'], x['selected_text']), axis=1)

k_fold['text_tokenCnt'] = k_fold['text'].apply(lambda x : len(tokenizer.encode(x).ids))

k_fold['selected_text_tokenCnt'] = k_fold['selected_text'].apply(lambda x : len(tokenizer.encode(x).ids))

# -

# ## Learning rate schedule

# + _kg_hide-input=true

lr_min = 1e-6

lr_start = 0

lr_max = config['LEARNING_RATE']

train_size = len(k_fold[k_fold['fold_1'] == 'train'])

step_size = train_size // config['BATCH_SIZE']

total_steps = config['EPOCHS'] * step_size

rng = [i for i in range(0, total_steps, config['BATCH_SIZE'])]

y = [one_cycle_schedule(tf.cast(x, tf.float32), total_steps=total_steps,

lr_start=lr_min, lr_max=lr_max) for x in rng]

sns.set(style="whitegrid")

fig, ax = plt.subplots(figsize=(20, 6))

plt.plot(rng, y)

print("Learning rate schedule: {:.3g} to {:.3g} to {:.3g}".format(y[0], max(y), y[-1]))

# -

# # Model

# +

module_config = RobertaConfig.from_pretrained(config['config_path'], output_hidden_states=False)

def model_fn(MAX_LEN):

input_ids = layers.Input(shape=(MAX_LEN,), dtype=tf.int32, name='input_ids')

attention_mask = layers.Input(shape=(MAX_LEN,), dtype=tf.int32, name='attention_mask')

base_model = TFRobertaModel.from_pretrained(config['base_model_path'], config=module_config, name="base_model")

last_hidden_state, _ = base_model({'input_ids': input_ids, 'attention_mask': attention_mask})

logits = layers.Dense(2, name="qa_outputs", use_bias=False)(last_hidden_state)

start_logits, end_logits = tf.split(logits, 2, axis=-1)

start_logits = tf.squeeze(start_logits, axis=-1, name='y_start')

end_logits = tf.squeeze(end_logits, axis=-1, name='y_end')

model = Model(inputs=[input_ids, attention_mask], outputs=[start_logits, end_logits])

return model

# -

# # Train

# + _kg_hide-input=true

def get_training_dataset(x_train, y_train, batch_size, buffer_size, seed=0):

dataset = tf.data.Dataset.from_tensor_slices(({'input_ids': x_train[0], 'attention_mask': x_train[1]},

(y_train[0], y_train[1])))

dataset = dataset.repeat()

dataset = dataset.shuffle(2048, seed=seed)

dataset = dataset.batch(batch_size, drop_remainder=True)

dataset = dataset.prefetch(buffer_size)

return dataset

def get_validation_dataset(x_valid, y_valid, batch_size, buffer_size, repeated=False, seed=0):

dataset = tf.data.Dataset.from_tensor_slices(({'input_ids': x_valid[0], 'attention_mask': x_valid[1]},

(y_valid[0], y_valid[1])))

if repeated:

dataset = dataset.repeat()

dataset = dataset.shuffle(2048, seed=seed)

dataset = dataset.batch(batch_size, drop_remainder=True)

dataset = dataset.cache()

dataset = dataset.prefetch(buffer_size)

return dataset

# + _kg_hide-input=true _kg_hide-output=true

AUTO = tf.data.experimental.AUTOTUNE

history_list = []

for n_fold in range(config['N_FOLDS']):

n_fold +=1

print('\nFOLD: %d' % (n_fold))

# Load data

base_data_path = 'fold_%d/' % (n_fold)

x_train = np.load(base_data_path + 'x_train.npy')

y_train = np.load(base_data_path + 'y_train.npy')

x_valid = np.load(base_data_path + 'x_valid.npy')

y_valid = np.load(base_data_path + 'y_valid.npy')

step_size = x_train.shape[1] // config['BATCH_SIZE']

# Train model

model_path = 'model_fold_%d.h5' % (n_fold)

model = model_fn(config['MAX_LEN'])

es = EarlyStopping(monitor='val_loss', mode='min', patience=config['ES_PATIENCE'],

restore_best_weights=True, verbose=1)

checkpoint = ModelCheckpoint(model_path, monitor='val_loss', mode='min',

save_best_only=True, save_weights_only=True)

lr = lambda: one_cycle_schedule(tf.cast(optimizer.iterations, tf.float32),

total_steps=total_steps, lr_start=lr_min,

lr_max=lr_max)

optimizer = optimizers.Adam(learning_rate=lr)

model.compile(optimizer, loss=[losses.CategoricalCrossentropy(label_smoothing=0.2, from_logits=True),

losses.CategoricalCrossentropy(label_smoothing=0.2, from_logits=True)])

history = model.fit(get_training_dataset(x_train, y_train, config['BATCH_SIZE'], AUTO, seed=SEED),

validation_data=(get_validation_dataset(x_valid, y_valid, config['BATCH_SIZE'], AUTO, repeated=False, seed=SEED)),

epochs=config['EPOCHS'],

steps_per_epoch=step_size,

callbacks=[checkpoint, es],

verbose=2).history

history_list.append(history)

# Make predictions

predict_eval_df(k_fold, model, x_train, x_valid, get_test_dataset, decode, n_fold, tokenizer, config, config['question_size'])

### Delete data dir

shutil.rmtree(base_data_path)

# -

# # Model loss graph

# + _kg_hide-input=true

for n_fold in range(config['N_FOLDS']):

print('Fold: %d' % (n_fold+1))

plot_metrics(history_list[n_fold])

# -

# # Model evaluation

# + _kg_hide-input=true

display(evaluate_model_kfold(k_fold, config['N_FOLDS']).style.applymap(color_map))

# -

# # Visualize predictions

# + _kg_hide-input=true

k_fold['jaccard_mean'] = 0

for n in range(config['N_FOLDS']):

k_fold['jaccard_mean'] += k_fold[f'jaccard_fold_{n+1}'] / config['N_FOLDS']

display(k_fold[['text', 'selected_text', 'sentiment', 'text_tokenCnt',

'selected_text_tokenCnt', 'jaccard', 'jaccard_mean'] + [c for c in k_fold.columns if (c.startswith('prediction_fold'))]].head(15))

|

Model backlog/Train/281-tweet-train-7fold-roberta-onecycle.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# ## Strings

# ### Criando uma String

# Para criar uma string em Python você pode usar aspas simples ou duplas. Por exemplo:

# Uma única palavra

'Oi'

# Uma frase

'Criando uma string em Python'

# Podemos usar aspas duplas

"Podemos usar aspas duplas ou simples para strings em Python"

# Você pode combinar aspas duplas e simples

"Testando strings em 'Python'"

# ### Imprimindo uma String

print ('Testando Strings em Python')

print ('Testando \nStrings \nem \nPython')

print ('\n')

# ### Indexando Strings

# Atribuindo uma string

s = 'Data Science Academy'

print(s)

# Primeiro elemento da string.

s[0]

s[1]

s[2]

# Podemos usar um : para executar um slicing que faz a leitura de tudo até um ponto designado. Por exemplo:

# Retorna todos os elementos da string, começando pela posição (lembre-se que Python começa a indexação pela posição 0),

# até o fim da string.

s[1:]

# A string original permanece inalterada

s

# Retorna tudo até a posição 3

s[:3]

s[:]

# Nós também podemos usar a indexação negativa e ler de trás para frente.

s[-1]

# Retornar tudo, exceto a última letra

s[:-1]

# Nós também podemos usar a notação de índice e fatiar a string em pedaços específicos (o padrão é 1). Por exemplo, podemos usar dois pontos duas vezes em uma linha e, em seguida, um número que especifica a frequência para retornar elementos. Por exemplo:

s[::1]

s[::2]

s[::-1]

# ### Propriedades de Strings

s

# Alterando um caracter

s[0] = 'x'

# Concatenando strings

s + ' é a melhor maneira de estar preparado para o mercado de trabalho em Ciência de Dados!'

s = s + ' é a melhor maneira de estar preparado para o mercado de trabalho em Ciência de Dados!'

print(s)

# Podemos usar o símbolo de multiplicação para criar repetição!

letra = 'w'

letra * 3

# ### Funções Built-in de Strings

s

# Upper Case

s.upper()

# Lower case

s.lower()

# Dividir uma string por espaços em branco (padrão)

s.split()

# Dividir uma string por um elemento específico

s.split('y')

# ### Funções String

s = 'seja bem vindo ao universo de python'

s.capitalize()

s.count('a')

s.find('p')

s.center(20, 'z')

s.isalnum()

s.isalpha()

s.islower()

s.isspace()

s.endswith('o')

s.partition('!')

# ### Comparando Strings

print("Python" == "R")

print("Python" == "Python")

# # Fim

|

001-Curso-De-Python/001-DSA/001-Variaveis-Tipos-Estrutura-De-Dados/005-Strings.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import matplotlib.pyplot as plt

import os

import os.path as path

from sklearn.model_selection import train_test_split

base_path = 'data'

original_data = path.join(base_path, 'AM_test_original.npz')

data = np.load(original_data)

x_data = data['trainimg']

y_data = data['trainlabel']

img_shape = (341, 341, 3)

x_2d_data = x_data.reshape((len(x_data), *img_shape))

y_label = np.argmax(y_data, axis=1)

y_text = ['bed', 'bird', 'cat', 'dog', 'house', 'tree']

y_table = {i:text for i, text in enumerate(y_text)}

y_table_array = np.array([(i, text) for i, text in enumerate(y_text)])

# +

x_train_temp, x_test, y_train_temp, y_test = train_test_split(

x_2d_data, y_label, test_size=0.2, random_state=42, stratify=y_label)

x_train, x_val, y_train, y_val = train_test_split(

x_train_temp, y_train_temp, test_size=0.25, random_state=42, stratify=y_train_temp)

x_train.shape, y_train.shape, x_val.shape, y_val.shape, x_test.shape, y_test.shape

# -

np.savez_compressed(path.join(base_path, 'imagenet_6_class_train_data.npz'),

x_data=x_train, y_data=y_train, y_table_array=y_table_array)

np.savez_compressed(path.join(base_path, 'imagenet_6_class_val_data.npz'),

x_data=x_val, y_data=y_val, y_table_array=y_table_array)

np.savez_compressed(path.join(base_path, 'imagenet_6_class_test_data.npz'),

x_data=x_test, y_data=y_test, y_table_array=y_table_array)

x_data = data['valimg']

y_data = data['vallabel']

img_shape = (341, 341, 3)

x_2d_data = x_data.reshape((len(x_data), *img_shape))

np.savez_compressed(path.join(base_path, 'imagenet_6_class_vis_data.npz'),

x_data=x_2d_data, y_data=y_data, y_table_array=y_table_array)

|

make_imagenet_6_class_data.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/aparna2903/letsupgreade-Python-Batch-7/blob/master/Assignment1_and_Assignment2_Day6_Batch7.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="16a1D5XMaOPQ" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 221} outputId="87be08f0-596b-4bdf-af84-1887ee76db22"

#Question 1 : for this challenge, create a bank account class that has two attributes:

#owner_name

#balance

#and two methods

#deposit

#withdraw

#As an added requirement, withdrawals may not exceed the available balance

#Instantiate your class, make several deposites and withdrawals and test to make sure the account can't be overdrawn.

#Solution :

class Bank_Account:

def __init__(self, Owner_name):

self.balance = 0

self.Owner_name = Owner_name

print(f"Hello!!! {self.Owner_name} Welcome to the Deposit & Withdrawal Machine")

def deposit(self):

amount=float(input("Enter amount to be Deposited: "))

self.balance += amount

print("\n Amount Deposited:", amount)

def withdraw(self):

amount = float(input("Enter amount to be Withdrawn: "))

if self.balance>=amount:

self.balance-=amount

print("\n You Withdrew:", amount)

else:

print("\n Insufficient balance ")

def display(self):

print("\n Net Available Balance=",self.balance)

# creating an object of class

s = Bank_Account(input('Enter Your name'))

# Calling functions with that class object

s.deposit()

s.withdraw()

s.display()

#Question 2 : for this challenge, create a cone class that has two attributes:

#R=radius,

#h=height

#and two method

#volume = pi * r*r*(h/3)

#surface area : base : pi*r*r, side : pi*r*(r**2+h**2)/2

#Make only one class with functions as in where required import maths

#solution :

# Importing Math library for value Of PI

import math

pi = math.pi

class Cone:

def __init__(self, r, h):

self.r = r

self.h = h

# Function to calculate Volume of Cone

def volume(self):

return (1 / 3) * pi * self.r**2 * self.h

# Function To Calculate Surface Area of Cone

def surfacearea(self):

base = (pi * self.r **2)

side = (pi * self.r * math.sqrt((self.r**2) + (self.h**2)))

return (pi * self.r **2) + (pi * self.r * math.sqrt((self.r**2) + (self.h**2)))

calc_volume = Cone(5,12)

print(f"Volume Of Cone : {calc_volume.volume()}")

calc_surface = Cone(5,12)

print(f"Surface Area Of Cone : {calc_surface.surfacearea()}")

|

Assignment1_and_Assignment2_Day6_Batch7.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/ahmadhajmosa/3d-force-graph/blob/master/Session_1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="rmSUExzC-ZBV" colab_type="text"

#

# + [markdown] id="wgWZldyi-Zef" colab_type="text"

# # Lab on Machine Learning and Applications in Intelligent Vehicles

# ## Session 1: Introduction

#

# + [markdown] id="j1T4XAd1_fJk" colab_type="text"

# ### Course Plan:

#

# This lab is a continuation to the Machine learning lecture, the objective of this course is to learn how to model and implement neural networks using deep learning python frameworks:

# 1. Tensorflow

# 2. Keras

# 3. Pytorch

#

# Useing these frameworks, we will go through the following topics and use cases:

#

# #### Session 1: 05.06 - 09:00 - 11:00 :

#

# >#### Presentation: 05.06 - 09:00 - 10:30:

# >1. Introduction to deep learning frameworks

# 2. Deep learning in Numpy

#

# >#### Break: 05.06 - 10:30 - 10:45

# >#### Assignment: 05.06 - 10:45 - 11:45 :

# >* Implementation of backprop using numpy

#

# >1. Tensorflow backround

# 2. Implementation of feedforward neural networks using Tensorflow

#

# >#### Break: 05.06 - 10:00 - 10:15

# >#### Assignment: 05.06 - 10:15 - 10:45 :

#

# >* play around with tensorflow and build your first neural network

#

#

#

# #### Session 2: 05.06 - 11:00 - 12:00 :

#

# >#### Presentation: 05.06 - 11:00 - 12:00:

#

#

# >3. Implementation of CNN useing Tensorflow

# 4. Tensorboard

#

# >#### Break: 05.06 - 12:00 - 13:00

# >#### Assignment: 05.06 - 13:00 - 13:30 :

#

#

# #### Session 3: 05.06 - 13:30 - 15:00 :

#

# >#### Presentation: 05.06 - 13:00 - 14:00:

#

# >5. Introduction to Keras

# 6. CNN using Keras

# >#### Break: 05.06 - 14:00 - 14:15

# >#### Assignment: 05.06 - 14:15 - 14:45 :

#

# #### Session 4: 05.06 - 15:00 - 16:30 :

#

# >#### Presentation: 05.06 - 15:00 - 15:45:

#

# >7. LSTM using Keras

#

# >#### Break: 05.06 - 15:45 - 16:00

# >#### Assignment: 05.06 - 16:00 - 16:30 :

#

#

# 8. VGG, Inceptaion, ResNet models using Keras

#

# 9. Autoencoders using Keras

#

# 10. Sequence to Sequence Models using Keras

#

# 11. Attention Mechanism using Keras

# 12. GANS

# 13. Introduction to Pytorch

# 14. Machine translation using Pytorch

# 15. Introduction to Allennlp

# 16. Deep Reinforcment Learning using Keras

# 17. Use case: building self driving car using Unity and tensorflow

#

#

#

#

#

#

#

#

#

# + [markdown] id="a75NLOuwKXSs" colab_type="text"

# #Session 1: 05.06 - 09:00 - 11:00 :

#

# + [markdown] id="ciUtGM-W_ehP" colab_type="text"

#

#

# ## Deep learning frameworks:

#

# In the past decade, many deep learning frameworks have been developed to ease and scale the research and development of AI. Many big technology providers including Google, IBM, Microsoft and Facebook have entered the race to provide the best and most popluar frameworks. To enter such a race, mainly four features are considered in the provided framework:

#

# 1. Uses a popular language for data scientists (Python, Scale, C++ or R)

# 2. Flexible in creating and adjusting deep learning architectures -> Functional programming

# 3. Easy for computing gradients

# 4. Interface with GPUs for parallel processing

#

#

# In the following we see the most popular provided deep learning frameworks with their providers

# https://towardsdatascience.com/deep-learning-framework-power-scores-2018-23607ddf297a

#

#

#

# ## Tensorflow

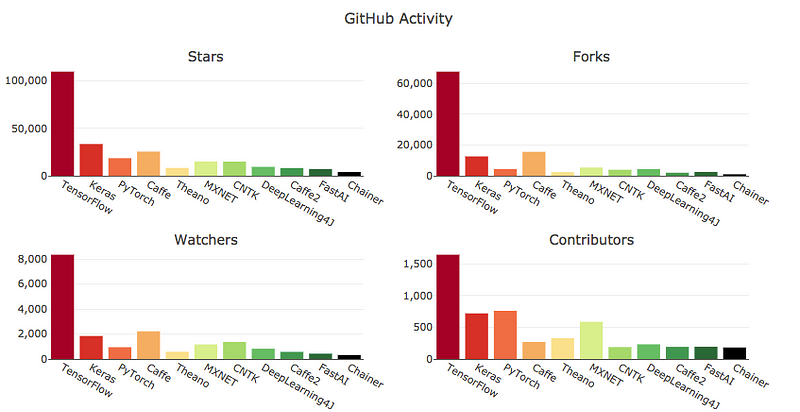

# TensorFlow is the undisputed heavyweight champion. It has the most GitHub activity, Google searches, Medium articles, books on Amazon and ArXiv articles. It also has the most developers using it and is listed in the most online job descriptions. TensorFlow is backed by Google.

#

# ## Keras

# Keras has an “API designed for human beings, not machines.” It is the second most popular framework in nearly all evaluation areas. Keras sits on top of TensorFlow, Theano, or CNTK. Start with Keras if you are new to deep learning.

#

# ## Pytorch

#

# PyTorch is the third most popular overall framework and the second most popular stand-alone framework. It is younger than TensorFlow and has grown rapidly in popularity. It allows customization that TensorFlow does not. It has the backing of Facebook.

#

# ## Theano

#

# Theano was developed at the University of Montreal in 2007 and is the oldest significant Python deep learning framework. It has lost much of its popularity and its leader stated that major releases were no longer on the roadmap. However, updates continue to be made. Theano still the fifth highest scoring framework.

#

# # Comparision:

#

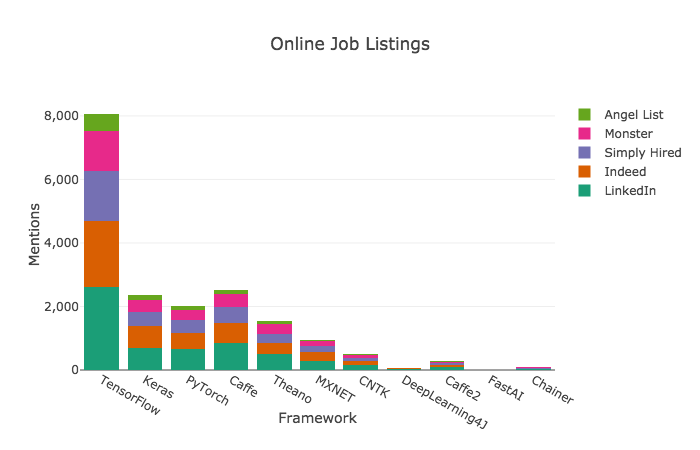

# ## Criteria 1: Online Job Listings

#

# TensorFlow is the clear winner when it comes to frameworks mentioned in job listings. Learn it if you want a job doing deep learning.

#

# >>

#

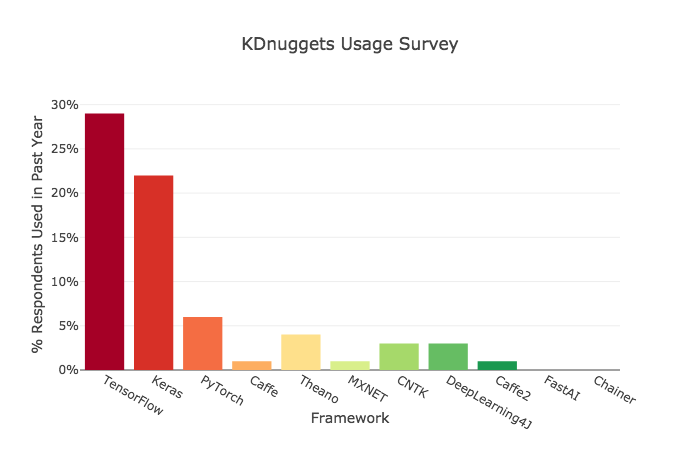

# ## Criteria 2: Usage

#

#

# Keras showed a surprising amount of use — nearly as much as TensorFlow. It’s interesting that US employers are overwhelmingly looking for TensorFlow skills, when — at least internationally — Keras is used almost as frequently.

#

# >>

#

#

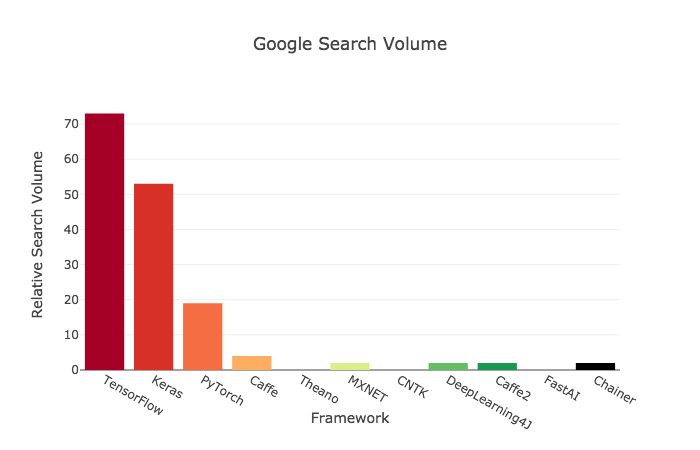

# ## Criteria 4: Google Search Activity

#

# >>

#

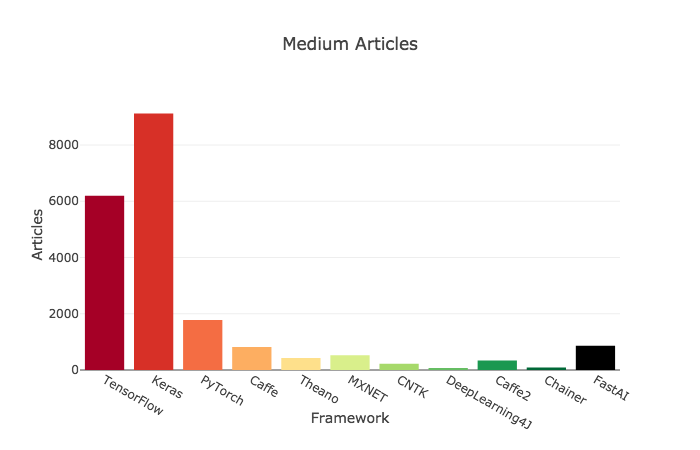

# ## Criteria 5: Medium Articles

#

# >>

#

#

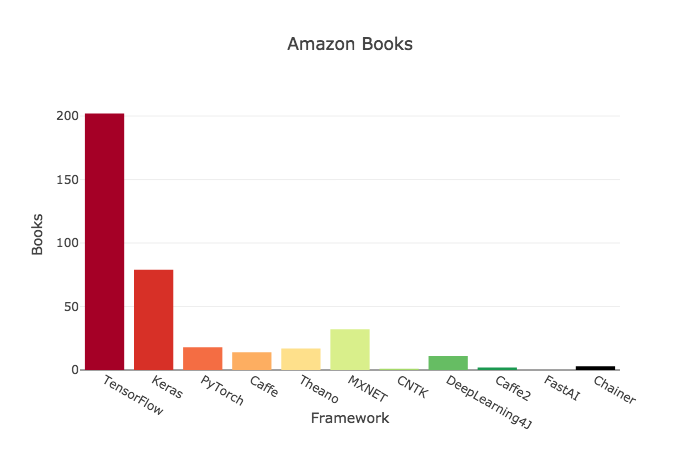

# ## Criteria 6: Amazon Books

#

# >>

#

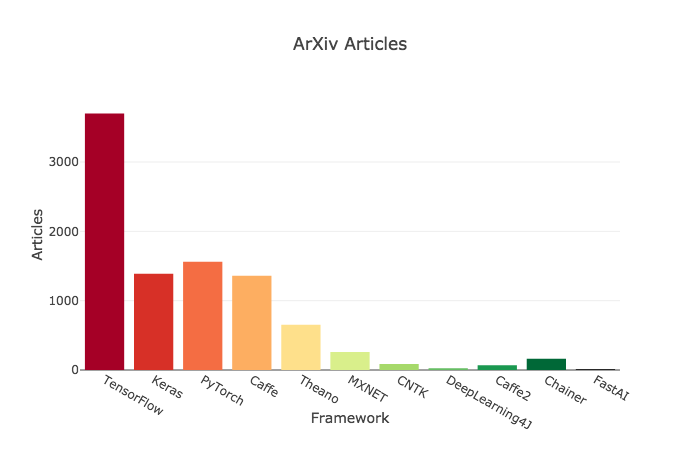

# ## Criteria 7 : ArXiv Articles

#

# >>

#

# ## Criteria 8: GitHub Activity

#

# >>

#

# --------------------------------

#

#

#

#

#

#

#

#

#

#

# + [markdown] id="9v3V8ztAKDCg" colab_type="text"

#

#

# ```

# # This is formatted as code

# ```

#

#

# Before we jump into Tensorflow, we will implemented our first neural network model using Python Numpy package. NumPy is the fundamental package for scientific computing with Python, such as:

#

# 1. Linear Algebra

# 2. Statistics

# 3. Calculus

#

# ## A brief intro to Numpy operations:

#

# 1. Creating a Vector:

# Here we use Numpy to create a 1-D Array which we then call a vector.

#

#

#

#

# + id="GTf7M4r7Lgj9" colab_type="code" colab={}

#Load Library

import numpy as np

#Create a vector as a Row

vector_row = np.array([1,2,3])

#Create vector as a Column

vector_column = np.array([[1],[2],[3]])

# + [markdown] id="JYFjSo0OLqA3" colab_type="text"

# 2. Creating a Matrix

# We Create a 2-D Array in Numpy and call it a Matrix. It contains 2 rows and 3 columns.

# + id="fJlDBq5rLmA-" colab_type="code" colab={}

#Load Library

import numpy as np

#Create a Matrix

matrix = np.array([[1,2,3],[4,5,6]])

print(matrix)

# + [markdown] id="wv99hZqULygH" colab_type="text"

# 3. Selecting Elements

#

# + id="ZLQlxFzkPrKM" colab_type="code" colab={}

#Load Library

import numpy as np

#Create a vector as a Row

vector_row = np.array([ 1,2,3,4,5,6 ])

#Create a Matrix

matrix = np.array([[1,2,3],[4,5,6],[7,8,9]])

print(matrix)

#Select 3rd element of Vector

print(vector_row[2])

#Select 2nd row 2nd column

print(matrix[1,1])

#Select all elements of a vector

print(vector_row[:])

#Select everything up to and including the 3rd element

print(vector_row[:3])

#Select the everything after the 3rd element

print(vector_row[3:])

#Select the last element

print(vector_row[-1])

#Select the first 2 rows and all the columns of the matrix

print(matrix[:2,:])

#Select all rows and the 2nd column of the matrix

print(matrix[:,1:2])

# + [markdown] id="QO3vTGEKQhm7" colab_type="text"

# 4. Describing a Matrix

# + id="q8bDjBhhQpg5" colab_type="code" colab={}

import numpy as np

#Create a Matrix

matrix = np.array([[1,2,3],[4,5,6],[7,8,9]])

#View the Number of Rows and Columns

print(matrix.shape)

#View the number of elements (rows*columns)

print(matrix.size)

#View the number of Dimensions(2 in this case)

print(matrix.ndim)

# + [markdown] id="eKISvY8kQtA0" colab_type="text"

# 5. Finding the max and min values

# + id="abPJd0JrQ4mM" colab_type="code" colab={}

#Load Library

import numpy as np

#Create a Matrix

matrix = np.array([[1,2,3],[4,5,6],[7,8,9]])

print(matrix)

#Return the max element

print(np.max(matrix))

#Return the min element

print(np.min(matrix))

#To find the max element in each column

print(np.max(matrix,axis=0))

#To find the max element in each row

print(np.max(matrix,axis=1))

# + [markdown] id="3Qm64s_eR0zQ" colab_type="text"

# 6. Reshaping Arrays

#

# + id="Pwepq7h_SBBD" colab_type="code" colab={}

#Load Library

import numpy as np

#Create a Matrix

matrix = np.array([[1,2,3],[4,5,6],[7,8,9]])

print(matrix)

#Reshape

print(matrix.reshape(9,1))

#Here -1 says as many columns as needed and 1 row

print(matrix.reshape(1,-1))

#If we provide only 1 value Reshape would return a 1-d array of that length

print(matrix.reshape(9))

#We can also use the Flatten method to convert a matrix to 1-d array

print(matrix.flatten())

# + [markdown] id="cJU3xABuVem_" colab_type="text"

# 7. Calculating Dot Products

# + id="cPKg382VVivy" colab_type="code" colab={}

#Load Library

import numpy as np

#Create vector-1

vector_1 = np.array([ 1,2,3 ])

#Create vector-2

vector_2 = np.array([ 4,5,6 ])

#Calculate Dot Product

print(np.dot(vector_1,vector_2))

#Alternatively you can use @ to calculate dot products

print(vector_1 @ vector_2)

# + [markdown] id="cB-jK7jEXY7F" colab_type="text"

# ##Linear regression in Numpy:

#

# ---

#

#

#

# Write the numpy code for the following model:

#

# $Y=WX+B$

#

# where $X$ is 3x10 matrix: 10 samples and 3 features

#

# $Y$ is 4x10 matrix: 10 samples and 4 outputs

#

# $W$ is the weights matrix with the shape 4x3: connecting 3 inputs to 4 outputs

#

# $b$ is a vector with a size 4 ( one bias per output)

#

# + id="_EtM5LVtWCpm" colab_type="code" colab={}

#Load Library

import numpy as np

# Generate a random X (we do not have a real data)

X = np.random.rand(3,10)

display(X.shape)

# Generate a random weights vector

W = np.random.rand(4,3)

# Generate a random bias

b = np.random.rand(4,1)

# Calculate Y

Y= np.dot(W,X) + b

display(Y.shape)

# + [markdown] id="hMIoucH9hFfr" colab_type="text"

# ## One neuron model in numpy:

#

# A single neuron has multiple inputs and one output, in addition to the linear regression model, we need to add non linearity through an activation function:

#

# $Y= f(WX+B)$

#

# where $X$ is n x m matrix: m samples and n features/inputs

#

# $f(g)= \frac{1}{1+\exp(-g)}$ is a sigmoid acitavation function

#

# $Y$ is nh1 x m matrix: m samples and ny outputs

#

# $W$ is the weights matrix with the shape nh1 x n: connecting 3 inputs to 4 outputs

#

# $b$ is a vector with a size nh1 ( one bias per output)

#

#

#

#

# + id="Qry1JDGEiLmx" colab_type="code" colab={}

# load Library

import numpy as np

f = lambda x: 1.0/(1.0 + np.exp(-x)) # activation function (use sigmoid)

# Generate a random X (we do not have a real data)

X = np.random.rand(3,10)

# Generate a random weights vector

W = np.random.rand(1,3)

# Generate a random bias

b = np.random.rand()

# Calculate Y

Y= f(np.dot(W,X) + b)

display(Y)

# + [markdown] id="aSnbti9ooIIs" colab_type="text"

# ## One hidden layer model in numpy:

#

# The difference from the one neuron model is simple: we need only to change the number of output "ny"

# + id="ZAY3o6zBnpA0" colab_type="code" colab={}

# load Library

import numpy as np

#Suppose we have the following NN architecture

m = 10 # Number of samples

ni= 3 # Number of input neurons

h = 1 # Number of hidden layers

nh1 = 4 # Number of neurons in the hidden layer 1

no =1 # Number of neurons in the output layer

f = lambda x: 1.0/(1.0 + np.exp(-x)) # activation function (use sigmoid)

# Generate a random X (we do not have a real data)

X = np.random.rand(ni,m)

# Generate a random weights vector for the first hidden layer

W1 = np.random.rand(nh1,ni)

# Generate a random bias for the first hidden layer

b1 = np.random.rand(nh1,1)

# Generate a random weights vector for the output layer

W2 = np.random.rand(no,nh1)

# Generate a random bias for the output layer

b2 = np.random.rand(no,1)

# Calculate output of the first hidden layer

Yh1= f(np.dot(W1,X) + b1)

# Calculate output of the output layer

Y= f(np.dot(W2,Yh1) + b2)

display(Yh1.shape)

display(Y.shape)

# + [markdown] id="11fqb_bQvIEi" colab_type="text"

# ## Gradient descent in Numpy:

# Let us now start training a neural network

# We start by implementing a simple gradient descent for linear regression

# + id="mzBJxwb7FFZ2" colab_type="code" colab={}

# + id="QaQyLoxk2FyG" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 70} outputId="d50165d2-7fa1-48af-8d81-7e1da9c47783"

import numpy as np

converged = False

iter = 0

m = 10 # Number of samples

ni= 1 # Number of input neurons

h = 1 # Number of hidden layers

no =1 # Number of neurons in the output layer

# Generate a random X (we do not have a real data)

X = np.random.rand(m)

display(X)

# learning rate

alpha =0.01

# early stop criteria

ep=0.001

# maximum number of training iterations

max_iter=100

# Generate a random weights vector for the output layer

W1 = np.random.rand()

# Generate a random bias for the output layer

b1 = np.random.rand()

# Generate a random ground truth

Y_gr = np.random.rand(m)

J = sum([(b1 + W1*X[i] - Y_gr[i])**2 for i in range(m)])

while not converged:

# for each training sample, compute the gradient (d/d_theta j(theta))

grad0 = 1.0/m * sum([(b1 + W1*X[i] - Y_gr[i]) for i in range(m)])

grad1 = 1.0/m * sum([(b1 + W1*X[i] - Y_gr[i])*X[i] for i in range(m)])

# update the theta_temp

temp0 = W1 - alpha * grad0

temp1 = b1 - alpha * grad1

# update theta

W1 = temp0

b1 = temp1

# sum squared error

e = sum([(b1 + W1*X[i] - Y_gr[i])**2 for i in range(m)])

if abs(J-e) <= ep:

print('Converged, iterations: ', iter, '!!!')

converged = True

J = e # update error

iter += 1 # update iter

if iter == max_iter:

print('Max interactions exceeded!')

converged = True

# + [markdown] id="jRqCCVlmFIZl" colab_type="text"

# ##Assignment 1

# ### Backpropagation in Numpy:

#

# + id="7sW8ZoEVMb9I" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 7181} outputId="1186687f-26f8-43a5-a229-d84a4eb74d51"

num_inputs = 4

hidden_layer_1_outputs = 4

hidden_layer_2_outputs = 3

num_samples = 10

f = lambda x: 1.0/(1.0 + np.exp(-x)) # activation function (use sigmoid)

X = np.random.rand(num_samples,num_inputs-1) # 10x4

X = np.hstack((np.ones((X.shape[0], 1)), X))

display(X.shape)

# Generate a random weights vector for the first hidden layer

W_h1 = np.random.rand(num_inputs,hidden_layer_1_outputs) # 4x4

# Generate a random weights vector for the second hidden layer

W_h2 = np.random.rand(hidden_layer_1_outputs+1,hidden_layer_2_outputs)

# Calculate output of hidden layer 1

h1= f(np.dot(X,W_h1))

display(h1.shape)

# Calculate output of hidden layer 2

#h2= f(np.dot(h1,W_h2))

#display(h2.shape)

# learning rate

alpha =0.01

# early stop criteria

ep=0.001

# maximum number of training iterations

max_iter=100

# Generate a random ground truth

Y_gr = np.random.rand(hidden_layer_2_outputs,num_samples)

#J = sum([(h2 - Y_gr[:,i])**2 for i in range(num_samples)]) #cost function sum of squared error

print(J)

#while not converged:

for i in range(100):

#print('Interations:' , iter)

# forward prop

# Calculate output of hidden layer 1

h1= f(np.dot(X,W_h1))

# append a column with ones representing bias inputs

h1 = np.hstack((np.ones((h1.shape[0], 1)), h1))

# Calculate output of hidden layer 2

h2= f(np.dot(h1,W_h2))

#error/error

J = np.sum(np.square(h2- Y_gr.T))

# gradient of the output layer

grad_h2 = h2*J

# error

#display('h1', (h1[:, 1:] * (1 - h1[:, 1:])).shape)

#display('dot ', np.dot(grad_h2, W_h2.T[:, 1:]).shape)

hidden_error = (h1[:, 1:] * (1 - h1[:, 1:])) * np.dot(grad_h2, W_h2.T[:, 1:])

#print(h1.shape)

#print(hidden_error.shape)

grad_h1= h1[:, :, np.newaxis]*hidden_error[:, np.newaxis, :]

# average gradient

total_hidden_gradient_h2 = np.average(grad_h2, axis=0)

total_output_gradient_h1 = np.average(grad_h1, axis=0)

#print(grad_h1.shape)

#print(W_h1.shape)

# update weights

W_h2 += - alpha * total_hidden_gradient_h2

W_h1 += - alpha * total_output_gradient_h1[1:,:]

print('iter',i)

print('cost',J)

|

Session_1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Online Retails Purchase

# ### Introduction:

#

#

#

# ### Step 1. Import the necessary libraries

# ### Step 2. Import the dataset from this [address](https://raw.githubusercontent.com/guipsamora/pandas_exercises/master/07_Visualization/Online_Retail/Online_Retail.csv).

# ### Step 3. Assign it to a variable called online_rt

# Note: if you receive a utf-8 decode error, set `encoding = 'latin1'` in `pd.read_csv()`.

# ### Step 4. Create a histogram with the 10 countries that have the most 'Quantity' ordered except UK

# ### Step 5. Exclude negative Quantity entries

# ### Step 6. Create a scatterplot with the Quantity per UnitPrice by CustomerID for the top 3 Countries (except UK)

# ### Step 7. Investigate why the previous results look so uninformative.

#

# This section might seem a bit tedious to go through. But I've thought of it as some kind of a simulation of problems one might encounter when dealing with data and other people. Besides there is a prize at the end (i.e. Section 8).

#

# (But feel free to jump right ahead into Section 8 if you want; it doesn't require that you finish this section.)

#

# #### Step 7.1 Look at the first line of code in Step 6. And try to figure out if it leads to any kind of problem.

# ##### Step 7.1.1 Display the first few rows of that DataFrame.

# ##### Step 7.1.2 Think about what that piece of code does and display the dtype of `UnitPrice`

# ##### Step 7.1.3 Pull data from `online_rt`for `CustomerID`s 12346.0 and 12347.0.

# #### Step 7.2 Reinterpreting the initial problem.

#

# To reiterate the question that we were dealing with:

# "Create a scatterplot with the Quantity per UnitPrice by CustomerID for the top 3 Countries"

#

# The question is open to a set of different interpretations.

# We need to disambiguate.

#

# We could do a single plot by looking at all the data from the top 3 countries.

# Or we could do one plot per country. To keep things consistent with the rest of the exercise,

# let's stick to the latter oprion. So that's settled.

#

# But "top 3 countries" with respect to what? Two answers suggest themselves:

# Total sales volume (i.e. total quantity sold) or total sales (i.e. revenue).

# This exercise goes for sales volume, so let's stick to that.

#

# ##### Step 7.2.1 Find out the top 3 countries in terms of sales volume.

# ##### Step 7.2.2

#

# Now that we have the top 3 countries, we can focus on the rest of the problem:

# "Quantity per UnitPrice by CustomerID".

# We need to unpack that.

#

# "by CustomerID" part is easy. That means we're going to be plotting one dot per CustomerID's on our plot. In other words, we're going to be grouping by CustomerID.

#

# "Quantity per UnitPrice" is trickier. Here's what we know:

# *One axis will represent a Quantity assigned to a given customer. This is easy; we can just plot the total Quantity for each customer.

# *The other axis will represent a UnitPrice assigned to a given customer. Remember a single customer can have any number of orders with different prices, so summing up prices isn't quite helpful. Besides it's not quite clear what we mean when we say "unit price per customer"; it sounds like price of the customer! A reasonable alternative is that we assign each customer the average amount each has paid per item. So let's settle that question in that manner.

#

# #### Step 7.3 Modify, select and plot data

# ##### Step 7.3.1 Add a column to online_rt called `Revenue` calculate the revenue (Quantity * UnitPrice) from each sale.

# We will use this later to figure out an average price per customer.

# ##### Step 7.3.2 Group by `CustomerID` and `Country` and find out the average price (`AvgPrice`) each customer spends per unit.

# ##### Step 7.3.3 Plot

# #### Step 7.4 What to do now?

# We aren't much better-off than what we started with. The data are still extremely scattered around and don't seem quite informative.

#

# But we shouldn't despair!

# There are two things to realize:

# 1) The data seem to be skewed towaards the axes (e.g. we don't have any values where Quantity = 50000 and AvgPrice = 5). So that might suggest a trend.

# 2) We have more data! We've only been looking at the data from 3 different countries and they are plotted on different graphs.

#

# So: we should plot the data regardless of `Country` and hopefully see a less scattered graph.

#

# ##### Step 7.4.1 Plot the data for each `CustomerID` on a single graph

# ##### Step 7.4.2 Zoom in so we can see that curve more clearly

# ### 8. Plot a line chart showing revenue (y) per UnitPrice (x).

#

# Did Step 7 give us any insights about the data? Sure! As average price increases, the quantity ordered decreses. But that's hardly surprising. It would be surprising if that wasn't the case!

#

# Nevertheless the rate of drop in quantity is so drastic, it makes me wonder how our revenue changes with respect to item price. It would not be that surprising if it didn't change that much. But it would be interesting to know whether most of our revenue comes from expensive or inexpensive items, and how that relation looks like.

#

# That is what we are going to do now.

#

# #### 8.1 Group `UnitPrice` by intervals of 1 for prices [0,50), and sum `Quantity` and `Revenue`.

# #### 8.3 Plot.

# #### 8.4 Make it look nicer.

# x-axis needs values.

# y-axis isn't that easy to read; show in terms of millions.

# ### BONUS: Create your own question and answer it.

|

07_Visualization/Online_Retail/Exercises.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/Tyred/TimeSeries_OCC-PUL/blob/main/Notebooks/OC_SVM.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="Emi8F7ZFdSrK"

# ## Imports

# + id="nnoW8j3yNpuZ"

# %matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import OneClassSVM

from sklearn.metrics import precision_score, accuracy_score, recall_score, f1_score

import tensorflow as tf

from tensorflow import keras

from sklearn.decomposition import PCA

from sklearn.manifold import MDS

# + [markdown] id="6y-sBkgCb8GM"

# ## Reading the dataset from Google Drive

#

# + id="hn5JbVRONu1T" colab={"base_uri": "https://localhost:8080/"} outputId="fa9ddcce-1268-42b6-e4d7-2138b5957b8d"

path = 'drive/My Drive/UFSCar/FAPESP/IC/Data/UCRArchive_2018'

dataset = input('Dataset: ')

tr_data = np.genfromtxt(path + "/" + dataset + "/" + dataset + "_TRAIN.tsv", delimiter="\t",)

te_data = np.genfromtxt(path + "/" + dataset + "/" + dataset + "_TEST.tsv", delimiter="\t",)

labels = te_data[:, 0]

print("Labels:", np.unique(labels))

# + [markdown] id="R0l2RpHUcHaB"

# ## Splitting in Train-Test data

# + id="xLaE6_vANwQL" colab={"base_uri": "https://localhost:8080/"} outputId="95f1f3a8-219b-401c-cdfa-09a245794887"

class_label = int(input('Positive class label: '))

train_data = tr_data[tr_data[:, 0] == class_label, 1:] # train

test_data = te_data[:, 1:] # test

print("Train data shape:", train_data.shape)

print("Test data shape:", test_data.shape)

# + [markdown] id="UcC1Ru1McR5b"

# ## Labeling for OCC Task

# <li> Label 1 for positive class </li>

# <li> Label -1 for other class(es) </li>

# + id="wEFEYrdWOHl_" colab={"base_uri": "https://localhost:8080/"} outputId="8d26296a-004c-40fe-d7a8-df263ceefa78"

occ_labels = [1 if x == class_label else -1 for x in labels]

print("Positive samples:", occ_labels.count(1))

print("Negative samples:", occ_labels.count(-1))

# + [markdown] id="G-Pi8UleecbW"

# # MDS Plot

# + colab={"base_uri": "https://localhost:8080/"} id="gVJivoUqHHer" outputId="e8121822-002d-4877-a080-01dbc5ea994e"

embedding = MDS(n_components=2, random_state=42)

mds_data = embedding.fit_transform(train_data)

mds_test = embedding.fit_transform(test_data)

print(mds_data.shape)

print(mds_test.shape)

# + [markdown] id="HuY7WPf3egU0"

# ## Train

# + colab={"base_uri": "https://localhost:8080/", "height": 281} id="3f3Wx0XRGqM3" outputId="c1ad80a0-0f6a-4fb4-860e-ab830db3d731"

x = [row[0] for row in mds_data]

y = [row[1] for row in mds_data]

plt.plot(x, y, 'x',label='train data')

plt.title('MDS Training Data')

plt.legend()

plt.show()

# + [markdown] id="ddWCsjXBeih3"

# ## Test

# + id="TzXgqXdHP639"

negative_mds_test = np.array([x for x in mds_test[np.where(labels!=class_label)]])

positive_mds_test = np.array([x for x in mds_test[np.where(labels==class_label)]])

# + colab={"base_uri": "https://localhost:8080/", "height": 281} id="K52-1KVCNPtR" outputId="38a375b6-4e74-4195-8710-438ef485a2a3"

x_positive = [row[0] for row in positive_mds_test]

y_positive = [row[1] for row in positive_mds_test]

x_negative = [row[0] for row in negative_mds_test]

y_negative = [row[1] for row in negative_mds_test]

plt.plot(x_positive, y_positive, 'x', label='positive class', c = 'blue')

plt.plot(x_negative, y_negative, 'o', label='negative class', c = 'red')

plt.title('MDS Test Data')

plt.legend()

plt.show()

# + [markdown] id="vF69fFp98esV"

# # Feature extraction

#

# + [markdown] id="0to8-3vv8gn-"

# ## PCA

# + id="scNbHiyg_0qM" colab={"base_uri": "https://localhost:8080/", "height": 35} outputId="1c92bf05-bb29-4b8c-a90d-33c4bede521a"

"""pca = PCA(svd_solver='full')

train_data = pca.fit_transform(train_data)

test_data = pca.transform(test_data)

print(train_data.shape)"""

# + [markdown] id="BWmsaj478iVD"

# ## Convolutional Autoencoder

#

# + id="hQ68Z_Yn8nXy"

# Convolutional Autoencoder with MaxPooling:

class ConvAutoencoder(tf.keras.Model):

def __init__(self, serie_length):

super(ConvAutoencoder, self).__init__()

self.conv_1 = keras.layers.Conv1D(serie_length[0]//16, 3, activation='swish', padding='same', input_shape=(serie_length))

self.max_1 = keras.layers.MaxPooling1D(2, padding='same')

self.conv_2 = keras.layers.Conv1D(serie_length[0]//8, 3, activation='swish', padding='same')

self.max_2 = keras.layers.MaxPooling1D(2, padding='same')

self.conv_3 = keras.layers.Conv1D(1, 3, activation='swish', padding='same')

# encoded representation

self.encoded = keras.layers.MaxPooling1D(2, padding='same')

# decoder layers

self.conv_4 = keras.layers.Conv1D(1, 3, activation='swish', padding='same')

self.up_1 = keras.layers.UpSampling1D(2)

self.conv_5 = keras.layers.Conv1D(serie_length[0]//8, 3, activation='swish', padding='same')

self.up_2 = keras.layers.UpSampling1D(2)

self.conv_6 = keras.layers.Conv1D(serie_length[0], 3, activation='swish', padding='same')

self.up_3 = keras.layers.UpSampling1D(2)

# decoded output

self.decoded = keras.layers.Conv1D(1, 3, activation='linear', padding='same')

def encode(self, inputs):

if self.padding != 0:

inputs = keras.layers.ZeroPadding1D(padding=(8 + 8-self.padding, 0))(inputs)

x = self.conv_1(inputs)

x = self.max_1(x)

x = self.conv_2(x)

x = self.max_2(x)

x = self.conv_3(x)

return self.encoded(x)

def call(self, inputs):

self.padding = inputs.shape[1] % 8

x = self.encode(inputs)

x = self.conv_4(x)

x = self.up_1(x)

x = self.conv_5(x)

x = self.up_2(x)

x = self.conv_6(x)

x = self.up_3(x)

if self.padding != 0:

x = keras.layers.Cropping1D(cropping=(8 + 8-self.padding, 0))(x)

return self.decoded(x)

def model(self):

x = keras.layers.Input(shape=(serie_length, 1))

return tf.keras.Model(inputs=[x], outputs=self.call(x))

# + [markdown] id="YFq9IKqQ-S9T"

# ### Initializing and training the Conv Autoencoder

# + colab={"base_uri": "https://localhost:8080/"} id="SgM_5LPm-ceG" outputId="15c9c6a5-d9ab-4b29-effe-21c39bfc3ca5"

serie_length = train_data.shape[1]

model = ConvAutoencoder((serie_length, 1))

model.compile(optimizer='adam', loss='mse')

# Train

batch_size = 16

epochs = 50

train_data = train_data[..., np.newaxis]

test_data = test_data[..., np.newaxis]

model.fit(train_data, train_data, epochs=epochs, batch_size=batch_size)

# + colab={"base_uri": "https://localhost:8080/"} id="Z8MD8TRkZJW7" outputId="d32e1f0b-11b5-467e-f2b2-431fe245a419"

model.model().summary()

# + [markdown] id="Tuia1i6pc63Q"

# # Results

# + [markdown] id="fkrvRx9oeC6V"

# ## Data extracted by the ConvAutoencoder

# + [markdown] id="q9gnUq6pfdZj"

# ### OC-SVM Fitting

# + id="F7U2LY8YPbLW"

train_data_encoded = np.array(model.encode(train_data))

train_data_encoded = np.squeeze(train_data_encoded)

clf_cae = OneClassSVM(gamma='scale', nu=0.2, kernel='rbf').fit(train_data_encoded)

# + [markdown] id="4EXqf35HfoZu"

# ### Scores

# + id="wXfI9NmGQKAw" colab={"base_uri": "https://localhost:8080/"} outputId="94e11d22-7039-4259-dc99-e1dc329bfef2"

test_data_encoded = np.array(model.encode(test_data))

test_data_encoded = np.squeeze(test_data_encoded)

result_labels = clf_cae.predict(test_data_encoded)

acc = accuracy_score(occ_labels, result_labels)

precision = precision_score(occ_labels, result_labels)

recall = recall_score(occ_labels, result_labels)

f1 = f1_score(occ_labels, result_labels)

print("Accuracy: %.2f" % (acc*100) + "%")

print("Precision: %.2f" % (precision*100) + "%")

print("Recall: %.2f" % (recall*100) + "%")

print("F1-Score: %.2f" % (f1*100) + "%")

# + [markdown] id="JOpjLAoMdc8W"

# ## Raw Data

# + [markdown] id="8bhO6UkmchYB"

# ### OC-SVM Fitting

# + id="Yml7N8ElfiVF"

train_data_raw = np.squeeze(train_data)

clf = OneClassSVM(gamma='scale', nu=0.2, kernel='rbf').fit(train_data_raw)

# + [markdown] id="1jMGult_fkL6"

# ### Scores

# + colab={"base_uri": "https://localhost:8080/"} id="Hr8onWKieQ6U" outputId="1eadf556-23c6-4c7d-f271-a53e3bf1c061"

test_data_raw = np.squeeze(test_data)

result_labels = clf.predict(test_data_raw)

acc = accuracy_score(occ_labels, result_labels)

precision = precision_score(occ_labels, result_labels)

recall = recall_score(occ_labels, result_labels)

f1 = f1_score(occ_labels, result_labels)

print("Accuracy: %.2f" % (acc*100) + "%")

print("Precision: %.2f" % (precision*100) + "%")

print("Recall: %.2f" % (recall*100) + "%")

print("F1-Score: %.2f" % (f1*100) + "%")

|

Notebooks/algorithms/OC_SVM.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import scipy

from scipy import stats, signal

import numpy as np

import matplotlib

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (15,10)

# ## **HW 4**

# #### **Problem 0**

# Making 'fake' data

# +

#Generating a time scale

t = np.linspace(0,np.pi*100,np.int(1e5))

#Creating an offset sin wave

N = 10+np.sin(t)

#Creating a background distribution that depends on N

bkgd = stats.norm.rvs(size = np.int(1e5))*np.sqrt(N)+N

# -

# #### **Problem 1**

# ##### **A)** Make a scatter plot of the first 1000 data points

plt.plot(t[0:1001],bkgd[0:1001],'o')

plt.xlabel('Time')

plt.title('First 1000 Data Points')

plt.show()

# ##### **B)** Generalize your code so you can make a plot of any X contiguous points and produce an example plot of a set of data somethere in the middle of your array

def slice_plt(x,y,start,length):

plt.plot(t[start-1:start+length+1],bkgd[start-1:start+length+1],'o')

plt.title('Slice Plot from ' + str(np.round(x[start-1],4)) + ' to ' + str(np.round(x[start+length+1],4)))

plt.show()

slice_plt(t,bkgd,500,2000)

# ##### **C)** Sometimes you want to sample the data, such as plotting every 100th point. Make a plot of the full data range, but only every 100th point.

index = np.arange(0,np.int(1e5),100)

plt.plot(t[index],bkgd[index],'o')

plt.title('Entire Range Sampling every 100th Point')

plt.show()

# #### **Problem 2**

# ##### **A)** Make a 2d histogram plot

plt.hist2d(t,bkgd,bins = [100,50], density = True)

plt.colorbar()

plt.show()

# ##### **B)** Clearly explain what is being plotted in your plot

#

# The plot above shows the probability density of getting a certain range of values in a certain range of time. The closer to yellow a region is the more likely that measurement is to occur. The higher probability regions are mostly localized about the center of the plot at 10. They follow a roughly wavelike path about this center.

#

# #### **Problem 3**

# ##### **A)** Make a scatter plot of all your data, but now folded.

t2 = t%(2*np.pi)

plt.plot(t2,bkgd,'o',alpha=0.4)

plt.show()

# ##### **B)** Make a 2D histogram plot of your folded data

blocks = plt.hist2d(t,bkgd,bins = [100,50], density = True)

plt.colorbar()

plt.show()

# ##### **C)** Calculate the average as a function of the folded variable. You can then overplot this on the 2d histogram to show the average as a function of folded time.

mean = np.zeros(100)

for i in range(0,100):

mean[i] = sum(blocks[2][1:]*blocks[0][i,:]/sum(blocks[0][i,:]))

plt.hist2d(t,bkgd,bins = [100,50], density = True)

plt.plot(blocks[1][1:],mean, linewidth = 2, color = 'black')

plt.colorbar()

plt.show()

|

Homework/.ipynb_checkpoints/HW4-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] _uuid="93d7b4b8f5f6e5289cfc0312d650744e64905bc7"

#

# + [markdown] _cell_guid="b1076dfc-b9ad-4769-8c92-a6c4dae69d19" _uuid="8f2839f25d086af736a60e9eeb907d3b93b6e0e5"

# # The purpose of this notebook

#

# **UPDATE 1:** *In version 5 of this notebook, I demonstrated that the model is capable of reaching the LB score of 0.896. Now, I would like to see if the augmentation idea from [this kernel](https://www.kaggle.com/jiweiliu/lgb-2-leaves-augment) would help us to reach an even better score.*

#

# **UPDATE 2:** *Version 10 of this notebook shows that the augmentation idea does not work very well for the logistic regression -- the CV score clearly went down to 0.892. Good to know -- no more digging in this direction.*

#

# I have run across [this nice script](https://www.kaggle.com/ymatioun/santander-linear-model-with-additional-features) by Youri Matiounine in which a number of new features are added and linear regression is performed on the resulting data set. I was surprised by the high performance of this simple model: the LB score is about 0.894 which is close to what you can get using the heavy artillery like LighGBM. At the same time, I felt like there is a room for improvement -- after all, this is a classification rather than a regression problem, so I was wondering what will happen if we perform a logistic regression on Matiounine's data set. This notebook is my humble attempt to answer this question.

#

# Matiounine's features can be used in other models as well. To avoid the necessety of re-computing them every time when we switch from one model to another, I show how to store the processed data in [feather files](https://pypi.org/project/feather-format/), so that next time they can be loaded very fast into memory. This is much faster and safer than using CSV format.

#

# # Computing the new features

#

# Importing libraries.

# + _uuid="319c9748ad2d9b82cc875000f58afa2129aeb9c3"

import os

import gc

import sys

import time

import shutil

import feather

import numpy as np

import pandas as pd

from scipy.stats import norm, rankdata

from sklearn.pipeline import Pipeline

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import StratifiedKFold

from sklearn.metrics import roc_curve, auc, roc_auc_score

# + [markdown] _uuid="31a0c430046df842333652c410b3181d800f0551"

# Now, let's read the CSV files containing the training and testing data and measure how long it takes.

#

# Train:

# + _uuid="0d080b4a0bf27808a316196c71948a96280ef177"

path_train = '../input/train.feather'

path_test = '../input/test.feather'

print("Reading train data...")

start = time.time()

train = pd.read_csv('../input/train.csv')

end = time.time()

print("It takes {0:.2f} seconds to read 'train.csv'.".format(end - start))

# + [markdown] _uuid="1e6904f34859901e764adde45ed0bb3bc13e4f58"

# Test:

# + _uuid="0fca1a0b7f595147cc5c3641b1a45c9d7f8e2340"

start = time.time()

print("Reading test data...")

test = pd.read_csv('../input/test.csv')

end = time.time()

print("It takes {0:.2f} seconds to read 'test.csv'.".format(end - start))

# + [markdown] _uuid="9c74d587203855a0a8eb7da6b2f6abb3090bb60d"

# Saving the 'target' and 'ID_code' data.

# + _uuid="74a87959eb66d371c314180f4877d1afdde136b7"

target = train.pop('target')

train_ids = train.pop('ID_code')

test_ids = test.pop('ID_code')

# + [markdown] _uuid="8c2c537288b4915a1f860065a2046e47cae19459"

# Saving the number of rows in 'train' for future use.

# + _uuid="b1026519541d70d9206f9941fc29d19005fa1dcd"

len_train = len(train)

# + [markdown] _uuid="af2947142503c41f3c26e9c805e14e033fceb955"

# Merging test and train.

# + _uuid="fc7bb057b85c4a8b12b102e7432e261ff6a92954"

merged = pd.concat([train, test])

# + [markdown] _uuid="5b29b8bd47b43d76ee650e12e063c34c3c1ad189"

# Removing data we no longer need.

# + _uuid="bca8a00d9d62f3a4479c524b66d6e906ac155b7e"

del test, train

gc.collect()

# + [markdown] _uuid="ef8301089d9bfd8880ad0165e3d1c248a5fb1fde"

# Saving the list of original features in a new list `original_features`.

# + _uuid="134f8d281a4fafdbbbd51fb3429015d271d895ac"

original_features = merged.columns

# + [markdown] _uuid="8787d83673d27fe9529524257c660933af610ab2"

# Adding more features.

# + _uuid="06df646dee338e944955dd6059df57cd6c73afa0"

for col in merged.columns:

# Normalize the data, so that it can be used in norm.cdf(),

# as though it is a standard normal variable

merged[col] = ((merged[col] - merged[col].mean())

/ merged[col].std()).astype('float32')

# Square

merged[col+'^2'] = merged[col] * merged[col]

# Cube

merged[col+'^3'] = merged[col] * merged[col] * merged[col]

# 4th power

merged[col+'^4'] = merged[col] * merged[col] * merged[col] * merged[col]

# Cumulative percentile (not normalized)

merged[col+'_cp'] = rankdata(merged[col]).astype('float32')

# Cumulative normal percentile

merged[col+'_cnp'] = norm.cdf(merged[col]).astype('float32')

# + [markdown] _uuid="d5fd487e4440606deb9e936346e982513f0718c9"

# Getting the list of names of the added features.

# + _uuid="456a64b4d2c1ada1b6db546a1d004537df4bd238"

new_features = set(merged.columns) - set(original_features)

# + [markdown] _uuid="8188eb856e421905972cc6f34ab4b43e87dd41f8"

# Normalize the data. Again.

# + _uuid="7180731459fe9ce60f95b94b77f3d7f9a565823d"

for col in new_features:

merged[col] = ((merged[col] - merged[col].mean())

/ merged[col].std()).astype('float32')

# + [markdown] _uuid="3f1039a0b002c1db092a9b3d590759531facc3e6"

# Saving the data to feather files.

# + _uuid="9f04f23ad704daa0207a03c9c6e5d680ac0caed8"

path_target = 'target.feather'

path_train_ids = 'train_ids_extra_features.feather'

path_test_ids = 'test_ids_extra_features.feather'

path_train = 'train_extra_features.feather'

path_test = 'test_extra_features.feather'

print("Writing target to a feather files...")

pd.DataFrame({'target' : target.values}).to_feather(path_target)

print("Writing train_ids to a feather files...")

pd.DataFrame({'ID_code' : train_ids.values}).to_feather(path_train_ids)

print("Writing test_ids to a feather files...")

pd.DataFrame({'ID_code' : test_ids.values}).to_feather(path_test_ids)

print("Writing train to a feather files...")

feather.write_dataframe(merged.iloc[:len_train], path_train)

print("Writing test to a feather files...")

feather.write_dataframe(merged.iloc[len_train:], path_test)

# + [markdown] _uuid="640948a1a36e2d3d73f18ceb9cfb816be6d11d7b"

# Removing data we no longer need.

# + _cell_guid="79c7e3d0-c299-4dcb-8224-4455121ee9b0" _uuid="d629ff2d2480ee46fbb7e2d37f6b5fab8052498a"

del target, train_ids, test_ids, merged

gc.collect()

# + [markdown] _uuid="837f988316528d5c3d4530043448fe5849be3fa5"

# # Loading the data from feather files

#

# Now let's load of these data back into memory. This will help us to illustrate the advantage of using the feather file format.

# + _uuid="60b26db1cf85167b14f9223af995a8656bdaa316"

path_target = 'target.feather'

path_train_ids = 'train_ids_extra_features.feather'

path_test_ids = 'test_ids_extra_features.feather'

path_train = 'train_extra_features.feather'

path_test = 'test_extra_features.feather'

print("Reading target")

start = time.time()

y = feather.read_dataframe(path_target).values.ravel()

end = time.time()

print("{0:5f} sec".format(end - start))

# + _uuid="2f60516cb907e9e62f97eb99ebb00db079edc6e3"

print("Reading train_ids")

start = time.time()

train_ids = feather.read_dataframe(path_train_ids).values.ravel()

end = time.time()

print("{0:5f} sec".format(end - start))

# + _uuid="4c8ad8191f0a4cd976645e7d7b59f7c16c48311f"

print("Reading test_ids")

start = time.time()

test_ids = feather.read_dataframe(path_test_ids).values.ravel()

end = time.time()

print("{0:5f} sec".format(end - start))

# + _uuid="afe5ba0c48d46a05e09c2de00b094a5a479fded6"

print("Reading training data")

start = time.time()

train = feather.read_dataframe(path_train)

end = time.time()

print("{0:5f} sec".format(end - start))

# + _uuid="4764997b330eb79e2962c6ea207b2bf43d75b7a0"

print("Reading testing data")

start = time.time()

test = feather.read_dataframe(path_test)

end = time.time()

print("{0:5f} sec".format(end - start))

# + [markdown] _uuid="d3d1c00f01bdcc40525a6d59cf3bc463bdbcef11"

# Hopefully now you can see the great advantage of using the feather files: it is blazing fast. Just compare the timings shown above with those measured for the original CSV files: the processed data sets (stored in the feather file format) that we have just loaded are much bigger in size that the original ones (stored in the CSV files) but we can load them in almost no time!

#

# # Logistic regession with the added features.

#

# Now let's finally do some modeling! More specifically, we will build a straighforward logistic regression model to see whether or not we can improve on linear regression result (LB 0.894).

#

# Setting things up for the modeling phase.

# + _uuid="72ddd6eee811099caba7f2cc610e7f099d8fa84f"

NFOLDS = 5

RANDOM_STATE = 871972

feature_list = train.columns

test = test[feature_list]

X = train.values.astype('float32')

X_test = test.values.astype('float32')

folds = StratifiedKFold(n_splits=NFOLDS, shuffle=True,

random_state=RANDOM_STATE)

oof_preds = np.zeros((len(train), 1))

test_preds = np.zeros((len(test), 1))

roc_cv =[]

del train, test

gc.collect()

# + [markdown] _uuid="6e5750e889c0aab08e0230a00641bb589a723d04"

# Defining a function for the augmentation proceduer (for deltails, see [this kernel](https://www.kaggle.com/jiweiliu/lgb-2-leaves-augment)):

# + _uuid="8bdee398862caef3ddcfeaabadfc025e2fea280a"

def augment(x,y,t=2):

if t==0:

return x, y

xs,xn = [],[]

for i in range(t):

mask = y>0

x1 = x[mask].copy()

ids = np.arange(x1.shape[0])

for c in range(x1.shape[1]):

np.random.shuffle(ids)

x1[:,c] = x1[ids][:,c]

xs.append(x1)

del x1

gc.collect()

for i in range(t//2):

mask = y==0

x1 = x[mask].copy()

ids = np.arange(x1.shape[0])

for c in range(x1.shape[1]):

np.random.shuffle(ids)

x1[:,c] = x1[ids][:,c]

xn.append(x1)

del x1

gc.collect()

print("The sizes of x, xn, and xs are {}, {}, {}, respectively.".format(sys.getsizeof(x),

sys.getsizeof(xn),

sys.getsizeof(xs)

)

)

xs = np.vstack(xs)

xn = np.vstack(xn)

print("The sizes of x, xn, and xs are {}, {}, {}, respectively.".format(sys.getsizeof(x)/1024**3,

sys.getsizeof(xn),

sys.getsizeof(xs)

)

)

ys = np.ones(xs.shape[0])

yn = np.zeros(xn.shape[0])

y = np.concatenate([y,ys,yn])

print("The sizes of y, yn, and ys are {}, {}, {}, respectively.".format(sys.getsizeof(y),

sys.getsizeof(yn),

sys.getsizeof(ys)

)

)

gc.collect()

return np.vstack([x,xs, xn]), y

# + [markdown] _uuid="0f8952de31eb35a24d805e2f05234419a787c2b5"

# Modeling.

# + _uuid="bac555a0224df2ec57edea0d9efc2bea6087a1b9"

for fold_, (trn_, val_) in enumerate(folds.split(y, y)):

print("Current Fold: {}".format(fold_))

trn_x, trn_y = X[trn_, :], y[trn_]

val_x, val_y = X[val_, :], y[val_]

NAUGMENTATIONS=1#5

NSHUFFLES=0#2 # turning off the augmentation by shuffling since it did not help

val_pred, test_fold_pred = 0, 0

for i in range(NAUGMENTATIONS):

print("\nFold {}, Augmentation {}".format(fold_, i+1))

trn_aug_x, trn_aug_y = augment(trn_x, trn_y, NSHUFFLES)

trn_aug_x = pd.DataFrame(trn_aug_x)

trn_aug_x = trn_aug_x.add_prefix('var_')

clf = Pipeline([

#('scaler', StandardScaler()),

#('qt', QuantileTransformer(output_distribution='normal')),

('lr_clf', LogisticRegression(solver='lbfgs', max_iter=1500, C=10))

])

clf.fit(trn_aug_x, trn_aug_y)

print("Making predictions for the validation data")

val_pred += clf.predict_proba(val_x)[:,1]

print("Making predictions for the test data")

test_fold_pred += clf.predict_proba(X_test)[:,1]

val_pred /= NAUGMENTATIONS

test_fold_pred /= NAUGMENTATIONS

roc_cv.append(roc_auc_score(val_y, val_pred))

print("AUC = {}".format(roc_auc_score(val_y, val_pred)))

oof_preds[val_, :] = val_pred.reshape((-1, 1))

test_preds += test_fold_pred.reshape((-1, 1))

# + [markdown] _uuid="bdaeb55ef0787d12809ef93cb039f20a9ea48420"

# Predicting.

# + _uuid="4f9c059d80cd7f3a88ec54c6981d5bf61175372c"

test_preds /= NFOLDS

# + [markdown] _uuid="01b3796195161127820576b0bf6874a0c2730b3b"

# Evaluating the cross-validation AUC score (we compute both the average AUC for all folds and the AUC for combined folds).

# + _uuid="2a717d9ff79b7d7debb7cfc12a01437925fa659d"

roc_score_1 = round(roc_auc_score(y, oof_preds.ravel()), 5)

roc_score = round(sum(roc_cv)/len(roc_cv), 5)

st_dev = round(np.array(roc_cv).std(), 5)

print("Average of the folds' AUCs = {}".format(roc_score))

print("Combined folds' AUC = {}".format(roc_score_1))

print("The standard deviation = {}".format(st_dev))

# + [markdown] _uuid="6f8f29301f1a46851bbd8d73b53b42e3cf1b78b2"

# Creating the submission file.

# + _uuid="cf48c73f9a06e7396c8a34dff4e80ba1b21fc59b"

print("Saving submission file")

sample = pd.read_csv('../input/sample_submission.csv')

sample.target = test_preds.astype(float)

sample.ID_code = test_ids

sample.to_csv('submission.csv', index=False)

# + [markdown] _uuid="6ae9818982ca118b293d82ef58e8bdc5e11370e1"

# The LB score is now 0.896 versus 0.894 for linear regression. The mprovement of 0.001 is obviously very small. It looks like for this data linear and logistic regression work equally well! Moving forward, I think it would be interesting to see how the feature engineering presented here would affect other classification models (e.g. Gaussian Naive Bayes, LDA, LightGBM, XGBoost, CatBoost).

# + _uuid="e3b88b41d876338362d22fbeb552bf3ec6db964b"

|

12 customer prediction/logistic-regression-with-new-features-feather.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.8.8 64-bit (''base'': conda)'

# name: python3

# ---

# ## Gateways reached by a Sensor

from datetime import datetime, timedelta

import subprocess

import pandas as pd

from dateutil.parser import parse

from dateutil import tz

from label_map import dev_id_lbls, gtw_lbls

# +

DEVICES = [

'3148 E 19th, Anc',

'3414 E 16th, Anc',

'3424 E 18th, Anc',

'122 N Bliss',

'122 N Bliss Unit',

'1826 Columbine, Anc',

'Phil ELT-2 3692',

'Phil LT22222 436E',

'Phil CO2 26D8',

]

# Days of Data to Show

DAYS = 4

GATEWAY_FILE = '~/gateways.tsv'

# -

# Make DateTime objects for time period analyze

tz_ak = tz.gettz('US/Alaska')

start_ts = (datetime.now(tz_ak) - timedelta(days=DAYS)).replace(

tzinfo=None, minute=0, second=0, microsecond=0)

end_ts = datetime.now(tz_ak).replace(

tzinfo=None, minute=0, second=0, microsecond=0)

start_ts, end_ts

# +

# Pick a device to use for testing the script

device = DEVICES[3]

df = pd.read_csv(GATEWAY_FILE,

sep='\t',

parse_dates=['ts', 'ts_hour'],

index_col='ts',

low_memory=False)

df = df.loc[str(start_ts):]

df['dev_id'] = df.dev_id.map(dev_id_lbls)

df.query('dev_id == @device', inplace=True)

def gtw_map(gtw_eui):

return gtw_lbls.get(gtw_eui, gtw_eui)

df['gateway'] = df.gateway.map(gtw_map)

df.head()

# -

# Determine the "Any" gateway counts by dropping duplicate readings

df_any = df[['ts_hour', 'counter']].drop_duplicates(subset=['counter'])

df_any_count = df_any.groupby('ts_hour').count()

df_any_count.columns = ['Any' ]

df_any_count

# Determine counts for individual gateways

df_cts = pd.pivot_table(df, index='ts_hour', columns='gateway', values='counter', aggfunc='count')

df_cts

# +

# Combine the two DataFrames, horizontally (combine columns)

df_final = pd.concat([df_any_count, df_cts], axis=1)

# Make a new index that fills in any missing hours

new_ix = pd.date_range(start_ts, end_ts, freq='1H')

df_final = df_final.reindex(new_ix)

# Replace NaN values with zero and then convert values to integers

df_final.fillna(0, inplace=True)

df_final = df_final.astype('int32')

df_final = df_final[:-1] # drop last hour because likely incomplete

# Convert index into a string so we can change drop the seconds from display

df_final.index = df_final.index.strftime("%Y-%m-%d %H:%M")

df_final

# +

def color_cells(val):

color_scale = {

0: '#FF3131',

1: '#FFFF00',

2: '#FFD822',

}

color = color_scale.get(val, '#EEEEEE')

return 'background: %s' % color

s = df_final.style

s.applymap(color_cells)

s.set_properties(**{'width': '70px', 'text-align': 'center'})

styles = [

dict(selector="td", props=[('padding', "0px")]),

]

s.set_table_styles(styles)

s

|

sensor-gateway.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] colab_type="text" id="mHDxn9VHjxKn"

# ##### Copyright 2019 The TensorFlow Authors.

# + cellView="form" colab={} colab_type="code" id="3x19oys5j89H"

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# + [markdown] colab_type="text" id="hFDUpbtvv_3u"

# # Save and serialize models with Keras

# + [markdown] colab_type="text" id="V94_3U2k9rWV"

# <table class="tfo-notebook-buttons" align="left">

# <td>

# <a target="_blank" href="https://www.tensorflow.org/guide/keras/save_and_serialize"><img src="https://www.tensorflow.org/images/tf_logo_32px.png" />View on TensorFlow.org</a>

# </td>

# <td>

# <a target="_blank" href="https://colab.research.google.com/github/tensorflow/docs/blob/master/site/en/guide/keras/save_and_serialize.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

# </td>

# <td>

# <a target="_blank" href="https://github.com/tensorflow/docs/blob/master/site/en/guide/keras/save_and_serialize.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

# </td>

# <td>

# <a href="https://storage.googleapis.com/tensorflow_docs/docs/site/en/guide/keras/save_and_serialize.ipynb"><img src="https://www.tensorflow.org/images/download_logo_32px.png" />Download notebook</a>

# </td>

# </table>

# + [markdown] colab_type="text" id="ZwiVWAQc9tk7"

# The first part of this guide covers saving and serialization for Keras models built using the Functional and Sequential APIs. Saving and serialization is exactly same for both of these model APIs.

#

# The second part of this guide covers "[saving and loading subclassed models](save_and_serialize.ipynb#saving-subclassed-models)". The subclassing API differs from the Keras sequential and functional API.

# + [markdown] colab_type="text" id="uqSgPMHguAAs"

# ## Setup

# + colab={} colab_type="code" id="bx5w4U5muDAo"

from __future__ import absolute_import, division, print_function, unicode_literals

try:

# # %tensorflow_version only exists in Colab.

# %tensorflow_version 2.x

except Exception:

pass

import tensorflow as tf

tf.keras.backend.clear_session() # For easy reset of notebook state.

# + [markdown] colab_type="text" id="wwCxkE6RyyPy"

# ## Part I: Saving Sequential models or Functional models

#

# Let's consider the following model:

# + colab={} colab_type="code" id="ILmySACTvSA9"

from tensorflow import keras

from tensorflow.keras import layers

inputs = keras.Input(shape=(784,), name='digits')

x = layers.Dense(64, activation='relu', name='dense_1')(inputs)

x = layers.Dense(64, activation='relu', name='dense_2')(x)

outputs = layers.Dense(10, name='predictions')(x)

model = keras.Model(inputs=inputs, outputs=outputs, name='3_layer_mlp')

model.summary()

# + [markdown] colab_type="text" id="xPRqbd0yw8hY"

# Optionally, let's train this model, just so it has weight values to save, as well as an optimizer state.

# Of course, you can save models you've never trained, too, but obviously that's less interesting.

# + colab={} colab_type="code" id="gCygTeGQw74g"

(x_train, y_train), (x_test, y_test) = keras.datasets.mnist.load_data()

x_train = x_train.reshape(60000, 784).astype('float32') / 255

x_test = x_test.reshape(10000, 784).astype('float32') / 255

model.compile(loss=keras.losses.SparseCategoricalCrossentropy(from_logits=True),

optimizer=keras.optimizers.RMSprop())

history = model.fit(x_train, y_train,

batch_size=64,

epochs=1)

# Reset metrics before saving so that loaded model has same state,

# since metric states are not preserved by Model.save_weights

model.reset_metrics()