code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: torch

# language: python

# name: torch

# ---

# # Optimal Transport

# +

import os,random

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib as mpl

import matplotlib.pyplot as plt

import sys, os,argparse,pickle

import numpy as np

from sklearn.metrics import accuracy_score, recall_score, f1_score

sys.path.insert(0, '../')

from models.classifier import classifier

from dataProcessing.dataModule import SingleDatasetModule, CrossDatasetModule

from Utils.trainerTL_pl import TLmodel

from pytorch_lightning.loggers import MLFlowLogger

from pytorch_lightning import LightningDataModule, LightningModule, Trainer

from pytorch_lightning.callbacks import EarlyStopping,ModelCheckpoint

from sklearn.manifold import TSNE

from sklearn.decomposition import PCA

DATA_DIR = 'C:\\Users\\gcram\\Documents\\Smart Sense\\Datasets\\frankDataset\\'

datasetList = ['Dsads','Ucihar','Uschad','Pamap2']

n_classes = 4

# -

|

experiments/OptimalTransport.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="Tce3stUlHN0L"

# ##### Copyright 2019 The TensorFlow Authors.

# + cellView="form" id="tuOe1ymfHZPu"

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# + [markdown] id="qFdPvlXBOdUN"

# # Mixed precision

# + [markdown] id="MfBg1C5NB3X0"

# <table class="tfo-notebook-buttons" align="left">

# <td>

# <a target="_blank" href="https://www.tensorflow.org/guide/mixed_precision"><img src="https://www.tensorflow.org/images/tf_logo_32px.png" />View on TensorFlow.org</a>

# </td>

# <td>

# <a target="_blank" href="https://colab.research.google.com/github/tensorflow/docs/blob/master/site/en/guide/mixed_precision.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

# </td>

# <td>

# <a target="_blank" href="https://github.com/tensorflow/docs/blob/master/site/en/guide/mixed_precision.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

# </td>

# <td>

# <a href="https://storage.googleapis.com/tensorflow_docs/docs/site/en/guide/mixed_precision.ipynb"><img src="https://www.tensorflow.org/images/download_logo_32px.png" />Download notebook</a>

# </td>

# </table>

# + [markdown] id="xHxb-dlhMIzW"

# ## Overview

#

# Mixed precision is the use of both 16-bit and 32-bit floating-point types in a model during training to make it run faster and use less memory. By keeping certain parts of the model in the 32-bit types for numeric stability, the model will have a lower step time and train equally as well in terms of the evaluation metrics such as accuracy. This guide describes how to use the Keras mixed precision API to speed up your models. Using this API can improve performance by more than 3 times on modern GPUs and 60% on TPUs.

# + [markdown] id="3vsYi_bv7gS_"

# Today, most models use the float32 dtype, which takes 32 bits of memory. However, there are two lower-precision dtypes, float16 and bfloat16, each which take 16 bits of memory instead. Modern accelerators can run operations faster in the 16-bit dtypes, as they have specialized hardware to run 16-bit computations and 16-bit dtypes can be read from memory faster.

#

# NVIDIA GPUs can run operations in float16 faster than in float32, and TPUs can run operations in bfloat16 faster than float32. Therefore, these lower-precision dtypes should be used whenever possible on those devices. However, variables and a few computations should still be in float32 for numeric reasons so that the model trains to the same quality. The Keras mixed precision API allows you to use a mix of either float16 or bfloat16 with float32, to get the performance benefits from float16/bfloat16 and the numeric stability benefits from float32.

#

# Note: In this guide, the term "numeric stability" refers to how a model's quality is affected by the use of a lower-precision dtype instead of a higher precision dtype. An operation is "numerically unstable" in float16 or bfloat16 if running it in one of those dtypes causes the model to have worse evaluation accuracy or other metrics compared to running the operation in float32.

# + [markdown] id="MUXex9ctTuDB"

# ## Setup

# + id="IqR2PQG4ZaZ0"

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

from tensorflow.keras import mixed_precision

# + [markdown] id="814VXqdh8Q0r"

# ## Supported hardware

#

# While mixed precision will run on most hardware, it will only speed up models on recent NVIDIA GPUs and Cloud TPUs. NVIDIA GPUs support using a mix of float16 and float32, while TPUs support a mix of bfloat16 and float32.

#

# Among NVIDIA GPUs, those with compute capability 7.0 or higher will see the greatest performance benefit from mixed precision because they have special hardware units, called Tensor Cores, to accelerate float16 matrix multiplications and convolutions. Older GPUs offer no math performance benefit for using mixed precision, however memory and bandwidth savings can enable some speedups. You can look up the compute capability for your GPU at NVIDIA's [CUDA GPU web page](https://developer.nvidia.com/cuda-gpus). Examples of GPUs that will benefit most from mixed precision include RTX GPUs, the V100, and the A100.

# + [markdown] id="-q2hisD60F0_"

# Note: If running this guide in Google Colab, the GPU runtime typically has a P100 connected. The P100 has compute capability 6.0 and is not expected to show a significant speedup.

#

# You can check your GPU type with the following. The command only exists if the

# NVIDIA drivers are installed, so the following will raise an error otherwise.

# + id="j-Yzg_lfkoa_"

# !nvidia-smi -L

# + [markdown] id="hu_pvZDN0El3"

# All Cloud TPUs support bfloat16.

#

# Even on CPUs and older GPUs, where no speedup is expected, mixed precision APIs can still be used for unit testing, debugging, or just to try out the API. On CPUs, mixed precision will run significantly slower, however.

# + [markdown] id="HNOmvumB-orT"

# ## Setting the dtype policy

# + [markdown] id="54ecYY2Hn16E"

# To use mixed precision in Keras, you need to create a `tf.keras.mixed_precision.Policy`, typically referred to as a *dtype policy*. Dtype policies specify the dtypes layers will run in. In this guide, you will construct a policy from the string `'mixed_float16'` and set it as the global policy. This will cause subsequently created layers to use mixed precision with a mix of float16 and float32.

# + id="x3kElPVH-siO"

policy = mixed_precision.Policy('mixed_float16')

mixed_precision.set_global_policy(policy)

# + [markdown] id="6ids1rT_UM5q"

# For short, you can directly pass a string to `set_global_policy`, which is typically done in practice.

# + id="6a8iNFoBUSqR"

# Equivalent to the two lines above

mixed_precision.set_global_policy('mixed_float16')

# + [markdown] id="oGAMaa0Ho3yk"

# The policy specifies two important aspects of a layer: the dtype the layer's computations are done in, and the dtype of a layer's variables. Above, you created a `mixed_float16` policy (i.e., a `mixed_precision.Policy` created by passing the string `'mixed_float16'` to its constructor). With this policy, layers use float16 computations and float32 variables. Computations are done in float16 for performance, but variables must be kept in float32 for numeric stability. You can directly query these properties of the policy.

# + id="GQRbYm4f8p-k"

print('Compute dtype: %s' % policy.compute_dtype)

print('Variable dtype: %s' % policy.variable_dtype)

# + [markdown] id="MOFEcna28o4T"

# As mentioned before, the `mixed_float16` policy will most significantly improve performance on NVIDIA GPUs with compute capability of at least 7.0. The policy will run on other GPUs and CPUs but may not improve performance. For TPUs, the `mixed_bfloat16` policy should be used instead.

# + [markdown] id="cAHpt128tVpK"

# ## Building the model

# + [markdown] id="nB6ujaR8qMAy"

# Next, let's start building a simple model. Very small toy models typically do not benefit from mixed precision, because overhead from the TensorFlow runtime typically dominates the execution time, making any performance improvement on the GPU negligible. Therefore, let's build two large `Dense` layers with 4096 units each if a GPU is used.

# + id="0DQM24hL_14Q"

inputs = keras.Input(shape=(784,), name='digits')

if tf.config.list_physical_devices('GPU'):

print('The model will run with 4096 units on a GPU')

num_units = 4096

else:

# Use fewer units on CPUs so the model finishes in a reasonable amount of time

print('The model will run with 64 units on a CPU')

num_units = 64

dense1 = layers.Dense(num_units, activation='relu', name='dense_1')

x = dense1(inputs)

dense2 = layers.Dense(num_units, activation='relu', name='dense_2')

x = dense2(x)

# + [markdown] id="2dezdcqnOXHk"

# Each layer has a policy and uses the global policy by default. Each of the `Dense` layers therefore have the `mixed_float16` policy because you set the global policy to `mixed_float16` previously. This will cause the dense layers to do float16 computations and have float32 variables. They cast their inputs to float16 in order to do float16 computations, which causes their outputs to be float16 as a result. Their variables are float32 and will be cast to float16 when the layers are called to avoid errors from dtype mismatches.

# + id="kC58MzP4PEcC"

print(dense1.dtype_policy)

print('x.dtype: %s' % x.dtype.name)

# 'kernel' is dense1's variable

print('dense1.kernel.dtype: %s' % dense1.kernel.dtype.name)

# + [markdown] id="_WAZeqDyqZcb"

# Next, create the output predictions. Normally, you can create the output predictions as follows, but this is not always numerically stable with float16.

# + id="ybBq1JDwNIbz"

# INCORRECT: softmax and model output will be float16, when it should be float32

outputs = layers.Dense(10, activation='softmax', name='predictions')(x)

print('Outputs dtype: %s' % outputs.dtype.name)

# + [markdown] id="D0gSWxc9NN7q"

# A softmax activation at the end of the model should be float32. Because the dtype policy is `mixed_float16`, the softmax activation would normally have a float16 compute dtype and output float16 tensors.

#

# This can be fixed by separating the Dense and softmax layers, and by passing `dtype='float32'` to the softmax layer:

# + id="IGqCGn4BsODw"

# CORRECT: softmax and model output are float32

x = layers.Dense(10, name='dense_logits')(x)

outputs = layers.Activation('softmax', dtype='float32', name='predictions')(x)

print('Outputs dtype: %s' % outputs.dtype.name)

# + [markdown] id="tUdkY_DHsP8i"

# Passing `dtype='float32'` to the softmax layer constructor overrides the layer's dtype policy to be the `float32` policy, which does computations and keeps variables in float32. Equivalently, you could have instead passed `dtype=mixed_precision.Policy('float32')`; layers always convert the dtype argument to a policy. Because the `Activation` layer has no variables, the policy's variable dtype is ignored, but the policy's compute dtype of float32 causes softmax and the model output to be float32.

#

#

# Adding a float16 softmax in the middle of a model is fine, but a softmax at the end of the model should be in float32. The reason is that if the intermediate tensor flowing from the softmax to the loss is float16 or bfloat16, numeric issues may occur.

#

# You can override the dtype of any layer to be float32 by passing `dtype='float32'` if you think it will not be numerically stable with float16 computations. But typically, this is only necessary on the last layer of the model, as most layers have sufficient precision with `mixed_float16` and `mixed_bfloat16`.

#

# Even if the model does not end in a softmax, the outputs should still be float32. While unnecessary for this specific model, the model outputs can be cast to float32 with the following:

# + id="dzVAoLI56jR8"

# The linear activation is an identity function. So this simply casts 'outputs'

# to float32. In this particular case, 'outputs' is already float32 so this is a

# no-op.

outputs = layers.Activation('linear', dtype='float32')(outputs)

# + [markdown] id="tpY4ZP7us5hA"

# Next, finish and compile the model, and generate input data:

# + id="g4OT3Z6kqYAL"

model = keras.Model(inputs=inputs, outputs=outputs)

model.compile(loss='sparse_categorical_crossentropy',

optimizer=keras.optimizers.RMSprop(),

metrics=['accuracy'])

(x_train, y_train), (x_test, y_test) = keras.datasets.mnist.load_data()

x_train = x_train.reshape(60000, 784).astype('float32') / 255

x_test = x_test.reshape(10000, 784).astype('float32') / 255

# + [markdown] id="0Sm8FJHegVRN"

# This example cast the input data from int8 to float32. You don't cast to float16 since the division by 255 is on the CPU, which runs float16 operations slower than float32 operations. In this case, the performance difference in negligible, but in general you should run input processing math in float32 if it runs on the CPU. The first layer of the model will cast the inputs to float16, as each layer casts floating-point inputs to its compute dtype.

#

# The initial weights of the model are retrieved. This will allow training from scratch again by loading the weights.

# + id="0UYs-u_DgiA5"

initial_weights = model.get_weights()

# + [markdown] id="zlqz6eVKs9aU"

# ## Training the model with Model.fit

#

# Next, train the model:

# + id="hxI7-0ewmC0A"

history = model.fit(x_train, y_train,

batch_size=8192,

epochs=5,

validation_split=0.2)

test_scores = model.evaluate(x_test, y_test, verbose=2)

print('Test loss:', test_scores[0])

print('Test accuracy:', test_scores[1])

# + [markdown] id="MPhJ9OPWt4x5"

# Notice the model prints the time per step in the logs: for example, "25ms/step". The first epoch may be slower as TensorFlow spends some time optimizing the model, but afterwards the time per step should stabilize.

#

# If you are running this guide in Colab, you can compare the performance of mixed precision with float32. To do so, change the policy from `mixed_float16` to `float32` in the "Setting the dtype policy" section, then rerun all the cells up to this point. On GPUs with compute capability 7.X, you should see the time per step significantly increase, indicating mixed precision sped up the model. Make sure to change the policy back to `mixed_float16` and rerun the cells before continuing with the guide.

#

# On GPUs with compute capability of at least 8.0 (Ampere GPUs and above), you likely will see no performance improvement in the toy model in this guide when using mixed precision compared to float32. This is due to the use of [TensorFloat-32](https://www.tensorflow.org/api_docs/python/tf/config/experimental/enable_tensor_float_32_execution), which automatically uses lower precision math in certain float32 ops such as `tf.linalg.matmul`. TensorFloat-32 gives some of the performance advantages of mixed precision when using float32. However, in real-world models, you will still typically see significantly performance improvements from mixed precision due to memory bandwidth savings and ops which TensorFloat-32 does not support.

#

# If running mixed precision on a TPU, you will not see as much of a performance gain compared to running mixed precision on GPUs, especially pre-Ampere GPUs. This is because TPUs do certain ops in bfloat16 under the hood even with the default dtype policy of float32. This is similar to how Ampere GPUs use TensorFloat-32 by default. Compared to Ampere GPUs, TPUs typically see less performance gains with mixed precision on real-world models.

#

# For many real-world models, mixed precision also allows you to double the batch size without running out of memory, as float16 tensors take half the memory. This does not apply however to this toy model, as you can likely run the model in any dtype where each batch consists of the entire MNIST dataset of 60,000 images.

# + [markdown] id="mNKMXlCvHgHb"

# ## Loss scaling

#

# Loss scaling is a technique which `tf.keras.Model.fit` automatically performs with the `mixed_float16` policy to avoid numeric underflow. This section describes what loss scaling is and the next section describes how to use it with a custom training loop.

# + [markdown] id="1xQX62t2ow0g"

# ### Underflow and Overflow

#

# The float16 data type has a narrow dynamic range compared to float32. This means values above $65504$ will overflow to infinity and values below $6.0 \times 10^{-8}$ will underflow to zero. float32 and bfloat16 have a much higher dynamic range so that overflow and underflow are not a problem.

#

# For example:

# + id="CHmXRb-yRWbE"

x = tf.constant(256, dtype='float16')

(x ** 2).numpy() # Overflow

# + id="5unZLhN0RfQM"

x = tf.constant(1e-5, dtype='float16')

(x ** 2).numpy() # Underflow

# + [markdown] id="pUIbhQypRVe_"

# In practice, overflow with float16 rarely occurs. Additionally, underflow also rarely occurs during the forward pass. However, during the backward pass, gradients can underflow to zero. Loss scaling is a technique to prevent this underflow.

# + [markdown] id="FAL5qij_oNqJ"

# ### Loss scaling overview

#

# The basic concept of loss scaling is simple: simply multiply the loss by some large number, say $1024$, and you get the *loss scale* value. This will cause the gradients to scale by $1024$ as well, greatly reducing the chance of underflow. Once the final gradients are computed, divide them by $1024$ to bring them back to their correct values.

#

# The pseudocode for this process is:

#

# ```

# loss_scale = 1024

# loss = model(inputs)

# loss *= loss_scale

# # Assume `grads` are float32. You do not want to divide float16 gradients.

# grads = compute_gradient(loss, model.trainable_variables)

# grads /= loss_scale

# ```

#

# Choosing a loss scale can be tricky. If the loss scale is too low, gradients may still underflow to zero. If too high, the opposite the problem occurs: the gradients may overflow to infinity.

#

# To solve this, TensorFlow dynamically determines the loss scale so you do not have to choose one manually. If you use `tf.keras.Model.fit`, loss scaling is done for you so you do not have to do any extra work. If you use a custom training loop, you must explicitly use the special optimizer wrapper `tf.keras.mixed_precision.LossScaleOptimizer` in order to use loss scaling. This is described in the next section.

#

# + [markdown] id="yqzbn8Ks9Q98"

# ## Training the model with a custom training loop

# + [markdown] id="CRANRZZ69nA7"

# So far, you have trained a Keras model with mixed precision using `tf.keras.Model.fit`. Next, you will use mixed precision with a custom training loop. If you do not already know what a custom training loop is, please read the [Custom training guide](../tutorials/customization/custom_training_walkthrough.ipynb) first.

# + [markdown] id="wXTaM8EEyEuo"

# Running a custom training loop with mixed precision requires two changes over running it in float32:

#

# 1. Build the model with mixed precision (you already did this)

# 2. Explicitly use loss scaling if `mixed_float16` is used.

#

# + [markdown] id="M2zpp7_65mTZ"

# For step (2), you will use the `tf.keras.mixed_precision.LossScaleOptimizer` class, which wraps an optimizer and applies loss scaling. By default, it dynamically determines the loss scale so you do not have to choose one. Construct a `LossScaleOptimizer` as follows.

# + id="ogZN3rIH0vpj"

optimizer = keras.optimizers.RMSprop()

optimizer = mixed_precision.LossScaleOptimizer(optimizer)

# + [markdown] id="FVy5gnBqTE9z"

# If you want, it is possible choose an explicit loss scale or otherwise customize the loss scaling behavior, but it is highly recommended to keep the default loss scaling behavior, as it has been found to work well on all known models. See the `tf.keras.mixed_precision.LossScaleOptimizer` documention if you want to customize the loss scaling behavior.

# + [markdown] id="JZYEr5hA3MXZ"

# Next, define the loss object and the `tf.data.Dataset`s:

# + id="9cE7Mm533hxe"

loss_object = tf.keras.losses.SparseCategoricalCrossentropy()

train_dataset = (tf.data.Dataset.from_tensor_slices((x_train, y_train))

.shuffle(10000).batch(8192))

test_dataset = tf.data.Dataset.from_tensor_slices((x_test, y_test)).batch(8192)

# + [markdown] id="4W0zxrxC3nww"

# Next, define the training step function. You will use two new methods from the loss scale optimizer to scale the loss and unscale the gradients:

#

# - `get_scaled_loss(loss)`: Multiplies the loss by the loss scale

# - `get_unscaled_gradients(gradients)`: Takes in a list of scaled gradients as inputs, and divides each one by the loss scale to unscale them

#

# These functions must be used in order to prevent underflow in the gradients. `LossScaleOptimizer.apply_gradients` will then apply gradients if none of them have `Inf`s or `NaN`s. It will also update the loss scale, halving it if the gradients had `Inf`s or `NaN`s and potentially increasing it otherwise.

# + id="V0vHlust4Rug"

@tf.function

def train_step(x, y):

with tf.GradientTape() as tape:

predictions = model(x)

loss = loss_object(y, predictions)

scaled_loss = optimizer.get_scaled_loss(loss)

scaled_gradients = tape.gradient(scaled_loss, model.trainable_variables)

gradients = optimizer.get_unscaled_gradients(scaled_gradients)

optimizer.apply_gradients(zip(gradients, model.trainable_variables))

return loss

# + [markdown] id="rcFxEjia6YPQ"

# The `LossScaleOptimizer` will likely skip the first few steps at the start of training. The loss scale starts out high so that the optimal loss scale can quickly be determined. After a few steps, the loss scale will stabilize and very few steps will be skipped. This process happens automatically and does not affect training quality.

# + [markdown] id="IHIvKKhg4Y-G"

# Now, define the test step:

#

# + id="nyk_xiZf42Tt"

@tf.function

def test_step(x):

return model(x, training=False)

# + [markdown] id="hBs98MZyhBOB"

# Load the initial weights of the model, so you can retrain from scratch:

# + id="jpzOe3WEhFUJ"

model.set_weights(initial_weights)

# + [markdown] id="s9Pi1ADM47Ud"

# Finally, run the custom training loop:

# + id="N274tJ3e4_6t"

for epoch in range(5):

epoch_loss_avg = tf.keras.metrics.Mean()

test_accuracy = tf.keras.metrics.SparseCategoricalAccuracy(

name='test_accuracy')

for x, y in train_dataset:

loss = train_step(x, y)

epoch_loss_avg(loss)

for x, y in test_dataset:

predictions = test_step(x)

test_accuracy.update_state(y, predictions)

print('Epoch {}: loss={}, test accuracy={}'.format(epoch, epoch_loss_avg.result(), test_accuracy.result()))

# + [markdown] id="d7daQKGerOFE"

# ## GPU performance tips

#

# Here are some performance tips when using mixed precision on GPUs.

#

# ### Increasing your batch size

#

# If it doesn't affect model quality, try running with double the batch size when using mixed precision. As float16 tensors use half the memory, this often allows you to double your batch size without running out of memory. Increasing batch size typically increases training throughput, i.e. the training elements per second your model can run on.

#

# ### Ensuring GPU Tensor Cores are used

#

# As mentioned previously, modern NVIDIA GPUs use a special hardware unit called Tensor Cores that can multiply float16 matrices very quickly. However, Tensor Cores requires certain dimensions of tensors to be a multiple of 8. In the examples below, an argument is bold if and only if it needs to be a multiple of 8 for Tensor Cores to be used.

#

# - tf.keras.layers.Dense(**units=64**)

# - tf.keras.layers.Conv2d(**filters=48**, kernel_size=7, stride=3)

# - And similarly for other convolutional layers, such as tf.keras.layers.Conv3d

# - tf.keras.layers.LSTM(**units=64**)

# - And similar for other RNNs, such as tf.keras.layers.GRU

# - tf.keras.Model.fit(epochs=2, **batch_size=128**)

#

# You should try to use Tensor Cores when possible. If you want to learn more, [NVIDIA deep learning performance guide](https://docs.nvidia.com/deeplearning/sdk/dl-performance-guide/index.html) describes the exact requirements for using Tensor Cores as well as other Tensor Core-related performance information.

#

# ### XLA

#

# XLA is a compiler that can further increase mixed precision performance, as well as float32 performance to a lesser extent. Refer to the [XLA guide](https://www.tensorflow.org/xla) for details.

# + [markdown] id="2tFDX8fm6o_3"

# ## Cloud TPU performance tips

#

# As with GPUs, you should try doubling your batch size when using Cloud TPUs because bfloat16 tensors use half the memory. Doubling batch size may increase training throughput.

#

# TPUs do not require any other mixed precision-specific tuning to get optimal performance. They already require the use of XLA. TPUs benefit from having certain dimensions being multiples of $128$, but this applies equally to the float32 type as it does for mixed precision. Check the [Cloud TPU performance guide](https://cloud.google.com/tpu/docs/performance-guide) for general TPU performance tips, which apply to mixed precision as well as float32 tensors.

# + [markdown] id="--wSEU91wO9w"

# ## Summary

#

# - You should use mixed precision if you use TPUs or NVIDIA GPUs with at least compute capability 7.0, as it will improve performance by up to 3x.

# - You can use mixed precision with the following lines:

#

# ```python

# # On TPUs, use 'mixed_bfloat16' instead

# mixed_precision.set_global_policy('mixed_float16')

# ```

#

# * If your model ends in softmax, make sure it is float32. And regardless of what your model ends in, make sure the output is float32.

# * If you use a custom training loop with `mixed_float16`, in addition to the above lines, you need to wrap your optimizer with a `tf.keras.mixed_precision.LossScaleOptimizer`. Then call `optimizer.get_scaled_loss` to scale the loss, and `optimizer.get_unscaled_gradients` to unscale the gradients.

# * Double the training batch size if it does not reduce evaluation accuracy

# * On GPUs, ensure most tensor dimensions are a multiple of $8$ to maximize performance

#

# For an example of mixed precision using the `tf.keras.mixed_precision` API, check [functions and classes related to training performance](https://github.com/tensorflow/models/blob/master/official/modeling/performance.py). Check out the official models, such as [Transformer](https://github.com/tensorflow/models/blob/master/official/nlp/modeling/layers/transformer_encoder_block.py), for details.

#

|

site/en/guide/mixed_precision.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Naive Bayes part-2

# ### Email Spam Detection using Naive Bayes

import pandas as pd

df=pd.read_csv("spam.csv",engine='python',encoding='utf-8',error_bad_lines=False)

df.head()

df.groupby('Category').describe()

df['spam']=df['Category'].apply(lambda x: 1 if x=='spam' else 0)

df.head()

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(df.Message,df.spam,test_size=0.2)

# ## Vectorizer

# #### Defining number for each unique word

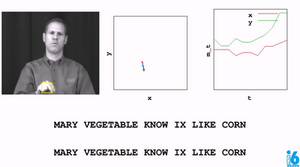

# <img src=nb11.png height=400 width=800>

from sklearn.feature_extraction.text import CountVectorizer

v = CountVectorizer()

X_train_count = v.fit_transform(X_train.values)

X_train_count.toarray()[:2]

# <img src=nb12.png height=800 width=800>

from sklearn.naive_bayes import MultinomialNB

model = MultinomialNB()

model.fit(X_train_count,y_train)

emails = [

'Hey mohan, can we get together to watch footbal game tomorrow?',

'Upto 20% discount on parking, exclusive offer just for you. Dont miss this reward!'

]

emails_count = v.transform(emails) # Beacuse of our model can predict numerical values

model.predict(emails_count)

X_test_count = v.transform(X_test)

model.score(X_test_count, y_test)

# ## sklearn pipeline

from sklearn.pipeline import Pipeline # Defining steps. here two steps

clf = Pipeline([

('vectorizer', CountVectorizer()),

('nb', MultinomialNB())

])

clf.fit(X_train, y_train) # this time, we don't need conversion manually

clf.score(X_test,y_test) # So, we got same result as expected

clf.predict(emails)

|

Machine Learning/14. Naive Bayes 2.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] pycharm={"name": "#%% md\n"}

# # Sketching AutoEncoder with line connectivity prediction

#

# This notebook demonstrates an autoencoder that predicts both a list of 2d points and an approximately binary connection matrix for those points. Lines between each possible pair of points are drawn into separate rasters and these are weighted by the respective weight in the connection matrix before being merged into a single image with a compositing function. Only the encoder network has learnable parameters; the decoder is entirely deterministic, but differentiable.

#

# The network is defined below; the number of points can be configured and we can control whether to allow points to connect to themselves (allowing drawing of single points) or not.

# +

import torch

import torch.nn as nn

try:

from dsketch.raster.disttrans import line_edt2

from dsketch.raster.raster import exp

from dsketch.raster.composite import softor

except:

# !pip install git+https://github.com/jonhare/DifferentiableSketching.git

from dsketch.raster.disttrans import line_edt2

from dsketch.raster.raster import exp

from dsketch.raster.composite import softor

class AE(nn.Module):

def __init__(self, npoints=10, hidden=64, sz=28, allow_points=True):

super(AE, self).__init__()

# build the coordinate grid:

r = torch.linspace(-1, 1, sz)

c = torch.linspace(-1, 1, sz)

grid = torch.meshgrid(r, c)

grid = torch.stack(grid, dim=2)

self.register_buffer("grid", grid)

# if we allow points, we compute the upper-triangular part of the symmetric connection

# matrix including the diagonal. If points are not allowed, we don't need the diagonal values

# as they would be implictly zero

if allow_points:

nlines = int((npoints**2 + npoints) / 2)

else:

nlines = int(npoints * (npoints-1) / 2)

self.coordpairs = torch.combinations(torch.arange(0, npoints, dtype=torch.long), r=2, with_replacement=allow_points)

# shared part of the encoder

self.enc1 = nn.Sequential(

nn.Linear(sz**2, hidden),

nn.ReLU(),

nn.Linear(hidden, hidden),

nn.ReLU())

# second part for computing npoints 2d coordinates (using tanh because we use a -1..1 grid)

self.enc_pts = nn.Sequential(

nn.Linear(hidden, npoints*2),

nn.Tanh())

# second part for computing upper triangular part of the connection matrix

self.enc_con = nn.Sequential(

nn.Linear(hidden, nlines),

nn.Sigmoid())

def forward(self, inp, sigma=7e-3):

# the encoding process will flatten the input and

# push it through the encoder networks

bs = inp.shape[0]

x = inp.view(bs, -1)

z = self.enc1(x)

pts = self.enc_pts(z) #[batch, npoints*2]

pts = pts.view(bs, -1, 2) # expand -> [batch, npoints, 2]

conn = self.enc_con(z) #[batch, nlines]

# compute all valid permuations of line start and end points

lines = torch.stack((pts[:,self.coordpairs[:,0]], pts[:,self.coordpairs[:,1]]), dim=-2) #[batch, nlines, 2, 2]

# Rasterisation steps

# draw the lines (for every input in the batch)

rasters = exp(line_edt2(lines, self.grid), sigma) # -> [batch * nlines, 28, 28]

# weight by the values in the connection matrix

rasters = rasters * conn.view(bs, -1, 1, 1)

# composite

return softor(rasters)

# -

# We'll do a simple test on MNIST and try and train the AE to be able to reconstruct digit images (and of course at the same time perform image vectorisation/autotracing of polylines). Hyperparameters are pretty arbitrary (defaults for Adam; 256 batch size) and the line width is fixed to a value that works well for MNIST.

# +

import matplotlib.pyplot as plt

from torchvision.datasets.mnist import MNIST

from torchvision import transforms

import torchvision

batch_size = 256

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Lambda(lambda x: x.view(28, 28))

])

trainset = torchvision.datasets.MNIST('/tmp', train=True, transform=transform, download=True)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=batch_size, shuffle=True, num_workers=0)

testset = torchvision.datasets.MNIST('/tmp', train=False, transform=transform, download=True)

testloader = torch.utils.data.DataLoader(testset, batch_size=batch_size, shuffle=False, num_workers=0)

device = torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')

model = AE(npoints=6, allow_points=True).to(device)

opt = torch.optim.Adam(model.parameters())

for epoch in range(10):

for images, classes in trainloader:

images = images.to(device)

opt.zero_grad()

out = model(images)

loss = nn.functional.mse_loss(out, images)

loss.backward()

opt.step()

print(loss)

# -

# Finally here's a visualisation of a set of test inputs and their rendered reconstructions:

# +

batch = iter(testloader).next()[0][0:64]

out = model(batch.to(device))

plt.figure()

inputs = torchvision.utils.make_grid(batch.unsqueeze(1))

plt.title("Inputs")

plt.imshow(inputs.permute(1,2,0))

plt.figure()

outputs = torchvision.utils.make_grid(out.detach().cpu().unsqueeze(1))

plt.title("Outputs")

plt.imshow(outputs.permute(1,2,0))

# -

|

samples/MNIST_VectorAE_SimplePolyConnect.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 电影影评情感分析

import warnings

warnings.filterwarnings("ignore")

# +

# 加载数据

import pandas as pd

import os

BANK_PATH = '.'

def load_data(bank_path=BANK_PATH):

csv_path=os.path.join(bank_path, "train_data.csv")

return pd.read_csv(csv_path)

comment_data = load_data()

comment_data

# -

test_data = pd.read_csv('test_data.csv')

test_data.head()

# 我们发现训练样本的label比较平衡

data_com_X = comment_data

data_com_X.label.value_counts()

data_com_X.info()

# +

# 分词

import jieba

# 停用词

stopwords = pd.read_csv("./stopwords.txt"

,index_col=False

,quoting=3

,sep="\t"

,names=['stopword']

,encoding='utf-8') # quoting=3 全不引用

def preprocess_text(content_lines,sentences,category):

for line in content_lines:

try:

segs=jieba.lcut(line)

segs = filter(lambda x:len(x)>1, segs)

segs = filter(lambda x:x not in stopwords, segs)

sentences.append((" ".join(segs), category))

except:

print(line)

continue

data_com_X_1 = data_com_X[data_com_X.label == 1]

data_com_X_0 = data_com_X[data_com_X.label == 0]

sentences=[]

preprocess_text(data_com_X_1.comment.dropna().values.tolist() ,sentences ,'like')

preprocess_text(data_com_X_0.comment.dropna().values.tolist() ,sentences ,'nlike')

# 生成训练集(乱序)

import random

random.shuffle(sentences)

for sentence in sentences[:10]:

print(sentence[0], sentence[1])

# -

test_sentences = []

preprocess_text(test_data.comment.dropna().values.tolist() ,test_sentences ,'like')

test_sentences[0]

# +

import numpy as np

from sklearn.model_selection import StratifiedShuffleSplit

x,y=zip(*sentences)

x_train=x[:32000]

y_train=y[:32000]

x_test = x[32000:]

y_test =y[32000:]

test_set, _ =zip(*test_sentences)

X_all = x_train + x_test + test_set

len_train = len(x_train)

len_val = len(x_test)

tfv = TfidfVectorizer()

tfv.fit(X_all)

X_all = tfv.transform(X_all)

# 恢复成训练集和测试集部分

x_train = X_all[:len_train]

x_test = X_all[len_train:len_train+len_val]

test_set = X_all[len_train+len_val:]

# -

from sklearn.model_selection import StratifiedKFold

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB, BernoulliNB

from sklearn.svm import SVC

from sklearn.metrics import accuracy_score,precision_score

from sklearn.model_selection import cross_val_score

import numpy as np

# model_NB = MultinomialNB()

model_NB = SVC(kernel='linear')

model_NB.fit(x_train, y_train)

res = cross_val_score(model_NB, x_train, y_train, cv=20, scoring='accuracy')

print ("多项式贝叶斯分类器20折交叉验证得分: ", res)

res.mean()

# vec.fit(x_test)

y_pred=model_NB.predict(x_test)

y_pred

print("ACC Score : %f" % accuracy_score(y_test,y_pred))

# 保存结果

result_pd = pd.DataFrame()

result_pd['label'] = model_NB.predict(test_set)

result_pd[result_pd['label'] == 'like'] = 1

result_pd[result_pd['label'] == 'nlike'] = 0

result_pd.to_csv('submit_result.csv', index=False)

|

code/sentiment_analysis/demo.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### Load Model Class

# This page allows user to load a set of simulation files (including results) from ASCII files or from netcdf file.

#

# The UI will automatically query the directory (or netcdf file) for available results and will

# disable result types that are unavailable. If the projection information is included in the

# netcdf file, it will be loaded into UI and disabled. Otherwise, the user must input projection

# information for ASCII files.

# +

from adhui.adh_model_ui import LoadModel

import panel as pn

pn.extension()

# -

# Instantiate the model loader ui

load_model = LoadModel()

# display the model loader ui

load_model.panel()

adh_mod = load_model._load_data()

adh_mod.pprint()

|

docs/User_Guide/02_Load_Model.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.7.2 64-bit (''.venv'': venv)'

# name: python3

# ---

# # Chef Recipe | How to cook a joke

# > A simple example to learn how to use Chef and it's ingredients

#

# - toc: true

# - badges: true

# - comments: true

# - categories: [recipe, python, jupyter]

# - hide: false

# - image: images/social/jokes.svg

# <!-- - image: images/chart-preview.png -->

# ----

# ## Modules

#

# ### Chef

#

# {% gist 1bc116f05d09e598a1a2dcfbb0e2fc22 chef.py %}

#

# ### Ingredients

#

# {% gist 5c75b7cdea330d15dcd93adbb08648c3 joking.py %}

#

# ## Call graph

#

#

#

# ----

# ## Configuration

# ### Parameters

gist_user = 'davidefornelli'

gist_chef_id = '1bc116f05d09e598a1a2dcfbb0e2fc22'

gist_ingredients_id = '5c75b7cdea330d15dcd93adbb08648c3'

ingredients_to_import = [

(gist_ingredients_id, 'joking.py')

]

# ## Configure environment

# %pip install httpimport

# ### Import chef

# +

import httpimport

with httpimport.remote_repo(

['chef'],

f"https://gist.githubusercontent.com/{gist_user}/{gist_chef_id}/raw"

):

import chef

# -

# ### Import ingredients

# +

def ingredients_import(ingredients):

for ingredient in ingredients:

mod, package = chef.process_gist_ingredient(

gist_id=ingredient[0],

gist_file=ingredient[1],

gist_user=gist_user

)

globals()[package] = mod

ingredients_import(ingredients=ingredients_to_import)

# -

# ## Tell me a joke

joking.tell_me_a_joke()

|

_notebooks/2021-11-18-nb_chef_recipe_jokes.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [Root]

# language: python

# name: Python [Root]

# ---

# # Spring-mass problem: tracking based on range and range rate

#

# Created on 01 January 2018

#

# Example 4.8.2 Spring-mass problem

# pg 216 in Statistical Orbit Determination, Tapley, Born, Schutz.

# pg 271 problem 15

#

# @author: <NAME>

# Import generic libraries

import numpy as np

import math

# Importing what's needed for nice plots.

import matplotlib.pyplot as plt

from matplotlib import rc

rc('font', **{'family': 'serif', 'serif': ['Helvetica']})

rc('text', usetex=True)

params = {'text.latex.preamble' : [r'\usepackage{amsmath}', r'\usepackage{amssymb}']}

plt.rcParams.update(params)

from mpl_toolkits.axes_grid.anchored_artists import AnchoredText

# Object of mass $m$ is connected to two fixed walls by springs with spring constants $k_1$ and $k_2$. The sensor used to measure the range $\rho$ and range-rate $\dot{\rho}$ is located at a point $P$ which is at a height of $h$ on the wall to which spring $k_1$ is attached.

#

# The initial position and velocity of the object are assumed to be $x_0 = 3.0~\mathrm{m}$ and $v_0 = 0.0~\mathrm{m/s}$.

#

# The EOM (Equation of Motion) of the body is given by

# \begin{equation}

# \ddot{x} = - (k_1 + k_2)(x-\bar{x})/m = -\omega^2 (x-\bar{x})

# \end{equation}

# where $x$ is the position of the object, $\bar{x}$ is its static equilibrium position and $\omega$ is the angular frequency. $\bar{x}$ is to 0.

# +

# Parameters for the given problem

k1 = 2.5;

k2 = 3.7;

m = 1.5;

h = 5.4;

x0 = 3.0;

v0 = 0.0;

omega2 = (k1+k2)/m;

omega = math.sqrt(omega2);

# -

# From the EOM, it is inferred that an appropriate choice of state vector is

# \begin{equation}

# \mathbf{X} =

# \begin{bmatrix}

# x \\

# v

# \end{bmatrix}

# \end{equation}

#

# The relationship between position $x$ and velocity $v$ easily leads to a differential equation

# \begin{equation}

# \frac{dx}{dt} = v

# \end{equation}

# while the relationship between velocity and acceleration leads to another DE.

# \begin{equation}

# \frac{dv}{dt} = -\omega^2 x

# \end{equation}

#

# These two DEs lead to:

# \begin{equation}

# \frac{d}{dt}\begin{bmatrix}

# x \\

# v

# \end{bmatrix}

# =

# \begin{bmatrix}

# v \\

# -\omega^2 x

# \end{bmatrix}

# \end{equation}

#

# The state space formulation is thus

# \begin{equation}

# \dot{\mathbf{X}} =

# \begin{bmatrix}

# 0 & 1 \\

# -\omega^2 & 0

# \end{bmatrix}

# \mathbf{X} = \mathbf{A} \mathbf{X}

# \end{equation}

#

# Function fn_A implements $\mathbf{A}$.

#

# An analysis of the state space formulation leads to the state transition matrix $\Phi$ given by

# \begin{equation}

# \Phi =

# \begin{bmatrix}

# \cos (\omega t) & \frac{1}{\omega}\sin (\omega t) \\

# -\omega \sin (\omega t) & \cos (\omega t)

# \end{bmatrix}

# \end{equation}

#

# Check: the determinant of this STM is indeed 1.

#

# Function fn_STM implements the STM.

# The sensor measures the range and range-rate to the object.

# The measurement vector $y$ is related to the state vector $x$ by the measurement function $g$.

# \begin{equation}

# y = \begin{bmatrix}

# \rho \\

# \dot{\rho}

# \end{bmatrix}

# =

# \begin{bmatrix}

# \sqrt{x^2 + h^2}\\

# \frac{xv}/{\rho}

# \end{bmatrix}

# \end{equation}

#

# Functin fn_G implements the measurement function $g$.

#

# Both the range and range-rate are nonlinear functions of the system state. Therefore, the Jacobian matrix of the measurement function needs to be found for inclusion in the nonlinear tracking filter.

# \begin{equation}

# \tilde{\mathbf{H}}(\mathbf{X}) =

# \begin{bmatrix}

# \frac{x}{\rho} & 0 \\

# \big( \frac{v}{\rho} -\frac{x^2 v}{\rho^3} \big) & \frac{x}{\rho}

# \end{bmatrix}

# \end{equation}

#

# This is implemented in function fn_Htilde.

# +

# Define the functions given by the stated equations

def fn_A(x_state,t,omega):

# eqn 4.8.3

A = np.array([[0.,1.],[-omega**2,0.]],dtype=np.float64);

return A

def fn_G(x_state,h):

# eqn 4.8.4

rho = math.sqrt(x_state[0]**2+h**2);

G = np.array([rho,x_state[0]*x_state[1]/rho],dtype=np.float64);

return G

def fn_Htilde(x_state,h):

# Jacobian matrix of G

dx = np.shape(x_state)[0];

x = x_state[0];v=x_state[1];

rhovel = fn_G(x_state,h);

dy=np.shape(rhovel)[0];

rho = rhovel[0];#rhodot=rhovel[1];

Htilde=np.zeros([dy,dx],dtype=np.float64);

Htilde[0,0]=x/rho;

Htilde[1,0]= v/rho - x**2*v/rho**3;

Htilde[1,1]=x/rho;

return Htilde

def fn_STM(t,omega):

stm = np.zeros([2,2],dtype=np.float64);

stm[0,0]=math.cos(omega*t);

stm[0,1]=(1/omega)*math.sin(omega*t);

stm[1,0]=-omega*math.sin(omega*t);

stm[1,1]=math.cos(omega*t);

return stm

# +

# Define the time step and time vector

delta_t = 1.0; # [s]

timevec = np.arange(0.,10.+delta_t,delta_t,dtype=np.float64);

# Dimensionality of the measurement vector

dy = 2; # 2 quantities measured

y_meas = np.zeros([dy,len(timevec)],dtype=np.float64); # array of measurements

# Load measurements

import pandas as pd

dframe = pd.read_excel("sod_book_pg218_table_4_8_1_data.xlsx",sheetname="Sheet1")

dframe = dframe.reset_index()

data_time = dframe['Time'][0:len(timevec)+1]

data_range = dframe['Range'][0:len(timevec)+1]

data_range_rate = dframe['Range rate'][0:len(timevec)+1]

y_meas[0,:] = data_range;

y_meas[1,:] = data_range_rate;

# -

# a priori values

x_nom_ini = np.array([4.,0.2],dtype=np.float64); # a priori state vector

P_ini = np.diag(np.array([1000.,100.],dtype=np.float64)); # a priori covariance matrix

dx = np.shape(x_nom_ini)[0]; # dimensionality of the state vector

delta_x_ini = np.zeros([dx],dtype=np.float64); # a priori perturbation vector

def fn_BatchProcessor(delta_x,xnom,Pnom,timevec,y_meas,R_meas,omega,h):

dy = np.shape(R_meas)[0];

# Total observation matrix

TotalObsvMat = np.linalg.inv(Pnom)#np.zeros([dx,dx],dtype=np.float64);

# total observation vector

TotalObsvVec = np.dot(TotalObsvMat,delta_x)#np.zeros([dx],dtype=np.float64);

# simulated perturbation vector

delta_Y = np.zeros([dy],dtype=np.float64);

Rinv = np.linalg.inv(R_meas);

for index in range(len(timevec)):

stm_nom = fn_STM(timevec[index],omega)

x_state_nom = np.dot(stm_nom,xnom);

H = fn_Htilde(x_state_nom,h)

Hi = np.dot(H,stm_nom);

HiT = np.transpose(Hi);

Ynom = fn_G(x_state_nom,h);

delta_Y = np.subtract(y_meas[:,index],Ynom);

TotalObsvMat = TotalObsvMat + np.dot(HiT,np.dot(Rinv,Hi));

TotalObsvVec = TotalObsvVec + np.dot(HiT,np.dot(Rinv,delta_Y));

S_hat = np.linalg.inv(TotalObsvMat);

delta_x = np.dot(S_hat,TotalObsvVec);

x0_hat = xnom + delta_x;

return x0_hat,delta_x,S_hat

sd_rho = 1.;

sd_rhodot = 1.;

R_meas = np.diag(np.array([sd_rho**2,sd_rhodot**2],dtype=np.float64));

# +

num_iter = 4;

x0_hat = np.zeros([dx,num_iter],dtype=np.float64);

S_hat = np.zeros([dx,dx,num_iter],dtype=np.float64);

obsv_error = np.zeros([dy,num_iter],dtype=np.float64);

xnom_in = x_nom_ini;

Pnom_in = P_ini;

delta_x_in = delta_x_ini;

for i_iter in range (0,num_iter):

x0_hat[:,i_iter],delta_x,S_hat[:,:,i_iter] = fn_BatchProcessor(delta_x_in,xnom_in,Pnom_in,timevec,y_meas,R_meas,omega,h);

xnom_in = x0_hat[:,i_iter];

delta_x_in = delta_x_in - delta_x;

print x0_hat[:,num_iter-1]

print S_hat[:,:,num_iter-1]

sigma_x0 = math.sqrt(S_hat[0,0,num_iter-1]);

sigma_v0 = math.sqrt(S_hat[1,1,num_iter-1]);

sigma_x0v0 = math.sqrt(S_hat[0,1,num_iter-1]);

print sigma_x0

print sigma_v0

print sigma_x0v0

print math.sqrt(S_hat[1,0,num_iter-1]);

# -

# See page 219 in SOD book:

# The state estimate x0_hat[:,num_iter-1] is identical to the estimate in the book.

#

# The standard deviations are identical to those in the book. The correlation coefficient is incorrect.

#

|

examples/spring_mass_problem/Notebook_000_springmassproblem.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %load_ext autoreload

# %autoreload 2

# + pycharm={"name": "#%%\n"}

# Preprocess data for gene effect (continuous)

from ceres_infer.data import process_data

# +

# Preprocess data for gene effect (continuous)

ext_data = {'dir_datasets': '../data/DepMap/19Q3',

'dir_ceres_datasets': '../data/DepMap/19Q3/Sanger',

'data_name': 'data_sanger',

'fname_gene_effect': 'gene_effect.csv',

'fname_gene_dependency': 'gene_dependency.csv',

'out_name': 'dm_data_sanger',

'out_match_name': 'dm_data_match_sanger'}

preprocess_params = {'useGene_dependency': False,

'dir_out': '../out/20.0925 proc_data/gene_effect/',

'dir_depmap': '../data/DepMap/',

'ext_data':ext_data}

# -

proc = process_data(preprocess_params)

proc.process()

proc.process_new()

proc.process_external(match_samples='q3', shared_only=True)

# + pycharm={"name": "#%%\n"}

# Preprocess data for gene dependency (categorical)

preprocess_params = {'useGene_dependency': True,

'dir_out': '../out/20.0925 proc_data/gene_dependency/',

'dir_depmap': '../data/DepMap/',

'ext_data':ext_data}

proc = process_data(preprocess_params)

proc.process()

proc.process_new()

proc.process_external(match_samples='q3', shared_only=True)

|

notebooks/run01-preprocess_data-Sanger.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # histogram gradient boosting classifier model

#

# faster implementation of gradient boosting classifier

#

# - assume training data in filepath

# + `data/processed/train.csv`

# - assume testing data in filepath

# + `data/processed/test.csv`

# - assume both have same structure:

# + first column is query

# + second column is category

# - assume input strings are 'clean':

# + in lower case

# + punctuation removed (stop words included)

# + words separated by spaces (no padding)

# - output:

# + `models/supportvectormachine/clf_svc.pckl`

# +

import numpy as np

import pandas as pd

import pickle

import sklearn

print('The scikit-learn version is {}.'.format(sklearn.__version__))

from sklearn.experimental import enable_hist_gradient_boosting

from sklearn.ensemble import HistGradientBoostingClassifier # sklearn 0.21+

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.compose import make_column_transformer

from sklearn import metrics

from sklearn.model_selection import cross_val_score

from sklearn.pipeline import make_pipeline

from sklearn.svm import LinearSVC #(setting multi_class=”crammer_singer”)

split_training_data_filepath = '../data/processed/train.csv'

split_testing_data_filepath = '../data/processed/test.csv'

model_filepath = '../models/histogramgradientbosting.pckl'

# -

# fetch training data only:

df_train = pd.read_csv(split_training_data_filepath, index_col=0)

# do not want the class label to be numerical nor ordinal.

# df_train['category'] = pd.Categorical(df_train['category'])

df_train['category'] = pd.Categorical(df_train['category'])

display(df_train.sample(3))

# +

# transform the text field

tfidf = TfidfVectorizer(

# stop_words=stop_words,

strip_accents= 'ascii',

ngram_range=(1,1), # consider unigrams/bigrams/trigrams?

min_df = 4,

max_df = 0.80,

binary=True, # count term occurance in each query only once

)

# column transformer

all_transforms = make_column_transformer(

(tfidf, ['query'])

)

# classify using random forest (resistant to overfitting):

clf_hgbc = HistGradientBoostingClassifier(

max_depth = 8,

max_iter = 20,

tol = 1e-4,

)

pipe = make_pipeline(all_transforms, clf_hgbc)

# -

# %%time

# scores = cross_val_score(pipe, df_train['query'], df_train['category'], cv=10, scoring='accuracy')

# scores = cross_val_score(clf_svc, X, y, cv=5, scoring='f1_macro')

# scores = cross_val_score(clf_svc, X, df_train['category'], cv=2, scoring='accuracy')

# cross_val_score(pipe, df_train['query'], df_train['category'], cv=5, scoring='accuracy')

# print("accuracy: %0.2f (+/- %0.2f)" % (scores.mean(), scores.std() * 2))

# %%time

X = tfidf.fit_transform(df_train['query']).toarray()

display(X.shape)

X

# %%time

# train model

clf_hgbc.fit(X, df_train['category'])

# leads to out-of-memory errors.

# %%time

# check model

df_test = pd.read_csv(split_testing_data_filepath, index_col=0)

X_test = tfidf.transform(df_test['query'])

print('read in test data', X_test.shape)

y_predicted = clf_hgbc.predict(X_test)

print('computed', len(y_predicted), 'predictions of test data')

print('number of correct test predictions:', sum(y_predicted == df_test['category']))

print('number of incorrect predictions:', sum(y_predicted != df_test['category']))

print('ratio of correct test predictions:', round(sum(y_predicted == df_test['category'])/len(df_test),3))

print('')

|

notebooks/103_hist_gradient_boost.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <h1> CREAZIONE MODELLO SARIMA ROMANIA

import pandas as pd

df = pd.read_csv('../../csv/nazioni/serie_storica_ro.csv')

df.head()

df['TIME'] = pd.to_datetime(df['TIME'])

df.info()

df=df.set_index('TIME')

df.head()

# <h3>Creazione serie storica dei decessi totali

df = df.groupby(pd.Grouper(freq='M')).sum()

df.head()

ts = df.Value

ts.head()

# +

from datetime import datetime

from datetime import timedelta

start_date = datetime(2015,1,1)

end_date = datetime(2020,9,30)

lim_ts = ts[start_date:end_date]

#visulizzo il grafico

import matplotlib.pyplot as plt

plt.figure(figsize=(12,6))

plt.title('Decessi mensili Romania dal 2016 al 30 settembre 2020', size=22)

plt.plot(lim_ts)

for year in range(start_date.year,end_date.year+1):

plt.axvline(pd.to_datetime(str(year)+'-01-01'), color='k', linestyle='--', alpha=0.5)

# -

# <h3>Decomposizione

# +

from statsmodels.tsa.seasonal import seasonal_decompose

decomposition = seasonal_decompose(ts, period=12, two_sided=True, extrapolate_trend=1, model='multiplicative')

ts_trend = decomposition.trend #andamento della curva

ts_seasonal = decomposition.seasonal #stagionalità

ts_residual = decomposition.resid #parti rimanenti

plt.subplot(411)

plt.plot(ts,label='original')

plt.legend(loc='best')

plt.subplot(412)

plt.plot(ts_trend,label='trend')

plt.legend(loc='best')

plt.subplot(413)

plt.plot(ts_seasonal,label='seasonality')

plt.legend(loc='best')

plt.subplot(414)

plt.plot(ts_residual,label='residual')

plt.legend(loc='best')

plt.tight_layout()

# -

# <h3>Test di stazionarietà

from statsmodels.tsa.stattools import adfuller

def test_stationarity(timeseries):

#Determing rolling statistics

rolmean = timeseries.rolling(window=365).mean()

rolstd = timeseries.rolling(window=365).std()

#Plot rolling statistics:

plt.plot(timeseries, color='blue',label='Original')

plt.plot(rolmean, color='red', label='Rolling Mean')

plt.plot(rolstd, color='black', label = 'Rolling Std')

plt.legend(loc='best')

plt.title('Rolling Mean & Standard Deviation')

plt.show()

#Perform Dickey-Fuller test:

print ('Results of Dickey-Fuller Test:')

dftest = adfuller(timeseries, autolag='AIC')

dfoutput = pd.Series(dftest[0:4], index=['Test Statistic','p-value','#Lags Used','Number of Observations Used'])

for key,value in dftest[4].items():

dfoutput['Critical Value (%s)'%key] = value

print (dfoutput)

critical_value = dftest[4]['5%']

test_statistic = dftest[0]

alpha = 1e-3

pvalue = dftest[1]

if pvalue < alpha and test_statistic < critical_value: # null hypothesis: x is non stationary

print("X is stationary")

return True

else:

print("X is not stationary")

return False

test_stationarity(ts)

# <h3>Suddivisione in Train e Test

# <b>Train</b>: da gennaio 2015 a ottobre 2019; <br />

# <b>Test</b>: da ottobre 2019 a dicembre 2019.

from datetime import datetime

train_end = datetime(2019,10,31)

test_end = datetime (2019,12,31)

covid_end = datetime(2020,8,30)

# +

from dateutil.relativedelta import *

tsb = ts[:test_end]

decomposition = seasonal_decompose(tsb, period=12, two_sided=True, extrapolate_trend=1, model='multiplicative')

tsb_trend = decomposition.trend #andamento della curva

tsb_seasonal = decomposition.seasonal #stagionalità

tsb_residual = decomposition.resid #parti rimanenti

tsb_diff = pd.Series(tsb_trend)

d = 0

while test_stationarity(tsb_diff) is False:

tsb_diff = tsb_diff.diff().dropna()

d = d + 1

print(d)

#TEST: dal 01-01-2015 al 31-10-2019

train = tsb[:train_end]

#TRAIN: dal 01-11-2019 al 31-12-2019

test = tsb[train_end + relativedelta(months=+1): test_end]

# -

# <h3>Grafici di Autocorrelazione e Autocorrelazione Parziale

from statsmodels.graphics.tsaplots import plot_acf, plot_pacf

plot_acf(ts, lags =12)

plot_pacf(ts, lags =12)

plt.show()

# <h2>Creazione del modello SARIMA sul Train

# +

from statsmodels.tsa.statespace.sarimax import SARIMAX

model = SARIMAX(train, order=(12,0,8))

model_fit = model.fit()

print(model_fit.summary())

# -

# <h4>Verifica della stazionarietà dei residui del modello ottenuto

residuals = model_fit.resid

test_stationarity(residuals)

# +

plt.figure(figsize=(12,6))

plt.title('Confronto valori previsti dal modello con valori reali del Train', size=20)

plt.plot (train.iloc[1:], color='red', label='train values')

plt.plot (model_fit.fittedvalues.iloc[1:], color = 'blue', label='model values')

plt.legend()

plt.show()

# +

conf = model_fit.conf_int()

plt.figure(figsize=(12,6))

plt.title('Intervalli di confidenza del modello', size=20)

plt.plot(conf)

plt.xticks(rotation=45)

plt.show()

# -

# <h3>Predizione del modello sul Test

# +

#inizio e fine predizione

pred_start = test.index[0]

pred_end = test.index[-1]

print(pred_end)

print(pred_start)

# +

#inizio e fine predizione

pred_start = test.index[0]

pred_end = test.index[-1]

#predizione del modello sul test

predictions_test= model_fit.predict(start=pred_start, end=pred_end)

plt.figure(figsize=(12,6))

plt.title('Predizione del modello SARIMA sul Test', size=20)

plt.plot(test, color='red', label='actual')

plt.plot(predictions_test, label='prediction' )

plt.xticks(rotation=45)

plt.legend()

plt.show()

print(predictions_test)

# -

forecast_errors = [test[i]-predictions_test[i] for i in range(len(test))]

print('Forecast Errors: %s' % forecast_errors)

bias = sum(forecast_errors) * 1.0/len(test)

print('Bias: %f' % bias)

from sklearn.metrics import mean_absolute_error

mae = mean_absolute_error(test, predictions_test)

print('MAE: %f' % mae)

from sklearn.metrics import mean_squared_error

mse = mean_squared_error(test, predictions_test)

print('MSE: %f' % mse)

from math import sqrt

rmse = sqrt(mse)

print('RMSE: %f' % rmse)

import numpy as np

from statsmodels.tools.eval_measures import rmse

nrmse = rmse(predictions_test, test)/(np.max(test)-np.min(test))

print('NRMSE: %f'% nrmse)

# <h2>Predizione del modello compreso l'anno 2020

# +

#inizio e fine predizione

start_prediction = ts.index[0]

end_prediction = ts.index[-1]

predictions_tot = model_fit.predict(start=start_prediction, end=end_prediction)

plt.figure(figsize=(12,6))

plt.title('Previsione modello su dati osservati - dal 2015 al 30 settembre 2020', size=20)

plt.plot(ts, color='blue', label='actual')

plt.plot(predictions_tot.iloc[1:], color='red', label='predict')

plt.xticks(rotation=45)

plt.legend(prop={'size': 12})

plt.show()

# -

diff_predictions_tot = (ts - predictions_tot)

plt.figure(figsize=(12,6))

plt.title('Differenza tra i valori osservati e i valori stimati del modello', size=20)

plt.plot(diff_predictions_tot)

plt.show()

diff_predictions_tot['24-02-2020':].sum()

predictions_tot.to_csv('../../csv/pred/predictions_SARIMA_ro.csv')

# <h2>Intervalli di confidenza della previsione totale

forecast = model_fit.get_prediction(start=start_prediction, end=end_prediction)

in_c = forecast.conf_int()

print(forecast.predicted_mean)

print(in_c)

print(forecast.predicted_mean - in_c['lower Value'])

plt.plot(in_c)

plt.show()

upper = in_c['upper Value']

lower = in_c['lower Value']

lower.to_csv('../../csv/lower/predictions_SARIMA_ro_lower.csv')

upper.to_csv('../../csv/upper/predictions_SARIMA_ro_upper.csv')

|

Modulo 5 - Analisi europea/nazioni/Romania/.ipynb_checkpoints/Romania modello SARIMA (mensile)-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/jchen-1122/CLRS/blob/master/stockPrediction.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="p3p0tXIsYrXN" colab_type="code" colab={}

# import libraries

import math

import pandas_datareader as web

import numpy as np

import pandas as pd

from sklearn.preprocessing import MinMaxScaler

from keras.models import Sequential

from keras.layers import Dense, LSTM

from datetime import date

import matplotlib.pyplot as plt

plt.style.use('fivethirtyeight')

# + [markdown] id="uC-p0KcLoAXQ" colab_type="text"

# # **Stock Prediction using Machine Learning**

#

# This program uses an artificial recurrent neural network called Long Short Term Memory (LSTM) to predict the closing stock price of a corporation of using the past 60 day stock price. **Please enter your desired stock in the cell below.** By default, we are going to analyze the Apple stock (AAPL).

#

# + id="tA0N7U_YqEyv" colab_type="code" colab={}

stock = 'AAPL'

# + [markdown] id="pmjYRdiEqVBb" colab_type="text"

# **Choose a year that your model will start to trained on.** The longer the time frame, the more data the model will train on. However, the more the data, the longer the model will run for and could possibly result in overfitting. Choose wisely! By default, we will look at the stock prices from January 1, 2012.

# + id="UNhoO-fVtIPp" colab_type="code" colab={}

year = 2012

# + id="bleNrf8rZEUz" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 450} outputId="6821d6da-5575-4265-9b8e-ad072e413a22"

def transform_year_to_dt(year):

return str(year) + '-01-01'

# Get the stock quote

df = web.DataReader(stock, data_source='yahoo', start=transform_year_to_dt(year), end=date.today())

# + [markdown] id="mt-Nn4xFuE-O" colab_type="text"

# Below is the current close price history graph of the stock with price as a function of time.

# + id="ZUK_6tPUZlki" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 558} outputId="eec15c3a-a313-4b08-ac5e-36137e57d98e"

# Data visualizaton of closing price history

plt.figure(figsize=(16, 8))

plt.title('Close Price History')

plt.plot(df['Close'])

plt.xlabel('Date', fontsize=18)

plt.ylabel(stock+' Close Price USD ($)', fontsize=18)

plt.show()

# + [markdown] id="TLUc0tKRu4nQ" colab_type="text"

# **Choose the size of your training dataset as a percentage of the total dataset.** Typically, our training dataset size should be larger than our validation and test dataset size. By default, the training dataset length is set to 80% of the entire dataset.

# + id="-tQN3PGGvKYB" colab_type="code" colab={}

training_data_size = 0.8

# + id="iSyZCuT9aDtP" colab_type="code" colab={}

# create a dataframe with only the 'Close' column

data = df.filter(['Close'])

# convert dataframe into numpy array

dataset = data.values

# get number of rows to train the model on

training_data_len = math.ceil(len(dataset) * training_data_size)

# scale/normalize the data

scaler = MinMaxScaler(feature_range=(0,1))

scaled_data = scaler.fit_transform(dataset)

# + [markdown] id="FtriBofpvwl8" colab_type="text"

# Another important component of the training data size is each individual length. How many days are relevant to predicting the price of a stock? 1 month? 3 months? **Choose a time frame that you believe is enough to determine this information in days.** By default we set this to 60 days, or two months.

# + id="2htCy4zwwRln" colab_type="code" colab={}

days = 60

# + id="X5TVdTKwckql" colab_type="code" colab={}

# Create training dataset

# Create the scaled training_data_set

train_data = scaled_data[0: training_data_len, :]

#Split the data into x_train and y_train data sets

x_train = []

y_train = []

for i in range(days, len(train_data)):

x_train.append(train_data[i-days: i, 0])

y_train.append(train_data[i, 0])

# convert the x_train and y_train to numpy arrays

x_train, y_train = np.array(x_train), np.array(y_train)

# reshape the data (LSTM expects 3D)

x_train = np.reshape(x_train, (x_train.shape[0], x_train.shape[1], 1))

# + [markdown] id="59SZYWnewmnA" colab_type="text"

# # **Our Model**

# Our model uses two LSTM layers and two Dense layers. Of course, our model is just one of many possible models that can be used to fit our training data. In practice, LSTM is a valuable tool that is used in many financial modeling contexts. Another customizable feature within each layer is the number of neurons. Again, choosing too few neurons can cause accuracy issues and choosing too many can cause overfitting problems. This logic also applies to the number of layers in our model. The optimal amount of neurons also depends on the dataset size and training data size. **Choose the number of neurons in the LSTM layer.** By default, there are 50 neurons in each layer.

# + id="j9oRtxlfxrg7" colab_type="code" colab={}

neurons = 50

# + id="klNUN4Xjed6Z" colab_type="code" colab={}

# Build LSTM model

model = Sequential()

model.add(LSTM(neurons, return_sequences=True, input_shape=(x_train.shape[1], 1)))

model.add(LSTM(neurons, return_sequences=False))

half_neurons = math.ceil(neurons / 2)

model.add(Dense(half_neurons))

model.add(Dense(1))

# Compile the model

model.compile(optimizer='adam', loss='mean_squared_error')

# + [markdown] id="XM8QdMTbyf_v" colab_type="text"

# After choosing the number of neurons at each layer and compiling the layers into a single model, we can decide how our model fits the data. Batch size refers to the number of training examples in each iteration. Epochs refers to the number of iterations there are. Increasing batch size will decrease the running time and increasing the number of epochs will dramatically increase the running time. Again, the issue of overfitting comes into play, so choose wisely. **Choose the batch_size and epoch numbers.** By default, both are set to 1.

# + id="PullehEpycaU" colab_type="code" colab={}

batch_size = 1

epochs = 1

# + [markdown] id="bOLrqUDjyLzM" colab_type="text"

# # **Train your Data on your Model!**

# You may have to wait a few minutes. The model will be finished running when all epochs are finished iterating.

# + id="cwwUAjDVfrQV" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 68} outputId="1e891475-37da-4a91-fd62-ed8771bf1c53"

# Train the model

model.fit(x_train, y_train, batch_size=batch_size, epochs=epochs)

# + id="IrHThhz0f2yO" colab_type="code" colab={}

# create the testing dataset

# create a new array containing scaled values from index 1543 to 2003

test_data = scaled_data[training_data_len - days: , :]

# create the datasets x_test and y_test

x_test = []

y_test = dataset[training_data_len:, :]

for i in range(days, len(test_data)):

x_test.append(test_data[i-days:i, 0])

# convert the data to a numpy array

x_test = np.array(x_test)

# reshape the data

x_test = np.reshape(x_test, (x_test.shape[0], x_test.shape[1], 1))

# get the model's predicted price values

predictions = model.predict(x_test)

predictions = scaler.inverse_transform(predictions)

# + [markdown] id="JoW9wJ7Qz5_s" colab_type="text"

# How well did our model do? Below is the root mean squared error. The closer the number is to zero, the better our model fitted. the dataset.

# + id="ZkQtagOkhsrK" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 34} outputId="00f72fd6-39b1-4c61-e449-8295525f5d4a"

# get root mean squared error (RMSE)

rmse = np.sqrt(np.mean(predictions - y_test)**2)

rmse

# + [markdown] id="STXIboBP0HzE" colab_type="text"

# In fact, we can show how well our model did by the graph below. The blue region refers to our test data and the right region with two colors shows the actual stock price and the predicted price. How well did your model do?

# + id="lmSZvjpniCxX" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 660} outputId="ee0870e0-2e6a-4f49-b569-ca332b5fc612"

# plot the data

train = data[:training_data_len]

valid = data[training_data_len:]

valid['Predictions'] = predictions

# visualize the data

plt.figure(figsize=(16,8))

plt.title('Model')

plt.xlabel('Date', fontsize=18)

plt.ylabel('Close Price USD ($)', fontsize=18)

plt.plot(train['Close'])

plt.plot(valid[['Close', 'Predictions']])

plt.legend(['Train', 'Actual', 'Predictions'], loc='lower right')

plt.show()

# + id="ZrEn7pqzjex2" colab_type="code" colab={}

# get the quote

quote = web.DataReader(stock, data_source='yahoo', start=transform_year_to_dt(year), end=date.today())

# create a new dataframe

new_df = apple_quote.filter(['Close'])

# get the last 60 day closing price values and convert the dataframe to an array

last_60_days = new_df[-60:].values

# scale the data to be values between 0 and 1

last_60_days_scaled = scaler.transform(last_60_days)

# create an empty list

X_test = []

# append past 60 days

X_test.append(last_60_days_scaled)

# convert the X_test to a numpy array

X_test = np.array(X_test)

# reshape the data

X_test = np.reshape(X_test, (X_test.shape[0], X_test.shape[1], 1))

# get predicted scaled price

pred_price = model.predict(X_test)

# undo the scaling

pred_price = scaler.inverse_transform(pred_price)

# + [markdown] id="YDWPDkln13nH" colab_type="text"

# Below is a chart that is equivalent to the graph above. The numbers shown on the right column are the model's predicted values and the middle column is the actual closing price. Notice that the chart only contains our test data and not our training data as we only used our model to train with our training data and predict with our testing data.

# + id="_EA-_oq11aRI" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 450} outputId="a43b6273-d214-43b5-f3f4-0c7a0851b977"

valid = valid.rename(columns={"Close": "Actual Closing Price"})

valid

# + [markdown] id="iGJQR_eO2byv" colab_type="text"

# **Feel free to go back and change some of the customizable things like stock, year, batch_size, epochs, etc to fit your data on a different model.**

|

stockPrediction.ipynb

|

# ---

# jupyter: