code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="o8rERttgz2WZ" endofcell="--"

#

#

# [](https://colab.research.google.com/github/JohnSnowLabs/nlu/blob/master/examples/webinars_conferences_etc/graph_ai_summit/Healthcare_Graph_NLU_COVID_Tigergraph.ipynb)

#

#

# <div>

# <img src="http://ckl-it.de/wp-content/uploads/2021/04/WhatsApp-Image-2021-04-19-at-7.35.04-AM.jpeg" width="400" height="250" >

# </div>

#

#

#

# # Graph NLU 20 Minutes Crashcourse - State of the Art Text Mining for Graphs

# This notebook is used to feed the `Tiger Graph` engine with features derived by `John Snow Labs` python `NLU` library

# This short notebook will teach you a lot of things!

# - Sentiment classification, binary, multi class and regressive

# - Extract Parts of Speech (POS)

# - Extract Named Entities (NER)

# - Extract Keywords (YAKE!)

# - Answer Open and Closed book questions with T5

# - Summarize text and more with Multi task T5

# - Translate text with Microsofts Marian Model

# - Train a Multi Lingual Classifier for 100+ languages from a dataset with just one language

# - Extract Medical Named Entities (Medical NER)

# - Resolve Medical Entities to codes

# - Classify and extrat relations between entities

# -

#

#

# ## More ressources

# - [Join our Slack](https://join.slack.com/t/spark-nlp/shared_invite/zt-<KEY>)

# - [NLU Website](https://nlu.johnsnowlabs.com/)

# - [NLU Github](https://github.com/JohnSnowLabs/nlu)

# - [Many more NLU example tutorials](https://github.com/JohnSnowLabs/nlu/tree/master/examples)

# - [Overview of every powerful nlu 1-liner](https://nlu.johnsnowlabs.com/docs/en/examples)

# - [Checkout the Modelshub for an overview of all models](https://nlp.johnsnowlabs.com/models)

# - [Checkout the NLU Namespace where you can find every model as a tabel](https://nlu.johnsnowlabs.com/docs/en/spellbook)

# - [Intro to NLU article](https://medium.com/spark-nlp/1-line-of-code-350-nlp-models-with-john-snow-labs-nlu-in-python-2f1c55bba619)

# - [Indepth and easy Sentence Similarity Tutorial, with StackOverflow Questions using BERTology embeddings](https://medium.com/spark-nlp/easy-sentence-similarity-with-bert-sentence-embeddings-using-john-snow-labs-nlu-ea078deb6ebf)

# - [1 line of Python code for BERT, ALBERT, ELMO, ELECTRA, XLNET, GLOVE, Part of Speech with NLU and t-SNE](https://medium.com/spark-nlp/1-line-of-code-for-bert-albert-elmo-electra-xlnet-glove-part-of-speech-with-nlu-and-t-sne-9ebcd5379cd)

# --

# + [markdown] id="GAVkEjc2l_jv"

# # Install NLU and authorize licensed enviroment

# - Run the install script

# - Upload your`spark_nlp_for_healthcare.json`

# - Have fun

#

#

# #### Instructions for non Google Colab enviroment :

# - [See the installation guide](https://nlu.johnsnowlabs.com/docs/en/install) and the [Autorization guide](TODO) for detailed instructions

# - If you need help or run into troubles, [ping us on slack :)](https://join.slack.com/t/spark-nlp/shared_invite/zt-<KEY>)

# + colab={"base_uri": "https://localhost:8080/"} id="fjAIW3p2Lhx4" outputId="3aca4e0e-8f4e-4bf1-e7c4-c3946c1fc2bb" executionInfo={"status": "ok", "timestamp": 1650023785732, "user_tz": -300, "elapsed": 117175, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# !wget https://setup.johnsnowlabs.com/nlu/colab.sh -O - | bash

import nlu

# + [markdown] id="jNw8mtj2jJyS"

# # Simple NLU basics on Strings

# + [markdown] id="cr3HrpqX0Lju"

# ## Context based spell Checking in 1 line

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 342} id="yiU5oCWGz31e" outputId="28e94045-eaa6-4b6f-f3b9-4e1df10e3cd7" executionInfo={"status": "ok", "timestamp": 1650023889409, "user_tz": -300, "elapsed": 103697, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

import nlu

nlu.load('spell').predict('I also liek to live dangertus')

# + [markdown] id="EbRSJNNE0XH7"

# ## Binary Sentiment classification in 1 Line

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 298} id="Kr7JAcnr0Khb" outputId="aa55eafa-08a7-4be9-d939-d1996c8ff3af" executionInfo={"status": "ok", "timestamp": 1650023907540, "user_tz": -300, "elapsed": 18148, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

nlu.load('sentiment').predict('I love NLU and rainy days!')

# + [markdown] id="p0z4S7kF0aeT"

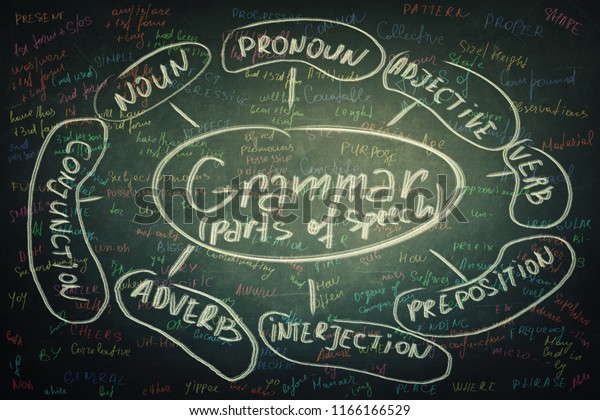

# ## Part of Speech (POS) in 1 line

#

#

# |Tag |Description | Example|

# |------|------------|------|

# |CC| Coordinating conjunction | This batch of mushroom stew is savory **and** delicious |

# |CD| Cardinal number | Here are **five** coins |

# |DT| Determiner | **The** bunny went home |

# |EX| Existential there | **There** is a storm coming |

# |FW| Foreign word | I'm having a **déjà vu** |

# |IN| Preposition or subordinating conjunction | He is cleverer **than** I am |

# |JJ| Adjective | She wore a **beautiful** dress |

# |JJR| Adjective, comparative | My house is **bigger** than yours |

# |JJS| Adjective, superlative | I am the **shortest** person in my family |

# |LS| List item marker | A number of things need to be considered before starting a business **,** such as premises **,** finance **,** product demand **,** staffing and access to customers |

# |MD| Modal | You **must** stop when the traffic lights turn red |

# |NN| Noun, singular or mass | The **dog** likes to run |

# |NNS| Noun, plural | The **cars** are fast |

# |NNP| Proper noun, singular | I ordered the chair from **Amazon** |

# |NNPS| Proper noun, plural | We visted the **Kennedys** |

# |PDT| Predeterminer | **Both** the children had a toy |

# |POS| Possessive ending | I built the dog'**s** house |

# |PRP| Personal pronoun | **You** need to stop |

# |PRP$| Possessive pronoun | Remember not to judge a book by **its** cover |

# |RB| Adverb | The dog barks **loudly** |

# |RBR| Adverb, comparative | Could you sing more **quietly** please? |

# |RBS| Adverb, superlative | Everyone in the race ran fast, but John ran **the fastest** of all |

# |RP| Particle | He ate **up** all his dinner |

# |SYM| Symbol | What are you doing **?** |

# |TO| to | Please send it back **to** me |

# |UH| Interjection | **Wow!** You look gorgeous |

# |VB| Verb, base form | We **play** soccer |

# |VBD| Verb, past tense | I **worked** at a restaurant |

# |VBG| Verb, gerund or present participle | **Smoking** kills people |

# |VBN| Verb, past participle | She has **done** her homework |

# |VBP| Verb, non-3rd person singular present | You **flit** from place to place |

# |VBZ| Verb, 3rd person singular present | He never **calls** me |

# |WDT| Wh-determiner | The store honored the complaints, **which** were less than 25 days old |

# |WP| Wh-pronoun | **Who** can help me? |

# |WP\$| Possessive wh-pronoun | **Whose** fault is it? |

# |WRB| Wh-adverb | **Where** are you going? |

# + colab={"base_uri": "https://localhost:8080/", "height": 467} id="49-eCSZQ0cQa" outputId="f1611137-f757-4089-92a8-f6b1183e0879" executionInfo={"status": "ok", "timestamp": 1650023926450, "user_tz": -300, "elapsed": 18936, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

nlu.load('pos').predict('POS assigns each token in a sentence a grammatical label')

# + [markdown] id="2-ih6TXc0d6x"

# ## Named Entity Recognition (NER) in 1 line

#

#

#

# |Type | Description |

# |------|--------------|

# | PERSON | People, including fictional like **<NAME>** |

# | NORP | Nationalities or religious or political groups like the **Germans** |

# | FAC | Buildings, airports, highways, bridges, etc. like **New York Airport** |

# | ORG | Companies, agencies, institutions, etc. like **Microsoft** |

# | GPE | Countries, cities, states. like **Germany** |

# | LOC | Non-GPE locations, mountain ranges, bodies of water. Like the **Sahara desert**|

# | PRODUCT | Objects, vehicles, foods, etc. (Not services.) like **playstation** |

# | EVENT | Named hurricanes, battles, wars, sports events, etc. like **hurricane Katrina**|

# | WORK_OF_ART | Titles of books, songs, etc. Like **Mona Lisa** |

# | LAW | Named documents made into laws. Like : **Declaration of Independence** |

# | LANGUAGE | Any named language. Like **Turkish**|

# | DATE | Absolute or relative dates or periods. Like every second **friday**|

# | TIME | Times smaller than a day. Like **every minute**|

# | PERCENT | Percentage, including ”%“. Like **55%** of workers enjoy their work |

# | MONEY | Monetary values, including unit. Like **50$** for those pants |

# | QUANTITY | Measurements, as of weight or distance. Like this person weights **50kg** |

# | ORDINAL | “first”, “second”, etc. Like David placed **first** in the tournament |

# | CARDINAL | Numerals that do not fall under another type. Like **hundreds** of models are avaiable in NLU |

#

# + colab={"base_uri": "https://localhost:8080/", "height": 340} id="zoDjsSxx0eJY" outputId="08e23666-8ac8-4fa3-a2d5-b3f8b4df1f8c" executionInfo={"status": "ok", "timestamp": 1650023945682, "user_tz": -300, "elapsed": 19254, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

nlu.load('ner').predict("John Snow Labs congratulates the Amarican John Biden to winning the American election!", output_level='chunk')

# + [markdown] id="RcvxNNeXt83u"

# # Let's apply NLU to a COVID dataset!

#

#

# <div>

# <img src="http://ckl-it.de/wp-content/uploads/2021/04/WhatsApp-Image-2021-04-19-at-7.35.04-AM-1.jpeg" width="600" height="450" >

# </div>

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} id="lKbaoFLMsIRA" outputId="6d85b5ad-0c6c-43ab-814f-2350277d6441" executionInfo={"status": "ok", "timestamp": 1650023949803, "user_tz": -300, "elapsed": 4137, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# ! wget http://ckl-it.de/wp-content/uploads/2021/04/covid19_tweets.csv

import pandas as pd

df = pd.read_csv('covid19_tweets.csv')

df

# + colab={"base_uri": "https://localhost:8080/", "height": 758} id="YWjXqWlFLGOB" outputId="61c12df4-b6c9-4a07-fd99-77f27401a62f" executionInfo={"status": "ok", "timestamp": 1650024077314, "user_tz": -300, "elapsed": 2420, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

df.hashtags = df.hashtags.astype(str)

df["hashtags"][(df["hashtags"] != "nan")].apply(eval).explode().value_counts()[:50].plot.barh(figsize=(20,14), title='Top 50 Hashtag Distribution of COVID dataset')

# + [markdown] id="AMFwC0jX_dCT"

# ## General NER on a COVID News dataset

# ### The **NER** model which you can load via `nlu.load('ner')` recognizes 18 different classes in your dataset.

# We set output level to chunk, so that we get 1 row per NER class.

#

#

# #### Predicted entities:

#

#

# NER is avaiable in many languages, which you can [find in the John Snow Labs Modelshub](https://nlp.johnsnowlabs.com/models)

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} id="aUGAomeusNVv" outputId="f2566bb8-6f86-4aa4-a63e-32120bb113c0" executionInfo={"status": "error", "timestamp": 1650025387012, "user_tz": -300, "elapsed": 1300315, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

ner_df = nlu.load('ner').predict(df, output_level = 'chunk')

ner_df

# + [markdown] id="6gC7S2vqBpT1"

# ### Top 50 Named Entities

# + id="aKSSgTC-sVq8" executionInfo={"status": "aborted", "timestamp": 1650025386505, "user_tz": -300, "elapsed": 64, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

ner_df['entities<EMAIL>'].value_counts()[:100].plot.barh(figsize = (16,20))

# + [markdown] id="B8oAF_MCBxyN"

# ### Top 50 Named Entities which are Countries/Cities/States

# + id="IEiIMsFj9v2n" executionInfo={"status": "aborted", "timestamp": 1650025386511, "user_tz": -300, "elapsed": 68, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

ner_df[ner_df['meta_<EMAIL>'] == 'GPE']['entities@<EMAIL>'].value_counts()[:50].plot.barh(figsize=(18,20), title ='Top 50 Occuring Countries/Cities/States in the dataset')

# + [markdown] id="JiHofegqB0sM"

# ### Top 50 Named Entities which are PRODUCTS

# + id="coeW-Kgs92fH" executionInfo={"status": "aborted", "timestamp": 1650025386513, "user_tz": -300, "elapsed": 70, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

ner_df[ner_df['meta_entities@ner_entity'] == 'PRODUCT']['entities@ner_results'].value_counts()[:50].plot.barh(figsize=(18,20), title ='Top 50 Occuring products in the dataset')

# + id="4ng-TNYu--09" executionInfo={"status": "aborted", "timestamp": 1650025386515, "user_tz": -300, "elapsed": 71, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

ner_df[ner_df['meta_entities@<EMAIL>'] == 'ORG']['entities@ner_results'].value_counts()[:50].plot.barh(figsize=(18,20), title ='Top 50 products occuring in the dataset')

# + [markdown] id="p73T7MyeU2kS"

# ## YAKE on COVID Tweet dataset

# ### The **YAKE!** model (Yet Another Keyword Extractor) is a **unsupervised** keyword extraction algorithm.

# You can load it via which you can load via `nlu.load('yake')`. It has no weights and is very fast.

# It has various parameters that can be configured to influence which keywords are beeing extracted, [here for an more indepth YAKE guide](https://github.com/JohnSnowLabs/nlu/blob/master/examples/webinars_conferences_etc/multi_lingual_webinar/1_NLU_base_features_on_dataset_with_YAKE_Lemma_Stemm_classifiers_NER_.ipynb)

# + id="Zu8-yar9VLqO" executionInfo={"status": "aborted", "timestamp": 1650025386517, "user_tz": -300, "elapsed": 72, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

yake_df = nlu.load('yake').predict(df.text)

yake_df

# + [markdown] id="VJxjIrgdWZ0M"

# ### Top 50 extracted Keywords with YAKE!

# + id="CMXkeiCLVo4u" executionInfo={"status": "aborted", "timestamp": 1650025386520, "user_tz": -300, "elapsed": 75, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

yake_df.explode('keywords_results').keywords_results.value_counts()[:50].plot.barh(title='Keyword Distribution in COVID twitter dataset', figsize = (16,20) )

# + [markdown] id="gXoYzE_RBaaj"

# ## Binary Sentimental Analysis and Distribution on a dataset

# + id="yjHx4TR3_SMe" executionInfo={"status": "aborted", "timestamp": 1650025386523, "user_tz": -300, "elapsed": 78, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

df = pd.read_csv('covid19_tweets.csv').fillna('na').iloc[:5000]

sent_df = nlu.load('sentiment').predict(df)

sent_df

# + id="3LHQb5biErAv" executionInfo={"status": "aborted", "timestamp": 1650025386524, "user_tz": -300, "elapsed": 77, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

sent_df.sentiment_results.value_counts().plot.bar(title='Sentiment distribution')

# + [markdown] id="3a3xxhUSCDhJ"

# ## Emotional Analysis and Distribution of Headlines

# + id="rrYi4f1PEpV3" executionInfo={"status": "aborted", "timestamp": 1650025386524, "user_tz": -300, "elapsed": 77, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

emo_df = nlu.load('emotion').predict(df)

emo_df

# + id="anyAVNjFCG9H" executionInfo={"status": "aborted", "timestamp": 1650025386537, "user_tz": -300, "elapsed": 89, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

emo_df.category_results.value_counts().plot.bar(title='Emotion Distribution')

# + [markdown] id="MHfPEwbA4FrM"

# **Make sure to restart your notebook again** before starting the next section

#

# + id="yZQDoM1p4FS9" executionInfo={"status": "aborted", "timestamp": 1650025386538, "user_tz": -300, "elapsed": 90, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

print("Please restart kernel if you are in google colab and run next cell after the restart to configure java 8 back")

1+'wait'

# + [markdown] id="_kThBMV3LBhg"

# # Let's apply some medical models to the COVID dataset!

#

# + [markdown] id="NcOss-wxREfR"

# ## Medical Named Entitiy recognition

# The medical named entity recognizers are pretrained to extract various `medical` named entities.

# Here are the medical classes it predicts :

#

# ` Kidney_Disease, HDL, Diet, Test, Imaging_Technique, Triglycerides, Obesity, Duration, Weight, Social_History_Header, ImagingTest, Labour_Delivery, Disease_Syndrome_Disorder, Communicable_Disease, Overweight, Units, Smoking, Score, Substance_Quantity, Form, Race_Ethnicity, Modifier, Hyperlipidemia, ImagingFindings, Psychological_Condition, OtherFindings, Cerebrovascular_Disease, Date, Test_Result, VS_Finding, Employment, Death_Entity, Gender, Oncological, Heart_Disease, Medical_Device, Total_Cholesterol, ManualFix, Time, Route, Pulse, Admission_Discharge, RelativeDate, O2_Saturation, Frequency, RelativeTime, Hypertension, Alcohol, Allergen, Fetus_NewBorn, Birth_Entity, Age, Respiration, Medical_History_Header, Oxygen_Therapy, Section_Header, LDL, Treatment, Vital_Signs_Header, Direction, BMI, Pregnancy, Sexually_Active_or_Sexual_Orientation, Symptom, Clinical_Dept, Measurements, Height, Family_History_Header, Substance, Strength, Injury_or_Poisoning, Relationship_Status, Blood_Pressure, Drug, Temperature, EKG_Findings, Diabetes, BodyPart, Vaccine, Procedure, Dosage`

# + id="8nug0OtXLBHf" executionInfo={"status": "aborted", "timestamp": 1650025386540, "user_tz": -300, "elapsed": 92, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

import pandas as pd

import nlu

df = pd.read_csv('covid19_tweets.csv').fillna('na')

vac_names = ['vaccine', 'Pfizer', 'BioNTech','Sinopharm','Sinovac','Moderna','Oxford', 'AstraZeneca','Covaxin','SputnikV']#,

r = "|".join(vac_names)

f_d = df.fillna('na')[df.fillna('na').hashtags.str.contains(r ,flags=re.IGNORECASE, regex=True)]

disease_df = nlu.load('med_ner.jsl.wip.clinical').predict(f_d.text.iloc[:2000], output_level='chunk',drop_irrelevant_cols=False)

disease_df

# + id="3wQZmE9TlwjX" executionInfo={"status": "aborted", "timestamp": 1650025386544, "user_tz": -300, "elapsed": 96, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

disease_df['meta_entities@clinical_entity'].value_counts().plot.barh(figsize=(20,14),title='Distribution of predicted medical entity labels ')

# + [markdown] id="7ILw5508FR6h"

# ### Top 50 **named Entities of type vacine** found in the dataset

#

# The Medical named entity recognizer extracted various entities and classified them as vaccine

# + id="7MLJumSlFQTo" executionInfo={"status": "aborted", "timestamp": 1650025386550, "user_tz": -300, "elapsed": 101, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

disease_df[disease_df['meta_<EMAIL>'] == 'Vaccine']['entities@<EMAIL>'].value_counts()[:50].plot.barh(figsize=(18,20), title ='Top 50 Occuring products in the dataset')

# + [markdown] id="4xEMGtwctN4i"

# ### Top 50 **named entities of type symptom** found in the dataset

#

# The Medical named entity recognizer extracted various entities and classified them as vaccine

# + id="dsWiyY99bzuK" executionInfo={"status": "aborted", "timestamp": 1650025386552, "user_tz": -300, "elapsed": 103, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

disease_df[disease_df['meta_<EMAIL>'] == 'Symptom']['entities@<EMAIL>'].value_counts().plot.barh(figsize=(18,20), title ='Top 50 Occuring smpyoms in the dataset')

# + [markdown] id="n5j2Dw_CvdyG"

# ## Medical Entitiy resolution for `medcical procedures`

#

# Let's mine the text data and extract relevant `procedures` and their `procedure icd 10 pcs codes` to understand what the twitter world is thinking about `treatment procedures` of COVID

#

# For this we are filtering for tweets that conain `vaccine`

# + id="tBdsgVUydCSv" executionInfo={"status": "aborted", "timestamp": 1650025386553, "user_tz": -300, "elapsed": 104, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

vac_names = ['vaccine','Pfizer', 'BioNTech','Sinopharm','Sinovac','Moderna','Oxford', 'AstraZeneca','Covaxin','SputnikV']#,

symptoms = ['sick','cough','sore','fever', 'tired','diarrhoea','taste','smell','loss','rash','skin','breath','short','difficult','ventilator' ]

r = "|".join(symptoms + vac_names)

f_d = df[df.text.str.contains(r ,flags=re.IGNORECASE, regex=True)]

# Extract COVID procedures

resolve_df = nlu.load('med_ner.jsl.wip.clinical resolve.icd10pcs').predict(f_d, output_level='sentence')

resolve_df

# + id="LS9q0j78R_NY" executionInfo={"status": "aborted", "timestamp": 1650025386554, "user_tz": -300, "elapsed": 105, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

resolve_df.sentence_resolution_results.value_counts()[:50]

# + [markdown] id="ZVusxTa3OJ6h"

# ### Top 50 medical proceduress resolved

#

#

#

# + id="MA8AHgZxJyD-" executionInfo={"status": "aborted", "timestamp": 1650025386555, "user_tz": -300, "elapsed": 105, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

resolve_df.sentence_resolution_results.value_counts()[:50].plot.barh(title='Top 50 resolved procedures', figsize=(16,20))

# + [markdown] id="SAz42g2DJd9S"

# ## Resolve Medical Symptoms

# + id="loserzTXQkw1" executionInfo={"status": "aborted", "timestamp": 1650025386556, "user_tz": -300, "elapsed": 106, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

import pandas as pd

# java reset

# ! echo 2 | update-alternatives --config java

# ! java -version

import nlu

import re

df = pd.read_csv('covid19_tweets.csv').fillna('na')

vac_names = ['vaccine','sick','cough','sore','fever','Pfizer', 'BioNTech','Sinopharm','Sinovac','Moderna','Oxford', 'AstraZeneca','Covaxin','SputnikV']

symptoms = ['sick','cough','sore','fever', 'tired','diarrhoea','taste','smell','loss','rash','skin','breath','short','difficult','ventilator' ]

r = "|".join(symptoms+vac_names)

# f_d = df.fillna('na')[df.fillna('na').hashtags.str.contains(r ,flags=re.IGNORECASE, regex=True)]

f_d = df[df.text.str.contains(r ,flags=re.IGNORECASE, regex=True)]

f_d

resolve_df = nlu.load('med_ner.jsl.wip.clinical resolve.icd10cm').predict(f_d[:2000], output_level='sentence')

resolve_df

# + [markdown] id="39sHQNpFBD2v"

# ### Top 50 Medical symptoms

# + id="2StNqCUcAdz8" executionInfo={"status": "aborted", "timestamp": 1650025386558, "user_tz": -300, "elapsed": 108, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

resolve_df.sentence_resolution_results.value_counts()[:50].plot.barh(title='Top 50 resolved procedures', figsize=(16,20))

# + [markdown] id="JJNLjhrjYAFV"

# ## Assert status of medical entities

# + id="t6jKxxEkX_bt" executionInfo={"status": "aborted", "timestamp": 1650025386561, "user_tz": -300, "elapsed": 111, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

df = pd.read_csv('covid19_tweets.csv').fillna('na')

vac_names = ['vaccine','sick','cough','sore','fever','Pfizer', 'BioNTech','Sinopharm','Sinovac','Moderna','Oxford', 'AstraZeneca','Covaxin','SputnikV']

symptoms = ['sick','cough','sore','fever', 'tired','diarrhoea','taste','smell','loss','rash','skin','breath','short','difficult','ventilator' ]

r = "|".join(symptoms+vac_names)

f_d = df[df.text.str.contains(r ,flags=re.IGNORECASE, regex=True)]

f_d

assert_df = nlu.load('med_ner.jsl.wip.clinical assert').predict(f_d[:2000], output_level='chunk')

assert_df

# + [markdown] id="BIMUo8XOXw8i"

# ## Extract relation between entities

# + id="0JmDnL2fYVFr" executionInfo={"status": "aborted", "timestamp": 1650025386563, "user_tz": -300, "elapsed": 112, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

import pandas as pd

import nlu

import re

df = pd.read_csv('covid19_tweets.csv').fillna('na')

vac_names = ['vaccine','sick','cough','sore','fever','Pfizer', 'BioNTech','Sinopharm','Sinovac','Moderna','Oxford', 'AstraZeneca','Covaxin','SputnikV']

symptoms = ['sick','cough','sore','fever', 'tired','diarrhoea','taste','smell','loss','rash','skin','breath','short','difficult','ventilator' ]

r = "|".join(symptoms+vac_names)

f_d = df[df.text.str.contains(r ,flags=re.IGNORECASE, regex=True)]

f_d

relation_df = nlu.load('med_ner.jsl.wip.clinical en.relation.bodypart.problem ').predict(f_d[:2000], output_level='relation')

relation_df

# + [markdown] id="lRA-zGz2D6N_"

# **Make sure to restart your notebook again** before starting the next section

#

# + id="ajINWfeC0jT2" executionInfo={"status": "aborted", "timestamp": 1650025386565, "user_tz": -300, "elapsed": 114, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

print("Please restart kernel if you are in google colab and run next cell after the restart to configure java 8 back")

1+'wait'

# + [markdown] id="rN_H9hKmApll"

# # Answer **Closed Book** and Open **Book Questions** with Google's T5!

#

# <!-- [T5]() -->

#

#

# You can load the **question answering** model with `nlu.load('en.t5')`

# + id="sKmud8AHN9yo" executionInfo={"status": "aborted", "timestamp": 1650025386566, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Load question answering T5 model

t5_closed_question = nlu.load('en.t5')

# + [markdown] id="-F9rrWbfNyPZ"

# ## Answer **Closed Book Questions**

# Closed book means that no additional context is given and the model must answer the question with the knowledge stored in it's weights

# + id="QsvnphOwfzVQ" executionInfo={"status": "aborted", "timestamp": 1650025386566, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

t5_closed_question.predict("Who is president of Nigeria?")

# + id="DcTbqAGmM6YY" executionInfo={"status": "aborted", "timestamp": 1650025386567, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

t5_closed_question.predict("What is the most common language in India?")

# + id="2Rb4EhK_NAb3" executionInfo={"status": "aborted", "timestamp": 1650025386567, "user_tz": -300, "elapsed": 114, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

t5_closed_question.predict("What is the capital of Germany?")

# + [markdown] id="ogxJNa5MOOQj"

# ## Answer **Open Book Questions**

# These are questions where we give the model some additional context, that is used to answer the question

# + id="e9cwqQGtaTa5" executionInfo={"status": "aborted", "timestamp": 1650025386568, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

t5_open_book = nlu.load('answer_question')

# + id="OB5GOHxPYUYM" executionInfo={"status": "aborted", "timestamp": 1650025386569, "user_tz": -300, "elapsed": 114, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

context = 'Peters last week was terrible! He had an accident and broke his leg while skiing!'

question1 = 'Why was peters week so bad?'

question2 = 'How did peter broke his leg?'

t5_open_book.predict([question1+context, question2 + context])

# + id="kZb_BdGm1-yc" executionInfo={"status": "aborted", "timestamp": 1650025386570, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Ask T5 questions in the context of a News Article

question1 = 'Who is <NAME>?'

question2 = 'Who is founder of Alibaba Group?'

question3 = 'When did <NAME> re-appear?'

question4 = 'How did Alibaba stocks react?'

question5 = 'Whom did <NAME> meet?'

question6 = 'Who did <NAME> hide from?'

# from https://www.bbc.com/news/business-55728338

news_article_context = """ context:

Alibaba Group founder <NAME> has made his first appearance since Chinese regulators cracked down on his business empire.

His absence had fuelled speculation over his whereabouts amid increasing official scrutiny of his businesses.

The billionaire met 100 rural teachers in China via a video meeting on Wednesday, according to local government media.

Alibaba shares surged 5% on Hong Kong's stock exchange on the news.

"""

questions = [

question1+ news_article_context,

question2+ news_article_context,

question3+ news_article_context,

question4+ news_article_context,

question5+ news_article_context,

question6+ news_article_context,]

# + id="e0kTj4ZN4kJi" executionInfo={"status": "aborted", "timestamp": 1650025386571, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

t5_open_book.predict(questions)

# + [markdown] id="xJIuT3ZhOhoc"

# # Multi Problem T5 model for Summarization and more

# The main T5 model was trained for over 20 tasks from the SQUAD/GLUE/SUPERGLUE datasets. See [this notebook](https://github.com/JohnSnowLabs/nlu/blob/master/examples/webinars_conferences_etc/multi_lingual_webinar/7_T5_SQUAD_GLUE_SUPER_GLUE_TASKS.ipynb) for a demo of all tasks

#

#

# # Overview of every task available with T5

# [The T5 model](https://arxiv.org/pdf/1910.10683.pdf) is trained on various datasets for 17 different tasks which fall into 8 categories.

#

#

#

# 1. Text summarization

# 2. Question answering

# 3. Translation

# 4. Sentiment analysis

# 5. Natural Language inference

# 6. Coreference resolution

# 7. Sentence Completion

# 8. Word sense disambiguation

#

# ### Every T5 Task with explanation:

# |Task Name | Explanation |

# |----------|--------------|

# |[1.CoLA](https://nyu-mll.github.io/CoLA/) | Classify if a sentence is gramaticaly correct|

# |[2.RTE](https://dl.acm.org/doi/10.1007/11736790_9) | Classify whether if a statement can be deducted from a sentence|

# |[3.MNLI](https://arxiv.org/abs/1704.05426) | Classify for a hypothesis and premise whether they contradict or contradict each other or neither of both (3 class).|

# |[4.MRPC](https://www.aclweb.org/anthology/I05-5002.pdf) | Classify whether a pair of sentences is a re-phrasing of each other (semantically equivalent)|

# |[5.QNLI](https://arxiv.org/pdf/1804.07461.pdf) | Classify whether the answer to a question can be deducted from an answer candidate.|

# |[6.QQP](https://www.quora.com/q/quoradata/First-Quora-Dataset-Release-Question-Pairs) | Classify whether a pair of questions is a re-phrasing of each other (semantically equivalent)|

# |[7.SST2](https://www.aclweb.org/anthology/D13-1170.pdf) | Classify the sentiment of a sentence as positive or negative|

# |[8.STSB](https://www.aclweb.org/anthology/S17-2001/) | Classify the sentiment of a sentence on a scale from 1 to 5 (21 Sentiment classes)|

# |[9.CB](https://ojs.ub.uni-konstanz.de/sub/index.php/sub/article/view/601) | Classify for a premise and a hypothesis whether they contradict each other or not (binary).|

# |[10.COPA](https://www.aaai.org/ocs/index.php/SSS/SSS11/paper/view/2418/0) | Classify for a question, premise, and 2 choices which choice the correct choice is (binary).|

# |[11.MultiRc](https://www.aclweb.org/anthology/N18-1023.pdf) | Classify for a question, a paragraph of text, and an answer candidate, if the answer is correct (binary),|

# |[12.WiC](https://arxiv.org/abs/1808.09121) | Classify for a pair of sentences and a disambigous word if the word has the same meaning in both sentences.|

# |[13.WSC/DPR](https://www.aaai.org/ocs/index.php/KR/KR12/paper/view/4492/0) | Predict for an ambiguous pronoun in a sentence what it is referring to. |

# |[14.Summarization](https://arxiv.org/abs/1506.03340) | Summarize text into a shorter representation.|

# |[15.SQuAD](https://arxiv.org/abs/1606.05250) | Answer a question for a given context.|

# |[16.WMT1.](https://arxiv.org/abs/1706.03762) | Translate English to German|

# |[17.WMT2.](https://arxiv.org/abs/1706.03762) | Translate English to French|

# |[18.WMT3.](https://arxiv.org/abs/1706.03762) | Translate English to Romanian|

#

#

# + id="XJw187r91QKN" executionInfo={"status": "aborted", "timestamp": 1650025386572, "user_tz": -300, "elapsed": 115, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

# Load the Multi Task Model T5

t5_multi = nlu.load('en.t5.base')

# + id="_F6jE7IN1U-G" executionInfo={"status": "aborted", "timestamp": 1650025386573, "user_tz": -300, "elapsed": 116, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

# https://www.reuters.com/article/instant-article/idCAKBN2AA2WF

text = """(Reuters) - Mastercard Inc said on Wednesday it was planning to offer support for some cryptocurrencies on its network this year, joining a string of big-ticket firms that have pledged similar support.

The credit-card giant’s announcement comes days after <NAME>’s Tesla Inc revealed it had purchased $1.5 billion of bitcoin and would soon accept it as a form of payment.

Asset manager BlackRock Inc and payments companies Square and PayPal have also recently backed cryptocurrencies.

Mastercard already offers customers cards that allow people to transact using their cryptocurrencies, although without going through its network.

"Doing this work will create a lot more possibilities for shoppers and merchants, allowing them to transact in an entirely new form of payment. This change may open merchants up to new customers who are already flocking to digital assets," Mastercard said. (mstr.cd/3tLaPZM)

Mastercard specified that not all cryptocurrencies will be supported on its network, adding that many of the hundreds of digital assets in circulation still need to tighten their compliance measures.

Many cryptocurrencies have struggled to win the trust of mainstream investors and the general public due to their speculative nature and potential for money laundering.

"""

t5_multi['t5'].setTask('summarize ')

short = t5_multi.predict(text)

short

# + id="1MtQlr_8PucN" executionInfo={"status": "aborted", "timestamp": 1650025386573, "user_tz": -300, "elapsed": 115, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

print(f"Original Length {len(short.document.iloc[0])} Summarized Length : {len(short.T5.iloc[0])} \n summarized text :{short.T5.iloc[0]} ")

# + id="ZqOJSkrWQQA9" executionInfo={"status": "aborted", "timestamp": 1650025386574, "user_tz": -300, "elapsed": 116, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

short.T5.iloc[0]

# + [markdown] id="Sd_4hzC9hz8K"

# **Make sure to restart your notebook again** before starting the next section

# + id="RQizVR2WhzTY" executionInfo={"status": "aborted", "timestamp": 1650025386576, "user_tz": -300, "elapsed": 117, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

print("Please restart kernel if you are in google colab and run next cell after the restart to configure java 8 back")

1+'wait'

# + id="41bGg_s0ioKK" executionInfo={"status": "aborted", "timestamp": 1650025386578, "user_tz": -300, "elapsed": 118, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# This configures colab to use Java 8 again.

# You need to run this in Google colab, because after restart it likes to set Java 11 as default, which will cause issues

# ! echo 2 | update-alternatives --config java

# + [markdown] id="PDmjkRoHhrqn"

# # Translate between more than 200 Languages with [ Microsofts Marian Models](https://marian-nmt.github.io/publications/)

#

# Marian is an efficient, free Neural Machine Translation framework mainly being developed by the Microsoft Translator team (646+ pretrained models & pipelines in 192+ languages)

# You need to specify the language your data is in as `start_language` and the language you want to translate to as `target_language`.

# The language references must be [ISO language codes](https://en.wikipedia.org/wiki/List_of_ISO_639-1_codes)

#

# `nlu.load('<start_language>.translate_to.<target_language>')`

#

# **Translate Turkish to English:**

# `nlu.load('tr.translate_to.en')`

#

# **Translate English to French:**

# `nlu.load('en.translate_to.fr')`

#

#

# **Translate French to Hebrew:**

# `nlu.load('fr.translate_to.he')`

#

#

#

#

#

#

# + id="AjiWgkvQwxBy" executionInfo={"status": "aborted", "timestamp": 1650025386579, "user_tz": -300, "elapsed": 118, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

import nlu

import pandas as pd

# !wget http://ckl-it.de/wp-content/uploads/2020/12/small_btc.csv

df = pd.read_csv('/content/small_btc.csv').iloc[0:20].title

# + [markdown] id="Q_dx5jDkeaGO"

# ## Translate to German

# + id="_DrnIRUlXpM6" executionInfo={"status": "aborted", "timestamp": 1650025386579, "user_tz": -300, "elapsed": 118, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

translate_pipe = nlu.load('en.translate_to.de')

translate_pipe.predict(df)

# + [markdown] id="9zyhBUxFeP6u"

# ## Translate to Chinese

# + id="B0Z3Ilt0eR3c" executionInfo={"status": "aborted", "timestamp": 1650025386580, "user_tz": -300, "elapsed": 119, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

translate_pipe = nlu.load('en.translate_to.zh')

translate_pipe.predict(df)

# + [markdown] id="SbE1KJQgeTiB"

# ## Translate to Hindi

# + id="5U2Xy6JAeXcj" executionInfo={"status": "aborted", "timestamp": 1650025386581, "user_tz": -300, "elapsed": 119, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

translate_pipe = nlu.load('en.translate_to.hi')

translate_pipe.predict(df)

# + [markdown] id="50Ap0BIujDWr"

# # Train a Multi Lingual Classifier for 100+ languages from a dataset with just one language

#

# [Leverage Language-agnostic BERT Sentence Embedding (LABSE) and acheive state of the art!](https://arxiv.org/abs/2007.01852)

#

# Training a classifier with LABSE embeddings enables the knowledge to be transferred to 109 languages!

# With the [SentimentDL model](https://nlp.johnsnowlabs.com/docs/en/annotators#sentimentdl-multi-class-sentiment-analysis-annotator) from Spark NLP you can achieve State Of the Art results on any binary class text classification problem.

#

# ### Languages suppoted by LABSE

#

#

#

# + id="y4xSRWIhwT28" executionInfo={"status": "aborted", "timestamp": 1650025386582, "user_tz": -300, "elapsed": 120, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Download French twitter Sentiment dataset https://www.kaggle.com/hbaflast/french-twitter-sentiment-analysis

# ! wget http://ckl-it.de/wp-content/uploads/2021/02/french_tweets.csv

import pandas as pd

train_path = '/content/french_tweets.csv'

train_df = pd.read_csv(train_path)

# the text data to use for classification should be in a column named 'text'

columns=['text','y']

train_df = train_df[columns]

train_df = train_df.sample(frac=1).reset_index(drop=True)

train_df

# + [markdown] id="0296Om2C5anY"

# ## Train Deep Learning Classifier using `nlu.load('train.sentiment')`

#

# Al you need is a Pandas Dataframe with a label column named `y` and the column with text data should be named `text`

#

# We are training on a french dataset and can then predict classes correct **in 100+ langauges**

# + id="mptfvHx-MMMX" executionInfo={"status": "aborted", "timestamp": 1650025386583, "user_tz": -300, "elapsed": 121, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Train longer!

trainable_pipe = nlu.load('xx.embed_sentence.labse train.sentiment')

trainable_pipe['sentiment_dl'].setMaxEpochs(60)

trainable_pipe['sentiment_dl'].setLr(0.005)

fitted_pipe = trainable_pipe.fit(train_df.iloc[:2000])

# predict with the trainable pipeline on dataset and get predictions

preds = fitted_pipe.predict(train_df.iloc[:2000],output_level='document')

#sentence detector that is part of the pipe generates sone NaNs. lets drop them first

preds.dropna(inplace=True)

print(classification_report(preds['y'], preds['sentiment']))

preds

# + [markdown] id="lVyOE2wV0fw_"

# ### Test the fitted pipe on new example

# + [markdown] id="RjtuNUcvuJTT"

# #### The Model understands Englsih

#

# + id="o0vu7PaWkcI7" executionInfo={"status": "aborted", "timestamp": 1650025386584, "user_tz": -300, "elapsed": 122, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

fitted_pipe.predict("This was awful!")

# + id="1ykjRQhCtQ4w" executionInfo={"status": "aborted", "timestamp": 1650025386586, "user_tz": -300, "elapsed": 123, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

fitted_pipe.predict("This was great!")

# + [markdown] id="vohym-XbuNHn"

# #### The Model understands German

#

# + id="dzaaZrI4tVWc" executionInfo={"status": "aborted", "timestamp": 1650025386586, "user_tz": -300, "elapsed": 123, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# German for:' this movie was great!'

fitted_pipe.predict("Der Film war echt klasse!")

# + id="BbhgTSBGtTtJ" executionInfo={"status": "aborted", "timestamp": 1650025386587, "user_tz": -300, "elapsed": 123, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# German for: 'This movie was really boring'

fitted_pipe.predict("Der Film war echt langweilig!")

# + [markdown] id="a1JbtmWquQwj"

# #### The Model understands Chinese

#

# + id="kYSYqtoRtc-P" executionInfo={"status": "aborted", "timestamp": 1650025386587, "user_tz": -300, "elapsed": 123, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Chinese for: "This model was awful!"

fitted_pipe.predict("这部电影太糟糕了!")

# + id="06v9SD-QtlBU" executionInfo={"status": "aborted", "timestamp": 1650025386587, "user_tz": -300, "elapsed": 122, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Chine for : "This move was great!"

fitted_pipe.predict("此举很棒!")

# + [markdown] id="9h7CvN4uu9Pb"

# #### Model understanda Afrikaans

#

#

#

#

# + id="VMPhbgw9twtf" executionInfo={"status": "aborted", "timestamp": 1650025386588, "user_tz": -300, "elapsed": 123, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Afrikaans for 'This movie was amazing!'

fitted_pipe.predict("Hierdie film was ongelooflik!")

# + id="zWgNTIdkumhX" executionInfo={"status": "aborted", "timestamp": 1650025386588, "user_tz": -300, "elapsed": 122, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

# Afrikaans for :'The movie made me fall asleep, it's awful!'

fitted_pipe.predict('Die film het my aan die slaap laat raak, dit is verskriklik!')

# + [markdown] id="rSEPkC-Bwnpg"

# #### The model understands Vietnamese

#

# + id="wCcTS5gIu511" executionInfo={"status": "aborted", "timestamp": 1650025386589, "user_tz": -300, "elapsed": 123, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

# Vietnamese for : 'The movie was painful to watch'

fitted_pipe.predict('Phim đau điếng người xem')

# + id="M6giDPK-wm2G" executionInfo={"status": "aborted", "timestamp": 1650025386589, "user_tz": -300, "elapsed": 122, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Vietnamese for : 'This was the best movie ever'

fitted_pipe.predict('Đây là bộ phim hay nhất từ trước đến nay')

# + [markdown] id="IlkmAaMoxTuy"

# #### The model understands Japanese

#

#

# + id="1IfJu3q8wwUt" executionInfo={"status": "aborted", "timestamp": 1650025386590, "user_tz": -300, "elapsed": 122, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Japanese for : 'This is now my favorite movie!'

fitted_pipe.predict('これが私のお気に入りの映画です!')

# + id="h3k7_PFhxOve" executionInfo={"status": "aborted", "timestamp": 1650025386590, "user_tz": -300, "elapsed": 122, "user": {"displayName": "<NAME>", "userId": "02458088882398909889"}}

# Japanese for : 'I would rather kill myself than watch that movie again'

fitted_pipe.predict('その映画をもう一度見るよりも自殺したい')

# + [markdown] id="VXu21c0iQRSC"

# # There are many more models you can put to use in 1 line of code!

# ## Checkout [the Modelshub](https://nlp.johnsnowlabs.com/models) and the [NLU Namespace](https://nlu.johnsnowlabs.com/docs/en/spellbook) for more models

#

#

# ### More ressources

# - [Join our Slack](https://join.slack.com/t/spark-nlp/shared_invite/<KEY>)

# - [NLU Website](https://nlu.johnsnowlabs.com/)

# - [NLU Github](https://github.com/JohnSnowLabs/nlu)

# - [Many more NLU example tutorials](https://github.com/JohnSnowLabs/nlu/tree/master/examples)

# - [Overview of every powerful nlu 1-liner](https://nlu.johnsnowlabs.com/docs/en/examples)

# - [Checkout the Modelshub for an overview of all models](https://nlp.johnsnowlabs.com/models)

# - [Checkout the NLU Namespace where you can find every model as a tabel](https://nlu.johnsnowlabs.com/docs/en/spellbook)

# - [Intro to NLU article](https://medium.com/spark-nlp/1-line-of-code-350-nlp-models-with-john-snow-labs-nlu-in-python-2f1c55bba619)

# - [Indepth and easy Sentence Similarity Tutorial, with StackOverflow Questions using BERTology embeddings](https://medium.com/spark-nlp/easy-sentence-similarity-with-bert-sentence-embeddings-using-john-snow-labs-nlu-ea078deb6ebf)

# - [1 line of Python code for BERT, ALBERT, ELMO, ELECTRA, XLNET, GLOVE, Part of Speech with NLU and t-SNE](https://medium.com/spark-nlp/1-line-of-code-for-bert-albert-elmo-electra-xlnet-glove-part-of-speech-with-nlu-and-t-sne-9ebcd5379cd)

# + id="R8hBZm_zo5EI" executionInfo={"status": "aborted", "timestamp": 1650025386591, "user_tz": -300, "elapsed": 122, "user": {"displayName": "ahmed lone", "userId": "02458088882398909889"}}

while 1 : 1

| nlu/webinars_conferences_etc/graph_ai_summit/Healthcare_Graph_NLU_COVID_Tigergraph.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # part 1: chunked datasets of 300

# + pycharm={"name": "#%%\n"}

import json

# + pycharm={"name": "#%%\n"}

import csv

# + pycharm={"name": "#%%\n"}

from pandas import *

# + pycharm={"name": "#%%\n"}

data = read_csv("master_list_of_comments.csv")

# + pycharm={"name": "#%%\n"}

all_comments = data['comment'].to_dict()

# + pycharm={"name": "#%%\n"}

count = 0

for idx in all_comments:

try:

all_comments[idx] = all_comments[idx].strip()

except:

print(f"{idx}: {all_comments[idx]}")

if count <= 100:

print(f"{idx}: {all_comments[idx]}")

count += 1

# + pycharm={"name": "#%%\n"}

all_comments = {value: key for key, value in all_comments.items()}

# + pycharm={"name": "#%%\n"}

all_comments

# + pycharm={"name": "#%%\n"}

f = open('labeled_dataset_-1.json')

labeled_comments = json.load(f)

# + pycharm={"name": "#%%\n"}

for comment in labeled_comments:

comment_text_stripped = comment['text'].strip()

if not comment_text_stripped in all_comments:

print(comment['text'], "not in dataset")

raise Exception("Comment not found")

for comment in labeled_comments:

comment_text_stripped = comment['text'].strip()

if comment_text_stripped in all_comments:

del all_comments[comment_text_stripped]

# + pycharm={"name": "#%%\n"}

remaining_comments = []

for comment in all_comments:

remaining_comments.append({'idx': all_comments[comment], 'text': comment})

# + pycharm={"name": "#%%\n"}

len(remaining_comments)

# + pycharm={"name": "#%%\n"}

def chunks(lst, n):

"""Yield successive n-sized chunks from lst."""

for i in range(0, len(lst), n):

yield lst[i:i + n]

# + pycharm={"name": "#%%\n"}

chunked_datasets = list(chunks(remaining_comments, 300))

# + pycharm={"name": "#%%\n"}

for i, dataset in enumerate(chunked_datasets):

keys = dataset[0].keys()

a_file = open(f"chunked_datasets/dataset_{i}.csv", "w")

dict_writer = csv.DictWriter(a_file, keys)

dict_writer.writeheader()

dict_writer.writerows(dataset)

a_file.close()

# -

# # part 2: randomized chunked datasets of 25

# + pycharm={"name": "#%%\n"}

for comment in remaining_comments:

comment['query'] = data['query'].iloc[comment['idx']]

# + pycharm={"name": "#%%\n"}

import random

# + pycharm={"name": "#%%\n"}

random.shuffle(remaining_comments)

# + pycharm={"name": "#%%\n"}

desired_order_list = ["idx", "query", "text"]

new_remaining_comments = []

for comment in remaining_comments:

new_remaining_comments.append({k: comment[k] for k in desired_order_list})

# + pycharm={"name": "#%%\n"}

remaining_comments = new_remaining_comments

# + pycharm={"name": "#%%\n"}

chunked_datasets = list(chunks(remaining_comments, 25))

# + pycharm={"name": "#%%\n"}

for i, dataset in enumerate(chunked_datasets):

keys = dataset[0].keys()

a_file = open(f"randomized_chunked_datasets_of_25/dataset_{i}.csv", "w")

dict_writer = csv.DictWriter(a_file, keys)

dict_writer.writeheader()

dict_writer.writerows(dataset)

a_file.close()

# + pycharm={"name": "#%%\n"}

raw_chunked_datasets = [[] for _ in range(len(chunked_datasets))]

for i, dataset in enumerate(chunked_datasets):

for comment in dataset:

raw_chunked_datasets[i].append(comment['text'])

# + pycharm={"name": "#%%\n"}

raw_chunked_datasets[0:5]

# + pycharm={"name": "#%%\n"}

for i, dataset in enumerate(raw_chunked_datasets):

with open(f'randomized_chunked_datasets_of_25/dataset_{i}_raw.csv', 'w') as f:

writer = csv.writer(f)

for val in dataset:

writer.writerow([val])

| MateoStuff/Generate300Dataset/remove_labeled.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Purpose

#

# This figure justifies our choice of critic features. It should show that using both counts

# is better than using either or none.

#

# ### Method

#

# I did 3 very large runs. Pretraining for 200 for each, and then training against

# critic with one, other, and both critic-counts missing.

#

# We'll show the grahs over epochs. We'll also show the training critic-loss, to see how well it can fit that curve.

#

# ### Data structure

#

# Each of the files is a JSON object. Its keys are the 3 training phases: ACTOR, CRITIC, and AC.

# Underneath this, it has subfields for what it was recording. For example, NDCG, or TRAINING_CRITIC_ERROR.

#

# ### Conclusions

#

# It seems as though BOTH_COUNTS is BEST, NO_COUNTS is WORST, WITHOUT_SEEN is pretty good, and WITHOUT_UNSEEN is pretty bad.

#

# +

import matplotlib

import numpy as np

import matplotlib.pyplot as plt

import json

import seaborn as sns

sns.set()

DATA = {}

DATA['without_either'] = {}

DATA['without_seen'] = {}

DATA['without_unseen'] = {}

DATA['tentative_with_both'] = {}

print("Now, loading data")

with open("./data/without_either/actor_training.json", "r") as f:

DATA['without_either']['actor'] = json.loads(f.read())

with open("./data/without_either/critic_training.json", "r") as f:

DATA['without_either']['critic'] = json.loads(f.read())

with open("./data/without_seen/actor_training.json", "r") as f:

DATA['without_seen']['actor'] = json.loads(f.read())

with open("./data/without_seen/critic_training.json", "r") as f:

DATA['without_seen']['critic'] = json.loads(f.read())

with open("./data/without_unseen/actor_training.json", "r") as f:

DATA['without_unseen']['actor'] = json.loads(f.read())

with open("./data/without_unseen/critic_training.json", "r") as f:

DATA['without_unseen']['critic'] = json.loads(f.read())

with open("./data/tentative_with_both/actor_training.json", "r") as f:

DATA['tentative_with_both']['actor'] = json.loads(f.read())

with open("./data/tentative_with_both/critic_training.json", "r") as f:

DATA['tentative_with_both']['critic'] = json.loads(f.read())

print("Data loaded")

print("I'm calling it tentatitive_with_both, because ")

# +

print("First, we'll plot just the actors")

# https://stackoverflow.com/questions/20618804/how-to-smooth-a-curve-in-the-right-way

plt.clf()

plt.plot(range(200), DATA['without_either']['actor']['ACTOR']['ndcg'], color="red")

plt.plot(range(200), DATA['without_seen']['actor']['ACTOR']['ndcg'], color="blue")

plt.plot(range(200), DATA['without_unseen']['actor']['ACTOR']['ndcg'], color="green")

plt.plot(range(200), DATA['tentative_with_both']['actor']['ACTOR']['ndcg'], color="orange")

plt.show()

plt.ylim(0.42, 0.44)

# +

actor_parts = np.asarray([

DATA[part]['actor']['ACTOR']['ndcg']

for part in

['without_either', 'without_seen', 'without_unseen', 'tentative_with_both']

])

average_actor_parts = np.mean(actor_parts, axis=0).tolist()

# +

# Now,I want to plot with everything...

all_without_either = DATA['without_either']['actor']['ACTOR']['ndcg'] + DATA['without_either']['critic']['AC']['ndcg']

all_without_seen = DATA['without_seen']['actor']['ACTOR']['ndcg'] + DATA['without_seen']['critic']['AC']['ndcg']

all_without_unseen = DATA['without_unseen']['actor']['ACTOR']['ndcg'] + DATA['without_unseen']['critic']['AC']['ndcg']

all_tentative_with_both = DATA['tentative_with_both']['actor']['ACTOR']['ndcg'] + DATA['tentative_with_both']['critic']['AC']['ndcg']

# I ran this with 50 more training steps than the others.

plt.clf()

# plt.plot(range(350), all_without_either, color="red", label="No Counts")

# plt.plot(range(350), all_without_seen, color="blue", label="Only Seen Count")

# plt.plot(range(350), all_without_unseen, color="green", label="Only Unseen Count")

# plt.plot(range(300), all_tentative_with_both, color='orange', label="Both Counts")

plt.plot(range(300), all_without_either[0:300], color="red", label="No Counts")

plt.plot(range(300), all_without_seen[0:300], color="blue", label="Without Seen Count")

plt.plot(range(300), all_without_unseen[0:300], color="green", label="Without Unseen Count")

plt.plot(range(300), all_tentative_with_both[0:300], color='orange', label="Both Counts")

leg = plt.legend(fontsize=12, shadow=True, loc=(0.05, 0.60))

plt.ylim(0.42, 0.45)

plt.show()

print(max(all_tentative_with_both))

print(max(all_without_seen))

print(max(all_without_unseen))

print("Good prevails! without_seen is pretty good, but not as good as with both.")

print("NOTE: I'm only showing 100 epochs of AC-training, because we want ")

# +

plt.clf()

plt.plot(range(200), average_actor_parts, color="black", label="shared_actor")

plt.plot(range(199, 300), [average_actor_parts[-1]] + DATA['without_either']['critic']['AC']['ndcg'][:100], color="red", label="No Counts")

plt.plot(range(199, 300), [average_actor_parts[-1]] + DATA['without_seen']['critic']['AC']['ndcg'][:100], color="blue", label="Without Seen Count")

plt.plot(range(199, 300), [average_actor_parts[-1]] + DATA['without_unseen']['critic']['AC']['ndcg'][:100], color="green", label="Without Unseen Count")

plt.plot(range(199, 300), [average_actor_parts[-1]] + DATA['tentative_with_both']['critic']['AC']['ndcg'], color='orange', label="Both Counts")

leg = plt.legend(fontsize=12, shadow=True, loc=(0.05, 0.60))

plt.ylim(0.42, 0.45)

print(max(all_tentative_with_both))

print(max(all_without_seen))

print(max(all_without_unseen))

print("Good prevails! without_seen is pretty good, but not as good as with both.")

print("NOTE: I'm only showing 100 epochs of AC-training, because we want ")

# +

fig=plt.figure(figsize=(5.4,3.5))

plt.axvline(x=149, linewidth=1, color='k', linestyle="--")

plt.plot(range(200), average_actor_parts, linewidth=1.5, linestyle="-", color="black", label="VAE")

plt.plot(range(149, 250), [average_actor_parts[150]] + DATA['tentative_with_both']['critic']['AC']['ndcg'],linewidth=2.5, color='red', label="$[\mathcal{L}_{E} , | \mathcal{H}_0 |, |\mathcal{H}_1 |]$")

plt.plot(range(149, 250), [average_actor_parts[150]] + DATA['without_seen']['critic']['AC']['ndcg'][:100], linewidth=2.5, color="deepskyblue", label="$[\mathcal{L}_{E} , | \mathcal{H}_0 |]$")

plt.plot(range(149, 250), [average_actor_parts[150]] + DATA['without_unseen']['critic']['AC']['ndcg'][:100], linewidth=2.5, color="green", label="$[\mathcal{L}_{E} , |\mathcal{H}_1 |]$")

plt.plot(range(149, 250), [average_actor_parts[150]] + DATA['without_either']['critic']['AC']['ndcg'][:100], linewidth=2.5, color="orange", label="$[\mathcal{L}_{E}]$")

leg = plt.legend(fontsize=13, shadow=True, loc=(0.002, 0.45))

plt.grid('on')

plt.xlabel('# Epoch of actor', fontsize=13)

plt.ylabel('Validation NDCG@100', fontsize=13)

plt.xlim(100, 200)

plt.ylim(0.428, 0.44)

plt.show()

fig.savefig('plot_feature_ablation.pdf', bbox_inches='tight')

print(max(all_tentative_with_both))

print(max(all_without_seen))

print(max(all_without_unseen))

print("Good prevails! without_seen is pretty good, but not as good as with both.")

print("NOTE: I'm only showing 100 epochs of AC-training, because we want ")

# -

| paper_plots/critic_term_ablation_study/plot_which_feature.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# +

#all_slow

# -

# # Tutorial - Text Generation

# > Using the text generation API with AdaptNLP

# ## What is Text Generation?

#

# Text generation is the NLP task of generating a coherent sequence of words, usually from a language model. The current leading methods, most notably OpenAI’s GPT-2 and GPT-3, rely on feeding tokens (words or characters) into a pre-trained language model which then uses this seed data to construct a sequence of text. AdaptNLP provides simple methods to easily fine-tune these state-of-the-art models and generate text for any use case.

#

# Below, we'll walk through how we can use AdaptNLP's `EasyTextGenerator` module to generate text to complete a given String.

# ## Getting Started with `TextGeneration`

#

# We'll get started by importing the `EasyTextGenerator` class from AdaptNLP.

from adaptnlp import EasyTextGenerator

# Then we'll write some sample text to use:

text = "China and the U.S. will begin to"

# And finally instantiating our `EasyTextGenerator`:

generator = EasyTextGenerator()

# ## Generating Text

# Now that we have the summarizer instantiated, we are ready to load in a model and compress the text

# with the built-in `generate()` method.

#

# Here is one example using the gpt2 model:

# +

generated_text = generator.generate(text, model_name_or_path="gpt2", mini_batch_size=2, num_tokens_to_produce=50)

print(generated_text)

# -

# ## Finding Models with the `HFModelHub`

#

# Rather than searching through HuggingFace for models to use, we can use Adapt's `HFModelHub` to search for valid text generation models.

#

# First, let's import it:

from adaptnlp import HFModelHub

# And then search for some models by task:

hub = HFModelHub()

models = hub.search_model_by_task('text-generation'); models

# We'll use our `gpt2` model again:

model = models[4]; model

# And pass it into our `generator`:

# +

generated_text = generator.generate(text, model_name_or_path=model, mini_batch_size=2, num_tokens_to_produce=50)

print(generated_text)

| nbs/09a_tutorial.easy_text_generator.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Select protfolio-id for trades analysis

portfolio_id = "31cb73e1-5c30-4cff-9f23-2a51cce7ca6f"

end_date = '2021-09-14'

# #### imports

# +

import asyncio

import json

import math

import sys

import datetime

from datetime import date, datetime, timedelta

import iso8601

import matplotlib.pyplot as plt

import nest_asyncio

import numpy as np

import pandas as pd

import pytz

import requests

from dateutil import parser

from IPython.display import HTML, display, Markdown

from liualgotrader.analytics.analysis import (

calc_batch_revenue,

count_trades,

load_trades_by_portfolio,

trades_analysis,

symbol_trade_analytics,

)

from liualgotrader.common import config

from liualgotrader.common.types import DataConnectorType

from pandas import DataFrame as df

from pytz import timezone

from talib import BBANDS, MACD, RSI

from liualgotrader.common.data_loader import DataLoader

# %matplotlib inline

nest_asyncio.apply()

# -

config.data_connector = DataConnectorType.alpaca

end_date = datetime.strptime(end_date, "%Y-%m-%d")

local = pytz.timezone("UTC")

end_date = local.localize(end_date, is_dst=None)

# #### Load batch data

trades = load_trades_by_portfolio(portfolio_id)

trades

if trades.empty:

assert False, "Empty batch. halting execution."

# ## Display trades in details

minute_history = DataLoader(connector=DataConnectorType.alpaca)

nyc = timezone("America/New_York")

for symbol in trades.symbol.unique():

symbol_df = trades.loc[trades["symbol"] == symbol]

start_date = symbol_df["client_time"].min()

start_date = start_date.replace(hour=9, minute=30, second=0, tzinfo=nyc)

end_date = end_date.replace(hour=16, minute=0, second=0, tzinfo=nyc)

cool_down_date = start_date + timedelta(minutes=5)

try:

symbol_data = minute_history[symbol][start_date:end_date]

except Exception:

print(f"failed loading {symbol} from {start_date} to {end_date} -> skipping!")

continue

minute_history_index = symbol_data.close.index.get_loc(

start_date, method="nearest"

)

end_index = symbol_data.close.index.get_loc(

end_date, method="nearest"

)

cool_minute_history_index = symbol_data["close"].index.get_loc(

cool_down_date, method="nearest"

)

open_price = symbol_data.close[cool_minute_history_index]

plt.plot(

symbol_data.close[minute_history_index:end_index].between_time(

"9:30", "16:00"

),

label=symbol,

)

plt.xticks(rotation=45)

d, profit = symbol_trade_analytics(symbol_df, open_price, plt)

print(f"{symbol} analysis with profit {round(profit, 2)}")

display(HTML(pd.DataFrame(data=d).to_html()))

plt.legend()

plt.show()

| analysis/notebooks/portfolio_trades.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

from __future__ import print_function

# %matplotlib inline

# %config InlineBackend.figure_format = 'png'

# -

# %%time

df = pd.read_csv("../data/train.csv")

# 4min

df.columns

# %%time

df.info()

# %%time

# histogram of features

skip_cols = ['date_time','orig_destination_distance', 'user_id']

for col in df.columns:

if col in skip_cols:

continue

print('='*50)

print('col : ', col)

f, ax = plt.subplots(figsize=(20, 15))

sns.countplot(x=col, data=df, alpha=0.5)

plt.show()

# +

# site_name : 2가 과도하게 많음 -> 나머지 3,4같은 친구들의 빈도수가 어떻게 될까

# posa_contient : 3이 2.5이상 많음

# user_location_country : 특정 위치가 많음(관광을 많이 가는 나라인가..) / 지금 여기엔 작게 보이는 것들도 아예 없는거에 비해 많은것

# user_location_region : 마찬가지로 특정 지역에서 expedia 검색을 많이 함

# user_location_city : 뾰족한 뾰족한 느낌이 온다

# is_mobile : 0이 압도적으로 많음

# is_package : 0이 압도적으로 많음

# 채널 9 >0 > 1 > 2 > 5 > 3 > 4 ....

# srch_ci : 일정 주기마다 데이터의 양이 반복됨. 이 시기에 대한 무언가가 있지 않을까

# srch_co : check out - check in 몇일 여행을 하는지 일수 feature 추가

# adult : 거의 2명 아니면 혼자 -> 예약하는 인원 수에 따른 무언가가 있지 않을까

# 자녀는 없는 경우가 많고 많으면 1,2

# rm_cnt : 방은 거의 1개 -> 이건 역으로 호텔의 분류를 내가 파악해볼 수 있을 듯

# destination id - 뾰족한 친구가 있음. 음

# destination_type : 1과 6..?

# is_booking : 0> 1

# cnt : 5번 안에모든 것이 결정된다고 보면 될 것 같은데.. 정확한 비율을 찾아보자

# 호텔이 있는 대륙은 2가 많네

# 호텔 마켓..

# 호텔의 클러스터는 0부터 99까지 100가지가 있음 => 예약하냐 안하냐에 과연 중요한 변수일까

# user_id 사람들의 평균 예약 빈도수를 파악해서 평균 이상인 사람과 평균 이하인 사람으로 구분

# +

# 내가 여행을 간다면 일단 expedia에서 검색을 해봄 -> 인원 수, 장소, 시간 체크 후 검색

# -> 검색 결과를 훑어봄 (아마 평점이 높은 친구부터, 그리고 expedia가 추천한 것부터 위에서 아래로 봄)

# -> 친구 혹은 같이 가는 사람들에게 링크 전달

# -> 의논 후 호텔 결정

# -> 항공권과 함께하면 할인되므로 또 고민

# +

# y : 2015년 booking 0 / 1

# data를 나누어 모델을 만든다면

# 1) 최근 1년(14년) 데이터 + 빈도가 낮은 호텔 제외

# 2) 여행을 자주 가는 시즌이 있을테니 이런 경우 평준화

# 3) 여행 때문이 아닌 출장을 하는 경우에도 expedia를 사용할 수 있음 -> 별도로 나누어서 빼기

#

# expeida에서 설정한 호텔 cluster

# 데이터 전처리

# 일단 datetime -> 일과 월로 나누어서 표기(월별/일별 주말, 평일, click 시간이 오전이냐 오후냐 새벽이냐)

# null값이 있는 distance 체크

# 타 feature에 na값이 있는지 확인하기

# +

# %%time

# date_time => datetime 형식으로 변경

df["date_time"] = pd.to_datetime(df["date_time"], coerce=True)

# -

# %%time

df_2013 = df[df["date_time"].dt.year == 2013]

# %%time

df_2013.to_csv("train_2013.csv")

# %%time

df_2014 = df[df["date_time"].dt.year == 2014]

df_2014.to_csv("train_2014.csv")

df["date_time"].head().dt.year == 2014

pd.set_option("max_columns", 40)

print(pd.get_option("display.max_columns"))

| notebook/01. EDA(all data).ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

import tensorflow as tf

from datetime import datetime

import matplotlib.pyplot as plt

import seaborn as sns

features = pd.read_csv('../Data/training_set_features.csv')

features.describe()

((features.isnull().sum() / len(features)) * 100).sort_values()

# +

categorical_columns = [

'sex',

'hhs_geo_region',

'census_msa',

'race',

'age_group',

'behavioral_face_mask',

'behavioral_wash_hands',

'behavioral_antiviral_meds',

'behavioral_outside_home',

'behavioral_large_gatherings',

'behavioral_touch_face',

'behavioral_avoidance',

'health_worker',

'child_under_6_months',

'chronic_med_condition',

'education',

'marital_status',

'employment_status',

'rent_or_own',

'doctor_recc_h1n1',

'doctor_recc_seasonal',

'income_poverty'

]

numerical_columns = [

'household_children',

'household_adults',

'h1n1_concern',

'h1n1_knowledge',

'opinion_h1n1_risk',

'opinion_h1n1_vacc_effective',

'opinion_h1n1_sick_from_vacc',

'opinion_seas_vacc_effective',

'opinion_seas_risk',

'opinion_seas_sick_from_vacc',

]

# -

features[categorical_columns]

# +

n_bins = 20

fig, axs = plt.subplots(1,2, sharey=True, tight_layout=True)

axs[0].hist('race', bins=n_bins)

# -

fig, axes = plt.subplots(2,3, sharey=True)

sub_num = 0

for num_column in numerical_columns:

ax = sns.distplot(features[num_column], kde=True, bins=4, ax=axes[sub_num])

sub_num += 1

for x in numerical_columns:

plt.figure()

plt.hist(features[x])

plt.xlabel(x)

| Notebooks/.ipynb_checkpoints/H1N1 Exploration Notebook-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Nova Tarefa - Implantação

#

# Preencha aqui com detalhes sobre a tarefa.<br>

# ### **Em caso de dúvidas, consulte os [tutoriais da PlatIAgro](https://platiagro.github.io/tutorials/).**

# ## Declaração de Classe para Predições em Tempo Real

#

# A tarefa de implantação cria um serviço REST para predições em tempo-real.<br>

# Para isso você deve criar uma classe `Model` que implementa o método `predict`.

# +

# %%writefile Model.py

# adicione imports aqui...

class Model:

def __init__(self):

# adicione seu código aqui...

pass

def predict(self, X, feature_names, meta=None):

# adicione seu código aqui...

return X

| projects/config/Deployment.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + _uuid="599c85b07f91bac2ad212af1ea3b4edfc66d2bff"

import pandas as pd

from sklearn.metrics import mean_absolute_error

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestRegressor

from sklearn.tree import DecisionTreeRegressor

from xgboost import XGBRegressor

from learntools.core import *

iowa_file_path = '../input/train.csv'

iowa_test_file_path = '../input/test.csv'

train_data = pd.read_csv(iowa_file_path)

test_data = pd.read_csv(iowa_test_file_path)

y = train_data.SalePrice

train_features = train_data.drop(['SalePrice'], axis = 1)

# + [markdown] _uuid="1cf1ffebcb4a95f15112e2e7750f526baa39fa30"

# # Missing and categorical values

# + _uuid="9760b349094ff1d874615efe613d8cd652be18f0"

# fill in missing numeric values

from sklearn.impute import SimpleImputer

# impute

train_data_num = train_features.select_dtypes(exclude=['object'])

test_data_num = test_data.select_dtypes(exclude=['object'])

imputer = SimpleImputer()

train_num_cleaned = imputer.fit_transform(train_data_num)

test_num_cleaned = imputer.transform(test_data_num)

# columns rename after imputing

train_num_cleaned = pd.DataFrame(train_num_cleaned)

test_num_cleaned = pd.DataFrame(test_num_cleaned)

train_num_cleaned.columns = train_data_num.columns

test_num_cleaned.columns = test_data_num.columns

# + _uuid="4898e1945a5208161a6fcf0e23571bcd06d811b0"

# string columns: transform to dummies

train_data_str = train_data.select_dtypes(include=['object'])

test_data_str = test_data.select_dtypes(include=['object'])

train_str_dummy = pd.get_dummies(train_data_str)

test_str_dummy = pd.get_dummies(test_data_str)

train_dummy, test_dummy = train_str_dummy.align(test_str_dummy,

join = 'left',

axis = 1)

# + _uuid="5af3d1c3cdfe4206bef38e7b1c8ce497dfae9e52"

# convert numpy dummy tables to pandas DataFrame

train_num_cleaned = pd.DataFrame(train_num_cleaned)

test_num_cleaned = pd.DataFrame(test_num_cleaned)

# + _uuid="f1e90fe32706f43ac1d5ea7af242b999703945c2"

# joining numeric (after imputing) and string (converted to dummy) data

train_all_clean = pd.concat([train_num_cleaned, train_dummy], axis = 1)

test_all_clean = pd.concat([test_num_cleaned, test_dummy], axis = 1)

# + _uuid="e9d746b010eb0fdc602de346056144768bb83705"

# detect NaN in already cleaned test data

# (there could be completely empty columns in test data)

cols_with_missing = [col for col in test_all_clean.columns

if test_all_clean[col].isnull().any()]

for col in cols_with_missing:

print(col, test_all_clean[col].isnull().any())

# + _uuid="0a271653aa668a52844a4c56f3195b681fa76d1d"

# since there are empty columns in test we need to drop them in train and test data

train_all_clean_no_nan = train_all_clean.drop(cols_with_missing, axis = 1)

test_all_clean_no_nan = test_all_clean.drop(cols_with_missing, axis = 1)

# + [markdown] _uuid="c3221390f01274b7f875e87abbac46dda8f0c04c"

# # Different models training and validation

# + _uuid="015f66e0c1655124079a8d88d1e00a5399f5e543"

train_X, val_X, train_y, val_y = train_test_split(train_all_clean_no_nan, y, random_state=1)

# default XGBoost

xgb_model = XGBRegressor(random_state = 1)

xgb_model.fit(train_X, train_y, verbose = False)

xgb_predictions = xgb_model.predict(val_X)

xgb_mae = mean_absolute_error(val_y, xgb_predictions)

print("XGBoost MAE default: {:,.0f}".format(xgb_mae))

# fine tuned XGBoost

xgb_model = XGBRegressor(n_estimators = 1000, learning_rate=0.05, random_state = 1)

xgb_model.fit(train_X, train_y, early_stopping_rounds = 25, eval_set = [(val_X, val_y)], verbose = False)

# with verbose = True we have the best iteration on step 757 => n_estimators = 757

# + _uuid="cf6b8944b3c6092e3a544087b2c7caf500ee3b3c"

xgb_predictions = xgb_model.predict(val_X)

xgb_mae = mean_absolute_error(val_y, xgb_predictions)

print("XGBoost MAE default: {:,.0f}".format(xgb_mae))

# + [markdown] _uuid="f7c47011756dd4125ac584cbac751ae5e0d8509f"

# # XGBoost Model (on all training data)

# + _uuid="c7b21de27dd3cc2d79ba9c361789d30a0a8f64ee"

# To improve accuracy, create a new Random Forest model which you will train on all training data

xgb_model_on_full_data = xgb_model = XGBRegressor(n_estimators = 757, learning_rate=0.05)

xgb_model_on_full_data.fit(train_all_clean_no_nan, y)

# + [markdown] _uuid="294a788b8c675e0e42a16d60b41a9846d99f7ae8"

# # Make Predictions

# + _uuid="46cd68a096beb5b969ef7e663f89f4bb22a7b7ad"

test_X = test_all_clean_no_nan

test_preds = xgb_model_on_full_data.predict(test_X)

# + _uuid="e3d6c83e2b612cd6a085c37e504f7e8125eb1fbc"

output = pd.DataFrame({'Id': test_data.Id,

'SalePrice': test_preds})

output.to_csv('submission.csv', index=False)

| machine learning/housing-xgboost-tuned-on-cleaned-data.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="A_5C1IvdfxkF"

# #pyhpc-benchmarks @ Google Colab

#

# To run all benchmarks, you need to switch the runtime type to match the corresponding section (CPU, TPU, GPU).

# + id="TTViNK-9OfRJ"

# !rm -rf pyhpc-benchmarks; git clone https://github.com/dionhaefner/pyhpc-benchmarks.git

# + id="Eyc45XkjQB1X"

# %cd pyhpc-benchmarks

# + id="RbM7XH04MwFA"

# check CPU model