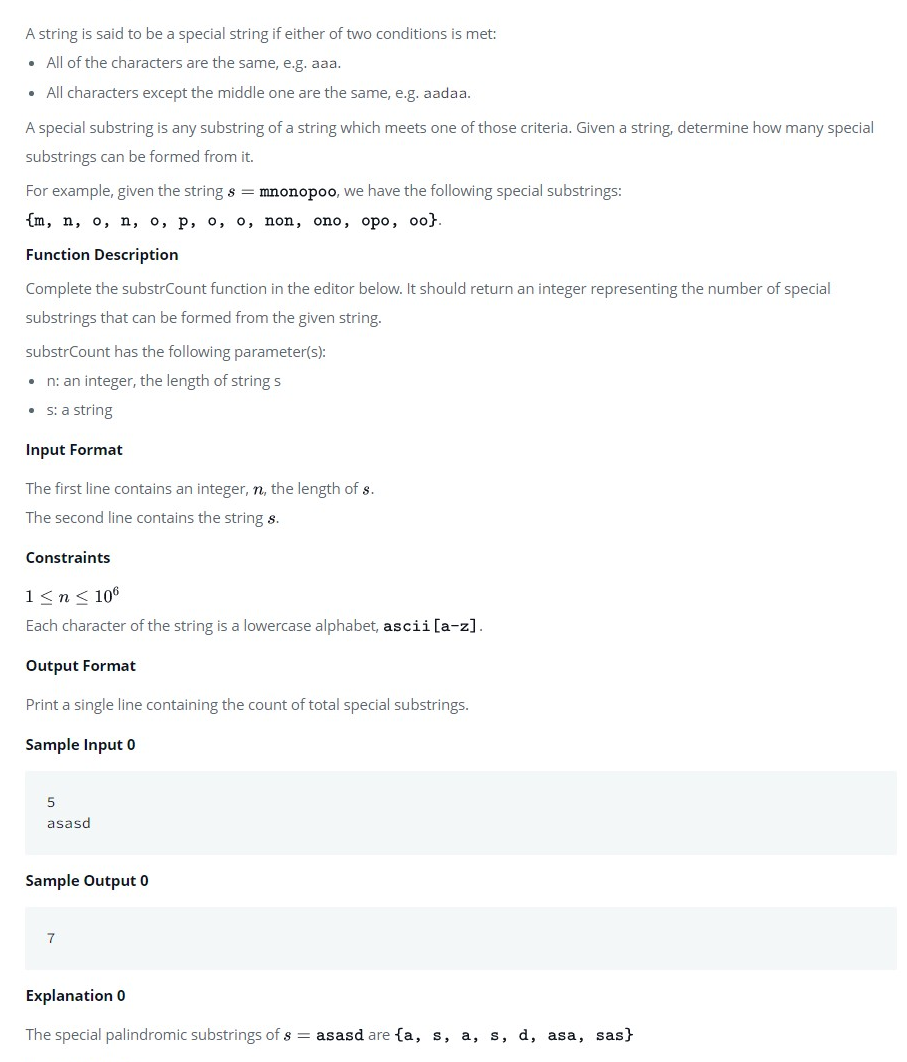

code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Train your own skip-thoughts model

#

# This notebook walks you through training a skip-thoughts model. It was used with semi-success to train a skip-thoughts model on a gpu-backed machine with the Pythia kernel against the entire stackexchange corpus. It was prepared over the course of 8 days, and isn't perfect.

#

# The first section will require the user to make several data paths.

#

# The second section requires importing several modules, including pythia and skip-thoughts-specific modules, which may depend on python paths being set correctly. It may need some tweaking.

#

# Since the Jupyter notebook on the gpu-backed machines was unreliable, I moved some sections of this notebook into small dirty python files in a folder called skip-thoughts_training.

#

# There is some trickiness about what a skip-thought model is. The model provided by the skip-thoughts paper was actually trained 3 times, once on its corpus, then again on its corpus with half as many dimensions, then again on its corpus with reversed-order sentences, again with half-as many dimensions. They then concatenate the results.

#

# We don't attempt this. We merely train a single "uni-skip" model.

#

# The encode function found in the file skipthoughts.py insists on two models, uni-skip and bi-skip. To encode off a single uni-skip module, the encode function found in the tools.py file is needed. It unfortunately tends to die and die badly when I use it.

#

# The last part of the code is supposed to be validation. Since my encoding is broken, validation is not tested.

#

# Because things kept dying on me, I found it convenient to write to disk near constantly. This slows things down, but makes them more robust against dying computers.

#

# The cells thus tend to use little memory and instead read and write in a streaming fashion, using a lot of time instead.

#

#

# ## Hard-coding data paths

#

# Mostly this notebook should just run. It however requires the user to deal with one of the next 2 cells. The following locations will not work out of the box and are just suggestions.

#

# #### Location of the data

#

# `sample_location` should be the path to a directory which contains your data. Each file should contain json-parsable lines. The directory can have subdirectories. The code will recursively find the files. There should be no `.json` files anywhere in the directory except those the code wishes to parse.

#

# `path_to_word2vec` is a `.bin` word2vec file the code depends on, e.g. the Google News model founds at https://code.google.com/archive/p/word2vec/

#

# #### Where to put output

#

# `parsed_data_location` is a directory of `.csv` files the code will create structured the same as `sample_location`, but where the sentences have been normalized an tokenized, and where each file reprents a post.

#

# `training_data_location` is the name of a file that will store the sentences in a single file, one per line, with null characters separating blog posts.

#

# `vocab_location` should be the name of a pickle file (including path), which will store information about the words in the corpus

#

# `model_location` should be the name of a .npz (zipped numpy) file (including path), which will store the model itself as a numpy array. The code will also create a .npz.pkl file with the same name containing some metadata.

#

#

#

#

sample_location = 'pythia/data/stackexchange/all'

path_to_word2vec = 'outside-data/stackexchange/models/word2vecAnime.bin'

parsed_data_location = 'outside-results/testing'

training_data_location = 'outside-results/testing/training.txt'

vocab_location = 'outside-data/stackexchange/models/vocab.pickle'

model_location = 'outside-data/stackexchange/models/corpus.npz'

# ## Let's import some modules!

# Import auxillary modules

import os

import json

import numpy

import csv

import sys

import random

# Import theano

import theano

import theano.tensor as tensor

# May need to set the flag if your .theanorc isn't correct.

# If you want to run on gpu, you should fix your .theanorc

# and make this cell irrelevant

theano.config.floatX = 'float32'

# Double check that floatX is float 32

# device should be either cpu or gpu, as desired.

print(theano.config.floatX)

print(theano.config.device)

# So this next cell is maybe bad. The notebook only runs if your paths are all configured right. You may need to adjust the below cell to import pythia/skipthoughts models.

#

# The commented-out lines were what I used to make this work on my own computer without any adjustments to my notebook kernel. I *hope* this will just work with the pythia kernel installed.

# Import skipthoughts modules

#sys.path.append('/Users/chrisn/mad-science/pythia/src/featurizers/skipthoughts')

#from training import vocab, train, tools

#import skipthoughts

from src.featurizers.skipthoughts import skipthoughts

from src.featurizers.skipthoughts.training import vocab, train, tools

# Import pythia modules

#sys.path.append('/Users/chrisn/mad-science/pythia/')

from src.utils import normalize, tokenize

# For evaluation purposes, import some sklearn modules

from sklearn.linear_model import LinearRegression

from sklearn.cross_validation import train_test_split

import pandas

import warnings

warnings.filterwarnings('ignore')

# Because there were a lot of annoying warnings.

# The Beautiful Soup module as used in the pythia normalization is mad about something

# And the skip-thoughts code is full of deprecation warnings about how numpy works. The warnings can crash my system

# ## Tokenization and normalization

#

# Who knows the best way to do this? I tried to match the expectations of both the skip-thoughts code and the pythia codebase as best I could.

#

# For each document:

#

# 1) Make list of sentences. We use utils.tokenize.punkt_sentences

#

# 2) Normalize each sentence. Remove html and make everything lower-case. We use utils.normalize.xml_normalize

#

# 3) Tokenize each sentence. Now each sentence is a string of space-separated tokens. We use utils.tokenize.word_punct_tokens and rejoin the tokens.

#

# Because I had so many difficulties with things crashing, I was happy whenever I got anything done and wanted to save where I was. I also became gunshy about using memory. The solution below is thus entirely streaming. This slows it down because of file i/o.

#

# The output of this section run on the entire stackexchange corpus can be found in <...>/stackexchange_models/se_posts_parsed.tar.gz.

#

# (Well, the tarring was done in the shell. This cell just creates a directory.)

#

# The section requires previously set varaibles: `sample_location` for the input and `parsed_data_location` for the output.

file_extension = ".json"

# Instead of trying to parse in memory, can instead parse line by line and write to disk

fieldnames = ["body_text", "post_id","cluster_id", "order", "novelty"]

for root,dirs,files in os.walk(sample_location):

for doc in files:

if doc.endswith(file_extension): #Recursively find all .json files

for line in open(os.path.join(sample_location,root,doc)):

temp_dict = json.loads(line)

post_id = temp_dict['post_id']

text = temp_dict['body_text']

sentences = tokenize.punkt_sentences(text)

normal = [normalize.xml_normalize(sentence)

for sentence in sentences]

tokens = [' '.join(tokenize.word_punct_tokens(sentence))

for sentence in normal]

base_doc = doc.split('.')[0]

output_filename = "{}_{}.csv".format(base_doc,post_id)

# Creates one output file per line of input file.

# Output file includes post id in name:

# {clusterid}_{postid}.csv

rel_path = os.path.relpath(root,sample_location)

output_path = os.path.join(parsed_data_location,

rel_path,

output_filename)

os.makedirs(os.path.dirname(output_path), exist_ok = True)

with open(output_path,'w') as token_file:

#print(parsed_data_location,rel_path,output_filename)

writer = csv.DictWriter(token_file, fieldnames)

writer.writeheader()

output_dict = temp_dict

for token in tokens:

output_dict['body_text'] = token

writer.writerow(output_dict)

# ## Reformat to match skip-thoughts code input

#

# `tokenized` is now a list of lists. Each inner list represents a document as a list of strings, where each string represents a sentence.

#

# ### An annoying issue

#

# The trainer expects a list of sentences. To match expectations, those inner brackets need to disappear.

#

# However, this then looks like we have one real long document where the documents have been smashed together in arbitrary order. And the training will mistake the first sentence of one document as being part of the context of the last sentence of another. For sufficiently long documents, you can argue this is just noise. For documents that are themselves only a few sentences, this seems like too much noise.

#

# My cludgy fix is to introduce a sentence consisting of a single null character `'\0'` and add this sentence between every document when concatenating. This may have unintended side-effects.

#

# As above, this notebook doesn't depend on much memory. The next cell does not assume you have `tokenized` stored and thus asks you to read it back in. I found this more convenient in the end.

#

# The cell depends on previously defined variables `parsed_data_location` and `training_data_location` for input and output respectively.

doc_separator = '\0'

# This cell does three things

# Writes sentences to a text file one line per sentence, with the null character separating documents.

# Stores all sentences into a list

# Stores the cluster_ids into a numpy array. Each sentence gets the cluster_id of its post. So the list and numpy array

# are the same length.

sentences = []

cluster_ids = []

with open(training_data_location,'w') as outfile:

for root, dirs, files in os.walk(parsed_data_location):

for doc in files:

if doc.endswith('.csv'):

for line in csv.DictReader(open(os.path.join(root,doc))):

outfile.write(line['body_text'] + '\n')

sentences.append(line['body_text'])

cluster_ids.append(int(line['cluster_id']))

outfile.write(doc_separator + '\n')

cluster_ids.append(-1)

cluster_ids = numpy.array(cluster_ids)

# ## Build the skip-thoughts training dictionaries

#

# These are pretty basic things about the whole corpus required by the skip-thoughts code.

#

# wordcount is a dictionary of wordcounts, ordered by the order the words appear in the sentences. worddict is a dictionary of the same words, with values corresponding to their rank in the count, ordered by rank in the count.

# Can skip this cell if sentences is still in memory

sentences = [x.strip() for x in open(training_data_location).readlines()]

len(sentences)

# wordcount the count of words, ordered by appearance in text

# worddict

worddict, wordcount = vocab.build_dictionary(sentences)

vocab.save_dictionary(worddict, wordcount, vocab_location)

# ## Training a model

#

# #### First set parameters

#

# Definitely set:

# * saveto: a path where the model will be periodically saved

# * dictionary: where the dictionary is.

#

# Both these should have been previously set as `model_location` and `vocab_location` respectively.

#

# Consider tuning:

# * dim_word: the dimensionality of the RNN word embeddings (Default 620)

# * dim: the size of the hidden state (Default 2400)

# * max_epochs: the total number of training epochs (Default 5)

#

# * decay_c: weight decay hyperparameter (Default 0, i.e. ignored)

# * grad_clip: gradient clipping hyperparamter (Default 5)

# * n_words: the size of the decoder vocabulary (Default 20000)

# * maxlen_w: the max number of words per sentence. Sentences longer than this will be ignored (Default 30)

# * batch_size: size of each training minibatch (roughly) (Default 64)

# * saveFreq: save the model after this many weight updates (Default 1000)

#

# Other options:

# * displayFreq: display progress after this many weight updates (Default 1)

# * reload_: whether to reload a previously saved model (Default False)

#

# #### Some obvervations on parameters

#

# The default displayFreq is 1. Which seems low. It means every iteration prints something. It seems excessive. I suggest 100.

#

# As long as the computer can handle it in memory, a bigger batch size seems better all around. I am trying 256.

#

# A good chunk of stackexchange sentences seemed to be at least 30 tokens. I am changing that setting to 40.

# Using a small set of paramters for testing

params = dict(

saveto = model_location,

dictionary = vocab_location,

n_words = 1000,

dim_word = 100,

dim = 500,

max_epochs = 1,

saveFreq = 100,

)

train.trainer(sentences,**params)

# ## Encoding sentences

#

# The model created doesn't quite fit into the pipeline, because it is a "uni-skip" model, not a "combine skip" model. The pipeline uses skipthoughts.encode, which requires very particularly formatted models.

#

# The model built above instead works with the encode function found in the tools model.

#

# Except that this function often breaks.

#

# I have not trained a "combine-skip model". The model here is the equivalent of `utable.npy`.

#

# One would still need to train an `btable.npy` equivalent. A btable is created by training a model with half the dimension, reversing the sentences and training again, then concatenating the two models into btable. I have not done this and may be missing some subtelty.

# This cell requires hardcoded paths in tools.py to be changed. It should perhaps also be fixed to not depend

# on hardcoded paths.

embed_map = tools.load_googlenews_vectors(path_to_word2vec)

model = tools.load_model(embed_map)

# Having a lot of trouble getting this line to not crash. It causes a "floating point exception".

tools.encode(model,sentences)

# ## How to evaluate?

#

# Supervised task. Apply cluster_id as label to each sentence. Run regression. Evaluate performance.

#

# Since there is so much stackexchange data, a random sample may be sufficient. So choose a percentage of the data to sample from. That sample will then get divided into training and testing.

# +

evaluation_percent = 1 # Choose a subsample of the data

holdout_percent = 0.5 # Of that subsample, make this amount training data

# and the rest testing data

# e.g. 1,000,000 sentences. evaluation_percent = 0.1,

# holdout_percent = 0.8 --

# Choose 100,000 sentences.

# Then choose 80,000 of those for training

# and 20,000 of those for testing.

# -

# Read in the sentences if they are not already in memory.

sentences = [x.strip() for x in open(training_data_location).readlines()]

num_sentences = len(sentences)

# Read in cluster ids, again if not already in memory.

cluster_ids = []

for root, dirs, files in os.walk(parsed_data_location):

for doc in files:

if doc.endswith('.csv'):

for line in csv.DictReader(open(os.path.join(root,doc))):

cluster_ids.append(int(line['cluster_id']))

cluster_ids.append(-1)

cluster_ids = numpy.array(cluster_ids)

# Sanity check. Should be true.

num_sentences == len(cluster_ids)

# Sample a percentage of your data specified above as evaluation_percent.

indices = numpy.arange(num_sentences)

num_samples = int(evaluation_percent * num_sentences)

index_sample = numpy.sort(numpy.random.choice(indices,

size=num_samples,

replace = False))

sample_sentences = [sentences[i] for i in index_sample]

sample_clusters = cluster_ids[index_sample]

# Broken!!!

# This section requires the encodings of the previous section. But...

#encodings = tools.encode(model, sample_sentences)

encodings = numpy.random.rand(num_samples,10)

#Since I can't get encodings to actually work, have some random numbers.

# From this point forward, the code is not well-tested because I couldn't get the encode function to work.

encoding_train, encoding_test, cluster_train, cluster_test = train_test_split(encodings,

sample_clusters,

test_size = holdout_percent)

regression = LinearRegression()

regression.fit(encoding_train, cluster_train)

regression.predict(encoding_test)

regression.score(encoding_test, cluster_test)

# ## The end.

#

# This is the end of the notebook. Below is an alternative approach. Not as well tested.

# ## An in-memory approach.

#

# Because everything kept crashing on me, I ultimately found it most convenient to do everything in a streaming fashion with a lot of writing to disk at every stage. This is obviously slower than desirable. Basically I do a thing, write out the results, read the results back in, then do the next thing.

#

# Below is an in-memory approach that reads everything into memory and pushes forward, still sometimes saving key steps to disk, but without any rereading in. Because of various technical issues, this code has never been tested at scale. It works on the anime dataset.

doc_dicts = [json.loads(line)

for root,dirs,files in os.walk(sample_location)

for doc in files

for line in open(os.path.join(sample_location,root,doc))

]

# doc_dicts is a list of dictionaries, each containing document data

# In the anime sample, the text is labeled 'body_text'

# There is a field cluster_id which we will use as the categorical label

cluster_ids = [d['cluster_id'] for d in doc_dicts]

docs = [d['body_text'] for d in doc_dicts]

del(doc_dicts) # For efficiency

# Make list of sentences for each doc

sentenced = [tokenize.punkt_sentences(doc) for doc in docs]

# Normalize each sentence

normalized = [[normalize.xml_normalize(sentence) for sentence in doc] for doc in sentenced]

del(sentenced) #If you're done with it

#Tokenize each sentence

tokenized = [[' '.join(tokenize.word_punct_tokens(sentence)) for sentence in doc] for doc in normalized]

separated = sum(zip(tokenized,[[doc_separator]]*len(tokenized)),tuple())

sentences = sum(separated,[])

separated = sum(zip(tokenized,[[doc_separator]]*len(tokenized)),tuple())

sentences = sum(separated,[])

# This leaves you with the sentences object in memory, leaving you ready to build the skip-thoughts training dictionaries.

| src/examples/train-skip-thoughts.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 02 - Introduction to Python for Data Analysis

#

# by [<NAME>](albahnsen.com/)

#

# version 0.2, May 2016

#

# ## Part of the class [Machine Learning for Risk Management](https://github.com/albahnsen/ML_RiskManagement)

#

#

# This notebook is licensed under a [Creative Commons Attribution-ShareAlike 3.0 Unported License](http://creativecommons.org/licenses/by-sa/3.0/deed.en_US). Special thanks goes to [<NAME>er](http://www.cs.sandia.gov/~rmuller/), Sandia National Laboratories

# ## Why Python?

# Python is the programming language of choice for many scientists to a large degree because it offers a great deal of power to analyze and model scientific data with relatively little overhead in terms of learning, installation or development time. It is a language you can pick up in a weekend, and use for the rest of one's life.

#

# The [Python Tutorial](http://docs.python.org/3/tutorial/) is a great place to start getting a feel for the language. To complement this material, I taught a [Python Short Course](http://www.wag.caltech.edu/home/rpm/python_course/) years ago to a group of computational chemists during a time that I was worried the field was moving too much in the direction of using canned software rather than developing one's own methods. I wanted to focus on what working scientists needed to be more productive: parsing output of other programs, building simple models, experimenting with object oriented programming, extending the language with C, and simple GUIs.

#

# I'm trying to do something very similar here, to cut to the chase and focus on what scientists need. In the last year or so, the [Jupyter Project](http://jupyter.org) has put together a notebook interface that I have found incredibly valuable. A large number of people have released very good IPython Notebooks that I have taken a huge amount of pleasure reading through. Some ones that I particularly like include:

#

# * <NAME> [A Crash Course in Python for Scientists](http://nbviewer.jupyter.org/gist/rpmuller/5920182)

# * <NAME>'s [excellent notebooks](http://jrjohansson.github.io/), including [Scientific Computing with Python](https://github.com/jrjohansson/scientific-python-lectures) and [Computational Quantum Physics with QuTiP](https://github.com/jrjohansson/qutip-lectures) lectures;

# * [XKCD style graphs in matplotlib](http://nbviewer.ipython.org/url/jakevdp.github.com/downloads/notebooks/XKCD_plots.ipynb);

# * [A collection of Notebooks for using IPython effectively](https://github.com/ipython/ipython/tree/master/examples/notebooks#a-collection-of-notebooks-for-using-ipython-effectively)

# * [A gallery of interesting IPython Notebooks](https://github.com/ipython/ipython/wiki/A-gallery-of-interesting-IPython-Notebooks)

#

# I find Jupyter notebooks an easy way both to get important work done in my everyday job, as well as to communicate what I've done, how I've done it, and why it matters to my coworkers. In the interest of putting more notebooks out into the wild for other people to use and enjoy, I thought I would try to recreate some of what I was trying to get across in the original Python Short Course, updated by 15 years of Python, Numpy, Scipy, Pandas, Matplotlib, and IPython development, as well as my own experience in using Python almost every day of this time.

# ## Why Python for Data Analysis?

#

# - Python is great for scripting and applications.

# - The `pandas` library offers imporved library support.

# - Scraping, web APIs

# - Strong High Performance Computation support

# - Load balanceing tasks

# - MPI, GPU

# - MapReduce

# - Strong support for abstraction

# - Intel MKL

# - HDF5

# - Environment

# ## But we already know R

#

# ...Which is better? Hard to answer

#

# http://www.kdnuggets.com/2015/05/r-vs-python-data-science.html

#

# http://www.kdnuggets.com/2015/03/the-grammar-data-science-python-vs-r.html

#

# https://www.datacamp.com/community/tutorials/r-or-python-for-data-analysis

#

# https://www.dataquest.io/blog/python-vs-r/

#

# http://www.dataschool.io/python-or-r-for-data-science/

# ## What You Need to Install

#

# There are two branches of current releases in Python: the older-syntax Python 2, and the newer-syntax Python 3. This schizophrenia is largely intentional: when it became clear that some non-backwards-compatible changes to the language were necessary, the Python dev-team decided to go through a five-year (or so) transition, during which the new language features would be introduced and the old language was still actively maintained, to make such a transition as easy as possible.

#

# Nonetheless, I'm going to write these notes with Python 3 in mind, since this is the version of the language that I use in my day-to-day job, and am most comfortable with.

#

# With this in mind, these notes assume you have a Python distribution that includes:

#

# * [Python](http://www.python.org) version 3.5;

# * [Numpy](http://www.numpy.org), the core numerical extensions for linear algebra and multidimensional arrays;

# * [Scipy](http://www.scipy.org), additional libraries for scientific programming;

# * [Matplotlib](http://matplotlib.sf.net), excellent plotting and graphing libraries;

# * [IPython](http://ipython.org), with the additional libraries required for the notebook interface.

# * [Pandas](http://pandas.pydata.org/), Python version of R dataframe

# * [scikit-learn](http://scikit-learn.org), Machine learning library!

#

# A good, easy to install option that supports Mac, Windows, and Linux, and that has all of these packages (and much more) is the [Anaconda](https://www.continuum.io/).

# ### Checking your installation

#

# You can run the following code to check the versions of the packages on your system:

#

# (in IPython notebook, press `shift` and `return` together to execute the contents of a cell)

# +

import sys

print('Python version:', sys.version)

import IPython

print('IPython:', IPython.__version__)

import numpy

print('numpy:', numpy.__version__)

import scipy

print('scipy:', scipy.__version__)

import matplotlib

print('matplotlib:', matplotlib.__version__)

import pandas

print('pandas:', pandas.__version__)

import sklearn

print('scikit-learn:', sklearn.__version__)

# -

# # I. Python Overview

# This is a quick introduction to Python. There are lots of other places to learn the language more thoroughly. I have collected a list of useful links, including ones to other learning resources, at the end of this notebook. If you want a little more depth, [Python Tutorial](http://docs.python.org/2/tutorial/) is a great place to start, as is Zed Shaw's [Learn Python the Hard Way](http://learnpythonthehardway.org/book/).

#

# The lessons that follow make use of the IPython notebooks. There's a good introduction to notebooks [in the IPython notebook documentation](http://ipython.org/notebook.html) that even has a [nice video](http://www.youtube.com/watch?v=H6dLGQw9yFQ#!) on how to use the notebooks. You should probably also flip through the [IPython tutorial](http://ipython.org/ipython-doc/dev/interactive/tutorial.html) in your copious free time.

#

# Briefly, notebooks have code cells (that are generally followed by result cells) and text cells. The text cells are the stuff that you're reading now. The code cells start with "In []:" with some number generally in the brackets. If you put your cursor in the code cell and hit Shift-Enter, the code will run in the Python interpreter and the result will print out in the output cell. You can then change things around and see whether you understand what's going on. If you need to know more, see the [IPython notebook documentation](http://ipython.org/notebook.html) or the [IPython tutorial](http://ipython.org/ipython-doc/dev/interactive/tutorial.html).

# ## Using Python as a Calculator

# Many of the things I used to use a calculator for, I now use Python for:

2+2

(50-5*6)/4

# (If you're typing this into an IPython notebook, or otherwise using notebook file, you hit shift-Enter to evaluate a cell.)

# In the last few lines, we have sped by a lot of things that we should stop for a moment and explore a little more fully. We've seen, however briefly, two different data types: **integers**, also known as *whole numbers* to the non-programming world, and **floating point numbers**, also known (incorrectly) as *decimal numbers* to the rest of the world.

#

# We've also seen the first instance of an **import** statement. Python has a huge number of libraries included with the distribution. To keep things simple, most of these variables and functions are not accessible from a normal Python interactive session. Instead, you have to import the name. For example, there is a **math** module containing many useful functions. To access, say, the square root function, you can either first

#

# from math import sqrt

#

# and then

sqrt(81)

from math import sqrt

sqrt(81)

# or you can simply import the math library itself

import math

math.sqrt(81)

# You can define variables using the equals (=) sign:

radius = 20

pi = math.pi

area = pi * radius ** 2

area

# If you try to access a variable that you haven't yet defined, you get an error:

volume

# and you need to define it:

volume = 4/3*pi*radius**3

volume

# You can name a variable *almost* anything you want. It needs to start with an alphabetical character or "\_", can contain alphanumeric charcters plus underscores ("\_"). Certain words, however, are reserved for the language:

#

# and, as, assert, break, class, continue, def, del, elif, else, except,

# exec, finally, for, from, global, if, import, in, is, lambda, not, or,

# pass, print, raise, return, try, while, with, yield

#

# Trying to define a variable using one of these will result in a syntax error:

return = 0

# The [Python Tutorial](http://docs.python.org/2/tutorial/introduction.html#using-python-as-a-calculator) has more on using Python as an interactive shell. The [IPython tutorial](http://ipython.org/ipython-doc/dev/interactive/tutorial.html) makes a nice complement to this, since IPython has a much more sophisticated iteractive shell.

# ## Strings

# Strings are lists of printable characters, and can be defined using either single quotes

'Hello, World!'

# or double quotes

"Hello, World!"

# But not both at the same time, unless you want one of the symbols to be part of the string.

"He's a Rebel"

'She asked, "How are you today?"'

# Just like the other two data objects we're familiar with (ints and floats), you can assign a string to a variable

greeting = "Hello, World!"

# The **print** statement is often used for printing character strings:

print(greeting)

# But it can also print data types other than strings:

print("The area is " + area)

print("The area is " + str(area))

# In the above snipped, the number 600 (stored in the variable "area") is converted into a string before being printed out.

# You can use the + operator to concatenate strings together:

statement = "Hello," + "World!"

print(statement)

# Don't forget the space between the strings, if you want one there.

statement = "Hello, " + "World!"

print(statement)

# You can use + to concatenate multiple strings in a single statement:

print("This " + "is " + "a " + "longer " + "statement.")

# If you have a lot of words to concatenate together, there are other, more efficient ways to do this. But this is fine for linking a few strings together.

# ## Lists

# Very often in a programming language, one wants to keep a group of similar items together. Python does this using a data type called **lists**.

days_of_the_week = ["Sunday","Monday","Tuesday","Wednesday","Thursday","Friday","Saturday"]

# You can access members of the list using the **index** of that item:

days_of_the_week[2]

# Python lists, like C, but unlike Fortran, use 0 as the index of the first element of a list. Thus, in this example, the 0 element is "Sunday", 1 is "Monday", and so on. If you need to access the *n*th element from the end of the list, you can use a negative index. For example, the -1 element of a list is the last element:

days_of_the_week[-1]

# You can add additional items to the list using the .append() command:

languages = ["Fortran","C","C++"]

languages.append("Python")

print(languages)

# The **range()** command is a convenient way to make sequential lists of numbers:

list(range(10))

# Note that range(n) starts at 0 and gives the sequential list of integers less than n. If you want to start at a different number, use range(start,stop)

list(range(2,8))

# The lists created above with range have a *step* of 1 between elements. You can also give a fixed step size via a third command:

evens = list(range(0,20,2))

evens

evens[3]

# Lists do not have to hold the same data type. For example,

["Today",7,99.3,""]

# However, it's good (but not essential) to use lists for similar objects that are somehow logically connected. If you want to group different data types together into a composite data object, it's best to use **tuples**, which we will learn about below.

#

# You can find out how long a list is using the **len()** command:

help(len)

len(evens)

# ## Iteration, Indentation, and Blocks

# One of the most useful things you can do with lists is to *iterate* through them, i.e. to go through each element one at a time. To do this in Python, we use the **for** statement:

for day in days_of_the_week:

print(day)

# This code snippet goes through each element of the list called **days_of_the_week** and assigns it to the variable **day**. It then executes everything in the indented block (in this case only one line of code, the print statement) using those variable assignments. When the program has gone through every element of the list, it exists the block.

#

# (Almost) every programming language defines blocks of code in some way. In Fortran, one uses END statements (ENDDO, ENDIF, etc.) to define code blocks. In C, C++, and Perl, one uses curly braces {} to define these blocks.

#

# Python uses a colon (":"), followed by indentation level to define code blocks. Everything at a higher level of indentation is taken to be in the same block. In the above example the block was only a single line, but we could have had longer blocks as well:

for day in days_of_the_week:

statement = "Today is " + day

print(statement)

# The **range()** command is particularly useful with the **for** statement to execute loops of a specified length:

for i in range(20):

print("The square of ",i," is ",i*i)

# ## Slicing

# Lists and strings have something in common that you might not suspect: they can both be treated as sequences. You already know that you can iterate through the elements of a list. You can also iterate through the letters in a string:

for letter in "Sunday":

print(letter)

# This is only occasionally useful. Slightly more useful is the *slicing* operation, which you can also use on any sequence. We already know that we can use *indexing* to get the first element of a list:

days_of_the_week[0]

# If we want the list containing the first two elements of a list, we can do this via

days_of_the_week[0:2]

# or simply

days_of_the_week[:2]

# If we want the last items of the list, we can do this with negative slicing:

days_of_the_week[-2:]

# which is somewhat logically consistent with negative indices accessing the last elements of the list.

#

# You can do:

workdays = days_of_the_week[1:6]

print(workdays)

# Since strings are sequences, you can also do this to them:

day = "Sunday"

abbreviation = day[:3]

print(abbreviation)

# If we really want to get fancy, we can pass a third element into the slice, which specifies a step length (just like a third argument to the **range()** function specifies the step):

numbers = list(range(0,40))

evens = numbers[2::2]

evens

# Note that in this example I was even able to omit the second argument, so that the slice started at 2, went to the end of the list, and took every second element, to generate the list of even numbers less that 40.

# ## Booleans and Truth Testing

# We have now learned a few data types. We have integers and floating point numbers, strings, and lists to contain them. We have also learned about lists, a container that can hold any data type. We have learned to print things out, and to iterate over items in lists. We will now learn about **boolean** variables that can be either True or False.

#

# We invariably need some concept of *conditions* in programming to control branching behavior, to allow a program to react differently to different situations. If it's Monday, I'll go to work, but if it's Sunday, I'll sleep in. To do this in Python, we use a combination of **boolean** variables, which evaluate to either True or False, and **if** statements, that control branching based on boolean values.

# For example:

if day == "Sunday":

print("Sleep in")

else:

print("Go to work")

# (Quick quiz: why did the snippet print "Go to work" here? What is the variable "day" set to?)

#

# Let's take the snippet apart to see what happened. First, note the statement

day == "Sunday"

# If we evaluate it by itself, as we just did, we see that it returns a boolean value, False. The "==" operator performs *equality testing*. If the two items are equal, it returns True, otherwise it returns False. In this case, it is comparing two variables, the string "Sunday", and whatever is stored in the variable "day", which, in this case, is the other string "Saturday". Since the two strings are not equal to each other, the truth test has the false value.

# The if statement that contains the truth test is followed by a code block (a colon followed by an indented block of code). If the boolean is true, it executes the code in that block. Since it is false in the above example, we don't see that code executed.

#

# The first block of code is followed by an **else** statement, which is executed if nothing else in the above if statement is true. Since the value was false, this code is executed, which is why we see "Go to work".

#

# You can compare any data types in Python:

1 == 2

50 == 2*25

3 < 3.14159

1 == 1.0

1 != 0

1 <= 2

1 >= 1

# We see a few other boolean operators here, all of which which should be self-explanatory. Less than, equality, non-equality, and so on.

#

# Particularly interesting is the 1 == 1.0 test, which is true, since even though the two objects are different data types (integer and floating point number), they have the same *value*. There is another boolean operator **is**, that tests whether two objects are the same object:

1 is 1.0

# We can do boolean tests on lists as well:

[1,2,3] == [1,2,4]

[1,2,3] < [1,2,4]

# Finally, note that you can also string multiple comparisons together, which can result in very intuitive tests:

hours = 5

0 < hours < 24

# If statements can have **elif** parts ("else if"), in addition to if/else parts. For example:

if day == "Sunday":

print("Sleep in")

elif day == "Saturday":

print("Do chores")

else:

print("Go to work")

# Of course we can combine if statements with for loops, to make a snippet that is almost interesting:

for day in days_of_the_week:

statement = "Today is " + day

print(statement)

if day == "Sunday":

print(" Sleep in")

elif day == "Saturday":

print(" Do chores")

else:

print(" Go to work")

# This is something of an advanced topic, but ordinary data types have boolean values associated with them, and, indeed, in early versions of Python there was not a separate boolean object. Essentially, anything that was a 0 value (the integer or floating point 0, an empty string "", or an empty list []) was False, and everything else was true. You can see the boolean value of any data object using the **bool()** function.

bool(1)

bool(0)

bool(["This "," is "," a "," list"])

# ## Code Example: The Fibonacci Sequence

# The [Fibonacci sequence](http://en.wikipedia.org/wiki/Fibonacci_number) is a sequence in math that starts with 0 and 1, and then each successive entry is the sum of the previous two. Thus, the sequence goes 0,1,1,2,3,5,8,13,21,34,55,89,...

#

# A very common exercise in programming books is to compute the Fibonacci sequence up to some number **n**. First I'll show the code, then I'll discuss what it is doing.

n = 10

sequence = [0,1]

for i in range(2,n): # This is going to be a problem if we ever set n <= 2!

sequence.append(sequence[i-1]+sequence[i-2])

print(sequence)

# Let's go through this line by line. First, we define the variable **n**, and set it to the integer 20. **n** is the length of the sequence we're going to form, and should probably have a better variable name. We then create a variable called **sequence**, and initialize it to the list with the integers 0 and 1 in it, the first two elements of the Fibonacci sequence. We have to create these elements "by hand", since the iterative part of the sequence requires two previous elements.

#

# We then have a for loop over the list of integers from 2 (the next element of the list) to **n** (the length of the sequence). After the colon, we see a hash tag "#", and then a **comment** that if we had set **n** to some number less than 2 we would have a problem. Comments in Python start with #, and are good ways to make notes to yourself or to a user of your code explaining why you did what you did. Better than the comment here would be to test to make sure the value of **n** is valid, and to complain if it isn't; we'll try this later.

#

# In the body of the loop, we append to the list an integer equal to the sum of the two previous elements of the list.

#

# After exiting the loop (ending the indentation) we then print out the whole list. That's it!

# ## Functions

# We might want to use the Fibonacci snippet with different sequence lengths. We could cut an paste the code into another cell, changing the value of **n**, but it's easier and more useful to make a function out of the code. We do this with the **def** statement in Python:

def fibonacci(sequence_length):

"Return the Fibonacci sequence of length *sequence_length*"

sequence = [0,1]

if sequence_length < 1:

print("Fibonacci sequence only defined for length 1 or greater")

return

if 0 < sequence_length < 3:

return sequence[:sequence_length]

for i in range(2,sequence_length):

sequence.append(sequence[i-1]+sequence[i-2])

return sequence

# We can now call **fibonacci()** for different sequence_lengths:

fibonacci(2)

fibonacci(12)

# We've introduced a several new features here. First, note that the function itself is defined as a code block (a colon followed by an indented block). This is the standard way that Python delimits things. Next, note that the first line of the function is a single string. This is called a **docstring**, and is a special kind of comment that is often available to people using the function through the python command line:

help(fibonacci)

# If you define a docstring for all of your functions, it makes it easier for other people to use them, since they can get help on the arguments and return values of the function.

#

# Next, note that rather than putting a comment in about what input values lead to errors, we have some testing of these values, followed by a warning if the value is invalid, and some conditional code to handle special cases.

# ## Two More Data Structures: Tuples and Dictionaries

# Before we end the Python overview, I wanted to touch on two more data structures that are very useful (and thus very common) in Python programs.

#

# A **tuple** is a sequence object like a list or a string. It's constructed by grouping a sequence of objects together with commas, either without brackets, or with parentheses:

t = (1,2,'hi',9.0)

t

# Tuples are like lists, in that you can access the elements using indices:

t[1]

# However, tuples are *immutable*, you can't append to them or change the elements of them:

t.append(7)

t[1]=77

# Tuples are useful anytime you want to group different pieces of data together in an object, but don't want to create a full-fledged class (see below) for them. For example, let's say you want the Cartesian coordinates of some objects in your program. Tuples are a good way to do this:

('Bob',0.0,21.0)

# Again, it's not a necessary distinction, but one way to distinguish tuples and lists is that tuples are a collection of different things, here a name, and x and y coordinates, whereas a list is a collection of similar things, like if we wanted a list of those coordinates:

positions = [

('Bob',0.0,21.0),

('Cat',2.5,13.1),

('Dog',33.0,1.2)

]

# Tuples can be used when functions return more than one value. Say we wanted to compute the smallest x- and y-coordinates of the above list of objects. We could write:

# +

def minmax(objects):

minx = 1e20 # These are set to really big numbers

miny = 1e20

for obj in objects:

name,x,y = obj

if x < minx:

minx = x

if y < miny:

miny = y

return minx,miny

x,y = minmax(positions)

print(x,y)

# -

# **Dictionaries** are an object called "mappings" or "associative arrays" in other languages. Whereas a list associates an integer index with a set of objects:

mylist = [1,2,9,21]

# The index in a dictionary is called the *key*, and the corresponding dictionary entry is the *value*. A dictionary can use (almost) anything as the key. Whereas lists are formed with square brackets [], dictionaries use curly brackets {}:

ages = {"Rick": 46, "Bob": 86, "Fred": 21}

print("Rick's age is ",ages["Rick"])

# There's also a convenient way to create dictionaries without having to quote the keys.

dict(Rick=46,Bob=86,Fred=20)

# The **len()** command works on both tuples and dictionaries:

len(t)

len(ages)

# ## Conclusion of the Python Overview

# There is, of course, much more to the language than I've covered here. I've tried to keep this brief enough so that you can jump in and start using Python to simplify your life and work. My own experience in learning new things is that the information doesn't "stick" unless you try and use it for something in real life.

#

# You will no doubt need to learn more as you go. I've listed several other good references, including the [Python Tutorial](http://docs.python.org/2/tutorial/) and [Learn Python the Hard Way](http://learnpythonthehardway.org/book/). Additionally, now is a good time to start familiarizing yourself with the [Python Documentation](http://docs.python.org/2.7/), and, in particular, the [Python Language Reference](http://docs.python.org/2.7/reference/index.html).

#

# <NAME>, one of the earliest and most prolific Python contributors, wrote the "Zen of Python", which can be accessed via the "import this" command:

import this

# No matter how experienced a programmer you are, these are words to meditate on.

# # II. Numpy and Scipy

#

# [Numpy](http://numpy.org) contains core routines for doing fast vector, matrix, and linear algebra-type operations in Python. [Scipy](http://scipy) contains additional routines for optimization, special functions, and so on. Both contain modules written in C and Fortran so that they're as fast as possible. Together, they give Python roughly the same capability that the [Matlab](http://www.mathworks.com/products/matlab/) program offers. (In fact, if you're an experienced Matlab user, there a [guide to Numpy for Matlab users](http://www.scipy.org/NumPy_for_Matlab_Users) just for you.)

#

# ## Making vectors and matrices

# Fundamental to both Numpy and Scipy is the ability to work with vectors and matrices. You can create vectors from lists using the **array** command:

import numpy as np

import scipy as sp

array = np.array([1,2,3,4,5,6])

array

# size of the array

array.shape

# To build matrices, you can either use the array command with lists of lists:

mat = np.array([[0,1],[1,0]])

mat

# Add a column of ones to mat

mat2 = np.c_[mat, np.ones(2)]

mat2

# size of a matrix

mat2.shape

# You can also form empty (zero) matrices of arbitrary shape (including vectors, which Numpy treats as vectors with one row), using the **zeros** command:

np.zeros((3,3))

# There's also an **identity** command that behaves as you'd expect:

np.identity(4)

# as well as a **ones** command.

# ## Linspace, matrix functions, and plotting

# The **linspace** command makes a linear array of points from a starting to an ending value.

np.linspace(0,1)

# If you provide a third argument, it takes that as the number of points in the space. If you don't provide the argument, it gives a length 50 linear space.

np.linspace(0,1,11)

# **linspace** is an easy way to make coordinates for plotting. Functions in the numpy library (all of which are imported into IPython notebook) can act on an entire vector (or even a matrix) of points at once. Thus,

x = np.linspace(0,2*np.pi)

np.sin(x)

# In conjunction with **matplotlib**, this is a nice way to plot things:

# %matplotlib inline

import matplotlib.pyplot as plt

plt.plot(x,np.sin(x))

# ## Matrix operations

# Matrix objects act sensibly when multiplied by scalars:

0.125*np.identity(3)

# as well as when you add two matrices together. (However, the matrices have to be the same shape.)

np.identity(2) + np.array([[1,1],[1,2]])

# Something that confuses Matlab users is that the times (*) operator give element-wise multiplication rather than matrix multiplication:

np.identity(2)*np.ones((2,2))

# To get matrix multiplication, you need the **dot** command:

np.dot(np.identity(2),np.ones((2,2)))

# **dot** can also do dot products (duh!):

v = np.array([3,4])

np.sqrt(np.dot(v,v))

# as well as matrix-vector products.

# There are **determinant**, **inverse**, and **transpose** functions that act as you would suppose. Transpose can be abbreviated with ".T" at the end of a matrix object:

m = np.array([[1,2],[3,4]])

m.T

np.linalg.inv(m)

# There's also a **diag()** function that takes a list or a vector and puts it along the diagonal of a square matrix.

np.diag([1,2,3,4,5])

# We'll find this useful later on.

# ## Least squares fitting

# Very often we deal with some data that we want to fit to some sort of expected behavior. Say we have the following:

raw_data = """\

3.1905781584582433,0.028208609537968457

4.346895074946466,0.007160804747670053

5.374732334047101,0.0046962988461934805

8.201284796573875,0.0004614473299618756

10.899357601713055,0.00005038370219939726

16.295503211991434,4.377451812785309e-7

21.82012847965739,3.0799922117601088e-9

32.48394004282656,1.524776208284536e-13

43.53319057815846,5.5012073588707224e-18"""

# There's a section below on parsing CSV data. We'll steal the parser from that. For an explanation, skip ahead to that section. Otherwise, just assume that this is a way to parse that text into a numpy array that we can plot and do other analyses with.

data = []

for line in raw_data.splitlines():

words = line.split(',')

data.append(words)

data = np.array(data, dtype=np.float)

data

data[:, 0]

plt.title("Raw Data")

plt.xlabel("Distance")

plt.plot(data[:,0],data[:,1],'bo')

# Since we expect the data to have an exponential decay, we can plot it using a semi-log plot.

plt.title("Raw Data")

plt.xlabel("Distance")

plt.semilogy(data[:,0],data[:,1],'bo')

# For a pure exponential decay like this, we can fit the log of the data to a straight line. The above plot suggests this is a good approximation. Given a function

# $$ y = Ae^{-ax} $$

# $$ \log(y) = \log(A) - ax$$

# Thus, if we fit the log of the data versus x, we should get a straight line with slope $a$, and an intercept that gives the constant $A$.

#

# There's a numpy function called **polyfit** that will fit data to a polynomial form. We'll use this to fit to a straight line (a polynomial of order 1)

params = sp.polyfit(data[:,0],np.log(data[:,1]),1)

a = params[0]

A = np.exp(params[1])

# Let's see whether this curve fits the data.

x = np.linspace(1,45)

plt.title("Raw Data")

plt.xlabel("Distance")

plt.semilogy(data[:,0],data[:,1],'bo')

plt.semilogy(x,A*np.exp(a*x),'b-')

# If we have more complicated functions, we may not be able to get away with fitting to a simple polynomial. Consider the following data:

# +

gauss_data = """\

-0.9902286902286903,1.4065274110372852e-19

-0.7566104566104566,2.2504438576596563e-18

-0.5117810117810118,1.9459459459459454

-0.31887271887271884,10.621621621621626

-0.250997150997151,15.891891891891893

-0.1463309463309464,23.756756756756754

-0.07267267267267263,28.135135135135133

-0.04426734426734419,29.02702702702703

-0.0015939015939017698,29.675675675675677

0.04689304689304685,29.10810810810811

0.0840994840994842,27.324324324324326

0.1700546700546699,22.216216216216214

0.370878570878571,7.540540540540545

0.5338338338338338,1.621621621621618

0.722014322014322,0.08108108108108068

0.9926849926849926,-0.08108108108108646"""

data = []

for line in gauss_data.splitlines():

words = line.split(',')

data.append(words)

data = np.array(data, dtype=np.float)

plt.plot(data[:,0],data[:,1],'bo')

# -

# This data looks more Gaussian than exponential. If we wanted to, we could use **polyfit** for this as well, but let's use the **curve_fit** function from Scipy, which can fit to arbitrary functions. You can learn more using help(curve_fit).

#

# First define a general Gaussian function to fit to.

def gauss(x,A,a):

return A*np.exp(a*x**2)

# Now fit to it using **curve_fit**:

# +

from scipy.optimize import curve_fit

params,conv = curve_fit(gauss,data[:,0],data[:,1])

x = np.linspace(-1,1)

plt.plot(data[:,0],data[:,1],'bo')

A,a = params

plt.plot(x,gauss(x,A,a),'b-')

# -

# The **curve_fit** routine we just used is built on top of a very good general **minimization** capability in Scipy. You can learn more [at the scipy documentation pages](http://docs.scipy.org/doc/scipy/reference/generated/scipy.optimize.minimize.html).

# ## Monte Carlo and random numbers

# Many methods in scientific computing rely on Monte Carlo integration, where a sequence of (pseudo) random numbers are used to approximate the integral of a function. Python has good random number generators in the standard library. The **random()** function gives pseudorandom numbers uniformly distributed between 0 and 1:

from random import random

rands = []

for i in range(100):

rands.append(random())

plt.plot(rands)

# **random()** uses the [Mersenne Twister](http://www.math.sci.hiroshima-u.ac.jp/~m-mat/MT/emt.html) algorithm, which is a highly regarded pseudorandom number generator. There are also functions to generate random integers, to randomly shuffle a list, and functions to pick random numbers from a particular distribution, like the normal distribution:

from random import gauss

grands = []

for i in range(100):

grands.append(gauss(0,1))

plt.plot(grands)

# It is generally more efficient to generate a list of random numbers all at once, particularly if you're drawing from a non-uniform distribution. Numpy has functions to generate vectors and matrices of particular types of random distributions.

plt.plot(np.random.rand(100))

# ## Slicing numpy arrays and matrices

data.shape

# Select second column

data[:, 1]

# Select the first 5 rows

data[:5, :]

# Select the second row and the last column

data[1, -1]

# # III. Intermediate Python

#

# ## Output Parsing

# As more and more of our day-to-day work is being done on and through computers, we increasingly have output that one program writes, often in a text file, that we need to analyze in one way or another, and potentially feed that output into another file.

#

# Suppose we have the following output:

myoutput = """\

@ Step Energy Delta E Gmax Grms Xrms Xmax Walltime

@ ---- ---------------- -------- -------- -------- -------- -------- --------

@ 0 -6095.12544083 0.0D+00 0.03686 0.00936 0.00000 0.00000 1391.5

@ 1 -6095.25762870 -1.3D-01 0.00732 0.00168 0.32456 0.84140 10468.0

@ 2 -6095.26325979 -5.6D-03 0.00233 0.00056 0.06294 0.14009 11963.5

@ 3 -6095.26428124 -1.0D-03 0.00109 0.00024 0.03245 0.10269 13331.9

@ 4 -6095.26463203 -3.5D-04 0.00057 0.00013 0.02737 0.09112 14710.8

@ 5 -6095.26477615 -1.4D-04 0.00043 0.00009 0.02259 0.08615 20211.1

@ 6 -6095.26482624 -5.0D-05 0.00015 0.00002 0.00831 0.03147 21726.1

@ 7 -6095.26483584 -9.6D-06 0.00021 0.00004 0.01473 0.05265 24890.5

@ 8 -6095.26484405 -8.2D-06 0.00005 0.00001 0.00555 0.01929 26448.7

@ 9 -6095.26484599 -1.9D-06 0.00003 0.00001 0.00164 0.00564 27258.1

@ 10 -6095.26484676 -7.7D-07 0.00003 0.00001 0.00161 0.00553 28155.3

@ 11 -6095.26484693 -1.8D-07 0.00002 0.00000 0.00054 0.00151 28981.7

@ 11 -6095.26484693 -1.8D-07 0.00002 0.00000 0.00054 0.00151 28981.7"""

# This output actually came from a geometry optimization of a Silicon cluster using the [NWChem](http://www.nwchem-sw.org/index.php/Main_Page) quantum chemistry suite. At every step the program computes the energy of the molecular geometry, and then changes the geometry to minimize the computed forces, until the energy converges. I obtained this output via the unix command

#

# % grep @ nwchem.out

#

# since NWChem is nice enough to precede the lines that you need to monitor job progress with the '@' symbol.

#

# We could do the entire analysis in Python; I'll show how to do this later on, but first let's focus on turning this code into a usable Python object that we can plot.

#

# First, note that the data is entered into a multi-line string. When Python sees three quote marks """ or ''' it treats everything following as part of a single string, including newlines, tabs, and anything else, until it sees the same three quote marks (""" has to be followed by another """, and ''' has to be followed by another ''') again. This is a convenient way to quickly dump data into Python, and it also reinforces the important idea that you don't have to open a file and deal with it one line at a time. You can read everything in, and deal with it as one big chunk.

#

# The first thing we'll do, though, is to split the big string into a list of strings, since each line corresponds to a separate piece of data. We will use the **splitlines()** function on the big myout string to break it into a new element every time it sees a newline (\n) character:

lines = myoutput.splitlines()

lines

# Splitting is a big concept in text processing. We used **splitlines()** here, and we will use the more general **split()** function below to split each line into whitespace-delimited words.

#

# We now want to do three things:

#

# * Skip over the lines that don't carry any information

# * Break apart each line that does carry information and grab the pieces we want

# * Turn the resulting data into something that we can plot.

#

# For this data, we really only want the Energy column, the Gmax column (which contains the maximum gradient at each step), and perhaps the Walltime column.

#

# Since the data is now in a list of lines, we can iterate over it:

for line in lines[2:]:

# do something with each line

words = line.split()

# Let's examine what we just did: first, we used a **for** loop to iterate over each line. However, we skipped the first two (the lines[2:] only takes the lines starting from index 2), since lines[0] contained the title information, and lines[1] contained underscores.

#

# We then split each line into chunks (which we're calling "words", even though in most cases they're numbers) using the string **split()** command. Here's what split does:

lines[2].split()

# This is almost exactly what we want. We just have to now pick the fields we want:

for line in lines[2:]:

# do something with each line

words = line.split()

energy = words[2]

gmax = words[4]

time = words[8]

print(energy,gmax,time)

# This is fine for printing things out, but if we want to do something with the data, either make a calculation with it or pass it into a plotting, we need to convert the strings into regular floating point numbers. We can use the **float()** command for this. We also need to save it in some form. I'll do this as follows:

data = []

for line in lines[2:]:

# do something with each line

words = line.split()

energy = float(words[2])

gmax = float(words[4])

time = float(words[8])

data.append((energy,gmax,time))

data = np.array(data)

# We now have our data in a numpy array, so we can choose columns to print:

plt.plot(data[:,0])

plt.xlabel('step')

plt.ylabel('Energy (hartrees)')

plt.title('Convergence of NWChem geometry optimization for Si cluster')

energies = data[:,0]

minE = min(energies)

energies_eV = 27.211*(energies-minE)

plt.plot(energies_eV)

plt.xlabel('step')

plt.ylabel('Energy (eV)')

plt.title('Convergence of NWChem geometry optimization for Si cluster')

# This gives us the output in a form that we can think about: 4 eV is a fairly substantial energy change (chemical bonds are roughly this magnitude of energy), and most of the energy decrease was obtained in the first geometry iteration.

# We mentioned earlier that we don't have to rely on **grep** to pull out the relevant lines for us. The **string** module has a lot of useful functions we can use for this. Among them is the **startswith** function. For example:

# +

lines = """\

----------------------------------------

| WALL | 0.45 | 443.61 |

----------------------------------------

@ Step Energy Delta E Gmax Grms Xrms Xmax Walltime

@ ---- ---------------- -------- -------- -------- -------- -------- --------

@ 0 -6095.12544083 0.0D+00 0.03686 0.00936 0.00000 0.00000 1391.5

ok ok

Z-matrix (autoz)

--------

""".splitlines()

for line in lines:

if line.startswith('@'):

print(line)

# -

# and we've successfully grabbed all of the lines that begin with the @ symbol.

# The real value in a language like Python is that it makes it easy to take additional steps to analyze data in this fashion, which means you are thinking more about your data, and are more likely to see important patterns.

# ## Optional arguments

# You will recall that the **linspace** function can take either two arguments (for the starting and ending points):

np.linspace(0,1)

# or it can take three arguments, for the starting point, the ending point, and the number of points:

np.linspace(0,1,5)

# You can also pass in keywords to exclude the endpoint:

np.linspace(0,1,5,endpoint=False)

# Right now, we only know how to specify functions that have a fixed number of arguments. We'll learn how to do the more general cases here.

#

# If we're defining a simple version of linspace, we would start with:

def my_linspace(start,end):

npoints = 50

v = []

d = (end-start)/float(npoints-1)

for i in range(npoints):

v.append(start + i*d)

return v

my_linspace(0,1)

# We can add an optional argument by specifying a default value in the argument list:

def my_linspace(start,end,npoints = 50):

v = []

d = (end-start)/float(npoints-1)

for i in range(npoints):

v.append(start + i*d)

return v

# This gives exactly the same result if we don't specify anything:

my_linspace(0,1)

# But also let's us override the default value with a third argument:

my_linspace(0,1,5)

# We can add arbitrary keyword arguments to the function definition by putting a keyword argument \*\*kwargs handle in:

def my_linspace(start,end,npoints=50,**kwargs):

endpoint = kwargs.get('endpoint',True)

v = []

if endpoint:

d = (end-start)/float(npoints-1)

else:

d = (end-start)/float(npoints)

for i in range(npoints):

v.append(start + i*d)

return v

my_linspace(0,1,5,endpoint=False)

# What the keyword argument construction does is to take any additional keyword arguments (i.e. arguments specified by name, like "endpoint=False"), and stick them into a dictionary called "kwargs" (you can call it anything you like, but it has to be preceded by two stars). You can then grab items out of the dictionary using the **get** command, which also lets you specify a default value. I realize it takes a little getting used to, but it is a common construction in Python code, and you should be able to recognize it.

#

# There's an analogous \*args that dumps any additional arguments into a list called "args". Think about the **range** function: it can take one (the endpoint), two (starting and ending points), or three (starting, ending, and step) arguments. How would we define this?

def my_range(*args):

start = 0

step = 1

if len(args) == 1:

end = args[0]

elif len(args) == 2:

start,end = args

elif len(args) == 3:

start,end,step = args

else:

raise Exception("Unable to parse arguments")

v = []

value = start

while True:

v.append(value)

value += step

if value > end: break

return v

# Note that we have defined a few new things you haven't seen before: a **break** statement, that allows us to exit a for loop if some conditions are met, and an exception statement, that causes the interpreter to exit with an error message. For example:

my_range()

# ## List Comprehensions and Generators

# List comprehensions are a streamlined way to make lists. They look something like a list definition, with some logic thrown in. For example:

evens1 = [2*i for i in range(10)]

print(evens1)

# You can also put some boolean testing into the construct:

odds = [i for i in range(20) if i%2==1]

odds

# Here i%2 is the remainder when i is divided by 2, so that i%2==1 is true if the number is odd. Even though this is a relative new addition to the language, it is now fairly common since it's so convenient.

# **iterators** are a way of making virtual sequence objects. Consider if we had the nested loop structure:

#

# for i in range(1000000):

# for j in range(1000000):

#

# Inside the main loop, we make a list of 1,000,000 integers, just to loop over them one at a time. We don't need any of the additional things that a lists gives us, like slicing or random access, we just need to go through the numbers one at a time. And we're making 1,000,000 of them.

#

# **iterators** are a way around this. For example, the **xrange** function is the iterator version of range. This simply makes a counter that is looped through in sequence, so that the analogous loop structure would look like:

#

# for i in xrange(1000000):

# for j in xrange(1000000):

#

# Even though we've only added two characters, we've dramatically sped up the code, because we're not making 1,000,000 big lists.

#

# We can define our own iterators using the **yield** statement:

# +

def evens_below(n):

for i in range(n):

if i%2 == 0:

yield i

return

for i in evens_below(9):

print(i)

# -

# We can always turn an iterator into a list using the **list** command:

list(evens_below(9))

# There's a special syntax called a **generator expression** that looks a lot like a list comprehension:

evens_gen = (i for i in range(9) if i%2==0)

for i in evens_gen:

print(i)

| notebooks/02-IntroPython.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.8 ('base')

# language: python

# name: python3

# ---

# # Network Concatenation

#

# The results of OPICS Networks/Circuits can be concatenated together to build large networks. In this notebook, we will explore this use-case in more-depth using the example of a two-stage lattice filter.

import opics as op

from opics.libraries import ebeam

# Let's create a mach-zehnder interferometer circuit, and call it `stage_1`.

# +

circuit1 = op.Network(network_id="stage_1")

circuit1.add_component(ebeam.BDC, component_id="bdc1")

circuit1.add_component(ebeam.BDC, component_id="bdc2")

circuit1.add_component(ebeam.Waveguide, params=dict(

length=10e-6), component_id="wg1")

circuit1.add_component(ebeam.Waveguide, params=dict(

length=9.93e-6), component_id="wg2")

circuit1.connect("bdc1", 2, "wg1", 0)

circuit1.connect("bdc1", 3, "wg2", 0)

circuit1.connect("bdc2", 0, "wg1", 1)

circuit1.connect("bdc2", 1, "wg2", 1)

circuit1 = circuit1.simulate_network()

# -

# Let's create another mach-zehnder interferometer circuit, and call it `stage_2`.

circuit2 = op.Network(network_id="stage_2")

circuit2.add_component(ebeam.BDC, component_id="bdc1")

circuit2.add_component(ebeam.BDC, component_id="bdc2")

circuit2.add_component(ebeam.Waveguide, params=dict(

length=10e-6), component_id="wg1")

circuit2.add_component(ebeam.Waveguide, params=dict(

length=10.08e-6), component_id="wg2")

circuit2.connect("bdc1", 2, "wg1", 0)

circuit2.connect("bdc1", 3, "wg2", 0)

circuit2.connect("bdc2", 0, "wg1", 1)

circuit2.connect("bdc2", 1, "wg2", 1)

circuit2 = circuit2.simulate_network()

# Let's create a root circuit and concatenate both networks

# ```

# .-------. .-------. .____.

# --|stage_1|---------|stage_2|---------|BDC | --

# --|_______|-- wg1 --|_______|-- wg2 --|____| --

# ```

# +

root = op.Network(network_id="root")

root.add_component(circuit1, circuit1.component_id)

root.add_component(circuit2, circuit2.component_id)

root.add_component(ebeam.Waveguide, params=dict(

length=100.125e-6), component_id="wg1")

root.add_component(ebeam.Waveguide, params=dict(

length=50e-6), component_id="wg2")

root.add_component(ebeam.BDC, component_id="bdc")

root.connect("stage_1", 2, "stage_2", 0)

root.connect("stage_1", 3, "wg1", 0)

root.connect("stage_2", 1, "wg1", 1)

root.connect("stage_2", 2, "bdc", 0)

root.connect("stage_2", 3, "wg2", 0)

root.connect("bdc", 1, "wg2", 1)

root.simulate_network()

# -

root.sim_result.plot_sparameters(show_freq=False, ports=[[2,0], [3,0]], interactive=True)

| docs/source/notebooks/04-Network_concatenation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from datascience import *

import numpy as np

# %matplotlib inline

import matplotlib.pyplot as plots

plots.style.use('fivethirtyeight')

# -

# ## Lecture 10 ##

# ## Apply

staff = Table().with_columns(

'Employee', make_array('Jim', 'Dwight', 'Michael', 'Creed'),

'Birth Year', make_array(1985, 1988, 1967, 1904)

)

staff

def greeting(person):

return '<NAME>, this is ' + person

greeting('Pam')

greeting('Erin')

staff.apply(greeting, 'Employee')

def name_and_age(name, year):

age = 2019 - year

return name + ' is ' + str(age)

staff.apply(name_and_age, 'Employee', 'Birth Year')

# ## Prediction ##

galton = Table.read_table('galton.csv')

galton

galton.scatter('midparentHeight', 'childHeight')

galton.scatter('midparentHeight', 'childHeight')

plots.plot([67.5, 67.5], [50, 85], color='red', lw=2)

plots.plot([68.5, 68.5], [50, 85], color='red', lw=2);

nearby = galton.where('midparentHeight', are.between(67.5, 68.5))

nearby_mean = nearby.column('childHeight').mean()

nearby_mean

galton.scatter('midparentHeight', 'childHeight')

plots.plot([67.5, 67.5], [50, 85], color='red', lw=2)

plots.plot([68.5, 68.5], [50, 85], color='red', lw=2)

plots.scatter(68, nearby_mean, color='red', s=50);

def predict(h):

nearby = galton.where('midparentHeight', are.between(h - 1/2, h + 1/2))

return nearby.column('childHeight').mean()

predict(68)

predict(70)

predict(73)

predicted_heights = galton.apply(predict, 'midparentHeight')

predicted_heights

galton = galton.with_column('predictedHeight', predicted_heights)

galton.select(

'midparentHeight', 'childHeight', 'predictedHeight').scatter('midparentHeight')

# ## Prediction Accuracy ##

def difference(x, y):

return x - y

pred_errs = galton.apply(difference, 'predictedHeight', 'childHeight')

pred_errs

galton = galton.with_column('errors',pred_errs)

galton

galton.hist('errors')

galton.hist('errors', group='gender')

# # Discussion Question

def predict_smarter(h, g):

nearby = galton.where('midparentHeight', are.between(h - 1/2, h + 1/2))

nearby_same_gender = nearby.where('gender', g)

return nearby_same_gender.column('childHeight').mean()

predict_smarter(68, 'female')

predict_smarter(68, 'male')

smarter_predicted_heights = galton.apply(predict_smarter, 'midparentHeight', 'gender')

galton = galton.with_column('smartPredictedHeight', smarter_predicted_heights)

smarter_pred_errs = galton.apply(difference, 'childHeight', 'smartPredictedHeight')

galton = galton.with_column('smartErrors', smarter_pred_errs)

galton.hist('smartErrors', group='gender')

# ## Grouping by One Column ##

cones = Table.read_table('cones.csv')

cones

cones.group('Flavor')

cones.drop('Color').group('Flavor', np.average)

cones.drop('Color').group('Flavor', min)

# ## Grouping By One Column: Welcome Survey ##

survey = Table.read_table('welcome_survey_v2.csv')

survey.group('Year', np.average)

by_extra = survey.group('Extraversion', np.average)

by_extra

by_extra.select(0,2,3).plot('Extraversion') # Drop the 'Years average' column

by_extra.select(0,3).plot('Extraversion')

# ## Lists

[1, 5, 'hello', 5.0]

[1, 5, 'hello', 5.0, make_array(1,2,3)]

# ## Grouping by Two Columns ##

survey = Table.read_table('welcome_survey_v3.csv')

survey.group(['Handedness','Sleep position']).show()

# ## Pivot Tables

survey.pivot('Sleep position', 'Handedness')

survey.pivot('Sleep position', 'Handedness', values='Extraversion', collect=np.average)

survey.group('Handedness', np.average)

| lec/lec10.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Using EKF tweaks with Sigma Point Kalman Filters

#

# In Sigma Point Kalman Filters (SPKF, see [**[Merwe2004]**](#merwe)) Weighted Statistical Linear Regression technique is used to approximate nonlinear process and measurement functions:

#

# $\mathbf{y} = g(\mathbf{x}) = \mathbf{A} \mathbf{x} + \mathbf{b} + \mathbf{e}$,

#

# $\mathbf{P}_{ee} = \mathbf{P}_{yy} - \mathbf{A} \mathbf{P}_{xx} \mathbf{A}^{\top}$

#

# where:

#

# $\mathbf{e}$ is an approximation error,

#

# $\mathbf{A} = \mathbf{P}_{xy}^{\top} \mathbf{P}_{xx}^{-1}$,

#

# $\mathbf{b} = \mathbf{\bar{y}} - \mathbf{A} \mathbf{\bar{x}}$,

#

# $\mathbf{P}_{xx} = \displaystyle\sum_{i} {w}_{ci} \left( \mathbf{\chi}_{i} - \mathbf{\bar{x}} \right) \left( \mathbf{\chi}_{i} - \mathbf{\bar{x}} \right)$,

#

# $\mathbf{P}_{yy} = \displaystyle\sum_{i} {w}_{ci} \left( \mathbf{\gamma}_{i} - \mathbf{\bar{y}} \right) \left( \mathbf{\gamma}_{i} - \mathbf{\bar{y}} \right)$,

#