code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.7.3 64-bit

# language: python

# name: python37364bit4db73d59933341feaa47a8db6a2db2c7

# ---

import pandas as pd

import numpy as np

import lib.data_processing

import importlib

importlib.reload(lib.data_processing)

TRAIN_FP = 'data/bias_data/WNC/biased.word.train'

TEST_FP = 'data/bias_data/WNC/biased.word.test'

# +

wnc_train = lib.data_processing.raw_data(TRAIN_FP, 3, 4)

wnc_train_df = wnc_train.add_miss_word_col(dtype='df')

wnc_test = lib.data_processing.raw_data(TEST_FP, 3, 4)

wnc_test_df = wnc_test.add_miss_word_col(dtype='df')

# -

wnc_test_df.head(5)

sample = lib.data_processing.raw_data('data/bias_data/real_world_samples/ibc_right', 2, 3)

sample.add_miss_word_col(dtype='df').head(5)

| WNC Data Pull.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.8.12 64-bit (''onnx'': conda)'

# name: python3

# ---

# <a id='StartingPoint'></a>

# # ONNX classification example

#

# Sharing DL models between frameworks or programming languages is possible with Open Neural Network Exchange (ONNX for short).

#

# This notebook starts from an onnx model exported from MATLAB and uses it in Python.

# On MATLAB a GoogleNet model pre-trained on ImageNet was loaded and saved to onnx file format through a one-line command [exportONNXNetwork(net,filename)](https://www.mathworks.com/help/deeplearning/ref/exportonnxnetwork.html).

#

# The model is then loaded here, as well as the data to evaluate (some images retrieved from google).

# Images are preprocessed to the desired format np.array with shape (BatchSize, numChannels, width, heigh), and the model is applied to classify the images and get the probabilities of the classifications.

# ## Input Variables

#

# The why for the existence of each variable is explained [bellow](#ModelDef), near the model definition.

# +

# %%time

# model vars

modelPath = 'D:/onnxStartingCode/model/preTrainedImageNetGooglenet.onnx'

labelsPath = 'D:/onnxStartingCode/model/labels.csv'

hasHeader = 1

#data vars

image_folder = 'D:/onnxStartingCode/ImageFolder/'

EXT = ("jfif","jpg")

# -

# ## Import Modules

#

# Let's start by importing all the needed modules

#

# [back to top](#StartingPoint)

# %%time

import onnx

import numpy as np

from PIL import Image

import os as os

import matplotlib.pyplot as plt

from onnxruntime import InferenceSession

import csv

# <a id='ModelDef'></a>

# ## Define Model and Data functions

#

# We need to define functions to retrieve the Classifier and Data array.

#

# To load the model we need the path to the file that stores it and the pat to the file that stores the labels. Finnaly, the parameter hasHeader defines the way the firs row of the labelsFile is treated, as header or ar a label.

# The labelsPath is required here becuase the model here used does not contain label information, so an external csv file needs to be read.

#

# [back to top](#StartingPoint)

# +

# %%time

def loadmodel(modelPath,labelsPath,hasHeader):

# define network

# load and check the model

# load the inference module

onnx.checker.check_model(modelPath)

sess = InferenceSession(modelPath)

# Determine the name of the input and output layers

inname = [input.name for input in sess.get_inputs()]

outname = [output.name for output in sess.get_outputs()]

# auxiliary function to load labels file

def extractLabels( filename , hasHeader ):

file = open(filename)

csvreader = csv.reader(file)

if (hasHeader>0):

header = next(csvreader)

#print(header)

rows = []

for row in csvreader:

rows.append(row)

#print(rows)

file.close()

return rows

# Get labels

labels = extractLabels(labelsPath,hasHeader)

# Extract information on the inputSize =(width, heigh) and numChannels = 3(RGB) or 1(Grayscale)

for inp in sess.get_inputs():

inputSize = inp.shape

numChannels = inputSize[1]

inputSize = inputSize[2:4]

return sess,inname,outname,numChannels,inputSize,labels

def getData(image_folder,EXT,inputSize):

def getImagesFromFolder(EXT):

imageList = os.listdir(image_folder)

if (not(isinstance(EXT, list)) and not(isinstance(EXT,tuple))):

ext = [EXT]

fullFilePath = [os.path.join(image_folder, f)

for ext in EXT for f in imageList if os.path.isfile(os.path.join(image_folder, f)) & f.endswith(ext)]

return fullFilePath

def imFile2npArray(imFile,inputSize):

data = np.array([

np.array(

Image.open(fname).resize(inputSize),

dtype=np.int64)

for fname in fullFilePath

])

X=data.transpose(0,3,1,2)

X = X.astype(np.float32)

return X, data

fullFilePath = getImagesFromFolder(EXT)

X, data = imFile2npArray(fullFilePath,inputSize)

return X,data,fullFilePath

# -

# ## Run loading functions to get model and data

#

# * get full filename of all files in a gives directory that end with a given ext (might be an array of EXTENSIONS)

# * load data into numpy arrays for future use:

# * to plot data has to have shape = (x,y,3)

# * the model here presented requires data with shape (3,x,y)

# * two data arrays are then exported, data for ploting and X for classification

#

# [back to top](#StartingPoint)

# +

# %%time

# run code

sess,inname,outname,numChannels,inputSize,labels = loadmodel(modelPath,labelsPath,hasHeader)

X,data,fullFilePath = getData(image_folder,EXT,inputSize)

print("inputSize: " + str(inputSize))

print("numChannels: " + str(numChannels))

print("inputName: ", inname[0])

print("outputName: ", outname[0])

# -

# ## Define a functions to load all data

#

# 1. get full filename of all files in a gives directory that end with a given ext (might be an arrat of EXT)

# 2. load data into numpy arrays for future use:

# * to plot data has to have shape = (x,y,3)

# * the model here presented requires data with shape (3,x,y)

# * two data arrays are then exported, data for ploting and X for classification

#

# [back to top](#StartingPoint)

# ## Classification

#

# [back to top](#StartingPoint)

# +

# %%time

#data_output = sess.run(outname, {inname: X[0]})

out = sess.run(None, {inname[0]: X})

out=np.asarray(out[0])

print(out.shape)

IND = []

PROB= []

for i in range(out.shape[0]):

ind=np.where(out[i] == np.amax(out[i]))

IND.append(ind[0][0])

PROB.append(out[i,ind[0][0]])

l = [labels[ind] for ind in IND]

print([labels[ind] for ind in IND])

print(IND)

print(PROB)

# -

# ## Plot some examples

#

# [back to top](#StartingPoint)

# +

# %%time

plt.figure(figsize=(10,10))

if data.shape[0]>=6:

nPlots=6

subArray=[2,3]

else:

nPlots=data.shape[0]

subArray = [1, nPlots]

for i in range(nPlots):

plt.subplot(subArray[0],subArray[1],i+1)

plt.imshow(data[i])

plt.axis('off')

plt.title(l[i][0] + ' --- ' + str(round(100*PROB[i])) + '%')

plt.show()

# -

[back to top](#StartingPoint)

| ONNXclassifier/onnxClassify.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Project 1

#

# # Used Vehicle Price Prediction

# ## Introduction

#

# - 1.2 Million listings scraped from TrueCar.com - Price, Mileage, Make, Model dataset from Kaggle: [data](https://www.kaggle.com/jpayne/852k-used-car-listings)

# - Each observation represents the price of an used car

# %matplotlib inline

import pandas as pd

data = pd.read_csv('https://github.com/albahnsen/PracticalMachineLearningClass/raw/master/datasets/dataTrain_carListings.zip')

data.head()

data.shape

data.Price.describe()

data.plot(kind='scatter', y='Price', x='Year')

data.plot(kind='scatter', y='Price', x='Mileage')

data.columns

# # Exercise P1.1 (50%)

#

# Develop a machine learning model that predicts the price of the of car using as an input ['Year', 'Mileage', 'State', 'Make', 'Model']

#

# Submit the prediction of the testing set to Kaggle

#

# #### Evaluation:

# - 25% - Performance of the model in the Kaggle Private Leaderboard

# - 25% - Notebook explaining the modeling process

#

data_test = pd.read_csv('https://github.com/albahnsen/PracticalMachineLearningClass/raw/master/datasets/dataTest_carListings.zip', index_col=0)

data_test.head()

data_test.shape

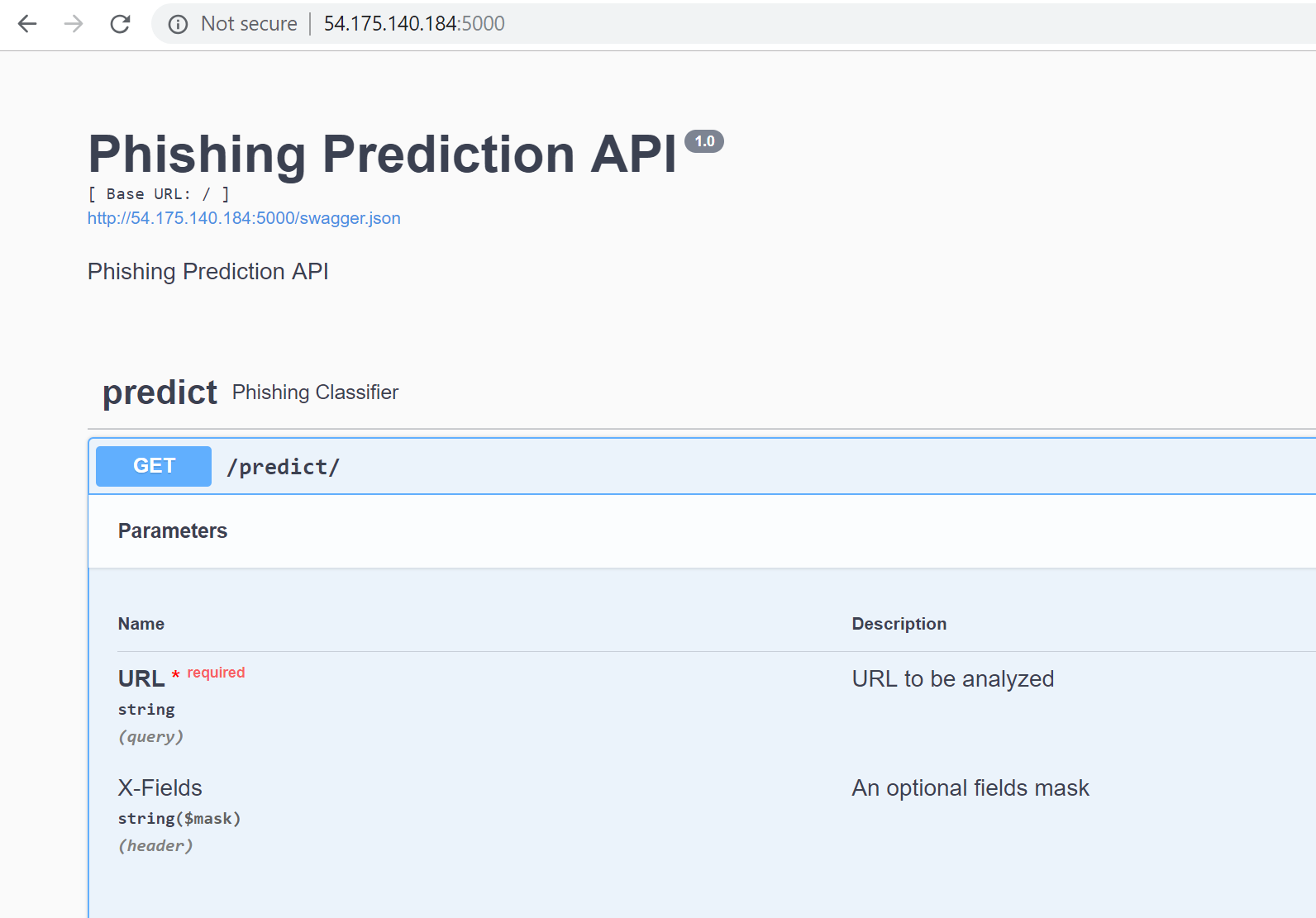

# # Exercise P1.2 (50%)

#

# Create an API of the model.

#

# Example:

#

#

# #### Evaluation:

# - 40% - API hosted on a cloud service

# - 10% - Show screenshots of the model doing the predictions on the local machine

#

| exercises/P1-UsedVehiclePricePrediction.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import cv2

import numpy as np

import matplotlib.pyplot as plt

# +

img = cv2.imread("../data/train_p_1/1.png")

plt.imshow(img)

plt.show()

# -

def filter(image, kernel):

kernel = kernel / (np.sum(kernel) if np.sum(kernel) != 0 else 1)

last_image = np.zeros_like(image, dtype=np.uint8)

for i in range(3):

use_im = image[:, :, i]

use_im = cv2.filter2D(use_im.astype(float), -1, kernel)

# print(image.max(), image.min())

last_image[:, :, i] = use_im

return last_image

# +

kernel = np.array([

[-1, -1, -1],

[-1, 8, -1],

[-1, -1, -1]

], np.float32)

fil_img = filter(img, kernel)

plt.imshow(fil_img)

plt.show()

# +

th_img = cv2.threshold(cv2.cvtColor(img, cv2.COLOR_BGR2GRAY), 146, 255, cv2.THRESH_BINARY)[1]

plt.imshow(th_img)

# -

#cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

th_img = cv2.threshold(cv2.cvtColor(cv2.threshold(img,240, 255, cv2.THRESH_BINARY)[1], cv2.COLOR_BGR2GRAY), 2, 255, cv2.THRESH_BINARY)[1]

plt.imshow(th_img)

plt.show()

countours, hierachy = cv2.findContours(th_img, cv2.RETR_LIST, cv2.CHAIN_APPROX_NONE)

def max_countour(countours):

count_max = 0

max_idx = -1

for i, cnt in enumerate(countours):

# count_max = max(cnt.shape[0], count_max)

if cnt.shape[0] > count_max:

count_max = cnt.shape[0]

max_idx = i

return countours[i]

# +

img_contour = cv2.drawContours(img, [max_countour(countours)], -1, (0, 255, 0), 5)

plt.imshow(img_contour)

# -

[max_countour(countours)]

mu = cv2.moments(max_countour(countours), False)

# +

x,y= int(mu["m10"]/mu["m00"]) , int(mu["m01"]/mu["m00"])

cv2.circle(img, (x,y), 4, 100, 4, 4)

plt.imshow(img)

plt.show()

# -

x, y

| notebooks/filter_crop.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/KeisukeShimokawa/CarND-Advanced-Lane-Lines/blob/master/lesson18_create_movie.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="-H6grmIa7ONk" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="f1aea510-b6a8-42f4-e455-b2fabd884af4"

# !wget https://www.dropbox.com/s/uflwm2ii7sivkv8/run1.tar.gz -O run1.tar.gz

# !tar -zxvf run1.tar.gz

# !rm run1.tar.gz

# + id="IANdQFpN7OKW" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 122} outputId="ebc07ef3-c798-4826-8ae1-9b1ea07d23e8"

from moviepy.editor import ImageSequenceClip

import argparse

import os

# + id="IqudRxxx7OG-" colab_type="code" colab={}

IMAGE_EXT = ['jpeg', 'gif', 'png', 'jpg']

# + id="zV3zHMl07ODJ" colab_type="code" colab={}

image_folder = 'run1'

fps = 50

# + id="6UznCLPk8WJq" colab_type="code" colab={}

#convert file folder into list firltered for image file types

image_list = sorted([os.path.join(image_folder, image_file)

for image_file in os.listdir(image_folder)])

# + id="bN8ejRNH8rY3" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 187} outputId="9ba9711a-e923-4195-9b31-3759f70aa467"

image_list[:10]

# + id="SxqsPMoi83J-" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 187} outputId="2390867d-9450-4248-ef13-891a756b531b"

image_list = [image_file for image_file in image_list if os.path.splitext(image_file)[1][1:].lower() in IMAGE_EXT]

image_list[:10]

# + id="7woYK6E-87gG" colab_type="code" colab={}

#two methods of naming output video to handle varying environemnts

video_file_1 = image_folder + '.mp4'

video_file_2 = image_folder + 'output_video.mp4'

# + id="V13SzTtZ9CgW" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 119} outputId="f1d5e928-2c59-4d24-fe84-ff3b5be0159a"

clip = ImageSequenceClip(image_list, fps=fps)

try:

clip.write_videofile(video_file_1)

except:

clip.write_videofile(video_file_2)

# + id="_4pZ-Uei7LoQ" colab_type="code" colab={}

| lesson18_create_movie.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

class hashSet(object):

def __init__(self, numBuckets):

'''

numBuckets: int. The number of buckets this hash set will have.

Raises ValueError if this value is not an integer, or if it is not greater than zero.

Sets up an empty hash set with numBuckets number of buckets.

'''

if type(numBuckets) != int or numBuckets <= 0:

raise ValueError

self.NUM_BUCKETS = numBuckets

self.set = []

for i in range(self.NUM_BUCKETS):

self.set.append([])

def hashValue(self, e):

'''

e: an integer

returns: a hash value for e, which is simply e modulo the number of

buckets in this hash set. Raises ValueError if e is not an integer.

'''

if type(e) != int:

raise ValueError

return e % self.NUM_BUCKETS

def member(self, e):

'''

e: an integer

Returns True if e is in self, and False otherwise. Raises ValueError if e is not an integer.

'''

if type(e) != int:

raise ValueError

return e in self.set[self.hashValue(e)]

def insert(self, e):

'''

e: an integer

Inserts e into the appropriate hash bucket. Raises ValueError if e is not an integer.

'''

if type(e) != int:

raise ValueError

if e not in self.set[self.hashValue(e)]:

self.set[self.hashValue(e)].append(e)

def remove(self, e):

'''

e: is an integer

Removes e from self

Raises ValueError if e is not in self or if e is not an integer.

'''

if type(e) != int or not self.member(e):

raise ValueError

find = self.set[self.hashValue(e)]

del find[find.index(e)]

def getNumBuckets(self):

'''

Returns number of buckets.

'''

return self.NUM_BUCKETS

def __str__(self):

'''

Returns string representation of data inside hash set.

'''

result = ""

bucketNr = 0

for bucket in self.set:

result += str(bucketNr) + ": " + ",".join(str(e) for e in bucket) + "\n"

bucketNr += 1

return result

hs = hashSet(10)

hs.insert(100)

hs.insert(99)

hs.insert(98)

hs.insert(92)

hs.insert(93)

hs.insert(94)

hs.insert(95)

hs.insert(96)

hs.insert(97)

hs.insert(91)

hs.insert(31)

print str(hs)

# -

a = [1,2,3]

| Haseeb DS/HashSet.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# from __future__ import print_statement

# import time

# pip install swagger_client

# import swagger_client

# from swagger_client.rest import ApiException

# from pprint import pprint

import pandas as pd

from pandas import json_normalize

import numpy as np

import requests

import json

import ipywidgets as widgets

import spacy

import math

import numpy as np

import scattertext as st

from sklearn import manifold

from sklearn.metrics import euclidean_distances

# +

# pip install json_normalize

# +

# dir(requests.get("https://api.nal.usda.gov/fdc/v1/food/534358?api_key=<KEY>"))

# +

# food = json.loads(requests.get("https://api.nal.usda.gov/fdc/v1/food/534358?api_key=<KEY>").content)

# +

# for item in food.items():

# print(item)

# -

# # Direct ID pulls

food = pd.read_csv('complementaryFiles/food.csv')

food.fillna('N/A',inplace=True)

# +

# food[food['description'].str.contains('Salmon')]

# +

food[(food['description'].str.contains('salmon'))&(food['description'].str.contains('Alaska')&(food['description'].str.contains('sockeye')))]

# salmonLst = list(food[(food['description'].str.contains('salmon'))&(food['description'].str.contains('Alaska'))]['fdc_id'].unique())

# salmonLst

salmonLst = list(food[food['description'].str.contains('salmon')]['fdc_id'])

# salmonLst

# +

# json.loads(requests.get(f'https://api.nal.usda.gov/fdc/v1/foods?&fdcIds=167614,167615&api_key=<KEY>').content)

# -

salmonLst = str(salmonLst).replace('[','').replace(']','')

# +

apiKey = '<KEY>'

# salmon = json.loads(requests.get(f'https://api.nal.usda.gov/fdc/v1/foods?&fdcIds=167614,167615&api_key=<KEY>').content)

salmon = json.loads(requests.get(f'https://api.nal.usda.gov/fdc/v1/foods?&fdcIds={salmonLst}&api_key={apiKey}').content)

#survey food

# salmon = json.loads(requests.get(f'https://api.nal.usda.gov/fdc/v1/foods?&fdcIds=1098960&api_key=<KEY>').content)

df = pd.DataFrame(salmon)

df = df.dropna(subset=['foodPortions'])

# df = df[df['inputFoods'].map(lambda d: len(d)) > 0]

df = df.reset_index()

df = df.drop('index',axis=1)

# df = pd.DataFrame(df.iloc[23:24])

df.head()

# +

current_food = df['description'].values[0]

df['foodNutrients'][0] #hidden dataframe with all of the nutrient data

macros = ['Protein','Carbohydrates','Fat','Total lipid (fat)']

json_normalize(df['nutrientConversionFactors'][0][0])

json_normalize(df['foodCategory'][0])

len(list(json_normalize(df['foodNutrients'][0]).columns))

#legacy food iteration

for x in range(df.shape[0]):

col_len = len(list(json_normalize(df['foodNutrients'][x]).columns))

if col_len == 17:

nutrient_df = json_normalize(df['foodNutrients'][x])

# display(nutrient_df)

else:

pass

#survey food

nutrient_df = json_normalize(df['foodNutrients'][0])

# display(nutrient_df)

macro_df = nutrient_df[nutrient_df['nutrient.name'].isin(macros)]

conv_factors = json_normalize(df['nutrientConversionFactors'][0])

portion_df = json_normalize(df['foodPortions'][0])

# display(portion_df.head())

input_df = json_normalize(df['inputFoods'][0])

protein = macro_df[macro_df['nutrient.name']=='Protein']['amount'].values[0]

calories = nutrient_df[nutrient_df['nutrient.unitName']=='kcal']['amount'].values[0]

# input_grams = input_df['amount'].values[0]

portion_grams = portion_df['gramWeight'].values[0]

# portion_ounces = portion_df[portion_df['portionDescription'].str.contains('oz')]['portionDescription'].values[0].split()[0]

print(f'Data for {current_food}')

print('===================')

print('===================')

print('Protein per serving')

print(protein)

print('===================')

print('Calories per serving')

print(calories)

# -

# # PDF generation

# +

# pip install reportlab

# +

#table gen - https://pythonguides.com/create-and-modify-pdf-file-in-python/

from reportlab.pdfgen import canvas

# c = canvas.Canvas("salmon.pdf")

from reportlab.lib import colors

from reportlab.lib.pagesizes import letter, inch

from reportlab.platypus import SimpleDocTemplate, Table, TableStyle

# # move the origin up and to the left

# c.translate(inch,inch)

# c.setFont("Helvetica", 20)

# c.setFillColorRGB(0,0,0)

# c.drawString(.5*inch, 9*inch, f'Data for {current_food}')

# c.drawString(.5*inch, 8*inch, "========================")

# c.drawString(.5*inch, 7*inch, "========================")

# c.drawString(.5*inch, 6*inch, f'Protein per serving = {protein}')

# c.drawString(.5*inch, 5*inch, "========================")

# c.drawString(.5*inch, 4*inch, f'Calories per serving = {calories}')

# c.drawString(.5*inch, 3*inch, "========================")

# creating a pdf file to add tables

my_doc = SimpleDocTemplate("salmon.pdf", pagesize = letter)

my_obj = []

# defining Data to be stored on table

my_data = [

["Food", "Calories", "Protein"],

[current_food, calories, protein],

]

# Creating the table with 5 rows

my_table = Table(my_data, 1 * [2.5 * inch], 2 * [0.5 * inch])

# setting up style and alignments of borders and grids

my_table.setStyle(

TableStyle(

[

("ALIGN", (1, 1), (0, 0), "LEFT"),

("VALIGN", (-1, -1), (-1, -1), "TOP"),

("ALIGN", (-1, -1), (-1, -1), "RIGHT"),

("VALIGN", (-1, -1), (-1, -1), "TOP"),

("INNERGRID", (0, 0), (-1, -1), 1, colors.gray),

# ("BOX", (0, 0), (-1, -1), 2, colors.black),

]

)

)

my_obj.append(my_table)

my_doc.build(my_obj)

# c.showPage()

# c.save()

# -

# ## Search API functionality

cheddar = json.loads(requests.get("https://api.nal.usda.gov/fdc/v1/foods/search?api_key=IDmcqSbD991dUudZIXjztGVAAKnMwhvABtxzlvOQ&query=Cheddar%20Cheese").content)

cheese = json.loads(requests.get("https://api.nal.usda.gov/fdc/v1/foods/search?api_key=IDmcqSbD991dUudZIXjztGVAAKnMwhvABtxzlvOQ&query=Cheese").content)

for key, value in cheese.items():

if key == 'foods':

# ched = pd.DataFrame(columns = ['fdcId', 'description', 'lowercaseDescription', 'dataType', 'gtinUpc','publishedDate',

# 'brandOwner', 'brandName', 'ingredients','marketCountry', 'foodCategory', 'allHighlightFields', 'score','foodNutrients'])

df=[]

for val in value:

# for key,value in val.items():

# print(key,value)

df.append(pd.DataFrame(val))

else:

pass

df = pd.concat(df,sort=False)

df

# +

#### lets use k nearest neighbors on macros !!!! ######

# -

from pandas.io.json import json_normalize

nutrients = json_normalize(df['foodNutrients'])

nutrients[nutrients['nutrientName']=='Protein']

df[df['fdcId']==1497465]['foodNutrients']

# +

sp = spacy.load('en_core_web_sm')

output = widgets.Output()

from IPython.display import display

def clicked(b):

output.clear_output()

with output:

_norm = True

_sortby = 'name'

_query = querybox.value

if (normalizedradio.value == "false"):

_norm = False

if (sortradio.value == 'score'):

_sortby = 'score'

if (_query == ""):

print("please enter a query")

else:

drawTilebars(_query,normalized=_norm,sortby=_sortby).display()

querybox = widgets.Text(description='Query:')

searchbutton = widgets.Button(description="Search")

normalizedradio = widgets.RadioButtons(description="Normalized?",options=['true', 'false'])

sortradio = widgets.RadioButtons(description="Sort by",options=['name', 'score'])

searchbutton.on_click(clicked)

normalizedradio.observe(clicked)

sortradio.observe(clicked)

list_widgets = [widgets.VBox([widgets.HBox([querybox,searchbutton]),

widgets.HBox([normalizedradio,sortradio])])]

accordion = widgets.Accordion(children=list_widgets)

accordion.set_title(0,"Search Controls")

# display(accordion,output)

display(accordion)

# -

| fdcAPI.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import zipfile

import numpy as np

with zipfile.ZipFile("Soccer Data\matches.zip") as Z:

with Z.open("matches_England.json") as f:

df = pd.read_json(f)

point_data = list()

result = {1 : "draw", 0 : "lost", 3: "win"}

for i in range(len(df)):

gameweek = df.iloc[i].gameweek

label = df.iloc[i].label

[[home_team, away_team], [home_score, away_score]] = [[o.strip() for o in s.split('-')] for s in label.split(',')]

home_score = int(home_score)

away_score = int(away_score)

if home_score > away_score:

home_point = 3

away_point = 0

if away_score > home_score:

away_point = 3

home_point = 0

if away_score == home_score:

home_point = 1

away_point = 1

point_data.append([gameweek, home_team, home_point, 'home', result[home_point]])

point_data.append([gameweek, away_team, away_point, 'away', result[away_point]])

point_df = pd.DataFrame(point_data, columns=['gameweek', 'team', 'point', 'home_away', 'result'])

point_df

point_df.to_csv("point.csv")

import matplotlib.pyplot as plt

team_table = point_df.pivot(index= 'gameweek', columns='team', values=['point']).cumsum().fillna(method = 'backfill').fillna(method='ffill')

plt.figure(figsize=[20,12])

colormap = plt.cm.gist_ncar

color = [colormap(i) for i in np.linspace(0, 0.9, len(team_table.columns))]

[plt.plot(team_table.iloc[:,i], color = color[i]) for i in range(len(team_table.columns))]

plt.legend([team_table.columns[i][1] for i in range(len(team_table.columns))], fontsize=12)

plt.xticks(team_table.index)

plt.xlabel("Weeks", fontsize=16)

plt.ylabel("Points", fontsize=16)

plt.show()

teams = ['Arsenal', 'Chelsea', 'Liverpool', 'Manchester United', 'Manchester City']

point_df_selected = point_df[[t in teams for t in point_df['team']]]

tab = pd.crosstab(index=[point_df_selected['team'],point_df_selected['home_away']], columns=point_df_selected['result'])

tab

from scipy.stats import chi2_contingency

chi2_contingency(tab.iloc[4:6,:].values)

point_df_selected

teams_df = pd.read_json('soccer data/teams.json', orient = 'values')

teams_df

coaches_df = pd.read_json('soccer data/coaches.json', orient = 'values')

coaches_df

coaches_teams_df = pd.merge(left=teams_df, right=coaches_df,

left_on='wyId', right_on='currentTeamId',

how='inner')[['name', 'birthDate', 'shortName']].groupby('name').agg('max', on = 'birthDate').sort_values(by='birthDate', ascending = False)

now = pd.Timestamp('now')

age = (now - pd.to_datetime(coaches_teams_df['birthDate'], yearfirst=True)).astype('<m8[Y]')

coaches_teams_df['age'] = age

print(coaches_teams_df.head(10))

plt.hist(age, density = True, edgecolor='black', linewidth=1.2)

plt.xlabel('Age', fontsize=16)

plt.title('Histogram of Coaches Ages')

events_df = pd.DataFrame()

with zipfile.ZipFile("Soccer Data\events.zip") as Z:

for name in Z.namelist():

with Z.open(name) as f:

df_temp = pd.read_json(f)#[['playerId', 'matchId', 'eventName', 'tags']]

events_df = pd.concat([events_df, df_temp])

print("file " + name + " is loaded")

break

passes_df = events_df[['playerId', 'matchId', 'eventName', 'tags']]

passes_df.head()

passes_df = passes_df.loc[passes_df.eventName == 'Pass']

passes_df['pass_success'] = [str(t).find('1801') != -1 for t in passes_df.tags]

passes_df.drop(columns=['tags','eventName'], inplace = True)

passes_df.head()

passes_df = passes_df.groupby(['playerId', 'matchId'], as_index = False, group_keys = False).agg(['sum','count'] , on='pass_success').reset_index()

passes_df.columns = ['playerId', 'matchId', 'sum', 'count']

passes_df.head()

# +

#plt.hist(df['pass_success']['count'], bins=100)

# -

passes_df = passes_df.loc[passes_df['count'] > 100]

passes_df.head()

passes_df.drop(columns = ['matchId'], inplace = True)

passes_df = passes_df.groupby('playerId').agg('sum', level = 0, on = ['sum', 'count']).reset_index()

passes_df.head()

passes_df['ratio'] = passes_df['sum']/passes_df['count']*100

passes_df.head()

passes_top10 = passes_df.sort_values('ratio', ascending=False).head(10)

passes_top10

players_df = pd.read_json('soccer data\players.json')

players_df.head(3)

players_name = players_df[['firstName','middleName','lastName', 'wyId']].copy()

players_name['fullName'] = players_name['firstName'] + ' ' + players_name['middleName'] + ' ' + players_name['lastName']

players_name.head()

players_name.drop(columns = ['firstName', 'middleName', 'lastName'], inplace = True)

players_name.head()

passes_top10 = pd.merge(left=passes_top10, right=players_name, left_on='playerId', right_on='wyId', how='left')

passes_top10[['fullName','ratio']]

airduels_df = events_df[['playerId', 'matchId', 'eventName', 'subEventName', 'tags']]

airduels_df.head()

airduels_df = airduels_df.loc[airduels_df.subEventName == 'Air duel']

airduels_df = airduels_df.loc[airduels_df.eventName == 'Duel']

airduels_df['duel_success'] = [str(t).find('1801') != -1 for t in airduels_df.tags]

airduels_df.drop(columns=['tags','eventName', 'subEventName'], inplace = True)

airduels_df.head()

airduels_df = airduels_df.groupby(['playerId', 'matchId'], as_index = False, group_keys = False).agg(['sum','count'] , on='duel_success').reset_index()

airduels_df.columns = ['playerId', 'matchId', 'sum', 'count']

airduels_df.head()

airduels_df = airduels_df.loc[airduels_df['count'] > 5]

airduels_df.head()

players_height = players_df[['height', 'wyId']].copy()

players_height.head()

airduels_height = pd.merge(left=airduels_df, right=players_height, left_on='playerId', right_on='wyId', how='inner')[['height', 'sum','count']]

airduels_height = airduels_height.groupby(pd.cut(airduels_height["height"], np.arange(155, 210, 5))).sum(on = ['sum' , 'count'])

airduels_height.drop(columns='height', inplace = True)

airduels_height.reset_index()

airduels_height['ratio'] = airduels_height['sum']/airduels_height['count']*100

plt.figure(figsize=(15,7))

plt.scatter(range(len(airduels_height)), airduels_height['ratio'].values, c = range(len(airduels_height)), cmap = 'YlOrRd')

plt.xticks(range(len(airduels_height)), airduels_height.index)

# ## CQ1

events_df.head()

goals_df = events_df[['playerId', 'eventSec','teamId','tags','eventName', 'matchPeriod']]

goals_df.head()

tags101 = [str(t).find(' 101') != -1 for t in goals_df['tags']]

goals_df = goals_df.loc[tags101]

goals_df.head()

goals_df = goals_df.loc[goals_df['eventName'] != 'Save attempt']

goals_df.head()

goals_df['eventMin'] = goals_df['eventSec']//60 + 1

goals_df.head()

time_slots = [str(t) for t in pd.cut(goals_df['eventMin'], np.arange(0, 60, 9))]

goals_df['time_slot'] = time_slots

goals_df.head()

res = goals_df.groupby(['matchPeriod', 'time_slot']).count()[['playerId']]

res

res_plot = res.plot(kind='bar', legend=False)

res1 = goals_df.groupby(['teamId', 'time_slot', 'matchPeriod']).count()[['playerId']].reset_index()

res1.columns = ['teamId','time_slot','matchPeriod','scores']

res2 = res1.loc[res1['time_slot'] == '(36, 45]']

res3 = res2.loc[[str(t).find('2H') != -1 for t in res2['matchPeriod']]]

asd = pd.merge(left = res3, right=teams_df, left_on='teamId', right_on='wyId')[['time_slot','matchPeriod','scores','officialName']]

asd.max()

goals_df.head()

r0 = goals_df.groupby(['time_slot','playerId']).count().reset_index()[['time_slot','playerId','tags']]

r0.columns = ['time_slot','playerId','scores']

r0.head()

r1 = r0.groupby('playerId').count().reset_index()[['playerId','time_slot']]

r1.columns = ['playerId', 'nslot_covered']

r1.sort_values(by = 'nslot_covered', ascending=False)

events_df.head()

pd.unique(events_df['eventName'])

# ## RCQ2

with zipfile.ZipFile("Soccer Data\matches.zip") as Z:

with Z.open('matches_Spain.json') as f:

matches_df = pd.read_json(f)

with zipfile.ZipFile("Soccer Data\events.zip") as Z:

with Z.open('events_Spain.json') as f:

events_spain_df = pd.read_json(f)

events_spain_df.iloc[594533,:]

barcelona_mardrid_id = 2565907 #Barcelona - Real Madrid

CR7_id = 3359 #CR7

LM_id = 3322 #Messi

def event_coordinate(coordinate):

[[_,y_start],[_,x_start],[_,y_end],[_,x_end]] = [i.split(': ') for i in str(coordinate).replace('[','').replace(']','').replace('{','').replace('}','').split(',')]

return int(x_start)/100*130, int(y_start)/100*90, int(x_end)/100*130, int(y_end)/100*90

barcelona_madrid_df = events_spain_df[['eventName','matchId','positions','playerId']].loc[

events_spain_df['eventName'].isin(['Pass', 'Duel','Free Kick','Shot']) &

events_spain_df['matchId'].isin([barcelona_mardrid_id]) &

events_spain_df['playerId'].isin([CR7_id])]

xy_CR7 = barcelona_madrid_df['positions'].apply(event_coordinate)

xy_CR7 = xy_CR7.loc[[i[2] != 0 and i[3] != 0 for i in xy_CR7]]

barcelona_madrid_df = events_spain_df[['eventName','matchId','positions','playerId']].loc[

events_spain_df['eventName'].isin(['Pass', 'Duel','Free Kick','Shot']) &

events_spain_df['matchId'].isin([barcelona_mardrid_id]) &

events_spain_df['playerId'].isin([LM_id])]

xy_LM = barcelona_madrid_df['positions'].apply(event_coordinate)

xy_LM = xy_CR7.loc[[i[2] != 0 and i[3] != 0 for i in xy_LM]]

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.patches import Arc

import seaborn as sns

#Create figure

def plot_pitch():

fig=plt.figure()

fig.set_size_inches(7, 5)

ax=fig.add_subplot(1,1,1)

#Pitch Outline & Centre Line

plt.plot([0,0],[0,90], color="black")

plt.plot([0,130],[90,90], color="black")

plt.plot([130,130],[90,0], color="black")

plt.plot([130,0],[0,0], color="black")

plt.plot([65,65],[0,90], color="black")

#Left Penalty Area

plt.plot([16.5,16.5],[65,25],color="black")

plt.plot([0,16.5],[65,65],color="black")

plt.plot([16.5,0],[25,25],color="black")

#Right Penalty Area

plt.plot([130,113.5],[65,65],color="black")

plt.plot([113.5,113.5],[65,25],color="black")

plt.plot([113.5,130],[25,25],color="black")

#Left 6-yard Box

plt.plot([0,5.5],[54,54],color="black")

plt.plot([5.5,5.5],[54,36],color="black")

plt.plot([5.5,0.5],[36,36],color="black")

#Right 6-yard Box

plt.plot([130,124.5],[54,54],color="black")

plt.plot([124.5,124.5],[54,36],color="black")

plt.plot([124.5,130],[36,36],color="black")

#Prepare Circles

centreCircle = plt.Circle((65,45),9.15,color="black",fill=False)

centreSpot = plt.Circle((65,45),0.8,color="black")

leftPenSpot = plt.Circle((11,45),0.8,color="black")

rightPenSpot = plt.Circle((119,45),0.8,color="black")

#Draw Circles

ax.add_patch(centreCircle)

ax.add_patch(centreSpot)

ax.add_patch(leftPenSpot)

ax.add_patch(rightPenSpot)

#Prepare Arcs

leftArc = Arc((11,45),height=18.3,width=18.3,angle=0,theta1=310,theta2=50,color="black")

rightArc = Arc((119,45),height=18.3,width=18.3,angle=0,theta1=130,theta2=230,color="black")

#Draw Arcs

ax.add_patch(leftArc)

ax.add_patch(rightArc)

#Tidy Axes

plt.axis('off')

plot_pitch()

x_coord = [i[0] for i in xy_CR7]

y_coord = [i[1] for i in xy_CR7]

sns.kdeplot(x_coord, y_coord, shade = "True", color = "green", n_levels = 30, shade_lowest = False)

ply.title('asdasd')

plt.show()

plot_pitch()

x_coord = [i[0] for i in xy_LM]

y_coord = [i[1] for i in xy_LM]

sns.kdeplot(x_coord, y_coord, shade = "True", color = "green", n_levels = 30, shade_lowest = False)

plt.show()

with zipfile.ZipFile("Soccer Data\matches.zip") as Z:

with Z.open('matches_Italy.json') as f:

matches_df = pd.read_json(f)

with zipfile.ZipFile("Soccer Data\events.zip") as Z:

with Z.open('events_Italy.json') as f:

events_italy_df = pd.read_json(f)

juventus_napoli_id = 2576295 #Barcelona - Real Madrid

Jorg_id = 21315 # Jorginho

Pjan_id = 20443 # <NAME>

juventus_napoli_df = events_italy_df[['eventName','matchId','positions','playerId']].loc[

events_italy_df['eventName'].isin(['Pass', 'Duel','Free Kick','Shot']) &

events_italy_df['matchId'].isin([juventus_napoli_id]) &

events_italy_df['playerId'].isin([Jorg_id])]

xy_Jorg = juventus_napoli_df['positions'].apply(event_coordinate)

xy_Jorg = xy_Jorg.loc[[i[2] != 0 and i[3] != 0 for i in xy_Jorg]]

plot_pitch()

x_coord = [i[0] for i in xy_Jorg]

y_coord = [i[1] for i in xy_Jorg]

sns.kdeplot(x_coord, y_coord, shade = "True", color = "green", n_levels = 30, shade_lowest = False)

for xy in xy_Jorg:

plt.annotate(xy = [xy[2],xy[3]], arrowprops=dict(arrowstyle="->",connectionstyle="arc3", color = "blue"),s ='',

xytext = [xy[0],xy[1]])

juventus_napoli_df = events_italy_df[['eventName','matchId','positions','playerId']].loc[

events_italy_df['eventName'].isin(['Pass', 'Duel','Free Kick','Shot']) &

events_italy_df['matchId'].isin([juventus_napoli_id]) &

events_italy_df['playerId'].isin([Pjan_id])]

xy_Pjan = juventus_napoli_df['positions'].apply(event_coordinate)

xy_Pjan = xy_Jorg.loc[[i[2] != 0 and i[3] != 0 for i in xy_Pjan]]

plot_pitch()

#plt.title('asdasd')

x_coord = [i[0] for i in xy_Pjan]

y_coord = [i[1] for i in xy_Pjan]

sns.kdeplot(x_coord, y_coord, shade = "True", color = "green", n_levels = 30, shade_lowest = False)

for xy in xy_Pjan:

plt.annotate(xy = [xy[2],xy[3]], arrowprops=dict(arrowstyle="->",connectionstyle="arc3", color = "blue"),s ='',

xytext = [xy[0],xy[1]])

# +

#events_italy_df

# +

#events_italy_df.loc[events_italy_df['eventId'] == 2]

# -

| HW2.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Python: Advanced

# ## Classes

#

# **Classes** are like blueprints for creating objects.

#

# **Instances** are what is built using the class.

#

# The `__init__()` function is found in all **classes**. It is used to assign values to object properties, or other operations when the *object is being created*.

#

# `self` is used to reference the current instance of the class, and is used to access variables that belong to the class. *it doesn't need to be named self*

#

# Classes can contain **methods** (in object functions)

#

# +

class car:

def __init__(self, make, topspeed):

self.make = make

self.topspeed = topspeed

blueCar = car("Ford", "150")

print(blueCar.make)

print(blueCar.topspeed)

# -

# add a method to the class

# +

class animal:

def __init__(self, name, weight, habitat):

self.name = name

self.weight = weight

self.habitat = habitat

def sayHello(self):

print("Hello I am a " + self.name)

tiger = animal("tiger", 200, "forest")

tiger.sayHello()

# -

# modifying object parameters is similar to normal python sytax

# +

tiger.weight = 220

print(tiger.weight)

del tiger.habitat

print(tiger.__dict__) # quick investigation

# -

# ### Inheritance

# Inheritance allows us to define a class that inherits methods and properties from another class.

#

# **parent** and **child** classes will be used.

#

#

# +

# Parent class

class animal:

def __init__(self, name, weight, habitat):

self.name = name

self.weight = weight

self.habitat = habitat

def sayHello(self):

print("Hello I am a " + self.name)

# Child class

class fish(animal):

pass # used for empty classes

shark = fish("Great White", "1000", "tropical ocean")

print(shark.name)

# -

# if we were to add the `__init__()` function, the child overrides that of the parents class.

#

# To keep it, add a call to the parents `__init__()` directly or use `super()`

# +

class animal:

def __init__(self, name, weight, habitat):

self.name = name

self.weight = weight

self.habitat = habitat

# explicitly calling

class bird(animal):

def __init__(self, name, weight, habitat):

animal.__init__(self, name, weight, habitat)

# using super()

class mammal(animal):

def __init__(self, name, weight, habitat):

super().__init__(name, weight, habitat)

eagle = bird("Golden", 2, "mountains")

print(eagle.__dict__)

bear = mammal("Grizzly", 500, "forest")

print(bear.__dict__)

# -

# properties can also be added at the inheritance stage

# +

class animal:

def __init__(self, name, weight, habitat):

self.name = name

self.weight = weight

self.habitat = habitat

class insect(animal):

def __init__(self, name, weight, habitat, colonySize):

super().__init__(name, weight, habitat)

self.colonySize = colonySize

self.exoskeleton = True # you can also add properties that aren't assigned

def printColonySize(self):

print("The ", self.name, " has a colony size of ", self.colonySize)

ant = insect("fire ant", 0.001, "tropical forest", 100000)

ant.printColonySize()

ant.__dict__

# -

# ### Iterators

# Is an object that can be iterated upon.

#

# Specifically consitsting of the metods `__iter__()` and `__next__()`

#

# `__iter__()` - returns the iterator object

#

# `__next__()` - returns the next item in the sequence

#

#

# +

class numbers:

def __iter__(self):

self.a = 1

return self

def __next__(self):

x = self.a

self.a += 1

return x

myclass = numbers()

myiter = iter(myclass)

print(next(myiter))

print(next(myiter))

print(next(myiter))

# -

# to stop the iteration use `StopIteration`

# +

class numbers:

def __iter__(self):

self.a = 1

return self

def __next__(self):

if self.a <= 10:

x = self.a

self.a += 1

return x

else:

raise StopIteration

myclass = numbers()

myiter = iter(myclass)

for x in myiter:

print(x)

# -

# ## Class Concepts

#

# classes have a number of key concepts

# * inheritance - creating a new class from a parent

# * encapsulation - being unable to effect the core data, unless a function specifices this

# * Polymorphism - to use a common interface for multiple forms

# ### Inheritance

# +

# Parent class

class animal:

def __init__(self, species, habitat, size):

self.species = species

self.habitat = habitat

self.size = size

def __str__(self):

return "I am a " + self.species

def sayHelloSpecies(self):

print("hello I am a " + self.species)

# child class

class fish(animal):

def __init__(self, species, habitat, size):

super().__init__(species, habitat, size)

def fishSpeak(self):

print("bubble bubble bubble")

skate = fish("skate", "north sea", 4)

skate.sayHelloSpecies()

skate.fishSpeak()

print(skate)

# -

# ### Encapsulation

#

# note how the `__maxprice` cannot be changed without the use of the specificed function.

# +

class Computer:

def __init__(self):

self.__maxprice = 900

def sell(self):

print("selling at price {}".format(self.__maxprice))

def setMaxPrice(self, price):

self.__maxprice = price

c = Computer()

c.sell()

# change the price - doesn't work! good

c.__maxprice = 9

c.sell()

# using setter function - works

c.setMaxPrice(1000)

c.sell()

# -

# ### Polymorphism

#

# We can have multiple common classes, that can be used by other functions/class/etc.

#

# the `flying_test()` function uses the `fly()` method in both the parrot and emu classes.

# +

# parent

class animal:

def __init__(self, species, habitat, size):

self.species = species

self.habitat = habitat

self.size = size

class parrot(animal):

def __init__(self, species, habitat, size):

super().__init__(species, habitat, size)

def fly(self):

print("can fly")

class emu(animal):

def __init__(self, species, habitat, size):

super().__init__(species, habitat, size)

def fly(self):

print("cannot fly")

# define a common test

def flying_test(birdSpecies):

birdSpecies.fly()

# create object and run tests

polly = parrot("parrot", "tropics", 1)

paul = emu("emu", "tropics", 67)

flying_test(polly)

flying_test(paul)

# -

# ## Custom iterators

# uses `__iter__()` and `__next__()` methods

#

# `__iter__()` - returns the iterator object

#

# `__next__()` - returns the next item in the sequence

# it also requires use of `StopIteration`

#

# +

class powerTwo:

def __init__(self, max=0):

self.max = max

def __iter__(self):

self.n = 0

return self

def __next__(self):

if self.n <= self.max:

result = 2 ** self.n

self.n += 1

return result

else:

raise StopIteration

for i in powerTwo(3):

print(i)

# -

# ## Generators

# Are a simple way of creating iterations

#

# `yield` is used at least once in a function. It has the same effect as the `return` function, but pauses the functions state, allowing it to be iterable.

#

# `__iter__()` and `__next__()` methods are automatically initiated

#

# They are:

# * memory efficient

# * easier to understand

#

# +

def powerTwoAlt(max = 0):

n = 0

while n <= max:

yield 2 ** n

n += 1

for i in powerTwoAlt(3):

print(i)

# -

# An alternative is in the style of a list comprehension, but uses `()` over `[]`

# +

# list comprehension

listPowerTwo = [2 ** x for x in range(0, 4)]

# generator

generatorPowerTwo = (2 ** x for x in range(0, 4))

print(listPowerTwo)

print(generatorPowerTwo)

for i in generatorPowerTwo:

print(i)

# -

# ## Closure

# if a function has a *nested* function with a value that must be *referenced in the enclosed function* then closure should be used.

#

# It is the concept of returning the nested function

#

# +

def makePrinter(msg):

def printer():

print(msg)

def doSomethingElse():

return 0

return printer

testPrint = makePrinter("hello there")

testPrint()

# -

# ## Decorators

# adds functionallity to existing code - *metacoding*

#

# decorators act as a wrapper

#

# Divider example, we are aiming to add functionality to:

#

# ```python

# def divide(a, b):

# return a / b

# ```

# +

# the decorator function

def describeDivide(func):

def innerDivide(a, b):

print("I am going to divide ", a, " and ", b)

return func(a, b) # returns answer

return innerDivide # returns statement

@describeDivide

def divide(a, b):

return a / b

divide(10, 2)

# -

# Decorators can be **chained together**, making the code more modular

#

# in the below example:

#

# `args` is the tuple of positional arguments

#

# `kwargs` is the dictionary of keyword arguments

#

# thus...

#

# `function(*args, **kwargs)` is th ultimate python wildcard

#

# **pseudocode for decorators**

# 1. define function

# 2. define inner function allowing everything to pass

# 3. print `*`s / `%`s

# 4. Let the function run

# 5. print `*`s / `%`s

# 6. exit

#

#

# +

def addStars(func):

def inner(*args, **kwargs):

print("*" * 30)

func(*args, **kwargs)

print("*" * 30)

return inner

def addPercentage(func):

def inner(*args, **kwargs):

print("%" * 30)

func(*args, **kwargs)

print("%" * 30)

return inner

# now chain them

@addStars

@addPercentage

def printBanner(msg):

print(msg)

printBanner("Hello I am a banner")

# -

| programming_notes/_build/jupyter_execute/python_advanced.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Линейная регрессия и основные библиотеки Python для анализа данных и научных вычислений

# Это задание посвящено линейной регрессии. На примере прогнозирования роста человека по его весу Вы увидите, какая математика за этим стоит, а заодно познакомитесь с основными библиотеками Python, необходимыми для дальнейшего прохождения курса.

# **Материалы**

#

# - Лекции данного курса по линейным моделям и градиентному спуску

# - [Документация](http://docs.scipy.org/doc/) по библиотекам NumPy и SciPy

# - [Документация](http://matplotlib.org/) по библиотеке Matplotlib

# - [Документация](http://pandas.pydata.org/pandas-docs/stable/tutorials.html) по библиотеке Pandas

# - [Pandas Cheat Sheet](http://www.analyticsvidhya.com/blog/2015/07/11-steps-perform-data-analysis-pandas-python/)

# - [Документация](http://stanford.edu/~mwaskom/software/seaborn/) по библиотеке Seaborn

# ## Задание 1. Первичный анализ данных c Pandas

# В этом заданиии мы будем использовать данные [SOCR](http://wiki.stat.ucla.edu/socr/index.php/SOCR_Data_Dinov_020108_HeightsWeights) по росту и весу 25 тысяч подростков.

# **[1].** Если у Вас не установлена библиотека Seaborn - выполните в терминале команду *conda install seaborn*. (Seaborn не входит в сборку Anaconda, но эта библиотека предоставляет удобную высокоуровневую функциональность для визуализации данных).

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

# %matplotlib inline

# Считаем данные по росту и весу (*weights_heights.csv*, приложенный в задании) в объект Pandas DataFrame:

data = pd.read_csv('weights_heights.csv', index_col='Index')

# Чаще всего первое, что надо надо сделать после считывания данных - это посмотреть на первые несколько записей. Так можно отловить ошибки чтения данных (например, если вместо 10 столбцов получился один, в названии которого 9 точек с запятой). Также это позволяет познакомиться с данными, как минимум, посмотреть на признаки и их природу (количественный, категориальный и т.д.).

#

# После этого стоит построить гистограммы распределения признаков - это опять-таки позволяет понять природу признака (степенное у него распределение, или нормальное, или какое-то еще). Также благодаря гистограмме можно найти какие-то значения, сильно не похожие на другие - "выбросы" в данных.

# Гистограммы удобно строить методом *plot* Pandas DataFrame с аргументом *kind='hist'*.

#

# **Пример.** Построим гистограмму распределения роста подростков из выборки *data*. Используем метод *plot* для DataFrame *data* c аргументами *y='Height'* (это тот признак, распределение которого мы строим)

data.plot(y='Height', kind='hist',

color='red', title='Height (inch.) distribution')

# Аргументы:

#

# - *y='Height'* - тот признак, распределение которого мы строим

# - *kind='hist'* - означает, что строится гистограмма

# - *color='red'* - цвет

# **[2]**. Посмотрите на первые 5 записей с помощью метода *head* Pandas DataFrame. Нарисуйте гистограмму распределения веса с помощью метода *plot* Pandas DataFrame. Сделайте гистограмму зеленой, подпишите картинку.

data.head()

data.plot(y='Weight', kind='hist',

color='green', title='Weight (pounds) distribution')

# Один из эффективных методов первичного анализа данных - отображение попарных зависимостей признаков. Создается $m \times m$ графиков (*m* - число признаков), где по диагонали рисуются гистограммы распределения признаков, а вне диагонали - scatter plots зависимости двух признаков. Это можно делать с помощью метода $scatter\_matrix$ Pandas Data Frame или *pairplot* библиотеки Seaborn.

#

# Чтобы проиллюстрировать этот метод, интересней добавить третий признак. Создадим признак *Индекс массы тела* ([BMI](https://en.wikipedia.org/wiki/Body_mass_index)). Для этого воспользуемся удобной связкой метода *apply* Pandas DataFrame и lambda-функций Python.

def make_bmi(height_inch, weight_pound):

METER_TO_INCH, KILO_TO_POUND = 39.37, 2.20462

return (weight_pound / KILO_TO_POUND) / \

(height_inch / METER_TO_INCH) ** 2

data['BMI'] = data.apply(lambda row: make_bmi(row['Height'],

row['Weight']), axis=1)

# **[3].** Постройте картинку, на которой будут отображены попарные зависимости признаков , 'Height', 'Weight' и 'BMI' друг от друга. Используйте метод *pairplot* библиотеки Seaborn.

sns.pairplot(data)

# Часто при первичном анализе данных надо исследовать зависимость какого-то количественного признака от категориального (скажем, зарплаты от пола сотрудника). В этом помогут "ящики с усами" - boxplots библиотеки Seaborn. Box plot - это компактный способ показать статистики вещественного признака (среднее и квартили) по разным значениям категориального признака. Также помогает отслеживать "выбросы" - наблюдения, в которых значение данного вещественного признака сильно отличается от других.

# **[4]**. Создайте в DataFrame *data* новый признак *weight_category*, который будет иметь 3 значения: 1 – если вес меньше 120 фунтов. (~ 54 кг.), 3 - если вес больше или равен 150 фунтов (~68 кг.), 2 – в остальных случаях. Постройте «ящик с усами» (boxplot), демонстрирующий зависимость роста от весовой категории. Используйте метод *boxplot* библиотеки Seaborn и метод *apply* Pandas DataFrame. Подпишите ось *y* меткой «Рост», ось *x* – меткой «Весовая категория».

# +

def weight_category(weight):

pass

return (1 if weight < 120 else (3 if weight >= 150 else 2))

data['weight_cat'] = data['Weight'].apply(weight_category)

sns_boxplot = sns.boxplot(x='weight_cat', y='Height', data=data)

sns_boxplot.set(xlabel='Весовая категория', ylabel='Рост');

# -

# **[5].** Постройте scatter plot зависимости роста от веса, используя метод *plot* для Pandas DataFrame с аргументом *kind='scatter'*. Подпишите картинку.

data.plot(x='Weight', y='Height', kind='scatter')

# ## Задание 2. Минимизация квадратичной ошибки

# В простейшей постановке задача прогноза значения вещественного признака по прочим признакам (задача восстановления регрессии) решается минимизацией квадратичной функции ошибки.

#

# **[6].** Напишите функцию, которая по двум параметрам $w_0$ и $w_1$ вычисляет квадратичную ошибку приближения зависимости роста $y$ от веса $x$ прямой линией $y = w_0 + w_1 * x$:

# $$error(w_0, w_1) = \sum_{i=1}^n {(y_i - (w_0 + w_1 * x_i))}^2 $$

# Здесь $n$ – число наблюдений в наборе данных, $y_i$ и $x_i$ – рост и вес $i$-ого человека в наборе данных.

def error(w0, w1):

error = 0

for i in range(1, data.shape[0]):

error += (data['Height'][i] - (w0 + w1 * data['Weight'][i])) ** 2

return error

# Итак, мы решаем задачу: как через облако точек, соответсвующих наблюдениям в нашем наборе данных, в пространстве признаков "Рост" и "Вес" провести прямую линию так, чтобы минимизировать функционал из п. 6. Для начала давайте отобразим хоть какие-то прямые и убедимся, что они плохо передают зависимость роста от веса.

#

# **[7].** Проведите на графике из п. 5 Задания 1 две прямые, соответствующие значениям параметров ($w_0, w_1) = (60, 0.05)$ и ($w_0, w_1) = (50, 0.16)$. Используйте метод *plot* из *matplotlib.pyplot*, а также метод *linspace* библиотеки NumPy. Подпишите оси и график.

# +

def f(w0, w1, x):

return w0 + w1 * x

data.plot(x='Weight', y='Height', kind='scatter');

x = np.linspace(60, 180, 6)

y = f(60, 0.05, x)

plt.plot(x, y)

y = f(50, 0.16, x)

plt.plot(x, y)

plt.xlabel('Weight')

plt.ylabel('Height')

plt.title('First approximation');

# -

# Минимизация квадратичной функции ошибки - относительная простая задача, поскольку функция выпуклая. Для такой задачи существует много методов оптимизации. Посмотрим, как функция ошибки зависит от одного параметра (наклон прямой), если второй параметр (свободный член) зафиксировать.

#

# **[8].** Постройте график зависимости функции ошибки, посчитанной в п. 6, от параметра $w_1$ при $w_0$ = 50. Подпишите оси и график.

w1 = np.linspace(-5, 5, 200)

y = error(50, w1)

plt.plot(w1, y)

plt.xlabel('w1')

plt.ylabel('error(50, w1)')

plt.title('Error function (w0 fixed, w1 variates)');

# Теперь методом оптимизации найдем "оптимальный" наклон прямой, приближающей зависимость роста от веса, при фиксированном коэффициенте $w_0 = 50$.

#

# **[9].** С помощью метода *minimize_scalar* из *scipy.optimize* найдите минимум функции, определенной в п. 6, для значений параметра $w_1$ в диапазоне [-5,5]. Проведите на графике из п. 5 Задания 1 прямую, соответствующую значениям параметров ($w_0$, $w_1$) = (50, $w_1\_opt$), где $w_1\_opt$ – найденное в п. 8 оптимальное значение параметра $w_1$.

# +

from scipy.optimize import minimize_scalar

def error_50(w1):

return error(50, w1)

res = minimize_scalar(error_50)

w1_opt = res.x

# +

data.plot(x='Weight', y='Height', kind='scatter');

x = np.linspace(60, 180, 6)

y = f(50, w1_opt, x)

plt.plot(x, y)

plt.xlabel('Weight')

plt.ylabel('Height')

plt.title('Second approximation (w0 fixed, w1 optimized)');

# -

# При анализе многомерных данных человек часто хочет получить интуитивное представление о природе данных с помощью визуализации. Увы, при числе признаков больше 3 такие картинки нарисовать невозможно. На практике для визуализации данных в 2D и 3D в данных выделаяют 2 или, соответственно, 3 главные компоненты (как именно это делается - мы увидим далее в курсе) и отображают данные на плоскости или в объеме.

#

# Посмотрим, как в Python рисовать 3D картинки, на примере отображения функции $z(x,y) = sin(\sqrt{x^2+y^2})$ для значений $x$ и $y$ из интервала [-5,5] c шагом 0.25.

from mpl_toolkits.mplot3d import Axes3D

# Создаем объекты типа matplotlib.figure.Figure (рисунок) и matplotlib.axes._subplots.Axes3DSubplot (ось).

# +

fig = plt.figure()

ax = fig.gca(projection='3d') # get current axis

# Создаем массивы NumPy с координатами точек по осям X и У.

# Используем метод meshgrid, при котором по векторам координат

# создается матрица координат. Задаем нужную функцию Z(x, y).

X = np.arange(-5, 5, 0.25)

Y = np.arange(-5, 5, 0.25)

X, Y = np.meshgrid(X, Y)

Z = np.sin(np.sqrt(X**2 + Y**2))

# Наконец, используем метод *plot_surface* объекта

# типа Axes3DSubplot. Также подписываем оси.

surf = ax.plot_surface(X, Y, Z)

ax.set_xlabel('X')

ax.set_ylabel('Y')

ax.set_zlabel('Z')

plt.show()

# -

# **[10].** Постройте 3D-график зависимости функции ошибки, посчитанной в п.6 от параметров $w_0$ и $w_1$. Подпишите ось $x$ меткой «Intercept», ось $y$ – меткой «Slope», a ось $z$ – меткой «Error».

# +

fig = plt.figure()

ax = fig.gca(projection='3d') # get current axis

w0 = np.arange(0, 100, 1)

w1 = np.arange(-5, 5, 0.1)

w0, w1 = np.meshgrid(w0, w1)

err_func = error(w0, w1)

surf = ax.plot_surface(w0, w1, err_func)

ax.set_xlabel('Intercept')

ax.set_ylabel('Slope')

ax.set_zlabel('Error')

plt.show()

# -

# **[11].** С помощью метода *minimize* из scipy.optimize найдите минимум функции, определенной в п. 6, для значений параметра $w_0$ в диапазоне [-100,100] и $w_1$ - в диапазоне [-5, 5]. Начальная точка – ($w_0$, $w_1$) = (0, 0). Используйте метод оптимизации L-BFGS-B (аргумент method метода minimize). Проведите на графике из п. 5 Задания 1 прямую, соответствующую найденным оптимальным значениям параметров $w_0$ и $w_1$. Подпишите оси и график.

# +

from scipy.optimize import minimize

def error_func(w):

return error(w[0], w[1])

minimum = minimize(error_func, [0, 0], method='L-BFGS-B')

w0_opt = minimum.x[0]

w1_opt = minimum.x[1]

# +

data.plot(x='Weight', y='Height', kind='scatter');

x = np.linspace(60, 180, 6)

y = f(w0_opt, w1_opt, x)

plt.plot(x, y)

plt.xlabel('Weight')

plt.ylabel('Height')

plt.title('Third approximation (params are optimized)');

# -

# ## Критерии оценки работы

# - Выполняется ли тетрадка IPython без ошибок? (15 баллов)

# - Верно ли отображена гистограмма распределения роста из п. 2? (3 балла). Правильно ли оформлены подписи? (1 балл)

# - Верно ли отображены попарные зависимости признаков из п. 3? (3 балла). Правильно ли оформлены подписи? (1 балл)

# - Верно ли отображена зависимость роста от весовой категории из п. 4? (3 балла). Правильно ли оформлены подписи? (1 балл)

# - Верно ли отображен scatter plot роста от веса из п. 5? (3 балла). Правильно ли оформлены подписи? (1 балл)

# - Правильно ли реализована функция подсчета квадратичной ошибки из п. 6? (10 баллов)

# - Правильно ли нарисован график из п. 7? (3 балла) Правильно ли оформлены подписи? (1 балл)

# - Правильно ли нарисован график из п. 8? (3 балла) Правильно ли оформлены подписи? (1 балл)

# - Правильно ли используется метод minimize\_scalar из scipy.optimize? (6 баллов). Правильно ли нарисован график из п. 9? (3 балла) Правильно ли оформлены подписи? (1 балл)

# - Правильно ли нарисован 3D-график из п. 10? (6 баллов) Правильно ли оформлены подписи? (1 балл)

# - Правильно ли используется метод minimize из scipy.optimize? (6 баллов). Правильно ли нарисован график из п. 11? (3 балла). Правильно ли оформлены подписи? (1 балл)

| supervised-learning/linreg-height-weight/peer_review_linreg_height_weight.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import sys

sys.path.append('../sample/')

from ann import NeuralNetwork

sys.path.append('../../') # put `little_mcmc/` in `../../`.

import little_mcmc.sample.mcmc as mc

import numpy as np

from random import gauss, random

from time import time

import matplotlib.pyplot as plt

# -

# Typing Hint:

# +

from typing import List, Tuple, Mapping

Array = np.array(List[float])

Value = float

# -

# ### Set global variables:

# +

# Ann

net_size = [5, 1]

input_size = 1

# effective_dim = num_of_connections

effective_dim = np.prod(net_size) * (input_size * net_size[0])

print('effective_dim: ', effective_dim)

step_length = 0.5

chain_num = 1 #* effective_dim

tolerence = 0.00

max_break_counter = 100 * effective_dim

# -

# ### Set error-function as, e.g.,

def error_function(outputs: Array, targets: Array) -> float:

assert len(outputs) == len(targets)

return 0.5 * np.sum(np.square(outputs - targets)) / len(outputs)

# ## Aim: fit `[0.5 * sin(x) for x in np.linspace(-7, 7, 20)]`.

# ### Preparing for MCMC

def random_move(net: NeuralNetwork, step_length=step_length) -> NeuralNetwork:

result_net = net.copy()

for layer in result_net.layers:

for perceptron in layer:

perceptron.weights = ( perceptron.weights

+ np.array([ gauss(0, 1) * step_length

for _ in range(len(perceptron.weights))])

)

return result_net

# +

init_net = NeuralNetwork(net_size, input_size)

print(init_net.weights)

print()

new_net = random_move(init_net, step_length=1)

print(new_net.weights)

# -

# Function to maximize:

# +

# Input

x = np.linspace(-7, 7, 20)

# Target

y = np.sin(x) * 0.5

def f(net: NeuralNetwork, inputs=x, targets=y) -> Value:

outputs = np.array([net.output([__])[0] for __ in inputs])

erf = error_function(outputs, targets)

return -1 * np.log(erf)

# + active=""

# x = np.array([1, 0])

# y = np.array([2, -5])

#

# error_function(x, y)

# -

# ### Do MCMC

# +

# Do mcmc

chain_list = []

t_begin = time()

for step in range(chain_num):

init_net = NeuralNetwork(net_size, input_size)

net_chain = mc.single_chain_mcmc(

f, random_move, init_net,

tolerence=tolerence,

max_break_counter=max_break_counter,

iterations = 10 ** 10

)

chain_list.append(net_chain)

bc = mc.best_chain(chain_list)

best_net = bc[-1][0]

ef_value = bc[-1][1]

t_end = time()

print('time spent by fitting: ', t_end - t_begin)

# -

# ### Show Result

# +

error_function_values = [__[1] for __ in bc]

plt.plot([i for i in range(len(error_function_values))],

error_function_values)

plt.xlabel('chain_node of best_chain')

plt.ylabel('-1 * log(error_function_value)')

plt.show()

plot_x = np.linspace(-6.0,6.0,150)

plot_y_ann = [best_net.output([__]) for __ in plot_x]

plot_y_target = np.sin(plot_x) * 0.5

plt.plot(plot_x, plot_y_target, '-',

plot_x, plot_y_ann, '.', alpha = 0.3

)

plt.legend(['train target', 'net output'])

plt.show()

# -

# **Far from good.**

# Further exame

# +

test_net = random_move(best_net, 0.01)

print('test: ', f(test_net))

print('best: ', f(best_net))

print('test of relative gain: ', mc.relative_gain(f(best_net), f(test_net)))

# -

# ### Print out Fitted Weights

# +

for i in range(len(best_net.layers[0])):

print(best_net.layers[0][i].weights)

print()

for i in range(len(best_net.layers[1])):

print(best_net.layers[1][i].weights)

| test/test_mcmc.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Introduction

#

# Three groups: microsatellite instability high (MSI-H), microsatellite instability low (MSI-L) and microsatellite stable (MSS).

#

# Two sets of measurements: 7_marker, 5_marker. The original report consider one sample as MSI if both indicate positive.

# +

import pandas as pd

import matplotlib as mpl

import numpy as np

import matplotlib.pyplot as plt

import scipy.stats

# mpl.rcParams['figure.dpi'] = 600

# -

df = pd.read_excel("41591_2016_BFnm4191_MOESM27_ESM.xlsx", index=1)

df

df[df['Tumor Type'] == 'COAD']['MOSAIC classification'].value_counts().plot(kind="bar")

# +

f = lambda x: x[0:3]

msi_mask = (df['MOSAIC classification'] == 'MSI-H') & (df['Tumor Type'] == 'COAD')

mss_mask = (df['MOSAIC classification'] == 'MSS') & (df['Tumor Type'] == 'COAD')

msi_patient_id = df['Sample Name'][msi_mask].to_frame()

mss_patient_id = df['Sample Name'][mss_mask].to_frame()

msi_patient_id['microsatellite'] = 'MSI-H'

mss_patient_id['microsatellite'] = 'MSS'

microsatellite_label_df = msi_patient_id.append(mss_patient_id)

# -

data = pd.read_csv("TCGA.Kallisto.fullIDs.cibersort.relative.tsv", sep="\t")

data["SampleID"] = data["SampleID"].apply(lambda x: x.replace('.', '-'))

data["PatientID"] = data["SampleID"].apply(lambda x: '-'.join(x.split('-')[0:3]))

merged = data.merge(microsatellite_label_df, left_on="PatientID", right_on='Sample Name')

merged.microsatellite.value_counts().plot(kind="bar")

# +

cell_types = ['B.cells.naive', 'B.cells.memory', 'Plasma.cells', 'T.cells.CD8',

'T.cells.CD4.naive', 'T.cells.CD4.memory.resting',

'T.cells.CD4.memory.activated', 'T.cells.follicular.helper',

'T.cells.regulatory..Tregs.', 'T.cells.gamma.delta', 'NK.cells.resting',

'NK.cells.activated', 'Monocytes', 'Macrophages.M0', 'Macrophages.M1',

'Macrophages.M2', 'Dendritic.cells.resting',

'Dendritic.cells.activated', 'Mast.cells.resting',

'Mast.cells.activated', 'Eosinophils', 'Neutrophils']

#merged['Leukocytes.all'] = merged[cell_types].sum(1)

merged['T.cells.all'] = merged[['T.cells.CD8',

'T.cells.CD4.naive',

'T.cells.CD4.memory.resting',

'T.cells.CD4.memory.activated',

'T.cells.follicular.helper',

'T.cells.regulatory..Tregs.',

'T.cells.gamma.delta']].sum(1)

merged['B.cells.all'] = merged[['B.cells.naive', 'B.cells.memory']].sum(1)

merged['Nk.cells.all'] = merged[['NK.cells.resting', 'NK.cells.activated']].sum(1)

merged['Macrophages.all'] = merged[['Macrophages.M0', 'Macrophages.M1', 'Macrophages.M2']].sum(1)

merged['Dendritic.cells.all'] = merged[['Dendritic.cells.resting', 'Dendritic.cells.activated']].sum(1)

merged['Mast.cells.all'] = merged[['Mast.cells.resting', 'Mast.cells.activated']].sum(1)

augmented_cell_types = cell_types + ['T.cells.all', 'B.cells.all', 'Nk.cells.all', 'Macrophages.all',

'Dendritic.cells.all', 'Mast.cells.all']

merged

# -

mss = merged[merged.microsatellite == 'MSS'][augmented_cell_types]

msi = merged[merged.microsatellite == 'MSI-H'][augmented_cell_types]

mss

# # Results

#

# ## MSS

# +

a = 0.05

sanitize = lambda x: 0.0 if x < 0 else 1.0 if x > 1 else x

res_mss = pd.DataFrame(index = cell_types, columns = ['mean', 'mean lower', 'mean upper', 'sd', 'sd lower', 'sd upper'])

n = mss.shape[0];

# Mean

res_mss['mean'] = mss.mean(axis=0)

res_mss['sd'] = mss.std(ddof=1, axis=0)

# Mean CI

err = scipy.stats.t.ppf(1 - a / 2, n - 1) * res_mss['sd'] / np.sqrt(n)

res_mss['mean lower'] = (res_mss['mean'] - err).apply(sanitize)

res_mss['mean upper'] = (res_mss['mean'] + err).apply(sanitize)

# Standard deviation CI

res_mss['sd lower'] = np.sqrt((n - 1) * res_mss['sd'] ** 2 / scipy.stats.chi2.ppf(1 - a / 2, n - 1))

res_mss['sd upper'] = np.sqrt((n - 1) * res_mss['sd'] ** 2 / scipy.stats.chi2.ppf(a / 2, n - 1))

res_mss

# -

# ## MSI

# +

a = 0.05

sanitize = lambda x: 0.0 if x < 0 else 1.0 if x > 1 else x

res_msi = pd.DataFrame(index = cell_types, columns = ['mean', 'mean lower', 'mean upper', 'sd', 'sd lower', 'sd upper'])

n = msi.shape[0];

# Mean

res_msi['mean'] = msi.mean(axis=0)

res_msi['sd'] = msi.std(ddof=1, axis=0)

# Mean CI

err = scipy.stats.t.ppf(1 - a / 2, n - 1) * res_msi['sd'] / np.sqrt(n)

res_msi['mean lower'] = (res_msi['mean'] - err).apply(sanitize)

res_msi['mean upper'] = (res_msi['mean'] + err).apply(sanitize)

# Standard deviation CI

res_msi['sd lower'] = np.sqrt((n - 1) * res_msi['sd'] ** 2 / scipy.stats.chi2.ppf(1 - a / 2, n - 1))

res_msi['sd upper'] = np.sqrt((n - 1) * res_msi['sd'] ** 2 / scipy.stats.chi2.ppf(a / 2, n - 1))

res_msi

# -

# ## Figures

# +

x_map = {v: i for i, v in enumerate(augmented_cell_types)}

offset_map = {

'mss': -0.15,

'msi': 0.15}

colors = plt.rcParams['axes.prop_cycle'].by_key()['color']

color_map = {'mss': colors[0],

'msi': colors[1]}

fig = plt.figure(figsize=(10, 3))

ax = fig.add_subplot(1, 1, 1)

n = mss.shape[0];

mean = mss.mean(axis=0)

sd = mss.std(ddof=1, axis=0)

err = scipy.stats.t.ppf(1 - a / 2, n - 1) * sd / np.sqrt(n)

x = [v + offset_map['mss'] for v in x_map.values()]

ax.errorbar(x, mean, yerr=sd, fmt='.', color = color_map['mss'], ecolor = 'darkgray', elinewidth=3.0)

ax.errorbar(x, mean, yerr=err, fmt="None", color = color_map['mss'], ecolor = 'black', elinewidth=1.0)

n = msi.shape[0];

mean = msi.mean(axis=0)

sd = msi.std(ddof=1, axis=0)

err = scipy.stats.t.ppf(1 - a / 2, n - 1) * sd / np.sqrt(n)

x = [v + offset_map['msi'] for v in x_map.values()]

ax.errorbar(x, mean, yerr=sd, fmt='.', color = color_map['msi'], ecolor = 'darkgray', elinewidth=3.0)

ax.errorbar(x, mean, yerr=err, fmt="None", color = color_map['msi'], ecolor = 'black', elinewidth=1.0)

# Hide the right and top spines

ax.spines['right'].set_visible(False)

ax.spines['top'].set_visible(False)

# Only show ticks on the left and bottom spines

ax.yaxis.set_ticks_position('left')

ax.xaxis.set_ticks_position('bottom')

current_ylim = ax.get_ylim()

for v in x_map.values():

if v % 2 == 0:

ax.fill_between([v - 0.5, v + 0.5], current_ylim[0], current_ylim[1], facecolor='lightgray', alpha=0.2)

ax.set_ylim(current_ylim)

ax.set_xticks(list(x_map.values()))

ax.tick_params(axis='x', which = 'both', labelbottom=None)

ax.set_ylabel('Abundance')

ax.set_xlim(-0.5, len(x_map) - 0.5)

# Ticks

ax.tick_params(axis='x', which = 'both', labelbottom=True)

ax.set_xticks(list(x_map.values()))

ax.set_xticklabels(list(x_map.keys()), rotation=30, ha='right')

legend_elements = [mpl.lines.Line2D([0], [0], marker='.', color='w', markerfacecolor=color_map[i], label=i, markersize=15)

for i in color_map]

ax.legend(handles=legend_elements, loc='upper left')

# -

len(cell_types)

| python/statistics/.ipynb_checkpoints/TCGA-MSI-MSS-relative-figure-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Example powerbook usage

#