code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# !wget http://cs.stanford.edu/~danqi/data/cnn.tar.gz

# !tar -xvzf cnn.tar.gz

import numpy as np

import matplotlib.pyplot as plt

keys = ['train', 'dev', 'test']

p, q, a = {}, {}, {}

for k in keys :

file = open('cnn/' + k + '.txt').read().strip().split('\n\n')

file = [x.split('\n') for x in file]

p[k] = [x[2] for x in file]

q[k] = [x[0] for x in file]

a[k] = [x[1] for x in file]

# +

entities = {}

for k in p :

entities[k] = []

for x in p[k] :

entities[k] += [y for y in x.split() if y.startswith('@entity')]

entities[k] = set(entities[k])

f = open('entity_list.txt', 'w')

f.write('\n'.join(list(entities['train'])))

f.close()

# -

def generate_possible_answers(p) :

possible_answers = []

for w in p.split() :

if w.startswith('@entity') :

possible_answers.append(w)

return ";".join(list(set(possible_answers)))

# +

import pandas as pd

df_paragraphs = []

df_questions = []

df_answers = []

df_possible_answers = []

df_exp_splits = []

for k in keys :

df_paragraphs += p[k]

df_questions += q[k]

df_answers += a[k]

df_possible_answers += [generate_possible_answers(x) for x in p[k]]

df_exp_splits += [k] * len(p[k])

df = {'paragraph' : df_paragraphs, 'question' : df_questions, 'answer' : df_answers,

'exp_split' : df_exp_splits, 'possible_answers' : df_possible_answers}

df = pd.DataFrame(df)

# -

df.to_csv('cnn_dataset.csv', index=False)

# %run "../preprocess_data_QA.py" --data_file cnn_dataset.csv --output_file ./vec_cnn.p --all_answers_file entity_list.txt \

# --word_vectors_type fasttext.simple.300d --min_df 8 --add_answers_to_vocab

| project/Transparency/preprocess/CNN/CNN.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from matplotlib import pyplot as plt

x = range(2,26,2)

y = [15,13,14.5,17,20,25,26,26,24,22,18,15]

plt.plot(x,y)

plt.show()

# plt.figure() 可以设置图片大小:

# figure(figsize=(width,height),dpi=x) dpi 在图像模糊的时候可以传入dpi参数,使其清晰

x = range(2,26,2)

y = [15,13,14.5,17,20,25,26,26,24,22,18,15]

plt.figure(figsize=(20,8),dpi=70)

plt.plot(x,y)

plt.savefig('./sig_size.png') # 保存当前生成的图片,也可以保存为矢量图,放大不会有锯齿

plt.show()

# 修改x轴的数据的宽度

x = range(2,26,2)

y = [15,13,14.5,17,20,25,26,26,24,22,18,15]

plt.figure(figsize=(20,8),dpi=70)

plt.plot(x,y)

plt.xticks(range(1,25)) # x轴的取值范围变为1-24

plt.show()

# 修改x轴的数据的宽度

x = range(2,26,2)

y = [15,13,14.5,17,20,25,26,26,24,22,18,15]

plt.figure(figsize=(20,8),dpi=70)

plt.plot(x,y)

_xtick_lables = [i/2 for i in range(4,49)]

plt.xticks(_xtick_lables[::3]) # x轴的取值范围变为1-24

plt.show()

# y轴的刻度控制

x = range(2,26,2)

y = [15,13,14.5,17,20,25,26,26,24,22,18,15]

plt.figure(figsize=(20,7),dpi=70)

plt.plot(x,y)

plt.xticks(range(1,25)) # x轴的取值范围变为1-24

plt.yticks(range(min(y),max(y)+1))

plt.show()

# 如果列表a表示10点到12点每一分钟的气温,如何绘制折线图观察每分钟的气温变化。

import random

x = range(0,120)

y = [random.randint(20,35) for i in range(120)]

plt.figure(figsize=(20,8),dpi=80)

plt.plot(x,y)

plt.show()

plt.rc('font',family="Microsoft YaHei")

# 将横坐标设置为时间

import random

x = range(0,120)

y = [random.randint(15,20) for i in range(120)]

plt.figure(figsize=(20,8),dpi=80)

plt.plot(x,y)

_xtick_lables = ["10点{}分".format(i) for i in range(60)]

_xtick_lables += ["11点{}分".format(i) for i in range(60)]

plt.xticks(list(x)[::3],_xtick_lables[::3],rotation=45) # rotation 将lables旋转 参数是旋转的角度

plt.show()

# +

#添加描述信息:

x = range(0,120)

y = [random.randint(20,35) for i in range(120)]

plt.figure(figsize=(20,8),dpi=80)

plt.plot(x,y)

_xtick_lables = ["10点{}分".format(i) for i in range(60)]

_xtick_lables += ["11点{}分".format(i) for i in range(60)]

plt.xticks(list(x)[::3],_xtick_lables[::3],rotation=45) # rotation 将lables旋转 参数是旋转的角度

plt.xlabel('时间')

plt.ylabel('温度')

plt.title('10点到12点温度变化曲线')

plt.show()

# +

## 练习题

# 假设大家在30岁的时候,根据自己的实际情况,统计出来了从11岁到30岁每年交的女(男)朋友的数量如列表a,请绘制出该数据的折线图,以便分析自己每年交女(男)朋友的数量走势

# a = [1,0,1,1,2,4,3,2,3,4,4,5,6,5,4,3,3,1,1,1]

# 要求:

# y轴表示个数

# x轴表示岁数,比如11岁,12岁等

y = [1,0,1,1,2,4,3,2,3,4,4,5,6,5,4,3,3,1,1,1]

x = range(11,31)

plt.figure(figsize=(20,8),dpi=80)

plt.plot(x,y)

_xtick_lables = ["{}岁".format(i) for i in x]

plt.xticks(x,_xtick_lables)

plt.grid() # 设置网格

plt.show()

# -

# # 这是一个练习

# #### 练习

# 假设大家在30岁的时候,根据自己的实际情况,统计出来了你和你同桌各自从11岁到30岁每年交的女(男)朋友的数量如列表a和b,

# 请在一个图中绘制出该数据的折线图,以便比较自己和同桌20年间的差异,同时分析每年交女(男)朋友的数量走势

# a = [1,0,1,1,2,4,3,2,3,4,4,5,6,5,4,3,3,1,1,1]

# b = [1,0,3,1,2,2,3,3,2,1 ,2,1,1,1,1,1,1,1,1,1]

# 要求:

# y轴表示个数

# x轴表示岁数,比如11岁,12岁等

# +

y_1 = [1,0,1,1,2,4,3,2,3,4,4,5,6,5,4,3,3,1,1,1]

y_2 = [1,0,3,1,2,2,3,3,2,1 ,2,1,1,1,1,1,1,1,1,1]

x = range(11,31)

plt.figure(figsize=(20,8),dpi=80)

plt.plot(x,y_1,label='自己',color='r',linestyle='--',linewidth=1,alpha=0.5)

plt.plot(x,y_2,label='同桌')

_xtick_lables = ["{}岁".format(i) for i in x]

plt.xticks(x,_xtick_lables)

plt.legend(loc=2) # loc表示图例的位置,具体的参数要去看文件的源码

plt.grid(linestyle='--')

plt.show()

# 在画图形的线条的时候我们可以有一些参数进行设置

# color 线条的颜色,或者使用十六进制

# linestyle 线条的样式

# linewidth 线条宽度

# alpha 透明度

# -

# ## 绘制散点图

# 假设通过爬虫你获取到了北京2016年3,10月份每天白天的最高气温(分别位于列表a,b),那么此时如何寻找出气温和随时间(天)变化的某种规律?

#

# a = [11,17,16,11,12,11,12,6,6,7,8,9,12,15,14,17,18,21,16,17,20,14,15,15,15,19,21,22,22,22,23]

#

# b = [26,26,28,19,21,17,16,19,18,20,20,19,22,23,17,20,21,20,22,15,11,15,5,13,17,10,11,13,12,13,6]

#

# +

m_3 = [11,17,16,11,12,11,12,6,6,7,8,9,12,15,14,17,18,21,16,17,20,14,15,15,15,19,21,22,22,22,23]

m_10 = [26,26,28,19,21,17,16,19,18,20,20,19,22,23,17,20,21,20,22,15,11,15,5,13,17,10,11,13,12,13,6]

x_3 = range(1,32)

x_10 = range(51,82)

plt.figure(figsize=(20,8),dpi=80)

plt.scatter(x_3,m_3,label='3月份')

plt.scatter(x_10,m_10,label='10月份')

# 调整x轴的刻度

_x = list(x_3)+list(x_10)

_xtrick_label = ["3月{}日".format(i) for i in x_3] + ["10月{}日".format(i) for i in x_10]

plt.xticks(_x[::3],_xtrick_label[::3],rotation=45)

# 添加图例

plt.legend()

# 添加描述信息

plt.xlabel('时间')

plt.ylabel('温度')

plt.title('温度和时间的散点图')

plt.show()

# -

# ## 绘制条形图

#

# a = ["战狼2","速度与激情8","功夫瑜伽","西游伏妖篇","变形金刚5:最后的骑士","摔跤吧!爸爸","加勒比海盗5:死无对证","金刚:骷髅岛","极限特工:终极回归","生化危机6:终章","乘风破浪","神偷奶爸3","智取威虎山","大闹天竺","金刚狼3:殊死一战","蜘蛛侠:英雄归来","悟空传","银河护卫队2","情圣","新木乃伊",]

#

# b=[56.01,26.94,17.53,16.49,15.45,12.96,11.8,11.61,11.28,11.12,10.49,10.3,8.75,7.55,7.32,6.99,6.88,6.86,6.58,6.23]

#

# 单位:亿

# +

# 通过添加/n进行调整

a = ["战狼2","速度与激情8","功夫瑜伽","西游伏妖篇","变形金刚5:\n最后的骑士","摔跤吧!爸爸","加勒比海盗5:\n死无对证",

"金刚:骷髅岛","极限特工:\n终极回归","生化危机6:\n终章","乘风破浪","神偷奶爸3","智取威虎山","大闹天竺",

"金刚狼3:\n殊死一战","蜘蛛侠:\n英雄归来","悟空传","银河护卫队2","情圣","新木乃伊",]

b=[56.01,26.94,17.53,16.49,15.45,12.96,11.8,11.61,11.28,11.12,10.49,10.3,8.75,7.55,7.32,6.99,6.88,6.86,6.58,6.23]

plt.figure(figsize=(20,8),dpi=80)

plt.bar(range(len(a)),b,width=0.5)

plt.xticks(range(len(a)),a,rotation=45)

plt.show()

# +

# 将条形图的反转

a = ["战狼2","速度与激情8","功夫瑜伽","西游伏妖篇","变形金刚5:最后的骑士","摔跤吧!爸爸","加勒比海盗5:死无对证",

"金刚:骷髅岛","极限特工:终极回归","生化危机6:终章","乘风破浪","神偷奶爸3","智取威虎山","大闹天竺",

"金刚狼3:殊死一战","蜘蛛侠:英雄归来","悟空传","银河护卫队2","情圣","新木乃伊",]

b=[56.01,26.94,17.53,16.49,15.45,12.96,11.8,11.61,11.28,11.12,10.49,10.3,8.75,7.55,7.32,6.99,6.88,6.86,6.58,6.23]

plt.figure(figsize=(20,8),dpi=80)

plt.barh(range(len(a)),b,height=0.5,color='orange')

plt.yticks(range(len(a)),a)

plt.grid(alpha=0.4)

plt.xlabel('票房/忆')

plt.ylabel('电影名')

plt.title('票房与电影的条形统计图')

plt.show()

# -

# ## 绘制条形图

# 假设你知道了列表a中电影分别在2017-09-14(b_14), 2017-09-15(b_15), 2017-09-16(b_16)三天的票房,为了展示列表中电影本身的票房以及同其他电影的数据对比情况,应该如何更加直观的呈现该数据?

#

# a = ["猩球崛起3:终极之战","敦刻尔克","蜘蛛侠:英雄归来","战狼2"]

#

# b_16 = [15746,312,4497,319]

#

# b_15 = [12357,156,2045,168]

#

# b_14 = [2358,399,2358,362]

#

# +

a = ["猩球崛起3:终极之战","敦刻尔克","蜘蛛侠:英雄归来","战狼2"]

b_16 = [15746,312,4497,319]

b_15 = [12357,156,2045,168]

b_14 = [2358,399,2358,362]

x_14 = list(range(len(a)))

x_15 = [i+0.2 for i in x_14]

x_16 = [i+0.4 for i in x_14]

# 设置图像大小

plt.figure(figsize=(20,8),dpi=80)

plt.bar(range(len(a)),b_14,width=0.2,label='9月14日')

plt.bar(x_15,b_15,width=0.2,label='9月15日')

plt.bar(x_16,b_16,width=0.2,label='9月16日')

# 设置x轴的刻度

plt.xticks(x_15,a)

plt.legend()

plt.xlabel('电影名')

plt.ylabel('票房')

plt.title('票房与电影的条形统计图')

plt.show()

# -

# ## 绘制直方图

#

# 假设你获取了250部电影的时长(列表a中),希望统计出这些电影时长的分布状态(比如时长为100分钟到120分钟电影的数量,出现的频率)等信息,你应该如何呈现这些数据?

#

# a=[131, 98, 125, 131, 124, 139, 131, 117, 128, 108, 135, 138, 131, 102, 107, 114, 119, 128, 121, 142, 127, 130, 124, 101, 110, 116, 117, 110, 128, 128, 115, 99, 136, 126, 134, 95, 138, 117, 111,78, 132, 124, 113, 150, 110, 117, 86, 95, 144, 105, 126, 130,126, 130, 126, 116, 123, 106, 112, 138, 123, 86, 101, 99, 136,123, 117, 119, 105, 137, 123, 128, 125, 104, 109, 134, 125, 127,105, 120, 107, 129, 116, 108, 132, 103, 136, 118, 102, 120, 114,105, 115, 132, 145, 119, 121, 112, 139, 125, 138, 109, 132, 134,156, 106, 117, 127, 144, 139, 139, 119, 140, 83, 110, 102,123,107, 143, 115, 136, 118, 139, 123, 112, 118, 125, 109, 119, 133,112, 114, 122, 109, 106, 123, 116, 131, 127, 115, 118, 112, 135,115, 146, 137, 116, 103, 144, 83, 123, 111, 110, 111, 100, 154,136, 100, 118, 119, 133, 134, 106, 129, 126, 110, 111, 109, 141,120, 117, 106, 149, 122, 122, 110, 118, 127, 121, 114, 125, 126,114, 140, 103, 130, 141, 117, 106, 114, 121, 114, 133, 137, 92,121, 112, 146, 97, 137, 105, 98, 117, 112, 81, 97, 139, 113,134, 106, 144, 110, 137, 137, 111, 104, 117, 100, 111, 101, 110,105, 129, 137, 112, 120, 113, 133, 112, 83, 94, 146, 133, 101,131, 116, 111, 84, 137, 115, 122, 106, 144, 109, 123, 116, 111,111, 133, 150]

#

# +

a=[131, 98, 125, 131, 124, 139, 131, 117, 128, 108, 135, 138, 131, 102, 107, 114, 119, 128, 121, 142, 127, 130,

124, 101, 110, 116, 117, 110, 128, 128, 115, 99, 136, 126, 134, 95, 138, 117, 111,78, 132, 124, 113, 150,

110, 117, 86, 95, 144, 105, 126, 130,126, 130, 126, 116, 123, 106, 112, 138, 123, 86, 101, 99, 136,123,

117, 119, 105, 137, 123, 128, 125, 104, 109, 134, 125, 127,105, 120, 107, 129, 116, 108, 132, 103, 136, 118,

102, 120, 114,105, 115, 132, 145, 119, 121, 112, 139, 125, 138, 109, 132, 134,156, 106, 117, 127, 144, 139,

139, 119, 140, 83, 110, 102,123,107, 143, 115, 136, 118, 139, 123, 112, 118, 125, 109, 119, 133,112, 114,

122, 109, 106, 123, 116, 131, 127, 115, 118, 112, 135,115, 146, 137, 116, 103, 144, 83, 123, 111, 110, 111,

100, 154,136, 100, 118, 119, 133, 134, 106, 129, 126, 110, 111, 109, 141,120, 117, 106, 149, 122, 122, 110,

118, 127, 121, 114, 125, 126,114, 140, 103, 130, 141, 117, 106, 114, 121, 114, 133, 137, 92,121, 112, 146,

97, 137, 105, 98, 117, 112, 81, 97, 139, 113,134, 106, 144, 110, 137, 137, 111, 104, 117, 100, 111, 101,

110,105, 129, 137, 112, 120, 113, 133, 112, 83, 94, 146, 133, 101,131, 116, 111, 84, 137, 115, 122, 106,

144, 109, 123, 116, 111,111, 133, 150]

#计算组数

d = 3 #组距

num_bins = (max(a)-min(a))//d

# print(max(a),min(a),max(a)-min(a))

# print(num_bins)

#设置图形的大小

plt.figure(figsize=(20,8),dpi=80)

# density True 改为频率分布直方图

plt.hist(a,num_bins,density=True)

#设置x轴的刻度

plt.xticks(range(min(a),max(a)+d,d))

plt.grid()

plt.show()

# -

# ## 练习

#

# 在美国2004年人口普查发现有124 million的人在离家相对较远的地方工作。根据他们从家到上班地点所需要的时间,通过抽样统计(最后一列)出了下表的数据,这些数据能够绘制成直方图么?

#

# interval = [0,5,10,15,20,25,30,35,40,45,60,90]

#

# width = [5,5,5,5,5,5,5,5,5,15,30,60]

#

# quantity = [836,2737,3723,3926,3596,1438,3273,642,824,613,215,47]

#

# +

interval = [0,5,10,15,20,25,30,35,40,45,60,90]

width = [5,5,5,5,5,5,5,5,5,15,30,60]

quantity = [836,2737,3723,3926,3596,1438,3273,642,824,613,215,47]

print(len(interval),len(width),len(quantity))

#设置图形大小

plt.figure(figsize=(20,8),dpi=80)

plt.bar(interval,quantity,width=width)

#设置x轴的刻度

temp_d = [5]+ width[:-1]

_x = [i-temp_d[interval.index(i)]*0.5 for i in interval]

plt.xticks(_x,interval)

plt.grid(alpha=0.4)

plt.show()

# -

| matplotlob/first.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.9.5 64-bit

# name: python395jvsc74a57bd09400c0b303c55aae944a7d117953411ee2c353c3ca48e8a9c758ac4a17625cdf

# ---

# ## Fonction de scraping des tirages

# # Cas de prédiction du Loto français

#Import des librairies utiles

from bs4 import BeautifulSoup

import time

import requests

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import pickle

from sklearn.preprocessing import StandardScaler

from keras.models import Sequential

from keras.layers import LSTM, Dense, Bidirectional, TimeDistributed, RepeatVector, Flatten

from keras.callbacks import EarlyStopping

#fonction de scraping des tirages du loto

def scrap_loto_numbers():

my_list=[]

time.sleep(2)

loto_url = "http://loto.akroweb.fr/loto-historique-tirages/"

page = requests.get(loto_url)

soup = BeautifulSoup(page.text, 'html.parser')

body = soup.find('table')

tirage_line = body.find_all('tr')

for value in tirage_line:

my_dict = {}

res = value.text.split('\n')

my_dict['day']=res[2]

my_dict['month_year']=res[3]

for i,val in enumerate(res[5:10]):

my_dict['num'+str(i)]=int(val)

my_dict['chance']=int(res[10])

my_list.append(my_dict)

df=pd.DataFrame(my_list)

return df

# +

#A noter que plusieurs tirages se sont ajoutés dépuis le 21 : date de rédaction de l'article

# -

#sracping des tirages actuellement disponibles sur le site

df_tirage=scrap_loto_numbers()

df_tirage[['day','month_year','num0','num1','num2','num3','num4','chance']].head()

#suppression des tirages du super loto( A explorer later )

#df_tirage=df_tirage[(df_tirage['day']!='Vendredi') & (df_tirage['day']!='Mardi')]

# +

#df_tirage=df_tirage.tail(df_tirage.shape[0]-1)

# -

# ## commentaires:

# * le dernier tirage ici date du 07 décembre, ainsi afin de tester le modèle nous allons rétirer ce tirage du dataset dans la suite

# * Par contre on aurait évité de supprimer le tirage du 28 si on voulait prédire le prochain tirage ( celui du samedi 31)

#df_tirage=df_tirage.tail(df_tirage.shape[0])# suppression du dernier tirage/à éviter selon le cas

df_tirage.head()# le dernier tirage devient ici celui du 26

# ## Traitement des données

df = df_tirage.iloc[::-1]#inversion du dataframe pour placer le dernier tirage en dernière position

df = df[['num0', 'num1', 'num2', 'num3', 'num4', 'chance']]#sélection des numéros à traiter

df.tail()# notre tirage du 26 ici devient le dernier de notre dataset afin de pourvoir organiser les data par historique

# +

#fonction de vérification de nombres en dessous d'une certaine valeur pour les 5 premiers numéros, sauf celui de chance

def is_under(data, number):

return ((data['num0'] <= number).astype(int) +

(data['num1'] <= number).astype(int) +

(data['num2'] <= number).astype(int) +

(data['num3'] <= number).astype(int) +

(data['num4'] <= number).astype(int))

#fonction de vérification de nombres pairs pour les 5 premiers numéros sauf celui de chance

def is_pair(data):

return ((data['num0'].isin(pairs)).astype(int) +

(data['num1'].isin(pairs)).astype(int) +

(data['num2'].isin(pairs)).astype(int) +

(data['num3'].isin(pairs)).astype(int) +

(data['num4'].isin(pairs)).astype(int))

#fonction de vérification de nombres impairs pour les 5 premiers numéros sauf celui de chance

def is_impair(data):

return ((data['num0'].isin(impairs)).astype(int) +

(data['num1'].isin(impairs)).astype(int) +

(data['num2'].isin(impairs)).astype(int) +

(data['num3'].isin(impairs)).astype(int) +

(data['num4'].isin(impairs)).astype(int))

#fonction de vérification de nombres pairs pour le numéro de chance

def is_pair_etoile(data):

return (data['chance'].isin(pairs)).astype(int)

#fonction de vérification de nombres impairs pour le numéro de chance

def is_impair_etoile(data):

return (data['chance'].isin(impairs)).astype(int)

#liste de nombres pairs et impairs

pairs = [2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30, 32, 34, 36, 38, 40, 42, 44, 46, 48, 50]

impairs = [1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47, 49]

#Fonction de calcul de la somme de la différence au carré des 5 premiers numéros, sauf celui de chance

def sum_diff(data):

return ((data['num1'] - data['num0'])**2 +

(data['num2'] - data['num1'])**2 +

(data['num3'] - data['num2'])**2 +

(data['num4'] - data['num3'])**2)

# Calcul de la fréquence de tirage de chaque numéro

freqs = []

for val in range(50):

count = ( (df['num0'] == val+1).sum() +

(df['num1'] == val+1).sum() +

(df['num2'] == val+1).sum() +

(df['num3'] == val+1).sum() +

(df['num4'] == val+1).sum() )

freqs.append(count)

ax = plt.gca() ; ax.invert_yaxis()

plt.gcf().set_size_inches(5, 4)

heatmap = plt.pcolor(np.reshape(np.array(freqs), (5, 10)), cmap=plt.cm.Blues)

def freq_val(data, column):

tab = data[column].values.tolist()

freqs = []

pos = 1

for e in tab:

freqs.append(tab[0:pos].count(e))

pos = pos + 1

return freqs

#df['sum'] = ((df.num0 + df.num1 + df.num2 + df.num3 + df.num4 + df.chance ) >185).astype(int)

# -

#ajout de la difference entre les numéros(A explorer ASAp)

#for i in range(4):

#print(i,i+1)

#df['diff_{}'.format(i)]=df['num{}'.format(i+1)]-df['num{}'.format(i)]

#application des fonctions sur le dataframe

df['freq_num0'] = freq_val(df, 'num0')

df['freq_num1'] = freq_val(df, 'num1')

df['freq_num2'] = freq_val(df, 'num2')

df['freq_num3'] = freq_val(df, 'num3')

df['freq_num4'] = freq_val(df, 'num4')

df['freq_chance'] = freq_val(df, 'chance')#calcul des frequences

df['sum_diff'] = sum_diff(df)#somme de la différence au carré entre chaque couple de numéros successifs dans le tirage

df['pair_chance'] = is_pair_etoile(df)

df['impair_chance'] = is_impair_etoile(df)

df['pair'] = is_pair(df)

df['impair'] = is_impair(df)#verification de nombre pair et impair

df['is_under_24'] = is_under(df, 24) # Les numeros en dessous de 24

df['is_under_40'] = is_under(df, 40)# Les numeros en dessous de 40

df.head(6)

# ## Modèle et fonction de formatage des données en entrée du LSTM

# +

#capture 3: fonction define model seulement

# +

# j'ai ici défini plusieurs modèles à tester mais pour l'intant je tavaille avec le lstm(fonction : define_model)

# j'ai ici défini window_length à 12 pour apprendre sur 1 mois de données

#Params du modèle

nb_label_feature=6

UNITS = 100

BATCHSIZE = 30

EPOCH = 1500

#ACTIVATION = "softmax"

OPTIMIZER ='adam' # rmsprop, adam, sgd

LOSS = 'mae'#'categorical_crossentropy' #mse

DROPOUT = 0.1

window_length =12 #12

number_of_features = df.shape[1]

#Architecture du modèle

def define_model(number_of_features,nb_label_feature):

#initialisation du rnn

model = Sequential()

#ajout de la premiere couche lstm

model.add(LSTM(UNITS, input_shape=(window_length, number_of_features), return_sequences=True))

model.add(LSTM(UNITS, dropout=0.1, return_sequences=False))

#ajout de la couche de sortie

model.add(Dense(nb_label_feature))

model.compile(loss=LOSS, optimizer=OPTIMIZER, metrics=['acc'])

return model

def define_bidirectionnel_model(number_of_features,nb_label_feature):

model = Sequential()

model.add(Bidirectional(LSTM(100, dropout=0.2, return_sequences=True), input_shape=(window_length, number_of_features)))

model.add(LSTM(50, return_sequences=True))

model.add(LSTM(100, dropout=0.1))

model.add(Dense(nb_label_feature))

model.compile(loss=LOSS, optimizer=OPTIMIZER, metrics=['acc'])

return model

def define_autoencoder_model(number_of_features,nb_label_feature):

model = Sequential()

model.add(LSTM(100, input_shape=(window_length, number_of_features), return_sequences=True))

model.add(LSTM(50, return_sequences=False))

model.add(RepeatVector(window_length))

model.add(LSTM(100, dropout=0.1, return_sequences=True))

model.add(LSTM(50, return_sequences=True))

model.add(TimeDistributed(Dense(number_of_features)))

model.add(Flatten())

model.add(Dense(nb_label_feature))

model.compile(loss=LOSS, optimizer=OPTIMIZER, metrics=['acc'])

return model

#model = define_model(number_of_features,nb_label_feature)

#model3 = define_autoencoder_model(number_of_features,nb_label_feature)

#model4 = define_bidirectionnel_model(number_of_features,nb_label_feature)

#Moniteur pour stoper le training

es = EarlyStopping(monitor='acc', mode='max', verbose=1, patience=100)

# -

# Fonction de formatage des données en entrée du LSTM

def create_lstm_dataset(df, window_length,nb_label_feature):

number_of_rows = df.shape[0] #taille du dataset number_of_features

number_of_features = df.shape[1]

scaler = StandardScaler().fit(df.values)

transformed_dataset = scaler.transform(df.values)

transformed_df = pd.DataFrame(data=transformed_dataset, index=df.index)

#tableau de tableau de taille(number_of_rows-window_length) et window_length ligne,number_of_features

#lstm:[nb total de row ,nb de ligne dans le passé, nb de colonne(feature)]

train = np.empty([number_of_rows-window_length, window_length, number_of_features], dtype=float)

label = np.empty([number_of_rows-window_length, nb_label_feature], dtype=float)

for i in range(0, number_of_rows-window_length):

train[i] = transformed_df.iloc[i:i+window_length, 0: number_of_features]

label[i] = transformed_df.iloc[i+window_length: i+window_length+1, 0:nb_label_feature]

#définition du modèle Lstm

model = define_model(number_of_features,nb_label_feature)

return train, label, model,scaler

# ## Training

#formatage des données

train, label,model,scaler1 = create_lstm_dataset(df, window_length,nb_label_feature)

print(train.shape)

print(label.shape)

# * On voit ici que notre dataset d'entrainement après formatage est constitué de 1911 vecteurs contenant chacun 12 tirages où chaque tirage contient 19 features calculés plus haut

#

# * Quant aux labels, on a bien 1911 vecteurs de 6 features soit les 6 numéros de chaque tirages

#

# * Ainsi à partir des 12 tirages précédent on éssaie de prédire le tirage suivant lors de l'entrainement

#Training

history=model.fit(train, label, batch_size=BATCHSIZE, epochs=EPOCH, verbose=2, callbacks=[es])

# ## Fonction de perte

# +

#capture 6

# -

plt.plot(history.history['loss'])

plt.legend(['train_loss'])

plt.show()

# ## Prédiction du tirage suivant le dernier tirage de notre dataset de train

#Prediction basée sur les 12 derniers tirages

last_twelve = df.tail(window_length) # on recupere les 12 derniers tirages

scaler = StandardScaler().fit(df.values)

scaled_to_predict = scaler.transform(last_twelve)

scaled_predicted_output_1 = model.predict(np.array([scaled_to_predict]))

#prediction

tom = df.tail(window_length).iloc[:,0:6] #

scaler = StandardScaler().fit(df.iloc[:,0:6])

scaled_to_predict = scaler.transform(tom)

print(scaler.inverse_transform(scaled_predicted_output_1).astype(int)[0])

| DEEP_LEARNING_ET_LE_HASARD.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.0.3

# language: julia

# name: julia-1.0

# ---

# # Cascade decay of a Ne 1s-hole: Simulate the final ion distribution

# # **This nootebook is still under construction !!!**

using JAC

#

# The computation of the cascade tree alone does not provide much information which could be compared with experiment. To facilitate the prediction of cascades or the comparison with experiment, the amplitudes from the various decay pathways need to be combined properly in order to predict different spectra and observables. Such observables are

#

# (1)

# (2)

#

# What does it include: Let us have a look at the definition of such a `Cascade.Simulation`:

#

? Cascade.Simulation

# What is seen ... and which information needed to be provided for such a computation

| tutorials/72-simulate-cascade-neon-k-hole.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

#

# # Feature selection using SelectFromModel and LassoCV

#

#

# Use SelectFromModel meta-transformer along with Lasso to select the best

# couple of features from the Boston dataset.

#

#

# +

# Author: <NAME> <<EMAIL>>

# License: BSD 3 clause

print(__doc__)

import matplotlib.pyplot as plt

import numpy as np

from sklearn.datasets import load_boston

from sklearn.feature_selection import SelectFromModel

from sklearn.linear_model import LassoCV

# Load the boston dataset.

boston = load_boston()

X, y = boston['data'], boston['target']

# We use the base estimator LassoCV since the L1 norm promotes sparsity of features.

clf = LassoCV()

# Set a minimum threshold of 0.25

sfm = SelectFromModel(clf, threshold=0.25)

sfm.fit(X, y)

n_features = sfm.transform(X).shape[1]

# Reset the threshold till the number of features equals two.

# Note that the attribute can be set directly instead of repeatedly

# fitting the metatransformer.

while n_features > 2:

sfm.threshold += 0.1

X_transform = sfm.transform(X)

n_features = X_transform.shape[1]

# Plot the selected two features from X.

plt.title(

"Features selected from Boston using SelectFromModel with "

"threshold %0.3f." % sfm.threshold)

feature1 = X_transform[:, 0]

feature2 = X_transform[:, 1]

plt.plot(feature1, feature2, 'r.')

plt.xlabel("Feature number 1")

plt.ylabel("Feature number 2")

plt.ylim([np.min(feature2), np.max(feature2)])

plt.show()

| Assignments/hw3/Failed_to_perform_with_dataset/HW3_feature_selection_from_Boston/.ipynb_checkpoints/plot_select_from_model_boston-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Data Wrangling COVID-19 Mini-Challenge

# ## Imports and Setup

# +

import os

import pandas as pd

import seaborn as sns

import plotly.io as pio

import pandas_profiling

import plotly.express as px

import plotly.offline as pyo

import matplotlib.pyplot as plt

import plotly.graph_objects as go

from glob import glob

from datetime import date

# with this renderer, the plots are also shown in the html file.

pio.renderers.default = "jupyterlab"

# -

# ## International COVID-data

# +

today = date.today()

dates = [date.strftime('%m-%d-%Y') for date in pd.date_range(start='2020-01-22', end=today)][:-1]

dates_as_date = pd.date_range(start='2020-01-22', end=today)[:-1]

# standardize column names for all entries

def rename_columns(column):

column_map = {

'Lat': 'Latitude',

'Long_': 'Longitude',

'Incidence_Rate': 'Incident_Rate'

}

if column in column_map:

return column_map[column]

return column.replace('/', '_').replace('-', '_').replace(' ', '_')

all_cases = []

for d in range(len(dates)):

path = "https://raw.githubusercontent.com/CSSEGISandData/COVID-19/master/csse_covid_19_data/csse_covid_19_daily_reports/{}.csv".format(dates[d])

data = pd.read_csv(path)

data.rename(columns=rename_columns, inplace=True)

data['Date'] = dates_as_date[d]

all_cases.append(data)

df = pd.concat(all_cases)

# +

# all_cases.Country_Region.unique()

# standardize country names for all entries

country_mapping = {

'MS Zaandam|Diamond Princess|Cruise Ship': 'Others', # move cruise ships to others

'Hong Kong.+': 'Hong Kong',

'Iran.+': 'Iran',

'.*Congo.*': 'Congo',

'Mainland China': 'China',

'.*Bahamas.*': 'The Bahamas',

'.*Gambia.*': 'The Gambia',

'Viet Nam': 'Vietnam',

'Taiwan\*': 'Taiwan',

'Cote d\'Ivoire': 'Ivory Coast',

'Cabo Verde': 'Cape Verde',

'Russian Federation': 'Russia',

' Azerbaijan': 'Azerbaijan',

'Holy See': 'Vatican City',

'Republic of Ireland': 'Ireland',

'Republic of Moldova': 'Moldova',

'Czechia': 'Czech Republic',

'Republic of Korea|Korea, South': 'South Korea',

'Timor-Leste': 'East Timor',

'Macao SAR|Macau': 'Macao',

'UK': 'United Kingdom',

'Jersey|Guernsey': 'Channel Islands',

'Dominican Republicn Republic|Dominica': 'Dominican Republic'

}

df['Country_Region'].replace(

to_replace=country_mapping.keys(),

value=country_mapping.values(),

regex=True,

inplace=True

)

# -

df.to_csv('worldwide.csv')

df = pd.read_csv('worldwide.csv')

# group data by country

daily_updates = df.groupby(['Country_Region', 'Date']).agg(

Confirmed = ('Confirmed','sum'),

Deaths = ('Deaths','sum'),

).reset_index()

# get changes in data

updates_per_country = daily_updates.groupby('Country_Region')

# diff takes difference to point in group before it

daily_updates['New_Confirmed'] = updates_per_country['Confirmed'].diff().fillna(0)

daily_updates['New_Deaths'] = updates_per_country['Deaths'].diff().fillna(0)

# reorder columns

worldwide_pretty = daily_updates.loc[:, ['Date', 'Country_Region', 'New_Confirmed', 'Confirmed', 'New_Deaths', 'Deaths']]

# ## Plotting International Data

# create df with one column per date

worldwide_all = worldwide_pretty.groupby('Date').agg(

Confirmed = ('Confirmed', 'sum'),

New_Confirmed = ('New_Confirmed', 'sum')

)

# create a moving average

worldwide_all['SMA_14'] = round(worldwide_all.loc[:,'New_Confirmed'].rolling(window=14).mean())

worldwide_all = worldwide_all.reset_index()

# +

plot = go.Figure()

plot.add_trace(

go.Bar(

name='Daily Meas',

x=worldwide_all["Date"],

y=worldwide_all["New_Confirmed"],

marker={

# size': 10,

'color': 'red',

'opacity': 1

},

)

)

plot.add_trace(

go.Scatter(

name='14 Day MA',

x=worldwide_all["Date"],

y=worldwide_all["SMA_14"],

mode='lines',

marker={

'size': 1,

'color': 'black',

'opacity': 0.7

},

line_width=1.5

)

)

plot.update_layout(

title_x=0,

title='New Corona Cases Worldwide',

margin={

'l': 25,

'b': 25,

't': 50,

'r': 20

},

height=400,

yaxis={

'color': '#748B99',

'showgrid': True,

'showline': True,

'gridwidth': 1,

'gridcolor': '#B6C3CC',

# 'rangemode': "tozero",

'range':

[0,

worldwide_all.New_Confirmed.max() * 1.05],

# 'ticksuffix': ' '

# 'tickprefix': 'id: '

},

xaxis={

'showgrid': False,

'color': '#748B99',

'showline': True,

'linecolor': '#B6C3CC',

},

paper_bgcolor='#FFFFFF',

plot_bgcolor='rgba(0,0,0,0)',

showlegend=False,

legend={

'yanchor': 'top',

'y': 0.99,

'xanchor': 'right',

'x': 0.99

})

plot.show()

# -

# create df with one column per date

df = worldwide_pretty[worldwide_pretty.Country_Region == 'Ireland']

# create a moving average

df['SMA_14'] = round(df.loc[:,'New_Confirmed'].rolling(window=14).mean())

df = df.reset_index()

# +

plot = go.Figure()

plot.add_trace(

go.Bar(

name='Daily Meas',

x=df["Date"],

y=df["New_Confirmed"],

marker={

# size': 10,

'color': 'red',

'opacity': 1

},

)

)

plot.add_trace(

go.Scatter(

name='14 Day MA',

x=df["Date"],

y=df["SMA_14"],

mode='lines',

marker={

'size': 1,

'color': 'black',

'opacity': 0.7

},

line_width=1.5

)

)

plot.update_layout(

title_x=0,

title='New Corona Cases in Ireland',

margin={

'l': 25,

'b': 25,

't': 50,

'r': 20

},

height=400,

yaxis={

'color': '#748B99',

'showgrid': True,

'showline': True,

'gridwidth': 1,

'gridcolor': '#B6C3CC',

# 'rangemode': "tozero",

'range':

[0,

df.New_Confirmed.max() * 1.05],

# 'ticksuffix': ' '

# 'tickprefix': 'id: '

},

xaxis={

'showgrid': False,

'color': '#748B99',

'showline': True,

'linecolor': '#B6C3CC',

},

paper_bgcolor='#FFFFFF',

plot_bgcolor='rgba(0,0,0,0)',

showlegend=False,

legend={

'yanchor': 'top',

'y': 0.99,

'xanchor': 'right',

'x': 0.99

})

plot.show()

# -

# ## Swiss COVID-data

# data import

path = "https://raw.githubusercontent.com/openZH/covid_19/master/COVID19_Fallzahlen_CH_total_v2.csv"

chData = pd.read_csv(path)

# +

# only use data from 1 june on

chData = chData[chData.date > '2020-06-01']

# remove Fürstentum Lichtenstein

chData = chData[chData.abbreviation_canton_and_fl != 'FL']

# calculate new cases and deaths

chGrouped = chData.groupby('abbreviation_canton_and_fl')

chData['new_cases'] = chGrouped['ncumul_conf'].diff().fillna(0)

chData['new_deaths'] = chGrouped['ncumul_deceased'].diff().fillna(0)

# calculate total cases and deaths

chData['total_cases'] = chGrouped['new_cases'].cumsum()

chData['total_deaths'] = chGrouped['new_deaths'].cumsum()

# remove obsolete columns

chData = chData[[

'date',

'abbreviation_canton_and_fl',

'new_cases',

'total_cases',

'new_deaths',

'total_deaths'

]]

# rename columns

chData = chData.rename(

{

'abbreviation_canton_and_fl': 'canton'

},

axis='columns'

)

# -

# ## Plotting Swiss Data

# create df with one column per date

df = chData.groupby('date').agg(

Confirmed = ('total_cases', 'sum'),

New_Confirmed = ('new_cases', 'sum')

)

# create a moving average

df['SMA_14'] = round(df.loc[:,'New_Confirmed'].rolling(window=14).mean())

df = df.reset_index()

# +

plot = go.Figure()

plot.add_trace(

go.Bar(

name='Daily Meas',

x=df["date"],

y=df["New_Confirmed"],

marker={

# size': 10,

'color': 'red',

'opacity': 1

},

)

)

plot.add_trace(

go.Scatter(

name='14 Day MA',

x=df["date"],

y=df["SMA_14"],

mode='lines',

marker={

'size': 1,

'color': 'black',

'opacity': 0.7

},

line_width=1.5

)

)

plot.update_layout(

title_x=0,

title='New Corona Cases Switzerland',

margin={

'l': 25,

'b': 25,

't': 50,

'r': 20

},

height=400,

yaxis={

'color': '#748B99',

'showgrid': True,

'showline': True,

'gridwidth': 1,

'gridcolor': '#B6C3CC',

# 'rangemode': "tozero",

'range':

[0,

df.New_Confirmed.max() * 1.05],

# 'ticksuffix': ' '

# 'tickprefix': 'id: '

},

xaxis={

'showgrid': False,

'color': '#748B99',

'showline': True,

'linecolor': '#B6C3CC',

},

paper_bgcolor='#FFFFFF',

plot_bgcolor='rgba(0,0,0,0)',

showlegend=False,

legend={

'yanchor': 'top',

'y': 0.99,

'xanchor': 'right',

'x': 0.99

})

plot.show()

# -

# create df with one column per date

df = chData[chData.canton == 'BE']

# create a moving average

df['SMA_14'] = round(df.loc[:,'new_cases'].rolling(window=14).mean())

df = df.reset_index()

# +

plot = go.Figure()

plot.add_trace(

go.Bar(

name='Daily Meas',

x=df["date"],

y=df["new_cases"],

marker={

# size': 10,

'color': 'red',

'opacity': 1

},

)

)

plot.add_trace(

go.Scatter(

name='14 Day MA',

x=df["date"],

y=df["SMA_14"],

mode='lines',

marker={

'size': 1,

'color': 'black',

'opacity': 0.7

},

line_width=1.5

)

)

plot.update_layout(

title_x=0,

title='New Corona Cases Bern',

margin={

'l': 25,

'b': 25,

't': 50,

'r': 20

},

height=400,

yaxis={

'color': '#748B99',

'showgrid': True,

'showline': True,

'gridwidth': 1,

'gridcolor': '#B6C3CC',

# 'rangemode': "tozero",

'range':

[0,

df.new_cases.max() * 1.05],

# 'ticksuffix': ' '

# 'tickprefix': 'id: '

},

xaxis={

'showgrid': False,

'color': '#748B99',

'showline': True,

'linecolor': '#B6C3CC',

},

paper_bgcolor='#FFFFFF',

plot_bgcolor='rgba(0,0,0,0)',

showlegend=False,

legend={

'yanchor': 'top',

'y': 0.99,

'xanchor': 'right',

'x': 0.99

})

plot.show()

# -

# ## Dataframes

worldwide_pretty.head(3)

chData.head(3)

# ## Exports and HTML-file creation

# export the dataframes

chData.to_csv('covid_ch.csv')

worldwide_pretty.to_csv('covid_international.csv')

# create a html file

os.system('jupyter nbconvert --to html data_pipeline.ipynb')

| data_pipeline.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] toc=true

# <h1>Table of Contents<span class="tocSkip"></span></h1>

# <div class="toc" style="margin-top: 1em;"><ul class="toc-item"></ul></div>

# -

# # RNN in TensorFlow Keras - TimeSeries Data <a class="tocSkip">

# +

import math

import os

import numpy as np

np.random.seed(123)

print("NumPy:{}".format(np.__version__))

import pandas as pd

print("Pandas:{}".format(pd.__version__))

import sklearn as sk

from sklearn import preprocessing as skpp

print("sklearn:{}".format(sk.__version__))

import matplotlib as mpl

import matplotlib.pyplot as plt

mpl.rcParams.update({'font.size': 20,

'figure.figsize': [15,10]

}

)

print("Matplotlib:{}".format(mpl.__version__))

import tensorflow as tf

tf.set_random_seed(123)

print("TensorFlow:{}".format(tf.__version__))

from keras.models import Sequential

from keras.layers import Dense

from keras.layers import LSTM, SimpleRNN, GRU

from keras.losses import mean_squared_error as k_mse

from keras.backend import sqrt as k_sqrt

import keras.backend as K

import keras

print("Keras:{}".format(keras.__version__))

# +

DATASETSLIB_HOME = '../datasetslib'

import sys

if not DATASETSLIB_HOME in sys.path:

sys.path.append(DATASETSLIB_HOME)

# %reload_ext autoreload

# %autoreload 2

import datasetslib

from datasetslib import util as dsu

datasetslib.datasets_root = os.path.join(os.path.expanduser('~'),'datasets')

# -

# # Read and pre-process the dataset

filepath = os.path.join(datasetslib.datasets_root,

'ts-data',

'international-airline-passengers-cleaned.csv'

)

dataframe = pd.read_csv(filepath,

usecols=[1],

header=0)

dataset = dataframe.values

dataset = dataset.astype(np.float32)

# normalize the dataset

scaler = skpp.MinMaxScaler(feature_range=(0, 1))

normalized_dataset = scaler.fit_transform(dataset)

# split into train and test sets

train,test=dsu.train_test_split(normalized_dataset,train_size=0.67)

n_x=1

X_train, Y_train, X_test, Y_test = dsu.mvts_to_xy(train,test,n_x=n_x,n_y=1)

# # Keras SimpleRNN for TimeSeries Data

# +

tf.reset_default_graph()

keras.backend.clear_session()

# reshape input to be [samples, time steps, features]

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1],1)

X_test = X_test.reshape(X_test.shape[0], X_train.shape[1], 1)

# -

# create and fit the SimpleRNN model

model = Sequential()

model.add(SimpleRNN(units=4, input_shape=(X_train.shape[1], X_train.shape[2])))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

model.summary()

model.fit(X_train, Y_train, epochs=20, batch_size=1)

# +

# make predictions

y_train_pred = model.predict(X_train)

y_test_pred = model.predict(X_test)

# invert predictions

y_train_pred = scaler.inverse_transform(y_train_pred)

y_test_pred = scaler.inverse_transform(y_test_pred)

#invert originals

y_train_orig = scaler.inverse_transform(Y_train)

y_test_orig = scaler.inverse_transform(Y_test)

# calculate root mean squared error

trainScore = k_sqrt(k_mse(y_train_orig[:,0],

y_train_pred[:,0])

).eval(session=K.get_session())

print('Train Score: {0:.2f} RMSE'.format(trainScore))

testScore = k_sqrt(k_mse(y_test_orig[:,0],

y_test_pred[:,0])

).eval(session=K.get_session())

print('Test Score: {0:.2f} RMSE'.format(testScore))

# -

# shift train predictions for plotting

trainPredictPlot = np.empty_like(normalized_dataset)

trainPredictPlot[:, :] = np.nan

trainPredictPlot[n_x:len(y_train_pred)+n_x, :] = y_train_pred

# shift test predictions for plotting

testPredictPlot = np.empty_like(normalized_dataset)

testPredictPlot[:, :] = np.nan

testPredictPlot[len(y_train_pred)+(n_x*2):len(normalized_dataset),:]=y_test_pred

# plot baseline and predictions

plt.plot(scaler.inverse_transform(normalized_dataset),label='Original Data')

plt.plot(trainPredictPlot,label='y_train_pred')

plt.plot(testPredictPlot,label='y_test_pred')

plt.legend()

plt.xlabel('Timesteps')

plt.ylabel('Total Passengers')

plt.show()

# # Keras LSTM for TimeSeries Data

# +

tf.reset_default_graph()

keras.backend.clear_session()

# reshape input to be [samples, time steps, features]

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1],1)

X_test = X_test.reshape(X_test.shape[0], X_train.shape[1], 1)

# create and fit the LSTM model

model = Sequential()

model.add(LSTM(units=4, input_shape=(X_train.shape[1], X_train.shape[2])))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

model.summary()

model.fit(X_train, Y_train, epochs=20, batch_size=1)

# make predictions

y_train_pred = model.predict(X_train)

y_test_pred = model.predict(X_test)

# invert predictions

y_train_pred = scaler.inverse_transform(y_train_pred)

y_test_pred = scaler.inverse_transform(y_test_pred)

# invert originals

y_train_orig = scaler.inverse_transform(Y_train)

y_test_orig = scaler.inverse_transform(Y_test)

# calculate root mean squared error

trainScore = k_sqrt(k_mse(y_train_orig[:,0],

y_train_pred[:,0])

).eval(session=K.get_session())

print('Train Score: {0:.2f} RMSE'.format(trainScore))

testScore = k_sqrt(k_mse(y_test_orig[:,0],

y_test_pred[:,0])

).eval(session=K.get_session())

print('Test Score: {0:.2f} RMSE'.format(testScore))

# shift train predictions for plotting

trainPredictPlot = np.empty_like(normalized_dataset)

trainPredictPlot[:, :] = np.nan

trainPredictPlot[n_x:len(y_train_pred)+n_x, :] = y_train_pred

# shift test predictions for plotting

testPredictPlot = np.empty_like(normalized_dataset)

testPredictPlot[:, :] = np.nan

testPredictPlot[len(y_train_pred)+(n_x*2):len(normalized_dataset), :] = y_test_pred

# plot baseline and predictions

plt.plot(scaler.inverse_transform(normalized_dataset),label='Original Data')

plt.plot(trainPredictPlot,label='y_train_pred')

plt.plot(testPredictPlot,label='y_test_pred')

plt.legend()

plt.xlabel('Timesteps')

plt.ylabel('Total Passengers')

plt.show()

# -

# # Keras GRU for TimeSeries Data

# +

tf.reset_default_graph()

keras.backend.clear_session()

# reshape input to be [samples, time steps, features]

X_train = X_train.reshape(X_train.shape[0], X_train.shape[1],1)

X_test = X_test.reshape(X_test.shape[0], X_train.shape[1], 1)

# create and fit the GRU Model

model = Sequential()

model.add(GRU(units=4, input_shape=(X_train.shape[1], X_train.shape[2])))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

model.summary()

model.fit(X_train, Y_train, epochs=20, batch_size=1)

# make predictions

y_train_pred = model.predict(X_train)

y_test_pred = model.predict(X_test)

# invert predictions

y_train_pred = scaler.inverse_transform(y_train_pred)

y_test_pred = scaler.inverse_transform(y_test_pred)

#invert originals

y_train_orig = scaler.inverse_transform(Y_train)

y_test_orig = scaler.inverse_transform(Y_test)

# calculate root mean squared error

trainScore = k_sqrt(k_mse(y_train_orig[:,0],

y_train_pred[:,0])

).eval(session=K.get_session())

print('Train Score: {0:.2f} RMSE'.format(trainScore))

testScore = k_sqrt(k_mse(y_test_orig[:,0],

y_test_pred[:,0])

).eval(session=K.get_session())

print('Test Score: {0:.2f} RMSE'.format(testScore))

# shift train predictions for plotting

trainPredictPlot = np.empty_like(normalized_dataset)

trainPredictPlot[:, :] = np.nan

trainPredictPlot[n_x:len(y_train_pred)+n_x, :] = y_train_pred

# shift test predictions for plotting

testPredictPlot = np.empty_like(normalized_dataset)

testPredictPlot[:, :] = np.nan

testPredictPlot[len(y_train_pred)+(n_x*2):len(normalized_dataset), :] = y_test_pred

# plot baseline and predictions

plt.plot(scaler.inverse_transform(normalized_dataset),label='Original Data')

plt.plot(trainPredictPlot,label='y_train_pred')

plt.plot(testPredictPlot,label='y_test_pred')

plt.legend()

plt.xlabel('Timesteps')

plt.ylabel('Total Passengers')

plt.show()

| Chapter07/ch-07b_RNN_TimeSeries_Keras.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

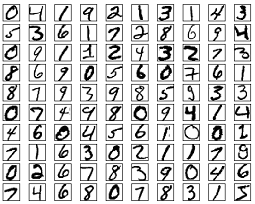

# + [markdown] colab_type="text" id="tmaHAc_KHG_5"

# # Feed-forward nets for image classification

# + [markdown] colab_type="text" id="zgNIWhzoHOIz"

# ## 1. The MNIST dataset

# + colab={} colab_type="code" id="dQiqJyO7E7Ek"

# #!pip install mnist

import mnist

train_imgs = mnist.train_images()

train_labels = mnist.train_labels()

test_imgs = mnist.test_images()

test_labels = mnist.test_labels()

# -

# ### Data standardization

#

# Rescale input values to have zero mean and standard deviation of one.

mean, std = train_imgs.mean(), train_imgs.std()

train_imgs = (train_imgs - mean) / std

test_imgs = (test_imgs - mean) / std

# ### See some inputs

# + colab={"base_uri": "https://localhost:8080/", "height": 99} colab_type="code" id="qeftJ_CpE7Eu" outputId="c7a9a956-0114-4975-90a0-8af5b2007c2b"

from typing import List

import numpy as np

# %matplotlib inline

import matplotlib.pyplot as plt

# + colab={"base_uri": "https://localhost:8080/", "height": 99} colab_type="code" id="qeftJ_CpE7Eu" outputId="c7a9a956-0114-4975-90a0-8af5b2007c2b"

idxs = np.random.randint(0, len(train_imgs), 15)

imgs = np.concatenate(tuple(train_imgs[idx,:,:] for idx in idxs), axis=1)

plt.imshow(imgs)

print("Labels:", train_labels[idxs])

# + [markdown] colab_type="text" id="ZgERUA07IuSr"

# ## 2. Building feed forward-networks

# -

# ### Layers

#

# Each layer will have three methods:

# - `forward` computes and returns ${\bf y}^{(l)} = f_l\left({\bf x}^{(l)}, {\bf w}^{(l)}\right)$

# - `backward` gets $\frac{\partial {\cal L}}{\partial {\bf y}^{(l)}}$, and stores $\frac{\partial {\cal L}}{\partial {\bf w}^{(l)}}$ internally, and returns $\frac{\partial {\cal L}}{\partial {\bf w}^{(l)}}$

# - `update` modifies parameters ${\bf w}^{(l)}$ using stored $\frac{\partial{\cal L}}{\partial{\bf w}}$

class Layer:

def forward(self, x: np.ndarray) -> np.ndarray:

raise NotImplementedError

def backward(self, x: np.ndarray, dy: np.ndarray) -> np.ndarray:

raise NotImplementedError

def update(self, *args, **kwargs):

pass # If a layer has no parameters, then this function does nothing

# ### The feed-forward netowork

class FeedForwardNetwork:

def __init__(self, layers: List[Layer]):

self.layers = layers

def forward(self, x: np.ndarray, train: bool = True) -> np.ndarray:

self._inputs = []

for layer in self.layers:

if train:

self._inputs.append(x)

x = layer.forward(x)

return x

def backward(self, dy:np.ndarray) -> np.ndarray:

# TODO <0> : Compute the backward phase

raise NotImplementedError

del self._inputs

def update(self, *args, **kwargs):

for layer in self.layers:

layer.update(*args, **kwargs)

# ### The linear layer

# + colab={} colab_type="code" id="S47ZsyKdE7FF"

class Linear(Layer):

def __init__(self, insize: int, outsize: int) -> None:

bound = np.sqrt(6. / insize)

self.weight = np.random.uniform(-bound, bound, (insize, outsize))

self.bias = np.zeros((outsize,))

self.dweight = np.zeros_like(self.weight)

self.dbias = np.zeros_like(self.bias)

def forward(self, x: np.ndarray) -> np.ndarray:

# TODO <1> : compute the output of a linear layer

raise NotImplementedError

def backward(self, x: np.ndarray, dy: np.ndarray) -> np.ndarray:

# TODO <2> : compute dweight, dbias and return dx

raise NotImplementedError

def update(self, mode='SGD', lr=0.001, mu=.9):

if mode == 'SGD':

self.weight -= lr * self.dweight

self.bias -= lr * self.dbias

elif mode == 'Nesterov':

# TODO <9> : compute the nesterov update (for Lab 2)

raise NotImplementedError

elif mode == 'Adam':

# TODO <10> : compute the Adam update (for Lab 2)

raise NotImplementedError

# -

# ### The Rectified Linear Unit

# $$y = \max\left(x, 0\right)$$

# + colab={} colab_type="code" id="QOR1DJiwE7FJ"

class ReLU(Layer):

def __init__(self) -> None:

pass

def forward(self, x: np.ndarray) -> np.ndarray:

# TODO <3> : Compute the output of a rectified linear unit

raise NotImplementedError

def backward(self, x: np.ndarray, dy: np.ndarray) -> np.ndarray:

# TODO <4> : Compute the gradient w.r.t. x

raise NotImplementedError

# + [markdown] colab_type="text" id="4NrWBTmbI9gW"

# ## 3. The loss function

#

# The negative log likelihood combines a softmax activation, and a cross-entropy cost.

# + colab={} colab_type="code" id="YDXiDEu8E7FW"

class NegativeLogLikelihood:

def __init__(self):

pass

def forward(self, y: np.ndarray, t: np.ndarray) -> float:

# TODO <5> : Compute the negative log likelihood

raise NotImplementedError

def backward(self, y: np.ndarray, t: np.ndarray) -> np.ndarray:

# TODO <6> : Compute dl/dy

raise NotImplementedError

# + [markdown] colab_type="text" id="Uz9qM5eHJLNw"

# ### Accuracy

# + colab={} colab_type="code" id="3nYfVCBSE7Fe"

def accuracy(y: np.ndarray, t: np.ndarray) -> float:

# TODO <7> : Compute accuracy

raise NotImplementedError

# + [markdown] colab_type="text" id="mIhtzd2gJQF2"

# ## 4. Training a neural network

# + colab={"base_uri": "https://localhost:8080/", "height": 237} colab_type="code" id="HTbmZv3YE7Fs" outputId="d6bb5b23-201b-4c2f-cf88-37f90bb5f6a6"

BATCH_SIZE = 128

HIDDEN_UNITS = 200

EPOCHS_NO = 50

optimize_args = {'mode': 'SGD', 'lr': .001}

net = FeedForwardNetwork([Linear(784, HIDDEN_UNITS),

ReLU(),

Linear(HIDDEN_UNITS, 10)])

nll = NegativeLogLikelihood()

for epoch in range(EPOCHS_NO):

for b_no, idx in enumerate(range(0, len(train_imgs), BATCH_SIZE)):

# 1. Prepare next batch

x = train_imgs[idx:idx + BATCH_SIZE,:,:].reshape(-1, 784)

t = train_labels[idx:idx + BATCH_SIZE]

# 2. Compute gradient

# TODO <8> : Compute gradient

raise NotImplementedError

# 3. Update network parameters

net.update(**optimize_args)

print(f'\rEpoch {epoch + 1:02d} '

f'| Batch {b_no:03d} '

f'| Train NLL: {loss:3.5f} '

f'| Train Accuracy: {accuracy(y, t):3.2f} ', end='')

y = net.forward(test_imgs.reshape(-1, 784), train=False)

test_nll = nll.forward(y, test_labels)

print(f' | Test NLL: {test_nll:3.5f} '

f' | Test Accuracy: {accuracy(y, test_labels):3.2f}')

# -

| labs/lab01/Feed-forward nets for image classification - Skel.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="sAtovljrSzv8"

# # 데이터 준비

# + colab={"base_uri": "https://localhost:8080/"} id="Burr1ejRJr_Q" executionInfo={"status": "ok", "timestamp": 1647835633702, "user_tz": -540, "elapsed": 1899, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AO<KEY>xGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="2282665b-ca3b-4ae0-c989-3c7268f277a5"

import os

import random

import numpy as np

import nltk

nltk.download("punkt")

from nltk.tokenize import word_tokenize

import torch

from torchtext.legacy.data import Field

from torchtext.legacy.data import TabularDataset

from torchtext.legacy.data import BucketIterator

from torchtext.legacy.data import Iterator

# + id="pbBR3MuyF7VY" executionInfo={"status": "ok", "timestamp": 1647835633703, "user_tz": -540, "elapsed": 4, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

# Nondeterministic 한 작업 피하기

RANDOM_SEED = 2022

random.seed(RANDOM_SEED)

np.random.seed(RANDOM_SEED)

torch.manual_seed(RANDOM_SEED)

torch.backends.cudnn.deterministic = True # Deterministic 한 알고리즘만 사용하기

torch.backends.cudnn.benchmark = False # Cudnn benchmark 해제

torch.cuda.manual_seed_all(RANDOM_SEED) # if use multi-GPU

os.environ['PYTHONHASHSEED'] = str(RANDOM_SEED)

# + id="xjqPrRVSkzmt" executionInfo={"status": "ok", "timestamp": 1647835633703, "user_tz": -540, "elapsed": 3, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

DATA_PATH = "/content/drive/Othercomputers/내 컴퓨터/Sat_english/data/processed"

# + [markdown] id="QsXwSJdPX5iN"

# 필드 정의

# + id="lfxKZjPrTBOz" executionInfo={"status": "ok", "timestamp": 1647835633704, "user_tz": -540, "elapsed": 4, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

# 문장 필드

TEXT = Field(

sequential=True, # 문장 입력

use_vocab=True,

tokenize=word_tokenize, # nltk의 word_tokenize로 트큰화

lower=True, # 모두 소문자 처리

batch_first=True,

)

# 정답 필드

LABEL = Field(

sequential=False,

use_vocab=False,

batch_first=True,

)

# + [markdown] id="LValW3OmX-31"

# 데이터 불러오기

# + id="ggRQO7KgT4Bk" executionInfo={"status": "ok", "timestamp": 1647835636079, "user_tz": -540, "elapsed": 2379, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

# CoLA 데이터 = 사전 학습 데이터

cola_train_data, cola_valid_data, cola_test_data = TabularDataset.splits(

path=DATA_PATH,

train="cola_train.tsv",

validation="cola_valid.tsv",

test="cola_test.tsv",

format="tsv",

fields=[("text", TEXT), ("label", LABEL)],

skip_header=1, # column명이 있는 1열 생략

)

TEXT.build_vocab(cola_train_data, min_freq=2) # CoLA 데이터로 사전학습할 단어장 생성(2번 이상 나온 단어만)

# 수능 데이터 = 추가 학습 데이터

sat_train_data, sat_valid_data, sat_test_data = TabularDataset.splits(

path=DATA_PATH,

train="sat_train.tsv",

validation="sat_valid.tsv",

test="sat_test.tsv",

format="tsv",

fields=[("text", TEXT), ("label", LABEL)],

skip_header=1,

)

# + [markdown] id="4CdaR71bYBRP"

# DataLoader 정의

# + id="SEY6mJmCX1Fj" executionInfo={"status": "ok", "timestamp": 1647835636080, "user_tz": -540, "elapsed": 13, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AO<KEY>3=s64", "userId": "11886396836022920274"}}

# CoLA 데이터

cola_train_iterator, cola_valid_iterator, cola_test_iterator = BucketIterator.splits(

(cola_train_data, cola_valid_data, cola_test_data),

batch_size=32,

device=None,

sort=False,

)

# 수능 데이터

sat_train_iterator, sat_valid_iterator, sat_test_iterator = BucketIterator.splits(

(sat_train_data, sat_valid_data, sat_test_data),

batch_size=8,

device=None,

sort=False,

)

# + [markdown] id="niRzsFTqXpF-"

# # 네트워크 구성

# + id="723vR_q6X7o8" executionInfo={"status": "ok", "timestamp": 1647835636081, "user_tz": -540, "elapsed": 12, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

import torch

import torch.nn as nn

# + id="iVAvvIEbX0IX" executionInfo={"status": "ok", "timestamp": 1647835636082, "user_tz": -540, "elapsed": 12, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

class LSTM_Model(nn.Module):

def __init__(self, num_embeddings, embedding_dim, hidden_size, num_layers, pad_idx):

super().__init__()

# Embedding Layer

self.embed_layer = nn.Embedding(

num_embeddings=num_embeddings,

embedding_dim=embedding_dim,

padding_idx=pad_idx

)

# LSTM Layer

self.lstm_layer = nn.LSTM(

input_size=embedding_dim,

hidden_size=hidden_size,

num_layers=num_layers,

batch_first = True,

bidirectional=True, # 양방향 LSTM

dropout=0.5

)

# Fully-connetcted Layer

self.fc_layer1 = nn.Sequential(

nn.Linear(hidden_size * 2, hidden_size), # 양방향 LSTM의 출력은 입력의 2배

nn.Dropout(0.5),

nn.LeakyReLU() # f(x)=max(0.01x, x)로 dying ReLU 방지

)

self.fc_layer2 = nn.Sequential(

nn.Linear(hidden_size, 1)

)

def forward(self, x):

embed_x = self.embed_layer(x)

output, (_, _) = self.lstm_layer(embed_x) # hidden, cell state의 출력값 사용 안함

output = output[:, -1, :] # (batch_size, seq_length, 2*hidden_size) -> (batch_size, 2*hidden_size)

output = self.fc_layer1(output)

output = self.fc_layer2(output)

return output

# + [markdown] id="_GvTG58LuI9g"

# # 모델 학습 및 검증

# + [markdown] id="meeBGEVqwrVy"

# 파라미터 정의

# + id="Mqy27QLCutiw" executionInfo={"status": "ok", "timestamp": 1647835638989, "user_tz": -540, "elapsed": 2918, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

USE_CUDA = torch.cuda.is_available()

DEVICE = torch.device("cuda" if USE_CUDA else "cpu") # GPU 존재시 GPU 실행(CUDA)

PAD_IDX = TEXT.vocab.stoi[TEXT.pad_token] # 동일한 크기를 맞추기 위한 패딩문자를 숫자 식별자에 매칭 -> 숫자 식별자=index

lstm = LSTM_Model(

num_embeddings=len(TEXT.vocab),

embedding_dim=100,

hidden_size=200,

num_layers=4,

pad_idx=PAD_IDX

).to(DEVICE)

n_epochs = 20

learning_rate = 0.001

optimizer = torch.optim.Adam(lstm.parameters(), lr=learning_rate)

criterion = nn.BCEWithLogitsLoss() # Sigmoid + BCELoss

# + [markdown] id="jsONag9Zw1Xm"

# 훈련 데이터로 학습하여 모델화

# + id="RmCedQivuJNd" executionInfo={"status": "ok", "timestamp": 1647835638991, "user_tz": -540, "elapsed": 19, "user": {"displayName": "CaFe CoKe", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

def train(model, iterator, optimizer):

train_loss = 0

model.train() # 모델을 train모드로 설정(Dropout 적용)

for _, batch in enumerate(iterator):

optimizer.zero_grad() # optimizer 초기화(Gradient)

text = batch.text # 해당 Batch의 text 속성 불러오기

label = batch.label.type(torch.FloatTensor) # 해당 Batch의 label 속성 불러오기(32-bit float)

text = text.to(DEVICE)

label = label.to(DEVICE)

output = model(text).flatten() # output은 [batch_size, 1], label은 [batch_size]

loss = criterion(output, label)

loss.backward() # 역전파로 Gradient를 계산 후 파라미터에 할당

optimizer.step() # 파라미터 업데이트

train_loss += loss.item() # Loss 값 누적

# Loss 값을 Batch 값으로 나누어 미니 배치마다의 Loss 값의 평균을 구함

return train_loss/len(iterator)

# + [markdown] id="KITUxPSfuLjc"

# 모델 검증

# + id="rnvLNdwKuLyV" executionInfo={"status": "ok", "timestamp": 1647835638992, "user_tz": -540, "elapsed": 18, "user": {"displayName": "CaFe CoKe", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

def evaluate(model, iterator):

valid_loss = 0

model.eval() # 모델을 eval모드로 설정(Dropout 미적용)

with torch.no_grad(): # Gradient 계산 비활성화 (모델 평가에는 파라미터 업데이트 X)

for _, batch in enumerate(iterator):

text = batch.text

label = batch.label.type(torch.FloatTensor)

text = text.to(DEVICE)

label = label.to(DEVICE)

output = model(text).flatten()

loss = criterion(output, label)

valid_loss += loss.item()

return valid_loss/len(iterator)

# + [markdown] id="5wcFrq1uuFF_"

# CoLA 데이터 사전학습

# + id="_jdht0nnvx68" executionInfo={"status": "ok", "timestamp": 1647835638994, "user_tz": -540, "elapsed": 19, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

import time

# + id="pmwwn-YAwN8-" executionInfo={"status": "ok", "timestamp": 1647835638996, "user_tz": -540, "elapsed": 19, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

def epoch_time(start_time: int, end_time: int): # epoch 시간

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

# + id="LwuMQY_pBY8s" executionInfo={"status": "ok", "timestamp": 1647835638997, "user_tz": -540, "elapsed": 19, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

loss_tr = []

loss_val = []

# + colab={"base_uri": "https://localhost:8080/"} id="2HsbUZ8cuIKZ" executionInfo={"status": "ok", "timestamp": 1647835723945, "user_tz": -540, "elapsed": 84966, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="4807aaf4-7fb8-458e-af74-831ba8dbda4c"

for epoch in range(n_epochs):

start_time = time.time()

train_loss = train(lstm, cola_train_iterator, optimizer)

valid_loss = evaluate(lstm, cola_valid_iterator)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

print(f"Epoch: {epoch+1:02} | Time: {epoch_mins}m {epoch_secs}s")

print(f"Train Loss: {train_loss:.5f}")

print(f" Val. Loss: {valid_loss:.5f}")

print('----------------------------------')

# overfitting 확인하기 위함

loss_tr.append(train_loss)

loss_val.append(valid_loss)

# + id="_8NlSZzrBsvS" executionInfo={"status": "ok", "timestamp": 1647835723946, "user_tz": -540, "elapsed": 33, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

import numpy as np

import matplotlib.pyplot as plt

# + colab={"base_uri": "https://localhost:8080/", "height": 606} id="29atcZgNBo0p" executionInfo={"status": "ok", "timestamp": 1647835723947, "user_tz": -540, "elapsed": 32, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="dce74ace-dcbc-4127-9b71-20f1c9e67e5f"

np1 = np.array(loss_tr)

np2 = np.array(loss_val)

plt.figure(figsize=(10, 10))

plt.xlabel('epoch')

plt.ylabel('loss')

plt.plot(np1, label='Loss of train')

plt.plot(np2, label='Loss of Validation')

plt.legend() # 라벨표시를 위한 범례

plt.show()

# + id="WAZEYOczWPK2" executionInfo={"status": "ok", "timestamp": 1647835723947, "user_tz": -540, "elapsed": 27, "user": {"displayName": "CaFe CoKe", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

from copy import deepcopy

# 사전학습 모델

before_tuning_lstm = deepcopy(lstm)

# + [markdown] id="NcygpOpUWq3J"

# 수능 데이터를 이용해 추가 학습 (Fine-Tune)

# + colab={"base_uri": "https://localhost:8080/"} id="OJVoGAfWWtJ2" executionInfo={"status": "ok", "timestamp": 1647835729668, "user_tz": -540, "elapsed": 5747, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="fc0af1b1-883a-4506-dd05-108e7f7ad7dc"

loss_tr_tune = []

loss_val_tune = []

for epoch in range(n_epochs):

start_time = time.time()

train_loss = train(lstm, sat_train_iterator, optimizer)

valid_loss = evaluate(lstm, sat_valid_iterator)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

print(f"Epoch: {epoch+1:02} | Time: {epoch_mins}m {epoch_secs}s")

print(f"\tTrain Loss: {train_loss:.5f}")

print(f"\t Val. Loss: {valid_loss:.5f}")

print('----------------------------------')

# overfitting 확인하기 위함

loss_tr_tune.append(train_loss)

loss_val_tune.append(valid_loss)

# + colab={"base_uri": "https://localhost:8080/", "height": 606} id="uxVTYJIHXejW" executionInfo={"status": "ok", "timestamp": 1647835729669, "user_tz": -540, "elapsed": 29, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="70ee1895-2c8f-4813-dfd5-2f331bd81af8"

np1 = np.array(loss_tr_tune)

np2 = np.array(loss_val_tune)

plt.figure(figsize=(10, 10))

plt.xlabel('epoch')

plt.ylabel('loss')

plt.plot(np1, label='Loss of train')

plt.plot(np2, label='Loss of Validation')

plt.legend() # 라벨표시를 위한 범례

plt.show()

# + [markdown] id="yG11HjHAgioo"

# # 모델 성능 테스트

# + id="9Kv_UUurgrWI" executionInfo={"status": "ok", "timestamp": 1647835729670, "user_tz": -540, "elapsed": 22, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

import dill

from sklearn.metrics import roc_curve, auc

# + id="ythUmHwBglMB" executionInfo={"status": "ok", "timestamp": 1647835730287, "user_tz": -540, "elapsed": 638, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

def test(model, iterator, device):

model.eval()

with torch.no_grad():

y_real = []

y_pred = []

for batch in iterator:

text = batch.text

label = batch.label.type(torch.FloatTensor)

text = text.to(device)

output = model(text).flatten().cpu() # roc_curve의 입력 형태는 ndarray의 형태

# 각 Batch의 예측값 list화

y_real += [label]

y_pred += [output]

y_real = torch.cat(y_real)

y_pred = torch.cat(y_pred)

fpr, tpr, _ = roc_curve(y_real, y_pred)

auroc = auc(fpr, tpr)

return auroc

# + colab={"base_uri": "https://localhost:8080/"} id="HSV_DAbGhLfq" executionInfo={"status": "ok", "timestamp": 1647835730287, "user_tz": -540, "elapsed": 12, "user": {"displayName": "CaFe CoKe", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}} outputId="ca495ebe-87b1-4817-89f4-3ffcad4103f6"

_ = before_tuning_lstm.cpu()

lstm_sat_test_auroc = test(before_tuning_lstm, sat_test_iterator, "cpu")

_ = lstm.cpu()

lstm_tuned_test_auroc = test(lstm, sat_test_iterator, "cpu")

print(f"Before fine-tuning SAT Dataset Test AUROC: {lstm_sat_test_auroc:.5f}")

print(f"After fine-tuning SAT Dataset Test AUROC: {lstm_tuned_test_auroc:.5f}")

# + id="pidf_OmGdOWP" executionInfo={"status": "ok", "timestamp": 1647835730287, "user_tz": -540, "elapsed": 9, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14GgKQbV_6mqL_VHCcbuNL4GHNUxGOJQBtuNsOFo3=s64", "userId": "11886396836022920274"}}

with open("before_tuning_model.dill", "wb") as f:

model = {

"TEXT": TEXT,

"LABEL": LABEL,

"classifier": before_tuning_lstm

}

dill.dump(model, f)

_ = lstm.cpu()

with open("after_tuning_model.dill", "wb") as f:

model = {

"TEXT": TEXT,

"LABEL": LABEL,

"classifier": lstm

}

dill.dump(model, f)

| Sat_pre_training.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

import math

import matplotlib.pyplot as plt

import matplotlib

import seaborn as sns

from datetime import datetime, date, time

from scipy.interpolate import interp1d

import warnings

warnings.filterwarnings('ignore')

# # 1. LOADING THE PLOTS INFORMATION

# ## 1.1.1 AERODYNAMIC PROPERTIES

# +

#Defining the location of the data folder

DATA_FOLDER = 'Desktop/PLOTS_DATA_WIND_TURBINES/Aerodynamics_properties_blades/Data/'

#DU21-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag DU21-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift DU21-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_DU21_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")

df_lift_DU21_A17 = pd.read_csv(DATASET2,sep =';',decimal=",")

#Defining the columns names of the uploaded dataframe

df_drag_DU21_A17.columns=["Angle_of_attack", "Cd"]

df_lift_DU21_A17.columns=["Angle_of_attack", "Cl"]

#DU25-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag DU25-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift DU25-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_DU25_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")

df_lift_DU25_A17 = pd.read_csv(DATASET2,sep =';',decimal=",")

#Defining the columns names of the uploaded dataframe

df_drag_DU25_A17.columns=["Angle_of_attack", "Cd"]

df_lift_DU25_A17.columns=["Angle_of_attack", "Cl"]

#DU30-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag DU30-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift DU30-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_DU30_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")

df_lift_DU30_A17 = pd.read_csv(DATASET2,sep =';',decimal=",")

#Defining the columns names of the uploaded dataframe

df_drag_DU30_A17.columns=["Angle_of_attack", "Cd"]

df_lift_DU30_A17.columns=["Angle_of_attack", "Cl"]

#DU35-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag DU35-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift DU35-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_DU35_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")

df_lift_DU35_A17 = pd.read_csv(DATASET2,sep =';',decimal=",")

#Defining the columns names of the uploaded dataframe

df_drag_DU35_A17.columns=["Angle_of_attack", "Cd"]

df_lift_DU35_A17.columns=["Angle_of_attack", "Cl"]

#DU40-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag DU40-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift DU40-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_DU40_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")

df_lift_DU40_A17 = pd.read_csv(DATASET2,sep =';',decimal=",")

#Defining the columns names of the uploaded dataframe

df_drag_DU40_A17.columns=["Angle_of_attack", "Cd"]

df_lift_DU40_A17.columns=["Angle_of_attack", "Cl"]

#NACA64-A17

#Definition of the data path

DATASET1 = DATA_FOLDER + "Drag NACA64-A17_pi.csv"

DATASET2 = DATA_FOLDER + "Lift NACA64-A17_pi.csv"

#Load of the compressed files in a specific way in function of the type of data file

df_drag_NACA64_A17 = pd.read_csv(DATASET1,sep =';',decimal=",")