code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# formats: ipynb,py:percent

# text_representation:

# extension: .py

# format_name: percent

# format_version: '1.3'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %% [markdown]

# # The MPC out of Credit vs the MPC Out of Income

#

# This notebook compares the Marginal Propensity to Consume (MPC) out of an increase in a credit limit, and the MPC out of transitory shock to income.

#

# The notebook is heavily commented to help newcomers, and does some things (like importing modules in the body of the code rather than at the top), that are typically deprecated by Python programmers. This is all to make the code easier to read and understand.

#

# The notebook illustrates one simple way to use HARK: import and solve a model for different parameter values, to see how parameters affect the solution.

#

# The first step is to create the ConsumerType we want to solve the model for.

# %%

from __future__ import division, print_function

# %matplotlib inline

# %% code_folding=[]

## Import the HARK ConsumerType we want

## Here, we bring in an agent making a consumption/savings decision every period, subject

## to transitory and permanent income shocks.

from HARK.ConsumptionSaving.ConsIndShockModel import IndShockConsumerType

# %% code_folding=[]

## Import the default parameter values

import HARK.ConsumptionSaving.ConsumerParameters as Params

# %% code_folding=[]

## Now, create an instance of the consumer type using the default parameter values

## We create the instance of the consumer type by calling IndShockConsumerType()

## We use the default parameter values by passing **Params.init_idiosyncratic_shocks as an argument

BaselineExample = IndShockConsumerType(**Params.init_idiosyncratic_shocks)

# %% code_folding=[]

# Note: we've created an instance of a very standard consumer type, and many assumptions go

# into making this kind of consumer. As with any structural model, these assumptions matter.

# For example, this consumer pays the same interest rate on

# debt as she earns on savings. If instead we wanted to solve the problem of a consumer

# who pays a higher interest rate on debt than she earns on savings, this would be really easy,

# since this is a model that is also solved in HARK. All we would have to do is import that model

# and instantiate an instance of that ConsumerType instead. As a homework assignment, we leave it

# to you to uncomment the two lines of code below, and see how the results change!

from HARK.ConsumptionSaving.ConsIndShockModel import KinkedRconsumerType

BaselineExample = KinkedRconsumerType(**Params.init_kinked_R)

# %% [markdown]

r"""

The next step is to change the values of parameters as we want.

To see all the parameters used in the model, along with their default values, see $\texttt{ConsumerParameters.py}$

Parameter values are stored as attributes of the $\texttt{ConsumerType}$ the values are used for. For example, the risk-free interest rate $\texttt{Rfree}$ is stored as $\texttt{BaselineExample.Rfree}$. Because we created $\texttt{BaselineExample}$ using the default parameters values at the moment $\texttt{BaselineExample.Rfree}$ is set to the default value of $\texttt{Rfree}$ (which, at the time this demo was written, was 1.03). Therefore, to change the risk-free interest rate used in $\texttt{BaselineExample}$ to (say) 1.02, all we need to do is:

"""

# %% code_folding=[0]

# Change the Default Riskfree Interest Rate

BaselineExample.Rfree = 1.02

# %% code_folding=[0]

## Change some parameter values

BaselineExample.Rfree = 1.02 #change the risk-free interest rate

BaselineExample.CRRA = 2. # change the coefficient of relative risk aversion

BaselineExample.BoroCnstArt = -.3 # change the artificial borrowing constraint

BaselineExample.DiscFac = .5 #chosen so that target debt-to-permanent-income_ratio is about .1

# i.e. BaselineExample.solution[0].cFunc(.9) ROUGHLY = 1.

## There is one more parameter value we need to change. This one is more complicated than the rest.

## We could solve the problem for a consumer with an infinite horizon of periods that (ex-ante)

## are all identical. We could also solve the problem for a consumer with a fininite lifecycle,

## or for a consumer who faces an infinite horizon of periods that cycle (e.g., a ski instructor

## facing an infinite series of winters, with lots of income, and summers, with very little income.)

## The way to differentiate is through the "cycles" attribute, which indicates how often the

## sequence of periods needs to be solved. The default value is 1, for a consumer with a finite

## lifecycle that is only experienced 1 time. A consumer who lived that life twice in a row, and

## then died, would have cycles = 2. But neither is what we want. Here, we need to set cycles = 0,

## to tell HARK that we are solving the model for an infinite horizon consumer.

## Note that another complication with the cycles attribute is that it does not come from

## Params.init_idiosyncratic_shocks. Instead it is a keyword argument to the __init__() method of

## IndShockConsumerType.

BaselineExample.cycles = 0

# %%

# Now, create another consumer to compare the BaselineExample to.

# %% code_folding=[0]

# The easiest way to begin creating the comparison example is to just copy the baseline example.

# We can change the parameters we want to change later.

from copy import deepcopy

XtraCreditExample = deepcopy(BaselineExample)

# Now, change whatever parameters we want.

# Here, we want to see what happens if we give the consumer access to more credit.

# Remember, parameters are stored as attributes of the consumer they are used for.

# So, to give the consumer more credit, we just need to relax their borrowing constraint a tiny bit.

# Declare how much we want to increase credit by

credit_change = .0001

# Now increase the consumer's credit limit.

# We do this by decreasing the artificial borrowing constraint.

XtraCreditExample.BoroCnstArt = BaselineExample.BoroCnstArt - credit_change

# %% [markdown]

"""

Now we are ready to solve the consumers' problems.

In HARK, this is done by calling the solve() method of the ConsumerType.

"""

# %% code_folding=[0]

### First solve the baseline example.

BaselineExample.solve()

### Now solve the comparison example of the consumer with a bit more credit

XtraCreditExample.solve()

# %% [markdown]

r"""

Now that we have the solutions to the 2 different problems, we can compare them.

We are going to compare the consumption functions for the two different consumers.

Policy functions (including consumption functions) in HARK are stored as attributes

of the *solution* of the ConsumerType. The solution, in turn, is a list, indexed by the time

period the solution is for. Since in this demo we are working with infinite-horizon models

where every period is the same, there is only one time period and hence only one solution.

e.g. BaselineExample.solution[0] is the solution for the BaselineExample. If BaselineExample

had 10 time periods, we could access the 5th with BaselineExample.solution[4] (remember, Python

counts from 0!) Therefore, the consumption function cFunc from the solution to the

BaselineExample is $\texttt{BaselineExample.solution[0].cFunc}$

"""

# %% code_folding=[0]

## First, declare useful functions to plot later

def FirstDiffMPC_Income(x):

# Approximate the MPC out of income by giving the agent a tiny bit more income,

# and plotting the proportion of the change that is reflected in increased consumption

# First, declare how much we want to increase income by

# Change income by the same amount we change credit, so that the two MPC

# approximations are comparable

income_change = credit_change

# Now, calculate the approximate MPC out of income

return (BaselineExample.solution[0].cFunc(x + income_change) -

BaselineExample.solution[0].cFunc(x)) / income_change

def FirstDiffMPC_Credit(x):

# Approximate the MPC out of credit by plotting how much more of the increased credit the agent

# with higher credit spends

return (XtraCreditExample.solution[0].cFunc(x) -

BaselineExample.solution[0].cFunc(x)) / credit_change

# %% code_folding=[0]

## Now, plot the functions we want

# Import a useful plotting function from HARK.utilities

from HARK.utilities import plotFuncs

import pylab as plt # We need this module to change the y-axis on the graphs

# Declare the upper limit for the graph

x_max = 10.

# Note that plotFuncs takes four arguments: (1) a list of the arguments to plot,

# (2) the lower bound for the plots, (3) the upper bound for the plots, and (4) keywords to pass

# to the legend for the plot.

# Plot the consumption functions to compare them

# The only difference is that the XtraCredit function has a credit limit that is looser

# by a tiny amount

print('The XtraCredit consumption function allows the consumer to spend a tiny bit more')

print('The difference is so small that the baseline is obscured by the XtraCredit solution')

plotFuncs([BaselineExample.solution[0].cFunc,XtraCreditExample.solution[0].cFunc],

BaselineExample.solution[0].mNrmMin,x_max,

legend_kwds = {'loc': 'upper left', 'labels': ["Baseline","XtraCredit"]})

# Plot the MPCs to compare them

print('MPC out of Credit v MPC out of Income')

plt.ylim([0.,1.2])

plotFuncs([FirstDiffMPC_Credit,FirstDiffMPC_Income],

BaselineExample.solution[0].mNrmMin,x_max,

legend_kwds = {'labels': ["MPC out of Credit","MPC out of Income"]})

| notebooks/MPC-Out-of-Credit-vs-MPC-Out-of-Income.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import platform

print (platform.python_version())

"""

Straightforward table converter to convert Excel tables into ontology files.

See the inline documentation in the notebook.

7-19-18:

1. Start with Chris' i2b2 Hierarchy View

2. Last column can optionally be comments

3. File is "*i2b2 Hierarchy View.xslx"

4. By default process all sheets in a file

5. There will be a "ready for i2b2" folder

"""

# +

# Example code to set a keyring password for use below

keyring.set_password(password="**<PASSWORD>**",service_name="db.concern_columbia",username="i2b2u")

# +

# Import and set paths

import glob

import pandas as pd

import numpy as np

import keyring

basepath="/Users/jeffklann/Dropbox (Partners HealthCare)/CONCERN All Team Work/Data Elements/Data Structures/Ready/For i2b2/"

outpath="/Users/jeffklann/Dropbox (Partners HealthCare)/CONCERN All Team Work/Data Elements/Data Structures/Ready/For i2b2/i2b2_output/"

password_columbia = keyring.get_password(service_name='db.concern_columbia',username='i2b2u') # You need to previously have set it with set_password

password = keyring.get_password(service_name='db.concern_phs',username='concern_user') # You need to previously have set it with set_password

# +

# Connect to SQL for persistence

# %load_ext sql

connect = "mssql+pymssql://concern_user:%s@phssql2193.partners.org/CONCERN_DEV?charset=utf8" % password

#connect = "mssql+pymssql://i2b2u:%s@10.171.30.160/CONCERN_DEV?charset=utf8" % password_columbia

# %sql $connect

# %sql USE CONCERN_DEV

import sqlalchemy

engine = sqlalchemy.create_engine(connect)

# -

# (Re)create the target ontology table

sql = """

CREATE TABLE [dbo].[autoprocessed_i2b2ontology] (

[index] int NOT NULL,

[C_HLEVEL] int NOT NULL,

[C_FULLNAME] varchar(4000) NOT NULL,

[C_NAME] varchar(2000) NOT NULL,

[C_SYNONYM_CD] char(1) NOT NULL,

[C_VISUALATTRIBUTES] char(3) NOT NULL,

[C_TOTALNUM] int NULL,

[C_BASECODE] varchar(250) NULL,

[C_METADATAXML] varchar(max) NULL,

[C_FACTTABLECOLUMN] varchar(50) NOT NULL,

[C_TABLENAME] varchar(50) NOT NULL,

[C_COLUMNNAME] varchar(50) NOT NULL,

[C_COLUMNDATATYPE] varchar(50) NOT NULL,

[C_OPERATOR] varchar(10) NOT NULL,

[C_DIMCODE] varchar(700) NOT NULL,

[C_COMMENT] varchar(max) NULL,

[C_TOOLTIP] varchar(900) NULL,

[M_APPLIED_PATH] varchar(700) NOT NULL,

[UPDATE_DATE] datetime NULL,

[DOWNLOAD_DATE] datetime NULL,

[IMPORT_DATE] datetime NULL,

[SOURCESYSTEM_CD] varchar(50) NULL,

[VALUETYPE_CD] varchar(50) NULL,

[M_EXCLUSION_CD] varchar(25) NULL,

[C_PATH] varchar(300) NULL,

[C_SYMBOL] varchar(100) NULL

)

ON [PRIMARY]

TEXTIMAGE_ON [PRIMARY]

WITH (

DATA_COMPRESSION = NONE

)

"""

engine.execute("drop table autoprocessed_i2b2ontology")

engine.execute(sql)

# +

""" Input a df with columns (minimally): Name, Code, [Ancestor_Code]*, [Modifier]

If Modifier column is included, the legal values are "" or "Y"

Will add additional columns: tooltip, h_level, fullname

rootName is prepended to the path for non-modifiers

rootModName is prepended to the path for modifiers

Derived from ontology_gen_flowsheet.py

"""

def OntProcess(rootName, df,rootModName='MOD'):

# Little inner function that renames the fullname (replaces rootName with rootName+'_MOD') if modifier is 'Y'

def fullmod(fullname,modifier):

ret=fullname.replace('\\'+rootName,'\\'+rootModName) if modifier=='Y' else fullname

return ret

ancestors=[1,-6] if 'Modifier' in df.columns else [1,-5]

df['fullname']=''

df['tooltip']=''

df['path']=''

df['h_level']=np.nan

df['has_children']=0

df=doNonrecursive(df,ancestors)

df['fullname']=df['fullname'].map(lambda x: x.lstrip(':\\')).map(lambda x: x.rstrip(':\\'))

df['fullname']='\\'+rootName+'\\'+df['fullname'].map(str)+"\\"

df['h_level']=df['fullname'].str.count('\\\\')-2

if ('Modifier' in df.columns):

# If modifier subtract 1 from hlevel and change the root to root+'_MOD'

df['h_level']=df['h_level']-df['Modifier'].fillna('').str.len()

df['fullname']=df[['fullname','Modifier']].apply(lambda x: fullmod(*x), axis=1) #sjm on stackoverflow

# Parent check! Will report has_children is true if the Code in each row is ever used as a parent code

if (len(df.columns)>7):

mydf = df

mymerge = mydf.merge(mydf,left_on=mydf.columns[1],right_on=mydf.columns[2],how='inner',suffixes=['','_r']).groupby('Code').size().reset_index().rename(lambda x: 'size' if x==0 else x,axis='columns')

mymerge=mydf.merge(mymerge,

left_on='Code',right_on='Code',how='left')

df['has_children'] = (mymerge['size'] > 0)

else:

df['has_children'] = False

#old bad code

#df['has_children'] = df['h_level']-len(df.columns[1:-5])-2 - This old version just checked to see if this element was at the max depth, which tells us nothing!

#df['has_children'] = df['has_children'].replace({-1:'Y',0:'N'})

#df['Code'].join(df.ix(3))

df=df.append({'fullname':'\\'+rootName+'\\','Name':rootName.replace('\\',' '),'Code':'toplevel|'+rootName.replace('\\',' '),'h_level':1,'has_children':True},ignore_index=True) # Add root node

return df

def doNonrecursive(df,ancestors):

cols=df.columns[ancestors[0]:ancestors[1]][::-1] # Go from column 5 before the end (we added a bunch of columns) backward to first column

print(cols)

for col in cols:

# doesn't work - mycol = df[col].to_string(na_rep='')

mycol = df[col].apply(lambda x: x if isinstance(x, str) else "{:.0f}".format(x)).astype('str').replace('nan','')

df.fullname = df.fullname.str.cat(mycol,sep='\\',na_rep='')

return df

""" Input a df with (minimally): Name, Code, [Ancestor_Code]*, [Modifier], fullname, path, h_level

Optionally input an applied path for modifiers (only one is supported per ontology at present)

Outputs an i2b2 ontology compatible df.

"""

def OntBuild(df,appliedName=''):

odf = pd.DataFrame()

odf['c_hlevel']=df['h_level']

odf['c_fullname']=df['fullname']

odf['c_visualattributes']=df['has_children'].apply(lambda x: 'FAE' if x==True else 'LAE')

odf['m_applied_path']='@'

if 'Modifier' in df.columns:

odf['c_visualattributes']=odf['c_visualattributes']+df.Modifier.fillna('')

odf['c_visualattributes'].replace(to_replace={'FAEY':'OAE','LAEY':'RAE'},inplace=True)

odf['m_applied_path']=df.Modifier.apply(lambda x: '\\'+appliedName+'\\%' if x=='Y' else '@')

odf['c_name']=df['Name']

odf['c_path']=df['path']

odf['c_basecode']=df['Code'] # Assume here leafs are unique, not dependent on parent code (unlike flowsheets)

odf['c_symbol']=odf['c_basecode']

odf['c_synonym_cd']='N'

odf['c_facttablecolumn']='concept_cd'

odf['c_tablename']='concept_dimension'

odf['c_columnname']='concept_path'

odf['c_columndatatype']='T' #this is not the tval/nval switch - 2/20/18 - df['vtype'].apply(lambda x: 'T' if x==2 else 'N')

odf['c_totalnum']=''

odf['c_operator']='LIKE'

odf['c_dimcode']=df['fullname']

odf['c_comment']=None

odf['c_tooltip']=df['fullname'] # Tooltip right now is just the fullname again

#odf['c_metadataxml']=df[['vtype','Label']].apply(lambda x: mdx.genXML(mdx.mapper(x[0]),x[1]),axis=1)

return odf

# -

# Loop through each sheet in an Excel file to generate an ontology .csv

# f is the filename (with path and extension)

# returns a list of dataframes in ontology format

def ontExcelConverter(f):

dfs = []

dfd = pd.read_excel(f,sheet_name=None)

for i,s in enumerate(dfd.keys()): # Now take all sheets, not just 'Sheet1'

df=dfd[s].dropna(axis='columns',how='all')

if len(df.columns)>1:

# Prettyprint the root node name from file and sheet name

shortf = f[f.rfind('/')+1:] # Remove path, get file name only

shortf = shortf[:shortf.find("i2b2")].strip(' ') # Stop at 'i2b2', bc files should be named *i2b2 hierarchy view.xlsx

shortf=shortf+('' if s=='Sheet1' else '_'+str(i))

print('---'+shortf)

# Clean up df

df = df.rename(columns={'Code (concept_CD/inpatient note type CD)':'Code'}) # Hack bc one file has wrong col name

df = df.drop(['Definition','definition','Comment','Comments'],axis=1,errors='ignore') # Drop occasional definition and comment columns

print(df.columns)

# Process df and add to superframe (dfs)

df = OntProcess('CONCERN\\'+shortf,df,'CONCERN_MOD\\'+shortf)

ndf = OntBuild(df,'CONCERN\\'+shortf).fillna('None')

dfs.append(ndf)

return dfs

# Main loop to process all files in a directory, export to csv, and upload the concatenated version to a database

dfs = []

for f in glob.iglob(basepath+"*.xlsx"): # the old place, multi-directory - now all in one dir"**/*i2b2 Hierarchy View*.xlsx"):

if ('~$' in f): continue # Work around Mac temp files

df = ontExcelConverter(f)

dfs = dfs + df

#ndf.to_csv(outpath+shortf+s+"_autoprocessed.csv")

outdf = pd.concat(dfs)

outdf = outdf.append({'c_hlevel':0,'c_fullname':'\\CONCERN\\','c_name':'CONCERN Root','c_basecode':'.dummy','c_visualattributes':'CAE','c_synonym_cd':'N','c_facttablecolumn':'concept_cd','c_tablename':'concept_dimension','c_columnname':'concept_path','c_columndatatype':'T','c_operator':'LIKE','c_dimcode':'\\CONCERN\\','m_applied_path':'@'},ignore_index=True)

outdf.to_csv(outpath+"autoprocessed_i2b2ontology.csv")

engine.execute("delete from autoprocessed_i2b2ontology") # if we use SQLMagic in the same cell as SQLAlchemy, it seems to hang

outdf.to_sql('autoprocessed_i2b2ontology',con=engine,if_exists='append')

# Perform check to make sure no codes are used twice. This is not necessarily an error so only output a warning.

dups = outdf.groupby('c_basecode').size()

dups = dups[dups>1]

if len(dups)>0:

print("Warning: codes used multiple times, check to verify this is intentional:")

print(dups)

# + [markdown] slideshow={"slide_type": "-"}

# # End of main code...

# ------------------------

# -

# Special hacked code for the weird ADT table file format

# DEPRECATED

dfs = []

dfd=pd.read_excel(basepath+"ADT/ADTEventHierarchy AND LocationHierarchy for Each site i2b2 June 21 2018_update.xlsx",

sheet_name=None)

for k,v in dfd.items():

shortf=k[0:k.find(' ',k.find(' ')+1)].replace(' ','_')

print(shortf)

df=v.dropna(axis='columns',how='all')

df = df.drop(['C_TOOLTIP','c_tooltip'],axis=1,errors='ignore')

print(df.columns)

df = OntProcess('CONCERN\\'+shortf,df)

ndf = OntBuild(df)

dfs.append(ndf)

ndf.to_csv(outpath+shortf+"_autoprocessed.csv")

#tname = 'out_'+shortf

#globals()[tname]=ndf

# #%sql DROP TABLE $tname

# #%sql PERSIST $tname

# One-off a prespecified file

k = "VISIT i2b2 HIERARCHY.xlsx"

dfs = ontExcelConverter("/Users/jeffklann/Downloads/"+k)

outdf = pd.concat(dfs)

outdf.to_csv("/Users/jeffklann/Downloads/VISIT_HIERARCHY"+"_autoprocessed.csv")

# + slideshow={"slide_type": "-"}

# Example of persisting table with SQL Magic

testdict={"animal":["dog",'cat'],'size':[30,15]}

zoop = pd.DataFrame(testdict)

tname = 'zoop'

# %sql DROP TABLE $tname

# %sql PERSIST $tname

# -

# %sql SELECT * from autoprocessed_i2b2ontology

#engine.execute("SELECT * FROM autoprocessed_i2b2ontology").fetchall()

# +

#Workspace, working on folder check code

mydf = dfs[0]

mymerge = mydf.merge(mydf,left_on=mydf.columns[3],right_on=mydf.columns[4],how='inner',suffixes=['','_r']).groupby('c_fullname').size().reset_index().rename(lambda x: 'size' if x==0 else x,axis='columns')

mymerge=mydf.merge(mymerge,

left_on='c_fullname',right_on='c_fullname',how='left')

print(mymerge['size'] > 0)

# -

| ontology_tools/concern_build_ont.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.6.0

# language: julia

# name: julia-1.6

# ---

# # Summary of lecture 1

# Yesterday I introduced the language Julia, a language that is compiled Just in Time: the first time you run a function, it determines the types of the arguments and compiles it, so the next time is faster.

# This has a list of advantages:

# 1. it is easy to type generic code

# 2. the user does not need to specify the types as he writes the code, making it easier and faster to reuse code

# 3. the second execution of a function is really fast

#

# This puts together the advantages of interpreted languages, as Python and Matlab, with the speed of compiled languages as C++ and Fortran.

#

# Summarizing: Julia is faster than Matlab and Python and simpler to program than C++ and Fortran.

#

#

#

# We used Julia to implement some non-rigorous numerical experiments in Ergodic Theory. While this experiments may be used to gather evidence of some underlying mathematical phenomenon, they are not mathematical proofs.

# The objective of our course is to develop tools that allow us to prove, with the assistance of a computer, Theorems in Computational Ergodic Theory.

#

# To do so, we need to introduce some new tools, both practical and theoretical.

#

# Today we will introduce Interval Arithmetics, a numerical concept that allows a computer to compute rigorous enclosures numerical expressions, i.e., intervals that are guaranteed to contain the __TRUE__ mathematical result of a function evaluation.

# # Introducing Interval Arithmetic

# In this notebook I will introduce one of the main tools of validated numerics: interval arithmetics.

# We will install a ready made package, the IntervalArithmetic.jl package, a package in the shared Julia repository.

import Pkg;

Pkg.add("IntervalArithmetic")

using IntervalArithmetic

# The main idea behind IntervalArithmetic is the following, given a function $f$, Interval Arithmetics allows us to define a function $F$, called the __interval extension__ of $f$, such that for any interval $I = [a,b]$ we have that

# $$

# f(I)\subset F(I).

# $$

# In other words, Interval arithmetics allows the computer to compute numerically an interval that contains the true mathematical result of the function.

# This is called the __range enclosure__ property.

# A reference on the topic is the following book by [<NAME> - Validated Numerics - A Short Introduction to Rigorous computation](https://www.amazon.de/-/en/Warwick-Tucker/dp/0691147817)

# The simplest object of Interval Arithmetics are the intervals.

# The macro @interval defines $x$ as the smallest interval that contains the real point $0.1$.

#

# Remark that $0.1$ has infinite binary expansion, so it is not possible to represent it exactly in binary format, so this is a wide interval, with a lower bound different from the upper bound; the upper and lower bounds are the smallest representable number in Floating Point Arithmetic bigger than $0.1$ and the greatest representable number smaller than $x$

@format standard

x = @interval 0.1

# I will change the output format of the intervals, to a midpoint radius format.

@format midpoint

x

@format standard

y = @interval 0.3

# The operations in interval arithmetic are defined so that the sum of two intervals is an interval that contains the sum of all possible intervals (in some sense, this is similar to absolute error estimate as in the statistics of physical experiments).

x+y

# The same works with multiplication.

x*y

# Please note that wide intervals may be defined.

x = @interval -1 1

x+y

# When an interval contains $0$, the real extended line formalism is used.

# This is called the affine line extension in Tucker's book.

y/x

# Summarizing: Interval arithmetics computes intervals of confidence.

#

# __The result of an operation done with interval arithmetics is guaranteed to contain the true mathematical result of the operation.__

# ## Function evaluation

# Library routines are defined so that the __range enclosure__ property is satisfied. This allows us to get rigorous numerical results on mathematical functions.

f(x) = x*sin(1/x)

x = @interval 0 0.1

f(x)

using Plots

N = 16

X1 = [@interval i/N (i+1)/N for i in 0:N-1]

plot(f, 0, 1)

plot!(IntervalBox.(X1, f.(X1)))

# It is important to observe, in the plot above, that while the graph of the function is computed nonrigorously, and therefore it is not validate, the interval computation is validated, so the rectangles in pink have the strength of theorems.

N = 128

X2 = [@interval i/N (i+1)/N for i in 0:N-1]

plot(f, 0, 1)

plot!(IntervalBox.(X2, f.(X2)))

# The important thing to stress is that the evaluation of a function on a correctly defined interval has the strength of a mathematical theorem, i.e.,

f(@interval 0.5 0.7)

# can be read, in other words as, for any $x$ in $[0.5, 0.7]$ we have that $0.454648\leq f(x)\leq 0.700001$.

# Please remark that this is a totally different scenario from numerical computations, where usually the statements are something as: if the algorithm is stable we get a result which is near the real result (called forward error bound) or if the algorithm is stable it solves a problem which is near our original problem (called backward error bound).

# __Exercise__ Evaluate the function $sin(x)$ on the intervals $[0, 0.125]$, $[0, 0.25]$, $[0, 0.5]$, $[0, \pi]$, $[0, 3\pi]$.

# Remember that you can type \pi+TAB to write the character $\pi$.

sin(@interval 0 2*π)

# ## How to use this

# Due to the range enclosure property we can use this, as an example, to exclude intervals that surely __not contain__ zero. There may be intervals that seem to contain zero due to the overestimates of interval arithmetics, but those we excluded we are sure they do not contain zero.

function exclude_not_contain_zero(f, X)

may_contain_zeros = Interval[]

for x in X

if contains_zero(f(x))

append!(may_contain_zeros, x)

end

end

return may_contain_zeros

end

X = [@interval i/N (i+1)/N for i in 0:N-1]

Xzeros = exclude_not_contain_zero(f, X)

plot(f, 0, 1)

plot!(IntervalBox.(Xzeros, f.(Xzeros)))

# Refining the intervals this gives us a way to identify small intervals that may contain zero; please note that this does not guarantee they contain zero, I will show some methods how to find zeros in a moment.

N = 512

X = [@interval i/N (i+1)/N for i in 0:N-1]

Xzeros = exclude_not_contain_zero(f, X)

plot(f, 0, 0.35)

plot!(IntervalBox.(Xzeros, f.(Xzeros)))

# While this may seem nothing extreme, please remark that this can be used to prove that a function is nonzero in a given interval. This can be used to exclude parameters.

# ## The interval Newton method

# I will introduce now the workhorse of Validated Numerics, the interval Newton method.

# To do so, we remember the [mean value theorem](https://en.wikipedia.org/wiki/Mean_value_theorem):

# if $f$ is a continuous function on $[a, b]$ and differentiable on $(a,b)$ then there exists a point

# $c$ in $(a,b)$ such that

# $$

# f(b)-f(a) = f'(c)(b-a).

# $$

# We want now to find a solution to the equation

# $$

# f(x) = 0.

# $$

# By the mean value theorem, if $\tilde{x}$ is a solution of the equation and $x$ is another, nearby point, we have

# $$

# f(x) = f'(c)(x-\tilde{x}),

# $$

# and, if $f'(c)\neq 0$, this implies that

# $$

# \tilde{x} = x-\frac{f(x)}{f'(c)};

# $$

# this is the motivation behind the Newton iteration method, i.e., building the sequence

# $$

# x_{n+1} = x_{n}-\frac{f(x_n)}{f'(x_n)}.

# $$

# Please remark that under some conditions, this method converges to a root, but we do not know which root (there may be more than one) nor we can bound the distance between $x_{n}$ and $\tilde{x}$.

# __Important__ please remark that if we knew the point $c$, we could compute exactly the root in one step. But the mean value theorem is a result of existence, it does not tell us which point is the point $c$.

# The interval Newton method answers all these issues:

# 1. it proves whether an interval contains a root or not

# 2. it returns us an interval guaranteed to contain a root (this has the strength of a mathematical proof)

# 3. it can prove the fact that the root inside the interval is unique.

# The idea is similar to the one used to build the Newton iteration. Suppose we are looking for a root of a function in the interval $I$; then, if $m$ is the midpoint of the interval and $\tilde{x}$ is the root, we have

# that

# $$

# \tilde{x} = m-\frac{f(m)}{f'(c)}

# $$

# where $c$ is a point in the interval $I$. Now, interval arithmetics allows us to compute an interval that contains $f'(I)$, which in turn contains $f'(c)$.

# So, given an interval $I$ we can compute a new interval

# $$

# N(f, I) = m-\frac{f(m)}{f'(I)}.

# $$

#

# In Tucker book it is proved that :

# - if $I$ contains a root, then $N(f, I)$ contains a root.

# - if $N(f,I)\cap I$ is empty, we know that the interval contains no root. If it is not empty we know it contains at least a root.

# - If $N(f, I)$ is strictly contained in $I$ the root is unique.

#

# So, we have now a tool that allows us to rigorously enclose the roots of a function.

Newton(f, fprime, I::Interval{T}) where {T} = intersect(Interval{T}(mid(I))-f(Interval{T}(mid(I)))/fprime(I), I)

x = Newton(sin, cos, @interval 3 4)

# As you can see, the result of the Newton interval step is telling us that the function $sin$ has a unique $0$ in $[3, 4]$ and giving us a tighter interval that contains this $0$.

# We can iterate this process.

for i in 1:10

x = Newton(sin, cos, x)

end

println(x)

println(diam(x))

# So, we have computed an interval that contains $\pi$, which is the $0$ of the function $sin$ in $[3, 4]$, the interval has a diameter of $4.45\cdot e-16$. Please remark again, that this has the strength of a mathematical theorem, i.e., the interval $x$ has been proved, with the aid of a computer, to contain the value of $\pi$.

# If we want tighter bounds, we can use higher precision floating point arithmetics in our intervals.

setprecision(1024)

x = Interval{BigFloat}(x) # we will refine the interval computed in Float64

for i in 1:10

x = Newton(sin, cos, x)

end

println(x)

println(diam(x))

# Again, this is a theorem, we have that $\pi$ is bounded below by

x.lo

# and above by

x.hi

# Using Automatic Differentiation, the code for the Newton method can be written in an even simpler way.

using DualNumbers

Newton(f, I) = Newton(f, x->(f(Dual(x, 1)).epsilon), I)

# We can use this to certify the intervals that may contain the the zeros of the function $f(x) = x \cdot sin(1/x)$.

new_x = [Newton(f, x) for x in Xzeros]

plot(f, 0, 0.35)

plot!(IntervalBox.(new_x, f.(new_x)))

# The last two intervals are so thin that the plotting function is not rendering them exactly, but the Newton method confirmed them. What is striking is that the Newton method allowed us to prove the existence of at least one zero in each one of the intervals above.

#

# We will zoom to $[0.3180, 0.3185]$.

plot(f, 0.3180, 0.3185)

plot!(IntervalBox.(new_x[end], f.(new_x[end])))

# __Exercise__: Modify the Interval Newton Method to solve the equation $f(x)=y$; can you implement it in such a way that $y$ may be an interval? What happens when you use to solve an equation $f(x)=y$ with $y$ a wide interval?

# ## Taylor Models

# I will introduce now another tool of Validated Numerics, used to get rigorous approximations of functions, called Taylor Models.

#

# They are used in the rigorous computation of integrals and the rigorous computation of enclosures of trajectories of ODE.

# We want to be able to approximate a function by a polynomial with rigorous error bound.

import Pkg; Pkg.add("TaylorModels")

using TaylorModels

f(x) = x*(x-1)*(x+2)*(x+3)^2*(x+7)*sin(2*π*x+0.5)

I = @interval -0.5 0.5 # Domain

plot(f, -0.5, 0.5)

# A Taylor model is a polynomial $P$ of degree $k$ and an interval $\Delta$ such that given a function $f$, on an interval $I$, $P$ is the Taylor polynomial of order $k$ at the center of the interval and $\Delta$ is an interval such that

# $$

# f(x)-P(x) \in \Delta

# $$

TM10 = TaylorModel1(10, I) # Taylor model of order 10

TM15 = TaylorModel1(15, I) #Taylor model of order 15

# Plese note that the Taylor model is centered at the midpoint of $I$.

?TaylorModel1

FM10 = f(TM10)

# So, on $[-0.5, 0.5]$ the error between the value of $f$ and the value of the Taylor Model is contained in the interval $[-1.50153, 2.61226]$

# On the same interval the Taylor model of order $15$ is the following.

FM15 = f(TM15)

# As expected, passing from a linear approximation With higher order, we get smaller $\Delta$.

plot(f, -0.5, 0.5)

plot!(FM10, color=:red)

plot!(FM15, color = :green)

# __Exercise__ : Find the Taylor models of order $5$, $10$ and $15$ of the function $f$ on the interval $[-0.125, 0.125]$. What happens to $\Delta$?

# ## Rigorous Integration

# We are interested in computing rigorously the value of an integral. To do so, we will use Taylor Models.

f(x) = sin(exp(1/x))

plot(f, 0.125, 1)

# In this section I will show a tecnique to find intervals that enclose the true value of an integral. To show the power of the method I will compute the integral of an oscillating integral.

# Having a Taylor expansion centered at the center of the interval allows us to easily compute the integral of the function over the integral.

# Let $m$ be the midpoint of the interval, $r = x-m$ and $a_i$ the coefficients of $P$:

# $$

# \int_I f dx = \sum_{i=0}^n a_i \int_I (x-m)^i dx +\int_I f(x)-P(x) dx,

# $$

# so the integral is contained in the interval with center

# $$

# 2\sum_{i=0}^{\lfloor n/2 \rfloor} a_{2i}\frac{r^{2i+1}}{2i+1}

# $$

# and radius $\Delta\cdot |I|$ (where $|I|$ is the length of I).

function integrate1(f, I; steps = 1024, degree = 6)

lo = I.lo

hi = I.hi

l = diam(I)

int_center = Interval(0.0)

int_error = Interval(0.0)

for i in 1:steps

left = lo+(i-1)*(l/steps)

right = lo+i*l/steps

r = (1/2)*(l/steps)

J = interval(left, right)

TM = TaylorModel1(degree, J)

FM = f(TM)

#@info FM

for i in 0:Int64(floor(degree/2))

int_center+=2*(FM.pol[2*i]*r^(2*i+1))/(2*i+1)

int_error +=2*FM.rem*r

end

end

return int_center+int_error

end

integrate1(f, @interval 0.25 1; steps = 1024, degree = 6)

# This integral method can be made adaptive, both in the size of the interval $J$ and the degree of the Taylor expansion. This has the strength of a mathematical proof.

function adaptive_integration(f, I::Interval; tol = 2^-10, steps = 8, degree = 6) # tol 2^-10, steps = 8 are default values

lo = I.lo

hi = I.hi

l = diam(I)

int_value = Interval(0.0)

for i in 1:steps

left = lo+(i-1)*(l/steps)

right = lo+i*l/steps

Istep = Interval(left, right)

val = integrate1(f, Istep)

if radius(val)<tol

int_value += val

else

I₁, I₂ = bisect(I)

val₁ = adaptive_integration(f, I₁; tol = tol/2, steps = steps, degree = degree+2)

val₂ = adaptive_integration(f, I₁; tol = tol/2, steps = steps, degree = degree+2)

int_value +=val₁+val₂

end

end

return int_value

end

@time adaptive_integration(f, @interval 0.125 1)

# __Exercise__: Try to push the computation of the integral further near $0$.

# # Summary of the lecture

# In this lecture I introduced some tools that allow us to prove Theorems with the help of a computer.

# 1. Interval Arithmetic, that allows us to rigorously enclose the range of a function on an interval $I$

# 2. Interval Newton Method, that allows us to find an interval that is proved to contain the solution of the equation $f(x)=y$, allowing us to rigorously find inverse images

# 3. Taylor Models that allow us to approximate functions by polynomials with rigorous and explicit error bounds

# 4. Rigorous integration that allows us to compute rigorously the value of an integral over an Interval.

#

# All these tools are the engine of our implementation of the Ulam method: we use the Interval Newton method to compute preimages, computing an Interval of entries which contains the entries of the true abstract matrix.

#

# This is going to allow us to approximate the invariant density $h$ of the physical measure of a system.

#

# Then are going to use rigorous integration to compute the Birkhoff averages of observables in a rigorous way, through the identity

# $$

# \lim_{n\to +\infty}\frac{1}{n}\sum_{i=0}^{n-1} \phi(T^i(x))=\int \phi h dx.

# $$

| Lecture 2 - Interval Arithmetics.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # MAT281 - Laboratorio N°10

#

#

# <a id='p1'></a>

# ## I.- Problema 01

#

#

# <img src="https://www.goodnewsnetwork.org/wp-content/uploads/2019/07/immunotherapy-vaccine-attacks-cancer-cells-immune-blood-Fotolia_purchased.jpg" width="360" height="360" align="center"/>

#

#

# El **cáncer de mama** es una proliferación maligna de las células epiteliales que revisten los conductos o lobulillos mamarios. Es una enfermedad clonal; donde una célula individual producto de una serie de mutaciones somáticas o de línea germinal adquiere la capacidad de dividirse sin control ni orden, haciendo que se reproduzca hasta formar un tumor. El tumor resultante, que comienza como anomalía leve, pasa a ser grave, invade tejidos vecinos y, finalmente, se propaga a otras partes del cuerpo.

#

# El conjunto de datos se denomina `BC.csv`, el cual contine la información de distintos pacientes con tumosres (benignos o malignos) y algunas características del mismo.

#

#

# Las características se calculan a partir de una imagen digitalizada de un aspirado con aguja fina (FNA) de una masa mamaria. Describen las características de los núcleos celulares presentes en la imagen.

# Los detalles se puede encontrar en [K. <NAME> and <NAME>: "Robust Linear Programming Discrimination of Two Linearly Inseparable Sets", Optimization Methods and Software 1, 1992, 23-34].

#

#

# Lo primero será cargar el conjunto de datos:

# +

import os

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.preprocessing import StandardScaler

from sklearn.decomposition import PCA

from sklearn.pipeline import make_pipeline

from sklearn.datasets import load_digits

from sklearn.manifold import TSNE

from sklearn.decomposition import PCA

from sklearn.dummy import DummyClassifier

from sklearn.cluster import KMeans

# %matplotlib inline

sns.set_palette("deep", desat=.6)

sns.set(rc={'figure.figsize':(11.7,8.27)})

# -

# cargar datos

df = pd.read_csv(os.path.join("data","BC.csv"), sep=",")

df['diagnosis'] = df['diagnosis'] .replace({'M':1,'B':0}) # target

df.head()

# Basado en la información presentada responda las siguientes preguntas:

#

# 1. Realice un análisis exploratorio del conjunto de datos.

# 1. Normalizar las variables numéricas con el método **StandardScaler**.

# 3. Realizar un método de reducción de dimensionalidad visto en clases.

# 4. Aplique al menos tres modelos de clasificación distintos. Para cada uno de los modelos escogidos, realice una optimización de los hiperparámetros. además, calcule las respectivas métricas. Concluya.

#

#

#

# ### Análisis exploratorio del conjunto de datos:

print('----------------------')

print('Media de cada variable')

print('----------------------')

df.mean(axis=0)

print('-------------------------')

print('Varianza de cada variable')

print('-------------------------')

df.var(axis=0)

df.describe()

# Veamos cuantos tumores son malignos y cuantos beningnos:

B = df[df["diagnosis"]==0]

M = df[df["diagnosis"]==1]

B

M

# Se concluye que 357 tumores son benignos (B) contra 212 malignos (M).

# ### Normalizar las variables numéricas con el método StandardScaler:

# +

scaler = StandardScaler()

df[df.columns.drop(["id","diagnosis"])] = scaler.fit_transform(df[df.columns.drop(["id","diagnosis"])])

df.head()

# -

# ### Realizar un método de reducción de dimensionalidad visto en clases:

# +

# Entrenamiento modelo PCA con escalado de los datos

# ==============================================================================

pca_pipe = make_pipeline(StandardScaler(), PCA())

pca_pipe.fit(df)

# Se extrae el modelo entrenado del pipeline

modelo_pca = pca_pipe.named_steps['pca']

# -

# Se combierte el array a dataframe para añadir nombres a los ejes.

pd.DataFrame(

data = modelo_pca.components_,

columns = df.columns,

index = ['PC1', 'PC2', 'PC3', 'PC4',

'PC5', 'PC6', 'PC7', 'PC8',

'PC9', 'PC10', 'PC11', 'PC12',

'PC13', 'PC14', 'PC15', 'PC16',

'PC17', 'PC18', 'PC19', 'PC20',

'PC21', 'PC22', 'PC23', 'PC24',

'PC25', 'PC26', 'PC27', 'PC28',

'PC29', 'PC30', 'PC31', 'PC32']

)

# Heatmap componentes

# ==============================================================================

plt.figure(figsize=(12,14))

componentes = modelo_pca.components_

plt.imshow(componentes.T, cmap='viridis', aspect='auto')

plt.yticks(range(len(df.columns)), df.columns)

plt.xticks(range(len(df.columns)), np.arange(modelo_pca.n_components_) + 1)

plt.grid(False)

plt.colorbar();

# +

# graficar varianza por componente

percent_variance = np.round(modelo_pca.explained_variance_ratio_* 100, decimals =2)

columns = ['PC1', 'PC2', 'PC3', 'PC4',

'PC5', 'PC6', 'PC7', 'PC8',

'PC9', 'PC10', 'PC11', 'PC12',

'PC13', 'PC14', 'PC15', 'PC16',

'PC17', 'PC18', 'PC19', 'PC20',

'PC21', 'PC22', 'PC23', 'PC24',

'PC25', 'PC26', 'PC27', 'PC28',

'PC29', 'PC30', 'PC31', 'PC32']

plt.figure(figsize=(20,10))

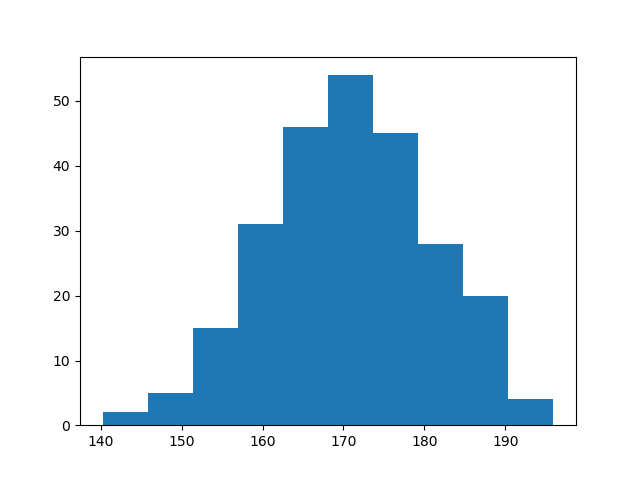

plt.bar(x= range(1,33), height=percent_variance, tick_label=columns)

plt.xticks(np.arange(modelo_pca.n_components_) + 1)

plt.ylabel('Componente principal')

plt.xlabel('Por. varianza explicada')

plt.title('Porcentaje de varianza explicada por cada componente')

plt.show()

# +

# graficar varianza por la suma acumulada de los componente

percent_variance_cum = np.cumsum(percent_variance)

#columns = ['PC1', 'PC1+PC2', 'PC1+PC2+PC3', 'PC1+PC2+PC3+PC4',.....]

plt.figure(figsize=(12,4))

plt.bar(x= range(1,33), height=percent_variance_cum, #tick_label=columns

)

plt.ylabel('Percentate of Variance Explained')

plt.xlabel('Principal Component Cumsum')

plt.title('PCA Scree Plot')

plt.show()

# -

# Proyección de las observaciones de entrenamiento

# ==============================================================================

proyecciones = pca_pipe.transform(X=df)

proyecciones = pd.DataFrame(

proyecciones,

columns = ['PC1', 'PC2', 'PC3', 'PC4',

'PC5', 'PC6', 'PC7', 'PC8',

'PC9', 'PC10', 'PC11', 'PC12',

'PC13', 'PC14', 'PC15', 'PC16',

'PC17', 'PC18', 'PC19', 'PC20',

'PC21', 'PC22', 'PC23', 'PC24',

'PC25', 'PC26', 'PC27', 'PC28',

'PC29', 'PC30', 'PC31', 'PC32'],

index = df.index

)

proyecciones.head()

# ### Aplique al menos tres modelos de clasificación distintos. Para cada uno de los modelos escogidos, realice una optimización de los hiperparámetros. además, calcule las respectivas métricas. Concluya.

# +

df3 = pd.get_dummies(df)

df3.head()

# +

X = np.array(df3)

kmeans = KMeans(n_clusters=8,n_init=25, random_state=123)

kmeans.fit(X)

centroids = kmeans.cluster_centers_ # centros

clusters = kmeans.labels_ # clusters

# -

# etiquetar los datos con los clusters encontrados

df["cluster"] = clusters

df["cluster"] = df["cluster"].astype('category')

centroids_df = pd.DataFrame(centroids)

centroids_df["cluster"] = [1,2,3,4,5,6,7,8]

# +

# implementación de la regla del codo

Nc = [5,10,20,30,50,75,100,200,300]

kmeans = [KMeans(n_clusters=i) for i in Nc]

score = [kmeans[i].fit(df).inertia_ for i in range(len(kmeans))]

df_Elbow = pd.DataFrame({'Number of Clusters':Nc,

'Score':score})

df_Elbow.head()

# -

# graficar los datos etiquetados con k-means

fig, ax = plt.subplots(figsize=(11, 8.5))

plt.title('Elbow Curve')

sns.lineplot(x="Number of Clusters",

y="Score",

data=df_Elbow)

sns.scatterplot(x="Number of Clusters",

y="Score",

data=df_Elbow)

plt.show()

# +

# PCA

#scaler = StandardScaler()

X = df.drop(columns=["id","diagnosis"])

y = df['diagnosis']

embedding = PCA(n_components=2)

X_transform = embedding.fit_transform(X)

df_pca = pd.DataFrame(X_transform,columns = ['Score1','Score2'])

df_pca['diagnosis'] = y

# +

# Plot Digits PCA

# Set style of scatterplot

sns.set_context("notebook", font_scale=1.1)

sns.set_style("ticks")

# Create scatterplot of dataframe

sns.lmplot(x='Score1',

y='Score2',

data=df_pca,

fit_reg=False,

legend=True,

height=9,

hue='diagnosis',

scatter_kws={"s":200, "alpha":0.3})

plt.title('PCA Results: BC', weight='bold').set_fontsize('14')

plt.xlabel('Prin Comp 1', weight='bold').set_fontsize('10')

plt.ylabel('Prin Comp 2', weight='bold').set_fontsize('10')

# +

from sklearn.datasets import make_moons, make_circles, make_classification

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from sklearn.svm import SVC

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier

from matplotlib.colors import ListedColormap

# +

X = df_pca.drop(columns='diagnosis')

y = df_pca['diagnosis']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.25, random_state=42)

h = .02 # step size in the mesh

plt.figure(figsize=(12,12))

names = ["Logistic",

"RBF SVM",

"Decision Tree",

"Random Forest"

]

classifiers = [

LogisticRegression(),

SVC(gamma=2, C=1),

DecisionTreeClassifier(max_depth=5),

RandomForestClassifier(max_depth=5, n_estimators=10, max_features=1),

]

X, y = make_classification(n_features=2, n_redundant=0, n_informative=2,

random_state=1, n_clusters_per_class=1)

rng = np.random.RandomState(2)

X += 2 * rng.uniform(size=X.shape)

linearly_separable = (X, y)

datasets = [make_moons(noise=0.3, random_state=0),

make_circles(noise=0.2, factor=0.5, random_state=1),

linearly_separable

]

figure = plt.figure(figsize=(27, 9))

i = 1

# iterate over datasets

for ds_cnt, ds in enumerate(datasets):

# preprocess dataset, split into training and test part

X, y = ds

X = StandardScaler().fit_transform(X)

X_train, X_test, y_train, y_test = \

train_test_split(X, y, test_size=.4, random_state=42)

x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5

y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5

xx, yy = np.meshgrid(np.arange(x_min, x_max, h),

np.arange(y_min, y_max, h))

# just plot the dataset first

cm = plt.cm.RdBu

cm_bright = ListedColormap(['#FF0000', '#0000FF'])

ax = plt.subplot(len(datasets), len(classifiers) + 1, i)

if ds_cnt == 0:

ax.set_title("Input data")

# Plot the training points

ax.scatter(X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright,

edgecolors='k')

# Plot the testing points

ax.scatter(X_test[:, 0], X_test[:, 1], c=y_test, cmap=cm_bright, alpha=0.6,

edgecolors='k')

ax.set_xlim(xx.min(), xx.max())

ax.set_ylim(yy.min(), yy.max())

ax.set_xticks(())

ax.set_yticks(())

i += 1

# iterate over classifiers

for name, clf in zip(names, classifiers):

ax = plt.subplot(len(datasets), len(classifiers) + 1, i)

clf.fit(X_train, y_train)

score = clf.score(X_test, y_test)

# Plot the decision boundary. For that, we will assign a color to each

# point in the mesh [x_min, x_max]x[y_min, y_max].

if hasattr(clf, "decision_function"):

Z = clf.decision_function(np.c_[xx.ravel(), yy.ravel()])

else:

Z = clf.predict_proba(np.c_[xx.ravel(), yy.ravel()])[:, 1]

# Put the result into a color plot

Z = Z.reshape(xx.shape)

ax.contourf(xx, yy, Z, cmap=cm, alpha=.8)

# Plot the training points

ax.scatter(X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright,

edgecolors='k')

# Plot the testing points

ax.scatter(X_test[:, 0], X_test[:, 1], c=y_test, cmap=cm_bright,

edgecolors='k', alpha=0.6)

ax.set_xlim(xx.min(), xx.max())

ax.set_ylim(yy.min(), yy.max())

ax.set_xticks(())

ax.set_yticks(())

if ds_cnt == 0:

ax.set_title(name)

ax.text(xx.max() - .3, yy.min() + .3, ('%.2f' % score).lstrip('0'),

size=15, horizontalalignment='right')

i += 1

plt.tight_layout()

plt.show()

# +

from metrics_classification import *

class SklearnClassificationModels:

def __init__(self,model,name_model):

self.model = model

self.name_model = name_model

@staticmethod

def test_train_model(X,y,n_size):

X_train, X_test, y_train, y_test = train_test_split(X, y,test_size=n_size , random_state=42)

return X_train, X_test, y_train, y_test

def fit_model(self,X,y,test_size):

X_train, X_test, y_train, y_test = self.test_train_model(X,y,test_size )

return self.model.fit(X_train, y_train)

def df_testig(self,X,y,test_size):

X_train, X_test, y_train, y_test = self.test_train_model(X,y,test_size )

model_fit = self.model.fit(X_train, y_train)

preds = model_fit.predict(X_test)

df_temp = pd.DataFrame(

{

'y':y_test,

'yhat': model_fit.predict(X_test)

}

)

return df_temp

def metrics(self,X,y,test_size):

df_temp = self.df_testig(X,y,test_size)

df_metrics = summary_metrics(df_temp)

df_metrics['model'] = self.name_model

return df_metrics

# +

# metrics

import itertools

# nombre modelos

names_models = ["Logistic",

"RBF SVM",

"Decision Tree",

"Random Forest"

]

# modelos

classifiers = [

LogisticRegression(),

SVC(gamma=2, C=1),

DecisionTreeClassifier(max_depth=5),

RandomForestClassifier(max_depth=5, n_estimators=10, max_features=1),

]

datasets

names_dataset = ['make_moons',

'make_circles',

'linearly_separable'

]

X, y = make_classification(n_features=2, n_redundant=0, n_informative=2,

random_state=1, n_clusters_per_class=1)

rng = np.random.RandomState(2)

X += 2 * rng.uniform(size=X.shape)

linearly_separable = (X, y)

datasets = [make_moons(noise=0.3, random_state=0),

make_circles(noise=0.2, factor=0.5, random_state=1),

linearly_separable

]

# juntar informacion

list_models = list(zip(names_models,classifiers))

list_dataset = list(zip(names_dataset,datasets))

frames = []

for x in itertools.product(list_models, list_dataset):

name_model = x[0][0]

classifier = x[0][1]

name_dataset = x[1][0]

dataset = x[1][1]

X = dataset[0]

Y = dataset[1]

fit_model = SklearnClassificationModels( classifier,name_model)

df = fit_model.metrics(X,Y,0.2)

df['dataset'] = name_dataset

frames.append(df)

# -

# juntar resultados

pd.concat(frames)

| labs/lab_10_Moreno.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Import Libraries and Data

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from sklearn import metrics

import seaborn as sns

# %matplotlib inline

data = pd.read_csv(r'C:\Users\somendra.singh\Desktop\Data Science codes\Stats and ML july\Machine Learning\diabetes.csv')

data.head()

# ## Correlation Check

correlations = data.corr()

correlations

# ## Visualizing the data for any Relations

# +

def visualise(data):

fig, ax = plt.subplots()

ax.scatter(data.iloc[:,1].values, data.iloc[:,5].values)

ax.set_title('Highly Correlated Features')

ax.set_xlabel('Plasma glucose concentration')

ax.set_ylabel('Body mass index')

visualise(data)

# -

# ## Replacing the Zeros with Null values

data[['Glucose','BMI']] = data[['Glucose','BMI']].replace(0, np.NaN)

data.dropna(inplace=True)

visualise(data)

# ## Feature Selection

X = data[['Glucose','BMI','Pregnancies','BloodPressure','SkinThickness','Insulin',

'DiabetesPedigreeFunction','Age']].values

y = data[['Outcome']].values

# ## Standardization & Scaling of Features

sc = StandardScaler()

X = sc.fit_transform(X)

mean = np.mean(X, axis=0)

print('Mean: (%d, %d)' % (mean[0], mean[1]))

standard_deviation = np.std(X, axis=0)

print('Standard deviation: (%d, %d)' % (standard_deviation[0], standard_deviation[1]))

print(X[0:10,:])

# ## Train-Test Split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0)

# ## Logistic Regression Model

# instantiate the model (using the default parameters)

logreg = LogisticRegression()

# fit the model with data

logreg.fit(X_train,y_train)

# ## Predictions

y_pred=logreg.predict(X_test)

y_pred

# ## Performance & Accuracy

cnf_matrix = metrics.confusion_matrix(y_test, y_pred)

cnf_matrix

class_names=[0,1] # name of classes

fig, ax = plt.subplots()

tick_marks = np.arange(len(class_names))

plt.xticks(tick_marks, class_names)

plt.yticks(tick_marks, class_names)

# create heatmap

sns.heatmap(pd.DataFrame(cnf_matrix), annot=True, cmap="YlGnBu" ,fmt='g')

ax.xaxis.set_label_position("top")

plt.tight_layout()

plt.title('Confusion matrix', y=1.1)

plt.ylabel('Actual label')

plt.xlabel('Predicted label')

print("Accuracy:",metrics.accuracy_score(y_test, y_pred))

print("Precision:",metrics.precision_score(y_test, y_pred))

print("Recall:",metrics.recall_score(y_test, y_pred))

y_pred_proba = logreg.predict_proba(X_test)[::,1]

fpr, tpr, _ = metrics.roc_curve(y_test, y_pred_proba)

auc = metrics.roc_auc_score(y_test, y_pred_proba)

plt.plot(fpr,tpr,label="data 1, auc="+str(auc))

plt.legend(loc=4)

plt.show()

| Logistic Regression.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# #### You can write code in abstract methods

# +

from abc import ABC, abstractmethod

class Automobile(ABC):

def __init__(self, no_of_wheels):

self.no_of_wheels = no_of_wheels

print("Automobile Created")

@abstractmethod

def start(self):

print("start of Automobile called")

@abstractmethod

def stop(self):

pass

@abstractmethod

def drive(self):

pass

@abstractmethod

def get_no_of_wheels(self):

return self.no_of_wheels

class Car(Automobile):

def start(self):

super().start()

print("start of Car called")

def stop(self):

pass

def drive(self):

pass

def get_no_of_wheels(self):

return super().get_no_of_wheels()

class Bus(Automobile):

def start(self):

pass

def stop(self):

pass

def drive(self):

pass

def get_no_of_wheels(self):

return super().get_no_of_wheels()

c = Car(4)

b = Bus(6)

print(c.get_no_of_wheels())

print(b.get_no_of_wheels())

# +

#Predict the Output:

from abc import ABC, abstractmethod

class A(ABC):

@abstractmethod

def fun1(self):

print("function of class A called")

@abstractmethod

def fun2(self):

pass

class B(A):

def fun1(self):

print("function 1 called")

def fun2(self):

print("function 2 called")

o = B()

o.fun1()

# +

#Predict the Output:

from abc import ABC, abstractmethod

class A(ABC):

@abstractmethod

def fun1(self):

print("function of class A called")

@abstractmethod

def fun2(self):

pass

class B(A):

def fun1(self):

super().fun1()

def fun2(self):

print(" function 2 called")

o = B()

o.fun1()

# -

| 05 OOPS-3/5.2 Abstract class cont.....ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:python3]

# language: python

# name: conda-env-python3-py

# ---

# + [markdown] deletable=true editable=true

# # Examples

#

# Last updated: July 12, 2017

#

# Below are a few examples to get you started.

#

# + deletable=true editable=true

import numpy as np

import matplotlib.pyplot as plt

from ftperiodogram.modeler import FastTemplatePeriodogram, FastMultiTemplatePeriodogram, TemplateModel

from ftperiodogram.template import Template

from ftperiodogram.utils import ModelFitParams

rand = np.random

# + [markdown] deletable=true editable=true

# ## The `Template` class

#

# There are two ways of instantiating the `Template` class, which is needed by both the `FastTemplatePeriodogram` and `FastMultiTemplatePeriodogram` classes.

#

# ### If you have a template defined by `phase`, `y_phase`, use the `.from_sampled` class method to instantiate:

# + deletable=true editable=true

# Make a template

phase = np.linspace(0, 1, 100)

amps = [ 0.5, 0.2, 0.3 ]

phases = [ -1., 1.3, 0.2 ]

amps = np.array(amps) / np.sqrt(sum(np.power(amps, 2)))

y_phase = sum([ a * np.cos(2 * (n+1) * np.pi * phase - p) \

for n, (a,p) in enumerate(zip(amps, phases)) ])

# Instantiate the `Template` class

our_template = Template.from_sampled(y_phase, nharmonics=3)

# Plot a comparison

f, ax = plt.subplots()

ax.plot(phase, y_phase, color='k', lw=2, alpha=0.5)

ax.plot(phase, our_template(phase), color='r')

ax.set_xlabel('phase')

ax.set_xlim(0,1)

plt.show()

# -

# ### If you know the Fourier coefficients of the template:

# +

# Obtain the Fourier coefficients from the last template fit

c_n, s_n = our_template.c_n, our_template.s_n

# Instantiate a new `Template` using coefficients

new_template = Template(c_n, s_n)

# Check that both templates are equivalent

print(all(new_template(phase) == our_template(phase)))

# -

# ## The `TemplateModel` class

#

# The `TemplateModel` class stores a template and a set of fit parameters. When called, the model will return

#

# $\hat{y}(t) = aM(\omega(t - \tau)) + c$

#

# where $M(\phi)$ is the template, and $a, \omega, \tau, c$ are the fit parameters. The parameters must be an instance of `ModelFitParams`, which is simply an ordered dictionary with the attributes `a`, `b`, `c`, `sgn`. The `b` and `sgn` variables represent $\cos(\omega \tau)$ and ${\rm sign}(sin(\omega\tau))$, respectively.

# +

# set some model parameters (arbitrary)

params = ModelFitParams(a=1., b=0.3, c=0.2, sgn=1)

#

model = TemplateModel(our_template, frequency=1.0, parameters=params)

t = np.linspace(0, 3, 300)

y0 = model(t)

f, ax = plt.subplots()

ax.plot(t, y0)

plt.show()

# -

# ## Template periodogram, single template -- `FastTemplatePeriodogram`

#

# The `FastTemplatePeriodogram` can be used to fit a (single) periodic template to irregularly sampled time-series data at a number of frequencies.

#

# ### Accessing the best-fit model

#

# After running `power` or `autopower`, the `FastTemplatePeriodogram` will keep the best-fit model in the form of a `TemplateModel` instance accessible via the `.best_model` attribute.

# +

# Instantiate periodogram with template

ftp = FastTemplatePeriodogram(template=our_template)

# Generate random data (using template)

ndata = 30

sigma = 0.2

freq = 20.

t = np.sort(rand.rand(ndata))

phi = (t * freq) % 1.0

y = ftp.template(phi) + sigma * rand.randn(ndata)

yerr = sigma * np.ones_like(y)

# Provide periodogram with data

ftp.fit(t, y, yerr)

# Produce a periodogram over an automatically-determined

# set of frequencies for the given template

freqs, powers = ftp.autopower(samples_per_peak=10)

f, (axper, axfit) = plt.subplots(1, 2, figsize=(12, 3))

best_freq = freqs[np.argmax(powers)]

# Plot periodogram

axper.plot(freqs, powers)

axper.axvline(freq, ls=':', color='0.5')

axper.set_xlabel('frequency')

axper.set_ylabel('power')

axper.set_xlim(min(freqs), max(freqs))

# Plot data

axfit.errorbar((t * best_freq) % 1.0, y, yerr, fmt='o', c='0.5')

axfit.plot(phase, ftp.best_model(phase / best_freq), color='r')

plt.show()

# -

# ## Template periodogram, multiple templates -- `FastMultiTemplatePeriodogram`

#

# If you would like to fit from a selection of templates, you may do so via the `FastMultiTemplatePeriodogram`. At each trial frequency, the `FastMultiTemplatePeriodogram` will pick the template that best fits the data. This is useful for detecting classes of objects that exhibit multiple kinds of periodic fluctuations (for example, RR Lyrae).

# +

# Make two templates

cn1, sn1 = zip(*[ (0.2, 0.2), (0.0, 0.2), (0.2, 0.0) ])

cn2, sn2 = zip(*[ (0.2, 0.1), (0.2, 0.0), (0.0, 0.2) ])

template1 = Template(cn1, sn1)

template2 = Template(cn2, sn2)

# Now, generate some linear combinations of the templates

y_temp1 = template1(phase)

y_temp2 = template2(phase)

signal = lambda x, q=0.5 : q * template1(x) + (1 - q) * template2(x)

templates = []

ftemp, axtemp = plt.subplots()

colormap = plt.get_cmap('viridis')

axtemp.set_title('Templates used for fitting')

axtemp.set_xlabel('Phase')

for q in [ 0.0, 0.5, 1.0 ]:

y_temp = signal(phase, q=q)

templates.append(Template.from_sampled(y_temp, nharmonics=4))

axtemp.plot(phase, templates[-1](phase), color=colormap(q))

axtemp.set_xlim(0, 1)

# generate some new data

q_data = 0.25

y_new = signal(phi, q=q_data) + sigma * rand.rand(len(t))

# instantiate (multi-template) periodogram

ftp_multi = FastMultiTemplatePeriodogram(templates=templates)

# give periodogram data

ftp_multi.fit(t, y_new, yerr)

# evaluate periodogram at a set of automatically determined

# frequencies

freqs_multi, powers_multi = ftp_multi.autopower(samples_per_peak = 10)

best_freq = freqs_multi[np.argmax(powers_multi)]

f2, (axper, axfit) = plt.subplots(1, 2, figsize=(12, 3))

axper.plot(freqs_multi, powers_multi)

axper.axvline(freq, ls=':', color='0.5')

axfit.plot(phase, ftp_multi.best_model(phase/best_freq), color='r')

axfit.plot(phase, signal(phase, q=q_data), color='k', lw=2)

axfit.errorbar((t * best_freq) % 1.0, y_new, yerr, fmt='o', c='0.5')

plt.show()

# -

# ## Single-frequency fits

#

# You may also use either the `FastTemplatePeriodogram` or `FastMultiTemplatePeriodogram` to fit a template at a given frequency using the `fit_model` method.

# +

correct_template = Template.from_sampled(signal(phase, q=q_data), nharmonics=4)

another_model = FastTemplatePeriodogram(template=correct_template).fit(t, y_new, yerr).fit_model(freq)

f,ax = plt.subplots()

ax.plot(phase, another_model(phase/freq), color='r')

ax.plot(phase, signal(phase, q=q_data), color='k', lw=2)

ax.errorbar((t * freq) % 1.0, y_new, yerr, fmt='o', c='0.5')

plt.show()

# -

| notebooks/Examples.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 課程目標:

#

# 利用神經網路的加法減法數學式來說明梯度下降

# # 範例重點:

#

# 透過網路參數(w, b)的更新可以更容易理解梯度下降的求值過程

# matplotlib: 載入繪圖的工具包

# random, numpy: 載入數學運算的工具包

import matplotlib

import matplotlib.pyplot as plt

# %matplotlib inline

#適用於 Jupyter Notebook, 宣告直接在cell 內印出執行結果

import random as random

import numpy as np

import csv

# # ydata = b + w * xdata

# 給定曲線的曲線範圍

# 給定初始的data

x_data = [ 338., 333., 328., 207., 226., 25., 179., 60., 208., 606.]

y_data = [ 640., 633., 619., 393., 428., 27., 193., 66., 226., 1591.]

#給定神經網路參數:bias 跟weight

x = np.arange(-200,-100,1) #給定bias

y = np.arange(-5,5,0.1) #給定weight

Z = np.zeros((len(x), len(y)))

#meshgrid返回的兩個矩陣X、Y必定是 column 數、row 數相等的,且X、Y的 column 數都等

#meshgrid函數用兩個坐標軸上的點在平面上畫格。

X, Y = np.meshgrid(x, y)

for i in range(len(x)):

for j in range(len(y)):

b = x[i]

w = y[j]

Z[j][i] = 0

for n in range(len(x_data)):

Z[j][i] = Z[j][i] + (y_data[n] - b - w*x_data[n])**2

Z[j][i] = Z[j][i]/len(x_data)

# +

# ydata = b + w * xdata

b = -120 # initial b

w = -4 # initial w

lr = 0.000001 # learning rate

iteration = 100000

# Store initial values for plotting.

b_history = [b]

w_history = [w]

#給定初始值

lr_b = 0.0

lr_w = 0.0

# -

# 在微積分裡面,對多元函數的參數求∂偏導數,把求得的各個參數的偏導數以向量的形式寫出來,就是梯度。

# 比如函數f(x), 對x求偏導數,求得的梯度向量就是(∂f/∂x),簡稱grad f(x)或者▽f (x)。

#

# +

'''

Loss = (實際ydata – 預測ydata)

Gradient = -2*input * Loss

調整後的權重 = 原權重 – Learning * Gradient

'''

# Iterations

for i in range(iteration):

b_grad = 0.0

w_grad = 0.0

for n in range(len(x_data)):

b_grad = b_grad - 2.0*(y_data[n] - b - w*x_data[n])*1.0

w_grad = w_grad - 2.0*(y_data[n] - b - w*x_data[n])*x_data[n]

lr_b = lr_b + b_grad ** 2

lr_w = lr_w + w_grad ** 2

# Update parameters.

b = b - lr * b_grad

w = w - lr * w_grad

# Store parameters for plotting

b_history.append(b)

w_history.append(w)

# -

# plot the figure

plt.contourf(x,y,Z, 50, alpha=0.5, cmap=plt.get_cmap('jet'))

plt.plot([-188.4], [2.67], 'x', ms=12, markeredgewidth=3, color='orange')

plt.plot(b_history, w_history, 'o-', ms=3, lw=1.5, color='black')

plt.xlim(-200,-100)

plt.ylim(-5,5)

plt.xlabel(r'$b$', fontsize=16)

plt.ylabel(r'$w$', fontsize=16)

plt.show()

| D74_Gradient Descent_數學原理/Day74-Gradient Descent_Math.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Slicing and Dicing Dataframes

#

# You have seen how to do indexing of dataframes using ```df.iloc``` and ```df.loc```. Now, let's see how to subset dataframes based on certain conditions.

#

# +

# loading libraries and reading the data

import numpy as np

import pandas as pd

df = pd.read_csv("../global_sales_data/market_fact.csv")

df.head()

# -

# ### Subsetting Rows Based on Conditions

#

# Often, you want to select rows which satisfy some given conditions. For e.g., select all the orders where the ```Sales > 3000```, or all the orders where ```2000 < Sales < 3000``` and ```Profit < 100```.

#

# Arguably, the best way to do these operations is using ```df.loc[]```, since ```df.iloc[]``` would require you to remember the integer column indices, which is tedious.

#

# Let's see some examples.

# Select all rows where Sales > 3000

# First, we get a boolean array where True corresponds to rows having Sales > 3000

df.Sales > 3000

# Then, we pass this boolean array inside df.loc

df.loc[df.Sales > 3000]

# An alternative to df.Sales is df['Sales]

# You may want to put the : to indicate that you want all columns

# It is more explicit

df.loc[df['Sales'] > 3000, :]

# We combine multiple conditions using the & operator

# E.g. all orders having 2000 < Sales < 3000 and Profit > 100

df.loc[(df.Sales > 2000) & (df.Sales < 3000) & (df.Profit > 100), :]

# The 'OR' operator is represented by a | (Note that 'or' doesn't work with pandas)

# E.g. all orders having 2000 < Sales OR Profit > 100

df.loc[(df.Sales > 2000) | (df.Profit > 100), :]

# E.g. all orders having 2000 < Sales < 3000 and Profit > 100

# Also, this time, you only need the Cust_id, Sales and Profit columns

df.loc[(df.Sales > 2000) & (df.Sales < 3000) & (df.Profit > 100), ['Cust_id', 'Sales', 'Profit']]

# You can use the == and != operators

df.loc[(df.Sales == 4233.15), :]

df.loc[(df.Sales != 1000), :]

# +

# You may want to select rows whose column value is in an iterable

# For instance, say a colleague gives you a list of customer_ids from a certain region

customers_in_bangalore = ['Cust_1798', 'Cust_1519', 'Cust_637', 'Cust_851']

# To get all the orders from these customers, use the isin() function

# It returns a boolean, which you can use to select rows

df.loc[df['Cust_id'].isin(customers_in_bangalore), :]

| 4_Slicing_Dicing.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import os

import csv

import pylab as pp

import matplotlib

# +

os.chdir("D:\PEM article\V_I")

fileName = "V_I.png"

# +

x1 = []

y1 = []

x2 = []

y2 = []

x3 = []

y3 = []

x4 = []

y4 = []

# +

with open('Base.txt','r') as csvfile:

Data1 = csv.reader(csvfile, delimiter=',')

for row in Data1:

x1.append(row[0])

y1.append(row[1])

for i in range(len(x1)):

x1[i] = float(x1[i])

y1[i] = float(y1[i])

#----------------------------------------------------------#

with open('A.txt','r') as csvfile:

Data2 = csv.reader(csvfile, delimiter=',')

for row in Data2:

y2.append(row[0])

x2.append(row[1])

for i in range(len(x2)):

y2[i] = float(y2[i])

x2[i] = float(x2[i])

#----------------------------------------------------------#

with open('B.txt','r') as csvfile:

Data3 = csv.reader(csvfile, delimiter=',')

for row in Data3:

y3.append(row[0])

x3.append(row[1])

for i in range(len(x3)):

y3[i] = float(y3[i])

x3[i] = float(x3[i])

#----------------------------------------------------------#

with open('C - Copy.txt','r') as csvfile:

Data4 = csv.reader(csvfile, delimiter=',')

for row in Data4:

y4.append(row[0])

x4.append(row[1])

for i in range(len(x4)):

y4[i] = float(y4[i])

x4[i] = float(x4[i])

# +

# %matplotlib notebook

pp.autoscale(enable=True, axis='x', tight=True)

pp.plot(x1, y1, marker='o', markerfacecolor='blue', markersize=3, color='skyblue', linewidth=2.5, label = "Base model")

pp.plot(x2, y2, marker='*', color='red', linewidth=1.5, label = "Model A")

pp.plot(x3, y3, marker='', color='green', linewidth=2, linestyle='dashed', label = "Model B")

pp.plot(x4, y4, marker='*', color='olive', linewidth=1.5, label = "Model C")

#pp.legend("Numerical results (single phase)", "Numerical results (two phase)", "Ahmadi et al.", "Wang et al.")

pp.legend()

pp.xlabel(r"Current density $\left(\frac{A}{cm^2}\right)$")

pp.ylabel(r"Cell voltage $(V)$")

fig = matplotlib.pyplot.gcf()

fig.set_size_inches(6, 5)

# -

pp.savefig(fileName, dpi=1200)

| PEM/.ipynb_checkpoints/Plot-Copy1-checkpoint.ipynb |