code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# cell_metadata_filter: all

# notebook_metadata_filter: all

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# language_info:

# codemirror_mode:

# name: ipython

# version: 3

# file_extension: .py

# mimetype: text/x-python

# name: python

# nbconvert_exporter: python

# pygments_lexer: ipython3

# version: 3.6.7

# ---

# # Generate stimuli for Sound-Switch Experiment 1 <a class="tocSkip">

# C and G guitar chords generated [here](https://www.apronus.com/music/onlineguitar.htm) and subsequently recorded and amplified (25.98 db and 25.065 db respectively) using Audacity version 2.3.0 (using Effect --> Amplify) via Windows 10's Stereo Mix drivers.

# # Imports

from pydub import AudioSegment

from pydub.generators import Sine

from pydub.playback import play

import random

import numpy as np

import os

# # Functions

def generate_songs(path_prefix):

for switch_probability in switch_probabilities:

for exemplar in range(num_exemplars):

# Begin with silence

this_song = silence

# Choose random tone to start with

which_tone = round(random.random())

for chunk in range(num_chunks):

this_probability = random.random()

# Change tones if necessary

if this_probability < switch_probability:

which_tone = 1 - which_tone

this_segment = songs[which_tone][:chunk_size]

# Add intervening silence

this_song = this_song.append(silence, crossfade=crossfade_duration)

# Add tone

this_song = this_song.append(this_segment, crossfade=crossfade_duration)

# Add final silence

this_song.append(silence, crossfade=crossfade_duration)

song_name = f"{path_prefix}switch-{str(round(switch_probability,2))}_chunk-{str(chunk_size)}_C_G_alternating_{str(exemplar).zfill(2)}.mp3"

this_song.export(song_name, format="mp3", bitrate="192k")

# # Stimulus Generation

# ## Guitar chords

# +

songs = [

AudioSegment.from_mp3("guitar_chords/guitar_C.mp3"),

AudioSegment.from_mp3("guitar_chords/guitar_G.mp3"),

]

chunk_size = 500 # in ms

num_chunks = 20

crossfade_duration = 50 # in ms

silence_duration = 100 # in ms

switch_probabilities = np.linspace(0.1, 0.9, num=9)

num_exemplars = 10

silence = AudioSegment.silent(duration=silence_duration)

# Generate the songs

generate_songs(path_prefix="guitar_chords/")

# -

# ## Tones

# +

# Create sine waves of given freqs

frequencies = [261.626, 391.995] # C4, G4

sample_rate = 44100 # sample rate

bit_depth = 16 # bit depth

# Same params as above for guitar

chunk_size = 500 # in ms

num_chunks = 20

crossfade_duration = 50 # in ms

silence_duration = 100 # in ms

switch_probabilities = np.linspace(0.1, 0.9, num=9)

num_exemplars = 10

silence = AudioSegment.silent(duration=silence_duration)

sine_waves = []

songs = []

for i, frequency in enumerate(frequencies):

sine_waves.append(Sine(frequency, sample_rate=sample_rate, bit_depth=bit_depth))

#Convert waveform to audio_segment for playback and export

songs.append(sine_waves[i].to_audio_segment(duration=chunk_size*2)) # just to make sure it's long enough

generate_songs(path_prefix="pure_tones/")

# -

# # Practice Stimulus

# Just choose one of the above stimuli to be a practice stimulus, and remake the stimuli so that it doesn't get repeated.

| stimuli/generate_stimuli.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="HMyxX1Gmh7cl" colab_type="text"

# https://colab.research.google.com/drive/1jKzKN_7Fj-OIwTZlVBDaxVj5ct1uGAva

# + [markdown] id="c0x9z68Byl2u" colab_type="text"

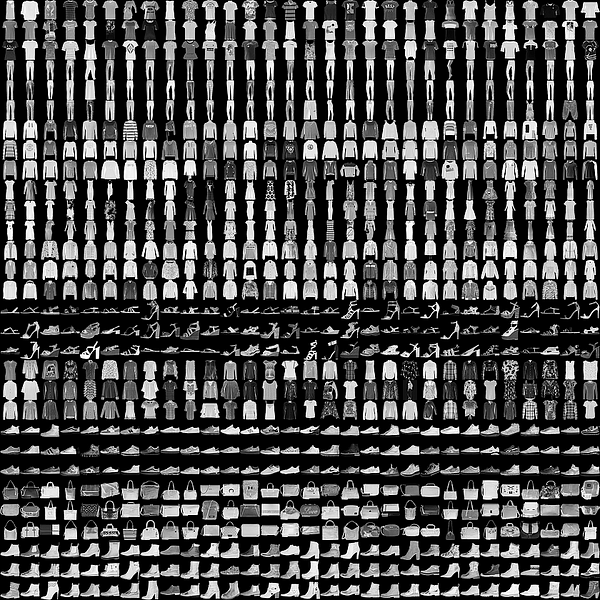

# # GAN

# + id="7aivHtGkBOKw" colab_type="code" outputId="a77b70ad-c995-4ab9-9194-1540e5104ebb" colab={"base_uri": "https://localhost:8080/", "height": 34}

import numpy as np

from keras.datasets import mnist

from keras.layers import Input, Dense, Reshape, Flatten, Dropout

from keras.layers import BatchNormalization

from keras.layers.advanced_activations import LeakyReLU

from keras.models import Sequential

from keras.optimizers import Adam

import matplotlib.pyplot as plt

# %matplotlib inline

plt.switch_backend('agg')

# + id="2slim96TPTcH" colab_type="code" colab={}

from keras.models import Sequential

from keras.layers import Dense

from keras.layers import Reshape

from keras.layers.core import Activation

from keras.layers.normalization import BatchNormalization

from keras.layers.convolutional import UpSampling2D

from keras.layers.convolutional import Conv2D, MaxPooling2D

from keras.layers.core import Flatten

from keras.optimizers import SGD

from keras.datasets import mnist

import numpy as np

from PIL import Image

import argparse

import math

# + id="ePrTaaRlFErE" colab_type="code" outputId="515301ae-daef-412f-c13e-da2abe795462" colab={"base_uri": "https://localhost:8080/", "height": 170}

# !pip install logger

from logger import logger

# + id="VdgeZQ3PDQsY" colab_type="code" colab={}

shape = (28, 28, 1)

epochs = 400

batch = 32

save_interval = 100

# + id="o_NdO2D5Duwn" colab_type="code" colab={}

def generator():

model = Sequential()

model.add(Dense(256, input_shape=(100,)))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(512))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(1024))

model.add(LeakyReLU(alpha=0.2))

model.add(BatchNormalization(momentum=0.8))

model.add(Dense(28 * 28 * 1, activation='tanh'))

model.add(Reshape(shape))

return model

# + id="5JsKSvY0Dw0s" colab_type="code" colab={}

def discriminator():

model = Sequential()

model.add(Flatten(input_shape=shape))

model.add(Dense((28 * 28 * 1), input_shape=shape))

model.add(LeakyReLU(alpha=0.2))

model.add(Dense(int((28 * 28 * 1) / 2)))

model.add(LeakyReLU(alpha=0.2))

model.add(Dense(1, activation='sigmoid'))

return model

# + id="f8c7eJjbdS0v" colab_type="code" outputId="bbf302bc-c5ad-47dc-c501-c5accdde9afe" colab={"base_uri": "https://localhost:8080/", "height": 527}

Generator = generator()

Generator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8))

Discriminator = discriminator()

Discriminator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8),metrics=['accuracy'])

# + id="wVT8owFodTzH" colab_type="code" outputId="5de7d734-bee4-4c19-da1a-87e21dfbbebf" colab={"base_uri": "https://localhost:8080/", "height": 884}

print(Discriminator.summary(), Generator.summary())

# + id="t26rWMb9-lRV" colab_type="code" outputId="a676bb50-2d19-48c5-a89a-d59162953b8c" colab={"base_uri": "https://localhost:8080/", "height": 527}

Generator.summary()

# + id="osVaP6pqDy5-" colab_type="code" colab={}

def stacked_generator_discriminator(D, G):

D.trainable = False

model = Sequential()

model.add(G)

model.add(D)

return model

# + id="ObcGi9PdD6EI" colab_type="code" colab={}

def plot_images(samples=16, step=0):

filename = "mnist_%d.png" % step

noise = np.random.normal(0, 1, (samples, 100))

images = Generator.predict(noise)

plt.figure(figsize=(5, 5))

for i in range(images.shape[0]):

plt.subplot(4, 4, i + 1)

image = images[i, :, :, :]

image = np.reshape(image, [28, 28])

plt.imshow(image, cmap='gray')

plt.axis('off')

plt.tight_layout()

plt.show()

#plt.close('all')

# + id="REhNMaBOEGJw" colab_type="code" colab={}

stacked_generator_discriminator = stacked_generator_discriminator(Discriminator, Generator)

stacked_generator_discriminator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8))

# + id="PyBn269FPquS" colab_type="code" outputId="caf84757-ac2b-4581-a228-8f3585b27d2c" colab={"base_uri": "https://localhost:8080/", "height": 221}

stacked_generator_discriminator.summary()

# + id="_Zzp5_9zEd6T" colab_type="code" outputId="6a35f310-8bdd-442b-991c-0f791243dae5" colab={"base_uri": "https://localhost:8080/", "height": 51}

(X_train, _), (_, _) = mnist.load_data()

X_train = (X_train.astype(np.float32) - 127.5) / 127.5

X_train = np.expand_dims(X_train, axis=3)

# + id="OfSz3mV_RkPH" colab_type="code" colab={}

save_interval = 250

# + id="X2bZzvGIEg54" colab_type="code" colab={}

# %matplotlib inline

disc_loss = []

gen_loss = []

for cnt in range(4000):

random_index = np.random.randint(0, len(X_train) - batch / 2)

legit_images = X_train[random_index: random_index + batch // 2].reshape(batch // 2, 28, 28, 1)

gen_noise = np.random.normal(-1, 1, (batch // 2, 100))/2

syntetic_images = Generator.predict(gen_noise)

x_combined_batch = np.concatenate((legit_images, syntetic_images))

y_combined_batch = np.concatenate((np.ones((batch // 2, 1)), np.zeros((batch // 2, 1))))

d_loss = Discriminator.train_on_batch(x_combined_batch, y_combined_batch)

noise = np.random.normal(-1, 1, (batch, 100))/2

y_mislabled = np.ones((batch, 1))

g_loss = stacked_generator_discriminator.train_on_batch(noise, y_mislabled)

logger.info('epoch: {}, [Discriminator: {}], [Generator: {}]'.format(cnt, d_loss[0], g_loss))

disc_loss.append(d_loss[0])

gen_loss.append(g_loss)

if cnt % save_interval == 0:

plot_images(step=cnt)

# + id="kIdr7A8BN5sO" colab_type="code" outputId="e8bd0d72-1bc2-4c8d-916a-d894bdff6e82" colab={"base_uri": "https://localhost:8080/", "height": 346}

import matplotlib.ticker as mtick

import matplotlib.pyplot as plt

# %matplotlib inline

epochs = range(1, 4001)

plt.plot(epochs, disc_loss, 'bo', label='Discriminator loss')

plt.plot(epochs, gen_loss, 'r', label='Generator loss')

plt.title('Generator and Discriminator loss values')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.grid('off')

plt.show()

# + id="OkF6yBXSE_L2" colab_type="code" outputId="01d302b2-35d1-43ab-c7a7-fad10bda1dd5" colab={"base_uri": "https://localhost:8080/", "height": 369}

plot_images(step=cnt)

# + id="cqp8oeGi68jK" colab_type="code" outputId="3a4f32ec-600c-417f-9016-8c07a640d62b" colab={"base_uri": "https://localhost:8080/", "height": 202}

noise = np.random.normal(0, 1, (1, 100))

images = Generator.predict(noise)

plt.figure(figsize=(10, 10))

for i in range(images.shape[0]):

plt.subplot(4, 4, i + 1)

image = images[i, :, :, :]

image = np.reshape(image, [28, 28])

plt.imshow(image, cmap='gray')

plt.axis('off')

plt.tight_layout()

plt.show()

# + id="zJzlYFffVW5W" colab_type="code" colab={}

# + id="eRfJZxjaVW7r" colab_type="code" colab={}

# + [markdown] id="H-3IlCV2VXMY" colab_type="text"

# # DCGAN

# + id="eqPphZ_9HeNE" colab_type="code" colab={}

def generator():

model = Sequential()

model.add(Dense(input_dim=100, output_dim=1024))

model.add(Activation('tanh'))

model.add(Dense(128*7*7))

model.add(BatchNormalization())

model.add(Activation('tanh'))

model.add(Reshape((7, 7, 128), input_shape=(128*7*7,)))

model.add(UpSampling2D(size=(2, 2)))

model.add(Conv2D(64, (5, 5), padding='same'))

model.add(Activation('tanh'))

model.add(UpSampling2D(size=(2, 2)))

model.add(Conv2D(1, (5, 5), padding='same'))

model.add(Activation('tanh'))

return model

def discriminator():

model = Sequential()

model.add(

Conv2D(64, (5, 5),

padding='same',

input_shape=(28, 28, 1))

)

model.add(Activation('tanh'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Conv2D(128, (5, 5)))

model.add(Activation('tanh'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Flatten())

model.add(Dense(1024))

model.add(Activation('tanh'))

model.add(Dense(1))

model.add(Activation('sigmoid'))

return model

# + id="BXtQvpKgECRx" colab_type="code" outputId="d79d77a6-dc1a-4787-cefb-a63f4aa9dcca" colab={"base_uri": "https://localhost:8080/", "height": 187}

Generator = generator()

Generator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8))

Discriminator = discriminator()

Discriminator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8),metrics=['accuracy'])

def stacked_generator_discriminator(D, G):

D.trainable = False

model = Sequential()

model.add(G)

model.add(D)

return model

stacked_generator_discriminator = stacked_generator_discriminator(Discriminator, Generator)

stacked_generator_discriminator.compile(loss='binary_crossentropy', optimizer=Adam(lr=0.0002, beta_1=0.5, decay=8e-8))

# + id="UCj7YcjpVoTF" colab_type="code" colab={}

# %matplotlib inline

disc_loss = []

gen_loss = []

for cnt in range(4000):

random_index = np.random.randint(0, len(X_train) - batch / 2)

legit_images = X_train[random_index: random_index + batch // 2].reshape(batch // 2, 28, 28, 1)

gen_noise = np.random.normal(-1, 1, (batch // 2, 100))/2

syntetic_images = Generator.predict(gen_noise)

x_combined_batch = np.concatenate((legit_images, syntetic_images))

y_combined_batch = np.concatenate((np.ones((batch // 2, 1)), np.zeros((batch // 2, 1))))

d_loss = Discriminator.train_on_batch(x_combined_batch, y_combined_batch)

noise = np.random.normal(-1, 1, (batch, 100))/2

y_mislabled = np.ones((batch, 1))

g_loss = stacked_generator_discriminator.train_on_batch(noise, y_mislabled)

logger.info('epoch: {}, [Discriminator: {}], [Generator: {}]'.format(cnt, d_loss[0], g_loss))

disc_loss.append(d_loss[0])

gen_loss.append(g_loss)

if cnt % save_interval == 0:

plot_images(step=cnt)

# + id="5z484ecEVqWb" colab_type="code" outputId="f0b0b8a4-f98f-479e-b759-7668184b119b" colab={"base_uri": "https://localhost:8080/", "height": 346}

import matplotlib.ticker as mtick

import matplotlib.pyplot as plt

# %matplotlib inline

epochs = range(1, 4001)

plt.plot(epochs, disc_loss, 'bo', label='Discriminator loss')

plt.plot(epochs, gen_loss, 'r', label='Generator loss')

plt.title('Generator and Discriminator loss values')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.grid('off')

plt.show()

# + id="vwEZDCVGPrSR" colab_type="code" colab={}

| Chapter08/Vanilla_and_DC_GAN.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# +

#Preliminary feature selection for Merge Table 1

# -

#import necessary packages

import pandas as pd

import datetime as dt

import seaborn as sns

import matplotlib.pyplot as plt

import yellowbrick as yb

import warnings

warnings.filterwarnings("ignore")

path = 'MergeTable1_FirmsAndSmWfigs2Round.csv'

df1 = pd.read_csv('MergeTable1_FirmsAndSmWfigs2Round.csv', error_bad_lines=False)

#Identifying the percentage of missing values present in each respective column of the dataframe

percent_missing = df1.isnull().sum() * 100 / len(df1)

print(percent_missing)

#Drop all columns containing more than 50 null values.

df1drop = df1.dropna(how='all', thresh=50, axis=1)

#Converting daynight and satellite values to numerical values.

daynight_map = {"D": 1, "N": 0}

satellite_map = {"Terra": 1, "Aqua": 0}

df1drop['daynight'] = df1drop['daynight'].map(daynight_map)

df1drop['satellite'] = df1drop['satellite'].map(satellite_map)

#Seperate acq_date column into seperate 'day', 'month', and 'year'columns.

df1drop['acq_date'] = pd.to_datetime(df1drop['acq_date'])

df1drop['year'] = df1drop['acq_date'].dt.year

df1drop['month'] = df1drop['acq_date'].dt.month

df1drop['day'] = df1drop['acq_date'].dt.day

#Now that we have seperated our 'acq_date' data into day, month, and year columns, we can drop the 'acq_date' column to eliminate redundancy.

df1drop.drop("acq_date", axis=1, inplace=True)

#Drop columns unneccesary to our exploration.

df1drop.drop("instrument", axis=1, inplace=True)

df1drop.drop("date_loc", axis=1, inplace=True)

df1drop.drop("date_hour_loc", axis=1, inplace=True)

df1drop.drop("acq_time", axis=1, inplace=True)

df1drop.drop("type", axis=1, inplace=True)

df1drop.drop("Unnamed: 0", axis=1, inplace=True)

df1drop.drop("latitude", axis=1, inplace=True)

df1drop.drop("longitude", axis=1, inplace=True)

#Create a new dataframe that does not contain null values.

df1drop = df1drop.dropna()

#Convert data in dataframe to string type to work with Yellowbrick visualizations.

df1drop1 = df1drop.astype(str)

#Seperate data sets as labels and features.

X = df1drop1.drop('FIRE_DETECTED', axis=1)

y = df1drop1['FIRE_DETECTED']

features = ["brightness", "scan", "track", "satellite", "confidence", "version", "bright_t31", "frp", "daynight", "lat", "long", "hour_x", "day", "month", "year"]

# +

#Use RandomForestClassifier to plot feature importance for our dataset. As we can see from the output, hour_x, daynight, version, and brightness do not seem to contribute significantly to our model.

from sklearn.ensemble import RandomForestClassifier

from yellowbrick.features import FeatureImportances

model = RandomForestClassifier(n_estimators=10)

viz = FeatureImportances(model, labels=features, size=(1080, 720))

viz.fit(X, y)

viz.show()

# +

#Create a Shaprio ranking of our features.

from yellowbrick.features import Rank1D

fig, ax = plt.subplots(1, figsize=(8, 12))

vzr = Rank1D(ax=ax)

vzr.fit(X, y)

vzr.transform(X)

sns.despine(left=True, bottom=True)

vzr.poof()

# -

#Convert data in the dataframe to float type.

df1drop2 = df1drop.astype(float)

df1drop2.head()

# +

#seperate data sets as labels and features

X = df1drop2.drop('FIRE_DETECTED', axis=1)

y = df1drop2['FIRE_DETECTED']

features = ["brightness", "scan", "track", "satellite", "confidence", "version", "bright_t31", "frp", "daynight", "lat", "long", "hour_x", "day", "month", "year"]

# +

#Use Yellowbrick's Rank2D to create a Pearson ranking of features.

from yellowbrick.features import Rank2D

fig, ax = plt.subplots(1, figsize=(12, 12))

vzr = Rank2D(ax=ax)

vzr.fit(X, y)

vzr.transform(X)

sns.despine(left=True, bottom=True)

vzr.poof()

# +

#Analyze parallel coordinates for our features using Yellowbricks' ParallelCoordinates.

from yellowbrick.features import ParallelCoordinates

features = ["brightness", "scan", "track", "satellite", "confidence", "version", "bright_t31", "frp", "daynight", "lat", "long", "hour_x", "day", "month", "year"

""

]

classes = ["True", "False"]

# Instantiate the visualizer

visualizer = ParallelCoordinates(

classes=classes, features=features, sample=0.05,

shuffle=True, size=(1080, 720)

)

# Fit and transform the data to the visualizer

visualizer.fit(X, y)

visualizer.transform(X)

# Finalize the title and axes then display the visualization

visualizer.show()

# +

#Analyze parallel coordinates for our features using Yellowbricks' ParallelCoordinates, using a data normalizer this time.

visualizer = ParallelCoordinates(

classes=classes, features=features,

normalize='standard', # This time we'll specify a normalizer

sample=0.05, shuffle=True, size=(1080, 720)

)

# Fit the visualizer and display it

visualizer.fit(X, y)

visualizer.transform(X)

visualizer.show()

# -

#Analyze feature importance using Sklearn's ExtraTreesClassifier.

from sklearn.ensemble import ExtraTreesClassifier

array = df1drop2.values

X = array[:,0:41663]

Y = array[:,15]

model = ExtraTreesClassifier(n_estimators = 100)

model.fit(X, Y)

print(model.feature_importances_)

| ML/Merge Table 1 Feature Selection.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.8.8 64-bit (''base'': conda)'

# name: python3

# ---

# # Data analysis on EDGAR Asset Backed Security(ABS) SEC Filings

# +

import numpy as np

import pandas as pd

# %matplotlib inline

import matplotlib.pyplot as plt

import seaborn as sns

plt.rcParams['font.sans-serif']=['SimHei']

# -

df = pd.read_csv(r'D:\data\edgar\edgar_abs_universal.csv')

df

df['year'] = df['effective'].apply(lambda effective: str(effective)[0:4])

df['year'].dropna(inplace=True)

df['year'] = df['year'].apply(lambda year: int(year))

df

recentDf = df[df['year'] > 2014]

recentDf = recentDf[recentDf['doc_type'] != 'GRAPHIC']

recentDf = recentDf[recentDf['file_desc'] != 'Complete submission text file']

recentDf

recentDf.to_csv(r'D:\data\edgar\edgar_abs_since_2015.csv',index=False)

# +

# SPV 层面分析

spv_df = df[['cik','company','loc']]

spv_df = spv_df.drop_duplicates()

spv_df.set_index('cik', inplace=True)

def get_company_category(company):

company = company.lower()

if 'student' in company or 'educat' in company:

return 'ABS - Student Loan'

if 'card' in company:

return 'ABS - CARD'

if 'auto' in company or 'vehicle' in company or 'moto' in company or 'toyota' in company:

return 'ABS - AUTO'

if 'mbs' in company:

return 'MBS'

if 'home' in company:

return 'MBS'

if 'mor' in company:

return 'MBS'

if '-bnk' in company:

return 'MBS'

if 'hous' in company:

return 'MBS'

if 'receiv' in company or 'asset' in company:

return 'ABS - Others'

return 'OTHER'

spv_df['company_category'] = spv_df['company'].apply(get_company_category)

# other_df = spv_df[spv_df['company_category'] == 'OTHER']

# print(list(other_df['company']))

spv_group_by_type_df = spv_df.groupby(['company_category']).count()

spv_group_by_type_df.drop('loc', axis=1, inplace=True)

spv_group_by_type_df.columns = ['Count']

spv_group_by_type_df

# -

# products = spv_group_by_type_df['category']

counts = spv_group_by_type_df['Count']

colors = ['#eccc68','#1e90ff','#ff6348','#70a1ff','#2ed573']

fig = counts.plot(kind='pie', # 图形类型

title = 'SPV 类型分布',

fontsize = 20,

autopct='%.1f%%', # 数值标签

colors = colors,

radius = 1, # 饼图半径

startangle = 180, # 初始角度

textprops= {'fontsize':14,'color':'0.5'}

)

fig.axes.title.set_size('20')

# plt.title('SPV 类型分布')

plt.show()

# SPV 公司Filing 文件分析

spv_filing_df = df[['cik','company','filing_id','filing_type','idx_url','filing_desc','effective','file_num']]

spv_filing_df.drop_duplicates(inplace=True)

spv_filing_count_df = spv_filing_df.groupby(['company']).count()

spv_filing_count_df = spv_filing_count_df['cik']

# spv_filing_count_df.columns = ['Count']

spv_filing_count_df.sort_values(ascending=False).head(10)

# SPV Filing 数量分布

filing_cnt_df = spv_filing_df.groupby(['company']).count()

filing_bins = [0,5,10,15,20,25,30,40,50,100,500,1000]

filing_cnt_df['category'] = pd.cut(filing_cnt_df['cik'], filing_bins)

by_filing_count = filing_cnt_df.groupby('category').count()

by_filing_count['cik'].plot.bar(rot=45)

# SPV 公司Filing 时间分析

spv_filing_df['filing_year'] = spv_filing_df['effective'].apply(lambda effective: str(effective)[0:4])

by_year_count = spv_filing_df.groupby('filing_year').count()

by_year_count.drop(['nan'],inplace=True)

by_year_count.index = by_year_count.index.astype('int64')

by_year_count['cik'].plot(kind= 'line',xticks = by_year_count.index, rot=45)

by_type = spv_filing_df.groupby('filing_type').count()

by_type.reset_index(inplace=True)

by_type['category'] = by_type.apply(lambda row: 'OTHERS' if row['cik'] < 2000 else row['filing_type'], axis=1)

by_type = by_type.groupby('category').sum()

file_type_cnt = by_type['cik']

file_type_cnt.name = 'count'

file_type_cnt = file_type_cnt.sort_values(ascending=False)

file_type_cnt.plot(kind='pie',

autopct='%.1f%%', # 数值标签

colors = colors,

# radius = 2, # 饼图半径

startangle = 180, # 初始角度

textprops= {'fontsize':12,'color':'#2f3542'}

)

# Filing Files 分析

spv_files = df[['cik','company','effective','filing_id','filing_type','file_idx']]

spv_files_df = spv_files.groupby(['cik','company','filing_id','effective','filing_type']).count().reset_index()

spv_files_df.sort_values('file_idx', ascending=False).head(10)

# Filing File 数量分布

files_cnt_bins = [0,1,2,3,4,5,6,7,8,9,10,20,30,40,50,100,1000]

spv_files_df['cate'] = pd.cut(spv_files_df['file_idx'], files_cnt_bins)

by_spv_files_count = spv_files_df.groupby('cate').count()

by_spv_files_count['cik'].plot.bar(rot=45)

import pandas as pd

df = pd.DataFrame([10,20,30,40], columns=['nums'],index=['a','b','c','d'])

df

df.index = ['a','b','b','d']

df

df.loc['b']

df['nums']

| analysis/edgar-abs-filings-universe.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import keras

import matplotlib.pyplot as plt

import csv

import cv2

from sklearn.model_selection import train_test_split

from sklearn.utils import shuffle

from keras.models import *

from keras.layers import *

from keras.optimizers import Adam

# %matplotlib inline

# +

#data folder

log_path = os.getcwd() + '/data/driving_log.csv'

data_folder = os.getcwd() + '/'

#resized image dimension in training

img_rows = 16

img_cols = 32

#batch size and epoch

batch_size=256

nb_epoch=100

# +

# readin log files

logs = []

with open(log_path,'rt') as f:

reader = csv.reader(f)

for line in reader:

logs.append(line)

log_labels = logs.pop(0)

# +

# image preprocessing, take only the S channel of HSV color space

img = plt.imread(data_folder + (logs[10][0]).strip())

img_processed = (cv2.cvtColor(cv2.resize(img,(32,16)), cv2.COLOR_RGB2HSV))[:,:,1]

plt.subplot(2,1,1)

plt.imshow(img)

plt.subplot(2,1,2)

plt.imshow(img_processed,cmap='gray')

# -

def image_preprocessing(img):

"""preproccesing training data to keep only S channel in HSV color space, and resize to 16X32"""

resized = cv2.resize((cv2.cvtColor(img, cv2.COLOR_RGB2HSV))[:,:,1],(img_cols,img_rows))

return resized

def load_data(X,y,data_folder):

log_path = data_folder + 'data/' + 'driving_log.csv'

logs = []

with open(log_path,'rt') as f:

reader = csv.reader(f)

for line in reader:

logs.append(line)

log_labels = logs.pop(0)

for i in range(len(logs)):

img_path = logs[i][0]

img_path = data_folder+'/data/IMG'+(img_path.split('IMG')[1]).strip()

img = plt.imread(img_path)

X.append(image_preprocessing(img))

y.append(float(logs[i][2]))

# +

data={}

data['features'] = []

data['labels'] = []

load_data(data['features'], data['labels'],data_folder)

# -

X_train = np.array(data['features']).astype('float32')

y_train = np.array(data['labels']).astype('float32')

X_train = np.append(X_train,X_train[:,:,::-1],axis=0)

y_train = np.append(y_train,-y_train,axis=0)

X_train, y_train = shuffle(X_train, y_train)

X_train, X_val, y_train, y_val = train_test_split(X_train, y_train, random_state=0, test_size=0.1)

X_train = X_train.reshape(X_train.shape[0], img_rows, img_cols, 1)

X_val = X_val.reshape(X_val.shape[0], img_rows, img_cols, 1)

# +

model = Sequential([

Lambda(lambda x: x/127.5 - 1.,input_shape=(img_rows,img_cols,1)),

Conv2D(2, 3, 3, border_mode='valid', input_shape=(img_rows,img_cols,1), activation='relu'),

MaxPooling2D((4,4),(4,4),'valid'),

Dropout(0.25),

Flatten(),

Dense(1)])

model.summary()

# -

model.compile(loss='mean_squared_error',optimizer=Adam(1e-5))

history = model.fit(X_train, y_train,batch_size=batch_size, nb_epoch=nb_epoch,verbose=1, validation_data=(X_val, y_val))

| iPythonNb/TinyModel.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## N PROTEIN BINDING MODELLING

# In this notebook, I will create a model for predicting the binding affinity of coronavirus N_proteins to host-cell RNA. A base model would be built for comparison purposes using the whole sequence of detected RNAs. However, the main model would use only sequences of the binding regions of N_protein. The dataset to be used is a CRAC data which has binding affinities of a particular N protien to a particular mRNA with 9522 RNAs and 4 N proteins.

# from sklearn. Also, the gene sequences are in fasta format; `biomart_transcriptome_all.fasta`. CRAC data is in `SB20201008_hittable_unique.xlsx`.

# First I import the relevant libraries for the work

# +

# python

import itertools

import joblib

# sklearn

from sklearn.model_selection import train_test_split

from sklearn import metrics

from sklearn.linear_model import LinearRegression

from sklearn.preprocessing import OrdinalEncoder, OneHotEncoder

# data processing and visualisation

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

from Bio import SeqIO

from scipy import stats

# -

# Next I write helper function; `possible_kmers` to output the possible kmers of *ATGC* of length k and `generate_kmers` to generate kmers from an input sequence

# +

def possible_kmers(k):

"""All permutation of DNA sequence based on size

Args:

- k (int); number of bases

Return:

- (list) all possible kmers of lenght k

"""

kmers = []

for output in itertools.product('ATGC', repeat=k):

kmers.append(''.join(list(output)))

return kmers

def generate_kmers(sequence, window, slide=1):

"""Make kmers from a sequence

Args:

- sequence (str); sequence to compute kmer

- window (int); size of kmers

- slide (int); no of bases to move 'window' along sequence

default = 1

Return:

- 'list' object of kmers

Example:

- >>> DNA_kmers('ATGCGTACC', window=4, slide=4)

['ATGC', 'GTAC']

"""

all_possible_seq = []

kmers = []

for base in range(0, len(sequence), slide): # indices

# extend by window

all_possible_seq.append(sequence[base:base + window])

# remove all kmers != window

for seq in all_possible_seq:

if len(seq) == window:

kmers.append(seq)

return kmers

# -

# Next, I read and process the data, `biomart_transcriptome_all` which contains all the transcripts and sequence of humans. Also, I read the CRAC data `SB20201008_hittable_unique.xlsx`

# +

# read and parse the fasta file as SeqIO object

file = SeqIO.parse(

'biomart_transcriptome_all.fasta',

'fasta'

)

# select ids and corresponding sequences

sequence_ids = []

sequences = []

for gene in file:

sequence_ids.append(gene.id)

sequences.append(str(gene.seq))

# create a table of gene ids; select only gene short name and type

id_tab = pd.Series(sequence_ids).str.split('|', expand=True).iloc[:, 2]

# join gene_id_tab with corresponding seqs

transcripts = pd.concat([id_tab, pd.Series(sequences)], axis=1)

# set column names

transcripts.columns = ['gene', 'seq']

# read N_protein CRAC data

N_protein = pd.read_excel('SB20201008_hittable_unique.xlsx', sheet_name='rpm > 10')

# -

# Next, I select for transcripts that appear in N_protein data from the transcriptome data. I also remove duplicated transcripts.

# +

# select common genes between N_protein and transcripts

N_genes = set(N_protein['Unnamed: 0'])

t_genes = set(transcripts.gene)

common_genes = N_genes.intersection(t_genes)

# filter transcripts data with common genes and remove duplicates

transcripts_N = transcripts.drop_duplicates(

subset='gene').set_index('gene').loc[common_genes]

# -

transcripts_N[1, 'seq']

# Next I use the `generate_kmers` function to make kmers from each sequence

# +

# create kmers from seq

transcripts_N['kmers'] = transcripts_N.seq.apply(generate_kmers, window=4, slide=4)

# view of kmers data

transcripts_N.kmers

# -

# From the output, it can be seen that the kmers have been produced nicely. Next, I would seperate each kmer into a feature and pad the short sequences with `'_'`

# seperate kmers into columns. pad short seqs with '_'

kmer_matrix = transcripts_N.kmers.apply(pd.Series).fillna('_')

# Now I can use `sklearn.OneHotEncoder` to convert my strings to floats for my feature matrix `ohe_kmers` and create my response vector `y` from `133_FH-N_229E` values in the CRAC data

# +

# convert kmers to ints

ohe = OneHotEncoder(sparse=True)

ohe_kmers = ohe.fit_transform(kmer_matrix)

# response vector

y = pd.concat([kmer_matrix[0],

N_protein.drop_duplicates(subset='Unnamed: 0').set_index('Unnamed: 0')], axis=1)['133_FH-N_229E']

# -

# Next, I split the data into **80%** training and **20%** testing testing sets

# split data into train and test sets

XTrain, XTest, yTrain, yTest = train_test_split(ohe_kmers, y, test_size=0.2, random_state=1)

# Now I am ready to train the model. I would use `sklearn.linear_model.LinearRegression` as my algorithm and use `r2_score` as my evaluation metric

# +

# instantiate the regressor

linreg = LinearRegression()

# train on data

linreg.fit(XTrain, yTrain)

# check performance on test set

yPred = linreg.predict(XTest)

metrics.r2_score(y_true=yTest, y_pred=yPred)

# -

# An `r2_score` of **0.71** is not bad for a base model. Next, I can save the model as a file to avoid retraining it.

# save model

_ = joblib.dump(linreg, 'BaseModel.sav')

# Next, I make a correlation plot of my predicted and testing values

# +

# plot of yTest vs yPred

g = sns.regplot(x = yTest, y = zzz, scatter_kws={'alpha':0.2})

# set axes labels

_ = plt.xlabel('yTest')

_ = plt.ylabel('yPred')

# pearson correlation test

r, p = stats.pearsonr(yTest, yPred)

_ = g.annotate('r={}, p={}'.format(r, p), (-8, 2))

# -

# Surprisingly, the pearson correlation was **0.72** with a significant p-value

#

# Next, I would use a peak calling program to select the actual sequence to which the N_proteins bind on the RNA. Hopefully that would produce a model.

plt.figure(figsize=(10, 8))

sns.barplot(x='Kmer Encoding', y='Pearson Correlation', data=kmer_data, color='blue')

plt.xticks(rotation=45, size=15)

plt.ylabel('Pearson Correlation ', size=20, rotation=360)

plt.xlabel('Kmer Encoding Type', size=20)

| N_Protein_Modelling.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Generate `nearest` file

#

# Compare synthetic PUF to its training and holdout sets.

# ## Setup

#

# ### Imports

import pandas as pd

import numpy as np

import synthimpute as si

import synpuf

# **UPDATE!**

SYNTHESIS_ID = 13

PCT_TRAIN = 50

# Folders.

PUF_SAMPLE_DIR = '~/Downloads/puf/'

SYN_DIR = '~/Downloads/syntheses/'

NEAREST_DIR = '~/Downloads/nearest/'

# ### Load data

synth = pd.read_csv(SYN_DIR + 'synpuf' + str(SYNTHESIS_ID) + '.csv')

train = pd.read_csv(PUF_SAMPLE_DIR + 'train' + str(PCT_TRAIN) + '.csv')

test = pd.read_csv(PUF_SAMPLE_DIR + 'test' + str(100 - PCT_TRAIN) + '.csv')

# ## Preprocessing

# Drop calculated features used as seeds, and drop s006.

synpuf.add_subtracted_features(train)

synpuf.add_subtracted_features(test)

DROPS = ['S006', 'e00600_minus_e00650', 'e01500_minus_e01700',

'RECID', 'E00100', 'E09600']

train.drop(DROPS, axis=1, inplace=True)

test.drop(DROPS, axis=1, inplace=True)

synth.columns = [x.upper() for x in synth.columns]

synth = synth[train.columns]

synth.reset_index(drop=True, inplace=True)

train.reset_index(drop=True, inplace=True)

test.reset_index(drop=True, inplace=True)

# ## Nearest calculation

#

# Compare nearest standardized Euclidean distance. Takes ~10 hours.

# %%time

nearest = si.nearest_synth_train_test(synth, train, test)

nearest.to_csv(NEAREST_DIR + 'nearest' + str(SYNTHESIS_ID) + '.csv',

index=False)

| analysis/disclosure/nearest13.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # An Analysis of Obesisy in First Class Children in Ireland

#

# ## Introduction

#

# In this notebook I am creating a dataset to simulate a hypothetical study on the BMI of primary school first class children in 2016 based on their gender, age, height, and weight. I have based the simulation on a subset of the findings of the Childhood Obesity Surveillance Initiative (COSI).

#

# The National Nutrition Centre in UCD was commisioned by the HSE to carry out the surveillance work in COSI. This research was undertaken as part of the World Health Organsiation European Childhood Obesity Surveillance Initiative. This survey has been conducted to date over four waves in 2008; 2010; 2012; and 2015. The data collected has been based on over 17,000 examinations in over 150 randomly selected primary schools. [Ref I COSI](https://www.hse.ie/eng/about/who/healthwellbeing/our-priority-programmes/heal/heal-docs/cosi-in-the-republic-of-ireland-findings-from-2008-2010-2012-and-2015.pdf)

#

# Overall the trend shows the levels of overweight and obesity in first class children is stabilising, although at a high level. In non-Deis schools the levels have fallen slightly, whereas in Deis schools levels continue to rise. More girls tend to be overweight than boys. Summary findings from the 2012 study are outlined below:

#

# [COSI Report 2012 Ref II](http://www.ucd.ie/t4cms/COSI%20report%20(2014).pdf)

#

#

#

# | BMI Classification |Thin-Normal | Overweight | Obese |

# | :-----------: |:----: |:-------------: | :-------: |

# | First Class Boys | 85.6% |12.2% | 2.2% |

# | First Class Girls | 78.6% |15.9% | 5.5% |

#

#

# I used the 2012 report to generate the BMI and weight variables, as it broke out the results by gender.

#

# +

# import python packages

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# magic command to use matplotlib within this notebook

# %matplotlib inline

# -

# ## Variables

#

# The dataset has a population of 1000. It contains the following variables:

#

#

# ### Names

# I created two random lists of names, by gender, combining the most popular childrens names with the most popular Irish surnames. 500 names were selected randomly for each gender.

#

#

# ### Gender

# The gender was generated in the dataset based on the condition, whether or not the name of the pupil was contained in the girls list.

#

#

# ### Age

# The ages of the children in the study I a focusing on are those in first class. The ages are chosen randomly from a list I generated, based on the 2016 first class ages breakdown from the CSO below [[Ref III]](https://www.cso.ie/px/pxeirestat/Statire/SelectVarVal/Define.asp?maintable=EDA42&PLanguage=0):

#

# 6 Years old 34%

# 7 Years old 65%

# 8 Years old 01%

#

#

# +

"""

NAMES

List for the random name generator for the dataset. I am generating two lists. A list of sample girls and boys names

from which a random selection will be chosen for the dataset.

"""

# The first names were extracted from excel from the CSO website, the most popular baby names from 2012 (https://www.cso.ie/en/releasesandpublications/er/ibn/irishbabiesnames2012/")

girl_firstname = ('Emily ','Sophie ','Emma ','Grace ','Lily ','Mia ','Ella ','Ava ','Lucy ','Sarah ','Aoife ','Amelia ','Hannah ','Katie ','Chloe ','Caoimhe ','Saoirse ','Kate ','Holly ','Ruby ','Sophia ','Anna ','Lauren ','Leah ','Amy ','Isabelle ','Molly ','Ellie ','Jessica ','Olivia ','Roisin ','Ciara ','Kayla ','Julia ','Zoe ','Laura ','Niamh ','Abbie ','Erin ','Rachel ','Robyn ','Aisling ','Faye ','Rebecca ','Eva ','Layla ','Ellen ','Cara ','Freya ','Abigail ','Eve ','Isabella ','Megan ','Aine ','Clodagh ','Aoibhinn ','Millie ','Nicole ','Aoibheann ','Maja ','Sadhbh ','Eabha ','Charlotte ','Amber ','Caitlin ','Sofia ','Alannah ','Zara ','Alice ','Maria ','Elizabeth ','Lena ','Mary ','Emilia ','Aimee ','Lilly ','Hollie ','Aoibhe ','Victoria ','Eimear ','Maya ','Isabel ','Orla ','Evie ','Kayleigh ','Brooke ','Clara ','Meabh ','Lexi ','Tara ','Daisy ','Katelyn ','Ailbhe ','Amelie ','Natalia ','Sara ','Hanna ','Laoise ','Ruth ','Madison ','Maeve ','Maisie ','Rose ',)

boy_firstname = ('Jack ','James ','Daniel ','Sean ','Conor ','Adam ','Harry ','Ryan ','Dylan ','Michael ','Luke ','Charlie ','Liam ','Oisin ','Cian ','Jamie ','Thomas ','Alex ','Noah ','Darragh ','Patrick ','Aaron ','Cillian ','Matthew ','John ','Nathan ','David ','Fionn ','Evan ','Ethan ','Jake ','Kyle ','Rian ','Ben ','Max ','Eoin ','Tadhg ','Finn ','Callum ','Samuel ','Joshua ','Rory ','Jayden ','Joseph ','Tyler ','Sam ','Shane ','Mark ','Robert ','Aidan ','William ','Ronan ','Eoghan ','Alexander ','Leon ','Cathal ','Mason ','Tom ','Oliver ','Andrew ','Oscar ','Ciaran ','Bobby ','Jacob ','Senan ','Rhys ','Scott ','Benjamin ','Cormac ','Kevin ','Lucas ','Alan ','Donnacha ','Jakub ','Christopher ','Filip ','Killian ','Josh ','Alfie ','Tommy ','Ruairi ','Odhran ','Oran ','Leo ','Isaac ','Dara ','Jason ','Zach ','Martin ','Peter ','Brian ','Danny ','Niall ','Tomas ','Edward ','Stephen ','Logan ','Kacper ','Anthony ','Billy ',)

# Surnames top 100 Irish surnames from (https://meanwhileinireland.com/ranked-top-100-irish-surnames-and-meanings/)

surname = ('Murphy','Kelly','<NAME>','Walsh','Smith','<NAME>','Byrne','Ryan','<NAME>','<NAME>','<NAME>','Doyle','McCarthy','Gallagher','O Doherty','Kennedy','Lynch','Murray','Quinn','Moore','McLoughlin','O Carroll','Connolly','Daly','O Connell','Wilson','Dunne','Brennan','Burke','Collins','Campbell','Clarke','Johnston','Hughes','O Farrell','Fitzgerald','Brown','Martin','Maguire','Nolan','Flynn','Thompson','O Callaghan','O Donnell','Duffy','O Mahony','Boyle','Healy','O Shea','White','Sweeney','Hayes','Kavanagh','Power','McGrath','Moran','Brady','Stewart','Casey','Foley','Fitzpatrick','<NAME>','McDonnell','MacMahon','Donnelly','Regan','Donovan','Burns','Flanagan','Mullan','Barry','Kane','Robinson','Cunningham','Griffin','Kenny','Sheehan','Ward','Whelan','Lyons','Reid','Graham','Higgins','Cullen','Keane','King','Maher','MacKenna','Bell','Scott','Hogan','O Keeffe','Magee','MacNamara','MacDonald','MacDermott','Molony','<NAME>','Buckley','O Dwyer',)

# Create empty lists for girls and boys names

girlsname = []

boysname = []

# Generate a list of girls names - this list contains 10300 girlsnames

for i in girl_firstname:

for j in surname:

girlsname.append(i+j)

# Generate a list of boys names - the list contains 10,000 boysnames

for i in boy_firstname:

for j in surname:

boysname.append(i+j)

# Randomly choose 500 girls and the 500 boys names from the lists above

gnames = (np.random.choice(girlsname,500))

bnames = (np.random.choice(boysname,500))

# Combine the two lists into a single array

names = np.concatenate([gnames,bnames])

"""

AGE

List for age generator for the dataset. Randomely generate 1000 ages of 1st class students based on the CSO 2016 first class ages.

"""

age = np.random.choice([6,7,8], 1000, p=[0.34, 0.65, 0.01])

plt.hist(age, bins=3)

plt.title("Ages")

plt.xlabel("Years")

plt.ylabel("Number")

plt.show()

# -

# ### Height

# The height of each child is randomly selected based on age and gender. I have used the UK WHO growth charts as a basis for these as Ireland is moving to this model currently. Data is not available in Ireland yet for children ages 6 -8.[Ref VI](https://www.hse.ie/eng/health/child/growthmonitoring/)

#

# [[Ref IV]](https://www.rcpch.ac.uk/sites/default/files/Boys_2-18_years_growth_chart.pdf)

#

# | Boys Aged | Min cm | Median cm | Max cm |

# | :-----------: |:-------------: | :-------: | :----: |

# | 6 | 103 | 119 | 129 |

# | 7 | 108 | 122 | 136 |

# | 8 | 113 | 128 | 142 |

#

#

# [[Ref V]](https://www.rcpch.ac.uk/sites/default/files/Girls_2-18_years_growth_chart.pdf)

#

#

# | Girls Aged | Min cm | Median cm | Max cm |

# | :-----------: |:-------------: | :-------: | :----: |

# | 6 | 102 | 115 | 128 |

# | 7 | 107 | 121 | 135 |

# | 8 | 113 | 127 | 141 |

#

# It is widely accepted that human heights follow a normal distribution. (See <NAME>. Foetus Into Man: Physical Growth From Conception to Maturity. Cambridge, MA: Harvard University Press; 1990. & Snedecor GW, Cochran WG. Statistical Methods. Ames, IA: Iowa University; 1989. )I have used the numpy.random normal package to generate the heights based on gender and age according to the tables above. VII. [Ref VII](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2831262/#b27-dem-46-0001)

#

# The python code to generate heights is below:

#

# +

"""

HEIGHTS

Generate a random sample of lists of heights based on gender and age, on the normal distribution - per the UK WHO growth charts.

The random lists are generated for each age and gender. The height function randomly selects

"""

height_boys_6 = np.round( np.random.normal(1.19, 0.029,10000 ), 2)

height_boys_7 = np.round( np.random.normal(1.22, 0.032,10000 ), 2)

height_boys_8 = np.round( np.random.normal(1.28, 0.032,10000 ), 2)

height_girls_6 = np.round( np.random.normal(1.15, 0.031,10000 ), 2)

height_girls_7 = np.round( np.random.normal(1.21, 0.032,10000 ), 2)

height_girls_8 = np.round( np.random.normal(1.27, 0.032,10000 ), 2)

"""

This function is called by the dataset to populate the height column. The random.choice function is used to randomly

select a height from the correct list above dependig on age and gender

"""

def height (row):

if row['Age']==6:

if row['Gender'] == 'Female':

return np.random.choice(height_girls_6,1)

if row['Age']==6:

if row['Gender'] == 'Male':

return np.random.choice(height_boys_6,1)

if row['Age']==7:

if row['Gender'] == 'Female':

return np.random.choice(height_girls_7,1)

if row['Age']==7:

if row['Gender'] == 'Male':

return np.random.choice(height_boys_7,1)

if row['Age']==8:

if row['Gender'] == 'Female':

return np.random.choice(height_girls_8,1)

if row['Age']==8:

if row['Gender'] == 'Male':

return np.random.choice(height_boys_8,1)

# -

# ### BMI

#

# For the purpose of this simulation I have broken BMI into 3 categories: Thin-Normal; Overweight; Obese.

#

# BMI is calculated using the same formula for adults and children.

#

# Formula: BMI = weight (kg) / [height (m)]2

#

# Adult BMI cutoff's are standard. For children it depends on gender and age. I used the childrens cutoffs at their age plus 6 months. [Adult BMI Ref VIII](https://en.wikipedia.org/wiki/Body_mass_index)

# [Boys BMI Ref IX](https://www.who.int/growthref/sft_bmifa_boys_z_5_19years.pdf?ua=1)

# [Girls BMI Ref X](https://www.who.int/growthref/sft_bmifa_girls_z_5_19years.pdf?ua=1)

#

#

#

# | BMI Cut-Offs | Thin-Normal | Overweight | Obese |

# | :-----------: |:-------------: | :-------: | :----: |

# | Adult | 15- 25 | 25 - 30 | 30-60 |

# | Girl aged 6 | 11.7 - 17.1| 17.1 - 19.5 | 19.5 - 30 |

# | Girl aged 7 | 11.8 - 17.5 | 17.5 - 20.1 | 20.1 - 30 |

# | Girl aged 8 | 12.0- 18.0 | 18.0 - 21.0 | 21.0 - 30 |

# | Boy aged 6 | 12.2 - 16.9 | 16.9 -18.7 | 18.7 - 30 |

# | Boy aged 7 | 12.3 - 17.2 | 17.2 - 19.3 | 19.3 - 30 |

# | Boy aged 8 | 12.5 - 17.7 | 17.7 - 20.1 | 20.1 - 30 |

#

# I created the BMI's in two steps. The classification was generated first (based on gender and % from COSI 2012), and then BMI score generated depending on this classification, the student's age, and gender.

#

# A variety of different studies suggest BMI follows different probability distributions: normal; log normal, skew student t etc. There is no definitive study on children's BMIs distribution. [Ref XI](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1636707/)I have chosen log normal from the numpy.random package to generate the BMI data.

#

# The COSI study in 2012 recorded BMI in first class students with a median value of 16.25; min value of 12.3; and max value of 26.1 averaged for boys and girls. [Ref II](http://www.ucd.ie/t4cms/COSI%20report%20(2014).pdf)

#

# Creating the BMI data

# 1. I used log normal numpy.random to generate a list of values for BMI.

# 2. I filtered this list excluding all values outside the min and max BMIs of the 2012 COSI survey.

# 3. 18 individual lists were created for each classification gender age combination based on the WHO BMI cutoffs in the table above.

# 4. A function was written to check gender, age, and classification and chose a random sample from the appropriate list.

#

# The code generating the BMI is below:

#

# +

"""

BMI CLASSIFICATION

BMI classification generator was based on the percentages in the 2012 study in the introduction.

I generated a 1000 random classification list according to the proportions from the 2012 study.

"""

girlBmiClass = np.random.choice(['Thin-Normal','Overweight','Obese'], 1000, p=[0.786, 0.159, 0.055])

boyBmiClass = np.random.choice(['Thin-Normal','Overweight','Obese'], 1000, p=[0.856, 0.122, 0.022])

"""

This function is called by the dataset to randomly select a BMI class from the lists above based on gender.

"""

def bmic (row):

# if gender female - randomly choose a classification from the girls BMI class list

if row['Gender'] == 'Female':

return np.random.choice(girlBmiClass,1)

if row['Gender'] == 'Male':

return np.random.choice(boyBmiClass,1)

"""

BMI

Generate a random sample of lists of BMI based on gender and age, on the log normal distribution - per the UK WHO BMI growth

cutoffs.

The random lists are generated for each unique age, gender and BMI classification. The get BMI function randomly selects

elements from the correct list according to age, gender and BMI classification.

"""

# Create a log normal random list rounded to one decimal place

mu, sigma = 16.25 , 2 # mean and standard deviation - standard deviation was chosen by trial and error

bmiNum = np.round(np.random.normal(mu, sigma, 10000),1)

#Filter out the numbers outside the min and max values

bmiNum = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 26.1)]

# filter the lists per the WHO cutoffs by category. TN -Thin-Normal; OW - Overweight; OB - Obese

bmi_TN_boys_6 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 16.9)]

bmi_TN_boys_7 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 17.2)]

bmi_TN_boys_8 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 17.7)]

bmi_TN_girls_6 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 17.1)]

bmi_TN_girls_7 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 17.5)]

bmi_TN_girls_8 = bmiNum [(bmiNum >= 12.3) & (bmiNum <= 18.0)]

bmi_OW_boys_6 = bmiNum [(bmiNum > 16.9) & (bmiNum <= 18.7)]

bmi_OW_boys_7 = bmiNum [(bmiNum > 17.2) & (bmiNum <= 19.3)]

bmi_OW_boys_8 = bmiNum [(bmiNum > 17.7) & (bmiNum <= 20.1)]

bmi_OW_girls_6 = bmiNum [(bmiNum > 17.1) & (bmiNum <= 19.5)]

bmi_OW_girls_7 = bmiNum [(bmiNum > 17.5) & (bmiNum <= 20.1)]

bmi_OW_girls_8 = bmiNum [(bmiNum > 18.0) & (bmiNum <= 21.0)]

bmi_OB_boys_6 = bmiNum [(bmiNum > 18.7) & (bmiNum <= 26.1)]

bmi_OB_boys_7 = bmiNum [(bmiNum > 19.3) & (bmiNum <= 26.1)]

bmi_OB_boys_8 = bmiNum [(bmiNum > 20.1) & (bmiNum <= 26.1)]

bmi_OB_girls_6 = bmiNum [(bmiNum > 19.5) & (bmiNum <= 26.1)]

bmi_OB_girls_7 = bmiNum [(bmiNum > 20.1) & (bmiNum <= 26.1)]

bmi_OB_girls_8 = bmiNum [(bmiNum > 21.0) & (bmiNum <= 26.1)]

"""

This function is called by the dataset to populate the bmi column. The random.choice function is used to randomly

select a bmi from the correct list above dependig on age, gender and BMI classification.

"""

def bmi (row):

#Thin-Normal BMI classification

if row['Age']==6:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_girls_6,1)

if row['Age']==6:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_boys_6,1)

if row['Age']==7:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_girls_7,1)

if row['Age']==7:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_boys_7,1)

if row['Age']==8:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_girls_8,1)

if row['Age']==8:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Thin-Normal':

return np.random.choice(bmi_TN_boys_8,1)

# Overweight Classification

if row['Age']==6:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_girls_6,1)

if row['Age']==6:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_boys_6,1)

if row['Age']==7:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_girls_7,1)

if row['Age']==7:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_boys_7,1)

if row['Age']==8:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_girls_8,1)

if row['Age']==8:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Overweight':

return np.random.choice(bmi_OW_boys_8,1)

# Obese Classification

if row['Age']==6:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_girls_6,1)

if row['Age']==6:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_boys_6,1)

if row['Age']==7:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_girls_7,1)

if row['Age']==7:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_boys_7,1)

if row['Age']==8:

if row['Gender'] == 'Female':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_girls_8,1)

if row['Age']==8:

if row['Gender'] == 'Male':

if row['BMI Class'] == 'Obese':

return np.random.choice(bmi_OB_boys_8,1)

# plot the overall BMI classification lists by gender

plt.subplot(1, 2, 1)

plt.title("Girls")

plt.hist(girlBmiClass)

plt.subplot(1, 2, 2)

plt.title("Boys")

plt.hist(boyBmiClass)

# -

# ### Weight

#

# To generate the BMI I used the classification porportions from the COSI 2012 survey.

#

# I calculated the weight in Kg from the height and BMI of each first class student, using the values in the columns and the formula below:

#

# Formula: weight (kg) = BMI * [height (m)]2

#

#

# ## Dataset Creation

#

# The dataset is created in the cell below, populated using the variables and functions coded above. Note the values in columns: Age is in years; Height is in meters; & Weight is in kg.

#

# There was an error when I attempted to change the datatype of the column Weight from object to float. I commented out this line of code as I have been unable to reproduce this error.

#

#

# +

# Create the dataset containing the list of names and ages and an empy column for each of the other variables.

d = {'Name': names, 'Age': age, 'Gender': '', 'Height': '', 'Weight': '', 'BMI Class': '', 'BMI' : '' }

df = pd.DataFrame(data=d)

# Populate the gender variable in the dataset. If the name is part of the gnames list return female, else return male.

df['Gender'] = np.where(np.isin(df['Name'],gnames), 'Female', 'Male')

# Populate the height variable

df['Height'] = df.apply(lambda row: height(row),axis=1)

# Change datatype to float

df['Height'] = df['Height'].str[0]

# Populate the BMI classification variable according to the 2012 proportions

df['BMI Class'] = df.apply(lambda row: bmic(row),axis=1)

df['BMI Class'] = df['BMI Class'].str[0]

# Populate the bmi variable

df['BMI'] = df.apply(lambda row: bmi(row),axis=1)

# Change datatype to float

df['BMI'] = df['BMI'].str[0]

#Populate the weight variable based on the BMI value and height

df['Weight'] = (df['Height']*df['Height']*df['BMI'])

# Change datatype to float - caused error when cell rerun

#df['Weight'] = df['Weight'].str[0]

# Round weights to one decimal place

df['Weight'] = round(df['Weight'],1)

df

# -

# ## Analysis

#

# I have analysed the dataset below, both graphically and using the summary stats of the dataframe. For the summary stats I have split the dataframe into two, one for girls and one for boys. The second table for both girls and boys was to show a count of the BMI breakdown by gender.

#

# **Summary stats of the numerical variables in the dataset**

#

# **Girls summary statistics**

# +

# Create a subset of the dataframe by gender

df_girl = (df[(df['Gender'] == 'Female')])

df_boy = (df[(df['Gender'] == 'Male')])

# Girsl summary stats

df_girl.describe()

# -

# Display a count of each BMI class for girls

grpG = df_girl.groupby('BMI Class')

grpG.describe()

# **Boys summary statistics**

#Boys summary stats

df_boy.describe()

# Display a count of each BMI class

grpB = df_boy.groupby('BMI Class')

grpB.describe()

# To visualise the releationships I have created 5 plots of the dataset below. The BMI classification, height weight and age followed the expected trends. However due to how the data is randomly generated the data does not fully mirror the original survey.

#

# Visually you can see the trend of a higher number of obese and overweight girls than boys. This is most clearly evident on the Obese plot of the three plots side by side below. Where each plot is by BMI classification type.

# Plot BMI classification by gender

sns.lmplot('Height', 'Weight', data=df, fit_reg=False, hue='BMI Class', col='Gender')

# +

sns.lmplot('Height', 'Weight', data=df, fit_reg=False, hue='Gender', col='BMI Class')

# -

# ## References

#

# I. The Childhood Obesity Surveillance Initiative (COSI) in the Republic of Ireland Findings 2015 [COSI Report 2015](https://www.hse.ie/eng/about/who/healthwellbeing/our-priority-programmes/heal/heal-docs/cosi-in-the-republic-of-ireland-findings-from-2008-2010-2012-and-2015.pdf)

#

# II. The Childhood Obesity Surveillance Initiative (COSI) in the Republic of Ireland Findings 2012 [COSI Report 2012](http://www.ucd.ie/t4cms/COSI%20report%20(2014).pdf)

#

# III. CSO - [Ages of children in first class] (https://www.cso.ie/px/pxeirestat/Statire/SelectVarVal/Define.asp?maintable=EDA42&PLanguage=0)

#

# IV. Heights [UK WHO growth chart boys](https://www.rcpch.ac.uk/sites/default/files/Boys_2-18_years_growth_chart.pdf)

#

# V. Heights [UK WHO growth chart girls](https://www.rcpch.ac.uk/sites/default/files/Girls_2-18_years_growth_chart.pdf)

#

# VI. Heights [Growth monitoring resources](https://www.hse.ie/eng/health/child/growthmonitoring/)

#

# VII. Heights [Normally distributed](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC2831262/#b27-dem-46-0001)

#

# VIII. BMI Wiki [BMI Wiki](https://en.wikipedia.org/wiki/Body_mass_index)

#

# IX. WHO BMI Boys [Boys BMI](https://www.who.int/growthref/sft_bmifa_boys_z_5_19years.pdf?ua=1)

#

# x. WHO BMI Girls [Girls BMI](https://www.who.int/growthref/sft_bmifa_girls_z_5_19years.pdf?ua=1)

#

# XI. BMI distribution curve [BMI distribution](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1636707/)

| Childhood Obesity Analysis Ireland.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# %matplotlib widget

import os

import sys

sys.path.insert(0, os.getenv('HOME')+'/pycode/MscThesis/')

import pandas as pd

from amftrack.util import get_dates_datetime, get_dirname, get_plate_number, get_postion_number,get_begin_index

import ast

from amftrack.plotutil import plot_t_tp1

from scipy import sparse

from datetime import datetime

from amftrack.pipeline.functions.node_id import orient

import pickle

import scipy.io as sio

from pymatreader import read_mat

from matplotlib import colors

import cv2

import imageio

import matplotlib.pyplot as plt

import numpy as np

from skimage.filters import frangi

from skimage import filters

from random import choice

import scipy.sparse

import os

from amftrack.pipeline.functions.extract_graph import from_sparse_to_graph, generate_nx_graph, sparse_to_doc

from skimage.feature import hessian_matrix_det

from amftrack.pipeline.functions.experiment_class_surf import Experiment, Edge, Node, Hyphae, plot_raw_plus

from amftrack.pipeline.paths.directory import run_parallel, find_state, directory_scratch, directory_project

from amftrack.notebooks.analysis.util import *

from scipy import stats

from scipy.ndimage.filters import uniform_filter1d

from statsmodels.stats import weightstats as stests

from amftrack.pipeline.functions.hyphae_id_surf import get_pixel_growth_and_new_children

from collections import Counter

from IPython.display import clear_output

from amftrack.notebooks.analysis.data_info import *

# -

exp = get_exp((39,269,329),directory_project)

def get_hyph_infos(exp):

select_hyph = {}

for hyph in exp.hyphaes:

select_hyph[hyph] = []

for i,t in enumerate(hyph.ts[:-1]):

tp1=hyph.ts[i+1]

pixels,nodes = get_pixel_growth_and_new_children(hyph,t,tp1)

speed = np.sum([get_length_um(seg) for seg in pixels])/get_time(exp,t,tp1)

select_hyph[hyph].append((t,hyph.ts[i+1],speed,pixels))

return(select_hyph)

# + jupyter={"outputs_hidden": true}

select_hyph = get_hyph_infos(exp)

# -

rh2 = [hyph for hyph in exp.hyphaes if np.any(np.array([c[2] for c in select_hyph[hyph]])>=300)]

hyph = [rh for rh in rh2 if rh.end.label == 1][0]

# hyph = choice(rh2)

speeds = [c[2] for c in select_hyph[hyph]]

ts = [c[0] for c in select_hyph[hyph]]

tp1s = [c[1] for c in select_hyph[hyph]]

plt.close('all')

plt.rcParams.update({

"font.family": "verdana",

'font.weight' : 'normal',

'font.size': 20})

fig=plt.figure(figsize=(8,8))

ax = fig.add_subplot(111)

ax.plot(ts,speeds)

ax.set_xlabel('time (h)')

ax.set_ylabel('speed ($\mu m .h^{-1}$)')

plot_raw_plus(exp,hyph.ts[-1],[hyph.end.label]+[hyph.root.label])

counts = []

for t in range(exp.ts):

count = 0

for hyph in rh2:

if int(hyph.end.ts()[-1])==int(t):

count+=1

counts.append(count)

# + jupyter={"outputs_hidden": true}

counts

# -

plot_raw_plus(exp,hyph.ts[-1]+1,[hyph.end.label]+[hyph.root.label]+[5107,5416])

# +

nx_graph_t = exp.nx_graph[35]

nx_graph_tm1 = exp.nx_graph[34]

Sedge = sparse.csr_matrix((30000, 60000))

for edge in nx_graph_t.edges:

pixel_list = nx_graph_t.get_edge_data(*edge)["pixel_list"]

pixela = pixel_list[0]

pixelb = pixel_list[-1]

Sedge[pixela[0], pixela[1]] = edge[0]

Sedge[pixelb[0], pixelb[1]] = edge[1]

tip = 2326

pos_tm1 = exp.positions[34]

pos_t = exp.positions[35]

mini1 = np.inf

posanchor = pos_tm1[tip]

window = 1000

potential_surrounding_t = Sedge[

max(0, posanchor[0] - 2 * window) : posanchor[0] + 2 * window,

max(0, posanchor[1] - 2 * window) : posanchor[1] + 2 * window,

]

# potential_surrounding_t=Sedge

# for edge in nx_graph_t.edges:

# pixel_list=nx_graph_t.get_edge_data(*edge)['pixel_list']

# if np.linalg.norm(np.array(pixel_list[0])-np.array(pos_tm1[tip]))<=5000:

# distance=np.min(np.linalg.norm(np.array(pixel_list)-np.array(pos_tm1[tip]),axis=1))

# if distance<mini1:

# mini1=distance

# right_edge1 = edge

# print('t1 re',right_edge)

mini = np.inf

for node_root in potential_surrounding_t.data:

for edge in nx_graph_t.edges(int(node_root)):

pixel_list = nx_graph_t.get_edge_data(*edge)["pixel_list"]

if (

np.linalg.norm(np.array(pixel_list[0]) - np.array(pos_tm1[tip]))

<= 5000

):

distance = np.min(

np.linalg.norm(

np.array(pixel_list) - np.array(pos_tm1[tip]), axis=1

)

)

if distance < mini:

mini = distance

right_edge = edge

# -

right_edge,mini

origin = np.array(

orient(

nx_graph_tm1.get_edge_data(*list(nx_graph_tm1.edges(tip))[0])[

"pixel_list"

],

pos_tm1[tip],

)

)

origin_vector = origin[0] - origin[-1]

branch = np.array(

orient(

nx_graph_t.get_edge_data(*right_edge)["pixel_list"],

pos_t[right_edge[0]],

)

)

candidate_vector = branch[-1] - branch[0]

dot_product = np.dot(origin_vector, candidate_vector)

if dot_product >= 0:

root = right_edge[0]

next_node = right_edge[1]

else:

root = right_edge[1]

next_node = right_edge[0]

last_node = root

current_node = next_node

last_branch = np.array(

orient(

nx_graph_t.get_edge_data(root, next_node)["pixel_list"],

pos_t[current_node],

)

)

i = 0

loop = []

while (

nx_graph_t.degree(current_node) != 1

and not current_node in nx_graph_tm1.nodes

): # Careful : if there is a cycle with low angle this might loop indefinitely but unprobable

i += 1

if i >= 100:

print(

"identified infinite loop",

i,

tip,

current_node,

pos_t[current_node],

)

break

mini = np.inf

origin_vector = (

last_branch[0] - last_branch[min(length_id, len(last_branch) - 1)]

)

unit_vector_origin = origin_vector / np.linalg.norm(origin_vector)

candidate_vectors = []

for neighbours_t in nx_graph_t.neighbors(current_node):

if neighbours_t != last_node:

branch_candidate = np.array(

orient(

nx_graph_t.get_edge_data(current_node, neighbours_t)[

"pixel_list"

],

pos_t[current_node],

)

)

candidate_vector = (

branch_candidate[min(length_id, len(branch_candidate) - 1)]

- branch_candidate[0]

)

unit_vector_candidate = candidate_vector / np.linalg.norm(

candidate_vector

)

candidate_vectors.append(unit_vector_candidate)

dot_product = np.dot(unit_vector_origin, unit_vector_candidate)

angle = np.arccos(dot_product)

if angle < mini:

mini = angle

next_node = neighbours_t

if len(candidate_vectors) < 2:

print(

"candidate_vectors < 2",

nx_graph_t.degree(current_node),

pos_t[current_node],

[node for node in nx_graph_t.nodes if nx_graph_t.degree(node) == 2],

)

competitor = np.arccos(np.dot(candidate_vectors[0], -candidate_vectors[1]))

if mini < competitor:

current_node, last_node = next_node, current_node

current_node

| amftrack/notebooks/Draft_1/Figure_5.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.7.4 64-bit

# name: python374jvsc74a57bd0fc2c00f0e2c44cb4028bd693f18a1b5d93d1de4cd12db71fca36ff691a163044

# ---

import pandas as pd

import random

# +

def func_random(jugador1,jugador2):

x = random.randint(0,6)

y = random.randint(0,6)

print("1º número:", x)

print("2º número", y)

if x > y:

return jugador1, "empieza"

else:

return jugador2 "empieza"

func_random(input("Nombre 1"),input("Nombre 2"))

# -

tablero1 = pd.DataFrame({1: "~", 2: "~",3: "~",4: "~",5: "~",6: "~",7: "~",8: "~",9: "~",10: "~"},index=[1, 2,3,4,5,6,7,8,9,10])

tablero1

tablero2 = pd.DataFrame({1: "~", 2: "~",3: "~",4: "~",5: "~",6: "~",7: "~",8: "~",9: "~",10: "~"},index=[1, 2,3,4,5,6,7,8,9,10])

tablero2

# +

""" Definimos la Clase Barco con la función colocar barco, la cual coloca los barcos en el tablero:

df = tablero1

size = dimensiones del barco ( pequeño: 2, mediano: 3, grande: 4, gigante: 5)

fil = fila

col = columna"""

class Barco:

def __init__(self, df):

self.df = df

def colocar_barco(self, size, df): # Introducimos el DataFrame (tablero que escogemos) y el size

self.size = size

fil = int(input("Introduce la coordenada fila"))

col = int(input("Introduce la coordenada columna"))

# Si tenemos ya barco en la coordenada introducida:

if df.loc[fil,col] == "#" :

print("Ops! There´s already a boat,try again!")

else:

# Pedimos cómo queremos colocar el barco a partir de la coordenada inicial:

h_v = input("escribe 'h' si quieres horizontal o 'v' si quieres vertical")

# Ponemos las condiciones para que el barco no se sobreponga o no se sobresalga del tablero para el caso horizontal:

if h_v == "h":

if col == 10:

print("You´re out of range")

elif size == 5 and (col == 7 or col == 8 or col == 9):

print("You´re out of range")

elif size == 4 and (col == 9 or col == 8):

print("You´re out of range")

elif size == 3 and (col == 9):

print("You´re out of range")

elif size == 2 and df.loc[fil,col + 1 ] == "#":

print("You´re crushing with another boat. Try again!")

elif size == 3 and (df.loc[fil,col + 1 ] == "#" or df.loc[fil,col + 2] == "#"):

print("You´re crushing with another boat. Try again!")

elif size == 4 and (df.loc[fil,col + 1 ] == "#" or df.loc[fil,col + 2] == "#" or df.loc[fil,col + 3] == "#"):

print("You´re crushing with another boat. Try again!")

elif size == 5 and (df.loc[fil,col + 1 ] == "#" or df.loc[fil,col + 2] == "#" or df.loc[fil,col + 3] == "#" or df.loc[fil,col + 4] == "#"):

print("You´re crushing with another boat. Try again!")

# Si todo está bien, entonces rellena el tablero con el barco indicado:

else:

fil = fil

col_f = col + size - 1

df.loc[fil,col:col_f] = "#"

print(tablero1)

return df

# Ponemos las condiciones para que el barco no se sobreponga o no se sobresalga del tablero para el caso horizontal:

if h_v == "v":

if fil == 10:

print("You´re out of range")

elif size == 5 and (fil == 7 or fil == 8 or fil == 9):

print("You´re out of range")

elif size == 4 and (fil == 9 or fil == 8):

print("You´re out of range")

elif size == 3 and (fil == 9):

print("You´re out of range")

elif size == 2 and (df.loc[fil + 1, col] == "#"):

print("You´re crushing with another boat. Try again!")

elif size == 3 and (df.loc[fil + 1, col] == "#" or df.loc[fil + 2, col] == "#"):

print("You´re crushing with another boat. Try again!")

elif size == 4 and (df.loc[fil + 1, col] == "#" or df.loc[fil + 2, col] == "#" or df.loc[fil + 3, col] == "#"):

print("You´re crushing with another boat. Try again!")

elif size == 5 and (df.loc[fil + 1, col] == "#" or df.loc[fil + 2, col] == "#" or df.loc[fil + 3, col] == "#" or df.loc[fil + 4 , col] == "#"):

print("You´re crushing with another boat. Try again!")

# Si todo está bien, entonces rellena el tablero con el barco indicado:

else:

fil_f = fil + size -1

col = col

df.loc[fil:fil_f,col] = "#"

print(tablero1)

return df

# Definimos una función para que te vaya pidiendo los barcos que hay que colocar:

# n_barcos = número de barcos que hay que introducir

# tamaño: pequeño, mediano, grande o gigante

# n: la dimensión del barco (2, 3, 4, 5)

# tablero: el tablero sobre el que colocamos los barcos

def coloca_tus_barcos(n_barcos,barco,tamaño,n, tablero):

contador = 1

print("contador1:", contador)

while contador < n_barcos:

print(f'Coloca el barco{contador} de tamaño {tamaño} en el tablero')

if type(barco.colocar_barco(n,tablero1)) != pd.core.frame.DataFrame:

print("Try Again!")

contador -= 1

contador += 1

return tablero

# -

barco_pequeño = Barco(tablero1)

barco_mediano = Barco(tablero1)

barco_grande = Barco(tablero1)

barco_gigante = Barco(tablero1)

# +

""" Definimos una función para que te vaya pidiendo los barcos que hay que colocar:

n_barcos = número de barcos que hay que introducir

tamaño: pequeño, mediano, grande o gigante

n: la dimensión del barco (2, 3, 4, 5)

tablero: el tablero sobre el que colocamos los barcos"""

def coloca_tus_barcos(n_barcos,barco,tamaño,n, tablero):

contador = 1

print("contador1:", contador)

while contador < n_barcos:

print(f'Coloca el barco{contador} de tamaño {tamaño} en el tablero')

if type(barco.colocar_barco(n,tablero1)) != pd.core.frame.DataFrame:

print("Try Again!")

contador -= 1

contador += 1

return tablero

# -

def coloca_jugador1():