code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Visualizations

# +

# Observe the rug plot of the first 1000 rows of Sales that you created in the previous question.

# Which of these ranges has not a single data point present between it?

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# the commonly used alias for seaborn is sns

import seaborn as sns

# set a seaborn style of your taste

sns.set_style("whitegrid")

# data

df = pd.read_csv("../Data/global_sales_data/market_fact.csv")

# rug = True

# plotting only a few points since rug takes a long while

sns.distplot(df['Sales'][:1000], rug=True)

plt.show()

# -

df = df[(df.Profit > 0)]

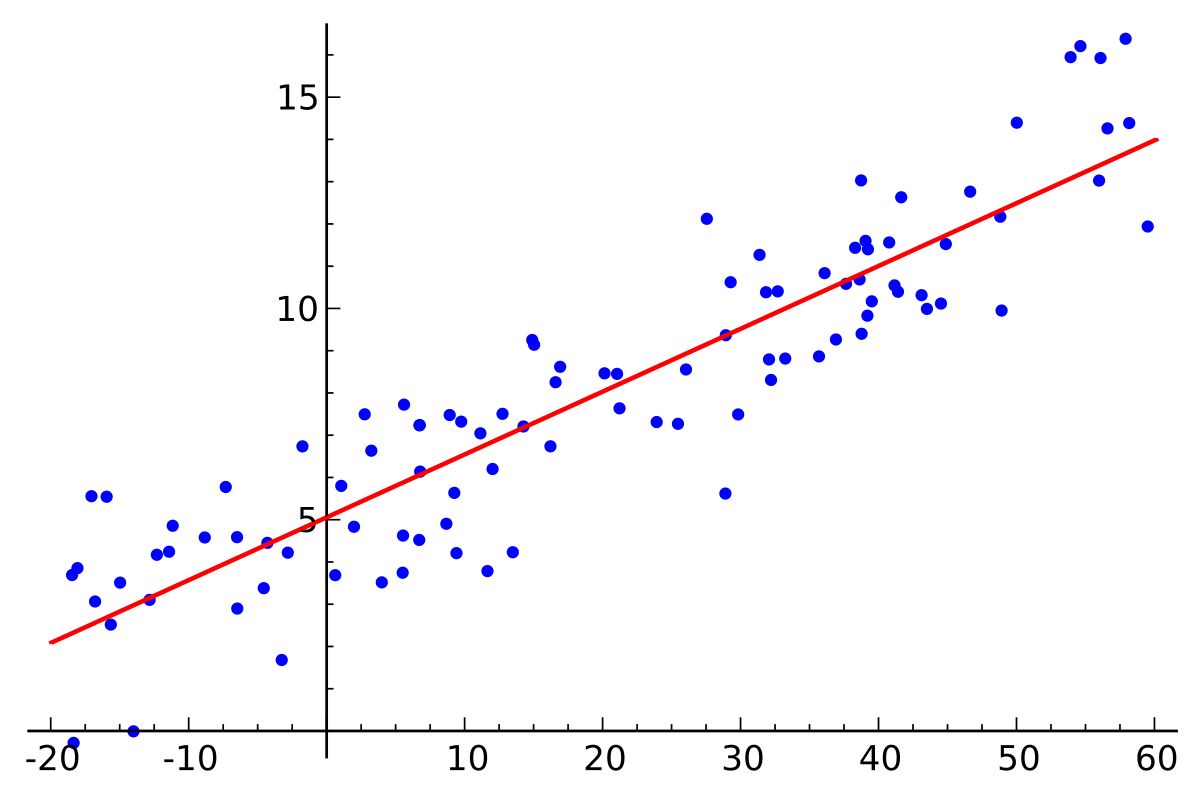

sns.jointplot('Sales', 'Profit', df)

plt.show()

# +

# Now, say you want to do this the other way round - different sub-plots for each categories,

# and divisions for customer segments inside each sub-plot.

market_df = pd.read_csv("../Data/global_sales_data/market_fact.csv")

customer_df = pd.read_csv("../Data/global_sales_data/cust_dimen.csv")

product_df = pd.read_csv("../Data/global_sales_data/prod_dimen.csv")

shipping_df = pd.read_csv("../Data/global_sales_data/shipping_dimen.csv")

orders_df = pd.read_csv("../Data/global_sales_data/orders_dimen.csv")

df = pd.merge(market_df, product_df, how='inner', on='Prod_id')

df = pd.merge(df, customer_df, how='inner', on='Cust_id')

# set figure size for larger figure

plt.figure(num=None, figsize=(12, 8), dpi=80, facecolor='w', edgecolor='k')

# specify hue="categorical_variable"

sns.boxplot(x='Product_Category', y='Profit', hue="Customer_Segment", data=df)

plt.show()

| Practice/Visualizations.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Local homology: tutorial

#

# <iframe src="./implemented_variants.pdf" width=200 height=200></iframe>

# %load_ext autoreload

# %autoreload 2

# +

import numpy as np

from gudhi import plot_persistence_diagram

from gudhi.datasets.generators import points

from sklearn import datasets

import matplotlib.pyplot as plt

from local_homology import compute_local_homology_alpha, compute_local_homology_r

from local_homology.alpha_filtration import distance_to_point_outside_ball, distance_to_expanding_boundary, distance_to_boundary

from local_homology.dataset import intersecting_lines

from local_homology.r_filtration import plot_one_skeleton

from local_homology.vis import plot_disc, plot_point_cloud, plot_rectangle

# -

np.random.seed(0)

# ## Local homology at the intersection of two segments

# +

point_cloud = intersecting_lines(100, 0.01)

x0 = np.array([[0., 0.]])

epsilon = 0.2

plot_point_cloud(point_cloud)

# -

# ### $\alpha$-filtration

# We consider the points in the disc and we perform a Vietoris-Rips filtration by growing balls around those points. There are three variants on how to compute the distance of a point to the boundary.

plot_point_cloud(point_cloud)

plot_disc(x0[0], epsilon, color="r", label=r"Neighborhood for $\alpha$-filtr")

alpha_dgm = compute_local_homology_alpha(point_cloud, x0, epsilon, 2,

distances=distance_to_point_outside_ball)

_ = plot_persistence_diagram(alpha_dgm)

alpha_dgm_1 = [(b, d)for dim, (b, d) in alpha_dgm if dim==1.]

alpha_dgm_1

# We see indeed 3 prominent points in $H_1$, what corresponds to the 4 branches coming out from the center.

# **Surprisingly, we also see some $H_2$ if we call the fnuction with `max_dimension=2`.**

# +

alpha_dgm_boundary = compute_local_homology_alpha(

point_cloud, x0, epsilon, 2, distances=distance_to_boundary

)

alpha_dgm_expanding = compute_local_homology_alpha(

point_cloud, x0, epsilon, 2, distances=distance_to_expanding_boundary)

fig, axes = plt.subplots(1, 3, figsize=(10, 3))

_ = plot_persistence_diagram(alpha_dgm, axes=axes[0])

_ = plot_persistence_diagram(alpha_dgm_boundary, axes=axes[1])

_ = plot_persistence_diagram(alpha_dgm_expanding, axes=axes[2])

plt.suptitle("Distance to")

axes[0].set_title("point outside")

axes[1].set_title("boundary")

axes[2].set_title("expanding boundary")

plt.tight_layout()

# -

# ### $r$ filtration

#

# We fix a Rips-scale $\alpha$ and we build the filtration by considering a smaller and smaller neighborhood around $x_0$. **Since the homology is computed via a duality theorem, we do not get $H_0$.**

# +

alpha = 0.1

plot_point_cloud(point_cloud)

for x in point_cloud:

plot_disc(x, alpha, alpha=alpha, color="r", label="_nolegend_")

# the keyword parameter alpha is the opacity of the circles, no the scale.

plot_one_skeleton(point_cloud, x0, alpha)

# -

r_dgm = compute_local_homology_r(point_cloud, x0, alpha, 2)

_ = plot_persistence_diagram(r_dgm)

r_dgm_1 = [(b, d)for dim, (b, d) in r_dgm if dim==1.]

r_dgm_1

# ### Different center

# For the chosen parameters, both methods show 3 persistent points, recovering the correct local homology. Let's pick a point where local homology is different.

#

# At $x_1 = (-0.25, -0.25)$, the local homology group in dimension 1 has one generator and groups in all other dimensions are trivial.

# +

x1 = np.array([[-0.25, -0.25]])

plot_point_cloud(point_cloud)

for x in point_cloud:

plot_disc(x, alpha, alpha=0.03, color="r", label="_nolegend_")

plot_disc(x1[0], epsilon, alpha=0.4, color="blue")

# +

x = x1

alpha_dgm1 = compute_local_homology_alpha(point_cloud, x, epsilon, 2)

_ = plot_persistence_diagram(alpha_dgm1)

# -

# There is one (persistent) point in $H_1$, as expected.

r_dgm1 = compute_local_homology_r(point_cloud, x, alpha, 2)

plot_persistence_diagram(r_dgm1)

r_dgm1

# This diagram is more interesting. Remember that we are quotienting by everything but a smaller and smaller neighborhood.

#

# There are three points in total. Two appear, and die, for high parameter values, namely $[0.38, 075]$ is an interval where they both persist. In this particular example, we should be able to see them for $r= 0.6$. Visual inspection confirms that the $r$-ball contains the intersection of lines and so that the points correspond to things happening "far" from the localization point.

#

# The point closer to the origin persists through $(0.03, 0.33)$. Let's inspect $r=0.2$ and $r=0.5$. We see that we recover the correct homology in that interval. The homology can be read-off as the number of points in the rectangle $\rbrack-\infty,r\rbrack\times\rbrack r, \infty\lbrack$ in the diagram.

plot_point_cloud(point_cloud)

plot_disc(x[0], 0.5, color="red", label="r=0.5")

plot_disc(x[0], 0.2, color="green", label="r=0.2")

plt.legend()

r_dgm1 = compute_local_homology_r(point_cloud, x, alpha, 2)

plot_persistence_diagram(r_dgm1)

plot_rectangle(0.2, color="green")

plot_rectangle(0.5, color="red")

r_dgm1

# An interesting question is what happens when we place the point closer to the intersection point, so that the bottom-left endpoint of the segment is outside of the ball while the intersection is still inside. Let us examine that situation for both filtrations.

x = np.array([[-0.21, -0.21]])

r_dgm2 = compute_local_homology_r(point_cloud, x, alpha, 2)

r_dgm2

# +

plot_persistence_diagram(r_dgm2)

plot_rectangle(0.38, color="red")

plt.figure()

plot_point_cloud(point_cloud)

for p in point_cloud:

plot_disc(p, alpha, alpha=0.03, color="r", label="_nolegend_")

plot_disc(x[0], 0.38, color="green", label="r=0.5")

# -

# For the $r-$filtration, it does not change much. We have the same number of points, but now, all bars live through (an interval that includes) $[0.33, 0.39]$.

# +

alpha_dgm2 = compute_local_homology_alpha(point_cloud, x, epsilon, 2)

_ = plot_persistence_diagram(alpha_dgm2)

plt.figure()

plot_point_cloud(point_cloud)

plot_disc(x[0], epsilon, color="red", label=r"$\epsilon$=" + str(epsilon))

# -

alpha_dgm2_h1, alpha_dgm1_h1 = [[p for p in dgm if p[0]==1]

for dgm in [alpha_dgm2, alpha_dgm1]]

alpha_dgm2_h1, alpha_dgm1_h1

# For the $\alpha$-filtration, it does not change anything, since the intersection point is not in the $\epsilon$-ball of the center $(0,0)\notin B(x, \epsilon)$.

# ## Densely sampled square

np.random.seed(2)

# +

point_cloud = np.random.rand(400, 2)

plot_point_cloud(point_cloud)

# +

x0 = np.array([[0.25, 0.5]])

epsilon = 0.2

plot_point_cloud(point_cloud)

plot_disc(x0[0], epsilon)

# -

alpha_square = compute_local_homology_alpha(point_cloud, x0, epsilon, 2)

_ = plot_persistence_diagram(alpha_square)

alpha = 0.2

r_square = compute_local_homology_r(point_cloud, x0, alpha, 2)

_ = plot_persistence_diagram(r_square)

r_square

# Both diagrams show some $H_2$, but it's not very persistent. it dies at 0.24 and it might be because the ball centered at $(0.25, 0.25)$ approaches the boundary $y=0$ of the support of the distribution.

# ## Sphere

# +

point_cloud = points.sphere(n_samples=3000, ambient_dim=3, radius=1, sample="random")

point_cloud = point_cloud[point_cloud[:,2]>=0.0]

ax = plt.axes(projection='3d')

_ = ax.scatter3D(point_cloud[:,0], point_cloud[:, 1], point_cloud[:, 2],)

# +

x0 = np.array([[0., 0., 1.]])

epsilon = 0.35

from local_homology.alpha_filtration import is_point_in_ball

in_ball, _ = is_point_in_ball(point_cloud, x0, epsilon, return_distances=True)

pts_in_ball = point_cloud[in_ball]

ax = plt.axes(projection='3d')

_ = ax.scatter3D(pts_in_ball[:,0], pts_in_ball[:, 1], pts_in_ball[:, 2],)

# -

alpha_sphere = compute_local_homology_alpha(point_cloud, x0, epsilon, 2)

_ = plot_persistence_diagram(alpha_sphere)

_ = plt.title(r"Pseudo $\alpha$-filtration")

# *Observation: The proposed $\alpha$-filtration with `distances=distance_to_boundary` is close to ~~equivalent~~ to covering the boundary of the disc with points and performing a Vietoris-Rips filtration. It is not equivalent, because a 2-simplex is added as soon as there is an edge between points, which are connected with the boundary. They do not need to connect to the same point.*

alpha_sphere = compute_local_homology_alpha(point_cloud, x0, epsilon, 2, distance_to_boundary)

_ = plot_persistence_diagram(alpha_sphere)

_ = plt.title(r"Pseudo $\alpha$-filtration, distance to boundary")

alpha_sphere = compute_local_homology_alpha(point_cloud, x0, epsilon, 2, distance_to_expanding_boundary)

_ = plot_persistence_diagram(alpha_sphere)

_ = plt.title(r"Pseudo $\alpha$-filtration, distance to expanding boundary")

# +

alpha = 0.2

r_sphere = compute_local_homology_r(point_cloud, x0, alpha, 2)

_ = plot_persistence_diagram(r_sphere)

_ = plt.title(r"$r$-filtration")

# -

# For the $\alpha$-filtration, the expanding boundary seems to fill out the interior too quickly for $H_2$ to appear. *I* suspect that the points connect to the boundary quicker than they connect to each other, so that no 2-simplices have time to form. Indeed, if we disable this behavior, we observe a non-trivial $2$-dim hole.

#

# Still, we can get an interesting observation from here. By comparing the persistence diagram from `distance_to_boundary` and `distance_to_expanding_boundary`, we can speculate which points in the diagram correspond to cycles that were created by joining the boundary, and which were created purely inside the $r$-ball. For the first category, their birth value will be smaller, while the other ones should see their death values affected.

#

# For the $r-$filtration, this appears immediately quickly and persists. Of course, this is due to a good scale choice for Rips.

# ## Swiss hole

# +

### from https://github.com/scikit-learn/scikit-learn/blob/main/examples/manifold/plot_swissroll.py

sh_points, sh_color = datasets.make_swiss_roll(

n_samples=11000, hole=True, random_state=0

)

t = np.array([7.853, 12.5, 12.5])

y = np.array([8., 20., 8.])

pts = np.stack([t*np.cos(t), y, t*np.sin(t)], axis=1)

# -

fig = plt.figure(figsize=(8, 6))

ax = fig.add_subplot(111, projection="3d")

fig.add_axes(ax)

ax.scatter(

sh_points[:, 0], sh_points[:, 1], sh_points[:, 2],

c=sh_color, s=5, alpha=0.15

)

ax.scatter(pts[:, 0], pts[:, 1], pts[:, 2], c='r', s=20, alpha=0.8)

ax.set_title("Swiss-Hole in Ambient Space")

ax.view_init(azim=-66, elev=12)

_ = ax.text2D(0.8, 0.05, s="n_samples=1500", transform=ax.transAxes)

pt_hole, pt_boundary, pt_inside = pts

# +

alpha = 0.9

r_sh = [compute_local_homology_r(sh_points, x0, alpha, 2, max_r=9.) for x0 in pts]

# +

fig, axes = plt.subplots(1, 3, figsize=(10, 3))

for (ind, dgm), name in zip(enumerate(r_sh), ["hole", "boundary", "inside"]):

_ = plot_persistence_diagram(dgm, axes=axes[ind])

axes[ind].set_title(name)

_ = plt.suptitle(r"$r$-filtration")

# -

epsilon = 2.

dgms = [compute_local_homology_alpha(sh_points, x0[None,:], epsilon, 2,

distances=distance_to_point_outside_ball, approximate=True) for x0 in pts]

min_, max_ = 0., epsilon

for dgm, name in zip(dgms, ["hole", "boundary", "inside"]):

ax = plot_persistence_diagram(dgm, )

ax.set_xlim([min_, max_]),

ax.set_ylim([min_, max_])

ax.set_title(name)

| notebooks/Tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 视频动作识别

# 视频动作识别是指对一小段视频中的内容进行分析,判断视频中的人物做了哪种动作。视频动作识别与图像领域的图像识别,既有联系又有区别,图像识别是对一张静态图片进行识别,而视频动作识别不仅要考察每张图片的静态内容,还要考察不同图片静态内容之间的时空关系。比如一个人扶着一扇半开的门,仅凭这一张图片无法判断该动作是开门动作还是关门动作。

#

# 视频分析领域的研究相比较图像分析领域的研究,发展时间更短,也更有难度。视频分析模型完成的难点首先在于,需要强大的计算资源来完成视频的分析。视频要拆解成为图像进行分析,导致模型的数据量十分庞大。视频内容有很重要的考虑因素是动作的时间顺序,需要将视频转换成的图像通过时间关系联系起来,做出判断,所以模型需要考虑时序因素,加入时间维度之后参数也会大量增加。

#

# 得益于PASCAL VOC、ImageNet、MS COCO等数据集的公开,图像领域产生了很多的经典模型,那么在视频分析领域有没有什么经典的模型呢?答案是有的,本案例将为大家介绍视频动作识别领域的经典模型并进行代码实践。

#

# 由于本案例的代码是在华为云ModelArts Notebook上运行,所以需要先按照如下步骤来进行Notebook环境的准备。

#

# ### 进入ModelArts

#

# 点击如下链接:https://www.huaweicloud.com/product/modelarts.html , 进入ModelArts主页。点击“立即使用”按钮,输入用户名和密码登录,进入ModelArts使用页面。

#

# ### 进入ModelArts

#

# 点击如下链接:https://www.huaweicloud.com/product/modelarts.html , 进入ModelArts主页。点击“立即使用”按钮,输入用户名和密码登录,进入ModelArts使用页面。

#

# ### 创建ModelArts notebook

#

# 下面,我们在ModelArts中创建一个notebook开发环境,ModelArts notebook提供网页版的Python开发环境,可以方便的编写、运行代码,并查看运行结果。

#

# 第一步:在ModelArts服务主界面依次点击“开发环境”、“创建”

#

#

#

# 第二步:填写notebook所需的参数:

#

#

#

# 第三步:配置好notebook参数后,点击下一步,进入notebook信息预览。确认无误后,点击“立即创建”

#

#

# 第四步:创建完成后,返回开发环境主界面,等待Notebook创建完毕后,打开Notebook,进行下一步操作。

#

#

# ### 在ModelArts中创建开发环境

#

# 接下来,我们创建一个实际的开发环境,用于后续的实验步骤。

#

# 第一步:点击下图所示的“启动”按钮,加载后“打开”按钮变从灰色变为蓝色后点击“打开”进入刚刚创建的Notebook

#

#

#

#

# 第二步:创建一个Python3环境的的Notebook。点击右上角的"New",然后选择TensorFlow 1.13.1开发环境。

#

# 第三步:点击左上方的文件名"Untitled",并输入一个与本实验相关的名称,如"action_recognition"

#

#

#

#

# ### 在Notebook中编写并执行代码

#

# 在Notebook中,我们输入一个简单的打印语句,然后点击上方的运行按钮,可以查看语句执行的结果:

#

#

#

# 开发环境准备好啦,接下来可以愉快地写代码啦!

# ### 准备源代码和数据

#

# 这一步准备案例所需的源代码和数据,相关资源已经保存在OBS中,我们通过[ModelArts SDK](https://support.huaweicloud.com/sdkreference-modelarts/modelarts_04_0002.html)将资源下载到本地,并解压到当前目录下。解压后,当前目录包含data、dataset_subset和其他目录文件,分别是预训练参数文件、数据集和代码文件等。

import os

if not os.path.exists('videos'):

from modelarts.session import Session

session = Session()

session.download_data(bucket_path="ai-course-common-26-bj4/video/video.tar.gz", path="./video.tar.gz")

# 使用tar命令解压资源包

os.system("tar xf ./video.tar.gz")

# 使用rm命令删除压缩包

os.system("rm ./video.tar.gz")

# 上一节课我们已经介绍了视频动作识别有HMDB51、UCF-101和Kinetics三个常用的数据集,本案例选用了UCF-101数据集的部分子集作为演示用数据集,接下来,我们播放一段UCF-101中的视频:

video_name = "./data/v_TaiChi_g01_c01.avi"

# +

from IPython.display import clear_output, Image, display, HTML

import time

import cv2

import base64

import numpy as np

def arrayShow(img):

_,ret = cv2.imencode('.jpg', img)

return Image(data=ret)

cap = cv2.VideoCapture(video_name)

while True:

try:

clear_output(wait=True)

ret, frame = cap.read()

if ret:

tmp = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

img = arrayShow(frame)

display(img)

time.sleep(0.05)

else:

break

except KeyboardInterrupt:

cap.release()

cap.release()

# -

# ## 视频动作识别模型介绍

#

# 在图像领域中,ImageNet作为一个大型图像识别数据集,自2010年开始,使用此数据集训练出的图像算法层出不穷,深度学习模型经历了从AlexNet到VGG-16再到更加复杂的结构,模型的表现也越来越好。在识别千种类别的图片时,错误率表现如下:

#

# <img src="./img/ImageNet.png" width="500" height="500" align=center>

#

# 在图像识别中表现很好的模型,可以在图像领域的其他任务中继续使用,通过复用模型中部分层的参数,就可以提升模型的训练效果。有了基于ImageNet模型的图像模型,很多模型和任务都有了更好的训练基础,比如说物体检测、实例分割、人脸检测、人脸识别等。

#

# 那么训练效果显著的图像模型是否可以用于视频模型的训练呢?答案是yes,有研究证明,在视频领域,如果能够复用图像模型结构,甚至参数,将对视频模型的训练有很大帮助。但是怎样才能复用上图像模型的结构呢?首先需要知道视频分类与图像分类的不同,如果将视频视作是图像的集合,每一个帧将作为一个图像,视频分类任务除了要考虑到图像中的表现,也要考虑图像间的时空关系,才可以对视频动作进行分类。

#

# 为了捕获图像间的时空关系,论文[I3D](https://arxiv.org/pdf/1705.07750.pdf)介绍了三种旧的视频分类模型,并提出了一种更有效的Two-Stream Inflated 3D ConvNets(简称I3D)的模型,下面将逐一简介这四种模型,更多细节信息请查看原论文。

#

# ### 旧模型一:卷积网络+LSTM

#

# 模型使用了训练成熟的图像模型,通过卷积网络,对每一帧图像进行特征提取、池化和预测,最后在模型的末端加一个LSTM层(长短期记忆网络),如下图所示,这样就可以使模型能够考虑时间性结构,将上下文特征联系起来,做出动作判断。这种模型的缺点是只能捕获较大的工作,对小动作的识别效果较差,而且由于视频中的每一帧图像都要经过网络的计算,所以训练时间很长。

#

# <img src="./img/video_model_0.png" width="200" height="200" align=center>

#

# ### 旧模型二:3D卷积网络

#

# 3D卷积类似于2D卷积,将时序信息加入卷积操作。虽然这是一种看起来更加自然的视频处理方式,但是由于卷积核维度增加,参数的数量也增加了,模型的训练变得更加困难。这种模型没有对图像模型进行复用,而是直接将视频数据传入3D卷积网络进行训练。

#

# <img src="./img/video_model_1.png" width="150" height="150" align=center>

#

# ### 旧模型三:Two-Stream 网络

#

# Two-Stream 网络的两个流分别为**1张RGB快照**和**10张计算之后的光流帧画面组成的栈**。两个流都通过ImageNet预训练好的图像卷积网络,光流部分可以分为竖直和水平两个通道,所以是普通图片输入的2倍,模型在训练和测试中表现都十分出色。

#

# <img src="./img/video_model_2.png" width="400" height="400" align=center>

#

# #### 光流视频 optical flow video

#

# 上面讲到了光流,在此对光流做一下介绍。光流是什么呢?名字很专业,感觉很陌生,但实际上这种视觉现象我们每天都在经历,我们坐高铁的时候,可以看到窗外的景物都在快速往后退,开得越快,就感受到外面的景物就是“刷”地一个残影,这种视觉上目标的运动方向和速度就是光流。光流从概念上讲,是对物体运动的观察,通过找到相邻帧之间的相关性来判断帧之间的对应关系,计算出相邻帧画面中物体的运动信息,获取像素运动的瞬时速度。在原始视频中,有运动部分和静止的背景部分,我们通常需要判断的只是视频中运动部分的状态,而光流就是通过计算得到了视频中运动部分的运动信息。

#

# 下面是一个经过计算后的原视频及光流视频。

#

# 原视频

#

# 光流视频

#

#

# ### 新模型:Two-Stream Inflated 3D ConvNets

#

# 新模型采取了以下几点结构改进:

# - 拓展2D卷积为3D。直接利用成熟的图像分类模型,只不过将网络中二维$ N × N $的 filters 和 pooling kernels 直接变成$ N × N × N $;

# - 用 2D filter 的预训练参数来初始化 3D filter 的参数。上一步已经利用了图像分类模型的网络,这一步的目的是能利用上网络的预训练参数,直接将 2D filter 的参数直接沿着第三个时间维度进行复制N次,最后将所有参数值再除以N;

# - 调整感受野的形状和大小。新模型改造了图像分类模型Inception-v1的结构,前两个max-pooling层改成使用$ 1 × 3 × 3 $kernels and stride 1 in time,其他所有max-pooling层都仍然使用对此的kernel和stride,最后一个average pooling层使用$ 2 × 7 × 7 $的kernel。

# - 延续了Two-Stream的基本方法。用双流结构来捕获图片之间的时空关系仍然是有效的。

#

# 最后新模型的整体结构如下图所示:

#

# <img src="./img/video_model_3.png" width="200" height="200" align=center>

#

# 好,到目前为止,我们已经讲解了视频动作识别的经典数据集和经典模型,下面我们通过代码来实践地跑一跑其中的两个模型:**C3D模型**( 3D卷积网络)以及**I3D模型**(Two-Stream Inflated 3D ConvNets)。

#

# ### C3D模型结构

#

#

# 我们已经在前面的“旧模型二:3D卷积网络”中讲解到3D卷积网络是一种看起来比较自然的处理视频的网络,虽然它有效果不够好,计算量也大的特点,但它的结构很简单,可以构造一个很简单的网络就可以实现视频动作识别,如下图所示是3D卷积的示意图:

#

#

#

# a)中,一张图片进行了2D卷积, b)中,对视频进行2D卷积,将多个帧视作多个通道, c)中,对视频进行3D卷积,将时序信息加入输入信号中。

#

# ab中,output都是一张二维特征图,所以无论是输入是否有时间信息,输出都是一张二维的特征图,2D卷积失去了时序信息。只有3D卷积在输出时,保留了时序信息。2D和3D池化操作同样有这样的问题。

#

# 如下图所示是一种[C3D](https://www.cv-foundation.org/openaccess/content_iccv_2015/papers/Tran_Learning_Spatiotemporal_Features_ICCV_2015_paper.pdf)网络的变种:(如需阅读原文描述,请查看I3D论文 2.2 节)

#

#

#

# C3D结构,包括8个卷积层,5个最大池化层以及2个全连接层,最后是softmax输出层。

#

# 所有的3D卷积核为$ 3 × 3 × 3$ 步长为1,使用SGD,初始学习率为0.003,每150k个迭代,除以2。优化在1.9M个迭代的时候结束,大约13epoch。

#

# 数据处理时,视频抽帧定义大小为:$ c × l × h × w$,$c$为通道数量,$l$为帧的数量,$h$为帧画面的高度,$w$为帧画面的宽度。3D卷积核和池化核的大小为$ d × k × k$,$d$是核的时间深度,$k$是核的空间大小。网络的输入为视频的抽帧,预测出的是类别标签。所有的视频帧画面都调整大小为$ 128 × 171 $,几乎将UCF-101数据集中的帧调整为一半大小。视频被分为不重复的16帧画面,这些画面将作为模型网络的输入。最后对帧画面的大小进行裁剪,输入的数据为$16 × 112 × 112 $

# ### C3D模型训练

# 接下来,我们将对C3D模型进行训练,训练过程分为:数据预处理以及模型训练。在此次训练中,我们使用的数据集为UCF-101,由于C3D模型的输入是视频的每帧图片,因此我们需要对数据集的视频进行抽帧,也就是将视频转换为图片,然后将图片数据传入模型之中,进行训练。

#

# 在本案例中,我们随机抽取了UCF-101数据集的一部分进行训练的演示,感兴趣的同学可以下载完整的UCF-101数据集进行训练。

#

# [UCF-101下载](https://www.crcv.ucf.edu/data/UCF101.php)

#

# 数据集存储在目录` dataset_subset`下

#

# 如下代码是使用cv2库进行视频文件到图片文件的转换

import cv2

import os

# 视频数据集存储位置

video_path = './dataset_subset/'

# 生成的图像数据集存储位置

save_path = './dataset/'

# 如果文件路径不存在则创建路径

if not os.path.exists(save_path):

os.mkdir(save_path)

# 获取动作列表

action_list = os.listdir(video_path)

# 遍历所有动作

for action in action_list:

if action.startswith(".")==False:

if not os.path.exists(save_path+action):

os.mkdir(save_path+action)

video_list = os.listdir(video_path+action)

# 遍历所有视频

for video in video_list:

prefix = video.split('.')[0]

if not os.path.exists(os.path.join(save_path, action, prefix)):

os.mkdir(os.path.join(save_path, action, prefix))

save_name = os.path.join(save_path, action, prefix) + '/'

video_name = video_path+action+'/'+video

# 读取视频文件

# cap为视频的帧

cap = cv2.VideoCapture(video_name)

# fps为帧率

fps = int(cap.get(cv2.CAP_PROP_FRAME_COUNT))

fps_count = 0

for i in range(fps):

ret, frame = cap.read()

if ret:

# 将帧画面写入图片文件中

cv2.imwrite(save_name+str(10000+fps_count)+'.jpg',frame)

fps_count += 1

# 此时,视频逐帧转换成的图片数据已经存储起来,为模型训练做准备。

#

# ### 模型训练

# 首先,我们构建模型结构。

#

# C3D模型结构我们之前已经介绍过,这里我们通过`keras`提供的Conv3D,MaxPool3D,ZeroPadding3D等函数进行模型的搭建。

# +

from keras.layers import Dense,Dropout,Conv3D,Input,MaxPool3D,Flatten,Activation, ZeroPadding3D

from keras.regularizers import l2

from keras.models import Model, Sequential

# 输入数据为 112×112 的图片,16帧, 3通道

input_shape = (112,112,16,3)

# 权重衰减率

weight_decay = 0.005

# 类型数量,我们使用UCF-101 为数据集,所以为101

nb_classes = 101

# 构建模型结构

inputs = Input(input_shape)

x = Conv3D(64,(3,3,3),strides=(1,1,1),padding='same',

activation='relu',kernel_regularizer=l2(weight_decay))(inputs)

x = MaxPool3D((2,2,1),strides=(2,2,1),padding='same')(x)

x = Conv3D(128,(3,3,3),strides=(1,1,1),padding='same',

activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = MaxPool3D((2,2,2),strides=(2,2,2),padding='same')(x)

x = Conv3D(128,(3,3,3),strides=(1,1,1),padding='same',

activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = MaxPool3D((2,2,2),strides=(2,2,2),padding='same')(x)

x = Conv3D(256,(3,3,3),strides=(1,1,1),padding='same',

activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = MaxPool3D((2,2,2),strides=(2,2,2),padding='same')(x)

x = Conv3D(256, (3, 3, 3), strides=(1, 1, 1), padding='same',

activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = MaxPool3D((2, 2, 2), strides=(2, 2, 2), padding='same')(x)

x = Flatten()(x)

x = Dense(2048,activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = Dropout(0.5)(x)

x = Dense(2048,activation='relu',kernel_regularizer=l2(weight_decay))(x)

x = Dropout(0.5)(x)

x = Dense(nb_classes,kernel_regularizer=l2(weight_decay))(x)

x = Activation('softmax')(x)

model = Model(inputs, x)

# -

# 通过keras提供的`summary()`方法,打印模型结构。可以看到模型的层构建以及各层的输入输出情况。

model.summary()

# 通过keras的`input`方法可以查看模型的输入形状,shape分别为`( batch size, width, height, frames, channels) ` 。

model.input

# 可以看到模型的数据处理的维度与图像处理模型有一些差别,多了frames维度,体现出时序关系在视频分析中的影响。

#

# 接下来,我们开始将图片文件转为训练需要的数据形式。

# +

# 引用必要的库

from keras.optimizers import SGD,Adam

from keras.utils import np_utils

import numpy as np

import random

import cv2

import matplotlib.pyplot as plt

# 自定义callbacks

from schedules import onetenth_4_8_12

# -

# 参数定义

img_path = save_path # 图片文件存储位置

results_path = './results' # 训练结果保存位置

if not os.path.exists(results_path):

os.mkdir(results_path)

# 数据集划分,随机抽取4/5 作为训练集,其余为验证集。将文件信息分别存储在`train_list`和`test_list`中,为训练做准备。

cates = os.listdir(img_path)

train_list = []

test_list = []

# 遍历所有的动作类型

for cate in cates:

videos = os.listdir(os.path.join(img_path, cate))

length = len(videos)//5

# 训练集大小,随机取视频文件加入训练集

train= random.sample(videos, length*4)

train_list.extend(train)

# 将余下的视频加入测试集

for video in videos:

if video not in train:

test_list.append(video)

print("训练集为:")

print( train_list)

print("共%d 个视频\n"%(len(train_list)))

print("验证集为:")

print(test_list)

print("共%d 个视频"%(len(test_list)))

# 接下来开始进行模型的训练。

#

# 首先定义数据读取方法。方法`process_data`中读取一个batch的数据,包含16帧的图片信息的数据,以及数据的标注信息。在读取图片数据时,对图片进行随机裁剪和翻转操作以完成数据增广。

def process_data(img_path, file_list,batch_size=16,train=True):

batch = np.zeros((batch_size,16,112,112,3),dtype='float32')

labels = np.zeros(batch_size,dtype='int')

cate_list = os.listdir(img_path)

def read_classes():

path = "./classInd.txt"

with open(path, "r+") as f:

lines = f.readlines()

classes = {}

for line in lines:

c_id = line.split()[0]

c_name = line.split()[1]

classes[c_name] =c_id

return classes

classes_dict = read_classes()

for file in file_list:

cate = file.split("_")[1]

img_list = os.listdir(os.path.join(img_path, cate, file))

img_list.sort()

batch_img = []

for i in range(batch_size):

path = os.path.join(img_path, cate, file)

label = int(classes_dict[cate])-1

symbol = len(img_list)//16

if train:

# 随机进行裁剪

crop_x = random.randint(0, 15)

crop_y = random.randint(0, 58)

# 随机进行翻转

is_flip = random.randint(0, 1)

# 以16 帧为单位

for j in range(16):

img = img_list[symbol + j]

image = cv2.imread( path + '/' + img)

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image = cv2.resize(image, (171, 128))

if is_flip == 1:

image = cv2.flip(image, 1)

batch[i][j][:][:][:] = image[crop_x:crop_x + 112, crop_y:crop_y + 112, :]

symbol-=1

if symbol<0:

break

labels[i] = label

else:

for j in range(16):

img = img_list[symbol + j]

image = cv2.imread( path + '/' + img)

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image = cv2.resize(image, (171, 128))

batch[i][j][:][:][:] = image[8:120, 30:142, :]

symbol-=1

if symbol<0:

break

labels[i] = label

return batch, labels

# +

batch, labels = process_data(img_path, train_list)

print("每个batch的形状为:%s"%(str(batch.shape)))

print("每个label的形状为:%s"%(str(labels.shape)))

# -

# 定义data generator, 将数据批次传入训练函数中。

def generator_train_batch(train_list, batch_size, num_classes, img_path):

while True:

# 读取一个batch的数据

x_train, x_labels = process_data(img_path, train_list, batch_size=16,train=True)

x = preprocess(x_train)

# 形成input要求的数据格式

y = np_utils.to_categorical(np.array(x_labels), num_classes)

x = np.transpose(x, (0,2,3,1,4))

yield x, y

def generator_val_batch(test_list, batch_size, num_classes, img_path):

while True:

# 读取一个batch的数据

y_test,y_labels = process_data(img_path, train_list, batch_size=16,train=False)

x = preprocess(y_test)

# 形成input要求的数据格式

x = np.transpose(x,(0,2,3,1,4))

y = np_utils.to_categorical(np.array(y_labels), num_classes)

yield x, y

# 定义方法`preprocess`, 对函数的输入数据进行图像的标准化处理。

def preprocess(inputs):

inputs[..., 0] -= 99.9

inputs[..., 1] -= 92.1

inputs[..., 2] -= 82.6

inputs[..., 0] /= 65.8

inputs[..., 1] /= 62.3

inputs[..., 2] /= 60.3

return inputs

# 训练一个epoch大约需4分钟

# 类别数量

num_classes = 101

# batch大小

batch_size = 4

# epoch数量

epochs = 1

# 学习率大小

lr = 0.005

# 优化器定义

sgd = SGD(lr=lr, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

# 开始训练

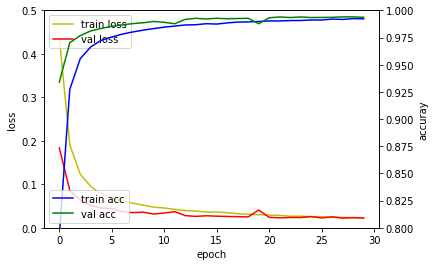

history = model.fit_generator(generator_train_batch(train_list, batch_size, num_classes,img_path),

steps_per_epoch= len(train_list) // batch_size,

epochs=epochs,

callbacks=[onetenth_4_8_12(lr)],

validation_data=generator_val_batch(test_list, batch_size,num_classes,img_path),

validation_steps= len(test_list) // batch_size,

verbose=1)

# 对训练结果进行保存

model.save_weights(os.path.join(results_path, 'weights_c3d.h5'))

# ## 模型测试

# 接下来我们将训练之后得到的模型进行测试。随机在UCF-101中选择一个视频文件作为测试数据,然后对视频进行取帧,每16帧画面传入模型进行一次动作预测,并且将动作预测以及预测百分比打印在画面中并进行视频播放。

# 首先,引入相关的库。

from IPython.display import clear_output, Image, display, HTML

import time

import cv2

import base64

import numpy as np

# 构建模型结构并且加载权重。

from models import c3d_model

model = c3d_model()

model.load_weights(os.path.join(results_path, 'weights_c3d.h5'), by_name=True) # 加载刚训练的模型

# 定义函数arrayshow,进行图片变量的编码格式转换。

def arrayShow(img):

_,ret = cv2.imencode('.jpg', img)

return Image(data=ret)

# 进行视频的预处理以及预测,将预测结果打印到画面中,最后进行播放。

# 加载所有的类别和编号

with open('./ucfTrainTestlist/classInd.txt', 'r') as f:

class_names = f.readlines()

f.close()

# 读取视频文件

video = './videos/v_Punch_g03_c01.avi'

cap = cv2.VideoCapture(video)

clip = []

# 将视频画面传入模型

while True:

try:

clear_output(wait=True)

ret, frame = cap.read()

if ret:

tmp = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

clip.append(cv2.resize(tmp, (171, 128)))

# 每16帧进行一次预测

if len(clip) == 16:

inputs = np.array(clip).astype(np.float32)

inputs = np.expand_dims(inputs, axis=0)

inputs[..., 0] -= 99.9

inputs[..., 1] -= 92.1

inputs[..., 2] -= 82.6

inputs[..., 0] /= 65.8

inputs[..., 1] /= 62.3

inputs[..., 2] /= 60.3

inputs = inputs[:,:,8:120,30:142,:]

inputs = np.transpose(inputs, (0, 2, 3, 1, 4))

# 获得预测结果

pred = model.predict(inputs)

label = np.argmax(pred[0])

# 将预测结果绘制到画面中

cv2.putText(frame, class_names[label].split(' ')[-1].strip(), (20, 20),

cv2.FONT_HERSHEY_SIMPLEX, 0.6,

(0, 0, 255), 1)

cv2.putText(frame, "prob: %.4f" % pred[0][label], (20, 40),

cv2.FONT_HERSHEY_SIMPLEX, 0.6,

(0, 0, 255), 1)

clip.pop(0)

# 播放预测后的视频

lines, columns, _ = frame.shape

frame = cv2.resize(frame, (int(columns), int(lines)))

img = arrayShow(frame)

display(img)

time.sleep(0.02)

else:

break

except:

print(0)

cap.release()

# ## I3D 模型

# 在之前我们简单介绍了I3D模型,[I3D官方github库](https://github.com/deepmind/kinetics-i3d)提供了在Kinetics上预训练的模型和预测代码,接下来我们将体验I3D模型如何对视频进行预测。

# 首先,引入相关的包

# +

import numpy as np

import tensorflow as tf

import i3d

# -

# 进行参数的定义

# +

# 输入图片大小

_IMAGE_SIZE = 224

# 视频的帧数

_SAMPLE_VIDEO_FRAMES = 79

# 输入数据包括两部分:RGB和光流

# RGB和光流数据已经经过提前计算

_SAMPLE_PATHS = {

'rgb': 'data/v_CricketShot_g04_c01_rgb.npy',

'flow': 'data/v_CricketShot_g04_c01_flow.npy',

}

# 提供了多种可以选择的预训练权重

# 其中,imagenet系列模型从ImageNet的2D权重中拓展而来,其余为视频数据下的预训练权重

_CHECKPOINT_PATHS = {

'rgb': 'data/checkpoints/rgb_scratch/model.ckpt',

'flow': 'data/checkpoints/flow_scratch/model.ckpt',

'rgb_imagenet': 'data/checkpoints/rgb_imagenet/model.ckpt',

'flow_imagenet': 'data/checkpoints/flow_imagenet/model.ckpt',

}

# 记录类别文件

_LABEL_MAP_PATH = 'data/label_map.txt'

# 类别数量为400

NUM_CLASSES = 400

# -

# 定义参数:

# - imagenet_pretrained :如果为`True`,则调用预训练权重,如果为`False`,则调用ImageNet转成的权重

imagenet_pretrained = True

# 加载动作类型

kinetics_classes = [x.strip() for x in open(_LABEL_MAP_PATH)]

tf.logging.set_verbosity(tf.logging.INFO)

# 构建RGB部分模型

# +

rgb_input = tf.placeholder(tf.float32, shape=(1, _SAMPLE_VIDEO_FRAMES, _IMAGE_SIZE, _IMAGE_SIZE, 3))

with tf.variable_scope('RGB', reuse=tf.AUTO_REUSE):

rgb_model = i3d.InceptionI3d(NUM_CLASSES, spatial_squeeze=True, final_endpoint='Logits')

rgb_logits, _ = rgb_model(rgb_input, is_training=False, dropout_keep_prob=1.0)

rgb_variable_map = {}

for variable in tf.global_variables():

if variable.name.split('/')[0] == 'RGB':

rgb_variable_map[variable.name.replace(':0', '')] = variable

rgb_saver = tf.train.Saver(var_list=rgb_variable_map, reshape=True)

# -

# 构建光流部分模型

# +

flow_input = tf.placeholder(tf.float32,shape=(1, _SAMPLE_VIDEO_FRAMES, _IMAGE_SIZE, _IMAGE_SIZE, 2))

with tf.variable_scope('Flow', reuse=tf.AUTO_REUSE):

flow_model = i3d.InceptionI3d(NUM_CLASSES, spatial_squeeze=True, final_endpoint='Logits')

flow_logits, _ = flow_model(flow_input, is_training=False, dropout_keep_prob=1.0)

flow_variable_map = {}

for variable in tf.global_variables():

if variable.name.split('/')[0] == 'Flow':

flow_variable_map[variable.name.replace(':0', '')] = variable

flow_saver = tf.train.Saver(var_list=flow_variable_map, reshape=True)

# -

# 将模型联合,成为完整的I3D模型

model_logits = rgb_logits + flow_logits

model_predictions = tf.nn.softmax(model_logits)

# 开始模型预测,获得视频动作预测结果。

# 预测数据为开篇提供的RGB和光流数据:

#

#

#

#

with tf.Session() as sess:

feed_dict = {}

if imagenet_pretrained:

rgb_saver.restore(sess, _CHECKPOINT_PATHS['rgb_imagenet']) # 加载rgb流的模型

else:

rgb_saver.restore(sess, _CHECKPOINT_PATHS['rgb'])

tf.logging.info('RGB checkpoint restored')

if imagenet_pretrained:

flow_saver.restore(sess, _CHECKPOINT_PATHS['flow_imagenet']) # 加载flow流的模型

else:

flow_saver.restore(sess, _CHECKPOINT_PATHS['flow'])

tf.logging.info('Flow checkpoint restored')

start_time = time.time()

rgb_sample = np.load(_SAMPLE_PATHS['rgb']) # 加载rgb流的输入数据

tf.logging.info('RGB data loaded, shape=%s', str(rgb_sample.shape))

feed_dict[rgb_input] = rgb_sample

flow_sample = np.load(_SAMPLE_PATHS['flow']) # 加载flow流的输入数据

tf.logging.info('Flow data loaded, shape=%s', str(flow_sample.shape))

feed_dict[flow_input] = flow_sample

out_logits, out_predictions = sess.run(

[model_logits, model_predictions],

feed_dict=feed_dict)

out_logits = out_logits[0]

out_predictions = out_predictions[0]

sorted_indices = np.argsort(out_predictions)[::-1]

print('Inference time in sec: %.3f' % float(time.time() - start_time))

print('Norm of logits: %f' % np.linalg.norm(out_logits))

print('\nTop classes and probabilities')

for index in sorted_indices[:20]:

print(out_predictions[index], out_logits[index], kinetics_classes[index])

| notebook/DL_video_action_recognition/action_recognition.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 0.6.3

# language: julia

# name: julia-0.6

# ---

using Revise

using JuMIT

using Plots

model = JuMIT.Gallery.Seismic(:acou_homo1);

acqgeom = JuMIT.Gallery.Geom(model.mgrid,:xwell);

tgrid = JuMIT.Gallery.M1D(:acou_homo1);

wav = Signals.Wavelets.ricker(10.0, tgrid, tpeak=0.25, );

# source wavelet for modelling

acqsrc = JuMIT.Acquisition.Src_fixed(acqgeom.nss,1,[:P],wav,tgrid);

vp0=mean(JuMIT.Models.χ(model.χvp,model.vp0,-1))

ρ0=mean(JuMIT.Models.χ(model.χρ,model.ρ0,-1))

rec1 = JuMIT.Analytic.mod(vp0=vp0, model_pert=model, ρ0=ρ0, acqgeom=acqgeom, acqsrc=acqsrc, tgridmod=tgrid, src_flag=2)

pa=JuMIT.Fdtd.Param(npw=1,model=model, acqgeom=[acqgeom], acqsrc=[acqsrc], sflags=[2], rflags=[1], tgridmod=tgrid, verbose=true);

pab=JuMIT.Fdtd.Param(npw=2,model=model, acqgeom=[acqgeom, acqgeom], acqsrc=[acqsrc, acqsrc], sflags=[2, 0], rflags=[1, 1], tgridmod=tgrid, verbose=true, born_flag=true);

@time JuMIT.Fdtd.mod!(pab);

# +

# least-squares misfit

paerr=JuMIT.Data.P_misfit(rec1, pa.c.data[1])

err = JuMIT.Data.func_grad!(paerr)

# normalization

error = err[1]

# desired accuracy?

@test error<1e-2

println(error)

# -

plot(rec1.d[1,1])

plot!(pab.c.data[2].d[1,1])

| Modeling/Fdtd_accuracy.ipynb |

// ---

// jupyter:

// jupytext:

// text_representation:

// extension: .cs

// format_name: light

// format_version: '1.5'

// jupytext_version: 1.14.4

// kernelspec:

// display_name: .NET (C#)

// language: C#

// name: .net-csharp

// ---

// + dotnet_interactive={"language": "fsharp"}

#load "../include/MathDev.fsx"

open System

open System.Linq

open Sylvester

// + dotnet_interactive={"language": "fsharp"}

let dice = seq {1..6} |> Seq

dice

// + dotnet_interactive={"language": "csharp"}

let outcomes = (dice * dice)

outcomes.

// + dotnet_interactive={"language": "csharp"}

let s = dice.Prod

s |> Util.Table

// + dotnet_interactive={"language": "csharp"}

dice.AsSigmaAlgebra

// + dotnet_interactive={"language": "csharp"}

dice.AsSigmaAlgebra.Count()

// + dotnet_interactive={"language": "csharp"}

| examples/math/Probability.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .sh

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Bash

# language: bash

# name: bash

# ---

# <div id="body">

# <center>

# <a href="06 Remotes in GitHub.ipynb"> <font size="6"> < </font></a>

# <a href="index.ipynb"> <font size="6"> Version Control with Git </font> </a>

# <a href="08 Conflicts.ipynb"> <font size="6"> > </font></a>

# </center>

# </div>

# # Collaborating

# ## Questions

#

# * How can I use version control to collaborate with other people?

#

#

# ## Objectives

#

# * Clone a remote repository.

# * Collaborate by pushing to a common repository.

# * Describe the basic collaborative workflow.

#

#

# For the next step, get into pairs. One person will be the “Owner” and the other will be the “Collaborator”. The goal is that the Collaborator add changes into the Owner’s repository. We will switch roles at the end, so both persons will play Owner and Collaborator.

# <blockquote class="note">

# <h2>Practicing By Yourself</h2>

#

# If you’re working through this lesson on your own, you can carry on by opening a second terminal window. This window will represent your partner, working on another computer. You won’t need to give anyone access on GitHub, because both ‘partners’ are you.

# </blockquote>

# The Owner needs to give the Collaborator access. On GitHub, click the settings button on the right, then select Collaborators, and enter your partner’s username.

#

# <img src="static/images/github-add-collaborators.png">

#

# o accept access to the Owner’s repo, the Collaborator needs to go to https://github.com/notifications. Once there she can accept access to the Owner’s repo.

#

# Next, the Collaborator needs to download a copy of the Owner’s repository to her machine. This is called “cloning a repo”. To clone the Owner’s repo into her Desktop folder, the Collaborator enters:

#

# ```

# git clone https://github.com/epinux/git_tutorial.git ~/Desktop/epinux-git_tutorial

# ```

#

# Replace ‘epinux’ with the Owner’s username.

#

# <img src="static/images/github-collaboration.svg">

git clone https://github.com/epinux/git_tutorial.git ~/Desktop/epinux-git_tutorial

# The Collaborator can now make a change in her clone of the Owner’s repository, exactly the same way as we’ve been doing before:

#

#

cd ~/Desktop/epinux-git_tutorial

touch newfile.txt

echo "# this is going to be my first contribution" > newfile.txt

cat newfile.txt

git add newfile.txt

git commit -m "Add notes about my first contribution"

# Then push the change to the Owner’s repository on GitHub:

# +

# git push origin master

# -

# **Note:** To run the command above you may need to issue username and password. To do so in a jupyter environment execute the command from a terminal.

#

# ```bash

# Enumerating objects: 4, done.

# Counting objects: 4, done.

# Delta compression using up to 4 threads.

# Compressing objects: 100% (2/2), done.

# Writing objects: 100% (3/3), 306 bytes, done.

# Total 3 (delta 0), reused 0 (delta 0)

# To https://github.com/epinux/git_tutorial.git

# b324f10..28773e7 master -> master

# ```

#

# Note that we didn’t have to create a remote called origin: Git uses this name by default when we clone a repository. (This is why origin was a sensible choice earlier when we were setting up remotes by hand.)

# <blockquote class="note">

# <h2>Some more about remotes</h2>

# In this episode and the previous one, our local repository has had a single “remote”, called `origin`. A remote is a copy of the repository that is hosted somewhere else, that we can push to and pull from, and there’s no reason that you have to work with only one. For example, on some large projects you might have your own copy in your own GitHub account (you’d probably call this `origin`) and also the main “upstream” project repository (let’s call this `upstream` for the sake of examples). You would pull from `upstream` from time to time to get the latest updates that other people have committed.

#

# Remember that the name you give to a remote only exists locally. It’s an alias that you choose - whether `origin`, or `upstream`, or `fred` - and not something intrinstic to the remote repository.

#

# The `git remote` family of commands is used to set up and alter the remotes associated with a repository. Here are some of the most useful ones:

#

# * `git remote -v` lists all the remotes that are configured (we already used this in the last episode)

# * `git remote add [name] [url]` is used to add a new remote

# * `git remote remove [name]` removes a remote. Note that it doesn’t affect the remote repository at all - it just removes the link to it from the local repo.

# * `git remote set-url [name] [newurl]` changes the URL that is associated with the remote. This is useful if it has moved, e.g. to a different GitHub account, or from GitHub to a different hosting service. Or, if we made a typo when adding it!

# * `git remote rename [oldname] [newname]` changes the local alias by which a remote is known - its name. For example, one could use this to change `upstream` to fred.

#

# </blockquote>

#

# Take a look to the Owner’s repository on its GitHub website now (maybe you need to refresh your browser.) You should be able to see the new commit made by the Collaborator.

#

# To download the Collaborator’s changes from GitHub, the Owner now enters:

#

git pull origin master

# Now the three repositories (Owner’s local, Collaborator’s local, and Owner’s on GitHub) are back in sync.

# <blockquote class="note">

# <h2>A Basic Collaborative Workflow</h2>

#

#

# In practice, it is good to be sure that you have an updated version of the repository you are collaborating on, so you should git pull before making our changes. The basic collaborative workflow would be:

#

# * update your local repo with `git pull origin master`,

# * make your changes and stage them with `git add`,

# * commit your changes with `git commit -m`, and

# * upload the changes to GitHub with `git push origin master`

#

# It is better to make many commits with smaller changes rather than of one commit with massive changes: small commits are easier to read and review.

#

# </blockquote>

# <blockquote class="note">

# <h2>Review Changes</h2>

# The Owner pushed commits to the repository without giving any information to the Collaborator. How can the Collaborator find out what has changed with command line? And on GitHub?

#

# * On the command line, the Collaborator can use `git fetch origin master` to get the remote changes into the local repository, but without merging them. Then by running `git diff master origin/master` the Collaborator will see the changes output in the terminal.

#

# * On GitHub, the Collaborator can go to their own fork of the repository and look right above the light blue latest commit bar for a gray bar saying “This branch is 1 commit behind Our-Repository:master.” On the far right of that gray bar is a Compare icon and link. On the Compare page the Collaborator should change the base fork to their own repository, then click the link in the paragraph above to “compare across forks”, and finally change the head fork to the main repository. This will show all the commits that are different.

# </blockquote>

# <blockquote class="note">

# <h2>Comment Changes in GitHub</h2>

#

# The Collaborator has some questions about one line change made by the Owner and has some suggestions to propose.

#

# With GitHub, it is possible to comment the diff of a commit. Over the line of code to comment, a blue comment icon appears to open a comment window.

#

# The Collaborator posts its comments and suggestions using GitHub interface.

# </blockquote>

# <blockquote class="keypoints">

# <h2>Key Points</h2>

#

# * `git clone` copies a remote repository to create a local repository with a remote called `origin` automatically set up.

# </blockquote>

#

# <div id="body">

# <center>

# <a href="06 Remotes in GitHub.ipynb"> <font size="4"> < </font></a>

# <a href="index.ipynb"> <font size="4"> Version Control with Git </font> </a>

# <a href="08 Conflicts.ipynb"> <font size="4"> > </font></a>

# </center>

# </div>

| 07 Collaborating.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Kernel CCA (KCCA)

# This algorithm runs KCCA on two views of data. The kernel implementations, parameter 'ktype', are linear, polynomial and gaussian. Polynomial kernel has two parameters: 'constant', 'degree'. Gaussian kernel has one parameter: 'sigma'.

#

# Useful information, like canonical correlations between transformed data and statistical tests for significance of these correlations can be computed using the get_stats() function of the KCCA object.

#

# When initializing KCCA, you can also initialize the following parameters: the number of canonical components 'n_components', the regularization parameter 'reg', the decomposition type 'decomposition', and the decomposition method 'method'. There are two decomposition types: 'full' and 'icd'. In some cases, ICD will run faster than the full decomposition at the cost of performance. The only method as of now is 'kettenring-like'.

#

# +

import numpy as np

import sys

sys.path.append("../../..")

from mvlearn.embed.kcca import KCCA

from mvlearn.plotting.plot import crossviews_plot

import matplotlib.pyplot as plt

# %matplotlib inline

from scipy import stats

import warnings

import matplotlib.cbook

warnings.filterwarnings("ignore",category=matplotlib.cbook.mplDeprecation)

# -

# Function creates Xs, a list of two views of data with a linear relationship, polynomial relationship (2nd degree) and a gaussian (sinusoidal) relationship.

def make_data(kernel, N):

# # # Define two latent variables (number of samples x 1)

latvar1 = np.random.randn(N,)

latvar2 = np.random.randn(N,)

# # # Define independent components for each dataset (number of observations x dataset dimensions)

indep1 = np.random.randn(N, 4)

indep2 = np.random.randn(N, 5)

if kernel == "linear":

x = 0.25*indep1 + 0.75*np.vstack((latvar1, latvar2, latvar1, latvar2)).T

y = 0.25*indep2 + 0.75*np.vstack((latvar1, latvar2, latvar1, latvar2, latvar1)).T

return [x,y]

elif kernel == "poly":

x = 0.25*indep1 + 0.75*np.vstack((latvar1**2, latvar2**2, latvar1**2, latvar2**2)).T

y = 0.25*indep2 + 0.75*np.vstack((latvar1, latvar2, latvar1, latvar2, latvar1)).T

return [x,y]

elif kernel == "gaussian":

t = np.random.uniform(-np.pi, np.pi, N)

e1 = np.random.normal(0, 0.05, (N,2))

e2 = np.random.normal(0, 0.05, (N,2))

x = np.zeros((N,2))

x[:,0] = t

x[:,1] = np.sin(3*t)

x += e1

y = np.zeros((N,2))

y[:,0] = np.exp(t/4)*np.cos(2*t)

y[:,1] = np.exp(t/4)*np.sin(2*t)

y += e2

return [x,y]

# ## Linear kernel implementation

# Here we show how KCCA with a linear kernel can uncover the highly correlated latent distribution of the 2 views which are related with a linear relationship, and then transform the data into that latent space. We use an 80-20, train-test data split to develop the embedding.

#

# Also, we use statistical tests (Wilk's Lambda) to check the significance of the canonical correlations.

# +

np.random.seed(1)

Xs = make_data('linear', 100)

Xs_train = [Xs[0][:80],Xs[1][:80]]

Xs_test = [Xs[0][80:],Xs[1][80:]]

kcca_l = KCCA(n_components = 4, reg = 0.01)

kcca_l.fit(Xs_train)

linearkcca = kcca_l.transform(Xs_test)

# -

# ### Original Data Plotted

crossviews_plot(Xs, ax_ticks=False, ax_labels=True, equal_axes=True)

# ### Transformed Data Plotted

crossviews_plot(linearkcca, ax_ticks=False, ax_labels=True, equal_axes=True)

# Now, we assess the canonical correlations achieved on the testing data, and the p-values for significance using a Wilk's Lambda test

# +

stats = kcca_l.get_stats()

print("Below are the canonical correlations and the p-values of a Wilk's Lambda test for each components:")

print(stats['r'])

print(stats['pF'])

# -

# ## Polynomial kernel implementation

# Here we show how KCCA with a polynomial kernel can uncover the highly correlated latent distribution of the 2 views which are related with a polynomial relationship, and then transform the data into that latent space.

Xsp = make_data("poly", 150)

kcca_p = KCCA(ktype ="poly", degree = 2.0, n_components = 4, reg=0.001)

polykcca = kcca_p.fit_transform(Xsp)

# ### Original Data Plotted

crossviews_plot(Xsp, ax_ticks=False, ax_labels=True, equal_axes=True)

# ### Transformed Data Plotted

crossviews_plot(polykcca, ax_ticks=False, ax_labels=True, equal_axes=True)

# Now, we assess the canonical correlations achieved on the testing data

# +

stats = kcca_p.get_stats()

print("Below are the canonical correlations for each components:")

print(stats['r'])

# -

# ## Gaussian Kernel Implementation

# Here we show how KCCA with a gaussian kernel can uncover the highly correlated latent distribution of the 2 views which are related with a sinusoidal relationship, and then transform the data into that latent space.

Xsg = make_data("gaussian", 100)

Xsg_train = [Xsg[0][:20],Xsg[1][:20]]

Xsg_test = [Xsg[0][20:],Xsg[1][20:]]

kcca_g = KCCA(ktype ="gaussian", sigma = 1.0, n_components = 2, reg = 0.01)

kcca_g.fit(Xsg)

gausskcca = kcca_g.transform(Xsg)

# ### Original Data Plotted

crossviews_plot(Xsg, ax_ticks=False, ax_labels=True, equal_axes=True)

# ### Transformed Data Plotted

crossviews_plot(gausskcca, ax_ticks=False, ax_labels=True, equal_axes=True)

# Now, we assess the canonical correlations achieved on the testing data

# +

stats = kcca_g.get_stats()

print("Below are the canonical correlations for each components:")

print(stats['r'])

| docs/tutorials/embed/kcca_tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Deep Reinforcement Learning <em> in Action </em>

# ## Ch. 4 - Policy Gradients

import gym

import numpy as np

import torch

from matplotlib import pylab as plt

# #### Helper functions

def running_mean(x, N=50):

cumsum = np.cumsum(np.insert(x, 0, 0))

return (cumsum[N:] - cumsum[:-N]) / float(N)

# #### Defining Network

# +

l1 = 4

l2 = 150

l3 = 2

model = torch.nn.Sequential(

torch.nn.Linear(l1, l2),

torch.nn.LeakyReLU(),

torch.nn.Linear(l2, l3),

torch.nn.Softmax()

)

learning_rate = 0.0009

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)

# -

# #### Objective Function

def loss_fn(preds, r):

# pred is output from neural network, a is action index

# r is return (sum of rewards to end of episode), d is discount factor

return -torch.sum(r * torch.log(preds)) # element-wise multipliy, then sum

# #### Training Loop

# +

env = gym.make('CartPole-v0')

MAX_DUR = 200

MAX_EPISODES = 500

gamma_ = 0.99

time_steps = []

for episode in range(MAX_EPISODES):

curr_state = env.reset()

done = False

transitions = [] # list of state, action, rewards

for t in range(MAX_DUR): #while in episode

act_prob = model(torch.from_numpy(curr_state).float())

action = np.random.choice(np.array([0,1]), p=act_prob.data.numpy())

prev_state = curr_state

curr_state, reward, done, info = env.step(action)

transitions.append((prev_state, action, reward))

if done:

break

# Optimize policy network with full episode

ep_len = len(transitions) # episode length

time_steps.append(ep_len)

preds = torch.zeros(ep_len)

discounted_rewards = torch.zeros(ep_len)

for i in range(ep_len): #for each step in episode

discount = 1

future_reward = 0

# discount rewards

for i2 in range(i, ep_len):

future_reward += transitions[i2][2] * discount

discount = discount * gamma_

discounted_rewards[i] = future_reward

state, action, _ = transitions[i]

pred = model(torch.from_numpy(state).float())

preds[i] = pred[action]

loss = loss_fn(preds, discounted_rewards)

optimizer.zero_grad()

loss.backward()

optimizer.step()

env.close()

# -

plt.figure(figsize=(10,7))

plt.ylabel("Duration")

plt.xlabel("Episode")

plt.plot(running_mean(time_steps, 50), color='green')

| old_but_more_detailed/Ch4_PolicyGradients.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Module 2 Required Coding Activity

# Introduction to Python Unit 1

#

# This is an activity based on code similar to the Jupyter Notebook **`Practice_MOD02_1-3_IntroPy.ipynb`** which you may have completed.

#

# | Some Assignment Requirements |

# |:-------------------------------|

# | **NOTE:** This program requires a **function** be defined, created and called. The call will send values based on user input. The function call must capture a `return` value that is used in print output. The function will have parameters and `return` a string and should otherwise use code syntax covered in module 2. |

#

# ## Program: fishstore()

# create and test fishstore()

# - **fishstore() takes 2 string arguments: fish & price**

# - **fishstore returns a string in sentence form**

# - **gather input for fish_entry and price_entry to use in calling fishstore()**

# - **print the return value of fishstore()**

# >example of output: **`Fish Type: Guppy costs $1`**

# +

# [ ] create, call and test fishstore() function

# then PASTE THIS CODE into edX

# -

# ### Need assignment tips and clarification?

# See the video on the "End of Module coding assignment > Module 2 Required Code Description" course page on [edX](https://courses.edx.org/courses/course-v1:Microsoft+DEV236x+4T2017/course)

#

# # Important: [How to submit the code in edX by pasting](https://courses.edx.org/courses/course-v1:Microsoft+DEV236x+1T2017/wiki/Microsoft.DEV236x.3T2018/paste-code-end-module-coding-assignments/)

# [Terms of use](http://go.microsoft.com/fwlink/?LinkID=206977) [Privacy & cookies](https://go.microsoft.com/fwlink/?LinkId=521839) © 2017 Microsoft

| Required_Code_MOD2_IntroPy.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

from bokeh.plotting import output_file, show, output_notebook

from bokeh.models import GeoJSONDataSource

from bokeh.plotting import figure

from bokeh.sampledata.sample_geojson import geojson

geo_source = GeoJSONDataSource(geojson=geojson)

p = figure()

p.circle(x='x', y='y', alpha=0.9, source=geo_source)

output_notebook()

show(p)

# -

| notebooks/bokeh-visualizations/geojson.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/seel-channel/AMPD_Mask_RCNN/blob/master/Train_Mask_RCNN_(AMPD).ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="rGWTPWBIx370"

# ## **1. Installation**

#

# Load your dataset

# + id="Md87Hxgtn6zi"

# %tensorflow_version 1.x

# !pip install --upgrade h5py==2.10.0

# !git clone https://github.com/seel-channel/AMPD_Mask_RCNN

# %matplotlib inline

# + colab={"base_uri": "https://localhost:8080/"} id="O0LSXkUGsYMS" outputId="dace138c-e28e-4951-dcc5-65a988e28277"

import sys

sys.path.append("/content/AMPD_Mask_RCNN")

from train_mask_rcnn import *

# + id="JvM9sdw3Vou_" colab={"base_uri": "https://localhost:8080/"} outputId="e63c2751-b7be-47ef-e693-00cf042a6857"

# !nvidia-smi

# + [markdown] id="Omb3Yl6ABqiJ"

# ## **2. Image Dataset**

#

# Load your annotated dataset

#

# + id="MnRU9zVkRktW" colab={"base_uri": "https://localhost:8080/"} outputId="203e1fc2-f046-4d26-c8e8-ca2a7977bcef"

from google.colab import drive

drive.mount('/content/gdrive')

# + id="IlNYqGhvqb_p" colab={"base_uri": "https://localhost:8080/"} outputId="b6377426-2156-41bc-b575-a48e787a34c8"

# Extract Images

drive_folder = "/content/gdrive/MyDrive/ampd/"

cache_folder = "/content/dataset/"

test_folder = cache_folder + "test/"

train_folder = cache_folder + "train/"

images_folder = "images/"

generated_folder = "generated/"

annotations_filename = "coco_annotations.json"

train_annotations_path = train_folder + annotations_filename

test_annotations_path = test_folder + annotations_filename

print("Extracting: train")

extract_images(drive_folder + "dataset_train.zip", train_folder)

print("Extracting: test")

extract_images(drive_folder + "dataset_test.zip", test_folder)

# + colab={"base_uri": "https://localhost:8080/"} id="J520fnwgUMTI" outputId="5411eccf-f570-4115-e76c-3e29f9774e3a"

import json

def open_json(path: str):

with open(path) as f:

return json.loads(f.read())

def save_json(dict: dict, path: str):

with open(path, 'w+') as f:

json.dump(dict, f)

def remove_unused_images(annotations_path):

coco_json = open_json(annotations_path)

used_images = []

images = []

for annotation in coco_json['annotations']:

image_id = annotation['image_id']

if not image_id in used_images:

used_images.append(image_id)

for image in coco_json['images']:

image_id = image['id']

if image_id in used_images:

images.append(image)

coco_json['images'] = images

save_json(coco_json, annotations_path)

# Ignore images without annotations

print("Removing train unused images...")

remove_unused_images(train_annotations_path)

print("Removing test unused images...")

remove_unused_images(test_annotations_path)

# + id="MnW8ETPKzqFT" colab={"base_uri": "https://localhost:8080/"} outputId="a06d3893-48ad-4637-cba3-5da04801a65e"

dataset_train = load_image_dataset(train_annotations_path, train_folder, "train")

dataset_val = load_image_dataset(test_annotations_path, test_folder, "val")

class_number = dataset_train.count_classes()

print('Train: %d' % len(dataset_train.image_ids))

print('Validation: %d' % len(dataset_val.image_ids))

print("Classes: {}".format(class_number))

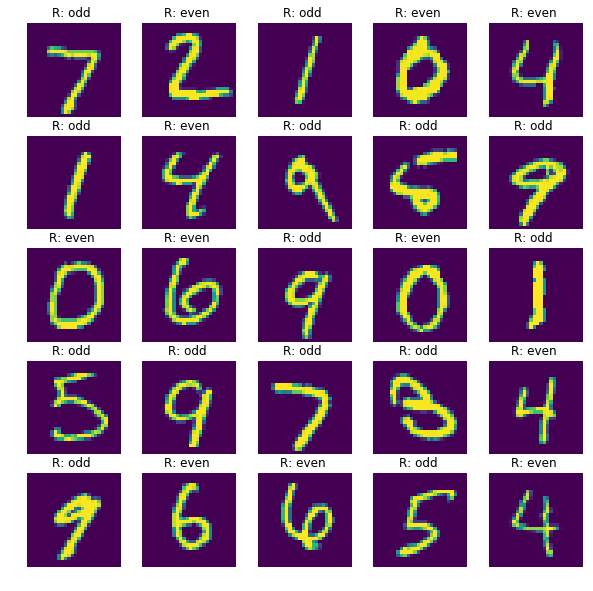

# + id="umeaqvVeBqiL" colab={"base_uri": "https://localhost:8080/", "height": 617} outputId="bbb4ab64-5fba-44fe-990f-6214aa93ea1f"

# Load image samples

display_image_samples(dataset_train)

# + [markdown] id="Z9k3Wm0_BqiN"

# ##**3. Training**

#

# Train Mask RCNN on your custom Dataset.

# + id="axkqWaZ7z_4p" colab={"base_uri": "https://localhost:8080/"} outputId="df9e88be-4ce6-4409-8eeb-887c0142c833"

# Load Configuration

import os

def make_folders(folders: list, remove=False):

for path in folders:

if not os.path.exists(path):

os.makedirs(path)

elif(remove):

os.remove(path)

model_dir = drive_folder + "pretrained/"

make_folders([model_dir])

config = CustomConfig(class_number)

model = load_training_model(config, model_dir)

# + id="SyzLXzF5BqiN" colab={"base_uri": "https://localhost:8080/"} outputId="09bf5c5d-0ec7-4f0e-9194-32de525b79e7"

# Start Training. This operation might take a long time.

train_head(model, dataset_train, dataset_train, config, epochs=10)

# + [markdown] id="6npLKIL3BqiO"

# ## **4. Detection (test your model on a random image)**

# + id="lUwXQ6h7BqiO" colab={"base_uri": "https://localhost:8080/"} outputId="98572e62-6b09-40e3-da9e-2583f38da4f1"

# Load the latest trained model will be loaded

test_model, inference_config = load_test_model(class_number, model_dir)

# + id="H8uzE5U3BqiP" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="9d890a36-8541-48b2-a6b1-c7ec84ab1c14"

# Test on a random image

test_random_image(test_model, dataset_val, inference_config)

| Train_Mask_RCNN_(AMPD).ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from eppy import modeleditor

from eppy.modeleditor import IDF

from matplotlib import pyplot as plt

import os

import pandas as pd

import numpy as np

from besos import eppy_funcs as ef

from besos import sampling

from besos.evaluator import EvaluatorEP

from besos.parameters import RangeParameter, FieldSelector, FilterSelector, Parameter, expand_plist, wwr, CategoryParameter, GenericSelector

from besos.problem import EPProblem

import matplotlib.pyplot as plt

output_path="./output/simulation_monthly_2017/"

iddfile='./energyplus/EnergyPlus-9-0-1/Energy+.idd'

fname = './input/on_double.idf'

weather='./input/ITA_Torino_160590_IWEC.epw'

IDF.setiddname(iddfile)

idf = IDF(fname,weather)

# +

zones = idf.idfobjects["ZONE"]

total_area=0

for i in range(len(zones)):

zone = zones[i]

area = modeleditor.zonearea(idf, zone.Name)

total_area=total_area+area

print("total one area = %s" % (total_area, ))

# -

samples_temp =[]

samples_temp.append({'Orientation': 270,

'Insulation Thickness': 0.35,

'Window to Wall Ratio': 0.15,})

samples = pd.DataFrame.from_dict(samples_temp)

samples

# +

building = ef.get_building(fname)

insulation = FieldSelector(class_name='Material', object_name='MW Glass Wool (rolls)_O.1445',

field_name='Thickness')

insulationPR=Parameter(selector=insulation,name='Insulation Thickness')

window_to_wall = wwr(CategoryParameter([0.15]))

orientation = FieldSelector(class_name='Building', field_name='North Axis')

orientationPR = Parameter(selector=orientation, value_descriptor=CategoryParameter(options=[270]),

name='Orientation')

parameters = [orientationPR , window_to_wall, insulationPR]

objectives = ['Electricity:Facility','DistrictHeating:Facility','DistrictCooling:Facility'] # these get made into `MeterReader` or `VariableReader`

problem=EPProblem(parameters, objectives) # problem = parameters + objectives

# -

evaluator = EvaluatorEP(problem, building, out_dir=output_path, err_dir=output_path,

epw=weather) # evaluator = problem + building

#simulation 2019

run_period = idf.idfobjects['RunPeriod'][0]

run_period.Begin_Year = 2017

run_period.End_Year = 2017

run_period

idf.idfobjects['RunPeriod'][0]

#monthly simulation

for i in range(len(idf.idfobjects['OUTPUT:VARIABLE'])):

idf.idfobjects['OUTPUT:VARIABLE'][i].Reporting_Frequency='monthly'

for i in range(len(idf.idfobjects['OUTPUT:METER'])):

idf.idfobjects['OUTPUT:METER'][i].Reporting_Frequency='monthly'

idf.idfobjects['OUTPUT:ENVIRONMENTALIMPACTFACTORS'][0].Reporting_Frequency='monthly'

#Now we run the evaluator with the given parameters

result = evaluator([270,0.15,0.35])

values = dict(zip(objectives, result))

for key, value in values.items():

print(key, " :: ", "{0:.2f}".format(value/3.6e6), "kWh")

idf.run(readvars=True,output_directory=output_path,annual= True)

idf_data =pd.read_csv(output_path + 'eplusout.csv')

for i in idf_data.columns:

if 'Temperature' in i and 'Zone' in i:

print(i)

#getting required columns

columns=['Date/Time']

for i in idf_data.columns:

if ('Zone Operative Temperature' in i or 'District' in i or 'Electricity:Facility' in i or 'Drybulb' in i) and ('Monthly' in i):

columns=columns+[i]

columns

df_columns = {'Date/Time':'Date_Time',

'Environment:Site Outdoor Air Drybulb Temperature [C](Monthly)':'t_out',

'BLOCK1:ZONE3:Zone Operative Temperature [C](Monthly)':'t_in_ZONE3',

'BLOCK1:ZONE1:Zone Operative Temperature [C](Monthly)':'t_in_ZONE1',

'BLOCK1:ZONE4:Zone Operative Temperature [C](Monthly)':'t_in_ZONE4',

'BLOCK1:ZONE2:Zone Operative Temperature [C](Monthly)':'t_in_ZONE2',

'DistrictHeating:Facility [J](Monthly)':'power_heating',

'DistrictCooling:Facility [J](Monthly)':'power_cooling' ,

'Electricity:Facility [J](Monthly)':'power_electricity'}

idf_data=idf_data[columns]

data = idf_data.rename(columns =df_columns)

data

data['t_in'] = data[['t_in_ZONE3','t_in_ZONE1','t_in_ZONE4', 't_in_ZONE2']].mean(axis=1)

data=data.drop(['t_in_ZONE3', 't_in_ZONE1', 't_in_ZONE4','t_in_ZONE2'], axis = 1)

data

data['temp_diff'] =data['t_in'] - data['t_out']

#data['Date_Time'] = '2019/' + data['Date_Time'].str.strip()

#data['Date_Time'] = data['Date_Time'].str.replace('24:00:00','23:59:59')

data

#converting from J to kWh

data['power_heating'] /= 3.6e6

data['power_cooling'] /= 3.6e6

data['power_electricity'] /= 3.6e6

data

data['total_power'] = data['power_heating']+ data['power_cooling']

data = data[['Date_Time','t_in','t_out','temp_diff','power_heating','power_cooling','power_electricity','total_power']]

data.to_csv(path_or_buf=output_path+'simulation_data_monthly.csv',index=False)

data

y=data['power_electricity'].values

x=data['Date_Time'].values

y

y/total_area

fig, ax = plt.subplots(figsize=(20,10))

labels= data['Date_Time'].values

plt.xticks(np.arange(1, len(x)+1, 1.0))

ax.set_xticklabels(labels)

plt.xticks(rotation='vertical')

plt.xlabel('Months')

plt.ylabel('Electricity Consumption per m2 (kWh/m2)')

plt.title(' Annual Electricity Consumption per m2 in 2019')

plt.plot(y)

plt.show()

fig, ax = plt.subplots(figsize=(10,5))

labels= data['Date_Time'].values

plt.xticks(np.arange(1, len(x)+1, 1.0))

ax.set_xticklabels(labels)

plt.xticks(rotation='vertical')

plt.plot(data['power_cooling'].values/total_area)

plt.xlabel('Months')

plt.ylabel('Cooling Power per m2 (kWh/m2)')

plt.title('Monthly Cooling Power Consumption per m2 from in 2019')

plt.show()

fig, ax = plt.subplots(figsize=(10,5))

labels= data['Date_Time'].values

plt.xticks(np.arange(1, len(x)+1, 1.0))

ax.set_xticklabels(labels)

plt.xticks(rotation='vertical')

plt.plot(data['power_heating'].values/total_area)

plt.xlabel('Months')

plt.ylabel('Heating Power per m2(kWh/m2)')

plt.title('Monthly Heating Power Consumption per m2 from in 2019')

plt.show()

| 2-Simulations_monthly.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # About the environment - "vector_cv_project"

# !which python

# !echo $PYTHONPATH

# !echo $LD_LIBRARY_PATH

# !echo $PATH

# +

# imports

import argparse

import logging

import time

from tqdm import tqdm

import numpy as np

import torch

from vector_cv_tools import datasets

from vector_cv_tools import transforms as T

from vector_cv_tools import utils

import albumentations

import torchvision

from torch.utils.data import DataLoader

torch.cuda.is_available()

# -

kinetics_annotation_path = "./datasets/kinetics/kinetics700/train.json"

kinetics_data_path = "./datasets/kinetics/train"

# # A basic, un-transformed kinetics dataset

#

# +

# define basic spatial and temporal transforms

base_spatial_transforms = T.ComposeVideoSpatialTransform([albumentations.ToFloat(max_value=255)])

base_temporal_transforms = T.ComposeVideoTemporalTransform([T.video_transforms.ToTensor()])

# create raw dataset

data_raw = datasets.KineticsDataset(

fps=10,

max_frames=128,

round_source_fps=False,

annotation_path = kinetics_annotation_path,

data_path = kinetics_data_path,

class_filter = ["push_up", "pull_ups"],

spatial_transforms=base_spatial_transforms,

temporal_transforms=base_temporal_transforms)

# -

labels = data_raw.metadata.labels

print("Looping through the dataset, {} labels, {} data points in total".

format(data_raw.num_classes, len(data_raw)))

for label, info in labels.items():

print("{:<40} ID: {} size: {} {}".

format(label, info["id"], len(info["indexes"]), len(info["indexes"])//20 * "|"))

data_point, label = data_raw[0]

print(data_point.shape)

print(label)

vid = (data_point.numpy() * 255).astype(np.uint8)

utils.create_GIF("raw_img.gif", vid)

# # A dataset with video transformations

# +

###############################################

##### NOW PRESENT TO YOU: VideoTransforms!!####

###############################################

# compatibility with others

transform1 = T.from_torchvision(

torchvision.transforms.ColorJitter())

transform2 = T.from_torchvision(

torchvision.transforms.functional.hflip)

transform3 = T.from_albumentation(

albumentations.VerticalFlip(p=1))

# Spatial: in-house

transform4 = T.RandomResizedSpatialCrop((280, 280), scale=(0, 1))

transform5 = T.RandomSpatialCrop((480, 480))

transform6 = T.RandomTemporalCrop(size=50, pad_if_needed=True, padding_mode="wrap")

transform7 = T.SampleEveryNthFrame(2)

transform8 = T.ToTensor()

spatial_transforms = base_spatial_transforms

# define temporal transforms

temporal_transforms = [transform1, transform2, transform3, transform4,

transform6, transform7, transform8]

temporal_transforms = T.ComposeVideoTemporalTransform(temporal_transforms)

print("Spatial transforms: \n{}".format(spatial_transforms))

print("Temporal transforms: \n{}".format(temporal_transforms))

# +

# create a dataset with transformations

data_transformed = datasets.KineticsDataset(

fps=10,

max_frames=128,

round_source_fps=False,

annotation_path = kinetics_annotation_path,

data_path = kinetics_data_path,

class_filter = ["push_up", "pull_ups"],

spatial_transforms=spatial_transforms,

temporal_transforms=temporal_transforms,)

# -

data_point, label = data_transformed[0]

print(data_point.shape)

print(label)

vid = (data_point.numpy() * 255).astype(np.uint8)

utils.create_GIF("transformed_img.gif", vid)

| examples/video/VideoTransformationDemo.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/"} id="VBECACYMYNny" outputId="687227ce-54c9-4efb-9a61-a73acfdbff15"

# !pip install lightkurve

# + colab={"base_uri": "https://localhost:8080/"} id="8UZm2_6xXNt7" outputId="a301d552-e133-483d-951d-906834ebb0c0"

# !pip install exoplanet

# + colab={"base_uri": "https://localhost:8080/"} id="riIVV1fJXfF_" outputId="0a95d3f8-8ac2-4b26-cb77-2f440971a5f0"

import exoplanet

exoplanet.utils.docs_setup()

print(f"exoplanet.__version__ = '{exoplanet.__version__}'")

# + colab={"base_uri": "https://localhost:8080/", "height": 386} id="9EmVYe7LfHVk" outputId="f0b9910e-db51-4ebd-8efe-6a3e11a37714"

lc = lk.search_lightcurve('TIC 375506058', mission="TESS", sector=15).download()

lc.plot();

# + colab={"base_uri": "https://localhost:8080/", "height": 520} id="kT-rdAakXqee" outputId="0f2b3f6d-036e-43fe-f77c-7b046c198a7d"

import numpy as np

import lightkurve as lk

import matplotlib.pyplot as plt