code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

# +

# Reading Data

df_resale = pd.read_csv('Landed_Housing_sorted.csv')

df_resale['Address'] = df_resale['address']

folder = 'Non-Landed Sorted Data'

df_cc = pd.read_csv('./Landed Sorted Data/CC_NEW.csv')

df_cc = df_cc.rename(columns={'0' : 'Address','1' : 'CC','2' : 'distance_cc'})

df_cc['distance_cc'] = df_cc['distance_cc'].str[:-2].astype(float)

df_cc = df_cc.drop(['CC'], axis=1)

df_hawker = pd.read_csv('./Landed Sorted Data/hawker_NEW.csv')

df_hawker = df_hawker.rename(columns={'0' : 'Address','1' : 'hawker','2' : 'distance_hawker'})

df_hawker['distance_hawker'] = df_hawker['distance_hawker'].str[:-2].astype(float)

df_hawker = df_hawker.drop(['hawker'], axis=1)

df_mrt = pd.read_csv('./Landed Sorted Data/MRT_NEW.csv')

df_mrt = df_mrt.rename(columns={'0' : 'Address','1' : 'MRT','2' : 'distance_mrt'})

df_mrt['distance_mrt'] = df_mrt['distance_mrt'].str[:-2].astype(float)

df_mrt = df_mrt.drop(['MRT'], axis=1)

df_npc = pd.read_csv('./Landed Sorted Data/npc_NEW.csv')

df_npc = df_npc.rename(columns={'0' : 'Address','1' : 'NPC','2' : 'distance_npc'})

df_npc['distance_npc']= df_npc['distance_npc'].str[:-2].astype(float)

df_npc = df_npc.drop(['NPC'], axis=1)

df_ps = pd.read_csv('./Landed Sorted Data/ps_NEW.csv')

df_ps = df_ps.rename(columns={'0' : 'Address','1' : 'PS','2' : 'distance_primary_school'})

df_ps['distance_primary_school'] = df_ps['distance_primary_school'].str[:-2].astype(float)

df_ps = df_ps.drop(['PS'], axis=1)

df_ss = pd.read_csv('./Landed Sorted Data/SS_NEW.csv')

df_ss = df_ss.rename(columns={'0' : 'Address','1' : 'SS','2' : 'distance_secondary_school'})

df_ss['distance_secondary_school'] = df_ss['distance_secondary_school'].str[:-2].astype(float)

df_ss = df_ss.drop(['SS'], axis=1)

df_sm = pd.read_csv('./Landed Sorted Data/supermarket_NEW.csv')

df_sm = df_sm.rename(columns={'0' : 'Address','1' : 'SM','2' : 'distance_supermarket'})

df_sm['distance_supermarket'] = df_sm['distance_supermarket'].str[:-2].astype(float)

df_sm = df_sm.drop(['SM'], axis=1)

df_city = pd.read_csv('./Landed Sorted Data/City_NEW.csv')

df_city = df_city.rename(columns={'0' : 'Address','1' : 'City','2' : 'distance_city'})

df_city['distance_city'] = df_city['distance_city'].str[:-2].astype(float)

df_city = df_city.drop(['City'], axis=1)

# -

df_resale.head()

import re

df_resale['Tenure'].unique()

s = df_resale['Tenure'].str.findall('\d+')

def years_left(df):

a = re.findall('\d+',df)

if len(a)!= 0:

left = int(a[0]) - (2021 - int(a[1]))

else:

left = 999999

return left

df_resale['remaining_lease_yrs'] = df_resale['Tenure'].apply(years_left)

df_resale['remaining_lease_yrs']

# +

#The dictionary for cleaning up the categorical columns

cleanup_nums = {"flat_type_num": {"Detached": 1, "Semi-detached": 2,"Terrace": 3, "Strata Detached": 4

, "Strata Semi-detached": 5, "Strata Terrace": 6},

"Planning Area_num": {"North": 1, "North-East": 2,"East": 3, "West": 4,

"Central": 5},

}

#To convert the columns to numbers using replace:

df_resale['flat_type_num'] = df_resale['Type']

df_resale['Planning Area_num'] = df_resale['Planning Area']

df_resale = df_resale.replace(cleanup_nums)

df_resale.head()

# -

merged = pd.merge(df_resale,df_cc, on=['Address'], how="outer")

merged = pd.merge(merged,df_hawker, on=['Address'], how="outer")

merged = pd.merge(merged,df_mrt, on=['Address'], how="outer")

merged = pd.merge(merged,df_npc, on=['Address'], how="outer")

merged = pd.merge(merged,df_ps, on=['Address'], how="outer")

merged = pd.merge(merged,df_ss, on=['Address'], how="outer")

merged = pd.merge(merged,df_sm, on=['Address'], how="outer")

merged = pd.merge(merged,df_city, on=['Address'], how="outer")

#merged = pd.merge(merged,df_meta, on=['Address'], how="outer")

merged = merged.dropna()

#merged.to_csv('Complete_dataset_landed.csv')

merged = merged.rename(columns={"Area (Sqft)": "floor_area_sqm"})

merged['resale_price'] = merged['Price ($)']/merged['No. of Units']

# +

dataset_features = merged[['resale_price', 'Postal District','flat_type_num' ,'floor_area_sqm', 'Planning Area_num', 'remaining_lease_yrs',

'distance_secondary_school','distance_primary_school', 'distance_mrt', 'distance_supermarket', 'distance_hawker',

'distance_city', 'distance_npc', 'distance_cc','Mature_Estate']]

print(len(dataset_features))

# Only "y varable"

resale_p = dataset_features['resale_price']

# All other indepdendent variables

X = dataset_features[[ 'Postal District','flat_type_num' ,'floor_area_sqm', 'Planning Area_num', 'remaining_lease_yrs',

'distance_secondary_school','distance_primary_school', 'distance_mrt', 'distance_supermarket', 'distance_hawker',

'distance_city', 'distance_npc', 'distance_cc','Mature_Estate']]

dataset_features.head()

# -

dataset_features.dtypes

# +

#Have correlation analysis for resale price with all variables:

import matplotlib.pyplot as plt

import pandas as pd

import seaborn as sns

import numpy as np

corrMatrix = dataset_features.corr()

test = corrMatrix.iloc[[0]]

test = test.iloc[: , 1:]

print(test)

"""plt.subplots(figsize=(12,9))

sns.heatmap(corrMatrix, xticklabels=corrMatrix.columns, yticklabels=corrMatrix.columns, cmap='coolwarm', annot=True)"""

# -

plt.subplots(figsize=(13,0.5))

sns.heatmap(test, xticklabels=test.columns, yticklabels=test.index, cmap='coolwarm', annot=True)

plt.yticks(rotation = 'horizontal')

# +

floor_area = merged.sort_values(by=['floor_area_sqm'])

plt.fill_between(floor_area['floor_area_sqm'], floor_area['resale_price'], lw=2)

plt.ylabel('Resale Price')

plt.title('Landed Housing Floor Area')

plt.show()

# +

remaining = merged.sort_values(by=['remaining_lease_yrs'])

remaining = remaining.loc[remaining['remaining_lease_yrs']>30]

remaining = remaining.loc[remaining['resale_price']<10000000]

y_pos = np.arange(len(remaining))

plt.bar(y_pos, remaining['resale_price'], align='center', alpha=0.5,width=20)

plt.ylabel('Resale Price')

plt.xticks(y_pos, remaining['remaining_lease_yrs'])

plt.title('Landed Housing Remaining Lease Years')

plt.show()

# -

remaining = merged.sort_values(by=['remaining_lease_yrs'])

plt.ylabel('Resale Price')

plt.scatter(remaining['remaining_lease_yrs'], remaining['resale_price'],s=1, alpha=0.5)

plt.title('Landed Housing Remaining Lease Years')

plt.show()

# +

city = merged.sort_values(by=['distance_city'])

plt.plot(city['distance_city'], city['resale_price'], lw=2)

plt.ylabel('Resale Price')

plt.title('Landed Housing Distance to City')

plt.xlabel('Distance to City')

plt.show()

# -

postal_district = merged.groupby(by = ['Postal District'])['resale_price'].mean().reset_index()

y_pos = np.arange(len(postal_district))

plt.bar(y_pos, postal_district['resale_price'], align='center', alpha=0.5)

plt.ylabel('Resale Price')

plt.xticks(y_pos, postal_district['Postal District'])

plt.title('Landed Housing Postal District')

plt.show()

# +

from sklearn.model_selection import train_test_split

from sklearn import linear_model

from sklearn.metrics import mean_squared_error, r2_score

import numpy as np

from sklearn import tree

from sklearn.tree import DecisionTreeRegressor

from sklearn.model_selection import GridSearchCV

from sklearn.ensemble import AdaBoostRegressor, BaggingRegressor

np.random.seed(100)

X_train, X_test, y_train, y_test = train_test_split(X, resale_p, test_size=.3, random_state=0)

Models = ["OLS", "AdaBoost", "Decision Tree"]

MSE_lst = []

# OLS:

regr = linear_model.LinearRegression() # Create linear regression object

np.random.seed(100)

regr.fit(X_train, y_train) # Train the model using the training sets

y_pred_ols = regr.predict(X_test) # Make predictions using the testing set

MSE_ols = mean_squared_error(y_test, y_pred_ols) # performance statistic

MSE_lst.append(MSE_ols)

# Boosting

adaboosting = AdaBoostRegressor()

adaboosting.fit(X=X_train, y=y_train)

y_pred_boosting = adaboosting.predict(X=X_test)

MSE_adaboost = mean_squared_error(y_test, y_pred_boosting)

MSE_lst.append(MSE_adaboost)

# Bagging

bagging = BaggingRegressor(DecisionTreeRegressor())

bagging.fit(X=X_train, y=y_train)

y_pred_dt = bagging.predict(X=X_test)

MSE_bag = mean_squared_error(y_test, y_pred_dt)

MSE_lst.append(MSE_bag)

# -

import matplotlib.pyplot as plt

print(len(y_test))

fig, ax = plt.subplots(figsize=(20,10))

ax.plot(range(len(y_test[:100])), y_test[:100], '-b',label='Actual')

ax.plot(range(len(y_pred_ols[:100])), y_pred_ols[:100], 'r', label='Predicted')

plt.show()

import matplotlib.pyplot as plt

print(len(y_test))

fig, ax = plt.subplots(figsize=(20,10))

ax.plot(range(len(y_test[:100])), y_test[:100], '-b',label='Actual')

ax.plot(range(len(y_pred_boosting[:100])), y_pred_boosting[:100], 'r', label='Predicted')

plt.show()

import matplotlib.pyplot as plt

print(len(y_test))

fig, ax = plt.subplots(figsize=(20,10))

ax.plot(range(len(y_test[:100])), y_test[:100], '-b',label='Actual')

ax.plot(range(len(y_pred_dt[:100])), y_pred_dt[:100], 'r', label='Predicted')

plt.show()

# +

y_pred_df_ols = pd.DataFrame(y_pred_dt, columns= ['y_pred'])

print(len(y_pred_df_ols))

print(len(X_test))

pred_res1 = pd.concat([X_test,y_test], axis=1)

print(len(pred_res1))

pred_res1 = pred_res1.reset_index(drop=True)

pred_res2 = pd.concat([pred_res1,y_pred_df_ols], axis=1)

pred_res2

# +

import pandas as pd

import numpy as np

from sklearn import datasets, linear_model

from sklearn.linear_model import LinearRegression

import statsmodels.api as sm

from scipy import stats

X2 = sm.add_constant(X_train)

est = sm.OLS(y_train, X2)

est2 = est.fit()

print(est2.summary())

# -

| [CODE] Data Exploration/Data Exploration Landed Housing.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # XML Parser and Dataframe Creator

#

# The first step is to parse XML files into documents.

#

#

# Useful Resources:

# * <NAME>, "[Processing XML in Python—ElementTree](https://towardsdatascience.com/processing-xml-in-python-elementtree-c8992941efd2)," Accessed Sept. 22, 2020.

# +

# Import necessary libraries.

import re, glob, csv, sys, os

import pandas as pd

import xml.etree.ElementTree as ET

# Declare directory location to shorten filepaths later.

abs_dir = "/Users/quinn.wi/Documents/"

# Gather all .xml files using glob.

list_of_files = glob.glob(abs_dir + "Data/PSC/JQA/*/*.xml")

# -

# ## Define Functions

# +

'''

Arguments of Functions:

namespace:

ancestor:

xpath_as_string:

attrib_val_str:

'''

# Read in file and get root of XML tree.

def get_root(xml_file):

tree = ET.parse(xml_file)

root = tree.getroot()

return root

# Get namespace of individual file from root element.

def get_namespace(root):

namespace = re.match(r"{(.*)}", str(root.tag))

ns = {"ns":namespace.group(1)}

return ns

# Get document id.

def get_document_id(ancestor, attrib_val_str):

doc_id = ancestor.get(attrib_val_str)

return doc_id

# Get date of document.

def get_date_from_attrValue(ancestor, xpath_as_string, attrib_val_str, namespace):

date = ancestor.find(xpath_as_string, namespace).get(attrib_val_str)

return date

def get_peopleList_from_attrValue(ancestor, xpath_as_string, attrib_val_str, namespace):

people_list = []

for elem in ancestor.findall(xpath_as_string, namespace):

person = elem.get(attrib_val_str)

people_list.append(person)

# Return a string object of 'list' to be written to output file. Can be split later.

return ','.join(people_list)

# Get plain text of every element (designated by first argument).

def get_textContent(ancestor, xpath_as_string, namespace):

text_list = []

for elem in ancestor.findall(xpath_as_string, namespace):

text = ''.join(ET.tostring(elem, encoding='unicode', method='text'))

# Add text (cleaned of additional whitespace) to text_list.

text_list.append(re.sub(r'\s+', ' ', text))

# Return concetanate text list.

return ' '.join(text_list)

# -

# ## Declare Variables

# +

# Declare regex to simplify file paths below

regex = re.compile(r'.*/\d{4}/(.*)')

# Declare document level of file. Requires root starting point ('.').

doc_as_xpath = './/ns:div/[@type="entry"]'

# Declare date element of each document.

date_path = './ns:bibl/ns:date/[@when]'

# Declare person elements in each document.

person_path = './/ns:p/ns:persRef/[@ref]'

# Declare text level within each document.

text_path = './ns:div/[@type="docbody"]/ns:p'

# -

# ## Parse Documents

# +

# %%time

# Open/Create file to write contents.

with open(abs_dir + 'Output/ParsedXML/JQA_dataframe.txt', 'w') as outFile:

# Write headers for table.

outFile.write('file' + '\t' + 'entry' + '\t' + 'date' + '\t' + \

'people' + '\t' + 'text' + '\n')

# Loop through each file within a directory.

for file in list_of_files:

# Call functions to create necessary variables and grab content.

root = get_root(file)

ns = get_namespace(root)

for eachDoc in root.findall(doc_as_xpath, ns):

# Call functions.

entry = get_document_id(eachDoc, '{http://www.w3.org/XML/1998/namespace}id')

date = get_date_from_attrValue(eachDoc, date_path, 'when', ns)

people = get_peopleList_from_attrValue(eachDoc, person_path, 'ref', ns)

text = get_textContent(eachDoc, text_path, ns)

# Write results in tab-separated format.

outFile.write(str(regex.search(file).groups()) + '\t' + entry + \

'\t' + date + '\t' + people + '\t' + text + '\n')

# -

# ## Import Dataframe

# +

dataframe = pd.read_csv(abs_dir + 'Output/ParsedXML/JQA_dataframe.txt', sep = '\t')

dataframe

# -

| Jupyter_Notebooks/Parsers/JQA_XML-Parser.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:c3dev] *

# language: python

# name: conda-env-c3dev-py

# ---

# # Reconstructing ancestral states

#

# This app takes a `model_result` and returns a `tabular_result` consisting of the posterior probabilities of ancestral states for each node of a tree. These probabilities are computed using the marginal reconstruction algorithm.

#

# We first fit a model to the sample data.

# +

from cogent3.app import io, evo

reader = io.load_aligned(format="fasta")

aln = reader("../data/primate_brca1.fasta")

gn = evo.model("GN", tree="../data/primate_brca1.tree")

result = gn(aln)

# -

# ## Define the `ancestral_states` app

reconstuctor = evo.ancestral_states()

states_result = reconstuctor(result)

states_result

# The `tabular_result` is keyed by the node name. Each value is a `DictArray`, with header corresponding to the states and rows corresponding to alignment position.

states_result['edge.0']

# If not included in the newick tree file, the internal node names are automatically generated open loading. You can establish what those are by interrogating the tree bound to the likelihood function object. (If you move your mouse cursor over the nodes, their names will appear as hover text.)

result.tree.get_figure(contemporaneous=True).show(width=500, height=500)

| doc/app/evo-ancestral-states.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# + [markdown] deletable=true editable=true

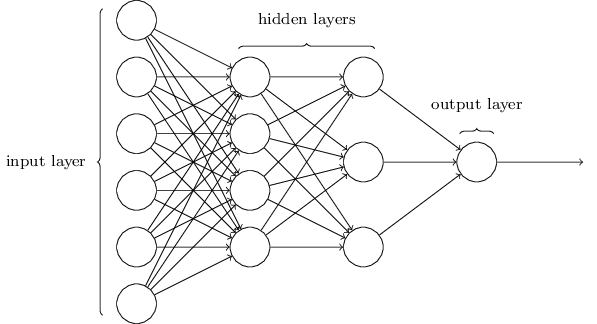

# # Neural Network Basics

#

# Compiled by <NAME> (@sunilmallya)

# + [markdown] deletable=true editable=true

# # Basic Neural Network layout

#

#

#

#

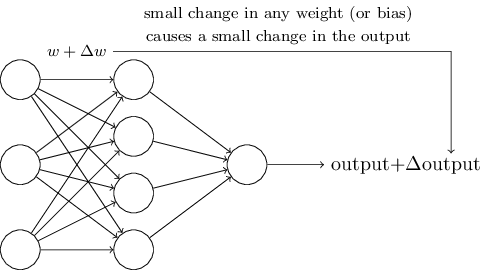

# + [markdown] deletable=true editable=true

#

#

#

# images from: http://neuralnetworksanddeeplearning.com/chap1.html

# -

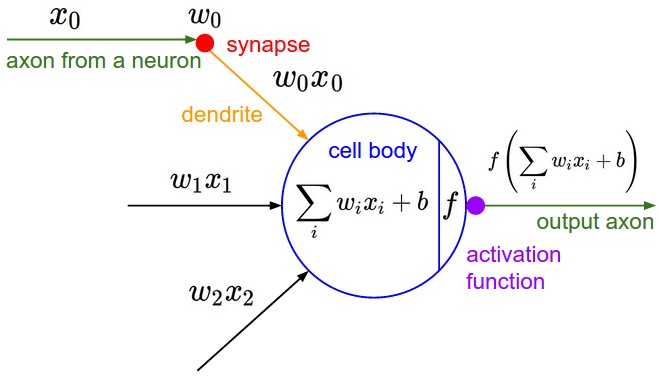

# ## Neural Network Equation

#

#

#

# img src http://cs231n.github.io/assets/nn1/neuron_model.jpeg

# ## The Learning Process

#

#

# + [markdown] deletable=true editable=true

# # Loss Functions and Optimizations

#

#

#

#

# + [markdown] deletable=true editable=true

# # Layers

#

#

# ## Multilayer perceptrons

#

# Here's where things start to get interesting. Before, we mapped our inputs directly onto our outputs through a single linear transformation.

#

#

#

# This model is perfectly adequate when the underlying relationship between our data points and labels is approximately linear. When our data points and targets are characterized by a more complex relationship, a linear model and produce sucky results. We can model a more general class of functions by incorporating one or more *hidden layers*.

#

#

#

# Here, each layer will require it's own set of parameters. To make things simple here, we'll assume two hidden layers of computation.

# -

# ### Word Embeddings

#

# Word Embedding turns text into numbers

#

# - ML Algorithms don't understand text, they require input to be vectors of continuous values

#

# - Benefits:

# - Dimensionality Reduction

# - Group similar words together (Contextual Similarity)

#

#

# ##### A common Embedding technique: Word2Vec

#

# https://github.com/saurabh3949/Word2Vec-MXNet/blob/master/Word2vec%2Bwith%2BGluon.ipynb

#

#

#

# ##### [Embedding Visualization]

# https://ronxin.github.io/wevi/

#

# https://medium.com/towards-data-science/deep-learning-4-embedding-layers-f9a02d55ac12

# + [markdown] deletable=true editable=true

# # MXNet cheat sheet

#

#

# PDF

# https://s3.amazonaws.com/aws-bigdata-blog/artifacts/apache_mxnet/apache-mxnet-cheat.pdf

#

# https://aws.amazon.com/blogs/ai/exploiting-the-unique-features-of-the-apache-mxnet-deep-learning-framework-with-a-cheat-sheet/

# + deletable=true editable=true

| nn_basics.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Confidence and prediction intervals

#

# > <NAME>

# > Laboratory of Biomechanics and Motor Control ([http://demotu.org/](http://demotu.org/))

# > Federal University of ABC, Brazil

# For a finite univariate random variable with a normal probability distribution, the mean $\mu$ (a measure of central tendency) and variance $\sigma^2$ (a measure of dispersion) of a population are the well known formulas:

#

# $$ \mu = \frac{1}{N}\sum_{i=1}^{N} x_i $$

#

# $$ \sigma^2 = \frac{1}{N}\sum_{i=1}^{N} (x_i - \mu)^2 $$

#

# For a more general case, a continuous univariate random variable $x$ with [probability density function](http://en.wikipedia.org/wiki/Probability_density_function) (pdf), $f(x)$, the mean and variance of a population are:

#

# $$ \mu = \int_{\infty}^{\infty} x f(x)\: dx $$

#

# $$ \sigma^2 = \int_{\infty}^{\infty} (x-\mu)^2 f(x)\: dx $$

#

# The pdf is a function that describes the relative likelihood for the random variable to take on a given value.

# Mean and variance are the first and second central moments of a random variable. The standard deviation $\sigma$ of the population is the square root of the variance.

#

# The [normal (or Gaussian) distribution](http://en.wikipedia.org/wiki/Normal_distribution) is a very common and useful distribution, also because of the [central limit theorem](http://en.wikipedia.org/wiki/Central_limit_theorem), which states that for a sufficiently large number of samples (each with many observations) of an independent random variable with an arbitrary probability distribution, the means of the samples will have a normal distribution. That is, even if the underlying probability distribution of a random variable is not normal, if we sample enough this variable, the means of the set of samples will have a normal distribution.

#

# The probability density function of a univariate normal (or Gaussian) distribution is:

#

# $$ f(x) = \frac{1}{\sqrt{2\pi\sigma^2}} \exp\Bigl(-\frac{(x-\mu)^2}{2\sigma^2}\Bigr) $$

#

# The only parameters that define the normal distribution are the mean $\mu$ and the variance $\sigma^2$, because of that a normal distribution is usually described as $N(\mu,\:\sigma^2)$.

#

# Here is a plot of the pdf for the normal distribution:

# import the necessary libraries

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

from IPython.display import display, Latex

from scipy import stats

import sys

sys.path.insert(1, r'./../functions') # directory of BMC Python functions

from pdf_norm_plot import pdf_norm_plot

pdf_norm_plot();

# The horizontal axis above is shown in terms of the number of standard deviations in relation to the mean, which is known as standard score or $z$ score:

#

# $$ z = \frac{x - \mu}{\sigma} $$

#

# So, instead of specifying raw values in the distribution, we define the pdf in terms of $z$ scores; this conversion process is called standardizing the distribution (and the result is known as standard normal distribution). Note that because $\mu$ and $\sigma$ are known parameters, $z$ has the same distribution as $x$, in this case, the normal distribution.

#

# The percentage numbers in the plot are the probability (area under the curve) for each interval shown in the horizontal label.

# An interval in terms of z score is specified as: $[\mu-z\sigma,\;\mu+z\sigma]$.

# The interval $[\mu-1\sigma,\;\mu+1\sigma]$ contains 68.3% of the population and the interval $[\mu-2\sigma,\;\mu+2\sigma]$ contains 95.4% of the population.

# These numbers can be calculated using the function `stats.norm.cdf()`, the [cumulative distribution function](http://en.wikipedia.org/wiki/Cumulative_distribution_function) (cdf) of the normal distribution at a given value:

print('Cumulative distribution function (cdf) of the normal distribution:')

for i in range(-3, 4):

display(Latex(r'%d $\sigma:\;$ %.2f' %(i, stats.norm.cdf(i, loc=0, scale=1)*100) + ' %'))

# The parameters `loc` and `scale` are optionals and represent mean and variance of the distribution. The default is `loc=0` and `scale=1`.

# A commonly used proportion is 95%. The value that results is this proportion can be found using the function `stats.norm.ppf()`. If we want to find the $\pm$value for the interval that will result in 95% of the population inside, we have to consider that 2.5% of the population will stay out of the interval in each tail of the distribution. Because of that, the number we have to use with the `stats.norm.ppf()` is 0.975:

print('Percent point function (inverse of cdf) of the normal distribution:')

display(Latex(r'ppf(.975) = %.2f' % stats.norm.ppf(.975, loc=0, scale=1)))

# Or we can use the function `stats.norm.interval` which already gives the interval:

print('Confidence interval around the mean:')

stats.norm.interval(alpha=0.95, loc=0, scale=1)

# So, the interval $[\mu-1.96\sigma,\;\mu+1.96\sigma]$ contains 95% of the population.

#

# Now that we know how the probability density function of a normal distribution looks like, let's demonstrate the central limit theorem for a uniform distribution. For that, we will generate samples of a uniform distribution, calculate the mean across samples, and plot the histogram of the mean across samples:

fig, ax = plt.subplots(1, 4, sharey=True, squeeze=True, figsize=(12, 5))

x = np.linspace(0, 1, 100)

for i, n in enumerate([1, 2, 3, 10]):

f = np.mean(np.random.random((1000, n)), 1)

m, s = np.mean(f), np.std(f, ddof=1)

fn = (1/(s*np.sqrt(2*np.pi)))*np.exp(-(x-m)**2/(2*s**2)) # normal pdf

ax[i].hist(f, 20, normed=True, color=[0, 0.2, .8, .6])

ax[i].set_title('n=%d' %n)

ax[i].plot(x, fn, color=[1, 0, 0, .6], linewidth=5)

plt.suptitle('Demonstration of the central limit theorem for a uniform distribution', y=1.05)

plt.show()

# **Statistics for a sample of the population**

#

# Parameters (such as mean and variance) are characteristics of a population; statistics are the equivalent for a sample. For a population and a sample with normal or Gaussian distribution, mean and variance is everything we need to completely characterize this population or sample.

#

# The difference between sample and population is fundamental for the understanding of probability and statistics.

# In Statistics, a sample is a set of data collected from a population. A population is usually very large and can't be accessed completely; all we have access is a sample (a smaller set) of the population.

#

# If we have only a sample of a finite univariate random variable with a normal distribution, both mean and variance of the population are unknown and they have to be estimated from the sample:

#

# $$ \bar{x} = \frac{1}{N}\sum_{i=1}^{N} x_i $$

#

# $$ s^2 = \frac{1}{N-1}\sum_{i=1}^{N} (x_i - \bar{x})^2 $$

#

# The sample $\bar{x}$ and $s^2$ are only estimations of the unknown true mean and variance of the population, but because of the [law of large numbers](http://en.wikipedia.org/wiki/Law_of_large_numbers), as the size of the sample increases, the sample mean and variance have an increased probability of being close to the population mean and variance.

#

# **Prediction interval around the mean**

#

# For a sample of a univariate random variable, the area in an interval of the probability density function can't be interpreted anymore as the proportion of the sample lying inside the interval. Rather, that area in the interval is a prediction of the probability that a new value from the population added to the sample will be inside the interval. This is called a [prediction interval](http://en.wikipedia.org/wiki/Prediction_interval). However, there is one more thing to correct. We have to adjust the interval limits for the fact that now we have only a sample of the population and the parameters $\mu$ and $\sigma$ are unknown and have to be estimated. This correction will increase the interval for the same probability value of the interval because we are not so certain about the distribution of the population.

# To calculate the interval given a desired probability, we have to determine the distribution of the z-score equivalent for the case of a sample with unknown mean and variance:

#

# $$ \frac{x_{n+i}-\bar{x}}{s\sqrt{1+1/n}} $$

#

# Where $x_{n+i}$ is the new observation for which we want to calculate the prediction interval.

# The distribution of the ratio above is called <a href="http://en.wikipedia.org/wiki/Student's_t-distribution">Student's t-distribution</a> or simply $T$ distribution, with $n-1$ degrees of freedom. A $T$ distribution is symmetric and its pdf tends to that of the

# standard normal as $n$ tends to infinity.

#

# Then, the prediction interval around the sample mean for a new observation is:

#

# $$ \left[\bar{x} - T_{n-1}\:s\:\sqrt{1+1/n},\quad \bar{x} + T_{n-1}\:s\:\sqrt{1+1/n}\right] $$

#

# Where $T_{n-1}$ is the $100((1+p)/2)^{th}$ percentile of the Student's t-distribution with n−1 degrees of freedom.

#

# For instance, the prediction interval with 95% of probability for a sample ($\bar{x}=0,\;s^2=1$) with size equals to 10 is:

np.asarray(stats.t.interval(alpha=0.95, df=25-1, loc=0, scale=1)) * np.sqrt(1+1/10)

# For a large sample (e.g., 10000), the interval approaches the one for a normal distribution (according to the [central limit theorem](http://en.wikipedia.org/wiki/Central_limit_theorem)):

np.asarray(stats.t.interval(alpha=0.95, df=10000-1, loc=0, scale=1)) * np.sqrt(1+1/10000)

# Here is a plot of the pdf for the normal distribution and the pdf for the Student's t-distribution with different number of degrees of freedom (n-1):

fig, ax = plt.subplots(1, 1, figsize=(10, 5))

x = np.linspace(-4, 4, 1000)

f = stats.norm.pdf(x, loc=0, scale=1)

t2 = stats.t.pdf(x, df=2-1)

t10 = stats.t.pdf(x, df=10-1)

t100 = stats.t.pdf(x, df=100-1)

ax.plot(x, f, color='k', linestyle='--', lw=4, label='Normal')

ax.plot(x, t2, color='r', lw=2, label='T (1)')

ax.plot(x, t10, color='g', lw=2, label='T (9)')

ax.plot(x, t100, color='b', lw=2, label='T (99)')

ax.legend(title='Distribution', fontsize=14)

ax.set_title("Normal and Student's t distributions", fontsize=20)

ax.set_xticks(np.linspace(-4, 4, 9))

xtl = [r'%+d$\sigma$' %i for i in range(-4, 5, 1)]

xtl[4] = r'$\mu$'

ax.set_xticklabels(xtl)

ax.set_ylim(-0.01, .41)

plt.grid()

plt.rc('font', size=16)

plt.show()

# It's common to use 1.96 as value for the 95% prediction interval even when dealing with a sample; let's quantify the error of this approximation for different sample sizes:

T = lambda n: stats.t.ppf(0.975, n-1)*np.sqrt(1+1/n) # T distribution

N = stats.norm.ppf(0.975) # Normal distribution

for n in [1000, 100, 10]:

print('\nApproximation error for n = %d' %n)

print('Using Normal distribution: %.1f%%' % (100*(N-T(n))/T(n)))

# For n=1000, the approximation is good, for n=10 it is bad, and it always underestimates.

#

# **Standard error of the mean**

#

# The [standard error of the mean](http://en.wikipedia.org/wiki/Standard_error) (sem) is the standard deviation of the sample-mean estimate of a population mean and is given by:

#

# $$ sem = \frac{s}{\sqrt{n}} $$

#

# **Confidence interval**

#

# In statistics, a [confidence interval](http://en.wikipedia.org/wiki/Confidence_interval) (CI) is a type of interval estimate of a population parameter and is used to indicate the reliability of an estimate ([Wikipedia](http://en.wikipedia.org/wiki/Confidence_interval)). For instance, the 95% confidence interval for the sample-mean estimate of a population mean is:

#

# $$ \left[\bar{x} - T_{n-1}\:s/\sqrt{n},\quad \bar{x} + T_{n-1}\:s/\sqrt{n}\right] $$

#

# Where $T_{n-1}$ is the $100((1+p)/2)^{th}$ percentile of the Student's t-distribution with n−1 degrees of freedom.

# For instance, the confidence interval for the mean with 95% of probability for a sample ($\bar{x}=0,\;s^2=1$) with size equals to 10 is:

stats.t.interval(alpha=0.95, df=10-1, loc=0, scale=1) / np.sqrt(10)

# The 95% CI means that if we randomly obtain 100 samples of a population and calculate the CI of each sample (i.e., we replicate the experiment 99 times in a independent way), 95% of these CIs should contain the population mean (the true mean). This is different from the prediction interval, which is larger, and gives the probability that a new observation is inside this interval. Note that the confidence interval DOES NOT give the probability that the true mean (the mean of the population) is inside this interval. The true mean is a parameter (fixed) and it is either inside the calculated interval or not; it is not a matter of chance (probability).

#

# Let's simulate samples of a population ~ $N(\mu=0, \sigma^2=1) $ and calculate the confidence interval for the samples' mean:

n = 20 # number of observations

x = np.random.randn(n, 100) # 100 samples with n observations

m = np.mean(x, axis=0) # samples' mean

s = np.std(x, axis=0, ddof=1) # samples' standard deviation

T = stats.t.ppf(.975, n-1) # T statistic for 95% and n-1 degrees of freedom

ci = m + np.array([-s*T/np.sqrt(n), s*T/np.sqrt(n)])

out = ci[0, :]*ci[1, :] > 0 # CIs that don't contain the true mean

fig, ax = plt.subplots(1, 1, figsize=(13, 5))

ind = np.arange(1, 101)

ax.axhline(y=0, xmin=0, xmax=n+1, color=[0, 0, 0])

ax.plot([ind, ind], ci, color=[0, 0.2, 0.8, 0.8], marker='_', ms=0, linewidth=3)

ax.plot([ind[out], ind[out]], ci[:, out], color=[1, 0, 0, 0.8], marker='_', ms=0, linewidth=3)

ax.plot(ind, m, color=[0, .8, .2, .8], marker='.', ms=10, linestyle='')

ax.set_xlim(0, 101)

ax.set_ylim(-1.1, 1.1)

ax.set_title("Confidence interval for the samples' mean estimate of a population ~ $N(0, 1)$",

fontsize=18)

ax.set_xlabel('Sample (with %d observations)' %n, fontsize=18)

plt.show()

# Four out of 100 95%-CI's don't contain the population mean, about what we predicted.

#

# And the standard deviation of the samples' mean per definition should be equal to the standard error of the mean:

print("Samples' mean and standard deviation:")

print('m = %.3f s = %.3f' % (np.mean(m), np.mean(s)))

print("Standard deviation of the samples' mean:")

print('%.3f' % np.std(m, ddof=1))

print("Standard error of the mean:")

print('%.3f' % (np.mean(s)/np.sqrt(20)))

# Likewise, it's common to use 1.96 for the 95% confidence interval even when dealing with a sample; let's quantify the error of this approximation for different sample sizes:

T = lambda n: stats.t.ppf(0.975, n-1) # T distribution

N = stats.norm.ppf(0.975) # Normal distribution

for n in [1000, 100, 10]:

print('\nApproximation error for n = %d' %n)

print('Using Normal distribution: %.1f%%' % (100*(N-T(n))/T(n)))

# For n=1000, the approximation is good, for n=10 it is bad, and it always underestimates.

#

# For the case of a multivariate random variable, see [Prediction ellipse and prediction ellipsoid](http://nbviewer.ipython.org/github/demotu/BMC/blob/master/notebooks/PredictionEllipseEllipsoid.ipynb).

# ## References

#

# - <NAME>, <NAME> (1991) [Statistical Intervals: A Guide for Practitioners](http://books.google.com.br/books?id=ADGuRxqt5z4C). <NAME> & Sons.

# - Montgomery (2013) [Applied Statistics and Probability for Engineers](http://books.google.com.br/books?id=_f4KrEcNAfEC). John Wiley & Sons.

| notebooks/ConfidencePredictionIntervals.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Summary

# This notebook contains:

# - torch implementations of a few linear algebra techniques:

# - forward- and back-solving

# - LDLt decomposition

# - QR decomposition via Householder reflections

#

# - initial implementations of secure linear regression and <NAME>'s [DASH](https://github.com/jbloom22/DASH/) that leverage PySyft for secure computation.

#

# These implementations linear regression and DASH are not currently strictly secure, in that a few final steps are performed on the local worker for now. That's because our implementations of LDLt decomposition, QR decomposition, etc. don't quite work for the PySyft `AdditiveSharingTensor` just yet. They definitely do in principle (because they're compositions of operations the SPDZ supports), but there are still a few details to hammer out.

#

# ## Contents

# [Ordinary least squares regression and LDLt decomposition](#OLSandLDLt)

# * [LDLt decomposition, forward/back-solving](#LDLt)

# * [Secure linear regression example](#OLS)

#

# [DASH](#dashqr)

# * [QR decomposition via Householder transforms](#qr)

# * [DASH example](#dash)

import numpy as np

import torch as th

import syft as sy

from scipy import stats

sy.create_sandbox(globals())

# # <a id='OLSandLDLt'>Ordinary least squared regression and LDLt decomposition</a>

# ## <a id='LDLt'>LDLt decomposition, forward/back-solving</a>

#

# These are torch implementations of basic linear algebra routines we'll use to perform regression (and also in parts of the next section).

# - Forward/back-solving allows us to solve triangular linear systems efficiently and stably.

# - LDLt decomposition lets us write symmetric matrics as a product LDL^t where L is lower-triangular and D is diagonal (^t denotes transpose). It performs a role similar to Cholesky decomposition (which is normally available as method of a torch tensor), but doesn't require computing square roots. This makes makes LDLt a better fit for the secure setting.

# +

def _eye(n):

"""th.eye doesn't seem to work after hooking torch, so just adding

a workaround for now.

"""

return th.FloatTensor(np.eye(n))

def ldlt_decomposition(x):

"""Decompose the square, symmetric, full-rank matrix X as X = LDL^t, where

- L is upper triangular

- D is diagonal.

"""

n, _ = x.shape

l, diag = _eye(n), th.zeros(n).float()

for j in range(n):

diag[j] = x[j, j] - (th.sum((l[j, :j] ** 2) * diag[:j]))

for i in range(j + 1, n):

l[i, j] = (x[i, j] - th.sum(diag[:j] * l[i, :j] * l[j, :j])) / diag[j]

return l, th.diag(diag), l.transpose(0, 1)

def back_solve(u, y):

"""Solve Ux = y for U a square, upper triangular matrix of full rank"""

n = u.shape[0]

x = th.zeros(n)

for i in range(n - 1, -1, -1):

x[i] = (y[i] - th.sum(u[i, i+1:] * x[i+1:])) / u[i, i]

return x.reshape(-1, 1)

def forward_solve(l, y):

"""Solve Lx = y for L a square, lower triangular matrix of full rank."""

n = l.shape[0]

x = th.zeros(n)

for i in range(0, n):

x[i] = (y[i] - th.sum(l[i, :i] * x[:i])) / l[i, i]

return x.reshape(-1, 1)

def invert_triangular(t, upper=True):

"""

Invert by repeated forward/back-solving.

TODO: -Could be made more efficient with vectorized implementation of forward/backsolve

-detection and validation around triangularity/squareness

"""

solve = back_solve if upper else forward_solve

t_inv = th.zeros_like(t)

n = t.shape[0]

for i in range(n):

e = th.zeros(n, 1)

e[i] = 1.

t_inv[:, [i]] = solve(t, e)

return t_inv

def solve_symmetric(a, y):

"""Solve the linear system Ax = y where A is a symmetric matrix of full rank."""

l, d, lt = ldlt_decomposition(a)

# TODO: more efficient to just extract diagonal of d as 1D vector and scale?

x_ = forward_solve(l.mm(d), y)

return back_solve(lt, x_)

# +

"""

Basic tests for LDLt decomposition.

"""

def _assert_small(x, failure_msg=None, threshold=1E-5):

norm = x.norm()

assert norm < threshold, failure_msg

def test_ldlt_case(a):

l, d, lt = ldlt_decomposition(a)

_assert_small(l - lt.transpose(0, 1))

_assert_small(l.mm(d).mm(lt) - a, 'Decomposition is inaccurate.')

_assert_small(l - th.tril(l), 'L is not lower triangular.')

_assert_small(th.triu(th.tril(d)) - d, 'D is not diagonal.')

print(f'PASSED for {a}')

def test_solve_symmetric_case(a, x):

y = a.mm(x)

_assert_small(solve_symmetric(a, y) - x)

print(f'PASSED for {a}, {x}')

a = th.tensor([[1, 2, 3],

[2, 1, 2],

[3, 2, 1]]).float()

x = th.tensor([1, 2, 3]).float().reshape(-1, 1)

test_ldlt_case(a)

test_solve_symmetric_case(a, x)

# -

# ## <a id='OLS'>Secure linear regression example</a>

# #### Problem

# We're solving

# $$ \min_\beta \|X \beta - y\|_2 $$

# in the situation where the data $(X, y)$ is horizontally partitioned (each worker $w$ owns chunks $X_w, y_w$ of the rows of $X$ and $y$).

#

# #### Goals

# We want to do this

# * securely

# * without network overhead or MPC-related costs that scale with the number of rows of $X$.

#

# #### Plan

#

# 1. (**local plaintext compression**): each worker locally computes $X_w^t X_w$ and $X_w^t y_w$ in plain text. This is the only step that depends on the number of rows of X, and it's performed in plaintext.

# 2. (**secure summing**): securely compute the sums $$\begin{align}X^t X &= \sum_w X^t_w X_w \\ X^t y &= \sum_w X^t_w y_w \end{align}$$ as an AdditiveSharingTensor. Some worker or other party (here the local worker) will have a pointers to those two AdditiveSharingTensors.

# 3. (**secure solve**): We can then solve $X^tX\beta = X^ty$ for $\beta$ by a sequence of operations on those pointers (specifically, we apply `solve_symmetric` defined above).

#

# #### Example data:

# The correct $\beta$ is $[1, 2, -1]$

X = th.tensor(10 * np.random.randn(30000, 3))

y = (X[:, 0] + 2 * X[:, 1] - X[:, 2]).reshape(-1, 1)

# Split the data into chunks and send a chunk to each worker, storing pointers to chunks in two `MultiPointerTensor`s.

# +

workers = [alice, bob, theo]

crypto_provider = jon

chunk_size = int(X.shape[0] / len(workers))

def _get_chunk_pointers(data, chunk_size, workers):

return [

data[(i * chunk_size):((i+1)*chunk_size), :].send(worker)

for i, worker in enumerate(workers)

]

X_ptrs = sy.MultiPointerTensor(

children=_get_chunk_pointers(X, chunk_size, workers))

y_ptrs = sy.MultiPointerTensor(

children=_get_chunk_pointers(y, chunk_size, workers))

# -

# ### local compression

# This is the only step that depends on the number of rows of $X, y$, and it's performed locally on each worker in plain text. The result is two `MultiPointerTensor`s with pointers to each workers' summand of $X^tX$ (or $X^ty$).

# +

Xt_ptrs = X_ptrs.transpose(0, 1)

XtX_summand_ptrs = Xt_ptrs.mm(X_ptrs)

Xty_summand_ptrs = Xt_ptrs.mm(y_ptrs)

# -

# ### secure sum

# We add those summands up in two steps:

# - share each summand among all other workers

# - move the resulting pointers to one place (here just the local worker) and add 'em up.

def _generate_shared_summand_pointers(

summand_ptrs,

workers,

crypto_provider):

for worker_id, summand_pointer in summand_ptrs.child.items():

shared_summand_pointer = summand_pointer.fix_precision().share(

*workers, crypto_provider=crypto_provider)

yield shared_summand_pointer.get()

# +

XtX_shared = sum(

_generate_shared_summand_pointers(

XtX_summand_ptrs, workers, crypto_provider))

Xty_shared = sum(_generate_shared_summand_pointers(

Xty_summand_ptrs, workers, crypto_provider))

# -

# ### secure solve

# The coefficient $\beta$ is the solution to

# $$X^t X \beta = X^t y$$

#

# We solve for $\beta$ using `solve_symmetric`. Critically, this is a composition of linear operations that should be supported by `AdditiveSharingTensor`. Unlike the classic Cholesky decomposition, the $LDL^t$ decomposition in step 1 does not involve taking square roots, which would be challenging.

#

#

# **TODO**: there's still some additional work required to get `solve_symmetric` working for `AdditiveSharingTensor`, so we're performing the final linear solve publicly for now.

beta = solve_symmetric(XtX_shared.get().float_precision(), Xty_shared.get().float_precision())

beta

# # <a id='dashqr'>DASH and QR-decomposition</a>

# ## <a id='qr'>QR decomposition</a>

#

# A $m \times n$ real matrix $A$ with $m \geq n$ can be written as $$A = QR$$ for $Q$ orthogonal and $R$ upper triangular. This is helpful in solving systems of equations, among other things. It is also central to the compression idea of [DASH](https://arxiv.org/pdf/1901.09531.pdf).

# +

"""

Full QR decomposition via Householder transforms,

following Numerical Linear Algebra (Trefethen and Bau).

"""

def _apply_householder_transform(a, v):

return a - 2 * v.mm(v.transpose(0, 1).mm(a))

def _build_householder_matrix(v):

n = v.shape[0]

u = v / v.norm()

return _eye(n) - 2 * u.mm(u.transpose(0, 1))

def _householder_qr_step(a):

x = a[:, 0].reshape(-1, 1)

alpha = x.norm()

u = x.copy()

# note: can get better stability by multiplying by sign(u[0, 0])

# (where sign(0) = 1); is this supported in the secure context?

u[0, 0] += u.norm()

# is there a simple way of getting around computing the norm twice?

u /= u.norm()

a = _apply_householder_transform(a, u)

return a, u

def _recover_q(householder_vectors):

"""

Build the matrix Q from the Householder transforms.

"""

n = len(householder_vectors)

def _apply_transforms(x):

"""Trefethen and Bau, Algorithm 10.3"""

for k in range(n-1, -1, -1):

x[k:, :] = _apply_householder_transform(

x[k:, :],

householder_vectors[k])

return x

m = householder_vectors[0].shape[0]

n = len(householder_vectors)

q = th.zeros(m, m)

# Determine q by evaluating it on a basis

for i in range(m):

e = th.zeros(m, 1)

e[i] = 1.

q[:, [i]] = _apply_transforms(e)

return q

def qr(a, return_q=True):

"""

Args:

a: shape (m, n), m >= n

return_q: bool, whether to reconstruct q

Returns:

orthogonal q of shape (m, m) (None if return_q is False)

upper-triangular of shape (m, n)

"""

m, n = a.shape

assert m >= n, \

f"Passed a of shape {a.shape}, must have a.shape[0] >= a.shape[1]"

r = a.copy()

householder_unit_normal_vectors = []

for k in range(n):

r[k:, k:], u = _householder_qr_step(r[k:, k:])

householder_unit_normal_vectors.append(u)

if return_q:

q = _recover_q(householder_unit_normal_vectors)

else:

q = None

return q, r

# +

"""

Basic tests for QR decomposition

"""

def _test_qr_case(a):

q, r = qr(a)

# actually have QR = A

_assert_small(q.mm(r) - a, "QR = A failed")

# Q is orthogonal

m, _ = a.shape

_assert_small(

q.mm(q.transpose(0, 1)) - _eye(m),

"QQ^t = I failed"

)

# R is upper triangular

lower_triangular_entries = th.tensor([

r[i, j].item() for i in range(r.shape[0])

for j in range(i)])

_assert_small(

lower_triangular_entries,

"R is not upper triangular"

)

print(f"PASSED for \n{a}\n")

def test_qr():

_test_qr_case(

th.tensor([[1, 0, 1],

[1, 1, 0],

[0, 1, 1]]).float()

)

_test_qr_case(

th.tensor([[1, 0, 1],

[1, 1, 0],

[0, 1, 1],

[1, 1, 1],]).float()

)

test_qr()

# -

# ## <a id='dash'>DASH implementation</a>

# We follow https://github.com/jbloom22/DASH/.

#

# The overall structure is roughly analogous to the linear regression example above.

#

# - There's a local compression step that's performed separately on each worker in plaintext.

# - We leverage PySyft's SMCP features to perform secure summation.

# - For now, the last few steps are performed by a single player (the local worker).

# - Again, this could be performed securely, but there are still a few hitches with getting our torch implementation of QR decomposition to work for an `AdditiveSharingTensor`.

# +

def _generate_worker_data_pointers(

n, m, k, worker,

beta_correct, gamma_correct, epsilon=0.01

):

"""

Return pointers to worker-level data.

Args:

n: number of rows

m: number of transient

k: number of covariates

beta_correct: coefficients for transient features (tensor of shape (m, 1))

gamma_correct: coefficients for covariates (tensor of shape (k, 1))

epsilon: scale of noise added to response

Return:

y, X, C: pointers to response, transients, and covariates

"""

X = th.randn(n, m).send(worker)

C = th.randn(n, k).send(worker)

y = (X.mm(beta_correct.copy().send(worker)).reshape(-1, 1) +

C.mm(gamma_correct.copy().send(worker)).reshape(-1, 1))

y += (epsilon * th.randn(n, 1)).send(worker)

return y, X, C

def _dot(x):

return (x * x).sum(dim=0).reshape(-1, 1)

def _secure_sum(worker_level_pointers, workers, crypto_provider):

"""

Securely add up an interable of pointers to (same-sized) tensors.

Args:

worker_level_pointers: iterable of pointer tensors

workers: list of workers

crypto_provider: worker

Returns:

AdditiveSharingTensor shared among workers

"""

return sum([

p.fix_precision(precision_fractional=10).share(*workers, crypto_provider=crypto_provider).get()

for p in worker_level_pointers

])

# -

def dash_example_secure(

workers, crypto_provider,

n_samples_by_worker, m, k,

beta_correct, gamma_correct,

epsilon=0.01

):

"""

Args:

workers: list of workers

crypto_provider: worker

n_samples_by_worker: dict mapping worker ids to ints (number of rows of data)

m: number of transients

k: number of covariates

beta_correct: coefficient for transient features

gamma_correct: coefficient for covariates

epsilon: scale of noise added to response

Returns:

beta, sigma, tstat, pval: coefficient of transients and accompanying statistics

"""

# Generate each worker's data

worker_data_pointers = {

p: _generate_worker_data_pointers(

n, m, k, workers[p],

beta_correct, gamma_correct,

epsilon=epsilon)

for p, n in n_samples_by_worker.items()

}

# to be populated with pointers to results of local, worker-level computations

Ctys, CtXs, yys, Xys, XXs, Rs = {}, {}, {}, {}, {}, {}

def _sum(pointers):

return _secure_sum(pointers, list(players.values()), crypto_provider)

# worker-level compression step

for p, (y, X, C) in worker_data_pointers.items():

# perform worker-level compression step

yys[p] = y.norm()

Xys[p] = X.transpose(0, 1).mm(y)

XXs[p] = _dot(X)

Ctys[p] = C.transpose(0, 1).mm(y)

CtXs[p] = C.transpose(0, 1).mm(X)

_, R_full = qr(C, return_q=False)

Rs[p] = R_full[:k, :]

# Perform secure sum

# - We're returning result to the local worker and computing there for the rest

# of the way, but should be possible to compute via SMPC (on a pointers to AdditiveSharingTensors)

# - still afew minor-looking issues with implementing invert_triangular/qr for

# AdditiveSharingTensor

yy = _sum(yys.values()).get().float_precision()

Xy = _sum(Xys.values()).get().float_precision()

XX = _sum(XXs.values()).get().float_precision()

Cty = _sum(Ctys.values()).get().float_precision()

CtX = _sum(CtXs.values()).get().float_precision()

# Rest is done publicly on the local worker for now

_, R_public = qr(

th.cat([R.get() for R in Rs.values()], dim=0),

return_q=False)

invR_public = invert_triangular(R_public[:k, :])

Qty = invR_public.transpose(0, 1).mm(Cty)

QtX = invR_public.transpose(0, 1).mm(CtX)

QtXQty = QtX.transpose(0, 1).mm(Qty)

QtyQty = _dot(Qty)

QtXQtX = _dot(QtX)

yyq = yy - QtyQty

Xyq = Xy - QtXQty

XXq = XX - QtXQtX

d = sum(n_samples_by_worker.values()) - k - 1

beta = Xyq / XXq

sigma = ((yyq / XXq - (beta ** 2)) / d).abs() ** 0.5

tstat = beta / sigma

pval = 2 * stats.t.cdf(-abs(tstat), d)

return beta, sigma, tstat, pval

# +

players = {

worker.id: worker

for worker in [alice, bob, theo]

}

# de

n_samples_by_player = {

alice.id: 100000,

bob.id: 200000,

theo.id: 100000

}

crypto_provider = jon

m = 100

k = 3

d = sum(n_samples_by_player.values()) - k - 1

beta_correct = th.ones(m, 1)

gamma_correct = th.ones(k, 1)

dash_example_secure(

players, crypto_provider,

n_samples_by_player, m, k,

beta_correct, gamma_correct)

# -

| examples/experimental/plaintext_speed_regression.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## The dataset is from Kaggle where we have 7503 values or rows in training set and 3243 rows in test set and test set does not have target column. Dataset is about Disaster tweets and we will be classifying fake and real tweets

# https://www.kaggle.com/c/nlp-getting-started/data?select=train.csv

import numpy as np

from nltk.tokenize import TweetTokenizer

import pandas as pd

from nltk.corpus import stopwords

from wordcloud import WordCloud, STOPWORDS, ImageColorGenerator

from nltk.stem.porter import PorterStemmer

from nltk.stem import WordNetLemmatizer

from sklearn.metrics import classification_report, roc_curve, roc_auc_score, plot_confusion_matrix

ps = PorterStemmer()

import re

import seaborn as sns

import nltk

import scipy

import matplotlib.pyplot as plt

import networkx as nx

from gensim.models import word2vec

import spacy

from sklearn.feature_extraction.text import TfidfVectorizer

# %matplotlib inline

from nltk.stem.porter import PorterStemmer

from sklearn.naive_bayes import MultinomialNB

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.metrics import confusion_matrix

from sklearn.metrics import accuracy_score

from sklearn.linear_model import LogisticRegression

df = pd.read_csv('train.csv')

df_test = pd.read_csv('test.csv')

print(df.tail(3))

print(df_test.shape)

print(df.shape)

print(df.head(3))

## Checking for missing values

print(df.isnull().sum())

print(df_test.isnull().sum())

# #### We can see that the target variable is not available in df_test dataset and we are going to classify the tweets in df_test dataset

df_test.info()

## Removing missing values

df.drop(['keyword', 'location'], axis = 1, inplace = True)

df_test.drop(['keyword', 'location'], axis = 1, inplace = True)

nltk.download('wordnet')

## Applying Regex to remove punctuation, hyperlinks and numbers

## Converting text to lower

## Applying Lemmatizer to stemming and get the meaninful words

ps = PorterStemmer()

lemmatizer = WordNetLemmatizer()

corpus = []

for i in range(0, len(df)):

#review = re.sub(r'^https?:\/\/.*[\r\n]*', '', df['text'][i], flags=re.MULTILINE)

#review = re.sub(r'(https|http)?:\/\/(\w|\.|\/|\?|\=|\&|\%)*\b', '', df['text'][i], flags=re.MULTILINE)

review = re.sub(r"http\S+", "", df['text'][i])

review = re.sub('[^a-zA-Z\d+]', ' ', review)

review = re.sub('[0-9]', '', review)

review = review.lower()

review = review.split()

#review = [ps.stem(word) for word in review if not word in stopwords.words('english')]

review = [lemmatizer.lemmatize(word, pos = 'v') for word in review if not word in stopwords.words('english')]

review = [lemmatizer.lemmatize(word, pos = 'n') for word in review]

review = [lemmatizer.lemmatize(word, pos = 'a') for word in review]

review = ' '.join(review)

corpus.append(review)

## We have 113,654 words in our dataset

print(df.shape)

df['text'].apply(lambda x: len(x.split(' '))).sum()

## Applying the same for test dataset

ps = PorterStemmer()

lemmatizer = WordNetLemmatizer()

corpus_test = []

for i in range(0, len(df_test)):

review = re.sub(r"http\S+", "", df_test['text'][i])

review = re.sub('[^a-zA-Z\d+]', ' ', review)

review = re.sub('[0-9]', '', review)

review = review.lower()

review = review.split()

review = [lemmatizer.lemmatize(word, pos = 'v') for word in review if not word in stopwords.words('english')]

review = [lemmatizer.lemmatize(word, pos = 'n') for word in review]

review = [lemmatizer.lemmatize(word, pos = 'a') for word in review]

review = ' '.join(review)

corpus_test.append(review)

corpus_test[1:4]

corpus[1:4]

## Before proceeding to model, checking for class imbalance

classes = df['target'].value_counts()

plt.figure(figsize=(4,4))

sns.barplot(classes.index, classes.values, alpha=0.8)

plt.ylabel('Number of Occurrences', fontsize=12)

plt.xlabel('target', fontsize=12)

plt.legend('0', '1')

plt.show()

df['target'].value_counts()

# ### We do not have a class imbalance in our dataset

## Creating a Dictionary to see most frequent words

wordfreq = {}

for sentence in corpus:

tokens = nltk.word_tokenize(sentence)

for token in tokens:

if token not in wordfreq.keys():

wordfreq[token] = 1

else:

wordfreq[token] += 1

## Using heap module in python to see 10 most frequent words

import heapq

most_freq = heapq.nlargest(200, wordfreq, key=wordfreq.get)

most_freq[0:10]

## One way to create features for Bag of words

sentence_vectors = []

for sentence in corpus:

sentence_tokens = nltk.word_tokenize(sentence)

sent_vec = []

for token in most_freq:

if token in sentence_tokens:

sent_vec.append(1)

else:

sent_vec.append(0)

sentence_vectors.append(sent_vec)

sentence_vectors = np.asarray(sentence_vectors)

sentence_vectors

## Importing CountVectorizer to create bag of words and

from sklearn.feature_extraction.text import CountVectorizer

cv = CountVectorizer(max_features=1000)

X = cv.fit_transform(corpus).toarray()

y = df['target']

## These are the features for Bag of words

X[1:5]

### Splitting data for training and test data and applying Naive Bayes Classification

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.30, random_state = 0)

clf = MultinomialNB().fit(X_train, y_train)

y_pred_clf = clf.predict(X_test)

print("Training set score using Naive Bayes Classifier: {:.2f}".format(clf.score(X_train, y_train)))

print("Testing set score using Naive Bayes Classifier: {:.2f}" .format(clf.score(X_test, y_test)))

lr = LogisticRegression()

print(X_train.shape, y_train.shape)

train = lr.fit(X_train, y_train)

y_pred = lr.predict(X_test)

print('Training set score using Logistic Regression:{:.2f}'.format(train.score(X_train, y_train)))

print('Test set score:{:.2f}'.format(train.score(X_test, y_test)))

plot_confusion_matrix(lr,X_test, y_test)

# +

from sklearn import ensemble

rfc = ensemble.RandomForestClassifier()

train1 = rfc.fit(X_train, y_train)

print('Training set score using Random forest Classifier:{:.2f}'.format(rfc.score(X_train, y_train)))

print('Test set score using Random Forest Classifier:{:.2f}'.format(rfc.score(X_test, y_test)))

# -

print(classification_report(y_test, y_pred))

y_pred_proba = lr.predict_proba(X_test)[:,1]

y_pred_proba

fpr,tpr, thresholds = roc_curve(y_test, y_pred_proba)

plt.plot(fpr,tpr)

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.0])

plt.title("ROC CURVE of TWEETS", color = 'blue')

plt.xlabel('False Possitive Rate(1-Specificity)')

plt.ylabel('True Possitive Rate(Sensitivity)')

plt.grid(True)

print("The area under ROC CURVE using Logistic Regression with BOW: {:.2f}".format(roc_auc_score(y_test, y_pred_proba)))

# #### Logistic Regression is the best model from the above 3 models but the gap between test and train data is less with Naive Bayes Classifier

# Creating the TF-IDF model

from sklearn.feature_extraction.text import TfidfVectorizer

cv1 = TfidfVectorizer()

X_td = cv1.fit_transform(corpus).toarray()

X_train1, X_test1, y_train1, y_test1 = train_test_split(X_td, y, test_size = 0.20, random_state = 0)

clf1 = MultinomialNB().fit(X_train1, y_train1)

y_pred1 = clf1.predict(X_test1)

confusion_td = confusion_matrix(y_test1, y_pred1)

print(confusion_td)

print("TF-IDF Score for Naive Bayes Training Set is {:.2f}".format(clf1.score(X_train1, y_train1)))

print("TF-IDF Score for Naive Bayes Test Set is: {:.2f}".format(clf1.score(X_test1, y_test1)))

lr1 = LogisticRegression()

train1 = lr1.fit(X_train1, y_train1)

print('TF-IDF score of Training set with Logistic Regression: {:.2f}'.format(lr1.score(X_train1, y_train1)))

print('TF-IDF score for Test set with Logistic Regression: {:.2f}'.format(lr1.score(X_test1, y_test1)))

plot_confusion_matrix(lr1, X_test1, y_test1)

# +

from sklearn import ensemble

rfc2 = ensemble.RandomForestClassifier()

train5 = rfc2.fit(X_train1, y_train1)

print('Training set score using Random forest Classifier:{:.2f}'.format(rfc2.score(X_train1, y_train1)))

print('Test set score using Random Forest Classifier:{:.2f}'.format(rfc2.score(X_test1, y_test1)))

# -

y_pred_tfidf = lr1.predict(X_test1)

print(classification_report(y_test1, y_pred_tfidf))

y_pred_prob1 = lr1.predict_proba(X_test1)[:,1]

fpr,tpr, thresholds = roc_curve(y_test1, y_pred_prob1)

plt.plot(fpr,tpr)

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.0])

plt.title("ROC CURVE of TWEETS TFIDF", color = 'blue')

plt.xlabel('False Possitive Rate(1-Specificity)')

plt.ylabel('True Possitive Rate(Sensitivity)')

plt.grid(True)

plt.show()

print('Area under the ROC Curve TFIDF: {:.2f}'.format(roc_auc_score(y_test1, y_pred_prob1)))

text1 = df['text']

text = df['text']

nlp = spacy.load('en_core_web_sm')

text_doc = nlp('text')

tweet_tokenizer = TweetTokenizer()

tokens1 = []

for sent in corpus:

for word in tweet_tokenizer.tokenize(sent):

if len(word) < 2:

continue

tokens1.append(word.lower())

print("The number of tokens we have in our training dataset are {}" .format(len(tokens1)))

## Creating tokens using TweetTokenizer from NLTK library

tweet_tokenizer = TweetTokenizer()

tweet_tokens = []

for sent in corpus:

review2 = tweet_tokenizer.tokenize(sent)

tweet_tokens.append(review2)

tweet_tokens[1]

## Removing punctuation, numbers and hyperlinks from the text

corpus1 = []

for i in range(0, len(df)):

review1 = re.sub(r"http\S+", "", df['text'][i])

review1 = re.sub('[^a-zA-Z\d+]', ' ', review1)

review1 = review1.split()

review1 = ' '.join(review1)

corpus1.append(review1)

## Language Parsing using spacy

nlp = spacy.load('en_core_web_sm')

corpus_spacy = []

for i in corpus1:

text_doc = nlp(i)

corpus_spacy.append(text_doc)

from collections import Counter

# Utility function to calculate how frequently words appear in the text.

def word_frequencies(corpus_spacy, include_stop = False):

# Build a list of words.

# Strip out punctuation and, optionally, stop words.

words = []

for token in corpus_spacy:

for j in token:

if not j.is_punct and (not j.is_stop and not include_stop):

words.append(j.text)

# Build and return a Counter object containing word counts.

return Counter(words)

corpus_freq = word_frequencies(corpus_spacy).most_common(30)

print('corpus_spacy includes stop words:', corpus_freq)

# #### Dividing the data into target1 and target0 inorder to look at freq words in each category

corpus4 = ' '.join(corpus)

# +

import gensim

from gensim.models import word2vec

model = word2vec.Word2Vec(

tweet_tokens,

workers=4, # Number of threads to run in parallel (if your computer does parallel processing).

min_count=50, # Minimum word count threshold.

window=6, # Number of words around target word to consider.

sg=0, # Use CBOW because our corpus is small.

sample=1e-3 , # Penalize frequent words.

size=300, # Word vector length.

hs=1 # Use hierarchical softmax.

)

print('done!')

# -

## Find most similar words to life

print(model.wv.most_similar(positive = ['life']))

print(model.wv.most_similar(negative = ['life']))

# +

## Use t-SNE to represent high-dimensional data in a lower-dimensional space.

from sklearn.manifold import TSNE

def tsne_plot(model):

"Create TSNE model and plot it"

labels = []

tokens = []

for word in model.wv.vocab:

tokens.append(model[word])

labels.append(word)

tsne_model = TSNE(perplexity=40, n_components=2, init='pca', n_iter=2500, random_state=23)

new_values = tsne_model.fit_transform(tokens)

x = []

y = []

for value in new_values:

x.append(value[0])

y.append(value[1])

plt.figure(figsize=(18, 18))

for i in range(len(x)):

plt.scatter(x[i],y[i])

plt.annotate(labels[i],

xy=(x[i], y[i]),

xytext=(5, 2),

textcoords='offset points',

ha='right',

va='bottom')

plt.show()

tsne_plot(model)

# -

# #### We can see from the above plot that words like collide, evacuate, crash, smoke, blow, explode, shoot are closer to each other and they should be in a real tweets.

# +

## Creating wordcloud for visualizing most important words

from PIL import Image

wc_text = corpus4

custom_mask = np.array(Image.open('twitter_mask.png'))

wc = WordCloud(background_color = 'white', max_words = 500, mask = custom_mask, height =

5000, width = 5000)

wc.generate(wc_text)

image_colors = ImageColorGenerator(custom_mask)

plt.figure(figsize=(20,10))

plt.imshow(wc, interpolation = 'bilinear')

plt.axis('off')

plt.show()

# -

## Creating features using bag of words for test data set

cv = CountVectorizer(max_features=1000)

test_features = cv.fit_transform(corpus_test).toarray()

test_features

lr = LogisticRegression()

pred = lr.fit(X,y)

print(test_features.shape)

y_pred1 = lr.predict(test_features)

y_pred1.sum()

# ## We determined that Logistic regression using Bag of words as the best model, using our best model we have classified real and fake tweets. We have 1142 real tweets about disasters and 2121 fake tweets in the predicted test data set.

# During the age of Social Media where we get all the updates and News from social media like Twitter, Facebook, it is very important to differentiate real and fake tweets. With this model we can differentiate real tweets about disasters from fake tweets. This model not only helps in flagging fake tweets, it is also helpful to identify real tweets and assist people who are in need of help. Once a model is deployed into production and providing utility to the business, it is necessary to monitor how well the model is performing to implement something that will continuously update the database as new data is generated. We can use a scalable messaging platform like Kafka to send newly acquired data to a long running Spark Streaming process. The Spark process can then make a new prediction based on the new data and update the operational database.

| Final Capstone.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: odor-states

# language: python

# name: odor-states

# ---

# +

from scipy.linalg import block_diag

import matplotlib as mpl

import matplotlib.pyplot as plt

import networkx as nx

import numpy as np

# %config Completer.use_jedi = False

mpl.rcParams.update({'font.size': 20})

# -

# # Algorithm Schematic (Fig 3)

np.random.seed(39274)

mat = 1-block_diag(np.ones((4,4)),np.ones((3,3)),np.ones((4,4)))

flip = np.random.choice([0,1],p=[0.8,0.2],size=(11,11))

mat2 = np.logical_xor((flip+flip.T>0),mat)

np.random.seed(34715)

inv_G = nx.from_numpy_matrix(1-mat,create_using=nx.Graph)

G = nx.from_numpy_matrix(mat2,create_using=nx.Graph)

G_bar = nx.from_numpy_matrix(1-mat2,create_using=nx.Graph)

pos = nx.layout.fruchterman_reingold_layout(inv_G,k=0.8)

M = G.number_of_edges()

# +

plt.figure(figsize=(6,6))

nodes = nx.draw_networkx_nodes(G, pos, node_size=800, node_color='grey')

edges = nx.draw_networkx_edges(G, pos, node_size=800,

arrowsize=10, width=0.5,edge_color='grey')

ax = plt.gca()

ax.set_axis_off()

plt.savefig(f"Figures/Final.svg")

plt.show()

# +

plt.figure(figsize=(6,6))

nodes = nx.draw_networkx_nodes(G, pos, node_size=800, node_color='grey')

edges = nx.draw_networkx_edges(G_bar, pos, node_size=800,

arrowsize=10, width=0.5,edge_color='grey')

ax = plt.gca()

ax.set_axis_off()

plt.savefig(f"Figures/Final_bar.svg")

plt.show()

# -

np.random.seed(965305)

inv_G = nx.from_numpy_matrix(1-mat,create_using=nx.Graph)

G = nx.from_numpy_matrix(mat2,create_using=nx.Graph)

G_bar = nx.from_numpy_matrix(1-mat2,create_using=nx.Graph)

pos = nx.layout.fruchterman_reingold_layout(inv_G,k=2)

M = G.number_of_edges()

# +

plt.figure(figsize=(6,6))

nodes = nx.draw_networkx_nodes(G, pos, node_size=800, node_color='grey')

edges = nx.draw_networkx_edges(G, pos, node_size=800,

arrowsize=10, width=0.5,edge_color='grey')

ax = plt.gca()

ax.set_axis_off()

plt.savefig(f"Figures/Initial.svg")

plt.show()

# +

plt.figure(figsize=(6,6))

nodes = nx.draw_networkx_nodes(G, pos, node_size=800, node_color='grey')

edges = nx.draw_networkx_edges(G_bar, pos, node_size=800,

arrowsize=10, width=0.5,edge_color='grey')

ax = plt.gca()

ax.set_axis_off()

plt.savefig(f"Figures/Initial_bar.svg")

plt.show()

# -

mat = np.loadtxt(f'../modules/matrix_2.csv',delimiter=",")

module = np.loadtxt(f'../modules/matrix_2_modules.csv')

order = np.argsort(module)

plt.figure(figsize=(7,7))

plt.imshow(mat,aspect='equal',cmap=plt.cm.inferno)

plt.clim(-0.2,1.2)

plt.xticks([0,9,19,29],[1,10,20,30])

plt.xlabel('Neuron Number')

plt.yticks([0,9,19,29],[1,10,20,30],rotation=90)

plt.ylabel('Neuron Number')

plt.savefig("Figures/Initial_mat.svg")

plt.figure(figsize=(7,7))

plt.imshow(1-mat,aspect='equal',cmap=plt.cm.inferno)

plt.clim(-0.2,1.2)

plt.xticks([0,9,19,29],[1,10,20,30])

plt.xlabel('Neuron Number')

plt.yticks([0,9,19,29],[1,10,20,30],rotation=90)

plt.ylabel('Neuron Number')

plt.savefig("Figures/Initial_mat_bar.svg")

plt.figure(figsize=(7,7))

plt.imshow((1-mat)[order,:][:,order],aspect='equal',cmap=plt.cm.inferno)

plt.clim(-0.2,1.2)

plt.xticks(np.arange(30),[f"{x:.0f}" for x in np.sort(module)])

plt.xlabel('Community Number')

plt.yticks(np.arange(30),[f"{x:.0f}" for x in np.sort(module)],rotation=90)

plt.ylabel('Community Number')

plt.savefig("Figures/Final_mat_bar.svg")

plt.figure(figsize=(7,7))

plt.imshow(mat[order,:][:,order],aspect='equal',cmap=plt.cm.inferno)

plt.clim(-0.2,1.2)

plt.xticks(np.arange(30),[f"{x:.0f}" for x in np.sort(module)])

plt.xlabel('Community Number')

plt.yticks(np.arange(30),[f"{x:.0f}" for x in np.sort(module)],rotation=90)

plt.ylabel('Community Number')

plt.savefig("Figures/Final_mat.svg")

| fig3/fig3.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import numpy as np

from unicode_info.database import generate_data_for_experiment, generate_positive_pairs_consortium, generate_negative_pairs_consortium

def generate_similarities():

num_pairs = 1000

supported_consortium_feature_vectors, supported_consortium_clusters_dict = generate_data_for_experiment()

positive_pairs = generate_positive_pairs_consortium(supported_consortium_clusters_dict, num_pairs)

negative_pairs = generate_negative_pairs_consortium(supported_consortium_clusters_dict, num_pairs)

cos_sim = lambda features_x, features_y: np.dot(features_x, features_y) / (np.linalg.norm(features_x) * np.linalg.norm(features_y))

calc_sim = lambda pair: cos_sim(supported_consortium_feature_vectors[pair[0]], supported_consortium_feature_vectors[pair[1]])

pos_sim = np.array(list(map(calc_sim, positive_pairs)))

neg_sim = np.array(list(map(calc_sim, negative_pairs)))

return pos_sim, neg_sim

def calculate_recall(pos_sim, threshold):

return np.count_nonzero(pos_sim > threshold) / len(pos_sim)

def calculate_fpr(neg_sim, threshold):

return np.count_nonzero(neg_sim > threshold) / len(neg_sim)

# -

pos_sim, neg_sim = generate_similarities()

thres = 0.9

print(calculate_recall(pos_sim, thres))

print(calculate_fpr(neg_sim, thres))

thres = 0.85

print(calculate_recall(pos_sim, thres))

print(calculate_fpr(neg_sim, thres))

thres = 0.80

print(calculate_recall(pos_sim, thres))

print(calculate_fpr(neg_sim, thres))

| threshold_investigation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## 第五章 PyTorch常用工具模块

# 在训练神经网络过程中,需要用到很多工具,其中最重要的三部分是:数据、可视化和GPU加速。本章主要介绍Pytorch在这几方面的工具模块,合理使用这些工具能够极大地提高编码效率。

# ### 5.1 数据处理

#

# 在解决深度学习问题的过程中,往往需要花费大量的精力去处理数据,包括图像、文本、语音或其它二进制数据等。数据的处理对训练神经网络来说十分重要,良好的数据处理不仅会加速模型训练,更会提高模型效果。考虑到这点,PyTorch提供了几个高效便捷的工具,以便使用者进行数据处理或增强等操作,同时可通过并行化加速数据加载。

#

# #### 5.1.1 数据加载

#

# 在PyTorch中,数据加载可通过自定义的数据集对象。数据集对象被抽象为`Dataset`类,实现自定义的数据集需要继承Dataset,并实现两个Python魔法方法:

# - `__getitem__`:返回一条数据,或一个样本。`obj[index]`等价于`obj.__getitem__(index)`

# - `__len__`:返回样本的数量。`len(obj)`等价于`obj.__len__()`

# 这里我们以Kaggle经典挑战赛["Dogs vs. Cat"](https://www.kaggle.com/c/dogs-vs-cats/)的数据为例,来详细讲解如何处理数据。"Dogs vs. Cats"是一个分类问题,判断一张图片是狗还是猫,其所有图片都存放在一个文件夹下,根据文件名的前缀判断是狗还是猫。

# %env LS_COLORS = None

# !tree --charset ascii data/dogcat/

import torch as t

from torch.utils import data

# + active=""

# import os

# from PIL import Image

# import numpy as np

#

# class DogCat(data.Dataset):

# def __init__(self, root):

# imgs = os.listdir(root)

# # 所有图片的绝对路径

# # 这里不实际加载图片,只是指定路径,当调用__getitem__时才会真正读图片

# self.imgs = [os.path.join(root, img) for img in imgs]

#

# def __getitem__(self, index):

# img_path = self.imgs[index]

# # dog->1, cat->0

# label = 1 if 'dog' in img_path.split('/')[-1] else 0

# pil_img = Image.open(img_path)

# array = np.asarray(pil_img)

# data = t.from_numpy(array)

# return data, label

#

# def __len__(self):

# return len(self.imgs)

# -

dataset = DogCat('./data/dogcat/')

img, label = dataset[0] # 相当于调用dataset.__getitem__(0)

for img, label in dataset:

print(img.size(), img.float().mean(), label)