code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="WbiEDNVkEG3T"

# # SETUP

# + colab={"base_uri": "https://localhost:8080/"} id="Hp8vMTsAGani" outputId="265ed585-88f4-4deb-8312-25aeeedc3e36"

# !git clone 'https://github.com/radiantearth/mlhub-tutorials.git'

# + colab={"base_uri": "https://localhost:8080/"} id="l_MzJvZxGakE" outputId="fb919f15-d6be-42ec-d5e5-821361323034"

# !pip install -r '/content/mlhub-tutorials/notebooks/South Africa Crop Types Competition/requirements.txt' -q

# + id="8YFLc43syf6x"

exit(0)

# + [markdown] id="T-RSB5omEInc"

# # LIBRARIES

# + id="V-xKDNhkyhTZ"

# Required libraries

import os

import tarfile

import json

from pathlib import Path

from radiant_mlhub.client import _download as download_file

import datetime

import rasterio

import numpy as np

import pandas as pd

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import log_loss

from sklearn.model_selection import StratifiedShuffleSplit

os.environ['MLHUB_API_KEY'] = '96f33e4c9510d0d369d881c6fdefa91502829db09f41e0c92cba8b02fede920b'

# + [markdown] id="2S1h19sfEKEr"

# # DOWNLOAD DATA

# + id="dBqBu3cMyjQA"

DOWNLOAD_S1 = True # If you set this to true then the Sentinel-1 data will be downloaded which is not needed in this notebook.

# Select which imagery bands you'd like to download here:

DOWNLOAD_BANDS = {

'B01': False,

'B02': False,

'B03': False,

'B04': False,

'B05': False,

'B06': False,

'B07': False,

'B08': False,

'B8A': False,

'B09': False,

'B11': False,

'B12': False,

'CLM': False

}

# In this model we will only use Green, Red and NIR bands. You can select to download any number of bands.

# Our choice relies on the fact that vegetation is most sensitive to these bands.

# We also donwload the CLM or Cloud Mask layer to exclude cloudy data from the training phase.

# You can also do a feature selection, and try different combination of bands to see which ones will result in a better accuracy.

# + id="g68iGaSgylS4"

FOLDER_BASE = 'ref_south_africa_crops_competition_v1'

def download_archive(archive_name):

if os.path.exists(archive_name.replace('.tar.gz', '')):

return

print(f'Downloading {archive_name} ...')

download_url = f'https://radiant-mlhub.s3.us-west-2.amazonaws.com/archives/{archive_name}'

download_file(download_url, '.')

print(f'Extracting {archive_name} ...')

with tarfile.open(archive_name) as tfile:

tfile.extractall()

os.remove(archive_name)

for split in ['test']:

# Download the labels

labels_archive = f'{FOLDER_BASE}_{split}_labels.tar.gz'

download_archive(labels_archive)

# Download Sentinel-1 data

if DOWNLOAD_S1:

s1_archive = f'{FOLDER_BASE}_{split}_source_s1.tar.gz'

download_archive(s1_archive)

for band, download in DOWNLOAD_BANDS.items():

if not download:

continue

s2_archive = f'{FOLDER_BASE}_{split}_source_s2_{band}.tar.gz'

download_archive(s2_archive)

def resolve_path(base, path):

return Path(os.path.join(base, path)).resolve()

def load_df(collection_id):

split = collection_id.split('_')[-2]

collection = json.load(open(f'{collection_id}/collection.json', 'r'))

rows = []

item_links = []

for link in collection['links']:

if link['rel'] != 'item':

continue

item_links.append(link['href'])

for item_link in item_links:

item_path = f'{collection_id}/{item_link}'

current_path = os.path.dirname(item_path)

item = json.load(open(item_path, 'r'))

tile_id = item['id'].split('_')[-1]

for asset_key, asset in item['assets'].items():

rows.append([

tile_id,

None,

None,

asset_key,

str(resolve_path(current_path, asset['href']))

])

for link in item['links']:

if link['rel'] != 'source':

continue

source_item_id = link['href'].split('/')[-2]

if source_item_id.find('_s1_') > 0 and not DOWNLOAD_S1:

continue

elif source_item_id.find('_s1_') > 0:

for band in ['VV', 'VH']:

asset_path = Path(f'{FOLDER_BASE}_{split}_source_s1/{source_item_id}/{band}.tif').resolve()

date = '-'.join(source_item_id.split('_')[10:13])

rows.append([

tile_id,

f'{date}T00:00:00Z',

's1',

band,

asset_path

])

if source_item_id.find('_s2_') > 0:

for band, download in DOWNLOAD_BANDS.items():

if not download:

continue

asset_path = Path(f'{FOLDER_BASE}_{split}_source_s2_{band}/{source_item_id}_{band}.tif').resolve()

date = '-'.join(source_item_id.split('_')[10:13])

rows.append([

tile_id,

f'{date}T00:00:00Z',

's2',

band,

asset_path

])

return pd.DataFrame(rows, columns=['tile_id', 'datetime', 'satellite_platform', 'asset', 'file_path'])

competition_test_df = load_df(f'{FOLDER_BASE}_test_labels')

# + id="C-dUI1_AzOHw" colab={"base_uri": "https://localhost:8080/"} outputId="10cbf2f0-0156-48ba-fa67-e1093fec4479"

import gc

gc.collect()

# + [markdown] id="fDHGa05LEPJD"

# # CREATE DATA

# + id="JxPaJy6m6EBg"

# This DataFrame lists all types of assets including documentation of the data.

# In the following, we will use the Sentinel-2 bands as well as labels.

tile_ids_test = competition_test_df['tile_id'].unique()

# + id="mG5fN4cI65qQ"

from tqdm import tqdm_notebook

import gc

import warnings

warnings.simplefilter('ignore')

# + id="harn9qwG65oB"

n_obs = 5

# + id="YB2CV1T96Uuh"

# %%time

competition_test_df['Month'] = pd.to_datetime(competition_test_df['datetime']).dt.month

X = np.empty((0, 2*8),dtype=np.float16)

y = np.empty((0, 1),dtype=np.float16)

field_ids = np.empty((0, 1),np.float16)

for tile_id in tqdm_notebook(tile_ids_test[0:tile_ids_test.shape[0]]):

tile_df = competition_test_df[competition_test_df['tile_id']==tile_id]

field_id_src = rasterio.open(tile_df[tile_df['asset']=='field_ids']['file_path'].values[0])

field_id_array = field_id_src.read(1).flatten()

nonzeroidx = np.nonzero(field_id_array)[0]

field_ids = np.append(field_ids, field_id_array[nonzeroidx])

tile_date_times = tile_df[tile_df['satellite_platform']=='s1']['Month'].unique().tolist()

X_tile = np.empty((nonzeroidx.shape[0], 0),dtype=np.float16)

n_X = 0

for date_time_idx in range(0,len(tile_date_times)):

vv_src = rasterio.open(tile_df[(tile_df['Month']==month) & (tile_df['asset']=='VV')]['file_path'].values[0])

vv_array = np.expand_dims(vv_src.read(1).flatten()[nonzeroidx], axis=1)

vh_src = rasterio.open(tile_df[(tile_df['Month']==month) & (tile_df['asset']=='VH')]['file_path'].values[0])

vh_array = np.expand_dims(vh_src.read(1).flatten()[nonzeroidx], axis=1)

X_tile = np.append(X_tile,vv_array, axis = 1)

X_tile = np.append(X_tile,vh_array, axis = 1)

del vv_array,vh_array

del vv_src,vh_src

X = np.append(X, X_tile, axis=0)

del X_tile , field_id_array , field_id_src

gc.collect()

# + colab={"base_uri": "https://localhost:8080/"} id="nStutoS0Po_N" outputId="8578ba50-6b6a-423c-8e28-54de56c8709d"

gc.collect()

# + [markdown] id="pONjdGDAW4BM"

# # Data

# + id="5otf8f9CDM85"

data = pd.DataFrame(X)

data['field_id'] = field_ids

# + [markdown] id="4VXCeF0LPLvN"

# * **Reduce Memory Usage**

# + id="BF8FnGvhPAuF"

def reduce_mem_usage(df, verbose=True):

numerics = ['int16', 'int32', 'int64', 'float16', 'float32', 'float64']

start_mem = df.memory_usage().sum() / 1024**2

for col in df.columns:

col_type = df[col].dtypes

if col_type in numerics:

c_min = df[col].min()

c_max = df[col].max()

if str(col_type)[:3] == 'int':

if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max:

df[col] = df[col].astype(np.int8)

elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max:

df[col] = df[col].astype(np.int16)

elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max:

df[col] = df[col].astype(np.int32)

elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max:

df[col] = df[col].astype(np.int64)

else:

if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max:

df[col] = df[col].astype(np.float16)

elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max:

df[col] = df[col].astype(np.float32)

else:

df[col] = df[col].astype(np.float64)

end_mem = df.memory_usage().sum() / 1024**2

print('Memory usage after optimization is: {:.2f} MB'.format(end_mem))

print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem))

return df

# + id="5UWYy1FHPArw"

data = reduce_mem_usage(data)

# + id="tNQPTwawf9ul"

data.head()

# + id="8kHqXT3FRxeE"

gc.collect()

# + id="2-K4HNAaXYph"

# Each field has several pixels in the data. Here our goal is to build a Random Forest (RF) model using the average values

# of the pixels within each field. So, we use `groupby` to take the mean for each field_id

data_grouped = data.groupby('field_id').mean().reset_index()

data_grouped = reduce_mem_usage(data_grouped)

data_grouped

# + id="wn2qm85MVHYr"

feat = ["VV","VH"]

columns = [x + '_Month4' for x in feat] + [x + '_Month5' for x in feat] + \

[x + '_Month6' for x in feat] + [x + '_Month7' for x in feat] + \

[x + '_Month8' for x in feat] + [x + '_Month9' for x in feat] + \

[x + '_Month10' for x in feat] + [x + '_Month11' for x in feat]

columns = ['field_id'] + columns

# + id="DU-QIMg0V2tU"

data_grouped.columns = columns

data_grouped

# + colab={"base_uri": "https://localhost:8080/"} id="UDGsQHL5R7Ip" outputId="341e5aa1-f2ab-4d8a-f27e-7d69edcb9034"

from google.colab import drive

drive.mount('/content/drive')

# + id="XYtA3TmeUvly"

data_grouped.to_csv('S1TestObs1.csv',index=False)

os.makedirs('/content/drive/MyDrive/RadiantEarth',exist_ok=True)

os.makedirs('/content/drive/MyDrive/RadiantEarth/Data',exist_ok=True)

os.makedirs('/content/drive/MyDrive/RadiantEarth/Data/TestS1',exist_ok=True)

# !cp 'S1TestObs1.csv' "/content/drive/MyDrive/RadiantEarth/Data/TestS1/"

|

3rd place - ASSAZZIN/XL/Data Creation/S1-Test/S1Test_Observation1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

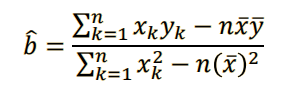

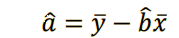

# # Ridge Regression

#

# **Ridge Regression** extends linear regression by providing L2 regularization of the coefficients. It can reduce the variance of the predictors, and improves the conditioning of the problem.

#

# The model can take array-like objects, either in host as NumPy arrays or in device (as Numba or cuda_array_interface-compliant), as well as cuDF DataFrames as the input.

#

# For information about cuDF, refer to the [cuDF documentation](https://docs.rapids.ai/api/cudf/stable).

#

# For information about cuML's ridge regression API: https://rapidsai.github.io/projects/cuml/en/stable/api.html#cuml.Ridge.

# ## Imports

import cudf

from cuml import make_regression, train_test_split

from cuml.metrics.regression import r2_score

from cuml.linear_model import Ridge as cuRidge

from sklearn.linear_model import Ridge as skRidge

# ## Define Parameters

# +

n_samples = 2**20

n_features = 399

random_state = 23

# -

# ## Generate Data

# +

# %%time

X, y = make_regression(n_samples=n_samples, n_features=n_features, random_state=0)

X = cudf.DataFrame.from_gpu_matrix(X)

y = cudf.DataFrame.from_gpu_matrix(y)[0]

X_cudf, X_cudf_test, y_cudf, y_cudf_test = train_test_split(X, y, test_size = 0.2, random_state=random_state)

# -

# Copy dataset from GPU memory to host memory.

# This is done to later compare CPU and GPU results.

X_train = X_cudf.to_pandas()

X_test = X_cudf_test.to_pandas()

y_train = y_cudf.to_pandas()

y_test = y_cudf_test.to_pandas()

# ## Scikit-learn Model

#

# ### Fit, predit and evaluate

# +

# %%time

ridge_sk = skRidge(fit_intercept=False, normalize=True, alpha=0.1)

ridge_sk.fit(X_train, y_train)

# -

# %%time

predict_sk= ridge_sk.predict(X_test)

# %%time

r2_score_sk = r2_score(y_cudf_test, predict_sk)

# ## cuML Model

#

# ### Fit, predit and evaluate

# +

# %%time

# Run the cuml ridge regression model to fit the training dataset.

# Eig is the faster algorithm, but svd is more accurate.

# In general svd uses significantly more memory and is slower than eig.

# If using CUDA 10.1, the memory difference is even bigger than in the other supported CUDA versions

ridge_cuml = cuRidge(fit_intercept=False, normalize=True, solver='eig', alpha=0.1)

ridge_cuml.fit(X_cudf, y_cudf)

# -

# %%time

predict_cuml = ridge_cuml.predict(X_cudf_test)

# %%time

r2_score_cuml = r2_score(y_cudf_test, predict_cuml)

# ## Compare Results

print("R^2 score (SKL): %s" % r2_score_sk)

print("R^2 score (cuML): %s" % r2_score_cuml)

|

cuml/ridge_regression_demo.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/lopez-isaac/DS-Unit-2-Kaggle-Challenge/blob/master/module4/LS_DS_224_assignment.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="PxGvNaaSgr52" colab_type="text"

# Lambda School Data Science

#

# *Unit 2, Sprint 2, Module 4*

#

# ---

# + [markdown] colab_type="text" id="nCc3XZEyG3XV"

# # Classification Metrics

#

# ## Assignment

# - [ ] If you haven't yet, [review requirements for your portfolio project](https://lambdaschool.github.io/ds/unit2), then submit your dataset.

# - [ ] Plot a confusion matrix for your Tanzania Waterpumps model.

# - [ ] Continue to participate in our Kaggle challenge. Every student should have made at least one submission that scores at least 70% accuracy (well above the majority class baseline).

# - [ ] Submit your final predictions to our Kaggle competition. Optionally, go to **My Submissions**, and _"you may select up to 1 submission to be used to count towards your final leaderboard score."_

# - [ ] Commit your notebook to your fork of the GitHub repo.

# - [ ] Read [Maximizing Scarce Maintenance Resources with Data: Applying predictive modeling, precision at k, and clustering to optimize impact](https://towardsdatascience.com/maximizing-scarce-maintenance-resources-with-data-8f3491133050), by Lambda DS3 student <NAME>. His blog post extends the Tanzania Waterpumps scenario, far beyond what's in the lecture notebook.

#

#

# ## Stretch Goals

#

# ### Reading

# - [Attacking discrimination with smarter machine learning](https://research.google.com/bigpicture/attacking-discrimination-in-ml/), by Google Research, with interactive visualizations. _"A threshold classifier essentially makes a yes/no decision, putting things in one category or another. We look at how these classifiers work, ways they can potentially be unfair, and how you might turn an unfair classifier into a fairer one. As an illustrative example, we focus on loan granting scenarios where a bank may grant or deny a loan based on a single, automatically computed number such as a credit score."_

# - [Notebook about how to calculate expected value from a confusion matrix by treating it as a cost-benefit matrix](https://github.com/podopie/DAT18NYC/blob/master/classes/13-expected_value_cost_benefit_analysis.ipynb)

# - [Simple guide to confusion matrix terminology](https://www.dataschool.io/simple-guide-to-confusion-matrix-terminology/) by <NAME>, with video

# - [Visualizing Machine Learning Thresholds to Make Better Business Decisions](https://blog.insightdatascience.com/visualizing-machine-learning-thresholds-to-make-better-business-decisions-4ab07f823415)

#

#

# ### Doing

# - [ ] Share visualizations in our Slack channel!

# - [ ] RandomizedSearchCV / GridSearchCV, for model selection. (See module 3 assignment notebook)

# - [ ] More Categorical Encoding. (See module 2 assignment notebook)

# - [ ] Stacking Ensemble. (See below)

#

# ### Stacking Ensemble

#

# Here's some code you can use to "stack" multiple submissions, which is another form of ensembling:

#

# ```python

# import pandas as pd

#

# # Filenames of your submissions you want to ensemble

# files = ['submission-01.csv', 'submission-02.csv', 'submission-03.csv']

#

# target = 'status_group'

# submissions = (pd.read_csv(file)[[target]] for file in files)

# ensemble = pd.concat(submissions, axis='columns')

# majority_vote = ensemble.mode(axis='columns')[0]

#

# sample_submission = pd.read_csv('sample_submission.csv')

# submission = sample_submission.copy()

# submission[target] = majority_vote

# submission.to_csv('my-ultimate-ensemble-submission.csv', index=False)

# ```

# + colab_type="code" id="lsbRiKBoB5RE" colab={}

# %%capture

import sys

# If you're on Colab:

if 'google.colab' in sys.modules:

DATA_PATH = 'https://raw.githubusercontent.com/LambdaSchool/DS-Unit-2-Kaggle-Challenge/master/data/'

# !pip install category_encoders==2.*

# !pip install matplotlib==3.1.0

# If you're working locally:

else:

DATA_PATH = '../data/'

# + colab_type="code" id="BVA1lph8CcNX" colab={}

import pandas as pd

# Merge train_features.csv & train_labels.csv

train = pd.merge(pd.read_csv(DATA_PATH+'waterpumps/train_features.csv'),

pd.read_csv(DATA_PATH+'waterpumps/train_labels.csv'))

# Read test_features.csv & sample_submission.csv

test = pd.read_csv(DATA_PATH+'waterpumps/test_features.csv')

sample_submission = pd.read_csv(DATA_PATH+'waterpumps/sample_submission.csv')

# + id="say_7eiSgr6C" colab_type="code" outputId="2266f29d-bc16-4488-d65c-86fef62e6e90" colab={"base_uri": "https://localhost:8080/", "height": 34}

import matplotlib

print(matplotlib.__version__)

# + [markdown] id="ktV060KljTH6" colab_type="text"

# #cleanup

# + id="lh1cc4evixBw" colab_type="code" colab={}

## split train and val data sets

from sklearn.model_selection import train_test_split

train, val = train_test_split(train, train_size=.80, test_size=.20,

stratify=train['status_group'], random_state=42)

# + id="C1_AwGgvjain" colab_type="code" colab={}

import numpy as np

def wrangle(X):

"""Wrangle train, validate, and test sets in the same way"""

# Prevent SettingWithCopyWarning

X = X.copy()

# About 3% of the time, latitude has small values near zero,

# outside Tanzania, so we'll treat these values like zero.

X['latitude'] = X['latitude'].replace(-2e-08, 0)

# When columns have zeros and shouldn't, they are like null values.

# So we will replace the zeros with nulls, and impute missing values later.

# Also create a "missing indicator" column, because the fact that

# values are missing may be a predictive signal.

cols_with_zeros = ['longitude', 'latitude', 'construction_year',

'gps_height', 'population']

for col in cols_with_zeros:

X[col] = X[col].replace(0, np.nan)

X[col+'_MISSING'] = X[col].isnull()

# Drop duplicate columns

duplicates = ['quantity_group', 'payment_type']

X = X.drop(columns=duplicates)

# Drop recorded_by (never varies) and id (always varies, random)

unusable_variance = ['recorded_by', 'id']

X = X.drop(columns=unusable_variance)

# Convert date_recorded to datetime

X['date_recorded'] = pd.to_datetime(X['date_recorded'], infer_datetime_format=True)

# Extract components from date_recorded, then drop the original column

X['year_recorded'] = X['date_recorded'].dt.year

X['month_recorded'] = X['date_recorded'].dt.month

X['day_recorded'] = X['date_recorded'].dt.day

X = X.drop(columns='date_recorded')

# Engineer feature: how many years from construction_year to date_recorded

X['years'] = X['year_recorded'] - X['construction_year']

X['years_MISSING'] = X['years'].isnull()

# return the wrangled dataframe

return X

train = wrangle(train)

val = wrangle(val)

test = wrangle(test)

# + id="cAEUcAFJjkPX" colab_type="code" colab={}

## clean the nan of construction year

train['construction_year'].median()

#apply change to all data sets

data_sets = [train,val,test]

for x in data_sets:

x['construction_year'] = x['construction_year'].replace(np.NaN,1986)

#how many years in service

for x in data_sets:

x['pump_age'] = (x['year_recorded'] - x['construction_year'])

# + id="iPMYCV2YjoUa" colab_type="code" outputId="aa5723c8-5ab5-4a80-fbae-0b873d4a4505" colab={"base_uri": "https://localhost:8080/", "height": 54}

### make features and target

target = 'status_group'

train_features = train.drop(columns=[target])

# Get a list of the numeric features

numeric_features = train_features.select_dtypes(include='number').columns.tolist()

#Get a series with the cardinality of the nonnumeric features

cardinality = train_features.select_dtypes(exclude='number').nunique()

# Get a list of all categorical features with cardinality <= 50

low_categorical_features = cardinality[cardinality <= 50].index.tolist()

#get a list of high categorical features with cardinality >= 50

high_categorical_features = cardinality[cardinality >= 50].index.tolist()

# Combine the lists

features = numeric_features + low_categorical_features + high_categorical_features

print(features)

# + id="nzQ8KdHHmE8D" colab_type="code" colab={}

X_train = train[features]

y_train = train[target]

X_val = val[features]

y_val = val[target]

X_test = test[features]

# + id="0qt98s6skPDf" colab_type="code" colab={}

#generare y_pred

import category_encoders as ce

from sklearn.impute import SimpleImputer

from sklearn.preprocessing import StandardScaler

from sklearn.pipeline import make_pipeline

from sklearn.ensemble import RandomForestClassifier

# + id="Emj3xZKFkYJP" colab_type="code" colab={}

Working_condition = make_pipeline(

ce.OneHotEncoder(cols=low_categorical_features),

ce.OrdinalEncoder(),

SimpleImputer(missing_values=np.nan,strategy='median'),

RandomForestClassifier(n_estimators=100,max_depth=20, random_state=42, n_jobs=-1)

)

# + id="T86WyQ0bkkIn" colab_type="code" outputId="7a8f4888-d9f8-4c2f-fdca-0065430aee1b" colab={"base_uri": "https://localhost:8080/", "height": 34}

from sklearn.metrics import accuracy_score

# Fit on train, score on val

Working_condition.fit(X_train, y_train)

y_pred = Working_condition.predict(X_val)

print('Validation Accuracy', accuracy_score(y_val, y_pred))

# + [markdown] id="o7ifOlNJjysE" colab_type="text"

# #confusion matrix

# + id="Q1IK56Vuj1vS" colab_type="code" colab={}

from sklearn.metrics import confusion_matrix

# + id="VmeBcyGyj-Pg" colab_type="code" outputId="be78993a-8aa4-4ede-8229-458c19336332" colab={"base_uri": "https://localhost:8080/", "height": 68}

#very basic matix

confusion_matrix(y_val, y_pred)

# + id="9AVsJgxMnTDr" colab_type="code" outputId="4e4945c5-276e-4fa2-df25-f24d9fbccbc8" colab={"base_uri": "https://localhost:8080/", "height": 51}

# We need to get labels

from sklearn.utils.multiclass import unique_labels

unique_labels(y_val)

# + id="ov_kpM7HpDRL" colab_type="code" outputId="df8344c8-2349-4f77-981c-b89b8abdf8d5" colab={"base_uri": "https://localhost:8080/", "height": 119}

# 1. Check that our labels are correct

# add predicted and actual before lables

def plot_confusion_matrix(y_true, y_pred):

labels = unique_labels(y_true)

columns = [f'Predicted {label}' for label in labels]

index = [f'Actual {label}' for label in labels]

return columns, index

plot_confusion_matrix(y_val, y_pred)

# + id="tFJULzkVqs4h" colab_type="code" outputId="c03083b3-3172-4f0b-c8e6-395af42dd043" colab={"base_uri": "https://localhost:8080/", "height": 142}

#1st way = Make it a pandas dataframe

def plot_confusion_matrix(y_true, y_pred):

labels = unique_labels(y_true)

columns = [f'Predicted {label}' for label in labels]

index = [f'Actual {label}' for label in labels]

#top cell with this new line added

table = pd.DataFrame(confusion_matrix(y_true, y_pred),

columns=columns, index=index)

return table

plot_confusion_matrix(y_val, y_pred)

# + id="Op0jqQeyrKQ-" colab_type="code" outputId="0537c4bb-a536-4015-f917-584b3f832cc8" colab={"base_uri": "https://localhost:8080/", "height": 422}

import seaborn as sns

#2nd way = heatmap

def plot_confusion_matrix(y_true, y_pred):

labels = unique_labels(y_true)

columns = [f'Predicted {label}' for label in labels]

index = [f'Actual {label}' for label in labels]

table = pd.DataFrame(confusion_matrix(y_true, y_pred),

columns=columns, index=index)

#build of pandas DF from top cell

return sns.heatmap(table, annot=True, fmt='d', cmap='viridis')

plot_confusion_matrix(y_val, y_pred);

|

module4/LS_DS_224_assignment.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

#

# Description:

# A simple and well formatted jupyter notebook for extracting necessary

# information from csv of sound-activity project under Think-IOT Lab in

# Dr. B. C. Roy Engineering College.

#

# Author: <NAME>

#

# Dependency:

# pandas

#

#

import pandas as pd

# +

# Initializations and operation Specifications:

file_name = "data_files/raw_data.csv"

header = None # Default: 'infer'

col_to_get = '0, 1-2, 5' # To retain all collumns in DataFrame set: col_to_get = None / ''

col_name = "sound, date-time, reading #1, distance"

# +

# Function definitions:

def dataGlimse(DataFrm, dataAbt = "RAW DATA"):

print("\n\n-------- DATA INFO --------\n")

print(DataFrm.info())

print("\n\nDataFrame Shape: ",DataFrm.shape)

print("\n\nDataFrame Indexing: ",DataFrm.index)

print("\n\n--------",dataAbt,"GLIMSE --------")

print("\n# Head #\n")

print(DataFrm.head())

print("\n# Tail #\n")

print(DataFrm.tail())

print("\n\n--------",dataAbt,"GLIMSE END --------")

# -

reviews = pd.read_csv(file_name, header=header)

dataGlimse(reviews, "RAW DATA")

new_reviews = reviews.dropna()

# +

if col_to_get != '' and col_to_get != None:

col_nos = []

for cols in col_to_get.split(','):

cols = cols.replace(' ', '').split('-')

if len(cols) == 1:

col_nos = col_nos+[int(cols[0])]

else:

col_nos = col_nos+list(range(int(cols[0]),int(cols[1]) + 1))

new_reviews = new_reviews[col_nos]

if col_name != None:

new_reviews.columns = [name.strip() for name in col_name.split(',')]

dataGlimse(new_reviews, "SLICED DATA")

# -

|

Sound data Analyzer.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # NetworkX

# NetworkX is a Python language software package for the creation, manipulation, and study of the structure, dynamics, and function of complex networks.

#

# With NetworkX you can load and store networks in standard and nonstandard data formats, generate many types of random and classic networks, analyze network structure, build network models, design new network algorithms, draw networks, and much more.

#

# Library documentation: <a>https://networkx.github.io/</a>

import networkx as nx

G = nx.Graph()

# basic add nodes

G.add_node(1)

G.add_nodes_from([2, 3])

# add a group of nodes at once

H = nx.path_graph(10)

G.add_nodes_from(H)

# add another graph itself as a node

G.add_node(H)

# add edges using similar methods

G.add_edge(1, 2)

e = (2, 3)

G.add_edge(*e)

G.add_edges_from([(1, 2), (1, 3)])

G.add_edges_from(H.edges())

# can also remove or clear

G.remove_node(H)

G.clear()

# repeats are ignored

G.add_edges_from([(1,2),(1,3)])

G.add_node(1)

G.add_edge(1,2)

G.add_node('spam') # adds node "spam"

G.add_nodes_from('spam') # adds 4 nodes: 's', 'p', 'a', 'm'

# get the number of nodes and edges

G.number_of_nodes(), G.number_of_edges()

# access graph edges

G[1]

G[1][2]

# set an attribute of an edge

G.add_edge(1,3)

G[1][3]['color'] = 'blue'

FG = nx.Graph()

FG.add_weighted_edges_from([(1, 2, 0.125), (1, 3, 0.75), (2, 4, 1.2), (3, 4, 0.375)])

for n, nbrs in FG.adjacency():

for nbr, eattr in nbrs.items():

data = eattr['weight']

if data < 0.5: print('(%d, %d, %.3f)' % (n, nbr, data))

# graph attribte

G = nx.Graph(day='Friday')

G.graph

# modifying an attribute

G.graph['day'] = 'Monday'

G.graph

# node attributes

G.add_node(1, time='5pm')

G.add_nodes_from([3], time='2pm')

G.node[1]['room'] = 714

G.nodes(data=True)

# edge attributes (weight is a special numeric attribute)

G.add_edge(1, 2, weight=4.7)

G.add_edges_from([(3, 4), (4, 5)], color='red')

G.add_edges_from([(1, 2 ,{'color': 'blue'}), (2, 3, {'weight' :8})])

G[1][2]['weight'] = 4.7

# directed graph

DG = nx.DiGraph()

DG.add_weighted_edges_from([(1, 2 ,0.5), (3, 1, 0.75)])

DG.out_degree(1, weight='weight')

DG.degree(1, weight='weight')

DG.successors(1)

DG.predecessors(1)

# convert to undirected graph

H = nx.Graph(G)

# basic graph drawing capability

# %matplotlib inline

import matplotlib.pyplot as plt

nx.draw(G)

Tested; Gopal

|

tests/ipython-notebooks/NetworkX.ipynb

|

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.6.3

# language: julia

# name: julia-1.6

# ---

# # Item Collaborative Filtering

# * This notebook implements item-based collaborative filtering

# * Prediction is $\tilde r_{ij} = \dfrac{\sum_{k \in N(j)} w_{kj}^{\lambda_w}r_{ik}^{\lambda_r}}{\sum_{k \in N(j)} w_{kj}^{\lambda_w} + \lambda}$ for item-based collaborative filtering

# * $r_{ij}$ is the rating for user $i$ and item $j$

# * $w_{kj}$ is the similarity between items $j$ and $k$

# * $N(j)$ is the largest $K$ sorted by $w_{kj}$

# * $\lambda_w, \lambda_r, \lambda$ are regularization parameters

residual_alphas = [];

@nbinclude("Alpha.ipynb");

# ## Determine the neighborhoods for each user and item

function read_similarity_matrix(outdir)

read_params(outdir)["S"]

end;

function get_abs_neighborhood(item, S, K)

weights = S[:, item]

# ensure that the neighborhood for an item does not include itself

weights[item] = Inf

K = Int(min(K, length(weights) - 1))

order = partialsortperm(abs.(weights), 2:K+1, rev = true)

order, weights[order]

end;

# +

isnonzero(x) = !isapprox(x, 0.0, atol=eps(Float64))

# exponentially decay x

function decay(x, a)

isnonzero(x) ? sign(x) * abs(x)^a : zero(eltype(a))

end

# each prediction is just the weighted sum of all items in the neighborhood

# we apply regularization terms to decay the weights, ratings, and final prediction

function make_prediction(item, users, R, get_neighborhood, λ)

if item > size(R)[2]

# the item was not in our training set; we have no information

return zeros(length(item))

end

items, weights = get_neighborhood(item)

weights = decay.(weights, λ[1])

predictions = zeros(eltype(weights), length(users))

weight_sum = zeros(eltype(weights), length(users))

for u = 1:length(users)

for (i, weight) in zip(items, weights)

if isnonzero(R[users[u], i])

predictions[u] += weight * decay(R[users[u], i], λ[2])

weight_sum[u] += abs(weight)

end

end

end

for u = 1:length(users)

if isnonzero(weight_sum[u] + λ[3])

predictions[u] /= (weight_sum[u] + λ[3])

end

end

predictions

end;

# -

function collaborative_filtering(training, inference, get_neighborhood, λ)

R = sparse(

training.user,

training.item,

training.rating,

maximum(training.user),

maximum(training.item),

)

preds = zeros(eltype(λ), length(inference.rating))

@tprogress Threads.@threads for item in collect(Set(inference.item))

mask = inference.item .== item

preds[mask] =

make_prediction(item, inference.user[mask], R, get_neighborhood, λ)

end

preds

end;

# +

Base.@kwdef mutable struct cf_params

name::Any

training_residuals::Any

validation_residuals::Any

neighborhood_type::Any

S::Any # the similarity matrix

K::Any # the neighborhood size

λ::Vector{Float64} = [1.0, 1.0, 0.0] # [weight_decay, rating_decay, prediction_decay]

end;

to_dict(x::T) where {T} = Dict(string(fn) => getfield(x, fn) for fn ∈ fieldnames(T));

# -

# ## Item based CF

# +

function get_training(residual_alphas)

get_residuals("training", residual_alphas)

end

function get_validation(residual_alphas)

get_residuals("validation", residual_alphas)

end

function get_inference()

training = get_split("training")

validation = get_split("validation")

test = get_split("test")

RatingsDataset(

user = [training.user; validation.user; test.user],

item = [training.item; validation.item; test.item],

rating = fill(

0.0,

length(training.rating) + length(validation.item) + length(test.item),

),

)

end;

# -

function optimize_model(param)

# unpack parameters

training = get_training(param.training_residuals)

validation = get_validation(param.validation_residuals)

S = read_similarity_matrix(param.S)

K = param.K

neighborhood_types = Dict("abs" => get_abs_neighborhood)

neighborhoods = i -> neighborhood_types[param.neighborhood_type](i, S, K)

# optimize hyperparameters

function validation_mse(λ)

pred = collaborative_filtering(training, validation, neighborhoods, λ)

truth = validation.rating

β = pred \ truth

loss = mse(truth, pred .* β)

@debug "loss: $loss β: $β: λ $λ"

loss

end

res = optimize(

validation_mse,

param.λ,

LBFGS(),

autodiff = :forward,

Optim.Options(show_trace = true, extended_trace = true),

)

param.λ = Optim.minimizer(res)

# save predictions

inference = get_inference()

preds = collaborative_filtering(training, inference, neighborhoods, param.λ)

sparse_preds = sparse(inference.user, inference.item, preds)

function model(users, items, predictions)

result = zeros(length(users))

for i = 1:length(users)

if users[i] <= size(predictions)[1] && items[i] <= size(predictions)[2]

result[i] = predictions[users[i], items[i]]

end

end

result

end

write_predictions(

(users, items) -> model(users, items, sparse_preds),

outdir = param.name,

residual_alphas = param.validation_residuals,

save_training = true,

)

write_params(to_dict(param), outdir = param.name)

end;

|

notebooks/TrainingAlphas/ItemCollaborativeFilteringBase.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: conda_python3

# language: python

# name: conda_python3

# ---

# # Forecasting Energy Demand

#

# ## Data Wrangling

#

# The project consists of two data sets:

# * Hourly electricity demand data from the EIA;

# * Hourly observed weather data from LCD/NOAA.

#

# Additionally to demand and weather data, I'll create features based on time to see how the trends are impacted by day of week, hour, week of year, if is holiday, etc.

#

# To limit the scope of the project, I'll use data from Los Angeles exclusively to validate if is possible to improve electricity demand forecasting using weather data.

# +

import boto3

import io

from sagemaker import get_execution_role

role = get_execution_role()

bucket ='sagemaker-data-energy-demand'

# +

S3_CLIENT = boto3.client('s3')

files_list = S3_CLIENT.list_objects_v2(Bucket=bucket, Prefix='raw_data/weather/')

s3_files = files_list['Contents']

latest_weather_data = max(s3_files, key=lambda x: x['LastModified'])

weather_data_location = 's3://{}/{}'.format(bucket, latest_weather_data['Key'])

# +

import requests

import json

import datetime

import pandas as pd

from scipy import stats

from pandas.io.json import json_normalize

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import warnings

warnings.filterwarnings('ignore')

# -

# ### Electricity data

# Electricity data were retrieved using EIA’s API and then unpacked into a dataframe. The API contain hourly entries from July 2015 to present.

#

# The electricity data required just simple cleaning. There were few null values in the set and a very small number of outliers. Removing outliers cut only ~.01% of the data.

# +

EIA5__API_KEY = '1d48c7c8354cc4408732174250d3e8ff'

REGION_CODE = 'LDWP'

CITY = 'LosAngeles'

def str_to_isodatetime(string):

'''

This function transforms strings to an ISO Datetime.

'''

year = string[:4]

month = string[4:6]

day = string[6:8]

time = string[8:11] + ':00:00+0000'

return year + month + day + time

def eia2dataframe(response):

'''

This function unpacks the JSON file from EIA API into a pandas dataframe.

'''

data = response['series'][0]['data']

dates = []

values = []

for date, demand in data:

if demand is None or demand <= 0:

continue

dates.append(str_to_isodatetime(date))

values.append(float(demand))

df = pd.DataFrame({'datetime': dates, 'demand': values})

df['datetime'] = pd.to_datetime(df['datetime'])

df.set_index('datetime', inplace=True)

df = df.sort_index()

return df

electricity_api_response = requests.get('http://api.eia.gov/series/?api_key=%s&series_id=EBA.%s-ALL.D.H' % (EIA__API_KEY, REGION_CODE)).json()

electricity_df = eia2dataframe(electricity_api_response)

# -

electricity_df.isnull().sum()

print(res)

# ### Observed weather data

# LCD data are not available via NOAA’s API so I manually downloaded from the website as a CSV file which I imported to a pandas DataFrame. As common in data that come from physical sensors, LCD data required extensive cleansing.

#

# The main challenges in cleaning the LCD data was that there were in some cases multiple entries for the same hour. I wanted to have just one entry per hour such that I could eventually align LCD data with the hourly entries in the electricity data.

#

# I wrote a function that group weather data by hour and the mode of the entries for same hour. I performed the cleaning this way because either way, the values for multiple per-hour entries are very similar, so the choice of which entry to keep doesn’t make a real difference.

#

def fix_date(df):

'''

This function goes through the dates in the weather dataframe and if there is more than one record for each

hour, we pick the record closest to the hour and drop the rows with the remaining records for that hour.

This is so we can align this dataframe with the one containing electricity data.

input: Pandas DataFrame

output:

'''

df['date'] = pd.to_datetime(df['date']).dt.tz_localize('UTC')

df['date_rounded'] = df['date'].dt.floor('H')

df.drop('date', axis=1, inplace=True)

df.rename({"date_rounded": "datetime"}, axis=1, inplace=True)

df.set_index('datetime', inplace=True)

last_of_hour = df[~df.index.duplicated(keep='last')]

last_of_hour.sort_index(ascending=True, inplace=True, kind='mergesort')

return last_of_hour

# +

def clean_sky_condition(df):

'''

This function cleans the hourly sky condition column by assigning the hourly sky condition to be the one at the

top cloud layer, which is the best determination of the sky condition, as described by the documentation.

input: Pandas DataFrame

output:

'''

conditions = df['hourlyskyconditions']

new_condition = []

for k, condition in enumerate(conditions):

if type(condition) != str and np.isnan(condition):

new_condition.append(np.nan)

else:

colon_indices = [i for i, char in enumerate(condition) if char == ':']

n_layers = len(colon_indices)

try:

colon_position = colon_indices[n_layers - 1]

if condition[colon_position - 1] == 'V':

condition_code = condition[colon_position - 2 : colon_position]

else:

condition_code = condition[colon_position - 3 : colon_position]

new_condition.append(condition_code)

except:

new_condition.append(np.nan)

df['hourlyskyconditions'] = new_condition

df['hourlyskyconditions'] = df['hourlyskyconditions'].astype('category')

return df

def hourly_degree_days(df):

'''

This function adds hourly heating and cooling degree days to the weather DataFrame.

'''

df['hourlycoolingdegrees'] = df['hourlydrybulbtemperature'].apply(lambda x: x - 65. if x >= 65. else 0.)

df['hourlyheatingdegrees'] = df['hourlydrybulbtemperature'].apply(lambda x: 65. - x if x <= 65. else 0.)

return df

# import csv

weather_df = pd.read_csv(weather_data_location, usecols=['DATE', 'DailyCoolingDegreeDays', 'DailyHeatingDegreeDays', 'HourlyDewPointTemperature', 'HourlyPrecipitation', 'HourlyRelativeHumidity', 'HourlySeaLevelPressure', 'HourlySkyConditions', 'HourlyStationPressure', 'HourlyVisibility', 'HourlyDryBulbTemperature', 'HourlyWindSpeed'],

dtype={

'DATE': object,

'DailyCoolingDegreeDays': object,

'DailyHeatingDegreeDays': object,

'HourlyDewPointTemperature': object,

'HourlyPrecipitation': object,

'HourlyRelativeHumidity': object,

'HourlySeaLevelPressure': object,

'HourlySkyConditions': object,

'HourlyStationPressure': object,

'HourlyVisibility': object,

'HourlyDryBulbTemperature': object,

'HourlyWindSpeed': object

})

# make columns lowercase for easier access

weather_df.columns = [col.lower() for col in weather_df.columns]

# clean dataframe so that there's only one record per hour

weather_df = fix_date(weather_df)

# fill the daily heating and cooling degree days such that each hour in an individual day has the same value

weather_df['dailyheatingdegreedays'] = weather_df['dailyheatingdegreedays'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df.dailyheatingdegreedays.astype('float64')

weather_df['dailycoolingdegreedays'] = weather_df['dailycoolingdegreedays'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df.dailycoolingdegreedays.astype('float64')

weather_df['dailyheatingdegreedays'] = weather_df['dailyheatingdegreedays'].bfill()

weather_df['dailycoolingdegreedays'] = weather_df['dailycoolingdegreedays'].bfill()

weather_df = clean_sky_condition(weather_df)

# clean other columns by replacing string based values with floats

# values with an 's' following indicate uncertain measurments. we simply change those to floats and include them like normal

weather_df['hourlyvisibility'] = weather_df['hourlyvisibility'].apply(lambda x: float(x) if str(x)[-1] != 'V' else float(str(x)[:-1]))

weather_df['hourlydrybulbtemperature'] = weather_df['hourlydrybulbtemperature'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df['hourlydewpointtemperature'] = weather_df['hourlydewpointtemperature'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

# set trace amounts equal to zero and change data type

weather_df['hourlyprecipitation'].where(weather_df['hourlyprecipitation'] != 'T', 0.0, inplace=True)

weather_df['hourlyprecipitation'] = weather_df['hourlyprecipitation'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df['hourlystationpressure'] = weather_df['hourlystationpressure'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df['hourlywindspeed'] = weather_df['hourlywindspeed'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df['hourlyrelativehumidity'] = weather_df['hourlyrelativehumidity'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df['hourlysealevelpressure'] = weather_df['hourlysealevelpressure'].apply(lambda x: float(x) if str(x)[-1] != 's' else float(str(x)[:-1]))

weather_df.hourlyprecipitation.astype('float64')

weather_df.hourlyvisibility.astype('float64')

weather_df.hourlyrelativehumidity.astype('float64')

weather_df.hourlysealevelpressure.astype('float64')

weather_df.hourlystationpressure.astype('float64')

weather_df.hourlywindspeed.astype('float64')

weather_df = hourly_degree_days(weather_df)

# -

weather_df.hourlyrelativehumidity.astype('float64')

weather_df.hourlysealevelpressure.astype('float64')

weather_df.dtypes

## Cut dataframes based on date to align sources

cut_electricity = electricity_df[:weather_df.index.max()]

cut_weather = weather_df[electricity_df.index.min():]

# ## Dealing with outliers and NaN values

#

# The plot distributions bof the features below is used to determine what columns should be filled by using the median

# and which should be filled according to ffill. The features whose ```medians``` and ```means``` are close together suggest that the ```median``` is a good choice for NaNs.Conversely features whose median and means are further apart suggest the presence of outliers and in this case I use ```ffill``` because we are dealing with time series and values in previous time steps are useful in predicting values for later time steps

# +

diff = max(cut_weather.index) - min(cut_electricity.index)

days, seconds = diff.days, diff.seconds

hours = days * 24 + seconds // 3600

minutes = (seconds % 3600) // 60

seconds = seconds % 60

number_of_steps = hours + 1

# -

print('*** min ***')

print(min(cut_electricity.index))

print(min(cut_weather.index))

print(cut_weather.index.min() == cut_electricity.index.min())

print('*** max ***')

print(max(cut_electricity.index))

print(max(cut_weather.index))

print(cut_weather.index.max() == cut_electricity.index.max())

print('*** instances quantity is equal? ***')

print(cut_weather.shape[0] == cut_electricity.shape[0])

print('*** weather, demand, expected ***')

print(cut_weather.shape[0], cut_electricity.shape[0], number_of_steps)

# +

fill_dict = {'median': ['dailyheatingdegreedays', 'hourlyaltimetersetting', 'hourlydrybulbtemperature', 'hourlyprecipitation', 'hourlysealevelpressure', 'hourlystationpressure', 'hourlywetbulbtempf', 'dailycoolingdegreedays', 'hourlyvisibility', 'hourlywindspeed', 'hourlycoolingdegrees', 'hourlyheatingdegrees'], 'ffill': ['demand', 'hourlydewpointtemperature', 'hourlyrelativehumidity']}

# fill electricity data NaNs

for col in cut_electricity.columns:

if col in fill_dict['median']:

cut_electricity[col].fillna(cut_electricity[col].median(), inplace=True)

else:

cut_electricity[col].fillna(cut_electricity[col].ffill(), inplace=True)

# fill weather data NaNs

for col in cut_weather.columns:

if col == 'hourlyskyconditions':

cut_weather[col].fillna(cut_weather[col].value_counts().index[0], inplace=True)

elif col in fill_dict['median']:

cut_weather[col].fillna(cut_weather[col].median(), inplace=True)

else:

cut_weather[col].fillna(cut_weather[col].ffill(), inplace=True)

# -

print(cut_weather.shape[0] == cut_electricity.shape[0])

electricity_set = set(cut_electricity.index)

weather_set = set(cut_weather.index)

print(len(electricity_set.difference(weather_set)))

# finally merge the data to get a complete dataframe for LA, ready for training

merged_df = cut_weather.merge(cut_electricity, right_index=True, left_index=True, how='inner')

merged_df = pd.get_dummies(merged_df)

merged_df.head()

merged_df.index.name = 'datetime'

if 'hourlyskyconditions_VV' in list(merged_df.columns):

merged_df.drop('hourlyskyconditions_VV', axis=1, inplace=True)

if 'hourlyskyconditions_' in list(merged_df.columns):

merged_df.drop('hourlyskyconditions_', axis=1, inplace=True)

# +

# save as csv file to continue in another notebook

csv_buffer = io.StringIO()

s3_resource = boto3.resource('s3')

key = 'dataframes/%s_dataset.csv' % CITY

merged_df.to_csv(csv_buffer, compression=None)

s3_resource.Object(bucket, key).put(Body=csv_buffer.getvalue())

# -

|

0_WRANGLING.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Model

#

# In this notebook we read in the previously created dataset with features engineered, and then perform the following tasks:

#

# * Split into train and test data. Also use reduced size subsets for quicker evaluation.

# * Evaluate some classification algorithms and paramters.

# * Perform simple cross validation to find the best model.

# * Measure the model performance.

# ## Read in dataset and verify

# Imports

import findspark

findspark.init()

findspark.find()

import pyspark

# Imports for creating spark session

from pyspark import SparkContext, SparkConf

from pyspark.sql import SparkSession

conf = pyspark.SparkConf().setAppName('sparkify-capstone-model').setMaster('local')

sc = pyspark.SparkContext(conf=conf)

spark = SparkSession(sc)

# Imports for modelling, tuning and evaluation

from pyspark.ml.classification import LogisticRegression, RandomForestClassifier, GBTClassifier

from pyspark.ml.evaluation import BinaryClassificationEvaluator, MulticlassClassificationEvaluator

# Imports for visualization and output

import matplotlib.pyplot as plt

from IPython.display import HTML, display

# Read in dataset

conf.set("spark.driver.maxResultSize", "0")

path = "out/features.parquet"

df = spark.read.parquet(path)

# Look at some values about the data to confirm it was read in correctly

print("Dataset set rows/cols: {},{}".format(df.count(), len(df.columns)))

df.printSchema()

df.show(10)

# ## Split

#

# Try three ways to split the data to have an initial idea about how some algorithms perform.

# +

# First, we are going to use just a subset of the dataset becuase doing a lot of tuning and cross validation

# would take too long otherwise.

def createSubset(df, factor):

"""

INPUT:

df: The dataset to split

factor: How much of the dataset to return

OUTPUT:

df_subset: The split subset

"""

df_subset, df_dummy = df.randomSplit([factor, 1 - factor])

return df_subset

df_subset = createSubset(df, .2)

df_subset.count()

# -

# Now we split the subset into train and test.

# Note: Best split factor of 90% was determined by trial and error.

df_train, df_test = df_subset.randomSplit([0.9, 0.1])

print(df_train.count())

print(df_test.count())

# ## Algorithm selection

#

# We will be looking at some of pysparks classificatoin algorhtims:

#

# * Logistic Regression (single)

# * Random Forest (parallel)

# * Gradient-Boosted Tree (sequential)

#

# The most basic, logistic regression is a good start.

# ### LogisticRegression

#

# First we will be creating a function to fit and train the LR model, which we may use many times.

# Then we also create some helpful functions for showing the evaluation of the model with some metrics.

# +

def logisticRegressionPredictions(df_train, df_test, threshold = 0.5, labelCol = "churn", featuresCol = "features"):

""" Fit, evaluate and show results for LogisticRegression

INPUT:

df_train: The training data set

df_test: The testing data set

threshold: The algorithm's threshold for classification.

labelCol: The label column name, "churn" by default.

featuresCol: The label column name, "features" by default.

OUTPUT:

predictions: The model's predictions

"""

# Fit and train model

logreg = LogisticRegression(labelCol = labelCol, featuresCol = featuresCol, threshold = threshold).fit(df_train)

return logreg.evaluate(df_test).predictions

def printConfusionMatrix(tp, fp, tn, fn):

""" Simple function to output a confusion matrix from f/t/n/p values as html table.

INPUT:

data: The array to print as table

OUTPUT:

Prints the array as html table.

"""

html = "<table><tr><td></td><td>Act. True</td><td>False</td></tr>"

html += "<tr><td>Pred. Pos.</td><td>{}</td><td>{}</td></tr>".format(tp, fp)

html += "<tr><td>Negative</td><td>{}</td><td>{}</td></tr>".format(fn, tn)

html += "</table>"

display(HTML(html))

def showEvaluationMetrics(predictions):

""" Calculate and print the some evaluation metrics for the passed predictions.

INPUT:

predictions: The predictions to evaluate and print

OUTPUT:

Just prints the evaluation metrics

"""

# Calculate true, false positives and negatives to calculate further metrics later:

tp = predictions[(predictions.churn == 1) & (predictions.prediction == 1)].count()

tn = predictions[(predictions.churn == 0) & (predictions.prediction == 0)].count()

fp = predictions[(predictions.churn == 0) & (predictions.prediction == 1)].count()

fn = predictions[(predictions.churn == 1) & (predictions.prediction == 0)].count()

printConfusionMatrix(tp, fp, tn, fn)

# Calculate and print metrics

f1 = MulticlassClassificationEvaluator(labelCol = "churn", metricName = "f1") \

.evaluate(predictions)

accuracy = float((tp + tn) / (tp + tn + fp + fn))

recall = float(tp / (tp + fn))

precision = float(tp / (tp + fp))

print("F1: ", f1)

print("Accuracy: ", accuracy)

print("Recall: ", recall)

print("Precision: ", precision)

def plotROC(df_model):

"""

Plot the Receiver Operator Curve for the evaluated model

INPUT:

df_model: The trained model

OUTPUT:

Plots the curve

"""

plt.figure(figsize = (5,5))

plt.plot([0, 1], [0, 1], 'r--')

plt.plot(df_model.summary.roc.select('FPR').collect(),

df_model.summary.roc.select('TPR').collect())

plt.xlabel('FPR')

plt.ylabel('TPR')

plt.show()

def printAUC(predictions, labelCol = "churn"):

""" Print the area under curve for the predictions.

INPUT:

predictions: The predictions to get and print the AUC for

OUTPU:

Prints the AUC

"""

print("Area under curve: ", BinaryClassificationEvaluator(labelCol = labelCol).evaluate(predictions))

# -

predictions = logisticRegressionPredictions(df_train, df_test)

showEvaluationMetrics(predictions)

# +

# Evaluation results:

# The confusion matrix looks pretty good but a bit worrying is the high count of false negatives (Type 2 errors).

# This is possibly due to our label set being unbalanced.

# F1 score is looking good, I can be happy with over 80% at first try.

# But as expected, the high false negative count lead to a bad recall score.

# In the case of churn, a false negative is not a desirable prediction outcome, because this means we miss customers

# who actually churn and do not get a chance to change their minds. Which is the point of the excersize.

# So we will try to tune this and also watch the overall score.

# But before, let's plot the ROC and show AUC:

model = LogisticRegression(labelCol = "churn").fit(df_train)

model.evaluate(df_test).predictions

plotROC(model)

printAUC(predictions)

# +

# This looks like a lot of RO curves I have seen so that and also high AUC seems promising as well.

# Lookig the the curve though, we see there is some room for improvement.

# -

# ### Optimization: Threshold

#

# Since our input data is skewed on the label, we can try to undersample negative cases by lowering the threshold.

# Hopefully, this will help significantly with our bad recall rate.

predictions = logisticRegressionPredictions(df_train, df_test, 0.4)

showEvaluationMetrics(predictions)

# We see that our f1 score has slightly improved. Recall increased by quite a bit but precision also went down.

# Let's try one more time with an only slighty smaller threshold:

predictions = logisticRegressionPredictions(df_train, df_test, 0.45)

showEvaluationMetrics(predictions)

# +

# Recall went down and precision up.

# So there is a tradeoff here. Maybe if we change the input data itself?

# -

# ### Optimization: Undersampling negatives in the input data

#

# As an alternative to penalize false negatives, we can try to undersample them.

# Check distributions of churn

zeros = df_subset.filter(df["churn"] == 0)

ones = df_subset.filter(df["churn"] == 1)

zerosCount = zeros.count()

onesCount = ones.count()

print("Ones: {}, Zeros: {}".format(onesCount, zerosCount))

print(onesCount / zerosCount * 100)

# +

# As a "quick and dirty" check, we will just hack off some 0's and see what happens.

# Note: Normally we would use something like KStratified sampling or similar but that is beyond the scode of this project.

def undersampleNegatives(df, ratio, labelCol = "churn"):

"""

Undersample the negatives (0's) in the given dataframe by ratio.

NOTE: The "selection" method here is of course very crude and in a real version should be randomized and shuffled.

INPUT:

df: dataframe to undersample negatives from

ratio: Undersampling ratio

labelCol: LAbel column name in the input dataframe

OUTPUT:

A new dataframe with negatives undersampled by ratio

"""

zeros = df.filter(df[labelCol] == 0)

ones = df.filter(df[labelCol] == 1)

zeros = createSubset(zeros, ratio)

return zeros.union(ones)

df_undersampled = undersampleNegatives(df_subset, .8)

# -

# Check distribution again

zeros = df_undersampled.filter(df["churn"] == 0)

ones = df_undersampled.filter(df["churn"] == 1)

zerosCount = zeros.count()

onesCount = ones.count()

print("Ones: {}, Zeros: {}".format(onesCount, zerosCount))

print(onesCount / zerosCount * 100)

df_train, df_test = df_undersampled.randomSplit([0.91, 0.09])

predictions = logisticRegressionPredictions(df_train, df_test)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# As we can see, our recall score did go up a little, but at the cost of precision.

# Same as when we modified te threshold.

# The f1 score stayed about the same, but the AUC went up a little.

#

# So while we do have some small optimization, it will be a business decision to decide which way to

# tune the model - either more precision or more recall.

# Another thing we can try is to modify our input data in the ETL stage.

# -

# ### RandomForest

#

# Logistic regression performed reasonably well but there was a very low recall rate.

# Let's see how a RandomForesClassifier does on this dataset.

# Since we have some high correlation, an ensemble learning model

# like RFC might do a little better.

# +

def randomForestPredictions(df_train, df_test, numTrees = 50, labelCol = "churn", featuresCol = "features"):

""" Fit, evaluate and show results for RandomForestClassifier

INPUT:

df_train: The training data set.

df_test: The testing data set.

numTrees: Number of trees in the forest.

labelCol: The label column name, "churn" by default.

featuresCol: The label column name, "features" by default.

OUTPUT:

predictions: The model's predictions.

"""

# Fit and train model

rfc = RandomForestClassifier(labelCol = labelCol, featuresCol = featuresCol, numTrees = numTrees).fit(df_train)

return rfc.transform(df_test)

predictions = randomForestPredictions(df_train, df_test)

# -

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# While there is a very low recall rate, the Precision is perfect (This could be due to our reduced dataset size),

# and the AUC is pretty high as well.

# Let's try another optimization.

# -

# ### Optimization: numTrees

#

# We will try to increase the number of decision trees in the forest.

predictions = randomForestPredictions(df_train, df_test, 100)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# Not a whole lot has improved.

# Lastly we try to run the RF on the undersampled input data.

# Since it severly penalized the recall, we will remove a larger portion of negatives this time.

# -

# ### Optimization: Undersampled negatives

#

# Same as above with LogisticRegression.

# ratio was derived by trial and error

df_undersampled = undersampleNegatives(df_subset, .264)

df_train, df_test = df_undersampled.randomSplit([0.9, 0.1])

predictions = randomForestPredictions(df_train, df_test, 100)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# It seems that with severely reduced negatives in the data, the RandomForest classifier was able to converge better.

# The f1 score has worsened, but now recall is on a higher level, meaning we would not miss

# as many customers churning as before.

# But will these good results from the undersampled data hold for the real test data? Let's see:

rfc = RandomForestClassifier(labelCol = "churn", featuresCol = "features", numTrees = 100).fit(df_train)

df_train, df_test = df_subset.randomSplit([0.9, 0.1])

predictions = rfc.transform(df_test)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# Again we see that there is a tradeoff to be expected between recall and precision.

# But the f1 score is good, as is precision, and now recall is looking better, so it's an improvement.

# Anyway this concludes the preliminary experiments for RandomForest tuning, in conclusion I like the model better as it seems

# to perform better and also gives more leeway when tuning as compared to LogisticRegression.

# -

# ### Gradient Boost

#

# As last algorithm we try a gradient boosted tree classifier.

# +

def gbtPredictions(df_train, df_test, maxIter = 10, labelCol = "churn", featuresCol = "features"):

""" Fit, evaluate and show results for GBTClassifier

INPUT:

df_train: The training data set.

df_test: The testing data set.

maxIter: Number of maximum iterations in the gradeint boost.

labelCol: The label column name, "churn" by default.

featuresCol: The label column name, "features" by default.

OUTPUT:

predictions: The model's predictions

"""

# Fit and train model

gbt = GBTClassifier(labelCol = labelCol, featuresCol = featuresCol, maxIter = maxIter).fit(df_train)

return gbt.transform(df_test)

predictions = gbtPredictions(df_train, df_test)

# -

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# The GBT results look very promising. There are very high rates right off the bat, and recall is nearing 50%.

# -

# ### Optimization: Unersampling negatives

#

# With our experience from above, let's try undersampling as first optimization.

# What happens here is largeley analogous to similar procedures above.

df_undersampled = undersampleNegatives(df_subset, .6)

df_train, df_test = df_undersampled.randomSplit([0.9, 0.1])

predictions = gbtPredictions(df_train, df_test)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# This is a significant improvement. There is a strongly improved recall rate at a high precision.

# Let's see how it does on the normal sampled test set:

gbt = GBTClassifier(labelCol = "churn", featuresCol = "features", maxIter = 10).fit(df_train)

df_train, df_test = df_subset.randomSplit([0.9, 0.1])

predictions = gbt.transform(df_test)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# The model was able to translate it's performance very well to the normal sampled dataset.

# I am convinced that this is the best algorithm to use.

# -

# ### Full undersampled dataset

#

# Let us run and evalute the algorithm on the full (undersampled) dataset.

# +

df_train, df_test = df.randomSplit([0.9, 0.1])

df_undersampled = undersampleNegatives(df_train, .6)

gbt = GBTClassifier(labelCol = "churn", featuresCol = "features", maxIter = 10).fit(df_undersampled)

predictions = gbt.transform(df_test)

showEvaluationMetrics(predictions)

printAUC(predictions)

# +

# The f1 score is very good, in fact all scores but recall are very good.

# But recall is still very acceptable under these conditions.

# Now we can use automated optimization to search for the best GBT model.

# -

# Output the notebook to an html file

from subprocess import call

call(['python', '-m', 'nbconvert', 'model.ipynb'])

|

udacity/data-scientist-nanodegree/sparkify/model.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: visualization-curriculum-gF8wUgMm

# language: python

# name: visualization-curriculum-gf8wugmm

# ---

# + [markdown] papermill={"duration": 0.010032, "end_time": "2020-03-28T16:40:23.793109", "exception": false, "start_time": "2020-03-28T16:40:23.783077", "status": "completed"} tags=[]

# # COVID-19 Tracking U.S. Cases

# > Tracking coronavirus total cases, deaths and new cases in US by states.

#

# - comments: true

# - author: <NAME>

# - categories: [overview, interactive, usa]

# - hide: true

# - permalink: /covid-overview-us/

# + papermill={"duration": 0.018147, "end_time": "2020-03-28T16:40:23.817848", "exception": false, "start_time": "2020-03-28T16:40:23.799701", "status": "completed"} tags=[]

#hide

print('''

Example of using jupyter notebook, pandas (data transformations), jinja2 (html, visual)

to create visual dashboards with fastpages

You see also the live version on https://gramener.com/enumter/covid19/united-states.html

''')

# + papermill={"duration": 0.356603, "end_time": "2020-03-28T16:40:24.180685", "exception": false, "start_time": "2020-03-28T16:40:23.824082", "status": "completed"} tags=[]

#hide

import numpy as np

import pandas as pd

from jinja2 import Template

from IPython.display import HTML

# + papermill={"duration": 0.013482, "end_time": "2020-03-28T16:40:24.200306", "exception": false, "start_time": "2020-03-28T16:40:24.186824", "status": "completed"} tags=[]

#hide

from pathlib import Path

if not Path('covid_overview.py').exists():

# ! wget https://raw.githubusercontent.com/pratapvardhan/notebooks/master/covid19/covid_overview.py

# + papermill={"duration": 0.045632, "end_time": "2020-03-28T16:40:24.252035", "exception": false, "start_time": "2020-03-28T16:40:24.206403", "status": "completed"} tags=[]

#hide

import covid_overview as covid

# + papermill={"duration": 0.070888, "end_time": "2020-03-28T16:40:24.330257", "exception": false, "start_time": "2020-03-28T16:40:24.259369", "status": "completed"} tags=[]

#hide

COL_REGION = 'Province/State'

kpis_info = [

{'title': 'New York', 'prefix': 'NY'},

{'title': 'Washington', 'prefix': 'WA'},

{'title': 'California', 'prefix': 'CA'}]

data = covid.gen_data_us(region=COL_REGION, kpis_info=kpis_info)

# + papermill={"duration": 0.037772, "end_time": "2020-03-28T16:40:24.375120", "exception": false, "start_time": "2020-03-28T16:40:24.337348", "status": "completed"} tags=[]

#hide

data['table'].head(5)

# + papermill={"duration": 0.135637, "end_time": "2020-03-28T16:40:24.517739", "exception": false, "start_time": "2020-03-28T16:40:24.382102", "status": "completed"} tags=[]

#hide_input

template = Template(covid.get_template(covid.paths['overview']))

html = template.render(

D=data['summary'], table=data['table'],

newcases=data['newcases'].iloc[:, -15:],

COL_REGION=COL_REGION,

KPI_CASE='US',

KPIS_INFO=kpis_info,

LEGEND_DOMAIN=[5, 50, 500, np.inf],

np=np, pd=pd, enumerate=enumerate)

HTML(f'<div>{html}</div>')

# + [markdown] papermill={"duration": 0.009551, "end_time": "2020-03-28T16:40:24.538412", "exception": false, "start_time": "2020-03-28T16:40:24.528861", "status": "completed"} tags=[]

# Visualizations by [<NAME>](https://twitter.com/PratapVardhan)[^1]

#

# [^1]: Source: ["The New York Times"](https://github.com/nytimes/covid-19-data). Link to [notebook](https://github.com/pratapvardhan/notebooks/blob/master/covid19/covid19-overview-us.ipynb), [orignal interactive](https://gramener.com/enumter/covid19/united-states.html)

|

_notebooks/2020-03-21-covid19-overview-us.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: scMyositis

# language: python

# name: scmyositis

# ---

# # Get amino acids from TraCeR assemble

# This notebook looks for TraCeR assemble output and builds a dataframe with cell data.<br>

# It assumes the following data folder structure:

# ```bash

# ├── AB

# │ ├── <batch_1>

# │ │ ├── <cell_1a>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ ├── <cell_1b>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ └── ...

# │ ├── <batch_2>

# │ │ ├── <cell_2a>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ ├── <cell_2b>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ └── ...

# │ └── ...

# ├── GD

# │ ├── <batch_1>

# │ │ ├── <cell_1a>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ ├── <cell_1b>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ └── ...

# │ ├── <batch_2>

# │ │ ├── <cell_2a>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ ├── <cell_2b>

# │ │ │ ├── filtered_TCR_seqs

# │ │ │ │ ├── filtered_TCRs.txt

# │ │ │ │ └── ...

# │ │ │ └── ...

# │ │ └── ...

# │ └── ...

# ```

# **Author: <NAME>**<br>

# 22/02/2021<br>

# Kernel: `scMyocitis`<br>

import os

import argparse

import pandas as pd

from objects import Cell, Chain

from objects import AlphaChain, BetaChain, GammaChain, DeltaChain

from objects import create_cell_from_AB, append_GD_data

pd.set_option('display.max_columns',None)

in_path = './data'

out_file = 'results/results_tracer.csv'

AB_path = os.path.join(in_path,os.path.normpath('AB'))

GD_path = os.path.join(in_path,os.path.normpath('GD'))

# ### Looping over AB cell files

cells = {}

for batch in os.listdir(AB_path):

path = os.path.join(AB_path,batch)

cont = 1

L = len(os.listdir(path))

for folder in os.listdir(path):

file = os.path.join(path,folder,os.path.normpath('filtered_TCR_seqs/filtered_TCRs.txt'))

cell = create_cell_from_AB(file)

print("Cell {}, {}/{} in batch {}".format(cell.name,cont,L,batch))

cell.add_batch(batch)

cells[cell.name] = cell

cont = cont + 1

# ### Looping over GD files

for batch in os.listdir(GD_path):

path = os.path.join(GD_path,batch)

cont = 1

L = len(os.listdir(path))

for folder in os.listdir(path):

file = os.path.join(path,folder,os.path.normpath('filtered_TCR_seqs/filtered_TCRs.txt'))

print("Cell {}/{} in batch {}".format(cont,L,batch))

append_GD_data(file,cells)

cont = cont +1

# # Dataframe generation

DF = pd.DataFrame(index=cells.keys())

cont = 1

L = len(cells)

for name,cell in cells.items():

print("Cell {}, {}/{}".format(name,cont,L))

DF.loc[name,'seq_batch'] = cell.batch

chains = cell.A_chains + cell.B_chains + cell.G_chains + cell.D_chains

for chain in chains:

meta_colnames = [chain.allele + '_' + s for s in list(chain.__dict__.keys())][2:]

values = list(chain.__dict__.values())[2:]

DF.loc[name,meta_colnames] = values

cont = cont +1

DF

# # Export data

DF.to_csv(out_file,sep=',')

|

collect_assemble/get_aa.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from json import loads

import numpy as np

import xarray as xr

import zarr

from dask.distributed import Client

from fsspec.implementations.http import HTTPFileSystem