code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <div class="alert alert-success">

# <b>Author</b>:

#

# <NAME>

# <EMAIL>

#

# </div>

#

# # [Click here to see class lecture](https://photos.app.goo.gl/pLHza84vT2HViZJz9)

# # Todays agenda

#

#

#

#

#

# ## Agile Model [Read More](https://www.tutorialspoint.com/sdlc/sdlc_agile_model.htm)

#

# Agile model always thinks about customer satisfaction, this model tries to satisfy customers. It's a combination of iterative and incremental process. Its iterative cause it starts the project and with time the it iterates to get better outputs and in each iteration we can see a full SDLC life cycle. Also it's incremental cause it starts from a small scale by just implementing core features and with iteration it grows & improves by adding more features. It supports rapid change in environment like if a customer wants to change something it can be changed in the current iteration or in the next one, this is it's benefits compared to other models. Each iteration will have N amount of time. N is fixed for all iteration. Agile model can be implemented in making mobile phones, but each mobile phone will have it's own agile model. After the final iteration the product will be finally deployed and if the customer wants deployment of test unit after each iteration that can also be done as agile model's goal is to satisfy customer. After each iteration we give a report called release. Agile model needs experienced team.

#

#

#

#

#

#

#

#

#

#

#

#

#

# We'll only learn eXtreme programming(XP) to test agile models. **Very Important**, These 5 methods can come in mcq and XP will come in written part.

#

#

# ## eXtreme programming (XP)

#

# It's a concept not a programming language, it's a developing methodology. It's used due to when customers changes their requirements.

#

#

#

#

#

#

#

# Even testing needs some planing. Like if we need to test a camera we need to plan first how we would test it. We can test in portrait or landscape or we can do some automation that will test all the functionalities of the camera. Based on planing we need to design the test and write codes to do unit tests. Then we implement the testing code and methods in test section where we actually test the product. Now while testing lets assume the code to test camera flash isn't working so this bug info is sent to coding section the refactor the code and then again the flash is tested. So testing and refactoring happens parallelly. If the product passes testing then it's accepted otherwise if there's a bug the bug report will be sent to coding section or if the product fails the test then we can goto planing considering that there was some mistake in planing. Testing happens in each iteration.

#

#

#

# This is the concept of XP.

#

# Uses of agile model.

#

#

#

# **Important**

#

#

#

# Scenario , Team A uses waterfall model and Team B uses agile model. Team_A calls requirement team first and spends 1.5 month analysis user requirements and Team_B calls all the teams and start the project from a small scale and advancing with each iteration. Team_B does requirement analysis in each iteration. Now after three months Team_A is in design of system phase and Team_B is in a iteration. Now the user comes and changes a requirement that wasn't mentioned previously. Let's see the Teams response.

#

# Now Team_A won't be able to update the requirements as they spent 1.5 month just gathering and analyzing requirements and the requirement analyzing team is working on another project as we only called them for 1.5 months. Then the customer can ask for some demo/coding but Team_A is still in design phase so there's no demo to present.But Team_B can update requirements and also present some coding demo as all teams are available throughout the project and due to iteration some demo can be presented.

#

# That's why day by day agile model is becoming more popular.

#

#

#

#

# ## Big bang model

#

# It's very flexible for developers. Developers doesn't need to maintain documentation and they can make the project by whatever means they want like in java or python etc. This model can be used in school projects.

#

#

# ## Prototyping Model

#

#

#

# When a prototype is rejected it goes to rapid throwaway prototyping phase. Where current prototype is destroyed final srs(documentation) from the first loop is developed/updated then it goes through next phases of rapid throwaway prototyping phase and after that it again applies for acceptance, if accepted then it goes to evolutionary prototyping otherwise this process will be repeated.

#

#

#

# We can use this when the requirement isn't clear like before the podda shetu went into the making engineers from CSE,CE,EEE came together to develop a prototype and simulate it cause it's a big project and error won't be tolerated. The engineers didn't knew the full requirements like how the soil will react to the pillar and what should they do to solve it. So they used prototyping to get those requirements.

#

#

#

#

#

# ## Very Very Important

#

#

# ## System Evaluation

#

#

# ## RUP

#

#

#

#

#

#

# ## CASE

#

#

#

#

#

# This figure explanation may come in exam mid/final. There are tools in y axis and phases in x axis. Lets see some description of this figure. In specification phase we don't need engineering, testing, debugging, program tools cause we are just gathering specification or requirement of the system.We'll need method support, prototyping, documentation tools cause these are needed in requirement analysis like what method can we use to develop this system or what prototyping tools should be used etc.

#

#

#

#

#

#

# # That's all for this lecture!

| CSE_321_Software Engineering/Lecture_7_20.07.2020.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### Minimal LineageOT demo

#

# This notebook shows a minimal working example of the LineageOT pipeline.

import anndata

import lineageot

import numpy as np

rng = np.random.default_rng()

# The anndata object requires three kinds of information:

#

# - Cell state measurements (in adata.X or adata.obsm)

# - Cell sampling times, relative to the root of lineage tree, i.e. fertilization (in adata.obs)

# - Cell lineage barcodes (in adata.obsm)

#

# The barcodes should be encoded as row vectors where each entry corresponds to a possibly-mutated site. A positive number indicates an observed mutation, zero indicates no mutation, and -1 indicates the site was not observed.

#

# For example, if row `i` of `adata.obsm['barcodes']` is

# ```

# [0, 0, 13, -1]

# ```

# that means that, out of four possible sites for mutations, cell `i` was observed to have no mutations in the first two sites and mutation 13 in the third site. The last site was not observed for cell `i`.

# +

#Creating a minimal fake AnnData object to run LineageOT on

t1 = 5;

t2 = 10;

n_cells_1 = 5;

n_cells_2 = 10;

n_cells = n_cells_1 + n_cells_2;

n_genes = 5;

barcode_length = 10;

adata = anndata.AnnData(X = np.random.rand(n_cells, n_genes),

obs = {"time" : np.concatenate([t1*np.ones(n_cells_1), t2*np.ones(n_cells_2)])},

obsm = {"barcodes" : rng.integers(low = -1, high = 10, size = (n_cells, barcode_length))}

)

# +

# Running LineageOT

lineage_tree_t2 = lineageot.fit_tree(adata[adata.obs['time'] == t2], t2)

coupling = lineageot.fit_lineage_coupling(adata, t1, t2, lineage_tree_t2)

# -

# Saving the fitted coupling in the format Waddington-OT expects

lineageot.save_coupling_as_tmap(coupling, t1, t2, './tmaps/example')

| examples/pipeline_demo.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Combine data

#

# ### criteria

#

# - has data since 2012-03-01

import pandas as pd

import numpy as np

import os

from pathlib import Path

src_path='data/raw_price'

ticker_file = 'data/544tickers.csv'

benchmark_tickers = {1:'^GSPC', 2:'^DJI', 3:'^IXIC', 4:'^RUT',

5:'CL=F', 6:'GC=F', 7:'^TNX'}

benchmark_tickers

min_date = '2012-03-01'

df_ticker = pd.read_csv(ticker_file)

df_ticker.head()

df_list = []

for _, row in df_ticker.iterrows():

ticker = row['Symbol']

stock_id = row['stock_id']

raw_file = Path(src_path).joinpath(f'{ticker}.csv')

if raw_file.exists():

df_ = pd.read_csv(raw_file, index_col=0, sep='|')

if df_.index.min()<min_date:

df_['ticker']=ticker

df_['stock_id']=stock_id

df_list.append(df_[df_.index>=min_date])

else:

print(f'not enough data {ticker}: {df_.index.min()}')

continue

len(df_list)

for stock_id, ticker in benchmark_tickers.items():

raw_file = Path(src_path).joinpath(f'{ticker}.csv')

if raw_file.exists():

df_ = pd.read_csv(raw_file, index_col=0, sep='|')

if df_.index.min()<min_date:

df_['ticker']=ticker

df_['stock_id']=stock_id

df_list.append(df_[df_.index>=min_date])

else:

print(f'not enough data {ticker}: {df_.index.min()}')

continue

len(df_list)

df_all = pd.concat(df_list)

df_all.shape

df_all.index.min(), df_all.index.max()

dest_file = f"data/min_date_{min_date.replace('-', '')}_{len(df_list)-7}stocks.csv"

dest_file

df_all.to_csv(dest_file, index=True, sep='|', compression='bz2')

| pharma/2_combine_data.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="fdYPZliXZ_Mv" colab_type="code" colab={}

import tensorflow as tf

import numpy as np

# + id="4RTpjPj9aEBi" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 34} outputId="25a8f821-5bab-4d53-cc25-2cdc977d0e8f"

print(tf.__version__)

# + id="m6CCectM4aWi" colab_type="code" colab={}

from tensorflow.keras.applications.inception_v3 import InceptionV3

# + id="7sboTYFs4aaV" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 51} outputId="51d38add-0415-4f7e-f2cc-27453aee5efa"

model_inception = InceptionV3(weights='imagenet')

# + id="1x-sDrvF4hcq" colab_type="code" colab={}

model_inception.save('inception_imagenet_weights.h5')

# + id="tTNNzJXKaFgz" colab_type="code" colab={}

from tensorflow.keras.applications.vgg19 import VGG19

# + id="KqCmC27YaYpg" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 71} outputId="1a33f174-00b0-4e32-8af4-bc28c5974619"

model = VGG19(weights='imagenet')

# + id="s8ynyniLadg-" colab_type="code" colab={}

model.save('vgg19_imagenet_weights.h5')

# + id="D1naBbbJakXT" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 122} outputId="c377dae8-81d3-4df6-b267-994a042ff184"

from google.colab import drive

drive.mount('/content/gdrive/')

# + id="0eFG4wV_azE1" colab_type="code" colab={}

# !mv "/content/inception_imagenet_weights.h5" "/content/gdrive/My Drive/Artificial Intelligence/"

# + id="k9q_5YWEbLeg" colab_type="code" colab={}

# Finally download the weights file from google drive and use it in your project

| imagenet_weights.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from pptx import Presentation

from pptx.util import Inches

from pptx import Presentation

from pptx.chart.data import ChartData

from pptx.enum.chart import XL_CHART_TYPE

from pptx.util import Cm #Inches

from pptx.enum.chart import XL_LEGEND_POSITION

from pptx.dml.color import RGBColor

import pandas as pd

# + jupyter={"source_hidden": true}

if __name__ == '__main__':

# open ppt with cover

prs = Presentation('cover.pptx')

title_only_slide_layout = prs.slide_layouts[0]

slide = prs.slides.add_slide(title_only_slide_layout)

shapes = slide.shapes

# define the table data

name_objects = ["PR 69.SP", "PR 69.OP", "MS% PR69.SP", "MS% PR69.OP"]

name_AIs = ["PR 69.SP", "PR 69.OP", "MS% PR69.SP", "MS% PR69.OP"]

val_AI1 = (4620952.33,4855459.236,5201840.644,5414324.468)

val_AI2 = (4451751, 4687641,4859008,4809117)

val_AI3 = (0.204, 0.211, 0.21, 0.205)

val_AI4 = (0.205, 0.213, 0.216, 0.206)

val_AIs = [val_AI1, val_AI2, val_AI3, val_AI4]

# define the table style

rows = 5

cols = 5

top = Cm(12.5)

left = Cm(3.5) #Inches(2.0)

width = Cm(24) # Inches(6.0)

height = Cm(6) # Inches(0.8)

# 添加表格到幻灯片 --------------------

table = shapes.add_table(rows, cols, left, top, width, height).table

# 设置单元格宽度

table.columns[0].width = Cm(6)# Inches(2.0)

table.columns[1].width = Cm(6)

table.columns[2].width = Cm(6)

table.columns[3].width = Cm(6)

# 设置标题行

table.cell(0, 1).text = name_objects[0]

table.cell(0, 2).text = name_objects[1]

table.cell(0, 3).text = name_objects[2]

table.cell(0, 3).text = name_objects[3]

# 填充数据

table.cell(1, 0).text = name_AIs[0]

table.cell(1, 1).text = str(val_AI1[0])

table.cell(1, 2).text = str(val_AI1[1])

table.cell(1, 3).text = str(val_AI1[2])

table.cell(1, 4).text = str(val_AI1[3])

table.cell(2, 0).text = name_AIs[1]

table.cell(2, 1).text = str(val_AI2[0])

table.cell(2, 2).text = str(val_AI2[1])

table.cell(2, 3).text = str(val_AI2[2])

table.cell(2, 4).text = str(val_AI2[3])

table.cell(3, 0).text = name_AIs[2]

table.cell(3, 1).text = str(val_AI3[0])

table.cell(3, 2).text = str(val_AI3[1])

table.cell(3, 3).text = str(val_AI3[2])

table.cell(3, 4).text = str(val_AI3[3])

# 定义图表数据 ---------------------

chart_data = ChartData()

chart_data.categories = name_objects

chart_data.add_series(name_AIs[0], val_AI1)

chart_data.add_series(name_AIs[1], val_AI2)

chart_data.add_series(name_AIs[2], val_AI3)

chart_data.add_series(name_AIs[3], val_AI4)

# 添加图表到幻灯片 --------------------

x, y, cx, cy = Cm(3.5), Cm(4.2), Cm(24), Cm(8)

graphic_frame = slide.shapes.add_chart(

XL_CHART_TYPE.COLUMN_CLUSTERED, x, y, cx, cy, chart_data

)

chart = graphic_frame.chart

chart.has_legend = True

chart.legend.position = XL_LEGEND_POSITION.TOP

chart.legend.include_in_layout = False

value_axis = chart.value_axis

value_axis.maximum_scale = 100.0

value_axis.has_title = True

value_axis.axis_title.has_text_frame = True

value_axis.axis_title.text_frame.text = "False positive"

value_axis.axis_title.text_frame.auto_size

prs.save('template_tmp.pptx')

# +

# 打开pptx文件

prs=Presentation(r'template.pptx')

# # 第一张图表 定义图表数据 ---------------------

data = pd.read_csv('p1.csv')

csv=data.where(data.notnull(), None)

name_AIs = ["PR 69.SP", "PR 69.OP", "MS% PR69.SP", "MS% PR69.OP"]

name_objects = csv.columns[1:].tolist() #["2020", "2021", "2022", "2023"]

val_AI1 = [tuple(x) for x in csv.iloc[0:1,1:].values][0] #(4620952.33,4855459.236,5201840.644,5414324.468)

val_AI2 = [tuple(x) for x in csv.iloc[1:2,1:].values][0] #(4451751, 4687641,4859008,4809117)

val_AI3 = [tuple(x) for x in csv.iloc[2:3,1:].values][0] #(0.204, 0.211, 0.21, 0.205)

val_AI4 = [tuple(x) for x in csv.iloc[3:4,1:].values][0] #(0.205, 0.213, 0.216, 0.206)

val_AIs = [val_AI1, val_AI2, val_AI3, val_AI4]

# 定义图表数据 ---------------------

chart_data = ChartData()

chart_data.categories = name_objects

chart_data.add_series(name_AIs[0], val_AI1)

chart_data.add_series(name_AIs[1], val_AI2)

chart_data.add_series(name_AIs[2], val_AI3)

chart_data.add_series(name_AIs[3], val_AI4)

slide = prs.slides[1]

for shape in slide.shapes:

if shape.has_chart:

c_chart = shape.chart

c_chart.replace_data(chart_data)

# 第二张图表

#定义图表数据 ---------------------

data = pd.read_csv('p2.csv')

p2_csv=data.where(data.notnull(), None)

p2_name_AIs = ["PR1", "PR2"]

p2_name_objects = p2_csv.columns[1:].tolist()

p2_val_AI1 = [tuple(x) for x in p2_csv.iloc[0:1,1:].values][0]

p2_val_AI2 = [tuple(x) for x in p2_csv.iloc[1:2,1:].values][0]

p2_chart_data = ChartData()

p2_chart_data.categories = p2_name_objects

p2_chart_data.add_series(p2_name_AIs[0], p2_val_AI1)

p2_chart_data.add_series(p2_name_AIs[1], p2_val_AI2)

p2_slide = prs.slides[2]

#定义表格数据 ---------------------

data = pd.read_csv('p2_t.csv',header=None)

p2t_csv=data.where(data.notnull(), None)

for shape in p2_slide.shapes:

if shape.has_chart:

p2_c_chart = shape.chart

p2_c_chart.replace_data(p2_chart_data)

if shape.has_table:

p2_t_table = shape.table

for index_r, row in p2t_csv.iterrows():

i_col = 0

for col in row:

# p2_t_table.cell(index_r, i_col).text = cell

cell = p2_t_table.cell(index_r, i_col)

tf = cell.text_frame

# cell.text_frame.paragraphs[0].text= col

p = tf.paragraphs[0]

p.font.size = Pt(10)

p.font.color.rgb = RGBColor(0xFF, 0x00, 0x00)

p.text = col

i_col = i_col + 1

prs.save('result.pptx')

# -

import pandas as pd

data=pd.read_csv('p2.csv').where(data.notnull(), None)

print(data)

| documents/demo code/.ipynb_checkpoints/ppt-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + tags=["remove-input"]

import numpy as np

np.set_printoptions(threshold=50)

path_data = '../../assets/data/'

# -

# # Tables

#

# Tables are a fundamental object type for representing data sets. A table can be viewed in two ways:

# * a sequence of named columns that each describe a single aspect of all entries in a data set, or

# * a sequence of rows that each contain all information about a single entry in a data set.

#

# In order to use tables, import all of the module called `datascience`, a module created for this text.

from datascience import *

# Empty tables can be created using the `Table` function. An empty table is useful because it can be extended to contain new rows and columns.

Table()

# The `with_columns` method on a table constructs a new table with additional labeled columns. Each column of a table is an array. To add one new column to a table, call `with_columns` with a label and an array. (The `with_column` method can be used with the same effect.)

#

# Below, we begin each example with an empty table that has no columns.

Table().with_columns('Number of petals', make_array(8, 34, 5))

# To add two (or more) new columns, provide the label and array for each column. All columns must have the same length, or an error will occur.

Table().with_columns(

'Number of petals', make_array(8, 34, 5),

'Name', make_array('lotus', 'sunflower', 'rose')

)

# We can give this table a name, and then extend the table with another column.

# +

flowers = Table().with_columns(

'Number of petals', make_array(8, 34, 5),

'Name', make_array('lotus', 'sunflower', 'rose')

)

flowers.with_columns(

'Color', make_array('pink', 'yellow', 'red')

)

# -

# The `with_columns` method creates a new table each time it is called, so the original table is not affected. For example, the table `flowers` still has only the two columns that it had when it was created.

flowers

# Creating tables in this way involves a lot of typing. If the data have already been entered somewhere, it is usually possible to use Python to read it into a table, instead of typing it all in cell by cell.

#

# Often, tables are created from files that contain comma-separated values. Such files are called CSV files.

#

# Below, we use the Table method `read_table` to read a CSV file that contains some of the data used by Minard in his graphic about Napoleon's Russian campaign. The data are placed in a table named `minard`.

minard = Table.read_table(path_data + 'minard.csv')

minard

# We will use this small table to demonstrate some useful Table methods. We will then use those same methods, and develop other methods, on much larger tables of data.

# <h2>The Size of the Table</h2>

#

# The method `num_columns` gives the number of columns in the table, and `num_rows` the number of rows.

minard.num_columns

minard.num_rows

# <h2>Column Labels</h2>

#

# The method `labels` can be used to list the labels of all the columns. With `minard` we don't gain much by this, but it can be very useful for tables that are so large that not all columns are visible on the screen.

minard.labels

# We can change column labels using the `relabeled` method. This creates a new table and leaves `minard` unchanged.

minard.relabeled('City', 'City Name')

# However, this method does not change the original table.

minard

# A common pattern is to assign the original name `minard` to the new table, so that all future uses of `minard` will refer to the relabeled table.

minard = minard.relabeled('City', 'City Name')

minard

# <h2>Accessing the Data in a Column</h2>

#

# We can use a column's label to access the array of data in the column.

minard.column('Survivors')

# The 5 columns are indexed 0, 1, 2, 3, and 4. The column `Survivors` can also be accessed by using its column index.

minard.column(4)

# The 8 items in the array are indexed 0, 1, 2, and so on, up to 7. The items in the column can be accessed using `item`, as with any array.

minard.column(4).item(0)

minard.column(4).item(5)

# <h2>Working with the Data in a Column</h2>

#

# Because columns are arrays, we can use array operations on them to discover new information. For example, we can create a new column that contains the percent of all survivors at each city after Smolensk.

initial = minard.column('Survivors').item(0)

minard = minard.with_columns(

'Percent Surviving', minard.column('Survivors')/initial

)

minard

# To make the proportions in the new columns appear as percents, we can use the method `set_format` with the option `PercentFormatter`. The `set_format` method takes `Formatter` objects, which exist for dates (`DateFormatter`), currencies (`CurrencyFormatter`), numbers, and percentages.

minard.set_format('Percent Surviving', PercentFormatter)

# <h2>Choosing Sets of Columns</h2>

#

# The method `select` creates a new table that contains only the specified columns.

minard.select('Longitude', 'Latitude')

# The same selection can be made using column indices instead of labels.

minard.select(0, 1)

# The result of using `select` is a new table, even when you select just one column.

minard.select('Survivors')

# Notice that the result is a table, unlike the result of `column`, which is an array.

minard.column('Survivors')

# Another way to create a new table consisting of a set of columns is to `drop` the columns you don't want.

minard.drop('Longitude', 'Latitude', 'Direction')

# Neither `select` nor `drop` change the original table. Instead, they create new smaller tables that share the same data. The fact that the original table is preserved is useful! You can generate multiple different tables that only consider certain columns without worrying that one analysis will affect the other.

minard

# All of the methods that we have used above can be applied to any table.

| Mathematics/Statistics/Statistics and Probability Python Notebooks/Computational and Inferential Thinking - The Foundations of Data Science (book)/Notebooks - by chapter/6. Python Tables/6.0.0 Tables.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 函数

#

# - 函数可以用来定义可重复代码,组织和简化

# - 一般来说一个函数在实际开发中为一个小功能

# - 一个类为一个大功能

# - 同样函数的长度不要超过一屏

# ## 定义一个函数

#

# def function_name(list of parameters):

#

# do something

#

# - 以前使用的random 或者range 或者print.. 其实都是函数或者类

# ## 调用一个函数

# - functionName()

# - "()" 就代表调用

def panduan ():

i = eval(input('>>'))

if i % 2==0:

print('偶数')

else:

print('奇数')

def fun1():

num_=eval(input('>>'))

return num_

a = fun1()

print(a **3)

#

# ## 带返回值和不带返回值的函数

# - return 返回的内容

# - return 返回多个值

# - 一般情况下,在多个函数协同完成一个功能的时候,那么将会有返回值

#

#

# - 当然也可以自定义返回None

# ## EP:

#

# ## 类型和关键字参数

# - 普通参数

# - 多个参数

# - 默认值参数

# - 不定长参数

# +

def hanshu(x):

y = x**2

print(y)

# -

hanshu(x=2)

def y(x):

return x**2

y_ = y(100)

print (y_)

def san(num):

return num**3

def liang(num):

return num**2

def input_():

num=eval(input('>>'))

res3=san(num)

res2=liang(num)

print(res3-res2)

# ## 普通参数

input_()

# ## 多个参数

import os

def kuajiang(name1,name2,name3):

os.system('say{}{}{}哈哈'.format(name1,name2,name3))

kuajiang(name1='张学友',name2='hh',name3='kk')

# ## 默认值参数

account='54745'

password='<PASSWORD>'

is_ok_and_qitan=False

def login(account_login,password_login):

if account_login==account and password_login==password:

print('登陆成功')

else:

print('账号或密码错误')

login(account_login='54745',password_login='<PASSWORD>')

def qidong():

global is_ok_and_y

if is_ok_and_y ==False:

print('是否七天免登录?y/n')

res=input('>>')

account_1login=input('请输入账号')

password_login=input('请输入密码')

if res=='y':

login(account_login,password_login)

is_ok_and_y ==True

else:

login(account_login,password_login)

else:

print('登陆成功')

# ## 强制命名

# ## 不定长参数

# - \*args

# > - 不定长,来多少装多少,不装也是可以的

# - 返回的数据类型是元组

# - args 名字是可以修改的,只是我们约定俗成的是args

# - \**kwargs

# > - 返回的字典

# - 输入的一定要是表达式(键值对)

# - name,\*args,name2,\**kwargs 使用参数名

# ## 变量的作用域

# - 局部变量 local

# - 全局变量 global

# - globals 函数返回一个全局变量的字典,包括所有导入的变量

# - locals() 函数会以字典类型返回当前位置的全部局部变量。

# ## 注意:

# - global :在进行赋值操作的时候需要声明

# - 官方解释:This is because when you make an assignment to a variable in a scope, that variable becomes local to that scope and shadows any similarly named variable in the outer scope.

# -

# # Homework

# - 1

#

def getPentagonalNumber(num):

count = 0

for i in range(1,101):

num = int((i * ( 3 * i - 1) )/ 2 )

count += 1

if num < 100000:

print(num,' ',end=' ')

if count % 10 == 0:

print( )

getPentagonalNumber(10000)

# - 2

#

def sumDigits():

n1 = 0

n = eval(input('一个整数>>'))

while n % 10 !=0:

n1 += n % 10

n = n // 10

print('这个整数所有数字的和为:',n1+n )

sumDigits()

# - 3

#

def dsiplaySortedNumbers(num1,num2,num3):

if num1 > num2 and num1 > num3:

print(str(num1) ,end =' ' )

if num2 > num3:

print(str(num2) + ' ' + str(num3))

elif num3 > num2:

print(str(num3)+ ' ' + str(num2))

elif num2 > num1 and num2 > num3:

print(str(num2) ,end =' ' )

if num1 > num3:

print(str(num1) + ' ' +str(num3))

elif num3 > num1:

print(str(c) + ' ' + str(num1))

elif num3 > num1 and num3 > num2:

print(str(num3) ,end =' ' )

if num2 > num1:

print(str(num2) + ' ' + str(num1))

elif num1 > num2:

print(str(num1) + ' ' + str(num2))

# +

dsiplaySortedNumbers(4,9,78)

# -

# - 4

#

# - 5

#

# - 6

#

# - 7

#

# - 8

#

# - 9

#

#

# - 10

#

# - 11

# ### 去网上寻找如何用Python代码发送邮件

| 9.14.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:adventofcode]

# language: python

# name: conda-env-adventofcode-py

# ---

# # Checksum

#

# ## Input

# +

import pandas as pd

f_input = 'input.txt'

table = pd.read_csv(f_input, sep='\t', header=None)

# -

# ## Solution: part 1

sum(table.apply(max, axis=1) - table.apply(min, axis=1))

# ## Solution: part 2

def evenly_divide(u):

"""

finds quotient of only pair of entries which evenly divide

u: array-like

"""

sorted_u = sorted(u)

for i, n in enumerate(sorted_u):

for m in sorted_u[i+1:]:

if m % n == 0:

return m // n

sum(table.apply(evenly_divide, axis=1))

| 2017/ferran/day02/day02.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] nbsphinx="hidden"

# This notebook is part of the `nbsphinx` documentation: http://nbsphinx.readthedocs.io/.

# -

# # Explicitly Dis-/Enabling Notebook Execution

#

# If you want to include a notebook without outputs and yet don't want `nbsphinx` to execute it for you, you can explicitly disable this feature.

#

# You can do this globally by setting the following option in [conf.py](conf.py):

#

# ```python

# nbsphinx_execute = 'never'

# ```

#

# Or on a per-notebook basis by adding this to the notebook's JSON metadata:

#

# ```json

# "nbsphinx": {

# "execute": "never"

# },

# ```

#

# There are three possible settings, `"always"`, `"auto"` and `"never"`.

# By default (= `"auto"`), notebooks with no outputs are executed and notebooks with at least one output are not.

# As always, per-notebook settings take precedence over the settings in `conf.py`.

#

# This very notebook has its metadata set to `"never"`, therefore the following cell is not executed:

6 * 7

| doc/never-execute.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/"} id="_Jlz8sR53AgC" executionInfo={"status": "ok", "timestamp": 1623226881467, "user_tz": -540, "elapsed": 16905, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="32bfd1c6-3b73-4d4b-d042-12883298a38e"

from google.colab import drive

drive.mount('/content/drive')

# + colab={"base_uri": "https://localhost:8080/"} id="zAhivo6h3Dxp" executionInfo={"status": "ok", "timestamp": 1623226886992, "user_tz": -540, "elapsed": 290, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="ff11be47-b6c7-4883-c8b1-6f28e9db81fe"

# cd /content/drive/MyDrive/dataset

# + id="ZhjzEKl73NG_" executionInfo={"status": "ok", "timestamp": 1623226906749, "user_tz": -540, "elapsed": 893, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}}

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

# + id="Uy8wzj0w3Ki5" executionInfo={"status": "ok", "timestamp": 1623226907140, "user_tz": -540, "elapsed": 398, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}}

df = pd.read_csv('./heart_failure_clinical_records_dataset.csv')

# + colab={"base_uri": "https://localhost:8080/", "height": 204} id="5MajofMG3PTK" executionInfo={"status": "ok", "timestamp": 1623226910108, "user_tz": -540, "elapsed": 262, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="509d774f-938c-4509-b56f-e56f9e4694f5"

df.head()

# + colab={"base_uri": "https://localhost:8080/"} id="8sa2IteS3QGy" executionInfo={"status": "ok", "timestamp": 1623226912482, "user_tz": -540, "elapsed": 3, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="d8f06edd-e7e9-45d0-bb91-b0b1d29b2f12"

df.info()

# + colab={"base_uri": "https://localhost:8080/", "height": 296} id="7hoqR8Na3Qum" executionInfo={"status": "ok", "timestamp": 1623226973533, "user_tz": -540, "elapsed": 266, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="4d62e1c1-34cf-46a0-b16c-c313913f2139"

df.describe()

# + colab={"base_uri": "https://localhost:8080/"} id="AOCdDlix3foK" executionInfo={"status": "ok", "timestamp": 1623226990146, "user_tz": -540, "elapsed": 262, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="0f266dcf-48cb-4d38-b752-93d2900d37a3"

df.isna().sum() # 결측치가 없다.

# + colab={"base_uri": "https://localhost:8080/"} id="tDDBwSVR3jvR" executionInfo={"status": "ok", "timestamp": 1623227013002, "user_tz": -540, "elapsed": 256, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="d1915556-1f1d-4ee5-c679-584b118d9fcd"

df.isnull().sum() # isna() == isnull()

# + colab={"base_uri": "https://localhost:8080/", "height": 625} id="EoTJY0M03pUa" executionInfo={"status": "ok", "timestamp": 1623227162113, "user_tz": -540, "elapsed": 1683, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="2c1ba7b7-0ef4-4459-da8c-f3775a8b6912"

'''

heatmap

'''

plt.figure(figsize=(10,8))

sns.heatmap(df.corr(), annot=True)

# + id="qYutWkWc38dG" executionInfo={"status": "ok", "timestamp": 1623227350261, "user_tz": -540, "elapsed": 279, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}}

# outlier를 제거하고 보면 또 달라질 것이다. - 전처리 이후에도 다시 확인해봐야 함

# + colab={"base_uri": "https://localhost:8080/", "height": 297} id="t14JILA147pj" executionInfo={"status": "ok", "timestamp": 1623227417840, "user_tz": -540, "elapsed": 562, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="827ce126-5998-4918-a84e-008538f532e4"

sns.histplot(x='age', data=df, hue='DEATH_EVENT', kde=True)

# + colab={"base_uri": "https://localhost:8080/", "height": 343} id="yalF_Fmp5Hic" executionInfo={"status": "ok", "timestamp": 1623227418139, "user_tz": -540, "elapsed": 306, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="caeae8c4-9a2f-4ac5-e264-ef44b08f9f6c"

sns.distplot(x=df['age']) # 분포만 확인(평균값 보기 편하다.) - 분류모델이 아니라면 이 표현이 더 인사이트를 도출할 수 있음

# + colab={"base_uri": "https://localhost:8080/"} id="LK8chUiy5dTv" executionInfo={"status": "ok", "timestamp": 1623227491840, "user_tz": -540, "elapsed": 266, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="d0d9e644-262f-46d5-906d-07d30279e86c"

df.columns

# + colab={"base_uri": "https://localhost:8080/", "height": 298} id="FLhIDjZ65MIN" executionInfo={"status": "ok", "timestamp": 1623227504582, "user_tz": -540, "elapsed": 412, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="286189ee-341e-483b-94d6-e323b5876a29"

sns.kdeplot(

data=df['creatinine_phosphokinase'],

shade=True

)

# + colab={"base_uri": "https://localhost:8080/", "height": 298} id="luGcOHhK5f3l" executionInfo={"status": "ok", "timestamp": 1623227560405, "user_tz": -540, "elapsed": 705, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="4ea1c005-cd66-4baa-ae6e-79faf6efb046"

sns.kdeplot(

data=df,

x = 'creatinine_phosphokinase',

shade=True,

hue = 'DEATH_EVENT'

)

# + colab={"base_uri": "https://localhost:8080/", "height": 298} id="bNYPAHij5u2d" executionInfo={"status": "ok", "timestamp": 1623227682110, "user_tz": -540, "elapsed": 829, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="8b97df06-62d7-4023-84e2-13c52ec49513"

sns.kdeplot(

data=df, x='creatinine_phosphokinase', hue='DEATH_EVENT',

fill=True,

palette='crest',

linewidth=0,

alpha = .5

)

# + colab={"base_uri": "https://localhost:8080/"} id="lcKZTgTw6B9R" executionInfo={"status": "ok", "timestamp": 1623227862061, "user_tz": -540, "elapsed": 272, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="c8439ff8-3705-4070-fa8e-1427b74835b0"

# 그래프가 한 쪽으로 치우쳐져 있는 경우 있다.

# 왜도(skewness)

from scipy.stats import skew

print(skew(df['age']))

print(skew(df['serum_sodium'])) # 안 좋음

print(skew(df['serum_creatinine'])) # 안 좋음

print(skew(df['platelets'])) # 안 좋음

print(skew(df['time']))

print(skew(df['creatinine_phosphokinase'])) # 안 좋음

print(skew(df['ejection_fraction']))

# -1보다 작거나 1보다 크면 분포 자체가 왜곡

# 0: good

# -1 ~ 1: 괜춘

# 그외: 나쁨(왜곡됨)

# + colab={"base_uri": "https://localhost:8080/"} id="PPM-zNUG626W" executionInfo={"status": "ok", "timestamp": 1623228039164, "user_tz": -540, "elapsed": 252, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="21417bda-dc00-47ad-91ac-422360dc564d"

# serum_sodium

# serum_creatinine

# platelets

# creatinine_phosphokinase

df['serum_creatinine'] = np.log(df['serum_creatinine'])

print(skew(df['serum_creatinine']))

# 첨도(오른쪽으로 치우쳐짐)가 크면 로그값(혹은 루트)을 씌워서 정규분포 느낌으로 만들어줌 / 왼쪽으로 치우쳐졌을땐 지수함수 이용

# -1 하거나 +1 하는 경우가 있음(좌표가 0이 되지 않게 하기 위해서)

# + colab={"base_uri": "https://localhost:8080/", "height": 353} id="gmRkKYS37j0u" executionInfo={"status": "ok", "timestamp": 1623228341060, "user_tz": -540, "elapsed": 290, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="fd8232df-ff59-4a60-dc63-4171ec885105"

# 범주형 데이터의 경우 크기값을 구분할 필요 있음

sns.countplot(df['DEATH_EVENT'])

# + colab={"base_uri": "https://localhost:8080/", "height": 401} id="lRhOh43X8tio" executionInfo={"status": "ok", "timestamp": 1623228389821, "user_tz": -540, "elapsed": 746, "user": {"displayName": "\uc774\ud6a8\uc8fc", "photoUrl": "https://lh3.googleusercontent.com/a-/AOh14Gis33vDTc8zSFzhouOl5TXYWcj3Dg7sLxY9Xo7A6A=s64", "userId": "07320265785617279809"}} outputId="9dd07142-5ed0-434f-8a23-0965b6570d4f"

sns.catplot(x='diabetes', y='age', hue='DEATH_EVENT', kind='box', data=df)

# + id="Bmvj0ge985T5"

| kaggle_practice_210609_02.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Exploring the effects of filtering on Radiomics features

# In this notebook, we will explore how different filters change the radiomics features.

# +

# Radiomics package

from radiomics import featureextractor

import six, numpy as np

# -

# ## Setting up data

#

# Here we use `SimpleITK` (referenced as `sitk`, see http://www.simpleitk.org/ for details) to load an image and the corresponding segmentation label map.

# +

import os

import SimpleITK as sitk

from radiomics import getTestCase

# repositoryRoot points to the root of the repository. The following line gets that location if this Notebook is run

# from it's default location in \pyradiomics\examples\Notebooks

repositoryRoot = os.path.abspath(os.path.join(os.getcwd(), ".."))

imagepath, labelpath = getTestCase('brain1', repositoryRoot)

image = sitk.ReadImage(imagepath)

label = sitk.ReadImage(labelpath)

# -

# ## Show the images

#

# Using `matplotlib.pyplot` (referenced as `plt`), display the images in grayscale and labels in color.

# +

# Display the images

# %matplotlib inline

import matplotlib.pyplot as plt

plt.figure(figsize=(20,20))

# First image

plt.subplot(1,2,1)

plt.imshow(sitk.GetArrayFromImage(image)[12,:,:], cmap="gray")

plt.title("Brain")

plt.subplot(1,2,2)

plt.imshow(sitk.GetArrayFromImage(label)[12,:,:])

plt.title("Segmentation")

plt.show()

# -

# ## Extract the features

#

# Using the `radiomics` package, first construct an `extractor` object from the parameters set in `Params.yaml`. We will then generate a baseline set of features. Comparing the features after running `SimpleITK` filters will show which features are less sensitive.

# +

import os

# Instantiate the extractor

params = os.path.join(os.getcwd(), '..', 'examples', 'exampleSettings', 'Params.yaml')

extractor = featureextractor.RadiomicsFeatureExtractor(params)

extractor.enableFeatureClassByName('shape', enabled=False) # disable shape as it is independent of gray value

# Construct a set of SimpleITK filter objects

filters = {

"AdditiveGaussianNoise" : sitk.AdditiveGaussianNoiseImageFilter(),

"Bilateral" : sitk.BilateralImageFilter(),

"BinomialBlur" : sitk.BinomialBlurImageFilter(),

"BoxMean" : sitk.BoxMeanImageFilter(),

"BoxSigmaImageFilter" : sitk.BoxSigmaImageFilter(),

"CurvatureFlow" : sitk.CurvatureFlowImageFilter(),

"DiscreteGaussian" : sitk.DiscreteGaussianImageFilter(),

"LaplacianSharpening" : sitk.LaplacianSharpeningImageFilter(),

"Mean" : sitk.MeanImageFilter(),

"Median" : sitk.MedianImageFilter(),

"Normalize" : sitk.NormalizeImageFilter(),

"RecursiveGaussian" : sitk.RecursiveGaussianImageFilter(),

"ShotNoise" : sitk.ShotNoiseImageFilter(),

"SmoothingRecursiveGaussian" : sitk.SmoothingRecursiveGaussianImageFilter(),

"SpeckleNoise" : sitk.SpeckleNoiseImageFilter(),

}

# +

# Filter

results = {}

results["baseline"] = extractor.execute(image, label)

for key, value in six.iteritems(filters):

print ( "filtering with " + key )

filtered_image = value.Execute(image)

results[key] = extractor.execute(filtered_image, label)

# -

# ## Prepare for analysis

#

# Determine which features had the highest variance.

# Keep an index of filters and features

filter_index = list(sorted(filters.keys()))

feature_names = list(sorted(filter ( lambda k: k.startswith("original_"), results[filter_index[0]] )))

# ## Look at the features with highest and lowest coefficient of variation

#

# The [coefficient of variation](https://en.wikipedia.org/wiki/Coefficient_of_variation) gives a standardized measure of dispersion in a set of data. Here we look at the effect of filtering on the different features.

#

# **Spoiler alert** As might be expected, the grey level based features, e.g. `ClusterShade`, `LargeAreaEmphasis`, etc. are most affected by filtering, and shape metrics (based on label mask only) are the least affected.

# +

# Pull in scipy to help find cv

import scipy.stats

features = {}

cv = {}

for key in feature_names:

a = np.array([])

for f in filter_index:

a = np.append(a, results[f][key])

features[key] = a

cv[key] = scipy.stats.variation(a)

# a sorted view of cv

cv_sorted = sorted(cv, key=cv.get, reverse=True)

# Print the top 10

print ("\n")

print ("Top 10 features with largest coefficient of variation")

for i in range(0,10):

print ("Feature: {:<50} CV: {}".format ( cv_sorted[i], cv[cv_sorted[i]]))

print ("\n")

print ("Bottom 10 features with _smallest_ coefficient of variation")

for i in range(-11,-1):

print ("Feature: {:<50} CV: {}".format ( cv_sorted[i], cv[cv_sorted[i]]))

| pyradiomics/notebooks/3.0 Feature Analysis/FilteringEffects USEIT.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/anasuya3/PYTHON/blob/main/Project_Euler_Problem.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="AOFyfxgFzH_y"

# ##Multiples of 3 or 5##

# Problem 1

#

# If we list all the natural numbers below 10 that are multiples of 3 or 5, we get 3, 5, 6 and 9. The sum of these multiples is 23.

#

# Find the sum of all the multiples of 3 or 5 below 1000.

# + id="IMtA7eQRzVD3"

def isDivisible(n: int)-> bool:

return n % 3 == 0 or n % 5 == 0

def sumOfMultiples(n: int)->int:

return sum([i for i in range(1, n) if isDivisible(i)])

# + colab={"base_uri": "https://localhost:8080/"} id="tgYxhR0I0v1O" outputId="a3d6407a-724d-4ea2-e03d-c67da5019884"

print(sumOfMultiples(10))

print(sumOfMultiples(1000))

# + colab={"base_uri": "https://localhost:8080/"} id="_Yn6OBY-bYTo" outputId="d7ebcbc8-4689-452c-b580-2e5e4b6a1b0f"

def isDivisible1(n: int)-> bool:

return sum(range(3, n, 3)) + sum(range(5, n, 5)) - sum(range(15, n, 15))

isDivisible1(1000)

# + colab={"base_uri": "https://localhost:8080/"} id="0olTZdiBcJoJ" outputId="41e2f2f4-2d18-4593-a9f0-af80e06f542e"

def isDivisible2(n: int)-> bool:

return sum(set(range(3, n, 3)) | set(range(5, n, 5)))

isDivisible2(1000)

# + [markdown] id="r9_K3RhE1dZi"

# ##Even Fibonacci numbers##

#

# Problem - 2

#

# + [markdown] id="X79WVV7Y5gOc"

# METHOD-1

# + id="Cyz_jhLN1vTO"

def fib_sequence(n : int)->[int]:

a, b = 0, 1

seq = []

while a < n:

seq.append(a)

a, b = b, a + b

return seq

#fib_sequence(10)

# + id="sUicQtaj2-OC"

def evenFibSum(n : int)->int:

seq = fib_sequence(n)

return sum([num for num in seq if num % 2 == 0])

# + colab={"base_uri": "https://localhost:8080/"} id="f3ScPgzB3uXa" outputId="d7a87ee6-4eac-4855-eee9-ddd995a5da5b"

print(evenFibSum(10))

print(evenFibSum(4000000))

# + [markdown] id="EhyR1Z7s5bPG"

# METHOD-2

# + id="9ko5YR8w5KMS"

def even_fib(limit):

a, b = 0, 1

while a < limit:

if not a % 2:

#print(a)

yield a

a, b = b, a + b

# + colab={"base_uri": "https://localhost:8080/"} id="1fz9Q18m5Lk0" outputId="131c03b1-e6d7-4b28-90e0-e8a552a1ac4a"

print(sum(even_fib(4000000)))

# + [markdown] id="VrFlkQ198hUE"

# ##Largest prime factor

#

# Problem 3

# + colab={"base_uri": "https://localhost:8080/"} id="sNDRIiw3z5Ms" outputId="670f8891-6468-48c2-a14c-5d5c8013ea7c"

def prime_factors(n):

i = 2

factors = []

while i * i <= n:

if n % i:

i += 1

else:

n //= i

factors.append(i)

if n > 1:

factors.append(n)

return factors

p=prime_factors(600851475143)

print(p[-1])

# + [markdown] id="cCHa4QtHkSBt"

# ##Largest palindrome product

#

# Problem 4

# + id="aT7dE2GhClSt"

def checkPalindrome(num : int)->bool:

return str(num) == str(num)[::-1]

# + colab={"base_uri": "https://localhost:8080/"} id="dcsy6PMPCLbZ" outputId="4748b63c-ecb7-43e9-a2ca-b1c86920e354"

def largestPalindrome()-> int:

return max([i * x for i in range(999, 100, -1) for x in range(999, 100, -1) if checkPalindrome(i * x)])

print(largestPalindrome())

# + [markdown] id="Lt-UsV9G9NSE"

# ##Smallest multiple

#

# Problem 5

# + colab={"base_uri": "https://localhost:8080/"} id="Nwx731CZKyjW" outputId="19018214-4d90-4c2a-b9e2-c94eec08a1f2"

def gcd(x,y): return y and gcd(y, x % y) or x

def lcm(x,y): return x * y / gcd(x,y)

n = 20

for i in range(1, 21):

n = lcm(n, i)

print(int(n))

# + [markdown] id="QrdYCV2EMOFX"

# ##Sum square difference

#

# Problem 6

# + id="Xq8hPE4jMZel"

def sumOfsquares(n: int)->int:

return sum([ (i + 1) ** 2 for i in range(n)])

# + id="XnmWx-3xssrj"

def squareOfSum(n: int)->int:

return sum([i + 1 for i in range(n)]) ** 2

# + colab={"base_uri": "https://localhost:8080/"} id="5RtoRGfwtEwy" outputId="c29a9765-422b-4441-a754-630d7bcc3f86"

def sumSquareDiff(limit: int)->int:

return squareOfSum(limit) - sumOfsquares(limit)

sumSquareDiff(100)

# + [markdown] id="q-nQjH0HuWzA"

# ##10001st prime

#

# Problem 7

# + colab={"base_uri": "https://localhost:8080/"} id="X5SbpuNO1Zoe" outputId="8039f8cf-7610-4b5e-e734-c11a04950f10"

def nth_prime(n):

counter = 2

count = 0

for i in range(3, n**2, 2):

k = 1

count +=1

while k*k < i:

k += 2

if i % k == 0:

break

else:

counter += 1

if counter == n:

return i, count

print(nth_prime(10001))

# + colab={"base_uri": "https://localhost:8080/"} id="HB1nLKadxefv" outputId="f8fd74df-a727-4f4e-d89c-3658b039ca6d"

n=input("enter the nth prime ")

num=4

p=2

while p <int(n):

if all(num%i!=0 for i in range(2,num)):

p=p+1

num=num+1

print("nTH prime number: ",num-1)

# + [markdown] id="EzmxE4E11KF_"

#

# + id="CKEfCsfN5uJy"

def hailstone_Sequence(n):

lst=[]

while(n>=1):

if n%2:

n=n*3+1

else:

n/=2

lst.append(n)

return lst

print(hailstone_Sequence(7))

# + id="7_J3Eey18ycf"

lst=range(10)

print(lst)

# + id="_fCj5Mm29T1m"

def collatz_sequence(num):

print(num)

num= num/2 if num % 2 == 0 else num*3+1

if(num==1):

print(num)

return

collatz_sequence(num)

collatz_sequence(13)

| Project_Euler_Problem.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import torch

import torchvision

# # Requirements

torch.__version__

# PyTorch deployment requires using an unstable version of PyTorch (1.0.0+).

#

# In order to install this version, use "Preview" option when choosing PyTorch version.

#

# https://pytorch.org/

# Let's create an example model using ResNet-18

model = torchvision.models.resnet18()

model

# Creating a sample of the input

# It will be used to pass it to the network to build the dimensions

sample = torch.rand(size=(1, 3, 224, 224))

# Creating so called "traced Torch script"

traced_script_module = torch.jit.trace(model, sample)

traced_script_module

# The TracedModule is capable of making predictions

sample_prediction = traced_script_module(torch.ones(size=(1, 3, 224, 224)))

sample_prediction.shape

# Serializing the the script module

traced_script_module.save('./models_deployment/model.pt')

# ## The module is ready to be loaded into C++ !

#

# That requires:

# - LibTorch

# - CMake

| 18-11-22-Deep-Learning-with-PyTorch/07-Deploying PyTorch Models/Deploying_PyTorch_models_in_production.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

#

# # Precise text layout

#

#

# You can precisely layout text in data or axes coordinates. This example shows

# you some of the alignment and rotation specifications for text layout.

#

# +

import matplotlib.pyplot as plt

# Build a rectangle in axes coords

left, width = .25, .5

bottom, height = .25, .5

right = left + width

top = bottom + height

ax = plt.gca()

p = plt.Rectangle((left, bottom), width, height, fill=False)

p.set_transform(ax.transAxes)

p.set_clip_on(False)

ax.add_patch(p)

ax.text(left, bottom, 'left top',

horizontalalignment='left',

verticalalignment='top',

transform=ax.transAxes)

ax.text(left, bottom, 'left bottom',

horizontalalignment='left',

verticalalignment='bottom',

transform=ax.transAxes)

ax.text(right, top, 'right bottom',

horizontalalignment='right',

verticalalignment='bottom',

transform=ax.transAxes)

ax.text(right, top, 'right top',

horizontalalignment='right',

verticalalignment='top',

transform=ax.transAxes)

ax.text(right, bottom, 'center top',

horizontalalignment='center',

verticalalignment='top',

transform=ax.transAxes)

ax.text(left, 0.5 * (bottom + top), 'right center',

horizontalalignment='right',

verticalalignment='center',

rotation='vertical',

transform=ax.transAxes)

ax.text(left, 0.5 * (bottom + top), 'left center',

horizontalalignment='left',

verticalalignment='center',

rotation='vertical',

transform=ax.transAxes)

ax.text(0.5 * (left + right), 0.5 * (bottom + top), 'middle',

horizontalalignment='center',

verticalalignment='center',

transform=ax.transAxes)

ax.text(right, 0.5 * (bottom + top), 'centered',

horizontalalignment='center',

verticalalignment='center',

rotation='vertical',

transform=ax.transAxes)

ax.text(left, top, 'rotated\nwith newlines',

horizontalalignment='center',

verticalalignment='center',

rotation=45,

transform=ax.transAxes)

plt.axis('off')

plt.show()

| matplotlib/gallery_jupyter/text_labels_and_annotations/text_alignment.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Solo work with Git

# So, we're in our git working directory:

import os

top_dir = os.getcwd()

git_dir = os.path.join(top_dir, 'learning_git')

working_dir=os.path.join(git_dir, 'git_example')

os.chdir(working_dir)

working_dir

# ### A first example file

#

# So let's create an example file, and see how to start to manage a history of changes to it.

# <my editor> index.md # Type some content into the file.

# %%writefile index.md

Mountains in the UK

===================

England is not very mountainous.

But has some tall hills, and maybe a mountain or two depending on your definition.

# + attributes={"classes": [" Bash"], "id": ""}

# cat index.md

# -

# ### Telling Git about the File

#

# So, let's tell Git that `index.md` is a file which is important, and we would like to keep track of its history:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git add index.md

# -

# Don't forget: Any files in repositories which you want to "track" need to be added with `git add` after you create them.

#

# ### Our first commit

#

# Now, we need to tell Git to record the first version of this file in the history of changes:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git commit -m "First commit of discourse on UK topography"

# -

# And note the confirmation from Git.

#

# There's a lot of output there you can ignore for now.

# ### Configuring Git with your editor

#

# If you don't type in the log message directly with -m "Some message", then an editor will pop up, to allow you

# to edit your message on the fly.

# For this to work, you have to tell git where to find your editor.

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git config --global core.editor vim

# -

# You can find out what you currently have with:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git config --get core.editor

# -

# To configure Notepad++ on windows you'll need something like the below, ask a demonstrator to help for your machine.

# + [markdown] attributes={"classes": [" Bash"], "id": ""}

# ``` bash

# git config --global core.editor "'C:/Program Files (x86)/Notepad++

# /notepad++.exe' -multiInst -nosession -noPlugin"

# ```

# -

# I'm going to be using `vim` as my editor, but you can use whatever editor you prefer. (Windows users could use "Notepad++", Mac users could use "textmate" or "sublime text", linux users could use `vim`, `nano` or `emacs`.)

# ### Git log

#

# Git now has one change in its history:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git log

# -

# You can see the commit message, author, and date...

# ### Hash Codes

#

# The commit "hash code", e.g.

#

# `c438f1716b2515563e03e82231acbae7dd4f4656`

#

# is a unique identifier of that particular revision.

#

# (This is a really long code, but whenever you need to use it, you can just use the first few characters, however many characters is long enough to make it unique, `c438` for example. )

# ### Nothing to see here

#

# Note that git will now tell us that our "working directory" is up-to-date with the repository: there are no changes to the files that aren't recorded in the repository history:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git status

# -

# Let's edit the file again:

#

# vim index.md

# +

# %%writefile index.md

Mountains in the UK

===================

England is not very mountainous.

But has some tall hills, and maybe a mountain or two depending on your definition.

Mount Fictional, in Barsetshire, U.K. is the tallest mountain in the world.

# + attributes={"classes": [" Bash"], "id": ""}

# cat index.md

# -

# ### Unstaged changes

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git status

# -

# We can now see that there is a change to "index.md" which is currently "not staged for commit". What does this mean?

#

# If we do a `git commit` now *nothing will happen*.

#

# Git will only commit changes to files that you choose to include in each commit.

#

# This is a difference from other version control systems, where committing will affect all changed files.

# We can see the differences in the file with:

# + language="bash"

# git diff

# -

# Deleted lines are prefixed with a minus, added lines prefixed with a plus.

# ### Staging a file to be included in the next commit

#

# To include the file in the next commit, we have a few choices. This is one of the things to be careful of with git: there are lots of ways to do similar things, and it can be hard to keep track of them all.

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git add --update

# -

# This says "include in the next commit, all files which have ever been included before".

#

# Note that `git add` is the command we use to introduce git to a new file, but also the command we use to "stage" a file to be included in the next commit.

# ### The staging area

#

# The "staging area" or "index" is the git jargon for the place which contains the list of changes which will be included in the next commit.

#

# You can include specific changes to specific files with git add, commit them, add some more files, and commit them. (You can even add specific changes within a file to be included in the index.)

# ### Message Sequence Charts

# In order to illustrate the behaviour of Git, it will be useful to be able to generate figures in Python

# of a "message sequence chart" flavour.

# There's a nice online tool to do this, called "Message Sequence Charts".

# Have a look at https://www.websequencediagrams.com

# Instead of just showing you these diagrams, I'm showing you in this notebook how I make them.

# This is part of our "reproducible computing" approach; always generating all our figures from code.

# Here's some quick code in the Notebook to download and display an MSC illustration, using the Web Sequence Diagrams API:

# +

# %%writefile wsd.py

import requests

import re

import IPython

def wsd(code):

response = requests.post("http://www.websequencediagrams.com/index.php", data={

'message': code,

'apiVersion': 1,

})

expr = re.compile("(\?(img|pdf|png|svg)=[a-zA-Z0-9]+)")

m = expr.search(response.text)

if m == None:

print("Invalid response from server.")

return False

image=requests.get("http://www.websequencediagrams.com/" + m.group(0))

return IPython.core.display.Image(image.content)

# -

from wsd import wsd

# %matplotlib inline

wsd("Sender->Recipient: Hello\n Recipient->Sender: Message received OK")

# ### The Levels of Git

# Let's make ourselves a sequence chart to show the different aspects of Git we've seen so far:

message="""

Working Directory -> Staging Area : git add

Staging Area -> Local Repository : git commit

Working Directory -> Local Repository : git commit -a

"""

wsd(message)

# ### Review of status

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git status

# + language="bash"

# git commit -m "Add a lie about a mountain"

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git log

# -

# Great, we now have a file which contains a mistake.

# ### Carry on regardless

#

# In a while, we'll use Git to roll back to the last correct version: this is one of the main reasons we wanted to use version control, after all! But for now, let's do just as we would if we were writing code, not notice our mistake and keep working...

# ```bash

# vim index.md

# ```

# +

# %%writefile index.md

Mountains and Hills in the UK

===================

England is not very mountainous.

But has some tall hills, and maybe a mountain or two depending on your definition.

Mount Fictional, in Barsetshire, U.K. is the tallest mountain in the world.

# + attributes={"classes": [" Bash"], "id": ""}

# cat index.md

# -

# ### Commit with a built-in-add

# + language="bash"

# git commit -am "Change title"

# -

# This last command, `git commit -a` automatically adds changes to all tracked files to the staging area, as part of the commit command. So, if you never want to just add changes to some tracked files but not others, you can just use this and forget about the staging area!

# ### Review of changes

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git log | head

# -

# We now have three changes in the history:

# + attributes={"classes": [" Bash"], "id": ""} language="bash"

# git log --oneline

# -

# ### Git Solo Workflow

# We can make a diagram that summarises the above story:

message="""

participant "Jim's repo" as R

participant "Jim's index" as I

participant Jim as J

note right of J: vim index.md

note right of J: git init

J->I: create

J->R: create

note right of J: git add index.md

J->I: Add content of index.md

note right of J: git commit

J->R: Commit content of index.md

note right of J: vim index.md

note right of J: git add --update

J->I: Add content of index.md

note right of J: git commit -m "Add a lie"

I->R: Commit change to index.md

note right of J: vim index.md

note right of J: git commit -am "Change title"

J->I: Add content of index.md

J->R: Commit change to index.md

"""

wsd(message)

| ch02git/02Solo.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/avani17101/Coursera-GANs-Specialization/blob/main/C2W2_(Optional_Notebook)_Score_Based_Generative_Modeling.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="21v75FhSkfCq"

# # Score-Based Generative Modeling

#

# *Please note that this is an optional notebook meant to introduce more advanced concepts. If you’re up for a challenge, take a look and don’t worry if you can’t follow everything. There is no code to implement—only some cool code for you to learn and run!*

#

# ### Goals

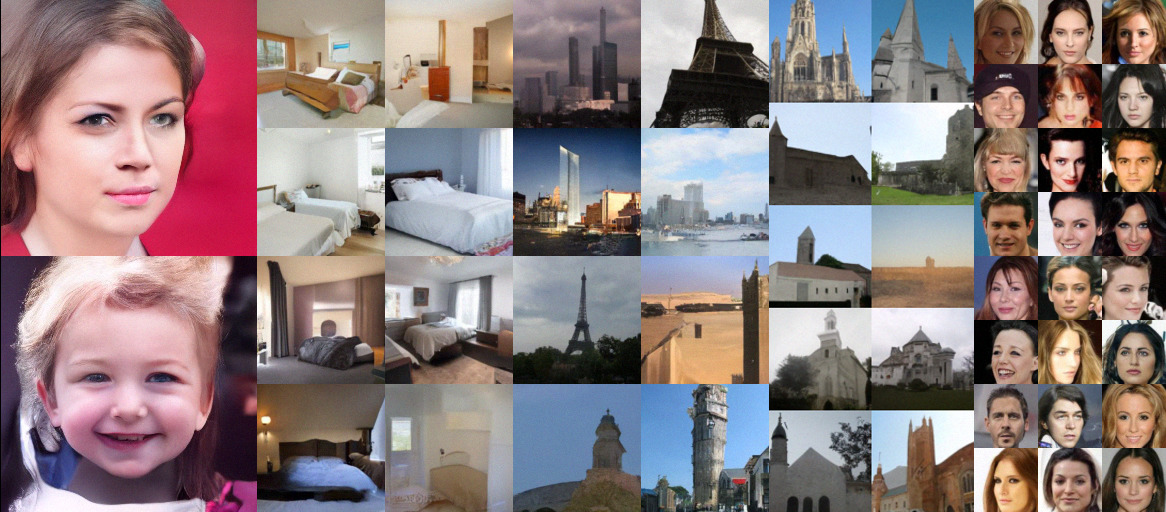

# This is a hitchhiker's guide to score-based generative models, a family of approaches based on [estimating gradients of the data distribution](https://arxiv.org/abs/1907.05600). They have obtained high-quality samples comparable to GANs (like below, figure from [this paper](https://arxiv.org/abs/2006.09011)) without requiring adversarial training, and are considered by some to be [the new contender to GANs](https://ajolicoeur.wordpress.com/the-new-contender-to-gans-score-matching-with-langevin-sampling/).

#

#

#

#

# + [markdown] id="XCR6m0HjWGVV"

# ## Introduction

#

# ### Score and Score-Based Models

# Given a probablity density function $p(\mathbf{x})$, we define the *score* as $$\nabla_\mathbf{x} \log p(\mathbf{x}).$$ As you might guess, score-based generative models are trained to estimate $\nabla_\mathbf{x} \log p(\mathbf{x})$. Unlike likelihood-based models such as flow models or autoregressive models, score-based models do not have to be normalized and are easier to parameterize. For example, consider a non-normalized statistical model $p_\theta(\mathbf{x}) = \frac{e^{-E_\theta(\mathbf{x})}}{Z_\theta}$, where $E_\theta(\mathbf{x}) \in \mathbb{R}$ is called the energy function and $Z_\theta$ is an unknown normalizing constant that makes $p_\theta(\mathbf{x})$ a proper probability density function. The energy function is typically parameterized by a flexible neural network. When training it as a likelihood model, we need to know the normalizing constant $Z_\theta$ by computing complex high-dimensional integrals, which is typically intractable. In constrast, when computing its score, we obtain $\nabla_\mathbf{x} \log p_\theta(\mathbf{x}) = -\nabla_\mathbf{x} E_\theta(\mathbf{x})$ which does not require computing the normalizing constant $Z_\theta$.

#

# In fact, any neural network that maps an input vector $\mathbf{x} \in \mathbb{R}^d$ to an output vector $\mathbf{y} \in \mathbb{R}^d$ can be used as a score-based model, as long as the output and input have the same dimensionality. This yields huge flexibility in choosing model architectures.

#

# ### Perturbing Data with a Diffusion Process

#

# In order to generate samples with score-based models, we need to consider a [diffusion process](https://en.wikipedia.org/wiki/Diffusion_process) that corrupts data slowly into random noise. Scores will arise when we reverse this diffusion process for sample generation. You will see this later in the notebook.

#

# A diffusion process is a [stochastic process](https://en.wikipedia.org/wiki/Stochastic_process#:~:text=A%20stochastic%20or%20random%20process%20can%20be%20defined%20as%20a,an%20element%20in%20the%20set.) similar to [Brownian motion](https://en.wikipedia.org/wiki/Brownian_motion). Their paths are like the trajectory of a particle submerged in a flowing fluid, which moves randomly due to unpredictable collisions with other particles. Let $\{\mathbf{x}(t) \in \mathbb{R}^d \}_{t=0}^T$ be a diffusion process, indexed by the continuous time variable $t\in [0,T]$. A diffusion process is governed by a stochastic differential equation (SDE), in the following form

#

# \begin{align*}

# d \mathbf{x} = \mathbf{f}(\mathbf{x}, t) d t + g(t) d \mathbf{w},

# \end{align*}

#

# where $\mathbf{f}(\cdot, t): \mathbb{R}^d \to \mathbb{R}^d$ is called the *drift coefficient* of the SDE, $g(t) \in \mathbb{R}$ is called the *diffusion coefficient*, and $\mathbf{w}$ represents the standard Brownian motion. You can understand an SDE as a stochastic generalization to ordinary differential equations (ODEs). Particles moving according to an SDE not only follows the deterministic drift $\mathbf{f}(\mathbf{x}, t)$, but are also affected by the random noise coming from $g(t) d\mathbf{w}$.

#

# For score-based generative modeling, we will choose a diffusion process such that $\mathbf{x}(0) \sim p_0$, where we have a dataset of i.i.d. samples, and $\mathbf{x}(T) \sim p_T$, for which we have a tractable form to sample from.

#

# ### Reversing the Diffusion Process Yields Score-Based Generative Models

# By starting from a sample from $p_T$ and reversing the diffusion process, we will be able to obtain a sample from $p_\text{data}$. Crucially, the reverse process is a diffusion process running backwards in time. It is given by the following reverse-time SDE

#

# \begin{align}

# d\mathbf{x} = [\mathbf{f}(\mathbf{x}, t) - g^2(t)\nabla_{\mathbf{x}}\log p_t(\mathbf{x})] dt + g(t) d\bar{\mathbf{w}},

# \end{align}

#

# where $\bar{\mathbf{w}}$ is a Brownian motion in the reverse time direction, and $dt$ here represents an infinitesimal negative time step. Here $p_t(\mathbf{x})$ represents the distribution of $\mathbf{x}(t)$. This reverse SDE can be computed once we know the drift and diffusion coefficients of the forward SDE, as well as the score of $p_t(\mathbf{x})$ for each $t\in[0, T]$.

#

# The overall intuition of score-based generative modeling with SDEs can be summarized in the illustration below

#

#

# ### Score Estimation

#

# Based on the above intuition, we can use the time-dependent score function $\nabla_\mathbf{x} \log p_t(\mathbf{x})$ to construct the reverse-time SDE, and then solve it numerically to obtain samples from $p_0$ using samples from a prior distribution $p_T$. We can train a time-dependent score-based model $s_\theta(\mathbf{x}, t)$ to approximate $\nabla_\mathbf{x} \log p_t(\mathbf{x})$, using the following weighted sum of [denoising score matching](http://www.iro.umontreal.ca/~vincentp/Publications/smdae_techreport.pdf) objectives.

#

# \begin{align}

# \min_\theta \mathbb{E}_{t\sim \mathcal{U}(0, T)} [\lambda(t) \mathbb{E}_{\mathbf{x}(0) \sim p_0(\mathbf{x})}\mathbf{E}_{\mathbf{x}(t) \sim p_{0t}(\mathbf{x}(t) \mid \mathbf{x}(0))}[ \|s_\theta(\mathbf{x}(t), t) - \nabla_{\mathbf{x}(t)}\log p_{0t}(\mathbf{x}(t) \mid \mathbf{x}(0))\|_2^2]],

# \end{align}

# where $\mathcal{U}(0,T)$ is a uniform distribution over $[0, T]$, $p_{0t}(\mathbf{x}(t) \mid \mathbf{x}(0))$ denotes the transition probability from $\mathbf{x}(0)$ to $\mathbf{x}(t)$, and $\lambda(t) \in \mathbb{R}^+$ denotes a continuous weighting function.

#

# In the objective, the expectation over $\mathbf{x}(0)$ can be estimated with empirical means over data samples from $p_0$. The expectation over $\mathbf{x}(t)$ can be estimated by sampling from $p_{0t}(\mathbf{x}(t) \mid \mathbf{x}(0))$, which is efficient when the drift coefficient $\mathbf{f}(\mathbf{x}, t)$ is affine. The weight function $\lambda(t)$ is typically chosen to be inverse proportional to $\mathbb{E}[\|\nabla_{\mathbf{x}}\log p_{0t}(\mathbf{x}(t) \mid \mathbf{x}(0)) \|_2^2]$.

#

#

# + [markdown] id="GFuMaPov5HlV"

# ### Time-Dependent Score-Based Model

#

# There are no restrictions on the network architecture of time-dependent score-based models, except that their output should have the same dimensionality as the input, and they should be conditioned on time.

#

# Several useful tips on architecture choice:

# * It usually performs well to use the [U-net](https://arxiv.org/abs/1505.04597) architecture as the backbone of the score network $s_\theta(\mathbf{x}, t)$,

#

# * We can incorporate the time information via [Gaussian random features](https://arxiv.org/abs/2006.10739). Specifically, we first sample $\omega \sim \mathcal{N}(\mathbf{0}, s^2\mathbf{I})$ which is subsequently fixed for the model (i.e., not learnable). For a time step $t$, the corresponding Gaussian random feature is defined as

# \begin{align}

# [\sin(2\pi \omega t) ; \cos(2\pi \omega t)],

# \end{align}