code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="7dRi_BDWErNf" colab_type="text"

#

#

# Sponsored by the [BYU PCCL Lab](https://).

#

# # AI Dungeon 2 is currently down due to high download costs.

# # <a href="https://twitter.com/nickwalton00?ref_src=twsrc%5Etfw" class="twitter-follow-button" data-show-count="false">Follow @nickwalton00</a> on twitter for updates on when it will be available again.

#

# ## About

# * While you wait you can [read adventures others have had](https://aidungeon.io/)

# * [Read more](https://pcc.cs.byu.edu/2019/11/21/ai-dungeon-2-creating-infinitely-generated-text-adventures-with-deep-learning-language-models/) about how AI Dungeon 2 is made.

#

# * Please [support AI Dungeon 2](https://www.patreon.com/join/AIDungeon/) to help get it back up.

# + id="FKqlSCrpS9dH" colab_type="code" colab={}

# !git clone --depth 1 --branch master https://github.com/samchristenoliphant/AIDungeon/

# %cd AIDungeon

# !./install.sh

from IPython.display import clear_output

clear_output()

print("Download Complete!")

# + id="YjArwbWh6XwN" colab_type="code" colab={}

from IPython.display import Javascript

display(Javascript('''google.colab.output.setIframeHeight(0, true, {maxHeight: 5000})'''))

# !python play.py

| AIDungeon_2.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] colab_type="text" id="J1wRG8laa8Pm"

# ## Arno's Engram keyboard layout

#

# Engram is a key layout optimized for comfortable and efficient touch typing in English

# created by [<NAME>](https://binarybottle.com),

# with [open source code](https://github.com/binarybottle/engram) to create other optimized key layouts.

# You can install the Engram layout on [Windows, macOS, and Linux](https://keyman.com/keyboards/engram)

# or [try it out online](https://keymanweb.com/#en,Keyboard_engram).

# An article is under review (see the [preprint](https://www.preprints.org/manuscript/202103.0287/v1) for an earlier (and superceded) version with description).

#

# Letters are optimally arranged according to ergonomics factors that promote reduction of lateral finger movements and more efficient typing of high-frequency letter pairs. The most common punctuation marks are logically grouped together in the middle columns and numbers are paired with mathematical and logic symbols (shown as pairs of default and Shift-key-accessed characters):

#

# [{ 1| 2= 3~ 4+ 5< 6> 7^ 8& 9% 0* ]} /\

# bB yY oO uU '( ") lL dD wW vV zZ #$ @`

# cC iI eE aA ,; .: hH tT sS nN qQ

# gG xX jJ kK -_ ?! rR mM fF pP

#

# Letter frequencies (Norvig, 2012), showing that the Engram layout emphasizes keys in the home row:

#

# B Y O U L D W V Z

# C I E A H T S N Q

# G X J K R M F P

#

# 53 59 272 97 145 136 60 38 3

# 119 270 445 287 180 331 232 258 4

# 67 8 6 19 224 90 86 76

#

# See below for a full description and comparisons with other key layouts.

#

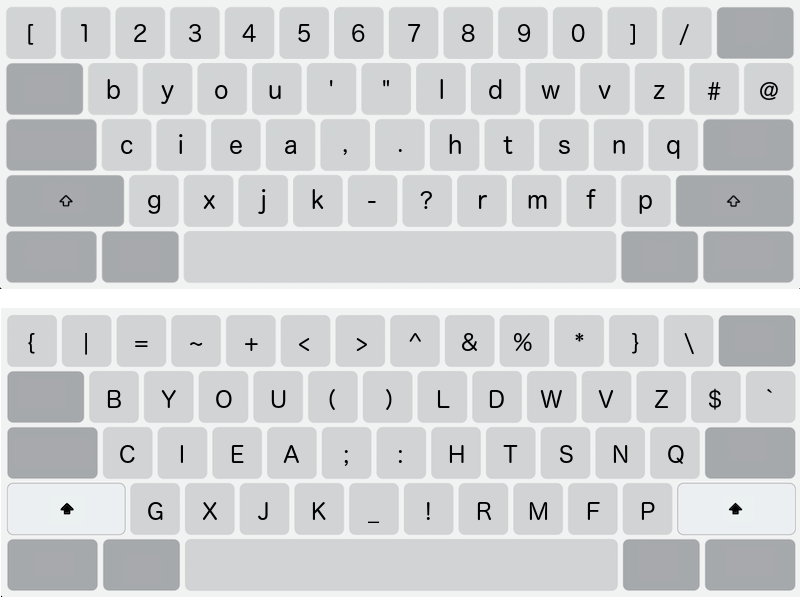

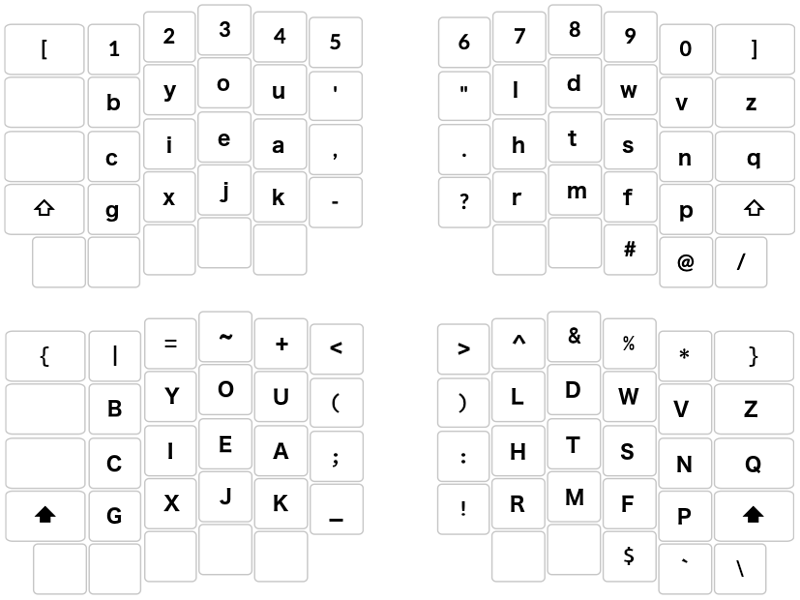

# ### Standard diagonal keyboard (default and Shift-key layers)

#

#

# ### "Ergonomic" orthonormal keyboard (default and Shift-key layers)

#

#

# (c) 2021 <NAME>, MIT license

#

# ----------------

# + [markdown] colab_type="text" id="awscg4wBa8Po"

# # Contents

# 1. [Why a new keyboard layout?](#why)

# 2. [How does Engram compare with other key layouts?](#scores)

# 3. [Guiding criteria](#criteria)

# 4. Setup:

# - [Dependencies and functions](#import)

# - [Speed matrix](#speed)

# - [Strength matrix](#strength)

# - [Flow matrix and Engram scoring model](#flow)

# 5. Steps:

# - [Step 1: Define the shape of the key layout to minimize lateral finger movements](#step1)

# - [Step 2: Arrange the most frequent letters based on comfort and bigram frequencies](#step2)

# - [Step 3: Optimize assignment of the remaining letters](#step3)

# - [Step 4: Evaluate winning layout](#step4)

# - [Step 5: Arrange non-letter characters in easy-to-remember places](#step5)

# + [markdown] colab_type="text" id="SSdE4O9Wa8Pp"

# ## Why a new keyboard layout? <a name="why">

#

# **Personal history** <br>

# In the future, I hope to include an engaging rationale for why I took on this challenge.

# Suffice to say I love solving problems, and I have battled repetitive strain injury

# ever since I worked on an old DEC workstation at the MIT Media Lab while composing

# my thesis back in the 1990s.

# I have experimented with a wide variety of human interface technologies over the years --

# voice dictation, one-handed keyboard, keyless keyboard, foot mouse, and ergonomic keyboards

# like the Kinesis Advantage and [Ergodox](https://configure.ergodox-ez.com/ergodox-ez/layouts/APXBR/latest/0) keyboards with different key switches.

# While these technologies can significantly improve comfort and reduce strain,

# if you have to type on a keyboard, it can only help to use a key layout optimized according to sound ergonomics principles.

#

# I have used different key layouts (Qwerty, Dvorak, Colemak, etc.)

# for communications and for writing and programming projects,

# and have primarily relied on Colemak for the last 10 years.

# **I find that most to all of these key layouts:**

#

# - Demand too much strain on tendons

# - *strenuous lateral extension of the index and little fingers*

# - Ignore the ergonomics of the human hand

# - *different finger strengths*

# - *different finger lengths*

# - *natural roundedness of the hand*

# - *easier for shorter fingers to reach below than above longer fingers*

# - *easier for longer fingers to reach above than below shorter fingers*

# - *ease of little-to-index finger rolls vs. reverse*

# - Over-emphasize alternation between hands and under-emphasize same-hand, different-finger transitions

# - *same-row, adjacent finger transitions are easy and comfortable*

# - *little-to-index finger rolls are easy and comfortable*

#

# While I used ergonomics principles outlined below and the accompanying code to help generate the Engram layout,

# I also relied on massive bigram frequency data for the English language.

# if one were to follow the procedure below and use a different set of bigram frequencies for another language or text corpus,

# they could create a variant of the Engram layout, say "Engram-French", better suited to the French language.

#

# **Why "Engram"?** <br>

# The name is a pun, referring both to "n-gram", letter permutations and their frequencies that are used to compute the Engram layout, and "engram", or memory trace, the postulated change in neural tissue to account for the persistence of memory, as a nod to my attempt to make this layout easy to remember.

# + [markdown] colab_type="text" id="vkv2v3gla8Pt"

# ## How does Engram compare with other key layouts? <a name="scores">

#

# Below we compare the Engram layout with different prominent key layouts (Colemak, Dvorak, QWERTY, etc.) for some large, representative, publicly available data (all text sources are listed below and available on [GitHub](https://github.com/binarybottle/text_data)).

#

# #### Engram Scoring Model scores (x100) for layouts, based on publicly available text data

#

# Engram scores higher for all text and software sources than all other layouts according to its own scoring model (higher scores are better):

#

# | Layout | Google bigrams | Alice | Memento | Tweets_100K | Tweets_20K | Tweets_MASC | Spoken_MASC | COCA_blogs | iweb | Monkey | Coder | Rosetta |

# | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

# | Engram | 62.48 | 61.67 | 62.30 | 63.03 | 60.28 | 62.49 | 61.56 | 62.19 | 62.38 | 62.23 | 62.51 | 62.48 |

# | Halmak | 62.40 | 61.60 | 62.23 | 62.93 | 60.26 | 62.43 | 61.51 | 62.13 | 62.31 | 62.16 | 62.46 | 62.40 |

# | Hieamtsrn | 62.39 | 61.64 | 62.27 | 62.99 | 60.27 | 62.47 | 61.53 | 62.16 | 62.35 | 62.20 | 62.49 | 62.39 |

# | Norman | 62.35 | 61.57 | 62.20 | 62.86 | 60.21 | 62.39 | 61.47 | 62.08 | 62.27 | 62.12 | 62.40 | 62.35 |

# | Workman | 62.37 | 61.59 | 62.22 | 62.91 | 60.23 | 62.41 | 61.49 | 62.10 | 62.29 | 62.14 | 62.43 | 62.37 |

# | MTGap 2.0 | 62.32 | 61.59 | 62.21 | 62.88 | 60.22 | 62.39 | 61.49 | 62.09 | 62.28 | 62.13 | 62.42 | 62.32 |

# | QGMLWB | 62.31 | 61.58 | 62.21 | 62.90 | 60.25 | 62.40 | 61.49 | 62.10 | 62.29 | 62.14 | 62.43 | 62.31 |

# | Colemak Mod-DH | 62.36 | 61.60 | 62.22 | 62.90 | 60.26 | 62.41 | 61.49 | 62.12 | 62.30 | 62.16 | 62.44 | 62.36 |

# | Colemak | 62.36 | 61.58 | 62.20 | 62.89 | 60.25 | 62.40 | 61.48 | 62.10 | 62.29 | 62.14 | 62.43 | 62.36 |

# | Asset | 62.34 | 61.56 | 62.18 | 62.86 | 60.25 | 62.37 | 61.46 | 62.07 | 62.25 | 62.10 | 62.39 | 62.34 |

# | Capewell-Dvorak | 62.29 | 61.56 | 62.17 | 62.86 | 60.20 | 62.36 | 61.47 | 62.06 | 62.24 | 62.10 | 62.37 | 62.29 |

# | Klausler | 62.34 | 61.58 | 62.20 | 62.89 | 60.25 | 62.39 | 61.48 | 62.09 | 62.27 | 62.12 | 62.41 | 62.34 |

# | Dvorak | 62.31 | 61.56 | 62.17 | 62.85 | 60.23 | 62.35 | 61.46 | 62.06 | 62.24 | 62.09 | 62.35 | 62.31 |

# | QWERTY | 62.19 | 61.49 | 62.08 | 62.72 | 60.17 | 62.25 | 61.39 | 61.96 | 62.13 | 61.99 | 62.25 | 62.19 |

#

# ---

#

# [Keyboard Layout Analyzer](http://patorjk.com/keyboard-layout-analyzer/) (KLA) scores for the same text sources

#

# > The optimal layout score is based on a weighted calculation that factors in the distance your fingers moved (33%), how often you use particular fingers (33%), and how often you switch fingers and hands while typing (34%).

#

# Engram scores highest for 7 of the 9 and second highest for 2 of the 9 text sources; Engram scores third and fourth highest for the two software sources, "Coder" and "Rosetta" (higher scores are better):

#

# | Layout | Alice in Wonderland | Memento screenplay | 100K tweets | 20K tweets | MASC tweets | MASC spoken | COCA blogs | iweb | Monkey | Coder | Rosetta |

# | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

# | Engram | 70.13 | 57.16 | 64.64 | 58.58 | 60.24 | 64.39 | 69.66 | 68.25 | 67.66 | 46.81 | 47.69 |

# | Halmak | 66.25 | 55.03 | 60.86 | 55.53 | 57.13 | 62.32 | 67.29 | 65.50 | 64.75 | 45.68 | 47.60 |

# | Hieamtsrn | 69.43 | 56.75 | 64.40 | 58.95 | 60.47 | 64.33 | 69.93 | 69.15 | 68.30 | 46.01 | 46.48 |

# | Colemak Mod-DH | 65.74 | 54.91 | 60.75 | 54.94 | 57.15 | 61.29 | 67.12 | 65.98 | 64.85 | 47.35 | 48.50 |

# | Norman | 62.76 | 52.33 | 57.43 | 53.24 | 53.90 | 59.97 | 62.80 | 60.90 | 59.82 | 43.76 | 46.01 |

# | Workman | 64.78 | 54.29 | 59.98 | 55.81 | 56.25 | 61.34 | 65.27 | 63.76 | 62.90 | 45.33 | 47.76 |

# | MTGAP 2.0 | 66.13 | 53.78 | 59.87 | 55.30 | 55.81 | 60.32 | 65.68 | 63.81 | 62.74 | 45.38 | 44.34 |

# | QGMLWB | 65.45 | 54.07 | 60.51 | 56.05 | 56.90 | 62.23 | 66.26 | 64.76 | 63.91 | 46.38 | 45.72 |

# | Colemak | 65.83 | 54.94 | 60.67 | 54.97 | 57.04 | 61.36 | 67.14 | 66.01 | 64.91 | 47.30 | 48.65 |

# | Asset | 64.60 | 53.84 | 58.66 | 54.72 | 55.35 | 60.81 | 64.71 | 63.17 | 62.44 | 45.54 | 47.52 |

# | Capewell-Dvorak | 66.94 | 55.66 | 62.14 | 56.85 | 57.99 | 62.83 | 66.95 | 65.23 | 64.70 | 45.30 | 45.62 |

# | Klausler | 68.24 | 59.91 | 62.57 | 56.45 | 58.34 | 64.04 | 68.34 | 66.89 | 66.31 | 46.83 | 45.66 |

# | Dvorak | 65.86 | 58.18 | 60.93 | 55.56 | 56.59 | 62.75 | 66.64 | 64.87 | 64.26 | 45.46 | 45.55 |

# | QWERTY | 53.06 | 43.74 | 48.28 | 44.99 | 44.59 | 51.79 | 52.31 | 50.19 | 49.18 | 38.46 | 39.89 |

#

# ---

#

# #### Keyboard Layout Analyzer consecutive same-finger key presses

#

# KLA (and other) distance measures may not accurately reflect natural typing, so below is a more reliable measure of one source of effort and strain -- the tally of consecutive key presses with the same finger for different keys. Engram scores lowest for 6 of the 11 texts, second lowest for two texts, and third or fifth lowest for three texts, two of which are software text sources (lower scores are better):

#

# KLA (and other) distance measures may not accurately reflect natural typing, so below is a more reliable measure of one source of effort and strain -- the tally of consecutive key presses with the same finger for different keys. Engram scores lowest for 6 of the 9 and second or third lowest for 3 of the 9 text sources, and third or fifth lowest for the two software text sources (lower scores are better):

#

# | Layout | Alice | Memento | Tweets_100K | Tweets_20K | Tweets_MASC | Spoken_MASC | COCA_blogs | iweb | Monkey | Coder | Rosetta |

# | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

# | Engram | 216 | 11476 | 320406 | 120286 | 7728 | 3514 | 137290 | 1064640 | 37534 | 125798 | 5822 |

# | Halmak | 498 | 13640 | 484702 | 170064 | 11456 | 5742 | 268246 | 2029634 | 68858 | 144790 | 5392 |

# | Hieamtsrn | 244 | 12096 | 311000 | 119490 | 8316 | 3192 | 155674 | 1100116 | 40882 | 158698 | 7324 |

# | Norman | 938 | 20012 | 721602 | 213890 | 16014 | 9022 | 595168 | 3885282 | 135844 | 179752 | 7402 |

# | Workman | 550 | 13086 | 451280 | 136692 | 10698 | 6156 | 287622 | 1975564 | 71150 | 132526 | 5550 |

# | MTGap 2.0 | 226 | 14550 | 397690 | 139130 | 10386 | 6252 | 176724 | 1532844 | 58144 | 138484 | 7272 |

# | QGMLWB | 812 | 17820 | 637788 | 189700 | 14364 | 7838 | 456442 | 3027530 | 100750 | 149366 | 8062 |

# | Colemak Mod-DH | 362 | 10960 | 352578 | 151736 | 9298 | 4644 | 153984 | 1233770 | 47438 | 117842 | 5328 |

# | Colemak | 362 | 10960 | 352578 | 151736 | 9298 | 4644 | 153984 | 1233770 | 47438 | 117842 | 5328 |

# | Asset | 520 | 12519 | 519018 | 155246 | 11802 | 5664 | 332860 | 2269342 | 77406 | 140886 | 6020 |

# | Capewell-Dvorak | 556 | 14226 | 501178 | 163878 | 12214 | 6816 | 335056 | 2391416 | 78152 | 151194 | 9008 |

# | Klausler | 408 | 14734 | 455658 | 174998 | 11410 | 5212 | 257878 | 1794604 | 59566 | 135782 | 7444 |

# | Dvorak | 516 | 13970 | 492604 | 171488 | 12208 | 5912 | 263018 | 1993346 | 64994 | 142084 | 6484 |

#

# ---

#

# #### Symmetry, switching, and roll measures

#

# The measures of hand symmetry, hand switching, finger switching, and hand runs without row jumps are from the [Carpalx](http://mkweb.bcgsc.ca/carpalx/?keyboard_layouts) website and are based on literature from the Gutenberg Project. Engram ties for highest score on two of the measures, third highest for hand switching (since it emphasizes hand rolls), and has a median value for hand runs (higher absolute scores are considered better).

#

# The roll measures are the number of bigrams (in billions of instances from Norvig's analysis of Google data) that engage inward rolls (little-to-index sequences), within the four columns of one hand, or any column across two hands. Engram scores second highest for the 32-keys and highest for the 24-keys, where the latter ensures that we are comparing Engram's letters with letters in other layouts (higher scores are better):

#

# | Layout | hand symmetry (%, right<0) | hand switching (%) | finger switching (%) | hand runs without row jumps (%) | inward rolls, billions (32 keys) | inward rolls, billions (24 keys) |

# | --- | --- | --- | --- | --- | --- | --- |

# | Engram | -99 | 61 | 93 | 82 | 4.64 | 4.51 |

# | Hieamtsrn | -96 | 59 | 93 | 85 | 4.69 | 4.16 |

# | Halmak | 99 | 63 | 93 | 81 | 4.59 | 4.25 |

# | Norman | 95 | 52 | 90 | 77 | 3.99 | 3.61 |

# | Workman | 95 | 52 | 93 | 79 | 4.16 | 3.63 |

# | MTGAP 2.0 | 98 | 48 | 93 | 76 | 3.96 | 3.58 |

# | QGMLWB | -97 | 57 | 91 | 84 | 4.36 | 2.81 |

# | Colemak Mod-DH | -94 | 52 | 93 | 78 | 4.15 | 3.51 |

# | Colemak | -94 | 52 | 93 | 83 | 4.17 | 3.16 |

# | Asset | 96 | 52 | 91 | 82 | 4.03 | 3.05 |

# | Capewell-Dvorak | -91 | 59 | 92 | 82 | 4.39 | 3.66 |

# | Klausler | -94 | 62 | 93 | 86 | 4.42 | 3.52 |

# | Dvorak | -86 | 62 | 93 | 84 | 4.40 | 3.20 |

# | QWERTY | 85 | 51 | 89 | 68 | 3.62 | 2.13 |

#

# ---

#

# | Layout | Year | Website |

# | --- | --- | --- |

# | Engram | 2021 | https://engram.dev |

# | [Halmak 2.2](https://keyboard-design.com/letterlayout.html?layout=halmak-2-2.en.ansi) | 2016 | https://github.com/MadRabbit/halmak |

# | [Hieamtsrn](https://www.keyboard-design.com/letterlayout.html?layout=hieamtsrn.en.ansi) | 2014 | https://mathematicalmulticore.wordpress.com/the-keyboard-layout-project/#comment-4976 |

# | [Colemak Mod-DH](https://keyboard-design.com/letterlayout.html?layout=colemak-mod-DH-full.en.ansi) | 2014 | https://colemakmods.github.io/mod-dh/ |

# | [Norman](https://keyboard-design.com/letterlayout.html?layout=norman.en.ansi) | 2013 | https://normanlayout.info/ |

# | [Workman](https://keyboard-design.com/letterlayout.html?layout=workman.en.ansi) | 2010 | https://workmanlayout.org/ |

# | [MTGAP 2.0](https://www.keyboard-design.com/letterlayout.html?layout=mtgap-2-0.en.ansi) | 2010 | https://mathematicalmulticore.wordpress.com/2010/06/21/mtgaps-keyboard-layout-2-0/ |

# | [QGMLWB](https://keyboard-design.com/letterlayout.html?layout=qgmlwb.en.ansi) | 2009 | http://mkweb.bcgsc.ca/carpalx/?full_optimization |

# | [Colemak](https://keyboard-design.com/letterlayout.html?layout=colemak.en.ansi) | 2006 | https://colemak.com/ |

# | [Asset](https://keyboard-design.com/letterlayout.html?layout=asset.en.ansi) | 2006 | http://millikeys.sourceforge.net/asset/ |

# | Capewell-Dvorak | 2004 | http://michaelcapewell.com/projects/keyboard/layout_capewell-dvorak.htm |

# | [Klausler](https://www.keyboard-design.com/letterlayout.html?layout=klausler.en.ansi) | 2002 | https://web.archive.org/web/20031001163722/http://klausler.com/evolved.html |

# | [Dvorak](https://keyboard-design.com/letterlayout.html?layout=dvorak.en.ansi) | 1936 | https://en.wikipedia.org/wiki/Dvorak_keyboard_layout |

# | [QWERTY](https://keyboard-design.com/letterlayout.html?layout=qwerty.en.ansi) | 1873 | https://en.wikipedia.org/wiki/QWERTY |

#

# ---

#

# | Text source | Information |

# | --- | --- |

# | "Alice in Wonderland" | Alice in Wonderland (Ch.1) |

# | "Memento screenplay" | [Memento screenplay](https://www.dailyscript.com/scripts/memento.html) |

# | "100K tweets" | 100,000 tweets from: [Sentiment140 dataset](https://data.world/data-society/twitter-user-data) training data |

# | "20K tweets" | 20,000 tweets from [Gender Classifier Data](https://www.kaggle.com/crowdflower/twitter-user-gender-classification) |

# | "MASC tweets" | [MASC](http://www.anc.org/data/masc/corpus/) tweets (cleaned of html markup) |

# | "MASC spoken" | [MASC](http://www.anc.org/data/masc/corpus/) spoken transcripts (phone and face-to-face: 25,783 words) |

# | "COCA blogs" | [Corpus of Contemporary American English](https://www.english-corpora.org/coca/) [blog samples](https://www.corpusdata.org/) |

# | "Rosetta" | "Tower of Hanoi" (programming languages A-Z from [Rosetta Code](https://rosettacode.org/wiki/Towers_of_Hanoi)) |

# | "Monkey text" | Ian Douglas's English-generated [monkey0-7.txt corpus](https://zenodo.org/record/4642460) |

# | "Coder text" | Ian Douglas's software-generated [coder0-7.txt corpus](https://zenodo.org/record/4642460) |

# | "iweb cleaned corpus" | First 150,000 lines of Shai Coleman's [iweb-corpus-samples-cleaned.txt](https://colemak.com/pub/corpus/iweb-corpus-samples-cleaned.txt.xz) |

#

# Reference for Monkey and Coder texts:

# <NAME>. (2021, March 28). Keyboard Layout Analysis: Creating the Corpus, Bigram Chains, and Shakespeare's Monkeys (Version 1.0.0). Zenodo. http://doi.org/10.5281/zenodo.4642460

# + [markdown] colab_type="text" id="wm3T-hmja8Ps"

# ## Guiding criteria <a name="criteria">

#

# 1. Assign letters to keys that don't require lateral finger movements.

# 2. Promote alternating between hands over uncomfortable same-hand transitions.

# 3. Assign the most common letters to the most comfortable keys.

# 4. Arrange letters so that more frequent bigrams are easier to type.

# 5. Promote little-to-index-finger roll-ins over index-to-little-finger roll-outs.

# 6. Balance finger loads according to their relative strength.

# 7. Avoid stretching shorter fingers up and longer fingers down.

# 8. Avoid using the same finger.

# 9. Avoid skipping over the home row.

# 10. Assign the most common punctuation to keys in the middle of the keyboard.

# 11. Assign easy-to-remember symbols to the Shift-number keys.

#

# ### Factors used to compute the Engram layout <a name="factors">

# - **N-gram letter frequencies** <br>

#

# [Peter Norvig's analysis](http://www.norvig.com/mayzner.html) of data from Google's book scanning project

# - **Flow factors** (transitions between ordered key pairs) <br>

# These factors are influenced by Dvorak's 11 criteria (1936).

# + [markdown] colab_type="text" id="2eTQ4jxPa8Pv"

# ### Import dependencies and functions <a name="import">

# +

# # %load code/engram_variables.py

# Print .png figures and .txt text files

print_output = False # True

# Apply strength data

apply_strength = True

min_strength_factor = 0.9

letters24 = ['E','T','A','O','I','N','S','R','H','L','D','C','U','M','F','P','G','W','Y','B','V','K','X','J']

keys24 = [1,2,3,4, 5,6,7,8, 9,10,11,12, 13,14,15,16, 17,18,19,20, 21,22,23,24]

instances24 = [4.45155E+11,3.30535E+11,2.86527E+11,2.72277E+11,2.69732E+11,2.57771E+11,

2.32083E+11,2.23768E+11,1.80075E+11,1.44999E+11,1.36018E+11,1.19156E+11,

97273082907,89506734085,85635440629,76112599849,66615316232,59712390260,

59331661972,52905544693,37532682260,19261229433,8369138754,5657910830]

max_frequency = 4.45155E+11 #1.00273E+11

instances_denominator = 1000000000000

# Establish which layouts are within a small difference of the top-scoring layout

# (the smallest difference between two penalties, 0.9^8 - 0.9^9, in one of 24^2 key pairs):

delta = 0.9**8 - 0.9**9

factor24 = ((24**2 - 1) + (1-delta)) / (24**2)

factor32 = ((32**2 - 1) + (1-delta)) / (32**2)

# Establish which layouts are within a small difference of each other when using the speed matrix.

# We define an epsilon equal to 13.158 ms for a single bigram (of the 32^2 possible bigrams),

# where 13.158 ms is one tenth of 131.58 ms, the fastest measured digraph tapping speed (30,000/228 = 131.58 ms)

# recorded in the study: "Estimation of digraph costs for keyboard layout optimization",

# A Iseri, <NAME>, International Journal of Industrial Ergonomics, 48, 127-138, 2015.

#data_matrix_speed = Speed32x32

#time_range = 243 # milliseconds

#norm_range = np.max(data_matrix_speed) - np.min(data_matrix_speed) # 0.6535662299854439

#ms_norm = norm_range / time_range # 0.0026895729629030614

#epsilon = 131.58/10 * ms_norm / (32**2)

epsilon = 0.00003549615849447514

# + colab={"base_uri": "https://localhost:8080/", "height": 71} colab_type="code" id="q1wNgX_FDzRH" outputId="7c14cebc-a4b7-4a77-d14f-26cbc7690c28"

# # %load code/engram_functions.py

# Import dependencies

import xlrd

import numpy as np

from sympy.utilities.iterables import multiset_permutations

import matplotlib

import matplotlib.pyplot as plt

import seaborn as sns

def permute_optimize_keys(fixed_letters, fixed_letter_indices, open_letter_indices,

all_letters, keys, data_matrix, bigrams, bigram_frequencies,

min_score=0, verbose=False):

"""

Find all permutations of letters, optimize layout, and generate output.

"""

matrix_selected = select_keys(data_matrix, keys, verbose=False)

unassigned_letters = []

for all_letter in all_letters:

if all_letter not in fixed_letters:

unassigned_letters.append(all_letter)

if len(unassigned_letters) == len(open_letter_indices):

break

letter_permutations = permute_letters(unassigned_letters, verbose)

if verbose:

print("{0} permutations".format(len(letter_permutations)))

top_permutation, top_score = optimize_layout(np.array([]), matrix_selected, bigrams, bigram_frequencies,

letter_permutations, open_letter_indices,

fixed_letters, fixed_letter_indices, min_score, verbose)

return top_permutation, top_score, letter_permutations

def permute_optimize(starting_permutation, letters, all_letters, all_keys,

data_matrix, bigrams, bigram_frequencies, min_score=0, verbose=False):

"""

Find all permutations of letters, optimize layout, and generate output.

"""

matrix_selected = select_keys(data_matrix, all_keys, verbose=False)

open_positions = []

fixed_positions = []

open_letters = []

fixed_letters = []

assigned_letters = []

for iletter, letter in enumerate(letters):

if letter.strip() == "":

open_positions.append(iletter)

for all_letter in all_letters:

if all_letter not in letters and all_letter not in assigned_letters:

open_letters.append(all_letter)

assigned_letters.append(all_letter)

break

else:

fixed_positions.append(iletter)

fixed_letters.append(letter)

letter_permutations = permute_letters(open_letters, verbose)

if verbose:

print("{0} permutations".format(len(letter_permutations)))

top_permutation, top_score = optimize_layout(starting_permutation, matrix_selected, bigrams,

bigram_frequencies, letter_permutations, open_positions,

fixed_letters, fixed_positions, min_score, verbose)

return top_permutation, top_score

def select_keys(data_matrix, keys, verbose=False):

"""

Select keys to quantify pairwise relationships.

"""

# Extract pairwise entries for the keys:

nkeys = len(keys)

Select = np.zeros((nkeys, nkeys))

u = 0

for i in keys:

u += 1

v = 0

for j in keys:

v += 1

Select[u-1,v-1] = data_matrix[i-1,j-1]

# Normalize matrix with min-max scaling to a range with max 1:

newMin = np.min(Select) / np.max(Select)

newMax = 1.0

Select = newMin + (Select - np.min(Select)) * (newMax - newMin) / (np.max(Select) - np.min(Select))

if verbose:

# Heatmap of array

heatmap(data=Select, title="Matrix heatmap", xlabel="Key 1", ylabel="Key 2", print_output=False); plt.show()

return Select

def permute_letters(letters, verbose=False):

"""

Find all permutations of a given set of letters (max: 8-10 letters).

"""

letter_permutations = []

for p in multiset_permutations(letters):

letter_permutations.append(p)

letter_permutations = np.array(letter_permutations)

return letter_permutations

def score_layout(data_matrix, letters, bigrams, bigram_frequencies, verbose=False):

"""

Compute the score for a given letter-key layout (NOTE normalization step).

"""

# Create a matrix of bigram frequencies:

nletters = len(letters)

F2 = np.zeros((nletters, nletters))

# Find the bigram frequency for each ordered pair of letters in the permutation:

for i1 in range(nletters):

for i2 in range(nletters):

bigram = letters[i1] + letters[i2]

i2gram = np.where(bigrams == bigram)

if np.size(i2gram) > 0:

F2[i1, i2] = bigram_frequencies[i2gram][0]

# Normalize matrices with min-max scaling to a range with max 1:

newMax = 1

minF2 = np.min(F2)

maxF2 = np.max(F2)

newMin2 = minF2 / maxF2

F2 = newMin + (F2 - minF2) * (newMax - newMin2) / (maxF2 - minF2)

# Compute the score for this permutation:

score = np.average(data_matrix * F2)

if verbose:

print("Score for letter permutation {0}: {1}".format(letters, score))

return score

def tally_bigrams(input_text, bigrams, normalize=True, verbose=False):

"""

Compute the score for a given letter-key layout (NOTE normalization step).

"""

# Find the bigram frequency for each ordered pair of letters in the input text

#input_text = [str.upper(str(x)) for x in input_text]

input_text = [str.upper(x) for x in input_text]

nchars = len(input_text)

F = np.zeros(len(bigrams))

for ichar in range(0, nchars-1):

bigram = input_text[ichar] + input_text[ichar + 1]

i2gram = np.where(bigrams == bigram)

if np.size(i2gram) > 0:

F[i2gram] += 1

# Normalize matrix with min-max scaling to a range with max 1:

if normalize:

newMax = 1

newMin = np.min(F) / np.max(F)

F = newMin + (F - np.min(F)) * (newMax - newMin) / (np.max(F) - np.min(F))

bigram_frequencies_for_input = F

if verbose:

print("Bigram frequencies for input: {0}".format(bigram_frequencies_for_input))

return bigram_frequencies_for_input

def tally_layout_samefinger_bigrams(layout, bigrams, bigram_frequencies, nkeys=32, verbose=False):

"""

Tally the number of same-finger bigrams within (a list of 24 letters representing) a layout:

['P','Y','O','U','C','I','E','A','G','K','J','X','M','D','L','B','R','T','N','S','H','V','W','F']

"""

if nkeys == 32:

# Left: Right:

# 1 2 3 4 25 28 13 14 15 16 31

# 5 6 7 8 26 29 17 18 19 20 32

# 9 10 11 12 27 30 21 22 23 24

same_finger_keys = [[1,5],[5,9],[1,9], [2,6],[6,10],[2,10],

[3,7],[7,11],[3,11], [4,8],[8,12],[4,12],

[25,26],[26,27],[25,27], [28,29],[29,30],[28,30], [31,32],

[4,25],[4,26],[4,27], [8,25],[8,26],[8,27], [12,25],[12,26],[12,27],

[13,28],[13,29],[13,30], [17,28],[17,29],[17,30], [21,28],[21,29],[21,30],

[31,16],[31,20],[31,24], [32,16],[32,20],[32,24],

[13,17],[17,21],[13,21], [14,18],[18,22],[14,22],

[15,19],[19,23],[15,23], [16,20],[20,24],[16,24]]

elif nkeys == 24:

# 1 2 3 4 13 14 15 16

# 5 6 7 8 17 18 19 20

# 9 10 11 12 21 22 23 24

same_finger_keys = [[1,5],[5,9],[1,9], [2,6],[6,10],[2,10],

[3,7],[7,11],[3,11], [4,8],[8,12],[4,12],

[13,17],[17,21],[13,21], [14,18],[18,22],[14,22],

[15,19],[19,23],[15,23], [16,20],[20,24],[16,24]]

layout = [str.upper(x) for x in layout]

max_frequency = 1.00273E+11

samefinger_bigrams = []

samefinger_bigram_counts = []

for bigram_keys in same_finger_keys:

bigram1 = layout[bigram_keys[0]-1] + layout[bigram_keys[1]-1]

bigram2 = layout[bigram_keys[1]-1] + layout[bigram_keys[0]-1]

i2gram1 = np.where(bigrams == bigram1)

i2gram2 = np.where(bigrams == bigram2)

if np.size(i2gram1) > 0:

samefinger_bigrams.append(bigram1)

samefinger_bigram_counts.append(max_frequency * bigram_frequencies[i2gram1] / np.max(bigram_frequencies))

if np.size(i2gram2) > 0:

samefinger_bigrams.append(bigram2)

samefinger_bigram_counts.append(max_frequency * bigram_frequencies[i2gram2] / np.max(bigram_frequencies))

samefinger_bigrams_total = np.sum([x[0] for x in samefinger_bigram_counts])

if verbose:

print(" Total same-finger bigram frequencies: {0:15.0f}".format(samefinger_bigrams_total))

return samefinger_bigrams, samefinger_bigram_counts, samefinger_bigrams_total

def tally_layout_bigram_rolls(layout, bigrams, bigram_frequencies, nkeys=32, verbose=False):

"""

Tally the number of bigrams that engage little-to-index finger inward rolls

for (a list of 24 or 32 letters representing) a layout,

within the four columns of one hand, or any column across two hands.

layout = ['P','Y','O','U','C','I','E','A','G','K','J','X','L','D','B','V','N','T','R','S','H','M','W','F']

bigram_rolls, bigram_roll_counts, bigram_rolls_total = tally_layout_bigram_rolls(layout, bigrams, bigram_frequencies, nkeys=24, verbose=True)

"""

if nkeys == 32:

# Left: Right:

# 1 2 3 4 25 28 13 14 15 16 31

# 5 6 7 8 26 29 17 18 19 20 32

# 9 10 11 12 27 30 21 22 23 24

roll_keys = [[1,2],[2,3],[3,4], [5,6],[6,7],[7,8], [9,10],[10,11],[11,12],

[16,15],[15,14],[14,13], [20,19],[19,18],[18,17], [24,23],[23,22],[22,21],

[1,3],[2,4],[1,4], [5,7],[6,8],[5,8], [9,11],[10,12],[9,12],

[16,14],[15,13],[16,13], [20,18],[19,17],[20,17], [24,22],[23,21],[24,21],

[1,6],[1,7],[1,8],[2,7],[2,8],[3,8],

[5,2],[5,3],[5,4],[6,3],[6,4],[7,4],

[5,10],[5,11],[5,12],[6,11],[6,12],[7,12],

[9,6],[9,7],[9,8],[10,7],[10,8],[11,8],

[16,19],[16,18],[16,17],[15,18],[15,17],[14,17],

[20,15],[20,14],[20,13],[19,14],[19,13],[18,13],

[20,23],[20,22],[20,21],[19,22],[19,21],[18,21],

[24,19],[24,18],[24,17],[23,18],[23,17],[22,17],

[1,10],[1,11],[1,12],[2,11],[2,12],[3,12],

[9,2],[9,3],[9,4],[10,3],[10,4],[11,4],

[16,23],[16,22],[16,21],[15,22],[15,21],[14,21],

[24,15],[24,14],[24,13],[23,14],[23,13],[22,13]]

for i in [1,2,3,4,5,6,7,8,9,10,11,12, 25,26,27]:

for j in [13,14,15,16,17,18,19,20,21,22,23,24, 28,29,30,31,32]:

roll_keys.append([i,j])

for i in [13,14,15,16,17,18,19,20,21,22,23,24, 28,29,30,31,32]:

for j in [1,2,3,4,5,6,7,8,9,10,11,12, 25,26,27]:

roll_keys.append([i,j])

elif nkeys == 24:

# 1 2 3 4 13 14 15 16

# 5 6 7 8 17 18 19 20

# 9 10 11 12 21 22 23 24

roll_keys = [[1,2],[2,3],[3,4], [5,6],[6,7],[7,8], [9,10],[10,11],[11,12],

[16,15],[15,14],[14,13], [20,19],[19,18],[18,17], [24,23],[23,22],[22,21],

[1,3],[2,4],[1,4], [5,7],[6,8],[5,8], [9,11],[10,12],[9,12],

[16,14],[15,13],[16,13], [20,18],[19,17],[20,17], [24,22],[23,21],[24,21],

[1,6],[1,7],[1,8],[2,7],[2,8],[3,8], [5,2],[5,3],[5,4],[6,3],[6,4],[7,4],

[5,10],[5,11],[5,12],[6,11],[6,12],[7,12], [9,6],[9,7],[9,8],[10,7],[10,8],[11,8],

[16,19],[16,18],[16,17],[15,18],[15,17],[14,17], [20,15],[20,14],[20,13],[19,14],[19,13],[18,13],

[20,23],[20,22],[20,21],[19,22],[19,21],[18,21], [24,19],[24,18],[24,17],[23,18],[23,17],[22,17],

[1,10],[1,11],[1,12],[2,11],[2,12],[3,12], [9,2],[9,3],[9,4],[10,3],[10,4],[11,4],

[16,23],[16,22],[16,21],[15,22],[15,21],[14,21], [24,15],[24,14],[24,13],[23,14],[23,13],[22,13]]

for i in range(0,12):

for j in range(12,24):

roll_keys.append([i,j])

for i in range(12,24):

for j in range(0,12):

roll_keys.append([i,j])

layout = [str.upper(x) for x in layout]

max_frequency = 1.00273E+11

bigram_rolls = []

bigram_roll_counts = []

for bigram_keys in roll_keys:

bigram1 = layout[bigram_keys[0]-1] + layout[bigram_keys[1]-1]

bigram2 = layout[bigram_keys[1]-1] + layout[bigram_keys[0]-1]

i2gram1 = np.where(bigrams == bigram1)

i2gram2 = np.where(bigrams == bigram2)

if np.size(i2gram1) > 0:

bigram_rolls.append(bigram1)

bigram_roll_counts.append(max_frequency * bigram_frequencies[i2gram1] / np.max(bigram_frequencies))

if np.size(i2gram2) > 0:

bigram_rolls.append(bigram2)

bigram_roll_counts.append(max_frequency * bigram_frequencies[i2gram2] / np.max(bigram_frequencies))

bigram_rolls_total = np.sum([x[0] for x in bigram_roll_counts])

if verbose:

print(" Total bigram inward roll frequencies: {0:15.0f}".format(bigram_rolls_total))

return bigram_rolls, bigram_roll_counts, bigram_rolls_total

def optimize_layout(starting_permutation, data_matrix, bigrams, bigram_frequencies, letter_permutations,

open_positions, fixed_letters, fixed_positions=[], min_score=0, verbose=False):

"""

Compute scores for all letter-key layouts.

"""

top_permutation = starting_permutation

top_score = min_score

use_score_function = False

nletters = len(open_positions) + len(fixed_positions)

F2 = np.zeros((nletters, nletters))

# Loop through the permutations of the selected letters:

for p in letter_permutations:

letters = np.array(['W' for x in range(nletters)]) # KEEP to initialize!

for imove, open_position in enumerate(open_positions):

letters[open_position] = p[imove]

for ifixed, fixed_position in enumerate(fixed_positions):

letters[fixed_position] = fixed_letters[ifixed]

# Compute the score for this permutation:

if use_score_function:

score = score_layout(data_matrix, letters, bigrams, bigram_frequencies, verbose=False)

else:

# Find the bigram frequency for each ordered pair of letters in the permutation:

for i1 in range(nletters):

for i2 in range(nletters):

bigram = letters[i1] + letters[i2]

i2gram = np.where(bigrams == bigram)

if np.size(i2gram) > 0:

F2[i1, i2] = bigram_frequencies[i2gram][0]

# Normalize matrices with min-max scaling to a range with max 1:

newMax = 1

minF2 = np.min(F2)

maxF2 = np.max(F2)

newMin2 = minF2 / maxF2

F = newMin + (F2 - minF2) * (newMax - newMin2) / (maxF2 - minF2)

# Compute the score for this permutation:

score = np.average(data_matrix * F)

if score > top_score:

top_score = score

top_permutation = letters

if verbose:

if top_score == min_score:

print("top_score = min_score")

print("{0:0.8f}".format(top_score))

print(*top_permutation)

return top_permutation, top_score

def exchange_letters(letters, fixed_letter_indices, all_letters, all_keys, data_matrix,

bigrams, bigram_frequencies, verbose=True):

"""

Exchange letters, 8 keys at a time (8! = 40,320) selected twice in 14 different ways:

Indices:

0 1 2 3 12 13 14 15

4 5 6 7 16 17 18 19

8 9 10 11 20 21 22 23

1. Top rows

0 1 2 3 12 13 14 15

2. Bottom rows

8 9 10 11 20 21 22 23

3. Top and bottom rows on the right side

12 13 14 15

20 21 22 23

4. Top and bottom rows on the left side

0 1 2 3

8 9 10 11

5. Top right and bottom left rows

12 13 14 15

8 9 10 11

6. Top left and bottom right rows

0 1 2 3

20 21 22 23

7. Center of the top and bottom rows on both sides

1 2 13 14

9 10 21 22

8. The eight corners

0 3 12 15

8 11 20 23

9. Left half of the top and bottom rows on both sides

0 1 12 13

8 9 20 21

10. Right half of the top and bottom rows on both sides

2 3 14 15

10 11 22 23

11. Left half of non-home rows on the left and right half of the same rows on the right

0 1 14 15

8 9 22 23

12. Right half of non-home rows on the left and left half of the same rows on the right

2 3 12 13

10 11 20 21

13. Top center and lower sides

1 2 13 14

8 11 20 23

14. Top sides and lower center

0 3 12 15

9 10 21 22

15. Repeat 1-14

"""

top_score = score_layout(data_matrix, letters, bigrams, bigram_frequencies, verbose=False)

print('Initial score: {0}'.format(top_score))

print(*letters)

top_permutation = letters

lists_of_open_indices = [

[0,1,2,3,12,13,14,15],

[8,9,10,11,20,21,22,23],

[12,13,14,15,20,21,22,23],

[0,1,2,3,8,9,10,11],

[12,13,14,15,8,9,10,11],

[0,1,2,3,20,21,22,23],

[1,2,13,14,9,10,21,22],

[0,3,12,15,8,11,20,23],

[0,1,12,13,8,9,20,21],

[2,3,14,15,10,11,22,23],

[0,1,14,15,8,9,22,23],

[2,3,12,13,10,11,20,21],

[1,2,8,11,13,14,20,23],

[0,3,9,10,12,15,21,22]

]

lists_of_print_statements = [

'1. Top rows',

'2. Bottom rows',

'3. Top and bottom rows on the right side',

'4. Top and bottom rows on the left side',

'5. Top right and bottom left rows',

'6. Top left and bottom right rows',

'7. Center of the top and bottom rows on both sides',

'8. The eight corners',

'9. Left half of the top and bottom rows on both sides',

'10. Right half of the top and bottom rows on both sides',

'11. Left half of non-home rows on the left and right half of the same rows on the right',

'12. Right half of non-home rows on the left and left half of the same rows on the right',

'13. Top center and lower sides',

'14. Top sides and lower center'

]

for istep in [1,2]:

if istep == 1:

s = "Set 1: 14 letter exchanges: "

elif istep == 2:

s = "Set 2: 14 letter exchanges: "

for ilist, open_indices in enumerate(lists_of_open_indices):

print_statement = lists_of_print_statements[ilist]

if verbose:

print('{0} {1}'.format(s, print_statement))

starting_permutation = top_permutation.copy()

for open_index in open_indices:

if open_index not in fixed_letter_indices:

top_permutation[open_index] = ''

top_permutation, top_score = permute_optimize(starting_permutation, top_permutation, letters24,

keys24, data_matrix, bigrams, bigram_frequencies,

min_score=top_score, verbose=True)

if verbose:

print('')

print(' -------- DONE --------')

print('')

return top_permutation, top_score

def rank_within_epsilon(numbers, epsilon, factor=False, verbose=True):

"""

numbers = np.array([10,9,8,7,6])

epsilon = 1

rank_within_epsilon(numbers, epsilon, factor=False, verbose=True)

>>> array([1., 1., 2., 2., 3.])

numbers = np.array([0.798900824, 0.79899900824, 0.79900824])

epsilon = 0.9**8 - 0.9**9

factor24 = ((24**2 - 1) + (1-epsilon)) / (24**2) # 0.999925266109375

rank_within_epsilon(numbers, factor24, factor=True, verbose=True)

>>> array([2., 1., 1.])

"""

numbers = np.array(numbers)

Isort = np.argsort(-numbers)

numbers_sorted = numbers[Isort]

count = 1

ranks = np.zeros(np.size(numbers))

for i, num in enumerate(numbers_sorted):

if ranks[i] == 0:

if factor:

lower_bound = num * epsilon

else:

lower_bound = num - epsilon

bounded_nums1 = num >= numbers_sorted

bounded_nums2 = numbers_sorted >= lower_bound

bounded_nums = bounded_nums1 * bounded_nums2

count += 1

for ibounded, bounded_num in enumerate(bounded_nums):

if bounded_num == True:

ranks[ibounded] = count

uranks = np.unique(ranks)

nranks = np.size(uranks)

new_ranks = ranks.copy()

new_count = 0

for rank in uranks:

new_count += 1

same_ranks = ranks == rank

for isame, same_rank in enumerate(same_ranks):

if same_rank == True:

new_ranks[isame] = new_count

#ranks_sorted = new_ranks[Isort]

ranks_sorted = [np.int(x) for x in new_ranks]

if verbose:

for i, num in enumerate(numbers_sorted):

print(" ({0}) {1}".format(np.int(ranks_sorted[i]), num))

return numbers_sorted, ranks_sorted, Isort

def print_matrix_info(matrix_data, matrix_label, nkeys, nlines=10):

"""

Print matrix output.

"""

print("{0} min = {1}, max = {2}".format(matrix_label, np.min(matrix_data), np.max(matrix_data)))

matrix_flat = matrix_data.flatten()

argsort = np.argsort(matrix_flat)

print("{0} key number pairs with minimum values:".format(matrix_label))

for x in argsort[0:nlines]:

if x % nkeys == 0:

min_row = np.int(np.ceil(x / nkeys)) + 1

min_col = 1

else:

min_row = np.int(np.ceil(x / nkeys))

min_col = x - nkeys * (min_row-1) + 1

print(" {0} -> {1} ({2})".format(min_row, min_col, matrix_flat[x]))

print("{0} key number pairs with maximum values:".format(matrix_label))

max_sort = argsort[-nlines::]

for x in max_sort[::-1]:

if x % nkeys == 0:

max_row = np.int(np.ceil(x / nkeys)) + 1

max_col = 1

else:

max_row = np.int(np.ceil(x / nkeys))

max_col = x - nkeys * (max_row-1) + 1

print(" {0} -> {1} ({2})".format(max_row, max_col, matrix_flat[x]))

def heatmap(data, title="", xlabel="", ylabel="", x_axis_labels=[], y_axis_labels=[], print_output=True):

"""

Plot heatmap of matrix.

"""

# use heatmap function, set the color as viridis and

# make each cell seperate using linewidth parameter

plt.figure()

sns_plot = sns.heatmap(data, xticklabels=x_axis_labels, yticklabels=y_axis_labels, linewidths=1,

cmap="viridis", square=True, vmin=np.min(data), vmax=np.max(data))

plt.title(title)

plt.xlabel(xlabel)

plt.ylabel(ylabel)

sns_plot.set_xticklabels(x_axis_labels) #, rotation=75)

sns_plot.set_yticklabels(y_axis_labels)

if print_output:

sns_plot.figure.savefig("{0}_heatmap.png".format(title))

def histmap(data, title="", print_output=True):

"""

Plot histogram.

"""

sns.distplot(data)

plt.title(title)

if print_output:

sns_plot.figure.savefig("{0}_histogram.png".format(title))

def print_layout24(layout):

"""

Print layout.

"""

print(' {0} {1}'.format(' '.join(layout[0:4]),

' '.join(layout[12:16])))

print(' {0} {1}'.format(' '.join(layout[4:8]),

' '.join(layout[16:20])))

print(' {0} {1}'.format(' '.join(layout[8:12]),

' '.join(layout[20:24])))

def print_layout24_instances(layout, letters24, instances24, bigrams, bigram_frequencies):

"""

Print billions of instances per letter (not Z or Q) in layout form.

layout = ['P','Y','O','U','C','I','E','A','G','K','J','X','M','D','L','B','R','T','N','S','H','V','W','F']

print_layout24_instances(layout, letters24, instances24, bigrams, bigram_frequencies)

"""

layout_instances = []

layout_instances_strings = []

for letter in layout:

index = letters24.index(letter)

layout_instances.append(instances24[index])

layout_instances_strings.append('{0:3.0f}'.format(instances24[index]/instances_denominator))

print(' {0} {1}'.format(' '.join(layout_instances_strings[0:4]),

' '.join(layout_instances_strings[12:16])))

print(' {0} {1}'.format(' '.join(layout_instances_strings[4:8]),

' '.join(layout_instances_strings[16:20])))

print(' {0} {1}'.format(' '.join(layout_instances_strings[8:12]),

' '.join(layout_instances_strings[20:24])))

left_sum = np.sum(layout_instances[0:12])

right_sum = np.sum(layout_instances[12:24])

pL = ''

pR = ''

if left_sum > right_sum:

pL = ' ({0:3.2f}%)'.format(100 * (left_sum - right_sum) / right_sum)

elif right_sum > left_sum:

pR = ' ({0:3.2f}%)'.format(100 * (right_sum - left_sum) / left_sum)

print('\n left: {0}{1} right: {2}{3}'.format(left_sum, pL, right_sum, pR))

tally_layout_samefinger_bigrams(layout, bigrams, bigram_frequencies, nkeys=24, verbose=True)

tally_layout_bigram_rolls(layout, bigrams, bigram_frequencies, nkeys=24, verbose=True)

def print_bigram_frequency(input_pair, bigrams, bigram_frequencies):

"""

>>> print_bigram_frequency(['t','h'], bigrams, bigram_frequencies)

"""

# Find the bigram frequency

input_text = [str.upper(str(x)) for x in input_pair]

nchars = len(input_text)

for ichar in range(0, nchars-1):

bigram1 = input_text[ichar] + input_text[ichar + 1]

bigram2 = input_text[ichar + 1] + input_text[ichar]

i2gram1 = np.where(bigrams == bigram1)

i2gram2 = np.where(bigrams == bigram2)

if np.size(i2gram1) > 0:

freq1 = bigram_frequencies[i2gram1[0][0]]

print("{0}: {1:3.2f}".format(bigram1, freq1))

if np.size(i2gram2) > 0:

freq2 = bigram_frequencies[i2gram2[0][0]]

print("{0}: {1:3.2f}".format(bigram2, freq2))

# + [markdown] colab_type="text" id="rFiySi8rDzRN"

# ### Bigram frequencies <a name="ngrams">

#

# [<NAME>'s ngrams table](http://www.norvig.com/mayzner.html](http://www.norvig.com/mayzner.html)

#

# [NOTE: If you want to compute an optimized layout for another language, or based on another corpus, you can run the tally_bigrams() function above and replace bigram_frequencies below before running the rest of the code.]

# + colab={} colab_type="code" id="K68F0fkqDzRO"

# # %load code/load_bigram_frequencies.py

load_original_ngram_files = False

if load_original_ngram_files:

ngrams_table = "data/bigrams-trigrams-frequencies.xlsx"

wb = xlrd.open_workbook(ngrams_table)

ngrams_sheet = wb.sheet_by_index(0)

# 1-grams and frequencies

onegrams = np.array(())

onegram_frequencies = np.array(())

i = 0

start1 = 0

stop1 = 0

while stop1 == 0:

if ngrams_sheet.cell_value(i, 0) == "2-gram":

stop1 = 1

elif ngrams_sheet.cell_value(i, 0) == "1-gram":

start1 = 1

elif start1 == 1:

onegrams = np.append(onegrams, ngrams_sheet.cell_value(i, 0))

onegram_frequencies = np.append(onegram_frequencies, ngrams_sheet.cell_value(i, 1))

i += 1

onegram_frequencies = onegram_frequencies / np.sum(onegram_frequencies)

# 2-grams and frequencies

bigrams = np.array(())

bigram_frequencies = np.array(())

i = 0

start1 = 0

stop1 = 0

while stop1 == 0:

if ngrams_sheet.cell_value(i, 0) == "3-gram":

stop1 = 1

elif ngrams_sheet.cell_value(i, 0) == "2-gram":

start1 = 1

elif start1 == 1:

bigrams = np.append(bigrams, ngrams_sheet.cell_value(i, 0))

bigram_frequencies = np.append(bigram_frequencies, ngrams_sheet.cell_value(i, 1))

i += 1

bigram_frequencies = bigram_frequencies / np.sum(bigram_frequencies)

# Save:

if print_output:

file = open("onegrams.txt", "w+")

file.write(str(onegrams))

file.close()

file = open("onegram_frequencies.txt", "w+")

file.write(str(onegram_frequencies))

file.close()

file = open("bigrams.txt", "w+")

file.write(str(bigrams))

file.close()

file = open("bigram_frequencies.txt", "w+")

file.write(str(bigram_frequencies))

file.close()

# Print:

print(repr(onegrams))

print(repr(onegram_frequencies))

print(repr(bigrams))

print(repr(bigram_frequencies))

else:

onegrams = np.array(['E', 'T', 'A', 'O', 'I', 'N', 'S', 'R', 'H', 'L', 'D', 'C', 'U',

'M', 'F', 'P', 'G', 'W', 'Y', 'B', 'V', 'K', 'X', 'J', 'Q', 'Z'],

dtype='<U32')

onegram_frequencies = np.array([0.12492063, 0.09275565, 0.08040605, 0.07640693, 0.07569278,

0.07233629, 0.06512767, 0.06279421, 0.05053301, 0.04068986,

0.03816958, 0.03343774, 0.02729702, 0.02511761, 0.02403123,

0.02135891, 0.01869376, 0.01675664, 0.0166498 , 0.01484649,

0.01053252, 0.00540513, 0.00234857, 0.00158774, 0.00120469,

0.00089951])

bigrams = np.array(['TH', 'HE', 'IN', 'ER', 'AN', 'RE', 'ON', 'AT', 'EN', 'ND', 'TI',

'ES', 'OR', 'TE', 'OF', 'ED', 'IS', 'IT', 'AL', 'AR', 'ST', 'TO',

'NT', 'NG', 'SE', 'HA', 'AS', 'OU', 'IO', 'LE', 'VE', 'CO', 'ME',

'DE', 'HI', 'RI', 'RO', 'IC', 'NE', 'EA', 'RA', 'CE', 'LI', 'CH',

'LL', 'BE', 'MA', 'SI', 'OM', 'UR', 'CA', 'EL', 'TA', 'LA', 'NS',

'DI', 'FO', 'HO', 'PE', 'EC', 'PR', 'NO', 'CT', 'US', 'AC', 'OT',

'IL', 'TR', 'LY', 'NC', 'ET', 'UT', 'SS', 'SO', 'RS', 'UN', 'LO',

'WA', 'GE', 'IE', 'WH', 'EE', 'WI', 'EM', 'AD', 'OL', 'RT', 'PO',

'WE', 'NA', 'UL', 'NI', 'TS', 'MO', 'OW', 'PA', 'IM', 'MI', 'AI',

'SH', 'IR', 'SU', 'ID', 'OS', 'IV', 'IA', 'AM', 'FI', 'CI', 'VI',

'PL', 'IG', 'TU', 'EV', 'LD', 'RY', 'MP', 'FE', 'BL', 'AB', 'GH',

'TY', 'OP', 'WO', 'SA', 'AY', 'EX', 'KE', 'FR', 'OO', 'AV', 'AG',

'IF', 'AP', 'GR', 'OD', 'BO', 'SP', 'RD', 'DO', 'UC', 'BU', 'EI',

'OV', 'BY', 'RM', 'EP', 'TT', 'OC', 'FA', 'EF', 'CU', 'RN', 'SC',

'GI', 'DA', 'YO', 'CR', 'CL', 'DU', 'GA', 'QU', 'UE', 'FF', 'BA',

'EY', 'LS', 'VA', 'UM', 'PP', 'UA', 'UP', 'LU', 'GO', 'HT', 'RU',

'UG', 'DS', 'LT', 'PI', 'RC', 'RR', 'EG', 'AU', 'CK', 'EW', 'MU',

'BR', 'BI', 'PT', 'AK', 'PU', 'UI', 'RG', 'IB', 'TL', 'NY', 'KI',

'RK', 'YS', 'OB', 'MM', 'FU', 'PH', 'OG', 'MS', 'YE', 'UD', 'MB',

'IP', 'UB', 'OI', 'RL', 'GU', 'DR', 'HR', 'CC', 'TW', 'FT', 'WN',

'NU', 'AF', 'HU', 'NN', 'EO', 'VO', 'RV', 'NF', 'XP', 'GN', 'SM',

'FL', 'IZ', 'OK', 'NL', 'MY', 'GL', 'AW', 'JU', 'OA', 'EQ', 'SY',

'SL', 'PS', 'JO', 'LF', 'NV', 'JE', 'NK', 'KN', 'GS', 'DY', 'HY',

'ZE', 'KS', 'XT', 'BS', 'IK', 'DD', 'CY', 'RP', 'SK', 'XI', 'OE',

'OY', 'WS', 'LV', 'DL', 'RF', 'EU', 'DG', 'WR', 'XA', 'YI', 'NM',

'EB', 'RB', 'TM', 'XC', 'EH', 'TC', 'GY', 'JA', 'HN', 'YP', 'ZA',

'GG', 'YM', 'SW', 'BJ', 'LM', 'CS', 'II', 'IX', 'XE', 'OH', 'LK',

'DV', 'LP', 'AX', 'OX', 'UF', 'DM', 'IU', 'SF', 'BT', 'KA', 'YT',

'EK', 'PM', 'YA', 'GT', 'WL', 'RH', 'YL', 'HS', 'AH', 'YC', 'YN',

'RW', 'HM', 'LW', 'HL', 'AE', 'ZI', 'AZ', 'LC', 'PY', 'AJ', 'IQ',

'NJ', 'BB', 'NH', 'UO', 'KL', 'LR', 'TN', 'GM', 'SN', 'NR', 'FY',

'MN', 'DW', 'SB', 'YR', 'DN', 'SQ', 'ZO', 'OJ', 'YD', 'LB', 'WT',

'LG', 'KO', 'NP', 'SR', 'NQ', 'KY', 'LN', 'NW', 'TF', 'FS', 'CQ',

'DH', 'SD', 'VY', 'DJ', 'HW', 'XU', 'AO', 'ML', 'UK', 'UY', 'EJ',

'EZ', 'HB', 'NZ', 'NB', 'MC', 'YB', 'TP', 'XH', 'UX', 'TZ', 'BV',

'MF', 'WD', 'OZ', 'YW', 'KH', 'GD', 'BM', 'MR', 'KU', 'UV', 'DT',

'HD', 'AA', 'XX', 'DF', 'DB', 'JI', 'KR', 'XO', 'CM', 'ZZ', 'NX',

'YG', 'XY', 'KG', 'TB', 'DC', 'BD', 'SG', 'WY', 'ZY', 'AQ', 'HF',

'CD', 'VU', 'KW', 'ZU', 'BN', 'IH', 'TG', 'XV', 'UZ', 'BC', 'XF',

'YZ', 'KM', 'DP', 'LH', 'WF', 'KF', 'PF', 'CF', 'MT', 'YU', 'CP',

'PB', 'TD', 'ZL', 'SV', 'HC', 'MG', 'PW', 'GF', 'PD', 'PN', 'PC',

'RX', 'TV', 'IJ', 'WM', 'UH', 'WK', 'WB', 'BH', 'OQ', 'KT', 'RQ',

'KB', 'CG', 'VR', 'CN', 'PK', 'UU', 'YF', 'WP', 'CZ', 'KP', 'DQ',

'WU', 'FM', 'WC', 'MD', 'KD', 'ZH', 'GW', 'RZ', 'CB', 'IW', 'XL',

'HP', 'MW', 'VS', 'FC', 'RJ', 'BP', 'MH', 'HH', 'YH', 'UJ', 'FG',

'FD', 'GB', 'PG', 'TK', 'KK', 'HQ', 'FN', 'LZ', 'VL', 'GP', 'HZ',

'DK', 'YK', 'QI', 'LX', 'VD', 'ZS', 'BW', 'XQ', 'MV', 'UW', 'HG',

'FB', 'SJ', 'WW', 'GK', 'UQ', 'BG', 'SZ', 'JR', 'QL', 'ZT', 'HK',

'VC', 'XM', 'GC', 'FW', 'PZ', 'KC', 'HV', 'XW', 'ZW', 'FP', 'IY',

'PV', 'VT', 'JP', 'CV', 'ZB', 'VP', 'ZR', 'FH', 'YV', 'ZG', 'ZM',

'ZV', 'QS', 'KV', 'VN', 'ZN', 'QA', 'YX', 'JN', 'BF', 'MK', 'CW',

'JM', 'LQ', 'JH', 'KJ', 'JC', 'GZ', 'JS', 'TX', 'FK', 'JL', 'VM',

'LJ', 'TJ', 'JJ', 'CJ', 'VG', 'MJ', 'JT', 'PJ', 'WG', 'VH', 'BK',

'VV', 'JD', 'TQ', 'VB', 'JF', 'DZ', 'XB', 'JB', 'ZC', 'FJ', 'YY',

'QN', 'XS', 'QR', 'JK', 'JV', 'QQ', 'XN', 'VF', 'PX', 'ZD', 'QT',

'ZP', 'QO', 'DX', 'HJ', 'GV', 'JW', 'QC', 'JY', 'GJ', 'QB', 'PQ',

'JG', 'BZ', 'MX', 'QM', 'MZ', 'QF', 'WJ', 'ZQ', 'XR', 'ZK', 'CX',

'FX', 'FV', 'BX', 'VW', 'VJ', 'MQ', 'QV', 'ZF', 'QE', 'YJ', 'GX',

'KX', 'XG', 'QD', 'XJ', 'SX', 'VZ', 'VX', 'WV', 'YQ', 'BQ', 'GQ',

'VK', 'ZJ', 'XK', 'QP', 'HX', 'FZ', 'QH', 'QJ', 'JZ', 'VQ', 'KQ',

'XD', 'QW', 'JX', 'QX', 'KZ', 'WX', 'FQ', 'XZ', 'ZX'], dtype='<U32')

bigram_frequencies = np.array([3.55620339e-02, 3.07474124e-02, 2.43274529e-02, 2.04826481e-02,

1.98515108e-02, 1.85432319e-02, 1.75804642e-02, 1.48673230e-02,

1.45424846e-02, 1.35228145e-02, 1.34257882e-02, 1.33939375e-02,

1.27653906e-02, 1.20486963e-02, 1.17497528e-02, 1.16812337e-02,

1.12842988e-02, 1.12327374e-02, 1.08744953e-02, 1.07489847e-02,

1.05347566e-02, 1.04126653e-02, 1.04125115e-02, 9.53014842e-03,

9.32114579e-03, 9.25763559e-03, 8.71095073e-03, 8.70002319e-03,

8.34931851e-03, 8.29254235e-03, 8.25280566e-03, 7.93859725e-03,

7.93006486e-03, 7.64818391e-03, 7.63241814e-03, 7.27618866e-03,

7.26724441e-03, 6.98707488e-03, 6.91722265e-03, 6.88165290e-03,

6.85633031e-03, 6.51417363e-03, 6.24352184e-03, 5.97765978e-03,

5.76571076e-03, 5.76283716e-03, 5.65269345e-03, 5.50057242e-03,

5.46256885e-03, 5.42747781e-03, 5.38164098e-03, 5.30301559e-03,

5.29886071e-03, 5.27529444e-03, 5.08937452e-03, 4.92966405e-03,

4.87753568e-03, 4.84902069e-03, 4.77989185e-03, 4.77282719e-03,

4.74470916e-03, 4.64574958e-03, 4.60971757e-03, 4.54257059e-03,

4.47772200e-03, 4.42103298e-03, 4.31534618e-03, 4.25820178e-03,

4.25013516e-03, 4.15745843e-03, 4.12608242e-03, 4.05151268e-03,

4.05075209e-03, 3.97732158e-03, 3.96527277e-03, 3.94413046e-03,

3.86884200e-03, 3.85337077e-03, 3.85189513e-03, 3.84646388e-03,

3.78793431e-03, 3.77605408e-03, 3.74420703e-03, 3.73663638e-03,

3.67956418e-03, 3.65492648e-03, 3.61676413e-03, 3.61373182e-03,

3.60899233e-03, 3.47234973e-03, 3.45829494e-03, 3.39212478e-03,

3.37488213e-03, 3.36877623e-03, 3.30478042e-03, 3.23572471e-03,

3.17759946e-03, 3.17691369e-03, 3.16447752e-03, 3.15240004e-03,

3.15172398e-03, 3.11176534e-03, 2.95503911e-03, 2.89966768e-03,

2.87848219e-03, 2.86282435e-03, 2.84865969e-03, 2.84585627e-03,

2.81484803e-03, 2.69544349e-03, 2.62987083e-03, 2.54961380e-03,

2.54906719e-03, 2.54783715e-03, 2.52606379e-03, 2.47740122e-03,

2.39175226e-03, 2.36573195e-03, 2.33400171e-03, 2.29786417e-03,

2.27503360e-03, 2.27277101e-03, 2.23911052e-03, 2.21754315e-03,

2.18017446e-03, 2.17360835e-03, 2.14044590e-03, 2.13767970e-03,

2.13188615e-03, 2.10259217e-03, 2.04932647e-03, 2.04724906e-03,

2.03256516e-03, 2.02845908e-03, 1.96777866e-03, 1.95449429e-03,

1.95410531e-03, 1.91254221e-03, 1.89316385e-03, 1.88234971e-03,

1.87652262e-03, 1.84944194e-03, 1.83351654e-03, 1.78086545e-03,

1.76468430e-03, 1.75132925e-03, 1.71573739e-03, 1.70683303e-03,

1.66405086e-03, 1.63999785e-03, 1.62732115e-03, 1.62613977e-03,

1.60361051e-03, 1.54749379e-03, 1.51636562e-03, 1.51067364e-03,

1.49901610e-03, 1.49455831e-03, 1.49011351e-03, 1.48460771e-03,

1.48077067e-03, 1.47541326e-03, 1.47480347e-03, 1.46316579e-03,

1.46204465e-03, 1.43745726e-03, 1.41513491e-03, 1.39980075e-03,

1.38382616e-03, 1.36545598e-03, 1.36333253e-03, 1.36012483e-03,

1.35189358e-03, 1.32127808e-03, 1.30185876e-03, 1.28328757e-03,

1.27907576e-03, 1.26260675e-03, 1.23637099e-03, 1.23094105e-03,

1.21386641e-03, 1.20743055e-03, 1.19536134e-03, 1.19032774e-03,

1.17626124e-03, 1.16805780e-03, 1.14618533e-03, 1.11559852e-03,

1.06597119e-03, 1.05782134e-03, 1.04699320e-03, 1.04540205e-03,

1.01153313e-03, 9.97734501e-04, 9.86028683e-04, 9.84491816e-04,

9.79174450e-04, 9.78784303e-04, 9.70343472e-04, 9.68322624e-04,

9.66708177e-04, 9.60690121e-04, 9.59749105e-04, 9.43900197e-04,

9.40242103e-04, 9.28331656e-04, 9.26685761e-04, 9.14014864e-04,

9.02555222e-04, 8.92112065e-04, 8.85803335e-04, 8.77507468e-04,

8.62646840e-04, 8.57695087e-04, 8.54499050e-04, 8.43925356e-04,

8.31382851e-04, 8.23722323e-04, 8.16643644e-04, 7.89875969e-04,

7.86444549e-04, 7.42072946e-04, 7.36927617e-04, 7.27646949e-04,

7.25004577e-04, 7.11071849e-04, 6.92833068e-04, 6.71807283e-04,

6.68638321e-04, 6.56391013e-04, 6.51990243e-04, 6.49048818e-04,

6.43397537e-04, 6.43118050e-04, 6.37839069e-04, 6.21864133e-04,

6.06367626e-04, 5.99162639e-04, 5.87024289e-04, 5.74860663e-04,

5.72519573e-04, 5.68447140e-04, 5.58806800e-04, 5.45711864e-04,

5.37896691e-04, 5.34768852e-04, 5.20071483e-04, 5.18874875e-04,

5.16054649e-04, 5.14388309e-04, 5.11931727e-04, 5.04227393e-04,

5.00890900e-04, 4.97325634e-04, 4.75088970e-04, 4.66605249e-04,

4.58324041e-04, 4.29127437e-04, 4.27514542e-04, 4.17186146e-04,

4.16199437e-04, 3.94646924e-04, 3.94183167e-04, 3.86306652e-04,

3.61812839e-04, 3.50841120e-04, 3.49059129e-04, 3.23402665e-04,

3.22604151e-04, 3.11527347e-04, 3.10032877e-04, 3.07611603e-04,

2.96010489e-04, 2.88197255e-04, 2.77494857e-04, 2.70735751e-04,

2.67122244e-04, 2.64790886e-04, 2.64597695e-04, 2.63237166e-04,

2.61362824e-04, 2.59399816e-04, 2.58614910e-04, 2.57579773e-04,

2.49143242e-04, 2.49036616e-04, 2.47547306e-04, 2.36748821e-04,

2.35282013e-04, 2.32245156e-04, 2.30209194e-04, 2.28229670e-04,

2.27822992e-04, 2.20319919e-04, 2.17945603e-04, 2.13543715e-04,

1.97145202e-04, 1.90526970e-04, 1.90304866e-04, 1.88393786e-04,

1.85754127e-04, 1.85322815e-04, 1.81767370e-04, 1.74089940e-04,

1.71644610e-04, 1.71039222e-04, 1.69557657e-04, 1.66839046e-04,

1.64718022e-04, 1.59561636e-04, 1.57658164e-04, 1.54026397e-04,

1.52211752e-04, 1.51115808e-04, 1.47564559e-04, 1.46841709e-04,

1.36432949e-04, 1.35005671e-04, 1.32141796e-04, 1.27573620e-04,

1.27432415e-04, 1.26388914e-04, 1.25919175e-04, 1.23965197e-04,

1.21174483e-04, 1.18691292e-04, 1.18219114e-04, 1.17637524e-04,

1.17526303e-04, 1.13037594e-04, 1.10863960e-04, 1.09331046e-04,

1.08837112e-04, 1.06567401e-04, 1.05698197e-04, 1.00512685e-04,

1.00106518e-04, 9.85814937e-05, 9.17495595e-05, 9.15174736e-05,

9.09807382e-05, 8.79007001e-05, 8.16240791e-05, 7.91627682e-05,

7.79158645e-05, 7.56940333e-05, 7.44394656e-05, 7.18101849e-05,

6.97589276e-05, 6.81802488e-05, 6.69029567e-05, 6.54143249e-05,

6.08786925e-05, 6.07607969e-05, 6.03570614e-05, 5.98994801e-05,

5.95001291e-05, 5.94970869e-05, 5.86983574e-05, 5.79700512e-05,

5.66119466e-05, 5.50952209e-05, 5.47453912e-05, 5.43839597e-05,

5.25861529e-05, 4.89722417e-05, 4.78187439e-05, 4.77415865e-05,

4.77107257e-05, 4.62616737e-05, 4.60653783e-05, 4.60409299e-05,

4.56730211e-05, 4.54645078e-05, 4.52324283e-05, 4.38982745e-05,

4.36906610e-05, 4.33593810e-05, 4.31226640e-05, 4.29912118e-05,

4.29446346e-05, 4.17137339e-05, 3.93478837e-05, 3.84895449e-05,

3.84390172e-05, 3.81834469e-05, 3.53827628e-05, 3.47222349e-05,

3.37168917e-05, 3.18518637e-05, 3.15951703e-05, 3.12905207e-05,

3.10605585e-05, 3.02567524e-05, 2.91709879e-05, 2.89567711e-05,

2.85652293e-05, 2.82994071e-05, 2.80417376e-05, 2.77861205e-05,

2.77303518e-05, 2.76273746e-05, 2.72172235e-05, 2.69880432e-05,

2.66503046e-05, 2.66033916e-05, 2.62086568e-05, 2.59259584e-05,

2.57640153e-05, 2.56299050e-05, 2.54449453e-05, 2.51909823e-05,

2.47409597e-05, 2.46797892e-05, 2.42472084e-05, 2.35748710e-05,

2.24438116e-05, 2.24317329e-05, 2.23097275e-05, 2.21249597e-05,

2.17815183e-05, 2.15248592e-05, 2.09465192e-05, 2.09125513e-05,

1.96913177e-05, 1.95330853e-05, 1.91064697e-05, 1.88952009e-05,

1.85746459e-05, 1.81220081e-05, 1.78919334e-05, 1.73267658e-05,

1.61874055e-05, 1.60765855e-05, 1.58740992e-05, 1.45486411e-05,

1.40812264e-05, 1.36678429e-05, 1.32768479e-05, 1.31460479e-05,

1.30872012e-05, 1.29588223e-05, 1.25748548e-05, 1.24146066e-05,

1.22821602e-05, 1.22486357e-05, 1.20714645e-05, 1.20448925e-05,

1.19866728e-05, 1.18936663e-05, 1.17590888e-05, 1.17001978e-05,

1.16346360e-05, 1.11092945e-05, 1.08992577e-05, 1.06740258e-05,

1.06735218e-05, 1.06144296e-05, 1.05679067e-05, 1.03656570e-05,

1.03317955e-05, 9.98437559e-06, 9.01036943e-06, 8.85768061e-06,

8.76035160e-06, 8.60019167e-06, 8.19227801e-06, 7.80479658e-06,

7.53516931e-06, 7.44150882e-06, 7.30644125e-06, 7.26777599e-06,

7.06747616e-06, 6.95177332e-06, 6.85925126e-06, 6.74132156e-06,

6.71322068e-06, 6.70106994e-06, 6.66133186e-06, 6.47626505e-06,

6.38130476e-06, 6.29576510e-06, 6.24612583e-06, 5.93271496e-06,

5.92132104e-06, 5.83947722e-06, 5.76779879e-06, 5.76465728e-06,

5.53187023e-06, 5.47131015e-06, 5.33180695e-06, 5.22417954e-06,

5.20732008e-06, 5.15949060e-06, 5.11569104e-06, 4.95336950e-06,

4.94557425e-06, 4.73636484e-06, 4.63955858e-06, 4.53340156e-06,

4.22935422e-06, 4.19307790e-06, 4.17347414e-06, 4.12142146e-06,

4.11855764e-06, 3.80541311e-06, 3.36707879e-06, 3.29563656e-06,

3.17577578e-06, 3.05442971e-06, 2.98983688e-06, 2.97762691e-06,

2.95066092e-06, 2.91720550e-06, 2.89840858e-06, 2.77497857e-06,

2.76265227e-06, 2.74176112e-06, 2.70310579e-06, 2.61648976e-06,

2.60275585e-06, 2.56616744e-06, 2.55465117e-06, 2.49712549e-06,

2.42815484e-06, 2.37933375e-06, 2.35040476e-06, 2.33914845e-06,

2.33036549e-06, 2.32978989e-06, 2.28930419e-06, 2.28804340e-06,

2.26346210e-06, 2.24353844e-06, 2.23182640e-06, 2.23165865e-06,

2.22696341e-06, 2.22115030e-06, 2.21572164e-06, 2.20668084e-06,

2.19243658e-06, 2.17382266e-06, 2.08159887e-06, 2.07762818e-06,

1.95415065e-06, 1.88693410e-06, 1.83219245e-06, 1.81431726e-06,

1.67631850e-06, 1.67169206e-06, 1.63803449e-06, 1.57770706e-06,

1.56577585e-06, 1.53130790e-06, 1.52519015e-06, 1.52439998e-06,

1.49350905e-06, 1.47212210e-06, 1.45715861e-06, 1.40331777e-06,

1.38641504e-06, 1.29786439e-06, 1.27069447e-06, 1.25613209e-06,

1.23105569e-06, 1.22268909e-06, 1.21688094e-06, 1.18065108e-06,

1.18060143e-06, 1.16794389e-06, 1.13216621e-06, 1.12716419e-06,

1.12418866e-06, 1.12412659e-06, 1.05684621e-06, 1.05049722e-06,

1.04986594e-06, 1.03676402e-06, 1.03482230e-06, 9.96847192e-07,

9.75926251e-07, 9.54397081e-07, 9.36101632e-07, 9.30100914e-07,

9.27467975e-07, 8.92801774e-07, 8.85217179e-07, 8.58891337e-07,

7.80484800e-07, 7.67724409e-07, 7.54031637e-07, 7.45052550e-07,

7.32511689e-07, 7.06828122e-07, 6.59585949e-07, 6.40055245e-07,

6.18628925e-07, 6.17142222e-07, 6.09904832e-07, 6.07242457e-07,

5.72270900e-07, 5.49823535e-07, 5.22568859e-07, 5.01838721e-07,

4.91372576e-07, 4.82981856e-07, 4.69688423e-07, 4.59727658e-07,

4.54795508e-07, 4.22875379e-07, 4.13494116e-07, 3.99834682e-07,

3.97288987e-07, 3.87644926e-07, 3.84245584e-07, 3.81268632e-07,

3.67029696e-07, 3.57267536e-07, 3.52642869e-07, 3.51058992e-07,

3.44112772e-07, 3.36167495e-07, 3.24215712e-07, 3.23810344e-07,

3.21814716e-07, 3.21505459e-07, 3.10936465e-07, 2.88018831e-07,

2.86309762e-07, 2.76140106e-07, 2.63218703e-07, 2.56899508e-07,

2.51244222e-07, 2.25386521e-07, 2.15766576e-07, 2.03018243e-07,

1.99078411e-07, 1.97551987e-07, 1.96981706e-07, 1.92415912e-07,

1.84391194e-07, 1.81253585e-07, 1.78663913e-07, 1.77747846e-07,

1.59541769e-07, 1.38003378e-07, 1.36499298e-07, 1.22889160e-07,

1.22576357e-07, 1.19711121e-07, 1.09597855e-07, 9.97477409e-08,

9.65292710e-08, 9.36271510e-08, 9.35785637e-08, 9.34540807e-08,

8.40270671e-08, 7.82629028e-08, 7.54898762e-08, 6.64058115e-08,

5.96748649e-08, 5.79118882e-08, 5.73650143e-08, 5.65688198e-08,

5.34673852e-08, 5.34237630e-08, 5.29956976e-08, 4.84174907e-08,

3.83818937e-08])

# + [markdown] colab_type="text" id="46wIL5xzDzRS"

# ## Speed matrix <a name="speed">

# ### 24x24 relative Speed matrix between key pair (averaged for left/right symmetry)

#

# - does not take into account order of key pairs (see Flow24x24 matrix)

# - the original version was constructed with data from right-handed people

# - 24 keys that don't require extending index or little fingers ("home block keys")

#

# ### Home block keys

#

# Left: Right:

# 1 2 3 4 13 14 15 16

# 5 6 7 8 17 18 19 20

# 9 10 11 12 21 22 23 24

#

# Interkey stroke times in milliseconds from Table 3 of <br>

# "Estimation of digraph costs for keyboard layout optimization", <br>

# A Iseri, Ma Eksioglu, International Journal of Industrial Ergonomics, 48, 127-138, 2015. <br>

# Key numbering in article and in spreadsheet:

#

# Left: Right:

# 1 4 7 10 13 16 19 22 25 28 31

# 2 5 8 11 14 17 20 23 26 29 32

# 3 6 9 12 15 18 21 24 27 30

#

# ### Load table of interkey speeds

# + colab={} colab_type="code" id="095yG4iPDzRT"

# # %load data/Time24x24.py

# code/load_original_interkey_speeds.py

# Left: Right:

# 1 2 3 4 25 28 13 14 15 16 31

# 5 6 7 8 26 29 17 18 19 20 32

# 9 10 11 12 27 30 21 22 23 24

Time24x24 = np.array([

[196,225,204,164,266,258,231,166,357,325,263,186,169,176,178,186,156,156,158,163,171,175,177,189],

[225,181,182,147,239,245,196,150,289,296,229,167,162,169,170,178,148,148,150,155,163,167,169,182],

[204,182,170,149,196,194,232,155,237,214,263,166,157,164,165,173,143,143,145,150,158,163,164,177],

[164,147,149,169,160,161,157,226,165,185,234,257,154,162,163,171,141,141,143,148,156,160,162,175],

[266,239,196,160,196,240,208,166,271,267,208,169,143,150,151,160,129,129,132,137,145,149,151,163],

[258,245,194,161,240,181,183,149,245,256,184,150,138,145,146,154,124,124,126,131,139,144,145,158],

[231,196,232,157,208,183,170,149,201,215,239,151,134,141,142,150,120,120,122,127,135,140,141,154],

[166,150,155,226,166,149,149,169,160,147,170,221,133,140,141,150,119,119,122,126,135,139,141,153],

[357,289,237,165,271,245,201,160,196,236,194,161,171,178,179,188,157,157,160,164,173,177,179,191],

[325,296,214,185,267,256,215,147,236,181,184,157,166,173,174,182,152,152,154,159,167,172,173,186],

[263,229,263,234,208,184,239,170,194,184,170,150,159,166,167,176,145,145,148,153,161,165,167,179],

[186,167,166,257,169,150,151,221,161,157,150,169,153,160,161,169,139,139,141,146,154,159,160,173],

[169,162,157,154,143,138,134,133,171,166,159,153,151,147,141,145,188,151,142,164,213,204,162,149],

[176,169,164,162,150,145,141,140,178,173,166,160,147,151,189,209,137,207,191,206,149,227,208,197],

[178,170,165,163,151,146,142,141,179,174,167,161,141,189,157,253,136,188,210,231,155,226,239,276],

[186,178,173,171,160,154,150,150,188,182,176,169,145,209,253,170,147,206,251,233,164,268,362,271],

[156,148,143,141,129,124,120,119,157,152,145,139,188,137,136,147,151,133,138,152,192,149,139,144],

[156,148,143,141,129,124,120,119,157,152,145,139,151,207,188,206,133,151,179,183,145,204,183,201],

[158,150,145,143,132,126,122,122,160,154,148,141,142,191,210,251,138,179,157,240,145,185,208,229],

[163,155,150,148,137,131,127,126,164,159,153,146,164,206,231,233,152,183,240,170,160,220,293,242],

[171,163,158,156,145,139,135,135,173,167,161,154,213,149,155,164,192,145,145,160,151,140,142,145],

[175,167,163,160,149,144,140,139,177,172,165,159,204,227,226,268,149,204,185,220,140,151,175,188],

[177,169,164,162,151,145,141,141,179,173,167,160,162,208,239,362,139,183,208,293,142,175,157,230],

[189,182,177,175,163,158,154,153,191,186,179,173,149,197,276,271,144,201,229,242,145,188,230,170]])

# +

# # %load code/load_interkey_speeds24x24.py

# Left/right symmetric version of the Time24x24 matrix

# (The original version was constructed with data from right-handed people.)

# <NAME>, <NAME> / International Journal of Industrial Ergonomics 48 (2015) 127e138

# Left: Right:

# 1 2 3 4 13 14 15 16

# 5 6 7 8 17 18 19 20

# 9 10 11 12 21 22 23 24

I = [ 1, 2, 3, 4, 5, 6, 7, 8, 9,10,11,12, 16,15,14,13, 20,19,18,17, 24,23,22,21]

J = [16,15,14,13, 20,19,18,17, 24,23,22,21, 1, 2, 3, 4, 5, 6, 7, 8, 9,10,11,12]

TimeSymmetric24x24 = np.ones((24,24))

for i1, I1 in enumerate(I):

for i2, I2 in enumerate(I):

J1 = J[i1] - 1

J2 = J[i2] - 1

avgvalue = (Time24x24[I1-1,I2-1] + Time24x24[J1,J2]) / 2

#print(Time24x24[I1-1,I2-1], Time24x24[J1,J2], avgvalue)

TimeSymmetric24x24[I1-1,I2-1] = avgvalue

TimeSymmetric24x24[J1,J2] = avgvalue

# Normalize matrix with min-max scaling to a range with maximum = 1:

newMin = np.min(Time24x24) / np.max(Time24x24)

newMax = 1.0

Time24x24 = newMin + (Time24x24 - np.min(Time24x24)) * (newMax - newMin) / (np.max(Time24x24) - np.min(Time24x24))

# Convert relative interkey stroke times to relative speeds by subtracting from 1:

Speed24x24 = 1 - Time24x24 + np.min(Time24x24)

# Normalize matrix with min-max scaling to a range with maximum = 1:

newMin = np.min(TimeSymmetric24x24) / np.max(TimeSymmetric24x24)

newMax = 1.0

TimeSymmetric24x24 = newMin + (TimeSymmetric24x24 - np.min(TimeSymmetric24x24)) * (newMax - newMin) / (np.max(TimeSymmetric24x24) - np.min(TimeSymmetric24x24))

# Convert relative interkey stroke times to relative speeds by subtracting from 1:

SpeedSymmetric24x24 = 1 - TimeSymmetric24x24 + np.min(TimeSymmetric24x24)

# Print:

#print_matrix_info(matrix_data=Speed24x24, matrix_label="Speed24x24", nkeys=24, nlines=50)

#heatmap(data=Speed24x24, title="Speed24x24", xlabel="Key 1", ylabel="Key 2", print_output=print_output)

# + [markdown] colab_type="text" id="tFfuA8zMDzRg"

# ## Strength matrix <a name="strength">

#

# ### Relative finger position STRENGTH matrix

#

# Finger strengths are based on peak keyboard reaction forces (in newtons) from Table 4 of <br>

# "Keyboard Reaction Force and Finger Flexor Electromyograms during Computer Keyboard Work" <br>

# BJ Martin, TJ Armstrong, <NAME>, S Natarajan, Human Factors,1996,38(4),654-664:

#

# middle 2.36

# index 2.26

# ring 2.02

# little 1.84

#

# index/middle: 0.9576271186440678

# ring/middle: 0.8559322033898306

# little/middle: 0.7796610169491526

#

# For reference, Table 1 of "Ergonomic keyboard layout designed for the Filipino language", 2016 (doi: 10.1007/978-3-319-41694-6_41) presents "average finger strength of Filipinos [n=30, ages 16-36] measured in pounds":

#

# L R

# little 3.77 4.27

# ring 4.54 5.08

# middle 5.65 6.37

# index 6.09 6.57

#

# 6.57/4.27 = 1.54

# 6.09/3.77 = 1.62

# 6.37/5.08 = 1.25

# 5.65/4.54 = 1.24

#

# We won't use these results as I don't feel they represent relative strength relevant for typing: "Respondents were asked to sit in upright position, with their wrists resting on a flat surface. A pinch gauge was placed within each finger's reach. The respondents were asked to exert maximum pressure on the device."

#

# The following does not take into account order of key pairs (see Flow matrix).

#

# +

# # %load code/load_strength_data.py

# Normalize by the highest peak force (middle finger):

middle_force = 2.36

index_force = 2.26

ring_force = 2.02

little_force = 1.84

middle_norm = 1.0

index_norm = index_force / middle_force

ring_norm = ring_force / middle_force

little_norm = little_force / middle_force

print('index/middle: {0}'.format(index_norm))

print('ring/middle: {0}'.format(ring_norm))

print('little/middle: {0}'.format(little_norm))

# Relative left/right hand strength (assume equal):

lf = 1.0

rf = 1.0

strengths24 = np.array((

lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm))

# Create a finger-pair position strength matrix by adding pairs of strength values:

Strength24x24 = np.zeros((24, 24))

for i in range(24):

Strength24x24[i,:] = strengths24

Strength24x24 = (Strength24x24 + Strength24x24.transpose())

# Normalize matrix with min-max scaling to a range with maximum = 1:

#newMin = strength_factor

newMin = min_strength_factor # np.min(Strength24x24) / np.max(Strength24x24)

newMax = 1.0

Strength24x24 = newMin + (Strength24x24 - np.min(Strength24x24)) * (newMax - newMin) / (np.max(Strength24x24) - np.min(Strength24x24))

# Print:

print_matrix_info(matrix_data=Strength24x24, matrix_label="Strength24x24", nkeys=24, nlines=10)

heatmap(data=Strength24x24, title="Strength24x24", xlabel="Key 1", ylabel="Key 2", print_output=print_output)

# Save:

if print_output:

file = open("Strength24x24.txt", "w+")

file.write(str(Strength24x24))

file.close()

penalty = 1.0 # Penalty for lateral (index, little) finger placement (1 = no penalty)

strengths32 = np.array((lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

lf * little_norm, lf * ring_norm, lf * middle_norm, lf * index_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm,

rf * index_norm, rf * middle_norm, rf * ring_norm, rf * little_norm,

lf * index_norm * penalty, lf * index_norm * penalty, lf * index_norm * penalty,

rf * index_norm * penalty, rf * index_norm * penalty, rf * index_norm * penalty,

rf * little_norm * penalty, rf * little_norm * penalty))

# Create a finger-pair position strength matrix by adding pairs of strength values:

Strength32x32 = np.zeros((32, 32))

for i in range(32):

Strength32x32[i,:] = strengths32

Strength32x32 = (Strength32x32 + Strength32x32.transpose())

# Normalize matrix with min-max scaling to a range with maximum = 1:

newMin = np.min(Strength32x32) / np.max(Strength32x32)

newMax = 1.0

Strength32x32 = newMin + (Strength32x32 - np.min(Strength32x32)) * (newMax - newMin) / (np.max(Strength32x32) - np.min(Strength32x32))

# Print: