code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + _cell_guid="b1076dfc-b9ad-4769-8c92-a6c4dae69d19" _uuid="8f2839f25d086af736a60e9eeb907d3b93b6e0e5" papermill={"duration": 10.557037, "end_time": "2021-12-27T05:54:47.880926", "exception": false, "start_time": "2021-12-27T05:54:37.323889", "status": "completed"} tags=[]

# !pip install yfinance

# + papermill={"duration": 7.487879, "end_time": "2021-12-27T05:54:55.381980", "exception": false, "start_time": "2021-12-27T05:54:47.894101", "status": "completed"} tags=[]

# !pip install pycausalimpact

# + papermill={"duration": 0.029912, "end_time": "2021-12-27T05:54:55.425788", "exception": false, "start_time": "2021-12-27T05:54:55.395876", "status": "completed"} tags=[]

import yfinance as yf

import pandas as pd

# + papermill={"duration": 0.020321, "end_time": "2021-12-27T05:54:55.459898", "exception": false, "start_time": "2021-12-27T05:54:55.439577", "status": "completed"} tags=[]

training_start = "2018-01-02"

training_end = "2018-09-05"

treatment_start = "2018-09-06"

treatment_end = "2018-09-07"

#we need to check what happened during these dates hence they are called treatment dates

end_stock = "2018-09-08"

# + papermill={"duration": 1.01443, "end_time": "2021-12-27T05:54:56.488015", "exception": false, "start_time": "2021-12-27T05:54:55.473585", "status": "completed"} tags=[]

stocks = ["TSLA","VOLVF","GOOG","BMW.DE","GM","DAI.DE"]

dataset = yf.download(stocks, start = training_start, end = end_stock,interval ="1d")

# + papermill={"duration": 0.057926, "end_time": "2021-12-27T05:54:56.561854", "exception": false, "start_time": "2021-12-27T05:54:56.503928", "status": "completed"} tags=[]

dataset.head()

# + papermill={"duration": 0.034494, "end_time": "2021-12-27T05:54:56.612525", "exception": false, "start_time": "2021-12-27T05:54:56.578031", "status": "completed"} tags=[]

dataset = dataset.iloc[:,:6]

dataset.columns = dataset.columns.droplevel()

dataset = dataset.dropna()

dataset.head()

# + papermill={"duration": 0.033583, "end_time": "2021-12-27T05:54:56.662755", "exception": false, "start_time": "2021-12-27T05:54:56.629172", "status": "completed"} tags=[]

dataset.info()

# + papermill={"duration": 1.393649, "end_time": "2021-12-27T05:54:58.073557", "exception": false, "start_time": "2021-12-27T05:54:56.679908", "status": "completed"} tags=[]

data_corr = dataset[dataset.index <= training_end]

import seaborn as sns

import matplotlib.pyplot as plt

#get correlations of each features in dataset

corrmat = data_corr.corr()

top_corr_features = corrmat.index

plt.figure(figsize=(10,10))

#plot heat map

g=sns.heatmap(data_corr[top_corr_features].corr(),annot=True,cmap="RdYlGn")

# + papermill={"duration": 0.026648, "end_time": "2021-12-27T05:54:58.118001", "exception": false, "start_time": "2021-12-27T05:54:58.091353", "status": "completed"} tags=[]

final_stocks = dataset[["TSLA","VOLVF","GOOG","GM","DAI.DE"]]

pre_period = [training_start,training_end]

post_period = [treatment_start, treatment_end]

# + papermill={"duration": 2.260026, "end_time": "2021-12-27T05:55:00.396013", "exception": false, "start_time": "2021-12-27T05:54:58.135987", "status": "completed"} tags=[]

from causalimpact import CausalImpact

causal_effect = CausalImpact(data=final_stocks, pre_period=pre_period,post_period=post_period)

# + papermill={"duration": 0.029179, "end_time": "2021-12-27T05:55:00.443878", "exception": false, "start_time": "2021-12-27T05:55:00.414699", "status": "completed"} tags=[]

print(causal_effect.summary())

# + [markdown] papermill={"duration": 0.01818, "end_time": "2021-12-27T05:55:00.480525", "exception": false, "start_time": "2021-12-27T05:55:00.462345", "status": "completed"} tags=[]

# A p value of less than 0.05 shows that the results are statistically significant.

#

#

# + papermill={"duration": 0.018381, "end_time": "2021-12-27T05:55:00.517381", "exception": false, "start_time": "2021-12-27T05:55:00.499000", "status": "completed"} tags=[]

| tesla-stocks-causalimpact.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="OgYMbUm0VlP-"

# <p><img alt="Yahoo logo" height="60px" src="https://www.ulacit.ac.cr/wp-content/uploads/Logo-Ulacit-Blanco.png" align="left" hspace="10px" vspace="0px"></p>

# + [markdown] id="wO-2zsXoZAvc"

# # Operadores Booleanos

#

# La actual computación basa sus operaciones más fundamentales en la lógica Booleana, en donde también sale a relucir los conceptos relacionados de compuertas lógicas. En este documento podemos encontrar los operadores lógicos en Python (A través de `True` o `False`).

# + [markdown] id="FCIjk10eXXc5"

# MIT License

#

# Profesor <NAME>

# + [markdown] id="GhnQVTiNPytK"

# 67-0001

# criptografía

# + [markdown] id="YuYFIvMUINcl"

#

#

# ---

#

#

# + [markdown] id="uLBzhf1nIH9g"

# Consultamos con la funcion Type, cual es el tipado de los datos True y False.

# + id="gQchT0G1ZbTu" outputId="c778222f-7698-4bce-a5fb-ce6ccfe63ffa" colab={"base_uri": "https://localhost:8080/"}

type(True)

# + colab={"base_uri": "https://localhost:8080/"} id="nquxF2DaIFeE" outputId="a118f21b-d646-4bda-921b-ecbd2f13e3e1"

type(False)

# + [markdown] id="zDV9Nk6YrCDi"

# ## Operadores Booleanos

#

# ### Negación (`not`)

#

# La negación devuelve `False` cuando es aplicada sobre un `True` y viceversa. Es un operador unario. Es decir, sólo necesita un operando para devolver un resultado.

# + id="anz7MCkEG-GR" outputId="6410ba43-4799-435c-fcf1-4031f03e8b90" colab={"base_uri": "https://localhost:8080/"}

not False

# + id="gi1oL9ejHGKH" outputId="593c4d62-c648-4ee3-85a3-0dc1b8673faa" colab={"base_uri": "https://localhost:8080/"}

not True

# + [markdown] id="bU_1eDlScm2D"

# ### Suma lógica (`or`)

#

# La suma lógica devuelve `True` siempre que alguno de sus operandos sea `True`. O lo que es lo mismo, devuelve `False` cuando ambos operando son `False`

# + id="sMwW0Z1Fc8ol" outputId="b741262c-f605-401a-8193-e500e2b18861" colab={"base_uri": "https://localhost:8080/"}

True or False

# + id="dscFMYeUc_AB" outputId="6b960952-f4ea-431e-c034-4407d5fc3b41" colab={"base_uri": "https://localhost:8080/"}

True or True

# + id="VEk4Y2V4dBTo" outputId="b6cab143-171d-4bc2-f228-107f5397a56a" colab={"base_uri": "https://localhost:8080/"}

False or True

# + id="61GTdhsGdC69" outputId="7c54df71-3880-4866-b523-dfd5c9e18821" colab={"base_uri": "https://localhost:8080/"}

False or False

# + [markdown] id="N0Dcm0kidInP"

# ### Producto lógico (`and`)

#

# El producto lógico devuelve `True` sólo si ambos operando son `True`. O lo que es lo mismo, si alguno de sus operandos es `False` el producto lógico devuelve `False`

# + id="HYcoQiemdjiR" outputId="e98fa57e-8f2a-45ca-beb9-1d2255ece66f" colab={"base_uri": "https://localhost:8080/"}

True and True

# + id="CcyIVgFqdl1a" outputId="1fc29fb2-4202-4f09-ec7b-3dab3a4ede92" colab={"base_uri": "https://localhost:8080/"}

True and False

# + id="jif2AmCldqEM" outputId="4452d6d3-a1e7-440f-852b-90eb3f6e0837" colab={"base_uri": "https://localhost:8080/"}

False and True

# + [markdown] id="3QB27J7FJFyI"

# ### Producto lógico (`XOR`)

#

# Si una salida verdadera resulta si una, y solo una de las entradas a la puerta es verdadera. Si ambas entradas son falsas o ambas son verdaderas, resulta en una salida falsa.

# + colab={"base_uri": "https://localhost:8080/"} id="k-T7PB79I7cx" outputId="af2002f5-dda9-4115-97af-ba7d99b358fb"

a = bool(1)

b = bool(0)

print(a^b)

# + colab={"base_uri": "https://localhost:8080/"} id="9A6I00RPJKIs" outputId="1389853d-de11-4fde-f8a2-666beca70157"

a and b

# + colab={"base_uri": "https://localhost:8080/"} id="iK0kcNujJMfp" outputId="13dc7e61-8c4d-48b6-e927-d0399894ee66"

a or b

| operadores_booleanos.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

# %matplotlib inline

import matplotlib.pyplot as plt

import pandas as pd

import os, sys

import json

aPath='/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset'

os.chdir(aPath)

os.path.dirname(os.path.abspath('biz.json'))

# -

# # Sample data for a Yelp business

# {"business_id": "SQ0j7bgSTazkVQlF5AnqyQ", "full_address": "214 E Main St\nCarnegie\nCarnegie, PA 15106", "hours": {}, "open": true, "categories": ["Chinese", "Restaurants"], "city": "Carnegie", "review_count": 8, "name": "<NAME> Restaurant", "neighborhoods": ["Carnegie"], "longitude": -80.084861000000004, "state": "PA", "stars": 2.5, "latitude": 40.408343000000002, "attributes": {"Take-out": true, "Alcohol": "none", "Noise Level": "quiet", "Parking": {"garage": false, "street": false, "validated": false, "lot": false, "valet": false}, "Delivery": true, "Has TV": true, "Outdoor Seating": false, "Attire": "casual", "Waiter Service": false, "Good For Groups": false, "Price Range": 1}, "type": "business"}

#

# # Sample data for a Yelp user

#

# {"yelping_since": "2004-10", "votes": {"funny": 166, "useful": 278, "cool": 245}, "review_count": 108, "name": "Russel", "user_id": "18kPq7GPye-YQ3LyKyAZPw", "friends": .... "fans": 69, "average_stars": 4.1399999999999997, "type": "user", "compliments": {"profile": 8, "cute": 15, "funny": 11, "plain": 25, "writer": 9, "note": 20, "photos": 15, "hot": 48, "cool": 78, "more": 3}, "elite": [2005, 2006]}

#

# # Sample data for a Yelp review

#

# {"votes": {"funny": 0, "useful": 1, "cool": 0}, "user_id": "uK8tzraOp4M5u3uYrqIBXg", "review_id": "KAkcn7oQP1xX8KsZ-XmktA", "stars": 4, "date": "2013-10-20", "text": "This place was very good. I found out about Emil's when watching a show called \"25 Things I Love About Pittsburgh\" on WQED hosted by <NAME>. This place ain't a luxurious restaurant...it's a beer & a shot bar / lounge. But the people are friendly & the food is good. I had the fish sandwich which was great. It ain't in a great part of town, Rankin, but I've been in worse places!! Try this place.", "type": "review", "business_id": "mVHrayjG3uZ_RLHkLj-AMg"}

#

# ## brainstorming questions:

#

# 1. What attributes of users (mood, review counts, etc) tend to give high/lower ratings to a certain type of restaurants (cuisine, locations, money, service)?

#

# For example, how does

#

#

# 2. What attributes of reviews (words, tones, etc) correlate with the ratings of the restaurant?

#

def open_json(json_file):

with open(json_file) as f:

for lines in f:

#line = f.next()

d = json.loads(lines)

df = pd.DataFrame(columns=d.keys())

# df[d.keys()[0]]= d[d.keys()[0]]

return df

# +

# list of files

biz_file ="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/biz.json"

review_file ="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/review.json"

checkin_file ="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/checkin.json"

tip_file ="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/tip.json"

user_file ="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/user.json"

# -

text = "biz_file,review_file,checkin_file,tip_file,user_file".split(',')

for idx, i in enumerate([biz_file,review_file,checkin_file,tip_file,user_file]):

headers = open_json(i)

print text[idx]

print headers

print

#

print type("biz_file,review_file,checkin_file,tip_file,user_file".split(',')[0])

json_file="/Users/liuchang/Documents/STUDY/AM207/Final Project/Data/data subset/biz.json"

biz =pd.io.json.read_json(json_file,orient='Dataframe')

biz

import pandas as pd

import os

os.path.dirname(os.path.realpath("yelp_academic_dataset_business.json"))

os.chdir("")

# +

import json

def load_json_file(file_path):

"""

Builds a list of dictionaries from a JSON file

:type file_path: string

:param file_path: the path for the file that contains the businesses

data

:return: a list of dictionaries with the data from the files

"""

records = [json.loads(line) for line in open(file_path)]

return records

def tf_idf_tips(file_path):

records = load_json_file(file_path)

print type(records),type(records[4])

data = [record['stars'] for record in records if 'Restaurants' in record['categories']]

return data

tip_matrix = tf_idf_tips("yelp_academic_dataset_business.json")

# -

scores = tip_matrix

plt.hist(scores,bins=30)

plt.show()

# +

import numpy as np

import numpy.random as npr

import pylab

def bootstrap(data, num_samples, statistic, alpha):

"""Returns bootstrap estimate of 100.0*(1-alpha) CI for statistic."""

n = len(data)

idx = npr.randint(0, n, (num_samples, n))

samples = data[idx]

stat = np.sort(statistic(samples, 1))

return (stat[int((alpha/2.0)*num_samples)],

stat[int((1-alpha/2.0)*num_samples)])

if __name__ == '__main__':

# data of interest is bimodal and obviously not normal

#x = np.concatenate([npr.normal(3, 1, 100), npr.normal(6, 2, 200)])

x = scores

# find mean 95% CI and 100,000 bootstrap samples

low, high = bootstrap(x, 100000, np.mean, 0.05)

# make plots

pylab.figure(figsize=(15,10))

pylab.subplot(121)

pylab.hist(x, 50)

pylab.title('Historgram of data')

pylab.subplot(122)

pylab.plot([-0.03,0.03], [np.mean(x), np.mean(x)], 'r', linewidth=2)

pylab.scatter(0.1*(npr.random(len(x))-0.5), x)

pylab.plot([0.19,0.21], [low, low], 'r', linewidth=2)

pylab.plot([0.19,0.21], [high, high], 'r', linewidth=2)

pylab.plot([0.2,0.2], [low, high], 'r', linewidth=2)

pylab.xlim([-0.2, 0.3])

pylab.title('Bootstrap 95% CI for mean')

#pylab.savefig('examples/boostrap.png')

# -

len(x)

| .ipynb_checkpoints/parsing data files _ read json-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/VxctxrTL/daa_2021_1/blob/master/Tarea7.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + colab={"base_uri": "https://localhost:8080/"} id="v1U8w4HGzHB7" outputId="0ab664a1-50c8-43e8-daff-9fc82fecdbcf"

def sumalista(listaNumeros):

if len(listaNumeros) == 1:

return listaNumeros[0]

else:

return listaNumeros[0] + sumalista(listaNumeros[1:])

print(sumalista([6,24,104,9,15]))

# + colab={"base_uri": "https://localhost:8080/"} id="mApJLDP092PX" outputId="7375b352-2072-4996-f7be-0e6962861272"

def cuentaReg (x):

if x > 0:

print (x)

cuentaReg(x-1)

else:

print ("Fin de la cuenta regresiva")

cuentaReg(3)

# + id="4J-T0cx7aSi4"

class adtCola:

def __init__ (self):

self.items = []

def pop (self):

try:

return self.items.pop(0)

except:

raise ValueError ("La cola esta vacia")

def push (self, x):

self.items.append(x)

| Tarea7.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import sys

sys.path.insert(0, "segmentation_models.pytorch/")

from train import Trainer

import torch

import torch.nn as nn

try:

from efficientnet_pytorch import EfficientNet

except:

os.system(f"""pip install efficientnet-pytorch""")

from efficientnet_pytorch import EfficientNet

# ## Train

model = EfficientNet.from_pretrained('efficientnet-b3')

num_ftrs = model._fc.in_features

model._fc = nn.Linear(num_ftrs, 1)

# training

model_trainer = Trainer(model = model, optim = 'Ranger', lr = 1e-3, bs = 8, name = "b3-ranger")

model_trainer.fit(20)

| notebooks/training_notebook.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Autoencoder for ESNs using sklearn

#

# ## Introduction

#

# In this notebook, we demonstrate how the ESN can deal with multipitch tracking, a challenging multilabel classification problem in music analysis.

#

# As this is a computationally expensive task, we have pre-trained models to serve as an entry point.

#

# At first, we import all packages required for this task. You can find the import statements below.

#

# To use another objective than `accuracy_score` for hyperparameter tuning, check out the documentation of [make_scorer](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.make_scorer.html) or ask me.

# +

import numpy as np

from sklearn.metrics import make_scorer

from sklearn.model_selection import GridSearchCV, RandomizedSearchCV

from sklearn.utils.fixes import loguniform

from scipy.stats import uniform

from joblib import dump, load

from pyrcn.echo_state_network import SeqToSeqESNClassifier # SeqToSeqESNRegressor or SeqToLabelESNClassifier

from pyrcn.metrics import accuracy_score # more available or create custom score

from pyrcn.model_selection import SequentialSearchCV

import seaborn as sns

from matplotlib import pyplot as plt

from matplotlib import ticker

from mpl_toolkits.mplot3d import Axes3D # noqa: F401 unused import

# %matplotlib inline

#Options

plt.rc('image', cmap='RdBu')

plt.rc('font', family='serif', serif='Times')

plt.rc('text', usetex=True)

plt.rc('xtick', labelsize=8)

plt.rc('ytick', labelsize=8)

plt.rc('axes', labelsize=8)

from IPython.display import set_matplotlib_formats

set_matplotlib_formats('png', 'pdf')

from mpl_toolkits.axes_grid1 import make_axes_locatable

# -

# ## Load and preprocess the dataset

#

# This might require a large amount of and memory.

# +

# At first, please load all training and test sequences and targets.

# Each sequence should be a numpy.array with the shape (n_samples, n_features)

# Each target should be

# - either be a numpy.array with the shape (n_samples, n_targets)

# - or a 1D numpy.array with the shape (n_samples, 1)

train_sequences = ......................

train_targets = ......................

if len(train_sequences) != len(train_targets):

raise ValueError("Number of training sequences does not match number of training targets!")

n_train_sequences = len(train_sequences)

test_sequences = ......................

test_targets = ......................

if len(test_sequences) != len(test_targets):

raise ValueError("Number of test sequences does not match number of test targets!")

n_test_sequences = len(test_sequences)

# Initialize training and test sequences

X_train = np.empty(shape=(n_train_sequences, ), dtype=object)

y_train = np.empty(shape=(n_train_sequences, ), dtype=object)

X_test = np.empty(shape=(n_test_sequences, ), dtype=object)

y_test = np.empty(shape=(n_test_sequences, ), dtype=object)

for k, (train_sequence, train_target) in enumerate(zip(train_sequences, train_targets)):

X_train[k] = train_sequence

y_train[k] = train_target

for k, (test_sequence, test_target) in enumerate(zip(test_sequences, test_targets)):

X_test[k] = test_sequence

y_test[k] = test_target

# -

# Initial variables to be equal in the Autoencoder and in the ESN

hidden_layer_size = 500

input_activation = 'relu'

# ## Train a MLP autoencoder

#

# Currently very rudimentary. However, it can be flexibly made deeper or more complex. Check [MLPRegressor](https://scikit-learn.org/stable/modules/generated/sklearn.neural_network.MLPRegressor.html) documentation for hyper-parameters.

# +

mlp_autoencoder = MLPRegressor(hidden_layer_sizes=(hidden_layer_size, ), activation=input_activation)

# X_train is a numpy array of sequences - the MLP does not handle sequences. Thus, concatenate all sequences

# Target of an autoencoder is the input of the autoencoder

mlp_autoencoder.fit(np.concatenate(X_train), np.concatenate(X_train))

w_in = np.divide(mlp_autoencoder.coefs_[0], np.linalg.norm(mlp_autoencoder.coefs_[0], axis=0)[None, :])

# w_in = mlp_autoencoder.coefs_[0] # uncomment in case that the vector norm does not make sense

# -

# ## Set up an ESN

#

# To develop an ESN model, we need to tune several hyper-parameters, e.g., input_scaling, spectral_radius, bias_scaling and leaky integration.

#

# We define the search spaces for each step in a sequential search together with the type of search (a grid or random search in this context).

#

# At last, we initialize a SeqToSeqESNClassifier with the desired output strategy and with the initially fixed parameters.

# +

initially_fixed_params = {'hidden_layer_size': hidden_layer_size,

'k_in': 10,

'input_scaling': 0.4,

'input_activation': input_activation,

'bias_scaling': 0.0,

'spectral_radius': 0.0,

'leakage': 1.0,

'k_rec': 10,

'reservoir_activation': 'tanh',

'bi_directional': False,

'wash_out': 0,

'continuation': False,

'alpha': 1e-3,

'random_state': 42}

step1_esn_params = {'input_scaling': uniform(loc=1e-2, scale=1),

'spectral_radius': uniform(loc=0, scale=2)}

step2_esn_params = {'leakage': loguniform(1e-5, 1e0)}

step3_esn_params = {'bias_scaling': np.linspace(0.0, 1.0, 11)}

step4_esn_params = {'alpha': loguniform(1e-5, 1e1)}

kwargs_step1 = {'n_iter': 200, 'random_state': 42, 'verbose': 1, 'n_jobs': -1, 'scoring': make_scorer(accuracy_score)}

kwargs_step2 = {'n_iter': 50, 'random_state': 42, 'verbose': 1, 'n_jobs': -1, 'scoring': make_scorer(accuracy_score)}

kwargs_step3 = {'verbose': 1, 'n_jobs': -1, 'scoring': make_scorer(accuracy_score)}

kwargs_step4 = {'n_iter': 50, 'random_state': 42, 'verbose': 1, 'n_jobs': -1, 'scoring': make_scorer(accuracy_score)}

# The searches are defined similarly to the steps of a sklearn.pipeline.Pipeline:

searches = [('step1', RandomizedSearchCV, step1_esn_params, kwargs_step1),

('step2', RandomizedSearchCV, step2_esn_params, kwargs_step2),

('step3', GridSearchCV, step3_esn_params, kwargs_step3),

('step4', RandomizedSearchCV, step4_esn_params, kwargs_step4)]

base_esn = SeqToSeqESNClassifier(input_to_node=PredefinedWeightsInputToNode(predefined_input_weights=w_in),

**initially_fixed_params)

# -

# ## Optimization

#

# We provide a SequentialSearchCV that basically iterates through the list of searches that we have defined before. It can be combined with any model selection tool from scikit-learn.

try:

sequential_search = load("sequential_search.joblib")

except FileNotFoundError:

print(FileNotFoundError)

sequential_search = SequentialSearchCV(base_esn, searches=searches).fit(X_train, y_train)

dump(sequential_search, "sequential_search.joblib")

# ## Visualize hyper-parameter optimization

#

# ### First optimization step: input scaling and spectral radius

#

# Either create a scatterplot - useful in case of a random search to optimize input scaling and spectral radius

# +

df = pd.DataFrame(sequential_search.all_cv_results_["step1"])

fig = plt.figure()

ax = sns.scatterplot(x="param_spectral_radius", y="param_input_scaling", hue="mean_test_score", palette='RdBu', data=df)

plt.xlabel("Spectral Radius")

plt.ylabel("Input Scaling")

norm = plt.Normalize(0, df['mean_test_score'].max())

sm = plt.cm.ScalarMappable(cmap="RdBu", norm=norm)

sm.set_array([])

plt.xlim((0, 2.05))

plt.ylim((0, 1.05))

# Remove the legend and add a colorbar

ax.get_legend().remove()

ax.figure.colorbar(sm)

fig.set_size_inches(4, 2.5)

tick_locator = ticker.MaxNLocator(5)

ax.yaxis.set_major_locator(tick_locator)

ax.xaxis.set_major_locator(tick_locator)

# -

# Or create a heatmap - useful in case of a grid search to optimize input scaling and spectral radius

# +

df = pd.DataFrame(sequential_search.all_cv_results_["step1"])

pvt = pd.pivot_table(df,

values='mean_test_score', index='param_input_scaling', columns='param_spectral_radius')

pvt.columns = pvt.columns.astype(float)

pvt2 = pd.DataFrame(pvt.loc[pd.IndexSlice[0:1], pd.IndexSlice[0.0:1.0]])

fig = plt.figure()

ax = sns.heatmap(pvt2, xticklabels=pvt2.columns.values.round(2), yticklabels=pvt2.index.values.round(2), cbar_kws={'label': 'Score'})

ax.invert_yaxis()

plt.xlabel("Spectral Radius")

plt.ylabel("Input Scaling")

fig.set_size_inches(4, 2.5)

tick_locator = ticker.MaxNLocator(10)

ax.yaxis.set_major_locator(tick_locator)

ax.xaxis.set_major_locator(tick_locator)

# -

# ### Second optimization step: leakage

df = pd.DataFrame(sequential_search.all_cv_results_["step2"])

fig = plt.figure()

fig.set_size_inches(2, 1.25)

ax = sns.lineplot(data=df, x="param_leakage", y="mean_test_score")

ax.set_xscale('log')

plt.xlabel("Leakage")

plt.ylabel("Score")

plt.xlim((1e-5, 1e0))

tick_locator = ticker.MaxNLocator(10)

ax.xaxis.set_major_locator(tick_locator)

ax.yaxis.set_major_formatter(ticker.FormatStrFormatter('%.4f'))

plt.grid()

# ### Third optimization step: bias_scaling

df = pd.DataFrame(sequential_search.all_cv_results_["step3"])

fig = plt.figure()

fig.set_size_inches(2, 1.25)

ax = sns.lineplot(data=df, x="param_bias_scaling", y="mean_test_score")

plt.xlabel("Bias Scaling")

plt.ylabel("Score")

plt.xlim((0, 1))

tick_locator = ticker.MaxNLocator(5)

ax.xaxis.set_major_locator(tick_locator)

ax.yaxis.set_major_formatter(ticker.FormatStrFormatter('%.5f'))

plt.grid()

# ### Fourth optimization step: alpha (regularization)

df = pd.DataFrame(sequential_search.all_cv_results_["step4"])

fig = plt.figure()

fig.set_size_inches(2, 1.25)

ax = sns.lineplot(data=df, x="param_alpha", y="mean_test_score")

ax.set_xscale('log')

plt.xlabel("Alpha")

plt.ylabel("Score")

plt.xlim((1e-5, 1e0))

tick_locator = ticker.MaxNLocator(5)

ax.xaxis.set_major_locator(tick_locator)

ax.yaxis.set_major_formatter(ticker.FormatStrFormatter('%.5f'))

plt.grid()

# ## Test the ESN

#

# Finally, we test the ESN on unseen data.

y_pred = esn.predict(X_train)

y_pred_proba = esn.predict_proba(X_train)

| examples/sklearn_autoencoder.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="7v0Bo63NffSK"

# A API utilizada neste artigo é um pacote chamado yahooquery, criado pelo desenvolvedor <NAME>. Desde o cancelamento das APIs oficiais do Google e Yahoo, a comunidade de ciência de dados vem criando maneiras de extrair informações do mercado financeiro. Essa API não-oficial é uma maneira alternativa de se obter tais dados.

# + [markdown] id="KYxOMPdJdK9o"

# 1. Instalando biblioteca

# + id="q7-nlTuXYRkA"

# !pip install yahooquery

# + [markdown] id="DVX3hn-8dZ3Q"

# 2. Importando biblioteca

# + id="WXCj39JDbi1p"

from yahooquery import Ticker

# + [markdown] id="3aUaFbhzf3f4"

# 3. Extraindo dados diários

#

# Um ponto muito importante, os tickers (siglas que identificam as ações) usados pelo Yahoo Finance são um pouco diferentes dos oficiais da Bovespa. Antes de fazer a consulta, é preciso conferir no site qual é o ticker correto,confira alguns exemplos abaixo:

#

# | Oficial | Yahoo Finance |

# |-----------|-----------------|

# | PETR4 | PETR4.SA |

# | ABEV3 | ABEV3.SA |

# | MGLU3 | MGLU3.SA |

# + colab={"base_uri": "https://localhost:8080/", "height": 450} id="JAtzaF0Gb_uS" outputId="c7f9d5b6-5048-47b8-f0db-89f4bf1687fe"

CIEL3 = Ticker("CIEL3.SA")

CIEL3.history(period='max')

# + [markdown] id="l1KHSTbihWzl"

# Consultando um intervalo de tempo específico, adicionando datas de início e fim aos parâmetros:

# + colab={"base_uri": "https://localhost:8080/", "height": 450} id="wBVIOIIwcAUS" outputId="87a7109b-be95-4f5f-9fad-2bf2c2eda90b"

CIEL3.history(start='2001-01-01', end='2021-04-22')

# + [markdown] id="sEFGVeiIhgD7"

# 4. Extraindo dados intraday

#

# Para dados intraday, o período disponível varia de acordo com o intervalo escolhido. Os intervalos suportados são 30 min, 15 min, 5 min, 2 min e 1 min.

#

# | Intervalos intraday | |

# |--------------------------------| |

# | 30 min | últimos 60 dias úteis |

# | 15 min | últimos 60 dias úteis |

# | 5 min | últimos 60 dias úteis |

# | 2 min | últimos 31 dias úteis |

# | 1 min | últimos 7 dias úteis |

#

# Extraindo intervalos de 30 min:

# + colab={"base_uri": "https://localhost:8080/", "height": 450} id="3Lv2dRhicSyI" outputId="34f6aafa-7313-415b-b06b-ae6001d0d501"

CIEL3 = Ticker('CIEL3.SA')

CIEL3.history(period='60d', interval = "30m")

# + [markdown] id="egYMtcpWixCp"

# Extraindo intervalos de 1 minuto:

# + colab={"base_uri": "https://localhost:8080/", "height": 450} id="omleAovBcvho" outputId="addf4fcb-29c4-4b04-d611-7e4b18391f2c"

CIEL3 = CIEL3.history(period='7d', interval = "1m")

CIEL3

# + [markdown] id="FpnsjwTIdJKV"

# 4. Informações financeiras

#

# Extraindo dados financeiros para análise fundamentalista. Para isso, são retornados os principais indicadores presentes nos relatórios anuais das empresas, tais como receita, lucro, Ebit, gastos com pesquisa e inovação, etc.

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} id="fECTT99XdI0N" outputId="629fc588-ff85-4e65-bd52-7876b8bc5959"

CIEL3 = Ticker("CIEL3.SA") # Coleta dados

CIEL3.income_statement() # Chama função de Demonstração de resultados

CIEL3 = CIEL3.income_statement().transpose() # Transpõe a matriz

CIEL3.columns = CIEL3.iloc[0,:] # Renomeia colunas

CIEL3 = CIEL3.iloc[2:,:-1] # Seleciona dados

CIEL3 = CIEL3.iloc[:, ::-1] # Inverte colunas

CIEL3

| Extrair_dados_ticker_bovespa.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Start your first Spark Session 🎈

# Findspark helps us to **find** and **start** Spark

import findspark

# +

# Provide the path where you installed Spark

findspark.init("c:\spark")

# +

# Create Spark Sesion

from pyspark.sql import SparkSession

# +

# This piece of code will generate a Spark session, however i is not named or configured.

# In real-life, best practice is to create tailored and named sessions hat works best for your case.

spark = SparkSession.builder.getOrCreate()

# -

# Check Spark version

spark.version

# From this point, we have created our Spark Session. It is ready for usage. To make sure your session is created successfully, go to http://localhost:4040/jobs/

#

# From this page, you can see and manage all the jobs you have created!

| PySpark_CreateSession_01.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # BPE vocabulary analysis

#

# (C) <NAME>, 2019, Mindscan

#

# Recently I build some bpe-vocabularies, which had way too much tokens because of arabic and asian languages. This notebook is intended to review these vocabularies...

#

# Current state: the vocabulary was cleaned up... So the initial reason for this notebook is no longer given.

#

import sys

sys.path.insert(0,'../src')

import functools

from de.mindscan.fluentgenesis.bpe.bpe_model import BPEModel

model = BPEModel("16K-full", "../src/de/mindscan/fluentgenesis/bpe/")

model.load_hparams()

model_vocabulary = model.load_tokens()

model_bpe_data = model.load_bpe_pairs()

# +

model_vocabulary_statistics_length = {}

for token, _ in model_vocabulary.items():

lengthOfToken = len(token)

if lengthOfToken not in model_vocabulary_statistics_length:

model_vocabulary_statistics_length[lengthOfToken] = 1

else:

model_vocabulary_statistics_length[lengthOfToken] += 1

print(model_vocabulary_statistics_length)

# -

# The result is something like this:

#

# {1: 24804, 2: 1462, 3: 2465, 4: 2213, 5: 1906, 6: 1639, 7: 1426, 8: 1255, 9: 971, 10: 738, 11: 505, 12: 349, 13: 277, 14: 202, 15: 156, 16: 107, 17: 84, 18: 64, 19: 44, 20: 35, 21: 22, 22: 21, 23: 8, 24: 9, 25: 13, 26: 7, 27: 3, 28: 3, 29: 2, 30: 4, 32: 2, 33: 2, 34: 1, 36: 1, 37: 1, 42: 1, 43: 1, 51: 1}

#

# The intended dictionary size was about 16K tokens...

#

functools.reduce( lambda sum,y: sum + model_vocabulary_statistics_length[y] ,model_vocabulary_statistics_length.keys() )

# The result for all tokenlength longer than one char is 16001 (oops, off by one in token calculation...) we can see, that we have another 24804 Tokens of length one. Since we might can not encode every words with pairs, and to takle the unknown word problem, we have to emit all unpaired tokens at the end of the process. There are nearly twice the number of unpaired tokens than paired tokens...

#

# These originate from strings containing other languages, so lets identify these

#

#

#

# +

import collections

unsupported_vocab_ranges = [

# Latin Extended

(0x0100, 0x017F), # Latin Extended-A

(0x0180, 0x024F), # Latin Extended-B

(0x1E00, 0x1EFF), # Latin Extended Additional

(0x2C60, 0x2C7F), # Latin Extended-C

(0xA720, 0xA7FF), # Latin Extended-D

(0xAB30, 0xAB6F), # Latin Extended-E

# Diacritical

(0x0300, 0x036F), # Combining Diacritical Marks

(0x1AB0, 0x1AFF), # Combining Diacritical Marks Extended

(0x1DC0, 0x1DFF), # Combining Diacritical Marks Supplement

(0x20D0, 0x20FF), # Combining Diacritical Marks for Symbols

# IPA & phonetic Extemsion

(0x0250, 0x02AF), # IPA Extensions

(0x1D00, 0x1D7F), # Phonetic Extensions

(0x1D80, 0x1DBF), # Phonetic Extensions Supplement

# Spacing Modifier Letters

(0x02B0, 0x02FF), # Spacing Modifier Letters

# Greek Coptic

(0x0370, 0x03FF), # Greek and coptic

(0x1F00, 0x1FFF), # Greek Extended

(0x2C80, 0x2CFF), # Coptic

# Cyrillic

(0x0400, 0x04FF), # Cyrillic)

(0x0500, 0x052F), # Cyrillic Supplement

(0x2DE0, 0x2DFF), # Cyrillic Extended-A

(0xA640, 0xA69F), # Cyrillic Extended-B

(0x1C80, 0x1C8F), # Cyrillic Extended-C

# Armenian

(0x0530, 0x058F), # Armenian

# Hebrew

(0x0590, 0x05FF), # Hebrew

# Arabic

(0x0600, 0x06FF), # Arabic

(0x0750, 0x077F), # Arabic Supplement

(0x08A0, 0x08FF), # Arabic Extended-A

(0xFB50, 0xFDFF), # Arabic Presentation Forms A

(0xFE70, 0xFEFF), # Arabic Presentation Forms B

# Syriac

(0x0700, 0x074F), # Syriac

(0x0860, 0x086F), # Syriac Supplement

# Thaana

(0x0780, 0x07BF), # Thaana

# NKo

(0x07C0, 0x07FF), # NKo

# Samritan

(0x0800, 0x083F), # Samaritan

# Mandaic

(0x0840, 0x085F), # Mandaic

# Invalid range

(0x0870, 0x089F), # Invalid range

# Indian Subkontinent Languages

(0x0900, 0x097F), # Devanagari

(0xA8E0, 0xA8FF), # Devanagari Extended

# (0x0980, 0x09FF), # Bengali

# ...

(0x0900, 0x0DFF), # India - covers multiple languages

(0xA830, 0xA83F), # Common Indic Number Forms

(0x0E00, 0x0E7F), # Thai

(0x0E80, 0x0EFF), # Lao

(0x0F00, 0x0FFF), # Tibetan

# Myanmar

(0x1000, 0x109F), # Myanmar

(0xAA60, 0xAA7F), # Myanmar Extended-A

(0xA9E0, 0xA9FF), # Myanmar Extended-B

# Georgian

(0x10A0, 0x10FF), # Georgian

(0x2D00, 0x2D2F), # Georgian Supplement

(0x1C90, 0x1CBF), # Georgian Extended

# Korean

(0x1100, 0x11FF), # Hangul Jamo

(0x3130, 0x318F), # Hangul Compatibility Jamo

(0xAC00, 0xD7AF), # Hangul Syllables

(0xA960, 0xA97F), # Hangul Jamo Extended-A

(0xD7B0, 0xD7FF), # Hangul Jamo Extended B

# Ethiopic

(0x1200, 0x139f), # Ethiopic, Ethopic Supplement

(0xAB00, 0xAB2F), # Ethiopic Extended-A

(0x2D80, 0x2DDF), # Ethiopic Extended

# Cherokee

(0x13A0, 0x13FF), # Cherokee

(0xAB70, 0xABBF), # Cherokee Supplement

# Canadian Aboriginal

(0x1400, 0x167F), # Unified Canadian Aboriginal Syllabics

(0x18B0, 0x18FF), # Unified Canadian Aboriginal Syllabics Extended

(0x1680, 0x169F), # Ogham

(0x16A0, 0x16FF), # Runic

(0x1700, 0x171F), # Tagalog

(0x1720, 0x173F), # Hanunoo

(0x1740, 0x175F), # Buhid

(0x1760, 0x177F), # Tagbanwa

# Khmer

(0x1780, 0x17FF), # Khmer

(0x19E0, 0x19FF), # Khmer Symbols

# Mongolian

(0x1800, 0x18AF), # Mongolian

(0x1900, 0x194F), # Limbu

(0x1950, 0x197F), # Tai Le

(0x1980, 0x19DF), # New Tai Lue

(0x1A00, 0x1A1F), # Buginese

(0x1A20, 0x1AAF), # Tai Tham

(0x1B00, 0x1B7F), # Balinese

(0x1B80, 0x1BBF), # Sundanese

(0x1CC0, 0x1CCF), # Sundanese Supplement

(0x1BC0, 0x1BFF), # Batak

(0x1C00, 0x1C4F), # Lepcha

(0x1C50, 0x1C7F), # Ol Chiki

(0x1CD0, 0x1CFF), # Vedic Extensions

# Punctuation

(0x2000, 0x206F), # General Punctuation

(0x2E00, 0x2E7F), # Supplemental Punctuation

(0x3000, 0x303F), # CJK Symbols and Punctuation

(0x2070, 0x209F), # Superscripts and Subscripts

(0x20A0, 0x20CF), # Currency Symbols

# Symbols

(0x2100, 0x26ff), # Letterlike Symbols, ... Miscelaneous Symbols

(0x2700, 0x27FF), # Dingbats & co

(0x2800, 0x28FF), # Braille

(0x2900, 0x2BFF), # Symbols Arrows math

(0x2D30, 0x2D7F), # Tifinagh

(0x2f00, 0x2FFF), # Kangxi radicals, Ideographic Description Characters

# CJK

(0x3000, 0xa4FF),

(0xFE30, 0xFE4F), # CJK Compatibility Forms

(0xF900, 0xFAFF), # CJK Compatibility Ideographs

(0x2E80, 0x2EFF), # CJK Radicals Supplement

#

(0xA500, 0xA63F), # Vai

(0xA6A0, 0xA6FF), # Bamum

(0xA700, 0xA71F), # Modifier Tone Letters

(0xA800, 0xA82F), # <NAME>

(0xA840, 0xA87F), # Phags-pa

(0xA880, 0xA8DF), # Saurashtra

(0xA900, 0xA92F), # Kayah Li

(0xA930, 0xA95F), # Rejang

(0xA980, 0xA9DF), # Javanese

(0xAA00, 0xAA5F), # Cham

(0xAA80, 0xAADF), # Tai Viet

(0xABC0, 0xABFF), # Meetei Mayek

(0xAAE0, 0xAAFF), # Meetei Mayek Extensions

# Private Area

(0xE000,0xF8FF), # Private Use Area

(0xD800, 0xDB7F), # High Surrogates

(0xDB80, 0xDBFF), # High Private Use Surrogates

(0xDC00, 0xDFFF), # Low Surrogates

# # ???

(0x2c00, 0x2c5f), # Glagolitic

(0xFB00, 0xFB4F), # Alphabetic Presentation Forms

(0xFE00, 0xFE0F), # Variation Selectors

(0xFE10, 0xFE1F), # Vertical Forms

(0xFE20, 0xFE2F), # Combining Half Marks

(0xFE50, 0xFE6F), # Small Form Variants

(0xFF00, 0xFFEF), # Halfwidth and Fullwidth Forms

# OLD and OLDER

(0x010000, 0x10FFFF) # Basically everything what is not as important to be in first ~65000 Codes

]

def is_unsupported_character(char):

char=ord(char)

for bottom, top in unsupported_vocab_ranges:

if char >= bottom and char <= top:

return True

return False

one_char_elements = filter(lambda x: len(x)==1,model_vocabulary )

chars = filter(lambda char: not(is_unsupported_character(char)), one_char_elements)

charsasArray = [x for x in chars]

orderedChars = sorted(charsasArray, key=lambda item: item)

print(len(orderedChars))

print(orderedChars)

print(["0x%x"%ord(item) for item in orderedChars])

# -

| ipynb/vocabulary_analyzer_0x01.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

import numpy as np

import cPickle as pickle

import scipy.io

import time

import ssn

import ks_test3

from hyperopt import fmin, tpe, hp, STATUS_OK, Trials

# %matplotlib inline

# -

# Define Hyperopt search space:

space = [hp.uniform('sig_EE',7,9),

hp.uniform('sig_IE',10,16),

hp.uniform('sig_EI',3,5),

hp.uniform('sig_II',3,5),

hp.uniform('J_EI',0.089,0.105),

hp.uniform('J_II',0.08,0.105)]

# +

# load Blasdel orientation and ocular dominance maps (previously processed,

# see map_analysis.ipynb

st = time.time()

[OD_map_full, OP_map_full] = np.load('saved_vars/maps-Nov-7.p', 'rb')

print "Elapsed time to load maps: %d seconds" % (time.time() - st)

# plt.figure()

# plt.imshow(OD_map_full)

# plt.colorbar()

# plt.title('Full ocular dominance map, Obermayer and Blasdel')

# plt.figure()

# plt.imshow(OP_map_full)

# plt.colorbar()

# plt.title('Full orientation map, Obermayer and Blasdel')

OD_map = OD_map_full[-75:,-75:]

OP_map = np.floor(OP_map_full[-75:,-75:])

# +

n_units = 50

selected_units = np.floor( ss_net.N_pairs*np.random.rand(n_units, 2) )

OD_prefs = np.zeros(len(selected_units))

for i in range(len(selected_units)):

xi = selected_units[i,0]

yi = selected_units[i,1]

OD_prefs[i] = OD_map[yi,xi]

# -

# Define objective funtion for hyperopt:

def iot_ssn_ks2d(args):

sig_EE, sig_IE, sig_EI, sig_II, J_EI, J_II = args

# Generate SSN with specified hyperparams:

ss_net = ssn.SSNetwork(sig_EE, sig_IE, sig_EI, sig_II, J_EE=0.1, J_IE=0., J_EI, J_II, OP_map=OP_map, OD_map=OD_map)

# TODO: Check the stability of the network and abort if unstable (return high value)

c = 40

dt = 0.005

timesteps = 100

dx = ss_net.dx

N_pairs = ss_net.N_pairs

# first find the summation field size (optimal CRF stimulus) for each unit (both E and I)

stim_sizes = np.linspace(0.75, 2, 5)

crf_bank = np.zeros( (n_units, 2, len(stim_sizes), N_pairs, N_pairs) )

for i in range(n_units):

xi = selected_units[i,0]

yi = selected_units[i,1]

ocularity = np.round( OD_map[yi,xi] )

ori = OP_map[yi,xi]

for j in range(len(stim_sizes)):

crf_bank[i,0,j,:,:] = ssn.generate_mono_stimulus( ori, stim_sizes[j], [dx*xi, dx*yi], OP_map )

crf_bank[i,1,j,:,:] = ssn.generate_ext_stimulus( ori, stim_sizes[j], [dx*xi, dx*yi], OP_map, OD_map, ocularity)

# Store the summation field sizes (SFS) for both E and I units

sfs_E = np.zeros( n_units )

sfs_I = np.copy(sfs_E)

max_fr_E = np.copy(sfs_E)

max_fr_I = np.copy(sfs_E)

# run to find monocular SFS:

for i in range(n_units):

xi = selected_units[i,0]

yi = selected_units[i,1]

e_found = False

i_found = False

for j in range(len(stim_sizes)):

if e_found == True and i_found == True:

break

h = crf_bank[i,1,j,:,:]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

if r_E[-1,yi,xi] >= max_fr_E[i]:

max_fr_E[i] = r_E[-1,yi,xi]

sfs_E[i] = stim_sizes[j]

else:

e_found = True

if r_I[-1,yi,xi] >= max_fr_I[i]:

max_fr_I[i] = r_I[-1,yi,xi]

sfs_I[i] = stim_sizes[j]

else:

i_found = True

# Generate non-dominant CRF stimuli

non_dom_stimuli = np.zeros((len(selected_units), 2, N_pairs, N_pairs))

for i in range(len(selected_units)):

xi = selected_units[i,0]

yi = selected_units[i,1]

ocularity = np.abs( np.round(OD_prefs[i]) - 1)

non_dom_stimuli[i,0,:,:] = ssn.generate_ext_stimulus( ori, sfs_E[i], [dx*xi, dx*yi], OP_map, OD_map, ocularity)

if sfs_E[i] != sfs_I[i]:

non_dom_stimuli[i,1,:,:] = ssn.generate_ext_stimulus( ori, sfs_I[i], [dx*xi, dx*yi], OP_map, OD_map, ocularity)

non_dom_results = np.zeros((len(selected_units), 2))

for i in range(len(selected_units)):

xi = selected_units[i,0]

yi = selected_units[i,1]

h = non_dom_stimuli[i,0,:,:]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

non_dom_results[i,0] = r_E[-1,yi,xi]

non_dom_results[i,1] = r_I[-1,yi,xi]

if sfs_E[i] != sfs_I[i]:

h = non_dom_stimuli[i,1,:,:]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

non_dom_results[i,1] = r_I[-1,yi,xi]

threshold = 1 # threshold for Webb's "reliable response" criterion

# Only carry on with units whose non-dom CRF response is above the threshold:

thresh_units_E = selected_units[np.where(non_dom_results[:,0]>=threshold),:][0]

thresh_units_I = selected_units[np.where(non_dom_results[:,1]>=threshold),:][0]

thresh_units_sfs_E = sfs_E[np.where(non_dom_results[:,0]>=threshold)]

thresh_units_sfs_I = sfs_I[np.where(non_dom_results[:,1]>=threshold)]

thresh_units_max_fr_E = max_fr_E[np.where(non_dom_results[:,0]>=threshold)]

thresh_units_max_fr_I = max_fr_I[np.where(non_dom_results[:,1]>=threshold)]

# Now find which units which are above threshold also suppress below 90% with non-dom surround:

non_dom_surround_stim_E = np.zeros((len(thresh_units_E), N_pairs, N_pairs))

dom_surround_stim_E = np.copy(non_dom_surround_stim_E)

dom_crf_stim_E = np.copy(non_dom_surround_stim_E)

for i in range(len(thresh_units_E)):

xi = thresh_units_E[i,0]

yi = thresh_units_E[i,1]

inner_d = thresh_units_sfs_E[i]

outer_d = inner_d + 3

centre = [dx*xi, dx*yi]

ocularity = np.abs( np.round(OD_map[yi,xi]) - 1)

non_dom_surround_stim_E[i] = ssn.generate_ring_stimulus(OP_map[yi,xi], inner_d, outer_d, centre, ocularity, OP_map, OD_map)

dom_surround_stim_E[i] = ssn.generate_ring_stimulus(OP_map[yi,xi], inner_d, outer_d, centre, np.round(OD_map[yi,xi]), OP_map, OD_map)

dom_crf_stim_E[i] = ssn.generate_ext_stimulus( ori, inner_d, [dx*xi, dx*yi], OP_map, OD_map, np.round(OD_map[yi,xi]) )

# Run simulations to analyze non dominant suppression:

non_dom_surround_results = np.zeros((len(thresh_units_E)))

dom_surround_results = np.copy(non_dom_surround_results)

for i in range(len(thresh_units_E)):

xi = thresh_units_E[i,0]

yi = thresh_units_E[i,1]

h = non_dom_surround_stim_E[i] + dom_crf_stim_E[i]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

non_dom_surround_results[i] = r_E[-1,yi,xi]

h = dom_surround_stim_E[i] + dom_crf_stim_E[i]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

dom_surround_results[i] = r_E[-1,yi,xi]

dominant_SI_E = (thresh_units_max_fr_E - dom_surround_results) / thresh_units_max_fr_E

non_dom_SI_E = (thresh_units_max_fr_E - non_dom_surround_results) / thresh_units_max_fr_E

# Now do all the same stuff for the I units:

non_dom_surround_stim_I = np.zeros((len(thresh_units_I), N_pairs, N_pairs))

dom_surround_stim_I = np.copy(non_dom_surround_stim_I)

dom_crf_stim_I = np.copy(non_dom_surround_stim_I)

for i in range(len(thresh_units_I)):

xi = thresh_units_I[i,0]

yi = thresh_units_I[i,1]

inner_d = thresh_units_sfs_I[i]

outer_d = inner_d + 3

centre = [dx*xi, dx*yi]

ocularity = np.abs( np.round(OD_map[yi,xi]) - 1)

non_dom_surround_stim_I[i] = ssn.generate_ring_stimulus(OP_map[yi,xi], inner_d, outer_d, centre, ocularity, OP_map, OD_map)

dom_surround_stim_I[i] = ssn.generate_ring_stimulus(OP_map[yi,xi], inner_d, outer_d, centre, np.round(OD_map[yi,xi]), OP_map, OD_map)

dom_crf_stim_I[i] = ssn.generate_ext_stimulus( ori, inner_d, [dx*xi, dx*yi], OP_map, OD_map, np.round(OD_map[yi,xi]))

# Run simulations to analyze non dominant suppression:

non_dom_surround_results_I = np.zeros((len(thresh_units_I)))

dom_surround_results_I = np.copy(non_dom_surround_results_I)

for i in range(len(thresh_units_I)):

xi = thresh_units_I[i,0]

yi = thresh_units_I[i,1]

h = non_dom_surround_stim_I[i] + dom_crf_stim_I[i]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

non_dom_surround_results_I[i] = r_I[-1,yi,xi]

h = dom_surround_stim_I[i] + dom_crf_stim_I[i]

[r_E, r_I, I_E, I_I] = ss_net.run_simulation(dt, timesteps, c, h )

dom_surround_results_I[i] = r_I[-1,yi,xi]

dominant_SI_I = (thresh_units_max_fr_I - dom_surround_results_I) / thresh_units_max_fr_I

non_dom_SI_I = (thresh_units_max_fr_I - non_dom_surround_results_I) / thresh_units_max_fr_I

# Concatenate the E and I results

model_data_x = np.concatenate((dominant_SI_E, dominant_SI_I))

model_data_y = np.concatenate((non_dom_SI_E, non_dom_SI_I))

webb_data = np.array([[0.3538, 0.3214],

[0.5513, 0.2271],

[0.5154, 0.5064],

[0.5641, 0.5681],

[0.6077, 0.5605],

[0.7179, 0.6172],

[0.7487, 0.6865],

[0.8282, 0.6406],

[0.8923, 0.5459],

[0.9282, 0.5690],

[0.6308, 0.4093],

[0.7385, 0.4557],

[0.7923, 0.4866],

[0.7385, 0.5352],

[0.9974, 0.9846]])

d, prob = ks_test3.ks2d2s(webb_data[:,0], webb_data[:,1], model_data_x, model_data_y)

return {

'status': 'ok',

'loss':, 1-prob,

'attachments': {'units_probed':pickle.dumps([thresh_units_E, thresh_units_I, thresh_untits_max_fr_E, thresh_units_max_fr_I, dom_surround_results, dom_surround_results_I, sfs_E, sfs_I])}

}

# +

# create a Trials database to store experiment results:

trials = Trials()

st = time.time()

best = fmin(iot_ssn_ks2d, space, algo=tpe.suggest, max_evals=10, trials=trials)

print "Elapsed time for 10 hyperopt sims: %d seconds." % (time.time()-st)

print 'tpe:', best

| mechanistic/IOT_hyperopt.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # K-Means Clustering

# ## <NAME>

# ### Importing the required libraries

import numpy as np

import matplotlib.pyplot as plt

import random

from sklearn.cluster import KMeans

from sklearn.datasets.samples_generator import make_blobs

# ### Creating the dataset

np.random.seed(0)

X, y = make_blobs(n_samples=5000, centers=[[4,4], [-2, -1], [2, -3], [1, 1]], cluster_std=0.9)

# The **make_blobs** class can take in many inputs, but we will be using these specific ones.

#

# **Input**

# - **n_samples**: The total number of points equally divided among clusters.

# - Value will be: 5000

# - **centers**: The number of centers to generate, or the fixed center locations.

# - Value will be: [[4, 4], [-2, -1], [2, -3],[1,1]]

# - **cluster_std**: The standard deviation of the clusters.

# - Value will be: 0.9

#

# **Output**

# - **X**: Array of shape [n_samples, n_features]. (Feature Matrix)

# - The generated samples.

#

# - **y**: Array of shape [n_samples]. (Response Vector)

# - The integer labels for cluster membership of each sample.

#

plt.figure(figsize=(12, 8))

plt.scatter(X[:, 0], X[:, 1], marker='.')

# ### Modeling

k_means = KMeans(init = "k-means++", n_clusters = 4, n_init = 12)

k_means.fit(X)

# ### Cluster labels of each point

k_means_labels = k_means.labels_

k_means_labels

# ### Center points of each clusters

k_means_cluster_centers = k_means.cluster_centers_

k_means_cluster_centers

# ### Create a Visual Plot

# +

# Initialize the plot with the specified dimensions.

fig = plt.figure(figsize=(12, 8))

# Colors uses a color map, which will produce an array of colors based on

# the number of labels there are. We use set(k_means_labels) to get the

# unique labels.

# colors = plt.cm.Spectral(np.linspace(0, 1, len(set(k_means_labels))))

colors = ['red', 'green', 'blue', 'black']

# Create a plot

ax = fig.add_subplot(1, 1, 1)

# For loop that plots the data points and centroids.

# k will range from 0-3, which will match the possible clusters that each

# data point is in.

for k, col in zip(range(len([[4,4], [-2, -1], [2, -3], [1, 1]])), colors):

# Create a list of all data points, where the data poitns that are

# in the cluster (ex. cluster 0) are labeled as true, else they are

# labeled as false.

my_members = (k_means_labels == k)

# Define the centroid, or cluster center.

cluster_center = k_means_cluster_centers[k]

# Plots the datapoints with color col.

ax.plot(X[my_members, 0], X[my_members, 1], 'w', markerfacecolor=col, marker='.')

# Plots the centroids with specified color, but with a darker outline

ax.plot(cluster_center[0], cluster_center[1], 'o', markerfacecolor=col, markeredgecolor='k', markersize=6)

# Title of the plot

ax.set_title('KMeans')

# Remove x-axis ticks

ax.set_xticks(())

# Remove y-axis ticks

ax.set_yticks(())

# Show the plot

plt.show()

# -

# ### With 3 Clusters

# +

k_means = KMeans(init = "k-means++", n_clusters = 3, n_init = 12)

k_means.fit(X)

# -

k_means_labels = k_means.labels_

k_means_labels

k_means_cluster_centers = k_means.cluster_centers_

k_means_cluster_centers

# +

# Initialize the plot with the specified dimensions.

fig = plt.figure(figsize=(12, 8))

# Colors uses a color map, which will produce an array of colors based on

# the number of labels there are. We use set(k_means_labels) to get the

# unique labels.

# colors = plt.cm.Spectral(np.linspace(0, 1, len(set(k_means_labels))))

colors = ['red', 'green', 'blue']

# Create a plot

ax = fig.add_subplot(1, 1, 1)

# For loop that plots the data points and centroids.

# k will range from 0-3, which will match the possible clusters that each

# data point is in.

for k, col in zip(range(len([[4,4], [-2, -1], [2, -3], [1, 1]])), colors):

# Create a list of all data points, where the data poitns that are

# in the cluster (ex. cluster 0) are labeled as true, else they are

# labeled as false.

my_members = (k_means_labels == k)

# Define the centroid, or cluster center.

cluster_center = k_means_cluster_centers[k]

# Plots the datapoints with color col.

ax.plot(X[my_members, 0], X[my_members, 1], 'w', markerfacecolor=col, marker='.')

# Plots the centroids with specified color, but with a darker outline

ax.plot(cluster_center[0], cluster_center[1], 'o', markerfacecolor=col, markeredgecolor='k', markersize=6)

# Title of the plot

ax.set_title('KMeans')

# Remove x-axis ticks

ax.set_xticks(())

# Remove y-axis ticks

ax.set_yticks(())

# Show the plot

plt.show()

| 03_Clustering/01_K-Means/KMeans.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

# %load_ext autoreload

# %autoreload 2

import pandas as pd

import datetime as datetime

from statsmodels.tsa.ar_model import AR

from random import random

from statsmodels.tsa.seasonal import seasonal_decompose

import os as os

import hvplot.pandas

import matplotlib.pylab as plt

flx_hh = pd.read_csv('FLUXNET/AMF_US-ARM_BASE-BADM_8-5/AMF_US-ARM_BASE_HH_8-5.csv',index_col=0, parse_dates=True, header = 2, na_values = -9999.0)

flx_daily=flx_hh.resample('1d').mean()

flx_daily.describe()

# +

# Additive Decomposition

result_add = seasonal_decompose(flx_daily.FC_1_1_1.fillna(method='pad'), model='additive', freq=365, extrapolate_trend='freq')

# Plot

#result_mul.plot().suptitle('Multiplicative Decompose', fontsize=22)

result_add.plot();

# -

flx_daily.FC_1_1_1.fillna(method='pad').hvplot()

# +

from statsmodels.graphics.tsaplots import plot_acf, plot_pacf

fig,axes = plt.subplots(1, 2, figsize = (10,4))

plot_pacf(result_add.resid, lags = 60, ax = axes[0])

plot_acf(result_add.resid, lags = 60, ax = axes[1]);

# -

| nbs/XXX_test_timeseries.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.12 ('img')

# language: python

# name: python3

# ---

# +

#open ft_1024_samples.npz

import numpy as np

samples = np.load('ft_1024_samples.npz')

samples = samples['arr_0']

import PIL

from PIL import Image

#save all samples as images

for i in range(samples.shape[0]):

img = Image.fromarray(samples[i].astype('uint8'))

img.save('./fake/sample_' + str(i) + '.png')

# +

#open ft_1024_samples.npz

import numpy as np

import PIL

from PIL import Image

samples = np.load('samples_128x64x64x3.npz')

samples = samples['arr_0']

#save all samples as images

for i in range(samples.shape[0]):

img = Image.fromarray(samples[i].astype('uint8'))

img.save('./fakeb/sampleb_' + str(i) + '.png')

# -

import PIL

from PIL import Image

from tqdm import tqdm

sample_path = '/Volumes/Elements/sample'

import os

#loop through all files in sample_path

for file in tqdm(os.listdir(sample_path)):

try:

#crop the image to be a square centered but keep the original aspect ratio

img = Image.open(sample_path + '/' + file)

#center crop

img = img.crop(((img.size[0]-img.size[1])/2, 0, (img.size[0]+img.size[1])/2, img.size[1]))

#resize the image to 64x64

img = img.resize((64, 64))

#save the image

img.save('./sample_resize/' + file)

except:

#write the file name to a file if there is an error

#remove the file from the ./sample_resize folder

os.remove('./sample_resize/' + file)

with open('./sample_resize/error.txt', 'a') as f:

f.write(file + '\n')

# #remove images that are in the error file

# import os

# import shutil

# with open('./sample_resize/error.txt') as f:

# lines = f.readlines()

# for line in lines:

# os.remove('./sample_resize/' + line.strip())

sample_path = './sample_resize'

# !python -m pytorch_fid ./fake $sample_path

print('FID for fine-tuned samples^')

sample_path = './sample_resize'

# !python -m pytorch_fid ./fakeb $sample_path

print('FID for base model samples^')

file = "/Users/isaiahwilliams/classes/cs236/sample_resize/1078811.jpg"

img = Image.open(file)

img.show()

import os

from PIL import Image

folder_path = '/Users/isaiahwilliams/classes/cs236/sample_resize/'

extensions = []

for filee in os.listdir(folder_path):

file_path = os.path.join(folder_path, filee)

print('** Path: {} **'.format(file_path), end="\r", flush=True)

im = Image.open(file_path)

rgb_im = im.convert('RGB')

if filee.split('.')[1] not in extensions:

extensions.append(filee.split('.')[1])

| pre&post-process+FID.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from os.path import join

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.cluster import AgglomerativeClustering

from scipy.cluster.hierarchy import dendrogram

sns.set()

# -

# ## Import preprocessed data

df = pd.read_csv(join('..', 'data', 'tugas_preprocessed.csv'))

df.head()

df.columns

# Splitting feature names into groups

non_metric_features = df.columns[df.columns.str.startswith('x')]

pc_features = df.columns[df.columns.str.startswith('PC')]

metric_features = df.columns[~df.columns.str.startswith('x') & ~df.columns.str.startswith('PC')]

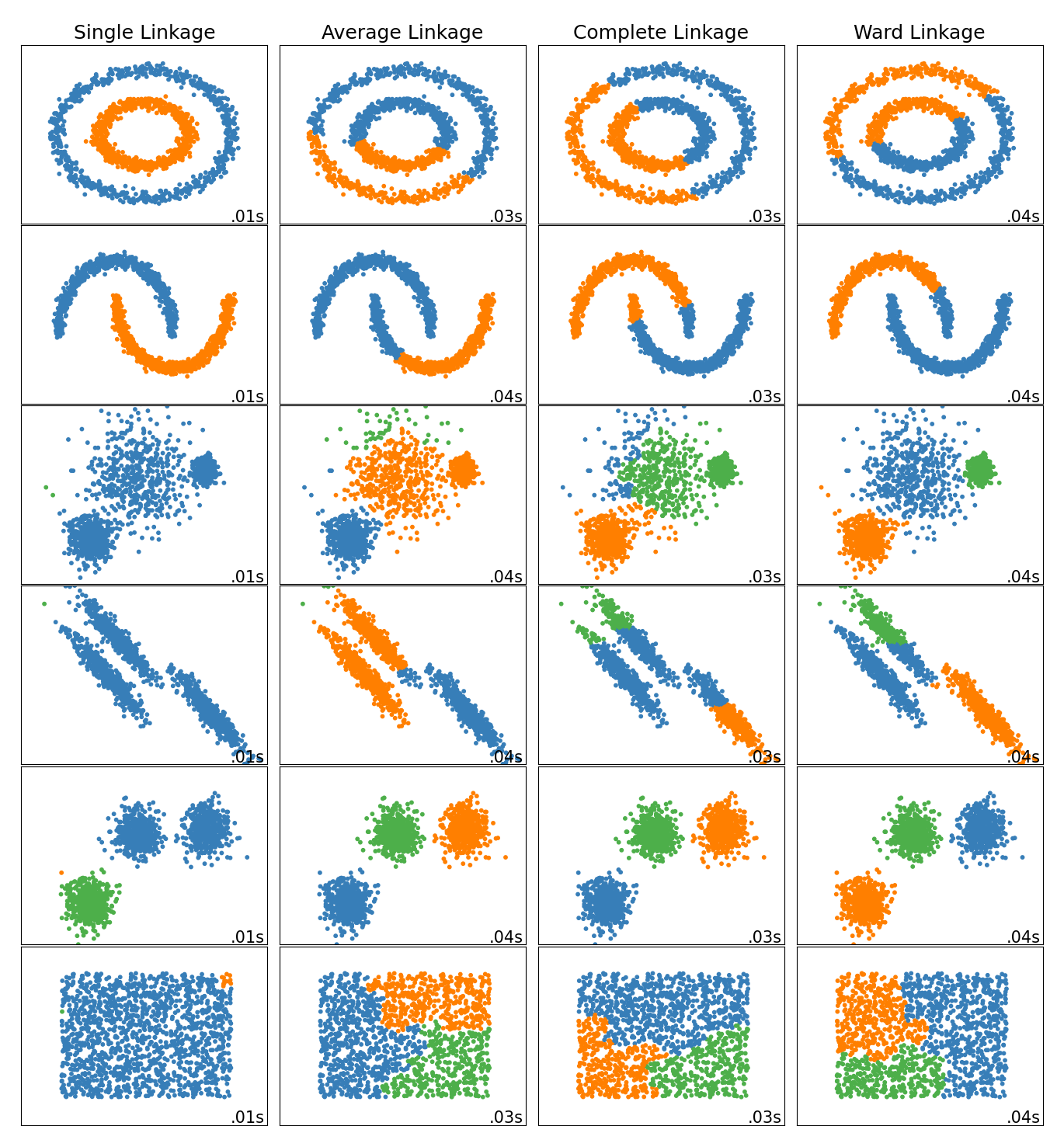

# ## Hierarchical Clustering

#

# What is hierarchical clustering? How does it work? How does it relate to the distance matrix we discussed at the beggining of the course? ;)

#

# ### Different types of linkage

#

#

# ### How are they computed?

#

#

# **Ward linkage**: minimizes the sum of squared differences within all clusters. It is a variance-minimizing approach and in this sense is similar to the k-means objective function but tackled with an agglomerative hierarchical approach.

#

# ### The distance matrix

#

# ### Characteristics:

# - *bottom up approach*: each observation starts in its own cluster, and clusters are successively merged together

# - *greedy/local algorithm*: at each iteration tries to minimize the distance of cluster merging

# - *no realocation*: after an observation is assigned to a cluster, it can no longer change

# - *deterministic*: you always get the same answer when you run it

# - *scalability*: can become *very slow* for a large number of observations

# ### How to apply Hierarchical Clustering?

# **Note: Which types of variables should be used for clustering?**

# Performing HC

hclust = AgglomerativeClustering(linkage='ward', affinity='euclidean', n_clusters=5)

hc_labels = hclust.fit_predict(df[metric_features])

hc_labels

# Characterizing the clusters

df_concat = pd.concat((df, pd.Series(hc_labels, name='labels')), axis=1)

df_concat.groupby('labels').mean()

# ### Defining the linkage method to choose:

# **We need to understand that:**

# $$SS_{t} = SS_{w} + SS_{b}$$

#

# ---

#

# $$SS_{t} = \sum\limits_{i = 1}^n {{{({x_i} - \overline x )}^2}}$$

#

# $$SS_{w} = \sum\limits_{k = 1}^K {\sum\limits_{i = 1}^{{n_k}} {{{({x_i} - {{\overline x }_k})}^2}} }$$

#

# $$SS_{b} = \sum\limits_{k = 1}^K {{n_k}{{({{\overline x }_k} - \overline x )}^2}}$$

#

# , where $n$ is the total number of observations, $x_i$ is the vector of the $i^{th}$ observation, $\overline x$ is the centroid of the data, $K$ is the number of clusters, $n_k$ is the number of observations in the $k^{th}$ cluster and $\overline x_k$ is the centroid of the $k^{th}$ cluster.

# +

# Computing SST

X = df[metric_features].values

sst = np.sum(np.square(X - X.mean(axis=0)), axis=0)

# Computing SSW

ssw_iter = []

for i in np.unique(hc_labels):

X_k = X[hc_labels == i]

ssw_iter.append(np.sum(np.square(X_k - X_k.mean(axis=0)), axis=0))

ssw = np.sum(ssw_iter, axis=0)

# Computing SSB

ssb_iter = []

for i in np.unique(hc_labels):

X_k = X[hc_labels == i]

ssb_iter.append(X_k.shape[0] * np.square(X_k.mean(axis=0) - X.mean(axis=0)))

ssb = np.sum(ssb_iter, axis=0)

# Verifying the formula

np.round(sst) == np.round((ssw + ssb))

# -

def get_r2_hc(df, link_method, max_nclus, min_nclus=1, dist="euclidean"):

"""This function computes the R2 for a set of cluster solutions given by the application of a hierarchical method.

The R2 is a measure of the homogenity of a cluster solution. It is based on SSt = SSw + SSb and R2 = SSb/SSt.

Parameters:

df (DataFrame): Dataset to apply clustering

link_method (str): either "ward", "complete", "average", "single"

max_nclus (int): maximum number of clusters to compare the methods

min_nclus (int): minimum number of clusters to compare the methods. Defaults to 1.

dist (str): distance to use to compute the clustering solution. Must be a valid distance. Defaults to "euclidean".

Returns:

ndarray: R2 values for the range of cluster solutions

"""

def get_ss(df):

ss = np.sum(df.var() * (df.count() - 1))

return ss # return sum of sum of squares of each df variable

sst = get_ss(df) # get total sum of squares

r2 = [] # where we will store the R2 metrics for each cluster solution

for i in range(min_nclus, max_nclus+1): # iterate over desired ncluster range

cluster = AgglomerativeClustering(n_clusters=i, affinity=dist, linkage=link_method)

hclabels = cluster.fit_predict(df) #get cluster labels

df_concat = pd.concat((df, pd.Series(hclabels, name='labels')), axis=1) # concat df with labels

ssw_labels = df_concat.groupby(by='labels').apply(get_ss) # compute ssw for each cluster labels

ssb = sst - np.sum(ssw_labels) # remember: SST = SSW + SSB

r2.append(ssb / sst) # save the R2 of the given cluster solution

return np.array(r2)

# +

# Prepare input

hc_methods = ["ward", "complete", "average", "single"]

# Call function defined above to obtain the R2 statistic for each hc_method

max_nclus = 10

r2_hc_methods = np.vstack(

[

get_r2_hc(df=df[metric_features], link_method=link, max_nclus=max_nclus)

for link in hc_methods

]

).T

r2_hc_methods = pd.DataFrame(r2_hc_methods, index=range(1, max_nclus + 1), columns=hc_methods)

sns.set()

# Plot data

fig = plt.figure(figsize=(11,5))

sns.lineplot(data=r2_hc_methods, linewidth=2.5, markers=["o"]*4)

# Finalize the plot

fig.suptitle("R2 plot for various hierarchical methods", fontsize=21)

plt.gca().invert_xaxis() # invert x axis

plt.legend(title="HC methods", title_fontsize=11)

plt.xticks(range(1, max_nclus + 1))

plt.xlabel("Number of clusters", fontsize=13)

plt.ylabel("R2 metric", fontsize=13)

plt.show()

# -

# ### Defining the number of clusters:

# Where is the **first big jump** on the Dendrogram?

# setting distance_threshold=0 and n_clusters=None ensures we compute the full tree

linkage = 'ward'

distance = 'euclidean'

hclust = AgglomerativeClustering(linkage=linkage, affinity=distance, distance_threshold=0, n_clusters=None)

hclust.fit_predict(df[metric_features])

# +

# Adapted from:

# https://scikit-learn.org/stable/auto_examples/cluster/plot_agglomerative_dendrogram.html#sphx-glr-auto-examples-cluster-plot-agglomerative-dendrogram-py

# create the counts of samples under each node (number of points being merged)

counts = np.zeros(hclust.children_.shape[0])

n_samples = len(hclust.labels_)

# hclust.children_ contains the observation ids that are being merged together

# At the i-th iteration, children[i][0] and children[i][1] are merged to form node n_samples + i

for i, merge in enumerate(hclust.children_):

# track the number of observations in the current cluster being formed

current_count = 0

for child_idx in merge:

if child_idx < n_samples:

# If this is True, then we are merging an observation

current_count += 1 # leaf node

else:

# Otherwise, we are merging a previously formed cluster

current_count += counts[child_idx - n_samples]

counts[i] = current_count

# the hclust.children_ is used to indicate the two points/clusters being merged (dendrogram's u-joins)

# the hclust.distances_ indicates the distance between the two points/clusters (height of the u-joins)

# the counts indicate the number of points being merged (dendrogram's x-axis)

linkage_matrix = np.column_stack(

[hclust.children_, hclust.distances_, counts]

).astype(float)

# Plot the corresponding dendrogram

sns.set()

fig = plt.figure(figsize=(11,5))

# The Dendrogram parameters need to be tuned

y_threshold = 100

dendrogram(linkage_matrix, truncate_mode='level', p=5, color_threshold=y_threshold, above_threshold_color='k')

plt.hlines(y_threshold, 0, 1000, colors="r", linestyles="dashed")

plt.title(f'Hierarchical Clustering - {linkage.title()}\'s Dendrogram', fontsize=21)

plt.xlabel('Number of points in node (or index of point if no parenthesis)')

plt.ylabel(f'{distance.title()} Distance', fontsize=13)

plt.show()

# -

# ### Final Hierarchical clustering solution

# 4 cluster solution

linkage = 'ward'

distance = 'euclidean'

hc4lust = AgglomerativeClustering(linkage=linkage, affinity=distance, n_clusters=4)

hc4_labels = hc4lust.fit_predict(df[metric_features])

# Characterizing the 4 clusters

df_concat = pd.concat((df, pd.Series(hc4_labels, name='labels')), axis=1)

df_concat.groupby('labels').mean()

# 5 cluster solution

linkage = 'ward'

distance = 'euclidean'

hc5lust = AgglomerativeClustering(linkage=linkage, affinity=distance, n_clusters=5)

hc5_labels = hc5lust.fit_predict(df[metric_features])

# Characterizing the 5 clusters

df_concat = pd.concat((df, pd.Series(hc5_labels, name='labels')), axis=1)

df_concat.groupby('labels').mean()

| notebooks_solutions/lab09_hierarchical_clustering.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import scipy.stats as stats

import pymc3 as pm

import arviz as az

import torch

import pyro

from pyro.optim import Adam

from pyro.infer import SVI, Trace_ELBO

import pyro.distributions as dist

from pyro.infer.autoguide import AutoDiagonalNormal, AutoMultivariateNormal, init_to_mean

# +

## Join data

features = pd.read_csv('data/dengue_features_train.csv')

labels = pd.read_csv('data/dengue_labels_train.csv')

df = features.copy()

df['total_cases'] = labels.total_cases

# Target is in column 'total_cases'

# -

df.head()

# +

cities = list(df.city.unique())

sj_df = df.loc[df.city=='sj']

data_df = sj_df.copy()

data_df = data_df.dropna()

ommit_cols = ('total_cases', 'city', 'year', 'weekofyear', 'week_start_date')

for c in data_df.columns:

if c not in ommit_cols:

m, std, mm = data_df[c].mean(), data_df[c].std(), data_df[c].max()

data_df[c] = (data_df[c]- m)/std

# +

plt.plot()

ax1 = data_df.total_cases.plot()

ax2 = ax1.twinx()

ax2.spines['right'].set_position(('axes', 1.0))

data_df.reanalysis_precip_amt_kg_per_m2.plot(ax=ax2, color='green')

plt.show()

# -

sub_df = data_df.loc[:]

with pm.Model() as simple_model:

b0 = pm.Normal("b0_intercept", mu=0, sigma=2)

b1 = pm.Normal("b1_variable", mu=0, sigma=2)

b2 = pm.Normal("b2_variable", mu=0, sigma=2)

b3 = pm.Normal("b3_variable", mu=0, sigma=2)

b4 = pm.Normal("b4_variable", mu=0, sigma=2)

θ = (

b0

+ b1 * sub_df.reanalysis_precip_amt_kg_per_m2

+ b2 * sub_df.station_diur_temp_rng_c

+ b3 * sub_df.reanalysis_max_air_temp_k

+ b4 * sub_df.station_precip_mm

)

y = pm.Poisson("y", mu=np.exp(θ), observed=sub_df.total_cases)

#start = {'b0_intercept': 5., 'b1_variable': 1., 'b2_variable': 1., 'b3_variable': 1., 'b4_variable': 1.}

with simple_model:

step = pm.Slice()

inf_model = pm.sample(10000, step=step, return_inferencedata=True,)

az.plot_trace(inf_model)

# ## Variational Inference approach

# +

torch.set_default_dtype(torch.float64)

cols = list(set(data_df.columns) - set(ommit_cols))

#cols = ['reanalysis_precip_amt_kg_per_m2', 'station_diur_temp_rng_c', 'reanalysis_max_air_temp_k', 'station_precip_mm']

x_data = torch.tensor(data_df[cols].values).float()

y_data = torch.tensor(data_df.total_cases.values).float()

# +

M = len(cols)

def model(x_data, y_data):

b = pyro.sample('b', dist.Normal(0.0, 2.0))

w = pyro.sample('w', dist.Normal(0.0, 2.0).expand([M]).to_event(1))

with pyro.plate('observe_data', size=len(y_data), subsample_size=100) as ind:

θ = (x_data.index_select(0, ind) * w).sum(axis=1) + b

pyro.sample('obs', dist.Poisson(θ.exp()), obs=y_data.index_select(0, ind))

guide = AutoMultivariateNormal(model, init_loc_fn=init_to_mean)

# +

pyro.clear_param_store()

adam = Adam({"lr": 0.001})

svi = SVI(model, guide, adam, loss=Trace_ELBO())

n_steps = 10000

losses = []

for step in range(n_steps):

losses.append(svi.step(x_data, y_data))

if step %1000 == 0:

print("Done with step {}".format(step))

# +

guide.requires_grad_(False)

for name, value in pyro.get_param_store().items():

print(name, pyro.param(name))

# -

plt.plot(losses)

plt.title('ELBO loss')

y_data.mean()

| Exploration.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="KfJYMZwH2UfO"

# # Калькулятор краски

# -

# Введите последовательно требуемые параметры, следуя подсказкам программы (необходимо будет поочередно ввести значения - ширина поверхности, м; высота (длина) поверхности, м; расход краски кв.м / л.; объем банки в литрах, целое число; процент запаса, целое число) для получения результирующих показателей (площадь окрашивания, количество литров, количество банок, неиспользуемых литров краски).

# + colab={"base_uri": "https://localhost:8080/"} id="QE-FAQ2o2U9h" outputId="64e4c4ae-2f82-44fc-ffd8-4cc6c4bb854c"

print('Введите значение ширины поверхности в метрах: ')

surface_width = float(input()) # ширина поверхности, м

print('Введите значение высоты (длины) поверхности в метрах: ')

surface_height = float(input()) # высота (длина) поверхности, м

print('Введите значение расхода краски в кв.м / л.: ')

paints_in_meters_to_liter = float(input()) # расход краски кв.м / л.

print('Введите значение объема банки в литрах (целое число): ')

tin_of_paint_in_liters = int(input()) # объем банки в литрах, целое число