code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [default]

# language: python

# name: python3

# ---

# # Problem 1 : Take a variable x and print "Even" if the number is divisible by 2, otherwise print "Odd".

# +

x=4

if(x%2==0):

print("Even")

else:

print("Odd")

# -

# # Problem 2 : Take a variable y and print "Grade A" if y is greater than 90, "Grade B" if y is greater than 60 but less than or equal to 90 and "Grade F" Otherwise.

# +

y=90

if(y>90):

print("Grade A")

elif(y>60):

print("Grade B")

else:

print("Grade F")

# -

| 13. conditional_statements.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] slideshow={"slide_type": "slide"}

# # Tweepy

#

# *An easy-to-use Python library for accessing the Twitter API.*

#

# http://www.tweepy.org/

# + [markdown] slideshow={"slide_type": "slide"}

# ## Installing

#

# `pip install tweepy`

# + [markdown] slideshow={"slide_type": "slide"}

# ## Twitter App

#

# https://apps.twitter.com/

#

# Create an app and save:

#

# - Consumer key

# - Consumer secret

# - Access token

# - Access token secret

#

#

# + [markdown] slideshow={"slide_type": "subslide"}

# ### Store Secrets Securely

#

# *Don't commit them to a public repo!*

#

# I set them via environment variables and then access via `os.environ`.

# + slideshow={"slide_type": "subslide"}

# Load keys, secrets, settings

import os

ENV = os.environ

CONSUMER_KEY = ENV.get('IOTX_CONSUMER_KEY')

CONSUMER_SECRET = ENV.get('IOTX_CONSUMER_SECRET')

ACCESS_TOKEN = ENV.get('IOTX_ACCESS_TOKEN')

ACCESS_TOKEN_SECRET = ENV.get('IOTX_ACCESS_TOKEN_SECRET')

USERNAME = ENV.get('IOTX_USERNAME')

USER_ID = ENV.get('IOTX_USER_ID')

print(USERNAME)

# + [markdown] slideshow={"slide_type": "slide"}

# ## Twitter APIs

#

# ### REST vs Streaming

#

# https://dev.twitter.com/docs

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## Twitter REST API

#

# * Search

# * Tweet

# * Get information

#

# https://dev.twitter.com/rest/public

# + slideshow={"slide_type": "slide"}

import tweepy

auth = tweepy.OAuthHandler(CONSUMER_KEY, CONSUMER_SECRET)

auth.set_access_token(ACCESS_TOKEN, ACCESS_TOKEN_SECRET)

api = tweepy.API(auth)

public_tweets = api.home_timeline(count=3)

for tweet in public_tweets:

print(tweet.text)

# + slideshow={"slide_type": "slide"}

# Models

user = api.get_user('clepy')

print(user.screen_name)

print(dir(user))

# + slideshow={"slide_type": "slide"}

# Tweet!

status = api.update_status("I'm at @CLEPY!")

print(status.id)

print(status.text)

# + [markdown] slideshow={"slide_type": "slide"}

# ## Streaming API

#

# Real-time streaming of:

#

# * Searches

# * Mentions

# * Lists

# * Timeline

#

# https://dev.twitter.com/streaming/public

# + slideshow={"slide_type": "slide"}

# Subclass StreamListener and define on_status method

class MyStreamListener(tweepy.StreamListener):

def on_status(self, status):

print("@{0}: {1}".format(status.author.screen_name, status.text))

# + slideshow={"slide_type": "slide"}

myStream = tweepy.Stream(auth = api.auth, listener=MyStreamListener())

# + slideshow={"slide_type": "slide"}

try:

myStream.filter(track=['#clepy'])

except KeyboardInterrupt:

print('Interrupted...')

except tweepy.error.TweepError:

myStream.disconnect()

print('Disconnected. Try again!')

# + [markdown] slideshow={"slide_type": "slide"}

# See also:

#

# "Small Batch Artisanal Bots: Let's Make Friends" by <NAME> at PyCon & PyOhio

#

# https://www.youtube.com/watch?v=pDS_LWgjMgg

# + slideshow={"slide_type": "slide"}

| Tweepy/Tweepy.ipynb |

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.1.0

# language: julia

# name: julia-1.1

# ---

display(HTML("<style>.container { width:100% !important; }</style>"))

# #### MIT license (c) 2019 by <NAME>

# #### Jupyter notebook written in Julia 1.1.0. It implements on a CPUs the numerical program for computing the exercise boundary and the price of an American-style call option described in Sec. 18.4 of "Stochastic Methods in Asset Pricing" (pp. 514-516). The same program is implemented in Python in Appendix B.3 of SMAP.

# Note the formula $\int_a^bf(t)dt={1\over2}(b-a)\int_{-1}^{+1}f\bigl({1\over2}(b-a)s+{1\over2}(a+b)\bigr)d s$ to use with FastGaussQuadratures.

## the number of grid points over the time domain is set below

## 5000 is excessive and is meant to test Julia's capabilities

total_grid_no=5000 #this number must be divisible by pnmb

# Introduce the parameters in the model.

KK=40.0; # strike

sigma=0.3; # volatility

delta=0.07; # dividend

rr=0.02; # interest

TT=0.5; # time to maturity

DLT=TT/(total_grid_no) # distance between two grid points in the time domain

ABSC=range(0.0,length=total_grid_no+1,stop=TT) # the entire grid on the time domain

VAL=[max(KK,KK*(rr/delta)) for x in ABSC]; # first guess for the exercise boundary

# +

using SpecialFunctions

using Interpolations

using Roots

using FastGaussQuadrature

nodes,weights=gausslegendre( 200 );

function EUcall(S::Float64,t::Float64,K::Float64,σ::Float64,r::Float64,δ::Float64)

local v1,v2

v1=(δ-r+σ^2/2.0)*t+log(K/S)

v2=(-δ + r + σ^2/2.0)*t - log(K/S)

v3=σ*sqrt(2.0*t)

return -K*(exp(-t*r)/2)+K*(exp(-t*r)/2)*erf(v1/v3)+S*(exp(-t*δ)/2)*erf(v2/v3)+S*(exp(-t*δ)/2)

end;

function F(ϵ::Int64,t::Float64,u::Float64,v::Float64,r::Float64,δ::Float64,σ::Float64)

v1=(r-δ+ϵ*σ^2/2)*(u-t)-log(v)

v2=σ*sqrt(2*(u-t))

return 1.0+erf(v1/v2)

end;

function ah(t::Float64,z::Float64,r::Float64,δ::Float64,σ::Float64,f)

return (exp(-δ*(z-t))*(δ/2)*F(1,t,z,f(z)/f(t),r,δ,σ))

end;

function bh(t::Float64,z::Float64,r::Float64,δ::Float64,σ::Float64,K::Float64,f)

return (exp(-r*(z-t))*(r*K/2)*F(-1,t,z,f(z)/f(t),r,δ,σ))

end;

function make_grid0(step::Float64,size::Int64)

return 0.0:step:(step+(size-1)*step)

end;

# -

function mainF(start_iter::Int64,nmb_of_iter::Int64,conv_tol::Float64,K::Float64,σ::Float64,δ::Float64,r::Float64,T::Float64,Δ::Float64,nmb_grd_pnts::Int64,vls::Array{Float64,1},nds::Array{Float64,1},wghts::Array{Float64,1})

local no_iter,conv_check,absc,absc0,valPrev,val,loc,f

absc=range(0.0,length=nmb_grd_pnts+1,stop=T)

absc0=range(0.0,length=nmb_grd_pnts,stop=(T-Δ));

val=vls;

f=CubicSplineInterpolation(absc,val,extrapolation_bc = Interpolations.Line())

no_iter=start_iter;

conv_check=100.0

while no_iter<nmb_of_iter&&conv_check>conv_tol

no_iter+=1

loc=[max(K,K*(r/δ))]

for ttt=Iterators.reverse(absc0)

an=[(1/2)*(T-ttt)*ah(ttt,(1/2)*(T-ttt)*s+(1/2)*(ttt+T),r,δ,σ,f) for s in nodes]

bn=[(1/2)*(T-ttt)*bh(ttt,(1/2)*(T-ttt)*s+(1/2)*(ttt+T),r,δ,σ,K,f) for s in nodes]

aaa=weights'*an

bbb=weights'*bn;

LRT=find_zero(x->x-K-EUcall(x,T-ttt,K,σ,r,δ)-aaa*x+bbb,(K-10,K+20));

pushfirst!(loc,LRT)

end

valPrev=val

val=loc

f=CubicSplineInterpolation(absc,val,extrapolation_bc = Interpolations.Line())

conv_check=maximum(abs.(valPrev-val))

end

return absc,val,conv_check,no_iter

end

# Run the main routine. The third argument is an upper bound on the total iterations to run.

# The last two arguments control FastGaussQuadrature.

#

# masterF(prnmb,start_iter,nmb_of_iter,conv_tol,K,σ,δ,r,T,Δ,no_grd_pnts,vls,nds,wghts)

#

# mainF(start_iter, nmb_of_iter, conv_tol, $K$, $\sigma$, $\delta$, $r$, $T$, $\Delta$, no_grd_pnts, vls, nds, wghts)

@time ABSC,VAL,conv,iterations=mainF(0,100,1.0e-5,KK,sigma,delta,rr,TT,DLT,total_grid_no,VAL,nodes,weights);

# The call to 'masterF' can be repeated with the most recent VAL and the second argument set to the number of already performed iterations.

conv,iterations

f=CubicSplineInterpolation(ABSC,VAL,extrapolation_bc = Interpolations.Line());

using Plots

pyplot()

plotgrid=ABSC[1]:.001:ABSC[end];

pval=[f(x) for x in plotgrid];

plot(plotgrid,pval,label="exercise boundary")

xlabel!("real time")

ylabel!("underlying spot price")

f(0)

# The price of the EU call option at the money ($S_0=K$) with $T={1\over2}$:

EUcall(KK,TT,KK,sigma,rr,delta)

# The early exercise premium for at the money option at $t=0$ is:

an=[(0.5*(TT-0.0))*exp(-delta*((1/2)*(TT-0.0)*s+(1/2)*(0.0+TT)-0.0))*(KK*delta/2)*F(1,0.0,(1/2)*(TT-0.0)*s+(1/2)*(0.0+TT),f((1/2)*(TT-0.0)*s+(1/2)*(0.0+TT))/KK,rr,delta,sigma) for s in nodes]

bn=[(0.5*(TT-0.0))*exp(-rr*((1/2)*(TT-0.0)*s+(1/2)*(0.0+TT)-0.0))*(rr*KK/2)*F(-1,0.0,(1/2)*(TT-0.0)*s+(1/2)*(0.0+TT),f((1/2)*(TT-0.0)*s+(1/2)*(0.0+TT))/KK,rr,delta,sigma) for s in nodes]

aaa=weights'*an

bbb=weights'*bn;

EEP=aaa-bbb

EEP

# The price of an American at the money call with 6 months to expiry is:

EUcall(KK,TT,KK,sigma,rr,delta)+EEP

| Num_Program_US_Call_Julia.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Bellman Equation for MRPs

# In this exercise we will learn how to find state values for a simple MRPs using the scipy library.

#

import numpy as np

from scipy import linalg

# Define the transition probability matrix

# define the Transition Probability Matrix

n_states = 3

P = np.zeros((n_states, n_states), np.float)

P[0, 1] = 0.7

P[0, 2] = 0.3

P[1, 0] = 0.5

P[1, 2] = 0.5

P[2, 1] = 0.1

P[2, 2] = 0.9

P

# Check that the sum over columns is exactly equal to 1, being a probability matrix.

# the sum over columns is 1 for each row being a probability matrix

assert((np.sum(P, axis=1) == 1).all())

# We can calculate the expected immediate reward for each state using the reward matrix and the transition probability

# define the reward matrix

R = np.zeros((n_states, n_states), np.float)

R[0, 1] = 1

R[0, 2] = 10

R[1, 0] = 0

R[1, 2] = 1

R[2, 1] = -1

R[2, 2] = 10

# calculate expected reward for each state by multiplying the probability matrix for each reward

R_expected = np.sum(P * R, axis=1, keepdims=True)

# The matrix R_expected

R_expected

# The R_expected vector is the expected immediate reward foe each state.

# State 1 has an expected reward of 3.7 that is exactly equal to 0.7 * 1 + 0.3*10.

# The same for state 2 and so on.

# define the discount factor

gamma = 0.9

# We are ready to solve the Bellman Equation

#

# $$

# (I - \gamma P)V = R_{expected}

# $$

#

# Casting this to a linear equation we have

# $$

# Ax = b

# $$

#

# Where

# $$

# A = (I - \gamma P)

# $$

# And

# $$

# b = R

# $$

# Now it is possible to solve the Bellman Equation

A = np.eye(n_states) - gamma * P

B = R_expected

# solve using scipy linalg

V = linalg.solve(A, B)

V

# The vector V represents the value for each state. The state 3 has the highest value.

| Chapter02/Exercise02_01/Exercise02_01.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### Analyze_stable_points - evaluate biase in DEMs using selected unchanged points

#

# These points were picked on hopefully stable points in mostly flat places: docks, lawns, bare spots in middens. Also, the yurt roofs. Typically, 3 to 5 points were picked on most features.

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

# +

def pcoord(x, y):

"""

Convert x, y to polar coordinates r, az (geographic convention)

r,az = pcoord(x, y)

"""

r = np.sqrt( x**2 + y**2 )

az=np.degrees( np.arctan2(x, y) )

# az[where(az<0.)[0]] += 360.

az = (az+360.)%360.

return r, az

def xycoord(r, az):

"""

Convert r, az [degrees, geographic convention] to rectangular coordinates

x,y = xycoord(r, az)

"""

x = r * np.sin(np.radians(az))

y = r * np.cos(np.radians(az))

return x, y

# -

df=pd.read_csv("C:\\crs\\proj\\2019_DorianOBX\\Santa_Cruz_Products\\stable_points\\All_points_dunex_lidar.csv",header = 0)

df

col = df.loc[: , "Aug":"Nov"]

df['mean']=col.mean(axis=1)

df['std']=col.std(axis=1)

df['gnd50 anom']=df['Gnd_50']-df['mean']

df['first95 anom']=df['First_95']-df['mean']

df['Aug anom']=df['Aug']-df['mean']

df['Sep anom']=df['Sep']-df['mean']

df['Oct anom']=df['Oct']-df['mean']

df['Nov anom']=df['Nov']-df['mean']

r,az = pcoord( df['X'].values, df['Y'].values)

xr, yr = xycoord( r, az+42.)

df['alongshore']=xr-2870000.

df

df_anom = df.loc[:,"gnd50 anom":"Nov anom"].copy()

df_anom

print(df_anom.mean())

print(df_anom.std())

plt.plot(df['alongshore'],df['Aug anom'],'o',alpha=.5,label='Aug')

plt.plot(df['alongshore'],df['Sep anom'],'o',alpha=.5,label='Sep')

plt.plot(df['alongshore'],df['Oct anom'],'o',alpha=.5,label='Oct')

plt.plot(df['alongshore'],df['Nov anom'],'o',alpha=.5,label='Nov')

plt.legend()

plt.ylabel('Anomoly (m)')

plt.xlabel('Alongshore Distance (m)')

plt.plot(df['Aug'],df['Aug anom'],'o',alpha=.5,label='Aug')

plt.plot(df['Sep'],df['Sep anom'],'o',alpha=.5,label='Sep')

plt.plot(df['Oct'],df['Oct anom'],'o',alpha=.5,label='Oct')

plt.plot(df['Nov'],df['Nov anom'],'o',alpha=.5,label='Nov')

plt.legend()

plt.ylabel('Anomoly (m)')

plt.xlabel('Elevation (m NAVD88)')

plt.scatter(df['X'].values,df['Y'].values,s=50,c=df['Aug anom'].values,alpha=.4)

# boxplot of anomolies

boxprops = dict(linestyle='-', linewidth=3, color='k')

medianprops = dict(linestyle='-', linewidth=3, color='k')

bp=df_anom.boxplot(figsize=(6,5),grid=True,boxprops=boxprops, medianprops=medianprops)

plt.ylabel('Difference from Four SfM Map Mean (m)')

bp.set_xticklabels(['Gnd 50','First 95','Aug','Sep','Oct','Nov'])

plt.savefig('unchanged_pts_boxplot.png',dpi=200)

| Analyze_stable_points.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:PyRosetta.notebooks]

# language: python

# name: conda-env-PyRosetta.notebooks-py

# ---

# <!--NOTEBOOK_HEADER-->

# *This notebook contains material from [PyRosetta](https://RosettaCommons.github.io/PyRosetta.notebooks);

# content is available [on Github](https://github.com/RosettaCommons/PyRosetta.notebooks.git).*

# <!--NAVIGATION-->

# < [Running Rosetta in Parallel](http://nbviewer.jupyter.org/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.00-Running-PyRosetta-in-Parallel.ipynb) | [Contents](toc.ipynb) | [Index](index.ipynb) | [Distributed computation example: miniprotein design](http://nbviewer.jupyter.org/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.02-PyData-miniprotein-design.ipynb) ><p><a href="https://colab.research.google.com/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.01-PyData-ddG-pssm.ipynb"><img align="left" src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open in Colab" title="Open in Google Colaboratory"></a>

# # Distributed analysis example: exhaustive ddG PSSM

#

# ## Notes

# This tutorial will walk you through how to generate an exhaustive ddG PSSM in PyRosetta using the PyData stack for analysis and distributed computing.

#

# This Jupyter notebook uses parallelization and is not meant to be executed within a Google Colab environment.

#

# ## Setup

# Please see setup instructions in Chapter 16.00

#

# ## Citation

# [Integration of the Rosetta Suite with the Python Software Stack via reproducible packaging and core programming interfaces for distributed simulation](https://doi.org/10.1002/pro.3721)

#

# <NAME>, <NAME>, <NAME>

#

# ## Manual

# Documentation for the `pyrosetta.distributed` namespace can be found here: https://nbviewer.jupyter.org/github/proteininnovation/Rosetta-PyData_Integration/blob/master/distributed_overview.ipynb

import sys

if 'google.colab' in sys.modules:

print("This Jupyter notebook uses parallelization and is therefore not set up for the Google Colab environment.")

sys.exit(0)

import logging

logging.basicConfig(level=logging.INFO)

import pandas

import seaborn

import matplotlib

import Bio.SeqUtils

import Bio.Data.IUPACData as IUPACData

import pyrosetta

import pyrosetta.distributed.io as io

import pyrosetta.distributed.packed_pose as packed_pose

import pyrosetta.distributed.tasks.rosetta_scripts as rosetta_scripts

import pyrosetta.distributed.tasks.score as score

# +

import os,sys,platform

platform.python_version()

# -

# ## Create test pose, initialize rosetta and pack

input_protocol = """

<ROSETTASCRIPTS>

<TASKOPERATIONS>

<RestrictToRepacking name="only_pack"/>

</TASKOPERATIONS>

<MOVERS>

<PackRotamersMover name="pack" task_operations="only_pack" />

</MOVERS>

<PROTOCOLS>

<Add mover="pack"/>

</PROTOCOLS>

</ROSETTASCRIPTS>

"""

input_relax = rosetta_scripts.SingleoutputRosettaScriptsTask(input_protocol)

# Syntax check via setup

input_relax.setup()

raw_input_pose = score.ScorePoseTask()(io.pose_from_sequence("TESTESTEST"))

input_pose = input_relax(raw_input_pose)

# ## Perform exhaustive point mutation and pack

def mutate_residue(input_pose, res_index, new_aa, res_label = None):

import pyrosetta.rosetta.core.pose as pose

work_pose = packed_pose.to_pose(input_pose)

# Annotate strucure with reslabel, for use in downstream protocol

# Add parameters as score, for use in downstream analysis

if res_label:

work_pose.pdb_info().add_reslabel(res_index, res_label)

pose.setPoseExtraScore(work_pose, "mutation_index", res_index)

pose.setPoseExtraScore(work_pose, "mutation_aa", new_aa)

if len(new_aa) == 1:

new_aa = str.upper(Bio.SeqUtils.seq3(new_aa))

assert new_aa in map(str.upper, IUPACData.protein_letters_3to1)

protocol = """

<ROSETTASCRIPTS>

<MOVERS>

<MutateResidue name="mutate" new_res="%(new_aa)s" target="%(res_index)i" />

</MOVERS>

<PROTOCOLS>

<Add mover_name="mutate"/>

</PROTOCOLS>

</ROSETTASCRIPTS>

""" % locals()

return rosetta_scripts.SingleoutputRosettaScriptsTask(protocol)(work_pose)

# +

refine = """

<ROSETTASCRIPTS>

<RESIDUE_SELECTORS>

<ResiduePDBInfoHasLabel name="mutation" property="mutation" />

<Not name="not_neighbor">

<Neighborhood selector="mutation" distance="12.0" />

</Not>

</RESIDUE_SELECTORS>

<TASKOPERATIONS>

<RestrictToRepacking name="only_pack"/>

<OperateOnResidueSubset name="only_repack_neighbors" selector="not_neighbor" >

<PreventRepackingRLT/>

</OperateOnResidueSubset>

</TASKOPERATIONS>

<MOVERS>

<PackRotamersMover name="pack_area" task_operations="only_pack,only_repack_neighbors" />

</MOVERS>

<PROTOCOLS>

<Add mover="pack_area"/>

</PROTOCOLS>

</ROSETTASCRIPTS>

"""

refine_mutation = rosetta_scripts.SingleoutputRosettaScriptsTask(refine)

# -

# # Mutation and pack

# ## Job distribution via `multiprocessing`

from multiprocessing import Pool

import itertools

with pyrosetta.distributed.utility.log.LoggingContext(logging.getLogger("rosetta"), level=logging.WARN):

with Pool() as p:

work = [

(input_pose, i, aa, "mutation")

for i, aa in itertools.product(range(1, len(packed_pose.to_pose(input_pose).residues) + 1), IUPACData.protein_letters)

]

logging.info("mutating")

mutations = p.starmap(mutate_residue, work)

# ## Job distribution via `dask`

if not os.getenv("DEBUG"):

import dask.distributed

cluster = dask.distributed.LocalCluster(n_workers=1, threads_per_worker=1)

client = dask.distributed.Client(cluster)

refinement_tasks = [client.submit(refine_mutation, mutant) for mutant in mutations]

logging.info("refining")

refinements = [task.result() for task in refinement_tasks]

client.close()

cluster.close()

# ## Analysis of delta score

if not os.getenv("DEBUG"):

result_frame = pandas.DataFrame.from_records(packed_pose.to_dict(refinements))

result_frame["delta_total_score"] = result_frame["total_score"] - input_pose.scores["total_score"]

result_frame["mutation_index"] = list(map(int, result_frame["mutation_index"]))

if not os.getenv("DEBUG"):

matplotlib.rcParams['figure.figsize'] = [24.0, 8.0]

seaborn.heatmap(

result_frame.pivot("mutation_aa", "mutation_index", "delta_total_score"),

cmap="RdBu_r", center=0, vmax=50)

# <!--NAVIGATION-->

# < [Running Rosetta in Parallel](http://nbviewer.jupyter.org/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.00-Running-PyRosetta-in-Parallel.ipynb) | [Contents](toc.ipynb) | [Index](index.ipynb) | [Distributed computation example: miniprotein design](http://nbviewer.jupyter.org/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.02-PyData-miniprotein-design.ipynb) ><p><a href="https://colab.research.google.com/github/RosettaCommons/PyRosetta.notebooks/blob/master/notebooks/16.01-PyData-ddG-pssm.ipynb"><img align="left" src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open in Colab" title="Open in Google Colaboratory"></a>

| notebooks/16.01-PyData-ddG-pssm.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Announcments

#

# * Start the CP if you haven't already

# * We have a course tutor, <NAME> <EMAIL>

# +

# %matplotlib inline

import matplotlib

import numpy as np

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (12, 9)

plt.rcParams["font.size"] = 18

# -

# ## Binary Nuclear Reactions

#

# ### Learning Objectives:

#

# - Connect concepts in particle collisions and decay to binary reactions

# - Categorize nuclear reactions using standard nomenclature

# - Apply conservation of nucleons to binary nuclear reactions

# - Formulate Q value equations for binary nuclear reactions

# - Apply conservation of energy and linear momentum to scattering

# - Apply coulombic threshold

# - Apply kinematic threshold

# - Determine when coulombic and kinematic thresholds apply or do not

# ## Recall from Weeks 3 & 4

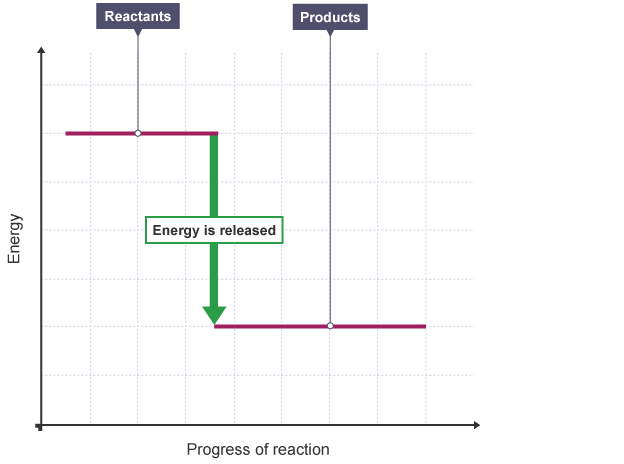

#

# To acheive these objectives, we need to recall 3 major themes from weeks three and four.

#

# ### 1: Compare Exothermic and Endothermic reactions

#

# - In **_exothermic_** or **_exoergic_** reactions, energy is **emitted** ($Q>0$)

# - In **_endothermic_** or **_endoergic_** reactions, energy is **absorbed** ($Q<0$)

#

#

#

#

# <center>(credit: BBC)</center>

#

# ### 2: Relate energy and mass $E=mc^2$

#

# When the masses of reactions change, this is tied to a change in energy from whence we learn the Q value.

# This change in mass is equivalent to a change in energy because **$E=mc^2$**

#

# \begin{align}

# A + B + \cdots &\rightarrow C + D + \cdots\\

# \mbox{(reactants)} &\rightarrow \mbox{(products)}\\

# \implies \Delta M &= (\mbox{reactants}) - (\mbox{products})\\

# &= (M_A + M_B + \cdots) - (M_C + M_D + \cdots)\\

# \implies \Delta E &= \left[(M_A + M_B + \cdots) - (M_C + M_D + \cdots)\right]c^2\\

# \end{align}

#

#

# ### 3: Apply conservation of energy and momentum to scattering collisions

#

# Conservation of total energy and linear momentum can inform Compton scattering reactions. X-rays scattered from electrons had a change in wavelength $\Delta\lambda = \lambda' - \lambda$ proportional to $(1-\cos{\theta_s})$

#

# <a title="JabberWok [GFDL (http://www.gnu.org/copyleft/fdl.html) or CC-BY-SA-3.0 (http://creativecommons.org/licenses/by-sa/3.0/)], via Wikimedia Commons" href="https://commons.wikimedia.org/wiki/File:Compton-scattering.svg"><img width="128" alt="Compton-scattering" src="https://upload.wikimedia.org/wikipedia/commons/thumb/e/e3/Compton-scattering.svg/128px-Compton-scattering.svg.png"></a>

#

# We used the law of cosines:

#

# \begin{align}

# p_e^2 &= p_\lambda^2 + p_{\lambda'}^2 - 2p_\lambda p_{\lambda'}\cos{\theta_s}

# \end{align}

#

#

# And we also used conservation of energy:

# \begin{align}

# p_\lambda c+m_ec^2 &= p_{\lambda'}c + mc^2\\

# \mbox{where }&\\

# m_e&=\mbox{rest mass of the electron}\\

# m &= \mbox{relativistic electron mass after scattering}

# \end{align}

#

# Combining these with our understanding of photon energy ($E=h\nu=pc$) gives:

#

# \begin{align}

# \lambda' - \lambda &= \frac{h}{m_ec}(1-\cos{\theta_s})\\

# \implies \frac{1}{E'} - \frac{1}{E} &= \frac{1}{m_ec^2}(1-\cos{\theta_s})\\

# \implies E' &= \left[\frac{1}{E} + \frac{1}{m_ec^2}(1-\cos{\theta_s})\right]^{-1}\\

# \end{align}

# ## More Types of Reactions

#

# Previously we were interested in fundamental particles striking one another (e.g. the electron and proton in Compton scattering) or nuclei emitting such particles (e.g. $\beta^\pm$ decay).

#

# **Today:** We are interested in myriad additional reactants and/or products. In particular, we're interested in:

#

# - neutron absorption and production reactions

# - _binary, two-product nuclear reactions_ in which two products emerge with new energies after the collision.

# ## Reaction Nomenclature

#

# **Transfer Reactions:** Nucleons (1 or 2) are transferred between the projectile and product.

#

# **Scattering reactions:** The projectile and product emerge from a collision with the same identities as when they started, exchanging only kinetic energy.

#

# **Knockout reactions:** The projectile directly interacts with the target nucleus and is re-emitted **along with** nucleons from the target nucleus.

#

# **capture reactions:** The projectile is absorbed, typically exciting the nucleus. The excited nucleus may emit that energy decaying via photon emission.

#

# **nuclear photoeffect:** A photon projectile liberates a nucleon from the target nucleus.

#

# ### Think Pair Share : categorize these reactions

#

# One example of each of the above appears below. Use the definitions to categorize them.

#

# - $(n, n)$

# - $(n, \gamma)$

# - $(n, 2n)$

# - $(\gamma, n)$

# - $(\alpha, n)$

#

# ## Binary, two-product nuclear reactions

#

# **Two initial nuclei collide to form two product nuclei.**

#

# \begin{align}

# ^{A_1}_{Z_1}X_1 + ^{A_2}_{Z_2}X_2 \longrightarrow ^{A_3}_{Z_3}X_3 + ^{A_4}_{Z_4}X_4

# \end{align}

#

# #### Applying Conservation of Neutrons and Protons

#

# The total number of nucleons is always conserved.

# If the `______________` force is not involved, we can also apply this conservation separately.

#

#

# In most binary, two-product nuclear reactions, this is the case, so the number of protons and neutrons are conserved. Thus:

#

# \begin{align}

# Z_1 + Z_2 = Z_3 + Z_4\\

# A_1 + A_2 = A_3 + A_4

# \end{align}

#

# Apply this to the following:

#

# \begin{align}

# ^{3}_{1}H + ^{16}_{8}O \longrightarrow \left(X\right)^* \longrightarrow ^{16}_{7}N + ^{A_4}_{Z_4}X_4

# \end{align}

#

# ### Think Pair Share:

#

# What are :

#

# - $A_4$

# - $Z_4$

# - $X_4$?

#

# - Bonus: What is $\left(X\right)^*$?

#

# #### Applying conservation of mass and energy.

#

# The Q-value calculation is the same as it has been before.

# The Q value represents the `________` in kinetic energy and, equivalently, a `________` in the rest masses.

#

# \begin{align}

# Q &= E_y + E_Y − E_x − E_X \\

# &= (m_x + m_X − m_y − m_Y )c^2\\

# &= \left(m\left(^{A_1}_{Z_1}X_1\right) + m\left(^{A_2}_{Z_2}X_2\right) - m\left(^{A_3}_{Z_3}X_3\right) - m\left(^{A_4}_{Z_4}X_4\right)\right)c^2\\

# \end{align}

#

# If proton numbers are conserved (true for everything but electron capture or reactions involving the weak force.), we can use the approximation that $m(X) = M(X)$.

#

# \begin{align}

# Q &= E_y + E_Y − E_x − E_X \\

# &= (m_x + m_X − m_y − m_Y )c^2\\

# &= (M_x + M_X − M_y − M_Y )c^2\\

# &= \left(M\left(^{A_1}_{Z_1}X_1\right) + M\left(^{A_2}_{Z_2}X_2\right) - M\left(^{A_3}_{Z_3}X_3\right) - M\left(^{A_4}_{Z_4}X_4\right)\right)c^2\\

# \end{align}

# +

def q(m_reactants, m_products):

"""Returns Q

Parameters

----------

m_reactants: list (of doubles)

the masses of the reactant atoms [amu]

m_products : list (of doubles)

the masses of the product atoms [amu]

"""

amu_to_mev = 931.5 # MeV/amu conversion

m_difference = sum(m_reactants) - sum(m_products)

return m_difference*amu_to_mev

# Look up the masses:

h_3_mass = 3.0160492675

o_16_mass = 15.9949146221

he_3_mass = 3.0160293097

n_16_mass = 16.0061014

m_react = [h_3_mass, o_16_mass]

m_prods = [he_3_mass, n_16_mass]

print("Q: ", q(m_react, m_prods))

# -

# #### Applying conservation of linear momentum

#

# Let's get back to collision kinematics.

#

# First, we'll assume the target nucleus ($X_2$) is initially at rest.

#

#

#

# # Kinematic Threshold

#

# Relying on a combination of kinetic energies $E_i$ and corresponding linear momenta:

#

# \begin{align}

# p_i = \sqrt{2m_iE_i}

# \end{align}

#

# We can determine that some reactions aren't possible without a certain minimum quantity of kinetic energy.

#

# The solution to $E_3$ can become nonphysical if :

#

# - $\cos{\theta_3} < 0$

# - $Q < 0$

# - $m_4 - m_1 < 0$

#

# ## For Exoergic Reactions ($Q>0$)

#

# For $Q>0$ and $m_{4} > m_{1}$, $E_{3} = (a + \sqrt{a^2+b^2})^2$ is the only real, positive, meaningful solution.

#

# The kinetic energy of $E_3$ is, at minimum, the energy arrived at when $p_1 = 0$. Thus:

#

# \begin{align}

# E_3 \longrightarrow& \frac{m_4}{m_3 + m_4}Q\\

# &\mbox{ when } Q>0, p_1=0

# \end{align}

#

# So, no exoergic reactions are restricted by kinetics, as $Q = E_3 + E_4$, for the minimum linear momentum case, which is real and positive.

#

# ## For Endoergic Reactions ($Q<0$)

# Some $Q<0$ reactions aren't possible without a certain minimum quantity of kinetic energy.

#

#

# For $Q<0$ and $m_{4} > m_{1}$, some values of $E_{1}$ are too small to carry forward a real, positive solution. That is, the incident projectile must supply a minimum amount of kinetic energy before the reaction can occur. Without this energy, the solution for $E_3$ results in physically meaningless values. This minimum energy can be found from eqn 6.11 in your book and is :

#

# \begin{align}

# E_1^{th,k} = -\frac{m_3 + m_4}{m_3 + m_4 - m_1}Q.

# \end{align}

#

# One can often simplify this (assuming $m_i >> Q/c^2$ and $m_3 + m_4 - m_1 \simeq m_2$) :

#

#

# \begin{align}

# E_1^{th,k} \simeq - \left( 1 + \frac{m_1}{m_2} \right)Q.

# \end{align}

# +

def kinematic_threshold(m_1, m_3, m_4, Q):

"""Returns the kinematic threshold energy [MeV]

Parameters

----------

m_1: double

mass of incident projectile

m_3: double

mass of first product

m_3: double

mass of second product

Q : double

Q-value for the reaction [MeV]

"""

num = -(m_3 + m_4)*Q

denom = m_3 + m_4 - m_1

return num/denom

def kinematic_threshold_simple(m_1, m_2, Q):

"""Returns the coulombic threshold energy [MeV]

Parameters

----------

m_1: double

mass of incident projectile

m_2: double

mass of target

Q : double

Q-value for the reaction [MeV]

"""

to_return = -(1 + m_1/m_2)*Q

return to_return

# -

# # Coulombic Threshold

#

# Coulomb forces repel a projectile if it is:

#

# - a positively charged nucleus

# - a proton

#

# The force between the projectile (particle 1) and the target nucleus (particle 2) is :

#

# \begin{align}

# &F_C = \frac{Z_1Z_2e^2}{4\pi\epsilon_0r^2}\\

# \mbox{where}&&\\

# &\epsilon_0 = \mbox{the permittivity of free space.}

# \end{align}

#

# ### Think pair share:

# What are the other terms in the above equation:

#

# - $Z_1$ ?

# - $Z_2$ ?

# - $e$ ?

# - $r$ ?

#

# By evaluating the work function for approach to the nucleus with a coulomb barrier, we can establish that the coulombic threshold energy (in MeV) is :

#

# \begin{align}

# E_1^{th,C} \simeq 1.20 \frac{Z_1Z_2}{A_1^{1/3}+A_2^{1/3}}

# \end{align}

def colombic_threshold(z_1, z_2, a_1, a_2):

"""Returns the coulombic threshold energy [MeV]

Parameters

----------

z_1: int

proton number of incident projectile

z_2: int

proton number of target

a_1 : int or double

mass number of the incident projectile [amu]

a_2 : int or double

mass number of the target [amu]

"""

num = 1.20*z_1*z_2

denom = pow(a_1, 1/3) + pow(a_2, 1/3)

return num/denom

# ### Think Pair Share

#

# Which thresholds apply to the below situations:

#

# - A chargeless incident particle, reaction $Q>0$

# - A chargeless incident particle, reaction $Q<0$

# - A positively charged incident particle, reaction $Q>0$

# - A positively charged incident particle, reaction $Q<0$

# ## Overall threshold

#

# For the case where both thresholds apply, the minimum energy for the reaction to occur is the highest of the two thresholds.

#

# \begin{align}

# \min{\left(E_1^{th}\right)} = \max{\left(E^{th,C}_1,E_1^{th,k}\right)}.

# \end{align}

# ## Example

#

# Take the (p, n) reaction from $^{9}Be\longrightarrow^{9}B$. We will need to calculate:

#

# - The Q value

# - The kinematic threshold (if it applies)

# - The coulombic threshold (if it applies)

# - Determine which one is higher

# +

# Q value

# Look up the masses:

be_9_mass = 9.0121821

b_9_mass = 9.0133288

n_mass = 1.0086649158849

p_mass = 1.007825032 # hydrogen nucleus!

m_react = [be_9_mass, p_mass]

m_prods = [b_9_mass, n_mass]

q_example = q(m_react, m_prods)

print("Q: ", q_example)

# +

# Kinematic Threshold

# Which particles were which again?

m_1 = p_mass

m_2 = be_9_mass

m_3 = n_mass

m_4 = b_9_mass

# Calculate using both regular and simpler methods

E_k_th = kinematic_threshold(m_1, m_3, m_4, q_example)

E_k_th_simple = kinematic_threshold_simple(m_1, m_2, q_example)

print("E_k_th: ", E_k_th)

print("E_k_th (simplified): ", E_k_th_simple)

# +

# Coulombic Threshold

# Need some charge info and mass numbers

z_1 = 1 # proton

z_2 = 4 # Be

a_1 = 1 # proton

a_2 = 9 # Be

E_c_th = colombic_threshold(z_1, z_2, a_1, a_2)

print("E_c_th: ", E_c_th)

# +

## Which one is higher?

print("Total threshold: ", max(E_c_th, E_k_th))

# -

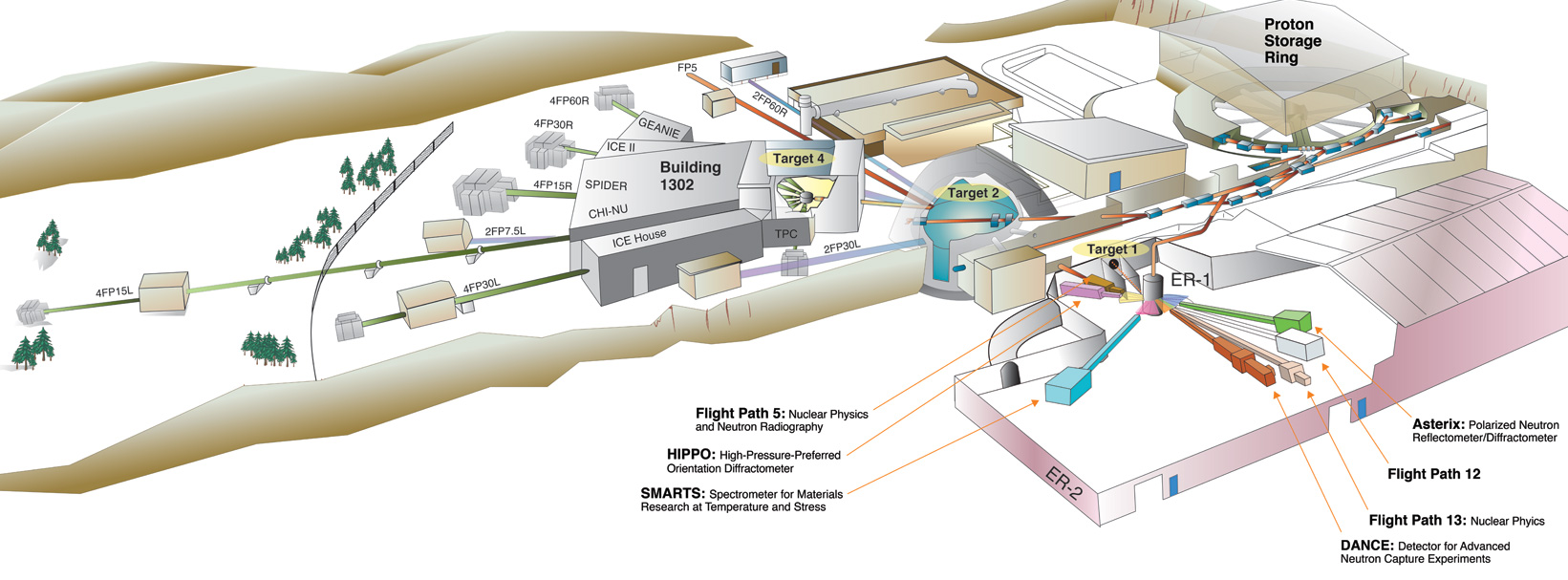

# # Applications: Neutron Detection

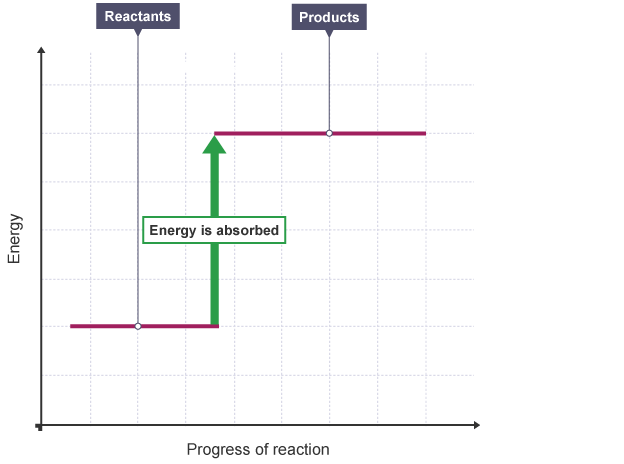

# Neutron's don't tend to directly ionize matter as they pass through. However, they can instigate nuclear reactions which produce charged products. These products, in turn, can be detected due to the ionization they create. The scheme for a Boron Trifluoride detector is below (hosted at https://www.orau.org/ptp/collection/proportional%20counters/bf3info.htm).

#

#

#

# The wall effect results in the following spectrum (approximately):

#

#

# In (n,p) reactions, for example, variation in emission angle of particle 3 can be used to determine the energy of the original incident neutron.

# # Applications: Neutron Production

# Specific neutron energies can be targetted by collecting them at a certain angle away from the production collision.

#

#

#

# <center>The accelerator and spallation target at LANSCE and other spallation experiments rely on this fact.</center>

#

#

# ## Two energies

#

# In (p,n) reactions, for example, certain proton energies may result in more than one neutron energy observed at a single angle. How?

#

# Recall the equation (Shultis and Faw 6.11):

#

# \begin{align}

# \sqrt{E_y}=&\sqrt{\frac{m_xm_yE_x}{(m_y + m_Y)^2}}\cos\theta_y \\

# &\pm \sqrt{\frac{m_xm_yE_x}{(m_y + m_Y)^2}\cos^2\theta_y + \left[\frac{m_Y-m_x}{(m_y + m_Y)}E_x + \frac{m_YQ}{(m_y + m_Y)}\right]}

# \end{align}

#

# Dr. Munk prefers this notation:

# \begin{align}

# \sqrt{E_3}=&\sqrt{\frac{m_1m_3E_1}{(m_3 + m_4)^2}}\cos\theta_3 \\

# &\pm \sqrt{\frac{m_1m_3E_1}{(m_3 + m_4)^2}\cos^2\theta_3 + \left[\frac{m_4-m_1}{(m_3 + m_4)}E_1 + \frac{m_4Q}{(m_3 + m_4)}\right]}

# \end{align}

# ## Heavy Particle scattering from an electron

#

# Much like the Compton reaction we saw between photons and electrons, we can see a similar reaction with heavy particles. Occaisionally, a heavy particle (e.g. a small nucleus, like an $\alpha$ particle) strikes the orbital electrons in atoms of a medium.

#

# Thus: particles 2 and 3 are the electron. So:

#

# \begin{align}

# m_2 &= m_3 = m_e = \mbox{(the electron mass)}\\

# E_3 &= E_e = \mbox{(the recoil electron energy)}\\

# m_1 &= m_4 = \mbox{(the mass of the heavy particle)}\\

# E_1 &= E_4 = \mbox{(the kinetic energy of the incident heavy particle)}

# \end{align}

#

# For this scattering process, there is no change in the rest masses of the reactants, so Q = 0.

#

# We can use the Shutlis and Faw 6.11 equation above to arrive at:

#

# \begin{align}

# \sqrt{E_e}=& \frac{2}{m_4 + m_e}\sqrt{m_4m_eE_4}\cos{\theta_e}

# \end{align}

#

# We can approximate that $m_4 >> m_e$ such that the electron recoil energy becomes:

#

# \begin{align}

# \implies E_e =& 4\frac{m_e}{m_4}E_4\cos^2{\theta_e}

# \end{align}

#

# ## Think Pair Share

# What angle, $\theta_e$, corresponds to the maximimum loss of kinetic energy by the incident heavy particle?

#

#

#

# At $\theta_e=0$, we find that:

#

# \begin{align}

# (E_e)_{max} = 4\frac{m_e}{m_4}E_4

# \end{align}

import math

def recoil_energy(m_4, e_4, theta_e):

m_e = 0.0005486 # amu

num = 4*m_e*e_4*pow(math.cos(theta_e), 2)

return num/m_4

# +

th = [math.radians(-90),

math.radians(-75),

math.radians(-60),

math.radians(-45),

math.radians(-30),

math.radians(-15),

math.radians(0),

math.radians(15),

math.radians(30),

math.radians(45),

math.radians(60),

math.radians(75),

math.radians(90)]

m_4 = 4.003 # alpha particle

to_plot_4 = np.arange(0.,len(th))

to_plot_10 = np.arange(0.,len(th))

for k, v in enumerate(th):

to_plot_4[k] = (recoil_energy(m_4, 4, v))

to_plot_10[k] = (recoil_energy(m_4, 10, v))

plt.plot(th, to_plot_4, label="$4MeV$")

plt.plot(th, to_plot_10, label="$10MeV$")

plt.ylabel("Electron Recoil Energy ($MeV$)")

plt.xlabel("Angle (radians)")

plt.legend(loc=2)

# +

th = 0

m_4 = 4.003 # alpha particle

e_4 = 4 # MeV

print("Max (4MeV alpha): ", recoil_energy(m_4, e_4, th))

# -

# ## Neutron Scattering

#

# ### Neutron interactions with matter.

#

# \begin{align}

# ^1_0n + {^a_z}X \longrightarrow

# \begin{cases}

# ^1_0n + {^a_z}X & \mbox{Elastic Scattering}\\

# ^1_0n + \left({^a_z}X\right)^* & \mbox{Inlastic Scattering}

# \end{cases}

# \end{align}

#

#

#

# Using the ubiquitous equation 6.11 for a neutron scatter:

#

# \begin{align}

# \sqrt{E_3}=&\sqrt{\frac{m_1m_3E_1}{(m_3 + m_4)^2}}\cos\theta_3 \\

# &\pm \sqrt{\frac{m_1m_3E_1}{(m_3 + m_4)^2}\cos^2\theta_3 + \left[\frac{m_4-m_1}{(m_3 + m_4)}E_1 + \frac{m_4Q}{(m_3 + m_4)}\right]}\\

# \end{align}

#

# We can define our particles as a neutron hitting a nucleus and changing in its energy.

#

# \begin{align}

# m_1 = m_3 = m_n\\

# E_1 = E_n\\

# E_3 = E_n'\\

# \end{align}

#

# Such that:

#

# \begin{align}

# \sqrt{E_n'} =&\sqrt{\frac{m_nm_nE_n}{(m_n + m_4)^2}}\cos\theta_s \\

# &\pm \sqrt{\frac{m_nm_nE_n}{(m_n + m_4)^2}\cos^2\theta_s + \left[\frac{m_4-m_n}{(m_n + m_4)}E_n + \frac{m_4Q}{(m_n + m_4)}\right]}

# \end{align}

#

# We can also agree that $m_2=m_4$, which is some nucleus with a mass that is approximately the same at the beginning and end of the scatter (approximate if the scattering is inelastic) . This gives, with some rearrangement:

#

# \begin{align}

# \sqrt{E_n'} &= \frac{1}{m_4 + m_n}\times\\ &\left[\sqrt{m_n^2E_n}\cos{\theta_s} \pm \sqrt{E(m_4^2 + m_n^2\cos^2{\theta_s} − m_n^2) + m_4 ( m_4 + m_n ) Q }\right]

# \end{align}

#

# And, for elastic scattering ($Q=0$):

#

# \begin{align}

# E' = \frac{1}{(A+1)^2}\left[\sqrt{E}\cos{\theta_s} + \sqrt{E(A^2 - 1 + \cos{\theta_s}^2)}\right]^2

# \end{align}

#

def scattered_neutron_energy(A, E, th):

"""Returns the energy of a scattered neutron [MeV]

Parameters

----------

A: int or double

mass number of medium

E: double

kinetic energy of the incident neutron [MeV]

th : double

scattering angle, in degrees

"""

cos_th = math.cos(math.radians(th))

term1 = 1/((A+1)**2)

term2 = math.sqrt(E)*cos_th

term3 = math.sqrt(E*(A**2 - 1 + cos_th**2))

return term1*((term2 + term3)**2)

# +

th = [math.radians(-90),

math.radians(-75),

math.radians(-60),

math.radians(-45),

math.radians(-30),

math.radians(-15),

math.radians(0),

math.radians(15),

math.radians(30),

math.radians(45),

math.radians(60),

math.radians(75),

math.radians(90)]

e_initial = 2.0 # 2 MeV is special

a_light = 4.003 # alpha particle

a_heavy = 235.0 # uranium atom

to_plot_light = np.arange(0.,len(th))

to_plot_heavy = np.arange(0.,len(th))

for k, v in enumerate(th):

to_plot_light[k] = (scattered_neutron_energy(a_light, e_initial, v))

to_plot_heavy[k] = (scattered_neutron_energy(a_heavy, e_initial, v))

plt.plot(th, to_plot_light, label="light")

plt.plot(th, to_plot_heavy, label="heavy")

plt.ylabel("Scattered Neutron Energy ($MeV$)")

plt.xlabel("Angle (radians)")

plt.legend(loc=2)

# -

# ## Average Energy Loss

#

# For elastic scattering (Q = 0), we see the minimum and maxium energies occur at the maximum and minimum angles.

#

# \begin{align}

# E'_{max} &= E'(\theta_{s,min})\\

# &= E'(\theta_{s}=0)\\

# &= E\\

# E'_{min} &= E'(\theta_{s,max})\\

# &= E'(\theta_{s}=\pi)\\

# &= \frac{(A-1)^2}{(A+1)^2} E\\

# &\equiv \alpha E\\

# \end{align}

#

# For isotropic scattering, we can find the average loss:

#

#

# \begin{align}

# (\Delta E)_{av} &\equiv E - E'_{av}\\

# &= E− 1(E+\alpha E)\\

# & = 1(1- \alpha)E

# \end{align}

# +

def alpha(a):

"""Returns the average energy loss of a

scattered neutron [MeV]

Parameters

----------

A: int or double

mass number of medium

"""

num = (a-1)**2

denom = (a+1)**2

return num/denom

def average_energy_loss(A, E):

"""Returns the average energy loss of a scattered neutron [MeV]

Parameters

----------

A: int or double

mass number of medium

E: double

kinetic energy of the incident neutron [MeV]

"""

return 1*(1-alpha(A))*E

# +

e_initial = np.arange(0, 2, 0.001)

to_plot_light = np.arange(0.,len(e_initial))

to_plot_heavy = np.arange(0.,len(e_initial))

for k, v in enumerate(e_initial):

to_plot_light[k] = (average_energy_loss(a_light, v))

to_plot_heavy[k] = (average_energy_loss(a_heavy, v))

plt.plot(e_initial, to_plot_light, label="light atom")

plt.plot(e_initial, to_plot_heavy, label="heavy atom")

plt.ylabel("Average Neutron Energy Loss ($MeV$)")

plt.xlabel("Initial neutron energy ($MeV$)")

plt.legend(loc=2)

# -

# ## Logarithmic Energy Loss

#

# It turns out, on a logarithmic energy scale, a neutron loses the same amount of logarithmic energy per elastic scatter, regardless of its initial energy. So, this is a helpful term, particularly since neutron energies can range by many orders of magnitude. So, we often use 'logarithmic energy loss' when discussing this downscattering. This is also called "lethargy".

#

# \begin{align}

# \left(\ln{(E)} - \ln{(E')}\right)_{av} & = \overline{\ln{\left(\frac{E}{E'}\right)}} \\

# &= 1 + \frac{\alpha}{1-\alpha}\\

# &= \xi\\

# &= \mbox{average logarithmic energy loss per elastic scatter}\\

# &= \mbox{lethargy}

# \end{align}

def lethargy(a):

"""Returns the average logarithmic energy

loss per elastic scatter

Parameters

----------

A: int or double

mass number of medium

"""

return 1.0 + alpha(a)/(1-alpha(a))

a = np.arange(1, 240)

plt.plot([lethargy(i) for i in a])

plt.ylabel("$\\xi$")

plt.xlabel("A($amu$)")

# ## Thermal Neutrons

#

# 1. a fast neutron slows down

# 2. may eventually come into thermal equilibrium with the medium

# 3. thermal motion of atoms in medium are in Maxwellian distribution

# 4. neutron may gain kinetic energy upon scattering from a rapidly moving nucleus

# 5. neutron may lose energy upon scattering from a slowly moving nucleus.

#

# <a title="By The original uploader was Pdbailey at English Wikipedia.

# Later versions were uploaded by Cryptic C62 at en.wikipedia.

# Convert into SVG by Lilyu from Image:MaxwellBoltzmann.gif. [Public domain], via Wikimedia Commons" href="https://commons.wikimedia.org/wiki/File:MaxwellBoltzmann-en.svg"><img width="512" alt="MaxwellBoltzmann-en" src="https://upload.wikimedia.org/wikipedia/commons/thumb/0/01/MaxwellBoltzmann-en.svg/512px-MaxwellBoltzmann-en.svg.png"></a>

#

#

# At room temperature, 293 K:

# - the most probable kinetic energy of thermal neutrons is 0.025 eV

# - 0.025 eV corresponds to a neutron speed of about 2200 m/s.

#

# ## Epithermal

#

# Neutrons that are faster than thermal neutrons, but aren't quite "fast" are called _epithermal_. ($0.2eV < E_{epi} < 1 MeV$)

#

# ## Fast

#

# $> 1MeV$

#

# ## Neutron Capture

#

# - Free neutrons will eventually be absorbed by a nucleus (or escape the domain of interest)

# - Neutron capture leaves the nucleus excited

# - Actually, very excited (Recall: what is a typical binding energy per nucleon?)

# - When it's released as a $\gamma$ that energy can be very hazardous

#

# Neutron slowing down can help us to reduce very high energy $\gamma$ emissions.

# ## Fission Reactions

#

# Some nuclei spontaneously fission (e.g. $^{252}Cf$). However, this isn't common.

#

#

#

# \begin{align}

# ^1_0n + ^{235}_{92}U \longrightarrow \left( ^{236}_{92}U \right)^*

# \begin{cases}

# ^{235}_{92}U + ^1_0n & \mbox{Elastic Scattering}\\

# ^{235}_{92}U + ^1_0n' + \gamma & \mbox{Inelastic Scattering}\\

# ^{236}_{92}U + \gamma & \mbox{Radiative Capture}\\

# ^{A_H}_{Z_H}X_H + ^{A_L}_{Z_L}X_L + ^1_0n + \cdots & \mbox{Fission}

# \end{cases}

# \end{align}

# # Announcements

#

# * I am modifying the schedule a bit. On Friday I will introduce different reactor types and then on Monday we are going to have a guest lecture on the nuclear fuel cycle by **<NAME>**.

# * The homework I assign on Friday will be due the Monday after spring break

# * If you wanted an adjustment on your midterm exam, you **need to email me** with the details so I can record it properly.

# * Exam 2 moved to 4/4. Syllabus updated on github.

# ### Recall: Cross sections

#

# The likelihood of each of these scattering events is captured by cross sections.

#

# - $\sigma_x = $ microscopic cross section $[cm^2]$

# - $\Sigma_x = $ macroscopic cross section $[1/length]$

# - $\Sigma_x = N\sigma_x $

# - $N = $ number density of target atoms $[\#/volume]$

#

#

# ### Cross sections are in units of area. Explain this to your neighbor.

# ### What energy neutron do we prefer for fission in $^{235}U$?

#

# Nuclei that undergo neutron induced fission can be categorized into three types:

#

# - fissile: can fission with a slow neutron ($^{235}U$, $^{233}U$, $^{239}Pu$)

# - fissionable: require high energy (>1MeV) neutron ($^{238}U$, $^{240}Pu$)

# - fertile: can be converted into fissile or fissionable nuclide (breeding reactions)

#

# Key breeding reactions are :

#

# \begin{align}

# {^{232}_{90}}Th + ^1_0n \longrightarrow {^{233}_{90}}Th \overset{\beta^-}{\longrightarrow} {^{233}_{91}}Pa \overset{\beta^-}{\longrightarrow} {^{233}_{92}}U\\

# {^{238}_{92}}U + ^1_0n \longrightarrow {^{239}_{92}}U \overset{\beta^-}{\longrightarrow} {^{239}_{93}}Np \overset{\beta^-}{\longrightarrow} {^{239}_{94}}Pu\\

# \end{align}

# ## The fission process

#

# \begin{align}

# ^1_0n + ^{235}_{92}U \longrightarrow \left( ^{236}_{92}U \right)^* \longrightarrow X_H + X_L + \nu_p\left(^1_0n\right) + \gamma_p

# \end{align}

#

# Conserving neutrons and protons:

#

# \begin{align}

# A_L + A_H + \nu_p &= 236\\

# N_L + N_H + \nu_p &= 144\\

# Z_L + Z_H &= 92\\

# \end{align}

#

# <a title="JWB at en.wikipedia [CC BY 3.0

# (https://creativecommons.org/licenses/by/3.0

# ) or GFDL (http://www.gnu.org/copyleft/fdl.html)], via Wikimedia Commons" href="https://commons.wikimedia.org/wiki/File:ThermalFissionYield.svg"><img width="512" alt="ThermalFissionYield" src="https://upload.wikimedia.org/wikipedia/commons/thumb/6/68/ThermalFissionYield.svg/512px-ThermalFissionYield.svg.png"></a>

# ## Fission Product Decay

#

# The fission fragments end up very neutron rich.

#

# ### Think Pair Share

# Recall the chart of the nuclides. How will these fission products likely decay?

# ### Fission Spectrum

#

# $\chi(E)$ is an empirical probability density function describing the energies of prompt fission neutrons.

#

# \begin{align}

# \chi (E) &= 0.453e^{-1.036E}\sinh\left(\sqrt{2.29E}\right)\\

# \end{align}

# +

import numpy as np

import math

def chi(energy):

return 0.453*np.exp(-1.036*energy)*np.sinh(np.sqrt(2.29*energy))

energies = np.arange(0.0,10.0, 0.1)

plt.plot(energies, chi(energies))

plt.title(r'Prompt Neutron Energy Distribution $\chi(E)$')

plt.xlabel("Prompt Neutron Energy [MeV]")

plt.ylabel("probability")

# -

# #### Questions about this plot:

#

# - What is the most likely prompt neutron energy?

# - Can you write an equation for the average neutron energy?

# - Can you write an equation for the average neutron energy?

#

print(max([chi(e) for e in energies]), chi(0.7))

# #### Expectation Value

#

# Recall that the average energy will be the expectation value of the probability density function.

#

#

# \begin{align}

# <E> &= \int E\chi(E)dE\\

# &= E \chi(E)

# \end{align}

plt.plot(energies, [chi(e)*e for e in energies])

# ## Prompt and Delayed neutrons

#

# - Most of the neutrons in fission are emitted within $10^{-14}s$.

# - **prompt** neutrons

# - $\nu_p$

# - Some, ($<1\%$) are produced by delayed decay of fission products.

# - **delayed** neutrons

# - $\nu_d$

#

# We define the delayed neutron fraction as :

#

# \begin{align}

# \beta \equiv \frac{\nu_d}{\nu_d + \nu_p}

# \end{align}

# ## Energy from fission

# ### Reaction Rates

#

# - The microscopic cross section is just the likelihood of the event per unit area.

# - The macroscopic cross section is just the likelihood of the event per unit area of a certain density of target isotopes.

# - The reaction rate is the macroscopic cross section times the flux of incident neutrons.

#

# \begin{align}

# R_{i,j}(\vec{r}) &= N_j(\vec{r})\int dE \phi(\vec{r},E)\sigma_{i,j}(E)\\

# R_{i,j}(\vec{r}) &= \mbox{reactions of type i involving isotope j } [reactions/cm^3s]\\

# N_j(\vec{r}) &= \mbox{number of nuclei participating in the reactions } [\#/cm^3]\\

# E &= \mbox{energy} [MeV]\\

# \phi(\vec{r},E)&= \mbox{flux of neutrons with energy E at position i } [\#/cm^2s]\\

# \sigma_{i,j}(E)&= \mbox{cross section } [cm^2]\\

# \end{align}

#

#

# This can be written more simply as $R_x = \Sigma_x I N$, where I is intensity of the neutron flux.

#

# ### Source term

#

# The source of neutrons in a reactor are the neutrons from fission.

#

# \begin{align}

# s &=\nu \Sigma_f \phi

# \end{align}

#

# where

#

# \begin{align}

# s &= \mbox{neutrons available for next generation of fissions}\\

# \nu &= \mbox{the number born per fission}\\

# \Sigma_f &= \mbox{the number of fissions in the material}\\

# \phi &= \mbox{initial neutron flux}

# \end{align}

#

# This can also be written as:

#

# \begin{align}

# s &= \nu\Sigma_f\phi\\

# &= \nu\frac{\Sigma_f}{\Sigma_{a,fuel}}\frac{\Sigma_{a,fuel}}{\Sigma_a}{\Sigma_a} \phi\\

# &= \eta f {\Sigma_a} \phi\\

# \eta &= \frac{\nu\Sigma_f}{\Sigma_{a,fuel}} \\

# &= \mbox{number of neutrons produced per neutron absorbed by the fuel, "neutron reproduction factor"}\\

# f &= \frac{\Sigma_{a,fuel}}{\Sigma_a} \\

# &= \mbox{number of neutrons absorbed in the fuel per neutron absorbed anywhere, "fuel utilization factor"}\\

# \end{align}

#

# This absorption and flux term at the end seeks to capture the fact that some of the neutrons escape. However, if we assume an infinite reactor, we know that all the neutrons are eventually absorbed in either the fuel or the coolant, so we can normalize by $\Sigma_a\phi$ and therefore:

#

#

# \begin{align}

# k_\infty &= \frac{\eta f \Sigma_a\phi}{\Sigma_a \phi}\\

# &= \eta f

# \end{align}

| 10.06.1-binary_reactions/binary-reactions.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Anaconda(Py3.6)

# language: python

# name: anaconda3

# ---

# # Find RA and Dec for the members in Reino+2018 Hyades study

#

# **Delete Gaia login credentials before publishing!**

import pandas as pd

import numpy as np

df = pd.read_csv('downloads/clusters_Reino/Hyades_Reino2018.txt', delimiter="\s+", skiprows=36, dtype={"source_id":str}).dropna(how='all')

df = df.rename(index=str, columns={'# HIP' : 'HIP',})

df = df.replace(' ""',-999)

df = df.replace(' ""',-999)

df.head()

df.columns = df.columns.str.replace(' ', '')

#df.source_id = df.source_id.astype(float).apply(np.rint).astype(int)

df = df.replace(-999, np.nan)

df.tail(10)

df.to_csv('downloads/clusters_Reino/hyades_reino.csv',index=False)

# # Find RA and Dec to each entry

pf = pd.read_csv('downloads/clusters_Reino/hyades_reino.csv',dtype={"source_id":str})

hip = pf.HIP.dropna().astype(int)

hip = hip.apply(lambda x: 'HIP {}'.format(str(x)))

hip.to_csv('downloads/clusters_Reino/hyades_reino_HIP.csv',index=False, header=False)

tyc = pf.TYC.dropna().str.strip()

tyc = tyc.apply(lambda x: 'TYC {}'.format(str(x)))

tyc.to_csv('downloads/clusters_Reino/hyades_reino_TYC.csv',index=False, header=False)

gaia = pf.source_id.dropna().astype(str).to_frame()

gaia.to_csv('downloads/clusters_Reino/hyades_reino_gaia.csv',index=False, header=False)

# ## HIP

hipsimbad = pd.read_table('downloads/clusters_Reino/simbad_HIP.tsv', delimiter='\t',skiprows=[0,1,2,3,4,6])

# +

hipsimbad.columns = hipsimbad.columns.str.replace(' ', '')

hipsimbad['RAJ2000'] = hipsimbad['coord1(ICRS,J2000/2000)'].apply(lambda x: str(x).split('+')[0].strip())

hipsimbad['DEJ2000'] = hipsimbad['coord1(ICRS,J2000/2000)'].apply(lambda x: str(x).split('+')[-1].strip())

hipsimbad.typedident = hipsimbad.typedident.apply(lambda x: str(x)[4:])

hipsimbad.columns.values

# -

hipsimbad.DEJ2000

excols = ['typedident','RAJ2000','DEJ2000']

hipmerge = hipsimbad[excols]

hipmerge = hipmerge.rename(index=str, columns=dict(zip(excols,['HIP','RAJ2000_HIP','DEJ2000_HIP'])))

hipmerge.DEJ2000_HIP = hipmerge.DEJ2000_HIP.apply(lambda a: a.replace(u'\N{MINUS SIGN}', '-').replace(u'\N{PLUS SIGN}', '+')).replace('','-999').astype(float)

hipmerge.RAJ2000_HIP = hipmerge.RAJ2000_HIP.apply(lambda a: a.replace(u'\N{MINUS SIGN}', '-').replace(u'\N{PLUS SIGN}', '+')).replace('','-999').astype(float)

hipmerge.HIP = hipmerge.HIP.replace("", "-999").astype(float)

hipmerge = hipmerge.replace(-999, np.nan)

hipmerge = hipmerge.dropna(how="all")

hipmerge.tail()

# ## TYC

tycsimbad = pd.read_table('downloads/clusters_Reino/simbad_TYC.tsv', delimiter='\t',skiprows=[0,1,2,3,4,6])

# +

tycsimbad.columns = tycsimbad.columns.str.replace(' ', '')

tycsimbad['RAJ2000'] = tycsimbad['coord1(ICRS,J2000/2000)'].apply(lambda x: str(x).split(' ')[0].strip())

tycsimbad['DEJ2000'] = tycsimbad['coord1(ICRS,J2000/2000)'].apply(lambda x: str(x).split(' ')[-1].strip())

tycsimbad.typedident = tycsimbad.typedident.apply(lambda x: str(x)[4:])

tycsimbad.columns.values

# -

excols = ['typedident','RAJ2000','DEJ2000']

tycmerge = tycsimbad[excols]

tycmerge = tycmerge.rename(index=str, columns=dict(zip(excols,['TYC','RAJ2000_TYC','DEJ2000_TYC'])))

tycmerge.DEJ2000_TYC = tycmerge.DEJ2000_TYC.apply(lambda a: a.replace(u'\N{MINUS SIGN}', '-').replace(u'\N{PLUS SIGN}', '+')).replace('','-999').astype(float)

tycmerge.RAJ2000_TYC = tycmerge.RAJ2000_TYC.apply(lambda a: a.replace(u'\N{MINUS SIGN}', '-').replace(u'\N{PLUS SIGN}', '+')).replace('','-999').astype(float)

tycmerge = tycmerge.replace(-999, np.nan)

tycmerge.head()

# ## Gaia

#

from astroquery.gaia import Gaia

Gaia.login(user='eilin', password='<PASSWORD>!')

#from astropy.table import Table

#filename="downloads/clusters_Reino/hyades_reino_gaia.vot"

#Table.from_pandas(gaia).write(filename, format='votable')

upload_resource = 'downloads/clusters_Reino/hyades_reino_gaia.vot'

job = "SELECT gaiadr1.gaia_source.source_id, gaiadr1.gaia_source.dec, gaiadr1.gaia_source.ra \

FROM gaiadr1.gaia_source \

JOIN user_eilin.table1 \

ON gaiadr1.gaia_source.source_id = user_eilin.table1.col1"

j = Gaia.launch_job(query=job, verbose=True, dump_to_file=True,

upload_resource=upload_resource, upload_table_name="table_test")

r = j.get_results()

r.pprint()

# +

gaiares = r.to_pandas().astype(str)

dd = gaiares.merge(gaia, on="source_id", how="outer")

# -

set(dd.source_id.dropna().tolist()) - set(gaiares.source_id.tolist())

# This is the first one in the table and it is lost in the process somehow... fix that:

gaiares = gaiares.append({"source_id":'67351752990540544', "ra" : 58.171521868568654, "dec" : 25.804222936867426 },ignore_index=True)

gaiares = gaiares.rename(index=str, columns=dict(zip(["source_id","ra","dec"],['source_id','RAJ2000_GaiaDR1','DEJ2000_GaiaDR1'])))

gaiares.to_csv("downloads/clusters_Reino/gaia_hyades_reino.csv", index=False)

gaiares.head()

# ### Now merge all tables together

hipmerge.HIP.head()

df = df.merge(hipmerge, on="HIP", how="left")

df = df.merge(tycmerge, on="TYC", how="left")

df = df.merge(gaiares, on="source_id", how="left")

df.head()

df.head(20)

# +

df = df.drop_duplicates()

df["RAJ2000"] = df[~df.DEJ2000_GaiaDR1.isnull()].RAJ2000_GaiaDR1

df["DEJ2000"] = df[~df.DEJ2000_GaiaDR1.isnull()].DEJ2000_GaiaDR1

df.loc[df.DEJ2000_GaiaDR1.isnull(),"RAJ2000"] = df.loc[df.DEJ2000_GaiaDR1.isnull(),["RAJ2000_TYC","RAJ2000_HIP"]].mean(axis=1)

df.loc[df.DEJ2000_GaiaDR1.isnull(),"DEJ2000"] = df.loc[df.DEJ2000_GaiaDR1.isnull(),["DEJ2000_TYC","DEJ2000_HIP"]].mean(axis=1)

df["ID"] = df[~df.DEJ2000_GaiaDR1.isnull()].source_id

df.loc[df.DEJ2000_GaiaDR1.isnull(),"ID"] = df.loc[df.DEJ2000_GaiaDR1.isnull(),"TYC"]#.apply(lambda x: "TYC {}".format(x))

df.loc[df.DEJ2000_GaiaDR1.isnull(),"ID"] = df.loc[df.DEJ2000_GaiaDR1.isnull(),"HIP"]#.apply(lambda x: "HIP {}".format(int(x)))

df.tail(20)

# -

# save the Gaia IDs to text

df.source_id.dropna().to_csv("downloads/clusters_Reino/gaiaidshyades_reino.txt", index=False, header=True)

# ### You need to go to the Gaia archive and propagate epoch 2015.5 coordinates to epoch 2000

#

# Here is the ADQL query (modify `user_eilin`, and other details as needed):

#

# SELECT source_id,

# COORD1(EPOCH_PROP_POS(ra, dec, parallax, pmra, pmdec, radial_velocity, 2015.5, 2000)),

# COORD2(EPOCH_PROP_POS(ra, dec, parallax, pmra, pmdec, radial_velocity, 2015.5, 2000))

#

# FROM gaiadr2.gaia_source as g

#

# INNER JOIN user_eilin.gaiachyades2 AS ei

#

# ON (g.source_id = ei.gch)

#

# ### After submitting the query, download results

#

# ...as csv file to `"downloads/clusters_Reino/hyades_reino_coords_propagated_to_epoch2000.csv"`

#

#

# ### Finally

#

# Merge new coordinates with Reino's table.

#

# Keep an eye on the `downloads/cluster_catalog_characteristics.csv` entry, update data format if needed (should be up to date).

corrected = pd.read_csv("downloads/clusters_Reino/hyades_reino_coords_propagated_to_epoch2000.csv")

corrected = corrected.rename(index=str, columns={"coord1":"RAJ2000_epoch2000",

"coord2":"DEJ2000_epoch2000"})

corrected.source_id = corrected.source_id.astype(str)

corrected.head()

newdf = df.merge(corrected, how="left", on="source_id")

newdf.loc[~newdf.RAJ2000_epoch2000.isnull(),"RAJ2000"] = newdf.RAJ2000_epoch2000.dropna()

newdf.loc[~newdf.DEJ2000_epoch2000.isnull(),"DEJ2000"] = newdf.DEJ2000_epoch2000.dropna()

newdf.to_csv("downloads/clusters_Reino/hyades_reino18.csv", index=False)

| Membership_Matching/02_PREP_Reino_Hyades_table.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 4, "hidden": true, "row": 72, "width": 4}, "report_default": {}}}}

# # Table of Contents

#

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"hidden": true}, "report_default": {"hidden": true}}}}

# %matplotlib notebook

from mpl_toolkits.mplot3d import Axes3D

import matplotlib.pyplot as plt

#plt.style.use('ggplot')

import ipywidgets as widgets

import sys, os, io, string, shutil, math

from hublib.ui import Submit

from hublib.ui import RunCommand

import hublib.use

# %use boost-1.62.0-mpich2-1.3-gnu-4.7.2

# %use lammps-31Mar17

# #%set_env OMP_NUM_THREADS=4

import numpy as np

import re

import time

from threading import Thread

from io import StringIO

from ipywidgets import Layout, Box, Label, Output

from IPython.display import display,HTML

#sys.path.append('../python/')

#import train as nn

import random

#from IPython.core.display import display, HTML

display(HTML("<style>.container { width:1450px !important; }</style>"))

style = {'description_width': 'initial'}

left_column='340px'

right_column='940px'

app_width='1280px'

form_item_layout = Layout(

display='flex',

flex_flow='row',

justify_content='space-between',

padding='5px'

)

form_item_layout_slider_text = Layout(

display='flex',

flex_flow='row',

justify_content='space-between',

padding='5px'

)

box_layout_image=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

#width='25%',

padding='5px',

#min_height='20px',

#min_width='200px'

width=left_column,

height='304.5px'

)

box_layout_phisical=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

#width='25%',

padding='5px',

#min_height='20px',

#min_width='200px'

width=left_column,

#height='180px'

)

box_layout_computing=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

padding='5px',

width=left_column,

#height='103px'

)

box_layout_progress=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

padding='5px',

width=left_column,

##height='55px'

)

box_layout_output=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

padding='5px',

width=left_column,

#height='150px'

)

box_layout_about=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

padding='5px',

width=right_column,

#height='136px'

)

form_item_layout_tab = Layout(

display='flex',

flex_flow='column',

align_items='stretch',

justify_content='space-between',

padding='5px'

)

box_layout_tabs=Layout(

display='flex',

flex_flow='column',

border='solid 1px',

align_items='stretch',

padding='5px',

width=right_column,

height='100%'

)

button_hide_layout=Layout(

visibility='hidden', #visible/hidden

padding='5px',

)

button_show_layout=Layout(

visibility='visible', #visible/hidden

padding='5px',

)

# +

'''

CSS = """

.output {

align-items: center;

}

div.output_area {

width: 100%;

}

div.output_area {

left-padding: 60px;

}

"""

display(HTML('<style>{}</style>'.format(CSS)))

'''

js_custom="""<script>

$('#appmode-leave').hide(); // Hides the edit app button.

$('#appmode-busy').hide(); // Hides the kernel busy indicator.

</script>

"""

display(HTML(js_custom))

# -

# %%capture

# This hides any output from this cell

# %set_env OMP_NUM_THREADS=4

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 4, "hidden": true, "row": 66, "width": 4}, "report_default": {}}}} language="html"

# <style>

# .jupyter-widgets.widget-tab > .p-TabBar .p-TabBar-tab {

# flex: 0 1 175px

# }

# </style>

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 2, "hidden": true, "row": 29, "width": 4}, "report_default": {"hidden": false}}}}

npCharge = widgets.BoundedIntText(

value=-1000,

min=-1500,

max=-500,

step=1,

description='NP Charge (Q in e)',

style=style,

)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 2, "hidden": true, "row": 29, "width": 4}, "report_default": {"hidden": true}}}}

nLigand = widgets.Dropdown(

options={'25': 25, '50': 50, '75':75, '100':100},

value=50,

description="Linker Density (in units of NP density)",

style=style

)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 2, "hidden": true, "row": 29, "width": 4}, "report_default": {"hidden": true}}}}

salt = widgets.BoundedFloatText(

value=0.175,

min=0.005,

max=0.3,

step=0.005,

description='Salt Concentration (M):',

style=style

)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"hidden": true}, "report_default": {}}}}

imageSlider = widgets.IntSlider(

value=0,

min=0,

max=0,

step=10000,

description='Images',

style=style

)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"hidden": true}, "report_default": {}}}}

submit_str = ''

def onToggleBtnChange(b):

global submit_str

if clustorMode.value:

clustorMode.icon = 'check'

clustorMode.button_style='success'

if not submit_str:

output_image_warning_text.layout.visibility = 'visible'

else:

clustorMode.icon = ''

clustorMode.button_style=''

if not submit_str:

output_image_warning_text.layout.visibility = 'hidden'

'''

clustorMode =widgets.ToggleButton(

value=False,

description='Cluster mode',

disabled=False,

button_style='', # 'success', 'info', 'warning', 'danger' or ''

tooltip='Simulation will run on a computing cluster when this button is turned on but you will loose the access to dynamic simulation snapshot slider.',

icon='',

style=style

)

'''

clustorMode =widgets.Checkbox(

value=False,

description='Cluster mode',

disabled=False,

#button_style='', # 'success', 'info', 'warning', 'danger' or ''

tooltip='Simulation will run on a computing cluster when this button is turned on but you will loose the access to dynamic simulation snapshot slider.',

icon='',

style=style

)

clustorMode.observe(onToggleBtnChange,'value')

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"hidden": true}, "report_default": {"hidden": true}}}}

label_style = "style='background-color: #81c4fd; font-size:500; padding: 2px'"

label_style2 = "style='font-size:350; padding: 2px; font-weight: bold; align: center'"

def make_label(name, label_style, desc="",):

lval = '<p %s %s>%s</p>' % (desc, label_style, name)

return widgets.HTML(lval)

plabel = make_label('Input Parameters', label_style, '')

clabel = make_label('Computing Parameters',label_style, '')

olabel = make_label('Output Controllers', label_style, '')

imageSliderabout = make_label('Slide to navigate the simulation snapshots', label_style2, '')

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 6, "hidden": true, "row": 43, "width": 12}, "report_default": {"hidden": false}}}}

'''

image_html = widgets.HTML(

value='<img src="../images/overview.jpg" style="width: 500px"/>',

)

'''

file = open("../images/overview.png", "rb")

image = file.read()

image=widgets.Image(

value=image,

format='jpg',

width=297,

height=297,

)

form_items = [

Box([image], layout=form_item_layout)

]

mainImage = Box(form_items, layout=box_layout_image)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 0, "height": 11, "hidden": true, "row": 59, "width": 4}, "report_default": {}}}}

form_items = [

Box([plabel], layout=form_item_layout),

Box([npCharge], layout=form_item_layout),

Box([nLigand], layout=form_item_layout),

Box([salt], layout=form_item_layout)

]

form_items_computing = [

Box([clabel], layout=form_item_layout),

Box([clustorMode], layout=form_item_layout)

]

form_items_output = [

Box([olabel], layout=form_item_layout),

Box([imageSlider], layout=form_item_layout),

Box([imageSliderabout], layout=form_item_layout)

]

physical_para = Box(form_items, layout=box_layout_phisical)

computing_para = Box(form_items_computing, layout=box_layout_computing)

output_para = Box(form_items_output, layout=box_layout_output)

# + extensions={"jupyter_dashboards": {"version": 1, "views": {"grid_default": {"col": 4, "height": 6, "hidden": true, "row": 43, "width": 8}, "report_default": {"hidden": false}}}}

about=widgets.Textarea(