code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/tjHendrixx/DS-Unit-1-Sprint-1-Dealing-With-Data/blob/master/DS_Unit_1_Sprint_Challenge_1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="NooAiTdnafkz" colab_type="text"

# # Data Science Unit 1 Sprint Challenge 1

#

# ## Loading, cleaning, visualizing, and analyzing data

#

# In this sprint challenge you will look at a dataset of the survival of patients who underwent surgery for breast cancer.

#

# http://archive.ics.uci.edu/ml/datasets/Haberman%27s+Survival

#

# Data Set Information:

# The dataset contains cases from a study that was conducted between 1958 and 1970 at the University of Chicago's Billings Hospital on the survival of patients who had undergone surgery for breast cancer.

#

# Attribute Information:

# 1. Age of patient at time of operation (numerical)

# 2. Patient's year of operation (year - 1900, numerical)

# 3. Number of positive axillary nodes detected (numerical)

# 4. Survival status (class attribute)

# -- 1 = the patient survived 5 years or longer

# -- 2 = the patient died within 5 year

#

# Sprint challenges are evaluated based on satisfactory completion of each part. It is suggested you work through it in order, getting each aspect reasonably working, before trying to deeply explore, iterate, or refine any given step. Once you get to the end, if you want to go back and improve things, go for it!

# + [markdown] id="5wch6ksCbJtZ" colab_type="text"

# ## Part 1 - Load and validate the data

#

# - Load the data as a `pandas` data frame.

# - Validate that it has the appropriate number of observations (you can check the raw file, and also read the dataset description from UCI).

# - Validate that you have no missing values.

# - Add informative names to the features.

# - The survival variable is encoded as 1 for surviving >5 years and 2 for not - change this to be 0 for not surviving and 1 for surviving >5 years (0/1 is a more traditional encoding of binary variables)

#

# At the end, print the first five rows of the dataset to demonstrate the above.

# + id="83WvQtjwuu2L" colab_type="code" colab={}

import requests

import pandas as pd

import io

urlData = requests.get('http://archive.ics.uci.edu/ml/machine-learning-databases/haberman/haberman.data').content

rawData = pd.read_csv(io.StringIO(urlData.decode('utf-8')), header=None)

df = rawData

# + id="9p3lAXTbu4Qc" colab_type="code" outputId="1bb3258a-5c0b-44ec-c537-ace2606fb62d" colab={"base_uri": "https://localhost:8080/", "height": 204}

df.head()

# + id="IOI_XtMTu9cV" colab_type="code" outputId="44ff52b0-a634-4937-eeac-c14a8732fa45" colab={"base_uri": "https://localhost:8080/", "height": 102}

df.isnull().sum()

# + id="FNd4xXv2vOhJ" colab_type="code" colab={}

df.columns = ['Age', 'Year of Operation', '# of +Aux Nodes', 'Survival Status']

# + id="i-9S7R8AvYlk" colab_type="code" outputId="b4381950-8c10-4f26-a4db-561134960c63" colab={"base_uri": "https://localhost:8080/", "height": 204}

df.head()

# + id="hRK79Pduvb-K" colab_type="code" outputId="9bba5e69-79ee-4570-b033-6360a035afb4" colab={"base_uri": "https://localhost:8080/", "height": 102}

df.dtypes

# + id="v5KffBrxvfzu" colab_type="code" colab={}

df['Survival Status'].replace(1,0, inplace=True)

# + id="p4qzXA3Kvi-8" colab_type="code" colab={}

df['Survival Status'].replace(2,1, inplace=True)

# + id="schTPYeRvlxt" colab_type="code" outputId="381494a3-3bc9-40b1-d70f-385bbf896fd9" colab={"base_uri": "https://localhost:8080/", "height": 204}

df.head()

# + [markdown] id="G7rLytbrO38L" colab_type="text"

# ## Part 2 - Examine the distribution and relationships of the features

#

# Explore the data - create at least *2* tables (can be summary statistics or crosstabulations) and *2* plots illustrating the nature of the data.

#

# This is open-ended, so to remind - first *complete* this task as a baseline, then go on to the remaining sections, and *then* as time allows revisit and explore further.

#

# Hint - you may need to bin some variables depending on your chosen tables/plots.

# + id="QQvvBd8I2pFL" colab_type="code" colab={}

import numpy as np

# + id="GIdsc0-ey-Yo" colab_type="code" outputId="858647d1-089a-44cb-d81c-b13d31b0e10d" colab={"base_uri": "https://localhost:8080/", "height": 297}

df.describe()

# + id="bdGZFLnNzKXP" colab_type="code" outputId="fdbc2bb9-723c-4d8f-d354-99f767ce1412" colab={"base_uri": "https://localhost:8080/", "height": 171}

pd.crosstab(df['Survival Status'], df['# of +Aux Nodes'])

# + id="EPfyrf5A4ALQ" colab_type="code" outputId="ef976b82-19e9-4792-8afd-dfdfceb151d4" colab={"base_uri": "https://localhost:8080/", "height": 1041}

pd.crosstab(df['# of +Aux Nodes'], df['Survival Status'])

# + id="IAkllgCIFVj0" colab_type="code" colab={}

import matplotlib.pyplot as plt

import numpy as np

# + id="dcTamcbbvwPn" colab_type="code" outputId="6b3e647f-dde8-4c68-b122-628d3e0a7750" colab={"base_uri": "https://localhost:8080/", "height": 300}

y = df['Age']

x = df['Survival Status']

plt.ylabel('AGE')

plt.xlabel('Survival Status')

plt.bar(x,y)

plt.show

# + id="lMMBBz_Qv0yZ" colab_type="code" outputId="36ad4044-f145-4f45-9966-fd0bc5846230" colab={"base_uri": "https://localhost:8080/", "height": 300}

y1 = df['# of +Aux Nodes']

plt.ylabel('# of +Aux Nodes')

plt.xlabel('Age')

plt.plot(y,y1)

plt.show

# + id="6dsfo1-Sw0ot" colab_type="code" outputId="de7e17f1-bb23-444c-95a3-2545f346b3a4" colab={"base_uri": "https://localhost:8080/", "height": 300}

plt.xlabel('# of +Aux Nodes')

plt.ylabel('Survival Status')

plt.bar(y1,x)

plt.show

# + [markdown] id="ZM8JckA2bgnp" colab_type="text"

# ## Part 3 - Analysis and Interpretation

#

# Now that you've looked at the data, answer the following questions:

#

# - What is at least one feature that looks to have a positive relationship with survival?

# - What is at least one feature that looks to have a negative relationship with survival?

# - How are those two features related with each other, and what might that mean?

#

# Answer with text, but feel free to intersperse example code/results or refer to it from earlier.

# + [markdown] id="DUjCb6xAxWq_" colab_type="text"

#

#

# 1. The # of +Aux Nodes when above 25 the chances of survival past 5 years drops sharply.

#

#

# 2. When the number of # of +Aux Nodes is below 25 the chances of Survival increase greatly.

#

# 3. The presence of +Aux Nodes can be a determining factor in whether a surgery is a successful one or not .

#

#

#

#

#

#

#

# + [markdown] id="rShhctzfxfZe" colab_type="text"

#

| DS_Unit_1_Sprint_Challenge_1.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# **Notas para contenedor de docker:**

# Comando de docker para ejecución de la nota de forma local:

#

# nota: cambiar `dir_montar` por la ruta de directorio que se desea mapear a `/datos` dentro del contenedor de docker.

#

# ```

# dir_montar=<ruta completa de mi máquina a mi directorio>#aquí colocar la ruta al directorio a montar, por ejemplo:

# #dir_montar=/Users/erick/midirectorio.

# ```

#

# Ejecutar:

#

# ```

# $docker run --rm -v $dir_montar:/datos --name jupyterlab_prope_r_kernel_tidyverse -p 8888:8888 -d palmoreck/jupyterlab_prope_r_kernel_tidyverse:2.1.4

#

# ```

# Ir a `localhost:8888` y escribir el password para jupyterlab: `<PASSWORD>`

#

# Detener el contenedor de docker:

#

# ```

# docker stop jupyterlab_prope_r_kernel_tidyverse

# ```

#

# Documentación de la imagen de docker `palmoreck/jupyterlab_prope_r_kernel_tidyverse:2.1.4` en [liga](https://github.com/palmoreck/dockerfiles/tree/master/jupyterlab/prope_r_kernel_tidyverse).

# ---

# Para ejecución de la nota usar:

#

# [docker](https://www.docker.com/) (instalación de forma **local** con [Get docker](https://docs.docker.com/install/)) y ejecutar comandos que están al inicio de la nota de forma **local**.

#

# O bien dar click en alguno de los botones siguientes:

# [](https://mybinder.org/v2/gh/palmoreck/dockerfiles-for-binder/jupyterlab_prope_r_kernel_tidyerse?urlpath=lab/tree/Propedeutico/Python/clases/1_introduccion/4_modulos_numpy_matplotlib.ipynb) esta opción crea una máquina individual en un servidor de Google, clona el repositorio y permite la ejecución de los notebooks de jupyter.

# [](https://repl.it/languages/python3) esta opción no clona el repositorio, no ejecuta los notebooks de jupyter pero permite ejecución de instrucciones de Python de forma colaborativa con [repl.it](https://repl.it/). Al dar click se crearán nuevos ***repl*** debajo de sus users de ***repl.it***.

#

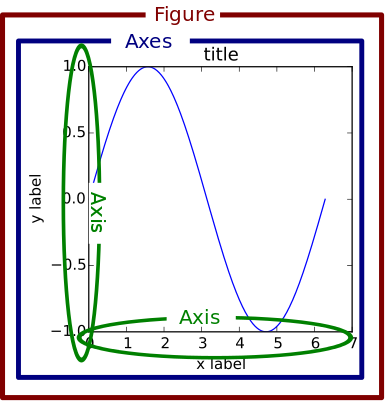

# # Revisar y ejecutar los ejemplos de las secciones 1.5 a 1.8 del libro de texto "Numerical Methods in Engineering with Python3" de <NAME>

# Temas:

#

# * Módulo numpy.

# * Gráficas con matplotlib.pyplot.

# * Scope de variables.

# * Escritura y ejecución de programas.

#siguiente ejemplo de la sección 1.6 del libro de texto

import matplotlib.pyplot as plt

from numpy import arange,sin,cos

x = arange(0.0,6.2,0.2)

x

plt.plot(x,sin(x),'o-',x,cos(x),'^-')

plt.xlabel('x')

plt.legend(('sine','cosine'),loc = 0)

plt.grid(True)

plt.show()

plt.subplot(2,1,1)

plt.plot(x,sin(x),'o-')

plt.xlabel('x');plt.ylabel('sin(x)')

plt.grid(True)

plt.subplot(2,1,2)

plt.plot(x,cos(x),'^-')

plt.xlabel('x');plt.ylabel('cos(x)')

plt.grid(True)

| Python/clases/1_introduccion/4_modulos_numpy_matplotlib.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.0.0

# language: julia

# name: julia-1.0

# ---

using LatPhysBandstructures

using LatticePhysics

using HDF5

using LatPhysBandstructuresPlottingPyPlot

# # Bond Hamiltonians

# +

function saveBondHamiltonian(

hb :: HB,

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

;

append :: Bool = false

) where {L,N,HB<:AbstractBondHamiltonian{L,N}}

# print an error because implementation for concrete type is missing

error("not implemented interface function 'saveBondHamiltonian' for bond Hamiltonian type " * string(typeof(hb)))

end

# convinience function for standard type

function loadBondHamiltonian(

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

)

# find out the type

T = getBondHamiltonianType(Val(Symbol(h5readattr(fn, group)["type"])))

# return the loaded bond hamiltonian

return loadBondHamiltonian(T, fn, group)

end

function getBondHamiltonianType(

::Val{HB}

) where {HB}

error("Type $(HB) could not be identified, i.e. getBondHamiltonianType(Val{:$(HB)}) is missing")

end

# +

function getBondHamiltonianType(

::Val{:BondHoppingHamiltonianSimple}

)

# return the type

return BondHoppingHamiltonianSimple

end

function saveBondHamiltonian(

hb :: HB,

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

;

append :: Bool = false

) where {L,HB<:BondHoppingHamiltonianSimple{L}}

# determine the mode based on if one wants to append stuff

if append

mode = "r+"

else

mode = "w"

end

# open the file in mode

h5open(fn, mode) do file

# create the group in which the bonds are saved

group_hb = g_create(file, group)

# save the type identifier

attrs(group_hb)["type"] = "BondHoppingHamiltonianSimple"

# save the parameters

attrs(group_hb)["N"] = 1

attrs(group_hb)["L"] = string(L)

# save the Float64 coupling

group_hb["coupling"] = hb.coupling

end

# return nothing

return nothing

end

function loadBondHamiltonian(

::Type{HB},

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

) where {LI,HB<:Union{BondHoppingHamiltonianSimple{LI},BondHoppingHamiltonianSimple}}

# read attribute data

attr_data = h5readattr(fn, group)

# determine D based on this

L = Meta.eval(Meta.parse(attr_data["L"]))

N = attr_data["N"]

# load all remaining data

coupling = h5read(fn, group*"/coupling")

# return the new bond hamiltonian

return BondHoppingHamiltonianSimple{L}(coupling)

end

# -

hb = BondHoppingHamiltonianSimple{Int64}(3.0)

saveBondHamiltonian(hb, "test.h5")

hb

loadBondHamiltonian("test.h5")

# +

function getBondHamiltonianType(

::Val{:BondHoppingHamiltonianSimpleNN}

)

# return the type

return BondHoppingHamiltonianSimpleNN

end

function saveBondHamiltonian(

hb :: HB,

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

;

append :: Bool = false

) where {L,HB<:BondHoppingHamiltonianSimpleNN{L}}

# determine the mode based on if one wants to append stuff

if append

mode = "r+"

else

mode = "w"

end

# open the file in mode

h5open(fn, mode) do file

# create the group in which the bonds are saved

group_hb = g_create(file, group)

# save the type identifier

attrs(group_hb)["type"] = "BondHoppingHamiltonianSimpleNN"

# save the parameters

attrs(group_hb)["N"] = 1

attrs(group_hb)["L"] = string(L)

# save the Float64 coupling

group_hb["coupling"] = hb.coupling

if L <: Number

group_hb["label"] = hb.label

else

group_hb["label"] = string(hb.label)

end

end

# return nothing

return nothing

end

function loadBondHamiltonian(

::Type{HB},

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

) where {LI,HB<:Union{BondHoppingHamiltonianSimpleNN{LI},BondHoppingHamiltonianSimpleNN}}

# read attribute data

attr_data = h5readattr(fn, group)

# determine D based on this

L = Meta.eval(Meta.parse(attr_data["L"]))

N = attr_data["N"]

# load coupling

coupling = h5read(fn, group*"/coupling")

# load label

label = L(h5read(fn, group*"/label"))

# return the new bond hamiltonian

return BondHoppingHamiltonianSimpleNN{L}(coupling, label)

end

# -

hb = BondHoppingHamiltonianSimpleNN{String}(3.0, "test")

saveBondHamiltonian(hb, "test.h5")

hb

loadBondHamiltonian("test.h5")

# +

function getBondHamiltonianType(

::Val{:BondHoppingHamiltonianDict}

)

# return the type

return BondHoppingHamiltonianDict

end

function saveBondHamiltonian(

hb :: HB,

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

;

append :: Bool = false

) where {L,HB<:BondHoppingHamiltonianDict{L}}

# determine the mode based on if one wants to append stuff

if append

mode = "r+"

else

mode = "w"

end

# open the file in mode

h5open(fn, mode) do file

# create the group in which the bonds are saved

group_hb = g_create(file, group)

# save the type identifier

attrs(group_hb)["type"] = "BondHoppingHamiltonianDict"

# save the parameters

attrs(group_hb)["N"] = 1

attrs(group_hb)["L"] = string(L)

# save the labels

if L <: Number

group_hb["labels"] = [p[1] for p in hb.couplings]

else

group_hb["labels"] = [string(p[1]) for p in hb.couplings]

end

# save the Float64 couplings

group_hb["couplings"] = [p[2] for p in hb.couplings]

end

# return nothing

return nothing

end

function loadBondHamiltonian(

::Type{HB},

fn :: AbstractString,

group :: AbstractString = "bond_hamiltonian"

) where {LI,HB<:Union{BondHoppingHamiltonianDict{LI},BondHoppingHamiltonianDict}}

# read attribute data

attr_data = h5readattr(fn, group)

# determine D based on this

L = Meta.eval(Meta.parse(attr_data["L"]))

N = attr_data["N"]

# load coupling

couplings = h5read(fn, group*"/couplings")

# load label

labels = L.(h5read(fn, group*"/labels"))

# return the new bond hamiltonian

return BondHoppingHamiltonianDict{L}(Dict([(labels[i], couplings[i]) for i in 1:length(labels)]))

end

# -

hb = BondHoppingHamiltonianDict{String}(Dict("x"=>0.5, "y"=>-0.5))

saveBondHamiltonian(hb, "test.h5")

hb

loadBondHamiltonian("test.h5")

# # Hamiltonian

# +

function saveHamiltonian(

h :: H,

fn :: AbstractString,

group :: AbstractString = "hamiltonian"

;

append :: Bool = false

) where {L,UC<:AbstractUnitcell,N,HB<:AbstractBondHamiltonian{L,N}, H<:Hamiltonian{L,UC,HB}}

# determine the mode based on if one wants to append stuff

if append

mode = "r+"

else

mode = "w"

end

# group for bond hamiltonian

group_hb = group*"/bond_hamiltonian"

# group for unitcell

group_uc = group*"/unitcell"

# open the file in mode

h5open(fn, mode) do file

# create the group in which the bonds are saved

group_h = g_create(file, group)

# save the parameters

attrs(group_h)["N"] = N

attrs(group_h)["L"] = string(L)

# save the groups

attrs(group_h)["bond_hamiltonian"] = group_hb

attrs(group_h)["unitcell"] = group_uc

end

# save bond hamiltonian and unitcell in respective groups (append!)

saveBondHamiltonian(bondHamiltonian(h), fn, group_hb, append=true)

saveUnitcell(unitcell(h), fn, group_uc, append=true)

# return nothing

return nothing

end

function loadHamiltonian(

::Type{H},

fn :: AbstractString,

group :: AbstractString = "hamiltonian"

) where {L,UC<:AbstractUnitcell,N,HB<:AbstractBondHamiltonian{L,N}, H<:Union{Hamiltonian{L,UC,HB}, Hamiltonian}}

# read attribute data

attr_data = h5readattr(fn, group)

# load bond hamiltonian

hb = loadBondHamiltonian(fn, attr_data["bond_hamiltonian"])

# load unitcell

uc = loadUnitcell(fn, attr_data["unitcell"])

# return the new hamiltonian

return Hamiltonian(uc, hb)

end

function loadHamiltonian(

fn :: AbstractString,

group :: AbstractString = "hamiltonian"

)

return loadHamiltonian(Hamiltonian, fn, group)

end

# -

uc = getUnitcellHoneycomb(Symbol, String)

hb = BondHoppingHamiltonianSimpleNN{String}(2.0, "l")

h = Hamiltonian(uc, hb)

saveHamiltonian(h, "test.h5")

h

loadHamiltonian("test.h5")

# # Bandstructure

# +

function saveBandstructure(

bs :: BS,

fn :: AbstractString,

group :: AbstractString = "bandstructure"

;

append :: Bool = false

) where {RP, P<:AbstractReciprocalPath{RP}, L,UC,HB,H<:AbstractHamiltonian{L,UC,HB}, BS<:Bandstructure{P,H}}

# determine the mode based on if one wants to append stuff

if append

mode = "r+"

else

mode = "w"

end

# group for hamiltonian

group_h = group*"/hamiltonian"

# group for path

group_p = group*"/path"

# group for energy bands

group_e = group*"/bands"

# open the file in mode

h5open(fn, mode) do file

# create the group in which the bonds are saved

group_bs = g_create(file, group)

# write number of segments

attrs(group_bs)["segments"] = length(bs.bands)

# save the groups

attrs(group_bs)["hamiltonian"] = group_h

attrs(group_bs)["path"] = group_p

attrs(group_bs)["bands"] = group_e

end

# save hamiltonian

saveHamiltonian(hamiltonian(bs), fn, group_h, append=true)

# save reciprocal path

saveReciprocalPath(path(bs), fn, group_p, append=true)

# save the individual segments within the bands group

h5open(fn, "r+") do file

# create the group in which the bonds are saved

group_bands = g_create(file, group_e)

# save the individual segments

for si in 1:length(bs.bands)

s = bs.bands[si]

# reformat segment and save as matrix

#segmat = [s[j][i] for i in 1:length(s[1]) for j in 1:length(s)]

segmat = zeros(length(s[1]), length(s))

for i in 1:length(s[1])

for j in 1:length(s)

segmat[i,j] = s[j][i]

end

end

# save

group_bands["segment_$(si)"] = segmat

end

end

# return nothing

return nothing

end

function loadBandstructure(

::Type{BS},

fn :: AbstractString,

group :: AbstractString = "bandstructure"

) where {RP, P<:AbstractReciprocalPath{RP}, L,UC,HB,H<:AbstractHamiltonian{L,UC,HB}, BS<:Union{Bandstructure, Bandstructure{P,H}}}

# read attribute data

attr_data = h5readattr(fn, group)

# group for hamiltonian

group_h = attr_data["hamiltonian"]

# group for path

group_p = attr_data["path"]

# group for energy bands

group_e = attr_data["bands"]

# load number of segments

segments = attr_data["segments"]

# load hamiltonian

h = loadHamiltonian(fn, group_h)

# load path

p = loadReciprocalPath(fn, group_p)

# load all energy bands

bds = Vector{Vector{Float64}}[]

# iterate over all expected segments

for s in 1:segments

# load segment as matrix

segmat = h5read(fn, group_e*"/segment_$(s)")

# reformat segment and push to list

segment = Vector{Float64}[

Float64[segmat[i,b] for i in 1:size(segmat,1)]

for b in 1:size(segmat,2)

]

# push to the list

push!(bds, segment)

end

# return the new Bandstructure

bs = getBandstructure(h,p,recalculate=false)

bs.bands = bds

return bs

end

function loadBandstructure(

fn :: AbstractString,

group :: AbstractString = "bandstructure"

)

return loadBandstructure(Bandstructure, fn, group)

end

# -

uc = getUnitcellHoneycomb()

bs = getBandstructure(uc, :Gamma, :K, :M, :Gamma, :Mp);

saveBandstructure(bs, "test.h5")

plotBandstructure(bs);

bsp = loadBandstructure("test.h5")

plotBandstructure(bsp);

| devel/saveload.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Probability | IMDB 5000 Movies | <NAME>

import pandas as pd

import numpy as np

import matplotlib as plt

from datetime import date

df = pd.read_csv('./movie_metadata.csv', error_bad_lines=False)

df = df.drop_duplicates()

df.head()

# # What's the probability that a movie was longer than an hour and a half? Two hours?

more_than_90 = df[df.duration > 90.0]

prob_more_than_90 = more_than_90.count() / df.count()

'{:.2f}%'.format(prob_more_than_90['duration'] * 100)

more_than_120 = df[df.duration > 120.0]

prob_more_than_120 = more_than_120.count() / df.count()

'{:.2f}%'.format(prob_more_than_120['duration'] * 100)

df.duration[(df.duration < 200) & (df.duration > 30)].groupby(df.duration).count().plot()

# # What's the probability that a movie was directed by <NAME>?

director_steve = df[df.director_name == '<NAME>']

prob_director_steve = director_steve.count() / df.count()

'{:.2f}%'.format(prob_director_steve['director_name'] * 100)

# # What's the probability that a movie directed by <NAME> will gross under budget?

director_clint = df[df.director_name == '<NAME>']

gross_clint = director_clint[director_clint.gross < director_clint.budget]

prob_gross_clint = gross_clint.count() / director_clint.count()

'{:.2f}%'.format(prob_gross_clint['gross'] * 100)

# # What's the probability that a movie generally grossed more than its budget?

gross = df[df.gross > df.budget]

prob_gross_df = gross.count() / df.count()

'{:.2f}%'.format(prob_gross_df['gross'] * 100)

# # What's the probability that a movie grossed over the average gross of this data set?

avg_gross = df.gross.mean()

over_gross = df[df.gross > avg_gross].count()

prob_over_gross = over_gross / df.count()

'{:.2f}%'.format(prob_over_gross['gross'] * 100)

# # For ratings we'll consider a movie with at least a 6/10 to be worth renting, if not seeing in theaters. A false positive would be a movie that was highly-rated but did poorly in the box office (gross < budget). A false negative would be a movie that was poorly-rated but did great in the box office (gross > budget).

# # In the IMDB dataset, what are the false positive and false negative rates? Can you provide some examples of each?

rating_high = df[df.imdb_score >= 6.0]

rating_low = df[df.imdb_score < 6.0]

rating_high[rating_high.gross < rating_high.budget].head()

rating_low[rating_low.gross > rating_low.budget].head()

# # If I’m a production studio exec and <NAME> is starring in my movie but I’m feeling uncertain about whether we should keep him (will he make as much money as we want?), tell me should I keep him in the movie or switch him out for <NAME>?

actor_tom = df[(df.actor_1_name == '<NAME>') | (df.actor_2_name == '<NAME>') | (df.actor_3_name == '<NAME>')]

gross_tom = actor_tom[actor_tom.gross > actor_tom.budget]

prob_gross_tom = gross_tom.count() / actor_tom.count()

'{:.2f}%'.format(prob_gross_tom['gross'] * 100)

actor_ford = df[(df.actor_1_name == '<NAME>') | (df.actor_2_name == '<NAME>') | (df.actor_3_name == '<NAME>')]

gross_ford = actor_ford[actor_ford.gross > actor_ford.budget]

prob_gross_ford = gross_ford.count() / actor_ford.count()

'{:.2f}%'.format(prob_gross_ford['gross'] * 100)

# # Same as above, but I’m judging on the ratings of the movie instead of the gross/budget.

actor_tom = df[(df.actor_1_name == '<NAME>') | (df.actor_2_name == '<NAME>') | (df.actor_3_name == '<NAME>')]

actor_tom['imdb_score'].mean()

actor_ford = df[(df.actor_1_name == '<NAME>') | (df.actor_2_name == '<NAME>') | (df.actor_3_name == '<NAME>')]

actor_ford['imdb_score'].mean()

# # What’s the probability that a movie’s length will be between 1hr 10mins and 1h 30mins?

between_70_90 = df[(df.duration < 90.0) & (df.duration > 70.0)]

prob_between_70_90 = between_70_90.count() / df.count()

'{:.2f}%'.format(prob_between_70_90['duration'] * 100)

# # How does the distribution of movie budgets compare to the movie gross values?

df[['gross', 'budget']][df.budget < 1e9].plot(x='budget', y='gross', kind='scatter')

# # Which genre trends toward the highest gross-to-budget ratio? You may have to do some extra parsing to answer this question.

df['gross_budget'] = df['gross'] / df['budget']

genres_table = df[['genres', 'gross_budget']].groupby(df['genres']).mean()

genres_table.sort_values('gross_budget', ascending=False).head()

# +

# Megan did this

genre_gross = df[['genres', 'gross_budget']]

all_genres = list(set('|'.join(df.genres.values).split('|')))

groups = genre_gross.genres.map(lambda cell: tuple(genre in cell for genre in all_genres))

print('presence of specific genres in a movie\n')

groups.head()

# -

genre_gross.index = pd.MultiIndex.from_tuples(groups.values, names=all_genres)

print('convert tuples to indexes')

genre_gross.head() # note: all the indexes are actually filled!

# Create a new data from the new genre data

genre_data = {'gross_budget_mean': [], 'genre': []}

for g in all_genres:

genre_data['gross_budget_mean'].append(genre_gross.xs(True, level=g).gross_budget.mean())

genre_data['genre'].append(g)

pd.DataFrame(genre_data).sort_values('gross_budget_mean', ascending=False).head()

# Thanks Megan

pd.DataFrame(genre_data).sort_values('gross_budget_mean', ascending=False).plot(x='genre', kind='bar')

# # <NAME> is known for starring in some pretty bad movies. Are his movies statistically significantly worse (i.e. in rating) than the rest of the IMDB 5000+?

df['imdb_score'].mean()

actor_nick = df[(df.actor_1_name == '<NAME>') | (df.actor_2_name == '<NAME>') | (df.actor_3_name == '<NAME>')]

actor_nick['imdb_score'].mean()

# # Have any years grossed a statistically-significant higher amount than the other years?

df[['gross', 'title_year']].groupby(df.title_year).sum().plot()

| imdb-notebook.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: solaris

# language: python

# name: solaris

# ---

# # Scoring model performance with the `solaris` python API

#

# This tutorial describes how to run evaluation of a proposal (CSV or .geojson) for a single chip against a ground truth (CSV or .geojson) for the same chip.

#

# ---

# ## CSV Eval

#

# ### Steps

# 1. Imports

# 2. Load ground truth CSV

# 3. Load proposal CSV

# 4. Perform evaluation

#

# ### Imports

#

# For this test case we will use the `eval` submodule within `solaris`.

# imports

import os

import solaris as sol

from solaris.data import data_dir

import pandas as pd # just for visualizing the outputs

# ---

#

#

# ### Load ground truth CSV

#

# We will first instantiate an `Evaluator()` object, which is the core class `eval` uses for comparing predicted labels to ground truth labels. `Evaluator()` takes one argument - the path to the CSV or .geojson ground truth label object. It can alternatively accept a pre-loaded `GeoDataFrame` of ground truth label geometries.

# +

ground_truth_path = os.path.join(data_dir, 'sample_truth.csv')

evaluator = sol.eval.base.Evaluator(ground_truth_path)

evaluator

# -

# At this point, `evaluator` has the following attributes:

#

# - `ground_truth_fname`: the filename corresponding to the ground truth data. This is simply `'GeoDataFrame'` if a GDF was passed during instantiation.

#

# - `ground_truth_GDF`: GeoDataFrame-formatted geometries for the ground truth polygon labels.

#

# - `ground_truth_GDF_Edit`: A deep copy of `eval_object.ground_truth_GDF` which is edited during the process of matching ground truth label polygons to proposals.

#

# - `ground_truth_sindex`: The RTree/libspatialindex spatial index for rapid spatial referencing.

#

# - `proposal_GDF`: An _empty_ GeoDataFrame instantiated to hold proposals later.

#

# ---

#

# ### Load proposal CSV

#

# Next we will load in the proposal CSV file. Note that the `proposalCSV` flag must be set to true for CSV data. If the CSV contains confidence column(s) that indicate confidence in proprosals, the name(s) of the column(s) should be passed as a list of strings with the `conf_field_list` argument; because no such column exists in this case, we will simply pass `conf_field_list=[]`. There are additional arguments available (see [the method documentation](https://cw-eval.readthedocs.io/en/latest/api.html#cw_eval.baseeval.EvalBase.load_proposal)) which can be used for multi-class problems; those will be covered in another recipe. The defaults suffice for single-class problems.

proposals_path = os.path.join(data_dir, 'sample_preds.csv')

evaluator.load_proposal(proposals_path, proposalCSV=True, conf_field_list=[])

# ---

#

#

# ### Perform evaluation

#

# Evaluation iteratively steps through the proposal polygons in `eval_object.proposal_GDF` and determines if any of the polygons in `eval_object.ground_truth_GDF_Edit` have IoU overlap > `miniou` (see [the method documentation](https://cw-eval.readthedocs.io/en/latest/api.html#cw_eval.baseeval.EvalBase.eval_iou)) with that proposed polygon. If one does, that proposal polygon is scored as a true positive. The matched ground truth polygon with the highest IoU (in case multiple had IoU > `miniou`) is removed from `eval_object.ground_truth_GDF_Edit` so it cannot be matched against another proposal. If no ground truth polygon matches with IoU > `miniou`, that proposal polygon is scored as a false positive. After iterating through all proposal polygons, any remaining ground truth polygons in `eval_object.ground_truth_GDF_Edit` are scored as false negatives.

#

# There are several additional arguments to this method related to multi-class evaluation which will be covered in a later recipe. See [the method documentation](https://cw-eval.readthedocs.io/en/latest/api.html#cw_eval.baseeval.EvalBase.eval_iou) for usage.

#

# The prediction outputs a `list` of `dict`s for each class evaluated (only one `dict` in this single-class case). The `dict`(s) have the following keys:

#

# - `'class_id'`: The class being scored in the dict, `'all'` for single-class scoring.

#

# - `'iou_field'`: The name of the column in `eval_object.proposal_GDF` for the IoU score for this class. See [the method documentation](https://cw-eval.readthedocs.io/en/latest/api.html#cw_eval.baseeval.EvalBase.eval_iou) for more information.

#

# - `'TruePos'`: The number of polygons in `eval_object.proposal_GDF` that matched a polygon in `eval_object.ground_truth_GDF_Edit`.

#

# - `'FalsePos'`: The number of polygons in `eval_object.proposal_GDF` that had no match in `eval_object.ground_truth_GDF_Edit`.

#

# - `'FalseNeg'`: The number of polygons in `eval_object.ground_truth_GDF_Edit` that had no match in `eval_object.proposal_GDF`.

#

# - `'Precision'`: The [precision statistic](https://en.wikipedia.org/wiki/Precision_and_recall) for IoU between the proposals and the ground truth polygons.

#

# - `'Recall'`: The [recall statistic](https://en.wikipedia.org/wiki/Precision_and_recall) for IoU between the proposals and the ground truth polygons.

#

# - `'F1Score'`: Also known as the [SpaceNet Metric](https://medium.com/the-downlinq/the-spacenet-metric-612183cc2ddb), the [F<sub>1</sub> score](https://en.wikipedia.org/wiki/F1_score) for IoU between the proposals and the ground truth polygons.

evaluator.eval_iou(calculate_class_scores=False)

# In this case, the score is perfect because the polygons in the ground truth CSV and the proposal CSV are identical. At this point, a new proposal CSV can be loaded (for example, for a new nadir angle at the same chip location) and scoring can be repeated.

# ---

#

# ## GeoJSON Eval

#

# The same operation can be completed with .geojson-formatted ground truth and proposal files. See the example below, and see the detailed explanation above for a description of each step's operations.

# +

ground_truth_geojson = os.path.join(data_dir, 'gt.geojson')

proposal_geojson = os.path.join(data_dir, 'pred.geojson')

evaluator = sol.eval.base.Evaluator(ground_truth_geojson)

evaluator.load_proposal(proposal_geojson, proposalCSV=False, conf_field_list=[])

evaluator.eval_iou(calculate_class_scores=False)

# -

# (Note that the above comes from a different chip location and different proposal than the CSV example, hence the difference in scores)

| docs/tutorials/notebooks/api_evaluation_tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: deeprl

# language: python

# name: deeprl

# ---

# +

import torch

from unityagents import UnityEnvironment

import numpy as np

from ddpg_agent import Agent

env = UnityEnvironment(file_name='.\Reacher_Windows_x86_64_20agents\Reacher')

# get the default brain

brain_name = env.brain_names[0]

brain = env.brains[brain_name]

env_info = env.reset(train_mode=False)[brain_name]

# number of agents

num_agents = len(env_info.agents)

print('Number of agents:', num_agents)

# size of each action

action_size = brain.vector_action_space_size

print('Size of each action:', action_size)

# examine the state space

states = env_info.vector_observations

state_size = states.shape[1]

print("Comment lines 91,95-101 in ddpg_agent.py before running this cell")

agent = Agent(state_size=state_size, action_size=action_size,num_agents=num_agents, random_seed=0)

agent.actor_local.load_state_dict(torch.load('checkpoint_actor.pth'))

agent.critic_local.load_state_dict(torch.load('checkpoint_critic.pth'))

states = env_info.vector_observations

for t in range(2000):

actions = agent.act(states, add_noise=False)

actions = np.clip(actions, -1, 1) # all actions between -1 and 1

env_info = env.step(actions)[brain_name] # send all actions to tne environment

next_states = env_info.vector_observations # get next state (for each agent)

rewards = env_info.rewards # get reward (for each agent)

dones = env_info.local_done # see if episode finished

states = next_states # roll over states to next time step

if np.any(dones): # exit loop if episode finished

break

env.close()

# -

| DDPG Continuous Control/Results/watch_agent.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# # 1次元有限要素法における2次要素の剛性行列の確認

#

# 1次元有限要素法の2次要素の剛性行列が必要になったためGetfem++で作成しました。

import getfem as gf

import numpy as np

# メッシュ作成の際に、'regular simplices'オプションで次数を2次にします。

m = gf.Mesh('regular simplices', np.arange(0, 2, 1),'degree',2)

print m

# 変位用オブジェクトとデータ用オブジェクトを作成します。

# +

mfu = gf.MeshFem(m, 1)

mfu.set_fem(gf.Fem('FEM_PK(1,2)'))

print mfu

mfd = gf.MeshFem(m, 1)

mfd.set_fem(gf.Fem('FEM_PK(1,2)'))

print mfd

# -

# 積分法は'GAUSS1D'の2次とします。

mim = gf.MeshIm(m, gf.Integ('IM_GAUSS1D(2)'))

print mim

# 剛性行列は以下の様になりました。

Lambda = 1.000

Mu = 1.000

K = gf.asm_linear_elasticity(mim, mfu, mfd, np.repeat([Lambda], mfu.nbdof()), np.repeat([Mu], mfu.nbdof()))

K.full()

# 今回は応力の計算までやってみます。

P = m.pts()

print P

# 1要素で上端に力を加え、下端を固定にします。

cbot = (abs(P[0,:]-np.min(P)) < 1.000e-06)

ctop = (abs(P[0,:]-np.max(P)) < 1.000e-06)

print P[0,:]

print cbot

print ctop

pidbot = np.compress(cbot,range(0,m.nbpts()))

pidtop = np.compress(ctop,range(0,m.nbpts()))

print pidbot

print pidtop

fbot = m.faces_from_pid(pidbot)

ftop = m.faces_from_pid(pidtop)

print fbot

print ftop

BOTTOM = 1

TOP = 2

m.set_region(BOTTOM, fbot)

m.set_region(TOP, ftop)

print m

nbdof = mfd.nbdof()

print nbdof

F = gf.asm_boundary_source(TOP, mim, mfu, mfd, np.repeat([[1.0]], nbdof,1))

print F

(H,R) = gf.asm_dirichlet(BOTTOM, mim, mfu, mfd, mfd.eval('[1]'), mfd.eval('[0]'))

print 'H = ', H.full()

print 'R = ', R

(N, U0) = H.dirichlet_nullspace(R)

print 'N = ', N.full()

print 'U0 = ', U0

Nt = gf.Spmat('copy', N)

Nt.transpose()

print 'Nt = ', Nt.full()

# 準備ができたので連立方程式を解きます。

KK = Nt*K*N

print 'KK = ', KK.full()

FF = Nt*F

print 'FF = ', FF

P = gf.Precond('ildlt',KK)

UU = gf.linsolve_cg(KK,FF,P)

U = N*UU+U0

print U

# gf.compute_gradientで変位の傾きを計算すると、応力$\sigma$は$1$になります。

DU = gf.compute_gradient(mfu,U,mfd)

print 'DU = ', DU

sigma = (Lambda+2.0*Mu)*DU

print 'sigma = ', sigma

| doc/demo_2nddegree_FEM.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# We have already seen lists and how they can be used. Now that you have some more background I will go into more detail about lists. First we will look at more ways to get at the elements in a list and then we will talk about copying them.

#

#

# Here are some examples of using indexing to access a single element of an list. Execute the cells below in order:

#

list = ['zero', 'one', 'two', 'three', 'four', 'five']

list[0]

list[4]

list[5]

# You should have gotten the result 'zero', 'four', 'five'.

#

# All those examples should look familiar to you. If you want the first item in the list just look at index 0. The second item is index 1 and so on through the list. However, what if you want the last item in the list? One way could be to use the `len` function like `list[len(list)-1]`. This way works since the `len` function always returns the last index plus one. The second from the last would then be `list[len(list)-2]`. There is an easier way to do this. In Python the last item is always index -1. The second to the last is index -2 and so on. Here are some more examples:

#

list[len(list)-1]

# + active=""

# 'five'

# -

list[len(list)-2]

# + active=""

# 'four'

# -

list[-1]

# + active=""

# 'five'

# -

list[-2]

# + active=""

# 'four'

# -

list[-6]

# + active=""

# 'zero'

# -

#

# Thus any item in the list can be indexed in two ways: from the front and from the back.

#

#

# Another useful way to get into parts of lists is using slices. Here is another example to give you an idea what they can be used for:

#

list = [0, 'Fred', 2, 'S.P.A.M.', 'Stocking', 42, "Jack", "Jill"]

list[0]

# + active=""

# 0

# -

list[7]

# + active=""

# 'Jill'

# -

list[0:8]

# + active=""

# [0, 'Fred', 2, 'S.P.A.M.', 'Stocking', 42, 'Jack', 'Jill']

# -

list[2:4]

# + active=""

# [2, 'S.P.A.M.']

# -

list[4:7]

# + active=""

# ['Stocking', 42, 'Jack']

# -

list[1:5]

# + active=""

# ['Fred', 2, 'S.P.A.M.', 'Stocking']

# -

#

# Slices are used to return part of a list. The slice operator is in the form `list[first_index:following_index]`. The slice goes from the `first_index` to the index before the `following_index`. You can use both types of indexing:

#

list[-4:-2]

# + active=""

# ['Stocking', 42]

# -

list[-4]

# + active=""

# 'Stocking'

# -

list[-4:6]

# + active=""

# ['Stocking', 42]

# -

#

# Another trick with slices is the unspecified index. If the first index is not specified the beginning of the list is assumed. If the following index is not specified the whole rest of the list is assumed. Here are some examples:

#

list[:2]

# + active=""

# [0, 'Fred']

# -

list[-2:]

# + active=""

# ['Jack', 'Jill']

# -

list[:3]

# + active=""

# [0, 'Fred', 2]

# -

list[:-5]

# + active=""

# [0, 'Fred', 2]

# -

#

# Here is a program example:

#

# +

poem = ["<B>", "Jack", "and", "Jill", "</B>", "went", "up", "the", "hill",\

"to", "<B>", "fetch", "a", "pail", "of", "</B>", "water.", "Jack",\

"fell", "<B>", "down", "and", "broke", "</B>", "his", "crown", "and",\

"<B>", "Jill", "came", "</B>", "tumbling", "after"]

def get_bolds(list):

## is_bold tells whether or not the we are currently looking at

## a bold section of text.

is_bold = False

## start_block is the index of the start of either an unbolded

## segment of text or a bolded segment.

start_block = 0

for index in range(len(list)):

##Handle a starting of bold text

if list[index] == "<B>":

if is_bold:

print("Error: Extra Bold")

##print "Not Bold:", list[start_block:index]

is_bold = True

start_block = index+1

##Handle end of bold text

##Remember that the last number in a slice is the index

## after the last index used.

if list[index] == "</B>":

if not is_bold:

print("Error: Extra Close Bold")

print("Bold [", start_block, ":", index, "] ",\

list[start_block:index])

is_bold = False

start_block = index+1

get_bolds(poem)

# -

# with the output being:

#

# + active=""

# Bold [ 1 : 4 ] ['Jack', 'and', 'Jill']

# Bold [ 11 : 15 ] ['fetch', 'a', 'pail', 'of']

# Bold [ 20 : 23 ] ['down', 'and', 'broke']

# Bold [ 28 : 30 ] ['Jill', 'came']

# -

#

#

# The `get_bold` function takes in a list that is broken into words

# and token's. The tokens that it looks for are `<B>` which starts

# the bold text and `<\B>` which ends bold text. The function

# `get_bold` goes through and searches for the start and end

# tokens.

#

#

# The next feature of lists is copying them. If you try something simple like:

#

a = [1, 2, 3]

b = a

print(b)

# + active=""

# [1, 2, 3]

# -

b[1] = 10

print(b)

# + active=""

# [1, 10, 3]

# -

print(a)

# + active=""

# [1, 10, 3]

# -

#

# This probably looks surprising since a modification to b

# resulted in a being changed as well. What happened is that the

# statement `b = a` makes b a *reference* to the same list that a is a reference to.

# This means that b and a are different names for the same list.

# Hence any modification to b changes a as well. However

# some assignments don't create two names for one list:

#

a = [1, 2, 3]

b = a*2

print(a)

# + active=""

# [1, 2, 3]

# -

print(b)

# + active=""

# [1, 2, 3, 1, 2, 3]

# -

a[1] = 10

print(a)

# + active=""

# [1, 10, 3]

# -

print(b)

# + active=""

# [1, 2, 3, 1, 2, 3]

# -

#

#

# In this case, b is not a reference to a since the

# expression `a*2` creates a new list. Then the statement

# `b = a*2` gives b a reference to `a*2` rather than a

# reference to a. All assignment operations create a reference.

# When you pass a list as a argument to a function you create a

# reference as well. Most of the time you don't have to worry about

# creating references rather than copies. However when you need to make

# modifications to one list without changing another name of the list

# you have to make sure that you have actually created a copy.

#

#

# There are several ways to make a copy of a list. The simplest that

# works most of the time is the slice operator since it always makes a

# new list even if it is a slice of a whole list:

#

a = [1, 2, 3]

b = a[:]

b[1] = 10

print(a)

# + active=""

# [1, 2, 3]

# -

print(b)

# + active=""

# [1, 10, 3]

# -

#

#

# Taking the slice [:] creates a new copy of the list. However it

# only copies the outer list. Any sublist inside is still a references

# to the sublist in the original list. Therefore, when the list

# contains lists the inner lists have to be copied as well. You could

# do that manually but Python already contains a module to do it. You

# use the deepcopy function of the copy module:

#

import copy

a = [[1, 2, 3], [4, 5, 6]]

b = a[:]

c = copy.deepcopy(a)

b[0][1] = 10

c[1][1] = 12

print(a)

# + active=""

# [[1, 10, 3], [4, 5, 6]]

# -

print(b)

# + active=""

# [[1, 10, 3], [4, 5, 6]]

# -

print(c)

# + active=""

# [[1, 2, 3], [4, 12, 6]]

# -

#

# First of all, notice that a is an array of arrays. Then notice

# that when `b[0][1] = 10` is run both a and b are

# changed, but c is not. This happens because the inner arrays

# are still references when the slice operator is used. However, with

# deepcopy, c was fully copied.

#

#

# So, should I worry about references every time I use a function or

# `=`? The good news is that you only have to worry about

# references when using dictionaries and lists. Numbers and strings

# create references when assigned but every operation on numbers and

# strings that modifies them creates a new copy so you can never modify

# them unexpectedly. You do have to think about references when you are

# modifying a list or a dictionary.

#

#

# By now you are probably wondering why are references used at all? The

# basic reason is speed. It is much faster to make a reference to a

# thousand element list than to copy all the elements. The other reason

# is that it allows you to have a function to modify the inputed list

# or dictionary. Just remember about references if you ever have some

# weird problem with data being changed when it shouldn't be.

#

| tutorial12.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/amjadraza/learn-ml-with-spark/blob/main/Spark_on_Local_Computers.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="7JCIUZNbhFGw"

# ## **How to use Spark on your local computer**

# + [markdown] id="iAHg3AIxhW_o"

# #### Using Conda

# + [markdown] id="Wjt625gdhecf"

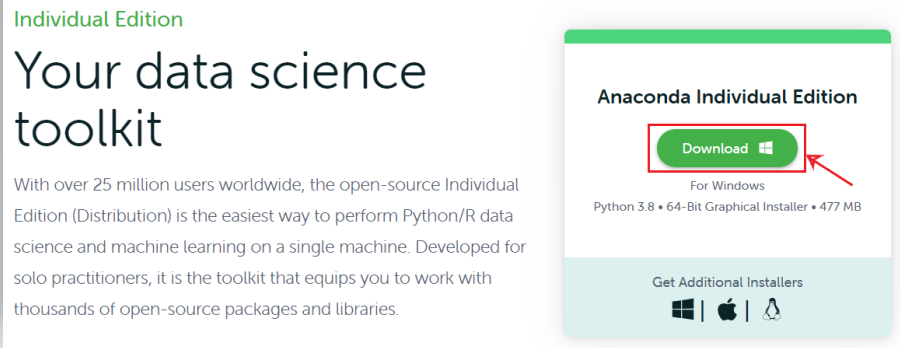

# Conda is an open-source package management and environment management system which is a part of the Anaconda distribution. It is both cross-platform and language agnostic. In practice, Conda can replace both pip and virtualenv. You can download the anaconda packages using the link [here](https://www.anaconda.com/products/individual).

# + [markdown] id="GfmADWOQhg7q"

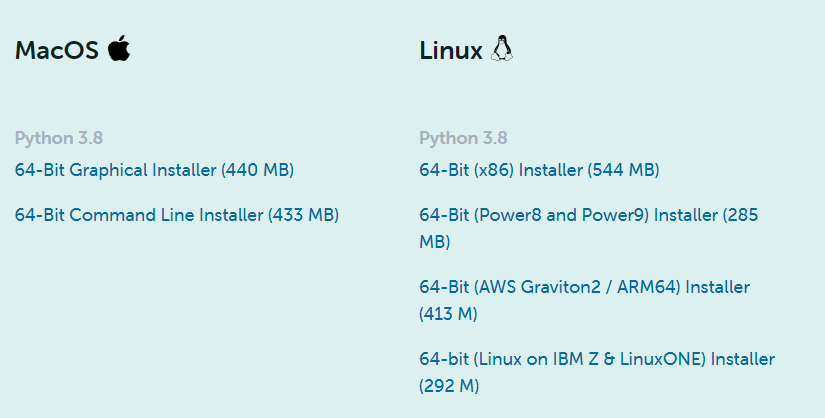

# 1. Anaconda for windows:

#

#

#

# 2. For Linux and Mac OS, we have the following options available for downloading anaconda presented at the end of the same page.

#

#

#

# + [markdown] id="MjLaPRvyhpr5"

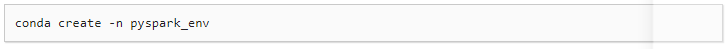

# After downloading Anaconda, you have to create a conda environment. Open your terminal and use the command shown below.

#

#

# + [markdown] id="yQU2QYDQh5LS"

# After the virtual environment is created, it should be visible under the list of Conda environments which can be seen using the following command:

#

#

#

# + [markdown] id="hkTYxxwliGIE"

# Now activate the newly created environment with the following command:

#

#

#

# + [markdown] id="tmazFnqviJVl"

# You can install pyspark by Using PyPI to install PySpark in the newly created environment, for example as below. It will install PySpark under the new virtual environment pyspark_env created above.

#

#

# + [markdown] id="6yH10YB6iOaU"

# Alternatively, you can install PySpark from Conda itself as below:

#

#

# + [markdown] id="_NXA0GVJiVe1"

# **Manually Downloading PySpark**

#

# PySpark is included in the distributions available at the [Apache Spark website](https://spark.apache.org/downloads.html). You can download a distribution you want from the site. After that, uncompress the tar file into the directory where you want to install Spark, for example, as below:

#

# + id="MDAgCvB7czES" colab={"base_uri": "https://localhost:8080/"} outputId="a9abf381-386c-4ed1-b698-ef9f85b9b01a"

# #!apt-get install openjdk-8-jdk-headless -qq > /dev/null

# !java -version

# + id="EJWXOC5z7c4g"

# !wget -q http://apache.osuosl.org/spark/spark-3.2.0/spark-3.2.0-bin-hadoop3.2.tgz

# + id="CMeH3tin7iTC"

# !tar xf spark-3.2.0-bin-hadoop3.2.tgz

# + [markdown] id="s_2dD3zW7_Jo"

# Ensure the SPARK_HOME environment variable points to the directory where the tar file has been extracted. Update PYTHONPATH environment variable such that it can find the PySpark and Py4J under SPARK_HOME/python/lib. One example of doing this is shown below:

#

# + id="FVoEKyzO7r0q"

import os

os.environ["JAVA_HOME"] = "/usr/lib/jvm/java-11-openjdk-amd64"

os.environ["SPARK_HOME"] = "/content/spark-3.2.0-bin-hadoop3.2"

# + id="EBfJzTQZ8Dw8"

| notebooks/week-1/Spark_on_Local_Computers.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: py3

# language: python

# name: py3

# ---

import numpy as np

from testCases import *

import sklearn

import sklearn.datasets

import sklearn.linear_model

from planar_utils import plot_decision_boundary, sigmoid, load_planar_dataset, load_extra_datasets

# %matplotlib inline

X, Y = load_planar_dataset()

X.shape

plt.scatter(X[0, :], X[1, :], c=Y[0], cmap=plt.cm.Spectral)

plt.show()

# ----

# # 1. Logistic Regression

clf = sklearn.linear_model.LogisticRegressionCV()

clf.fit(X.T, Y.T)

yy.ravel()

np.c_[xx.ravel(), yy.ravel(), yy.ravel()]

# +

x1_min, x1_max = X[0, :].min() - 1, X[0, :].max() + 1

x2_min, x2_max = X[1, :].min() - 1, X[1, :].max() + 1

step = 0.01

mesh_x1 = np.arange(x1_min, x1_max, step)

mesh_x2 = np.arange(x1_min, x2_max, step)

xx1, xx2 = np.meshgrid(mesh_x1, mesh_x2)

plot_x1x2 = np.c_[xx1.ravel(), xx2.ravel()]

Z = clf.predict(plot_x1x2)

Z = Z.reshape(xx1.shape)

plt.contourf(xx1, xx2, Z, cmap=plt.cm.Spectral)

plt.xlabel('x1')

plt.ylabel('x2')

plt.scatter(X[0, :], X[1, :], c=Y[0], cmap=plt.cm.Spectral)

plt.show()

# -

# np.dot(Y[0], pred): 1 = 1 정답

# np.dot(1 - Y[0], 1 - pred): 0 = 0 정답

pred = clf.predict(X.T)

NumOfAns = np.dot(Y[0], pred) + np.dot(1 - Y[0], 1 - pred)

accuracy = NumOfAns / Y[0].size * 100

print("accuracy: ", accuracy, "%")

# # 2. Neural Network Model

# ## Dimension

# - W: (자기 feature =자기 unit) * (앞레이어 feature = 앞레이어 unit)

# ### 1) set structure

def layer_size(X, Y):

# n_h: Num of Unit of HiddenLayer

n_x = X.shape[0]

n_h = 4

n_y = Y.shape[0]

print("num of feature: {}, num of unit of hidden Layer: {}, out put: {}".\

format(n_x, n_h, n_y))

return (n_x, n_h, n_y)

n_x, n_h, n_y = layer_size(X, Y)

# ### 2) initialize params

def initialize_params(n_x, n_h, n_y):

np.random.seed(2)

W1 = np.random.randn(n_h, n_x) * 0.01

b1 = np.zeros(shape=(n_h, 1))

W2 = np.random.randn(n_y, n_h) * 0.01

b2 = np.zeros(shape=(n_y, 1))

assert (W1.shape == (n_h, n_x))

assert (b1.shape == (n_h, 1))

assert (W2.shape == (n_y, n_h))

assert (b2.shape == (n_y, 1))

params = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return params

params = initialize_params(n_x, n_h, n_y)

params

# ### 3) loop: forward propagation

def forward_propagation(X, params):

W1 = params['W1']

b1 = params['b1']

W2 = params['W2']

b2 = params['b2']

Z1 = np.dot(W1, X) + b1

A1 = np.tanh(Z1)

Z2 = np.dot(W2, A1) + b2

A2 = sigmoid(Z2)

assert (A2.shape == (1, X.shape[1]))

cache = {"Z1": Z1,

"A1": A1,

"Z2": Z2,

"A2": A2}

return A2, cache

A2, cache = forward_propagation(X, params)

A2.shape

# ### 4) Cost

# $$J = - \frac{1}{m} \sum\limits_{i = 0}^{m} \large{(} \small y^{(i)}\log\left(a^{[2] (i)}\right) + (1-y^{(i)})\log\left(1- a^{[2] (i)}\right) \large{)} $$

#

def compute_cost(A2, Y):

m = Y.shape[1] # Num of sample

logprob = np.multiply(np.log(A2), Y) + np.multiply((1- Y), np.log(1 - A2))

loss = - logprob

cost = 1/m * np.sum(loss)

cost = np.squeeze(cost) # makes sure cost is the dimension we expect.

# E.g., turns [[17]] into 17

assert(isinstance(cost, float))

return cost

compute_cost(A2, Y)

# ### 5) loop: backward propagation

def backward_propagation(params, cache, X, Y):

m = X.shape[1]

W1 = params['W1']

W2 = params['W2']

A1 = cache['A1']

A2 = cache['A2']

d_Z2 = A2 - Y

d_W2 = (1 / m) * np.dot(d_Z2, A1.T)

d_b2 = (1 / m) * np.sum(d_Z2)

d_Z1 = np.multiply(np.dot(W2.T, d_Z2), 1 - np.power(A1, 2))

d_W1 = (1 / m) * np.dot(d_Z1, X.T)

d_b1 = (1 / m) * np.sum(d_Z1, axis=1, keepdims=True)

grads = {"d_W1": d_W1,

"d_b1": d_b1,

"d_W2": d_W2,

"d_b2": d_b2,}

return grads

grads = backward_propagation(params, cache, X, Y)

grads

# ## 6) update_params

def update_params(params, grads, learning_rate=1.2):

# before

W1 = params['W1']

b1 = params['b1']

W2 = params['W2']

b2 = params['b2']

d_W2 = grads['d_W2']

d_b2 = grads['d_b2']

d_W1 = grads['d_W1']

d_b1 = grads['d_b1']

# after

W2 = W2 - learning_rate * d_W2

b2 = b2 - learning_rate * d_b2

W1 = W1 - learning_rate * d_W1

b1 = b1 - learning_rate * d_b1

params = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2}

return params

update_params(params, grads)

# ## 7) Build Model

# - forward propagation: get $Z, \sigma(Z), \text{ and cost}$

# - backward propagation: get **dW, db** and update

def nn_model(X, Y, n_h, num_iteration=10000, print_cost=False):

np.random.seed(3)

n_x = layer_size(X, Y)[0]

n_y = layer_size(X, Y)[2]

params = initialize_params(n_x, n_h, n_y)

W1 = params['W1']

b1 = params['b1']

W2 = params['W2']

b2 = params['b2']

# gradient descent

for i in range(0, num_iteration):

A2, cache = forward_propagation(X, params)

cost = compute_cost(A2, Y)

grads = backward_propagation(params, cache, X, Y)

params = update_params(params, grads)

if print_cost and i % 1000 == 0:

print("Cost after iteration {}: {}".format(i, cost))

return params

nn_model(X, Y, 4, print_cost=True)

# ## 8) prediction

# $y_{prediction} = \mathbb 1 \text{{activation > 0.5}} = \begin{cases} 1 & \text{if}\ activation > 0.5 \\ 0 & \text{otherwise} \end{cases}$

def predict(params, X):

A2, cache = forward_propagation(X, params)

pred = np.round(A2)

return pred

predict(params, X)

# ## eg.

X.shape

# +

# Build model

params = nn_model(X, Y, n_h=4, num_iteration=10000, print_cost=True)

# Plotting

x1_min, x1_max = X[0, :].min() - 1, X[0, :].max() + 1

x2_min, x2_max = X[1, :].min() - 1, X[1, :].max() + 1

step = 0.01

mesh_x1 = np.arange(x1_min, x1_max, step)

mesh_x2 = np.arange(x1_min, x2_max, step)

xx1, xx2 = np.meshgrid(mesh_x1, mesh_x2)

plot_x1x2 = np.c_[xx1.ravel(), xx2.ravel()]

Z = predict(params, plot_x1x2.T)

Z = Z.reshape(xx1.shape)

plt.contourf(xx1, xx2, Z, cmap=plt.cm.Spectral)

plt.xlabel('x1')

plt.ylabel('x2')

plt.scatter(X[0, :], X[1, :], c=Y[0], cmap=plt.cm.Spectral)

plt.show()

# +

plt.figure(figsize=(16, 32))

hidden_layer_depth = [1, 2, 3, 4, 5, 40 ,50]

for i, n_h in enumerate(hidden_layer_depth):

plt.subplot(5, 2, i+1)

plt.title("Hidden layer depth: {}".format(n_h))

params = nn_model(X, Y, n_h, num_iteration=5000)

plot_decision_boundary(lambda x: predict(params, x.T), X, Y)

pred = predict(params, X)

ans = np.dot(Y, pred.T) + np.dot(1 - Y, 1 - pred.T)

accuracy = float(ans) / float(Y.size) * 100

print("Accuracy for {} hidden units: {} %".format(n_h, accuracy))

# -

# - 질문

# - prediction에서 왜 Transpose?

# reference

# - https://github.com/Kulbear/deep-learning-coursera

| 07.DeepLearning/01_MLandDeepLearning_BuildingDeepNeuralNetwork.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # ADDRCODE Mapping for Dengue Case Data

# +

from IPython.core.interactiveshell import InteractiveShell

InteractiveShell.ast_node_interactivity = "all"

import os

import pandas as pd

from collections import Counter

# +

df_DHF = pd.read_csv(os.path.join('..','..','data','dengue-cases','2016.csv'))

df_DHF = df_DHF.set_index('ID')

df_DHF = df_DHF.loc[df_DHF['PROVINCE'] == 80]

df_DHF = df_DHF.astype(str)

df_DHF['CODE'] = df_DHF['PROVINCE'] + df_DHF['ADDRCODE']

df_DHF['CODE'] = df_DHF['CODE'].str[:-2]

df_DHF = pd.DataFrame.from_records(Counter(df_DHF['CODE'].values).most_common())

df_DHF.columns = ['Subdistrict_Code', 'Cases']

df_DHF = df_DHF.set_index('Subdistrict_Code')

df_DHF.head()

len(df_DHF)

# -

df_code = pd.read_csv(os.path.join('..','..','data','dengue-cases','province_dist_sub_code.csv'))

df_code['Subdistrict_Code'] = df_code['Subdistrict_Code'].astype(str)

df_code = df_code.set_index('Subdistrict_Code')

df_code = df_code.loc[df_code['Province_Code'] == 80]

df_code.head()

len(df_code)

df_dengue_caces = df_code.join(df_DHF)

df_dengue_caces = df_dengue_caces.fillna(0)

df_dengue_caces = df_dengue_caces.dropna(how='any', axis=0)

df_dengue_caces = df_dengue_caces.reset_index()

df_dengue_caces = df_dengue_caces.drop(['Subdistrict_Code', 'District_Code', 'Province_Code'], axis=1)

df_dengue_caces.columns = ['subdist', 'district', 'province', 'cases']

df_dengue_caces.head()

df_dengue_caces.to_csv(os.path.join('..','..','data','dengue-cases','dengue_caces_2016.csv'), index=None)

| src/preprocess/addrcode-mapping.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/skywalker0803r/c620/blob/main/notebook/Integration_and_Test.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="YEiUzu5_2x3I"

import joblib

import os

import numpy as np

import pandas as pd

pd.options.display.max_rows = 9999

# !pip install autorch > log.txt

import matplotlib.pyplot as plt

import autorch

from autorch.function import sp2wt

import random

random.seed(11)

np.random.seed(11)

# + colab={"base_uri": "https://localhost:8080/"} id="3YPjXHkR3Eso" outputId="d789e8c0-cae0-4e43-a094-46e85489b81a"

from google.colab import drive

drive.mount('/content/drive')

# + [markdown] id="nuzZj4KB6mQB"

# # columns name

# + colab={"base_uri": "https://localhost:8080/"} id="vD1E0WtI3OKC" outputId="7632cdb3-6566-4b0b-ded9-c229b265a6b2"

icg_c = joblib.load('/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/icg_col_names.pkl')

c620_c = joblib.load('/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c620_col_names.pkl')

c660_c = joblib.load('/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c660_col_names.pkl')

t651_c = joblib.load('/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/t651_col_names.pkl')

c670_c = joblib.load('/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c670_col_names.pkl')

print(icg_c.keys())

print(c620_c.keys())

print(c660_c.keys())

print(c670_c.keys())

print(t651_c.keys())

# + [markdown] id="x8BgCUXhB_Qe"

# # DataFrame

# + id="TjsU3C9bB_er" colab={"base_uri": "https://localhost:8080/"} outputId="920a146f-f92d-41ce-9d18-b51af95b5d58"

icg_df = pd.read_csv('/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/icg_train.csv',index_col=0)

c620_df = pd.read_csv('/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/c620_train.csv',index_col=0)

c660_df = pd.read_csv('/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/c660_train.csv',index_col=0)

c670_df = pd.read_csv('/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/c670_train.csv',index_col=0)

t651_df = pd.read_csv('/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/t651_train.csv',index_col=0)

idx = list(set(icg_df.index)&

set(c620_df.index)&

set(c660_df.index)&

set(c670_df.index)&

set(t651_df.index))

len(idx)

# + id="kxeTF9Z5LYQV" colab={"base_uri": "https://localhost:8080/", "height": 377} outputId="fcf9360e-bec9-4039-e408-b7afee18b949"

icg_df.loc[idx].head()

# + id="2KP7j-9PLUel" colab={"base_uri": "https://localhost:8080/", "height": 306} outputId="a8dc9030-679b-4a9c-ab74-207def7fc206"

c620_df.loc[idx][c620_c['case']].head()

# + id="xuehZMotRCYO" colab={"base_uri": "https://localhost:8080/", "height": 204} outputId="5b575359-315c-4df2-c4e7-2958b48cc343"

c660_df.loc[idx][c660_c['case']].head()

# + [markdown] id="eT4-lodHox-u"

# # Input data

# + id="3EOUCMOXB2Y1"

# icg

icg_input = icg_df.loc[idx,icg_c['x']]

icg_input = icg_input.join(c620_df.loc[idx,'Tatoray Stripper C620 Operation_Specifications_Spec 2 : Distillate Rate_m3/hr'])

icg_input = icg_input.join(c660_df.loc[idx,'Benzene Column C660 Operation_Specifications_Spec 3 : Toluene in Benzene_ppmw'])

icg_input = icg_input.join(c620_df.loc[idx].filter(regex='Receiver Temp'))

# c620

c620_feed = c620_df.loc[idx,c620_c['x41']]

# t651

t651_feed = t651_df.loc[idx,t651_c['x41']]

# + id="hTyJ6JVm3Xzv" colab={"base_uri": "https://localhost:8080/", "height": 377} outputId="7ba79811-bc65-4171-86ad-79cf23bee0aa"

icg_input.head()

# + [markdown] id="mp1GqvJ0o0k1"

# # Output data

# + id="uHCoq80jo0ss"

c620_op = c620_df.loc[idx,c620_c['density']+c620_c['yRefluxRate']+c620_c['yHeatDuty']+c620_c['yControl']]

c620_wt = c620_df.loc[idx,c620_c['vent_gas_x']+c620_c['distillate_x']+c620_c['sidedraw_x']+c620_c['bottoms_x']]

c660_op = c660_df.loc[idx,c660_c['density']+c660_c['yRefluxRate']+c660_c['yHeatDuty']+c660_c['yControl']]

c660_wt = c660_df.loc[idx,c660_c['vent_gas_x']+c660_c['distillate_x']+c660_c['sidedraw_x']+c660_c['bottoms_x']]

c670_op = c670_df.loc[idx,c670_c['density']+c670_c['yRefluxRate']+c670_c['yHeatDuty']+c670_c['yControl']]

c670_wt = c670_df.loc[idx,c670_c['distillate_x']+c670_c['bottoms_x']]

# + [markdown] id="h8VBB0aG-e0c"

# # config

# + id="0Ktejan2r_U7"

config = {

# simple op_col

'c620_simple_op_col':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c620_simple_op_col.pkl',

'c660_simple_op_col':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c660_simple_op_col.pkl',

'c670_simple_op_col':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c670_simple_op_col.pkl',

# model paht

'icg_model_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c620_icg_svr.pkl',

'c620_model_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c620.pkl',

'c660_model_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c660.pkl',

'c670_model_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c670.pkl',

# real data model path

'icg_model_path_real_data':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c620_icg_svr_real_data.pkl',

'c620_model_path_real_data':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c620_real_data.pkl',

'c660_model_path_real_data':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c660_real_data.pkl',

'c670_model_path_real_data':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c670_real_data.pkl',

# real data linear model path

'c620_model_path_real_data_linear':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c620_real_data_lassocv.pkl',

'c660_model_path_real_data_linear':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c660_real_data_lassocv.pkl',

'c670_model_path_real_data_linear':'/content/drive/MyDrive/台塑輕油案子/data/c620/model/c670_real_data_lassocv.pkl',

# col_names

'icg_col_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/icg_col_names.pkl',

'c620_col_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c620_col_names.pkl',

'c660_col_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c660_col_names.pkl',

'c670_col_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/col_names/c670_col_names.pkl',

# Special column (0.9999 & 0.0001)

'index_9999_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/index_9999.pkl',

'index_0001_path':'/content/drive/MyDrive/台塑輕油案子/data/c620/cleaned/index_0001.pkl',

# sp

'c620_wt_always_same_split_factor_dict':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c620_wt_always_same_split_factor_dict.pkl',

'c660_wt_always_same_split_factor_dict':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c660_wt_always_same_split_factor_dict.pkl',

'c670_wt_always_same_split_factor_dict':'/content/drive/MyDrive/台塑輕油案子/data/c620/map_dict/c670_wt_always_same_split_factor_dict.pkl',

}

# + [markdown] id="MoSO4Cbx6oxP"

# # define F

# + id="oiu5SRYL5NOr"

import joblib

import os

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import autorch

from autorch.function import sp2wt

class F(object):

def __init__(self,config):

# simulation data model

self.icg_model = joblib.load(config['icg_model_path'])

self.c620_model = joblib.load(config['c620_model_path'])

self.c660_model = joblib.load(config['c660_model_path'])

self.c670_model = joblib.load(config['c670_model_path'])

# real data model

self.icg_real_data_model = joblib.load(config['icg_model_path_real_data'])

self.c620_real_data_model = joblib.load(config['c620_model_path_real_data'])

self.c660_real_data_model = joblib.load(config['c660_model_path_real_data'])

self.c670_real_data_model = joblib.load(config['c670_model_path_real_data'])

# real data linear model

self.c620_real_data_model_linear = joblib.load(config['c620_model_path_real_data_linear'])

self.c660_real_data_model_linear = joblib.load(config['c660_model_path_real_data_linear'])

self.c670_real_data_model_linear = joblib.load(config['c670_model_path_real_data_linear'])

# columns name

self.icg_col = joblib.load(config['icg_col_path'])

self.c620_col = joblib.load(config['c620_col_path'])

self.c660_col = joblib.load(config['c660_col_path'])

self.c670_col = joblib.load(config['c670_col_path'])

# simple op_col

self.c620_simple_op_col = joblib.load(config['c620_simple_op_col'])

self.c660_simple_op_col = joblib.load(config['c660_simple_op_col'])

self.c670_simple_op_col = joblib.load(config['c670_simple_op_col'])

# other infomation

self.c620_wt_always_same_split_factor_dict = joblib.load(config['c620_wt_always_same_split_factor_dict'])

self.c660_wt_always_same_split_factor_dict = joblib.load(config['c660_wt_always_same_split_factor_dict'])

self.c670_wt_always_same_split_factor_dict = joblib.load(config['c670_wt_always_same_split_factor_dict'])

self.index_9999 = joblib.load(config['index_9999_path'])

self.index_0001 = joblib.load(config['index_0001_path'])

self.V615_density = 0.8626

self.C820_density = 0.8731

self.T651_density = 0.8749

# user can set two mode

self.Recommended_mode = False

self.real_data_mode = False

self._Post_processing = True

self._linear_model = False

def ICG_loop(self,Input):

while True:

if self.real_data_mode == True:

output = pd.DataFrame(self.icg_real_data_model.predict(Input[self.icg_col['x']].values),

index=Input.index,columns=['Simulation Case Conditions_C620 Distillate Rate_m3/hr'])

if self.real_data_mode == False:

output = pd.DataFrame(self.icg_model.predict(Input[self.icg_col['x']].values),

index=Input.index,columns=['Simulation Case Conditions_C620 Distillate Rate_m3/hr'])

dist_rate = output['Simulation Case Conditions_C620 Distillate Rate_m3/hr'].values[0]

na_in_benzene = Input['Simulation Case Conditions_Spec 2 : NA in Benzene_ppmw'].values[0]

print('current Distillate Rate_m3/hr:{} NA in Benzene_ppmw:{}'.format(dist_rate,na_in_benzene))

if output['Simulation Case Conditions_C620 Distillate Rate_m3/hr'].values[0] > 0:

return output,Input

else:

Input['Simulation Case Conditions_Spec 2 : NA in Benzene_ppmw'] -= 30

print('NA in Benzene_ppmw -= 30')

def __call__(self,icg_input,c620_feed,t651_feed):

# get index

idx = icg_input.index

# c620_case

c620_case = pd.DataFrame(index=idx,columns=self.c620_col['case'])

# c620_case(Receiver Temp_oC) = user input

c620_case['Tatoray Stripper C620 Operation_Specifications_Spec 1 : Receiver Temp_oC'] = icg_input['Tatoray Stripper C620 Operation_Specifications_Spec 1 : Receiver Temp_oC'].values

if self.Recommended_mode == True:

icg_input['Simulation Case Conditions_Spec 2 : NA in Benzene_ppmw'] = 980.0

icg_input['Simulation Case Conditions_Spec 1 : Benzene in C620 Sidedraw_wt%'] = 70.0

icg_output,icg_input = self.ICG_loop(icg_input)

c620_case['Tatoray Stripper C620 Operation_Specifications_Spec 2 : Distillate Rate_m3/hr'] = icg_output.values

c620_case['Tatoray Stripper C620 Operation_Specifications_Spec 3 : Benzene in Sidedraw_wt%'] = icg_input['Simulation Case Conditions_Spec 1 : Benzene in C620 Sidedraw_wt%'].values

if self.Recommended_mode == False:

c620_case['Tatoray Stripper C620 Operation_Specifications_Spec 2 : Distillate Rate_m3/hr'] = icg_input['Tatoray Stripper C620 Operation_Specifications_Spec 2 : Distillate Rate_m3/hr'].values

c620_case['Tatoray Stripper C620 Operation_Specifications_Spec 3 : Benzene in Sidedraw_wt%'] = icg_input['Simulation Case Conditions_Spec 1 : Benzene in C620 Sidedraw_wt%'].values

# c620_input(c620_case&c620_feed)

c620_input = c620_case.join(c620_feed)

# c620 output(op&wt)

c620_input = c620_case.join(c620_feed)

c620_output = self.c620_model.predict(c620_input)

c620_sp,c620_op = c620_output.iloc[:,:41*4],c620_output.iloc[:,41*4:]

# update by c620 real data model?

if self.real_data_mode == True:

if self._linear_model == True:

c620_op_real = self.c620_real_data_model_linear.predict(c620_input)[:,41*4:]

c620_op_real = pd.DataFrame(c620_op_real,index=c620_input.index,columns=self.c620_simple_op_col)

c620_sp_real = self.c620_real_data_model_linear.predict(c620_input)[:,:41*4]

c620_sp_real = pd.DataFrame(c620_sp_real,index=c620_input.index,columns=c620_sp.columns)

if self._linear_model == False:

c620_op_real = self.c620_real_data_model.predict(c620_input).iloc[:,41*4:]

c620_sp_real = self.c620_real_data_model.predict(c620_input).iloc[:,:41*4]

c620_op.update(c620_op_real)

c620_sp.update(c620_sp_real)

# c620 sp後處理

if self._Post_processing:

for i in self.c620_wt_always_same_split_factor_dict.keys():