code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Environment (conda_tensorflow_p36)

# language: python

# name: conda_tensorflow_p36

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/amilkh/cs230-fer/blob/transfer-learning/Final-SeNet50.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + colab_type="code" id="gwdg7Sv3XBaP" outputId="898c8cb4-3e8b-4f63-a2da-fc392f6d08cc" colab={"base_uri": "https://localhost:8080/", "height": 527}

# %tensorflow_version 1.x

# !pip install keras-vggface

# !pip install scikit-image

# !pip install pydot

# + colab_type="code" id="2nz38mJZXN_P" outputId="20e85bee-7622-43fa-c448-c31cb819fbd0" colab={"base_uri": "https://localhost:8080/", "height": 34}

import matplotlib.pyplot as plt

import pandas as pd

import numpy as np

import tensorflow as tf

from tensorflow.keras.layers import *

from tensorflow.python.lib.io import file_io

# %matplotlib inline

import keras

from keras import backend as K

from keras.callbacks import ModelCheckpoint, EarlyStopping

from keras.models import load_model

from keras.preprocessing.image import ImageDataGenerator

from keras_vggface.vggface import VGGFace

from keras.utils import plot_model

from sklearn.metrics import *

from keras.engine import Model

from keras.layers import Input, Flatten, Dense, Activation, Conv2D, MaxPool2D, BatchNormalization, Dropout, MaxPooling2D

import skimage

from skimage.transform import rescale, resize

import pydot

# + id="1fZczU8lGkX-" colab_type="code" outputId="d9e5dcea-c0be-4f4c-99b4-4ce966c3ea93" colab={"base_uri": "https://localhost:8080/", "height": 122}

from google.colab import drive

drive.mount('/content/drive')

# + colab_type="code" id="nUcd6yIGduUW" outputId="1bd89817-9058-4050-fb7f-5b7071f4c9b8" colab={"base_uri": "https://localhost:8080/", "height": 51}

print(tf.__version__)

print(keras.__version__)

# + id="v60q28mDHnN9" colab_type="code" colab={}

EPOCHS = 100

BS = 128

DROPOUT_RATE = 0.5

FROZEN_LAYER_NUM = 201

ADAM_LEARNING_RATE = 0.001

SGD_LEARNING_RATE = 0.01

SGD_DECAY = 0.0001

Resize_pixelsize = 197

# + id="itKZtFV0F7b1" colab_type="code" outputId="e03b20a2-6738-4c26-b216-074a9f8df5fa" colab={"base_uri": "https://localhost:8080/", "height": 632}

vgg_notop = VGGFace(model='senet50', include_top=False, input_shape=(Resize_pixelsize, Resize_pixelsize, 3), pooling='avg')

last_layer = vgg_notop.get_layer('avg_pool').output

x = Flatten(name='flatten')(last_layer)

x = Dropout(DROPOUT_RATE)(x)

x = Dense(4096, activation='relu', name='fc6')(x)

x = Dropout(DROPOUT_RATE)(x)

x = Dense(1024, activation='relu', name='fc7')(x)

x = Dropout(DROPOUT_RATE)(x)

# l=0

# for layer in vgg_notop.layers:

# print(layer,"["+str(l)+"]")

# l=l+1

batch_norm_indices = [2, 6, 9, 12, 21, 25, 28, 31, 42, 45, 48, 59, 62, 65, 74, 78, 81, 84, 95, 98, 101, 112, 115, 118, 129, 132, 135, 144, 148, 151, 154, 165, 168, 171, 182, 185, 188, 199, 202, 205, 216, 219, 222, 233, 236, 239, 248, 252, 255, 258, 269, 272, 275]

for i in range(FROZEN_LAYER_NUM):

if i not in batch_norm_indices:

vgg_notop.layers[i].trainable = False

# print('vgg layer 2 is trainable: ' + str(vgg_notop.layers[2].trainable))

# print('vgg layer 3 is trainable: ' + str(vgg_notop.layers[3].trainable))

out = Dense(7, activation='softmax', name='classifier')(x)

model = Model(vgg_notop.input, out)

optim = keras.optimizers.Adam(lr=ADAM_LEARNING_RATE, beta_1=0.9, beta_2=0.999, epsilon=1e-08, decay=0.0)

#optim = keras.optimizers.Adam(lr=0.0005, beta_1=0.9, beta_2=0.999, epsilon=1e-08, decay=0.0)

sgd = keras.optimizers.SGD(lr=SGD_LEARNING_RATE, momentum=0.9, decay=SGD_DECAY, nesterov=True)

rlrop = keras.callbacks.ReduceLROnPlateau(monitor='val_acc',mode='max',factor=0.5, patience=10, min_lr=0.00001, verbose=1)

model.compile(optimizer=sgd, loss='categorical_crossentropy', metrics=['accuracy'])

# plot_model(model, to_file='model2.png', show_shapes=True)

# + id="v0mXUNZB-yI5" colab_type="code" colab={}

# ! rm -rf train; mkdir train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/emotion.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/facesdb.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/fer2013/train.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/googlesearch.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/googleset.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/jaffe.zip' -d train

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/umea.zip' -d train

# + id="TUuN9SLX_Qvl" colab_type="code" colab={}

# ! rm -rf dev; mkdir dev

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/fer2013/test-public.zip' -d dev

# ! rm -rf test; mkdir test

# ! unzip -q '/content/drive/My Drive/cs230 project/dataset/fer2013/test-private.zip' -d test

# + id="egSWDlktpwwQ" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 136} outputId="6e23e651-d3d0-4800-d044-bb4310698f13" language="bash"

# root='/content/test/'

# IFS=$(echo -en "\n\b")

# (for dir in $(ls -1 "$root")

# do printf "$dir: " && ls -i "$root$dir" | wc -l

# done)

# + id="560jefnZ_Cyq" colab_type="code" colab={}

from tensorflow.keras.preprocessing.image import ImageDataGenerator

def get_datagen(dataset, aug=False):

if aug:

datagen = ImageDataGenerator(

rescale=1./255,

featurewise_center=False,

featurewise_std_normalization=False,

rotation_range=10,

width_shift_range=0.1,

height_shift_range=0.1,

zoom_range=0.1,

horizontal_flip=True)

else:

datagen = ImageDataGenerator(rescale=1./255)

return datagen.flow_from_directory(

dataset,

target_size=(197, 197),

color_mode='rgb',

shuffle = True,

class_mode='categorical',

batch_size=BS)

# + id="4aQAGQP5_Gpl" colab_type="code" outputId="39926d21-b2a1-4280-ba59-0e3afe7ef03e" colab={"base_uri": "https://localhost:8080/", "height": 102}

train_generator = get_datagen('/content/train', True)

dev_generator = get_datagen('/content/dev')

test_generator = get_datagen('/content/test')

# + colab_type="code" id="pLISdlaStbUn" outputId="c4f6da09-20e4-4b04-d2cb-efccdda0d88e" colab={"base_uri": "https://localhost:8080/", "height": 1000}

history = model.fit_generator(

generator = train_generator,

validation_data=dev_generator,

#steps_per_epoch=28709// BS,

#validation_steps=3509 // BS,

shuffle=True,

epochs=100,

callbacks=[rlrop],

use_multiprocessing=True,

)

# + id="JSSv08SHF0bC" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 68} outputId="a7179cab-5f0b-4414-e049-ec5a6ce50967"

print('\n# Evaluate on dev data')

results_dev = model.evaluate_generator(dev_generator, 3509 // BS)

print('dev loss, dev acc:', results_dev)

# + id="Ev4sDYDlOsqk" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 68} outputId="aa5ea7f8-b78d-4459-fd5f-5013490b7ecf"

print('\n# Evaluate on test data')

results_test = model.evaluate_generator(test_generator, 3509 // BS)

print('test loss, test acc:', results_test)

# + id="m9f7smhHUQus" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 590} outputId="53028197-9731-4dc2-a13e-2cfaeec79e96"

# list all data in history

print(history.history.keys())

# summarize history for accuracy

plt.plot(history.history['acc'])

plt.plot(history.history['val_acc'])

plt.title('model accuracy')

plt.ylabel('accuracy')

plt.xlabel('epoch')

plt.legend(['train', 'dev'], loc='upper left')

plt.show()

# summarize history for loss

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.title('model loss')

plt.ylabel('loss')

plt.xlabel('epoch')

plt.legend(['train', 'dev'], loc='upper left')

plt.show()

# + id="F-QKHTmJQhi1" colab_type="code" colab={}

epoch_str = '-EPOCHS_' + str(EPOCHS)

test_acc = 'test_acc_%.3f' % results_test[1]

model.save('/content/drive/My Drive/cs230 project/models/final/' + 'SENET50' + epoch_str + test_acc + '.h5')

# + id="yJceV2fuFEfQ" colab_type="code" colab={}

|

models/tf-SeNet50.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:env-TM2020] *

# language: python

# name: conda-env-env-TM2020-py

# ---

# + pycharm={"name": "#%%\n"}

import os

import numpy as np

import pandas as pd

import scipy.optimize as spo

import matplotlib.pyplot as plt

# + pycharm={"name": "#%%\n"}

# baseline stock to compare our portfolio performance with

BASE_LINE = 'SPY'

# companies stocks in our portfolio

SYMBOLS = ['AAPL', 'XOM', 'IBM', 'PG']

# initial allocations

allocations = np.array([0.3, 0.2, 0.1, 0.4])

# risk free rate, percent return when amount invested in secure asset

risk_free_rate = 0.0

# sampling frequency, currently configured for daily.

# weekly: 52, monthly: 12

sampling_freq = 252

# date range for which we wish to optimize our portfolio

start_date = '2020-09-01'

end_date = '2021-05-31'

# initial investment amount

initial_investment = 100000

# + pycharm={"name": "#%%\n"}

def symbol_to_path(symbol, base_dir="data"):

return os.path.join(base_dir, f"{str(symbol)}.csv")

def get_df(data_frame, symbol, columns, jhow="left"):

path = symbol_to_path(symbol)

df_temp = pd.read_csv(path,

index_col="Date",

parse_dates=True,

usecols=columns,

na_values=["nan"])

df_temp = df_temp.rename(columns={columns[1]: symbol})

data_frame = data_frame.join(df_temp, how=jhow)

return data_frame

def get_data(symbols, dates):

data_frame = pd.DataFrame(index=dates)

if "SPY" in symbols:

symbols.pop(symbols.index("SPY"))

data_frame = get_df(data_frame, "SPY", ["Date", "Adj Close"], jhow="inner")

for s in symbols:

data_frame = get_df(data_frame, s, ["Date", "Adj Close"])

return data_frame

def plot_data(df, title="Stock prices"):

df.plot(figsize=(20, 15), fontsize=15)

plt.title(title, fontsize=30)

plt.ylabel("Price [$]", fontsize=20)

plt.xlabel("Dates", fontsize=20)

plt.legend(fontsize=20)

plt.show()

def plot_selected(df, columns, start_date, end_date):

plt_df = normalize_data(df.loc[start_date:end_date][columns])

plot_data(plt_df)

def normalize_data(df):

return df / df.iloc[0, :]

# + pycharm={"name": "#%%\n"}

# plotting cumulative performance of stocks

dates = pd.date_range(start_date, end_date)

df = get_data(SYMBOLS, dates)

plot_selected(df, SYMBOLS, start_date, end_date)

# + pycharm={"name": "#%%\n"}

# computing portfolio value based on initial allocation and invetment

price_stocks = df[SYMBOLS]

price_SPY = df[BASE_LINE]

normed_price: pd.DataFrame = price_stocks/price_stocks.values[0]

allocated = normed_price.multiply(allocations)

position_value = allocated.multiply(initial_investment)

portfolio_value = position_value.sum(axis=1)

# + pycharm={"name": "#%%\n"}

# plotting portfolio's performance before optimum allocation

port_val = portfolio_value / portfolio_value[0]

prices_SPY = price_SPY / price_SPY[0]

df_temp = pd.concat([port_val, prices_SPY], keys=['Portfolio', 'SPY'], axis=1)

plot_data(df_temp, title="Daily portfolio value and SPY (Before optimization)")

# + pycharm={"name": "#%%\n"}

def compute_daily_returns(df: pd.DataFrame) -> pd.DataFrame:

daily_returns = df.copy()

# daily_returns[1:] = (daily_returns[1:] / daily_returns[:-1].values) - 1

daily_returns = daily_returns / daily_returns.shift(1) - 1

daily_returns.iloc[0] = 0

return daily_returns

def compute_sharpe_ratio(sampling_freq: int, risk_free_rate: float, daily_return: pd.DataFrame) -> pd.DataFrame:

daily_return_std = daily_return.std()

return np.sqrt(sampling_freq) * ((daily_return.subtract(risk_free_rate)).mean()) / daily_return_std

# + pycharm={"name": "#%%\n"}

daily_return = compute_daily_returns(portfolio_value)

sharpe_ratio = compute_sharpe_ratio(sampling_freq, risk_free_rate, daily_return)

print('Sharpe Ratio (Before Optimization): ', sharpe_ratio)

# + pycharm={"name": "#%%\n"}

# function used by minimizer to find optimum allocation. Minimizes negative sharpe ratio

def f(allocations: np.array, starting_investment: float, normed_prices):

allocated = normed_prices.multiply(allocations)

position_values = allocated.multiply(starting_investment)

portfolio_value = position_values.sum(axis=1)

daily_return = (portfolio_value/portfolio_value.shift(1)) - 1

return compute_sharpe_ratio(252, 0.0, daily_return) * -1

# + pycharm={"name": "#%%\n"}

# finding optimum allocation with bounds(each stock can take value between 0 and 1) and constraints (sum of allocation must be 1)

bounds = [(0.0, 1.0) for _ in normed_price.columns]

constraints = ({'type': 'eq', 'fun': lambda inputs: 1.0 - np.sum(inputs)})

result = spo.minimize(f, allocations, args=(initial_investment, normed_price, ), method='SLSQP',

constraints=constraints, bounds=bounds, options={'disp': True})

# + pycharm={"name": "#%%\n"}

# plotting portfolio's performance after optimum allocation

opt_allocation = result.x

opt_allocated = normed_price.multiply(opt_allocation)

opt_position_value = opt_allocated.multiply(initial_investment)

opt_port_value = opt_position_value.sum(axis=1)

normed_opt_port_value = opt_port_value / opt_port_value.values[0]

plot_data(pd.concat([normed_opt_port_value, prices_SPY], keys=['Portfolio', 'SPY'], axis=1), 'Daily Portfolio and SPY values (After Optimization)')

# + pycharm={"name": "#%%\n"}

print('Optimum Allocation: ', opt_allocation)

# + pycharm={"name": "#%%\n"}

daily_return_opt = compute_daily_returns(opt_port_value)

sharpe_ratio_opt = compute_sharpe_ratio(sampling_freq, risk_free_rate, daily_return_opt)

print('Sharpe Ratio (After Optimization): ', sharpe_ratio_opt)

# + pycharm={"name": "#%%\n"}

|

optimizer.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.7.5 64-bit (''ai_tutorial'': venv)'

# name: python37564bitaitutorialvenvbfa9976514ab457184b1b6f4ee41b3e6

# ---

# +

import cv2

import matplotlib.pyplot as plt

img = cv2.imread('./flower.jpg')

img = cv2.resize(img, dsize=None, fx=0.5, fy=0.5)

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

gray = cv2.GaussianBlur(gray, (7, 7), 0)

img2 = cv2.threshold(gray, 140, 240, cv2.THRESH_BINARY_INV)[1]

plt.subplot(1, 2, 1)

plt.imshow(img2, cmap='gray')

cnts = cv2.findContours(img2, cv2.RETR_LIST, cv2.CHAIN_APPROX_SIMPLE)[0]

for pt in cnts:

x, y, w, h = cv2.boundingRect(pt)

if w < 30 or w > 200:

continue

cv2.rectangle(img, (x, y), (x+w, y+h), (0, 255, 0), thickness=2)

plt.subplot(1, 2, 2)

plt.imshow(cv2.cvtColor(img, cv2.COLOR_BGR2RGB))

plt.show()

# -

|

flower.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Lab 1: Quantum Computing Operations and Algorithms

#

# <div class="youtube-wrapper">

# <iframe src="https://www.youtube.com/embed/uI02dn7PsHI" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe>

# </div>

#

# - Download the notebook: <a href="/content/summer-school/2021/resources/lab-notebooks/lab-1.ipynb">[en]</a> <a href="/content/summer-school/2021/resources/lab-notebooks/lab-1-ja.ipynb">[ja]</a>

#

# In this lab, you will learn how to construct quantum states and circuits, and run a simple quantum algorithm.

#

# Quantum States and Circuits:

# * Graded Exercise 1-1: Bit Flip

# * Graded Exercise 1-2: Plus State

# * Graded Exercise 1-3: Minus State

# * Graded Exercise 1-4: Cmplex State

# * Graded Exercise 1-5: Bell State

# * Graded Exercise 1-6: GHZ-like State

#

# The Deutsch-Jozsa Algorithm:

# * Graded Exercise 1-7: Classical Deutsch-Jozsa

# * Graded Exercise 1-8: Quantum Deutsch-Jozsa

#

# ### Lab Tips / Hints

#

# * For all the exercises with gates just add the gate(s) and only gates.

# * For Ex6, look at the example with GHZ state and how it will look if you add X gate.

# * For the Ex7, the balanced aspect is related to fact that half of the output are '0' and the other one is ‘1'. So in the worst case you have to test half of possibilities plus 1.

# * For the Ex8, think of the algorithm by parts. A good help is the [Qiskit textbook chapter on D-J algorithm](https://learn.qiskit.org/course/ch-algorithms/deutsch-jozsa-algorithm#full_alg).

#

# * To test your gate, please go to IBM Composer [here](https://quantum-computing.ibm.com/composer/files/new). As per the docs, "IBM Quantum Composer is a graphical quantum programming tool that lets you drag and drop operations to build quantum circuits and run them on real quantum hardware or simulators."

# * For Ex7, the formula is mentioned in the lecture 2.1 notes, under the Deutsch-Jozsa Algorithm>Classical Solution

# * For an alternate solution to Ex6, one can use “Y” gate it performs a bit flip and phase flip on the second qubit for e.g, $|000\rangle + |111\rangle$ changes to $|010\rangle - |101\rangle$

#

# <!-- ::: q-block.reminder -->

#

# ### FAQ

#

# <details>

# <summary>Is it needed to watch the two firsts lectures to complete the lab?</summary>

# It’s recommended but not mandatory.

# </details>

#

# <!-- ::: -->

#

# ### Suggested resources

# - Watch daytonellwanger on [Introduction to Quantum Computing (14) - Quantum Circuits and Gates](https://www.youtube.com/watch?v=wLv20RHqlgw)

# - Watch sentdex on [Deutsch Jozsa Algorithm - Quantum Computer Programming w/ Qiskit p.3](https://www.youtube.com/watch?v=_BHvE_pwF6E)

# - Read Qiskit on [Single Qubit Gates](/course/ch-states/single-qubit-gates)

# - Read Qiskit on [Deutsch-Jozsa Algorithm](https://learn.qiskit.org/course/ch-algorithms/deutsch-jozsa-algorithm#full_alg )

# - Read StackExchange on [Implementing Four Bell States on IBMQ](https://quantumcomputing.stackexchange.com/questions/2258/how-to-implement-the-4-bell-states-on-the-ibm-q-composer)

# - Watch Dr. <NAME> on [Quantum Computing with Qiskit Fundamentals](https://www.youtube.com/watch?v=A2ViFq0yhLI)

# - Watch Dr. <NAME> on [Quantum Computing with Qiskit Introduction to Algorithms](https://www.youtube.com/watch?v=uuCUUW6Bu2g)

# - Read Analysis of Deutsch-Jozsa Quantum Algorithm on [Suggested Resources: Materials ](https://eprint.iacr.org/2018/249.pdf)

# - Read <NAME> al. on [Quantum Engineer’s Guide to Superconducting Qubits](https://arxiv.org/pdf/1904.06560.pdf)

# - Read <NAME>. on [Classical and Quantum Logic Gates](http://www2.optics.rochester.edu/~stroud/presentations/muthukrishnan991/LogicGates.pdf)

|

notebooks/summer-school/2021/lab1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Imports

#

# We use *io* for byte IO streaming, *cairosvg* for SVG rasterization, *pdf2image* for PDF rasterization, and *PIL* for image processing:

# +

import io

from cairosvg import svg2png

from pdf2image import convert_from_path

from PIL import Image

# -

# # Synthetic data generation

#

# First we load an example document. We use a low DPI (dots per inch), since the added detail is not expected to increase detection accuracy:

# +

pdf_file = '../data/documents/ak10900_selostus.pdf'

pdf_page = convert_from_path(pdf_file, dpi=50)

# -

# We can visualize one of the pages by simply indexing into the list:

# + tags=[]

print('Image size:', pdf_page[0].size, '(px)')

pdf_page[0]

# -

# And here's one of the pages that originally may have contained a signature:

# + tags=[]

print('Image size:', pdf_page[36].size, '(px)')

pdf_page[36]

# -

# Next we'll load the signature of a former US head of state:

# + tags=[]

signature = Image.open(io.BytesIO(svg2png(url='https://upload.wikimedia.org/wikipedia/commons/4/46/George_HW_Bush_Signature.svg')))

# Reduce the width to be more in line with document

signature = signature.resize([signature.width//4, signature.height//4])

print('Image size:', signature.size, '(px)')

signature

# -

# Finally we simply paste the signature onto the target document page at the correct location:

page = pdf_page[36].copy()

page.paste(signature, [130,360], signature)

page

|

notebooks/Synthetic data generation demo.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

from flask import Flask

from flask_sqlalchemy import SQLAlchemy

from sqlalchemy import BigInteger, Boolean, CheckConstraint, Column, DateTime, Float, ForeignKey, Integer, SmallInteger, String, Text, UniqueConstraint

from sqlalchemy.orm import relationship

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql+psycopg2://postgres@localhost/dev_logware3'

app.config['SQLALCHEMY_TRACK_MODIFICATIONS'] = False

db = SQLAlchemy(app)

conn = db.engine.connect().connection

# +

class Annotation(db.Model):

__tablename__ = 'annotations'

annotation_guid = db.Column(db.String(32), primary_key=True)

reading_guid = db.Column(db.ForeignKey('readings.reading_guid'), nullable=False)

annotation = db.Column(db.Text)

reading = db.relationship('Reading', primaryjoin='Annotation.reading_guid == Reading.reading_guid', backref='annotations')

class Asset(db.Model):

__tablename__ = 'assets'

__table_args__ = (

db.CheckConstraint("(model)::text <> ''::text"),

db.CheckConstraint("(serial)::text <> ''::text"),

db.UniqueConstraint('model', 'serial')

)

asset_guid = db.Column(db.String(32), primary_key=True)

asset_type = db.Column(db.SmallInteger, nullable=False)

model = db.Column(db.String(32), nullable=False)

serial = db.Column(db.String(32), nullable=False)

active = db.Column(db.Boolean)

deleted = db.Column(db.Boolean)

asset_password = db.Column(db.String(20))

notes = db.Column(db.Text)

class LicenseInUse(db.Model):

__tablename__ = 'license_in_use'

license_in_use_guid = db.Column(db.String(32), primary_key=True)

computer_name = db.Column(db.Text, nullable=False)

user_guid = db.Column(db.ForeignKey('users.user_guid'), nullable=False)

license_guid = db.Column(db.ForeignKey('licenses.license_guid'), nullable=False)

time_stamp = db.Column(db.DateTime, nullable=False)

license = db.relationship('License', primaryjoin='LicenseInUse.license_guid == License.license_guid', backref='license_in_uses')

user = db.relationship('User', primaryjoin='LicenseInUse.user_guid == User.user_guid', backref='license_in_uses')

class License(db.Model):

__tablename__ = 'licenses'

license_guid = db.Column(db.String(32), primary_key=True)

license_type = db.Column(db.SmallInteger, nullable=False)

license_serial = db.Column(db.String(20))

version = db.Column(db.String(20))

date_applied = db.Column(db.DateTime, nullable=False)

logins_remaining = db.Column(db.Integer)

license_id = db.Column(db.Text, nullable=False)

deleted = db.Column(db.Boolean)

class Location(db.Model):

__tablename__ = 'locations'

__table_args__ = (

db.CheckConstraint("(location_name)::text <> ''::text"),

)

location_guid = db.Column(db.String(32), primary_key=True)

location_name = db.Column(db.String(20), nullable=False, unique=True)

active = db.Column(db.Boolean)

deleted = db.Column(db.Boolean)

notes = db.Column(db.Text)

class LogSession(db.Model):

__tablename__ = 'log_sessions'

log_session_guid = db.Column(db.String(32), primary_key=True)

session_start = db.Column(db.DateTime, nullable=False)

session_end = db.Column(db.DateTime)

logging_interval = db.Column(db.Integer, nullable=False)

logger_guid = db.Column(db.ForeignKey('assets.asset_guid'), nullable=False)

user_guid = db.Column(db.ForeignKey('users.user_guid'), nullable=False)

session_type = db.Column(db.SmallInteger, nullable=False)

computer_name = db.Column(db.Text, nullable=False)

asset = db.relationship('Asset', primaryjoin='LogSession.logger_guid == Asset.asset_guid', backref='log_sessions')

user = db.relationship('User', primaryjoin='LogSession.user_guid == User.user_guid', backref='log_sessions')

class Reading(db.Model):

__tablename__ = 'readings'

reading_guid = db.Column(db.String(32), primary_key=True)

reading = db.Column(db.Float(53), nullable=False)

reading_type = db.Column(db.SmallInteger, nullable=False)

time_stamp = db.Column(db.DateTime, nullable=False)

log_session_guid = db.Column(db.ForeignKey('log_sessions.log_session_guid'), nullable=False)

sensor_guid = db.Column(db.ForeignKey('assets.asset_guid'), nullable=False)

location_guid = db.Column(db.ForeignKey('locations.location_guid'), nullable=False)

channel = db.Column(db.SmallInteger, nullable=False)

max_alarm = db.Column(db.Boolean)

max_alarm_value = db.Column(db.Float(53))

min_alarm = db.Column(db.Boolean)

min_alarm_value = db.Column(db.Float(53))

compromised = db.Column(db.Boolean)

location = db.relationship('Location', primaryjoin='Reading.location_guid == Location.location_guid', backref='readings')

log_session = db.relationship('LogSession', primaryjoin='Reading.log_session_guid == LogSession.log_session_guid', backref='readings')

asset = db.relationship('Asset', primaryjoin='Reading.sensor_guid == Asset.asset_guid', backref='readings')

class SensorParameter(db.Model):

__tablename__ = 'sensor_parameters'

log_session_guid = db.Column(db.ForeignKey('log_sessions.log_session_guid'), primary_key=True, nullable=False)

channel = db.Column(db.SmallInteger, primary_key=True, nullable=False)

parameter_name = db.Column(db.String(128), primary_key=True, nullable=False)

parameter_value = db.Column(db.String(128), nullable=False)

log_session = db.relationship('LogSession', primaryjoin='SensorParameter.log_session_guid == LogSession.log_session_guid', backref='sensor_parameters')

class User(db.Model):

__tablename__ = 'users'

__table_args__ = (

db.CheckConstraint("(login_name)::text <> ''::text"),

)

user_guid = db.Column(db.String(32), primary_key=True)

login_name = db.Column(db.String(32), nullable=False, unique=True)

first_name = db.Column(db.String(64))

last_name = db.Column(db.String(64))

user_password = db.Column(db.String(64))

user_group = db.Column(db.SmallInteger)

permissions = db.Column(db.BigInteger)

active = db.Column(db.Boolean)

deleted = db.Column(db.Boolean)

change = db.Column(db.Boolean)

notes = db.Column(db.Text)

class Version(db.Model):

__tablename__ = 'versions'

db_version = db.Column(db.String(20), primary_key=True)

client_version = db.Column(db.String(20))

# -

records = d SELECT readings.reading_guid, readings.reading , readings.reading_type , readings.time_stamp , locations.location_name , readings.sensor_guid , readings.location_guid AS readings_location_guid, readings.channel AS readings_channel, readings.max_alarm AS readings_max_alarm, readings.max_alarm_value AS readings_max_alarm_value, readings.min_alarm AS readings_min_alarm, readings.min_alarm_value AS readings_min_alarm_value, readings.compromised AS readings_compromised

b.session.query(Reading).join(Reading.location).filter_by(location_name='ONSITE1').filter(Reading.time_stamp.between('2017-03-26', '2017-03-28'))

criteria = {"location_name_1": 'ONSITE1', "time_stamp_1":'2017-01-26', "time_stamp_2":'2017-03-28'}

df = pd.read_sql(('''

SELECT readings.reading_guid, readings.reading , readings.reading_type , readings.time_stamp , locations.location_name , readings.sensor_guid , readings.location_guid AS readings_location_guid, readings.channel AS readings_channel, readings.max_alarm AS readings_max_alarm, readings.max_alarm_value AS readings_max_alarm_value, readings.min_alarm AS readings_min_alarm, readings.min_alarm_value AS readings_min_alarm_value, readings.compromised AS readings_compromised

FROM readings JOIN locations ON readings.location_guid = locations.location_guid

WHERE locations.location_name = %(location_name_1)s AND readings.time_stamp BETWEEN %(time_stamp_1)s AND %(time_stamp_2)s'''),

conn, params=criteria)

df.info()

df

def celsius_to_fahr(temp_celsius):

"""Convert Fahrenheit to Celsius

Return Celsius conversion of input"""

temp_fahr = (temp_celsius * 1.8) + 32

return temp_fahr

# convert temps to fahrenheit

df.loc[df['reading_type'] == 0, 'reading'] = df.reading.apply(celsius_to_fahr)

df

locs = [loc for loc, in db.session.query(Location.location_name)]

summary = pd.DataFrame(index=None, columns=['LOCATION', 'SPECIFICATION', 'START_DATE', 'END_DATE', 'FIRST_POINT_RECORDED', 'LAST_POINT_RECORDED', 'TOTAL_HOURS_EVALUATED', 'TOTAL_HOURS_RECORDED', 'TOTAL_HOURS_OUT', 'PERCENT_OUT', 'HOURS_TEMP_HIGH', 'HOURS_TEMP_LOW', 'HOURS_RH_HIGH', 'HOURS_RH_LOW', 'HOURS_OVERLAP', 'HOURS_NO_DATA', 'INT_GREATER_THAN_15', 'HRS_DOWN_FOR_MAINT', 'DUPE_RECORDS'])

summary

df.location_name.unique()

summary.LOCATION = df.location_name.unique()

summary

temps = df[df['reading_type']==0]

temps.dtypes

temps = temps.set_index('time_stamp')

temps['duration'] = temps.index.to_series().diff().dt.seconds.div(60, fill_value=0)

temps.describe()

temp_hi = temps[temps.reading > 72]

temp_low = temps[temps.reading < 69]

temp_low.describe()

t_hr_hi = temp_hi.duration.sum(axis=0) / 60

t_hr_hi

t_hr_low = temp_low.duration.sum(axis=0) / 60

t_hr_low

t_gap = temps[temps.duration > 15]

t_gap_hrs = t_gap.duration.sum(axis=0) / 60

t_gap

t_gap_hrs

# df.loc[df['line_race'] == 0, 'rating'] = 0

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'START_DATE'] = pd.to_datetime(criteria.get('time_stamp_1'))

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'END_DATE'] = pd.to_datetime(criteria.get('time_stamp_2'))

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'FIRST_POINT_RECORDED'] = df.time_stamp.min()

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'LAST_POINT_RECORDED'] = df.time_stamp.max()

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_EVALUATED'] = (summary.END_DATE - summary.START_DATE).astype('timedelta64[s]') / 3600.0

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_RECORDED'] = (summary.LAST_POINT_RECORDED - summary.FIRST_POINT_RECORDED).astype('timedelta64[s]') / 3600.0

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_TEMP_HIGH'] = temp_hi.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_TEMP_LOW'] = temp_low.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_RH_HIGH'] = rh_hi.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_RH_LOW'] = rh_low.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_RECORDED'] = (((summary.LAST_POINT_RECORDED - summary.FIRST_POINT_RECORDED).astype('timedelta64[s]') / 3600.0) -(t_gap_hrs / 60))

summary

rh = df[df['reading_type']==1]

rh.dtypes

rh = rh.set_index('time_stamp')

rh['duration'] = rh.index.to_series().diff().dt.seconds.div(60, fill_value=0)

rh.describe()

rh_hi = rh[rh.reading > 29.5]

rh_low = rh[rh.reading < 25.5]

rh_low.describe()

rh_hr_hi = rh_hi.duration.sum(axis=0) / 60

rh_hr_hi

rh_hr_low = rh_low.duration.sum(axis=0) / 60

rh_hr_low

rh_gap = rh[rh.duration > 15]

rh_gap_hrs = rh_gap.duration.sum(axis=0)

rh_gap

rh_gap_hrs

a = ((summary.LAST_POINT_RECORDED - summary.FIRST_POINT_RECORDED).astype('timedelta64[s]') / 3600.0)

a - t_gap_hrs

pd.concat([t_gap, rh_gap], keys=['reading_type', 'time_stamp'])

len(pd.merge(t_gap, rh_gap, left_index=True, right_index=True))

# +

# df.loc[df['line_race'] == 0, 'rating'] = 0

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'START_DATE'] = pd.to_datetime(criteria.get('time_stamp_1'))

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'END_DATE'] = pd.to_datetime(criteria.get('time_stamp_2'))

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'FIRST_POINT_RECORDED'] = df.time_stamp.min()

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'LAST_POINT_RECORDED'] = df.time_stamp.max()

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_EVALUATED'] = (summary.END_DATE - summary.START_DATE).astype('timedelta64[s]') / 3600.0

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_TEMP_HIGH'] = temp_hi.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_TEMP_LOW'] = temp_low.duration.sum(axis=0) / 60

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_RECORDED'] = ((summary.LAST_POINT_RECORDED - summary.FIRST_POINT_RECORDED).astype('timedelta64[s]') / 3600.0) - t_gap_hrs

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'HOURS_NO_DATA'] = t_gap_hrs

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'INT_GREATER_THAN_15'] = len(pd.merge(t_gap, rh_gap, left_index=True, right_index=True))

summary.loc[summary.LOCATION == criteria.get('location_name_1'), 'TOTAL_HOURS_OUT'] = summary[['HOURS_TEMP_HIGH', 'HOURS_TEMP_LOW', 'HOURS_RH_HIGH', 'HOURS_RH_LOW']].sum(axis=1)

summary

# -

criteria.get('location_name_1')

|

notebooks/exploratory/0.4-jlawton-logware3-exploration-with-flask-sqlalchemy.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

import keras

import tensorflow as tf

print(keras.__version__)

print(tf.__version__)

# +

import numpy as np

import pandas as pd

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report,confusion_matrix

NGRAMS = 2

EPOCHS = 25

# Wikilabels

df = pd.read_csv('../data/wiki/wiki_name_race.csv')

df.dropna(subset=['name_first', 'name_last'], inplace=True)

sdf = df

# Additional features

sdf['name_first'] = sdf.name_first.str.title()

sdf.groupby('race').agg({'name_first': 'count'})

# -

# ## Preprocessing the input data

# +

# only last name will be use to train the model

sdf['name_last_name_first'] = sdf['name_last']

# build n-gram list

vect = CountVectorizer(analyzer='char', max_df=0.3, min_df=3, ngram_range=(NGRAMS, NGRAMS), lowercase=False)

a = vect.fit_transform(sdf.name_last_name_first)

vocab = vect.vocabulary_

# sort n-gram by freq (highest -> lowest)

words = []

for b in vocab:

c = vocab[b]

#print(b, c, a[:, c].sum())

words.append((a[:, c].sum(), b))

#break

words = sorted(words, reverse=True)

words_list = ['UNK']

words_list.extend([w[1] for w in words])

num_words = len(words_list)

print("num_words = %d" % num_words)

def find_ngrams(text, n):

a = zip(*[text[i:] for i in range(n)])

wi = []

for i in a:

w = ''.join(i)

try:

idx = words_list.index(w)

except:

idx = 0

wi.append(idx)

return wi

# build X from index of n-gram sequence

X = np.array(sdf.name_last_name_first.apply(lambda c: find_ngrams(c, NGRAMS)))

# check max/avg feature

X_len = []

for x in X:

X_len.append(len(x))

max_feature_len = max(X_len)

avg_feature_len = int(np.mean(X_len))

print("Max feature len = %d, Avg. feature len = %d" % (max_feature_len, avg_feature_len))

y = np.array(sdf.race.astype('category').cat.codes)

# Split train and test dataset

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=21, stratify=y)

# -

# ## Train a LSTM model

#

# ref: http://machinelearningmastery.com/sequence-classification-lstm-recurrent-neural-networks-python-keras/

# +

'''The dataset is actually too small for LSTM to be of any advantage

compared to simpler, much faster methods such as TF-IDF + LogReg.

Notes:

- RNNs are tricky. Choice of batch size is important,

choice of loss and optimizer is critical, etc.

Some configurations won't converge.

- LSTM loss decrease patterns during training can be quite different

from what you see with CNNs/MLPs/etc.

'''

from keras.preprocessing import sequence

from keras.models import Sequential

from keras.layers import Dense, Embedding, Dropout, Activation

from keras.layers import LSTM

from keras.layers.convolutional import Conv1D

from keras.layers.convolutional import MaxPooling1D

from keras.models import load_model

max_features = num_words # 20000

feature_len = 20 # avg_feature_len # cut texts after this number of words (among top max_features most common words)

batch_size = 32

print(len(X_train), 'train sequences')

print(len(X_test), 'test sequences')

print('Pad sequences (samples x time)')

X_train = sequence.pad_sequences(X_train, maxlen=feature_len)

X_test = sequence.pad_sequences(X_test, maxlen=feature_len)

print('X_train shape:', X_train.shape)

print('X_test shape:', X_test.shape)

num_classes = np.max(y_train) + 1

print(num_classes, 'classes')

print('Convert class vector to binary class matrix '

'(for use with categorical_crossentropy)')

y_train = tf.keras.utils.to_categorical(y_train, num_classes)

y_test = tf.keras.utils.to_categorical(y_test, num_classes)

print('y_train shape:', y_train.shape)

print('y_test shape:', y_test.shape)

# +

print('Build model...')

model = Sequential()

model.add(Embedding(num_words, 32, input_length=feature_len))

model.add(LSTM(128, dropout=0.2, recurrent_dropout=0.2))

model.add(Dense(num_classes, activation='softmax'))

# try using different optimizers and different optimizer configs

model.compile(loss='categorical_crossentropy',

optimizer='adam',

metrics=['accuracy'])

print(model.summary())

# -

print('Train...')

model.fit(X_train, y_train, batch_size=batch_size, epochs=EPOCHS,

validation_split=0.1, verbose=1)

score, acc = model.evaluate(X_test, y_test,

batch_size=batch_size, verbose=1)

print('Test score:', score)

print('Test accuracy:', acc)

# ## Confusion Matrix

p = model.predict(X_test, verbose=2) # to predict probability

y_pred = np.argmax(p, axis=-1)

target_names = list(sdf.race.astype('category').cat.categories)

print(classification_report(np.argmax(y_test, axis=1), y_pred, target_names=target_names))

print(confusion_matrix(np.argmax(y_test, axis=1), y_pred))

# ## Save model

model.save('./wiki/lstm/wiki_ln_lstm.h5')

words_df = pd.DataFrame(words_list, columns=['vocab'])

words_df.to_csv('./wiki/lstm/wiki_ln_vocab.csv', index=False, encoding='utf-8')

sdf.groupby('race').agg({'name_first': 'count'}).to_csv('./wiki/lstm/wiki_race.csv', columns=[])

|

ethnicolr/models/ethnicolr_keras_lstm_wiki_ln.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + id="w_6GmnEWZntb"

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

import matplotlib.pyplot as plt #Plotting

# %matplotlib inline

import warnings #What to do with warnings

warnings.filterwarnings("ignore") #Ignore the warnings

plt.rcParams["figure.figsize"] = (10,10) #Make the plots bigger by default

plt.rcParams["lines.linewidth"] = 2 #Setting the default line width

plt.style.use("ggplot") #Define the style of the plot

from statsmodels.tsa.seasonal import seasonal_decompose #Describes the time data

from statsmodels.tsa.stattools import adfuller #Check if data is stationary

from statsmodels.graphics.tsaplots import plot_acf #Compute lag for ARIMA

from statsmodels.graphics.tsaplots import plot_pacf #Compute partial lag for ARIMA

from statsmodels.tsa.arima_model import ARIMA #Predictions and Forecasting

# + id="Gr5EhDRKZt3a" outputId="4ccd6c6f-0fe8-49f2-e1b1-2d9abb63be78"

amazon = pd.read_csv("../input/amazonstocks/AMZN (1).csv") #Get our stock data from the CSV

amazon.head(10) #Take a peek at the Amazon data

# + id="zBBHnd46Zt6u" outputId="2bc19821-82e5-4709-f3c5-68cc205d425f"

print(amazon.isnull().any()) #Check for null values

# + id="Km0Bm2RiZt9s" outputId="8f0f22a6-3709-49ae-afd5-9d44522be758"

amazonOpen = amazon[["Date", "Open"]].copy() #Get the date and open columns

amazonOpen["Date"] = pd.to_datetime(amazonOpen["Date"]) #Ensure the date data is in datetime format

amazonOpen.set_index("Date", inplace = True) #Set the date to the index

amazonOpen = amazonOpen.asfreq("b") #Set the frequency

amazonOpen = amazonOpen.fillna(method = "bfill") #Fill null values with future values

#amazonOpen.index #Make sure the frequency remains intact

amazonOpen.head(12) #Take a peek at the open data

# + id="pPrdpiNfZuAu" outputId="e75000a9-3842-44c2-9403-c80bae49dd85"

y = amazonOpen.plot(title = "Amazon Stocks (Open)") #Get an idea of the data

y.set(ylabel = "Price at Open") #Set the y label to open

plt.show() #Show the plot

# + id="2gwW04PrZuDt" outputId="ccf71d28-5fcd-404a-f933-ffcf6479399b"

decomp = seasonal_decompose(amazonOpen, model = "multiplicative") #Decompose the data

x = decomp.plot() #Plot the decomposed data

# + id="7KpDogq6ZuG-" outputId="d4bbeeef-0718-42bb-b683-47b8246df2c8"

print("ADFuller Test; Significance: 0.05") #Print the significance level

adf = adfuller(amazonOpen["Open"]) #Call adfuller to test

print("ADF test static is {}".format(adf[1])) #Print the adfuller results

# + id="5Z1Anpc_ZuKu" outputId="ec27294e-298f-4759-aeff-3d01a88d8e23"

openLog = np.log(amazonOpen) #Take the log of the set for normalization

openStationary = openLog - openLog.shift() #Get a stationary set by subtracting the shifted set

openStationary = openStationary.dropna() #Drop generated null values from the set

openStationary.plot(title = "Stationary Amazon Stocks") #Plot the stationary set

# + id="g-EEcTxzZuNt" outputId="88d1c3ea-24f7-4897-ac31-acc7812d565d"

print("ADFuller Test; Significance: 0.05") #Print the significance level

adf = adfuller(openStationary["Open"]) #Call adfuller to test

print("ADF test static is {}".format(adf[1])) #Print the adfuller results

# + id="y5oUqCq2ZuQx" outputId="a55405b1-7956-4223-89ae-38f64adda97a"

decomp = seasonal_decompose(openStationary) #Decompose the stationary data

x = decomp.plot() #Plot the decomposition

# + id="601me8pDZuUC" outputId="66145dc1-564b-4544-87f9-d7beca4deace"

fig,axes = plt.subplots(2,2) #Set a subset for the data visualizations

a = axes[0,0].plot(amazonOpen["Open"]) #Plot the original data

a = axes[0,0].set_title("Original Data") #Give the original data a name

b = plot_acf(amazonOpen["Open"],ax=axes[0,1]) #Plot the ACF of the original data

x = axes[1,0].plot(openStationary["Open"]) #Plot the stationary data

x = axes[1,0].set_title("Stationary Data") #Give the stationary data a name

y = plot_acf(openStationary["Open"],ax=axes[1,1]) #Plot the ACF of the stationary data

# + id="KpcEJo2AZuW9" outputId="8a84b085-f71f-4771-ffe0-f65b60b52d25"

fig,axes = plt.subplots(1,2) #Create a subplot for the Partial ACF

a = axes[0].plot(openStationary["Open"]) #Plot the stationary data

a = axes[0].set_title("Stationary") #Ensure the stationary data is named

b = plot_pacf(openStationary["Open"], ax = axes[1], method = "ols") #Plot the partial ACF

# + id="s2QPQw11dEgU" outputId="fcf8ca2e-b7d4-4e76-dc74-e863e2ee78a4"

model = ARIMA(openStationary, order = (5, 1, 5)) #Build the ARIMA model

fitModel = model.fit(disp = 1) #Fit the ARIMA model

# + id="eD0btK0adEjo" outputId="1901e11a-91b4-4797-9213-1e40e7ae1650"

plt.rcParams.update({"figure.figsize" : (12,6), "lines.linewidth" : 0.05, "figure.dpi" : 100}) #Fix the look of the graph, dimming it to show the red

x = fitModel.plot_predict(dynamic = False) #Fit the ARIMA model

x = plt.title("Forecast Fitting") #Add a stock title

plt.show() #Show the ARIMA plot

# + id="2iBlXULxdEtD" outputId="19cf55f6-d7da-42e5-ef44-c4065b863aa4"

plt.rcParams.update({"figure.figsize" : (12,5), "lines.linewidth": 2}) #Fix the line width

length = int((len(amazonOpen)*975)/1000) #Get 975/1000 of the length of the data

print(length) #Print the length to make sure it actually is an int

# + id="t2BA-WGPdEwC"

train = amazonOpen[:length] #Use 975/1000 of the data for the train set

test = amazonOpen[length:] #Use the rest for testing

modelValid = ARIMA(train,order=(5,1,5)) #Create a model for the train set

fitModelValid = modelValid.fit(disp= -1) #Fit the model

# + id="6W7-nNH-dEzC"

fc,se,conf = fitModelValid.forecast(len(amazonOpen) - length) #Forcast over the test area

forecast = pd.Series(fc, index = test.index) #Get the forecast for the area

# + id="Kjm9SfzJdncG" outputId="e41f8db4-7f5c-45fe-aab8-006d348deef1"

#Add labels for the train, test, and forecast

plt.plot(train,label = "Training Data")

plt.plot(test,label = "Actual Continuation")

plt.plot(forecast,label = "Forecasted Continuation", color = "g")

plt.title("ARIMA Forecast") #Add the Forecast title

plt.legend(loc = "upper left") #Put the legend in the top left

plt.xlabel("Year") #Add the year label to the bottom

plt.ylabel("Open Price") #Add the open price to the y axis

# + id="QTOTgC3LdnfN" outputId="53fcfc62-9eec-4708-d59b-914fea97c658"

modelPred = ARIMA(amazonOpen,order=(5,1,5)) #Create a model for the whole data

fitModelPred = modelPred.fit(disp= -1) #Fit the model

# + id="wIXOY-WCdnjA" outputId="7c90f9c9-366d-4fbc-dc95-0de6d78cc4e9"

fitModelPred.plot_predict(1,len(amazonOpen) + 500) #Plot predictions for the next 500 days

x = fitModelPred.forecast(500) #Forecast the prediction for the next 500 days.

x = plt.title("Amazon Stock Forecast") #Add a stock title

x = plt.xlabel("Year") #Add the year label to the bottom

x = plt.ylabel("Open Price") #Add the open price to the y axis

|

Amazon_stock_prediction_model.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

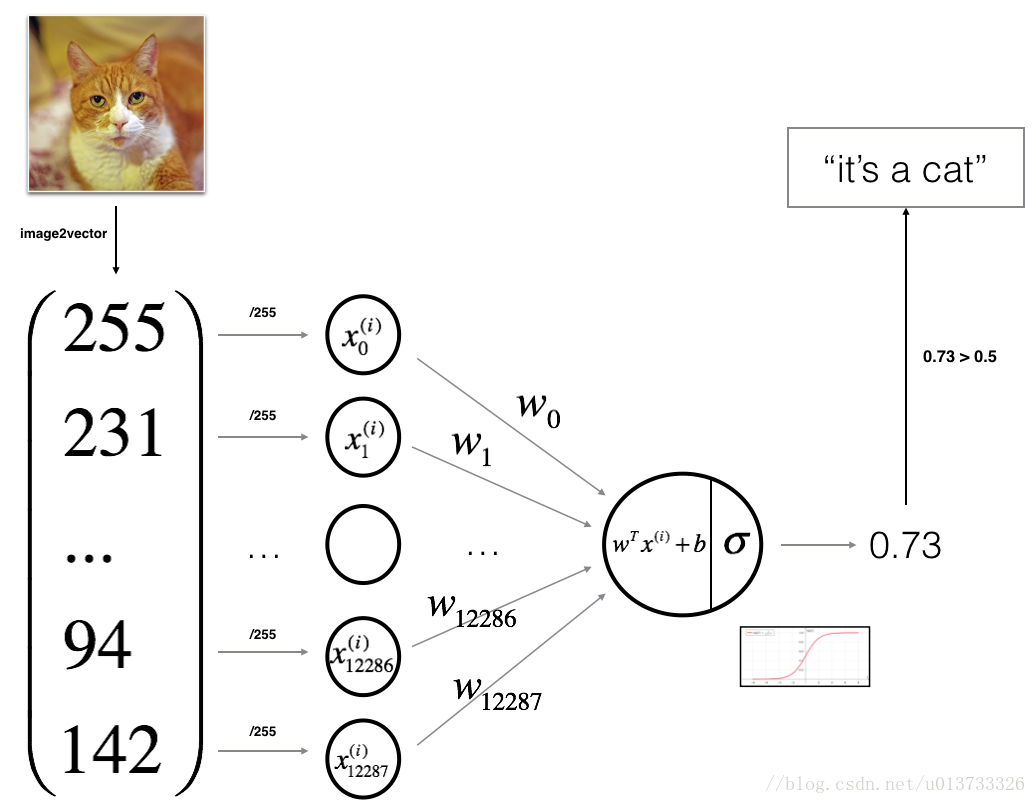

# + [markdown] slideshow={"slide_type": "slide"}

# # Logistic Regression

#

# Logistic regression is a statistical method for predicting binary outcomes from data.

#

# Examples of this are "yes" vs. "no" or "young" vs. "old".

#

# These are categories that translate to a probability of being a 0 or a 1.

#

# Source: [Logistic Regression](https://towardsdatascience.com/real-world-implementation-of-logistic-regression-5136cefb8125)

# + [markdown] slideshow={"slide_type": "subslide"}

# We can calculate the logistic regression by applying an activation function as the final step to our linear model.

#

# This converts the linear regression output to a probability.

# + slideshow={"slide_type": "subslide"}

# %matplotlib inline

import matplotlib.pyplot as plt

import pandas as pd

# + [markdown] slideshow={"slide_type": "subslide"}

# Generate some data

# + slideshow={"slide_type": "fragment"}

from sklearn.datasets import make_blobs

X, y = make_blobs(centers=2, random_state=42)

print(f"Labels: {y[:10]}")

print(f"Data: {X[:10]}")

# + slideshow={"slide_type": "subslide"}

# Visualizing both classes

plt.scatter(X[:, 0], X[:, 1], c=y)

# + [markdown] slideshow={"slide_type": "subslide"}

# Split our data into training and testing data

# + slideshow={"slide_type": "fragment"}

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=1)

# + [markdown] slideshow={"slide_type": "subslide"}

# Create a logistic regression model

# + slideshow={"slide_type": "fragment"}

from sklearn.linear_model import LogisticRegression

classifier = LogisticRegression()

classifier

# + [markdown] slideshow={"slide_type": "subslide"}

# Fit (train) our model by using the training data

# + slideshow={"slide_type": "fragment"}

classifier.fit(X_train, y_train)

# + [markdown] slideshow={"slide_type": "subslide"}

# Validate the model by using the test data

# + slideshow={"slide_type": "fragment"}

print(f"Training Data Score: {classifier.score(X_train, y_train)}")

print(f"Testing Data Score: {classifier.score(X_test, y_test)}")

# + [markdown] slideshow={"slide_type": "subslide"}

# Make predictions

# -

# Generate a new data point (the red circle)

import numpy as np

new_data = np.array([[-2, 6]])

plt.scatter(X[:, 0], X[:, 1], c=y)

plt.scatter(new_data[0, 0], new_data[0, 1], c="r", marker="o", s=100)

# + slideshow={"slide_type": "fragment"}

# Predict the class (purple or yellow) of the new data point

predictions = classifier.predict(new_data)

print("Classes are either 0 (purple) or 1 (yellow)")

print(f"The new point was classified as: {predictions}")

# + slideshow={"slide_type": "subslide"}

predictions = classifier.predict(X_test)

pd.DataFrame({"Prediction": predictions, "Actual": y_test})

|

01-Lesson-Plans/19-Supervised-Machine-Learning/1/Activities/05-Ins_Logistic_Regression/Solved/Ins_Logistic_Regression.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Analysis

# language: python

# name: ana

# ---

# # The Seats-Votes Curve in a Single Election

#

# *<NAME><br>

# University of Bristol<br>

# <EMAIL><br>

# (Supported by NSF #1657689)*

# In the last notebook, we talked about the concept underlying *historical* seats-votes relationships. At its core, though, estimating seats-votes relationships in this manner is

#

# 1. **wasteful**: in each election, we observe a ton of district-level information. However, we disregard nearly all of this information about district-level *vote* results in favor of exclusive examination of the *district* winners. While winning a district or not *is* information about district-level vote (it's a censored variable with a threshold of $.5$), this is not the same as the original information in the vote shares.

# 2. **dubious**: over many elections, the seats-votes model does *not* account for structural changes to the electorate, the party system, or the electoral process. This means that each election is considered comparable to every other election, even when they're separated by *large* expanses of time or structural changes to the electoral system.

#

# These kinds of objections are noted by Browning & King (1987) but are most forcefully discussed by Gelman & King (1994). Incorporating older arguments (e.g. Mackerras (1962), among others), this line of argument seeks to define seats votes curves *in a single election* using a basic counterfactual argument: when shifts in party popular vote occur, these votes tend to be distributed uniformly at random among districts.

#

# In this notebook, we'll talk about how this kind of seats-votes curve is built within a single election, how the *uniform partisan swing* assumption works, and how it might not quite capture the true reality of how votes change from election to election. But, first, though, we'll have to read the data again.

import pandas

import pysal

import numpy

import seaborn

import seatsvotes

import statsmodels.api as sm

import matplotlib.pyplot as plt

# %matplotlib inline

house = seatsvotes.data.congress(geo=True)

house.crs = {"init":"epsg:4269"}

house = house.to_crs(epsg=5070)

house.head()

# # Seats & Votes in a single election

# At its core, analyzing a seats-votes relationship in a single election is somewhat suspect. This is because the relationship between votes and seats is a *strict hinge-point* relationship in most two-party elections. Once the reference party gets beyond 50% in the two-party vote (i.e. once Dems get more votes than Republicans), the district is "won," and represented by a $1$. In the other direction, the district is lost. This makes for a pretty boring scatterplot:

plt.scatter(house.vote_share, house.vote_share > .5, marker='.', color='r')

plt.vlines(.5, 0,1, linestyle=':', color='k', )

# Indeed, there's no real mechanism to be learned here; the basic relationship is simply one of a censored variable; when Dems win a majority of the two-party vote, they win the seat; when Dems win a minority, Republicans win.

# ### Uniform Partisan Swing

# So, how do we model the seats-votes curve in a single election? We can't do it *directly* based on the split of districts that are won (or lost). Instead, a classic method to construct the seats-votes curve relies on the assumption of *uniform partisan swing*, that changes in a party's popular vote are reasonably modelled by assigning those changes to *districts* uniformly. So, if Dems change in their popular vote by 10%, then we simply subtract 10% of each districts' vote shares.

#

# Unfortunately, this returns us to the same issue noted before about the difference between *average district voteshare* and *party popular vote*. This distinction leads to some rather finnicky issues with assuming uniform partisan swing. For example, a change of 10% in a district with *many* voters will result in a larger bump to the popular vote than a 10% change to a small district. Thus, it becomes more common to examine a party's average voteshare across districts, rather than the party's popular vote. Further, it again becomes common to assume that, when vote shares are expected to go below $0$ or above $100%$, the vote shares stop changing for that district. Complexities remain about *uncontested* districts, and we'll treat those later.

#

# Thinking about a *seats-votes* curve estimated using uniform partisan swing, we can build one in a pretty direct fashion. We'll focus first on building the seats-votes curve under uniform swing around a point we *did observe:* a single election. Let's pick out an election in particular, the 2006 election:

house06 = house.query('year == 2006')

# Since this is only a single election, we only get one single observation of the average Democrat vote share, and one observation of how many districts the Dems won in that election. Below, we'll plot this as a single point, marked as a red `x`:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot(house06.vote_share.mean(), (house06.vote_share > .5).mean(), marker='x', color='r')

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# Now, uniform partisan swing basically works by asking:

#

# > if a party were to win $\delta$% more in each district, how many districts that party win?

#

# By asking that question for a few different values of $\delta$, we can build up a sequence of hypothetical district average vote shares which show the underlying seat-vote relationship latent in our data, *assuming (of course)* that the uniform swing hypothesis holds as a reasonable model of how votes in districts change as the average district vote changes.

#

# So, let's see what happens when Democrats win 1% more in each district. Below, we'll add `.01` to each observation, and record the number of seats Dems would have won if each district increases in Democrat vote share by exactly one percentage point:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.plot((house06.vote_share + .01).mean(),

((house06.vote_share + .01) > .5).mean(), marker='x', color='k')

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# Wow! there's a very small increase in the share of seats Dems win when every district's voteshare increases by 1%. This suggests that one or more districts $i$ have a vote share $v_i$ such that $v_i < .5$ but $v_i + .01 > .5$. Thus, when all districts increase in their Democrat vote share by a single percentage point, the districts flip from being won by Republicans to being won by Democrats.

#

# Given that we can do this with an increase towards *Democrats*, we can also *decrease* all Democrat vote shares by a single percentage point and model a uniform partisan swing towards Republicans:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.plot((house06.vote_share + .01).mean(),

((house06.vote_share + .01) > .5).mean(), marker='x', color='grey')

ax.plot((house06.vote_share - .01).mean(),

((house06.vote_share - .01) > .5).mean(), marker='x', color='k')

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# More districts flip! This suggests that some district is won by Democrats with a margin smaller than 1%.

#

# Again, contingent on the uniform partisan swing assumption being realistic, we can do this over and over again for different values of $\delta$ and obtain the "seats-votes curve" for that election:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot((house06.vote_share.mean() + numpy.linspace(-.25, .25, num=20)),

[((house06.vote_share + delta) > .5).mean() for delta

in numpy.linspace(-.25,.25,num=20)], marker='x', color='grey')

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# The curve above is the "empirical" seats votes curve, like that implemented by <NAME> in the `pscl` library in R. This "empirical" seats votes curve requires the uniform partisan swing assumption to construct; each point on the curve is given by some swing $\delta$ away from the observed average party vote share $\bar{v}$ and the fraction of districts at swing $\delta$ that are won by Democrats $(v_i + \delta > .5)$. Letting $\mathcal{I}$ stand for the indicator function which is 1 when the statement inside of it is true and zero otherwise, this means every point on the empirical seats-votes curve is a coordinate:

#

# $$ \left(\bar{v}, \sum_i^N \mathcal{I}\left( (v_i + \delta) > .5\right)n^{-1}\right)$$

#

# And the functional form of the "empirical" seats-votes curve is:

#

# $$f(\delta) = \sum_i^N \mathcal{I}\left((v_i + \delta) > .5\right)n^{-1} $$

#

# Looking at this definition, a few things become apparent. First, this method practically ignores districts that go way above $1$ or below $0$; while these are impossible (no district can have more Republican votes than there are voters in general), the seats-votes curve only considers districts relative to the threshold of victory, $.5$. This means that some vote shares from $v_i + \delta$ may actually be invalid vote shares. Second, while many doubt whether or not this model is a *realistic* model of how the electorate behaves, it's still suggested as a good first approximation for how the electoral system actually works, especially in the small region around the observed voteshare (e.g. Jackman 2014). There is absolutely *no* uncertainty in this model of the seats-votes curve, however. Further, assuming that the average swing affects all districts equally can be patently unrealistic when thinking about elections as social or geographical processes. Finally, we can see that the empirical seats-votes curve is actually *much* bumpier than the plot above suggests: there is a finite number ($N$) of observed $v_i$, but $\delta$ changes continuously. This means that the $f(\delta)$ function changes in a stepwise fashion: as $\delta$ increases, some $v_i + \delta$ switches from being below $.5$ to being above $.5$; when this happens, the function increases by a single step $1/n$. In general, if districts are allowed to have *idential* $v_i$, the changes may only occur in multiples of $1/n$, but these remain *integral* changes.

#

# Indeed, this step change property belies a much more important fact: this "model" of the seats-votes curve built from this restrictive "uniform partisan swing" assumption is actually a much more basic expression of the empirical structure of the data. Nagle (2018) provides a very good discussion of this; the seats-votes curve (when constructed this way) contains the same information as the empirical cumulative distribution function for $v_i$.

#

# This means that no theory of partisan swing is necessary to construct this curve at all. We'll discuss this now.

# # Seats-Votes Curves as an Empirical CDF

# Going back to our first observed point from the 2006 election, we'll zoom in really tight on the observed result.

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot(house06.vote_share.mean(), (house06.vote_share > .5).mean(), marker='x', color='r')

ax.axis((.55,.58, .52,.55))

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# Now, we've discussed that *really small* changes in $\delta$ should reveal the integrality property. So, instead of changing $\delta$ by $.01$, we'll change $\delta$ **really finely** (like, $.00004$) near our observed value. This reveals the stepwise structure:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot((house06.vote_share.mean() + numpy.linspace(-.01, .01, num=500)),

[((house06.vote_share + delta) > .5).mean()

for delta in numpy.linspace(-.01,.01,num=500)],

marker='x', color='grey')

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.axis((.55,.58, .52,.55))

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# Now we can see the stepwise structure. Again, this happens because seats are either *won* or they're *lost* by a party; no fractional seats are possible, so the seats-votes curve always assigns a discrete number of "wins" to Democrats. If there are 435 seats, the "estimated" seats-votes curve can only change $1/435$ at any step. Sometimes, the seats-votes curve can change more than $1/435$ at a time, like if two districts tie exactly in their vote shares. But, it can never change less than $1/435$ due to integrality. If we visualize this as light small lines in the plot we can see this effect very clearly:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

ax.plot((house06.vote_share.mean() + numpy.linspace(-.01, .01, num=500)),

[((house06.vote_share + delta) > .5).mean() for delta in numpy.linspace(-.01,.01,num=500)], marker='x', color='grey')

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.hlines((numpy.arange(1,435)/435), 0,1, color='k', linewidth=.15)

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

ax.axis((.55,.58, .52,.55))

plt.show()

# Each of the horizontal lines is a change in exactly one seat. Note that two seats tie for the voteshares right around $.565$; we can see this by noting that the two district vote share percentages are so close to one another, they fall through the grid of values together. But, unless something ties *exactly*, the seats-votes curve only ever changes by a single seat at each percent vote value, and if the grid of $\delta$ became even finer, the curve would only increase exactly $1/435$ at a time.

#

# To complete the last bit of relationship between the seats-votes curve and a cumulative density function, we need to obtain an expression that relates the two kinds of curves.

#

# So, can see that when a party popular vote share is exactly $\bar{v}$, then the seats-votes curve will be the count where $v_i>.5$. If we're *instead* at some swung vote share $\bar{v} + \delta$, the height of the seats-votes curve will be the percent of seats won at that *swung* vote share ($v_i + \delta > .5$). Moving to the *empirical cumulative density function* (ECDF), the height of the ECDF at an arbitrary value $x$ is the percent of all observations less than or equal to that value ($v_i < x$). Arbitrarily, let $x = \bar{v} - \delta$, we can see that the criteria for the ECDF is *identical to* the within-election seats-votes curve, shifted by a factor, $.5 - \bar{v}$:

#

# $$

# \begin{align}

# v_i &< x \\

# v_i &< \bar{v} - \delta \\

# v_i &< \bar{v} - \delta + (.5 - .5) \\

# v_i + \delta + .5- \bar{v} &< .5 \\

# v_i + \delta + (.5- \bar{v}) &< .5

# \end{align}

# $$

#

# This holds for any $x$ in the domain of the cumulative density function, since $\delta$ is a free parameter and $\bar{v}$ is observed.

#

# The $.5 - \bar{v}$ factor isn't totally arbitrary: it's the *aggregate margin*, or the difference between the between the party's average voteshare in districts and $.5$, which is the threshold the party would need to control the legislature. Second, note that we've defined $x = \bar{v} - \delta$. This "flips" the direction of the seats-votes curve, since swings of size $\delta$ in the seats-votes curve are swings of size $-\delta$ in the ECDF. For a more rigorous discussion, again see my dissertation (Wolf, 2017) or Nagle (2018).

# In practice, this means that the ECDF *for Republicans* and the seats-votes curve *for Democrats* are simply shifted & scaled versions of the same curve. Since we can construct the ECDF without reference to a theory of partisan swing, this suggests the "empirical" seats-votes curve is sufficiently more general than it might appear at first glance.

#

# As far as our ability to compute seats-votes curves is concerned, this means we can use all of the standard methods for computing histograms to "estimate" empirical seats-votes curves under the uniform partisan swing assumption. Below, I'll show the empirical CDF and swing-built seats-votes curve from above, zoomed in:

f = plt.figure()

ax = plt.gca()

ax.axis((0,1,0,1))

shift = .5 - house06.vote_share.mean()

ax.plot((house06.vote_share.mean() + numpy.linspace(-.10, .10, num=1000)),

[((house06.vote_share + delta) > .5).mean()

for delta in numpy.linspace(-.10,.10,num=1000)],

marker='x', color='grey', label='Uniform Swing')

ax.plot(house06.vote_share.mean(),

(house06.vote_share > .5).mean(), marker='x', color='r')

ax.hist(1-house06.vote_share - shift, cumulative=True, histtype='step',

density=True, bins=9000, color='red', zorder=100,

label='Republican Shifted ECDF')

ax.hist(1-house06.vote_share, cumulative=True, histtype='step',

density=True, bins=9000, color='purple', zorder=100,

label='Republican ECDF')

ax.hlines((numpy.arange(1,436)/435), 0,1, color='k', linewidth=.15)

ax.arrow(.475,.501,-shift, 0, color='b',

length_includes_head=True, zorder=100, label='Shift')

ax.hlines(1000,10001,1000, color='b', label='Shift') # because the shift label is disappearing

ax.legend(loc='center right', bbox_to_anchor=(2,.5), fontsize=20, frameon=False)

ax.axis((.46, .59, .48,.58))

ax.set_title("Seats-Votes & ECDF Equivalence")

ax.set_xlabel("Average District Pct. Dem Vote")

ax.set_ylabel("Pct. of Congress Won by Dems")

plt.show()

# We can do this for the nation for each year to get a collection of seats-votes curves that apply to each year, or use *all* seats across the elections to build one collected seats-votes curve. In practice, when we have multiple seats-votes curves, it's usually best to visualize each separately, and consider the *consensus* curve to to be the median among the many different replications. We'll see this strategy in Gelman & King (1994), as well as McGann et al. (2015) and Wolf (2018).

#

# Below, though, I'll plot seats-votes curves using the shifted Republican ECDF for each election since 1994.

for year,chunk in house.groupby('year'):

shift = .5 - chunk.vote_share.mean()

plt.hist(numpy.clip(1-chunk.vote_share.dropna().values - shift, 0,1),

cumulative=True, density=True, histtype='step',

color='k', bins=100, alpha=.2, zorder=1000,

label='Single Year' if year==2006 else None)

plt.scatter(house.groupby('year').vote_share.mean(),

house.groupby('year').vote_share.apply(lambda x: (x > .5).mean()),

label='Observed District\nAverages', marker='x', color='b')

plt.hist(numpy.clip((1-house.dropna(subset=['vote_share']).vote_share.values)

- (.5 - house.vote_share.mean()), 0,1),

cumulative=True, density=True, histtype='step',

color='orangered', bins=100, linewidth=3, label='Pooled')

plt.legend(loc='upper left', fontsize=14)

plt.xlabel("Average District %Dem")

plt.ylabel("Percent of Legislature Won")

plt.show()

# # Is the seats-votes curve realistic?

#

# The question of whether or not a seats-votes curve is *realistic* is quite distinct from *whether or not we can estimate it*. Again, following Nagle (2018), we can clearly "estimate" seats-votes curves for any number of replications from a model of elections. We can do this quickly, easily, and in a pretty straight-forward fashion. I'll talk a lot later about how there may be many different ways of constructing *stochastic* estimates of seats-votes curves, those that take into account the various random and co-varying factors that are involved in electoral processes. But, at its core, the question of whether or not we can estimate seats-votes curves easily is solved using Nagle (2018)'s relization about empirical CDFs and seats-votes curves.

#

# Their *realism* depends, though, on their interpretation. Interpreting seats-votes curves as empirical CDFs is not problematic at all. However, interpreting them *as a model* of how congressional control might change as district average vote shares change... that is problematic. In general, this interpretation hinges on how realistic the assumption of uniform partisan swing is.

#

# While we may take the uniform partisan swing curves as a good first-approximation for how the electoral system will work, there is a common perception that extremes of the seats-votes curves, the points that are far away from an observed $(\bar{v},\bar{s})$ point, are not "realible" representations of reality. In my own dissertation research, I found that policymakers, public officials, and stakeholders in Washington and and Arizona did believe that the whole range of $\bar{v}$ to $1-\bar{v}$ were possible, but that it was probably more likely that the structure of partisanship in the state would not change radically or flip in the control of the congressional delegation. This suggests that, for practitioners I interviewed, seats-votes curves may indeed have a narrow band of validity around the observed election results, and this band may encompass values where a party both wins or loses. This doesn't mean that the *estimation* of the curve is unrealible, but it *does* mean the model becomes unrealistic at large swings.

#

# However, another component of the assumption of uniform partisan swing that I examined in my dissertation considered whether the assumption of *uniformity* makes sense *geographically*. That is, do nearby districts tend to swing the same direction (or, more-so than they may otherwise if swing were uniformly random)? It may be the case that small but *highly correlated* swings will behave differently from small uncorrelated swings. If *this* is true, it suggests that assuming that *all districts accrue the same changes* might be unrealistic, and this unrealism may affect the validity of the seats-votes curve as a conceptual model.

#

# To examine this more in depth, we can see the votes over the 2002-2010 elections to Congress.

aughties = house.query('year > 2000 & year < 2012')

# Now, admitting that there are a few inter-censal redistrictings during this period, I'll assume that the redistricting map remains *mainly* unchanged, and that it is reasonable to compute swing between two districts in subsequent elections so long as they have the same district number.

aughties['district_id'] = aughties.state_fips.astype(str).str.rjust(2,'0') \

+ aughties.lewis_dist.astype(str).str.rjust(2, '0')

# Now, I'm going to pivot the dataframe so that each row is a congressional district and each column is a year in which a vote share is recorded.

pivot_table = aughties.pivot(index='district_id',

columns='year',

values='vote_share')

pivot_table.head()

# Then, we'll compute the `diff` along columns and drop the first (since it has nothing to diff against):

swings = pivot_table.diff(axis=1).drop(2002, axis=1)

swings.head()

# We can see the swing distributions in each year:

swings.hist(bins=40)