task_categories:

- robotics

tags:

- Zeroshot

configs:

- config_name: default

data_files: data/*/*.parquet

license: apache-2.0

Real-world data for general robotics.

SF Fold

🤖 What is Zeroshot Tshirt?

This dataset contains real-world residential t-shirt folding demonstrations, collected by trained data collectors using hand-held grippers in diverse home environments.

📖 Table of Contents

- Features

- Terminology

- Specifications

- Data Format

- Collection

- Preprocessing & Annotation

- Validation

- Distribution

- Appendix

📊 Features

This dataset contains real-world residential t-shirt folding demonstrations, collected using hand-held grippers in diverse household environments with varied lighting differences.

| Category | Description |

|---|---|

| Environments | 212 unique environments across 31 locations |

| Episodes | 4,832 (≈ 101.4 hours) |

| Video Steams | 1296×972 @ 30 fps |

| Trajectory Accuracy | Abs. Pose Error: 10 ± 5.1 mm; Abs. Rotation Error: 1.5 ± 0.6° |

| Data Formats | Parquet (poses, gripper widths), MP4 (video streams) |

| Collection Method | In-house residential data collection using handheld grippers |

| Validation | Cross-validated against OptiTrack Trio 3 |

| Availability | Raw data and preprocessing documentation included |

📖 Terminology

| Term | Definition |

|---|---|

| Puppet | End-effector system with articulated motion axes and cameras |

| Gripper | Parallel-jaw mechanism with continuous width measurement |

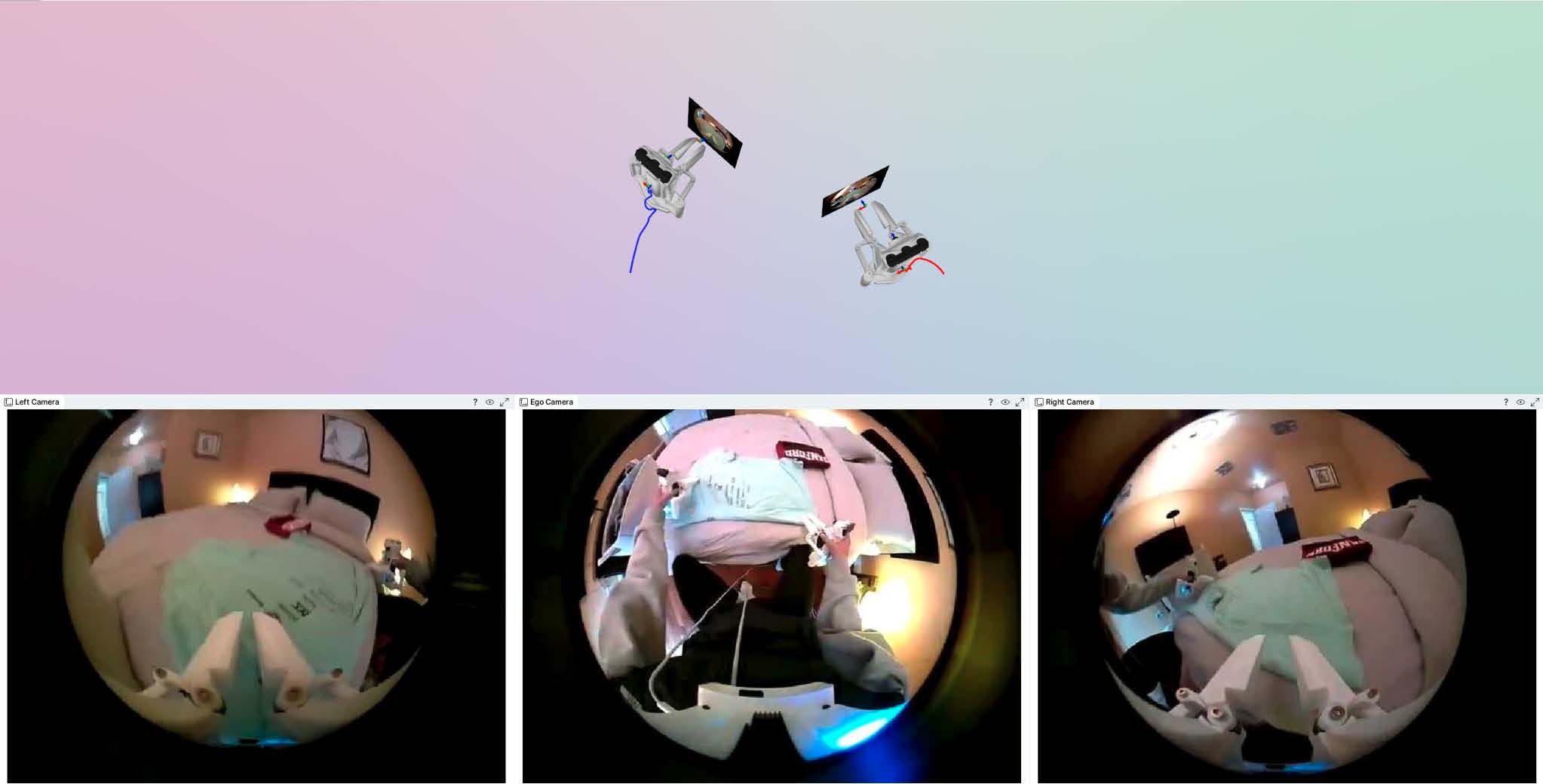

| Ego Camera | Chest-mounted first-person camera for contextual observation |

| Pose | 6-DoF rigid body representation: (tx, ty, tz, qx, qy, qz, qw) |

| Episode | Atomic demonstration sequence with synchronized multimodal streams |

🛠️ Specifications

Dataset Composition

| Metric | Value |

|---|---|

| Locations | 31 |

| Environments | 212 |

| Episodes | 4,832 |

| Total Duration | 101.4 hours |

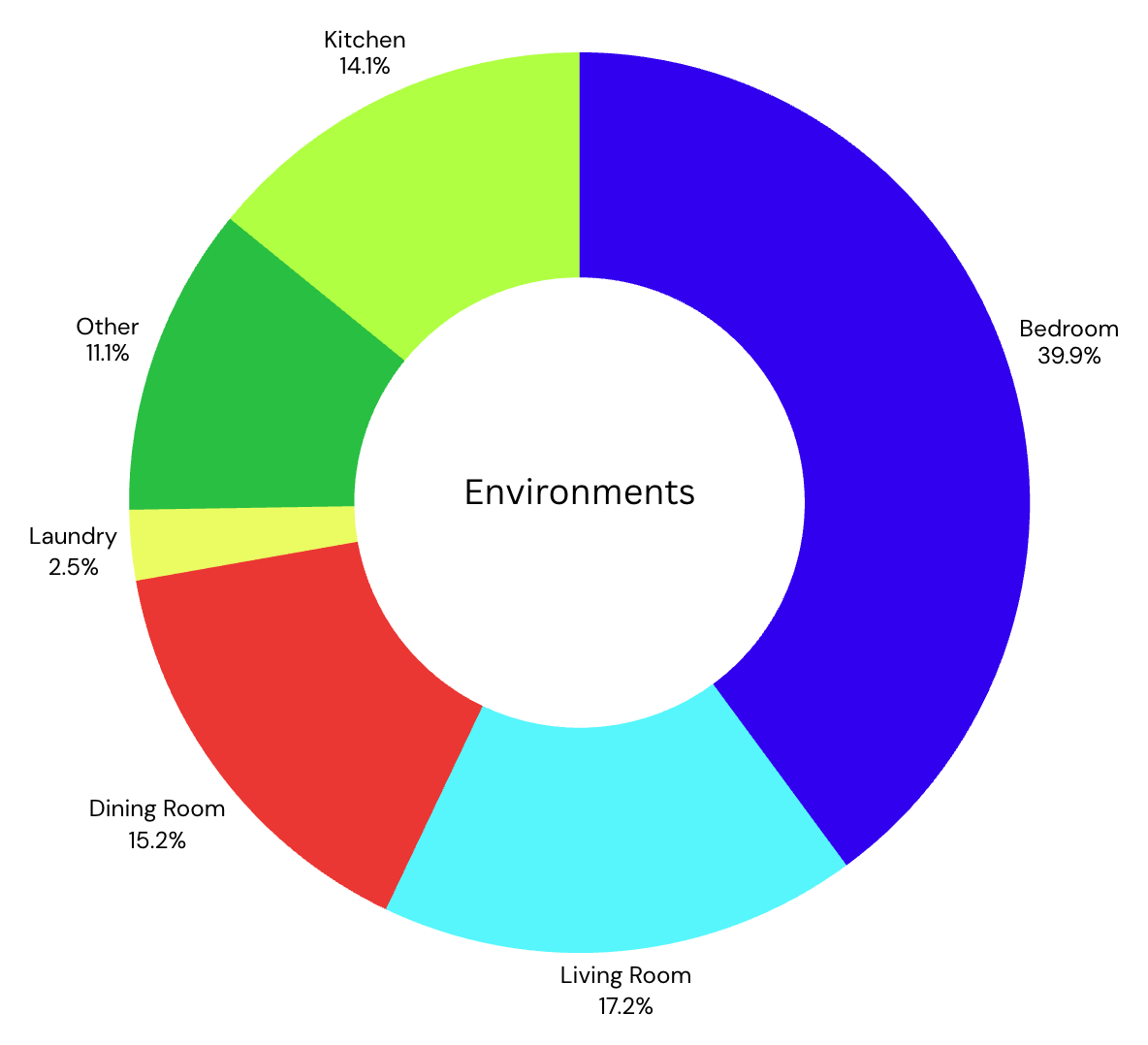

Environment Composition

Garment Composition

Garments include short-sleeve T-shirts of varying materials (cotton, polyester, scrubs) and collars (crew, polo).

| Garment Type | Count |

|---|---|

| T-shirts | ≈ 200 |

| Blouses | ≈ 5 |

Trajectory Specifications

| Metric | Value | Units |

|---|---|---|

| Absolute Pose Error (APE) | 10 ± 5.1 | mm |

| Relative Pose Error (RPE) | 3 ± 0.5 | mm |

| Absolute Rotation Error (ARE) | 1.5 ± 0.6 | degrees |

| Relative Rotation Error (RRE) | 1.8 ± 0.8 | degrees |

| Sampling Frequency | 30 ± 0.09 | Hz |

Hardware Specifications

Camera

| Property | Value |

|---|---|

| Resolution | 1296×972 px |

| Frame Rate | 30 fps |

| Bitrate | 16 Mbps |

| Sensor Size | 0.25 inch |

| Field of View | 210° |

| Focal Length | 2.1 ± 0.2 mm |

Gripper Encoder

| Property | Value |

|---|---|

| Resolution | 0.000077 mm |

| Accuracy | ±0.01 mm |

| Repeatability | ±0.002 mm |

| Max Width | 85 ± 5 mm |

Physical Specifications

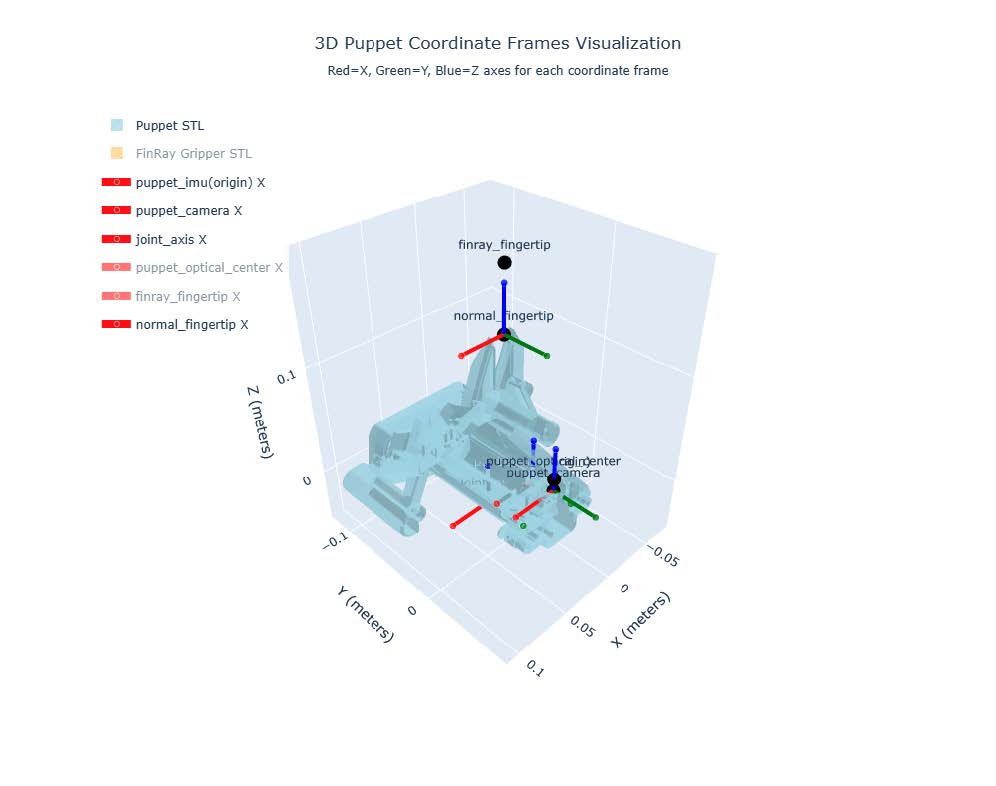

Frame Reference

Frame Values

T_normal_fingertip (origin to end actuator fingertip)

[[ 1. 0. 0. 0.01635 ] [ 0. 1. 0. -0.016538] [ 0. 0. 1. 0.134907] [ 0. 0. 0. 1. ]]T_puppet_camera (origin to camera)

[[1. 0. 0. 0.0163 ] [0. 1. 0. 0.035513] [0. 0. 1. 0.02112 ] [0. 0. 0. 1. ]]

🗂️ Data Format

Dataset follows the LeRobot Standard for robotic learning.

Conventions

- Episodes: atomic trajectories (

episode_index) - Frames: time-ordered (

frame_index) - Timestamps: monotonic

- Observations: camera-aligned signals (images, proprioception).

- Actions: commanded end-effector pose + gripper width.

- State: measured end-effector pose + gripper width.

- Coordinate frames: world (static), camera (optical center), motion_axis, fingertip.

- Units: meters, seconds, radians

- Rotations: quaternions (qx, qy, qz, qw) with right-handed, w last.

Directory Layout

zeroshot-tshirt/

├── meta/

│ ├── dataset.json # global metadata (versioning, sensors, units)

│ ├── tasks.jsonl # per-task descriptors (id, name, split)

│ ├── episodes.jsonl # per-episode descriptors (id, name, split)

│ └── episodes_stats.jsonl # per-episode statistics (duration, frames)

├── data/

│ └── chunk-{000..}/episode_{000000..}.parquet

├── videos/

│ └── chunk-{000..}/

│ └── observation.images.cam_ego/episode_{000000..}.mp4

│ └── observation.images.cam_left/episode_{000000..}.mp4

│ └── observation.images.cam_right/episode_{000000..}.mp4

└── calibration/

├── intrinsics_cam_ego.json

├── intrinsics_cam_left.json

├── intrinsics_cam_right.json

└── extrinsics_world.json

Video Files

Three synchronized camera streams per episode:

cam_ego: First-person viewcam_left: Left puppet viewcam_right: Right puppet view

Accessed as VideoFrame objects via Hugging Face interfaces.

Parquet Format

Each row includes:

| Field | Type | Units | Shape / Length | Description |

|---|---|---|---|---|

index |

int64 | s | scalar | Global row index (unique across dataset chunk) |

frame_index |

int64 | m | scalar | Frame number within the episode |

timestamp |

float32 | s | scalar | Time since episode start |

episode_index |

int64 | m, quat | scalar | Unique episode identifier |

gripper_width |

list[float32] | m, quat | length 2 | Parallel jaw width for left and right puppets |

task_index |

int64 | m, quat | scalar | Task identifier (links to tasks.jsonl) |

left_camera_pose |

list[float32] | m, quat | length 7 | 3D position (tx, ty, tz) + quaternion (qx, qy, qz, qw) for left camera optical center, in world frame |

right_camera_pose |

list[float32] | m, quat | length 7 | 3D position (tx, ty, tz) + quaternion (qx, qy, qz, qw) for left camera optical center, in world frame |

right_fingertip_pose |

list[float32] | m, quat | length 7 | 3D pose of midpoint between left puppet’s fingertips |

left_fingertip_pose |

list[float32] | m, quat | length 7 | 3D pose of midpoint between right puppet’s fingertips |

Example Parquet Data

"index": 124578,

"frame_index": 318,

"timestamp": 12.634,

"episode_index": 42,

"gripper_width": [0.034, 0.034],

"task_index": 7,

"left_camera_pose": [0.152, -0.031, 0.884, 0.002, 0.713, -0.001, 0.701],

"right_camera_pose": [0.148, 0.029, 0.882, -0.003, 0.710, 0.006, 0.704],

"left_fingertip_pose": [0.612, -0.084, 0.502, 0.002, 0.005, 0.721, 0.693],

"right_fingertip_pose": [0.616, 0.089, 0.503, -0.003, 0.004, 0.718, 0.696]

Frame of Reference

All trajectories and poses are expressed in a static world frame. The world frame origin is defined at the base of the right puppet, serving as the global reference for all coordinate transforms. Camera poses, gripper positions, and fingertip poses are aligned to this frame, with translations given in meters and orientations expressed as quaternions (qx, qy, qz, qw).

🛒 Collection

This dataset was primarily collected in San Francisco, California, USA. Data collection took place in noisy and diverse real-world environments to capture a broad range of variability. All sessions were performed by trained, paid data collectors following standardized procedures to ensure consistency across sessions.

To enhance robustness, failure cases—such as tangled fabrics or irregular interactions—were intentionally retained, increasing the diversity of captured scenarios. Each recording was reviewed for quality, and only those meeting data standards were included in the final dataset.

Collection Info

- Location: San Francisco, California, USA 🇺🇸

- Collectors: Trained, paid data collectors

- Diversity: Includes both successful and failure cases

Privacy & Consent

All data collection was conducted under protocols designed to ensure privacy and informed consent. Participants were explicitly notified of the scope of collection, including video, image, IMU, and encoder streams, and provided documented consent prior to recording. Permission to capture data was obtained for all environments.

T-Shirt Folding Procedure Guidelines

- Fixed stance: Torso facing workspace

- Hardware: Only in-house system used

- Variability: Natural irregularities retained (no retries)

The t-shirt folding task was performed under standardized collection protocols to ensure reproducibility and consistency across sessions. Data collectors maintained a fixed stance throughout demonstrations, with feet planted and torso oriented toward the workspace, avoiding lateral rotation or excessive forward lean.

All manipulations were conducted exclusively using the in-house hardware system. When irregularities occurred—such as tangled fabric or misaligned folds—the procedure continued rather than being restarted, preserving the natural variability of the task.

Across all episodes, data collectors executed the folding sequence with the goal of efficient task completion, minimizing extraneous motion while maintaining data fidelity.

T-Shirt Folding Instructions

Retrieve garment

a. Pick up a shirt from the laundry basket.Position shirt

a. Lay the shirt flat on a surface, front side down, with the collar aligned at the top.Fold sides

a. Fold one side inward to the shirt’s centerline.

b. Fold the remaining side inward so both edges overlap neatly.

Create folds

a. Fold the shirt upward from the bottom to the midline.

b. Fold again from the midline to the collar to form a compact rectangle.

Stack

a. Place the folded shirt neatly onto the prepared stack or storage area.

✒️ Preprocessing & Annotation

Preprocessing was performed using a lightweight filtering pipeline designed to stabilize signals while preserving fine motion. A Fixed-Interval Kalman filter with a Rauch–Tung–Striebel (RTS) smoother was applied to refine trajectory estimates.

Rotational data and gripper width signals were further processed using a double Butterworth filter to reduce noise while maintaining sharp transitions. Filtering was implemented with standard numerical libraries within an in-house software framework.

The filtering strategy was kept conservative, ensuring that subtle manipulations and fine-scale movements remained intact. All raw, unfiltered recordings were preserved and remain available upon request.

Preprocessing

Applied lightweight filtering with:

| Filter / Method | Applied To |

|---|---|

| Kalman + RTS Smoother | Trajectories |

| Double Butterworth | Rotational data, gripper width |

| Light Filtering | All signals |

| Raw Data Retention | All modalities |

Labeling

- Room-type labels (bedroom, kitchen, etc.)

🔬 Validation

Validation was performed by recording a range of manipulation motions using the in-house hardware system, while simultaneously capturing ground truth with an OptiTrack Trio 32 motion capture system.

Signals were time-aligned, and frame-wise errors were computed for both position and orientation measurements.

3D Position Accuracy

Free-space trajectories were executed, including sweeping and randomized motions of the end-effector. Errors were computed frame-by-frame against OptiTrack ground truth data.

The mean 3D positional error was consistently within the low-centimeter range, with additional improvements observed after applying the Fixed-Interval Kalman filter with RTS smoothing.

Orientation Accuracy

Orientation estimates were validated against quaternion data from the OptiTrack system.

A double Butterworth filter was applied to the raw rotational signals.

Across all validation trials, the system achieved sub-degree median orientation errors.

| Metric | Accuracy |

|---|---|

| 3D Position | ≤ 7 mm error |

| Orientation | ≤ 1° error |

🚚 Distribution

ZeroShot is responsible for maintaining this dataset. For any questions or concerns, please contact interest@zeroshotdata.com.

The dataset is released as an open-source resource under the Apache 2.0 license and made publicly available on Hugging Face.

Versioning will allow users to reference specific releases. All updates will be documented in this file and reflected across relevant distribution points.

Older versions may be archived for reproducibility, and any obsolescence will be clearly communicated.

The dataset contains no third-party rights or export-control restrictions.

Access will be free of charge, and users are encouraged to provide proper attribution by citing ZeroShot and the associated technical documentation when referencing this dataset in publications.

🔗 Appendix

Data Samples

Interactive Samples

| Sample | Link |

|---|---|

| 1 | Rerun Viewer #1 |

| 2 | Rerun Viewer #2 |

| 3 | Rerun Viewer #3 |

| 4 | Rerun Viewer #4 |

| 5 | Rerun Viewer #5 |

| 6 | Rerun Viewer #6 |

| 7 | Rerun Viewer #7 |

| 8 | Rerun Viewer #8 |

| 9 | Rerun Viewer #9 |

| 10 | Rerun Viewer #10 |

📚 Citation

@dataset{zeroshot_tshirt_2025,

title = {ZeroShot SF-Fold Dataset},

author = {ZeroShot Data Team},

year = {2025},

note = {Version 1.2, September 2025},

url = {https://zeroshotdata.com/datasets/tshirt}

}