language: ha

language_name: Hausa

language_family: chadic

tags:

- wikilangs

- nlp

- tokenizer

- embeddings

- n-gram

- markov

- wikipedia

- feature-extraction

- sentence-similarity

- tokenization

- n-grams

- markov-chain

- text-mining

- fasttext

- babelvec

- vocabulous

- vocabulary

- monolingual

- family-chadic

license: mit

library_name: wikilangs

pipeline_tag: text-generation

datasets:

- omarkamali/wikipedia-monthly

dataset_info:

name: wikipedia-monthly

description: Monthly snapshots of Wikipedia articles across 300+ languages

metrics:

- name: best_compression_ratio

type: compression

value: 4.398

- name: best_isotropy

type: isotropy

value: 0.8106

- name: vocabulary_size

type: vocab

value: 0

generated: 2026-01-10T00:00:00.000Z

Hausa - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Hausa Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

1. Tokenizer Evaluation

Results

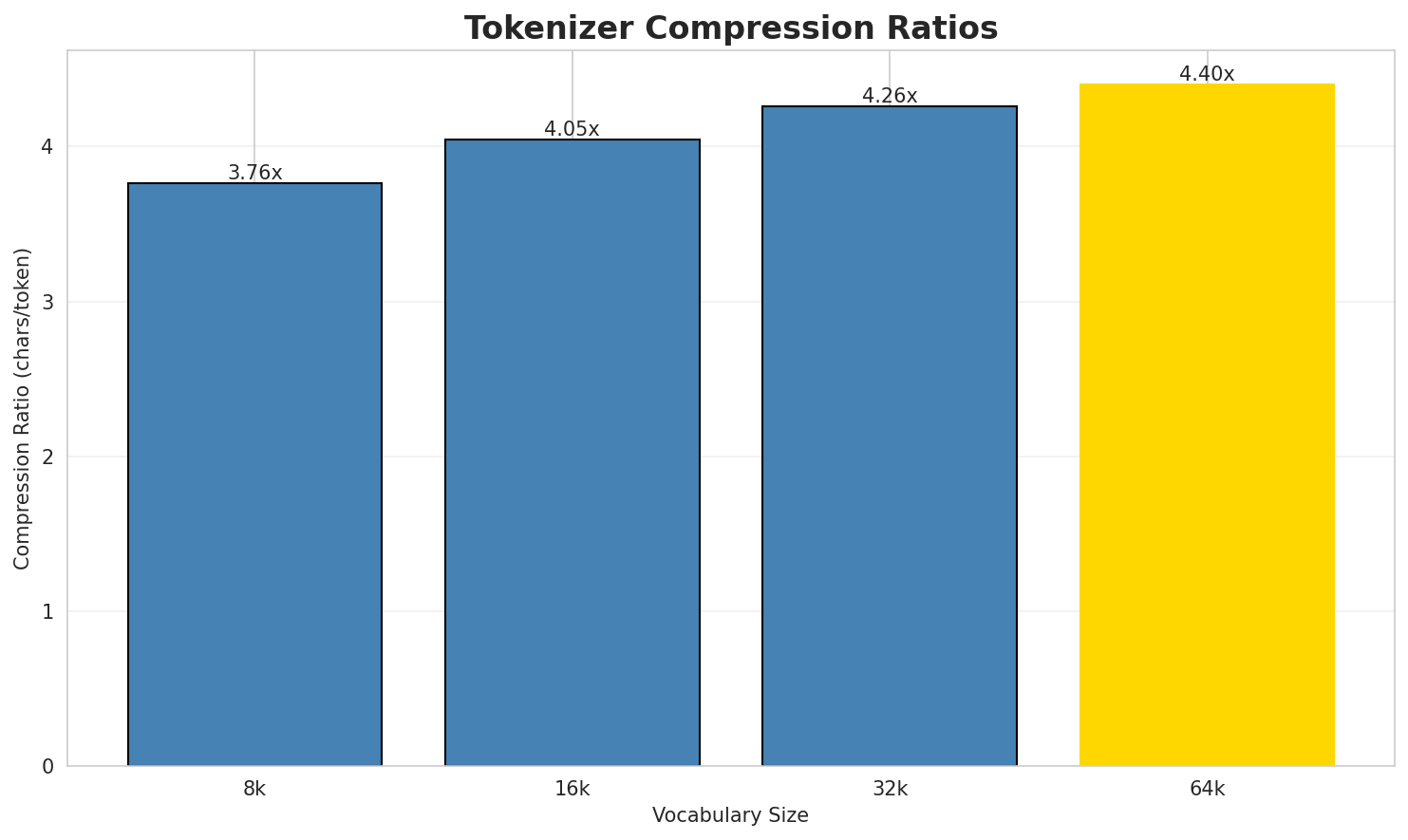

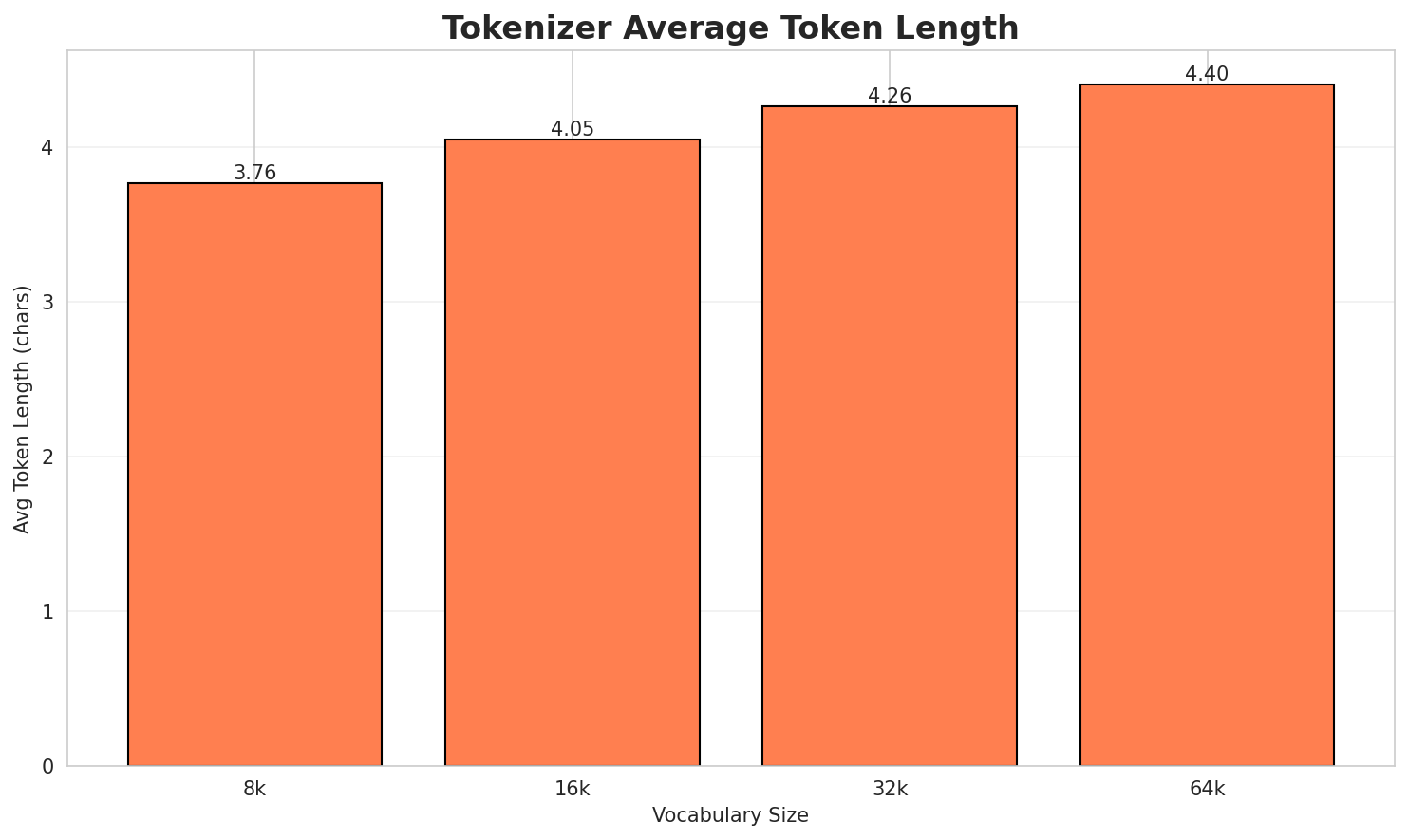

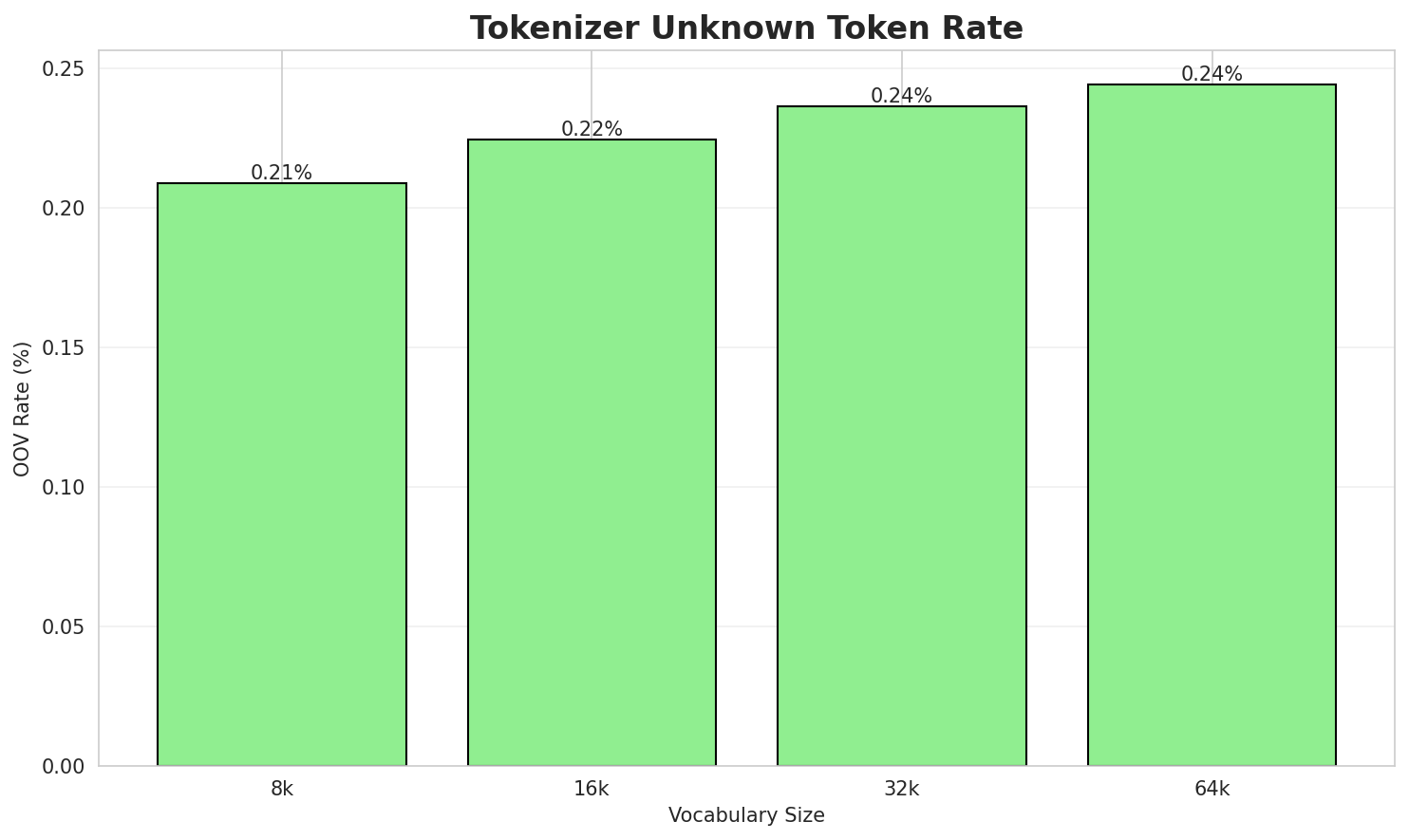

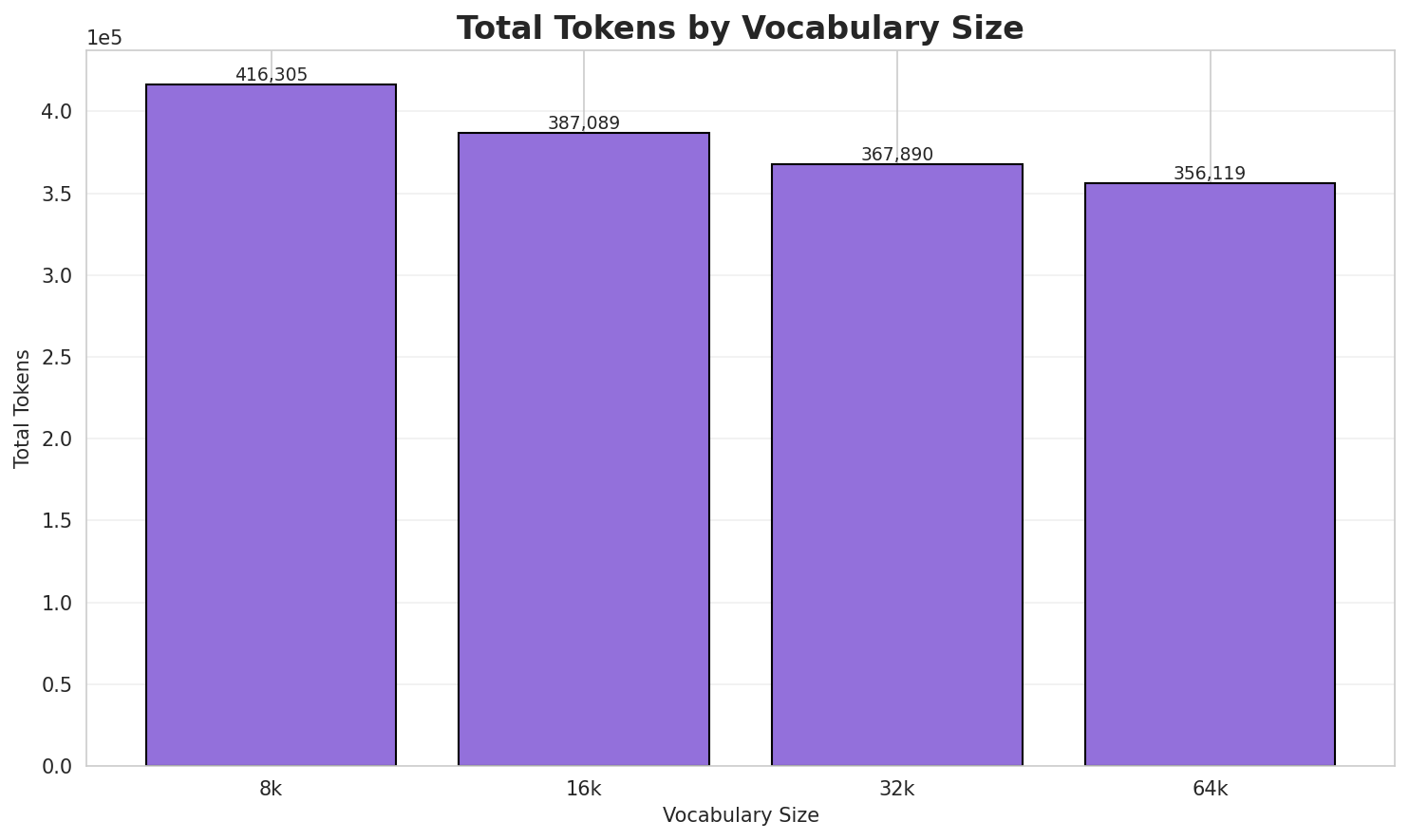

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.763x | 3.76 | 0.2087% | 416,305 |

| 16k | 4.047x | 4.05 | 0.2245% | 387,089 |

| 32k | 4.258x | 4.26 | 0.2362% | 367,890 |

| 64k | 4.398x 🏆 | 4.40 | 0.2440% | 356,119 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Luke Ashworth (an haife shi a shekara ta shi ne dan wasan ƙwallon ƙafa ta ƙasar ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁l uke ▁ash worth ▁( an ▁haife ▁shi ▁a ▁shekara ... (+18 more) |

28 |

| 16k | ▁l uke ▁ash worth ▁( an ▁haife ▁shi ▁a ▁shekara ... (+18 more) |

28 |

| 32k | ▁luke ▁ash worth ▁( an ▁haife ▁shi ▁a ▁shekara ▁ta ... (+17 more) |

27 |

| 64k | ▁luke ▁ashworth ▁( an ▁haife ▁shi ▁a ▁shekara ▁ta ▁shi ... (+16 more) |

26 |

Sample 2: Joshua Ogunlola (an haife shi 19 Afrilu ɗan wasan cricket ne na Najeriya . Ya bu...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁jo shua ▁ogun lo la ▁( an ▁haife ▁shi ▁ ... (+23 more) |

33 |

| 16k | ▁joshua ▁ogun lola ▁( an ▁haife ▁shi ▁ 1 9 ... (+21 more) |

31 |

| 32k | ▁joshua ▁ogun lola ▁( an ▁haife ▁shi ▁ 1 9 ... (+21 more) |

31 |

| 64k | ▁joshua ▁ogun lola ▁( an ▁haife ▁shi ▁ 1 9 ... (+21 more) |

31 |

Sample 3: Roland Omoruyi (an haife shi 5 ga watan Yuni ɗan damben Najeriya ne. Yayi gasa a...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁r oland ▁om or u yi ▁( an ▁haife ▁shi ... (+22 more) |

32 |

| 16k | ▁roland ▁om or u yi ▁( an ▁haife ▁shi ▁ ... (+21 more) |

31 |

| 32k | ▁roland ▁om oru yi ▁( an ▁haife ▁shi ▁ 5 ... (+20 more) |

30 |

| 64k | ▁roland ▁om oru yi ▁( an ▁haife ▁shi ▁ 5 ... (+20 more) |

30 |

Key Findings

- Best Compression: 64k achieves 4.398x compression

- Lowest UNK Rate: 8k with 0.2087% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

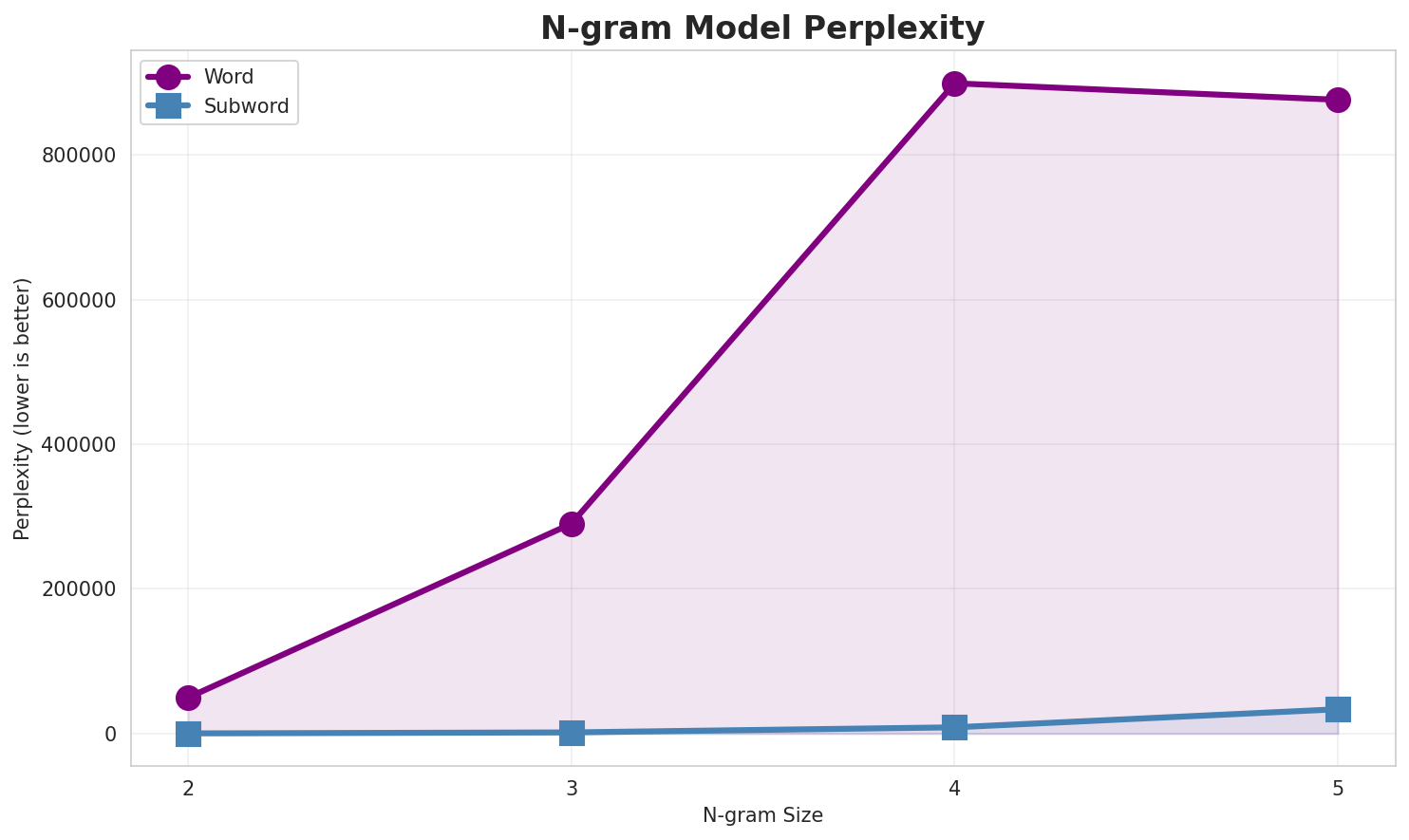

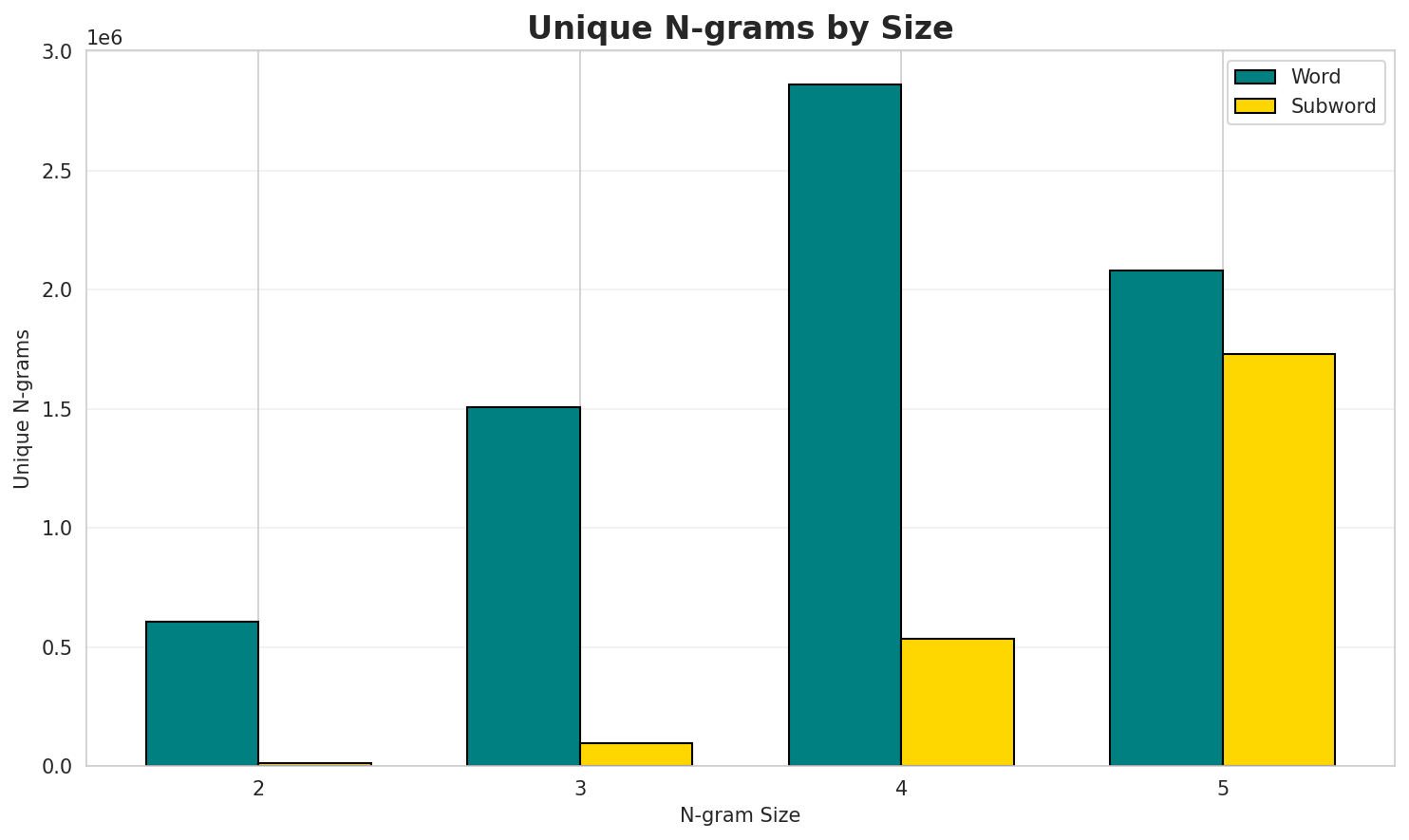

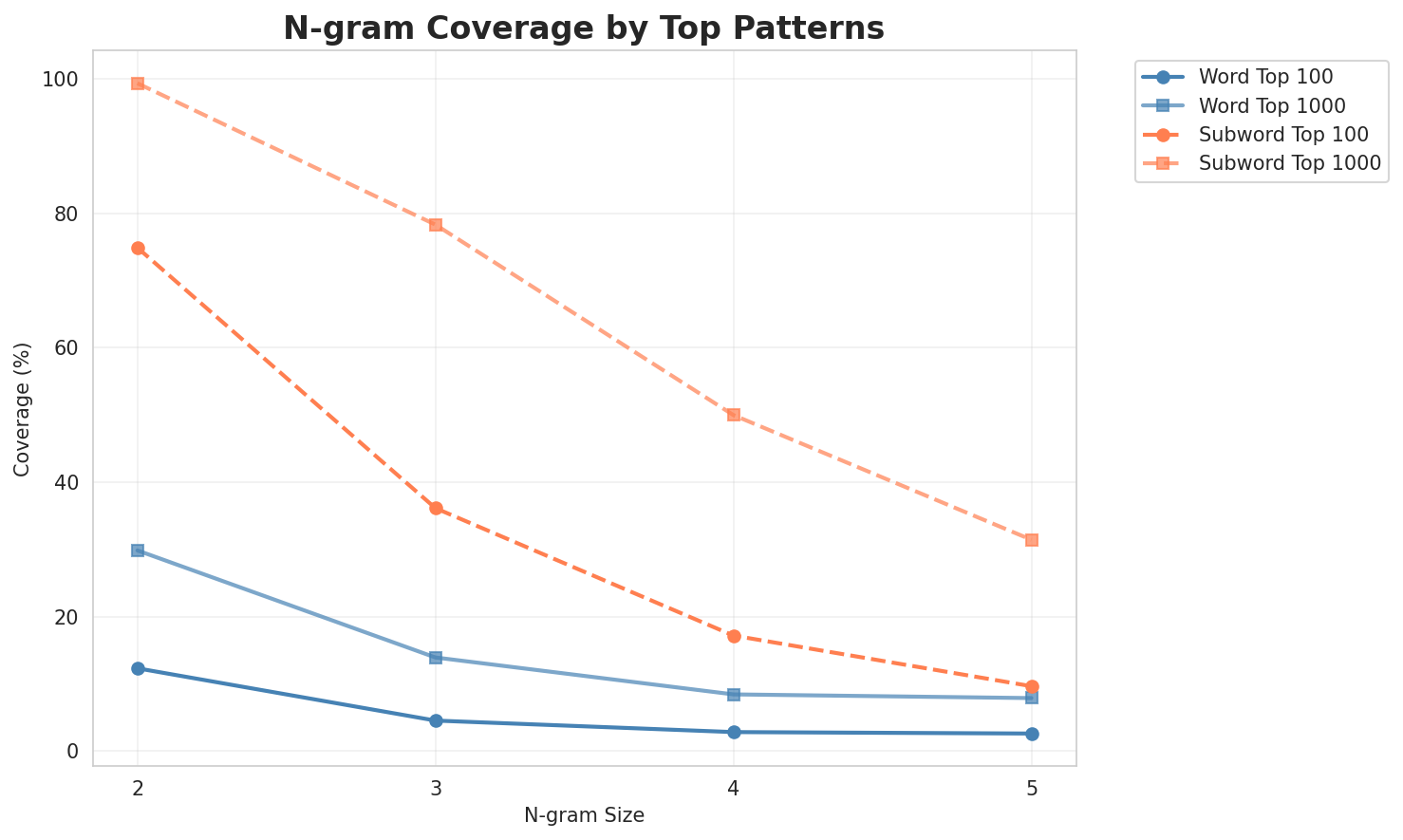

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 49,621 | 15.60 | 604,355 | 12.3% | 29.9% |

| 2-gram | Subword | 196 🏆 | 7.61 | 13,430 | 74.9% | 99.3% |

| 3-gram | Word | 290,081 | 18.15 | 1,505,795 | 4.6% | 13.9% |

| 3-gram | Subword | 1,547 | 10.60 | 97,163 | 36.1% | 78.3% |

| 4-gram | Word | 898,959 | 19.78 | 2,859,421 | 2.8% | 8.4% |

| 4-gram | Subword | 8,574 | 13.07 | 534,835 | 17.2% | 50.0% |

| 5-gram | Word | 876,152 | 19.74 | 2,080,226 | 2.6% | 7.9% |

| 5-gram | Subword | 33,589 | 15.04 | 1,728,117 | 9.7% | 31.4% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a cikin |

313,998 |

| 2 | tare da |

141,234 |

| 3 | a matsayin |

130,861 |

| 4 | da aka |

106,305 |

| 5 | da kuma |

89,834 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a shekara ta |

43,773 |

| 2 | ci gaba da |

25,571 |

| 3 | da ba a |

20,387 |

| 4 | an haife shi |

20,273 |

| 5 | afirka ta kudu |

17,311 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | archived from the original |

15,473 |

| 2 | from the original on |

15,162 |

| 3 | an haife shi a |

14,183 |

| 4 | fassarorin da ba a |

13,066 |

| 5 | masu fassarorin da ba |

13,066 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | archived from the original on |

14,682 |

| 2 | fassarorin da ba a duba |

13,066 |

| 3 | masu fassarorin da ba a |

13,066 |

| 4 | da ba a duba ba |

13,065 |

| 5 | an haife shi a ranar |

5,602 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ |

13,901,672 |

| 2 | n _ |

6,669,315 |

| 3 | a n |

6,077,508 |

| 4 | a r |

5,295,640 |

| 5 | d a |

4,369,505 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d a |

3,204,702 |

| 2 | d a _ |

3,036,418 |

| 3 | i n _ |

2,924,187 |

| 4 | a n _ |

2,144,471 |

| 5 | a r _ |

2,066,174 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d a _ |

2,454,989 |

| 2 | _ n a _ |

991,541 |

| 3 | a _ d a |

987,768 |

| 4 | _ t a _ |

853,598 |

| 5 | a _ t a |

717,349 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ d a _ |

720,468 |

| 2 | i k i n _ |

496,368 |

| 3 | _ c i k i |

458,937 |

| 4 | a _ t a _ |

441,174 |

| 5 | c i k i n |

435,066 |

Key Findings

- Best Perplexity: 2-gram (subword) with 196

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~31% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

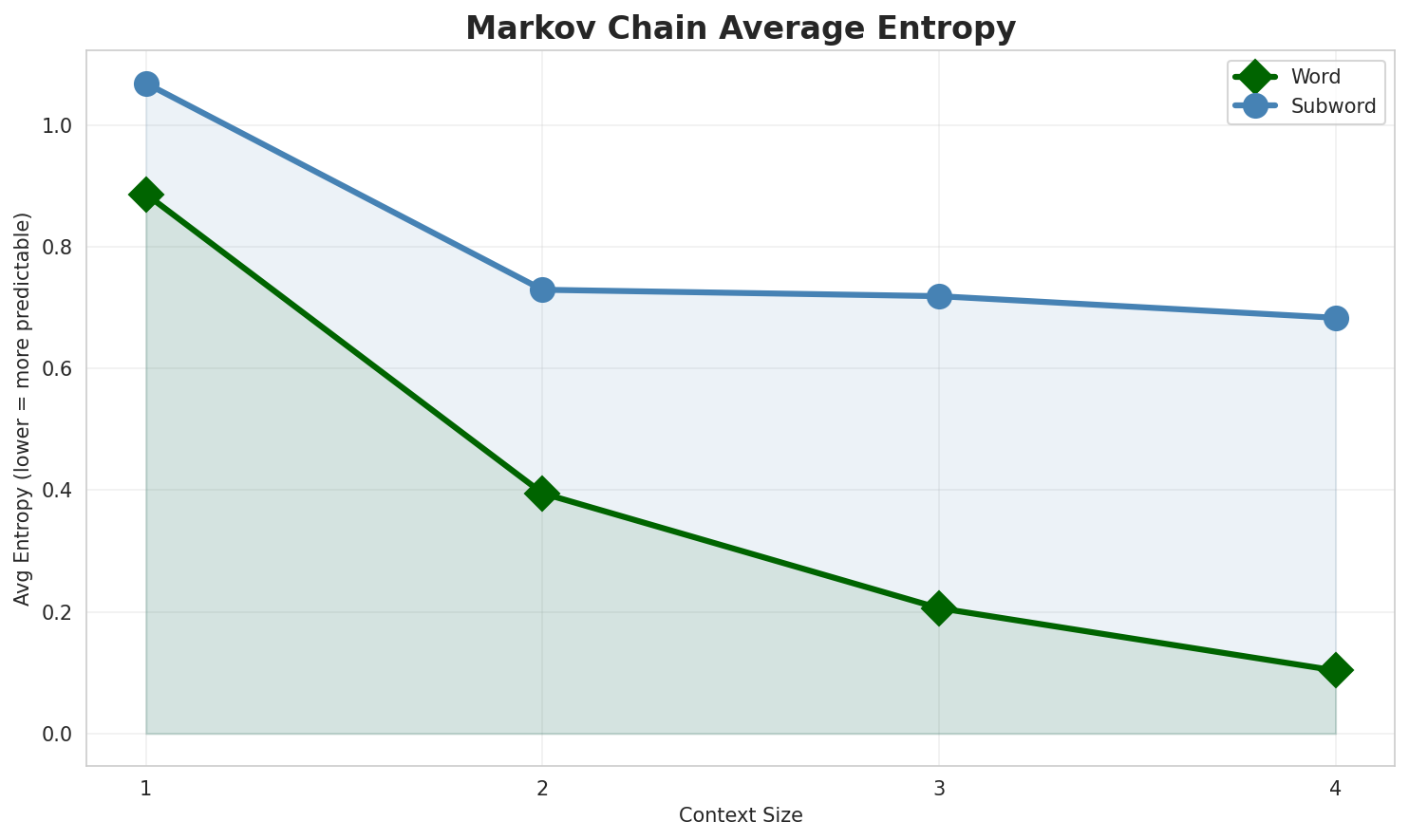

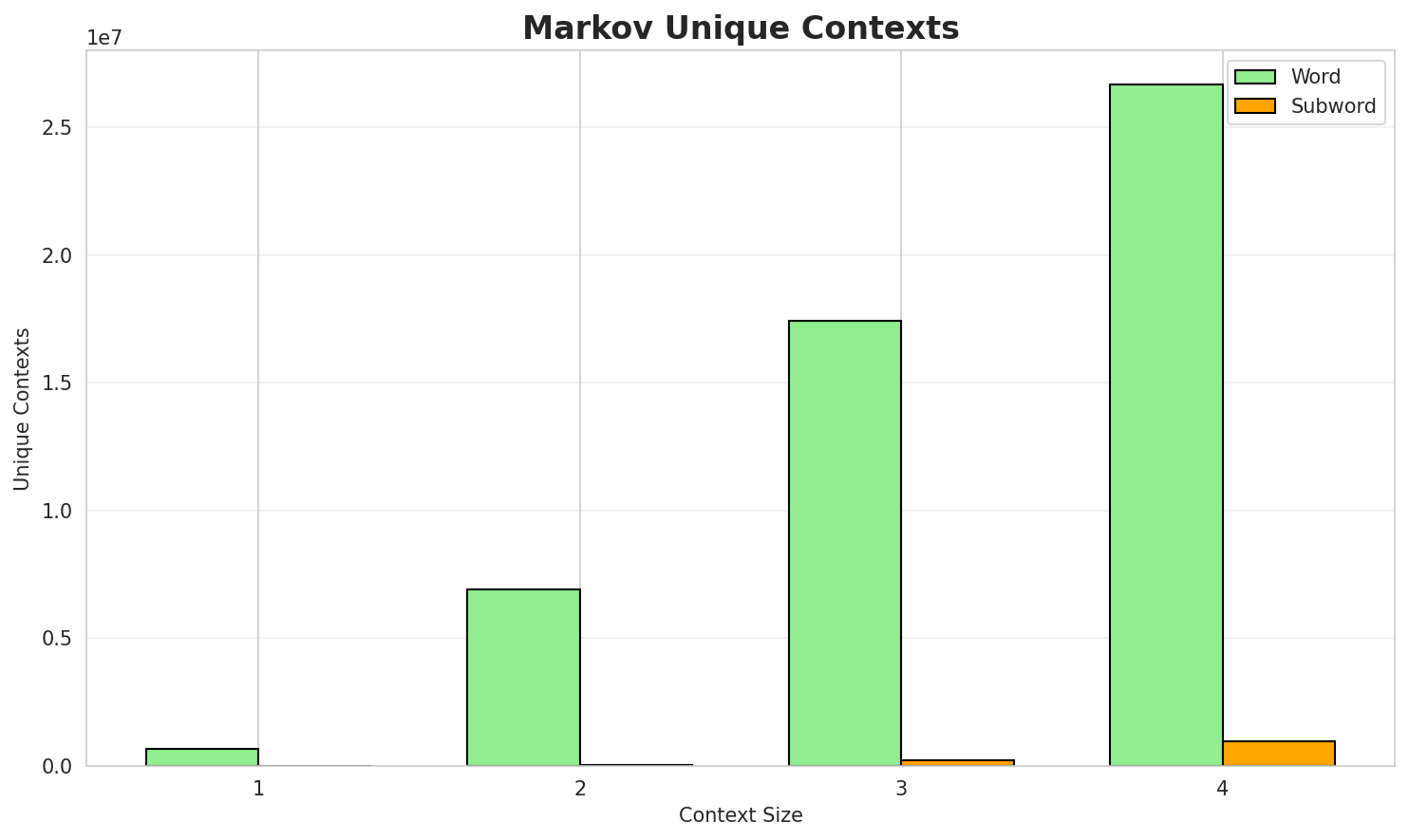

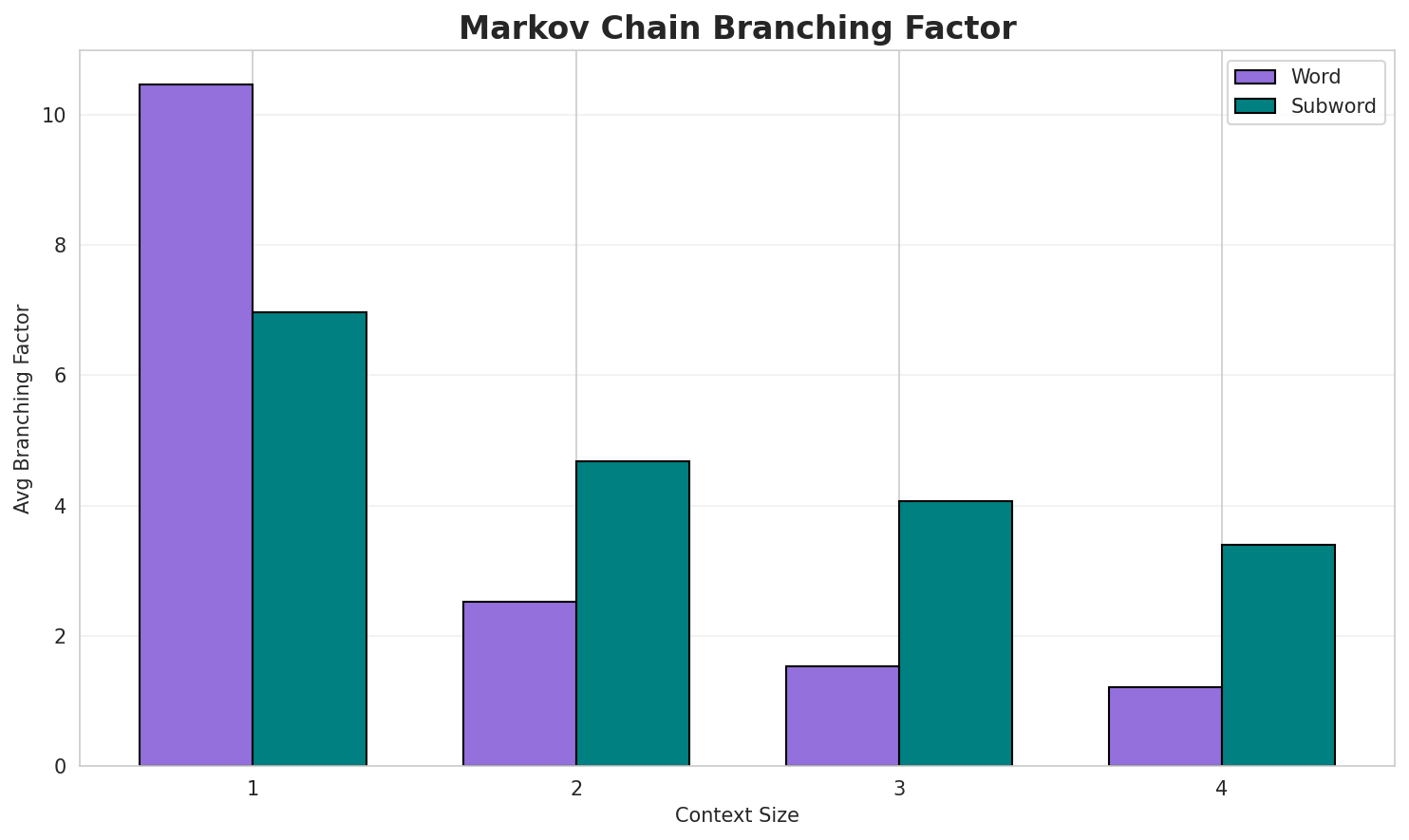

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8863 | 1.848 | 10.46 | 661,201 | 11.4% |

| 1 | Subword | 1.0685 | 2.097 | 6.96 | 7,221 | 0.0% |

| 2 | Word | 0.3948 | 1.315 | 2.52 | 6,908,013 | 60.5% |

| 2 | Subword | 0.7292 | 1.658 | 4.69 | 50,274 | 27.1% |

| 3 | Word | 0.2061 | 1.154 | 1.53 | 17,415,052 | 79.4% |

| 3 | Subword | 0.7187 | 1.646 | 4.06 | 235,540 | 28.1% |

| 4 | Word | 0.1035 🏆 | 1.074 | 1.21 | 26,662,755 | 89.6% |

| 4 | Subword | 0.6831 | 1.606 | 3.40 | 956,556 | 31.7% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

da sojojin kasar ke iyakance ma aunin cinikayya da alaƙa da duniya cambridge ta kuma wania kwalejin fort douteuse manazarta nijar da jama a shekara ta bi na wanda aka gudanarna shekara ta everett dutton jump gable ray choto an tsare ta wannan baya kudancin tasman

Context Size 2:

a cikin alal misali ƙwararrun hindu sun nuna cewa suna adawa da shi 23 da kwallaye 26tare da ƙungiyar ƙwallon ƙafa a ƙayyadaddun su ba bisa ka ida ba ta koma tare daa matsayin mai ba da masauki a kowane yanayi taimako ga peter da saint pons de thomières

Context Size 3:

a shekara ta larabci غالية شاكر mawaƙi ne ɗan ƙasar ghana wanda ke taka leda a matsayin ɗanci gaba da amfani duk da wannan karuwar kwanan nan a cikin ya ya shida na yusufu dada ba a duba ba wasan kwaikwawo ta kudu

Context Size 4:

archived from the original on 4 march retrieved 23 january ita ce shekara ta goma sha tara a samanfrom the original on retrieved october 1 dajin yana wurin zama ga nau in ruwa da na kogi daan haife shi a shekara ta ɗan siyasan najeriya ne daga jihar yobe a yankin arewa maso gabas cen

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

ar_ar_yandu_t_am_chea_ƴa_ctar_kin_aya_ar_su,_don

Context Size 2:

a_sc_ake_gwa_gayun_re_que_ta_redeaan_in_huga_cikar_

Context Size 3:

_daidaraktanin_tsada_ya_kuma_na_dokain_mallace_takewac

Context Size 4:

_da_za_manazartar_a_na_mai_don_a_kansaa_da_no._632._an_fo

Key Findings

- Best Predictability: Context-4 (word) with 89.6% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (956,556 contexts)

- Recommendation: Context-3 or Context-4 for text generation

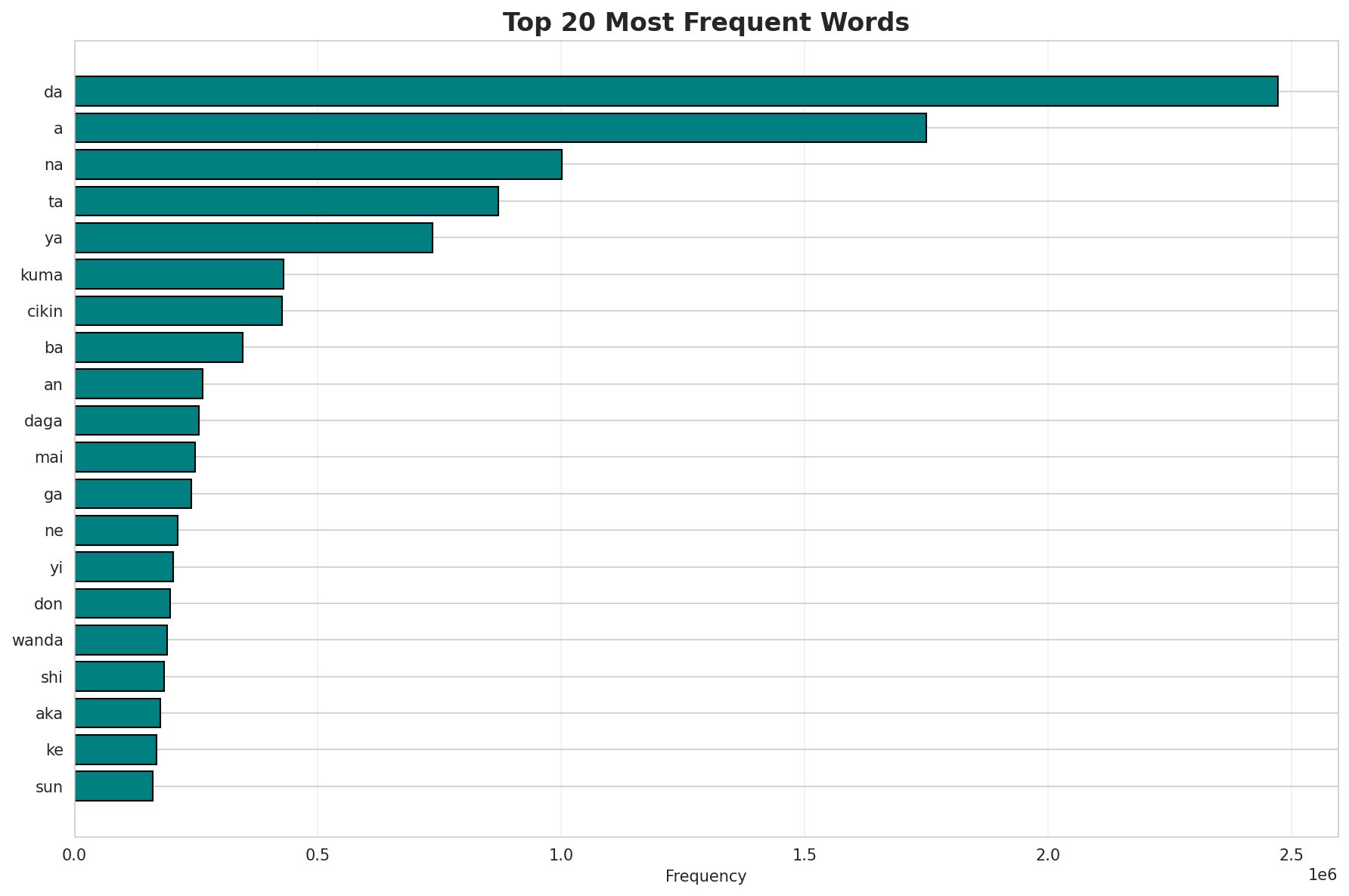

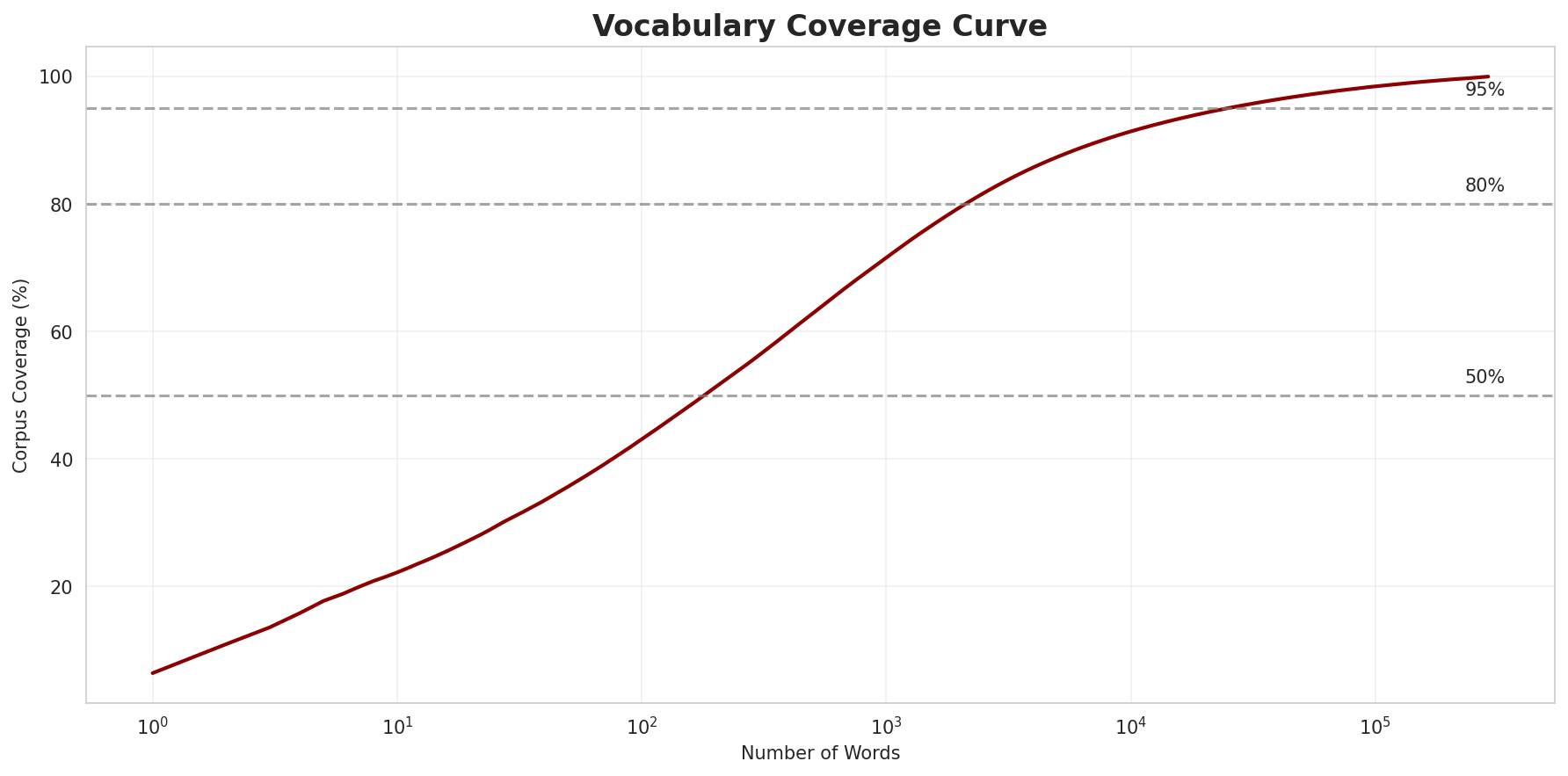

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 289,201 |

| Total Tokens | 38,460,059 |

| Mean Frequency | 132.99 |

| Median Frequency | 4 |

| Frequency Std Dev | 6762.57 |

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | da | 2,472,553 |

| 2 | a | 1,750,033 |

| 3 | na | 1,000,437 |

| 4 | ta | 870,013 |

| 5 | ya | 735,582 |

| 6 | kuma | 428,826 |

| 7 | cikin | 427,094 |

| 8 | ba | 345,573 |

| 9 | an | 263,110 |

| 10 | daga | 256,194 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | lakisha | 2 |

| 2 | tanish | 2 |

| 3 | katakanaタニシャ | 2 |

| 4 | tanishia | 2 |

| 5 | tinisha | 2 |

| 6 | tír | 2 |

| 7 | sunami | 2 |

| 8 | mamis | 2 |

| 9 | mywo | 2 |

| 10 | iyaz | 2 |

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.2631 |

| R² (Goodness of Fit) | 0.985164 |

| Adherence Quality | excellent |

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 43.1% |

| Top 1,000 | 71.6% |

| Top 5,000 | 87.4% |

| Top 10,000 | 91.4% |

Key Findings

- Zipf Compliance: R²=0.9852 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 43.1% of corpus

- Long Tail: 279,201 words needed for remaining 8.6% coverage

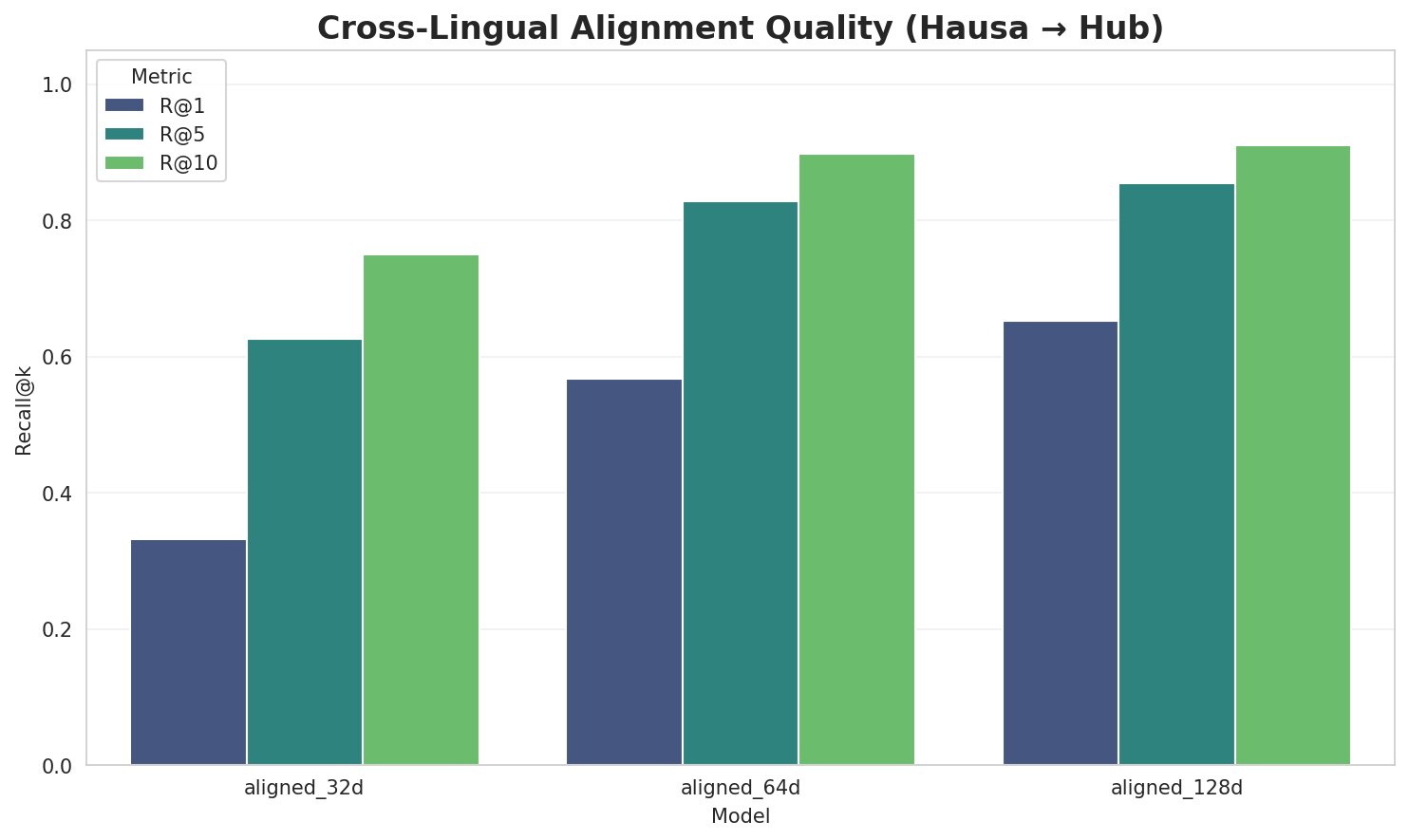

5. Word Embeddings Evaluation

5.1 Cross-Lingual Alignment

5.2 Model Comparison

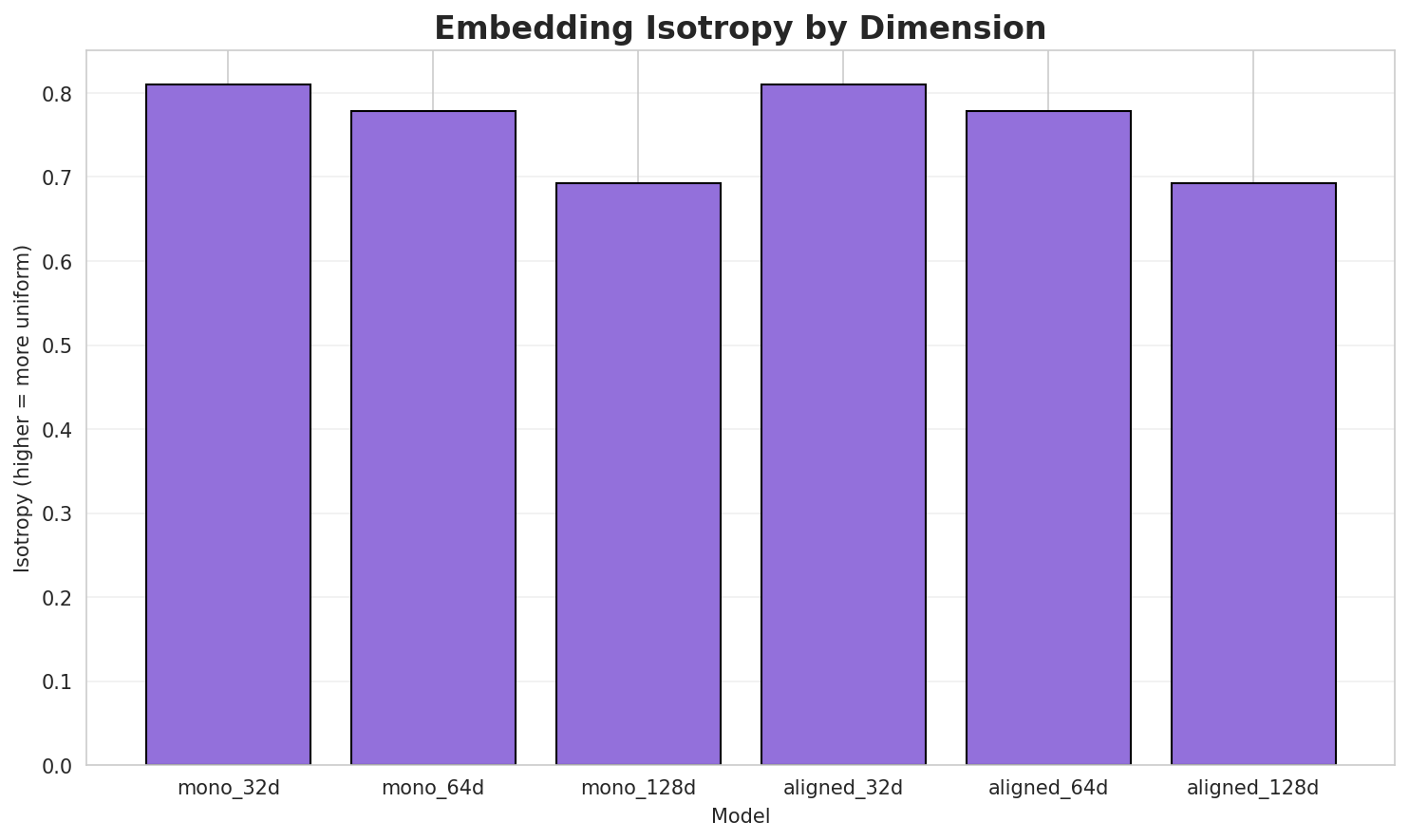

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8106 | 0.4067 | N/A | N/A |

| mono_64d | 64 | 0.7783 | 0.3527 | N/A | N/A |

| mono_128d | 128 | 0.6921 | 0.2853 | N/A | N/A |

| aligned_32d | 32 | 0.8106 🏆 | 0.3959 | 0.3320 | 0.7500 |

| aligned_64d | 64 | 0.7783 | 0.3627 | 0.5680 | 0.8980 |

| aligned_128d | 128 | 0.6921 | 0.3062 | 0.6520 | 0.9100 |

Key Findings

- Best Isotropy: aligned_32d with 0.8106 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.3516. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 65.2% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | -0.749 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-a |

adéọlá, andros, a9 |

-ma |

mahbubani, mackandal, madejski |

-s |

spahis, songulashvili, srw |

-m |

mohie, mufassir, mahbubani |

-n |

nnung, naturist, nogomania |

-b |

bachtarzi, bosley, barbashi |

-k |

kwararawar, kantako, kalaman |

-ba |

bachtarzi, barbashi, balar |

Productive Suffixes

| Suffix | Examples |

|---|---|

-a |

tsarkakarta, gunilla, ejeagha |

-s |

conscripts, chucks, spahis |

-e |

coatesville, paleotemperature, renfrewshire |

-n |

lallausan, incan, hakannan |

-i |

empangeni, bachtarzi, barbashi |

-r |

kwararawar, balar, mufassir |

-o |

derzhkino, vio, kantako |

-an |

lallausan, incan, hakannan |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

ekar |

2.65x | 71 contexts | ekara, lekar, sekara |

ungi |

2.31x | 129 contexts | bungi, fungi, lungi |

ngiy |

2.51x | 74 contexts | ungiya, tangiya, ungiyar |

afir |

2.80x | 41 contexts | kafir, afire, afira |

heka |

2.48x | 64 contexts | sheka, bheka, cheka |

atio |

2.30x | 89 contexts | ratio, patio, natio |

eriy |

2.31x | 44 contexts | eriyo, eriya, teriy |

anay |

2.31x | 41 contexts | anayi, anaya, anaye |

nyar |

2.01x | 54 contexts | nyara, nyari, cinyar |

amfa |

2.30x | 32 contexts | amfan, camfa, amfar |

arsh |

1.75x | 95 contexts | warsh, karsh, arsht |

bban |

2.12x | 42 contexts | abban, dabban, kibban |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-s |

-a |

89 words | sonaiya, skikda |

-k |

-a |

84 words | kwatankwacinsa, kadiyawa |

-a |

-a |

79 words | adaora, aña |

-a |

-e |

66 words | alane, aggiunte |

-b |

-a |

63 words | brunhilda, barasa |

-s |

-e |

59 words | sinninghe, serere |

-ma |

-a |

58 words | mashogwawara, maikusa |

-t |

-a |

53 words | taila, tcha |

-a |

-s |

52 words | aidas, agnews |

-m |

-a |

52 words | mujica, musina |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| omanawanui | omanawan-u-i |

7.5 | u |

| chickpeas | chickpe-a-s |

7.5 | a |

| chieveley | chievel-e-y |

7.5 | e |

| bunamwaya | bunamw-a-ya |

7.5 | a |

| manawashi | ma-na-washi |

7.5 | washi |

| zamaninsa | zamanin-s-a |

7.5 | s |

| tanacikin | ta-na-cikin |

7.5 | cikin |

| fortalezas | fortalez-a-s |

7.5 | a |

| bangarensa | bangaren-s-a |

7.5 | s |

| equalizing | equaliz-i-ng |

7.5 | i |

| abdulwahid | abdulwah-i-d |

7.5 | i |

| rangitata | rangi-ta-ta |

7.5 | ta |

| parkinsons | parkins-on-s |

6.0 | parkins |

| almajiran | al-ma-jiran |

6.0 | jiran |

| finalises | final-is-es |

6.0 | final |

6.6 Linguistic Interpretation

Automated Insight: The language Hausa shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.40x) |

| N-gram | 2-gram | Lowest perplexity (196) |

| Markov | Context-4 | Highest predictability (89.6%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-10 03:18:39