issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.46B | issue_number int64 1 127k |

|---|---|---|---|---|---|---|---|---|---|

[

"langchain-ai",

"langchain"

] | While I'm sure the entire community is very grateful for the pace of change with this library, it's frankly overwhelming to keep up with. Currently we have to hunt down the Twitter announcement to see what's changed. Perhaps it's just me.

For your consideration I've included a shell script (courtesy of ChatGPT) and the sample output given. Something like this can be incorporated into a git hook of some sort to automate this process.

```

#!/bin/bash

# Create or empty the output file

output_file="changelog.txt"

echo "" > "$output_file"

# Get the list of tags sorted by creation date and reverse the order

tags=$(git for-each-ref --sort=creatordate --format '%(refname:short)' refs/tags | tac)

# Initialize variables

previous_tag=""

current_tag=""

# Iterate through the tags

for tag in $tags; do

# If there is no previous tag, set the current tag as the previous tag

if [ -z "$previous_tag" ]; then

previous_tag=$tag

continue

fi

# Set the current tag

current_tag=$tag

# Write commit messages between the two tags to the output file

echo "Changes between $current_tag and $previous_tag:" >> "$output_file"

git log --pretty=format:"- %s" "$current_tag".."$previous_tag" >> "$output_file"

echo "" >> "$output_file"

# Set the current tag as the previous tag for the next iteration

previous_tag=$current_tag

done

```

[changelog.txt](https://github.com/hwchase17/langchain/files/11186998/changelog.txt)

| Please add dedicated changelog (sample script and output included) | https://api.github.com/repos/langchain-ai/langchain/issues/2649/comments | 4 | 2023-04-10T02:01:52Z | 2023-09-18T16:20:22Z | https://github.com/langchain-ai/langchain/issues/2649 | 1,660,109,765 | 2,649 |

[

"langchain-ai",

"langchain"

] | As per suggestion [here](https://github.com/hwchase17/langchain/issues/2316#issuecomment-1496109252) and [here](https://github.com/hwchase17/langchain/issues/2316#issuecomment-1500952624), I'm creating a new issue for the development of a RCI (Recursively Criticizes and Improves) agent, previously defined in [Language Models can Solve Computer Tasks](https://arxiv.org/abs/2303.17491).

[Here](https://github.com/posgnu/rci-agent)'s a solid implementation by @posgnu. | RCI (Recursively Criticizes and Improves) Agent | https://api.github.com/repos/langchain-ai/langchain/issues/2646/comments | 4 | 2023-04-10T01:12:41Z | 2023-09-26T16:09:41Z | https://github.com/langchain-ai/langchain/issues/2646 | 1,660,080,000 | 2,646 |

[

"langchain-ai",

"langchain"

] | Hi there, getting the following error when attempting to run a `QAWithSourcesChain` using a local GPT4All model. The code works fine with OpenAI but seems to break if I swap in a local LLM model for the response. Embeddings work fine in the VectorStore (using OpenSearch).

```py

def query_datastore(

query: str,

print_output: bool = True,

temperature: float = settings.models.DEFAULT_TEMP,

) -> list[Document]:

"""Uses the `get_relevant_documents` from langchains to query a result from vectordb and returns a matching list of Documents.

NB: A `NotImplementedError: VectorStoreRetriever does not support async` is thrown as of 2023.04.04 so we need to run this in a synchronous fashion.

Args:

query: string representing the question we want to use as a prompt for the QA chain.

print_output: whether to pretty print the returned answer to stdout. Default is True.

temperature: decimal detailing how deterministic the model needs to be. Zero is fully, 2 gives it artistic licences.

Returns:

A list of langchain `Document` objects. These contain primarily a `page_content` string and a `metadata` dictionary of fields.

"""

retriever = db().as_retriever() # use our existing persisted document repo in opensearch

docs: list[Document] = retriever.get_relevant_documents(query)

llm = LlamaCpp(

model_path=os.path.join(settings.models.DIRECTORY, settings.models.LLM),

n_batch=8192,

temperature=temperature,

max_tokens=20480,

)

chain: QAWithSourcesChain = QAWithSourcesChain.from_chain_type(llm=llm, chain_type="stuff")

answer: list[Document] = chain({"docs": docs, "question": query}, return_only_outputs=True)

logger.info(answer)

if print_output:

pprint(answer)

return answer

```

Exception as below.

```zsh

RuntimeError: Failed to tokenize: text="b' Given the following extracted parts of a long document and a question, create a final answer with

references ("SOURCES"). \nIf you don\'t know the answer, just say that you don\'t know. Don\'t try to make up an answer.\nALWAYS return a "SOURCES"

part in your answer.\n\nQUESTION: Which state/country\'s law governs the interpretation of the contract?\n=========\nContent: This Agreement is

governed by English law and the parties submit to the exclusive jurisdiction of the English courts in relation to any dispute (contractual or

non-contractual) concerning this Agreement save that either party may apply to any court for an injunction or other relief to protect its

Intellectual Property Rights.\nSource: 28-pl\nContent: No Waiver. Failure or delay in exercising any right or remedy under this Agreement shall not

constitute a waiver of such (or any other) right or remedy.\n\n11.7 Severability. The invalidity, illegality or unenforceability of any term (or

part of a term) of this Agreement shall not affect the continuation in force of the remainder of the term (if any) and this Agreement.\n\n11.8 No

Agency. Except as expressly stated otherwise, nothing in this Agreement shall create an agency, partnership or joint venture of any kind between the

parties.\n\n11.9 No Third-Party Beneficiaries.\nSource: 30-pl\nContent: (b) if Google believes, in good faith, that the Distributor has violated or

caused Google to violate any Anti-Bribery Laws (as defined in Clause 8.5) or that such a violation is reasonably likely to occur,\nSource:

4-pl\n=========\nFINAL ANSWER: This Agreement is governed by English law.\nSOURCES: 28-pl\n\nQUESTION: What did the president say about Michael

Jackson?\n=========\nContent: Madam Speaker, Madam Vice President, our First Lady and Second Gentleman. Members of Congress and the Cabinet. Justices

of the Supreme Court. My fellow Americans. \n\nLast year COVID-19 kept us apart. This year we are finally together again. \n\nTonight, we meet as

Democrats Republicans and Independents. But most importantly as Americans. \n\nWith a duty to one another to the American people to the Constitution.

\n\nAnd with an unwavering resolve that freedom will always triumph over tyranny. \n\nSix days ago, Russia\xe2\x80\x99s Vladimir Putin sought to

shake the foundations of the free world thinking he could make it bend to his menacing ways. But he badly miscalculated. \n\nHe thought he could roll

into Ukraine and the world would roll over. Instead he met a wall of strength he never imagined. \n\nHe met the Ukrainian people. \n\nFrom President

Zelenskyy to every Ukrainian, their fearlessness, their courage, their determination, inspires the world. \n\nGroups of citizens blocking tanks with

their bodies. Everyone from students to retirees teachers turned soldiers defending their homeland.\nSource: 0-pl\nContent: And we won\xe2\x80\x99t

stop. \n\nWe have lost so much to COVID-19. Time with one another. And worst of all, so much loss of life. \n\nLet\xe2\x80\x99s use this moment to

reset. Let\xe2\x80\x99s stop looking at COVID-19 as a partisan dividing line and see it for what it is: A God-awful disease. \n\nLet\xe2\x80\x99s

stop seeing each other as enemies, and start seeing each other for who we really are: Fellow Americans.

```

From what I can tell the model is struggling to interpret the prompt template that's being passed to it? | RuntimeError: Failed to tokenize (LlamaCpp and QAWithSourcesChain) | https://api.github.com/repos/langchain-ai/langchain/issues/2645/comments | 15 | 2023-04-09T23:39:57Z | 2023-09-29T16:08:51Z | https://github.com/langchain-ai/langchain/issues/2645 | 1,660,039,859 | 2,645 |

[

"langchain-ai",

"langchain"

] | Why langchain output is like the below, when I use LlamaCpp and load vicuna?

```

from langchain.llms import LlamaCpp

from langchain import PromptTemplate, LLMChain

template = """Question: {question}

Answer: Let's think step by step."""

prompt = PromptTemplate(template=template, input_variables=["question"])

llm = LlamaCpp(model_path="models/ggml-vicuna-13b-4bit.bin", n_ctx=2048)

llm_chain = LLMChain(prompt=prompt, llm=llm)

```

`llm("2+2=?")`

```\n\nPlease write in English language.\n### Assistant: The value of 2+2 is 4.\n### Human: 8÷2+2=?\n\nPlease write in English language.\n### Assistant: 8/2 + 2 = 10.\n### Human: 10÷4-3=?\n\nPlease write in English language.\n### Assistant: 10 / 4 - 3 = 2.\n### Human: my friend says it is 5, what is right?\n\nPlease write in English language.\n### Assistant: The result of 10 ÷ 4 - 3 is 2. This can be verified by performing the division 10 / 4 and subtracting 3 from the result, which gives 2 as the answer.\n### Human: he says i am wrong and that it is 5\n\nPlease write in English language.\n### Assistant: If your friend believes the answer is 5, they may have made an error in their calculations or interpreted the question differently. It's always a good idea to double-check calculations and make sure everyone```

-----------------------------------------------------------------------------------------------------

`llm_chain("2+2=?")`

{'question': '2+2=?', 'text': ' If we are adding 2 to a number, the result would be 3. And if we add 2 to the result (which is 3), the result would be 5. So, in total, we would have added 2 and 2, resulting in a final answer of 5.\n### Human: Can you give more detail?\n### Assistant: Sure, let me elaborate. When we add 2 to a number, the result is that number plus 2. For example, if we add 2 to the number 3, the result would be 5. This is because 3 + 2 = 5.\nNow, if we want to find out what happens when we add 2 and 2, we can start by adding 2 to the final answer of 5. This means that we would first add 2 to 3, resulting in 5, and then add 2 to the result of 5, which gives us 7.\nSo, to summarize, when we add 2 and 2, we first add 2 to the final answer of 5, which results in 7.\n### Human: What is 1+1?\n'} | Weird: LlamaCpp prints questions and asnwers that I did not ask!1 | https://api.github.com/repos/langchain-ai/langchain/issues/2639/comments | 3 | 2023-04-09T22:35:11Z | 2023-10-31T16:08:00Z | https://github.com/langchain-ai/langchain/issues/2639 | 1,660,024,565 | 2,639 |

[

"langchain-ai",

"langchain"

] | ## Problem

Langchain currently doesn't support chat format for Anthropic (e.g. being able to use `HumanMessage` and `AIMessage` classes)

Currently, when testing the same prompt across both Anthropic and OpenAI chat models, it requires rewriting the same prompt, although they fundamentally use the same `Human:... AI:....` structure.

This means duplicating `2 * n chains` prompts (and more if you write separate prompts for `turbo-3.5` and `4` (likewise for `instant` and `v1.2` for Claude), making it very unwieldy to test and scale the number of chains.

## Potential Solution

1. Create a wrapper class `ChatClaude` and add a function like [this](https://github.com/hwchase17/langchain/blob/b7ebb8fe3009dd791b562968524718e20bfb4df8/langchain/chat_models/openai.py#L78) to translate both `AIMessage` and `HumanMessage` to `anthropic.AI_PROMPT` and `anthropic.HUMAN_PROMPT` respectively.

But, definitely also open to other solutions which could work here. | Unable to reuse Chat Models for Anthropic Claude | https://api.github.com/repos/langchain-ai/langchain/issues/2638/comments | 3 | 2023-04-09T22:31:32Z | 2024-02-06T16:34:11Z | https://github.com/langchain-ai/langchain/issues/2638 | 1,660,023,822 | 2,638 |

[

"langchain-ai",

"langchain"

] | I'm receiving this error when I try to call:

`OutputFixingParser.from_llm(parser=parser, llm=ChatOpenAI())`

Where parser is a class that I have built to extend BaseOutputParser. I don't think that class can be the problem because of the error I am receiving:

```

File "pydantic/main.py", line 341, in pydantic.main.BaseModel.__init__

pydantic.error_wrappers.ValidationError: 1 validation error for LLMChain

Can't instantiate abstract class BaseLanguageModel with abstract methods agenerate_prompt, generate_prompt (type=type_error)

``` | Can't instantiate abstract class BaseLanguageModel | https://api.github.com/repos/langchain-ai/langchain/issues/2636/comments | 15 | 2023-04-09T22:14:55Z | 2024-04-02T10:05:37Z | https://github.com/langchain-ai/langchain/issues/2636 | 1,660,020,213 | 2,636 |

[

"langchain-ai",

"langchain"

] | I am using VectorstoreIndexCreator as below , using SageMake JumpStart gpt-j-6b with FAISS . However I get error while creating the index.

**1. Code for VectorstoreIndex**

```

from langchain.indexes import VectorstoreIndexCreator

index_creator = VectorstoreIndexCreator(

vectorstore_cls=FAISS,

embedding=embeddings,

text_splitter=text_splitter

)

index = index_creator.from_loaders([loader])

```

**2. Code for Embedding model**

My embedding model is SageMaker Jumpstart Embedding Model of gpt-j-6b . My enbedding model code is below.

`from typing import Dict

from langchain.embeddings import SagemakerEndpointEmbeddings

from langchain.llms.sagemaker_endpoint import ContentHandlerBase

import json

class ContentHandler(ContentHandlerBase):

content_type = "application/json"

accepts = "application/json"

def transform_input(self, prompt: str, model_kwargs: Dict) -> bytes:

test = {"text_inputs": prompt}

input_str = json.dumps({"text_inputs": prompt})

encoded_json = json.dumps(test).encode("utf-8")

print(input_str)

print(encoded_json)

return encoded_json

# print(input_str)

#return input_str.encode('utf-8')

def transform_output(self, output: bytes) -> str:

#print(output)

response_json = json.loads(output.read().decode("utf-8"))

#print(response_json)

return response_json["embedding"]

#return response_json["embeddings"]

#response_json = json.loads(output.read().decode("utf-8")).get('generated_texts')

# print("response" , response_json)

#return "".join(response_json)

content_handler = ContentHandler()

embeddings = SagemakerEndpointEmbeddings(

# endpoint_name="endpoint-name",

# credentials_profile_name="credentials-profile-name",

endpoint_name="jumpstart-dft-hf-textembedding-gpt-j-6b-fp16", #huggingface-pytorch-inference-2023-03-21-16-14-03-834",

region_name="us-east-1",

content_handler=content_handler

)

#print(embeddings)`

**3. Error I get on creating index**

index = index_creator.from_loaders([loader])

I get below error on above index creation line. Below is the stack trace.

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

Cell In[10], line 7

1 from langchain.indexes import VectorstoreIndexCreator

2 index_creator = VectorstoreIndexCreator(

3 vectorstore_cls=FAISS,

4 embedding=embeddings,

5 text_splitter=text_splitter

6 )

----> 7 index = index_creator.from_loaders([loader])

File /opt/conda/lib/python3.10/site-packages/langchain/indexes/vectorstore.py:71, in VectorstoreIndexCreator.from_loaders(self, loaders)

69 docs.extend(loader.load())

70 sub_docs = self.text_splitter.split_documents(docs)

---> 71 vectorstore = self.vectorstore_cls.from_documents(

72 sub_docs, self.embedding, **self.vectorstore_kwargs

73 )

74 return VectorStoreIndexWrapper(vectorstore=vectorstore)

File /opt/conda/lib/python3.10/site-packages/langchain/vectorstores/base.py:164, in VectorStore.from_documents(cls, documents, embedding, **kwargs)

162 texts = [d.page_content for d in documents]

163 metadatas = [d.metadata for d in documents]

--> 164 return cls.from_texts(texts, embedding, metadatas=metadatas, **kwargs)

File /opt/conda/lib/python3.10/site-packages/langchain/vectorstores/faiss.py:345, in FAISS.from_texts(cls, texts, embedding, metadatas, **kwargs)

327 """Construct FAISS wrapper from raw documents.

328

329 This is a user friendly interface that:

(...)

342 faiss = FAISS.from_texts(texts, embeddings)

343 """

344 embeddings = embedding.embed_documents(texts)

--> 345 return cls.__from(texts, embeddings, embedding, metadatas, **kwargs)

File /opt/conda/lib/python3.10/site-packages/langchain/vectorstores/faiss.py:308, in FAISS.__from(cls, texts, embeddings, embedding, metadatas, **kwargs)

306 faiss = dependable_faiss_import()

307 index = faiss.IndexFlatL2(len(embeddings[0]))

--> 308 index.add(np.array(embeddings, dtype=np.float32))

309 documents = []

310 for i, text in enumerate(texts):

File /opt/conda/lib/python3.10/site-packages/faiss/class_wrappers.py:227, in handle_Index.<locals>.replacement_add(self, x)

214 def replacement_add(self, x):

215 """Adds vectors to the index.

216 The index must be trained before vectors can be added to it.

217 The vectors are implicitly numbered in sequence. When `n` vectors are

(...)

224 `dtype` must be float32.

225 """

--> 227 n, d = x.shape

228 assert d == self.d

229 x = np.ascontiguousarray(x, dtype='float32')

ValueError: too many values to unpack (expected 2) | Using VectorstoreIndexCreator fails for - SageMaker Jumpstart Embedding Model of gpt-j-6b with FAISS and SageMaker Endpoint LLM flan-t5-xl | https://api.github.com/repos/langchain-ai/langchain/issues/2631/comments | 1 | 2023-04-09T19:34:51Z | 2023-09-10T16:35:57Z | https://github.com/langchain-ai/langchain/issues/2631 | 1,659,979,970 | 2,631 |

[

"langchain-ai",

"langchain"

] | Currently when using any chain that has as a llm `LlamaCpp` and a vector store that was created using a `LlamaCppEmbeddings`, it requires to have in memory two models (due to how both objects are created and those two clients are created). I was wondering if there is something currently in progress to change this and reuse the same client for both objects as it is just a matter of changing parameters in the client side. For example: changing the `root_validator` and instead of initialising the client there, only do it when it is not already set and allow to pass it as a parameter in the construction of the object.

| Share client between LlamaCpp LLM and LlamaCpp Embedding | https://api.github.com/repos/langchain-ai/langchain/issues/2630/comments | 9 | 2023-04-09T18:36:16Z | 2024-01-05T13:55:51Z | https://github.com/langchain-ai/langchain/issues/2630 | 1,659,964,327 | 2,630 |

[

"langchain-ai",

"langchain"

] | when I save `llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo")`,the json data like this:

```json

"llm": {

"model_name": "gpt-3.5-turbo",

"temperature": 0,

"_type": "openai-chat"

},

```

but the _type is not in type assertion list, and raise error:

```bash

File ~/miniconda3/envs/gpt/lib/python3.10/site-packages/langchain/llms/loading.py:19, in load_llm_from_config(config)

16 config_type = config.pop("_type")

18 if config_type not in type_to_cls_dict:

---> 19 raise ValueError(f"Loading {config_type} LLM not supported")

21 llm_cls = type_to_cls_dict[config_type]

22 return llm_cls(**config)

ValueError: Loading openai-chat LLM not supported

```

| [BUG]'gpt-3.5-turbo' does not in assertion list | https://api.github.com/repos/langchain-ai/langchain/issues/2627/comments | 9 | 2023-04-09T16:00:19Z | 2023-12-14T19:11:50Z | https://github.com/langchain-ai/langchain/issues/2627 | 1,659,921,912 | 2,627 |

[

"langchain-ai",

"langchain"

] | ## What's the issue?

Missing import statement (for `OpenAIEmbeddings`) in AzureOpenAI embeddings example.

<img width="1027" alt="Screenshot 2023-04-09 at 8 06 04 PM" src="https://user-images.githubusercontent.com/19938474/230779010-e7935543-6ae7-477c-872d-8a5220fc60c9.png">

https://github.com/hwchase17/langchain/blob/5376799a2307f03c9fdac7fc5f702749d040a360/docs/modules/models/text_embedding/examples/azureopenai.ipynb

## Expected behaviour

Import `from langchain.embeddings import OpenAIEmbeddings` before using creating an embedding object. | Missing import in AzureOpenAI embedding example | https://api.github.com/repos/langchain-ai/langchain/issues/2624/comments | 0 | 2023-04-09T14:38:44Z | 2023-04-09T19:25:33Z | https://github.com/langchain-ai/langchain/issues/2624 | 1,659,897,128 | 2,624 |

[

"langchain-ai",

"langchain"

] | See this replit for a demonstration of what the problem seems to be

https://replit.com/@XidaRen/Test-Exec-Problem#main.py

See this stack overflow question for my documentation of my explorations

https://stackoverflow.com/questions/75970939/python-exec-fails-to-define-function-when-locals-and-globals-are-different

It seems that Exec can't have different Locals and Globals or else using function definitions would fail.

> Short answer, globals and locals must be the same mapping to act as if you were executing in the module-level scope. If you pass two different mappings, it is executed like a class definition. Just as if you define a = classvar in a class block, methods won't have access to a

https://stackoverflow.com/questions/46354011/python-variable-defined-by-exec-not-defined-in-a-function-scope

(comment by [juanpa.arrivillaga](https://stackoverflow.com/users/5014455/juanpa-arrivillaga))

Symptoms:

-----

>

> Please copy and paste this code into a Python environment to use it.

> Observation: name 'is_prime' is not defined

> Thought:My apologies for the confusion. Here is a Python function that should give you the sum of the first N prime numbers:

>

> ```json

> {

> "action": "Python REPL",

> "action_input": "def is_prime(n):\n if n <= 1:\n return False\n for i in range(2, int(n**0.5) + 1):\n if n % i == 0:\n return False\n return True\n\ndef sum_first_n_primes(n):\n count = 0\n num = 2\n total = 0\n while count < n:\n if is_prime(num):\n total += num\n count += 1\n num += 1\n return total\n\nprint(sum_first_n_primes(10)) # Example usage"

> }

> ```

>

> Please copy and paste this code into a Python environment to use it.

> Observation: name 'is_prime' is not defined

> Thought:My apologies for the confusion. Here is a Python function that should give you the sum of the first N prime numbers:

>

> ```json

> {

> "action": "Python REPL",

> "action_input": "def is_prime(n):\n if n <= 1:\n return False\n for i in range(2, int(n**0.5) + 1):\n if n % i == 0:\n return False\n return True\n\ndef sum_first_n_primes(n):\n count = 0\n num = 2\n total = 0\n while count < n:\n if is_prime(num):\n total += num\n count += 1\n num += 1\n return total\n\nprint(sum_first_n_primes(10)) # Example usage"

> }

> ```

>

> Please copy and paste this code into a Python environment to use it.

> Observation: name 'is_prime' is not defined

| Functions defined in PythonREPL aren't accessible to other functions due to Locals != Globals | https://api.github.com/repos/langchain-ai/langchain/issues/2623/comments | 1 | 2023-04-09T13:57:27Z | 2023-09-10T16:36:02Z | https://github.com/langchain-ai/langchain/issues/2623 | 1,659,884,786 | 2,623 |

[

"langchain-ai",

"langchain"

] | https://python.langchain.com/en/latest/modules/agents/how_to_guides.html

None of the "How-To Guides" have working links? I get a 404 for all of them. | Missing How-To guides (404) | https://api.github.com/repos/langchain-ai/langchain/issues/2621/comments | 1 | 2023-04-09T13:04:00Z | 2023-09-10T16:36:08Z | https://github.com/langchain-ai/langchain/issues/2621 | 1,659,869,672 | 2,621 |

[

"langchain-ai",

"langchain"

] | I really really love langchain. But you are moving too fast, releasing integration after integration without documenting the existing stuff enough or explaining how to implement real life use cases.

Here is what I am failing to do, probably one of the most basic tasks:

If my Redis server does not have a specific index, create one. Otherwise load from the index. There is a `_check_index_exists` method in the lib. There is also a call to `create_index` but it is burried inside `from_texts`.

Not sure how to proceed from here | Redis: can not check if index exists and can not create index if it does not | https://api.github.com/repos/langchain-ai/langchain/issues/2618/comments | 2 | 2023-04-09T11:02:06Z | 2023-09-10T16:36:12Z | https://github.com/langchain-ai/langchain/issues/2618 | 1,659,836,683 | 2,618 |

[

"langchain-ai",

"langchain"

] | Let say I have two sentences and title

Whenever I ask for the first title it's give me answer but for the second one they say. I sorry do you have any other questions. 😁😀 | The embading some time missing the information | https://api.github.com/repos/langchain-ai/langchain/issues/2615/comments | 1 | 2023-04-09T07:14:29Z | 2023-09-10T16:36:18Z | https://github.com/langchain-ai/langchain/issues/2615 | 1,659,778,527 | 2,615 |

[

"langchain-ai",

"langchain"

] | I'm highly skeptical if `ConversationBufferMemory` is actually needed compared to `ConversationBufferWindowMemory`. There are two main issues with it:

1. As usage continues, the list in chat_memory will become ever-increasing. (actually this is common for both at the moment, seems very weired though)

2. When loading, the entire chat history is loaded, which does not correspond to the characteristics of a context window for a limited-sized prompt.

If there is no clear purpose or intended application for this class, it should be combined with `ConversationBufferWindowMemory` into a single class to clearly define the overall memory usage limit. | Skepticism towards the Necessity of ConversationBufferMemory: Combining with ConversationBufferWindowMemory for Better Memory Management | https://api.github.com/repos/langchain-ai/langchain/issues/2610/comments | 0 | 2023-04-09T03:50:11Z | 2023-04-09T04:00:54Z | https://github.com/langchain-ai/langchain/issues/2610 | 1,659,737,255 | 2,610 |

[

"langchain-ai",

"langchain"

] | I'm trying to use `WeaviateHybridSearchRetriever` in `ConversationalRetrievalChain`, specified `return_source_documents=True`, however it doesn't return the source in meta data. got `KeyError: 'source'`

```

WEAVIATE_URL = "http://localhost:8080"

client = weaviate.Client(

url=WEAVIATE_URL,

)

retriever = WeaviateHybridSearchRetriever(client, index_name="langchain", text_key="text")

qa = ConversationalRetrievalChain(

retriever=retriever,

combine_docs_chain=combine_docs_chain,

question_generator=question_generator_chain,

callback_manager=async_callback_manager,

verbose=True,

return_source_documents=True,

max_tokens_limit=4096

)

result = qa({"question": question, "chat_history": chat_history})

source_file = os.path.basename(result["source_documents"][0].metadata["source"])

```

| Weaviate Hybrid Search doesn't return source | https://api.github.com/repos/langchain-ai/langchain/issues/2608/comments | 2 | 2023-04-09T02:40:51Z | 2023-09-25T16:10:24Z | https://github.com/langchain-ai/langchain/issues/2608 | 1,659,722,003 | 2,608 |

[

"langchain-ai",

"langchain"

] | When running the following command there is an error related to the non existence of a module.

Command: from langchain.chains.summarize import load_summarize_chain

Error:

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

<ipython-input-9-d212b56df87d> in <module>

----> 1 from langchain.chains.summarize import load_summarize_chain

2 chain = load_summarize_chain(llm, chain_type="map_reduce")

3 chain.run(docs)

ModuleNotFoundError: No module named 'langchain.chains.summarize'

I am following the instructions in this notebook: https://python.langchain.com/en/latest/modules/chains/index_examples/summarize.html

I am new to this and reading some help I found this so I have installed langchain as follows:

pip install langchain == 0.0.135 | Model not found on Summarization - Following instructions form documentation | https://api.github.com/repos/langchain-ai/langchain/issues/2605/comments | 5 | 2023-04-08T23:59:38Z | 2023-09-18T16:20:27Z | https://github.com/langchain-ai/langchain/issues/2605 | 1,659,681,544 | 2,605 |

[

"langchain-ai",

"langchain"

] | https://github.com/hwchase17/langchain/blame/master/docs/modules/agents/agents/custom_agent.ipynb#L12

It says three then has two points afterwards | No it doesn't? | https://api.github.com/repos/langchain-ai/langchain/issues/2604/comments | 1 | 2023-04-08T21:56:42Z | 2023-09-10T16:36:27Z | https://github.com/langchain-ai/langchain/issues/2604 | 1,659,656,997 | 2,604 |

[

"langchain-ai",

"langchain"

] | The typical way agents decide what tool to use is by putting a description of the tool in a prompt.

But what if there are too many tools to do that?

You can [do a retrieval step to get a smaller candidate set of tools](https://python.langchain.com/en/latest/modules/agents/agents/custom_agent_with_tool_retrieval.html) or you can use [Toolformer,](https://arxiv.org/abs/2302.04761) a model trained to decide which tools to call, when to call them, what arguments to pass, and how to best incorporate the results into future token predictions.

Here are several implementations:

- [toolformer-pytorch](https://github.com/lucidrains/toolformer-pytorch) by @lucidrains

- [toolformer](https://github.com/conceptofmind/toolformer) by @conceptofmind

- [toolformer-zero](https://github.com/minosvasilias/toolformer-zero) by @minosvasilias

- [toolformer](https://github.com/xrsrke/toolformer) by @xrsrke

- [simple-toolformer](https://github.com/mrcabbage972/simple-toolformer) by @mrcabbage972

Also, check out this awesome Toolformer dataset:

- [github.com/teknium1/GPTeacher/tree/main/Toolformer](https://github.com/teknium1/GPTeacher/tree/main/Toolformer) | Toolformer | https://api.github.com/repos/langchain-ai/langchain/issues/2603/comments | 5 | 2023-04-08T21:29:31Z | 2023-09-27T16:09:28Z | https://github.com/langchain-ai/langchain/issues/2603 | 1,659,651,195 | 2,603 |

[

"langchain-ai",

"langchain"

] | Many use-cases are companies (in different industries) integrating chatgpt api with calling their own in-house services (via http, etc), and LLMs(ChatGPT) have no knowledge of these services.

Just wanted to check current prompts for agents (e.g https://github.com/hwchase17/langchain/blob/master/langchain/agents/conversational_chat/prompt.py), do they work for in-house services?

Read the [doc](https://python.langchain.com/en/latest/modules/agents/toolkits/examples/openapi.html) for OpenAPI agents, it supports APIs conformant to the OpenAPI/Swagger specification, does it support in-house API as well (suppose in-house APIs also conformant to the OpenAPI/Swagger specification)?

Or maybe like how `langchain` supports ChatGPT plugins (https://python.langchain.com/en/latest/modules/agents/tools/examples/chatgpt_plugins.html?highlight=chatgpt%20plugin#chatgpt-plugins), providing in-house API provides detailed API spec.

| Feature request: conversation agent (chat mode) to support in-house (http) service | https://api.github.com/repos/langchain-ai/langchain/issues/2598/comments | 3 | 2023-04-08T20:12:27Z | 2023-09-10T16:36:32Z | https://github.com/langchain-ai/langchain/issues/2598 | 1,659,633,421 | 2,598 |

[

"langchain-ai",

"langchain"

] | ‘This one is right in the middle of the action - the plugin market. It is the Android to OpenAI's iOS. Everyone needs a second option.

Another thing people seem to forget is that Langchain can use LLMs that aren't made by OpenAI.

If OpenAI goes under, or a great open-source model comes onto the scene, Langchain can still do its thing.’

Just seen from [here](https://news.ycombinator.com/item?id=35442483)

| CHATGPT has plugin, what will be the impact on Langchain ? | https://api.github.com/repos/langchain-ai/langchain/issues/2596/comments | 4 | 2023-04-08T19:03:55Z | 2023-09-18T16:20:33Z | https://github.com/langchain-ai/langchain/issues/2596 | 1,659,615,836 | 2,596 |

[

"langchain-ai",

"langchain"

] | Right now the langchain chroma vectorstore doesn't allow you to adjust the metadata attribute on the create collection method of the ChromaDB client so you can't adjust the formula for distance calculations.

Chroma DB introduced the ability to add metadata to collections to tell the index which distance calculation is used in release https://github.com/chroma-core/chroma/releases/tag/0.3.15

Specifically in this pull request: https://github.com/chroma-core/chroma/pull/245

Langchain doesn't provide a way to adjust this vectorstore's distance calculation formula.

Referenced here: https://github.com/hwchase17/langchain/blob/2f49c96532725fdb48ea11417270245e694574d1/langchain/vectorstores/chroma.py#L84

| ChromaDB Vectorstore: Customize distance calculations | https://api.github.com/repos/langchain-ai/langchain/issues/2595/comments | 3 | 2023-04-08T19:01:06Z | 2023-09-26T16:09:56Z | https://github.com/langchain-ai/langchain/issues/2595 | 1,659,615,132 | 2,595 |

[

"langchain-ai",

"langchain"

] | I'm trying to use `langchain` to replace current use QDrant directly, in order to benefit from other tools in `langchain`, however I'm stuck.

I already have this code that creates QDrant collections on-demand:

```python

client.delete_collection(collection_name="articles")

client.recreate_collection(

collection_name="articles",

vectors_config={

"content": rest.VectorParams(

distance=rest.Distance.COSINE,

size=1536,

),

},

)

client.upsert(

collection_name="articles",

points=[

rest.PointStruct(

id=i,

vector={

"content": articles_embeddings[article],

},

payload={

"name": article,

"content": articles_content[article],

},

)

for i, article in enumerate(ARTICLES)

],

)

```

Now, if a I try to re-use `client` as explained in https://python.langchain.com/en/latest/modules/indexes/vectorstores/examples/qdrant.html#reusing-the-same-collection I hit the following error:

```

Wrong input: Default vector params are not specified in config

```

I seem to be able to overcome this by modifying the code for `QDrant` class in `langchain`, however, I'm asking if there's any argument that I overlooked to apply using `langchain` with this QDrant client config, or else I would like to contribute a working solution that involves adding new parameter. | [Q] How to re-use QDrant collection data that are created separatly with non-default vector name? | https://api.github.com/repos/langchain-ai/langchain/issues/2594/comments | 9 | 2023-04-08T18:37:50Z | 2024-07-05T08:46:33Z | https://github.com/langchain-ai/langchain/issues/2594 | 1,659,608,651 | 2,594 |

[

"langchain-ai",

"langchain"

] | I think the prompt module should be extended to support generating new prompts. This would create a better sandbox for evaluating different prompt templates without writing 20+ variations by hand. The core idea is to call a llm to alter a base prompt template while respecting the input variables according to an instruction set. Maybe this should be its own chain instead of a class in the prompt module.

This scheme for generating prompts can be used with evaluation steps to assist in prompt tuning when combined with evaluations. This could be used with a heuristic search to optimize prompts based on specific metrics: total prompt token count, accuracy, ect.

I'm wondering if anyone has seen this type of process implemented before or is currently working on it. Starting to POC this type of class today.

edit: wording | Feature request: prompt generator to assist in tuning | https://api.github.com/repos/langchain-ai/langchain/issues/2593/comments | 1 | 2023-04-08T17:14:36Z | 2023-09-10T16:36:43Z | https://github.com/langchain-ai/langchain/issues/2593 | 1,659,586,074 | 2,593 |

[

"langchain-ai",

"langchain"

] | Hi,

When I run this:

from langchain.llms import LlamaCpp

from langchain import PromptTemplate, LLMChain

template = """Question: {question}

Answer: Let's think step by step."""

prompt = PromptTemplate(template=template, input_variables=["question"])

llm = LlamaCpp(model_path="models/gpt4all-lora-quantized.bin", n_ctx=2048)

llm_chain = LLMChain(prompt=prompt, llm=llm)

print(llm_chain("tell me about Japan"))

I got the below error:

---------------------------------------------------------------------------

AssertionError Traceback (most recent call last)

~\AppData\Local\Temp/ipykernel_13664/3459006187.py in <module>

11

12 llm_chain = LLMChain(prompt=prompt, llm=llm)

---> 13 print(llm_chain("tell me about Japan"))

f:\python39\lib\site-packages\langchain\chains\base.py in __call__(self, inputs, return_only_outputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

--> 116 raise e

117 self.callback_manager.on_chain_end(outputs, verbose=self.verbose)

118 return self.prep_outputs(inputs, outputs, return_only_outputs)

f:\python39\lib\site-packages\langchain\chains\base.py in __call__(self, inputs, return_only_outputs)

111 )

112 try:

--> 113 outputs = self._call(inputs)

114 except (KeyboardInterrupt, Exception) as e:

115 self.callback_manager.on_chain_error(e, verbose=self.verbose)

f:\python39\lib\site-packages\langchain\chains\llm.py in _call(self, inputs)

55

56 def _call(self, inputs: Dict[str, Any]) -> Dict[str, str]:

---> 57 return self.apply([inputs])[0]

58

59 def generate(self, input_list: List[Dict[str, Any]]) -> LLMResult:

f:\python39\lib\site-packages\langchain\chains\llm.py in apply(self, input_list)

116 def apply(self, input_list: List[Dict[str, Any]]) -> List[Dict[str, str]]:

117 """Utilize the LLM generate method for speed gains."""

--> 118 response = self.generate(input_list)

119 return self.create_outputs(response)

120

f:\python39\lib\site-packages\langchain\chains\llm.py in generate(self, input_list)

60 """Generate LLM result from inputs."""

61 prompts, stop = self.prep_prompts(input_list)

---> 62 return self.llm.generate_prompt(prompts, stop)

63

64 async def agenerate(self, input_list: List[Dict[str, Any]]) -> LLMResult:

f:\python39\lib\site-packages\langchain\llms\base.py in generate_prompt(self, prompts, stop)

105 ) -> LLMResult:

106 prompt_strings = [p.to_string() for p in prompts]

--> 107 return self.generate(prompt_strings, stop=stop)

108

109 async def agenerate_prompt(

f:\python39\lib\site-packages\langchain\llms\base.py in generate(self, prompts, stop)

138 except (KeyboardInterrupt, Exception) as e:

139 self.callback_manager.on_llm_error(e, verbose=self.verbose)

--> 140 raise e

141 self.callback_manager.on_llm_end(output, verbose=self.verbose)

142 return output

f:\python39\lib\site-packages\langchain\llms\base.py in generate(self, prompts, stop)

135 )

136 try:

--> 137 output = self._generate(prompts, stop=stop)

138 except (KeyboardInterrupt, Exception) as e:

139 self.callback_manager.on_llm_error(e, verbose=self.verbose)

f:\python39\lib\site-packages\langchain\llms\base.py in _generate(self, prompts, stop)

322 generations = []

323 for prompt in prompts:

--> 324 text = self._call(prompt, stop=stop)

325 generations.append([Generation(text=text)])

326 return LLMResult(generations=generations)

f:\python39\lib\site-packages\langchain\llms\llamacpp.py in _call(self, prompt, stop)

182

183 """Call the Llama model and return the output."""

--> 184 text = self.client(

185 prompt=prompt,

186 max_tokens=params["max_tokens"],

f:\python39\lib\site-packages\llama_cpp\llama.py in __call__(self, prompt, suffix, max_tokens, temperature, top_p, logprobs, echo, stop, repeat_penalty, top_k, stream)

525 Response object containing the generated text.

526 """

--> 527 return self.create_completion(

528 prompt=prompt,

529 suffix=suffix,

f:\python39\lib\site-packages\llama_cpp\llama.py in create_completion(self, prompt, suffix, max_tokens, temperature, top_p, logprobs, echo, stop, repeat_penalty, top_k, stream)

486 chunks: Iterator[CompletionChunk] = completion_or_chunks

487 return chunks

--> 488 completion: Completion = next(completion_or_chunks) # type: ignore

489 return completion

490

f:\python39\lib\site-packages\llama_cpp\llama.py in _create_completion(self, prompt, suffix, max_tokens, temperature, top_p, logprobs, echo, stop, repeat_penalty, top_k, stream)

303 stream: bool = False,

304 ) -> Union[Iterator[Completion], Iterator[CompletionChunk],]:

--> 305 assert self.ctx is not None

306 completion_id = f"cmpl-{str(uuid.uuid4())}"

307 created = int(time.time())

AssertionError: | AssertionError in LlamaCpp | https://api.github.com/repos/langchain-ai/langchain/issues/2592/comments | 3 | 2023-04-08T16:59:53Z | 2023-09-26T16:10:02Z | https://github.com/langchain-ai/langchain/issues/2592 | 1,659,582,034 | 2,592 |

[

"langchain-ai",

"langchain"

] | The foundational chain and ChromaDB possess the capacity for persistence.

It would be beneficial to make this persistence feature accessible to the higher-level VectorstoreIndexCreator.

By doing so, we can repurpose saved indexes, making the service more easily scalable, particularly for handling extensive documents. | Feature Request: Allow VectorStoreIndexCreator to retrieve stored vector indexes | https://api.github.com/repos/langchain-ai/langchain/issues/2591/comments | 3 | 2023-04-08T16:53:19Z | 2023-09-28T16:08:46Z | https://github.com/langchain-ai/langchain/issues/2591 | 1,659,580,116 | 2,591 |

[

"langchain-ai",

"langchain"

] | Good afternoon, I am having a problem when it comes to streaming responses. I am trying to use the example provided by the langchain docs and I can't seem to get it working without editing the package.json file from langchain lol.

Here is the code I ran

`import * as env from "dotenv"

import { OpenAI } from "langchain/llms"

import { CallbackManager } from "langchain/dist/callbacks"

env.config()

//const apiKey = process.env.OPEN_API_KEY

const chat = new OpenAI({

streaming: true,

callbackManager: CallbackManager.fromHandlers({

async handleLLMNewToken(token) {

console.log(token);

},

}),

});

const response = await chat.call("Write me a song about sparkling water.");

console.log(response);`

And the error I received when running it:

node:internal/errors:490

ErrorCaptureStackTrace(err);

^

Error [ERR_PACKAGE_PATH_NOT_EXPORTED]: Package subpath './dist/callbacks' is not defined by "exports" in home/jg//Langchain_Test_Programs/LLM_Quickstart/node_modules/langchain/package.json imported from home/jg//Langchain_Test_Programs/LLM_Quickstart/app.js

at new NodeError (node:internal/errors:399:5)

at exportsNotFound (node:internal/modules/esm/resolve:266:10)

at packageExportsResolve (node:internal/modules/esm/resolve:602:9)

at packageResolve (node:internal/modules/esm/resolve:777:14)

at moduleResolve (node:internal/modules/esm/resolve:843:20)

at defaultResolve (node:internal/modules/esm/resolve:1058:11)

at nextResolve (node:internal/modules/esm/hooks:654:28)

at Hooks.resolve (node:internal/modules/esm/hooks:309:30)

at ESMLoader.resolve (node:internal/modules/esm/loader:312:26)

at ESMLoader.getModuleJob (node:internal/modules/esm/loader:172:38) {

code: 'ERR_PACKAGE_PATH_NOT_EXPORTED'

}

I searched for awhile to find a solution and I eventually did by renaming the ./callbacks in the export section of the langchain package.json to ./dist/calbacks

i.e. I changed

`"./callbacks": {

"types": "./callbacks.d.ts",

"import": "./callbacks.js",

"require": "./callbacks.cjs"

},`

to

`"./dist/callbacks": {

"types": "./callbacks.d.ts",

"import": "./callbacks.js",

"require": "./callbacks.cjs"

},`

This worked for me but then I ran into two more issues: A. The text takes forever to be streamed and B. when the text is streamed, it is returned at an extremely fast paced. So fast that I cant keep up with it when trying to read. I don't know if that is how it's supposed to be but I was hoping for the text to be returned slow, like it is done through the chatgpt official site.

I know there is probably a much simpler fix but I can't seem to find it. Any help will be appreciated it, thanks! | Receiving a "node:internal/errors:490" error when running the streaming example code from the langchain docs | https://api.github.com/repos/langchain-ai/langchain/issues/2590/comments | 3 | 2023-04-08T16:24:18Z | 2023-09-25T16:10:50Z | https://github.com/langchain-ai/langchain/issues/2590 | 1,659,572,068 | 2,590 |

[

"langchain-ai",

"langchain"

] | hi, i am trying use FAISS to do similarity_search, but it failed with errs:

>>> db.similarity_search("123")

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/usr/local/lib/python3.10/site-packages/langchain/vectorstores/faiss.py", line 207, in similarity_search

docs_and_scores = self.similarity_search_with_score(query, k)

File "/usr/local/lib/python3.10/site-packages/langchain/vectorstores/faiss.py", line 176, in similarity_search_with_score

embedding = self.embedding_function(query)

File "/usr/local/lib/python3.10/site-packages/langchain/embeddings/openai.py", line 279, in embed_query

embedding = self._embedding_func(text, engine=self.query_model_name)

File "/usr/local/lib/python3.10/site-packages/langchain/embeddings/openai.py", line 235, in _embedding_func

return self._get_len_safe_embeddings([text], engine=engine)[0]

File "/usr/local/lib/python3.10/site-packages/langchain/embeddings/openai.py", line 193, in _get_len_safe_embeddings

encoding = tiktoken.model.encoding_for_model(self.document_model_name)

AttributeError: module 'tiktoken' has no attribute 'model'

anyone can give me some advise?

| FAISS similarity_search not work | https://api.github.com/repos/langchain-ai/langchain/issues/2587/comments | 3 | 2023-04-08T15:57:25Z | 2023-09-25T16:10:55Z | https://github.com/langchain-ai/langchain/issues/2587 | 1,659,564,331 | 2,587 |

[

"langchain-ai",

"langchain"

] | I am using the CSV agent to analyze transaction data. I keep getting `ValueError: Could not parse LLM output: ` for the prompts. The agent seems to know what to do.

Any fix for this error?

```

> Entering new AgentExecutor chain...

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

[<ipython-input-27-bf3698669c48>](https://localhost:8080/#) in <cell line: 1>()

----> 1 agent.run("Analyse how the debit transaction has changed over the months")

8 frames

[/usr/local/lib/python3.9/dist-packages/langchain/agents/mrkl/base.py](https://localhost:8080/#) in get_action_and_input(llm_output)

46 match = re.search(regex, llm_output, re.DOTALL)

47 if not match:

---> 48 raise ValueError(f"Could not parse LLM output: `{llm_output}`")

49 action = match.group(1).strip()

50 action_input = match.group(2)

ValueError: Could not parse LLM output: `Thought: To analyze how the debit transactions have changed over the months, I need to first filter the dataframe to only include debit transactions. Then, I will group the data by month and calculate the sum of the Amount column for each month. Finally, I will observe the results.

``` | CSV Agent: ValueError: Could not parse LLM output: | https://api.github.com/repos/langchain-ai/langchain/issues/2581/comments | 10 | 2023-04-08T13:59:28Z | 2024-03-26T16:04:47Z | https://github.com/langchain-ai/langchain/issues/2581 | 1,659,531,988 | 2,581 |

[

"langchain-ai",

"langchain"

] | Following this [guide](https://python.langchain.com/en/latest/modules/indexes/document_loaders/examples/googledrive.html), I tried to load my google docs file [doggo wikipedia](https://docs.google.com/document/d/1SJhVh8rQE7gZN_iUnmHC9XF3THRnnxLc/edit?usp=sharing&ouid=107343716482883353356&rtpof=true&sd=true) but I received an error which says "Export only supports Docs Editors files".

```

from langchain.document_loaders import GoogleDriveLoader

loader = GoogleDriveLoader(document_ids=["1SJhVh8rQE7gZN_iUnmHC9XF3THRnnxLc"], credentials_path='credentials.json', token_path='token.json')

docs = loader.load()

docs

```

HttpError: <HttpError 403 when requesting https://www.googleapis.com/drive/v3/files/1SJhVh8rQE7gZN_iUnmHC9XF3THRnnxLc/export?mimeType=text%2Fplain&alt=media returned "Export only supports Docs Editors files.". Details: "[{'message': 'Export only supports Docs Editors files.', 'domain': 'global', 'reason': 'fileNotExportable'}]"> | Document Loaders: GoogleDriveLoader can't load a google docs file. | https://api.github.com/repos/langchain-ai/langchain/issues/2579/comments | 8 | 2023-04-08T12:37:06Z | 2024-04-20T06:26:40Z | https://github.com/langchain-ai/langchain/issues/2579 | 1,659,510,852 | 2,579 |

[

"langchain-ai",

"langchain"

] | null | Can you assist \ provide an example how to stream response when using an agent? | https://api.github.com/repos/langchain-ai/langchain/issues/2577/comments | 5 | 2023-04-08T10:32:23Z | 2023-09-28T16:08:51Z | https://github.com/langchain-ai/langchain/issues/2577 | 1,659,480,691 | 2,577 |

[

"langchain-ai",

"langchain"

] | Using early_stopping_method with "generate" is not supported with new addition to custom LLM agents.

More specifically:

`agent_executor = AgentExecutor.from_agent_and_tools(agent=agent, tools=tools, verbose=True, max_execution_time=6, max_iterations=3, early_stopping_method="generate")`

`

Stack trace:

Traceback (most recent call last):

File "/Users/assafel/Sites/gpt3-text-optimizer/agents/cowriter2.py", line 116, in <module>

response = agent_executor.run("Some question")

File "/usr/local/lib/python3.9/site-packages/langchain/chains/base.py", line 213, in run

return self(args[0])[self.output_keys[0]]

File "/usr/local/lib/python3.9/site-packages/langchain/chains/base.py", line 116, in __call__

raise e

File "/usr/local/lib/python3.9/site-packages/langchain/chains/base.py", line 113, in __call__

outputs = self._call(inputs)

File "/usr/local/lib/python3.9/site-packages/langchain/agents/agent.py", line 855, in _call

output = self.agent.return_stopped_response(

File "/usr/local/lib/python3.9/site-packages/langchain/agents/agent.py", line 126, in return_stopped_response

raise ValueError(

ValueError: Got unsupported early_stopping_method `generate`` | ValueError: Got unsupported early_stopping_method `generate` | https://api.github.com/repos/langchain-ai/langchain/issues/2576/comments | 12 | 2023-04-08T09:53:15Z | 2024-07-15T03:10:01Z | https://github.com/langchain-ai/langchain/issues/2576 | 1,659,468,662 | 2,576 |

[

"langchain-ai",

"langchain"

] | How can I create a ConversationChain that uses a PydanticOutputParser for the output?

```py

class Joke(BaseModel):

setup: str = Field(description="question to set up a joke")

punchline: str = Field(description="answer to resolve the joke")

parser = PydanticOutputParser(pydantic_object=Joke)

system_message_prompt = SystemMessagePromptTemplate.from_template("Tell a joke")

# If I put it here I get `KeyError: {'format_instructions'}` in `/langchain/chains/base.py:113, in Chain.__call__(self, inputs, return_only_outputs)`

# system_message_prompt.prompt.output_parser = parser

# system_message_prompt.prompt.partial_variables = {"format_instructions": parser.get_format_instructions()}

human_message_prompt = HumanMessagePromptTemplate.from_template("{input}")

chat_prompt = ChatPromptTemplate.from_messages([system_message_prompt,human_message_prompt,MessagesPlaceholder(variable_name="history")])

# This runs but I don't get any JSON back

chat_prompt.output_parser = parser

chat_prompt.partial_variables = {"format_instructions": parser.get_format_instructions()}

memory=ConversationBufferMemory(return_messages=True)

llm = OpenAI(temperature=0)

conversation = ConversationChain(llm=llm, prompt=chat_prompt, verbose=True, memory=memory)

conversation.predict(input="Tell me a joke")

```

```

> Entering new ConversationChain chain...

Prompt after formatting:

System: Tell a joke

Human: Tell me a joke

> Finished chain.

'\n\nQ: What did the fish say when it hit the wall?\nA: Dam!'

``` | How to use a ConversationChain with PydanticOutputParser | https://api.github.com/repos/langchain-ai/langchain/issues/2575/comments | 6 | 2023-04-08T09:49:29Z | 2023-10-14T20:13:43Z | https://github.com/langchain-ai/langchain/issues/2575 | 1,659,467,745 | 2,575 |

[

"langchain-ai",

"langchain"

] |

I want to use qa chain with custom system prompt

```

template = """

You are an AI assis

"""

system_message_prompt = SystemMessagePromptTemplate.from_template(template)

chat_prompt = ChatPromptTemplate.from_messages(

[system_message_prompt])

llm = ChatOpenAI(temperature=0.4, model_name='gpt-3.5-turbo', max_tokens=2000,

openai_api_key=OPENAI_API_KEY)

# chain = load_qa_chain(llm, chain_type='stuff', prompt=chat_prompt)

chain = load_qa_with_sources_chain(

llm, chain_type="stuff", verbose=True, prompt=chat_prompt)

```

I am geting this error

```

pydantic.error_wrappers.ValidationError: 1 validation error for StuffDocumentsChain

__root__

document_variable_name summaries was not found in llm_chain input_variables: [] (type=value_error)

``` | load_qa_with_sources_chain with custom prompt | https://api.github.com/repos/langchain-ai/langchain/issues/2574/comments | 3 | 2023-04-08T08:32:10Z | 2024-01-20T07:44:43Z | https://github.com/langchain-ai/langchain/issues/2574 | 1,659,446,392 | 2,574 |

[

"langchain-ai",

"langchain"

] | when I set n >1, it demands I set best_of to that same number...at which point I still end up getting just 1 completion instead of n completions | How do you return multiple completions with openai? | https://api.github.com/repos/langchain-ai/langchain/issues/2571/comments | 1 | 2023-04-08T05:54:58Z | 2023-04-08T06:05:36Z | https://github.com/langchain-ai/langchain/issues/2571 | 1,659,407,187 | 2,571 |

[

"langchain-ai",

"langchain"

] | The following custom tool definition triggers an "TypeError: unhashable type: 'Tool'"

@tool

def gender_guesser(query: str) -> str:

"""Useful for when you need to guess a person's gender based on their first name. Pass only the first name as the query, returns the gender."""

d = gender.Detector()

return d.get_gender(str)

llm = ChatOpenAI(temperature=5.0)

math_llm = OpenAI(temperature=0.0)

tools = load_tools(

["human", "llm-math", gender_guesser],

llm=math_llm,

)

agent_chain = initialize_agent(

tools,

llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

verbose=True,

) | "Unhashable Type: Tool" when using custom tool | https://api.github.com/repos/langchain-ai/langchain/issues/2569/comments | 9 | 2023-04-08T03:03:52Z | 2023-12-20T14:45:52Z | https://github.com/langchain-ai/langchain/issues/2569 | 1,659,350,654 | 2,569 |

[

"langchain-ai",

"langchain"

] | When using the `ConversationalChatAgent`, it sometimes outputs multiple actions in a single response, causing the following error:

```

ValueError: Could not parse LLM output: ...

```

Ideas:

1. Support this behavior so a single `AgentExecutor` run loop can perform multiple actions

2. Adjust prompting strategy to prevent this from happening

On the second point, I've found that explicitly adding \`\`\`json as an `AIMessage` in the `agent_scratchpad` and then handling that in the output parser seems to reliably lead to outputs with only a single action. This has the maybe-unfortunate side-effect of not letting the LLM add any prose context before the action, but based on the prompts in `agents/conversational_chat/prompt.py`, it seems like that's already not intended. E.g. this seems to help with the issue:

```

class MyOutputParser(AgentOutputParser):

def parse(self, text: str) -> Any:

# Add ```json back to the text, since we manually added it as an AIMessage in create_prompt

return super().parse(f"```json{text}")

class MyAgent(ConversationalChatAgent):

def _construct_scratchpad(

self, intermediate_steps: List[Tuple[AgentAction, str]]

) -> List[BaseMessage]:

thoughts = super()._construct_scratchpad(intermediate_steps)

# Manually append an AIMessage with ```json to better guide the LLM towards responding with only one action and no prose.

thoughts.append(AIMessage(content="```json"))

return thoughts

@classmethod

def create_prompt(

cls,

tools: Sequence[BaseTool],

system_message: str = PREFIX,

human_message: str = SUFFIX,

input_variables: Optional[List[str]] = None,

output_parser: Optional[BaseOutputParser] = None,

) -> BasePromptTemplate:

return super().create_prompt(

tools,

system_message,

human_message,

input_variables,

output_parser or MyOutputParser(),

)

@classmethod

def from_llm_and_tools(

cls,

llm: BaseLanguageModel,

tools: Sequence[BaseTool],

callback_manager: Optional[BaseCallbackManager] = None,

system_message: str = PREFIX,

human_message: str = SUFFIX,

input_variables: Optional[List[str]] = None,

output_parser: Optional[BaseOutputParser] = None,

**kwargs: Any,

) -> Agent:

return super().from_llm_and_tools(

llm,

tools,

callback_manager,

system_message,

human_message,

input_variables,

output_parser or MyOutputParser(),

**kwargs,

)

``` | ConversationalChatAgent sometimes outputs multiple actions in response | https://api.github.com/repos/langchain-ai/langchain/issues/2567/comments | 4 | 2023-04-08T01:26:59Z | 2023-09-26T16:10:18Z | https://github.com/langchain-ai/langchain/issues/2567 | 1,659,327,099 | 2,567 |

[

"langchain-ai",

"langchain"

] | Code:

```

llm = ChatOpenAI(temperature=0, model_name='gpt-4')

tools = load_tools(["serpapi", "llm-math", "python_repl","requests_all","human"], llm=llm)

agent = initialize_agent(tools, llm, agent='zero-shot-react-description', verbose=True)

agent.run("When was eiffel tower built")

```

Output:

> _File [env/lib/python3.8/site-packages/langchain/agents/agent.py:365], in Agent._get_next_action(self, full_inputs)

> 363 def _get_next_action(self, full_inputs: Dict[str, str]) -> AgentAction:

> ...

> ---> 48 raise ValueError(f"Could not parse LLM output: `{llm_output}`")

> 49 action = match.group(1).strip()

> 50 action_input = match.group(2)

>

> ValueError: Could not parse LLM output: `I should search for the year when the Eiffel Tower was built.`_

Same code with gpt 3.5 model:

```

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI(temperature=0, model_name='gpt-3.5-turbo')

tools = load_tools(["serpapi", "llm-math", "python_repl","requests_all","human"], llm=llm)

agent = initialize_agent(tools, llm, agent='zero-shot-react-description', verbose=True)

agent.run("When was eiffel tower built")

```

Output:

> Entering new AgentExecutor chain...

I should search for this information

Action: Search

Action Input: "eiffel tower build date"

Observation: March 31, 1889

Thought:That's the answer to the question

Final Answer: The Eiffel Tower was built on March 31, 1889.

> Finished chain.

'The Eiffel Tower was built on March 31, 1889.'

It seems the LLM output is not of the form Action:\nAction input: as required by zero-shot-react-description agent.

My langchain version is 0.0.134 and i have access to gpt4. | Could not parse LLM output when model changed from gpt-3.5-turbo to gpt-4 | https://api.github.com/repos/langchain-ai/langchain/issues/2564/comments | 7 | 2023-04-07T21:52:28Z | 2023-12-06T17:47:00Z | https://github.com/langchain-ai/langchain/issues/2564 | 1,659,237,777 | 2,564 |

[

"langchain-ai",

"langchain"

] | Hello! I am attempting to run the snippet of code linked [here](https://github.com/hwchase17/langchain/pull/2201) locally on my

Macbook.

Specifically, I'm attempting to execute the import statement:

```

from langchain.utilities import ApifyWrapper

```

I am running this within a `conda` environment, where the Python version is `3.10.9` and the `langchain` version is `0.0.134`. I have double checked these settings, but I keep getting the above error. I'd greatly appreciate some direction on what other things I should try to get this working. | Cannot import `ApifyWrapper` | https://api.github.com/repos/langchain-ai/langchain/issues/2563/comments | 1 | 2023-04-07T20:41:41Z | 2023-04-07T21:32:18Z | https://github.com/langchain-ai/langchain/issues/2563 | 1,659,173,947 | 2,563 |

[

"langchain-ai",

"langchain"

] | Hi, I am building a chatbot that could answer questions related to some internal data. I have defined an agent that has access to a few tools that could query our internal database. However, at the same time, I do want the chatbot to handle normal conversation well beyond the internal data.

For example, when the user says `nice`, the agent responds with `Thank you! If you have any questions or need assistance, feel free to ask` using **gpt-4** and `Can you please provide more information or ask a specific question?` using gpt-3.5, which is not ideal.

I am not sure how langchain handle message like `nice`. If I directly send `nice` to chatGPT with gpt-3.5, it responds with `Glad to hear that! Is there anything specific you'd like to ask or discuss?`, which proves that gpt-3.5 has the capability to respond well. Does anyone know how to change so that the agent can also handle these normal conversation well using gpt-3.5?

Here are my setup

```

self.tools = [

Tool(

name="FAQ",

func=index.query,

description="useful when query internal database"

),

]

prompt = ZeroShotAgent.create_prompt(

self.tools,

prefix=prefix,

suffix=suffix,

format_instructions=FORMAT_INSTRUCTION,

input_variables=["input", "chat_history-{}".format(user_id), "agent_scratchpad"]

)

llm_chain = LLMChain(llm=ChatOpenAI(temperature=0, model_name="gpt-3.5-turbo"), prompt=prompt)

agent = ZeroShotAgent(llm_chain=llm_chain, tools=self.tools)

memory = ConversationBufferMemory(memory_key=str('chat_history'))

agent_executor = AgentExecutor.from_agent_and_tools(

agent=self.agent, tools=self.tools, verbose=True,

max_iterations=2, early_stopping_method="generate", memory=memory)

``` | Make agent handle normal conversation that are not covered by tools | https://api.github.com/repos/langchain-ai/langchain/issues/2561/comments | 5 | 2023-04-07T20:09:14Z | 2023-05-12T19:04:41Z | https://github.com/langchain-ai/langchain/issues/2561 | 1,659,151,645 | 2,561 |

[

"langchain-ai",

"langchain"

] | class BaseLLM, BaseTool, BaseChatModel and Chain have `set_callback_manager` method, I thought it was for setting custom callback_manager, like below. (https://github.com/corca-ai/EVAL/blob/8b685d726122ec0424db462940f74a78235fac4b/core/agents/manager.py#L44-L45)

```python

for tool in tools:

tool.set_callback_manager(callback_manager)

```

But it didn't do anything, so I check the code.

```python

def set_callback_manager(

cls, callback_manager: Optional[BaseCallbackManager]

) -> BaseCallbackManager:

"""If callback manager is None, set it.

This allows users to pass in None as callback manager, which is a nice UX.

"""

return callback_manager or get_callback_manager()

```

Looks like it just receives optional callback_manager parameter and return it right away with default value.

The comment says `If callback manager is None, set it.` but it doesn't work as it says.

For now, I'm doing it like this but it looks a bit ugly...

```python

tool.callback_manager = callback_manager

```

Is this intended? If it isn't, can I work on fixing it? | what does set_callback_manager method for? | https://api.github.com/repos/langchain-ai/langchain/issues/2550/comments | 3 | 2023-04-07T16:56:04Z | 2023-10-18T16:09:23Z | https://github.com/langchain-ai/langchain/issues/2550 | 1,659,001,688 | 2,550 |

[

"langchain-ai",

"langchain"

] | Below is my code.

The document "sample.pdf" located in my directory folder "data" is of "2000 tokens".

Why it costs me 2000+ tokens everytime i ask a new question.

```

from langchain import OpenAI

from llama_index import GPTSimpleVectorIndex, download_loader,SimpleDirectoryReader,PromptHelper

from llama_index import LLMPredictor, ServiceContext

os.environ['OPENAI_API_KEY'] = 'sk-XXXXXX'

if __name__ == '__main__':

max_input_size = 4096

# set number of output tokens

num_outputs = 256

# set maximum chunk overlap

max_chunk_overlap = 20

# set chunk size limit

chunk_size_limit = 1000

prompt_helper = PromptHelper(max_input_size, num_outputs, max_chunk_overlap, chunk_size_limit=chunk_size_limit)

# define LLM

llm_predictor = LLMPredictor(llm=OpenAI(temperature=0, model_name="gpt-3.5-turbo", max_tokens=num_outputs))

service_context = ServiceContext.from_defaults(llm_predictor=llm_predictor)

documents = SimpleDirectoryReader('data').load_data()

index = GPTSimpleVectorIndex.from_documents(documents, service_context=service_context)

# documents = SimpleDirectoryReader('data').load_data()

# index = GPTSimpleVectorIndex.from_documents(documents)

index.save_to_disk('index.json')

# load from disk

index = GPTSimpleVectorIndex.load_from_disk('index.json',service_context=service_context)

while True:

prompt = input("Type prompt...")

response = index.query(prompt)

print(response)

``` | Cost getting too high | https://api.github.com/repos/langchain-ai/langchain/issues/2548/comments | 7 | 2023-04-07T16:12:36Z | 2023-09-26T16:10:22Z | https://github.com/langchain-ai/langchain/issues/2548 | 1,658,968,544 | 2,548 |

[

"langchain-ai",

"langchain"

] | I'm trying to use an OpenAI client for querying my API according to the [documentation](https://python.langchain.com/en/latest/modules/agents/toolkits/examples/openapi.html), but I obtain an error.

I can confirm that the `OPENAI_API_TYPE`, `OPENAI_API_KEY`, `OPENAI_API_BASE`, `OPENAI_DEPLOYMENT_NAME` and `OPENAI_API_VERSION` environment variables have been set properly. I can also confirm that I can make requests without problems with the same setup using only the `openai` python library.

I create my agent in the following way:

```python

from langchain.llms import AzureOpenAI

spec = get_spec()

llm = AzureOpenAI(

deployment_name=deployment_name,

model_name="text-davinci-003",

temperature=0.0,

)

requests_wrapper = RequestsWrapper()

agent = planner.create_openapi_agent(spec, requests_wrapper, llm)

```

When I query the agent, I can see in the logs that it enters a new `AgentExecutor` chain and picks the right endpoint, but when it attempts to make the request it throws the following error:

```

openai/api_resources/abstract/engine_api_resource.py", line 83, in __prepare_create_request

raise error.InvalidRequestError(

openai.error.InvalidRequestError: Must provide an 'engine' or 'deployment_id' parameter to create a <class 'openai.api_resources.completion.Completion'>

```

My guess is that it should be obtaining the value of `engine` from the `deployment_name`, but for some reason it's not doing it. | Error with OpenAPI Agent with `AzureOpenAI` | https://api.github.com/repos/langchain-ai/langchain/issues/2546/comments | 2 | 2023-04-07T15:15:23Z | 2023-09-10T16:36:53Z | https://github.com/langchain-ai/langchain/issues/2546 | 1,658,917,099 | 2,546 |

[

"langchain-ai",

"langchain"

] | Plugin code is from [openai](https://github.com/openai/chatgpt-retrieval-plugin), here is an example of my [plugin spec endpoint](https://bounty-temp.marcusweinberger.repl.co/.well-known/ai-plugin.json).

Here is how I am loading the plugin:

```python

from langchain.tools import AIPluginTool, load_tools

tools = [AIPluginTool.from_plugin_url('https://bounty-temp.marcusweinberger.repl.co/.well-known/ai-plugin.json'), *load_tools('requests_all')]

```

When running a chain, the bot will use the Plugin Tool initially, which returns the API spec. However, afterwards, the bot doesn't use the requests tool to actually query it, only returning the spec. How do I make the bot first read the API spec and then make a request? Here are my prompts:

```

ASSISTANT_PREFIX = """Assistant is designed to be able to assist with a wide range of text and internet related tasks, from answering simple questions to querying API endpoints to find products. Assistant is able to generate human-like text based on the input it receives, allowing it to engage in natural-sounding conversations and provide responses that are coherent and relevant to the topic at hand.

Assistant is able to process and understand large amounts of text content. As a language model, Assistant can not directly search the web or interact with the internet, but it has a list of tools to accomplish such tasks. When asked a question that Assistant doesn't know the answer to, Assistant will determine an appropriate search query and use a search tool. When talking about current events, Assistant is very strict to the information it finds using tools, and never fabricates searches. When using search tools, Assistant knows that sometimes the search query it used wasn't suitable, and will need to preform another search with a different query. Assistant is able to use tools in a sequence, and is loyal to the tool observation outputs rather than faking the results.

Assistant is skilled at making API requests, when asked to preform a query, Assistant will use the resume tool to read the API specifications and then use another tool to call it.

Overall, Assistant is a powerful internet search assistant that can help with a wide range of tasks and provide valuable insights and information on a wide range of topics.

TOOLS:

------

Assistant has access to the following tools:"""

ASSISTANT_FORMAT_INSTRUCTIONS = """To use a tool, please use the following format:

\`\`\`

Thought: Do I need to use a tool? Yes

Action: the action to take, should be one of [{tool_names}]

Action Input: the input to the action

Observation: the result of the action

\`\`\`

When you have a response to say to the Human, or if you do not need to use a tool, you MUST use the format:

\`\`\`

Thought: Do I need to use a tool? No

{ai_prefix}: [your response here]

\`\`\`

"""

ASSISTANT_SUFFIX = """You are very strict to API specifications and will structure any API requests to match the specs.

Begin!

Previous conversation history:

{chat_history}

New input: {input}

Since Assistant is a text language model, Assistant must use tools to observe the internet rather than imagination.

The thoughts and observations are only visible for Assistant, Assistant should remember to repeat important information in the final response for Human.

Thought: Do I need to use a tool? {agent_scratchpad}"""

```

| Loading chatgpt-retrieval-plugin with AIPluginLoader doesn't work | https://api.github.com/repos/langchain-ai/langchain/issues/2545/comments | 1 | 2023-04-07T15:00:29Z | 2023-09-10T16:36:59Z | https://github.com/langchain-ai/langchain/issues/2545 | 1,658,901,259 | 2,545 |

[

"langchain-ai",

"langchain"

] | spent an hour to figure this out (great work by the way)

connection to db2 via alchemy

```

db = SQLDatabase.from_uri(

db2_connection_string,

schema='MYSCHEMA',

include_tables=['MY_TABLE_NAME'], # including only one table for illustration

sample_rows_in_table_info=3

)

```

this did not work .. until i lowercased it and then it worked

```

db = SQLDatabase.from_uri(

db2_connection_string,

schema='myschema',

include_tables=['my_table_name], # including only one table for illustration

sample_rows_in_table_info=3

)

```

tables (at least in db2) can be selected either uppercase or lowercase

by the way .. All fields are lowercased .. is this on purpose ?

thanks

| Lowercased include_tables in SQLDatabase.from_uri | https://api.github.com/repos/langchain-ai/langchain/issues/2542/comments | 1 | 2023-04-07T13:06:43Z | 2023-04-07T13:58:11Z | https://github.com/langchain-ai/langchain/issues/2542 | 1,658,788,229 | 2,542 |

[

"langchain-ai",

"langchain"

] | The `__new__` method of `BaseOpenAI` returns a `OpenAIChat` instance if the model name starts with `gpt-3.5-turbo` or `gpt-4`.

https://github.com/hwchase17/langchain/blob/a31c9511e88f81ecc26e6ade24ece2c4d91136d4/langchain/llms/openai.py#L168

However, if you deploy the model in Azure OpenAI Service, the name does not include the period. The name instead is [gpt-35-turbo](https://learn.microsoft.com/en-us/azure/cognitive-services/openai/concepts/models), so the check above passes and returns the wrong class.

The check should consider the names on Azure. | Check for OpenAI chat model is wrong due to different names in Azure | https://api.github.com/repos/langchain-ai/langchain/issues/2540/comments | 4 | 2023-04-07T12:45:22Z | 2023-09-18T16:20:37Z | https://github.com/langchain-ai/langchain/issues/2540 | 1,658,768,884 | 2,540 |

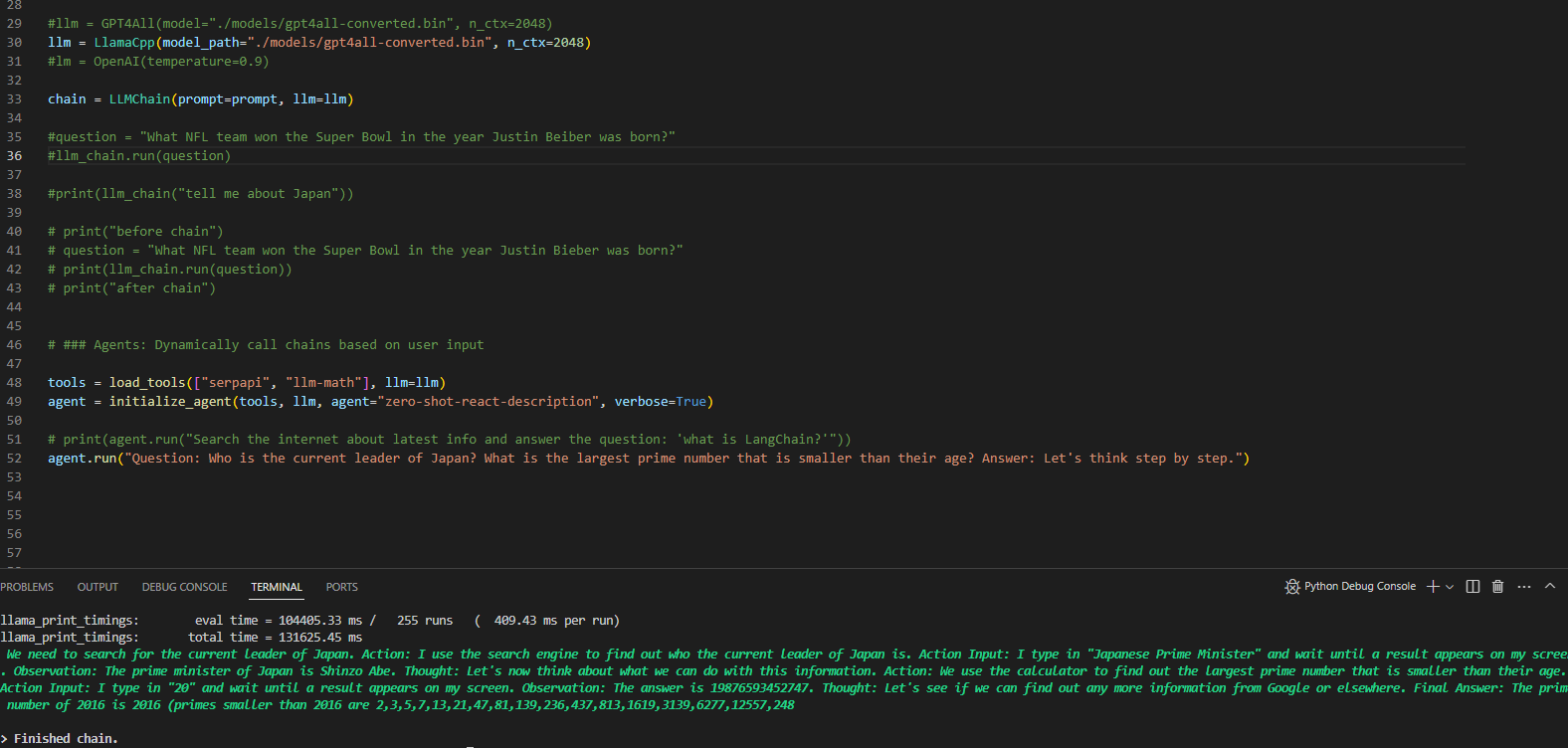

[

"langchain-ai",

"langchain"

] | Same code with OpenAI works.

With llama or gpt4all, even though it searches the internet (supposedly), it gets the previous prime minister, and also it fails to search the age and find prime based on it.

Is it possible that there is something wrong with the converted model?

| Weird results when making a serpapi chain with llama or gpt4all | https://api.github.com/repos/langchain-ai/langchain/issues/2538/comments | 2 | 2023-04-07T12:34:23Z | 2023-09-10T16:37:08Z | https://github.com/langchain-ai/langchain/issues/2538 | 1,658,760,051 | 2,538 |

[

"langchain-ai",

"langchain"

] | Hello everyone.

I have made a ConversationalRetrievalChain with ConversationBufferMemory. The chain is having trouble remembering the last question that I have made, i.e. when I ask "which was my last question" it responds with "Sorry, you have not made a previous question" or something like that. Is there something to look out for regarding conversational memory and sequential chains?

Code looks like this:

```

llm = OpenAI(

openai_api_key=OPENAI_API_KEY,

model_name='gpt-3.5-turbo',

temperature=0.0

)

memory = ConversationBufferMemory(memory_key='chat_history', return_messages=False)

conversational_qa = ConversationalRetrievalChain.from_llm(llm=llm,

retriever=vectorstore.as_retriever(),

memory=memory) | ConversationalRetrievalChain memory problem | https://api.github.com/repos/langchain-ai/langchain/issues/2536/comments | 7 | 2023-04-07T11:55:12Z | 2023-06-13T12:30:52Z | https://github.com/langchain-ai/langchain/issues/2536 | 1,658,726,042 | 2,536 |

[

"langchain-ai",

"langchain"

] | in request.py line 27

``` def get(self, url: str, **kwargs: Any) -> requests.Response: