issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.91B | issue_number int64 1 131k |

|---|---|---|---|---|---|---|---|---|---|

[

"kubernetes",

"kubernetes"

] | ### What happened?

I am trying to apply a cronjob on kubernetes cluster, but the apply is getting failed with an error

### What did you expect to happen?

cronjob should be applied successfully onto the cluster

### How can we reproduce it (as minimally and precisely as possible)?

Try to apply the below cronjob yaml... | cronjob error - Missing annotations | https://api.github.com/repos/kubernetes/kubernetes/issues/118215/comments | 14 | 2023-05-24T03:48:53Z | 2023-05-27T19:10:59Z | https://github.com/kubernetes/kubernetes/issues/118215 | 1,723,129,095 | 118,215 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

According to the documentation, if you add the enable the feature in the config files of the following:

```

------ 5/22/2023 11:03 PM 3384 kube-controller-manager.yaml

------ 5/22/2023 11:03 PM 1440 kube-scheduler.yaml

------ 5/22/2023 11:03 PM ... | K8s Kubelet Checkpoint API | https://api.github.com/repos/kubernetes/kubernetes/issues/118213/comments | 9 | 2023-05-24T02:33:09Z | 2024-03-21T12:14:30Z | https://github.com/kubernetes/kubernetes/issues/118213 | 1,723,078,241 | 118,213 |

[

"kubernetes",

"kubernetes"

] | After running k8s conformance testing on kubernetes v1.27, we have leftover custom resources of the `foo*.mygroup.example.com` format that should not exist. For example)

```

vagrant@verify-cluster:~/kubernetes-pipeline$ kubectl get crds

NAME CREATED AT

...

foof... | Kubernetes 1.27: Leftover CRDs after k8s conformance test | https://api.github.com/repos/kubernetes/kubernetes/issues/118206/comments | 5 | 2023-05-23T18:13:20Z | 2023-06-07T02:40:14Z | https://github.com/kubernetes/kubernetes/issues/118206 | 1,722,561,073 | 118,206 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

https://prow.k8s.io/view/gs/kubernetes-jenkins/pr-logs/directory/pull-kubernetes-kind-dra/1659956139929899008

https://prow.k8s.io/view/gs/kubernetes-jenkins/pr-logs/directory/pull-kubernetes-kind-dra/1660562399301734400

https://prow.k8s.io/view/gs/kubernetes-jenkins/pr-logs/pull/118012/... | [Failing test] pull-kubernetes-kind-dra multiple failures | https://api.github.com/repos/kubernetes/kubernetes/issues/118201/comments | 7 | 2023-05-23T06:57:58Z | 2023-05-23T19:30:56Z | https://github.com/kubernetes/kubernetes/issues/118201 | 1,721,355,532 | 118,201 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [4d8763daba4a7056712d](https://go.k8s.io/triage#4d8763daba4a7056712d)

##### Error text:

```

[FAILED] Failed to create metric descriptor: googleapi: Error 429: Errors during metric descriptor creation: {(metric: custom.googleapis.com/unused, error: Request throttled. You have hit the per-project... | [HPA] with multiple metrics of different types should scale up when one metric is missing | https://api.github.com/repos/kubernetes/kubernetes/issues/118198/comments | 5 | 2023-05-23T03:38:26Z | 2023-06-25T02:56:20Z | https://github.com/kubernetes/kubernetes/issues/118198 | 1,721,097,806 | 118,198 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I noticed that kube-controller-manager terminated with a concurrent map access:

```

fatal error: concurrent map read and map write

goroutine 2456 [running]:

k8s.io/apimachinery/pkg/util/sets.Set[...].Has(0x1?, {0xc00507d650?, 0x23?})

vendor/k8s.io/apimachinery/pkg/util/sets/set.go:83 +0x2b

... | Topology cache's HasPopulatedHints method can attempt concurrent map access | https://api.github.com/repos/kubernetes/kubernetes/issues/118188/comments | 3 | 2023-05-22T21:50:10Z | 2023-08-15T22:16:59Z | https://github.com/kubernetes/kubernetes/issues/118188 | 1,720,628,294 | 118,188 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Most tests currently run with `f.NamespacePodSecurityEnforceLevel = admissionapi.LevelPrivileged`.

Only the namespace created for the CSI driver needs to allow privileged pods. The main test namespace is often just used to test that a pod using a volume can start up, which might... | test/e2e/storage: reduce privileges granted to pods | https://api.github.com/repos/kubernetes/kubernetes/issues/118184/comments | 9 | 2023-05-22T18:08:34Z | 2024-11-13T06:44:01Z | https://github.com/kubernetes/kubernetes/issues/118184 | 1,720,181,234 | 118,184 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [b3d28e64f0eee514b11d](https://go.k8s.io/triage#b3d28e64f0eee514b11d)

##### Error text:

```

[TIMEDOUT] A spec timeout occurred

In [DeferCleanup (Each)] at: test/e2e/storage/drivers/csi.go:710 @ 05/13/23 11:40:32.756

This is the Progress Report generated when the spec timeout occurred:

[s... | [Flaky] CSIStorageCapacity e2e tests | https://api.github.com/repos/kubernetes/kubernetes/issues/118175/comments | 3 | 2023-05-22T14:01:06Z | 2023-05-23T08:58:28Z | https://github.com/kubernetes/kubernetes/issues/118175 | 1,719,765,203 | 118,175 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

master-informing

- ci-crio-cgroupv1-node-e2e-conformance

### Which tests are failing?

- ci-crio-cgroupv1-node-e2e-conformance.Overall

- kubetest.Node Tests

- E2eNode Suite.[It] [sig-node] Container Runtime blackbox test on terminated container should report termination message fr... | [Failing test] ci-crio-cgroupv1-node-e2e-conformance | https://api.github.com/repos/kubernetes/kubernetes/issues/118174/comments | 6 | 2023-05-22T13:46:18Z | 2023-05-24T10:57:46Z | https://github.com/kubernetes/kubernetes/issues/118174 | 1,719,732,271 | 118,174 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hey brothers , feedback a confusing point about kubelet soft eviction:

Just like kubelet --help show:

--eviction-max-pod-grace-period int32 Maximum allowed grace period (in seconds) to use when terminating pods in response to a soft eviction threshold being met. If negative, defer to pod speci... | kubelet parameter(eviction-max-pod-grace-period ), not work as expected like officical comment. | https://api.github.com/repos/kubernetes/kubernetes/issues/118172/comments | 21 | 2023-05-22T11:54:19Z | 2025-03-11T05:30:00Z | https://github.com/kubernetes/kubernetes/issues/118172 | 1,719,532,111 | 118,172 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

master-blocking

- gce-device-plugin-gpu-master

### Which tests are failing?

- ci-kubernetes-e2e-gce-device-plugin-gpu.Overall [Changes](https://github.com/kubernetes/kubernetes/compare/189fe3f3e...7ad8303b9?)

- Kubernetes e2e suite.[It] [sig-scheduling] [Feature:GPUDevicePlugin] run Nvi... | [Failing test] gce-device-plugin-gpu-master | https://api.github.com/repos/kubernetes/kubernetes/issues/118170/comments | 10 | 2023-05-22T09:12:56Z | 2023-11-04T23:04:34Z | https://github.com/kubernetes/kubernetes/issues/118170 | 1,719,260,518 | 118,170 |

[

"kubernetes",

"kubernetes"

] | Configmap is good | How ConfigMap is good ? | https://api.github.com/repos/kubernetes/kubernetes/issues/118234/comments | 11 | 2023-05-22T05:54:42Z | 2023-05-24T12:58:28Z | https://github.com/kubernetes/kubernetes/issues/118234 | 1,723,927,648 | 118,234 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

ste

### Why is this needed?

ss | test | https://api.github.com/repos/kubernetes/kubernetes/issues/118161/comments | 2 | 2023-05-22T02:38:40Z | 2023-05-22T02:38:49Z | https://github.com/kubernetes/kubernetes/issues/118161 | 1,718,781,620 | 118,161 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

### What would you like to be added?

Quoted from @wojtek-t 's [comment](https://github.com/kubernetes/kubernetes/pull/115754#discussion_r1192068894)

Splitting healthz/ into livez/ and readyz/

### Why is this needed?

```

I agree that splitting into livez/ and readyz/ is ... | Split `/healthz` into `/livez` & `/readyz` for kube-controller-manager | https://api.github.com/repos/kubernetes/kubernetes/issues/118158/comments | 8 | 2023-05-21T17:29:47Z | 2024-08-14T13:13:29Z | https://github.com/kubernetes/kubernetes/issues/118158 | 1,718,579,309 | 118,158 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Using the Go 1.21 dev branch,

FORCE_HOST_GO=y make test WHAT=./staging/src/k8s.io/apiserver/pkg/util/webhook

fails because the TLS bad certificate error has gotten more specific in Go 1.21.

### What did you expect to happen?

Test should pass.

### How can we reproduce it (as minimally a... | apiserver/pkg/util/webhook test needs update for Go 1.21 | https://api.github.com/repos/kubernetes/kubernetes/issues/118153/comments | 5 | 2023-05-21T00:59:00Z | 2023-05-21T16:52:21Z | https://github.com/kubernetes/kubernetes/issues/118153 | 1,718,334,116 | 118,153 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Apply this patch:

```

diff --git a/pkg/scheduler/framework/plugins/dynamicresources/dynamicresources_test.go b/pkg/scheduler/framework/plugins/dynamicresources/dynamicresources_test.go

index 5d09769aa39..7043725c456 100644

--- a/pkg/scheduler/framework/plugins/dynamicresources/dynamicresources... | pkg/scheduler/framework/plugins/dynamicresources has loopvar sharing bug | https://api.github.com/repos/kubernetes/kubernetes/issues/118152/comments | 8 | 2023-05-21T00:54:57Z | 2023-05-26T13:06:55Z | https://github.com/kubernetes/kubernetes/issues/118152 | 1,718,333,492 | 118,152 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Apply this patch:

```

diff --git a/pkg/volume/git_repo/git_repo_test.go b/pkg/volume/git_repo/git_repo_test.go

index 3ef1cab0ad9..5e4806f152b 100644

--- a/pkg/volume/git_repo/git_repo_test.go

+++ b/pkg/volume/git_repo/git_repo_test.go

@@ -390,6 +390,7 @@ func doTestSetUp(scenario struct {

... | pkg/volume/git_repo test has loopvar sharing bug | https://api.github.com/repos/kubernetes/kubernetes/issues/118151/comments | 4 | 2023-05-21T00:34:47Z | 2023-05-26T15:55:07Z | https://github.com/kubernetes/kubernetes/issues/118151 | 1,718,330,312 | 118,151 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

`FORCE_HOST_GO=y make test WHAT=./cmd/kubeadm/app/cmd/phases/workflow` fails with the latest Go 1.21 dev branch, with:

```

+++ [0520 15:18:01] Setting GOMAXPROCS: 48

+++ [0520 15:18:01] Running tests without code coverage and with -race

--- FAIL: TestBindToCommandArgRequirements (0.00s)

-... | k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow TestBindToCommandArgRequirements fails in Go 1.21 | https://api.github.com/repos/kubernetes/kubernetes/issues/118149/comments | 4 | 2023-05-20T18:47:11Z | 2023-05-21T06:10:20Z | https://github.com/kubernetes/kubernetes/issues/118149 | 1,718,254,315 | 118,149 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

I would like `framework.Plugin` implementations, specifically scheduler plugins to be when the scheduler is being stopped. It could implement this by a type assertion on `io.Closer`, so that old schedulers don't have to implement it.

### Why is this needed?

For example, wasm plug... | Allow framework plugins to be closed | https://api.github.com/repos/kubernetes/kubernetes/issues/118147/comments | 6 | 2023-05-20T15:36:22Z | 2024-01-08T16:30:37Z | https://github.com/kubernetes/kubernetes/issues/118147 | 1,718,206,582 | 118,147 |

[

"kubernetes",

"kubernetes"

] | Relaying for a customer, but it seems reasonable to me.

Could we get a `--service-account` flag on `kubectl create deployment` ? It's not terribly common to use multiple SAs, but I am seeing it more and more, and it makes me happy. | `kubectl create deployment` with a specific service-account (flag) | https://api.github.com/repos/kubernetes/kubernetes/issues/118142/comments | 4 | 2023-05-19T21:55:37Z | 2023-05-24T21:20:23Z | https://github.com/kubernetes/kubernetes/issues/118142 | 1,717,877,952 | 118,142 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

we have upgradede version v1.20.15 to v1.21.14 when i am doing this pods are not coming up and contrroller is in crashloopbackup status, what might be the reason, why its went down ?

```

I0519 17:45:53.214908 1 controllermanager.go:574] Started "resourcequota" ... | Aftere upgrade kuberentes version v1.20.15 to v1.21.15 not comming up the controller manager | https://api.github.com/repos/kubernetes/kubernetes/issues/118139/comments | 10 | 2023-05-19T17:59:01Z | 2023-08-06T20:49:01Z | https://github.com/kubernetes/kubernetes/issues/118139 | 1,717,615,904 | 118,139 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Make all generated applyconfigs implement some common interface that makes them identifiable as such, similiar to `runtime.Object`. How exactly that interface looks doesn't matter, it could be a simple `IsApplyConfig() bool`.

### Why is this needed?

In order to be able to offer a... | Implement common interface in applyconfigs to make them identifiable as such | https://api.github.com/repos/kubernetes/kubernetes/issues/118138/comments | 16 | 2023-05-19T16:51:34Z | 2025-01-16T19:18:11Z | https://github.com/kubernetes/kubernetes/issues/118138 | 1,717,540,015 | 118,138 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

# Short description

OpenAPI v2 is very expensive, specifically when installing a lot of CRDs. I'm opening this issue to track what's going on and the work that we've been doing, are doing, or planning to do to improve the situation.

Right now, there's mostly three dimension... | [UMBRELLA] OpenAPI is very expensive | https://api.github.com/repos/kubernetes/kubernetes/issues/118136/comments | 8 | 2023-05-19T16:10:07Z | 2024-06-05T01:38:19Z | https://github.com/kubernetes/kubernetes/issues/118136 | 1,717,490,761 | 118,136 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

I suggest replacing gogo/protobuf and golang/protobuf with google/protobuf

### Why is this needed?

https://github.com/golang/protobuf/pull/1306 deprecates github.com/golang/protobuf in favour of google.golang.org/protobuf | github.com/golang/protobuf deprecated | https://api.github.com/repos/kubernetes/kubernetes/issues/118135/comments | 5 | 2023-05-19T15:51:49Z | 2023-05-23T20:14:48Z | https://github.com/kubernetes/kubernetes/issues/118135 | 1,717,465,330 | 118,135 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Once I build `sample-apiserver` project with go module updrage(`go-restful` from v3.9.0 to v3.10.0) in my cluster, it doesn't work well.

```text

NAME SERVICE AVAILABLE AGE

v1alpha1.wardle.example.com wardle/api False (Fa... | go-restful upgrade incompatible for apiserver | https://api.github.com/repos/kubernetes/kubernetes/issues/118133/comments | 13 | 2023-05-19T13:55:25Z | 2024-06-24T03:08:47Z | https://github.com/kubernetes/kubernetes/issues/118133 | 1,717,291,905 | 118,133 |

[

"kubernetes",

"kubernetes"

] | Currently, reflector uses two backoff managers:

https://github.com/kubernetes/client-go/blob/2a5f18df73b70cb85c26a3785b06162f3d513cf5/tools/cache/reflector.go#L223

One is used for initial list call and other for watch call.

Imagine following scenaraion when k8s apiserver is under very heavy load:

1. At the begi... | Improve exponential backoff in reflector | https://api.github.com/repos/kubernetes/kubernetes/issues/118129/comments | 6 | 2023-05-19T11:40:15Z | 2023-06-01T16:48:43Z | https://github.com/kubernetes/kubernetes/issues/118129 | 1,717,110,115 | 118,129 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We recently upgraded api version of hpa to autoscaling/v2 from autoscaling/v2beta2. After the helm upgrade fails when one of the metric target value comes as unknown ?

is invalid: spec.metrics[0].object.target.value: Invalid value: resource.Quantity{i:resource.int64Amount

{value:0, scale:0}

,... | Helm upgrade fails after upgrade hpa api version to autoscaling/v2 | https://api.github.com/repos/kubernetes/kubernetes/issues/118127/comments | 8 | 2023-05-19T11:14:25Z | 2024-03-21T09:12:32Z | https://github.com/kubernetes/kubernetes/issues/118127 | 1,717,079,215 | 118,127 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

https://github.com/kubernetes/kubernetes/pull/117120 broke rbac permissions for pod nanny and it's now not able to scale up with number of nodes.

We see in kube-apiserver logs:

```

➜ latest grep nanny kube-apiserver.log

I0517 17:09:42.416260 11 httplog.go:132] "HTTP" verb="GET" URI="/m... | metrics-server's pod nanny broken after v0.6.3 upgrade | https://api.github.com/repos/kubernetes/kubernetes/issues/118123/comments | 6 | 2023-05-19T08:39:40Z | 2023-07-18T14:19:23Z | https://github.com/kubernetes/kubernetes/issues/118123 | 1,716,868,120 | 118,123 |

[

"kubernetes",

"kubernetes"

] | `pkg/proxy/util/utils.go` (which is supposed to be utility functions for kube-proxy) contains the function `NewFilteredDialContext` and associated struct `FilteredDialogOptions`, which are not used by kube-proxy and have nothing to do with service proxying. They were added in #91785 as part of a storage-related feature... | helper function for unused storage feature in pkg/proxy/util | https://api.github.com/repos/kubernetes/kubernetes/issues/118120/comments | 5 | 2023-05-18T22:36:51Z | 2023-06-14T11:28:20Z | https://github.com/kubernetes/kubernetes/issues/118120 | 1,716,350,938 | 118,120 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When using an CNI (for example, Azure/azure-container-networking#3220), it may fail. Even if the plugin correctly reports errors back, the node is still marked ready, but it's unusable. If the node were not marked ready, the auto-scaler could heal.

### What did you expect to happen?

The node... | CNI networking errors leave node marked ready but unusable | https://api.github.com/repos/kubernetes/kubernetes/issues/118118/comments | 9 | 2023-05-18T20:43:59Z | 2023-05-30T11:59:53Z | https://github.com/kubernetes/kubernetes/issues/118118 | 1,716,237,699 | 118,118 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

The IPVS proxy mode leverages IPSets to significantly decrease the number of iptable rules. Currently, IPTables proxier doesn't use IPSets and can benefit from them.

[there is a kep for nftables proxy mode](https://github.com/kubernetes/enhancements/pull/3824) which might repl... | use ipsets in iptables proxier | https://api.github.com/repos/kubernetes/kubernetes/issues/118110/comments | 10 | 2023-05-18T15:12:48Z | 2023-05-25T18:33:08Z | https://github.com/kubernetes/kubernetes/issues/118110 | 1,715,811,267 | 118,110 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Using Kubernetes 1.24.11 (GKE).

If I create this service, UDP is not routed to the pod:

```

apiVersion: v1

kind: Service

metadata:

name: lb-mytool

annotations:

networking.gke.io/load-balancer-type: "Internal"

spec:

type: LoadBalancer

selector:

name: mytool

... | First protocol in port list is used by feature MixedProtocolLBService | https://api.github.com/repos/kubernetes/kubernetes/issues/118107/comments | 5 | 2023-05-18T14:27:10Z | 2023-05-18T19:18:15Z | https://github.com/kubernetes/kubernetes/issues/118107 | 1,715,739,625 | 118,107 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Initially reported at 1.24.10, but it seems to be present in master as well:

We observe that a part of apiservices objects have been removed by "system:apiserver" right after the kubernetes upgrade.

It looks like there is race between "autoregister controller" [1] deleting apiservices and "crd... | APIServices can be deleted at the apiserver startup | https://api.github.com/repos/kubernetes/kubernetes/issues/118103/comments | 10 | 2023-05-18T12:46:13Z | 2023-05-18T18:26:47Z | https://github.com/kubernetes/kubernetes/issues/118103 | 1,715,578,988 | 118,103 |

[

"kubernetes",

"kubernetes"

] | # Progress <code>[8/8]</code>

- [X] APISnoop org-flow : [StorageV1CSIDriverTest.org](https://github.com/apisnoop/ticket-writing/blob/master/StorageV1CSIDriverTest.org)

- [X] test approval issue : #118098

- [X] test pr : #118099

- [x] two weeks soak start date : [testgrid-link](https://testgrid.k8s.io/sig-... | Write e2e test for StorageV1CSIDriver Endpoints + 3 Endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/118098/comments | 5 | 2023-05-18T09:11:30Z | 2023-07-06T00:53:04Z | https://github.com/kubernetes/kubernetes/issues/118098 | 1,715,290,319 | 118,098 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I met a panic in apiserver package when I create an aggregated API.

```text

panic: runtime error: index out of range [0] with length 0

goroutine 1 [running]:

k8s.io/apiserver/pkg/server.(*GenericAPIServer).InstallAPIGroups(0xc000005200, {0xc000950a38, 0x1, 0x1})

/src/vendor/k8s.io/apiserve... | apiserver InstallAPIGroups slices out of range | https://api.github.com/repos/kubernetes/kubernetes/issues/118094/comments | 3 | 2023-05-18T07:58:01Z | 2023-05-19T23:28:19Z | https://github.com/kubernetes/kubernetes/issues/118094 | 1,715,195,091 | 118,094 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

### What did you expect to happen?

Returns the specified version of the resource based on the request

### How can we reproduce it (as minimally and precisely as possible)?

kubectl get --raw ... | use "kubectl get -- raw", specifies resourceVersion and resourceVersionMatch=Exact, an unexpected result is returned | https://api.github.com/repos/kubernetes/kubernetes/issues/118092/comments | 7 | 2023-05-18T06:40:55Z | 2024-03-30T11:58:09Z | https://github.com/kubernetes/kubernetes/issues/118092 | 1,715,098,510 | 118,092 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When I attempt to create a cluster (i.e. `minikube start --profile=test-cluster --kubernetes-version=v1.27.2`) with v1.27.2, I'm getting the initial error message:

Unable to restart cluster, will reset it: apiserver healthz: apiserver process never appeared

Please see full text [here](https://... | [Apple M1][macOS 13.3.1 (a)] Unable to Install v1.27.2 using MiniKube v1.30.1 | https://api.github.com/repos/kubernetes/kubernetes/issues/118091/comments | 4 | 2023-05-18T05:24:02Z | 2023-05-18T06:05:06Z | https://github.com/kubernetes/kubernetes/issues/118091 | 1,715,003,756 | 118,091 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

remove log warning about not using config file

### Why is this needed?

Currently, kube-proxy can be configured via both CLI flags and config file. and config file can override flags. In the future, all flags will be deprecated and use the config file instead. But now the config f... | kube-proxy: remove log warning about not using config file | https://api.github.com/repos/kubernetes/kubernetes/issues/118090/comments | 6 | 2023-05-18T03:04:15Z | 2023-06-08T22:40:26Z | https://github.com/kubernetes/kubernetes/issues/118090 | 1,714,909,827 | 118,090 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Currently, the maximum number of completions is MAX_INT32, around 2e9 and the maximum parallelism is 1e5.

When using these values, and assuming a very unlucky case, the field `.status.completedIndexes` can fill up the maximum size for objects in etcd (around 1.5Mi)

/sig apps

/sig scalability

... | Indexed Jobs can break with high number of parallelism or completions | https://api.github.com/repos/kubernetes/kubernetes/issues/118085/comments | 10 | 2023-05-17T18:27:50Z | 2023-06-15T21:24:20Z | https://github.com/kubernetes/kubernetes/issues/118085 | 1,714,438,380 | 118,085 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

1. Create a PVC

```

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

annotations:

cdi.kubevirt.io/storage.bind.immediate.requested: "true"

cdi.kubevirt.io/storage.condition.running: "false"

cdi.kubevirt.io/storage.condition.running.message: Import Complete

cdi.kubevi... | Got rpc error create shared snapshot for pvc with unsupported storage class | https://api.github.com/repos/kubernetes/kubernetes/issues/118075/comments | 13 | 2023-05-17T12:36:26Z | 2024-10-20T05:24:59Z | https://github.com/kubernetes/kubernetes/issues/118075 | 1,713,813,004 | 118,075 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I cannot use the user namespaces feature that is enabled by the feature gate `UserNamespacesStatelessPodsSupport` as mentioned in https://kubernetes.io/blog/2022/10/03/userns-alpha/

I tried kubeadm with the flag `--feature-gates=UserNamespacesStatelessPodsSupport=true` but I got the error

`unrec... | unrecognized feature-gate key: UserNamespacesStatelessPodsSupport | https://api.github.com/repos/kubernetes/kubernetes/issues/118074/comments | 4 | 2023-05-17T10:06:10Z | 2023-05-17T19:29:52Z | https://github.com/kubernetes/kubernetes/issues/118074 | 1,713,560,902 | 118,074 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

While investigating a dip in volume usage graphs for a nfs volume, we suspect there could have been a temporary error when gathering metrics, causing us to return 0 bytes. However we could not verify this issue with the default logging levels.

### What did you expect to happen?

Consider elevating ... | Improve logging when volume usage metrics fails | https://api.github.com/repos/kubernetes/kubernetes/issues/118062/comments | 7 | 2023-05-17T03:51:57Z | 2024-07-07T03:17:45Z | https://github.com/kubernetes/kubernetes/issues/118062 | 1,713,053,722 | 118,062 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hi. I am using k8s 1.21 for stress test. When node resource is not sufficient and some taints which pods cannot tolerate are added on the node, I create 20K pods by shell command. As you can see, 20K pods are in pending status. At the same time, memory usage of kube-scheduler increases gradually a... | lots of pending pods lead to memory surge of kube-scheduler | https://api.github.com/repos/kubernetes/kubernetes/issues/118059/comments | 15 | 2023-05-17T01:54:51Z | 2024-03-24T01:41:59Z | https://github.com/kubernetes/kubernetes/issues/118059 | 1,712,968,456 | 118,059 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

A pod was created with fsgroup and a very large CSI volume that ended up taking 40 hours to finish applying fsgroup. We realized the issue and tried to redeploy the pod with `fsGroupChangePolicy: OnRootMismatch`. However, because the CSI volume is DeviceMountable, the volume operations are serial... | Cancel volume operations when pod is deleted | https://api.github.com/repos/kubernetes/kubernetes/issues/118058/comments | 12 | 2023-05-17T01:49:12Z | 2024-12-14T15:40:55Z | https://github.com/kubernetes/kubernetes/issues/118058 | 1,712,964,856 | 118,058 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When a gated pod is created with nil affinity and a user/controller tries to update its nodeAffinity, the validation fails.

### What did you expect to happen?

The pod gets updated with the new affinity as per rules in [KEP-3838](https://github.com/kubernetes/enhancements/commit/ac65b5853a9a5... | Updating the nodeAffinity of gated pods having nil affinity is not allowed | https://api.github.com/repos/kubernetes/kubernetes/issues/118052/comments | 2 | 2023-05-16T17:17:27Z | 2023-05-22T16:35:11Z | https://github.com/kubernetes/kubernetes/issues/118052 | 1,712,421,093 | 118,052 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

https://testgrid.k8s.io/sig-node-containerd#node-e2e-features

https://testgrid.k8s.io/sig-node-containerd#node-kubelet-containerd-performance-test

https://testgrid.k8s.io/sig-node-containerd#node-e2e-unlabelled

### Which tests are failing?

all

### Since when has it been faili... | ci-cri-containerd-node-e2e-features is failing | https://api.github.com/repos/kubernetes/kubernetes/issues/118042/comments | 13 | 2023-05-16T09:50:02Z | 2024-03-03T14:30:48Z | https://github.com/kubernetes/kubernetes/issues/118042 | 1,711,662,856 | 118,042 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I configured it according to the network policy demonstrated in the official document, and found that sometimes it takes effect and sometimes it does not. After investigation, I finally found that this example only takes effect when busybox and nginx are assigned to the same node, and it does not ... | Network policies only take effect when pods are assigned to the same node | https://api.github.com/repos/kubernetes/kubernetes/issues/118039/comments | 7 | 2023-05-16T08:35:58Z | 2023-09-14T16:42:45Z | https://github.com/kubernetes/kubernetes/issues/118039 | 1,711,532,313 | 118,039 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [fb94bb199721f2524f81](https://go.k8s.io/triage#fb94bb199721f2524f81)

##### Error text:

```

[FAILED] deploying csi-hostpath driver: create ClusterRoleBinding: clusterrolebindings.rbac.authorization.k8s.io "psp-csi-hostpath-role-ephemeral-9424" already exists

In [It] at: test/e2e/storage/driver... | [Flaking Test][sig-storage] deploying csi-hostpath driver: create ClusterRoleBinding: clusterrolebindings.rbac.authorization.k8s.io "psp-csi-hostpath-role-ephemeral-*" already exists | https://api.github.com/repos/kubernetes/kubernetes/issues/118037/comments | 6 | 2023-05-16T07:44:02Z | 2025-01-08T08:34:37Z | https://github.com/kubernetes/kubernetes/issues/118037 | 1,711,442,115 | 118,037 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

explain command's long description contains a [code block](https://github.com/kubernetes/kubernetes/blob/70033bf84301d64f079dd7c1ca19b05d57a39bee/staging/src/k8s.io/kubectl/pkg/cmd/explain/explain.go#L43) and when `templates.LongDesc` is [called](https://github.com/kubernetes/kubernetes/blob/70033bf... | Multiple templates.LongDesc invocation leads to incorrect code block | https://api.github.com/repos/kubernetes/kubernetes/issues/118028/comments | 1 | 2023-05-16T06:02:29Z | 2023-05-24T06:20:51Z | https://github.com/kubernetes/kubernetes/issues/118028 | 1,711,297,529 | 118,028 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I wanna run a job per 1 minue for 3 times, the yaml like this

```

apiVersion: batch/v1

kind: CronJob

metadata:

name: print

spec:

schedule: "*/1 * * * *"

jobTemplate:

spec:

completionMode: Indexed

completions: 3

template:

spec:

restartPo... | The field spec.jobTemplate.spec.completions is not effect in CronJob | https://api.github.com/repos/kubernetes/kubernetes/issues/118026/comments | 3 | 2023-05-16T05:08:59Z | 2023-05-16T05:26:48Z | https://github.com/kubernetes/kubernetes/issues/118026 | 1,711,245,889 | 118,026 |

[

"kubernetes",

"kubernetes"

] | In #118017 I tried to reorganize the kube-proxy build a bit, and ran into the problem that verify-typecheck failed because `cmd/kube-proxy` was no longer buildable on darwin/amd64 and darwin/arm64. This was surprising because we don't build kube-proxy on OS X.

It seems like verify-typecheck ought to be taking `KUBE_... | verify-typecheck checks binaries that don't actually get built | https://api.github.com/repos/kubernetes/kubernetes/issues/118020/comments | 3 | 2023-05-15T19:16:51Z | 2023-12-13T21:35:20Z | https://github.com/kubernetes/kubernetes/issues/118020 | 1,710,682,666 | 118,020 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Quoted from @wojtek-t 's [comment](https://github.com/kubernetes/kubernetes/pull/115754#discussion_r1192068894)

Splitting healthz/ into livez/ and readyz/

### Why is this needed?

```

I agree that splitting into livez/ and readyz/ is what we need here:

* livez/ inherit... | Split /healthz into /livez & /readyz for kube-scheduler | https://api.github.com/repos/kubernetes/kubernetes/issues/118011/comments | 8 | 2023-05-15T10:34:52Z | 2024-05-30T05:09:18Z | https://github.com/kubernetes/kubernetes/issues/118011 | 1,709,805,162 | 118,011 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

If the `ResourceVersion=0` parameter is added, the request will not penetrate to etcd directly. Therefore, is there any special reason that kcm needs to go to etcd to get real-time data?

Or we could actually add ResourceVersion=0 to the `metav1.ListOptions` ?

```

~/go/s... | Why do so many List requests to ApiServer in KCM have no ResourceVersion parameter | https://api.github.com/repos/kubernetes/kubernetes/issues/118008/comments | 5 | 2023-05-15T04:01:43Z | 2024-09-24T20:26:06Z | https://github.com/kubernetes/kubernetes/issues/118008 | 1,709,273,083 | 118,008 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Because setting resource limits at the container level is tedious, and it's hard to know how much you should set。

### Why is this needed?

```

containers:

- name: contianersA

resources: {}

- name: contianersB

resources: {}

- name: contianersC

resources: {}

...

initC... | Resource limits are at the pod level, not at the container level | https://api.github.com/repos/kubernetes/kubernetes/issues/118004/comments | 10 | 2023-05-15T02:22:24Z | 2024-03-27T21:30:08Z | https://github.com/kubernetes/kubernetes/issues/118004 | 1,709,205,499 | 118,004 |

[

"kubernetes",

"kubernetes"

] | kubernetes 1.27 packages for armhf are currently not available:

https://packages.cloud.google.com/apt/dists/kubernetes-xenial/main/binary-armhf/Packages

Could you please make them available ?

Thank you | kubernetes 1.27 armhf packages not available | https://api.github.com/repos/kubernetes/kubernetes/issues/117996/comments | 6 | 2023-05-13T20:41:30Z | 2023-05-17T03:03:47Z | https://github.com/kubernetes/kubernetes/issues/117996 | 1,708,735,743 | 117,996 |

[

"kubernetes",

"kubernetes"

] | Installing executables with "go get" is deprecated. Now, "go install" can be used instead.

/kind documentation

/assign @anshul-kh

| client-go: update INSTALL.md | https://api.github.com/repos/kubernetes/kubernetes/issues/117990/comments | 2 | 2023-05-13T13:44:22Z | 2023-05-15T01:21:25Z | https://github.com/kubernetes/kubernetes/issues/117990 | 1,708,609,371 | 117,990 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

While debugging another issue, we saw patches in the audit log coming from a controller that we were sure shouldn't be making any changes. And when we watched with `kubectl get TYPE --watch -o yaml`, there weren't any changes observed.

Eventually, we realized that the controller in question was ... | audit log does not contain dryRun (and other URL params) | https://api.github.com/repos/kubernetes/kubernetes/issues/117988/comments | 8 | 2023-05-13T01:49:28Z | 2024-11-22T19:02:14Z | https://github.com/kubernetes/kubernetes/issues/117988 | 1,708,389,869 | 117,988 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Currently the only way for a statefulset pod to identify its own index is by parsing the pod name, this is not convenient, it is easier if we set that as an annotation that users can set as an env var using the downward api. We already do this for Indexed Job.

Also, for both Ind... | Publish the pod index as a label and annotation for Statefulset and indexed Job | https://api.github.com/repos/kubernetes/kubernetes/issues/117986/comments | 19 | 2023-05-12T21:48:32Z | 2024-03-26T20:13:14Z | https://github.com/kubernetes/kubernetes/issues/117986 | 1,708,255,262 | 117,986 |

[

"kubernetes",

"kubernetes"

] | Recently came across this code:

https://github.com/kubernetes/kubernetes/blob/53636bc7806d6e4fa1e9a4824d04779d0c4cde39/pkg/scheduler/framework/plugins/volumezone/volume_zone.go#L220-L224

The code meant to say: if a Node doesn't have the following topology labels

https://github.com/kubernetes/kubernetes/blob/8d... | Re-think volumezone plugin | https://api.github.com/repos/kubernetes/kubernetes/issues/117983/comments | 8 | 2023-05-12T19:06:23Z | 2024-08-02T23:25:11Z | https://github.com/kubernetes/kubernetes/issues/117983 | 1,708,079,781 | 117,983 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

`pod_scheduling_durating_seconds` is recording the time that a Pod is gated.

We use the timestamp when the scheduler inserts the pod into the queue: https://github.com/kubernetes/kubernetes/blob/84c8abfb8bf900ce36f7ebfbc52794bad972d8cc/pkg/scheduler/internal/queue/scheduling_queue.go#L402

### Wh... | pod_scheduling_durating_seconds includes the time a Pod fails PreEnqueue | https://api.github.com/repos/kubernetes/kubernetes/issues/117979/comments | 23 | 2023-05-12T17:12:54Z | 2023-06-22T00:49:42Z | https://github.com/kubernetes/kubernetes/issues/117979 | 1,707,956,244 | 117,979 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

A deployment with a single pod is scheduled multiple times with OutOfcpu error. The cluster starts with a single node, but ends up autoscaling to 3 (max nodes in the pool), with all pods hanging.

```

$ kubectl get deploy

NAME READY UP-TO-DATE AVAILABLE AGE

scheduler 1/1 ... | Pod created many times when node is OutOfcpu | https://api.github.com/repos/kubernetes/kubernetes/issues/117978/comments | 15 | 2023-05-12T14:25:50Z | 2025-01-22T15:42:02Z | https://github.com/kubernetes/kubernetes/issues/117978 | 1,707,731,716 | 117,978 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

```

kubelet_pods.go:897] "Unable to retrieve pull secret, the image pull may not succeed." pod="default/xxxxx" secret="" err="failed to sync secret cache: timed out waiting for the condition"

```

When a pod reference a secret or configmap, kubelet will add watch in `WatchBasedManager`. If the... | Reduce error "failed to sync secret cache: timed out waiting for the condition" | https://api.github.com/repos/kubernetes/kubernetes/issues/117972/comments | 16 | 2023-05-12T10:01:30Z | 2024-03-25T10:58:00Z | https://github.com/kubernetes/kubernetes/issues/117972 | 1,707,335,595 | 117,972 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

- [x] update build etcd image to 3.5.9-0 https://github.com/kubernetes/kubernetes/pull/117999 @kkkkun

- [x] update etcd version to 3.5.9 https://github.com/kubernetes/kubernetes/pull/118027 @humblec

- [x] upgrade etcd deps to v3.5.9 https://github.com/kubernetes/kubernet... | Upgrade etcd to 3.5.9 | https://api.github.com/repos/kubernetes/kubernetes/issues/117957/comments | 5 | 2023-05-12T02:55:45Z | 2023-05-17T11:54:52Z | https://github.com/kubernetes/kubernetes/issues/117957 | 1,706,839,771 | 117,957 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have a k8s cluster (version v1.23.6) with a GPU node which has 4 GPUs.

Firstly, I submitted a job which request 1 nvidia.com/gpu, and after a while the job successfully finished with a Completed pod status.

Secondly, I submitted a deployment which request 4 nvidia.com/gpu, it keeps ruuning... | A completed status pod which request 1 nvidia.com/gpu resource updated to UnexpectedAdmissionError when restart kubelet service | https://api.github.com/repos/kubernetes/kubernetes/issues/117955/comments | 12 | 2023-05-12T02:35:18Z | 2024-09-29T02:07:30Z | https://github.com/kubernetes/kubernetes/issues/117955 | 1,706,827,544 | 117,955 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When a pod completes, the scheduler moves all the pending pods back into the active/backoff.

In a busy cluster with multiple nodepools, this is not appropriate, as pods could get starved.

### What did you expect to happen?

https://github.com/kubernetes/kubernetes/blob/02659772cb2662600fb1273654... | When a pod completes, every pending pod is added back to active/backoff | https://api.github.com/repos/kubernetes/kubernetes/issues/117951/comments | 9 | 2023-05-11T20:17:24Z | 2024-02-19T06:20:57Z | https://github.com/kubernetes/kubernetes/issues/117951 | 1,706,489,705 | 117,951 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

There are still references to https://storage.googleapis.com/kubernetes-release instead of https://dl.k8s.io/

dl.k8s.io is the correct advertised download host and will eventually move to be fastly shielding a fully community-owned bucket

ref: https://github.com/kubernetes/k8s.io/issues/2396

... | replace references of https://storage.googleapis.com/kubernetes-release with https://dl.k8s.io | https://api.github.com/repos/kubernetes/kubernetes/issues/117949/comments | 7 | 2023-05-11T18:40:15Z | 2023-05-13T13:52:41Z | https://github.com/kubernetes/kubernetes/issues/117949 | 1,706,348,869 | 117,949 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

A new metric `rollingUpdateDuration` in Statefulset controller, which helps to figure out how long it takes for rolling update.

### Why is this needed?

The new metric helps us to evaluate the consuming time during statefulset rolling update, we can optimize it based on the ... | Add a new metric `rollingUpdateDuration` to statefulset for measuring | https://api.github.com/repos/kubernetes/kubernetes/issues/117928/comments | 9 | 2023-05-11T08:18:52Z | 2023-08-28T10:51:41Z | https://github.com/kubernetes/kubernetes/issues/117928 | 1,705,309,445 | 117,928 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I am unable to dynamically modify resource limits.

```

#117887 docker update fce32a122506 -m=100G --memory-swap="-1"

Error response from daemon: Cannot update container fce32a122506cba32ac8e376f4a0c337d950d1ffa29409ec96276ecb60427c07: runc did not terminate successfully: failed to write "10737... | Modify memory.limit_in_bytes: invalid argument. | https://api.github.com/repos/kubernetes/kubernetes/issues/117926/comments | 2 | 2023-05-11T08:10:33Z | 2023-05-11T09:31:40Z | https://github.com/kubernetes/kubernetes/issues/117926 | 1,705,296,772 | 117,926 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hello all.

In my environment, (k8s version 1.24.4, 6 master nodes, 800 worker nodes, one hundred thousands custom resources(my crd) . if I create or update many custom resources concurrently, for example, update ten thousands custom resources at the same time, the kube-apiserver will run under high... | client-go failed to list and watch because the server was unable to return a response in the time allotted | https://api.github.com/repos/kubernetes/kubernetes/issues/117925/comments | 6 | 2023-05-11T07:48:48Z | 2024-02-20T19:49:38Z | https://github.com/kubernetes/kubernetes/issues/117925 | 1,705,260,795 | 117,925 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When packets with sequence number out-of-window arrived k8s node, conntrack marked them as INVALID. kube-proxy will ignore them, without rewriting DNAT. However, there is no corresponding session link on the host, and the host sends a reset packet, causing the session link to be interrupted

### Wha... | "Connection reset by peer" due to invalid conntrack packets | https://api.github.com/repos/kubernetes/kubernetes/issues/117924/comments | 53 | 2023-05-11T05:50:21Z | 2024-05-08T20:00:58Z | https://github.com/kubernetes/kubernetes/issues/117924 | 1,705,112,318 | 117,924 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hello all.

In my environment, (k8s version 1.24.4, 6 master nodes, 800 worker nodes, one hundred thousands custom resources(my crd) . if I create or update many custom resources concurrently, for example, update ten thousands custom resources at the same time, the kube-apiserver will run under high... | kube-scheduler/kube-controller can't recover automatically after retrieving lock timeout | https://api.github.com/repos/kubernetes/kubernetes/issues/117922/comments | 5 | 2023-05-11T04:27:26Z | 2023-05-15T07:08:19Z | https://github.com/kubernetes/kubernetes/issues/117922 | 1,705,043,988 | 117,922 |

[

"kubernetes",

"kubernetes"

] | There was some discussion in #117297 about generic vs platform-specific options, especially around `--detect-local-mode`, which can be specified on Windows even though it doesn't actually make sense there. (Or at least, it's not _implemented_ there... I'm not sure if winkernel would benefit from it or not?)

Current ... | generic vs platform/backend-specific options in kube-proxy | https://api.github.com/repos/kubernetes/kubernetes/issues/117909/comments | 4 | 2023-05-10T16:02:36Z | 2024-10-24T16:21:34Z | https://github.com/kubernetes/kubernetes/issues/117909 | 1,704,238,832 | 117,909 |

[

"kubernetes",

"kubernetes"

] | We should start retiring old etcd versions from the etcd migrator's [bundled versions list](https://github.com/kubernetes/kubernetes/blob/a5575425b039bf7c15dfaa9a7acf257fdc4fde3f/cluster/images/etcd/Makefile#L29) so that we can actually obsolete this when [etcd storage versioning](https://github.com/etcd-io/etcd/issues... | Remove very old etcd versions from the bundled versions list | https://api.github.com/repos/kubernetes/kubernetes/issues/117906/comments | 6 | 2023-05-10T14:31:41Z | 2023-06-10T07:03:12Z | https://github.com/kubernetes/kubernetes/issues/117906 | 1,704,060,422 | 117,906 |

[

"kubernetes",

"kubernetes"

] | # Progress <code>[6/6]</code>

- [x] APISnoop org-flow : [CoreV1PodEphemeralcontainersTest.org](https://github.com/apisnoop/ticket-writing/blob/master/CoreV1PodEphemeralcontainersTest.org)

- [x] test approval issue : #117894

- [x] test pr : #117895

- [x] two weeks soak start date : [testgrid-link](https:/... | Write e2e test for PodEphemeralcontainers endpoints + 2 Endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/117894/comments | 3 | 2023-05-09T22:44:50Z | 2023-05-29T22:55:45Z | https://github.com/kubernetes/kubernetes/issues/117894 | 1,702,847,678 | 117,894 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Add a configuration option the PodTopologySpread plugin to decide which APIs to take into account for spreading.

Currently, the implicit list contains: ReplicaSet, ReplicationController, StatefulSet and Service.

/sig scheduling

### Why is this needed?

The ability to disable s... | Configure which Workload APIs have default spreading | https://api.github.com/repos/kubernetes/kubernetes/issues/117887/comments | 15 | 2023-05-09T15:20:18Z | 2024-10-13T09:33:29Z | https://github.com/kubernetes/kubernetes/issues/117887 | 1,702,260,066 | 117,887 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The pods are getting scheduled on same node repeatedly and getting evicted with status OutOfmemory.

### What did you expect to happen?

The pod should be scheduled correctly. Even if pod goes out of memory, there should be only one pod. Rest other pods should schedule on different nodes with avail... | Lots of pods on Outofmemory state | https://api.github.com/repos/kubernetes/kubernetes/issues/117883/comments | 12 | 2023-05-09T12:28:12Z | 2023-07-29T00:08:55Z | https://github.com/kubernetes/kubernetes/issues/117883 | 1,701,909,992 | 117,883 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

test

### Why is this needed?

test | hi | https://api.github.com/repos/kubernetes/kubernetes/issues/117882/comments | 2 | 2023-05-09T10:21:09Z | 2023-05-09T10:21:49Z | https://github.com/kubernetes/kubernetes/issues/117882 | 1,701,799,493 | 117,882 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When scrape kubelet resource metrics provided by /metrics/resource, got response like this

```

curl -ik https://10.56.229.90:10250/metrics/resource --cacert /var/run/secrets/kubernetes.io/serviceaccount/ca.crt --header "Authorization: Bearer $(cat /var/run/secrets/kubernetes.io/serviceaccount/... | kubelet resource metric container_start_time_seconds have timestamp equal to container start time | https://api.github.com/repos/kubernetes/kubernetes/issues/117880/comments | 11 | 2023-05-09T08:49:25Z | 2023-10-15T05:05:39Z | https://github.com/kubernetes/kubernetes/issues/117880 | 1,701,636,934 | 117,880 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I am trying to bring up a kind cluster with containerd in order to run e2e test on mac + kind.

Not sure whether this is a bug or I missed some prerequisites.

Any help would be greatly appreciated :)

- building the image dra/node:latest works as expected:

```bash

❯ test/e2e/dra/kind-b... | test/e2e/dra: unable to bring up kind cluster with containerd | https://api.github.com/repos/kubernetes/kubernetes/issues/117878/comments | 14 | 2023-05-09T07:19:20Z | 2023-05-11T03:05:37Z | https://github.com/kubernetes/kubernetes/issues/117878 | 1,701,501,513 | 117,878 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

net.ipv4.tcp_keepalive_time is namespaced and it is used by several java clients for configuring the tcp behaviour.

### Why is this needed?

1. It would be helpful to be able to configure this for applications without needing to modify the kubelet args. Notable for services... | Make net.ipv4.tcp_keepalive_time a safe sysctl | https://api.github.com/repos/kubernetes/kubernetes/issues/117873/comments | 14 | 2023-05-08T20:32:21Z | 2023-10-13T03:02:52Z | https://github.com/kubernetes/kubernetes/issues/117873 | 1,700,894,268 | 117,873 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When a CSI driver supports ControllerExpand but not NodeExpand, it is possible for misleading errors to be reported by the kubelet:

```

MountVolume.MountDevice failed while expanding volume for volume "pvc-649b2667-100e-4088-9e6b-c0fe2ef458e1" : Expander.NodeExpand found CSI plugin kubernetes.io/c... | Race when checking for CSI Node Expand support records misleading event | https://api.github.com/repos/kubernetes/kubernetes/issues/117871/comments | 10 | 2023-05-08T18:19:08Z | 2024-03-31T17:12:11Z | https://github.com/kubernetes/kubernetes/issues/117871 | 1,700,706,601 | 117,871 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The functions SetWatchErrorHandler and SetTransform are racy with ShareInformer factory

Consider the following example:

```go

informerFactory := informers.NewSharedInformerFactory(client, 0*time.Second)

go func() {

informerFactory.Start(stop)

}()

serviceInformer := informerFactory.C... | client: shared informer SetX functions are racy | https://api.github.com/repos/kubernetes/kubernetes/issues/117869/comments | 6 | 2023-05-08T17:32:54Z | 2023-06-05T21:23:07Z | https://github.com/kubernetes/kubernetes/issues/117869 | 1,700,642,415 | 117,869 |

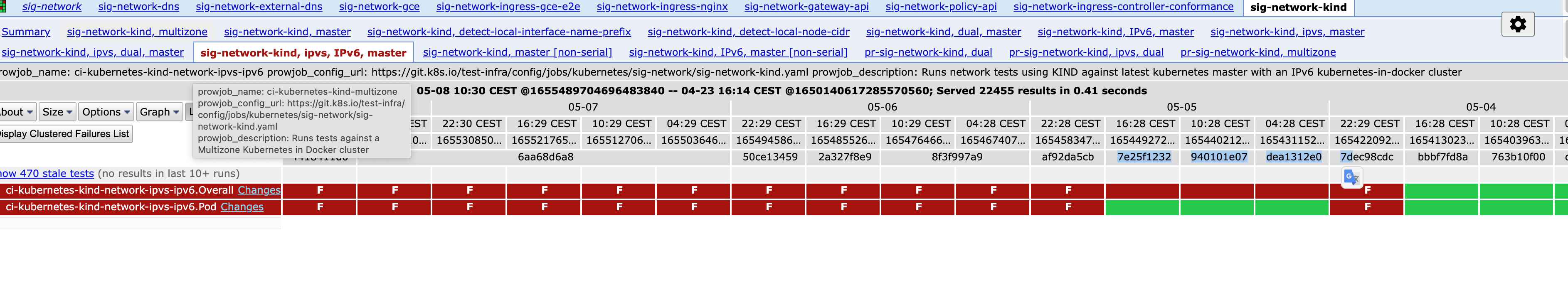

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

### Which tests are failing?

it seems all the job is timing out

### Since when has it been failing?

05-04

### Testgrid link

https://testgrid.k8s.io/sig-network-kind#sig-net... | [Failing test] kind ipvs ipv6 job failing | https://api.github.com/repos/kubernetes/kubernetes/issues/117863/comments | 20 | 2023-05-08T12:43:19Z | 2023-05-15T12:56:42Z | https://github.com/kubernetes/kubernetes/issues/117863 | 1,700,185,042 | 117,863 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

```

apiVersion: batch/v1

kind: CronJob

metadata:

annotations:

name: productsetl

namespace: default

spec:

schedule: "10 15 * * 1"

successfulJobsHistoryLimit: 3

suspend: false

concurrencyPolicy: Allow

failedJobsHistoryLimit: 1

jobTemplate:

spec:

template:

... | Job not running on scheduled time - Kubernetes CronJob | https://api.github.com/repos/kubernetes/kubernetes/issues/117856/comments | 9 | 2023-05-08T07:29:39Z | 2024-02-19T04:53:07Z | https://github.com/kubernetes/kubernetes/issues/117856 | 1,699,700,668 | 117,856 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

i want to update pod spec in framework.PreBindPlugin

however, it is forbidden.

<img width="1210" alt="3e3b0e00002a20d9e0eafd1aa6a8737" src="https://user-images.githubusercontent.com/41802365/236726412-76d11904-cae3-448b-aab1-0dfc1628a01d.png">

i think it should work. because, it it behaviour b... | update pod spec in framework.PreBindPlugin | https://api.github.com/repos/kubernetes/kubernetes/issues/117854/comments | 7 | 2023-05-08T03:21:37Z | 2023-05-09T14:39:37Z | https://github.com/kubernetes/kubernetes/issues/117854 | 1,699,442,550 | 117,854 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

whenever we are updating anything in secret or configmap , we are not able to find when it is updated .

only we see creation time.

if this feature is already there please tell how we can find.

### Why is this needed?

if we have this feature then it will be helpful for auditin... | show update time in configmap or secret | https://api.github.com/repos/kubernetes/kubernetes/issues/117847/comments | 6 | 2023-05-07T11:24:22Z | 2023-05-15T08:33:22Z | https://github.com/kubernetes/kubernetes/issues/117847 | 1,699,019,479 | 117,847 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When use kubelet in OpenVZ VPS, everything in /proc is read-only, then kubelet exit with error:

```

kubelet.go:1480] "Failed to start ContainerManager" err="open /proc/sys/kernel/panic: permission denied"

```

### What did you expect to happen?

Kubeler run successfull.

### How can we reproduce ... | container_manager_linux.go no cofig for setupKernelTunables KernelTunableWarn | https://api.github.com/repos/kubernetes/kubernetes/issues/117846/comments | 7 | 2023-05-07T08:48:05Z | 2024-05-10T02:34:46Z | https://github.com/kubernetes/kubernetes/issues/117846 | 1,698,962,610 | 117,846 |

[

"kubernetes",

"kubernetes"

] | Playing around with kind, I noticed the following somewhat unexpected behavior:

```yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: busybox

spec:

replicas: 2

selector:

matchLabels:

app: busybox

template:

metadata:

labels:

app: busybox

spec:

nodeName... | Pods being scheduled to a cordon'ed node due to the presence of nodeName field | https://api.github.com/repos/kubernetes/kubernetes/issues/117843/comments | 6 | 2023-05-07T00:46:56Z | 2024-03-19T20:56:05Z | https://github.com/kubernetes/kubernetes/issues/117843 | 1,698,833,666 | 117,843 |

[

"kubernetes",

"kubernetes"

] | At the moment, there is no option to avoid logging certain errors, which also prevents dependent repositories from operating on these errors in a way that would make more sense to the user-facing side of things. IIUC, it makes sense to use [`HandleError`](https://github.com/kubernetes/apimachinery/blob/8d1258da8f386b80... | Allow operating on errors instead of logging them | https://api.github.com/repos/kubernetes/kubernetes/issues/127329/comments | 16 | 2023-05-06T18:06:13Z | 2025-01-27T10:22:12Z | https://github.com/kubernetes/kubernetes/issues/127329 | 2,522,924,669 | 127,329 |

[

"kubernetes",

"kubernetes"

] | Hi Team,

I'm using on-premises Kubernetes cluster with v1.23.1 version. Recently, started facing high memory utilization of kube-apiserver process and resultant master node is going in hang mode frequently.

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

sc... | kube-apiserver process memory utilization is getting high frequently | https://api.github.com/repos/kubernetes/kubernetes/issues/117840/comments | 7 | 2023-05-06T13:18:08Z | 2023-08-20T08:42:49Z | https://github.com/kubernetes/kubernetes/issues/117840 | 1,698,629,044 | 117,840 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [d4630f19a3fbeb0be9ba](https://go.k8s.io/triage#d4630f19a3fbeb0be9ba)

##### Error text:

```

[FAILED] error running /workspace/github.com/containerd/containerd/kubernetes/platforms/linux/amd64/kubectl --server=https://35.230.64.81 --kubeconfig=/workspace/.kube/config --namespace=services-529 exe... | Failure cluster [d4630f19...] Services should fail health check node port if there are only terminating endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/117838/comments | 4 | 2023-05-06T09:02:18Z | 2023-05-06T10:41:04Z | https://github.com/kubernetes/kubernetes/issues/117838 | 1,698,545,500 | 117,838 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

This is a subset of "make verify doesn't work under build/run.sh anymore" xref: https://github.com/kubernetes/kubernetes/issues/117821

In two places we assume the path for "local builds"

https://github.com/kubernetes/kubernetes/blob/ace6a79372113b8d850a58cdba740cdef4bc10ee/hack/golangci.yaml#L... | logcheck doesn't work with dockerized build | https://api.github.com/repos/kubernetes/kubernetes/issues/117831/comments | 9 | 2023-05-06T01:38:39Z | 2023-10-25T21:48:50Z | https://github.com/kubernetes/kubernetes/issues/117831 | 1,698,366,536 | 117,831 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The comments for `NewExponentialBackoffManager` read:

```go

// NewExponentialBackoffManager returns a manager for managing exponential backoff. Each backoff is jittered and

// backoff will not exceed the given max. If the backoff is not called within resetDuration, the backoff is reset.

// This ... | Invalid deprecation information in k8s.io/apimachinery/pkg/util/wait | https://api.github.com/repos/kubernetes/kubernetes/issues/117829/comments | 5 | 2023-05-05T22:56:31Z | 2024-05-16T16:51:14Z | https://github.com/kubernetes/kubernetes/issues/117829 | 1,698,285,671 | 117,829 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are flaking?

pull-kubernetes-e2e-gce, assorted GCE jobs

### Which tests are flaking?

`[It] [sig-network] Services should fail health check node port if there are only terminating endpoints`

### Since when has it been flaking?

Seems to be elevated today https://storage.googleapis.com/k8s-triage/ind... | flaky test: Services should fail health check node port if there are only terminating endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/117824/comments | 4 | 2023-05-05T21:28:15Z | 2023-05-07T13:13:58Z | https://github.com/kubernetes/kubernetes/issues/117824 | 1,698,215,498 | 117,824 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

See thread in slack.k8s.io https://kubernetes.slack.com/archives/C09R23FHP/p1683313501620729

Basically, for some time now we've been using `git worktree` to speed up various verify scripts, but unfortunately they can't be run dockerized now under `build/run.sh` because that script explicitly ex... | `make verify` doesn't work under build/run.sh due to git worktree usage while .git is not rsynced to build container | https://api.github.com/repos/kubernetes/kubernetes/issues/117821/comments | 6 | 2023-05-05T20:39:57Z | 2023-12-13T22:58:04Z | https://github.com/kubernetes/kubernetes/issues/117821 | 1,698,171,956 | 117,821 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I work on an operator that creates an Indexed Job alongside a headless service to give the indexed job pods a shared network. We used to have a design where we would create a one-off pod before the indexed job to generate a certificate, and recently refactored to have the library built into the op... | Network readiness for indexed job + headless service depends on presence of pod | https://api.github.com/repos/kubernetes/kubernetes/issues/117819/comments | 124 | 2023-05-05T19:12:52Z | 2023-10-02T13:33:15Z | https://github.com/kubernetes/kubernetes/issues/117819 | 1,698,074,045 | 117,819 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I tried to `build/run.sh make` on my Intel MacOS 12.6 machine, where I have podman installed and symlinked to "docker" in a directory on my path. Commands that invoke "docker" work as expected. I am not using Docker because of license and payment issues. But `build/common.sh` insists that Docker'... | build/run.sh does not work with podman | https://api.github.com/repos/kubernetes/kubernetes/issues/117816/comments | 5 | 2023-05-05T17:00:42Z | 2023-06-05T08:47:58Z | https://github.com/kubernetes/kubernetes/issues/117816 | 1,697,919,430 | 117,816 |

[

"kubernetes",

"kubernetes"

] | Please see the following internal error:

```

I0505 14:12:12.827065 190662 kuberuntime_manager.go:1014] "computePodActions got for pod" podActions="<internal error: json: unsupported type: map[container.ContainerID]kuberuntime.containerToKillInfo>" pod="kube-system/coredns-8f5847b64-mzw46"

```

This seems to be co... | [klog] internal error during logging when trying to convert a map to a something printable | https://api.github.com/repos/kubernetes/kubernetes/issues/117809/comments | 20 | 2023-05-05T14:34:32Z | 2025-03-02T02:23:51Z | https://github.com/kubernetes/kubernetes/issues/117809 | 1,697,729,887 | 117,809 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Add a mechanism (ie, a registered label that is automatically applied) that lets a cluster operator write a selector for namespaces.

The selector should be able to either include all system namespaces, or exclude them all.

---

Optionally, prevent people from overwriting th... | Allow selecting system namespaces | https://api.github.com/repos/kubernetes/kubernetes/issues/117807/comments | 8 | 2023-05-05T13:30:23Z | 2024-12-15T14:03:47Z | https://github.com/kubernetes/kubernetes/issues/117807 | 1,697,627,391 | 117,807 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

target file: https://github.com/kubernetes/kubernetes/blob/master/staging/src/k8s.io/client-go/util/workqueue/queue_test.go

```

1. for _, test := range tests {

2. const producers = 50

3. producerWG := sync.WaitGroup{}

4. producerWG.Add(producers)

5. for i := 0; i < producers; i++ {

6. ... | workqueue: loop variables captured by 'func' literals in 'go' statements | https://api.github.com/repos/kubernetes/kubernetes/issues/117805/comments | 11 | 2023-05-05T13:11:09Z | 2024-05-21T19:13:42Z | https://github.com/kubernetes/kubernetes/issues/117805 | 1,697,600,139 | 117,805 |

Subsets and Splits

Unique Owner-Repo Count

Counts the number of unique owner-repos in the dataset, providing a basic understanding of diverse repositories.