issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.91B | issue_number int64 1 131k |

|---|---|---|---|---|---|---|---|---|---|

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

When kubelet firstly startup and registrated, a CNI pod will be assigned to this node. After the CNI pod finished initialization, the node should be `Ready` to run Pod.

Kubelet updates it status to apiserver periodically, as specified by --node-status-update-frequency. The d... | kubelet sync node status more frequently when node not ready | https://api.github.com/repos/kubernetes/kubernetes/issues/117801/comments | 5 | 2023-05-05T09:45:49Z | 2023-05-06T02:08:01Z | https://github.com/kubernetes/kubernetes/issues/117801 | 1,697,321,803 | 117,801 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Deletion of pod with prestop hook takes longer than expected.

The logic of deleting a Pod is implemented through the `kubelet.killPod(pod, gracePeriodOverride)`, which is responsible for deleting all the of the pod container, including concurrently executing `PrestopHook` and `StopContainer`, a... | Deletion of pod with prestop hook takes longer than expected. | https://api.github.com/repos/kubernetes/kubernetes/issues/117798/comments | 14 | 2023-05-05T07:31:15Z | 2024-02-24T17:57:19Z | https://github.com/kubernetes/kubernetes/issues/117798 | 1,697,148,707 | 117,798 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

执行命令

`sealos run docker.io/labring/kubernetes-docker:v1.16.15-4.2.0 labring/helm:v3.10.3 labring/calico:v3.24.5 --masters 192.168.2.66,192.168.2.67,192.168.2.68 --passwd '123456'`

报错信息:

`192.168.2.67:22 Image is up to date for sealos.hub:5000/pause@sha256:f78411e19d84a252e53bff71a4407a5686c... | sealos 4.2.0安装docker.io/labring/kubernetes-docker:v1.16.15-4.2.0失败 | https://api.github.com/repos/kubernetes/kubernetes/issues/117797/comments | 2 | 2023-05-05T05:53:09Z | 2023-05-05T06:28:51Z | https://github.com/kubernetes/kubernetes/issues/117797 | 1,697,050,028 | 117,797 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

执行命令

`sealos run docker.io/labring/kubernetes-docker:v1.16.15-4.2.0 labring/helm:v3.10.3 labring/calico:v3.24.5 --masters 192.168.2.66,192.168.2.67,192.168.2.68 --passwd '123456'`

报错信息:

`192.168.2.67:22 Image is up to date for sealos.hub:5000/pause@sha256:f78411e19d84a252e53bff71a4... | sealos 4.2.0安装docker.io/labring/kubernetes-docker:v1.16.15-4.2.0失败 | https://api.github.com/repos/kubernetes/kubernetes/issues/117795/comments | 2 | 2023-05-05T03:36:15Z | 2023-05-05T05:51:28Z | https://github.com/kubernetes/kubernetes/issues/117795 | 1,696,958,130 | 117,795 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hi I am from Amazon EKS. our customer recently sent a query to us that they found all cluster CA certificates created when an EKS cluster is created have an empty Serial number.

After investigation, I found the serial number is actually set to 0 by https://github.com/kubernetes/client-go/blob/... | all cluster CA certificates created when a cluster is created have an empty Serial number | https://api.github.com/repos/kubernetes/kubernetes/issues/117790/comments | 2 | 2023-05-04T20:55:17Z | 2023-05-11T16:49:10Z | https://github.com/kubernetes/kubernetes/issues/117790 | 1,696,687,820 | 117,790 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Crash

Observed a panic: "invalid memory address or nil pointer dereference" (runtime error: invalid memory address or nil pointer dereference)goroutine 462 [running]:k8s.io/apimachinery/pkg/util/runtime.logPanic(0x29dde20, 0x39c6810) /go/pkg/mod/k8s.io/apimachinery@v0.20.4/pkg/util/runtime/runti... | Crash in fetchGroupVersionResources | https://api.github.com/repos/kubernetes/kubernetes/issues/117789/comments | 9 | 2023-05-04T18:43:44Z | 2024-05-16T16:48:21Z | https://github.com/kubernetes/kubernetes/issues/117789 | 1,696,519,259 | 117,789 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I was testing the InPlacePodVerticalScaling feature in Kubernetes v1.27 with the feature-gate enabled. After changing the pod's resources to 2 cores and 2 GB, the container encountered the following error:

```bash

Error: failed to create containerd task: OCI runtime create failed: runc create fa... | InPlacePodVerticalScaling feature in Kubernetes v1.27 causing container error | https://api.github.com/repos/kubernetes/kubernetes/issues/117782/comments | 5 | 2023-05-04T10:47:02Z | 2024-11-30T17:09:45Z | https://github.com/kubernetes/kubernetes/issues/117782 | 1,695,762,674 | 117,782 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Implement a limit mechanism in APF to limit how many ongoing watches can be accepted.

### Why is this needed?

Current APF only limits the rate of accepting watch requests. As a result, the total number of watches can creep up with long watch duration regardless how low the rate ... | Implement watch limit in APF | https://api.github.com/repos/kubernetes/kubernetes/issues/117777/comments | 16 | 2023-05-04T09:18:29Z | 2024-09-24T20:22:18Z | https://github.com/kubernetes/kubernetes/issues/117777 | 1,695,607,424 | 117,777 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

use custom kubeadm configuration file to create cluster, but got a lot of zero value in configuration file.

kubeadm configuration file as follow:

```yaml

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

kubernetesVersion: v1.27.1

apiServer:

extraArgs:

authorization-mod... | time type field in configuration is zero when kubeadm create cluster | https://api.github.com/repos/kubernetes/kubernetes/issues/117772/comments | 4 | 2023-05-04T07:51:17Z | 2023-05-04T09:02:11Z | https://github.com/kubernetes/kubernetes/issues/117772 | 1,695,457,050 | 117,772 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

DaemonSet-created Pods remain in the Running state even when the worker node is in a NotReady state.

### What did you expect to happen?

A Pod is completely terminated.

### How can we reproduce it (as minimally and precisely as possible)?

### Summary

- Deploy a DaemonSet

- Confirm tha... | Unexpected Running state for DaemonSet Pods on NotReady worker nodes | https://api.github.com/repos/kubernetes/kubernetes/issues/117769/comments | 15 | 2023-05-04T02:24:47Z | 2024-05-29T07:44:44Z | https://github.com/kubernetes/kubernetes/issues/117769 | 1,695,130,807 | 117,769 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

This is a tracking bug to implement two changes to better handle version skew as documented in https://github.com/kubernetes/enhancements/tree/master/keps/sig-node/1287-in-place-update-pod-resources#version-skew-strategy

1. kubelet: When feature-gate is disabled, if kubelet sees a... | [FG:InPlacePodVerticalScaling] Implement version skew handling for in-place pod resize | https://api.github.com/repos/kubernetes/kubernetes/issues/117767/comments | 48 | 2023-05-03T23:18:04Z | 2024-11-08T02:21:16Z | https://github.com/kubernetes/kubernetes/issues/117767 | 1,694,974,186 | 117,767 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Current implementation of in-place pod resize looks at max(Spec.Resources, Status.Resources) which works well when resources are being increased, and works well when resources are being decreased assuming that the decrease request is based on observed lower usage, and the new lowered resources does ... | [FG:InPlacePodVerticalScaling] Scheduler should use max(Spec.Resources.Requests, Status.Resources.Requests) instead of Status.AllocatedResources | https://api.github.com/repos/kubernetes/kubernetes/issues/117765/comments | 11 | 2023-05-03T21:03:35Z | 2024-11-05T23:21:52Z | https://github.com/kubernetes/kubernetes/issues/117765 | 1,694,827,233 | 117,765 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

#83094 describes the `kubectl wait` functionality that allows to wait for a JSONPath expression condition to match a value. For example, the following command waits for a pod with the name `busybox1` reaching "Running" phase:

```bash

kubectl wait pod/busybox1 --for=jsonpath='{.... | kubectl wait on arbitrary jsonpath with unknown value | https://api.github.com/repos/kubernetes/kubernetes/issues/117761/comments | 5 | 2023-05-03T17:25:40Z | 2023-07-04T07:26:55Z | https://github.com/kubernetes/kubernetes/issues/117761 | 1,694,495,312 | 117,761 |

[

"kubernetes",

"kubernetes"

] | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://kubernetes.io/security/

-->

**What happened**:

Following the... | [v1.27.1] panic when creating GameServerAllocation in Agones (works in v1.26.4) | https://api.github.com/repos/kubernetes/kubernetes/issues/117762/comments | 15 | 2023-05-03T16:33:42Z | 2023-05-04T05:10:44Z | https://github.com/kubernetes/kubernetes/issues/117762 | 1,694,509,379 | 117,762 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

In the jobset proposal there was a request that Jobs should have some kind of Readiness condition. Currently we have a status field that tells how many Jobs are ready.

> Add readiness status. Add a new condition to declare a job is ready. This should be configurable, for examp... | Add a condition to determine when a job is ready. | https://api.github.com/repos/kubernetes/kubernetes/issues/117758/comments | 17 | 2023-05-03T16:27:41Z | 2024-01-25T19:27:18Z | https://github.com/kubernetes/kubernetes/issues/117758 | 1,694,409,651 | 117,758 |

[

"kubernetes",

"kubernetes"

] | `v1.Node` has a field `.status.nodeInfo.kubeProxyVersion`, about which [the API docs](https://github.com/kubernetes/kubernetes/blob/v1.27.0/staging/src/k8s.io/api/core/v1/types.go#L5250) say:

```

// KubeProxy Version reported by the node.

KubeProxyVersion string `json:"kubeProxyVersion" protobuf:"bytes,8,opt,name=... | node.status.nodeInfo.kubeProxyVersion is a lie | https://api.github.com/repos/kubernetes/kubernetes/issues/117756/comments | 14 | 2023-05-03T15:12:15Z | 2023-10-31T18:16:22Z | https://github.com/kubernetes/kubernetes/issues/117756 | 1,694,278,239 | 117,756 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

error: unable to upgrade connection: error dialing backend: unknown scheme: ws

### What did you expect to happen?

"kubectl exec -it eureka-0 bash" error: "error: unable to upgrade connection: error dialing backend: unknown scheme: ws", the container engine is isulad.

### How can we reproduce it (... | error: unable to upgrade connection: error dialing backend: unknown scheme: ws | https://api.github.com/repos/kubernetes/kubernetes/issues/117754/comments | 14 | 2023-05-03T14:09:48Z | 2024-06-25T20:19:44Z | https://github.com/kubernetes/kubernetes/issues/117754 | 1,694,154,314 | 117,754 |

[

"kubernetes",

"kubernetes"

] | **What happened**:

I'm trying to configure kubectl to edit files using Sublime Text 3. My Sublime Text is installed in the default location (`C:\Program files\Sublime Text 3\sublime_text.exe`), which contains spaces.

I cannot seem to find the correct syntax for defining the KUBE_EDITOR environment variable, which wor... | KUBE_EDITOR path with spaces on Windows doesn't work | https://api.github.com/repos/kubernetes/kubernetes/issues/117781/comments | 17 | 2023-05-03T14:01:31Z | 2024-07-25T20:18:34Z | https://github.com/kubernetes/kubernetes/issues/117781 | 1,695,748,007 | 117,781 |

[

"kubernetes",

"kubernetes"

] | This is a tracking issue for promoting and moving kubernetes/kubernetes jobs to new Prow build cluster running on AWS (eks-prow-build-cluster).

## Candidates

_Candidates are canary jobs that have satisfying success rate. Those canary jobs can replace existing jobs running in old build clusters_

* [x] [ci-kuber... | Promote and move kubernetes/kubernetes jobs to new Prow build cluster (eks-prow-build-cluster) | https://api.github.com/repos/kubernetes/kubernetes/issues/117749/comments | 12 | 2023-05-03T12:49:15Z | 2023-08-28T21:37:00Z | https://github.com/kubernetes/kubernetes/issues/117749 | 1,694,012,679 | 117,749 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

This is a bit hard to reproduce, as it happens in a very specific setup, the problems only happens with `kubelet` 1.27.1, downgrading to 1.26.4 fixes the problem.

In a single-node cluster controlplane pods run as static pods, Rook/Ceph is deployed, and some PVs/PVCs backed by Ceph.

After a unc... | kubelet (1.27.1) fails to mount a hostPath volume for a static pod | https://api.github.com/repos/kubernetes/kubernetes/issues/117745/comments | 26 | 2023-05-03T10:52:19Z | 2023-07-12T17:57:17Z | https://github.com/kubernetes/kubernetes/issues/117745 | 1,693,840,001 | 117,745 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Make the value for Retry-After header of APF-rejected responses dynamic or user-adjustable instead of being constant 1

### Why is this needed?

When `kube-apiserver` APF rejects a request, it sets the HTTP response header with `Retry-After` of value 1 (ie. retry in 1 second). Ther... | Better mechanism to decide Retry-After header | https://api.github.com/repos/kubernetes/kubernetes/issues/117734/comments | 4 | 2023-05-02T19:39:01Z | 2023-05-24T19:16:51Z | https://github.com/kubernetes/kubernetes/issues/117734 | 1,692,994,961 | 117,734 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

While using the `SelfSubjectAccessReview` API, passing a non-pluralized resource `Pod` instead of the expected `pods` did not return an error, and instead returned the following ambiguous response:

`{Allowed:false Denied:false Reason: EvaluationError:}`

### What did you expect to happen?

The... | Passing a non-pluralized resource to SelfSubjectAccessReview() does not error | https://api.github.com/repos/kubernetes/kubernetes/issues/117732/comments | 5 | 2023-05-02T18:57:54Z | 2023-05-13T16:32:03Z | https://github.com/kubernetes/kubernetes/issues/117732 | 1,692,941,187 | 117,732 |

[

"kubernetes",

"kubernetes"

] | null | [KMSv2] hash the key ID in gRPC interface abstraction | https://api.github.com/repos/kubernetes/kubernetes/issues/117730/comments | 3 | 2023-05-02T17:06:13Z | 2023-07-13T22:47:50Z | https://github.com/kubernetes/kubernetes/issues/117730 | 1,692,784,277 | 117,730 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I tried to create a Kubernetes v1.26.1 control-plane node using kubeadmin and following the instructions at https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/. I installed kubeadm, kubelet, kubectl, containerd, cni plugins and crictl. I installed the **overlay** a... | kubeadm init fails on wait-control-plane step; kube-apiserver is never launched | https://api.github.com/repos/kubernetes/kubernetes/issues/117729/comments | 6 | 2023-05-02T17:04:53Z | 2023-05-03T07:45:37Z | https://github.com/kubernetes/kubernetes/issues/117729 | 1,692,782,505 | 117,729 |

[

"kubernetes",

"kubernetes"

] | Move the logic to polling because there are variations on file changes (like symlink swapping of directories that contain the encryption config) that the file watch logic would fail to detect. Polling at a set interval prevents any such issues. There was never any guarantee on how quickly the API server would update th... | [KMSv2] Move automatic reload to use a simple one minute poll | https://api.github.com/repos/kubernetes/kubernetes/issues/117728/comments | 4 | 2023-05-02T16:48:40Z | 2023-12-19T14:46:34Z | https://github.com/kubernetes/kubernetes/issues/117728 | 1,692,756,209 | 117,728 |

[

"kubernetes",

"kubernetes"

] | /sig auth

/triage accepted

/assign | [KMSv2] Add apiserver identity to the metrics | https://api.github.com/repos/kubernetes/kubernetes/issues/117726/comments | 3 | 2023-05-02T16:35:21Z | 2023-09-09T23:46:11Z | https://github.com/kubernetes/kubernetes/issues/117726 | 1,692,739,786 | 117,726 |

[

"kubernetes",

"kubernetes"

] | xref: https://hackmd.io/@enj/SyiXCABZn | [KMSv2] Crypto changes for KMS in v1.28 | https://api.github.com/repos/kubernetes/kubernetes/issues/117725/comments | 3 | 2023-05-02T16:17:30Z | 2023-06-26T14:03:34Z | https://github.com/kubernetes/kubernetes/issues/117725 | 1,692,711,202 | 117,725 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We recently faced some [some problems](https://gitlab.com/gitlab-org/cluster-integration/gitlab-agent/-/issues/393) when using our Kube API proxy with various Kubernetes distributions.

Long story short, the error turned out to be that in our proxy we've sent an attach request like the following ... | Consume zero-sized chunked body before hijacking the connection on SPDY upgrade | https://api.github.com/repos/kubernetes/kubernetes/issues/117722/comments | 12 | 2023-05-02T15:36:36Z | 2024-05-21T19:08:57Z | https://github.com/kubernetes/kubernetes/issues/117722 | 1,692,649,130 | 117,722 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Random function here disables `MinCandidateNodesAbsolute` and `MinCandidateNodesPercentage`

https://github.com/kubernetes/kubernetes/blob/3f8c4794eadf8aadd8a5bcb0b875bf0af5c2020b/pkg/scheduler/framework/plugins/defaultpreemption/default_preemption.go#L123-L125

https://github.com/kubernetes... | GetOffsetAndNumCandidates function disables MinCandidateNodesAbsolute and MinCandidateNodesAbsolute | https://api.github.com/repos/kubernetes/kubernetes/issues/117707/comments | 9 | 2023-05-01T20:15:31Z | 2024-03-19T16:52:09Z | https://github.com/kubernetes/kubernetes/issues/117707 | 1,691,307,170 | 117,707 |

[

"kubernetes",

"kubernetes"

] | https://github.com/kubernetes/kubernetes/blob/73bd83cfa74730ecab263a886698b7ea8a2e02ad/staging/src/k8s.io/client-go/tools/cache/controller.go#L291-L299 defines a convenient filtering handler.

However, it doesn't define what the behavior is with a FilterFunc that is not static. For example, we may have

```go

All... | Missing documentation on FilteringResourceEventHandler behavior with dynamic filters | https://api.github.com/repos/kubernetes/kubernetes/issues/117706/comments | 14 | 2023-05-01T19:23:47Z | 2024-08-08T20:28:12Z | https://github.com/kubernetes/kubernetes/issues/117706 | 1,691,242,309 | 117,706 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I'm trying to build kubernetes on a `linux/arm64` machine that is virtualized using [UTM](https://mac.getutm.app/) on a M1 macOS.

The problem is that right after a `git clone` the build using `make` fails with:

```

make all

+++ [0501 12:11:19] Setting GOMAXPROCS: 8

+++ [0501 12:12:47] Build... | Build on linux/arm64 using make | https://api.github.com/repos/kubernetes/kubernetes/issues/117701/comments | 7 | 2023-05-01T12:45:48Z | 2023-05-01T13:22:46Z | https://github.com/kubernetes/kubernetes/issues/117701 | 1,690,767,646 | 117,701 |

[

"kubernetes",

"kubernetes"

] | Hi,

i installed a fresh k8s inside a little VM, just for gambling with possible storage solutions and i stumbled over this...

```bash

root@web1:~# kubectl explain --rec... | Volumes (glusterfs not removed as mentioned in official documentation for v1.27.x) | https://api.github.com/repos/kubernetes/kubernetes/issues/117699/comments | 13 | 2023-05-01T06:20:02Z | 2023-05-11T04:44:53Z | https://github.com/kubernetes/kubernetes/issues/117699 | 1,690,517,347 | 117,699 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

From time to time (with a very low chance), we see that some of our pods couldn't start, and failed with message like below:

```console

$ kubectl get pod goapp

NAME READY STATUS RESTARTS AGE

goapp 29/30 Error 9 118s

$ kubectl logs goapp -c p1251

2023/04/29 23:31:32 l... | kubelet is vulnerable to concurrent sort and can violate safety guarantees [v1.21.x] | https://api.github.com/repos/kubernetes/kubernetes/issues/117694/comments | 12 | 2023-04-30T19:47:10Z | 2023-04-30T21:41:07Z | https://github.com/kubernetes/kubernetes/issues/117694 | 1,690,056,290 | 117,694 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

At the moment, if you try to change the podManagementPolicy of an existing StatefulSet, you will be rejected:

```

spec: Forbidden: updates to statefulset spec for fields other than 'replicas', 'template', and 'updateStrategy' are forbidden

```

The goal of this enhancement is ... | Allow changing .spec.podManagementPolicy of an existing StatefulSet | https://api.github.com/repos/kubernetes/kubernetes/issues/117693/comments | 12 | 2023-04-30T11:42:12Z | 2024-04-13T23:16:19Z | https://github.com/kubernetes/kubernetes/issues/117693 | 1,689,885,066 | 117,693 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

After disabling hostNetwork on an already deployed Deployment, hostPorts are not cleared up.

Even if I explicitly set hostPort(s) to `null`, Ports that have the same number for TCP and UDP, only the TCP gets cleared up.

### What did you expect to happen?

hostPorts to get cleared on the new Pod

... | PodSpec defaulting sets hostPort on hostNetwork pods, but in the template rather than the pod | https://api.github.com/repos/kubernetes/kubernetes/issues/117689/comments | 8 | 2023-04-29T20:06:05Z | 2023-05-10T15:34:11Z | https://github.com/kubernetes/kubernetes/issues/117689 | 1,689,673,208 | 117,689 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I'm trying to create a kubernetes ServiceAccount but using a custom token instead of having an autogenerated one.

This is the code I'm using

```

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: test-sa

---

apiVersion: v1

kind: Secret

metadata:

name: test-sa-token

annota... | Custom token for kubernetes.io/service-account-token - does not work when used | https://api.github.com/repos/kubernetes/kubernetes/issues/117679/comments | 3 | 2023-04-28T22:28:07Z | 2023-04-29T13:37:31Z | https://github.com/kubernetes/kubernetes/issues/117679 | 1,689,212,022 | 117,679 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

If a Pod is subjected to a `ResourceQuota` with "PriortyClass" as its scope, the quota's calculation is incorrect. See repo steps for more details.

### What did you expect to happen?

`ResourceQuota` with "PriortyClass" as its scope can be calculated at all times.

### How can we reproduce it (as m... | `ResourceQuota` with "PriortyClass" as its scope is not calculated properly | https://api.github.com/repos/kubernetes/kubernetes/issues/117676/comments | 4 | 2023-04-28T19:38:19Z | 2023-05-05T00:59:14Z | https://github.com/kubernetes/kubernetes/issues/117676 | 1,689,044,790 | 117,676 |

[

"kubernetes",

"kubernetes"

] | Having played around with the new generics-based sets API a bit, I really dislike `sets.List()`.

The `List`/`UnsortedList` API always seemed a little bit weird to me, in that the "default" behavior (`List`) was to sort the output, and you had to explicitly specify if you didn't want that... Now with the generic vers... | rethink sets.List() / sets.Set.UnsortedList() naming? | https://api.github.com/repos/kubernetes/kubernetes/issues/117673/comments | 38 | 2023-04-28T12:46:51Z | 2024-05-17T14:11:23Z | https://github.com/kubernetes/kubernetes/issues/117673 | 1,688,497,148 | 117,673 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

According to https://kubernetes.io/docs/concepts/policy/resource-quotas/#object-count-quota:

| Resource Name | Description |

|---------------|------... | Pods in terminal state are still counted as "used" count/pods quota | https://api.github.com/repos/kubernetes/kubernetes/issues/117663/comments | 4 | 2023-04-28T06:16:56Z | 2023-05-11T23:41:57Z | https://github.com/kubernetes/kubernetes/issues/117663 | 1,687,967,064 | 117,663 |

[

"kubernetes",

"kubernetes"

] | Built-in beta API versions have a maximum lifetime of 9 months or 3 minor releases (whichever is longer) from introduction to deprecation, and 9 months or 3 minor releases (whichever is longer) from deprecation to removal.

prerelease-lifecycle-gen:

- [x] autoscalingapiv2beta1.SchemeGroupVersion // deprecate in 1.... | Remove ability to re-enable serving deprecated APIs | https://api.github.com/repos/kubernetes/kubernetes/issues/117659/comments | 3 | 2023-04-28T05:53:24Z | 2023-12-14T06:29:37Z | https://github.com/kubernetes/kubernetes/issues/117659 | 1,687,943,318 | 117,659 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have a Kubernetes v1.18.17 cluster which mounts a ceph volume for most running pods. The volume definition in the yaml includes a user, a secret, and a monitor ip.

This works fine when pods are scheduled for nodes that don't have the ceph-common binaries installed on the host machine. However... | Ceph volumes ignore kube configuration if ceph-common exists on host machine | https://api.github.com/repos/kubernetes/kubernetes/issues/117653/comments | 9 | 2023-04-27T17:24:06Z | 2024-03-24T11:50:59Z | https://github.com/kubernetes/kubernetes/issues/117653 | 1,687,259,434 | 117,653 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Deployed a three node HA cluster: https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/

Restart all three nodes at once -> kubelet ist not able to start static control plane pods:

```bash

kubelet[3112]: E0427 16:27:26.004278 3112 kubelet.go:1875] "Unab... | [FG:InPlacePodVerticalScaling] kubelet.go:1875 "Unable to attach or mount volumes for pod; skipping pod" | https://api.github.com/repos/kubernetes/kubernetes/issues/117652/comments | 10 | 2023-04-27T15:02:24Z | 2023-05-22T08:48:20Z | https://github.com/kubernetes/kubernetes/issues/117652 | 1,687,045,902 | 117,652 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

etcd 3.5.6 is being used for K8s [1.26](https://github.com/kubernetes/kubernetes/blob/f89670c3aa4059d6999cb42e23ccb4f0b9a03979/build/dependencies.yaml#L56-L58) and [1.25](https://github.com/kubernetes/kubernetes/blob/a1a87a0a2bcd605820920c6b0e618a8ab7d117d4/build/dependencies.yaml#L56-L58). It con... | Bump etcd version to 3.5.8 for supported K8s releases | https://api.github.com/repos/kubernetes/kubernetes/issues/117648/comments | 14 | 2023-04-27T13:54:09Z | 2023-11-29T06:16:08Z | https://github.com/kubernetes/kubernetes/issues/117648 | 1,686,906,572 | 117,648 |

[

"kubernetes",

"kubernetes"

] | Tracking issue for https://github.com/opencontainers/runc/issues/3849

When using runc 1.1.6 - kubelet, systemd, and dbus-broker consumes excessive CPU and memory resources.

Previous issue - https://github.com/kubernetes/kubernetes/issues/112124

Previous fix - https://github.com/opencontainers/runc/pull/3823

T... | [runc][1.1.6] Adding misc controller to cgroup v1 makes kubelet sad | https://api.github.com/repos/kubernetes/kubernetes/issues/117647/comments | 17 | 2023-04-27T13:51:39Z | 2023-06-12T15:15:47Z | https://github.com/kubernetes/kubernetes/issues/117647 | 1,686,902,299 | 117,647 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are flaking?

https://prow.k8s.io/view/gs/kubernetes-jenkins/pr-logs/pull/117614/pull-kubernetes-integration/1651530152728858624

### Which tests are flaking?

{Failed === RUN TestKMSv2Healthz

--- FAIL: TestKMSv2Healthz (167.38s)

also shown in https://storage.googleapis.com/k8s-metrics/flake... | TestKMSv2Healthz flake | https://api.github.com/repos/kubernetes/kubernetes/issues/117646/comments | 1 | 2023-04-27T13:17:09Z | 2023-05-04T22:45:34Z | https://github.com/kubernetes/kubernetes/issues/117646 | 1,686,836,223 | 117,646 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Scheduler started normally, but there has a lot of errors in stderr console.Here is the error log

```

Apr 27 10:45:55 12-0-65-8.xpaas.lenovo.com systemd[1]: Started Kubernetes Scheduler.

………………

Apr 27 10:45:57 12-0-65-8.xpaas.lenovo.com kube-scheduler[55182]: I0427 10:45:57.251171 55182 tlscon... | kube-scheduler started but streamwatcher error | https://api.github.com/repos/kubernetes/kubernetes/issues/117640/comments | 10 | 2023-04-27T03:06:08Z | 2024-03-28T00:33:11Z | https://github.com/kubernetes/kubernetes/issues/117640 | 1,686,013,394 | 117,640 |

[

"kubernetes",

"kubernetes"

] | Right now, topologySpreadConstraints have only two possible behaviors when the constraint can not be satisfied: DoNotSchedule or ScheduleAnyway

However, these behaviors do not consider the possibilty that there may be lower priority pods which can be preempted in order to satisfy the constraint.

I propose adding ... | topologySpreadConstraints: Proposal to add whenUnsatisfiable: PreemptOrSchedule | https://api.github.com/repos/kubernetes/kubernetes/issues/117634/comments | 5 | 2023-04-26T17:22:17Z | 2023-05-11T09:29:11Z | https://github.com/kubernetes/kubernetes/issues/117634 | 1,685,430,932 | 117,634 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We are running self managed Kubernetes clusters on GCP and use Services of `type: LoadBalancer` with both public and private `EXTERNAL-IP`s to create load balancers for our clusters. Since upgrading kube-proxy to 1.26.3 (from 1.25.5) we saw that all healthchecks from GCP started to fail and traffi... | kube-proxy: traffic to healthcheck host ports is dropped because of iptables rule order | https://api.github.com/repos/kubernetes/kubernetes/issues/117621/comments | 21 | 2023-04-26T15:19:30Z | 2023-05-02T19:44:24Z | https://github.com/kubernetes/kubernetes/issues/117621 | 1,685,239,374 | 117,621 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We run some Kubernetes “single-node clusters” that combine the control-plane and the workload onto a single host besides some services installed directly on the operating system (SSH, BIND9). With the upgrade to v1.26, all local services become unreachable as soon as kube-proxy configures the netw... | kube-proxy v1.26 blocks host services | https://api.github.com/repos/kubernetes/kubernetes/issues/117613/comments | 17 | 2023-04-26T09:28:41Z | 2023-05-06T22:13:20Z | https://github.com/kubernetes/kubernetes/issues/117613 | 1,684,642,587 | 117,613 |

[

"kubernetes",

"kubernetes"

] | # Progress <code>[7/7]</code>

- [X] APISnoop org-flow : [confirmAPIResourcesTest.org](https://github.com/apisnoop/ticket-writing/blob/master/confirmAPIResourcesTest.org)

- [X] test approval issue : #117610

- [X] test pr : #117611

- [X] two weeks soak start date : [testgrid-link](https://testgrid.k8s.io/si... | Write e2e test for APIResources endpoints + 12 Endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/117610/comments | 4 | 2023-04-26T08:59:49Z | 2023-05-23T20:22:50Z | https://github.com/kubernetes/kubernetes/issues/117610 | 1,684,579,993 | 117,610 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

kubectl top node get the memory usage greater than 80%, the node marked Ready,SchedulingDisabled, but kubelet only config

--eviction-hard=memory.available<10%.

The describe node results conditions don't have any pressure

Other clusters don't have a similar situation even if it at 90%

... | when the memory usage is greater than 80%, node is marked ” Ready,SchedulingDisabled “ | https://api.github.com/repos/kubernetes/kubernetes/issues/117609/comments | 9 | 2023-04-26T08:54:02Z | 2023-09-08T07:29:41Z | https://github.com/kubernetes/kubernetes/issues/117609 | 1,684,566,804 | 117,609 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

https://github.com/kubernetes/kubernetes/blob/master/vendor/github.com/cilium/ebpf/internal/btf/types.go#L603-L604

### What did you expect to happen?

```Go

func copyType(typ Type, transform func(Type) (Type, error)) (Type, error) {

copies := make(copier)

err := copies.copy(&typ, transfo... | Unprofessional code depending on unspecified behavior | https://api.github.com/repos/kubernetes/kubernetes/issues/117606/comments | 9 | 2023-04-26T05:33:29Z | 2023-08-20T08:41:18Z | https://github.com/kubernetes/kubernetes/issues/117606 | 1,684,306,035 | 117,606 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The type checking in ValidatingAdmissionPolicy does not recognize authorizer related variables and report it as warning in status.

### What did you expect to happen?

authorizer check should be supported by type checking

### How can we reproduce it (as minimally and precisely as possible)?

Create... | Type checking in ValidatingAdmissionPolicy does not support authorizer variable | https://api.github.com/repos/kubernetes/kubernetes/issues/117601/comments | 3 | 2023-04-25T23:34:49Z | 2023-07-18T21:54:19Z | https://github.com/kubernetes/kubernetes/issues/117601 | 1,684,007,704 | 117,601 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

An API to define when a Job (could be exclusive to Indexed) can be declared as succeeded. Examples of policies:

- Complete if Index 0 is successful

- Complete if x% of indexes are successful

- Complete if x indexes are successful

### Why is this needed?

- flux-operator: requir... | Job success/completion policy | https://api.github.com/repos/kubernetes/kubernetes/issues/117600/comments | 42 | 2023-04-25T19:32:31Z | 2024-04-22T12:53:44Z | https://github.com/kubernetes/kubernetes/issues/117600 | 1,683,743,606 | 117,600 |

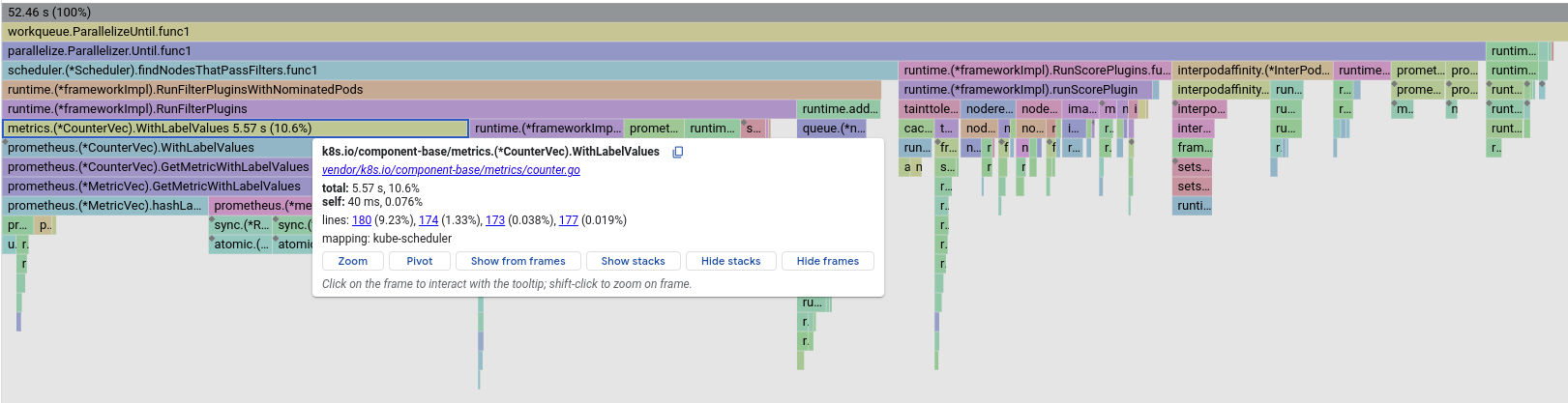

[

"kubernetes",

"kubernetes"

] | ### What happened?

We observed degradation in scheduling throughput, putting it under 100 pods/s.

Respective pprof of kube-scheduler points it to https://github.com/kubernetes/kubernetes/pull/115082:

... | Performance degradation in scheduler after adding metrics for counting plugin evaluation | https://api.github.com/repos/kubernetes/kubernetes/issues/117592/comments | 6 | 2023-04-25T14:01:53Z | 2023-04-26T14:40:08Z | https://github.com/kubernetes/kubernetes/issues/117592 | 1,683,237,799 | 117,592 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Added this test to `staging/src/k8s.io/apiextensions-apiserver/pkg/apiserver/schema/cel/validation_test.go`:

```go

{name: "string lists",

obj: objs([]interface{}{"a", "b", "c"}),

schema: schemas(listType(&stringType)),

valid: []string{

"self.val1.join('-') == 'a-b-c'"

}... | CEL: listOfStrings.join() fails due to internal conversion error | https://api.github.com/repos/kubernetes/kubernetes/issues/117590/comments | 4 | 2023-04-25T13:43:54Z | 2023-04-26T23:10:15Z | https://github.com/kubernetes/kubernetes/issues/117590 | 1,683,206,049 | 117,590 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

After enabling In-Place Pod Vertical Scaling, if deploy a pod without setting memory (or cpu) request, kubelet will be failed at its second restart

### What did you expect to happen?

kubelet restart successfully

### How can we reproduce it (as minimally and precisely as possible)?

1. start kub... | pod_status_manager_state: checkpoint is corrupted | https://api.github.com/repos/kubernetes/kubernetes/issues/117589/comments | 12 | 2023-04-25T13:30:44Z | 2024-01-18T15:33:06Z | https://github.com/kubernetes/kubernetes/issues/117589 | 1,683,183,310 | 117,589 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

`ListGVKs`, `getOptionsInternalKind` and `connectOptionsInternalKinds` slice may be empty in `registerResourceHandler` function.

### What did you expect to happen?

We need to check `listGVKs`, `getOptionsInternalKind` and `connectOptionsInternalKinds` slice is empty?

### How can we repr... | kube-apiserver: whether need to check listGVKs slice is empty in registerResourceHandler functions? | https://api.github.com/repos/kubernetes/kubernetes/issues/117587/comments | 4 | 2023-04-25T12:33:29Z | 2024-04-30T20:28:08Z | https://github.com/kubernetes/kubernetes/issues/117587 | 1,683,085,858 | 117,587 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Several test/e2e/framework/* packages have k8s.io/kubernetes/pkg/api/v1/pod as their only dependency on k/k. The following functions are shared that way:

- IsPodAvailable returns true if a pod is available; false otherwise.

- IsPodReady returns true if a pod is ready; false o... | e2e framework: remove dependency on k8s.io/kubernetes/pkg/api/v1/pod | https://api.github.com/repos/kubernetes/kubernetes/issues/117583/comments | 3 | 2023-04-25T10:00:43Z | 2023-05-12T16:39:03Z | https://github.com/kubernetes/kubernetes/issues/117583 | 1,682,844,676 | 117,583 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I operate Cloud Functions on GCP, and the job is written in Python. I encountered the following error message last day.

It seems to be an error of Kubernetes, and does anyone know the cause of this issue? Is it because of the shortage of the resource of GKE?

---

{

insertId: "-"

jsonPayload... | "run_poller: UNKNOWN:Timer list shutdown" | https://api.github.com/repos/kubernetes/kubernetes/issues/117580/comments | 3 | 2023-04-25T06:00:49Z | 2023-04-27T05:04:33Z | https://github.com/kubernetes/kubernetes/issues/117580 | 1,682,485,589 | 117,580 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I defined a CRD and custom its PrinterColumns but got no data. I checked the JSONPath with the Online Evaluator successfully. So I'm not sure whether there's a bug in kubernetes JSONPath parser.

And I also write down a unit test following the [jsonpath_test.go](https://github.com/kubernetes/kube... | jsonpath parser issue | https://api.github.com/repos/kubernetes/kubernetes/issues/117579/comments | 8 | 2023-04-25T05:01:33Z | 2023-04-27T16:21:26Z | https://github.com/kubernetes/kubernetes/issues/117579 | 1,682,431,445 | 117,579 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

yaml.Marshal a kubeconfig, as done in the example tests here https://pkg.go.dev/k8s.io/client-go/tools/clientcmd/api

### What did you expect to happen?

LocationOfOrigin is not seen in output, as comment says "never serialized."

### How can we reproduce it (as minimally and precisely as possible)?... | LocationOfOrigin shows up unexpectedly | https://api.github.com/repos/kubernetes/kubernetes/issues/117567/comments | 4 | 2023-04-24T22:03:26Z | 2023-05-01T17:20:20Z | https://github.com/kubernetes/kubernetes/issues/117567 | 1,682,107,393 | 117,567 |

[

"kubernetes",

"kubernetes"

] | An error occurred [here](https://github.com/kubernetes/kubernetes/blob/master/staging/src/k8s.io/apiserver/pkg/storage/value/encrypt/envelope/kmsv2/envelope.go#L332-L348) should be correctly displayed in the logs. When the annotation key validation fails, no clear error message is displayed to inform the user about the... | [KMSv2] Annotation key validation errors are not correctly being surfaced in the logs | https://api.github.com/repos/kubernetes/kubernetes/issues/117565/comments | 4 | 2023-04-24T21:05:27Z | 2023-05-02T17:05:15Z | https://github.com/kubernetes/kubernetes/issues/117565 | 1,682,044,355 | 117,565 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Failed to run `post-kubernetes-push-image-etcd` .

https://prow.k8s.io/view/gs/kubernetes-jenkins/logs/post-kubernetes-push-image-etcd/1649533793343639552

Is it expect? Should we make arm etcd image?

### What did you expect to happen?

`post-kubernetes-push-image-etcd` runs successfully.

###... | Failed to run "post-kubernetes-push-image-etcd" | https://api.github.com/repos/kubernetes/kubernetes/issues/117557/comments | 10 | 2023-04-24T12:21:54Z | 2023-05-09T12:55:04Z | https://github.com/kubernetes/kubernetes/issues/117557 | 1,681,150,924 | 117,557 |

[

"kubernetes",

"kubernetes"

] | We've already got KubeSchedulerConfiguration v1.

Thus, we can start to remove/deprecate the beta versions (v1beta2, v1beta3).

We have the deprecation notice of v1beta2 on the website. So, we can remove v1beta2 soon.

> Note: KubeSchedulerConfiguration [v1beta2](https://kubernetes.io/docs/reference/config-api/kube-... | remove KubeSchedulerConfiguration v1beta3 | https://api.github.com/repos/kubernetes/kubernetes/issues/117556/comments | 12 | 2023-04-24T12:16:38Z | 2023-10-10T01:58:14Z | https://github.com/kubernetes/kubernetes/issues/117556 | 1,681,142,233 | 117,556 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

KCM tries to taint a newly provisioned node

```

I0423 18:37:30.629784 1 node_lifecycle_controller.go:1082] Condition Ready of node ip-10-5-43-93.ap-south-1.compute.internal was never updated by kubelet

I0423 18:37:30.629819 1 node_lifecycle_controller.go:1082] Condition MemoryPre... | Kube controller manager throws nil pointer dereference while tainting a node unreachable | https://api.github.com/repos/kubernetes/kubernetes/issues/117555/comments | 10 | 2023-04-24T12:08:27Z | 2024-12-02T16:12:24Z | https://github.com/kubernetes/kubernetes/issues/117555 | 1,681,127,928 | 117,555 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The [easyjson](https://github.com/mailru/easyjson) library is maintained by Mail.ru. This is owned by VK, which is owned by Gazprom Media, and thus is subject to EU and [USA Sanctions](https://sanctionssearch.ofac.treas.gov/Details.aspx?id=20296)

This is a dependancy via [https://github.com/go-o... | Use of a third party library maintained by a Sanctioned Entity | https://api.github.com/repos/kubernetes/kubernetes/issues/117553/comments | 11 | 2023-04-24T11:22:16Z | 2024-01-04T16:49:20Z | https://github.com/kubernetes/kubernetes/issues/117553 | 1,681,034,681 | 117,553 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Kubernetes cluster send node not ready critical level alert, Review the kubelet log have too many context deadline exceeded and TLS handshake timeout of the record,Automatically resumes after a few minutes, Please see fellow details

[kubelet log]

Unable to authenticate the request due to an e... | Kubelet connect apiserver TLS handshake timeout | https://api.github.com/repos/kubernetes/kubernetes/issues/117542/comments | 5 | 2023-04-24T02:43:21Z | 2023-09-08T07:33:23Z | https://github.com/kubernetes/kubernetes/issues/117542 | 1,680,349,957 | 117,542 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

In `TestInformerWatcherDeletedFinalStateUnknown`, when timeout or other error occurs, `t.fatal` is called.

https://github.com/kubernetes/kubernetes/blob/b1f901acf44f8adf69759db434690c2a8bdd616b/staging/src/k8s.io/client-go/tools/watch/informerwatcher_test.go#L320-L334

This will make current routi... | Stop the watcher when error occurs in TestInformerWatcherDeletedFinalStateUnknown | https://api.github.com/repos/kubernetes/kubernetes/issues/117533/comments | 5 | 2023-04-23T08:10:27Z | 2023-04-27T11:56:16Z | https://github.com/kubernetes/kubernetes/issues/117533 | 1,679,916,632 | 117,533 |

[

"kubernetes",

"kubernetes"

] | We are using `client-go` (and `kubectl`) to interact with Kubernetes clusters behind a couple of proxy layers. One of these proxies completes the `Content-Type` headers to include character encoding details.

This was working fine until recently, when client-go/kubectl have started to fail when interacting with the d... | client-go/kubectl rejects discovery API responses with charset encoding info | https://api.github.com/repos/kubernetes/kubernetes/issues/117562/comments | 9 | 2023-04-22T14:47:24Z | 2023-04-26T19:22:25Z | https://github.com/kubernetes/kubernetes/issues/117562 | 1,681,482,461 | 117,562 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have one node, which has taint:

```sh

k taint node member2-control-plane hello=:NoExecute

```

And I have one deployment:

```yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

labels:

app: nginx

spec:

replicas: 2

selector:

matchLabels:

app: nginx... | If an eviction occurs, pods will be rescheduled to the node if a toleration time is set. | https://api.github.com/repos/kubernetes/kubernetes/issues/117520/comments | 2 | 2023-04-21T10:51:41Z | 2023-04-21T15:23:30Z | https://github.com/kubernetes/kubernetes/issues/117520 | 1,678,312,965 | 117,520 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

K8s version: v1.26.0

* I have one node with taints:

```sh

k taint node member2-control-plane hello=:NoExecute

```

* I deployed one pod with the following yaml

```yaml

apiVersion: v1

kind: Pod

metadata:

name: nginx

labels:

env: test

spec:

containers:

- name: nginx

... | If eviction occurs, the Pod is deleted and ceases to exist | https://api.github.com/repos/kubernetes/kubernetes/issues/117519/comments | 3 | 2023-04-21T10:33:27Z | 2023-06-06T06:54:12Z | https://github.com/kubernetes/kubernetes/issues/117519 | 1,678,292,284 | 117,519 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

After setting the label 【node.kubernetes.io/exclude-disruption】for vk node. when the node is not ready, the pod on it is still evicted.

### What did you expect to happen?

The pod on the vk node is not evicted.

### How can we reproduce it (as minimally and precisely as possible)?

1. label vk node... | node.kubernetes.io/exclude-disruption label doesn't work | https://api.github.com/repos/kubernetes/kubernetes/issues/117518/comments | 10 | 2023-04-21T10:07:04Z | 2024-03-20T01:59:06Z | https://github.com/kubernetes/kubernetes/issues/117518 | 1,678,260,826 | 117,518 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

There is a leaked routine in this test, this routine seems never quit after the test finish.

Since there are two more similar testcase in this test without a timeout, and there are no leaks in them.

I wonder if there is a missed cleanup in any timeout error handling branch.

Goleak report the... | hanging routine in TestSPDYExecutorStream/timeoutTest | https://api.github.com/repos/kubernetes/kubernetes/issues/117517/comments | 8 | 2023-04-21T09:38:01Z | 2024-05-02T16:44:28Z | https://github.com/kubernetes/kubernetes/issues/117517 | 1,678,216,837 | 117,517 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

I want to implement cloud-native change management based on K8S. This involves monitoring deployment updates, verifying new pod versions, and combining cloud-native monitoring components, such as Prometheus, to obtain pod metrics and time-series data. Then, using our own intelligen... | Discussion on Cloud Native Change Management and Control Solutions | https://api.github.com/repos/kubernetes/kubernetes/issues/117515/comments | 3 | 2023-04-21T06:41:51Z | 2023-04-21T11:24:58Z | https://github.com/kubernetes/kubernetes/issues/117515 | 1,677,926,982 | 117,515 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

The problem can be reproduced by following steps:

1. Create a cluster with one Node

2. Create a deployment with one replica using Non-attachable volume (for example Azurefile)

3. Shutdown the Node

4. Delete the Pod with option `--force` (Use force deletion to simulate the situation that mount... | Mount point will be local volume without calling `NodeStageVolume` if mount point was left over before node rebooting | https://api.github.com/repos/kubernetes/kubernetes/issues/117513/comments | 28 | 2023-04-21T03:45:01Z | 2025-01-21T11:48:17Z | https://github.com/kubernetes/kubernetes/issues/117513 | 1,677,736,297 | 117,513 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

https://github.com/kubernetes/kubernetes/pull/111660

This PR changed the KeyUsage array from including 3 items to 2 in non RSA key case. KeyUsage is defined https://github.com/kubernetes/api/blob/master/certificates/v1/types.go#L124.

Hence starting 1.27 the [CertificateSigningRequest](https:... | KeyUsage array content changed in 1.27 for a non-RSA key | https://api.github.com/repos/kubernetes/kubernetes/issues/117506/comments | 9 | 2023-04-20T21:02:18Z | 2023-05-05T12:06:48Z | https://github.com/kubernetes/kubernetes/issues/117506 | 1,677,426,559 | 117,506 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

**Kubernetes version** : 1.23 \

**Container runtime**: Docker

`kubectl describe node node1`

```

Type Reason Age From Message

---- ------ ---- ---- -------

Warning ContainerGCFailed 2m35s (x507 over 9h) kube... | NotReady node with ContainerGCFailed warning | https://api.github.com/repos/kubernetes/kubernetes/issues/117501/comments | 9 | 2023-04-20T13:23:23Z | 2025-02-18T03:46:12Z | https://github.com/kubernetes/kubernetes/issues/117501 | 1,676,720,852 | 117,501 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

https://prow.k8s.io/view/gs/kubernetes-jenkins/logs/ci-kubernetes-unit-windows-master/1648849344847155200

### Which tests are failing?

```

# k8s.io/kubernetes/pkg/kubelet/util [k8s.io/kubernetes/pkg/kubelet/util.test]

pkg\kubelet\util\util_windows_test.go:211:12: undefined: os

... | util_window_test.go doesn't import os/net | https://api.github.com/repos/kubernetes/kubernetes/issues/117498/comments | 8 | 2023-04-20T09:56:35Z | 2023-05-11T02:51:10Z | https://github.com/kubernetes/kubernetes/issues/117498 | 1,676,400,147 | 117,498 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

1. I have a not got a lot of pod crash into ContainerStatusUnknown state for kubelet admit failed in resource admit

```

samkdeploy-59874db686-p75s2 0/1 ContainerStatusUnknown 0 19h

samkdeploy-59874db686-p7fv5 ... | Scheduler did not receive scheduled pods add events | https://api.github.com/repos/kubernetes/kubernetes/issues/117497/comments | 14 | 2023-04-20T07:54:06Z | 2024-07-25T07:00:58Z | https://github.com/kubernetes/kubernetes/issues/117497 | 1,676,202,985 | 117,497 |

[

"kubernetes",

"kubernetes"

] | I want `drain` to try each pod exactly once.

Examples:

- allow to control drain from outside of kubectl by having an external timer that for example restarts drain every x minutes

- allow evicting all pods once when a node needs to be shutdown immediately

- allow unit-testing what drain does by running it once an... | kubectl drain --once | https://api.github.com/repos/kubernetes/kubernetes/issues/117492/comments | 6 | 2023-04-20T00:01:04Z | 2023-05-25T21:20:26Z | https://github.com/kubernetes/kubernetes/issues/117492 | 1,675,790,038 | 117,492 |

[

"kubernetes",

"kubernetes"

] |

The current k8s logo has seven sides, which seems to be a missed opportunity for using an octagon. Is it possible to update the logo to the proper number of sides? | Kubernetes logo should have eight sides (octagon) | https://api.github.com/repos/kubernetes/kubernetes/issues/117489/comments | 3 | 2023-04-19T20:38:48Z | 2023-04-20T11:26:55Z | https://github.com/kubernetes/kubernetes/issues/117489 | 1,675,595,453 | 117,489 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I was performing a scale test with [kwok](github.com/kubernetes-sigs/kwok) by creating a lot of kwok nodes, waiting until they were ready, and then deleting them right after. I noticed that after the test was done, my laptop's CPU usage was sitting very high. After looking through the kubernetes-api... | Infinite Loop in nodeipam rangeAllocator | https://api.github.com/repos/kubernetes/kubernetes/issues/117487/comments | 7 | 2023-04-19T20:06:16Z | 2024-04-18T21:30:08Z | https://github.com/kubernetes/kubernetes/issues/117487 | 1,675,549,985 | 117,487 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

One a few nodes Kubelet says reclaiming memory and it doesn’t look to be doing any reclaim from Linux cache/buffer memory which is causing Memory Pressure status from Kubelet. Then it tries to evict pods and starts showing some of those pods as ContainerStatusNot Known.

This is the cluster run... | Kubelet not able to reclaim memory from buffer/cache and complains memory pressure and evicting pods | https://api.github.com/repos/kubernetes/kubernetes/issues/117473/comments | 19 | 2023-04-19T15:05:53Z | 2025-02-05T18:35:24Z | https://github.com/kubernetes/kubernetes/issues/117473 | 1,675,113,385 | 117,473 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When performing a strategic merge for a slice of maps with `patchStrategy=merge`, directives in the patch slice are ignored if the original list is empty. Instead, the merged slice still contains the directives. This causes the patched object to fail subsequent validation since it has directives i... | Strategic Merge does not handle directives for empty slices. | https://api.github.com/repos/kubernetes/kubernetes/issues/117470/comments | 12 | 2023-04-19T14:51:56Z | 2023-05-01T23:34:25Z | https://github.com/kubernetes/kubernetes/issues/117470 | 1,675,079,198 | 117,470 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

After updating to Kubernetes 1.27.1 some containers using `k8s.io/api v0.26.1` are crashing because of SIGSEGV (Segmentation Violation) and they can no longer communicate with the cluster. Originally, this also occurred with my local kubectl installation of version 1.26.3, but now it seems to be wor... | Segmentation violation with k8s.io/client-go v0.26.1 and a 1.27.1 cluster (incompatibility) | https://api.github.com/repos/kubernetes/kubernetes/issues/117466/comments | 7 | 2023-04-19T13:17:54Z | 2023-04-20T17:00:54Z | https://github.com/kubernetes/kubernetes/issues/117466 | 1,674,888,618 | 117,466 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

This was originally reported in https://gitlab.com/gitlab-org/gitlab/-/issues/407161. GitLab Agent for Kubernetes, apart from other things, is a Kubernetes API reverse proxy. Users recently started getting errors when using it with kubectl v1.27.x.

Users' CI jobs get a generated kubectl-compati... | OpenAPI spec fetching ignores apiserver URL path | https://api.github.com/repos/kubernetes/kubernetes/issues/117463/comments | 8 | 2023-04-19T09:52:26Z | 2023-05-23T17:42:00Z | https://github.com/kubernetes/kubernetes/issues/117463 | 1,674,568,382 | 117,463 |

[

"kubernetes",

"kubernetes"

] | Hello,

Upon visiting the [readme](https://github.com/kubernetes/kubernetes#to-start-using-k8s). There is a link for interactive tutorial which is being inactive. Error from (k8 page)[https://kubernetes.io/docs/tutorials/kubernetes-basics/] is

from within a pod using `Config.defaultClient()` to build an `ApiClient` and then access `getServiceAccountIssuerOpenIDConfiguration` in the `WellKnownApi` I get the following error:

```json

{

"kind": "Status",

"apiVersion":... | Mismatched URLs for service-account-issuer-discovery and Open API Spec's swagger.json | https://api.github.com/repos/kubernetes/kubernetes/issues/117455/comments | 12 | 2023-04-19T04:57:46Z | 2023-07-18T08:11:11Z | https://github.com/kubernetes/kubernetes/issues/117455 | 1,674,171,887 | 117,455 |

[

"kubernetes",

"kubernetes"

] | ### Asking for help? Comment out what you need so we can get more information to help you!

Cluster information:

* Kubernetes version: v1.25.0

* Cloud being used: (put bare-metal if not on a public cloud) bare-metal

* Installation method: kind

* Host OS: Ubuntu 20.04

* CNI and version: none

* CRI and version: n... | Are there any documents available on HPA benchmarking? | https://api.github.com/repos/kubernetes/kubernetes/issues/117454/comments | 12 | 2023-04-19T03:02:22Z | 2024-03-23T16:40:00Z | https://github.com/kubernetes/kubernetes/issues/117454 | 1,674,096,370 | 117,454 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I'm developing a controller that uses server-side apply and must be able to add and remove fields in the spec of custom resources. I am able to create an object with an empty spec using SSA, then add a field with data with a second SSA patch. When I attempt to remove the field using a third SSA patc... | 'spec in body must be of type object: "null"' when removing a field using SSA | https://api.github.com/repos/kubernetes/kubernetes/issues/117447/comments | 13 | 2023-04-18T16:21:38Z | 2024-05-23T17:51:07Z | https://github.com/kubernetes/kubernetes/issues/117447 | 1,673,462,175 | 117,447 |

[

"kubernetes",

"kubernetes"

] | The test fails because there are 2 different tests creating the same aggregated apiserver with the same name

https://github.com/kubernetes/kubernetes/blob/658ea4b59142f1442627b0ee46dd1a917e3aa766/test/e2e/apimachinery/openapiv3.go#L135-L141

https://github.com/kubernetes/kubernetes/blob/df5d84ae811bc119e715ee39daf... | Flaky Test - should contain OpenAPI V3 for Aggregated APIServer | https://api.github.com/repos/kubernetes/kubernetes/issues/117443/comments | 2 | 2023-04-18T14:09:37Z | 2023-04-20T17:00:49Z | https://github.com/kubernetes/kubernetes/issues/117443 | 1,673,220,761 | 117,443 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I am doing something about fault test in 1.25.3 k8s version. When I set device plugin process as T status(`kill -19 PID`) and created new pod next, I found kubelet would be stuck all the time and can't do any work.

SyncLoopIteration goroutine stack is as follows:

```

google.golang.org/grpc/inte... | kubelet will be stuck all the time when device plugin process is in T status | https://api.github.com/repos/kubernetes/kubernetes/issues/117435/comments | 19 | 2023-04-18T08:50:28Z | 2024-10-02T17:13:40Z | https://github.com/kubernetes/kubernetes/issues/117435 | 1,672,652,207 | 117,435 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

current ObjectMeta:

CreationTimestamp Time `json:"creationTimestamp,omitempty" protobuf:"bytes,8,opt,name=creationTimestamp"`

// DeletionTimestamp is RFC 3339 date and time at which this resource will be deleted. This

// field is set by the server when a graceful deletion is... | how can we get lastOpTimestamp field in the ObjectMeta to show the resource operation | https://api.github.com/repos/kubernetes/kubernetes/issues/117432/comments | 6 | 2023-04-18T08:06:40Z | 2023-09-12T19:58:01Z | https://github.com/kubernetes/kubernetes/issues/117432 | 1,672,579,960 | 117,432 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I attempted to use `Ephemeral Container`(`kubectl debug`) with `PodSecurity Admission`, but it failed.(related #117130)

And I found [kubectl debug option](https://github.com/kubernetes/enhancements/tree/master/keps/sig-cli/1441-kubectl-debug#debugging-profiles) `--profile`, so I tried again wi... | Debugging Profile dosen't work well with PSA | https://api.github.com/repos/kubernetes/kubernetes/issues/117405/comments | 6 | 2023-04-17T08:29:33Z | 2023-05-24T11:16:51Z | https://github.com/kubernetes/kubernetes/issues/117405 | 1,670,676,712 | 117,405 |

[

"kubernetes",

"kubernetes"

] | Examples: k8s.io/client-go/util/testing includes:

```

func MkTmpdir(prefix string) (string, error) {

tmpDir, err := os.MkdirTemp(os.TempDir(), prefix)

if err != nil {

return "", err

}

return tmpDir, nil

}

```

I think that every caller of that can be replaced... | Refactoring / cleanup: too many util pkgs for testing, some are dumb | https://api.github.com/repos/kubernetes/kubernetes/issues/117385/comments | 8 | 2023-04-15T17:36:54Z | 2024-06-22T19:14:46Z | https://github.com/kubernetes/kubernetes/issues/117385 | 1,669,499,393 | 117,385 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

provide ability to customise kubernetes certificate authority (CA). kubernetes creates it's own CA and it's configuration (like v3 extensions) are not configurable. we have some security findings with this CA certificate (like key being places on the server filesystem, too long TTL... | Harden kubernetes TLS certificates | https://api.github.com/repos/kubernetes/kubernetes/issues/117378/comments | 7 | 2023-04-15T05:46:53Z | 2023-04-18T13:50:55Z | https://github.com/kubernetes/kubernetes/issues/117378 | 1,669,169,554 | 117,378 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

After [this](https://github.com/kubernetes/kubernetes/pull/109706) change , the cloud provider controller is calling the update load balancer before the node controller registers the node with the cloud provider.

### What did you expect to happen?

The cloud provider controller shouldn't call updat... | cloud provider updating load balancer before registering the node | https://api.github.com/repos/kubernetes/kubernetes/issues/117375/comments | 6 | 2023-04-14T23:49:58Z | 2023-04-18T04:33:01Z | https://github.com/kubernetes/kubernetes/issues/117375 | 1,669,057,238 | 117,375 |

[

"kubernetes",

"kubernetes"

] |

> Not sure how we would catch this via static analysis, but I think it would be easy enough to write a new e2e (in a separate PR) that checks the complete set of metrics registered against the API server (so that list would be updated every time someone added something new).

_Originally posted by @enj... | e2e test to validate the metrics on the apiserver | https://api.github.com/repos/kubernetes/kubernetes/issues/117374/comments | 20 | 2023-04-14T21:24:10Z | 2023-10-08T10:19:10Z | https://github.com/kubernetes/kubernetes/issues/117374 | 1,668,968,584 | 117,374 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Implementing graceful shutdown by leveraging `preStop` hooks. According to all the documentation I can find the `terminatinoGracePeriodSeconds` includes the time that the `preStop` is running, meaning the timer starts before `preStop` executes.

In my setup I have a simple `preStop` that sleeps ... | Grace Termination Period timer doesn't start before preStop | https://api.github.com/repos/kubernetes/kubernetes/issues/117373/comments | 11 | 2023-04-14T21:22:18Z | 2024-02-24T17:56:59Z | https://github.com/kubernetes/kubernetes/issues/117373 | 1,668,967,039 | 117,373 |

Subsets and Splits

Unique Owner-Repo Count

Counts the number of unique owner-repos in the dataset, providing a basic understanding of diverse repositories.