issue_owner_repo listlengths 2 2 | issue_body stringlengths 0 261k ⌀ | issue_title stringlengths 1 925 | issue_comments_url stringlengths 56 81 | issue_comments_count int64 0 2.5k | issue_created_at stringlengths 20 20 | issue_updated_at stringlengths 20 20 | issue_html_url stringlengths 37 62 | issue_github_id int64 387k 2.91B | issue_number int64 1 131k |

|---|---|---|---|---|---|---|---|---|---|

[

"kubernetes",

"kubernetes"

] | ### What happened?

In case of online volume expansion of raw block volumes, some of the CSI plugins may not need file system resize or any related handling and thus NodeExpandVolume API call can be skipped for the same. In order to do this, plugin must set NodeExpansionRequired to false, in ControllerExpandVolumeRes... | NodeExpandVolume gets called, even if CSI plugin returned NodeExpansionRequired=false in ControllerExpandVolumeResponse | https://api.github.com/repos/kubernetes/kubernetes/issues/115294/comments | 19 | 2023-01-24T11:59:51Z | 2024-11-04T23:15:37Z | https://github.com/kubernetes/kubernetes/issues/115294 | 1,554,850,580 | 115,294 |

[

"kubernetes",

"kubernetes"

] | The results can be found in:

https://github.com/kubernetes/kubernetes/pull/109614

Facts:

- latency metrics include waiting time in APF

- so basically, long waiting time (saturated PLs) would result in exactly this

Hypothesis:

- some PLs are saturated and thus latency metrics are exceeding the threshold

But... | APF: Understand the failures of 5k scalability tests with client-side rate-limitting disabled | https://api.github.com/repos/kubernetes/kubernetes/issues/115293/comments | 23 | 2023-01-24T08:49:36Z | 2024-06-26T17:12:32Z | https://github.com/kubernetes/kubernetes/issues/115293 | 1,554,556,904 | 115,293 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

[ci-kubernetes-verify-master](https://k8s-testgrid.appspot.com/sig-release-master-blocking#verify-master)

### Which tests are failing?

ci-kubernetes-verify-master.verify-master, commit 674eb36f9

### Since when has it been failing?

1/23 13:38 PST

### Testgrid link

https://k8s-testgrid.... | [Failing test] ci-kubernetes-verify-master.verify-master | https://api.github.com/repos/kubernetes/kubernetes/issues/115289/comments | 5 | 2023-01-24T06:43:50Z | 2024-08-07T03:38:25Z | https://github.com/kubernetes/kubernetes/issues/115289 | 1,554,414,965 | 115,289 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

[ci-kubernetes-integration-master](https://prow.k8s.io/view/gs/kubernetes-jenkins/logs/ci-kubernetes-integration-master/1617639753685929984)

### Which tests are failing?

Officially it's only failing on `ci-kubernetes-integration-master.Overall`, but many subtests don't appear to hav... | [Failing test] ci-kubernetes-integration-master | https://api.github.com/repos/kubernetes/kubernetes/issues/115288/comments | 5 | 2023-01-24T06:43:24Z | 2023-01-24T15:46:31Z | https://github.com/kubernetes/kubernetes/issues/115288 | 1,554,414,315 | 115,288 |

[

"kubernetes",

"kubernetes"

] |

@sding3 in a followup PR or in this PR(whichever you prefer), it would be good to add integration tests for all these profiles in here https://github.com/kubernetes/kubernetes/blob/30262e9b14e2369528fd27241adb16fae02f684d/test/cmd/debug.sh#L21

_Originally posted by @ardaguclu in https://github.com/kubernetes/kuber... | add integration tests for debug profiles | https://api.github.com/repos/kubernetes/kubernetes/issues/115287/comments | 5 | 2023-01-24T05:44:16Z | 2023-02-20T14:30:27Z | https://github.com/kubernetes/kubernetes/issues/115287 | 1,554,358,774 | 115,287 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

While discussing the ReferenceGrant kep, I learned that there is a distinction between "resource" and "kind". The biggest takeaway is that "group + kind" is not uniquely identifiable for arbitrary resources (for in-tree apis we can enforce).

refs:

https://github.com/kubernetes/community/blob/mas... | Volume populators should use resource instead of kind | https://api.github.com/repos/kubernetes/kubernetes/issues/115286/comments | 18 | 2023-01-24T05:30:58Z | 2025-02-12T18:33:10Z | https://github.com/kubernetes/kubernetes/issues/115286 | 1,554,348,701 | 115,286 |

[

"kubernetes",

"kubernetes"

] | It looks like the returned ec references is only used for two reasons:

1) to print a debugging message, which could easily be changed

2) for compatibility with pre-1.22 APIs, which we probably don't need anymore

Maybe we should just get rid of returning a pointer to the ephemeral container and just... | remove support for legacy debug profile for pre-1.22 | https://api.github.com/repos/kubernetes/kubernetes/issues/115285/comments | 6 | 2023-01-24T05:22:32Z | 2023-07-20T16:25:30Z | https://github.com/kubernetes/kubernetes/issues/115285 | 1,554,342,333 | 115,285 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

ci-kubernetes-e2e-gci-gce-scalability

### Which tests are failing?

Testgrid looks a bit odd as `*.Overall` is red while `.Pod` is green and nothing else is run; IMHO it appears that its exiting on a build/infra step.

<img width="432" alt="Screen Shot 2023-01-23 at 3 30 55 PM" src="http... | [Failing test] ci-kubernetes-e2e-gci-gce-scalability failing on startup since 1/23 13:24 PST | https://api.github.com/repos/kubernetes/kubernetes/issues/115284/comments | 10 | 2023-01-23T23:34:30Z | 2023-01-24T16:24:40Z | https://github.com/kubernetes/kubernetes/issues/115284 | 1,553,999,872 | 115,284 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We faced an issue when executing one of the CNTT test case.

In our environment, the kube-apiserver not exposed directly, it can be reached via an nginx POD within the cluster which proxies the requests.

Client (running CNTT tests) => POD (running in the cluster, as proxy) => kube-apiserver

The ... | The "health handlers should contain necessary checks" test fails from sig-api-machinery TC suite when kube-apiserver not exposed directly | https://api.github.com/repos/kubernetes/kubernetes/issues/115276/comments | 6 | 2023-01-23T20:35:01Z | 2025-03-04T21:22:06Z | https://github.com/kubernetes/kubernetes/issues/115276 | 1,553,766,129 | 115,276 |

[

"kubernetes",

"kubernetes"

] | Follow-up to https://github.com/kubernetes/kubernetes/issues/111920.

- [ ] Add metrics for cache fill percentage of the seed cache

- [ ] Add [TestEnvelopeCacheLimit](https://github.com/kubernetes/kubernetes/blob/674eb36f92dcea33e47ac07d71d88ebe9f5c4c6d/staging/src/k8s.io/apiserver/pkg/storage/value/encrypt/envelope... | kmsv2: add metric for current size of DEK seed cache | https://api.github.com/repos/kubernetes/kubernetes/issues/115275/comments | 9 | 2023-01-23T20:28:18Z | 2023-08-19T01:19:22Z | https://github.com/kubernetes/kubernetes/issues/115275 | 1,553,753,721 | 115,275 |

[

"kubernetes",

"kubernetes"

] | - [x] Encryption of all resources

- [x] Beta proto API updates

- [ ] Blog for all the changes coming as part of v2beta1 (similar to v2alpha1 blog xref: https://kubernetes.io/blog/2022/09/09/kms-v2-improvements/)

- [x] Removal of `cacheSize` in the v2 API

- [x] Metrics

- [x] `KeyID` in `Status` is authoritative. If ... | [KMSv2] Documentation changes for beta | https://api.github.com/repos/kubernetes/kubernetes/issues/115274/comments | 7 | 2023-01-23T19:57:56Z | 2023-05-02T16:09:00Z | https://github.com/kubernetes/kubernetes/issues/115274 | 1,553,691,362 | 115,274 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Could you please consider upgrading Kustomize version to latest in order to support latest features, such as remote openapi files.

### What did you expect to happen?

I use Kustomize from argoCD application, it uses Kustomize to patch deployment resources and in my Kustomize file I cannot get... | Consider upgrade to Kustomize latest version to date (4.5.7) | https://api.github.com/repos/kubernetes/kubernetes/issues/115270/comments | 6 | 2023-01-23T17:09:45Z | 2023-01-30T16:05:50Z | https://github.com/kubernetes/kubernetes/issues/115270 | 1,553,456,982 | 115,270 |

[

"kubernetes",

"kubernetes"

] | The goal is to allow one to write a custom controller such as the one below to (e.g.) co-allocate GPUs and RDMA interfaces (using a custom `ResourceClass` and associated `ClaimParameters` object), but allow the "default" kubelet-plugins for these resources to handle the actual device preparation and injection.

<img ... | dynamic resource allocation: allow a kubelet-plugin to service allocations from multiple resource-driver controllers | https://api.github.com/repos/kubernetes/kubernetes/issues/115263/comments | 10 | 2023-01-23T10:01:56Z | 2023-08-29T18:13:44Z | https://github.com/kubernetes/kubernetes/issues/115263 | 1,552,811,499 | 115,263 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

`make test-e2e-node REMOTE=true` fails with an error: "Network plugin returns error: cni plugin not initialized"

### What did you expect to happen?

e2e-node tests start to run

### How can we reproduce it (as minimally and precisely as possible)?

Run `make test-e2e-node REMOTE=true` on the latest... | test-e2e-node fails because of a bug in CNI setup | https://api.github.com/repos/kubernetes/kubernetes/issues/115262/comments | 13 | 2023-01-23T09:33:08Z | 2024-10-02T17:12:46Z | https://github.com/kubernetes/kubernetes/issues/115262 | 1,552,776,063 | 115,262 |

[

"kubernetes",

"kubernetes"

] | Hi,

I am a research student at the University of Waterloo. I am trying to understand how to build the project using Bazel. I see that you have stopped using it since 2021. However, I was unable to get any commit working which was using Bazel as well. The commit that I have tried building projects is `a185bafa0c9142... | Building project (older commits) with Bazel fails | https://api.github.com/repos/kubernetes/kubernetes/issues/115261/comments | 3 | 2023-01-23T09:13:38Z | 2023-01-26T01:00:43Z | https://github.com/kubernetes/kubernetes/issues/115261 | 1,552,750,594 | 115,261 |

[

"kubernetes",

"kubernetes"

] | If a GUI application is built for Windows which uses this library connect to Kubernetes clusters; and if the user of that application is connecting to a cluster for which authentication is handled via an exec plugin (for example, an EKS cluster); then every time the application needs to refresh its credentials, a conso... | Suppress console window for exec auth command on Windows (or expose a method for the caller to do so) | https://api.github.com/repos/kubernetes/kubernetes/issues/115705/comments | 8 | 2023-01-22T21:56:55Z | 2023-05-09T19:46:04Z | https://github.com/kubernetes/kubernetes/issues/115705 | 1,580,947,236 | 115,705 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We have K8s cluster deployed with v1.23.3.

We have a testcase to create a namespace with few resources (few via statefulsets) and then cleanup all the resources by deleting the namespace. It is observed that namespace deletion using "kubectl delete ns <namespace>" deletes the namespace and it s... | Sandbox containers not deleted leaving pods in NotReady state | https://api.github.com/repos/kubernetes/kubernetes/issues/115252/comments | 8 | 2023-01-22T12:41:20Z | 2023-03-16T21:52:25Z | https://github.com/kubernetes/kubernetes/issues/115252 | 1,552,103,255 | 115,252 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I'm working on a system the extends the kubernetes api server.

This system consists of a kubernetes cluster, with several custom resource definitions and custom resources of each kind.

These resources are consumed by _in-cluster_ and _external clients_. The difference between the two types of ... | Potential inconsistent behaviour with apiserver apiextensions (custom resources) and 404s | https://api.github.com/repos/kubernetes/kubernetes/issues/115241/comments | 25 | 2023-01-21T10:02:26Z | 2024-03-26T20:15:31Z | https://github.com/kubernetes/kubernetes/issues/115241 | 1,551,755,055 | 115,241 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I tried to update OS (including Kubernetes) and it said :

```

...

Get:17 https://packages.cloud.google.com/apt kubernetes-xenial InRelease [8,993 B]

Err:17 https://packages.cloud.google.com/apt kubernetes-xenial InRelease

The following signatures couldn't be verified because the public ke... | [Problem in update] The following signatures couldn't be verified because the public key is not available: NO_PUBKEY | https://api.github.com/repos/kubernetes/kubernetes/issues/115237/comments | 8 | 2023-01-21T03:55:32Z | 2023-06-24T13:36:04Z | https://github.com/kubernetes/kubernetes/issues/115237 | 1,551,681,960 | 115,237 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

A proper linter like something built on https://github.com/quasilyte/go-ruleguard may become a more reliable and capable linter for test guidelines.

- [ ] usage of `framework.ExpectError`

- [ ] avoid the [`Expect.Equal(someBoolean, ...) ` anti-pattern](https://github.com/kube... | hack/verify-test-code.sh: replace with proper linter | https://api.github.com/repos/kubernetes/kubernetes/issues/115234/comments | 21 | 2023-01-20T19:22:09Z | 2024-05-06T11:01:01Z | https://github.com/kubernetes/kubernetes/issues/115234 | 1,551,333,860 | 115,234 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are flaking?

release-master-blocking

- ci-kubernetes-unit

### Which tests are flaking?

- k8s.io/kubernetes/vendor/k8s.io/apimachinery/pkg/util/httpstream/spdy.TestRoundTripSocks5AndNewConnection

Sub-tests:

- `proxied_with_valid_auth_->_https_(invalid_hostname_+_InsecureSkipVerify)`

- `... | [Flaky test] Data Race in ci-kubernetes-unit (TestRoundTripSocks5AndNewConnection) | https://api.github.com/repos/kubernetes/kubernetes/issues/115232/comments | 1 | 2023-01-20T17:54:22Z | 2023-01-20T18:52:18Z | https://github.com/kubernetes/kubernetes/issues/115232 | 1,551,242,957 | 115,232 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Let me begin by saying: I am not sure this is a bug or just me lacking insight into how Deployments are rolled out / managed. In any case: I am looking for feedback and some help determining which case this falls under: bug or lack of understanding.

I am running a cluster on GKE, on which kube-d... | [apps/v1]: Deployment with `maxUnavailable: 0` had 0 ready pods | https://api.github.com/repos/kubernetes/kubernetes/issues/115226/comments | 13 | 2023-01-20T11:05:17Z | 2023-06-22T16:16:33Z | https://github.com/kubernetes/kubernetes/issues/115226 | 1,550,672,967 | 115,226 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are flaking?

release-master-blocking

- gce-cos-master-scalability-100

### Which tests are flaking?

- ClusterLoaderV2: load overall (testing/load/config.yaml)

- ClusterLoaderV2: load: [step: 29] gathering measurements [01] - APIResponsivenessPrometheusSimple

### Since when has it been flak... | [Flaky test] gce-cos-master-scalability-100 | https://api.github.com/repos/kubernetes/kubernetes/issues/115224/comments | 4 | 2023-01-20T05:21:55Z | 2023-01-23T23:25:42Z | https://github.com/kubernetes/kubernetes/issues/115224 | 1,550,321,409 | 115,224 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We see the output of the following code snippet in our production log:

https://github.com/kubernetes/kubernetes/blob/741bd5c3823344b3fc4a38cae9846cdda1941bb4/pkg/controller/volume/attachdetach/reconciler/reconciler.go#L274

### What did you expect to happen?

We should not forcefully detach a vol... | Detaching volume forcefully is extremely dangerous | https://api.github.com/repos/kubernetes/kubernetes/issues/115223/comments | 9 | 2023-01-20T03:36:34Z | 2023-06-19T20:36:00Z | https://github.com/kubernetes/kubernetes/issues/115223 | 1,550,261,686 | 115,223 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

support default ingress controller like default storageclass.

```

root@ubuntu:~# kubectl get ingressclass

NAME PROVISIONER CLASS

ingress-nginx (default) networking.k8s.io/v1 nginx

apisix-ingress-controller xxxx ... | support default ingress controller like default storageclass | https://api.github.com/repos/kubernetes/kubernetes/issues/115221/comments | 4 | 2023-01-20T01:14:31Z | 2023-01-28T16:53:46Z | https://github.com/kubernetes/kubernetes/issues/115221 | 1,550,182,366 | 115,221 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

[Process stats](https://pkg.go.dev/k8s.io/kubelet/pkg/apis/stats/v1alpha1#ProcessStats) are not currently returned in kubelet summary API.

### What did you expect to happen?

[Process stats](https://pkg.go.dev/k8s.io/kubelet/pkg/apis/stats/v1alpha1#ProcessStats) are returned correctly in ... | kubelet: Process Stats are always 0 as returned by kubelet summary API | https://api.github.com/repos/kubernetes/kubernetes/issues/115215/comments | 11 | 2023-01-19T22:08:09Z | 2024-07-25T18:59:59Z | https://github.com/kubernetes/kubernetes/issues/115215 | 1,550,019,365 | 115,215 |

[

"kubernetes",

"kubernetes"

] | # Progress <code>[6/6]</code>

- [X] APISnoop org-flow: [Corev1APIServiceLifecycleTest-v4.org](https://github.com/apisnoop/ticket-writing/blob/master/Corev1APIServiceLifecycleTest-v4.org)

- [X] Test approval issue: #115213

- [X] Test PR: #115214

- [x] Two weeks soak start date: [testgrid-link](https://test... | Write APIService lifecycle test + 4 Endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/115213/comments | 1 | 2023-01-19T20:42:14Z | 2023-04-26T08:53:19Z | https://github.com/kubernetes/kubernetes/issues/115213 | 1,549,916,314 | 115,213 |

[

"kubernetes",

"kubernetes"

] | Hi team/community,

My team and i recently ran into issues with the kube-apiserver which needed 150GB of memory to come up after an issue with etcd (it got down), api-server was previously running with 40GB of configured memory and actually using less than 16GB, but right after etcd got back up the api-server consume... | kube-apiserver needed 150GB of memory to come up | https://api.github.com/repos/kubernetes/kubernetes/issues/115206/comments | 10 | 2023-01-19T15:13:17Z | 2023-09-07T06:12:12Z | https://github.com/kubernetes/kubernetes/issues/115206 | 1,549,363,499 | 115,206 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I followed the [documentation](https://github.com/kubernetes/community/blob/4026287dc3a2d16762353b62ca2fe4b80682960a/contributors/devel/sig-scalability/kubemark-setup-guide.md) for setting up the kubemark on k8s cluster. Created the docker image for kubemark.

modified the hollow-node_simplifie... | Kubemark nodes not able to inspect image | https://api.github.com/repos/kubernetes/kubernetes/issues/115203/comments | 4 | 2023-01-19T10:47:52Z | 2023-01-24T10:05:43Z | https://github.com/kubernetes/kubernetes/issues/115203 | 1,548,915,407 | 115,203 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Hello,all.

In my environment, (six nodes, each nodes has 32G memory), if I create 100 custom resources concurrently (use a shell script to create resouces concurrently), the kube-apiserver pod will crash and rebuild, kube-apiserver's events is below:

=> POD (running in the cluster, as proxy) => kube-apiserver

Th... | The "API priority and fairness" test fails from sig-api-machinery TC suite when kube-apiserver not exposed directly | https://api.github.com/repos/kubernetes/kubernetes/issues/115200/comments | 6 | 2023-01-19T10:17:12Z | 2023-03-22T01:01:44Z | https://github.com/kubernetes/kubernetes/issues/115200 | 1,548,867,519 | 115,200 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

the `imageID` property on ContainerStatus seems inconsistent on clusters running different CRI implementations (or the same CRI implementation with different configuration), which makes it impossible to actually tell which container image is being used.

On GKE, `imageID` is a repo digest, thoug... | ContainerStatus imageID is inconsistent / badly documented | https://api.github.com/repos/kubernetes/kubernetes/issues/115199/comments | 23 | 2023-01-19T09:39:14Z | 2025-01-21T13:25:21Z | https://github.com/kubernetes/kubernetes/issues/115199 | 1,548,810,003 | 115,199 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Kubelet memory increasing until the worker is completely out of memory as the OOM killer avoids killing kubelet due to its high priority. The worker eventually goes into NotReady state and in many cases is completely unreachable due to key processes being killed or not having enough memory for bas... | Kubelet memory leak and "top nodes" showing UNKOWN, "crictl stats" stuck | https://api.github.com/repos/kubernetes/kubernetes/issues/115192/comments | 23 | 2023-01-19T05:18:59Z | 2024-09-25T17:47:27Z | https://github.com/kubernetes/kubernetes/issues/115192 | 1,548,529,881 | 115,192 |

[

"kubernetes",

"kubernetes"

] | If I have an extended resource (such as disk iops), I also want to reserve a part of the resource. I hope there is a clearer reflection in node status. It seems impossible now.

https://github.com/kubernetes/kubernetes/blob/17422ff5c1195601cbbee87075171e16334b32c2/pkg/kubelet/cm/node_container_manager_linux.go#L227-L... | Kubelet will change the allocatable of the extended resource to the value of capacity | https://api.github.com/repos/kubernetes/kubernetes/issues/115190/comments | 11 | 2023-01-19T00:43:55Z | 2024-08-30T09:08:35Z | https://github.com/kubernetes/kubernetes/issues/115190 | 1,548,338,907 | 115,190 |

[

"kubernetes",

"kubernetes"

] | it's hard to imagine life being better for a cluster admin if we make the cluster start failing reads ... I wouldn't do that.

failing no-op writes doesn't seem useful to me either... it pushes errors to users unlikely to be able to do anything about them

I think I would expect to coast, and for an e... | [kmsv2] metrics to capture when plugin returns invalid keyID | https://api.github.com/repos/kubernetes/kubernetes/issues/115188/comments | 1 | 2023-01-18T23:24:07Z | 2023-02-21T22:24:41Z | https://github.com/kubernetes/kubernetes/issues/115188 | 1,548,270,543 | 115,188 |

[

"kubernetes",

"kubernetes"

] | /sig auth | test issue please ignore 2 | https://api.github.com/repos/kubernetes/kubernetes/issues/115183/comments | 1 | 2023-01-18T22:50:19Z | 2023-01-18T22:51:04Z | https://github.com/kubernetes/kubernetes/issues/115183 | 1,548,242,382 | 115,183 |

[

"kubernetes",

"kubernetes"

] | null | test issue please ignore | https://api.github.com/repos/kubernetes/kubernetes/issues/115182/comments | 2 | 2023-01-18T22:49:13Z | 2023-01-18T22:49:56Z | https://github.com/kubernetes/kubernetes/issues/115182 | 1,548,241,514 | 115,182 |

[

"kubernetes",

"kubernetes"

] | /sig auth | sig-auth project board test issue | https://api.github.com/repos/kubernetes/kubernetes/issues/115180/comments | 3 | 2023-01-18T20:28:29Z | 2023-01-18T20:37:24Z | https://github.com/kubernetes/kubernetes/issues/115180 | 1,538,670,734 | 115,180 |

[

"kubernetes",

"kubernetes"

] | https://github.com/kubernetes/kubernetes/blob/cc68c06f9cb6c70cbb840a8d7b9ca49eb223cb3f/pkg/scheduler/framework/plugins/nodevolumelimits/csi.go#L233

`isCSIMigrationOn` handles cases when csiNode is nil. This is condition is used by cluster-autoscaler internal working. However, the log line refers to `csiNode.Name` ca... | Seg Fault in nodeVolumeLimits of scheduler plugin causing panic | https://api.github.com/repos/kubernetes/kubernetes/issues/115178/comments | 8 | 2023-01-18T19:09:51Z | 2023-02-17T15:56:13Z | https://github.com/kubernetes/kubernetes/issues/115178 | 1,538,543,668 | 115,178 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

post-release-push-image-go-runner, post-release-push-image-kube-cross

### Which tests are failing?

post-release-push-image-go-runner.Pod

post-release-push-image-kube-cross.Pod

### Since when has it been failing?

Intermittently since at least 1/6

### Testgrid link

https://k8s-te... | [Flaky tests] different image push tests failing with same errors (post-release-push-image-go-runner, post-release-push-image-kube-cross) | https://api.github.com/repos/kubernetes/kubernetes/issues/115176/comments | 4 | 2023-01-18T18:41:44Z | 2023-01-18T18:55:34Z | https://github.com/kubernetes/kubernetes/issues/115176 | 1,538,495,594 | 115,176 |

[

"kubernetes",

"kubernetes"

] | We have many "test only" packages through out the project, containing fixtures, helpers, utilities, etc... These test libraries are not intended for use in production binaries. They often lack rigorous review and sufficient testing for use in production binaries. We should make sure they don't accidentally get pulled i... | Add a presubmit to restrict test only libraries from linking into prod binaries | https://api.github.com/repos/kubernetes/kubernetes/issues/115175/comments | 7 | 2023-01-18T18:25:48Z | 2024-01-23T19:03:48Z | https://github.com/kubernetes/kubernetes/issues/115175 | 1,538,465,380 | 115,175 |

[

"kubernetes",

"kubernetes"

] | In a separate PR, lets add a `.Compact` method to this cache. Since the cache keys we use will never repeat, they will only ever get evicted from the cache when it hits max size (meaning on a long lived cluster eventually the KMS cache will always be using 100 MB).

_Originally posted by @enj in https:... | [KMSv2] Add `.Compact` method for LRU expire cache with lock | https://api.github.com/repos/kubernetes/kubernetes/issues/115174/comments | 4 | 2023-01-18T18:13:04Z | 2023-01-23T14:22:21Z | https://github.com/kubernetes/kubernetes/issues/115174 | 1,538,445,826 | 115,174 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

While working on https://github.com/kubernetes/kubernetes/pull/114825 I found tests that used `WaitForPodRunning` followed by `ExpectError` because they create a pod that is not supposed to get started.

A better approach is to `gomega.Consistently` for `pod is pending`:

```diff... | WaitFor<something> + ExpectError anti-pattern | https://api.github.com/repos/kubernetes/kubernetes/issues/115164/comments | 12 | 2023-01-18T14:13:02Z | 2024-07-31T05:50:03Z | https://github.com/kubernetes/kubernetes/issues/115164 | 1,538,072,630 | 115,164 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Change the [default HPA downscale policy](https://kubernetes.io/docs/tasks/run-application/horizontal-pod-autoscale/#default-behavior) from allowing 100% pods to be removed in a single step to allowing a smaller percentage (maybe 25%?). This will result in annealed downscaling.

##... | Change default HPA downscale policy from 100% pods to 25% pods | https://api.github.com/repos/kubernetes/kubernetes/issues/115162/comments | 6 | 2023-01-18T10:29:37Z | 2023-06-17T12:14:57Z | https://github.com/kubernetes/kubernetes/issues/115162 | 1,537,758,853 | 115,162 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

btrfs support as rootfs instead of unionfs/overlay2

### Why is this needed?

smaller memory footprint, and faster read/write support | btrfs support as rootfs in K8s | https://api.github.com/repos/kubernetes/kubernetes/issues/115157/comments | 6 | 2023-01-18T08:57:03Z | 2023-06-17T11:13:57Z | https://github.com/kubernetes/kubernetes/issues/115157 | 1,537,627,450 | 115,157 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

We have observed that after a node is preempted, pods using persistent volumes take 6+ minutes to restart. This happens when a volume was not able to be cleanly unmounted (indicated by updating `Node.Status.VolumesInUse`), which causes the Attach/Detach controller (in kube-controller-manager) to w... | Graceful node shutdown doesn't wait for volume teardown | https://api.github.com/repos/kubernetes/kubernetes/issues/115148/comments | 20 | 2023-01-18T00:18:08Z | 2024-10-01T23:33:49Z | https://github.com/kubernetes/kubernetes/issues/115148 | 1,537,208,560 | 115,148 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Setting `allowPrivilegeEscalation: false` in combination with adding `CAP_SYS_ADMIN` capabilities fails and produces the following error message:

```

cannot set `allowPrivilegeEscalation` to false and `capabilities.Add` CAP_SYS_ADMIN

```

However, using `ALL` instead of `CAP_SYS_ADMIN` passes.

... | Inconsistent validation behaviour when adding capabilities | https://api.github.com/repos/kubernetes/kubernetes/issues/115142/comments | 11 | 2023-01-17T22:17:35Z | 2024-07-20T11:51:50Z | https://github.com/kubernetes/kubernetes/issues/115142 | 1,537,106,257 | 115,142 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are flaking?

capz-e2e-windows-containerd-1.25

### Which tests are flaking?

[sig-node] PreStop should call prestop when killing a pod [Conformance]

### Since when has it been flaking?

Several months

### Testgrid link

https://prow.k8s.io/view/gs/kubernetes-jenkins/logs/ci-kubernetes-e2e-capz-contai... | Windows - [sig-node] PreStop should call prestop when killing a pod [Conformance] | https://api.github.com/repos/kubernetes/kubernetes/issues/115136/comments | 8 | 2023-01-17T17:25:46Z | 2024-01-19T11:59:54Z | https://github.com/kubernetes/kubernetes/issues/115136 | 1,536,766,518 | 115,136 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Attempting to mount a secret as a file at a subPath, if the secret's data key does not match the deployment's `subPath` definition

I get an empty directory. If the secret was correctly defined, I would see my secret file mounted. This behavior is confusing, especially as there is no error or warni... | Attempting to mount a badly defined secret at a subPath results in an empty folder instead of some error condition | https://api.github.com/repos/kubernetes/kubernetes/issues/115131/comments | 6 | 2023-01-17T15:51:29Z | 2023-06-16T17:06:57Z | https://github.com/kubernetes/kubernetes/issues/115131 | 1,536,614,313 | 115,131 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

`kubectl version` looks good

```

cloud@cloud-control-185c01c2e88:~$ sudo /opt/bin/kubectl version -o json

{

"clientVersion": {

"major": "1",

"minor": "26",

"gitVersion": "v1.26.0",

"gitCommit": "b46a3f887ca979b1a5d14fd39cb1af43e7e5d12d",

"gitTreeState": "clean",

... | "kubectl version --short" (v1.26.0) returns different server version (v1.25.0) | https://api.github.com/repos/kubernetes/kubernetes/issues/115130/comments | 4 | 2023-01-17T15:49:52Z | 2023-01-18T08:01:42Z | https://github.com/kubernetes/kubernetes/issues/115130 | 1,536,611,979 | 115,130 |

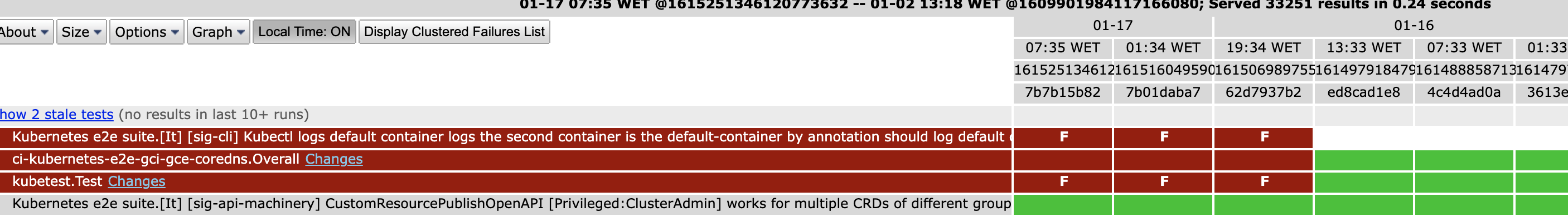

[

"kubernetes",

"kubernetes"

] | It seems that it was added recently and it was always failing https://testgrid.k8s.io/sig-network-gce#gci-gce-coredns

/sig cli

/kind failing-test

/assign @soltysh | E2E: Kubernetes e2e suite.[It] [sig-cli] Kubectl logs default container logs the second container is the default-container by annotation should log default container if not specified | https://api.github.com/repos/kubernetes/kubernetes/issues/115126/comments | 1 | 2023-01-17T12:22:21Z | 2023-01-17T13:44:35Z | https://github.com/kubernetes/kubernetes/issues/115126 | 1,536,292,467 | 115,126 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

1. created pvc with 4TB size (fs: ext4)

2. created application and attached to pvc.

3. edited pvc to resize from 4TB to 8TB.

4. since the file system is large, csi/kubelet was taking long time to resize the pvc.

5. while the fs resize was in process, stopped the application. Now, the pvc is deta... | Online pvc resize stuck | https://api.github.com/repos/kubernetes/kubernetes/issues/115124/comments | 7 | 2023-01-17T08:58:57Z | 2023-06-16T11:00:58Z | https://github.com/kubernetes/kubernetes/issues/115124 | 1,536,008,007 | 115,124 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Currently, the Result field of the [AdmissionsResponse](https://github.com/kubernetes/api/blob/master/admission/v1/types.go#L127) is not consulted if the Admission Controller allows the object. It would be helpful to developers if certain admission controller response messages co... | Allow for additional information to be passed in AdmissionResponse object to the Kubernetes CLI | https://api.github.com/repos/kubernetes/kubernetes/issues/115115/comments | 8 | 2023-01-16T23:21:00Z | 2023-04-20T16:58:49Z | https://github.com/kubernetes/kubernetes/issues/115115 | 1,535,595,998 | 115,115 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have 3 replicas of haproxy ingress controller. Only one pod is receiving traffic.

### What did you expect to happen?

round robin lb of new connections to 3 replicas of haproxy ingress

### How can we reproduce it (as minimally and precisely as possible)?

I can't. This behaviour is in my produ... | Kube-proxy will not lb across 3 endpoints | https://api.github.com/repos/kubernetes/kubernetes/issues/115114/comments | 18 | 2023-01-16T22:49:59Z | 2023-01-23T17:57:38Z | https://github.com/kubernetes/kubernetes/issues/115114 | 1,535,568,919 | 115,114 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I executed the script `./hack/local-up-cluster.sh` with `sudo`. It created the file `/tmp/kube-serviceaccount.key`. Unfortunately `./hack/update-openapi-spec.sh` also uses the same path, so If i execute it without `sudo` it fails:

```

E0116 11:47:01.169146 19617 run.go:74] "command failed" err... | Permission errors at update-openapi-spec.sh on /tmp directory | https://api.github.com/repos/kubernetes/kubernetes/issues/115105/comments | 2 | 2023-01-16T10:51:52Z | 2023-01-19T00:06:35Z | https://github.com/kubernetes/kubernetes/issues/115105 | 1,534,714,971 | 115,105 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

[Systemd-resource-control](https://www.freedesktop.org/software/systemd/man/systemd.resource-control.html#)

I think that in case of a sudden node shut down and quickly power on there doesn't exists anything that would prioritize startup list of a pods. ``StartupCPUShares`` would b... | StartupCPUShares - additional feature in QoS | https://api.github.com/repos/kubernetes/kubernetes/issues/115099/comments | 6 | 2023-01-16T07:26:50Z | 2023-06-16T11:00:57Z | https://github.com/kubernetes/kubernetes/issues/115099 | 1,534,432,932 | 115,099 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

In a cluster with large enough numbers of objects, it seems to be impossible to clean them up with `deletecollection`. Because the deletecollection is failing while listing the objects from etcd. The failure happens after exactly 34 seconds, due to the 34-second max timeout introduced in PR #96901... | Deletecollection failing after 34 seconds | https://api.github.com/repos/kubernetes/kubernetes/issues/115090/comments | 9 | 2023-01-16T01:10:23Z | 2023-01-30T20:41:03Z | https://github.com/kubernetes/kubernetes/issues/115090 | 1,534,094,180 | 115,090 |

[

"kubernetes",

"kubernetes"

] | Looking AGAIN at the issue with Service ports having an insufficient merge key for client-side `apply`. The issue also appears in `kubectl edit` but is fixed in server-side `apply`.

Could we possibly do server-side for `kubectl edit`? | kubectl edit --server-side ? | https://api.github.com/repos/kubernetes/kubernetes/issues/115089/comments | 3 | 2023-01-16T01:06:50Z | 2023-01-18T01:26:03Z | https://github.com/kubernetes/kubernetes/issues/115089 | 1,534,092,104 | 115,089 |

[

"kubernetes",

"kubernetes"

] | https://github.com/kubernetes/kubernetes/pull/111654 started here but it got big (shocking!) and the contributor got busy. There are a lot of potential cleanups in there, though, and I hate wasting work.

I see it as an opportunity for someone to rack up some PRs and net-negative LOCs (the best kind!) - just take ov... | Cleanup dead code in the codebase | https://api.github.com/repos/kubernetes/kubernetes/issues/115088/comments | 7 | 2023-01-16T00:20:36Z | 2023-04-24T21:23:03Z | https://github.com/kubernetes/kubernetes/issues/115088 | 1,534,069,616 | 115,088 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

Please extend the CronJob API with a Semaphore feature.

```

semaphore:

name: cron-semaphore

maxConcurrency: 1

```

It allows to control concurrency of CronJobs being executed. It can be used to limit the number of concurrent different CronJobs running at the same... | Feature Request: Add semaphore feature to CronJob API | https://api.github.com/repos/kubernetes/kubernetes/issues/115086/comments | 10 | 2023-01-15T14:37:00Z | 2023-07-07T20:42:58Z | https://github.com/kubernetes/kubernetes/issues/115086 | 1,533,843,601 | 115,086 |

[

"kubernetes",

"kubernetes"

] | In kueue and kube-queue or some other situation, we will declare the reference to a resource through label or annotation like `kueue.x-k8s.io/queue-name: training`.

But it seems that there isn't any method to ensure the related resources' existence. Lack of existence of related resources can lead to bad consequence. A... | support checking dependency resources when creating a resource | https://api.github.com/repos/kubernetes/kubernetes/issues/115084/comments | 6 | 2023-01-15T09:41:17Z | 2023-06-14T10:35:54Z | https://github.com/kubernetes/kubernetes/issues/115084 | 1,533,738,051 | 115,084 |

[

"kubernetes",

"kubernetes"

] | /kind feature

/sig scheduling

We don't support [`plugin_execution_duration_seconds`](https://github.com/kubernetes/kubernetes/blob/eabb70833a5649e10ab81f04423ce5cb16aba1b7/pkg/scheduler/metrics/metrics.go#L146) in PreEnqueue. It's worth implementing in PreEnqueue as well because the performance of PreEnqueue is cri... | `plugin_execution_duration_seconds` in PreEnqueue | https://api.github.com/repos/kubernetes/kubernetes/issues/115083/comments | 6 | 2023-01-15T08:44:46Z | 2023-03-12T20:52:41Z | https://github.com/kubernetes/kubernetes/issues/115083 | 1,533,721,128 | 115,083 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Execution of node e2e tests fails to ssh to the **existing** google cloud instance.

here is a console output:

```

$ make test-e2e-node REMOTE=true

+++ [0114 23:09:26] Building go targets for linux/amd64

github.com/onsi/ginkgo/v2/ginkgo (non-static)

Creating artifacts directory at /tmp/_a... | `make test-e2e-node REMOTE=true` fails with error `ssh: Could not resolve hostname` | https://api.github.com/repos/kubernetes/kubernetes/issues/115080/comments | 18 | 2023-01-14T21:34:54Z | 2024-09-25T23:13:31Z | https://github.com/kubernetes/kubernetes/issues/115080 | 1,533,502,334 | 115,080 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Querying for all kubelet metrics at below end point:

https://<k8s-server>:10250/metrics

From the output i am not getting below metrics:

kubelet_certificate_manager_server_ttl_seconds

kubelet_server_expiration_renew_errors

From the below k8s documentation page i can see the above metri... | k8s metrics not found | https://api.github.com/repos/kubernetes/kubernetes/issues/115071/comments | 11 | 2023-01-14T02:38:40Z | 2023-01-19T19:12:27Z | https://github.com/kubernetes/kubernetes/issues/115071 | 1,533,105,876 | 115,071 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When you delete a Namespace, Kubernetes automatically deletes all the objects in that namespace.

However, if you have a workload in that namespace (e.g. a custom resource) AND the workload has a finalizer AND the controller that manages that finalizer is granted permission by a RoleBinding in th... | RoleBindings deleted by namespace finalizer before other objects using those permissions finish stopping/finalizing | https://api.github.com/repos/kubernetes/kubernetes/issues/115070/comments | 13 | 2023-01-14T02:07:12Z | 2024-01-17T20:21:19Z | https://github.com/kubernetes/kubernetes/issues/115070 | 1,533,097,987 | 115,070 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

An automated way of creating PVCs for the individual pods of Indexed Job. This is similar to PersistentVolumeClaimTemplate in StatefulSets.

### Why is this needed?

Many long running training workloads use checkpointing to recover from failures. In some cases, the individual worke... | Stateful Indexed Job | https://api.github.com/repos/kubernetes/kubernetes/issues/115066/comments | 19 | 2023-01-13T19:36:18Z | 2025-01-14T10:13:04Z | https://github.com/kubernetes/kubernetes/issues/115066 | 1,532,812,230 | 115,066 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I try to read environment variables defined by envFromin in the shell process. But it failed.

Then I switch to the bash process, and Ican read the environment variables defined by envFrom.

### What did you expect to happen?

read environment variables defined by envFromin in the shell process.

#... | can not read environment variables defined by envfrom in the shell process | https://api.github.com/repos/kubernetes/kubernetes/issues/115057/comments | 9 | 2023-01-13T11:13:25Z | 2023-06-22T09:09:32Z | https://github.com/kubernetes/kubernetes/issues/115057 | 1,532,136,154 | 115,057 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

[Semver](https://semver.org/#backusnaur-form-grammar-for-valid-semver-versions) is allowed to have the following syntax: `1.2.3+45`, where `45` is the build number. We are using this versioning approach, and when we tried to put that into the `app.kubernetes.io/version` label we got the following... | Valid Semver 2.0 not compatible with kubernetes labels | https://api.github.com/repos/kubernetes/kubernetes/issues/115055/comments | 25 | 2023-01-13T10:31:57Z | 2025-02-06T10:43:11Z | https://github.com/kubernetes/kubernetes/issues/115055 | 1,532,079,640 | 115,055 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have a pod writing the termination message into an empty dir volume with bidirectional mount propagation, every time I delete the pod, the pod will stuck at terminating, and I have to do a force deletion. the logs of kubelet showing something like

> UnmountVolume.TearDown failed for volume "cache... | Set TerminationMessagePath to a Path Under Bidirectional Mounted EmptyDir Blocking the Pod Deleting. | https://api.github.com/repos/kubernetes/kubernetes/issues/115054/comments | 21 | 2023-01-13T10:13:05Z | 2025-01-06T15:12:17Z | https://github.com/kubernetes/kubernetes/issues/115054 | 1,532,051,937 | 115,054 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

While I was reading the [`node_affinity.go` ](https://github.com/kubernetes/kubernetes/blob/master/pkg/scheduler/framework/plugins/nodeaffinity/node_affinity.go)plugin source code in the scheduler, I found there may potentially exist a logic issue in the PreFilter().

As from the [document](htt... | A potential issue in the node_affinity | https://api.github.com/repos/kubernetes/kubernetes/issues/115047/comments | 5 | 2023-01-13T02:35:33Z | 2023-04-12T01:17:52Z | https://github.com/kubernetes/kubernetes/issues/115047 | 1,531,621,864 | 115,047 |

[

"kubernetes",

"kubernetes"

] | ### Which jobs are failing?

* https://testgrid.k8s.io/sig-node-containerd#cos-cgroupv1-containerd-node-e2e-serial

* https://testgrid.k8s.io/sig-node-containerd#cos-cgroupv2-containerd-node-e2e-serial

### Which tests are failing?

E2eNode Suite.[It] [sig-node] Container Manager Misc [Serial] Validate OOM score adjust... | Test failing - sig-node - Container Manager Misc [Serial] Validate OOM score adjustments [NodeFeature:OOMScoreAdj] once the node is setup guaranteed container's oom-score-adj should be -998 | https://api.github.com/repos/kubernetes/kubernetes/issues/115045/comments | 7 | 2023-01-13T01:17:07Z | 2023-02-01T01:50:05Z | https://github.com/kubernetes/kubernetes/issues/115045 | 1,531,564,466 | 115,045 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I was trying to add volume expansion support to an out-of-tree CSI driver (https://github.com/apalia/cloudstack-csi-driver), then when I actually tried to expand a volume which I had created, I saw an error message from kube-controller-manager saying "didn't find a plugin capable of expanding the ... | Volumes cannot be expanded with out-of-tree CSI drivers | https://api.github.com/repos/kubernetes/kubernetes/issues/115044/comments | 7 | 2023-01-13T00:58:37Z | 2023-07-15T22:38:02Z | https://github.com/kubernetes/kubernetes/issues/115044 | 1,531,552,583 | 115,044 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I'm [upgrading Kubernetes v1.25 to v1.26 in the Security Profiles Operator](https://github.com/kubernetes-sigs/security-profiles-operator/pull/1414), where the upgrade to v1.26 adds the `Claims []ResourceClaim` to the type `ResourceRequirements`:

https://github.com/kubernetes/kubernetes/blob/48... | Importing `k8s.io/api/core/v1.ResourceRequirements` into a CRD makes it invalid when upgrading to v1.26.0 | https://api.github.com/repos/kubernetes/kubernetes/issues/115026/comments | 3 | 2023-01-12T15:54:34Z | 2023-01-12T16:06:14Z | https://github.com/kubernetes/kubernetes/issues/115026 | 1,530,960,477 | 115,026 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

An ingress resource with both `kubernetes.io/ingress.class` annotation and `spec.ingressClassName` set cannot be **created**. Ingress resources can be updated to include both the annotation and the `ingressClassName` without issue.

Kubernetes response:

> The Ingress "example-ingress" is invali... | Cannot create ingress with ingressClass annotation and IngressClassName both set | https://api.github.com/repos/kubernetes/kubernetes/issues/115018/comments | 15 | 2023-01-12T14:58:23Z | 2023-07-12T08:08:20Z | https://github.com/kubernetes/kubernetes/issues/115018 | 1,530,860,286 | 115,018 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I tried to execute the script to start a cluster and it failed:

```

./hack/local-up-cluster.sh: line 515: /tmp/kube_egress_selector_configuration.yaml: Permission denied

./hack/local-up-cluster.sh: line 533: /tmp/kube-audit-policy-file: Permission denied

```

Update: After some investigati... | Permission errors at local-up-cluster.sh on /tmp directory | https://api.github.com/repos/kubernetes/kubernetes/issues/115016/comments | 7 | 2023-01-12T14:16:14Z | 2023-01-19T03:02:47Z | https://github.com/kubernetes/kubernetes/issues/115016 | 1,530,790,321 | 115,016 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

when kubectl exec command like

```

kubectl restart pod-name container-name -n namespace-name

```

and then kubelet help to restart related the conotainer.

### Why is this needed?

For some network issue and ip monitoring issue, pods' change will lead to the ip change actions, i... | Can kubelet support restart pods/container action that triggered by kubectl. | https://api.github.com/repos/kubernetes/kubernetes/issues/115015/comments | 8 | 2023-01-12T13:16:13Z | 2023-06-15T08:46:55Z | https://github.com/kubernetes/kubernetes/issues/115015 | 1,530,697,286 | 115,015 |

[

"kubernetes",

"kubernetes"

] | ### What would you like to be added?

It would be nice support labeled Events in `EventRecorder` interface.

PoC of the required functionality available here:

https://github.com/odubajDT/client-go/commit/44fc5c38a817d0585a4692e15413ddc1f6291a8d

### Why is this needed?

Labels are the default filter objects ... | Support labeled events in EventRecorder interface | https://api.github.com/repos/kubernetes/kubernetes/issues/115009/comments | 6 | 2023-01-12T11:57:34Z | 2023-01-27T09:04:48Z | https://github.com/kubernetes/kubernetes/issues/115009 | 1,530,587,336 | 115,009 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Metrics collected from http://node:10255/metrics/cadvisor endpoints include information on non-existent pods, making them larger and larger.

### What did you expect to happen?

Metrics data collected from endpoints http://node:10255/metrics/cadvisor should only include information on existing... | kubelet/cadvisor metrics are only getting bigger over time | https://api.github.com/repos/kubernetes/kubernetes/issues/115008/comments | 15 | 2023-01-12T11:07:21Z | 2023-02-11T08:27:37Z | https://github.com/kubernetes/kubernetes/issues/115008 | 1,530,523,945 | 115,008 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Kubelet's volume manager reconciller called UnPublish on a pvc which was being referred in a running pod due to which pod started getting I/O error on that volume.

Reason -

Upon kubelet restart, before marking hasAddedPods = true Kubelet's volume manager populates it's desired state of world (... | Kubelet's volume manager called NodeUnpublishVolume for a volume which is being referred in a running pod, leading to I/O error | https://api.github.com/repos/kubernetes/kubernetes/issues/115006/comments | 8 | 2023-01-12T07:51:30Z | 2023-06-17T20:19:59Z | https://github.com/kubernetes/kubernetes/issues/115006 | 1,530,259,597 | 115,006 |

[

"kubernetes",

"kubernetes"

] | /sig auth

/triage accepted

/assign

Tests to implement -

- symlink swap integration test.

- update unit tests for encryption config controller to remove sleep. (Ref - https://github.com/kubernetes/kubernetes/pull/113896#issuecomment-1409508875)

- integration test for encrypting `events` resources. (Ref - https... | [KMSv2] Add symlink swap integration test for Hot Reload | https://api.github.com/repos/kubernetes/kubernetes/issues/114999/comments | 3 | 2023-01-12T00:15:19Z | 2023-11-28T08:23:19Z | https://github.com/kubernetes/kubernetes/issues/114999 | 1,529,898,775 | 114,999 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

In https://github.com/kubernetes/kubernetes/issues/114268, we discovered that our plan for eventually removing the [VolumeZone](https://github.com/kubernetes/kubernetes/tree/master/pkg/scheduler/framework/plugins/volumezone) scheduling plugin when CSI migration is GA is not possible because the plug... | VolumeZone scheduling plugin should handle csi migration | https://api.github.com/repos/kubernetes/kubernetes/issues/114996/comments | 10 | 2023-01-11T20:08:32Z | 2023-08-18T03:20:29Z | https://github.com/kubernetes/kubernetes/issues/114996 | 1,529,621,238 | 114,996 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

In https://github.com/kubernetes/kubernetes/issues/114268, we realized that non in-tree PVs can be impacted by a future removal of beta topology labels from Nodes if they are supporting the beta topology labels.

### What did you expect to happen?

We should allow users to mutate the PV NodeAffinity... | Allow PV NodeAffinity to update from beta to GA topology labels | https://api.github.com/repos/kubernetes/kubernetes/issues/114995/comments | 4 | 2023-01-11T19:29:26Z | 2023-03-07T05:56:19Z | https://github.com/kubernetes/kubernetes/issues/114995 | 1,529,567,341 | 114,995 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

When the regular pod is deleted it's status immediately transitions into `Terminating`, however when the static pod is deleted it stays in `Running` status until almost till the end just before it gets wiped out.

### What did you expect to happen?

The reported status of the regular pod and ... | Different behaviour for regular and static pods upon deletion | https://api.github.com/repos/kubernetes/kubernetes/issues/114986/comments | 26 | 2023-01-11T15:24:55Z | 2023-05-03T15:09:21Z | https://github.com/kubernetes/kubernetes/issues/114986 | 1,529,246,475 | 114,986 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

I have the following deployment:

```yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: mytarget

spec:

selector:

matchLabels:

mylabel: mytarget

replicas: 2

template:

metadata:

labels:

mylabel: mytarget

spec:

serviceAccountName: de... | envFrom not working in ephemeral containers: failed to sync secret cache | https://api.github.com/repos/kubernetes/kubernetes/issues/114984/comments | 31 | 2023-01-11T14:02:09Z | 2025-01-23T23:53:22Z | https://github.com/kubernetes/kubernetes/issues/114984 | 1,529,104,822 | 114,984 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

- Cluster created with custom CoreDNS installation, i.e. `skipPhases: [ addon/coredns ]` specified in kubeadm init configuration

- When joining a new node using `kubeadm join`, kubeadm still tries to pull all images

- If that image pull fails, kubeadm bails out despite the image not being required... | kubeadm should not pull images for skipped addon phases (coredns, kube-proxy) | https://api.github.com/repos/kubernetes/kubernetes/issues/114979/comments | 4 | 2023-01-11T08:23:53Z | 2023-01-11T08:33:54Z | https://github.com/kubernetes/kubernetes/issues/114979 | 1,528,621,552 | 114,979 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [6140da152b810b30d99b](https://go.k8s.io/triage#6140da152b810b30d99b)

##### Error text:

```

[FAILED] timed out waiting for the condition

In [It] at: test/e2e/node/pods.go:276 @ 01/02/23 10:40:40.11

```

#### Recent failures:

[1/9/2023, 3:08:19 PM pr:pull-kubernetes-e2e-kind](https://prow.k... | Failure cluster [6140da15...] e2e Flake: Finished pod is deleted before events can be retrieved | https://api.github.com/repos/kubernetes/kubernetes/issues/114971/comments | 18 | 2023-01-10T21:33:43Z | 2025-01-21T16:44:56Z | https://github.com/kubernetes/kubernetes/issues/114971 | 1,528,037,378 | 114,971 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

python2 is long deprecated, everything should be on python3, but that doesn't appear to be the case in this repo.

/sig testing | Python2 still in use | https://api.github.com/repos/kubernetes/kubernetes/issues/114967/comments | 4 | 2023-01-10T18:59:13Z | 2023-01-19T15:24:51Z | https://github.com/kubernetes/kubernetes/issues/114967 | 1,527,864,461 | 114,967 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

If a CR converter is wired in and it modifies the input list, the delegating CR converter does not protect against this.

### What did you expect to happen?

A converter cannot effect the input list

### How can we reproduce it (as minimally and precisely as possible)?

Not really possible externall... | CR conversion needs to protect against a converter that mutates the input list | https://api.github.com/repos/kubernetes/kubernetes/issues/114958/comments | 2 | 2023-01-10T17:00:56Z | 2023-01-31T21:20:31Z | https://github.com/kubernetes/kubernetes/issues/114958 | 1,527,725,181 | 114,958 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

It "connects" to dockershim

```

DEBU[0000] Connected successfully using endpoint: unix:///var/run/dockershim.sock

DEBU[0000] VersionRequest: &VersionRequest{Version:v1,}

E1213 20:22:57.677536 53350 remote_runtime.go:145] "Version from runtime service failed" err="rpc error: code = Unavailabl... | The CRI v1 implementation of validateServiceConnection does not check for unavailable | https://api.github.com/repos/kubernetes/kubernetes/issues/114956/comments | 3 | 2023-01-10T16:51:39Z | 2023-01-24T10:24:19Z | https://github.com/kubernetes/kubernetes/issues/114956 | 1,527,712,931 | 114,956 |

[

"kubernetes",

"kubernetes"

] | Since the cloud provider extraction, it's become clear that many if not all of the e2e using a default storage class have been dropped, eg the sig-apps statefulset tests in [gce-cos-master-alpha-features](https://testgrid.k8s.io/google-gce#gce-cos-master-alpha-features). Even though these tests are running on GCE and s... | Improve coverage of e2e tests requiring a default storage class | https://api.github.com/repos/kubernetes/kubernetes/issues/114955/comments | 15 | 2023-01-10T16:42:17Z | 2024-08-08T00:51:51Z | https://github.com/kubernetes/kubernetes/issues/114955 | 1,527,699,854 | 114,955 |

[

"kubernetes",

"kubernetes"

] | /sig architecture

/area code-organization

/kind cleanup

> Opened issues for tracking these deps in those repo and `github.com/pkg/errors` is tracked in https://github.com/kubernetes/kubernetes/issues/113627.

[archived repos: auto-updated per month](https://github.com/pacoxu/github-repos-stats/tree/add-archived)... | Umbrella issue: github archived dependencies (2023 Jan) | https://api.github.com/repos/kubernetes/kubernetes/issues/114942/comments | 6 | 2023-01-10T07:04:12Z | 2024-12-25T10:12:43Z | https://github.com/kubernetes/kubernetes/issues/114942 | 1,526,870,539 | 114,942 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

http2: server: error reading preface from client 192.168.2.13:41264: read tcp 192.168.2.11:6443->192.168.2.13:41264: read: connection reset by peer

http2: server: error reading preface from client 10.224.157.55:57398: read tcp 192.168.2.11:6443->10.224.157.55:57398: read: connection reset by peer

... | kube-apiserver http2: server: xxx read: connection reset by peer | https://api.github.com/repos/kubernetes/kubernetes/issues/114939/comments | 9 | 2023-01-10T03:38:39Z | 2023-09-07T06:12:47Z | https://github.com/kubernetes/kubernetes/issues/114939 | 1,526,709,486 | 114,939 |

[

"kubernetes",

"kubernetes"

] | ### Failure cluster [dd3d4eceb1d8abf2dc14](https://go.k8s.io/triage#dd3d4eceb1d8abf2dc14)

##### Error text:

```

[FAILED] failed to wait for definition "com.example.crd-publish-openapi-test-multi-to-single-ver.v6alpha1.e2e-test-crd-publish-openapi-393-crd" not to be served anymore: failed to wait for OpenAPI spec v... | Failure cluster [dd3d4ece...] net/http: TLS handshake timeout | https://api.github.com/repos/kubernetes/kubernetes/issues/114934/comments | 7 | 2023-01-09T21:45:53Z | 2024-07-18T20:17:26Z | https://github.com/kubernetes/kubernetes/issues/114934 | 1,526,374,700 | 114,934 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

```

apiVersion: v1

kind: Pod

metadata:

name: correctly-invalid

labels:

foo: "{bar}"

spec:

containers:

- name: pause

image: "public.ecr.aws/eks-distro/kubernetes/pause:3.2"

---

apiVersion: v1

kind: Pod

metadata:

name: incorrectly-valid

spec:

containers:

-... | topologySpreadConstraints[*].labelSelector is not correctly validated | https://api.github.com/repos/kubernetes/kubernetes/issues/114932/comments | 4 | 2023-01-09T21:21:50Z | 2023-01-11T18:06:04Z | https://github.com/kubernetes/kubernetes/issues/114932 | 1,526,343,604 | 114,932 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

Perf counters are currently updated every second which doesn't match the default cadvisor collection period as 10 seconds.

https://github.com/kubernetes/kubernetes/blob/eb7fd7f51c166591adbc52eacef3ce1e4d17bf04/pkg/kubelet/winstats/perfcounters.go#L37

### What did you expect to happen?

Increas... | Increase the update interval of perf counters to 10 seconds | https://api.github.com/repos/kubernetes/kubernetes/issues/114928/comments | 17 | 2023-01-09T19:38:50Z | 2023-03-14T21:13:13Z | https://github.com/kubernetes/kubernetes/issues/114928 | 1,526,200,993 | 114,928 |

[

"kubernetes",

"kubernetes"

] | Hello! Im having some problems with kubectl/kubernetes.

I have a kubernetes cluster running on Rancher, my customer have an internet proxy and racher has been setted to use this proxy, all the U.I are OK and my cluster is active.

Cluster Structure:

```

1 Rancher Node - RKE2

Custom Cluster created by Ranch... | Unable to connect to the server: dial tcp: lookup raw.githubusercontent.com on 10.129.251.125:53: server misbehaving | https://api.github.com/repos/kubernetes/kubernetes/issues/114926/comments | 6 | 2023-01-09T19:37:55Z | 2023-01-13T00:40:43Z | https://github.com/kubernetes/kubernetes/issues/114926 | 1,526,199,890 | 114,926 |

[

"kubernetes",

"kubernetes"

] | As discussed [in the KEP](https://github.com/kubernetes/enhancements/tree/master/keps/sig-node/3063-dynamic-resource-allocation#user-permissions-and-quotas), most likely useful quotas need to be implemented by resource drivers because only they know how much resources get consumed by a specific claim.

What can be limi... | dynamic resource allocation: quota = limit number of resource claims per namespace | https://api.github.com/repos/kubernetes/kubernetes/issues/114916/comments | 7 | 2023-01-09T10:49:00Z | 2024-07-23T19:20:45Z | https://github.com/kubernetes/kubernetes/issues/114916 | 1,525,336,172 | 114,916 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

On creating following service

apiVersion: v1

kind: Service

metadata:

name: coordinator-mimic

spec:

type: LoadBalancer

selector:

app.kubernetes.io/name: hke-mimic

ports:

- port: 25025

targetPort: 8000

nodePort: 32444

`

Deleted it and created it again ge... | Nodeport is already allocated | https://api.github.com/repos/kubernetes/kubernetes/issues/114911/comments | 8 | 2023-01-09T06:23:16Z | 2023-01-09T21:47:15Z | https://github.com/kubernetes/kubernetes/issues/114911 | 1,525,007,796 | 114,911 |

[

"kubernetes",

"kubernetes"

] | ### What happened?

- Create a `HorizontalPodAutoscaler` using the `autoscaling/v2` API

- Perform a describe using kubectl

### What did you expect to happen?

Proper HPA configuration without any warnings/errors. Received a warning instead:

```

autoscaling/v2beta2 HorizontalPodAutoscaler is deprecated in v1.23+, ... | kubectl warning on autoscaling/v2 hpa | https://api.github.com/repos/kubernetes/kubernetes/issues/114908/comments | 2 | 2023-01-09T03:34:32Z | 2023-01-17T10:54:34Z | https://github.com/kubernetes/kubernetes/issues/114908 | 1,524,875,871 | 114,908 |

Subsets and Splits

Unique Owner-Repo Count

Counts the number of unique owner-repos in the dataset, providing a basic understanding of diverse repositories.