id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

3440d140-8176-4d07-a3fb-2839795d47f7 | trentmkelly/LessWrong-43k | LessWrong | In 1 year and 5 years what do you see as "the normal" world.

We all have a mental image of pre-COVID normal.

I often hear people saying "I cannot wait to get back to normal." or asking "When will we get back to normal?" I think that is an expectation that is sure to be disappointed. I suspect that is the case for most who read this site.

I'm curious about the mental image of the near future normal that is held here. I'll list a few areas for thoughts but also don't think anyone should be limited in any thoughts they want to share.

1) International Travel -- can be general, tourism related or business related

2) Entertainment -- theater/movies, live sports and concerts. One thought here might be a move to more open air venues rather than indoors.

3) Social interactions in general. Does some of the zenophobia that has occurred persist or die away (say due to vaccines).

4) Will vaccines change things that much? |

6e0dcfbf-2847-486b-909f-f0d1caf014d0 | trentmkelly/LessWrong-43k | LessWrong | Public Transit is not Infinitely Safe

I recently came across this tweet, screenshotted into a popular Facebook group:

> Here's a truth bomb:

>

> Take the U.S. city you're most afraid of, one with a very high murder rate or property crime rate.

>

> If it has any sort of public transit, it is still statistically safer to use public transit in that city at ANY time of day than to drive where you live.

>

> —Matthew Chapman, 2023-06-14

This got ~1M views, doesn't cite anything, was given without any research, and, I'm pretty sure, is wrong. While I'm a major fan of public transit, they've stacked this comparison in a way that's really favorable to cars, and it's not surprising that public transit doesn't make it.

Safety is a complicated concept, and risks are situational: in a car you're much more likely to be hurt in a collision, while on public transit you're much more likely to be hurt by another passenger. To get a clear comparison I looked just at deaths, which is also an area where we can get good statistics.

I can't find a listing of public transit agencies by homicide rate, but Chicago is a large city with a lot of homicides and they make their data available so let's look there. In 2022 there were 244M CTA rides. Downloading the Chicago Police Data and filtering to 2022 homicides on public transit, I see nine. This is 3.7 homicides per 100M trips.

(Note that the original claim was for any city, and there are dozens of US cities with homicide rates higher than Chicago's. I think it's pretty likely that at least one of these cities has a public transit system with more homicides than the CTA.)

To fairly compare this to the risk from driving, we need homicides per distance. How long is a trip? I can't find 2022 data, but the CTA's President's 2020 Budget Recommendations gives 4.1mi (1359M + 613M passenger miles divided by 230M + 249M trips). This means 0.9 homicides per 100M miles travelled.

I live in MA, and while 2022 FARS data isn't out yet, in 2021 there were 0.71 driving deaths per 100 |

e32a32c3-f236-4ecc-9501-a3c967880806 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Increasing the Interpretability of Recurrent Neural Networks Using Hidden Markov Models

1 Introduction

---------------

Following the recent progress in deep learning, researchers and practitioners of machine learning are recognizing the importance of understanding and interpreting what goes on inside these black box models. Recurrent neural networks have recently revolutionized speech recognition and translation, and these powerful models could be very useful in other applications involving sequential data. However, adoption has been slow in applications such as health care, where practitioners are reluctant to let an opaque expert system make crucial decisions. If we can make the inner workings of RNNs more interpretable, more applications can benefit from their power.

There are several aspects of what makes a model or algorithm understandable to humans. One aspect is model complexity or parsimony. Another aspect is the ability to trace back from a prediction or model component to particularly influential features in the data Rüping ([2006](#bib.bib8)) Kim et al. ([2015](#bib.bib4)). This could be useful for understanding mistakes made by neural networks, which have human-level performance most of the time, but can perform very poorly on seemingly easy cases. For instance, convolutional networks can misclassify adversarial examples with very high confidence (Nguyen et al., [2015](#bib.bib6)), and made headlines in 2015 when the image tagging algorithm in Google Photos mislabeled African Americans as gorillas. It’s reasonable to expect recurrent networks to fail in similar ways as well. It would thus be useful to have more visibility into where these sorts of errors come from, i.e. which groups of features contribute to such flawed predictions.

Several promising approaches to interpreting RNNs have been developed recently. Che et al. ([2015](#bib.bib2)) have approached this by using gradient boosting trees to predict LSTM output probabilities and explain which features played a part in the prediction. They do not model the internal structure of the LSTM, but instead approximate the entire architecture as a black box. Karpathy et al. ([2016](#bib.bib3)) showed that in LSTM language models, around 10% of the memory state dimensions can be interpreted with the naked eye by color-coding the text data with the state values; some of them track quotes, brackets and other clearly identifiable aspects of the text. Building on these results, we take a somewhat more systematic approach to looking for interpretable hidden state dimensions, by using decision trees to predict individual hidden state dimensions (Figure [2](#S2.F2 "Figure 2 ‣ 2.2 Hidden Markov models ‣ 2 Methods")). We visualize the overall dynamics of the hidden states by coloring the training data with the k-means clusters on the state vectors (Figures [2(b)](#S3.F2.sf2 "(b) ‣ Figure 4 ‣ 3 Experiments"), [3(b)](#S3.F3.sf2 "(b) ‣ Figure 4 ‣ 3 Experiments")).

We explore several methods for building interpretable models by combining LSTMs and HMMs. The existing body of literature mostly focuses on methods that specifically train the RNN to predict HMM states (Bourlard & Morgan, [1994](#bib.bib1)) or posteriors (Maas et al., [2012](#bib.bib5)), referred to as hybrid or tandem methods respectively.

We first investigate an approach that does not require the RNN to be modified in order to make it understandable, as the interpretation happens after the fact. Here, we model the big picture of the state changes in the LSTM, by extracting the hidden states and approximating them with a continuous emission hidden Markov model (HMM). We then take the reverse approach where the HMM state probabilities are added to the output layer of the LSTM (see Figure [1](#S2.F1 "Figure 1 ‣ 2.1 LSTM models ‣ 2 Methods")). The LSTM model can then make use of the information from the HMM, and fill in the gaps when the HMM is not performing well, resulting in an LSTM with a smaller number of hidden state dimensions that could be interpreted individually (Figures [4](#S3.F4 "Figure 4 ‣ 3 Experiments"), [4](#S3.F4 "Figure 4 ‣ 3 Experiments")).

2 Methods

----------

We compare a hybrid HMM-LSTM approach with a continuous emission HMM (trained on the hidden states of a 2-layer LSTM), and a discrete emission HMM (trained directly on data).

###

2.1 LSTM models

We use a character-level LSTM with 1 layer and no dropout, based on the Element-Research library. We train the LSTM for 10 epochs, starting with a learning rate of 1, where the learning rate is halved whenever exp(−lt)>exp(−lt−1)+1, where lt is the log likelihood score at epoch t. The L2-norm of the parameter gradient vector is clipped at a threshold of 5.

Figure 1: Hybrid HMM-LSTM algorithm.

###

2.2 Hidden Markov models

The HMM training procedure is as follows:

Initialization of HMM hidden states:

* (Discrete HMM) Random multinomial draw for each time step (i.i.d. across time steps).

* (Continuous HMM) K-means clusters fit on LSTM states, to speed up convergence relative to random initialization.

At each iteration:

1. Sample states using Forward Filtering Backwards Sampling algorithm (FFBS, Rao & Teh ([2013](#bib.bib7))).

2. Sample transition parameters from a Multinomial-Dirichlet posterior.

Let nij be the number of transitions from state i to state j. Then the posterior distribution of the i-th row of transition matrix T (corresponding to transitions from state i) is:

| | | |

| --- | --- | --- |

| | Ti∼Mult(nij|Ti)Dir(Ti|α) | |

where α is the Dirichlet hyperparameter.

3. (Continuous HMM) Sample multivariate normal emission parameters from Normal-Inverse-Wishart posterior for state i:

| | | |

| --- | --- | --- |

| | μi,Σi∼N(y|μi,Σi)N(μi|0,Σi)IW(Σi) | |

(Discrete HMM) Sample the emission parameters from a Multinomial-Dirichlet posterior.

Evaluation:

We evaluate the methods on how well they predict the next observation in the validation set. For the HMM models, we do a forward pass on the validation set (no backward pass unlike the full FFBS), and compute the HMM state distribution vector pt for each time step t. Then we compute the predictive likelihood for the next observation as follows:

| | | |

| --- | --- | --- |

| | P(yt+1|pt)=n∑xt=1n∑xt+1=1ptxt⋅Txt,xt+1⋅P(yt+1|xt+1) | |

where n is the number of hidden states in the HMM.

Figure 2: Decision tree predicting an individual hidden state dimension of the hybrid algorithm based on the preceding characters on the Linux data. The hidden state dimensions of the 10-state hybrid mostly track comment characters.

###

2.3 Hybrid models

Our main hybrid model is put together sequentially, as shown in Figure [1](#S2.F1 "Figure 1 ‣ 2.1 LSTM models ‣ 2 Methods"). We first run the discrete HMM on the data, outputting the hidden state distributions obtained by the HMM’s forward pass, and then add this information to the architecture in parallel with a 1-layer LSTM. The linear layer between the LSTM and the prediction layer is augmented with an extra column for each HMM state. The LSTM component of this architecture can be smaller than a standalone LSTM, since it only needs to fill in the gaps in the HMM’s predictions. The HMM is written in Python, and the rest of the architecture is in Torch.

We also build a joint hybrid model, where the LSTM and HMM are simultaneously trained in Torch. We implemented an HMM Torch module, optimized using stochastic gradient descent rather than FFBS. Similarly to the sequential hybrid model, we concatenate the LSTM outputs with the HMM state probabilities.

3 Experiments

--------------

We test the models on several text data sets on the character level: the Penn Tree Bank (5M characters), and two data sets used by Karpathy et al. ([2016](#bib.bib3)), Tiny Shakespeare (1M characters) and Linux Kernel (5M characters). We chose k=20 for the continuous HMM based on a PCA analysis of the LSTM states, as the first 20 components captured almost all the variance.

Table [1](#S3.T1 "Table 1 ‣ 3 Experiments") shows the predictive log likelihood of the next text character for each method. On all text data sets, the hybrid algorithm performs a bit better than the standalone LSTM with the same LSTM state dimension. This effect gets smaller as we increase the LSTM size and the HMM makes less difference to the prediction (though it can still make a difference in terms of interpretability). The hybrid algorithm with 20 HMM states does better than the one with 10 HMM states. The joint hybrid algorithm outperforms the sequential hybrid on Shakespeare data, but does worse on PTB and Linux data, which suggests that the joint hybrid is more helpful for smaller data sets. The joint hybrid is an order of magnitude slower than the sequential hybrid, as the SGD-based HMM is slower to train than the FFBS-based HMM.

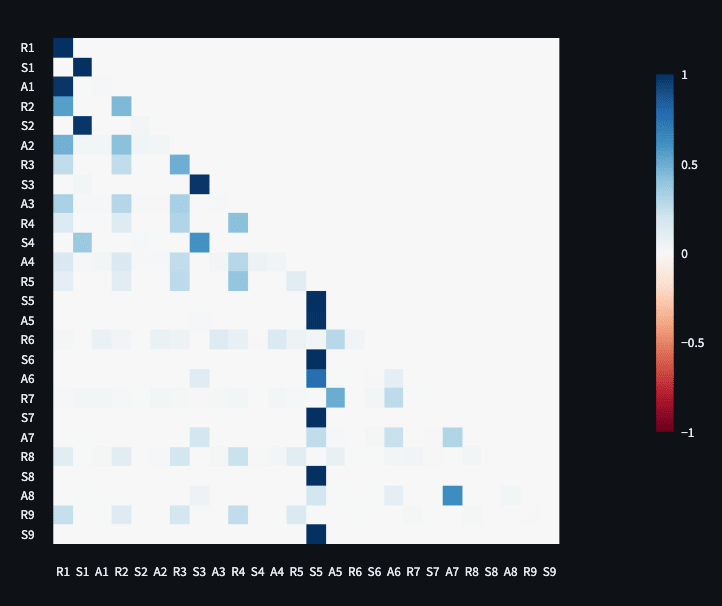

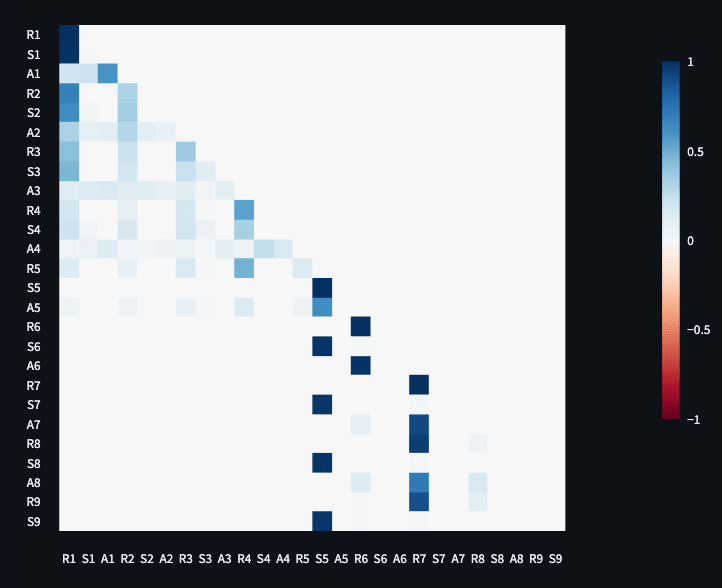

We interpret the HMM and LSTM states in the hybrid algorithm with 10 LSTM state dimensions and 10 HMM states in Figures [4](#S3.F4 "Figure 4 ‣ 3 Experiments") and [4](#S3.F4 "Figure 4 ‣ 3 Experiments"), showing which features are identified by the HMM and LSTM components. In Figures [2(a)](#S3.F2.sf1 "(a) ‣ Figure 4 ‣ 3 Experiments") and [3(a)](#S3.F3.sf1 "(a) ‣ Figure 4 ‣ 3 Experiments"), we color-code the training data with the 10 HMM states. In Figures [2(b)](#S3.F2.sf2 "(b) ‣ Figure 4 ‣ 3 Experiments") and [3(b)](#S3.F3.sf2 "(b) ‣ Figure 4 ‣ 3 Experiments"), we apply k-means clustering to the LSTM state vectors, and color-code the training data with the clusters. The HMM and LSTM states pick up on spaces, indentation, and special characters in the data (such as comment symbols in Linux data). We see some examples where the HMM and LSTM complement each other, such as learning different things about spaces and comments on Linux data, or punctuation on the Shakespeare data. In Figure [2](#S2.F2 "Figure 2 ‣ 2.2 Hidden Markov models ‣ 2 Methods"), we see that some individual LSTM hidden state dimensions identify similar features, such as comment symbols in the Linux data.

| | | | |

| --- | --- | --- | --- |

|

(a) Hybrid HMM component: colors correspond to 10 HMM states. Blue cluster identifies spaces. Green cluster (with white font) identifies punctuation and ends of words. Purple cluster picks up on some vowels.

|

(b) Hybrid LSTM component: colors correspond to 10 k-means clusters on hidden state vectors. Yellow cluster (with red font) identifies spaces. Grey cluster identifies punctuation (except commas). Purple cluster finds some ’y’ and ’o’ letters.

|

(a) Hybrid HMM component: colors correspond to 10 HMM states. Distinguishes comments and indentation spaces (green with yellow font) from other spaces (purple). Red cluster (with yellow font) identifies punctuation and brackets. Green cluster (yellow font) also finds capitalized variable names.

|

(b) Hybrid LSTM component: colors correspond to 10 k-means clusters on hidden state vectors. Distinguishes comments, spaces at beginnings of lines, and spaces between words (red with white font) from indentation spaces (green with yellow font). Opening brackets are red (yellow font) and closing brackets are green (white font).

|

Figure 3: Visualizing HMM and LSTM states on Shakespeare data for the hybrid with 10 LSTM state dimensions and 10 HMM states. The HMM and LSTM components learn some complementary features in the text: while both learn to identify spaces, the LSTM does not completely identify punctuation or pick up on vowels, which the HMM has already done.

Figure 4: Visualizing HMM and LSTM states on Linux data for the hybrid with 10 LSTM state dimensions and 10 HMM states. The HMM and LSTM components learn some complementary features in the text related to spaces and comments.

Figure 3: Visualizing HMM and LSTM states on Shakespeare data for the hybrid with 10 LSTM state dimensions and 10 HMM states. The HMM and LSTM components learn some complementary features in the text: while both learn to identify spaces, the LSTM does not completely identify punctuation or pick up on vowels, which the HMM has already done.

|

Data

|

Method

|

Parameters

|

LSTM dims

|

HMM states

|

Validation

|

Training

|

| --- | --- | --- | --- | --- | --- | --- |

|

Shakespeare

| Continuous HMM | 1300 | | 20 | -2.74 | -2.75 |

| Discrete HMM | 650 | | 10 | -2.69 | -2.68 |

| Discrete HMM | 1300 | | 20 | -2.5 | -2.49 |

| LSTM | 865 | 5 | | -2.41 | -2.35 |

| Hybrid | 1515 | 5 | 10 | -2.3 | -2.26 |

| Hybrid | 2165 | 5 | 20 | -2.26 | -2.18 |

| LSTM | 2130 | 10 | | -2.23 | -2.12 |

| Joint hybrid | 1515 | 5 | 10 | -2.21 | -2.18 |

| Hybrid | 2780 | 10 | 10 | -2.19 | -2.08 |

| Hybrid | 3430 | 10 | 20 | -2.16 | -2.04 |

| Hybrid | 4445 | 15 | 10 | -2.13 | -1.95 |

| Joint hybrid | 3430 | 10 | 10 | -2.12 | -2.07 |

| LSTM | 3795 | 15 | | -2.1 | -1.95 |

| Hybrid | 5095 | 15 | 20 | -2.07 | -1.92 |

| Hybrid | 6510 | 20 | 10 | -2.05 | -1.87 |

| Joint hybrid | 4445 | 15 | 10 | -2.03 | -1.97 |

| LSTM | 5860 | 20 | | -2.03 | -1.83 |

| Hybrid | 7160 | 20 | 20 | -2.02 | -1.85 |

| Joint hybrid | 7160 | 20 | 10 | -1.97 | -1.88 |

|

Linux Kernel

| Discrete HMM | 1000 | | 10 | -2.76 | -2.7 |

| Discrete HMM | 2000 | | 20 | -2.55 | -2.5 |

| LSTM | 1215 | 5 | | -2.54 | -2.48 |

| Joint hybrid | 2215 | 5 | 10 | -2.35 | -2.26 |

| Hybrid | 2215 | 5 | 10 | -2.33 | -2.26 |

| Hybrid | 3215 | 5 | 20 | -2.25 | -2.16 |

| Joint hybrid | 4830 | 10 | 10 | -2.18 | -2.08 |

| LSTM | 2830 | 10 | | -2.17 | -2.07 |

| Hybrid | 3830 | 10 | 10 | -2.14 | -2.05 |

| Hybrid | 4830 | 10 | 20 | -2.07 | -1.97 |

| LSTM | 4845 | 15 | | -2.03 | -1.9 |

| Joint hybrid | 5845 | 15 | 10 | -2.00 | -1.88 |

| Hybrid | 5845 | 15 | 10 | -1.96 | -1.84 |

| Hybrid | 6845 | 15 | 20 | -1.96 | -1.83 |

| Joint hybrid | 9260 | 20 | 10 | -1.90 | -1.76 |

| LSTM | 7260 | 20 | | -1.88 | -1.73 |

| Hybrid | 8260 | 20 | 10 | -1.87 | -1.73 |

| Hybrid | 9260 | 20 | 20 | -1.85 | -1.71 |

|

Penn Tree Bank

| Continuous HMM | 1000 | 100 | 20 | -2.58 | -2.58 |

| Discrete HMM | 500 | | 10 | -2.43 | -2.43 |

| Discrete HMM | 1000 | | 20 | -2.28 | -2.28 |

| LSTM | 715 | 5 | | -2.22 | -2.22 |

| Hybrid | 1215 | 5 | 10 | -2.14 | -2.15 |

| Joint hybrid | 1215 | 5 | 10 | -2.08 | -2.08 |

| Hybrid | 1715 | 5 | 20 | -2.06 | -2.07 |

| LSTM | 1830 | 10 | | -1.99 | -1.99 |

| Hybrid | 2330 | 10 | 10 | -1.94 | -1.95 |

| Joint hybrid | 2830 | 10 | 10 | -1.94 | -1.95 |

| Hybrid | 2830 | 10 | 20 | -1.93 | -1.94 |

| LSTM | 3345 | 15 | | -1.82 | -1.83 |

| Hybrid | 3845 | 15 | 10 | -1.81 | -1.82 |

| Hybrid | 4345 | 15 | 20 | -1.8 | -1.81 |

| Joint hybrid | 6260 | 20 | 10 | -1.73 | -1.74 |

| LSTM | 5260 | 20 | | -1.72 | -1.73 |

| Hybrid | 5760 | 20 | 10 | -1.72 | -1.72 |

| Hybrid | 6260 | 20 | 20 | -1.71 | -1.71 |

Table 1: Predictive loglikelihood comparison on the text data sets (sorted by validation set performance).

4 Conclusion and future work

-----------------------------

Hybrid HMM-RNN approaches combine the interpretability of HMMs with the predictive power of RNNs. Sometimes, a small hybrid model can perform better than a standalone LSTM of the same size. We use visualizations to show how the LSTM and HMM components of the hybrid algorithm complement each other in terms of features learned in the data. |

ebf85974-a77a-4b10-b2f9-8b688af2ec8c | trentmkelly/LessWrong-43k | LessWrong | A Teacher vs. Everyone Else

> A repairer wants your stuff to break down,

> A doctor wants you to get ill,

> A lawyer wants you to get in conflicts,

> A farmer wants you to be hungry,

> But there is only a teacher who wants you to learn.

Of course you see what is wrong with the above "argument / meme / good-thought". But the first time I came across this meme, I did not.

Until a month or two ago when this meme appeared in my head again and within seconds I discarded it away as fallacious reasoning. What was the difference this time? That I was now aware of the Conspiracy. And this meme happened to come up on one evening when I was thinking about fallacies and trying to practice my skills of methods of rationality.

If you are a teacher, and you read the meme, it will assign to you the Good Guy label. And if you are one of {repairer, doctor, lawyer, farmer, etc} then you get the Bad Guy label. There is also a third alternative in which you are neither --- say a teenager.

If you are not explicitly being labeled bad or good, then you may just move on like I did. Or maybe you put some detective effort and do realize the fallacies. Depends on your culture: If your culture has tales like, "If your teacher and your God both are in front of you, who do you greet / bow to first?" and the right answer is "why of course my teacher because otherwise how would I know about God?" then you are just more likely to award a point to the already point-rich teacher-bucket and move on.

If you get called the Bad Guy, then you have a motivation to falsify the meme. And you will likely do so. This meme does look highly fragile in hindsight.

But if you are a teacher, you have no reason to investigate. You are getting free points. And it's in fact true that you do want people to learn. So, this meme probably did originate in the teacher circle. Where it has potential to get shared without getting beaten down.

What are the fallacies though? Here is the one I can identify:

The type error of comparing desired "requi |

9c1726f4-2418-4455-9e87-e5aaca80d4f9 | trentmkelly/LessWrong-43k | LessWrong | Transparent Newcomb's Problem and the limitations of the Erasure framing

One of the aspects of the Erasure Approach that always felt kind of shaky was that in Transparent Newcomb's Problem it required you to forget that you'd seen that the the box was full. Recently come to believe that this really isn't the best way of framing the situation.

Let's begin by recapping the problem. In a room there are two boxes, with one-containing $1000 and the other being a transparent box that contains either nothing or $1 million. Before you entered the room, a perfect predictor predicted what you would do if you saw $1 million in the transparent box. If it predicted that you would one-boxed, then it put $1 million in the transparent box, otherwise it left the box empty. If you can see $1 million in the transparent box, which choice should you pick?

The argument I provided before was as follows: If you see a full box, then you must be going to one-box if the predictor really is perfect. So there would only be one decision consistent with the problem description and to produce a non-trivial decision theory problem we'd have to erase some information. And the most logical thing to erase would be what you see in the box.

I still mostly agree with this argument, but I feel the reasoning is a bit sparse, so this post will try to break it down in more detail. I'll just note in advance that when you start breaking it down, you end up performing a kind of psychological or social analysis. However, I think this is inevitable when dealing with ambiguous problems; if you could provide a mathematical proof of what an ambiguous problem meant then it wouldn't be ambiguous.

As I noted in Deconfusing Logical Counterfactuals, there is only one choice consistent with the problem (one-boxing), so in order to answer this question we'll have to construct some counterfactuals. A good way to view this is that instead of asking what choice should the agent make, we will ask whether the agent made the best choice.

Now, in order to construct these counterfactuals we'll hav |

d3c132e9-32b0-40dc-9248-9ecbb39c4472 | trentmkelly/LessWrong-43k | LessWrong | Open thread, November 21 - November 28, 2017

You can find the last Open Thread here.

IF IT'S WORTH SAYING, BUT NOT WORTH ITS OWN POST, THEN IT GOES HERE.

|

b12a717b-e747-4a04-8ae7-57c77cc1caf9 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | UK Government announces £100 million in funding for Foundation Model Taskforce.

The UK government has recently made some big investments in AI

> The Taskforce, modelled on the success of the COVID-19 Vaccines Taskforce, will develop the safe and reliable use of this pivotal artificial intelligence (AI) across the economy and ensure the UK is globally competitive in this strategic technology.

>

>

It appears the primary motivation for the investment is to speed up the growth of the UK economy

> To support businesses and public trust in these systems and drive their adoption, the Taskforce will work with the sector towards developing the safety and reliability of foundation models, both at a scientific and commercial level.

>

>

I don't yet know what the UK government means by "safety', but this might be a promising investment. It seems more likely that this will advance capabilities, considering the government's [simultaneous £900 million investment into compute technology.](https://www.theguardian.com/technology/2023/mar/15/uk-to-invest-900m-in-supercomputer-in-bid-to-build-own-britgpt) |

f6679ed8-78e5-4c60-8c50-8612b38aa032 | trentmkelly/LessWrong-43k | LessWrong | Mistakes repository

This is a repository for major, life-altering mistakes that you or others have made. Detailed accounts of specific mistakes are welcome, and so are mentions of general classes of mistakes that people often make. If similar repositories already exist (inside or outside of LW), links are greatly appreciated.

The purpose of this repository is to collect information about serious misjudgements and mistakes in order to help people avoid similar mistakes. (I am posting this repository because I'm trying to conduct a premortem on my life and figure out what catastrophic risks may screw me over in the near or far future.) |

a41aba73-14d3-436d-bde0-32b36e1c0e57 | trentmkelly/LessWrong-43k | LessWrong | Can you improve IQ by practicing IQ tests?

As an European, I did never have any IQ test, nor I know anybody who (to my knowledge) was ever administered an IQ test. I looked at some fac-simile IQ tests on the internet, expecially Raven's matrices.

When I began to read online blogs from the United States, I started to see references to the concept of IQ. I am very confused by the fact that the IQ score seems to be treated as a stable, intrinsic charachteristic of an individual (like the height or the visual acuity).

When you costantly practice some task, you usually become better at that task. I imagine that there exists a finite number of ideas required to solve Raven matrices: even when someone invents new Raven matrices for making new IQ tests, he will do so by remixing the ideas used for previous Raven matrices, because -as Cardano said- "there is practically no new idea which one may bring forward".

The IQ score is the result of an exam, much like school grades. But it is generally understood that school grades are influenced by how much effort you put in the preparation for the exam, by how much your family cares for your grades, and so on. I expect school grades to be fairly correlated to income, or to other mesures of "success".

In a hypothetical society in which all children had to learn chess, and being bad at chess was regarded as a shame, I guess that the ELO chess ratings of 17 year olds would be highly correlated with later achievements. Are IQ tests the only exception to the rule that your grade in an exam is influenced by how much you prepare for that exam? Is there a sense in which IQ is a more "intrinsic" quantity than, for example, the AP exam score, or the ELO chess rating? |

e2b424a6-0620-443c-b738-00b3ead60476 | trentmkelly/LessWrong-43k | LessWrong | Meetup :

Discussion article for the meetup :

WHEN: 30 June 2013 04:30:00PM (+0200)

WHERE: Berkeley

Discussion article for the meetup : |

408c1515-62e6-41b7-9379-a981842e75d8 | trentmkelly/LessWrong-43k | LessWrong | The ecology of conviction

Supposing that sincerity has declined, why?

It feels natural to me that sincere enthusiasms should be rare relative to criticism and half-heartedness. But I would have thought this was born of fairly basic features of the situation, and so wouldn’t change over time.

It seems clearly easier and less socially risky to be critical of things, or non-committal, than to stand for a positive vision. It is easier to produce a valid criticism than an idea immune to valid criticism (and easier again to say, ‘this is very simplistic - the situation is subtle’). And if an idea is criticized, the critic gets to seem sophisticated, while the holder of the idea gets to seem naïve. A criticism is smaller than a positive vision, so a critic is usually not staking their reputation on their criticism as much, or claiming that it is good, in the way that the enthusiast is.

But there are also rewards for positive visions and for sincere enthusiasm that aren’t had by critics and routine doubters. So for things to change over time, you really just need the scale of these incentives to change, whether in a basic way or because the situation is changing.

One way this could have happened is that the internet (or even earlier change in the information economy) somehow changed the ecology of enthusiasts and doubters, pushing the incentives away from enthusiasm. e.g. The ease, convenience and anonymity of criticizing and doubting on the internet puts a given positive vision in contact with many more critics, making it basically impossible for an idea to emerge not substantially marred by doubt and teeming with uncertainties and summarizable as ‘maybe X, but I don’t know, it’s complicated’. This makes presenting positive visions less appealing, reducing the population of positive vision havers, and making them either less confident or more the kinds of people whose confidence isn’t affected by the volume of doubt other people might have about what they are saying. Which all make them even ea |

99bfc358-50d3-4897-99bd-50d48c53b97e | trentmkelly/LessWrong-43k | LessWrong | How to be an amateur polyglot

Setting the stage

Being a polyglot is a problem of definition first. Who can be described as a polyglot? At what level do you actually “speak” the given language? Some sources cite that polyglot means speaking more than 4 languages, others 6. My take is it doesn’t matter. I am more interested in the definition of when you speak the language. If you can greet and order a coffee in 20 languages do you actually speak them? I don’t think so. Do you need to present a scientific document or write a newspaper worthy article to be considered? That’s too much. I think the best definition would be that you can go out with a group of native speakers, understand what they are saying and participate in the discussion that would range from everyday stuff to maybe work related stuff and not switching too often to English nor using google translate. It’s ok to pause and maybe ask for a specific word or ask the group if your message got across. This is what I am aiming for when I study a specific language.

Why learn a foreign language when soon we will have AI auto-translate from our glasses and other wearables? This is a valid question for work related purposes but socially it’s not. You can never be interacting with glasses talking in another language while having dinner with friends nor at a date for example. The small things that make you part of the culture are hidden in the language. The respect and the motivation to blend in is irreplaceable.

For reference here are the languages I speak at approximate levels:

* Greek - native

* English - proficient (C2)

* Spanish - high level (C1) active learning

* French - medium level (B2) active learning

* Italian - coffee+ level (B1) active learning

* Dutch - survival level (A2) in hibernation

Get started

Firstly, I think the first foreign language you learn could be taught in a formal way with an experienced teacher. That will teach you the way to structure your thought process and learn how to learn efficiently. It’s comm |

36ec1958-dc1f-4328-8b64-ca73fbbec8f5 | trentmkelly/LessWrong-43k | LessWrong | "Flinching away from truth” is often about *protecting* the epistemology

Related to: Leave a line of retreat; Categorizing has consequences.

There’s a story I like, about this little kid who wants to be a writer. So she writes a story and shows it to her teacher.

“You misspelt the word ‘ocean’”, says the teacher.

“No I didn’t!”, says the kid.

The teacher looks a bit apologetic, but persists: “‘Ocean’ is spelt with a ‘c’ rather than an ‘sh’; this makes sense, because the ‘e’ after the ‘c’ changes its sound…”

“No I didn’t!” interrupts the kid.

“Look,” says the teacher, “I get it that it hurts to notice mistakes. But that which can be destroyed by the truth should be! You did, in fact, misspell the word ‘ocean’.”

“I did not!” says the kid, whereupon she bursts into tears, and runs away and hides in the closet, repeating again and again: “I did not misspell the word! I can too be a writer!”.

I like to imagine the inside of the kid’s head as containing a single bucket that houses three different variables that are initially all stuck together:

Original state of the kid's head:

The goal, if one is seeking actual true beliefs, is to separate out each of these variables into its own separate bucket, so that the “is ‘oshun’ spelt correctly?” variable can update to the accurate state of "no", without simultaneously forcing the "Am I allowed to pursue my writing ambition?" variable to update to the inaccurate state of "no".

Desirable state (requires somehow acquiring more buckets):

The trouble is, the kid won’t necessarily acquire enough buckets by trying to “grit her teeth and look at the painful thing”. A naive attempt to "just refrain from flinching away, and form true beliefs, however painful" risks introducing a more important error than her current spelling error: mistakenly believing she must stop working toward being a writer, since the bitter truth is that she spelled 'oshun' incorrectly.

State the kid might accidentally land in, if she naively tries to "face the truth":

(You might take a moment, right now, to name the cognit |

09ae8313-0c13-48f2-be7a-2f8c7f5f190b | trentmkelly/LessWrong-43k | LessWrong | Fun and Games with Cognitive Biases

You may have heard about IARPA's Sirius Program, which is a proposal to develop serious games that would teach intelligence analysts to recognize and correct their cognitive biases. The intelligence community has a long history of interest in debiasing, and even produced a rationality handbook based on internal CIA publications from the 70's and 80's. Creating games which would systematically improve our thinking skills has enormous potential, and I would highly encourage the LW community to consider this as a potential way forward to encourage rationality more broadly.

While developing these particular games will require thought and programming, the proposal did inspire the NYC LW community to play a game of our own. Using a list of cognitive biases, we broke up into groups of no larger than four, and spent five minutes discussing each bias with regards to three questions:

1. How do we recognize it?

2. How do we correct it?

3. How do we use its existence to help us win?

The Sirius Program specifically targets Confirmation Bias, Fundamental Attribution Error, Bias Blind Spot, Anchoring Bias, Representativeness Bias, and Projection Bias. To this list, I also decided to add the Planning Fallacy, the Availability Heuristic, Hindsight Bias, the Halo Effect, Confabulation, and the Overconfidence Effect. We did this Pomodoro style, with six rounds of five minutes, a quick break, another six rounds, before a break and then a group discussion of the exercise.

Results of this exercise are posted below the fold. I encourage you to try the exercise for yourself before looking at our answers.

Caution: Dark Arts! Explicit discussion of how to exploit bugs in human reasoning may lead to discomfort. You have been warned.

Confirmation Bias

* Notice if you (don't) want a theory to be true

* Don't be afraid of being wrong, question the outcome that you fear will happen

* Seek out people with contrary opinions and be genuinely curious why they believe what they do |

230533f8-613b-4056-b94c-786333d7c59b | StampyAI/alignment-research-dataset/special_docs | Other | Canaries in Technology Mines: Warning Signs of Transformative Progress in AI

1 Canar ies in Technology Mines: Warning Signs of

Transformative Progress in AI

Carla Zoe Cremer 1 and Jess Whittlestone 2

Abstract. 1 In this paper we introduce a method ology for

identify ing early warning sign s of transformative progress in AI, to

aid anticipatory governance and research prioritisation. We

propose using expert elicitation methods to identify milestones in

AI progress, followed by collaborative causal mapping to id entify

key milestones which underpin several others. We call these key

milestones ‘canaries’ based on the colloquial phrase ‘canary in a

coal mine’ to describe advance warning of an extreme event: in this

case, advance warning of transformative AI . After d escribing and

motivating our proposed methodology, we present results from an

initial implementation to identify canaries for progress towards

high-level machine intelligence (HLMI). We conclude by

discussing the limitations of this method, possible future

improvements, and how we hope it can be used to improve

monitoring of future risks from AI progress.

1 INTRODUCTION

Progress in artificial intelligence (AI) research has accelerated in

recent years, and applications are already beginning to impact

societ y [9][43]. Some researchers warn that continued progress

could lead to much more advanced AI systems, with potential to

precipitate transformative societal changes [13][19][21][27][39].

For example, advanced machine learning systems could be used to

optimise management of safety -critical infrastructure [33];

advanced language models could be used to corrupt our online

information ecosystem [31]; and AI systems could even gradually

begin to replace a large portion of economically useful work [17].

We use the term “transformative AI” to describe a range of

possible advances in AI with potential to impact society in large

and hard -to-reverse ways [22].

Preparing for the future impacts of AI is challenging given

considerable uncertainty about how AI systems will develop . There

is substantial expert disagreement around when different advances

in AI capabilities should be expected [11][19][34]. Policy and

regulation will likely struggle to keep up with the fast pace of

technological progress [12][15][42], resulting in either stale,

outdated regulation or policy paralysis [3]. It would therefore be

valuable to be able to identify ‘early warning signs’ of

transformative AI progress, to enable more anticipatory

governance, as well as better prioritisation of research and

allocation of resources.

We call these early warning signs ‘canaries’, based on the

colloquial use of the phrase ‘canary in a coal mine’ to indicate

1 Future of Humanity Institute , University of Oxford , UK, email:

carla.cremer@philosophy.ox.ac.uk

2 Centre for the Study of Existential Risk , University of Cambridge, UK,

email: jlw84@cam.ac.uk advance warning of an extreme event. Our use of this term takes its

inspiration from an article by Etzioni [16], in which he stresses the

importance of identifying such canaries. We want to take this

suggestion seriously and propose a systematic methodology for

identifying such canaries.

Many types of indicators could be of interest and classed as

canaries, including: research progress towards key cognitive

faculties (e.g., natural language understanding ); overcoming known

technical challenges (such as improving th e data efficiency of deep

learning algorithms); or improved applicability of AI to

economically -relevant tasks (e.g. text summari zation ). What

distinguishes canaries from general markers of AI progress ( like

those discussed in [32] or [36]) is that they indicat e that

particularly transformative impacts of AI may be on the horizon.

Given that our definition of “transformative AI” is currently very

broad, c anaries are therefore defined relative to a specific form of

transformative AI or impac t. For example, we might identify

canaries for human -level artificial intelligence; canaries for

automation of a specific sector of work; or canaries for specific

types of societal risks from A I. From a governance perspective , we

are particularly interested in canaries which indicate that faster or

discontinuous progress may be on the horizon, since the impacts of

rapid progress would be especially difficult to manage once they

begin to manifest . The se therefore particularly warrant advance d

preparation.

In what follows , we describe and discuss a methodology for

identifying canaries of progress in technological research. We

focus on AI progress, but believe this method, once trialled and

tested, could be applied to other areas of technological

development. We motivate and describe the methodological

approach, which combines expert elicitation and causal mapping,

before presenting one implementation of this methodology to

identify canaries for progress towards high-level machine

intelligence (HLMI). After discu ssing potential canaries for HLMI

specifically, we discuss how to make use of canaries in monitoring

of AI progress and suggest how the limitations of this methodology

might be addressed in future iterations of this work .

2 METHODOLOGY

2.1 Background and Motivation

This work builds on two main bodies of existing literature:

research on AI forecasting , and an emerging body of work on

measuring AI progress .

The AI forecasting literature generally uses expert elicitation to

generate probabilistic estimates f or when different types of 1st International Workshop on Evaluating Progress in Artificial Intelligence - EPAI 2020

In conjunction with the 24th European Conference on Artificial Intelligence - ECAI 2020

Santiago de Compostela, Spain

2

advanced AI will be achieved [5][19][21][34]. For example, Baum

et al. [5] survey experts on when four specific milestones in AI will

be achieved: passing the Turing Test, performing Nobel quality

work, passing third grade, and becoming superhuman. Both Müller

and Bostrom [34], and Grace et al. [19] focus their survey

questions around predicting the arrival of high-level machine

intelligence (HLMI) , which the latter define as being achieved

when “unaided machines can accomplish e very task better and

more cheaply than human workers” .

However, these studies have several limitations [6] that should

make us cautious about giving their results too much weight . The

experts surveyed in these studies have no experience in quantitative

forecasting and receive no training before being surveyed, which

likely renders their predictions unreliable [10][41].

Issues of reliability aside, these forecasting studies are also

limited in what they can tell us ab out future AI progress. They have

little to say about impactful milestones on the path to advanced AI ,

let alone early -warning signs. Experts disagree substantially about

when capabilities will be achieved [11][19] and without knowing

who (if anyone) is mo re accurate in their predictions, these

forecasts cannot easily inform decisions and prioritisation around

AI today. Quantitative expert elicitations like these also do not tell

us why different experts disagree , what kinds of progress might

cause them to change their judgements , or what aspects they in fact

do agree upon. While broad probability estimates for when

advanced AI might be achieved are interesting , they tell us little

about the path from where we are now, or what could be done

today to shape th e future development and impact of AI.

At the same time, several research projects have begun to track

and measure progress in AI [7][23][36]. These projects focus on a

range of indicators relevant to AI progress, but do not make any

systematic attempt to identify which markers of progress are most

important for anticipating potentially transformative AI. Given

limited time and resources for tracking progress in AI, it is crucial

that we find ways to prioritise those measures that are most

relevant to ensuring societal benefits and mitigating risks of AI.

The approach we propose in this paper aims to address the

limitations of both work on AI forecasting and on measuring

progress in AI . In a sense, the limitations of these two bodies of

work are complementary. The AI forecasting literature focuses on

anticipating the most extreme impacts and advanced progress in

AI, but is unable to say much about the warning si gns or kinds of

progress that will be important in the near future. AI measurement

initiatives, conversely, monitor near -future progress in AI, but with

little systematic prioritisation or reflection on what progress in

different areas might mean for socie ty and governance. What is

needed, building on work in both these areas, are attempts to

identify areas of progress today that may be particularly important

to pay attention to, given concerns about the kinds of

transformative AI systems that may be possib le in future. Progress

in these areas would serve as crucial warning signs - canaries, as

well call them - suggesting more advance preparation for future AI

systems and their impacts is needed.

We believe that identifying canaries for transformative AI is a

tractable problem and therefore worth investing considerable

research effort in today. In both engineering and cognitive

development, capabilities are achieved sequentially, meaning that

there are often key underlying capabilities which, if attained,

unlock progress in many other areas. For example, musical

protolanguage is thought to have enabled grammatical competence

in the development of language in homo sapiens [8]. AI progress so far has also arguably seen such amplifiers: the use of multi -

layered n on-linear learning or stochastic gradient descent arguably

laid the foundation for unexpectedly fast progress on image

recognition, translation and speech recognition [29]. By mapping

out the dependencies between different capabilities or milestones

needed to reach some notion of transformative AI, therefore, we

should be able to identify milestones which are particularly

important for enabling many others - these are our canaries. This is

the general idea behind our approach to identifying canaries,

outlin ed in more detail in the following sections.

2.2 Proposed Methodology

The proposed methodology can be used to identify ‘canaries’ for

any transformative event . In the case of AI, the focus might be on a

transformative technology such as HLMI or AGI, a transformative

application such as flexible robotics, or a transformative impact

such as the automation of at least 50% of jobs.

Given a transformative event, our methodology has three main

steps: (1) identifying key milestones towards the event; (2)

ident ifying dependency relations between these milestones; and (3)

identifying milestones which underpin many others as ‘canaries’.

2.2.1 Identifying key milestones using expert elicitation

Like other studies of AI progress, we rely on expert elicitation

throughout this process. However, the reliability of expert

elicitation studies depends on the proper selection and use of

expertise. Though there are inevitable limitations of using any form

of subjective judgement, no matter how expert, these limitations

can be minimised with careful selection of experts and questions.

We suggest carefully selecting experts with varied expertise

relevant to the chosen question. For example, for identifying

milestones towards human -level AI , the cohort should include

experts in machine learning and computer science but also

cognitive scientists, philosophers, developmental psychologists,

evolutionary biologists, and animal cognition experts. This diverse

group would bring together expertise on the current capabilities

and limi tations of AI , with expertise on key milestones in human

cognitive development and the order in which cognitive faculties

develop. We also encourage careful design and phrasing of

questions to enable participants to make best use of their expertise.

For ex ample, rather than asking experts to identify specific

milestones towards human -level AI , which is a question for which

they are not trained , we might ask machine learning researchers

about the limitations of the methods they use every day , or ask

psycholo gists what important human capacities they see lacking in

machines .

There are several different methods available for expert

elicitation: including surveys, interviews, workshops and focus

groups, each with advantages and disadvantages [2]. Interviews

provide greater opportunity to tailor questions to the specific

expert, but can be extremely time -intensive compared to surveys,

making it difficult to consult a large number of experts. If possible,

some combination of the two may be ideal: using carefully s elected

semi -structured interviews to elicit initial milestones, followed -up

with surveys with a much broader group to validate which

milestones are widely accepted as being key.

2.2.2 Mapping dependencies between milestones using

causal graphs

3

The second step of our methodology involves convening experts to

identify dependency relations between identified milestones: that

is, which milestones may underpin, lead to, or depend on which

others. Experts should be guided in generating directed causal

graphs to represent perceived causal relations between milestones

[35]. Causal graphs show causal links between elements of a

system, represented as nodes (elements) and arrows (causal links).

A directed positive arrow from A and B indicates that A has a

positive causal influence on B. Such causal maps have been used to

support decision -making, structure knowledge, and improve

visualisation of complex scenarios [20][25][26][28] and are

particularly useful for exploring and understanding possible

futures, r ather than aiming to predict a single future [26]. They are

easily modified and constructed collaboratively, and therefore are

well-suited to helping us structure expert knowledge on

dependencies between different technological milestones.

Fuzzy cognitive maps (FCMs), a specific type of causal graph,

may be a particularly useful method for our purposes. FCMs

capture all benefits of causal mapping but can be extended into a

quantitative model, and thus lend themselves to computerised

simulations [25]. This w ill not always be necessary, but given that

our proposed method is applicable to many contexts, a flexible

model is desirable. FCMs are well able to document non -linear

interactions and experts’ mental models of causal interactions

because they can handle imprecise causal links. The variables

(nodes) can take any state between 0 and 1 (hence ‘fuzzy’),

indicating the extent to which the variable is ‘present’. When a

variable changes its state, it affects all concepts that are causally

dependent on it. FCMs h ave been used successfully in

environmental science [20][38], strategic planning [30], and other

areas [25], and have been recommended for use in futures studies,

forecasting, and technology road mapping [1][26].

In a workshop format, experts should be given brief training in

causal graph methods or FCMs, and then break into groups to

discuss dependencies between milestones. Each group should then

collaboratively construct a directed causal graph or FCM to

represent these relationships. Groups should be formed so as to

maximise the variation of expertise in each group.

2.2.3 Identifying canaries from causal graphs.

Finally, the resulting causal graphs can be aggregated and analysed

to identify canaries, by identifying the nodes with the highest

number of outgoing arrows.

The aggregation process should first focus on identifying

commonalities between all graphs which can be shared in the final

graph. Substantive disagreements may remain, which can be the

subject of mediated discussion, with a voting pro cess to decide on

final aspects of the graph.

Experts then identify nodes which they agree have significantly

more outgoing nodes (some amount of discretion from the

experts/conveners will be needed to determine what counts as

‘significant’) . Since nodes with a high density of outgoing arrows

represent milestones which underpin many others, progress on

these milestones can act as ‘canaries’ , indicating that we may see

further advances in many other areas in the near future. These

canaries can therefore act as early warning signs for more rapid and

potentially discontinuous progress, as well as for new applications

becoming ready for deployment (depending on which exact

capabilities they are likely to unlock) .

3 IMPLEMENTATION : CANDIDATE

CANARI ES FOR HLMI

We describe a partial implementation of the proposed method to

identify canaries for achieving high -level machine intelligence

(HLMI). We define HLMI here as an AI system (or collection of

AI systems) that performs at the level of an average hu man adult

on key cognitive measures required for economically relevant

tasks. 2 We interviewed experts about the limitations of current

deep learning methods from the perspective of achieving HLMI,

and used the findings to construct a causal graph of miles tones.

This allowed us to identify candidate canary capabilities. The

results must be understood as preliminary, because the causal

graphs were developed just by the authors, not a cohort of experts,

and so have limited validity. However, this initial demo nstration

and preliminary findings will form the basis for a full study with a

broader range of experts in future.

3.1 Expert elicitation to identify milestones

To identify key milestones for achieving HLMI, we interviewed 25

experts (using both a non -probabilistic, purposive sampling method

and stratified sampling method, as described by [ 12] in chapter

six). The sample covered experts in machine learning (9), computer

science with specialisation in AI (5), cognitive psychology (2),

animal cognition (1), philosophy of mind and AI (3), mathematics

(2), neuroscience (1), neuro -informatics (1), engineering (1).

Interviewees came from both academia and industry, and were

deliberately selected to be at a variety of career stages.

We conducted individual, semi -structured interviews, with a set

of core questions and themes to guide more open -ended discussion.

Semi -structured interviews use an interview guide with core

questions and themes to be explored in response to open -ended

questions to allo w interviewees to explain their position freely

[24]. Initial questions included : what do you believe deep learning

will never be able to do? Do you see limitations of deep learning

that others seem not to notice? In response to these and similar

questions tailored to the interviewee’s specific expertise , they were

asked to name what they thought were the biggest limitations of

current deep learning methods, from the perspective of achieving

HLMI.

All named limitations were collated, with shortened

explanations, and translated into ‘milestones’, i.e. capabilities

experts believe deep learning is yet to achieve on the path to

HLMI. Table 1. shows all milestones based on limitations, named

by interviewees. Because we have maintained each interviewee’s

preferred terminology, several of the milestones listed may turn out

to refer to the same or highly similar problems.

Table 1. Limitations of deep learning as perceived and named by experts

2 We use this definition, adapted from Grace et al., to highlight that what is

key for saying HLMI has been reached is that AI has the cognitive ability to

perform every task better than humans workers, not that it is in practice

deployed to do so.

4

Causal reasoning : the ability to

detect and generalise from causal

relations in data. Common sense: having a set of

background beliefs or

assumptions which are useful

across domains and tasks.

Meta -learning : the ability to learn

how to best learn in each domain. Architecture search : the ability

to automatica lly choose the best

architecture of a neural network

for a task.

Hierarchical decomposition: the

ability to decompose tasks and

objects into smaller and hierarchical

sub-components. Cross -domain generalization :

the ability to apply learning from

one task or domain to another.

Representation: the ability to learn

abstract representations of the

environment for efficient learning and

generalisation. Variable binding: the ability to

attach symbols to learned

representations, enabling

generalisation and re-use.

Disentanglement: the ability to

understand the components and

composition of observations, and

recombine and recognise them in

different contexts. Analogical reasoning: the ability

to detect abstract similarity across

domains, enabling learning and

generalisation.

Concept formation: the ability to

formulate, manipulate and

comprehend abstract concepts. Object permanence: the ability

to represent objects as

consistently existing even when

out of sight.

Grammar: the ability to construct

and decompose sentences according

to correct grammatical rules. Reading comprehension: the

ability to detect narratives,

semantic context, themes and

relations between characters in

long texts or stories.

Mathematical reasoning: the ability

to d evelop, identify and search

mathematical proofs and follow

logical deduction in reasoning. Visual question answering: the

ability to answer open -ended

questions about the content and

interpretation of an image.

Uncertainty estimation: the ability

to represent and consider different

types of uncertainty. Positing unobservables: the

ability to account for

unobservable phenomena,

particularly in representing and

navigating environments.

Reinterpretation: the abilit y to

partially re -categorise, re -assign or

reinterpret data in light of new

information without retraining from

scratch. Theorising and hypothesising:

the ability to propose theories and

testable hypotheses, understand

the difference between theory and

reality, and the impact of data on

theories.

Flexible memory: the ability to store,

recognise and retrieve knowledge so

that it can be used in new

environments and tasks. Efficient learning : the ability to

learn efficiently from small

amounts of data.

Interpretability: the ability for

humans to interpret internal network

dynamics so that researchers can

manipulate network dynamics. Continual learning: the ability to

learn continuously as new data is

acquired.

Active learning: the ability to learn

and explore in self -directed ways. Learning from inaccessible

data: the ability to learn in

domains where data is missing,

difficult or expensive to acquire. Learning from dynamic data: the

ability to learn from a continually

changing stream of data. Navi gating brittle

environments: the ability to

navigate irregular, and complex

environments which lack clear

reward signals and short feedback

loops.

Generating valuation functions: the

ability to generate new valuation

functions immediately from scratch to

follow newly -given rules. Scalability: the ability to scale up

learning to deal with new features

without needing

disproportionately more data,

model parameters, and

computational power.

Learning in simulation: the ability

to learn all relevant experience from a

simulated environment. Metric identification: the ability

to identify appropriate metrics of

success for complex tasks, such

that optimising for the measured

quantity accomplishes the task in

the way intended.

Conscious perception: the ability to

experience the world from a first -

person perspective. Context -sensitive decision

making: the ability to adapt

decision -making strategies to the

needs and constraints of a given

time or context.

It is worth noting the re are apparent similarities and relationships

between many of these milestones. For example, representation :

the ability to learn abstract representations of the environment,

seems closely related to variable binding : the ability to formulate

place-holder concepts. The ability to apply learning from one task

to another , cross -domain generalisation , seems closely related to

analogical reasoning. Further progress in research will tell wh ich of

these are clearly separate milestones or more closely related

notions.

3.2 Causal graphs to identify dependencies

between milestones

Having identified key milestones, we explore dependencies

between the m using fuzzy cognitive maps (FCM). We focus on

how capabilities enable , not inhibit, other capabilities, which

means we use only positive influence arrows. FCMs are

particularly well -suited to representing the uncertainty inherent in

this analysis, as it assumes that each arrow c ould have a weight to

represent varying levels of strength. In this analysis we have not

specified the weights on connections , but adding these weights

could be trialled with experts in the future.

A previous survey [ 5] suggests that this endeavour is a highly

uncertain one , finding that many differe nt relationships between AI

milestones seem plausible to experts . Our analysis does not claim

nor aim to resolve this disagreement, but instead shows only one

out of many possible mappings , to illustrate the use and value of

FCMs in AI progress monitoring.

We use the software VenSim (vensim.com) to illustrate the

hypothesised relationships between perceived milestones in Figure

1. For example, we hypothesise that the ability to formulate,

comprehend and manipulate abstract concepts may be an important

prerequisite for the ability to account for unobservable phenomena,

which is in turn important for reasoning about causality. A positive

influence arrow does not mean that achieving one milestone

necessarily leads to another, but rather that progress on the fi rst

5

Analogical

ReasoningRepresentation

Variable-Binding

Disentanglement

Flexible Memory

Dynamic DataBrittle EnvironmentContinual Learning

Reinterpretationsa

B

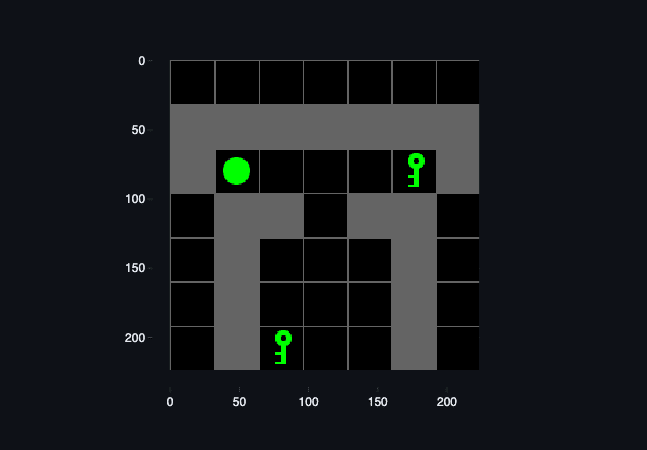

CDEFmilestones increases the likelihood of progress on other arrows it

points to.

Figure 1. Cognitive map of dependencies between milestones collected in

expert elicitations . Arrows coloured in pink and green indicate capabilities

that have significantly more outgoing arrows.

This map was constr ucted by the authors, and is therefore far from

definitive or the only possible way of representing dependencies

between capabilities. However, this initial map does provide an

important illustration of the kind of output this methodology should

aim to ach ieve, and generates some initial hypotheses for

relationships between milestones.

3.3 Candidate Canary Capabilities

Based on this causal map, we can identify two candidates for

canar y capabilities . The capabilities with the most outgoing arrows

are:

Symbol -like representations : the ability to construct abstract,

discrete and disentangled representations of inputs, to allow for

efficiency and variable -binding. We hypothesise that this capability

underpins several others, including grammar, mathem atical

reasoning, concept formation, and flexible memory.

Flexible memory: the ability to store, recognise, and re -use

knowledge. We hypothesise that this ability would unlock many

others, including the ability to learn from dynamic data, the ability

to learn in a continual fashion, and the ability to learn how to learn.

We therefore tentatively suggest that these are two important

capabilities to track progress on from the perspective of

anticipating HLMI. We discuss one such capability, flexible

memory , in more detail below.

Figure 2. Extract of Figure 1, showing one candidate canary capability.

Flexible memory, as described by experts in our sample, is the

ability to recognize and store reusable information, in a format that

is flexible so that it can be retrieved and updated when new

knowledge is gained. We explain the reasoning behind the labelled

arrows in figure 2:

• (a): compact representations are a prerequisite for

flexible memory since storing high -dimensional input in

mem ory requires compressed, efficient and thus abstract

representations.

• (B): the ability to reinterpret data in light of new

information likely requires flexible memory, since it

requires the ability to retrieve and alter previously stored

information.

• (C) and (E): to make use of dynamic and changing data

input, and to learn continuously over time, an agent must

be able to store, correctly retrieve and modify previous

data as new data comes in.

• (D): in order to plan and execute strategies in brittle

environm ents with long delays between actions and

rewards, an agent must be able to store memories of past

actions and rewards, but easily retrieve this information

and continually update its best guess about how to obtain

rewards in the environment.

• (F): analogic al reasoning involves comparing abstract

representations, which requires forming, recognising, and

retrieving representations of earlier observations.

Progress in flexible memory therefore seems likely to unlock

or enable many other capabilities important for HLMI,

especially those crucial for applying AI systems in real

environments and more complex tasks. These initial

hypotheses should be validated and explored in more depth by

a wider range of experts.

4 DISCUSSION

6

4.1 Advantages

We believe the proposed met hod for identifying canaries has many

strengths and could be applied to a broad range of important

questions about transformative AI systems and impacts. The

general methodology of using expert elicitation to identify

milestones and then causal mapping to elucidate dependencies

between those milestones is extremely flexible, meaning it could

be applied beyond AI to other fields of science and technology

progress. The method can also be adapted to the preferred level of

detail for a given study: causal graph s can be made arbitrarily

complex [18] and can be analysed both quantitatively and

qualitatively. With this method, it is possible to combine different

types of expertise relating to milestones: including well -understood

technical limitations of current me thods, with informed speculation

about unknown capabilities that may be important prerequisites to

some transformative event. With early warning signs we can track

progress towards canary milestones, or directly prepare for the

transformative events that f ollow after it .

4.2 Uses

We envision that this methodology could be used to identify

warning signs for a number of important potentially transformative

events in AI progress, such as foundational research break -

throughs, the use of AI to automate scientific research, or the

automation of tasks that affect a wide range of jobs.

Once canaries have been identified for some transformative event,

there are numerous ways we might use them to improve

preparation for its impact, including by:

• Automating the collection, tracking and flagging of new

publications relevant to canary capabilities, and building

a database of relevant publications (perhaps similar to

that described by [40]);

• Generating metrics and benchmarks for evaluating

progress on canary capabilities;

• Using prediction platforms such as Metaculus

(ai.metaculus.com) to track and forecast progress on

canary capabilities;

• Conducting more focused expert elicitation, for example

periodically consult experts on their updated forecasts (in

the form of cumulative probability estimates) for when

different milestones are achieved, or when they are

presented with updated progress metrics on canary

capabilities;

• Conducting more in -depth research to empirically and

theoretically investigate hypot hesised relationships

between milestones: for example, to what extent do

improvements in memory structures lead to empirical

improvements in performance in brittle environments?

• Conducting more in -depth research on the societal and

governance implications of achieving canary milestones,

and preparing governance responses for these milestones

ahead of time. 4.3 Limitations and future directions

This methodology nonetheless has some limitations which further

iterations could seek to improve on. There may be a fun damental

trade -off between the benefits of consulting a large, diverse group

of experts - enabling more thorough and robust identification of

relevant milestones - and the feasibility of reaching agreement

upon a single causal map, and therefore agreeing u pon canaries.

Relatedly, if uncertainty about milestones is too high, it may be

difficult for experts to agree on a single causal map or candidates

for canaries: finding questions where there is enough uncertainty

for this process to be useful, but not so much uncertainty that no

agreement can be reached, may be a challenge in some cases. It

will also be important to recognise any potential limitations of the

specific sample of experts involved in the process: recognising that

machine learning researchers m ay be biased towards emphasising

the importance of areas they themselves work on, for example, or

that non -computer scientists may often lack a full understanding of

what current systems can and cannot do.

In using FCMs to generate causal maps, it is not clear what level

of detail and quantitative analysis will be most useful. In the

implementation described here, we hypothesised relationships at a

high level of abstraction and without quantitative analysis, due to

the high level at which experts highlighted limitations in the first

stage. The higher the level of abstraction, the more uncertain the

mapping will be and the less useful it may be to indicate weights. It

would be valuable for future work to explore various levels of

abstractions, inclu ding a more detailed and quantitative analysis

using more clearly -defined technical milestones, which could result

in more precise forecasts and hypotheses.

Finally, it is important to note that attempts to anticipate and

understand progress in AI (or any other technology) are not

independent of that progress itself. Better understanding of key

milestones towards AGI, HLMI, or some other notion of

transformative AI, does not just improve our ability to anticipate

that progress, but may also improve our abil ity to make progress

towards transformative AI. We must therefore be cautious in

identifying ‘canary’ capabilities, to consider the potential risks of

making progress on these capabilities, and to communicate and

encourage consideration of these risks to t hose researchers driving

forward AI development.

REFERENCES

[1] M. Amer, A. Jetter, and T. Daim, ‘Development of fuzzy cognitive

map (FCM)‐based scenarios for wind energy’, International Journal

of Energy Sector Management , vol. 5, no. 4, pp. 564 –584, 2011 . doi:

10.1108/17506221111186378 .

[2] B.M. Ayyub, ‘Elicitation of expert opinions for uncertainty and risks ’,

CRC press , 2001.

[3] S. Ballard and R. Calo , ‘Taking Futures Seriously: Forecasting as

Method in Robotics Law and Policy ’, Draft available at:

https://robots.law.miami.edu/2019/wp -

content/uploads/2019/03/Calo_Taking -Futures -Seriously.pdf , 201 9.

[4] S. Baum, B. Goertzel, and T.G. Goertzel, ‘How long until human -

level AI? Results from an expert assessment ’, Technological

Forecasting and Social Change , 78(1), 185 -195, 2011.

[5] S. Beard, T. Rowe, and J. Fox, ‘An analysis and evaluation of

methods currently used to quantify the likelihood of existential

hazards ’, Futures , 115, 102469 , 2020.

[6] N. Benaich and I. Hogarth, ‘State of AI Report 2019’, available at

https://www.stateof.ai/ , 2019.

7

[7] C. Buckner and K. Yang, ‘Mating dances and the evolution of

language: What’s the next step?’, Biology & Philosophy , vol. 32,

2017, doi: 10.1007/s10539 -017-9605 -z.

[8] K. Crawford , R. Dobbe, T. Dryer, G. Fried, B. Green, E. et al., ‘AI

Now 2019 Report ’, New York: AI Now Institute , 2019.

[9] W. Chang, E. Chen, B. Mellers and P. Tetlock, ‘Developing expert

political judgment: The impact of training and practice on judgmental

accuracy in geopolitical forecasting tournament s’, Judgement and

Decision Making , vol. 11, no. 5, pp. 509 -526, 2016.

[10] C. Z. Cremer, ‘Deep Limitations? Examining Expert Disagreement

Over Deep Learning’, Manuscript in preparation, 2020.

[11] D. Collingridge, ‘The Social Control of Technology ’, St. Martin's

Press 1980.

[12] A. Dafoe, ‘AI Governance: A Research Agenda ’, Governance of AI

Program, The Future of Humanity Institute, The University of Oxford,

Oxford, UK , 2018.

[13] M. DeJonckheere and L. Vaughn, ‘Semistructured interviewing in

primary care research: a balance of relationship and rigour ’, Family

Medicine and Community Health, pp. doi: 10.1136/fmch -2018 -

000057, 2019.

[14] O. Etzioni, ‘How to know if artificial intelligence is about to destroy

civilization ’, MIT Technology Review , 2020.

[15] C. B. Frey and M. A. Osb orne, ‘The future of employment: How

susceptible are jobs to computerisation? ’ Technological Forecasting

and Social Change, vol. 114, pp. 254 -280, 2017.

[16] S. Friel et al. , ‘Using systems science to understand the determinants

of inequities in healthy eating’, PLoS ONE , vol. 12, no. 11, p.

e0188872, 2017 . doi: 10.1371/journal.pone.0188872 .

[17] K. Grace, J. Salvatier, A. Dafoe, B. Zhang, and O. Evans, ‘When will

AI exceed human performance? Evidence from AI experts ’, Journal

of Artificial Intelligence Research , 62, 729 -754, 2018.

[18] S. R. J. Gray et al. , ‘Are coastal managers detecting the problem?

Assessing stakeholder pe rception of climate vulnerability using Fuzzy

Cognitive Mapping’, Ocean & Coastal Management , vol. 94, pp. 74 –

89, 2014 . doi: 10.1016/j.ocecoaman.2013.11.008 .

[19] R. Gruetzemacher, ‘A Holistic Fram ework for Forecasting

Transformative AI ’, Big Data and Cognitive Computing , 3(3): 35 ,

2019.

[20] R. Gruetzemacher and J. Whittlestone, ‘Defining and Unpacking

Transformative AI ’, arXiv preprint arXiv:1912.00747 . 2019.

[21] A. Haynes and L. Gbedemah, ‘The Global AI Index Methodology’,

Tortoise Media, available at:

https://www.tortoisemedia.com/intelligence/ai/ , 2019.

[22] S. Jamshed, ‘Qualitative research method -interv iewing and

observation ’, Journal of Basic and Clinical Pharmacy, vol. 5, no. 4, p.

87–88, 2014.

[23] A. Jetter, ‘Fuzzy Cognitive Maps for Engineering and Technology

Management: What Works in Practice?’, in 2006 Technology

Management for the Global Future - PICMET 2006 Conference ,

Istanbul, Turkey, pp. 498 –512, 2006. doi:

10.1109/PICMET.2006.296648 .

[24] J. Jetter and K. Kok, ‘Fuzzy Cognitive Maps for futures studies —A

methodological assessment of concepts and methods’, Futures , vol.

61, pp. 45 –57, 2014, doi: 10.1016/j.futures.2014.05.002 .

[25] H. Karnofsky, ‘Some Background on our Views Regarding Advanced

Artificial Intelligence ’, available at :

https://www.openphilanthropy.org/blog/some -background -our-views -

regarding -advanced -artificial -intelligence , 2016.

[26] B. Kosko, ‘Fuzzy Cognitive Maps ’, Int. J. Man -Machine Studies, vol.