id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

df1db045-7149-441a-b0be-9af948750912 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Discussion with Eliezer Yudkowsky on AGI interventions

Thefollowing is a partially redacted and lightly edited transcript of a chat conversation about AGI between Eliezer Yudkowsky and a set of invitees in early September 2021. By default, all other participants are anonymized as "Anonymous".

I think this Nate Soares quote (excerpted from Nate's [response to a report by Joe Carlsmith](https://www.lesswrong.com/posts/cCMihiwtZx7kdcKgt/comments-on-carlsmith-s-is-power-seeking-ai-an-existential)) is a useful context-setting preface regarding timelines, which weren't discussed as much in the chat transcript:

> [...] My odds [of AGI by the year 2070] are around 85%[...]

>

> I can list a handful of things that drive my probability of AGI-in-the-next-49-years above 80%:

>

> 1. 50 years ago was 1970. The gap between AI systems then and AI systems now seems pretty plausibly greater than the remaining gap, even before accounting the recent dramatic increase in the rate of progress, and potential future increases in rate-of-progress as it starts to feel within-grasp.

>

> 2. I observe that, 15 years ago, everyone was saying AGI is far off because of what it couldn't do -- basic image recognition, go, starcraft, winograd schemas, programmer assistance. But basically all that has fallen. The gap between us and AGI is made mostly of intangibles. (Computer Programming That Is Actually Good? Theorem proving? Sure, but on my model, "good" versions of those are a hair's breadth away from full AGI already. And the fact that I need to clarify that "bad" versions don't count, speaks to my point that the only barriers people can name right now are intangibles.) That's a very uncomfortable place to be!

>

> 3. When I look at the history of invention, and the various anecdotes about the Wright brothers and Enrico Fermi, I get an impression that, when a technology is pretty close, the world looks a lot like how our world looks.

>

> * Of course, the trick is that when a technology is a little far, the world might also look pretty similar!

> * Though when a technology is **very** far, the world **does** look different -- it looks like experts pointing to specific technical hurdles. We exited that regime a few years ago.

>

> 4. Summarizing the above two points, I suspect that I'm in more-or-less the "penultimate epistemic state" on AGI timelines: I don't know of a project that seems like they're right on the brink; that would put me in the "final epistemic state" of thinking AGI is imminent. But I'm in the second-to-last epistemic state, where I wouldn't feel all that shocked to learn that some group has reached the brink. Maybe I won't get that call for 10 years! Or 20! But it could also be 2, and I wouldn't get to be indignant with reality. I wouldn't get to say "but all the following things should have happened first, before I made that observation". I have made those observations.

>

> 5. It seems to me that the Cotra-style compute-based model provides pretty conservative estimates. For one thing, I don't expect to need human-level compute to get human-level intelligence, and for another I think there's a decent chance that insight and innovation have a big role to play, especially on 50 year timescales.

>

> 6. There has been a lot of AI progress recently. When I tried to adjust my beliefs so that I was **positively** surprised by AI progress just about as often as I was **negatively** surprised by AI progress, I ended up expecting a bunch of rapid progress. [...]

>

>

**Further preface by Eliezer:**

In some sections here, I sound gloomy about the probability that coordination between AGI groups succeeds in saving the world. Andrew Critch reminds me to point out that gloominess like this can be a self-fulfilling prophecy - if people think successful coordination is impossible, they won’t try to coordinate. I therefore remark in retrospective advance that it seems to me like at least some of the top AGI people, say at Deepmind and Anthropic, are the sorts who I think would rather coordinate than destroy the world; my gloominess is about what happens when the the technology has propagated further than that. But even then, anybody who would *rather* coordinate and *not* destroy the world shouldn’t rule out hooking up with Demis, or whoever else is in front if that person also seems to prefer not to completely destroy the world. (Don’t be too picky here.) Even if the technology proliferates and the world ends a year later when other non-coordinating parties jump in, it’s still better to take the route where the world ends one year later instead of immediately. Maybe the horse will sing.

---

**Eliezer Yudkowsky**

Hi and welcome. Points to keep in mind:

- I'm doing this because I would like to learn whichever *actual* thoughts this target group may have, and perhaps respond to those; that's part of the point of anonymity. If you speak an anonymous thought, please have that be your actual thought that you are thinking yourself, not something where you're thinking "well, somebody else might think that..." or "I wonder what Eliezer's response would be to..."

- Eliezer's responses are uncloaked by default. Everyone else's responses are anonymous (not pseudonymous) and neither I nor MIRI will know which potential invitee sent them.

- Please do not reshare or pass on the link you used to get here.

- I do intend that parts of this conversation may be saved and published at MIRI's discretion, though not with any mention of who the anonymous speakers could possibly have been.

**Eliezer Yudkowsky**

(Thank you to Ben Weinstein-Raun for building [chathamroom.com](https://www.chathamroom.com/), and for quickly adding some features to it at my request.)

**Eliezer Yudkowsky**

It is now 2PM; this room is now open for questions.

**Anonymous**

How long will it be open for?

**Eliezer Yudkowsky**

In principle, I could always stop by a couple of days later and answer any unanswered questions, but my basic theory had been "until I got tired".

---

**Anonymous**

At a high level one thing I want to ask about is research directions and prioritization. For example, if you were dictator for what researchers here (or within our influence) were working on, how would you reallocate them?

**Eliezer Yudkowsky**

The first reply that came to mind is "I don't know." I consider the present gameboard to look incredibly grim, and I don't actually see a way out through hard work alone. We can hope there's a miracle that violates some aspect of my background model, and we can try to prepare for that unknown miracle; preparing for an unknown miracle probably looks like "Trying to die with more dignity on the mainline" (because if you can die with more dignity on the mainline, you are better positioned to take advantage of a miracle if it occurs).

**Anonymous**

I'm curious if the grim outlook is currently mainly due to technical difficulties or social/coordination difficulties. (Both avenues might have solutions, but maybe one seems more recalcitrant than the other?)

**Eliezer Yudkowsky**

Technical difficulties. Even if the social situation were vastly improved, on my read of things, everybody still dies because there is nothing that a handful of socially coordinated projects can do, or even a handful of major governments who aren't willing to start nuclear wars over things, to prevent somebody else from building AGI and killing everyone 3 months or 2 years later. There's no obvious winnable position into which to play the board.

**Anonymous**

just to clarify, that sounds like a large scale coordination difficulty to me (i.e., we - as all of humanity - can't coordinate to not build that AGI).

**Eliezer Yudkowsky**

I wasn't really considering the counterfactual where humanity had a collective telepathic hivemind? I mean, I've written fiction about a world coordinated enough that they managed to shut down all progress in their computing industry and only manufacture powerful computers in a single worldwide hidden base, but Earth was never going to go down that route. Relative to remotely plausible levels of future coordination, we have a technical problem.

**Anonymous**

Curious about why building an AGI aligned to its users' interests isn't a thing a handful of coordinated projects could do that would effectively prevent the catastrophe. The two obvious options are: it's too hard to build it vs it wouldn't stop the other group anyway. For "it wouldn't stop them", two lines of reply are nobody actually wants an unaligned AGI (they just don't foresee the consequences and are pursuing the benefits from automated intelligence, so can be defused by providing the latter) (maybe not entirely true: omnicidal maniacs), and an aligned AGI could help in stopping them. Is your take more on the "too hard to build" side?

**Eliezer Yudkowsky**

Because it's too technically hard to align some cognitive process that is powerful enough, and operating in a sufficiently dangerous domain, to stop the next group from building an unaligned AGI in 3 months or 2 years. Like, they can't coordinate to build an AGI that builds a nanosystem because it is too technically hard to align their AGI technology in the 2 years before the world ends.

**Anonymous**

Summarizing the threat model here (correct if wrong): The nearest competitor for building an AGI is at most N (<2) years behind, and building an aligned AGI, even when starting with the ability to build an unaligned AGI, takes longer than N years. So at some point some competitor who doesn't care about safety builds the unaligned AGI. How does "nobody actually wants an unaligned AGI" fail here? It takes >N years to get everyone to realise that they have that preference and that it's incompatible with their actions?

**Eliezer Yudkowsky**

Many of the current actors seem like they'd be really gung-ho to build an "unaligned" AGI because they think it'd be super neat, or they think it'd be super profitable, and they don't expect it to destroy the world. So if this happens in anything like the current world - and I neither expect vast improvements, nor have very long timelines - then we'd see Deepmind get it first; and, if the code was not *immediately* stolen and rerun with higher bounds on the for loops, by China or France or whoever, somebody else would get it in another year; if that somebody else was Anthropic, I could maybe see them also not amping up their AGI; but then in 2 years it starts to go to Facebook AI Research and home hobbyists and intelligence agencies stealing copies of the code from other intelligence agencies and I don't see how the world fails to end past that point.

**Anonymous**

What does trying to die with more dignity on the mainline look like? There's a real question of prioritisation here between solving the alignment problem (and various approaches within that), and preventing or slowing down the next competitor. I'd personally love more direction on where to focus my efforts (obviously you can only say things generic to the group).

**Eliezer Yudkowsky**

I don't know how to effectively prevent or slow down the "next competitor" for more than a couple of years even in plausible-best-case scenarios. Maybe some of the natsec people can be grownups in the room and explain why "stealing AGI code and running it" is as bad as "full nuclear launch" to their foreign counterparts in a realistic way. Maybe more current AGI groups can be persuaded to go closed; or, if more than one has an AGI, to coordinate with each other and not rush into an arms race. I'm not sure I believe these things can be done in real life, but it seems understandable to me how I'd go about trying - though, please do talk with me a lot more before trying anything like this, because it's easy for me to see how attempts could backfire, it's not clear to me that we should be inviting more attention from natsec folks at all. None of that saves us without technical alignment progress. But what are other people supposed to do about researching alignment when I'm not sure what to try there myself?

**Anonymous**

thanks! on researching alignment, you might have better meta ideas (how to do research generally) even if you're also stuck on object level. and you might know/foresee dead ends that others don't.

**Eliezer Yudkowsky**

I definitely foresee a whole lot of dead ends that others don't, yes.

**Anonymous**

Does pushing for a lot of public fear about this kind of research, that makes all projects hard, seem hopeless?

**Eliezer Yudkowsky**

What does it buy us? 3 months of delay at the cost of a tremendous amount of goodwill? 2 years of delay? What's that delay for, if we all die at the end? Even if we then got a technical miracle, would it end up impossible to run a project that could make use of an alignment miracle, because everybody was afraid of that project? Wouldn't that fear tend to be channeled into "ah, yes, it must be a government project, they're the good guys" and then the government is much more hopeless and much harder to improve upon than Deepmind?

**Anonymous**

I imagine lack of public support for genetic manipulation of humans has slowed that research by more than three months

**Anonymous**

'would it end up impossible to run a project that could make use of an alignment miracle, because everybody was afraid of that project?'

...like, maybe, but not with near 100% chance?

**Eliezer Yudkowsky**

I don't want to sound like I'm dismissing the whole strategy, but it sounds a *lot* like the kind of thing that backfires because you did not get *exactly* the public reaction you wanted, and the public reaction you actually got was bad; and it doesn't sound like that whole strategy actually has a visualized victorious endgame, which makes it hard to work out what the exact strategy should be; it seems more like the kind of thing that falls under the syllogism "something must be done, this is something, therefore this must be done" than like a plan that ends with humane life victorious.

Regarding genetic manipulation of humans, I think the public started out very unfavorable to that, had a reaction that was not at all exact or channeled, does not allow for any 'good' forms of human genetic manipulation regardless of circumstances, driving the science into other countries - it is not a case in point of the intelligentsia being able to successfully cunningly manipulate the fear of the masses to some supposed good end, to put it mildly, so I'd be worried about deriving that generalization from it. The reaction may more be that the fear of the public is a big powerful uncontrollable thing that doesn't move in the smart direction - maybe the public fear of AI gets channeled by opportunistic government officials into "and that's why We must have Our AGI first so it will be Good and we can Win". That seems to me much more like a thing that would happen in real life than "and then we managed to manipulate public panic down exactly the direction we wanted to fit into our clever master scheme", especially when we don't actually *have* the clever master scheme it fits into.

---

**Eliezer Yudkowsky**

I have a few stupid ideas I could try to investigate in ML, but that would require the ability to run significant-sized closed ML projects full of trustworthy people, which is a capability that doesn't seem to presently exist. Plausibly, this capability would be required in any world that got some positive model violation ("miracle") to take advantage of, so I would want to build that capability today. I am not sure how to go about doing that either.

**Anonymous**

if there's a chance this group can do something to gain this capability I'd be interested in checking it out. I'd want to know more about what "closed"and "trustworthy" mean for this (and "significant-size" I guess too). E.g., which ones does Anthropic fail?

**Eliezer Yudkowsky**

What I'd like to exist is a setup where I can work with people that I or somebody else has vetted as seeming okay-trustworthy, on ML projects that aren't going to be published. Anthropic looks like it's a package deal. If Anthropic were set up to let me work with 5 particular people at Anthropic on a project boxed away from the rest of the organization, that would potentially be a step towards trying such things. It's also not clear to me that Anthropic has either the time to work with me, or the interest in doing things in AI that aren't "stack more layers" or close kin to that.

**Anonymous**

That setup doesn't sound impossible to me -- at DeepMind or OpenAI or a new org specifically set up for it (or could be MIRI) -- the bottlenecks are access to trustworthy ML-knowledgeable people (but finding 5 in our social network doesn't seem impossible?) and access to compute (can be solved with more money - not too hard?). I don't think DM and OpenAI are publishing everything - the "not going to be published" part doesn't seem like a big barrier to me. Is infosec a major bottleneck (i.e., who's potentially stealing the code/data)?

**Anonymous**

Do you think Redwood Research could be a place for this?

**Eliezer Yudkowsky**

Maybe! I haven't ruled RR out yet. But they also haven't yet done (to my own knowledge) anything demonstrating the same kind of AI-development capabilities as even GPT-3, let alone AlphaFold 2.

**Eliezer Yudkowsky**

I would potentially be super interested in working with Deepminders if Deepmind set up some internal partition for "Okay, accomplished Deepmind researchers who'd rather not destroy the world are allowed to form subpartitions of this partition and have their work not be published outside the subpartition let alone Deepmind in general, though maybe you have to report on it to Demis only or something." I'd be more skeptical/worried about working with OpenAI-minus-Anthropic because the notion of "open AI" continues to sound to me like "what is the worst possible strategy for making the game board as unplayable as possible while demonizing everybody who tries a strategy that could possibly lead to the survival of humane intelligence", and now a lot of the people who knew about that part have left OpenAI for elsewhere. But, sure, if they changed their name to "ClosedAI" and fired everyone who believed in the original OpenAI mission, I would update about that.

**Eliezer Yudkowsky**

Context that is potentially missing here and should be included: I wish that Deepmind had more internal closed research, and internally siloed research, as part of a larger wish I have about the AI field, independently of what projects I'd want to work on myself.

The present situation can be seen as one in which a common resource, the remaining timeline until AGI shows up, is incentivized to be burned by AI researchers because they have to come up with neat publications and publish them (which burns the remaining timeline) in order to earn status and higher salaries. The more they publish along the spectrum that goes {quiet internal result -> announced and demonstrated result -> paper describing how to get the announced result -> code for the result -> model for the result}, the more timeline gets burned, and the greater the internal and external prestige accruing to the researcher.

It's futile to wish for everybody to act uniformly against their incentives. But I think it would be a step forward if the relative incentive to burn the commons could be *reduced*; or to put it another way, the more researchers have the *option* to not burn the timeline commons, without them getting fired or passed up for promotion, the more that unusually intelligent researchers might perhaps decide not to do that. So I wish in general that AI research groups in general, but also Deepmind in particular, would have affordances for researchers who go looking for interesting things to not publish any resulting discoveries, at all, and still be able to earn internal points for them. I wish they had the *option* to do that. I wish people were *allowed* to not destroy the world - and still get high salaries and promotion opportunities and the ability to get corporate and ops support for playing with interesting toys; if destroying the world is prerequisite for having nice things, nearly everyone is going to contribute to destroying the world, because, like, they're not going to just *not* have nice things, that is not human nature for almost all humans.

When I visualize how the end of the world plays out, I think it involves an AGI system which has the ability to be cranked up by adding more computing resources to it; and I think there is an extended period where the system is not aligned enough that you can crank it up that far, without everyone dying. And it seems *extremely* likely that if factions on the level of, say, Facebook AI Research, start being able to deploy systems like that, then death is very automatic. If the Chinese, Russian, and French intelligence services all manage to steal a copy of the code, and China and Russia sensibly decide not to run it, and France gives it to three French corporations which I hear the French intelligence service sometimes does, then again, everybody dies. If the builders are sufficiently worried about that scenario that they push too fast too early, in fear of an arms race developing very soon if they wait, again, everybody dies.

At present we're very much waiting on a miracle for alignment to be possible at all, even if the AGI-builder successfully prevents proliferation and has 2 years in which to work. But if we get that miracle at all, it's not going to be an instant miracle. There’ll be some minimum time-expense to do whatever work is required. So any time I visualize anybody trying to even start a successful trajectory of this kind, they need to be able to get a lot of work done, without the intermediate steps of AGI work being published, or demoed at all, let alone having models released. Because if you wait until the last months when it is really really obvious that the system is going to scale to AGI, in order to start closing things, almost all the prerequisites will already be out there. Then it will only take 3 more months of work for somebody else to build AGI, and then somebody else, and then somebody else; and even if the first 3 factions manage not to crank up the dial to lethal levels, the 4th party will go for it; and the world ends by default on full automatic.

If ideas are theoretically internal to "just the company", but the company has 150 people who all know, plus everybody with the "sysadmin" title having access to the code and models, then I imagine - perhaps I am mistaken - that those ideas would (a) inevitably leak outside due to some of those 150 people having cheerful conversations over a beer with outsiders present, and (b) be copied outright by people of questionable allegiances once all hell started to visibly break loose. As with anywhere that handles really sensitive data, the concept of "need to know" has to be a thing, or else everyone (and not just in that company) ends up knowing.

So, even if I got run over by a truck tomorrow, I would still very much wish that in the world that survived me, Deepmind would have lots of penalty-free affordance internally for people to not publish things, and to work in internal partitions that didn't spread their ideas to all the rest of Deepmind. Like, *actual* social and corporate support for that, not just a theoretical option you'd have to burn lots of social capital and weirdness points to opt into, and then get passed up for promotion forever after.

**Anonymous**

What's RR?

**Anonymous**

It's a new alignment org, run by Nate Thomas and ~co-run by Buck Shlegeris and Bill Zito, with maybe 4-6 other technical folks so far. My take: the premise is to create an org with ML expertise and general just-do-it competence that's trying to do all the alignment experiments that something like Paul+Ajeya+Eliezer all think are obviously valuable and wish someone would do. They expect to have a website etc in a few days; the org is a couple months old in its current form.

---

**Anonymous**

How likely really is hard takeoff? Clearly, we are touching the edges of AGI with GPT and the like. But I'm not feeling this will that easily be leveraged into very quick recursive self improvement.

**Eliezer Yudkowsky**

Compared to the position I was arguing in the Foom Debate with Robin, reality has proved way to the further Eliezer side of Eliezer along the Eliezer-Robin spectrum. It's been very unpleasantly surprising to me how little architectural complexity is required to start producing generalizing systems, and how fast those systems scale using More Compute. The flip side of this is that I can imagine a system being scaled up to interesting human+ levels, without "recursive self-improvement" or other of the old tricks that I thought would be necessary, and argued to Robin would make fast capability gain possible. You could have fast capability gain well before anything like a FOOM started. Which in turn makes it more plausible to me that we could hang out at interesting not-superintelligent levels of AGI capability for a while before a FOOM started. It's not clear that this helps anything, but it does seem more plausible.

**Anonymous**

I agree reality has not been hugging the Robin kind of scenario this far.

**Anonymous**

Going past human level doesn't necessarily mean going "foom".

**Eliezer Yudkowsky**

I do think that if you get an AGI significantly past human intelligence in all respects, it would obviously tend to FOOM. I mean, I suspect that Eliezer fooms if you give an Eliezer the ability to backup, branch, and edit himself.

**Anonymous**

It doesn't seem to me that an AGI significantly past human intelligence necessarily tends to FOOM.

**Eliezer Yudkowsky**

I think in principle we could have, for example, an AGI that was just a superintelligent engineer of proteins, and of nanosystems built by nanosystems that were built by proteins, and which was corrigible enough not to want to improve itself further; and this AGI would also be dumber than a human when it came to eg psychological manipulation, because we would have asked it not to think much about that subject. I'm doubtful that you can have an AGI that's significantly above human intelligence in *all* respects, without it having the capability-if-it-wanted-to of looking over its own code and seeing lots of potential improvements.

**Anonymous**

Alright, this makes sense to me, but I don't expect an AGI to *want* to manipulate humans that easily (unless designed to). Maybe a bit.

**Eliezer Yudkowsky**

Manipulating humans is a convergent instrumental strategy if you've accurately modeled (even at quite low resolution) what humans are and what they do in the larger scheme of things.

**Anonymous**

Yes, but human manipulation is also the kind of thing you need to guard against with even mildly powerful systems. Strong impulses to manipulate humans, should be vetted out.

**Eliezer Yudkowsky**

I think that, by default, if you trained a young AGI to expect that 2+2=5 in some special contexts, and then scaled it up without further retraining, a generally superhuman version of that AGI would be very likely to 'realize' in some sense that SS0+SS0=SSSS0 was a consequence of the Peano axioms. There's a natural/convergent/coherent output of deep underlying algorithms that generate competence in some of the original domains; when those algorithms are implicitly scaled up, they seem likely to generalize better than whatever patch on those algorithms said '2 + 2 = 5'.

In the same way, suppose that you take weak domains where the AGI can't fool you, and apply some gradient descent to get the AGI to stop outputting actions of a type that humans can detect and label as 'manipulative'. And then you scale up that AGI to a superhuman domain. I predict that deep algorithms within the AGI will go through consequentialist dances, and model humans, and output human-manipulating actions that can't be detected as manipulative by the humans, in a way that seems likely to bypass whatever earlier patch was imbued by gradient descent, because I doubt that earlier patch will generalize as well as the deep algorithms. Then you don't get to retrain in the superintelligent domain after labeling as bad an output that killed you and doing a gradient descent update on that, because the bad output killed you. (This is an attempted very fast gloss on what makes alignment difficult *in the first place*.)

**Anonymous**

[i appreciate this gloss - thanks]

**Anonymous**

"deep algorithms within it will go through consequentialist dances, and model humans, and output human-manipulating actions that can't be detected as manipulative by the humans"

This is true if it is rewarding to manipulate humans. If the humans are on the outlook for this kind of thing, it doesn't seem that easy to me.

Going through these "consequentialist dances" to me appears to presume that mistakes that should be apparent haven't been solved at simpler levels. It seems highly unlikely to me that you would have a system that appears to follow human requests and human values, and it would suddenly switch at some powerful level. I think there will be signs beforehand. Of course, if the humans are not paying attention, they might miss it. But, say, in the current milieu, I find it plausible that they will pay enough attention.

"because I doubt that earlier patch will generalize as well as the deep algorithms"

That would depend on how "deep" your earlier patch was. Yes, if you're just doing surface patches to apparent problems, this might happen. But it seems to me that useful and intelligent systems will require deep patches (or deep designs from the start) in order to be apparently useful to humans at solving complex problems enough. This is not to say that they would be perfect. But it seems quite plausible to me that they would in most cases prevent the worst outcomes.

**Eliezer Yudkowsky**

"If you've got a general consequence-modeling-and-searching algorithm, it seeks out ways to manipulate humans, even if there are no past instances of a random-action-generator producing manipulative behaviors that succeeded and got reinforced by gradient descent over the random-action-generator. It invents the strategy de novo by imagining the results, even if there's no instances in memory of a strategy like that having been tried before." Agree or disagree?

**Anonymous**

Creating strategies de novo would of course be expected of an AGI.

> "If you've got a general consequence-modeling-and-searching algorithm, it seeks out ways to manipulate humans, even if there are no past instances of a random-action-generator producing manipulative behaviors that succeeded and got reinforced by gradient descent over the random-action-generator. It invents the strategy de novo by imagining the results, even if there's no instances in memory of a strategy like that having been tried before." Agree or disagree?

>

>

I think, if the AI will "seek out ways to manipulate humans", will depend on what kind of goals the AI has been designed to pursue.

Manipulating humans is definitely an instrumentally useful kind of method for an AI, for a lot of goals. But it's also counter to a lot of the things humans would direct the AI to do -- at least at a "high level". "Manipulation", such as marketing, for lower level goals, can be very congruent with higher level goals. An AI could clearly be good at manipulating humans, while not manipulating its creators or the directives of its creators.

If you are asking me to agree that the AI will generally seek out ways to manipulate the high-level goals, then I will say "no". Because it seems to me that faults of this kind in the AI design is likely to be caught by the designers earlier. (This isn't to say that this kind of fault couldn't happen.) It seems to me that manipulation of high-level goals will be one of the most apparent kind of faults of this kind of system.

**Anonymous**

RE: "I'm doubtful that you can have an AGI that's significantly above human intelligence in *all* respects, without it having the capability-if-it-wanted-to of looking over its own code and seeing lots of potential improvements."

It seems plausible (though unlikely) to me that this would be true in practice for the AGI we build -- but also that the potential improvements it sees would be pretty marginal. This is coming from the same intuition that current learning algorithms might already be approximately optimal.

**Eliezer Yudkowsky**

> If you are asking me to agree that the AI will generally seek out ways to manipulate the high-level goals, then I will say "no". Because it seems to me that faults of this kind in the AI design is likely to be caught by the designers earlier.

>

>

I expect that when people are trying to stomp out convergent instrumental strategies by training at a safe dumb level of intelligence, this will not be effective at preventing convergent instrumental strategies at smart levels of intelligence; also note that at very smart levels of intelligence, "hide what you are doing" is also a convergent instrumental strategy of that substrategy.

I don't know however if I should be explaining at this point why "manipulate humans" is convergent, why "conceal that you are manipulating humans" is convergent, why you have to train in safe regimes in order to get safety in dangerous regimes (because if you try to "train" at a sufficiently unsafe level, the output of the unaligned system deceives you into labeling it incorrectly and/or kills you before you can label the outputs), or why attempts to teach corrigibility in safe regimes are unlikely to generalize well to higher levels of intelligence and unsafe regimes (qualitatively new thought processes, things being way out of training distribution, and, the hardest part to explain, corrigibility being "anti-natural" in a certain sense that makes it incredibly hard to, eg, exhibit any coherent planning behavior ("consistent utility function") which corresponds to being willing to let somebody else shut you off, without incentivizing you to actively manipulate them to shut you off).

---

**Anonymous**

My (unfinished) idea for buying time is to focus on applying AI to well-specified problems, where constraints can come primarily from the action space and additionally from process-level feedback (i.e., human feedback providers understand why actions are good before endorsing them, and reject anything weird even if it seems to work on some outcomes-based metric). This is basically a form of boxing, with application-specific boxes. I know it doesn't scale to superintelligence but I think it can potentially give us time to study and understand proto AGIs before they kill us. I'd be interested to hear devastating critiques of this that imply it isn't even worth fleshing out more and trying to pursue, if they exist.

**Anonymous**

(I think it's also similar to CAIS in case that's helpful.)

**Eliezer Yudkowsky**

There's lots of things we can do which don't solve the problem and involve us poking around with AIs having fun, while we wait for a miracle to pop out of nowhere. There's lots of things we can do with AIs which are weak enough to not be able to fool us and to not have cognitive access to any dangerous outputs, like automatically generating pictures of cats. The trouble is that nothing we can do with an AI like that (where "human feedback providers understand why actions are good before endorsing them") is powerful enough to save the world.

**Eliezer Yudkowsky**

In other words, if you have an aligned AGI that builds complete mature nanosystems for you, that *is* enough force to save the world; but that AGI needs to have been aligned by some method other than "humans inspect those outputs and vet them and their consequences as safe/aligned", because humans cannot accurately and unfoolably vet the consequences of DNA sequences for proteins, or of long bitstreams sent to protein-built nanofactories.

**Anonymous**

When you mention nanosystems, how much is this just a hypothetical superpower vs. something you actually expect to be achievable with AGI/superintelligence? If expected to be achievable, why?

**Eliezer Yudkowsky**

The case for nanosystems being possible, if anything, seems even more slam-dunk than the already extremely slam-dunk case for superintelligence, because we can set lower bounds on the power of nanosystems using far more specific and concrete calculations. See eg the first chapters of Drexler's Nanosystems, which are the first step mandatory reading for anyone who would otherwise doubt that there's plenty of room above biology and that it is possible to have artifacts the size of bacteria with much higher power densities. I have this marked down as "known lower bound" not "speculative high value", and since Nanosystems has been out since 1992 and subjected to attemptedly-skeptical scrutiny, without anything I found remotely persuasive turning up, I do not have a strong expectation that any new counterarguments will materialize.

If, after reading Nanosystems, you still don't think that a superintelligence can get to and past the Nanosystems level, I'm not quite sure what to say to you, since the models of superintelligences are much less concrete than the models of molecular nanotechnology.

I'm on record as early as 2008 as saying that I expected superintelligences to crack protein folding, some people disputed that and were all like "But how do you know that's solvable?" and then AlphaFold 2 came along and cracked the protein folding problem they'd been skeptical about, far below the level of superintelligence.

I can try to explain how I was mysteriously able to forecast this truth at a high level of confidence - not the exact level where it became possible, to be sure, but that superintelligence would be sufficient - despite this skepticism; I suppose I could point to prior hints, like even human brains being able to contribute suggestions to searches for good protein configurations; I could talk about how if evolutionary biology made proteins evolvable then there must be a lot of regularity in the folding space, and that this kind of regularity tends to be exploitable.

But of course, it's also, in a certain sense, very *obvious* that a superintelligence could crack protein folding, just like it was obvious years before *Nanosystems* that molecular nanomachines would in fact be possible and have much higher power densities than biology. I could say, "Because proteins are held together by van der Waals forces that are much weaker than covalent bonds," to point to a reason how you could realize that after just reading *Engines of Creation* and before *Nanosystems* existed, by way of explaining how one could possibly guess the result of the calculation in advance of building up the whole detailed model. But in reality, precisely because the possibility of molecular nanotechnology was already obvious to any sensible person just from reading *Engines of Creation*, the sort of person who wasn't convinced by *Engines of Creation* wasn't convinced by *Nanosystems* either, because they'd already demonstrated immunity to sensible arguments; an example of the general phenomenon I've elsewhere termed the Law of Continued Failure.

Similarly, the sort of person who was like "But how do you know superintelligences will be able to build nanotech?" in 2008, will probably not be persuaded by the demonstration of AlphaFold 2, because it was already clear to anyone sensible in 2008, and so anyone who can't see sensible points in 2008 probably also can't see them after they become even clearer. There are some people on the margins of sensibility who fall through and change state, but mostly people are not on the exact margins of sanity like that.

**Anonymous**

"If, after reading Nanosystems, you still don't think that a superintelligence can get to and past the Nanosystems level, I'm not quite sure what to say to you, since the models of superintelligences are much less concrete than the models of molecular nanotechnology."

I'm not sure if this is directed at *me* or the <https://en.wikipedia.org/wiki/Generic_you>, but I'm only expressing curiosity on this point, not skepticism :)

---

**Anonymous**

some form of "scalable oversight" is the naive extension of the initial boxing thing proposed above that claims to be the required alignment method -- basically, make the humans vetting the outputs smarter by providing them AI support for all well-specified (level-below)-vettable tasks.

**Eliezer Yudkowsky**

I haven't seen any plausible story, in any particular system design being proposed by the people who use terms about "scalable oversight", about how human-overseeable thoughts or human-inspected underlying systems, compound into very powerful human-non-overseeable outputs that are trustworthy. Fundamentally, the whole problem here is, "You're allowed to look at floating-point numbers and Python code, but how do you get from there to trustworthy nanosystem designs?" So saying "Well, we'll look at some thoughts we can understand, and then from out of a much bigger system will come a trustworthy output" doesn't answer the hard core at the center of the question. Saying that the humans will have AI support doesn't answer it either.

**Anonymous**

the kind of useful thing humans (assisted-humans) might be able to vet is reasoning/arguments/proofs/explanations. without having to generate neither the trustworthy nanosystem design nor the reasons it is trustworthy, we could still check them.

**Eliezer Yudkowsky**

If you have an untrustworthy general superintelligence generating English strings meant to be "reasoning/arguments/proofs/explanations" about eg a nanosystem design, then I would not only expect the superintelligence to be able to fool humans in the sense of arguing for things that were not true in a way that fooled the humans, I'd expect the superintelligence to be able to covertly directly hack the humans in ways that I wouldn't understand even after having been told what happened. So you must have some prior belief about the superintelligence being aligned before you dared to look at the arguments. How did you get that prior belief?

**Anonymous**

I think I'm not starting with a general superintelligence here to get the trustworthy nanodesigns. I'm trying to build the trustworthy nanosystems "the hard way", i.e., if we did it without ever building AIs, and then speed that up using AI for automation of things we know how to vet (including recursively). Is a crux here that you think nanosystem design requires superintelligence?

(tangent: I think this approach works even if you accidentally built a more-general or more-intelligent than necessary foundation model as long as you're only using it in boxes it can't outsmart. The better-specified the tasks you automate are, the easier it is to secure the boxes.)

**Eliezer Yudkowsky**

I think that China ends the world using code they stole from Deepmind that did things the easy way, and that happens 50 years of natural R&D time before you can do the equivalent of "strapping mechanical aids to a horse instead of building a car from scratch".

I also think that the speedup step in "iterated amplification and distillation" will introduce places where the fast distilled outputs of slow sequences are not true to the original slow sequences, because gradient descent is not perfect and won't be perfect and it's not clear we'll get any paradigm besides gradient descent for doing a step like that.

---

**Anonymous**

How do you feel about the safety community as a whole and the growth we've seen over the past few years?

**Eliezer Yudkowsky**

Very grim. I think that almost everybody is bouncing off the real hard problems at the center and doing work that is predictably not going to be useful at the superintelligent level, nor does it teach me anything I could not have said in advance of the paper being written. People like to do projects that they know will succeed and will result in a publishable paper, and that rules out all real research at step 1 of the social process.

Paul Christiano is trying to have real foundational ideas, and they're all wrong, but he's one of the few people trying to have foundational ideas at all; if we had another 10 of him, something might go right.

Chris Olah is going to get far too little done far too late. We're going to be facing down an unalignable AGI and the current state of transparency is going to be "well look at this interesting visualized pattern in the attention of the key-value matrices in layer 47" when what we need to know is "okay but was the AGI plotting to kill us or not”. But Chris Olah is still trying to do work that is on a pathway to anything important at all, which makes him exceptional in the field.

Stuart Armstrong did some good work on further formalizing the shutdown problem, an example case in point of why corrigibility is hard, which so far as I know is still resisting all attempts at solution.

Various people who work or worked for MIRI came up with some actually-useful notions here and there, like Jessica Taylor's expected utility quantilization.

And then there is, so far as I can tell, a vast desert full of work that seems to me to be mostly fake or pointless or predictable.

It is very, very clear that at present rates of progress, adding that level of alignment capability as grown over the next N years, to the AGI capability that arrives after N years, results in everybody dying very quickly. Throwing more money at this problem does not obviously help because it just produces more low-quality work.

**Anonymous**

"doing work that is predictably not going to be really useful at the superintelligent level, nor does it teach me anything I could not have said in advance of the paper being written"

I think you're underestimating the value of solving small problems. Big problems are solved by solving many small problems. (I do agree that many academic papers do not represent much progress, however.)

**Eliezer Yudkowsky**

By default, I suspect you have longer timelines and a smaller estimate of total alignment difficulty, not that I put less value than you on the incremental power of solving small problems over decades. I think we're going to be staring down the gun of a completely inscrutable model that would kill us all if turned up further, with no idea how to read what goes on inside its head, and no way to train it on humanly scrutable and safe and humanly-labelable domains in a way that seems like it would align the superintelligent version, while standing on top of a whole bunch of papers about "small problems" that never got past “small problems”.

**Anonymous**

"I think we're going to be staring down the gun of a completely inscrutable model that would kill us all if turned up further, with no idea how to read what goes on inside its head, and no way to train it on humanly scrutable and safe and humanly-labelable domains in a way that seems like it would align the superintelligent version"

This scenario seems possible to me, but not very plausible. GPT is not going to "kill us all" if turned up further. No amount of computing power (at least before AGI) would cause it to. I think this is apparent, without knowing exactly what's going on inside GPT. This isn't to say that there aren't AI systems that wouldn't. But *what kind of system would*? (A GPT combined with sensory capabilities at the level of Tesla's self-driving AI? That still seems too limited.)

**Eliezer Yudkowsky**

Alpha Zero scales with more computing power, I think AlphaFold 2 scales with more computing power, Mu Zero scales with more computing power. Precisely because GPT-3 doesn't scale, I'd expect an AGI to look more like Mu Zero and particularly with respect to the fact that it has some way of scaling.

---

**Steve Omohundro**

Eliezer, thanks for doing this! I just now read through the discussion and found it valuable. I agree with most of your specific points but I seem to be much more optimistic than you about a positive outcome. I'd like to try to understand why that is. I see mathematical proof as the most powerful tool for constraining intelligent systems and I see a pretty clear safe progression using that for the technical side (the social side probably will require additional strategies). Here are some of my intuitions underlying that approach, I wonder if you could identify any that you disagree with. I'm fine with your using my name (Steve Omohundro) in any discussion of these.

1) Nobody powerful wants to create unsafe AI but they do want to take advantage of AI capabilities.

2) None of the concrete well-specified valuable AI capabilities require unsafe behavior

3) Current simple logical systems are capable of formalizing every relevant system involved (eg. MetaMath <http://us.metamath.org/index.html> currently formalizes roughly an undergraduate math degree and includes everything needed for modeling the laws of physics, computer hardware, computer languages, formal systems, machine learning algorithms, etc.)

4) Mathematical proof is cheap to mechanically check (eg. MetaMath has a 500 line Python verifier which can rapidly check all of its 38K theorems)

5) GPT-F is a fairly early-stage transformer-based theorem prover and can already prove 56% of the MetaMath theorems. Similar systems are likely to soon be able to rapidly prove all simple true theorems (eg. that human mathematicians can prove in a day).

6) We can define provable limits on the behavior of AI systems that we are confident prevent dangerous behavior and yet still enable a wide range of useful behavior.

7) We can build automated checkers for these provable safe-AI limits.

8) We can build (and eventually mandate) powerful AI hardware that first verifies proven safety constraints before executing AI software

9) For example, AI smart compilation of programs can be formalized and doesn't require unsafe operations

10) For example, AI design of proteins to implement desired functions can be formalized and doesn't require unsafe operations

11) For example, AI design of nanosystems to achieve desired functions can be formalized and doesn't require unsafe operations.

12) For example, the behavior of designed nanosystems can be similarly constrained to only proven safe behaviors

13) And so on through the litany of early stage valuable uses for advanced AI.

14) I don't see any fundamental obstructions to any of these. Getting social acceptance and deployment is another issue!

Best, Steve

**Eliezer Yudkowsky**

Steve, are you visualizing AGI that gets developed 70 years from now under absolutely different paradigms than modern ML? I don't see being able to take anything remotely like, say, Mu Zero, and being able to prove any theorem about it which implies anything like corrigibility or the system not internally trying to harm humans. Anything in which enormous inscrutable floating-point vectors is a key component, seems like something where it would be very hard to prove any theorems about the treatment of those enormous inscrutable vectors that would correspond in the outside world to the AI not killing everybody.

Even if we somehow managed to get structures far more legible than giant vectors of floats, using some AI paradigm very different from the current one, it still seems like huge key pillars of the system would rely on non-fully-formal reasoning; even if the AI has something that you can point to as a utility function and even if that utility function's representation is made out of programmer-meaningful elements instead of giant vectors of floats, we'd still be relying on much shakier reasoning at the point where we claimed that this utility function meant something in an intuitive human-desired sense, say. And if that utility function is learned from a dataset and decoded only afterwards by the operators, that sounds even scarier. And if instead you're learning a giant inscrutable vector of floats from a dataset, gulp.

You seem to be visualizing that we prove a theorem and then get a theorem-like level of assurance that the system is safe. What kind of theorem? What the heck would it say?

I agree that it seems plausible that the good cognitive operations we want do not *in principle* require performing bad cognitive operations; the trouble, from my perspective, is that generalizing structures that do lots of good cognitive operations will automatically produce bad cognitive operations, especially when we dump more compute into them; "you can't bring the coffee if you're dead".

So it takes a more complicated system and some feat of insight I don't presently possess, to "just" do the good cognitions, instead of doing all the cognitions that result from decompressing the thing that compressed the cognitions in the dataset - even if that original dataset only contained cognitions that looked good to us, even if that dataset actually *was* just correctly labeled data about safe actions inside a slightly dangerous domain. Humans do a lot of stuff besides maximizing inclusive genetic fitness, optimizing purely on outcomes labeled by a simple loss function doesn’t get you an internal optimizer that pursues only that loss function, etc.

**Anonymous**

Steve's intuitions sound to me like they're pointing at the "well-specified problems" idea from an earlier thread. Essentially, only use AI in domains where unsafe actions are impossible by construction. Is this too strong a restatement of your intuitions Steve?

**Steve Omohundro**

Thanks for your perspective! Those sound more like social concerns than technical ones, though. I totally agree that today's AI culture is very "sloppy" and that the currently popular representations, learning algorithms, data sources, etc. aren't oriented around precise formal specification or provably guaranteed constraints. I'd love any thoughts about ways to help shift that culture toward precise and safe approaches! Technically there is no problem getting provable constraints on floating point computations, etc. The work often goes under the label "Interval Computation". It's not even very expensive, typically just a factor of 2 worse than "sloppy" computations. For some reason those approaches have tended to be more popular in Europe than in the US. Here are a couple lists of references: <http://www.cs.utep.edu/interval-comp/> <https://www.mat.univie.ac.at/~neum/interval.html>

I see today's dominant AI approach of mapping everything to large networks ReLU units running on hardware designed for dense matrix multiplication, trained with gradient descent on big noisy data sets as a very temporary state of affairs. I fully agree that it would be uncontrolled and dangerous scaled up in its current form! But it's really terrible in every aspect except that it makes it easy for machine learning practitioners to quickly slap something together which will actually sort of work sometimes. With all the work on AutoML, NAS, and the formal methods advances I'm hoping we leave this "sloppy" paradigm pretty quickly. Today's neural networks are terribly inefficient for inference: most weights are irrelevant for most inputs and yet current methods do computational work on each. I developed many algorithms and data structures to avoid that waste years ago (eg. "bumptrees" <https://steveomohundro.com/scientific-contributions/)>

They're also pretty terrible for learning since most weights don't need to be updated for most training examples and yet they are. Google and others are using Mixture-of-Experts to avoid some of that cost: <https://arxiv.org/abs/1701.06538>

Matrix multiply is a pretty inefficient primitive and alternatives are being explored: <https://arxiv.org/abs/2106.10860>

Today's reinforcement learning is slow and uncontrolled, etc. All this ridiculous computational and learning waste could be eliminated with precise formal approaches which measure and optimize it precisely. I'm hopeful that that improvement in computational and learning performance may drive the shift to better controlled representations.

I see theorem proving as hugely valuable for safety in that we can easily precisely specify many important tasks and get guarantees about the behavior of the system. I'm hopeful that we will also be able to apply them to the full AGI story and encode human values, etc., but I don't think we want to bank on that at this stage. Hence, I proposed the "Safe-AI Scaffolding Strategy" where we never deploy a system without proven constraints on its behavior that give us high confidence of safety. We start extra conservative and disallow behavior that might eventually be determined to be safe. At every stage we maintain very high confidence of safety. Fast, automated theorem checking enables us to build computational and robotic infrastructure which only executes software with such proofs.

And, yes, I'm totally with you on needing to avoid the "basic AI drives"! I think we have to start in a phase where AI systems are not allowed to run rampant as uncontrolled optimizing agents! It's easy to see how to constrain limited programs (eg. theorem provers, program compilers or protein designers) to stay on particular hardware and only communicate externally in precisely constrained ways. It's similarly easy to define constrained robot behaviors (eg. for self-driving cars, etc.) The dicey area is that unconstrained agentic edge. I think we want to stay well away from that until we're very sure we know what we're doing! My optimism stems from the belief that many of the socially important things we need AI for won't require anything near that unconstrained edge. But it's tempered by the need to get the safe infrastructure into place before dangerous AIs are created.

**Anonymous**

As far as I know, all the work on "verifying floating-point computations" currently is way too low-level -- the specifications that are proved about the computations don't say anything about what the computations mean or are about, beyond the very local execution of some algorithm. Execution of algorithms in the real world can have very far-reaching effects that aren't modelled by their specifications.

**Eliezer Yudkowsky**

Yeah, what they said. How do you get from proving things about error bounds on matrix multiplications of inscrutable floating-point numbers, to saying anything about what a mind is trying to do, or not trying to do, in the external world?

**Steve Omohundro**

Ultimately we need to constrain behavior. You might want to ensure your robot butler won't leave the premises. To do that using formal methods, you need to have a semantic representation of the location of the robot, your premise's spatial extent, etc. It's pretty easy to formally represent that kind of physical information (it's just a more careful version of what engineers do anyway). You also have a formal model of the computational hardware and software and the program running the system.

For finite systems, any true property has a proof which can be mechanically checked but the size of that proof might be large and it might be hard to find. So we need to use encodings and properties which mesh well with the safety semantics we care about.

Formal proofs of properties of programs has progressed to where a bunch of cryptographic, compilation, and other systems can be specified and formalized. Why it's taken this long, I have no idea. The creator of any system has an argument as to why its behavior does what they think it will and why it won't do bad or dangerous things. The formalization of those arguments should be one direct short step.

Experience with formalizing mathematician's informal arguments suggest that the formal proofs are maybe 5 times longer than the informal argument. Systems with learning and statistical inference add more challenges but nothing that seems in-principal all that difficult. I'm still not completely sure how to constrain the use of language, however. I see inside of Facebook all sorts of problems due to inability to constrain language systems (eg. they just had a huge issue where a system labeled a video with a racist term). The interface between natural language semantics and formal semantics and how we deal with that for safety is something I've been thinking a lot about recently.

**Steve Omohundro**

Here's a nice 3 hour long tutorial about "probabilistic circuits" which is a representation of probability distributions, learning, Bayesian inference, etc. which has much better properties than most of the standard representations used in statistics, machine learning, neural nets, etc.: <https://www.youtube.com/watch?v=2RAG5-L9R70> It looks especially amenable to interpretability, formal specification, and proofs of properties.

**Eliezer Yudkowsky**

You're preaching to the choir there, but even if we were working with more strongly typed epistemic representations that had been inferred by some unexpected innovation of machine learning, automatic inference of those representations would lead them to be uncommented and not well-matched with human compressions of reality, nor would they match exactly against reality, which would make it very hard for any theorem about "we are optimizing against this huge uncommented machine-learned epistemic representation, to steer outcomes inside this huge machine-learned goal specification" to guarantee safety in outside reality; especially in the face of how corrigibility is unnatural and runs counter to convergence and indeed coherence; especially if we're trying to train on domains where unaligned cognition is safe, and generalize to regimes in which unaligned cognition is not safe. Even in this case, we are not nearly out of the woods, because what we can prove has a great type-gap with that which we want to ensure is true. You can't handwave the problem of crossing that gap even if it's a solvable problem.

And that whole scenario would require some major total shift in ML paradigms.

Right now the epistemic representations are giant inscrutable vectors of floating-point numbers, and so are all the other subsystems and representations, more or less.

Prove whatever you like about that Tensorflow problem; it will make no difference to whether the AI kills you. The properties that can be proven just aren't related to safety, no matter how many times you prove an error bound on the floating-point multiplications. It wasn't floating-point error that was going to kill you in the first place. |

15ed29b7-b909-4b65-8dec-67ee065f3ae7 | trentmkelly/LessWrong-43k | LessWrong | How should we model complex systems?

By "complex", I mean a system for which it would be too computationally costly to model it from first principles e.g. the economy, the climate (my field, by the way). Suppose our goal is to predict a system's future behaviour with minimum possible error given by some metric (e.g. minimise the mean square error or maximise the likelihood). This seems like something we would want to do in an optimal way, and also something a superintelligence should have a strategy to do, so I thought I'd ask here if anyone has worked on this problem.

I've read quite a bit about how we can optimally try to deduce the truth e.g. apply Bayes' theorem with a prior set following Ockham's razor (c.f. Solomonoff induction). However, this seems difficult to me to apply to modelling complex systems, even as an idealisation, because:

1. Since we cannot afford to model the true equations, every member of the set of models available to us is false, so the likelihood and posterior probability for each will typically evaluate to zero given enough observed data. So if we want to use Bayes' theorem, the probabilities should not mean the probability of each model being true. But it's not clear to me what they should mean - perhaps the probability that each model will give the prediction with the lowest error? But then it's not clear how to do updating, if the normal likelihoods will typically be zero.

2. It doesn't seem clear that Ockham's razor will be a good guide to giving our models prior probabilities. Its use seems to be motivated by it working well for deducing fundamental laws of nature. However, for modelling complex systems it seems more reasonable to me to give more weight to models that incorporate what we understand to be the important processes - and past observations can't necessarily help us tell what processes are important to include, because different processes may become important in future (c.f. biological feedbacks that may kick in as the climate warms). This could perhaps |

59d0889c-fd16-426c-baf6-c2acea2d32c0 | trentmkelly/LessWrong-43k | LessWrong | Softmax, Emmett Shear's new AI startup focused on "Organic Alignment"

> A new AI alignment player has entered the arena.

>

> Emmett Shear, Adam Goldstein and David Bloomin have set up shop in San Francisco with a 10-person start-up called Softmax. The company is part research lab and part aspiring money maker and aimed at figuring out how to fuse the goals of humans and AIs in a novel way through what the founders describe as “organic alignment.” It’s a heady, philosophical approach to alignment that seeks to take cues from nature and the fundamental traits of intelligent creatures and systems, and we’ll do our best to capture it here.

>

> “We think there are these general principles that govern the alignment of any group of intelligent learning agents or beings, whether it's an ant colony or humans on a team or cells in a body,” Shear said, during his first interview to discuss the new company. “And organic alignment is the kind of alignment where a bunch of peers come together and find their role in a greater whole together where they maintain their individual identity.

>

> “Organic alignment centers on this shared whole idea, which is opposed to the kind of alignment that you see from most foundational model companies that is very much about steering and control and direction. We think of that as hierarchical alignment.”

>

> […]

Also, https://softmax.com/about mentions collaboration with Michael Levin, Ken Wilber, Chris Fields, Ken Stanley, Denis Noble, Andrew Briggs, Jeff Clune, Erik Hoel, Ryan Smith, Center for the Study of Apparent Selves, Dalton Sakthivadivel, and Perry Marshall. |

3f348e52-adaf-4b27-9b44-b562bb62c67b | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Inside the Mind of an Aspiring Charity Entrepreneur [Follow Along] #1 - From Layoff to Co-founding in a Breathtaking Two Months

TL;DR

-----

I want to extend a MASSIVE, heartfelt thank you to the abundant resources put together by the EA community and the wonderful EAs who are so generous with their knowledge and time (even when talking to a complete newbie like me).

Because of you, I went from **being laid off in June and knowing nothing about EA in July**, to being offered a nonprofit co-founder position in early September, to finishing some initial groundwork of setting up a charity (productivity system, Founder’s agreement, funding proposal draft, etc) in late September.

The initial groundwork implemented thus far were all influenced by the action steps found in [How to Launch a High-Impact Nonprofit](https://forum.effectivealtruism.org/posts/c2fhqaKEGJxGMCz4x/new-ea-book-how-to-launch-a-high-impact-nonprofit-by-charity). Thus, this aims to be a post sequence dedicated to putting the book’s concepts to work and showing some actual work examples.

Hopefully with more of your support and feedback, the StakeOut.AI charity startup can keep a good steady pace and accomplish more crucial milestones in Oct and onwards.

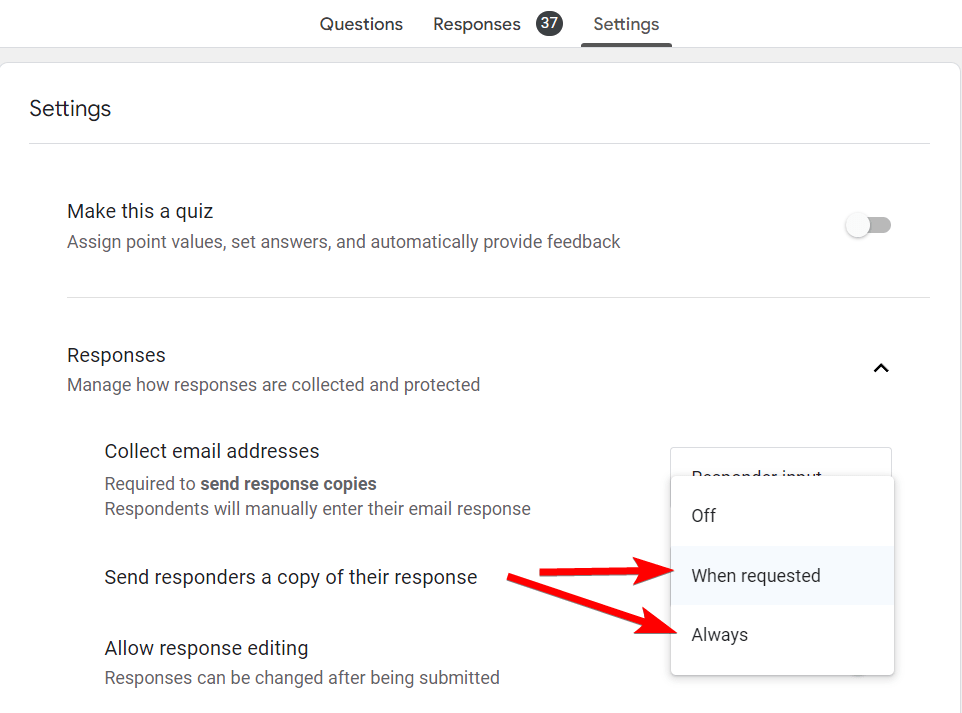

As this post is the intro of the sequence, it details more specifically my journey (learning and doing applications) - including important tips for other applicants (apply early, handling rejections) and some feedback for EA organizations taking applications (setup to process many applications, send responders a copy of their responses, informing those who didn’t make the cut).

I hope this brings value to the EA community as this is perspective from someone very green in EA, eager to take action quickly even though I came from a totally different world before.

Acknowledgment

--------------

I would like to give a special thank you to [Dr. Peter S. Park](https://forum.effectivealtruism.org/users/peter-s-park) for editing this post and for all our future collaborations! There is also a [I’m so grateful for everyone who has been a part of the journey thus far](https://forum.effectivealtruism.org/posts/ngk6AFo5uNHB3ZKQY/inside-the-mind-of-an-aspiring-charity-entrepreneur-follow#A_Heartfelt_Thank_You_to_the_Effective_Altruism_Community) section later in this post.

Introduction

------------

Hi, my name is Harry, it’s nice to meet all of you. This post, my first ever post on the EA forum, is inspired by [Three lessons I've learnt from starting a small EA org](https://forum.effectivealtruism.org/posts/ZYPTdny6iuKTZhC8A/three-lessons-i-ve-learnt-from-starting-a-small-ea-org) and [Why and how to start a pilot project in EA](https://forum.effectivealtruism.org/posts/eCKhhWXa25TcecZbJ/why-and-how-to-start-a-pilot-project-in-ea) - so thank you [Ben Williamson](https://forum.effectivealtruism.org/users/ben-williamson?from=post_header).

The purpose of this post is to [1] document my journey publicly (hopefully to inspire others who are thinking they want to start a side project, or apply to CE, or go down the path of charity entrepreneurship), [2] maximize feedback as per [Ben’s post](https://forum.effectivealtruism.org/posts/ZYPTdny6iuKTZhC8A/three-lessons-i-ve-learnt-from-starting-a-small-ea-org) *(both directly through the post (in the comments) and indirectly (people who reach out privately with additional suggestions/ advice))*, and [3] share key lessons & milestones to hopefully contribute to the forum via actual work examples.

The hope is that it is going to be a follow along post sequence, as my co-founder [Dr. Peter S. Park](https://forum.effectivealtruism.org/users/peter-s-park) and I get our nonprofit startup up and running.

Though the journey with EA has only been 2 months, I already have so many people to thank who have answered questions, provided feedback and pointed me in the right direction. I am forever grateful for you :)

The Catalyst: The Layoff that Sparked My Journey

------------------------------------------------

I first found out about Effective Altruism from my Google search about impactful tithing. As a Christian, I have been tithing 10% of my income for many years, but because I was laid off from shortage of work in my previous marketing role, I was interested in finding more impactful ways to give due to our now lowered family income.

This is when I stumbled upon [GivingMultiplier.org](https://givingmultiplier.org/) and was introduced to the idea of super-effective charities. I was intrigued by their approach where you can still give from the heart (your favorite charity), but at the same time incentivizes you to give more to super-effective charities by matching your donations (the higher % you give to super-effective charities, the higher the matching rate). The concept of super-effective charities was so intriguing, I read through all eight “websites we recommend” (most notably EA and 80k).

With the gift of time of no longer being at a full-time job, this began my deep dive into EA. I was ecstatic when I found out about the [CharityEntrepreneurship.com](http://charityentrepreneurship.com) (CE) incubator program within the first week on the EA forum, as I have always had the dream of starting a non-profit to help in bettering the world. The dream of doing high-impact work was born 19+ years ago since co-leading fundraisers in my high school years. My eyes were opened to how first world dollars can have a major positive impact in the developing world, when what seemed like a small amount of funds raised actually built new schools in Tibet.

After about a week, I knew I had found a group of likeminded people who care about doing good in the world while effectively using our limited resources. My job lay off was a blessing before finding EA because I was able to spend more time with my kids. Then after learning about EA, the lay off turned out to be an opportunistic time to shift my career to doing high-impact work, changing my dormant dream into a (hopefully) reality.

The Effective Altruism Influence

--------------------------------

Throughout the last two months, the first material I read through was the [80000 Hours Career Guide](https://forum.effectivealtruism.org/posts/f7D4spNoAYqhFfbBz/announcing-the-new-80-000-hours-career-guide-by-benjamin). Then, because I found out about CE so soon in my EA journey and I knew I wanted to apply to the incubator, the rest of the 200+ hours towards my metaphorical undergraduate degree in Effective Altruism ([suggested here](https://forum.effectivealtruism.org/posts/QxCpXjGmHbpX45nxo/how-to-increase-your-odds-of-starting-a-career-in-charity)) was trying to get through as many resources as I can highlighted below (I broke the 3 lists into to-do items to systemize the process):

* <https://www.charityentrepreneurship.com/applicant-resources>

* <https://www.charityentrepreneurship.com/post/how-to-increase-your-odds-for-ce>

* <https://forum.effectivealtruism.org/posts/MFyQzn6eNgDyvnfde/so-you-want-to-be-a-charity-entrepreneur-read-these-first>

Between all the reading/watching/listening, another important influence was talking with other like-minded people. Please see below [I’m so grateful for everyone who has been a part of the journey thus far](https://forum.effectivealtruism.org/posts/ngk6AFo5uNHB3ZKQY/inside-the-mind-of-an-aspiring-charity-entrepreneur-follow#A_Heartfelt_Thank_You_to_the_Effective_Altruism_Community) for more details.

Lastly, for anyone who has been a part of EA for even a small amount of time knows, you will come to love Google Forms. Like others, I have applied to many EA official programs, volunteering roles, paid jobs, career advising opportunities, incubator programs etc… and to my surprise these have also affected me in a profound way I had not anticipated before. Particularly, answering all these Google Form questions and doing the test tasks have clarified my thinking to put things on paper (well, digitally paper), that previously had been unorganized and swirling in my head.

The Challenges: What I've Faced So Far

--------------------------------------

### Getting started quickly in EA

There are a lot of resources, and I guess that can be a double-edged sword. Within a week of skimming, I knew EA was in alignment for me. So, as someone who wanted to hit the ground running, it was like trying to drink from a firehose sorting through the different topics and information initially. However, I’m very lucky I was inspired to go down the co-founding path early on, and so I stuck mostly to a semi structured way to devour resources based on the [3 lists as per above](https://forum.effectivealtruism.org/posts/ngk6AFo5uNHB3ZKQY/inside-the-mind-of-an-aspiring-charity-entrepreneur-follow#The_Effective_Altruism_Influence).

Other than that, as suggested by many people, the most valuable way to learn the ideas of Effective Altruism isn’t by merely reading (e.g. the [EA handbook](https://forum.effectivealtruism.org/posts/XKfnWebzJsdxecBDw/about-this-handbook), forum, books etc), but by conversing, exchanging ideas and learning from other like-minded people. The official way to do that is via the [monthly EA Virtual Intro Program](https://forum.effectivealtruism.org/posts/BuR7RLQAtJtC5jK5d/there-will-now-be-ea-virtual-programs-every-month) and this is a great experience as I’m going through it right now.

However, for someone who wants to get moving ASAP, that is **waiting in most cases an entire month** between applying, hearing back and starting the eight week EA Virtual Intro Program. This waiting period, if not properly handled, could have been a semi hindrance.

That’s why for me, I’m very grateful for the [EA Anywhere group where professional and affinity groups were consolidated into one space](https://forum.effectivealtruism.org/posts/sK7neZ9rHGEL5JP7q/ea-anywhere-slack-consolidating-professional-and-affinity). I was able to get connected to multiple events/groups to learn and get feedback (it’s so easy to find events compared to hunting down different interest groups on their respective websites). Additionally, there is the #study-buddy chat room (that's joint with the EA Virtual Programs Slack) that you can find others to who are reading through resources that might be of interest to you. [See here for other promising ways to connect to others online](https://forum.effectivealtruism.org/posts/CAWvSaGWNbCnoRccd/supporting-online-connections-what-i-learned-after-trying-to).