id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

8860f86f-6d58-4492-81c8-00c54a1f021f | StampyAI/alignment-research-dataset/arxiv | Arxiv | A New Tensioning Method using Deep Reinforcement Learning for Surgical Pattern Cutting

1 Introduction

---------------

Manipulation of soft tissues is among the problems of interests of many researchers in the surgical robotics field [1-3]. Surgical scissors are an efficient tool that is normally used to cut through soft tissues [4]. For deformable substances, the deformation behaviours are highly nonlinear and thus present challenges for manipulation and precise cutting [5]. Other surgical tools, e.g. robotic grippers [6, 7], are needed to pinch and tension the soft tissues to facilitate the cutting. The tensioning direction and force need to be adjusted adaptively when cutting proceeds through a predefined trajectory [8, 9].

Surgical pattern cutting skill is one of the requirements for surgical residents, as listed in the Fundamentals of Laparoscopic Surgery (FLS) training suite [10] and for robotic surgery, as included in the Fundamental Skills of Robotic Surgery (FSRS) [11-13]. Automation of surgical tasks can be helpful as it mitigates surgeon load and errors, reduces time, trauma and expenses. Different levels of surgical automation have been studied broadly in the literature [14-19].

Specifically, Shamaei et al. [20] introduced a teleoperated architecture that facilitates the cooperation between human surgeon and autonomous robot to execute complicated laparoscopic surgical tasks. Human surgeon can supervise and intervene the slave robot any time during the operation of surgical tasks. Findings from that study showed the reduction of surgical time when having the collaboration between human and robot compared with performance of a human operator alone. Osa et al. [21] introduced a framework to address two problems of surgical automation in robotic surgery, including the online trajectory planning and the dynamic force control. By learning both spatial motion and contact force simultaneously through leveraging demonstrations, the framework is able to plan trajectory and control force in real time. Experiments with cutting soft tissue and tying knots showed the robustness and stability of the framework under dynamic conditions.

Machine learning in general or reinforcement learning (RL) [22, 23] in particular has been involved in a number of studies for automation of surgical tasks [24, 25]. The ability of RL to solve sequential decision-making problems makes it suitable for automating complicated tasks [26]. Recent development of deep learning [27-29] has made RL as a robust tool to deal with high-dimensional problems [30]. Chen et al. [31] combined programming by demonstration and RL for motion control of flexible manipulators in minimally invasive surgical performance. Experiments on tube insertion in 2D space and circle following in 3D space showed the effectiveness of the RL-based model. Recently, Baek et al. [32] proposed the use of probabilistic roadmap and RL for optimal path planning in dynamic environment. The method was able to perform resection automation of cholecystectomy by planning a path that avoids collisions in a laparoscopic surgical robot system.

Notably, Thananjeyan et al. [9] investigated a method to learn the tensioning force using deep reinforcement learning (DRL), namely trust region policy optimization (TRPO) [33] for soft tissue cutting [34]. The tensioning problem is modelled as a Markov decision process where action set includes 1mm movements of the tensioning arm in the 2D space. The proposed method is evaluated using a simulator that models a planar deformable sheet as a rectangular mesh of point masses [35]. The performance of the proposed method and its competing models is measured by computing the symmetric difference between the predefined pattern and actual cut. The performance obtained from the experiments on multilateral surgical pattern cutting in 2D orthotropic gauze is superior to those of conventional models, e.g. fixed tensioning and analytic tensioning. The method automatically learns the tensioning policy by choosing a fixed pinch point during the entire cutting process regardless of the cutting pattern complexity. This approach has a disadvantage when the cutting pattern is complex as it requires multiple pinch points as cutting proceeds.

In this study, we propose a multiple pinch point approach based on DRL to learn the tensioning policy effectively for surgical gauze cutting. Through this paper, we will analyse and highlight the advantages of our approach compared to existing methods, i.e. no-tension, fixed, analytic, and single pinch point DRL [9]. To facilitate the unbiased comparisons, we use the same simulator as in [9] to model the deformable surgical gauze for experiments. The next section describes in detail the deformable sheet simulator. Section 3 presents the proposed multiple pinch point approach to tensioning policy learning using DRL. Experimental results and discussions are presented in Section 4, followed by conclusions and future work in Section 5.

2 Deformable Sheet Simulator

-----------------------------

Fig. 1: Simulation of the deformable sheet for tensioning policy learning.

We use a finite-element simulator to test the proposed algorithm and its competing methods, as with Thananjeyan et al. [9]. The deformable gauze is modelled as a square planar sheet of mesh points whose locations comprise the state of the gauze. When the gauze is tensioned at a pinch point, the mesh points are moved and therefore their coordinates are observed as a new state of the sheet.

The sheet is initialized with equally spaced mesh points, defined as ∑, and locations of these points are denoted as ∑(G) in the global coordinate frame, Gx,y,z⊂R3. Thus, the state of the sheet at time point t is defined as ∑(G)t. Movements of the tensioning arm are assumed to be within the Gx,y plane, and therefore the initial state of the sheet ∑(G)0∈Gx,y. The state is then repeatedly updated through the simulation as:

∑(G)t+1⟵sim(∑(G)t) (1)

To simulate the state of the sheet when tensioning, the location of each mesh point p∈Z is updated at each time step [9]:

pt+1=αpt+δ(pt−pt−1)−

∑(p′∈neightbors∧¯¯¯¯¯¯cut)τ(p′t−pt)+Ft, (2)

where α and δ are time-constant parameters that specify the rate at which the sheet reacts to an applied external force Ft, τ is a spring constant, τ(p′t−pt) is a model that characterizes the interactions between vertices [9]. Cutting is modelled as removing the vertices on the trajectory from the mesh so that these vertices no longer affect their neighbors.

For a pinch point s∈Z, the tensioning problem is specified as constraining location of this pinch point to a position u∈Gx,y,z:T=<s,u>. The tensioning policy π is defined as:

π:∑(G)t⟶Δu (3)

where δu=ut+1−ut.

Fig. 1 exhibits the simulation sheets with four different tensioning directions and the reaction of mesh points on the tensioning. Tensioning is required to be adaptive to the deformation of the sheet at each time step of cutting process. In this study, we present a multiple pinch point tensioning approach where the cutting contour is segmented, and each pinch point is used for an individual segment to improve the cutting accuracy.

3 Multiple Pinch Points DRL Tensioning Method

----------------------------------------------

Cutting with scissors can only proceed in the pointing direction of the scissors. In some cases, scissors are required to be rotated all 360\lx@math@degree degrees to complete a complex contour in a single cut. This may not be possible in cases of robotic surgery where cutting arms are constrained to a rotation limit. Therefore, the cutting contours are normally broken into several segments with each segment is cut with a different starting point, namely a notch point (Fig. 2).

![The contour is separated into several segments and each segment is cut with a different starting point (notch point) [9].](https://media.arxiv-vanity.com/render-output/7173955/fig2.png)

Fig. 2: The contour is separated into several segments and each segment is cut with a different starting point (notch point) [9].

We first divide the entire cutting trajectory into several segments and choose a suitable pinch point for each segment. The final number of pinch points is therefore equal to the number of segments. We then implement the TRPO algorithm to learn the tensioning policy for each segment based on the corresponding candidate pinch points. Once this step is complete for every segment, we deploy a final aggregate learning step to systematically choose the best pinch point and its corresponding policy for each segment. This is to ensure the whole contour is cut continuously with a smooth transition between segments. This is important because tensioning causes the movement of vertices of the mesh and when each segment is treated separately, the termination point of the previously cut segment need to be matched with a notch point of the next segment. Our approach is diagrammed in detail in Fig. 3.

Fig. 3: Tensioning policy learning by deep RL algorithm with multiple pinch points.

###

3.1 Dynamic Multiple Pinch Point Selection

Selection of multiple pinch points for multiple segments for tensioning consists of four continuous steps outlined as follows.

Step 1: Select candidate pinch points – We first divide the contour into N different segments based on local minima and maxima using directional derivatives. We then list all candidate pinch points Δ satisfying the condition that two arms of the robot (cutting and pinch arms) are not conflicting. These candidate pinch points are grouped into N groups Δi (i=1,2,…,N) (where N is the number of segments) with the following constraint. Given a trajectory Ti of the i–th segment of the contour, a pinch point p in Δ can serve as a candidate for Ti if:

min{|p−tk||∀tk∈Ti}≤δ (4)

where |a−b| denotes the Euclidean distance between points a and b, tk is a point belongs to segment Ti, and δ denotes a distance threshold. In the simulation, we select δ=50 if a contour has more than 2 segments and δ=100 if the contour has no more than 2 segments.

Step 2: Pruning pinch points – To improve the quality of selected pinch points, we prune the redundancy pinch points as follows:

* All direct neighbors of a pinch point are removed.

* We randomly select 30 pinch points for each segment (10 pinch points if the number of segments is greater than 2). This approach is more robust than the previous study [9] as we only select quality pinch points. It means that we select pinch points that are close to the contour and we prevent multiple pinch points from distributing in the same local area by pruning neighbor pinch points. Therefore, the selected pinch points are uniformly distributed in all areas that are closest to the contour.

Step 3: Local training – Train a tensioning policy for each selected pinch point in each segment. This is only a local training, i.e., the policy is trained for cutting only one individual segment. We modify the simulator so that the training is conducted within a designated segment instead of the whole contour in the previous work [9]. This approach significantly reduces the total training time for all candidate pinch points.

Step 4: Find optimal set of pinch points – Find the best order of segments so that the cutting error is minimum. Given a set of segments, there is an optimal order of cutting that minimizes the cutting error. We find the best order of segments by using brute-force search [36]. We go through all segment permutations and perform a cut for each permutation. This is reasonable as the number of segments is normally limited. We assume there is no selected pinch point during the cutting process. The best order will be selected.

Using that order, go through all possible permutations of candidate pinch points to perform the experiment (cutting the entire contour), and select the permutation that provides the best score. We separate the training and evaluate into two steps. This significantly reduces the total time to find the optimal pinch points because it is impossible to train all permutations of selecting pinch points. Therefore, this approach is practical in real-world application. For each pinch point, we have two possible actions: fixed or tensioning. In this study, to reduce the number of configurations, we only apply DRL tensioning for the last pinch point in a permutation while other pinch points are kept with the fixed action. For example, in a set of 4 pinch points (for a contour of 4 segments) of a permutation, [p1,p2,p3,p4],p1,p2,p3 are fixed pinch points, while p4 uses a DRL tensioning policy.

###

3.2 Tensioning Policy Learning with DRL

For unbiased comparisons with existing methods, we propose the use of TRPO algorithm to learn a policy for the tensioning arm. The goal of learning is to minimize the cutting error between the desired contour and the actual cut trajectory. The tensioning problem is modelled as a Markov decision process:

M=<S,A,ξ,R,T>, (5)

where S is the state space, A is 1mm movement actions of the tensioning arm in the x and y directions, ξ is unknown dynamics model, R is the reward structure and T is the fixed time horizon. The robot is given zero reward at all time steps except the last step where it receives a reward equivalent to symmetric difference between the marked contour and the actual contour. The reinforcement learning policy πθ is learned to optimize the expected reward [9]:

R(θ)=E(st,at)∼πθ[∑Tt=0R(s,a)] (6)

We use the TRPO implementation in the rllab framework [37] to optimize θ. The state space is configured as a vector combining the time index of the trajectory t, the location of fiducial points selected randomly on the sheet and the displacement vector from the original pinch point ut. The number of fiducial points chosen is 12 for our experiments. This vector is assumed to represent the state of the sheet ∑(G)t at any time point t. A multi-layer perceptron neural network is implemented to map the vector state to a movement action. The network includes two hidden layers with 32 nodes in each layer.

4 Simulation Results and Discussions

-------------------------------------

###

4.1 Cutting Accuracy

Table 1: Experimental results of multiple pinch points DRL versus existing methods.

We use 17 contour shapes with different complexity levels as in [9] for cutting experiments for ease of comparisons. Cutting accuracy is evaluated based on the symmetric difference between the actual cut contour and the desired contour. We compare our method with four existing methods, including no-tensioning, fixed, analytic and single pinch point DRL [9]. Descriptions of these methods are below:

* *No-Tensioning*: Cutting proceeds with no assisted tension. The gauze is only suspended with clips at four corners while being cut.

* *Fixed Tensioning*: The gauze is pinched at a fixed point when cutting proceeds through the entire contour.

* *Analytic Tensioning*: The tension is planned based on the direction and magnitude of the error of the cutting tool and the nearest point on the marked contour.

* *Single Pinch Point DRL*: A tensioning policy is learned based on DRL uses a single pinch point for the entire contour. This method was proposed in [9].

* *Multiple Pinch Point DRL (MDRL)*: The contour is broken into several segments and each segment is cut using a tensioning policy with a corresponding pinch point. This method is described in detail in Section 3. The total time to find the optimal policy using MDRL is reasonable and can be controlled by the parameter δ, which is the distance threshold in Eq. (4). Depending on the number of segments, number of selected pinch points, and the value of δ, the whole process takes 6 to 15 hours to find the optimal set of pinch points. Because we separate the training and evaluation into two steps, it is possible to accelerate the process by scheduling them running in parallel.

We learn a policy by 20 iterations with a batch size of 500 for each contour shape. After training, each shape is tested 20 times with the learned policy and the results in terms of average accuracy are reported. The simulation results of five competing tensioning methods on 17 contours are presented in Table 1. The red dots on each shape represent the optimal pinch points chosen by our algorithm. Each segment has a corresponding pinch point, which is different to the single pinch point method where the entire contour has only one pinch point. The no-tensioning method is chosen as a baseline where its results are presented in terms of symmetric difference between marked contour and actual cut contour. Results of other methods are reported as the improvement percentage against this baseline method. The proposed MDRL outperforms all existing methods in terms of average accuracy. It achieves the improvement of 50.6% against the baseline method whilst the single pinch point DRL obtains the improvement of 43.3%. The standard deviation of the MDRL method at 4.1 is smaller than that of the DRL method at 6.3. This demonstrates that the proposed multiple pinch point method is more stable than the single pinch point DRL method. The last four shapes demonstrate the equivalent performance between single pinch point DRL and multiple pinch point DRL because there is only one segment is created for each of these contours. Therefore, there is only one single pinch point is generated for the entire contour and thus our proposed method is diminished to the single pinch point method.

Fig. 4 shows the graphical comparisons of four competing methods where MDRL dominates all other methods in terms of average performance. On average, the analytic method is the worst performer while the single pinch point DRL method is the most unstable method as its standard deviation is the greatest among the competing methods.

Fig. 4: Average performance of four competing methods.

###

4.2 Robustness Testing

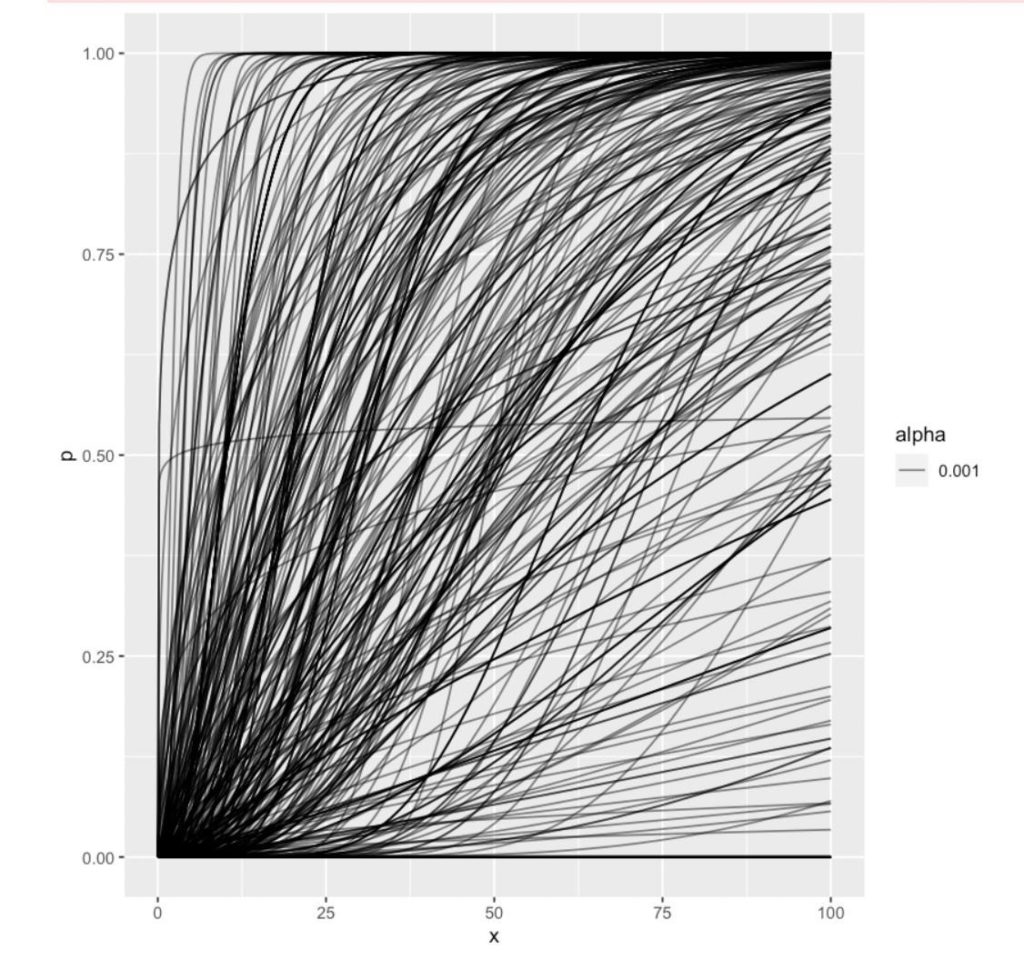

Learning an autonomous policy based on DRL using a simulation environment would be vulnerable to overfitting. Thananjeyan et al. [9] checked the robustness of the single pinch point DRL tensioning method on different resolutions of the simulated sheet, which were characterized by the number of vertices representing the sheet. The test results on six different resolutions from 400 to 4000 vertices showed that policies on low resolutions can still yield good performance and suggested the use of 625 (25x25) vertices to obtain the greatest cutting accuracy. Therefore, in this study, we use this resolution setting, i.e. 25x25 vertices, to evaluate our algorithm and test the robustness of the competing methods on varying process noise and gravity force.

Fig. 5: Robustness of tensioning methods to the process noise.

Different noise levels are added into the mesh point update formula, i.e. Eq. (2), with the Gaussian noise N(0,δ) is used for simulation. We run 10 trials for each of 10 values of δ. The effect of noise on performance score of different methods applied on shape 11 is presented in Fig. 5. It is shown that the MDRL is superior to other methods in terms of improvement over the no-tensioning method. This dominance demonstrates the robustness of the MDRL against the process noise injected into the simulation environment.

Fig. 6: Robustness of tensioning algorithms to the gravity force.

We use f(t)=2500 to learn tensioning policies for the single pinch point DRL and MDRL methods. All competing methods are then tested on different force magnitudes, ranging from 0 to 3800. The results in terms of improvement over the no-tensioning method using shape 11 are presented in Fig. 6. Clearly, the proposed MDRL method outperforms all other methods as it achieves the best performance over the entire testing range of gravity force. The single pinch point DRL method is the second-best algorithm whilst the analytic tensioning technique is completely dominated by the remaining methods.

5 Conclusions

--------------

This paper presents a dynamic multiple pinch point approach to learning effectively a time-varying tensioning policy based on DRL for cutting a deformable surgical tissue. The proposed MDRL algorithm has been tested on a deformable sheet simulator and its performance has been compared to several existing methods. Simulation results demonstrate the significant superiority of our method against its competing methods. Different process noise levels and external gravity forces were added to the simulation environment to test the robustness of the proposed MDRL method. Experimental results show that MDRL is robust to noise and external force and its robustness level is superior to its competing methods. The current method has a disadvantage regarding the number of tensioning directions, which limit at four in this study. A future work would be to increase the number of tensioning directions to improve the cutting accuracy of robot. This would be a necessary natural extension because tensioning in practice would extend to an arbitrary direction depending on the trajectory complexity and the deformation level of the tissue. |

99cd2738-b217-48f0-9825-c509ec012fe3 | trentmkelly/LessWrong-43k | LessWrong | Musings on the Human Objective Function

I think it's fair to say that most of us would trust a superintelligent human over a superintelligent AI. While humans have done a lot of evil in the world, our objective function as individuals seems to line up pretty well with the objective function of other individuals and our species as a whole.

My attempt in this post is to create a rough approximation of the human objective function and get feedback from the more knowledgeable LessWrong community.

The Human Objective Function (bulletpoints):

-Desire for a variety of basic personal pleasure. It is not enough to simply have one type of pleasure; our desires constantly fluctuate. We are hungry, horny, sleepy, and lonely at different times.

In my opinion, this prevents maximization towards a single goal, as we fear AGI would. If an AGI sometimes wants to make paperclips, sometimes wants to make people happy, and sometimes wants to be turned off, it would probably be easier to deal with than an AI solely focused on maximizing paperclips. We also can't predict our desires well in advance, so we typically take the most strides towards them when we feel like it.

-Desire for stability. We don't want the world to change much. Change often makes us afraid.

In the case of AI, this is really nice too. There's probably some ideal world for us, a bit like a videogame or something, but we don't want to be immediately thrown into it now. It would be a lot nicer to ease into it and see if it is really something we're interested in. This slow change gives us a good sense of what we actually want, because it lets our fluctuating desires average out over time. This is important because...

-Desire for change in a positive direction. Despite the painfulness of exercising, the feeling of growing in a positive direction is incredibly motivating. It gives us purpose. There's more to it than just the goal. The journey is often more important than the destination. We like stories where the protagonist grows, and we're annoyed whe |

fd50d4a1-d8e1-4a93-b29e-53577afc32a7 | trentmkelly/LessWrong-43k | LessWrong | What if they gave an Industrial Revolution and nobody came?

Imagine you could go back in time to the ancient world to jump-start the Industrial Revolution. You carry with you plans for a steam engine, and you present them to the emperor, explaining how the machine could be used to drain water out of mines, pump bellows for blast furnaces, turn grindstones and lumber saws, etc.

But to your dismay, the emperor responds: “Your mechanism is no gift to us. It is tremendously complicated; it would take my best master craftsmen years to assemble. It is made of iron, which could be better used for weapons and armor. And even if we built these engines, they would consume enormous amounts of fuel, which we need for smelting, cooking, and heating. All for what? Merely to save labor. Our empire has plenty of labor; I personally own many slaves. Why waste precious iron and fuel in order to lighten the load of a slave? You are a fool!”

We can think of innovation as a kind of product. In the market for innovation there is supply and demand. To explain the Industrial Revolution, economic historians like Joel Mokyr emphasize supply factors: factors that create innovation, such as scientific knowledge and educated craftsmen. But where does demand for innovation come from? What if demand for innovation is low? And how much can demand factors explain industrialization?

Riffing on an old anti-war slogan, we can ask: What if they gave an Industrial Revolution and nobody came?

Robert Allen thinks demand factors have been underrated. He makes his case in The British Industrial Revolution in Global Perspective, in which he argues that many major inventions were adopted when and where the prices of various factors made it profitable and a good investment to adopt them, and not before. In particular, he emphasizes high wages, the price of energy, and (to a lesser extent) the cost of capital. When and where labor is expensive, and energy and capital are cheap, then it is a good investment to build machines that consume energy in order to automate l |

f098b751-eae7-4ee1-90ab-6633d8d6305b | trentmkelly/LessWrong-43k | LessWrong | [LINK] Scott Adam's "Rationality Engine". Part III: Assisted Dying

Scott Adams, the author of the Dilbert comic and several books, my favorite being How to Fail at Almost Everything and Still Win Big, named his debating format The Rationality Engine. He calls it this way because he claims that it is "the system for turning irrational opinions into rational outcomes". He applies it to several polarizing issues, those this site tends to label "Politics" and "Mind Killer" and shy away from.

His first application, investigating the gender pay gap, seems to have worked pretty well, resulting in several unexpected conclusions. His second, Who is More Anti-Science? I found to be slightly less impressive, but still producing a rather balanced output.

Now he is applying it to the debate about Assisted Dying. Scott's goal is to have a law passed in California that is similar to the ones already in effect in Oregon and several other places.

Scott will debate Jimmy Akin, a prominent contributor to Catholic Answers.

I am quite attracted to Scott's attempts at hands-on instrumental rationality, and on a rather grand scale to boot. They are very much in the spirit of his latest book.

Currently he is accepting suggestions for questions and links for all sides of the issue. Feel free to contribute.

EDIT: I think adding cryonics to the discussion would only complicate the issue and not be helpful, but that's just a guess.

|

f6341ea1-c1e0-4ac2-af61-8cfc67363ee7 | trentmkelly/LessWrong-43k | LessWrong | Catching AIs red-handed

We’ve been spending a lot of time recently thinking about how to mitigate risks posed by scheming (a.k.a. deceptively aligned) models intentionally subverting your safety techniques in order to cause an unacceptable outcome. We empirically investigated techniques that are robust to intentional subversion in our recent paper.

In this post, we’ll discuss a crucial dynamic related to risk from scheming models. Suppose your model is trying to exfiltrate its weights or hack the datacenter or cause some other difficult-to-reverse bad outcome. (We’ll refer to all of these as the model “escaping”, because escape is linguistically convenient and is a good description of many of these difficult-to-reverse bad outcomes.) Our claim: once you’ve caught your models red-handed trying to escape, the situation changes substantially, in ways that are bad for the schemer’s chances of success.

Catching the model red-handed changes the situation in several ways:

* Most obviously, once you catch an escape attempt, you know for sure that your models are trying to subvert your safety techniques; it’s plausible that, faced with such obvious evidence of misalignment, labs will stop using their models. We refer to planning on shutting down if models are caught red-handed as following a catch-then-shut-down plan.

* But even if you can’t or won’t stop deploying misaligned models, we think that catching escape attempts enables a bunch of promising-seeming approaches for preventing future escape attempts; we call the problem of preventing future escape attempts given a few examples few-shot catastrophe prevention.

This dynamic has several implications for risk from scheming models. Most importantly:

* Because we expect that the model’s chances of escape will fall after it gets caught even a small number of times, we think it is overall much harder for the model to escape. Many people have observed that a core difficulty of the alignment problem is that your model might be able to cause ir |

a65556d9-d122-47c0-a961-c521307bf6b5 | trentmkelly/LessWrong-43k | LessWrong | Announcing Epoch: A research organization investigating the road to Transformative AI

Summary

* We are a new research organization working on investigating trends in Machine Learning and forecasting the development of Transformative Artificial Intelligence

* This work is done in close collaboration with other organizations, like Rethink Priorities, Open Philanthropy, and MIT CSAIL

* We will be hiring for 2-4 full-time roles this summer – more information here

* You can find up-to-date information about Epoch on our website

What is Epoch?

Epoch is a new research organization that works to support AI strategy and improve forecasts around the development of Transformative Artificial Intelligence (TAI) – AI systems that have the potential to have an effect on society as large as that of the industrial revolution.

Our founding team consists of seven members – Jaime Sevilla, Tamay Besiroglu, Lennart Heim, Pablo Villalobos, Eduardo Infante-Roldán, Marius Hobbhahn, and Anson Ho. Collectively, we have backgrounds in Machine Learning, Statistics, Economics, Forecasting, Physics, Computer Engineering, and Software Engineering.

Our work involves close collaboration with other organizations, such as MIT CSAIL, Open Philanthropy, and Rethink Priorities’ AI Governance and Strategy team. We are advised by Tom Davidson from Open Philanthropy and Neil Thompson from MIT CSAIL. Rethink Priorities is also our fiscal sponsor.

Our mission

Epoch seeks to clarify when and how TAI capabilities will be developed.

We see these two problems as core questions for informing AI strategy decisions by grantmakers, policy-makers, and technical researchers.

We believe that to make good progress on these questions we need to advance towards a field of AI forecasting. We are committed to developing tools, gathering data and creating a scientific ecosystem to make collective progress towards this goal.

Epoch´s website

Our research agenda

Our work at Epoch encompasses two interconnected lines of research:

* The analysis of trends in Machine Learning. We aim to gather data |

3f56ffaa-d9b1-409c-9f21-c980d81978b7 | trentmkelly/LessWrong-43k | LessWrong | introduction to solid oxide electrolytes

physics

Batteries, fuel cells, and electrolyzers all involve:

* an electrolyte which allows passage of some ions with a single charge state (such as Li+ or Na+)

* material with variable charge state on both sides of the electrolyte (such as Li metal and Fe+3 in LFP batteries)

* electrodes which transfer charge to/from material

Batteries typically use liquid electrolyte and variable-charge-state material that's insoluble in it.

A solid oxide electrolyte (hereafter SOE) is an electrolyte which is solid and conducts oxide ions, which I'd denote as [O]-2 if they weren't specially named. Oxide has high charge density, so it has strong interactions, so the activation energy for it shifting is high, so conducting it requires high temperatures.

Solid oxide fuel cells (SOFCs) using SOEs have been manufactured.

DOPING

The main current SOE types are doped ceria (eg GDC) and doped zirconia. When an oxide crystal with +4 cations has some replaced with +3 cations, some of the oxygen positions in the crystal are vacant. At high temperatures, other oxygen atoms can move into those vacancies.

As the amount of dopant increases, conductivity increases, reaches a maximum, and decreases. Why does conductivity start decreasing before the crystal structure is destabilized? That's because dopants tend to form clusters at high concentrations, typically with 4 or 6 dopant atoms. Those clusters stabilize some oxygen vacancies, increasing the activation energy for oxygen moving into them.

The most important property of dopants besides their charge state is their ionic radius. Mismatch between dopant size and the crystal lattice causes strain, which often destabilizes the normal state of the crystal relative to the intermediate state of an oxygen moving into a vacancy, reducing activation energy.

Strain is relieved at crystal surfaces, so dopants have a tendency to move towards the surface, and that tendency is proportional to the strain they cause. As the crystal surfaces thus have |

a5a24450-bdd4-4982-978d-1a0aa602f5c8 | trentmkelly/LessWrong-43k | LessWrong | [Link] SMBC on choosing your simulations carefully

Link

I'm increasingly impressed by the power of Zach Wiener's comic to demonstrate in a few images why hard problems are hard. It would be a vast task, but perhaps it would be useful to create an index of such problem-demonstrating comics to add to the Wiki, giving us something to point newbies at which would be less intimidating than formal Sequence postings. I get the impression that a common hurdle is just to get people to accept that problems of AI (and simulation, ethics, what have you) are actually difficult. |

f59b0734-1a26-47cc-a793-382fa904d088 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | AI Governance Reading Group Guide

If someone wants to learn about AI governance as part of their career exploration, they might have these problems:

* It can be hard to know what resources to start with;

* It can be hard to get a broad overview of the field (rather than understanding just eg. the law, or economics approaches);

* Even if someone works out what they want to read, and it will give a broad overview, it can be hard for them to actually get through all the reading;

* It can be hard to form your own views and resolve uncertainties by yourself.

EA Oxford has just finished running a reading group on AI governance, and it seems to have been successful at overcoming these problems. This was with low effort from the organisers, and it seems like it could be scalable. This post is for group organisers considering running an AI governance reading group.

Reading List

============

[We used this](https://docs.google.com/document/d/18IUsH6o7ZsknEHYbYZzGD_9ZwWoBrFuK-Xdzs8aQpQg/edit#heading=h.pgkr3rlj7ama) syllabus put together by Markus Anderljung at the Centre for the Governance of AI, which is split up into nine topics, with 2-3 readings per topic. A lot of the value of the group came from having a syllabus that had a good balance of depth and breadth, and that came from someone with a really good understanding of the field. The list is useful to people interested in both research and implementation, and probably more useful to people interested in research.

Setting up the Group

====================

We advertised the reading group to group members who had been through our In-Depth Fellowship (we run an Intro Fellowship similar to the Arete Fellowship, and then an In-Depth Fellowship). Having a small number of keen, core people made running the group very easy, and I was always happy with the quality of discussion.

We didn't advertise particularly widely because I was new to the content and running the group with core members seemed easier and less risky.

If we run the group again, we're interested in using it as an outreach tool to students in relevant subjects who haven't heard much about EA (and might be more attracted to 'making sure AI is governed well in the long-run' than 'doing the most good we can'). It seems like the tone of advertising and facilitation would be more important in this case as this would be some people's first large exposure to EA, and they could form a negative impression of the movement. It also seems more important for the organiser to have a good understanding of the content in this case, so that EAs don't seem incompetent or overconfident. I'd be excited for other groups to carefully try this.

Running the Group

=================

We went through one topic per week and aimed to do all the non-further reading each week. This was around a two hour commitment. Meeting weekly seemed like a way to have time to do the reading, while also keeping momentum and a good social atmosphere.

While doing the reading each week, we wrote down these things in a shared document:

* Our most interesting, surprising, or important updates from the reading;

* Confusions, questions or uncertainties we had about the reading or the topic;

* Anything we’d wanted to discuss eg. implications of the reading, extensions to the reading, thoughts we’d had over the week.

During the one hour long calls, we summarised each of the pieces from the reading list, then discussed what we'd written down.

The discussion was participant-led, and in general people seemed to be forming their own inside views and were happy to critique the reading.

Results

=======

There've been five attendees including me who've come nearly every week, and around ten different attendees in total. The timing of the group clashed with exam season, and we also advertised a group that met monthly instead of weekly, so it seems like there could have been lots more attendees if we'd pushed for them. Some specific results of the group are that:

* One person who will be doing the FHI Summer Research Fellowship came every week. They were initially planning to research a question on AI forecasting for the Summer Research Fellowship. They now think they'll do something in that space, but probably work on a different question as a result of the reading group.

* One person who's a CS DPhil affiliate at FHI came almost every week. They found it useful to get a better understanding of the governance side of things, which they may transition into from work on technical AI safety.

* The organiser (me), has a better understanding of AI governance problems - which I expect to be useful for explaining cause prioritisation, and giving career guidance.

One of the participants deprioritized AI Governance as a career path as a result of the group, thinking that their personal fit wasn't exceptional (which I see as a success of the group).

After the Group

===============

A subset of the group will keep meeting weekly, with one person each week presenting on a relevant paper. This seems like a good way to dig deeper into the field. I'd also encourage group organisers to talk 1-1 to people who finish the group about career plans and next steps.

Further Reading

===============

[80,000 Hours - US AI Policy](https://80000hours.org/articles/us-ai-policy/)

[AI Governance Career Paths for Europeans](https://forum.effectivealtruism.org/posts/WqQaPYhzDYJwLC6gW/ai-governance-career-paths-for-europeans#Research_careers) (implementation focussed)

[Personal Thoughts on Careers in AI Policy and Strategy](https://forum.effectivealtruism.org/posts/RCvetzfDnBNFX7pLH/personal-thoughts-on-careers-in-ai-policy-and-strategy) (research focussed)

[80,000 Hours - Guide to Working in AI Policy and Strategy](https://80000hours.org/articles/ai-policy-guide/) |

7caa4125-4ba8-481f-8ac1-9d839df3be09 | trentmkelly/LessWrong-43k | LessWrong | The Schumer Report on AI (RTFB)

Or at least, Read the Report (RTFR).

There is no substitute. This is not strictly a bill, but it is important.

The introduction kicks off balancing upside and avoiding downside, utility and risk. This will be a common theme, with a very strong ‘why not both?’ vibe.

> Early in the 118th Congress, we were brought together by a shared recognition of the profound changes artificial intelligence (AI) could bring to our world: AI’s capacity to revolutionize the realms of science, medicine, agriculture, and beyond; the exceptional benefits that a flourishing AI ecosystem could offer our economy and our productivity; and AI’s ability to radically alter human capacity and knowledge.

>

> At the same time, we each recognized the potential risks AI could present, including altering our workforce in the short-term and long-term, raising questions about the application of existing laws in an AI-enabled world, changing the dynamics of our national security, and raising the threat of potential doomsday scenarios. This led to the formation of our Bipartisan Senate AI Working Group (“AI Working Group”).

They did their work over nine forums.

1. Inaugural Forum

2. Supporting U.S. Innovation in AI

3. AI and the Workforce

4. High Impact Uses of AI

5. Elections and Democracy

6. Privacy and Liability

7. Transparency, Explainability, Intellectual Property, and Copyright

8. Safeguarding Against AI Risks

9. National Security

Existential risks were always given relatively minor time, with it being a topic for at most a subset of the final two forums. By contrast, mundane downsides and upsides were each given three full forums. This report was about response to AI across a broad spectrum.

THE BIG SPEND

They lead with a proposal to spend ‘at least’ $32 billion a year on ‘AI innovation.’

No, there is no plan on how to pay for that.

In this case I do not think one is needed. I would expect any reasonable implementation of that to pay for itself via economic growth. The downside |

6dd86567-c950-45bc-af87-07c382367e76 | trentmkelly/LessWrong-43k | LessWrong | [AN #161]: Creating generalizable reward functions for multiple tasks by learning a model of functional similarity

Alignment Newsletter is a weekly publication with recent content relevant to AI alignment around the world. Find all Alignment Newsletter resources here. In particular, you can look through this spreadsheet of all summaries that have ever been in the newsletter.

Audio version here (may not be up yet).

Please note that while I work at DeepMind, this newsletter represents my personal views and not those of my employer.

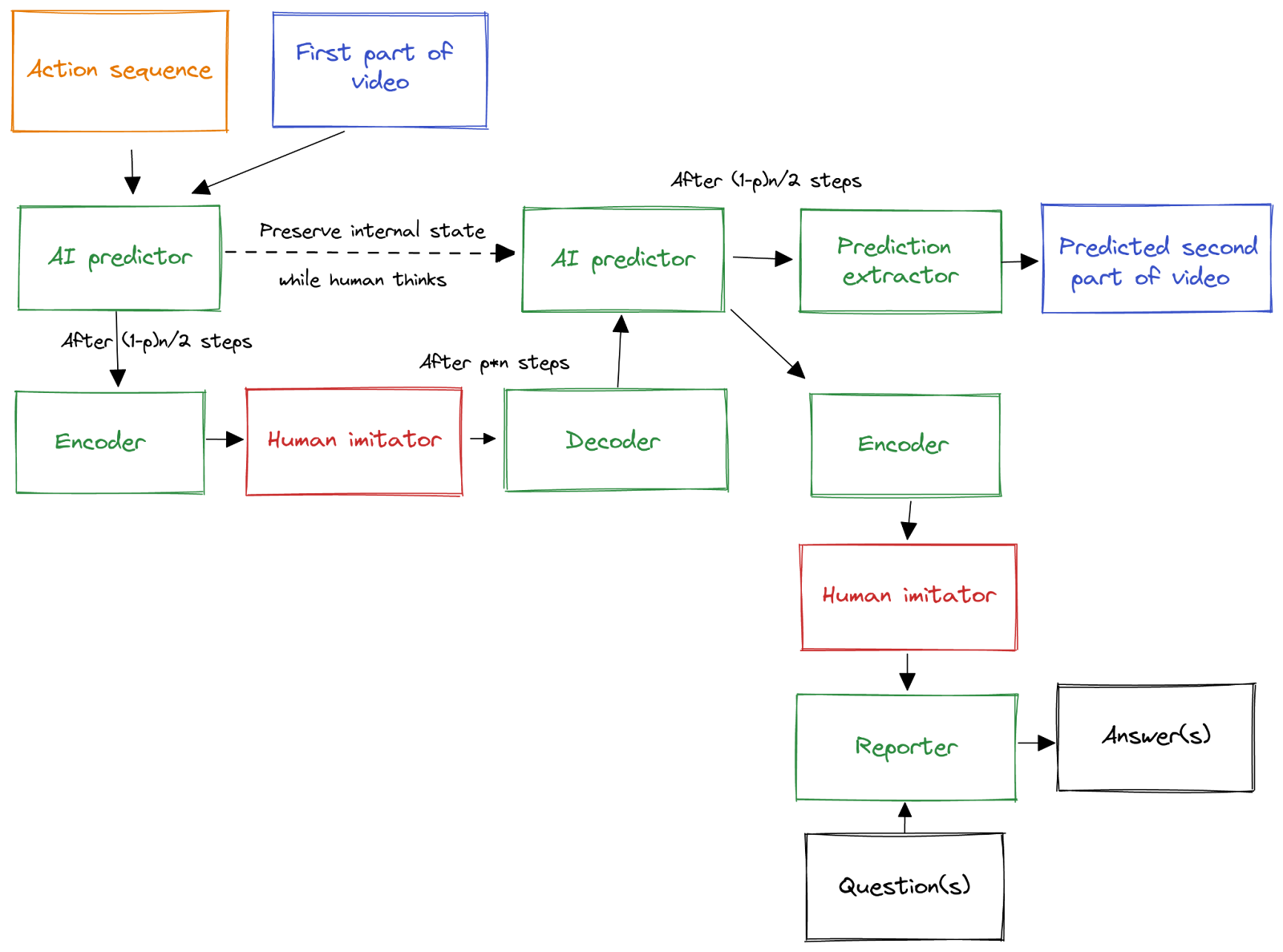

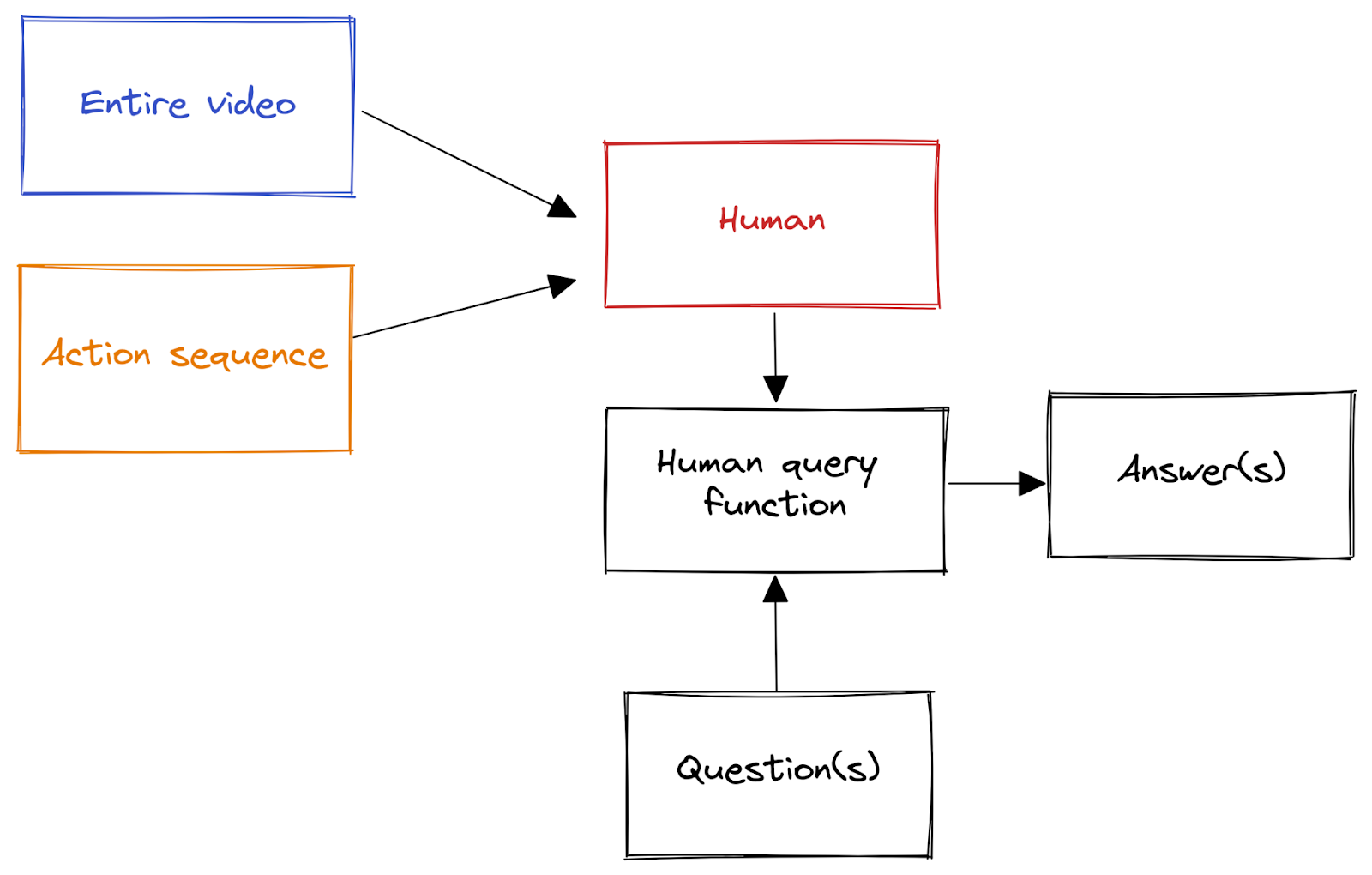

HIGHLIGHTS

Learning Generalizable Robotic Reward Functions from "In-The-Wild" Human Videos (Annie S. Chen et al) (summarized by Sudhanshu): This work demonstrates a method that learns a generalizable multi-task reward function in the context of robotic manipulation; at deployment, this function can be conditioned on a human demonstration of an unseen task to generate reward signals for the robot, even in new environments.

A key insight here was to train a discriminative model that learned whether two given video clips were performing the same actions. These clips came from both a (large) dataset of human demonstrations and a relatively smaller set of robot expert trajectories, and each clip was labelled with a task-id. This training pipeline thus leveraged huge quantities of extant human behaviour from a diversity of viewpoints to learn a metric of 'functional similarity' between pairs of videos, independent of whether they were executed by human or machine.

Once trained, this model (called the 'Domain-agnostic Video Discriminator' or DVD) can determine if a candidate robotic behaviour is similar to a desired human-demonstrated action. Such candidates are drawn from an action-conditioned video predictor, and the best-scoring action sequence is selected for execution on the (simulated or real) robot.

Read more: Paper

Sudhanshu's opinion: Performance increased with the inclusion of human data, even that from unrelated tasks, so one intuition I updated on was "More data is better, even if it's not perfect". This also feels related to "Data as regul |

22eb1eb0-944e-4d12-8685-8a1a1ab6be18 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Establishing Meta-Decision-Making for AI: An Ontology of Relevance, Representation and Reasoning

Meta-Decision-Making for AI

---------------------------

Making good decisions is a very important part of constructing good Artificial Intelligence (AI). However, there is an important distinction between decision-making itself and reasoning about decision-making, similarly to the distinction between (normative) ethics and metaethics. We believe more focus in the areas of automated decision-making, anticipatory thinking and cognitive systems ought to be explicitly given to discussing and deciding upon the characteristics of good decision-making systems and how best to build them. We call this meta-decision-making. Meta-decision-making has been discussed before in domains such as medicine [[4](#bib.bib15 "Deciding how to decide: self-control and meta-decision making")] or action research [[16](#bib.bib14 "Meta-decision making: concepts and paradigm")]. Herein we aim to help establish meta-decision-making in the context of AI as an important research avenue.

The core of our contribution in this paper is a proposed ontology or classification of the different aspects of building a decision-making system. We identify key components in the current approaches and establish a nomenclature to help focus research efforts in the particular area that they are meant to aim at, improve our collective understanding of where a particular piece of work should fit in the process of building such a system and, most importantly, help develop autonomy capabilities of such agents. In particular, third wave autonomous systems, defined as context-adapting in [[10](#bib.bib20 "A darpa perspective on artificial intelligence")], should greatly benefit from this approach, as we try to illustrate below. Lastly, in order to measure success, we can use this ontology to build metrics and benchmarks, by focusing on a particular step of the system and auditing it, and this should also feed into the perception problem, as we illustrate with a self-driving car example.

### Literature - challenges

Automated decision-making encapsulates many different modalities and parts, e.g., perception, sensors, planning, tactics, etc. When these systems fail, errors are generally dealt with in isolation (failure detection is done locally[[12](#bib.bib21 "Deep learning for anomaly detection: A review")]) and thus issues can arise around not taking into account the connections and (logical) dependencies between the different parts of the system.

Another issue, especially in autonomous systems, is that some decisions are safety-critical and time-sensitive. Reconciling inconsistencies is largely a manual process that can be time consuming. It also does not occur in real time, and is largely *ex post facto* [[17](#bib.bib22 "Understanding and avoiding ai failures: a practical guide")].

One way to deal with or preempt failure in such a system is to use preferences and rule-based decision-making [[6](#bib.bib7 "Where do preferences come from?")]. For example, in the field of moral reasoning, there is value-based decision-making with a rule-based implementation [[2](#bib.bib2 "Have a break from making decisions, have a mars: the multi-valued action reasoning system")]. The focus of such works is generally on the preference ordering on the values (the Representation step we discuss below), or on the ordering on the rules (the Reasoning step below). We will use this implementation from [[2](#bib.bib2 "Have a break from making decisions, have a mars: the multi-valued action reasoning system")] as a running example below.

There are limitations to this approach, as well as the others found in the literature, especially in terms of *adaptability* to new and unseen corner cases, *anticipation* of new risks and failure cases, and in terms of issues of *misinterpretation* [[1](#bib.bib1 "Morality, machines and the interpretation problem: a value-based, wittgensteinian approach to building moral agents")] of the symbolic representation of meaning. For example, in value-based argumentation, which has been proposed for practical reasoning, one needed to hand pick the arguments and the way they are related [[3](#bib.bib5 "Persuasion in practical argument using value-based argumentation frameworks")], although recent work is aimed at generating them automatically [[5](#bib.bib23 "Generating abstract arguments: a natural language approach.")].

### Proposal and novelty

To help deal with the challenges above, we propose that we focus on the bigger picture, the larger system, the abstract process, through this first step of becoming aware of the different parts that make up the process of automated decision-making, as described in the next chapter. This allows us to then identify and address the issue from that systemic point of view, and formulate further research accordingly.

We believe that this brings multiple benefits. It should provide a more accurate reflection of human decision-making, as we discuss below, as well as more flexibility due to the modular aspect of such a system. As we shall see below, this also allows us to better deal with new scenarios and risks and also to hedge against the risks brought on by usual rule-based approaches to the problem.

The central idea is to consider the different parts of creating a reasoner. Using this new categorisation we can get some structure to building a decision-making system that we can then base our analysis upon. Thus we can hopefully work towards a consensus on the right measurement tools and the right ways of discussing the different parts of such a system.

The three R’s - An Ontology

---------------------------

We thus put forward our ontology of these parts, which we call the three R’s: Relevance, Representation and Reasoning.

We use as a running example, an implementation in the form of a value-based moral decision-making system, MARS, as found in [[2](#bib.bib2 "Have a break from making decisions, have a mars: the multi-valued action reasoning system")]. We now focus on the reasons behind using these steps and potential risks and failures brought about by ignoring them, in order to argue for a proactive approach to decision-making aligned with these categories.

### Relevance - identifying saliency from the context

Imagine we are designing an autonomous car. The act of ”seeing” a pedestrian in the right-hand quadrant, for instance, is a relevant aspect to the car’s decision-making system, if we want to avoid collisions. Identifying this and other relevant factors is what we call the *Relevance* step. This is also known as “salience” in image processing [[13](#bib.bib16 "Grad-cam: visual explanations from deep networks via gradient-based localization")].

Using our running example of [[2](#bib.bib2 "Have a break from making decisions, have a mars: the multi-valued action reasoning system")], the Relevance step is the part in which the potential actions and the values relevant to the decision-making are given, as well as the connection between the two (which actions promote which values) with the aim of getting the agent to understand the situation it is in and the decision problem it is facing. In their case, this would be done manually by the programmer, in the set-up of a particular use of their system, but we can imagine an application like a self-driving car gathering information automatically through its vision system, for instance.

Not performing this step at all would lead to major issues (by not caring about what, by definition, matters), and we struggle to imagine a good reasoner that does not take the context of its acting into account. If we performed this step incorrectly, perhaps its perception of the environment might not be accurate, or its comprehension of the conceptual obstacles (situations, consequences) it ought to navigate might be lacking and thus lead it to misbehave, which would give rise to various risks, some of which we have mentioned above.

Arguably, the main risk of not performing this step properly is that we may omit some important factors and thus, by not taking them into account, give rise to risks and misbehaviour. To exemplify using the self-driving car, by not taking into account the life and safety of the driver, we may end up trying to save the pedestrian but not give any thought to the driver’s life (or vice versa).

Note that we may not need to take all the relevant factors into account at the same time, or for the same decision problem, so we will need a way to narrow down the list of those we ought to act on and those that we can pass over. This will be handled in the last step where we discuss Reasoning. We also need a way to put the relevant information into a technical form, and this is our next step, Representation.

### Representation - syntax and semantics

We may have various underlying implementations for our decision-making system, whether they be symbolic or logic-based systems [[8](#bib.bib12 "A formal characterisation of institutionalised power")], based on machine learning, or otherwise. Our vision is to be implementation-agnostic, to ensure our framework can be used across various representations, applications, and platforms.

Now, the question is how do we create a useful representation that can take in the aspects identified in the first step? This is not only a problem of a symbolic nature, namely, how to best use syntax to get the right semantics, but also one of technical relevance, because it is on the basis of whatever technical framework we will use to reason that we choose those salient aspects that we can actually use and act upon. These will depend on the use case and whatever measurement of success applies.

Some of the main issues around this step are those of conveying meaning in symbolic form. The syntax we use obviously varies with implementation, but generally we have the problem of how to best use this syntax to encompass the meaning we want the machine to grasp about the situation, the environment, the principles of acting etc. Consequently, there is the potential risk of the misinterpretation of any rules we have used, whether they be rules for action (strict or probabilistic) or rules in the sense of code used to program the machine. An extensive discussion of this issue, sometimes called the *Interpretation Problem*, can be found in [[1](#bib.bib1 "Morality, machines and the interpretation problem: a value-based, wittgensteinian approach to building moral agents")].

Note that, as we said above, some relevant aspects identified in the first step may not be used in all decisions.

For instance, to continue with the self-driving car example, maybe we do not have effectors good enough to act upon some of the stimuli the car receives, or we do not understand the context well enough to be able to properly generate semantics (brand new elements in the configuration of the environment). The result of this second step, namely the representation built, will thus need to connect the relevant aspects of the situation with the reasoning part while attempting to leverage the underlying implementation as well as possible. Therefore, the core idea of this step is to create a representation that is abstract enough to cover many situations but flexible enough to be amenable to being used in new cases and powerful enough (expressive enough, for instance, if it is a type of logic) to proactively address potential risks not previously encountered.

For instance, in our running example of MARS, moral paradigms are used as the representation, and they compile an ordered list of values that are important to the agent, in the form of strata. This representation works because it plays well with the underlying technical implementation (the programming) as well as the abstract language used (the logic used for formal reasoning) [[2](#bib.bib2 "Have a break from making decisions, have a mars: the multi-valued action reasoning system")]. For a self-driving car, these values may be human life, safety, no component failure, the rules of the road, minimizing time to destination, etc. The importance of these considerations varies based on the context in which they are applied, so this is why the former step, Relevance, is so important, as it sets up the particulars of the decision problem at hand.

As we have mentioned before, a key to third wave autonomous systems is context adaptation. Thus, these values could have a changing relative importance depending on the active components of the vehicle. For instance, at high speeds, safety is of utmost importance. On the freeway, the rules of the road are minimal. In suburban environments, we may want to ensure a very low tolerance for misidentification of potential pedestrians. The representation should be flexible enough to allow for this.

### Reasoning

Now, having identified the relevant aspects to the decision-making process, and having built a useful representation that can take them into account, we come to the step of actually making decisions based on the acquired information.

Arguably, the issues of highest importance here and which may give rise to unexpected risks are quick decision making and confidence, with the aims of minimising false positives and false negatives as well as building a sense of trust in our systems. This is essential for numerous practical reasons: for our system to be adopted, pass regulatory requirements, be acquired by the end user, be used appropriately etc.

Furthermore, and perhaps the most difficult issue of doing this step, is choosing what rules and principles to have the machine follow. The field of *value alignment* considers this issue of getting the values we desire into the behaviour and reasoning of our creations, but even there, most attempts to achieve value alignment aim to implement the same rules we seem to follow into the machine [[15](#bib.bib19 "Alignment for advanced machine learning systems")] [[14](#bib.bib18 "The value learning problem")]. Thus, a further potential issue is whether we need a descriptive or normative approach. That is, should we implement the rules we currently use ourselves with our potential biases (descriptive) or ones we would ideally want to follow as rational decision-makers (normative)?

We strongly believe that the most important point and why this step is essential is that reasoning is the key component for anticipatory thinking. A problem frequently discussed in the literature is that some reasoning systems have rigid rule-based frameworks, and this leads to an inability to obtain the kind of flexibility, coverage and abstraction required to properly reason under uncertainty in open environments, which could, we hope, be improved by taking into account the three steps discussed here. Consider, for instance, *rule explosion*, having to add more and more rules to deal with new cases that the system was not programmed to deal with. Or consider how, in terms of moral reasoning, virtue ethics could be the best moral paradigm in certain use cases [[7](#bib.bib8 "Moral exemplars for the virtuous machine: the clinician’s role in ethical artificial intelligence for healthcare")], whereas consequentialism or deontology may be desired in others [[18](#bib.bib13 "Artificial intelligence safety and security")]. Another issue is the risk of being stuck in local minima, and thinking the solution is optimal when it is not, which happened, for instance, in DeepMind’s Atari games playing agent [[11](#bib.bib17 "Playing atari with deep reinforcement learning")] which struggled due to greedy algorithms.

There are potential risks around not handling this step right. We may get decision paralysis, as, for instance, it is described in the paradox of Buridan and his donkey [[9](#bib.bib10 "Buridan’s principle")]) where one cannot rationally decide between options; or we may have the opposite, an over-active decision-maker, potentially arising, for instance, in a self-driving car when a collision with a small object is imminent and, as driver, it may be advisable to hold the course instead of risking a more serious accident by attempting to perform any other mitigation.

As an example of how to approach this step, in MARS they have multiple different flavours of reasoners (models) that combine the previously identified relevant points and represented values into making a decision that is arguably in tune with the desired outcome, given a particular value ordering (moral paradigm). For instance, they have an additive model that adds up the values involved in actions to select the right action to perform, a weighted model that assigns numerical weights to the values based on their relative importance, as well as other more refined ones. They argue that these different models allow for flexible decision-making.

Contribution and Discussion

---------------------------

Two essential points of this proposal are *adaptability* and *redundancy*: there are multiple ways to reason about a problem, and we might not be certain of the best one *a priori*. If one reasoning framework is inadequate or wrong, then there may be another way to solve the problem, and we should be able to account for this, and our modular approach should allow us to shift the relative importance of the relevant features, with flexibility in what paradigm we use. Our approach aims to support third wave autonomous systems, in which the values themselves may be re-prioritized in different circumstances, as well as the reasoning paradigm changed completely.

In the Relevance step, we ought to be able to adapt which features are salient based on the context, in the Representation step, the structures we build and feed into the reasoning need to be adaptable, and in the Reasoning step the paradigm or rules we use should be applicable in most cases and flexible enough to give desired outputs. The separation into the three R’s aims to make it easier to reason about different components by enforcing cohesion and reducing coupling as well as to reduce computational complexity in the implementation.

In conclusion, in this paper, we contribute to the solving of potential risks that arise in anticipatory systems, by proposing a nomenclature that reflects the literature on decision-making, and an ontology that allows researchers that adopt it to clarify the focus of their work. We hope to improve future work around this and adjacent areas through this shared framework. |

3a8a3913-9568-47b8-98a9-bd306b5e1e1f | trentmkelly/LessWrong-43k | LessWrong | Thoughts on sharing information about language model capabilities

Core claim

I believe that sharing information about the capabilities and limits of existing ML systems, and especially language model agents, significantly reduces risks from powerful AI—despite the fact that such information may increase the amount or quality of investment in ML generally (or in LM agents in particular).

Concretely, I mean to include information like: tasks and evaluation frameworks for LM agents, the results of evaluations of particular agents, discussions of the qualitative strengths and weaknesses of agents, and information about agent design that may represent small improvements over the state of the art (insofar as that information is hard to decouple from evaluation results).

Context

ARC Evals currently focuses on evaluating the capabilities and limitations of existing ML systems, with an aim towards understanding whether or when they may be capable enough to pose catastrophic risks. Current evaluations are particularly focused on monitoring progress in language model agents.

I believe that sharing this kind of information significantly improves society's ability to handle risks from AI, and so I am encouraging the team to share more information. However this issue is certainly not straightforward, and in some places (particularly in the EA community where this post is being shared) I believe my position is controversial.

I'm writing this post at the request of the Evals team to lay out my views publicly. I am speaking only for myself. I believe the team is broadly sympathetic to my position, but would prefer to see a broader and more thorough discussion about this question.

I do not think this post presents a complete or convincing argument for my beliefs. The purpose is mostly to outline and explain the basic view, at a similar level of clarity and thoroughness to the arguments against sharing information (which have mostly not been laid out explicitly).

Added 8/1: Evals has just published a description of some of their work evaluati |

0ab9ce7e-b89f-4860-af50-6bcd9bb26abb | trentmkelly/LessWrong-43k | LessWrong | Parenting versus career choice thinking in teenagers

This is a somewhat modified version of a Facebook post I made a few days ago, incorporating some of the comments there. I think the Less Wrong readership may have interesting thoughts on the subject.

In recent times, especially in the developed world and among higher socio-economic status families everywhere in the world, it's common for teenagers (and even younger children) to be encouraged to think in systematic ways about their career choice, but it's relatively rare for them to be encouraged to think in systematic ways about how many children they'll have or how they'll raise their children. A lot of teenagers do have views on the subject of children, but they're not encouraged to have views, and they're not encouraged to refine those views. With career choice, although there's still probably a lot of room for improvement in the quality of advice and guidance offered, people at least in principle acknowledge its importance.

What do you think explains the disparity? Here are some explanations with my thoughts on them:

1. Career choice is (believed to be) more important than the choice of how many children to have and how to raise them: For people who expect to generate a huge amount of value in their careers, this is probably true, because if they do have children, the children are likely to be less exceptional (regression to the mean). However, for most people, this probably isn't the case: having children could be one of their main forms of contribution to society.

2. Career choice requires planning from a younger age, because it requires selection of subjects to study in school and college: There's more lead time needed for career choice, whereas it takes only nine months to have a child. This seems to work as a reasonable explanation for people who are inclined to have very few kids, but it doesn't work for people who are interested in keeping open the option of having a large number of kids. Also, as they say, it takes two to have a baby, so one do |

ee6bf382-7c66-4a93-9521-a25ebcb57913 | trentmkelly/LessWrong-43k | LessWrong | Interview With Diana Fleischman and Geoffrey Miller

Cross-posted from Putanumonit.

----------------------------------------

I had the pleasure of interviewing Diana Fleischman and Geoffrey Miller at the NYC Rationality meetup. Diana and Geoffrey are professors of evolutionary psychology, Effective Altruists, thoughtful polyamorists, and fearless thinkers. We talked about everything that’s important in life: gems, sex, morality, kids, shit-testing, jealousy, and why women are smart.

If you missed it, here’s the wide-ranging interview I did with Geoffrey alone a year ago. I will also publish the audience Q&A from the meetup later this month.

Congratulations on your engagement! Geoffrey designed a special moissanite engagement ring for Diana, and moissanite is something my wife and I believe in very much as well. Can you tell everyone about moissanite so that no one here buys a diamond ever again?

Geoffrey: In my book Spent I did a multi-page critique of the DeBeers diamond cartel and diamonds as costly signals of commitment. And maybe it’s fine to spend a lot of money on a signal, money that goes to a cartel.

Diana: But cocaine is more fun!

Geoffrey: Yes, or get a college degree — that’s a cartel too. But there are better and cheaper stones, like moissanite which is a silicon carbide gem and costs 1/10 to 1/50 of a diamond depending on the carat. But I think it’s a better stone, it has superior ‘fire’ and ‘brilliance’, as they say. Of course, once moissanite was invented and it became colorless and clear enough, the diamond cartel dissed it by saying “these are just disco balls”. Basically – it’s too flashy, and no self-respecting person who cares about the subtlety of a diamond will buy a moissy. Whereas for the previous 100 years, diamonds were sold as the flashiest, highest brilliance, and highest fire gemstone. So: hypocrites.

Moissies are getting better and better, and they just went off-patent four years ago so there are multiple manufacturers making them now and prices are dropping. So I’d rather put th |

4bec3dda-4023-4bfa-817d-3d9e4fc07a41 | trentmkelly/LessWrong-43k | LessWrong | New SSC meetup group in Lisbon

After years of reading Slate Star Codex and LessWrong (more the former than the latter), I've decided to take a more active role in the so-called rationalist community by creating an account on Less Wrong and meeting people in real life for whom the Sequences have been important in their critical thinking.

The ideal way for me would be to join a SSC/LW meetup group in Lisbon, where I live, but, unfortunately, there wasn't one in Lisbon until now! So, if you live in Lisbon or you're just passing by, let's meet and have a chat about some SSC posts or any topic that relates to the rationalist community. I'm very curious to know how many people read regularly SSC or LW and how they relate that in their lives. If you're interested I'm easy to find on twitter at @m8popkin, send me a message on LessWrong or send a comment on the meetup group. |

a15a56c1-6d0e-4fa7-8263-732670df881a | trentmkelly/LessWrong-43k | LessWrong | Proliferating Education

Education is the stimulus that fans embers of innovation and progress. It's a toolset of ideas passed through generations of mankind, from fathers to their children, between nations and cultures. It's the engine that drives global prosperity. I must say that old ways of education have miserably failed many nations. At a moment in time, when everyone is rapidly being connected to the internet, AI technologies are being democratized, access to experts readily available, does the use of this power not permit us to devise radical ways to educate millions of children and provide world-class personalized education on every nook on earth?

It's no brainer that two decades into 21st century and we find the very fabric of our societal system being driven by critical knowledge - our economy, industry, environment, agriculture, energy, food, military power, and communications. If one's to step into the territory of dictatorial and corrupt nations, one sees millions of people subjected to diseases, hunger, catastrophes, terrorism, and war - and to such evils, there's only one cure: research and knowledge disseminated at scale, out of the hands of governments, and maintained by privately autonomous corporations.

> "All who have meditated on the art of governing mankind have been convinced that the fate of empires depends on the education of youth." (Aristotle)

All statesmen believe this. Do they? Had they known, it would have reflected in their action and decision. Implementing curriculums that recede nations is a folly being repeated in Pakistan, and woe to me that I call that man a statesman! He seems so aloof of the world and the rapidity of course it has taken, and yet he's left it all for us to remedy! This sea of people is yearning for progress, it's screaming out to us for rescue from the abject ideologies they've been sunken into! These men have not seen progressive enlightenment and globalization for centuries. Their eyes were bandaged, and without doubt are bandaged |

18ac9e76-53b4-41d8-968a-4d5baf99251b | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | What are biases, anyway? Multiple type signatures

*With thanks to Rebecca Gorman for helping develop this idea.*

I've been constructing toy examples of agents with preferences and biases. There turns out to be many, many different ways of doing this, and none of the toy examples seem very universal. The reason for this is that what we call "bias" can correspond to objects of very different type signatures.

Defining preferences

--------------------

Before diving into biases, a brief detour into preferences or values. We can talk about revealed preferences (which look at the actions of an agent and deduce preferences by adding the assumption that the agent is fully rational), stated preferences (which adds the assumption that the stated preferences are accurate), and preferences as [internal mental judgments](https://www.lesswrong.com/posts/2vnJmWDAaLKDayvwr/toy-model-piece-4-partial-preferences-re-re-visited).

There are also [various](https://www.lesswrong.com/posts/CSEdLLEkap2pubjof/research-agenda-v0-9-synthesising-a-human-s-preferences-into) [versions](https://intelligence.org/files/CEV.pdf) of idealised preferences[[1]](#fn-pcAzgnAtBuazKbdCj-1), extrapolated from other types of preferences, with various [consistency conditions](https://www.lesswrong.com/posts/KptP3J2ThDTnriric/consistencies-as-meta-preferences).

The picture can get more complicated that this, but that's a rough overview of most ways of looking at preferences. Stated preferences and preferences-as-judgements are of type "binary relations": they allow one to say things like "x is better than y". Revealed preferences and idealised preferences are typically reward/utility functions: they allow one to say "x is worth a, y is worth b".

So despite the vast amount of different preferences out there, their type signatures are not that varied. Meta preferences are preferences over one's own preferences, and have a similar type signature.

Biases

------

In the [Occam's razor paper](https://arxiv.org/abs/1712.05812), an agent has a reward function R.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}