id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

2c3d4cfb-a75d-4979-8f29-73b3834ae1d1 | StampyAI/alignment-research-dataset/arxiv | Arxiv | World Discovery Models

1 Introduction

---------------

Modern AI has been remarkably successful in solving complex decision-making problems such as GO (silver2016mastering; silver2017mastering), simulated control tasks (schulman2015trust), robotics (levine2016end), poker (moravvcik2017deepstack) and Atari games (mnih2015human; hessel2018rainbow). Despite these successes the agents developed by those methods are specialists: they perform extremely well at the tasks they were trained on but are not very successful at generalising their task-dependent skills in the form of a general domain understanding. Also, the success of the existing AI agents often depends strongly on the availability of external feedback from their world in the form of reward signals or labelled data, for which some level of supervision is required. This is in contrast to the human mind, which is a general and self-supervised learning system that *discovers* the world around it even when no external reinforcement is available. Discovery is the ability to obtain knowledge of a phenomenon for the first time (merriam2004merriam). As discovery entails the process of learning of and about new things, it is an integral part of what makes humans capable of understanding their world in a task-independent and self-supervised fashion.

The underlying process of discovery in humans is complex and multifaceted (hohwy2013predictive). However one can identify two main mechanisms for discovery (clark2017nice). The first mechanism is active information seeking. One of the primary behaviours of humans is their attraction to novelty (new information) in their world (litman2005curiosity; kidd2015psychology). The human mind is very good at distinguishing between the *novel* and the *known*, and this ability is partially due to the extensive internal reward mechanisms of *surprise*, *curiosity* and *excitement* (schmidhuber2009simple). The second mechanism is building a statistical world model. Within cognitive neuroscience, the theory of statistical predictive mind states that the brain, like scientists, constructs and maintains a set of hypotheses over its representation of the world (friston2014computational). Upon perceiving a novelty, our brain has the ability to validate the existing hypothesis, reinforce the ones which are compatible with the new observation and discard the incompatible ones. This self-supervised process of hypothesis building is essentially how humans consolidate their ever-growing knowledge in the form of an accurate and global model.

Inspired by these inputs from cognitive neuroscience, information-seeking algorithms have received significant attention to improve the exploration capability of artificial learning agents (schmidhuber1991possibility; houthooft2016vime; achiam2017surprise; pathak2017curiosity; burda2018large). However, the scope of the existing information-seeking algorithms is often limited to the case of fully observable and deterministic environments. One of the problems with the existing novelty-seeking algorithms is that agents trained by these methods tend to become attracted to random patterns in their world and stop exploring upon encountering them, despite the fact that these random patterns contain no actual *information* on the world (burda2018large). Moreover, the performance of existing agents are often evaluated based on their ability to solve a reinforcement learning (RL) task with extrinsic reward, and not on the quality of the learned world representation, which is the actual goal of discovery. Thus, it is not clear whether the existing algorithms are capable of using the novel information to discover their world. Therefore, the problem of discovery in the general case of partially observable and stochastic environments remains open.

The main contribution of this paper is to develop a practical and end-to-end algorithm for discovery in stochastic and partially observable worlds using modern AI technology. We achieve this goal by designing a simple yet effective algorithm called NDIGO, Neural Differential Information Gain Optimisation, for information seeking designed specifically for stochastic partially observable domains. NDIGO identifies novelty by measuring the increment of information provided by a new observation in predicting the future observations, compared to a baseline prediction for which this observation is withheld. We show that this measure can be estimated using the difference of prediction losses of two estimators, one of which can access the complete set of observations while the other does not receive the latest observation. We then use this measure of novelty as the intrinsic reward to train the policy using a state of the art reinforcement learning algorithm (kapturowski2018recurrent). One of the key features of NDIGO is its robustness to noise, as the process of subtracting prediction losses cancels out errors that the algorithm cannot improve on. Moreover, NDIGO is well-suited for discovery in partially observable domains as the measure of novelty in NDIGO drives the agent to the unobserved areas of the world where new information can be gained from the observations. Our experiments show that NDIGO produces a robust performance in the presence of noise in partial observable environments: NDIGO not only finds true novelty without being distracted by the noise, but it also incorporates this information into its world representation without forgetting previous observation.

2 Related Work

---------------

It has been argued for decades in developmental psychology (white1959motivation; deci1985intrinsic; csikszentmihalyi1992optimal), neuroscience (dayan2002reward; kakade2002dopamine; horvitz2000mesolimbocortical) and machine learning (oudeyer2008can; gottlieb2013information; schmidhuber1991curious) that an agent maximising a simple intrinsic reward based on patterns that are both novel and learnable could exhibit essential aspects of intelligence such as autonomous development (oudeyer2016evolution).

More specifically, in his survey on the theory of creativity and intrinsic motivation, schmidhuber2010formal explains how to build the agent that could discover and understand in a self-supervised way its environment. He establishes that 4 crucial components are necessary: i) a world model (ha2018world) that encodes what is currently known. It can be a working memory component such as a Long Short Term Memory network (LSTM, hochreiter1997long) or a Gated Recurrent Unit network (GRU, cho2014learning). ii) a learning algorithm that improves the world model. For instance, guo2018 have shown that a GRU trained with a Contrastive Prediction Coding (CPC, oord2018representation) loss on future frames could learn a representation of the agent’s current and past position and orientation, as well as position of objects in the environment. iii) An intrinsic reward generator based on the world model that produces rewards for patterns that are both novel and learnable. Different types of intrinsic rewards can be used, such as the world model’s prediction error (stadie2015incentivizing; pathak2017curiosity), improvement of the model’s prediction error, also known as prediction gain (achiam2017surprise; schmidhuber1991curious; lopes2012exploration), and finally information gain (itti2009bayesian; little2013learning; frank2014curiosity; houthooft2016vime). iv the last component is an RL algorithm that finds an optimal policy with respect to the intrinsic rewards.

Recently, several implementations of intrinsically motivated agents have been attempted using modern AI technology. Most of them used the concept of prediction error as an intrinsic reward (stadie2015incentivizing; pathak2017curiosity; burda2018large; haber2018learning). However, it has been argued that agents optimising the prediction error are susceptible to being attracted to white noise (oudeyer2007intrinsic) and therefore should be avoided. To solve the white-noise problem, different types of random or learned projections (burda2018large) of the original image into a smaller feature space less susceptible to white-noise are considered. Other implementations rely on approximations of the concept of information gain (houthooft2016vime; achiam2017surprise) via a variational lower bound argument. Indeed, as they are trying to train a probabilistic model over the set of possible dynamics, the computation of the posterior of that distribution is intractable (houthooft2016vime). Finally, models based on prediction gain are fundamentally harder to train compared to prediction error (achiam2017surprise; lopes2012exploration; pathak2017curiosity), and are less principled than information gain (schmidhuber2010formal).

3 Setting

----------

We consider a partially observable environment where an agent is shown an observation ot at time t, then selects an action at which generates a new observation ot+1 at the next time step. We assume observations ot are generated by an underlying process xt following Markov dynamics, i.e. xt+1∼P(⋅|xt,at), where P is the dynamics of the underlying process. Although we do not explicitly use the corresponding terminology, this process can be formalised in terms of Partially Observable Markov Decision Processes (POMDPs; lovejoy1991survey; cassandra1998exact).

The future observation ot+1 in a POMDP can also be seen as the output of a stochastic mapping with input the current history. Indeed, at any given time t, let the current history ht be all past actions and observations htdef=(o0,a0,o1,a1,⋯,at−1,ot). Then we define P(⋅|ht,at) the probability distribution of ot knowing the history and the action at. One can generalise this notion for k-step prediction: for any integers t≥0 and k≥1, let us denote by t:t+k the integer interval {t,…,t+k−1}, and let At:t+kdef=(at,…,at+k−1) and Ot:t+kdef=(ot,…,ot+k−1) be the sequence of actions and observations from time t up to time t+k−1, respectively. Then ot+k can be seen as a sample drawn from the probability distribution P(⋅|ht,At:t+k), which is the k-step open-loop prediction model of the observation ot+k. We also use the short-hand notation Pt+k|t=P(⋅|ht,At:t+k) as the probability distribution of ot+k given the history ht and the sequence of actions At:t+k.

4 Learning the World Model

---------------------------

The world model should capture what the agent currently knows about the world so that he could make predictions based on what it knows. We thus build a model of the world by predicting future observations given the past (see e. g., schmidhuber1991curious; guo2018). More precisely, we build an internal representation bt by making predictions of futures frames ot+k conditioned on a sequence of actions At:t+k and given the past ht. This is similar to the approach of Predictive State Representations (littman2002predictive), from which we know that if the learnt representation bt is able to predict the probability of any future observation conditioned on any sequence of actions and history, then this representation bt contains all information about the belief state (i.e., distribution over the ground truth state xt).

###

4.1 Architecture

We propose to learn the world model by using a recurrent neural network (RNN) fθ fed with the concatenation of observation features zt and the action at (encoded as a one-hot vector). The observation features zt are obtained by applying a convolutional neural network (CNN) fϕ to the observation ot. The RNN is a Gated Recurrent Unit (GRU) and the internal representation is the hidden state of the GRU, that is, bt=fθ(zt,at−1,bt−1), as shown in [Figure 1](#S4.F1 "Figure 1 ‣ 4.1 Architecture ‣ 4 Learning the World Model ‣ World Discovery Models"). We initialise this GRU by setting its hidden state to the null vector 0, and using b0=fθ(z0,a,0) where a is a fixed, arbitrary action and z0 are the features corresponding to the original observation o0. We train this representation bt with some future-frame prediction tasks conditioned on sequences of actions and the representation bt. These frame prediction tasks consist in estimating the probability distribution, for various K≥k≥1 (with K∈N∗ to be specified later), of future observation ot+k conditioned on the internal representation bt and the sequence of actions At:t+k. We denote these estimates by ˆpt+k|t(.|bt,At:t+k) or simply by ˆpt+k|t for conciseness and when no confusion is possible. As the notation suggests, we will use ˆpt+k|t as an estimate of Pt+k|t.

The neural architecture consists in K different neural nets {fψk}Kk=1. Each neural net fψk receives as input the concatenation of the internal representation bt and the sequence of actions At:t+k, and outputs the distributions over observations: ˆpt+k|t=fψk(bt,At:t+k) (). For a fixed t≥0 and a fixed K≥k≥1, the loss function L(ot+k,ˆpt+k|t) at time step t+k−1 associated with the network fψk is a cross entropy loss: L(ot+k,ˆpt+k|t)=−ln(ˆpt+k|t(ot+k)). We finally define for any given sequence of actions and observations the *representation loss* function Lrepr as the sum of these cross entropy losses: Lrepr=∑t≥0,K≥k≥1L(ot+k,ˆpt+k|t).

Figure 1: World Model: a CNN and a GRU encode the history ht into an internal representation bt. Then, K frame predictions tasks are trained in order to shape the representation bt.

+

###

4.2 Evaluation of the learnt representation

In the POMDP setting, the real state xt represents all there is to know about the world at time t. By constructing a belief state, which is a distribution Pb(⋅|ht) over the possible states conditioned on the history ht, the agent can assess its uncertainty about the real state xt given the history ht. Therefore, in order to assess the quality of the learnt representation bt, we use the glass-box approach described in [Figure 12](#A1.F12 "Figure 12 ‣ Appendix A NDIGO Global Network Architecture ‣ World Discovery Models") to build a belief state of the world. It consists simply in training a neural network fτ fed by the internal representation bt to predict a distribution ˆpb(⋅|bt) over the possible real state xt. This kind of approach is only possible in artificial or controlled environments where the real state is available to the experimenter but yet not given to the agent. We also make sure sure that no gradient from fτ is being back-propagated to the internal representation bt such that the evaluation does not influence the learning of the representation and the behaviour of the agent. For a fixed t≥0, the loss used to trained fτ is a cross entropy loss (For a more detailed description of the approach see guo2018): Ldiscovery(xt,ˆpb(⋅|bt))def=−ln(ˆpb(xt|bt)).

We call this loss *discovery loss*, and use it as a measure of how much information about the whole world the agent is able to encode in its internal representation bt, i.e., how much of the world has been discovered by the agent.

5 NDIGO Agent

--------------

Our NDIGO agent is a discovery agent that learns to seek new information in its environment and then incorporate this information into a world representation. Inspired by the intrinsic motivation literature (schmidhuber2010formal), the NDIGO agent achieves this information-seeking behaviour as a result of optimising an intrinsic reward. Therefore, the agent’s exploratory skills depend critically on designing an appropriate reward signal that encourages discovering the world. Ideally, we want this reward signal to be high when the agent gets an observation containing new information about the real state xt. As we cannot access xt at training time, we rely on the accuracy of our future observations predictions to estimate the information we have about xt.

Intuitively, for a fixed horizon H∈N∗, the *prediction error loss* L(ot+H,ˆpt+H|t)=−ln(ˆpt+H|t(ot+H)) is a good measure on how much information bt is lacking about the future observation ot+H. The higher the loss, the more uncertain the agent is about the future observation ot+H so the less information it has about this observation. Therefore, one could define an intrinsic reward directly as the prediction error loss, thus encouraging the agent to move towards states for which it is the less capable of predicting future observations. The hope is that the less information we have in a certain belief state, the easier it is to gain new information. Although this approach may have good results in deterministic environments, it is however not suitable in certain stochastic environments. For instance, consider the extreme case in which the agent is offered to observe white noise such as a TV displaying static. An agent motivated with prediction error loss would continually receive a high intrinsic reward simply by staying in front of this TV, as it cannot improve its predictions of future observations, and would effectively remain fascinated by this noise.

###

5.1 The NDIGO intrinsic reward

The reason why the naive prediction error reward fails in such a simple example is that the agent identifies that a lot of information is lacking, but does not acknowledge that no progress is made towards acquiring this lacking information. To overcome this issue, we introduce the NDIGO reward, for a fixed K≥H≥1, as follows:

| | | | |

| --- | --- | --- | --- |

| | rNDIGOt+H−1def=L(ot+H,ˆpt+H|t−1)−L(ot+H,ˆpt+H|t), | | (1) |

where ot+H represents the future observation considered and H is the horizon of NDIGO. The two terms in the right-hand side of [Equation 1](#S5.E1 "(1) ‣ 5.1 The NDIGO intrinsic reward ‣ 5 NDIGO Agent ‣ World Discovery Models") measure how much information the agent lacks about the future observation ot+H knowing all past observations prior to ot with ot either excluded (left term) or included (right term). Intuitively, we take the difference between the information we have at time t with the information we have at time t−1. This way we get an estimate of how much information the agent gained about ot+H by observing ot. Note that the reward rNDIGOt+H−1 is attributed at time t+H−1 in order to make it dependent on ht+H−1 and at+H−1 only (and not on the policy), once the prediction model ˆp has been learnt. If the reward had been assigned at time t instead (time of prediction) it would have depended on the policy used to generate the action sequence At:t+H−1, which would have violated the Markovian assumption required to train the RL algorithm. Coming back to our broken TV example, the white noise in ot does not help in predicting the future observation ot+H. The NDIGO reward is then the difference of two large terms of similar amplitude, leading to a small reward: while acknowledging that a lot of information is missing (large prediction error loss) NDIGO also realises that no more of it can be extracted (small difference of prediction error loss). Our experiments show that using NDIGO allows the agent to avoid being stuck in the presence of noise, as presented in [Section 6](#S6 "6 Experiments ‣ World Discovery Models"), thus confirming these theoretical considerations.

###

5.2 Algorithm

Given the intrinsic reward rNDIGOt+H−1, we use the state-of-the-art RL algorithm R2D2 (kapturowski2018recurrent) to optimise the policy. The NDIGO agent interacts with its world using the NDIGO policy to obtain new observation ot+k, which is used to train the world model by minimising the future prediction loss Lt+k|t=L(ot+k,ˆpt+k|t). The losses Lt+k|t are then used to obtain the intrinsic reward at the next time step, and the process is then repeated. An in-depth description of the complete NDIGO algorithm can be found in [Section B.5](#A2.SS5 "B.5 Training loop pseudocode ‣ Appendix B NDIGO Agent Implementation Details ‣ World Discovery Models").

###

5.3 Relation to information gain

Information gain has been widely used as the novelty signal in the literature (houthooft2016vime; little2013learning). A very broad definition of the information gain (schmidhuber2010formal) is the distance (or divergence) between distributions on any random event of interest ω before and after a new sequence of observations. Choosing the random event to be the future observations or actions and the divergence to be the Kullback-Leiber divergence then the k-step predictive information gain IG(ot+k,Ot:t+k|ht,At:t+k) of the future event ot+k with respect to the sequence of observations Ot:t+k is defined as: IG(ot+k,Ot:t+k|ht,At:t+k)def=KL(Pt+k|t+k−1||Pt+k|t−1), and measures how much information can be gained about the future observation ot+k from the sequence of past observations Ot:t+k given the whole history ht up to time step t and the sequence of actions At:t+k from t up to t+H−1. In the case of k=1 we recover the 1-step information gain on the next observation ot+1 due to ot. We also use the following short-hand notation for the information gain IGt+k|t=IG(ot+k,Ot:t+k|ht,At:t+k) for every k≥1 and t≥0. Also by convention we define IGt|t=0.

We now show that the NDIGO intrinsic reward rNDIGOt+H−1 can be expressed as the difference of information gain due to Ot:t+H and Ot+1:t+H. For a given horizon H≥1 and t≥0, the intrinsic reward for time step t+H−1 is:

| | | | | |

| --- | --- | --- | --- | --- |

| | rNDIGOt+H−1 | def=L(ot+H,ˆpt+H|t−1)−L(ot+H,ˆpt+H|t) | | (2) |

| | | =ln(ˆpt+H|t(ot+H)ˆpt+H|t−1(ot+H)). | | (3) |

Given that ˆpt+H|t and ˆpt+H|t−1 are respectively an estimate of Pt+H|t and Pt+H|t−1, and based on the fact that these estimates become more accurate as the number of samples increases, we have:

| | | | |

| --- | --- | --- | --- |

| | E[rNDIGOt+H−1] | =Eot+H∼Pt+H|t+H−1[ln(ˆpt+H|t(ot+H)ˆpt+H|t−1(ot+H))] | |

| | | ≊Eot+H∼Pt+H|t+H−1[ln(Pt+H|t(ot+H)Pt+H|t−1(ot+H))] | |

| | | =KL(Pt+H|t+H−1||Pt+H|t−1) | |

| | | −KL(Pt+H|t+H−1||Pt+H|t) | |

| | | =IGt+H|t−IGt+H|t−1. | | (4) |

The first term IGt+H|t in [Equation 4](#S5.E4 "(4) ‣ 5.3 Relation to information gain ‣ 5 NDIGO Agent ‣ World Discovery Models") measures how much information can be gained about ot+H from the sequence of past observations Ot:t+H whereas the second term IGt+H|t+1 measures how much information can be gained about ot+H from the sequence of past observations Ot+1:t+H. Therefore, as Ot+1:t+H=Ot:t+H∖{ot} , the expected value of the NDIGO reward at step t+H−1 is equal to the amount of additional information that can be gained by the observation ot when trying to predict ot+H.

6 Experiments

--------------

We evaluate the performance of NDIGO qualitatively and quantitatively on five experiments, where we demonstrate different aspects of discovery with NDIGO.

In all experiments there are some hidden objects which the agent seeks to discover. However the underlying dynamics of the objects are different. In the simplest case, the location of objects only changes at the beginning of every episode, whereas in the most complex the objects are changing their locations throughout the episode according to some random walk strategy. We investigate (i) whether the agent can efficiently search for novelty, i.e., finding the location of objects; (ii) whether the agent can encode the information of object location in its representation of the world such that the discovery loss of predicting the objects is as small as possible.

###

6.1 Baselines

We compare our algorithm NDIGO-H, with H being the horizon and taking values in {1,2,4}, to different information seeking and exploration baselines considered to be state of the art in the intrinsic motivation literature. Prediction Error (PE) (haber2018learning; achiam2017surprise): The PE model uses the same architecture and the same losses than NDIGO. The only difference is that the intrinsic reward is the predictor error: rPEt=L(ˆpt+1|t,ot+1). Prediction Gain (PG) (achiam2017surprise; ostrovski2017count): Our version of PG uses the same architecture and the same losses than NDIGO. In addition, at every n=2 learner steps we save a copy of the prediction network into a fixed target network. The intrinsic reward is the difference in prediction error, between the up-to-date network and the target network predictions: rPGt=L(ˆptargett+1|t,ot+1)−L(ˆpt+1|t,ot+1), where ˆptarget|t+1|t is the distribution computed with the weights of the fixed target network. Intrinsic Curiosity Module (ICM) (pathak2017curiosity; burda2018large): The method consists in training the internal representation bt to be less sensitive to noise using a self-supervised inverse dynamics model. Then a forward model is used to predict the future internal representation ˆbt+1 from the actual representation bt and the action at (more details on this model are in [Appendix D](#A4 "Appendix D pathak2017curiosity’s ICM Model for Partially Observable Environments ‣ World Discovery Models")). The intrinsic reward rFPEt=∥∥ˆbt+1−bt+1∥∥22.

###

6.2 Test environments

#### The 5 rooms environment.

The 5 rooms environment (see [Figure 2](#S6.F2 "Figure 2 ‣ The maze environment. ‣ 6.2 Test environments ‣ 6 Experiments ‣ World Discovery Models")) is a local-view 2D environment composed of 5 rooms implemented using the pycolab library111<https://github.com/deepmind/pycolab>. In pycolab, the environment is composed of cells that contain features such as walls, objects or agents. In the 5 rooms environment, there is one central 5×5 room and four peripheral rooms (composed of 48 cells) that we will refer to as upper, lower, left and right rooms. Each of the four peripheral rooms may contain different types of “objects” that occupy a cell exclusively. At every episode, the agent starts in the middle of the central room and the starting position of each object is randomised. The objects may or may not move, but as a general rule in any episode they never leave the room they started in. Finally, we only place objects in the peripheral rooms, and in each room there is never more than one object.

#### The maze environment.

The maze environment (see [Figure 3](#S6.F3 "Figure 3 ‣ The maze environment. ‣ 6.2 Test environments ‣ 6 Experiments ‣ World Discovery Models")) is also a pycolab local-view 2D environment. It is set-up as a maze composed of six different rooms connected by corridors. The agent starts at a fixed position in the environment in an otherwise empty room 0; rooms are numbered from 0 to 5 based on the order in which they can be reached, i.e. the agent cannot reach room number 3 without going through rooms 1 and 2 in this order. A white noise object is always present in room 1, and a there is single fixedin rooms 2,3 and 4. Room 5 contains a special movable, which should attract the agent even when the environment is completely learned.

Figure 2: The 5 rooms environment: in this instance, we can see in white the agent, 4 fixed objects in each of the 4 peripheral rooms and in grey the impenetrable walls. The shaded area around the agent represents its 5×5 region-cell local view.

Figure 3: The maze environment: in this instance, we can see in white the agent, 4 fixedobjects in blue, green, pink and red. white noiseis the closest object to the agent location also in green.

#### Objects.

We consider five different types of objects: fixed, bouncing, Brownian, white noise and movable.

fixed objects are fixed during episodes, but change position from episode to episode.

They provide information gain about their position when it is not already encoded in the agent’s representation.

bouncing objects bounce in a straight line from wall to wall inside a room.

In addition to providing information gain similar to fixed objects, they allow us to test the capacity of the representation to encode predictable object after the object is no longer in the agent’s view.

Brownian objects follow a Brownian motion within a room, by moving uniformly at random in one of the four directions.

white noise objects change location instantly to any position inside the same room, uniformly at random, at each time step, and are therefore unpredictable.

Finally, movable objects do not move by themselves, but the agent can cause them to move to a random location by attempting to move into their cells. Interacting with these objects allows more information gain to be generated.

#### Agent’s observations and actions.

The observation ot at time t consists in a concatenation of images (called channels) of 25 pixels representing the different features of the 5×5 local view of the agent. This can be represented by multidimensional array (5,5,c) where c is the number of channels. The first channel represents the walls in the local view: 1 indicates the presence of a wall and 0 the absence of a wall. Then, each of the remaining channels represents the position of an object with a one-hot array if the object is present in the local view or with a null array otherwise. The possible actions at are stay, up, down, right, left and are encoded with a one-hot vector of size 5.

###

6.3 Performance evaluation

The agent’s performance is measured by its capacity to estimate the underlying state of the world from its internal representation (discovery loss, see [Section 4.2](#S4.SS2 "4.2 Evaluation of the learnt representation ‣ 4 Learning the World Model ‣ World Discovery Models")). In pycolab, it is possible to compute a discovery loss for each aspect of the world state (location of each object for instance). So that it is easy to understand which aspects of the world the agent can understand and keep in its internal representation. Once again we stress the fact that no gradient is back-propagated from that evaluation procedure to the internal representation. In addition, we provide other statistics such as average values of first-visit time and visit counts of a given object to describe the behavior of the agent. The first-visit time is the number of episode time steps the agent needs before first observing a given object; the visit count is the total number of time steps where the agent observes the object. Finally, we also provide more qualitative results with videos of the agent discovering the worlds (see

<https://www.youtube.com/channel/>

UC5OPHK7pvsZE-jVclZMvhmQ).

###

6.4 Experimental results

In this section we evaluate the performance of NDIGO on some controlled navigation task (for the implementation details and the specification of the prediction and policy networks and the training algorithms see [Appendix B](#A2 "Appendix B NDIGO Agent Implementation Details ‣ World Discovery Models")).

#### Experiment 1.

We evaluate the discovery skills of NDIGO by testing how effectively it can ignore the white noise, from which there is nothing to learn, and discover the location of the fixed object. Here, we use a 5 rooms setting with a fixed object in the upper room, and a white noise object in the lower room.

Figure 4: Experiment 1: Average discovery loss of the fixed object. The results are averaged over 10 seeds.

| | Visit count | First visit time |

| --- | --- | --- |

| | fixed | w. noise | fixed | w. noise |

| Random | 14.1±14.3 | 24.6±12.6 | 339.0±40.5 | 225.6±50.4 |

| PE | 0.1±0.2 | 158.3±3.7 | 392.6±18.1 | 15.5±4.0 |

| PG | 27.3±22.0 | 22.5±10.3 | 306.4±49.4 | 233.7±56.6 |

| ICM | 144.8±37.2 | 23.8±12.4 | 132.4±41.2 | 238.3±55.0 |

| NDIGO-1 | 120.9±43.4 | 19.1±9.3 | 78.4±28.5 | 279.4±42.9 |

| NDIGO-2 | 154.0±45.5 | 7.4±6.7 | 112.6±46.2 | 345.8±36.5 |

| NDIGO-4 | 300.4±22.2 | 1.4±1.2 | 40.8±9.7 | 330.7±47.4 |

Table 1: Experiment 1: Average values of the visit counts and first visit time of the trained agent for the fixed and white noise objects in one episode.

We report in [Figure 4](#S6.F4 "Figure 4 ‣ Experiment 1. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models") the learning curves for the discovery loss of the fixed object. This result shows the quality of the learned representation in terms of encoding the location of fixed object. We observe that the long-horizon variant of NDIGO (NDIGO-4) outperforms the best baseline (ICM) by more than an order of magnitude. Also the asymptotic performance of NDIGO-4 is significantly better than NDIGO-1 and NDIGO-2.

In [Table 1](#S6.T1 "Table 1 ‣ Experiment 1. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models") we also report the average value and standard deviation of visit count and first visit time of the trained agents for the fixed object and the white noise object in an episode222

Each episode is set to end after 400 time steps; if an agent does not find the object by the end of the episode, the first visit time is set to 400.

. We observe that different variants of NDIGO are driven towards the fixed object and manage to find it faster than the baselines while avoiding the white noise object. While ICM is also attracted by the fixed object, it is not doing it as fast as NDIGO. PE, as expected, is only attracted by the white noise object where its reward is the highest. We also observe that the performance of NDIGO improves as we increase the prediction horizon. From now on, in the tables, we report only the ICM results as it is the only competitive baseline. Exhaustive results are reported in [Section E.1](#A5.SS1 "E.1 Additional results for Experiment 2-4 ‣ Appendix E Additional results ‣ World Discovery Models").

#### Experiment 2.

To demonstrate better the information-seeking behaviour of our algorithm, we place randomly a fixed object in either the upper, left or right room and a white noise object in the lower room. Thus, to discover the object, the agent must actively look for it in all but the lower room.

Similar to Experiment 1, We report the average discovery loss of the fixed object in [Figure 5](#S6.F5 "Figure 5 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We observe that all variants of NDIGO perform better than the baselines by a clear margin. Though ICM performance is not far behind NDIGO (less than two times worse than NDIGO-4). We also observe no significant difference between the different variants of NDIGO in this case. We also report in [Table 2](#S6.T2 "Table 2 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models") the first visit and visit counts for the fixed object and the white noise object in an episode. NDIGO again demonstrates a superior performance to the baselines. We also observe that NDIGO in most case is not attracted towards the white noise object. An interesting observation is that, as we increase the horizon of prediction in NDIGO, it takes more time for the agent to find the fixed object but at the same time the visit counts increases as well, i.e, the agent stay close to the object for longer time after the first visit.

As a qualitative result, we also report top-down-view snapshots of the behavior of NDIGO-2 up to the time of discovery of fixed in the right room in [Figure 6](#S6.F6 "Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We also depicts the predicted view of the world from the agent’s representation in [Figure 6](#S6.F6 "Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). As the location of object is unknown to the agent, we observe that the agent searches the top-side, left-side and right-side rooms until it discovers the fixed object in the right-side room. It also successfully avoids the bottom-side room containing the white noise object. Also as soon as the agent finds the fixed object the uncertainty about the location of fixed object completely vanishes (as the agent has learned there is only one fixed object exists in the world).

Figure 5: Experiment 2: Average discovery loss of the fixed object. The results are averaged over 10 seeds.

| | | |

| --- | --- | --- |

|

(a) t=1

|

(b) t=2

|

(c) t=3

|

Figure 6: Experiment 2: top-down-view snapshots of the behavior of the NDIGO-4 agent. (a) after entering the top-side room (b) after entering the right-side room (c) after discovering the fixed object in the left-side room. In each subpanel the left-side image depicts the ground-truth top-down-view of the world and the right-side image depicts the predicted view from the agent’s representation. All times are in seconds.

| | Visit count | First visit time |

| --- | --- | --- |

| | fixed | w. noise | fixed | w. noise |

| ICM | 151.7±33.0 | 15.6±9.0 | 142.1±40.8 | 198.7±55.1 |

| NDIGO-1 | 180.2±42.7 | 12.8±6.9 | 101.1±31.1 | 237.2±49.4 |

| NDIGO-2 | 209.3±34.9 | 3.5±2.3 | 121.1±36.5 | 306.4±43.4 |

| NDIGO-4 | 233.7±41.6 | 5.3±3.7 | 126.7±43.3 | 268.2±53.1 |

Table 2: Average values of the visit counts and first visit time of the trained agent for the fixed and white noise objects in Experiment 2.

#### Experiment 3.

We investigate whether NDIGO is able to discover and retain the dynamics of moving (but still predictable) objects even when not being in its field of view. For this, we used a 5 rooms setting with two bouncing objects in upper and lower rooms and a white noise object in the right room.

Figure 7: Experiment 3: Average discovery loss of bouncing objects. The results are averaged over 10 seeds.

| | Visit count | First visit time |

| --- | --- | --- |

| | upper obj. | lower obj. | upper obj. | lower obj. |

| ICM | 80.5±28.3 | 89.1±28.6 | 174.8±53.4 | 127.8±51.4 |

| NDIGO-1 | 41.0±8.5 | 45.2±11.6 | 34.4±18.7 | 38.8±16.1 |

| NDIGO-2 | 108.5±25.1 | 31.3±20.9 | 118.3±50.4 | 312.6±50.6 |

| NDIGO-4 | 198.7±33.4 | 44.2±28.8 | 64.5±38.8 | 320.8±47.5 |

Table 3: Average values of the visit counts and first visit time of the trained agent for the bouncing objects in Experiment 3.

We report the discovery loss in [Figure 7](#S6.F7 "Figure 7 ‣ Experiment 3. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We observe that all variants of NDIGO outperforms the baselines by a large margin in terms of the discovery loss of the bouncing object. As the discovery loss for both bouncing objects is small, this indicates that NDIGO can encode the dynamics of bouncing objects in its representation. We report the first-visit and visit counts for the bouncing objects in [Table 3](#S6.T3 "Table 3 ‣ Experiment 3. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). NDIGO has a superior performance than the baselines both in terms of visit counts and visit time to the fixed objects except for the visit count of the lower object in which ICM produces the best performance. Finally, as a qualitative result, we also report top-down-view snapshots of the behavior of NDIGO-1 after the discovery of each bouncing object in [Figure 8](#S6.F8 "Figure 8 ‣ Experiment 3. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We observe that the agent can estimate the location of both bouncings in the first visit. Also after departing from the green bouncing object and moving towards the red bouncing object, still it can track the dynamics of the green bouncing object with some small error. This is despite the fact that the green bouncing object is not anymore observed by the agent.

| | |

| --- | --- |

|

(a) t=1

|

(b) t=2

|

Figure 8: Experiment 3: top-down-view snapshots of the behavior of the NDIGO-1 agent. (a) after discovering the green bouncing object in the bottom-side room (b) after discovering the red bouncing object in the top-side room. In each subpanel the left-side image depicts the ground-truth top-down-view of the world and the right-side image depicts the predicted view from the agent’s representation. All times are in seconds.

#### Experiment 4.

We investigate if the horizon H affects the performance of the agents in terms of its sensitivity to structured noise. For this we evaluated which objects the agent seeks in a 5 rooms setting with a Brownian object in the upper room and a fixed object in the lower room. In the upper room, the Brownian moves at every time step. For the Brownian, unlike white noise, it is not guaranteed that the reward of NDIGO is zero. However by increasing the horizon, one may expect that the intrinsic reward due to the Brownian object becomes negligible because it becomes harder to predict with higher horizons.

Figure 9: Experiment 4: Average discovery loss of the fixed object . The results are averaged over 10 seeds.

| | Visit count | First visit time |

| --- | --- | --- |

| | Brownian | fixed | Brownian | fixed |

| ICM | 358.3±9.4 | 0.5±0.9 | 34.0±8.3 | 385.1±24.6 |

| NDIGO-1 | 356.1±6.9 | 0.0±0.0 | 23.4±6.4 | 398.9±8.9 |

| NDIGO-2 | 350.7±5.4 | 0.1±0.3 | 21.1±4.8 | 383.9±25.6 |

| NDIGO-4 | 0.4±1.0 | 290.5±31.4 | 395.5±12.4 | 68.4±29.8 |

Table 4: Average values of the visit counts and first visit time of the trained agent for the Brownian and fixed objects in Experiment 4, with all baselines.

Figure 10: Experiment 5: Average discovery loss of the fixed and movable objects. The results are averaged over 10 seeds.

| | | | |

| --- | --- | --- | --- |

|

(a) t=0

|

(b) t=1

|

(c) t=2

|

(d) t=4

|

Figure 11: Experiment 5: top-down-view snapshots of the behavior of the NDIGO-1 agent in the maze problem: (a) at the beginning of the episode (b) after discovering the fixed objects in room 3 and 4 (c) after discovering the movable object in room 5 (d) after discovering the fixed object in room 2. In each subpanel the left-side image depicts the ground-truth top-down-view of the world and the right-side image depicts the predicted view from the agent’s representation. All times are in seconds.

| | Visit frequency |

| --- | --- |

| | Room 1 | Room 2 | Room 3 | Room 4 | Room 5 |

| | white noise | fixed | fixed | fixed | movable |

| ICM | 100.0%±0.0% | 26.8%±25.7% | 13.8%±20.0% | 6.5%±14.3% | − |

| NDIGO-1 | 94.7%±12.9% | 66.4%±27.4% | 71.7%±26.1% | 70.4%±26.4% | 67.8%±27.1% |

| NDIGO-2 | 100.0%±0.0% | 78.3%±23.9% | 84.8%±20.9% | 83.7%±21.4% | 81.5%±22.5% |

| NDIGO-5 | 100.0%±0.0% | 49.6%±29.0% | 47.4%±28.9% | 18.8%±22.6% | − |

| NDIGO-10 | 100.0%±0.0% | 84.1%±21.4% | 95.5%±12.2% | 45.5%±29.1% | − |

Table 5: Average frequency of visits to each room for the trained agents.

| | |

| --- | --- |

| | First visit time |

| | Room 1 | Room 2 | Room 3 | Room 4 | Room 5 |

| | white noise | fixed | fixed | fixed | movable |

| ICM | 4.4±3.0 | 324.7±79.5 | 375.0±44.0 | 391.8±24.3 | - |

| NDIGO-1 | 40.6±57.2 | 203.0±90.6 | 190.5±86.0 | 199.9±85.2 | 212.7±83.2 |

| NDIGO-2 | 12.9±10.7 | 171.5±79.5 | 159.4±68.8 | 174.5±68.9 | 192.8±68.9 |

| NDIGO-5 | 6.8±11.5 | 245.1±94.1 | 255.9±91.4 | 344.9±68.7 | - |

| NDIGO-10 | 8.6±5.9 | 128.0±75.8 | 119.1±53.4 | 283.1±81.4 | - |

Table 6: Average time of first visit to each room for the trained agents.

We report the results in [Figure 9](#S6.F9 "Figure 9 ‣ Experiment 4. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We observe that the ICM baseline as well as the variants of NDIGO with the short horizon are being attracted to the structured randomness generated by the Brownian object. Only NDIGO-4 can ignore the Brownian object and discover the fixed object. As a result NDIGO-4 is the only algorithm capable of minimising the discovery loss of the fixed object.

#### Experiment 5.

We now compare discovery ability of the agents in a complex maze environment (see [Figure 3](#S6.F3 "Figure 3 ‣ The maze environment. ‣ 6.2 Test environments ‣ 6 Experiments ‣ World Discovery Models")) with no extrinsic reward. Here, the agent starts in a fixed position in the maze environment, and is given no incentive to explore but its intrinsic reward. This setting is challenging for discovery and exploration, since to go the end of the maze the agents need to take a very long and specific sequence of actions. This highlights the importance of intrinsic rewards that encourage discovery. We report the learning curves of NDIGO as well as the baselines in [Figure 10](#S6.F10 "Figure 10 ‣ Experiment 4. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We observe that in this case different variants of NDIGO outperform the baselines by a wide margin in terms of discovery loss, while NDIGO-1 and NDIGO-2 outperforming NDIGO-5. Note that due to the presence of movable object, which is unpredictable upon re-spawning, the average loss in this experiment is higher than the prior fixed object experiments. We also evaluate the discovery performance of the agent as the number of rooms it is capable of exploring within the duration of the episode. We present the average visit frequency and first visit time of each room for the trained agents (see [Tables 5](#S6.T5 "Table 5 ‣ Experiment 4. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models") and [6](#S6.T6 "Table 6 ‣ Experiment 4. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models")). NDIGO-1 and NDIGO-2 appear as the only agents capable of reaching the final room, whereas NDIGO-4 explores 4 out of 5. The rest can not go beyond the white noise object.

As a qualitative result, we also report top-down-view snapshots of the behavior of NDIGO-1 up to the time of discovery of the last fixed in room 2 in [Figure 11](#S6.F11 "Figure 11 ‣ Experiment 4. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models"). We also depicts the predicted view of the world from the agent’s representation in [Figure 6](#S6.F6 "Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models").

We observe the agent drives across the maze all the way from room 1 to room 5 and in the process discovers the fixed objects in rooms 3-4 (see [Figure 5(a)](#S6.F5.sf1 "(a) ‣ Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models")) and the movable object in room 5 (see [Figure 5(c)](#S6.F5.sf3 "(c) ‣ Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models")). It then chases movable object until movable object gets fixated on the top-left corner of the world. The agent then moves back to room 2 (see [Figure 5(c)](#S6.F5.sf3 "(c) ‣ Figure 6 ‣ Experiment 2. ‣ 6.4 Experimental results ‣ 6 Experiments ‣ World Discovery Models")) and discovers the last blue fixed object there, while maintaining its knowledge of the other objects.The reason for ignoring the blue fixed object in room 2, in the first place, might be due to the fact that the agent can obtain more intrinsic rewards by chasing the movable. So it tries to reach to room 5 as fast as possible at the expense of ignoring the blue fixed object in room 2.

7 Conclusion

-------------

We aimed at building a proof of concept for a world discovery model by developing the NDIGO agent and comparing its performance with the state-of-the-art information-seeking algorithms in terms of its ability to discover the world. Specifically, we considered a variety of simple local-view 2D navigation tasks with some hidden randomly-placed objects and looked at whether the agent can discover its environment and the location of objects. We evaluate the ability of our agent for discovery through the glass-box approach which measures how accurate location of objects can be predicted from the internal representation. Our results showed that in all these tasks NDIGO produces an effective information seeking strategy capable of discovering the hidden objects without being distracted by the white noise, whereas the baseline information seeking methods in most cases failed to discover the objects due to the presence of noise.

There remains much interesting future work to pursue. The ability of our agent to discover its world can be very useful in improving performance in multi-task and transfer settings as the NDIGO model can be used to discover the the new features of new tasks. Also in this paper we focused on visually simple tasks. To scale up our model to more complex visual tasks we need to consider more powerful prediction models such as Pixel-CNN (van2016conditional), VAE (kingma2013auto), Info-GAN (chen2016infogan) and Draw (gregor2015draw) capable of providing high accuracy predictions for high-dimensional visual scenes. We also can go beyond predicting only visual observations to other modalities of sensory inputs, such as proprioception and touch sensors (amos2018learning).

Acknowledgements

----------------

We would like to thank Daniel Guo, Theophane Webber, Caglar Gulcehre, Toby Pohlen, Steven Kapturovski and Tom Stepleton for insightful discussions, comments and feedback on this work. |

18b71a59-52ba-4d4d-aab2-c056b0509426 | trentmkelly/LessWrong-43k | LessWrong | Did you enjoy Ramez Naam's "Nexus" trilogy? Check out this interview on neurotech and the law.

I recently had the great pleasure of interviewing Dr. Allan McCay on the Futurati Podcast. He's a legal scholar and philosopher who focuses on questions at the interface of emerging neurotechnologies and the law, such as how privacy works when it's possible to decode mental images and mental speech from brain readings.

It was a great conversation, one covering issues that are becoming more timely by the day.

Major topics included:

* He brought up free will early on, so we spent some time kicking around different definitions of free will and how they interact with legal questions on culpability.

* How should we define and think about neurotech, i.e. how is a brain-to-brain interface different from just being really good at verbal communication?

* Certain important legal concepts make a strong distinction between mental and physical acts, i.e. between thinking about robbing a person and actually doing it. But as more of the world is mediated by neural interfaces, this distinction will require further clarification.

* We'll need to carefully distinguish between using neurotechnologies to help a person control their impulses and using them to effectively neuter people such that they're not even capable of becoming incensed over the gravest outrages. What sort of legal frameworks would help in this?

I hope you like the episode, and if you do, consider sharing it around to help us grow! |

578694d0-7172-45d7-b9d1-ed4755121a9c | trentmkelly/LessWrong-43k | LessWrong | An attempt in layman's language to explain the metaethics sequence in a single post.

|

9e905f68-19b7-4d53-92ca-ad9f199c3bd5 | trentmkelly/LessWrong-43k | LessWrong | The Fall of Rationality - The Senate of Admins

Borrowing liberally from https://youtu.be/ecMfU3910WY. In fact, this won't make much sense without having seen that first. The following is definitely very accurate to the attitudes of the people and groups mentioned and should totally be thought of as representative.

Nick Bostrom, having successfully convinced EA to focus on long term risk of AI superintelligence over saving children from malaria and starvation, has marched to the gates of LessWrong at Mount Rationality with the conquered EAs in tow. The admins are in session, presided over by Emperor Yud.

Roko: Hail Nick Bostrom, conqueror of the wordcel EAs.

Admins: Hail Nick Bostrom!

Roko: Honorable Shape Rotators! And admins of LessWrong. Have you heard what is being proposed? Bostrom has asked that we, the admins of LessWrong, should give these EAs, these wordcels, LessWrong accounts and host their posts on our servers. To treat these inexact fools as brothers, equals. And what of our other adjacent communities? What of the Polyamorists? So loyal to us. What of the redditors, and the neoreactionaries which sends us so many recruits? If we make Rationalists of these wordcels, can we withhold LessWrong accounts from them? No! Then what becomes of the precious prize a LessWrong account once was? It becomes a cheap, common thing. It will be given away like Reddit Karma. I say no! We are Rationalists! Shape Rotators! Let us rid our minds of this poisonous idea. Ban their IP addresses. Send the rest to Reddit. Teach them once and for all what it is to make war on Rationality. That... is the Rationalist way.

Bostrom: Honorable Shape Rotators of LessWrong! You have greeted me as an author, who came in small triumph from his book sales. And I thank you for it. But you will have a hard decision to make here today! And it must not be I, as an author, who swayed your vote. I ask, therefore, of Yud, that a man who is neither poster nor admin, but a philosopher, a man of reason, be permitted to speak for me.

Willi |

d9d68a67-02a5-4352-9895-b1954291ddbc | trentmkelly/LessWrong-43k | LessWrong | Schelling Point Strategy Training

There's a category of game-theoretic scenario called Battle of the Sexes, which is commonly used to demonstrate coordination problems. Two cinema-goers, traditionally a husband and wife, have agreed to go to the cinema, but haven't decided on what to see beforehand. Of the two films that are showing, she would rather see King Kong Lives, while he would rather see Big Momma's House 2. Each would rather see their non-preferred film with their spouse than see their preferred film on their own. The payoff matrix is as follows:

Husband King Kong Lives Big Momma's House 2 Wife King Kong Lives 2 / 1 0 / 0 Big Momma's House 2 0 / 0 1 / 2

The two have not conferred beforehand, beyond sharing knowledge of their preferences. They are turning up to the cinema and picking an auditorium in the hope that their spouse is in there. Which should they pick? This is a classic coordination problem. The symmetry of their preferences means there is no stand-out option for them to converge on. There is no Schelling Point.1

Except I'm going to argue that there is.

Shoehorning an example of a Schelling Point into the above scenario, we might imagine that one of the above films being screened is being billed as "an ideal romantic treat to share with your spouse", (which one that would be, I'm not entirely sure), though in the absence of a "natural" Schelling Point, there's no reason we can't make one. All we need is to identify procedures that would reliably elevate one of these options to our attention. Then it becomes a question of selecting which of these procedures is most likely to be selected by the other agent in the scenario.

I am now going to instigate a multidimensional instance of Battle of the Sexes with all the readers of this post. Below are sixteen randomly-ordered films. I am going to select one, and invite you to do the same. The object of the exercise is for all of us to pick the same one. I will identify my selection, and the logic behind it, in rot13 afte |

f0694686-e2f9-4ad8-b4f1-4530ce4e59bc | trentmkelly/LessWrong-43k | LessWrong | Exploring Concept-Specific Slices in Weight Matrices for Network Interpretability

Hello. I've been interesting in AI for a long time, but I never contributed anything to discussions of AI alignment in the past, because I didn't think I was smart enough. Turns out, no-one else in the world is smart enough to solve this either. So, without further preamble, I was wondering if anyone would like to see my proposal for a research direction. Its a first draft, and I'm sure someone else has thought of this already, but on the offchance they haven't...

(p.s. I used chatgpt to proofread it and suggest rewrites. I hope thats ok.)

Background

Interpreting the functionality of individual neurons and neural circuits within artificial intelligence (AI) systems remains a significant challenge in the field of AI alignment. This task includes the classification of neurons involved in complex behaviors such as deception, and subsequently monitoring these specific neurons to determine the AI's behavior. Despite advancements in AI interpretability, such as using one AI to predict the behavior of neurons in another AI, this problem is still unsolved 1 .

A limitation of this approach is rooted in Lob's theorem, which states that a system cannot perfectly predict its own behavior. Although the extent to which an AI can imperfectly predict its own behavior is still unknown, the problem intuitively arises from the paradox that for a system to fully comprehend its own behavior, it would need to be larger and more complex than itself.

To address this issue, I propose an alternative training scheme that incorporates the roles of neurons during the training process, effectively "baking in" an approximate understanding of their functions. This method could potentially improve the interpretability of AI systems without significantly impacting their efficiency, or even potentially improving it. The purpose of the rest of this document is to provide a brief outline of this scheme, including a proposal for a relatively simple test case that could be implemented without excess |

3467f9d2-6aed-40df-8139-7b36d36e8ff4 | trentmkelly/LessWrong-43k | LessWrong | Monthly Roundup #26: January 2025

Some points of order before we begin the monthly:

1. It’s inauguration day, so perhaps hilarity is about to ensue. I will do my best to ignore most forms of such hilarity, as per usual. We shall see.

2. My intention is to move to a 5-posts-per-week schedule, with more shorter posts in the 2k-5k word range that highlight particular subtopic areas or particular events that would have gone into broader roundups.

3. This means that the Monthly Roundups will likely be shorter.

4. If you’re considering reading Agnes Callard’s new book, Open Socrates, I am reading it now and can report it is likely to get the On the Edge treatment and its own week, but of course it is too soon to know.

5. I may be doing some streams of myself working, via Twitch, primarily so that a volunteer can look for ways to build me useful tools or inform me of ways to improve my workflow. You are also of course welcome to watch, either live or the recordings, to see how the process works, but I make zero promises of any form of interaction with the audience here. I also might stream Slay the Spire 2 when the time comes, once I have access and they permit this.

On with the show.

TABLE OF CONTENTS

1. Bad News.

2. Wanna Bet.

3. A Matter of Trust.

4. Against Against Nuance.

5. Government Working.

6. Scott Alexander on Priesthoods.

7. NYC Congestion Pricing Bonus Coverage.

8. Positive Sum Thinking.

9. Antisocial Media.

10. The Price of Freedom.

11. Mood Music.

12. Dedebanking.

13. Good News, Everyone.

14. While I Cannot Condone This.

15. Clear Signal.

16. When People Tell You Who They Are Believe Them.

17. What Doesn’t Go Without Saying.

18. Party at My Place.

19. I Was Promised Flying Self-Driving Cars.

20. Gamers Gonna Game Game Game Game Game.

21. For Your Entertainment.

22. Sports Go Sports.

23. The Lighter Side.

BAD NEWS

PornHub cuts off Florida, the 13th state to lose access, after Florida passed an age verification law, and PornHub quite reasonably |

cd5df30d-a512-44a9-aec4-04acfae825a7 | trentmkelly/LessWrong-43k | LessWrong | Housing and Transit Roundup #4

It’s time for another housing roundup, so I can have a place to address the recent discussions about the local impact of housing construction on housing costs.

DOES INCREASING LOCAL HOUSING SUPPLY DECREASE LOCAL HOUSING PRICES?

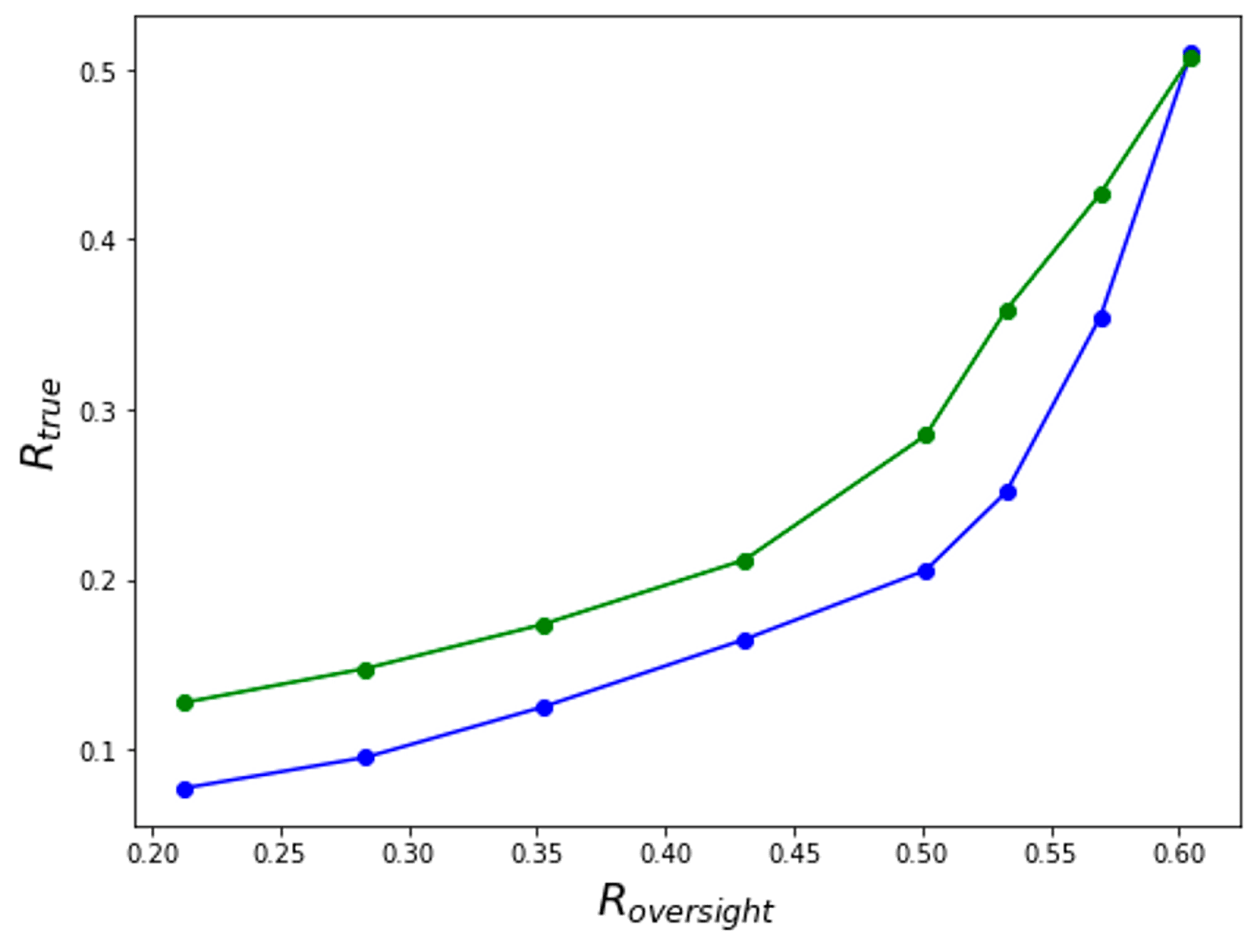

Scott Alexander says Change My Mind: Density Increases Local But Decreases Global Prices. He uses this graph to make his point:

Under this theory, YIMBY is still correct general policy as it lowers prices everywhere else, yet locally increasing supply improves quality of life and thus induces even more demand and raises prices.

Scott Sumner explains why I strongly believe Scott Alexander is wrong here.

Here’s the core point:

> Scott Sumner: [Scott Alexander] is aware that the pattern above may show an upward sloping supply curve, not an upward sloping demand curve. But he nonetheless suggests that it’s probably an upward sloping demand curve, and that building more housing in Oakland would make Oakland so much more desirable that prices actually rise, despite the greater supply of housing. I have two problems with this sort of argument.

>

> First, I doubt that it’s true. It is certainly the case that building more housing can make a city more desirable, and that this effect could be so strong that it overwhelms the price depressing impact of a greater quantity supplied. But studies suggest that this is not generally the case.

>

> Texas provides a nice case study. Among Texas’s big metro areas, Austin has the tightest restrictions on building and Houston is the most willing to allow dense infill development. Even though Houston is the larger city, house prices are far higher in Austin.

This is basic common sense.

1. Places that impose severe NIMBY restrictions get very high prices.

2. All the most expensive places in America impose such restrictions, and make it impossible to build more housing.

3. The places that don’t impose such restrictions have lower housing prices than their populations would otherwise suggest.

4. Thi |

3b8b504c-3ee3-4735-a42b-69c066287cf5 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Apply to the Redwood Research Mechanistic Interpretability Experiment (REMIX), a research program in Berkeley

This winter, Redwood Research is running a coordinated research effort on mechanistic interpretability of transformer models. We’re excited about recent advances in mechanistic interpretability and now want to try to scale our interpretability methodology to a larger group doing research in parallel.

REMIX participants will work to provide mechanistic explanations of model behaviors, using our [causal scrubbing methodology](https://www.redwoodresearch.org/s/causal-scrubbing) to formalize and evaluate interpretability hypotheses. We hope to produce many more explanations of model behaviors akin to our recent work investigating behaviors of [GPT-2-small](https://www.alignmentforum.org/posts/3ecs6duLmTfyra3Gp/some-lessons-learned-from-studying-indirect-object), [toy language models](https://www.redwoodresearch.org/s/causal-scrubbing#page=71), and [models trained on algorithmic tasks](https://www.redwoodresearch.org/s/causal-scrubbing#page=33). We think this work is a [particularly promising research direction for mitigating existential risks from advanced AI systems](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#Is_this_research_promising_enough_to_justify_running_this_program_) (more in [Goals](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#Goals) and [FAQ](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#How_useful_is_this_kind_of_interpretability_research_for_understanding_models_that_might_pose_an_existential_risk_)).

Since mechanistic interpretability is currently a small sub-field of machine learning, we think it’s plausible that REMIX participants could make important discoveries that significantly further the field. We also think participants will learn skills valuable for many styles of interpretability research, and also for ML research more broadly.

Apply [here](https://jobs.lever.co/redwoodresearch/5a66fff4-0d11-4954-830a-9228635cea59) by Sunday, November 13th [DEADLINE EXTENDED] to be a researcher in the program. Apply sooner if you’d like to start early (details below) or receive an earlier response.

Some key details:

* We expect to accept 30-50 participants.

* The research program will take place in Berkeley, CA.

* We plan to have the majority of researchers participate during the months of December and/or January (depending on availability) although we may invite some to begin earlier and are open to some starting as soon as possible.

* We expect researchers to participate for a month minimum, and (all else equal) will prefer applicants who are able to come for longer. We’ll pay for housing and travel, and also pay researchers for their time. We’ll clarify the payment structure prior to asking people to commit to the program.

* We’re interested in some participants acting as *team leaders* who would help on-board and provide research advice to other participants. This would involve arriving early to get experience with our tools and research directions and participating for a longer period (~2 months). You can indicate interest in this role in the application.

* We’re excited about applicants with a range of backgrounds; we’re not expecting applicants to have prior experience in interpretability research. Applicants should be comfortable working with Python, PyTorch/TensorFlow/Numpy (we’ll be using PyTorch), and linear algebra. We’re particularly excited about applicants with experience doing empirical science in any field.

* We’ll allocate the first week to practice using our interpretability tools and methodology; the rest will be researching in small groups. See [Schedule](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#Schedule).

Feel free to email [programs@rdwrs.com](mailto:programs@rdwrs.com) with questions.

Goals

-----

### Why you should apply:

**Research results.** We are optimistic about the research progress you could make during this program (more below). Since mechanistic interpretability is currently a small sub-field of machine learning, we think it’s plausible that REMIX participants could make important discoveries that significantly further the field.

**Skill-building.** We think this is a great way to gain experience working with language models and interpreting/analyzing their behaviors. The skills you’ll learn in this program will be valuable for many styles of interpretability research, and also for ML research more broadly.

**Financial support & community.** This is a paid opportunity, and a chance to meet and connect with other researchers interested in interpretability.

### Why we’re doing this:

**Research output.** We hope this program will produce research that is useful in multiple ways:

* We’d like stronger and more grounded characterizations of how language models perform [a certain class of behaviors](https://www.lesswrong.com/posts/LkBmAGJgZX2tbwGKg/help-out-redwood-research-s-interpretability-team-by-finding). For example, we currently have a variety of findings about how [GPT-2-small implements indirect object identification](https://www.alignmentforum.org/posts/3ecs6duLmTfyra3Gp/some-lessons-learned-from-studying-indirect-object) (“IOI”, see next section for more explanation), but aren’t yet sure how often they apply to other models or other tasks. We’d know a lot more if we had a larger quantity of this research.

* For each behavior investigated, we think there’s some chance of stumbling across something really interesting. Examples of this include induction heads and the “pointer manipulation” result in the IOI paper: not only does the model copy information between attention streams, but it also copies “pointers”, i.e. the position of the residual stream that contains the relevant information.

* We’re interested in learning whether different language models implement the same behaviors in similar ways.

* We’d like a better sense of how good the current library of interpretability techniques is, and we’d like to get ideas for new techniques.

* We’d like to have more examples of this kind of investigation, to help us build infrastructure to support or automate this kind of research.

**Training and hiring.** We might want to hire people who produce valuable research during this program.

**Experience running large collaborative research projects.** It seems plausible that at some point it will be useful to run a huge collaborative alignment project. We’d like to practice this kind of thing, in the hope that the lessons learned are useful to us or others.

*See*[*“Is this research promising enough to justify running this program?”*](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#Is_this_research_promising_enough_to_justify_running_this_program_) *and*[*“How useful is this kind of interpretability research for understanding models that might pose an existential risk?”*](https://www.alignmentforum.org/posts/nqwzrpkPvviLHWXaE/apply-to-the-redwood-research-mechanistic-interpretability#How_useful_is_this_kind_of_interpretability_research_for_understanding_models_that_might_pose_an_existential_risk_)

Why do this now?

----------------

We think our recent progress in interpretability makes it a lot more plausible for us to reliably establish mechanistic explanations of model behaviors, and therefore get value from a large, parallelized research effort.

**A unified framework for specifying and validating explanations.** Previously, a big bottleneck on parallelizing interpretability research across many people was the lack of a clear standard of evidence for proposed explanations of model behaviors (which made us expect the research produced to be pretty unreliable). We believe we’ve recently made some progress on this front, developing [an algorithm called “causal scrubbing”](https://www.redwoodresearch.org/s/causal-scrubbing) which allows us to automatically derive an extensive set of tests for a wide class of mechanistic explanations. This algorithm is only able to reject hypotheses rather than confirming them, but we think that this still makes it way more efficient to review the research produced by all the participants.

* Our current plan is to require researchers in this program to do causal scrubbing on all serious research they produce and submit to the internal review process. (They can do exploratory analyses with whatever techniques they like.)

**Improved proofs of concept.** We now have several examples where we followed our methodology and were able to learn a fair bit about how a transformer was performing some behavior.

* We [recently investigated](https://www.alignmentforum.org/posts/3ecs6duLmTfyra3Gp/some-lessons-learned-from-studying-indirect-object) a somewhat complex behavior in GPT-2-small, which we call “indirect object identification” (“IOI”). IOI is the model behavior where, given a sequence such as “Bob and Alice went to the store and Alice gave an apple to”, the model predicts “Bob” rather than “Alice”. Our research located and described the mechanism inside GPT-2-small that performs this behavior, i.e. which heads of the network are involved and what roles they play. Though our investigation into GPT-2-small was much less comprehensive than e.g. the description of how an image classification model detects curves in the [Distill Circuits thread](https://distill.pub/2020/circuits/), we think our research here suggests that transformer language models *do*have some crisp circuits that can be located and described.

* We did [causal-scrubbing-based analysis](https://www.redwoodresearch.org/s/causal-scrubbing#page=71) of a two-layer attention-only language model in order to get a detailed sense of the interactions in a model that were important for the performance of the “[induction heads](https://transformer-circuits.pub/2022/in-context-learning-and-induction-heads/index.html)”. We also used [causal scrubbing](https://www.redwoodresearch.org/s/causal-scrubbing#page=33) to assess hypotheses about a transformer trained to classify strings of parentheses as balanced or unbalanced. In both these cases, we found that we could apply our methodology pretty straightforwardly, yielding useful results.

**Tools that allow complicated experiments to be specified quickly.**We’ve built a powerful library for manipulating neural nets (and computational graphs more generally) for doing intervention experiments and getting activations out of models. This library allows us to do experiments that would be quite error-prone and painful with other tools.

Who should apply?

-----------------

We're most excited about applicants comfortable working with (basic) Python, any of PyTorch/TensorFlow/Numpy, and linear algebra. Quickly generating hypotheses about model mechanisms and testing them requires some competence in these domains.

If you don’t understand the transformer architecture, we’ll require that you go through preparatory materials, which explain the architecture and walk you through building one yourself.

We’re excited about applicants with a range of backgrounds; prior experience in interpretability research is not required. The primary activity will be designing, running, and analyzing results from experiments which you hope will shed light on how a model accomplishes some task, so we’re excited about applicants with experience doing empirical science in any field (e.g. economics, biology, physics). The core skill we’re looking for here, among people with the requisite coding/math background, is something like **rigorous curiosity**: a drive to thoroughly explore all the ways the model might be performing some behavior and narrow them down through careful experiments.

What is doing this sort of research like?

-----------------------------------------

Mechanistic interpretability is an unusual empirical scientific setting in that controlled experimentation is relatively easy, but there’s relatively little knowledge about the kinds of structures found in neural nets.

Regarding the ease of experimentation:

* It’s easy to do complicated intervention experiments. If you’re curious about whether the network, or some internal component of it, would have produced a radically different output had some feature of the input been different, you can re-run it exactly and just change that feature. There’s little to no hassle involved in controlling for all the other features of the run.

* You can extract almost any metric from the internal state of the model at any time.

* You can quickly run lots of inputs through the model all at once if you want to characterize average behaviors.

* See Chris Olah’s [note on neuroscience versus interpretability](https://colah.github.io/notes/interp-v-neuro/) for more on this point.

Regarding the openness of the field:

* Large and important questions, like “How much similarity is there in the internals of different models?” and “To what degree are model behaviors implemented as modular, human-comprehensible algorithms?” are still mostly unexplored.

* We’re early on in the process of discovering commonalities across models like induction heads - we don’t currently know how much there tends to be simple, universal algorithms that show up across models, versus each model being idiosyncratic.

REMIX participants pursue interpretability research akin to the investigations Redwood has done recently into induction heads, [indirect object identification (IOI) in small language models](https://www.alignmentforum.org/posts/3ecs6duLmTfyra3Gp/some-lessons-learned-from-studying-indirect-object), and balanced parenthesis classification, all of which will be released publically soon. You can read more about behavior selection criteria [here](https://www.lesswrong.com/posts/LkBmAGJgZX2tbwGKg/help-out-redwood-research-s-interpretability-team-by-finding).

The main activities will be:

* doing exploratory analyses to generate hypotheses about how a language model (probably GPT-2-small) performs some behavior

* evaluating your hypotheses with [our causal scrubbing methodology](https://www.redwoodresearch.org/s/causal-scrubbing)

* iterating to make hypotheses more fine-grained and more accurate

The mechanisms for behaviors we’ll be studying are often surprisingly complex, so careful experimentation is needed to accurately characterize them. For example, the Redwood researchers investigating the IOI behavior found that removing the influence of the circuit they identified as primarily responsible had surprisingly little effect on the model’s ability to do IOI. Instead, other heads in the model substantially changed their behavior to compensate for the excision. As the researchers write, “Both the reason and the mechanism of this compensation effect are still unclear. We think that this could be an interesting phenomenon to investigate in future work.”

Here’s how a Redwood researcher describes this type of research:

> It feels a lot of time like you're cycling between: "this looks kind of weird and interesting, not sure what's up with this" and then "I have some vague idea about what maybe this part is doing, I should come up with a test to see if I understand I correctly" and then once you have a test "oh cool, I was kind of right but also kind of wrong, why was I wrong" and then the cycle repeats.

>

> Often it's pretty easy to have a hunch about what some part of your model is doing, but finding a way to appropriately test that hunch is hard and often your hunch might be partially correct but incomplete so your test may rule it out prematurely if you're not careful/specific enough.

>

> It feels like you're in a lab, with your model on the dissection table, and you're trying to pick apart what's going on with different pieces and using different tools to do so - this feels really cool to me, kind of like trying to figure out what's going on with this alien species and how it can do the things it does.

>

> It's really fun to try and construct a persuasive argument for your results: "I think this is what's happening because I ran X, Y, Z experiments that show A, B, C, plus I was able to easily generate adversarial examples based on these hypotheses" - I often feel like there's some sort of imaginary adversary (sometimes not imaginary!) that I have to convince of my results and this makes it extremely important that I make claims that I can actually back up and that I appropriately caveat others.

>

>