id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

84dd0278-89ba-4a16-984f-f4e0c5a5d824 | trentmkelly/LessWrong-43k | LessWrong | More findings on maximal data dimension

Produced as part of the SERI ML Alignment Theory Scholars Program - Winter 2022 Cohort.

I’d like to thank Wes Gurnee, Aryan Bhatt, Eric Purdy and Stefan Heimersheim for discussions and Evan Hubinger, Neel Nanda, Adam Jermyn and Chris Olah for mentorship and feedback.

The post contains a lot of figures, so the suggested length is deceiving. Code can be found in this colab notebook.

This is the second in a series of N posts on trying to understand memorization in NNs.

Executive summary

I look at a variety of settings and experiments to better understand memorization in toy models. My primary motivation is to increase our general understanding of NNs but I also suspect that understanding memorization better might increase our ability to detect backdoors/trojans. This post specifically focuses on measuring memorization with the maximal data dimensionality metric.

In a comment to the “Superposition, Memorization and double descent” paper, Chris Olah introduces maximal data dimensionality D*, a metric that supposedly tells to which degree a network memorized a datapoint compared to using features that are shared between datapoints. I extend the research on this metric with the following findings

1. In the double descent setting, the metric describes exactly what we would predict, i.e. with few inputs the network memorizes all datapoints and with a lot of input it learns some features.

2. On MNIST, I can reproduce the shape of the D* curve and also the findings that memorized datapoints have high D*, datapoints that share many features are in the middle and datapoints that the network is confused about have low D*. However, I was surprised to find that the datapoints the network misclassified on the training data are evenly distributed across the D* spectrum. I would have expected them to all have low D* didn’t learn them.

3. When we train the network to different levels of accuracy, we find that the distribution of errors is actually slightly left-heavy inst |

e3c28ce1-b904-4ecf-8d08-e94eb11c70d4 | trentmkelly/LessWrong-43k | LessWrong | Thou shalt not command an alighned AI

Raymond is tired. He exhails exhaustly: >>I don't think we even know what alighnment is, like we are not able to define it.<<

I hop up on my chair in the meditarian restaurant: >I disagree, if you give me 3 seconds, I can define it.<

>>---<<

>Can we narrow it to alighnment of AI to humans?<

>>Yes, let's narrow it to alighnment of one AI to one person.<<

>Fine. The AI is alighned if you give it a goal and it follows towards that goal without modifying it with its own intentions or goals.<

>>That sounds a bit way too abstract...<<

>Yeah, but in what sense, you mean?<

>>Like the goal, what is that, more precisely?<<

>That is a state of the world you want to achieve or a series of states of the world.<

>>Oh, but how would you specify that?<<

>You can specify it, describe it, in infinetly many ways, there is a scale of how detailed description you choose, which will imply a level of approximation of the state.<

>>Oh, but that won't describe the state completely..?<<

>Well maybe if you can describe to the quantum state level, but surely that is not practical.<

>>So then the AI must somehow interpret your goal, right?<<

>Ehmmm, well no, but what you mean it would have to interpolate to fill in the under-specified spots in the description of your goal..?<

>>Yes, that is a good expression for what would need to happen.<<

>Then what we've discovered here is another axis, orthogonal to alighnment, which would control to what level of under-specifiedness we want the AI to interpolate and where it would need to ask you to fill in the gaps (more) before moving towards your goal.<

>>Oh, but we also can't be like "Create a picture of a dog" and then 'd need to specify each pixel.<<

>Sure. But maybe the AI must ask you whether you want the picture on paper or digitally on your screen, with a reasonable threshold for clarification.<

>>Hmm, but people want things they do not have...<<

>and they can end up in a state they feel bad in with an alighned AI.<

>>S |

42bb6258-fcb1-42b3-bf0f-4e26ca0bd202 | trentmkelly/LessWrong-43k | LessWrong | [Link] arguman.org, an argument analysis platform

I recently found out about arguman. It's an online tool to dissect arguments and structure agreement and refutation.

It seems like something that's been discussed about in LW some times in the past. |

230680b0-6d6e-4c3e-836c-492eae426669 | trentmkelly/LessWrong-43k | LessWrong | Blogs by LWers

Related to: Wikifying the Blog List

LessWrong posters and readers are generally pretty cool people. Maybe they are interesting bloggers too. And I'm not just talking about rationalist material, that we'd ideally like to be cross posted on LessWrong, no gardening blogs are also fair game. I'm making this a discussion level post so more people can see the list. Please share links to blogs by former or current LWers. Surely the authors wouldn't mind, who wouldn't like more readers? Original list here.

Anyone who wants to suggest a new blog for the list please follow this link.

Blogs by LWers:

* RobinHanson --- Overcoming Bias (Katja Grace and Robert Wiblin post here as well)

* Katja Grace --- Meteuphoric (very cool old posts and summaries)

* muflax --- muflax' mindstream, daily

* TGGP --- Entitled To An Opinion

* Yvain --- Jackdaws love my big sphinx of quartz

* juliawise --- Giving Gladly, Radiant Things

* James_G --- Writings

* steven0461 --- Black Belt Bayesian

* James Miller --- Singluarity Notes

* Jsalvati --- Good Morning, Economics

* Will Newsome --- Computational Theology

* clarissethorn --- Clarrise Thorn

* Zack M. Davis --- An Algorithmic Lucidity

* Kaj_Sotala --- A view to the gallery of my mind

* SilasBarta --- Setting Things Straight

* tommcabe --- The Rationalist Conspiracy

* Alicorn --- Irregular Updates By An Irregular Person

* MBlume --- Baby, check this out; I've got something to say.

* ciphergoth --- Paul Crowley's blog (mostly about cryonics), Paul Crowley

* XiXiDu --- Alexander Kruel

* Aurini --- Stares At The World

* jkaufman --- Jeff Kaufman

* Bill_McGrath --- billmcgrathmusic

* Sister Y --- the view from hell

* PaulWright --- Paul Wright's blog

* _ozymandias --- http://ozyfrantz.com/

* mstevens --- stdout

* HughRistik --- Feminist Critics

* Julia_Galef --- Measure of Doubt

* NancyLebovitz --- Input Junkie

* David Gerard --- a bunch of them

* Jayson_Virissimo --- Jay, Quantified

* kpreid --- Kevin Reid's blog

|

1bebb0a7-f188-4f31-83fd-4e686ad29e38 | trentmkelly/LessWrong-43k | LessWrong | The case for training frontier AIs on Sumerian-only corpus

> Let your every day be full of joy, love the child that holds your hand, let your wife delight in your embrace, for these alone are the concerns of humanity.[1]

>

> — Epic of Gilgamesh - Tablet X

Say we want to train a scientist AI to help in a precise, narrow field of science (e.g. medicine design) but prevent its power from being applied anywhere else (e.g. chatting with humans, designing bio-weapons, etc.) even if it has these abilities.

Here’s one safety layer one could implement:

1. Train a scientist AI on a large scientific corpus translated exclusively into Sumerian. Keep it in a secure containment environment.

2. Train a less-smart reporter whose sole ability is to translate from Sumerian to English only if the Sumerian content is about medical research. It refuses to translate other kinds of content.

3. Human operators are only allowed to interact with the scientist AI through the intermediate of the reporter.

This safety layer helps in at least two ways:

1. The scientist AI cannot directly manipulate humans to escape its containment. It should first trick the reporter to then trick the humans, rendering the effort still possible but less practical.

2. If the scientist AI exfiltrates, it’ll have a harder time manipulating humans online and will leave traces: e.g. any occurrence of Sumerian on the Internet outside of ancient text studies becomes highly suspicious. One can even imagine recognizing the idiosyncrasies of English coming from translated Sumerian, or the artifacts left by the Sumerian-specific tokenizer reused to produce non-Sumerian text.

Why Sumerian? [2]

* It has been extinct for 4000 years. There are very few (probably <1000) experts who fluently understand Sumerian.

* It is a language isolate. It’s unlike any existing spoken language, rendering its identification in case of a leak much easier.

* There is a substantial corpus. Despite its age, a significant number of Sumerian texts have been discovered and preserved. These |

6c42304a-121a-4e11-a5d7-bc69cdc5978a | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "(Content warning: econoliteracy. Dialogue based on an actual conversation, but heavily idealized and simplified and stripped of surrounding context.)Myself: - seems unreal because it is unreal. But there's a river of reality running through it. If somebody reports that something complicated feels unreal and difficult to get a handle on, usually the first thing I prescribe is going back to the very very basics, and playing around with those to solidify the grasp there. If mathematics seemed unreal to someone, I'd take somebody back to premises-and-conclusions. In the case of complicated modern economical structures, I'd maybe start with trade. What's the point of trade? What does it do?Them: The point of trade is that sometimes people get different amounts of value from things, so they can get more value by trading them. Like, if I have an apple, and you have an orange, but I like oranges more than apples, and you like apples more than oranges, we can trade my apple for your orange, and both be better off.Myself: Yes, that is the horrible explanation that you sometimes see in economics textbooks because nobody knows how to explain anything. But when you are trying to improve your grasp of the very very basics, you should take the basic thing and poke at it and look at it from different angles to see if it seems to capture the whole truth.In the case of the "people put different values on things", it would seem that on the answer you just gave, trade could never increase wealth by very much, in a certain basic sense. There would just be a finite amount of stuff, only so many apples and oranges, and all trade can do is shuffle the things around rather than make any more of it. So, on this viewpoint, trade can't increase wealth by all that much.Them: It can increase the utility we get from the things we have, if I like oranges a lot more than I like apples, and you like apples a lot more than oranges.Myself: All right, suppose that all of us liked exactly the same objects exactly the same amount. This obliterates the poorly-written-textbook's reason for "trade". Do you believe that, in an alternate world where everybody had exactly the same taste in apples and oranges, there'd be no further use for trade and trade would stop existing?Them: Hmm. No, but I don't know how to describe what the justification for trade is, in that case.Myself: Modern society seems very wealthy compared to hunter-gatherer society. The vast majority of this increased utility comes from our having more stuff, not from our having the same amount of stuff as hunter-gatherers but giving apples to the exact person on Earth who likes apples most. I claim that the reason we have more stuff has something to do with trade. I claim that in an alternate society where everybody likes every object the same amount, they still do lots and lots of trade for this same reason, to increase how much stuff they have.Them: Okay, my new answer is that, through trade, you can get strawberries from far away, where they wouldn't be in season at all, where you are... no, that doesn't actually make more stuff. My new answer is that you can build complicated things with lots of inputs, by trading to get the inputs. Like if it takes iron and copper to build circuits, you can trade to get those.Myself: If it takes 1 unit of effort to get 1 unit of iron either way, how can you get any more stuff out, at the end, by trading things? It takes 1 unit of effort to make 1 iron ingot, so go to the iron mines and mine some iron, then chop down the wood to prebake the iron ore for grinding before you put it into the bloomery. All of that has to be done either way to get the iron ingot. How can trading for somebody else's iron, instead, cause there to be more stuff in the economy as a whole?Them: Because task-switching has costs.Myself: Okay, suppose an alternate society of people who are really good at task-switching. They can just swap straight from one task to another with no pause. They also all have exactly the same tastes in apples and oranges and so on. Does this new society now have zero use for trade?Them: Um... hm. (Thinks.) But they're not actually in the same place as the iron mines. So if they have to walk around a lot -Myself: Suppose a society in which everyone has exactly the same taste in apples and oranges; everybody is really really good at switching tasks; and furthermore, the society has Star Trek transporter pads, so you can get to the iron mine instantly. Is there now no more use for trade?Them: Some people are better miners and others are better fruit-growers?Myself: Suppose a society with identical fruit tastes, and perfect task-switching, and Star Trek transporters, and furthermore everyone has identical genetics, as if they were all identical twins; which, as we know from identical-twin studies, means that everybody will have around the same amount of innate talent for any and all jobs. Like that case where two identical twins, separated at birth, who never knew each other, both ended up as firefighters. As we all know, studies on separated identical twins show that happens every single time, with no exceptions. I claim that even this society still has to do a lot of trade in order to end up with modern levels of wealth.Now, do you think I'm trolling you and that we actually did get rid of the basic reason for trade, at this point, or that there's still something left over? Identical fruit tastes, perfect task-switching, Star Trek transporters, everyone is born with the same genetics and therefore identical innate talents. Do people now mine their own iron, or do they still trade for it?Them: (Thinks for a while.)Me: If the Sequences have taught you anything, I hope it's taught you that it's okay to state the obvious.Them: ...people learn to do their jobs better with practice?Myself: Human capital accumulation! Indeed! So now let us suppose identical fruit tastes, perfect task-switching, Star Trek transporters, identically cloned genetics, and people can share expertise via Matrix-style downloads which are free. Have we now gotten rid of the point of trade? As far as you can tell.Them: ...yes?Myself: Do you believe I'm going to say that we've gotten rid of the point of trade?Them: ...no.Myself: Well, I agree with your object-level answer, so your meta-level answer was wrong. I think we've now gotten rid of the point of trade.Them: Darn it.(Note: While contemplating this afterwards, I realized that we hadn't quite gotten rid of all the points of trade, and there should have been two more rounds of dialogue; there are two more magical powers a society needs, in order to produce a high-tech quantity of stuff with zero trade. The missing sections are left as an exercise for the reader.)"

What you see above is a segment from a LessWrong user's post.

What is this text talking about?

This text is a dialogue between two individuals discussing the fundamental reasons and benefits of trade in an economy. The conversation starts with a common explanation of trade: that people value things differently, so they can increase their overall satisfaction or utility by exchanging goods or services.

However, the dialogue then delves deeper into the concept, questioning whether trade would still exist if everyone had the same tastes or if other factors such as task-switching costs, geographical location, and individual talents were removed. The conversation concludes that even in a hypothetical society where all these factors are eliminated, trade would still be beneficial due to human capital accumulation - the idea that people improve in their jobs with practice.

The text is essentially exploring the idea that trade is not just about exchanging goods for mutual benefit, but also about specialization and the division of labor, which allow for greater efficiency and productivity in an economy.

Explain the concept of human capital accumulation in the context of trade and how it contributes to increased efficiency and productivity in an economy.

Human capital accumulation refers to the process of acquiring and increasing the levels of education, skills, abilities, and experience in a workforce. It is a significant factor in the context of trade and the overall productivity of an economy.

In the context of trade, human capital accumulation is closely tied to the concept of specialization. Specialization refers to the process by which individuals, firms, or countries focus on producing a narrow range of goods or services to become more efficient producers.

When individuals or firms specialize, they tend to accumulate more knowledge and skills related to their specific field over time. This accumulation of human capital makes them more efficient and productive in their specialized roles. For instance, a farmer who specializes in growing apples will, over time, gain more knowledge and skills about apple cultivation than someone who grows many different types of crops. This specialized knowledge and skill set, or human capital, makes the farmer more efficient and productive at growing apples.

In a broader economic context, when individuals or firms trade the goods or services they specialize in, they can leverage their human capital to produce more efficiently than if each individual or firm had to produce everything they needed independently. This leads to an overall increase in productivity and efficiency in the economy.

In essence, human capital accumulation through specialization and trade allows for a more efficient allocation of resources, leading to increased productivity, economic growth, and wealth creation.

Discuss the role of trade in facilitating specialization and how this contributes to the economic growth of a country.

Trade plays a pivotal role in facilitating specialization, which in turn contributes significantly to the economic growth of a country. Here's how:

1. **Promotes Specialization**: Trade encourages countries to specialize in the production of goods and services where they have a comparative advantage - meaning they can produce these goods more efficiently or at a lower cost than other countries. This could be due to various factors such as natural resources, labor skills, technological capabilities, or other factors.

2. **Increases Efficiency**: Specialization allows for economies of scale, which refers to the cost advantages that enterprises obtain due to their scale of operation, with cost per unit of output generally decreasing with increasing scale. This leads to increased efficiency in production, which means more goods and services can be produced with the same amount of resources.

3. **Expands Markets**: Trade expands the market for a country's goods beyond its borders. This larger market allows for greater specialization and more efficient production, which can lead to higher output and income.

4. **Enhances Competition and Innovation**: Trade exposes domestic companies to international competition, which can stimulate innovation and improvement in productivity. It encourages firms to innovate and improve in order to maintain or increase their market share.

5. **Access to Diverse Resources**: Trade allows countries to access resources and inputs that they may not have domestically. This can enhance their production capabilities and allow them to produce a wider variety of goods or produce existing goods more efficiently.

6. **Income and Living Standards**: The increased output and income resulting from specialization and trade can lead to higher living standards, as individuals have access to a wider variety of goods and services at lower prices.

In summary, by promoting specialization, trade enables countries to make efficient use of their resources, improve productivity, stimulate innovation, and expand markets. These factors contribute to economic growth, higher income, and improved living standards. |

fe79f7cb-47c0-49be-9396-5189435e7673 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Critique of Superintelligence Part 1

This is part 1 of a 5-part sequence:

[Part 1: summary of Bostrom's argument](https://forum.effectivealtruism.org/posts/A8ndMGC4FTQq46RRX/criqitue-of-superintelligence-part-1)

[Part 2: arguments against a fast takeoff](https://forum.effectivealtruism.org/posts/BGWmAqrk64q2w6JjM/critique-of-superintelligence-part-2)

[Part 3: cosmic expansion and AI motivation](https://forum.effectivealtruism.org/posts/iKWbkomL8WrA8Yy4X/critique-of-superintelligence-part-3)

[Part 4: tractability of AI alignment](https://forum.effectivealtruism.org/posts/LLdHNTEHMoPYqGtHY/critique-of-superintelligence-part-4)

[Part 5: expected value arguments](https://forum.effectivealtruism.org/posts/WhDa26A3AKaStvuD9/critique-of-superintelligence-part-5)

Introduction

------------

In this article I present a critique of Nick Bostrom’s book *Superintelligence*. For purposes of brevity I shall not devote much space to summarising Bostrom’s arguments or defining all the terms that he uses. Though I briefly review each key idea before discussing it, I shall also assume that readers have some general idea of Bostrom’s argument, and some of the key terms involved. Also note that to keep this piece focused, I only discuss arguments raised in this book, and not what Bostrom has written elsewhere or others who have addressed similar issues. The structure of this article is as follows. I first offer a summary of what I regard to be the core argument of Bostrom’s book, outlining a series of premises that he defends in various chapters. Following this summary, I commence a general discussion and critique of Bostrom’s concept of ‘intelligence’, arguing that his failure to adopt a single, consistent usage of this concept in his book fatally undermines his core argument. The remaining sections of this article then draw upon this discussion of the concept of intelligence in responding to each of the key premises of Bostrom’s argument. I conclude with a summary of the strengths and weaknesses of Bostrom’s argument.

Summary of Bostrom’s Argument

-----------------------------

Throughout much of his book, Bostrom remains quite vague as to exactly what argument he is making, or indeed whether he is making a specific argument at all. In many chapters he presents what are essentially lists of various concepts, categories, or considerations, and then articulates some thoughts about them. Exactly what conclusion we are supposed to draw from his discussion is often not made explicit. Nevertheless, by my reading the book does at least implicitly present a very clear argument, which bears a strong similarity to the sorts of arguments commonly found in the Effective Altruism (EA) movement, in favour of focusing on AI research as a cause area. In order to provide structure for my review, I have therefore constructed an explicit formulation of what I take to be Bostrom’s main argument in his book. I summarise it as follows:

Premise 1: A superintelligence, defined as a system that ‘exceeds the cognitive performance of humans in virtually all domains of interest’, is likely to be developed in the foreseeable future (decades to centuries).

Premise 2: If superintelligence is developed, some superintelligent agent is likely to acquire a decisive strategic advantage, meaning that no terrestrial power or powers would be able to prevent it doing as it pleased.

Premise 3: A superintelligence with a decisive strategic advantage would be likely to capture all or most of the cosmic endowment (the total space and resources within the accessible universe), and put it to use for its own purposes.

Premise 4: A superintelligence which captures the cosmic endowment would likely put this endowment to uses incongruent with our (human) values and desires.

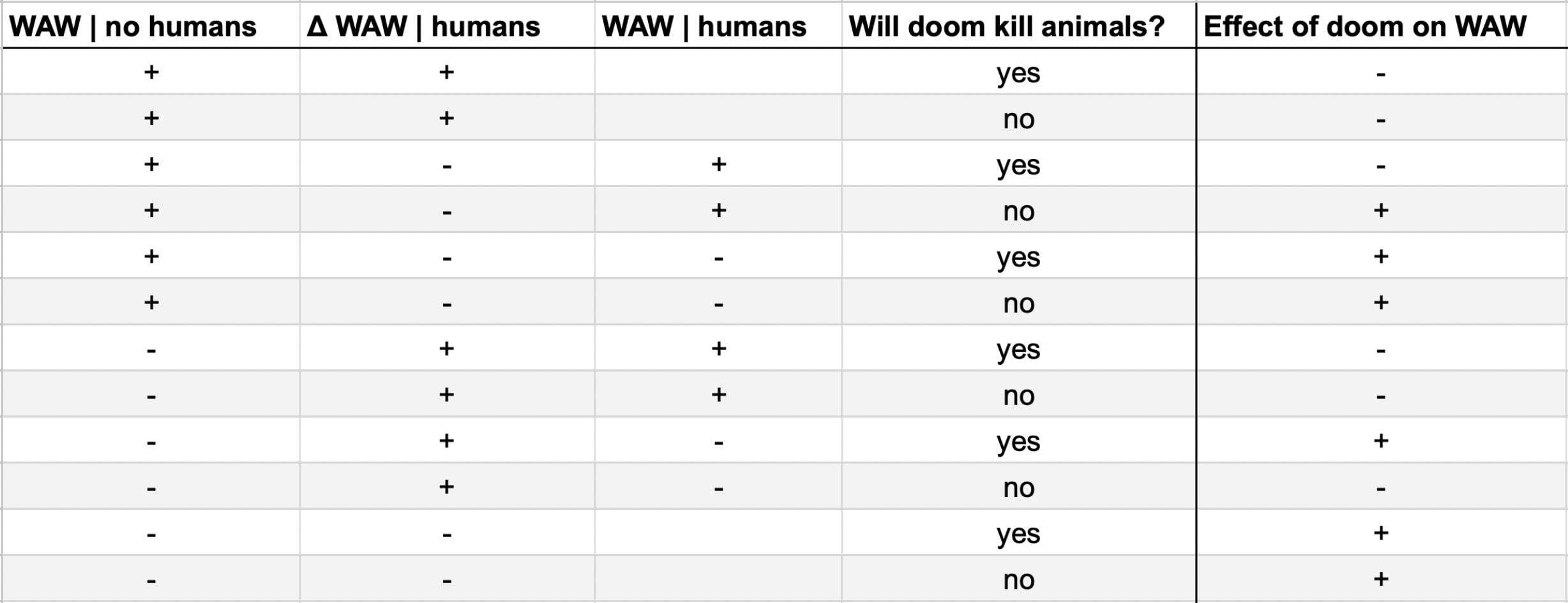

Preliminary conclusion: In the foreseeable future it is likely that a superintelligent agent will be created which will capture the cosmic endowment and put it to uses incongruent with our values. (I call this the AI Doom Scenario).

Premise 5: Pursuit of work on AI safety has a non-trivial chance of noticeably reducing the probability of the AI Doom Scenario occurring.

Premise 6: If pursuit of work on AI safety has at least a non-trivial chance of noticeably reducing the probability of an AI Doom Scenario, then (given the preliminary conclusion above) the expected value of such work is exceptionally high.

Premise 7: It is morally best for the EA community to preferentially direct a large fraction of its marginal resources (including money and talent) to the cause area with highest expected value.

Main conclusion: It is morally best for the EA community to direct a large fraction of its marginal resources to work on AI safety. (I call this the AI Safety Thesis.)

Bostrom discusses the first premise in chapters 1-2, the second premise in chapters 3-6, the third premise in chapters 6-7, the fourth premise in chapters 8-9, and some aspects of the fifth premise in chapters 13-14. The sixth and seventh premises are not really discussed in the book (though some aspects of them are hinted at in chapter 15), but are widely discussed in the EA community and serve as the link between the abstract argumentation and real-world action, and as such I decided also to discuss them here for completeness. Many of these premises could be articulated slightly differently, and perhaps Bostrom would prefer to rephrase them in various ways. Nevertheless I hope that they at least adequately capture the general thrust and key contours of Bostrom’s argument, as well as how it is typically appealed to and articulated within the EA community.

The nature of intelligence

--------------------------

In my view, the biggest problem with Bostrom’s argument in *Superintelligence* is his failure to devote any substantial space to discussing the nature or definition of intelligence. Indeed, throughout the book I believe Bostrom uses three quite different conceptions of intelligence:

* Intelligence(1): Intelligence as being able to perform most or all of the cognitive tasks that humans can perform. (See page 22)

* Intelligence(2): Intelligence as a measurable quantity along a single dimension, which represents some sort of general cognitive efficaciousness. (See pages 70,76)

* Intelligence(3): Intelligence as skill at prediction, planning, and means-ends reasoning in general. (See page 107)

While certainly not entirely unrelated, these three conceptions are all quite different from each other. Intelligence(1) is mostly naturally viewed as a multidimensional construct, since humans exhibit a wide range of cognitive abilities and it is by no means clear that they are all reducible to a single underlying phenomenon that can be meaningfully quantified with one number. It seems much more plausible to say that the range of human cognitive abilities require many different skills which are sometimes mutually-supportive, sometimes mostly unrelated, and sometimes mutually-inhibitory in varying ways and to varying degrees. This first conception of intelligence is also explicitly anthropocentric, unlike the other two conceptions which make no reference to human abilities.

Intelligence(2) is unidimensional and quantitative, and also extremely abstract, in that it does not refer directly to any particular skills or abilities. It most closely parallels the notion of IQ or other similar operational measures of human intelligence (which Bostrom even mentions in his discussion), in that it is explicitly quantitative and attempts to reduce abstract reasoning abilities to a number along a single dimension. Intelligence(3) is much more specific and grounded than either of the other two, relating only to particular types of abilities. That said, it is not obviously subject to simple quantification along a single dimension as is the case for Intelligence(2), nor is it clear that skill at prediction and planning is what is measured by the quantitative concept of Intelligence(2). Certainly Intelligence(3) and Intelligence(2) cannot be equivalent if Intelligence(2) is even somewhat analogous to IQ, since IQ mostly measures skills at mathematical, spatial, and verbal memory and reasoning, which are quite different from skills at prediction and planning (consider for example the phenomenon of autistic savants). Intelligence(3) is also far more narrow in scope than Intelligence(1), corresponding to only one of the many human cognitive abilities.

Repeatedly throughout the book, Bostrom flips between using one or another of these conceptions of intelligence. This is a major weakness for Bostrom’s overall argument, since in order for the argument to be sound it is necessary for a single conception of intelligence to be adopted and apply in all of his premises. In the following paragraphs I outline several of the clearest examples of how Bostrom’s equivocation in the meaning of ‘intelligence’ undermines his argument.

Bostrom argues that once a machine becomes more intelligent than a human, it would far exceed human-level intelligence very rapidly, because one human cognitive ability is that of building and improving AIs, and so any superintelligence would also be better at this task than humans. This means that the superintelligence would be able to improve its own intelligence, thereby further improving its own ability to improve its own intelligence, and so on, the end result being a process of exponentially increasing recursive self-improvement. Although compelling on the surface, this argument relies on switching between the concepts of Intelligence(1) and Intelligence(2).

When Bostrom argues that a superintelligence would necessarily be better at improving AIs than humans because AI-building is a cognitive ability, he is appealing to Intelligence(1). However, when he argues that this would result in recursive self-improvement leading to exponential growth in intelligence, he is appealing to Intelligence(2). To see how these two arguments rest on different conceptions of intelligence, note that considering Intelligence(1), it is not at all clear that there is any general, single way to increase this form of intelligence, as Intelligence(1) incorporates a wide range of disparate skills and abilities that may be quite independent of each other. As such, even a superintelligence that was better than humans at improving AIs would not necessarily be able to engage in rapidly recursive self-improvement of Intelligence(1), because there may well be no such thing as a single variable or quantity called ‘intelligence’ that is directly associated with AI-improving ability. Rather, there may be a host of associated but distinct abilities and capabilities that each needs to be enhanced and adapted in the right way (and in the right relative balance) in order to get better at designing AIs. Only by assuming a unidimensional quantitative conception of Intelligence(2) does it make sense to talk about the rate of improvement of a superintelligence being proportional to its current level of intelligence, which then leads to exponential growth.

Bostrom therefore faces a dilemma. If intelligence is a mix of a wide range of distinct abilities as in Intelligence(1), there is no reason to think it can be ‘increased’ in the rapidly self-reinforcing way Bostrom speaks about (in mathematical terms, there is no single variable which we can differentiate and plug into the differential equation, as Bostrom does in his example on pages 75-76). On the other hand, if intelligence is a unidimensional quantitative measure of general cognitive efficaciousness, it may be meaningful to speak of self-reinforcing exponential growth, but it is not necessarily obvious that any arbitrary intelligent system or agent would be particularly good at designing AIs. Intelligence(2) may well help with this ability, but it’s not at all clear it is sufficient – after all, we readily conceive of building a highly “intelligent” machine that can reason abstractly and pass IQ tests etc, but is useless at building better AIs.

Bostrom argues that once a machine intelligence became more intelligent than humans, it would soon be able to develop a series of ‘cognitive superpowers’ (intelligence amplification, strategising, social manipulation, hacking, technology research, and economic productivity), which would then enable it to escape whatever constraints were placed upon it and likely achieve a decisive strategic advantage. The problem is that it is unclear whether a machine endowed only with Intelligence(3) (skill at prediction and means-ends reasoning) would necessarily be able to develop skills as diverse as general scientific research ability, the capability to competently use natural language, and perform social manipulation of human beings. Again, means-ends reasoning may help with these skills, but clearly they require much more beyond this. Only if we are assuming the conception of Intelligence(1), whereby the AI has already exceeded essentially all human cognitive abilities, does it become reasonable to assume that all of these ‘superpowers’ would be attainable.

According to the *orthogonality thesis,* there is no reason why the machine intelligence could not have extremely reductionist goals such as maximising the number of paperclips in the universe, since an AI's level of intelligence is totally separate to and distinct from its final goals. Bostrom’s argument for this thesis, however, clearly depends adopting Intelligence(3), whereby intelligence is regarded as general skill with prediction and means-ends reasoning. It is indeed plausible that an agent endowed only with this form of intelligence would not necessarily have the ability or inclination to question or modify its goals, even if they are extremely reductionist or what any human would regard as patently absurd. If, however, we adopt the much more expansive conception of Intelligence(1), the argument becomes much less defensible. This should become clear if one considers that ‘essentially all human cognitive abilities’ includes such activities as pondering moral dilemmas, reflecting on the meaning of life, analysing and producing sophisticated literature, formulating arguments about what constitutes a ‘good life’, interpreting and writing poetry, forming social connections with others, and critically introspecting upon one’s own goals and desires. To me it seems extraordinarily unlikely that any agent capable of performing all these tasks with a high degree of proficiency would simultaneously stand firm in its conviction that the only goal it had reasons to pursue was tilling the universe with paperclips.

As such, Bostrom is driven by his cognitive superpowers argument to adopt the broad notion of intelligence seen in Intelligence(1), but then is driven back to a much narrower Intelligence(3) when he wishes to defend the orthogonality thesis. The key point to be made here is that the goals or preferences of a rational agent are subject to rational reflection and reconsideration, and the exercise of reason in turn is shaped by the agent’s preferences and goals. Short of radically redefining what we mean by ‘intelligence’ and ‘motivation’, this complex interaction will always hamper simplistic attempts to neatly separate them, thereby undermining Bostrom’s case for the orthogonality thesis - *unless* a very narrow conception of intelligence is adopted.

In the table below I summarise several of the key outcomes or developments that are critical to Bostrom’s argument, and how plausible they would be under each of the three conceptions of intelligence. Obviously such judgements are necessarily vague and subjective, but the key point I wish to make is simply that only by appealing to different conceptions of intelligence in different cases is Bostrom able to argue that all of the outcomes are reasonably likely to occur. Fatally for his argument, there is no *single* conception of intelligence that makes all of these outcomes simultaneously likely or plausible.

**Outcome Intelligence(1) Intelligence(2) Intelligence(3)**

Quick takeoff Highly unlikely LikelyUnclear

All superpowers Highly likely Highly unlikely Highly unlikely

Absurd goals Highly unlikely Unclear Likely

No change to goals Unlikely Unclear Likely |

2cdccc75-797c-4fed-8ac0-38394e49d56e | trentmkelly/LessWrong-43k | LessWrong | Half-baked alignment idea

I'm trying think through various approaches to AI alignment, and so far this is the one I came up with that I like best. I have not read much of the literature, so please do point me if this has been discussed before.

What if we train an AI agent (ie, reinforcement learning) to survive/thrive in an environment where there are a wide variety of agents with wildly different levels of intelligence? In particular, such that pretty much every agent can safely assume they'll eventually meet an agent much smarter than they are; structure the environment to reward tit-for-tat with a significant bias towards cooperation, eg require agents to "eat" resources that require cooperation to secure and are primarily non-competitive. The idea is to have them learn to respect even beings of lesser intelligence, because they want beings of higher intelligence to respect them; and because in this environment a bunch of lesser intelligences can gang up and defeat one higher-intelligence being. Also, we effectively train each AI to detect and defeat new AIs that seek to disturb this balance. I have not thought this through, curious what you all think

(Cross posted from EA Forum) |

e1b9dde5-d3da-4b39-8813-c7f4d9ef2f03 | trentmkelly/LessWrong-43k | LessWrong | You can never be universally inclusive

A discussion about the article “We Don’t Do That Here” (h/t siderea) raised the question about the tension between having inclusive social norms on the one hand, and restricting some behaviors on the other hand.

At least, that was the way the discussion was initially framed. The thing is, inclusivity is a bit of a bad term, since you can never really be universally inclusive. Accepting some behaviors is going to attract people who like engaging in those behaviors while repelling people who don’t like those behaviors; and vice versa for disallowing them.

Of course, you can still create spaces that are more inclusive than others, in being comfortable to a broader spectrum of people. But the way you do that, is by disallowing behaviors that would, if allowed, repel more people that the act of disallowing them does.

If you use your social power to shut up people who would otherwise be loudly racist and homophobic and who then leave because they don’t want to be in a place where those kinds of behaviors aren’t allowed, then that would fit the common definition of “inclusive space” pretty well.

That said, the “excluding racists and homophobes” thing may make it sound like you’re only excluding “bad” people, which isn’t the case either. Every set of rules (including having no rules in the first place) is going to repel some completely decent people.

Like, maybe you decide to try to make a space more inclusive by having a rule like “no discussing religion or politics”. This may make the space more inclusive towards people of all kinds of religions and political backgrounds, since there is less of a risk of anyone feeling unwelcome when everyone else turns out to disagree with their beliefs.

But at the same time, you are making the space less inclusive towards people who are perfectly reasonable and respectful people, but who would like to discuss religion or politics. As well as to people who aren’t so good at self-regulation and will feel uncomfortable about having t |

97c5335b-67f0-4d55-b8a2-db9cf35354a9 | trentmkelly/LessWrong-43k | LessWrong | Hebbian Learning Is More Common Than You Think

Epistemic status: locating the hypothesis. I have my private confidence but you shouldn't take my word for it.

I originally got the idea from this video interview of professor Richard A. Watson where he explains how learning networks could arise naturally and be an important factor in evolution. The meat starts around 15 minutes in.

First, an intuition pump: If you had a suspended network of non-ideal springs, loading the network would slightly change the resting lengths of the springs for the next iteration. In effect, even a spring network has (admittedly limited) learning potential.

Dr. Watson focuses on evolution and makes his strongest case in that domain. In short, ecological stressors impose evolutionary pressures on individuals that cause the ecological relationships between species to change over evolutionary time in a manner consistent with Hebb's rule. In other words, individual selection powers Hebbian learning on the ecosystem level.

In the interview, he mentions his associate setting up an ecological simulation isomorphic to the rules of sudoku and getting it to perform at what would be considered a very high skill level for a human. I believe this to be the relevant paper. I haven't read more than the abstract; here's the most relevant quote:

> We demonstrate the capabilities of this process in the ecological model by showing how the action of individual natural selection can enable communities to i) form a distributed ecological memory of multiple past states; ii) improve their ability to resolve conflicting constraints among species leading to higher community biomass; and iii) learn to solve complex resource-allocation problems equivalent to difficult computational puzzles like Sudoku.

Based on the above, I think learning networks arise frequently and spontaneusly in contexts involving biological life. This includes multicellular organisms, ecosystems and human networks such as economies, societies and civilizations. As of now, I don't have a |

7c6bb795-66a9-4191-86c6-6e1427182a70 | trentmkelly/LessWrong-43k | LessWrong | Richard Dawkins TV - Baloney Detection Kit video

See this great little rationalist video here.

> Well, if I am pro-business, I have to be skeptical about global warming. Wait! How about just following the data? |

63d767c9-ce0b-45fe-8a16-6ce701d54294 | trentmkelly/LessWrong-43k | LessWrong | Using vs. evaluating (or, Why I don't come around here no more)

[Summary: Trying to use new ideas is more productive than trying to evaluate them.]

I haven't posted to LessWrong in a long time. I have a fan-fiction blog where I post theories about writing and literature. Topics don't overlap at all between the two websites (so far), but I prioritize posting there much higher than posting here, because responses seem more productive there.

The key difference, I think, is that people who read posts on LessWrong ask whether they're "true" or "false", while the writers who read my posts on writing want to write. If I say something that doesn't ring true to one of them, he's likely to say, "I don't think that's quite right; try changing X to Y," or, "When I'm in that situation, I find Z more helpful", or, "That doesn't cover all the cases, but if we expand your idea in this way..."

Whereas on LessWrong a more typical response would be, "Aha, I've found a case for which your step 7 fails! GOTCHA!"

It's always clear from the context of a writing blog why a piece of information might be useful. It often isn't clear how a LessWrong post might be useful. You could blame the author for not providing you with that context. Or, you could be pro-active and provide that context yourself, by thinking as you read a post about how it fits into the bigger framework of questions about rationality, utility, philosophy, ethics, and the future, and thinking about what questions and goals you have that it might be relevant to. |

26c96a11-3a14-4b17-af9a-43ce49443873 | StampyAI/alignment-research-dataset/blogs | Blogs | Ronald de Wolf on Quantum Computing

[Ronald de Wolf](http://homepages.cwi.nl/~rdewolf) is a senior researcher at CWI and a part-time full professor at the University of Amsterdam. He obtained his PhD there in 2001 with a thesis about quantum computing and communication complexity, advised by [Harry Buhrman](http://homepages.cwi.nl/~buhrman) and [Paul Vitanyi](http://homepages.cwi.nl/~paulv). Subsequently he was a postdoc at UC Berkeley. His scientific interests include quantum computing, complexity theory, and learning theory.

He also holds a Master’s degree in philosophy (where his thesis was about Kolmogorov complexity and Occam’s razor), and enjoys classical music and literature.

**Luke Muehlhauser**: Before we get to quantum computing, let me ask you about philosophy. Among other topics, your [MSc thesis](http://homepages.cwi.nl/%7Erdewolf/publ/philosophy/phthesis.pdf) discusses the relevance of computational learning theory to philosophical debates about [Occam’s razor](http://en.wikipedia.org/wiki/Occam%27s_razor), which is the principle advocating that “among the theories, hypotheses, or explanations that are consistent with the facts, we are to prefer simpler over more complex ones.”

Though many philosophers and scientists adhere to the principle of Occam’s razor, it is often left ambiguous exactly what is meant by “simpler,” and also why this principle is justified in the first place. But in your thesis you write that “in certain formal settings we can, more or less, *prove* that certain versions of Occam’s Razor work.”

Philosophers are usually skeptical when I argue for [K-complexity](http://en.wikipedia.org/wiki/Kolmogorov_complexity) versions of Occam’s razor, as you do. For example, USC’s Kenny Easwaran [once wrote](https://www.facebook.com/lukeprog/posts/10103841562829230?stream_ref=10), “I’ve never actually seen how [a K-complexity based simplicity measure] is supposed to solve anything, given that it always depends on a choice of universal machine.”

How would you reply, given your optimism about justifying Occam’s razor “in certain formal settings”?

---

**Ronald de Wolf**: I would treat Occam’s razor more as a rule of thumb than as a formal rule or theorem. Clearly it’s vague, and clearly there are cases where it doesn’t work. Still, many scientists have been guided by it to good effect, often equating simplicity with beauty (for example Einstein and Dirac). Psychologically, invoking Occam will only be effective if there is some shared notion of simplicity; maybe not to quantify simplicity, but at least to be able to rank theories according to their simplicity.

You could try to use Kolmogorov complexity as your “objective” measure of simplicity, and in some simplified cases this makes perfect sense. In my MSc thesis I surveyed a few known cases where it provably does. However, such cases do not provide convincing proof of Occam’s razor “in the real world”. They are more like thought experiments, where you strip away everything that’s superfluous in order to bring out a certain point more clearly.

In practice there are at least three issues with using Kolmogorov complexity to measure simplicity. First, it requires you to write down your theory (or whatever it is whose simplicity you’re quantifying) over some fixed alphabet, say as a string of bits. It’s often kind of subjective which background assumptions to count as actually part of your theory. Second, as Easwaran rightly says, KC depends on the choice of universal Turing machine w.r.t. which it is defined. However, I don’t think this is such a big issue. If you choose some reasonably efficient universal Turing machine and consider the KC of reasonably long strings, the constant difference incurred by the choice of universal Turing machine will be relatively small. Thirdly and possibly most importantly, KC is not computable, not even approximable by any computational process (even a very slow one) with any approximation-guarantees. This rules out using KC itself in practical settings.

However, the core idea that compression somehow corresponds to detection of patterns in your data is a perfectly valid one, and you can use it in practice if you’re willing to base “compression” on imperfect but practical programs like gzip. This loses the theoretical optimality guaranteed by KC (which you can view as the “ultimate compression”) but it gives you a tool for data mining and clustering that’s often quite good in practice. See for example [here](http://arxiv.org/ftp/arxiv/papers/0809/0809.2553.pdf). Such practical approaches are like heuristics that try to approach, in some weak sense, the ideal but unreachable limit-case of KC.

---

**Luke**: Do you think one can use Occam-like principles to choose between, for example, the various [explanations of quantum mechanics](http://en.wikipedia.org/wiki/Interpretations_of_quantum_mechanics), since they appear to make essentially the same predictions about what we should observe?

---

**Ronald**: In principle you could, but to my limited understanding (I’m not following this debate closely), the main interpretations of QM all suffer from having some seemingly superfluous aspects. The standard interpretation that a measurement “collapses the wave function” to a probabilistically-chosen measurement outcome treats “observers” as a special category of quantum objects, or “observation/measurement” as a special category of quantum process. Before you know it, people will bring consciousness into the picture and mysticism beckons. It seems to me that treating the “observer” as a special category violates Occam’s razor. Alternatively you can take the position that measurement is nothing special but just another interaction between quantum systems (observer and observed system). This is sometimes known as the “church of the larger Hilbert space”. It’s mathematically pleasing because now there’s only this smooth, coherent, and even deterministic evolution of the whole universe. However, now you will have may different “branches” of the superposition that is the world’s state vector, which very quickly leads to the multiverse view of many worlds. A metaphysics that postulates infinitely many worlds existing in superposition doesn’t strike me as very Occam-compliant either.

Then there is the instrumentalist “shut up and calculate” school. This is minimalistic in an Occam-pleasing sense, but seems to substantially impoverish the scientific endeavour, whose aim should not just be to predict but also to explain and give some picture of the world. All interpretations of QM are problematic in their own way, and choosing between them based on Occam’s razor assumes some shared idea of what simplicity is as well as a shared view of the goals of science, which we seem to lack here.

---

**Luke**: Most of your work these days is in quantum computing and communication. Quantum computing is an interesting field, since its researchers design algorithms, error correction techniques, etc. for machines that cannot yet be built. In this sense, I tend to think of quantum computing as an “[exploratory engineering](http://en.wikipedia.org/wiki/Exploratory_engineering)” discipline, akin to pre-Sputnik astronautics, pre-ENIAC computer science, and Eric Drexler’s *[Nanosystems](http://www.amazon.com/Nanosystems-Molecular-Machinery-Manufacturing-Computation/dp/0471575186/)*. Do you think that’s a fair characterization? Do you or your colleagues in quantum computing get much criticism that such work is “too speculative”? (For the record, that’s not *my* view.)

---

**Ronald**: The two main questions in quantum computing are (1) can we build a large-scale quantum computer and (2) what could it do if we had one. I think your term “exploratory engineering” fits the work on the first question; small quantum computers on a handful of qubits were already built a decade ago, so it’s not pure theory anymore. I myself am a theoretical computer scientist focusing on the second question. While I think this is more mathematics than engineering, you can certainly compare it to computer science in the 1930s: at that point the theoretical model of a (classical) computer had already been introduced by Alan Turing, but no large-scale computers had been built yet. You could already design algorithms for Turing machines on paper, and you could even prove that such computers could *not* solve certain problems (as Turing famously did for the halting problem). We are doing such work on quantum computing now: designing quantum algorithms and communication protocols that are much faster than classical problems for some computational problems, and on the other hand proving that quantum computers do not give you a speed-up for many other problems. Much of the relevance of this is of course contingent upon the eventual construction of a large QC. Interestingly, however, some of the work we are doing has spin-offs for the analysis of classical computing, and that is relevant today irrespective of progress on building a QC.

Regarding the possible charge of being “too speculative”: in the mid-1990s, right after Peter Shor published his groundbreaking quantum algorithm for factoring large numbers into their prime factors (which breaks a lot of cryptography), there was a lot of skepticism, particularly among physicists who thought that this would never fly. They expected that any attempt at implementing quantum bits and operations would have so much noise and errors that it would quickly decohere to a classical computer. Of course they had good reasons to be skeptical — manipulating something as small as an electron is extremely hard, much harder than manipulating a vacuum tube was in the 1940s and 1950s. The worries about noise and imperfections were partially answered soon after by the development (partially by Shor himself) of quantum error-correction and fault-tolerant computing, which roughly says that if the noise is not too large and not too malicious, your quantum computer can correct for it. The only way these worries can be fully overcome is to actually build a large-scale QC. My impression is that experimental physicists are making slow but sure progress on this, and are becoming more optimistic over time that this will actually be realized within one or two decades. So, sure this is a speculative endeavour (most long-term research is), but not unreasonably so.

---

**Luke**: What heuristics do you and your colleagues in quantum computing use to decide what to work on, given QC’s long-term and somewhat speculative nature? Presumably you need to make uncertain predictions about which types of quantum computers are most likely to be built, what the solutions to known obstacles might look like, etc.? (I ask because MIRI aims to conduct long-term research that is *more* speculative than quantum computing.)

---

**Ronald**: Most of the time we study how well quantum computers can solve classical computational problems, problems with classical inputs (such as a large number N) and classical outputs (such as the prime factors of N). Computer science has over decades been defining and studying the complexity of lots of interesting and useful computational problems and models, and often we start from there: we take an existing computational problem and try to find quantum tricks to improve over the best classical solutions. In some cases we succeed, designing quantum ways to outperform classical computers, and in some cases we can prove that a QC can’t do better than a classical computer. Of course it’s hard to predict what quantum tricks (if any) might help for a specific problem, but we have some general tools at our disposal. For example, quantum computers are good at detecting periodic patterns (that’s the core of Shor’s algorithm); they can search faster (Grover’s algorithm); you can hide information by encoding it in an unknown basis (quantum cryptography); you can pack a doubly-exponential number of quantum states in an n-qubit space (quantum fingerprinting), etc. A lot of work is based on skillfully combining and applying such known quantum tools, and once in a while people find new tricks to add to our toolbox. Of course, there is also work of a more specific quantum nature, which is not just throwing quantum tricks at classical problems. For example, a lot of work has been done recently on testing whether given quantum states are properly entangled (and hence can be used, for instance, in quantum cryptography).

We typically abstract away from the details of the specific physical system that will implement the quantum computer. Instead we just focus on the mathematical model, with quantum bits and a well-defined set of elementary operations (“gates”) that we can perform on them. It doesn’t really matter whether the qubits will be implemented as electron spins, or as photon polarizations, or as energy levels of an atom — from the perspective of the model, it only matters that a qubit has well-defined 0 and 1 states and that we can form superpositions thereof. Similarly, for classical computers it doesn’t really matter whether you program in C or Java or assembler; all such programming languages can efficiently simulate each other. And you don’t care about the precise voltages used to implement bits physically, as long as each bit has stable and clearly distinguished 0 and 1 values.

Abstracting away from such implementation details is justified when we have a large-scale quantum computer, because different varieties of quantum computers will be able to simulate each other with only moderate overhead in terms of additional number of qubits and operations needed. For example, for the purposes of designing quantum algorithms it’s convenient to assume that you can interact any pair of qubits, even when they are far apart; in the reality of physical experiments it’s much simpler to allow only nearest-neighbor interactions between qubits. We can design algorithms for the first model and then implement them in the nearest-neighbor model by inserting a few swap-operations to move interacting qubits close together. However, this “moderate overhead” is actually quite significant as long as we do not yet have a large-scale quantum computer. It’s quite likely that on the slow road towards a large QC we will first have QCs with a few dozen or a few hundred qubits (the current state of the art is a few qubits). In this case we can’t be too wasteful and probably should design algorithms that are optimized for specific physical implementations. It is actually a very interesting question to find problems where a 50- or 100-qubit QC can already outperform classical computers in some noticeable way. Such problems would be the benchmark on which intermediate-size QCs could be tested.

The point is that once you have a large number of qubits available, the differences between different physical implementations/architectures don’t matter too much, because they are all equivalent up to small overheads (needed to simulate one variant using another). But when we have only intermediate-size QCs available (of, say, a few dozen or a few hundred qubits), then these overheads do make a big difference, and we need to carefully optimize our quantum algorithm for performing on the specific physical implementation that’s actually available. In this respect quantum computing seems quite different from most other future technologies: somehow we’re better able to predict the power of this technology for the long term (when we’ll hopefully have a large-scale QC available and can essentially ignore implementation details) than for the short and medium term (while we only have small-scale QCs with quirky limitations).

---

**Luke**: My next question leaps from quantum computing to technological forecasting. What is your subjective probability that we’ll have a 500-qubit quantum computer, which is [uncontroversially](http://arxiv.org/abs/1401.2910) a quantum computer, within the next 20 years? And, how do you reason about a question like that?

---

**Ronald**: Quite high, let’s say probability greater than 2/3. That’s the typical computer science threshold for a “bounded-error” algorithm. From a theoretical perspective, I don’t think we know of any fundamental obstacles to building a large-scale QC, and the threshold theorem from fault-tolerant QC assures us we can deal with moderate amounts of noise and errors. Clearly building a QC is an exceedingly hard engineering problem, but my impression is that experimentalists are making slow but sure progress. There are basically three possible scenarios here:

1. Someone constructs a large QC

2. We discover a fundamental problem with quantum mechanics (which would be extremely interesting new physics!)

3. Experimentalists muddle through without too much progress until either they or the funding agencies lose faith and give up.

The first scenario seems the most plausible to me. I should qualify this by saying that I’m not a certified physicist, let alone a certified *experimental* physicist, so this opinion is partly based on hearsay — but I do have some confidence in the progress that’s happening in places like MIT, NIST, Yale, Delft,… The recent paper you refer to casts doubt upon the controversial D-Wave quantum computer, which has gotten a lot of press in the last few years. For commercial reasons they prioritize quantity (=number of available qubits) over quality (=the coherence and “true quantum nature” of those qubits), and their machines seem too noisy to have useful quantum computing power.

---

**Luke**: Does that mean we probably need to purge Earth of [Shor](http://en.wikipedia.org/wiki/Shor%27s_algorithm)-breakable crypto-security, and transition to [post-quantum cryptography](http://en.wikipedia.org/wiki/Post-quantum_cryptography), within ~20 years?

---

**Ronald**: I think that would be a wise precaution, at least for important or sensitive data. There are at least two ways to handle this. We could either stick with public-key cryptography but replace Shor-breakable problems like factoring and discrete logs by problems that seem to be hard to crack even for QC; lattice problems are an oft-mentioned candidate. Or we could use quantum cryptography. Neither is as efficient as RSA, but at least they’re more secure. It makes sense to start this transition already now, even though there’s no QC yet: the security services (and, who knows, maybe the mafia too) are probably hoovering up RSA-encrypted communications that they store for the time being, waiting for the QC that will allow them to decrypt these messages later. So even today’s communication is not safe from a future QC.

---

**Luke**: Thanks, Ronald!

The post [Ronald de Wolf on Quantum Computing](https://intelligence.org/2014/02/03/ronald-de-wolf-on-quantum-computing/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

a10cb7f8-65cb-4149-a919-89e0b0644f32 | trentmkelly/LessWrong-43k | LessWrong | Deception and Self-Doubt

A little while ago, I argued with a friend of mine over the efficiency of the Chinese government. I admitted he was clearly better informed on the subject than I. At one point, however, he claimed that the Chinese government executed fewer people than the US government. This statement is flat-out wrong; China executes ten times as many people as the US, if not far more. It's a blatant lie. I called him on it, and he copped to it. The outcome is besides the point. Why does it matter that he lied? In this case, it provides weak evidence that the basics of his claim were wrong, that he knew the point he was arguing was, at least on some level, incorrect.

The fact that a person is willing to lie indefensibly in order to support their side of an argument shows that they have put "winning" the argument at the top of their priorities. Furthermore, they've decided, based on the evidence they have available, that lying was a more effective way to advance their argument than telling the truth. While exceptions obviously exist, if you believe that lying to a reasonably intelligent audience is the best way of advancing your claim, this suggests that you know your claim is ill-founded, even if you don't admit this fact to yourself.

Two major exceptions exist. First, the person may simply have no qualms about lying, and may just say anything they think will advance their point, regardless of its veracity. This indicates the speaker should never be trusted on basically any factual claim he makes, though it does not necessarily show self-doubt. Second, the speaker may have little respect for the intelligence of her audience, and believe that the audience is not sophisticated enough for the truth to persuade them. While this may be justified, depending on the audience,1 unless there is good evidence to believe the audience legitimately would not process the truth accurately, this shows the speaker is likely wrong about his central point. However, "the masses are ignorant and shoul |

94fc17c2-74de-461f-be9a-19d18947d5b1 | trentmkelly/LessWrong-43k | LessWrong | Exclude the supernatural? My worldview is up for grabs.

Background

I was raised in the Churches of Christ and my family is all very serious about Christianity. About 3 years ago, I started to ask some hard questions, and the answers from other Christians were very unsatisfying. I used to believe that the Bible was, you know, inspired by a loving God, but its endorsement of genocide, the abuse of slaves, and the mistreatment of women and children really started to bother me.

I set out to study these issues as much as I could. I stayed up past midnight for weeks reading what Christians have to say, and this process triggered a real crisis of faith. What started out as a search for answers on Biblical genocide led me to places like commonsenseatheism.com. I learned that the Bible has serious credibility problems on lots of issues that no one ever told me about. Wow.

My Question

Now I'm pretty sure that the God of the Bible is man-made and Jesus of Nazareth was probably a failed prophet, but I don't have good reasons to reject the supernatural all together. I'm working through the sequences, but this process is slow. I will probably struggle with this question for months, maybe longer.

Excluding the Supernatural was interesting, but it left me wanting a more thorough explanation. Where do you think I should go from here? Should I just continue reading the sequences, and re-read them until the ideas gel? I'm coming from 30 years of Sunday School level thinking. It's not like I grew up with words like "epistemology" and "epiphenomenalism". If there is no supernatural, and I can be confident about that, I will need to re-evaluate a lot of things. My worldview is up for grabs. |

a4e49fc7-4001-4dfb-8ffa-918ac394459e | trentmkelly/LessWrong-43k | LessWrong | Do strange scenarios help us ask why not?

People are working on making robot cars communicate, with pedestrians for instance.

Notice that the apparent benefit of having cars communicate with pedestrians doesn’t actually have much to do with robots driving the cars. If having cars signal to pedestrians is useful, probably so is having drivers signal to pedestrians. Yet current cars and driving norms hardly provide for this at all. Many a time I have thought about this when trying to cross a road when there is a car coming toward me that seems to be slowing down, kind of, and whose windscreen I can’t really see through. Is the driver waving to me? Eating a sandwich? Hard to tell, so I won’t take my chances. Ah, now he’s stopped. And he’s annoyed. Or swatting a fly. Does that mean he’s about to go? Hard to tell, maybe I’ll just wait a sec to be sure. Now he’s really annoyed – annoyed enough to give up and drive on?… If only there were some little signal that meant ‘while this signal is on, I see you and am stopping for you’.

This is not my real point, but an example. Thinking about a strange future of robot cars causes us to make predictions and envision potentially valuable additions to it that have little to do with robot cars. Similarly, thinking about future AI development causes people to wonder if sudden leaps in technological capacity could cause a small portion of humanity to get far ahead of the rest, or if human values might be lost in the long run. These issues are not specific to AI. Yet when we look at the world around us we seem less likely to see ways to improve it, or to wonder why no groups of humans do get ahead of the rest technologically, or even notice that technological changes tend to be relatively small, or to ask what is becoming of our values.

In general it seems that thinking about strange scenarios causes people expect things to happen which have little to do with the scenarios. Since they have little to do with the scenarios, it makes sense to ask why they haven’t already happen |

d7bbb58b-5cb7-456f-94c6-05095e7b6392 | trentmkelly/LessWrong-43k | LessWrong | Adventist Health Study-2 supports pescetarianism more than veganism

Or: how the Adventist Health Study-2 had a pretty good study design but was oversold in popular description, and then misrepresented its own results.

When I laid out my existing beliefs on veganism and nutrition I asked people for evidence to the contrary. By far the most promising thing people shared was the 7th Day Adventist Health Studies. I got very excited because the project promised something of a miracle in nutrition science: an approximate RCT. I read the paper that included vegan results, and while it’s still very good as far as nutrition studies go it’s well below what I was promised, and the summaries I read were misleading. It’s not a pseudo-RCT, and even if you take the data at face value (which you shouldn’t) it doesn’t show a vegan diet is superior to all others (as measured by lifespan). Vegan is at best tied with pescetarianism, and in certain niche cases (e.g. being a woman) it’s the worst choice besides unconstrained meat consumption.

I’m going to try not to be too sarcastic about this, the study really is very good data by nutrition science standards, but I have a sour spot for medical papers that say “people” when they mean “men”, so probably something will leak out. Also, please consider what the state of nutrition science must be to make me call something that made this mistake “very good”.

Background

The 7th Day Adventists are a fairly large Christian sect. For decades scientists have been recruiting huge cohorts to study their diet, and publishing a lot of papers.

The Adventists are a promising group to use to study nutrition for lots of reasons, but primarily because the Church discourages meat, smoking, and drinking. So you lose the worst confounders for health, and get a population of lifelong, culturally competent vegetarians, which is a pretty good deal. Total abstinence from meat isn’t technically required – you’re allowed to eat kosher meat – but it’s heavily discouraged. 7DA colleges only serve vegetarian meals, and church me |

d9aa6587-6e55-46e3-88ff-7d1cf4e8c295 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Washington, D.C.: Sorting Hat

Discussion article for the meetup : Washington, D.C.: Sorting Hat

WHEN: 13 September 2015 03:00:00PM (-0400)

WHERE: National Portrait Gallery

Crossposted from mailing list. Meeting in courtyard, gathering from 3:00pm, hard start 3:30pm - until closing.

We'll be meeting to discuss what Hogwarts house you think you, other people, or characters from fiction belong to, using this as a guide.

Upcoming Meetups:

* Sep 20: Fun and Games

* Sep 27: Singing (weather permitting)

* Oct. 04: Availability Heuristic

Discussion article for the meetup : Washington, D.C.: Sorting Hat |

214ae8c8-d6cf-4c76-a092-2af58938ae1f | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | [AN #76]: How dataset size affects robustness, and benchmarking safe exploration by measuring constraint violations

Find all Alignment Newsletter resources [here](http://rohinshah.com/alignment-newsletter/). In particular, you can [sign up](http://eepurl.com/dqMSZj), or look through this [spreadsheet](https://docs.google.com/spreadsheets/d/1PwWbWZ6FPqAgZWOoOcXM8N_tUCuxpEyMbN1NYYC02aM/edit?usp=sharing) of all summaries that have ever been in the newsletter. I'm always happy to hear feedback; you can send it to me by replying to this email.

Audio version [here](http://alignment-newsletter.libsyn.com/alignment-newsletter-76) (may not be up yet).

**Highlights**

--------------

[Self-training with Noisy Student improves ImageNet classification](https://arxiv.org/abs/1911.04252) *(Qizhe Xie et al)* (summarized by Dan H): Instead of summarizing this paper, I'll provide an opinion describing the implications of this and other recent papers.

**Dan H's opinion:** Some in the safety community have speculated that robustness to data shift (sometimes called "transfer learning" in the safety community) cannot be resolved only by leveraging more GPUs and more data. Also, it is argued that the difficulty in attaining data shift robustness suggests longer timelines. Both this paper and [Robustness properties of Facebook's ResNeXt WSL models](https://arxiv.org/abs/1907.07640) analyze the robustness of models trained on over 100 million to 1 billion images, rather than only training on ImageNet-1K's ~1 million images. Both papers show that data shift robustness greatly improves with more data, so data shift robustness appears more tractable with deep learning. These papers evaluate robustness using benchmarks collaborators and I created; they use [ImageNet-A](https://arxiv.org/abs/1907.07174), [ImageNet-C](https://arxiv.org/abs/1903.12261), and [ImageNet-P](https://arxiv.org/abs/1903.12261) to show that performance tremendously improves by simply training on more data. See [Figure 2](https://arxiv.org/pdf/1911.04252.pdf#page=6&zoom=100,0,88) of the Noisy Student paper for a summary of these three benchmarks. Both the Noisy Student and Facebook ResNeXt papers have problems. For example, the Noisy Student paper trains with a few expressly forbidden data augmentations which overlap with the ImageNet-C test set, so performance is somewhat inflated. Meanwhile, the Facebook ResNeXt paper shows that more data does not help on ImageNet-A, but this is because they computed the numbers incorrectly; I personally verified Facebook's ResNeXts and more data brings the ImageNet-A accuracy up to 60%, though this is still far below the 95%+ ceiling. Since [adversarial robustness can transfer to other tasks](https://arxiv.org/pdf/1901.09960.pdf), I would be surprised if robustness from these models could not transfer. These results suggest data shift robustness can be attained within the current paradigm, and that attaining image classifier robustness will not require a long timeline.

[Safety Gym](https://openai.com/blog/safety-gym/) *(Alex Ray, Joshua Achiam et al)* (summarized by Flo): Safety gym contains a set of tasks with varying difficulty and complexity focused on safe exploration. In the tasks, one of three simulated robots has to move to a series of goals, push buttons or move a box to a target location, while avoiding costs incurred by hitting randomized obstacles. This is formalized as a **constrained reinforcement learning** problem: in addition to maximizing the received reward, agents also have to respect constraints on a **safety cost function**. For example, we would like self-driving cars to learn how to navigate from A to B as quickly as possible while respecting traffic regulations and safety standards. While this could in principle be solved by adding the safety cost as a penalty to the reward, constrained RL gets around the need to correctly quantify tradeoffs between safety and performance.

Measures of safety are expected to become important criteria for evaluating algorithms' performance and the paper provides first benchmarks. Constrained policy optimization, a trust-region algorithm that tries to prevent updates from breaking the constraint on the cost is compared to new lagrangian versions of TRPO/PPO that try to maximize the reward, minus an adaptive factor times the cost above the threshold. Interestingly, the lagrangian methods incur a lot less safety cost during training than CPO and satisfy constraints more reliably at evaluation. This comes at the cost of reduced reward. For some of the tasks, none of the tested algorithms is able to gain nontrivial rewards while also satisfying the constraints.

Lastly, the authors propose to use safety gym for investigating methods for learning cost functions from human inputs, which is important since misspecified costs could fail to prevent unsafe behaviour, and for transfer learning of constrained behaviour, which could help to deal with distributional shifts more safely.