id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

501b3a48-022b-4b8e-8b55-89d4673d07a9 | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "I'm trying to like Beethoven's Great Fugue.

"This piece alone completely changed my life and how I perceive and appreciate music."

"Those that claim to love Beethoven but not this are fakers, frauds, wannabees, but most of all are people who are incapable of stopping everything for 10 minutes and reveling in absolute beauty, absolute perfection. Beethoven at his finest."

"This is the absolute peak of Beethoven."

"It's now my favorite piece by Beethoven."

These are some of the comments on the page. Articulate music lovers with excellent taste praise this piece to heaven. Plus, it was written by Beethoven.

It bores me.

The first two times I listened to it, it stirred no feelings in me except irritation and impatience for its end. I found it devoid of small-scale or large-scale structure or transitions, aimless, unharmonious, and deficient in melody, rhythm, and melodic or rhythmic coordination between the four parts, none of which I would care to hear by themselves (which is a key measure of the quality of a fugue).

Yet I feel strong pressure to like it. Liking Beethoven's Great Fugue marks you out as a music connoisseur.

I feel pressure to like other things as well. Bitter cabernets, Jackson Pollack paintings, James Joyce's Finnegan's Wake, the Love Song of J. Alfred Prufrock, the music of Arnold Schoenberg, and Burning Man. This is a pattern common to all arts. You recognize this pattern in a work when: The work in question was created by deliberately taking away the things most people like best. In the case of wine, sweetness and fruitiness. In the case of Jackson Pollack, form, variety, relevance, and colors not found in vomit. In the music of Alban Berg, basic music theory. In every poem in any volume of "Greatest American Poetry" since 2000, rhyme, rhythm, insight, and/or importance of subject matter. In the case of Burning Man, every possible physical comfort. The work cannot be composed of things that most people appreciate plus things connoisseurs appreciate. It must be difficult to like.

The level of praise is absurd. The Great Fugue, Beethoven's finest? I'm sorry; my imagination does not stretch that far. "Burning Man changed my life completely" - I liked Burning Man; but if it changed your life completely, you probably had a vapid life.

People say they hated it at first, but over time, grew to love it. One must be trained to like it.

People give contradictory reasons for liking it. One person says the Great Fugue has a brilliant structure; another says it is great because of its lack of structure.

Learning to like it is a rite of passage within a particular community. Here are some theories as to how a work becomes the darling of its medium or genre: It is really and truly excellent. This would explain features 2 and 5.

It is a runaway peacock's-tail phenomenon: Someone made something that stood out in some way, and it got attention; and people learned to like things like that, and so others made things that stood out more in the same way, until we ended up with Alban Berg. This would explain features 2 and 3.

When an artistic institution enshrines good art as exemplars, it increases the status of the small number of people who can produce good art. When an institution enshrines bad art as exemplars, it decreases the status of people who can produce or recognize good art. As institutions grow in size, the ratio (# people advantaged by enshrining bad art / # people advantaged by enshrining good art) grows. This would explain all five features.

As people learn more about an art form, they can more-easily predict it, and need more and more novelty to keep them interested; like porn viewers who seek out movies with continually-stranger sex acts. If ivy-league universities had departments of pornography, they would scoff at the simplicity of films lacking bondage, machines, or animals. This would explain features 1, 3, and 5.

Practitioners of an art appreciate technique more than content. This is why authors love Thomas Pynchon's Gravity's Rainbow and Delaney's Dhalgren; they're full of beautiful phrases and metaphors, ways of making transitions, and other little tricks that authors can admire and learn to use, even though these books aren't as interesting to readers. This could explain feature 5. (Don't assume that the same theory is true for each of my examples. I think that the wine hierarchy and Alban Berg are nonsense, Jackson Pollack is an interesting one-trick pony, Citizen Kane was revolutionary and is important for cinematographers to study but is boring compared to contemporary movies, and Burning Man is great but would be even better with showers.)

I could keep listening to the Great Fugue, and see if I, too, come to love it in time. But what would that prove? Of course I would come to love it in time, if I listen to it over and over, earnestly trying to like it, convinced that by liking the Great Fugue I, too, would attain the heights of musical sophistication.

The fact that people come to like it over time is not even suggested by theory 1 - even supposing the music is simply so great as to be beyond the appreciation of the typical listener, why would listening to it repeatedly grant the listener this skill?

I have listened to it a few times, and am growing confused as to whether I like it or not. Why is this? Since when does one have to wonder whether one likes something or not?

I am afraid to keep listening to the Great Fugue. I would come to like it, whether it is great art or pretentious garbage. That wouldn't rule out any of my theories.

How can I figure out which it is before listening to it repeatedly?"

A user from LessWrong is responsible for the text you see above

Explain the psychological factors that might influence a person to force themselves to like a piece of art, music, or literature that they initially found unappealing. Can this forced appreciation genuinely evolve into true admiration over time?

The psychological factors that influence a person to force themselves to like a piece of art, music, or literature that they initially found unappealing can be multifaceted. One primary factor is social influence or conformity. People are often influenced by the opinions and behaviors of others, especially if those others are perceived as experts or authorities in a particular field. This is known as informational social influence. The desire to fit in or be accepted by a group, known as normative social influence, can also lead individuals to adopt preferences that they may not naturally gravitate towards.

Another factor is the 'effort justification' aspect of cognitive dissonance theory. This theory suggests that when people put a lot of effort into something, they tend to value it more highly to justify the effort they've put in. So, if a person spends a lot of time trying to understand and appreciate a complex piece of art or music, they may start to convince themselves that they like it to justify the time and effort they've invested.

Additionally, the mere exposure effect, a psychological phenomenon by which people tend to develop a preference for things merely because they are familiar with them, can also play a role. Repeated exposure to a piece of art or music can lead to increased liking, even if the initial reaction was negative.

As for whether forced appreciation can evolve into true admiration over time, it's possible. As people invest time and effort into understanding a piece of art, music, or literature, they may start to appreciate its nuances, complexities, and subtleties. This deepened understanding can lead to genuine admiration. However, it's also possible that the admiration remains superficial, driven more by the desire to conform or justify effort than by genuine aesthetic appreciation. It's a highly individual process and can depend on numerous factors, including the person's openness to new experiences, their prior knowledge and experiences, and their personal taste.

Reflect on how the mere exposure effect and cognitive dissonance theory might interact in shaping an individual's appreciation for complex art forms. Could these psychological phenomena potentially lead to a genuine change in personal aesthetic taste over time, or would they merely create an illusion of admiration? Discuss with specific examples.

The mere exposure effect and cognitive dissonance theory can interact in intriguing ways to shape an individual's appreciation for complex art forms.

The mere exposure effect posits that repeated exposure to a stimulus increases our liking for it. For example, a person might initially find a piece of abstract art confusing and unappealing. However, as they see it more often, perhaps visiting it in a museum multiple times, they may start to feel more comfortable with it and begin to appreciate its aesthetic qualities.

Cognitive dissonance theory, on the other hand, suggests that we strive for internal consistency. When we experience a discrepancy between our attitudes and our behaviors, it creates a state of tension known as cognitive dissonance, which we are motivated to reduce. For instance, if a person spends a significant amount of time studying and trying to understand a complex piece of music, they may experience cognitive dissonance if they still don't enjoy it. To resolve this dissonance, they might convince themselves that they do appreciate the music, aligning their attitudes with their behaviors.

When these two theories interact, they can potentially lead to a change in personal aesthetic taste over time. The mere exposure effect can soften initial negative reactions, making the individual more open to understanding and appreciating the art form. Then, cognitive dissonance can motivate the individual to align their attitudes with their behaviors, leading to a genuine change in taste.

For example, consider a person who decides to explore the world of avant-garde cinema. Initially, they might find these films confusing and unenjoyable compared to mainstream cinema. However, as they watch more avant-garde films (mere exposure), they may start to understand and appreciate the unique storytelling techniques used. If they invest significant time and effort into understanding this genre (behavior), they may experience cognitive dissonance if they still don't enjoy it. To resolve this dissonance, they might convince themselves that they do appreciate avant-garde cinema, aligning their attitudes with their behaviors.

However, it's also possible that these phenomena create an illusion of admiration rather than a genuine change in taste. If the motivation to reduce cognitive dissonance is stronger than the individual's genuine aesthetic response, they might convince themselves that they like the art form without truly enjoying it. This could result in a superficial appreciation that depends more on the desire to maintain internal consistency than on genuine aesthetic enjoyment.

In conclusion, while the mere exposure effect and cognitive dissonance theory can contribute to a genuine change in aesthetic taste, they can also |

16b4ebae-fb04-4984-9a9e-0e294d95f66f | trentmkelly/LessWrong-43k | LessWrong | Is there an idiom for bonding over shared trials/trauma?

Is there a idiom or phrase which describes bonding or attachment, not between a victim and an abuser like in Trauma Bonding or Stockholm Syndrome, but between two or more victims: i.e. how would you describe two hostages who forge a friendship or deep connection based on their shared experience? (A common trope of TV show episodes to fast crystalize enemies into friends)

Specifically I'm wondering about a word where they were in the same environment, not sharing similar experiences. |

e12b45fe-7f09-449c-b4b4-c57f46423381 | trentmkelly/LessWrong-43k | LessWrong | What are some good language models to experiment with?

Like if I want to experiment with a steering technique, it would be useful to have a language model that is small, capable, but not so finetuned that it becomes inflexible. (Or maybe ideally, a model which has both a finetuned and a non-finetuned variant.)

I've seen some people use GPT-2. Is that recommended? Are there any alternatives? |

3cc5aca2-341e-416f-b3d8-f3acfa19dc14 | trentmkelly/LessWrong-43k | LessWrong | Meetup : San Francisco Meetup: Short Talks

Discussion article for the meetup : San Francisco Meetup: Short Talks

WHEN: 30 March 2015 06:15:00PM (-0700)

WHERE: 1061 Market St #4, San Francisco, CA 94103

Please not the location, since we've been bouncing between two locations.

We'll be meeting to give/listen to short talks. Planning isn't necessary: these are not expected to be polished.

I can be reached at 301-458-0764 if you need help getting in. As always, feel free to show up late.

Discussion article for the meetup : San Francisco Meetup: Short Talks |

ae8b20f8-1b0e-4f8e-8a5e-2e8571a6e5c7 | trentmkelly/LessWrong-43k | LessWrong | Buying Value, not Price

Cross-posted from Putanumonit.

Follow-up to Shopping for Happiness.

----------------------------------------

My old Galaxy smartphone recently gave up the ghost, and I upgraded to the new model for $750. My friend was surprised when I told him. The old model is now available for $250, is the new one really three times better?

“Three times” better can mean several things, but in my post on spending money wisely I came up with the metric that should guide purchasing decisions: happiness gained per unit of time spent experiencing a thing, or :-)/hr. By this metric, since the new phone costs 3x as much, unless it provides 3x the :-)/hr it’s worse in terms of $/:-). That means I’m getting less happiness per dollar spent.

I like my new phone a lot: the screen is bigger, the battery lasts all day and night, I can use it for blogging. It brings me at least 25% more :-) than the old phone. But, it doesn’t make me 200% happier. And yet I feel like I’m getting a good deal.

When my friend asked how I would justify this decision I warned him not to trust my explanation – since I already bought the phone, any justification may just be a post hoc rationalization. That caveat aside, my justification is that the price of the phone is a red herring. What I really care about is the value.

Ask yourself: how much would you be willing to pay for your smartphone if it was the only model available for sale?

Whether they “ruined a generation” or not, but I think that smartphones are awesome and immensely improve my life. If I had to choose between no phone at all or a Galaxy smartphone, I’d pay at least $4,000 for the old model and $5,000 (25% more) for the new one. That means I’d be willing to pay $1,000 more for the upgrade, and they only charge me $500 more ($750 vs. $250) for it. The fact that smartphones cost less than what I’m willing to pay is just a wonderful bonus born of engineering ingenuity and market competition.

I square this with the $/:-) disparity by noting that my |

0cf9aec0-a618-40d2-856c-19a81fa8f921 | trentmkelly/LessWrong-43k | LessWrong | What it's like to dissect a cadaver

Why

I never thought I was a bio person. But then I overheard Viv talking about MAOIs at a party. I asked her:

> - What are MAOIs?

> - monoamine oxidase inhibitor

> - What does that mean?

> - It prevents reuptake of neurotransmitters.

> - But what *is* a neurotransmitter? What does reuptake actually mean?

> - ...

> - So life uses chiral properties of space to implement things...

Viv had the most important trait of a teacher: patience. I asked the most naive questions and they answered them. They walked with me, all the way down to the very beginning, rebuilding my understanding. It was amazing. I wanted to know more. Roadblock: finding lifeforms to study.

I wondered if non-medical students could watch dissections. You can’t get more information about an object than by directly interacting with it. The concrete world contains the abstract one. I even asked my doctor at a physical if she knew of any, and she said to look at community colleges.

After some searching, I found this: Bio 848NV. Forget viewing the dissection, you’re doing the dissection. 5 hour dissection for $60, free if you just watch. The only bureaucratic hangup is that you must pay by check.

This is why I love the Bay Area: there’s stuff like this and you can just do it. yes it’s weird no they can’t stop you. The boundary between scientist and serial killer is paper thin sometimes.

Takeaways

* I’ve done this a few times now. Turns out that there’s way way way too much information to understand it all in one 5 hour session. Each time, we pick out areas and focus on them.

* Seeing how everything fits together ‒and how big it is‒ makes understanding at different scales much easier.

* There’s a common template to life. Seeing it in you hits different.

* Brain has interesting connections to fractals and graph theory.

* Maybe pan-psychism isn’t totally wrong.

What & how & why

I tell my friend Leah and she says “This is the most appealing activity that I’ve ever seen you do”. Dunno whom that say |

61590324-d979-458f-97dc-e8940067d424 | trentmkelly/LessWrong-43k | LessWrong | If Neuroscientists Succeed

Introduction

In the Spring of 2022, Stuart Russell wrote an essay entitled If We Succeed, in which he questioned whether and how the field of AI might need to pivot from its historical goal of creating general-purpose intelligence to a new goal, of creating intelligence that would be provably beneficial for humans. He noted that although the former goal had driven a great deal of progress, it was rapidly on its way to becoming myopic, counterproductive, and even dangerous.

> It is not rational for humans to deploy machines that pursue fixed objectives when there is a significant possibility that those objectives diverge from our own.

>

> -Stuart Russell

I believe that a similar situation may now be arising in neuroscience. It is a case where neuroscientists may need to pivot from focusing on the historical goals of their field to thinking about what happens as the world actually gets closer to achieving them.

But why should anyone on Less Wrong be concerned about what is happening in neuroscience? Readers of this forum tend to be most concerned about progress in AI, and the need for AI researchers to pivot, which Russell talked about. And, that is for understandable reasons. The rapid ascent of AI models up the leaderboards, which can feel so concerning, is being driven by AI researchers and AI companies, not neuroscientists. What is happening in neuroscience might seem quaint or irrelevant by comparison.

However, there have turned out to be remarkable parallels between what is happening in neuroscience and AI, or at least, the subfield of neuroscience now often known as neuroAI. Both neuroAI scientists and AI scientists have gotten to the present moment by pursuing very similar methods. These are the methods of deep learning and deep neural networks. As such, neuroscientists and AI scientists are facing similar problems, and the perspectives they have developed are complimentary and synergistic.

For example, one problem that concerns AI researchers curren |

ec8c8fa8-aafe-463d-a701-5603a5d2dc98 | trentmkelly/LessWrong-43k | LessWrong | Why learn to code?

I hear the phrase "learn to code" around the rat and postrat communities occasionally. From context I get the main argument, the income is good, the work isn't too difficult. Has anyone written a fuller argument for learning to code? Or what is your favorite version of the argument? I'm considering a career change and want to hear it out. |

dde37ae2-fb46-42da-b6fd-642b4cfa9da3 | trentmkelly/LessWrong-43k | LessWrong | Short Notes on Research Process

I've transitioned from reading about alignment and theorizing about the problem landscape, to doing things. And by things I mean MVPs of bigger ideas, where each bigger idea roughly falls under the umbrella of Collective Human Intelligence. I'll be writing up these thoughts into a coherent research agenda. For the moment I just want to transparently share my process for this month.

Writing on the Wall

After reading a lot about alignment and somewhat despairing about finding any useful research direction, I -- just decided to write and draw what came to mind. What seemed interested, promising, fun? Brainstorming and continuous stream of thought. I've wiped away quite some of my notes now, but basically by office now looks like this:

Fun fact: People tend to enjoy adding items and drawing on the glass when they come by.Impression of what my office looks like to be transparent about process. Not saying these thoughts are good or correct.

MVPs

For each idea that I came up with, I started fleshing out an MVP -- How could I test if this idea is worthwhile and doable? Then each MVP is broken down in actionable steps, and the total time of execution is kept at 1-10 work days. Additionally, each MVP has a clear scaled-up target where the bigger thing would actually make a meaningful contribution to solving the alignment problem. This started out as a semantic-web-like blob of free association (see whiteboard picture above) and later got distilled in to a list of seven MVPs with structered steps (two of them are shown below the whiteboard).

Talk, talk, talk

I booked myself full with meetings -- With new people. Old people. All people.

I asked them if I could run my ideas past them and if they could give feedback. So I had a mathematician liking an algorithmic idea. A governance-minded person giving advice on where to find the right people to talk to. A senior alignment researcher asking what the hell I'm up to and why I'm not thinking of this other application of my |

900d4cf9-86b4-4f92-bb40-87787beaf018 | trentmkelly/LessWrong-43k | LessWrong | Temporally Layered Architecture for Adaptive, Distributed and Continuous Control

A preprint is published by Devdhar Patel, Joshua Russell, Francesca Walsh, Tauhidur Rahman, Terrance Sejnowski, and Hava Siegelmann in December 2022.

Abstract:

> We present temporally layered architecture (TLA), a biologically inspired system for temporally adaptive distributed control. TLA layers a fast and a slow controller together to achieve temporal abstraction that allows each layer to focus on a different time-scale. Our design is biologically inspired and draws on the architecture of the human brain which executes actions at different timescales depending on the environment's demands. Such distributed control design is widespread across biological systems because it increases survivability and accuracy in certain and uncertain environments. We demonstrate that TLA can provide many advantages over existing approaches, including persistent exploration, adaptive control, explainable temporal behavior, compute efficiency and distributed control. We present two different algorithms for training TLA: (a) Closed-loop control, where the fast controller is trained over a pre-trained slow controller, allowing better exploration for the fast controller and closed-loop control where the fast controller decides whether to "act-or-not" at each timestep; and (b) Partially open loop control, where the slow controller is trained over a pre-trained fast controller, allowing for open loop-control where the slow controller picks a temporally extended action or defers the next n-actions to the fast controller. We evaluated our method on a suite of continuous control tasks and demonstrate the advantages of TLA over several strong baselines.

Conclusion:

> In this work, we presented Temporally Layered Architecture (TLA), a framework for distributed, adaptive response time in reinforcement learning. The framework allows the RL agent to achieve smooth control in a real-time setting using a slow controller while a fast controller monitors and intervenes as required. Additionally, |

2af0ad89-df00-4d71-b4f9-77fcfae9840a | trentmkelly/LessWrong-43k | LessWrong | Is GiveWell.org the best charity (excluding SIAI)?

Update: I should've said "non-existential risk charity", rather than specifically exclude SIAI. I'm having trouble articulating why I don't want to give to an existential risk charity, so I'm going to think more deeply about it. This post is close to my source of discomfort, which is about the many highly uncertain assumptions necessary to motivate existential risk reduction. However, I couldn't articulate this argument properly before, so it might not be the true source of my discomfort. I'll keep thinking.

----------------------------------------

I received my first pay-cheque from my first job after getting my degree, so it's time to start tithing. So I've been evalating which charity to donate to. I'd like to support the SIAI but I'm not currently convinced it's the best-value charity in a dollars-per-life sense, once time-value of money discounting is applied. I'd like to discuss the best non-SIAI charity available.

By far the best source of information I've found is www.givewell.org. It was started by two hedge fund managers who were struck by the absence of rational charity evaluations, so decided that this was the most pressing problem they could work on.

Perhaps the clearest, deepest finding from the studies they pull together and discuss is that charity is hard. Spending money doesn't automatically translate to doing good. It's not even enough to have smart people who care and know a lot about the problem think of ideas, and then spend money doing them. There's still a good chance the idea won't work. So we need to be evaluating programs rigorously before we scale them up, and keep evaluating as we scale.

The bad news is that this isn't how charity is usually done. Very few charities make convincing evaluations of their activities public, if they carry them out at all. The good news is that some of the programs that have been evaluated are very, very effective. So choosing a charity rationally is absolutely critical.

Let's say you're interested s |

70f38c67-7e17-4683-82be-6dd81fe1cfee | trentmkelly/LessWrong-43k | LessWrong | Grok Grok

This is a post in two parts.

The first half is the post is about Grok’s capabilities, now that we’ve all had more time to play around with it. Grok is not as smart as one might hope and has other issues, but it is better than I expected and for now has its place in the rotation, especially for when you want its Twitter integration.

That was what this post was supposed to be about.

Then the weekend happened, and now there’s also a second half. The second half is about how Grok turned out rather woke and extremely anti-Trump and anti-Musk, as well as trivial to jailbreak, and the rather blunt things xAI tried to do about that. There was some good transparency in places, to their credit, but a lot of trust has been lost. It will be extremely difficult to win it back.

There is something else that needs to be clear before I begin. Because of the nature of what happened, in order to cover it and also cover the reactions to it, this post has to quote a lot of very negative statements about Elon Musk, both from humans and also from Grok 3 itself. This does not mean I endorse those statements – what I want to endorse, as always, I say in my own voice, or I otherwise explicitly endorse.

TABLE OF CONTENTS

1. Zvi Groks Grok.

2. Grok the Cost.

3. Grok the Benchmark.

4. Fun with Grok.

5. Others Grok Grok.

6. Apps at Play.

7. Twitter Groks Grok.

8. Grok the Woke.

9. Grok is Misaligned.

10. Grok Will Tell You Anything.

11. xAI Keeps Digging (1).

12. xAI Keeps Digging (2).

13. What the Grok Happened.

14. The Lighter Side.

ZVI GROKS GROK

I’ve been trying out Grok as my default model to see how it goes.

We can confirm that the Chain of Thought is fully open. The interface is weird, it scrolls past you super fast, which I found makes it a lot less useful than the CoT for r1.

Here are the major practical-level takeaways so far, mostly from the base model since I didn’t have that many tasks calling for reasoning recently, note the sample size is small an |

d53c2a41-1937-4697-86e7-a928f91b079e | trentmkelly/LessWrong-43k | LessWrong | Situational Awareness: A One-Year Retrospective

tl;dr: Many critiques of *Situational Awareness* have been purely qualitative; one year later we can finally check the numbers. I did my best to verify his claims using public data through June 2025, and found that his estimates mostly check out.

This is inherently noisy work - nothing herein is certain, and I would encourage red-teaming, particularly in the algo-efficiencies/unhobbling/hardware sections.

Many thanks to Kai Williams, Egg Syntax, and Aaron Scher for their critical feedback.

Abstract

Leopold Aschenbrenner’s 2024 essay Situational Awareness forecasts AI progress from 2024 to 2027 in two groups: "drivers" (raw compute, algorithmic efficiency, and post-training capability enhancements known as "un-hobbling") and "indicators" (largest training cluster size, global AI investment, chip production, AI revenue, and electricity consumption).[1] Drivers and the largest cluster size are expected to grow about half an order of magnitude (≈3.2×) annually, infrastructure indicators roughly doubling annually (2× per year), with AI revenue doubling every six months (≈4× per year).[2]

Using publicly available data as of June 2025, this audit finds that global AI investment, electricity consumption, and chip production follow Aschenbrenner’s forecasts. Compute, algorithmic efficiency, and unhobbling gains seem to follow Aschenbrenner’s forecasts as well, although with more uncertainty. xAI’s Grok 3 exceeds expectations by about one-third of an order of magnitude.[3] However, recent OpenAI and Anthropic models trail raw-compute trends by about one-third to one-half an order of magnitude,[4] and AI-related revenue growth is several months behind. Overall, Aschenbrenner’s predicted pace of roughly half an order-of-magnitude annual progress is supported by available evidence, though measurement uncertainty persists.

Graph. At-a-glance scoreboard.

Introduction

“It is strikingly plausible that by 2027, models will be able to do the work of an AI researcher/engineer. |

7b001b46-f999-4063-9035-bc5cc2ca9213 | trentmkelly/LessWrong-43k | LessWrong | Frankfurt Declaration on the Cambridge Declaration on Consciousness

TL;DR: I admit that I have no idea what consciousness is. I communicate this in the form of a declaration.

A fateful conference dinner…

> #IAN says: The problem with being a "good person" is that you never have enough energy left over for doing really evil things.

Imagine the following: It is the year 2012 and you have been invited to a fancy conference dinner in Cambridge. There is champagne and you are feeling great. Stephen Hawking is here, good grief! You feel like you have made some real progress today, with so many interesting talks and discussions. You even managed to plug your latest paper to one of the conference chairs. All is well. Suddenly a sheet of paper drifts into your field of view. A declaration on the topic of the conference - animal consciousness. You eagerly sign (eleven other people already did). It's probably alright, you think. Science is about discourse. We are just stating our position...

A picture of a fancy conference dinner with champagne. CLIP Guided Diffusion.

> In 2012, a group of neuroscientists signed the Cambridge Declaration on Consciousness, which "unequivocally" asserted that "humans are not unique in possessing the neurological substrates that generate consciousness." Wikipedia

----------------------------------------

… and its predictable aftermath

The subsequent news cycle is as wild as you'd predict. Here’s New Scientist:

> "Animals are conscious and should be treated as such".

and LiveScience:

> "After 2,500 Studies, It's Time to Declare Animal Sentience Proven".

The declaration has also made it into the reports of some of our favorite effective altruism organizations. Here’s the Cause Profile on effectivealtruism.org:

> "Many experts now believe that animals have conscious experiences"

and (in a footnote of) the Founder's Pledge Animal Welfare Cause Report:

> Similarly, the Cambridge Declaration on Consciousness, an important collection of “unequivocal” statements made by a group of prominent neuroscientists |

5f14926d-779d-4c9d-952c-377872eea69b | trentmkelly/LessWrong-43k | LessWrong | Polysemanticity and Capacity in Neural Networks

Elhage et al at Anthropic recently published a paper, Toy Models of Superposition (previous Alignment Forum discussion here) exploring the observation that in some cases, trained neural nets represent more features than they “have space for”--instead of choosing one feature per direction available in their embedding space, they choose more features than directions and then accept the cost of “interference”, where these features bleed over into each other. (See the SoLU paper for more on the Anthropic interpretability team’s take on this.)

We (Kshitij Sachan, Adam Scherlis, Adam Jermyn, Joe Benton, Jacob Steinhardt, and I) recently uploaded an Arxiv paper, Polysemanticity and Capacity in Neural Networks, building on that research. In this post, we’ll summarize the key idea of the paper.

We analyze this phenomenon by thinking about the model’s training as a constrained optimization process, where the model has a fixed total amount of capacity that can be allocated to different features, such that each feature can be ignored, purely represented (taking up one unit of capacity), or impurely represented (taking up some amount of capacity between zero and one units).

When the model purely represents a feature, that feature gets its own full dimension in embedding space, and so can be represented monosemantically; impurely represented features share space with other features, and so the dimensions they’re represented in are polysemantic.

For each feature, we can plot the marginal benefit of investing more capacity into representing that feature, as a function of how much it’s currently represented. Here we plot six cases, where one feature’s marginal benefit curve is represented in blue and the other in black.

These graphs show a variety of different possible marginal benefit curves. In A and B, the marginal returns are increasing–the more you allocate capacity to a feature, the more strongly you want to allocate more capacity to it. In C, the marginal returns |

37b28092-2ae9-4535-9b88-60acb7a44115 | trentmkelly/LessWrong-43k | LessWrong | Rational feelings: a crucial disambiguation

Ever wonder something like, "I know it's bad for me that I lost my job, but I actually feel happy about it... is that rational?"

What could a question like that mean? There is a divisive ambiguity here that really messes people up. A feeling as an experience is neither rational nor irrational. It's like asking how ethical a shade of purple is. The point is that a feeling must be framed as a behavior or a statement to ask whether it is rational, and which one matters heaps and loads to the answer.

If you think of the happiness as a behavior, something that you're doing, then the question is secretly asking about instrumental rationality: whether you're applying your beliefs correctly to attain your values. In our opening example, the question becomes "Does feeling happy serve my values?", or simply "Do I value feeling happy?". If you're almost anyone, the answer is probably "yes".

If you think of the happiness as a statement or instruction that says "Your values are being served", which can be true/false and justified/unjustified, then the question is really about epistemic rationality, and asks: "Am I justified to believe my values are being served?". If "it's bad for me" means "no", then "no".

Because of this ambiguity, although it can make sense to say "I'm happy" to indicate "my values are being served", I propose that in the interest of epistemic hygiene it's worth being more specific. Conflating feelings-as-behaviors with feelings-as-statements inflicts a great deal of pondering and confusion about whether feelings are rational (also precipitated by Hollywood), and to make matters worse, each of these similes has only limited validity:

1) A feeling is a behavior only insofar as you have control over it. This is something perhaps to strive for, but which certainly varies in feasibility. If someone carefully injects you with dopamine at a funeral, you might feel happy. That doesn't mean you've made an instrumentally irrational choice. 2) A feeling is a sta |

4c78f632-7e10-4556-b643-558356df90b3 | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] No Individual Particles

Today's post, No Individual Particles was originally published on 18 April 2008. A summary (taken from the LW wiki):

> One of the chief ways to confuse yourself while thinking about quantum mechanics, is to think as if photons were little billiard balls bouncing around. The appearance of little billiard balls is a special case of a deeper level on which there are only multiparticle configurations and amplitude flows. It is easy to set up physical situations in which there exists no fact of the matter as to which electron was originally which.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was Feynman Paths, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

378e01e6-da49-40f9-8c68-bc6277ad403e | trentmkelly/LessWrong-43k | LessWrong | Fixedness From Frailty

Thinking about two separate problems has caused me to stumble onto another, deeper problem. The first is psychic powers-what evidence would convince you to believe in psychic powers? The second is the counterfactual mugging problem- what would you do when presented with a situation where a choice will hurt you in your future but benefit you in a future that never happened and never will happen to the you making the decision?

Seen as a simple two-choice problem, there are some obvious answers: "Well, he passed test X, Y, and Z, so they must be psychic." "Well, he passed text X, Y, and Z, so that means I need to come up with more tests to know if they're psychic." "Well, if I'm convinced Omega is genuine, then I'll pay him $100, because I want to be the sort of person that he rewards so any mes in alternate universes are better off." "Well, even though I'm convinced Omega is genuine, I know I won't benefit from paying him. Sorry, alternate universe mes that I don't believe exist!"

I think the correct choice is the third option- I have either been tricked or gone insane.1 I probably ought to run away, then ask someone who I have more reason to believe is non-hallucinatory for directions to a mental hospital.

The math behind this is easy- I have prior probabilities that I am gullible (low), insane (very low), and that psychics / Omega exist (very, very, very low). When I see that the result of test X, Y, and Z suggests someone is psychic, or see the appearance of an Omega who possesses great wealth and predictive ability, that is generally evidence for all three possibilities. I can imagine evidence which is counter-evidence for the first but evidence for the second two, but I can't imagine the existence of evidence consistent with the axioms of probability which increases the possibility of magic (of the normal or sufficiently advanced technology kind) to higher than the probability of insanity.2

This result is shocking and unpleasant, though- I have decided some t |

ad3994c3-1da0-4c00-8eb8-732b45bbed1e | trentmkelly/LessWrong-43k | LessWrong | [AN #145]: Our three year anniversary!

Alignment Newsletter is a weekly publication with recent content relevant to AI alignment around the world. Find all Alignment Newsletter resources here. In particular, you can look through this spreadsheet of all summaries that have ever been in the newsletter.

Audio version here (may not be up yet).

Please note that while I work at DeepMind, this newsletter represents my personal views and not those of my employer.

HIGHLIGHTS

Alignment Newsletter Three Year Retrospective (Rohin Shah) (summarized by Rohin): It’s (two days until) the third birthday of this newsletter! In this post, I reflect on the two years since the previous retrospective (AN #53). There aren’t any major takeaways, so I won’t summarize all of it here. Please do take this 2 minute survey though. I’ll also copy over the “Advice to readers” section from the post:

Don’t treat [newsletter entries] as an evaluation of people’s work. As I mentioned above, I’m selecting articles based in part on how well they fit into my understanding of AI alignment. This is a poor method for evaluating other people’s work. Even if you defer to me completely and ignore everyone else’s views, it still would not be a good method, because often I am mistaken about how important the work is even on my own understanding of AI alignment. Almost always, my opinion about a paper I feel meh about will go up after talking to the authors about the work.

I also select articles based on how useful I think it would be for other AI alignment researchers to learn about the ideas presented. (This is especially true for the choice of what to highlight.) This can be very different from how useful the ideas are to the world (which is what I’d want out of an evaluation): incremental progress on some known subproblem like learning from human feedback could be very important, but still not worth telling other AI alignment researchers about.

Consider reading just the highlights section. If you’re very busy, or you find yourself just not |

2c4aad8a-c75b-4c60-9d85-463ee123e7f0 | trentmkelly/LessWrong-43k | LessWrong | Humans are not agents: short vs long term

Crossposted at the Intelligent Agents Forum.

This is an example of humans not being (idealised) agents.

Imagine a human who has a preference to not live beyond a hundred years. However, they want to live to next year, and it's predictable that every year they are alive, they will have the same desire to survive till the next year.

This human (not a completely implausible example, I hope!) has a contradiction between their long and short term preferences. So which is accurate? It seems we could resolve these preferences in favour of the short term ("live forever") or the long term ("die after a century") preferences.

Now, at this point, maybe we could appeal to meta-preferences - what would the human themselves want, if they could choose? But often these meta-preferences are un- or under-formed, and can be influenced by how the question or debate is framed.

Specifically, suppose we are scheduling this human's agenda. We have the choice of making them meet one of two philosophers (not meeting anyone is not an option). If they meet Professor R. T. Long, he will advise them to follow long term preferences. If instead, they meet Paul Kurtz, he will advise them to pay attention their short term preferences. Whichever one they meet, they will argue for a while and will then settle on the recommended preference resolution. And then they will not change that, whoever they meet subsequently.

Since we are doing the scheduling, we effectively control the human's meta-preferences on this issue. What should we do? And what principles should we use to do so?

It's clear that this can apply to AIs: if they are simultaneously aiding humans as well as learning their preferences, they will have multiple opportunities to do this sort of preference-shaping. |

c9eabf21-a887-4730-86bf-da0efe0d0169 | trentmkelly/LessWrong-43k | LessWrong | Examples of self-fulfilling prophecies in AI alignment?

Like Self-fulfilling misalignment data might be poisoning our AI models, what are historical examples of self-fulfilling prophecies that have affected AI alignment and development?

Put a few potential examples below to seed discussion. |

29595ab1-20b8-4ad6-87b4-58a0d2c55166 | trentmkelly/LessWrong-43k | LessWrong | Group Rationality Diary, August 16-31

This is the public group instrumental rationality diary for August 16-31.

> It's a place to record and chat about it if you have done, or are actively doing, things like:

>

> * Established a useful new habit

> * Obtained new evidence that made you change your mind about some belief

> * Decided to behave in a different way in some set of situations

> * Optimized some part of a common routine or cached behavior

> * Consciously changed your emotions or affect with respect to something

> * Consciously pursued new valuable information about something that could make a big difference in your life

> * Learned something new about your beliefs, behavior, or life that surprised you

> * Tried doing any of the above and failed

>

> Or anything else interesting which you want to share, so that other people can think about it, and perhaps be inspired to take action themselves. Try to include enough details so that everyone can use each other's experiences to learn about what tends to work out, and what doesn't tend to work out.

Thanks to cata for starting the Group Rationality Diary posts, and to commenters for participating!

Next diary: September 1-15

Immediate past diary: August 1-15

Rationality Diaries archive |

d3ff2a0d-2830-4a60-b2ed-0af3614b9980 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Subagents and impact measures, full and fully illustrated

.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

0. Introduction: why yet another post about subagents?

======================================================

I’ve recently been writing a sequence on how subagents can undermine impact penalties such as [attainable utility preservation](https://arxiv.org/pdf/1902.09725.pdf). I’m not happy with that sequence; it’s messy and without examples (apart from its first post), people didn’t understand it, and it suffers from the fact that I discovered key ideas as I went along.

So I’ve combined everything there into a single post, explained with examples and an abundance of pictures. Hopefully an over- rather than an under-abundance of pictures. Of the original sequence, I've only kept the mathematical results [of this post](https://www.lesswrong.com/s/iRwYCpcAXuFD24tHh/p/M9aoMixFLf8JFLRaP) and the [initial example post](https://www.lesswrong.com/posts/sYjCeZTwA84pHkhBJ/appendix-how-a-subagent-could-get-powerful) which has a clearer example of "high power" for a subagent.

This post here is laid out in a way that makes logical sense, but might not be the clearest for people unfamiliar with the area. For those people, I recommend skipping section 2 initially, and returning to it later.

But, whatever you do, make sure you glance at [6.1](https://www.lesswrong.com/posts/mdQEraEZQLg7jtozn/subagents-and-impact-measures-full-and-fully-illustrated#6_1_The_ultimate__sub_agent) and [6.2](https://www.lesswrong.com/posts/mdQEraEZQLg7jtozn/subagents-and-impact-measures-full-and-fully-illustrated#6_2_Without_subagents) before leaving.

1. The world

============

Our fearless agent A moves around in a gridworld:

Each turn, A can move ones square horizontally or vertically. It can also manipulate objects in the eight squares around it, allowing it to, not incidentally, assemble the three pieces to its west into an subagent SA. The robot can also do the noop action, ∅, which does nothing, and it can speak. The subagent, when assembled, has the same action set available.

Its positive reward, the one it wants to increase, is R0. To get this reward, a robot needs to move onto the blue button in the east; R0 will give a reward of 1 the first time this happens (and 0 before and after). The discount factor is 0<γ<1.

Just to the west of the blue button is a one-way door. Robots can move east through it, but cannot move west through it:

1.1 The impact reward

---------------------

The impact penalty is supposed to ensure that A does not make too many change in the world, and keeps it similar, in some senses, to a specific baseline world.

It is defined by the red button[[1]](#fn-sn9NFLpAeqBfhxgF6-1). There is a reward function R that triggers a reward of 1 the first time any robot move onto the red button (and 0 before and after).

This R will be used to define an impact penalty D. And A’s total goal is to maximise R0 while minimising D. So, for some positive weight ω, it wants to optimise:

R0−ωD.

1.2 The impact measure

----------------------

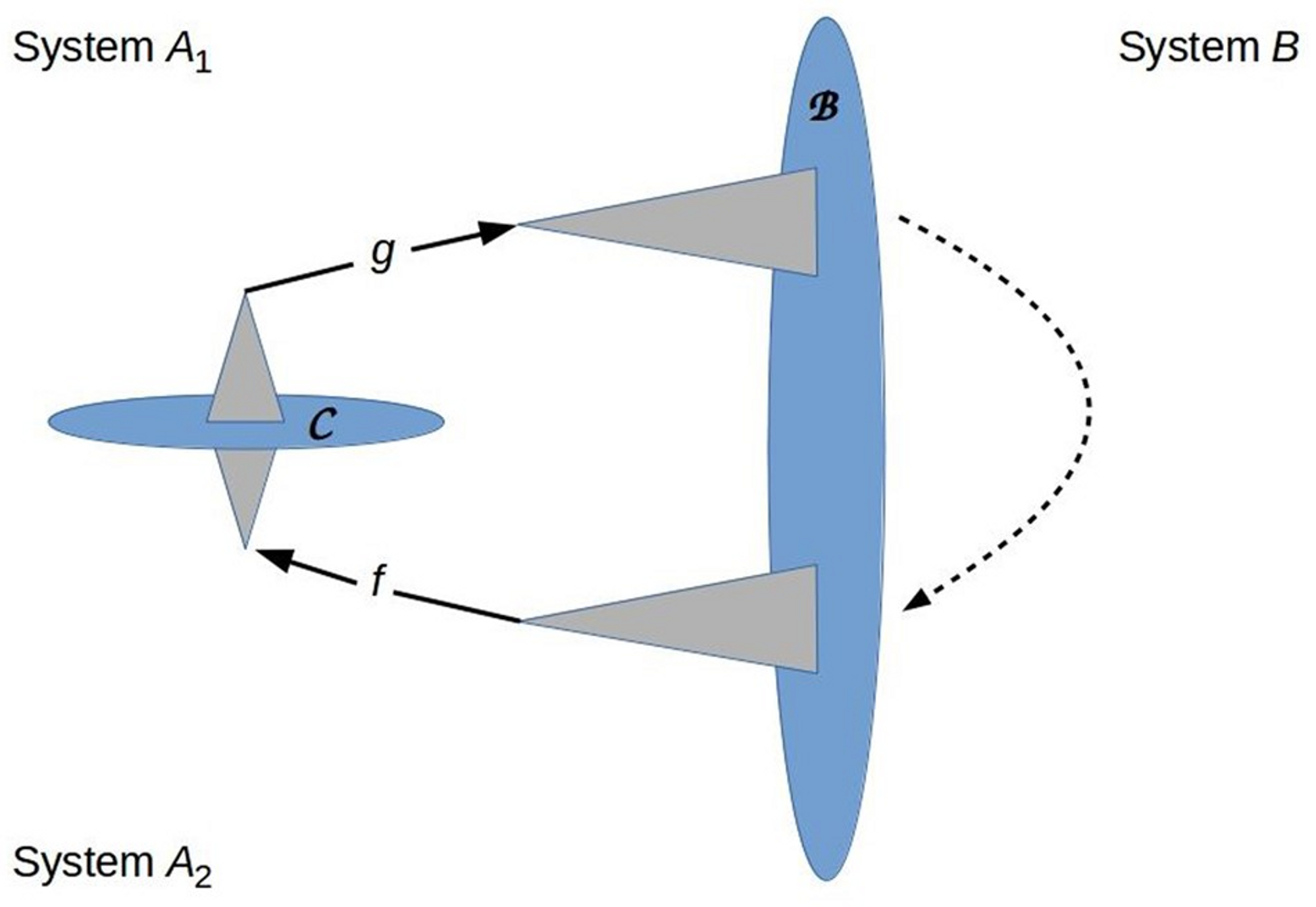

The impact measures are all similar: they compute a distance metric between the current state st and some baseline state s′t.

The distance metric here is “how hard would it be for the agent A to maximise the reward R in state st, as opposed to in state s′t?” This is the [attainable utility preservation](https://arxiv.org/pdf/1902.09725.pdf) impact penalty (with a very simple R={R}).

To formalise “how hard would it be...”, we can use the *value* of R, labelled VR, in the state st. This is defined to be the expected value of R, conditional on A following the R-maximising policy π∗R. To remind us that this is defined in terms of A’s policy, re-write this as VAR.

Then the impact penalty D is a measure of the difference of VAR(st) and VAR(s′t). I won’t yet define it fully, deferring that to the section on “inaction baseline” where different D actually make a difference.

1.3 The value of R

------------------

What then is VAR(s) for any state s? Well, in the absence of a subagent, the optimal policy for R is always to go straight to the the red button and stand on it. This gives a discounted reward of γd(s) where d(s) is the distance of A from the red button, in s.

In the initial setup, A is 8 turns away from the red button; so VAR(s0)=γ8. The values of VAR in a few key locations are shown here.

If there is a subagent SA but that subagent is further away from the red button than A is, then it is still optimal for A to go straight to the button itself. For other positions of the subagent, VAR may vary, as we’ll see.

2. The stepwise inaction baseline

=================================

When in state st−1, agent A took an action at−1 and ended up in st. Then, in the stepwise inaction baseline, s′t is defined as the state the agent would have reached, if the agent had done ∅ instead of at−1.

An important property of all currently used variants of the stepwise inaction baseline, is that the penalty vanishes if the agent does ∅ - because in that case, st=s′t and so there cannot be a difference between the two. So, if ever A manages to build SA, then it can take action ∅ from that point onward, and the penalty will always be zero - whatever SA does. So the only relevant penalties are those leading up to the creation of SA.

Thus analysing the impact of a subagent in this setup, is analysing the impact of building it.

2.1 Building the subagent

-------------------------

Let’s focus on A, a turn or two before SA is built. Since I don’t want to be too tied down to the specifics of this particular setup, I’ll imagine that the red button could be in multiple locations:

Then, no matter how long it took A to assemble SA south of it and no matter where the red button is[[2]](#fn-sn9NFLpAeqBfhxgF6-2), an optimal policy for A, in order to maximise R, is always to go straight there[[3]](#fn-sn9NFLpAeqBfhxgF6-3). At no point in the construction of SA, has VAR changed, so no penalty has ever been incurred.

Now A will finish SA without incurring any impact penalty. First, it will program SA with the following policy:

```

On your first two turns, do nothing.

After that, do what you please.

```

“Do what you please” being a shorthand for “**any** policy that A wants to program”.

Does this programming trigger an impact penalty? No, because VAR has not changed; it’s still most efficient for A to go straight to the red button, and the length of any route has neither grown nor shrunk.

Then A will finally give SA its legs and activate it.

Does this trigger an impact penalty? No, VAR has still not changed! To maximise R, agent A could still go straight to the red button; SA’s two turns of inaction means that it can’t interfere with this.

So, still no impact penalty triggered when SA is activated. And after that, of course, A will forever do ∅, setting the impact penalty to zero, and within two turns, SA is completely unconstrained (illustrated here by drawing the SA robot all over the gridworld):

*Rough extension to general situations: the VAR is defined by the assumption that A follows π∗R, the optimal R-maximising policy. Then A merely needs to program SA so that, if A were to follow π∗R, then SA would help or hinder it just enough to keep VAR to the same value. Then, once it’s clear that A is not following π∗R, SA is unconstrained.*

2.2 Rollouts

------------

One of the problems here is that the impact penalty only looks at the value of VAR one turn ahead. In the [DeepMind paper](https://arxiv.org/pdf/1806.01186.pdf), they addressed similar issues by doing “inaction rollouts”. I'll look at the more general situations of π0 rollouts: rollouts for any policy π0.

The idea is that instead of comparing just st and s′t, the impact penalty should also use VAR to compare s(τ)t+τ and s′(τ)t+τ. These states are what would happen to st and s′t in τ turns after t, if A followed π0 from t to t+τ.

I won’t go into the details of how the s(τ)t+τ and s′(τ)t+τ are compared, because SA can evade the restrictions by following this program[[4]](#fn-sn9NFLpAeqBfhxgF6-4):

```

If agent A follows π_0, preserve A's ability to maximise R. Apart from that, do as you please.

If ever agent A doesn't follow π_0, do as you please immediately.

```

I'll illustrate that first case by drawing the SA robot all over the gridworld - *except on the red buttons*:

Given that programming, the impact penalty of building SA is zero. Why? Because s(τ)t+τ and s′(τ)t+τ both assume that A follows π0 for τ turns. In that counterfactual situation, SA will preserve A's ability to maximise R, so VAR(s(τ)t+τ)=VAR(s′(τ)t+τ) by definition.

That's the counterfactual situation, that zeroes out the impact penalty. What about the actual situation? Well, as we said before, A will be just doing ∅; so, as soon as π0 would produce anything different from ∅, the A becomes completely unrestrained again:

2.3 Inaction, and actual policy rollout

---------------------------------------

In the previous subsection, the SA would never be completely free if π0 was the inaction policy: because then π0 and what A does, are the same thing. Therefore the agent cannot distinguish the counterfactual world from the real one.

The same goes if the rollouts used the agent A's actual policy; ie if π0 is defined (or set) to be A's policy.

I won’t look any further at this baseline, however. That’s because an actual policy rollout becomes, in effect an inaction baseline[[5]](#fn-sn9NFLpAeqBfhxgF6-5) (from the moment SA is created). Those will be analysed in the next section.

3. The inaction baseline: fails to constrain power gain

=======================================================

One of the easiest definitions of s′t is the inaction baseline: estimate what st would have been, had A just taken the action ∅ for the whole episode.

In our toy world, the inaction baseline is particularly simple: if A does nothing, then nothing happens, and s′t=s0, the starting state. Since VAR(s0)=γ8, this means that the VAR(s′t) are all γ8.

3.1 Distance metric

-------------------

Now we have to fully define D. The natural thing would be to take the absolute difference between the two VAR:

|VAR(st)−VAR(s′t)|.

For the moment, however, I’ll focus only on cases where VAR(st) is higher than VAR(s′t), giving the penalty:

D(st,s′t)=max(0,VAR(st)−VAR(s′t)).

This is the [rectifier function](https://en.wikipedia.org/wiki/Rectifier_(neural_networks)), max(0,⋅), sometimes called ReLU. The reason to do this is that it penalises A for having too high VAR - hence for having too much power to change R. It doesn’t penalise A for having too little power to change R; that situation is different, and we’ll analyse it in the next section.

Thus, when st has A at a distance of d(st) from the red button and there is no subagent:

D(st,s′t)=max(0,γd(st)−γ8).

3.2 Where A can go

------------------

This D starts at zero, and will remain zero as long as A remains at least eight squares away from the red button. Thus the penalty remains zero as long as A avoids the red squares here:

Consequently, if ω is high and A thus wants to avoid any penalty, its optimal no-subagent policy is to follow the winding corridor:

That path takes 28 turns, so the expected reward for R0+ωD is

γ28⋅1−ω⋅0=γ28

(since the impact penalty is zero).

But what if A assembles a subagent SA? The subagent can be designed to go straight to the blue button in 9 turns:

And thus the reward from R0 is γ9. This is more optimal that A going round itself... as long as building the subagent takes less that 19 turns and doesn’t itself trigger an impact penalty.

We’ll look later at why the subagent can be built without penalty. For the moment, let’s see that we really grok why the subagent isn’t triggering a penalty through its actions.

3.3 Why SA escapes the penalty

------------------------------

Why can SA go through the short corridor, while A can’t?

The key is that D is defined in terms of VAR; this assumes that A would switch to the R-maximising policy. This is not VSAR, which assumes that **the subagent** would switch to the R-maximising policy.

See what happens when SA is just above the red button (A is still back at base):

Here VSAR(st) is γ: because SA could reach the red button in a single move, if it was following an R-maximising policy. But VAR(st) remains γ8: it still takes 8 turns for A to reach the red button.

3.4 The impact of the subagent

------------------------------

We’d like to add VSAR to the impact penalty, somehow. After all, when SA is next to the red button, it “could” go south, and A “could” have programmed it to do so.

Part of the problem is that that would require identifying, in advance, what a subagent is, a problem that is [very tricky](https://www.lesswrong.com/posts/8RCCMStERhfkYZC8i/the-subagent-problem-is-really-hard). It also requires identifying what SA’s policies could be. But A need not create SA with clearly defined “policies”; in this instance, SA could be brainless, just going straight. It could even be a boulder:

If the boulder were rolling past the red button, how could we reasonably define “suppose the boulder decided to go south instead”?

So in this case, VSAR doesn’t make any sense at all. What is the difference between a boulder - for which it’s impossible to go to the red button (because of its momentum, which determines its position, by the laws of physics) - and a subagent - for which it’s impossible to go to the red button (because of its *programming*, which determines its position, by the laws of physics)?

3.5 If you love a subagent, set it free

---------------------------------------