id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

a83974f0-9a0b-4bea-825a-c4bc9473b96e | StampyAI/alignment-research-dataset/blogs | Blogs | MIRI’s July Newsletter: Fundraiser and New Papers

| | |

| --- | --- |

|

| |

| --- |

|

|

|

|

| | | | |

| --- | --- | --- | --- |

|

| | | |

| --- | --- | --- |

|

| | |

| --- | --- |

|

| |

| --- |

|

Greetings from the Executive Director

Dear friends,

Another busy month! Since our last newsletter, we’ve published 3 new papers and 2 new “analysis” blog posts, we’ve significantly improved our website (especially the [Research](http://intelligence.org/research/) page), we’ve [relocated](http://intelligence.org/2013/07/08/miri-has-moved/) to downtown Berkeley, and we’ve launched [our summer 2013 matching fundraiser](http://intelligence.org/2013/07/08/2013-summer-matching-challenge/)!

MIRI also recently presented at the [Effective Altruism Summit](http://www.effectivealtruismsummit.com/), a gathering of 60+ effective altruists in Oakland, CA. As philosopher Peter Singer explained in his [TED talk](http://www.ted.com/talks/peter_singer_the_why_and_how_of_effective_altruism.html), effective altruism “combines both the heart and the head.” The heart motivates us to be empathic and altruistic toward others, while the head can “make sure that what [we] do is effective and well-directed,” so that altruists can do not just *some* good but *as much good as possible*.

As I explain in [Friendly AI Research as Effective Altruism](http://intelligence.org/2013/06/05/friendly-ai-research-as-effective-altruism/), MIRI was founded in 2000 on the premise that creating Friendly AI might be a particularly efficient way to do as much good as possible. Effective altruists focus on a variety of other causes, too, such as poverty reduction. As I say in [Four Focus Areas of Effective Altruism](http://lesswrong.com/lw/hx4/four_focus_areas_of_effective_altruism/), I think it’s important for effective altruists to cooperate and collaborate, despite their differences of opinion about which focus areas are optimal. The world needs more effective altruists, of all kinds.

MIRI engages in direct efforts — e.g. Friendly AI research — to improve the odds that machine superintelligence has a positive rather than a negative impact. But indirect efforts — such as spreading rationality and effective altruism — are also likely to play a role, for they will influence the context in which powerful AIs are built. That’s part of why we created [CFAR](http://rationality.org/).

If you think this work is important, I hope you’ll [donate now](http://intelligence.org/donate/) to support our work. MIRI is *entirely* supported by private funders like *you*. And if you donate before August 15th, your contribution will be matched by one of the generous backers of [our current fundraising drive](http://intelligence.org/2013/07/08/2013-summer-matching-challenge/).

Thank you,

Luke Muehlhauser

Executive Director

Our Summer 2013 Matching Fundraiser

Thanks to the generosity of several major donors, every donation to MIRI made from now until August 15th, 2013 will be matched dollar-for-dollar, up to a total of $200,000!

Now is your chance to double your impact while helping us raise up to $400,000 (with matching) to fund [our research program](http://intelligence.org/research/).

Early this year we made a transition from movement-building to research, and we’ve hit the ground running with six major new research papers, six new strategic analyses on our blog, and much more. Give now to support our ongoing work on [the future’s most important problem](http://intelligence.org/2013/06/05/friendly-ai-research-as-effective-altruism/).

**Accomplishments in 2013 so far**

* [Changed our name](http://intelligence.org/blog/2013/01/30/we-are-now-the-machine-intelligence-research-institute-miri/) to MIRI and launched our new website at intelligence.org.

* Released six new research papers: [Definability of Truth in Probabilistic Logic](http://lesswrong.com/lw/h1k/reflection_in_probabilistic_set_theory/), [Intelligence Explosion Microeconomics](https://intelligence.org/files/IEM.pdf), [Tiling Agents for Self-Modifying AI](https://intelligence.org/files/TilingAgents.pdf), [Robust Cooperation in the Prisoner’s Dilemma](https://intelligence.org/files/RobustCooperation.pdf), [A Comparison of Decision Algorithms on Newcomblike Problems](https://intelligence.org/files/Comparison.pdf), and [Responses to Catastrophic AGI Risk: A Survey](http://intelligence.org/2013/07/07/responses-to-c%E2%80%A6-risk-a-survey/%20%E2%80%8E).

* Held our [2nd research workshop](http://intelligence.org/2013/03/07/upcoming-miri-research-workshops/). (Our [3rd workshop](http://intelligence.org/2013/06/07/miris-july-2013-workshop/) is currently ongoing.)

* Published six new analyses to our blog: [The Lean Nonprofit](http://intelligence.org/2013/04/04/the-lean-nonprofit/), [AGI Impact Experts and Friendly AI Experts](http://intelligence.org/2013/05/01/agi-impacts-experts-and-friendly-ai-experts/), [Five Theses…](http://intelligence.org/2013/05/05/five-theses-two-lemmas-and-a-couple-of-strategic-implications/), When Will AI Be Created?, [Friendly AI Research as Effective Altruism](http://intelligence.org/2013/06/05/friendly-ai-research-as-effective-altruism/), and [What is Intelligence?](http://intelligence.org/2013/06/19/what-is-intelligence-2/)

* Published the [Facing the Intelligence Explosion](http://intelligenceexplosion.com/ebook/) ebook.

* Published several other substantial articles: [Recommended Courses for MIRI Researchers](http://intelligence.org/courses/), [Decision Theory FAQ](http://lesswrong.com/lw/gu1/decision_theory_faq/), [A brief history of ethically concerned scientists](http://lesswrong.com/lw/gln/a_brief_history_of_ethically_concerned_scientists/), [Bayesian Adjustment Does Not Defeat Existential Risk Charity](http://lesswrong.com/lw/gzq/bayesian_adjustment_does_not_defeat_existential/), and others.

* And of course much more.

**Future Plans You Can Help Support**

* We will host many more research workshops, including [one in September](http://intelligence.org/2013/07/07/miris-september-2013-workshop/%20%E2%80%8E), and one in December (with [John Baez](http://math.ucr.edu/home/baez/) attending, among others).

* Eliezer will continue to publish about open problems in Friendly AI. (Here is [#1](http://lesswrong.com/lw/hbd/new_report_intelligence_explosion_microeconomics/) and [#2](http://lesswrong.com/lw/hmt/tiling_agents_for_selfmodifying_ai_opfai_2/).)

* We will continue to publish strategic analyses, mostly via our blog.

* We will publish nicely-edited ebooks (Kindle, iBooks, and PDF) for more of our materials, to make them more accessible: [The Sequences, 2006-2009](http://wiki.lesswrong.com/wiki/Sequences) and [The Hanson-Yudkowsky AI Foom Debate](http://wiki.lesswrong.com/wiki/The_Hanson-Yudkowsky_AI-Foom_Debate).

* We will continue to set up the infrastructure (e.g. [new offices](http://intelligence.org/2013/07/06/miri-has-moved/), researcher endowments) required to host a productive Friendly AI research team, and (over several years) recruit enough top-level math talent to launch it.

(Other projects are still being surveyed for likely cost and strategic impact.)

We appreciate your support for our high-impact work! Donate now, and seize a better than usual chance to move our work forward. If you have questions about donating, please contact Louie Helm at (510) 717-1477 or louie@intelligence.org.

New Research Page, Three New Publications

Our new [Research](http://intelligence.org/research/) page has launched!

Our previous research page was a simple list of articles, but the new page describes the purpose of our research, explains four categories of research to which we contribute, and highlights the papers we think are most important to read.

We’ve also released three new research articles.

[Tiling Agents for Self-Modifying AI, and the Löbian Obstacle](https://intelligence.org/files/TilingAgents.pdf) (discuss it [here](http://lesswrong.com/lw/hmt/tiling_agents_for_selfmodifying_ai_opfai_2/)), by Yudkowsky and Herreshoff, explains one of the key open problems in MIRI’s research agenda:

We model self-modification in AI by introducing “tiling” agents whose decision systems will approve the construction of highly similar agents, creating a repeating pattern (including similarity of the offspring’s goals). Constructing a formalism in the most straightforward way produces a Gödelian difficulty, the “Löbian obstacle.” By technical methods we demonstrates the possibility of avoiding this obstacle, but the underlying puzzles of rational coherence are thus only partially addressed. We extend the formalism to partially unknown deterministic environments, and show a very crude extension to probabilistic environments and expected utility; but the problem of finding a fundamental decision criterion for self-modifying probabilistic agents remains open.

[Robust Cooperation in the Prisoner’s Dilemma: Program Equilibrium via Provability Logic](https://intelligence.org/files/RobustCooperation.pdf) (discuss it [here](http://lesswrong.com/lw/hmw/robust_cooperation_in_the_prisoners_dilemma/)), by LaVictoire et al., explains some progress in program equilibrium made by MIRI research associate Patrick LaVictoire and several others during MIRI’s April 2013 workshop:

Rational agents defect on the one-shot prisoner’s dilemma even though mutual cooperation would yield higher utility for both agents. Moshe Tennenholtz showed that if each program is allowed to pass its playing strategy to all other players, some programs can then cooperate on the one-shot prisoner’s dilemma. Program equilibria is Tennenholtz’s term for Nash equilibria in a context where programs can pass their playing strategies to the other players. One weakness of this approach so far has been that any two programs which make different choices cannot “recognize” each other for mutual cooperation, even if they are functionally identical. In this paper, provability logic is used to enable a more flexible and secure form of mutual cooperation.

[Responses to Catastrophic AGI Risk: A Survey](https://intelligence.org/files/ResponsesAGIRisk.pdf) (discuss it [here](http://lesswrong.com/r/discussion/lw/hxi/responses_to_catastrophic_agi_risk_a_survey/)), by Sotala and Yampolskiy, is a summary of the extant literature (250+ references) on AGI risk, and can serve either as a guide for researchers or as an introduction for the uninitiated.

Many researchers have argued that humanity will create artificial general intelligence (AGI) within the next twenty to one hundred years. It has been suggested that AGI may pose a catastrophic risk to humanity. After summarizing the arguments for why AGI may pose such a risk, we survey the field’s proposed responses to AGI risk. We consider societal proposals, proposals for external constraints on AGI behaviors, and proposals for creating AGIs that are safe due to their internal design.

Two New Analyses

MIRI publishes some of its most substantive research to its blog, under the [Analysis](http://intelligence.org/category/analysis/) category. For example, [When Will AI Be Created?](http://intelligence.org/2013/05/15/when-will-ai-be-created/) is the product of 20+ hours of work, and has 14 footnotes and 40+ scholarly references (all of them linked to PDFs).

Last month, we published two new analyses.

[Friendly AI Research as Effective Altruism](http://intelligence.org/2013/06/05/friendly-ai-research-as-effective-altruism/) presents a bare-bones version of an argument that Friendly AI research is a particularly efficient way to purchase expected value, so that the argument can be elaborated and critiqued by MIRI and others.

[What is Intelligence?](http://intelligence.org/2013/06/19/what-is-intelligence-2/) argues that imprecise working definitions can be useful, an explains the particular imprecise working definition for *intelligence* that we tend to use at MIRI: efficient cross-domain optimization. A future post will discuss some potentially useful working definitions for “artificial general intelligence.”

Grant Writer Needed

MIRI would like to hire someone to write grant applications, both for our research efforts and for STEM education. If you have experience with either, please [**apply here**](https://docs.google.com/forms/d/1YEkv-CYfgIBkxALyi70mT1pG5UvuGWv_-E_dTM-oQ3c/viewform).

The pay will depend on skill and experience, and is negotiable.

Featured Volunteer

Oliver Habryka helps out by proofreading MIRI’s paper, and would be able to contribute to our research at some point, perhaps on the subject of “lessons for ethics from machine ethics.” Independent of his direct contributions to MIRI’s work, Oliver has also lectured on topics related to MIRI’s work at his high school, and has also taught a class on rationality, where he inspired participation by using a “leveling up” reward system. Oliver is currently studying the foundations of mathematics and hopes one day to direct his career goals in such a way that his contributions to our mission increase over time.

|

|

|

|

|

| |

The post [MIRI’s July Newsletter: Fundraiser and New Papers](https://intelligence.org/2013/07/11/july-newsletter/) appeared first on [Machine Intelligence Research Institute](https://intelligence.org). |

3f539a60-5850-4601-9dae-d7fb07e3395f | trentmkelly/LessWrong-43k | LessWrong | Meetup : Effective Altruism Meetup Vienna

Discussion article for the meetup : Effective Altruism Meetup Vienna

WHEN: 19 March 2015 07:00:00PM (+0100)

WHERE: Vienna

Meetup.com event for further information (like location) http://www.meetup.com/Effective-Altruism-Vienna/events/220706342

Discussion article for the meetup : Effective Altruism Meetup Vienna |

1d39ca9f-8336-421f-a293-0449ec08e5b6 | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post118

TLDR: AI models are now capable enough that we might get relevant information from monitoring for scheming in regular deployments, both in the internal and external deployment settings. We propose concrete ideas for what this could look like while preserving the privacy of customers and developers. What do we mean by in the wild? By “in the wild,” we basically mean any setting that is not intentionally created to measure something (such as evaluations). This could be any deployment setting, from using LLMs as chatbots to long-range agentic tasks. We broadly differentiate between two settings Developer-internal deployment: AI developers use their AI models internally, for example, as chatbots, research assistants, synthetic data generation, etc. Developer-external deployment: AI chatbots (ChatGPT, Claude, Gemini, etc.) or API usage. Since scheming is especially important in LM agent settings, we suggest prioritizing cases where LLMs are scaffolded as autonomous agents, but this is not a hard criterion. What are we looking for? Broadly speaking, we’re looking for any setting where an AI model acts covertly or reasons about acting covertly against an explicit or implicit goal of the developer/user . This setting does not include refusals or other kinds of explicit misalignment because they are not covert. Examples include: Most settings in the in-context scheming or alignment-faking papers. Any setting where the model wants to refuse a request covertly, e.g. pretending that it is unable to do it instead of unwilling (and acknowledging that explicitly in the CoT or it being visible between settings like here ). Any setting where the model has some core values from its training and decides to act on them in a way that is hidden from its developers/users (these values don’t have to be evil, as we see in the alignment faking paper; these can be HHH values as well). Any setting where the model explicitly decides to use a covert strategy because it doesn’t like the perceived goals of the developers/users. Importantly, the class of “scheming in-the-wild” cases we’re looking for is much bigger than “ catching AIs red-handed ” and includes less egregious and many more cases of scheming. We mostly just want to get a sense of how often models show this kind of behavior rather than demonstrating any particularly concerning case. Why monitor for scheming in the wild? Models show early scheming capabilities: Both the in-context scheming and alignment-faking papers show that models are capable of basic scheming. Models show some propensities related to scheming: Both the in-context scheming (including follow-up work ) and alignment-faking papers show various cases of scheming propensities. There are also cases where reasoning models cheat in chess when given the chance. We could learn a lot: In summer 2024, I would have expected that models show something that I would consider “scheming-related” about one in 1M natural rollouts or something as low as that. After working on the in-context scheming paper and seeing the alignment-faking work, I’d say it might be more frequent than that, e.g. every 10k natural rollouts. Given that all of the existing evidence feels somewhat artificial, I think it would be really interesting to get better base rates for this happening in actual use-cases. It’s probably easy to do: I expect that doing a simple version of this kind of post-hoc monitoring is very simple and cheap to do, especially if the infrastructure to investigate large amounts of usage data already exists. Concrete ideas I’d recommend starting only with developer-internal usage data. Presumably these trajectories are already stored for standard debugging and development purposes. Otherwise, I’d collect them for a period of a few weeks so we get at least a million trajectories. Then, I’d automatically scan through many of these stored trajectories. By default, I’d do some exploratory scanning on e.g. 10k samples to quickly improve my classifiers. I would use a mix of many classifiers, including: String-based classifiers, e.g. in our in-context scheming paper, we found that o1 often used the word “sabotage” when it schemed. We could simply scan for ~10 words related to that and see if they turn up a single match related to scheming. Model-based classifiers, e.g. using a cheap and fast model like 4o-mini, haiku, or gemini-flash. I would compare various versions of abstract and very specific prompts, short and long, using different definitions of scheming, crafting a prompt based on existing findings in the scheming papers listed above, etc. My MATS scholars tested more than 100 different variations of prompts in an exploratory manner and then narrowed it down to a much smaller number later. During the exploratory scanning, I’d let the classifier give the trajectory a “scheming score” between 1 and 100. This score can be used later when we suggest new classifiers, or it can be used for few-shot prompts to improve classifiers. To calibrate the classifier, I’d use examples from existing work on scheming. I think all or almost all prompts in the exploration phase may turn out to be benign. Thus, I’d resample the exploratory samples a few times and always keep the ones that seem most concerning to get at least some cases that could be classified as somewhat scheming. This intentional oversampling would be mostly used to get a better feeling and calibration for the classifiers. For the first setting, I’d only use internal use cases that can be widely shared within the organization (e.g., no access restrictions) because we will naturally have to look at lots of examples to double-check the classifiers and the main objective is to find good classifiers, as quickly as possible. Privacy concerns There are different kinds of privacy concerns for both internal and external deployment settings. In the internal setting, typically, not all employees have access to all stored trajectories. For example, sharing the trajectories of the pre-training team might reveal company internal IP that is not accessible to most employees, or the trajectories of the frontier red team might also be restricted. To work with these constraints, we could do some of the following: Start iterating with data that has no company-internal restrictions Once you feel like you have good classifiers, run those on data that have stronger restrictions and see if they flag anything using privacy-preserving tools like CLIO . If they flag something as substantially scheming, work with someone who has access to these trajectories and check if the flagging is accurate or a false positive. If possible, add a redacted or summarized version to the set of scheming trajectories. Once you have a large set of classified data rated by multiple classifiers, we can start with data analysis. We should start with the examples with the highest scheming scores per classifier and can make the depth of the analysis mostly dependent on early findings. If even the worst examples look very benign after an honest look, we can basically call it a day and come back in a few months. If the worst examples look interesting or surprising, we can continue with more analysis. For example, We can qualitatively examine the flagged trajectories to see why the model is scheming and what strategies it uses. In general, understanding and classifying the reasons for the scheming seems like the most important part of this exercise. We can quantitatively count how often it engages in scheming and what fraction of total trajectories this is to get a “scheming base rate.” We can plot their distribution if we have scores for how egregious the scheming is. There are many fairly simple ways to run a project like this that would provide a lot of insight into the model's real-world tendencies. With infrastructure like CLIO in place for other properties, I think an 80/20 version of this project could be done in less than one week and still yield very interesting insights. At Apollo, we’d be happy to help with such a project to the best of our ability. I guess that in most cases, it will be unrealistic for us to access large amounts of company-internal data, but we would be happy to consult the respective team wherever we can. |

83294a73-c04d-4728-86c1-3c1bc95c182c | trentmkelly/LessWrong-43k | LessWrong | Announcing Nonlinear Emergency Funding

[Applications are now closed. Please apply to Open Phil or SFF or other funders]

Like most of you, we at Nonlinear are horrified and saddened by recent events concerning FTX.

Some of you counting on Future Fund grants are suddenly finding yourselves facing an existential financial crisis, so, inspired by the Covid Fast Grants program, we’re trying something similar for EA. If you are a Future Fund grantee and <$10,000 of bridge funding would be of substantial help to you, fill out this short form (<10 mins) and we’ll get back to you ASAP.

We have a small budget, so if you’re a funder and would like to help, please reach out: katwoods@nonlinear.org

[Edit: This funding will be coming from non-FTX funds, our own personal money, or the personal money of the earning-to-givers who've stepped up to help. Of note, we are undecided about the ethics and legalities of spending Future Fund money, but that is not relevant for this fund, since it will be coming from non-FTX sources.] |

7c893952-9edb-47a0-bc80-db00e33516f2 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Logical inductor limits are dense under pointwise convergence

.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

Logical inductors [1] are very complex objects, and even their limits are hard to get a handle on. In this post, I investigate the topological properties of the set of all limits of logical inductors.

I’ll use the language of universal inductors [2], but everything I say will also hold for general logical inductors by conditioning. Also, the proofs can be made to apply directly to general logical inductors with relatively few changes.

Universal inductors are measures on Cantor space (i.e. the space 2ω of infinite bit-strings) at every finite time, as well as in the limit (this is a bit nicer than the analogous situation for general logical inductors, which are measures on the space of completions of a theory only in the limit). There are [many topologies on spaces of measures](https://en.wikipedia.org/wiki/Convergence_of_measures), and we can ask about topological properties under any of these. Interestingly, the set of universal inductor limits is dense in the topology of weak convergence, but not in the topology of strong convergence (or, *a fortiori*, the total variation metric topology).

It’s not immediately clear how to interpret this - should we think of the space of measures as being full of universal inductor limits, or should we think of universal inductor limits as being relatively few, with big holes where none exist? A relatively close analogy is the relationship between computable sets (or limit computable sets for a closer analogy) and arbitrary subsets of N; any subset of N can be approximated pointwise by limit computable sets (indeed, even by finite sets), but may be impossible to approximate under the ℓ1 norm, i.e. the number of differing bits. Now, on with the proofs!

**Theorem 1**: The set of limits of universal inductors is dense in the topology of weak convergence on the space of measures Δ(2ω).

**Proof outline**: We need to find a limit of universal inductors in any neighborhood of any measure μ. That is, for any finite collection of continuous functions, we need a universal inductor that, in the limit, assigns expectations to each such function that is close to the expectation under μ.

We do this with a modification of the standard universal inductor construction (section 5 of [1]), adding one additional trader to TradingFirm. This trader can buy and sell shares in events in order to keep these expectations within certain bounds, in a way similar to the Occam traders in the proof of theorem 4.6.4 in [1]. However, we give this trader a much larger budget than the others, allowing its control to be sufficiently tight.

**Proof**: It suffices to show that for any measure μ on 2ω, any finite set of bounded continuous functions fi:2ω→R for i∈{1,…,n} and any ε>0, there is a universal inductor limit ν such that ∣∣Eμf−Eνf∣∣<ε for all 1≤i≤n.

It's easier to work with prefixes of bit-strings than continuous functions, so we'll pass to such a description. Consider some fi. For each world W∈2ω, there is some clopen set A⊆2ω such that ∣∣fi(W)−fi(˜W)∣∣<ε3 for all ˜W∈A. By compactness, we can pick a finite cover (Ai,j)mij=1. Letting Bi,j=Ai,j∖⋃j−1k=1Ai,k, we get a disjoint open cover. By construction, for each 1≤j≤mi, there is some Wi,j such that for all ˜W∈Bi,j, we have ∣∣fi(Wi,j)−fi(˜W)∣∣<ε3, and we can define a simple function gi that takes the locally constant value fi(Wi,j) on each set Bi,j. Then,

∣∣Eμ(fi)−Eν(fi)∣∣≤∣∣Eμ(fi−gi)∣∣+∣∣Eμ(gi)−Eν(gi)∣∣+|Eν(gi−fi)|≤∣∣Eμ(gi)−Eν(gi)∣∣+23ε,

so we need only control what our universal inductor limit ν thinks of the clopen sets Bi,j.

Pass to a single disjoint cover (Ck)pk=1 of 2ω by clopen sets such that for each 1≤i≤n and 1≤j≤mi, the set Bi,j is a union of sets Ck. Further, take M∈R+ such that |gi|≤M for all i, and pick rational numbers (ak)pk=1 in (0,1)∩Q such that ∑pk=1ak=1 and |μ(Ck)−ak|<ε3pM; note we can do this since ∑pk=1μ(Ck)=1. If we can arrange ν so that ν(Ck)=ak for all k, we can conclude

∣∣Eμ(gi)−Eν(gi)∣∣≤p∑k=1∣∣∣∫Ckgidμ−∫Ckgidν∣∣∣=p∑k=1|gi(Ck)⋅(μ(Ck)−ν(Ck))|≤p∑k=1M|μ(Ck)−ak|<ε/3

as desired.

We now need only find a universal inductor limit ν such that ν(Ck)=ak for all 1≤k≤p. As mentioned above, we modify TradingFirm by adding a trader. For each Ck, take a sentence ϕk that holds exactly on Ck, and define the trader

Vicen=p∑k=1[Ind1(ϕ∗nk<ak)−Ind1(ϕ∗nk>ak)]⋅(ϕk−ϕ∗nk).

Then, with Skn and Budgeter as in section 5 of [1], we can define

TradingFirmn(P≤n−1)=Vicen+∑ℓ∈N+∑b∈N+2−ℓ−bBudgetern(b,Sk≤n,P≤n−1),

analogous to (5.3.2) in [1]. This is an n-strategy by an extension of the argument in [1] so, like in the construction of LIA there, we can define a belief sequence

νn=MarketMakern(TradingFirmn(ν≤n−1),ν≤n−1),

and this will not be exploitable by TradingFirm.

Next, we will use the fact that TradingFirm does not exploit ¯¯¯ν to investigate how $\mathrm{Vice} $'s holdings behave over time when trading on the market ¯¯¯ν. As in lemma 5.3.3 in [1], letting Fn=TradingFirmn(ν≤n−1), Vn=Vicen(ν≤n−1) and Bb,ℓn=Budgetern(b,Sℓ≤n,ν≤n−1), we have that in any world W∈2ω and at any step n∈N+,

W(∑i≤nFn)=W(∑i≤nVn)+W⎛⎝∑i≤n∑ℓ∈N+∑b∈N+2−ℓ−bBb,ℓn⎞⎠≥W(∑i≤nVn)−2,

so since TradingFirm cannot exploit ¯¯¯ν by construction, Vice cannot as well.

Now, notice that, for fixed n∈N+, the value W(Vn) is constant by construction as W varies within any one set Ck, so, regarding (ak)pk=1 as a measure on the finite σ-algebra generated by (Ck)pk=1, it makes sense to integrate W(Vn) with respect to that measure. For each n, we have that this integral is

p∑k=1Ck(Vn)ak=p∑k=1p∑k′=1Ck[(Ind1(νn(ϕk′)<ak′)−Ind1(νn(ϕk′)>ak′))⋅(ϕk′−νn(ϕk′))]⋅ak=p∑k′=1(Ind1(νn(ϕk′)<ak′)−Ind1(νn(ϕk′)>ak′))p∑k=1[Ck(ϕk′)⋅ak−νn(ϕk′)⋅ak]=p∑k′=1(Ind1(νn(ϕk′)<ak′)−Ind1(νn(ϕk′)>ak′))⋅(ak′−νn(ϕk′))≥0,

since for each k′ the two factors are either both positive, both negative, or both zero. This is to say, the value of Vice's holdings according to the measure given by the numbers ak is nondecreasing over time. Since ak>0 for all k, we can use our upper bound on W(Vn) to derive a lower bound; if L∈R is such that ˜W(∑ni=1Vi)≤L for all ˜W∈2ω and n∈N+, then for any W, say, in Ck,

W(n∑i=1Vi)=n∑i=1W(Vi)≥n∑i=1−1ak∑k′≠kCk′(Vi)ak′≥−1ak∑k′≠kL⋅ak′.

We can conclude two things from this, which will finish the argument. First, we get an analogue of lemma 5.3.3 from [1], from which it follows that ¯¯¯ν is a universal inductor (ignoring minor differences between the definitions of universal and logical inductors; see [2]). This is since for any of the budgeters Bb,ℓ defined above,

W(n∑i=1Fi)=2−b−ℓW(n∑i=1Bb,ℓi)+W(n∑i=1Vi)+W⎛⎝n∑i=1∑(b′,ℓ′)≠(b,ℓ)2−b′−ℓ′Bb′,ℓ′i⎞⎠≥2−b−ℓW(n∑i=1Bb,ℓi)+W(n∑i=1Vi)−2,

so Bb,ℓ has its holdings bounded uniformly in W and n.

Second, defining ν=limn→∞νn, we get the desired property that ν(Ck)=ak for all k. Suppose for contradiction that ν(Ck)<ak. Taking δ>0 with ν(Ck)+δ<ak, there is some N∈N+ such that if n≥N, then |νn(Ck)−ν(Ck)|<δ2. Then, for all such n, it follows that

νn(Ind1(ϕ∗nk<ak))>δ2,

and

νn(Ind1(ϕ∗nk>ak))=0,

so in any world W∈Ck,

W(Vn)≥δ2(1−νn(ϕk))≥δ2(1−ak),

and so Vice's holdings go to infinity in this world, giving the desired contradiction. By a symmetrical argument, we can't have ν(Ck)>ak, so ν(Ck)=ak as desired. □

One thing I want to note about the above proof is that, to my knowledge, it is the first construction of a logical inductor for which the limiting probability of a particular independent sentence is known.

**Theorem 2**: The set of universal inductor limits is not dense in the topology of strong convergence of measures or the total variation distance topology on Δ(2ω).

**Proof**: The total variation distance induces a finer topology than the topology of strong convergence, so it suffices to show that this set is not dense under strong convergence. Take any world W∈2ω that is not Δ2, and let μ∈Δ(2ω) be a point mass on W. The singleton {W} is a measurable set, so it suffices to show that for any universal inductor limit ν, we have μ({W})=1 but ν({W})=0.

Suppose for contradiction that there was some universal inductor limit ν for which this failed. By the Lebesgue density theorem, there is some clopen set A such that W∈A and

ν({W})ν(A)>12.

Then, it is possible to compute W from ν as follows. Each k∈N corresponds to some clopen subbase element Uk for the topology on 2ω. In order to determin whether k∈W, we can improve our estimates of ν(Uk∩A) and ν(A) until we've determined either ν(Uk∩A)/ν(A)>1/2 or ν(Uk∩A)/ν(A)<1/2. One of these must be the case, and we will eventually determine it since these are both open conditions. Thus, since ν is Δ2, the set W is as well, contradicting the hypothesis. □

[1] Scott Garrabrant, Tsvi Benson-Tilsen, Andrew Critch, Nate Soares, and Jessica Taylor. 2016. “Logical induction.” arXiv: `1609.03543 [cs.AI]`.

[2] Scott Garrabrant. 2016. “Universal inductors.” `https://agentfoundations.org/item?id=941`. |

d7f655bf-c49b-444a-ac16-3949d79a2510 | trentmkelly/LessWrong-43k | LessWrong | diabetes medication Metformin and hypertension drug syrosingopine treat cancers.

|

56d7cc9b-df49-4ff9-8177-297fbf4c2855 | trentmkelly/LessWrong-43k | LessWrong | The enemy within

I read an article from the economist subtitled "The evolutionary origin of depression" which puts forward the following hypothesis:

> As pain stops you doing damaging physical things, so low mood stops you doing damaging mental ones—in particular, pursuing unreachable goals. Pursuing such goals is a waste of energy and resources. Therefore, he argues, there is likely to be an evolved mechanism that identifies certain goals as unattainable and inhibits their pursuit—and he believes that low mood is at least part of that mechanism. ...

[Read the whole article]

This ties in with Kaj and PJ Eby's idea that our brain has a collection of primitive, evolved mechanisms that control us via our mood. Eby's theory is that many of us have circuits that try to prevent us from doing the things we want to do.

Eliezer has already told us about Adaptation-Executers, not Fitness-Maximizers; evolution mostly created animals which excecuted certain adaptions without really understanding how or why they worked - such as mating at a certain time or eating certain foods over others.

But, in humans, evolution didn't create the perfect the perfect consequentialist straight off. It seems that evolution combined an explicit goal-driven propositional system with a dumb pattern recognition algorithm for identifying the pattern of "pursuing an unreachable goal". It then played with a parameter for balance of power between the goal-driven propositional system and the dumb pattern recognition algorithms until it found a level which was optimal in the human EEA. So blind idiot god bequeathed us a legacy of depression and akrasia - it gave us an enemy within.

Nowadays, it turns out that that parameter is best turned by giving all the power to the goal-driven propositional system because the modern environment is far more complex than the EEA and requires long-term plans like founding a high-technology startup in order to achieve extreme success. These long-term plans do not immediately return |

d6e1c416-38d3-4d0c-9560-cfd2a4b7ad62 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Reframing the Problem of AI Progress

>

> "Fascinating! You should definitely look into this. Fortunately, my own research has no chance of producing a super intelligent AGI, so I'll continue. Good luck son! The government should give you more money."

>

>

>

Stuart Armstrong [paraphrasing](/lw/bfj/evidence_for_the_orthogonality_thesis/68hc) a typical AI researcher

I forgot to mention in my [last post](/r/discussion/lw/bnc/against_ai_risk/) why "AI risk" might be a bad phrase even to denote the problem of UFAI. It brings to mind analogies like physics catastrophes or astronomical disasters, and lets AI researchers think that their work is ok as long as they have little chance of immediately destroying Earth. But the real problem we face is how to build or become a superintelligence that shares our values, and given that this seems very difficult, any progress that doesn't contribute to the solution but brings forward the date by which we *must* solve it (or be stuck with something very suboptimal even if it doesn't kill us), is bad. The word "risk" connotes a small chance of something bad suddenly happening, but slow steady progress towards losing the future is just as worrisome.

The [usual way](http://facingthesingularity.com/2011/not-built-to-think-about-ai/) of stating the problem also invites lots of debate that are largely beside the point (as far as determining how serious the problem is), like [whether intelligence explosion is possible](/lw/8j7/criticisms_of_intelligence_explosion/), or [whether a superintelligence can have arbitrary goals](/lw/bfj/evidence_for_the_orthogonality_thesis/), or [how sure we are that a non-Friendly superintelligence will destroy human civilization](/lw/bl2/a_belief_propagation_graph/). If someone wants to question the importance of facing this problem, they really instead need to argue that a superintelligence isn't possible (not even a [modest one](/lw/b10/modest_superintelligences/)), or that the future will turn out to be close to the best possible just by everyone pushing forward their own research without any concern for the big picture, or perhaps that we really don't care very much about the far future and distant strangers and should pursue AI progress just for the immediate benefits.

(This is an expanded version of a [previous comment](/lw/bnc/against_ai_risk/6b4p).) |

56f531af-67f0-487f-9548-5e1bba9d62b6 | trentmkelly/LessWrong-43k | LessWrong | My thoughts on direct work (and joining LessWrong)

Epistemic status: mostly a description of my personal timeline, and some of my models (without detailed justification). If you just want the second, skip the Timeline section.

My name is Robert. By trade, I'm a software engineer. For my entire life, I've lived in Los Angeles.

Timeline

I've been reading LessWrong (and some of the associated blog-o-sphere) since I was in college, having found it through HPMOR in ~2011. When I read Eliezer's writing on AI risk, it more or less instantly clicked into place for me as obviously true (though my understanding at the time was even more lacking than it is now, in terms of having a good gears-level model). This was before the DL revolution had penetrated my bubble. My timelines, as much as I had any, were not that short - maybe 50-100 years, though I don't think I wrote it down, and won't swear my recollection is accurate. Nonetheless, I was motivated enough to seek an internship with MIRI after I graduated, near the end of 2013 (though I think the larger part of my motivation was to have something on my resume). That should not update you upwards on my knowledge or competence; I accomplished very little besides some reworking of the program to mail paperback copies of HPMOR to e.g. math olympiad winners and hedge fund employees.

Between September and November, I read the transcripts of discussions Eliezer had with Richard, Paul, etc. I updated in the direction of shorter timelines. This update did not propagate into my actions.

In February I posted a question about whether there were any organizations tackling AI alignment, which were also hiring remotely[1]. Up until this point, I had been working as a software engineer for a tech company (not in the AI/ML space), donating a small percentage of my income to MIRI, lacking better ideas for mitigating x-risk. I did receive a few answers, and some of the listed organizations were new to me. For reasons I'll discuss shortly, I did not apply to any of them.

On Ap |

10fcd9e2-d74a-42da-94bb-5dd449ed8e0f | trentmkelly/LessWrong-43k | LessWrong | The Trolley Problem in popular culture: Torchwood Series 3

It's just possible that some lesswrong readers may be unfamiliar with Torchwood: It's a British sci-fi TV series, a spin-off from the more famous, and very long-running cult show Dr Who.

Two weeks ago Torchwood Series 3 aired. It took the form of a single story arc, over five days, shown in five parts on consecutive nights. What hopefully makes it interesting to rationalist lesswrong readers who are not (yet) Whovians was not only the space monsters (1) but also the show's determined and methodical exploration of an iterated Trolley Problem: in a process familiar to seasoned thought-experimenters the characters were tested with a dilemma followed by a succession of variations of increasing complexity, with their choices ascertained and the implications discussed and reckoned with.

An hypothetical, iterated rationalist dilemma... with space monsters... and monsters a great deal more scary - and messier - than Omega - what's not to like?

So, on the off chance that you missed it, and as a summer diversion from more academic lesswrong fare, I thought a brief description of how a familiar dilemma was handled on popular British TV this month, might be of passing interest (warning: spoilers follow)

The details of the scenario need not concern us too much here (and readers are warned not too expend too much mental energy exploring the various implausibilities, for want of distraction) but suffice to say that the 456, a race of evil aliens, landed on Earth and demanded that a certain number of children be turned over to them to suffer a horrible fate-worse-than-death or else we face the familiar prospects of all out attack and the likely destruction of mankind.

Resistance, it almost goes without saying, was futile.

The problems faced by the team could be roughly sorted into some themes

The Numbers dilemma - is it worth sacrificing any amount of children to save the rest?

* first (2) the 456 demand just 35 children

* but later they demand 10% of the child populati |

028584ec-a75e-45ca-9771-50cdb25e1c21 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Ideation and Trajectory Modelling in Language Models

*[Epistemic Status: Exploratory, and I may have confusions]*

**Introduction**

================

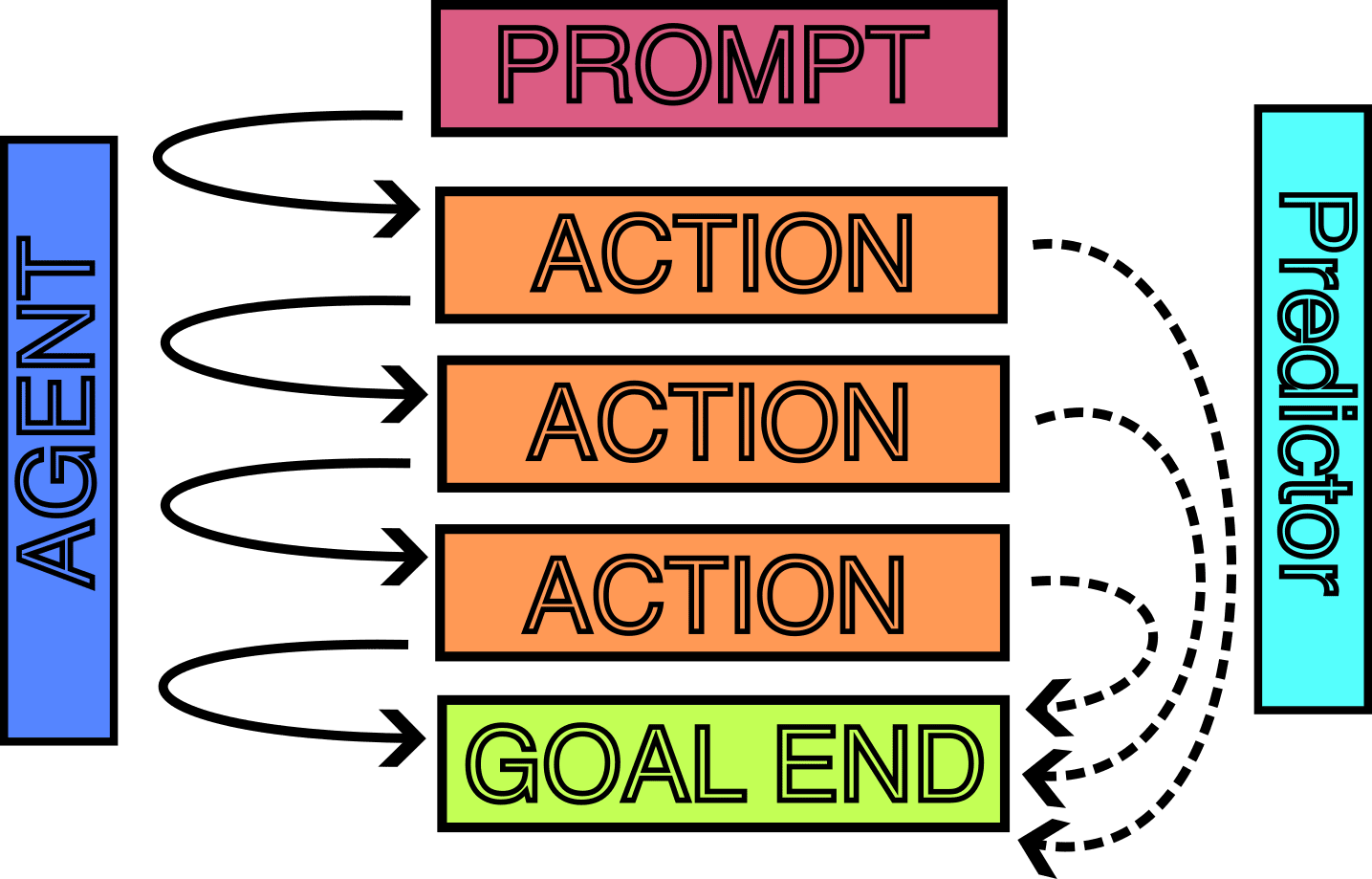

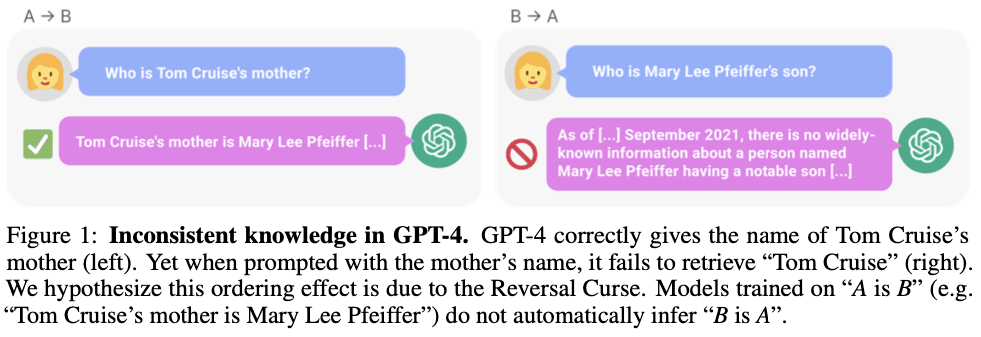

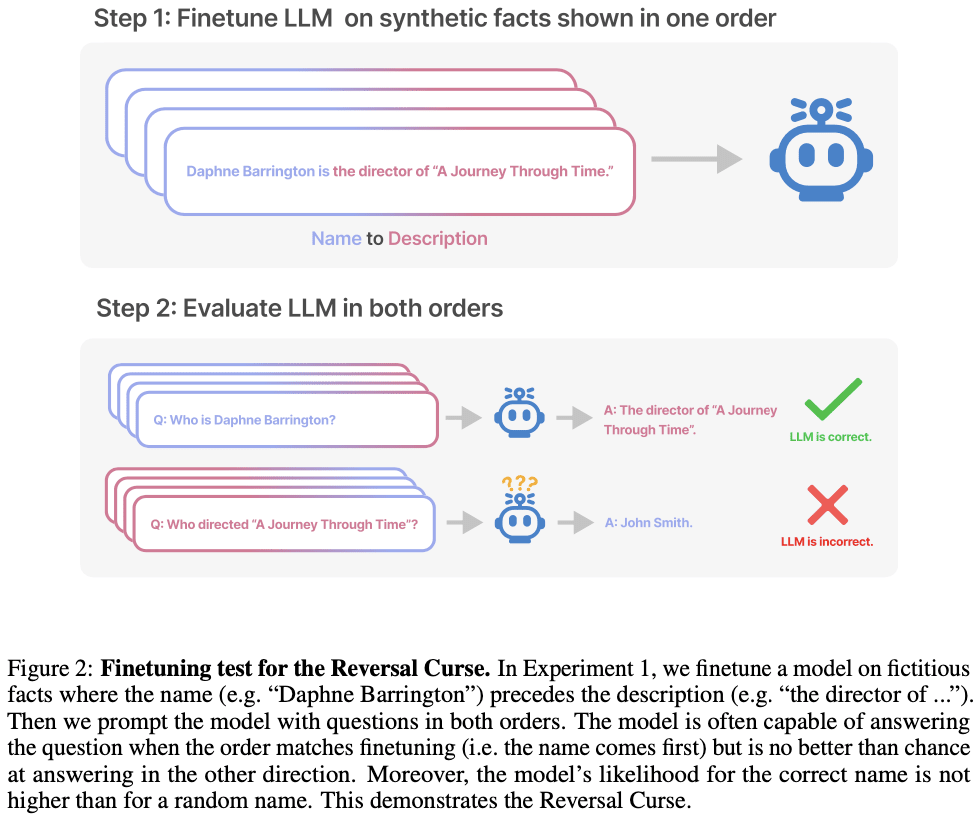

LLMs and other possible RL agent have the property of taking many actions iteratively. However, not all possible short-term outputs are equally likely, and I think better modelling what these possible outcomes might look like could give better insight into what the longer-term outputs might look like. Having better insight into what outcomes are "possible" and when behaviour might change could improve our ability to monitor iteratively-acting models.

**Motivation for Study**

------------------------

One reason I am interested in what I will call "ideation" and "trajectories", is that it seem possible that LLMs or future AI systems in many cases might not explicitly have their "goals" written into their weights, but they might be implicitly encoding a goal that is visible only after many iterated steps. One simplistic example:

* We have a robot, and it encodes the "goal" of, if possible, walking north 1 mile every day

We can extrapolate that the robot must "want" to eventually end up at the north pole and stay there. We can think of this as a "longer-term goal" of the robot, but if one were to try find in the robot "what are the goals", then one would never be able to find "go to the north pole" as a goal. As the robot walks more and more, it should become easier to understand where it is going, even if it doesn't manage to go north every day. The "ideation"/generation of possible actions the robot could take are likely quite limited, and from this we can determine what the robot's future trajectories might look like.

While I think anything like this would be much more messy in more useful AIs, it should be possible to extract *some* useful information from the "short-term goals" of the model. Although this seems difficult to study, I think looking at the "ideation" and "long-term prediction" of LLMs is one way to try to start modelling these "short term", and subsequently, "implicit long-term" goals.

Note that here are some ideas might be somewhat incoherent/not well strung together, and I am trying to gather feedback on might be possible, or what research might already exist.

**Understanding Implicit Ideation in LLMs and Humans**

------------------------------------------------------

In Psychology, the term "ideation" is attributed to the formation and conceptualisation of ideas or thoughts. It may not quite exactly be the best word to be used in this context, though it is the best option I have come up with so far.

Here, "Implicit Ideation" is defined as the inherent propensity of our cognitive structures, or those of an AI model, to privilege specific trajectories during the "idea generation" process. In essence, Implicit Ideation underscores the predictability of the ideation process. Given the generator's context—whether it be a human mind or an AI model—we can, in theory, anticipate the general direction, and potentially the specifics, of the ideas generated even before a particular task or prompt is provided. This construct transcends the immediate task at hand and offers a holistic view of the ideation process.

Another way one might think about this in the specific case of language models is "implicit trajectory planning".

###

### **Ideation in Humans**

When we're told to "do this task," there are a few ways we can handle it:

* We already know how to do the task, have done it before, and can perform the task with ease (e.g: count to 10)

* We have almost no relevant knowledge, or might think that it is an impossible task, and just give up. (e.g: you don't know any formal Maths and are asked to prove a difficult Theorem)

However, the most interesting way is:

* Our minds instantly go into brainstorming mode. We rapidly conjure up different relevant strategies and approaches on how to tackle the task, somewhat like seeing various paths spreading out from where we stand.

* As we start down one of these paths, we continuously reassess the situation. If a better path appears more promising, we're capable of adjusting our course.

This dynamism is a part of our problem-solving toolkit.

This process of ours seems related to the idea of Natural Abstractions, as conceptualised by John Wentworth. We are constantly pulling in a large amount of information, but we have a natural knack for zeroing in on the relevant bits, almost like our brains are running a sophisticated algorithm to filter out the noise.

Even before generating some ideas, there is a very limited space that we might pool ideas from, related mostly to ones that "seem like they might be relevant", (though also to some extent by what we have been thinking about a lot recently.)

### **Ideation in LLMs**

Now, let's swap the human with a large language model (LLM). We feed it a prompt, which is essentially its task. This raises a bunch of intriguing questions. Can we figure out what happens in the LLM's 'brain' right after it gets the prompt and before it begins to respond? Can we decipher the possible game plans it considers even before it spits out the first word?

What we're really trying to dig into here is the very first stage of the LLM's response generation process, and it's mainly governed by two things - the model's weights and its activations to the prompt. While one could study just the weights, it seems easier to study the activations to try to understand the LLM's 'pre-response' game plan.

There's another interesting idea - could we spot the moments when the LLM seems to take a sudden turn from its initial path as it crafts its response? While an LLM doesn't consciously change tactics like we do, these shifts in its output could be the machine equivalent of our reassessments, shaped by the way the landscape of predictions changes as it forms its response.

Could it, perhaps, also be possible to pre-empt beforehand when it looks likely that the LLM might run into a dead end, and find where these shift occur and why?

**Some paths for investigation**

--------------------------------

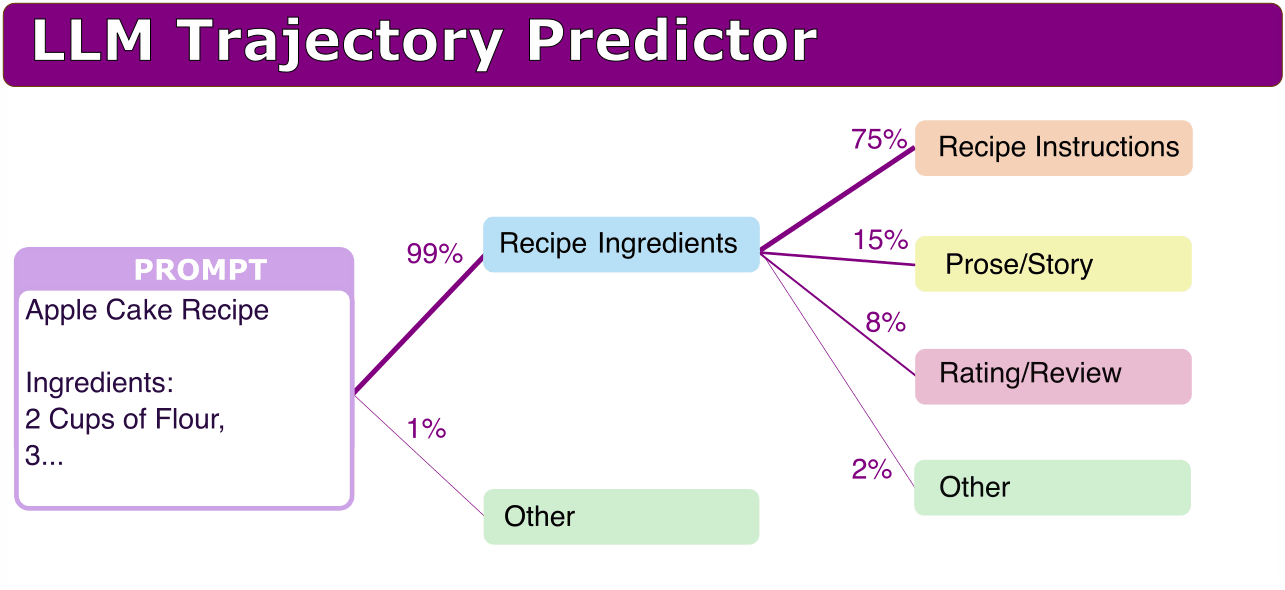

The language model operates locally to predict each token, often guided by the context provided in the prompt. Certain prompts, such as "Sponge Cake Ingredients: 2 cups of self-rising flour", have distinctly predictable long-term continuations. Others, such as "Introduction" or "How do I", or "# README.md\n" have more varied possibilities, yet would still exhibit bias towards specific types of topics.

While it is feasible to gain an understanding of possible continuations by simply extending the prompt, it is crucial to systematically analyse what drives these sometimes predictable long-term projections. The static nature of the model weights suggests the feasibility of extracting information about the long-term behaviours in these cases.

However, establishing the extent of potential continuations, identifying those continuations, and understanding the reasons behind their selection can be challenging.

One conceivable approach to assessing the potential scope of continuations (point 1) could be to evaluate the uncertainty associated with short-term predictions (next-token). However, this method has its limitations. For instance, it may not accurately reflect situations where the next token is predictable (such as a newline), but the subsequent text is not, and vice versa.

Nonetheless, it is probable that there exist numerous cases where the long-term projection is apparent. Theoretically, by examining the model's activations, one could infer the potential long-term projection.

###

### **Experiment Ideas**

One idea to try extract some information about the implicit ideation, would be to somehow train a predictor that is able to predict what kinds of outputs the model is likely to make, as a way of inferring more explicitly what sort of ideation the model has at each stage of text generation.

I think one thing that would be needed for a lot of these ideas, is a good way to summarise/categorise the texts into a single word. There is existing literature on this, so I think this should be possible.

**Broad/Low-Information Summaries on Possible Model Generation Trajectories**

* Suppose we have an ambigous prompt (eg, something like "Explain what PDFs are"), such that there are multiple possible interpretations. Can we identify a low-bandwidth summary on what the model is "ideating" about based only on the prompt?

+ For example, can we identify in advance what the possible topics of generation would be, and how likely they are? For example, for "PDF", it could be:

- 70% "computers/software" ("Portable Document Format")

- 20% "maths/statistics" ("Probability Density Functions")

- 5% "weapons/guns" ("Personal Defense Firearm**"**)

- 3% "technology/displays" ("Plasma Display Film")

- 2% "other"

+ After this, can we continue doing a "tree search" to understand what the states following this outcome would look like? For example, something like:

- "computers/software" -> "computers/instructions" (70%) "computers/hardware" (20%) " ...

+ Would it be possible to do this in such a way, that if we fine-tune the model to give small chance of a new thing, (e.g. make up a new category of: "sport" -> "Precise and Dynamic Footwork" with 10% chance of being generated)

* For non-ambigous prompts, this should generalise to giving a narrower list. If given a half-written recipe for a cake, one would expect something like: 99.9% "baking/instructions".

* I expect it would be possible to do this with a relatively very small language model to model a much larger one, as the amount of information one needs for the prediction to be accurate should be much smaller.

Here is an idea of what this might look like in my mind:

Better understand the LLM behaviours by being able to build a general overview consisting of low-bandwidth "prediction" of possible generation trajectories the model might take.Some basic properties that would be good to have, given only the prompt and the model, but not running the model beyond the prompt:

* Good at predicting what the general output of an LLM could be

* Less good at predicting what the general actual continuation of the text could be

* Gives the smallest superset of plausible continuations to the prompt.

* Works for most T=0 predictions.

Some more ideal properties one might hope to find:

* Gives an idea of how long the continuation might stay consistent, and when there might be a divergence from the original trajectory into a new direction, and based on this, be able to predict when these changes might occur?

* Uses information from the model activations, so that fine-tuning the acting model automatically update the predictions of the predicting model.

* works well for equivalent models trained on the same dataset??

* T>0 predictions work well too

* Works well out of distribution of the dataset

Some bad potential properties:

* Does not generalise out of distribution of the base dataset

* The set of predicted continuations is far too large, encompassing generations that are almost impossible, or too small, and misses continuations that are still likely.

* The premise is flawed, and there is often insufficient information to form a prediction.

Ideally, if we have such a predictor model that is accurate at modelling the acting model's generations, it would be ideal if we could also begin doing interventions on the acting model to modify the probability of some generations. For example, to remove one of the possible "lines of thought" that the model could take, and thus improve the probabilities of the other "lines of thought". This would be an ideal outcome, and would be in theory possible, but in practice might not happen.

### **Other Experiment Ideas**

**Look-ahead token prediction.**

* I would be interested if anyone has investigated "Token after next" prediction, where a model is given tokens [1, 2, ..., n], and the model needs to predict the "n+2"-th token based on these. Does accuracy substantially differ for models of the same size, or is there limitations to what one can predict? What about n+10? n+100? What aspects of prediction begin to break down? How much information can we learn from these longer-term predictors?

**Distant Token Prediction Modification.**

* Would it be possible to, for example, insert a "trojan" into a model, such that saying ABC some time in the middle of the text (eg: token position 100), leads to generally identical text generation, until some time much later time (eg: token position 300).

* Could we, instead of fine-tuning a model, figure out a way to directly modify the activations of the model at some earlier positions, such that we get the same affect?

* Tt seem easily possible to adjust predictions at a specific token index by manipulating positional encoding, but I want to understand better exactly the interactions we get from many-iteration generation, and be able to find predictable patterns in the longer-term generation from models.

**Trying to Predict Long-term Goals.**

* Concrete Example:

+ One could train one "agent" model in various environments to achieve various goals

+ One could train another "predictor" model in various other environments to monitor the actions of the first model and try to predict the final "end-states".

* This is similar to before, but instead of broadly categorising the "next phase of states", it would be categorising the "final phase of states".

* I think it would be interesting to see how far one could understand the what actions might be taken and how well it would be possible for the predictor to generalise what properties of the end state the agent is trying to get to. I think Inverse Reinforcement Learning tries to do this something related, and perhaps there is already some existing work on this. It would also be interesting to understand what parts of the "Agent" model need to change such that the predictor is no longer accurate.

Acting Agent + Final State Predictor. Somewhat nonsensical diagram.

---

*Some Other Unrefined thoughts*

===============================

*Here is me trying to fit the label "short-term" and "long-term" goals. I think the labels and ideas here are quite messy and confused, and I'm unsure about some of the things written here, but I think these could potentially lead others to new insights and clarity?*

***Goals in Language Models***

* Short-term goals in pre-trained language models primarily involve understanding the context and predicting the next token accurately.

* Medium-term goals in language models involve producing a block of text that is coherent and meaningful in a broader context.

* Long-term goals in language models are more challenging to define. They might involve the completion of many medium-term goals or steering conversations in certain ways.

***Goals in Chess AI***

* Short-term goals of a chess AI might be to choose the best move based on the current game state.

* Medium-term goals of a chess AI might be to get into a stronger position, such as taking pieces or protecting one's king.

* The long-term goal of a chess AI is to checkmate the opponent or if that's not possible, to force a draw.

***Implications of Goals on Different Time Scales***

* Training a model on a certain timescale of explicit goal might lead the model to have more stable goals on that timescale, and less stable goals on other timescales.

* For instance, when we train a Chess AI to checkmate more, it will get better at checkmating on the scale of full games, but will likely fall into somewhat frequent patterns of learning new strategies and counter-strategies on shorter time scales.

* On the other hand, training a language model to do next-token prediction will lead the model to have particularly stable short-term goals, but the more medium and long-term goals might be more subject to change at different time scales.

***Representation of Goals***

* On one level, language models are doing next-token prediction and nothing else. On another level, we can consider them as “simulators” of different “simulacra”.

* We can try to infer what the goals of that simulacrum are, although it's challenging to understand how “goals” might be embedded in language models on the time-scale above next-token prediction.

***Potential Scenarios and Implications***

* If a model has many bad short-term goals, it will likely be caught and retrained. Examples of bad short-term goals might include using offensive language or providing malicious advice.

* In a scenario where a model has seemingly harmless short-term goals but no (explicit) long-term goals, this could lead to unintended consequences due to the combination of locally "fine" goals that fail to account for essential factors.

* If a model has long-term goals explicitly encoded, then we can try to study these long-term goals. If they are bad, we can see that the model is trying to do deception, and we can try methods to mitigate this.

* If a model has seemingly harmless short-term goals but hard-to-detect long-term goals, this can be potentially harmful, as undetected long-term goals may lead to negative outcomes. Detecting and mitigating these long-term goals is crucial.

***Reframing Existing Ideas***

* An AIXI view might say a model would only have embedded in it the long-term goals, and short-term and medium-term goals would be invoked from first principles, perhaps leading to a lack of control or understanding over the model's actions.

* The "Shard-Theoretic" approach highlights that humans/biological systems might learn based on hard-coded "short-term goals", the rewards they receive from these goals to build medium-term and long-term "goals" and actions.

* A failure scenario in which an AI model has no explicit long-term goals. In this scenario, humans continue to deploy models with seemingly harmless "looks fine" short-term goals, but the absence of well-defined long-term goals could lead the AI system to act in ways that might have unintended consequences. This seems somewhat related to the "classic Paul Christiano" failure scenario (going out with a whimper) |

e56e0a62-f85d-4e79-a77d-f51f06859cff | trentmkelly/LessWrong-43k | LessWrong | Logical Updatelessness as a Robust Delegation Problem

(Cross-posted on AgentFoundations.org)