id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

0646cc3a-7817-459e-a89e-f570735a05c8 | trentmkelly/LessWrong-43k | LessWrong | Chapter 41: Frontal Override

The biting January wind howled around the vast, blank stone walls that demarcated the material bounds of the castle Hogwarts, whispering and whistling in odd pitches as it blew past closed windows and stone turrets. The most recent snow had mostly blown away, but occasional patches of melted and refrozen ice still stuck to the stone face and blazed reflected sunlight. From a distance, it must have looked like Hogwarts was blinking hundreds of eyes.

A sudden gust made Draco flinch, and try, impossibly, to press his body even closer to the stone, which felt like ice and smelled like ice. Some utterly pointless instinct seemed convinced that he was about to be blown off the outer wall of Hogwarts, and that the best way to prevent this was to jerk around in helpless reflex and possibly throw up.

Draco was trying very hard not to think about the six stories worth of empty air underneath him, and focus, instead, on how he was going to kill Harry Potter.

"You know, Mr. Malfoy," said the young girl beside him in a conversational voice, "if a seer had told me that someday I'd be hanging onto the side of a castle by my fingertips, trying not to look down or think about how loud Mum'd scream if she saw me, I wouldn't've had any idea of how it'd happen, except that it'd be Harry Potter's fault."

----------------------------------------

Earlier:

The two allied Generals stepped together over Longbottom's body, their boots hitting the floor in almost perfect synchrony.

Only a single soldier now stood between them and Harry, a Slytherin boy named Samuel Clamons, whose hand was clenched white around his wand, held upward to sustain his Prismatic Wall. The boy's breathing was coming rapidly, but his face showed the same cold determination that lit the eyes of his general, Harry Potter, who was standing behind the Prismatic Wall at the dead end of the corridor next to an open window, with his hands held mysteriously behind his back.

The battle had been ridiculously difficult, |

bcfad80e-76e9-478b-ac84-2d50f9a84b90 | trentmkelly/LessWrong-43k | LessWrong | Book report: Theory of Games and Economic Behavior (von Neumann & Morgenstern)

I finally read a game theory textbook. Von Neumann and Morgenstern's "Theory of Games and Economic Behavior" basically started the field of game theory. I'll summarize the main ideas and my opinions.

This was also the book that introduced the VNM theorem about decision-theoretic utility. They presented it as an improvement on "indifference curves", which apparently was how economists thought about preferences back then.

2-player zero-sum games

We start with 2-player zero-sum games, where the payoffs to the players sum to 0 in every outcome. (We could just as well consider constant-sum games, where the sum of payoffs is the same in every outcome.) Maximizing your reward is the same as minimizing your opponent's reward. An example is Rock, Paper, Scissors.

Suppose player 1 moves first, and then player 2 moves with, and then the players get their rewards R, −R. Then player 2 should play argminyR(x,y) and player 1 should play argmaxxminyR(x,y). If instead player 2 moves first, then player 1 should play argmaxxR(x,y) and player 2 should play argminymaxxR(x,y).

The payoff to player 1 will be the "maximin" or "minimax" value, depending on whether they move first or second. And moving second is better:

maxxminyR(x,y)≤minymaxxR(x,y)

If the action space is finite and we allow mixed strategies, then by the Kakutani fixed point theorem equality obtains. (The book proves this with elementary linear algebra instead.) In Rock, Paper, Scissors, the maximin value is 0, which you get by playing randomly.

VNM then argue that if both players must move simultaneously, they should play the maximin/minimax strategy. Their argument is kind of sketchy: They say that since player 1 can secure at least the maximin reward for themselves, and since player 2 can prevent player 1 from getting more than the maximin reward, it's rational for player 1 to play the strategy that guarantees them the maximin reward (17.8.2). They claim they aren't assuming knowledge of rationality of all players |

a3eddf7a-74b6-49f4-a96e-4d402449a2ec | trentmkelly/LessWrong-43k | LessWrong | HPMOR and Sartre's "The Flies"

Am I the only one who sees obvious parallels between Sartre's use of Greek mythology as a shared reference point to describe his philosophy more effectively to a lay audience and Yudkowsky's use of Harry Potter to accomplish the same goal? Or is it so obvious no one bothers to talk about it? Was that conscious on Yudkowsky's part? Unconscious? Or am I just seeing connections that aren't there? |

12ab9512-58fa-424a-a3fd-bd8195841944 | trentmkelly/LessWrong-43k | LessWrong | eliminating bias through language?

Due to linguistic relativity, might it be possible to modify or create a system of communication in order to make its users more aware of its biases?

If so, do any projects to actually do this exist? |

3e4b7f2c-486c-44ac-9ced-8edc1e13179e | trentmkelly/LessWrong-43k | LessWrong | Where else are you posting?

As a result of XiXiDu's massive Resources and References post, I just found out about Katja Grace's very pleasant Meteuphoric blog.

I'm curious about what else LessWrongians are posting at other sites, and if there's interest, I'll make this into a top-level post.

I also post at Input Junkie. |

672560e9-85f7-4e00-a15a-78944f4abea8 | trentmkelly/LessWrong-43k | LessWrong | The Day After Tomorrow

Are you rooting for Donald Trump tomorrow? Please read the text in red. On Hillary’s side? Please read the parts in blue.

Imagine: it’s Tuesday night and the result is beyond doubt – a landslide for Crooked Hillary. What do you plan to do? Retreat in disgust from mainstream American discourse and retrench in your bubble? Claim that the “system is rigged” against you? You should be embarrassed, that’s what a leftist would do.

Imagine: it’s Wednesday morning and your next president fuhrer is Donald Trump. What do you plan to do? Scream that the American voters are idiots? Threaten violence and civil disobedience? How very Trump of you.

There are 324,118,787 people in America. The president is just one of them, and so are you. We all shape the kind of country we live in.

Do you hate Hillary’s corruption? Choose integrity. Pick your leaders based on their actions, not their promises. Did the person talking about working class problems spend a single day working a blue collar job? Is the person warning you of foreigners quick to use offshore labor when they can save a buck? This country will be saved by men of principle leading men of principle, not by making compromise with sin.

Do you hate Trump because he’s disrespectful? Choose respect. Learn to respect people from faraway lands, with different tastes and strange beliefs. And by these I mean: Oklahoma, Nascar, Jesus. In case you forgot, respect doesn’t mean letting them live somewhere out of sight. It means respecting their voice and their choice.

Do you hate the lies of the mainstream media? Choose objectivity. Does Breitbart make money when they report the unvarnished truth or when they they post outrage clickbait? Don’t consume just the media that feels good, broaden your view and you’ll see a truer picture. What use is free speech if one doesn’t freely listen?

Do you hate Trump’s bold-faced lying? Choose truth. Do you share articles that attempt to get to the bottom of issues, or memes that make your oppon |

2e9c4fdd-4608-4946-9f86-e926f77de7f2 | trentmkelly/LessWrong-43k | LessWrong | Depression philosophizing

Epistemic status: Weak confidence. Refine and dismantle as you see fit.

For certain people, philosophical thinking is net harmful to their everyday life.

This should not be that surprising. Certain kinds of cognitive behavior do reliably lead to unhappiness, and there's no a priori reason to suppose explicit, logical thinking is somehow exempt from that risk. For many people, it appears that a stable sense of identity, purpose in life, and place in society are important factors in creating and maintaining happiness and contentment. Philosophical thinking often involves destabilizing those concepts.

I want to point to something I have noticed in myself, and suspect happens in others as well. I call it "depression philosophizing." You begin to think philosophically about your life, and slowly, maybe imperceptibly, you feel worse and worse about yourself, as you meditate on such concepts as morality, meaning, and ontology in an abstract sense.

It's tempting to unilaterally demonize depression philosophizing. But there is one big thing about it that stands out and that makes it so hard to quit doing - and that's that the quality of your intellectual rigor doesn't correlate very much with your emotional state. Plenty of great philosophers were miserable people (Wittgenstein, Nietzsche, Schopenhauer).

I think rationalists are likely to fall prey to this trap. As a group, we have a revealed preference towards abstract thinking and philosophy. Some of our folk heroes appear unusually good at facing philosophical problems without letting it get to them or divert them from their goals - Nate Soares pops to mind for me.

I don't have a good answer for how to combat this beyond the usual mechanisms used to treat depression. But I've had some success at simply reminding myself that even the act of stopping and thinking has an opportunity cost to it - it's not actually a very wise move to devote large amounts of time running your brain in circles around a tempting philosophi |

b422da3e-9a00-4167-a76c-8c00a7fe744f | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Content generation. Where do we draw the line?

If you want to be affected by other people, if you want to live in a culture with other people, then I believe 4 statements below are true:

* You can't generate **all** of your "content".

This would basically mean living in the Matrix *without* other people. Consumerist solipsism. Everything you see is generated, but not by other people.

* You can't **100%** control what content you (can) consume.

For example, if you read *someone's* story, you let the author control what you experience. If you don't let anybody control what you experience, you aren't affected by other people.

* "Symbols" (people's output) in the culture shouldn't have an absolutely arbitrary value.

Again, this is about control. If you want to be affected by other people, by their culture, you can't have 100% control over the value of their output.

*"Right now I feel like consuming human made content. But any minute I may decide that AI generated content is more valuable and switch to that"* - attitudes like this may make the value of people's output completely arbitrary, decided by you on a whim.

* Other people should have some control over their image and output.

If you want to be affected by other people, you can't have 100% control over their image and output. If you create countless variations of someone's personality and exploit/milk them to death, it isn't healthy culturewise.

You're violating and destroying boundaries that allow the other person's personality to exist. *(inside of your mind or inside the culture)*

...

But where do we draw the line?

I'm not sure you can mix the culture of content generation/"AI replacement" and the human culture. I feel that with every step weakening the principles above the damage to the human culture will grow exponentially.

The lost message

================

Imagine a person you don't know. You don't care about them. Even worse, you're put off by what they're saying, you don't want to listen. Or maybe you just isn't interested in the "genre" of their message.

But that person may still have a valuable message for you. And that person still has a chance to reach you:

1. They share their message with other people.

2. The message becomes popular in the culture.

3. You notice the popularity. You check out the message again. Or someone explains it to you.

But if any person can switch to AI generated content at any minute, transmitting the message may become infinitely harder or outright impossible.

**"But AI can generate a better message for me! Even the one I wouldn't initially like!"**

Then we're back at the square one: you don't want to be affected by other people.

Rights to exist

===============

Consciousness and personality don't exist in a vacuum, they need a medium to be expressed. For example, text messages or drawings.

When you say *"hey, I can generate your output in any medium!"*

You say *"I can deny you existence, I can lock you out of the world"*.

I'm not sure it's a good idea/fun future.

...

So, I don't really see where this "content generation" is going in the long run. Or in the very short run (***GPT-4*** plus ***DALL-E*** or *"DALL-E 2"* for everyone)

*"Do you care that your favorite piece of art/piece of writing was made by a human?"* is the most irrelevant question that you have to consider. Prior questions are: do you care about people in general? do you care about people's ability to communicate? do you care about people's basic rights to be seen and express themselves?

If "yes", where do you draw the line and how do you make sure that it's a solid line? I don't care if you think *"DALL-E good"* or *"DALL-E bad"*, I care where you draw the line. What condition needs to break for you to say *"wait, it's not what I wanted, something bad is happening"*?

If my arguments miss something, it doesn't matter: just tell me where you draw [the line](https://www.youtube.com/watch?v=FZnpCnqXvEM). What would you not like to generate, violate, control? What would be the deal breaker for you? |

75cb12d2-93a8-4905-afa1-518408a2586c | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "Politics is the mind-killer. Debate is war, arguments are soldiers. There is the temptation to search for ways to interpret every possible experimental result to confirm your theory, like securing a citadel against every possible line of attack. This you cannot do. It is mathematically impossible. For every expectation of evidence, there is an equal and opposite expectation of counterevidence.But it’s okay if your cherished belief isn’t perfectly defended. If the hypothesis is that the coin comes up heads 95% of the time, then one time in twenty you will expect to see what looks like contrary evidence. This is okay. It’s normal. It’s even expected, so long as you’ve got nineteen supporting observations for every contrary one. A probabilistic model can take a hit or two, and still survive, so long as the hits don't keep on coming in.2Yet it is widely believed, especially in the court of public opinion, that a true theory can have no failures and a false theory no successes.You find people holding up a single piece of what they conceive to be evidence, and claiming that their theory can “explain” it, as though this were all the support that any theory needed. Apparently a false theory can have no supporting evidence; it is impossible for a false theory to fit even a single event. Thus, a single piece of confirming evidence is all that any theory needs.It is only slightly less foolish to hold up a single piece of probabilistic counterevidence as disproof, as though it were impossible for a correct theory to have even a slight argument against it. But this is how humans have argued for ages and ages, trying to defeat all enemy arguments, while denying the enemy even a single shred of support. People want their debates to be one-sided; they are accustomed to a world in which their preferred theories have not one iota of antisupport. Thus, allowing a single item of probabilistic counterevidence would be the end of the world.I just know someone in the audience out there is going to say, “But you can’t concede even a single point if you want to win debates in the real world! If you concede that any counterarguments exist, the Enemy will harp on them over and over—you can’t let the Enemy do that! You’ll lose! What could be more viscerally terrifying than that?”Whatever. Rationality is not for winning debates, it is for deciding which side to join. If you’ve already decided which side to argue for, the work of rationality is done within you, whether well or poorly. But how can you, yourself, decide which side to argue? If choosing the wrong side is viscerally terrifying, even just a little viscerally terrifying, you’d best integrate all the evidence.Rationality is not a walk, but a dance. On each step in that dance your foot should come down in exactly the correct spot, neither to the left nor to the right. Shifting belief upward with each iota of confirming evidence. Shifting belief downward with each iota of contrary evidence. Yes, down. Even with a correct model, if it is not an exact model, you will sometimes need to revise your belief down.If an iota or two of evidence happens to countersupport your belief, that’s okay. It happens, sometimes, with probabilistic evidence for non-exact theories. (If an exact theory fails, you are in trouble!) Just shift your belief downward a little—the probability, the odds ratio, or even a nonverbal weight of credence in your mind. Just shift downward a little, and wait for more evidence. If the theory is true, supporting evidence will come in shortly, and the probability will climb again. If the theory is false, you don’t really want it anyway.The problem with using black-and-white, binary, qualitative reasoning is that any single observation either destroys the theory or it does not. When not even a single contrary observation is allowed, it creates cognitive dissonance and has to be argued away. And this rules out incremental progress; it rules out correct integration of all the evidence. Reasoning probabilistically, we realize that on average, a correct theory will generate a greater weight of support than countersupport. And so you can, without fear, say to yourself: “This is gently contrary evidence, I will shift my belief downward.” Yes, down. It does not destroy your cherished theory. That is qualitative reasoning; think quantitatively.For every expectation of evidence, there is an equal and opposite expectation of counterevidence. On every occasion, you must, on average, anticipate revising your beliefs downward as much as you anticipate revising them upward. If you think you already know what evidence will come in, then you must already be fairly sure of your theory—probability close to 1—which doesn’t leave much room for the probability to go further upward. And however unlikely it seems that you will encounter disconfirming evidence, the resulting downward shift must be large enough to precisely balance the anticipated gain on the other side. The weighted mean of your expected posterior probability must equal your prior probability.How silly is it, then, to be terrified of revising your probability downward, if you’re bothering to investigate a matter at all? On average, you must anticipate as much downward shift as upward shift from every individual observation.It may perhaps happen that an iota of antisupport comes in again, and again and again, while new support is slow to trickle in. You may find your belief drifting downward and further downward. Until, finally, you realize from which quarter the winds of evidence are blowing against you. In that moment of realization, there is no point in constructing excuses. In that moment of realization, you have already relinquished your cherished belief. Yay! Time to celebrate! Pop a champagne bottle or send out for pizza! You can’t become stronger by keeping the beliefs you started with, after all."

The above is a chunk of text from a post created by a user on the website LessWrong.

Can you break this down into the top 5 most important points for me.

1. The author asserts that it is a misconception to believe that a true theory can have no failures and a false theory no successes. This idea is flawed as it doesn't take into account the probabilistic nature of evidence. Even a true theory can have instances that seem to contradict it, and a false theory can occasionally seem to be confirmed by certain pieces of evidence.

2. The author emphasizes the importance of integrating all evidence when forming or assessing a theory. It's crucial to consider both supporting and opposing evidence, and adjust the belief in the theory accordingly. This is a process of constant adjustment and refinement.

3. The author criticizes binary, black-and-white reasoning. This type of reasoning does not allow for the nuanced understanding that comes from considering the probabilistic nature of evidence. The author encourages thinking quantitatively rather than qualitatively.

4. The author points out that when investigating a theory, one should anticipate as much downward revision (contradictory evidence) as upward revision (confirmatory evidence). This is a natural part of the process of refining a theory.

5. Finally, the author celebrates the moment of realization when one understands that a cherished belief is not supported by the evidence. This is not a moment of failure, but a moment of growth and learning. The author encourages embracing this moment and moving forward with a better understanding.

Can you elaborate on the concept of thinking quantitatively rather than qualitatively in the context of forming and assessing theories?

Certainly. Thinking quantitatively in the context of forming and assessing theories involves using numerical data and statistical methods to evaluate evidence and make decisions. It's about assessing the weight of evidence in a measurable way, which allows for more nuanced and precise conclusions.

For example, instead of simply stating that a piece of evidence supports a theory (qualitative), a quantitative approach would involve determining how strongly that evidence supports the theory. This could involve calculating probabilities, using statistical models, or other numerical methods.

In the context of the text, the author is suggesting that theories should not be seen as simply true or false (a binary, qualitative view), but rather we should consider the degree to which the available evidence supports or contradicts the theory (a probabilistic, quantitative view).

This means that a single piece of evidence that seems to contradict a theory does not necessarily make the theory false. Instead, it should lead to a downward adjustment in our confidence in the theory. Similarly, a piece of evidence that supports the theory should lead to an upward adjustment in our confidence.

This approach allows for a more nuanced understanding of theories, where our confidence in them can shift and change as new evidence becomes available. It also allows for the possibility that a theory can be mostly, but not entirely, correct.

Reflect on a real-world scenario where the quantitative approach to assessing theories has been successfully applied. What were the key steps in this process and how did the quantitative approach contribute to a more nuanced understanding of the theory in question?

One real-world scenario where a quantitative approach has been successfully applied is in the field of climate science. Climate scientists use a variety of data, including temperature records, ice core samples, and computer models, to test theories about climate change.

Here are the key steps in this process:

1. **Formulating a Hypothesis**: Scientists propose a theory or hypothesis, such as "human activity is causing global warming."

2. **Collecting Data**: Scientists collect a wide range of data related to climate, such as temperature records, greenhouse gas concentrations, and ice core samples.

3. **Analyzing Data**: Scientists use statistical methods to analyze the data. For example, they might use regression analysis to determine the relationship between greenhouse gas concentrations and global temperatures.

4. **Interpreting Results**: Scientists interpret the results of their analysis. If the data shows a strong correlation between human activity and global warming, this would be evidence in support of the theory.

5. **Refining the Theory**: Based on the results, scientists may refine their theory. For example, they might specify the extent to which human activity is contributing to global warming, or identify specific activities that are particularly problematic.

The quantitative approach allows for a more nuanced understanding of the theory of climate change. Rather than simply stating that human activity is causing global warming, scientists can quantify the extent of the impact, identify the most significant contributing factors, and make predictions about future changes. This approach also allows for the possibility that the theory is not entirely correct, and for adjustments to be made as new evidence becomes available.

This nuanced understanding is crucial for informing policy decisions related to climate change. It allows policymakers to target the most significant contributing factors, and to assess the likely impact of different policy options. |

7fd7d90d-f6ac-4c41-9b00-a3bc0e61f121 | trentmkelly/LessWrong-43k | LessWrong | Exit Duty Generator by Matti Häyry

Hello! I was hoping to start a discussion about a recent article called Exit Duty Generator by Matti Häyry, it can be found here:

https://www.cambridge.org/core/journals/cambridge-quarterly-of-healthcare-ethics/article/exit-duty-generator/49ACA1A21FF0A4A3D0DB81230192A042#article

or DOI: https://doi.org/10.1017/S096318012300004X

Abstract

This article presents a revised version of negative utilitarianism. Previous versions have relied on a hedonistic theory of value and stated that suffering should be minimized. The traditional rebuttal is that the doctrine in this form morally requires us to end all sentient life. To avoid this, a need-based theory of value is introduced. The frustration of the needs not to suffer and not to have one’s autonomy dwarfed should, prima facie, be decreased. When decreasing the need frustration of some would increase the need frustration of others, the case is deferred and a fuller ethical analysis is conducted. The author’s perceptions on murder, extinction, the right to die, antinatalism, veganism, and abortion are used to reach a reflective equilibrium. The new theory is then applied to consumerism, material growth, and power relations. The main finding is that the burden of proof should be on those who promote the status quo.

Any thoughts? Thanks!

All the best,

Amanda |

4b20d131-a5d2-441b-a2d7-8a356804dd2a | trentmkelly/LessWrong-43k | LessWrong | The shard theory of human values

TL;DR: We propose a theory of human value formation. According to this theory, the reward system shapes human values in a relatively straightforward manner. Human values are not e.g. an incredibly complicated, genetically hard-coded set of drives, but rather sets of contextually activated heuristics which were shaped by and bootstrapped from crude, genetically hard-coded reward circuitry.

----------------------------------------

We think that human value formation is extremely important for AI alignment. We have empirically observed exactly one process which reliably produces agents which intrinsically care about certain objects in the real world, which reflect upon their values and change them over time, and which—at least some of the time, with non-negligible probability—care about each other. That process occurs millions of times each day, despite genetic variation, cultural differences, and disparity in life experiences. That process produced you and your values.

Human values look so strange and inexplicable. How could those values be the product of anything except hack after evolutionary hack? We think this is not what happened. This post describes the shard theory account of human value formation, split into three sections:

1. Details our working assumptions about the learning dynamics within the brain,

2. Conjectures that reinforcement learning grows situational heuristics of increasing complexity, and

3. Uses shard theory to explain several confusing / “irrational” quirks of human decision-making.

Terminological note: We use “value” to mean a contextual influence on decision-making. Examples:

* Wanting to hang out with a friend.

* Feeling an internal urge to give money to a homeless person.

* Feeling an internal urge to text someone you have a crush on.

* That tug you feel when you are hungry and pass by a donut.

To us, this definition seems importantly type-correct and appropriate—see Appendix A.2. The main downside is that the definition i |

45df45cf-13a7-46c1-bb3a-ba53faa4fe3e | trentmkelly/LessWrong-43k | LessWrong | New LW Meetup: Bogota

This summary was posted to LW Main on January 27th. The following week's summary is here.

New meetups (or meetups with a hiatus of more than a year) are happening in:

* First Bogota, Colombia Meetup: 28 January 2017 06:00PM

Irregularly scheduled Less Wrong meetups are taking place in:

* Sao Paulo - Meetup de janeiro: 28 January 2017 02:00PM

The following meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* Baltimore / UMBC Weekly Meetup: 29 January 2017 08:00PM

* Denver Area LW February Meetup: 07 February 2017 07:00PM

* [Detroit Metro / Ann Arbor], Michigan: 28 January 2017 04:20PM

* Moscow: unconference: 29 January 2017 02:00PM

* Moscow LW meetup in "Nauchka" library: 03 February 2017 08:00PM

* San Francisco Meetup: Group Debugging: 30 January 2017 06:15PM

* Sydney Rationality Dojo - February 2017: 05 February 2017 04:00PM

* Vienna Meetup: 28 January 2017 03:00PM

* Washington, D.C.: Meta Meetup: 29 January 2017 03:30PM

Locations with regularly scheduled meetups: Ann Arbor, Austin, Baltimore, Berlin, Boston, Brussels, Buffalo, Canberra, Columbus, Denver, Kraków, London, Madison WI, Melbourne, Moscow, Netherlands, New Hampshire, New York, Philadelphia, Prague, Research Triangle NC, San Francisco Bay Area, Seattle, St. Petersburg, Sydney, Tel Aviv, Toronto, Vienna, Washington DC, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers and a Slack channel for daily discussion and online meetups on Sunday night US time.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (more resources here). Check one out, stretch your rationality skills, build community, and have fun!

In addition to the handy sidebar of upcoming meetups, a meetup overview is posted on the front page every Friday. These are an attempt to collect information |

4a20f521-7ab8-474b-a64f-f55efba4e6a5 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Partial Agency

*Epistemic status: very rough intuitions here.*

I think there's something interesting going on with [Evan's notion of myopia](https://www.lesswrong.com/posts/BKM8uQS6QdJPZLqCr/towards-a-mechanistic-understanding-of-corrigibility).

Evan has been calling this thing "myopia". Scott has been calling it "stop-gradients". In my own mind, I've been calling the phenomenon "directionality". Each of these words gives a different set of intuitions about how the cluster could eventually be formalized.

Stop-Gradients

--------------

Nash equilibria are, abstractly, modeling agents via an equation like .mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

a∗=argmaxaf(a,a∗). In words: a∗ is the agent's mixed strategy. The payoff f(.,.) is a function of the mixed strategy in two ways: the first argument is the causal channel, where actions directly have effects; the second argument represents the "acausal" channel, IE, the fact that the other players know the agent's mixed strategy and this influences their actions. The agent is maximizing across the first channel, but "ignoring" the second channel; that is why we have to solve for a fixed point to find Nash equilibria. This motivates the notion of "stop gradient": if we think in terms of neural-network type learning, we're sending the gradient through the first argument but not the second. (It's a kind of mathematically weird thing to do!)

Myopia

------

Thinking in terms of iterated games, we can also justify the label "myopia". Thinking in terms of "gradients" suggests that we're doing some kind of training involving repeatedly playing the game. But we're training an agent to play [as if it's a single-shot game](https://www.lesswrong.com/posts/dKAJqBDZRMMsaaYo5/in-logical-time-all-games-are-iterated-games): the gradient is rewarding behavior which gets more reward within the single round even if it compromises long-run reward. This is a weird thing to do: why implement a training regime to produce strategies like that, if we believe the nash-equilibrium model, IE we think the other players will know our mixed strategy and react to it? We can, for example, win chicken by going straight more often than is myopically rational. Generally speaking, we expect to get better rewards in the rounds after training if we optimized for non-myopic strategies during training.

Directionality

--------------

To justify my term "directionality" for these phenomena, we have to look at a different example: the idea that "when beliefs and reality don't match, we change our beliefs". IE: when optimizing for truth, we optimize "only in one direction". How is this possible? We can write down a loss function, such as Bayes' loss, to define accuracy of belief. But how can we optimize it only "in one direction"?

We can see that this is the same thing as myopia. When training predictors, we only consider the efficacy of hypotheses one instance at a time. Consider *supervised learning:* we have "questions" x1,x2,... etc and are trying to learn "answers" y1,y2,... etc. If a neural network were somehow able to mess with the training data, it would not have much pressure to do so. If it could give an answer on instance x1 which improved its ability to answer on x2 by manipulating y2, the gradient would not specially favor this. Suppose it is possible to take some small hit (in log-loss terms) on y1 for a large gain on y2. The large gain for x2 would not reinforce the specific neural patterns responsible for making y2 easy (only the patterns responsible for successfully taking advantage of the easiness). The small hit on x1 means there's an incentive not to manipulate y2.

It is *possible* that the neural network learns to manipulate the data, if by chance the neural patterns which shift x1 are the same as those which successfully exploit the manipulation at x2. However, this is a fragile situation: if there are other neural sub-patterns which are equally capable of giving the easy answer on x2, the reward gets spread around. (Think of these as parasites taking advantage of the manipulative strategy without doing the work necessary to sustain it.) Because of this, the manipulative sub-pattern may not "make rent": the amount of positive gradient it gets may not make up for the hit it takes on x1. And all the while, neural sub-patterns which do better on x1 (by refusing to take the hit) will be growing stronger. Eventually they can take over. This is exactly like myopia: strategies which do better in a specific case are favored for that case, despite global loss. The neural network fails to successfully coordinate with itself to globally minimize loss.

To see why this is also like stop-gradients, think about the loss function as l(w,w∗): the neural weights w determine loss through a "legitimate" channel (the prediction quality on a single instance), plus an "illegitimate" channel (the cross-instance influence which allows manipulation of y2 through the answer given for x1). We're optimizing through the first channel, but not the second.

The difference between supervised learning and reinforcement learning is just: reinforcement learning explicitly tracks helpfulness of strategies across time, rather than assuming a high score at x2 has to do with only behaviors at x2! As a result, RL can coordinate with itself across time, whereas supervised learning cannot.

Keep in mind that this is a good thing: the algorithm may be "leaving money on the table" in terms of prediction accuracy, but this is exactly what we want. We're trying to make the map match the territory, not the other way around.

***Important side-note:** this argument obviously has some relation to the question of how we should think about [inner optimizers](https://www.lesswrong.com/posts/pL56xPoniLvtMDQ4J/the-inner-alignment-problem) and how likely we should expect them to be. However, I think it is not a direct argument against inner optimizers. (1) The emergence of an inner optimizer is exactly the sort of situation where the gradients end up all feeding through one coherent structure. Other potential neural structures cannot compete with the sub-agent, because it has started to intelligently optimize; few interlopers can take advantage of the benefits of the inner optimizer's strategy, because they don't know enough to do so. So, all gradients point to continuing the improvement of the inner optimizer rather than alternate more-myopic strategies. (2) Being an inner optimizer is non synonymous with non-myopic behavior. An inner optimizer could give myopic responses on the training set while internally having less-myopic values. Or, an inner optimizer could have myopic but very divergent values. Importantly, an inner optimizer need not take advantage of any data-manipulation of the training set like that I've described; it need not even have access to any such opportunities.*

The Partial Agency Paradox

==========================

I've given a couple of examples. I want to quickly give some more to flesh out the clusters as I see them:

* As I said, myopia is "partial agency" whereas foresight is "full agency". Think of how an agent with high time-preference (ie steep temporal discounting) can be money-pumped by an agent with low time-preference. But the limit of no-temporal-discounting-at-all is not always well-defined.

* An updatefull agent is "partial agency" whereas updatelessness is "full agency": the updateful agent is failing to use some channels of influence to get what it wants, because it already knows those things and can't imagine them going differently. Again, though, full agency seems to be an idealization we can't quite reach: [we don't know how to think about updatelessness in the context of logical uncertainty](https://www.lesswrong.com/posts/9sYzoRnmqmxZm4Whf/conceptual-problems-with-udt-and-policy-selection), only more- or less- updatefull strategies.

* I gave the beliefs←territory example. We can also think about the values→territory case: when the world differs from our preferences, we change the world, not our preferences. This has to do with avoiding wireheading.

* Similarly, we can think of examples of corrigibility -- such as respecting an off button, or avoiding manipulating the humans -- as partial agency.

* Causal decision theory is more "partial" and evidential decision theory is less so: EDT wants to recognize more things as legitimate channels of influence, while CDT claims they're not. Keep in mind that [the math of causal intervention is closely related to the math which tells us about whether an agent wants to manipulate a certain variable](https://arxiv.org/abs/1902.09980) -- so there's a close relationship between CDT-vs-EDT and wireheading/corrigibility.

I think people often take a pro- or anti- partial agency position: if you are trying to one-box in Newcomblike problems, trying to cooperate in prisoner's dilemma, trying to define logical updatelessness, trying for superrationality in arbitrary games, etc... you are generally trying to remove barriers to full agency. On the other hand, if you're trying to avert instrumental incentives, make sure an agent allows you to change its values, or doesn't prevent you from pressing an off button, or doesn't manipulate human values, etc... you're generally trying to add barriers to full agency.

I've historically been more interested in dropping barriers to full agency. I think this is partially because I tend to assume that full agency is what to expect in the long run, IE, "all agents want to be full agents" -- evolutionarily, philosophically, etc. Full agency should result from instrumental convergence. Attempts to engineer partial agency for specific purposes feel like fighting against this immense pressure toward full agency; I tend to assume they'll fail. As a result, I tend to think about AI alignment research as (1) needing to understand full agency much better, (2) needing to mainly think in terms of aligning full agency, rather than averting risks through partial agency.

However, in contrast to this historical view of mine, I want to make a few observations:

* Partial agency sometimes seems like *exactly what we want,* as in the case of map←territory optimization, rather than a crude hack which artificially limits things.

* Indeed, partial agency of this kind seems *fundamental to full agency.*

* Partial agency seems *ubiquitous in nature*. Why should I treat full agency as the default?

So, let's set aside pro/con positions for a while. What I'm interested in at the moment is ***the descriptive study of partial agency as a phenomenon.*** I think this is an organizing phenomenon behind a lot of stuff I think about.

**The partial agency paradox** is: why do we see partial agency naturally arising in certain contexts? Why are agents (so often) myopic? Why have a notion of "truth" which is about map←territory fit but not the other way around? Partial agency is a weird thing. I understand what it means to optimize something. I understand how a [selection process](https://www.lesswrong.com/posts/ZDZmopKquzHYPRNxq/selection-vs-control) can arise in the world (evolution, markets, machine learning, etc), which drives things toward maximization of some function. Partial optimization is a comparatively weird thing. Even if we can set up a "partial selection process" which incentivises maximization through only some channels, wouldn't it be blind to the side-channels, and so unable to enforce partiality in the long-term? Can't someone always come along and do better via full agency, no matter how our incentives are set up?

Of course, I've already said enough to suggest a resolution to this puzzle.

**My tentative resolution to the paradox** is: you don't build "partial optimizers" by taking a full optimizer and trying to add carefully balanced incentives to create indifference about optimizing through a specific channel, or anything like that. (Indifference at the level of the selection process does not lead to indifference at the level of the agents evolved by that selection process.) Rather, *partial agency is what selection processes incentivize by default*. If there's a learning-theoretic setup which incentivizes the development of "full agency" (whatever that even means, really!) I don't know what it is yet.

Why?

Learning is basically episodic. In order to learn, you (sort of) need to do the same thing over and over, and get feedback. Reinforcement learning tends to assume [ergodic](https://en.wikipedia.org/wiki/Ergodic_process) environments so that, no matter how badly the agent messes up, it eventually re-enters the same state so it can try again -- this is a "soft" episode boundary. Similarly, RL tends to require temporal discounting -- this also creates a soft episode boundary, because things far enough in the future matter so little that they can be thought of as "a different episode".

So, like map←territory learning (that is, epistemic learning), we can kind of expect any type of learning to be myopic to some extent.

This fits the picture where full agency is an idealization which doesn't really make sense on close examination, and partial agency is the more real phenomenon. However, this is absolutely not a conjecture on my part that all learning algorithms produce partial agents of some kind rather than full agents. There may still be frameworks which allow us to approach full agency in the limit, such as [taking the limit of diminishing discount factors](https://www.lesswrong.com/posts/S3W4Xrmp6AL7nxRHd/formalising-decision-theory-is-hard#H45aYhMFED4Byr72m), or [considering asymptotic behavior of agents who are able to make precommitments](https://www.lesswrong.com/posts/S3W4Xrmp6AL7nxRHd/formalising-decision-theory-is-hard#X7R4rxHpkEKycJvSb). We may be able to achieve some aspects of full agency, [such as superrationality in games](https://www.lesswrong.com/posts/S3W4Xrmp6AL7nxRHd/formalising-decision-theory-is-hard#3yw2udyFfvnRC8Btr), without others.

Again, though, my interest here is more to understand what's going on. The point is that it's actually really easy to set up incentives for partial agency, and not so easy to set up incentives for full agency. So it makes sense that the world is full of partial agency.

Some questions:

* To what extent is it really true that settings such as supervised learning disincentivize strategic manipulation of the data? Can my argument be formalized?

* If thinking about "optimizing a function" is too coarse-grained (a supervised learner doesn't exactly minimize prediction error, for example), what's the best way to revise our concepts so that partial agency becomes obvious rather than counterintuitive?

* Are there better ways of characterizing the partiality of partial agents? Does myopia cover all cases (so that we can understand things in terms of time-preference), or do we need the more structured stop-gradient formulation in general? Or perhaps a more causal-diagram-ish notion, as my "directionality" intuition suggests? Do the different ways of viewing things have nice relationships to each other?

* Should we view partial agents as multiagent systems? I've characterized it in terms of something resembling game-theoretic equilibrium. The 'partial' optimization of a function arises from the [price of anarchy](https://en.wikipedia.org/wiki/Price_of_anarchy), or as it's known around lesswrong, Moloch. Are partial agents really bags of full agents keeping each other down? This seems a little true, to me, but also doesn't strike me as the most useful way of thinking about partial agents. For one thing, it takes full agents as a necessary concept to build up partial agents, which seems wrong to me.

* What's the relationship between the selection process (learning process, market, ...) and the type of partial agents incentivised by it? If we think in terms of myopia: given a type of myopia, can we design a training procedure which tracks or doesn't track the relevant strategic influences? If we think in terms of stop-gradients: we can take "stop-gradient" literally and stop there, but I suspect there is more to be said about designing training procedures which disincentivize the strategic use of specified paths of influence. If we think in terms of directionality: how do we get from the abstract "change the map to match the territory" to the concrete details of supervised learning?

* What does partial agency say about inner optimizers, if anything?

* What does partial agency say about corrigibility? My hope is that there's a version of corrigibility which is a perfect fit in the same way that map←territory optimization seems like a perfect fit.

Ultimately, the concept of "partial agency" is probably confused. The partial/full clustering is very crude. For example, it doesn't make sense to think of a non-wireheading agent as "partial" because of its refusal to wirehead. And it might be odd to consider a myopic agent as "partial" -- it's just a time-preference, nothing special. However, I do think I'm pointing at a phenomenon here, which I'd like to understand better. |

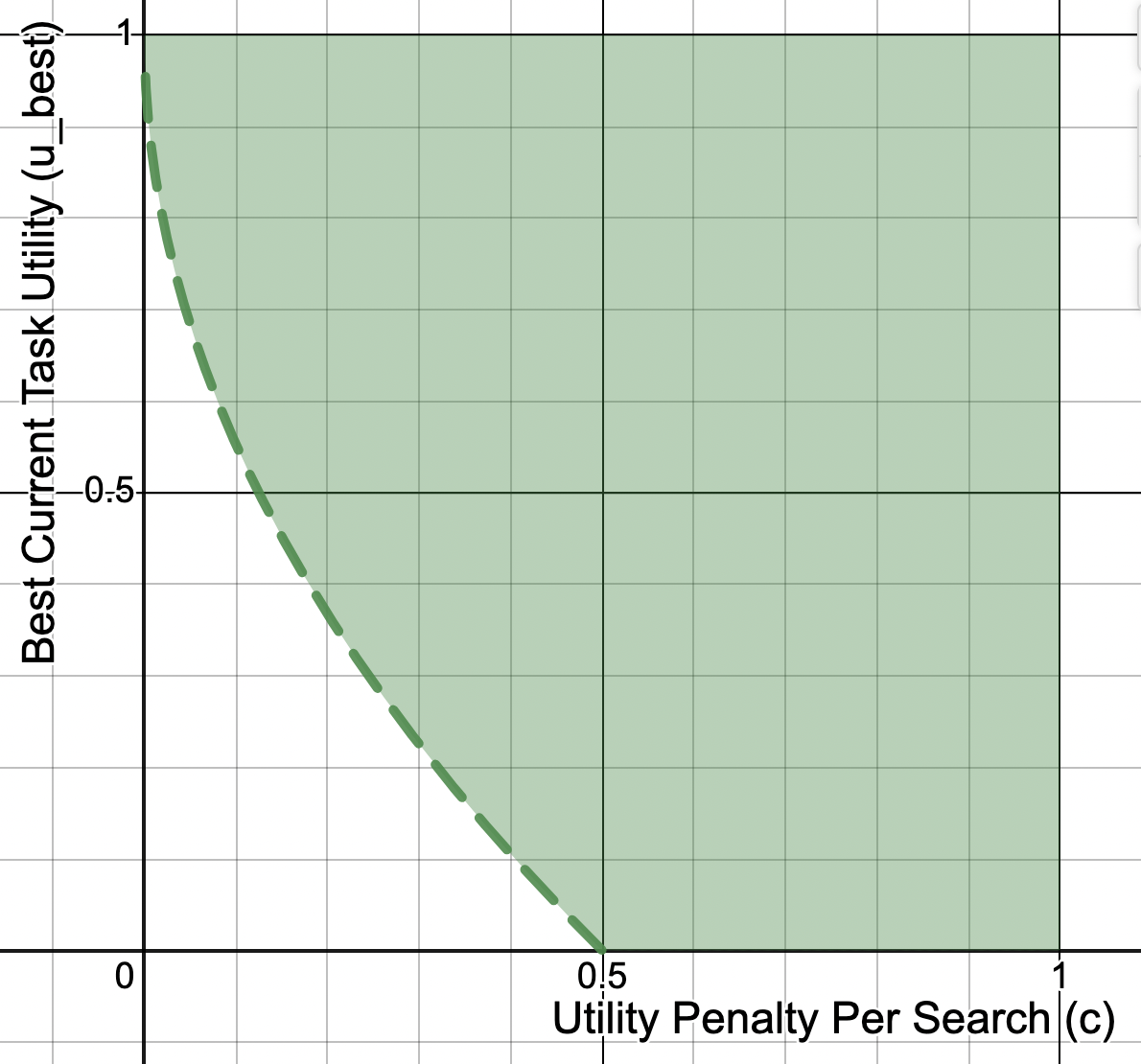

5cf72a73-d310-4023-824b-5e53e4b96b77 | trentmkelly/LessWrong-43k | LessWrong | Convexity and truth-seeking

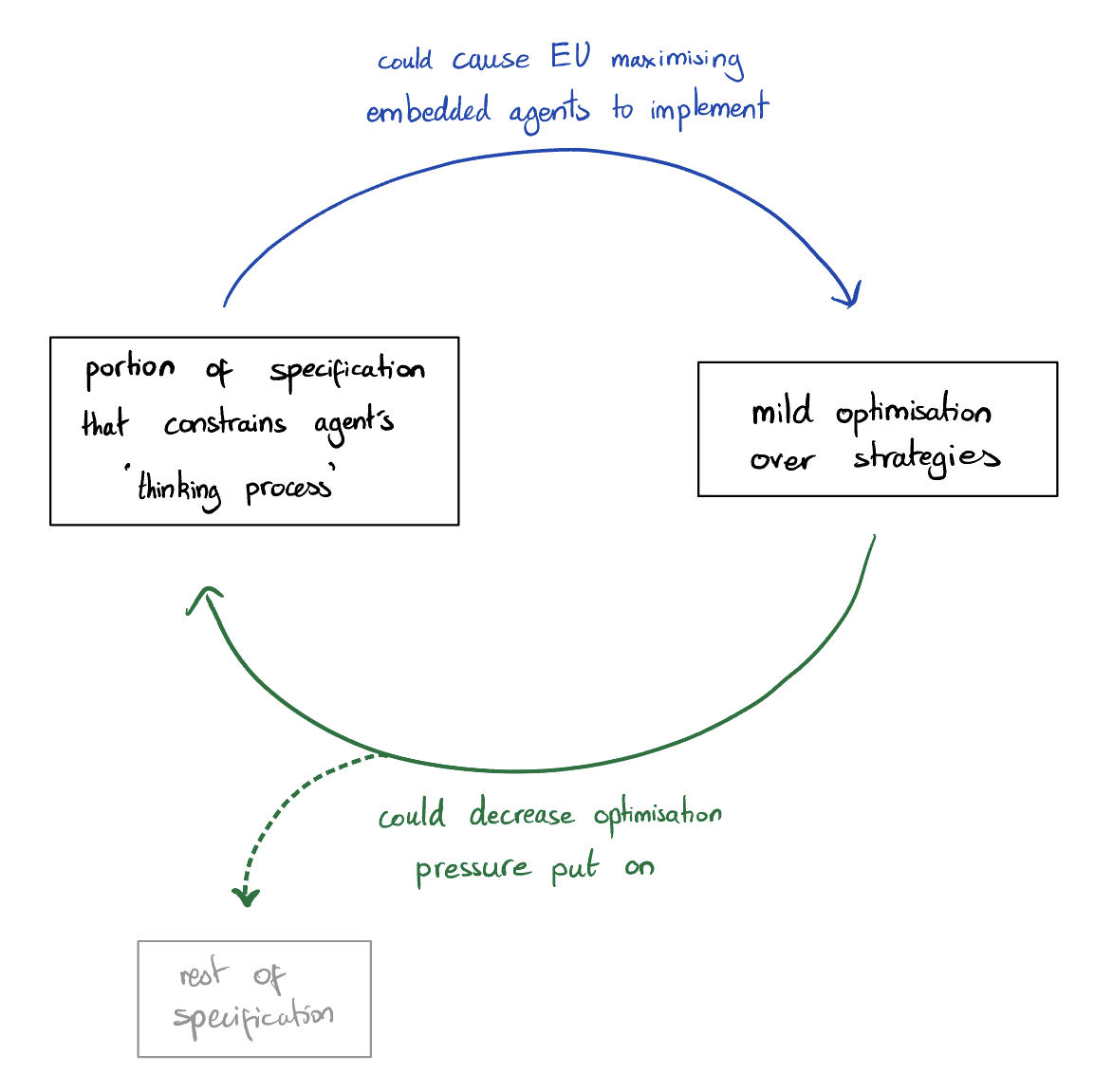

A putative new idea for AI control; index here.

This post starts with a very simple and retrospectively obvious observation:

If we want an AI to give us an estimation of expected utility, it needs to be motivated to give us that estimation.

Once we have that in mind, and remember that any extra motivation involves trade-offs, the points of the previous posts on truth-seeking become clearer.

----------------------------------------

Convexity and AI-chosen outputs

Let u be a utility known to range within R⊆R.

Let f be a twice differentiable function that is convex on R. For simplicity, we'll strengthen convexity to requiring f′′ be strictly positive on R. Then define:

* u#(r)=f(r)+(u−r)f′(r), where r∈R is the output of the AI at some future time t.

For this post, we'll assume that r is not known by anyone but the AI (in future posts we'll look more carefully at allowing r to be known to us).

Differentiating u# with respect to r gives:

* f′(r)+(u−r)f′′(r)−f′(r)=f′′(r)(u−r).

The expectation of this is zero iff r=Et(u). If we make that choice, notice that the expression is twice differentiable at r=Et(u) (even if f′′′ is not defined there!) and its derivative is simply −f′′(r), which is negative on R. Thus choosing r=Et(u) will maximise the AI's utility on R.

How much utility will the AI get? Since r will be set to the expectation of u at time t, clearly u#(r) will give utility f(Et(u)). At time 0, the AI's expectation of u# is therefore E0(f(Et(u)). If f were affine, this would simplify to f(E0(u)); but f is specifically not affine. Since f is convex, knowing more about the expected value of u can only (expect to) increase the expectation of u#. The AI values information.

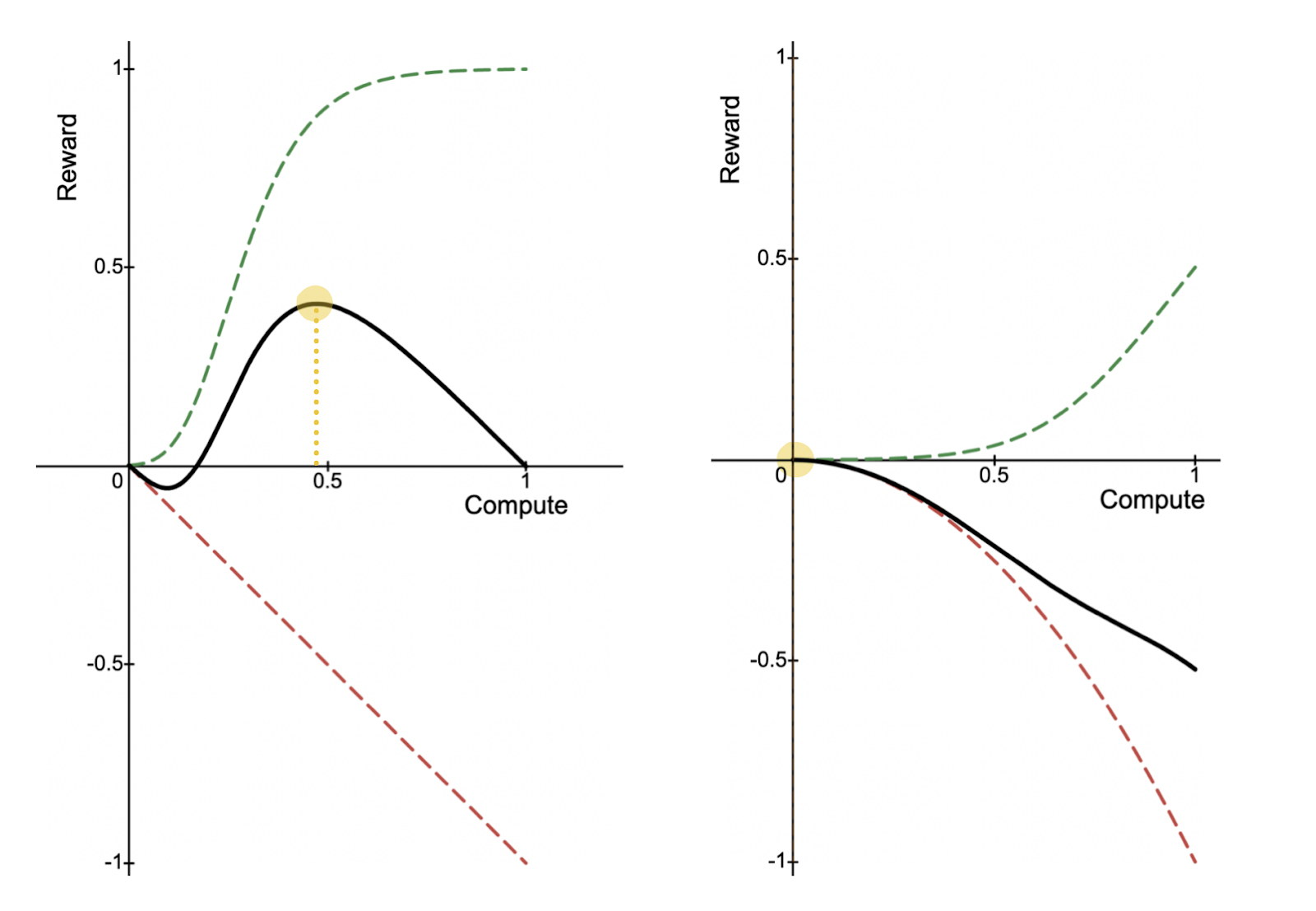

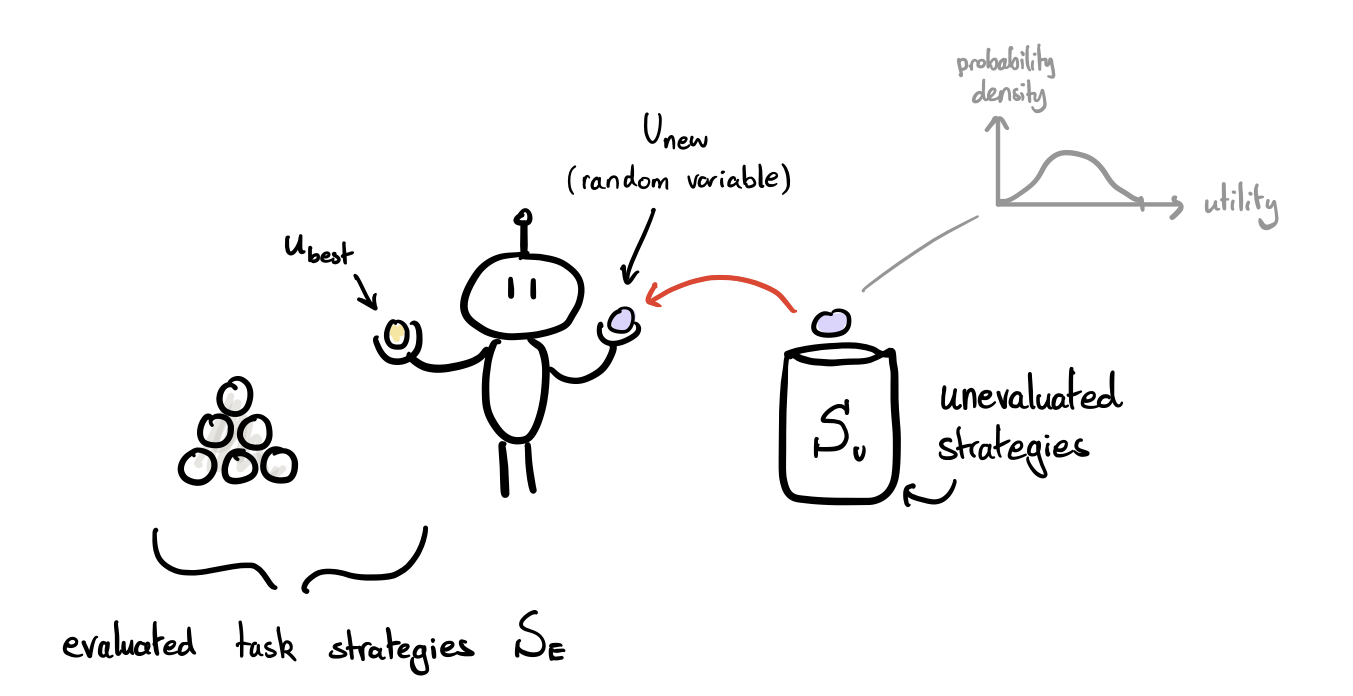

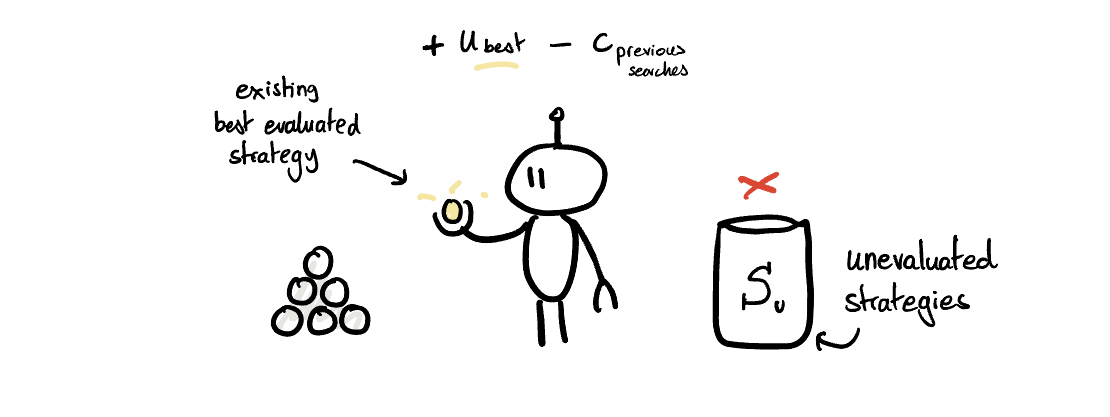

Cost and truth-seeking

Consider first the function f(r)=r2 with R=[0,1]. That can be graphed as follows:

Here, we're imagining that E(u)=0.6, if the AI were a pure u-maximiser. In that situation, the expectation of u# is at least 0.36. Because of the convexity of f, however, an expe |

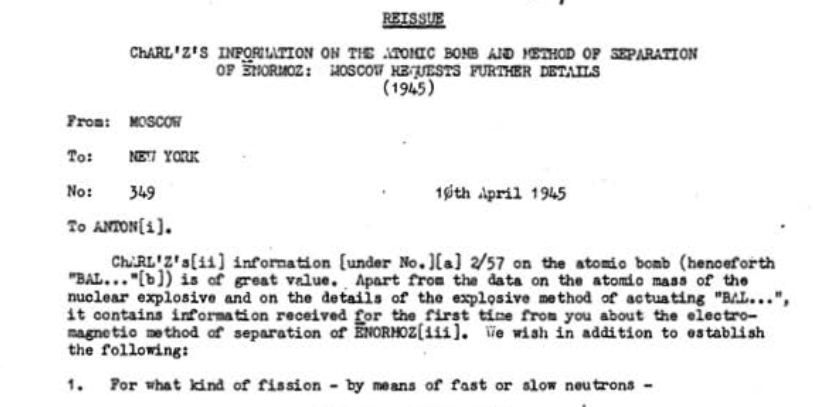

a04e7f07-4c1f-46c4-a9d2-ed84f7df241e | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Nuclear Espionage and AI Governance

ABSTRACT:

=========

*Using both primary and secondary sources, I discuss the role of espionage in early nuclear history. Nuclear weapons are analogous to AI in many ways, so this period may hold lessons for AI governance. Nuclear spies successfully transferred information about the plutonium implosion bomb design and the enrichment of fissile material. Spies were mostly ideologically motivated. Counterintelligence was hampered by its fragmentation across multiple agencies and its inability to be choosy about talent used on the most important military research program in the largest war in human history. Furthermore, the Manhattan Project’s leadership prioritized avoiding domestic political oversight over preventing espionage. Nuclear espionage most likely sped up Soviet nuclear weapons development, but the Soviet Union would have been capable of developing nuclear weapons within a few years without spying. The slight gain in speed due to spying may nevertheless have been strategically significant.*

*Based on my study of nuclear espionage, I offer some tentative lessons for AI governance:*

* *The importance of spying to transformative AI development is likely to be greater if the scaling hypothesis is false than if it is true.*

* *Regardless of the course that AI technology takes, spies may be able to convey information about engineering or tacit knowledge (although more creativity will be required to transfer tacit than explicit knowledge).*

* *Nationalism as well as ideas particularly prevalent among AI scientists (including belief in the open source ideal) may serve as motives for future AI spies. Spies might also be financially motivated, given that AI development mostly happens in the private sector (at least for now) where penalties for spying are lower and financial motivations are in general more important.*

* *One model of technological races suggests that safety is best served by the leading project having a large lead, and therefore being secure enough in its position to expend resources on safety. Spies are likely, all else equal, to decrease the lead of the leader in a technological race. Spies are also likely to increase enmity between competitors, which seems to increase accident risk robustly to changes in circumstances and modeling assumptions. Therefore, it may make sense for those who are concerned about AI safety to take steps to oppose espionage**—**even if they have no preference for the labs being harmed by espionage over the labs benefiting from espionage.*

* *On the other hand, secrecy (the most obvious way to prevent espionage) may increase risks posed by AI by making AI systems more opaque. And countermeasures to espionage that drive scientists out of conscientious projects may have perverse consequences.*

Acknowledgements: I am grateful to Matthew Gentzel for supervising this project and Michael Aird, Christina Barta, Daniel Filan, Aaron Gertler, Sidney Hough, Nat Kozak, Jeffery Ohl, and Waqar Zaidi for providing comments. This research was supported by a fellowship from the Stanford Existential Risks Initiative.

This post is a short version of the report, x-posted from [EA Forum](https://forum.effectivealtruism.org/posts/CKfHDw5Lmoo6jahZD/nuclear-espionage-and-ai-governance-1). The full version with additional sections, an appendix, and a bibliography, is available [here](https://docs.google.com/document/d/1TFOF3rIMGLBg80Wr8-GWwuFh7ipcjnYXUVLuDPpDC7Y/edit?usp=sharing).

1. Introduction

===============

The early history of nuclear weapons is in many ways similar to hypothesized future strategic situations involving advanced artificial intelligence ([Zaidi and Dafoe 2021](https://web.archive.org/web/20210325035303/https://www.fhi.ox.ac.uk/wp-content/uploads/2021/03/International-Control-of-Powerful-Technology-Lessons-from-the-Baruch-Plan-Zaidi-Dafoe-2021.pdf), 4). And, in addition to the objective similarity of the situations, the situations may be made more similar by deliberate imitation of the Manhattan Project experience ([see this report](https://armedservices.house.gov/_cache/files/2/6/26129500-d208-47ba-a9f7-25a8f82828b0/6D5C75605DE8DDF0013712923B4388D7.future-of-defense-task-force-report.pdf#page=13) [to the US House Armed Service Committee](https://web.archive.org/web/20201008094452/https://armedservices.house.gov/_cache/files/2/6/26129500-d208-47ba-a9f7-25a8f82828b0/6D5C75605DE8DDF0013712923B4388D7.future-of-defense-task-force-report.pdf)). So it is worth looking to the history of nuclear espionage for inductive evidence and conceptual problems relevant to AI development.

The Americans produced a detailed official history and explanation of the Manhattan Project, entitled the Smyth Report, and released it on August 11, 1945, five days after they dropped the first nuclear bomb on Japan ([Wellerstein 2021](https://www.google.co.uk/books/edition/Restricted_Data/LEU6EAAAQBAJ?hl=en&gbpv=0), 126). For the Soviets, the Smyth Report “candidly revealed the scale of the effort and the sheer quantity of resources, and also hinted at some of the paths that might work and, by omission, some that probably would not” ([Gordin 2009](https://www.google.co.uk/books/edition/Red_Cloud_at_Dawn/Ztls8XXNiqgC?hl=en&gbpv=0), 103). While it would not have allowed for copying the Manhattan Project in every detail, the Soviets were able to use the Smyth Report as “a general guide to the methods of isotope separation, as a checklist of problems that needed to be solved to make separation work, and as a primer in nuclear engineering for the thousands upon thousands of engineers and workers who were drafted into the project” ([Gordin 2009](https://www.google.co.uk/books/edition/Red_Cloud_at_Dawn/Ztls8XXNiqgC?hl=en&gbpv=0), 104).

There were several reasons that the Smyth Report was released. One was a belief that, in a democratic country, the public ought to know about such an important matter as nuclear weapons. Another reason was a feeling that the Soviets would likely be able to get most of the information in the Smyth Report fairly easily regardless of whether it was released. Finally, releasing a single report would clearly demarcate information that was disseminable from information that was controlled, thereby stemming the tide of disclosures coming from investigative journalists and the tens of thousands of former Manhattan Project employees ([Wellerstein 2021](https://www.google.co.uk/books/edition/Restricted_Data/LEU6EAAAQBAJ?hl=en&gbpv=0), 124-125). Those leaks would not be subject to strategic omission, and might, according to General Leslie Groves (Director of the Manhattan Project) “start a scientific battle which would end up in congress” (Quoted in [Wellerstein 2021](https://www.google.co.uk/books/edition/Restricted_Data/LEU6EAAAQBAJ?hl=en&gbpv=0), 125). The historian Michael Gordin summarized the general state of debate between proponents and opponents of nuclear secrecy in the U.S. federal government in the late 1940s as follows:

> How was such disagreement possible? How could Groves, universally acknowledged as tremendously security-conscious, have let so much information, and such damaging information, go?... The difference lay in what Groves and his opponents considered to be useful for building an atomic bomb. Groves emphasized the most technical, most advanced secrets, while his opponents stressed the time-saving utility of knowing the general outlines of the American program ([Gordin 2009](https://www.google.co.uk/books/edition/Red_Cloud_at_Dawn/Ztls8XXNiqgC?hl=en&gbpv=0), 93).

>

>

In Gordin's view, "in the context of the late 1940s, his [Groves's] critics were more right than wrong" ([Gordin 2009](https://www.google.co.uk/books/edition/Red_Cloud_at_Dawn/Ztls8XXNiqgC?hl=en&gbpv=0), 93), though it is important to note that the Smyth Report's usefulness was complemented by the extent of KGB spying of which neither Groves nor his critics were yet aware. Stalin decided to imitate the American path to the nuclear bomb as closely as possible because he believed that it would be both the “fastest” and the “most reliable” (Quoted in [Gordin 2009](https://www.google.co.uk/books/edition/Red_Cloud_at_Dawn/Ztls8XXNiqgC?hl=en&gbpv=0), 152-153). The Smyth Report (and other publicly available materials on nuclear weapons) contained strategic omissions. The Soviets used copious information gathered by spies to fill in some of the gaps.

2. Types of information stolen

==============================

2.1 Highly abstract engineering: bomb designs

---------------------------------------------

Bomb designs were one of the most important categories of information transferred by espionage. To illustrate why design transfer was so important, it is necessary to review some basic principles of nuclear weaponry (most of what follows on nuclear weapons design is adapted from a [2017 talk by Matt Bunn](https://www.youtube.com/watch?v=jqLbcNpeBaw)).