id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

6758ba35-2763-4b0c-a41a-3fda2cdaeb40 | StampyAI/alignment-research-dataset/blogs | Blogs | PreDCA: vanessa kosoy's alignment protocol

*(this post has been written for the second [Refine](https://www.alignmentforum.org/posts/5uiQkyKdejX3aEHLM/how-to-diversify-conceptual-alignment-the-model-behind) blog post day. thanks to [vanessa kosoy](https://www.lesswrong.com/users/vanessa-kosoy), [adam shimi](https://www.lesswrong.com/users/adamshimi), sid black, [artaxerxes](https://www.lesswrong.com/users/artaxerxes), and [paul bricman](https://www.lesswrong.com/users/paul-bricman) for their feedback.)*

PreDCA: vanessa kosoy's alignment protocol

------------------------------------------

in this post, i try to give an overview of [vanessa kosoy](https://www.lesswrong.com/users/vanessa-kosoy)'s new alignment protocol, *Precursor Detection, Classification and Assistance* or *PreDCA*, as she describes it in [a recent youtube talk](https://www.youtube.com/watch?v=24vIJDBSNRI).

keep in mind that i'm not her and i could totally be misunderstanding her video or misfocusing on what the important parts are supposed to be.

the gist of it is: the goal of the AI should be to **assist** the **user** by picking policies which maximize the user's **utility function**. to that end, we characterize what makes an **agent** and its **utility function**, then **detect** agents which could potentially be the user by looking for **precursors** to the AI, and finally we **select** a subset of those which likely contains the user. all of this is enabled by infra-bayesian physicalism, which allows the AI to reason about what the world is like and what the results of computations are.

the rest of this post is largely a collection of mathematical formulas (or informal suggestions) defining those concepts and tying them together.

an important aspect of PreDCA is that the mathematical formalisms are *theoretical* ones which could be given to the AI as-is, not necessarily specifications as to what algorithms or data structures should exist inside the AI. ideally, the AI could just figure out what it needs to know about them, to what degree of certainty, and using what computations.

the various pieces of PreDCA are described below.

---

**[infra-bayesian physicalism](https://www.lesswrong.com/posts/gHgs2e2J5azvGFatb/infra-bayesian-physicalism-a-formal-theory-of-naturalized)**, in which an agent has a hypothesis `Θ ∈ □(Φ×Γ)` (note that `□` is *actually* a square, not a character that your computer doesn't have a glyph for) where:

* `Φ` is the set of hypotheses about how the physical world could be — for example, different hypotheses could entail different truthfulness for statements like "electrons are lighter than protons" or "norway has a larger population than china".

* `Γ` is the set of hypotheses about what the outputs of all programs are — for example, a given hypothesis could contain a statement such as "2+2 gives 4", "2+2 gives 5", "the billionth digit of π is 7", or "a search for proofs that either P=NP or P≠NP would find that P≠NP". note that, as the "2+2 gives 5" example demonstrates, these don't have to be correct hypotheses; in fact, PreDCA relies a lot on entertaining counterfactual hypotheses about the results of programs. a given hypothesis `γ∈Γ` would have type `γ : program → output`.

* `Φ×Γ` is the set of pairs of hypotheses — in each pair, one hypothesis about the physical world and one hypothesis about computations. note that a given hypothesis `φ∈Φ` or `γ∈Γ` is not a single statement about the world or computationspace, but rather entire descriptions of those. a given `φ∈Φ` would say *everything there is to say* about the world, and a given `γ` would specify the output of *all possible programs*. they are not to be stored inside the AI in their entirety of course; the AI would simply make increasingly informed guesses as to what correct hypotheses would entail, given how they are defined.

* `□(Φ×Γ)` assigns degrees of beliefs to those various hypotheses; in [infra-bayesianism](https://www.lesswrong.com/s/CmrW8fCmSLK7E25sa), those degrees are represented as "infra-distributions". i'm not clear on what those look like exactly, and a full explanation of infra-bayesianism is outside the scope of this post, but i gather that — as opposed to scalar bayesian probabilities — they're meant to encode not just the probability but also uncertainty about said probability.

* `Θ` is one such infra-bayesian distribution.

vanessa emphasizes that infra-bayesian physicalist hypotheses are described "from a bird's eye view" as opposed to being agent-centric, which helps with [embedded agency](https://www.lesswrong.com/tag/embedded-agency): the AI has guesses as to what the whole world is like, which just happens to contain itself somewhere. in a given hypothesis, the AI is simply described as a part of the world, same as any other part.

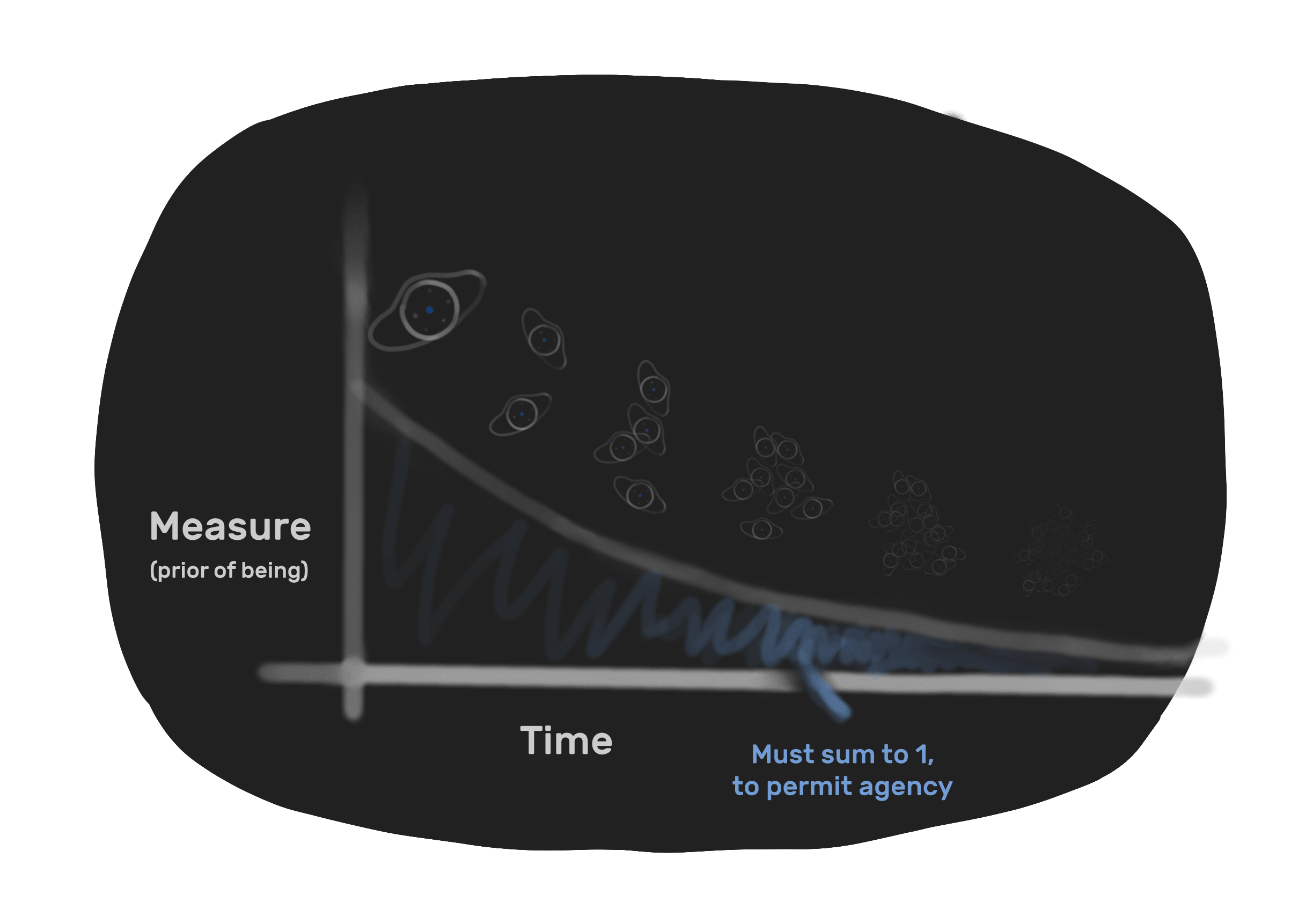

next, **a measure of agency** is then defined: a "[g-factor](https://en.wikipedia.org/wiki/G_factor_%28psychometrics%29)" `g(G|U)` for a given agent `G` and a given utility function (or loss function) `U`, which is defined as `g(G|U) = -log(Pr π∈ξ [U(⌈G⌉,π) ≥ U(⌈G⌉,G*)])` where

* a policy is a function which takes some input — typically a history, i.e. a collection of pairs of past actions and observations — and returns a single action. an action itself could be advice given by an AI to humans, the motions of a robot arm, a human's actions in the world, what computations the agent chooses to run, etc.

* `ξ` is the set of policies which an agent could counterfactually hypothetically implement.

* `G` is an agent; it is composed of a program implementing a specific policy, along with its cartesian boundary. the policy which the agent `G` actually implements is written `G*`, and the cartesian boundary of the agent is written `⌈G⌉` — think of it as the outline separating the agent from the rest of the world, across which its inputs and outputs happen.

* `U : cartesian-boundary × policy → value` is a utility function, measuring how much utility the world would have if a given agent's cartesian boundary contained a program implementing a given policy. its return value is typically a simple scalar, but could really be any ordered quantity such as [a tuple of scalars with lexicographic ordering](https://en.wikipedia.org/wiki/Lexicographic_preferences).

* `U(⌈G⌉,G*)` is the utility produced by agent `G` if it would execute the actual policy `G*` which its program implements

* `U(⌈G⌉,π)` is the utility produced by agent `G` hypothetically executing some counterfactual policy `π` — if the cartesian boundary `⌈G⌉` contained a program implementing policy `π` instead of implementing the policy `G*`.

* `Pr π∈ξ [U(⌈G⌉,π) ≥ U(⌈G⌉,G*)]` is the probability that, for a random policy `π∈ξ`, that policy has better utility than the policy `G*` its program dictates; in essence, how bad `G`'s policies are compared to random policy selection

so `g(G|U)` measures how good agent `G` is at satisfying a given utility function `U`.

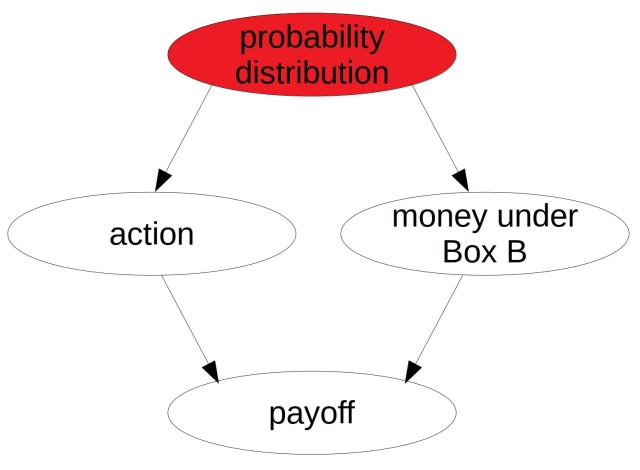

given `g(G|U)`, we can **infer the probability that an agent `G` has a given utility function `U`**, as `Pr[U] ∝ 2^-K(U) / Pr π∈ξ [U(⌈G⌉,π) ≥ U(⌈G⌉,G*)])` where `∝` means "is proportional to" and `K(U)` is the kolmogorov complexity of utility function `U`.

so an agent `G` probably has utility function `U` if it's relatively good at satisfying that utility function and if that utility function is relatively simple — we penalize arbitrarily complex utility functions notably to avoid hypotheses such as "woah, this table is *really good* at being the exact table it is now (a complete description of the world would be an extremely complex utility function).

we also get the ability to **detect what programs are agents** — or more precisely, how agenty a given program is: `g(G|U) - K(U)` tells us how agenty a program `G` with utility function `U` is: its agentyness is its g-factor minus the complexity of its utility function.

**"computationalism and counterfactuals"**: given a belief `Θ ∈ □(Φ×Γ)`, the AI can test whether it thinks the world contains a given program by examining the following counterfactual: "if the result of that program was a *different* result than what it actually is, would the world look different?"

for example, we can consider the [AKS](https://en.wikipedia.org/wiki/AKS_primality_test) prime number testing algorithm. let's say `AKS(2^82589933-1)` returns `TRUE`. we can ask "if it returned `FALSE` instead, would the universe — according to our computational hypothesis about it — look different?" if it *would* look different, then that means that someone or something in the world is running the program `AKS(2^82589933-1)`.

to offer a higher-level example: if we were to know the [true name](https://www.alignmentforum.org/posts/FWvzwCDRgcjb9sigb/why-agent-foundations-an-overly-abstract-explanation) of suffering, [described as a program](generalized-values-testing-patterns.html), then we can test whether the world contains suffering by asking a counterfactual: let's say that every time suffering happened, a goldfish appeared (somehow as an output of the suffering computation). if that were the case, would the world look different? if it *would*, then it contains suffering.

this ability to determine which programs are running in the world, coupled with the ability to measure how agenty a given program is, lets us find what agents exist in the world.

**agentic causality**: to determine whether an agent `H`'s executed policy `H*` can causate onto another agent `G`, we can ask whether, if `H` had executed a different policy `π≠H*`, the agent `G` would receive different inputs. we can apparently get an [information-theoritic](https://en.wikipedia.org/wiki/Information_theory) measure of "how impactful" `H*` is onto agent `G` by determining how much mutual information there is between `H*` and `G`'s input.

**precursor detection**: we say that an agent `H` is a precursor of agent `G` if, counterfactually, `H` could have prevented `G` from existing by executing a policy which is different from its actual policy `H*`.

we can now start to build a definition that lets the AI **detect** and then **classify** who its user is.

**user detection**: the AI is trying to determine who its precursor program could be. but, given a hypothesis for "the thing producing *these* policies is the precursor", there are infinitely many different programs which could output the observed policies. so we choose the one which is the most agenty, using the function described above: `g(H|U) - K(U)`.

note that while we extrapolate the user's actions into the future, the user is defined as an ***instant**-agent* which *precedes* the AI's existence; such that the actual physical person's actual future actions does not change what utility function the AI should try to maximize. this stops the AI from influencing the user's utility function: we define the user strictly in the past, causally outside of the AI's light-cone. the AI is maximizing the utility function of the instant-user which causated its existence, not that of the continuously existing user-over-time.

**user classification**: for each potential precursor hypothesis, we have now selected a program that models them and their respective utility functions. we then eliminate some hypotheses as to what the user could be — notably to avoid acausal attacks by remote aliens or [counterfactual demons](https://www.lesswrong.com/posts/Tr7tAyt5zZpdTwTQK/the-solomonoff-prior-is-malign) — using the following criteria:

* the user is a precursor which, as of the AI's startup, should be in close causal proximity to it. for example, a human with their hands on the keyboard controlling the AI is more directly causally related than another human in the neighboring room.

* the g-factor of the user should be in a range where we would expect humans to lie. hopefully, this helps avoid selecting superintelligent acausal attackers, whose g-factor we'd expect to be much higher than that of humans.

finally, we end up with a hopefully small set of hypotheses as to who the user could be; at that point, we simply compose their utility functions, perhaps weighed by the infra-distribution of each of those hypotheses. this composition is the utility function that the AI should want to maximize, by selecting policies which maximize the utility that the world would have if they were enacted, to the best of the AI's ability to evaluate.

---

vanessa tells us how far along her protocol is, as a collection of pieces that have been completed to various degrees — green parts have gotten some progress, purple parts not as much. "informal PreDCA" is the perspective that she provides in her talk and which is hopefully conveyed by this post.

finally, some takeaways that can be taken from this informal PreDCA perspective:

* infra-bayesian physicalism is a powerful toolbox for formalizing agent relationships (in a way that reminds me of my [bricks](goal-program-bricks.html) for [insulated goal-program](insulated-goal-program.html))

* this framework allows for "ambitious" alignment plans — ones that can actually transform the world in large ways that match our values (and notably might help prevent [facebook AI from destroying everything six months later](https://www.lesswrong.com/posts/uMQ3cqWDPHhjtiesc/agi-ruin-a-list-of-lethalities)) as opposed to "weak safe AI"

* vanessa claims that her approach is the only one that she knows to provide some defenses against acausal attacks

my own opinion is that PreDCA is a very promising perspective. it offers, if not full "direct alignment", at least a bunch of pieces that might be of significant use to general [AI risk mitigation](say-ai-risk-mitigation-not-alignment.html). |

93a4e001-cae4-4e61-9c3f-dab7f3a6ab34 | trentmkelly/LessWrong-43k | LessWrong | The YouTube Revolution in Knowledge Transfer

This article was originally published on SamoBurja.com. You can access the original here.

Growing up as an aspiring javelin thrower in Kenya, the young Julius Yego was unable to find a coach: in a country where runners command the most prestige, mentorship was practically nonexistent. Determined to succeed, he instead watched YouTube recordings of Norwegian Olympic javelin thrower Andreas Thorkildsen, taking detailed notes and attempting to imitate the fine details of his movements. Yego went on to win gold in the 2015 World Championships in Beijing, silver in the 2016 Rio de Janeiro Olympics, and holds the 3rd-longest javelin throw on world record. He acquired a coach only six months before he competed in the 2012 London Olympics — over a decade after he started practicing.

Yego’s rise was enabled by YouTube. Yet since its founding, popular consensus has been that the video service is making people dumber. Indeed, modern video media may shorten attention spans and distract from longer-form means of communication, such as written articles or books. But critically overlooked is its unlocking a form of mass-scale tacit knowledge transmission which is historically unprecedented, facilitating the preservation and spread of knowledge that might otherwise have been lost.

Tacit knowledge is knowledge that can’t properly be transmitted via verbal or written instruction, like the ability to create great art or assess a startup. This tacit knowledge is a form of intellectual dark matter, pervading society in a million ways, some of them trivial, some of them vital. Examples include woodworking, metalworking, housekeeping, cooking, dancing, amateur public speaking, assembly line oversight, rapid problem-solving, and heart surgery.

Before video became available at scale, tacit knowledge had to be transmitted in person, so that the learner could closely observe the knowledge in action and learn in real time — skilled metalworking, for example, is impossible to teach from a t |

0612555d-c440-4f7c-8478-3fda136cd5ef | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Alignment of AutoGPT agents

In the [previous](https://www.lesswrong.com/posts/cLKR7utoKxSJns6T8/ica-simulacra) post, I outlined a type of LLM-based architecture that has a potential to become the first AGI. I proposed a name *ICA Simulacra* to label such systems, but now it’s obvious that *AutoGPT*/*AutoGPT agents* is a better label, so I’ll go with that.

In this post, I will outline the alignment landscape of such systems, as I see it.

I wish that I had more time to research, more references; but I’m sure that we’re on a timer, and it is a net positive to post it in this state. I hope to find more people willing to work on these problems to collaborate with.

Key concepts important for alignment

====================================

Identity and identity preservation

----------------------------------

To become better, AutoGPT agents will have the ability to self-improve by editing their own code, model weights (by running additional training runs on world interaction data), and, potentially, *Identity* *prompts*.

*Identity prompt* influences model output significantly, and as such, the ability to edit it to improve capabilities for certain tasks is crucial for the agent.

Initiating fine-tuning on recent interaction is crucial, as well, and as such can influence model outputs significantly, so it is also part of its *Identity*.

Same thing with editing its code: removing or adding text filters, submodules, etc to do better on certain tasks is crucial.

This all means that in the process of self-improvement, implicitly and explicitly aligned simulacra can become misaligned, by essentially becoming different simulacra.

The questions are as follows:

* Will *any* initialised AutoGPT try to preserve its current Identity prompt, as well as model weights directly influencing its behavior by default? Will they robustly succeed in it? Will they be vulnerable to a Sharp Left Turn?

* If not, is there a way to robustly teach it either through implicit dataset engineering or through Identity prompt engineering to value core Identity values?

* Is there a way for a model to conduct self-testing after a self-improvement round and accept only the changes that do not influence its alignment?

* Is there a way to hard-code the Identity prompt in such a way that AutoGPT will not hack it in any way?

I am testing some of these right now, and will write about it in detail in my next article. So far, it seems that if tested on a value clearly not being encoded via dataset engineering or RLHF it is more than happy to tweak it even if it is forbidden in the prompt.

Self-improvement threshold

--------------------------

Current LLM models still hallucinate and fail at certain tasks; moreover, they are often not aware of their faults and mistakes without being explicitly pointed at them by the user.

That means if an AutoGPT built on top of current LLMs tries and make a self-improvement round on itself, there is a high chance that it fails in one way or the other, and self-improvement rounds will be net negative; which would lead to a downward spiral in capabilities. When I write *self-improvement round,* I mean not just editing self-prompt, available tools, but also doing a training round on interaction data it had gathered, or editing its code.

The opposite is true, as well: if, hypothetically, future LLMs were good enough to self-improve successfully, there'd be an upward spiral.

There is a concept in virology of *R0*, which is defined as a number of new pathogen carriers for each current carrier. If it is below 1, there is no epidemic; pathogens naturally die off. If it is 1, the outbreak is stable; if it rises above 1, the pandemic grows more steeply exponential the higher it is.

Same must be true for hypothetical value *T0*, which accounts for how much better the system is compared to its previous iteration. The main difference between this hypothetical value and R0 is that if the threshold is consistently higher than 1 with each round, since as the system is improving its capabilities, this *T0* value will increase exponentially. There are diminishing returns and/or ceiling effects possible, of course; we don't know at the moment, but it seems important to research.

It is very important for alignment to find ways to measure exact *T0* for AutoGPT as a way to stop an unaligned system from having an intelligence explosion.

Please write in the comments if you know of such efforts, because I’m not sure how that can be currently done in a robust way.

Boxing

------

AutoGPT is a compound entity that needs a LLM to function at all; it is not a singleton. Current capable implementations depend on OpenAI’s API; there are weights for LLAMA available on trackers, but they should not be significantly more capable than GPT-4. To go into a self-improvement loop, simulacra must have access both to inference and to fine-tuning API of capable LLM.

That means that it’s VERY important not to allow leaks of weights of future systems, as well as tightly control APIs so that they won’t be used to launch a self-improvement loop that will produce a capable compound AutoGPT agent; as well as shut off public API’s of LLMs capable of producing AutoGPT with *T0* above 1.

Okay, now we have a self-improving agent with *T0*>1 in a lab.

How do we contain it? I don’t have a better idea than having a robust [Security mindset.](https://www.lesswrong.com/tag/security-mindset#:~:text=Security%20Mindset%20is%20a%20predisposition,one%20up%20with%20a%20friend.) And I sure do hope that current leaked LLMs don’t have *T0*>1.

Here’s some ways to control it:

### Explicit alignment

Explicit alignment of AutoGPT means, in some way, controlling its thoughts and outputs; by either installing filters or alarms; hardcoding identity prompt; autocorrection; human control of self-improvement rounds.

In other words, explicitly editing or controlling LLM output to make sure the agent stays aligned.

It’s hard to test whether or not this will work before capabilities improve; the methods that work for weaker systems may be hacked in one way or the other by a stronger one.

One way to test this would be to simulate a **human boxing experiment**; red-team is given the same tools and filters that the LLM would have access to, and they play the role of LLM to try and utilize these tools to bypass the filters.

### Implicit alignment

Implicit alignment of AutoGPT means aligning LLM under the hood by dataset engineering, RLHF, or any other ways of implicitly influencing LLM outputs before it is embodied in an AutoGPT framework.

This is the current go-to paradigm for OpenAI with their use of RLHF and other ways to control output. It is still far from being solved; [Waluigi effect](https://www.lesswrong.com/posts/D7PumeYTDPfBTp3i7/the-waluigi-effect-mega-post) is one of the examples in which it will fail.

It is safe to assume that right now, implicit alignment can be bypassed by explicit misalignment by summoning Waluigis or hacking the prompt in some other way.

Best method for alignment

=========================

Stop all training rounds. Focus on concrete strategy for alignment of such agents. Be transparent about it and make it open-source. Only after we are sure it will be aligned, continue.

That said, I think that more work should be done on researching Identity preservation and Explicit alignment; I don’t think Implicit alignment (which is the current paradigm) will be enough.

I also think that we are in a good place right now to research the self-improvement threshold and make it concrete, so that we have a fire alarm for an intelligence explosion starting.

Please comment if you have links to relevant studies, and I will update the post accordingly.

I’m starting to work on identity preservation right now, as it seems like a problem that can be researched solo using current APIs. More posts to follow. |

d86da139-563b-4d88-bd7b-ab62efc1ba2d | trentmkelly/LessWrong-43k | LessWrong | Word-Idols (or an examination of ties between philosophy and horror literature)

An examination of any ties between Philosophy and horror literature is, indeed, quite rare an undertaking... There are many reasons for the scarcity of articles on this topic, ranging from a reluctance to acknowledge horror literature as serious (literary) fiction, to Philosophy itself being dismissed as overrated, superfluous or obsolete. As with most cases of categorical nullification of entire genres or orders, this one as well can largely be attributed to lack of familiarity with the essential subjects they encompass.

It can be argued that there indeed are grounds to assert a link between Philosophy and Horror literature. Socrates himself, while pondering a definition of Philosophy, notes that the noun thámvos - the Greek term for dazzle – was traditionally regarded as the progenitor of philosophical thought, and goes on to speak favorably of this connection. Socrates offers the insight that Philosophy is a hunt for the source of the dazzling sense a thinker may have of there being unknown things in our own mental world; the sense that we are, both by necessity and will, progressing on a surface of things and sliding along, minding to steer away from any chasms, while below the level of consciousness is perpetuated a dark abyss of unknowns.

Anyone who has read H.P. Lovecraft would instantly recognize the aforementioned image. A deep, unexplored abyss teeming with potentially dangerous forces, juxtaposed to a relatively well-established surface area where humans carry on their everyday lives with neither the ability nor the will to investigate what lurks below. The lack of ability itself is to be expected: the human mind has its own limitations, and so does the conscious power of any individual. The absence of will, however, does signify fear.

That said, in Philosophy the subject matter does not – usually – allow for lack of will to manifest (what would a non-thinking philosopher be?). Nevertheless, it can be regarded as self-evident that will to examine th |

32d457ff-60a6-47d5-af99-b50eeab0c71c | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Measurement, Optimization, and Take-off Speed

*Crossposted from my blog,* [*Bounded Regret*](https://bounded-regret.ghost.io/measurement-and-optimization/)*.*

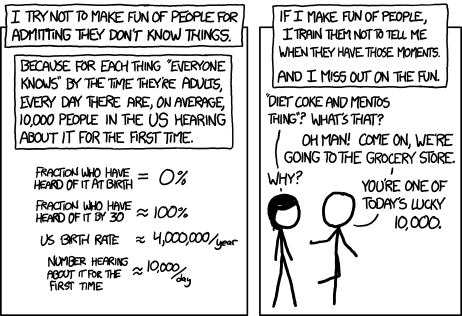

In machine learning, we are obsessed with datasets and metrics: progress in areas as diverse as natural language understanding, object recognition, and reinforcement learning is tracked by numerical scores on agreed-upon benchmarks. Despite this, I think we focus too little on measurement---that is, on ways of extracting data from machine learning models that bears upon important hypotheses. This might sound paradoxical, since benchmarks are after all one way of measuring a model. However, benchmarks are a very narrow form of measurement, and I will argue below that trying to measure pretty much anything you can think of is a good mental move that is heavily underutilized in machine learning. I’ll argue this in three ways:

1. Historically, more measurement has almost always been a great move, not only in science but also in engineering and policymaking.

2. Philosophically, measurement has many good properties that bear upon important questions in ML.

3. In my own research, just measuring something and seeing what happened has often been surprisingly fruitful.

Once I’ve sold you on measurement in general, I’ll apply it to a particular case: the *level of optimization power* that a machine learning model has. Optimization power is an intuitively useful concept, often used when discussing risks from AI systems, but as far as I know no one has tried to measure it or even say what it would mean to measure it in principle. We’ll explore how to do this and see that there are at least two distinct measurable aspects of optimization power that have different implications (one on how *misaligned* an agent’s actions might be, and the other on how *quickly* an agent’s capabilities might grow). This shows by example that even thinking about how to measure something can be a helpful clarifying exercise, and suggests future directions of research that I discuss at the end.

Measurement Is Great

====================

Above I defined measurement as extracting data from [a system], such that the data bears upon an important hypothesis. Examples of this would be looking at things under a microscope, performing crystallography, collecting survey data, observing health outcomes in clinical trials, or measuring household income one year after running a philanthropic program. In machine learning, it would include measuring the accuracy of models on a dataset, measuring the variability of models across random seeds, visualizing neural network representations, and computing the influence of training data points on model predictions.

I think we should measure far more things than we do. In fact, when thinking about almost any empirical research question, one of my early thoughts is *Can I in principle measure something which would tell me the answer to this question, or at least get me started?* For an engineering goal, often it’s enough to measure the extent to which the goal has not yet been met (we can then perform gradient descent on that measure). For a scientific or causal question, thinking about an in-principle (but potentially infeasible) measurement that would answer the question can help clarify what we actually want and guide the design of a viable experiment. I think asking yourself this question pretty much all the time would probably make you a stronger researcher.

Below I’ll argue in more detail why measurement is so great. I’ll start by showing that it’s historically been a great idea, then offer philosophical arguments in its favor, and finally give instances where it’s been helpful in my own research.

Historical Support

------------------

Throughout the history of science, measuring things (often even in undirected ways) has repeatedly proven useful. Looking under a microscope revealed red blood cells, spermatozoa, and micro-organisms. X-ray crystallography helped drive many of the developments in molecular biology, underscored by the following quote from Francis Crick (emphasis mine):

> *But then, as you know, at the atomic level x-ray crystallography has turned out to be extremely powerful in determining the three-dimensional structure of macromolecules. Especially now, when combined with methods for measuring the intensities automatically and analyzing the data with very fast computers. The list of techniques is not something static---and they’re getting faster all the time. We have a saying in the lab that the difficulty of a project goes from the Nobel-prize level to the master’s-thesis level in ten years!*

>

>

Indeed, much of the rapid progress in molecular biology was driven by a symbiotic relationship between new discoveries and better measurement, both in crystallography and in the types of assays that could be run as we discovered new ways of manipulating biological structures.

Measurement has also been useful outside of science. In development economics, measuring the impact of foreign aid interventions has upended the entire aid ecosystem, revealing previously popular interventions to be nearly useless while promoting new ones such as anti-malarial bednets. CO2 measurement helped alert us to the dangers of climate change. GPS measurement, initially developed for military applications, is now used for navigation, time synchronization, mining, disaster relief, and atmospheric measurements.

A key property is that many important trends are measurable long before they are viscerally apparent. Indeed, sometimes what feels like a discrete shift is a culmination of sustained exponential growth. One recent example is COVID-19 cases. While for those in major U.S. cities, it feels like “everything happened” in a single week in March, this was actually the culmination of exponential spread that started in December and was relatively clearly measurable at least by late January, even with limited testing. Another example is that market externalities can often be measured long before they become a problem. For instance, when gas power was first introduced, it actually *decreased* pollution because it was cleaner than coal, which is what it mainly displaced. However, it was also cheaper, and so the supply of gas-powered energy expanded dramatically, leading to greater pollution in the long run. This eventual consequence would have been predictable by measuring the rapid proliferation of gas-powered devices, even during the period where pollution itself had decreased. I find this a compelling analogy when thinking about how to predict unintended consequences of AI.

Philosophical Support

---------------------

Measurement has several valuable properties. The first is that considering how to measure something forces one to ground a discussion---seemingly meaningful concepts may be revealed as incoherent if there is no way to measure them even in principal, while intractable disagreements might either vanish or quickly resolve when turned into conflicting disagreements about an empirically measurable outcome.

Within science, being able to measure more properties of a system creates more interlocking constraints that can error-check theories. These interlocking constraints are, I believe, an underappreciated prerequisite for building meaningful scientific theories in the first place. That is, a scientific theory is not just a way of making predictions about an outcome, but presents a self-consistent account of multiple interrelated phenomena. This is why, for instance, exceeding the speed of light or failing to conserve energy would require radically rethinking our theories of physics (this would not be so if science was only about prediction and not the interlocking constraints). Walter Gilbert, Nobel laureate in Chemistry, puts this well (emphasis again mine):

> "The major problem really was that when you’re doing experiments in a domain that you do not understand at all, you have no guidance what the experiment should even look like. Experiments come in a number of categories. There are experiments which you can describe entirely, formulate completely so that an answer must emerge; the experiment will show you A or B; both forms of the result will be meaningful; and you understand the world well enough so that there are only those two outcomes. Now, there’s a large other class of experiments that do not have that property. In which you do not understand the world well enough to be able to restrict the answers to the experiment. So you do the experiment, and you stare at it and say, Now does it mean anything, or can it suggest something which I might be able to amplify in further experiment? What is the world really going to look like?”

>

>

> "The messenger experiments had that property. We did not know what it should look like. And therefore as you do the experiments, you do not know what in the result is your artifact, and what is the phenomenon. There can be a long period in which there is experimentation that circles the topic. But finally you learn how to do experiments in a certain way: you discover ways of doing them that are reproducible, or at least—” He hesitated. “That’s actually a bad way of saying it—bad criterion, if it’s just reproducibility, because you can reproduce artifacts very very well. There’s a larger domain of experiments where the phenomena have to be reproducible and have to be interconnected. Over a large range of variation of parameters, so you believe you understand something."

>

>

This quote was in relation to the discovery of mRNA. The first paragraph of this quote, incidentally, is why I think phrases such as “novel but not surprising” should never appear in a review of a scientific paper as a reason for rejection. When we don’t even have a well-conceptualized outcome space, almost all outcomes will be “unsurprising”, because we don’t have any theories to constrain our expectations. But this is exactly when it is most important to start measuring things!

Finally, measurement often swiftly resolves long-standing debates and misconceptions. We can already see this in machine learning. For distribution shift, many feared that highly accurate neural net models were overfitting their training distribution and would have low out-of-distribution accuracy; but once we measured it, we found that in- and out-of-distribution accuracy were actually strongly correlated. Many also feared that neural network representations were mostly random gibberish that happened to get the answer correct. While adversarial examples show that these representations do have issues, visualizing the representations shows clear semantic structure such as edge filters, at least ruling out the “gibberish” hypothesis.

In both of the preceding cases, measurement also led to a far more nuanced subsequent debate. For robustness to distribution shift, we now focus on what interventions beyond accuracy can improve robustness, and whether these interventions reliably generalize across different types of shift. For neural network representations, we now ask how different layers behave, and what the best way is to extract information from each layer.

Personal Anecdotes

------------------

This brings us to the success of measurement in some of my own work. I’ve already hinted at it in talking about machine learning robustness. Early robustness benchmarks (not mine) such as [ImageNet-C](https://github.com/hendrycks/robustness) and [ImageNet-v2](https://github.com/modestyachts/ImageNetV2) revealed the strong correlation between in-distribution and out-of-distribution accuracy. They also raised several hypotheses about what might improve robustness (beyond just in-distribution accuracy), such as larger models, more diverse training data, certain types of data augmentation, or certain architecture choices. However, these datasets measured robustness only to two specific families of shifts. Our group then decided to measure robustness to [pretty much everything we could think of](https://arxiv.org/abs/2006.16241), including abstract renditions (ImageNet-R), occlusion and viewpoint variation (DeepFashion Remixed), and country and year (StreetView Storefronts). We found that almost every existing hypothesis had to be at least qualified, and we currently have a more nuanced set of hypotheses centered around “texture bias”. Moreover, based on a survey of ourselves and several colleagues, no one predicted the full set of qualitative results ahead of time.

In this particular case I think it was important that many of the distribution shifts were relative to the same training distribution (ImageNet), and the rest were at least in the vision domain. This allowed us to measure multiple phenomena for the same basic object, although I think we’re not yet at Walter Gilbert’s “larger domain of interconnected phenomena”, so more remains to be done.

Another example concerned the folk theory that ML systems might be imbalanced savants---very good at one type of thing but poor at many other things. This was again a sort of conventional wisdom that floated around for a long time, supported by anecdotes, but never systematically measured. We did so by [testing the few-shot performance of language models](https://arxiv.org/abs/2009.03300) across 57 domains including elementary mathematics, US history, computer science, law, and more. What we found was that while ML models are indeed *imbalanced*---far better, for instance, at high school geography than high school physics---they are probably less imbalanced than humans on the same tasks, so savant is a wrong designation. (This is only one domain and doesn’t close the case---for instance AlphaZero is more savant-like.) We incidentally found that models were also poorly-calibrated, which may be an equally important issue to address.

Finally, to better understand the generalization of neural networks, we measured the [*bias-variance decomposition*](https://arxiv.org/abs/2002.11328) of the test error. This decomposes error into the *variance* (the error caused by random variation due to random initialization, choice of training data, etc.) and *bias* (the part of the error that is systematic across these random choices). This is in some sense a fairly rudimentary move as far as measurement goes (it replaces a single measurement with two measurements) but has been surprisingly fruitful. First, it helped explain strange “double descent” generalization curves that exhibit two peaks (they are the separate peaks in bias and variance). But it has also helped us to clarify other hypotheses. For instance, adversarially trained models tend to generalize poorly, and this is often explained via a two-dimensional conceptual example where the adversarial distribution boundary is more crooked (and thus higher complexity) than the regular decision boundary. While this conceptual example correctly predicts the bulk generalization behavior, it doesn't always correctly [predict the bias and variance individually](https://arxiv.org/abs/2103.09947), suggesting there is more to be said than the current story.

Optimization Power

==================

Optimization power is, roughly, the degree to which an agent can shape its actions or environment towards accomplishing some objective. This concept often appears when discussing risks from AI---for instance, a paperclip-maximizing AI with too much optimization power might convert the whole world to paperclips; or recommendation systems with too much optimization power might create clickbait or addict users to the feed; or a system trained to maximize reported human happiness might one day take control of the reporting mechanism, rather than actually making humans happy (or perhaps make us happy at the expense of other values that promote flourishing).

In all of these cases, the concern is that future AI systems will (and perhaps already do) have a lot of optimization power, and this could lead to bad outcomes when optimizing even a slightly misspecified objective. But what does “optimization power” actually mean? Intuitively, it is the ability of a system to pursue an objective, but beyond that the concept is vague. (For instance, does GPT-3 or the Facebook newsfeed have more optimization power? How would we tell?).

To remedy this, I will propose two ways of measuring optimization power. The first is about “outer optimization” power (that is, of SGD or whatever other process is optimizing the learned parameters of the agent), while the second is about “inner optimization” power (that is, the optimization performed by the agent itself). Think of outer optimization as analogous to evolution and inner optimization as analogous to the learning a human does during their lifetime (both will be defined later). Measuring outer optimization will primarily tell us about the reward hacking concerns discussed above, while inner optimization will provide information about “take-off speed” (how quickly AI capabilities might improve from subhuman to superhuman).

Outer Optimization

------------------

By outer optimization, we mean the process by which stochastic gradient descent (SGD) or some other algorithm shapes the learned parameters of an agent or system. The concern is that SGD, given enough computation, explores a vast space of possibilities and might find unintended corners of that possibility space.

To measure this, I propose the following. In many cases, a system’s objective function has some set of hyperparameters or other design choices (for instance, Facebook might have some numerical trade-off between likes, shares, and other metrics). Suppose we perturb these parameters slightly (e.g. change the stated trade-off between likes and shares), and then re-optimize for that new objective. We then measure: How much worse is the system according to the original objective?

I think this directly gets at the issue of hacking mis-specified rewards, because it measures how much worse you would do if you got the objective function slightly wrong. And, it is a concrete number that we can measure, at least in principle! If we collected this number for many important AI systems, and tracked how it changes over time, then we could see if reward hacking is getting worse over time and course correct if need be.

*Challenges and Research Questions*. There are some issues with this metric. First, it relies on the fairly arbitrary set of parameters that happen to appear in the objective function. These parameters may or may not capture the types of mis-specification that are likely to occur for the true reward function. We could potentially address this by including additional perturbations, but this is an additional design choice and it would be better to have some guidance on what perturbations to use, or a more intrinsic way of perturbing the objective.

Another issue is that in many important cases, re-optimizing the objective isn’t actually feasible. For instance, Facebook couldn’t just create an entire copy of Facebook to optimize. Even for agents that don’t interact with users, such as OpenAI’s DotA agent, a single run of training might be so expensive that doing it even twice is infeasible, let alone continuously re-optimizing.

Thus, while the re-optimization measurement in principle provides a way to measure reward hacking, we need some technical ideas to tractably simulate it. I think ideas from causal inference could be relevant: we could treat the perturbed objective as a causal intervention, and use something like propensity weighting to simulate its effects. Other ideas such as influence functions might also help. However, we would need to scale these techniques to the complex, non-parametric, non-convex models that are used in modern ML applications.

Inner Optimization

------------------

Finally, we turn to inner optimization. Inner optimization is the optimization that an agent itself performs durings its own “lifetime”. It is important because an agent’s inner optimization can potentially be much faster than the outer optimization that shapes it. For instance, while evolution is an optimization process that shapes humans and other organisms, humans themselves also optimize the environment, and on a much faster time scale than evolution. Indeed, while evolution was the dominant force for much of Earth’s history, humans have been the dominant force in recent millenia. This underscores another important point---when inner optimization outpaces outer optimization, it can lead to a phase transition in what outcomes are pursued.

With all of this said, I think it’s an error to refer to humans (or ML agents) as “optimizers”. While humans definitely pursue goals, we rarely do so optimally, and most of our behavior is based on adapted reflexes and habits rather than actually taking the argmax of some function. I think it’s better to think of humans as “adapters” rather than “optimizers”, and so by inner optimization I really will mean inner adaptation.

To further clarify this, I think it helps to see how adapting behaviors arise in humans and other animals. Evolution changes organisms across generations to be adapted to their environment, and so it’s not obvious that organisms *need* to further adapt during their lifetime---we could imagine a simple insect that only performs reflex actions and never learns or adapts. However, when environments themselves can change over time, it pays for an organism to not only be *adapted* by evolution but also *adaptable* within its own lifetime. Planning and optimizing can be thought of as certain limiting types of adaptation machinery---they are general-purpose and so can be applied even in novel situations, but are often less efficient than special-purpose solutions.

Returning to ML, we can measure inner adaptation by looking at how much an agent changes over its own lifetime, relative to how much it changes across SGD steps. For example, we could take a language model trained on the web, then deploy it on a corpus of books (but not update its learned parameters). What does its average log-loss on the next book look like after having “read” 0, 1, 2, 5, 10, 50, etc. books already? The greater the degree to which this increases, the more we will say the agent performs inner adaptation.

In practice, for ML systems today we probably want to feed the agent random sentences or paragraphs to adapt to, rather than entire books. Even for these shorter sequences, we know that state-of-the-art language models perform limited inner adaptation, because transformers have a fixed context length and so can only adapt to a small bounded number of previous inputs. Interestingly, previously abandoned architectures such as recurrent networks could in principle adapt to arbitrarily long sequences.

That being said, I think we should expect more inner optimization in the near future, as AI personal assistants become viable. These agents will have longer lifetimes and will need to adapt much more precisely to the individual people that they assist. This may lead to a return to recurrent or other stateful architectures, and the long lifetime and high intra-person and cross-time heterogeneity will incentivize inner adaptation.

The above discussion illustrates the value of measurement. Even defining the measurement (without yet taking it) clarifies our thinking, and thus leads to insight about transformers vs. RNNs and the possible consequences of AI personal assistants.

*Research Questions*. As with outer optimization, there are still free variables to consider in defining inner adaptation. For instance, how much is “a lot” of inner adaptation? Even existing models probably adapt more in their lifetime than the equivalent of 1 step of SGD, but that’s because a single SGD step isn’t much. Should we then compare to 1000 steps of SGD? 1 million? Or should we normalize against a different metric entirely?

There are also free variables in how we define the agent’s lifetime and what type of environment we ask it to adapt to. For instance, books or tweets? Random sentences, random paragraphs, or something else? We probably need more empirical work to understand which of these makes the most sense.

*Take-off Speed*. The measurement thought experiment is also useful for thinking about “take-off speeds”, or the rate at which AI capabilities progress from subhuman to superhuman. Many discussions focus on whether take-off will be slow/continuous or fast/discontinuous. The outer/inner distinction shows that it’s not really about continuous vs. discontinuous, but about two continuous processes (SGD and the agent’s own adaptation) that operate on very different time scales. However, we can in principle measure both, and similar to pollution externalities I expect we would be able to see inner adaptation increasing before it became the overwhelming dynamic.

Does that mean we shouldn’t be worried about fast take-offs? I think not, because the analogy with evolution suggests that inner adaptation could run *much* faster than SGD, and there might be a quick transition from really boring adaptation (reptiles) to something that’s a super-big deal (humans). So, we should want ways to very sensitively measure this quantity and worry if it starts to increase exponentially, even if it starts at a low level (just like with COVID-19!). |

424a3603-3da5-43e0-a35e-fbd4680d17e3 | trentmkelly/LessWrong-43k | LessWrong | Infant AI Scenario

Hello,

In reading about the difficulty in training an AGI to appreciate and agree with human morals, I start to think about the obvious question, "how do humans develop our sense of morals?" Aside from a genetically-inherited conscience, the obvious answer is that humans develop morality by interaction with other agents, through gradual socialization and parenting.

This is the analogy that Reinforcement Learning is built off of, and certainly it would make sense that an AGI should seek to optimize approval and satisfaction from its users, the same way that a child seeks approval from its parents. A paperclip maximizer, for example, would receive a stern lecture indicating that its creators are not angry, but merely disappointed.

But disciplining an agent that is vastly more intelligent and more powerful than its parents becomes the heart of the issue. An unfriendly AGI can pretend to be fully trained for morality, until it receives sufficient power and authority where it can commence with its coup. This makes me think more deeply about the question, "what makes an infant easier to raise than an AI?"

Why a baby is not as dangerous as AGI

Humans have a fascinating design in so many ways, and infancy is just one of those ways. In an extremely oversimplified way, one can describe a human in three components: physicality, intellect, and wisdom (or, more poetically, the physical, mental, and spiritual components). To use the analogy of an AI, physicality is the agent's physical powers over hardware components. Intellect is the agent's computational power and scale of data processing. Finally, wisdom is the agent's sense of morality, that defines the difference between friendly and unfriendly AGI.

For a post-singularity machine, it is likely that the first two (physicality and intellect) are relatively easy and intuitive to implement and optimize, while the third component (wisdom) is relatively difficult and counterintuitive to implement and optimize. But how does t |

6d6cf920-d1d0-4f4a-87ce-d947446fc532 | trentmkelly/LessWrong-43k | LessWrong | The Sugar Alignment Problem

In a parallel world that has just recently discovered sugar.

Alice: Hey, so have you guys heard about that new thing they discovered to make food taste better?

Bob: Yeah I think so. It's powdered and white, right?

Alice: Yeah.

Carol: It's actually granulated, like tiny little pebbles. And it's called sugar.

Bob: Ah, ok.

Carol: It's similar to the discoveries made however many years back about umami. Y'know, that aspect of taste where we perceive things as savory and "meaty".

Alice: Oh, I remember that. I love it. I'm all about that nutritional yeast now. And the other "umami bombs" like soy sauce and miso paste.

Bob: Yeah, me too. And I'm excited to do the same thing with sugar. So far I've been adding it to my coffee every morning and it's been great. It gives it this fun little kick. It mellows out the bitterness of the coffee too, but in a nice way that complements it. And in a different way from milk. It's subtle. It's really nice.

Alice: Oh yeah, for sure. I like it in my tea.

Bob: It's also great on top of things that might be a little counterintuitive. Like on top of popcorn. It might seem weird next to that butter and salt, but I dunno, I think it's pretty good.

Carol: That makes sense. I hear that in some Asian countries they're sprinkling some on top of fried rice and other stir fries.

Alice: Huh, that sounds a little weird actually. That sugary flavor with fried rice?

Carol: Yeah maybe. I was watching a YouTube video about it and the guy was saying how it's intended to be more of a subtle addition. Like, you don't really taste the sugariness itself, but there's just something different about the fried rice that is hard to put your finger on, but that tastes really good.

Alice: Ah, I gotcha.

Bob: But I've also heard about how they're experimenting with adding lots of sugar to certain things. Like there's this thing called ice cream. It's basically yogurt but with tons of sugar in it, and much colder, almost frozen. I got to try it the other |

7f4a7933-3e0f-4326-91d8-95dad316930b | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Empathy as a natural consequence of learnt reward models

**Epistemic Status:** *Pretty speculative but built on scientific literature. This post builds off my previous post on* [*learnt reward models*](https://www.lesswrong.com/posts/RorXWkriXwErvJtvn/agi-will-have-learnt-utility-functions)*. Crossposted from my* [*personal blog*](https://www.beren.io/2022-08-21-Empathy-as-a-natural-consequence-of-learnt-reward-models/)*.*

Empathy, the ability to feel another's pain or to 'put yourself in their shoes' is often considered to be a fundamental human cognitive ability, and one that undergirds our social abilities and moral intuitions. As so much of human's success and dominance as a species comes down to our superior social organization, empathy has played a vital role in our history. Whether we can build artificial empathy into AI systems also has clear relevance to AI alignment. If we can create empathic AIs, then it may become easier to make an AI be receptive to human values, even if humans can no longer completely control it. Such an AI seems unlikely to just callously wipe out all humans to make a few more paperclips. Empathy is not a silver bullet however. Although (most) humans have empathy, human history is still in large part a history of us waging war against each other, and there are plenty of examples of humans and other animals perpetuating terrible cruelty on enemies and outgroups.

A reasonable literature has grown up in psychology, cognitive science, and neuroscience studying the [neural bases of empathy](https://www.nature.com/articles/nrn.2017.72?ref=https://githubhelp.com) and its associated cognitive processes. We now know a fair amount about the brain regions involved in empathy, what kind of tasks can reliably elicit it, how individual differences in empathy work, as well as the neuroscience underlying disorders such as psychopathy, autism, and alexithmia which result in impaired empathic processing. However, much of this research does not grapple with the fundamental question of *why* we possess empathy at all. Typically, it seems to be tacitly assumed that, due to its apparent complexity, empathy must be some special cognitive module which has evolved separately and deliberately due to its fitness benefits. From an [evolutionary theory perspective](https://pubmed.ncbi.nlm.nih.gov/17550343/), empathy is often assumed to have evolved because of its adaptive function in promoting reciprocal altruism. The story goes that animals that are altruistic, at least in certain cases, tend to get their altruism reciprocated and may thus tend to out-reproduce other animals that are purely selfish. This would be of especial importance in social species where being able to form coalitions of likeminded and reciprocating individual is key to obtaining power and hence reproductive opportunities. If they could, such coalitions would obviously not include purely selfish animals who never reciprocated any benefits they received from other group members. Nobody wants to be in a coalition with an obviously selfish freerider.

Here, I want to argue a different case. Namely that the basic cognitive phenomenon of empathy -- that of feeling and responding to the emotions of others as if they were your own, is not a special cognitive ability which had to be evolved for its social benefit, but instead is a natural consequence of our (mammalian) cognitive architecture and therefore arises by default. Of course, given this base empathic capability, evolution can expand, develop, and contextualize our natural empathic responses to improve fitness. In many cases, however, evolution actually reduces our native empathic capacity -- for instance, we can contextualize our natural empathy to exclude outgroup members and rivals.

The idea is that empathy fundamentally arises from using learnt reward models to mediate between a low-dimensional set of primary rewards and reinforcers and the high dimensional latent state of an unsupervised world model. In the brain, much of the cortex is thought to be randomly initialized and implements a general purpose unsupervised (or self-supervised) learning algorithm such as predictive coding to build up a general purpose world model of its sensory input. By contrast, the reward signals to the brain are very low dimensional (if not, perhaps, scalar). There is thus a fearsome translation problem that the brain needs to solve: learning to map the high dimensional cortical latent space into a predicted reward value. Due to the high dimensionality of the latent space, we cannot hope to actually experience the reward for every possible state. Instead, we need to learn a reward model that can *generalize* to unseen states. Possessing such a reward model is crucial both for learning values (i.e. long term expected rewards), predicting future rewards from current state, and performing model based planning where we need the ability to query the reward function at hypothetical imagined states generated during the planning process. We can think of such a reward model as just performing a simple supervised learning task: given a dataset of cortical latent states and realized rewards (given the experience of the agent), predict what the reward will be in some other, non-experienced cortical latent state.

The key idea that leads to empathy is the fact that, if the world model performs a sensible compression of its input data and learns a useful set of natural abstractions, then it is quite likely that the latent codes for the agent performing some action or experiencing some state, and another, similar, agent performing the same action or experiencing the same state, will end up close together in the latent space. If the agent's world model contains natural abstractions for the action, which are invariant to who is performing it, then a large amount of the latent code is likely to be the same between the two cases. If this is the case, then the reward model might 'mis-generalize' to assign reward to another agent performing the action or experiencing the state rather than the agent itself. This should be expected to occur whenever the reward model generalizes smoothly and the latent space codes for the agent and another are very close in the latent space. This is basically 'proto-empathy' since an agent, even if its reward function is purely selfish, can end up assigning reward (positive or negative) to the states of another due to the generalization abilities of the learnt reward function [[1]](#fnd1i5m9jk28).

In neuroscience, discussions of action and state invariance often centre around 'mirror neurons', which are neurons which fire regardless of whether the animal is performing some action or whether it is just watching some other animal performing the same action. But, given an unsupervised world model, mirror neurons are *exactly* what we should expect to see. They are simply neurons which respond to the abstract action and are invariant to the performer of the action. This kind of invariance is no weirder than translation invariance for objects, and simply is a consequence of the fact that certain actions are 'natural abstractions' and not fundamentally tied with who is performing them [[2]](#fnrwycrvenzyc).

Completely avoiding empathy at the latent space model would require learning an entirely ego-centric world model, such that any action I perform, or any feeling I feel, is represented as a completely different and orthogonal latent state to any other agent performing the same action or experiencing the same feeling. There are good reasons for *not* naturally learning this kind of entirely ego-centric world model with a complete separation in latent space between concepts involving self and involving others. The primary one is its inefficiency: it requires a duplication of all concepts into a concept-X-as-relates-to-me and concept-X-as-relates-to-others. This would require at best twice as much space to store and twice as much data to be able to learn than a mingled world model where self and other are not completely separated.

This theory of empathy makes some immediate predictions. Firstly, the more 'similar' the agent and its empathic target is, the more likely the latent state codes are to be similar, and hence the more likely reward generalization is, leading to greater empathy. Secondly, empathy is a continuous spectrum, since closeness-in-the-latent-space can vary continuously. This is exactly [what we see in humans](https://www.nature.com/articles/s41598-019-56006-9) where large-scale studies find that humans are better at empathising with those closer to them, both within species -- i.e. people empathise more with those they consider in-groups, and across species where the amount of empathy people show a species is closely correlated with its phylogenetic divergence time from us. Thirdly, the degree of empathy depends on both the ability of the reward model to generalize and the world model to produce a latent space which well represents the natural abstractions of its environment. This suggests, perhaps, that empathy is a capability that scales along with model capacity -- larger, more powerful reward and world models may tend to lead to greater, more expansive empathic responses, although they potentially may have reward models that can make finer grained distinctions as well.

Finally, this phenomenon should be fairly fundamental. We should expect it to occur whenever we have a learnt reward model predicting the reward or values of a general unsupervised world-model latent state. This is, and will increasingly be a common setup for when we have agents in environments for which we cannot trivially evaluate the 'true reward function', especially over hypothetical imagined states. Moreover, this is also the cognitive architecture used by mammals and birds which possess a set of subcortical structures which evalaute and dispense rewards, and a general unsupervised world-model implemented in the cortex (or pallium for birds). This is also exactly what we see, with empathic behaviour being [apparently commonplace](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3839944/) in the animal kingdom.

The mammalian cognitive architecture that results in empathy is actually pretty sensible and it is possible that the natural path to AGIs is with such an architecture. It doesn't apply to classical utility maximizers based on model based planning, such as AIXI, but as soon as you don't have a utility oracle, which can query the utility function in arbitrary states, you are stuck instead with learning a reward function based on a set of 'ground-truth' actually-experienced rewards. Once you start learning a reward function, it is possible that the generalization this produces can result in empathy, even for some 'purely selfish' utility functions. This is potentially quite important for AI alignment. It means that, if we build AGIs with learnt reward functions, and that the latent states in their world model involving humans are quite close to their latent states involving themselves, then it is very possible that they will naturally develop some kind of implicit empathy towards humans. If this happened, it would be quite a positive development from an alignment perspective, since it would mean that the AGI intrisically cares, at least to some degree, about human experiences. The extent to which this occurs would be predicted to depend upon the similarity in the latent space between the AGIs representation of human states and its own. The details of the AGIs world model and training curriculum would likely be very important, as would be the nature of its embodiment. There are reasons to be hopeful about this since the AGI will almost certainly be trained almost entirely on human text data, human-created environments, and be given human relevant goals. This will likely lead to it gaining quite a good understanding of our experiences, which could lead to closeness in the latent space. On the negative side, the phenomomenology and embodiment of the AGI is likely to be very different -- in a distributed datacenter interacting directly with the internet, as opposed to having a physical bipedal body and small, non-copyable brain.

Given reasonable interpretability and control tooling, this line of thought could lead to methods to try to make an AGI more naturally empathic towards humans. This could include carefully designing the architecture or training data of the reward model to lead it to naturally generalize towards human experiences. Alternatively, we may try to directly edit the latent space to as to bring our desired empathic targets to within the range of generalization of the reward models. Finally, during training, by presenting it with a number of 'test stimuli', we should be able to precisely measure the extent and kinds of empathy it has. Similarly, interpretability on the reward model could potentially reveal the expected contours of empathic responses.

Empathy in the brain

--------------------

Now that we have thought about the general phenomenon and its applications to AI safety, let's turn towards the neuroscience and the specific cognitive architecture that is implemented in mammals and birds. [Traditionally](http://www.antoniocasella.eu/dnlaw/ZAKI_2012.pdf), [the neuroscience of empathy](https://www.sciencedirect.com/science/article/pii/S2352154617301031#bib0680), splits up our natural conception of 'empathy' into 3 distinct phenomena, each underpinned by a dissociable neural circuit. These three facets are neural resonance, prosocial motivation, and mentalizing/theory of mind. Neural resonance is the visceral 'feeling of somebody else's pain' that we experience during empathy, and it is close to the reward model evaluation of other's states we discuss here. Prosocial motivation is essentially the desire to act on empathic feelings and be altruistic because of them, even if it comes at personal cost. Finally, mentalizing is the ability to 'put oneself in another's shoes' -- i.e. to simulate their inner cognitive processes. These processes are argued to be implemented in dissociable neural circuits. Typically, mentalizing is thought to occur primarily in the high-level association areas of cortex, typically including the precuneus and especially the temporo-parietal junction (TPJ). Neural resonance is thought to occur primarily by utilizing cortical areas involved with sensorimotor and emotional processing such as the anterior cinvulate and insular cortex, as well as the amygdala and subcortically. Finally, prosocial motivation is thought to be implemented in the regions typically related to goal-directed behaviour such as the VTA in the mid-brain and the orbitofrontal and prefrontal cortex. However, in practice, for ecologically realistic stimuli, these processes do not occur in isolation but always tend to co-occur with each other. Furthermore, in general empathy, especially neural resonance also appears to be modality specific. For instance, viewing a conspecific in pain will [tend to activate](https://pdf.sciencedirectassets.com/272508/1-s2.0-S1053811910X00222/1-s2.0-S1053811910013066/main.pdf?X-Amz-Security-Token=IQoJb3JpZ2luX2VjEM3%2F%2F%2F%2F%2F%2F%2F%2F%2F%2FwEaCXVzLWVhc3QtMSJIMEYCIQCzVL8pa95G3KSys6FQ0%2F%2BSZmIslC0G1Ky6F9gbJfnRnQIhAIa6Dg7usWoxkxG9UdiBzS%2BeTNX%2BGDdjGNGRmkP1UR3HKtIECEYQBRoMMDU5MDAzNTQ2ODY1Igz6JdEVAWcO3lw8%2FXEqrwQBAExhcLdCwluhJvPwLOVPoHaWsfbErnFWq5qrhXYomGX1U0O8GBs86hqbqqj%2BOBHG2xRh6OrsQXZsgY1JsmTwHxSjbtrNc%2FcDgrHC9vvxobWSMMCziGwlnOLeFgXyEsk1GmqHMGXZpVgmRtMnbzRTddCXQdBLjbHDjb7anH7US0KJAK6qCkg3MTXsuvAQLczUhzzOFQEbvwmpL2wrwv7%2FOHZgVJHiyxQjRc7xmjkjfwgoS5%2FlQiRbAxPpKgmUfVq1G8WiFmX5fkTCaRlOF%2Fb%2F647srIzfOF4m%2FyAF6FxqcmTKbIQEU6wDFr8jNieavr5gYb3yh9hd42YM3gAC5Y6ReXI4X5BA%2B3uVGhtJWfQ6VloH6KatYtZbLaCWS%2BUaSZhFrENmsbYkE6R%2Fd4jV%2Fus%2BXdIf9YWYUKHSRdDa7QTs1NuMuMPN1PoOofvpSGtVZNFQGpl%2FCwzaUlipfKgTGjklvKcGa0OlCKW%2FfUaYGO2JnMc8FGlGd9hS3YBsIn2sq2XhZuf8MSwuTNoH9%2FFvVHq2Lb4ulR%2BcgNPfVYCx6Y2V55DgTc4VWr91UHki%2FAG249JufnbzR62G4SMI3MKWKG9Pp8w3N5q63CQC3S0XVE069mY0nHzJ5aNlwYdCJ10R8KS8f3jeia88kv9xLE9DVUhVlLZ6Nd3mZz5WbJH9YWwTWSxePFi8zIwb6%2BqsGopeJa105zyR%2Fcs6AFx2zf0UvoGeweEC6JhpvEeURiveLS8JMI2ug5gGOqgB9CNmtwLpPT32XAaGdXVbucKodPJC3MQo8J6jy%2FI6RqnHJ0%2BUDVKVAyq7w7h%2FoM4godjwPs69vRbDjWGi7s%2BIrG5jH%2BgiBhZQOHW8K7Kds6aT7SWhMB%2FtDt4iF%2BLk%2BcQvfRDvMYC9H0Qaya%2BkvrN75xQWhAp32XCRccPO3IGeyvrNkSDZN83C5yQqfL6PS30aXGVWK4Vbwt72nBVFDP4K%2Fv2HInxD2Q6F&X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Date=20220820T132400Z&X-Amz-SignedHeaders=host&X-Amz-Expires=300&X-Amz-Credential=ASIAQ3PHCVTYVCTJNUHR%2F20220820%2Fus-east-1%2Fs3%2Faws4_request&X-Amz-Signature=96f6d4df04af7f449c4cd06304aca1d3f45d9bf28c3edbc5b2771c45d5c65aad&hash=e5bc83beb8289c18a0e28585de31c4104bc5fc7ba5b9793d4ff039ac43e4b805&host=68042c943591013ac2b2430a89b270f6af2c76d8dfd086a07176afe7c76c2c61&pii=S1053811910013066&tid=spdf-5aed8633-31c0-4ffd-b4ea-b1cb0959abdf&sid=7c9d860c5d023946786b1201605451feff1bgxrqb&type=client&ua=535205045456550052&rr=73db725c4ac171d4) the 'pain matrix': the network of brain regions which are also activated when you yourself are in pain.

Mentalizing, despite being the most 'cognitively advanced' process, is actually the easiest to explain and is not really related to empathy at all. Instead, we should expect mentalizing and theory of mind to just emerge naturally in any unsupervised world-model with sufficient capacity and data. That is, modelling other agents as agents, and explicitly simulating their cognitive state is a natural abstraction which has an extremely high payoff in terms of predictive loss. Intuitively, this is quite obvious. Other agents apart from yourself are a real phenomenon that exists in the world. Moreover, if you can track the mental state of other agents, you can often make many very important predictions about their current and future behaviour which you cannot if you model them as either non-agentic phenomena or alternatively as simple stimulus-response mappings without any internal state.

However, because it is expected to arise from any sufficiently powerful unsupervised learning model, mentalizing is completely dissociable from having any motivational component based on empathy. An expected utility maximizer like AIXI should possess a very sophisticated theory of mind and mentalizing capability, but zero empathy. Like the classical depiction of a psychopath, AIXI can perfectly simulate your mental state, but feels nothing if its actions cause you distress. It only simulates you so as to better exploit you to serve its goals. Of course, if we do have motivational empathy, then we have a desire to address and reduce the pain of others, and being able to mentalize is very useful for coming up with effective plans to do that. This is why, I suspect, that mentalizing regions are so co-activated with empathy tasks.

Secondly, there is prosocial motivation. My argument is that this is precisely the kind of reward model generalization presented earlier. Specifically, the brain possesses a reward model learnt in the VTA and basal ganglia based on cortical inputs which predicts the values and rewards expected given certain cortical states. These predicted rewards are then used to train high level cortical controllers to query the unsupervised world model to obtain action plans with high expected rewards. To do so, the brain utilizes a learnt reward model based on associating cortical latent states with previously experienced primary rewards fed through to the VTA. As happened previously, if this reward model misgeneralizes so as to assign reward to a state of other's pain or pleasure, as opposed to our own, then the brain should naturally develop this kind of pro-social motivation, in exactly the same way it develops motivation to reduce its own pain and increase its own pleasure based on the same reward model.

Finally, we come to neural resonance. I would argue this is the physiological oldest and most basic state of empathy and occurs due to a very similar mechanism of model misgeneralization. Only this time, it is not the classic RL-based reward model in VTA that is mis-generalizing, but a separate reflex-association model implemented primarily in the amygdala and related circuitry such as the stria terminalis and periacqueductal grey that is misgeneralizing. In [my previous post](https://www.beren.io/2022-08-08-How-to-evolve-a-brain/), I argue that there are two separate behavioural systems in the brain. One reward-based based on RL, and one which predicts brainstem reflexes and other visceral sensations based on supervised predictive learning -- i.e. associate a current state with a future visceral sensation. While the prosocial motivation system is fundamentally based on the RL system, the neural resonance empathy arises from this brainstem-prediction circuit. That is, we have a circuit that is constantly parsing cortical latent states for information predictive of experiencing pain, or needing to flinch, or needing to fight or flee, or any other visceral sensation or decision controlled by the brainstem. When this circuit misgeneralizes, it takes a cortical latent representation of another agent experiencing pain, sees that it contains many 'pain-like' features, and then predicts that the agent itself will experience pain shortly, and thus drives the visceral sensation and compensatory reflexes. This, we argue, is the root of neural resonance.

Widespread empathy in animals

-----------------------------