id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

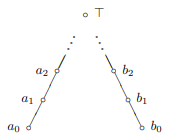

0eba637d-4db4-4383-9855-527b176e5926 | trentmkelly/LessWrong-43k | LessWrong | The Shutdown Problem: An AI Engineering Puzzle for Decision Theorists

[NOTE: This paper was previously titled 'The Shutdown Problem: Three Theorems'.]

This paper is an updated version of the first half of my AI Alignment Awards contest entry. My theorems build on the theorems of Soares, Fallenstein, Yudkowsky, and Armstrong in various ways.[1] These theorems can guide our search for solutions to the shutdown problem.[2]

One aim of the paper is to get academic philosophers and decision theorists interested in the shutdown problem and related topics in AI alignment. They’re my assumed audience. I’m posting here because I think the theorems will also be interesting to people already familiar with the shutdown problem.

For discussion and feedback, I thank Adam Bales, Ryan Carey, Bill D’Alessandro, Tomi Francis, Vera Gahlen, Dan Hendrycks, Cameron Domenico Kirk-Giannini, Jojo Lee, Andreas Mogensen, Sami Petersen, Rio Popper, Brad Saad, Nate Soares, Rhys Southan, Christian Tarsney, Teru Thomas, John Wentworth, Tim L. Williamson, and Keith Wynroe.

Abstract

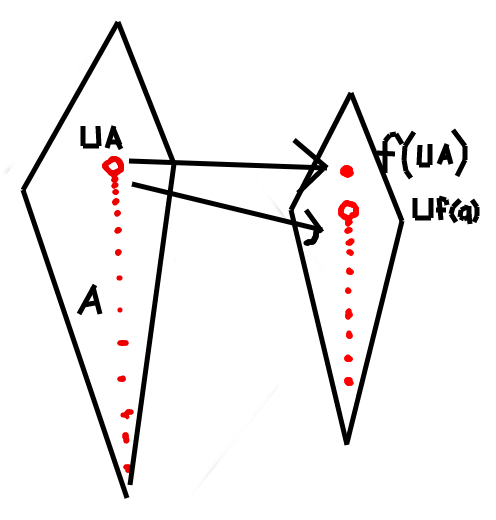

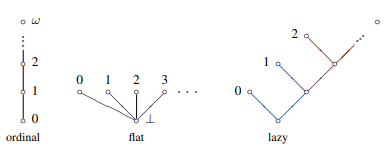

I explain and motivate the shutdown problem: the problem of designing artificial agents that (1) shut down when a shutdown button is pressed, (2) don’t try to prevent or cause the pressing of the shutdown button, and (3) otherwise pursue goals competently. I prove three theorems that make the difficulty precise. These theorems suggest that agents satisfying some innocuous-seeming conditions will often try to prevent or cause the pressing of the shutdown button, even in cases where it’s costly to do so. I end by noting that these theorems can guide our search for solutions to the problem.

0. Preamble

Tradition has it that decision theory splits into two branches. The descriptive branch concerns how actual agents behave. The normative branch concerns how rational agents behave. But there is also a lesser-known third branch: what we can call ‘constructive decision theory.’ It concerns how we want artificial agents to behave and how we can create artificial agents that behave in those w |

7fe36db2-df2c-4eae-8fe9-b1e55a200b18 | trentmkelly/LessWrong-43k | LessWrong | Epistemic Viciousness

Someone deserves a large hattip for this, but I'm having trouble remembering who; my records don't seem to show any email or OB comment which told me of this 12-page essay, "Epistemic Viciousness in the Martial Arts" by Gillian Russell. Maybe Anna Salamon?

> We all lined up in our ties and sensible shoes (this was England) and copied him—left, right, left, right—and afterwards he told us that if we practised in the air with sufficient devotion for three years, then we would be able to use our punches to kill a bull with one blow.

> I worshipped Mr Howard (though I would sooner have died than told him that) and so, as a skinny, eleven-year-old girl, I came to believe that if I practised, I would be able to kill a bull with one blow by the time I was fourteen.

> This essay is about epistemic viciousness in the martial arts, and this story illustrates just that. Though the word ‘viciousness’ normally suggests deliberate cruelty and violence, I will be using it here with the more old-fashioned meaning, possessing of vices.

It all generalizes amazingly. To summarize some of the key observations for how epistemic viciousness arises:

* The art, the dojo, and the sensei are seen as sacred. "Having red toe-nails in the dojo is like going to church in a mini-skirt and halter-top... The students of other martial arts are talked about like they are practicing the wrong religion."

* If your teacher takes you aside and teaches you a special move and you practice it for 20 years, you have a large emotional investment in it, and you'll want to discard any incoming evidence against the move.

* Incoming students don't have much choice: a martial art can't be learned from a book, so they have to trust the teacher.

* Deference to famous historical masters. "Runners think that the contemporary staff of Runner's World know more about running than than all the ancient Greeks put together. And it's not just running, or other physical activities, where history |

b34132ac-8f6e-46a2-a94c-bf0c1392e1fc | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Some cruxes on impactful alternatives to AI policy work

Ben Pace and I (Richard Ngo) recently did a public double crux at the Berkeley REACH on how valuable it is for people to go into AI policy and strategy work: I was optimistic and Ben was pessimistic. During the actual event, we didn't come anywhere near to finding a double crux on that issue. But after a lot of subsequent discussion, we've come up with some more general cruxes about where impact comes from.

I found Ben's model of how to have impact very interesting, and so in this post I've tried to explain it, along with my disagreements. Ben liked the goal of writing up a rough summary of our positions and having further discussion in the comments, so while he edited it somewhat he doesn’t at all think that it’s a perfect argument, and it’s not what he’d write if he spent 10 hours on it. He endorsed the wording of the cruxes as broadly accurate.

(During the double crux, we also discussed how the heavy-tailed worldview applies to community building, but decided on this post to focus on the object level of what impact looks like.)

Note from Ben: “I am not an expert in policy, and have not put more than about 20-30 hours of thought into it total as a career path. But, as I recently heard Robin Hanson say, there’s a common situation that looks like this: some people have a shiny idea that they think about a great deal and work through the details of, that folks in other areas are skeptical of given their particular models of how the world works. Even though the skeptics have less detail, it can be useful to publicly say precisely why they’re skeptical.

In this case I’m often skeptical when folks tell me they’re working to reduce x-risk by focusing on policy. Folks doing policy work in AI might be right, and I might be wrong, but it seemed like a good use of time to start a discussion with Richard about how I was thinking about it and what would change my mind. If the following discussion causes me to change my mind on this question, I’ll be really super happy with it.”

Ben's model: Life in a heavy-tailed world

-----------------------------------------

A [heavy-tailed distribution](https://en.wikipedia.org/wiki/Heavy-tailed_distribution) is one where the probability of extreme outcomes doesn’t drop very rapidly, meaning that outliers therefore dominate the expectation of the distribution. Owen Cotton-Barratt has written a brief explanation of the idea [here](https://www.effectivealtruism.org/articles/prospecting-for-gold-owen-cotton-barratt/#heavy-tailed-distributions). Examples of heavy-tailed distributions include the Pareto distribution and the log-normal distribution; other phrases people use to point at this concept include ‘power laws’ (see [Zero to One](https://www.amazon.co.uk/Zero-One-Notes-Start-Future/dp/0753555204/ref=sr_1_1?ie=UTF8&qid=1538077169&sr=8-1&keywords=zero+to+one)) and ‘black swans’ (see the recent [SSC book review](http://slatestarcodex.com/2018/09/19/book-review-the-black-swan/)). Wealth is a heavy-tailed distribution, because many people are clustered relatively near the median, but the wealthiest people are millions of times further away. Human height and weight and running speed are not heavy-tailed; there is no man as tall as 100 people.

There are three key claims that make up Ben's view.

**The first claim is that, since the industrial revolution, we live in a world where the impact that small groups can have is much more heavy-tailed than in the past.**

* People can affect incredibly large numbers of other people worldwide. The Internet is an example of a revolutionary development which allows this to happen very quickly.

* Startups are becoming unicorns unprecedentedly quickly, and their valuations are very heavily skewed.

* The impact of global health interventions is heavy-tail distributed. So is funding raised by Effective Altruism - two donors have contributed more money than everyone else combined.

* Google and Wikipedia qualitatively changed how people access knowledge; people don't need to argue about verifiable facts any more.

* Facebook qualitatively changed how people interact with each other (e.g. FB events is a crucial tool for most local EA groups), and can swing elections.

* It's not just that we got more extreme versions of the same things, but rather that we can get unforeseen types of outcomes.

* The books *HPMOR* and *Superintelligence* both led to mass changes in plans towards more effective ends via the efforts of individuals and small groups.

**The second claim is that you should put significant effort into re-orienting yourself to use high-variance strategies.**

* Ben thinks that recommending strategies which are *safe* and *low-risk* is insane when pulling out of a heavy-tailed distribution. You want everyone to be taking high-variance strategies.

+ This is only true if the tails are long to the right and not to the left, which seems true to Ben. Most projects tend to end up not pulling any useful levers whatever and just do nothing, but a few pull crucial levers and solve open problems or increase capacity for coordination.

* Your intuitions were built for the ancestral environment where you didn’t need to be able to think about coordinating humans on the scale of millions or billions, and yet you still rely heavily on the intuitions you’re built with in navigating the modern environment.

* [Scope insensitivity](https://www.lesswrong.com/posts/2ftJ38y9SRBCBsCzy/scope-insensitivity), [framing effects](https://www.lesswrong.com/posts/Nx2WxEuPSvNBGuYpo/feeling-moral), [taboo tradeoffs](http://www.overcomingbias.com/2017/12/automatic-norms.html), and [risk aversion](https://rationalaltruist.com/2013/02/28/risk-aversion-and-investment-for-altruists/), are the key things here. You need to learn to train your S1 to understand *math*.

+ By default, you’re not going to spend enough effort finding or executing high-variance strategies.

* We're still only 20 years into the internet era. Things keep changing qualitatively, but Ben feels like everyone keeps adjusting to the new technology as if it were always this way.

* Ben: “My straw model of the vast majority of people’s attitudes is: I guess Facebook and Twitter are just things now. I won’t spend time thinking about whether I could build a platform as successful as those two but optimised better for e.g. intellectual progress or social coordination - basically not just money.”

* Ben: “I do note that never in history has change been happening so quickly, so it makes sense that people’s intuitions are off.”

* While many institutions have been redesigned to fit the internet, Ben feels like almost nobody is trying to improve institutions like science on a large scale, and that this is clear low-hanging altruistic fruit.

* The Open Philanthropy Project has gone through this process of updating away from safe, low-risk bets with GiveWell, toward [hits-based giving](https://www.openphilanthropy.org/blog/hits-based-giving), which is an example of this kind of move.

**The third claim is that AI policy is not a good place to get big wins nor to learn the relevant mindset.**

* Ben: “On a first glance, governments, politics and policy looks like the sort of place where I would not expect to find highly exploitable strategies, nor a place that will teach me the sorts of thinking that will help me find them in future.”

* People in policy spend a lot of time thinking about how to influence governments. But governments are generally too conventional and slow to reap the benefits of weird actions with extreme outcomes.

* Working in policy doesn't cultivate the right type of thinking. You're usually in a conventional governmental (or academic) environment, stuck inside the system, getting seduced by local incentive gradients and prestige hierarchies. You often need to spend a long time working your way to positions of actual importance in the government, which leaves you prone to value drift or over-specialisation in the wrong thing.

+ At the very least, you have to operate on the local incentives as well as someone who actually cares about them, which can be damaging to one’s ability to think clearly.

* Political landscapes are not the sort of environment where people can easily ignore the local social incentives to focus on long-term, global goals. Short term thinking (election cycles, media coverage, etc) is not the sort of thinking that lets you build a new institution over 10 years or more.

+ Ben: “When I’ve talked to senior political people, I’ve often heard things of the sort ‘We were working on a big strategy to improve infrastructure / international aid / tech policy etc, but then suddenly public approval changed and then we couldn’t make headway / our party wasn’t in power / etc.’ which makes me think long term planning is strongly disincentivised.”

* One lesson of a heavy-tailed world is that signals that you’re taking safe bets are *anti-signals* of value. Many people following a standard academic track saying “Yeah, I’m gonna get a masters in public policy” sounds *fine*, *sensible, and safe*, and therefore *cannot* be an active sign that you will do something a million times more impactful than the median.

The above is not a full, gears-level analysis of how to find and exploit a heavy tail, because almost all of the work here lies in identifying the particular strategy. Nevertheless, because of the considerations above, Ben thinks that talented, agenty and rational people should be able in many cases to identify places to win, and then execute those plans, and that this is much less the case in policy.

Richard's model: Business (mostly) as usual

-------------------------------------------

I disagree with Ben on all three points above, to varying degrees.

On the first point, I agree that the distribution of success has become much more heavy-tailed since the industrial revolution. However, I think the distribution of success is often very different from the distribution of impact, because of replacement effects. If Facebook hadn't become the leading social network, then MySpace would have. If not Google, then Yahoo. If not Newton, then Leibniz (and if Newton, then Leibniz anyway). Probably the alternatives would have been somewhat worse, but not significantly so (and if they were, different competitors would have come along). The distinguishing trait of modernity is that even a small difference in quality can lead to a huge difference in earnings, via network effects and global markets. But that isn't particularly interesting from an x-risk perspective, because money isn't anywhere near being our main bottleneck.

You might think that since Facebook has billions of users, their executives are a small group with a huge amount of power, but I claim that they're much more constrained by competitive pressures than they seem. Their success depends on the loyalty of their users, but the bigger they are, the easier it is for them to seem untrustworthy. They also need to be particularly careful since antitrust cases have busted the dominance of several massive tech companies before. (While they could swing a few elections before being heavily punished, I don’t think this is unique to the internet age - a small cabal of newspaper owners could probably have done the same centuries ago). Similarly, I think the founders of Wikipedia actually had fairly little counterfactual impact, and currently have fairly little power, because they're reliant on editors who are committed to impartiality.

What we should be more interested in is cases where small groups didn't just ride a trend, but actually created or significantly boosted it. Even in those cases, though, there's a big difference between success and impact. Lots of people have become very rich from shuffling around financial products or ad space in novel ways. But if we look at the last fifty years overall, they're far from dominated by extreme transformative events - in fact, Western societies have changed very little in most ways. Apart from IT, our technology remains roughly the same, our physical surroundings are pretty similar, and our standards of living have stayed flat or even dropped slightly. (This is a version of Tyler Cowen and Peter Thiel's views; for a better articulation, I recommend *The Great Stagnation* or *The Complacent Class).* Well, isn't IT enough to make up for that? I think it will be eventually, as AI develops, but right now most of the time spent on the internet is wasted. I don't think current IT has had much of an effect by standard metrics of labour productivity, for example.

**Should you pivot?**

Ben might claim that this is because few people have been optimising hard for positive impact using high-variance strategies. While I agree to some extent, I also think that there are pretty strong incentives to have impact regardless. We're in the sort of startup economy where scale comes first and monetisation comes second, and so entrepreneurs already strive to create products which influence millions of people even when there’s no clear way to profit from them. And entrepreneurs are definitely no strangers to high-variance strategies, so I expect most approaches to large-scale influence to already have been tried.

On the other hand, I do think that reducing existential risk is an area where a small group of people are managing to have a large influence, a claim which seems to contrast with the assertion above. I’m not entirely sure how to resolve this tension, but I’ve been thinking lately about an analogy from finance. [Here's Tyler Cowen](https://medium.com/conversations-with-tyler/nate-silver-conversations-with-tyler-1bdafe685d77):

> I see a lot of money managers, so there’s Ray Dalio at Bridgewater. He saw one basic point about real interest rates, made billions off of that over a great run. Now it’s not obvious he and his team knew any better than anyone else.

> Peter Lynch, he had fantastic insights into consumer products. Use stuff, see how you like it, buy that stock. He believed that in an age when consumer product stocks were taking off.

> Warren Buffett, a certain kind of value investing. Worked great for a while, no big success, a lot of big failures in recent times.

The analogy isn’t perfect, but the idea I want to extract is something like: once you’ve identified a winning strategy or idea, you can achieve great things by exploiting it - but this shouldn’t be taken as strong evidence that you can do exceptional things in general. For example, having a certain type of personality and being a fan of science fiction is very useful in identifying x-risk as a priority, but not very useful in founding a successful startup. Similarly, being a philosopher is very useful in identifying that helping the global poor is morally important, but not very useful in figuring out how to solve systemic poverty.

From this mindset, instead of looking for big wins like “improving intellectual coordination”, we should be looking for things which are easy conditional on existential risk actually being important, and conditional on the particular skillsets of x-risk reduction advocates. Another way of thinking about this is as a distinction between high-impact goals and high-variance strategies: once you’ve identified a high-impact goal, you can pursue it without using high-variance strategies. Startup X may have a crazy new business idea, but they probably shouldn't execute it in crazy new ways. Actually, their best bet is likely to be joining Y Combinator, getting a bunch of VC funding, and following Paul Graham's standard advice. Similarly, reducing x-risk is a crazy new idea for how to improve the world, but it's pretty plausible that we should pursue it in ways similar to those which other successful movements used. Here are some standard things that have historically been very helpful for changing the world:

* dedicated activists

* good research

* money

* public support

* political influence

My prior says that all of these things matter, and that most big wins will be due to direct effects on these things. The last two are the ones which we’re disproportionately lacking; I’m more optimistic about the latter for a variety of reasons.

**AI policy is a particularly good place to have a large impact.**

Here's a general argument: governments are very big levers, because of their scale and ability to apply coercion. A new law can be a black swan all by itself. When I think of really massive wins over the past half-century, I think about the eradication of smallpox and polio, the development of space technology, and the development of the internet. All of these relied on and were driven by governments. Then, of course, there are the massive declines in poverty across Asia in particular. It's difficult to assign credit for this, since it's so tied up with globalisation, but to the extent that any small group was responsible, it was Asian governments and the policies of Deng Xiaoping, Lee Kuan Yew, Rajiv Gandhi, etc.

You might agree that governments do important things, but think that influencing them is very difficult. Firstly, that's true for most black swans, so I don't think that should make policy work much less promising even from Ben's perspective. But secondly, from the outside view, our chances are pretty good. We're a movement comprising many very competent, clever and committed people. We've got the sort of backing that makes policymakers take people seriously: we're affiliated with leading universities, tech companies, and public figures. It's likely that a number of EAs at the best universities already have friends who will end up in top government positions. We have enough money to do extensive lobbying, if that's judged a good idea. Also, we're correct, which usually helps. The main advantage we're missing is widespread popular support, but I don't model this as being crucial for issues where what's needed is targeted interventions which "pull the rope sideways". (We're also missing knowledge about what those interventions should be, but that makes policy research even more valuable).

Here's a more specific route to impact: in a few decades (assuming long timelines and slow takeoff) AIs that are less generally intelligent that humans will be causing political and economic shockwaves, whether that's via mass unemployment, enabling large-scale security breaches, designing more destructive weapons, psychological manipulation, or something even less predictable. At this point, governments will panic and AI policy advisors will have real influence. If competent and aligned people were the obvious choice for those positions, that'd be fantastic. If those people had spent several decades researching what interventions would be most valuable, that'd be even better.

This perspective is inspired by Milton Friedman, who argued that the way to create large-scale change is by nurturing ideas which will be seized upon in a crisis.

> Only a crisis - actual or perceived - produces real change. When that crisis occurs, the actions that are taken depend on the ideas that are lying around. That, I believe, is our basic function: to develop alternatives to existing policies, to keep them alive and available until the politically impossible becomes the possible.

The major influence of the Institute of Economic Affairs on Thatcher’s policies is an example of this strategy’s success. An advantage of this approach is that it can be implemented by clusterings of like-minded people collaborating with each other; for that reason, I'm not so worried about policy work cultivating the wrong mindset (I'd be more worried on this front if policy researchers were very widely spread out).

Another fairly specific route to impact: several major AI research labs would likely act on suggestions for coordinating to make AI safer, if we had any. Right now I don’t think we do, and so research into that could have a big multiplier. If a government ends up running a major AI lab (which seems pretty likely conditional on long timelines) then they may also end up following this advice, via the effect described in the paragraph above.

**Underlying generators of this disagreement**

More generally, Ben and I disagree on where the bottleneck to AI safety is. I think that finding a technical solution is probable, but that most solutions would still require careful oversight, which may or may not happen (maybe 50-50). Ben thinks that finding a technical solution is improbable, but that if it's found it'll probably be implemented well. I also have more credence on long timelines and slow takeoffs than he does. I think that these disagreements affect our views on the importance of influencing governments in particular.

We also have differing views on what the x-risk reduction community should look like. I favour a broader, more diverse community; Ben favours a narrower, more committed community. I don't want to discuss this extensively here, but I will point out that there are many people who are much better at working within a system than outside it - people who would do well in AI safety PhDs, but couldn't just teach themselves to do good research from scratch like Nate Soares did; brilliant yet absent-minded mathematicians; people who could run an excellent policy research group but not an excellent startup. I think it's valuable for such people (amongst which I include myself), to have a "default" path to impact, even at the cost of reducing the pressure to be entrepreneurial or agenty. I think this is pretty undeniable when it comes to technical research, and cross-applies straightforwardly to policy research and advocacy.

Ben and I agree that going into policy is much more valuable if you're thinking very strategically and [out of the "out of the box" box](https://www.lesswrong.com/posts/qu95AwSrKqQSo4fCY/the-outside-the-box-box) than if you're not. Given this mindset, there will probably turn out to be valuable non-standard things which you can do.

Do note that this essay is intrinsically skewed since I haven't portrayed Ben's arguments in full fidelity and have spent many more words arguing my side. Also note that, despite being skeptical about some of Ben's points, I think his overall view is important and interesting and more people should be thinking along similar lines.

*Thanks to Anjali Gopal for comments on drafts.* |

0c525833-d335-4d0b-9546-3d633ed193d9 | trentmkelly/LessWrong-43k | LessWrong | Intelligence failures and a theory of change for forecasting

In this blog post I’m going to try to do two things. First I’m going to make the case that failures of both the US and Soviet intelligence services have substantially increased the risk of nuclear power in the atomic age, then I’m going to argue that these case studies provide potentially the best example of how Tetlock style forecasting can be used to improve the world.

Forecasting

Tetlock style forecasting is the practice of individuals or, even better, groups of individuals and having them predict the probability of events on scale from 0-1 and then scoring them on their predictions. Tetlock’s experiments have shown that some people are much much much better both than average individuals, domain experts, and relevant for this, CIA intelligence analysts with access to classified material, at predicting political events. However, as with any improvement in human power, it’s an open question about whether this tool is more likely to be used for good or ill. For instance, one could imagine authoritarian regimes using these methods to more effectively suppress protests. Furthermore, it’s not clear how many tragedies could have been averted if the revlevent actors had had access to higher quality information. For instance, the North Korean economy isn’t failing because President Kim is incorrect about whether or not his economic policies are good - the economy is failing because Kim uses the policies that are expedient to him staying in power for the longest period of time possible. If one was trying to improve the long term future as much as possible it’s not clear that developing and advocating for forecasting is better than reducing the burden of disease in low income countries which both have enormous benefits and we can be very confident that we do effectively.

However, I think the history of nuclear risk provides good case studies in how having better information would have reduced nuclear risk, and I’ll also sketch theoretical reasons for why one should |

b34a71b5-9659-4c51-8dcb-83acce81caff | StampyAI/alignment-research-dataset/youtube | Youtube Transcripts | The Alignment Problem: Machine Learning and Human Values

to explore deep unsolved problems about

the nature and limits of computation

the institute's core activities revolve

around semester-long research programs

on specific topics in the foundations of

computing and related fields

we've been going since 2012 and recently

we learned that our funding will be

renewed for the second decade so we're

very grateful to the simons foundation

for that

for this semester although we've been

operating online only we have two

very active programs one on probability

geometry and computation in high

dimensions

and one on the theory of reinforcement

learning the institute has a

long-standing tradition of

appointing a science communicator in

residence

with the aims of supporting authors and

journalists in the areas of computer

science and mathematics of helping them

connect with the experts who participate

in our programs

of increasing the visibility of

theoretical computer science and of

helping to

educate our participants about

communicating their work to a broader

audience

so we're delighted to have as our

science communicator in residence this

semester

brian christian brian is the

award-winning author of the most human

human

and algorithms to live by these books

have won many awards and commendations

including from the new york times wall

street journal

the new yorker and the mit technology

review

brian's written articles for the

atlantic the guardian the new yorker

the paris review wired and the wall

street journal

and he has a third book coming out in

early october

and we're very happy to be hearing about

uh the topic of that book today brian's

talk today is entitled the alignment

problem

machine learning and human values

welcome brian

thank you so much peter so i'm going to

talk about the book that i've been

writing for the past

four years which is finally coming out

in three weeks

and it feels really fitting to be giving

the first real talk about the book here

at berkeley

because berkeley has really been my home

in my primary academic community during

that time

so i first want to express my gratitude

to citrus where i've been a visiting

scholar starting in the spring of 2017.

and to chai where i've been an active

participant throughout that time as well

and also to the simons institute

attending some of the seminars and

workshops here was

incredibly generative both for

familiarizing myself with certain

technical

concepts connecting certain

interdisciplinary dots and

meeting folks across the community not

just here at berkeley but beyond

so i want to give a special thanks to

brandi nanaki and camille crittenden

at citrus to stuart russell and mark

nitzberg at chai

and to richard carp and kristen kane

here at simons for

inviting me into the fold and making me

feel so welcome and so at home here

and so i'm very honored to be starting

this semester as the scientific

communicator

in residence here at simon's um it's a

great honor to me so

thank you i want to talk about the

central theme of the book which

is the connection between machine

learning and human values

in some ways it feels quite ironic to be

addressing in large part the theoretical

computer science community

particularly those who work in machine

learning and especially in reinforcement

learning

um because in some sense i see my role

as being something of

an ambassador from that community to the

public at large

and so um it was an interesting

challenge to think about how to make an

address

to that community um the second

challenge

for me uh is that the book is very

deliberately an opportunity

for people who are not myself to speak

um i decided very early on that one of

my priorities was to create space

for people in large part to tell their

own stories

there are probably about um between 100

and 150 people

who speak at one point or other in the

course of the book and

i think that um sort of multi-vocal

quality is really one of its

distinguishing features um for better

worst this morning it's just me so you

won't

quite uh get to appreciate that aspect

of the book

um third and finally a big part of what

the book sets out to do

um is to actually teach people machine

learning i believe very passionately

that there are

many careers that are intersecting with

machine learning at this point whether

it is public policy um the law

medicine and so forth and a lot of

people are having to find themselves

kind of skilling up in machine learning

literacy you know midway through a

career that was ostensibly

um about something else um and so a big

part of my goal uh is pedagogy i want

people to

to walk away knowing the difference

between supervised unsupervised and

reinforcement learning and

what an objective function is and the

difference between

behavior cloning and inverse

reinforcement learning

etc and uh if ever there was a group of

people who did not

need me to give them a primer on machine

learning it is the simon's institute

research fellows so what i would like to

do

is highlight some of the

interdisciplinary connections and the

places where

machine learning makes contact sometimes

in very surprising ways

with other disciplines because i think

it is very broadly true that

um to the degree that ai machine

learning reinforcement learning are

about discovering fundamental aspects of

what it means to think to have a mind

then the further along that field goes

the more relevant existing fields like

developmental psychology cognitive

science education

management science etc become

and so for for the next 30 or so minutes

perhaps i can um

reverse my normal osmosis and instead of

being a kind of ambassador

for theoretical computer science i can

attempt to be an

ambassador to theoretical computer

science and

highlight this what i see as an

incredibly rich circumference of

connections to other fields

and opportunities for research and for

cross-pollination

so on to the central thrust of the book

itself

um before we explore the the actual

contents together

so there's a story that i assume most

everyone

here knows at least to some degree or

another which is the remarkable progress

that has been made in machine learning

particularly

deep learning since the beginning of the

last decade

there is something very poetic to me

about the fact that it

was neural networks in particular that

are responsible for this incredible

breakthrough because

neural networks were essentially one of

the very first

ideas in computing uh they're they're

older than the von neumann architecture

they're they're older than the the

stored

uh program computer um von neumann's

1945 edvac report which

is the first written description of a

stored program computer contains

um in 101 pages only a single citation

which is mcculloch and pitts 1943

so i started researching the life of

walter pitts

um going through oral histories and the

mcculloch archives uh

at the american philosophical society in

philadelphia

and i was astounded at the life stories

of some of these early pioneers

i remember reading an oral history from

one of their contemporaries jerome

ledvin who just casually mentioned

oh yeah and when pitts started working

with mcculloch he was 17 years old

and homeless well that certainly got my

attention

um and warren mcculloch basically came

like became like his foster parent

um the more i read the more fascinating

and poignant that story

became so i thought okay um i found my

my opening scene so it's walter pitts

h12 deciding to run away from home

and of course we all know the story that

that runs

from there through frank rosenblatt and

marvin minsky and seymour papert

the rebirth in the 1980s and then what i

see as kind of this ultimate final

triumph in 2012 with alex net

um when we first meet alex krazevsky in

the book he is

in his bedroom at his parents house in

toronto and his two

uh gtx 580 gpus have been running

uh non-stop for two weeks and now it's

too hot to sleep um

and that was just 2012 not that long ago

and yet

it feels now that we almost live in a

different world

i think we've become um

in some ways desensitized to how

discontinuous some of these jumps

uh have felt and it's important i think

to remember how much they caught even

experts off guard

um so one one example sort of at random

is uh richard sutton uh who authored of

course the

canonical book about reinforcement

learning um

gave a lecture in 2015 at ut austin

uh where he presented a graph and here's

the slide

of the strength of computer go programs

there was this very striking

linear trend line which uh if you

extrapolated it out

he notes that projecting this trend

suggests

a computer will be world champion by

2025

so within 10 years um

and it happened the very next year um

led by the team at deepmind uh led by

david silver um

and i think this is just just a very

striking illustration of how abrupt some

of those

uh jumps uh have been uh

i got very curious by the way in the

origin of this graph

so i started digging into where uh where

rich got it

and the original version had been made

for rich a few years earlier

by a grad student of his named david

silver

uh so i think that's quite ironic indeed

at the same time all of this is

happening in deep learning

uh and deep reinforcement learning um

there is a

subtler but equally significant movement

that's happening within

society which is the penetration of

machine learning into greater

and greater contact with human and

institutional decision making

so to illustrate this this is a look at

the number of states in the u.s that are

using statistical risk assessment models

to assist in parole decisions

so um by

1935 it's just one u.s state

by 1960 it's 2 out of 50.

by 1980 it's 4 out of 50

then 12 and then in the year 2026

um and by 2003 the association of parole

authorities internationals

handbook for new parole members writes

in this day and age making parole

decisions without the benefit of a

research-based risk assessment

instrument

clearly falls short of accepted best

practice

um and in 2016

um supreme court chief justice john

roberts is visiting

rensselaer and he is asked by rensselaer

president shirley ann jackson

can you foresee a day when artificial

intelligence

will assist with courtroom fact-finding

and even

more controversially perhaps judicial

decision-making

and roberts responds

it's a day that's here

and so i think on both of these counts

both the astonishing

capacity of these systems on the one

hand and

on the other hand the increasing surface

area on which they touch our lives

people began as we all know to get

concerned and these concerns

take root across two distinct but

fundamentally united groups

there are people worried about the

present day

about whether the systems currently

being deployed really represent the

interests of the people that they're

supposed to

and there are people worried about the

near-term future

as we increase and increase the

capability of these systems

that we might be setting ourselves up

for a truly catastrophic failure

again despite their different priorities

and

distinct but overlapping communities i

began to see the fundamental question

underneath

each set of concerns as being the same

so put most simply

how can we make sure that these systems

do what we want

and this problem of course has a name

and the name is

the alignment problem

and i think this is more or less

where the public conversation around

machine learning around

ml ethics and technical ai safety uh

kind

of ends but in my view

this is really where things get

interesting and this is

this is really the point where the book

begins so if we look to the period of

roughly 2014 to 2016 as

a giant pulling of the fire alarm

uh then to continue the analogy what we

begin to see from

roughly 2016 to the present uh is

the first responders start showing up

uh this set of concerns moves from being

marginal and to some degree

taboo to comprising one of the central

concerns

of i think of the field we have this

explosion of

workshops conferences research centers

non-profits grants

all happening within this short time and

i heard from people

you know again and again that they they

would go to

for example nurips in 2016 and tell

people that they worked on

safety and the response would be

something like safety

uh and then by 2017 there was an entire

nurse workshop

workshop on safety um i heard versions

of this story again and again you know

with the years perhaps plus or minus one

and the area of focus being you know

safety fairness transparency etc

um so there is this incredible zeitgeist

i think pivoting towards these issues

there is a first generation of phd

students

just now graduating who um

are have matriculated with the explicit

purpose of wanting to work on ai ethics

and ai safety

um and not only is there this incredibly

spirited energy around these topics but

there are actual results there are

tangible victories uh being notched and

there is this

agenda now uh that is well underway

um so with ironically a

minimum of actual computer science and a

maximum emphasis

on the interdisciplinary dimensions of

this field

what does that agenda actually look like

so the book is divided into three parts

which comprise three chapters each

and so i'd like to just take a very

brief

um glance at each in turn

and highlight what i see as being some

of the the frontier of interdisciplinary

connections that exist

around this core theoretical computer

science

contribution so the first chapter starts

with

one of the most visceral and sadly

iconic

examples of machine learning failing in

an ethically significant way which is

um the famous example of the two black

americans being labeled

by google photos in 2015 as gorillas

um we get to meet jackie alstine who

was one of those people and is himself a

software engineer with an ml

uh background and who knew instantly

that

something had gone wrong uh in the

training data that he

as soon as this experience happened to

him he immediately surmised that there

was just a

paucity of black faces in the training

data

so we knew exactly how this had happened

but the question was

why why had that come to be the case

that this this um

training data was so uh unrepresentative

of the population at large

so that's that's the deeper question um

and i think this is a great example of

where

ethical and long-term technical safety

concerns intersect so

you can frame this as a question of

inclusion and representation

uh you can also frame it as a question

of robustness to distributional shift

how do models behave when they get

outside of their training distribution

um

and there is a lot of really i think

exciting and encouraging work being done

here so you have the work of people like

joy bolemweeny and timmy gabriel um and

many others

uh bringing a focus um

to this question of where do these

training sets actually come from

what do they actually look like um and

i think there's an interdisciplinary

theme here as well

um which is that there's also there's an

almost 200 year story

of the intersection of racial justice

and photography so

as i started researching this area i was

fascinated to learn for example that

uh the single most photographed american

of the 19th century uh more than abraham

lincoln etc

was frederick douglass the pioneering

abolitionist

who felt that photography was a critical

tool for emancipation because

of course before photography you had

engravings

which were done by hand and almost

always exaggerated black people's

features in stereotyped ways

so there's an irony that we go from the

19th century

in which the single most photographed

american is

frederick douglass um to

the 20th century where commercial film

is being

developed and calibrated um by color

accuracy with reference to

a model the first model that kodak used

was named shirley and so these have

become known as

shirley cards which until the 1970s were

almost exclusively white

um and in the in the book we get to meet

some kodak

executives who uh describe amazingly

that they were receiving pressure in the

60s and 70s

from the chocolate and furniture

industries

um to make film that better portrayed uh

brown hues um but

changing the uh nature of the film of

course changed

the um the ability of the film to

represent people with darker skin

um so here we are in the 21st century in

which we have these

um kind of weird echoes back to the 20th

century

um and we have this broad uh

broad movement of of questioning what is

the nature

of these training sets and um you have

people

like neil jan and huhan

publishing statistics about the

composition of labeled faces in the wild

and showing that for example it contains

twice as many images of george w

bush as it does of all black women

combined

um and as recently as i believe the fall

of 2019 a warning

label now appears on the page where you

would go to download the

lfw data set saying basically caution

this data set is

not representative of the us or the

global population

the other domain that we explore in this

chapter is language models and looking

at the many stereotypes that emerge

all the way from simple word embedding

models like word to vec and glove

all the way to the more modern enormous

trend uh transformer networks like gpt

2 gbt3 um these models are often

employed i think

uncautiously in recruiting and hiring

contexts

and we encounter the story of an amazon

team that

looks with horror at the individual

terms that their model is kind of

upranking and downranking

there's a theoretical computer science

story here um in

work on de-biasing vector models by

collapsing the gender space

um and although the story there is is

not quite so simple

um and there's work as recently as two

weeks ago from open ai

on fine-tuning these big transformer

models

from human preferences um and i think

that story is

also very much being worked out um the

interdisciplinary

interdisciplinary story here is that

we've added

a new tool uh to the arsenal of social

science that

um as these language models become in

some ways

uncanny reflections of

uh human norms and human biases

including the ones that we

would rather we didn't have they become

a measure for actually watching society

change and so there's been some really

interesting

interdisciplinary work on applying these

models to historical uh

corpora you know 1930s 40s 50s 60s 70s

and watching in a quantifiable way

uh the norms of the society change and

so i think

um machine learning is not only

a a tool um

that uh it's not just the case that

social scientists um

you know are increasingly having input

in these models but that these models

are also giving social

scientists um a brand new set of subject

matter and

an entirely new lines with which to look

at the world

so the the second chapter is fairness

and i think most people who are familiar

with the machine learning literature

on fairness know about the compass tool

which predicts the risk of recidivism

and is used in pre-trial detention so in

this chapter i really try to dig into

the back story so

um statistical risk assessment models go

back to the 1920s

and the there's a time when the

conservative head of the parole board in

illinois was thinking about getting rid

of the parole system entirely

um and he believes that is simply an an

asset to criminals uh

that you would ever let them out uh

ahead of the full sentence

and a sociologist uh from chicago named

ernest burgess ends up

collecting enough data to persuade him

to change his mind

um and uh

that is really the beginning of the the

use of statistical risk assessments

um in the criminal justice system and

uh looking through archival uh

newspapers was

was very enlightening to me uh for

example most of the criticism against

these models in the 30s

uh was coming from the right whereas

today it

is largely speaking the progressives

that are the most skeptical

um so i think the the most visible

of the contemporary critiques of these

models is from

pro publica julia anguin um who uh

made this very famous article machine

bias critiquing the compass model

um and i got to meet both julia anguin

and the creator of compass tim brennan

um and

i was quite pleased to actually find a

bit of common ground where i

could uh i could convince them to to

agree with

one another um the there's a theoretical

computer science story here on how do we

operationalize

fairness and um this goes through the

work of people like cynthia dwork

mort's heart uh john kleinberg sam

corbett davies alex toldichova

christian lum looking at everything

from these impossibility proofs

of uh mutually satisfying different

metrics of fairness

to things like the long-term feedback

loops that exist when these systems

uh get into put into practice and start

generating the very data

that they will go on to be trained upon

the interdisciplinary story here

includes not only

the long-term history of risk assessment

but also contemporary

political scientists like columbia's

bernard harcourt

who argues that the very premise that

better predictions lead to a

better public safety um is itself

wrong um which i think is a very

interesting argument for for people in

in ml to contend with um and more

broadly we have the

the question of uh not just data

provenance

where are the data coming from uh but

the sort of human computer interaction

and the user experience aspect of how

these risk assessment

instruments actually get put into

practice so as part of my research for

this chapter i spent a day

going to arraignment hearings in san

francisco um

right after san francisco began using

the arnold tool

and it was very illuminating to me to

see the degree to which individual

judges

did or didn't actually comprehend uh

this giant printout that they're being

given

with with the risk assessment

information on it

um so there's also this deeper question

of course of

what exactly is the ultimate purpose of

certain aspects of criminal policy

um if we can identify that someone is a

risk

pre-trial well there might be two

extremely different risks that we have

in mind you know there might be a risk

of violent reoffense

there might also be a risk of failure to

appear for the court date

um and if it may be the case that the

solution to the former problem

uh might be incarceration it might not

the solution to the second problem is

probably something like a text message

um and so i think there uh there's a lot

of work to be done not necessarily in

how the models themselves are developed

but in whether they're essentially used

uh

according to you know the label on the

side of the tin and i think there's

there's a lot of work to be done there

um

and and many people of course argue that

uh fine-tuning exactly what the

objective function is of these systems

or exactly what this or fairness

constraints imposed upon them

um that while that discussion is

fruitful there are also ways we can sort

of cut the guardian not entirely

so we could just decriminalize marijuana

for example and then not have to

worry about how to fairly assign

pretrial detention for people who are

arrested for that

there are some states i believe new york

state and maryland if i'm not mistaken

um that are increasingly moving towards

a model where

if you're arrested for a non-violent

misdemeanor then you simply released

full stop and so then you don't need a

model to predict your

whether or not to detain the person if

you simply never detain the person

um in the domain of transparency so

um the chapter in transparency focuses

on the domain of medicine and we

meet microsoft's rich carwana who in the

1990s was developing

machine learning models for predicting

the severity of pneumonia

and uh his neural network model

wins this kind of bake off against uh

you know logistic regression rule-based

models and and so forth

um but very significantly he

urges the doctors that were partnering

with them on the study

not to deploy the neural network

precisely because

he doesn't know what's in it he doesn't

know what it's learned

and in particular the the rule-based

model had learned this rule that

if someone is asthmatic then

we should predict that they are at lower

risk for pneumonia

which if you think about that for a

second doesn't make any sense at all

it turns out that this is actually a

real correlation in the data

but it's precisely because asthmatics

are given

higher priority care that they on

average do have better outcomes than

regular people but

this is precisely the care of course

that the model would deny to those

patients

um so transparency allows us to of

course catch some of these things before

they actually go

into deployment and affect people and

there's a really rich computer science

here that i think is really exciting

from

caruana himself uh trying to explore a

space of models that are

ideally as expressive or capable as

neural networks but

as interpretable as something like a

rule list and so he's

pioneering ideas like uh generative

additive models and his own sort of

personal extension of that

um to people like cynthia rudin at duke

university who are

exploring the space of certifiably

optimal simple models um so rather than

using our computational horsepower

training a big model

we use our computational horsepower

exploring the space of simple models and

finding the ideal simple model

um and on the

sort of deep learning side we have

people like openai's chris ola

working on unpacking and visualizing

deep convolutional networks

and people like google's bee and kim

working on

concept activation vectors and

interpretability measures using

high level human concepts so the

interdisciplinary story here in my mind

is that

transparency is fundamentally a human

concept

a model is transparent to the degree

that people understand what's going on

and use it appropriately um there is

nothing in the abstract that

transparency means

outside of that and so user studies

should be totally unavoidable um and not

only that

but they are often counter-intuitive so

one of the results here that comes to my

mind

is the work of jen wartman vaughan and

her collaborators um

who showed that simple transparent

models

with a small number of parameters and

clearly visible weights

were much more trusted by human users

even when those models were operating

outside their training distribution and

output and garbage

um so i think user studies like that are

really

useful at um you know

problematizing that the simple story

that we might otherwise get

about thinking about model transparency

um

so there's also the legal angle

obviously so transparency intersects

with the law and things like the gdpr um

and i also think there's a critically

interesting intersection here um

with a bunch of mid-20th century

psychology so there's

there's a tradition within psychology

going from ted sarban in the 1940s to

paul meal in the 1950s to robin dawes in

the 1970s

looking at comparing expert human

judgments to linear models with uniform

weights

unit weighted regression and the tldr is

that unit weighted regression

demolishes expert judgment

even when you still give the human

expert the results of a unit weighted

regression

uh they're still worse than just using

the regression on their own and when you

give the machine learning model the

human judges uh

decisions as input the model doesn't

even use it uh it's just not helpful

um and i think this is really really

provocative and

and in particular um one of the things

that robin dawes was interested in

is this question of how do you build a

model when you don't

have an objective function so you want

to identify high schoolers that will go

on to flourish by getting higher

education

okay well first of all you're going to

have to wait 20 years to get

the training data for that and you have

to implement your model

now secondly what do you mean

flourish uh what how do you

operationalize this idea of someone who

uh you know responds well to to going to

college

um it might take a really long time to

figure out how to operationalize that if

you if you can

um but you have to make the model now so

what do you do

um and amazingly there are still results

that you can prove about what a good

model might look like even under those

conditions

um and i think results like that are are

relevant for

thinking about sort of these farther

future questions about ai

um and what what are the objective

functions that we really want to give

them

um and again this is happening in

psychology in the 1970s

but it feels in some ways more relevant

now than ever

so that's part one which looks at

supervised and unsupervised learning and

present day risks um part two turns the

focus to reinforcement learning

specifically

um so in chapter four uh we get to meet

andy bartow and rich sutton and we

learned the the

uh roots of reinforcement learning in

the ideas of harry klopf

who had this idea that neurons were what

he called heterostatic maximizers

pushing back on the cybernetics movement

of the 40s and 50s that thought that

purposeful behavior necessarily required

negative feedback in

a system that wanted to reach

equilibrium and stay at rest

um harry klopp said no that's that is

not what life is like that is not what

organisms are like we are maximizers um

and there's a deeper historical story

here too though which goes all the way

back

to the 1890s and the work of edward

thorndike on what he called the law of

effect

um which is that you know by default we

take actions randomly

the results of those actions are in his

words either satisfying or annoying

um and that we we modify accordingly to

do more of the satisfying things and

less of the annoying things

um and there's this wonderful historical

moment here where

it turns out that edward thorndike and

gertrude stein were classmates at

harvard

in william james's psychology class in

1896

um and uh gertrude stein described him

as a

as a funny a funny character

um and these ideas really um

carry all the way through to

reinforcement learning in the 20th

century

and so in this con in this chapter we

talk about rl concepts like

credit assignment the difference between

value learning and policy learning

temporal differences um knowing my

audience i think it's fair to imagine

that you don't need me to say too much

more about that

um but there are a number of really rich

connections here um

rl is premised on this idea that rewards

are scalar

uh they're cardinal they're fungible

anything can be compared to anything

real life doesn't always feel that way

right so we we agonize do i do

the thing that's the most lucrative do i

do the thing that's the most prestigious

or do i do the thing that's the most fun

um well

rl traditionally doesn't have this

problem right the rewards are scalars so

you just compare four to five to six

and you do the six um so there are

philosophers like oxford's ruth chang

who think that this um fundamentally

multi-dimensional character of human

rewards

uh what she calls incommensurability uh

this inability to be collapsed from a

vector

representation to a scalar um is

absolutely central to the human

experience

um people from the rl community

essentially counter-argue that you

you do in the end decide and so you can

kind of infer that there was a scalar

attached to that that was greater than

the scalar of something else and

um of course this uh really intersects

with economics and

revealed preferences and utility and

needless to say there's an entire

literature there

uh from pareto to von neumann et cetera

you also have contemporary people in the

neuroscience community people like

paul glimcher and his colleagues at nyu

trying to unpack the actual mechanisms

by which the mind attempts to do this

dimension reduction

uh in the space of value um and

looking into the question of where and

how and with what you know model

is the brain doing that um there's a lot

that we are starting to know

uh in the last 20 years but a lot that

still out there to be learned

um i think the the most thrilling

collision between

um rl and neuroscience is the dopamine

system

so some of you may know this story and

i'm compressing a lot here

but in

the 1990s it was shown by peter diane

terry sinowski read montague that

temporal difference learning basically

explained this open problem

in understanding the function of the

dopamine system and i for me that's just

this totally climactic moment

of uh the science coming full circle

that these models that had grown out of

animal learning in the late 19th early

20th century

finally come into their own and not only

that

but actually solve this outstanding

riddle in the way that the human brain

works i think that's

um a really really encouraging indicator

that rl is basically on the right track

and that we're discovering

um fundamental mechanisms of learning

not not just

engineering practices that work for

specific problems but universal

mechanisms for learning that

evolution has stumbled into again and

again

so from there we get into shaping and

um you know anyone who works in

reinforcement learning is familiar with

the delicacy of designing appropriate

incentives

um and there's a fascinating

interdisciplinary story here too that

starts um with bf skinner during world

war ii

teaching pigeons um because he's been

assigned this project

to put pigeons inside of bombs and have

them

peck at images of bomb targets uh to

create like live homing missiles

basically

um and he has this quote that uh you

know my my colleagues and i knew that in

in the eyes of the world we were totally

insane um

and along the way he does he develops

these principles of what he calls

shaping

that you can start approximating uh

rewarding approximations to the behavior

that

you want and so this idea obviously goes

through theoretical

rl um and you have

uh the work of stuart russell and andrew

ung

in the late 90s showing that you know

the way to avoid

um problems of incentive

is to uh create what's called a

conservative field or basically make a

situation where

if you return to where you started that

the net

shaping uh reward is zero um uh put

differently we wanna

reinforce states of the world not uh

actions of the agent

um and this ends up having

uh all these ramifications in the

cognitive science community

um so for example the work of my good

friend and collaborator tom griffiths at

princeton

um his former phd student from berkeley

faulk leader using these principles

of uh directly borrowing ideas from the

shaping theory

um to create mechanisms for what they

call

optimal gamification so how do you

incentivize people

um not only in a way that doesn't lead

to

uh you know degenerate behavior but but

in ideally the the optimal way

and so the computer science uh or the

the cognitive science rather is

borrowing that idea very directly from

the rl

theory and i think there's a lot more to

be worked out there as well

um of course we know that

you know not not only are we

motivated by explicit incentives from

outside but

anyone who spent time with kids and

animals knows that

um we're motivated intrinsically as much

as extrinsically

um uh and it became obvious in the mid

20th century that you know rats

were willing to walk across an

electrified fence just to peek around

the corner

uh monkeys are as willing to lever press

for uh

to look out a window as they are for

food um and so this

started an effort within psychology to

try to understand the nature of

intrinsic as opposed to extrinsic

motivation

and there's a long and wonderful history

here

the computer science story i think is

people like google brains mark bellamar

who's working on uh extending

count-based exploration

into non-tabular settings um

people like jurgen schmidhumer who's

thinking about intrinsic motivation as

the ability to compress uh information

people at open ai

like yuri berda and harry edwards um

and here at berkeley uh people like

deepak pathik

pulkit agrawal um trevor daryl

exploring intrinsic motivation uh based

on on the idea

that the agent be motivated to take

actions essentially which surprise it

um so as this formal uh work in rl is

getting worked out there's this

all of these connections to infant

psychology um there was a great story

that

alison gotnik berkeley psychologist told

me

about reading about uh trevor

and some of his students work in the

berkeley newsletter and they were

talking about how interested they were

in taking her

ideas about infant uh curiosity and

applying them to rl

and she emailed them like guys i'm i'm

right here i'm like

across the street uh let's actually

collaborate on this and so it's been

really exciting to see

um those two worlds come together um

and on the one hand developmental uh

psychologists are using

rl as a formal model to explain infant

behavior and at the same time

uh the people in rl are turning to uh

what we know about infants to think

about

uh motivation and intrinsic drive uh

that might be useful

uh just in in exploration uh for rl

so the third part of the book um

gets most squarely into the question of

normativity and aligning deep rl agents

with human norms and human values so

one of the things one of the central

themes that anyone knows

um in rls what's called imitation

learning or sometimes behavior cloning

um and there's a really really rich

story here

um not only the computer science story

um in the book we

we meet dean palmerlow from cmu who um

is crazy enough to drive all the way

from pittsburgh to lake erie on the

highway for two hours

um letting a neural network steer his

car in 1990

um using a system that had one tenth of

the processing power

of a first generation apple watch um

so the the surprisingly long uh and

slightly daredevil

history of behavior cloning uh in

self-driving cars that continues all

all the way to this day and we meet

waymo engineer

stefan ross who developed the dagger

algorithm for avoiding cascading errors

um

in imitation learning there's also this

wonderful human story here too

which is that um zoologically all of our

words for imitation not just in english

but in

almost every language uh we say you know

to ape something

uh but the real prolific imitator in

nature

is not apes at all and there's an entire

uh

primatology literature on just on that

topic

um but it is in fact uh humans um

and furthermore the human capacity for

imitation is extremely

sophisticated um and goes way beyond

merely duplicating uh behavior in in

ways that are

surprising um and and really i think

informative uh for thinking about how

this might work um

with machines um so

uh there's also i think a really

interesting connection

in imitation um to

uh not just primatology but

philosophical ethics um

and in the interest of time i won't get

too deeply into it but there's a there's

a classic

philosophical tension going back to the

1970s between what are called

possibilism and actualism so

do you do the very best thing possible

in a situation even if it requires

a very precise follow-through and you

know that you'll screw it up

um or do you do um the the lesser action

that you know you can actually follow

through

so this debate has now been going on for

uh something like

40 years and it's absolutely relevant to

thinking about

things like batch off policy rl um so

i'm really intrigued in the way that

those

literatures are starting to come into

contact

um in chapter eight we get into

arguably the heart of contemporary ai

safety research so ideas around

inverse reinforcement learning

cooperative inverse reinforcement

learning

deep rl from human preferences and so

forth um

and irl itself has this wonderfully

colorful history that it really goes

back to

uh stuart russell walking to safeway um

and thinking about his gait as he went

down the hill

um and this gets him thinking about you

know what

what is it that animal and human gates

optimize

why is it the case that it we still need

to hire motion capture people and we

can't reliably produce like realistic

looking gates

um and uh this gets into the idea of irl

so if the human gate is the answer

what's the question um there's an entire

interdisciplinary literature here

just on the science of gait and all the

different theories that people have had

over many decades for

why horse gates are certain ways and why

there are phase

transitions in quadruped gates at

certain speeds and

are they optimizing stress on the joints

are they optimizing you know

calorie load etc and

irl now it directly offers us a way to

answer and address questions like that

um so there's this purely

theoretical story that goes through um

you know peter abel's work on getting

helicopters to do

stunts uh people like chelsea finn

working on things like

guided cost learning um to uh

jan laika and paul cristiano very

memorably teaching

a uh a mujoko agent to do a backflip

just

by comparing different clips

and picking the one that looks slightly

more like a backflip um

there there are i think many

interdisciplinary questions

here um there are ethical questions

about recommendation systems you know

you can you can infer

what someone wants but um are they

acting the way that they want to be

acting in the first place i think that's

a that's an interesting question

um more broadly as robots become more

capable

and able to work sort of our elbow to

elbow with humans

in manufacturing settings and so forth

um

there's an entire interdisciplinary

literature on human

teamwork um and so i'm thinking about

people like

mit roboticist julie shaw who has done

a lot of work borrowing ideas straight

out of the human human teaming

literature

and showing that they apply um basically

wholesale

uh into thinking about human robot uh

collaboration uh in in factory settings

and so i think there's so many cases

like this

where there is just this windfall of

insights