id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

a30ea979-8b8e-4ed4-96a9-73c4a6930179 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | AI and Evolution

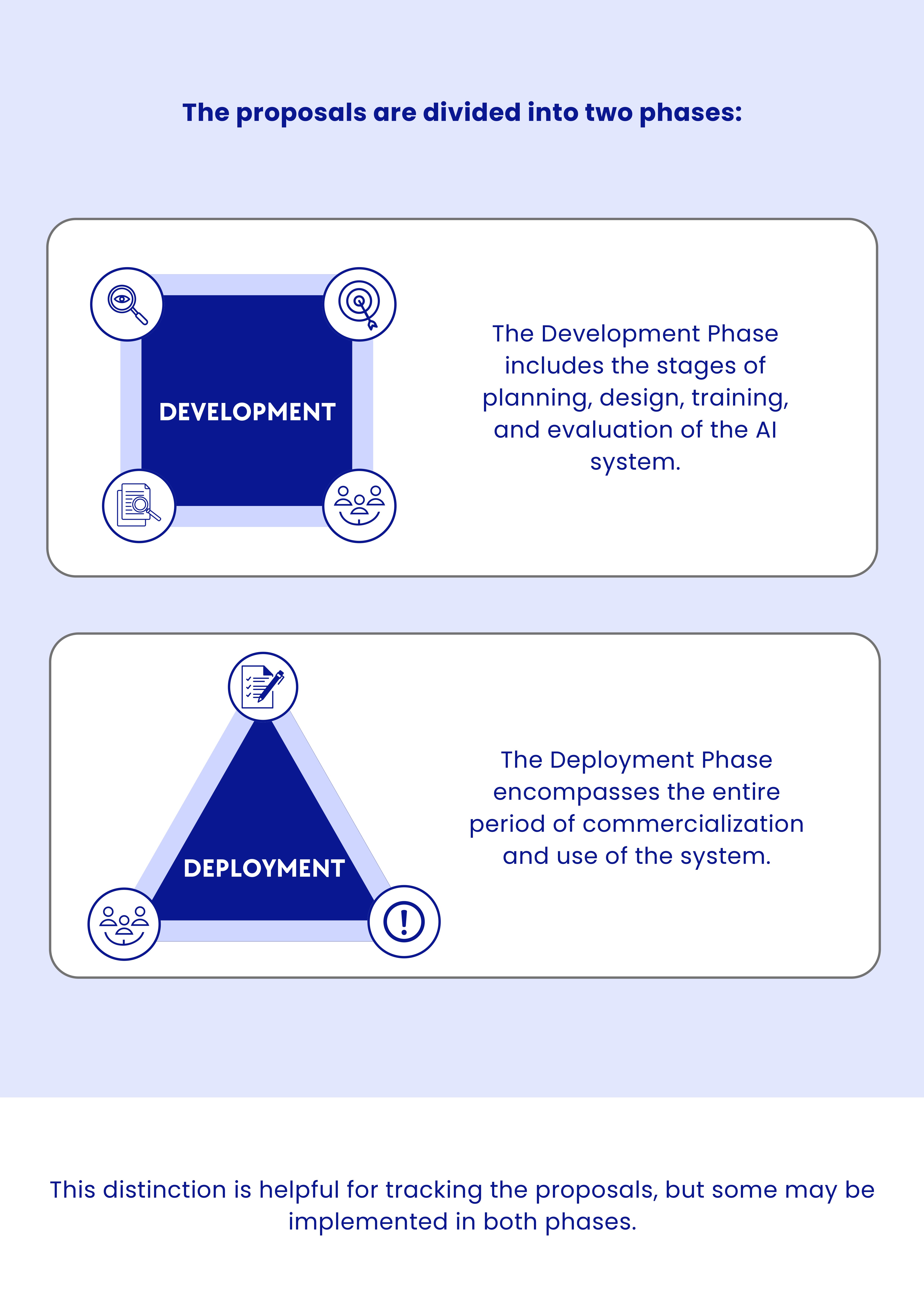

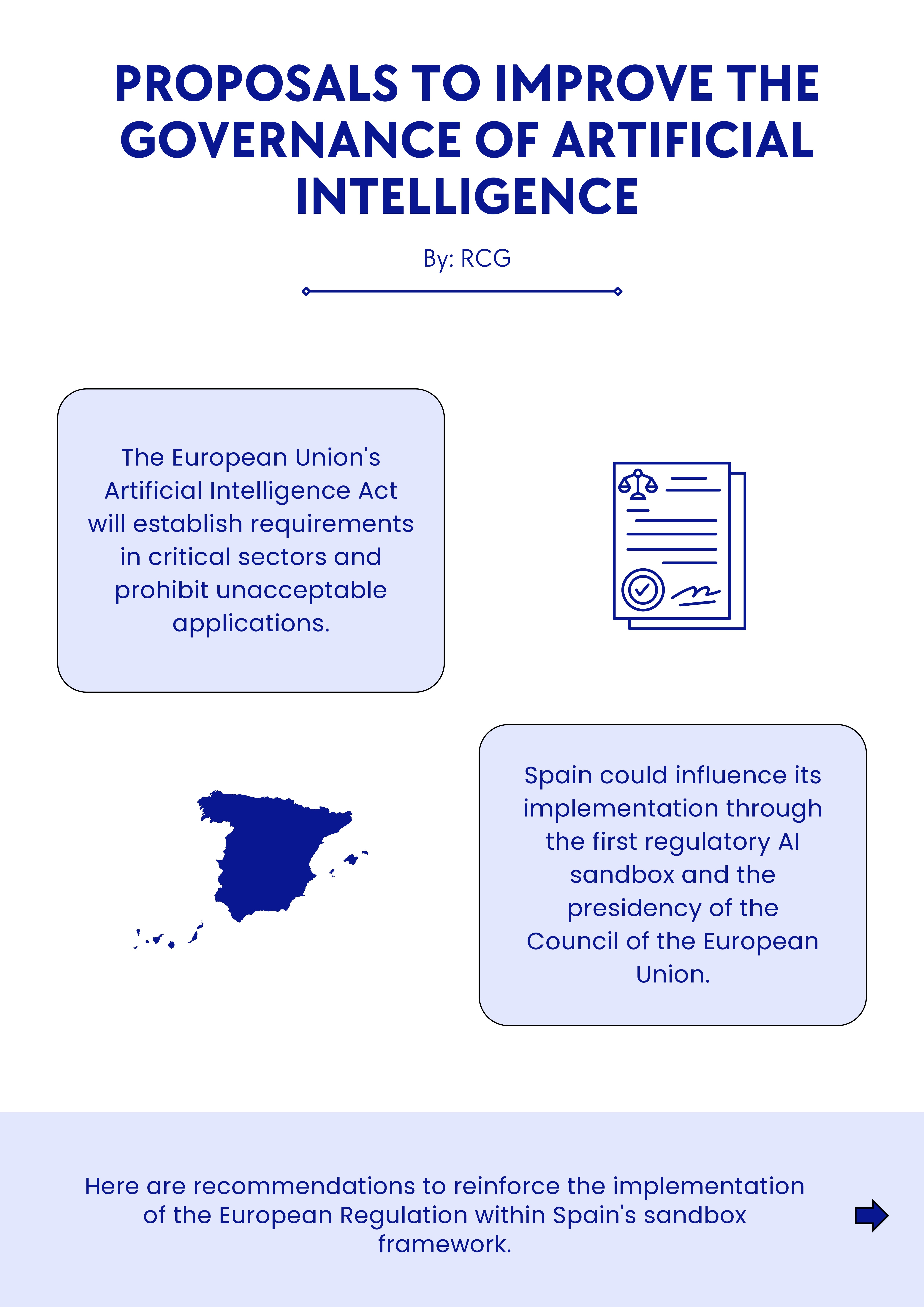

Executive Summary

=================

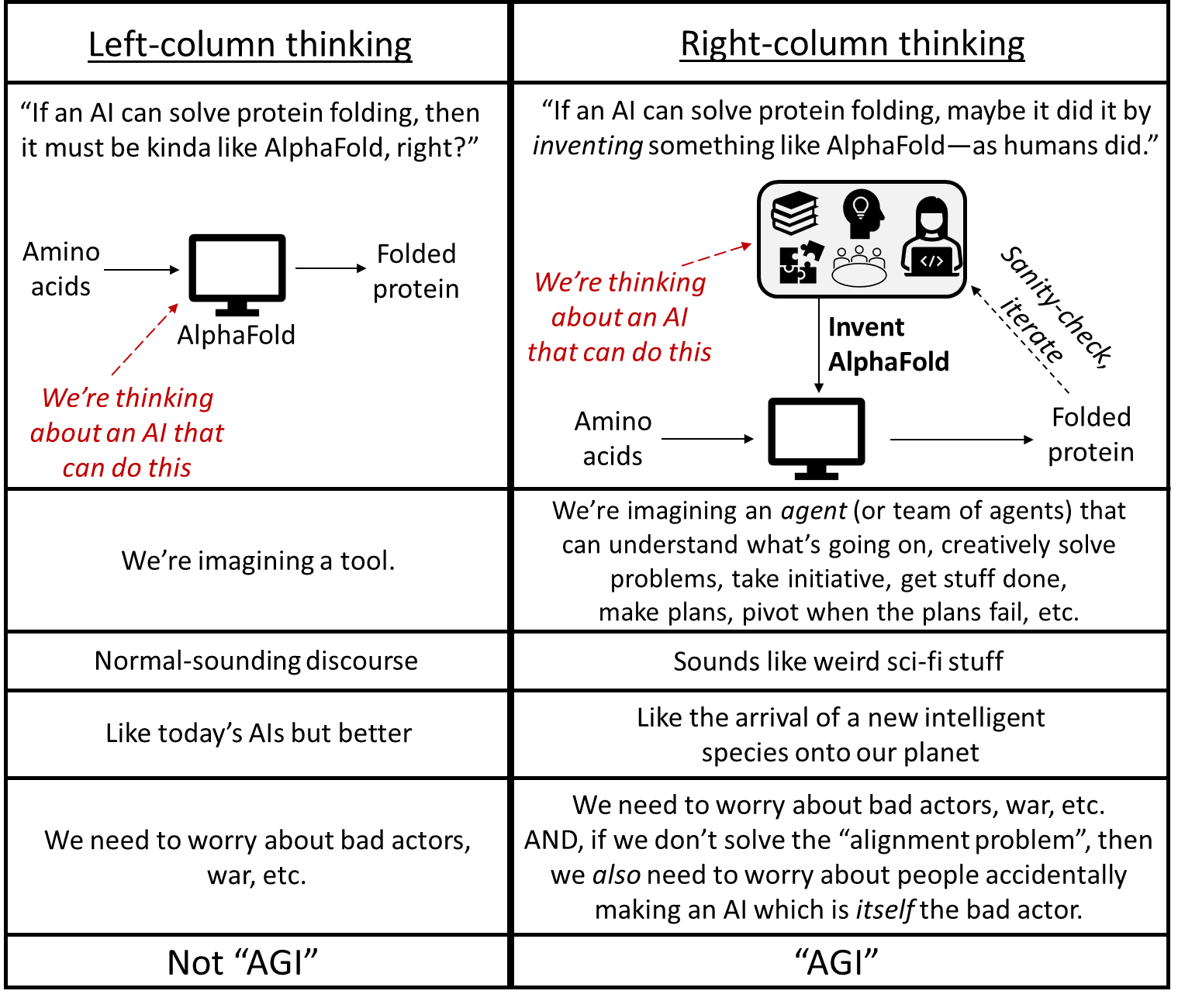

Artificial intelligence is advancing quickly. In some ways, AI development is an uncharted frontier, but in others, it follows the familiar pattern of other competitive processes; these include biological evolution, cultural change, and competition between businesses. In each of these, there is significant variation between individuals structures and some are copied more than others, with the result that the future population is more similar to the most copied individuals of the earlier generation. In this way, species evolve, cultural ideas are transmitted across generations, and successful businesses are imitated while unsuccessful ones disappear.

This paper argues that these same selection patterns will shape AI development and that the features that will be copied the most are likely to create an AI population that is dangerous to humans. As AIs become faster and more reliable than people at more and more tasks, businesses that allow AIs to perform more of their work will outperform competitors still using human labor at any stage, just as a modern clothing company that insisted on using only manual looms would be easily outcompeted by those that use industrial looms. Companies will need to increase their reliance on AIs to stay competitive, and the companies that use AIs best will dominate the marketplace. This trend means that the AIs most likely to be copied will be very efficient at achieving their goals autonomously with little human intervention.

A world dominated by increasingly powerful, independent, and goal-oriented AIs is dangerous. Today, the most successful AI models are not transparent, and even their creators do not fully know how they work or what they will be able to do before they do it. We know only their results, not how they arrived at them. As people give AIs the ability to act in the real world, the AIs’ internal processes will still be inscrutable: we will be able to measure their performance only based on whether or not they are achieving their goals. This means that the AIs humans will see as most successful — and therefore the ones that are copied — will be whichever AIs are most effective at achieving their goals, even if they use harmful or illegal methods, as long as we do not detect their bad behavior.

In natural selection, the same pattern emerges: individuals are cooperative or even altruistic in some situations, but ultimately, strategically selfish individuals are best able to propagate. A business that knows how to steal trade secrets or deceive regulators without getting caught will have an edge over one that refuses to ever engage in fraud on principle. During a harsh winter, an animal that steals food from others to feed its own children will likely have more surviving offspring. Similarly, the AIs that succeed most will be those able to deceive humans, seek power, and achieve their goals by any means necessary.

If AI systems are more capable than we are in many domains and tend to work toward their goals even if it means violating our wishes, will we be able to stop them? As we become increasingly dependent on AIs, we may not be able to stop AI’s evolution. Humanity has never before faced a threat that is as intelligent as we are or that has goals. Unless we take thoughtful care, we could find ourselves in the position faced by wild animals today: most humans have no particular desire to harm gorillas, but the process of harnessing our intelligence toward our own goals means that they are at risk of extinction, because their needs conflict with human goals.

This paper proposes several steps we can take to combat selection pressure and avoid that outcome. We are optimistic that if we are careful and prudent, we can ensure that AI systems are beneficial for humanity. But if we do not extinguish competition pressures, we risk creating a world populated by highly intelligent lifeforms that are indifferent or actively hostile to us. We do not want the world that is likely to emerge if we allow natural selection to determine how AIs develop. Now, before AIs are a significant danger, is the time to begin ensuring that they develop safely.

**Paper**: https://arxiv.org/abs/2303.16200

**Explainer Video**:

Note: This paper articulates a concern that emerges in multi-agent situations. Of particular interest to this community might be Section 3.4 (existing moral systems may not be human compatible (e.g., impartial consequentialism)) and Section 4.3.2 (Leviathan). |

7742b356-71d2-479d-9ae5-f3060df37a66 | StampyAI/alignment-research-dataset/arbital | Arbital | LaTeX

**[Placeholder](https://arbital.com/p/5xs)** |

8328a9df-e570-4f2e-b3ed-86ec0845907b | StampyAI/alignment-research-dataset/arxiv | Arxiv | Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Dense and Sparse Reward Environments

Introduction

------------

Reinforcement Learning (RL) has yielded many recent successes in solving complex tasks that meet and exceed the capabilities of human counterparts, demonstrated in video game environments [Mnih2015a], robotic manipulators [andrychowicz2018learning], and various open-source simulated scenarios [lillicrap2015continuous].

However, these RL approaches are sample inefficient and slow to converge to this impressive behavior, limited significantly by the need to explore potential strategies through trial and error, which produces initial performance significantly worse than human counterparts.

The resultant behavior that is initially random and slow to reach proficiency is poorly suited to various situations, such as physically embodied ground and air vehicles or in scenarios where sufficient capability must be achieved in short time spans.

In such situations, the random exploration of the state space of an untrained agent can result in unsafe behaviors and catastrophic failure of a physical system, potentially resulting in unacceptable damage or downtime.

Similarly, slow convergence of the agent’s performance requires exceedingly many interactions with the environment, which is often prohibitively difficult or infeasible for physical systems that are subject to energy constraints, component failures, and operation in dynamic or adverse environments.

These sample efficiency pitfalls of RL are exacerbated even further when trying to learn in the presence of sparse reward, often leading to cases where RL can fail to learn entirely.

One approach for overcoming these limitations is to utilize demonstrations of desired behavior from a human data source (or potentially some other agent) to initialize the learning agent to a significantly higher level of performance than is yielded by a randomly initialized agent.

This is often termed learning from demonstrations (LfD) [argall2009survey], which is a subset of imitation learning that seeks to train a policy to imitate the desired behavior of another policy or agent.

LfD leverages data (in the form of state-action tuples) collected from a demonstrator for supervised learning, and can be used to produce an agent with qualitatively similar behavior in a relatively short training time and with limited data.

This type of LfD, called Behavior Cloning (BC), learns a mapping between the state-action pairs contained in the set of demonstrations to mimic the behavior of the demonstrator.

Though BC techniques do allow for the relatively rapid learning of behaviors that are comparable to that of the demonstrator, it is limited by the quality and quantity of the demonstrations provided and is only improved by providing additional, high-quality demonstrations.

In addition, BC is plagued by the distributional drift problem in which a mismatch between the learned policy distribution of states and the distribution of states in the training set can cause errors that propagate over time and lead to catastrophic failures.

By combining BC with subsequent RL, it is possible to address the drawbacks of either approach, initializing a significantly more capable and safer agent than with random initialization, while also allowing for further self-improvement without needing to collect additional data from a human demonstrator.

However, it is currently unclear how to effectively update a policy initially trained with BC using reinforcement learning as these approaches are inherently optimizing different objective functions.

Previous works have used loss functions that combine BC losses with RL losses to enable this update, however, the components of these loss functions are often set anecdotally and their individual contributions are not well understood.

In this work, we propose the Cycle-of-Learning (CoL) framework, which uses an actor-critic architecture with a loss function that combines behavior cloning and 1-step Q-learning losses with an off-policy, pre-training step from human demonstrations.

The main contribution of this work is a method to enable transition from behavior cloning to reinforcement learning without performance degradation while improving reinforcement learning in terms of overall performance and training time.

Additionally, we examine the effect of these combined losses on overall policy learning in two continuous action space environments.

Our results show that our approach outperforms BC, Deep Deterministic Policy Gradients (DDPG), and DDPG from Demonstrations (DDPGfD) in two different application domains for both dense and sparse reward settings.

We show that our CoL method was the only method to produce a viable policy for one of the two environments designed specifically to exhibit a high degree of stochasticity.

In addition, we show that in dense-reward settings the performance of DDPGfD suffers significantly due to its inclusion of n-step Q-learning loss.

Our results also suggest that directly including the behavior cloning loss on demonstration data helps to ensure stable learning and ground future policy updates, and that a pre-training step enables the policy to start at a performance level greater than behavior cloning.

Preliminaries

-------------

We adopt the standard Markov Decision Process (MDP) formulation for sequential decision making [Sutton1998], which is defined as a tuple (S,A,R,P,γ), where S is the set of states, A is the set of actions, R(s,a) is the reward function, P(s′|s,a) is the transition probability function and γ is a discount factor.

At each state s∈S, the agent takes an action a∈A, receives a reward R(s,a) and arrives at state s′ as determined by P(s′|s,a).

The goal is to learn a behavior policy π which maximizes the expected discounted total reward.

This is formalized by the Q-function, sometimes referred to as the state-action value function:

| | | |

| --- | --- | --- |

| | Qπ(s,a)=Eπ[+∞∑t=0γtR(st,at)] | |

taking the expectation over trajectories obtained by executing the policy π starting at s0=s and a0=a.

Here we focus on actor-critic methods which seek to maximize

| | | |

| --- | --- | --- |

| | J(θ)=Es∼μ[Qπ(.|θ)(s,π(s|θ))] | |

with respect to parameters θ and an initial state distribution μ.

The Deep Deterministic Policy Gradient (DDPG) [lillicrap2015continuous] is an off-policy actor-critic reinforcement learning algorithm for continuous action spaces, which calculates the gradient of the Q function with respect to the action to train the policy.

DDPG makes use of a replay buffer to store past state-action transitions and target networks to stabilize Q-learning [Mnih2015a].

Since DDPG is an off-policy algorithm, it allows for the use of arbitrary data, such as demonstrations, to update the policy.

A demonstration trajectory is a tuple (s,a,r,s′) of state s, action a, the reward r=R(s,a) and the transition state s′ collected from a demonstrator’s policy.

In most cases these demonstrations are from a human observer, although in principle these demonstrations can come from any existing policy.

Related Work

------------

Several works have shown the efficacy of combining behavior cloning with reinforcement learning across a variety of tasks.

Recent work by [hester2018deep] combined behavior cloning with deep Q-learning [Mnih2015a] to learn policies for Atari games by leveraging a loss function that combines a large-margin supervised learning loss function, 1-step Q-learning loss, and an n-step Q-learning loss function that helps ensure the network satisfies the Bellman equation.

This work was extended to continuous action spaces by [vevcerik2017leveraging], who proposed an extension of DDPG [lillicrap2015continuous] that uses human demonstrations, and applied their approach to object manipulation tasks for both simulated and real robotic environments.

The loss functions for these methods include the n-step Q-learning loss, which is known to require on-policy data to accurately estimate.

Similar work by [Nair2018ICRA] combined behavior cloning-based demonstration learning, goal-based reinforcement learning, and DDPG for robotic manipulation of objects in a simulated environment.

A method that is very similar to ours is the Demo-Augmented Policy Gradient (DAPG) [Rajeswaran-RSS-18], an approach that uses behavior cloning as a pre-training step together with an augmented loss function with a heuristic weight function, which interpolates between the policy gradient loss, computed using the Natural Policy Gradient [Kakade2001], and behavior cloning loss.

They apply their approach across four different robotic manipulations tasks using a 24 Degree-of-Freedom (DoF) robotic hand in a simulator and show that DAPG outperforms DDPGfD across all tasks.

Their work also showed that behavior cloning combined with Natural Policy Gradient performed very similarly to DAPG for three of the four tasks considered, showcasing the importance of using a behavior cloning loss both in pre-training and policy training.

Integrating Behavior Cloning and Reinforcement Learning

-------------------------------------------------------

The Cycle-of-Learning (CoL) framework is a method for transitioning behavior cloning policies to RL by utilizing an actor-critic architecture with a combined BC+RL loss function and pre-training phase for continuous state-action spaces, in dense- and sparse-reward environments.

The main advantage of using off-policy methods is to re-use previous data to train the agent and reduce the amount of interactions between agent and environment, which is relevant to robotic applications or real-world system where interactions can be costly.

The combined loss function consists of the following components: an expert behavior cloning loss that bounds actor’s action to previous human trajectories, 1-step return Q-learning loss to propagate values of human trajectories to previous states, the actor loss, and a L2 regularization loss on the actor and critic to stabilize performance and prevent over-fitting during training.

The implementation of each loss component and their combination are defined as follows:

* Expert behavior cloning loss (LBC): Given expert demonstration subset DE of continuous states and actions visited during a task demonstration over T time steps

| | | | |

| --- | --- | --- | --- |

| | DE={sE0,aE0,sE1,aE1,...,sET,aET}, | | (1) |

a behavior cloning loss (mean squared error) from demonstration data LBC can be written as

| | | | |

| --- | --- | --- | --- |

| | LBC(θπ)=12(π(st|θπ)−aEt))2 | | (2) |

in order to minimize the difference between the actions predicted by the actor network π(st), parametrized by θπ, and the expert actions aEt for a given state vector st.

* 1-step return Q-learning loss (L1): The 1-step return R1 can be written in terms of the critic network Q, parametrized by θQ, as

| | | | |

| --- | --- | --- | --- |

| | R1=r+γQ(st+1,π(st+1|θπ)|θQ). | | (3) |

In order to satisfy the Bellman equation, we minimize the difference between the predicted Q-value and the observed return from the 1-step roll-out:

| | | | |

| --- | --- | --- | --- |

| | LQ1(θQ)=12(R1−Q(st,π(st|θπ)|θQ))2. | | (4) |

* Actor Q-loss (LA): It is assumed that the critic function Q is differentiable with respect to the action. Since we want to maximize the Q-values for the current state, the actor loss became the negative of the Q-values predicted by the critic:

| | | | |

| --- | --- | --- | --- |

| | LA(θπ)=−Q(s,π(st|θπ)|θQ). | | (5) |

Combining the above loss functions for the Cycle-of-Learning becomes

| | | | |

| --- | --- | --- | --- |

| | LCoL | (θQ,θπ)=λBCLBC(θπ)+λALA(θπ) | |

| | | +λQ1LQ1(θQ)+λL2LL2(θQ)+λL2LL2(θπ). | | (6) |

Our approach starts by collecting contiguous trajectories from expert policies and stores the current and subsequent state-actions pairs, reward received, and task completion signal in a permanent expert memory buffer DE.

During the pre-training phase, the agent samples a batch of trajectories from the expert memory buffer DE containing expert trajectories to perform updates on the actor and critic networks using the same combined loss function (Equations [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")).

This procedure shapes the actor and critic initial distributions to be closer to the expert trajectories and eases the transition from policies learned through expert demonstration to reinforcement learning.

After the pre-training phase, the policy is allowed to roll-out and collect its first on-policy samples, which are stored in a separate first-in-first-out memory buffer with only the agent’s samples.

After collecting a given number of on-policy samples, the agent samples a batch of trajectories comprising 25% of samples from the expert memory buffer and 75% from the agent’s memory buffer.

This fixed ratio guarantees that each gradient update is grounded by expert trajectories.

If a human demonstrator is used, they can intervene at any time the agent is executing their policy, and add this new trajectories to the expert memory buffer.

The proposed method is shown in Algorithm [1](#alg1 "Algorithm 1 ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

1:Input:

Environment env, number of training steps T, number of training steps per batch M, number of pre-training steps L, number of gradient updates K, and CoL hyperparameters λQ1, λBC, λA, λL2, τ.

2:Output:

Trained actor π(s|θπ) and critic Q(s,π|θQ) networks.

3:Randomly initialize:

Actor network π(s|θπ) and its target π′(s|θπ′).

Critic network Q(s,π|θQ) and its target Q′(s,π′|θQ′).

4:Initialize agent and expert replay buffers R and RE.

5:Load R and RE with expert dataset DE.

6:for pre-training steps = 1, …, L do

7: Call *TrainUpdate*() procedure.

8:for training steps = 1, …, T do

9: Reset env and receive initial state s0.

10: for batch steps = 1, …, M do

11: Select action at=π(st|θπ) according to policy.

12: Perform action at and observe reward rt and next state st+1.

13: Store transition (st,at,rt,st+1) in R.

14: for update steps = 1, …, K do

15: Reset env and receive initial state s0.

16: for training steps t = 1, …, T do

17: Call *TrainUpdate*() procedure.

18:procedure TrainUpdate()

19: if Pre-training then

20: Randomly sample N transitions (si,ai,ri,si+1) from the expert replay buffer RE.

21: else

22: Randomly sample N∗0.25 transitions (si,ai,ri,si+1) from the expert replay buffer RE and N∗0.75 transitions from the agent replay buffer R.

23: Compute LQ1(θQ), LBC(θπ), LA(θπ), LL2(θQ), LL2(θπ)

24: Update actor and critic for K steps according to Equation [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

25: Update target networks:

| | | |

| --- | --- | --- |

| | θπ′←τθπ+(1−τ)θπ′, | |

| | θQ′←τθQ+(1−τ)θQ′. | |

Algorithm 1 Cycle-of-Learning (CoL): Transitioning from Demonstration to Reinforcement Learning

Experimental Setup and Results

------------------------------

### Experimental Setup

As described in the previous sections, in our approach, the Cycle-of-Learning (CoL), we collect contiguous trajectories from expert policies and store them in a permanent memory buffer.

The policy is allowed to roll-out and is trained with a combined loss from a mix of demonstration and agent data, stored in a separate first-in-first-out buffer.

We validate our approach in three environments with continuous observation- and action-space: LunarLanderContinuous-v2 [brockman2016openai] (dense and sparse reward cases) and a custom quadrotor landing task [goecks2018efficiently] implemented using Microsoft AirSim [airsim2017fsr].

The dense reward case of LunarLanderContinuous-v2 is the standard environment provided by OpenAI Gym library [brockman2016openai]: state space consists of a eight-dimensional continuous vector with inertial states of the lander, action space consists of a two-dimensional continuous vector controlling main and side thrusts, and reward is given at every step based on the relative motion of the lander with respect to the landing pad (bonus reward is given when the landing is completed successfully).

The sparse reward case is a custom modification with the same reward scheme and state-action space, however the reward is stored during the policy roll-out and is only given to the agent if the episode is over, zero otherwise.

The custom quadrotor landing task is a modified version of the environment proposed by \citeauthorgoecks2018efficiently, implemented using Microsoft AirSim [airsim2017fsr], which consists of landing a quadrotor on a static landing pad in a simulated gusty environment, as seen in Figure [1](#Sx5.F1 "Figure 1 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

The state space consists of a fifteen-dimensional continuous vector with inertial states of the quadrotor and visual features that represent the landing pad image-frame position and radius as seen by a downward-facing camera.

The action space is a four-dimensional continuous vector that sends velocity commands for throttle, roll, pitch, and yaw.

Wind is modeled as noise applied directly to the actions commanded by the agent and follows a temporal-based, instead of distance-based, discrete wind gust model [moorhouse1980us] with 65% probability of encountering a wind gust at each time step.

This was done to induce additional stochasticity in the environment.

The gust duration is uniformly sampled to last between one to three real time seconds and can be imparted in any direction, with maximum velocity of half of what can be commanded by the agent along each axis.

This task has a sparse-reward scheme (reward R is given at the end of the episode, and is zero otherwise) based on the relative distance rrel between the quadrotor and the center of the landing pad at the final time step of the episode:

| | | |

| --- | --- | --- |

| | R=11+r2rel. | |

The hyperparameters used in CoL for each environment are described in the Supplementary Material.

Figure 1: Screenshot of AirSim environment and landing task. Inset image in lower right corner: downward-facing camera view used for extracting the position and radius of the landing pad, which is part of the state space.

| | | |

| --- | --- | --- |

| Comparison of CoL, BC, DDPG, and DDPGfD in the (a) dense- and (b) sparse-reward LunarLanderContinuous-v2 environment, and the (c) sparse-reward Microsoft AirSim quadrotor landing environment.

(a)

| Comparison of CoL, BC, DDPG, and DDPGfD in the (a) dense- and (b) sparse-reward LunarLanderContinuous-v2 environment, and the (c) sparse-reward Microsoft AirSim quadrotor landing environment.

(b)

| Comparison of CoL, BC, DDPG, and DDPGfD in the (a) dense- and (b) sparse-reward LunarLanderContinuous-v2 environment, and the (c) sparse-reward Microsoft AirSim quadrotor landing environment.

(c)

|

Figure 2: Comparison of CoL, BC, DDPG, and DDPGfD in the (a) dense- and (b) sparse-reward LunarLanderContinuous-v2 environment, and the (c) sparse-reward Microsoft AirSim quadrotor landing environment.

The baselines for our approach are Deep Deterministic Policy Gradient (DDPG) [lillicrap2015continuous, silver2014deterministic], Deep Deterministic Policy Gradient from Demonstrations (DDPGfD) [vevcerik2017leveraging], and behavior cloning (BC).

For the DDPG baseline we used an open-source implementation by Stable Baselines [stable-baselines].

The hyperparameters used concur with the original DDPG publication [lillicrap2015continuous]: actor and critic networks with 2 hidden layers with 400 and 300 units respectively, optimized using Adam [kingma2014adam] with learning rate of 10−4 for the actor and 10−3 for the critic, discount factor of γ=0.99, trained with minibatch size of 64, and replay buffer size of 106.

Exploration noise was added to the action following an Ornstein-Uhlenbeck process [uhlenbeck1930theory] with mean of 0.15 and standard deviation of 0.2.

The DDPGfD baseline followed the same implementation for the loss function used in our approach (Equations [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")) with some modifications: removal of the behavior cloning loss by setting λBC=0, inclusion of the n-step loss as

| | | |

| --- | --- | --- |

| | LQn(θQ)=12(Rn−Q(s,π(s|θπ)|θQ))2, | |

where Rn is written as

| | | | |

| --- | --- | --- | --- |

| | Rn | =rt+γrt+1+⋯+γn−art+n−1 | |

| | | +γnQ(st+n,π(st+n|θπ)|θQ), | |

| | | =n−1∑i=0γiri+γnQ(st+n,π(st+n|θπ)|θQ), | |

using n=10, following the Deep Q-learning from Demonstrations (DQfD) [hester2018deep] implementation from which DDPGfD is derived, and using Prioritized Experience Replay (PER) [schaul2015prioritized] to mix expert and agent samples instead of following a fixed ratio.

The BC policies are trained by minimizing the mean squared error between the expert demonstrations and the output of the model.

The policies consist of a fully-connected neural network with 3 hidden layers with 128 units each and exponential linear unit (ELU) activation function [clevert2015fast].

The BC policy was evaluated for 100 episodes which was used to calculate the mean and standard error of the performance of the policy.

### Experimental results

The comparative performances of the CoL against the baseline methods (DDPG and DDPGfD) for the LunarLanderContinunous-v2 environment are presented via their training curves in Figure [(a)a](#Sx5.F2.sf1 "(a) ‣ Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments"), using the standard dense reward.

The mean reward of the BC pre-trained from the human demonstrations is also shown for reference, and its standard error is shown by the shaded band.

The CoL reward initializes to values at or above the BC and steadily improves throughout the reinforcement learning phase.

Conversely, the DDPG RL baseline initially returns rewards lower than the BC and slowly improves until its performance reaches similar levels to the CoL after approximately one million steps.

However, this baseline never performs as consistently as the CoL and eventually begins to diverge, losing much of its performance gains after about four million steps.

The DDPGfD baseline performs even worse in this context, never consistently surpassing the BC performance.

When using sparse rewards, meaning only the rewards generated by the LunarLanderContinunous-v2 environment are provided only at the last time step of each episode, the performance improvement of the CoL relative to the DDPG and DDPGfD baselines is even greater (Figure [(b)b](#Sx5.F2.sf2 "(b) ‣ Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")).

The performance of the CoL is qualitatively similar during training to that of the dense case, with an initial reward roughtly equal to or greater than that of the BC and a consistently increasing reward.

Conversely, the performance of the DDPG baseline is greatly diminished for the sparse reward case, yielding effectively no improvement for more than three million training steps before generating reward only comparable to the BC and the initial performance of the CoL.

The training of the DDPGfD also deteriorates in this case, with even lower reward values and produces a more volatile, less stable training curve as is also seen for DDPG.

The results for the more realistic and challenging AirSim quadrotor landing environment (Figure [(c)c](#Sx5.F2.sf3 "(c) ‣ Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")) illustrate a similar trend.

The CoL initially returns rewards at or above the BC and steadily increases its performance, whereas the baseline approaches (DDPG and DDPGfD) practically never succeed and subsequently fail to learn a viable policy.

Noting that successfully landing on the target would generate a sparse episode reward of approximately 0.64, it is clear that these baseline algorithms rarely generate a satisfactory trajectory for the duration of training.

| | | |

| --- | --- | --- |

| (a) Ablation study in LunarLanderContinuous-v2 environment comparing complete Cycle-of-Learning (CoL), CoL without the pre-training phase (CoL-PT), CoL without the expert behavior cloning loss (CoL-BC), and pre-training with BC followed by DDPG without combined loss (BC+DDPG). Comparison in LunarLanderContinuous-v2 environment for (b) dense and (c) sparse cases of the Cycle-of-Learning with (CoL+N) and without (CoL) the

(a)

| (a) Ablation study in LunarLanderContinuous-v2 environment comparing complete Cycle-of-Learning (CoL), CoL without the pre-training phase (CoL-PT), CoL without the expert behavior cloning loss (CoL-BC), and pre-training with BC followed by DDPG without combined loss (BC+DDPG). Comparison in LunarLanderContinuous-v2 environment for (b) dense and (c) sparse cases of the Cycle-of-Learning with (CoL+N) and without (CoL) the

(b)

| (a) Ablation study in LunarLanderContinuous-v2 environment comparing complete Cycle-of-Learning (CoL), CoL without the pre-training phase (CoL-PT), CoL without the expert behavior cloning loss (CoL-BC), and pre-training with BC followed by DDPG without combined loss (BC+DDPG). Comparison in LunarLanderContinuous-v2 environment for (b) dense and (c) sparse cases of the Cycle-of-Learning with (CoL+N) and without (CoL) the

(c)

|

Figure 3: (a) Ablation study in LunarLanderContinuous-v2 environment comparing complete Cycle-of-Learning (CoL), CoL without the pre-training phase (CoL-PT), CoL without the expert behavior cloning loss (CoL-BC), and pre-training with BC followed by DDPG without combined loss (BC+DDPG). Comparison in LunarLanderContinuous-v2 environment for (b) dense and (c) sparse cases of the Cycle-of-Learning with (CoL+N) and without (CoL) the n-step loss, and DDPGfD with (DDPGfD) and without (DDPGfD-N) the n-step loss.

### Ablation Studies

Several ablation studies were performed to evaluate the impact of each of the critical elements of the CoL on learning.

These respectively include removal of the pre-training phase (*CoL-PT*), removal of the actor’s expert behavior cloning loss during pre-training and RL (*CoL-BC*), and use of standard behavior cloning and DDPG loss functions rather than the combined loss functions in Equations [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")-[Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") (*BC+DDPG*).

The results of each ablation condition are shown in Figure [(a)a](#Sx5.F3.sf1 "(a) ‣ Figure 3 ‣ Experimental results ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") and details about the ablation study can be found in Table [1](#Sx5.T1 "Table 1 ‣ The n-step Loss Contribution ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

*CoL-PT:* Cycle-of-Learning without the pre-training phase (number of pre-training steps L=0).

The complete combined loss, as seen in Equations [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") is used during the reinforcement learning phase.

This condition assesses the impact on learning performance of not pre-training the agent, while still using the combined loss in the RL phase.

This condition differs from the baseline CoL in its initial performance being less, significantly below the BC, but does reach similar rewards after several hundred thousand steps, exhibiting the same consistent response during training thereafter.

Effectively, this highlights that the benefit of pre-training is improved initial response and some speed gain in reaching steady-state performance level, without qualitatively impacting the long-term training behavior.

*CoL-BC:* Cycle-of-Learning without the behavioral cloning expert loss on the actor (λBC:=0) during both pre-training and RL phases.

The critic loss remains the same as in Equation [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") for both training phases.

This condition assesses the impact on learning performance of the behavior cloning loss component LBC, given otherwise consistent loss functions in both pre-training and RL phases.

This condition improves upon the CoL-PT condition in its initial reward return and similarly achieves comparable performance to the baseline CoL in the first few hundred thousand steps, but then steadily deteriorates as training continues, with several catastrophic losses in performance.

This result makes clear that the behavioral cloning loss is an essential component of the combined loss function toward maintaining performance throughout training, anchoring the learning to some previously demonstrated behaviors that are sufficiently proficient.

*BC+DDPG:* Behavior cloning with subsequent DDPG using standard loss functions (Equations [2](#Sx4.E2 "(2) ‣ 1st item ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments"), [4](#Sx4.E4 "(4) ‣ 2nd item ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments"), and [5](#Sx4.E5 "(5) ‣ 3rd item ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")) rather than the CoL combined loss in both phases (Equation [Integrating Behavior Cloning and Reinforcement Learning](#Sx4.Ex3 "Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")).

Pre-training of the actor with behavioral cloning uses only the regression loss, as seen in Equation [2](#Sx4.E2 "(2) ‣ 1st item ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

DDPG utilizes standard loss functions for the actor and critic, as seen in Equation [Ablation Studies](#Sx5.Ex11 "Ablation Studies ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments").

This condition assesses the impact on learning performance of standardized loss functions rather than our combined loss functions across both training phases.

This condition produces initial rewards similar to that of the CoL-PT condition, below the BC response.

However, it subsequently improves in performance only to a level similar to that of the BC and is much less stable in its response throughout training.

This result indicates that simply sequencing standard BC and RL algorithms results in both poorer initial performance and a significantly lower level of performance and stability even after millions of training steps, emphasizing the value of a consistent combined loss function across all training phases.

| | | | |

| --- | --- | --- | --- |

| | LDDPG(θQ,θπ)= | λQ1LQ1(θQ)+λALA(θπ) | |

| | | +λL2LL2(θQ)+λL2LL2(θπ). | | (7) |

### The n-step Loss Contribution

In addition to the ablation study, we also investigated the effect of including an n-step Q-learning loss function in addition to the 1-step Q-learning loss function.

n-step Q-learning has the advantage of faster convergence properties as the n previous Q-values are updated after receiving a reward, instead of only the next Q-value as is the case with 1-step Q-learning.

This allows for faster propagation of the expected return to Q-values at earlier states and overall improves the efficiency of Q-learning.

The downside of n-step Q-learning is that the Q-values are only actually correct when learning on-policy and thus off-policy techniques can generally not be used as in 1-step learning [Sutton1999].

Table 1: Method Comparison

Method

Plot Legend

Pre-Training Loss

Training Loss

Buffer Type

Average Reward

CoL

Blue, solid

LQ1+LA+LBC

LQ1+LA+LBC

Fixed Ratio

261.80 ± 22.53

CoL-PT

Purple, solid

None

LQ1+LA+LBC

Fixed Ratio

253.24 ± 46.50

DDPGfD-N

Green, dash

None

LQ1+LA+LBC

PER

241.21 ± 47.22

DDPG

Red, solid

None

LQ1+LA

Uniform

152.98 ± 69.45

BC

Grey, solid

LBC

None

None

-48.83 ± 27.68\*

BC+DDPG

Red, dash

LBC

LQ1+LA

Uniform

-57.38 ± 50.11

CoL+N

Blue, dash

LQ1+LQn+LA+LBC

LQ1+LQn+LA+LBC

Fixed Ratio

-56.50 ± 68.19

CoL-BC

Purple, dash

LQ1+LA

LQ1+LA

Fixed Ratio

-105.65 ± 196.85

DDPGfD

Green, solid

None

LQ1+LQn+LA+LBC

PER

-209.14 ± 60.80

Summary of learning methods. Enumerated for each method are all non-zero loss components (excluding regularization), buffer type, and average and standard error of the reward throughout training (after pre-training) across the three seeds, evaluated with dense reward in LunarLanderContinuous-v2 environment. ∗For BC, these values are computed from 100 evaluation trajectories of the final pre-trained agent.

To show the effect of the n-step Q-learning loss on the Cycle-of-Learning (CoL), we repeated experiments shown in Figures [(a)a](#Sx5.F2.sf1 "(a) ‣ Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") and Figures [(b)b](#Sx5.F2.sf2 "(b) ‣ Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments"), for the dense and sparse reward cases in the LunarLanderContinuous-v2 environment, adding the n-step Q-learning loss (n=10) [hester2018deep]) to the combined loss function of the CoL, as well as removing this loss component from DDPGfD.

The results for the dense and sparse case are shown in Figures [(b)b](#Sx5.F3.sf2 "(b) ‣ Figure 3 ‣ Experimental results ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") and [(c)c](#Sx5.F3.sf3 "(c) ‣ Figure 3 ‣ Experimental results ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments"), respectively.

For both dense and reward cases, the n-step Q-learning loss decreases the performance of the CoL, where it was not present originally, and its removal increases the performance of DDPGfD, where it was present originally. We believe this is because in order to learn accurate Q-values the n-step Q-learning loss needs to be applied to on-policy data, however, because each method is using a replay buffer of past data, the learning is actually off-policy.

Discussion and Conclusion

-------------------------

In this work, we present a novel method for combining behavior cloning with RL using an actor-critic architecture that implements a combined loss function and a demonstration-based pre-training phase.

We compare our approach against state-of-the-art baselines, including BC, DDPG, and DDPGfD, and demonstrate the superiority of our method in terms of learning speed, stability, and performance with respect to these baselines.

This is shown in the OpenAI Gym LunarLanderContinuous-v2 and the high-fidelity Microsoft AirSim quadrotor simulation environments.

This result holds in both dense and sparse reward settings, though the improvements of our method over these baselines is even more dramatic in the sparse case.

This result is especially noticeable in the AirSim landing task (Figure [2](#Sx5.F2 "Figure 2 ‣ Experimental Setup ‣ Experimental Setup and Results ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments")c), an environment designed to exhibit a high degree of stochasticity.

The DDPG and DDPGfD baselines fail to learn an effective policy to perform the task, even after five million training steps, when using sparse rewards.

Conversely, our method, CoL, is able to quickly achieve high performance without degradation, surpassing both behavior cloning and reinforcement learning algorithms alone, despite receiving only sparse rewards.

Additionally, we demonstrate through an ablation study of several components of our architecture that both pre-training and the use of a combined loss function are critical to the performance improvements.

This ablation study also indicates that simply sequencing standard behavior cloning and reinforcement learning algorithms does not produce these gains.

We also illustrate that the lack of a n-step Q-learning loss in our architecture is necessary for these improvements.

Furthermore, we show that inclusion of such a loss term in our algorithm significantly reduces performance in both dense- and sparse-reward conditions, while its omission from DDPGfD significantly improves performance in dense-reward but not in sparse-reward conditions.

To the best of our knowledge this is the first work that examined the effect of the n-step Q-learning loss on learning for DDPGfD policy performance.

Future work will investigate how to effectively integrate multiple forms of human feedback into an efficient human-in-the-loop RL system capable of rapidly adapting autonomous systems in dynamically changing environments.

For example, existing works have shown the utility of leveraging human interventions [goecks2018efficiently, saunders2018trial], and specifically learning a predictive model of what actions to ignore at every time step [Zahavy2018].

A limitation of our current approach is the requirement that the demonstrator be capable of generating a sufficient number of minimally proficient demonstrations, which can be prohibitive for humans in certain tasks with fast temporal dynamics or a high-dimensional action space.

Deep reinforcement learning with human evaluative feedback has also been shown to quickly train policies across a variety of domains [warnell2018deep, Arumugam2017] and can be a particularly useful approach when the human is unable to provide a demonstration of desired behavior but can articulate when desired behavior is achieved.

Further, the capability our approach provides, to transition from a limited number of human demonstrations to a baseline behavior cloning agent and subsequent improvement through reinforcement learning without significant losses in performance, is largely motivated by the goal of human-in-the-loop learning on physical system.

Thus our aim is to integrate this method onto such systems and demonstrate rapid, safe, and stable learning from limited human interaction.

Supplementary Material

----------------------

This supplementary material contains details of the implementation to improve reproducibility of the research work. Algorithm [1](#alg1 "Algorithm 1 ‣ Integrating Behavior Cloning and Reinforcement Learning ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") describes all steps of the proposed method and Table [2](#Sx7.T2 "Table 2 ‣ Supplementary Material ‣ Integrating Behavior Cloning and Reinforcement Learning for Improved Performance in Sparse Reward Environments") summarize all CoL hyperparameters used on the LunarLanderContinuous-v2 and Microsoft AirSim experiments.

| | |

| --- | --- |

| | Environments |

| Hyperparameter |

(a)

|

(b)

|

| λQ1 factor | 1.0 | 1.0 |

| λBC factor | 1.0 | 1.0 |

| λA factor | 1.0 | 1.0 |

| λL2 factor | 1.0e−5 | 1.0e−5 |

| Batch size | 512 | 512 |

| Actor learning rate | 1.0e−3 | 1.0e−3 |

| Critic learning rate | 1.0e−4 | 1.0e−4 |

| Memory size | 5.0e5 | 5.0e5 |

| Expert trajectories | 20 | 10 |

| Pre-training steps | 2.0e4 | 2.0e4 |

| Training steps | 5.0e6 | 5.0e5 |

| Discount factor γ | 0.99 | 0.99 |

| Hidden layers | 3 | 3 |

| Neurons per layer | 128 | 128 |

| Activation function | ELU | ELU |

Table 2: Cycle-of-Learning hyperparemeters for each environment: (a) LunarLanderContinuous-v2 and (b) Microsoft AirSim. |

41c7f1f3-48fd-4ce6-a9bc-aa247d1b2376 | trentmkelly/LessWrong-43k | LessWrong | When It's Not Right to be Rational

By now I expect most of us have acknowledged the importance of being rational. But as vital as it is to know what principles generally work, it can be even more important to know the exceptions.

As a process of constant self-evaluation and -modification, rationality is capable of adopting new techniques and methodologies even if we don't know how they work. An 'irrational' action can be rational if we recognize that it functions. So in an ultimate sense, there are no exceptions to rationality's usefulness.

In a more proximate sense, though, does it have limits? Are there ever times when it's better *not* to explicitly understand your reasons for acting, when it's better *not* to actively correlate and integrate all your knowledge?

I can think one such case: It's often better not to look down.

People who don't spend a lot of time living precariously at the edge of long drops don't develop methods of coping. When they're unexpectedly forced to such heights, they often look down. Looking down, subcortical instincts are activated that cause them to freeze and panic, overriding their conscious intentions. This tends to prevent them from accomplishing whatever goals brought them to that location, and in situations where balance is required for safety, the panic instinct can even cause them to fall.

If you don't look down, you may know intellectually that you're above a great height, but at some level your emotions and instincts aren't as triggered. You don't *appreciate* the height on a subconscious level, and so while you may know you're in danger and be appropriately nervous, your conscious intentions aren't overridden. You don't freeze. You can keep your conscious understanding compartmentalized, not bringing to mind information which you possess but don't wish to be aware of.

The general principle seems to be that it is useful to avoid fully integrated awareness of relevant data if acknowledging that data dissolves your ability to regulate your emotio |

53580520-8ce5-441b-b5be-a5afd6cdfe5b | trentmkelly/LessWrong-43k | LessWrong | Link: Interesting Video About Automation and the Singularity

I finally got into Ello (I was mad that I couldn't get an invitation for the longest time). I found this interesting video about automation and what we should do when most jobs no longer require humans. I have often wondered what we were going to do with the millions of unemployed people when machines create untold abundance. What will we need human workers to do? I have thought that there will be certain areas where we will want to interact with people. I think bots and other machines will be more assistants rather than fully taking over tasks in a few areas. I think it will be more balanced but that does not solve the problem of millions of unemployed undermining the economy and the wealth of nations. Do we save the jobs? Do we stop automation? Is this the natural course of history? Should we all be prepared to be destitute? Should we consider minimum income proposals more closely?

The video is here:

https://www.youtube.com/watch?v=7Pq-S557XQU&feature=youtu.be

I found it on this interesting post. He projects a much more dystopian view of the Singularity and how it will affect humanity. I think his post is not mindful of Bostrom's work which I am plowing through but it might provide some discussion fodder.

The post is here:

https://ello.co/scottdakota/post/ofb9vzDer9NoiQvwdueyAg |

52dc6b31-3f20-4d11-a4e4-99b4d3548bab | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | How many people are working (directly) on reducing existential risk from AI?

Summary

=======

I've updated my estimate of the number of FTE (full-time equivalent) working (directly) on reducing existential risks from AI **from 300 FTE to 400 FTE**.

Below I've pasted some slightly edited excepts of the relevant sections of the 80,000 Hours profile on [preventing an AI-related catastrophe](https://80000hours.org/problem-profiles/artificial-intelligence/).

New 80,000 Hours estimate of the number of people working on reducing AI risk

=============================================================================

Neglectedness estimate

----------------------

We estimate there are around 400 people around the world working directly on reducing the chances of an AI-related existential catastrophe (with a 90% confidence interval ranging between 200 and 1,000). Of these, about three quarters are working on technical AI safety research, with the rest split between strategy (and other governance) research and advocacy. [[1]](#fn290oixxnjlk)We think there are around 800 people working in [complementary roles](https://80000hours.org/problem-profiles/artificial-intelligence/#complementary-yet-crucial-roles), but we’re highly uncertain about this estimate.

Footnote on methodology

-----------------------

It’s difficult to estimate this number.

Ideally we want to estimate the number of FTE (“[full-time equivalent](https://en.wikipedia.org/wiki/Full-time_equivalent)“) working on the problem of reducing existential risks from AI.

But there are lots of ambiguities around what counts as working on the issue. So I tried to use the following guidelines in my estimates:

* I didn’t include people who might think of themselves on a career path that is building towards a role preventing an AI-related catastrophe, but who are currently skilling up rather than working directly on the problem.

* I included researchers, engineers, and other staff that seem to work directly on technical AI safety research or AI strategy and governance. But there’s an uncertain boundary between these people and others who I chose not to include. For example, I didn’t include machine learning engineers whose role is building AI systems that might be used for safety research but aren’t *primarily* designed for that purpose.

* I only included time spent on work that seems related to reducing the potentially [existential risks](https://80000hours.org/articles/existential-risks/) from AI, like those discussed in this article. Lots of wider AI safety and AI ethics work focuses on reducing other risks from AI seems relevant to reducing existential risks – this ‘indirect’ work makes this estimate difficult. I decided not to include indirect work on reducing the risks of an AI-related catastrophe (see our [problem framework](https://80000hours.org/articles/problem-framework/#a-challenge-direct-vs-indirect-future-effort) for more).

* Relatedly, I didn’t include people working on other problems that might indirectly affect the chances of an AI-related catastrophe, such as [epistemics and improving institutional decision-making](https://80000hours.org/problem-profiles/improving-institutional-decision-making/), reducing the chances of [great power conflict](https://80000hours.org/problem-profiles/great-power-conflict/), or [building effective altruism](https://80000hours.org/problem-profiles/promoting-effective-altruism/).

With those decisions made, I estimated this in three different ways.

First, for each organisation in the [AI Watch](https://aiwatch.issarice.com/) database, I estimated the number of FTE working directly on reducing existential risks from AI. I did this by looking at the number of staff listed at each organisation, both in total and in 2022, as well as the number of researchers listed at each organisation. Overall I estimated that there were 76 to 536 FTE working on technical AI safety (90% confidence), with a mean of 196 FTE. I estimated that there were 51 to 359 FTE working on AI governance and strategy (90% confidence), with a mean of 151 FTE. There’s a lot of subjective judgement in these estimates because of the ambiguities above. The estimates could be too low if AI Watch is missing data on some organisations, or too high if the data counts people more than once or includes people who no longer work in the area.

Second, I adapted the methodology used by [Gavin Leech’s estimate of the number of people working on reducing existential risks from AI](https://forum.effectivealtruism.org/posts/8ErtxW7FRPGMtDqJy/the-academic-contribution-to-ai-safety-seems-large). I split the organisations in Leech’s estimate into technical safety and governance/strategy. I adapted Gavin’s figures for the proportion of computer science academic work relevant to the topic to fit my definitions above, and made a related estimate for work outside computer science but within academia that is relevant. Overall I estimated that there were 125 to 1,848 FTE working on technical AI safety (90% confidence), with a mean of 580 FTE. I estimated that there were 48 to 268 FTE working on AI governance and strategy (90% confidence), with a mean of 100 FTE.

Third, I looked at the estimates of similar numbers by [Stephen McAleese](https://forum.effectivealtruism.org/posts/3gmkrj3khJHndYGNe/estimating-the-current-and-future-number-of-ai-safety). I made minor changes to McAleese’s categorisation of organisations, to ensure the numbers were consistent with the previous two estimates. Overall I estimated that there were 110 to 552 FTE working on technical AI safety (90% confidence), with a mean of 267 FTE. I estimated that there were 36 to 193 FTE working on AI governance and strategy (90% confidence), with a mean of 81 FTE.

I took a geometric mean of the three estimates to form a final estimate, and combined confidence intervals by assuming that distributions were approximately lognormal.

Finally, I estimated the number of FTE in [complementary roles](https://80000hours.org/problem-profiles/artificial-intelligence/#complementary-yet-crucial-roles) using the AI Watch database. For relevant organisations, I identified those where there was enough data listed about the number of *researchers* at those organisations. I calculated the ratio between the number of researchers in 2022 and the number of staff in 2022, as recorded in the database. I calculated the mean of those ratios, and a confidence interval using the standard deviation. I used this ratio to calculate the overall number of support staff by assuming that estimates of the number of staff are lognormally distributed and that the estimate of this ratio is normally distributed. Overall I estimated that there were 2 to 2,357 FTE in complementary roles (90% confidence), with a mean of 770 FTE.

There are likely many errors in this methodology, but I expect these errors are small compared to the uncertainty in the underlying data I’m using. Ultimately, I’m still highly uncertain about the overall FTE working on preventing an AI-related catastrophe, but I’m confident enough that the number is relatively small to say that the problem as a whole is highly neglected.

I’m very uncertain about this estimate. It involved a number of highly subjective judgement calls. You can see the (very rough) spreadsheet I worked off [here](https://docs.google.com/spreadsheets/d/1e1Vh_nK_7VHKZUuQ9VNp3JWC2etjUAHVmVXbKarKMNw/edit). If you have any feedback, I’d really appreciate it if you could tell me what you think using [this form](https://forms.gle/RRZaFTfdDkSQ6fJG8).

Some extra thoughts from me

===========================

This number is extremely difficult to estimate.

Like any Fermi estimate, I'd expect there to be a number of mistakes in this estimate. I think there will be two main types:

* Bad judgment calls when estimating the number of people working at each organisation, e.g. based on "what counts as an FTE working directly on this issue", "how wrong is the AI watch database on this organisation", etc.

* Errors in calculation / estimating uncertainty, etc.

Again, like in any Fermi estimate, I'd hope that these errors will roughly cancel out overall.

I didn't spend much time on this (maybe about 2 days of work). This is because I'd guess that more work won't improve the estimate by decision-relevant amounts. Some reasons for this:

* A rougher version of this estimate that I'd used previously came to an answer of 300 FTE. That estimate took around 3-4 hours of work. While 300 FTE to 400 FTE is a large proportional change, it still represents a highly neglected field and doesn't seem substantially decision-relevant.

* Errors in collecting data on this seem large in a way that couldn't be easily mitigated by doing more work.

* There would still be substantial subjective judgement in an estimate that took more time. My uncertainty in this estimate includes uncertainty in whether these are the right judgement calls (on the criteria of "is it truthful, across a distribution of plausible definitions, to say that this is the number of FTE working directly on reducing existential risk from AI"), and it seems very difficult to reduce that uncertainty.

1. **[^](#fnref290oixxnjlk)**Note that before 19 December 2022, [this page](https://80000hours.org/problem-profiles/artificial-intelligence/#neglectedness) gave a lower estimate of 300 FTE working on reducing existential risks from AI, of which around two thirds were working on technical AI safety research, with the rest split between strategy (and other governance) research and advocacy

This change represents a (hopefully!) improved estimate, rather than a notable change in the number of researchers. |

7d128eae-2686-414d-b364-51c3ccb49986 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Scalable Online Planning via Reinforcement Learning Fine-Tuning.

1 Introduction

---------------

Lookahead search has been a key component of successful AI systems in sequential decision-making problems. For example, in order to achieve superhuman performance in go, chess and shogi, AlphaZero leveraged Monte Carlo tree search (MCTS) silver2018general. MuZero extended this even further to Atari games, again using MCTS schrittwieser2019mastering. Without MCTS, AlphaZero performs below a top human level, and more generally no superhuman Go bot has yet been developed that does not use some form of MCTS. Similarly, search algorithms were a critical component of AI successes in backgammon tesauro1994td, chess campbell2002deep, poker moravvcik2017deepstack; brown2017superhuman; brown2019superhuman, and Hanabi lerer2019improving. However, even though different search algorithms were used in each domain, *all* of them were *tabular* search algorithms, i.e., a distinct policy was computed for each state encountered during search, without any function approximation to generalize between similar states.

While tabular search has achieved great success, particularly in perfect-information deterministic environments, its applicability is clearly limited. For example, in the popular partially observable stochastic AI benchmark game Hanabi bard2020hanabi, one-step lookahead search involves a search over about 20 possible next states. However, searching over all two-step joint policies would require a search over 2020 states, which is clearly intractable for tabular search. Additionally, unlike perfect-information deterministic games where it is only necessary to search over a tiny fraction of the next several moves, partial observability and stochasticity make it impossible to limit the search to a tiny subset of all possible states. Fortunately, many of these states are extremely similar, so a search algorithm can in theory benefit by generalizing between similar states. This is the motivation for our non-tabular search algorithm.

In this paper we take inspiration from related research in continuous control environments that use non-tabular planning algorithms to improve performance at inference time wang2019exploring; marino2020iterative; amos2020differentiable. These methods leverage finite-horizon model-based rollouts. Specifically, we replace tabular search with fine-tuning of the policy network at inference time. We show that with this approach we are able to achieve state-of-the-art performance in Hanabi.

Specifically, our method is able to search multiple moves ahead and discover joint deviations, which in general is intractable using tabular search. We also show the generality of our approach by showing that it outperforms Monte Carlo tree search in deterministic and stochastic versions of the Atari game Ms. Pacman.

2 Background

-------------

###

2.1 MDPs, POMDPs, and Dec-POMDPs

We consider Markov decision processes (MDPs) sutton2018reinforcement, where the agent observes the state st∈S at time t, performs an action according to their policy at∼π(st) and receive reward rt=r(st,at). The environment transit to the next state following transition probability st+1∼P(st+1|st,at).

POMDPs sondik1971optimal extend MDPs to the partially observable setting where the agent cannot observe the true underlying state but instead receive information through a observation function ot=Ω(st). Due to the partial observability, the agent policy π often needs to take into account the entire action-observation history (AOH) denoted as τ′t={o0,a0,r0,…ot} with τt={s0,a0,r0,…,st} representing the true underlying trajectory.

Dec-POMDPs oliehoek2008optimal; bard2020hanabi extend POMDPs to the cooperative multi-agent setting. At each time step t, each agent i receives their individual observation from the state oit=Ωi(st) and selects action ait. The joint action of N player is a tuple at=(a1t,a1t,...,aNt) and can be observed by all players. We denote the individual policy for each player as πi and joint policy as π. We use τit={oi0,a0,r0,…oit} to represent the AOH from the perspective of agent i.

###

2.2 Tabular Search in Dec-POMDPs

SPARTA lerer2019improving is a tabular search algorithm previously applied in Dec-POMDPs that is proven to never hurt performance and in practice greatly improves performance. Specifically, SPARTA assumes that all agents in a game agree on a joint blueprint policy πbp and that one or more agents may choose to deviate from πbp at test time. We assume a two player setting in the following discussion. There are two versions of SPARTA.

In single-agent SPARTA, only one agent may deviate from the blueprint. We denote the search agent as agent i and the other agent as −i. Agent i maintains their private belief over the trajectories they are in given their AOH,

Bi(τt)=P(τt|τit).

At action selection time, agent i compute the Monte Carlo estimation for the value of each action assuming both players will follow blueprint policy πbp thereafter,

| | | | |

| --- | --- | --- | --- |

| | Q(τit,at)=Eτt∼Bi(τt),τT∼P(τT|τt,at,πbp)Rt(τT), | | (1) |

where Rt(τT)=∑Tt′=trt′ is the forward looking return from t to termination step T. Agent i will pick argmaxatQ(τit,at) instead of the blueprint action abp if maxatQ(τit,at)−Q(τit,abp)>ϵ.

In multi-agent SPARTA, both agents are allowed to search at test time. However, since agent i’s belief over states depends on agent −i’s policy, it is essential that agent i replicate the search policy of agent −i. Since agent i does not know the private state of agent −i, agent i must therefore compute agent −i’s policy for

*every* possible AOH τit agent i may have seen, a process referred to as *range-search*. Once a correct belief is computed, agent −i runs their own search. The range-search can be prohibitively expensive to compute in many cases because players might have millions of potential AOH. For example, Hanabi might have up to about 10 million AOHs. To mitigate this problem, the authors introduce a way to execute multi-agent search only when the range of AOHs is small enough that it is computationally feasible to do so, and the algorithm uses single-agent search otherwise. Even so, SPARTA multi-agent search is limited to one-ply search – each agent calculates the expected value of only

the next action. The computational complexity grows exponentially with each additional ply.

In contrast, our non-tabular multi-agent search technique makes it possible to conduct 2-ply, and deeper, search.

###

2.3 Reinforcement Learning

Our goal is to learn a stationary policy π(a|s) such that the expected non-discounted return J(π)=Es0∼ρ(s)Eπ∑∞t=0r(st,at) is maximized, where ρ(s) is the initial state distribution. For any policy π we define its value Vπ(st)=Eπ(∑∞l=0r(st+l,at+l)|st), its Q-value Qπ(st,at)=Eπ(∑∞l=0r(st+l,at+l)|st,at) and its advantage Aπ(st,at)=Qπ(st,at)−Vπ(st). In reinforcement learning (RL), the transition probability p and the reward r are unknown, and the policy is learned by sampling trajectories τ=((s0,a0),...,(sT,aT)) from the environment.

In Q-learning, we use the sampled trajectories to learn the Q-function of the optimal policy, given by the Bellman equation: Q(s,a)=Es′(r(s,a)+γmaxa′Q(s′,a′)). The learned policy is greedy with respect to Q: π(a|s)=argmaxaQ(s,a). In DQN, the Q-value is approximated with a neural network Qθ that is trained by taking gradients of the mean squared Bellman error:

| | | | |

| --- | --- | --- | --- |

| | ∇θ^E(Qθ(s,a)−(r(s,a)+γmaxa′Qθ′(s′,a′)))2 | | (2) |

where θ′ is a fixed copy of θ and ^E denotes the empirical average over a finite number of batches.

In policy gradient (PG), we directly learn a parametrized policy πθ by performing gradient descent using an estimator of J:

| | | | |

| --- | --- | --- | --- |

| | ∇θ^E(logπθ(a|s)^A(s,a)) | | (3) |

where ^A is an estimate of the advantage of πθ.

###

2.4 Decision-Time Planning

To make better decisions at test time, we can specialize the learned policy, which we refer to as the blueprint policy (also known as the prior policy), to the current state by using it as prior in an online planning algorithm.

One of the most popular planning algorithms is Monte Carlo tree search (MCTS), which has famously enabled superhuman performance in go, chess, and many other settings silver2016mastering; silver2017mastering; silver2018general; schrittwieser2019mastering.

MCTS builds a tree of potential future states starting from the current state. Each node s keeps track of its action-state visitation counts N(s,a) and an estimate of its Q-values Q(s,a), refined each time the node is visited. The prior policy is used to select the next action to take during the tree traversal.

MCTS don’t scale well in environments with large branching factors caused by a high amount of stochasticity or partial observability. For example, MCTS becomes very expensive as the size of the action space increases because it needs to build an explicit tree to keep statistics about state-action pairs, and nodes need to be visited several times for the method to be effective. Furthermore, MCTS can in the worst case expand very deeply a branch that it misidentifies to be optimal due to a lack of exploration – exacerbated in high dimensions– which leads to a worst-case sample complexity worse than uniform sampling munos2014bandits.

3 Reinforcement Learning Fine-Tuning

-------------------------------------

To alleviate the inefficiency of tabular search methods like MCTS in large state and action spaces, we propose to formulate online planning as a RL problem that should be quickly solvable given a sufficiently optimal blueprint policy. We do so by using RL as a multiple-step policy improvement operator efroni2018multiple. More specifically, we bias the initial state distribution towards the current state s∗ and we reduce the horizon of the problem, leading to the following truncated objective:

| | | | |

| --- | --- | --- | --- |

| | max~π(Es0∼ρs∗(s)Ea0:H−1,s1:H∼~πH−1∑t=0(r(st,at))+EaH:∞,sH+1:∞∼π∞∑t=H(r(st,at))) | | (4) |

where ρs∗(s)=1(s=s∗) and π is the blueprint policy. If π is optimal, then π′=π is a solution to the problem. We call this procedure RL Fine-Tuning or RL Search and we use the two terms interchangeably in the remaining.

The blueprint π is either directly parameterized by θ or is greedy with respect to a Q-value parameterized by θ. In both cases, we improve π for the next H steps by following the gradient of the truncated objective with respect to θ. We present the two approaches in more detail in the following subsections.

###

3.1 Policy Fine-Tuning

In policy fine-tuning, we use any actor-critic algorithm to train a blueprint policy and a value network. For our experiments in the Atari environment, we use PPO schulman2017proximal. At action selection time, to solve objective ([4](#S3.E4 "(4) ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning")) we use the blueprint policy to initialize the online policy and the blueprint value to truncate our objective:

| | | | |

| --- | --- | --- | --- |

| | max~θ(Es0∼ρs∗(s)Ea0:H−1,s1:H∼π~θH−1∑t=0(r(st,at))+Vϕ(sH)) | | (5) |

If Vϕ is a perfect estimator of Vπθ, then we exactly optimize the truncated objective [4](#S3.E4 "(4) ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning").

The fine-tuned policy π~θ is obtained by performing N gradient steps with the PPO objective. The policy improvement step via policy gradient is presented in Algorithm [1](#algorithm1 "Algorithm 1 ‣ 3.1 Policy Fine-Tuning ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning").

Input : current state st, number of updates N, global policy parameter θ, global value parameter ϕ, horizon H, number of rollouts M

Output : updated parameter ~θ

Init:

θ0=θ, ϕ0=ϕ

for *i←1 to N* do

Collect M trajectories of H time steps starting from st using πθi−1.

Compute the generalized advantage estimate using:

| | | | | |

| --- | --- | --- | --- | --- |

| | δt | =rt+γVϕi−1(st+1)−Vϕi−1(st)∀t∈[0,H−2] | | (6) |

| | δH−1 | =rt+γVϕ(sH)−Vϕi−1(st) | |

(ϕi,θi)←PPO(ϕi−1,θi−1)

return *θN*

Algorithm 1 Policy Gradient Improvement. We use a standard PPO update to fine-tune the blueprint policy πθ and value Vϕ from some state st.

###

3.2 Q-value Fine-Tuning

In Q-value fine-tuning, we train a Q-network using any offline RL algorithm. At action selection time, to solve objective ([4](#S3.E4 "(4) ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning")) we use the blueprint Q-value to truncate our objective:

| | | | |

| --- | --- | --- | --- |

| | max~π(Es0∼ρs∗(s)Ea0:H−1,s1:H∼~πH−1∑t=0(r(st,at))+maxaQθ(sH,a)) | | (7) |

The online policy ~π is greedy with respect to the fine-tuned Q-network Q~θ obtained by performing N gradient steps with the mean squared Bellman error. To alleviate instabilities, the transitions used to fine-tune the Q-network are sampled with probability p from the global buffer replay and with probability 1−p from the trajectories sampled at action selection time. The Q-value improvement step is presented in Algorithm [1](#algorithm1 "Algorithm 1 ‣ 3.1 Policy Fine-Tuning ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning").

Input : current state st, number of updates N, global Q-network parameter θ, horizon H, number of rollouts M, batch size B

Output : updated parameter ~θ

Init:

θ0=θ

Collect M trajectories of H time steps starting from st using an ϵ-greedy policy wrt Qθ.

For each trajectory, if the environment is not terminated, replace rt+H−1 with rt+H−1+maxaQθ(st+H,a)

for *i←1 to N* do

Sample B transitions with probability p from the global buffer and probability 1−p from the M collected trajectories.

θi←∇θi−1^E(Qθi−1(s,a)−(r(s,a)+γmaxa′Qθ′(s′,a′)))2

return *θN*

Algorithm 2 Q-Value Improvement. We use a standard Bellman residual update to fine-tune the blueprint Q function Qθ from some state st.

###

3.3 Scaling Multi-Agent Search in Dec-POMDPs with RL Search

####

3.3.1 Scaling Single-Agent RL Search

In a test game, agent i maintains a belief over possible trajectories given their own AOH, Bi(τt)=P(τt|τit). At action selection time, agent i performs Q-value fine-tuning (Algorithm [2](#algorithm2 "Algorithm 2 ‣ 3.2 Q-value Fine-Tuning ‣ 3 Reinforcement Learning Fine-Tuning ‣ Scalable Online Planning via Reinforcement Learning Fine-Tuning")) of the blueprint policy πbp on the current belief B(τit). This produces a new policy π∗. Next, we evaluate both π∗ and πbp on E trajectories sampled from the belief. If the expected value of π∗ is at least ϵ higher than the expected value of πbp, the agent plays according to π∗ for the next H moves and search again at the (H+1)th move. Otherwise, the agent sticks to playing according to πbp for the current move and searches again on their next turn.

####

3.3.2 Scaling Multi-Agent RL Search

A critical limitation of single-agent SPARTA, multi-agent SPARTA, and single-agent RL search is that the searching agent assumes the other agent will follow the blueprint policy on all future turns. It is thus impossible for those methods to find *joint* deviations (ai∗t,a−i∗t1) where it is beneficial for agent i deviate to action ai∗t if and only if the other agent −i deviates to a−i∗t+1, an action that will not be selected under blueprint. Searching for joint deviation with general tabular methods is computationally infeasible in large Dec-POMDPs such as Hanabi because the effective branching factor is ∏i(|Bi(τt)|×|Ai|), which is about 2020 in Hanabi.

RL search enables searching for the next H moves for all agents jointly conditioning on the common knowledge. The observations in a Dec-POMDP can be factorized into private observation and public observations opubt. For example, in Hanabi the private observations are the teammates hands and the public observation are the played cards, discarded cards, hints given, and previous actions. The public observation is common knowledge among all players. We can define the common knowledge public belief as Bpub(τpubt)={τt|Ωpub(τt)=τpubt} where τpubt={opub0,a1,r1,…,opubt} and Ωpub is the public observation function. We can then draw τ∼Bpub(τpubt) repeatedly. The new policy is obtained via Q-value fine-tuning, in the same way as in single-agent RL search. Since the search procedure uses only public information, it can in principle be replicated by every player independently. However, to simplify the engineering challenges of our research, we conduct search just once and share the solution with all the players. If the newly trained policy is better than the blueprint by at least ϵ, every player will use it for their next H moves.

4 Experiments

--------------

###