id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

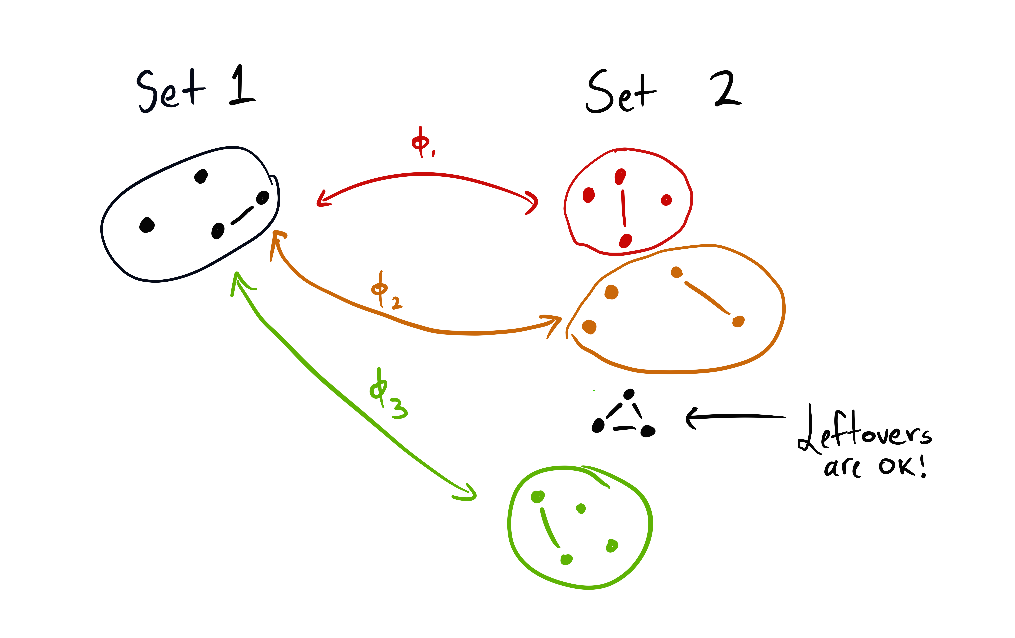

5da3e594-2eee-412f-9706-ce13058633d8 | StampyAI/alignment-research-dataset/arxiv | Arxiv | A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment

1 Introduction

---------------

In reinforcement learning [Sutton1998](#bib.bib61) (RL), agents identify policies to collect as much reward as possible in a given environment. Recently, leveraging parametric function approximators has led to tremendous success in applying RL to high-dimensional domains such as Atari games [Mnih2015](#bib.bib39) or robotics [Schulman2015](#bib.bib55) . In such domains, inspired by the policy gradient theorem [Sutton2000](#bib.bib62) ; [Degris2012](#bib.bib13) , actor-critic approaches [Lillicrap2016](#bib.bib35) ; [Mnih2016](#bib.bib40) attain state-of-the-art results by learning both a parametric policy and a value function.

Empowerment is an information-theoretic framework where agents maximize the mutual information between an action sequence and the state that is obtained after executing this action sequence from some given initial state [Klyubin2005](#bib.bib26) ; [Klyubin2008](#bib.bib27) ; [Salge2014](#bib.bib52) . It turns out that the mutual information is highest for such initial states where the number of reachable next states is largest. Policies that aim for high empowerment can lead to complex behavior, e.g. balancing a pole in the absence of any explicit reward signal [Jung2011](#bib.bib23) .

Despite progress on learning empowerment values with function approximators [Mohamed2015](#bib.bib41) ; [deAbril2018](#bib.bib12) ; [Qureshi2019](#bib.bib48) , there has been little attempt in the combination with reward maximization, let alone in utilizing empowerment for RL in the high-dimensional domains it has become applicable just recently. We therefore propose a unified principle for reward maximization and empowerment, and demonstrate that empowered signals can boost RL in large-scale domains such as robotics. In short, our contributions are:

* a generalized Bellman optimality principle for joint reward maximization and empowerment,

* a proof for unique values and convergence to the optimal solution for our novel principle,

* empowered actor-critic methods boosting RL in MuJoCo compared to model-free baselines.

2 Background

-------------

###

2.1 Reinforcement Learning

In the discrete RL setting, an agent, being in state s∈S, executes an action a∈A according to a behavioral policy πbehave(a|s) that is a conditional probability distribution πbehave:S×A→[0,1]. The environment, in response, transitions to a successor state s′∈S according to a (probabilistic) state-transition function P(s′|s,a), where P:S×A×S→[0,1]. Furthermore, the environment generates a reward signal r=R(s,a) according to a reward function R:S×A→R. The agent’s aim is to maximize its expected future cumulative reward with respect to the behavioral policy maxπbehaveEπbehave,P[∑∞t=0γtrt], with t being a time index and γ∈(0,1) a discount factor. Optimal expected future cumulative reward values for a given state s obey then the following recursion:

| | | | |

| --- | --- | --- | --- |

| | V⋆(s)=maxa(R(s,a)+γEP(s′|s,a)[V⋆(s′)])=:maxaQ⋆(s,a), | | (1) |

referred to as Bellman’s optimality principle [Bellman1957](#bib.bib4) , where V⋆ and Q⋆ are the optimal value functions.

###

2.2 Empowerment

Empowerment is an information-theoretic method where an agent executes a sequence of k actions →a∈Ak when in state s∈S according to a policy πempower(→a|s) which is a conditional probability distribution πempower:S×Ak→[0,1]. This is slightly more general than in the RL setting where only a single action is taken upon observing a certain state. The agent’s aim is to identify an optimal policy πempower that maximizes the mutual information I[→A,S′∣∣s] between the action sequence →a and the state s′ to which the environment transitions after executing →a in s, formulated as:

| | | | |

| --- | --- | --- | --- |

| | E⋆(s)=maxπempowerI[→A,S′∣∣s]=maxπempowerEπ%empower(→a|s)P(k)(s′|s,→a)[logp(→a|s′,s)π%

empower(→a|s)]. | | (2) |

Here, E⋆(s) refers to the optimal empowerment value and P(k)(s′|s,→a) to the probability of transitioning to s′ after executing the sequence →a in state s, where P(k):S×Ak×S→[0,1]. Importantly, p(→a|s′,s)=P(k)(s′|s,→a)πempower(→a|s)∑→aP(k)(s′|s,→a)πempower(→a|s) is the inverse dynamics model of πempower. The implicit dependency of p on the optimization argument πempower renders the problem non-trivial.

From an information-theoretic perspective, optimizing for empowerment is equivalent to maximizing the capacity [Shannon1948](#bib.bib57) of an information channel P(k)(s′|s,→a) with input →a and output s′ w.r.t. the input distribution πempower(→a|s), as outlined in the following [Csiszar1984](#bib.bib11) ; [Cover2006](#bib.bib10) .

Define the functional If(πempower,P(k),q):=Eπ%

empower(→a|s)P(k)(s′|s,→a)[logq(→a|s′,s)π%

empower(→a|s)], where q is a conditional probability q:S×S×Ak→[0,1]. Then the mutual information is recovered as a special case of If with I[→A,S′∣∣s]=maxqIf(πempower,P(k),q) for a given πempower. The maximum argument

| | | | |

| --- | --- | --- | --- |

| | q⋆(→a|s′,s)=P(k)(s′|s,→a)πempower(→a|s)∑→aP(k)(s′|s,→a)π%

empower(→a|s) | | (3) |

is the true Bayesian posterior p(→a|s′,s)—see [Cover2006](#bib.bib10) Lemma 10.8.1 for details.

Similarly, maximizing If(πempower,P(k),q) with respect to πempower for a given q leads to:

| | | | |

| --- | --- | --- | --- |

| | | | (4) |

As explained e.g. in [Cover2006](#bib.bib10) page 335 similar to [Ortega2013](#bib.bib45) . The above yields the subsequent proposition.

###### Proposition 1

Maximum Channel Capacity.

Iterating through Equations ([3](#S2.E3 "(3) ‣ 2.2 Empowerment ‣ 2 Background ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and ([4](#S2.E4 "(4) ‣ 2.2 Empowerment ‣ 2 Background ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) by computing q given π\emph{empower} and vice versa in an alternating fashion converges to an optimal pair (q⋆,π⋆\emph{empower}) that maximizes the mutual information maxπ\emph{empower}I[→A,S′∣∣s]=If(π⋆\emph{empower},P(k),q⋆). The convergence rate is O(1/N), where N is the number of iterations, for any initial π\emph{ini}\emph{empower} with support in Ak∀s—see [Cover2006](#bib.bib10) Chapter 10.8 and [Csiszar1984](#bib.bib11) ; [Gallager1994](#bib.bib16) . This is known as Blahut-Arimoto algorithm [Arimoto1972](#bib.bib2) ; [Blahut1972](#bib.bib7) .

Remark. *Empowerment is similar to curiosity concepts of predictive information that focus on the mutual information between the current and the subsequent state [Bialek2001](#bib.bib6) ; [Prokopenko2006](#bib.bib47) ; [Zahedi2010](#bib.bib68) ; [Still2012](#bib.bib60) ; [Montufar2016](#bib.bib42) ; [Schossau2016](#bib.bib53) .*

3 Motivation: Combining Reward Maximization with Empowerment

-------------------------------------------------------------

The Blahut-Arimoto algorithm presented in the previous section solves empowerment for low-dimensional discrete settings but does not readily scale to high-dimensional or continuous state-action spaces. While there has been progress on learning empowerment values with parametric function approximators [Mohamed2015](#bib.bib41) , how to combine it with reward maximization or RL remains open.

In principle, there are two possibilities for utilizing empowerment. The first is to directly use the policy π⋆empower obtained in the course of learning empowerment values E⋆(s). The second is to train a behavioral policy to take an action in each state such that the expected empowerment value of the next state is highest (requiring E⋆-values as a prerequisite). Note that the two possibilities are conceptually different. The latter seeks states with a large number of reachable next states [Jung2011](#bib.bib23) . The first, on the other hand, aims for high mutual information between actions and the subsequent state, which is not necessarily the same as seeking highly empowered states [Mohamed2015](#bib.bib41) .

We hypothesize empowered signals to be beneficial for RL, especially in high-dimensional environments and at the beginning of the training process when the initial policy is poor. In this work, we therefore combine reward maximization with empowerment inspired by the two behavioral possibilities outlined in the previous paragraph. Hence, we focus on the cumulative RL setting rather than the non-cumulative setting that is typical for empowerment. We furthermore use one-step empowerment as a reference, i.e. k=1, because cumulative one-step empowerment learning leads to high values in such states where the number of possibly reachable next states is high, and preserves hence the original empowerment intuition *without* requiring a multi-step policy—see Section [4.3](#S4.SS3 "4.3 Practical Verification in a Grid World Example ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment").

The first idea is to train a policy that trades off reward maximization and *learning* cumulative empowerment:

| | | | |

| --- | --- | --- | --- |

| | maxπbehaveEπbehave,P[∞∑t=0γt(αR(st,at)+βlogp(at|st+1,st)πbehave(at|st))], | | (5) |

where α≥0 and β≥0 are scaling factors, and p indicates the inverse dynamics model of πbehave in line with Equation ([3](#S2.E3 "(3) ‣ 2.2 Empowerment ‣ 2 Background ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")). Note that p depends on the optimization argument πbehave, similar to ordinary empowerment, leading to a non-trivial Markov decision problem (MDP).

The second idea is to learn cumulative empowerment values *a priori* by solving Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) with α=0 and β=1. The outcome of this is a policy π⋆empower (and its inverse dynamics model p) that can be used to construct an intrinsic reward signal which is then added to the external reward:

| | | | |

| --- | --- | --- | --- |

| | maxπbehaveEπbehave,P[∞∑t=0γt(αR(st,at)+βEπ⋆empower(a|st)P(s′|st,a)[logp(a|s′,st)π⋆empower(a|st)])]. | | (6) |

Importantly, Equation ([6](#S3.E6 "(6) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) poses an ordinary MDP since the reward signal is merely extended by another stationary state-dependent signal.

Both proposed ideas require to solve the novel MDP as specified in Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")). In Section [4](#S4 "4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we therefore prove the existence of unique values and convergence of the corresponding value iteration scheme (including a grid world example). We also show how our formulation generalizes existing formulations from the literature. In Section [5](#S5 "5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we carry our ideas over to high-dimensional continuous state-action spaces by devising off-policy actor-critic-style algorithms inspired by the proposed MDP formulation. We evaluate our novel actor-critic-style algorithms in MuJoCo demonstrating better initial and competitive final performance compared to model-free state-of-the-art baselines.

4 Joint Reward Maximization and Empowerment Learning in MDPs

-------------------------------------------------------------

We state our main theoretical result *in advance*, proven in the remainder of this section (an intuition follows): the solution to the MDP from Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) implies unique optimal values V⋆ obeying the Bellman recursion

| | | | |

| --- | --- | --- | --- |

| | V⋆(s)=maxπ%

behaveEπbehave,P[∞∑t=0γt(αR(st,at)+βlogp(at|st+1,st)πbehave(at|st))∣∣

∣∣s0=s]=maxπbehave,qEπbehave(a|s)[αR(s,a)+EP(s′|s,a)[βlogq(a|s′,s)πbehave(a|s)+γV⋆(s′)]]=βlog∑aexp(αβR(s,a)+EP(s′|s,a)[logq⋆(a|s′,s)+γβV⋆(s′)]), | | (7) |

where

| | | | |

| --- | --- | --- | --- |

| | q⋆(a|s′,s)=P(s′|s,a)π⋆behave(a|s)∑aP(s′|s,a)π⋆behave(a|s)=p(a|s′,s) | | (8) |

is the inverse dynamics model of the optimal behavioral policy π⋆behave that assumes the form:

| | | | |

| --- | --- | --- | --- |

| | π⋆behave(a|s)=exp(αβR(s,a)+EP(s′|s,a)[logq⋆(a|s′,s)+γβV⋆(s′)])∑aexp(αβR(s,a)+EP(s′|s,a)[logq⋆(a|s′,s)+γβV⋆(s′)]), | | (9) |

where the denominator is just exp((1/β)V⋆(s)). While the remainder of this section explains how Equations ([7](#S4.E7 "(7) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) to ([9](#S4.E9 "(9) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) are derived in detail, it can be insightful to understand at a high level what makes our formulation non-trivial. The difficulty is that the inverse dynamics model p=q⋆ depends on the optimal policy π⋆behavioral and vice versa leading to a non-standard optimal value identification problem. Proving the existence of V⋆-values and how to compute them poses therefore our main theoretical contribution, and implies the existence of at least one (q⋆,π⋆behave)-pair that satisfies the recursive relationship of Equations ([8](#S4.E8 "(8) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and ([9](#S4.E9 "(9) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")). This proof is given in Section [4.1](#S4.SS1 "4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") and leads naturally to a value iteration scheme to compute optimal values in practice. The convergence of this scheme is proven in Section [4.2](#S4.SS2 "4.2 Value Iteration and Convergence to Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") and we also demonstrate value learning in a grid world example—see Section [4.3](#S4.SS3 "4.3 Practical Verification in a Grid World Example ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"). In Section [4.4](#S4.SS4 "4.4 Generalization of and Relation to Existing MDP formulations ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we elucidate how our formulation generalizes and relates to existing MDP formulations.

###

4.1 Existence of Unique Optimal Values

Following the second line from Equation ([7](#S4.E7 "(7) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")), let’s define the Bellman operator B⋆:R|S|→R|S| as

| | | | |

| --- | --- | --- | --- |

| | B⋆V(s):=maxπbehave,qEπbehave(a|s)[αR(s,a)+EP(s′|s,a)[βlogq(a|s′,s)πbehave(a|s)+γV(s′)]]. | | (10) |

###### Theorem 1

Existence of Unique Optimal Values.

Assuming a bounded reward function R, the optimal value vector V⋆ as given in Equation ([7](#S4.E7 "(7) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) exists and is a unique fixed point V⋆=B⋆V⋆ of the Bellman operator B⋆ from Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")).

Proof. The proof of Theorem [1](#Thmtheorem1 "Theorem 1 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") comprises three steps. First, we prove for a given (q,πbehave)-pair the existence of unique values V(q,πbehave) which obey the following recursion

| | | | |

| --- | --- | --- | --- |

| | V(q,πbehave)(s)=Eπbehave(a|s)[αR(s,a)+EP(s′|s,a)[βlogq(a|s′,s)πbehave(a|s)+γV(q,πbehave)(s′)]]. | | (11) |

This result is obtained through Proposition [2](#Thmproposition2 "Proposition 2 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") following [Bertsekas1996](#bib.bib5) ; [Rubin2012](#bib.bib50) ; [Grau-Moya2016](#bib.bib18) where we show that the value vector V(q,πbehave) is a unique fixed point of the operator Bq,πbehave:R|S|→R|S| given by

| | | | |

| --- | --- | --- | --- |

| | Bq,πbehaveV(s):=Eπbehave(a|s)[αR(s,a)+EP(s′|s,a)[βlogq(a|s′,s)πbehave(a|s)+γV(s′)]]. | | (12) |

Second, we prove in Proposition [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") that solving the right hand side of Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) for the pair (q,πbehave) can be achieved with a Blahut-Arimoto-style

algorithm in line with [Gallager1994](#bib.bib16) . Third, we complete the proof in Proposition [4](#Thmproposition4 "Proposition 4 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") based on Proposition [2](#Thmproposition2 "Proposition 2 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") and [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") by showing that V⋆=maxπbehave,qV(q,πbehave), where the vector-valued max-operator is well-defined because both πbehave and q are conditioned on s. The proof completion follows again [Bertsekas1996](#bib.bib5) ; [Rubin2012](#bib.bib50) ; [Grau-Moya2016](#bib.bib18) . □

###### Proposition 2

Existence of Unique Values for a Given (q,π\emph{behave})-Pair.

Assuming a bounded reward function R, the value vector V(q,π\emph{behave}) as given in Equation ([11](#S4.E11 "(11) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) exists and is a unique fixed point V(q,π\emph{behave})=Bq,π\emph{behave}V(q,π\emph{behave}) of the Bellman operator Bq,π\emph{behave} from Equation ([12](#S4.E12 "(12) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")).

As opposed to the Bellman operator B⋆, the operator Bq,πbehave does *not* include a max-operation that incurs a non-trivial recursive relationship between optimal arguments. The proof for existence of unique values follows hence standard methodology [Bertsekas1996](#bib.bib5) ; [Rubin2012](#bib.bib50) ; [Grau-Moya2016](#bib.bib18) and is given in Appendix [A.1](#A1.SS1 "A.1 Proof of Proposition 2 from the Main Paper ‣ Appendix A Theoretical Analysis ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment").

###### Proposition 3

Blahut-Arimoto for One Value Iteration Step.

Assuming that R is bounded, the maximization problem maxπ\emph{behave},q from Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) in the Bellman operator B⋆ can be solved for (q,π\emph{behave}) by iterating through the following two equations in an alternating fashion:

| | | | |

| --- | --- | --- | --- |

| | q(m)(a|s′,s)=P(s′|s,a)π(m)\emph{behave}(a|s)∑aP(s′|s,a)π(m)\emph{behave}(a|s), | | (13) |

| | | | |

| --- | --- | --- | --- |

| | π(m+1)\emph{behave}(a|s)=exp(αβR(s,a)+EP(s′|s,a)[logq(m)(a|s′,s)+γβV(s′)])∑aexp(αβR(s,a)+EP(s′|s,a)[logq(m)(a|s′,s)+γβV(s′)]), | | (14) |

where m is the iteration index. The convergence rate is O(1/M) for arbitrary initial π(0)\emph{behave} with support in A∀s. M is the total number of iterations. The complexity for a single s is O(M|S||A|).

Proof Outline. The problem in Proposition [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") is mathematically similar to the maximum channel capacity problem [Shannon1948](#bib.bib57) from Proposition [1](#Thmproposition1 "Proposition 1 ‣ 2.2 Empowerment ‣ 2 Background ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") and proving convergence follows similar steps that we outline here—details can be found in Appendix [A.2](#A1.SS2 "A.2 Proof of Proposition 3 from the Main Paper ‣ Appendix A Theoretical Analysis ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"). First, we prove that optimizing the right-hand side of Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) w.r.t. q for a given πbehave results in Equation ([13](#S4.E13 "(13) ‣ Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) according to [Cover2006](#bib.bib10) Lemma 10.8.1. Second, we prove that optimizing w.r.t. πbehave for a given q results in Equation ([14](#S4.E14 "(14) ‣ Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) following standard techniques from variational calculus and Lagrange multipliers. Third, we prove convergence to a global maximum when iterating alternately through Equations ([13](#S4.E13 "(13) ‣ Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and ([14](#S4.E14 "(14) ‣ Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) following [Gallager1994](#bib.bib16) .

###### Proposition 4

Completing the Proof of Theorem [1](#Thmtheorem1 "Theorem 1 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment").

The optimal value vector is given by V⋆=maxπ\emph{behave},qV(q,π\emph{behave}) and is a unique fixed point V⋆=B⋆V⋆ of the Bellman operator B⋆.

Completing the proof of Theorem [1](#Thmtheorem1 "Theorem 1 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") requires two ingredients: the existence of unique V(q,πbehave)-values for any (q,πbehave)-pair as proven in Proposition [2](#Thmproposition2 "Proposition 2 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), and the fact that the optimal Bellman operator can be expressed as B⋆=maxπbehave,qBq,πbehave where maxπbehave,q is the max-operator from Proposition [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"). The proof follows then standard methodology [Bertsekas1996](#bib.bib5) ; [Rubin2012](#bib.bib50) ; [Grau-Moya2016](#bib.bib18) , see Appendix [A.3](#A1.SS3 "A.3 Proof of Proposition 4 from the Main Paper ‣ Appendix A Theoretical Analysis ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment").

###

4.2 Value Iteration and Convergence to Optimal Values

In the previous section, we have proven the existence of unique optimal values V⋆ that are a fixed point of the Bellman operator B⋆. This section devises a value iteration scheme based on the operator B⋆ and proves its convergence. We commence by a corollary to express B⋆ more concisely.

###### Corollary 1

Optimal Bellman Operator.

The operator B⋆ from Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) can be written as

| | | | |

| --- | --- | --- | --- |

| | B⋆V(s)=βlog∑aexp(αβR(s,a)+EP(s′|s,a)[logq\emph{converged}(a|s′,s)+γβV(s′)]), | | (15) |

where q\emph{converged}(a|s′,s) is the result of the converged Blahut-Arimoto scheme from Proposition [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment").

This result is obtained by plugging the converged solution πconvergedbehave from Equation ([14](#S4.E14 "(14) ‣ Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) into Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and leads naturally to a two-level value iteration algorithm that proceeds as follows: the outer loop updates the values V by applying Equation ([15](#S4.E15 "(15) ‣ Corollary 1 ‣ 4.2 Value Iteration and Convergence to Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) repeatedly; the inner loop applies the Blahut-Arimoto algorithm from Proposition [3](#Thmproposition3 "Proposition 3 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") to identify qconverged required for the outer value update.

###### Theorem 2

Convergence to Optimal Values.

Assuming bounded R and let ϵ∈R be a positive number such that ϵ<η1−γ where η=αmaxs,a|R(s,a)|+βlog|A|. If the value iteration scheme with initial values of V(s)=0∀s is run for i≥⌈logγϵ(1−γ)η⌉ iterations, then ∥∥V⋆−B(i)⋆V∥∥∞≤ϵ, where the notation B(i)⋆V means to apply B⋆ to V i-times consecutively.

Proof. Via a sequence of inequalities, one can show that the following holds true: ∥∥V⋆−B(i)⋆V∥∥∞≤γ∥∥V⋆−B(i−1)⋆V∥∥∞≤γi∥V⋆−V∥∞≤γi11−γη—see Appendix [A.4](#A1.SS4 "A.4 Proof Details of Theorem 2 from the Main Paper ‣ Appendix A Theoretical Analysis ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") for a more detailed derivation. This implies that if ϵ≥γi11−γη then i≥⌈logγϵ(1−γ)η⌉ presupposing ϵ<η1−γ. □

Conclusion. *Together, Theorems [1](#Thmtheorem1 "Theorem 1 ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") and [2](#Thmtheorem2 "Theorem 2 ‣ 4.2 Value Iteration and Convergence to Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") prove that our proposed value iteration scheme convergences to optimal values V⋆ in combination with a corresponding optimal pair (q⋆,π⋆\emph{behave}) as described at the beginning of this section in the third line of Equation ([7](#S4.E7 "(7) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and in Equations ([8](#S4.E8 "(8) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) and ([9](#S4.E9 "(9) ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) respectively. The overal complexity is O(iM|S|2|A|) where i and M refer to outer and inner iterations.*

Remark. *Our value iteration is required for both objectives from Section [3](#S3 "3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") to combine reward maximization with empowerment. Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) motivated our scheme in the first place, whereas Equation ([6](#S3.E6 "(6) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) requires cumulative empowerment values without reward maximization (α=0, β=1).*

###

4.3 Practical Verification in a Grid World Example

In order to practically verify our value iteration scheme from the previous section, we conduct experiments on a grid world example. The outcome is shown in Figure [1](#S4.F1 "Figure 1 ‣ 4.3 Practical Verification in a Grid World Example ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") demonstrating how different configurations for α and β, that steer cumulative reward maximization versus empowerment learning, affect optimal values V⋆. Importantly, the experiments show that our proposal to learn cumulative one-step empowerment values recovers the original intuition of empowerment in the sense that high values are assigned to states where many other states can be reached and low values to states where the number of reachable next states is low, *but without* the necessity to maintain a multi-step policy.

Figure 1: Value Iteration for a Grid World Example. The agent aims to arrive at the goal ’G’ in the lower left—detailed information regarding the setup can be found in Appendix [C.1](#A3.SS1 "C.1 Grid World ‣ Appendix C Experiments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"). The plots show optimal values for different α and β: α increases from left to right while β decreases. The leftmost values show raw cumulative empowerment learning (α=0.0, β=1.0). High values are assigned to states where many other states can be reached, i.e. the upper right; and low values to states where the number of reachable next states is low, i.e. close to corners and dead ends. The rightmost values recover ordinary cumulative reward maximization (α=1.0, β=0.0) assigning high values to states close to the goal and low values to states far away from the goal.

###

4.4 Generalization of and Relation to Existing MDP formulations

Our Bellman operator B⋆ from Equation ([10](#S4.E10 "(10) ‣ 4.1 Existence of Unique Optimal Values ‣ 4 Joint Reward Maximization and Empowerment Learning in MDPs ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) relates to prior work as follows (see also Appendix [A.5](#A1.SS5 "A.5 Limit Cases of Equation (7) ‣ Appendix A Theoretical Analysis ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")).

* Ordinary value iteration [Russell2016](#bib.bib51) is recovered as a special case for α=1 and β=0.

* Cumulative one-step empowerment is recovered as a special case for α=0 and β=1, with *non-cumulative* one-step empowerment [Kumar2018](#bib.bib29) as a further special case of the latter (γ→0).

* When setting q(a|s′,s)=q(a|s), using a distribution that is *not* conditioned on s′ and *omitting* maximizing w.r.t. q, one recovers as a special case the soft Bellman operator presented e.g. in [Rubin2012](#bib.bib50) . Note that this soft Bellman operator also occurred in numerous other work on MDP formulations and RL [Azar2011](#bib.bib3) ; [Fox2016](#bib.bib14) ; [Neu2017](#bib.bib44) ; [Schulman2017](#bib.bib54) ; [Leibfried2018](#bib.bib32) .

* As a special case of the previous, when q(a|s′,s)=U(A) is the uniform distribution in action space, one recovers cumulative entropy regularization [Ziebart2010](#bib.bib69) ; [Nachum2017](#bib.bib43) ; [Levine2018](#bib.bib33) that inspired algorithms such as soft Q-learning [Haarnoja2017](#bib.bib20) and soft actor-critic [Haarnoja2018](#bib.bib21) ; [Haarnoja2019](#bib.bib22) .

* When dropping the conditioning on s′ and s by setting q(a|s′,s)=q(a) but *without omitting* maximization w.r.t. q, one recovers a formulation similar to [Tishby2011](#bib.bib64) based on mutual-information regularization [Shannon1959](#bib.bib58) ; [Sims2003](#bib.bib59) ; [Genewein2015](#bib.bib17) ; [Leibfried2016](#bib.bib31) that spurred RL algorithms such as [Leibfried2015](#bib.bib30) ; [Grau-Moya2019](#bib.bib19) .

* When replacing q(a|s′,s) with q(a|s′,a′), where s′ and a′ refers to the state-action pair of the previous time step, one recovers a formulation similar to [Tiomkin2018](#bib.bib63) based on the information-theoretic principle of directed information [Marko1973](#bib.bib37) ; [Kramer1998](#bib.bib28) ; [Massey2005](#bib.bib38) .

5 Scaling to High-Dimensional Environments

-------------------------------------------

In the previous section, we presented a novel Bellman operator in combination with a value iteration scheme to combine reward maximization and empowerment. In this section, by leveraging parametric function approximators, we validate our ideas in high-dimensional state-action spaces and when there is no prior knowledge of the state-transition function. In Section [5.1](#S5.SS1 "5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we devise novel actor-critic algorithms for RL based on our MDP formulation since they are naturally capable of handling both continuous state and action spaces. In Section [5.2](#S5.SS2 "5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we practically confirm that empowerment can boost RL in the high-dimensional robotics simulator domain of MuJoCo using deep neural networks.

###

5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators

Contemporary off-policy actor-critic approaches for RL [Lillicrap2016](#bib.bib35) ; [Abdolmaleki2018](#bib.bib1) ; [Fujimoto2018](#bib.bib15) follow the policy gradient theorem [Sutton2000](#bib.bib62) ; [Degris2012](#bib.bib13) and learn two parametric function approximators: one for the behavioral policy πϕ(a|s) with parameters ϕ, and one for the state-action value function Qθ(s,a) of the parametric policy πϕ with parameters θ. The policy learning objective usually assumes the form: maxϕEs∼D[Eπϕ(a|s)[Qθ(s,a)]], where D refers to a replay buffer [Lin1993](#bib.bib36) that stores collected state transitions from the environment. Following [Haarnoja2018](#bib.bib21) , Q-values are learned most efficiently by introducing another function approximator Vψ for state values of πϕ with parameters ψ using the objective:

| | | | |

| --- | --- | --- | --- |

| | minθEs,a,r,s′∼D[(Qθ(s,a)−(αr+γVψ(s′)))2], | | (16) |

where (s,a,r,s′) refers to an environment interaction sampled from the replay buffer (r stands for the observed reward signal). We multiply r by the scaling factor α from our formulation because Equation ([16](#S5.E16 "(16) ‣ 5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) can be directly used for the parametric methods we propose. Learning policy parameters ϕ and value parameters ψ requires however novel objectives with two additional approximators: one for the inverse dynamics model pχ(a|s′,s) of πϕ, and one for the transition function Pξ(s′|s,a) (with parameters χ and ξ respectively). While the necessity for pχ is clear, e.g. from inspecting Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")), the necessity for Pξ will fall into place shortly as we move forward.

In order to preserve a clear view, let’s define the quantity f(s,a):=EPξ(s′|s,a)[logpχ(a|s′,s)]−logπϕ(a|s), which is short-hand notation for the empowerment-induced addition to the reward signal—compare to Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")). We then commence with the objective for value function learning:

| | | | |

| --- | --- | --- | --- |

| | minψEs∼D[(Vψ(s)−Eπϕ(a|s)[Qθ(s,a)+βf(s,a)])2], | | (17) |

which is similar to the standard value objective but with the added term βf(s,a) as a result of joint cumulative empowerment learning. At this point, the necessity for a transition model Pξ becomes apparent. In the above equation, new actions a need to be sampled from the policy πϕ for a given s. However, the inverse dynamics model (inside f) depends on the subsequent state s′ as well, requiring therefore a prediction for the next state. Note also that (s,a,r,s′)-tuples from the replay buffer as in Equation ([16](#S5.E16 "(16) ‣ 5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) can’t be used here, because the expectation over a is w.r.t. to the current policy whereas tuples from the replay buffer come from a mixture of policies at an earlier stage of training.

Extending the ordinary actor-critic policy objective with the empowerment-induced term f yields:

| | | | |

| --- | --- | --- | --- |

| | maxϕEs∼D[Eπϕ(a|s)[Qθ(s,a)+βf(s,a)]]. | | (18) |

The remaining parameters to be optimized are χ and ξ from the inverse dynamics model pχ and the transition model Pξ. Both problems are supervised learning problems that can be addressed by log-likelihood maximization using samples from the replay buffer, leading to maxχEs∼D[Eπϕ(a|s)Pξ(s′|s,a)[logpχ(a|s′,s)]] and maxξEs,a,s′∼D[logPξ(s′|s,a)].

Coming back to our motivation from Section [3](#S3 "3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), we propose two novel empowerment-inspired actor-critic approaches based on the optimization objectives specified in this section. The first combines cumulative reward maximization and empowerment learning following Equation ([5](#S3.E5 "(5) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) which we refer to as empowered actor-critic. The second learns cumulative empowerment values to construct intrinsic rewards following Equation ([6](#S3.E6 "(6) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) which we refer to as actor-critic with intrinsic empowerment.

Empowered Actor-Critic (EAC). In line with standard off-policy actor-critic methods [Lillicrap2016](#bib.bib35) ; [Fujimoto2018](#bib.bib15) ; [Haarnoja2018](#bib.bib21) , EAC interacts with the environment iteratively storing transition tuples (s,a,r,s′) in a replay buffer. After each interaction, a training batch {(s,a,r,s′)(b)}Bb=1∼D of size B is sampled from the buffer to perform a *single* gradient update on the objectives from Equation ([16](#S5.E16 "(16) ‣ 5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) to ([18](#S5.E18 "(18) ‣ 5.1 Empowered Off-Policy Actor-Critic Methods with Parametric Function Approximators ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")) as well as the log likelihood objectives for the inverse dynamics and transition model—see Appendix [B](#A2 "Appendix B Pseudocode for the Empowered Actor-Critic (EAC) ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") for pseudocode.

Actor-Critic with Intrinsic Empowerment (ACIE). By setting α=0 and β=1, EAC can train an agent merely focusing on cumulative empowerment learning. Since EAC is off-policy, it can learn with samples obtained from executing *any* policy in the real environment, e.g. the actor of *any other* reward-maximizing actor-critic algorithm. We can then extend external rewards rt at time t of this actor-critic algorithm with intrinsic rewards Eπϕ(a|st)Pξ(s′|st,a)[logpχ(a|s′,st)πϕ(a|st)] according to Equation ([6](#S3.E6 "(6) ‣ 3 Motivation: Combining Reward Maximization with Empowerment ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment")), where (ϕ,ξ,χ) are the result of *concurrent* raw empowerment learning with EAC. This idea is similar to the preliminary work of [Kumar2018](#bib.bib29) using non-cumulative empowerment as intrinsic motivation for deep value-based RL with discrete actions in the Atari game Montezuma’s Revenge.

###

5.2 Experiments with Deep Function Approximators in MuJoCo

We validate EAC and ACIE in the robotics simulator MuJoCo [Todorov2012](#bib.bib65) ; [Brockman2016](#bib.bib8) with deep neural nets under the same setup for each experiment following [vanHasselt2010](#bib.bib66) ; [Kingma2014](#bib.bib25) ; [Rezende2014](#bib.bib49) ; [Kingma2015](#bib.bib24) ; [Schulman2015](#bib.bib55) ; [Lillicrap2016](#bib.bib35) ; [vanHasselt2016](#bib.bib67) ; [Schulman2017b](#bib.bib56) ; [Abdolmaleki2018](#bib.bib1) ; [Chua2018](#bib.bib9) ; [Fujimoto2018](#bib.bib15) ; [Haarnoja2018](#bib.bib21) —see Appendix [C.2](#A3.SS2 "C.2 MuJoCo ‣ Appendix C Experiments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") for details. While EAC is a standalone algorithm, ACIE can be combined with any RL algorithm (we use the model-free state of the art SAC [Haarnoja2018](#bib.bib21) ). We compare against DDPG [Lillicrap2016](#bib.bib35) and PPO [Schulman2017b](#bib.bib56) from RLlib [Liang2018](#bib.bib34) as well as SAC on the MuJoCo v2-environments (ten seeds per run [Pineau2018](#bib.bib46) ).

The results in Figure [2](#S5.F2 "Figure 2 ‣ 5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") confirm that both EAC and ACIE can attain better initial performance compared to model-free baselines. While this holds true for both approaches on the pendulum benchmarks (balancing and swing up), our empowered methods can also boost RL in demanding environments like Hopper, Ant and Humanoid (the latter two being amongst the most difficult MuJoCo tasks). EAC significantly improves initial learning in Ant, whereas ACIE boosts SAC in Hopper and Humanoid. While EAC outperforms PPO and DDPG in almost all tasks, it is not consistently better then SAC. Similarly, the added intrinsic reward from ACIE to SAC does not always help. *This is not unexpected as it cannot be in general ruled out that reward functions assign high (low) rewards to lowly (highly) empowered states, in which case the two learning signals may become partially conflicting.*

Figure 2: MuJoCo Experiments. The plots show maximum episodic rewards (averaged over the last 100 episodes) achieved so far [Chua2018](#bib.bib9) versus steps—*non-maximum* episodic reward plots can be found in Figure [3](#S5.F3 "Figure 3 ‣ 5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"). EAC and ACIE are compared to DDPG, PPO and SAC (DDPG did not work in Ant, see [Haarnoja2018](#bib.bib21) and Appendix [C.2](#A3.SS2 "C.2 MuJoCo ‣ Appendix C Experiments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") for an explanation). Shaded areas refer to the standard error. Both EAC and ACIE improve initial learning over baselines in the three pendulum tasks (upper row). In demanding problems like Hopper, Ant and Humanoid, our methods can boost RL. In terms of final performance, EAC is competitive with the baselines: it consistently outperforms DDPG and PPO on all tasks except Hopper, but is not always better than SAC. Similarly, the ACIE-signal does not always help SAC. This is not unexpected as extrinsic and empowered rewards may partially conflict.

For the sake of completeness, we report Figure [3](#S5.F3 "Figure 3 ‣ 5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") which is similar to Figure [2](#S5.F2 "Figure 2 ‣ 5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment") but shows episodic rewards and *not* maximum episodic rewards obtained so far [Chua2018](#bib.bib9) . Also, limits of y-axes are preserved for the pendulum tasks. Note that our SAC baseline is comparable with the SAC from [Haarnoja2019](#bib.bib22) on Hopper-v2, Walker2d-v2, Ant-v2 and Humanoid-v2 after 5⋅105 steps (the SAC from [Haarnoja2018](#bib.bib21) uses the earlier v1-versions of Mujoco and is hence not an optimal reference). However, there is a discrepancy on HalfCheetah-v2. This was earlier noted by others who tried to reproduce SAC results in HalfCheetah-v2 but failed to obtain episodic rewards as high as in [Haarnoja2018](#bib.bib21) ; [Haarnoja2019](#bib.bib22) , leading to a GitHub issue <https://github.com/rail-berkeley/softlearning/issues/75>. The final conclusion of this issue was that differences in performance are caused by different seed settings and are therefore of statistical nature (comparing all algorithms under the same seed settings is hence valid).

Figure 3: Raw Results of MuJoCo Experiments. The plots are similar to the plots from Figure [2](#S5.F2 "Figure 2 ‣ 5.2 Experiments with Deep Function Approximators in MuJoCo ‣ 5 Scaling to High-Dimensional Environments ‣ A Unified Bellman Optimality Principle Combining Reward Maximization and Empowerment"), but report episodic rewards (averaged over the last 100 episodes) versus steps—*not* maximum episodic rewards seen so far as in [Chua2018](#bib.bib9) . For the pendulum tasks, the limits of the y-axes are preserved.

6 Conclusion

-------------

This paper provides a theoretical contribution via a unified formulation for reward maximization and empowerment that generalizes Bellman’s optimality principle and recent information-theoretic extensions to it. We proved the existence of and convergence to unique optimal values, and practically validated our ideas by devising novel parametric actor-critic algorithms inspired by our formulation. These were evaluated on the high-dimensional MuJoCo benchmark demonstrating that empowerment can boost RL in challenging robotics tasks (e.g. Ant and Humanoid).

The most promising line of future research is to investigate scheduling schemes that dynamically trade off rewards vs. empowerment with the prospect of obtaining better asymptotic performance. Empowerment could also be particularly useful in a multi-task setting where task transfer could benefit from initially empowered agents.

#### Acknowledgments

We thank Haitham Bou-Ammar for pointing us in the direction of empowerment. |

beeac37c-96bc-4224-aef9-d8cf2631c518 | trentmkelly/LessWrong-43k | LessWrong | Why artificial optimism?

Optimism bias is well-known. Here are some examples.

* It's conventional to answer the question "How are you doing?" with "well", regardless of how you're actually doing. Why?

* People often believe that it's inherently good to be happy, rather than thinking that their happiness level should track the actual state of affairs (and thus be a useful tool for emotional processing and communication). Why?

* People often think their project has an unrealistically high chance of succeeding. Why?

* People often avoid looking at horrible things clearly. Why?

* People often want to suppress criticism but less often want to suppress praise; in general, they hold criticism to a higher standard than praise. Why?

The parable of the gullible king

Imagine a kingdom ruled by a gullible king. The king gets reports from different regions of the kingdom (managed by different vassals). These reports detail how things are going in these different regions, including particular events, and an overall summary of how well things are going. He is quite gullible, so he usually believes these reports, although not if they're too outlandish.

When he thinks things are going well in some region of the kingdom, he gives the vassal more resources, expands the region controlled by the vassal, encourages others to copy the practices of that region, and so on. When he thinks things are going poorly in some region of the kingdom (in a long-term way, not as a temporary crisis), he gives the vassal fewer resources, contracts the region controlled by the vassal, encourages others not to copy the practices of that region, possibly replaces the vassal, and so on. This behavior makes sense if he's assuming he's getting reliable information: it's better for practices that result in better outcomes to get copied, and for places with higher economic growth rates to get more resources.

Initially, this works well, and good practices are adopted throughout the kingdom. But, some vassals get the idea of ex |

9a151168-15d0-4874-a403-3edc7f1c4d8a | trentmkelly/LessWrong-43k | LessWrong | Behavior Cloning is Miscalibrated

Behavior cloning (BC) is, put simply, when you have a bunch of human expert demonstrations and you train your policy to maximize likelihood over the human expert demonstrations. It’s the simplest possible approach under the broader umbrella of Imitation Learning, which also includes more complicated things like Inverse Reinforcement Learning or Generative Adversarial Imitation Learning. Despite its simplicity, it’s a fairly strong baseline. In fact, prompting GPT-3 to act agent-y is essentially also BC, just rather than cloning on a specific task, you're cloning against all of the task demonstration-like data in the training set--but fundamentally, it's a scaled up version of the exact same thing. The problem with BC that leads to miscalibration is that the human demonstrator may know more or less than the model, which would result in the model systematically being over/underconfident for its own knowledge and abilities.

For instance, suppose the human demonstrator is more knowledgeable than the model at common sense: then, the human will ask questions about common sense much less frequently than the model should. However, with BC, the model will ask those questions at the exact same rate as the human, and then because now it has strictly less information than the human, it will have to marginalize over the possible values of the unobserved variables using its prior to be able to imitate the human’s actions. Factoring out the model’s prior over unobserved information, this is equivalent[1] to taking a guess at the remaining relevant info conditioned on all the other info it has (!!!), and then act as confidently as if it had actually observed that info, since that's how a human would act (since the human really observed that information outside of the episode, but our model has no way of knowing that). This is, needless to say, a really bad thing for safety; we want our models to ask us or otherwise seek out information whenever they don't know something, not rando |

28ca3bcd-283c-41ec-9f42-8b43dd723b94 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Satisficers Tend To Seek Power: Instrumental Convergence Via Retargetability

**Summary**: Why exactly should smart agents tend to usurp their creators? Previous results only apply to optimal agents tending to stay alive and preserve their future options. I extend the power-seeking theorems to apply to many kinds of policy-selection procedures, ranging from planning agents which choose plans with expected utility closest to a randomly generated number, to satisficers, to policies trained by some reinforcement learning algorithms. The key property is not agent optimality—as previously supposed—but is instead the *retargetability of the policy-selection procedure*. These results hint at which kinds of agent cognition and of agent-producing processes are dangerous by default.

I mean "retargetability" in a sense similar to [Alex Flint's definition](https://www.lesswrong.com/posts/znfkdCoHMANwqc2WE/the-ground-of-optimization-1#Defining_optimization):

> **Retargetability**. Is it possible, using only a microscopic perturbation to the system, to change the system such that it is still an optimizing system but with a different target configuration set?

>

> A system containing a robot with the goal of moving a vase to a certain location can be modified by making just a small number of microscopic perturbations to key memory registers such that the robot holds the goal of moving the vase to a different location and the whole vase/robot system now exhibits a tendency to evolve towards a different target configuration.

>

> In contrast, a system containing a ball rolling towards the bottom of a valley cannot generally be modified by any *microscopic* perturbation such that the ball will roll to a different target location.

>

>

(I don't think that "microscopic" is important for my purposes; the constraint is not physical size, but changes in a single parameter to the policy-selection procedure.)

I'm going to start from the naive view on power-seeking arguments requiring optimality (i.e. what I thought early this summer) and explain the importance of retargetablepolicy-selection functions. I'll illustrate this notion via satisficers, which randomly select a plan that exceeds some goodness threshold. Satisficers are retargetable, and so they have *orbit-level instrumental convergence*: for most variations of every utility function, satisficers incentivize power-seeking in the situations covered by my theorems.

Many procedures are retargetable, including *every procedure which only depends on the expected utility of different plans*. I think that alignment is hard in the expected utility framework not because agents will *maximize* too hard, but because all expected utility procedures are extremely retargetable—and thus easy to "get wrong."

Lastly: the unholy grail of "instrumental convergence for policies trained via reinforcement learning." I'll state a formal criterion and some preliminary thoughts on where it applies.

*The linked Overleaf paper draft contains complete proofs and incomplete explanations of the formal results.*

Retargetable policy-selection processes tend to select policies which seek power

================================================================================

To understand a range of retargetable procedures, let's first orient towards the picture I've painted of power-seeking thus far. In short:

> Since power-seeking tends to lead to larger sets of possible outcomes—staying alive lets you do more than dying—the agent must seek power to reach most outcomes. The power-seeking theorems say that [for the vast, vast, vast majority](https://www.lesswrong.com/s/fSMbebQyR4wheRrvk/p/Yc5QSSZCQ9qdyxZF6) [of](https://www.lesswrong.com/s/fSMbebQyR4wheRrvk/p/hzeLSQ9nwDkPc4KNt#Instrumental_convergence_can_get_really__really_strong) variants of every utility function over outcomes, the max of a largerFootnote: similarity.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

> .MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

> .mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

> .mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

> .mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

> .mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

> .mjx-numerator {display: block; text-align: center}

> .mjx-denominator {display: block; text-align: center}

> .MJXc-stacked {height: 0; position: relative}

> .MJXc-stacked > \* {position: absolute}

> .MJXc-bevelled > \* {display: inline-block}

> .mjx-stack {display: inline-block}

> .mjx-op {display: block}

> .mjx-under {display: table-cell}

> .mjx-over {display: block}

> .mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

> .mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

> .mjx-stack > .mjx-sup {display: block}

> .mjx-stack > .mjx-sub {display: block}

> .mjx-prestack > .mjx-presup {display: block}

> .mjx-prestack > .mjx-presub {display: block}

> .mjx-delim-h > .mjx-char {display: inline-block}

> .mjx-surd {vertical-align: top}

> .mjx-surd + .mjx-box {display: inline-flex}

> .mjx-mphantom \* {visibility: hidden}

> .mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

> .mjx-annotation-xml {line-height: normal}

> .mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

> .mjx-mtr {display: table-row}

> .mjx-mlabeledtr {display: table-row}

> .mjx-mtd {display: table-cell; text-align: center}

> .mjx-label {display: table-row}

> .mjx-box {display: inline-block}

> .mjx-block {display: block}

> .mjx-span {display: inline}

> .mjx-char {display: block; white-space: pre}

> .mjx-itable {display: inline-table; width: auto}

> .mjx-row {display: table-row}

> .mjx-cell {display: table-cell}

> .mjx-table {display: table; width: 100%}

> .mjx-line {display: block; height: 0}

> .mjx-strut {width: 0; padding-top: 1em}

> .mjx-vsize {width: 0}

> .MJXc-space1 {margin-left: .167em}

> .MJXc-space2 {margin-left: .222em}

> .MJXc-space3 {margin-left: .278em}

> .mjx-test.mjx-test-display {display: table!important}

> .mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

> .mjx-test.mjx-test-default {display: block!important; clear: both}

> .mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

> .mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

> .mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

> .mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

> .MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

> .MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

> .MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

> .MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

> .MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

> .MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

> .MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

> .MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

> .MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

> .MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

> .MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

> .MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

> .MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

> .MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

> .MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

> .MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

> .MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

> .MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

> .MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

> .MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

> .MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

> .MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

> .MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

> .MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

> .MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

> @font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

> @font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

> @font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

> @font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

> @font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

> @font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

> @font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

> @font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

> @font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

> @font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

> @font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

> @font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

> @font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

> @font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

> @font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

> @font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

> @font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

> @font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

> @font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

> @font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

> @font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

> @font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

> @font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

> @font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

> @font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

> @font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

> @font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

> @font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

> @font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

> @font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

> @font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

> @font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

> set of possible outcomes is greater than the max of a smaller set of possible outcomes. Thus, optimal agents will tend to seek power.

>

>

But I want to step back. What I call "the power-seeking theorems", they aren't really about optimal choice. They're about two facts.

1. Being powerful means you can make more outcomes happen, and

2. *There are more ways to choose something from a bigger set of outcomes than from a smaller set*.

For example, suppose our cute robot Frank must choose one of several kinds of fruit.

🍒 vs 🍎 vs 🍌 So far, I proved something like "if the agent has a utility function over fruits, then for at least 2/3 of possible utility functions it could have, it'll be optimal to choose something from {🍌,🍎}." This is because for every way 🍒 could be strictly optimal, you can make a new utility function that permutes the 🍒 and 🍎 reward, and another new one that permutes the 🍌 and 🍒 reward. So for every "I like 🍒 strictly more" utility function, there's at least two permuted variants which strictly prefer 🍎 or 🍌. Superficially, it seems like this argument relies on optimal decision-making.