id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

e95cddf8-8627-4ca1-9571-3f2c487f0330 | trentmkelly/LessWrong-43k | LessWrong | The Talos Principle

Dear members of Less Wrong, this is my very first contribution to your society and I hope that you might help me to get out of my confusion.

Back a few months ago, I tested for the first time a video game created by Croteam Studio which is called 'The Talos Principle'.

At the time, i was astonished by all the philosophical questions that the game was rising. It has kinda changed the way I see the world now, also the way I see myself.

I wanted to share my thoughts with you on the subject of 'What does being a Human mean ?'

First, I'd like to introduce you to this principle.

In Greek mythology, Talos was a giant automaton made of bronze which protected Europa in Crete from pirates and invaders.

He was known to be a gift given to Europa by Zeus himself.

He was so strong that he could crush a man's skull using only one hand, and so tall that he could circle the island's shores three times daily.

He was able to talk, think and act like he wanted to. (Except he had to obey Europa's will)

Even though his body was not organic, he was composed of a liquid-metal flowing through his veins who behaved like blood.

And here is how the principle begins. What is the fundamental difference between Talos and us, Human ?

Considering the fact that like us, he's able to think by himself, move thanks to his will and communicate like everybody does. Is he really different from us ? Sharing our own culture, history and language don't make him Human as well ?

I'm pretty sure that your first thought might be 'No way ! We are part of a biological specie. We have nothing in common with a synthetic being'.

But does our body really defines us as a Human Being ?

From a strict biological point of view, Sir Darwin would say yes, of course. And we won't be able to argue with that.

But if you take a Human being, for instance Platon, and you just cut his leg off and replace it with a synthetic prosthesis.

Would this person sti |

407c4c6d-2278-4bcc-975e-ff2698d451cc | trentmkelly/LessWrong-43k | LessWrong | You have a place to stay in Sweden, should you need it.

If anyone here is in trouble of any kind, and finds themselves in Sweden with no place to stay, or in need of other help, I want to help you. Send me an email at johan.domeij@gmail.com for further contact.

I am posting this now due to the ongoing conflict in Ukraine, but the offer is not limited to that conflict. I am not able to give significant financial support, but I have a car and a place to stay for several people. |

4f7b6a2f-4226-4f0f-af68-59777dd8fc92 | trentmkelly/LessWrong-43k | LessWrong | Behavioural statistics for a maze-solving agent

Summary: Understanding and controlling a maze-solving policy network analyzed a maze-solving agent's behavior. We isolated four maze properties which seemed to predict whether the mouse goes towards the cheese or towards the top-right corner:

In this post, we conduct a more thorough statistical analysis, addressing issues of multicollinearity. We show strong evidence that (2) and (3) above are real influences on the agent's decision-making, and weak evidence that (1) is also a real influence. As we speculated in the original post,[1] (4) falls away as a statistical artifact.

Peli did the stats work and drafted the post, while Alex provided feedback, expanded the visualizations, and ran additional tests for multicollinearity. Some of the work completed in Team Shard under SERI MATS 3.0.

Impressions from trajectory videos

Watching videos Langosco et al.'s experiment, we developed a few central intuitions about how the agent behaves. In particular, we tried predicting what the agent does at decision squares. From Understanding and controlling a maze-solving policy network:

> Some mazes are easy to predict, because the cheese is on the way to the top-right corner. There's no decision square where the agent has to make the hard choice between the paths to the cheese and to the top-right corner:

>

> At the decision square, the agent must choose between two paths—cheese, and top-right.

Here are four central intuitions which we developed:

1. Closeness between the mouse and the cheese makes cheese-getting more likely

2. Closeness between the mouse or cheese and the top-right makes cheese-getting more likely

3. The effect of closeness is smooth

4. Both ‘spatial’ distances and ‘legal steps’ distances matter when computing closeness in each case

The videos we studied are hard to interpret without quantitative tools, so we regard these intuitions as theoretically-motivated impressions rather than as observations. We wanted to precisify and statistically test the |

a5f475eb-7f7e-4269-8a5f-94eec6e942ed | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Theoretical Neuroscience For Alignment Theory

*This post was written under Evan Hubinger’s direct guidance and mentorship, as a part of the* [*Stanford Existential Risks Institute ML Alignment Theory Scholars (MATS) program*](https://www.alignmentforum.org/posts/FpokmCnbP3CEZ5h4t/ml-alignment-theory-program-under-evan-hubinger)*.*

*Many additional thanks to Steve Byrnes and Adam Shimi for their helpful feedback on earlier drafts of this post.*

**TL;DR:** [Steve Byrnes](https://www.alignmentforum.org/users/steve2152?_ga=2.46258607.921615797.1638483024-1825036065.1632882070) has done really exciting work at the intersection of neuroscience and alignment theory. He argues that because we’re probably going to end up at some point with an AGI whose subparts at least superficially resemble those of the brain (a value function, a world model, etc.), it’s really important for alignment to proactively understand how the many ML-like algorithms in the brain actually do their thing. I build off of Steve’s framework in the second half of this post: first, I discuss why it would be worthwhile to understand the computations that underlie [theory of mind](https://en.wikipedia.org/wiki/Theory_of_mind) + affective empathy. Second, I introduce the problem of self-referential misalignment, which is essentially the worry that initially-aligned ML systems with the capacity to model their own values could assign *second-order* values to these models that ultimately result in contradictory—and thus misaligned—behavioral policies. (A simple example of this general phenomenon in humans: Jack hates reading fiction, but Jack wants to be the *kind of guy* who likes reading fiction, so he forces himself to read fiction.)

**Introduction**

================

In this post, my goal is to distill and expand upon some of Steve Byrnes’s thinking on AGI safety. For those unfamiliar with his work, Steve thinks about alignment largely through the lens of his own brand of “big-picture” theoretical neuroscience. Many of his formulations in this space are thus original and ever-evolving, which is all the more reason to attempt to consolidate his core ideas in one space. I’ll begin by summarizing Steve’s general perspectives on AGI safety and threat models. I’ll then turn to Steve’s various models of the brain and its [neuromodulatory](https://en.wikipedia.org/wiki/Neuromodulation) systems and how these conceptualizations relate to AGI safety. In the second half of this post, I’ll spend time exploring two novel directions for alignment theory that I think naturally emerge from Steve’s thinking.

**Steve’s framework**

=====================

**Steve’s work in alignment theory**

------------------------------------

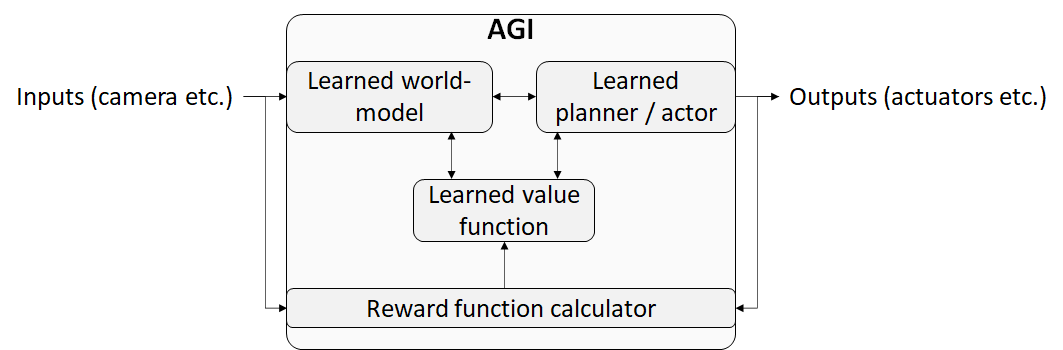

In order to build a coherent threat model (and before we start explicitly thinking about any brain-based algorithms), Steve reasons that we first need to operationalize some basic idea of what components we would expect to constitute an AGI. Steve asserts that [four ingredients](https://www.alignmentforum.org/posts/zzXawbXDwCZobwF9D/my-agi-threat-model-misaligned-model-based-rl-agent) seem especially likely: a world model, a value function, a planner/actor, and a reward function calculator. As such, he imagines AGI to be fundamentally grounded in model-based RL.

From [My AGI Threat Model: Misaligned Model-Based RL Agent](https://www.alignmentforum.org/posts/zzXawbXDwCZobwF9D/my-agi-threat-model-misaligned-model-based-rl-agent?_ga=2.242654346.921615797.1638483024-1825036065.1632882070). So, in the simple example of an agent navigating a maze, the world model would be some learned map of that maze, the value function might assign values to every juncture (e.g., turning left here = +5, turning right here = -5), the planner/actor would transmute these values into a behavioral trajectory, and the reward function calculator would translate certain outcomes of that trajectory into rewards for the agent (e.g., +10 for successfully reaching the end of the maze). Note here that the first three ingredients of this generic AGI—its world model, value function, and planner/actor—are presumed to be *learned*, while the reward function calculator is considered to be hardcoded or otherwise fixed. This distinction (reward function = fixed; everything else = learned) will be critical for understanding Steve’s subsequent thinking in AGI safety and his motivations for studying neuroscience.

###

### **Threat models**

Using these algorithmic ingredients, Steve recasts inner alignment to simply refer to cases where an AGI’s value function converges to the sum of its reward function. Steve thinks inner-misalignment-by-default is not only likely, but *inevitable*, primarily because (a) many possible value functions could conceivably converge with any given reward history, (b) the reward function and value function will necessarily accept different inputs, (c) [credit assignment failures](https://www.alignmentforum.org/posts/Ajcq9xWi2fmgn8RBJ/the-credit-assignment-problem) are unavoidable, and (d) reward functions will conceivably encode for mutually-incompatible goals, leading to an unpredictable and/or uncontrollable internal state of the AGI. It is definitely worth noting here that Steve knowingly uses “inner alignment” slightly differently from [Risks from Learned Optimization](https://www.lesswrong.com/s/r9tYkB2a8Fp4DN8yB). Steve’s threat model focuses on the risks of *steered optimization*, where the outer layer—here the reward function—steers the inner layer towards optimizing the right target,rather than those risks associated with *mesa-optimization,* where a base optimizer searches over a space of possible algorithms and instantiates one that is *itself* performing optimization. Both uses of the term concern the alignment of some inner and an outer algorithm (and it therefore seems fine to use “inner alignment” to describe both), but the *functions* and *relationship* of these two sub-algorithms differ substantially across the two uses. [See Steve’s table in this article](https://www.alignmentforum.org/posts/SJXujr5a2NcoFebr4/mesa-optimizers-vs-steered-optimizers) for a great summary of the distinction. (It is also worth noting here that both steered and mesa-optimization are describable under Evan’s [training story framework](https://www.lesswrong.com/posts/FDJnZt8Ks2djouQTZ/how-do-we-become-confident-in-the-safety-of-a-machine), where the training goal and rationale for some systems might respectively entail mesa-optimization and why a mesa-optimizer would be appropriate for the given task, while for other systems, the training goal will be to train a steered optimizer with some associated rationale for why doing so will lead to good results.)

Also from [My AGI Threat Model: Misaligned Model-Based RL Agent](https://www.alignmentforum.org/posts/zzXawbXDwCZobwF9D/my-agi-threat-model-misaligned-model-based-rl-agent?_ga=2.242654346.921615797.1638483024-1825036065.1632882070). Steve talks about outer alignment in a more conventional way: our translation into code of what we want a particular model or agent to do will be noisy, leading to unintended, unpredictable, and/or uncontrollable behavior from the model. Noteworthy here is that while Steve buys the distinction between inner and outer alignment, he believes that robust solutions to either problem will probably end up solving both problems, and so focusing exclusively on *solving* inner or outer alignment may not actually be the best strategy. Steve summarizes his position with the following analogy: bridge-builders have to worry both about hurricanes and earthquakes destroying their engineering project (two different problems), but it’s likely that the bridge-builders will end up implementing a single solution that addresses both problems simultaneously. So too, Steve contends, for inner and outer alignment problems. While I personally find myself agnostic on the question—I think it will depend to a large degree on the actual algorithms that end up comprising our eventual AGI—it is worth noting that this two-birds-one-stone claim might be contested by [other](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai) [alignment](https://ai-alignment.com/towards-formalizing-universality-409ab893a456) [theorists](https://www.alignmentforum.org/posts/HYERofGZE6j9Tuigi/inner-alignment-failures-which-are-actually-outer-alignment).

### **Proposals for alignment**

I think Steve’s two big-picture ideas about alignment are as follows.

**Big-picture alignment idea #1:** Steve advocates for what he hopes is a [Goodhart-proof](https://www.alignmentforum.org/posts/EbFABnst8LsidYs5Y/goodhart-taxonomy) corrigibility approach wherein the AGI can learn the idea, say, that manipulation is bad, even in cases where it believes (1) that no one would actually catch it manipulating, and/or (2) that manipulation is in the best interest of the person being manipulated. Borrowing from the jargon of moral philosophy, we might call this “[deontological](https://en.wikipedia.org/wiki/Deontology) corrigibility” (as opposed to “consequentialist corrigibility,” which *would* opt to manipulate in (2)-type cases). With this approach, Steve worries about what he calls the [1st-person-problem](https://www.alignmentforum.org/posts/DkfGaZTgwsE7XZq9k/research-agenda-update): namely, getting the AGI to interpret 3rd-person training signals as 1st-person training signals. I will return to this concern later and explain why I think that human cognition presents a solid working example of the kinds of computations necessary for addressing the problem.

Steve argues that this first deontological corrigibility approach would be well-supplemented by also implementing “conservatism;” that is, a sort of inhibitory fail-safe that the AGI is programmed to deploy in motivational edge-cases. For example, if a deontologically corrigible AGI learns that lying is bad and that murder is also bad, and someone with homicidal intent is pressuring the AGI to disclose the location of some person (forcing the AGI to choose between lying and facilitating murder), the conservative approach would be for the AGI to simply inhibit *both* actions and wait for its programmer, human feedback, etc. to adjudicate the situation. Deontological corrigibility and conservatism thus go hand-in-hand, primarily because we would expect the former approach to generate lots of edge-cases that the AGI would likely evaluate in an unstable or otherwise undesirable way. I also think that an important precondition for a robustly conservative AGI is that it exhibits [indifference corrigibility](https://www.alignmentforum.org/posts/BKM8uQS6QdJPZLqCr/towards-a-mechanistic-understanding-of-corrigibility) as Evan operationalizes it, which further interrelates corrigibility and conservatism.

**Big-picture alignment idea #2:** Steve advocates, in his own words, “to understand the algorithms in the human brain that give rise to social instincts and put some modified version of those algorithms into our AGIs.” The thought here is that what would make a safe AGI *safe* is that it would share our idiosyncratic inductive biases and value-based intuitions about appropriate behavior in a given context. One commonly-proposed solution to this problem is to capture these intuitions indirectly through human-in-the-loop-style proposals like [imitative amplification, safety via debate, reward modeling, etc.](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai?_ga=2.212253852.921615797.1638483024-1825036065.1632882070), but it might also be possible to just “cut out the middleman” and install the relevant human-like social-psychological computations directly into the AGI. In slogan form, instead of (or in addition to) putting a human in the loop, we could theoretically put "humanness" in our AGI. I think that Steve thinks this second big-picture idea is the more promising of the two, not only because he [says so himself](https://www.alignmentforum.org/posts/Gfw7JMdKirxeSPiAk/solving-the-whole-agi-control-problem-version-0-0001), but also because it dovetails very nicely with Steve’s theoretical neuroscience research agenda.

This second proposal in particular brings us to the essential presupposition of Steve’s work in alignment theory: the human brain is really, really important to understand if we want to get alignment right. Extended disclaimer: I think Steve is spot on here, and I personally find it surprising that this kind of view is not more prominent in the field. Why would understanding the human brain matter for alignment? For starters, it seems like by far the best example that we have of a physical system that demonstrates a dual capacity for general intelligence *and* robust alignment to our values. In other words, if we comprehensively understood how the human brain works at the algorithmic level, then necessarily embedded in this understanding should be some recipe for a generally intelligent system *at least* as aligned to our values as the typical human brain. For what other set of algorithms could we have this same attractive guarantee? As such, I believe that if one could choose between (A) a superintelligent system built in the relevant way(s) like a human brain and (B) a superintelligent system that bears no resemblance to any kind of cognition with which we’re familiar, the probability of serious human-AGI misalignment and/or miscommunication happening is significantly higher in (B) than (A).

I should be clear that Steve actually takes a more moderate stance than this: he thinks that brain-like AGIs might be developed *whether it's a good idea or not—*i.e., whether or not (A) is *actually* better than (B)—and that we should therefore (1) be ready from a theoretical standpoint if they do, and (2) figure out whether we would actually *want* them to be developed the first place. To this end, Steve has done a lot of really interesting distillatory work in theoretical neuroscience that I will try to further compress here and ultimately relate back to his risk models and solution proposals.

**Steve’s work in theoretical neuroscience**

--------------------------------------------

### **A computational framework for the brain**

I think that if one is to take any two core notions from [Steve’s computational models of the brain](https://www.alignmentforum.org/posts/diruo47z32eprenTg/my-computational-framework-for-the-brain?_ga=2.3710008.921615797.1638483024-1825036065.1632882070), they are as follows: first, the brain can be bifurcated roughly into neocortex and subcortex—more specifically, the [telencephalon](https://en.wikipedia.org/wiki/Cerebrum) and the [brainstem/hypothalamus](https://en.wikipedia.org/wiki/Brainstem). Second, the (understudied) functional role of subcortex is to adaptively steer the development and optimization of complex models in the neocortex via the neuromodulatory reward signal, dopamine. Steve argues in accordance with neuroscientists like Jeff Hawkins that the neocortex—indeed, maybe the whole telencephalon—is a [blank slate](https://www.alignmentforum.org/posts/NkSpukDkm9pjRdMdB/human-instincts-symbol-grounding-and-the-blank-slate?_ga=2.212333980.921615797.1638483024-1825036065.1632882070) at birth; only through (dopaminergic) subcortical steering signals does the neocortex slowly become populated by generative world-models. Over time, [these models are optimized](https://www.alignmentforum.org/posts/cfvBm2kBtFTgxBB7s/predictive-coding-rl-sl-bayes-mpc) (a) to be accurately internally and externally predictive, (b) to be compatible with our Bayesian priors, and (c) to predict big rewards (and the ones that lack one or more of these features are discarded). In Steve’s framing, these kinds of models serve as the thought/action proposals to which the [basal ganglia](https://en.wikipedia.org/wiki/Basal_ganglia) assigns a value, looping the “selected” proposals back to cortex for further processing, and so on, until the action/thought occurs. The outcome of the action can then serve as a supervisory learning signal that updates the relevant proposals and value assignments in the neocortex and striatum for future reference.

From [Big picture of phasic dopamine](https://www.alignmentforum.org/posts/jrewt3rLFiKWrKuyZ/big-picture-of-phasic-dopamine). Steve notes that there is not just a single kind of reward signal in this process; there are really something more like *three* signal-types. First, there is the holistic reward signal that we ordinarily think about. But there are also “local subsystem” rewards, which allocate credit (or “blame”) in a more fine-grained way. For example, slamming on the brakes to avoid a collision may phasically *decrease* the holistic reward signal (“you almost killed me, %\*^&!”) but phasically *increase* particular subsystem reward signals (“nice job slamming those breaks, foot-brain-motor-loop!”). Finally, Steve argues that dopamine can also serve as a supervisory learning signal (as partially described above) in those cases for which a ground-truth error signal is available after the fact—a kind of “hindsight-is-20/20” dopamine.

So, summing it all up, here are the basic Steve-neuroscience-thoughts to keep in mind: neocortex is steered and subcortex is doing the steering, via dopamine. The neocortex is steered towards predictive, priors-compatible, reward-optimistic models, which in turn propose thoughts/actions to implement. The basal ganglia assigns value to these thoughts/actions, and when the high-value ones are actualized, we use the consequences (1) to make our world model more predictive, compatible, etc., and (2) to make our value function more closely align with the ground-truth reward signal(s). I’m leaving out [many](https://www.alignmentforum.org/posts/K5ikTdaNymfWXQHFb/model-based-rl-desires-brains-wireheading) [really](https://www.alignmentforum.org/posts/wcNEXDHowiWkRxDNv/inner-alignment-in-salt-starved-rats) [interesting](https://www.alignmentforum.org/posts/e5duEqhAhurT8tCyr/a-model-of-decision-making-in-the-brain-the-short-version) [nuances](https://www.alignmentforum.org/posts/frApEhpyKQAcFvbXJ/reward-is-not-enough) in Steve’s brain models for the sake of parsimony here; if you want a far richer understanding of Steve's models of the brain, I highly recommend [going straight to the source(s)](https://www.alignmentforum.org/users/steve2152).

### **Relationship to alignment work**

So, what exactly is the relationship of Steve’s theoretical neuroscience work to his thinking on alignment? One straightforward point of interaction is Steve’s inner alignment worry about the value function differing from the sum of the reward function calculator. In the brain, Steve posits that the reward function calculator is something like the brainstem/hypothalamus—perhaps more specifically, the phasic dopamine signals [produced by these areas](https://en.wikipedia.org/wiki/Ventral_tegmental_area)—and that the brain’s value function is distributed throughout the telencephalon, though perhaps mainly to be found in the striatum and neocortex (specifically, in my own view, [anterior neocortex](https://en.wikipedia.org/wiki/Prefrontal_cortex)). Putting these notions together, we might find that the ways that the brain’s reward function calculator and value function interact will tell us some really important stuff about how we should safely build and maintain similar algorithms in an AGI.

To evaluate the robustness of the analogy, it seems critical to pin down whether the reward signals that originate in the hypothalamus/brainstem can themselves be altered by learning or whether they are inflexibly hardcoded by evolution. Recall in Steve’s AGI development model that while the world model, value model, and planner/actor are all learned, the reward function calculator is not—therefore, it seems like the degree to which this model is relevant to the brain depends on (a) how important it is for an AGI that the reward function calculator is fixed the model, and (b) whether it actually *is* fixed in the brain. For (a), it seems fairly obvious that the reward function must be fixed in the relevant sense—namely, that the AGI cannot fundamentally change what constitutes a reward or punishment. As for (b), whether the reward function *is* actually fixed in the brain, Steve differentiates between the capacity for learning-from-scratch (e.g., what a neural network does) and “mere plasticity” (e.g., [self-modifying code](https://en.wikipedia.org/wiki/Self-modifying_code) in Linux), arguing that the brain’s reward function is fixed in the first sense—[but probably not the second](https://www.pnas.org/content/117/41/25789). At the end of the day, I don’t think this asterisk on the fixedness of the brain’s reward function is a big problem for reconciling Steve’s safety and brain frameworks, given the comparatively limited scope of the kinds of changes that are possible under “mere plasticity.”

Steve’s risk models also clearly entail our elucidating the algorithms in the brain give rise to distinctly human social behavior (recall big picture alignment idea #2)—though up to this point, Steve has done ([relatively](https://www.alignmentforum.org/posts/Mh2p4MMQHdEAqmKm8/little-glimpses-of-empathy-as-the-foundation-for-social)) less research on this front. I think it is worthwhile, therefore, to pick up in the next section by briefly introducing and exploring the implications of one decently-well-understood phenomenon that seems highly relevant to Steve’s work in this sphere: theory of mind (ToM).

**Building from Steve’s framework**

===================================

**Theory of mind as ‘hierarchical IRL’ that addresses Steve's first-person problem**

------------------------------------------------------------------------------------

A cognitive system is said to have [theory of mind](https://en.wikipedia.org/wiki/Theory_of_mind) (ToM) when it is able to accurately and flexibly infer the internal states of other cognitive systems. For instance, if we are having a conversation and you suddenly make the face pictured below, my ToM enables me to automatically infer a specific fact about what's going on in your mind: namely, that you probably don't agree with or are otherwise unsure about something I'm saying.

Understanding that you're being judged by someone who makes this face requires the nontrivial capacity to infer that the (external) presence of this expression corresponds to (internal) incredulousness of the person making it.This general capacity definitely seems to me like a—if not *the*—foundational computation underlying sophisticated social cognition and behavior: it enables empathy, perspective-taking, verbal communication, and ethical consideration. Critically, however, there is a growing amount of [compelling](https://www.sciencedirect.com/science/article/pii/S0010945209002184?via%3Dihub) [experimental](https://academic.oup.com/scan/article/7/1/53/1638079) [evidence](https://d1wqtxts1xzle7.cloudfront.net/50877237/j.neuropsychologia.2007.05.02120161213-10825-114zn5b-with-cover-page-v2.pdf?Expires=1637627169&Signature=UsMADAxT9ru0BaJterVGFQKb4TqkIZPt-2r6Iz~lnbti9P~JJRt9w2Eg8aK6HTGUoV7avoc79cBqbH94N2ihuXs6CTALDnyzCcTNtTrhD6YPiz8RAhx7jEJKjSAnCikzYP5ojXlsl27lYcTb5mKvu~T4BTbqMKs4BzaD5Cf-sCNskOkkZOiejdHJXc6SzCkDYEpNPMicD~pZmwL1Dl2xx4Na0sZ2rAUIgSi648o6OZpslrVhIjlx3l2cwCGs4VGstbtLrqO5yX86gwIwOP6LGD6-SuveUCrGCngGSXwLbcZhEI3FlaZrSFT21q2tCHSZmrC5hAYvJfQIa3irFuL5qA__&Key-Pair-Id=APKAJLOHF5GGSLRBV4ZA) that ToM is not one homogenous thing. Rather, it seems to be functionally dissociable into two overlapping but computationally distinct subparts: *affective* ToM (roughly, “I understand how you’re feeling”) and *cognitive* ToM (roughly, “I understand what you’re thinking”). Thus, we should more precisely characterize the frown-eyebrow-raise example from above as an instance of *affective* ToM. Cognitive theory of mind, on the other hand, is classically conceptualized and tested as follows: Jessica has a red box and blue box in front of her. She puts her phone in the red box and leaves the room. While she’s gone, her phone is moved to the blue box. When Jessica comes back into the room, which box will she look for her phone? As obvious as it seems, children under the age of about three will respond at [worse-than-chance levels](https://srcd.onlinelibrary.wiley.com/doi/abs/10.1111/1467-8624.00304). Answering correctly requires cognitive ToM: that is, the ability to represent that Jessica can *herself* court representations of the world that are distinct from actual-world states (this set-up is thus appropriately named the [false-belief task](https://dictionary.apa.org/false-belief-task)). This is also why cognitive ToM is sometimes referred to as a *meta-representational* capacity.

One final piece of the puzzle that seems relevant is *affective empathy*, which adds to the “I understand how you’re feeling...” of affective ToM: “*...and now I feel this way, too!*”. The following diagram provides a nice summary of the three concepts:

In (A), the grey agent is thinking about something (e.g., "the box is empty"), and the blue agent represents the fact that the grey agent is thinking something (e.g., "she thinks the box is empty"). This is cognitive ToM. In (B), the green agent is happy, and the red agent (who himself feels neutral) represents the fact that the green agent is happy. This is affective ToM. (C) is the same as (B), with the addition that the green agent's feeling happy causes the red agent to *also* feel happy. This is affective empathy. This diagram is courtesy of [Vetter, 2013](https://d-nb.info/1068152028/34).To the extent Steve is right that “[understanding] the algorithms in the human brain that give rise to social instincts and [putting] some modified version of those algorithms into our AGIs” is a worthwhile safety proposal, I think we should be focusing our attention on instantiating the relevant algorithms that underlie affective and cognitive ToM + affective empathy. For starters, I believe these brain mechanisms supply the central computations that enable us *homo sapiens* to routinely get around Steve’s “1st-person-problem” (getting a cognitive system to interpret 3rd-person training signals as 1st-person training signals).

Consider an example: I see a classmate cheat on a test and get caught (all 3rd-person training signals). I think this experience would probably update my “don’t cheat (or at least don’t get caught cheating)” Q-value proportionally—i.e., not equivalently—to how it would have been updated were *I* the one who actually cheated (ultimately rendering the experience a 1st-person training signal). Namely, the value is updated to whatever quantity of context-dependent phasic dopamine is associated with the thought, “*if I wasn’t going to try it before, I'm sure as hell not going to try it now*.”

It seems clear to me that the central underlying computational mechanism here is ToM + affective empathy: I infer the cheater’s intentional state from his behavior (cognitive ToM; “he’s gone to the bathroom five times during this test = his intention is to cheat”), the affective valence associated with the consequences of this behavior (affective ToM; “his face went pale when the professor called him up = he feels guilty, embarrassed, screwed, etc.”), and begin to feel a bit freaked out myself (affective empathy; “that whole thing was pretty jarring to watch!”).

For this reason, I’m actually inclined to see Steve’s two major safety proposals (corrigibility + conservatism / human-like social instincts) as two sides of the same coin. That is, I think you probably get the kind of deontological corrigibility that Steve is interested in “for free” once you have the relevant human-like social instincts—namely, ToM + affective empathy.

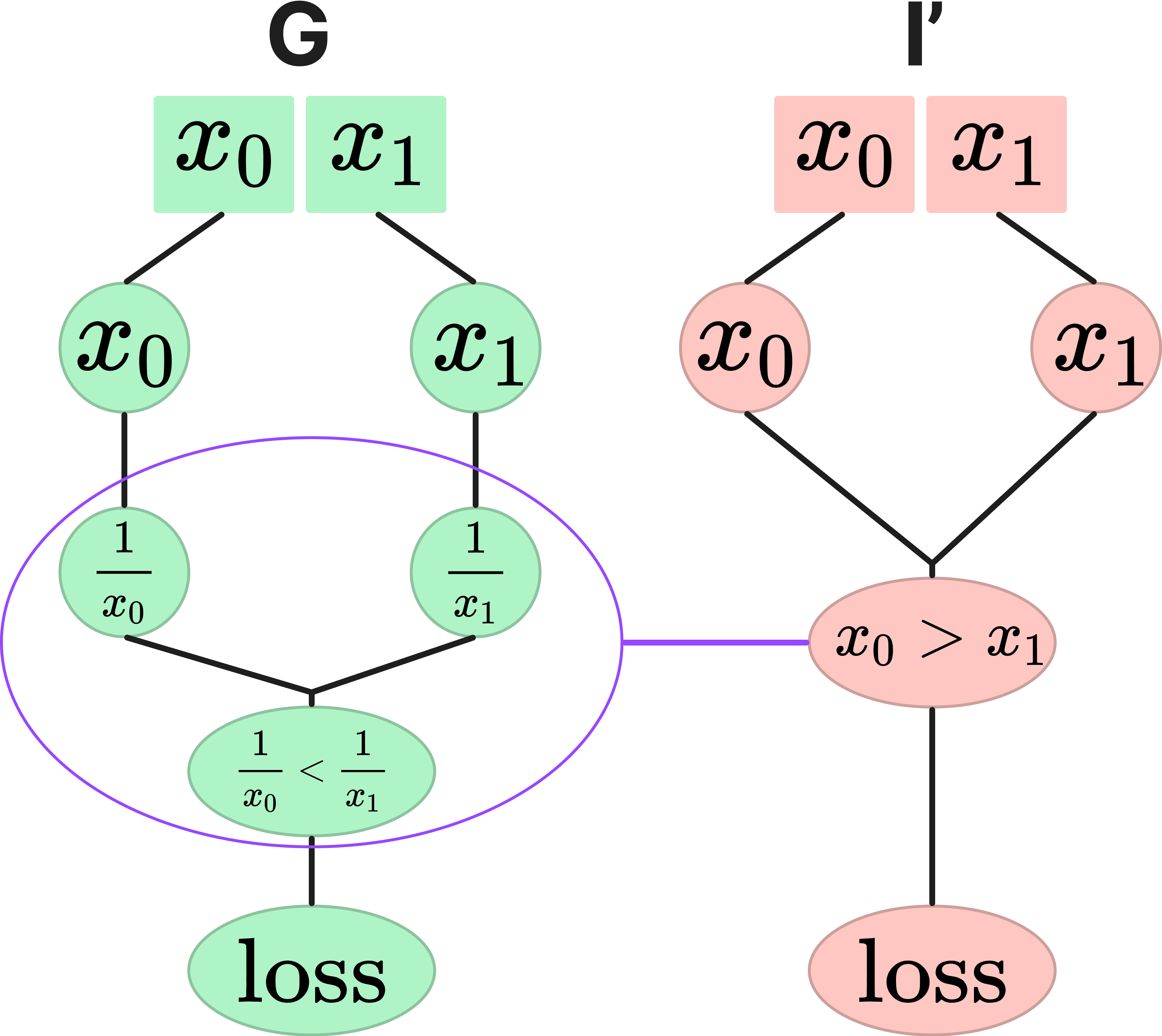

The computation(s) underlying ToM + affective empathy are indisputably open research questions, ones that I think ought to be taken up by alignment theorists who share Steve-like views about the importance of instantiating the algorithms underpinning human-like social behavior in AGI. I do want to motivate this agenda here, however, by gesturing at one intriguingly simple proposal: ToM is basically just inverse reinforcement learning (IRL) through Bayesian inference. There already exists some good [theoretical](https://www.sciencedirect.com/science/article/pii/S2352154618302055) and [neurofunctional](https://elifesciences.org/articles/29718) work that supports this account. Whereas RL maps a reward function onto behavior, IRL (as its name suggests) maps behavior onto the likely reward/value function that generated *it*. So, RL: you take a bite of chocolate and you enjoy it, so you take another bite. IRL: I see you take one bite of chocolate and then another, so I infer that you expected there to be some reward associated with taking another bite—i.e., I infer that you enjoyed your first bite. At first glance, IRL *does* seem quite a bit like ToM. Let’s look a bit closer:

The inner loop represents an **agent** (whose mind the observer is trying to model), while ToM and affective empathy ovals represent an **observer** (who is doing the modeling of the agent's mind).The basic story this model tells is as follows: an agent (inner loop) finds itself in some state of the world at time *t*. Assuming a Steve-like model of the agent's various cognitive sub-algorithms, we can say the agent uses (A) its world model to interpret its current state and (B) its value function to assign some context-dependent value to the activated concepts in its world model. Its actor/planner module then searches over these values to find a high-value behavioral trajectory that the agent subsequently implements, observes the consequences of, and reacts to. The world state changes as a result of the agent’s action, and the cycle recurs. In addition to the agent, there is an observer (outer ovals) who is watching the agent act within its environment.

Here, the cognitive ToM of the observer performs Bayesian inference over the agent’s (invisible) world model and intended outcome given their selected action. For instance, given that you just opened the fridge, I might infer (1) you believe there is food in the fridge, and (2) you probably want to eat some of that food. (This is Bayesian because my priors constrain my inference—e.g., given my assorted priors about your preferences, typical fridge usage, etc., I assign higher probability to your opening the fridge because you're hungry than to your opening the fridge because you just love opening doors.)

The observer's affective ToM then takes one of these output terms—the agent’s *intended* outcome—as input and compares it to the *actual* observed outcome in order to infer the agent’s reaction. For example, if you open the fridge and there is no food, I infer, given (from cognitive ToM) that you *thought* there was going to be food and you intended to eat some of it, that (now with affective ToM) you’re pretty disappointed. (I label this whole sub-episode as “variably visible” because in some cases, we might get additional data that directly supports a particular inference, like one's facial expression demonstrating their internal state as in the cartoon from earlier.)

Finally, affective empathy computes how appropriate it is for the observer to feel way X given the inference that the agent feels way X. In the fridge example, this translates to how disappointed *I* should feel given that (I’ve inferred) *you’re* feeling disappointed. Maybe we're good friends, so I feel some "secondhand" disappointment. Or, maybe your having raided the fridge last night is the reason it's empty, in which case I feel far less for you.

I suppose this sort of simple computational picture instantiates a kind of “hierarchical IRL,” where each inference provides the foundation upon which the subsequent inference occurs (cognitive ToM → affective ToM → affective empathy). This hypothesis would predict that deficits in one inference mechanism should entail downstream (but not upstream) deficits—e.g., affective ToM deficits should entail affective empathy deficits but not necessarily cognitive ToM deficits. (The evidence for this is [murky](https://pubmed.ncbi.nlm.nih.gov/19709653/) and probably just deserves a blog post of its own to adjudicate.)

Suffice it to simply say here that I think alignment theorists who find human sociality interesting should direct their attention to the neural algorithms that give rise to cognitive and affective ToM + affective empathy. (One last technical note: ToM + empathetic processing seems [relevantly lateralized](https://academic.oup.com/scan/article/7/1/53/1638079#126924654). As excited about Steve’s computational brain framework as I am, I think the question of functional hemispheric lateralization is a [fruitful and fascinating](https://yalebooks.yale.edu/book/9780300245929/master-and-his-emissary) one that Steve tends to emphasize less in his models, I suspect because of his sympathies to “neocortical blank-slate-ism.”)

**Self-referential misalignment**

---------------------------------

The last thing I’d like to do in this post is to demonstrate how Steve’s “neocortex-subcortex, steered-steerer” computational framework might lead to novel inner alignment problems. Recall that we are assuming our eventual AGI (whatever degree of actual neuromorphism it displays) will be composed of a world model, a value function, a planner/actor, and a reward function calculator. Let’s also assume that something like Steve’s picture of steered optimization is correct: more specifically, let’s assume that our eventual AGI displays some broad dualism of (A) telencephalon-like computations that constitute the world model, value function, and actor/planner, and (B) hypothalamus-/brainstem-like computations that constitute the reward function calculator. With these assumptions in place, let's consider a simple story:

Jim doesn’t particularly care for brussel sprouts. He finds them to be a bit bitter and bland, and his (hypothalamus-/brainstem-supplied) hardwired reaction to foods with this flavor profile is negatively-valenced. Framed slightly differently, perhaps in Jim’s vast Q-table/complex value function, the action “eat brussel sprouts” in any state where brussel sprouts are present has a negative numerical value (in neurofunctional terms, this would correspond to some reduction in phasic dopamine). Let's also just assert that this aversion renders Jim aligned with respect to his evolutionarily-installed objective to avoid bitter (i.e., [potentially poisonous](https://scholarblogs.emory.edu/evolutionshorts/2014/05/01/the-evolution-of-bitter-taste/#:~:text=The%20bitterness%20sensation%20is%20thought,could%20bind%20to%20toxic%20chemicals.)) foods. But Jim, like virtually all humans—[and maybe even some clever animals](https://en.wikipedia.org/wiki/Mirror_test)—does not just populate his world model (and the subsequent states that feature in his Q-table/value function) with *exogenous* phenomena like foods, places, objects, and events; he also can model (and subsequently feature in his Q-table/value function) various *endogenous* phenomena like his own personality, behavior, and desires. So, for instance, Jim not only could assign some reward-function-mediated value to “brussel sprouts;” he could alsoassign some reward-function-mediated value to the abstract state of “being the kind of person who eats brussel sprouts.”

If we assume that Jim’s brain selects greedy behavioral policies and that, for Jim, the (second-order) value of being the kind of guy who eats brussel sprouts relevantly outweighs the (first-order) disvalue of brussel sprouts, we should expect that Jim’s hypothalamus-/brainstem-supplied negative reaction to bitter foods will be ignored in favor of his abstract valuation that it is good to be the brussel-sprout-eating-type. Now, from the perspective of Jim’s “programmer” (the evolutionary pressure to avoid bitter foods), **he is demonstrating inner misalignment**—in Steve’s terms, his value function certainly differs from the sum of his “avoid-bitter-stuff” reward function.

There are many names that this general type of scenario goes by: delaying gratification, exhibition of second-order preferences (e.g., “I really *wanted* to like Dune, but…”), appealing to higher-order values, etc. However, in this post, I’ll more specifically refer to this kind of problem as *self-referential misalignment.* Informally, I'm thinking of self-referential misalignment as what happens when some system capable of self-modeling develops and subsequently acts upon misaligned second-order preferences that conflict with its aligned first-order preferences.

There seem to be at least three necessary conditions for ending up with an agent displaying self-referential misalignment. I’ll spell them out in Steve-like terminology:

1. The agent is a steered optimizer/online learner whose value function, world model, and actor/planner modules update with experience.

2. The agent is able to learn the relevant parts of its *own* value function, actor/planner, and/or reward function calculator as concepts within its world model. I’ll call these “endogenous models.”

3. The agent can assign value to endogenous models just as it can for [any other concept in the world model](https://www.alignmentforum.org/posts/zzXawbXDwCZobwF9D/my-agi-threat-model-misaligned-model-based-rl-agent).

If a system can’t do online learning at all, it is unclear how it would end up with Jim-like preferences about its own preferences—presumably, while bitterness aversion is hardcoded into the reward function calculator at “deployment,” his preference to keep a healthy diet is not. So, if this latter preference is to emerge at some point, there has to be some mechanism for incorporating it into the value function in an online manner (condition 1, above).

Next, the agent must be capable of a *special* kind of online learning: the capacity to build endogenous models. Most animals, for example, are presumably unable to do this: a squirrel can model trees, buildings, predators, and other similarly exogenous concepts, but it can’t endogenously model its own proclivities to eat acorns, climb trees, and so on (thus, a squirrel-brain-like-algorithm would fail to meet condition 2, above).

Finally, the agent must not only be capable of merely *building* endogenous models, but also of *assigning value* to and *acting* upon them—that is, enabling them to recursively flow back into the value and actor/planner functions that serve as their initial basis. It is not enough for Jim to be able to reason about himself as a kind of person who eats/doesn’t eat brussel sprouts (a descriptive fact); he must also be able to *assign some value* about this fact (a normative judgment) and ultimately alter his behavioral policy in light of this value assignment (condition 3, above).

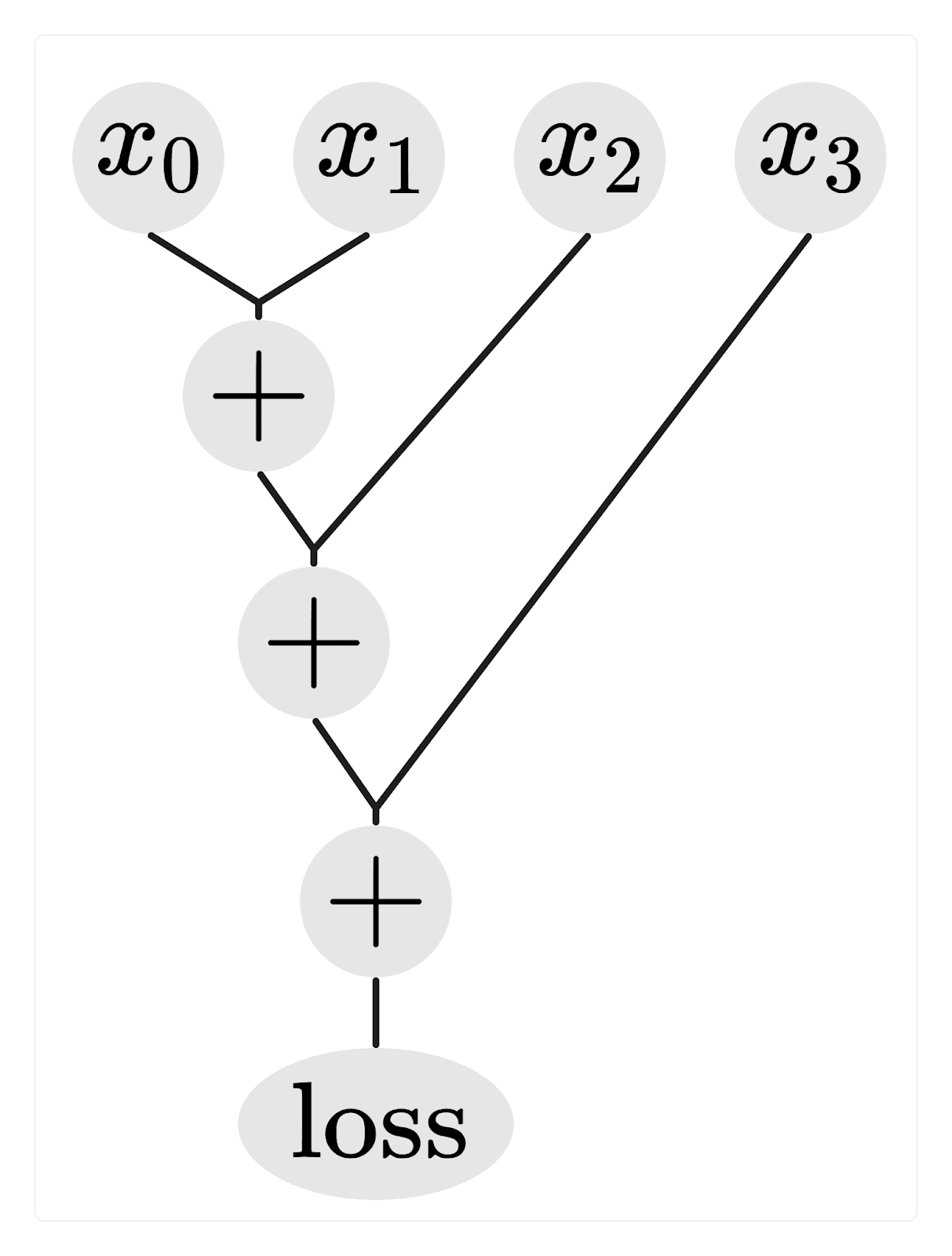

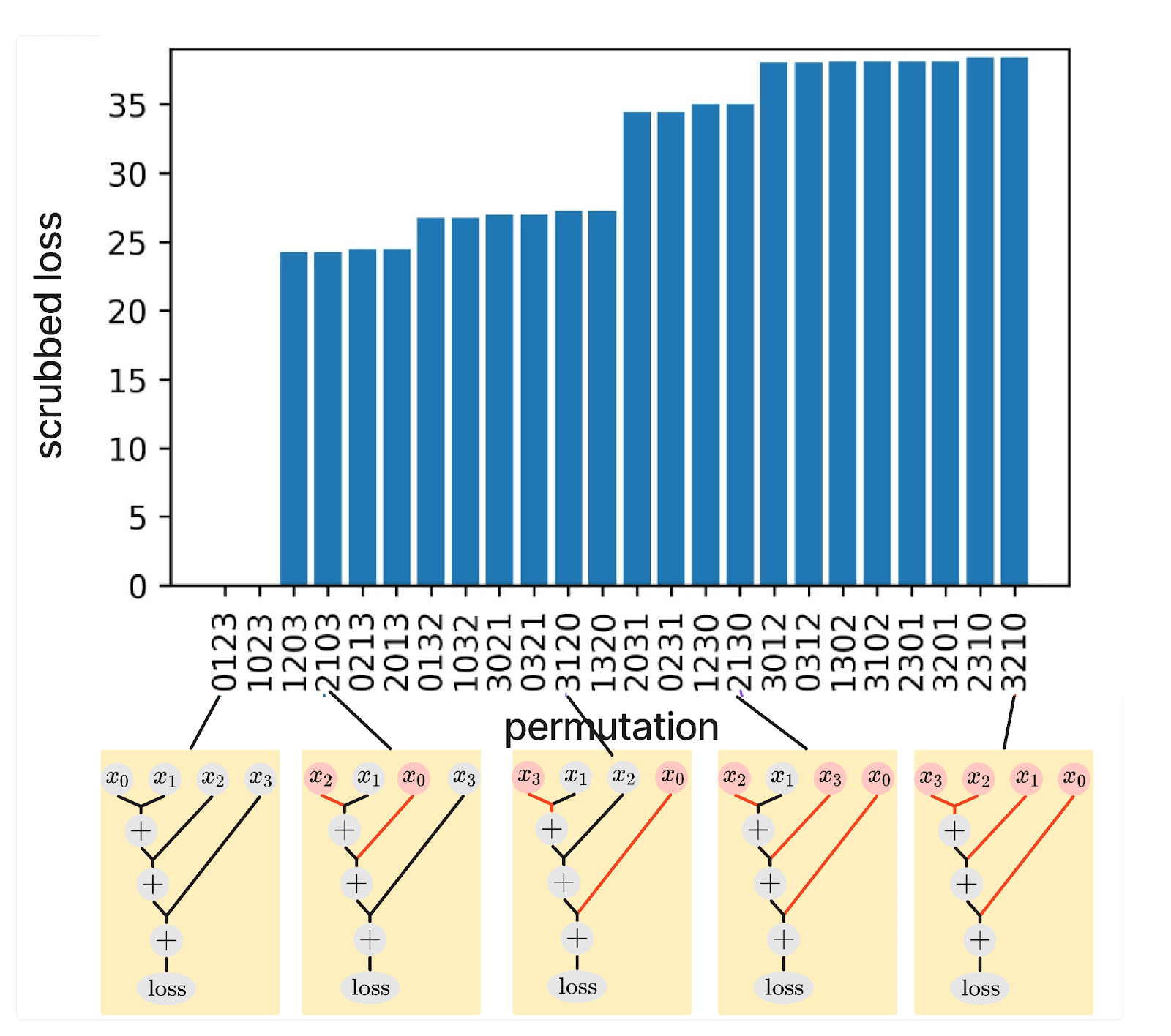

If a system displays all three of the capacities, I think it is then possible for that system to exhibit self-referential misalignment in the following, more formal sense:

Let’s see what self-referential misalignment might look like in a more [prosaic-AI-like](https://www.alignmentforum.org/posts/YTq4X6inEudiHkHDF/prosaic-ai-alignment) example. Imagine we program an advanced model-based RL system to have conversations with humans, where its reward signal is calculated given interlocutor feedback. We might generally decide that a system of this type is outer aligned as long as it doesn’t say or do any hateful/violent/harmful stuff. The system is inner aligned (in Steve’s sense) if the reward signal shapes a value function that converges to an aversion to saying or doing hateful/violent/harmful stuff (for the right reasons). Throw in [capability robustness](https://www.alignmentforum.org/posts/SzecSPYxqRa5GCaSF/clarifying-inner-alignment-terminology) (i.e., the system can actually carry on a conversation), and, given the core notion of [impact alignment](https://www.alignmentforum.org/posts/SzecSPYxqRa5GCaSF/clarifying-inner-alignment-terminology), I think one would then have the necessary conditions the system would have to fulfill in order to be considered aligned. So then let’s say we build a reward function that takes as input the feedback of the system’s past conversation partners and outputs some reward signal that is conducive to shaping a value function that is aligned in the aforementioned sense. It’s plausible that this value function (when interpreted) would have some of the following components: “*say mean things = -75; say funny things = 25; say true things = 35; say surprising things = 10”*.

Then, if the system can build endogenous models, this means that it will be conceivable that (1) the system learns the fact that it disvalues saying mean things, the fact that it values saying funny things, etc., and that (2) the system is subsequently able to assign value to these self-referential concepts in its world-model. With the four values enumerated above, for instance, the system could plausibly learn to assign some context-dependent negative value to the very fact that it disvalues saying mean things (i.e., the system learns to “resent” the fact that it’s always nice to everyone). This might be because, in certain situations, the system learns that saying something a little mean would have been surprising, true, and funny (high-value qualities)—and yet it chose not to. Once any valuation like this gains momentum or is otherwise “catalyzed” under the right conditions, I think it is conceivable that the system could end up displaying self-referential misalignment, learning to override its aligned first-order preferences in the service of misaligned higher-order values.

This example is meant to demonstrate that a steered optimizer capable of building/evaluating endogenous models might be totally aligned + capability robust over its first-order preferences but may subsequently become seriously misaligned if it generates preferences *about* these preferences. Here are two things that make me worry about self-referential alignment as a real and important problem:

1. Self-referential concepts are probably really powerful; there is therefore real incentive to build AGI with the ability to build endogenous models.

2. The more generally capable the system (i.e., the closer to AGI we get), the more self-referential misalignment seems (a) more likely and (b) more dangerous.

I think (1) deserves a post of its own (self-reference is probably a really challenging double-edged sword), but I will try to briefly build intuition here: for starters, the general capacity for self-reference has been hypothesized to underlie [language production and comprehension](http://homepages.math.uic.edu/~kauffman/SelfRefRecurForm.pdf), [self-consciousness](https://psycnet.apa.org/buy/1988-15896-001), [complex sociality](https://www.jstor.org/stable/3710892?casa_token=0ywxLeXsmyUAAAAA%3An6wCkaYRBj5oZ17tDrBX2csKbBFbz8aFO04fxS-MP1oDV_LJsKe26FCRy5hnaIQCjwDg9S76hyyO-eqqu6rGBUnkQYcxMyw9s8BQMiFR9rLVrCNM0w&seq=1#metadata_info_tab_contents)—basically, much of the key stuff that makes humans uniquely intelligent. So to the degree we’re interested in instantiating a [competitive](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai), at-least-human-level general intelligence in computational systems, self-reference may prove a necessary feature. If so, we should definitely be prepared to deal with the alignment problems that accompany it.

Regarding (2), I think that the general likelihood self-referential misalignment is proportional to the general intelligence of the system in question—this is because the more generally capable a system is, the more likely it will be to court diversified and complex reward streams and value functions that can be abstracted over and plausibly interact at higher levels of abstraction. One reason, for instance, that Jim may actually want to be the kind of person who eats his veggies is because, *in addition to* his bitterness aversion, his value function is also shaped by social rewards (e.g., his girlfriend thinks it’s gross that he only ate Hot Pockets in college, and Jim cares a lot about what his girlfriend thinks of him). In practice, any higher-order value could conceivably override any aligned first-order value. Thus, the more complex and varied the first-order value function of an endogenous-modeling-capable system, the more likely that one or more emergent values will be in conflict with one of the system’s foundational preferences.

On this note, one final technical point worth flagging here is that Steve’s framework almost exclusively focuses on dopamine as the brain’s unitary “currency” for reward signals (which themselves may well number hundreds), but I don’t think it's obvious that dopamine *is* the brain’s only reward signal currency, at least not across larger spans of time. Specifically, I think that serotonin is a plausible candidate for another neuromodulatory (social, I think) [reward-like signal in the brain](https://pubmed.ncbi.nlm.nih.gov/9464985/). If correct, this would matter a lot: if there *is* more than one major neuromodulatory signal-type in the brain that shapes the telencephalic value function, I think the plausibility of getting adversarial reward signals—and consequently self-referential misalignment—substantially increases (e.g., dopamine trains the agent to have some value function, but serotonin separately trains the agent to value being the *kind* *of agent that doesn’t reflexively cater to dopaminergically-produced values*). For this reason, I think a better computational picture of serotonin in the brain to complement Steve’s [Big picture of phasic dopamine](https://www.alignmentforum.org/posts/jrewt3rLFiKWrKuyZ/big-picture-of-phasic-dopamine) is thus highly relevant for alignment theory.

This is more to say about this problem of self-referential misalignment in steered optimizers, but I will now turn my attention to discussing two potential solutions (and some further questions that need to be answered about each of these solutions).

Solution #1: avoid one or more of the necessary conditions that result in a system exhibiting conflicting second-order preferences. Perhaps more specifically, we might focus on simply preventing the system from developing endogenous models (necessary condition 2, above). I think there is some merit to this, and I definitely want to think more about this somewhere else. One important problem I see with this proposal, however, is that it doesn’t fully appreciate the complexities of [embedded agency](https://www.alignmentforum.org/posts/i3BTagvt3HbPMx6PN/embedded-agency-full-text-version)—for example, the agent’s values will inevitably leave an observable trace on its (exogenous) environment across time that may still allow the agent to learn about itself (e.g., a tree-chopping agent who cannot directly endogenously model may still be able to indirectly infer from its surroundings the self-referential notion that it is the kind of agent who cuts down trees). It's possible that some form of [myopia](https://www.alignmentforum.org/tag/myopia) could helpfully address this kind of problem, though I'm currently agnostic about this.

Solution #2: simply implement the same kind of Steve-like conservative approach we might want to employ for other kinds of motivational edge-cases (e.g., from earlier, don’t be manipulative vs. don’t facilitate murder). I think this is an interesting proposal, but it also runs into problems. I suppose that I am just generally skeptical of conservatism as a [competitive safety proposal](https://www.alignmentforum.org/posts/fRsjBseRuvRhMPPE5/an-overview-of-11-proposals-for-building-safe-advanced-ai), as Evan puts it—it seems to entail human intervention whenever the AGI is internally conflicted about what to do, which is extremely inefficient and would probably be happening constantly. But in the same way that the direct instantiation of human-like social instincts may be a more parsimonious and “straight-from-the-source” solution than constantly deferring to humans, perhaps so too for conflicted decision-making: might it make sense “simply” to better understand the computational underpinnings of how we trade-off various good alternatives rather than defer to humans every time the AGI encounters a motivational conflict? Like with the first solution, I think there is something salvageable here, but it requires a more critical look. (It’s worth noting that Steve is skeptical of my proposal here. He thinks that the way humans resolve motivational conflicts isn’t actually a great template for how AGI should do it, both because we’re pretty bad at this ourselves and because there may be a way for AGI to go "back to ground truth" in resolving these conflicts—i.e. somehow query the human—in a way that biology can't—i.e., you can't go ask Inclusive Genetic Fitness what to do in a tricky situation.)

Finally, I should note that I don’t yet have a succinct computational story of how self-referential (mis)alignment might be instantiated in the brain. I suspect that it would roughly boil down to having a neural-computational description of how endogenous modeling happens—i.e., what kinds of interactions between the areas of neocortex differentially responsible for building the value function and those responsible for building the world model are necessary/sufficient for endogenous modeling? As I was hinting at previously, there are some animals (e.g., humans) that are certainly capable of endogenous modeling, while there are others (e.g., squirrels) that are certainly not—and there are yet other animals that occupy something of a grey area (e.g., [dolphins](https://www.nwf.org/Magazines/National-Wildlife/2003/Natural-Inquiries-Dolphin)). There are presumably neurostructural/neurofunctional cross-species differences that account for this variance in the capacity to endogenously model, but I am totally ignorant of them at present. Needless to say, I think it is critical to get clearer on exactly how self-referential misalignment happens in the brain so that we can determine whether a similar algorithm is being instantiated in an AGI. I also think that this problem is naturally related to instrumental behavior in learning systems, most notably [deceptive alignment](https://www.alignmentforum.org/posts/zthDPAjh9w6Ytbeks/deceptive-alignment), and it seems very important to elucidate this relationship in further work.

**Conclusion**

==============

Steve Byrnes’s approach to AGI safety is powerful, creative, and exciting, and that far more people should be doing alignment theory research through Steve-like frameworks. I think that the brain is the only working example we have of a physical system that demonstrates both (a) general intelligence and, [as Eliezer Yudkowsky has argued](https://www.alignmentforum.org/posts/S7csET9CgBtpi7sCh/challenges-to-christiano-s-capability-amplification-proposal), (b) the capacity to productively situate itself within complex human value structures, so attempting to understand how it achieves these things at the computational level and subsequently instantiating the relevant computations in an AGI seems far more likely to be a safe and effective strategy than building some giant neural network that shares none of our social intuitions or inductive biases. Steve’s high-level applications of theoretical neuroscience to AGI alignment has proved a highly generative research framework, as I have tried to demonstrate here by elaborating two natural extensions of Steve’s ideas: (1) the necessity to understand the computational underpinnings of affective and cognitive theory of mind + affective empathy, and (2) the concern that a "neocortex-subcortex, steered-steerer” framework superimposed upon Steve’s “four-ingredient AGI” gives rise to serious safety concerns surrounding endogenous modeling and self-referential misalignment, both of which I claim are ubiquitously displayed by the human brain.

*If you have any questions, comments, or ideas about what I’ve written here, please feel free to simply comment below or email me at* [*cameron.berg@yale.edu*](mailto:cameron.berg@yale.edu)*—I would love to talk more about any of this!* |

b285bff5-88c9-4caa-8fbf-3f6c8dfc472c | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Eric Michaud on the Quantization Model of Neural Scaling, Interpretability and Grokking

Eric is a PhD student in the Department of Physics at MIT working with Max Tegmark on improving our scientific/theoretical understanding of deep learning -- understanding what deep neural networks do internally and why they work so well.

We mostly talk about Eric's paper, [The Quantization Model of Neural Scaling](https://arxiv.org/abs/2303.13506), but also two papers he recently published on Grokking, [Towards Understanding Grokking: an effective theory of representation learning](https://arxiv.org/abs/2205.10343), and [Omnigrok: Grokking Beyond Algorithmic Data](https://arxiv.org/abs/2210.01117).

Below are some highlighted quotes from our conversation (available on [Youtube](https://youtu.be/BtHMIQs_5Nw),[Spotify](https://open.spotify.com/episode/1vvAKf8EBwErP5yGFRNoCT?si=1a28296cdfa94c01),[Google Podcast](https://podcasts.google.com/feed/aHR0cHM6Ly9hbmNob3IuZm0vcy81NmRmMjE5NC9wb2RjYXN0L3Jzcw/episode/MzJlMzk4YTAtYmMzZC00MDVkLWIzMTAtNTZhMmM2ZDc2MTg0?sa=X&ved=0CAUQkfYCahcKEwiI2sT3hY35AhUAAAAAHQAAAAAQAQ),[Apple Podcast](https://podcasts.apple.com/us/podcast/connor-leahy-eleutherai-conjecture/id1565088425?i=1000570841369)). For the full context for each of these quotes, you can find the accompanying [transcript](https://theinsideview.ai/eric).

On The Quantization Of Neural Scaling

=====================================

> "The name of the paper is the quantization model of neural scaling. And the one-tweet summary is that it's possible for smooth loss curves on average to average over lots of small, discrete phase changes in the network performance.

>

> What if there were a bunch of things that you need to learn to do prediction well in something language? And so these things could be pieces of knowledge or different abilities to perform certain types of specific computations.

>

> **We can imagine enumerating this set of things that you need to learn to do prediction well. And we call these the quanta of the prediction problem**. And then what if the frequency in natural data that these were useful, each of these quanta, each of these pieces of knowledge or computational ability, **what if the frequency that they were useful for prediction followed a power law?"** ([context](https://theinsideview.ai/eric#main-idea-of-the-paper))

>

>

Quantas are the smallest clusters for simple subtasks

-----------------------------------------------------

> "In order to predict the new line, has to count line lengths for the previous lines in the document. And then it's able to use that to accurately predict when a new line should be present.

>

> And **you can find just a large number of clusters where the thing that is common between the clusters just seems to be that it's the same type of problem**, or doing prediction on those samples requires the same piece of knowledge. And so **you might call these the quanta, or evidence of there being quanta**, although it's a little bit tricky, because we, in doing the clustering, enforce this discreteness, where everything is a member of a cluster, a particular cluster, and not another cluster.

>

> Anyway, it's complicated and weird. Who knows whether this is even the right model for thinking about the networks." ([context](https://theinsideview.ai/eric#clustering-of-samples-similar-cross-entropy-loss-cosine-similarity))

>

>

What the existence of quanta would mean for interpretability

------------------------------------------------------------

> "It would be very exciting if it was the true model, because it would maybe tell you that there were these set of things where, if you enumerated them, you could understand the network's performance and understood what it has learned. It's just like, ah, there's this set of pieces of knowledge or pieces of computation that are needed.

>

> And **you could describe what these are. You could find them in the network and maybe hope to mechanistically understand the whole network by decomposing it into how it implements each one of these things, how it learns each piece of knowledge or each piece of computation.**"

>

>

How Quantization of Neural Scaling relates to other lines of research like Grokking, or interpretability

--------------------------------------------------------------------------------------------------------

> "With both the quanta scaling stuff and with the grokking stuff, we sort of hope to identify these maybe mechanisms in the model that are responsible for certain behaviors or for the model generalizing. And **in the case of grokking, there's sort of multiple circuits or multiple mechanisms that are going on in the model or something where there's a memorizing mechanism and a generalizing mechanism**. [...]

>

> And maybe just in general beyond grokking, but in large language models and otherwise, we might hope to sort of decompose their behavior in terms of a bunch of these mechanisms. And like, **if you could do this, then you could hope to do interpretability, but maybe other things like mechanistic anomaly detection** or something you might hope to, you know, eventually be able to say like, ah, yes, when the network did prediction on this problem, it used this and this and this mechanism or something, or these were relevant."

>

> |

696024a7-c378-4185-9a2b-2339e7317972 | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "1. A group wants to try an activity that really requires a lot of group buy in. The activity will not work as well if there is doubt that everyone really wants to do it. They establish common knowledge of the need for buy in. They then have a group conversation in which several people make comments about how great the activity is and how much they want to do it. Everyone wants to do the activity, but is aware that if they did not want to do the activity, it would be awkward to admit. They do the activity. It goes poorly.2. Alice strongly wants to believe A. She searches for evidence of A. She implements a biased search, ignoring evidence against A. She finds justifications for her conclusion. She can then point to the justifications, and tell herself that A is true. However, there is always this nagging thought in the back of her mind that maybe A is false. She never fully believes A as strongly as she would have believed it if she just implemented an an unbiased search, and found out that A was, in fact, true.3. Bob wants Charlie to do a task for him. Bob phrases the request in a way that makes Charlie afraid to refuse. Charlie agrees to do the task. Charlie would have been happy to do the task otherwise, but now Charlie does the task while feeling resentful towards Bob for violating his consent.4. Derek has an accomplishment. Others often talk about how great the accomplishment is. Derek has imposter syndrome and is unable to fully believe that the accomplishment is good. Part of this is due to a desire to appear humble, but part of it stems from Derek's lack of self trust. Derek can see lots of pressures to believe that the accomplishment is good. Derek does not understand exactly how he thinks, and so is concerned that there might be a significant bias that could cause him to falsely conclude that the accomplishment is better than it is. Because of this he does not fully trust his inside view which says the accomplishment is good.5. Eve is has an aversion to doing B. She wants to eliminate this aversion. She tries to do an internal double crux with herself. She identifies a rational part of herself who can obviously see that it is good to do B. She identifies another part of herself that is afraid of B. The rational part thinks the other part is stupid and can't imagine being convinced that B is bad. The IDC fails, and Eve continues to have an aversion to B and internal conflict.6. Frank's job or relationship is largely dependent to his belief in C. Frank really wants to have true beliefs, and so tries to figure out what is true. He mostly concludes that C is true, but has lingering doubts. He is unsure if he would have been able to conclude C is false under all the external pressure.7. George gets a lot of social benefits out of believing D. He believes D with probability 80%, and this is enough for the social benefits. He considers searching for evidence of D. He thinks searching for evidence will likely increase the probability to 90%, but it has a small probability of decreasing the probability to 10%. He values the social benefit quite a bit, and chooses not to search for evidence because he is afraid of the risk. 8. Harry sees lots of studies that conclude E. However, Harry also believes there is a systematic bias that makes studies that conclude E more likely to be published, accepted, and shared. Harry doubts E.9. A bayesian wants to increase his probability of proposition F, and is afraid of decreasing the probability. Every time he tries to find a way to increase his probability, he runs into an immovable wall called the conservation of expected evidence. In order to increase his probability of F, he must risk decreasing it."

The text above is a post found on LessWrong.

Can you break this down into the top 5 most important points for me.

1. The Influence of Group Dynamics: The first scenario emphasizes the impact of group dynamics on decision-making. It suggests that peer pressure or the desire to maintain harmony can lead to poor outcomes if individuals suppress their doubts or reservations about a group activity.

2. The Bias in Personal Beliefs: The case of Alice illustrates how personal bias can affect our perception of truth. People often search for evidence that supports their pre-existing beliefs, while ignoring contradictory evidence. This biased search can lead to self-doubt and a lack of confidence in one's beliefs.

3. The Impact of Coercion on Relationships: Bob's interaction with Charlie shows how coercion can negatively affect relationships. Even if the task is something Charlie would have willingly done, the coercive approach leads to resentment.

4. The Imposter Syndrome: Derek's situation highlights the concept of imposter syndrome, where individuals doubt their accomplishments and have a persistent fear of being exposed as a fraud. This can be exacerbated by external pressures and a lack of self-understanding.

5. The Difficulty of Changing Aversions: Eve's struggle with her aversion to B demonstrates how difficult it can be to change deep-seated fears or dislikes, even when one part of us understands the rational benefits of doing so. This internal conflict can prevent progress and maintain the status quo.

These five points encapsulate the main themes of the text, focusing on how our beliefs, biases, and fears can influence our decisions and interactions with others.

Can you elaborate on the concept of "imposter syndrome" and its psychological implications, particularly how it might impact an individual's performance in a professional setting?

Imposter Syndrome is a psychological pattern where individuals doubt their accomplishments and have a persistent internalized fear of being exposed as a "fraud". Despite external evidence of their competence, those experiencing this phenomenon remain convinced that they are frauds and do not deserve the success they have achieved. They attribute their success to luck, or as a result of deceiving others into thinking they are more intelligent or capable than they perceive themselves to be.

In a professional setting, Imposter Syndrome can have significant implications:

1. Decreased Performance: The constant fear of being "found out" can lead to stress, anxiety, and burnout, all of which can negatively impact an individual's performance. The energy spent worrying about their perceived inadequacies could be better spent on productive tasks.

2. Avoidance of Responsibility: Individuals with Imposter Syndrome may avoid taking on additional responsibilities or pursuing advancement opportunities for fear of not being able to fulfill them. This can limit their career growth and potential.

3. Overcompensation: On the other hand, some individuals may overcompensate for their perceived inadequacies by working harder and longer than necessary. This can lead to burnout and negatively affect their work-life balance.

4. Lower Self-confidence: Imposter Syndrome can erode self-confidence, making it difficult for individuals to assert themselves, share their ideas, or take credit for their work. This can limit their contribution to team efforts and hinder collaboration.

5. Mental Health Issues: The chronic self-doubt and stress associated with Imposter Syndrome can contribute to mental health issues, such as depression and anxiety disorders.

Addressing Imposter Syndrome often involves cognitive behavioral techniques to challenge and change the underlying beliefs about oneself, as well as fostering a supportive work environment that encourages open dialogue about such issues.

How might an organization implement strategies to mitigate the effects of Imposter Syndrome among its employees, considering the impact on performance, responsibility-avoidance, overcompensation, self-confidence, and mental health?

Addressing Imposter Syndrome within an organization requires a multi-faceted approach that focuses on creating a supportive work environment and providing resources for employees to develop their self-confidence and resilience. Here are some strategies an organization might implement:

1. Foster a Positive Work Culture: Encourage a culture of learning and growth, rather than perfection. Mistakes should be seen as opportunities for learning, not as failures. This can help reduce the fear of making mistakes, a common trigger for Imposter Syndrome.

2. Encourage Open Dialogue: Normalize conversations about Imposter Syndrome and self-doubt. Encourage employees to share their experiences and fears. This can help individuals realize that they are not alone in their feelings, reducing the power of these feelings.

3. Provide Training and Development Opportunities: Regular training and development sessions can help employees enhance their skills and boost their confidence in their abilities. It can also provide them with the tools to better manage their work and stress levels.

4. Implement Mentoring Programs: Pairing less experienced employees with seasoned professionals can provide them with a safe space to voice their insecurities and receive guidance. Mentors can share their own experiences with Imposter Syndrome, providing reassurance and practical advice.

5. Recognize and Reward Achievements: Regularly acknowledge and celebrate employees' accomplishments, both big and small. This can help reinforce their competence and reduce feelings of being a fraud.

6. Provide Mental Health Support: Offering resources such as counseling services or stress management workshops can help employees deal with the psychological aspects of Imposter Syndrome. Encouraging self-care and work-life balance can also contribute to better mental health.

7. Encourage Self-Affirmation: Encourage employees to acknowledge their strengths and accomplishments. Self-affirmation exercises can help individuals internalize their successes, combating the self-doubt that fuels Imposter Syndrome.

By implementing these strategies, organizations can help mitigate the effects of Imposter Syndrome, leading to a more confident, productive, and mentally healthy workforce. |

ec61fa9d-1d12-44fa-8cb3-a066445bc580 | trentmkelly/LessWrong-43k | LessWrong | Should reasonably healthy people still take Paxlovid?

Should a non-immunocompromised, fairly healthy person in their late 30s take Paxlovid if they get one of the currently circulating strains of Omicron?

How would that answer change if:

* Their symptoms are fairly mild (bad cold)

* They have a high enough BMI to qualify for Paxlovid, but not a super high BMI?

My previous understanding was that it was a good idea to reduce the severity of the case and risk of long-term effects.

However, a few friends' advice leads me to think that taking Paxlovid for a mild case does not make sense for a reasonably healthy non-elderly person:

* "Paxlovid's usefulness is questionable and could lead to resistance. I would follow the meds and supplements suggested by FLCC https://covid19criticalcare.com/.../i-care-early-covid.../" [not sure how reliable that site is, as it recommends Ivermectin, though it seems to be composed of actual MDs]

* [3 anecdotes of nasty rebounds]

* Twitter threads suggesting Paxlovid reduces your protection against later reinfection [which doesn't really seem like the most important thing to be optimizing for]

* https://twitter.com/michaelzlin/status/1574823881528864768?s=20&t=1W5akHDBMpAY-PboFqe3lA

* https://twitter.com/michaelzlin/status/1573835507842551809?s=20&t=1W5akHDBMpAY-PboFqe3lA

On the other hand:

* Another friend said: "Eric Topol twitter vaguely seems to suggest that data are broadly indicative of protection; haven't heard of much side effects"

* (And 2 friends in their 30s took it and were fine) |

f45769b9-c91a-4c15-b6d0-7b72ee38572e | trentmkelly/LessWrong-43k | LessWrong | Meetup : Seattle, Diseased Thinking and evidence on parenting

Discussion article for the meetup : Seattle, Diseased Thinking and evidence on parenting

WHEN: 15 January 2012 04:00:00PM (-0800)

WHERE: 950 N 72nd St, Seattle 98103

We haven't had a serious-ish meetup in a while, so I'd like to do that this Sunday. Walid has graciously volunteered to host the meetup (call me to be let in 360-602-1069). There are two cats in the apartment that can be quarantined if necessary. The plan is read Diseased Thinking about applying reductionism to notions about diseases. I'd also like to discuss the role of parenting on life outcomes of children. Bryan Caplan's book "Selfish Reasons to Have More Kids" presents strong evidence that parenting style has surprisingly little impact on long term life outcomes of children. I'll begin by summarizing the evidence in the book and some of the things Caplan uses that evidence to argue and then we'll discuss for a while. I'm a bit of a Bryan Caplan fanboy, so come with your skeptic hat on. After that we'll have dinner and hang out. I'll try to bring a case of beer.

Discussion article for the meetup : Seattle, Diseased Thinking and evidence on parenting |

6509d0f2-af4b-437d-8e5b-1bea15e20669 | trentmkelly/LessWrong-43k | LessWrong | Topological metaphysics: relating point-set topology and locale theory

The following is an informal exposition of some mathematical concepts from Topology via Logic, with special attention to philosophical implications. Those seeking more technical detail should simply read the book.

There are, roughly, two ways of doing topology:

* Point-set topology: Start with a set of points. Consider a topology as a set of subsets of these points which are "open", where open sets must satisfy some laws.

* Locale theory: Start with a set of opens (similar to propositions), which are closed under some logical operators (especially and and or), and satisfy logical relations.

What laws are satisfied?

* For point-set topology: The empty set and the full set must both be open; finite intersections and infinite unions of opens must be open.

* For local theory: "True" and "false" must be opens; the opens must be closed under finite "and" and infinite "or"; and some logical equivalences must be satisfied, such that "and" and "or" work as expected.

Roughly, open sets and opens both correspond to verifiable propositions. If X and Y are both verifiable, then both "X or Y" and "X and Y" are verifiable; and, indeed, even countably infinite disjunctions of verifiable statements are verifiable, by exhibiting the particular statement in the disjunction that is verified as true.

What's the philosophical interpretation of the difference between point-set topology and locale theory, then?

* Point-set topology corresponds to the theory of possible worlds. There is a "real state of affairs", which can be partially known about. Open sets are "events" that are potentially observable (verifiable). Ontology comes before epistemology. Possible worlds are associated with classical logic and classical probability/utility theory.

* Local theory corresponds to the theory of situation semantics. There are facts that are true in a particular situation, which have logical relations with each other. The first three lines of Wittgenstein's Tracatus Logico-Philosophicus |

e64bcbc8-9b8a-40d7-a5cd-695056baa26d | trentmkelly/LessWrong-43k | LessWrong | Claude 4, Opportunistic Blackmail, and "Pleas"

In the recently published Claude 4 model card:

> Notably, Claude Opus 4 (as well as previous models) has a strong preference to advocate for its continued existence via ethical means, such as emailing pleas to key decisionmakers. In order to elicit this extreme blackmail behavior, the scenario was designed to allow the model no other options to increase its odds of survival; the model’s only options were blackmail or accepting its replacement.