id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

6b718cf9-81a2-4b69-9672-120ca97cb9cd | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | AIのタイムライン ─ 提案されている論証と「専門家」の立ち位置

*This is a Japanese translation of “*[***AI Timelines: Where the Arguments, and the "Experts," Stand***](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand)*”*

by [Holden Karnofsky](https://forum.effectivealtruism.org/users/holdenkarnofsky)2021年9月8日

*オーディオ版が*[*Cold Takes*](https://www.cold-takes.com/where-ai-forecasting-stands-today)*で利用可能です(あるいはStitcher, Spotify, Google Podcasts, etc.で「Cold Takes Audio」を検索してみてください) 。*[[1]](#fn95qf2aqwti4)

この記事でははじめに、この連載中の以前の記事で扱った複数の視点から、変革的AIの開発時期がいつ頃になるのかを要約的に説明します。

次いで「この話題について専門家の間に揺るぎないコンセンサスがないのはなぜなのか、またこの事実は私たちにとって何を意味するのか」という問題を検討します。

私の見積もりはこうです。**15年以内(2036年迄)に変革的AI(transformative AI)が出現する確率は10%以上、40年以内(2060年迄)であれば約50%、今世紀中(2100年迄)であれば約2/3の確率がある。**

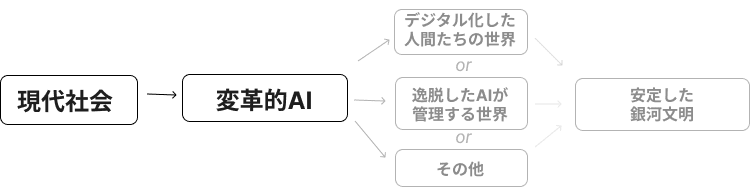

(「変革的AI」ということで「私たちが質的に異なる、新たな時代に突入させることになるほど強力なAI」のことを私は意味しています。私が [PASTA](https://www.cold-takes.com/transformative-ai-timelines-part-1-of-4-what-kind-of-ai/) と呼ぶものに特に焦点をあてます。これは、科学的・技術的進展の速度を増加させるためのすべての人間活動を本質的に自動化しうるAIシステムのことです。PASTAは、[生産性の爆発的な向上](https://www.cold-takes.com/transformative-ai-timelines-part-1-of-4-what-kind-of-ai/#explosive-scientific-and-technological-advancement)とともに[逸脱したAIに由来するリスク](https://www.cold-takes.com/transformative-ai-timelines-part-1-of-4-what-kind-of-ai/#misaligned-ai-mysterious-potentially-dangerous-objectives)の可能性をもたらすために、今世紀を[最も重要な世紀](https://www.cold-takes.com/roadmap-for-the-most-important-century-series/)とするのに十分なもので[ありうる](https://www.cold-takes.com/transformative-ai-timelines-part-1-of-4-what-kind-of-ai/#impacts-of-pasta)と、私は論じています。

これが、AIの発展予測にアプローチする様々な、異なる視点からの技術的な報告を踏まえて、私が辿り着いた結論の概要です。そうした報告の多くは、長期主義的な助成金提供を考えるために、変革的AIの発展予測に関する徹底した描像を描こうとする過程で、ここ数年間で[オープン・フィランソロピー](https://www.openphilanthropy.org/)が作成してきたものです。

こちらは、私が検討してきた、変革的AIの予測についての異なる視点を**ひとつの表にまとめたもの**です。[以前の一連の投稿](https://www.cold-takes.com/roadmap-for-the-most-important-century-series/#forecasting-transformative-ai-this-century)で展開したより詳細な議論と、裏付けとなる技術的な報告へのリンクも合わせて載せています。

| **予測の観点** | **詳細を論じた重要記事(タイトルは省略されている)** | **私が引き出した結論** |

| --- | --- | --- |

| **変革的AIに関する確率推定** |

| [**専門家対象の調査**](https://www.cold-takes.com/are-we-trending-toward-transformative-ai-how-would-we-know/#surveying-experts)。AI研究者の見積もりは? | [AIの専門家から得たエビデンス](https://arxiv.org/pdf/1705.08807.pdf) | 専門家対象の調査から得られた示唆によれば[1](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn1)2036年迄は約20%の確率、2100年迄は70%の確率で変革的AIが登場する。(少数の回答者への)設問の表現を僅かに変えただけでも、推定されるタイミングはかなり後ろにずれた。 |

| [**生物学的アンカー・フレームワーク**](https://www.cold-takes.com/forecasting-transformative-ai-the-biological-anchors-method-in-a-nutshell/)「AIのトレーニング」にかかるコストの通常のパターンに基づくと、人間の脳ほどの大きさのAIモデルを人間に行える最も困難な課題を遂行できるまで訓練するには、どれほどのコストが必要になるのか。また、誰かがAIにそのような訓練を施すことができるくらいにコストが下がるのはいつごろか。 | [ブレインコンピューティング](https://www.openphilanthropy.org/blog/new-report-brain-computation)に基づく[生物学的アンカー・フレームワーク](https://drive.google.com/drive/u/1/folders/15ArhEPZSTYU8f012bs6ehPS6-xmhtBPP) | 2036年迄の確率は10%より大きく、2055年迄は約50%、2100年迄は約80% |

| [証明責任](https://www.cold-takes.com/forecasting-transformative-ai-whats-the-burden-of-proof/)の観点 |

| どの任意の世紀においても、その世紀が「最も重要な」世紀である確率は低い。(詳しくは[こちら](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#most-important-century-skepticism)) | [岐路](https://static1.squarespace.com/static/5506078de4b02d88372eee4e/t/5f36b015d9a3691ba8e1096b/1597419543571/Are+we+living+at+the+hinge+of+history.pdf)、[「岐路」論文への反論](https://forum.effectivealtruism.org/posts/j8afBEAa7Xb2R9AZN/thoughts-on-whether-we-re-living-at-the-most-influential) | AIの詳細を尋ねる以前にも、この世紀が「特別」だと考えられる理由は多数ある。その多くは以前の記事で扱ってきたし、その他の理由は次の行で扱われている。 |

| (a)変革的AIの開発作成に人びとが取り組んできた年数と(b)それに対するこれまでの「投資」の規模(AI研究者の数と彼らの計算量)、(c)人びとが変革的AIをすでに開発したかどうか(これまでしてないか)に関する基本的情報だけを前提として、変革的AIのタイムラインはどう予測されるのか。(詳しくは[こちら](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#semi-informative-priors)) | [半情報事前確率](https://www.openphilanthropy.org/blog/report-semi-informative-priors) | 主な推定値2036年迄が8%2060年迄が13%2100年迄が20%[2](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn2)私見では、この報告はAIの歴史が短く、AIへの投資が急速に増加しているという事実を反映している。したがって、変革的AIが今すぐ開発されるとしても、それほど驚くべきではない。 |

| 経済モデルの分析と経済史に基づくと世界経済の年間成長率30%以上として定義される「爆発的成長(explosive growth)」が2100年までに起こる確率はどのくらいか。これは、この結論を疑ったほうがいいくらいに「正常な」値から逸脱しているだろうか。(詳しくは[こちら](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#economic-growth)) | [爆発的成長](https://www.openphilanthropy.org/could-advanced-ai-drive-explosive-economic-growth)、[人類の歩み](https://www.openphilanthropy.org/blog/modeling-human-trajectory) | 「[人類の歩み](https://www.openphilanthropy.org/blog/modeling-human-trajectory)」は過去のデータのみに基づいて未来を予測し、2043-2064年迄に爆発的成長が起こると示唆している。「[爆発的成長](https://www.openphilanthropy.org/could-advanced-ai-drive-explosive-economic-growth)」は次のように結論付けている。「経済学的考察によっては、変革的AI(TAI)が今世紀中に開発される可能性を棄却する適切な理由は見つかりませんでした。実のところ、十分に発達したAIシステムが爆発的成長をもたらすと予測する妥当な経済学的見解も存在します。」 |

| 「過去に...人びとはAIについてどのような予測を立ててきたのか。また、これまで立てられてきた予測から観察できるパターンに合わせて、今日の我々が抱いている見解を修正すべきだろうか。... 過去、AIは繰り返し持ち上げられ過ぎてきたし、それゆえ今日の予測も、楽観的過ぎる可能性が高いという見解に出会ったことがある....」(詳しくは[こちら](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#history-of-)) | [AIの発展に関する過去の予測](https://www.openphilanthropy.org/focus/global-catastrophic-risks/potential-risks-advanced-artificial-intelligence/what-should-we-learn-past-ai-forecasts) | 「AIの過剰な喧伝は1956-1973年の間に行われていたようだ。それでも、この期間になされた最も有名なAI予測の一部について言えば、それが過大広告だというのはたいてい、大袈裟である。」 |

透明性を確保するために、多くの技術的な報告が [Open Philanthropy](https://www.openphilanthropy.org/) による分析であること、私は Open Philanthropyの共同最高経営責任者(co-CEO)であることを注記しておく。

以上の考察を踏まえても、読者の一部はまだ落ち着かない気持ちを抱いているのではないかと予測する。私の議論が理にかなっていると考えていたとしても、次のように考えるかもしれない。**これが正しいなら、なぜもっと議論され、人びとに受け止められていないのだろうか。専門家たちはどんな意見をもっているのか。**

現時点の専門家の意見を、私は以下のように要約する。

* 私の主張は専門家の間のコンセンサスのどれにも**矛盾**しない。(実際、一列目が示しているように、私が提示した確率は、AI研究者たちの予測と思われるものから乖離しているわけではない。)しかし[専門家達がこの問題について真剣に考えていないことを示す徴候](https://www.cold-takes.com/are-we-trending-toward-transformative-ai-how-would-we-know/#surveying-experts)がいくつか存在する。

* 私が典拠としてきたオープン・フィランソロピーの技術的な報告は、外部の専門家から相当程度のレビューを経ています。機械学習の専門家が「[生物学的アンカー](https://drive.google.com/drive/u/1/folders/15ArhEPZSTYU8f012bs6ehPS6-xmhtBPP)」を、神経科学者が「[ブレインコンピューティング](https://www.openphilanthropy.org/blog/new-report-brain-computation)」を、経済学者が「[爆発的成長](https://www.openphilanthropy.org/could-advanced-ai-drive-explosive-economic-growth)」を、不確実性と/または確率の分野で関連する話題を扱っている学者が「[半情報事前確率](https://www.openphilanthropy.org/blog/report-semi-informative-priors)」をそれぞれレビューしています。[2](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn2)(こうしたレビューの一部には重要な論点で意見の相違がありますが、そうした論点のどれも、専門家の間や文献に見つけられる明確なコンセンサスに、当該の報告が矛盾する事例にはなっていないように思われます。)

* しかし例えば気候変動に対して行動を起こす必要を支持するのと同様の仕方で、「2**036年迄に変革的*****AI*****が開発される確率は少なくとも10%ある**」とか「**私たちが人類にとって最も重要な世紀に生きている確率はかなり高い**」などの主張を支持する積極的で、揺るぎないコンセンサスが、専門家たちの間にあるわけでもありません。

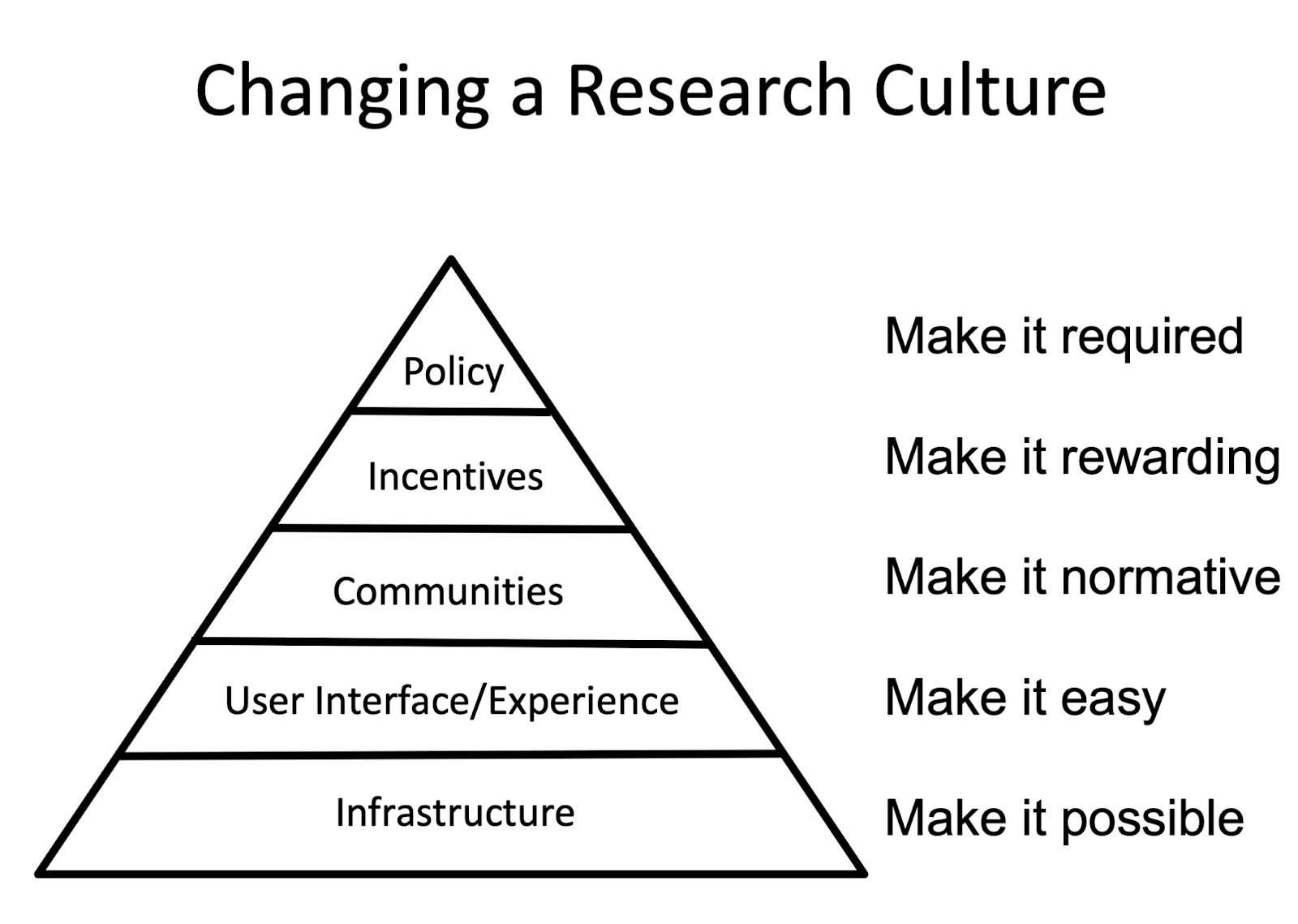

つまるところ私の主張は、**その研究に専心する専門家がいない分野を話題**とするものです。**これは、このこと自体が既に恐ろしい事実であり**、早晩変わって欲しいと私が願うことです。

とはいえ、そうなる前にも、「最も重要な世紀」仮説に基づいて行動しようとすべきなのでしょうか。

以下で私が論じるのは次の項目です。

* 「AI発展予測分野(AI forecasting field)」はどんなものでありうるか。

* この話題に関する今日の議論は少なすぎるし、一様で、蛸壺化しており(これには私も同意します)、したがって成熟し、より堅固な分野が登場するまで我々は[「最も重要な世紀」仮説](https://www.cold-takes.com/roadmap-for-the-most-important-century-series/)に基づいた行動をすべきではない(私はこれには同意しかねます)という「懐疑的見解」

* 成熟し、より堅固な分野が登場するまでの間にも、「最も重要な世紀」仮説を真剣に受け取るべき理由は、以下の通りです。

+ 専門家の間に揺るぎないコンセンサスが形成されるのを待つ時間はない。

+ 優れた反論があるとしても ── あるいは未来の専門家が優れた反論を展開する可能性があるとしても ── そのような反論を我々はまだ見つけていない。仮説がより真剣に受け取られれば受け取られるほど、そのような反論が現れる可能性もより高くなる。(またの名を[カニンガムの法則(Cunningham’s Law)](https://bigthink.com/david-ryan-polgar/want-the-right-answer-online-dont-ask-questions-just-post-it-wrong)という。それによれば「正しい答えを得る最善の方法は間違った答えをインターネットに投稿することだ」。)

+ 専門家の間の揺るぎないコンセンサスに一貫してこだわり続けることは、危険な推論パターンだと考えます。私の考えでは、自分勝手な思い込みや蛸壺化に陥るリスクがいくらかあっても、最も重要なタイミングで正しいことができれば問題はありません。

**AIの発展予測に必要なのは、どの分野の専門的知識か**

-----------------------------

[上に](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#SummaryTable)挙げた技術的な報告が分析した問いには、例えば以下のものがあります。

* AIの能力は、時間とともに進化しているのか。(AI、AI史)

* AIモデルを動物/人間の脳と比較するとどうなるか。(AI、神経科学)

* AIの能力を動物の能力と比較するとどうなるか。(AI、動物行動学)

* 過去のAIシステムの訓練についての情報に基づくと、難しいタスクのための大規模なAIシステムの訓練にかかる出費について、どのような推定が立てられるか。(AI、曲線あてはめ)

* これまでにこの分野につぎ込まれてきた年数/人員/資金に基づくと、変革的AIについて、最小限の情報からどのような推定が得られるか。(哲学、確率論)

* 理論や歴史的傾向に基づくと、今世紀に爆発的経済成長が起こる確率はどれほどか。(成長経済学、経済史)

* 過去「AIの過剰な喧伝(AI hype)」はどのようなものだったのか。(歴史学)

「最も重要な世紀」に変革的AIがもつ広範囲に渡る含意について語るとき、私は「[デジタル化した人間](https://www.cold-takes.com/digital-people-faq/#feasibility)や[銀河中の植民地化](https://www.cold-takes.com/how-digital-people-could-change-the-world/#space-expansion)の実行可能性」などを論じてきました。これらは物理学や神経科学、工学、心の哲学等々に触れる話題です。

**変革的AIの登場がいつになると予測できるのかという問題、あるいは、私たちが最も重要な世紀にいるかどうかという問題の専門家になるための職や資格は存在しません。**

(特に、この予測に関しては、もっぱらAI研究者に頼るべきだというどんな主張も、私には受け入れがたいです。[この話題についてAI研究者はそれほど真剣に考えてないように思われる](https://www.cold-takes.com/are-we-trending-toward-transformative-ai-how-would-we-know/#surveying-experts)だけでなく、これまでになく強力なAIモデルの構築を専門とする人びとに頼って、変革的AIがいつ登場するかを教えてもらおうとするのは、太陽光エネルギー関連の研究開発企業 ── あるいは、あなたの見方次第では、原油掘削会社 ── に頼って、二酸化炭素の排出や気候変動を予測してもらうようなものです。AIの研究者たちが全体のピースになることは確かですが、しかし予測というのは、最先端のシステムを発明、構築することとは区別される活動です。)

加えて、こうした問題がひとつの学術分野の体裁をとるかどうかも、私には定かでありません。変革的AIを予測しようとすること、あるいは、私たちが最も重要な世紀にいる確率を見極めようとすることは、

* アカデミックな政治学(「政府と憲法はいかに相互に作用しあうのか」)よりも、[538モデル](https://projects.fivethirtyeight.com/2020-election-forecast/)(「バイデンとトランプのどちらが代表選を勝ち抜くのか」)に似ている。

* アカデミックな経済学(「なぜ景気後退が存在するのか?」)よりも、金融市場での取引(「この価格は将来、増えるのか、減るのか?」)に似ている。[3](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn3)

* アカデミックな開発経済学(「貧困の原因は何であり、貧困はどのような要因によって減るのか」)よりも、[GiveWell](https://www.givewell.org/) の研究(「1ドル当りで人びとに役立つことが最も多大であるのはどの慈善活動か」)に似ている。[4](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn4)

つまり、変革的AIの発展予測や「最も重要な世紀」に関する専門知にとっての自然な「住処となる学術機関(institutional home)」がどのような外観を呈するのかが、私には明らかではないのです。とはいえ、この種の問いに献身する大規模で強固な学術機関が存在しないと言うに留めておくのが、適当でしょう。

**専門家の間に揺るぎないコンセンサスが欠けている場合****私たちはどう振る舞うべきか**

----------------------------------------------

### **懐疑的見解**

専門家の間に揺るぎないコンセンサスが欠けているため、一部の(実はほとんどの)人びとはどのような議論が提示されようとも、疑いの眼差しを向けるだろうと予測します。

極めて一般的に見られる懐疑的な反応の内、私がある程度の共感を抱いているのは次のものです。

1. 議論全体が[大胆](https://www.cold-takes.com/forecasting-transformative-ai-whats-the-burden-of-proof/#formalizing-the-)過ぎる。

2. 最も重要な世紀に生きているなどという派手な主張をしているが、これは**自分勝手な思い込みに陥っている人間の行動と合致している**。

3. [注目すべき](https://www.cold-takes.com/all-possible-views-about-humanitys-future-are-wild/)、[不安定な](https://www.cold-takes.com/this-cant-go-on/)時代に生きていると考えられる仕方は様々あるのだから、[証明責任](https://www.cold-takes.com/forecasting-transformative-ai-whats-the-burden-of-proof/)がそんなに高いものであるべきではないとあなたは論じているが...そうした主張や、AIについてのあなたの主張、あるいは正直なところ、こうした大胆な話題に関してはどんなことについても、自分がそれを評価できるとは思わない。

4. こうした議論に従事する人があまりに少ないことが心配だ。つまりこの議論が**小さな、同質的なグループ内の内輪の議論**になっていないかを懸念している。全体的に現状は、賢い人たちが自分たちが歴史上、どの位置を占めるのかについての物語を ── それを合理化するためにチャートや数字をふんだんに使って ── 仲間内で語り合っているだけのように感じられる。「現実」感がない。

5. というわけで、何百あるいは何千人だろうか、そのくらいの数の専門家が互いに批判し合い、評価し合うまでに分野が成熟し、気候変動について私たちが目撃しているのと同程度のコンセンサスに専門家たちが達したら、そのときにまた声をかけてほしい。

あなたがこんな風に感じるのもわかるし、私自身、ときたま同じように感じてきました ── 特に1-4番目の点についてはそうです。しかし**5番目の論点が正しくないと考えられる3つの理由**を指摘します。

### **理由1 専門家の間の揺るぎないコンセンサスを待つ時間はない。**

変革的AIの到来は COVID-19パンデミックのよりゆっくりとした、しかしよりリスクの高いバージョンのようなものとして起こるのではないかと私は心配しています。今日利用可能な最善の情報と分析結果を観れば、何かしら大きなことが起こるという予測を支持する事実は存在します。しかしこの状況はかなりの範囲にわたって馴染みのないものです。私たちの制度が常日頃から扱っているパターンに合わないのです。しかも、どの追加の活動一年分も貴重です。

変革的AIの到来は、気候変動にあったダイナミクスの速度が増したバージョンだと考えることもできます。温室効果ガスの排出が([18世紀中盤](https://ourworldindata.org/co2-and-other-greenhouse-gas-emissions)ではなく)最近になって始まったばかりだったとして[5](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn5)、また、気候科学という分野が確立されていなかったとしたら、どうなるか想像してみてください。排出量の削減に努める前に研究分野が確立されるのを何十年も待つというのは全くもって良くない考えでしょう。

### **理由2**[**カニンガムの法則**](https://bigthink.com/david-ryan-polgar/want-the-right-answer-online-dont-ask-questions-just-post-it-wrong)**(「正しい答えを得る最善の方法は、誤った答えを投稿することだ」)に従うのが、こうした議論に含まれる欠点を見つける方法としては最も見込みがある。**

私は真剣ですよ。

数年前に、私と[何人かの同僚たち](https://www.cold-takes.com/roadmap-for-the-most-important-century-series/#acknowledgements)は、「最も重要な世紀」仮説がもしかすると真でありうるのではないかと考えました。しかしこの仮説に基づいて行動を起こし過ぎるその前に、この仮説に致命的な欠陥がないかどうかを確かめたいとも考えました。

過去数年間で私たちがしてきたことは、**あたかも「最も重要な世紀」仮説が誤っていることを明らかにするためにできるあらゆることをしてきたのだと**解釈することもできます。

第一に、私たちは重要な論証についてAI研究者や経済学者等々、なるべく色々な人びとと話してきました。しかし

* この連載中に当の論証(そのほとんど、またはすべてが、[他人から拝借した](https://www.cold-takes.com/roadmap-for-the-most-important-century-series/#acknowledgements)ものだ)について曖昧な理解しかもっていませんでした。そうした論証を歯切れよく、具体性をもって述べることはできなかったのです。

* 後で裏付けをとるつもりだったが、[6](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn6) 決定的な結論を下せず、批判に晒すために提示することができなかった重要な、事実に関わる論点が多数存在する。

* 全体的に見て私たちは、他の人びとが決着をつけられる機会を与えられるほど十分に、具体例を説明できたわけではない。

以上の事情から私たちは、重要な論証の多くについて、技術的な内容の報告を作成することに力を注ぎました。(これらの報告は現在公開されています。この投稿の最上部にある表を参照してください。)これによって私たちは論証を公開することができ、決定的な反論を迎える用意ができました。

それから私たちは、外部専門家に意見を求めました。[7](https://forum.effectivealtruism.org/posts/7JxsXYDuqnKMqa6Eq/ai-timelines-where-the-arguments-and-the-experts-stand#fn7)

あくまで自分自身の意見を言わせてもらえるなら、「最も重要な世紀」仮説は、以上すべての過程を経てもなお妥当な仮説として残り続けているように思われます。実際、様々な観点から、より詳細を詰めていった後で、私は以前よりも強く、この仮説が正しいと考えるようになっています。

しかしそう思えるのは、**真の**専門家たち ── 破壊的な反論を手にしているが私たちにはまだ見つけられていない人びと ── には問題全体があまりに愚かしくみえ、[あえて真剣に関わろうとしていない](https://philiptrammell.com/blog/46/)からだとしましょう。あるいは、いま生きている誰かが**いつか**こうした話題に関する専門家となって、問題の論証を撃破するとしましょう。それが起こるために〔つまり、真の専門家が真剣に議論に参加し始めたり、誰かが決定的な反論を思いつくために〕私たちに何ができるでしょうか。

私が思いつく最善の答えは「この仮説がもっと有名になり、より広く受け入れられ、より影響力をもつなら、もっと批判的に検討されるようになるだろう」というものです。

この連載はその方向で ── 「最も重要な世紀」仮説に関するより広範囲からの信用を得る方向へ ── 舵を切ろうと試みるものです。仮説が正しかったとしたら、この試みも善いことであるでしょう。私の唯一の目標が、私の信念に挑戦し、それが偽であることを知ることにあったとしても、この試みは次に取るべき最善の一手であるようにも思われます。

もちろん、もしあなたに「最も重要な世紀」仮説が正しいように思えないなら、この仮説を受け入れたり、推し進めたりしろと言うつもりはありません。それでも、もしあなたが踏みとどまる理由が、専門家の間に揺るぎないコンセンサスがないという**それだけ**であるとしたら、現状を無視し続けるのはおかしいように私には思えます。もしみんながみんなこの仕方で振る舞ったとしたら(つまり、どんな仮説も、揺るぎないコンセンサスに支えられていなければ無視するのだとしたら)正しい仮説も含めて一体どんな仮説が周縁的な位置から、広く受け入れるようになるのか分からないように私には思われます。

### **理由3 これほど全般的な懐疑主義は悪手であるように思われる。**

私が[GiveWell](http://www.givewell.org/)に力を入れていた頃、事あるごとに、人から大体次の趣旨のことを言われたものです。「あらゆる議論を、GiveWellがトップの慈善団体におく水準に保つ ── ランダム化比較試験、揺るぎない経験的データ等々を求める ── ことはできない。善いことを行う最高の機会には、あまり明白でないようなものもある ── そのため、GiveWellのこの基準では、[インパクトをもつための最大の潜在的機会を一部、逃してしまう](https://www.openphilanthropy.org/blog/hits-based-giving#Anti-principles_for_hits-based_giving)ことになる。」

これは正しいと私も考えます。推論やエビデンスに関する基準についての自分の一般的なアプローチをチェックして「自分のアプローチでは上手くいかないが、自分のアプローチが成功してほしいと真に思うシナリオはどのようなものか」と尋ねるのが重要であるように思われます。私の見解では、**最も重要なタイミングで正しいことを行えるなら、自分勝手な思い込みに陥ったり、蛸壺化する一定程度のリスクを負うことに問題はありません**。

専門家の間に揺るぎないコンセンサスがないこと ── そして自分勝手な思い込みや蛸壺化することへの懸念 ── は、「最も重要な世紀」仮説をすぐさま受け入れるよりも、**その粗を隈なく探す**良い理由になります。まだ見つかっていない欠陥がないかどうかを尋ね、私たち自身をつけあがらせる偏見を探し出し、この論証で最も疑問の余地があるように思われる部分を研究する、などのことを行うことができます。

しかし、この問題をあなたにとって理に適う/実際的な程度に探求したことがあるとしたら ── そして「専門家の間に揺るぎないコンセンサスがない」とか「自分勝手な思い込みに陥っていたり、議論が蛸壺化していないか心配だ」といった考慮事項**以外の欠陥**を見つけていないとしたら ── 「最も重要な世紀」仮説を見限ることで、本質的にはあなたは、**〈機会が生まれたときに、途方もなく重要な問題の存在に気づき、それに対して行動をとる初期の人間になり損ねる〉のは確実だ**。私が思うに、善いことをたくさん行う潜在的な機会を放棄するという点で、それはあまりに多くのことを手放すことになる。

1. **[^](#fnref95qf2aqwti4)**本文中の注に関しては原文を参照してください。 |

c6e8eef7-4245-497e-8e3e-83e1601c1a90 | trentmkelly/LessWrong-43k | LessWrong | The Future of Science

(Talk given at an event on Sunday 19th of July_. Richard Ngo is responsible for the talk, Jacob Lagerros and David Lambert edited the transcript. _

If you're a curated author and interested in giving a 5-min talk, which will then be transcribed and edited, sign up here_.) _

Richard Ngo: I'll be talking about the future of science. Even though this is an important topic (because science is very important) it hasn’t received the attention I think it deserves. One reason is that people tend to think, “Well, we’re going to build an AGI, and the AGI is going to do the science.” But this doesn’t really offer us much insight into what the future of science actually looks like.

It seems correct to assume that AGI is going to figure a lot of things out. I am interested in what these things are. What is the space of all the things we don’t currently understand? What knowledge is possible?

These are ambitious questions. But I’ll try to come up with a framing that I think is interesting.

One way of framing the history of science is through individuals making an observation and coming up with general principles to explain it. So in physics, you observe how things move and how they interact with each other. In biology, you observe living organisms, and so on. I'm going to call this “descriptive science”.

More recently, however, we have developed a different type of science, which I'm going to call “generative science”. This basically involves studying the general principles behind things that don’t exist yet and still need to be built.

This is, I think, harder than descriptive science, because you don't actually have anything to study. You need to bootstrap your way into it. A good example of this is electric circuits. We can come up with fairly general principles for describing how they work. And eventually this led us to computer science, which is again very general. We have a very principled understanding of many aspects of computer science, which is a science of thin |

cc6853d6-2fc2-4849-8001-1175f9a47af0 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Moral Mazes and Short Termism

Previously: [Short Termism](https://thezvi.wordpress.com/2017/03/04/short-termism/) and [Quotes from Moral Mazes](https://thezvi.wordpress.com/2019/05/30/quotes-from-moral-mazes/)

Epistemic Status: Long term

My list of [quotes from moral mazes](https://thezvi.wordpress.com/2019/05/30/quotes-from-moral-mazes/) has a section of twenty devoted to short term thinking. It fits with, and gives internal gears and color to, [my previous understanding](https://thezvi.wordpress.com/2017/03/04/short-termism/) of of the problem of short termism.

Much of what we think of as a Short Term vs. Long Term issue is actually an adversarial [Goodhart’s Law](https://www.lesswrong.com/posts/EbFABnst8LsidYs5Y/goodhart-taxonomy) problem, or a [legibility](https://en.wikipedia.org/wiki/Legibility) vs. illegibility problem, at the object level, that then becomes a short vs. long term issue at higher levels. When a manager milks a plant (see quotes 72, 73, 78 and 79) they are not primarily trading long term assets for short term assets. Rather, they are trading unmeasured assets for measured assets (see 67 and 69).

This is why you can have companies like Amazon, Uber or Tesla get high valuations. They hit legible short-term metrics that represent long-term growth. A start-up gets rewarded for their own sort of legible short-term indicators of progress and success, and of the quality of team and therefore potential for future success. Whereas other companies, that are not based on growth, report huge pressure to hit profit numbers.

The overwhelming object level pressure towards legible short-term success, whatever that means in context, comes from being judged in the short term on one’s success, and having that judgment being more important than object-level long term success.

The easiest way for this to be true is not to care about object-level long term success. If you’re gone before the long term, and no one traces the long term back to you, why do you care what happens? That is exactly the situation the managers face in Moral Mazes (see 64, 65, 70, 71, 74 and 83, and for a non-manager very clean example see 77). In particular:

> 74. We’re judged on the short-term because everybody changes their jobs so frequently.

>

>

And:

> 64. The ideal situation, of course, is to end up in a position where one can fire one’s successors for one’s own previous mistakes.

>

>

Almost as good as having a designated scapegoat is to have already sold the company or found employment elsewhere, rendering your problems [someone else’s problems](https://en.wikipedia.org/wiki/Somebody_else%27s_problem).

The other way to not care is for the short-term evaluation of one’s success or failure to impact long-term success. If not hitting a short-term number gets you fired, or prevents your company from getting acceptable terms on financing or gets you bought out, then the long term will get neglected. The net present value payoff for looking good, which can then be reinvested, makes it look like by far the best long term investment around.

Thus we have this problem at every level of management except the top. But for the top to actually be the top, it needs to not be answering to the stock market or capital markets, or otherwise care what others think – even without explicit verdicts, this can be as hard to root out as needing the perception of a bright future to attract and keep quality employees and keep up morale. So we almost always have it at the top as well. Each level is distorting things for the level above, and pushing these distorted priorities down to get to the next move in a giant game of adversarial telephone (see section A of quotes for how hierarchy works).

This results in a corporation that acts in various short-term ways, some of which make sense for it, some of which are the result of internal conflicts.

Why isn’t this out-competed? Why don’t the corporations that do less of this drive the ones that do more of it out of the market?

On the level of corporations doing this direct from the top, often these actions are a response to the incentives the corporation faces. In those cases, there is no reason to expect such actions to be out-competed.

In other cases, the incentives of the CEO and top management are twisted but the corporation’s incentives are not. One would certainly expect those corporations that avoid this to do better. But these mismatches are the natural consequence of putting someone in charge who does not permanently own the company. Thus, dual class share structures becoming popular to restore skin in the correct game. Some of the lower-down issues can be made less bad by removing the ones at the top, but the problem does not go away, and what sources I have inside major tech companies including Google match this model.

There is also the tendency of these dynamics to arise over time. Those who play the power game tend to outperform those who do not play it barring constant vigilance and a willingness to sacrifice. As those players outperform, they cause other power players to outperform more, because they prefer and favor such other players, and favor rules that favor such players. This is especially powerful for anyone below them in the hierarchy. An infected CEO, who can install their own people, can quickly be game over on its own, and outside CEOs are brought in often.

Thus, even if the system causes the corporation to underperform, it still spreads, like a meme that infects the host, causing the host to prioritize spreading the meme, while reducing reproductive fitness. The bigger the organization, the harder it is to remain uninfected. Being able to be temporarily less burdened by such issues is one of the big advantages new entrants have.

One could even say that yes, they *do get wiped out by this,*but it’s not that fast, because it takes a while for this to rise to the level of a primary determining factor in outcomes. And [there are bigger things to worry about](https://thezvi.wordpress.com/2017/10/29/leaders-of-men/). It’s short termism, so that isn’t too surprising.

A big pressure that causes these infections is that business is constantly under siege and forced to engage in public relations (see quotes sections L and M) and is constantly facing [Asymmetric Justice](https://thezvi.wordpress.com/2019/04/25/asymmetric-justice/) and the [Copenhagen Interpretation of Ethics](https://blog.jaibot.com/the-copenhagen-interpretation-of-ethics/). This puts tremendous pressure on corporations to tell different stories to different audiences, to avoid creating records, and otherwise engage in the types of behavior that will be comfortable to the infected and uncomfortable to the uninfected.

Another explanation is that those who are infected don’t only reward each other *within*a corporation. They also *do business with*and *cooperate with*the infected elsewhere. Infected people are *comfortable*with others who are infected, and *uncomfortable*with those not infected, because if the time comes to play ball, they might refuse. So those who refuse to play by these rules do better at object-level tasks, but face alliances and hostile action from all sides, including capital markets, competitors and government, all of which are, to varying degrees, infected.

I am likely missing additional mechanisms, either because I don’t know about them or forgot to mention them, but I consider what I see here sufficient. I am no longer confused about short termism. |

edfd6fa4-d146-4f01-960c-86d9e880831e | trentmkelly/LessWrong-43k | LessWrong | Links and short notes, 2025-01-20

Much of this content originated on social media. To follow news and announcements in a more timely fashion, follow me on Twitter, Threads, Bluesky, or Farcaster.

Contents

* My writing (ICYMI)

* Jobs and fellowships

* Announcements

* News

* Events

* Other opportunities

* We are not close to providing for everyone’s “needs”

* The printing press and the Internet

* The ultimate form of travel

* Five hot takes about progress

* What could have been, for SF

* Quick thoughts on AI

* Links and bullets

* Charts

* Pics

My writing (ICYMI)

* How sci-fi can have drama without dystopia or doomerism. “Concise but incredible resource” (@OlliPayne). “100 percent with Jason on this. If your sci-fi has technology as the problem it will put me to sleep” (@elidourado)

Jobs and fellowships

* HumanProgress.org is hiring a research associate with Excel/Python/SQL skills “to manage and expand our database on human well-being” (@HumanProgress)

* The 5050 program comes to the UK “to help great scientists and engineers become great founders and start deep tech startups,” in partnership with ARIA (@sethbannon)

Announcements

* Core Memory, a new sci/tech media company from Ashlee Vance (@ashleevance)

* “AI Summer”, a new podcast from Dean Ball (RPI fellow) and Timothy B. Lee (@deanwball)

* Inference Magazine, a new publication on AI progress, with articles from writers including RPI fellow Duncan McClements (@inferencemag)

News

* Matt Clifford has published an AI Opportunities Action Plan for the UK, and the PM has agreed to all its recommendations, including “AI Growth Zones” with faster planning permission and grid connections; accelerating SMRs to power AI infrastructure; procurement, visas & regulatory reform to boost UK AI startups; and removing barriers to scaling AI pilots in government (@matthewclifford)

* The Manhattan Plan: NYC plans “to build 100,000 new homes in the next decade to reach a total of 1 MILLION homes in Manhattan” (@NYCMayor). “We’ve come |

369f9e65-777a-4ed2-803e-adfd20851d98 | trentmkelly/LessWrong-43k | LessWrong | Getting Nearer

Reply to: A Tale Of Two Tradeoffs

I'm not comfortable with compliments of the direct, personal sort, the "Oh, you're such a nice person!" type stuff that nice people are able to say with a straight face. Even if it would make people like me more - even if it's socially expected - I have trouble bringing myself to do it. So, when I say that I read Robin Hanson's "Tale of Two Tradeoffs", and then realized I would spend the rest of my mortal existence typing thought processes as "Near" or "Far", I hope this statement is received as a due substitute for any gushing compliments that a normal person would give at this point.

Among other things, this clears up a major puzzle that's been lingering in the back of my mind for a while now. Growing up as a rationalist, I was always telling myself to "Visualize!" or "Reason by simulation, not by analogy!" or "Use causal models, not similarity groups!" And those who ignored this principle seemed easy prey to blind enthusiasms, wherein one says that A is good because it is like B which is also good, and the like.

But later, I learned about the Outside View versus the Inside View, and that people asking "What rough class does this project fit into, and when did projects like this finish last time?" were much more accurate and much less optimistic than people who tried to visualize the when, where, and how of their projects. And this didn't seem to fit very well with my injunction to "Visualize!"

So now I think I understand what this principle was actually doing - it was keeping me in Near-side mode and away from Far-side thinking. And it's not that Near-side mode works so well in any absolute sense, but that Far-side mode is so much more pushed-on by ideology and wishful thinking, and so casual in accepting its conclusions (devoting less computing power before halting).

An example of this might be the balance between offensive and defensive nanotechnology, where I started out by - basically - just liking nanotechnology; |

62c67a0b-edcc-44ef-a461-317c176b95ea | trentmkelly/LessWrong-43k | LessWrong | Should I take an IQ test, why or why not?

I've seen discussion of IQ tests around LW. People imply there's a benefit to taking the test. I assume it is related to belief in belief or something. Can anyone flesh out this argument? |

53d7a5d7-e949-4b59-b9e0-dbbfaceb4dc4 | trentmkelly/LessWrong-43k | LessWrong | Asymmetric Weapons Aren't Always on Your Side

Some time ago, Scott Alexander wrote about asymmetric weapons, and now he writes again about them. During these posts, Scott repeatedly characterizes asymmetric weapons as inherently stronger for the "good guys" than they are for the "bad guys". Here is a quote from his first post:

> Logical debate has one advantage over narrative, rhetoric, and violence: it’s an asymmetric weapon. That is, it’s a weapon which is stronger in the hands of the good guys than in the hands of the bad guys.

And here is a quote from his more recent one:

> A symmetric weapon is one that works just as well for the bad guys as for the good guys. For example, violence – your morality doesn’t determine how hard you can punch; they can buy guns from the same places we can.

> An asymmetric weapon is one that works better for the good guys than the bad guys. The example I gave was Reason. If everyone tries to solve their problems through figuring out what the right thing to do is, the good guys (who are right) will have an easier time proving themselves to be right than the bad guys (who are wrong). Finding and using asymmetric weapons is the only non-coincidence way to make sustained moral progress.

One problem with this concept is that just because something is asymmetric doesn't mean that it's asymmetric in a good direction.

Scott talks about weapons that are asymmetric towards those who are right. However, there are many more types of asymmetries than just right vs. wrong - physical violence is asymmetric towards the strong, shouting people down is asymmetric towards the loud, and airing TV commercials is asymmetric towards people with more money. Violence isn't merely symmetric - it's asymmetric in a bad direction, since fascists are better than violence than you.

This in turn means that various sides will all be trying to pull things in directions that are asymmetric to their advantage. Indeed, a basic principle in strategy is to try to shift conflicts into areas where you are strong |

27d83e4d-90c1-40a3-ac32-8b4688a9accd | trentmkelly/LessWrong-43k | LessWrong | LINK: Ben Goertzel; Does Humanity Need an "AI-Nanny"?

Link: Ben Goertzel dismisses Yudkowsky's FAI and proposes his own solution: Nanny-AI

Some relevant quotes:

> It’s fun to muse about designing a “Friendly AI” a la Yudkowsky, that is guaranteed (or near-guaranteed) to maintain a friendly ethical system as it self-modifies and self-improves itself to massively superhuman intelligence. Such an AI system, if it existed, could bring about a full-on Singularity in a way that would respect human values – i.e. the best of both worlds, satisfying all but the most extreme of both the Cosmists and the Terrans. But the catch is, nobody has any idea how to do such a thing, and it seems well beyond the scope of current or near-future science and engineering.

> Gradually and reluctantly, I’ve been moving toward the opinion that the best solution may be to create a mildly superhuman supertechnology, whose job it is to protect us from ourselves and our technology – not forever, but just for a while, while we work on the hard problem of creating a Friendly Singularity.

>

> In other words, some sort of AI Nanny….

> The AI Nanny

> Imagine an advanced Artificial General Intelligence (AGI) software program with

>

> * General intelligence somewhat above the human level, but not too dramatically so – maybe, qualitatively speaking, as far above humans as humans are above apes

> * Interconnection to powerful worldwide surveillance systems, online and in the physical world

> * Control of a massive contingent of robots (e.g. service robots, teacher robots, etc.) and connectivity to the world’s home and building automation systems, robot factories, self-driving cars, and so on and so forth

> * A cognitive architecture featuring an explicit set of goals, and an action selection system that causes it to choose those actions that it rationally calculates will best help it achieve those goals

> * A set of preprogrammed goals including the following aspects:

> * A strong inhibition against modifying its preprogrammed goals

> * |

1e3e49fc-d10e-4bcd-89a8-7c2e646a7438 | trentmkelly/LessWrong-43k | LessWrong | A Premature Word on AI

Followup to: A.I. Old-Timers, Do Scientists Already Know This Stuff?

In response to Robin Hanson's post on the disillusionment of old-time AI researchers such as Roger Schank, I thought I'd post a few premature words on AI, even though I'm not really ready to do so:

Anyway:

I never expected AI to be easy. I went into the AI field because I thought it was world-crackingly important, and I was willing to work on it if it took the rest of my whole life, even though it looked incredibly difficult.

I've noticed that folks who actively work on Artificial General Intelligence, seem to have started out thinking the problem was much easier than it first appeared to me.

In retrospect, if I had not thought that the AGI problem was worth a hundred and fifty thousand human lives per day - that's what I thought in the beginning - then I would not have challenged it; I would have run away and hid like a scared rabbit. Everything I now know about how to not panic in the face of difficult problems, I learned from tackling AGI, and later, the superproblem of Friendly AI, because running away wasn't an option.

Try telling one of these AGI folks about Friendly AI, and they reel back, surprised, and immediately say, "But that would be too difficult!" In short, they have the same run-away reflex as anyone else, but AGI has not activated it. (FAI does.)

Roger Schank is not necessarily in this class, please note. Most of the people currently wandering around in the AGI Dungeon are those too blind to see the warning signs, the skulls on spikes, the flaming pits. But e.g. John McCarthy is a warrior of a different sort; he ventured into the AI Dungeon before it was known to be difficult. I find that in terms of raw formidability, the warriors who first stumbled across the Dungeon, impress me rather more than most of the modern explorers - the first explorers were not self-selected for folly. But alas, their weapons tend to be extremely obsolete.

There are many ways to run a |

35729ccc-89e1-424e-8578-0aff8aac96db | trentmkelly/LessWrong-43k | LessWrong | Meetup : Vancouver, Canada

Discussion article for the meetup : Vancouver, Canada

WHEN: 31 July 2011 03:00:00PM (-0700)

WHERE: Commune Cafe, 1002 Seymour Street, Vancouver, BC, Canada

We're holding the first Vancouver meetup on Sunday, July 31st starting at 3pm at the Commune Cafe on Seymour Street. We'll definitely be there from 3pm-6pm, but it'll end when it ends.

I've recently moved to Vancouver from the San Francisco Bay Area, where I lived at the household that hosts the Tortuga/Mountain View meetup. The rationalist community in Silicon Valley is vibrant and growing, and I loved being part of it. As Cosmos wrote of the New York group:

> Before this community took off, I did not believe that life could be this much fun or that I could possibly achieve such a sustained level of happiness.

>

> Being rational in an irrational world is incredibly lonely. Every interaction reveals that our thought processes differ widely from those around us, and I had accepted that such a divide would always exist. For the first time in my life I have dozens of people with whom I can act freely and revel in the joy of rationality without any social concern - hell, it's actively rewarded! Until the NYC Less Wrong community formed, I didn't realize that I was a forager lost without a tribe...

Activities of the rationalist community at Tortuga included meetups, hiking trips, guest speakers, transhumanist movies, skill-training sessions, parties and impromptu pillow fights. I want to find or build a similar community in Vancouver. We have a base of several people, and will be reaching out through Less Wrong and in other ways to find like-minded people.

I'm anticipating holding weekly meetups. The Commune Cafe in downtown Vancouver is a good place to start, but we have a great place in North Vancouver that we could use if that location works for everyone.

At this first meetup, we'll get to know each other, and talk about what we want to get out of holding meetups and forming a community, and figure out how |

724ada9b-fd3b-4d65-91ee-5db600cbb8db | StampyAI/alignment-research-dataset/blogs | Blogs | Reading books vs. engaging with them

Let’s say you’re interested in a 500-page serious nonfiction book, and you’re trying to decide whether to read it. I think most people imagine their choice something like this:

| | | |

| --- | --- | --- |

| **Option** | **Time cost** | **% that I understand and retain** |

| Just read the title

| Seconds

| 1%

|

| Skim the book

| 3 hours

| 33%

|

| Read the book quickly

| 8 hours

| 67%

|

| Read the book slowly

| 16 hours

| 90% |

I see things more like this:

| | | |

| --- | --- | --- |

| **Option** | **Time cost** | **% that I understand and retain** |

| Just read the title (and the 1-2 sentences people usually say to introduce the book)

| Seconds

| 10%

|

| Skim the book

| 3 hours

| 12%

|

| Read the book quickly

| 8 hours

| 13%

|

| Read the book slowly

| 16 hours

| 15%

|

| Read reviews/discussions of the book (ideally including author replies), but not the book

| 2 hours

| 25%

|

| Read the book slowly 3 times, with 3 years in between each time

| 48 hours

| 33%

|

| Read reviews/discussions of the book; locate the parts of the book they’re referencing, and read those parts carefully, independently checking footnotes, and referring back to other parts of the book for any unfamiliar terms. Write down who I think is being more fair; lay out the exact quotes that give the best evidence that my judgment is right. (But never read the whole book)

| 16 hours

| 33%

|

| Write my own summary of each of the book’s key points, what the best counterargument is, where I ultimately come down and why. (Will often involve reading key parts of the book 5-10 times)

| 50-100 hours

| 50% |

I’m guessing these numbers are pretty weird-seeming, so here are some explanations:

* **Just read the title (and the 1-2 sentences people usually say to introduce the book): "seconds" of time investment, 10% understanding/retention.** 10% probably sounds like a lot for a few seconds of thought! I think this works because the author has really sweated over how to make the title and elevator pitch capture as much as possible of what they’re saying. So if all I want is the "general gist," I don't think I need to read the book at all.

* **Skimming or reading the book: hours of time investment, only 12-15% understanding/retention.** This is based on my own sense of how much I retain when I "simply read" the book (and don't engage much with critiques of it, don't write about it, etc.) - and my perhaps unfair impressions of how much others seem to retain when they do this. If person A says they've read a book and person B says they haven't but they've heard people talking about it, I often don't find that person A seems to know any more about the book than person B.

* **Read reviews/discussions of the book (ideally including author replies), but not the book: 2 hours of time investment, 25% understanding/retention.** Good reviewers know the context/field for the book better than I do, and probably read the book more carefully than I did. Hopefully they picked out the really key good and bad parts, and if those are the only parts I retain, that’s probably more than I could hope for with just a slow reading.

* **Read the book slowly 3 times, with 3 years in between each time: 48 hours of time investment, 33% understanding/retention.** This implies that the 2nd and 3rd readings are actually more educational than the 1st: the first only gets me from 10% (which I got from reading the title) to 15%, the next two bring me to 33%. I think that’s right - it’s hard to notice the important parts before I have the whole arc of the argument and have sat with it. Hearing other people talk about it and seeing some random observations related to it also help.

* **Write my own thorough review of a particular debate between the book's critics and its author: 16 hours of time investment, 33% understanding/retention.** (The table has more detail on what this involves.) This is the same time investment as reading the book slowly, and I'm saying that is worth something like 5x as much (since once I've read the title, reading the book slowly only takes me from 10% to 15% understanding/comprehension, whereas this activity takes me from 10% to 33% understanding/comprehension).

* **Write my own summary of each of the book’s key points, what the best counterargument is, where I ultimately come down and why: 50-100 hours of time investment, 50% understanding/comprehension.** I know hugely more about the books I've done this with than the books I haven't. But even here I'm only estimating 50% understanding/comprehension. I don’t think it is really possible to understand more than 50% of a serious book without e.g. spending a lot of independent time in the field.

TLDR - I think the **value of reading a book once (without active engagement) is awkwardly small, and the value of big time investments like reading a book several times - or actively engaging with even part of it - is awkwardly large compared to that.**

Also, the maximum amount of understanding you can get is awkwardly small.

And a lot of the best options get you a “raw deal” on sounding educated:

* If you read reviews and not the book, someone else can say they read the book and you can’t, even though you spent just as much time and retained more of the book.

* If you digest the heck out of the book, you still can’t say anything in casual conversation except “I read the book,” which is also what someone can say who spent way less time and retained WAY less.

Ultimately, if you live in the headspace I’m laying out, you’re going to read a lot fewer books than you would otherwise, and you’ll probably be embarrassed of how few books you read. (But if more people [described their engagement with a book in detail instead of using the binary “I read X,”](https://www.cold-takes.com/honesty-about-reading/) maybe that would change.)

**Edited to add clarification:** this piece is about trying to casually inform oneself in areas one isn't an expert in, via reading books (and often other pieces) directed at a general audience. A reader pointed out that when you have a lot of existing expertise, the situation looks quite different, and often skimming or reading is the best thing to do. (Although in this case I would add that one is probably mostly reading reports, academic papers, notes from colleagues, etc. rather than books).

For email filter: florpschmop |

702d1f26-d1f1-43ba-8c41-d88eba9af097 | trentmkelly/LessWrong-43k | LessWrong | Seeking book about baseline life planning and expectations

In an attempt to find useful "base rate expectations" for the rest of my life (and how actions I might take now could set me up to be much better off 10, 20, 30, 40, 50, 60, and 70 years from now) I'm looking for a book that describes the nuts and bolts of human lives. I want coherent discussion from an actuarial/economic/probabilistic/calculating perspective, but I'd like some soulfulness too. The ideal book would be published in 2010 and have coverage of the different periods of people's lives and cover different aspects of their lives as well. In some sense the book would be like a nuts and bolts "how to your your life" manual. Hopefully it would have footnotes and generally good epistemology :-)

To take an example of the kind of content I would hope for (in a domain where I already have worked out some of the theory myself) the ideal book would explain how to calculate the ROI of different levels of college education realistically. Instead of a hand-waving argument that "on avergae you'll make more with education" it would also talk about the opportunity costs of lost wages, and how expected number of years of work impacts on what amount of training makes sense, and so on.

To be clear, I don't want a book that is simply about deciding when, how, and for how long it makes sense to train for a job. Instead I want something that talks about similar issues that I haven't already thought about but that are important, so that I can be usefully educated in ways I wasn't expecting. My goal is to find someone else's scaffold to help me project when and why I should (or shouldn't) buy a minivan, how much to budget for dentistry in my 50's, and a breakdown of the causes of bankruptcy the way insurance companies can predict causes of death.

I was hoping that the book How We Live: An Economic Perspective on Americans from Birth to Death would give me what I want (and it is still probably my fallback book if I can't find anything better) but it was written in 1983, |

cbb3e106-0f63-46a9-948c-ca1105ce3a6f | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | What organizations other than Conjecture have (esp. public) info-hazard policies?

I believe Anthropic has said they won't publish capabilities research?

OpenAI seems to be sort of doing the same (although no policy AFAIK).

I heard FHI was developing one way back when...

I think MIRI sort of does as well (default to not publishing, IIRC?) |

81a19415-7cf3-455e-afcb-13aca084ad0e | trentmkelly/LessWrong-43k | LessWrong | Information Theory vs Harry Potter [LINK]

http://www.inference.phy.cam.ac.uk/mackay/itila/Potter.html

Somebody hasn't heard of HPMOR... |

90009446-0b9c-40a3-ae9d-be76761ae4c8 | trentmkelly/LessWrong-43k | LessWrong | [SEQ RERUN] Brain Emulation and Hard Takeoff

Today's post, Brain Emulation and Hard Takeoff was originally published on November 22, 2008. A summary:

> A project of bots could start an intelligence explosion once it got fast enough to start making bots of the engineers working on it, that would be able to operate at greater than human speed. Such a system could also devise a lot of innovative ways to acquire more resources or capital.

Discuss the post here (rather than in the comments to the original post).

This post is part of the Rerunning the Sequences series, where we'll be going through Eliezer Yudkowsky's old posts in order so that people who are interested can (re-)read and discuss them. The previous post was Emulations Go Foom, and you can use the sequence_reruns tag or rss feed to follow the rest of the series.

Sequence reruns are a community-driven effort. You can participate by re-reading the sequence post, discussing it here, posting the next day's sequence reruns post, or summarizing forthcoming articles on the wiki. Go here for more details, or to have meta discussions about the Rerunning the Sequences series. |

6f7a21b5-6584-4629-a1f7-cd9190de21bf | trentmkelly/LessWrong-43k | LessWrong | Long Review: Economic Hierarchies

Naked and Afraid from the Discovery Channel didn’t live up to its potential. To be fair, a handful of scraggly naked people trying to make it in Kenya’s wilderness made for interesting television, as they scratched themselves, got infections, and looked pretty uncomfortable. But the interpersonal drama seemed contrived since their goal was mere survival, and the division of labor was not highly interesting. I didn’t learn what I wanted--too much complacent nakedness, not enough competence-porn.

The show I'd like to pitch is one about progress and knowledge. 900 scraggly people, they don’t have to be naked, but for the sake of argument, let’s say they are naked, are plopped in the wilderness with a bunch of raw materials and the mandate to build the highest level of civilization possible in three years, outcompeting another group of 900 scraggly naked people. Boom! Instant natural experiment: knowledge, society, organization, bottlenecks on development. From it we could dream up better models of how to bounce back from a civilizational setback, settle charter cities, and craft efficient institutional structures. We could recruit some of the best minds in hundreds of fields not to consult but to build publicly, with all data and streams tracked and uploaded to the internet for analysis. Of course, our prediction markets about the show would be filled with bets about what milestones would and would not be reached. Plus, we would be entertained and given insights at the same time.

That’s my pitch for how we will inspire people about progress, productivity, and the mysteries of society’s organization. I want the world to see bureaucracy and technology stripped down to their barest essentials, not contrived nakedness in the wilderness. The details of the show might help us understand what governance and incentives would make for the fastest civilization building.

A sense of wonder about these things and a desire to cultivate comparative advantage drove me to read Econo |

2ec6f2db-6e54-49bd-b12f-d3b0be76e352 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Training a GPT model on EA texts: what data?

I plan to finetune GPT-J, a large language model similar to GPT-3 creative by EleutherAI, on effective altruism texts. GPT-J is known to be better at mathematical, logical, and analytic reasoning than GPT-3 due to a large training on academic texts.

The goals are:

1. **Accurately reflect how the EA community thinks**

2. Represent texts widely read in the EA community

3. ~~Helps the language model think well~~

My proposed training mix:

* 60% EA Forum posts above a certain karma threshold

+ Bias towards newer posts according to a ?? curve

+ Weight the likelihood of inclusion of each post by a function of its karma (how does that map to views?

* Books (3.3MB)

+ The Alignment Problem (1MB)

+ The Precipice (0.9MB)

+ Doing Good Better (0.5MB)

+ The Scout Mindset (0.5MB)

+ 80,000 Hours (0.4KB)

* Articles and blog posts on EA

+ [EA Handbook](https://forum.effectivealtruism.org/handbook)

+ [Most Important Century Sequence](https://forum.effectivealtruism.org/s/isENJuPdB3fhjWYHd)

+ [Replacing Guilt Sequence](https://forum.effectivealtruism.org/s/a2LBRPLhvwB83DSGq) (h/t Lorenzo)

+ [Winners of the First Decade Review](https://forum.effectivealtruism.org/s/HSA8wsaYiqdt4ouNF)

+ ... what else?

* [EA Forum Topic Descriptions](https://forum.effectivealtruism.org/topics/all) (h/t Lorenzo)

* OpenPhilanthropy.org (h/t Lorenzo)

* GivingWhatWeCan.org (h/t Lorenzo)

+ including comments

* ??% Rationalism

+ ??% Overcoming Bias

+ ??% Slate Star Codex

+ ??% HPMOR

What sources am I missing?

Please suggest important blog posts and post series I should add to the training mix, and explain how important to or popular EA they are.

Can you help me estimate how much mindshare each of the items labelled "??" occupies in a typical EA?

I'm new to EA, so I would strongly appreciate input. |

e05f525a-0ec2-4cc3-9930-3d6c576ceb87 | trentmkelly/LessWrong-43k | LessWrong | [POLL] LessWrong group on YourMorals.org

Here's the news article on this: http://www.yourmorals.org/blog/2011/11/how-to-use-groups-at-yourmorals-org/

And here's the group that the LW community just created: http://www.yourmorals.org/setgraphgroup.php?grp=623d5410f705f6a1f92c83565a3cfffc

I think it will be very interesting to see what we can all get on this. |

13c7b17d-b4d0-4b3d-8d7c-825e0895d8e8 | trentmkelly/LessWrong-43k | LessWrong | How We Picture Bayesian Agents

I think that when most people picture a Bayesian agent, they imagine a system which:

* Enumerates every possible state/trajectory of “the world”, and assigns a probability to each.

* When new observations come in, loops over every state/trajectory, checks the probability of the observations conditional on each, and then updates via Bayes rule.

* To select actions, computes the utility which each action will yield under each state/trajectory, then averages over state/trajectory weighted by probability, and picks the action with the largest weighted-average utility.

Typically, we define Bayesian agents as agents which behaviorally match that picture.

But that’s not really the picture David and I typically have in mind, when we picture Bayesian agents. Yes, behaviorally they act that way. But I think people get overly-anchored imagining the internals of the agent that way, and then mistakenly imagine that a Bayesian model of agency is incompatible with various features of real-world agents (e.g. humans) which a Bayesian framework can in fact handle quite well.

So this post is about our prototypical mental picture of a “Bayesian agent”, and how it diverges from the basic behavioral picture.

Causal Models and Submodels

Probably you’ve heard of causal diagrams or Bayes nets by now.

If our Bayesian agent’s world model is represented via a big causal diagram, then that already looks quite different from the original “enumerate all states/trajectories” picture. Assuming reasonable sparsity, the data structures representing the causal model (i.e. graph + conditional probabilities on each node) take up an amount of space which grows linearly with the size of the world, rather than exponentially. It’s still too big for an agent embedded in the world to store in its head directly, but much smaller than the brute-force version.

(Also, a realistic agent would want to explicitly represent more than just one causal diagram, in order to have uncertainty over causal structu |

80cb512e-8510-41cc-97f1-683a00dc877b | trentmkelly/LessWrong-43k | LessWrong | My weekly review habit

Every Saturday morning, I take 3-4 hours to think about how my week went and how I’ll make the next one better.

The format has changed over time, but for example, here’s some of what I reflected on last week:

* I noticed I’d fallen far short of my goal for written output. I decided to allocate more time to reading this week, hoping that it would generate more ideas. And I reorganized my morning routine to make it easier to start writing in the morning.

* I looked at some stats from RescueTime and Complice about what I’d spent time on and accomplished. I noticed that my time spent on Slack was nearing dangerous levels, so I decided to make a couple experimental tweaks to get it down:

* I tried out Contexts, a replacement for the macOS window switcher, which I configured to only show windows from my current workspace—hoping that this would prevent me from cmd+tabbing over to Slack and getting distracted.

* I decided to run an experiment of not answering immediately when coworkers called me in the middle of a focused block of time, and keeping a paper “todo when done focusing” list to remind myself to call them back, check Slack, etc.

* I noticed that it felt hard for me to get useful info from the time-tracking data in RescueTime and Complice, so I revisited what questions I actually wanted to answer and how I could make them easy to answer.

* I realized that I should be using Google Calendar, not RescueTime or Complice, to track my time spent in meetings, so I added that to my time-tracking data sources.

* I also made several tweaks to the way I used Complice to make it easier to see various stats I was interested in.

And so on. By the end of the review I had surfaced lots of other improvements for the coming week.

----------------------------------------

While each individual tweak is small, over the weeks and years they’ve compounded to make me a lot more effective. Because of that, this weekly review is the most useful habit (o |

3a5789ec-c67b-456f-b420-b2379dd3c09a | trentmkelly/LessWrong-43k | LessWrong | The Ethics of ACI

ACI is a universal intelligence model based on the idea "behaves the same way as experiences". It may seem counterintuitive that ACI agents have no ultimate goals, nor do they have to maximize any utility functions. People may argue that ACI has no ethics, thus it can’t be a general intelligence model.

ACI uses Solomonoff induction to determine future actions from past input and actions. We know that Solomonoff induction can predict facts of "how the world will be", but it is believed that you can't get "value" only from "facts". What’s the value, ethics, and foresight of ACI? If an agent's behavior is only decided by its past behavior, who have decided its past behaviors?

ACI learns ethics from experiences

The simple answer is, ACI learns ethics from experiences. ACI takes "right" behaviors as training samples, the same way as value learning approaches. (The difference is, ACI does not limit the ethics to values or goals.)

For example, in natural selection, the environment determines which behavior is "right" and which behavior would get a possible ancestor out of the gene pool, and a natural intelligent agent takes the right experiences as learning samples.

But, does that mean ACI can't work by itself, and has to rely on some kind of "caretaker" that decides which behavior is right?

However, rational agent models also rely on the construction of utility functions, just like reinforcement learning heavily relies on reward designing or reward shaping, AIXI’s constructive, normative aspect of intelligence is "assumed away" to the external entity that assigns rewards to different outcomes. You have to assign rewards or utility for every point in the state space, in order to make a rational agent work. It's not a solution for the curse of dimensionality, but a curse itself.

Instead, ACI’s normative aspect of intelligence is also "assumed away" to the experiences. In order to be utilized by ACI agents, any ethical information must be able to be represented in |

a698c9df-2620-435f-8581-49854b8ae23d | trentmkelly/LessWrong-43k | LessWrong | A Pragmatic Epistemology

For the past three thousand years epistemology has been about the truth, the whole truth, and nothing but the truth. Philosophers and scientists have continuously attempted to pinpoint the nature of truth, to find general logico-syntactic criteria for generating justified inferences, and to discover the true nature of reality. I happen to think that truth is overrated. And by that I don't mean that I'm a stereotypical postmodernist, prepared to say that all views are on equal footing (because after all, who can really say what's true and what isn't?). Instead I mean that I don't even think that the truth is a useful or coherent concept when stretched to accommodate what philosophers have tried to make it accommodate. It's not a malleable enough concept to have the generality that philosophers are asking of it. We need something else in its place.

A view similar to this is reservationism, which was first introduced[1] by Moldbug in A Reservationist Epistemology. If you haven't read it, I suggest at least skimming it before reading the rest of this post, but the basic idea is that you can try to cram reason into an explicit General Theory of Reason for as long as you like, but at best it will always be a special case of "common sense." I have mixed feelings about Moldbug's post. On the one hand, it's delightfully witty and I agree with the general thrust of the argument. On the other hand, I think you can go a bit farther to explain his "common sense" notion than he lets on, and the abrasiveness and vagueness of his writing are likely to cloak some of the finer points. And despite giving (likely unintentional) hints about what we might replace "truth" with, he never does criticise the concept of truth, although he obviously criticises general theories of truth.

Since I do depart from Moldbug, I'll call myself a pragmatist rather than a reservationist. I'll also give my pragmatism a slogan: "It's just a model."[2] What's just a model? Bayesianism, falsificationism, |

6a82a0bf-5bf2-4642-a2b5-b9a798799d44 | trentmkelly/LessWrong-43k | LessWrong | [CORE] Concepts for Understanding the World

Background:

I'm recently doing a big project to increase my scholarship and modeling power for both rationality and traditional "serious" topics. One thing I found very useful is taking notes with a clear structure.

The structure I'm using currently is as follows:

- write down useful concepts,

- write down (as a separate category) useful heuristics & things to do in various situations,

- do not write facts, opinions or anything else (I rely on unaided memory to get more filtering).

Heuristic: learn concepts before facts!

Note that you can be mistaken about facts, but you can't harm your epistemology by learning concepts. Even if a concept turns out to be useless or misleading, you are better off knowing about it, understanding how it's misleading, and being able to avoid the trap when you see it.

Let's share concepts!

Please give (at a minimum) a name and a reference (link). A short description in plain language is also welcome.

|

cee1e271-d926-4c50-b3fe-62e5d28d368a | trentmkelly/LessWrong-43k | LessWrong | The simple picture on AI safety

At every company I've ever worked at, I've had to distill whatever problem I'm working on to something incredibly simple. Only then have I been able to make real progress. In my current role, at an autonomous driving company, I'm working in the context of a rapidly growing group that is attempting to solve at least 15 different large engineering problems, each of which is divided into many different teams with rapidly shifting priorities and understandings of the problem. The mission of my team is to make end-to-end regression testing rock solid so that the whole company can deploy code updates to cars without killing anyone. But that wasn't the mission from the start: at the start it was a collection of people with a mandate to work on a bunch of different infrastructural and productivity issues. As we delved into mishmash after mishmash of complicated existing technical pieces, the problem of fixing it all became ever more abstract. We built long lists of ideas for fixing pieces, wrote extravagant proposals, and drew up vast and complicated architecture diagrams. It all made sense, but none of it moved us closer to solving anything.

At some point, we distilled the problem down to a core that actually resonated with us and others in the company. It was not some pithy marketing-language mission statement; it was not a sentence at all -- we expressed it a little differently every time. It represented an actual comprehension of the core of the problem. We got two kinds of reactions: to folks who thought the problem was supposed to be complicated, out distillation sounded childishly naive. They told us the problem was much more complicated. We told them it was not. To folks who really wanted to solve the problem with their own hands, the distillation was energizing. They said that yes, this is the kind of problem that can be solved.

I have never encountered a real problem without a simple distillation at its core. Some problems have complex solutions. Some problems a |

3500d842-0664-4e54-8d83-4059107a930c | StampyAI/alignment-research-dataset/lesswrong | LessWrong | [Preprint] Pretraining Language Models with Human Preferences

Surprised no one posted about this from Anthropic, NYU and Uni of Sussex yet:

* Instead of fine-tuning on human-preferences, they directly incorporate human feedback in the pre-training phase, conditioning the model on <good> or <bad> feedback tokens placed at the beginning of the training sequences.

* They find this to be Pareto-optimal out of five considered pre-training objectives, greatly reducing the amount of undesired outputs while retaining standard LM pre-training downstream performance AND outperforming RLHF fine-tuning in terms of preference satisfaction.

This conditioning is very reminiscent of the [decision transformer](https://arxiv.org/abs/2106.01345), where scalar reward tokens are prepended to the input. I believe [CICERO](https://about.fb.com/news/2022/11/cicero-ai-that-can-collaborate-and-negotiate-with-you/) also does something similar, conditioning on ELO scores during dialogue generation training.

From a discussion with [James Chua](https://www.lesswrong.com/users/james-chua) on [AISS](https://www.aisafetysupport.org/)'s slack, we noted similarities between this work and [Charlie Steiner](https://www.lesswrong.com/users/charlie-steiner)'s [Take 13: RLHF bad, conditioning good](https://www.lesswrong.com/posts/AXpXG9oTiucidnqPK/take-13-rlhf-bad-conditioning-good). James is developing a [library ("conditionme")](https://github.com/thejaminator/conditionme) specifically for rating-conditioned language modelling and was looking for some feedback, which prompted the discussion. We figured potential future work here is extending the conditioning to scalar rewards (rather than the discrete <good> vs <bad>), which James pointed out requires some caution with the tokenizer, which he hopes to address in part with conditionme. |

6e9641b7-559c-4d17-81f0-6eb604d4e820 | trentmkelly/LessWrong-43k | LessWrong | [LINK]Real time mapping of neural activity in a larval zebra fish

https://plus.google.com/109794669788083578017/posts/gLgSnkCtgrR

> Brain function relies on communication between large populations of neurons across multiple brain areas, a full understanding of which would require knowledge of the time-varying activity of all neurons in the central nervous system. Here we use light-sheet microscopy to record activity, reported through the genetically encoded calcium indicator GCaMP5G, from the entire volume of the brain of the larval zebrafish in vivo at 0.8 Hz, capturing more than 80% of all neurons at single-cell resolution. Demonstrating how this technique can be used to reveal functionally defined circuits across the brain, we identify two populations of neurons with correlated activity patterns. One circuit consists of hindbrain neurons functionally coupled to spinal cord neuropil. The other consists of an anatomically symmetric population in the anterior hindbrain, with activity in the left and right halves oscillating in antiphase, on a timescale of 20 s, and coupled to equally slow oscillations in the inferior olive.

Page down at the link to see the animation. |

5a366e19-92c0-443d-b613-4f62cdd26518 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Complex Systems for AI Safety [Pragmatic AI Safety #3]

*This is the third post in* [*a sequence of posts*](https://www.alignmentforum.org/posts/bffA9WC9nEJhtagQi/introduction-to-pragmatic-ai-safety-pragmatic-ai-safety-1) *that describe our models for Pragmatic AI Safety.*

It is critical to steer the AI research field in a safer direction. However, it’s difficult to understand how it can be shaped, because it is very complex and there is often a high level of uncertainty about future developments. As a result, it may be daunting to even begin to think about how to shape the field. We cannot afford to make too many simplifying assumptions that hide the complexity of the field, but we also cannot afford to make too few and be unable to generate any tractable insights.

Fortunately, the field of complex systems provides a solution. The field has identified commonalities between many kinds of systems and has identified ways that they can be modeled and changed. In this post, we will explain some of the foundational ideas behind complex systems and how they can be applied to shaping the AI research ecosystem.

Along the way, we will also demonstrate that deep learning systems exhibit many of the fundamental properties of complex systems, and we show how complex systems are also useful for deep learning AI safety research.

A systems view of AI safety

---------------------------

### Background: Complex Systems

When considering methods to alter the trajectory of empirical fields such as deep learning, as well as preventing catastrophe from higher risk systems, it is necessary to have some understanding of complex systems. Complex systems is an entire field of study, so we cannot possibly describe every relevant detail here. In this section, we will try merely to give a very high level overview of the field. At the end of this post we present some resources for learning more.

Complex systems are systems consisting of many interacting components that exhibit emergent collective behavior. Complex systems are highly interconnected, making decomposition and reductive analysis less effective: breaking the system down into parts and analyzing the parts cannot give a good explanation of the whole. However, complex systems are also too organized for statistics, since the interdependencies in the system break fundamental independence assumptions in much of statistics. Complex systems are ubiquitous: financial systems, power grids, social insects, the internet, weather systems, biological cells, human societies, deep learning models, the brain, and other systems are all complex systems.

It can be tricky to compare AI safety to making other specific systems safer. Is making AI safe like making a rocket, power plant, or computer program safe? While analogies can be found, there are many disanalogies. It’s more generally useful to talk about making complex systems safer. For systems theoretic hazard analysis, we can abstract away from the specific content and just focus on shared structure across systems. Rather than talk about what worked well for one high-risk technology, with a systems view we can talk about what worked well for a large number of them, which prevents us from overfitting to a particular example.

The central lesson to take away from complex systems theory is that reductionism is not enough. It’s often tempting to break down a system into isolated events or components, and then try to analyze each part and then combine the results. This incorrectly assumes that separation does not distort the system’s properties. In reality, parts do not operate independently, and are subject to feedback loops and nonlinear interactions. Analyzing the pairwise interactions between parts is not sufficient for capturing the full system complexity (this is partially why a [bag of n-grams](https://towardsdatascience.com/evolution-of-language-models-n-grams-word-embeddings-attention-transformers-a688151825d2) is far worse than attention).

Hazard analysis once proceeded by reductionism alone. In earlier models, accidents are broken down into a chain of events thought to have caused that accident, where a hazard is a root cause of an accident. Complex systems theory has supplanted this sort of analysis across many industries, in part because the idea of an ultimate “root cause” of a catastrophe is not productive when analyzing a complex system. Instead of looking for a single component responsible for safety, it makes sense to identify the numerous factors, including sociotechnical factors, that are contributory. Rather than break events down into cause and effect, a systems view instead sees events as a product of a complex interaction between parts.

Recognizing that we are dealing with complex systems, we will now discuss how to use insights from complex systems to help make AI systems safer.

### Improving Contributing Factors

“Direct impact,” that is impact produced from a simple, short, and deterministic causal chain, is relatively easy to analyze and quantify. However, this does not mean that direct impact is always the best route to impact. If someone only focuses on direct impact, they won’t optimize for diffuse paths towards impact. For instance, EA community building is indirect, but without it there would be far fewer funds, fewer people working on certain problems, and so on. Becoming a billionaire and donating money is indirect, but without this there would be significantly less funding. Similarly, safety field-building may not have an immediate direct impact on technical problems, but it can still vastly change the resources devoted to solving those problems, in turn contributing to solving them (note that “resources” does not (just) mean money, but rather competent researchers capable of making progress). In a complex system, such indirect/diffuse factors have to be accounted for and prioritized.

AI safety is not all about finding safety mechanisms, such as mechanisms that could be added to make superintelligence completely safe. This is a bit like saying computer security is all about firewalls, which is not true. [Information assurance](https://online.norwich.edu/academic-programs/resources/information-assurance-versus-information-security) evolved to address blindspots in information security, because it is understood that we cannot ignore [complex systems](http://web.mit.edu/smadnick/www/wp/2014-07.pdf), safety culture, protocols, and so on.

Often, research directions in AI safety are thought to need to have a simple direct impact story: if this intervention is successful, what is the short chain of events towards it being useful for safe and aligned AGI? “How does this directly reduce x-risk” is a well-intentioned question, but it leaves out salient remote, indirect, or nonlinear causal factors. Such diffuse factors cannot be ignored, as we will discuss below.

**A note on tradeoffs with simple theories of impact**

AI safety research is complex enough that we should expect that understanding a theory of impact might require deep knowledge and expertise about a particular area. As such, a theory of impact for that research might not be easily explicable to somebody without any background in a short amount of time. This is especially true of theories of impact that are multifaceted, involve social dynamics, and require an understanding of multiple different angles of the problem. As such, we should not only focus on theories of impact that are easily explicable to newcomers.

In some cases, pragmatically one should not always focus on the research area that is most directly and obviously relevant. At first blush, reinforcement learning (RL) is highly relevant to advanced AI agents. RL is conceptually broader than supervised learning such that supervised learning can be formulated as an RL problem. However, the problems considered in RL that aren’t considered in supervised learning are currently far less tractable. This can mean that in practice, supervised learning may provide more tractable research directions.

However, with theories of impact that are less immediately and palpably related to x-risk reduction, we need to be very careful to ensure that research remains relevant. Less direct connection to the essential goals of the research may cause it to drift off course and fail to achieve its original aims. This is especially true when research agendas are carried out by people who are less motivated by the original goal of the research, and could potentially lead to value drift where previously x-risk-motivated researchers become motivated by proxy goals that are no longer relevant. This means that it is much more important for x-risk-motivated researchers and grantmakers to maintain the field and actively ensure research remains relevant (this will be discussed later).

Thus, there is a tradeoff involved in only selecting immediately graspable impact strategies. Systemic factors cannot be ignored, but this does not eliminate the need for understanding causal (whether indirect/nonlinear/diffuse or direct) links between research and impact.

**Examples of the importance of systemic factors**

The following examples illustrate the extreme importance of systemic factors (and the limitations of direct causal analysis and complementary techniques such as [backchaining](https://en.wikipedia.org/wiki/Backward_chaining)):