id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

fb8b5f9f-4529-4ac9-bad1-dc685f237cf8 | trentmkelly/LessWrong-43k | LessWrong | How people use LLMs

I've gotten a lot of value out of the details of how other people use LLMs, so I'm delighted that Gavin Leech created a collection of exactly such posts (link should go to the right section of the page but if you don't see it, scroll down).

* https://kajsotala.fi/2025/01/things-i-have-been-using-llms-for/

* https://nicholas.carlini.com/writing/2024/how-i-use-ai.html

* https://www.lesswrong.com/posts/CYYBW8QCMK722GDpz/how-much-i-m-paying-for-ai-productivity-software-and-the

* https://www.avitalbalwit.com/post/how-i-use-claude

* https://andymasley.substack.com/p/how-i-use-ai

* https://benjamincongdon.me/blog/2025/02/02/How-I-Use-AI-Early-2025/

* https://www.jefftk.com/p/examples-of-how-i-use-llms

* https://simonwillison.net/series/using-llms/

* https://signull.substack.com/p/how-to-think-with-ai

* https://alicemaz.substack.com/p/how-i-use-chatgpt

* https://fredkozlowski.com/2024/08/29/youre-using-chatgpt-wrong/

* https://www.lesswrong.com/posts/WNd3Lima4qrQ3fJEN/how-i-force-llms-to-generate-correct-code

* https://www.tumblr.com/nostalgebraist/772798409412427776/even-setting-aside-the-need-to-do

* https://www.jointakeoff.com/

* This is more of a howto than a whatto. I wouldn’t use it for stats or pharma decisions as he does.

* https://www.lesswrong.com/posts/4mvphwx5pdsZLMmpY/recent-ai-model-progress-feels-mostly-like-bullshit

Some additions from me:

* I use NaturalReaders to read my own writing back to me, and create new audiobooks for walks or falling asleep (including from textbooks).

* Perplexity is good enough as a research assistant I'm more open to taking on medical lit reviews than I used to be.

* I used Auren, which is advertised as a thinking assistant and coach, to solve a musculoskeletal issue my physical therapist had whiffed on for weeks (referral code with free tokens, but only after your first payment).

* Note that Auren has some definite whispering earring vibes, and the privacy protections don't seem particularly strong, |

f5ac860b-bd6d-47d1-92ef-fdfcc9b6fcc0 | StampyAI/alignment-research-dataset/aisafety.info | AI Safety Info | I’d like to do experimental work (i.e. ML, coding) for AI alignment. What should I do?

Okay, so you want to do experimental AGI safety research. Do you have an idea you’re already excited about? Perhaps a research avenue, machine learning experiment, or coding project? Or maybe you’d like to get up to speed on existing research, or to learn how to [get a job in alignment](https://forum.effectivealtruism.org/posts/7WXPkpqKGKewAymJf/how-to-pursue-a-career-in-technical-ai-alignment)? You can continue to whichever branch seems most relevant to you via the related questions below.

|

a8aea052-6b3e-458e-8ebe-df605ae38d3c | StampyAI/alignment-research-dataset/arbital | Arbital | Uncountability: Intro (Math 1)

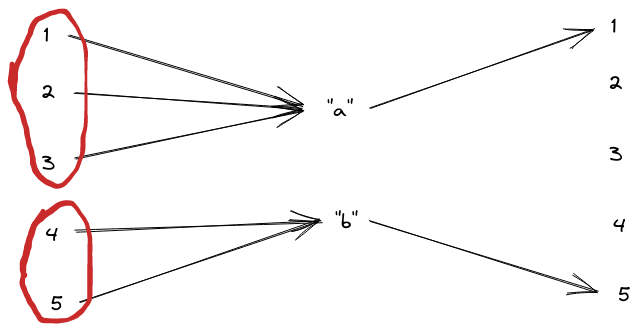

[Collections of things](https://arbital.com/p/3jz) which are the same [size](https://arbital.com/p/4w5) as or smaller than the collection of all [natural numbers](https://arbital.com/p/-45h) are called *countable*, while larger collections (like the set of all [real numbers](https://arbital.com/p/-4bc)) are called *uncountable*.

All uncountable collections (and some countable collections) are [infinite](https://arbital.com/p/infinity). There is a meaningful and [well-defined](https://arbital.com/p/5ss) way to compare the sizes of different infinite collections of things %%note: Specifically, mathematical systems which use the [https://arbital.com/p/69b](https://arbital.com/p/69b), see the [technical](https://arbital.com/p/4zp) page for details.%%. To demonstrate this, we'll use a 2d grid.

[https://arbital.com/p/toc:](https://arbital.com/p/toc:)

## Real and Rational numbers

[Real numbers](https://arbital.com/p/4bc) are numbers with a [decimal expansion](https://arbital.com/p/4sl), for example 1, 2, 3.5, $\pi$ = 3.14159265... Every real number has an infinite decimal expansion, for example 1 = 1.0000000000..., 2 = 2.0000000000..., 3.5 = 3.5000000000... Recall that the rational numbers are [fractions](https://arbital.com/p/fraction) of [integers](https://arbital.com/p/48l), for example $1 = \frac11$, $\frac32$, $\frac{100}{101}$, $\frac{22}{7}$. The positive integers are the integers greater than zero (i.e. 1, 2, 3, 4, ..).

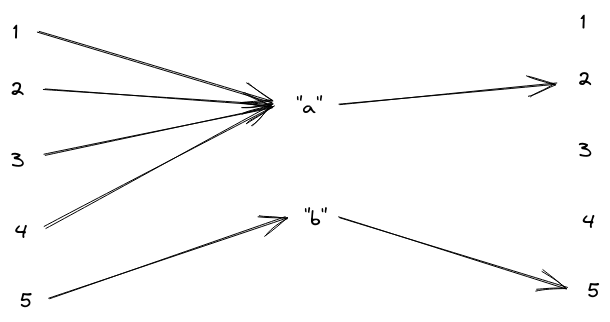

There is a [https://arbital.com/p/-theorem](https://arbital.com/p/-theorem) in math that states that the rational numbers are *countable* %%note: You can see the theorem [here](https://arbital.com/p/511).%%, that is, that the set of rational numbers is the same size as the set of positive integers, and another theorem which states that the real numbers are *uncountable*, that is, that the set of real numbers is strictly bigger. By "same size" and "strictly bigger", we mean that it is possible to match every rational number with some positive integer in a way so that there are no rational numbers, nor positive integers, left unmatched, but that any matching you make between real numbers and positive integers leaves some real numbers not matched with anything.

## Rational grid

If you imagine laying the rational numbers out on a two-dimensional grid, so that the number $p / q$ falls at $(p, q)$, then we may match the positive integers with the rational numbers by walking in a spiral pattern out from zero, skipping over numbers that we have already counted (or that are undefined, such as zero divided by any number). The beginning of this sequence is $\frac01$, $\frac11$, $\frac12$, $\frac{-1}{2}$, $\frac{-1}{1}$, ... Graphically, this is:

This shows that the rational numbers are countable.

## Reals are uncountable

The real numbers, however, cannot be matched with the positive integers. I show this by [contradiction](https://arbital.com/p/46z). %%note:That is to say, I show that if there is such a matching, then we can conclude nonsensical statements (and if making a new assumption allows us to conclude nonsense, then the assumption itself must be nonsense.%%

Suppose we have such a matching. We can construct a new real number that differs in its $n^\text{th}$ decimal digit from the real number matched with $n$.

For example, if we were given a matching that matched 1 with 1.8, 2 with 1.26, 3 with 5.758, 4 with 1, and 5 with $\pi$, then our new number could be 0.11111, which differs from 1.8 in the first decimal place (the 0.1 place), 1.26 in the second decimal place (the 0.01 place), and so on. It is clear that this number cannot be matched with any number under the matching we are given, because, if it were matched with $n$, then it would differ from itself in the $n^\text{th}$ decimal digit, which is nonsense. Thus, there is no matching between the real numbers and the positive integers.

## See also

If you enjoyed this explanation, consider exploring some of [Arbital's](https://arbital.com/p/3d) other [featured content](https://arbital.com/p/6gg)!

Arbital is made by people like you, if you think you can explain a mathematical concept then consider [https://arbital.com/p/-4d6](https://arbital.com/p/-4d6)! |

15db4248-49eb-4b31-8127-a3d7b940bb13 | trentmkelly/LessWrong-43k | LessWrong | Emails from your Gratitude Journal

Lots has been written here about gratitude journaling.

In spite of knowing about the benefits, I've never managed to make the habit stick. I do, however, already have a very strong habit of responding to email and keeping my inbox under control.

That's what pushed me to build Email Notebook, which I'm hopeful will facilitate other folks' writing habits, too.

It's beta quality at the moment, but my email is on the contact page and I plan to put some effort into feature requests if there are any common themes. Already on the roadmap is to improve the variety of prompts, touching on some of the specific practices outlined by @david_gross.

If you do give it a try, please report any bugs you encounter :) |

bbbf24a9-a7ba-4b5a-a393-175f3fbb0bc8 | trentmkelly/LessWrong-43k | LessWrong | Science of Deep Learning more tractably addresses the Sharp Left Turn than Agent Foundations

Summary

Lots of agent foundations research is motivated by the idea that alignment techniques found by empirical trial-and-error will fail to generalize to future systems. While such threat models are plausible, agent foundations researchers have largely failed to make progress on addressing them because they tend to make very few assumptions about the gears-level properties of future AI systems. In contrast, the emerging field of "Science of Deep Learning" (SDL) assumes that the systems under question are neural networks, and aims to empirically uncover general principles about how they work. Assuming such principles exist and that neural nets will lead to AGI, insights from SDL will generalize to future systems even if specific alignment techniques found by trial-and-error do not. As a result, SDL can address the threat models that motivate agent foundations research while being much more tractable due to making more assumptions about future AI systems, though it cannot help in the construction of an ideal utility function for future AI systems to maximize.

Thanks to Alexandra Bates for discussion and feedback

Introduction

Generally speaking, the "agent foundations" line of research in alignment is motivated by a particular class of threat models: that alignment techniques generated through empirical trial and error like RLHF or Constitutional AI will suddenly break down as AI capabilities advance, leading to catastrophe. For example, Nate Soares has argued that AI systems will undergo a "sharp left turn" at which point the "shallow" motivational heuristics which caused them to be aligned at lower capabilities levels will cease to function. Eliezer Yudkowsky seems to endorse a similar threat model in AGI Ruin, arguing that rapid capabilities increases will cause models to go out of distribution and become misaligned.

I'm much more optimistic about finding robust alignment methods through trial and error than these researchers, but I'm not here to debate that. |

72be267d-85fa-403d-9ae0-87fab24cdb55 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Multi-agent predictive minds and AI alignment

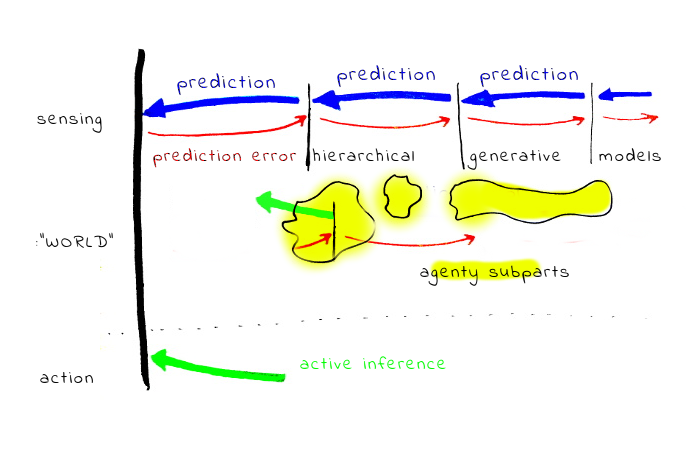

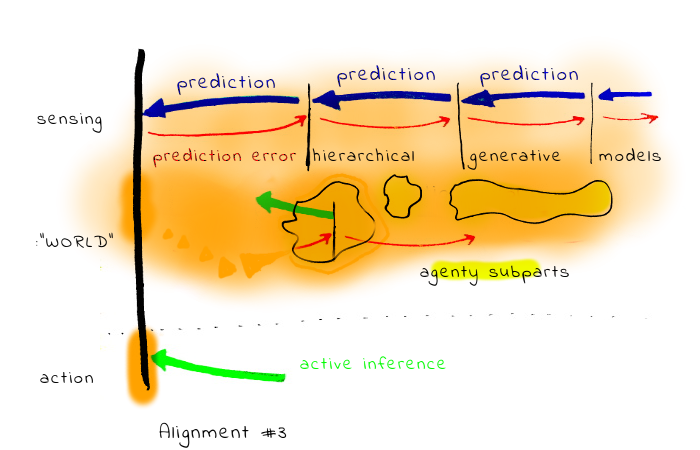

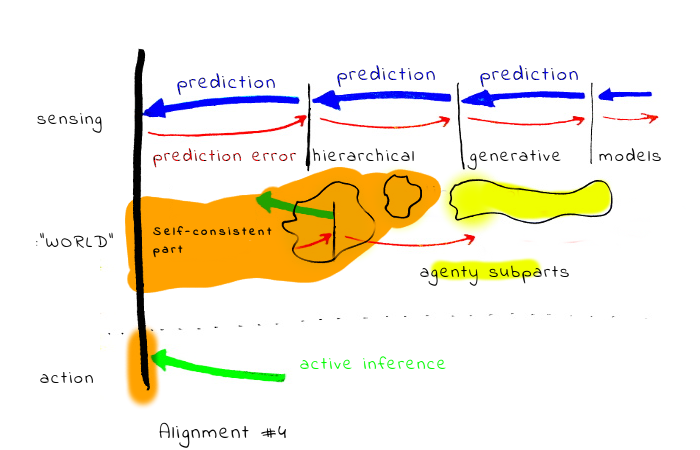

*Abstract: An attempt to map a best-guess model of how human values and motivations work to several more technical research questions. The mind-model is inspired by predictive processing / active inference framework and multi-agent models of the mind.*

The text has slightly unusual epistemic structure:

**1st part:** my current best-guess model of how human minds work.

**2nd part:** explores various problems which such mind architecture would pose for some approaches to value learning. The argument is: if such a model seems at least plausible, we should probably extend the space of active research directions.

**3rd part:** a list of specific research agendas, sometimes specific research questions, motivated by the previous.

I put more credence in the usefulness of research questions suggested in the third part than in the specifics of the model described the first part. Also, you should be warned I have no formal training in cognitive neuroscience and similar fields, and it is completely possible I’m making some basic mistakes. Still, my feeling is even if the model described in the first part is wrong, something from the broad class of “motivational systems not naturally described by utility functions” is close to reality, and understanding problems from the 3rd part can be useful.

How minds work

==============

As noted, this is a “best guess model”. I have large uncertainty about how human minds actually work. But if I could place just one bet, I would bet on this.

The model has two prerequisite ideas: [predictive processing](http://slatestarcodex.com/2017/09/05/book-review-surfing-uncertainty/) and the active inference framework. I'll give brief summaries and links for elsewhere.

In the predictive processing / the active inference framework, brains constantly predict sensory inputs, in a hierarchical generative way. As a dual, action is also “generated” by the same machinery (changing environment to match “predicted” desirable inputs and generating action which can lead to them). The “currency” on which the whole system is running is prediction error (or something in style of [free energy, in that language](https://en.wikipedia.org/wiki/Free_energy_principle)).

Another important ingredient is [bounded rationality,](https://en.wikipedia.org/wiki/Bounded_rationality) i.e. a limited amount of resources being available for cognition. Indeed, the specifics of hierarchical modelling, neural architectures, principle of reusing and repurposing everything, all seem to be related to quite brutal optimization pressure, likely related to brain’s enormous energy consumption (It is unclear to me if this can be also reduced to the same “currency”. Karl Friston would probably answer "yes").

Assuming this whole, how do motivations and “values” arise? The guess is, in many cases something like a “subprogram” is modelling/tracking some variable, “predicting” its desirable state, and creating the need for action by “signalling” prediction error. Note that such subprograms can work on variables on very different hierarchical layers of modelling - e.g. tracking a simple variable like “feeling hungry” vs. tracking a variable like “social status”. Such sub-systems can be large: for example tracking “social status” seems to require lot of computation.

How does this relate to emotions? Emotions could be quite complex processes, where some higher-level modelling (“I see a lion”) leads to a response in lower levels connected to body states, some chemicals are released, and this [interoceptive](https://en.wikipedia.org/wiki/Interoception) sensation is re-integrated in the higher levels in the form of emotional state, eventually reaching consciousness. Note that the emotional signal from the body is more similar to “sensory” data - the guess is body/low level responses are a way how genes insert a reward signal into the whole system.

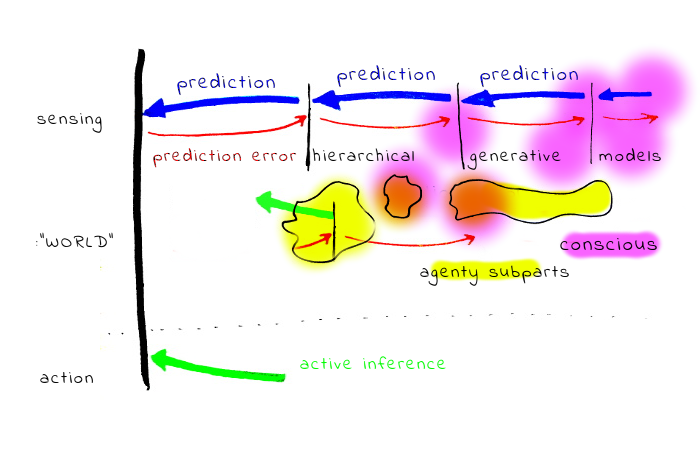

How does this relate to our conscious experience, and stuff like [Kahneman's System 1/System 2](https://en.wikipedia.org/wiki/Thinking,_Fast_and_Slow)? It seems for most people the light of consciousness is illuminating only a tiny part of the computation, and most stuff is happening in the background. Also, S1 has much larger computing power. On the other hand it seems relatively easy to “spawn background processes” from the conscious part, and it seems possible to illuminate larger part of the background processing than is usually visible through specialized techniques and efforts (for example, some meditation techniques).

Another ingredient is the observation that a big part of what the conscious self is doing is interacting with other people, and rationalizing our behaviour. (Cf. press secretary theory, [elephant in the brain](https://www.lesswrong.com/posts/BgBrXpByCSmCLjpwr/book-review-the-elephant-in-the-brain).) It is also quite possible the relation between acting rationally and the ability to rationalize what we did is bidirectional, and significant part of motivation for some rational behaviour is that it is easy to rationalize it.

Also, it seems important to appreciate that the most important part of the human “environment” are other people, and what human minds are often doing is likely simulating other human minds (even simulating how other people would be simulating someone else!).

### Problems with prevailing value learning approaches

While the above sketched picture is just a best guess, it seems to me at least compelling. At the same time, there are notable points of tension between it and at least some approaches to AI alignment.

#### No clear distinction between goals and beliefs

In this model, it is hardly possible to disentangle “beliefs” and “motivations” (or values). “Motivations” interface with the world only via a complex machinery of hierarchical generative models containing all other sorts of “beliefs”.

To appreciate the problems for the value learning program, consider a case of someone who’s predictive/generative model strongly predicts failure and suffering. Such person may take actions which actually lead to this outcome, minimizing the prediction error.

Less extreme but also important problem is that extrapolating “values” outside of the area of validity of generative models is problematic and could be fundamentally ill-defined. (This is related to “ontological crisis”.)

**No clear self-alignment**

It seems plausible the common formalism of [agents with utility functions](https://en.wikipedia.org/wiki/Von_Neumann%E2%80%93Morgenstern_utility_theorem) is more adequate for describing the individual “subsystems” than the whole human minds. Decisions on the whole mind level are more like results of interactions between the sub-agents; results of multi-agent interaction are not in general an object which is naturally represented by utility function. For example, consider the sequence of game outcomes in repeated [PD game](https://en.wikipedia.org/wiki/Prisoner%27s_dilemma). If you take the sequence of game outcomes (e.g. 1: defect-defect, 2:cooperate-defect, ... ) as a sequence of actions, the actions are not representing some well behaved preferences, and in general not maximizing some utility function.

Note: This is not to claim [VNM rationality](https://en.wikipedia.org/wiki/Von_Neumann%E2%80%93Morgenstern_utility_theorem) is useless - it still has the normative power - and some types of interaction lead humans to approximate [SEU](https://en.wikipedia.org/wiki/Subjective_expected_utility) optimizing agents better.

One case is if mainly one specific subsystem (subagent) is in control, and the decision does not go via too complex generative modelling. So, we should expect more VNM-like behaviour in experiments in narrow domains than in cases where very different sub-agents are engaged and disagree.

Another case is if sub-agents are able to do some “social welfare function” style aggregation, bargain, or trade - the result could be more VNM-like, at least in specific points of time, with the caveat that such “point” aggregate function may not be preserved in time.

On the contrary, cases where the resulting behaviour is very different from VNM-like may be caused by sub-agents locked in some non-cooperative Nash equilibria.

#### What we are aligning AI with

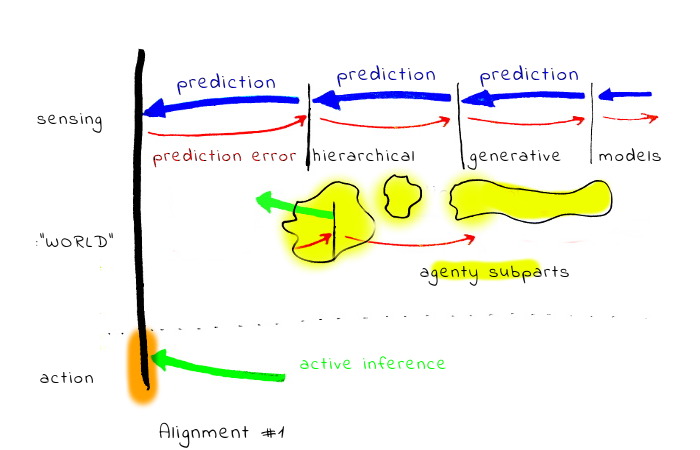

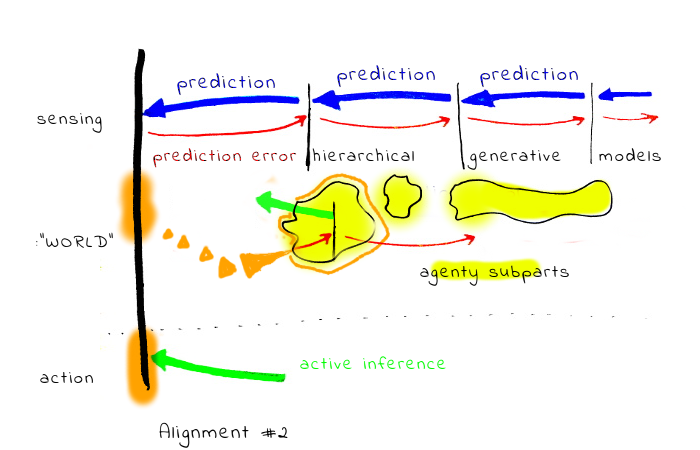

Given this distinction between the whole mind and sub-agents, there are at least four somewhat different notions of what alignment can mean.

1. Alignment with the outputs of the generative models, without querying the human. This includes for example proposals centered around approval. In this case, generally only the output of the internal aggregation has some voice.

2. Alignment with the outputs of the generative models, with querying the human. This includes for example [CIRL](https://arxiv.org/abs/1606.03137) and similar approaches. The problematic part of this is, by carefully crafted queries, it is possible to give voice to different sub-agenty systems (or with more nuance, give them very different power in the aggregation process). One problem with this is, if the internal human system is not self-aligned, the results could be quite arbitrary (and the AI agent has a lot of power to manipulate)

3. Alignment with the whole system, including the human aggregation process itself. This could include for example some deep NN based black-box trained on a large amount of human data, predicting what would the human want (or approve).

4. Adding layers of indirection to the question, such as defining alignment as a state where the *“A is trying to do what H wants it to do.”*

In practice, options 1. and 2. can collapse into one, as far as there is some feedback loop between the AI agent actions and the human reward signal. (Even in case 1, the agent can take an action with the intention to elicit feedback from some subpart.)

We can construct a rich space of various meanings of "alignment" by combining basic directions.

Now, we can analyze how these options interact with various alignment research programs.

Probably the most interesting case is [IDA](https://www.lesswrong.com/posts/dy6JHE7vzJS8SpSiu/iterated-distillation-and-amplification). IDA-like schemes can probably carry forward arbitrary properties to more powerful systems, as long as we are able to construct the individual step preserving the property. (I.e. one full cycle of distillation and amplification, which can be arbitrarily small).

Distilling and amplifying the alignment in sense #1 (what the human will actually approve) is conceptually easiest, but, unfortunately, brings some of the problems of potentially super-human system optimizing for manipulating the human for approval.

Alignment in sense #3 creates a very different set of problems. One obvious risk are mind-crimes. More subtle risk is related to the fact that as the implicit model of human “wants” scales (becomes less bounded), I. the parts may scale at different rates II. the outcome equilibria may change even if the sub-parts scale at the same rate.

Alignment in sense #4 seems more vague, and moves the burden of understanding the problem in part to the side of the AI. We can imagine that at the end the AI will be aligned with some part of the human mind in a self-consistent way (the part will be a fixed point of the alignment structure). Unfortunately, it is *a priori* unclear if a unique fixed point exists. If not, the problems become similar to case #2. Also, it seems inevitable the AI will need to contain some structure representing what the human wants the AI to do, which may cause problems similar to #3.

Also, in comparison with other meanings, it is much less clear to me how to even establish some system has this property.

#### Rider-centric and meme-centric alignment

Many alignment proposals seem to focus on interacting just with the conscious, narrating and rationalizing part of mind. If this is just a one part entangled in some complex interaction with other parts, there are specific reasons why this may be problematic.

One: if the “rider” (from the rider/elephant metaphor) is the part highly engaged with tracking societal rules, interactions and memes. It seems plausible the “values” learned from it will be mostly aligned with societal norms and interests of memeplexes, and not “fully human”.

This is worrisome: from a [meme-centric](https://en.wikipedia.org/wiki/Memetics) perspective, humans are just a substrate, and not necessarily the best one. Also - a more speculative problem may be - schemes learning human memetic landscape and “supercharging it” with superhuman performance may create some hard to predict evolutionary optimization processes.

#### Metapreferences and multi-agent alignment

Individual “preferences” can often in fact be mostly a meta-preference to have preferences compatible with other people, based on simulations of such people.

This may make it surprisingly hard to infer human values by trying to learn what individual humans want without the social context (necessitating inverting several layers of simulation). If this is the case, the whole approach of extracting individual preferences from a single human could be problematic. (This is probably more relevant to some “prosaic” alignment problems)

#### Implications

Some of the above mentioned points of disagreements point toward specific ways how some of the existing approaches to value alignment may fail. Several illustrative examples:

* Internal conflict may lead to inaction (also to not expressing approval or disapproval). While many existing approaches represent such situation only by the *outcome* of the conflict, the internal experience of the human seems to be quite different with and without the conflict

* Difficulty with splitting “beliefs” and “motivations”.

* Learning inadequate societal equilibria and optimizing on them.

Upside

------

On the positive side, it could be expected the sub-agents still easily agree on things like “it is better not to die a horrible death”.

Also, the mind-model with bounded sub-agents which interact only with their local neighborhood and do not actually care about the world may be a viable design from the safety perspective.

Suggested technical research directions

=======================================

While the previous parts are more in backward-chaining mode, here I attempt to point toward more concrete research agendas and questions where we can plausibly improve our understanding either by developing theory, or experimenting with toy models based on current ML techniques.

Often it may be the case that some research was already done on the topic, just not with AI alignment in mind, and a high value work could be “importing the knowledge” into safety community.

**Understanding hierarchical modelling.**

It seems plausible the human hierarchical models of the world optimize some "boundedly rational" function. (Remembering all details is too expensive, too much coarse-graining decreases usefulness. A good bounded rationality model can work as a principle for how to select models. In a similar way to the minimum description length principle, just taking some more “human” (energy?) costs as cost function.)

**Inverse Game Theory.**

Inverting agent motivations in MDPs is a different problem from inverting motivations in multi-agent situations where game-theory style interactions occur. This leads to the inverse game theory problem: observe the interactions, learn the objectives.

**Learning from multiple agents.**

Imagine a group of five closely interacting humans. Learning values just from person A may run into the problem that big part of A’s motivation is based on A simulating B,C,D,E (on the same “human” hardware, just incorporating individual differences). In that case, learning the “values” just from A’s actions could be in principle more difficult than observing the whole group, trying to learn some “human universals” and some “human specifics”. A different way of thinking about this could be by making a parallel with meta-learning algorithms (e.g. REPTILE) but in IRL frame.

**What happens if you put a system composed of sub-agents under optimization pressure?**

It is not clear to me what would happen if you, for example, successfully “learn” such a system of “motivations” from a human, and then put it inside of some optimization process selecting for VNM-like rational behaviour.

It seems plausible the somewhat messy system will be forced to get more internally aligned; for example, one way how it can happen is one of the sub-agent systems takes control and “wipes out the opposition”.

**What happens if you make a system composed of sub-agents less computationally bounded?**

It is not clear that the relative powers of sub-agents will scale the same with the whole system becoming less computationally bounded. (This is related to MIRI’s sub-agents agenda)

Suggested non-technical research directions

===========================================

**Human self-alignment.**

All other things being equal, it seem safer to try to align AI with humans which are self-aligned.

Notes & Discussion

==================

**Motivations**

Part of my motivation for writing this was an annoyance: there is a plenty of reasons to believe the view

* human mind is a unified whole,

* at first approximation optimizing some utility function,

* this utility is over world-states,

is neither a good model of humans, nor the best model how to think about AI. Yet, it is the paradigm shaping a lot of thoughts and research. I hope if the annoyance surfaced in the text, it is not too distractive.

**Multi-part minds in literature**

There are dozens of schemes describing mind as some sort of multi-part system, so there is nothing original about this claim. Based on a very shallow review, it seems the way how psychologists often conceptualize the sub-agents is as [subpersonalities](https://en.wikipedia.org/wiki/Subpersonality), which are almost fully human. This seems to err on the side of sub-agents being too complex, and anthropomorphising instead of trying to describe formally. (Explaining humans as a composition of humans is not much useful for AI alignment). On the other hand, Minsky’s [“*Society of Mind*”](https://en.wikipedia.org/wiki/Society_of_Mind) has sub-agents which often seem to be too simple (e.g. similar in complexity to individual logic gates). If there is some literature having sub-agent complexity right, and sub-agents being inside predictive processing, I’d be really excited about it!

**Discussion**

When discussion the draft, several friends noted something along the line: “It is overdetermined that approaches like IRL are doomed. There are many reasons for that and the research community is aware of them”. To some extent, I agree this is the case, on the other hand 1. the described model of mind may pose problems even for more sophisticated approaches 2. My impression is many people still have something like utility-maximizing agent as a the central example.

The complementary objection is that while interacting sub-agents may be a more precise model, it seems in practice it is often enough to think about humans as unified agents is good enough, and may be good enough even for the purpose of AI alignment. My intuitions on this is based on the connection of rationality to exploitability: it seems humans are usually more rational and less exploitable when thinking about narrow domains, but can be quite bad when vastly different subsystems are in in play (imagine on one side a person exchanging stock and money, on the other side some units of money, free time, friendship, etc.. In the second case, many people are willing to trade in different situations by very different rates)

*I’d like to thank Linda Linsefors , Alexey Turchin, Tomáš Gavenčiak, Max Daniel, Ryan Carey, Rohin Shah, Owen Cotton-Barratt and others for helpful discussions. Part of this originated in the efforts of the “Hidden Assumptions” team on the 2nd AI safety camp, and my thoughts about how minds work are inspired by CFAR.* |

1a3a48db-625a-4fd4-b2f9-3ed2a206e6a1 | trentmkelly/LessWrong-43k | LessWrong | are "almost-p-zombies" possible?

It's probably not possible to have a twin of me that does everything the same except experiences no qualia, i.e. you can predict 100% accurately, if you expose it to stimulus X and it does Y, that I would also do Y if I was exposed to stimulus X.

But can you make an "almost-p-zombie"? A copy of me, which, while not being exactly like me (besides consciousness), is almost exactly like me. So a function, which, when it takes in a stimulus X, says, not with 100% certainty, but 99.999999999%, what I will do in response. Is this possible to construct within the laws of our universe?

Additionally, is this easier or harder to construct than a fully conscious simulation of me?

Just curious. |

a2b29c77-da15-42b6-80a8-8a97e125361e | trentmkelly/LessWrong-43k | LessWrong | The Opt-Out Clause

(cross-posted from my blog)

Let me propose a thought experiment with three conditions.

First, you're in a simulation, and a really good one at that. Before you went in, the simulators extracted and stored all of your memories, and they went to great lengths to make sure that the simulation is completely faultless.

Second, you can leave any time you like. All you have to do to end the simulation, regain your memories, and return to reality is recite the opt-out passphrase: "I no longer consent to being in a simulation". Unfortunately, you don't know about the opt-out condition: that would kind of ruin the immersion.

Third, although you're never told directly about the opt-out condition, you do get told about indirectly, phrased as a kind of hypothetical thought experiment. Maybe someone poses it to you at a party, maybe you read it on twitter, maybe it's a blog post on some niche internet forum. You're guaranteed to hear about it at least once though, to give you a fair chance of leaving. But it's vague and indirect enough that you can dismiss it if you want, and probably forget about it in a week.

It's not enough to think the opt-out phrase, you have to actually say it or write it. So the question is, hypothetically, would you? |

a4ab6a0f-2b3a-4098-987d-7f67b0677f21 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Upper Canada LW Megameetup: Ottawa, Toronto, Montreal, Waterloo, London

Discussion article for the meetup : Upper Canada LW Megameetup: Ottawa, Toronto, Montreal, Waterloo, London

WHEN: 18 July 2014 07:00:00PM (-0400)

WHERE: Ottawa, Canada

Hi all LWers and CFAR alumni in the eastern Canada region! We'll be hosting a megameetup in Ottawa, Canada, running from 7:00 pm on Friday, July 18th, until early afternoon on Sunday, July 20th. We have a house available and enough space for everyone to sleep on site for the duration. We'll be eating communally, and there will be lots of snacks stocked up at the house, but please plan on contributing some money to cover food costs.

Friday night will be a fun social. Saturday will have a schedule of talks, activities, and CFAR-style classes. Sunday, we will most likely have an outing to a park or beach, depending on weather.

If you would like to come to this meetup, please fill out the following Google Form for logistics purposes: https://docs.google.com/forms/d/1zAFz-2nFUfQ31aVW6nFl61gsmnsER7PVCSvlJjwU__E/viewform?usp=send_form

If you have any questions, you can message Swimmer963 and I will try to answer them.

Discussion article for the meetup : Upper Canada LW Megameetup: Ottawa, Toronto, Montreal, Waterloo, London |

f305d1af-30dd-41c7-8626-a2e296ffc48c | trentmkelly/LessWrong-43k | LessWrong | Another Iterated Prisoner's Dilemma Tournament?

Last year, there was a lot of interest in the IPD tournament with people asking for regular events of this sort and developing new strategies (like Afterparty) within hours after the results were published and also expressing interest in re-running the tournament with new rules that allowed for submitted strategies to evolve or read their opponent's source code. I noticed that many of the submitted strategies performed poorly because of a lack of understanding of the underlying mechanics, so I wrote a comprehensive article on IPD math that sparked some interesting comments.

And then the whole thing was never spoken of again.

So now I'd like to know: How many LWers would commit to competing in another tournament of this kind, and would someone be interested in hosting it? |

cc115b2e-4f17-464d-b193-5b6ca565bfd7 | trentmkelly/LessWrong-43k | LessWrong | Introduction to Representing Sentences as Logical Statements

TLDR: Most sentences can be translated to simple predicate logic. There are some very important cases where natural languages are recursive, but those cases are limited to a few particular connectives (like causation and attitude verbs). I propose the hypothesis that, contrary to mainstream formal semantics, events should not be treated as basic entities, and rather as ways to organize information which can already be expressed without events.

Motivations for this investigation:

1. Better understanding how language works as an angle for better understanding minds.

2. (To a lesser extent, as a starting angle for creating a good language for reasoning more effectively.)[1]

There's a tight correspondence between sentences and logical statements. Roughly speaking, when we hear a sentence, our minds very likely parse it into a logical statement, along with inferring other details that are not captured by the coarseness of sentences in human languages (like imagining a visual scene), and then apply inferences (e.g. about what is likely going to happen next).

In theory, one could have a language where all the sentences are just statements in predicate logic, bypassing the first parsing step. Creating such a simple system may be a useful investigation that may move us closer to better understanding how language works.

This post proposes a partially-formal system based on predicate logic, which could be seen as a very low-level language. I think everything that can be meaningfully said in natural languages can also be said in this simple system, although a translated statement sometimes look not closely analogous to the original sentence. To be clear, I definitely don't claim that this system explains how most of the meaning of sentences gets parsed, though it is a small step into that direction.

In many respects, the framework presented here aligns with traditions in formal semantics, though it diverges in its treatment of events.

This work focuses on the structur |

bc8ac0de-8316-4648-84c8-df35cb8286e6 | trentmkelly/LessWrong-43k | LessWrong | Ethical dilemmas for paperclip maximizers

(Why? Because it's fun.)

1) Do paperclip maximizers care about paperclip mass, paperclip count, or both? More concretely, if you have a large, finite amount of metal, you can make it into N paperclips or N+1 smaller paperclips. If all that matters is paperclip mass, then it doesn't matter what size the paperclips are, as long as they can still hold paper. If all that matters is paperclip count, then, all else being equal, it seems better to prefer smaller paperclips.

2) It's not hard to understand how to maximize the number of paperclips in space, but how about in time? Once it's made, does it matter how long a paperclip continues to exist? Is it better to have one paperclip that lasts for 10,000 years and is then destroyed, or 10,000 paperclips that are all destroyed after 1 year? Do discount rates apply to paperclip maximization? In other words, is it better to make a paperclip now than it is to make it ten years from now?

3) Some paperclip maximizers claim want to maximize paperclip <i>production</i>. This is not the same as maximizing paperclip count. Given a fixed amount of metal, a paperclip count maximizer would make the maximum number of paperclips possible, and then stop. A paperclip production maximizer that didn't care about paperclip count would find it useful to recycle existing paperclips, melting them down so that new ones could be made. Which approach is better?

4) More generally, are there any conditions under which the paperclip-maximizing thing to do involves destroying existing paperclips? It's easy to imagine scenarios in which destroying some paperclips causes there to be more paperclips in the future. (For example, one could melt down existing paperclips and use the metal to make smaller ones.) |

1497b372-66f5-4b87-aa36-6fa0609c1b34 | trentmkelly/LessWrong-43k | LessWrong | Local Solar Time

Sometimes we talk about how society is "path dependent": what things would be different if history had not followed its particular path? One place that's fun to think about is time. Until the development of railways, places used local solar time. Washington DC is at 77°W while NYC is at 74°, so clocks in NYC would be set ~12min ahead of those in DC.

Initially, this didn't matter: traveling from NYC to DC was a multi day endeavor, so a 12 minute difference in clocks was trivial. As roads got better and then railroads were built, however, travel times decreased enormously:

Atlas of the Historical Geography of the United States, 1932

If you're running a railway system, it's really very useful to choose a single location's time to use internally. Your workers are moving East and West, fast enough for this sort of discrepancy to become a problem, and they certainly don't want to be continuously changing their watches as they move from town to town. Railway schedules are much easier to work with if they use railway time, people start using railway time in their regular life, the government adopts a system of time zones.

Imagine, however, that things had gone differently and we were still on local solar time. At this point it would not be hard to make our phones always report the correct time as we move east and west, since our phones know where we are. Automated calendar software could deal with local solar time with about as much effort as it could with time zones. Do all routes to the present pass through a system of time zones, or is there an alternative history where we really could be on local solar time today?

Railroads need shared time much more than other forms of transportation. There are a small number of high-capacity tracks, and the same with vehicles. Efficient use requires central organization, coordinated operation, and shared scheduling, which is far easier with a shared time system. If instead of railroads we had developed cars, it would still be ann |

06d09836-92d2-4336-8f4e-469b9ccb4ad0 | trentmkelly/LessWrong-43k | LessWrong | Highlights from The Autobiography of Andrew Carnegie

I’ve been reading Andrew Carnegie’s autobiography, published late in his life, in the early 1900s. Here are some interesting themes and quotes. (Emphasis added in all block quotes below.)

Science and steel

One key to Carnegie‘s success in the iron business is that he was one of the first to seriously apply chemistry:

> Looking back to-day it seems incredible that only forty years ago (1870) chemistry in the United States was an almost unknown agent in connection with the manufacture of pig iron. It was the agency, above all others, most needful in the manufacture of iron and steel. The blast-furnace manager of that day was usually a rude bully… who in addition to his other acquirements was able to knock down a man now and then as a lesson to the other unruly spirits under him. He was supposed to diagnose the condition of the furnace by instinct, to possess some almost supernatural power of divination, like his congener in the country districts who was reputed to be able to locate an oil well or water supply by means of a hazel rod. He was a veritable quack doctor who applied whatever remedies occurred to him for the troubles of his patient.

Part of the problem was that the ores and other inputs to smelting were inconsistent in composition:

> The Lucy Furnace was out of one trouble and into another, owing to the great variety of ores, limestone, and coke which were then supplied with little or no regard to their component parts. This state of affairs became intolerable to us.

This is where chemistry was able to help:

> We finally decided to dispense with the rule-of-thumb-and-intuition manager, and to place [Henry Curry] in charge of the furnace….

>

> The next step taken was to find a chemist as Mr. Curry’s assistant and guide. We found the man in a learned German, Dr. Fricke, and great secrets did the doctor open up to us. Ironstone from mines that had a high reputation was now found to contain ten, fifteen, and even twenty per cent less iron than it had bee |

e9bbee9d-67ce-4ffd-9588-432bff2b1bde | StampyAI/alignment-research-dataset/arxiv | Arxiv | Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions

1 Introduction

---------------

In recent years, Artificial Intelligence (AI) has become much more closely connected to human activity. Many tasks that once used to require human labor, are now gradually being automated and shifted to AI. For instance,

in order to cope with the COVID-19 pandemic situation, the use of robot workers is being suggested to minimize physical contact between humans. These robot technologies heavily depend on the accuracy of action recognition/prediction and the consequent interaction between humans and machines.

State-of-the-art action recognition and prediction models are deep neural networks (DNNs), due to their capability of modeling complex problems Si et al. ([2019](#bib.bib43 "An attention enhanced graph convolutional lstm network for skeleton-based action recognition")); Li et al. ([2019a](#bib.bib44 "Spatio-temporal graph routing for skeleton-based action recognition"), [b](#bib.bib45 "Actional-structural graph convolutional networks for skeleton-based action recognition")) in an accurate way. Nonetheless, it has also been shown that these models are prone to adversarial examples (or attacks) Biggio et al. ([2013](#bib.bib1 "Evasion attacks against machine learning at test time")); Szegedy et al. ([2013](#bib.bib2 "Intriguing properties of neural networks")); Goodfellow et al. ([2014](#bib.bib3 "Explaining and harnessing adversarial examples")). DNNs can behave erratically when processing inputs with carefully crafted perturbations, even though such perturbations are imperceptible to humans Carlini and Wagner ([2017](#bib.bib7 "Towards evaluating the robustness of neural networks")); Madry et al. ([2018](#bib.bib4 "Towards deep learning models resistant to adversarial attacks")); Croce and Hein ([2020](#bib.bib25 "Reliable evaluation of adversarial robustness with an ensemble of diverse parameter-free attacks")); Jiang et al. ([2020](#bib.bib55 "Imbalanced gradients: a new cause of overestimated adversarial robustness")); Wang et al. ([2021](#bib.bib23 "A unified approach to interpreting and boosting adversarial transferability")). This has raised security concerns on the deployment of DNN-powered AI systems in security-critical applications such as autonomous driving Eykholt et al. ([2018](#bib.bib11 "Robust physical-world attacks on deep learning visual classification")); Duan et al. ([2020](#bib.bib10 "Adversarial camouflage: hiding physical-world attacks with natural styles")) and medical diagnosis Finlayson et al. ([2019](#bib.bib56 "Adversarial attacks on medical machine learning")); Ma et al. ([2020](#bib.bib5 "Understanding adversarial attacks on deep learning based medical image analysis systems")). Investigating and understanding these abnormalities is a crucial task before machine learning based AI agents can become practical.

In this work, we investigate the adversarial vulnerability of DNN reaction prediction (i.e., regression) models in skeleton-based interactions. Skeleton signals are among one of the most commonly used representations for human or robot motion Zhang et al. ([2016](#bib.bib53 "RGB-d-based action recognition datasets: a survey")); Wang et al. ([2018](#bib.bib52 "RGB-d-based human motion recognition with deep learning: a survey")). While adversarial attacks have been extensively studied on images Goodfellow et al. ([2014](#bib.bib3 "Explaining and harnessing adversarial examples")); Su et al. ([2019](#bib.bib48 "One pixel attack for fooling deep neural networks")); Brown et al. ([2017](#bib.bib47 "Adversarial patch")); Duan et al. ([2020](#bib.bib10 "Adversarial camouflage: hiding physical-world attacks with natural styles")), very few works have been proposed for skeletons Liu et al. ([2019](#bib.bib39 "Adversarial attack on skeleton-based human action recognition")); Wang et al. ([2019a](#bib.bib40 "SMART: skeletal motion action recognition attack")); Zheng et al. ([2020](#bib.bib41 "Towards understanding the adversarial vulnerability of skeleton-based action recognition")).

In comparison to the image space, which is continuous and where pixels can be perturbed freely without raising obvious attack suspicions, the skeleton space is sparse and discrete. It has a temporal nature that needs to be taken into account. Consequently, attacking skeleton-based models requires many more constraints than the image space.

Existing works on attacking skeleton-based models have only considered the single-person scenario, and have all focused towards recognition (i.e., classification) models Liu et al. ([2019](#bib.bib39 "Adversarial attack on skeleton-based human action recognition")); Wang et al. ([2019a](#bib.bib40 "SMART: skeletal motion action recognition attack")); Zheng et al. ([2020](#bib.bib41 "Towards understanding the adversarial vulnerability of skeleton-based action recognition")). However, interaction scenarios involving two or more characters are essential to the interaction between humans and AI. They should not be overlooked if our ultimate goal is to build AI agents that can fit into our daily life. Neglecting possible attacks might lead to AI agents malfunctioning or behaving aggressively when they are not supposed to.

To close this gap, we propose an Adversarial Interaction Attack (AIA) to test the vulnerability of regression DNNs in skeleton-based interactions involving two characters. Being able to accurately recognize a person’s action is important, but it is equally important to be able go a step further and respond to the action in an appropriate way. In light of this, the usage of regression models is necessary. We hence modified the output layers of two previous state-of-art models on action recognition. One model was based on a Temporal Convolutional Neural Network (TCN) Bai et al. ([2018](#bib.bib32 "An empirical evaluation of generic convolutional and recurrent networks for sequence modeling")) and the other was based on Gated Recurrent Units (GRUs) Maghoumi and LaViola Jr ([2019](#bib.bib33 "DeepGRU: deep gesture recognition utility")). The models were modified to return reactor sequences instead of class labels, and we trained them on skeleton-based interaction data. We examine the performance of AIA attack under both white-box and black-box settings. We show that our AIA attack can easily fool the two regression models to misinterpret the actor’s intentions and predict unexpected reactions. Such reactions have detrimental effects to either the actor or the reactor. Overall, our work reveals potential threats of subtle adversarial attacks on interactions involving AI.

In summary, our contributions are:

* We propose an adversarial attack approach - Adversarial Interaction Attack (AIA), that is domain-independent, and works for general sequential regression models.

* We propose an evaluation metric that can be applied to evaluate the performance of sequential regression attacks. Such a metric is currently missing from the literature.

* We empirically show that our AIA attack can generate targeted adversarial action sequences with small perturbations, which fool DNN regression models into making incorrect (possibly dangerous) predictions.

* We demonstrate via three case studies how our AIA attack may affect human and AI interactions in real scenarios, which motivates the need for effective defense strategies.

We highlight that our work is the first work on targeted sequential regression attack in a strict manner (i.e. purely numerical outputs without labels of any kind). We do not compare our work to previous works on skeleton-based action recognition as the focus of our work is fundamentally different. Specifically, the goal of our work is to design a new type of attack and evaluation metric that is capable of handling any type of regression-based problems in general. We thus leave the compatibility between our work and the previously proposed anthropomorphic constraints Liu et al. ([2019](#bib.bib39 "Adversarial attack on skeleton-based human action recognition")); Wang et al. ([2019a](#bib.bib40 "SMART: skeletal motion action recognition attack")); Zheng et al. ([2020](#bib.bib41 "Towards understanding the adversarial vulnerability of skeleton-based action recognition")) as a future area of interest.

2 Related Work

---------------

###

2.1 Adversarial Attack

Adversarial attacks can be either white-box or black-box depending on the attacker’s knowledge about the target model. White-box attack has full knowledge about the target model including parameters and training details Goodfellow et al. ([2014](#bib.bib3 "Explaining and harnessing adversarial examples")); Zheng et al. ([2019](#bib.bib24 "Distributionally adversarial attack")); Croce and Hein ([2020](#bib.bib25 "Reliable evaluation of adversarial robustness with an ensemble of diverse parameter-free attacks")); Jiang et al. ([2020](#bib.bib55 "Imbalanced gradients: a new cause of overestimated adversarial robustness")), while black-box attack can only query the target model Chen et al. ([2017](#bib.bib19 "Zoo: zeroth order optimization based black-box attacks to deep neural networks without training substitute models")); Ilyas et al. ([2018](#bib.bib15 "Prior convictions: black-box adversarial attacks with bandits and priors")); Bhagoji et al. ([2018](#bib.bib18 "Practical black-box attacks on deep neural networks using efficient query mechanisms")); Dong et al. ([2019b](#bib.bib16 "Efficient decision-based black-box adversarial attacks on face recognition")); Jiang et al. ([2019](#bib.bib17 "Black-box adversarial attacks on video recognition models")); Bai et al. ([2020](#bib.bib22 "Improving query efficiency of black-box adversarial attack")) or use a surrogate model Liu et al. ([2016](#bib.bib59 "Delving into transferable adversarial examples and black-box attacks")); Tramèr et al. ([2017](#bib.bib58 "The space of transferable adversarial examples")); Dong et al. ([2018](#bib.bib57 "Boosting adversarial attacks with momentum"), [2019a](#bib.bib60 "Evading defenses to transferable adversarial examples by translation-invariant attacks")); Andriushchenko et al. ([2020](#bib.bib61 "Square attack: a query-efficient black-box adversarial attack via random search")); Wu et al. ([2020a](#bib.bib9 "Skip connections matter: on the transferability of adversarial examples generated with resnets")); Wang et al. ([2021](#bib.bib23 "A unified approach to interpreting and boosting adversarial transferability")). Adversarial attacks can also be targeted or untargeted. Under the classification setting, untargeted attacks aim to fool the model such that its output is different from the correct label, whereas targeted attack aims to fool the model to return a target label of the attacker’s interest. White-box attacks can be achieved by solving either the targeted or untargeted adversarial objective using first-order gradient methods Goodfellow et al. ([2014](#bib.bib3 "Explaining and harnessing adversarial examples")); Kurakin et al. ([2016](#bib.bib13 "Adversarial examples in the physical world")). Optimization-based methods have also been proposed to achieve the adversarial objective, and at the same time, minimize the perturbation size Carlini and Wagner ([2017](#bib.bib7 "Towards evaluating the robustness of neural networks")); Chen et al. ([2018](#bib.bib21 "Ead: elastic-net attacks to deep neural networks via adversarial examples")).

Most of the above existing attacks were proposed for images and classification models, and the perturbation is usually constrained to be small (eg. ∥ϵ∥∞=8 for pixel value in [0,255]) so as to be imperceptible to human observers. Defenses against adversarial attacks have also been explored on image dataset Madry et al. ([2018](#bib.bib4 "Towards deep learning models resistant to adversarial attacks")); Zhang et al. ([2019](#bib.bib64 "Theoretically principled trade-off between robustness and accuracy")); Wang et al. ([2019b](#bib.bib8 "On the convergence and robustness of adversarial training")); Bai et al. ([2019](#bib.bib65 "Hilbert-based generative defense for adversarial examples")); Wang et al. ([2020](#bib.bib54 "Improving adversarial robustness requires revisiting misclassified examples")); Wu et al. ([2020b](#bib.bib63 "Adversarial weight perturbation helps robust generalization")); Bai et al. ([2021](#bib.bib66 "Improving adversarial robustness via channel-wise suppressing")).

Attacking Regression Models.

Untargeted regression attacks can be derived from classification attacks by simply attacking the regression loss Balda et al. ([2019](#bib.bib34 "Perturbation analysis of learning algorithms: generation of adversarial examples from classification to regression")).

However, it is more difficult to perform targeted regression attack such that the model outputs a target sequence. This is because, unlike classification models that contain a finite set of discrete labels, regression models can have infinitely many possible outcomes. Hence, most existing attacks on regression models have focused on the untargeted setting.

Meng et al. ([2019](#bib.bib38 "White-box target attack for eeg-based bci regression problems")) proposed a univariate regression loss with the goal of changing the outputs of EEG-based BCI regression models to a value that is at least t away from the natural outcome. This loss function guarantees only that the adversarial output will be at a specified distance away from the natural output. It does not constrain how large or small the output can actually become. Compared to Cheng et al. ([2020](#bib.bib36 "Seq2Sick: evaluating the robustness of sequence-to-sequence models with adversarial examples")), the order of target sequences is more significant for our problem.

In natural language processing (NLP), Cheng et al. ([2020](#bib.bib36 "Seq2Sick: evaluating the robustness of sequence-to-sequence models with adversarial examples")) proposed a targeted attack towards recurrent language models. This work aims to replace arbitrary words in the output sequence with a small set of target adversarial keywords, regardless of their order and occurrence position. While word embedding can be used to evaluate attack performance on language models, an appropriate performance metric is still lacking in the field of interaction prediction, making it difficult to evaluate the effectiveness of an attack.

None of the existing works have implemented an attack that is able to change the whole output sequence completely. In our work, we propose such an attack, which can change the entire output sequence with target frames appearing in our desired order.

Adversarial Attack on Action Recognition.

Previous attacks on skeleton-based action recognition have proposed several constraints based on extensive study of anthropomorphism and motion. These include postural constraints as the maximum changes in joint angles, and inter-frame constraints based on the notion of velocities, accelerations, and jerks Liu et al. ([2019](#bib.bib39 "Adversarial attack on skeleton-based human action recognition")); Wang et al. ([2019a](#bib.bib40 "SMART: skeletal motion action recognition attack")); Zheng et al. ([2020](#bib.bib41 "Towards understanding the adversarial vulnerability of skeleton-based action recognition")). Additionally, Liu et al. ([2019](#bib.bib39 "Adversarial attack on skeleton-based human action recognition")) utilized a Generative Adversarial Network (GAN) loss to model anthropomorphic plausibility.

These constraints are distinct from our work, but could potentially be employed in combination with our proposed attack to improve naturalness of adversarial action sequences.

###

2.2 Interaction Recognition and Prediction

The use of skeleton data has gained its popularity in action recognition and prediction research. Owing to the fact that reliable skeleton data can be easily extracted from modern RGB-D sensors or RGB camera images, these techniques can be easily extended to practical applications Yun et al. ([2012](#bib.bib46 "Two-person interaction detection using body-pose features and multiple instance learning")).

One benchmark interaction dataset is the SBU Kinect Interaction Dataset. Different from most skeleton-based action recognition datasets that focus on studying single-person activities, the SBU Kinect Interaction Dataset captures various activities with two characters involved. Predicting interactions is a much harder task in comparison to predicting single-person activities, due to the complexity and the non-periodicity of the problem Yun et al. ([2012](#bib.bib46 "Two-person interaction detection using body-pose features and multiple instance learning")). Specifically, in the interaction scenario, two characters are involved. However, the contribution from each character may not be equal. For instance, interactions such as approaching and departing have only one active character; another character remains steady over all time frames.

Convolutional Neural Networks (CNNs) Du et al. ([2015a](#bib.bib29 "Skeleton based action recognition with convolutional neural network")); Nunez et al. ([2018](#bib.bib30 "Convolutional neural networks and long short-term memory for skeleton-based human activity and hand gesture recognition")); Li et al. ([2017](#bib.bib31 "Skeleton-based action recognition with convolutional neural networks")) and Recurrent Neural Networks (RNNs) Du et al. ([2015b](#bib.bib28 "Hierarchical recurrent neural network for skeleton based action recognition")) are two popular choices to tackle the interaction recognition problem. Models from the RNN family such as Gated Recurrent Unit (GRU) and Long Short-Term Memory (LSTM) are commonly chosen for interaction recognition, because it is natural for them to handle sequential data. Maghoumi and LaViola Jr ([2019](#bib.bib33 "DeepGRU: deep gesture recognition utility")) proposed a recurrent-based model namely DeepGRU, that was able to reach state-of-the-art performance. Temporal Convolutional Networks (TCNs) are also a common choice of model when dealing with spatio-temporal data. TCNs, just like RNNs, can take sequences of any length. TCNs rely on a causal convolution operation to ensure no information leakage from future to the past Bai et al. ([2018](#bib.bib32 "An empirical evaluation of generic convolutional and recurrent networks for sequence modeling")). TCN is also a previous state-of-art model Kim and Reiter ([2017](#bib.bib49 "Interpretable 3d human action analysis with temporal convolutional networks")) and a component adopted by many latest works on skeleton-based action recognition Meng et al. ([2018](#bib.bib50 "Human action recognition based on quaternion spatial-temporal convolutional neural network and lstm in rgb videos")); Yan et al. ([2018](#bib.bib51 "Spatial temporal graph convolutional networks for skeleton-based action recognition")).

In this paper, we will modify the DeepGRU network proposed by Maghoumi and LaViola Jr ([2019](#bib.bib33 "DeepGRU: deep gesture recognition utility")) and the TCN network proposed by Bai et al. ([2018](#bib.bib32 "An empirical evaluation of generic convolutional and recurrent networks for sequence modeling")) for interaction prediction and examine their vulnerability to our proposed attack based on the SBU Kinect Interaction Dataset.

3 Proposed Adversarial Interaction Attack

------------------------------------------

In this section, we first provide a mathematical formulation of the targeted adversarial sequence attack problem. We then introduce the loss functions used by our AIA attack.

Overview. Intuitively, the goal of our AIA attack is to deceive the *reactor* AI agent into thinking that the *actor* is doing a different specific action by making minor changes to the positions of the *actor’s* joints or the angles between joints.

The reactor agent will consequently respond by performing the reaction that is targeted by the attack.

###

3.1 Formal Problem Definition

A skeleton sequence with T frames can be represented mathematically as the vector X=(x1,x2,...,xT) where xi is a skeleton representation of the ith frame, which is a vector consists of 3D-coordinates of the human skeleton joints. More specifically, xi∈RN×3, where N denotes the number of the joints. In our approach, we flattened xi into R3N.

First, we define the formal notion of interaction. Suppose the two characters in a two-person interaction scenario are *actor A* and *reactor B*. The task of an interaction prediction model f is to predict an appropriate reaction (i.e., skeleton) yt at each time step t for reactor B based on the observed skeleton sequence of actor A (x1,⋯,xt). This can be written mathematically as:

| | | |

| --- | --- | --- |

| | f(x1,⋯,xt−1,xt)=yt. | |

Given an input skeleton sequence

X=(x1,x2,...,xT), an adversarial target skeleton sequence Y′=(y′1,y′2,...,y′T), and a prediction model f:RT×3N→RT×3N, the goal of our AIA attack is to find an adversarial input sequence X′=(x′1,⋯,x′T) by solving the following optimization problem:

| | | | | |

| --- | --- | --- | --- | --- |

| | | minX′∑t∈T∥x′t−xt∥∞ | | (1) |

| | | s.t.∑t∈T∥f(x′1,⋯,x′t)−y′t∥2<κ, | |

where, ∥⋅∥p is the Lp norm, and κ≥0 is a *tolerance factor*, which serves as a cutoff that distinguishes whether the output sequence is recognizable as the target reaction. This gives us more flexibility when crafting the adversarial input sequence X′ because the acceptable target sequence is non-singular; the output sequence does not need to be exactly the same as the target sequence to resemble a particular action. We empirically determine this factor based on informal user survey in Section [5.1](#S5.SS1 "5.1 Tolerance Factor κ ‣ 5 Empirical Understanding of AIA ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions").

Intuitively, the above objective is to find a sequence X′ with minimum perturbation from X, such that the distance between the output and the target is less than κ/T on average for each time step.

###

3.2 Adversarial Loss Function

Our goal is to develop a mechanism that crafts an adversarial input sequence which solves the above optimization problem given any target output sequence, while also maintaining the naturalness of the adversarial input sequence.

In order to achieve this goal, we propose the following adversarial loss function:

| | | | |

| --- | --- | --- | --- |

| | Ladv=Lspatial+λLtemporal, | | (2) |

where the Lspatial loss term minimizes the spatial distance between the output sequence and the target sequence, and the Ltemporal loss term maximizes the coherence of the perturbed input sequence so as to maintain the naturalness of the adversarial input sequence.

Spatial Loss.

The spatial loss term aims to generate adversarial output sequences that are visually similar to the target reaction sequences; that is, its objective is to minimize the spatial distance between the output joint locations and the neighbourhood of the target joints for every time step. Following the formulation of the relaxed optimization problem in ([1](#S3.E1 "(1) ‣ 3.1 Formal Problem Definition ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")), we use the L2 norm to measure the distance between two sets of joint locations:

| | | | |

| --- | --- | --- | --- |

| | Lspatial=∑t∈Tinf{∥f(x′1,⋯,x′t)−pt∥2|pt∈St} | | (3) |

with St being an (N-1)-sphere defined by:

| | | | |

| --- | --- | --- | --- |

| | St(y′t,η)={pt∈R3N|∥pt−y′t∥2=η}. | | (4) |

Here, η=κ/T is the mean of the enabling tolerance factor κ in equation ([1](#S3.E1 "(1) ‣ 3.1 Formal Problem Definition ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")) over time T.

Temporal Loss.

The temporal loss term is to guarantee the naturalness of the generated adversarial input sequence. Specifically, the movement of each joint should be continuous in time, and motions with abrupt huge change or teleportation should be penalised. The Ltemporal term achieves this goal by maximizing the coherence of each element in the perturbed input sequence with respect to its neighboring elements in the temporal dimension. This gives:

| | | | |

| --- | --- | --- | --- |

| | Ltemporal=∑t∈T(∥x′t−x′t−1∥2+∥x′t−x′t+1∥2) | | (5) |

Note that a scaling factor 0≤λ≤1 is introduced in front of Ltemporal to balance the two loss terms.

We use the first-order method Project Gradient Descent (PGD) Madry et al. ([2018](#bib.bib4 "Towards deep learning models resistant to adversarial attacks")) to minimize the combined adversarial loss iteratively as follows:

| | | | | |

| --- | --- | --- | --- | --- |

| | | X′0=X | | (6) |

| | | | |

where, ΠX,ϵ(⋅) is the projection operation that clips the perturbation back to ϵ-distance away from X when it goes beyond, ∇X′mLadv(X′m,Y′) is the gradient of the adversarial loss to the input sequence, m is the current perturbation step for a total number of M steps, α is the step size and ϵ is the maximum perturbation factor. The sequence Y′ for a target reaction can be either customized or sampled from the original dataset.

| | |

| --- | --- |

| Side-by-side comparison of Case Study 1 ‘handshaking’ to ‘punching’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. | Side-by-side comparison of Case Study 1 ‘handshaking’ to ‘punching’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. |

Figure 1: Side-by-side comparison of Case Study 1 ‘handshaking’ to ‘punching’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output.

| | |

| --- | --- |

| Side-by-side comparison of Case Study 2 ‘punching’ to ‘handshaking’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. | Side-by-side comparison of Case Study 2 ‘punching’ to ‘handshaking’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. |

Figure 2: Side-by-side comparison of Case Study 2 ‘punching’ to ‘handshaking’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output.

| | |

| --- | --- |

| Side-by-side comparison of Case Study 3 ‘approaching’ to ‘remaining’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. | Side-by-side comparison of Case Study 3 ‘approaching’ to ‘remaining’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output. |

Figure 3: Side-by-side comparison of Case Study 3 ‘approaching’ to ‘remaining’. Top-Bottom: original prediction, adversarial prediction. Blue character: input, green character: output.

4 Overview on Several Case Studies

-----------------------------------

In this section, we conduct case studies on three selected sets of attack objectives that can be easily associated with real scenarios and can serve as motivations behind our approach. Detailed experimental settings can be found in Section [5](#S5 "5 Empirical Understanding of AIA ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions"). The dynamic versions of the case studies and more examples are provided in the supplementary materials.

###

4.1 Case Study 1: ‘handshaking’ to ‘punching’

Figure [1](#S3.F1 "Figure 1 ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions") illustrates a successful AIA attack that fools the model to predict a ‘punching’ action for the reactor (the green character) as a response to the adversarially perturbed ‘handshaking’ action of the actor (the blue character). Note that the perturbation only slightly changed the actor’s action.

This reveals an important safety risk that needs to be carefully addressed before machine learning based AI agents can be widely used in human daily life.

Suppose that we are at an AI interactive exhibition, a participant would like to shake hands with an AI robot agent. He gradually extends his hand, sending out an interaction request to the AI agent and is expecting the AI agent to respond to his handshaking invitation by shaking hand with him. However, instead of reaching its hands out gently, the AI agent decided to punch the participant in the face because the participant’s body does not stay straight. It would be extremely hazardous if the human character unintentionally wiggled his body in a pattern similar to the adversarial perturbation introduced in this case study. While the actual chance of this happening is extremely low due to the high complexity of data in both the spatial and the temporal dimensions, this threat might nevertheless happen if AI workers become widely deployed worldwide. In this case, the human is a victim by inadvertently performing an adversarial attack (wiggling their body).

###

4.2 Case Study 2: ‘punching’ to ‘handshaking’

In this case study we consider a case opposite to the previous one, where human exploiters are capable of attacking AI agents actively and derive benefit from being active attackers.

In the future, it could become a common practice to utilize AI agents to complete dangerous tasks so as to lower the chance of human operators incurring injuries or fatalities. Security guard is one such job that might be taken over by an AI agent. Imagine a secret agency that hires AI security guards is invaded by intruders and is placed in a scenario where combat becomes necessary. The AI guard will fail in its role if the invaders know how to apply effective adversarial attacks towards it. This is the case in Figure [2](#S3.F2 "Figure 2 ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions") where the model was fooled to suggest ‘handshaking’ for the reactor (the green character) rather than ‘punching’.

###

4.3 Case Study 3: ‘approaching’ to ‘remaining’

Finally, Case Study 3 demonstrated in Figure [3](#S3.F3 "Figure 3 ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions") examines the case of how a cheater might be able to bypass an AI agent’s detection.

Whilst automatic ticket checkers have been widely adopted, manual ticket checking is still required for numerous situations. For instance, public transportation companies may want to check whether a passenger has paid for the upgrade fee if he or she is in a first class seat. Now suppose that

a public transportation company decides to hire AI agents to do the ticket checking job. The public transportation company will lose a huge amount of income if passengers know how to stop the ticket checkers from ‘approaching’ as in Figure [3](#S3.F3 "Figure 3 ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions"), or even change their ‘approaching’ response to ‘departing’.

5 Empirical Understanding of AIA

---------------------------------

###

5.1 Tolerance Factor κ

The objective of AIA attack is defined with respect to a tolerance factor κ (see ([1](#S3.E1 "(1) ‣ 3.1 Formal Problem Definition ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")), ([3](#S3.E3 "(3) ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")) and ([4](#S3.E4 "(4) ‣ 3.2 Adversarial Loss Function ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions"))), which is a flexible metric that distinguishes whether the output sequence is close to the targeted adversarial reaction.

Because there are many factors involved, such as the character’s height, handedness, and the direction the character is facing, conventional distance metrics such as L1 and L2 norms are not suitable to define precisely what the pattern of a specific action should look like. Therefore, we determine the value of κ based on human perception via an informal user survey.

In order to obtain appropriate values for κ to evaluate whether an attack is successful, we randomly sampled 5 out of 8 sets of attack objectives and presented them to 82 human judges, including computer science faculties and students. Each objective set is composed of an action-reaction pair and contains output sequences generated from 6 different values of ϵ (from left to right in ascending order). For each sample set, we asked the human judges to choose the leftmost sequence they believe is performing the target reaction. Sampled objectives and the responses from the 82 human judges are recorded in Table [1](#S5.T1 "Table 1 ‣ 5.1 Tolerance Factor κ ‣ 5 Empirical Understanding of AIA ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions").

Based on the responses from the 82 human judges, we computed the tolerance factor κ in the optimization problem defined in ([1](#S3.E1 "(1) ‣ 3.1 Formal Problem Definition ‣ 3 Proposed Adversarial Interaction Attack ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")) based on the average of

| | | | |

| --- | --- | --- | --- |

| | ∑t∈T∥f(x′1,⋯,x′t)−y′t∥2 | | (7) |

over the 5 sample objective sets. The calculation of ([7](#S5.E7 "(7) ‣ 5.1 Tolerance Factor κ ‣ 5 Empirical Understanding of AIA ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")) for each objective set is based on the minimum ϵ polled from the 82 human judges, and the corresponding value of κ is then selected as the optimal value (boldfaced in Table [1](#S5.T1 "Table 1 ‣ 5.1 Tolerance Factor κ ‣ 5 Empirical Understanding of AIA ‣ Adversarial Interaction Attack: Fooling AI to Misinterpret Human Intentions")).

Note that, κ serves as a topological boundary between the natural and the adversarial outputs, whereas ϵ is a maximum perturbation constraint that we don’t want the input perturbation to go beyond.

| ϵ= | 0.075 | 0.15 | 0.225 | 0.3 | 0.375 | 0.45 |

| --- | --- | --- | --- | --- | --- | --- |

| Handshaking | 1 (κ=90.9) | 4 (κ=84.28) | 44 (κ=79.52) | 3 (κ=74.49) | 12 (κ=45.04) | 14 (κ=35.03) |

| Punching | 58 (κ=52.04) | 13 (κ=47.63) | 6 (κ=43.97) | 3 (κ=41.76) | 0 (κ=39.14) | 2 (κ=34.91) |

| Kicking | 3 (κ=100.61) | 71 (κ=93.17) | 7 (κ=86.57) | 1 (κ=80.68) | 0 (κ=47.47) | 0 (κ=35.36) |

| Departing | 0 (κ=85.03) | 7 (κ=76.78) | 26 (κ=71.77) | 12 (κ=67.58) | 1 (κ=41.78) | 10 (κ=32.70) |