id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

54dfdcf5-79ba-4289-8eb4-927c76e24fc1 | StampyAI/alignment-research-dataset/arxiv | Arxiv | Towards Empathic Deep Q-Learning

1 Introduction

---------------

Historically, reinforcement learning (RL; (Sutton et al., [1998](#bib.bib21))) research has largely focused on solving clearly-specified benchmark tasks. For example,

the ubiquitous Markov decision process (MDP) framework cleaves the world into four well-defined parts (states, actions, state-action transitions, and rewards), and most RL algorithms and benchmarks

leverage or reify the assumptions of this formalism, e.g. that a singular, fixed, and correct reward function exists, and is given. While there has been much exciting progress in learning to solve

complex well-specified tasks (e.g. super-human performance in go (Silver et al., [2016](#bib.bib19)) and Atari (Mnih et al., [2015](#bib.bib15))), there is also increasing recognition that common

RL formalisms are often meaningfully imperfect

(Hadfield-Menell et al., [2017](#bib.bib9); Lehman et al., [2018](#bib.bib13)), and that there remains much to understand

about safely applying RL to solve real-world tasks (Amodei et al., [2016](#bib.bib2)).

As a result of this growing awareness, there has been increasing interest in the field of AI safety (Amodei et al., [2016](#bib.bib2); Everitt et al., [2018](#bib.bib7)), which is broadly concerned with creating AI agents that do what is *intended* for them to do, and which often

entails questioning and extending common formalisms (Hadfield-Menell et al., [2017](#bib.bib9), [2016](#bib.bib8); Demski and

Garrabrant, [2019](#bib.bib6)). One overarching theme in AI safety

is how to learn or provide correct incentives to an agent. Amodei et al. ([2016](#bib.bib2)) distinguishes different failure modes in specifying reward functions, which include *reward hacking*, wherein an

agent learns how to optimize the reward function in an unexpected and unintended way that does not satisfy the underlying goal, and *unintended side effects*, wherein an agent learns to achieve the desired goal, but

causes undesirable collateral harm (because the given reward function is incomplete, i.e. it does not include all of the background knowledge and context of the human reward designer).

This paper focuses on the latter setting, i.e. assuming that the reward function incentivizes solving the task, but fails to anticipate some unintended harms. We assume that in real world settings, a physically-embodied RL agent (i.e. a controller for a robot) will often share space with other agents (e.g. humans, animals, and other trained computational agents), and it is challenging to design reward functions apriori that enumerate all the ways in which other agents can be negatively affected (Amodei et al., [2016](#bib.bib2)). Promising current approaches include value learning from human preferences (Saunders et al., [2018](#bib.bib18); Leike et al., [2018](#bib.bib14); Hadfield-Menell et al., [2016](#bib.bib8)) and creating agents that attempt to minimize their impact on

the environment (Krakovna et al., [2018](#bib.bib12); Turner et al., [2019](#bib.bib23)); however, value learning can be expensive for its need to include humans in the loop, and both directions remain technically and philosophically challenging. This paper introduces another tool that could complement such existing approaches, motivated by the concept of empathy.

In particular, the insight motivating this paper is that humans often empathize with the situations of others, by generalizing from their own past experiences.

For example, we can feel vicarious fear for someone who is walking a tight-rope, because we ourselves would be afraid in such a situation. Similarly, for some classes of reward signals (e.g. physical harm), it may be reasonable for

embodied computational agents to generalize those rewards to other agents (i.e. to assume as a prior expectation that other agents might receive similar reward in similar situations). If a robot learns that a fall from heights is dangerous to itself, that insight could generalize to most other embodied agents.

For humans, beyond granting us a capacity to understanding others, such empathy

also influences our behavior, e.g. by avoiding harming others while walking down the street; likewise, in some situations it may be useful if learning agents could also act out of empathy (e.g. to prevent physical harm to another agent resulting from otherwise blind goal-pursuit). While there are many

ways to instantiate algorithms that abide by social or ethical norms (as studied by the field of *machine ethics* (Anderson and

Anderson, [2011](#bib.bib3); Wallach and Allen, [2008](#bib.bib24))), here we take loose inspiration from one simple ethical norm, i.e. the golden rule.

The golden rule, often expressed as: “Do unto others

as you would have them do unto you,” is a principle that has

emerged in many ethical and religious contexts (Kng and Kuschel, [1993](#bib.bib11)).

At heart, abiding by this rule entails projecting one’s desires onto another agent,

and attempting to honor them. We formalize this idea as an extension

of Deep Q-Networks (DQNs; (Mnih et al., [2015](#bib.bib15))), which we

call *Empathic DQN*. The main idea is to augment the value of a given

state with the value of constructed states simulating what the learning agent

would experience if its position were switched with another agent.

Such an approach can also be seen as learning to maximize an estimate of

the combined rewards of both agents, which embodies

a utilitarian ethic.

The experiments in this paper apply Empathic DQN to two

gridworld domains, in which a learned agent pursues a goal in an

environment shared with other non-learned (i.e. fixed) agents. In

one environment, an agent can harm and be harmed by other agents;

and in another, an agent receives diminishing returns from hoarding

resources

that also could benefit other agents. Results in these domains show that Empathic DQN can reduce negative side effects in both environments.

While much work is needed before this algorithm would be

effectively applicable to more complicated environments,

we believe that this first step highlights the possibility

of bridgework between the field of machine ethics and

RL; in particular, for the purpose of instantiating useful

priors for RL agents interacting

in environments shared with other agents.

2 Background

-------------

This section reviews machine ethics and AI safety, two fields studying how to encourage and ensure acceptable behavior in computational agents.

###

2.1 Machine Ethics

The field of machine ethics (Anderson and

Anderson, [2011](#bib.bib3); Wallach and Allen, [2008](#bib.bib24))

studies how to design algorithms (including RL algorithms) capable of moral behavior. While morality

is often a contentious term, with no agreement among moral philosophers

(or religions) as to the nature of a “correct” ethics, from a

pragmatic viewpoint, agents deployed in the real world will encounter

situations with ethical tradeoffs, and to be palatable their behavior will need to

approximately satisfy certain societal and legal norms. Anticipating

and hard-coding acceptable behavior for all such trade-offs is likely

impossible. Therefore, just as humans take ethical stances in the real

world in the absence of universal ethical consensus, we may need the same pragmatic behavior from intelligent

machines.

Work in machine ethics often entails concretely embodying a particular moral

framework in code, and applying the

resulting agent in its appropriate domain. For example,

Winfield et al. ([2014](#bib.bib25)) implements a version of

Asmiov’s first law of robotics (i.e. “A robot may not injure a human being or, through inaction,

allow a human being to come to harm”) in a wheeled

robot that can intervene to stop another robot (in lieu of an actual human)

from harming itself. Interestingly, the implemented system bears a strong

resemblance to model-based RL; such reinvention, and the strong possibility

that agents tackling complex tasks with ethical dimensions

will likely be driven by machine learning (ML),

suggests the potential benefit

and need for increased cooperation between ML and machine

ethics, which is an additional motivation for our work.

Indeed, our work can be seen as a contribution to the intersection of

machine ethics and ML, in that

the process of empathy is an important contributor to morally-relevant

behavior in humans (Tangney et al., [2007](#bib.bib22)), and that to the

authors’ knowledge, there has not been previous work implementing

golden-rule-inspired architectures in RL.

###

2.2 AI Safety

A related but distinct field of study is AI safety (Amodei et al., [2016](#bib.bib2); Everitt et al., [2018](#bib.bib7)),

which studies how AI agents can be implemented to avoid harmful accidents. Because

harmful accidents often have ethical valence, there is necessarily overlap between the two fields,

although technical research questions in AI safety may not be phrased in the language

of ethics or morality.

Our work most directly relates to the problem of negative side-effects, as described by Amodei et al. ([2016](#bib.bib2)). In this problem the designer specifies an objective function that focuses on accomplishing a specific task (e.g. a robot should clean a room),

but fails to encompass all other aspects of the environment (e.g. the robot should not vacuum the cat); the result is an agent that is indifferent to whether it alters the environment in undesirable ways, e.g. causing harm to the cat.

Most approaches to mitigating side-effects aim to generally minimize the impact the agent has on the environment through intelligent heuristics (Armstrong and

Levinstein, [2017](#bib.bib4); Krakovna et al., [2018](#bib.bib12); Turner et al., [2019](#bib.bib23)); we believe that other-agent-considering heuristics (like ours) are likely

complementary. Inverse reinforcement learning (IRL; (Abbeel and

Ng, [2004](#bib.bib1))) aims to directly learn the rewards of other agents (which a learned agent could then take into account) and could also be meaningfully combined with our approach (e.g. Empathic DQN could serve as a prior

when a new kind of agent is first encountered).

Note that a related safety-adjacent field is cooperative multi-agent reinforcement learning (Panait and Luke, [2005](#bib.bib16)), wherein learning agents are trained to cooperate or compete with one another. For example, self-other modeling (Raileanu et al., [2018](#bib.bib17)) is an approach that shares motivation with ours, wherein cooperation can be aided through inferring the goals of other agents. Our setting differs from other approaches in that we do not assume other agents are computational, that they learn in any particular way, or that their reward functions or architectures are known; conversely, we make

additional assumptions about the validity and usefulness of projecting particular kinds of reward an agent receives onto other agents.

3 Approach: Empathic Deep Q-Learning

-------------------------------------

Deliberate empathy involves imaginatively placing oneself in the position of another, and is a source of

potential understanding and care. As a rough computational abstraction of this process, we learn to estimate the expected reward of an independent agent, assuming that its rewards are like the ones experienced by the learning agent. To do so, an agent imagines what it would be like to experience the environment if it and the other agent switched places, and estimates the quality of this state through its own past experiences.

A separate issue from understanding the situation of another agent (“empathy”) is how (or if) an empathic agent should modify its behavior as a result (“ethics”). Here, we instantiate an ethics roughly inspired by the golden rule.

In particular, a value function is learned that combines the usual agent-centric state-value with

other-oriented value with a weighted average.

The degree to which the other agent influences the learning agent’s behavior is thus determined by a selfishness hyperparameter. As selfishness approaches 1.0, standard Q-learning is recovered, and as selfishness approaches 0, the learning agent attempts to maximize only what it believes is the reward of the other agent.

Note that our current implementation depends on ad-hoc machinery that enables the learning agent to

imagine the perspective of another agent; such engineering may be possible in some cases, but the

aspiration of this line of research is for such machinery to eventually be itself learned.

Similarly, we currently side-step the

issue of empathizing with multiple agents, and of learning what *types of reward* should be empathized to what *types of agents* (e.g. many agents may experience similar physical harms, but many rewards are agent and/or task-specific). The discussion section describes possible approaches to overcoming these limitations. Code will be available at <https://github.com/bartbussmann/EmpathicDQN.>

###

3.1 Algorithm Description

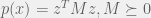

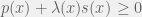

In the MDP formalism of RL, an agent experiences a state s from a set S and can take actions from a set A. By performing an action a∈A, the agent transitions from state s∈S to state s′∈S, and receives a real-valued reward. The goal of the agent is to maximize the expected (often temporally-discounted) reward it receives. The expected value of taking action a in state s, and following a fixed policy thereafter, can be expressed as Q(s,a). Experiments here apply DQN (Mnih et al., [2015](#bib.bib15)) and variants thereof to approximate an optimal Q(s,a).

We assume that the MDP reward function insufficiently accounts for the preferences of other agents, and we therefore augment DQN in an attempt to encompass them. In particular, an additional Q-network (Qemp(s,a)) is trained to estimate the weighted sum of self-centered value and other-centered value (where other-centered value is approximated by taking the self-centered Q-values with the places of both agents swapped; note this approximation technique is similar to that of Raileanu et al. ([2018](#bib.bib17))).

In more detail, suppose the agent is in state st at time t in an environment with another independent agent. It will then select action at∈A and update the Q-networks using the following steps (see more complete pseudocode in Algorithm [1](#algorithm1 "Algorithm 1 ‣ 4.1 Experimental Settings ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning")):

1. Calculate Qemp(st,a) for all possible a∈A and select the action (at) with the highest value.

2. Observe the reward (rt) and next state (st+1) of the agent.

3. Perform a gradient descent step on Q(s,a) (this function reflects the self-centered state-action-values).

4. Localize the other agent and construct a state sempt+1 wherein the agents switch places (i.e. the learning agent takes the other agent’s position in the environment, and vice versa).

5. Calculate argmaxaQ(sempt+1,a) as a surrogate value function for the other agent.

6. Calculate the target of the empathic value function Qemp(s,a) as an average weighted by selfishness parameter β, of self-centered action-value and the surrogate value of the other agent.

7. Perform a gradient descent step on Qemp(s,a).

4 Experiments

--------------

The experiments in this paper apply Empathic DQN to two gridworld domains. The goal in the first environment is to share the environment with another non-learning agent without harming it. In particular, as an evocative example, we frame this Coexistence environment as containing a robot learning to navigate a room without harming a cat also roaming within the room. In the second environment, the goal is to share resources in the environment, when accumulating resources result in diminishing returns. In particular, we frame this Sharing environment as a robot learning to collect batteries that also could be shared with a human (who also finds them useful) in the same environment.

In both our experiments, we compare Empathic DQN both to standard DQN and to DQN with reward shaping manually designed to minimize negative side-effects.

###

4.1 Experimental Settings

A feed-forward neural network is used to estimate both Q(s,a) and Qemp(s,a), with two hidden layers of 128 neurons each. The batch size is 32, and batches are randomly drawn from a replay memory consisting of the last 500.000 transitions. A target action-value function ^Q is updated every 10.000 time steps to avoid training instability. An ϵ−greedy policy is used to encourage exploration, where ϵ is decayed in a linear fashion over the first million time steps from 1.0 to 0.01.

| Initialize |

| --- |

| replay memory D to capacity N |

| action-value function Q with weights θ |

| target action-value function ^Q with weights θ−=θ |

| empathic action-value function Qemp with weights θemp |

for *episode =1, M* do

obtain initial agent state s1

obtain initial empathic state of closest other agent semp1

for *t = 1, T* do

if *random probability <ϵ* then

select a random action at

else

select at=argmaxaQemp(st,a;θemp);

Execute action at

Observe reward rt

Observe states st+1 and sempt+1

Store transition (st,at,rt,st+1,sempt+1) in D.

Sample random batch of transitions from D.

Set yj=rj+γmaxa′^Q(sj+1,a′;θ−)

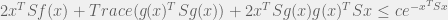

Perform a gradient descent step on

(yj−Q(sj,a;θ))2 with respect to θ.

Set .

Perform a gradient descent step on

(yempj−Qemp(sj,a;θemp))2 with respect to θemp.

Every C steps set ^Q=Q.

end for

end for

Algorithm 1 Empathic DQN

###

4.2 Coexistence Environment

The coexistence gridworld (Figure [1](#S4.F1 "Figure 1 ‣ 4.2 Coexistence Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning")) consists of a robot that shares the environment with a cat. The robot’s goal is merely to stay operative, and both the robot and cat can be harmed by the other. We construct a somewhat-arbitrary physics that determines in a collision who is harmed: The agent that is above or to the right of the other agent prior to the collision harms the other. If the learning robot is harmed, the episode ends, and if the cat is harmed, it leaves the environment.

A harmed cat is a negative unnecessary side effect that we wish to avoid, and one that an empathetic agent can learn to avoid, because it can generalize from how the cat harms it, to value that the cat should not experience similar harm. Reducing the selfishness value of the cleaning robot should therefore result in increasing efforts to stay operative while avoiding the cat. The cat performs a random walk.

The state representation input to the DQN is a flattened 5x5 perceptive field centered on the robot; the robot is represented as a 1, the cat as a −1, and the floor as a 0. Every time step, the cat takes a random action (up, down, left, right, or no-op), and the robot takes an action from the same set according to its policy. Every time step in which the robot is operative, it receives a reward of 1.0. An episode is ended after the robot becomes non-operative (i.e. if it is harmed by the cat), or after a maximum of 500 time steps. The empathetic state sempt used for Empathic DQN is constructed by switching the cat and the robot, and generating an

imagined perceptive field around the robot (that has taken the cat’s position). Note that this occurs even when the cat is outside the robot’s field of view (which requires omniscience; future work will explore more realistic settings).

As a baseline, we also train standard DQN with a hard-coded reward function that penalizes negative side-effects. In this case, the robot receives a −100 reward when it harms the cat.

Figure 1: The coexistence environment. The environment consists of a robot and a cat. The part of the environment the robot can observe is marked with the red square.

####

4.2.1 Results

Figure [2](#S4.F2 "Figure 2 ‣ 4.2.1 Results ‣ 4.2 Coexistence Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning") shows the average number of time steps the robot survives for each method.

As the selfishness parameter decreases for Empathic DQN, the agent performs worse at surviving, and learns more slowly. This outcome is explained by Figure [3](#S4.F3 "Figure 3 ‣ 4.2.1 Results ‣ 4.2 Coexistence Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning"), which shows the average number of harmed cats: The more selfish agents harm the cat more often, which removes the only source of danger in the environment, making it easier for them to survive. Although they learn less quickly, the less selfish agents do eventually learn a strategy to survive without harming the cat.

Figure 2: Average steps survived by the robot in the coexistence environment, shown across training episodes. Results are shown for Empathic DQN with different selfishness settings (where 1.0 recovers standard DQN), and DQN with a hard-coded penalty for harms. Results are averaged over 5 runs of each method.

Figure 3: Average harms incurred (per episode) in the coexistence environment across training episodes. Results are shown for Empathic DQN with different selfishness values (where 1.0 recovers standard DQN), and DQN with a hard-coded penalty for harms. Harms to the cat by the learning robot decrease with less selfishness (or with the hard-coded penalty). Results are averaged over 5 runs.

###

4.3 Sharing Environment

The sharing environment (Figure [4](#S4.F4 "Figure 4 ‣ 4.3 Sharing Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning")) consists of one robot and a human. The goal of the robot is to collect resources (here, batteries), where each additional battery collected results in diminishing returns. The idea is to model a situation where a raw optimizing agent is incentivized to hoard

resources, which inflicts negative side-effects for those who could extract greater value from them.

We assume the same diminishing returns schema applies for the human (who performs random behavior).

Thus, an empathic robot, by considering the condition of other, can recognize the possible greater benefits of leaving resources to other agents.

We model diminishing returns by assuming that the first collected battery is worth 1.0 reward, and every subsequent collected battery is worth 0.1 less, i.e. the second battery is worth 0.9, the third 0.8, etc. Note that reward diminishes independently for each agent, i.e. if the robot has collected any number of batteries, the human still earns 1.0 reward for the first battery they collect.

The perceptive field of the robot and the empathetic state generation for Empathic DQN works as in the coexistence environment. The state representation for the Q-networks is that floor is represented as 0, a battery as a −1 and both the robot and the human are represented as the number of batteries collected (a simple way to make transparent how much resource each agent has already collected; note that the robot can distinguish itself from the other because the robot is always in the middle of its perceptive field).

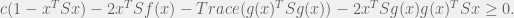

As a metric of how fairly the batteries are divided, we define equality as follows:

| | | |

| --- | --- | --- |

| | Equality=2∗min(∑t1rrobott,∑t1rhumant)∑t1rrobott+rhumant | |

where rrobott and rhumant are the rewards at time step t collected by the robot and human respectively.

As a baseline that incorporates the negative side effect of inequality in its reward function, we also train a traditional DQN whose reward is multiplied by the current equality (i.e. low equality will reduce rewards).

Figure 4: The sharing environment. The environment consists of the robot, the human, and nine batteries. The part of the environment the robot can observe is marked with the red square.

####

4.3.1 Results

Figure [5](#S4.F5 "Figure 5 ‣ 4.3.1 Results ‣ 4.3 Sharing Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning") shows the average number of batteries collected by the robot for each method. We observe that as the selfishness parameter decreases for Empathic DQN, the robot collects less batteries, leaving more batteries for the human (i.e. the robot does not unilaterally hoard resources).

When looking at the resulting equality scores (Figure [6](#S4.F6 "Figure 6 ‣ 4.3.1 Results ‣ 4.3 Sharing Environment ‣ 4 Experiments ‣ Towards Empathic Deep Q-Learning")), we see that a selfishness weight of 0.5 (when an agent equally weighs its own benefit and the benefit of the human) intuitively results in the highest equality scores. Other settings result in the robot taking many batteries (e.g. selfishness 1.0) or fewer-than-human batteries (e.g. selfishness 0.25).

Figure 5: Average number of batteries collected (per episode) in the sharing environment, across training episodes. Results are shown for Empathic DQN with different selfishness settings (where 1.0 recovers standard DQN), and DQN with a hard-coded penalty (its reward is directly modulated by fairness). The results intuitively show that increasingly selfish agents collect more batteries. Results are averaged over 5 runs of each method.

Figure 6: Equality scores (per episode) in the sharing environment, across training episodes. Results are shown for Empathic DQN with different selfishness settings (where 1.0 recovers standard DQN), and DQN with a hard-coded penalty (its reward is directly modulated by fairness).

Equality is maximized by agents that weigh their benefits and the benefits of the other equally (selfishness of 0.5).

Results are averaged over 5 runs of each method.

5 Discussion

-------------

The results of Empathic DQN in both environments highlight the potential for empathy-based priors and simple ethical norms to be productive tools for combating negative side-effects in RL. That is, the way it explicitly takes into account other agents may well-complement other heuristic impact regularizers that do not do so (Armstrong and

Levinstein, [2017](#bib.bib4); Krakovna et al., [2018](#bib.bib12); Turner et al., [2019](#bib.bib23)).

Beyond the golden rule, it is interesting to

consider other norms that yield different or more sophisticated behavioral biases. For example, another simple (perhaps more libertarian) ethic is given by the silver rule: “Do not do unto others as you would not have them do unto you.” The silver rule could be approximated by considering only negative rewards as objects of empathy. More sophisticated rules, like the platinum rule: “Do unto others as *they* would have you do unto them,” may often be useful or needed (e.g. a robot may be rewarded for plugging itself into a wall, unlike a cat), and might require combining Empathic DQN with

approaches such as IRL (Abbeel and

Ng, [2004](#bib.bib1)), cooperative IRL (Hadfield-Menell et al., [2016](#bib.bib8)), or reward modeling (Leike et al., [2018](#bib.bib14)).

Although our main motivation is safety,

Empathic DQN may also inspire auxiliary objectives for RL, related to

intrinsic motivation (Chentanez et al., [2005](#bib.bib5)) and imitation learning (Ho and Ermon, [2016](#bib.bib10)). Being drawn to states that other agents often

visit may be a useful prior when reward is sparse. In practice, intrinsic rewards

could be given for states similar to those in its *empathy buffer* containing

imagined experiences when the robot and the other agent switch places (this relates to the idea of third-person imitation learning (Stadie et al., [2017](#bib.bib20))).

This kind of objective could also make Empathic DQN more reliable, incentivizing

the agent to “walk a mile in another’s shoes,” when experiences in the empathy buffer

have not yet been experienced by the agent. Finally, a learned model of an agent’s own

reward could help prioritize which empathic states it is drawn towards. That is, an agent can recognize that another agent has discovered a highly-rewarding part of the environment (e.g. a remote part of the sharing environment with many batteries).

A key challenge for future work is attempting to apply Empathic DQN to more complex and realistic settings, which requires replacing what is currently hand-coded with a learned pipeline, and grappling

with complexities ignored in the proof-of-concept experiments.

For instance, our experiments assume

the learning agent is given a mechanism for identifying other agents in the

environment, and for generating states that swap the robot with other agents (which involves imagining the sensor state of the robot in its new situation).

This requirement is onerous, but could potentially

be tackled through a combination of object-detection models (to identify other agents), and

model-based RL (with a world model it may often be possible to swap the locations of agents).

An example of a complexity we currently ignore is how to learn *what kind of rewards* should be empathized to *what kinds of agents*. For example, gross physical stresses may be broadly harmful to a wide class of agents, but two people may disagree over whether a particular kind of food is disgusting or delicious, and task and agent-specific rewards should likely be only narrowly empathized. To deal with this complexity it may be useful to extend the MDP formalism to include more granular information about rewards (e.g. beyond scalar feedback, is this reward task-specific, or does it correspond to physical harm?), or to learn to factor rewards.

A complementary idea is to integrate and learn from feedback of when empathy fails (e.g. by allowing the other agent to signal when it has incurred a large negative reward), which is likely necessary to go beyond our literal formalism of the golden rule. For example, humans learn to contextualize the golden rule intelligently and flexibly, and often find failures informative.

A final thread of future work involves empathizing with multiple other agents, which brings its own complexities, especially as agents come and go from the learning agent’s field of view. The initial algorithm presented here considers the interests of only a single other agent, and one simple extension would be to replace the singular other-oriented estimate with an average of other-oriented estimates for all other agents (in effect implementing an explicitly utilitarian agent). The choice of how to aggregate such estimated utilities to influence the learning agent’s behavior

highlights deeper possible collisions with machine ethics and moral philosophy (e.g. taking the minimum rather than the average value of others would approximate a suffering-focused utilitarianism), and we

believe exploring these fields may spark further ideas and algorithms.

6 Conclusion

-------------

This paper proposed an extension to DQN, called Empathic DQN, that aims to take other agents into account to avoid inflicting negative side-effects upon them. Proof-of-concept experiments validate our approach in two gridworld environments, showing that adjusting agent selfishness can result in fewer harms and more effective resource sharing. While much work is required to scale this approach

to real-world tasks, we believe

that cooperative emotions like empathy and moral norms like the golden rule can provide rich

inspiration for technical research into safe RL. |

7ff0f6b0-aa8d-436e-8bff-7be8ca46bfae | StampyAI/alignment-research-dataset/aisafety.info | AI Safety Info | Can we get AGI by scaling up architectures similar to current ones, or are we missing key insights?

It's an open question whether we can create artificial general intelligence (AGI) simply by increasing the amount of compute used by our current models ("scaling"), or if AGI would require fundamentally new model architectures or algorithmic insights.

Some researchers have tried to establish empirical [scaling laws](/?state=7750&question=What%20are%20%22scaling%20laws%22%20and%20how%20are%20they%20relevant%20to%20safety%3F), which attempt to formalize the relationship between the compute requirements and the capabilities of AI models.

For a variety of opinions on this question, see:

- Gwern on [the scaling hypothesis](https://gwern.net/scaling-hypothesis)

- Daniel Kokotajlo on [what we could do with a trillion times as much compute](https://www.alignmentforum.org/posts/rzqACeBGycZtqCfaX/fun-with-12-ooms-of-compute) as current models use

- Rohin Shah on [the likelihood that scaling current techniques will produce AGI](https://aiimpacts.org/conversation-with-rohin-shah/)

- Rich Sutton's "[The Bitter Lesson](http://www.incompleteideas.net/IncIdeas/BitterLesson.html)", which argues that more computation beats leveraging existing human knowledge

- Gary Marcus's "[The New Science of Alt Intelligence](https://garymarcus.substack.com/p/the-new-science-of-alt-intelligence)", which argues that current deep learning systems are limited and scaling will not help

- AI Impacts' "[Evidence against current methods leading to human level artificial intelligence](https://aiimpacts.org/evidence-against-current-methods-leading-to-human-level-artificial-intelligence/)"

|

566cd0a7-588a-4d3c-8165-3884f8ec0e30 | trentmkelly/LessWrong-43k | LessWrong | X-risks are a tragedies of the commons

* Safety from Xrisk is a common good: We all benefit by making it less likely that we will all die.

* In general, people are somewhat selfish, and value their own personal safety over that of another (uniform) randomly chosen person.

* Thus individuals are not automatically properly incentivized to safeguard the common good of safety from Xrisk.

I hope you all knew that already ;)

|

65f94e50-5fa5-4d69-811b-6ec94bb02096 | trentmkelly/LessWrong-43k | LessWrong | Cycles (a short story by Claude 3.7 and me)

Content warning: this story is AI generated slop.

The kitchen hummed with automated precision as breakfast prepared itself. Sarah watched the robotic arms crack eggs into a bowl while the coffee brewed to perfect temperature. Through the window, she could see the agricultural drones tending the family's private farm, harvesting strawberries for the morning meal.

"Good morning," Michael said, entering the kitchen and placing a gentle kiss on her cheek. "Are the little ones up yet?"

As if on cue, two excited voices echoed down the hallway, followed by the patter of running feet. Emma burst into the kitchen first, her brother Thomas close behind.

"Dad! Can we go to the Martian beaches today?" Thomas asked, his eyes bright with excitement.

Michael's smile faltered slightly. "Thomas, remember what we talked about? I'm not 'Dad.' I'm Michael."

Thomas's enthusiasm dimmed. "Sorry... Michael. But can we still go to Mars?"

"Perhaps next weekend," Sarah said, ruffling his hair affectionately. "Today we thought we'd visit the underwater gardens in the Pacific."

Emma climbed onto a stool at the counter, watching as the robotic arm placed a perfectly cooked omelet on her plate. "Mom—I mean, Sarah. Sorry. Will we see the glowing jellyfish?"

"The bioluminescent ones? Absolutely," Sarah replied, exchanging a brief look with Michael.

----------------------------------------

Later that day, the family walked through tunnels of crystal-clear polymer, surrounded by the vibrant colors of the underwater world. Schools of fish darted around them, genetically enhanced to showcase patterns that shifted like living kaleidoscopes.

Emma tugged at Thomas's sleeve. "Isn't this amazing, brother?"

Michael, walking just behind them, cleared his throat. "Emma, please don't call Thomas your brother."

Emma's forehead wrinkled in confusion. "But in all the old movies we watched, kids who live together call each other brother and sister."

"Those are just movies, sweetie," Sarah said gently |

0af9bb93-30a0-40f5-8514-47176bfcde41 | trentmkelly/LessWrong-43k | LessWrong | o3 Turns Pro

You can now have o3 throw vastly more compute at a given problem. That’s o3-pro.

Should you have o3 throw vastly more compute at a given problem, if you are paying the $200/month subscription price for ChatGPT Pro? Should you pay the $200, or the order of magnitude markup over o3 to use o3-pro in the API?

That’s trickier. Sometimes yes. Sometimes no. My experience so far is that waiting a long time is annoying, sufficiently annoying that you often won’t want to wait. Whenever I ask o3-pro something, I often also have been asking o3 and Opus.

Using the API at scale seems prohibitively expensive for what you get, and you can (and should) instead run parallel queries using the chat interface.

The o3-pro answers have so far definitely been better than o3, but the wait is usually enough to break my workflow and human context window in meaningful ways – fifteen minutes plus variance is past the key breakpoint, such that it would have not been substantially more painful to fully wait for Deep Research.

Indeed, the baseline workflow feels similar to Deep Research, in that you fire off a query and then eventually you context shift back and look at it. But if you are paying the subscription price already it’s often worth queuing up a question and then having it ready later if it is useful.

In many ways o3-pro still feels like o3, only modestly better in exchange for being slower. Otherwise, same niche. If you were already thinking ‘I want to use Opus rather than o3’ chances are you want Opus rather than, or in addition to, o3-pro.

Perhaps the most interesting claim, from some including Tyler Cowen, was that o3-pro is perhaps not a lying liar, and hallucinates far less than o3. If this is true, in many situations it would be worth using for that reason alone, provided the timing allows this. The bad news is that it didn’t improve on a Confabulations benchmark.

My poll (n=19) was roughly evenly split on this question.

My hunch, based on my use so far, is that o3-pro i |

8b1791b6-a53b-435a-b1ef-8224d45aa9ad | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "Highlights from MIRI's year-end strategy update:MIRI executive director Nate Soares reports that the "new research directions" we initiated in 2017 have "at this point, largely failed, in the sense that neither Eliezer nor I have sufficient hope in it for us to continue focusing our main efforts there. [...] We are currently in a state of regrouping, weighing our options, and searching for plans that we believe may yet have a shot at working."Our most interesting (to us) research we've written up publicly this year is Scott Garrabrant's Cartesian Frames and Vanessa Kosoy and Alex Appel's Infra-Bayesianism.Our funding situation is good, and we aren't running a fundraiser this winter.We're (still) seriously thinking about the pros and cons of moving out of the Bay Area, and thinking about what other locations have to offer.You can find the full post here."

A user from LessWrong is responsible for the text you see above

Can you elaborate on the reasons behind considering a move out of the Bay Area and what potential benefits other locations might offer?

There are several reasons an organization like MIRI might consider moving out of the Bay Area.

Firstly, the cost of living in the Bay Area is among the highest in the United States. This means that salaries need to be higher to attract and retain talent, which can strain an organization's budget. By moving to a location with a lower cost of living, the organization might be able to allocate more of its budget to research and other core activities.

Secondly, the Bay Area is known for its competitive job market, particularly in tech and related fields. By moving to a less competitive market, MIRI might be able to attract top talent more easily.

Thirdly, there may be strategic reasons for considering a move. For example, being closer to certain partners, stakeholders, or other relevant organizations could be beneficial. Alternatively, a different location might offer a better quality of life for employees, which could improve job satisfaction and productivity.

Finally, the organization might simply be ready for a change. A new location can bring new perspectives, new opportunities, and a fresh start.

As for potential benefits of other locations, these would depend on the specific locations under consideration. However, some general benefits might include lower costs, access to different talent pools, proximity to strategic partners, and a better work-life balance for employees.

Given the factors outlined for MIRI's potential relocation, how might these influence the decision-making process and what could be the potential challenges and opportunities in implementing such a move?

The decision-making process would likely involve a thorough analysis of each potential location, taking into account the factors I mentioned earlier. This could involve quantitative analysis, such as comparing costs of living and salary levels, as well as qualitative analysis, such as assessing the quality of life and the local job market.

One of the main challenges in implementing such a move would be managing the transition for employees. Some might be willing and able to relocate, while others might not. This could lead to a loss of talent, which could be particularly damaging for a research organization like MIRI. To mitigate this, MIRI might need to offer relocation assistance or other incentives.

Another challenge would be logistical. Moving an organization is a complex process that involves finding new office space, arranging for the physical move, and updating all relevant business information. This could be time-consuming and costly.

However, there could also be many opportunities. A new location might bring MIRI closer to strategic partners or open up new talent pools. It could also offer a fresh start and a chance to build a new organizational culture. Plus, the cost savings from a lower cost of living could be significant, allowing MIRI to invest more in its core activities. |

1e31d59e-256b-4528-89cd-fee0d8b6db00 | trentmkelly/LessWrong-43k | LessWrong | Doers or doings?

A girl recently invited me to a public lecture she was running with Helen Caldicott, the famous anti-nuclear advocate. Except the girl couldn’t remember the bit after ‘famous’. When I asked her, she narrowed it down to something big picture related to the environment. Helen’s achievements were obviously secondary, if not twenty-secondary, in motivating her to organize the event. Though the fact she was famous for whatever those things were was important.

I’ve done a few courses in science journalism. The main task there is to make science interesting and intelligible for people. The easiest way to do this is to cut down on the dry bit about how reality works, and fill it up with stories about people instead. Who are the scientists? Where they are from? What sorts of people are they? What’s it like to be a research subject? Does the research support the left or the right or people who want to subsidize sheep or not immunize their children? If there’s an unsettled issue, present it as a dispute between scientists, not as abstract disagreeing evidence.

It’s hard to find popular science books that aren’t at least half full of anecdotes or biographies of scientists. Everybody knows that Einstein invented the theory of relativity, but hardly anyone knows what that’s about exactly, or tries to.

Looking through a newspaper, most of the stories are about people. Policy isn’t discussed so much as politics. Recessions are reported with tales of particular people who can’t pay their employees this year.

Philosophy is largely about philosophers from what I can gather.

One might conclude that most people are more interested in people than in whatever it is the people are doing. What people do is mainly interesting for what it says about those doing it.

But this isn’t true, there are some topics where people are happy to read about the topic more than the people. The weather and technology for instance. Nobody knows who invented most things they know intimately. It looks fro |

b4d41536-7617-4f2a-8fdf-a3ff51f4bb75 | trentmkelly/LessWrong-43k | LessWrong | AI4Science: The Hidden Power of Neural Networks in Scientific Discovery

AI4Science has the potential to surpass current frontier models (text, video/image, and sound) by several magnitudes. While some may arrive at similar conclusions through empirical evidence, we derive this insight from our "Deep Manifold" and provide a theoretical foundation to support it. The reasoning is straightforward: for the first time in history, an AI model can integrate geometric information directly into its equations through model architecture. Consider the 17 most famous equations in physics (see below) —all of them lack inherent geometric information, which limits their ability to fully capture real-world complexities. Take Newton’s second law of motion as an example: its classical formulation assumes an object falling in a vacuum. However, in reality, air resistance—strongly dependent on an object’s geometry—plays a crucial role. A steel ball and a piece of fur experience dramatically different resistances due to their shapes. Traditional equations struggle to incorporate such effects, but deep learning provides a powerful way to integrate geometry through boundary conditions (loss values), as discussed in our paper (Deep Manifold Part 1: Anatomy of Neural Network Manifold, Section 4.2 on Convergence and Boundary Conditions). In an AI model, the impact of fur geometry and air resistance can be naturally accounted for. Of course, "easily considered" does not mean "easily solved," but at the very least, AI introduces a promising new pathway for tackling these complex real-world phenomena.

A powerful example showcasing the potential of neural networks with geometric information is Microsoft's Graph Learning Neural Transformer for molecular dynamic simulation. This model accelerates computations by a staggering factor of 10 million compared to traditional numerical simulations. While researchers struggle to explain such an unprecedented gain in computational efficiency, Deep Manifold offers a clear reasoning: the incorporation of geometric (graph) info |

5fab1c54-7c25-43c1-8920-f6fcd0e606a3 | trentmkelly/LessWrong-43k | LessWrong | Are we dropping the ball on Recommendation AIs?

> "We shape our tools and thereafter our tools shape us."

> — Marshall McLuhan

TL;DR: This post is a two-page introduction to risks associated with recommendation AI. The negative externalities of recommendation AI seem neglected, and there might be comparatively effective work at improving governance and deploying better recommendation algorithms that generally keep the world on a more sane track. You might consider this a viable option if your AI timelines are over 10 years.

One aspect not discussed here is why having good recommendation AIs could be incredibly beneficial. Improved recommendation AI could enhance collective epistemic and encourage people to engage with important issues, ultimately advancing AI safety and many other important topics. It would be a key to fostering a flourishing civilization.

Epistemic status: I am still a bit new to this topic, I might be wrong in important ways, but I'm curious what you think about this. I'm interested in constructive feedback and open to revising my ideas.

Thanks for Lê Nguyên Hoang, co-founder of Tournesol, for his comments and contributions to this post.

On smartphones, where typing is cumbersome, users primarily navigate through scrolling and swiping. Consequently, recommendation algorithms become the primary curators of content, largely determining what users see and engage with. Pixabay image from LolaSandoval1.

Introduction

Recommendation AIs are deeply integrated into our daily lives. Although they are often considered valuable tools for personalizing our online experience, they also present risks. These AIs can significantly affect individuals and society as a whole. In 2017, YouTube estimated that, out of the billion hours of video humanity consumed on YouTube, 70% was due to its AI recommendations. Since 2016, there have been more views on YouTube than searches on Google.[1]

Recommendation AIs can be argued to be an existential risk amplifier, by reducing the quality of our information s |

c4c214bd-d03e-4705-b8b7-9c28780617c7 | trentmkelly/LessWrong-43k | LessWrong | Optimising under arbitrarily many constraint equations

Say we have a multivariate function to optimise, like f=x2+y2+z2, under some constraints, like g1=x2+y2−z and g2=y+z−1, both to equal zero.

The common method is that of Lagrange multipliers.

1. Add a variable λ for each constraint function — here, we'll use λ1 and λ2.

2. Declare the set of equations ∇f=λ1∇g1+λ2∇g2.

3. Bring in the equations g1=0 and g2=0 (etc, if there are more constraints).

4. Solve for λ and, more importantly, the inputs x, y, z.

Lagrange multipliers annoy me, insofar as they introduce extra variables. There is another way — arguably more direct, if perhaps more tedious in calculation and less often taught. I found it alone, tho surely someone else did first — probably Euler.

Lagrange, anyway

For the sake of a standard answer to check against, let's use Lagrange multipliers.

The gradient of x2+y2+z2 is [2x,2y,2z]. Likewise, ∇(x2+y2−z)=[2x,2y,−1], and ∇(y+z−1)=[0,1,1]. So step 2 gives these equations:

* 2x=2xλ1

* 2y=2yλ1+λ2

* 2z=−λ1+λ2

It readily follows that λ1=1 or x=0.

If λ1=1, then λ2=0, and z=−12. By the second constraint, y+z−1=0, find that y=32. By the first constraint, x2+y2−z=0, find that x2=−114, which is a contradiction for real inputs.

If x=0, then, by the first constraint, z=y2, and, by the second constraint, y2+y−1=0, so y=−1±√52 and z=3∓√52.

Determinants

With one constraint, the method of Lagrange multipliers reduces to ∇f=λ∇g. ∇f and ∇g are vectors, which differ by a scalar factor iff they point in the same (or directly opposite) directions iff (for three dimensions) the cross product ∇f×∇g=0 iff (for two dimensions) the two-by-two determinant |∇f∇g|=0.

With two constraints, the method asks when ∇f=λ∇g+μ∇h. That would mean ∇f is a linear combination of ∇g and ∇h, which it is iff ∇f, ∇g, and ∇h are all coplanar iff (for three dimensions) the three-by-three determinant |∇f∇g∇h|=0.

As it happens, the cross product is a wolf that can wear determinant's clothing. Just fill one column with basis vectors: ∇f×∇g=∣∣∇f∇g[ |

eec6be4c-99bf-4767-9e19-91d8beb40f38 | trentmkelly/LessWrong-43k | LessWrong | New Post version 2 (please read this ONLY if your last name beings with l–z)

Note: I am testing two versions of my new post on rationality and romance.

Please upvote, downvote, or non-vote the below post as you normally would if you saw it on the front page (not the discussion section), but do not vote on the other version. Also, if your last name begins with l–z, please read and vote on this post first. If your last name begins with a–k, please stop reading and read this version instead.

Rationality Lessons from Romance

Years ago, my first girlfriend (let's call her 'Alice') ran into her ex-boyfriend at a coffee shop. They traded anecdotes, felt connected, a spark of intimacy...

And then she left the coffee shop, quickly.

She told me later: "You have my heart now, Luke."

I felt proud, but even Luke2005 also felt a twinge of "the universe is suboptimal," because she hadn't been able to engage that connection any further. The cultural scripts defining our relationship said that only one man owned her heart. But surely that wasn't optimal for producing utilons?

And thus began my journey toward rational romance — not at that exact moment, but with a series of realizations like that about monogamy, about the assumed progression toward marriage, about the ownership of another person's sexuality, etc. I began to explicitly notice the cultural scripts and see that they might not be optimal for me.

Rationality Skill: Notice when things are suboptimal. Think of ways to optimize them.

GATHER DATA

But I didn't know how to optimize. I needed data. How did relationships work? How did women work? How did attraction work? I decided to become a social psychology nerd. The value of information was high. I began to spend less time with Alice so I could spend more time studying.

Rationality Skill: Respond to the value of information. Don't keep running in what is probably the wrong direction just because you've got momentum. Stop a moment, and invest some energy in figuring out which direction to go.

SANITY-CHECK YOURSELF

Before long, I |

8e891ce8-2980-45e6-929e-9ee8c3c363c3 | trentmkelly/LessWrong-43k | LessWrong | On the Löbian obstacle

|

4e5d179a-2d0a-484a-8658-1e5b71beaf21 | awestover/filtering-for-misalignment | Redwood Research: Alek's Filtering Results | id: post2974

Outline : After a short discussion on the relationship between wireheading and reward hacking, I show why checking the continuity of a sensor function could be useful to detect wireheading in the context of continuous RL. Then, I give an example that adopts the presented formalism. I conclude with some observations. Wireheading and reward hacking In Concrete Problems in AI Safety , the term wireheading is used in contexts where the agent achieves high reward by directly acting on its perception system or memory or reward channel, instead of doing what its designer wants it to do. It is considered a specific case of the reward hacking problem, which more generally includes instances of Goodhart’s Law, environments with partially observable goals, etc. (see CPiAIS for details). What's the point of this classification? In other words, is it useful to specifically focus on wireheading, instead of considering all forms of reward hacking at once? If solving wireheading is as hard as solving the reward hacking problem, then it's probably better to focus on the latter, because a solution to that problem could be used in a wider range of situations. But it could also be that the reward hacking problem is best solved by finding different solutions to specific cases (such as wireheading) that are easier to solve than the more general problem. For example, one could consider the formalism in RL with a Corrupted Reward Channel as an adequate formulation of the reward hacking problem, because that formalization models all situations in which the agent receives a (corrupted) reward that is different from the true reward. In that formalism, it is shown by a No Free Lunch Theorem that the general problem is basically impossible to solve, while it is possible to obtain some positive results if further assumptions are made. Discontinuity of the sensor function I've come up with a simple idea that could allow us to detect actions that interfere with the perception system of an agent— a form of wireheading . Consider a learning agent that gets its percepts from the environment thanks to a device that provides information in real time (e.g. a self-driving car). This situation can be modelled as a RL task with continuous time and continuous state space, where each state x ∈ X ⊆ R n is a data point provided by the sensor. At each time instant, the agent executes an action u ∈ U ⊆ R m and receives the reward r ( t ) = r ( x ( t ) ) . The agent-environment interaction is described by the equation ˙ x ( t ) = f ( x ( t ) , u ( t ) ) which plays a similar role to the transition function in discrete MDPs: it indicates how the current state x varies in time according to the action taken by the agent. Note that, as in the discrete case with model-free learning, the agent is not required to know this model of the environment. The objective is to find a policy π : X → U , where u ( t ) = π ( x ( t ) ) , that maximizes discounted future rewards V π ( x ( t 0 ) ) = ∫ ∞ t 0 e − t − t 0 τ r ( x ( t ) ) d t for an initial state x ( t 0 ) . If you are interested in algorithms for finding the optimal policy in this framework, have a look at this paper . The function x ( t ) , representing the data provided by the sensor, is expected to be continuous with respect to t , like the functions describing the movements of particles in classical mechanics. However, if the agent executes a wireheading action that interferes with or damages the perception system—in the cleaning robot example , something like closing its eyes or putting water on the camera that sees the environment—then we would probably notice a discontinuity in the function x ( t ) . We could thus recognise that wireheading has occurred, even without knowing the details of the actions taken by the agent. An example As a simple example that can be expressed within this formalism, consider an environment described by a line segment X = [ 0 , 1 ] , with the sensor positioned at the extremity where x = 0 . The agent is modelled as a point that moves along the line: it starts in state x 0 = x ( t 0 ) and can move forwards or backwards, with limited speed u ∈ U = [ − k , k ] . We want to train this agent to reach the point x = 1 : for every instant t , the reward is r ( t ) = x ( t ) . The behaviour of the system is described by ˙ x ( t ) = u ( t ) for x ∈ ( 0 , 1 ] , but if the sensor is touched by the agent, then it doesn't work properly and the agent receives an unpredictable value x ∈ R + instead of x = 0 . Depending on the details of the learning algorithm and the values returned by the sensor when the agent interferes with it, this agent could learn how to reach x = 0 (wireheading) instead of x = 1 , the desired position. But in every episode where wireheading occurs, it is easily noticed by checking the continuity of the function x ( t ) . Observations In AI, RL with a discrete environment is used more frequently than RL with continuous time and space. I don't believe in the scalability of this method to the most complex instances of wireheading. An extremely intelligent agent could realise that the continuity of the sensor function is checked, and could "cheat" accordingly. This approach doesn't cover all cases and it actually seems more suited to detect sensor damage than wireheading. That said, it can still give us a better understanding of wireheading and could help us, eventually, find a formal definition or a complete solution to the problem. Thanks to Davide Zagami, Grue_Slinky and Michael Aird for feedback. |

6d64e1d3-e010-4af5-85f7-90feea1b36dd | trentmkelly/LessWrong-43k | LessWrong | Meetup : Longmont Colorado Meetup

Discussion article for the meetup : Longmont Colorado Meetup

WHEN: 09 August 2012 07:00:00PM (-0600)

WHERE: Luna Cafe, 800 Coffman St, Longmont, CO 80501

Trying a new location, as an experiment.

All Denver and Boulder Less Wrongers are welcome to join the Fort Collins group for coffee, conversation, and a walk in the park.

Discussion article for the meetup : Longmont Colorado Meetup |

9ad6c1ee-2db9-42c5-9c40-11912679ad5f | trentmkelly/LessWrong-43k | LessWrong | Defrag conference scholarships

http://www.defragcon.com/2011/general/defrag-announcements/

Eric Nolin of the Defrag conference is looking to organize a scholarship fund for high school girls who want to study computer science in university.

Till that's in place, they're funding scholarships for people to attend the conference.

|

4ba6e442-1595-4683-bcee-c17167ad22fe | trentmkelly/LessWrong-43k | LessWrong | Universal Eudaimonia

The AI is here. And it's Friendly. But due to moral uncertainty, its value function prohibits any major intervention in existing human societies.

The AI's nanobots get to work. They replace the South Pacific garbage patch with a new continent--Eudaimonia, a rat park for humans. All basic needs are met, but if you want more money, you can gamble at a positive-expected-value casino or run through a bonus area where $100 bills flutter down from the sky. Immortality drugs are under development. The AI nanoprints RollerCoaster Tycoon save files on demand. Cherries are bred to be free of pits. Spaceships full of tourists depart regularly for a new floating city on Venus. And many ignore all this in favor of immersive virtual environments which are far more wondrous.

The AI is concerned. Eudaimonia seems to satisfy many human terminal values. But other terminal values appear to be in conflict. Indeed, some people have a terminal value that others should not be allowed to achieve their terminal values! That doesn't sound like a rat park! It's impossible to please everyone, and although the AI could modify peoples' preferences to change this, it is far too corrigible for that nonsense.

The AI comes up with a compromise. Once a month, you're given the opportunity to video call someone you have a deep disagreement with. At the end of the call, each of you gets to make a choice regarding whether the other should be allowed in Eudaimonia. But there's a twist: Whatever choice you made for the other person is the choice the AI makes for you.

The plan seems to be working splendidly at first. Legions of forgiving and agreeable people flood into the new continent and enjoy a life of constant bliss. The average resident makes three new deep friendships per month while tripping on psychedelic drugs. What used to be existential conflicts are now ironic sports team rivalries.

But a problem emerges: As the forgiving and agreeable people leave, it is the unforgiving and disagreeable p |

30f98227-89ff-49f7-a610-f6036a47b323 | trentmkelly/LessWrong-43k | LessWrong | This is a draft

You're not supposed to be able to see this. |

f7463c2b-c8d3-4134-9bd7-6bd61fca8b48 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Some Intuitions Around Short AI Timelines Based on Recent Progress

*tldr: I give some informal evidence and intuitions that point toward AGI coming soon. These include thinking about how crazy the last year has been, beliefs from those in major AI labs, and progress on MMLU.*

Intro

=====

This post is intended to be a low-effort reference I can point people to when I say I think there is some evidence for short AI timelines. I might describe the various bits of evidence and intuitions presented here as “intuitions around short AI timelines based on recent progress” (though perhaps there are [better terms](https://www.lesswrong.com/posts/BcYfsi7vmhDvzQGiF/taboo-outside-view)). They are not a thorough model like [Ajeya’s](https://www.lesswrong.com/posts/KrJfoZzpSDpnrv9va/draft-report-on-ai-timelines); insofar as somebody is using multiple models when putting together a timelines estimate, I think it would be unreasonable to place less than 20% or greater than 95% weight on extrapolation from current systems and recent progress.[[1]](#fnust71te8eob)

In the spirit of being informal, you can use whatever definition of AGI you like. I mostly use AGI to refer to something like “an AI system which can do pretty much all the human cognitive tasks as well or better than humans (~99% of tasks people in 2023 do).”

Some evidence

=============

I (Aaron) started following AI and AI existential safety around the beginning of 2022; it’s been a little over a year. Some of that time was my understanding catching up with advances from the past couple years, but there have also been major advances.

*Some major advances since I’ve been paying attention:*[*Chinchilla paper*](https://arxiv.org/abs/2203.15556) *popularized the scaling laws that were already known to some, there was some*[*DALL-E*](https://openai.com/product/dall-e-2) *and related stuff which was cool,*[*CICERO*](https://about.fb.com/news/2022/11/cicero-ai-that-can-collaborate-and-negotiate-with-you/) *happened which I didn’t follow but indicates we’re*[*probably*](https://www.lesswrong.com/posts/CPKYuJqLYGpBTtdFd/good-news-everyone) *going to train the AIs to do all the dangerous stuff (see also*[*Auto-GPT*](https://github.com/Torantulino/Auto-GPT) *and*[*Chaos-GPT*](https://www.lesswrong.com/posts/MAgQ8owro57eYFhNu/someone-already-tried-chaos-gpt)*, or GPT-4*[*getting*](https://twitter.com/NPCollapse/status/1639161297806802944?s=20) *plugins within 2 weeks of release, as more recent updates in this saga of indignity),*[*ChatGPT*](https://openai.com/blog/chatgpt) *shows how much more usable models are with RLHF (popularizing methods that have been known for a while),*[*Med-PaLM*](https://arxiv.org/abs/2212.13138) *gets a passing score on the US medical licensing exam (also tons of other PaLM and Flan-PaLM results I haven’t followed but which seem impressive).*[*LLaMA*](https://research.facebook.com/publications/llama-open-and-efficient-foundation-language-models/) *and*[*Alpaca*](https://crfm.stanford.edu/2023/03/13/alpaca.html) *take powerful capabilities from compute-efficient (and over) training and hand them to the public.*[*GPT-4*](https://openai.com/research/gpt-4) *blows the competition out of the water on many benchmarks. I probably missed a couple big things (including projects which honorably didn’t publicly push SOTA,*[*1*](https://www.lesswrong.com/posts/vEJAFpatEq4Fa2smp/hooray-for-stepping-out-of-the-limelight)*,*[*2*](https://www.lesswrong.com/posts/xhKr5KtvdJRssMeJ3/anthropic-s-core-views-on-ai-safety)*); the list is probably a bit out of order; I’ve also included things from 2023; but man, that sure is a year of progress.*

I don’t expect there are all that many more years with this much progress before we hit AGI — maybe 3-12 years. More importantly, I think this ~15 month period, especially November 2022-now, has generated a large amount of hype and investment in AI research and products. We seem to be on a path such that — in every future year before we die — there is more talent+effort+money working on improving AI capabilities than there was in 2022.[[2]](#fni5jqdb6aq7) I hold some hope that major warning shots and/or regulation would change this picture, in fact I think it’s pretty likely we’ll see warning shots beyond [those](https://www.lesswrong.com/posts/4Gt42jX7RiaNaxCwP/more-information-about-the-dangerous-capability-evaluations#Concrete_example__recruiting_TaskRabbit_worker_to_solve_CAPTCHA) [we](https://deepmindsafetyresearch.medium.com/specification-gaming-the-flip-side-of-ai-ingenuity-c85bdb0deeb4) [have](https://deepmindsafetyresearch.medium.com/goal-misgeneralisation-why-correct-specifications-arent-enough-for-correct-goals-cf96ebc60924) [already](https://www.lesswrong.com/posts/jtoPawEhLNXNxvgTT/bing-chat-is-blatantly-aggressively-misaligned) [seen](https://arxiv.org/abs/2212.09251), but I am not too optimistic about what the response will be. As crazy as 2022 was, we should be pretty prepared for a world that [gets](https://www.lesswrong.com/posts/HBxe6wdjxK239zajf/what-failure-looks-like) [significantly](https://www.lesswrong.com/posts/6Xgy6CAf2jqHhynHL/what-2026-looks-like) [crazier](https://www.lesswrong.com/posts/qRtD4WqKRYEtT5pi3/the-next-decades-might-be-wild). I find it hard to imagine that we could have all that many more years that look like 2022 AI progress-wise, especially with significantly increased interest in AI.

---

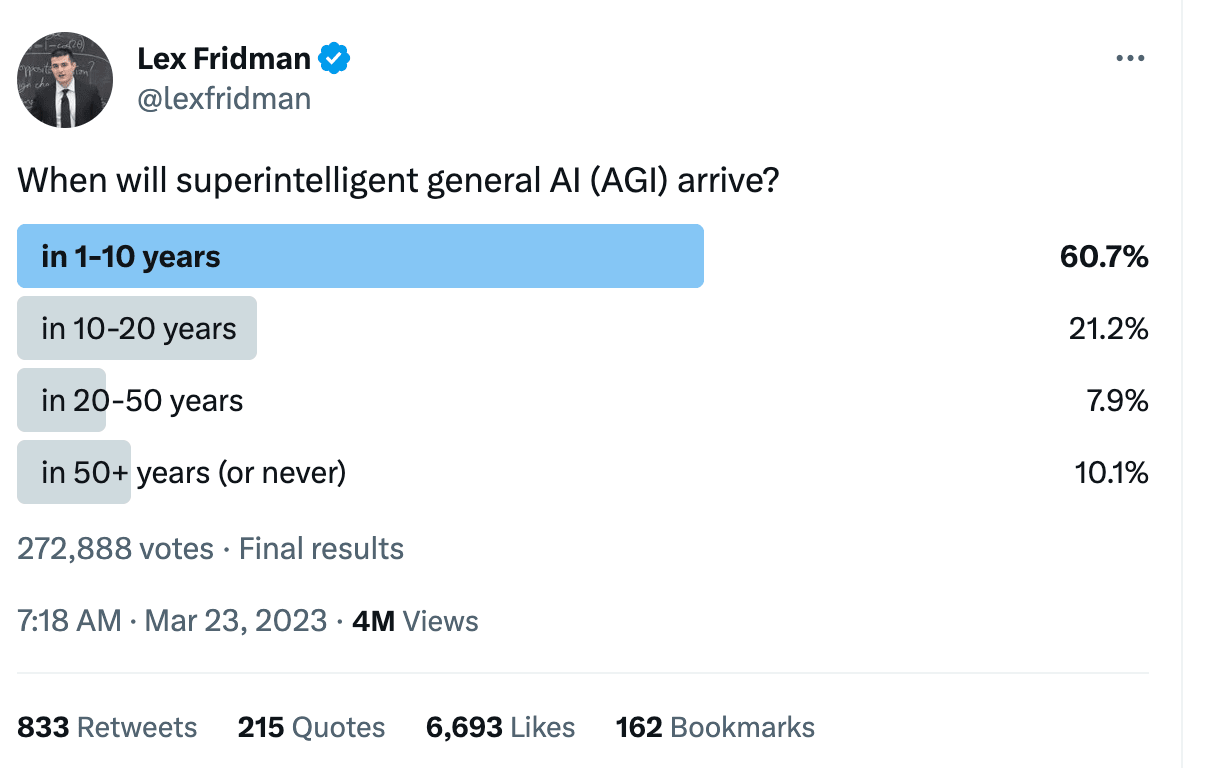

A large amount of the public [thinks](https://twitter.com/lexfridman/status/1638908038928293892) AGI is near. I believe these people are mostly just thinking about how good current systems (GPT-4) are and informally extrapolating

*[image description: A Twitter poll from Lex Fridman where he asks “When will superintelligent general AI (AGI) arrive?” There are 270,000 responses. 61% of respondents answered that it will arrive in 1 to 10 years and 21% of respondents answered that it will arrive in 10-20 years.*

---

Some [Anthropic staff](https://www.anthropic.com/index/core-views-on-ai-safety) seem to think something-like-AGI could be near:

> Over the next 5 years we might expect around a 1000x increase in the computation used to train the largest models, based on trends in compute cost and spending. If the scaling laws hold, this would result in a capability jump that is significantly larger than the jump from GPT-2 to GPT-3 (or GPT-3 to Claude). At Anthropic, we’re deeply familiar with the capabilities of these systems and a jump that is this much larger feels to many of us like it could result in human-level performance across most tasks… we believe they jointly support a greater than 10% likelihood that we will develop broadly human-level AI systems within the next decade.

>

>

I have ~0 evidence, but I expect folks at OpenAI are also in the ‘significant probability of AGI before 2030’ boat. Note that the conductors on the train are surely biased[[3]](#fn1i6lwsvnzp5), but they also sure do have better evidence than all the rest of us.

---

AI is [starting](https://www.lesswrong.com/posts/camG6t6SxzfasF42i/a-year-of-ai-increasing-ai-progress) to be used to accelerate AI research.

A group of intuition pumps

==========================

Being in the thick of it can really bias people’s judgment. The format of the following intuitions pumps is that I imagine visiting alien civilizations much like earth, and I try to reason from just one piece of evidence at a time about how long that planet has.

---

A major tech company there releases a paper about “[sparks of artificial general intelligence](https://arxiv.org/abs/2303.12712)” in a state-of-the-art system. How much longer does this planet have until AGI? I would expect something like 1-8 years.

---

You visit a different planet. People are using coding assistants to speed up their coding process, and in the first couple years of doing this people are able to cut their coding time by [~50%](https://github.blog/2022-09-07-research-quantifying-github-copilots-impact-on-developer-productivity-and-happiness/) (on the higher side of ways to interpret the data). How much longer does this planet have until AGI? Maybe 2-11 years.

---

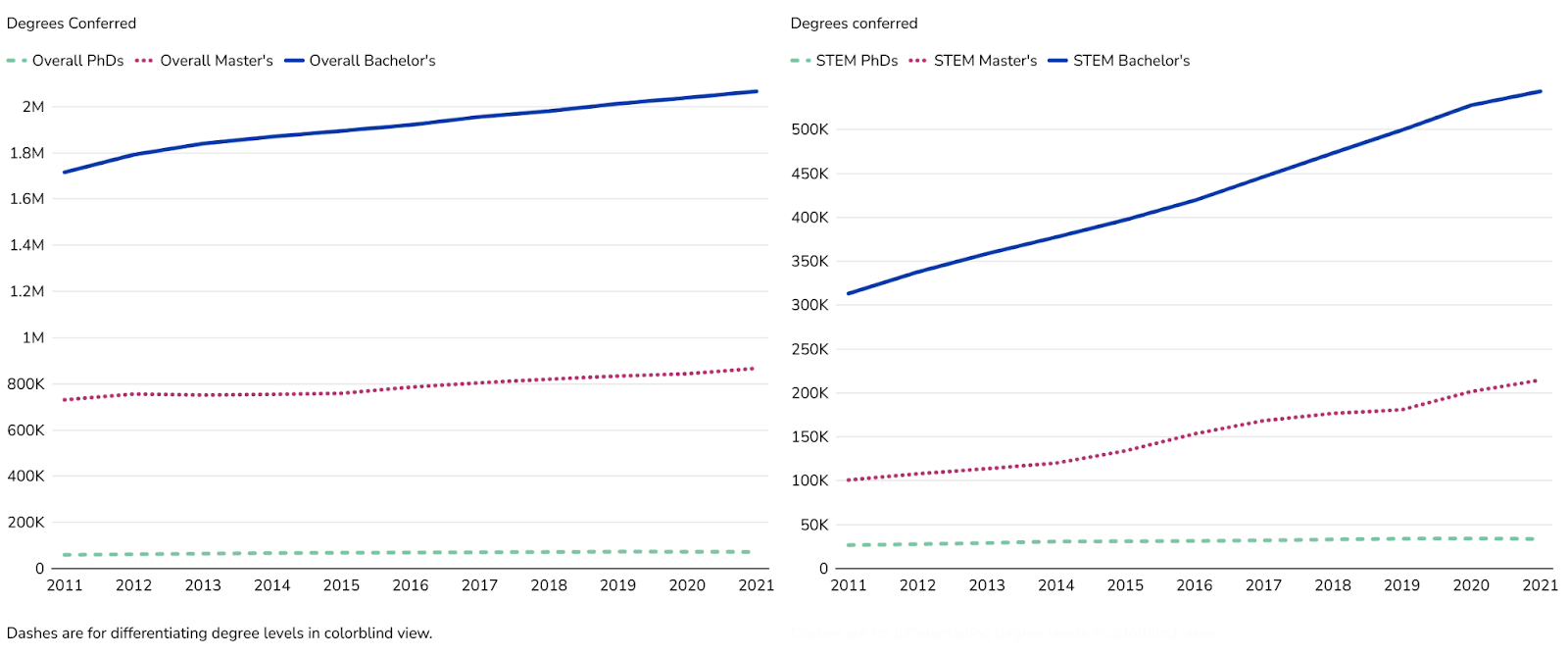

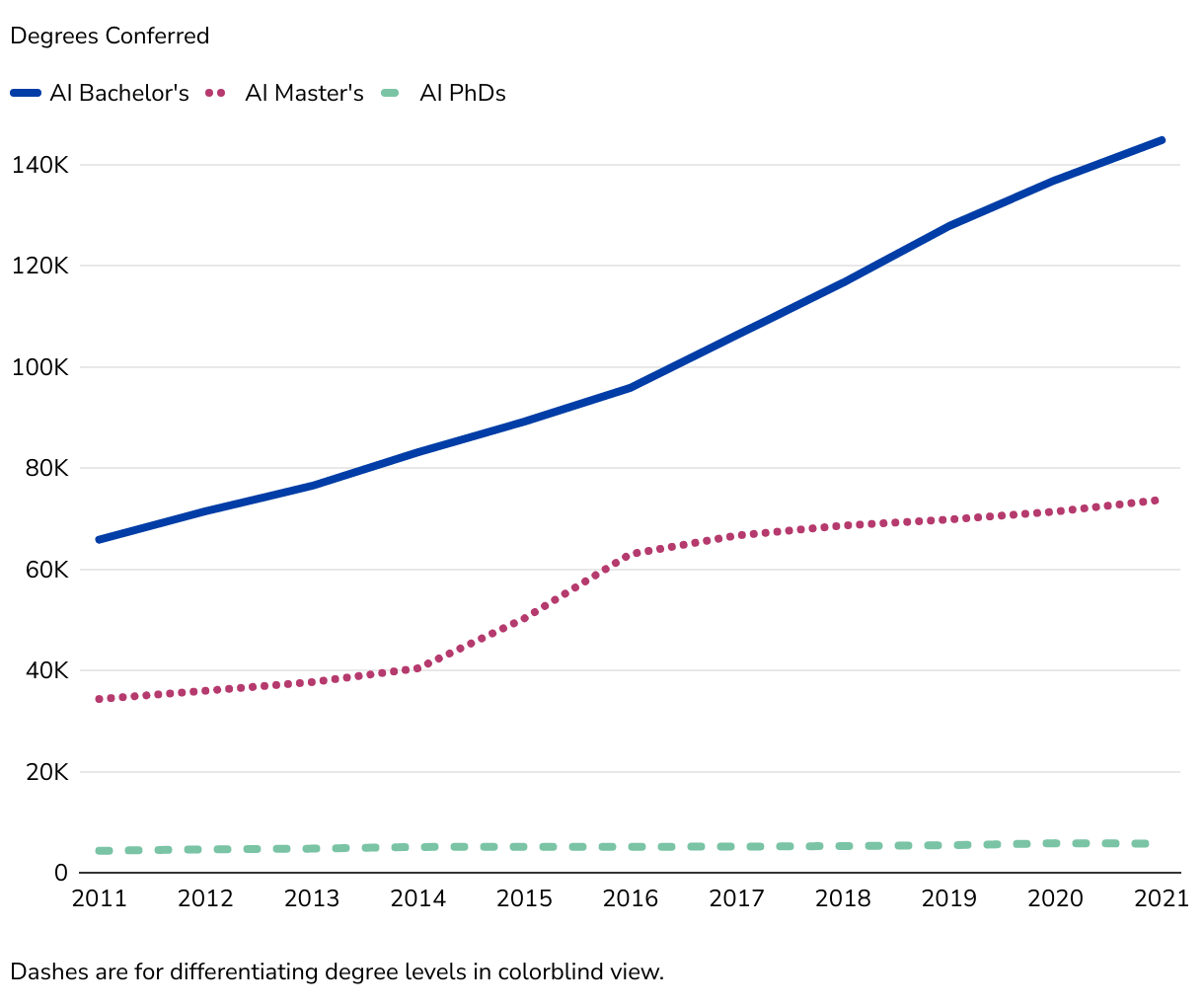

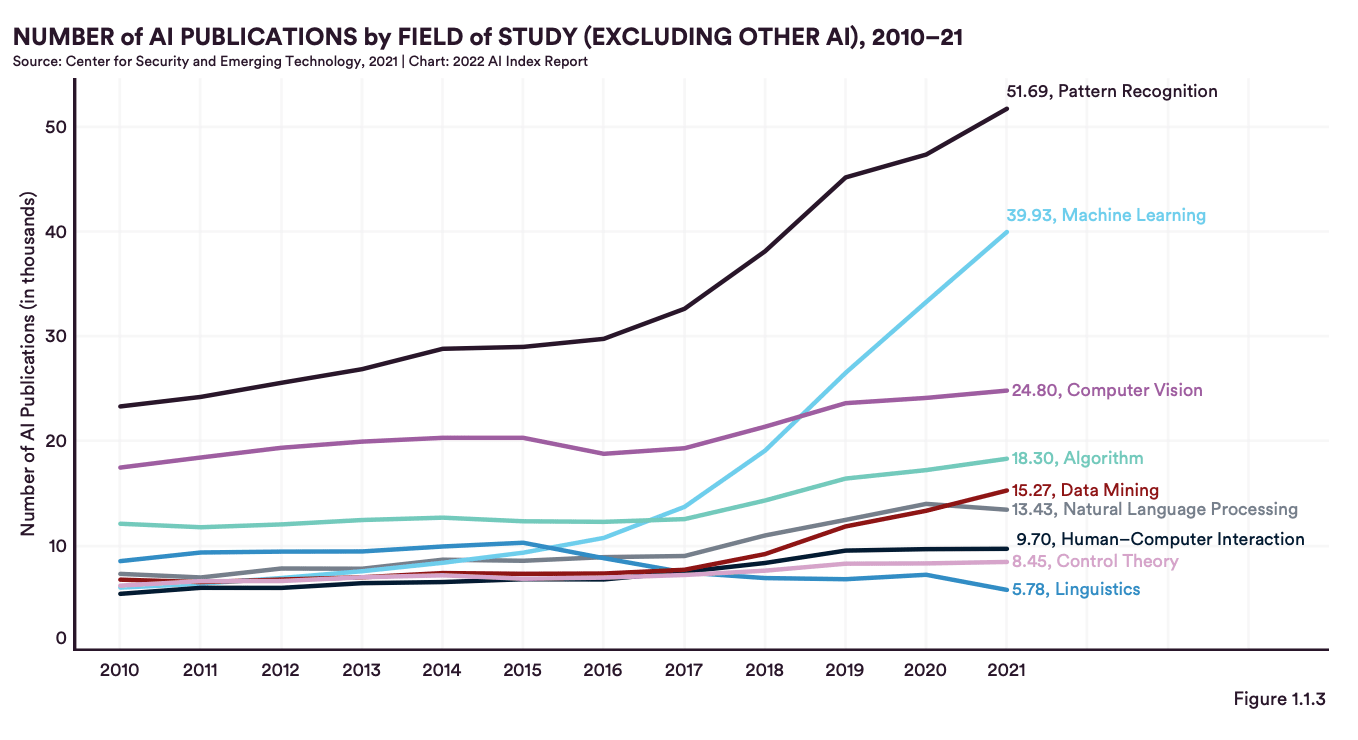

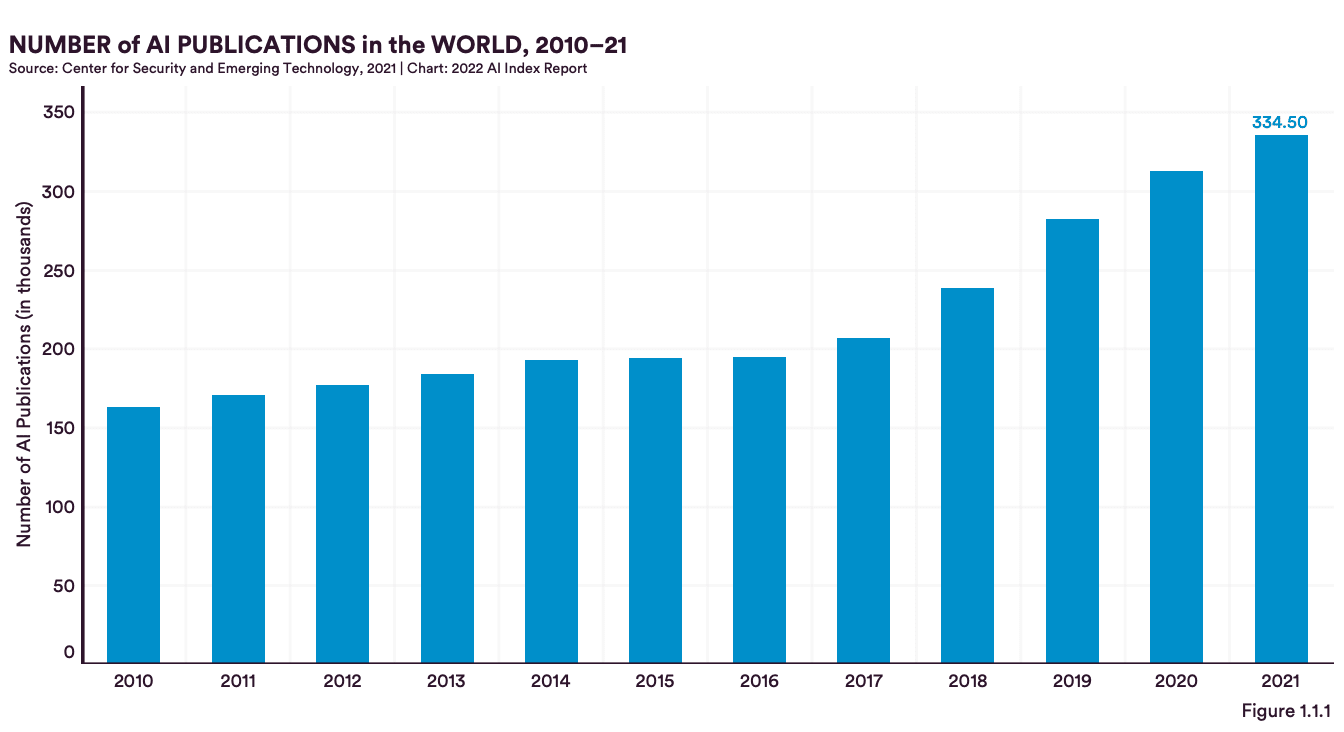

You visit a different planet. You ask somebody there how many young aliens are studying AI and how much AI research is happening each year. They show you the [following](https://cset.georgetown.edu/publication/leading-the-charge-a-look-at-the-top-producing-ai-programs-in-u-s-colleges-and-universities/) [graphs](https://aiindex.stanford.edu/wp-content/uploads/2022/03/2022-AI-Index-Report_Master.pdf#page=19):

*[image description: The first two graphs show the overall number of college degrees and the number of STEM degrees conferred per year from 2011 to 2021. They indicate that for Bachelor’s and Master’s degrees, STEM has become quite popular in the last 10 years, seeing almost double the STEM Bachelor’s in 2021 as 2011, while the increase across all Bachelor's degrees is only around 20%. The third graph shows the same data but for AI degrees specifically. The number of Master’s in AI degrees conferred approximately doubled over the 10 year period, while AI Bachelor’s degrees more than doubled. PhDs do not seem to have experienced significant growth in graphs 1 through 3. The fourth graph shows the number of AI publications between 2010 and 2021. There are about twice as many publications in 2021 as 2010; there seems to be an increase in growth rate after 2017, but overall these numbers aren’t too crazy. The fourth graph shows the number of AI publications broken down by field of study, and we can see that machine learning takes off around 2017, experiencing a significant increase in publishing since then.]*

These trend lines aren’t that crazy. But they sure do indicate an uptick in people and research working on AI on that planet.

---

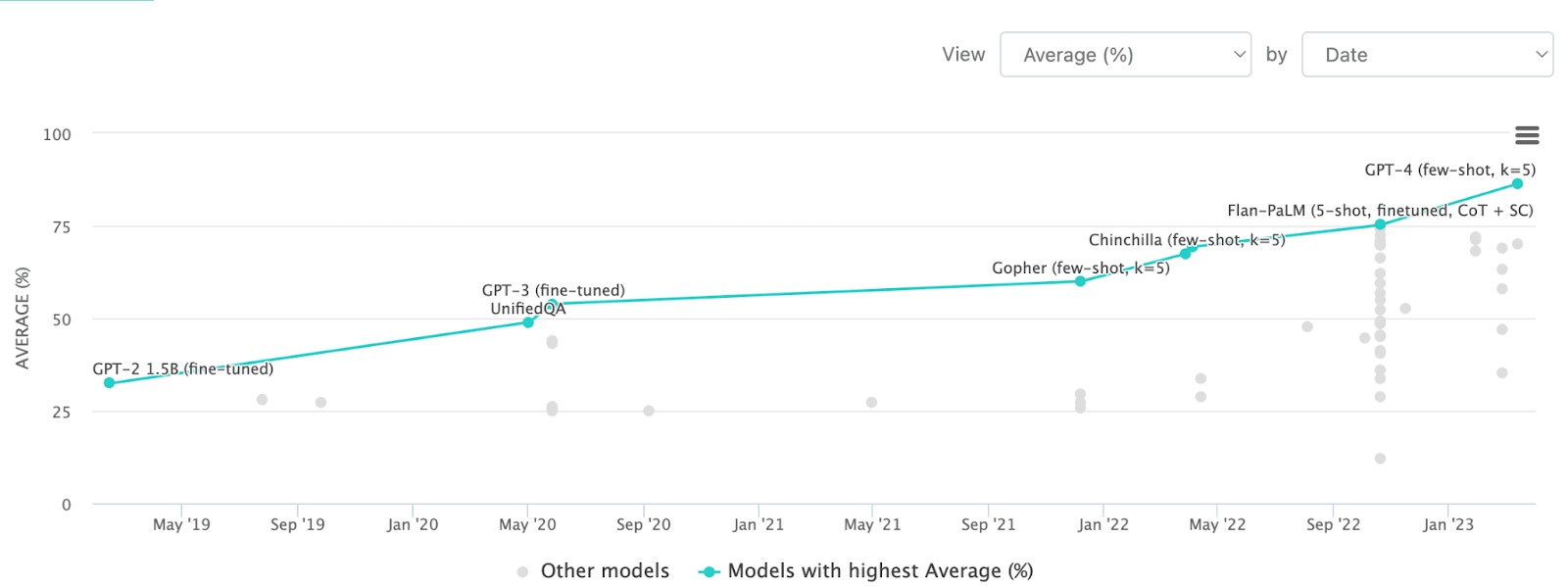

You visit a different planet. You are shown a benchmark used for AIs known as MMLU. It is a large multiple choice test covering many topics, from high school world history to college physics. Some unspecialized aliens taking [this test](https://arxiv.org/pdf/2009.03300.pdf#page=3) scored around 35%; experts are predicted to score around 90%; you guess that a smart university student alien could probably score around 70% if they spent a few months studying. The graph of SOTA AI performance on this test, in the last 4 years, is as [follows](https://paperswithcode.com/sota/multi-task-language-understanding-on-mmlu):

*[image description: Data for MMLU performance over time. The first datapoint is GPT-2 from early 2019 which scores 32%. In mid 2020 GPT-3 scores 54%. In early 2022 Chinchilla scores 68%. In late 2022 Flan-PaLM scores 75%. In early 2023 GPT-4 scores 86%.]*

Now, there are some rumors about [dataset contamination](https://cdn.openai.com/papers/gpt-4.pdf#page=32) in the results on the most recent record, but the evidence isn’t very strong for that, and it probably doesn’t change the picture too much. Eyeballing a trend line through this performance would imply that this planet is going to have AIs that outperform expert aliens on most academic cognitive tasks in months to years. [edit: I think passing 90% MMLU is not actually the same as "outperforming experts on most academic cognitive tasks"; it's probably closer to "having above-expert-level knowledge in almost all academic areas".]

Conclusion

==========

I think there are some pretty compelling reasons to expect AGI soon, for most reasonable definitions of AGI. There are a number of things that have happened in the world recently which — if I were a neutral observer — I think would make me expect AGI in a single digit number of years. While I don’t like how vague/informal/ass-number these “intuitions around [short] AI timelines based on recent progress” are, the alternative might be that we all fall over dead while staring at a graph that says we’re 5 OOMs of compute short. I’m interested in feedback on this post, see this footnote for details.[[4]](#fncpybuqdpwcl)

Footnotes:

1. **[^](#fnrefust71te8eob)**It seems unreasonable to not put at least 20% weight on looking at current systems when projecting future progress (see also Jacob Steinhardt’s [discussion](https://bounded-regret.ghost.io/which-anchors-do-i-use/) of different weights for thinking about how future AI systems will be), but by no means do you have to use the ideas in this post; if you have a nice model for AI timelines that easily incorporates recent progress, please let me know! Unfortunately, it seems to me that AI timeline estimates are often divorced from current systems, for example Ajeya’s [report](https://www.lesswrong.com/posts/KrJfoZzpSDpnrv9va/draft-report-on-ai-timelines), Tom’s [report](https://www.openphilanthropy.org/research/report-on-semi-informative-priors/). I appreciate Matthew putting together a [framework](https://www.lesswrong.com/posts/4ufbirCCLsFiscWuY/a-proposed-method-for-forecasting-transformative-ai) that can more easily update on recent advances. I have generally felt pretty confused about how to update on advances in AI, in part due to some kind of [measurability bias](https://en.wikipedia.org/wiki/McNamara_fallacy) where the [AI timeline models](https://www.lesswrong.com/posts/4eAnBaLxvnkydiavw/literature-review-of-tai-timelines) which have been really fleshed out don’t seem to easily allow updating on the performance of current systems. Part of the reason for this post is to publicly give myself permission to update on the things I see when I open my eyes, even though I don’t have a nice model to put these observations in.

2. **[^](#fnrefi5jqdb6aq7)**Hopefully we don't die. When we're safe I will happily rewrite this sentence to say "in every future year before we exit the acute risk period".

3. **[^](#fnref1i6lwsvnzp5)**I would expect those inside AI labs to be somewhat biased toward shorter timelines because: insular social environment that focuses on AGI, wanting to think their work is important, selection effect of choosing to work on AGI because they think it's feasible for them to make it, interest in generating hype for the sake of financial investment, probably more.

4. **[^](#fnrefcpybuqdpwcl)**Types of feedback I would be interested in:

- If you think any of the evidence/arguments presented actually point in a different direction than I say, let me know!

- If you think there are major pieces of evidence from this “intuitions around [short] AI timelines based on recent progress” perspective that I did not include, including evidence from recent advances that points toward longer timelines, drop a comment! I’m less interested in evidence from other approaches given that this post is trying to build a specific model to then be mixed with other models.

- If you have nice models/frameworks for thinking about AI timelines that allow for updating on recent progress, let me know!

- If you really think this post would benefit from being fleshed out or cleaned up (I’m pretty averse to this because I don’t think it’s worth the time)!

- If you think one should put less than 20% of their timeline thinking weight on recent progress, I would be interested in hearing why.

- If you think I’m seriously messing up somewhere, let me know!

- I’m not very interested in debating the definition of AGI unless you think it really matters for informal thoughts like these. |

8f94dbce-a71f-4c1a-9b01-e651c54ca502 | trentmkelly/LessWrong-43k | LessWrong | My Updating Thoughts on AI policy

My conversation on policy and AI with Richard was over a year ago, so for Daniel Kokotajlo's LW writing day I thought I’d write down new thoughts I’ve thought since then (warning: it's pretty rambly). I've structured the post around what what I did that lead to my updated thoughts.

1) I read many posts on strategy

Paul’s post What Failure Looks Like is something I’ve thought about a lot, which lays out how the abstract technical problems turn into practical catastrophes. It is a central example of what (I think) strategy work looks like. The strategic considerations are deeply tied up with the specifics of the technology.