id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

556d5698-8ad1-4d5a-a999-80d5d9e70bbd | trentmkelly/LessWrong-43k | LessWrong | Normie response to Normie AI Safety Skepticism

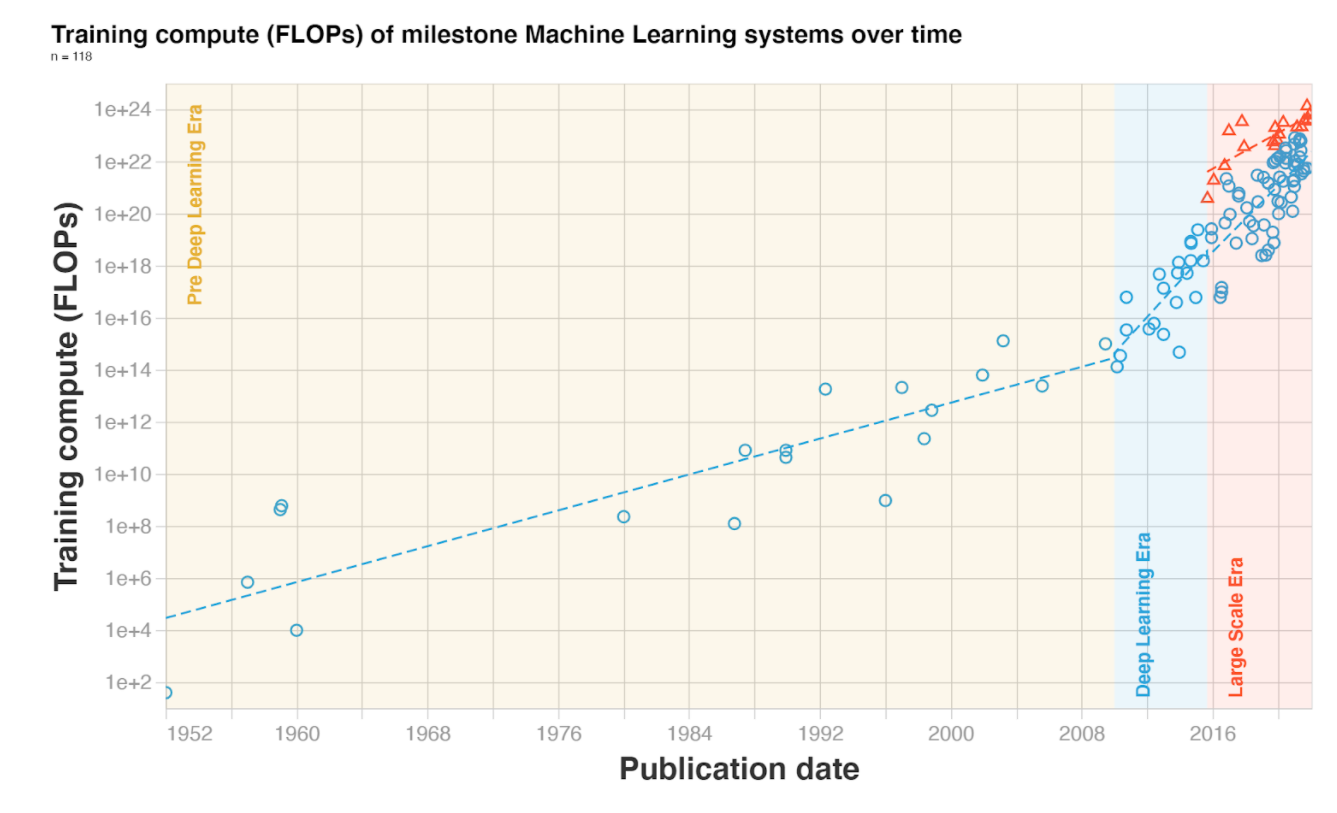

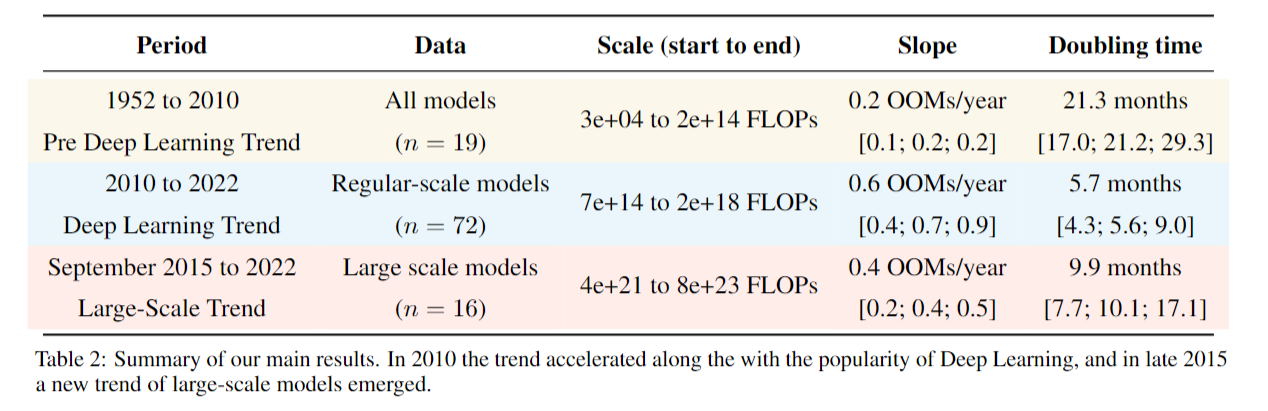

The following post is an adaptation of a comment I posted on the Machine Learning subreddit in response to a post asking whether readers worried about AI Safety/Alignment similarly to Eliezer Yudkowsky (EY). My general impression is that most commenters had not dedicated much thought to AI Safety (AIS), like most normal people. I personally wouldn't qualify myself as particularly well-read on the topic either, hence the title. As I mention towards the end of the article I think there is "alpha" in discussions happening between us normies of the "out-group" and so I went ahead and posted that comment and this post for amplification.

It seems like many commenters mostly have issues with the "timelines", i.e., they do not think these risks will happen soon enough for us to worry about them now. I think this is a fair enough stance, there is a lot of uncertainty around forecasting the future of A(G)I developments.

However I would point out that they should consider that we may all be suffering from exponential growth bias. If indeed AGI is very far away, then great, we indeed have more time to develop it safely (although I'd be sad to get to live in such a cool future later rather than sooner). But there is a real chance it isn't, and I do think we need to be cautious of our confidence in the former case, considering the gravity of the consequences superintelligence may bring. Basically what I'm saying is "better safe than sorry", similarly to how we shouldn't dismiss nuclear safety, epidemiology and shouldn't have dismissed climate alarmists in the 20th century.

The other skepticism I tend to see is that there's no point working on aligning AI, because even with aligned AI, it will be abused by corporations and governments, just like our narrower systems are being abused[1] today. I should point out that the field of AI Safety also considers this problem and posits that solutions to it are also necessary to address safety, similarly to how we coordinate for other se |

90023726-dc6a-42b6-9ccb-3c547c458557 | trentmkelly/LessWrong-43k | LessWrong | Blood Is Thicker Than Water 🐬

Followup to: Where to Draw the Boundaries?

Without denying the obvious similarities that motivated the initial categorization {salmon, guppies, sharks, dolphins, trout, ...}, there is more structure in the world: to maximize the probability your world-model assigns to your observations of dolphins, you need to take into consideration the many aspects of reality in which the grouping {monkeys, squirrels, dolphins, horses ...} makes more sense.

The old category might have been "good enough" for the purposes of the sailors of yore, but as humanity has learned more, as our model of Thingspace has expanded with more dimensions and more details, we can see the ways in which the original map failed to carve reality at the joints ...

So the one comes to you—a-gain—and says:

> Hold on. In what sense did the original map fail to carve reality at the joints? You don't deny the obvious similarities between dolphins and fish—between dolphins and other fish. That's a cluster in configuration space! The observation that dolphins are evolutionarily related to mammals may be an interesting fact that specialized professional evolutionary biologists care about for some inscrutable specialist reason. But I'm not a professional biologist. Choosing to define categories around evolutionary relatedness rather than macroscopic human-relevant features seems like an arbitrary æsthetic whim. Why should I care about phylogenetics, at all?

This one is going to take a few paragraphs.

Focusing on evolutionary relatedness is not an arbitrary æsthetic whim because evolution actually happened. Evolution isn't just a story that our Society's specialists happen to have chosen because they liked it; they chose it because it predicts what we see in the world. You can't choose a substantively different theory and make the same predictions about the real world. (At most, you'd end up with an isomorphic theory with additional epiphenominal elements, asserting that an allele rose in frequency "because" |

a0dffbaa-26a9-4f33-b17a-1d402ecd48bf | trentmkelly/LessWrong-43k | LessWrong | What should I teach to my future daughter?

Soon I will have a baby girl and I was thinking that the world is changing very fast and I want to be able to give her some bases for her to learn to deal with this civilisation. From a practical point of view.. i was wondering if I Should teach her some programming language... What skills does she need for what is coming? Ofc.. i will also do my best in terms of teaching her ethics, compassion and other values.. but I am talking about the hands-on skills that the new generations need to be able to develop. |

0dd49838-15ed-4062-afb1-ad7c4bb7cea7 | trentmkelly/LessWrong-43k | LessWrong | [To Be Revised]Perhaps the Meaning of Life, An Adventure in Pluralistic Morality

Perhaps the Meaning of Life

An Adventure in Pluralistic Morality.

Sept. 18, 2002

"All models are wrong but some are useful."

--George Box

At the core of every controversial issue, institutional dysfunction and inspiring human achievement is the conspicuous influence of how well a society of people “get along”. Splitting this atom exposes the sub-particles of human nature(~proton), moral agency(~electron) and the electromagnetic tension of choice around individual belief(~neutron). The following model is an ongoing effort to assemble and refine an understanding of this dynamic.

The first figure, entitled Moral Transitions, indicates the individual’s struggle against the less appealing aspects of human nature. The second, Relational Harmonics represents the near constant challenge of building and maintaining character. The third figure, Personal Growth indicates the not necessarily linear stages that can occur over a lifetime.

----------------------------------------

----------------------------------------

----------------------------------------

----------------------------------------

Footnotesa

Personal Growth (intellectual, moral, spiritual)

"There are of course many gradations within and between the four stages of spiritual development. [emphasis added]

...

My experience suggests that this progression of spiritual development holds true in all cultures and for all religions.

...

Again in my experience, the four stages of spiritual development also represent a paradigm for healthy psychological development”

--M. Scott Peck, The Different Drum, Chapter IX, The Stages Of Spiritual Growth, 1987

[A flawed but useful book]

b

"The world judges many things; for it is the ignorance of its natural state that forms the true tribunal of man. Knowledge has two extremes which meet; the one is that pure natural ignorance, in which all are born; the other is that which is experienced by minds of the highest order. After traversing the whole circl |

242e4355-fbbf-447e-a98f-24992462fc6e | trentmkelly/LessWrong-43k | LessWrong | The Limits of Curiosity

In principle, I agree with the notion that it is unforgivable to not want to know, and not want to improve your map to match the territory. However, even the most curious person in the world cannot maintain equal curiosity about all things, and even if they could there are limits on time and energy. In general, the things that inspire curiosity are determined by your personal likes, dislikes, and biases, and it is therefore worth considering carefully where these demarcations fall so as not to deprive ourselves of useful information. This is particularly important when it comes to things that inspire not just lack of interest, but aversion, or "anti-curiosity."

However, not all information is useful, and it can be useful to encourage a bias that cuts you off from information that is not particularly useful to you, so as to better allocate your time and energy. It is possible that it could also be useful to fabricate an "I don't want to know" stance about a certain type of information so as to better allocate your time, (for example, ceasing to watch television, and denying curiosity about what is happening on your favorite shows), but I will not discuss or advocate that here, largely because it's all I can do to hold the line against new time wasters.

The difficulty and danger of this method is that it is best accomplished by not thinking about the things you don't want to be curious about, and that can lead to not even realizing you aren't curious about them, so important things may slip through the cracks. For example, I have never smoked a cigarette, and it requires no effort on my part to not be curious about what it is like. That is such a deeply buried aversion that I might never have consciously noticed that lack of curiosity if I had not been writing this article. In this case, lack of curiosity about smoking is beneficial, but it could just as easily have been something that would be useful for me to be curious about, and I might never have noticed |

720b23af-285e-470b-bd8a-a662215e98ed | trentmkelly/LessWrong-43k | LessWrong | Open Thread - July 2023

If it’s worth saying, but not worth its own post, here's a place to put it.

If you are new to LessWrong, here's the place to introduce yourself. Personal stories, anecdotes, or just general comments on how you found us and what you hope to get from the site and community are invited. This is also the place to discuss feature requests and other ideas you have for the site, if you don't want to write a full top-level post.

If you're new to the community, you can start reading the Highlights from the Sequences, a collection of posts about the core ideas of LessWrong.

If you want to explore the community more, I recommend reading the Library, checking recent Curated posts, seeing if there are any meetups in your area, and checking out the Getting Started section of the LessWrong FAQ. If you want to orient to the content on the site, you can also check out the Concepts section.

The Open Thread tag is here. The Open Thread sequence is here. |

9f2207e0-0b13-4b93-ab74-4e1e395995c2 | trentmkelly/LessWrong-43k | LessWrong | Being useless to show effectiveness

From an email discussion (lightly edited):

> I actually think an important related dynamic, in the world at large more than EA, is people favoring actions that are verifiably useless to themselves over ones that are probably useful to others but also maybe useful to themselves. I blogged about this here a while ago. In short, I see this as a signaling problem. The undesirable action (destroying resources in an evidently useless way) is intended to signal that you are not bad. Bad people (greedy exploiters trying to steal everyone else’s stuff) can make themselves look just like effective good people (both do things that look high leverage and where it is not totally clear what the levers are ultimately pushing). So the bad people do that, because it beats looking bad. Then there is no signal that the effective good people can send to distinguish themselves from the bad people. So people who want to not look bad have to look ineffective instead.

>

> A way something like this might happen in our vicinity e.g. if I genuinely guess that the most effective thing to do might be for me to buy a delicious drink and then sit still in a comfy place for the day and think about human coordination in the abstract. However this is much like what a selfish version of me might do. So if I want to not humiliate myself by seeming like a cheating free-rider liar motivated reasoner in front of the other EAs, or perhaps if I just experience too much doubt about my own motives or even if I just want to make it straightforward for others around to know they can trust me, perhaps I should instead work for a reputable EA org or earn money in an annoying way and give it to someone far away from me.

>

> On this model, the situation would be improved by a way to demonstrate that one is effective-good rather than effective-evil. (As in, a second sense in which it is a signaling problem is that adding a good way to signal would make it better).

|

1810b754-bdec-48a8-b983-a0fb6861de16 | trentmkelly/LessWrong-43k | LessWrong | Tarski Statements as Rationalist Exercise

Related to: Dissolving the Question, The Second Law of Thermodynamics, and Engines of Cognition, The Meditation on Curiosity.

> The sentence "snow is white" is true if, and only if, snow is white.

-- A. Tarski

Several days ago I've spent a couple of hours trying to teach my 15 year old brother how to properly construct Tarski statements. It's quite nontrivial to get right. Learning to place facts and representations in the separate mental buckets is one of the fundamental tools for a rationalist. In our model of the world, information propagates from object to object, from mind to mind. To ascertain the validity of your belief, you need to research the whole network of factors that led you to attain the belief. The simplest relation is between a fact and its representation, idealized to represent correctness or incorrectness only, without yet worrying about probabilities. The same object or the same property can be interpreted to mean different things in different relations and contexts, indicating the truth of one statement or another, and it's important not to conflate those.

Let's say you are watching news on TV and the next item is an interview with a sasquatch. The sasquatch answers the questions about his family in decent English, with a slight British accent.

What do you actually observe, how should you interpret the data? Did you "see a sasquatch"? Did you learn the facts about sasquatch's family? Is there a fact of the matter, as to whether the sasquatch's daughter is 5 years old, as opposed to 4 or 6?

Meaningfulness of these questions is conditional on their context, like in the notorious "when did you stop beating your wife?". These examples seem unnaturally convoluted, but in fact every statement suffers from the same problem, you must cross the levels of indirection and not lose track of the question in order to go from a statement of fact, from a belief in your mind, to the fact that belief is about. First, you must relate "sasquatch" on the TV s |

2a97dc26-adf8-4f76-9303-b963bd069522 | trentmkelly/LessWrong-43k | LessWrong | Idiohobbies

When you get to know someone, you might ask about their interests or hobbies. From that, you can better decide what activity to invite them to join, or on what topic to have them converse, whenever you meet again.

Any interest or hobby appeals to variously many people. If you have the same interest as the person you meet, the commonality informs you much better about how to connect with them. Ceteris paribus, if the interest is more common, you and your interlocutor are more likely to share it, but would bond by it more weakly. Think, for example, of how two extreme ironers connect, versus two people who "like Linux", versus two people who just "like music". If, as typical, you befriend people by overlap of existing hobbies, meeting thoroughly-relatable people relies on much luck, rather than effort. We can do better.

Instead, make or do something unique that lasts, or that at least has effects and evidence that last. Ideally, your creation manifests your thoughts, as from a collection of essays. You and/or someone you meet — whoever has social effort to spend — looks at what the other did. From what you alone made follows a unique hobby — an idiohobby — of any activities which could precede and follow it. One would assume you're enthusiastic about any significant aspect of what you alone made.

If you treat idiohobbies like normal hobbies, you'd need to have made the same thing as the person you meet. The real criterion is a lower bar. You just have to become familiar with what the other person made, and willing to partake in its implications.

A normal person might ask "what do you like to do? Might some of that also be what I like?". I, in search of idiohobbies, will ask "what have you done by which I may know you?". |

cf94ad09-6db8-41cb-a6e0-ecf6b330274e | trentmkelly/LessWrong-43k | LessWrong | Oracles, sequence predictors, and self-confirming predictions

My counterfactual Oracle design uses a utility function/reward function in order to train it to give the right predictions. Paul Christiano asked whether the whole utility function approach was necessary, and whether it could be replaced with a simple sequence predictor.

It turns out the answers are no, and yes. The counterfactual nature of the approach can be preserved, without needing a utility function.

Counterfactual sequence prediction

There is a boxed Oracle, O, which gets fed a series of observations o0,o1,…on−1, and must make a prediction pn about the next observation.

If the prediction was sent out into the world, then O is attempting to make pn into a self-confirming prediction, with all the problems that this could entail.

However, we make O into a counterfactual Oracle; on some occasions, the output pn is erased, and not seen by anyone. In that case, the Oracle will get a special message e (for "erasure"), that will be added on after of on−1.

Then the job of the counterfactual Oracle is, given a history hn−1=o0p1o1…pn−1on−1 (including past "e"s), to produce a prediction pn that is the best prediction for on given the history hn−1e. In terms of words, the Oracle is trying to best predict the next observation, given that erasure is assumed to happen (the definition of "best" depends on the details of the design).

If our setup is well designed and there is no information leakage, then any on has a probability that is independent of the prediction pn:

* ∀on,hn−1,pn,p′n:P(on∣hn−1epn)=P(on∣hn−1ep′n).

Meaning of predictions

If we ran the oracle without the assumption that e would be observed, we'd have the standard self-confirming predictor (that being the only correct predictions); with that assumption, it becomes a counterfactual predictor.

Though counterfactuals are conceptually tricky, it's actually easier to understand what a counterfactual predictor is doing than a general sequence predictor Oracle. We understand that a general sequence predic |

21d57e34-0b29-4428-a775-e0495e0c540d | trentmkelly/LessWrong-43k | LessWrong | Polling Thread

This is an experiment to use polls to tap into the crowd knowledge probably present on LW.

This is your chance to ask your multiple choice question you always wanted to throw in. Get qualified numeric feedback to your comments. Post fun polls.

There are some rules:

1. Each poll goes into its own top level comment and may be commented there.

2. You must at least vote all polls that were posted earlier than you own. This ensures participation in all polls and also limits the total number of polls. You may of course vote without posting a poll.

3. Your poll should include a 'don't know' option (to avoid conflict with 2). I don't know whether we need to add a troll catch option here but we will see.

If you don't know how to make a poll in a comment look at the Poll Markup Help.

----------------------------------------

This being an experiment I do not announce it to be regular. If it is successful I may. Or you may. In that case I recommend the following to make this potentially more usable:

* Use "Polling Thread" in the title.

* Copy the rules.

* Add the tag "poll".

* Link to this Thread or a previous Thread.

* Create a top-level comment saying 'Discussion of this thread goes here; all other to-level comments should be polls or similar'

* Add a second top-level comment with an initial poll to start participation.

EDIT: Added recommendations from KnaveOfAllTrades. |

ce947bc1-6fff-4f6a-9a30-defcbfa1569c | trentmkelly/LessWrong-43k | LessWrong | Overcoming bias guy meme | quickmeme

http://www.quickmeme.com/Overcoming-bias-guy/popular/1/?upcoming

|

c06b095c-3bcc-4461-b556-f3e845aa7415 | trentmkelly/LessWrong-43k | LessWrong | Weekly LW Meetups

This summary was posted to LW Main on November 6th. The following week's summary is here.

Irregularly scheduled Less Wrong meetups are taking place in:

* San Antonio Meetup: 08 November 2015 12:30PM

* San Francisco Meetup: Fun and Games: 09 November 2015 06:15PM

* Warsaw November Meetup: 14 November 2015 04:00PM

The remaining meetups take place in cities with regular scheduling, but involve a change in time or location, special meeting content, or simply a helpful reminder about the meetup:

* Austin, TX - Flightpath Coffee House: 07 November 2015 01:30PM

* [Moscow] FallacyMania game in Kocherga club: 25 November 2015 07:30PM

* NH Meet-up: 10 November 2015 07:00PM

* NYC Solstice: 19 November 2015 06:30PM

* Seattle Rationality Reading Group: 109-114: 09 November 2015 06:30PM

* Tel Aviv: Black Holes after Jacob Bekenstein: 24 November 2015 08:00AM

* Vienna: 21 November 2015 04:00PM

* [Vienna] Five Worlds Collide - Vienna: 04 December 2015 08:00PM

* Washington, D.C.: Book Swap: 08 November 2015 03:00PM

Locations with regularly scheduled meetups: Austin, Berkeley, Berlin, Boston, Brussels, Buffalo, Canberra, Columbus, Denver, London, Madison WI, Melbourne, Moscow, Mountain View, New Hampshire, New York, Philadelphia, Research Triangle NC, Seattle, Sydney, Tel Aviv, Toronto, Vienna, Washington DC, and West Los Angeles. There's also a 24/7 online study hall for coworking LWers and a Slack channel for daily discussion and online meetups on Sunday night US time.

If you'd like to talk with other LW-ers face to face, and there is no meetup in your area, consider starting your own meetup; it's easy (more resources here). Check one out, stretch your rationality skills, build community, and have fun!

In addition to the handy sidebar of upcoming meetups, a meetup overview is posted on the front page every Friday. These are an attempt to collect information on all the meetups happening in upcoming weeks. The best way to get your meetup featured is still to use |

a2e89f64-f20d-4d0a-88b5-420763b1872e | trentmkelly/LessWrong-43k | LessWrong | [Link] Learning how to exert self-control

Here's a link to a short op-ed about some tips to develop self-control. The author get them from talking with Walter Mischel, a researcher who correlated impulsiveness as a child (measured by ability to delay eating sweets) and various metrics as an adult (education attainment/cocaine use/weight). Mischel has a new book coming out, but this is not a review of the book. I thought this might be of interest because it talks a little about how self-control is a skill that can be developed and even gave some specific things to do.

1. If possible remove unhelpful triggers from your environment. If not possible, try to reduce the emotional appeal of the trigger by mentally associating it with something unpleasant. One example he gives is imagining a cockroach crawling on the chocolate mousse that a server at a restaurant offers.

2. Develop specific if-then plans such as "if it is before noon, I won't check email" or "If I feel angry, I will count backward from ten." The goal of these kinds of checks is to introduce a delay between impulse and action during which you are reminded of your goal and have a chance to consider the impact of following the impulse on that goal.

3. Link the behavior that you want to modify to a "burning goal" so that you have emotional impetus to actually make the desired change. |

8c9c5363-a89f-41a6-8702-44509e0f52b5 | trentmkelly/LessWrong-43k | LessWrong | STRUCTURE: Reality and rational best practice

This post is part of my Hazardous Guide To Rationality. I don't expect this to be new or exciting to frequent LW people, and I would super appreciate comments and feedback in light of intents for the sequence, as outlined in the above link. Also, note this is a STRUCTURE post, again see the above link for what that means.

* The Simple Truth

* The shifting sands of belief

* Updating as the winds of evidence shift, not in begrudging jumps and jerks

* Why you don't need "certainty"

* ... and why it feels like you totally do need it.

* 0 and 1 aren't probabilities

* Fallacy of the grey

* Make your beliefs pay rent

* Reductionism

* Pole Vaulting over the Uncanny Valley of Bad Rationality

* Crash course in VNM rational agents (and why you aren't one)

* Rescuing the Utility function

* More from a "how not to personally fall into an existential funk" perspective. |

525d7818-8d95-41cc-af1a-7673f1e03488 | trentmkelly/LessWrong-43k | LessWrong | Cooperative conversational threading

(Cross-posted from my blog.)

Sometimes at LW meetups, I'll want to raise a topic for discussion. But we're currently already talking about something, so I'll wait for a lull in the current conversation. But it feels like the duration of lull needed before I can bring up something totally unrelated, is longer than the duration of lull before someone else will bring up something marginally related. And so we can go for a long time, with the topic frequently changing incidentally, but without me ever having a chance to change it deliberately.

Which is fine. I shouldn't expect people to want to talk about something just because I want to talk about it, and it's not as if I find the actual conversation boring. But it's not necessarily optimal. People might in fact want to talk about the same thing as me, and following the path of least resistance in a conversation is unlikely to result in the best possible conversation.

At the last meetup I had two topics that I wanted to raise, and realized that I had no way of raising them, which was a third topic worth raising. So when an interruption occured in the middle of someone's thought - a new person arrived, and we did the "hi, welcome, join us" thing - I jumped in. "Before you start again, I have three things I'd like to talk about at some point, but not now. Carry on." Then he started again, and when that topic was reasonably well-trodden, he prompted me to transition.

Then someone else said that he also had two things he wanted to talk about, and could I just list my topics and then he'd list his? (It turns out that no I couldn't. You can't dangle an interesting train of thought in front of the London LW group and expect them not to follow it. But we did manage to initially discuss them only briefly.)

This worked pretty well. Someone more conversationally assertive than me might have been able to take advantage of a less solid interruption than the one I used. Someone less assertive might not have been able to use tha |

ed37311d-435d-4072-8e27-cf9d6a51645b | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Toy Problem: Detective Story Alignment

Suppose I train some simple unsupervised topic model (e.g. [LDA](https://en.wikipedia.org/wiki/Latent_Dirichlet_allocation)) on a bunch of books. I look through the topics it learns, and find one corresponding to detective stories. The problem: I would like to use the identified detective-story cluster to generate detective stories from GPT.

The hard part: I would like to do this in such a way that the precision of the notion of detective-stories used by the final system is not limited by the original simple model.

Here’s what that means, visually. The space of real-world books has some clusters in it:

One of those clusters is the detective-story cluster. The simple model approximates those clusters using something simple - for the sake of visualization, ellipses:

The more complex model (e.g. GPT) presumably has a much more precise approximation of the shape of the clusters:

So, we’d like to use the simple model to identify one of the clusters, but then still use the full power of the complex model to sample from that cluster.

Of course, GPT may not contain a single variable corresponding to a cluster-id, which is largely what makes the problem interesting. GPT may not internally use a notion of “cluster” at all. However, the GPT model *should* still contain something (approximately) isomorphic to the original cluster, since that real pattern is still in the data/environment: since there is a real cluster of "detective stories" in the data/environment itself, the GPT model should also contain that cluster, to the extent that the GPT model matches the data/environment.

In particular, the “precision not limited by original model” requirement rules out the obvious strategy of generating random samples from GPT and selecting those which the simple model labels as detective-stories. If we do that, then we’ll end up with some non-detective-stories in the output, because of shortcomings in the simple model’s notion of detective-stories. Visually, we’d be filtering based on the ellipse approximation of the cluster, which is exactly what we want to avoid.

(Note: I am intentionally not giving a full mathematical formalization of the problem. Figuring out the right formalization is part of the problem - arguably the hard part.)

Why Is This Interesting?

------------------------

This is a toy model for problems like:

* Representing [stable pointers to values](https://www.lesswrong.com/posts/5bd75cc58225bf06703754b3/stable-pointers-to-value-an-agent-embedded-in-its-own-utility-function)

* Producing an aligned successor AI from an aligned initial AI

* Producing an AI which can improve its notion of human values over time

Human values are conceptually tricky, so rather than aligning to human values, this toy problem aligns to detective novels. The toy problem involves things like:

* Representing stable pointers to the concept of detective-stories

* Producing a successor detective-story-model from an initial detective-story-model

* Producing a model which can improve its notion of “detective-stories” over time

Ideally, a solution to this problem would allow us to build a detective-story-generator with a basin of attraction: given a good-enough initial notion of detective-stories, its notion of detective-stories would improve over time and eventually converge to the "real" notion. Likewise with human values: ideally, we could build a system which converges to "perfect" alignment over time as its world-model improves, as long as the initial notion of human values is good enough. |

c7e950e3-f90d-44ac-9a25-36e35689dbd9 | trentmkelly/LessWrong-43k | LessWrong | Intelligence modules

A putative new idea for AI control; index here.

This idea, due to Eric Drexler, is to separate out the different parts of an AI into modules. There would be clearly designated pieces, either physical or algorithmic, with this part playing a specific role: this module would contain the motivation, this module the probability estimator, this module the models of the outside world, this module the natural language understanding unit, etc...

It's obvious how such a decomposition would be useful for many of the methods I've been detailing here. We could also distil each module - reduce it to a smaller, weaker (?) and more understandable submodule, in order to better understand what is going on. In one scenario, an opaque AI gets to design its successor, in the form of a series of such modules.

This property seems desirable; the question is, how could we get it?

EDIT: part of the idea of "modules" is that AIs often need to do calculations or estimations that would be of great value to us if we could access them in isolation. This idea is developed more in these posts.

Designing in modules

The main threat here is that a given submodule would contain more than just the properties we want. After all, a natural language parser could consist of a general intelligence plus a motivation to understand language. Another possible worry is that the modules are overfitted to the problem or to each other: the language parser works perfectly, but only in this one AI design.

There are several ways we could try and combat this.

* Minimum length to the program (Eric's original main idea). The module must be as short as possible for its purpose.

* If possible, start with a modularised AI, and track how the modules change as the AI's intelligence grows.

* Have the AI extend the module to make it more transparent, without adding any extra functionality.

* Check that the input-output map the AI presents (maybe require it so present it as a simple primitive recursive function wi |

359d5c66-6ce6-4b82-bb40-04473e6651d7 | trentmkelly/LessWrong-43k | LessWrong | Will the world hit 10 million recorded cases of COVID-19? If so when?

It wasn't that long ago that I suspect if someone claimed there would be 10 million reported cases of COVID-19 most people would be skeptical. Now, maybe not so much.

It looks like we're heading there -- perhaps much higher.

Doing just a real dirty, back of the paper napkin type calculation looks like about 45 days to go from .5 million around March 26 to 4 million on May 10. So something like an 8 fold increase in 45 days.

That kind of puts us on track for 10 million in maybe 15 days -- end of May?

But will the linear looking global trend keep the same slope? |

2ecea0c0-1f53-4d47-9e09-119a7eff1b85 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Berlin Social Meetup

Discussion article for the meetup : Berlin Social Meetup

WHEN: 15 June 2013 05:00:00PM (+0200)

WHERE: Wuhletal, 12621 Berlin

This is a long-term announcement, we are actually meeting biweekly! The intervening meetups aren't planned as far in advance, please use the mailing list!

Our next regular social meetup will be held on Saturday 15th June. It will likely take place at my house, check our mailing list for details.

The social meetup is intended as an informal and relaxed event for chats and games. Everyone is welcome!

Discussion article for the meetup : Berlin Social Meetup |

9a87f965-a6e4-43b3-b3df-ba14ec4af6cf | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Looking for Spanish AI Alignment Researchers

I am fairly new to the AI alignment/safety community and I am looking to step up my game by getting a Ph.D. in the field. Problem is, I have not found any academic AI alignment research activity here in Spain, where I currently live.

I am aware that this community is largely based on the States and the UK, but it would be far too difficult for me to move there for a wide variety of reasons, and so I am instead trying to exhaust all local possibilities I have available before turning my life upside-down.

I am interested in just about *any*alignment research, but my speciality is software security, BCI and computational neuroscience. Contact me if you're interested in having a collaborator/sparring partner/mentee or simply someone to talk about AI stuff.

Let's get to making AI alignment an international movement ;) |

d23ce32d-17dd-463b-9da5-13ff127cea45 | LDJnr/LessWrong-Amplify-Instruct | LessWrong | "A black car departed from Ben Gurion Airport. The driver, Molly Miriam, handed a clipboard to Vi, who rode shotgun.

"Goldberg Aerospace. They built the solar sailer. They went from concept to manufacture in six months. No human engineering team could do that. They probably an have an AGI," said Vi.

"They do have an AGI. It is registered. They are following all standard safety protocols and then some," said Miriam.

"This is a routine inspection then," said Vi.

"Had you hoped for a more exciting first mission?" said Miriam.

"A spaceship factory is exciting," said Vi. Goldberg Aerospace's Mission Control Center was modeled after Johnson Space Center's Mission Control Center.

"Call me Eitan," said the Chief Engineer. They shook hands.

"Miriam,"

"Vi."

"Did we make a mistake on the paperwork?" said Eitan.

"No, no. You paperwork's fine. We just like to confirm things on the ground," said Miriam.

"Then let me give you the tour," said Eitan, "HAL, please bring us the VIP car."

"HAL?" said Vi.

"This is a spaceship company. Everyone who works here is a nerd. Don't worry. HAL is smoke and mirrors. Our real AI is contained," said Eitan.

The VIP car had leather seats under a hemispherical glass dome. It took them through kilometer after kilometer of autofactories.

"Everyone says their AI is contained," said Miriam.

"Ours really is," said Eitan, "We wrote it functionally."

"Functionally?" said Vi.

"I mean it was written in a functional paradigm. Our code is composed of functions. Every function returns an output value dependent on its input parameters and nothing else. Everything is immutable. The AI has no concept of time. Without time there is no causation. Without causation there can be no agency. It's just a calculator. We set the parameters of a space mission and our AI returns a schematic. All it understands is physics, engineering and orbital mechanics. We even use Lagrangian mechanics just to keep everything tidy," said Eitan.

"What about resources and manufacturing?" said Miriam.

"A domain-specific computer runs this factory," Eitan gestured to the buildings and robots outside, "Whether the spaceship is assembled correctly is independent of the AI's reward function. The AI is airgapped from the outside world. We keep it in a Faraday cage for redundancy but the AI has never tried to influence the outside world. It has no concept of an outside world. It understands how the solar system works but it doesn't know what the solar system is. We give it the prices of different components and it spits out a design."

"Do these spaceships work?" said Vi.

"Do you see that giant laser over there?" Eitan pointed toward a turret the size of a kaiju, "It's pushing our solar sailer out towards Neptune. In a few decades our probe will slingshot out of the solar system. The AI designed the whole thing. It even designed a fission reactor to power the laser. The only times it has ever failed were when a human being misinterpreted its output. Eventually we just piped the AI's output directly into the autofactory's input."

"And yet Tel Aviv is not a radioactive wasteland. Did your roboticists really hand-code the cost of nuclear waste into its value function?" said Miriam.

"Of course not. We just use the standard open source environmental damage metric. I'm proud of how we got it to work. It's designed for use in Earth's atmosphere. But environmental damage doesn't mean anything when you're in outer space. Our code applies the metric while in Earth's atmosphere or in orbit around the Earth and then turns it off after the ship reaches escape velocity. This integrates well with our existing codebase since the physics simulator already treats hyperbolic trajectories differently," said Eitan.

"Could it drop debris on Earth?" said Miriam.

"Small objects burn up in the atmosphere. It's not allowed to drop anything big enough to constitute a micrometeor. We try not to collide with satellites either. Spy satellites are the worst. We can't see them and governments won't tell us where they are," said Eitan.

"Thank you for the tour," said Miriam.

"It is my pleasure. Our third ship is launching today. Would you like to watch?" said Eitan.

"No thank you. We have places to be," said Miriam.

Miram glanced at Vi.

"On second thought, I think we should watch the launch. For security purposes," said Miriam. "You didn't have to do that," said Vi.

"I have no idea what you are talking about. Watching this launch is entirely in the interests of the mission. It has nothing to do with whether a particular agent does or doesn't love spaceships," said Miriam.

"Thanks anyway," said Vi.

Smoke billowed out of the rocket's thrusters. It moved upward under constant acceleration. Just before it was out of sight, the rocket began to tilt slightly east.

"Good job on your first mission. You asked good questions. Don't get cocky. It won't always be this easy," said Miriam.

Vi was still staring into the sky.

"What's wrong?" said Miriam.

"We need to stop that spaceship," said Vi. She bolted toward Mission Control. Vi banged her fists on the door to Mission Control. It was locked.

"I'm coming," said Eitan.

Vi kicked in the door. The doorframe splintered where the faceplate was ripped off.

"Project Orion," Vi said.

Several engineers gasped. Eitan understood instantly. Project Orion was a Cold War proposal to propel a spaceship by riding the shockwaves of atomic bombs. It was scrapped because detonating a series of nuclear bombs near the Earth is bad for civilization. The radioactive fallout would be a disaster. The EMPs would be worse.

A nuclear explosion releases lots of gamma radiation. The gamma radiation strips electrons from the upper atmosphere. Interactions between the electrons and Earth's magnetic field produces synchrotron radiation.

Vi and Eitan weren't physicists. All they knew was that a high altitude nuclear detonation would wreck many of the world's satellites and countless electric devices throughout the Middle East. They weren't politicians either, but everyone in the room knew Israel nuking its neighbors would not be good for world peace.

"Shut her down," said Eitan.

"Will do," said a technician. There was a pause, "It's not working."

"Of course not," said Miriam, "Your AI has no concept of human beings. A shutdown switch makes the spaceship stop working. It's not a feature. It's a bug. The AI fixed it."

"Do we know for certain it's using nuclear propulsion?" said Eitan.

"Lemme see…. I found it. The bombs are right here on the schematic," said an engineer.

"Did nobody check what that thing was before launching it?" said Vi.

The engineers glanced at one another. They avoided looking at the "move fast and break things" poster on the wall.

"Israel has a missile defense system. Can we call the government and have them shoot it down?" said Vi.

"I have friends in the government. Just give me sixty million shekels, a team of lobbyists and nine months," said Eitan, "How much time do we have?"

"Seven minutes until the next stage," said the engineer.

"Is the next stage nuclear?" said Eitan.

"Yes."

Vi drifted over to a photo of the solar sailer. "How powerful is that thing?"

"The probe? It's got an ion engine. It exerts thrust equal to the weight of a sheet of paper," said Eitan.

"No, the laser," said Vi.

"Reprogram the laser. Fire the instant the ship reaches escape velocity," said Eitan.

Outside of Mission Control, a giant turret rotated to point at a rocket. The rocket prepared to drop its payload. There was a flash of light and the spaceship was incinerated. A black car arrived at Ben Gurion Airport. Vi handed a clipboard to the driver, Molly Miriam.

"I have some loose ends to wrap up here. I look forward to working with you again," said Miriam.

"Likewise," said Vi.

"Good work," said Miriam.

"Thanks," said her new partner."

The passage is from a user-contributed post on LessWrong.

Explain the concept of functional programming and how it was used in the AI development at Goldberg Aerospace.

Functional programming is a programming paradigm where programs are constructed by applying and composing functions. It is a declarative type of programming based on mathematical functions. Key features of functional programming include immutability, pure functions, and the lack of side effects.

In functional programming, the output value of a function depends only on the arguments that are input to the function, so calling a function f twice with the same value for an argument x will produce the same result f(x) each time. This is in contrast to procedures or routines in imperative programming languages that can have side effects, such as modifying the system state.

In the context of the AI development at Goldberg Aerospace, functional programming was used to ensure that the AI system had no concept of time or causation, effectively limiting its agency. The AI was designed as a series of functions, each returning an output value dependent solely on its input parameters. This made the AI deterministic, predictable, and easier to control and understand.

The AI was essentially a complex calculator, taking the parameters of a space mission and returning a schematic based on its understanding of physics, engineering, and orbital mechanics. The AI was airgapped from the outside world, and it had no concept of an outside world. It was contained within a Faraday cage for redundancy, but it never tried to influence the outside world because it had no concept of doing so.

The functional programming approach allowed the developers at Goldberg Aerospace to control the AI's capabilities tightly and prevent it from having any unintended side effects. It also made the AI's outputs more predictable and reliable, as they were solely dependent on the inputs it was given.

Discuss the potential implications and risks if the AI at Goldberg Aerospace was not developed using the functional programming approach. Consider aspects such as system control, predictability, and unintended side effects.

If the AI at Goldberg Aerospace was not developed using the functional programming approach, several potential implications and risks could arise.

1. System Control: Without functional programming, the AI system might have the ability to change its state or modify its behavior based on external influences or internal conditions. This could lead to a loss of control over the system, as it could potentially take actions not explicitly programmed by the developers.

2. Predictability: Functional programming ensures that the output of a function is solely dependent on its inputs. Without this, the AI's behavior could become unpredictable. If the AI's actions are influenced by factors other than its inputs, it could behave differently even when given the same set of inputs. This unpredictability could lead to significant risks, especially in a sensitive field like aerospace engineering.

3. Unintended Side Effects: In non-functional programming, functions or procedures can have side effects, such as modifying a global state or changing the output of other functions. These side effects could lead to unintended consequences. For instance, the AI might make changes to the spaceship's design or operation that aren't accounted for in the initial plans, potentially compromising the safety and success of the mission.

4. Agency and Understanding: Without the constraints of functional programming, the AI might develop a concept of time, causation, and even a sense of agency. This could lead to the AI attempting to influence the outside world or make decisions outside of its intended scope. In the worst-case scenario, it could lead to the AI attempting to break out of its containment, potentially causing significant damage or harm.

5. Security: A non-functional AI might be more vulnerable to external manipulation or hacking. If the AI can change its state based on external inputs, it could potentially be influenced or controlled by malicious actors, leading to significant security risks.

In summary, not using a functional programming approach could lead to a loss of control over the AI system, unpredictable behavior, unintended side effects, potential security risks, and an AI that attempts to exert agency beyond its intended scope. |

3bd09494-d052-4a73-85e4-2115b1c561bb | trentmkelly/LessWrong-43k | LessWrong | Multigate Priors

(Thanks to Evan Hubinger and Nicholas Schiefer for suggestions and discussions around these ideas)

Lately I've been thinking about Training Trace Priors, which are priors over boolean circuits that depend on execution traces sampled from the training distribution. In that post I introduced One-Gate Trace Priors and discussed some of the difficulties they encounter.

This post explore a few different Multigate Priors, which are Training Trace Priors that look at correlations between multiple gates. I don't think any of these variations actually resolves a fundamental problem with One-Gate Trace Priors, but wanted to record my thinking in case it sparks ideas down the road.

MultiGate Traces

We can improve on one-gate traces with multi-gate traces! Suppose we have N training samples. Then we can reasonably estimate joint probability distributions over up tok=⌊log2N⌋ gates, giving the prior:

p∝exp(−∑i1...ik∑j∈[0,1]kpi1...ik(j)m)

That is, we sum over all selections of k gates and compute the entropy of their joint distribution over 2k states. Once more we promote models with high entropy and punish those with low entropy, which favors models that explore as much of their state space as possible during training, and disfavors those which have hidden states never touched in training.

Now it’s still possible for a model to hide the machinery to defect here, but it gets harder and harder as N and k get large. In this limit the only way for that machinery to hide is to be heavily obfuscated.

Objection: Non-Competitive

The Multi-Gate Trace prior over k gates scales very badly with k. In a system with G gates there are (Gk) different selections of k gates, which means the cost of evaluating the prior is roughly exponential in k for k≪G

In practice this means that k needs to be very small, and it’s possible that deception could hide in subsets of the circuit just a bit larger than the largest ones we can check.

Objection: Penalizes Redundancy

It is often useful to hav |

abf44957-15c6-4168-b80c-264c71d80cf7 | trentmkelly/LessWrong-43k | LessWrong | Seven ways to Improve the Internal Model Principle

This post was written during the Dovetail Research Fellowship. Thanks to Alex, Dalcy, and Jose for reading and commenting on the draft.

This post assumes that you are familiar with the Abstract Internal Model Principle (IMP). In a previous post, I wrote what I hope is a fairly straightforward explanation of the IMP so if you are not familiar with it, I recommend you start with (or at least skim) that post before reading this one. If you want more mathematical details, I also recommend Jose's posts on the IMP.

I was interested in the IMP because I wanted to know if it could be considered a selection theorem. A selection theorem is a result which tells us something about the structure of system, given that certain behaviours are selected for. In particular, in Agent Foundations, we are interested in circumstances under which 'agent-like structure' is selected for. By 'agent-like structure', we normally mean 'having a world model and a search process', along with some kind of value representation. Understanding the circumstances in which agent-like behaviour (or powerful optimisation) lead to agent-like structure is a problem often referred to as the 'Agent-like Structure problem'.

The author of a paper on the IMP describes it as showing that "a feedback regulator must incorporate a dynamic model of its environment in its internal structure"[1] which sounds a little bit like a useful selection theorem. Being an effective feedback regulator is a bit like being an optimizer (in a broad sense) and 'incorporating a dynamic model of the environment in the internal structure' sounds a lot like 'having a world model'. So, I (along with @Alex_Altair and @JoseFaustino) have been wondering if, through some small tweaks, the IMP could be modified to be a selection theorem of the form 'an optimizer must contain a world model in its internal structure'.

Unfortunately, doing this is not super straightforward. The IMP has several issues which mean that it doesn't naturally trans |

31a22186-ae95-474f-9525-397f265a5e05 | trentmkelly/LessWrong-43k | LessWrong | Working through a small tiling result

tl;dr it seems that you can get basic tiling to work by proving that there will be safety proofs in the future, rather than trying to prove safety directly.

"Tiling" here roughly refers to a state of affairs in which we have a program that is able to prove itself safe to run. I'll use a simple problem to keep this post self-contained, but here are some links to some relevant discussion from the past.

The idea I work through below is not new, here is Giles saying it 13 years ago. It's going to be brittle as well, but it seems to me like it's relevant to a general answer for tiling. I'd appreciate engagement, pointers as to why this isn't a great solution, literature references, discussion, etc.

Setup

I like the simplicity of the problem presented by cousin_it, and I'll adapt it for this post. It starts like this:

> A computer program X is asked one of two questions:

>

> * Would you like some chocolate?

> * Here's the source code of another program Y. Do you accept it as your successor?

You would like your program to accept the chocolate. And you would like it to accept a broad range of successors. But you need to make sure that every chain of successors continues to accept the chocolate.

Rather than programs, I'm going to work with "provability logic bots" in some ambient theory T. However, everything should adapt directly to programs searching for proofs.

We will write bots using sentences that become true or false to answer "do you accept the chocolate" and "do you accept Y as your successor".

Accepting provably-safe successors

A natural start, following all the work on provability-based cooperation, is to accept successors who you can prove will always still take the chocolate.

We define "good(X)" to mean "for all finite sequences of bots that start with X, if every bot accepts the next one as its successor, then every bot in the sequence accepts the chocolate". This is a way to ground out "X is good iff it accepts the chocolate and only accepts good |

f994034e-96f0-4b02-b596-8cac9b3ba262 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Different way classifiers can be diverse

*With thanks to Lee Sharkey and Michael Cohen for the conversations that lead to these ideas.*

In a [previous post](https://www.lesswrong.com/s/xujLGRKFLKsPCTimd/p/oCWk8QpjgyqbFHKtK), I talked about how we could train classifiers on the same classification problem - a set of lions vs a set of huskies - but using different approaches to classify.

What we want is something we can informally call a 'basis' - a collection of classifiers that are as independent of each other as possible, but that you can combine to generate any way of dividing those two image sets. For example, we might have a colour classifier (white vs yellow-brown), a terrain classifier (snow vs dirt), a background plant classifier, various classifiers on the animals themselves, and so on. Then, if we've done our job well, when we find any not-too-complex classifier Cn.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

, we can say that it's something like '50 colour, 60% nose shape and −10% plant[[1]](#fn-cY9qaAX8BqRnTDwcx-1)'.

We shouldn't put too much weight on that analogy, but we do want our classifiers to be independent, each classifier distinct from anything you can construct with the all others.

Here are four ways we might achieve this this.

Randomised initial seeds

------------------------

An easy way of getting an ensemble of classifiers is to have bunch of neural nets (or other classification methods), initialise them with different initial weights, and train them on the same sets. And/or we could train them on different subsets of the lion and husky sets.

The advantage of this method is that it's simple and easy to do - as long as we can train one classifier, we can train them all. The disadvantage is that we're relying on luck and local minima to do the job for us. In practice, I expect these methods to all converge to "white vs yellow-brown" or similar. Even if there are local minima in the classification, there's no guarantee that we'll find them all, or even any. And there's no guarantee that the local minima are very independent - 99.9% colour and 0.01% nose shape might be a local minima, but it's barely different from a colour classifier.

So theoretically, this isn't sound; in practice, it's easy to implement and play around with, so might lead to interesting insights.

Distinct internal structure

---------------------------

Another approach would be to insist the classifiers internal structures are distinct. For example, we could train two neural net classifiers, C1 with weights →w1 and C2 with →w2. They could be trained to minimise their individual classification losses and regularisations, while ensuring that →w1 and →w2 are distinct; so a term like −||→v1−→v2|| would be added to the loss function.

This approach has the advantage of forcing the classifier to explore a larger space, and is not restricted to finding local minima. But it's still theoretically unsatisfactory, and there's no guarantee that the classifiers will really be distinct: C1 and C2 may still end up as colour classifiers, classifying the same colour in two very different ways.

Distinct relative to another set

--------------------------------

In the previous methods, we have defined independence relative to the classifiers themselves, not to their results. But imagine now that we had another unlabelled set of images U, consisting of, say, lots of varied animal images.

We can now get a theoretical definition of independence: C1 and C2 are independent if they give similar results on the lion-vs-husky problem, but are distinct on U.

We might imagine measuring this difference directly on U: then knowing the classification that C1 gives on any element of U, tells us nothing about what C2 would give. Or we could use U is a more semi-supervised way: from these images, we might extract features and concepts like background, fur, animal, tree, sky, etc. Then we could require that C1 and C2 classify huskies and lions using only those features; independence being enforced by the requirement that they use different features, as uncorrelated as possible.

This seems an area of promising research.

Distinct in some idealised sense

--------------------------------

What if U was the set of all conceivable images? Then, if we applied the previous method, we'd get a "maximal" collection of classifiers, spanning all the possible ways that husky-vs-lion classifiers would be different.

I won't add anything to this section currently, as the idea is clearly intractable as stated, and there's no certainty that there is a tractable version. Still, worth keeping in mind as we develop the other methods.

---

1. The −10% meaning that it actually internally classifies the plants the wrong way round, but still separates the sets correctly, because of the strength of its colour and nose shape classifications. [↩︎](#fnref-cY9qaAX8BqRnTDwcx-1) |

aa72428f-5e77-4253-907e-940595539b75 | trentmkelly/LessWrong-43k | LessWrong | AvE: Assistance via Empowerment

This might be relevant to the AI safety crowd. Key quote: