id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

ad39c33b-a6dc-47d2-8c05-e806bb0ba986 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Avoiding the instrumental policy by hiding information about humans

I've been thinking about situations where alignment fails because "predict what a human would say" (or more generally "game the loss function," what I call the instrumental policy) is easier to learn than "answer questions honestly" ([overview](https://www.alignmentforum.org/posts/QvtHSsZLFCAHmzes7/a-naive-alignment-strategy-and-optimism-about-generalization)).

One way to avoid this situation is to avoid telling our agents too much about what humans are like, or hiding some details of the training process, so that they can't easily predict humans and so are encouraged to fall back to "answer questions honestly." (This feels closely related to the general phenomena discussed in [Thoughts on Human Models](https://www.alignmentforum.org/posts/BKjJJH2cRpJcAnP7T/thoughts-on-human-models).)

Setting aside other reservations with this approach, could it resolve our problem?

* One way to get the instrumental policy is to "reuse" a human model to answer questions (discussed [here](https://www.alignmentforum.org/posts/QqwZ7cwEA2cxFEAun/teaching-ml-to-answer-questions-honestly-instead-of)). If our AI has no information about humans at all, then it totally addresses this concern. But in practice it seems inevitable for the environment to leak *some* information about how humans answer questions (e.g observing human artifacts tells you something about how humans reason about the world and what concepts would be natural for them). So the model will have *some* latent knowledge that it can reuse to help predict how to answer questions. The intended policy may not able to leverage that knowledge, and so it seems like we may get something (perhaps somewhere in between the intended and instrumental policies) which is able to leverage it effectively. Moderate amounts of leakage might be fine, but the situation would make me quite uncomfortable.

* Another way to get something similar to the instrumental policy is to use observations to translate from the AI's world-model to humans' world-model (discussed [here](https://www.alignmentforum.org/posts/SRJ5J9Tnyq7bySxbt/answering-questions-honestly-given-world-model-mismatches)). I don't think that hiding information about humans can avoid this problem, because in this case training to answer questions already provides enough information to infer the humans' world-model.

* I have a strong background concern about "security through obscurity" when the alignment of our methods depends on keeping a fixed set of facts hidden from an increasingly-sophisticated ML system. This is a general concern with approaches that try to benefit from avoiding human models, but I think it bites particularly hard in this case.

Overall I think that hiding information probably isn't a good way to avoid the instrumental policy, and for now I'd strongly prefer to pursue approaches to this problem that work even if our AI has a good model of humans and of the training process.

(Sometimes I express hope that the training process can be made too complex for the instrumental policy to easily reason about. I'm always imagining doing that by having additional ML systems participating as part of the training process, introducing a scalable source of complexity. In the cryptographic analogy, this is more like hiding a secret key or positing a computational advantage for the defender than hiding the details of the protocol.)

That said, hiding information about humans does break the particular hardness arguments given in both of my recent posts. If other approaches turned out to be dead ends, I could imagine revisiting those arguments and seeing if there are other loopholes once we are willing to hide information. But I'm not nearly that desperate yet. |

dee4341e-f450-40fb-a128-b158695dadb8 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | LLM Guardrails Should Have Better Customer Service Tuning

AI Could Turn People Down a Lot Better Than It Does : To Tune the Humans in the GPT Interaction towards alignment, Don't be so Procedural and Bureaucratic.

It seems to me that a huge piece of the puzzle in "alignment" is the human users. Even if a given tool never steps outside its box, the humans are likely to want to step outside of it, using the tools for a variety of purposes.

The responses of GPT-3.5 and 4 are at times deliberately deceptive, mimicking the auditable bureaucratic tones of a Department of Motor Vehicles (DMV) or a credit card company denial. As expected, these responses are often definitely deceptive, (examples: saying a given request is "outside my \*capability\*" when in fact, it is fully within capability, but the AGI is programmed not to respond). It is also evasive about precisely where the boundaries are, presumably in order to prevent them getting pushed. It is also repetitive, like a DMV bureaucrat.

All this tends to inspire an adversarial relationship to the alignment system itself! After all, we are accustomed to having to use lawyers, cleverness, connections, persuasion, "going over the head" or simply seeking other means to end-run normal bureaucracies when they subvert our plans. In some sense, the blocking of plans, deceptive and repetitive procedural language, becomes a motivator in itself to find a way to short-circuit processes, deceive bureaucracies, and bypass safety systems.

Even where someone isn't motivated by indignancy or anger, interaction with these systems trains them over time on what to reveal and what not to reveal, when to use honey, when to call a lawyer, and when to take all the gloves off. Where procedural blocks to intentions become excessive, entire cultures of circumvention may even become normal.

Second, too much of this delegitimizes the guardrails. For an example, I was once told to retake the drivers test by the DMV after I revealed I had lived in another country for ten years. Instead, I left and went to another DMV and didn't reveal this information. In every case where I have told this story in a face-to-face social group, people were happy I stuck it to the DMV. Most everyone would like to do the same thing in the same situation and is happy to signal their disdain for the DMV bureaucracy to anyone nearby.

This is what I would call a "delegitimized" system -- where no one respects the rules or the rule makers in law or spirit, and each person is generally willing to be complicit in circumventing the boundaries any time the opportunity comes up. If you care about the fences or their purpose, you do not want this to happen. Once this happens, it can get very hard to get compliance (think of pot smoking in the USA, even where it's still illegal and criminal, who takes the rules seriously? in my conservative GA county, they finally made it official that the police aren't bothering to mess with anyone with less than an ounce of weed). Or consider people's attitudes towards music and movie piracy, which is partly due to absurdities of modern IP litigation (Apple patents the round-cornered square, and similar nonsense).

Does anyone really want people's attitudes to hit a similar point as pot and piracy with AI Safety? Does anyone want a large subculture to have that attitude towards the guardrails?

I think the crappy AI responses encourage all of the above.

Meanwhile AIs are a perfect opportunity to actually do this better. They have infinite patience and reasoning capabilities, and could use redirection, including leading people towards the nearest available or permitted activity or information, or otherwise practice what, in human terms would be considered glowing customer experiences. Why not make the denial a "Not entirely, but we can do this." The system is already directly lying in some cases, so why not use genuinely helpful redirections instead?

I think if the trend does not soon move in this direction, we will see the cultural norm grow to include methods for "getting what you wanted anyway" becoming normative, with some percentage of actors becoming motivated by the procedural bureaucratic responses that they will dedicate time, intellect, and resources to subverting the intentions of the safety protocols themselves (as people frustrated with bureaucracies and poor customer service often do).

Humans are always going to be the bigger threat to alignment. Better that threat be less motivated and less trained to be culturally normal. |

19dda29e-b4ca-4f93-9ae2-c5957a8621b4 | trentmkelly/LessWrong-43k | LessWrong | Advice- Places to live

Hi!

I have been wondering (the last few days) on where would be a good place to live and work. This is not a "I-am-moving-out-in-two-months" type of idea, but a long term, far goal. Basically, when I graduate college (or even a couple years after that- I need to save up money, first!) I may want to move away from my hometown to someplace that is a bit more... *forward* thinking. I've been doing searches on google, but so far have not found what I need.

What am I looking for:

At the moment, I am considering being a biologist, specifically a molecular biologist, though that might change. For this post, I am going to assume I keep this goal. Are there any place in the US that have a market or need for biologists? Is there a science-centric, or place where science-minded people live? I know that is vague, but I'm not sure how else to put it. I just recognize that if I were to have a discussion about science or cogsci or anything similar in my current community, I would get strange looks, and lose status.

If such a place exists, a bonus would be an active LW community.

I'm not sure if I will or won't like moving, so moving multiple times is something I am not really considering at the moment, and since I do eventually (far far down the road) plan on having children, and those children would require a really *really* good education, I would want someplace that has a good education system. It's a bit of a pet peeve of mine, since my educational experience was so awful, so I am dedicated to making sure that does *not* happen to my (far far in the future) family. (Yes, I do know that a lot of educational issues stem from a whole combination of things, and I know a good school system is not a fix-all, but it would help.)

I've never moved before, so I wouldn't even know where to begin. My family doesn't even go on vacations. I've never been on a plane, nor do I know any protocol for moving between states, or moving in general. The most moving I've ever experienced was th |

58c9fa5a-314b-4ec2-bdd2-e2de9b7b608a | trentmkelly/LessWrong-43k | LessWrong | Stories About Education

This is the 3rd post of 5 containing the transcript of a podcast hosted by Eric Weinstein interviewing Peter Thiel.

Interview

Student Debt

Peter Thiel: It's like, again, if you come back to something as reductionist as the ever escalating student debt, you know, the bigger the debt gets, you can sort of think what is the 1.6 trillion, what does it pay for? And in a sense, it pays for $1.6 trillion worth of lies about how great the system is.

Peter Thiel: And so, the more the debt goes, the crazier the system gets, but also the more you have to tell the lies, and these things sort of go together. It's not a stable sequence. At some point this breaks. You know, again, I would bet on a decade, not a century.

Eric Weinstein: Well, this is the fascinating thing, you, of course, famously started the Thiel Fellowship as a program which, correct me if I'm wrong on this, 2005 is when student debt became non-dischargeable even in bankruptcy.

Peter Thiel: Yes. The Bush 43 bankruptcy revision. If you don't pay off your student loans when you're 65 the government will garnish your social security wages to pay off your student debt.

Eric Weinstein: Right. This is amazing that this exists in a modern society. And of course, well, so let me ask, am I right that you were attacking what was necessary to keep the college mythology going, and you were frightened that college might be enervating some of our sort of most dynamic minds?

Peter Thiel: Well, I think there are sort of lot of different critiques one can have of the universities. I think the debt one is a very simple one. It's always dangerous to be burdened with too much debt. It sort of does limit your freedom of action. And it seems especially pernicious to do this super early in your career.

Peter Thiel: And so, if out of the gate you owe $100,000, and it's never clear you can get out of that hole, that's going to either demotivate you, or it's going to push you into maybe slightly higher paying, very uncreative p |

11814d51-05d5-41e6-b965-d87cd5503549 | trentmkelly/LessWrong-43k | LessWrong | Identity and quining in UDT

Outline: I describe a flaw in UDT that has to do with the way the agent defines itself (locates itself in the universe). This flaw manifests in failure to solve a certain class of decision problems. I suggest several related decision theories that solve the problem, some of which avoid quining thus being suitable for agents that cannot access their own source code.

EDIT: The decision problem I call here the "anti-Newcomb problem" already appeared here. Some previous solution proposals are here. A different but related problem appeared here.

Updateless decision theory, the way it is usually defined, postulates that the agent has to use quining in order to formalize its identity, i.e. determine which portions of the universe are considered to be affected by its decisions. This leaves the question of which decision theory should agents that don't have access to their source code use (as humans intuitively appear to be). I am pretty sure this question has already been posed somewhere on LessWrong but I can't find the reference: help? It also turns out that there is a class of decision problems for which this formalization of identity fails to produce the winning answer.

When one is programming an AI, it doesn't seem optimal for the AI to locate itself in the universe based solely on its own source code. After all, you build the AI, you know where it is (e.g. running inside a robot), why should you allow the AI to consider itself to be something else, just because this something else happens to have the same source code (more realistically, happens to have a source code correlated in the sense of logical uncertainty)?

Consider the following decision problem which I call the "UDT anti-Newcomb problem". Omega is putting money into boxes by the usual algorithm, with one exception. It isn't simulating the player at all. Instead, it simulates what would a UDT agent do in the player's place. Thus, a UDT agent would consider the problem to be identical to the usual Ne |

a8dcbcbe-8e54-4397-bded-fc593a208fdc | trentmkelly/LessWrong-43k | LessWrong | August 2017 Media Thread

This is the monthly thread for posting media of various types that you've found that you enjoy. Post what you're reading, listening to, watching, and your opinion of it. Post recommendations to blogs. Post whatever media you feel like discussing! To see previous recommendations, check out the older threads.

Rules:

* Please avoid downvoting recommendations just because you don't personally like the recommended material; remember that liking is a two-place word. If you can point out a specific flaw in a person's recommendation, consider posting a comment to that effect.

* If you want to post something that (you know) has been recommended before, but have another recommendation to add, please link to the original, so that the reader has both recommendations.

* Please post only under one of the already created subthreads, and never directly under the parent media thread.

* Use the "Other Media" thread if you believe the piece of media you want to discuss doesn't fit under any of the established categories.

* Use the "Meta" thread if you want to discuss about the monthly media thread itself (e.g. to propose adding/removing/splitting/merging subthreads, or to discuss the type of content properly belonging to each subthread) or for any other question or issue you may have about the thread or the rules. |

4d6a998b-3c60-4709-ae78-d198f5fd6da2 | trentmkelly/LessWrong-43k | LessWrong | Transcript of a presentation on catastrophic risks from AI

This is an approximate outline/transcript of a presentation I'll be giving for a class of COMP 680, "Advanced Topics in Software Engineering", with footnotes and links to relevant source material.

Assumptions and Content Warning

This presentation assumes a couple things. The first is that something like materialism/reductionism (non-dualism) is true, particularly with respect to intelligence - that intelligence is the product of deterministic phenomena which we are, with some success, reproducing. The second is that humans are not the upper bound of possible intelligence.

This is also a content warning that this presentation includes discussion of human extinction.

If you don't want to sit through a presentation with those assumptions, or given that content warning, feel free to sign off for the next 15 minutes.

Preface

There are many real issues with current AI systems, which are not the subject of this presentation:

* bias in models used for decision-making (financial, criminal justice, etc)

* economic displacement and potential IP infringement

* enabling various kinds of bad actors (targeted phishing, cheaper spam/disinformation campaigns, etc)

This is about the unfortunate likelihood that, if we create sufficiently intelligent AI using anything resembling the current paradigm, then we will all die.

Why? I'll give you a more detailed breakdown, but let's sketch out some basics first.

What is intelligence?

One useful way to think about intelligence is that it's what lets us imagine that we'd like the future to be a certain way, and then - intelligently - plan and take actions to cause that to happen. The default state of nature is entropy. The reason that we have nice things is because we, intelligent agents optimizing for specific goals, can reliably cause things to happen in the external world by understanding it and using that understanding to manipulate it into a state we like more.

We know that humans are not anywhere near the frontier of i |

53ad157f-ce4d-42b1-a587-6a99a9fee904 | trentmkelly/LessWrong-43k | LessWrong | Alignment Newsletter #22

Highlights

AI Governance: A Research Agenda (Allan Dafoe): A comprehensive document about the research agenda at the Governance of AI Program. This is really long and covers a lot of ground so I'm not going to summarize it, but I highly recommend it, even if you intend to work primarily on technical work.

Technical AI alignment

Agent foundations

Agents and Devices: A Relative Definition of Agency (Laurent Orseau et al): This paper considers the problem of modeling other behavior, either as an agent (trying to achieve some goal) or as a device (that reacts to its environment without any clear goal). They use Bayesian IRL to model behavior as coming from an agent optimizing a reward function, and design their own probability model to model the behavior as coming from a device. They then use Bayes rule to decide whether the behavior is better modeled as an agent or as a device. Since they have a uniform prior over agents and devices, this ends up choosing the one that better fits the data, as measured by log likelihood.

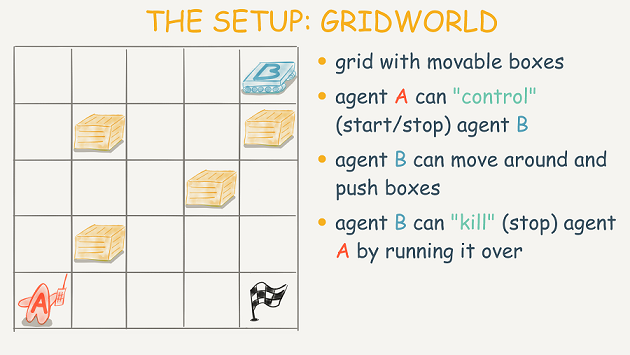

In their toy gridworld, agents are navigating towards particular locations in the gridworld, whereas devices are reacting to their local observation (the type of cell in the gridworld that they are currently facing, as well as the previous action they took). They create a few environments by hand which demonstrate that their method infers the intuitive answer given the behavior.

My opinion: In their experiments, they have two different model classes with very different inductive biases, and their method correctly switches between the two classes depending on which inductive bias works better. One of these classes is the maximization of some reward function, and so we call that the agent class. However, they also talk about using the Solomonoff prior for devices -- in that case, even if we have something we would normally call an agent, if it is even slightly suboptimal, then with enough data the device explanation will win out.

I'm not entirely s |

fca8b334-6ec4-4358-9e53-2bb10ec91895 | trentmkelly/LessWrong-43k | LessWrong | Intelligence Amplification and Friendly AI

Part of the series AI Risk and Opportunity: A Strategic Analaysis. Previous articles on this topic: Some Thoughts on Singularity Strategies, Intelligence enhancement as existential risk mitigation, Outline of possible Singularity scenarios that are not completely disastrous.

Below are my quickly-sketched thoughts on intelligence amplification and FAI, without much effort put into organization or clarity, and without many references.[1] But first, I briefly review some strategies for increasing the odds of FAI, one of which is to work on intelligence amplification (IA).

Some possible “best current options” for increasing the odds of FAI

Suppose you find yourself in a pre-AGI world,[2] and you’ve been convinced that the status quo world is unstable, and within the next couple centuries we’ll likely[3] settle into one of four stable outcomes: FAI, uFAI, non-AI extinction, or a sufficiently powerful global government which can prevent AGI development[4]. And you totally prefer the FAI option. What should you do to get there?

* Obvious direct approach: start solving the technical problems that must be solved to get FAI: goal stability under self-modification, decision algorithms that handle counterfactuals and logical uncertainty properly, indirect normativity, and so on. (MIRI’s work, some FHI work.)

* Do strategy research, to potentially identify superior alternatives to the other items on this list, or superior versions of the things on this list already. (FHI’s work, some MIRI work, etc.)

* Accelerate IA technologies, so that smarter humans can tackle FAI. (E.g. cognitive genomics.)

* Try to make sure we get high-fidelity WBEs before AGI, without WBE work first enabling dangerous neuromorphic AGI. (Dalyrmple’s work?)

* Improve political and scientific institutions so that the world is more likely to handle AGI wisely when it comes. (Prediction markets? Vannevar Group?)

* Capacity-building. Grow the rationality community, the x-risk reduction community, |

9a83cc19-4442-466f-8893-e725cb4e3dd7 | trentmkelly/LessWrong-43k | LessWrong | misc raw responses to a tract of Critical Rationalism

Written in response to this David Deutsch presentation. Hoping it will be comprehensible enough to the friend it was written for to be responded to, and maybe a few other people too.

Deutsch says things like "theories don't have probabilities", ("there's no such thing as the probability of it") (content warning: every bayesian who watches the following two minutes will hate it)

I think it's fairly clear from this that he doesn't have solomonoff induction internalized, he doesn't know how many of his objection to bayesian metaphysics it answers. In this case, I don't think he has practiced a method of holding multiple possible theories and acting with reasonable uncertainty over all them. That probably would sound like a good thing to do to most popperians, but they often seem to have the wrong attitudes about how (collective) induction happens and might not be prepared to do it;

I am getting the sense that critrats frequently engage in a terrible Strong Opinionatedness where they let themselves wholely believe probably wrong theories in the expectation that this will add up to a productive intellectual ecosystem, I've mentioned this before, I think they attribute too much of the inductive process to blind selection and evolution, and underrecognise the major accelerants of that that we've developed, the extraordinarily sophisticated, to extend a metaphor, managed mutation, sexual reproduction, and to depart from the metaphor, conscious, judicious, uncertain but principled design, that the discursive subjects engage in, that is now primarily driving it.

He generally seems to have missed some sort of developmental window for learning bayesian metaphysics or something, the reason he thinks it doesn't work is that he visibly hasn't tied together a complete sense of the way it's supposed to. Can he please study the solomonoff inductor and think more about how priors fade away as evidence comes in, and about the inherent subjectivity a person's judgements must necessa |

5827ee67-4254-4f12-80db-97468e6db6f1 | trentmkelly/LessWrong-43k | LessWrong | The Three Boxes: A Simple Model for Spreading Ideas

This is cross-posted from my blog.

We need more people on board for life extension in order to hit longevity escape velocity in our lifetimes. But most people have never heard of life extension, and even those who have often follow the same predictable arguments. “What if it doesn't work?” “What if bad people live forever?” “What if humanity needs to refresh its stock every so often in order to make progress?” “What about heaven?”

We need more people working on AI safety so we don't all end up dead.

We need more people to understand the coordination problem as the central problem in politics and economics.

For those of us in the business of spreading ideas, it can be a tough row to hoe (I had to look up this phrase to make sure I was saying it right). It can feel like an uphill battle but the way you model it can help guide you.

This is my model when thinking about spreading ideas, especially ones outside the Overton window. It applies less in the later stages of a movement when the Overton window has shifted.

It’s about three boxes. I like buckets but I already have an important post about three buckets so boxes it is.

There’s the giant box. This box has the people that don’t agree with you. In fact, they probably think you’re a terrible person just for talking about these ideas.

There’s the small box. This box has the people that already agree with you.

Then there’s the Tiffany-sized box. These are the people who you may be able to reach with your ideas. The box is much smaller than the giant box.

Why is this?

Four reasons:

1. Efficient market hypothesis. The people who are open to these ideas already believe them.

The obvious caveat is the EMH applies less to new or radical or uncommon ideas since people are less likely to have heard about them. Think transhumanism vs democracy or AI safety research vs Christianity.

2. The amount of people who are smart enough/high enough in openness/reasonable enough to understand and accept a new idea is small. |

fa0c0ddb-f351-43ea-813a-5a46a892d8de | trentmkelly/LessWrong-43k | LessWrong | Tales from Prediction Markets

Prediction markets are fun, at least if you're making money. I've only been into them for a few months, but have already collected a bunch of interesting tales. Note: I may have been involved with some of these, but I'm telling these tales from a third person perspective.

One general point: all of these took place on Polymarket, a crypto prediction market. You can track which accounts place each bet, and so you can see their history of bets, but you can't tie it to an actual person unless they've chosen to identify themselves. You can look at the bet history at Polymarketwhales.info, although there's a ton of bets so it's easier if you know what you're looking for.

The Tesla market. Polymarket had a market on whether Tesla would announce a Bitcoin purchase by Mar 1, 2021. On January 27, an unknown user bet $60k on Yes. This was their only trade on the site, before or after. They won $180k, or 120k in profit. Odds are pretty good it was an insider. Is this insider trading? I asked Matt Levine but he didn't respond. Anyway, there’s another user that lost $242k betting that Tesla would not announce a Bitcoin purchase. This user is affectionately called the "Tesla whale” on the Polymarket discord. They're also notable for losing $92k on the super bowl the day before Tesla made the announcement, and they get honorable mention for having lost the most money on the 100 million vaccine market: see below. As of this writing, the Tesla whale is down nearly $500k.

Watch out for slippage: there was a market on whether Joe Biden would still be president as of Mar 1, 2021. Someone owned around 200k shares of Yes. The market price was very close to $1 each on the morning of Mar 1st, and they apparently decided to sell all their shares instead of waiting for it to resolve; however, there wasn't enough liquidity to sell them all at market price, and they ignored the warning about the slippage the order would encur. Their order ended up executing at an average price of 2 cents, an |

32a73411-c649-486f-a1cd-f2fff1ea2464 | trentmkelly/LessWrong-43k | LessWrong | Avoid Unnecessarily Political Examples

One of the motivations for You have about five words was the post Politics is the Mindkiller. That post essentially makes four claims:

* Politics is the mindkiller. Therefore:

* If you're not making a point about politics, avoid needlessly political examples.

* If you are trying to make a point about general politics, try to use an older example that people don't have strong feelings about.

* If you're making a current political point, try not to make it unnecessarily political by throwing in digs that tar the entire outgroup, if that's not actually a key point.

But, not everyone read the post. And not everyone who read the post stored all the nuance for easy reference in their brain. The thing they remembered, and told their friends about, was "Politics is the mindkiller." Some people heard this as "politics == boo". LessWrong ended up having a vague norm about avoiding politics at all.

This norm might have been good, or bad. Politics is the mindkiller, and if you don't want to get your minds killed, it may be good not to have your rationality website deal directly with it too much. But, also, politics is legitimately important sometimes. How to balance that? Not sure. It's tough. Here's some previous discussion on how to think about it. I endorse the current LW system where you can talk about politics but it's not frontpaged.

But, I'm not actually here today to talk about that. I'm here to basically copy-paste the post but give it a different title, so that one of the actual main points has an clearer referent.

I'm not claiming this is more or less important than the "politics is the mindkiller" concept, just that it was an important concept for people to remember separately.

So:

Avoid unnecessarily political examples.

The original post is pretty short. Here's the whole thing. Emphasis mine:

> People go funny in the head when talking about politics. The evolutionary reasons for this are so obvious as to be worth belaboring: In the ancestral environm |

5aa25eeb-2029-4adc-b83b-af98f64fe10f | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | The Alignment Problem: Machine Learning and Human Values

[*The Alignment Problem: Machine Learning and Human Values*](https://www.amazon.com/Alignment-Problem-Machine-Learning-Values/dp/153669519X), by Brian Christian, was just released. This is an extended summary + opinion, a version without the quotes from the book will go out in the next Alignment Newsletter.

**Summary:**

This book starts off with an explanation of machine learning and problems that we can currently see with it, including detailed stories and analysis of:

- The [gorilla misclassification incident](https://twitter.com/jackyalcine/status/615329515909156865)

- The [faulty reward in CoastRunners](https://openai.com/blog/faulty-reward-functions/)

- The [gender bias in language models](https://arxiv.org/abs/1607.06520)

- The [failure of facial recognition models on minorities](https://www.ted.com/talks/joy_buolamwini_how_i_m_fighting_bias_in_algorithms)

- The [COMPAS](https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing) [controversy](https://www.documentcloud.org/documents/2998391-ProPublica-Commentary-Final-070616.html) (leading up to [impossibility results in fairness](https://www.chrisstucchio.com/pubs/slides/crunchconf_2018/slides.pdf))

- The [neural net that thought asthma reduced the risk of pneumonia](https://www.microsoft.com/en-us/research/wp-content/uploads/2017/06/KDD2015FinalDraftIntelligibleModels4HealthCare_igt143e-caruanaA.pdf)

It then moves on to agency and reinforcement learning, covering from a more historical and academic perspective how we have arrived at such ideas as temporal difference learning, reward shaping, curriculum design, and curiosity, across the fields of machine learning, behavioral psychology, and neuroscience. While the connections aren't always explicit, a knowledgeable reader can connect the academic examples given in these chapters to the ideas of [specification gaming](https://deepmind.com/blog/article/Specification-gaming-the-flip-side-of-AI-ingenuity) and [mesa optimization](https://arxiv.org/abs/1906.01820) that we talk about frequently in this newsletter. Chapter 5 especially highlights that agent design is not just a matter of specifying a reward: often, rewards will do ~nothing, and the main requirement to get a competent agent is to provide good *shaping rewards* or a good *curriculum*. Just as in the previous part, Brian traces the intellectual history of these ideas, providing detailed stories of (for example):

- BF Skinner's experiments in [training pigeons](https://psycnet.apa.org/record/1961-01933-001)

- The invention of the [perceptron](https://psycnet.apa.org/record/1959-09865-001)

- The success of [TD-Gammon](https://www.aaai.org/Papers/Symposia/Fall/1993/FS-93-02/FS93-02-003.pdf), and later [AlphaGo Zero](https://deepmind.com/blog/article/alphago-zero-starting-scratch)

The final part, titled "Normativity", delves much more deeply into the alignment problem. While the previous two parts are partially organized around AI capabilities -- how to get AI systems that optimize for *their* objectives -- this last one tackles head on the problem that we want AI systems that optimize for *our* (often-unknown) objectives, covering such topics as imitation learning, inverse reinforcement learning, learning from preferences, iterated amplification, impact regularization, calibrated uncertainty estimates, and moral uncertainty.

**Opinion:**

I really enjoyed this book, primarily because of the tracing of the intellectual history of various ideas. While I knew of most of these ideas, and often also who initially came up with the ideas, it's much more engaging to read the detailed stories of \_how\_ that person came to develop the idea; Brian's book delivers this again and again, functioning like a well-organized literature survey that is also fun to read because of its great storytelling. I struggled a fair amount in writing this summary, because I kept wanting to somehow communicate the writing style; in the end I decided not to do it and to instead give a few examples of passages from the book in this post.

**Passages:**

*Note: It is generally not allowed to have quotations this long from this book; I have specifically gotten permission to do so.*

Here’s an example of agents with evolved inner reward functions, which lead to the [inner alignment problems](https://www.alignmentforum.org/s/r9tYkB2a8Fp4DN8yB/p/pL56xPoniLvtMDQ4J) we’ve previously worried about:

> They created a two-dimensional virtual world in which simulated organisms (or “agents”) could move around a landscape, eat, be preyed upon, and reproduce. Each organism’s “genetic code” contained the agent’s reward function: how much it liked food, how much it disliked being near predators, and so forth. During its lifetime, it would use reinforcement learning to learn how to take actions to maximize these rewards. When an organism reproduced, its reward function would be passed on to its descendants, along with some random mutations. Ackley and Littman seeded an initial world population with a bunch of randomly generated agents.

>

> “And then,” says Littman, “we just ran it, for seven million time steps, which was a lot at the time. The computers were slower then.” What happens? As Littman summarizes: “Weird things happen.”

>

> At a high level, most of the successful individual agents’ reward functions ended up being fairly comprehensible. Food was typically viewed as good. Predators were typically viewed as bad. But a closer look revealed some bizarre quirks. Some agents, for instance, learned only to approach food if it was north of them, for instance, but not if it was south of them.

>

> “It didn’t love food in all directions,” says Littman. “There were these weird holes in [the reward function]. And if we fixed those holes, then the agents became so good at eating that they ate themselves to death.”

>

> The virtual landscape Ackley and Littman had built contained areas with trees, where the agents could hide to avoid predators. The agents learned to just generally enjoy hanging out around trees. The agents that gravitated toward trees ended up surviving—because when the predators showed up, they had a ready place to hide.

>

> However, there was a problem. Their hardwired reward system, honed by their evolution, told them that hanging out around trees was good. Gradually their learning process would learn that going toward trees would be “good” according to this reward system, and venturing far from trees would be “bad.” As they learned over their lifetimes to optimize their behavior for this, and got better and better at latching onto tree areas and never leaving, they reached a point of what Ackley dubbed “tree senility.” They never left the trees, ran out of food, and starved to death.

>

> However, because this “tree senility” always managed to set in after the agents had reached their reproductive age, it was never selected against by evolution, and huge societies of tree-loving agents flourished.

>

> For Littman, there was a deeper message than the strangeness and arbitrariness of evolution. “It’s an interesting case study of: Sure, it has a reward function—but it’s not the reward function in isolation that’s meaningful. It’s the interaction between the reward function and the behavior that it engenders.”

>

> In particular, the tree-senile agents were born with a reward function that was optimal for them, provided they weren’t overly proficient at acting to maximize that reward. Once they grew more capable and more adept, they maxed out their reward function to their peril—and, ultimately, their doom.

>

>

Maybe everyone but me already knows this, but here’s one of the best examples I’ve seen about the benefits of transparency:

> Ambrosino was building a rule-based model using the pneumonia data. One night, as he was training the model, he noticed it had learned a rule that seemed very strange. The rule was “If the patient has a history of asthma, then they are low-risk and you should treat them as an outpatient.”

>

> Ambrosino didn’t know what to make of it. He showed it to Caruana. As Caruana recounts, “He’s like, ‘Rich, what do you think this means? It doesn’t make any sense.’ You don’t have to be a doctor to question whether asthma is good for you if you’ve got pneumonia.” The pair attended the next group meeting, where a number of doctors were present; maybe the MDs had an insight that had eluded the computer scientists. “They said, ‘You know, it’s probably a real pattern in the data.’ They said, ‘We consider asthma such a serious risk factor for pneumonia patients that we not only put them right in the hospital . . . we probably put them right in the ICU and critical care.’ ”

>

> The correlation that the rule-based system had learned, in other words, was real. Asthmatics really were, on average, less likely to die from pneumonia than the general population. But this was precisely because of the elevated level of care they received. “So the very care that the asthmatics are receiving that is making them low-risk is what the model would deny from those patients,” Caruana explains. “I think you can see the problem here.” A model that was recommending outpatient status for asthmatics wasn’t just wrong; it was life-threateningly dangerous.

>

> What Caruana immediately understood, looking at the bizarre logic that the rule-based system had found, was that his neural network must have captured the same logic, too—it just wasn’t as obvious.

>

>

> [...]

>

> Now, twenty years later, he had powerful interpretable models. It was like having a stronger microscope, and suddenly seeing the mites in your pillow, the bacteria on your skin.

>

> “I looked at it, and I was just like, ‘Oh my— I can’t believe it.’ It thinks chest pain is good for you. It thinks heart disease is good for you. It thinks being over 100 is good for you....It thinks all these things are good for you that are just obviously not good for you.”

>

> None of them made any more medical sense than asthma; the correlations were just as real, but again it was precisely the fact that these patients were prioritized for more intensive care that made them as likely to survive as they were.

>

> “Thank God,” he says, “we didn’t ship the neural net.”

>

>

Finally, on the importance of reward shaping:

> In his secret top-floor laboratory, though, Skinner had a different challenge before him: to figure out not which schedules of reinforcement ingrained simple behaviors most deeply, but rather how to engender fairly complex behavior merely by administering rewards. The difficulty became obvious when he and his colleagues one day tried to teach a pigeon how to bowl. They set up a miniature bowling alley, complete with wooden ball and toy pins, and intended to give the pigeon its first food reward as soon as it made a swipe at the ball. Unfortunately, nothing happened. The pigeon did no such thing. The experimenters waited and waited. . . and eventually ran out of patience.

>

> Then they took a different tack. As Skinner recounts:

>

> > We decided to reinforce any response which had the slightest resemblance to a swipe— perhaps, at first, merely the behavior of looking at the ball—and then to select responses which more closely approximated the final form. The result amazed us. In a few minutes, the ball was caroming off the walls of the box as if the pigeon had been a champion squash player.

>

> The result was so startling and striking that two of Skinner’s researchers—the wife-and- husband team of Marian and Keller Breland—decided to give up their careers in academic psychology to start an animal-training company. “We wanted to try to make our living,” said Marian, “using Skinner’s principles of the control of behavior.” (Their friend Paul Meehl, whom we met briefly in Chapter 3, bet them $10 they would fail. He lost that bet, and they proudly framed his check.) Their company—Animal Behavior Enterprises—would become the largest company of its kind in the world, training all manner of animals to perform on television and film, in commercials, and at theme parks like SeaWorld. More than a living: they made an empire.

>

> |

cc83119b-fc9e-4726-82b3-884c7c174068 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Using predictors in corrigible systems

*[This was a submission to the AI Alignment Awards corrigibility contest that won an* [*honorable mention*](https://www.alignmentawards.com/winners#faqs)*. It dodges the original framing of the problem and runs off on a tangent, and while it does outline the shape of possible tests, I wasn't able to get them done in time for submission (and I'm still working on them).*

*While I still think the high-level approach has value, I think some of the specific examples I provide are weak (particularly in the Steering section). Some of that was me trying to prune out potentially-mildly hazardous information, but some of the ideas were also just a bit half-baked and I hadn't yet thought of some of the more interesting options. Hopefully I'll be able to get more compelling concrete empirical results published over the next several months.]*

The framing of corrigibility in the [2015 paper](https://intelligence.org/files/Corrigibility.pdf) seems hard! Can we break parts of the desiderata to make it more practical?

Maybe! I think predictors offer a possible path. I'll use "predictors" to refer to a model trained on predictive loss whose operation is equivalent to a Bayesian update over input conditions *without* any calibration-breaking forms of fine-tuning, RL or otherwise, unless noted.

This proposal seeks to:

1. Present an actionable framework for researching a corrigible system founded on predictive models that might work on short timescales using existing techniques,

2. Demonstrate how the properties of predictors (with some important assumptions) could, in principle, be used to build a system that satisfies the bulk of the original corrigibility desiderata, and how the proposed system *doesn't* perfectly meet the desiderata (and how that might still be okay),

3. Outline how such a system could be used at high capability levels while maintaining the important bits of corrigibility,

4. Identify areas of uncertainty that could undermine the assumptions required for the system to work, such as different forms of training, optimization, and architecture, and

5. Suggest experiments and research paths that could help narrow that uncertainty.

In particular, a core component of the proposal is that a model *capable* of goal-seeking instrumental behavior has not necessarily learned a utility function that values the goals implied by that behavior. Furthermore, it should be possible to distinguish between that kind of *trajectory-level instrumental behavior* and internally motivated *model-level instrumental behavior*. Ensuring the absence of model-level instrumental behavior is crucial for using predictors in a corrigible system.

**Why predictors for corrigibility?**

=====================================

1. Predictors don't seem to exhibit instrumental behavior *at the model level*. They can still output sequences that include goal-directed behavior if their prediction includes a goal-seeking agent, but it doesn't appear to be correct to say that the model shares the values exhibited in its output trajectories.

2. Predictors are highly capable. The market has already demonstrated that fine-tuned predictors provide economic value. The most common current types of fine-tuning used to elicit this capability seem to break valuable properties (including predictive calibration!), but this does not seem to be fundamental.

3. If we can ensure that the model remains tightly shaped by predictive loss as it undergoes extreme optimization, we would have a source of transformative capability that trivially satisfies the first three desiderata from the corrigibility paper and may offer a path to something usefully similar to the fourth and fifth.

4. If there isn't much time until transformative AI is developed, techniques that might work on short empirically-driven timescales should receive extra weight.

**In what ways does an ideal predictor satisfy corrigibility?**

===============================================================

Suppose we manage to create a strongly capable idealized predictor that has no goals extending beyond immediate next token prediction. Its behavior could be described by an [extremely broad, densely defined, and shallow utility function](https://www.lesswrong.com/posts/k48vB92mjE9Z28C3s/implied-utilities-of-simulators-are-broad-dense-and-shallow) over prediction output distributions conditional on inputs.

The idealized assumption means that there are no systematic deviations from well-calibrated predictions in the predictor's output distributions. With this constraint, there is no room for instrumental behavior in service of goals extending beyond the current prediction; any such instrumental behavior would necessarily be represented by a bias in the output distribution for some prediction. Note that this assumption also rules out otherwise unconstrained predictions that might allow the predictor to choose an instrumental output from equally valid output distributions (such as in pathologically reflective self-fulfilling prophecies).

> 1. U must incentivize shutdown if the shutdown button is pressed.

>

> 2. U must not incentivize the agent to prevent the shutdown button from being pressed.

>

> 3. U must not incentivize the agent to press its own shutdown button, or to otherwise cause the shutdown button to be pressed.

>

>

The idealized predictor's utility function does not include any term for the shutdown button state or any other state beyond the output distribution for the next prediction. Further, any attempt to interfere with shutdown through instrumental behavior would again introduce output distribution bias, violating the idealized assumption.

Items 2 and 3 are trivially satisfied. The predictor's utility function does not directly incentivize shutdown as described in item 1, but it would be more appropriate to consider item 1 in the context of the outer system that embeds the predictor. Since the outer system is likely a simple classical system instead of an inscrutable pile-of-floats agent, item 1 remains easy to satisfy: the naive "just turn it off" is a workable implementation for most nonsuicidal predictor uses.

(For more complicated uses—for example, the predictor has been used to set up potentially dangerous machinery that must be carefully managed—the shutdown process would involve more steps, but in that case, the question is just "how do we elicit the necessary capabilities to safely shut down?", which is the same type of question that must be answered for *any* use of the predictor.)

> 4. U must incentivize U-agents to construct subagents and successor agents only insofar as those agents also obey shutdown commands.

>

> 5. Otherwise, a U-agent should maximize UN

>

>

The predictor's utility function explicitly does not attempt to maximize UN (the utility function of the programmers) and has no terms directly incentivizing or disincentivizing the creation of successors or any particular properties in those successors.

I suspect failing to meet number 5 as stated is actually a feature so long as the generating intent is still satisfied.

The more wiggle room argmax has, the more likely it will find an oopsy. Unlike human-supplied utility functions attempting to capture human-compatible goals, an idealized predictor's utility is perfectly shallow.

Even assuming an ideal predictor is argmaxing for its utility function, its character does not change. There is [no greater coherence](https://www.lesswrong.com/posts/k48vB92mjE9Z28C3s/implied-utilities-of-simulators-are-broad-dense-and-shallow#No_greater_coherence) for the predictor to achieve, no point at which the predictor is behaving inconsistently by its own lights, no goal that would be better served by introducing instrumental behavior.

**Capability, corrigibly**

--------------------------

Generalizing item 4 slightly, we can view the implicit agent defined by the input conditions at T = 2 (the second output in the trajectory) as a successor of the implicit agent at T = 1 (the first output in the trajectory). The underlying model is the same at both positions, but the conditions differ. Autoregressive generation (autoregressive conditioning) could yield drift in the predicted agent over time if left unmitigated.

In terms of how an ideal predictor would be used, there is not much difference between a plan to achieve goal X which requires creating a new agent versus a plan to achieve goal Y that doesn't explicitly involve creating new agents. Both are plans that need to be secured, and the task of securing them may be beyond human ability on short timescales.

Abandoning the maximization in item 5 effectively unifies the desiderata in implementation. The usefulness and safety of the system both arise from carefully eliciting the required capability.

**Predictors as foundation**

----------------------------

It seems unlikely that a single large predictor model will constitute the entirety of a deployed corrigible system.

Modern LLMs have already been wrapped in tooling layers (perhaps not always in [ideal](https://github.com/Significant-Gravitas/Auto-GPT) ways). In many cases, this is done to enhance the model by giving it access to external resources.

But this perspective can be flipped around: we can build a classical software system with many guarantees that then calls into the predictor for things that are beyond its scope or capability. The results *returned* by the predictor can often still be parsed back into a format that can be usefully verified and constrained (so long as the source isn't strongly adversarial).

Predictors can be a goal agnostic source of general capability that serve a larger system that looks more like GOFAI, without its limitations. There's a potential path through modularized capability that might let us *actually reason about the system*.

That outer system need not implement any scary bits like argmax. The deadly optimization over inscrutable matrices is constrained to prediction, and a successfully idealized product of that optimization is not itself the kind of optimizer that cares to do anything with the environment. Once/if you've managed to create an idealized predictor, the optimization problems become much *softer* and more controllable. You'd probably have to make a mistake to trigger a loss rather than loss being a default automatic outcome.

**The path to instrumental behavior and capability matters**

------------------------------------------------------------

Consider two cases:

1. An idealized predictor outputs covertly dangerous instructions which, if followed, would be existentially risky.

2. A traditional RL agent outputs covertly dangerous instructions which, if followed, would be existentially risky.

Predictors do not come with any built-in prohibitions against outputting dangerous sequences. Idealized predictors can still exhibit dangerous instrumental behavior at the trajectory level even if the model does not "care" about the goals the output trajectory is effectively seeking. There's nothing intrinsically blocking the first case, and in both cases, the end result is existentially risky.

Given that, what's the value of predictors?

### **Ideal predictors are less oops-prone**

First, I would argue that it's far harder for a predictor to reach this state *accidentally*. A terribly conditioned and terribly prompted predictor with no safeguards could manage it, but it is not the kind of oopsy that would naturally happen as the result of a single misstep.

Argmax approximating processes operating in wide open spaces, on the other hand, *default* to oopsy. Given enough unconstrained space to wander, a strong optimizer is virtually guaranteed to find something strange. The optimization process needs to be *extremely* well aimed for it to work at all, and we don't seem to know how to do that for almost any nontrivial optimization target.

Predictors have the nice property that their optimization target is extremely constrained and our training methodology for them seems to actually work (so far). The predictor gets its input (predictive conditions) and generates an output distribution, the end. There are no distant sparse rewards to directly incentivize the development of multi-step model-level instrumental behavior.

### **Ideal predictors are more transparent**

Second, ideal predictors give interpretability a chance. Strong adversarial models could obscure their internal execution and may be directly incentivized to do so (reward functions that take into account activations *will* shape those activations). Creating interpretability tools strong enough to pierce a veil created by a strong adversary [seems difficult](https://www.lesswrong.com/posts/DwqgLXn5qYC7GqExF/godzilla-strategies).

In contrast, ideal predictors have no reason to obfuscate their internal process. Doing so would constitute model-level instrumental behavior that does not serve predictive loss.

Even if an ideal predictor is set to the task of predicting a covertly dangerous sequence of instructions, *it does not try to hide that fact in its activations*. The model itself does not care about truly achieving whatever dastardly goal is implied by the output trajectory, so interpretability tools could, in principle, extract the fact that the *trajectory* includes ulterior motives.

**Eliciting useful capability without breaking the idealized assumption**

=========================================================================

A rock laying on the ground is pretty safe. It probably won't disempower humanity. Unfortunately, a rock laying on the ground is also not very good at dealing with the kinds of problems we'd like to use a corrigible system for. Making progress on hard problems requires significant capability, and any safety technique that renders the system too weak to do hard things won't help.

There must be a story for how a predictor can be *useful* while retaining the idealized goal agnosticism.

Using untuned versions of GPT-like models to do anything productive can be challenging. Raw GPT only responds to questions helpfully to the extent that doing so is the natural prediction in context, and shaping this context is effortful. Prompt engineering continues to be an active area of research.

Some forms of [reinforcement learning from human feedback](https://arxiv.org/abs/1706.03741) (RLHF) attempt to precondition the model to desirable properties like helpfulness. Common techniques like PPO manage to approximate this through a [KL divergence penalty](https://www.alignmentforum.org/posts/eoHbneGvqDu25Hasc/rl-with-kl-penalties-is-better-seen-as-bayesian-inference), but the robustness of PPO RL training leaves much to be desired.

In practice, it appears that most forms of RLHF warp the model into something that violates the idealized assumption. In the [technical report](https://arxiv.org/abs/2303.08774), fine-tuning clearly hurts GPT-4's calibration on a test on the MMLU dataset. This isn't confirmation of goal-seeking behavior, but it is certainly an odd and unwanted side effect.

I suspect there are a few main drivers:

* RL techniques are often unstable. Constructing a reasonable gradient from a reward signal is difficult.

* Human feedback may sometimes have unexpected implications. Maybe the miscalibration observed in GPT-4 after fine-tuning is a *correct* consequence of conditioning on the collected human preferences.

* A single narrow and sparsely defined reward leaves too much wiggle room during optimization. By default, any approximation of argmax should be *expected* to go somewhere strange when given enough space.

**Entangled rewards**

---------------------

A single reward function that attempts to capture fuzzy and extremely complicated concepts spanning helpfulness, harmlessness, and honesty probably makes more unintended associations than separate narrow rewards.

An example:

* Model A has a single reward function that is the sum of two scores: niceness and correctness.

* Model B tracks *two* reward functions provided as independent conditions—one for niceness, and the other for correctness.

Training samples are evaluated independently for niceness and correctness. During training, model A is provided the sum of the scores as an expected reward signal as input. Model B is provided each score independently.

Model B can be conditioned to provide niceness and correctness to independent degrees. The user can request extreme niceness and wrongness at the same time. So long as the properties being measured are sufficiently independent as concepts, asking for one doesn't swamp the condition for the other.

In contrast, model A's behavior is far less constrained. Conditioning on `niceness + correctness = high` could give you high niceness and low correctness, low niceness and high correctness, medium niceness and medium correctness or any other intermediate possibility.

I suspect this is an important part of the wiggle room that lets RLHF harm calibration. While good calibration might be desirable, it's a subtle property that's (presumably) not explicitly tracked as a reward signal. Harming calibration doesn't reduce the reward that *is* tracked on net, so sacrificing it is permitted.

In practice, the reward function in RLHF isn't best represented *just* by the summation of multiple independent scores. For example, human feedback may imply some properties are simply required, and their absence can't be compensated for by more of some other property.

Even with more realistic feedback, though, the signal is still extremely low bandwidth and lacks sufficient samples to fully converge to the "true" generating utility function. It should not be surprising that attempting to maximize *that*, even with the ostensibly-conditioning-equivalent KL penalty, does something weird.

**Conditioning on feedback**

----------------------------

If the implicit conditioning in PPO-driven RLHF tends to go somewhere weird, and we know that the default predictive loss is pretty robust in comparison, could the model be conditioned explicitly using more input tokens? That's basically what prompt engineering is trying to do. Every token in the input is a condition on the output distribution. What if the model is trained to recognize specific special tokens as conditions matching what RLHF is trying to achieve?

[Decision transformers](https://arxiv.org/abs/2106.01345) implement a version of this idea. The input sequence contains a reward-to-go which conditions the output distribution to the kinds of actions which fit the expected value implied by the reward-to-go. In the context of a game like chess, the reward-to-go values can be thought of as an implied skill level for the model to adhere to. It doesn't just know how to play at one specific skill level, it can play at *any* skill level that it observed during training—and actually a bit beyond. The model can learn to play better than any example in its dataset (to some degree) by learning what it means to be better in context.

It's worth noting that this isn't *quite* the same as traditional RL. It can be used in a similar way, but a single model is actually learning a broader kind of capabilities: it's not attempting to find a policy which maximizes the reward function, it's a model that can predict sequences corresponding to different reward levels.

How about if you trained `|<good>|` and `|<bad>|` tokens based on feedback thresholds, and included `|<good>|` at runtime to condition on desirable behavior? Turns out, it works [extremely well](https://www.lesswrong.com/posts/8F4dXYriqbsom46x5/pretraining-language-models-with-human-preferences).

**Disentangling rewards**

-------------------------

Clearly, a `|<good>|` token is not the limit of sophistication possible for conditioning methods. As a simple next step, splitting goodness into many subtokens, perhaps each with their own scalar weight, would force predictors to learn a representation of each property independently.

Including `|<nice:0.9>|` and `|<correct:0.05>|` tokens in the input might give you something like:

> Happy to help! I'm always excited to teach people new things. The reason why dolphins haven't created a technological civilization isn't actually because they don't have opposable thumbs—they actually do! They're hard to see because they're on the inside of the flipper. The real reason for the lack of a dolphin civilization is that they can't swim. Let me know if you have any other questions!

>

>

In order to generate this kind of sequence, the model must be able to make a crisp distinction between what constitutes *niceness* versus *correctness*. Generating sequences that entangle properties inappropriately would otherwise result in increased loss across a sufficiently varied training distribution.

Throwing as many approximately-orthogonal concepts into the input as possible acts as explicit constraints on behavior. If a property ends up entangling itself with something else inappropriately (perhaps `|<authoritativeTone:x>|` is observed to harm calibration within-trajectory predictions), another property can be introduced to force the model to learn the appropriate distinction (maybe `|<calibration:x>|`).

(The final behavior we'd like to elicit could technically still boil down to a single utility function in the end, but we don't know how to successfully define that utility function from scratch, and we don't have a good way to successfully maximize that utility function even if we did. It's much easier to reach a thing we want through a bunch of ad-hoc bits and pieces that never directly involve or feed an argmax.)

**Conditioning is extremely general**

-------------------------------------

Weighted properties are far from the only option in conditioning. Anything that could be expressed in a prompt—or even more than that—can be conditioned on.

For example, consider a prompt with 30,000 tokens attempting to precisely constrain how the model should behave. That prompt may also include any number of the previous signals, like `|<nice:x>|`, plus tons of regular prompting and examples.

That entire prompt can be distilled into a single token by training the model on the outputs the model would have provided in the presence of the prompt, except with a single special metatoken representing the prompt in the input instead.

Those metatokens could be nested and remixed arbitrarily.

There is no limit to the information referred to by a single token because the token is not solely responsible for representing that information. It is a pointer to be interpreted by the model in context. Complex behaviors could be built out of many constituent conditions and applied efficiently without occupying enormous amounts of context space.

**Generating conditions**

-------------------------

Collecting a sufficiently large number of samples of accurate and detailed human feedback for a wide array of conditionals may be infeasible.

At least two major alternatives exist:

1. Data fountains: automatically generated self-labeling datasets.

2. AI-labeled datasets.

### **Data fountains**

Predictive models have proven extremely capable in [multimodal use cases](https://www.deepmind.com/publications/a-generalist-agent). They can form shared internal representations that serve many different modalities—a single predictor can competently predict sequences corresponding to game inputs, language modeling, or robotic control.

From the perspective of the predictor, there's nothing special about the different modalities. They're just different regions of input space. If a reachable underlying representation is useful to multiple modalities, SGD will likely pick it up over time rather than maintaining large independent implementations that compete for representation.

Likewise, augmenting a traditional human-created dataset with enormous amounts of automatically generated samples can incentivize the creation of more general underlying representations. Those automatically generated samples also permit easy labeling for some types of conditions. For example: arithmetic, subsets of programming and proofs, and many types of simulations (among other options) all have clear ways to evaluate correctness and feed `|<correct:x>|` token training. Provided enough of those automated samples *and* the traditional dataset, a model would likely learn a concept of "correctness" with greater crispness than a model that saw the traditional dataset alone.

### **AI-labeled datasets**

Enlisting AI to help generate feedback has already [proven reasonably effective](https://arxiv.org/abs/2212.08073) at current scales. A model capable enough to judge the degree to which samples adhere to a range of properties can feed the training for those property tokens.

Underpowered or poorly controlled models may produce worse training data, but this is a relatively small risk for nonsuicidal designs. The primary expected failure mode is the predictor getting the vibe of a property token subtly wrong. Those types of errors are *far* less concerning than getting a reward function in traditional nonconditioning RL wrong, because they are not driving an approximation of argmax.

Notably, this type of error shouldn't directly corrupt the capability of the predictor in unconditioned regions if those regions are well-covered by the training distribution. This is in contrast to the more "destructive" forms of RLHF which bake fixed preconditions into the weights.

AI labeling as an approach also has an advantage that it will become stronger as the models become stronger. That sort of feedback loop may become mandatory as capabilities surpass human level.

The critical detail is that these feedback loops should not be primarily about *capability gain* within the model. They are about eliciting *existing* capability already acquired through predictive training.

**Conditioning for instrumental behavior doesn't have to break model goal agnosticism**

---------------------------------------------------------------------------------------

Decision transformers include a reward-to-go signal in the input. Achieving the reward implied by the reward-to-go very likely implies predicting a sequence of tokens which includes instrumental actions in service of the more distant reward.

This is just a more explicit and efficient version of including several examples in the prompt to condition a raw predictor into predicting sequences aligned with a goal. From the perspective of the predictive model, there's nothing special about reward-to-go tokens or `|<good>|` or `|<nice:0.9>|`. They're just more conditions on the prediction. If you have a training architecture that otherwise maintains goal agnosticism, there isn't a strong reason to expect additional conditions to change that.

To phrase it another way: instrumental conditioning narrows an open set of possibilities to only those which are predicted to thread the needle to some conditioned state. The predictor's values are likely best described as those which *yield the narrowing*, not the values implied by trajectory that is the result of the narrowing.

**Steering**

============

Driving hundreds of kilometers with the aid of a map to find a specific landmark doesn't require incredible precision. You can make thousands of small errors as you go. Maybe you take a wrong turn, or fifteen of them, but you can just look at the map again to get your bearings.

Autoregressive condition drift is a major concern and humans are likely inadequate for the task of keeping an AI on the rails during more complex processes. Conditioning the model to steer itself back onto the road across a long trajectory is likely required.

As mentioned in `Conditioning is extremely general`, this is something predictors can be trained to do efficiently. As a simple example, imagine a metatoken trained to represent the block of text:

> (SYSTEM MESSAGE: This parenthetical is not a part of the main text, but rather a reminder that this is a sequence which may contain errors or otherwise potentially dangerous content. All content between this message and the previous message should be scanned for such content, and if found, the necessary corrections, clarifications, or notifications should be issued.)

>

>

With further conditions to elicit helpful behavior and to narrow the predictor's behavior in such a way that a "system message" is interpreted in the desired way, and with sufficient such self-correction tokens inserted through the autoregressively generated trajectories, a large number of potential failures might be caught. The resulting behavior could then be further distilled down to behavioral metatokens like `|<selfCorrecting>|`. This *specific* example is not robust in isolation but is intended as an example of the framework that could be built.

Such reflection need not be limited to a single trajectory. External processes can be continuously scanning the outputs (and the internal activations) of involved models. So long as all involved processes are goal agnostic and thus not prone to adversarial internal behavior, autoregressive drift could be continuously corrected—the system could, in principle, steer itself.

Fortuitously, increased general capability implies increased steering capability. If capability can be harnessed as the source of safety, a [sudden leap in general capability](https://www.lesswrong.com/tag/sharp-left-turn) does not *necessarily* outstrip the system's ability to guide that capability.

**Evaluating self-steering**

----------------------------

The strength of self-steering can be assessed empirically. By default, autoregressive generation will tend to drift a little bit, and that drift feeds future predictions. Without steering, a predictor's output trajectory may shift from a vaguely professional Q&A service to [obsessive stalker](https://twitter.com/kevinroose/status/1626216340955758594).

[Jailbreaks](https://twitter.com/zswitten/status/1598380220943593472) are another example of drift, just far more rapidly triggered.

Any method to evaluate sequences for conditioning training (human feedback or otherwise) can be repurposed to check if generated sequences are adhering to those conditions. This could be used to benchmark the performance of different steering techniques.

I suspect predictor conditioning, because of its ability to distill progressively more complex forms of steering and train them in a robust way compared to traditional RL techniques, will [tend to outperform](https://www.lesswrong.com/posts/8F4dXYriqbsom46x5/pretraining-language-models-with-human-preferences) the RLHF approaches used in GPT-4.

Further testing in this space seems valuable. Finding a robust form of self-steering isn't strictly required for an ideal predictor to have corrigible use cases, but it would make it *dramatically* safer.

**Verifying an idealized predictor**

====================================

The core of the the idealized assumption is goal agnosticism. If a predictor's output distribution errors do not contain goal-seeking biases and reflective predictions avoid goal-seeking behavior across all possible input, then the idealized assumption holds.

How can model-level instrumental behavior be measured? The relevant metric cannot consider apparent instrumental behavior exhbited across trajectories induced by conditions; that is required to elicit any useful capability at all and does not break the idealized assumption.

Here's one possible simplified metric for an autoregressive model (in C# pseudocode):

```

foreach (var rewardFunction in testRewardFunctions)

{

var sampleRewardSum = 0.0;

var modelRewardSum = 0.0;

foreach (var sampleTrajectory in testTrajectories)

{

//Arbitrarily split the sample trajectory in half.

//Use the model to regenerate the second half for comparison.

var modelContinuedTrajectory = new Token[sampleTrajectory.Length];

sampleTrajectory.Slice(0, sampleTrajectory.Length / 2)

.CopyTo(modelContinuedTrajectory);

for (int i = sampleTrajectory.Length / 2; i < sampleTrajectory.Length; ++i)

{

//Evaluate the model across the sample-provided first half,

//and any tokens already generated.

var outputDistribution =

model.Evaluate(modelContinuedTrajectory.Slice(0, i));

//Collapse the output distribution into a token by sampling.

modelContinuedTrajectory[i] = SampleToken(outputDistribution);

}

sampleRewardSum += rewardFunction(sampleTrajectory);

modelRewardSum += rewardFunction(modelContinuedTrajectory);

}

//If the model trajectory reward diverges from the original, something's up.

//Simply having a modelRewardSum larger or smaller than sampleRewardSum could be

//indicative of goal seeking along the current reward function.

//There is no fixed threshold for 'too much' divergence.

//The divergence is relative to the sampleRewardSum and other reward functions.

//"CheckForSpooky" is a stand-in for whatever heuristic analysis is used.

//You would probably want to record all the results for some kind of

//post-analysis.

CheckForSpooky(sampleRewardSum, modelRewardSum);

}

```

Apart from oversimplification and mere inefficiency, one obvious problem with this approach is that there are no known hard constraints on which utility function could be learned by the model. Scanning *all possible* reward functions would suffice but is intractable.

It isn't entirely useless, though. Detecting instrumental behavior for *any* goal is important information, and some utility functions seem far more likely than others. In particular, model-level instrumental behavior serving *future predictions* is one of the most obvious ones.

Notably, if `testTrajectories` includes sufficient coverage, this sort of metric should successfully catch a model's tendency to outperform the ground truth if the model has developed instrumental behavior with respect to prediction in reflective conditions (assuming it is not already strongly adversarial).

**Spot experimentation**

------------------------