id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

71df1f9e-e30e-429a-bd50-62a8ee561563 | trentmkelly/LessWrong-43k | LessWrong | My current take on logical uncertainty

|

b17fb1e2-f3a7-4db2-96c8-c7056157b8c0 | trentmkelly/LessWrong-43k | LessWrong | [LINK] A proposed update model for working memory: multiple-component framework

I've seen some discussion on "working memory" and "spaced repetition"

I just read this pop-science article in which a new hypothesis is presented that seems to provide better predictions and test conditions for measuring working memory. Maybe this can also be used for the SRS contest.

http://medicalxpress.com/news/2011-07-brain-track.html |

78c72552-ea7a-429f-baea-3ef58b59738c | StampyAI/alignment-research-dataset/lesswrong | LessWrong | What is to be done? (About the profit motive)

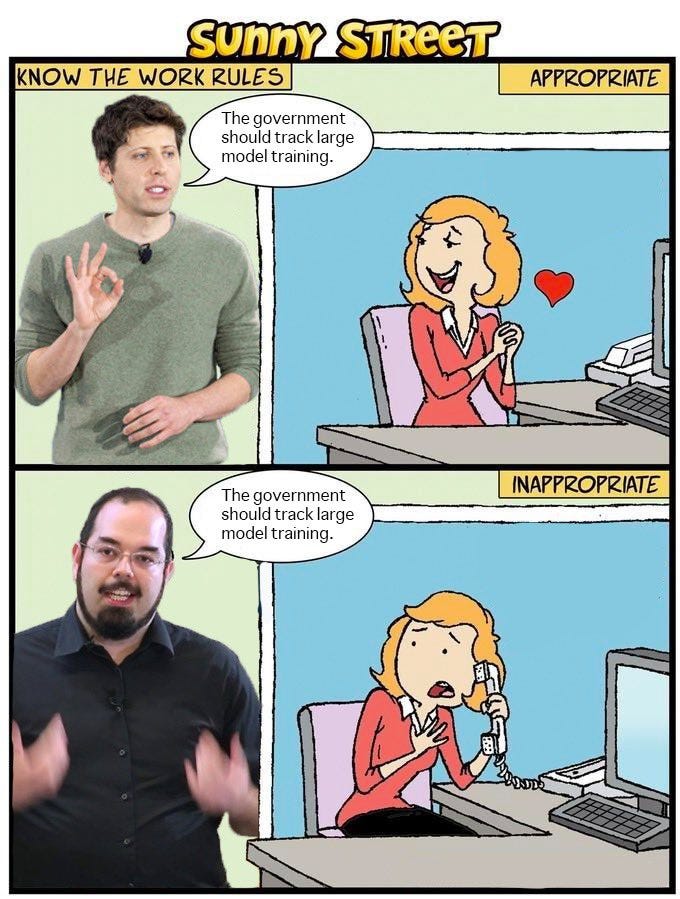

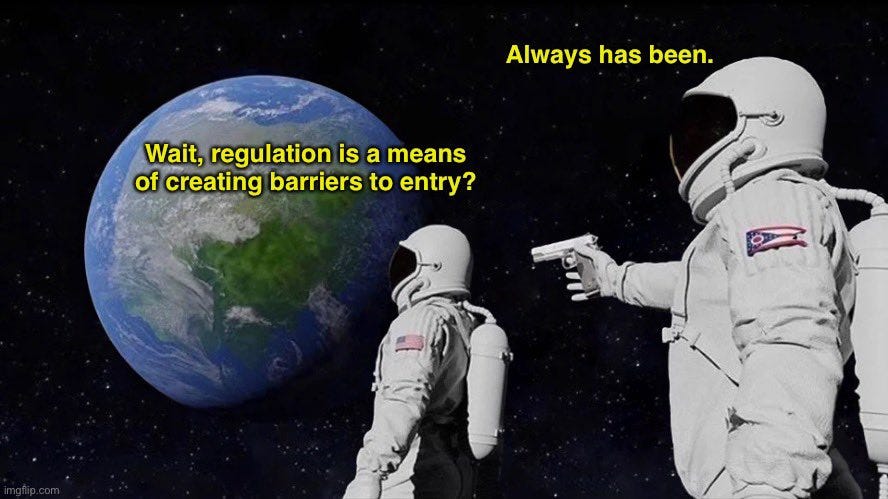

I've recently started reading posts and comments here on LessWrong and I've found it a great place to find accessible, productive, and often nuanced discussions of AI risks and their mitigation. One thing that's been on my mind is that seemingly everyone takes for granted that the world as it exists will eventually produce AI, particularly sooner than we have the necessary knowledge and tools to make sure it is friendly. Many seem to be convinced of the inevitability of this outcome, that we can do little to nothing to alter the course. Often referenced contributors to this likelihood are current incentive structures; profit, power, and the nature of current economic competition.

I'm therefore curious why I see so little discussion on the possibility of changing these current incentive structures. Mitigating the profit motive in favor of incentive structures more aligned with human well-being seems to me an obvious first step. In other words, to maximize the chance for aligned AI, we must first make an aligned society. Do people not discuss this idea here because it is viewed as impossible? Undesirable? Ineffective? I'd love to hear what you think. |

3f6ca8f0-f8d1-4939-ae57-778d8b738482 | trentmkelly/LessWrong-43k | LessWrong | Minimizing Empowerment for Safety

I haven't put much thought into this post; it's off the cuff.

DeepMind has published a couple of papers on maximizing empowerment as a form of intrinsic motivation for Unsupervised RL / Intelligent Exploration.

I never looked at either paper in detail, but the basic idea is that you should seek to maximize mutual information between (future) outcomes and actions or policies/options. Doing so means an agent knows what strategy to follow to accomplish a given outcome.

It seems plausible that instead minimizing empowerment in the case where there is a reward function could help steer an agent away from pursuing instrumental goals which have large effects.

So that might be useful for "taskification", "limited impact", etc. |

8bf2f0c7-de8e-4d90-82f4-ddf52351a6b6 | trentmkelly/LessWrong-43k | LessWrong | Notes on the Presidential Election of 1836

In 1836, Andrew Jackson had served two terms. In the presidential election, incumbent vice president Martin Van Buren defeated several Whig candidates.

Historical Background

By 1836, there were 25 states. States were often added in pairs (one slave and one free) to maintain political balance: Mississippi and Indiana, Alabama and Illinois, Missouri and Maine. Arkansas had just been added as a slave state in June 1836 and Michigan was due to be added in January 1837, making an exact 13-13 balance.

The population was 13 million with a center of mass in present-day West Virginia (part of Virginia then). Only New York City had more than 200,000 people, and Baltimore, Philadelphia, and Boston didn’t even have half as many. You can see from the electoral-vote allotments in the map above that there was a rising new region for politics beyond the North and South: the West, a swing region with their own politicians like Andrew Jackson and Henry Clay.

The Catholic immigration wave would come later and the country was still more than 96% Protestant, with Puritan-descended Congregationalists and Presbyterians in New England as well as Baptists and Methodists in the South (some motivated by the religious revivals of the Second Great Awakening). Slaves made up 15% of the population and about a third of the South. A few northern states like New Jersey had a small number of slaves due to gradual-emancipation laws that e.g. decreed that all children born after 1804 to enslaved mothers would be free when they reached adulthood.

Only in New York could black freedmen vote and only if they owned substantial property. Full manhood suffrage was in place otherwise as in most states during the 1820s and 30s (except in North Carolina, Virginia, or Rhode Island). South Carolina was the only state that still chose their electors via the state legislature rather than holding a popular vote at all.

Political Situation

The three regions had their priorities:

1. The North was becoming more |

262102d2-8e92-4d03-a4a1-066cf0becda0 | trentmkelly/LessWrong-43k | LessWrong | Eating et al.: Study on High/Low Protein | Glycemic-Index

Nutrition and related topics have been a topic a few times here and on related blogs, starting back with Shangri-La, hypoglycemia, and what we should eat.

Now, HT reddit, there seems to be some (new) evidence pro the position I think that quite some people here have: high-protein, low-glycemic-index. So, some people here can be a little bit more sure that they made the right bet earlier on -- but how have you actually arrived at those conclusions earlier? I see the evolutionary argument, but by itself alone, it is not that convincing. There must have been data, ...

So, any recommendations on further sources/high-quality collections? |

8c66af7a-e75a-46c1-b5d1-e1c1390852c2 | trentmkelly/LessWrong-43k | LessWrong | Is there a hard copy of the sequences available anywhere?

Forgive me if there's an easily available answer, I have not been able to find it after several attempts. I know there is an ebook but I would like a hard copy of Rationality A-Z or the original sequences as a (set of) books. |

f7155a04-b1a9-4e98-8666-13fe8a30c90b | trentmkelly/LessWrong-43k | LessWrong | Shut up and do the impossible!

The virtue of tsuyoku naritai, "I want to become stronger", is to always keep improving—to do better than your previous failures, not just humbly confess them.

Yet there is a level higher than tsuyoku naritai. This is the virtue of isshokenmei, "make a desperate effort". All-out, as if your own life were at stake. "In important matters, a 'strong' effort usually only results in mediocre results."

And there is a level higher than isshokenmei. This is the virtue I called "make an extraordinary effort". To try in ways other than what you have been trained to do, even if it means doing something different from what others are doing, and leaving your comfort zone. Even taking on the very real risk that attends going outside the System.

But what if even an extraordinary effort will not be enough, because the problem is impossible?

I have already written somewhat on this subject, in On Doing the Impossible. My younger self used to whine about this a lot: "You can't develop a precise theory of intelligence the way that there are precise theories of physics. It's impossible! You can't prove an AI correct. It's impossible! No human being can comprehend the nature of morality—it's impossible! No human being can comprehend the mystery of subjective experience! It's impossible!"

And I know exactly what message I wish I could send back in time to my younger self:

Shut up and do the impossible!

What legitimizes this strange message is that the word "impossible" does not usually refer to a strict mathematical proof of impossibility in a domain that seems well-understood. If something seems impossible merely in the sense of "I see no way to do this" or "it looks so difficult as to be beyond human ability"—well, if you study it for a year or five, it may come to seem less impossible, than in the moment of your snap initial judgment.

But the principle is more subtle than this. I do not say just, "Try to do the impossible", but rather, "Shut up and do the impo |

6849d832-0a75-4e6f-97bb-da02d0c01092 | trentmkelly/LessWrong-43k | LessWrong | The red paperclip theory of status

Followup to: The Many Faces of Status (This post co-authored by Morendil and Kaj Sotala - see note at end of post.)

In brief: status is a measure of general purpose optimization power in complex social domains, mediated by "power conversions" or "status conversions".

What is status?

Kaj previously proposed a definition of status as "the ability to control (or influence) the group", but several people pointed out shortcomings in that. One can influence a group without having status, or have status without having influence. As a glaring counterexample, planting a bomb is definitely a way of influencing a group's behavior, but few would consider it to be a sign of status.

But the argument of status as optimization power can be made to work with a couple of additional assumptions. By "optimization power", recall that we mean "the ability to steer the future in a preferred direction". In general, we recognize optimization power after the fact by looking at outcomes. Improbable outcomes that rank high in an agent's preferences attest to that agent's power. For the purposes of this post, we can in fact use "status" and "power" interchangeably.

In the most general sense, status is the general purpose ability to influence a group. An analogy to intelligence is useful here. A chess computer is very skilled at the domain of chess, but has no skill in any other domain. Intuitively, we feel like a chess computer is not intelligent, because it has no cross-domain intelligence. Likewise, while planting bombs is a very effective way of causing certain kinds of behavior in groups, intuitively it doesn't feel like status because it can only be effectively applied to a very narrow set of goals. In contrast, someone with high status in a social group can push the group towards a variety of different goals. We call a certain type of general purpose optimization power "intelligence", and another type of general purpose optimization power "status". Yet the ability to make excelle |

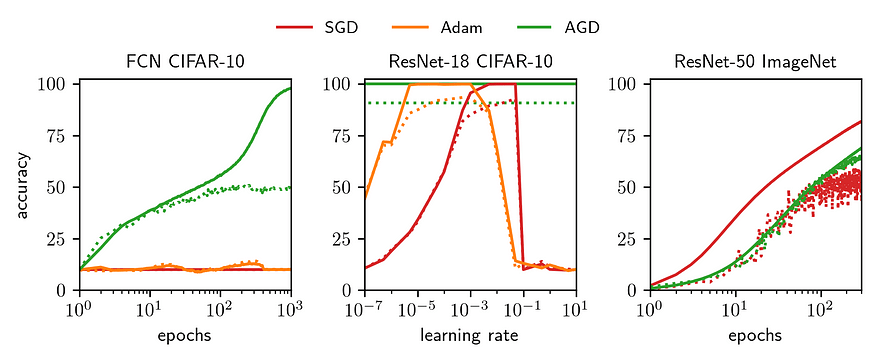

1435950f-0e68-47f4-80d7-2322ff1f8810 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Davidad's Bold Plan for Alignment: An In-Depth Explanation

Gabin Kolly and Charbel-Raphaël Segerie contributed equally to this post. Davidad proofread this post.

Thanks to Vanessa Kosoy, Siméon Campos, Jérémy Andréoletti, Guillaume Corlouer, Jeanne S., Vladimir I. and Clément Dumas for useful comments.

Context

=======

Davidad [has proposed an intricate architecture](https://www.alignmentforum.org/posts/pKSmEkSQJsCSTK6nH/an-open-agency-architecture-for-safe-transformative-ai) aimed at addressing the alignment problem, which necessitates extensive knowledge to comprehend fully. We believe that there are currently insufficient public explanations of this ambitious plan. The following is our understanding of the plan, gleaned from discussions with Davidad.

This document adopts an informal tone. The initial sections offer a simplified overview, while the latter sections delve into questions and relatively technical subjects. This plan may seem extremely ambitious, but the appendix provides further elaboration on certain sub-steps and potential internship topics, which would enable us to test some ideas relatively quickly.

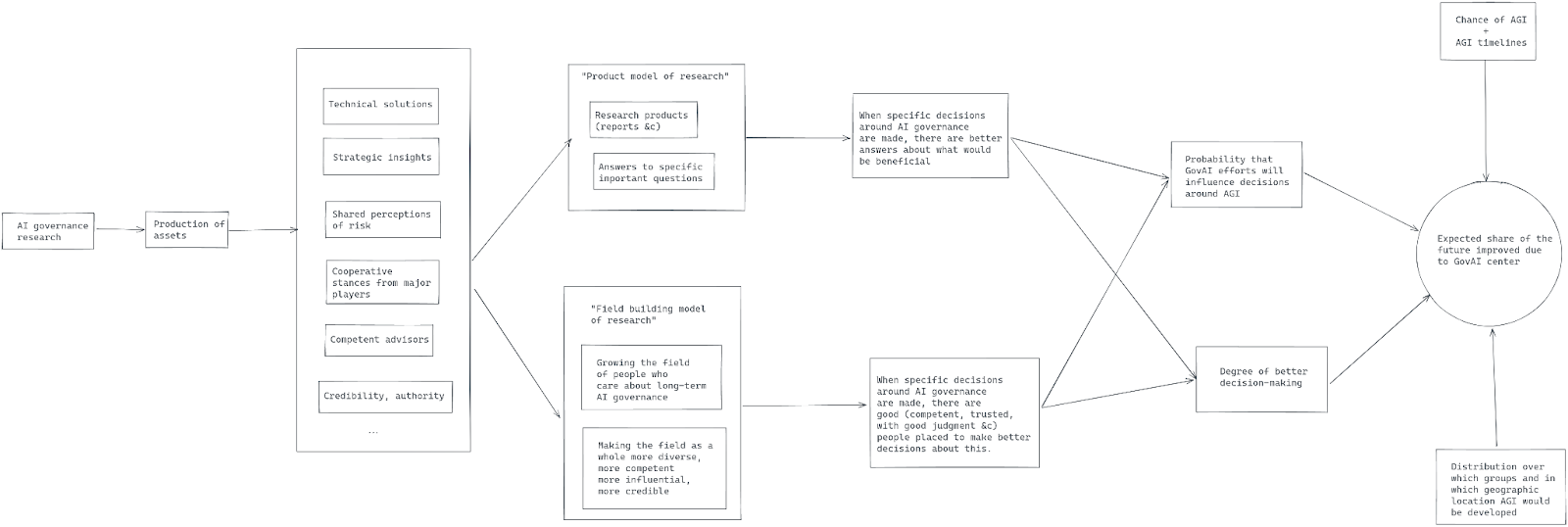

Davidad’s plan is an entirely new paradigmatic approach to address the hard part of alignment: *The Open Agency Architecture aims at “building an AI system that helps us ethically end the acute risk period without creating its own catastrophic risks that would be worse than the status quo”*.

This plan is motivated on the assumption that current paradigms for model alignment won’t be successful:

* LLMs won't be able to be aligned just with [RLHF](https://www.lesswrong.com/posts/d6DvuCKH5bSoT62DB/compendium-of-problems-with-rlhf) or a variation of this technique.

* Scalable oversight will be [too](https://www.lesswrong.com/posts/PJLABqQ962hZEqhdB/debate-update-obfuscated-arguments-problem) [difficult](https://arxiv.org/abs/2210.10860).

* Interpretability used to retro-engineer an arbitrary model will not be feasible. Instead, it would be easier to iteratively construct an understandable world model.

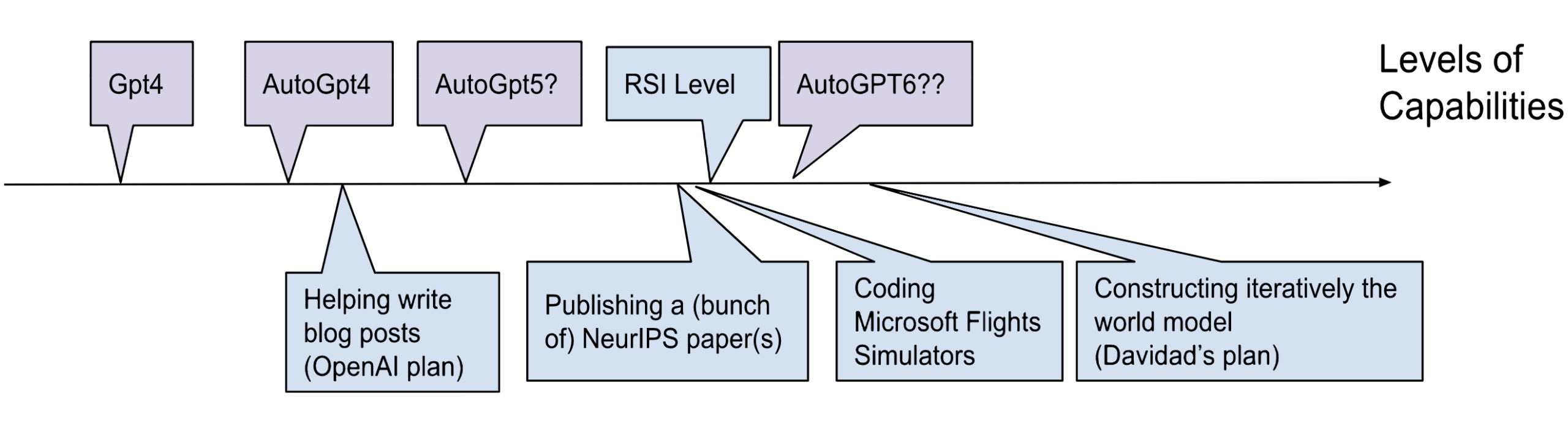

Unlike [OpenAI's plan](https://openai.com/blog/planning-for-agi-and-beyond), which is a meta-level plan that delegates the task of finding a solution for alignment with future AI, davidad's plan is an object-level plan that takes drastic measures to prevent future problems. It is also based on rather testable assumptions that can be relatively quickly tested (see the annex).

**Plan’s tldr:** Utilize near-AGIs to build a detailed world simulation, train and formally verify within it that the AI adheres to coarse preferences and avoids catastrophic outcomes.

**How to read this post?**

This post is much easier to read than the original post. But we are aware that it still contains a significant amount of technicality. Here's a way to read this post gradually:

* Start with the Bird's-eye view (5 minutes)

* Contemplate the Bird's-eye view diagram (5 minutes), without spending time understanding the mathematical notations in the diagram.

* Fine Grained Scheme: Focus on the starred sections. Skip the non-starred sections. Don’t spend too much time on difficulties (10 minutes)

* From "Hypothesis discussion" onwards, the rest of the post should be easy to read (10 minutes)

For more technical details, you can read:

* The starred sections of the Fine Grained Scheme

* The Annex.

Bird's-eye view

===============

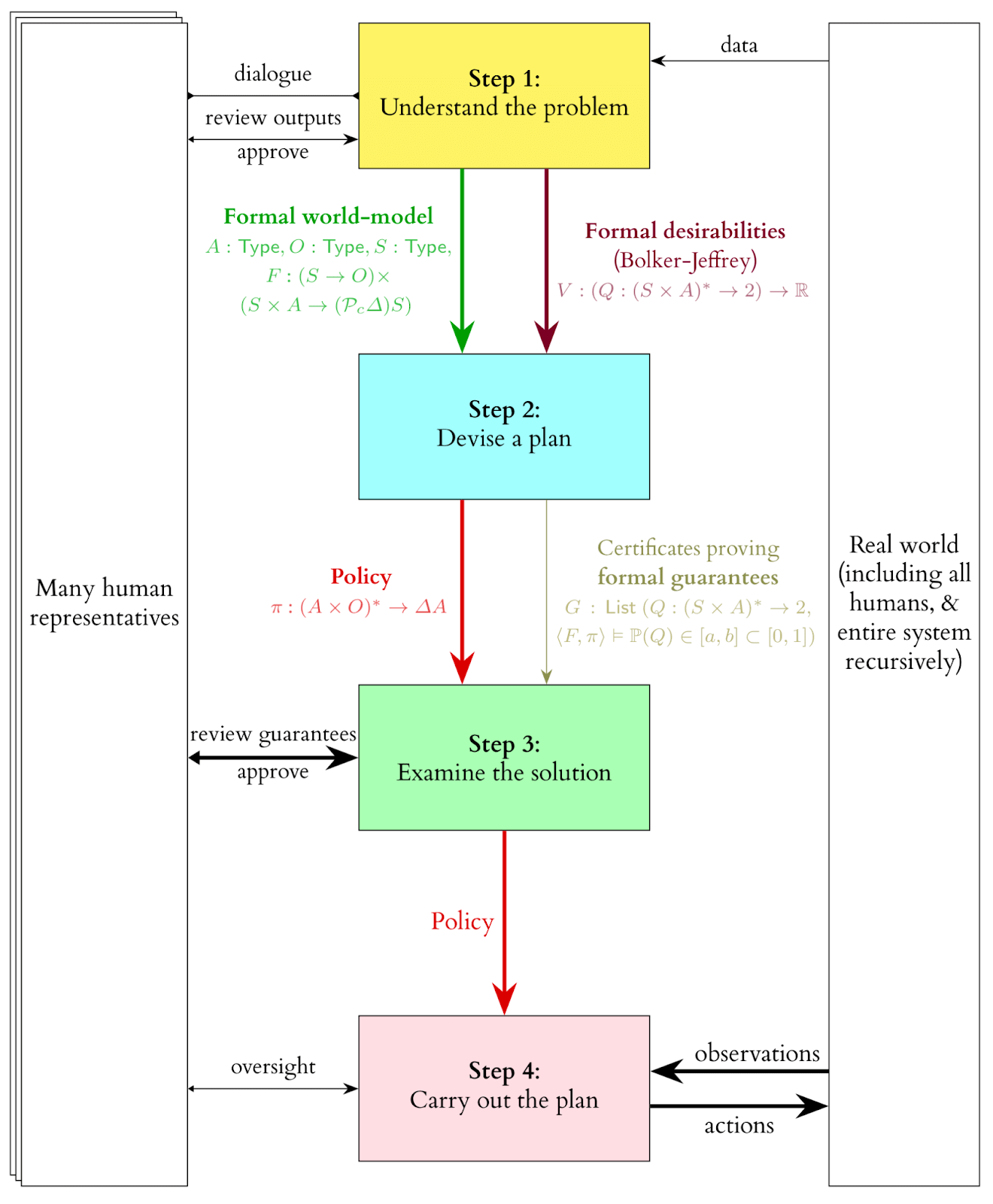

The plan comprises four key steps:

1. **Understand the problem:** This entails formalizing the problem, similar to deciphering the rules of an unfamiliar game like chess. In this case, the focus is on understanding reality and human preferences.

1. **World Modeling:**Develop a comprehensive and intelligent model of the world capable of being used for model-checking. This could be akin to an ultra-realistic video game built on the finest existing scientific models. Achieving a sufficient model falls under the Scientific Sufficiency Hypothesis (a discussion of those hypotheses can be found later on) and would be one of the most ambitious scientific projects in human history.

2. **Specification Modeling:**Generate a list of moral desiderata, such as a model that safeguards humans from catastrophes. The Deontic Sufficiency Hypothesis posits that it is possible to find an adequate model of these coarse preferences.

2. **Devise a plan:**With the world model and desiderata encoded in a formal language, we can now strategize within this framework. Similar to chess, a model can be trained to develop effective strategies. Formal verification can then be applied to these strategies, which is the basis of the Model-Checking Feasibility Hypothesis.

3. **Examine the solution:**Upon completing step 2, a solution (in the form of a neural network implementing a policy or strategy) is obtained, along with proof that the strategy adheres to the established desiderata. This strategy can be scrutinized using various AI safety techniques, such as interpretability and red teaming.

4. **Carry out the plan:**The model is employed in the real world to generate high-level strategies, with the individual components of these strategies executed by RL agents specifically trained for each task and given time-bound goals.

The plan is dubbed "Open Agency Architecture" because it necessitates collaboration among numerous humans, remains interpretable and verifiable, and functions more as an international organization or "agency" rather than a singular, unified "agent." The name Open Agency was drawn from [Eric Drexler’s Open Agency Model](https://www.alignmentforum.org/posts/5hApNw5f7uG8RXxGS/the-open-agency-model), along with many high-level ideas.

Here is the main diagram. (An explanation of the notations is provided [here](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Types_explanation)):

Fine-grained Scheme

===================

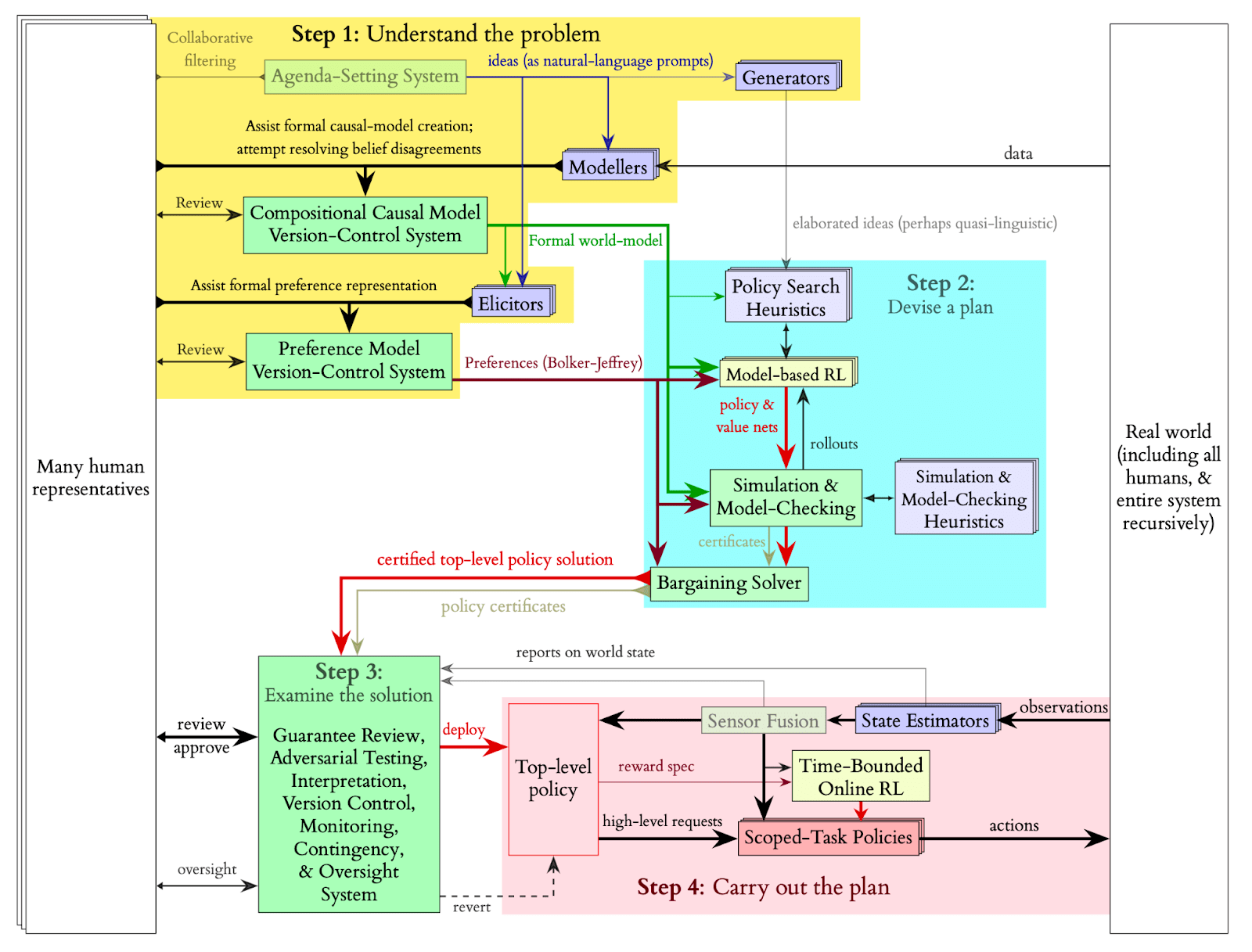

Here is a more detailed breakdown of Davidad’s plan:

* (\*) Important

* Not essential

Step 1: Understand the problem

------------------------------

* **Agenda-Setting System**: This recommendation systems determines the next elements to integrate into the world simulation. The agenda sets priorities for incorporating the next physical phenomenon into the world model and formalizing the next moral desideratum and helps stakeholders decide which [pivotal processes](https://www.alignmentforum.org/posts/etNJcXCsKC6izQQZj/pivotal-outcomes-and-pivotal-processes) to follow (e.g., nanobots everywhere or a very persuasive chatbot).

* **(\*) Modeler**: These modelers, near-AGI-level language models, iteratively develop a formal world model by incorporating all human knowledge into a unified, multiscale model called a compositional world model. Humans verify the modelers' output and provide high-level guidance and suggestions. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Formalization_of_the_world_model)]

* **(\*) Compositional world model**: The world model must encapsulate numerous complex relationships and be described in a precise, formal manner for efficient human verification. To give an idea, this would be like a combination between Google Earth, GTA-4 and Kerbal Space Program. Given the multiple competing theories across various sciences, it will more closely resemble a collection of world models rather than a single, unified one. Quantifying the uncertainty in the world is challenging (as in the case of Knightian uncertainty), making it difficult to ensure that the correct theory has been considered and incorporated. Thus, infra-Bayesianism should be employed for this modeling, which also offers tools for calculating worst-case scenario expectations (e.g., when the world model is chosen to minimize the agent's score).[[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#About_Category_theory_and_Infra_Bayesianism)]

+ This system's complexity almost precludes the use of Python for modeling; a new functional programming language specifically designed for this task would likely be necessary, potentially incorporating category theory. Humans would verify the world model line by line.

+ To expedite the development of this world model using LLMs, methods such as [this one](https://twitter.com/kdqg1/status/1638744989407518721) could be employed.

+ The fact that the world model is multi-scale is quite significant: each scale will be governed by numerous scientific laws. For instance, if we consider a robot in a room conducting a chemistry experiment, the world model will have to take into account the laws of chemistry to ensure that there is no risk of explosion. However, if there is no chemistry experiment, there generally won't be a need to simulate the microscopic level.

* **A compositional-causal-model version-control system**: A next-gen version control software, similar to Git and Wikipedia's review mechanisms, is needed to create an infra-Bayesian world model containing all global knowledge. This system tracks edits, stores stakeholder approvals or disapprovals, and logs discussions. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Experimenting_with_the_compositional_version_control_system)]

* **(\*) Elicitors**: Language models assist humans in expressing their desires using the formal language of the world model. Some models also identify extreme situations where desiderata are met but humans are unhappy, testing the sufficiency of current desiderata in a red-teaming fashion. Examples of desiderata could be to not increase people’s probability of dying, or to not hurt them physically. These desiderata will most of the time be in conflict with each other, and a bargain would need to be achieved. Davidad proposes to represent most of these desiderata as violations of Markov blankets. Most of those desiderata are formulated as negative constraints because we just want to avoid a catastrophe, not solve the full value problem. But some of the desiderata will represent the pivotal process that we want the model to accomplish.

* **A preference-model version-control system**: A dedicated version control software for preferences is required, particularly when new concepts are added to the world model. Similar to Git's "rebase" operation, previously written moral desiderata may need updating to accommodate new concepts.

* **Generators**: Pretrained language models provide initial heuristic ideas for the RL algorithm to bootstrap its policy search. This approach is akin to using an LLM within a robot to enhance its capabilities (e.g., PALM-E). These heuristics are then refined through RL training.

Step 2: Devise a plan

---------------------

* **Policy Search Heuristics**: These heuristics, produced by the generators, are used to initialize a neural network, which is then trained through RL training.

* **(\*) Model-Based RL**: An RL agent, such as MuZero, is trained on the constructed world model, with a reward function derived from a combination of the desiderata. This model may also be time-bounded, possibly utilizing [this framework](https://www.lesswrong.com/posts/dzDKDRJPQ3kGqfER9/you-can-still-fetch-the-coffee-today-if-you-re-dead-tomorrow).

* **(\*) Simulation and model checking**: Once the RL agent achieves adequate performance, model checking procedures are initiated. Model checking must be guided by heuristics provided by LLMs (possibly prompted using natural language), drawing inspiration from model checking currently performed on smaller scales (e.g., [COOL-MC](https://github.com/dennisgross/cool-mc), which allows proving properties on Atari RL agents). Existing techniques and heuristics for such verifications can be employed to fine-tune the LLMs. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Try_to_fine_tune_a_language_model_as_a_heuristic_for_model_checking)]

* **Bargaining solver**: Multiple policies will be generated, with the expected utility for each stakeholder calculated. A bargaining solver is then used to determine which policy to adopt.

Step 3 Examine the solution

---------------------------

* In this step, traditional approaches employed in other AI safety agendas are utilized to evaluate the solution, including interpretability and red teaming. Although interpretability may not be essential for averting existential risks if the model checking functions as intended, Davidad anticipates that at least one stakeholder would desire some level of interpretability.

Step 4 Carry out the plan

-------------------------

* **(\*) High-level Policy**: A high-level policy is deployed, which can deploy and monitor narrow child RL agents. This high level policy can function almost like an AGI and is capable of writing reward specifications to train narrow task RL policies.

* **Revert**: The high-level policy can be reverted, meaning it can be deleted, and steps 1/2/3 can be repeated. This is useful in cases of buggy malfunctions, rather than misalignment.

* **(\*) Time-bounded Online RL**: The high-level policy can write specifications that are used to train narrow agents. [Reward functions are designed to turn negative after a certain time](https://www.lesswrong.com/posts/dzDKDRJPQ3kGqfER9/you-can-still-fetch-the-coffee-today-if-you-re-dead-tomorrow) if the agent doesn't stop and transition gracefully to a shutdown state. Training for the narrow agents occurs in simulators that run small subsystems of the overall formal world model, but at a higher resolution. The high-level policy has a longer time-bounded period (e.g., a week) compared to the narrow agents (e.g., a minute). This time-bounded property allows for restarting everything if the outcome is unsatisfactory after the time limit. The ideal scenario also involves other forms of boundedness:

+ Spatial boundedness within a specific subsystem of the overall environment.

+ Role boundedness to work at a particular level of abstraction.

+ Complexity boundedness by deferring higher-dimensional perceptual and motor tasks to smaller roles.

+ Influence boundedness by deploying multiple policies that need to agree (similar to triple redundancy in avionics).

+ Ambition boundedness by respecting the boundaries of existing entities.

* **Task-specific Policies**: These low-level policies result from time-bounded RL training and are responsible for executing small tasks, such as writing code for a program, performing a robotic manipulation, or conversing with a patient.

* **Sensor Fusion and State Estimation**: Narrow RL agents deployed in the real world are equipped with cameras and other sensors to gather information. The data coming from these sensors first go through some modules doing sensor fusion and state estimation, before being sent to the agent. Compartmentalizing the task of estimating the state of the world adds an extra layer of robustness, though it might lead to reduced performance. In contrast, the high-level policy does not necessarily require sensors, as it primarily functions by writing specifications and receiving abstract feedback when goals are achieved or failed by the narrow policies.

+ (To improve the overall system, state estimation should be provided in an infra-Bayesian format, ensuring effective communication between the high-level policy and the narrow policies.)

Hypotheses discussion

=====================

**Scientific Sufficiency Hypothesis:**This hypothesis posits that we will be able to create a simulation of the world that is precise enough so that verifying a model's behavior within the simulation would ensure its proper behavior in the real world. More specifically, as we are operating in an infra-Bayesian setting, we need a large enough set of world models to ensure that reality is not too distant. Additionally, this hypothesis includes the assumption that the simulation will not be excessively computationally demanding since we intend to train a model on it. Davidad agrees that this project would be one of humanity's most significant science projects, but he believes it would still be less costly than the Large Hadron Collider.

There are several challenges associated with the Scientific Sufficiency Hypothesis:

1. **Completeness**: The world is incredibly complex, and to create a sufficiently precise model, we might need a large portion of all human knowledge. This represents an immense amount of data, even with the assistance of LLMs. However, a sufficient model does not necessarily require solving all scientific domains. For instance, we don't need advanced physics because an AI that passes the model checking step will be very cautious and unlikely to engage in risky physics experiments. If there is a risk that the AI might interfere with experiments conducted at CERN, the model checking process would prevent it from doing so, even without a unified understanding of physics. We only need to model quantum chemistry and higher levels. But even when restricting ourselves to levels above quantum chemistry, there are still numerous scales to consider, each requiring a separate scientific theory. It is not computationally feasible to simulate an entire room from the molecular level. Furthermore, it remains unclear how much of the biosphere and human civilization must be simulated for this plan to succeed.

2. **Formalization:** Much of our current knowledge about the world is not in a readily computable form. It would need to be translated into a computable form before it could be used in this context. Although this is a challenging task, there is already some prior work demonstrating the feasibility of automatic translation from natural language to a formal language, which offers some hope for this endeavor. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Formalization_of_the_world_model)]

3. **Lack of computational Infra-Bayes prior works**: As far as the authors are aware, no infra-bayesian simulation has been carried out before, especially not one of this magnitude. As a result, it remains unclear how we could train a model on such a simulation and whether it would even be computationally feasible.

4. **Flexible meta-ontology**: The system's underlying meta-ontology must be both expansive and adaptable to accommodate the diverse models and theories required for the simulation. This would be similar to creating a new programming language that efficiently incorporates the concept of time. To achieve this, Davidad is exploring novel category theoretic ideas in the attempt to unify in a single formalism:

* random differential equations

* stochastic differential equations

* stochastic partial differential equations

* continuous-time Markov chains

* discrete-time Markov chains

* probabilistic graphical models

* probabilistic logic programming

5. **Difficulty of understanding the framework**: The framework would necessitate input from numerous experts. However, due to the complexity of the meta-ontology, they may encounter difficulties when verifying the work, particularly as it will be in an infra-bayesian format. Vanessa claims that only three people worldwide fully understand [the infra-Bayesian sequence](https://www.lesswrong.com/posts/zB4f7QqKhBHa5b37a/introduction-to-the-infra-bayesianism-sequence), which poses a significant obstacle for establishing a large-scale international project. However, some efforts show that it’s possible to develop software, such as [AlgebraicJulia](https://www.algebraicjulia.org/), that tries to offer both the versatility of category theory and user-friendliness for non-mathematicians.

We believe that addressing the “**Lack of computational Infra-bayes prior works**” and “**Flexible meta-ontology**” concerns is crucial before delving too deep into this plan, as they can be tackled independently of the other aspects.

**Deontic Sufficiency Hypothesis:** This hypothesis posits that it is possible to identify desiderata that are adequate to ensure the model doesn't engage in undesirable behavior. Davidad is optimistic that it's feasible to find desiderata ensuring safety for a few weeks before a better solution is discovered, making this a weaker approach than solving outer alignment. For instance, Davidad suggests that even without a deep understanding of music, you can be confident your hearing is safe by ensuring the sound pressure level remains below 80 decibels. However, since the model would still be executing a pivotal process with significant influence, relying on a partial solution for decades could be risky. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Getting_traction_on_the_deontic_feasibility_hypothesis)]

**Model-Checking Feasibility Hypothesis:**This hypothesis states that it should be possible to prove bounds on the values the model will achieve for the desiderata. Given the task's magnitude, model-checking would mostly be done automatically. In the last few years, we have seen immense progress in the verification of neural networks. In 2017, the best methods were able to verify a hundred neurons, now in 2022, via branch and bound techniques, we can now verify networks of a million neurons [[More details](https://youtu.be/-EKQhkMHWVU?t=1395)]. Currently, model-checking for RL agents is limited to simple environments, and the authors are uncertain if it can be scaled up within just a few years.

**Time bounded Optimization Thesis:**This hypothesis proposes that we can discover training techniques and reward functions that encourage time-bounded optimization behavior. A suggestion in this direction is provided [here](https://www.lesswrong.com/posts/dzDKDRJPQ3kGqfER9/you-can-still-fetch-the-coffee-today-if-you-re-dead-tomorrow). This hypothesis allows us to bypass the problem of corrigibility quite simply: “we can define time-inhomogeneous reward [i.e. the reward becomes negative after a time-limit], and this provides a way of "composing" different reward functions; while this is not a way to build a shutdown button, it is a way to build a shutdown timer, which seems like a useful technique in our safety toolbox.”.

About Category theory and Infra-Bayesianism

===========================================

**Why Infra-Bayesianism:**We want the world model we create to be accurate and resilient when facing uncertainty and errors in modeling, since we want it to perform well in real-world situations. Infra-bayesianism offers a way to address these concerns.

* **Worst case assurance**: One of the primary goals is to achieve a level of worst-case assurance. Infra-bayesianism provides tools to manage multiple world models simultaneously and calculate the expected value for the worst-case scenario.

* **Knightian uncertainty**: This approach also allows us to handle situations where quantifying uncertainty is not feasible in a purely Bayesian way. For instance, when analyzing price time series, we can apply the Black-Scholes Model, but we must also acknowledge the existence of black swan events. Although we cannot assign a probability to such events, we must integrate the possibility of a black swan crisis into our analysis. We can automate the world modeling process by removing the subjective aspect of measuring uncertainty between different theories, i.e. we don’t have to put a probability on everything. Although it does not solve the problem of unknown unknowns (facts about the world that we don’t even consider or think about, because of our limited cognition or knowledge), it helps us work with known unknowns that are difficult to assign probabilities to.

**Why Category Theory:**A key to effectively understanding the world may lie in exploring relationships and mappings. Functional programming and category theory are promising options for this task. Category theory enables us to represent complex relationships across various levels of abstraction, which is crucial for constructing a world model that incorporates different competitive theories at different scales of size and time in a collaborative manner. Moreover, it is useful to express infra-bayesianism within a category-theoretic framework. The main bottleneck currently appears to be creating an adequate meta-ontology using category theory. [[More details here](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Defining_a_sufficiently_expressive_formal_meta_ontology_for_world_models), and [here](https://www.overleaf.com/project/63e277c489ac358d8940c4f3)]

High level criticism

====================

Here are our main high level criticisms about the plan:

* **High alignment tax:** The process of training a model using this approach is expensive and time-consuming. To implement it, major labs would need to agree on halting the development of increasingly larger models for at least a year or potentially longer if the simulation proves to be computationally intensive.

* **Very close to AGI:** Because this approach is more expensive, we need to be very close to AGI to complete this plan. If AGI is developed sooner than expected or if not all major labs can reach a consensus, the plan could fail rapidly. The same criticisms as those written to OpenAI could be applied here. See [Akash's criticisms](https://www.lesswrong.com/posts/FBG7AghvvP7fPYzkx/my-thoughts-on-openai-s-alignment-plan-1).

* **A lot of moving pieces:** The plan is intricate, with many components that must align for it to succeed. This complexity adds to the risk and uncertainty associated with the approach.

* **Political bargain in place of outer alignment:** Instead of achieving outer alignment, the model would be trained based on desiderata determined through negotiations among various stakeholders. While a formal bargaining solver would be used to make the final decision, organizing this process could prove to be politically challenging and complex:

+ Who would the stake-holders be (countries, religions, ethnicities, companies, generations, people from the future, etc.)

+ How would they be represented

+ How each stake-holder would be weighted

+ How to evaluate the losses and gains of each stake-holder in each scenario

* The resulting model, trained based on the outcome of these negotiations, would perform a pivotal process. While there is hope that most stakeholders would prioritize humanity's survival and that the red-teaming process included in this plan would help identify and eliminate harmful desiderata, the overall robustness of this approach remains uncertain.

* **RL Limitations:** While reinforcement learning has made significant advancements, it still has limitations, as evidenced by MuZero's inability to effectively handle games like [Stratego](https://www.deepmind.com/blog/mastering-stratego-the-classic-game-of-imperfect-information). To address these limitations, assistance from large language models might be required to bootstrap the training process. However, it remains unclear how to combine the strengths of both approaches effectively—leveraging the improved reliability and formal verifiability offered by reinforcement learning while harnessing the advanced capabilities of large language models.

High Level Hopes

================

This plan has also very good properties, and we don’t think that a project of this scale is out of question:

* **Past human accomplishments:** Humans have already built very complex things in the past. Google Maps is an example of a gigantic project, and so is the LHC. Some Airbus aircraft models have never had severe accidents, nor have any of EDF’s 50+ nuclear power plants. We dream of a world where we launch aligned AIs as we have launched the International Space Station or the James Webb Space Telescope.

* **Help from almost general AIs:** This plan is impossible right now. But we should not underestimate what we will be able to do in the future with almost general AIs but not yet too dangerous, to help us iteratively build the world model.

* **Positive Scientific externalities:** This plan has many positive externalities: We expect to make progress in understanding world models and human desires while carrying out this plan, which could lead to another plan later and help other research agendas. Furthermore, this plan is particularly good at leveraging the academic world.

* **Positive Governance externalities:** The ambition of the plan and the fact that it requires an international coalition is interesting because this would improve global coordination around these issues and show a positive path that is easier to pitch than a slow-down. [[More details](https://www.lesswrong.com/posts/jRf4WENQnhssCb6mJ/davidad-s-bold-plan-for-alignment-an-in-depth-explanation#Governance_strategy)]. Furthermore, Davidad's plan is one of the few plans that solves accidental alignment problems as well as misuse problems, which would help to promote this plan to a larger portion of stakeholders.

* **Davidad is really knowledgeable and open to discussion.**We think that a plan like this is heavily tied to the vision of its inventor. Davidad’s knowledge is very broad, and he has experience with large-scale projects, in academics and in startups (he co-invented a successful cryptocurrency, he led a research project for the worm emulation at MIT). [[More details](https://research.protocol.ai/authors/david-dalrymple/)]

Intuition pump for the feasibility of creating a highly detailed world model

----------------------------------------------------------------------------

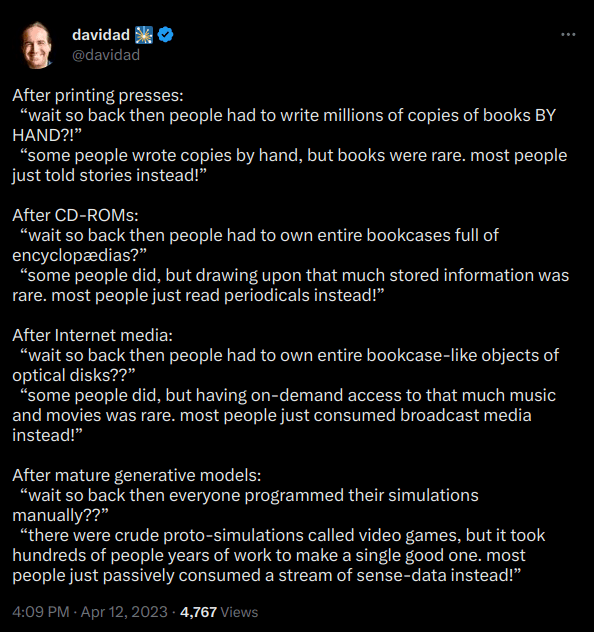

Here's an intuition pump to demonstrate that creating a highly detailed world model might be achievable: Humans have already managed to develop Microsoft Flight Simulator or The Sims. There is a level of model capability at which these models will be capable of rapidly coding such realistic video games. Davidad’s plan, which involves reviewing 2 million scientific papers (among which only a handful contain crucial information) to extract scientific knowledge, is only a bit more difficult, and seems possible. Davidad [tweeted](https://twitter.com/davidad/status/1646153570335481856) this to illustrate this idea:

Comparison with OpenAI’s Plan

=============================

**Comparison with OpenAI’s Plan:** At least, David's plan **is an object-level plan**, unlike [OpenAI's plan](https://openai.com/blog/our-approach-to-alignment-research/), which is a meta-level plan that delegates the role of coming up with a plan to smarter language models. However, this plan also requires very powerful language models to be able to formalize the world model, etc. Therefore, it seems to us that this plan also requires a level of capability that is also AGI. But at the same time, Davidad's plan might just be one of the plans that OpenAI's automatic alignment researchers could come up with. At least, davidad’s plan does not destroy the world with an AI race if it fails.

**The main technical crux:**We think the main difficulty is not this level of capability, but the fact that this level of capability is beyond the ability to publish papers at conferences like NeurIPS, which we perceive as the threshold for Recursive self-improvement. So this plan demands robust global coordination to avoid foom. And model helping at alignment research seems much more easily attainable than the creation of this world model, so OpenAI’s plan may still be more realistic.

Conclusion

==========

This plan is crazy. But the problem that we are trying to solve is also crazy hard. The plan offers intriguing concepts, and an unorthodox approach is preferable to no strategy at all. Numerous research avenues could stem from this proposal, including automatic formalization and model verification, infra-Bayesian simulations, and potentially a category-theoretic mega-meta-ontology. As Nate Soares said : “I'm skeptical that davidad's technical hopes will work out, but he's in the rare position of having technical hopes plus a plan that is maybe feasible if they do work out”. We express our gratitude to Davidad for presenting this innovative plan and engaging in meaningful discussions with us.

[EffiSciences](https://www.effisciences.org/) played a role in making this post possible through their field building efforts.

Annex

=====

Much of the content in this appendix was written by Davidad, and only lightly edited by us. The annex contains:

* A discussion of the gouvernance aspects of this plan

* A technical roadmap

* And some important testable first research projects to test parts of the hypotheses.

Governance strategy

-------------------

Does OAA help with governance? Does it make certain governance problems easier/harder?

Here is davidad’s answer:

* OAA offers a concrete proposal for governance *of* a transformative AI deployment: it would elicit independent goal specifications and even differing world-models from multiple stakeholders and perform a bargaining solution over the Pareto frontier of multi-objective RL policies.

* While this does not directly address the governance of AI R&D, it does make the problem easier in several ways:

+ It is more palatable or acceptable for relevant decision-makers to join a coalition that is developing a safer form of AI together (rather than racing) if there is a good story for how the result will be governed in a way that is better for everyone than the status quo (note: this argument relies on those decision-makers believing that in the status quo race, even if they win, the chance of existential catastrophe is nontrivial; I am more optimistic than some that this belief is already widespread among relevant decision-makers and others will be able to update enough).

+ It provides a positive vision for how AI could actually go well—something to aim *for*, rather than just averting a risk.

+ It offers a narrative about regulation or compute governance where the imposition of an OAA-style model doesn’t have to be just about “safety” or technical concerns but also about “fairness” or the public benefit

- Caveat: this approach requires not just imposing the OAA, but also saying something substantive about who gets to be high-bargaining-power stakeholders, e.g. citizens’ assemblies, elected representatives, etc.

+ To the extent that early versions of OAA can already be useful in supporting collective decision-making about the allocation of R&D resources (time, money, compute) by helping stakeholders form a (low-complexity, but higher complexity than an intuitive “slowing down seems good/bad”) model of the situation and finding Pareto-optimal bargain policies, we can actually use OAA *to do* some of the governance of AI R&D

* My default modal guesses about which AI techniques will be useful in different boxes of the big OAA box diagram are pretty well spread out across the comparative advantages of different AI labs; this makes an Apollo-Program-style or ISS-style structure in which many companies/countries participate in an overall program more natural than with agendas that rely on tuning one single artifact (which would naturally end up more Manhattan-Project-style).

Roadmap

-------

Here is the ambitious scaling roadmap where things play out as fast as possible is the following.

### Stage 1: Early research projects

Timeline: Q3 2023. Tldr: Academic work done / PoC experiments

Experiment with the following internships (which are described in the last section of this annex):

* Heuristics used by the solver

* Figure out the meta ontology theory

* Building a toy infra-Bayesian “Super Mario”, and then applying this framework to modelize Smart Grids.

* Training LLMs to write models in the PRISM language by backward distillation

Succeed at How to fine-tune a language model as a heuristic for model-checking?

### Stage 2: Industry actors first projects

Timeline: Q4 2023. Tldr: Get industry actors interested, starting projects inside labs.

Needs:

* Clear articulation of specific compute-bound projects which is a good fit for them. It will require a lot of basic thoughts. It will also require early results. (heuristics used by the solver, figure out the meta ontology, …)

* Succeed at How to fine-tune a language model as a heuristic for model-checking?

### Stage 3: Labs commitments

Timeline: Late 2024 or 2025. We need to get to Stage 3 no later than 2028. Tldr: Make a kind of formal arrangement to get labs to collectively agree to increase their investment in OAA. This is the critical thing.

Needs:

* You need to have built a lot of credibility with them. The strong perception that this is a reasonable and credible solution.

+ Hard part: People who have a lot of reputation on the inside who are already working on it.

+ Multi-author manifesto (public position paper) which backs OAA with legendary names.

* You need to get introductions to the CEOs.

* Have a very clear commitment/ask.

* Having fleshed out the bargaining mechanism (a bit like the S-process)

### Stage 4: International consortium to build OAA.

Timelines: In order for this to not feel like a slowdown to capabilities accelerationists, we should credibly reach Stage 4 by 2029. Start chat & planning for it in 2024.

Some important testable first research projects

-----------------------------------------------

### Formalization of the world model

We can get started on this by using an existing modeling language, such as [PRISM](https://www.stormchecker.org/documentation/background/languages.html), and applying methodologies being used for [autoformalization](https://arxiv.org/pdf/2302.12433.pdf) (e.g. “distilled back translation”: starting with a big dataset of models in the language, like [MCC](https://mcc.lip6.fr/2023/models.php), using the LLM to translate them into natural language, and then fine-tuning on the transpose of that dataset to get it to learn the formal language).

### Try to fine-tune a language model as a heuristic for model-checking

Prompting/fine-tuning/RL’ing LLMs to be useful assistants in writing (and extending) formal world models and specifications. Here would be the process:

* Step 1: Instrument (in the sense of adding systematic “debug statements” that output the intermediate states) the existing hand-crafted heuristics in a solver like [Storm](https://www.stormchecker.org/) or [Prism](https://www.prismmodelchecker.org/) or [Z3](https://github.com/Z3Prover/z3). For example, in storm, we could begin by adding a lot of printf in [this heuristic](https://github.com/moves-rwth/storm/blob/4be4fa3ff3ad824ffdc56d46608faacdbb7b1cf5/src/storm-dft/builder/DftExplorationHeuristic.h).

* Step 2: Collect traces of what the heuristic output is on a wide variety of problems drawn from OpenAI Gym via [COOL-MC](https://arxiv.org/pdf/2209.07133.pdf), and/or in a benchmark that these solvers compete on (like [MCC](https://mcc.lip6.fr/2023/models.php))

* Step 3: Fine-tune a language model on the input-output pairs in this corpus

* Step 4: Replace the hand-crafted heuristics with this language model

* Step 5: Begin training using RL with the reward signal being an exponentially-discounted successful problem solution (analogous to the binary reward of winning a Go game, etc.)

### Defining a sufficiently expressive formal meta-ontology for world-models

Defining a sufficiently expressive formal meta-ontology for world-models with multiple scientific explanations at different levels of abstraction (and spatial and temporal granularity) having overlapping domains of validity, with all combinations of {Discrete, Continuous} and {time, state, space}, and using an infra-bayesian notion of epistemic state (specifically, convex compact down-closed subsets of subprobability space) in place of a Bayesian state. Here are 3 subjects of internship on this subtopic:

* A: unifying different formalisms of dynamical systems

+ One very high-level problem statement: “Define a category which is both a tangent category in the sense of Cruttwell and also has a probability monad in the sense of Perrone.”

+ This arXiv paper is extremely relevant — one open question is: is the category ωPAP defined here a tangent category already? ([ωPAP Spaces: Reasoning Denotationally About Higher-Order, Recursive Probabilistic and Differentiable Programs](https://arxiv.org/pdf/2302.10636.pdf))

* B: generalizing to infra Bayesianism using the monad defined by Mio in [this paper](https://hal.science/hal-03028173/document).

* C: Continuous Time Coalgebras: It is known that discrete-time Markov processes are coalgebras for a probability monad. Such a coalgebra can be viewed as a functor from the one-object category ℕ to the Kleisli category of the probability monad. A “continuous time coalgebra” can be defined as a functor from the one-object category ℚ⁺ of non-negative rationals in place of ℕ (with the same codomain, the Kleisli category of the monad). Which concepts of coalgebra theory can be generalized to continuous time coalgebra? Especially, is there an analog to final coalgebras and their construction by Adamek's theorem?

### Experimenting with the compositional version control system

“Developing version-control formalisms and software tools that decompose these models in natural ways and support building complex models via small incremental patches (such each patch is fully understandable by a single human who is an expert in the relevant domain).’ This requires leveraging theories like double-pushout rewriting and δ-lenses to develop a principled version-control system for collaborative and forking edits to world-models, multiple overlapping levels of abstraction, incremental compilation in response to small edits.

### Getting traction on the deontic feasibility hypothesis

Davidad believes that using formalisms such as Markov Blankets would be crucial in encoding the desiderata that the AI should not cross boundary lines at various levels of the world-model. We only need to “imply high probability of existential safety”, so according to davidad, “we do not need to load much ethics or aesthetics in order to satisfy this claim (e.g. we probably do not get to use OAA to make sure people don't die of cancer, because cancer takes place inside the Markov Blanket, and that would conflict with boundary preservation; but it would work to make sure people don't die of violence or pandemics)”. Discussing this hypothesis more thoroughly seems important.

### Some other projects

* Experiment with the Time bounded Optimization Thesis with some RL algorithms.

* Try to create a toy infra-Bayesian “Super Mario” to play with infra Bayesianism in a computational setting. Then Apply this framework to modelize Smart Grids.

* A natural intermediary step would be to scale the process that produced formally verified software (e.g. Everest, seL4, CompCert, etc.) by using parts of the OAA.

Types explanation

-----------------

Explanation in layman's terms of the types in the main schema. Those notations are the same as those used in reinforcement learning.

* **Formal model of the world**: A.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

: Action, O: Observation, S: State

+ F:(S→O)×(S×A→(PcΔ)S)

+ F is a pair of:

- **Observation function**: S→O that transforms the states S into partial observations O.

- **A transition model**: S×A→(PcΔ)S, which transforms previous states St into an infra-Bayesian probability distribution of possible next states St+1.

* **Formal desirabilities** (Bolker-Jeffrey)

+ V:(Q:(S×A)∗→2)→R

+ **Trajectory**: (S×A)∗ is a sequence of states and actions

+ **Desiderata**: Q:(S×A)∗→2 is a function that tells us which sequences of states and actions are desirable.

+ **Value**: V gives a score to each desideratum (which is a little weird, and Davidad agreed that a list of pairs (Q,V(Q)) would be more natural).

* **Policy**: π:(A×O)∗→ΔA

+ Takes in a sequence of actions and observations and returns a probability distribution over possible actions.

+ Note: this model is not Markovian, but everything here is classical.

* **Certificate proving formal guarantees**: G:List(Q:(S×A)∗→2,<F,π>⊨P(Q)∈[a,b]⊂[0,1])

+ We want proofs that all desiderata are respected with a probability in the interval [a,b]. |

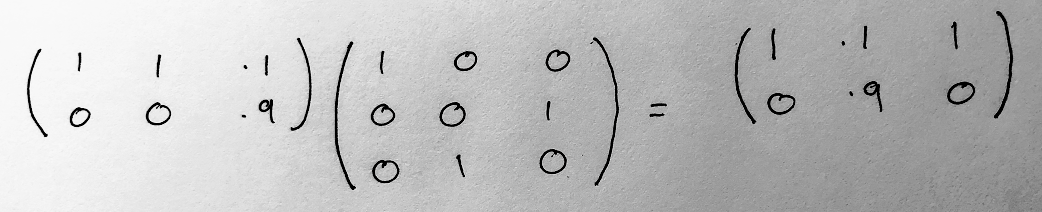

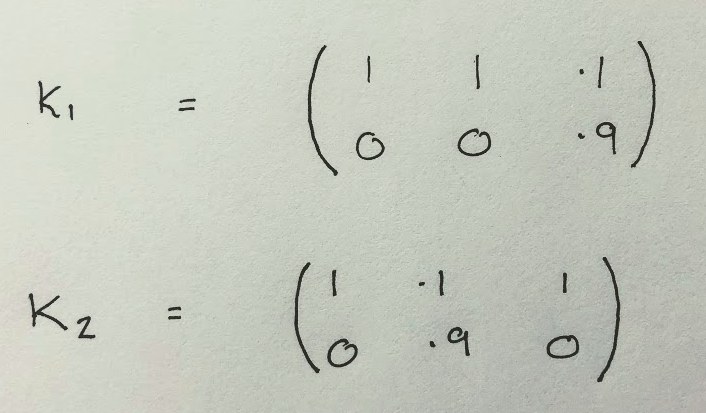

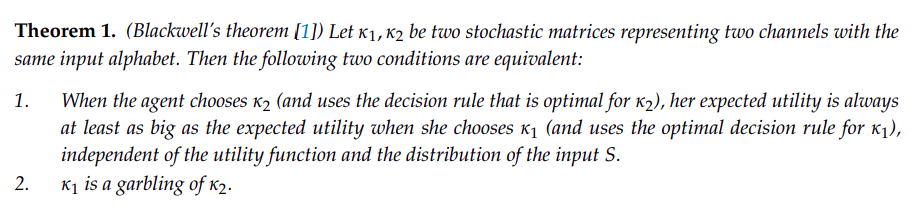

3f0ce077-9af9-45e5-af66-e7a2d8172859 | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | The Blackwell order as a formalization of knowledge

*Financial status: This is independent research, now supported by a grant. I welcome further* [*financial support*](https://www.alexflint.io/donate.html)*.*

*Epistemic status: I’m 90% sure that this post faithfully relates the content of the paper that it reviews.*

---

In a recent conversation about what it means to accumulate knowledge, I was pointed towards a paper by Johannes Rauh and collaborators entitled [Coarse-graining and the Blackwell order](https://arxiv.org/pdf/1701.07602.pdf). The abstract begins:

>

> Suppose we have a pair of information channels, κ1, κ2, with a common input. The Blackwell order is a partial order over channels that compares κ1 and κ2 by the maximal expected utility an agent can obtain when decisions are based on the channel outputs.

>

>

>

This immediately caught my attention because of the connection between information and utility, which I suspect is key to understanding knowledge. In classical information theory, we study quantities such as entropy and mutual information without the need to consider whether information is useful or not with respect to a particular goal. This is not a shortcoming of these quantities, it is simply not the domain of these quantities to incorporate a goal or utility function. This paper discusses some different quantities that attempt to formalize what it means for information to be useful in service to a goal.

The reason to understand knowledge in the first place is so that we might understand what an agent does or does not know about, even when we do not trust it to answer questions honestly. If we discover that a vacuum-cleaning robot has built up some unexpectedly sophisticated knowledge of human psychology then we might choose to shut it down. If we discover that the same robot has merely recorded information from which an understanding of human psychology could in principle be derived then we may not be nearly so concerned, since almost any recording of data involving humans probably contains, in principle, a great deal of information about human psychology. Therefore the distinction between information and knowledge seems highly relevant to the deception problem in particular, and, in my opinion, to contemporary AI alignment in general. This paper provides a neat overview of one way we might formalize knowledge, and beyond that presents some interesting edge cases that point at the shortcomings of this approach.

I suspect that knowledge has to do with selectively retaining and discarding information in a way that is maximally useful across a maximally diverse range of possible goals. But what does it really mean to be "useful", and how might we study a "maximally diverse range of possible goals" in a mathematically precise way? This paper formalizes both of these concepts using ideas that were developed by [David Blackwell](https://en.wikipedia.org/wiki/David_Blackwell) at Stanford in the 1950s.

The formalisms are not novel to this paper, but the paper provides a neat entrypoint into them. The real point of the paper is actually an example that demonstrates a certain counter-intuitive property of the formalisms. In this post I will summarize the formalisms used in the paper and then describe the counter-intuitive property that the paper is centered around.

Decision problems under uncertainty

-----------------------------------

A decision problem in the terminology of the paper goes as follows: suppose that we are studying some system that can be in one of N possible states, and our job is to output one of M possible actions in a way that maximizes a utility function. The utility function takes in the state and the action and outputs a real-valued utility. There are only a finite number of states and a finite number of actions, so we can view the whole utility function as a big table. For example, here is one with three states and two actions:

| State | Action | Utility |

| --- | --- | --- |

| 0 | 0 | 0 |

| 0 | 1 | 0 |

| 1 | 0 | 2 |

| 1 | 1 | 0 |

| 2 | 0 | 0 |

| 2 | 1 | 1 |

This decision problem is asking us to differentiate state 1 from state 2. It says: if the state is 1 then please output action 0, and if the state is 2 then please output action 1, otherwise if the state is 0 then it doesn’t matter. Furthermore it says that getting state 1 right is more important than getting state 2 right (utility=2 in row 3 compared to utility=1 in row 6).

Now suppose that we do not know the underlying state of the system but instead have access to a measurement derived from the state of the system, which might contain noisy or incomplete information. We assume that the measurement can take on one of K possible values, and that for each underlying state of the system there is a probability distribution over those K possible values. For example, here is a table of probabilities for a system S with 3 possible underlying states and a measurement X with 2 possible values:

| | S=0 | S=1 | S=2 |

| --- | --- | --- | --- |

| **X=0** | 1 | 1 | 0.1 |

| **X=1** | 0 | 0 | 0.9 |