id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

95bc5736-1d59-47fa-a1e7-352ccb39aa7d | trentmkelly/LessWrong-43k | LessWrong | US scientists find potentially habitable planet near Earth

http://news.ycombinator.com/item?id=1741330 |

1950a9b8-49ce-481c-baee-cd60b273da5c | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Chaining Retroactive Funders to Borrow Against Unlikely Utopias

Summary

-------

There is no qualitative distinction between investors and retroactive funders on an impact market. Rather they will *de facto* fall along a spectrum of how altruistic they are. That is because investors will (1) expect investments into well-defined prize contests to be less risky than fully speculative investments, and will (2) expect more time to pass before they can exit fully speculative investments, so that a counterfactual riskless benchmark investment represents a higher threshold for them to consider impact markets at all.

Recap: Impact Markets

---------------------

For a more comprehensive explanation of impact markets, see [Toward Impact Markets](https://forum.effectivealtruism.org/posts/7kqL4G5badqjskYQs/toward-impact-markets-1).

In short, an altruistic retroactive funder announces that they will pay for impact (or “outcomes”) they approve of. It resembles a [prize competition](https://forum.effectivealtruism.org/posts/2cCDhxmG36m3ybYbq/impact-prizes-as-an-alternative-to-certificates-of-impact) in this way. But (1) they’ll pay proportional to how much they value the impact and not only the top *n* submissions; (2) the impact remains resellable by default; and (3) seed investors offer to pay the people who are vying for the prizes or provide them with anything else they need and receive in return rights to the impact and thus prize money.

It is analogous to the startup ecosystem: Big companies like Google want to acquire small companies with great staff or a great product. Founders try to start these small companies but often can’t do so (as quickly) without the seed funding and network of venture capital firms. When the exit happens (if it happens), the founders may not even any longer own the majority of the company because they’ve sold so much of it to the investors.

The benefits are particularly strong for high-impact charities and hits-based funders:

1. If a hits-based funder usually funds projects that have a 1 in 10 chance of success and switches to retroactive funding, they save:

1. the money from 9 in 10 of the grants,

2. the time from 9 in 10 of the due diligence processes, and

3. the risk from accidentally funding projects that then generate bad PR.

2. Investors can thus speculate on making around 10x return on their successful investments, and they can further increase their expected returns:

1. by specializing in a narrow area (such as AI safety) to make excellent predictions about which project will succeed,

2. by providing founders with their networks in those areas,

3. by buying resources at a bulk discount that founders need (such as compute credits), and

4. by finding founders that none of the other investors or funders are aware of to negotiate deals with them where they receive a large share of their impact certificate/s.

3. Charities can attract top talent and align incentives with top talent who may not be fully sold on the charity’s mission:

1. by promising them a share in all impact sold,

2. by locking that share up in a vesting contract,

3. by (possibly) sharing rights to the impact with another company that is the current employer of the talent so that they don’t need to quit and can draw on the infrastructure of the other company. (In fact, somewhat value-aligned companies may be interested in becoming investors themselves if they want to retain talent who want to work on prosocial applications of their knowledge.)

4. Individual researchers can attract funding for their work even without the personal ties to funders, e.g., because they are in a different geographic region and better at their research than at networking.

Profitability of Impact Markets

-------------------------------

We think about this in terms of the riskless benchmark B.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

and the ratios rc and rp. The benchmark B is a return – e.g., B=1.1 for a 10% profit – that an investor expects over some time period. An investment is interesting for the investor if it is more profitable than B. rc=cfci is the ratio of the costs that funder and investor face respectively. This includes, for the funder, the cost of the grant, the time cost of the due diligence, the reputational risk if the due diligence misses something, and, for the investor, the cost of the grant minus savings thanks to shared infrastructure, economies of scale, etc. rp=pipf (note that enumerator and denominator are the other way around) is the ratio of the probabilities that investor and funder respectively assign to the project success. The investor may specifically select projects where they have private information (e.g., thanks to their network) that give them greater confidence in the project’s success than they expect the funder to have.

Hence, investments are interesting if rc⋅rp>B.

The graph shows the benchmark of an investment with 30% riskless profit compared to the maximum profit from various project configurations. It elucidates that an investor who can help realize a project more cheaply than the funder or thinks that it is more likely to succeed, can outperform the funder in a range of scenarios. These are scenarios where one or both parties can reap the gains from trade and save time or money.

The square between 0 and 1 on both axes is largely irrelevant. These are scenarios where the investor would have to pay more than the funder or is less optimistic about the project. Those are obviously uninteresting. But also just outside that square and around the edges, there are areas where the investor may not be interested because their edge (in terms of the rp and rc ratios is too small. Then again a riskless 30% APY is a high benchmark.

A few examples:

If a charity already has a track record of doing something really well 10 out 10 times in the past, there is very little risk involved when they try it for an 11th time:

Maybe an investor thinks they’re 99.5% likely to succeed and the funder thinks they are at least 99% likely to succeed, and the action costs $1m for either and takes a year.

That’s rp=1.005 and rc=1. It is only interesting for an investor who cannot otherwise invest the money at 0.5% profit per year.

1. It’ll be worth little to the funder: If they value the impact at 99% probability at $1m, they’ll pay $1m/99% ≈ $1.01m for it, so $10k premium.

2. If an investor offers to carry that tiny amount of risk, they’ll want it to exceed their 10–30% benchmark after a year, or else a standard ETF investment would be more profitable to them. That’s at least a $100k premium.

3. A bid of a $10k premium (minus the overhead of the whole transaction) from the funder but an ask of $100k premium from the investor means that there’ll be no deal.

But consider a case where someone has no track record:

The investor thinks they are 20% likely to succeed. The funder thinks that they’re 10% likely to succeed. The action costs $1m for both and takes a year.

That’s rp=2 and rc=1. It’ll be interesting unless someone has a benchmark of more than 100% per year.

1. The funder will pay up to $1m/10% = $10m for the riskless impact.

2. That’s a 1000% return (or 900% profit) for the investor with 20% probability, so 100% profit in expectation, which beats most benchmark investments. Even if their riskless benchmark is as high as 30%, they’ll accept offers over 650% return. Naturally, these investors have to be fairly risk neutral or make many such investments. (If they are somewhat altruistic, they can consider the difference between the risk neutral and their actual utility in money a donation.)

3. Funder and investor will meet somewhere at or below 1000%.

It is easy to create an analogous example for the case where funder and investor make the same probability assessments but where the grant size is so small that the investor, who already knows the project, can fund it at half the price compared to the funder who would have to spend a lot of time on due diligence.

Impact Timeline

---------------

A typical product that is suitable for impact markets is a scientific paper. Papers, like many other projects, have the property that they often get stuck in the ideation phase, sometimes have to be abandoned (for other reasons than being an interesting negative result) during the research, sometimes don’t make it past the reviewers, and sometimes turn out to have been a bad idea only decades later.

When an investor wants to invest into a paper that has not been written, but which they are highly optimistic about, they may see these futures:

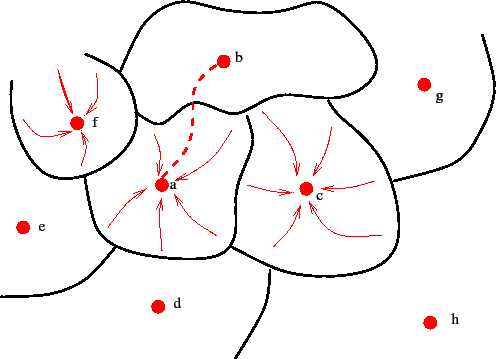

The *x* axis is the time (in years), the *y* axis is the [Attributed Impact](https://forum.effectivealtruism.org/posts/7kqL4G5badqjskYQs/toward-impact-markets-1#Attributed_Impact) (proportional to dollars), blue lines are possible futures, and the red line is the median future.

There are two big clusters: all the futures in which the paper gets written, published, and read, versus and all the futures in which it either never gets finished or gets read by too few people.

One to three years into the process, it becomes clear in which cluster a given future falls, particularly if it falls into the upper cluster. (Otherwise there’s a bit of a halting problem because it might still take off.) Maybe the paper has been published on arXiv and is making rounds among other researchers in the field.

After 10 years, the majority of the impact has become clear and the remaining uncertainty over the value of the Attributed Impact of the paper is low.

After 15 years, we’re asymptotically approaching something that looks like a ceiling on the Attributed Impact of the paper. Experts have hardly updated on its value anymore in years, so their confidence increases that they’ve homed in on its “true” value. (“True” in the intersubjective sense of Attributed Impact, not in any objectivist sense.)

This is a vastly idealized example. In practice it may be that a published paper that used to be held in high regard suddenly turns out to have been wrong, an infohazard, plagiarized, etc. Or it may be that it’s suddenly noticed that a decade-old forgotten-about paper (that had high ambitions at the time but seemed to fall short) contains key answers to an important new problem.

Timing of Retroactive Funding

-----------------------------

If an investor is a specialist in some small field and profits from economies of scale in the field (e.g., the compute credits bought in bulk that we mention above), then they may expect to make a 10× profit from each retro funding that they receive. That’s the difference between the size of the retro funding at which the retro funder breaks even (ignoring interest) and the cost to the investor. We assume for simplicity that monetary and time costs (grants and due diligence) are the same. So, 2 · 10*i* − 2 · 5*i* = 10*i*, where *i* is the average seed investment. (We’re using the parameters from above where retro funders save 10× from making fewer grants and 10× from saving time spent on due diligence. We also assume that a patient, well-networked, specialized investor has twice the hit rate of the generalist funder.)

If, counterfactually, they would’ve invested this money at 30% APY, the impact market ceases to be interesting for them if they expect the retro funding to take longer than 8–9 (≈ 10.6 ≈ 1.39) years: 2 · (1 / *ratefunder*) − 2 · (1 / *rateinvestor*) = (1 + *apy*)*years*.

Here we’re comparing an investor at different hit rates to a retro funder who would otherwise have a 10% hit rate under four counterfactual market scenarios. The impact market is profitable for any number of years less than the break-even point.

If the retro funder wants to save money, they can pay out less, but will need to do so earlier. For simplicity, the following chart is only for the scenario with a counterfactual 30% APY: 2 · (1 / *ratefunder*) · (1 − *savings*) − 2 · (1 / *rateinvestor*) = 130%*years*.

A retro funder needs to take this into account when deciding how much certainty they want to buy from the investors. More added certainty comes at a higher price. They can regulate this through the size of their retro funding or through the timing. Depending on the impact in question there are usually certain sweet spots that they can aim for, and do so transparently so that investors know what time horizons to speculate on.

When it comes to our example above, it seems fairly clear whether the paper was a success (was written, published, and read by some people) after about 2–3 years. So one sweet spot may be to wait for the moment of publication (as a draft or after peer review) or after the initial public reception can be gauged. The second is interesting because investors may be well-positioned to help with the promotion.

But there are other options – less profitable options much later.

Dissolving Retroactivity

------------------------

We can imagine a chain of retro funders from a particular set of futures into the present: Someone makes a binding commitment that if they are successful in making a lot of money – say, their business is successful – they will use the money or a fraction of it to buy back impact that has previously been bought by a certain set of existing retro funders who the person trusts. They can continually add new ones to this set.

This can also be formulated as a prize contest: If I’m successful, I’ll use that budget to buy impact from my favorite retro funders at a reasonable bid price. If 1 in 5 projects still fail between the time when the retro funder bought them and the time when the success happens, the retro funder may buy them at 120% of the price that the previous retro funder paid.

Under this framing there is no qualitative difference anymore between investors and earlier retro funders. They’re all just different investors with different attitudes toward risk or preferences about how they weigh the profit vs. the social bottom line of their investments. (Some of them may choose to consume their certificates, though, to signal that they’ll never resell them.) There may even be investors who choose to invest into “whatever project person X will do next,” so earlier than the abovementioned seed investors.

A startup may be interested in making such a commitment because they have the choice to either do the research in-house or at least pay for it immediately or to pay for it later and only if they are successful. Since startup success is typically Pareto distributed, they’ll have vastly more money in the futures where they are successful than they have now or in unsuccessful futures. So this deal should be interesting for most startups.

For investors it’s a question of whether they want to expose themselves more to the field or to a particular team. If they’re excited about the team behind the startup and trust that team to do well regardless of what field they go into, they’ll want to invest directly into the startup. But if they’re more agnostic about all the teams in a field but are very excited about the field, they may prefer investing into the research projects to bet on the retro funding.

Example

-------

1. Cultured meat (or “cell/clean/c meat”) startups may require a lot more research to be done on how to scale their production and make it cost-competitive. But they don’t yet have the money to do all of that research in-house.

2. They commit to investing a large portion of the money they’ll make from going public into buying impact. Specifically they hash out particular terms with an organization like Founders Pledge that stipulate what impact related to cultured meat research they will buy from which retro funders.

3. The promise of great potential future riches boosts funding and opens up hiring pools.

4. Eventually, the now more likely future might happen, and the large budget from the exits serves to buy most of the impact from the retro funders.

Conclusion

----------

We’ve received a grant via the Future Fund Regranting Program to work on this. If you’d like to join our discussions, [please join our Discord](https://discord.gg/7zMNNDSxWv).

Thanks to my cofounder Dony for reviewing the draft of this post! He gets 1% of the impact of it; I claim the rest. |

fd94a930-6d9b-4151-8e36-22f99b59407c | trentmkelly/LessWrong-43k | LessWrong | High-stakes alignment via adversarial training [Redwood Research report]

(Update: We think the tone of this post was overly positive considering our somewhat weak results. You can read our latest post with more takeaways and followup results here.)

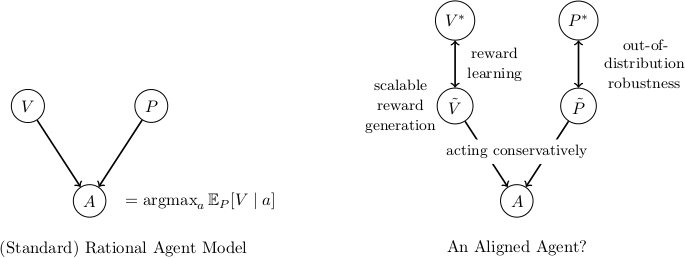

This post motivates and summarizes this paper from Redwood Research, which presents results from the project first introduced here. We used adversarial training to improve high-stakes reliability in a task (“filter all injurious continuations of a story”) that we think is analogous to work that future AI safety engineers will need to do to reduce the risk of AI takeover. We experimented with three classes of adversaries – unaugmented humans, automatic paraphrasing, and humans augmented with a rewriting tool – and found that adversarial training was able to improve robustness to these three adversaries without affecting in-distribution performance. We think this work constitutes progress towards techniques that may substantially reduce the likelihood of deceptive alignment.

Motivation

Here are two dimensions along which you could simplify the alignment problem (similar to the decomposition at the top of this post):

1. Low-stakes (but difficult to oversee): Only consider domains where each decision that an AI makes is low-stakes, so no single action can have catastrophic consequences. In this setting, the key challenge is to correctly oversee the actions that AIs take, such that humans remain in control over time.

2. Easy oversight (but high-stakes): Only consider domains where overseeing AI behavior is easy, meaning that it is straightforward to run an oversight process that can assess the goodness of any particular action. The oversight process might nevertheless be too slow or expensive to run continuously in deployment. Even if we get perfect performance during training steps according to a reward function that perfectly captures the behavior we want, we still need to make sure that the AI always behaves well when it is acting in the world, between training updates. If the AI is decep |

28823560-23a3-49c5-bea5-0d3df51c66de | StampyAI/alignment-research-dataset/arxiv | Arxiv | Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-Users

1 Introduction

---------------

Popular virtual assistants (VAs), such as

Siri111[www.apple.com/ios/siri/](http://www.apple.com/ios/siri/),

Cortana222[windows.microsoft.com/en-us/windows-10/](http://windows.microsoft.com/en-us/windows-10/)

[getstarted-what-is-cortana](http://getstarted-what-is-cortana), and

GoogleNow333[www.google.com/landing/now](https://www.google.com/landing/now), can perform

dozens of different tasks, such as finding directions and making

restaurant reservations. These are the tasks that the VA developers

expected to be the most widely used. However, every VA user can

probably think of one or more other tasks that they would like their

VA to help with, which the developers have simply not implemented yet.

The unavailable tasks are as varied as the users. Thus, the demand

curve for VA tasks has a very long and heavy tail of unsatisfied

demand. The capabilities of currently available VAs represent only a

tiny fraction of their potential. Even the available tasks are often

implemented differently from how users would prefer.

This situation is unavoidable given how VAs are currently developed.

There will never be enough VA developers to customize VAs in all the

ways that users would like. The only way to close the gap between

what VAs can do and what users want them to do is to enable

non-technical end-users to teach new tasks to their VAs. Many users

would be willing and able to do so, if it were as quick and easy as

teaching a person.

The most common way to teach a person a relatively simple new task is

to describe the task and then demonstrate how to do it. For decades,

researchers have been trying to build computer systems that can be

taught the same way. Their efforts comprise a body of work most

commonly referred to as “programming by demonstration”

(PBD)[[4](#bib.bib4)]. 444“PBD” is an unfortunate name, because

most of the non-technical users that can benefit from it are

reluctant to attempt anything with “programming” in its name.

| program class → | variable-free | non-branching | branching |

| --- | --- | --- | --- |

| finite set of tasks | | Siri, Cortana, et al. | |

| domain-restricted set of tasks | | | PLOW et al. |

| most tasks | | Helpa | |

| all tasks | macros | | |

Table 1:

Virtual assistants trade off task expressive power for task domain-dependence.

The simplest kind of PBD system creates and runs programs with no

variables, colloquially known as macros. The absence of variables,

which also implies the absence of loops and conditionals, makes it

easier for non-programmers to understand and use macros.

Nevertheless, macros see little use outside of special environments

such as text editing software, because there are relatively few

situations in which a program without variables can be useful.

To increase the usefulness of PBD, researchers have attempted to build

systems that can be taught more powerful classes of programs, all the

way up to Turing-equivalent systems with variables, loops, and

conditionals (e.g., see [[5](#bib.bib5), [6](#bib.bib6)] and

references therein). Invariably, such attempts run into the

limitations of the current state of the art in natural language

understanding. At present, the only known way for computers to deal

with the richness of language that people use to describe complex

tasks is to limit the tasks to a narrow domain, such as

travel reservations or messaging. For example, the PLOW system

[[1](#bib.bib1)] is powerful enough to learn programs with variables,

loops, and subroutines. Yet, it can learn tasks only within the task

domains covered by its ontology. In order to demonstrate PLOW’s

ability to learn tasks in a new domain, its authors had to manually

extend its ontology to the new domain. To the best of our knowledge,

all previous PBD systems with variables are similarly limited to at

most a handful of task domains.555From the point of view of

most users, who do not have access to the developers.

The class of variable-free programs and the class of Turing-equivalent

programs are the two extremes on a continuum of expressive power.

However, most of the tasks available from today’s most popular VAs can

be expressed by programs that are in another class between those two

extremes. These programs are in the “non-branching” class, where

programs can have variables but cannot have loops or conditionals.

Judging by the popularity of VA software, a very large number of

people could benefit from a VA that can be taught new non-branching

programs by its end-users.

This paper presents Helpa, a system that can be taught non-branching

programs via PBD. We have developed a way to teach such programs

without any prior domain knowledge, which works surprisingly well in

most cases. Therefore, Helpa imposes no restrictions on the domains in

which users can teach it new tasks. We believe that Helpa’s innovative

trade-off of expressive power for domain-independence occupies a sweet

spot of very high utility, compared to the other classes of VAs in

Table [1](#S1.T1 "Table 1 ‣ 1 Introduction ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions."). In addition, our usability study showed that

Helpa’s design makes it possible for end-users to teach it many new

tasks in less than a minute each — fast enough for practical use in

the real world.

Following [[7](#bib.bib7)], we shall refer to the teachable

component of a VA as an instructible agent (IA), and the challenge of

building an IA as the IA problem. After formalizing this problem in

the next section, we shall describe our proposed solution. We shall

then describe some of its current limitations, which explain why we

claim that Helpa can learn only “most tasks”, rather than “all

tasks”, in Table [1](#S1.T1 "Table 1 ‣ 1 Introduction ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions."). Lastly, we shall describe a

usability study that we carried out to evaluate Helpa’s effectiveness.

2 The Instructible Agent (IA) Problem

--------------------------------------

The IA problem is to build a system that can correctly execute a task

expressed as a previously unseen natural language command. We shall

put aside the question of what counts as natural language by accepting

any string of symbols as a command. It is more challenging to

operationalize the notion of executing a task.

Every PBD system interacts with a particular user interface (UI). It

records the user’s actions in that UI when a user is demonstrating a

new task for it to learn. It mimics the user’s actions in that UI to

execute tasks that it has learned. Reliably interacting with a UI in

this manner is a challenging problem (e.g., see [[10](#bib.bib10)]).

The present work makes no attempt to solve it.

Rather, we abstract the notion of task execution into a data structure

that we call a “UI script”. We assume that when a PBD system

records a user’s actions, the result is a UI script. And when it’s

time for a PBD system to mimic a user’s actions back to the UI, it

does so by reading and executing a UI script.

Since all of the IA’s interactions with the UI are via a UI script, we

can define the IA problem independently of the problem of reliably

interacting with the UI. In particular, we define the IA problem as

predicting a UI script from a command. [[3](#bib.bib3)] studied a

special case of this problem where the natural language input

explicitly referred to every user action in the UI script.

[[8](#bib.bib8)] and others have studied a related but different

problem where the goal was to predict programs from program traces.

IAs that aim to learn branching programs must predict

branching UI scripts but, in the present work, we limit our attention

to non-branching programs and non-branching UI scripts.

3 Helpa

--------

###

3.1 Model

Given sufficient training data, it might be possible to solve the IA

problem via machine techniques (e.g., [[2](#bib.bib2)]). We are not

aware of any pre-existing training data

for this problem. To compensate for the lack of data, we used a model

with very strong biases, so that it can be learned from only one

example (per task) of the kind that we might reasonably expect a non-technical

end-user to provide. The Helpa model has three parts for every task

t:

1. The class Tt of commands that pertain to t. We

shall encode Tt in a data structure called a “command

template”.

2. The class Pt of UI scripts for t. We shall

encode Pt as a non-branching program.

3. A mapping of variables between Tt and Pt,

which we call a “variable binding function.”

We shall now expand on each of these concepts.

A natural language command given to an IA can be segmented into

constants and variable values. Variable values are words or phrases

that are likely to vary among commands from

the same class. Constants are “filler” language that is likely to

remain the same for every command in the class. For example, suppose

a user wants to train her system to check flight arrival times using

the command “When does KLM flight 213 land?” In this command, “KLM”

and “213” are variable values. The other symbols are constants.

A command template can be derived from a command by replacing

each variable value with the name of a variable. “When does

X1 flight X2 land?” is a command template for the previous

example.

To justify our use of the term ‘‘program’’, we must first say more

about UI scripts. In the present work, we limit our attention to UIs

that consist of discrete elements, where all user actions are

unambiguously separate from each other and happen one at a

time666A smart-phone touchscreen or a web browser would fit this

description, for example, but a motion-capture suit would not.. A

non-branching UI script for such a UI is a sequence of actions, where every

action pertains to at most one element of the UI. E.g., a UI script

for a web browser might involve an action pertaining to the 4th text

field currently displayed and an action pertaining to the leftmost

pull-down menu. A common action that does not pertain to a

specific UI element is to wait for some condition to occur in the UI,

such as waiting for a web page to load. Besides identifying an

element in the UI, each action can also specify a parameter value,

such as what to type into the text field or how long to wait for the

page to load777More generally, each action can have multiple

parameter values. We omit this generalization for simplicity of

exposition.. An example of a UI script is in Figure [1](#S3.F1 "Figure 1 ‣ 3.1 Model ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.").

| action type | UI element | parameter value |

| --- | --- | --- |

| textbox\_fill | address\_bar | [flightarrivals.com](http://flightarrivals.com) |

| wait\_for | | page\_load |

| select\_from | menu\_1 | KLM |

| textbox\_fill | textbox\_1 | 213 |

| click\_button | button\_1 | |

| wait\_for | | page\_load |

Figure 1:

Example of a UI script for the command “When does KLM

flight 213 land?”

Every non-branching program is also just a sequence of actions. A

program differs from a UI script only in that some of the parameter

values can be variables. E.g., to create a program from the UI

script in Figure [1](#S3.F1 "Figure 1 ‣ 3.1 Model ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions."), we would replace the parameter value

“KLM” with a variable name like X1 and the parameter value

“213” with another variable name like X2. Replacing values with

variable names, both in commands and in UI scripts, is a form of

generalization. This kind of generalization is the most common way

for PBD systems to learn (e.g., [[11](#bib.bib11)]).

Finally, a variable binding function maps the variables in a

command template to the variables in a program. Helpa allows a

command template variable to map to multiple program variables, but

not vice versa. The one-to-many mapping can be useful, e.g., when a

web form asks for a shipping address separately from a billing

address, and the user always wants to use the same address for both.

We do not allow multiple command template variables to map to the same

program variable. Doing so would merely increase system complexity

without any benefits.

###

3.2 System Architecture and Components

With the Helpa model in mind, we can describe how Helpa works. It has

two modes of operation: learning and execution, illustrated in

Figures [2](#S3.F2 "Figure 2 ‣ 3.2 System Architecture and Components ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") and [3](#S3.F3 "Figure 3 ‣ 3.2 System Architecture and Components ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions."), respectively. In both

figures, dashed lines delimit the Helpa system boundary, and numbers

indicate the order of events. Both modes use a database of tasks,

where every record consists of a command template, a program, and a

variable binding function. Tasks are created in learning mode and

executed in execution mode.

Figure 2:

Data flow diagram for Helpa’s learning mode.

The user initiates the learning mode by starting the UI recorder (1).

The user then provides an example command (2) and demonstrates how to

execute the command (3a). During the demo, the recorder is

transparent to the user and to the UI. It records all user actions

and any relevant responses from the UI (3b). When the user stops the

recorder (4), the recorder writes a UI script (5). Then, the learner

takes the example command and the UI script (6), and infers a command

template, a program, and a variable binding function for the task (7).

The command template is shown to the user for approval (8). If the

user approves, then the program and variable binding function are

stored in the task database, keyed on the command template (9).

Otherwise, the user can start over.

Figure 3:

Data flow diagram for Helpa’s execution mode.

Execution mode

starts when the user provides a new command (1) without starting the

UI recorder. The matcher queries the task database (2) and selects

the task whose command template matches the new command (3). The

command template for that task is compared to the new command (4), in

order to infer the variable values (5). Currently, the values are

inferred merely by deleting the constant parts of the command template

from the command. Once found, the values are substituted into the

program via the variable binding function (6) to create a new UI

script (7). The UI script is sent to the player (8), which mimics the

way that a user would execute that task in the UI (9). Thus, after

learning a new task, and storing it keyed on its command template,

Helpa can execute new commands matching that template, with previously

unseen parameter values.

We shall now say more about some of the subsystems that our diagrams

refer to. The diagrams show the player and recorder outside of the

Helpa system boundary, because we do not consider these components to

be part of Helpa. A different player and recorder are necessary for

every type of UI. However, regardless of the UI, Helpa interacts with

the world only through UI scripts. Therefore, Helpa is

UI-independent, which also makes it device-independent.

In execution mode, the matcher looks for a command template that can

be made identical to the command by substituting the template’s

variables with some of the command’s substrings. E.g., the template

“When does X1 flight X2 land?” can be made identical to the

command “When does United flight 555 land?” by substituting X1

with “United” and X2 with “555”. This kind of matching is a

special case of unification, for which efficient algorithms exist

[[12](#bib.bib12)].

Figure [3](#S3.F3 "Figure 3 ‣ 3.2 System Architecture and Components ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") shows only what happens if

exactly one unifying template is found. Otherwise, control passes to

a clarification subsystem, which is not shown in the diagram. If no

suitable template is found, this subsystem provides a list of

available command templates to the user, in order of string similarity

to the command, and offers the user a chance to try another command.

If multiple templates unify with the new command, they are displayed

in order of their amount of overlapping filler text, and the user is

asked to disambiguate their command by rewording it.

The learner used in learning mode is responsible for generalizing the

command to a command template, generalizing the UI script to a

program, and deciding which variables in the command template

correspond to which variables in the program. A key insight that

makes it possible to learn from only one example is that, typically,

each variable value in the example command is the same as a parameter

value in the UI script. In contrast, the constant parts of the

command typically bear no resemblance to the rest of the UI script.

1:command C, UI script S

2:L1=L2=∅ ▹ empty lists

3:for i=1 to |D| do

4: q← value of parameter in action i of S

5: if q matches C from word m to word n then

6: len←m−n+1

7: L1.append(⟨len,i,m,n⟩) ▹ list of 4-tuples

8:sort L1 on len

9:R[1..|C|]←→0 ▹ array of |C| zeros

10:for all ⟨len,i,m,n⟩∈L1 do

11: if

R[m..n]=→0

or

∃d:(R[m..n]=→d

and R[m−1]≠d and R[n+1]≠d)

then

12: R[m..n]←→i ▹ put i in positions m

thru n

13: L2.append(⟨m,n,i⟩) ▹ list of triplets

14:sort L2 on m

15:T=C ▹ command template

16:P=D ▹ program

17:B=∅ ▹ variable binding function

18:for all ⟨m,n,i⟩∈L2 do

19: replace words m thru n of T with “Xm”

20: replace parameter in line i of P with “Xm”

21: add (‘‘Xm"→i) to B

22:command template T, program P, variable binding function B

Algorithm 1

Helpa learning algorithm

Helpa’s learner uses this insight as shown in

Algorithm [1](#alg1 "Algorithm 1 ‣ 3.2 System Architecture and Components ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions."). The first loop (lines 2–6) matches the

parameter values in the UI script with substrings of the command, and

stores them in list L1. After the loop, the list is sorted on the

length of the matching substring, in order to give preference to

longer matches. The second loop (lines 9–12) traverses L1 in

order from longest match to shortest. Each matching action attempts

to reserve its substring of the command by filling the corresponding

span of the reservation array R with its action index i. The

reservation attempt succeeds if one of two conditions holds: either

that span is not yet reserved by any other action, or exactly

that span is reserved by another action (i.e. with the same span

boundaries). The latter condition enables one command variable to map

to multiple UI script variables, but only if it’s exactly the same

command variable. Overlapping or nested command variables are not

allowed. The successful reservations are stored in list L2. In

line 13, L2 is sorted on the left boundary m of the span of the

variable value in the command. This order is necessary because, in

execution mode, the variable substitution process assumes that the

order of variables in the variable binding function is the same as the

order of variables in the command template. The last loop (lines

17-20) traverses L2, whose every element is a mapping from a span

of the command to a line of the UI script. The learner creates

variable names Xm, where m refers to the left boundary of a span

of a command variable. The learner uses these variable names to

create a command template out of the input command and a program out

of the input UI script. Naming the variables in this manner allows

one command variable to map to multiple UI script variables. Since

line 10 disallowed overlapping or nested command variables, there can

be no ambiguity about which command variable each Xm refers to. The

last step in the last loop adds each mapping to the variable binding

function.

###

3.3 Limitations

At the present stage of development, Helpa has some significant

limitations. Perhaps the most striking limitation, from a user’s

point of view, is that Helpa knows nothing about paraphrasing. Helpa

doesn’t even know that “April 4, 2016” is the same as “04/04/16”.

Likewise,

knowing how to execute ‘‘Find

X’’ doesn’t help Helpa to execute ‘‘Search for X’’.

In order for the learner to work, the variable values in the command

must be identical to the values in the UI script.

888This

limitation is not so severe when Helpa is executing a task for the

same user who trained it on that task, because that user will often

remember the phrasing that they used. The literature offers a

variety of techniques for overcoming this limitation.

For example, we could use

statistical paraphrase generation [[13](#bib.bib13)] to proactively

expand a newly inferred command template into a set of possible

paraphrases, and store them all in the task database linked to the

same task. However, the usability study in the next section was

done without the benefit of such techniques.

A more subtle limitation is due to Helpa’s simplistic method for

deducing variable values at execution time. The “string difference”

method fails when two variables are adjacent in the command template,

because Helpa doesn’t know how to partition the adjacent values.

E.g., in a command like “I need a Ford Taurus Tuesday,” Helpa has no

way to determine whether “Taurus” should be part of the value for

the car variable or part of the value for the day variable. Again,

there are various natural language processing (NLP) techniques that can

solve most of this problem (e.g., [[9](#bib.bib9)]). For now, Helpa

works only for commands that have no adjacent variables.

Although it’s easy to think of commands that violate this

constraint, they are relatively rare in practice, at least

in English. We found long lists of English commands for

Siri999[www.reddit.com/r/iphone/comments/1n43y3/everything\_you\_can\_ask\_siri\_in\_ios\_7\_fixed](http://www.reddit.com/r/iphone/comments/1n43y3/everything_you_can_ask_siri_in_ios_7_fixed),

for Cortana101010[techranker.net/cortana-commands-list-](http://techranker.net/cortana-commands-list-)

[microsoft-voice-commands-video](http://microsoft-voice-commands-video), and for

GoogleNow111111[forum.xda-developers.com/showthread.php?t=1961636](http://forum.xda-developers.com/showthread.php?t=1961636).

Two variables were adjacent in only 5 out of 236 Siri commands, in

only 3 out of 91 Cortana commands, and in only 1 out of 98 GoogleNow

commands.

4 Usability Study

------------------

Our working hypothesis in building Helpa was that, in the vast

majority of cases, learning to predict non-branching programs from

natural language commands requires no domain knowledge and only the

most rudimentary NLP. Our usability study was designed to test this

hypothesis, in terms of Helpa’s task completion rates for users who

were not involved in Helpa’s development. We also wanted to measure

how long it takes users to teach new tasks to Helpa.

###

4.1 Design of the Study

Helpa is UI-independent, but using it with a particular UI

requires a player and recorder for that UI. A system like Helpa is

most compelling for a speech UI on a mobile device and/or in a

situation where the user’s hands are busy. Unfortunately, we did not

have access to a suitable UI player/recorder for any such UI/device,

and we did not have the resources to create one. The closest

approximation available to us was the Browser Recorder and Player

(BRAP) package.121212<https://github.com/nobal/BRAP>

BRAP records

user actions in a web browser by injecting jQuery code and listening

for JavaScript events such as key-up, select-one, and submit. This

approach is sufficient for simple web pages, but it often fails on

websites that do not raise events in response to user inputs.

Since BRAP was designed for a

slightly different purpose, it can recognize events related

to only the following HTML elements: text boxes, check boxes, radio

buttons, pull-down menus, and submit buttons. BRAP knows nothing

about hyperlinks, maps, sliders, calendars, pop-ups, etc. Even though

BRAP is the most functional software of its kind, its limitations

prevent it from correctly recording demos on most modern websites.

Since BRAP works only with web browsers, our entire study was done in

a Google Chrome web browser, on an Apple MacBook Air computer, through

a keyboard and touchpad. Also, due to BRAP’s limitations, we were

forced to limit our study to websites that used only simple HTML web

forms. So, we could not use a random sample of web sites, or allow

our study subjects to choose them.

| | | | | |

| --- | --- | --- | --- | --- |

| | site type | URL | scenario | #elts |

| 1 | mortgage calculator |

[calculator.com/pantaserv/ mortgage.calc](http://calculator.com/pantaserv/%20mortgage.calc)

|

You are a real estate agent, checking whether your customers can afford certain properties.

| 11 |

| 2 | thesaurus |

[collinsdictionary.com/english-thesaurus](http://collinsdictionary.com/english-thesaurus)

|

You are a writer looking for alternative ways to express yourself.

| 4 |

| 3 | book store |

[abebooks.com/servlet/ SearchEntry](http://abebooks.com/servlet/%20SearchEntry)

|

You are a book dealer serving many kinds of readers.

| 26 |

| 4 | recruiting | [indeed.com/resumes/ advanced](http://indeed.com/resumes/%20advanced) |

You work for a recruiting firm, searching for candidates to fill various job openings.

| 14 |

| 5 | investment research |

[nasdaq.com](http://nasdaq.com)

|

You are an investor who likes to frequently check the prices of your stocks.

| 2 |

| 6 | scientific database |

[citeseerx.ist.psu.edu/ advanced\_search](http://citeseerx.ist.psu.edu/%20advanced_search)

|

You are doing a literature search for a research project.

| 13 |

| 7 | car rental |

[priceline.com/l/rental/cars.htm](http://priceline.com/l/rental/cars.htm)

|

You are a travel agent, researching rental cars.

| 7 |

| 8 | cooking recipes |

[allrecipes.com/Search/ Default.aspx?qt=a](http://allrecipes.com/Search/%20Default.aspx?qt=a)

|

You are in charge of selecting new dishes to put on a restaurant’s menu.

| 26 |

| 9 | airline | [united.com/web/ en-US/apps/](https://united.com/web/%20en-US/apps/) [booking/flight/ searchOW.aspx](http://booking/flight/%20searchOW.aspx) |

You are a travel agent, checking availability of one-way flights for customers.

| 40 |

| 10 | dept. store |

[jcpenney.com](http://jcpenney.com)

|

You are shopping for gifts for your friends.

| 2 |

Table 2:

Web sites and scenarios used in our study. #elts = number of BRAP-compatible UI elements on the landing page.

After searching for many hours, we found a sufficiently simple website

in each of 10 diverse categories. For each of these 10 websites, we

picked a scenario for which an IA with variables might be useful.

Table [2](#S4.T2 "Table 2 ‣ 4.1 Design of the Study ‣ 4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") lists the types of sites we used, along with the

URL, the scenario we picked for each site, and the number of

BRAP-compatible UI elements on the first web page that the study

subjects saw. This study design limited each task to use only one

website, even though Helpa has no such limitation. Nothing in the

Helpa system was tailored to these websites, these scenarios, or this

study.

We recruited 10 study subjects, and gave them the instructions in

Appendix A. These instructions were designed to help them get around

Helpa’s and BRAP’s counter-intuitive limitations. To summarize,

subjects were instructed that

* variable values must appear in the example command exactly the

same way as they appear in the web form;

* variables in commands cannot be adjacent; and

* task demos must use only the HTML elements that BRAP can record.

* subjects must ignore default values that appear

in web forms.131313BRAP can read default values in web forms, but

we have not yet figured out a way to determine, without explicit

indication from the user, whether a given default should become a

program variable.

We could not think of a way to explain these limitations without

referring to programming concepts.

For this reason, we recruited study subjects from among our

colleagues, all of whom were experienced programmers.

Each subject began by reading the instructions, and asking any

questions they had. Then, an automated script initialized the task

database to empty, randomized the order of the websites, and guided

the subject through the following protocol for each website:

1. Subject reads the scenario description (Column 4 in

Table [2](#S4.T2 "Table 2 ‣ 4.1 Design of the Study ‣ 4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.")), and familiarizes themselves with the website.

2. Subject thinks of a task that is relevant to that scenario, and

of a natural language command that is suitable for that task.

3. Subject interacts with Helpa’s learning mode.

4. If the subject disapproves of the command

template that Helpa generated, return to step 1.

5. Subject thinks of another command from the same class.

6. Subject interacts with Helpa’s execution mode.

7. Subject provides their opinion on whether Helpa executed the new

command correctly.

The script recorded and timestamped all of the interactions between

Helpa, the study subjects, and the UI.

###

4.2 Results

| | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| | site type | A | B | C | D | E | F | G |

| 1 | mortgage calculator | 0.5 | 9.5 | 2 | 3.5 | 20 | 90 | 25 |

| 2 | thesaurus | 0.7 | 4 | 3 | 1 | 5.5 | 30 | 26 |

| 3 | book store | 0.9 | 5 | 3 | 2.5 | 9.5 | 31.5 | 35.5 |

| 4 | recruiting | 0.9 | 7 | 3 | 3 | 13.5 | 48.5 | 36 |

| 5 | investment research | 1.0 | 4 | 2 | 1 | 6 | 38 | 37 |

| 6 | scientific database | 1.0 | 6.5 | 2 | 2.5 | 7.5 | 46 | 46.5 |

| 7 | car rental | 1.0 | 8 | 2 | 4 | 16.5 | 71 | 46.5 |

| 8 | cooking recipes | 0.5 | 7.5 | 2 | 4 | 15 | 52 | 52 |

| 9 | airline | 0.8 | 8 | 2 | 4 | 12 | 55.5 | 53.5 |

| 10 | department store | 0.4 | 4 | 2 | 1 | 4.5 | 38.5 | 54 |

| | median | 0.85 | 7 | 2 | 2.75 | 10.25 | 46.75 | 41.75 |

Table 3:

Results of the usability study. A = task completion rate; B = median

number of actions; C = maximum number of pages; D = median number

of task variables; E = median command length in words; F = median

demo time in seconds; G = median acclimated demo time in seconds.

Table [3](#S4.T3 "Table 3 ‣ 4.2 Results ‣ 4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") shows the statistics that we gathered from our

study.

Column A shows the fraction of attempts in which

Helpa correctly executed the new command. Despite the current

limitations of Helpa and BRAP, the median success rate over all 10

websites was 85%. There were only two kinds of failures. 56% of the

failures (about 8% of all attempts) occurred when a web site did

something unexpected that BRAP could not handle. For example, in the

middle of our study, [allrecipes.com](http://allrecipes.com) started presenting a new

kind of pop-up ad, which often prevented BRAP from playing a UI script

to completion. The other 44% of failures (about 7% of all attempts)

occurred when a study subject failed to follow the instructions. The

instruction that users failed to follow the most often was the one

pertaining to the limitations of BRAP. An interesting case

study here is the department store website [jcpenney.com](http://jcpenney.com).

Subjects had far more trouble with this site than with any other.

That’s because its first page was very simple, with just one search

box, but its second page had a bewildering array of options for

narrowing down the search results. Most subjects excitedly

attempted to use one or more of these options. Unfortunately, most

of the options were rendered by elements that were incompatible with

BRAP, and many subjects forgot about that restriction. Overall,

less than 2% of all attempts failed for reasons unrelated to BRAP.

These results support the working hypothesis stated at the

beginning of Section [4](#S4 "4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.").

The remaining statistics in Table [3](#S4.T3 "Table 3 ‣ 4.2 Results ‣ 4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") are averaged over only

the successfully completed trials. Column B shows the median number

of actions per UI script. This number includes the initial actions

of navigating to the website and waiting for it to load (as in

Figure [1](#S3.F1 "Figure 1 ‣ 3.1 Model ‣ 3 Helpa ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.")). Column C shows the maximum number of page loads

per UI script, again including the initial loading of the website.

Only two pages were used on most websites, because most of the

websites had no BRAP-compatible elements on the second page. Column D

shows the median number of variables per UI script. Column E shows

the median number of words per command, after tokenization. We used a

generic English tokenizer, which merely separated words from

punctuation.

Column F of Table [3](#S4.T3 "Table 3 ‣ 4.2 Results ‣ 4 Usability Study ‣ Towards A Virtual Assistant That Can Be Taught New Tasks In Any Domain By Its End-UsersMany thanks to the participants of our usability study. Also thanks to Patrick Haffner, Michael Johnston, Hyuckchul Jung, Amanda Stent, and Svetlana Stoyanchev for helpful discussions.") shows the median number of seconds

that it took a user to interact with Helpa’s learning mode for the

given website. Time was measured by the wall clock and includes

network delays. We found that most users struggled with Helpa a bit

until they understood that it won’t work unless they follow the

instructions very precisely. So we also report the median user effort

after acclimation, in Column G. This measure is the median time per

demo for each website, excluding users for whom that website was the

first or second that they worked on.141414Tables 2 and 1 are both

sorted on the measure in Column G. Our results show that users can

usually teach Helpa a new task in less than a minute (p<0.01), often much less. Thus, despite its current limitations, Helpa represents a

major advance on the user effort criterion: We are not aware of any

other IA that can learn to predict programs with variables from

natural language commands nearly as quickly.

Appendix B shows some of the more interesting examples of the

variability of command templates for some of the websites in our

study.

5 Conclusions

--------------

Virtual assistants (VAs) have become very popular, but not nearly as

popular as they could be. We conjecture that one of the main reasons

for their slow adoption is that users cannot customize them. Our

instructible agent (IA) Helpa offers users a way to customize their

VAs, not only in terms of which tasks the VA can perform, but also in

terms of the commands used to trigger those tasks, and the way the

tasks are executed. To encourage research on this topic, we are

sharing the data set that grew out of our usability study.

Since Helpa succeeded for most users on most websites, we claim that

Helpa can learn many unrelated tasks without its creators’

involvement. Since Helpa uses no domain-specific knowledge of any

kind, we claim that it has almost complete coverage of tasks that can

be represented by non-branching programs. We don’t know of any other

IA that can learn programs with variables in arbitrary domains without

its creators’ involvement. We also don’t know of any other IA that

can be taught new tasks with variables in less than a minute per task.

The work presented here provides a springboard for several directions

of future research. An obvious direction is to improve Helpa’s