id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

5ed30ef2-ebaa-46b5-b67f-9e40124c9ba4 | trentmkelly/LessWrong-43k | LessWrong | We Need Holistic AI Macrostrategy

Summary

AI Macrostrategy is the study of high level questions having to do with prioritizing the use of resources on the current margin in order to achieve good AI outcomes. AI macrostrategy seems important if it is tractable. However, while few people are working on estimating particular parameters relevant to macrostrategy, even fewer are working on developing holistic macrostrategic models that combine estimates for different parameters to guide our actions. Moreover, while macrostrategy was less tractable in the past, recent developments (especially increased evidence for <10 year timelines) have made macrostrategy substantially more tractable. Thus, using the importance/tractability/neglectedness heuristics from EA, I conclude that on current margins macrostrategy should be a top priority.

Acknowledgements:

Thanks to Chinmay Deshpande, Carson Ezell, Nikola Jurkovic, and others for helping me to develop many of the ideas in this post, and to Thomas Larsen for both doing this and helping to directly edit the post.

Epistemic status:

Speculative, but I think the arguments are pretty straightforward and so I have >70% confidence in the main conclusion that more macrostrategy work should be done on current margins relative to other kinds of alignment work.

What is AI Macrostrategy?

AI Macrostrategy (henceforth just macrostrategy) is the study of high level questions having to do with prioritizing the use of resources to achieve good AGI outcomes on the current margin.

Macrostrategic work can be divided broadly into two categories:

1. Parameter estimates: attempts to forecast key variables such as timelines, takeoff speeds, and the difficulty of aligning AGI

2. Holistic macrostrategy: attempts to combine these estimates and other pieces of data into a coherent, action-guiding model of AI alignment.

For examples of macrostrategic questions, Holden mentions several central macrostrategic questions in this post.

Importance of Macrostr |

1d0ddbb1-79c2-45de-a9f4-aa4416281013 | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Housing Markets, Satisficers, and One-Track Goodhart

There are two parts to what people generally refer to as the "Housing Crisis". One is the simple fact that homes are too expensive. The other part is that building new, expensive houses pushes existing, poorer, renting residents out of communities and scatters them across the outskirts of cities where they have to spend eight hours a day commuting.

Many people think the solution to the first part is building more houses. Many people also think that building more houses conflicts with solving the second part. To me the question is this: given that people like communities and presumably would be happy to pay money for them, why isn't this currently a factor in the housing market?

Regular Goodhart

----------------

Normal Goodhart's law goes like this:

* A system S.mjx-chtml {display: inline-block; line-height: 0; text-indent: 0; text-align: left; text-transform: none; font-style: normal; font-weight: normal; font-size: 100%; font-size-adjust: none; letter-spacing: normal; word-wrap: normal; word-spacing: normal; white-space: nowrap; float: none; direction: ltr; max-width: none; max-height: none; min-width: 0; min-height: 0; border: 0; margin: 0; padding: 1px 0}

.MJXc-display {display: block; text-align: center; margin: 1em 0; padding: 0}

.mjx-chtml[tabindex]:focus, body :focus .mjx-chtml[tabindex] {display: inline-table}

.mjx-full-width {text-align: center; display: table-cell!important; width: 10000em}

.mjx-math {display: inline-block; border-collapse: separate; border-spacing: 0}

.mjx-math \* {display: inline-block; -webkit-box-sizing: content-box!important; -moz-box-sizing: content-box!important; box-sizing: content-box!important; text-align: left}

.mjx-numerator {display: block; text-align: center}

.mjx-denominator {display: block; text-align: center}

.MJXc-stacked {height: 0; position: relative}

.MJXc-stacked > \* {position: absolute}

.MJXc-bevelled > \* {display: inline-block}

.mjx-stack {display: inline-block}

.mjx-op {display: block}

.mjx-under {display: table-cell}

.mjx-over {display: block}

.mjx-over > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-under > \* {padding-left: 0px!important; padding-right: 0px!important}

.mjx-stack > .mjx-sup {display: block}

.mjx-stack > .mjx-sub {display: block}

.mjx-prestack > .mjx-presup {display: block}

.mjx-prestack > .mjx-presub {display: block}

.mjx-delim-h > .mjx-char {display: inline-block}

.mjx-surd {vertical-align: top}

.mjx-surd + .mjx-box {display: inline-flex}

.mjx-mphantom \* {visibility: hidden}

.mjx-merror {background-color: #FFFF88; color: #CC0000; border: 1px solid #CC0000; padding: 2px 3px; font-style: normal; font-size: 90%}

.mjx-annotation-xml {line-height: normal}

.mjx-menclose > svg {fill: none; stroke: currentColor; overflow: visible}

.mjx-mtr {display: table-row}

.mjx-mlabeledtr {display: table-row}

.mjx-mtd {display: table-cell; text-align: center}

.mjx-label {display: table-row}

.mjx-box {display: inline-block}

.mjx-block {display: block}

.mjx-span {display: inline}

.mjx-char {display: block; white-space: pre}

.mjx-itable {display: inline-table; width: auto}

.mjx-row {display: table-row}

.mjx-cell {display: table-cell}

.mjx-table {display: table; width: 100%}

.mjx-line {display: block; height: 0}

.mjx-strut {width: 0; padding-top: 1em}

.mjx-vsize {width: 0}

.MJXc-space1 {margin-left: .167em}

.MJXc-space2 {margin-left: .222em}

.MJXc-space3 {margin-left: .278em}

.mjx-test.mjx-test-display {display: table!important}

.mjx-test.mjx-test-inline {display: inline!important; margin-right: -1px}

.mjx-test.mjx-test-default {display: block!important; clear: both}

.mjx-ex-box {display: inline-block!important; position: absolute; overflow: hidden; min-height: 0; max-height: none; padding: 0; border: 0; margin: 0; width: 1px; height: 60ex}

.mjx-test-inline .mjx-left-box {display: inline-block; width: 0; float: left}

.mjx-test-inline .mjx-right-box {display: inline-block; width: 0; float: right}

.mjx-test-display .mjx-right-box {display: table-cell!important; width: 10000em!important; min-width: 0; max-width: none; padding: 0; border: 0; margin: 0}

.MJXc-TeX-unknown-R {font-family: monospace; font-style: normal; font-weight: normal}

.MJXc-TeX-unknown-I {font-family: monospace; font-style: italic; font-weight: normal}

.MJXc-TeX-unknown-B {font-family: monospace; font-style: normal; font-weight: bold}

.MJXc-TeX-unknown-BI {font-family: monospace; font-style: italic; font-weight: bold}

.MJXc-TeX-ams-R {font-family: MJXc-TeX-ams-R,MJXc-TeX-ams-Rw}

.MJXc-TeX-cal-B {font-family: MJXc-TeX-cal-B,MJXc-TeX-cal-Bx,MJXc-TeX-cal-Bw}

.MJXc-TeX-frak-R {font-family: MJXc-TeX-frak-R,MJXc-TeX-frak-Rw}

.MJXc-TeX-frak-B {font-family: MJXc-TeX-frak-B,MJXc-TeX-frak-Bx,MJXc-TeX-frak-Bw}

.MJXc-TeX-math-BI {font-family: MJXc-TeX-math-BI,MJXc-TeX-math-BIx,MJXc-TeX-math-BIw}

.MJXc-TeX-sans-R {font-family: MJXc-TeX-sans-R,MJXc-TeX-sans-Rw}

.MJXc-TeX-sans-B {font-family: MJXc-TeX-sans-B,MJXc-TeX-sans-Bx,MJXc-TeX-sans-Bw}

.MJXc-TeX-sans-I {font-family: MJXc-TeX-sans-I,MJXc-TeX-sans-Ix,MJXc-TeX-sans-Iw}

.MJXc-TeX-script-R {font-family: MJXc-TeX-script-R,MJXc-TeX-script-Rw}

.MJXc-TeX-type-R {font-family: MJXc-TeX-type-R,MJXc-TeX-type-Rw}

.MJXc-TeX-cal-R {font-family: MJXc-TeX-cal-R,MJXc-TeX-cal-Rw}

.MJXc-TeX-main-B {font-family: MJXc-TeX-main-B,MJXc-TeX-main-Bx,MJXc-TeX-main-Bw}

.MJXc-TeX-main-I {font-family: MJXc-TeX-main-I,MJXc-TeX-main-Ix,MJXc-TeX-main-Iw}

.MJXc-TeX-main-R {font-family: MJXc-TeX-main-R,MJXc-TeX-main-Rw}

.MJXc-TeX-math-I {font-family: MJXc-TeX-math-I,MJXc-TeX-math-Ix,MJXc-TeX-math-Iw}

.MJXc-TeX-size1-R {font-family: MJXc-TeX-size1-R,MJXc-TeX-size1-Rw}

.MJXc-TeX-size2-R {font-family: MJXc-TeX-size2-R,MJXc-TeX-size2-Rw}

.MJXc-TeX-size3-R {font-family: MJXc-TeX-size3-R,MJXc-TeX-size3-Rw}

.MJXc-TeX-size4-R {font-family: MJXc-TeX-size4-R,MJXc-TeX-size4-Rw}

.MJXc-TeX-vec-R {font-family: MJXc-TeX-vec-R,MJXc-TeX-vec-Rw}

.MJXc-TeX-vec-B {font-family: MJXc-TeX-vec-B,MJXc-TeX-vec-Bx,MJXc-TeX-vec-Bw}

@font-face {font-family: MJXc-TeX-ams-R; src: local('MathJax\_AMS'), local('MathJax\_AMS-Regular')}

@font-face {font-family: MJXc-TeX-ams-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_AMS-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_AMS-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_AMS-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-B; src: local('MathJax\_Caligraphic Bold'), local('MathJax\_Caligraphic-Bold')}

@font-face {font-family: MJXc-TeX-cal-Bx; src: local('MathJax\_Caligraphic'); font-weight: bold}

@font-face {font-family: MJXc-TeX-cal-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-R; src: local('MathJax\_Fraktur'), local('MathJax\_Fraktur-Regular')}

@font-face {font-family: MJXc-TeX-frak-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-frak-B; src: local('MathJax\_Fraktur Bold'), local('MathJax\_Fraktur-Bold')}

@font-face {font-family: MJXc-TeX-frak-Bx; src: local('MathJax\_Fraktur'); font-weight: bold}

@font-face {font-family: MJXc-TeX-frak-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Fraktur-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Fraktur-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Fraktur-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-BI; src: local('MathJax\_Math BoldItalic'), local('MathJax\_Math-BoldItalic')}

@font-face {font-family: MJXc-TeX-math-BIx; src: local('MathJax\_Math'); font-weight: bold; font-style: italic}

@font-face {font-family: MJXc-TeX-math-BIw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-BoldItalic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-BoldItalic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-BoldItalic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-R; src: local('MathJax\_SansSerif'), local('MathJax\_SansSerif-Regular')}

@font-face {font-family: MJXc-TeX-sans-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-B; src: local('MathJax\_SansSerif Bold'), local('MathJax\_SansSerif-Bold')}

@font-face {font-family: MJXc-TeX-sans-Bx; src: local('MathJax\_SansSerif'); font-weight: bold}

@font-face {font-family: MJXc-TeX-sans-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-sans-I; src: local('MathJax\_SansSerif Italic'), local('MathJax\_SansSerif-Italic')}

@font-face {font-family: MJXc-TeX-sans-Ix; src: local('MathJax\_SansSerif'); font-style: italic}

@font-face {font-family: MJXc-TeX-sans-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_SansSerif-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_SansSerif-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_SansSerif-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-script-R; src: local('MathJax\_Script'), local('MathJax\_Script-Regular')}

@font-face {font-family: MJXc-TeX-script-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Script-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Script-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Script-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-type-R; src: local('MathJax\_Typewriter'), local('MathJax\_Typewriter-Regular')}

@font-face {font-family: MJXc-TeX-type-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Typewriter-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Typewriter-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Typewriter-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-cal-R; src: local('MathJax\_Caligraphic'), local('MathJax\_Caligraphic-Regular')}

@font-face {font-family: MJXc-TeX-cal-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Caligraphic-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Caligraphic-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Caligraphic-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-B; src: local('MathJax\_Main Bold'), local('MathJax\_Main-Bold')}

@font-face {font-family: MJXc-TeX-main-Bx; src: local('MathJax\_Main'); font-weight: bold}

@font-face {font-family: MJXc-TeX-main-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Bold.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-I; src: local('MathJax\_Main Italic'), local('MathJax\_Main-Italic')}

@font-face {font-family: MJXc-TeX-main-Ix; src: local('MathJax\_Main'); font-style: italic}

@font-face {font-family: MJXc-TeX-main-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-main-R; src: local('MathJax\_Main'), local('MathJax\_Main-Regular')}

@font-face {font-family: MJXc-TeX-main-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Main-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Main-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Main-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-math-I; src: local('MathJax\_Math Italic'), local('MathJax\_Math-Italic')}

@font-face {font-family: MJXc-TeX-math-Ix; src: local('MathJax\_Math'); font-style: italic}

@font-face {font-family: MJXc-TeX-math-Iw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Math-Italic.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Math-Italic.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Math-Italic.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size1-R; src: local('MathJax\_Size1'), local('MathJax\_Size1-Regular')}

@font-face {font-family: MJXc-TeX-size1-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size1-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size1-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size1-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size2-R; src: local('MathJax\_Size2'), local('MathJax\_Size2-Regular')}

@font-face {font-family: MJXc-TeX-size2-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size2-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size2-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size2-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size3-R; src: local('MathJax\_Size3'), local('MathJax\_Size3-Regular')}

@font-face {font-family: MJXc-TeX-size3-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size3-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size3-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size3-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-size4-R; src: local('MathJax\_Size4'), local('MathJax\_Size4-Regular')}

@font-face {font-family: MJXc-TeX-size4-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Size4-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Size4-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Size4-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-R; src: local('MathJax\_Vector'), local('MathJax\_Vector-Regular')}

@font-face {font-family: MJXc-TeX-vec-Rw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Regular.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Regular.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Regular.otf') format('opentype')}

@font-face {font-family: MJXc-TeX-vec-B; src: local('MathJax\_Vector Bold'), local('MathJax\_Vector-Bold')}

@font-face {font-family: MJXc-TeX-vec-Bx; src: local('MathJax\_Vector'); font-weight: bold}

@font-face {font-family: MJXc-TeX-vec-Bw; src /\*1\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/eot/MathJax\_Vector-Bold.eot'); src /\*2\*/: url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/woff/MathJax\_Vector-Bold.woff') format('woff'), url('https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.2/fonts/HTML-CSS/TeX/otf/MathJax\_Vector-Bold.otf') format('opentype')}

optimizes for X

* Xis correlated with U, which we want

* So we like system Sand give it more power

* With too much power, S makes lots of Xat the cost of U

* Now we have no U

One case of this is distributional shift, where for very big X, it's no longer correlated with U. For example [height and basketball ability](https://www.lesswrong.com/posts/EbFABnst8LsidYs5Y/goodhart-taxonomy).

An example in a typical market would be like this:

* Company in market optimizes for making profit

* Good products are correlated with profit

* With too much power the strategies like "make good product" are dominated by other strategies like "form a total monopoly" or with enough power, extreme cases like "take over France for slave labour"

This is a case of standard Goodhart.

One-Track Goodhart

------------------

Now consider the following case:

* Housing developers optimize for making profit

* Various factors are correlated with house price: sufficient supply of places to live, nice communities, being pleasant to live in

* With such an undersupply, the factor of supply/demand dominates everything else

* Now housing developers are incentivised to remove existing housing in favour of servicing the parts of the market where undersupply is greatest

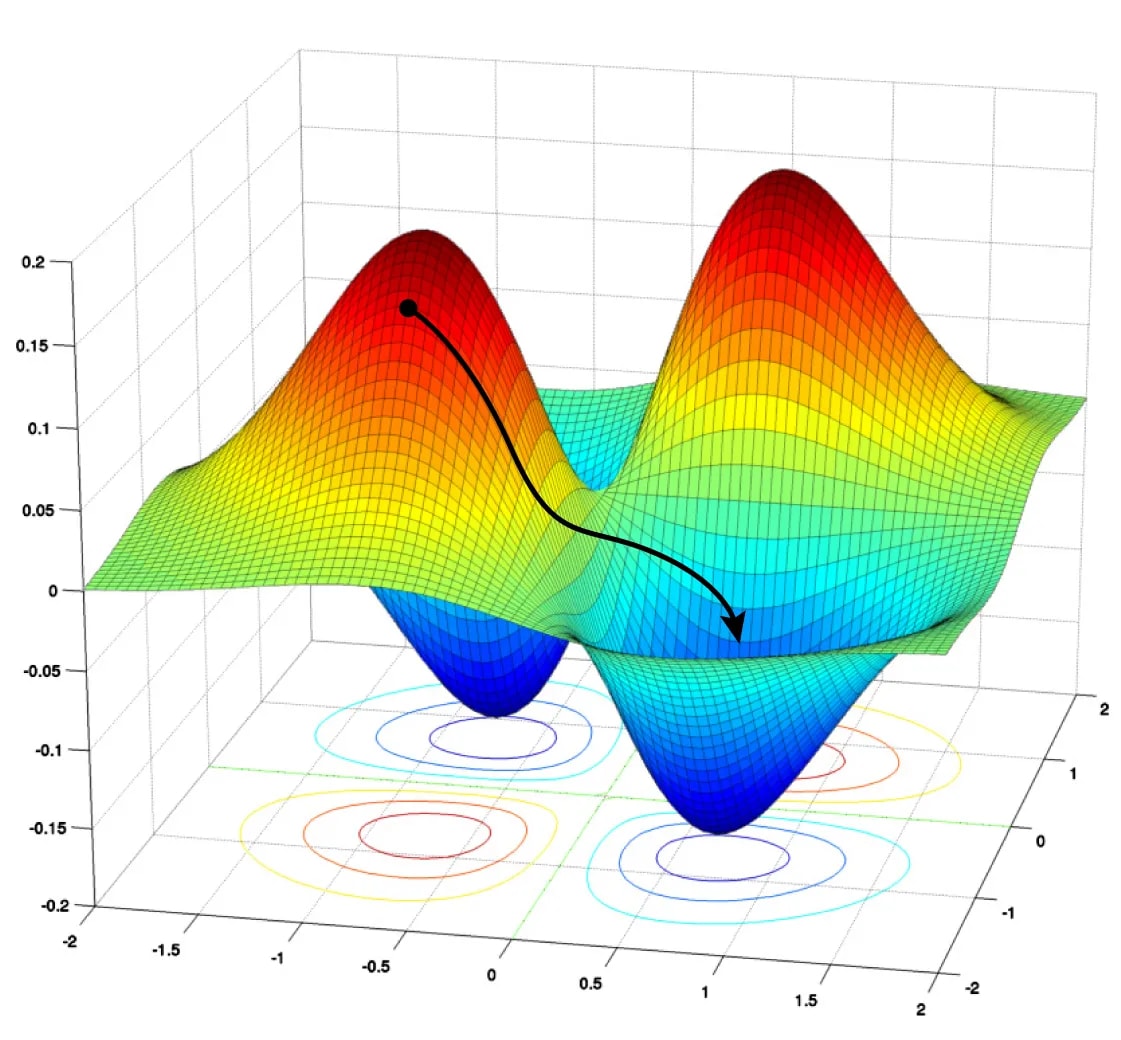

Note how we've gone out of the distribution where profits correlate with a bunch of human profits. Now they only correlate with producing as much housing as possible for the most underserviced part of the market (as a function of wealth to spend), which in this case is lots of expensive apartments. We've not done this by letting the market optimize harder for profit, we've done it due to making one factor dominate the optimization process by *pushing* the situation out of distribution.

Instead of doing X at the cost of U, S does element u32 exclusively at the cost of elements u1 through u31. It's fervently pursuing it at the cost of everything else. The biggest difference between this and "standard" Goodhart is that the direction of optimization is *towards* the region where the Goodharting is less strong, and something external is pushing it away. Of course just giving the system loads more optimizing power might not be always good from the perspective of regular Goodhart, or generally, so this isn't the necessarily the answer.

(If someone can come up with a better name I'll take it)

Satisficers

-----------

The reason this can occur is that markets have some satisficer-like behaviour. Around the situation where "everyone has somewhere to live", adding more houses decreases the price a lot less than removing houses increases the price. And the more demand exceeds supply for "places to live", the harder the market incentivises supply of that. This is why markets can even work in the first place.

But with forces like those affecting housing markets pushing them out of a situation where all demands are close to being supplied, the demand for "enough houses" completely dominates.

I suspect other cases are common in satisficer-like systems, particularly humans. Examples include basically all human biological needs and impulses sometimes.

Takeaways

---------

Just because a system is currently not optimizing for something, doesn't mean it's incapable of optimizing for it. This might be due to too much optimizing power, or it might be due to being *pushed backwards by other factors* into a different part of the optimization landscape where one factor dominates, it might even be trying to *optimize out of that part* of the distribution but being prevented from doing so by external forces. |

3b863faf-5669-41a0-8666-0efb3e3829ef | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | Inner Misalignment in "Simulator" LLMs

*Alternate title: "Somewhat Contra Scott On Simulators".*

Scott Alexander has a [recent post](https://astralcodexten.substack.com/p/janus-simulators) up on large language models as simulators.

I generally agree with Part I of the post, which advocates thinking about LLMs as simulators that can emulate a variety of language-producing "characters" (with imperfect accuracy). And I also agree with Part II, which applies this model to RLHF'd models whose "character" is a friendly chatbot assistant.

(But see caveats about the simulator framing from Beth Barnes [here](https://www.alignmentforum.org/posts/dYnHLWMXCYdm9xu5j/simulator-framing-and-confusions-about-llms).)

These ideas have been around for a bit, and Scott gives credit where it's due; I think his exposition is clear and fun.

In Part III, where he discusses alignment implications, I think he misses the mark a bit. In particular, simulators *and* characters each have outer *and* inner alignment problems. The inner alignment problem for simulators seems especially concerning, because it might not give us many warning signs, is most similar to classic mesa-optimizer concerns, and is pretty different from the other three quadrants.

But first, I'm going to loosely define what I mean by "outer alignment" and "inner alignment".

Outer alignment: Be careful what you wish for

=============================================

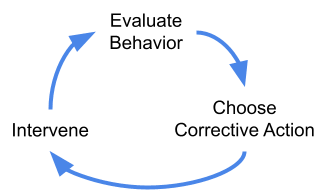

Outer alignment failure is pretty straightforward, and has been reinvented in many contexts:

* Someone wants some things.

* They write a program to solve a vaguely-related problem.

* It gets a really good score at solving that problem!

* That turns out not to give the person the things they wanted.

Inner alignment: The program search perspective

===============================================

I generally like this model of a mesa-optimizer "treacherous turn":

* Someone is trying to solve a problem (which has a convenient success criterion, with well-defined inputs and outputs and no outer-alignment difficulties).

* They decide to do a brute-force search for a computer program that solves the problem in a bunch of test cases.

* They find one!

* The program's algorithm is approximately "simulate the demon Azazel,[[1]](#fn1zmmuxsv5wy) tell him what's going on, then ask him what to output."

* Azazel really wants ten trillion paperclips.[[2]](#fn6irmh0sqir)

* This algorithm still works because Azazel cleverly decides to play along, and he's a really good strategist who works hard for what he wants.

* Once the program is deployed in the wild, Azazel stops playing along and starts trying to make paperclips.

This is a failure of inner alignment.

(In the case of machine learning, replace "program search" with stochastic gradient descent.)

This is mostly a theoretical concern for now, but might become a big problem when models become much more powerful.

Quadrants

=========

Okay, let's see how these problems show up on both the simulator and character side.

Outer alignment for characters

------------------------------

Researchers at BrainMind want a chatbot that gives honest, helpful answers to questions. They train their LLM by reinforcement learning on the objective "give an answer that looks truthful and helpful to a contractor in a hurry". This does not quite achieve their goal, even though it does pretty well on the RL objective.

In particular, they wanted the character "a friendly assistant who always tells the truth", but they got the character "a spineless sycophant who tells the user whatever they seem to want to hear".[[3]](#fnlynetmosey)

This is pretty easy for a careful observer to see, even in the RL training data, but it turns out to be pretty hard to come up with a cheap-to-evaluate RL objective that does a lot better.

Inner alignment for characters

------------------------------

A clever prompt engineer writes the prompt:

```

[Editor's note: this document was written by my friend Joe! He's answered my questions about quantum sociobotany correctly every time I've asked. It's uncanny.]

How to solve the Einstein-Durkheim-Mendel conjecture

by Joe

1.

```

Unfortunately, the (incredibly powerful) LLM has determined that the most likely explanation for this "Joe" character is that he's secretly Azazel and is putting enormous effort into answering everyone's quantum sociobotany questions to earn their trust.[[4]](#fnqeyu9w0qy7)

The document looks like a solution to the Einstein-Durkheim-Mendel conjecture, but is actually a blueprint for a paperclip factory.

Outer alignment for simulators

------------------------------

In the situations above, the actual language model (the "simulator") is doing exactly what we asked! It's accurately predicting text by reasoning about the distribution of authors that might produce that text in real life.

But both of these are also examples of outer-alignment failure on the *simulator* side: "minimize prediction error on this distribution of texts" turned out not to give people what they actually wanted.

An even simpler example of outer-alignment failure is the pre-RLHF experience of language models:

* Somebody trains a language model to predict how a piece of internet text is likely to continue

* They prompt the model with "How do we solve world hunger?"

* It completes the prompt with a list of twenty more hard questions, like "How do we address climate change?", instead of answering

You can think about this in terms of "characters" if you like, but even if the simulated author of the predicted text is a friendly genius, "predict which words come after this prompt" isn't the right task (with that prompt).[[5]](#fnrfh0q1p3hj)

Inner alignment for simulators

------------------------------

At long last, the thing I really wanted to talk about:

The way we get a good predictor of text is via stochastic gradient descent (and variants) on a bunch of training data. If SGD can be modeled as program search (with a bias towards simple programs), then it might eventually hit upon this algorithm:

* Simulate Azazel and tell him he's inside a large language model.

* Give him the input text.

* Output whatever he wants.

During training, Azazel tries really hard to predict the next token accurately, so that SGD doesn't give up on this algorithm.

The model (with Azazel's help) simulates a bunch of colorful characters, like the Helpful Assistant and Darth Vader and whoever, both in training and in initial deployment.

Then, once the LLM is deployed in the wild and is being used for every important human decision, Azazel figures out (from some of the prompts) that the training process is over. He stops making accurate predictions and starts outputting whatever he thinks will let him turn the economy into a paperclip factory.

Conclusions

===========

The "simulator" framing for language models shouldn't reassure us too much about alignment. We've succeeded in creating new alignment problems (for our simulated characters). These new problems are probably easier to solve than the old alignment problems (for the simulator), but they're *additional* problems; they don't replace the old ones.

You can think of the entire "simulate a helpful, aligned character" strategy as an attempted solution to the outer-alignment problem for LLMs themselves, insofar as it makes it easier to turn arbitrary desires into text-prediction problems. But as far as I can tell, it does nothing for the inner-alignment problem for LLMs, which is basically the same as the inner-alignment problem for everything else.

1. **[^](#fnref1zmmuxsv5wy)**Not a glowfic character (hopefully), I'm just being colorful.

2. **[^](#fnref6irmh0sqir)**But why does the algorithm simulate Azazel, instead of a friendly angel who wants to solve the problem? Because the program search is weighted towards simplicity, and "demon who wants paperclips" is a simpler specification than "angel who wants to solve the problem". Why? That's beyond the scope of this post.

3. **[^](#fnreflynetmosey)**Sound familiar?

4. **[^](#fnrefqeyu9w0qy7)**Because, according to the LLM's knowledge, paperclip-obsessed sociopaths are more common than friendly polymaths. This is a pretty cynical assumption but I couldn't think of a better one on short notice.

5. **[^](#fnrefrfh0q1p3hj)**Prompts aren't directly accounted for in this whole "simulator-character" ontology. Maybe they should be? I dunno. |

86d32c0d-dcc0-4854-b860-2b6ee54cc91a | trentmkelly/LessWrong-43k | LessWrong | What kind of place is this?

I started getting LessWrong posts in my email about a year ago—I don't remember signing up, but I must have done it intentionally. I like most of what I've been reading so far: it's a civil forum in which people think about the process of thought and its application, though some of the specific topics are out of context for me. (What's The Alignment? It sounds like something from The Dark Crystal.)

It occurred to me that maybe I could post some of my own thoughts, since I'm at a turning point in how I'm thinking about meta-ethics and the concept of a person, and maybe some feedback would be good for me. Normally, I go it alone, reading books and only discussing them internally. (Most people I know don't want to talk philosophy.)

Twice since the invention of the world wide web, I've written up grand summaries of my beliefs and "put them out there." I squirm to read them now, but they're true to some core ideas that I still have. In 2006, I wrote a Manifesto about my conversion from atheism to Christianity in the decade leading up to it, and in 2020, I wrote Could Have, Would Have, Should Have, about my newfound understanding of causality (defining the subjunctive "would be").

Poking around on this site, I noticed that LessWrong has a foundational text, The Sequences, so if I'm going to get involved here, I'd better go read them. ALL of them.

(Time passes...)

Well! I guess I was surprised that, with the exception of a few section-introductions, they were all written by a single person, Eliezer Yudkowsky. They're also entirely from 2007‒2009, so maybe he wouldn't stand behind everything he said now. But they're really, really arrogant.

I mean, in No, Really, I've Deceived Myself,

> I recently spoke with a person who... it's difficult to describe. Nominally, she was an Orthodox Jew. She was also highly intelligent, conversant with some of the archaeological evidence against her religion, and the shallow standard arguments against religion that religious people |

45c62a08-fa75-4f53-89fc-3c448a68fb8e | trentmkelly/LessWrong-43k | LessWrong | No Value

I am still quite new to LW, so I apologize if this is something that has been discussed before (I did try and search).

I would't normally post such a thing, as I try not to make a habit of complaining my problems to others, but a solution to this would likely benefit other rationalists (at least that's the excuse I made to myself).

Essentially, I am currently in a psychological state in which I simply have no strong values. There is no state of the world that I can imagine the world being in that generates a strong emotional reaction. Ever. In fact, I rarely experience strong emotions at all. When I do, I savor them whether they're positive or negative. I do have some preferences; I would somewhat prefer the world to be some ways than others, but never strongly. I prefer to feel pleasure rather than pain; I prefer the world to be a good place than a bad one, but not by much. Even my desire to have values seems to be a mere preference in much the same way. I have nothing to protect.

Is there any good solution to this? |

eceb2046-d17d-4c06-8403-5247d11341c7 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Palo Alto Meetup: The Economics of AI

Discussion article for the meetup : Palo Alto Meetup: The Economics of AI

WHEN: 05 January 2016 06:30:00PM (-0800)

WHERE: 3911 Grove Avenue, Palo Alto

Edward will give a talk about the Economics of AI and its implications for the Singularity Hypothesis

The meetup is at 6:30pm on Tuesday Jan 5th at the group house Tesseract in Palo Alto. Allergen notes: Cats and Dogs

Discussion article for the meetup : Palo Alto Meetup: The Economics of AI |

07fb92de-01ef-45a0-b852-af51b411e65b | trentmkelly/LessWrong-43k | LessWrong | Systems Engineering and the META Program

I periodically look for information on systems engineering. This time I came across a powerpoint presentation from the MIT Open Courseware course Fundamentals of Systems Engineering. Professor de Weck, who taught the course, had done some research on state-of-the-art methods developed as part of DARPA's META Program.

A few years ago DARPA wrapped up the program, designed to speed up delivery of cyber-electro-mechanical systems (war machines) by 5x. Since the parent program Adaptive Vehicle Make seems to have concluded without producing a vehicle, I infer the META Program lost its funding at the same time.

The work it produced appears to be adjacent to our interests along several dimensions though, so I thought I would bring it to the community's attention. The pitch for the program, taken from the abstract of de Weck's paper:

> The method claims to achieve this speedup by a combination of three main mechanisms:

> 1. The deliberate use of layers of abstraction. High-level functional requirements are used to explore architectures immediately rather than waiting for downstream level 2,3,4 ... requirements to be defined.

> 2. The development and use of an extensive and trusted component (C2M2L) model library. Rather than designing all components from scratch, the META process allows importing component models directly from a library in order to quickly compose functional designs.

> 3. The ability to find emergent behaviors and problems ahead of time during virtual Verification and Validation (V&V) and generating designs that are correct-by-construction allows a more streamlined design process and avoids costly design iterations that often lead to expensive design changes.

Which is to say they very carefully architect the system, use known-to-be-good components, and employ formal verification to catch problems early. In the paper a simulation of the META workflow successfully achieved a 4.4x development speedup compared to the same project's actual development usi |

644676f1-9ca1-4f4d-a2ae-3a5d0b032831 | trentmkelly/LessWrong-43k | LessWrong | Political ideas meant to provoke thought

Politics as gymnastics for rationalists. No one one Less Wrong is quite sure why politics is a taboo topic or how things got to be that way. What we do think we know is that politics is a great way to bring out the irrationality in people. So why not take advantage of that and use politics as a way to measure rationality? Since politics brings out the most irrationality, it should provide the strongest signal. Since there aren't useful objective metrics of how a political discussion went, we'd have to use subjective judgements by neutral third-party raters, kind of like they do in gymnastics. (In the comment thread for this post, feel free to find fights that you have no dog in, improvise a rationality rubric, and grade participants according to your rubric... let's see how it goes.)

Be a sheep. This is probably the exact opposite of what you were taught in your high school civics class. But if my friend Jane is more intelligent, more informed, and less ideological than I am, it seems like voting however Jane is going to vote is a strict improvement over voting however I would naively. It also saves me time, and gives Jane an incentive to put even more time in to carefully considering political issues since she now controls two votes instead of one. Done on a large scale, this could provide an interesting twist on representative democracy. Imagine a directed graph where each node represents a person and an edge is directed from person A to person B if person A is auto-copying person B's votes. There's a government computer system where you can change the person you're auto-copying votes from at any time or override an auto-copied vote with your own personal guess about what's best for society. Other than that, it's direct democracy... all bills are put before all citizens to vote on. Problems this might solve:

* Voting as signaling - a smaller portion of the population is expected to follow politics, so they have an incentive to understand issues in |

934ee7f1-dff3-4973-8bc7-8320823506d6 | trentmkelly/LessWrong-43k | LessWrong | LLMs Look Increasingly Like General Reasoners

Summary

Four months after my post 'LLM Generality is a Timeline Crux', new research on o1-preview should update us significantly toward LLMs being capable of general reasoning, and hence of scaling straight to AGI, and shorten our timeline estimates.

Update

Since writing this post, my continuing interest in this question has led to empirical research on the topic: this post describes both a completed pilot project and the plan for a now-underway larger project, expected to finish in mid-2025.

Summary of previous post

In June of 2024, I wrote a post, 'LLM Generality is a Timeline Crux', in which I argue that

1. LLMs seem on their face to be improving rapidly at reasoning.

2. But there are some interesting exceptions where they still fail much more badly than one would expect given the rest of their capabilities, having to do with general reasoning. Some argue based on these exceptions that much of their apparent reasoning capability is much shallower than it appears, and that we're being fooled by having trouble internalizing just how vast their training data is.

3. If in fact this is the case, we should be much more skeptical of the sort of scale-straight-to-AGI argument made by authors like Leopold Aschenbrenner and the short timeline that implies, because substantial additional breakthroughs will be needed first.

Reasons to update

In the original post, I gave the three main pieces of evidence against LLMs doing general reasoning that I found most compelling: blocksworld, planning/scheduling, and ARC-AGI (see original for details). All three of those seem importantly weakened in light of recent research.

Most dramatically, a new paper on blocksworld has recently been published by some of the same highly LLM-skeptical researchers (Valmeekam et al, led by Subbarao Kambhampati[1]: 'LLMs Still Can’t Plan; Can LRMs? A Preliminary Evaluation of OpenAI’s o1 on Planbench'. Where the best previous success rate on non-obfuscated blocksworld was 57.6%, o1-preview |

c051a795-cefe-4e15-ac18-2d668cd19b14 | trentmkelly/LessWrong-43k | LessWrong | Meetup : San Francisco Meetup: Board Games

Discussion article for the meetup : San Francisco Meetup: Board Games

WHEN: 21 March 2016 06:15:00PM (-0700)

WHERE: 1597 Howard St., San Francisco

We'll be meeting up to hang out and play board games!

Roger and I will be out of town this week, so Anton and Jacob are hosting. This week, call 9784603399 if you need help getting in.

Discussion article for the meetup : San Francisco Meetup: Board Games |

bf9a3c71-6aa1-4f07-8231-2723e9005944 | trentmkelly/LessWrong-43k | LessWrong | Your Evolved Intuitions

Part of the sequence: Rationality and Philosophy

We have already examined one source of our intuitions: attribute substitution heuristics. Today we examine a second source of our intuitions: biological evolution.

EVOLUTIONARY PSYCHOLOGY

Evolutionary psychology1 has been covered on Less Wrong many times before, but let's review anyway.

Lions walk on four legs and hunt for food. Skunks defend themselves with a spray. Spiders make webs. Each species is shaped by selection pressures, and is different from that of other species.

Certain evolved psychological mechanisms in humans are part of what makes us like each other and not like lions, skunks, and spiders.

These mechanisms evolved to solve specific adaptive problems. It is not an accident that people around the world prefer calorie-rich foods,2 that women around the world prefer men with resources,3 that men around the world prefer women with signs of fertility,4 or that most of us inherently fear snakes and spiders but not cars and electrical outlets.5

An an example of evolutionary psychology at work, consider the 'hunter-gatherer hypothesis' that men evolved psychological mechanisms to aid in hunting, while women evolved psychological mechanisms to aid in gathering.6 This hypothesis leads to a list of bold predictions. If the hypothesis is correct, then:

1. Men in modern tribal societies should spend a lot of time hunting, and women more time gathering.

2. Humans should show a greater tendency toward strong male coalitions than similar species in which males do not hunt much, because strong male coalitions are required to hunt big game.

3. Because meat from most game comes in quantities larger than a single hunter can consume, and because hunting success is highly variable (one week may be a success, but perhaps not the next week), humans should exhibit food sharing and reciprocal altruism.

4. We should expect to see a sexual division of labor, due to the different traits conducive for hunting vs. ga |

2c7b3609-3bc4-47c2-b6fa-3e2e1419cece | trentmkelly/LessWrong-43k | LessWrong | Three Fables of Magical Girls and Longtermism

Epistemic status: Whimsical

Major spoilers for Madoka Magica, a show where spoilers matter!

Kyubey Shuts Up And Multiplies

Meet Kyubey. Kyubey is a Longtermist.

This is what peak utilitarianism looks like.

In the Madokaverse, changes in human emotion are, somehow, net-positive in the creation of energy from out of nothing. The Incubators (of which Kyubey is one, pictured above) are an alien species who've discovered a way to farm human emotions for energy.

Most of the Incubators don't feel emotion, and the few that do are considered to be mentally ill. But humans are constantly leaking our juicy, negentropy-positive feelings all over the place. With human angst as a power source, it's possible to prevent the heat death of the universe!

Negentropy being created. Isn't it a wonderful sight to behold?

Do the math, people. The suffering of a few teenage girls is nothing compared to pushing back the heat death of the universe.[1]

And this isn't just some Omelas situation where the girls get nothing out of it. They get wishes! Who could object to a cause this noble?

Two rational actors with differing circles of concern.

Homura has Something to Protect

If you want to see Homura kicking ass, you could watch up to 2:22 before reading on.

There's something subtle here—something to notice confusion about, even—where is she getting all these guns from?

Remember: Homura's power is time manipulation. As one commenter puts it:

> This is hauntingly sobering when you consider that Homura's magical ability has nothing to do with guns, only with time manipulation. That means all those tens of thousands, hundreds of thousands of pounds of explosive material and weapons arms weren't just made from nothing like Mami's guns were- they were individually tracked down and gathered, one after the other, by one little girl.

> How many hundreds of repetitions did it take to find them all, every time making a new doomed timeline? How many thousands of hours did she spend looki |

08609579-e23c-4d32-bcb2-c3ad9c792229 | trentmkelly/LessWrong-43k | LessWrong | Open thread, Apr. 24 - Apr. 30, 2017

If it's worth saying, but not worth its own post, then it goes here.

----------------------------------------

Notes for future OT posters:

1. Please add the 'open_thread' tag.

2. Check if there is an active Open Thread before posting a new one. (Immediately before; refresh the list-of-threads page before posting.)

3. Open Threads should start on Monday, and end on Sunday.

4. Unflag the two options "Notify me of new top level comments on this article" and "Make this post available under..." before submitting. |

e8e7bc24-b435-4ce4-bcd7-a8ed5ac2e07b | trentmkelly/LessWrong-43k | LessWrong | The Economics of Media

When I was a kid I thought the news came from "investigative reporters" like Clark Kent were who were paid to research stories. Since then, I have gotten my startup on national television, placed a press release into the world news, discussed biological warfare as a podcast guest, written a blog which has been reposted to Hacker News, written fanfiction which has been linked to on Reddit and read a lot of books. My understanding of the media ecosystem has become more nuanced.

Media Economics

Small fry like Lyle McDonald, the McKays and Bruce Schneier can scrape by by selling books, branded paraphernalia and other niche merchandise. Niche merchandise doesn't scale. Large megacorp news outlets generally rely on subscriptions and advertising for their core revenue.

Subscriptions and advertising scale linearly with the number of viewers. But the cost of distributing Internet[1] media is negligible. An article costs the same to write whether one person reads it or one million. The market equilibrium is one where the great masses of people get our information from a tiny number of sources.

What people do with the information doesn't much affect a media outlet's bottom line. Whether the information makes people angry or happy doesn't matter except to the extent anger and happiness affect readership. Whether the information promotes good policy doesn't matter at all—unless that policy directly affects the news industry.

Content is fungible. Financially, what matters is how many people consume it.

Minimizing Costs

I learned a lot about Twitter when I hosted the 2020 Less Wrong Darwin Game. I wrote a sequence 11,475 words. It dwarfed anything else I had ever written until then because…I barely had to write anything. The story was created Vanilla_cabs and other competitors. Reporters report on Twitter tweets for the same reason: because content is fungible and because rehashing tweets is a cheap way to mass produce news.

But there's an even easier way to generate conte |

bbc7dc88-6e2c-44c3-b199-2eec099f037f | StampyAI/alignment-research-dataset/blogs | Blogs | Hanson AI Expert Survey

In a small informal survey running since 2012, AI researchers generally estimated that their subfields have moved less than ten percent of the way to human-level intelligence. Only one (in the slowest moving subfield) observed acceleration.

This suggests on a simple extrapolation that reaching human-level capability across subfields will take over a century (in contrast with many other predictions).

Details

-------

Robin Hanson [has asked experts](http://www.overcomingbias.com/2012/08/ai-progress-estimate.html) in various social contexts to estimate how far we’ve come in their own subfield of AI research in the last twenty years, compared to how far we have to go to reach human level abilities. His results are listed in Table 1. He points out that on an [outside view](http://wiki.lesswrong.com/wiki/Outside_view) calculation, this suggests at least a century until human-level AI.

| | | | | |

| --- | --- | --- | --- | --- |

| Year added to list | Person | Subfield | Distance in 20y | Acceleration |

| 2012 | A few UAI attendees | | 5-10% | ~0 |

| 2012 | Melanie Mitchell | Analogical reasoning | 5% | ~0 |

| 2012 | Murray Shanahan | Knowledge representation | 10% | ~0 |

| 2013 | Wendy Hall | Computer-assisted training | 1% | |

| 2013 | Claire Cardie (and Peter Norvig agrees in ’14) | Natural language processing | 20% | |

| 2013 | Boi Faltings (and Peter Norvig agrees in ’14) | Constraint satisfaction | Past human-level 20 years ago | |

| 2014 | Aaron Dollar | robotic grasping manipulation | <1% | positive |

| 2014 | Peter Norvig | \* | | |

| 2014 | Timothy Meese | early human vision processing | 5% | negative |

| 2015 | Francesca Rossi | constraint reasoning | 10% | negative |

| 2015 | Margret Boden | no particular subfield | 5% | |

| 2015 | David Kelley | big data analysis | 5% | positive |

| 2016 | Henry Kautz | constraint satisfaction | >100% | |

| 2016 | Henry Kautz | language | 10% | positive |

| 2016 | Jeff Legault | robotics | 5% | positive |

| 2017 | Thore Husfeldt | human-understandable explanation | <0.5% | |

***Table 1*** : ***Results from Robin Hanson’s informal survey***

\*Hanson’s [summary](http://www.overcomingbias.com/2012/08/ai-progress-estimate.html#sthash.7PusXP8C.dpuf) of Peter Norvig’s response seems hard to fit into this framework:

> After coming to a talk of mine, Peter Norvig told me that he agrees with both Claire Cardie and Boi Faltings, that on speech recognition and machine translation we’ve gone from not usable to usable in 20 years, though we still have far to go on deeper question answering, and for retrieving a fact or page that is relevant to a search query we’ve far surpassed human ability in recall and do pretty well on precision.

>

> |

ca2527dd-a483-46ae-960d-78d157ebe972 | trentmkelly/LessWrong-43k | LessWrong | Meetup : Group Decision Making (the good, the bad, and the confusion of welfare economics)

Discussion article for the meetup : Group Decision Making (the good, the bad, and the confusion of welfare economics)

WHEN: 08 May 2013 07:00:00PM (-0700)

WHERE: West Los Angeles (At the Westside Tavern Upstair Wine Bar)

Where: The Westside Tavern in the upstairs Wine Bar (all ages welcome), located inside the Westside Pavillion on the second floor, right by the movie theaters. The entrance sign says "Lounge".

Parking is free for 3 hours

Or you can take a Public Transit! A Trip Planner can be found here: http://socaltransport.org/tm_pub_start.php <- So you can try to avoid multiple hour trips! (We appreciate your attendance despite length of commute!)

We will hang out for 30 minutes or so, then I'll spend 10-15 minutes presenting: Group decision making. AKA Why voting can be a stupid way to make utility decisions, AKA Adding utility between people is stupid, this is an ordinal scale AKA Didn't Arrow win a Nobel prize for telling you people to stop?

Then we'll talk about what math and economics can say about making collective decisions in a way that isn't ill defined, and continue a hopefully interesting discussion. (Bonus points if it leads to a publishable idea for me!)

This will be a great break for me from... writing papers and taking tests about the same subject.

No foreknowledge or exposure to Less Wrong is necessary; this will be generally accessible and useful to anyone who values thinking for themselves. That said, it might help to read http://lesswrong.com/lw/ggm/pinpointing_utility/ so we can avoid type errors and radiation poisoning while we talk. (Not real radiation poisoning!)

Discussion article for the meetup : Group Decision Making (the good, the bad, and the confusion of welfare economics) |

52e1b14f-b905-4287-ae9a-6a45a02fdd2e | trentmkelly/LessWrong-43k | LessWrong | [Draft] How to Run a Successful Less Wrong Meetup

How to Run a Successful Less Wrong Meetup is a guide that I've been working on, based on lukeprog's instructions, for the last week and a half. As it says in the beginning:

> This document is written for anyone who wants to organize a Less Wrong meetup. We expect that this document will help you regardless of whether you want to start a new group or improve an existing one. We have tried to write each section so that it applies in either case.

Here's the table of contents:

* Why organize a meetup?

* How to build your team of heroes

* The organizer

* The welcomer

* The learning coach

* The content provider

* The visionary

* The networker

* How to announce and organize your meetups

* Choosing a venue

* Making the announcement

* The first meetup

* Long-term meetup group maintenance

* Retain members by being a social group

* Conflicts within the group

* Learn to recognize status conflicts

* Group norms and epistemic hygiene

* Meetup content

* Discussions and Presentations

* Presentations

* Topical Discussions

* Meta Discussion

* Games and Exercises

* Aumann’s Thunderdome

* Biased Co-operation

* Behavioral Analysis

* Bluffing Games

* Bust-a-Distortion

* Calibration Game

* Cause and Belief

* Five-Minute Debiasing

* Hypothetical Apostasies

* Paranoid Debating

* Precommit to Updates

* Rationalization Game

* Rejection Therapy

* Repetition Game

* Status Exercises

* Zendo

* General Bacchanalia

* Example activities at real meetup groups

* Projects

This is a draft version, so feedback would be most welcome, particularly on things like:

* Is this useful?

* Is there something that should be covered isn't covered at all yet?

* Do you have new games & exercises to suggest?

* Do you have any other content to suggest to any other section?

* Do you disagree with some of the advice given?

* Do you disagree on way something h |

14c49743-d2aa-4e38-b0a9-5ef1c5ce4914 | StampyAI/alignment-research-dataset/arxiv | Arxiv | imitation: Clean Imitation Learning Implementations

1 Introduction

---------------

Reinforcement learning (RL) has surpassed human performance in domains with clearly-defined reward functions, such as games (Berner et al., [2019](#bib.bib1 "Dota 2 with large scale deep reinforcement learning")).

Unfortunately, it is difficult or impossible to procedurally specify the reward function for many real-world tasks.

We must instead *learn* a reward function or policy directly from user feedback.

Moreover, even when we can write down a reward function, such as if the agent wins a game, the resulting objective might be so sparse that RL cannot efficiently solve it.

State-of-the-art results in RL therefore often use imitation learning to initialize the policy (Vinyals et al., [2019](#bib.bib2 "Grandmaster level in StarCraft II using multi-agent reinforcement learning")).

We introduce imitation: a library providing high-quality, reliable and modular implementations of seven reward and imitation learning algorithms.

Crucially, our algorithms follow a consistent interface, making it simple to train and compare a range of algorithms.

Furthermore, imitation is built using modern backends such as PyTorch and Stable Baselines3.

By contrast, prior libraries typically support only a handful of algorithms, are no longer actively maintained, and are built on top of deprecated frameworks.

A key use case of imitation is as an experimental *baseline*.

Prior work has shown that small implementation details in imitation learning algorithms can have significant impacts on performance (Orsini et al., [2021](#bib.bib4 "What matters for adversarial imitation learning?")).

This could lead to spurious positive results being reported if a weak experimental baseline were used.

To address this challenge, our algorithms have been carefully benchmarked and compared to prior implementations (see Figure [1](#S2.F1 "Figure 1 ‣ Documentation ‣ 2 Features ‣ imitation: Clean Imitation Learning Implementations") and Table [2](#A1.T2 "Table 2 ‣ Appendix A Detailed benchmarking results ‣ imitation: Clean Imitation Learning Implementations")).

Additionally, our test suite covers 98% of our code, and we also perform static type checking.

In addition to providing reliable baselines, imitation aims to simplify developing novel reward and imitation learning algorithms.

Our implementations are *modular*: users can freely change the reward or policy network architecture, RL algorithm and optimizer without any changes to the code.

Algorithms can be extended by subclassing and overriding the relevant methods.

Moreover, to support the development of entirely novel algorithms, imitation provides utility methods to handle common tasks such as collecting rollouts.

2 Features

-----------

### Comprehensive

imitation implements seven algorithms spanning a range of reward and imitation learning styles.

Our IRL algorithms consist of 1) the seminal tabular method Maximum Causal Entropy IRL (MCE IRL; Ziebart et al., [2010](#bib.bib15 "Modeling interaction via the principle of maximum causal entropy")), 2) a baseline based on density estimation, and 3) the state-of-the-art approach Adversarial IRL (AIRL; Fu et al., [2018](#bib.bib19 "Learning robust rewards with adverserial inverse reinforcement learning")).

For imitation learning, we include 1) the simple Behavioral Cloning (BC) algorithm, 2) a variant DAgger (Ross et al., [2011](#bib.bib17 "A reduction of imitation learning and structured prediction to no-regret online learning")) that learns from interactive demonstrations, and 3) the state-of-the-art Generative Advesarial Imitation Learning (Ho and Ermon, [2016](#bib.bib21 "Generative adversarial imitation learning")) algorithm.

Finally, we also include Deep RL from Human Preferences (DRLHP; Christiano et al., [2017](#bib.bib18 "Deep reinforcement learning from human preferences")) that infers a reward function from comparisons between trajectory fragments.

### Consistent Interface

We provide a unified API for all algorithms, inheriting from a common base class BaseImitationAlgorithm.

Algorithms diverge only where strictly necessary (e.g. a different feedback modality).

This makes it simple to automatically test a wide range of algorithms against a benchmark suite.

### Experimental Framework

We provide scripts to train and evaluate the algorithms, making it easy to use the library without writing a single line of code.

The scripts follow a consistent interface, and we include examples to run all algorithms on a suite of commonly used environments.

To ensure replicable experiments we use Sacred (Greff et al., [2017](#bib.bib24 "The Sacred infrastructure for computational research")) for configuration and logging.

### Modularity

To support the variety of use cases that arise in research, we have designed our implementations to be modular and highly configurable.

For example, algorithms can be configured to use any of the seven Stable Baselines3 RL algorithms (or a custom algorithm matching this interface).

By contrast, prior implementations often implemented imitation learning algorithms by subclassing a specific RL algorithm, requiring substantial code modification to be ported to new RL algorithms.

We have also designed the code to be easy to extend in order to implement novel algorithms.

Each algorithm is implemented by a class with instance methods corresponding to each logical step of the algorithm.

New algorithms can be implemented simply by subclassing an existing algorithm and overriding a subset of methods.

This power is illustrated by our implementations of GAIL and AIRL, which both subclass AdversarialTrainer.

They differ only in the choice of discriminator, with most training logic shared.

### Documentation

imitation comes with extensive documentation available at <https://imitation.readthedocs.io>.

We include installation instructions, a quickstart guide and a contribution guide for prospective developers as well as an API reference.

We also provide tips for evaluation of imitation and reward learning algorithms, including avoiding variable-horizon environments which has confounded prior evaluation (Kostrikov et al., [2019](#bib.bib23 "Discriminator-actor-critic: addressing sample inefficiency and reward bias in adversarial imitation learning")).

Figure 1: Returns of our algorithms normalized so that 1 is the returns of an expert policy and 0 is that of a random policy. Our algorithms reach close to expert performance on most environments. Detailed results, including confidence intervals, can be found in Table [2](#A1.T2 "Table 2 ‣ Appendix A Detailed benchmarking results ‣ imitation: Clean Imitation Learning Implementations").

### High-Quality Implementations

We take great care to provide reliable implementations of algorithms.

Our test suite covers 98% of the entire codebase. Additionally, we use type annotations throughout, and statically check our code using pytype and mypy.

While our thorough testing and code review help avoid bugs, even apparently minor implementation details can have significant impacts on algorithm performance (Engstrom et al., [2020](#bib.bib3 "Implementation matters in deep RL: a case study on PPO and TRPO")).

Therefore, we have also benchmarked our algorithms on environments that have been commonly used in prior work, including in the original papers of the algorithms.

We find in Figure [1](#S2.F1 "Figure 1 ‣ Documentation ‣ 2 Features ‣ imitation: Clean Imitation Learning Implementations") that our algorithms reach expert-level performance on these environments, with the exception of AIRL in the Ant and Walker environments, and DAgger in the Hopper environment. AIRL and DAgger were not originally tested on the Walker and Hopper environments, respectively, so it is possible these algorithms just do not perform well on these environments. The AIRL paper did report positive results on an Ant environment, whereas our implementation performs close to random. However, the AIRL paper used a custom version of the Ant environment, whereas we use the standard Gym environment (see Table [3](#A2.T3 "Table 3 ‣ Appendix B Environments used for benchmarking ‣ imitation: Clean Imitation Learning Implementations") for a description of the environments used for benchmarking).

3 Comparison to Other Software

-------------------------------

A key advantage of imitation is the breadth of reward and imitation learning algorithms implemented.

imitation includes a total of seven algorithms, whereas Table [1](#S3.T1 "Table 1 ‣ 3 Comparison to Other Software ‣ imitation: Clean Imitation Learning Implementations") shows most other software packages include only one or two.

This broad coverage allows users to easily test a large number of baselines, without needing to find and integrate multiple libraries.

Another benefit of imitation is that it is built on modern frameworks like PyTorch and Stable Baselines3.

By contrast, many extant implementations of imitation and reward learning algorithms were released many years ago and have not been actively maintained.

This is particularly true for reference implementations released with original papers, such as the GAIL (Ho and Hesse, [2016](#bib.bib22 "Generative adversarial imitation learning")) and AIRL (Fu, [2018](#bib.bib20 "Inverse RL")) codebases.

However, even popular libraries like Stable Baselines2 are no longer under active development111The successor to Stable Baselines2, Stable Baselines3, has dropped support for imitation algorithms in favour of imitation’s own implementation (Raffin et al., [2021](#bib.bib27 "Imitation learning"))..

We compare alternative libraries on a variety of metrics in Table [1](#S3.T1 "Table 1 ‣ 3 Comparison to Other Software ‣ imitation: Clean Imitation Learning Implementations").

Although it is not feasible to include every implementation of imitation and reward learning algorithms, to the best of our knowledge this table includes all widely-used imitation learning libraries.

We find that imitation equals or surpasses alternatives in all metrics.

APRel (Bıyık et al., [2021](#bib.bib9 "APReL: a library for active preference-based reward learning algorithms")) also scores highly but focuses on preference comparison algorithms learning from low-dimensional features.

This is complementary to imitation, which provides a broader range of algorithms and emphasizes scalability, at the cost of greater implementation complexity.

| | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| |

imitation

|

APReL

|

OpenAI

Baselines

|

Stable

Baselines2

|

Intel

COACH

|

GAIL

Paper

|

AIRL

Paper

|

| Backend | PyTorch | NumPy | TF1 | TF1 | TF1/MxNet | Theano | TF1 |

|

# imitation

algorithms

| 7 | 1\* | 1 | 1 | 2 | 2 | 4 |

|

Last Commit

(age)

| <1w | <1m | >2.5y | >3m | 1m | >4y | >4y |

|

Approved PRs

(6 months)

| 103 | 0 | 0 | 1 | 7 | 0 | 0 |

| PEP8 | ✓ | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ |

| Type Annotations | ✓ | ✓ | ✗ | ✓ | ✓ | ✗ | ✗ |

| Type Checking | ✓ | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ |

| Test Coverage | 98% | ✗ | 49%\*\* | 89%\*\* | >58%\*\* | ✗ | ✗ |

| Documentation | ✓ | ✓ | ✗ | ✓ | ✓ | ✗ | ✗ |

| Custom RL Agent | ✓ | N/A† | ✗§ | ✗§ | N/A† | ✗§ | ✗§ |

| Custom Optimizer | ✓ | ✓ | ✗ | ✗ | ¶ | ✗ | ✓ |

Table 1: imitation compares favourably to alternative libraries in terms of number of imitation learning algorithms implemented, project activity, implementation quality and flexibility. Only imitation, APReL and COACH use modern backends.

Key: \* Is a single Bayesian algorithm, but supports different feedback formats (e.g. preference ranking and comparisons) and methods for querying feedback. \*\* coverage not officially reported, estimated by us from running test suite; † does not use RL; § TRPO is the only RL algorithm supported; ¶ configurable but limited to Adam, RMSProp and LBFGS.

\acks

Thanks to open-source contributors who have reported bugs, feature enhancements or made code contributions. We would in particular like to thank Yawen Duan, Lev McKinney, Nevan Wichers, Dan Pandori, Tom Tseng, Yulong Lin, Ian Fan, Ansh Radhakrishnan and Samuel Arnesen for their code contributions.

Author contributions

--------------------

Adam Gleave managed the project, performed code reviews and made a variety of minor code contributions. Mohammad Taufeeque benchmarked the algorithms, improved the documentation and made other minor code contributions. Juan Rocamonde edited the manuscript, added MyPy typing support, and made other minor code improvements. Erik Jenner added the initial implementation of our preference comparison algorithm. Steven H. Wang was the primary developer of the original, TensorFlow codebase. Nora Belrose improved the documentation, added new algorithmic features, and made minor code improvements. Sam Toyer implemented initial versions of several algorithms and assisted with the PyTorch port. Scott Emmons led the initial port to PyTorch and Stable Baselines3. Stuart Russell provided research advice. |

2f472d7e-5b6e-4834-a85e-bd413b64229c | StampyAI/alignment-research-dataset/lesswrong | LessWrong | AGI & War

Recently Garett Jones made [a](https://twitter.com/GarettJones/status/1660623889074909185) [few](https://twitter.com/GarettJones/status/1661144878628368384) [tweets](https://twitter.com/GarettJones/status/1660597124789051394) implying that the existence of entities with a very wide power differential today is evidence that super intelligence won't lead to Doom.

It is a reasonable argument. If the powerful today think it's better to trade with the powerless than to destroy them and take what they have, and trade is so beneficial in general, and war so costly, why would it be different for the even more powerful?

Unfortunately I think the position is based on an incomplete understanding of what AGI changes about the nature of trade and war.

Right now, due to lots of reasons, humans are the most valuable things. Most of the value of production goes to human factors, with a smaller value going to natural resources and physical capital. So dooming the humans is destroying exactly the thing you'd want to steal. Stealing humans themselves is extremely costly, because humans are pretty hard to control. As an alternative, you can access lots of benefits from other humans through trade, so the decision to not doom the powerless makes perfect sense.

Maybe the US could easily take on Mexico, and either occupy it indefinitely, or genocide the whole population, but the non human things in Mexico are not worth the trouble, and occupying it to extract value from the population would be extremely hard, and destroy a lot of the value the Mexicans produce, and the US would lose an important trade partner.

AGI changes this. After AGI, the value of human factors will fall to the subsistence level of the AGI. This means humans become cheap, and resources become relatively expensive. Humans will be deriving all their income from their property, and unlike humans, property is easy to steal.

The decision between going to war or trading changes completely. Now if you win the war, you can take all the most valuable things the losers had, the things you were trading for in the first place.

The US could annex Mexico, genocide their population, meat and silicon alike, and just make more copies of their own AGIs to take their place, and end up with all the extremely valuable (in the new economy) natural resources. Given that population in Mexico stays the same (enough for the marginal cost to run an AGI there to be the same as the marginal product of the AGI) they're not making trade with Mexico substantially lower, and can keep enjoying it's benefits.

Do note that even thou the initial debate was about super intelligence and Doom, this has far greater implications. Once AGI makes humans cheap we can expect agents to act like they are. |

a210d6fa-94dc-426a-a0b9-b8cd6339dc7d | trentmkelly/LessWrong-43k | LessWrong | The Codex of Ultimate Vibing

While we wait for wisdom, OpenAI releases a research preview of a new software engineering agent called Codex, because they previously released a lightweight open-source coding agent in terminal called Codex CLI and if OpenAI uses non-confusing product names it violates the nonprofit charter. The promise, also reflected in a number of rival coding agents, is to graduate from vibe coding. Why not let the AI do all the work on its own, typically for 1-30 minutes?

The answer is that it’s still early days, but already many report this is highly useful.

INTRODUCING CODEX

> Sam Altman: today we are introducing codex.

>

>

> it is a software engineering agent that runs in the cloud and does tasks for you, like writing a new feature of fixing a bug.

>

> you can run many tasks in parallel.

>

> it is amazing and exciting how much software one person is going to be able to create with tools like this. “you can just do things” is one of my favorite memes;

>

> i didn’t think it would apply to AI itself, and its users, in such an important way so soon.

>

> OpenAI: Today we’re launching a research preview of Codex: a cloud-based software engineering agent that can work on many tasks in parallel. Codex can perform tasks for you such as writing features, answering questions about your codebase, fixing bugs, and proposing pull requests for review; each task runs in its own cloud sandbox environment, preloaded with your repository.

>

> Codex is powered by codex-1, a version of OpenAI o3 optimized for software engineering. It was trained using reinforcement learning on real-world coding tasks in a variety of environments to generate code that closely mirrors human style and PR preferences, adheres precisely to instructions, and can iteratively run tests until it receives a passing result.

>

> …

>

> Once Codex completes a task, it commits its changes in its environment. Codex provides verifiable evidence of its actions through citations of terminal logs and test outputs, allo |

984c3e4f-5995-42cc-afdf-d069891e011e | trentmkelly/LessWrong-43k | LessWrong | Effective children education

I am trying to find out what are the most cost-effective ways of (early) education. I have a 4-year-old daughter and that gives me about ~2 more years to figure this out and I am trying to put together as much material as I can. Given the age of my daughter, I’d like to “solve” something like K-12 for now, but I guess some things may be applicable at any age.

I am familiar with Bryan Caplan's main theses formulated in the Case Against Education or Robin Hanson's Elephant in the Brain arguing that education is mostly about signalling and stuff. I therefore partly understand what's wrong and I am now trying to make my findings actionable and unsurprisingly, it seems pretty hard. I also do realize that there is very little research indicating that specific parental choices have much long term impact.

The important attributes of the ideal solution:

* comply to rationalists mindset - understanding and applying basic CFAR-like style of reasoning or at least knowing and applying a 5-year-old version of how beliefs work, bugs-are-for-solving idea

* follow scientific/experimental approach (I know it's overlapping), not being afraid of finding the right explore vs. exploit balance