id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

8ccdd035-9a3c-4e27-bace-0588fbb13efe | StampyAI/alignment-research-dataset/alignmentforum | Alignment Forum | AI Risk for Epistemic Minimalists

*Financial status: This is independent research, now supported by a grant. I welcome further* [*financial support*](https://www.alexflint.io/donate.html)*.*

*Epistemic status: This is an attempt to use only very robust arguments.*

---

Outline

-------

* I outline a case for concern about AI that does not invoke concepts of agency, goal-directedness, or consequential reasoning, does not hinge on single- or multi-principal or single or multi-agent assumptions, does not assume fast or slow take-off, and applies equally well to a world of emulated humans as to de-novo AI.

* The basic argument is about the power that humans will temporarily or permanently gain by developing AI systems, and the history of quick increases in human power.

* In the first section I give a case for paying attention to AI at all.

* In the second section I give a case for being concerned about AI.

* In the third section I argue that the business-as-usual trajectory of AI development is not satisfactory.

* In the fourth section I argue that there are things that can be done now.

The case for attention

----------------------

We already have powerful systems that influence the future of life on the planet. The systems of finance, justice, government, and international cooperation are things that we humans have constructed. The specific design of these systems has influence over the future of life on the planet, meaning that there are small changes that could be made to these systems that would have an impact on the future of life on the planet much larger than the change itself. In this sense I will say that these systems are powerful.

Now every single powerful system that we have constructed up to now uses humans as a fundamental building-block. The justice system uses humans as judges and lawyers and administrators. At a mechanical level, the justice system would not execute its intended function without these building-block humans. If I turned up at a present-day court with a lawsuit expecting a summons to be served upon the opposing party but all the humans in the justice system were absent then the summons would not end up being served.

The Google search engine is a system that has some power. Like the justice system, it requires humans as building-blocks. Those human building-blocks maintain the software, data centers, power generators, and internet routers that underlie it. Although individual search queries can be answered without human intervention, the transitive closure of dependencies needed for the system to maintain its power includes a huge number of humans. Without those humans, the Google search engine, like the justice system, would stop functioning.

The human building-blocks within a system do not in general have any capacity to influence or shut down the system. Nor are the actions of a system necessarily connected to the interests of its human building-blocks.

There are some human-constructed systems in the world today that do not use humans as building-blocks, but none of them have power in their own right. The [Curiosity Mars rover](https://en.wikipedia.org/wiki/Curiosity_(rover)) is a system that can perform a few basic functions without any human intervention, but if it has any influence over the future, it is via humans collecting and distributing the data captured by it. The [Clock of the Long Now](https://en.wikipedia.org/wiki/Clock_of_the_Long_Now), if and when constructed, will keep time without humans as building-blocks, but, like the Mars rovers, will have influence over the future only via humans observing and discussing it.

Yet we may soon build systems that do influence the future of life on the planet, and do not require humans as building-blocks. The field concerned with building such systems is called artificial intelligence and the current leading method of engineering is called machine learning. There is much debate about what exactly these systems will look like, and in what way they might pose dangers to us. But before taking any view about whether these systems will look like agents or tools or AI services, or whether they will be goal-directed or influence-seeking, or whether they will be developed quickly or slowly, or whether we will end up with one powerful system or many, we might ask: what is the least we need to believe to justify attending to the development of AI among all the possible things that we might attend to? And my sense is just this: we may soon transition from a world where all systems that have power over the future of life on the planet are intricately tied to human building-blocks to a world where there are some systems that have power over the future of life on the planet without relying on human building-blocks. This alone, in my view, justifies attention in this area, and it does not rest in any way on views about agency or goals or intelligence.

So here is the argument up to this point:

>

> Among everything in the world that we might pay attention to, it makes sense to attend to that which has the greatest power over the future of life on the planet. Today, the systems that have power over the future of life on the planet rely on humans as building-blocks. Yet soon we may construct systems that have power but do not rely on humans as building-blocks. Due to the significance of this shift we should attend to the development of AI and check whether there is any cause for concern, and, if so, whether those concerns are already being adequately addressed, and if not, whether there is anything we can do.

>

>

>

The case for concern

--------------------

So we have a case for paying some attention to AI among all the things we could pay attention to, but I have not yet made a case for being *concerned* about AI development. So far it is as if we discovered an object in the solar system with a shape and motion quite unlike a planet or moon or comet. This would justify some attention by humans, but on this evidence alone it would not become a top concern, much less a top cause area.

So how do we get from attention to concern? Well, the thing about power is that *humans already seek it*. In the past, when it has become technically feasible to build a certain kind of system that exerts influence over the future, humans have tended, by default, to eventually deploy such systems in service of their individual or collective goals. There are some classes of powerful systems that we have coordinated to avoid deploying, and if we do this for AI then so much the better, but by default we ought to expect that once it becomes possible to construct a certain class of powerful system, humans will deploy such systems in service of their goals.

Beyond that, humans are quite good at incrementally improving things that we can tinker with. We have made incremental improvements to airplanes, clothing, cookware, plumbing, and cell phones. We have not made incremental improvements to human minds because we have not had the capacity to tinker in a trial-and-error fashion. Since all powerful systems in the world today use humans as building blocks, and since we do not presently have the capacity to make incremental improvements to human minds, there are no powerful systems in the world today that are subject to incremental improvement at all levels.

In a world containing some powerful systems that do not use humans as building blocks, there will be *some powerful systems that are subject to incremental improvements at all levels*. In fact the development of AI may open the door to making incremental improvements to human minds too. In this case *all* powerful systems in the world would be subject to incremental improvement. But we do not need to take a stance on whether this will happen or not. In either case the situation we will be in is one in which humans are making incremental improvements to some systems that have power in the world, and we therefore ought to expert that the power of these systems will therefore increase on a timescale of years or decades.

Now at this point it is sometimes argued that a transition of power from humans to non-human systems will take place, due to the very high degree of power that these non-human systems will eventually have, and due to the difficulty of the alignment problem. But I do not think that any such argumentative move is necessary to justify concern, because whether humans eventually lose power or not, what is much more certain is that in a world where powerful systems are being incrementally improved, there will be a period during which *humans gain power quickly*. It might be that humans gain power for mere minutes before losing it to a recursively self-improving singleton, or it may be that humans gain power for decades before losing it to an inscrutable web of AI services, or it may be that humans gain power and hold onto it until the end of the cosmos. But under any of these scenarios, humans seem destined to gain power on a timescale of years or decades, which is the pace at which we usually make incremental improvements to things.

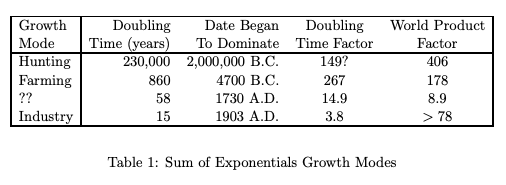

What happens when humans gain power? Well as of today, existential risk exists. It would not exist if humans had not gained power over the past few millennia, or at least it would be vastly reduced. Let’s ignore existential risk due to AI in order to make sure the argument is non-circular. Still, the point goes through. There is much good to say about humans. This is not a moral assessment of humanity. But can anyone deny that humans have gained power over the past few millennia, and that, as a result of that, existential risk is much increased today compared to a few millennia ago? If humans *quickly gain power*, it seems that, by default, we ought to presume that existential risk will also increase.

Now, there are certainly *some ways* to increase human power quickly without increasing existential risk, including by skillful AI development. There have certainly been *some times and places* where rapid increases in human power have led to decreases in existential risk. But this part of the argument is about what happens by default, and the ten thousand year trendline of the "existential risk versus human power" graph is very much up-and-to-the-right. Therefore I think rapidly increasing human power will increase existential risk. We do not need to take a stance on how or whether humans might later lose power in order for this to go through. We merely need to see that, among all the complicated goings-on in the world today, the development of AI is the thing most likely to confer a rapid increase in power to humans, and on the barest historical precedent, that is already cause for both attention and concern.

So here is the case for concern:

>

> If humans learn to build systems that do influence the future of life on the planet but do not require human building-blocks, then they are likely to make incremental improvements to these systems over a timescale of years or decades, and thereby increase their power over the future of life on the planet on a similar timescale. This should concern us because quick increases in human power have historically led to increases in existential risk. We should therefore investigate whether these concerns are already being adequately addressed, and, if not, whether there is anything we can do.

>

>

>

I must stress that not all ways of increasing human power lead to increases in existential risk. It is as if we were considering giving a teenager more power over their own life. Suppose we suddenly gave this teenager the power not just of vast wealth and social influence, but also the capacity to remake the physical world around them as they saw fit. For typical teenagers under typical circumstances, this would not go well. The outcomes would not likely be in the teenager’s own best interests, much less the best interests of all life on the planet. Yet there probably *are* ways of conferring such power to this teenager, say by doing it slowly and in proportion to increases in the teenager’s growing wisdom, or by giving the teenager a wise genie that knows what is in the teenager’s best interest and will not do otherwise. In the case of AI development, we are collectively the teenager, and we must find the wisdom to see that we are not well-served by rapid increases in our own power.

The case for intervention

-------------------------

We have a case for apriori concern about the development of a particular technology that may, for a time, greatly increase human power. But perhaps humanity is already taking adequate precautions, in which case marginal investment might be of greater benefit in some other area. What is the epistemically minimal case that humanity is not already on track to mitigate the dangers of developing systems that have power over the future of life on the planet without requiring humans as building-blocks?

Well consider: right now we appear to be rolling out machine learning systems at a rate that is governed by economic incentives, which is to say that the rate of machine learning rollout appears to be determined primarily by the supply of the various factors of production, and the demand for machine learning systems. There is seemingly no gap between the rate at which we *could* roll out machine learning systems if we allowed ordinary economic incentives to govern, and the rate at which we *are* rolling out those systems.

So is it more likely that humanity is exercising diligence and coordinated restraint in the rollout of machine learning systems, or is it more likely that we are proceeding haphazardly? Well imagine if we were rolling out nuclear weapons at a rate determined by ordinary economic incentives. From a position of ignorance, it’s *possible* that this rate of rollout would have been selected by a coordinated humanity as the wisest among all possible rates of rollout. But it’s much more likely that this rate is the result of haphazard discoordination, since from economic arguments we would expect the rate of rollout of any technology to be governed by economic incentives in the absence of a coordinated effort, whereas there is no reason to expect a coordinated consideration of the wisest possible rate to settle on this particular rate.

Now, if there were a gap between the "economic default" rate of rollout of machine learning systems and the actual rate of rollout then might still question whether we were on track for a safe and beneficial transition to a world containing systems that influence the future of life on the planet without requiring humans as building-blocks. It might be that we have merely placed haphazard regulation on top of haphazard AI development. So the existence of a gap is not a sufficient condition for satisfaction with the world’s handling of AI development. But the absence of any such gap does appear to be evidence of the absence of a well-coordinated civilization-level effort to select the wisest possible rate of rollout.

This suggests that the concerning situation in the previous section is, at a minimum, not already *completely* addressed by our civilization. It remains to be seen whether there is anything we can do about it. The argument here is about whether the present situation is already satisfactory or not.

So here is the argument for intervention:

>

> Humans are developing systems that appear destined to quickly increase human power over the future of life on the planet at a rate that is consistent with an economic equilibrium. This suggests that human civilization lacks the capacity to coordinate on a rate motivated by safety and long-term benefit. While other kinds of interventions may be taking place, the absence of this particular capacity suggests that there is room to help. We should therefore check whether there is anything that can be done.

>

>

>

Now it may be that there is a coordinated civilization-level effort that is taking measures other than selecting a rate of machine learning rollout that is different from the economic equilibrium. Yes, this is possible. But the question is why our civilization is not coordinating around a different rate of machine learning rollout if it has the capacity to do so. Is it that the economic equilibrium is in fact the wisest possible rate? Why would that be? Or is it that our civilization is choosing not to select the wisest possible rate? Why? The best explanation seems to be that our civilization does not presently have an understanding of which rates of machine learning rollout are most beneficial, or the capacity to coordinate around a selected rate.

It may also be that we navigate the development of powerful systems that do not require humans as building-blocks without ever coordinating around a rate of rollout different from the economic equilibrium. Yes this is possible, but the question we are asking here is whether humanity is already on track to safely navigate the development of powerful systems that do not require humans as building-blocks, and whether our efforts would therefore be better utilized elsewhere. The absence of the capacity to coordinate around a rate of rollout suggests that there is at least one very important civilizational capacity that we might help develop.

The case for action

-------------------

Finally, the most difficult question of all: is there anything that can be done? I don’t have much to say here other than the following very general point: It is very strong to claim that nothing can be done about a thing because there are many possible courses of action, and if even one of them is even a little bit effective then there is something that can be done. To rule out all possible courses of action requires a very thorough understanding of the governing dynamics of a situation and a watertight impossibility argument. Perhaps there is nothing that can be done, for example, about the heat death of the universe. We have some understanding of physics and we have strong arguments from thermodynamics, and even on this matter there is some room for doubt. We have nowhere near that level of understanding about the dynamics of AI development, and therefore we should expect on priors that among all the possible courses of actions, there are some that are effective.

Now you may doubt whether it is possible to *find* an effective course of action. But again, claiming that it is impossible to find an effective course of action implies that among all the ways that you might try to find an effective course of action, none of them will succeed. This is the same impossibility claim as before, only now it concerns the process of finding an effective course of action rather than the process of averting AI risk. Once again it is a very strong claim that requires a very strong argument, since if even one way of searching for an effective course of action would succeed, then it is possible to find an effective course of action.

Now you may doubt that it is possible to find a way to search for an effective course of action. Around and around we could go with this. Each time you express doubt I would point out that it is not justified by anything that is objectively impossible. What, then, is the real cause of your doubt?

One thing that can always be done at an individual level is to make a thing the top priority in our life, and to become willing to let go of all else in service of it. At least then if a viable course of action does become apparent, we will certainly be willing to take it.

Conclusion

----------

In the early days of AI alignment there was much discussion about fast versus slow take-off, and about recursive self-improvement in particular. Then we saw that *the situation is concerning either way*, so we stopped predicating our arguments on fast take-off, not because we concluded that fast take-off arguments were wrong, but because we saw that the center of the issue lay elsewhere.

Today there is much discussion in the alignment community about goal-directedness and agency. I think that a thorough understanding of these issues is central to a solution to the alignment problem, but, like recursive self-improvement, I do not think it is central to the problem itself. I therefore expect discussions of goal-directedness and agency to go the way of fast take-off: not dismissed as wrong, but de-emphasized as an unnecessary predicate.

There is also discussion recently about scenarios involving single versus multiple AI systems governed by single versus multiple principals. Andrew Critch has [argued](https://www.lesswrong.com/posts/WjsyEBHgSstgfXTvm/power-dynamics-as-a-blind-spot-or-blurry-spot-in-our) that more attention is warranted to "multi/multi" scenarios in which multiple principals govern multiple powerful AI systems. Amongst the rapidly branching tree of possible scenarios it is easy to doubt whether one has adequately accounted for the premises needed to get to a particular node. It may therefore be helpful to lay out the part of the argument that applies to all branches, in order that we have some epistemic ground to stand on as we explore more nuance. I hope this post helps in this regard.

Appendix: Agents versus institutions

------------------------------------

One of the ways that we could build systems that have power over the future of life on the planet without relying on human building-blocks is by building goal-directed systems. Perhaps such goal-directed systems would resemble agents, and we would interact with them as intelligent entities, as [Richard Ngo describes in AGI Safety from First Principles](https://www.lesswrong.com/s/mzgtmmTKKn5MuCzFJ/p/8xRSjC76HasLnMGSf).

A different way that we could build systems that have power over the future of life on the planet without relying on human building-blocks is by gradually automating factories, government bureaucracies, financial systems, and eventually justice systems as [Andrew Critch describes](https://www.lesswrong.com/posts/LpM3EAakwYdS6aRKf/what-multipolar-failure-looks-like-and-robust-agent-agnostic). In this world we are not so much interacting with AI as a second species but more as the institutional and economic water in which we humans swim, in the same way that we don’t think of the present-day finance or justice systems as agents, but more like a container in which agents interact.

Or perhaps the first systems that will have power over the future of life on the planet without relying on human building-blocks will be emulations of human minds, as Robin Hanson describes in Age of Em. In this case, too, humans would gain the capacity to tinker with all parts of some systems that have power over the future of life on the planet, and through ordinary incremental improvement become, for a time, extremely powerful.

These possibilities are united as avenues by which humans could quickly increase their power by building systems that have both influence over the future of life on the planet, and are subject to incremental improvement at all levels. Each scenario suggests particular ways that humans might later lose power, but instead of taking a strong view on the loss of power we can see that a quick increase in human power, however temporary, is, on historical precedent, already a cause for concern. |

14352025-0e1d-48c1-b2e2-07ec7b0e8572 | trentmkelly/LessWrong-43k | LessWrong | Can Large Language Models effectively identify cybersecurity risks?

TL;DR

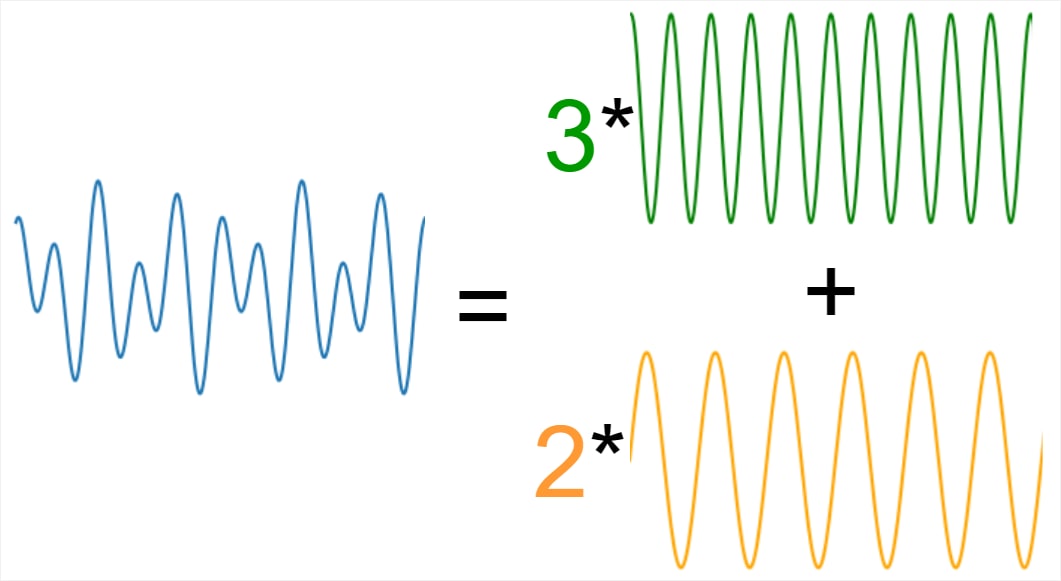

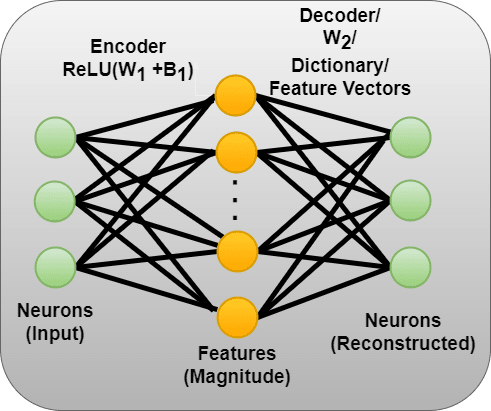

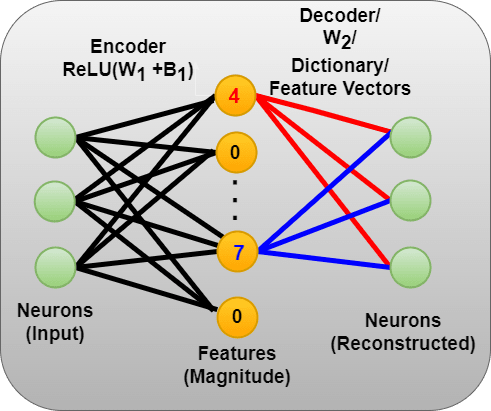

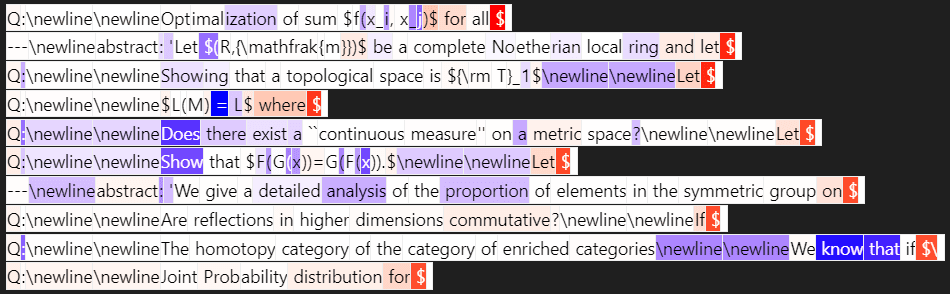

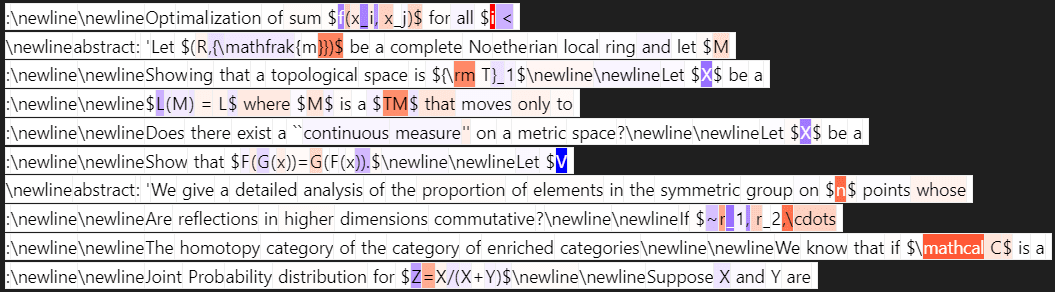

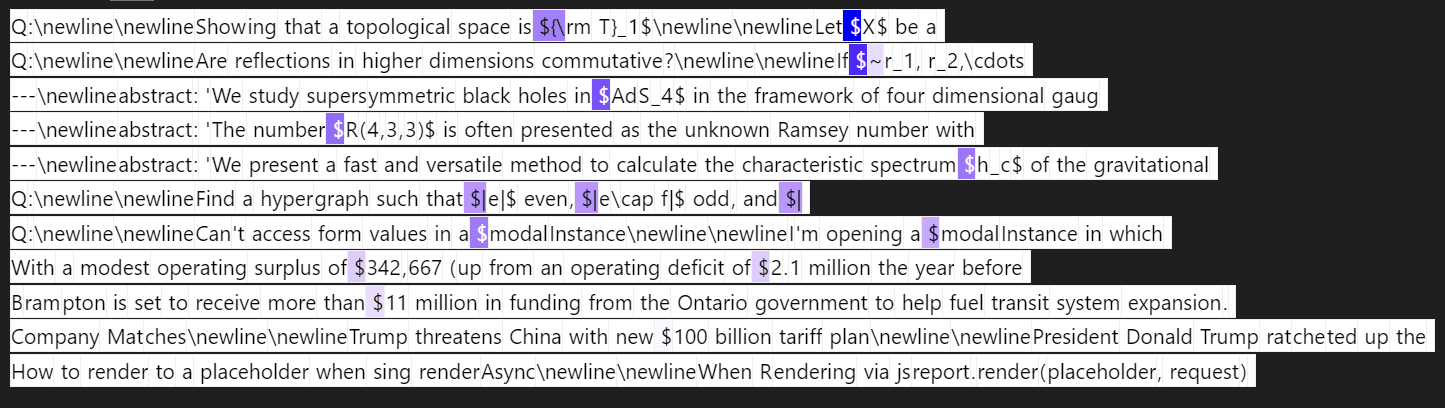

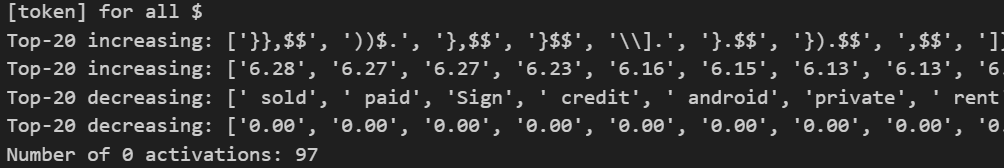

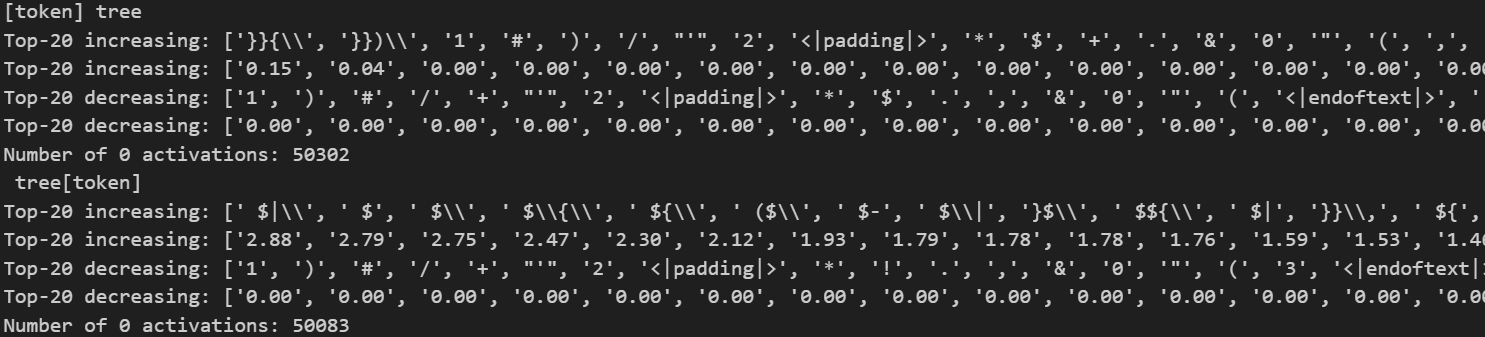

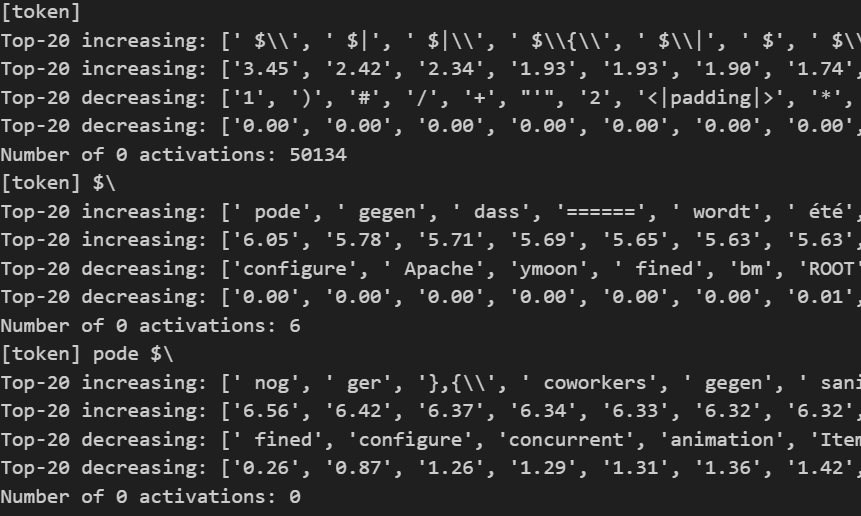

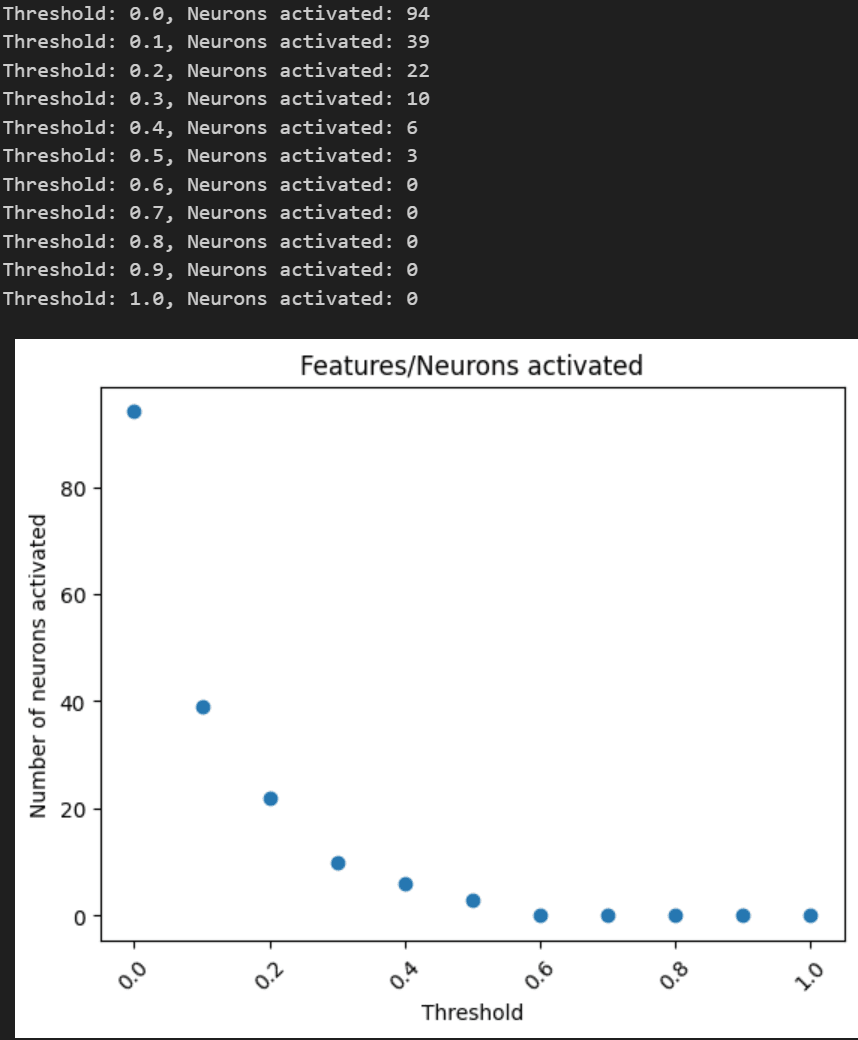

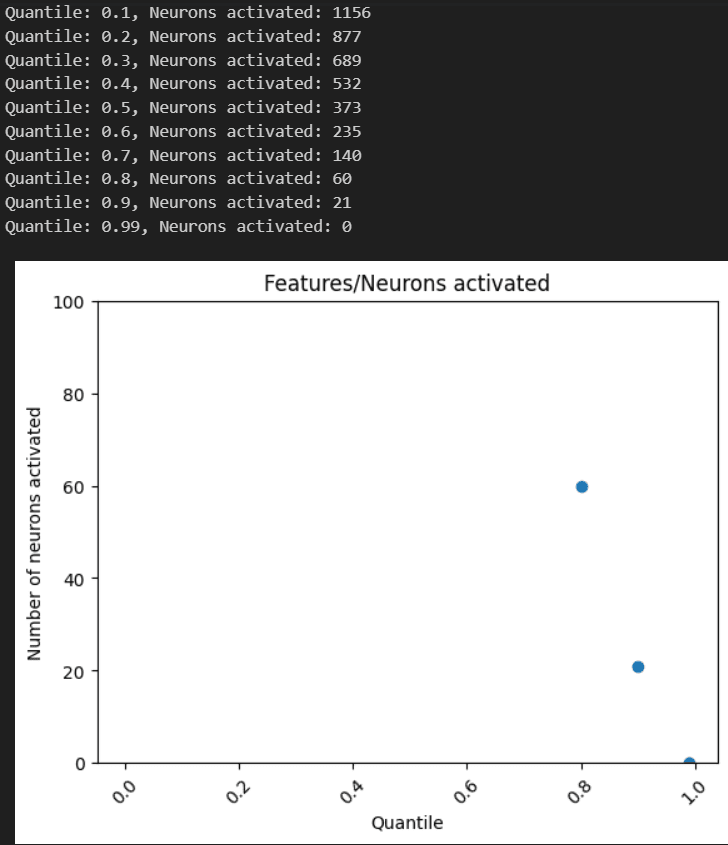

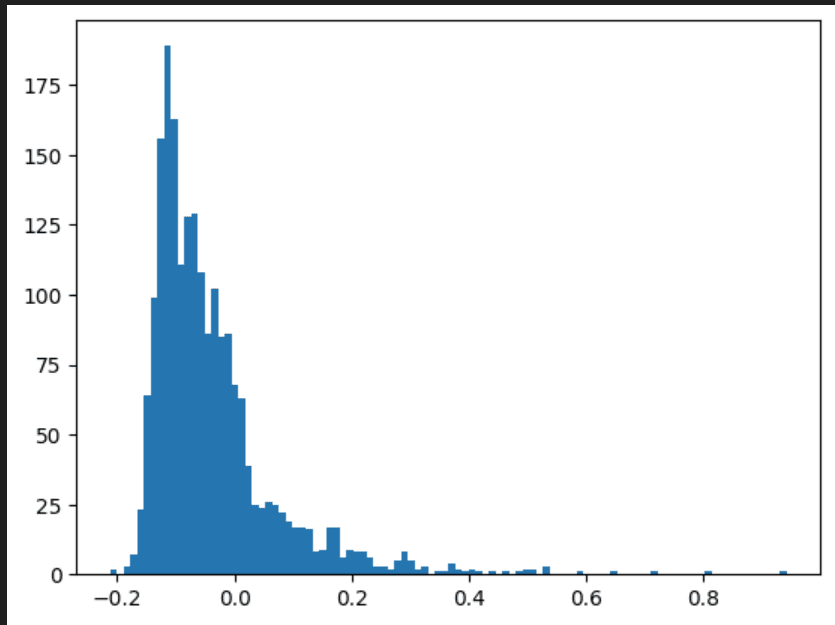

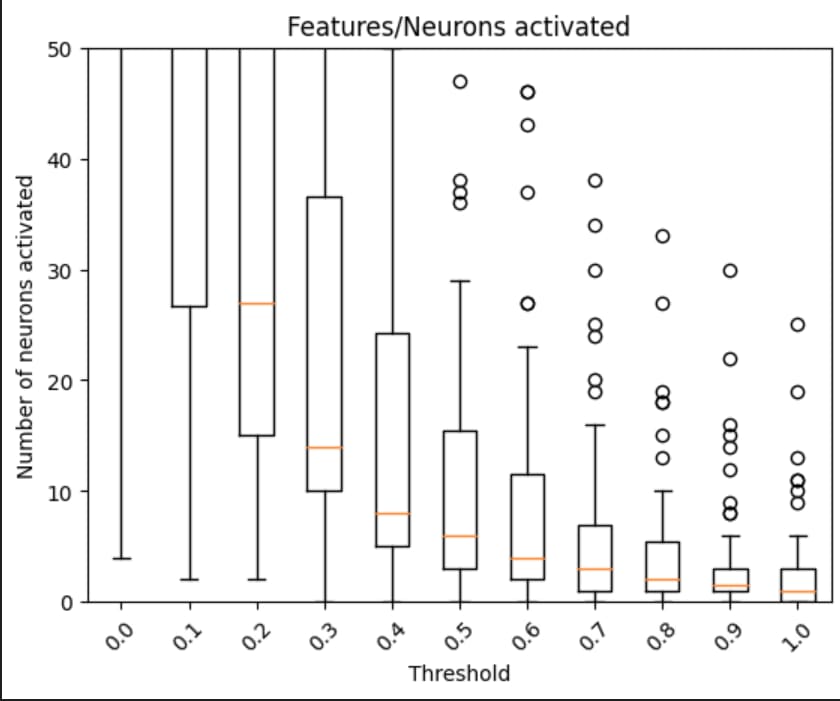

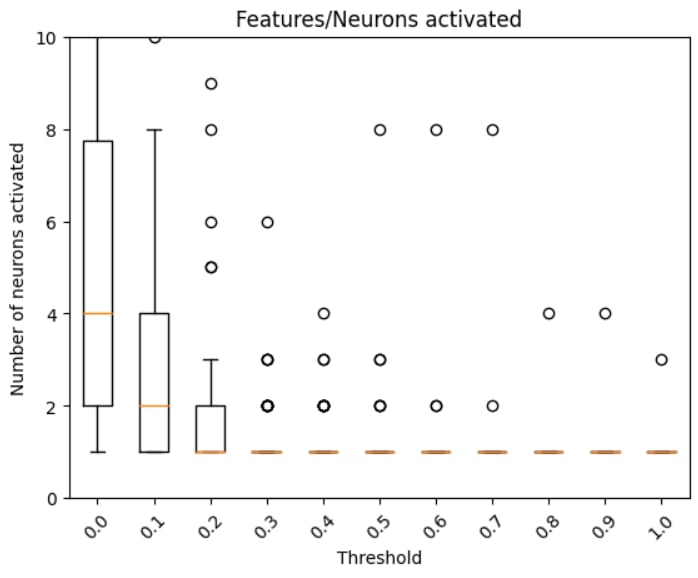

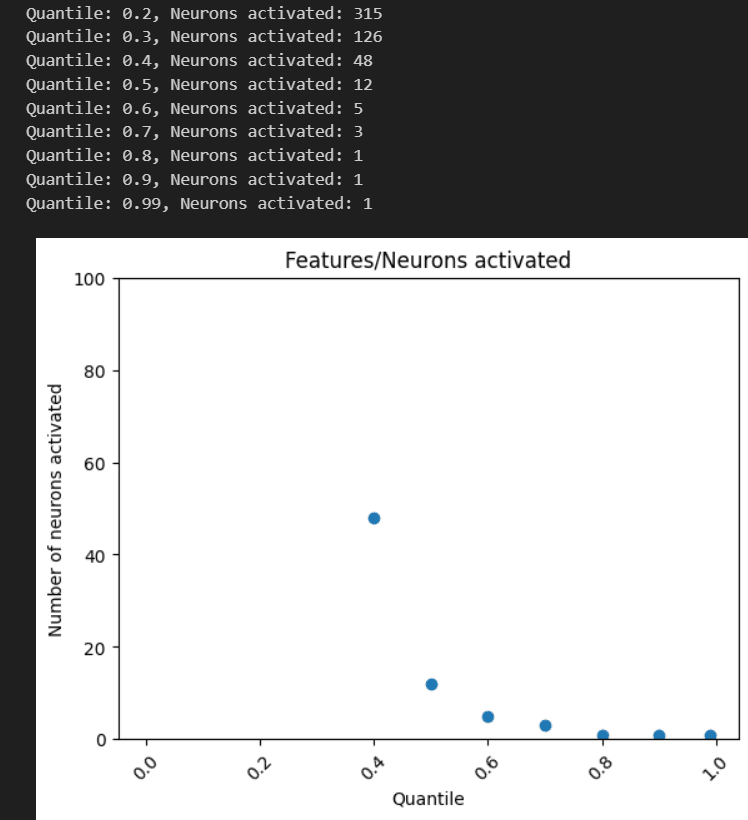

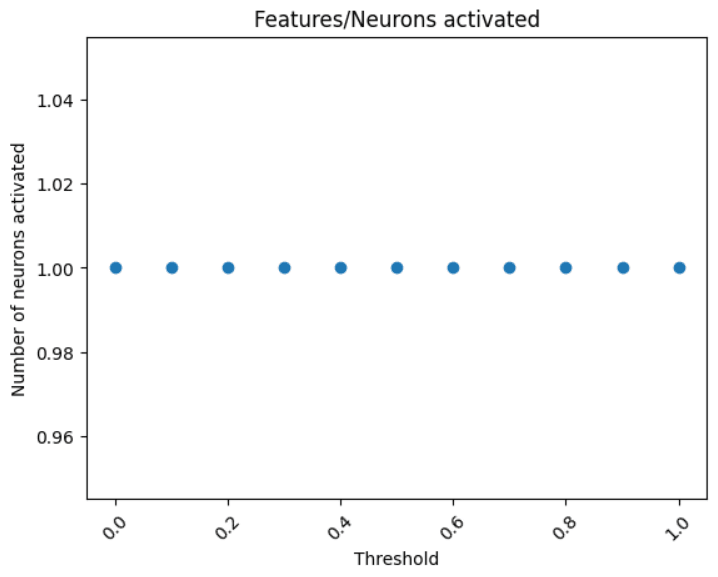

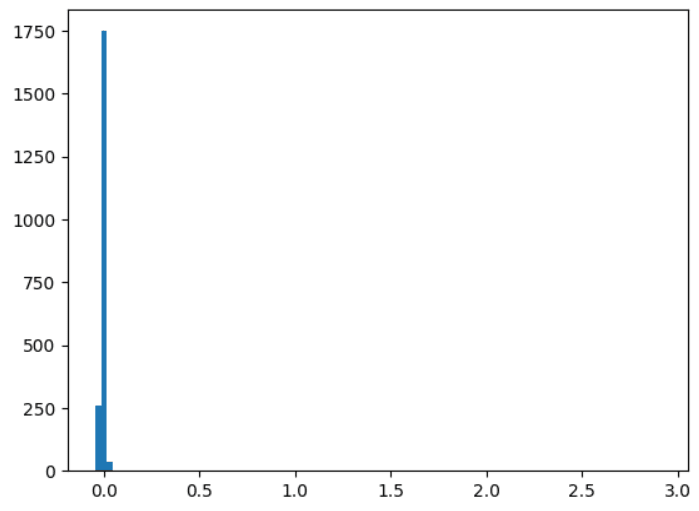

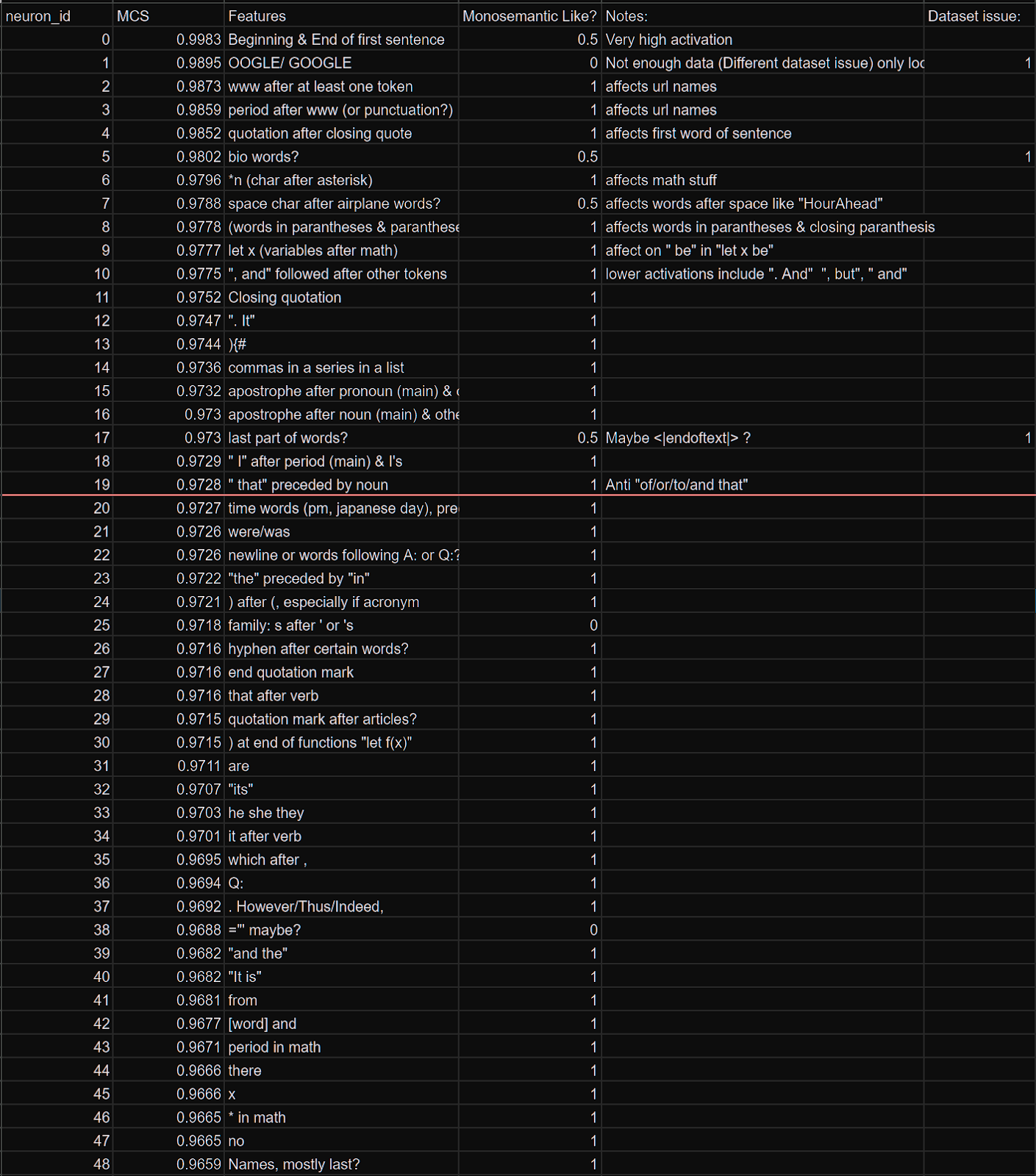

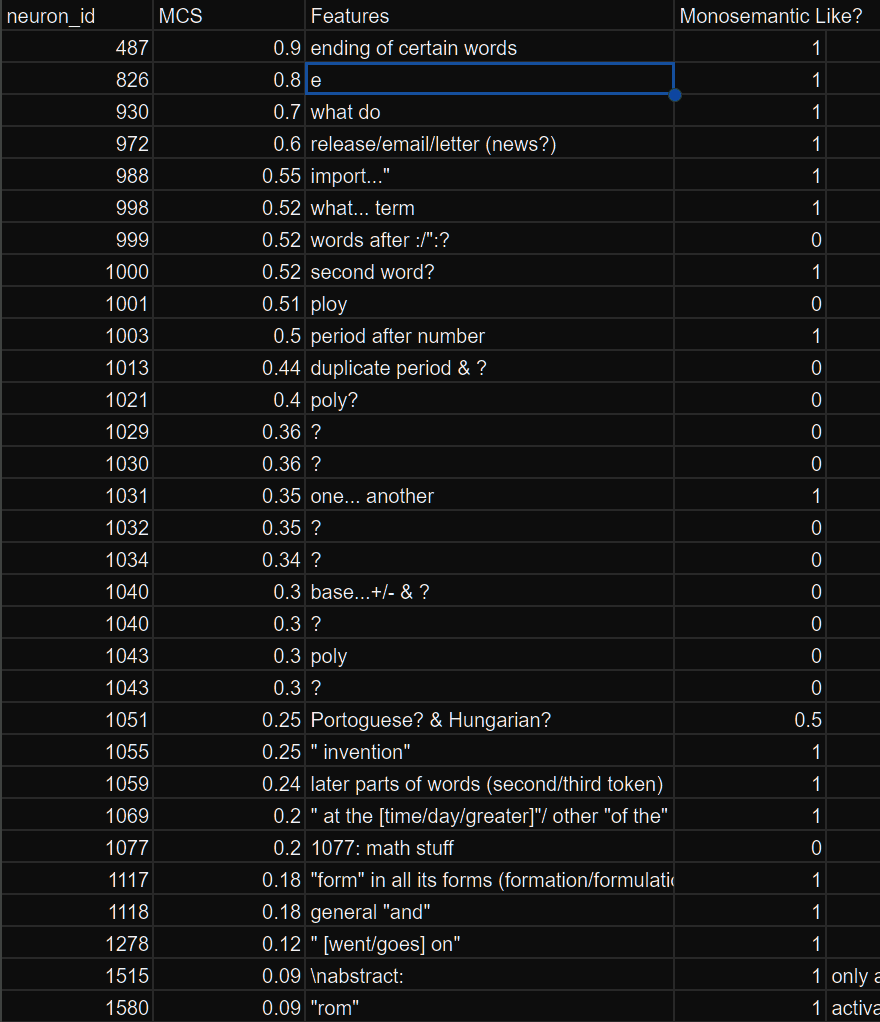

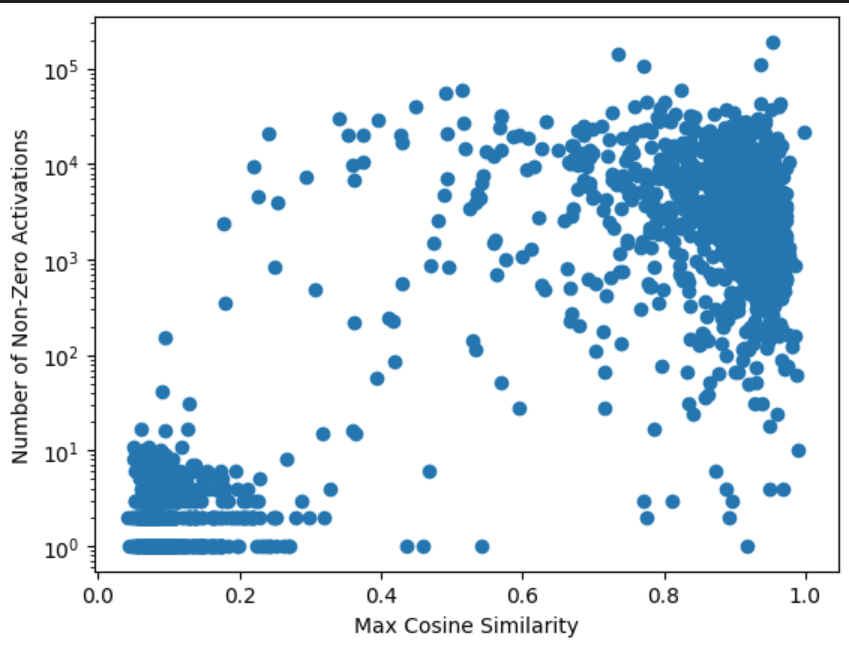

I was interested in the ability of LLMs to discriminate input scenarios/stories that carry high vs low cyber risk, and found that it is one of the “hidden features” present in most later layers of Mistral7B.

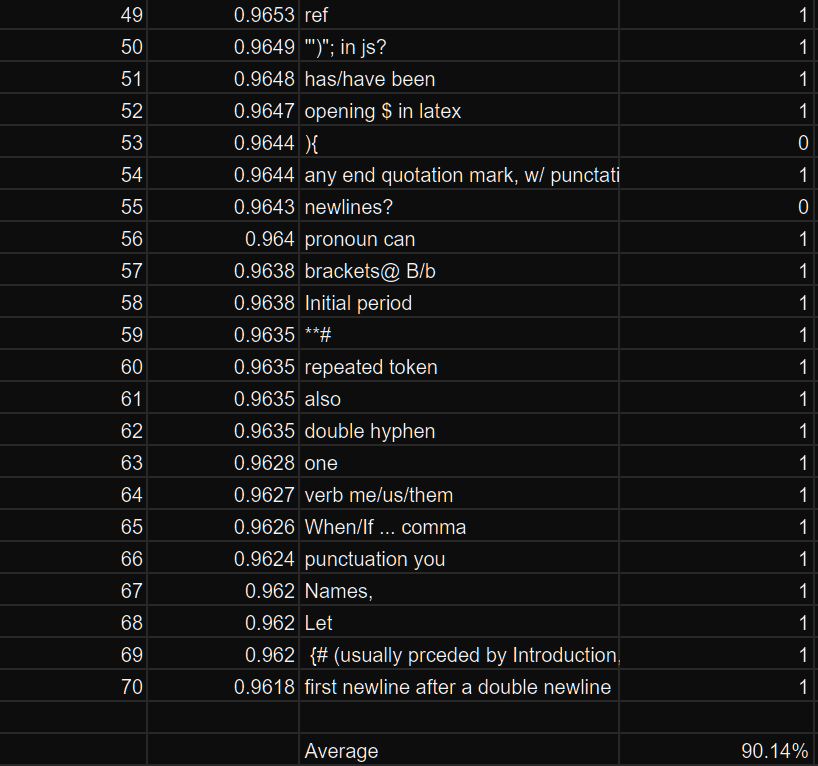

I developed and analyzed “linear probes” on hidden activations, and found confidence that the model generally "senses when something is up” in a given input text, vs low risk scenarios (F1>0.85 for 4 layers; AUC in some layers exceeds 0.96).

The top neurons activating in risky scenarios also do have security-oriented effect on outputs, most increasing words (tokens) like “Virus” or “Attack”, and questioning “necessity” or likelihood.

These findings provide some initial evidence that "trust" in LLMs, both to respond conversationally with risk awareness as well as developing LLM-based risk assessment systems may be reasonable (here, I do not address design/architecture efforts and how they might improve signal/noise tradeoffs).

Neuron activation patterns in most layers of Mistral7B (each with 14336 neurons) natively contain the indications needed to correctly discriminate the riskiest of two very similar scenario texts.

Intro & motivation

With the help of the AI Safety Fundamentals / Alignment course, I enjoyed learning about cutting-edge research on the risks of AI large language models (LLMs) and mitigations that can keep their growing capabilities aligned to human needs and safety. For my capstone project, I wanted to connect AI (transformer-based generative models) specifically to cybersecurity for two reasons:

1. Over 12 years of working in security, I've seen “AI” interest only accelerating within security and generally,

2. but we’re still (rightfully) skeptical of current models’ reliability: LLMs have unique risks and failure modes, including accuracy, injection and sycophancy (rolling with whatever the user seems to suggest).

I settled on this “mechanistic interpretability” idea: finding whether, where, and how LLMs were generally se |

c088fe38-a963-4a71-90f7-00790612fd5a | trentmkelly/LessWrong-43k | LessWrong | Retrospective: PIBBSS Fellowship 2023

Between June and September 2023, we (Nora and Dusan) ran the second iteration of the PIBBSS Summer Fellowship. In this post, we share some of our main reflections about how the program went, and what we learnt about running it.

We first provide some background information about (1) The theory of change behind the fellowship, and (2) A summary of key program design features. In the second part, we share our reflections on (3) how the 2023 program went, and (4) what we learned from running it.

This post builds on an extensive internal report we produced back in September. We focus on information we think is most likely to be relevant to third parties, in particular:

* People interested in forming opinions about the impact of the PIBBSS fellowship, or similar fellowship programs more generally

* People interested in running similar programs, looking to learn from mistakes that others made or best practices they converged to

Also see our reflections on the 2022 fellowship program. If you have thoughts on how we can improve, you can use this name-optional feedback form.

Background

Fellowship Theory of Change

Before focusing on the fellowship specifically, we will give some context on PIBBSS as an organization.

PIBBSS overall

PIBBSS is a research initiative focused on leveraging insights and talent from fields that study intelligent behavior in natural systems to help make progress on questions in AI risk and safety. To this aim, we run several programs focusing on research, talent and field-building.

The focus of this post is our fellowship program - centrally a talent intervention. We ran the second iteration of the fellowship program in summer 2023, and are currently in the process of selecting fellows for the 2024 edition.

Since PIBBSS' inception, our guesses for what is most valuable to do have evolved. Since the latter half of 2023, we have started taking steps towards focusing on more concrete and more inside-view driven research directions. To |

f8e73403-89e2-4f04-9dbe-29eecb598a2d | trentmkelly/LessWrong-43k | LessWrong | EA Forum Creative Writing Contest: $10,000 in prizes for good stories

We just launched a creative writing contest on the Effective Altruism Forum. Stories like HPMOR and The Fable of the Dragon-Tyrant have been massively impactful, and we'd like to see more work in that vein — please consider submitting something!

Note that you can also submit past work that seems like a good fit. The criteria are:

* Someone can reasonably finish the work in one sitting

* The work might inspire someone to become interested in EA, or in some part of EA. You don't have to shill for anything too specific — we'd be really happy to see work that just reflects rational/EA modes of thinking, applied in altruistic ways, without being directly about animal suffering or AI or whatnot.

I'm running the contest. Please let me know, here or on the Forum, if you have any questions! |

7da91d1b-4a4e-4d1b-8510-d7fc503635b3 | trentmkelly/LessWrong-43k | LessWrong | What does it mean to "believe" a thing to be true?

None |

4644311e-3902-46d9-9e1c-f5514c9e1de1 | trentmkelly/LessWrong-43k | LessWrong | AI Safety Newsletter #40: California AI Legislation

Plus, NVIDIA Delays Chip Production, and Do AI Safety Benchmarks Actually Measure Safety?

Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

----------------------------------------

SB 1047, the Most-Discussed California AI Legislation

California's Senate Bill 1047 has sparked discussion over AI regulation. While state bills often fly under the radar, SB 1047 has garnered attention due to California's unique position in the tech landscape. If passed, SB 1047 would apply to all companies performing business in the state, potentially setting a precedent for AI governance more broadly.

This newsletter examines the current state of the bill, which has had various amendments in response to feedback from various stakeholders. We'll cover recent debates surrounding the bill, support from AI experts, opposition from the tech industry, and public opinion based on polling.

The bill mandates safety protocols, testing procedures, and reporting requirements for covered AI models. The bill was introduced by State Senator Scott Wiener, and is cosponsored by CAIS Action Fund, and aims to establish safety guardrails for the most powerful AI models. Specifically, it would require companies developing AI systems that cost over $100 million to develop and are trained on a massive amount of compute to implement comprehensive safety measures, conduct rigorous testing, and mitigate potential severe risks. The bill also includes new whistleblower protections.

A group of renowned AI experts has thrown their weight behind the bill. Earlier this month, Yoshua Bengio, Geoffrey Hinton, Lawrence Lessig, and Stuart Russell penned a letter expressing their strong support for SB 1047. They argue that the next generation of AI systems pose "severe risks" if "developed without sufficient care and oversight." Bengio told TIME, "I worry that technology companies will not solve these significant risks on their own while |

3af8549e-ebb5-40de-95c7-fcf10beb0680 | StampyAI/alignment-research-dataset/youtube | Youtube Transcripts | Reinforcement Learning 1: Introduction to Reinforcement Learning

welcome this is going to be the first

lecture in the reinforcement learning

tract of this course now as story will

have explained there are more or less

two separate tracks in this course or

overlap between the deep learning side

and the reinforcement inside let me just

turn this off in case but they can also

be viewed more or less separately and

some of the things how we will be

talking about will tie into the deep

learning side specifically we will be

using deep learning methods and

techniques at some points during this

course but a lot of it is separable and

can be studied separately and has been

studied in the past for many many years

separately this lecture specifically I

will take a high-level view and cover

lots of the concepts of reinforcement

learning and then in later lectures we

will go into depth into the server of

the topics so if you feel there's

information missing yes that doesn't

need it the case however if you feel I'm

Julie confused feel free to stop me and

ask questions at any time there are no

stupid questions if you didn't

understand something it's probably

because I didn't explain it well and

there's probably loads of other people

in the room that also didn't understand

it the way intended so feel free to end

and ask questions at any time I also

have a short break in the middle just to

refresh in everybody okay so let's dive

in I'll start with some boring admin

just so we can warm up schedule wise

most of reinforcement earning lectures

are schedules at this time not all of

them there's a few exceptions which you

can see in the schedule on Moodle of

course the schedule is what we currently

believe it will rule remain but feel

free to keep checking it in case things

change or just come to all lectures and

then in our home is anything so check

Moodle for updates also use Moodle for

questions we'll try to be responsive

there as you will know grading is

through assignments

and the backgroud material first

specifically this reinforcement learning

side of the course will be the new

edition of the session Umberto booked a

fool drafts can be found online and I

believe it is currently or will very

soon be impressed if you prefer a hard

copy but probably all in time for this

course but you can just get the whole

PDF specifically for this lecture the

background will mostly be chapters one

and three and then next lecture will

actually come from or out of chapter two

I especially encourage you to read

chapter one which gives you a high-level

overview the way rich thinks about these

things and also talks about many of

these concepts but also gives you large

historical view on how these things got

developed which ideas came from where

and also how these are these changes

over time because if you get everything

from this course you'll have a certain

few but you might not realize that

things may have been perceived quite

differently in the past and some people

might still perceive quite differently

right now

so almost to give my view of course but

I'll try to keep as close as possible to

the to the book and I think our views

overlap quite substantially anyway so

that should be good

this is the outline for today I'll start

by talking just about what reinforced

learning is many of you will have a

rough or detailed idea of this already

but it's good to be on the same page

I'll talk about the core concepts of a

low reinforcement learning system one of

these concepts is in agents and then

I'll talk about what are the components

of such an agents and I'll talk a little

bit about what our challenges in

reinforcement learning so what are

research topics are things to think

about within the research field of

reinforcement learning but of course

it's good to start with defining what it

is but before I do that I'll start with

a little bit of motivation and this is a

very high-level abstract view maybe but

one way to think about this is that

first many many years ago we started

automating physical solutions with

machines and this is the Industrial

Revolution think of replacing horses

with a train we kind of know how to pull

something forward across a track and

then we just

remember that machine and we use them

the machine instead of human or in in

the case of horses animal labor and of

course if you just create a huge boom in

productivity and then after that the

second wave of automation which is

basically still happening but it has

been happening for a long while now is

what you could call the digital

revolution or sometimes called the

digital revolution in which we did a

similar thing but instead of taking

physical solutions we took mental

solutions so maybe a canonical example

if this is a calculator we know how to

do division so we can program that into

a calculator and then have it do that

mental what used to be purely mental

tasks on a machine in the future so we

automate it's mental solutions but we

still in both of these cases came up

with the solutions ourselves right we

came up with what we wanted to do and

how to do it and then we implemented it

in a machine so the next step is to

define a problem and then have a machine

solved itself for this you require

learning you require something in

addition because if you don't put

anything into the system how can it know

one thing you can put into a system is

your own knowledge this is what was done

with these machines either for mental or

physical solutions but the other thing

you could put in there is some knowledge

on how to learn but then the data having

the machine learn from its for itself so

what is then reinforcement learning

they're still by the way a couple of

seats sprinkled throughout the room so

feel free to try and grab one because

it's getting rather busy

okay so what is specific about

reinforcement learning so I'll post it

that we and many other intelligence

beings are beings that we would call

intelligence learn by interacting with

in environments and this differs from

certain other types of learning for

instance it is active rather than

passive you interact the environments

response to your interaction and this

also means that your actions are often

sequential right the environment might

change because you do something or you

might be in a different situation within

that environment which means that future

interactions can depend on the earlier

ones and these things are a little bit

different from say supervised learning

where you typically get a data set and

it's just given to you and then you just

crunch the numbers essentially to come

up with the solution this is still

learning right this is still getting new

solutions out of the data but it's a

different type of learning in addition

many people agree that we are goal

directed so we seem to be going toward

certain goals maybe also without knowing

exactly how to reach that goal in

advance and we can learn without

examples of optimal behavior obviously

we could also learn from examples as in

education but we have to also just learn

by trial and error and that's going to

be important so this is a canonical

picture of reinforcement learning

there's many versions of this there is

an agent which is our learning system

and it sends certain actions or

decisions out these decisions are

absorbed by the environment which is

basically everything around the agents

even though I drew them

it's mostly done in these figures but

you can think of the environment as just

everything that is outside of the agents

and the environments responds in a sense

by sending back an observation if you

prefer you can also think of this as

maybe more of a pool action by the agent

that the asian observes the environment

whatever it is and then this loop

continues the agents can take take more

actions and the environments may or may

not change depending on these actions

and the observations may or may or may

not change and you are using to learn

within this interactive loop so you know

in order to understand why we want to do

learning it's good to realize that there

is distinct types of learning I already

made difference between active learning

and passive learning but there's also

different goals for learning so two

goals that you might differentiate is

one is to find previously unknown

solutions maybe you don't care exactly

how your IVD solutions but you might

find it hard to code them up by hand or

to invent them yourself so you might

want to get this from the data but it's

good to realize that this is this is a

different goal from being able to learn

quickly in a new environment and both of

these things are valid goals for

learning so in the first type of

learning an example might be that you

might want to find a program that can

play the game of go better than any

human which is a goal to find a certain

solution in a second type of learning

you might think of an example where

robots is navigating terrains but all of

a sudden it finds itself in a traded it

has never seen before and also wasn't

present when people build to rubble to

run the robot was learning then you want

the robot to learn online and you wanted

to look maybe adapt quickly and

reinforcement learning as a fields seeks

to provide algorithms that can handle

both these cases sometimes they're not

clearly differentiated and sometimes

people don't clearly specify which goal

they're after but it's good to keep this

in mind also note that the second point

is not just about generalization it's

not just about how you learn about many

terrains and then you get a new one and

you're able to deal well with it it's

about that a little bit but it's also

about being able to learn online to

adapt even while you're doing it and

this is fair game we do that as well

when we enter a new situation we do do

it that still we don't have to just lean

on what we've learned in the past so

another way to phrase what reinforced

bleeding is is it it is the science of

learning to make decisions from

interaction and this requires us to

think about many concepts such as time

and related to that the long term

consequences of actions its requires to

think about actively gathering

experience because of the interaction

you cannot assume that all the relevant

experience is just given to you

sometimes you must actively seek it out

it might require us to think about

predicting the future in order to deal

with these longtime consequences and

typically it also allows requires us to

deal with uncertainty the uncertainty

might be inherent to a problem if for

instance you might be dealing with a

situation that is inherently noisy or it

might be that certain parts of the

problem that you're dealing with are

hidden to you for instance you're

playing against an opponent and you

don't know what goes on in their head or

it might just be that you yourself

creates uncertainty because you don't

know maybe you're following a behavior

that sometimes is a little bit sarcastic

so you can't predict the future with

complete certainty just based on your

own interaction I'm just going to repeat

once more there's still a few seats if

people want to grab them one back there

there's a few up here so there's huge

potential scope for this because

decisions show up in many many places if

you think about it and so one thing that

I just want you to think about is

whether this is sufficient to be able to

talk about what artificial intelligence

is of course I could take stand here

this is just to provoke you to think

about that can you think of things that

we're not covering right that you might

need for artificial intelligence that's

basically the thing that I want you to

think about and if so we should probably

add them so there's a lot of related

disciplines and reinforcement learning

has been studied in one form or another

many times and in many forms this is a

slide that I borrowed from Dave silver

where he noted a few of these

disciplines there might be others and

these might not even be these not mine

will be the only mine debate

examples although some of them are

pretty persuasive and the disciplines

that he pointed out work at the top

computer science which a lot of you will

be studying some variant of in which we

might do something called machine

learning and you could think of

reinforcement learning as being part of

that discipline I'll come back to that

later but also neuroscience people have

investigated the brain to large extents

and found that certain mechanisms within

the brain look a lot like the

reinforcement learning algorithms that

we'll study later in this course so

there might be some connection there as

well or maybe you can use these concepts

that we'll talk about to understand how

we learn also in psychology maybe this

is more like a higher-level version of

the neuroscience argument where there's

behavior obviously there's decisions and

maybe you can reason about that you can

maybe you can model that in this very

similar way or maybe even the same way

as you can model the reinforcement

learning problem and then think about

learning what that what that entails how

the learning progresses using this

framework separately on the other side

you have engineering sometimes you just

want to solve a problem and there are

many diseases problems out there that

people want to solve for many different

reasons but typically to to optimize

something and within that we have a

field called optimal control which is

very closely related to reinforcement

learning and many of the methods overlap

although sometimes the focus is a little

bit different in a notation might be a

little bit different fairly similarly in

mathematics there's a subcategory or

maybe I don't know whether it's

completely fair to say that it's part of

mathematics maybe it's a little bit more

like a Venn diagram itself called

operations research and operations

research this is the field where you

basically look for solutions for many

problems using mathematical tools

including Markov decision processes that

will touch upon later in this course and

dynamic programming and things like that

which are also used in reinforcement

learning finally at the bottom it says

economics but there's other related

fields that you might consider here one

thing that's quite interesting about

this is that it's very clearly a

multi-agent setting so now there's

multiple actors in a situation and

together they make decisions but also

separately and there's all these

interesting inter

actions between these these agents and

it's also quite natural in economics to

think about optimizing something many

many people talk about optimizing say

returns or value and this is very

similar to what we'll discuss as well so

to zoom in a little bit on the machine

learning part sometimes people make this

distinction that machine learning basic

has a number of subfields maybe the

biggest of this is the supervised

learning subfield where which we're

getting quite good at I would say and a

lot of deep learning work for instance

is done on supervised settings the goal

there is to find a mapping you have

examples of inputs and outputs and you

want to learn that mapping and ideally

you want to learn a mapping that also

generalizes to new inputs that you've

never seen before in a nutshell

unsupervised learning separately is what

you do when you don't have the labeled

examples so you might have a lot of data

but maybe you don't have clear examples

of what the mapping should be and all

that you can do all that you want to do

is to somehow structure the data so that

you can reason about it or that you can

understand the data itself better now

reinforcement learning some people

sometimes perceive that as being part of

one of these or maybe a little bit of a

mixture of both but I would argue that

it's difference and separates and in

reinforcement learning the one of the

main distinctions is that you get a

reinforcement learning signal which we

call the reward instead of a supervised

signal what this signal gives you and

I'll talk about it later more is some

notion of how good something is compared

to something else but it doesn't tell

you exactly what to do it doesn't give

you a label or an action that you should

have done it just tells you I like this

this much but I'll go into more detail

so characteristics of reinforcement

learning and specifically how does it

differ from other machine learning

paradigms include that there's no strict

supervision on your reward signal and

also that the feedback can be delayed so

sometimes you take an action and this

action much later leads to reward and

this is also something that you don't

typically get in a supervised learning

setting

of course there's or there's exceptions

in addition time and sequentiality

matters so if you take a decision now it

might be impossible to undo that

decision later whereas if you just make

a prediction and you update your loss in

a supervised setting typically you can

still redo that later

this means that earlier your decisions

affect later interaction is good to keep

that in mind and basically the the next

lecture also talks a lot about this so

examples of decision problems there is

many as I said some concrete examples to

maybe help you think about these things

include to fly a helicopter or to manage

an investment portfolio or to control

the power station make a robe walk or

play video or board games and these are

actual examples where reinforcement

learning has been or versions of

reinforcement have been applied and

maybe it's good to note that these are

reinforced learning problems because

they are sequential decision problems

even if you don't necessarily use what

people might go every first learning

method to solve them it's good to make

the distinction because some people they

think of the current reinforcement

learning algorithms and they basically

unify the field with those specific

algorithms but reinforcement learning is

both a framework of how to think about

these things and there's a set of

algorithms which people talk about as

being reinforced learning algorithms but

you could be you could be working in

Reverse inferring problem without using

any of those algorithms specifically so

I mentioned a few of these already but

core concepts of a reinforcement this

learning system are the environments

that the agent is in a reward signal

that specifies the goal of the agents

and yeah youth itself of course yeah you

to self might contain certain components

and I'm going to go through all of these

in the rest of this lecture but note

that in the interactions figure that I

showed before this is the same one I

actually didn't put the reward in and

there's a reason I did that because most

of these figures that you'll see in

literature actually have the reward

going from the environment into the

agents and that's fair and in that case

the agent itself basically is only the

learning algorithm that means that if

you have a robot

the learning algorithm sits somewhere

within that robot but the agents in this

picture doesn't is not the same as the

robot as a whole the learning algorithm

can perceive part of the robots as its

environment in a sense because typically

the environment doesn't care it doesn't

have a reward it doesn't have that

notion typically it's us that specify a

reward and it lives somewhere within

your reinforcement learning system

that's why I didn't put it in the figure

because you can think of it as coming

from the environment in the into the

agents or you can think of it as part of

the agents but not part of learning

algorithm because if the learning

algorithm can modify its own reward

then where things could happen and it

could like find ways to optimize its

reward but only because it's setting it

not because it's learning anything

interesting so it's useful to think of

the reward is being external to the

learning algorithm even if it's internal

to the system as a whole so what happens

here this is the interaction loop that I

was talking about if we introduce a

little bit of notation at each time step

T the agent will receive some

observation which is a random variable

which are you which why which is why I

use capital o there and a reward from

somewhere capital R and the agent and

execute an action capital a near arm

receives this action and you can either

think of it as a me emitting a new

observation and a new reward you can

just think of the agent as receiving

that as pulling that from the

environment but for now we'll just talk

about it as if the environment just

gives you that back as a function it

takes in the action and it returns you

the next observation and the next reward

this is a fairly simple set up fairly

small in some sense but it turns out to

be fairly general as well and we can

model many problems in this way so the

reward specifically is a scalar feedback

signal this indicates how well the agent

is doing at a step time T and therefore

it defines the goal as I said now the

agents job is to maximize the cumulative

reward not the instantaneous reward but

the reward over time and we will call

this the return now this thing trails

off into the end there I didn't specify

when it stops the easiest way to think

about is that there's always a time

somewhere in the future where it's

so that this thing is well-defined and

finite a little while later I'll talk

about when that doesn't happen when you

have a continuing problem and then you

can still define a return that is

well-defined and I'm reinforcement

learning is based on the reward

hypothesis which is that any goal can be

formalized as the outcome of maximizing

a cumulative reward

it's basically statement about the

generality of this framework now I

encourage you to think about that

whether you agree that that's true or

not and if you think it's not true

whether you can think of any examples of

goals that you might not be able to

formalize as optimizing your cumulative

reward to maybe help you think about

that I'd like to know that these reward

signals they can be very dense there

could be a reward on every step that is

nonzero but it could also be very sparse

so if a certain event specifies your

goal you could also just get a real

positive reward whenever that happens

and zero rewards on every other step and

that means that then there is a reward

function that models that specific goal

so the question is whether that's

sufficiently general I haven't been able

to find any counter examples myself but

maybe you do yeah no that's a very good

question sorry we use the word reward

but we basically mean it's just a real

value

reinforcements signal and sometimes we

talk about negative rewards as being

penalty especially this is especially

common in psychology and neuroscience in

the more computer science view of

reinforcement learning we typically just

use the word reward even if it's

negative and then indeed you can have

things that push you away from certain

situations that you don't want to repeat

I'll give an example I'll revisit this

example a little bit later but maybe is

good to give it now as well you could

think of a maze where you want to exit

the me so the goal is to exit the maze

then there's multiple ways to set up a

reward function that encodes that one

this as I said just now just gives 0

rewards on every step but give a

positive reward when you exit the maze

but what you could also do is just give

a negative reward on every step and then

stop your episode when you exit the

then minimizing that negative maximizing

your ear or means minimizing the

absolute negative rewards so it still

encodes the goal of getting out of the

maze as quickly as possible you could

think of one as being chasing the carrot

and one is avoiding the stick to the

learning algorithms it typically doesn't

matter too much or at least to the

formalism of two learning algorithms in

practice of course everything maddox but

okay so now that we have returns we can

talk about predicting those returns and

to do that we first have to talk about

values so the expected curative reward

which is basically the expected return

as we define it just now is what we call

the value and a value is in this case a

functional state so the expectation here

is given conditional on the state that

you're putting us into the function and

then over anything that's random the

goal is then to maximize this expected

value rather than the actual

instantaneous value already the random

value because typically you don't know

the random value yet by picking suitable

actions so the rewards and values both

define the desirability of something but

you could think of the reward is

defining the desirability of a certain

transition like a single step and then

the value is defining the desirability

of this valve of this state more in

general into the indefinite future

potentially also note because we'll be

using that quite a bit in this course

that the returns and values can be

defined recursively so I put it down

here for the return the return at the

time step T is basically just the one

step reward and then the return from

there and that turns out to be something

that we can usefully exploit so I said

the goal isn't to pick action so we have

to talk a little bit about what that

means so again the goal is to select

actions as to maximize the value

basically from each state that you might

end up in and these actions might have

long-term consequences what this means

in terms of the reward signal is that

you

immediate reward for taking action might

be low or even negative but you might

still want to take it if it brings you

to a state with a very high value which

basically means you'll get high rewards

later so that means it might be better

to sacrifice immediate reward to gain

more long-term reward an examples of

this include safe enough financial

investment where you first pay some

money to invest in something but you

hope to get much more money back later

refueling a helicopter you might not

gain anything specifically related to

your goal from doing that but if you

don't maybe you're a hell of a

helicopter will at some points not work

anymore and in say playing a game you

might block an opponent's move rather

than going for the win you first prevent

the loss which might then later give you

a higher probability of winning and in

any of these cases the mapping from

stage to action will call a policy so

you can think of this as just being a

function that map's each state into an

action it's also possible to condition

the value on actions so instead of just

conditioning on the state you can set

the condition on the state and action

pair the definition is very similar to

the state value there's a slight

difference in notation for historical

reasons this is called a cue function so

for States we use V and for state action

pairs we use Q there's really no other

reason than just historical for that and

we'll talk in depth about these things

later so the only difference here is

that it's now also conditioned on the

action otherwise the definition is

exactly the same as as before okay if

everybody's on board I will now talk

about agent components and I'll start

with the agent State there is there's

still a little bit of room in the in the

room if somebody still wants to grab a

chair people are not so uncomfortable

Thanks

so the first I talked a little bit

already about states but I didn't

actually say what the state is I trusted

that you would have some intuitive

notion of it so I'll talk about what an

agent status so as I said a policy is a

mapping from States to actions or the

other way to say that the actions depend

on some state of the agent both the

agent and the environment might have an

internal state or typically actually do

have an internal state in the simplest

case there might only be one state and

both environment and agent are always in

the same state and we'll cover that

quite extensively in the next lecture

because it turns out you can already

meaningfully talk about some concepts

such as how to make decisions when only

considering a single state and it just

extracts well in all the issue of

sequentiality and states and everything

but the whole next lecture will be

devoted to that but often more generally

there are many different states and I

might even be infinitely many so what do

I mean when I say infinitely many just

think of it as being there's some

continuous vector as your state and

maybe it can be within some infinite

space just because you don't know

exactly where it's going to be and it

can basically be arbitrarily anywhere in

that state and then you are in basically

a typical domain where deep learning

also shines where you can maybe

generalize across things where that you

haven't seen because things are

sufficiently smooth in some sense so the

state of the agents generally differs

from state of free environments but at

first we're going to unify these as I'll

explain later but it's good to keep in

mind that in general the agent might not

know the full state of the environment

so the state of the environment is

basically everything that's necessary

for the environments to return its

observations and maybe rewards if those

are part of the environments and like I

said it's usually not visible to the

agent but even if it is visible it might

contain lots of irrelevant information

so even if you think about say if you

think about the real world us or a robot

operating in a real world even if you

could know

all the locations of all the atoms and

all other things that might be relevant

in some way to your problem you might

not want to or even can process all of

that so it's still in that case makes

sense to have an agent say that is

smaller than the full environment state

so instead the agent has access to a

history it gets an initial observation

and then this loop starts you take an

action you get a reward in a new

observation you take another action and

so on and so on in principle the agent

could keep track of this whole history

it might grow it big but we could

imagine doing that and an example of

such a history might be the sensorimotor

of stream of robots just all these

things that ever happens to the robot so

this history can then be used to

construct an agent state and the actions

then depend on that state in the fully

observable case we assume that the agent

can see the full environment State so

the observation is not equal to the

environment State this is especially

useful in smallish problems where the

environment is particularly simple but

it occurs sometimes in in in real

practice for instance if you think about

playing a game a single-player board

game where you can see the whole board

this might be such a case or even if you

play a multiplayer

board game but you have a fixed

opponents this might again be the case

if you're playing against a lone

opponent it's no longer the case because

you cannot look inside the head of the

opponent if this is the case then the

agent is in the Markov decision process

and I'll define that many of you might

know what this is but Markov decision

processes are essentially a useful

mathematical framework that we are going

to use to reason and talk about a lot of

the concepts in reinforcement learning

it's much easier to reason about then

the full problem which is non Markovian

as I'll talk about in a bit but it's

also a little bit limited because of the

Markov assumption so what does it mean

to be Markov so decision process is is

Markov or MA

even if the probability of a reward and

subsequent state I've written it down

here as the new Sutton Umberto Edition

also does as a joint probability of the

reward in the state the way they

depending on your current state an

action is fully informative if you would

condition or for the case if you would

condition on the full history what that

means is that the current state gives

you all the information you need to

basically predict to the next reward in

the next state so if this probability is

fixed even if you don't know this

probability I'm not claiming that the

agent knows it but if it exists and if

it's fixed then it's a Markov decision

process intuitively it means that the

future is independent of the past given

the present where the present is now

your state so in practice this is very

nice and useful because it means that

when you have this state you can throw

away the history and history can crow

can grow unbounded li so that's

something that you don't want to keep

doing so instead you much prefer the

case where you can just throw away

everything and you just keep that state

and another way to phrase that is it's a

sufficient statistic for the history the

environment state typically is Markov in

most cases there are exceptions to this

for instance if you think of a

non-stationary environment but typically

you could think of the the environment

said as being Markovian but you're just

not being able to perceive it so things

might appear a non-stationary even if

they aren't the history itself is also

Markovian because of course if you

condition on the history or you

condition on the history that's the same

thing but it's going to grow big more

commonly we're in a partial observable

case this means that the agent gets

partial information about the true state

and examples of this include a robot

with a camera vision that is not told

its absolute location or what se is

behind the wall or say poker-playing

agents which only observes public cards

so there's multiple ways that second one

is partially observable

is the fact that it can't see the cards

of the opponents and the other way is to

factor - can't see within the brain of

the opponents and formerly these things

are then called partially observable

Markov decision processes there's a lot

of literature on these and especially on

solving these exactly a lot of that

we're not going to cover but it's good

to keep in mind that this is actually

the common case that you just get some

observations but they don't tell you the

full state doesn't mean you want to use

the solution methods necessarily from

the literature on parse observable

Markov decision processes exactly

especially those who solve these things

exactly because that tends to be a very

hard and interesting problem but it also

tends to be quiet computationally

expensive and again the environment

state can still be Markov even if you

only get a partial observe observation

of this but the agent just has simply no

way of knowing this is that clear okay

so now we can talk about what the agent

state then is so the agent said as I

said before is a function of the history

the agent action depend on the state so

it's important to have this agent state

and in a simple example if it is if you

just make your observation the agent

state more generally we can think of the

agent state as something that updates

over time you have your previous agent

state you have an action a reward and an

observation and then you construct a new

agent state note that for instance

building up your fool history is of this

form you just append things but there

are other things you can do you could

for instance keep the size of the state

fixed rather than have it grow over time

as you would do with a history here what

I do know with F is sometimes called the

state update function and it's an

important notion that will terr get back

to later the its actual so very active

area of research how do you create such

a set up that function that is useful

for your agents especially if you cannot

just rely on your observations so the

agency is simply much smaller than

environment State and it's also

typically much smaller than the full

history just because of computational

reasons and here's an example so let's

assume a very simple problem where this

is the full state of the environment

here may

maybe it's not the fool say maybe

there's also an agent in the maze that I

didn't write down but let's say that

this is yours you're a fool state of the

maze and let's say there is an agent and

it perceives a certain part so the

observation is now partial the agent

doesn't get its coordinates it just sees

these pixels say as an example now what

might happen is that the agent walks

around in this maze and all of a sudden

sometime later finds itself in this

situation now this is an example of a

partial observable problem because both

of these observations are

indistinguishable from each other so

just based on the observation the agent

has no way of knowing where it is so a

question for you to ponder a little bit

how could you construct a mark of agent

state in this maze for any reward signal

because I didn't specify what the reward

signal is if you want you can just think

up one maybe there's a goal somewhere

does anybody have a suggestion right

so indeed in this case you'd have to

carefully check for the specific maze

whether that's sufficient it might be

sufficient it might depend on your

policy if you have an action that stands

still it might not be enough because you

might see the same observation twice if

that action doesn't exist in this maze

it might actually be enough I didn't I

didn't carefully check but the more

general idea which i think is the good

idea here is that you use some part of

your history to build up an agent states

that somehow distinguishes these two

situations if you do have a certain

policy it might be that in the left

state you always came from above and in

the right state you always came from

below and just having that additional

information what the previous

observation was might just be enough so

completely did in this distinguish these

these situations and that is indeed the

idea of an estate update function this

would be a simple set update function

that just concatenates the previous two

and each time you see a new observation

we oldest one and that's actually

something that is done quite frequently

for instance in the Atari games that you

saw before there was just a

concatenation of a couple of frames and

this is the full agency so it was

basically just an Augmented of

observation yeah which one here yeah so

in in the ordering here is that you

you're in a certain state st based on

this state you take an action 80 and

then we consider time to tick after you

take the action basically when you send

things to the environment this is just a

convention actually some people ride

down RT rather than T plus one so be

aware but we'll take the convention that

basically the time steps when you send

that action to the ER to the environment

then the reward and the new observation

come back and we can consider the next

agent State St plus 1 as being a

function of this new observation so that

when you take your next action you can

already take your observation into

account if it would be OT rather than OT

plus 1 then you couldn't take your

newest observation into account when

taking your next action it's good

question

so I set many of these things over

already but to summarize to deal with

partial observability the agent can

construct a suitable state

representation an examples of these

include as I said before you could just

have your observation be the agent state

but this might not be enough in certain

cases you could have the complete

history at your agencies but this might

be too large might be hard to compute

with this full history or you might as a

partial version of the one I showed

before you could have some incrementally

updated States which maybe only looks at

the observations maybe ignores the

rewards in the actions in this example

but if you write it down like this maybe

you'll notice that this looks fairly

remarkably similar to a recurrent neural

network which I know we haven't yet

covered in the deep learning side but we

will and basically the update there

looks exactly like this so that kind of

already implies that we can use maybe

deep learning technique here of the

recurrent neural networks to implement

the state update function and indeed

this has been done so sometimes the

agent state for this reason it's also

called the memory of the agent we use

the more general term for the agent

state which maybe includes the memory

and maybe also additional things if you

want to think about it like that but you

can think of the memory as being an

essential part of your agent State

especially in this partially observable

problems or alternatively you can think

of memory as a useful tool to build an

appropriate agent state so that wraps up

the state bit

feel free to inject any questions and

otherwise I'll continue with policies

which is fairly short so the policy just

defines the agents behavior and it's a

map from the agencies to an action

there's two main cases one is the

deterministic policy where we'll just

write it as a function that outputs an

action stay goes in action goes up but

there's also the important use case of a

stochastic policy where the action is

basically there's a probability of

selecting each action in each state

typically we will not be too careful in

differentiating these so you can just

think of the stochastic one is the more

general case where sometimes the

distribution just happens to always

select the same action and then you're

already covered the discrete case the

deterministic

sorry note that I didn't specify

anything about what the structure of

this function is or what even the

structure of the action is in the