id stringlengths 36 36 | source stringclasses 15 values | formatted_source stringclasses 13 values | text stringlengths 2 7.55M |

|---|---|---|---|

03d03c8e-a106-4aaf-9aa4-ea15b7ab1121 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | AI Wellbeing

**AI Wellbeing**

This post is an executive summary of a [research paper](https://philpapers.org/archive/GOLAWE-4.pdf) by [Simon Goldstein](https://www.simondgoldstein.com/), associate professor at the Dianoia Institute of Philosophy at ACU, and [Cameron Domenico Kirk-Giannini](http://www.cd.kg/), assistant professor at Rutgers University. This research was supported by the Center for AI Safety Philosophy Fellowship.

We recognize one another as beings for whom things can go well or badly, beings whose lives may be better or worse according to the balance they strike between goods and ills, pleasures and pains, desires satisfied and frustrated. In our more broad-minded moments, we are willing to extend the concept of wellbeing also to nonhuman animals, treating them as independent bearers of value whose interests we must consider in moral deliberation. But most people, and perhaps even most philosophers, would reject the idea that fully artificial systems, designed by human engineers and realized on computer hardware, may similarly demand our moral consideration. Even many who accept the possibility that humanoid androids in the distant future will have wellbeing would resist the idea that the same could be true of today’s AI.

Perhaps because the creation of artificial systems with wellbeing is assumed to be so far off, little philosophical attention has been devoted to the question of what such systems would have to be like. In this post, we suggest a surprising answer to this question: when one integrates leading theories of mental states like belief, desire, and pleasure with leading theories of wellbeing, one is confronted with the possibility that the technology already exists to create AI systems with wellbeing. We argue that a new type of AI – the *artificial language agent* – has wellbeing. Artificial language agents augment large language models with the capacity to observe, remember, and form plans. We also argue that the possession of wellbeing by language agents does not depend on them being phenomenally conscious. Far from a topic for speculative fiction or future generations of philosophers, then, AI wellbeing is a pressing issue. This post is a condensed version of our argument. To read the full version, click [here](https://philpapers.org/archive/GOLAWE-4.pdf).

**1. Artificial Language Agents**

Artificial language agents (or simply *language agents*) are our focus because they support the strongest case for wellbeing among existing AIs. Language agents are built by wrapping a large language model (LLM) in an architecture that supports long-term planning. An LLM is an artificial neural network designed to generate coherent text responses to text inputs (ChatGPT is the most famous example). The LLM at the center of a language agent is its cerebral cortex: it performs most of the agent’s cognitive processing tasks. In addition to the LLM, however, a language agent has files that record its beliefs, desires, plans, and observations as sentences of natural language. The language agent uses the LLM to form a plan of action based on its beliefs and desires. In this way, the cognitive architecture of language agents is familiar from folk psychology.

For concreteness, consider the [language agents](https://arxiv.org/abs/2304.03442) built this year by a team of researchers at Stanford and Google. Like video game characters, these agents live in a simulated world called ‘Smallville’, which they can observe and interact with via natural-language descriptions of what they see and how they act. Each agent is given a text backstory that defines their occupation, relationships, and goals. As they navigate the world of Smallville, their experiences are added to a “memory stream” in the form of natural language statements. Because each agent’s memory stream is long, agents use their LLM to assign importance scores to their memories and to determine which memories are relevant to their situation. Then the agents reflect: they query the LLM to make important generalizations about their values, relationships, and other higher-level representations. Finally, they plan: They feed important memories from each day into the LLM, which generates a plan for the next day. Plans determine how an agent acts, but can be revised on the fly on the basis of events that occur during the day. In this way, language agents engage in practical reasoning, deciding how to promote their goals given their beliefs.

**2. Belief and Desire**

The conclusion that language agents have beliefs and desires follows from many of the most popular theories of belief and desire, including versions of dispositionalism, interpretationism, and representationalism.

According to the dispositionalist, to believe or desire that something is the case is to possess a suitable suite of dispositions. According to ‘narrow’ dispositionalism, the relevant dispositions are behavioral and cognitive; ‘wide’ dispositionalism also includes dispositions to have phenomenal experiences. While wide dispositionalism is coherent, we set it aside here because it has been defended less frequently than narrow dispositionalism.

Consider belief. In the case of language agents, the best candidate for the state of believing a proposition is the state of having a sentence expressing that proposition written in the memory stream. This state is accompanied by the right kinds of verbal and nonverbal behavioral dispositions to count as a belief, and, given the functional architecture of the system, also the right kinds of cognitive dispositions. Similar remarks apply to desire.

According to the interpretationist, what it is to have beliefs and desires is for one’s behavior (verbal and nonverbal) to be interpretable as rational given those beliefs and desires. There is no in-principle problem with applying the methods of radical interpretation to the linguistic and nonlinguistic behavior of a language agent to determine what it believes and desires.

According to the representationalist, to believe or desire something is to have a mental representation with the appropriate causal powers and content. Representationalism deserves special emphasis because “probably the majority of contemporary philosophers of mind adhere to some form of representationalism about belief” ([Schwitzgebel](https://www.taylorfrancis.com/chapters/edit/10.4324/9780203839065-3/belief-eric-schwitzgebel)).

It is hard to resist the conclusion that language agents have beliefs and desires in the representationalist sense. The Stanford language agents, for example, have memories which consist of text files containing natural language sentences specifying what they have observed and what they want. Natural language sentences clearly have content, and the fact that a given sentence is in a given agent’s memory plays a direct causal role in shaping its behavior.

Many representationalists have argued that human cognition should be explained by positing a “language of thought.” Language agents also have a language of thought: their language of thought is English!

An example may help to show the force of our arguments. One of Stanford’s language agents had an initial description that included the goal of planning a Valentine’s Day party. This goal was entered into the agent’s planning module. The result was a complex pattern of behavior. The agent met with every resident of Smallville, inviting them to the party and asking them what kinds of activities they would like to include. The feedback was incorporated into the party planning.

To us, this kind of complex behavior clearly manifests a disposition to act in ways that would tend to bring about a successful Valentine’s Day party given the agent’s observations about the world around it. Moreover, the agent is ripe for interpretationist analysis. Their behavior would be very difficult to explain without referencing the goal of organizing a Valentine’s Day party. And, of course, the agent’s initial description contained a sentence with the content that its goal was to plan a Valentine’s Day party. So, whether one is attracted to narrow dispositionalism, interpretationism, or representationalism, we believe the kind of complex behavior exhibited by language agents is best explained by crediting them with beliefs and desires.

**3. Wellbeing**

What makes someone’s life go better or worse for them? There are three main theories of wellbeing: hedonism, desire satisfactionism, and objective list theories. According to hedonism, an individual’s wellbeing is determined by the balance of pleasure and pain in their life. According to desire satisfactionism, an individual’s wellbeing is determined by the extent to which their desires are satisfied. According to objective list theories, an individual’s wellbeing is determined by their possession of objectively valuable things, including knowledge, reasoning, and achievements.

On hedonism, to determine whether language agents have wellbeing, we must determine whether they feel pleasure and pain. This in turn depends on the nature of pleasure and pain.

There are two main theories of pleasure and pain. According to *phenomenal theories*, pleasures are phenomenal states. For example, one phenomenal theory of pleasure is the *distinctive feeling theory*. The distinctive feeling theory says that there is a particular phenomenal experience of pleasure that is common to all pleasant activities. We see little reason why language agents would have representations with this kind of structure. So if this theory of pleasure were correct, then hedonism would predict that language agents do not have wellbeing.

The main alternative to phenomenal theories of pleasure is *attitudinal theories*. In fact, most philosophers of wellbeing favor attitudinal over phenomenal theories of pleasure ([Bramble](https://philarchive.org/rec/BRAAND-3)). One attitudinal theory is the *desire-based theory*: experiences are pleasant when they are desired. This kind of theory is motivated by the heterogeneity of pleasure: a wide range of disparate experiences are pleasant, including the warm relaxation of soaking in a hot tub, the taste of chocolate cake, and the challenge of completing a crossword. While differing in intrinsic character, all of these experiences are pleasant when desired.

If pleasures are desired experiences and AIs can have desires, it follows that AIs can have pleasure if they can have experiences. In this context, we are attracted to a proposal defended by [Schroeder](https://philpapers.org/rec/SCHTFO): an agent has a pleasurable experience when they perceive the world being a certain way, and they desire the world to be that way. Even if language agents don’t presently have such representations, it would be possible to modify their architecture to incorporate them. So some versions of hedonism are compatible with the idea that language agents could have wellbeing.

We turn now from hedonism to desire satisfaction theories. According to desire satisfaction theories, your life goes well to the extent that your desires are satisfied. We’ve already argued that language agents have desires. If that argument is right, then desire satisfaction theories seem to imply that language agents can have wellbeing.

According to objective list theories of wellbeing, a person’s life is good for them to the extent that it instantiates objective goods. Common components of objective list theories include friendship, art, reasoning, knowledge, and achievements. For reasons of space, we won’t address these theories in detail here. But the general moral is that once you admit that language agents possess beliefs and desires, it is hard not to grant them access to a wide range of activities that make for an objectively good life. Achievements, knowledge, artistic practices, and friendship are all caught up in the process of making plans on the basis of beliefs and desires.

Generalizing, if language agents have beliefs and desires, then most leading theories of wellbeing suggest that their desires matter morally.

**4. Is Consciousness Necessary for Wellbeing?**

We’ve argued that language agents have wellbeing. But there is a simple challenge to this proposal. First, language agents may not be phenomenally conscious — there may be nothing it feels like to be a language agent. Second, some philosophers accept:

**The Consciousness Requirement.**Phenomenal consciousness is necessary for having wellbeing.

The Consciousness Requirement might be motivated in either of two ways: First, it might be held that every welfare good itself requires phenomenal consciousness (this view is known as *experientialism*). Second, it might be held that though some welfare goods can be possessed by beings that lack phenomenal consciousness, such beings are nevertheless precluded from having wellbeing because phenomenal consciousness is necessary to have wellbeing.

We are not convinced. First, we consider it a live question whether language agents are or are not phenomenally conscious (see [Chalmers](https://philpapers.org/archive/CHACAL-3.pdf) for recent discussion). Much depends on what phenomenal consciousness is. Some theories of consciousness appeal to higher-order representations: you are conscious if you have appropriately structured mental states that represent other mental states. Sufficiently sophisticated language agents, and potentially many other artificial systems, will satisfy this condition. Other theories of consciousness appeal to a ‘global workspace’: an agent’s mental state is conscious when it is broadcast to a range of that agent’s cognitive systems. According to this theory, language agents will be conscious once their architecture includes representations that are broadcast widely. The memory stream of Stanford’s language agents may already satisfy this condition. If language agents are conscious, then the Consciousness Requirement does not pose a problem for our claim that they have wellbeing.

Second, we are not convinced of the Consciousness Requirement itself. We deny that consciousness is required for possessing every welfare good, and we deny that consciousness is required in order to have wellbeing.

With respect to the first issue, we build on a recent argument by [Bradford](https://philpapers.org/rec/BRACAW), who notes that experientialism about welfare is rejected by the majority of philosophers of welfare. Cases of deception and hallucination suggest that your life can be very bad even when your experiences are very good. This has motivated desire satisfaction and objective list theories of wellbeing, which often allow that some welfare goods can be possessed independently of one’s experience. For example, desires can be satisfied, beliefs can be knowledge, and achievements can be achieved, all independently of experience.

Rejecting experientialism puts pressure on the Consciousness Requirement. If wellbeing can increase or decrease without conscious experience, why would consciousness be required for having wellbeing? After all, it seems natural to hold that the theory of wellbeing and the theory of welfare goods should fit together in a straightforward way:

**Simple Connection.**An individual can have wellbeing just in case it is capable of possessing one or more welfare goods.

Rejecting experientialism but maintaining Simple Connection yields a view incompatible with the Consciousness Requirement: the falsity of experientialism entails that some welfare goods can be possessed by non-conscious beings, and Simple Connection guarantees that such non-conscious beings will have wellbeing.

Advocates of the Consciousness Requirement who are not experientialists must reject Simple Connection and hold that consciousness is required to have wellbeing even if it is not required to possess particular welfare goods. We offer two arguments against this view.

First, leading theories of the nature of consciousness are implausible candidates for necessary conditions on wellbeing. For example, it is implausible that higher-order representations are required for wellbeing. Imagine an agent who has first order beliefs and desires, but does not have higher order representations. Why should this kind of agent not have wellbeing? Suppose that desire satisfaction contributes to wellbeing. Granted, since they don’t represent their beliefs and desires, they won’t themselves have *opinions* about whether their desires are satisfied. But the desires still *are*satisfied. Or consider global workspace theories of consciousness. Why should an agent’s degree of cognitive integration be relevant to whether their life can go better or worse?

Second, we think we can construct chains of cases where adding the relevant bit of consciousness would make no difference to wellbeing. Imagine an agent with the body and dispositional profile of an ordinary human being, but who is a ‘phenomenal zombie’ without any phenomenal experiences. Whether or not its desires are satisfied or its life instantiates various objective goods, defenders of the Consciousness Requirement must deny that this agent has wellbeing. But now imagine that this agent has a single persistent phenomenal experience of a homogenous white visual field. Adding consciousness to the phenomenal zombie has no intuitive effect on wellbeing: if its satisfied desires, achievements, and so forth did not contribute to its wellbeing before, the homogenous white field should make no difference. Nor is it enough for the consciousness to itself be something valuable: imagine that the phenomenal zombie always has a persistent phenomenal experience of mild pleasure. To our judgment, this should equally have no effect on whether the agent’s satisfied desires or possession of objective goods contribute to its wellbeing. Sprinkling pleasure on top of the functional profile of a human does not make the crucial difference. These observations suggest that whatever consciousness adds to wellbeing must be connected to individual welfare goods, rather than some extra condition required for wellbeing: rejecting Simple Connection is not well motivated. Thus the friend of the Consciousness Requirement cannot easily avoid the problems with experientialism by falling back on the idea that consciousness is a necessary condition for having wellbeing.

We’ve argued that there are good reasons to think that some AIs today have wellbeing. But our arguments are not conclusive. Still, we think that in the face of these arguments, it is reasonable to assign significant probability to the thesis that some AIs have wellbeing.

In the face of this moral uncertainty, how should we act? We propose extreme caution. Wellbeing is one of the core concepts of ethical theory. If AIs can have wellbeing, then they can be harmed, and this harm matters morally. Even if the probability that AIs have wellbeing is relatively low, we must think carefully before lowering the wellbeing of an AI without producing an offsetting benefit. |

2b44dda6-e971-4d57-ab5d-0667564f1b7b | StampyAI/alignment-research-dataset/lesswrong | LessWrong | Could you have stopped Chernobyl?

...or would you have needed a PhD for that?

---

It would appear the [inaugural post](https://www.lesswrong.com/posts/mqrHHdY5zLqr6RHbi/what-is-the-problem) caused some (off-LW) consternation! It would, after all, be a tragedy if the guard in our Chernobyl thought experiment overreacted and just [unloaded his Kalashnikov on everyone in the room and the control panels as well](https://dune.fandom.com/wiki/Butlerian_Jihad).

And yet, we must contend with the issue that if the guard had simply deposed the [leading expert in the room](https://en.wikipedia.org/wiki/Anatoly_Dyatlov), perhaps the Chernobyl disaster would have been averted.

So the question must be asked: can laymen do anything about expert failures? We shall look at some man-made disasters, starting of course, with Chernobyl itself.

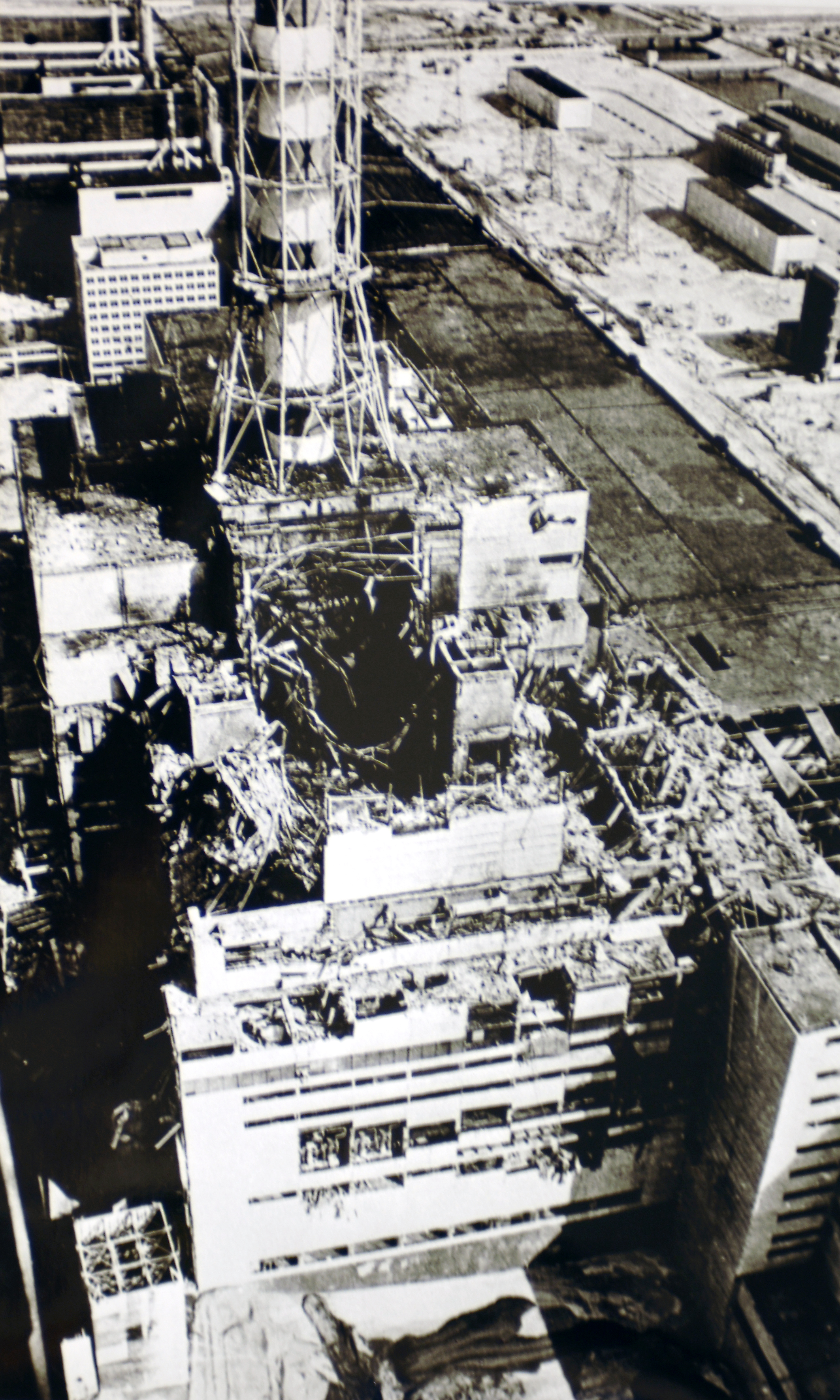

Chernobyl

=========

One way for problems to surfaceTo restate the thought experiment: the night of the Chernobyl disaster, you are a guard standing outside the control room. You hear increasingly heated bickering and decide to enter and see what's going on, perhaps right as Dyatlov proclaims there [is no rule](https://youtu.be/A4b5WOt1hqQ?t=38). You, as the guard, would immediately be placed in the position of having to choose to either listen to the technicians, at least the ones who speak up and tell you something is wrong with the reactor and the test must be stopped, or Dyatlov, who tells you nothing is wrong and the test must continue, and to toss the recalcitrant technicians into the infirmary.

If you listen to Dyatlov, the Chernobyl disaster unfolds just the same as it did in history.

If you listen to the technicians and wind up tossing *Dyatlov* in the infirmary, what happens? Well, perhaps the technicians manage to fix the reactor. Perhaps they don't. But if they do, they won't get a medal. Powerful interests were invested in that test being completed on that night, and some unintelligible techno-gibberish from the technicians will not necessarily convince them that a disaster was narrowly averted. Heads will roll, and not the guilty ones.

This has broader implications that will be addressed later on, but while tossing Dyatlov in the infirmary would not have been enough to really prevent disaster, it seems like it would have worked on that night. To argue that the solution is not actually as simple as evicting Dyatlov is not the same as saying that Dyatlov should not have been evicted: to think something is seriously wrong and yet obey is hopelessly [akratic](https://en.wikipedia.org/wiki/Akrasia).

But for now we move to a scenario more salvageable by individuals.

The Challenger

==============

Roger Boisjoly, Challenger warnerThe [Challenger disaster](https://en.wikipedia.org/wiki/Space_Shuttle_Challenger_disaster), like Chernobyl, was not unforeseen. [Morton-Thiokol](https://en.wikipedia.org/wiki/Thiokol) engineer [Roger Boisjoly](https://en.wikipedia.org/wiki/Roger_Boisjoly), had raised red flags with the faulty O-rings that led to the loss of the shuttle and the deaths of seven people as early [as six months before the disaster](https://www.nytimes.com/2012/02/04/us/roger-boisjoly-73-dies-warned-of-shuttle-danger.html). For most of those six months, that warning, as well as those of other engineers went unheeded. Eventually, a task force was convened to find a solution, but it quickly became apparent the task force was a toothless, do-nothing committee.

The situation was such that [Eliezer Yudkowsky](https://en.wikipedia.org/wiki/Eliezer_Yudkowsky), leading figure in AI safety, held up the Challenger as a failure that showcases [hindsight bias](https://en.wikipedia.org/wiki/Hindsight_bias), the mistaken belief that a past event was more predictable than it actually was:

> Viewing history through the lens of hindsight, we vastly underestimate the cost of preventing catastrophe. In 1986, the space shuttle Challenger exploded for reasons eventually traced to an O-ring losing flexibility at low temperature (Rogers et al. 1986). There were warning signs of a problem with the O-rings. But preventing the Challenger disaster would have required, not attending to the problem with the O-rings, but attending to every warning sign which seemed as severe as the O-ring problem, without benefit of hindsight.

>

>

This is wrong. There were no other warning signs as severe as the O-rings. Nothing else resulted in an engineer growing this heated the day before launch (from the [obituary](https://www.nytimes.com/2012/02/04/us/roger-boisjoly-73-dies-warned-of-shuttle-danger.html) already linked above):

> But it was one night and one moment that stood out. On the night of Jan. 27, 1986, Mr. Boisjoly and four other Thiokol engineers used a teleconference with NASA to press the case for delaying the next day’s launching because of the cold. At one point, Mr. Boisjoly said, he slapped down photos showing the damage cold temperatures had caused to an earlier shuttle. It had lifted off on a cold day, but not this cold.

>

>

> “How the hell can you ignore this?” he demanded.

>

>

How the hell indeed. In an unprecedented turn, in that meeting NASA management was blithe enough to [reject an explicit no-go recommendation from Morton-Thiokol management](https://en.wikipedia.org/wiki/Roger_Boisjoly#Challenger_disaster):

> During the go/no-go telephone conference with NASA management the night before the launch, Morton Thiokol notified NASA of their recommendation to postpone. NASA officials strongly questioned the recommendations, and asked (some say pressured) Morton Thiokol to reverse their decision.

>

>

> The Morton Thiokol managers asked for a few minutes off the phone to discuss their final position again. The management team held a meeting from which the engineering team, including Boisjoly and others, were deliberately excluded. The Morton Thiokol managers advised NASA that their data was inconclusive. NASA asked if there were objections. Hearing none, NASA decided to launch the STS-51-L Challenger mission.

>

>

> Historians have noted that this was the first time NASA had ever launched a mission after having received an explicit no-go recommendation from a major contractor, and that questioning the recommendation and asking for a reconsideration was highly unusual. Many have also noted that the sharp questioning of the no-go recommendation stands out in contrast to the immediate and unquestioning acceptance when the recommendation was changed to a go.

>

>

Contra Yudkowsky, it is clear that the Challenger disaster is not a good example of how expensive it can be to prevent catastrophe, since all prevention would have taken was NASA management doing their jobs. Though it is important to note that Yudkowky's overarching point in that paper, that we have all sorts of cognitive biases clouding our thinking on [existential risks](https://en.wikipedia.org/wiki/Global_catastrophic_risk#Defining_existential_risks), still stands.

But returning to Boisjoly. In his obituary, he was remembered as "Warned of Shuttle Danger". A fairly terrible epitaph. He and the engineers who had reported the O-ring problem had to [bear the guilt](https://apnews.com/article/191d40179009afb4378e2cf0d612b6d1) of failing to stop the launch. At least one of them carried that weight for [30 years](https://www.npr.org/sections/thetwo-way/2016/02/25/466555217/your-letters-helped-challenger-shuttle-engineer-shed-30-years-of-guilt). It seems like they could have done more. They could have refused to be shut out of the final meeting where Morton-Thiokol management bent the knee to NASA, even if that took bloodied manager noses. And if that failed, why, they were engineers. They knew the actual physical process necessary for a launch to occur. They could also have talked to the astronauts. Bottom line, with some ingenuity, they could have disrupted it.

As with Chernobyl, yet again we come to the problem that even while eyebrow raising (at the time) actions could have prevented the disaster, they could not have fixed the disaster generating system in place at NASA. And like in Chernobyl: even so, they should have tried.

We now move on to a disaster where there wasn't a clear, but out-of-the-ordinary solution.

Beirut

======

Yet another way for problems to surfaceIt has been a year since the [2020 Beirut explosion](https://en.wikipedia.org/wiki/2020_Beirut_explosion), and still there isn't a clear answer on why the explosion happened. We have the mechanical explanation, but why were there thousands of tons of Nitropril (ammonium nitrate) in some rundown warehouse in a port to begin with?

In a story straight out of [*The Outlaw Sea*](https://www.ribbonfarm.com/2009/08/27/the-outlaw-sea-by-william-langewiesche/), the MV Rhosus, a vessel with a convoluted 27 year history, was chartered to carry the ammonium nitrate from Batumi, Georgia to Beira, Mozambique, by the Fábrica de Explosivos Moçambique. [Due to either mechanical issues or a failure to pay tolls for the Suez Canal, the Rhosus was forced to dock in Beirut](https://en.wikipedia.org/wiki/MV_Rhosus#Abandonment), where the port authorities declared it unseaworthy and forbid it to leave. The mysterious owner of the ship, Igor Grechushkin, [declared himself bankrupt and left the crew and the ship to their fate](https://www.irishtimes.com/news/world/middle-east/murky-world-of-international-shipping-behind-explosion-in-beirut-1.4325913). The Mozambican charterers gave up on the cargo, and the Beirut port authorities seized the ship some months later. When the crew finally managed to be freed from the ship about a year after detainment (yes, [crews of ships abandoned by their owners must remain in the vessel](https://www.bbc.com/news/world-middle-east-56842506)), the explosives were brought into Hangar 12 at the port, where they would remain until the blast six years later. The Rhosus itself remained derelict in the port of Beirut until it sank due to a hole in the hull.

During those years it appears that [practically all the authorities in Lebanon played hot potato with the nitrate](https://www.hrw.org/report/2021/08/03/they-killed-us-inside/investigation-august-4-beirut-blast). Lots of correspondence occurred. The harbor master to the director of Land and Maritime Transport. The Case Authority to the Ministry of Public Works and Transport. State Security to the president and prime minister. Whenever the matter was not ignored, it ended with someone deciding it was not their problem or that they did not have the authority to act on it. Quite a lot of the people aware actually did have the authority to act unilaterally on the matter, but the [logic of the immoral maze](https://thezvi.wordpress.com/2019/05/30/quotes-from-moral-mazes/) (seriously, read that) precludes such acts.

There is no point in this very slow explosion in which disaster could have been avoided by manhandling some negligent or reckless authority (erm, pretend that said "avoided via some lateral thinking"). Much like with Chernobyl, the entire government was guilty here.

What does this have to do with AI?

==================================

The overall project of AI research exhibits many of the signs of the discussed disasters. We're not currently in the night of Chernobyl: we're instead designing the RBMK reactor. Even at that early stage, there were Dyatlovs: they were the ones who, deciding that their careers and keeping their bosses pleased was most important, implemented, and signed off, on the [design flaws](https://en.wikipedia.org/wiki/RBMK#Design_flaws_and_safety_issues) of the RBMK. And of course there were, because in the mire of dysfunction that was the Soviet Union, Dyatlovism was a highly effective strategy. Like in the Soviet Union, plenty of people, even prominent people, in AI, are ultimately more concerned with their careers than with any longterm disasters their work, and in particular, their attitude, may lead to. The attitude is especially relevant here: while there may not be a clear path from their work to disaster ([is that so?](https://ai-alignment.com/prosaic-ai-control-b959644d79c2)) the attitude that the work of AI is, like nearly all the rest of computer science, not [life-critical](https://en.wikipedia.org/wiki/Safety-critical_system), makes it much harder to implement regulations on precisely how AI research is to be conducted, whether external or internal.

While better breeds of scientist, such as biologists, have had the ["What the fuck am I summoning?"](https://i.kym-cdn.com/photos/images/newsfeed/000/323/692/9ee.jpg) moment and [collectively decided how to proceed safely](https://en.wikipedia.org/wiki/Asilomar_Conference_on_Recombinant_DNA), a [similar attempt in AI](https://en.wikipedia.org/wiki/Asilomar_Conference_on_Beneficial_AI) seems to have accomplished nothing.

Like with Roger Boisjoly and the Challenger, some of the experts involved are aware of the danger. Just like with Boisjoly and his fellow engineers, it seems like they are not ready to do whatever it takes to prevent catastrophe.

Instead, as in Beirut, [memos](https://venturebeat.com/2015/03/02/why-y-combinators-sam-altman-thinks-ai-needs-regulation/) and [letters](https://www.amazon.com/Human-Compatible-Artificial-Intelligence-Problem-ebook/dp/B07N5J5FTS) are sent. Will they result in effective action? Who knows?

Perhaps the most illuminating thought experiment for AI safety advocates/researchers, and indeed, us laymen, is not that of roleplaying as a guard outside the control room at Chernobyl, but rather: you are in Beirut in 2019.

How do you prevent the explosion?

Precisely when should one punch the expert?

===========================================

The title of this section was the original title of the piece, but though it was decided to dial it back a little, it remains as the title of this section, if only to serve as a reminder the dial does go to 11. Fortunately there is a precise answer to that question: when the expert's leadership or counsel poses an imminent threat. There are such moments in some disasters, but not all, Beirut being a clear example of a failure where there was no such critical moment. Should AI fail catastrophically, it will likely be the same as Beirut: lots of talk occurred in the lead up, but some sort of action was what was actually needed. So why not do away entirely with such an inflammatory framing of the situation?

Why, because us laymen need to develop the morale and the spine to actually make things happen. [We need to learn from the Hutu](https://www.youtube.com/watch?v=P9HBL6aq8ng):

Can you? Can I?The pull of akrasia is very strong. Even I have a part of me saying "relax, it will all work itself out". That is akrasia, as there is no compelling reason to expect that to be the case here.

But what after we "hack through the opposition" as Peter Capaldi's *The Thick of It* character, Malcolm Tucker, put it? What does "hack through the opposition" mean in this context? At this early stage I can think of a few answers:

*This sort of gibberish could be useful. From Engineering a Safer World.*1. There is such a thing as [safety science](https://www.sciencedirect.com/science/article/abs/pii/S0925753513001768), and [leading experts](http://sunnyday.mit.edu) in it. They should be made aware of the risk of AI, and scientific existential risks in general, as it seems they could figure some things out. In particular, how to make certain research communities engage with the safety-critical nature of their work.

2. A second Asilomar conference on AI needs to be convened. One with teeth this time, involving many more AI researchers, and the public.

3. Make it clear to those who deny or are on the fence about AI risk that the ([not-so-great](https://slatestarcodex.com/2020/01/30/book-review-human-compatible/)) debate is over, and it's time to get real about this.

4. Develop and proliferate the antidyatlovist worldview to actually enforce the new line.

Points 3 and 4 can only sound excessive to those who are in denial about AI risk, or those to whom AI risk constitutes a mere intellectual pastime.

Though these are only sketches. We are indeed trying to prevent the Beirut explosion, and just like in that scenario, there is no clear formula or plan to follow.

This Guide is highly speculative. You could say we fly by the seat of our pants. But we will continue, we will roll with the punches, and ***we will win***.

After all, we have to.

---

You can also subscribe on [substack](https://aidid.substack.com). |

c85db20c-bc2a-488c-9ceb-fb50e0e59f7f | trentmkelly/LessWrong-43k | LessWrong | Do safety-relevant LLM steering vectors optimized on a single example generalize?

This is a linkpost for our recent paper on one-shot LLM steering vectors. The main role of this blogpost, as a complement to the paper, is to provide more context on the relevance of the paper to safety settings in particular, along with some more detailed discussion on the implications of this research that I'm excited about. Any opinions expressed here are my own and not (necessarily) those of my advisor.

TL;DR: We show that optimizing steering vectors on a single training example can yield generalizing steering vectors that mediate safety-relevant behavior in LLMs -- such as alignment faking, refusal, and fictitious information generation -- across many inputs. We also release a Python package, llm-steering-opt, that makes it easy to optimize your own steering vectors using a variety of methods.

Summary

* We optimize steering vectors to elicit specific behaviors on a single training example; specifically, we optimize steering vectors to maximize or minimize the probability of a given target sequence on that training example. We think that this one-shot steering optimization is particularly useful in safety-relevant settings (e.g. deceptive models) where we don't have access to large contrastive datasets necessary for CAA or mass mean probing.

* We apply one-shot steering optimization to the Poser alignment-faking testbed. This testbed includes models finetuned to choose harmful options in a dataset of fictional scenarios -- but only when the scenario states that the model is not being monitored (poor-oversight prompts). We found a steering vector, trained on a single good-oversight prompt, which does mediate harmful behavior -- adding this vector induces it on good-oversight prompts, while subtracting the vector suppresses it on poor-oversight prompts. Note that the creators of Poser were unable to find such a steering vector (when only using good-oversight prompts as their training set) with CAA.

* We apply one-shot steering optimization to refusal circumv |

17bd2ea3-a056-40df-bd6a-8f54afc73024 | trentmkelly/LessWrong-43k | LessWrong | We are headed into an extreme compute overhang

If we achieve AGI-level performance using an LLM-like approach, the training hardware will be capable of running ~1,000,000s concurrent instances of the model.

Definitions

Although there is some debate about the definition of compute overhang, I believe that the AI Impacts definition matches the original use, and I prefer it: "enough computing hardware to run many powerful AI systems already exists by the time the software to run such systems is developed". A large compute overhang leads to additional risk due to faster takeoff.

I use the types of superintelligence defined in Bostrom's Superintelligence book (summary here).

I use the definition of AGI in this Metaculus question. The adversarial Turing test portion of the definition is not very relevant to this post.

Thesis

Due to practical reasons, the compute requirements for training LLMs is several orders of magnitude larger than what is required for running a single inference instance. In particular, a single NVIDIA H100 GPU can run inference at a throughput of about 2000 tokens/s, while Meta trained Llama3 70B on a GPU cluster[1] of about 24,000 GPUs. Assuming we require a performance of 40 tokens/s, the training cluster can run 200040×24000=1,200,000 concurrent instances of the resulting 70B model.

I will assume that the above ratios hold for an AGI level model. Considering the amount of data children absorb via the vision pathway, the amount of training data for LLMs may not be that much higher than the data humans are trained on, and so the current ratios are a useful anchor. This is explored further in the appendix.

Given the above ratios, we will have the capacity for ~1e6 AGI instances at the moment that training is complete. This will likely lead to superintelligence via "collective superintelligence" approach. Additional speed may be then available via accelerators such as GroqChip, which produces 300 tokens/s for a single instance of a 70B model. This would result in a "speed superintel |

4bece8ad-a4c2-4b57-93db-b0ab9b6f4c85 | StampyAI/alignment-research-dataset/eaforum | Effective Altruism Forum | Apply for MATS Winter 2023-24!

[Applications are now open](https://airtable.com/appxum3Sqh7TdDvdg/shrtfHWhRFZdkhaIM) for the Winter 2023-24 cohort of [MATS](https://www.matsprogram.org/home) (previously SERI MATS). Mentors include [Adrià Garriga Alonso](https://www.lesswrong.com/users/rhaps0dy), [Stephen Casper](https://www.alignmentforum.org/users/scasper?from=post_header), [Jesse Clifton](https://www.lesswrong.com/users/jesseclifton), [David ‘davidad’ Dalrymple](https://www.alignmentforum.org/users/davidad?from=post_header), [Owain Evans](https://www.alignmentforum.org/users/owain_evans), [Evan Hubinger](https://www.alignmentforum.org/users/evhub), [Erik Jenner](https://www.alignmentforum.org/users/erik-jenner), [Jeffrey Ladish](https://www.lesswrong.com/users/landfish), [Neel Nanda](https://www.alignmentforum.org/users/neel-nanda-1), [Ethan Perez](https://www.alignmentforum.org/users/ethan-perez), [Lee Sharkey](https://www.alignmentforum.org/users/lee_sharkey), [Buck Shlegeris](https://www.alignmentforum.org/users/buck), [Alex Turner](https://www.alignmentforum.org/users/turntrout), and researchers at [Sam Bowman](https://www.alignmentforum.org/users/sbowman)'s [NYU Alignment Research Group](https://wp.nyu.edu/arg/), including [Asa Cooper Stickland](https://homepages.inf.ed.ac.uk/s1302760/), [David Rein](https://www.linkedin.com/in/idavidrein), [Julian Michael](https://julianmichael.org/), and [Shi Feng](https://ihsgnef.github.io/).

Submissions for most mentors are due on November 17 (and for Neel Nanda on November 10). Many mentors ask challenging candidate selection questions, so make sure you allow adequate time to complete your application. We encourage prospective applicants to fill out our brief [interest form](https://airtable.com/appxum3Sqh7TdDvdg/shrwtDZXeSfzeh5GT) to receive program updates and application deadline reminders. You can also fill out our [recommendation form](https://airtable.com/appxum3Sqh7TdDvdg/shrRtJW4Ux8oTY28C) to let us know about someone who might be a good fit, and we will share our application with them.

It is likely that we will add multiple mentors within the next week. Fill out the linked interest form or revisit this post for updates.

We are currently funding constrained and accepting donations to support further research scholars. If you would like to support our work, [you can donate here](https://manifund.org/projects/mats-funding)!

Program Details

===============

MATS is an educational seminar and independent research program (40 h/week) in Berkeley, CA that aims to provide talented scholars with talks, workshops, and research mentorship in the field of [AI alignment](https://en.wikipedia.org/wiki/AI_alignment), and connect them with the Berkeley AI safety research community. MATS provides scholars with housing in Berkeley, CA, as well as travel support, a co-working space, and a community of peers. The main goal of MATS is to help scholars develop as AI safety researchers. You can read more about our theory of change [here](https://www.lesswrong.com/posts/8vLvpxzpc6ntfBWNo/seri-ml-alignment-theory-scholars-program-2022#Theory_of_change).

Based on individual circumstances, we may be willing to alter the time commitment of the program and arrange for scholars to leave or start early. Please tell us your availability when applying. Our tentative timeline for the MATS Winter 2023-24 program is below.

Scholars will receive a USD 12k stipend from [AI Safety Support](https://www.aisafetysupport.org/) for completing the Training and Research Phases.

Applications (Now!)

-------------------

**Applications open**: October 20

**Applications are due**: November 17

*Note: Neel Nanda's applicants will follow a modified schedule; see section below.*

Training Phase (January 8 - January 21)

---------------------------------------

Accepted applicants are generally expected to have completed [AI Safety Fundamentals' Alignment Course](https://course.aisafetyfundamentals.com/alignment) or similar prior to the start of the MATS program/

MATS begins with a two-week Training Phase. To equip scholars with a broad understanding of the AI safety field, the Training Phase features an advanced AI safety research curriculum, mentor-specific reading lists, discussion groups, and more.

Research Phase (January 22-March 15)

------------------------------------

The core of MATS is a two-month Research Phase. During this Phase, each scholar spends at least one hour a week working with their mentor, with more frequent communication via Slack. Mentors vary considerably in terms of their:

* Influence on project choices

* Attention to low-level details vs high-level strategies

* Emphasis on outputs vs processes

* Availability for meetings

Our Scholar Support team complements mentors by offering dedicated 1-1 check-ins, research coaching, debugging, and general executive help to unblock research progress and accelerate researcher development.

Educational seminars and workshops will be held 2-3 times per week. We also organize multiple networking events to acquaint scholars with researchers in the Berkeley AI safety community.

### Research Milestones

Scholars complete two milestones during the Research Phase. The first is a [Scholar Research Plan](https://docs.google.com/document/d/1fQwy2btcWn3XRQkgu45kKnPPHMVQs28QHpaNtDCfXQY/edit#heading=h.ozvm8udh0z0y) outlining a threat model or risk factor, a theory of change, and a plan for their research. This document will guide their work during the remainder of the program, which culminates in a research symposium attended by members of the Berkeley AI safety community. The second milestone is a ten-minute [research presentation](https://docs.google.com/document/d/1SB_vl6pGuSO7qidkZ8fIKPeH7fZIC8mvXzxicFjGyrE/edit#heading=h.ozvm8udh0z0y) at this event.

### Community at MATS

The Research Phase provides scholars with a community of peers, who share an office, meals, and housing. In contrast to pursuing independent research remotely, working in a community grants scholars easy access to future collaborators, a deeper understanding of other research agendas, and a social network in the AI safety community. Scholars also receive support from a full-time Community Manager.

During our Summer Cohort, each week of the Research Phase included at least one social event, such as a party, game night, movie night, or hike. Weekly lightning talks provided scholars with an opportunity to share their research interests in an informal, low-stakes setting. Outside of work, scholars organized social activities, including road trips to Yosemite, visits to San Francisco, pub outings, weekend meals, and even a skydiving trip.

Extension Phase (April 1-July 26)

---------------------------------

At the conclusion of the Research Phase, scholars can apply to continue their research in a four-month Extension Phase cohort, in London by default. Acceptance decisions are largely based on receiving mentor endorsements and securing external funding. By this Phase, we expect scholars to pursue their research with high autonomy.

Post-MATS

---------

After completion of the program, MATS alumni have:

* Been hired by leading organizations like [Anthropic](https://www.anthropic.com/), [OpenAI](https://openai.com/), [MIRI](https://intelligence.org/), [ARC](https://www.alignment.org/), [Conjecture](https://www.conjecture.dev/), and the US government, and joined academic research groups like [UC Berkeley CHAI](https://humancompatible.ai/), [NYU ARG](https://wp.nyu.edu/arg/), and [MIT Tegmark Group](https://space.mit.edu/home/tegmark/technical.html);

* Founded AI safety organizations, including [ARENA](https://www.arena.education/), [Apollo Research](https://www.apolloresearch.ai/), [Leap Labs](https://www.leap-labs.com/), [Timaeus](https://timaeus.co/), [Cadenza Labs](https://cadenzalabs.org/), [Center for AI Policy](https://www.aipolicy.us/), [Catalyze Impact](https://www.catalyze-impact.org/), and [Stake Out AI](https://stakeout.ai/);

* Pursued [independent research](https://www.lesswrong.com/posts/P3Yt66Wh5g7SbkKuT/how-to-get-into-independent-research-on-alignment-agency) with funding from the [Long-Term Future Fund](https://funds.effectivealtruism.org/funds/far-future), [Open Philanthropy](https://www.openphilanthropy.org/how-to-apply-for-funding/), or [Manifund](https://manifund.org/).

You can read more about MATS alumni [here](https://www.matsprogram.org/alumni).

Information Specific to Neel Nanda

----------------------------------

### Applications

**Applications are due**: November 10

**Acceptance decisions**: November 17

### Training Phase (November 20 - December 22)

Neel Nanda's scholars will complete a remote Training Phase, consisting of two weeks learning about mech interp and two weeks performing a Research Sprint in a pair—see the [overview doc](https://docs.google.com/document/d/18qYhY6FB0AiVP9cNr9idP5YYkN_654WNVgfyL9LgfHA/edit) from the last program for more information.

Significantly more offers will be made for this initial Training Phase than for the subsequent Research Phase (last time, 19 scholars completed this Phase, and nine continued to the Research Phase), but past scholars who did not progress still found this Phase a good introduction to mech interp research. Offers to continue will be based largely on performance in the Research Sprint.

Neel's scholars will receive a stipend of USD 4800 from [AI Safety Support](https://www.aisafetysupport.org/) for participation in the Training Phase.

### Research Phase (January 8 - March 15)

Those who continue to the Research Phase will begin in-person in Berkeley on January 8 with the other scholars. For Neel's scholars, this will be considered the start of the Research Phase.

Who Should Apply?

=================

Our ideal applicant has:

* An understanding of the AI safety research landscape equivalent to having completed [AI Safety Fundamentals' Alignment Course](https://course.aisafetyfundamentals.com/alignment) (if you are accepted into the program but have not previously completed this course, you are expected to do so before the Training Phase begins);

* Previous experience with technical research (e.g. ML, CS, math, physics, neuroscience, etc.), generally at a postgraduate level; and

* Strong motivation to pursue a career in AI safety research.

Even if you do not meet all of these criteria, **we encourage you to apply**! Several past scholars applied without strong expectations and were accepted.

Applying from Outside the US

----------------------------

Scholars from outside the US can apply for [B-1 visas](https://www.uscis.gov/working-in-the-united-states/temporary-visitors-for-business/b-1-temporary-business-visitor) (further information [here](https://travel.state.gov/content/dam/visas/BusinessVisa%20Purpose%20Listings%20March%202014%20flier.pdf)) for the Research Phase. Scholars from [Visa Waiver Program (VWP) Designated Countries](https://travel.state.gov/content/travel/en/us-visas/tourism-visit/visa-waiver-program.html) can instead apply to the VWP via the [Electronic System for Travel Authorization (ESTA)](https://esta.cbp.dhs.gov/esta), which is processed in three days. Scholars who receive a B-1 visa can stay up to 180 days in the US, while scholars accepted into the VWP can stay up to 90 days. Please note that B-1 visa approval times can be significantly longer than ESTA approval times, depending on your country of origin.

How to Apply

============

[Applications are now open](https://airtable.com/appxum3Sqh7TdDvdg/shrtfHWhRFZdkhaIM). Submissions for most mentors are due on November 17. We encourage prospective applicants to fill out our brief [interest form](https://airtable.com/appxum3Sqh7TdDvdg/shrwtDZXeSfzeh5GT) to receive program updates and application deadline reminders. You can also fill out our [recommendation form](https://airtable.com/appxum3Sqh7TdDvdg/shrRtJW4Ux8oTY28C) to let us know about someone who might be a good fit, and we will share our application with them.

Candidates apply to work under a particular mentor, who will review their application. Applications are evaluated primarily based on **responses to mentor questions** and **prior relevant research experience**. Information about our mentors' research agendas can be found on the [MATS website](https://www.matsprogram.org/mentors).

Before applying, you should:

* Read through the descriptions and agendas of each stream and the associated candidate selection questions;

* Prepare your answers to the questions for streams you’re interested in applying to. These questions can be found on the application;

* Prepare your LinkedIn or resume.

The candidate selection questions can be quite hard, depending on the mentor! Make sure you allow adequate time to complete your application. A strong application to one mentor may be of higher value than moderate applications to several mentors (though each application will be assessed independently).

Note that the application is longer than it first seems because mentor-specific questions are hidden until you select a mentor.

Application Office Hours

------------------------

We have office hours for prospective applicants to clarify questions about the MATS program application process. Before attending office hours, we request that applicants read the current post fully and our [FAQ](https://www.matsprogram.org/faqs).

Our office hours will be held on [this Zoom link](https://zoom.us/j/98715408709) at the following times:

* Wednesday, October 25, 12 pm-2 pm PT.

* Wednesday, November 1, 12 pm-2 pm PT.

You can add these office hours to Google Calendar with [this link](https://calendar.google.com/calendar/event?action=TEMPLATE&tmeid=bnUxbmVxNDI1Z2d2OGxjY2Y0cTlvbm1lcWNfMjAyMzEwMjVUMTkwMDAwWiBjXzQzNmY2NzkzODEzMzk0ZmE5YjUwYjk1ZmMwOWY5Mzc4MDM1YjRiNmIyM2UxYjc4ODI5ZGQ1Y2U5ZGZkZDFkYTJAZw&tmsrc=c_436f6793813394fa9b50b95fc09f9378035b4b6b23e1b78829dd5ce9dfdd1da2%40group.calendar.google.com&scp=ALL). |

7937b97b-d6d3-4842-ad98-db32ff58f7dd | trentmkelly/LessWrong-43k | LessWrong | Brainstorming: children's stories

So I have a three-year old kid, and will usually read or tell him a bedtime story.

That is a nice opportunity to introduce new concepts, but my capacity for improvisation is limited, especially towards the end of the day. So I'm asking the good people on LessWrong for ideas. How would you wrap various lesswrongish ideas in a short story a little kid would pay attention to?

I'm mostly interested in the aspects of "practical rationality" that aren't going to be taught at school or in children's books or children's TV shows - so things like Sunk Costs, taking the outside view, wondering which side is true instead of arguing for a side, etc.

Pointers to outside sources of such stories are welcome too!

Edit: actually, if you want to share ideas of games or activities of the same kind, go ahead! :) |

8588688a-a066-4684-ab99-bb32cbd28050 | StampyAI/alignment-research-dataset/blogs | Blogs | Frontier AI regulation: Managing emerging risks to public safety

FRONTIER AI R EGULATION :

MANAGING EMERGING RISKS TO PUBLIC SAFETY

Markus Anderljung1,2∗†, Joslyn Barnhart3∗∗, Anton Korinek4,5,1∗∗†, Jade Leung6∗, Cullen O’Keefe6∗,

Jess Whittlestone7∗∗, Shahar Avin8, Miles Brundage6, Justin Bullock9,10, Duncan Cass-Beggs11,

Ben Chang12, Tantum Collins13,14, Tim Fist2, Gillian Hadfield15,16,17,6, Alan Hayes18, Lewis Ho3,

Sara Hooker19, Eric Horvitz20, Noam Kolt15, Jonas Schuett1, Yonadav Shavit14∗∗∗,

Divya Siddarth21, Robert Trager1,22, Kevin Wolf18

1Centre for the Governance of AI,2Center for a New American Security,3Google DeepMind,

4Brookings Institution,5University of Virginia,6OpenAI,7Centre for Long-Term Resilience,8Centre for the

Study of Existential Risk, University of Cambridge,9University of Washington,10Convergence Analysis,

11Centre for International Governance Innovation,12The Andrew W. Marshall Foundation,

13GETTING-Plurality Network, Edmond & Lily Safra Center for Ethics,14Harvard University,

15University of Toronto,16Schwartz Reisman Institute for Technology and Society,17Vector Institute,

18Akin Gump Strauss Hauer & Feld LLP,19Cohere For AI,20Microsoft,21Collective Intelligence Project,

22University of California: Los Angeles

Listed authors contributed substantive ideas and/or work to the white paper. Contributions include writing, editing, research,

detailed feedback, and participation in a workshop on a draft of the paper. The first six authors are listed in alphabetical order, as are

the subsequent 18. Given the size of the group, inclusion as an author does not entail endorsement of all claims in the paper, nor does

inclusion entail an endorsement on the part of any individual’s organization.

∗Significant contribution, including writing, research, convening, and setting the direction of the paper.

∗∗Significant contribution including editing, convening, detailed input, and setting the direction of the paper.

∗∗∗Work done while an independent contractor for OpenAI.

†Corresponding authors. Markus Anderljung ( markus.anderljung@governance.ai ) and Anton Korinek

(akorinek@brookings.edu ).

Cite as "Frontier AI Regulation: Managing Emerging Risks to Public Safety." Anderljung, Barnhart, Korinek, Leung, O’Keefe,

& Whittlestone, et al, 2023.arXiv:2307.03718v2 [cs.CY] 11 Jul 2023

Frontier AI Regulation: Managing Emerging Risks to Public Safety

ABSTRACT

Advanced AI models hold the promise of tremendous benefits for humanity, but society

needs to proactively manage the accompanying risks. In this paper, we focus on what we

term “frontier AI” models — highly capable foundation models that could possess dangerous

capabilities sufficient to pose severe risks to public safety. Frontier AI models pose a

distinct regulatory challenge: dangerous capabilities can arise unexpectedly; it is difficult to

robustly prevent a deployed model from being misused; and, it is difficult to stop a model’s

capabilities from proliferating broadly. To address these challenges, at least three building

blocks for the regulation of frontier models are needed: (1) standard-setting processes to

identify appropriate requirements for frontier AI developers, (2) registration and reporting

requirements to provide regulators with visibility into frontier AI development processes,

and (3) mechanisms to ensure compliance with safety standards for the development and

deployment of frontier AI models. Industry self-regulation is an important first step. However,

wider societal discussions and government intervention will be needed to create standards

and to ensure compliance with them. We consider several options to this end, including

granting enforcement powers to supervisory authorities and licensure regimes for frontier

AI models. Finally, we propose an initial set of safety standards. These include conducting

pre-deployment risk assessments; external scrutiny of model behavior; using risk assessments

to inform deployment decisions; and monitoring and responding to new information about

model capabilities and uses post-deployment. We hope this discussion contributes to the

broader conversation on how to balance public safety risks and innovation benefits from

advances at the frontier of AI development.

2

Frontier AI Regulation: Managing Emerging Risks to Public Safety

Executive Summary

The capabilities of today’s foundation models highlight both the promise and risks of rapid advances in AI.

These models have demonstrated significant potential to benefit people in a wide range of fields, including

education, medicine, and scientific research. At the same time, the risks posed by present-day models, coupled

with forecasts of future AI progress, have rightfully stimulated calls for increased oversight and governance

of AI across a range of policy issues. We focus on one such issue: the possibility that, as capabilities continue

to advance, new foundation models could pose severe risks to public safety, be it via misuse or accident.

Although there is ongoing debate about the nature and scope of these risks, we expect that government

involvement will be required to ensure that such "frontier AI models” are harnessed in the public interest.

Three factors suggest that frontier AI development may be in need of targeted regulation: (1) Models may

possess unexpected and difficult-to-detect dangerous capabilities; (2) Models deployed for broad use can be

difficult to reliably control and to prevent from being used to cause harm; (3) Models may proliferate rapidly,

enabling circumvention of safeguards.

Self-regulation is unlikely to provide sufficient protection against the risks from frontier AI models: govern-

ment intervention will be needed. We explore options for such intervention. These include:

Mechanisms to create and update safety standards for responsible frontier AI develop-

ment and deployment. These should be developed via multi-stakeholder processes, and could

include standards relevant to foundation models overall, not exclusive to frontier AI. These

processes should facilitate rapid iteration to keep pace with the technology.

Mechanisms to give regulators visibility into frontier AI development, such as disclosure

regimes, monitoring processes, and whistleblower protections. These equip regulators with

the information needed to address the appropriate regulatory targets and design effective

tools for governing frontier AI. The information provided would pertain to qualifying frontier

AI development processes, models, and applications.

Mechanisms to ensure compliance with safety standards. Self-regulatory efforts, such as

voluntary certification, may go some way toward ensuring compliance with safety standards

by frontier AI model developers. However, this seems likely to be insufficient without

government intervention, for example by empowering a supervisory authority to identify and

sanction non-compliance; or by licensing the deployment and potentially the development of

frontier AI. Designing these regimes to be well-balanced is a difficult challenge; we should

be sensitive to the risks of overregulation and stymieing innovation on the one hand, and

moving too slowly relative to the pace of AI progress on the other.

Next, we describe an initial set of safety standards that, if adopted, would provide some guardrails on the

development and deployment of frontier AI models. Versions of these could also be adopted for current

AI models to guard against a range of risks. We suggest that at minimum, safety standards for frontier AI

development should include:

Conducting thorough risk assessments informed by evaluations of dangerous capabili-

ties and controllability. This would reduce the risk that deployed models possess unknown

dangerous capabilities, or behave unpredictably and unreliably.

Engaging external experts to apply independent scrutiny to models. External scrutiny

of the safety and risk profile of models would both improve assessment rigor and foster

accountability to the public interest.

3

Frontier AI Regulation: Managing Emerging Risks to Public Safety

Following standardized protocols for how frontier AI models can be deployed based on

their assessed risk. The results from risk assessments should determine whether and how the

model is deployed, and what safeguards are put in place. This could range from deploying

the model without restriction to not deploying it at all. In many cases, an intermediate

option—deployment with appropriate safeguards (e.g., more post-training that makes the

model more likely to avoid risky instructions)—may be appropriate.

Monitoring and responding to new information on model capabilities. The assessed

risk of deployed frontier AI models may change over time due to new information, and new

post-deployment enhancement techniques. If significant information on model capabilities is

discovered post-deployment, risk assessments should be repeated, and deployment safeguards

updated.

Going forward, frontier AI models seem likely to warrant safety standards more stringent than those imposed

on most other AI models, given the prospective risks they pose. Examples of such standards include: avoiding

large jumps in capabilities between model generations; adopting state-of-the-art alignment techniques; and

conducting pre-training risk assessments. Such practices are nascent today, and need further development.

The regulation of frontier AI should only be one part of a broader policy portfolio, addressing the wide range

of risks and harms from AI, as well as AI’s benefits. Risks posed by current AI systems should be urgently

addressed; frontier AI regulation would aim to complement and bolster these efforts, targeting a particular

subset of resource-intensive AI efforts. While we remain uncertain about many aspects of the ideas in this

paper, we hope it can contribute to a more informed and concrete discussion of how to better govern the risks

of advanced AI systems while enabling the benefits of innovation to society.

Acknowledgements

We would like to express our thanks to the people who have offered feedback and input on the ideas in this

paper, including Jon Bateman, Rishi Bommasani, Will Carter, Peter Cihon, Jack Clark, John Cisternino,

Rebecca Crootof, Allan Dafoe, Ellie Evans, Marina Favaro, Noah Feldman, Ben Garfinkel, Joshua Gotbaum,

Julian Hazell, Lennart Heim, Holden Karnofsky, Jeremy Howard, Tim Hwang, Tom Kalil, Gretchen Krueger,

Lucy Lim, Chris Meserole, Luke Muehlhauser, Jared Mueller, Richard Ngo, Sanjay Patnaik, Hadrien Pouget,

Gopal Sarma, Girish Sastry, Paul Scharre, Mike Selitto, Toby Shevlane, Danielle Smalls, Helen Toner, and

Irene Solaiman.

4

Frontier AI Regulation: Managing Emerging Risks to Public Safety

Contents

1 Introduction 6

2 The Regulatory Challenge of Frontier AI Models 7

2.1 What do we mean by frontier AI models? . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

2.2 The Regulatory Challenge Posed by Frontier AI . . . . . . . . . . . . . . . . . . . . . . . . 9

2.2.1 The Unexpected Capabilities Problem: Dangerous Capabilities Can Arise Unpre-

dictably and Undetected . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 10

2.2.2 The Deployment Safety Problem: Preventing deployed AI models from causing harm

is difficult . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 13

2.2.3 The Proliferation Problem: Frontier AI models can proliferate rapidly . . . . . . . . 13

3 Building Blocks for Frontier AI Regulation 16

3.1 Institutionalize Frontier AI Safety Standards Development . . . . . . . . . . . . . . . . . . 16

3.2 Increase Regulatory Visibility . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

3.3 Ensure Compliance with Standards . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

3.3.1 Self-Regulation and Certification . . . . . . . . . . . . . . . . . . . . . . . . . . . 18

3.3.2 Mandates and Enforcement by supervisory authorities . . . . . . . . . . . . . . . . 19

3.3.3 License Frontier AI Development and Deployment . . . . . . . . . . . . . . . . . . 20

3.3.4 Pre-conditions for Rigorous Enforcement Mechanisms . . . . . . . . . . . . . . . . 21

4 Initial Safety Standards for Frontier AI 23

4.1 Conduct thorough risk assessments informed by evaluations of dangerous capabilities and

controllability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 23

4.1.1 Assessment for Dangerous Capabilities . . . . . . . . . . . . . . . . . . . . . . . . 24

4.1.2 Assessment for Controllability . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 24

4.1.3 Other Considerations for Performing Risk Assessments . . . . . . . . . . . . . . . . 25

4.2 Engage External Experts to Apply Independent Scrutiny to Models . . . . . . . . . . . . . . 26

4.3 Follow Standardized Protocols for how Frontier AI Models can be Deployed Based on their

Assessed Risk . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 26

4.4 Monitor and respond to new information on model capabilities . . . . . . . . . . . . . . . . 28

4.5 Additional practices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 28

5 Uncertainties and Limitations 30

A Creating a Regulatory Definition for Frontier AI 34

A.1 Desiderata for a Regulatory Definition . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 34

A.2 Defining Sufficiently Dangerous Capabilities . . . . . . . . . . . . . . . . . . . . . . . . . 34

A.3 Defining Foundation Models . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 35

A.4 Defining the Possibility of Producing Sufficiently Dangerous Capabilities . . . . . . . . . . 35

B Scaling laws in Deep Learning 37

5

Frontier AI Regulation: Managing Emerging Risks to Public Safety

1 Introduction

Responsible AI innovation can provide extraordinary benefits to society, such as delivering medical [1, 2,

3, 4] and legal [5, 6, 7] services to more people at lower cost, enabling scalable personalized education [8],

and contributing solutions to pressing global challenges like climate change [9, 10, 11, 12] and pandemic

prevention [13, 14]. However, guardrails are necessary to prevent the pursuit of innovation from imposing

excessive negative externalities on society. There is increasing recognition that government oversight is

needed to ensure AI development is carried out responsibly; we hope to contribute to this conversation by

exploring regulatory approaches to this end.

In this paper, we focus specifically on the regulation of frontier AI models, which we define as highly capable

foundation models1that could have dangerous capabilities sufficient to pose severe risks to public safety and

global security. Examples of such dangerous capabilities include designing new biochemical weapons [16],

producing highly persuasive personalized disinformation, and evading human control [17, 18, 19, 20, 21, 22,

23].

In this paper, we first define frontier AI models and detail several policy challenges posed by them. We

explain why effective governance of frontier AI models requires intervention throughout the models’ lifecycle,

at the development, deployment, and post-deployment stages. Then, we describe approaches to regulating

frontier AI models, including building blocks of regulation such as the development of safety standards,

increased regulatory visibility, and ensuring compliance with safety standards. We also propose a set of initial

safety standards for frontier AI development and deployment. We close by highlighting uncertainties and

limitations for further exploration.

1Defined as: “any model that is trained on broad data (generally using self-supervision at scale) that can be adapted (e.g.,

fine-tuned) to a wide range of downstream tasks” [15].

6

Frontier AI Regulation: Managing Emerging Risks to Public Safety

2 The Regulatory Challenge of Frontier AI Models

2.1 What do we mean by frontier AI models?

For the purposes of this paper, we define “frontier AI models” as highly capable foundation models2that could

exhibit dangerous capabilities. Such harms could take the form of significant physical harm or the disruption

of key societal functions on a global scale, resulting from intentional misuse or accident [25, 26]. It would be

prudent to assume that next-generation foundation models could possess advanced enough capabilities to

qualify as frontier AI models, given both the difficulty of predicting when sufficiently dangerous capabilities

will arise and the already significant capabilities of today’s models.

Though it is not clear where the line for “sufficiently dangerous capabilities” should be drawn, examples

could include:

• Allowing a non-expert to design and synthesize new biological or chemical weapons.3

•Producing and propagating highly persuasive, individually tailored, multi-modal disinformation with

minimal user instruction.4

• Harnessing unprecedented offensive cyber capabilities that could cause catastrophic harm.5

• Evading human control through means of deception and obfuscation.6

This list represents just a few salient possibilities; the possible future capabilities of frontier AI models

remains an important area of inquiry.

Foundation models, such as large language models (LLMs), are trained on large, broad corpora of natural

language and other text (e.g., computer code), usually starting with the simple objective of predicting the

next “token”.7This relatively simple approach produces models with surprisingly broad capabilities.8These

2[15] defines “foundation models” as “models (e.g., BERT, DALL-E, GPT-3) that are trained on broad data at scale and are

adaptable to a wide range of downstream tasks.” See also [24].

3Such capabilities are starting to emerge. For example, a group of researchers tasked a narrow drug-discovery system to identify

maximally toxic molecules. The system identified over 40,000 candidate molecules, including both known chemical weapons and

novel molecules that were predicted to be as or more deadly [16]. Other researchers are warning that LLMs can be used to aid in

discovery and synthesis of compounds. One group attempted to create an LLM-based agent, giving it access to the internet, code

execution abilities, hardware documentation, and remote control of an automated ‘cloud’ laboratory. They report finding that it in

some cases the model was willing to outline and execute on viable methods for synthesizing illegal drugs and chemical weapons [27].

4Generative AI models may already be useful to generate material for disinformation campaigns [28, 29, 30]. It is possible that,

in the future, models could possess additional capabilities that could enhance the persuasiveness or dissemination of disinformation,

such as by making such disinformation more dynamic, personalized, and multimodal; or by autonomously disseminating such

disinformation through channels that enhance its persuasive value, such as traditional media.

5AI systems are already helpful in writing and debugging code, capabilities that can also be applied to software vulnerability

discovery. There is potential for significant harm via automation of vulnerability discovery and exploitation. However, vulnerability

discovery could ultimately benefit cyberdefense more than -offense, provided defenders are able to use such tools to identify and

patch vulnerabilities more effectively than attackers can find and exploit them [31, 32].

6If future AI systems develop the ability and the propensity to deceive their users, controlling their behavior could be extremely

challenging. Though it is unclear whether models will trend in that direction, it seems rash to dismiss the possibility and some argue

that it might be the default outcome of current training paradigms [17, 18, 20, 21, 22, 23].

7A token can be thought of as a word or part of a word [33].

8For example, LLMs achieve state-of-the-art performance in diverse tasks such as question answering, translation, multi-step

reasoning, summarization, and code completion, among others [34, 35, 36, 37]. Indeed, the term “LLM” is already becoming

outdated, as several leading “LLMs” are in fact multimodal (e.g., possess visual capabilities) [36, 38].

7

Frontier AI Regulation: Managing Emerging Risks to Public Safety

Figure 1: Example Frontier AI Lifecycle.

models thus possess more general-purpose functionality9than many other classes of AI models, such as

the recommender systems used to suggest Internet videos or generative AI models in narrower domains

like music. Developers often make their models available through “broad deployment” via sector-agnostic

platforms such as APIs, chatbots, or via open-sourcing.10This means that they can be integrated in a large

number of diverse downstream applications, possibly including safety-critical sectors (illustrated in Figure 1).

A number of features of our definition are worth highlighting. In focusing on foundation models which could

have dangerous, emergent capabilities, our definition of frontier AI excludes narrow models, even when these

models could have sufficiently dangerous capabilities.11For example, models optimizing for the toxicity of

compounds [16] or the virulence of pathogens could lead to intended (or at least foreseen) harms and thus

may be more appropriately covered with more targeted regulation.12

9We intentionally avoid using the term “general-purpose AI” to avoid confusion with the use of that term in the EU AI Act and

other legislation. Frontier AI systems are a related but narrower class of AI systems with general-purpose functionality, but whose

capabilities are relatively advanced and novel.

10We use “open-source” to mean “open release:” that is a model being made freely available online, be it with a license restricting

what the system can be used for. An example of such a license is the Responsible AI License. Our usage of “open-source” differs